file_path stringlengths 20 202 | content stringlengths 9 3.85M | size int64 9 3.85M | lang stringclasses 9

values | avg_line_length float64 3.33 100 | max_line_length int64 8 993 | alphanum_fraction float64 0.26 0.93 |

|---|---|---|---|---|---|---|

NVlabs/ACID/ACID/src/training.py | import numpy as np

from collections import defaultdict

from tqdm import tqdm

class BaseTrainer(object):

''' Base trainer class.

'''

def evaluate(self, val_loader):

''' Performs an evaluation.

Args:

val_loader (dataloader): pytorch dataloader

'''

eval_list = def... | 988 | Python | 23.724999 | 65 | 0.571862 |

NVlabs/ACID/ACID/src/common.py | # import multiprocessing

import torch

import numpy as np

import math

import numpy as np

def compute_iou(occ1, occ2):

''' Computes the Intersection over Union (IoU) value for two sets of

occupancy values.

Args:

occ1 (tensor): first set of occupancy values

occ2 (tensor): second set of occupa... | 11,186 | Python | 29.399456 | 109 | 0.562846 |

NVlabs/ACID/ACID/src/config.py | import yaml

from torchvision import transforms

from src import data

from src import conv_onet

method_dict = {

'conv_onet': conv_onet

}

# General config

def load_config(path, default_path=None):

''' Loads config file.

Args:

path (str): path to config file

default_path (bool): whether t... | 2,573 | Python | 23.990291 | 76 | 0.624563 |

NVlabs/ACID/ACID/src/checkpoints.py | import os

import urllib

import torch

from torch.utils import model_zoo

class CheckpointIO(object):

''' CheckpointIO class.

It handles saving and loading checkpoints.

Args:

checkpoint_dir (str): path where checkpoints are saved

'''

def __init__(self, checkpoint_dir='./chkpts', **kwargs):

... | 2,962 | Python | 28.63 | 70 | 0.568535 |

NVlabs/ACID/ACID/src/layers.py | import torch

import torch.nn as nn

# Resnet Blocks

class ResnetBlockFC(nn.Module):

''' Fully connected ResNet Block class.

Args:

size_in (int): input dimension

size_out (int): output dimension

size_h (int): hidden dimension

'''

def __init__(self, size_in, size_out=None, size_... | 1,203 | Python | 24.083333 | 68 | 0.532835 |

NVlabs/ACID/ACID/src/conv_onet/training.py | import os

import numpy as np

import torch

from torch.nn import functional as F

from src.common import compute_iou

from src.utils import common_util, plushsim_util

from src.training import BaseTrainer

from sklearn.metrics import roc_curve

from scipy import interp

import matplotlib.pyplot as plt

from collections import d... | 15,474 | Python | 42.105849 | 109 | 0.511439 |

NVlabs/ACID/ACID/src/conv_onet/config.py | import os

from src.encoder import encoder_dict

from src.conv_onet import models, training

from src.conv_onet import generation

from src import data

def get_model(cfg,device=None, dataset=None, **kwargs):

if cfg['model']['type'] == 'geom':

return get_geom_model(cfg,device,dataset)

elif cfg['model']['typ... | 4,514 | Python | 29.1 | 77 | 0.597475 |

NVlabs/ACID/ACID/src/conv_onet/__init__.py | from src.conv_onet import (

config, generation, training, models

)

__all__ = [

config, generation, training, models

]

| 127 | Python | 14.999998 | 40 | 0.661417 |

NVlabs/ACID/ACID/src/conv_onet/generation.py | import torch

import torch.optim as optim

from torch import autograd

import numpy as np

from tqdm import trange, tqdm

import trimesh

from src.utils import libmcubes, common_util

from src.common import make_3d_grid, normalize_coord, add_key, coord2index

from src.utils.libmise import MISE

import time

import math

counter ... | 14,928 | Python | 36.044665 | 126 | 0.536509 |

NVlabs/ACID/ACID/src/conv_onet/models/decoder.py | import torch

import torch.nn as nn

import torch.nn.functional as F

from src.layers import ResnetBlockFC

from src.common import normalize_coordinate, normalize_3d_coordinate, map2local

class GeomDecoder(nn.Module):

''' Decoder.

Instead of conditioning on global features, on plane/volume local features.

... | 8,333 | Python | 35.876106 | 114 | 0.554062 |

NVlabs/ACID/ACID/src/conv_onet/models/__init__.py | import torch

import numpy as np

import torch.nn as nn

from torch import distributions as dist

from src.conv_onet.models import decoder

from src.utils import plushsim_util

# Decoder dictionary

decoder_dict = {

'geom_decoder': decoder.GeomDecoder,

'combined_decoder': decoder.CombinedDecoder,

}

class ConvImpDyn(n... | 17,056 | Python | 39.80622 | 113 | 0.525797 |

NVlabs/ACID/ACID/src/encoder/__init__.py | from src.encoder import (

pointnet

)

encoder_dict = {

'geom_encoder': pointnet.GeomEncoder,

}

| 104 | Python | 10.666665 | 41 | 0.663462 |

NVlabs/ACID/ACID/src/encoder/pointnet.py | import torch

import torch.nn as nn

import torch.nn.functional as F

from src.layers import ResnetBlockFC

from torch_scatter import scatter_mean, scatter_max

from src.common import coordinate2index, normalize_coordinate

from src.encoder.unet import UNet

class GeomEncoder(nn.Module):

''' PointNet-based encoder networ... | 4,654 | Python | 37.791666 | 134 | 0.592609 |

NVlabs/ACID/ACID/src/encoder/unet.py | '''

Codes are from:

https://github.com/jaxony/unet-pytorch/blob/master/model.py

'''

import torch

import torch.nn as nn

import torch.nn.functional as F

from torch.autograd import Variable

from collections import OrderedDict

from torch.nn import init

import numpy as np

def conv3x3(in_channels, out_channels, stride=1,

... | 8,696 | Python | 32.57915 | 80 | 0.575092 |

NVlabs/ACID/ACID/src/utils/common_util.py | import os

import glob

import json

import scipy

import itertools

import numpy as np

from PIL import Image

from scipy.spatial.transform import Rotation

from sklearn.neighbors import NearestNeighbors

from sklearn.manifold import TSNE

from matplotlib import pyplot as plt

def get_color_map(x):

colours = plt.cm.Spectral... | 12,618 | Python | 36.005865 | 116 | 0.560628 |

NVlabs/ACID/ACID/src/utils/io.py | import os

from plyfile import PlyElement, PlyData

import numpy as np

def export_pointcloud(vertices, out_file, as_text=True):

assert(vertices.shape[1] == 3)

vertices = vertices.astype(np.float32)

vertices = np.ascontiguousarray(vertices)

vector_dtype = [('x', 'f4'), ('y', 'f4'), ('z', 'f4')]

verti... | 3,415 | Python | 29.230088 | 78 | 0.513616 |

NVlabs/ACID/ACID/src/utils/visualize.py | import numpy as np

from matplotlib import pyplot as plt

from mpl_toolkits.mplot3d import Axes3D

import src.common as common

def visualize_data(data, data_type, out_file):

r''' Visualizes the data with regard to its type.

Args:

data (tensor): batch of data

data_type (string): data type (img, v... | 2,378 | Python | 26.66279 | 65 | 0.585786 |

NVlabs/ACID/ACID/src/utils/mentalsim_util.py | import os

import glob

import json

import scipy

import itertools

import numpy as np

from PIL import Image

from scipy.spatial.transform import Rotation

from sklearn.neighbors import NearestNeighbors

########################################################################

# Viewpoint transform

###########################... | 19,039 | Python | 44.118483 | 116 | 0.564578 |

NVlabs/ACID/ACID/src/utils/plushsim_util.py | import os

import glob

import json

import scipy

import itertools

import numpy as np

from PIL import Image

from scipy.spatial.transform import Rotation

from sklearn.neighbors import NearestNeighbors

from .common_util import *

########################################################################

# Some file getters

#... | 20,693 | Python | 43.407725 | 124 | 0.605905 |

NVlabs/ACID/ACID/src/utils/libmise/__init__.py | from .mise import MISE

__all__ = [

MISE

]

| 47 | Python | 6.999999 | 22 | 0.531915 |

NVlabs/ACID/ACID/src/utils/libmise/test.py | import numpy as np

from mise import MISE

import time

t0 = time.time()

extractor = MISE(1, 2, 0.)

p = extractor.query()

i = 0

while p.shape[0] != 0:

print(i)

print(p)

v = 2 * (p.sum(axis=-1) > 2).astype(np.float64) - 1

extractor.update(p, v)

p = extractor.query()

i += 1

if (i >= 8):

... | 456 | Python | 16.576922 | 55 | 0.570175 |

NVlabs/ACID/ACID/src/utils/libsimplify/__init__.py | from .simplify_mesh import (

mesh_simplify

)

import trimesh

def simplify_mesh(mesh, f_target=10000, agressiveness=7.):

vertices = mesh.vertices

faces = mesh.faces

vertices, faces = mesh_simplify(vertices, faces, f_target, agressiveness)

mesh_simplified = trimesh.Trimesh(vertices, faces, process=... | 355 | Python | 21.249999 | 77 | 0.723944 |

NVlabs/ACID/ACID/src/utils/libsimplify/test.py | from simplify_mesh import mesh_simplify

import numpy as np

v = np.random.rand(100, 3)

f = np.random.choice(range(100), (50, 3))

mesh_simplify(v, f, 50) | 153 | Python | 20.999997 | 41 | 0.705882 |

NVlabs/ACID/ACID/src/utils/libsimplify/Simplify.h | /////////////////////////////////////////////

//

// Mesh Simplification Tutorial

//

// (C) by Sven Forstmann in 2014

//

// License : MIT

// http://opensource.org/licenses/MIT

//

//https://github.com/sp4cerat/Fast-Quadric-Mesh-Simplification

//

// 5/2016: Chris Rorden created minimal version for OSX/Linux/Windows compil... | 25,295 | C | 23.58309 | 142 | 0.567108 |

NVlabs/ACID/ACID/src/utils/libmcubes/pyarray_symbol.h |

#define PY_ARRAY_UNIQUE_SYMBOL mcubes_PyArray_API

| 51 | C | 16.333328 | 49 | 0.803922 |

NVlabs/ACID/ACID/src/utils/libmcubes/README.rst | ========

PyMCubes

========

PyMCubes is an implementation of the marching cubes algorithm to extract

isosurfaces from volumetric data. The volumetric data can be given as a

three-dimensional NumPy array or as a Python function ``f(x, y, z)``. The first

option is much faster, but it requires more memory and becomes unfe... | 1,939 | reStructuredText | 28.846153 | 81 | 0.682826 |

NVlabs/ACID/ACID/src/utils/libmcubes/marchingcubes.h |

#ifndef _MARCHING_CUBES_H

#define _MARCHING_CUBES_H

#include <stddef.h>

#include <vector>

namespace mc

{

extern int edge_table[256];

extern int triangle_table[256][16];

namespace private_

{

double mc_isovalue_interpolation(double isovalue, double f1, double f2,

double x1, double x2);

void mc_add_vertex(double... | 20,843 | C | 37.457565 | 92 | 0.372931 |

NVlabs/ACID/ACID/src/utils/libmcubes/pyarraymodule.h |

#ifndef _EXTMODULE_H

#define _EXTMODULE_H

#include <Python.h>

#include <stdexcept>

// #define NPY_NO_DEPRECATED_API NPY_1_7_API_VERSION

#define PY_ARRAY_UNIQUE_SYMBOL mcubes_PyArray_API

#define NO_IMPORT_ARRAY

#include "numpy/arrayobject.h"

#include <complex>

template<class T>

struct numpy_typemap;

#define define... | 4,645 | C | 32.666666 | 93 | 0.655328 |

NVlabs/ACID/ACID/src/utils/libmcubes/__init__.py | from src.utils.libmcubes.mcubes import (

marching_cubes, marching_cubes_func

)

from src.utils.libmcubes.exporter import (

export_mesh, export_obj, export_off

)

__all__ = [

marching_cubes, marching_cubes_func,

export_mesh, export_obj, export_off

]

| 265 | Python | 19.461537 | 42 | 0.70566 |

NVlabs/ACID/ACID/src/utils/libmcubes/exporter.py |

import numpy as np

def export_obj(vertices, triangles, filename):

"""

Exports a mesh in the (.obj) format.

"""

with open(filename, 'w') as fh:

for v in vertices:

fh.write("v {} {} {}\n".format(*v))

for f in triangles:

fh.write("f {} {... | 1,697 | Python | 25.53125 | 81 | 0.570418 |

NVlabs/ACID/ACID/src/utils/libmcubes/marchingcubes.cpp |

#include "marchingcubes.h"

namespace mc

{

int edge_table[256] =

{

0x000, 0x109, 0x203, 0x30a, 0x406, 0x50f, 0x605, 0x70c, 0x80c, 0x905, 0xa0f, 0xb06, 0xc0a, 0xd03, 0xe09, 0xf00,

0x190, 0x099, 0x393, 0x29a, 0x596, 0x49f, 0x795, 0x69c, 0x99c, 0x895, 0xb9f, 0xa96, 0xd9a, 0xc93, 0xf99, 0xe90,

0x230, 0x339,... | 18,889 | C++ | 56.069486 | 116 | 0.339827 |

NVlabs/ACID/ACID/src/utils/libmcubes/pywrapper.cpp |

#include "pywrapper.h"

#include "marchingcubes.h"

#include <stdexcept>

struct PythonToCFunc

{

PyObject* func;

PythonToCFunc(PyObject* func) {this->func = func;}

double operator()(double x, double y, double z)

{

PyObject* res = PyObject_CallFunction(func, "(d,d,d)", x, y, z); // py::extract<d... | 7,565 | C++ | 35.907317 | 120 | 0.624455 |

NVlabs/ACID/ACID/src/utils/libmcubes/pywrapper.h |

#ifndef _PYWRAPPER_H

#define _PYWRAPPER_H

#include <Python.h>

#include "pyarraymodule.h"

#include <vector>

PyObject* marching_cubes(PyArrayObject* arr, double isovalue);

PyObject* marching_cubes2(PyArrayObject* arr, double isovalue);

PyObject* marching_cubes3(PyArrayObject* arr, double isovalue);

PyObject* marching... | 455 | C | 25.823528 | 64 | 0.758242 |

NVlabs/ACID/ACID/src/data/__init__.py |

from src.data.core import (

PlushEnvGeom, collate_remove_none, worker_init_fn, get_plush_loader

)

from src.data.transforms import (

PointcloudNoise, SubsamplePointcloud,

SubsamplePoints,

)

__all__ = [

# Core

PlushEnvGeom,

get_plush_loader,

collate_remove_none,

worker_init_fn,

Pointc... | 379 | Python | 18.999999 | 71 | 0.693931 |

NVlabs/ACID/ACID/src/data/core.py | import os

import yaml

import pickle

import torch

import logging

import numpy as np

from torch.utils import data

from torch.utils.data.dataloader import default_collate

from src.utils import plushsim_util, common_util

scene_range = plushsim_util.SCENE_RANGE.copy()

to_range = np.array([[-1.1,-1.1,-1.1],[1.1,1.1,1.1]])... | 26,177 | Python | 42.557404 | 133 | 0.593154 |

NVlabs/ACID/ACID/src/data/transforms.py | import numpy as np

# Transforms

class PointcloudNoise(object):

''' Point cloud noise transformation class.

It adds noise to point cloud data.

Args:

stddev (int): standard deviation

'''

def __init__(self, stddev):

self.stddev = stddev

def __call__(self, data):

''' Ca... | 3,578 | Python | 25.708955 | 67 | 0.507546 |

NVlabs/ACID/ACID/configs/default.yaml | method: conv_onet

data:

train_split: train

val_split: val

test_split: test

dim: 3

act_dim: 6

padding: 0.1

type: geom

model:

decoder: simple

encoder: resnet18

decoder_kwargs: {}

encoder_kwargs: {}

multi_gpu: false

c_dim: 512

training:

out_dir: out/default

batch_size: 64

pos_weight: 5

p... | 1,121 | YAML | 18.68421 | 51 | 0.702944 |

NVlabs/ACID/ACID/configs/plush_dyn_geodesics.yaml | method: conv_onet

data:

flow_path: train_data/flow

pair_path: train_data/pair

pointcloud_n_obj: 5000

pointcloud_n_env: 1000

pointcloud_noise: 0.005

points_subsample: 3000

model:

type: combined

obj_encoder_kwargs:

f_dim: 3

hidden_dim: 64

plane_resolution: 128

unet_kwargs:

depth: 4

... | 1,175 | YAML | 18.932203 | 46 | 0.67234 |

NVlabs/ACID/ACID/preprocess/gen_data_flow_plush.py | import numpy as np

import os

import time, datetime

import sys

import os.path as osp

ACID_dir = osp.dirname(osp.dirname(osp.realpath(__file__)))

sys.path.insert(0,ACID_dir)

import json

from src.utils import plushsim_util

from src.utils import common_util

import glob

import tqdm

from multiprocessing import Pool

impor... | 4,353 | Python | 39.691588 | 143 | 0.64668 |

NVlabs/ACID/ACID/preprocess/gen_data_contrastive_pairs_flow.py | import os

import sys

import glob

import tqdm

import random

import argparse

import numpy as np

import os.path as osp

import time

from multiprocessing import Pool

ACID_dir = osp.dirname(osp.dirname(osp.realpath(__file__)))

sys.path.insert(0,ACID_dir)

parser = argparse.ArgumentParser("Training Contrastive Pair Data Gener... | 3,584 | Python | 35.212121 | 100 | 0.66183 |

NVlabs/ACID/ACID/preprocess/gen_data_flow_splits.py | import os

import sys

import os.path as osp

ACID_dir = osp.dirname(osp.dirname(osp.realpath(__file__)))

sys.path.insert(0,ACID_dir)

import glob

import argparse

flow_default = osp.join(ACID_dir, "train_data", "flow")

parser = argparse.ArgumentParser("Making training / testing splits...")

parser.add_argument("--flow_roo... | 1,972 | Python | 28.447761 | 76 | 0.625761 |

erasromani/isaac-sim-python/simulate_grasp.py | import os

import argparse

from grasp.grasp_sim import GraspSimulator

from omni.isaac.motion_planning import _motion_planning

from omni.isaac.dynamic_control import _dynamic_control

from omni.isaac.synthetic_utils import OmniKitHelper

def main(args):

kit = OmniKitHelper(

{"renderer": "RayTracedLighting"... | 2,835 | Python | 39.514285 | 197 | 0.662434 |

erasromani/isaac-sim-python/README.md | # isaac-sim-python: Python wrapper for NVIDIA Omniverse Isaac-Sim

## Overview

This repository contains a collection of python wrappers for NVIDIA Omniverse Isaac-Sim simulations. `grasp` package simulates a planar grasp execution of a Panda arm in a scene with various rigid objects place in a bin.

## Installation

Thi... | 2,201 | Markdown | 56.947367 | 550 | 0.79055 |

erasromani/isaac-sim-python/grasp/grasp_sim.py | import os

import numpy as np

import tempfile

import omni.kit

from omni.isaac.synthetic_utils import SyntheticDataHelper

from grasp.utils.isaac_utils import RigidBody

from grasp.grasping_scenarios.grasp_object import GraspObject

from grasp.utils.visualize import screenshot, img2vid

default_camera_pose = {

'posit... | 5,666 | Python | 32.532544 | 148 | 0.59107 |

erasromani/isaac-sim-python/grasp/grasping_scenarios/scenario.py | # Credits: The majority of this code is taken from build code associated with nvidia/isaac-sim:2020.2.2_ea with minor modifications.

import gc

import carb

import omni.usd

from omni.isaac.utils.scripts.nucleus_utils import find_nucleus_server

from grasp.utils.isaac_utils import set_up_z_axis

class Scenario:

""" ... | 3,963 | Python | 32.880342 | 132 | 0.609134 |

erasromani/isaac-sim-python/grasp/grasping_scenarios/grasp_object.py | # Credits: Starter code taken from build code associated with nvidia/isaac-sim:2020.2.2_ea.

import os

import random

import numpy as np

import glob

import omni

import carb

from enum import Enum

from collections import deque

from pxr import Gf, UsdGeom

from copy import copy

from omni.physx.scripts.physicsUtils import ... | 27,230 | Python | 35.502681 | 137 | 0.573265 |

erasromani/isaac-sim-python/grasp/grasping_scenarios/franka.py | # Credits: The majority of this code is taken from build code associated with nvidia/isaac-sim:2020.2.2_ea with minor modifications.

import time

import os

import numpy as np

import carb.tokens

import omni.kit.settings

from pxr import Usd, UsdGeom, Gf

from collections import deque

from omni.isaac.dynamic_control impo... | 13,794 | Python | 34.371795 | 132 | 0.582935 |

erasromani/isaac-sim-python/grasp/utils/isaac_utils.py | # Credits: All code except class RigidBody and Camera is taken from build code associated with nvidia/isaac-sim:2020.2.2_ea.

import numpy as np

import omni.kit

from pxr import Usd, UsdGeom, Gf, PhysicsSchema, PhysxSchema

def create_prim_from_usd(stage, prim_env_path, prim_usd_path, location):

"""

Create pri... | 9,822 | Python | 32.640411 | 124 | 0.633069 |

erasromani/isaac-sim-python/grasp/utils/visualize.py | import os

import ffmpeg

import matplotlib.pyplot as plt

def screenshot(sd_helper, suffix="", prefix="image", directory="images/"):

"""

Take a screenshot of the current time step of a running NVIDIA Omniverse Isaac-Sim simulation.

Args:

sd_helper (omni.isaac.synthetic_utils.SyntheticDataHelper): h... | 1,647 | Python | 30.692307 | 115 | 0.649059 |

pantelis-classes/omniverse-ai/README.md | # Learning in Simulated Worlds in Omniverse.

Please go to the wiki tab.

https://github.com/pantelis-classes/omniverse-ai/wiki

<hr />

# Wiki Navigation

* [Home][home]

* [Isaac-Sim-SDK-Omniverse-Installatio... | 3,133 | Markdown | 57.037036 | 256 | 0.785828 |

pantelis-classes/omniverse-ai/Images/images.md | # A markdown file containing all the images in the wiki. (Saved in github's cloud)

.md | # Synthetic Data in Omniverse from Isaac Sim

Omniverse comes with synthetic data generation samples in Python. These can be found in (home/.local/share/ov/pkg/isaac_sim-2021.2.0/python_samples)

## Offline Dataset Generation

This example will demonstrate how to generate synthetic dataset offline which can be used for ... | 3,918 | Markdown | 49.243589 | 218 | 0.782797 |

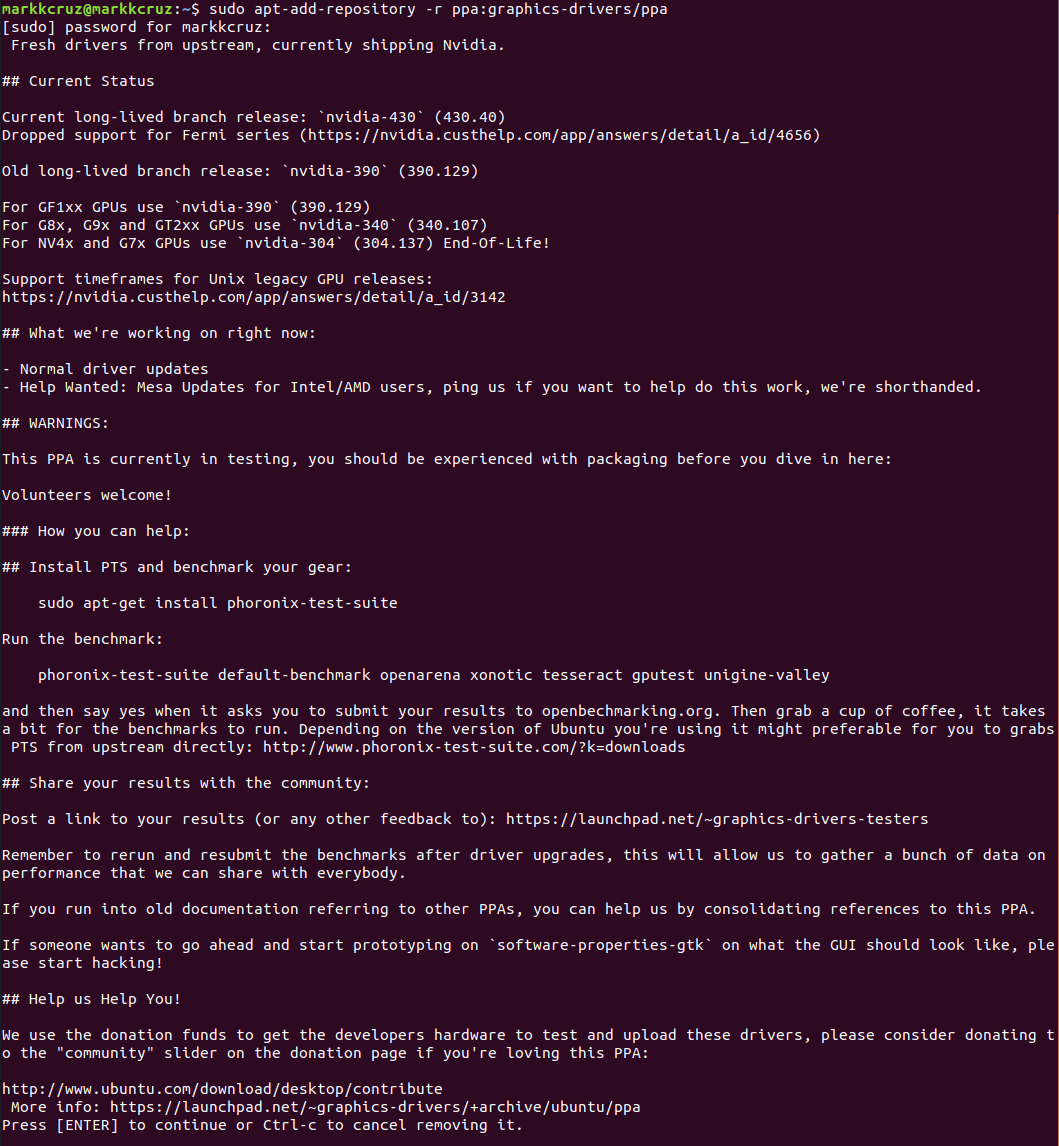

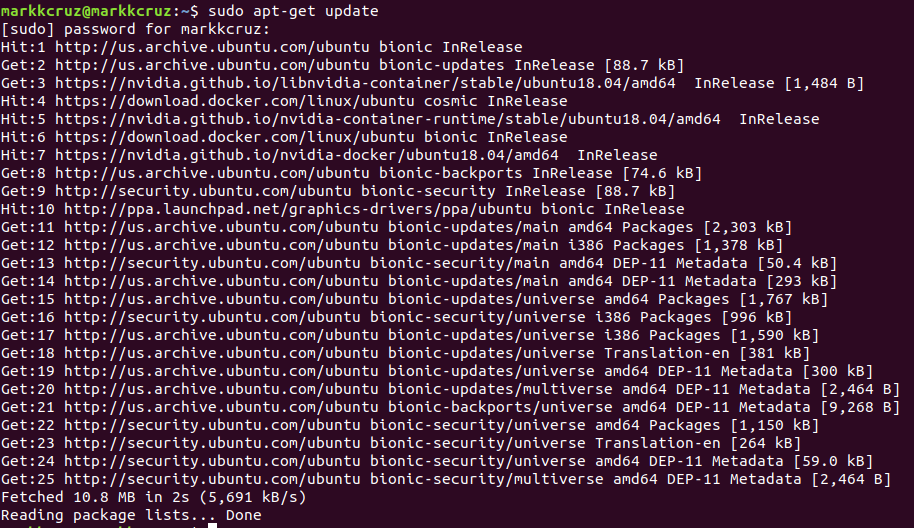

pantelis-classes/omniverse-ai/Wikipages/Isaac Sim SDK Omniverse Installation.md | ## Prerequisites

Ubuntu 18.04 LTS required

Nvidia drivers 470 or higher

### Installing Nvidia Drivers on Ubuntu 18.04 LTS

sudo apt-add-repository -r ppa:graphics-drivers/ppa

sudo apt updat... | 7,828 | Markdown | 38.741117 | 234 | 0.774527 |

pantelis-classes/omniverse-ai/Wikipages/TAO (NVIDIA Train, Adapt, and Optimize).md | All instructions stem from this <a href="https://docs.nvidia.com/tao/tao-toolkit/text/tao_toolkit_quick_start_guide.html">Nvidia Doc</a>.

# Requirements

### Hardware Requirements (Recommended)

32 GB system RAM

32 GB of GPU RAM

8 core CPU

1 NVIDIA GPU

100 GB of SSD space

### Hardware Requirem... | 5,654 | Markdown | 40.580882 | 408 | 0.759816 |

pantelis-classes/omniverse-ai/Wikipages/_Sidebar.md | # Isaac Sim in Omniverse

* [Home][home]

* [Isaac-Sim-SDK-Omniverse-Installation][Omniverse]

* [Synthetic-Data-Generation][SDG]

* [NVIDIA Transfer Learning Toolkit (TLT) Installation][TLT]

* [NVIDIA TAO][TAO]

* [detectnet_v2 Installation][detectnet_v2]

* [Jupyter Notebook][Jupyter-Notebook]

[home]: https://github.com/... | 1,061 | Markdown | 57.999997 | 112 | 0.782281 |

pantelis-classes/omniverse-ai/Wikipages/home.md | # Learning in Simulated Worlds in Omniverse.

## Wiki Navigation

* [Home][home]

* [Isaac-Sim-SDK-Omniverse-Installation][Omniverse]

* [Synthetic-Data-Generation][SDG]

* [NVIDIA Transfer Learning Toolkit (TLT) Installation][TLT]

* [NVIDIA TAO][TAO]

* [detectnet_v2 Installation][detectnet_v2]

* [Jupyter Notebook][Jupyter... | 1,834 | Markdown | 64.535712 | 247 | 0.794984 |

pantelis-classes/omniverse-ai/Wikipages/NVIDIA Transfer Learning Toolkit (TLT) Installation.md | # Installing the Pre-requisites

## 1. Install docker-ce:

### * Set up repository:

Update apt package index and install packages.

sudo apt-get update

sudo apt-get install \

ca-certif... | 6,554 | Markdown | 38.727272 | 247 | 0.754043 |

pantelis-classes/omniverse-ai/Wikipages/_Footer.md | ## Authors

### <a href="https://github.com/dfsanchez999">Diego Sanchez</a> | <a href="https://harp.njit.edu/~jga26/">Jibran Absarulislam</a> | <a href="https://github.com/markkcruz">Mark Cruz</a> | <a href="https://github.com/sppatel2112">Sapan Patel</a>

## Supervisor

### <a href="https://pantelis.github.io/">Dr. P... | 446 | Markdown | 39.63636 | 244 | 0.686099 |

pantelis-classes/omniverse-ai/Wikipages/detectnet_v2 Installation.md | # Installing running detectnet_v2 in a jupyter notebook

## Setup File Structures.

- Run these commands to create the correct file structure.

cd ~

mkdir tao

mv cv_samples_v1.2.0/ tao

cd tao/cv_samples_v1.2.0/

rm -r detectnet_v2

Toolkit is a si... | 3,749 | Markdown | 45.874999 | 243 | 0.787143 |

aws-samples/nvidia-omniverse-nucleus-on-amazon-ec2/CODE_OF_CONDUCT.md | ## Code of Conduct

This project has adopted the [Amazon Open Source Code of Conduct](https://aws.github.io/code-of-conduct).

For more information see the [Code of Conduct FAQ](https://aws.github.io/code-of-conduct-faq) or contact

opensource-codeofconduct@amazon.com with any additional questions or comments.

| 309 | Markdown | 60.999988 | 105 | 0.789644 |

aws-samples/nvidia-omniverse-nucleus-on-amazon-ec2/CONTRIBUTING.md | # Contributing Guidelines

Thank you for your interest in contributing to our project. Whether it's a bug report, new feature, correction, or additional

documentation, we greatly value feedback and contributions from our community.

Please read through this document before submitting any issues or pull requests to ensu... | 3,160 | Markdown | 51.683332 | 275 | 0.792405 |

aws-samples/nvidia-omniverse-nucleus-on-amazon-ec2/README.md | # NVIDIA Omniverse Nucleus on Amazon EC2

NVIDIA Omniverse is a scalable, multi-GPU, real-time platform for building and operating metaverse applications, based on Pixar's Universal Scene Description (USD) and NVIDIA RTX technology. USD is a powerful, extensible 3D framework and ecosystem that enables 3D designers and d... | 8,456 | Markdown | 53.211538 | 581 | 0.786542 |

aws-samples/nvidia-omniverse-nucleus-on-amazon-ec2/src/tools/nucleusServer/setup.py | # Copyright 2022 Amazon.com, Inc. or its affiliates. All Rights Reserved.

# SPDX-License-Identifier: LicenseRef-.amazon.com.-AmznSL-1.0

# Licensed under the Amazon Software License http://aws.amazon.com/asl/

from setuptools import setup

with open("README.md", "r") as fh:

long_description = fh.read()

setup(

... | 576 | Python | 21.192307 | 73 | 0.609375 |

aws-samples/nvidia-omniverse-nucleus-on-amazon-ec2/src/tools/nucleusServer/nst_cli.py | # Copyright 2022 Amazon.com, Inc. or its affiliates. All Rights Reserved.

# SPDX-License-Identifier: LicenseRef-.amazon.com.-AmznSL-1.0

# Licensed under the Amazon Software License http://aws.amazon.com/asl/

# Copyright 2022 Amazon.com, Inc. or its affiliates. All Rights Reserved.

# SPDX-License-Identifier: LicenseRe... | 2,391 | Python | 25.876404 | 143 | 0.677123 |

aws-samples/nvidia-omniverse-nucleus-on-amazon-ec2/src/tools/nucleusServer/README.md | # Tools for configuring Nuclues Server

The contents of this directory are zipped and then deployed to the nuclues server | 121 | Markdown | 39.666653 | 81 | 0.826446 |

aws-samples/nvidia-omniverse-nucleus-on-amazon-ec2/src/tools/nucleusServer/nst/__init__.py | # Copyright 2022 Amazon.com, Inc. or its affiliates. All Rights Reserved.

# SPDX-License-Identifier: LicenseRef-.amazon.com.-AmznSL-1.0

# Licensed under the Amazon Software License http://aws.amazon.com/asl/

| 210 | Python | 41.199992 | 73 | 0.766667 |

aws-samples/nvidia-omniverse-nucleus-on-amazon-ec2/src/tools/nucleusServer/nst/logger.py | # Copyright 2022 Amazon.com, Inc. or its affiliates. All Rights Reserved.

# SPDX-License-Identifier: LicenseRef-.amazon.com.-AmznSL-1.0

# Licensed under the Amazon Software License http://aws.amazon.com/asl/

import os

import logging

LOG_LEVEL = os.getenv('LOG_LEVEL', 'DEBUG')

logger = logging.getLogger()

logger.setL... | 480 | Python | 20.863635 | 73 | 0.708333 |

aws-samples/nvidia-omniverse-nucleus-on-amazon-ec2/src/tools/reverseProxy/rpt_cli.py | # Copyright 2022 Amazon.com, Inc. or its affiliates. All Rights Reserved.

# SPDX-License-Identifier: LicenseRef-.amazon.com.-AmznSL-1.0

# Licensed under the Amazon Software License http://aws.amazon.com/asl/

"""

helper tools for reverse proxy nginx configuration

"""

# std lib modules

import os

import logging

from pa... | 2,373 | Python | 24.526881 | 105 | 0.659503 |

aws-samples/nvidia-omniverse-nucleus-on-amazon-ec2/src/tools/reverseProxy/setup.py | # Copyright 2022 Amazon.com, Inc. or its affiliates. All Rights Reserved.

# SPDX-License-Identifier: LicenseRef-.amazon.com.-AmznSL-1.0

# Licensed under the Amazon Software License http://aws.amazon.com/asl/

from setuptools import setup

with open("README.md", "r") as fh:

long_description = fh.read()

setup(

... | 532 | Python | 25.649999 | 73 | 0.657895 |

aws-samples/nvidia-omniverse-nucleus-on-amazon-ec2/src/tools/reverseProxy/README.md | # Tools for configuring Nginx Reverse Proxy

The contents of this directory are zipped and then deployed to the reverse proxy server | 132 | Markdown | 43.333319 | 87 | 0.825758 |

aws-samples/nvidia-omniverse-nucleus-on-amazon-ec2/src/tools/reverseProxy/templates/acm.yaml | # Copyright 2020 Amazon.com, Inc. or its affiliates. All Rights Reserved.

# SPDX-License-Identifier: Apache-2.0

---

# ACM for Nitro Enclaves config.

#

# This is an example of setting up ACM, with Nitro Enclaves and nginx.

# You can take this file and then:

# - copy it to /etc/nitro_enclaves/acm.yaml;

# - fill in your A... | 1,689 | YAML | 39.238094 | 83 | 0.68206 |

aws-samples/nvidia-omniverse-nucleus-on-amazon-ec2/src/lambda/customResources/reverseProxyConfig/index.py | import os

import logging

import json

from crhelper import CfnResource

import aws_utils.ssm as ssm

import aws_utils.ec2 as ec2

import config.reverseProxy as config

LOG_LEVEL = os.getenv("LOG_LEVEL", "DEBUG")

logger = logging.getLogger()

logger.setLevel(LOG_LEVEL)

helper = CfnResource(

json_logging=False, log_lev... | 2,776 | Python | 26.495049 | 78 | 0.667147 |

aws-samples/nvidia-omniverse-nucleus-on-amazon-ec2/src/lambda/customResources/nucleusServerConfig/index.py | # Copyright 2022 Amazon.com, Inc. or its affiliates. All Rights Reserved.

# SPDX-License-Identifier: LicenseRef-.amazon.com.-AmznSL-1.0

# Licensed under the Amazon Software License http://aws.amazon.com/asl/

import os

import logging

import json

from crhelper import CfnResource

import aws_utils.ssm as ssm

import aw... | 3,303 | Python | 30.169811 | 107 | 0.718438 |

aws-samples/nvidia-omniverse-nucleus-on-amazon-ec2/src/lambda/asgLifeCycleHooks/reverseProxy/index.py | # Copyright 2022 Amazon.com, Inc. or its affiliates. All Rights Reserved.

# SPDX-License-Identifier: LicenseRef-.amazon.com.-AmznSL-1.0

# Licensed under the Amazon Software License http://aws.amazon.com/asl/

import boto3

import os

import json

import logging

import traceback

from botocore.exceptions import ClientErro... | 3,116 | Python | 28.40566 | 75 | 0.662067 |

aws-samples/nvidia-omniverse-nucleus-on-amazon-ec2/src/lambda/common/aws_utils/ec2.py | # Copyright 2022 Amazon.com, Inc. or its affiliates. All Rights Reserved.

# SPDX-License-Identifier: LicenseRef-.amazon.com.-AmznSL-1.0

# Licensed under the Amazon Software License http://aws.amazon.com/asl/

import os

import logging

import boto3

from botocore.exceptions import ClientError

LOG_LEVEL = os.getenv("LO... | 4,068 | Python | 21.605555 | 76 | 0.630285 |

aws-samples/nvidia-omniverse-nucleus-on-amazon-ec2/src/lambda/common/aws_utils/ssm.py | # Copyright 2022 Amazon.com, Inc. or its affiliates. All Rights Reserved.

# SPDX-License-Identifier: LicenseRef-.amazon.com.-AmznSL-1.0

# Licensed under the Amazon Software License http://aws.amazon.com/asl/

import os

import time

import logging

import boto3

from botocore.exceptions import ClientError

LOG_LEVEL = os... | 4,304 | Python | 30.195652 | 99 | 0.574814 |

aws-samples/nvidia-omniverse-nucleus-on-amazon-ec2/src/lambda/common/aws_utils/r53.py | # Copyright 2022 Amazon.com, Inc. or its affiliates. All Rights Reserved.

# SPDX-License-Identifier: LicenseRef-.amazon.com.-AmznSL-1.0

# Licensed under the Amazon Software License http://aws.amazon.com/asl/

import boto3

client = boto3.client("route53")

def update_hosted_zone_cname_record(hostedZoneID, rootDomain... | 1,989 | Python | 33.310344 | 144 | 0.553042 |

aws-samples/nvidia-omniverse-nucleus-on-amazon-ec2/src/lambda/common/aws_utils/sm.py | # Copyright 2022 Amazon.com, Inc. or its affiliates. All Rights Reserved.

# SPDX-License-Identifier: LicenseRef-.amazon.com.-AmznSL-1.0

# Licensed under the Amazon Software License http://aws.amazon.com/asl/

import json

import boto3

SM = boto3.client("secretsmanager")

def get_secret(secret_name):

response = S... | 429 | Python | 25.874998 | 73 | 0.745921 |

aws-samples/nvidia-omniverse-nucleus-on-amazon-ec2/src/lambda/common/config/nucleus.py |

def start_nucleus_config() -> list[str]:

return '''

cd /opt/ove/base_stack || exit 1

echo "STARTING NUCLEUS STACK ----------------------------------"

docker-compose --env-file nucleus-stack.env -f nucleus-stack-ssl.yml start

'''.splitlines()

def stop_nucleus_config() -> list[str]:

... | 4,176 | Python | 48.72619 | 247 | 0.582136 |

aws-samples/nvidia-omniverse-nucleus-on-amazon-ec2/src/lambda/common/config/reverseProxy.py | def get_config(artifacts_bucket_name: str, nucleus_address: str, full_domain: str) -> list[str]:

return f'''

echo "------------------------ REVERSE PROXY CONFIG ------------------------"

echo "UPDATING PACKAGES ----------------------------------"

sudo yum update -y

echo "INSTALLING... | 1,670 | Python | 44.162161 | 99 | 0.511976 |

arhix52/Strelka/conanfile.py | import os

from conan import ConanFile

from conan.tools.cmake import cmake_layout

from conan.tools.files import copy

class StrelkaRecipe(ConanFile):

settings = "os", "compiler", "build_type", "arch"

generators = "CMakeToolchain", "CMakeDeps"

def requirements(self):

self.requires("glm/cci.20230113... | 1,294 | Python | 37.088234 | 87 | 0.619784 |

arhix52/Strelka/BuildOpenUSD.md | USD building:

VS2019 + python 3.10

To build debug on windows:

python USD\build_scripts\build_usd.py "C:\work\USD_build_debug" --python --materialx --build-variant debug

For USD 23.03 you could use VS2022

Linux:

* python3 ./OpenUSD/build_scripts/build_usd.py /home/<user>/work/OpenUSD_build/ --python --materialx

| 315 | Markdown | 30.599997 | 106 | 0.746032 |

arhix52/Strelka/README.md | # Strelka

Path tracing render based on NVIDIA OptiX + NVIDIA MDL and Apple Metal

## OpenUSD Hydra render delegate

## Basis curves support

## Project... | 2,849 | Markdown | 33.337349 | 197 | 0.713935 |

arhix52/Strelka/src/HdStrelka/RenderParam.h | #pragma once

#include "pxr/pxr.h"

#include "pxr/imaging/hd/renderDelegate.h"

#include "pxr/imaging/hd/renderThread.h"

#include <scene/scene.h>

PXR_NAMESPACE_OPEN_SCOPE

class HdStrelkaRenderParam final : public HdRenderParam

{

public:

HdStrelkaRenderParam(oka::Scene* scene, HdRenderThread* renderThread, std::atom... | 901 | C | 24.055555 | 105 | 0.694784 |

arhix52/Strelka/src/HdStrelka/BasisCurves.h | #pragma once

#include <pxr/pxr.h>

#include <pxr/imaging/hd/basisCurves.h>

#include <scene/scene.h>

#include <pxr/base/gf/vec2f.h>

PXR_NAMESPACE_OPEN_SCOPE

class HdStrelkaBasisCurves final : public HdBasisCurves

{

public:

HF_MALLOC_TAG_NEW("new HdStrelkaBasicCurves");

HdStrelkaBasisCurves(const SdfPath& id,... | 2,048 | C | 28.271428 | 120 | 0.67334 |

arhix52/Strelka/src/HdStrelka/Tokens.cpp | #include "Tokens.h"

PXR_NAMESPACE_OPEN_SCOPE

TF_DEFINE_PUBLIC_TOKENS(HdStrelkaSettingsTokens, HD_STRELKA_SETTINGS_TOKENS);

TF_DEFINE_PUBLIC_TOKENS(HdStrelkaNodeIdentifiers, HD_STRELKA_NODE_IDENTIFIER_TOKENS);

TF_DEFINE_PUBLIC_TOKENS(HdStrelkaSourceTypes, HD_STRELKA_SOURCE_TYPE_TOKENS);

TF_DEFINE_PUBLIC_TOKENS(HdStrel... | 564 | C++ | 42.461535 | 85 | 0.833333 |

arhix52/Strelka/src/HdStrelka/MdlDiscoveryPlugin.h | #pragma once

#include <pxr/usd/ndr/discoveryPlugin.h>

PXR_NAMESPACE_OPEN_SCOPE

class HdStrelkaMdlDiscoveryPlugin final : public NdrDiscoveryPlugin

{

public:

NdrNodeDiscoveryResultVec DiscoverNodes(const Context& ctx) override;

const NdrStringVec& GetSearchURIs() const override;

};

PXR_NAMESPACE_CLOSE_SCOPE

| 317 | C | 18.874999 | 71 | 0.807571 |

arhix52/Strelka/src/HdStrelka/Material.h | #pragma once

#include "materialmanager.h"

#include "MaterialNetworkTranslator.h"

#include <pxr/imaging/hd/material.h>

#include <pxr/imaging/hd/sceneDelegate.h>

PXR_NAMESPACE_OPEN_SCOPE

class HdStrelkaMaterial final : public HdMaterial

{

public:

HF_MALLOC_TAG_NEW("new HdStrelkaMaterial");

HdStrelkaMaterial(... | 1,258 | C | 21.890909 | 107 | 0.709062 |

arhix52/Strelka/src/HdStrelka/Light.cpp | #include "Light.h"

#include <glm/glm.hpp>

#include <glm/gtc/matrix_transform.hpp>

#include <glm/gtc/type_ptr.hpp>

#include <glm/gtx/compatibility.hpp>

#include <pxr/imaging/hd/instancer.h>

#include <pxr/imaging/hd/meshUtil.h>

#include <pxr/imaging/hd/smoothNormals.h>

#include <pxr/imaging/hd/vertexAdjacency.h>

PXR_NA... | 8,162 | C++ | 35.936651 | 117 | 0.636486 |

arhix52/Strelka/src/HdStrelka/MdlParserPlugin.cpp | // Copyright (C) 2021 Pablo Delgado Krämer

//

// This program is free software: you can redistribute it and/or modify

// it under the terms of the GNU General Public License as published by

// the Free Software Foundation, either version 3 of the License, or

// (a... | 2,153 | C++ | 34.311475 | 119 | 0.681839 |

arhix52/Strelka/src/HdStrelka/Instancer.cpp | // Copyright (C) 2021 Pablo Delgado Krämer

//

// This program is free software: you can redistribute it and/or modify

// it under the terms of the GNU General Public License as published by

// the Free Software Foundation, either version 3 of the License, or

// (a... | 5,927 | C++ | 29.556701 | 131 | 0.639615 |

arhix52/Strelka/src/HdStrelka/RenderDelegate.h | #pragma once

#include <pxr/imaging/hd/renderDelegate.h>

#include "MaterialNetworkTranslator.h"

#include <render/common.h>

#include <scene/scene.h>

#include <render/render.h>

PXR_NAMESPACE_OPEN_SCOPE

class HdStrelkaRenderDelegate final : public HdRenderDelegate

{

public:

HdStrelkaRenderDelegate(const HdRenderSe... | 3,149 | C | 33.23913 | 113 | 0.768498 |

arhix52/Strelka/src/HdStrelka/RenderDelegate.cpp | #include "RenderDelegate.h"

#include "Camera.h"

#include "Instancer.h"

#include "Light.h"

#include "Material.h"

#include "Mesh.h"

#include "BasisCurves.h"

#include "RenderBuffer.h"

#include "RenderPass.h"

#include "Tokens.h"

#include <pxr/base/gf/vec4f.h>

#include <pxr/imaging/hd/resourceRegistry.h>

#include <log.h>... | 6,338 | C++ | 25.634454 | 122 | 0.718523 |

arhix52/Strelka/src/HdStrelka/BasisCurves.cpp | #include "BasisCurves.h"

#include <log.h>

PXR_NAMESPACE_OPEN_SCOPE

void HdStrelkaBasisCurves::Sync(HdSceneDelegate* sceneDelegate,

HdRenderParam* renderParam,

HdDirtyBits* dirtyBits,

const TfToken& reprToken)

{

TF_UNUSE... | 8,047 | C++ | 30.685039 | 121 | 0.644215 |

arhix52/Strelka/src/HdStrelka/RenderBuffer.cpp | #include "RenderBuffer.h"

#include "render.h"

#include <pxr/base/gf/vec3i.h>

PXR_NAMESPACE_OPEN_SCOPE

HdStrelkaRenderBuffer::HdStrelkaRenderBuffer(const SdfPath& id, oka::SharedContext* ctx) : HdRenderBuffer(id), mCtx(ctx)

{

m_isMapped = false;

m_isConverged = false;

m_bufferMem = nullptr;

}

HdStrelkaR... | 2,264 | C++ | 16.558139 | 120 | 0.671378 |

arhix52/Strelka/src/HdStrelka/Tokens.h | #pragma once

#include <pxr/base/tf/staticTokens.h>

PXR_NAMESPACE_OPEN_SCOPE

#define HD_STRELKA_SETTINGS_TOKENS \

((spp, "spp"))((max_bounces, "max-bounces"))

// mtlx node identifier is given by usdMtlx.

#define HD_STRELKA_NODE_IDENTIFIER_TOKENS \

(mtlx)(mdl)

#define HD_STRELKA_SOURCE_TYPE_TOKENS \

(mtl... | 1,175 | C | 29.947368 | 86 | 0.771064 |

arhix52/Strelka/src/HdStrelka/Camera.h | #pragma once

#include <pxr/imaging/hd/camera.h>

#include <scene/scene.h>

PXR_NAMESPACE_OPEN_SCOPE

class HdStrelkaCamera final : public HdCamera

{

public:

HdStrelkaCamera(const SdfPath& id, oka::Scene& scene);

~HdStrelkaCamera() override;

public:

float GetVFov() const;

uint32_t GetCameraIndex() co... | 687 | C | 17.594594 | 58 | 0.697234 |

arhix52/Strelka/src/HdStrelka/MdlDiscoveryPlugin.cpp | #include "MdlDiscoveryPlugin.h"

#include <pxr/base/tf/staticTokens.h>

//#include "Tokens.h"

PXR_NAMESPACE_OPEN_SCOPE

// clang-format off

TF_DEFINE_PRIVATE_TOKENS(_tokens,

(mdl)

);

// clang-format on

NDR_REGISTER_DISCOVERY_PLUGIN(HdStrelkaMdlDiscoveryPlugin);

NdrNodeDiscoveryResultVec HdStrelkaMdlDiscoveryP... | 996 | C++ | 23.317073 | 88 | 0.646586 |

arhix52/Strelka/src/HdStrelka/Material.cpp | #include "Material.h"

#include <pxr/base/gf/vec2f.h>

#include <pxr/usd/sdr/registry.h>

#include <pxr/usdImaging/usdImaging/tokens.h>

#include <log.h>

PXR_NAMESPACE_OPEN_SCOPE

HdStrelkaMaterial::HdStrelkaMaterial(const SdfPath& id, const MaterialNetworkTranslator& translator)

: HdMaterial(id), m_translator(trans... | 7,130 | C++ | 35.015151 | 112 | 0.551192 |

Subsets and Splits

No community queries yet

The top public SQL queries from the community will appear here once available.