metadata_version string | name string | version string | summary string | description string | description_content_type string | author string | author_email string | maintainer string | maintainer_email string | license string | keywords string | classifiers list | platform list | home_page string | download_url string | requires_python string | requires list | provides list | obsoletes list | requires_dist list | provides_dist list | obsoletes_dist list | requires_external list | project_urls list | uploaded_via string | upload_time timestamp[us] | filename string | size int64 | path string | python_version string | packagetype string | comment_text string | has_signature bool | md5_digest string | sha256_digest string | blake2_256_digest string | license_expression string | license_files list | recent_7d_downloads int64 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

2.4 | AstrBot | 4.17.6 | Easy-to-use multi-platform LLM chatbot and development framework |

<div align="center">

<a href="https://github.com/AstrBotDevs/AstrBot/blob/master/README_en.md">English</a> |

<a href="https://github.com/AstrBotDevs/AstrBot/blob/master/README_ja.md">日本語</a> |

<a href="https://github.com/AstrBotDevs/AstrBot/blob/master/README_zh-TW.md">繁體中文</a> |

<a href="https://github.com/AstrBotDevs/AstrBot/blob/master/README_fr.md">Français</a> |

<a href="https://github.com/AstrBotDevs/AstrBot/blob/master/README_ru.md">Русский</a>

<div>

<a href="https://trendshift.io/repositories/12875" target="_blank"><img src="https://trendshift.io/api/badge/repositories/12875" alt="Soulter%2FAstrBot | Trendshift" style="width: 250px; height: 55px;" width="250" height="55"/></a>

<a href="https://hellogithub.com/repository/AstrBotDevs/AstrBot" target="_blank"><img src="https://api.hellogithub.com/v1/widgets/recommend.svg?rid=d127d50cd5e54c5382328acc3bb25483&claim_uid=ZO9by7qCXgSd6Lp&t=2" alt="Featured|HelloGitHub" style="width: 250px; height: 54px;" width="250" height="54" /></a>

</div>

<br>

<div>

<img src="https://img.shields.io/github/v/release/AstrBotDevs/AstrBot?color=76bad9" href="https://github.com/AstrBotDevs/AstrBot/releases/latest">

<img src="https://img.shields.io/badge/python-3.10+-blue.svg" alt="python">

<img src="https://deepwiki.com/badge.svg" href="https://deepwiki.com/AstrBotDevs/AstrBot">

<a href="https://zread.ai/AstrBotDevs/AstrBot" target="_blank"><img src="https://img.shields.io/badge/Ask_Zread-_.svg?style=flat&color=00b0aa&labelColor=000000&logo=data%3Aimage%2Fsvg%2Bxml%3Bbase64%2CPHN2ZyB3aWR0aD0iMTYiIGhlaWdodD0iMTYiIHZpZXdCb3g9IjAgMCAxNiAxNiIgZmlsbD0ibm9uZSIgeG1sbnM9Imh0dHA6Ly93d3cudzMub3JnLzIwMDAvc3ZnIj4KPHBhdGggZD0iTTQuOTYxNTYgMS42MDAxSDIuMjQxNTZDMS44ODgxIDEuNjAwMSAxLjYwMTU2IDEuODg2NjQgMS42MDE1NiAyLjI0MDFWNC45NjAxQzEuNjAxNTYgNS4zMTM1NiAxLjg4ODEgNS42MDAxIDIuMjQxNTYgNS42MDAxSDQuOTYxNTZDNS4zMTUwMiA1LjYwMDEgNS42MDE1NiA1LjMxMzU2IDUuNjAxNTYgNC45NjAxVjIuMjQwMUM1LjYwMTU2IDEuODg2NjQgNS4zMTUwMiAxLjYwMDEgNC45NjE1NiAxLjYwMDFaIiBmaWxsPSIjZmZmIi8%2BCjxwYXRoIGQ9Ik00Ljk2MTU2IDEwLjM5OTlIMi4yNDE1NkMxLjg4ODEgMTAuMzk5OSAxLjYwMTU2IDEwLjY4NjQgMS42MDE1NiAxMS4wMzk5VjEzLjc1OTlDMS42MDE1NiAxNC4xMTM0IDEuODg4MSAxNC4zOTk5IDIuMjQxNTYgMTQuMzk5OUg0Ljk2MTU2QzUuMzE1MDIgMTQuMzk5OSA1LjYwMTU2IDE0LjExMzQgNS42MDE1NiAxMy43NTk5VjExLjAzOTlDNS42MDE1NiAxMC42ODY0IDUuMzE1MDIgMTAuMzk5OSA0Ljk2MTU2IDEwLjM5OTlaIiBmaWxsPSIjZmZmIi8%2BCjxwYXRoIGQ9Ik0xMy43NTg0IDEuNjAwMUgxMS4wMzg0QzEwLjY4NSAxLjYwMDEgMTAuMzk4NCAxLjg4NjY0IDEwLjM5ODQgMi4yNDAxVjQuOTYwMUMxMC4zOTg0IDUuMzEzNTYgMTAuNjg1IDUuNjAwMSAxMS4wMzg0IDUuNjAwMUgxMy43NTg0QzE0LjExMTkgNS42MDAxIDE0LjM5ODQgNS4zMTM1NiAxNC4zOTg0IDQuOTYwMVYyLjI0MDFDMTQuMzk4NCAxLjg4NjY0IDE0LjExMTkgMS42MDAxIDEzLjc1ODQgMS42MDAxWiIgZmlsbD0iI2ZmZiIvPgo8cGF0aCBkPSJNNCAxMkwxMiA0TDQgMTJaIiBmaWxsPSIjZmZmIi8%2BCjxwYXRoIGQ9Ik00IDEyTDEyIDQiIHN0cm9rZT0iI2ZmZiIgc3Ryb2tlLXdpZHRoPSIxLjUiIHN0cm9rZS1saW5lY2FwPSJyb3VuZCIvPgo8L3N2Zz4K&logoColor=ffffff" alt="zread"/></a>

<a href="https://hub.docker.com/r/soulter/astrbot"><img alt="Docker pull" src="https://img.shields.io/docker/pulls/soulter/astrbot.svg?color=76bad9"/></a>

<img src="https://img.shields.io/badge/dynamic/json?url=https%3A%2F%2Fapi.soulter.top%2Fastrbot%2Fplugin-num&query=%24.result&suffix=%E4%B8%AA&label=%E6%8F%92%E4%BB%B6%E5%B8%82%E5%9C%BA&cacheSeconds=3600">

<img src="https://gitcode.com/Soulter/AstrBot/star/badge.svg" href="https://gitcode.com/Soulter/AstrBot">

</div>

<br>

<a href="https://astrbot.app/">文档</a> |

<a href="https://blog.astrbot.app/">Blog</a> |

<a href="https://astrbot.featurebase.app/roadmap">路线图</a> |

<a href="https://github.com/AstrBotDevs/AstrBot/issues">问题提交</a>

</div>

AstrBot 是一个开源的一站式 Agentic 个人和群聊助手,可在 QQ、Telegram、企业微信、飞书、钉钉、Slack、等数十款主流即时通讯软件上部署,此外还内置类似 OpenWebUI 的轻量化 ChatUI,为个人、开发者和团队打造可靠、可扩展的对话式智能基础设施。无论是个人 AI 伙伴、智能客服、自动化助手,还是企业知识库,AstrBot 都能在你的即时通讯软件平台的工作流中快速构建 AI 应用。

## 主要功能

1. 💯 免费 & 开源。

2. ✨ AI 大模型对话,多模态,Agent,MCP,Skills,知识库,人格设定,自动压缩对话。

3. 🤖 支持接入 Dify、阿里云百炼、Coze 等智能体平台。

4. 🌐 多平台,支持 QQ、企业微信、飞书、钉钉、微信公众号、Telegram、Slack 以及[更多](#支持的消息平台)。

5. 📦 插件扩展,已有近 800 个插件可一键安装。

6. 🛡️ [Agent Sandbox](https://docs.astrbot.app/use/astrbot-agent-sandbox.html) 隔离化环境,安全地执行任何代码、调用 Shell、会话级资源复用。

7. 💻 WebUI 支持。

8. 🌈 Web ChatUI 支持,ChatUI 内置代理沙盒、网页搜索等。

9. 🌐 国际化(i18n)支持。

<br>

<table align="center">

<tr align="center">

<th>💙 角色扮演 & 情感陪伴</th>

<th>✨ 主动式 Agent</th>

<th>🚀 通用 Agentic 能力</th>

<th>🧩 900+ 社区插件</th>

</tr>

<tr>

<td align="center"><p align="center"><img width="984" height="1746" alt="99b587c5d35eea09d84f33e6cf6cfd4f" src="https://github.com/user-attachments/assets/89196061-3290-458d-b51f-afa178049f84" /></p></td>

<td align="center"><p align="center"><img width="976" height="1612" alt="c449acd838c41d0915cc08a3824025b1" src="https://github.com/user-attachments/assets/f75368b4-e022-41dc-a9e0-131c3e73e32e" /></p></td>

<td align="center"><p align="center"><img width="974" height="1732" alt="image" src="https://github.com/user-attachments/assets/e22a3968-87d7-4708-a7cd-e7f198c7c32e" /></p></td>

<td align="center"><p align="center"><img width="976" height="1734" alt="image" src="https://github.com/user-attachments/assets/0952b395-6b4a-432a-8a50-c294b7f89750" /></p></td>

</tr>

</table>

## 快速开始

#### Docker 部署(推荐 🥳)

推荐使用 Docker / Docker Compose 方式部署 AstrBot。

请参阅官方文档 [使用 Docker 部署 AstrBot](https://astrbot.app/deploy/astrbot/docker.html#%E4%BD%BF%E7%94%A8-docker-%E9%83%A8%E7%BD%B2-astrbot) 。

#### uv 部署

```bash

uv tool install astrbot

astrbot

```

#### 启动器一键部署(AstrBot Launcher)

进入 [AstrBot Launcher](https://github.com/Raven95676/astrbot-launcher) 仓库,在 Releases 页最新版本下找到对应的系统安装包安装即可。

#### 宝塔面板部署

AstrBot 与宝塔面板合作,已上架至宝塔面板。

请参阅官方文档 [宝塔面板部署](https://astrbot.app/deploy/astrbot/btpanel.html) 。

#### 1Panel 部署

AstrBot 已由 1Panel 官方上架至 1Panel 面板。

请参阅官方文档 [1Panel 部署](https://astrbot.app/deploy/astrbot/1panel.html) 。

#### 在 雨云 上部署

AstrBot 已由雨云官方上架至云应用平台,可一键部署。

[](https://app.rainyun.com/apps/rca/store/5994?ref=NjU1ODg0)

#### 在 Replit 上部署

社区贡献的部署方式。

[](https://repl.it/github/AstrBotDevs/AstrBot)

#### Windows 一键安装器部署

请参阅官方文档 [使用 Windows 一键安装器部署 AstrBot](https://astrbot.app/deploy/astrbot/windows.html) 。

#### CasaOS 部署

社区贡献的部署方式。

请参阅官方文档 [CasaOS 部署](https://astrbot.app/deploy/astrbot/casaos.html) 。

#### 手动部署

首先安装 uv:

```bash

pip install uv

```

通过 Git Clone 安装 AstrBot:

```bash

git clone https://github.com/AstrBotDevs/AstrBot && cd AstrBot

uv run main.py

```

或者请参阅官方文档 [通过源码部署 AstrBot](https://astrbot.app/deploy/astrbot/cli.html) 。

#### 系统包管理器安装

##### Arch Linux

```bash

yay -S astrbot-git

# 或者使用 paru

paru -S astrbot-git

```

#### 桌面端(Tauri)

桌面端已迁移为独立仓库(Tauri):[https://github.com/AstrBotDevs/AstrBot-desktop](https://github.com/AstrBotDevs/AstrBot-desktop)。

## 支持的消息平台

**官方维护**

- QQ

- OneBot v11 协议实现

- Telegram

- 企微应用 & 企微智能机器人

- 微信客服 & 微信公众号

- 飞书

- 钉钉

- Slack

- Discord

- LINE

- Satori

- Misskey

- Whatsapp (将支持)

**社区维护**

- [Matrix](https://github.com/stevessr/astrbot_plugin_matrix_adapter)

- [KOOK](https://github.com/wuyan1003/astrbot_plugin_kook_adapter)

- [VoceChat](https://github.com/HikariFroya/astrbot_plugin_vocechat)

## 支持的模型服务

**大模型服务**

- OpenAI 及兼容服务

- Anthropic

- Google Gemini

- Moonshot AI

- 智谱 AI

- DeepSeek

- Ollama (本地部署)

- LM Studio (本地部署)

- [AIHubMix](https://aihubmix.com/?aff=4bfH)

- [优云智算](https://www.compshare.cn/?ytag=GPU_YY-gh_astrbot&referral_code=FV7DcGowN4hB5UuXKgpE74)

- [302.AI](https://share.302.ai/rr1M3l)

- [小马算力](https://www.tokenpony.cn/3YPyf)

- [硅基流动](https://docs.siliconflow.cn/cn/usercases/use-siliconcloud-in-astrbot)

- [PPIO 派欧云](https://ppio.com/user/register?invited_by=AIOONE)

- ModelScope

- OneAPI

**LLMOps 平台**

- Dify

- 阿里云百炼应用

- Coze

**语音转文本服务**

- OpenAI Whisper

- SenseVoice

**文本转语音服务**

- OpenAI TTS

- Gemini TTS

- GPT-Sovits-Inference

- GPT-Sovits

- FishAudio

- Edge TTS

- 阿里云百炼 TTS

- Azure TTS

- Minimax TTS

- 火山引擎 TTS

## ❤️ 贡献

欢迎任何 Issues/Pull Requests!只需要将你的更改提交到此项目 :)

### 如何贡献

你可以通过查看问题或帮助审核 PR(拉取请求)来贡献。任何问题或 PR 都欢迎参与,以促进社区贡献。当然,这些只是建议,你可以以任何方式进行贡献。对于新功能的添加,请先通过 Issue 讨论。

### 开发环境

AstrBot 使用 `ruff` 进行代码格式化和检查。

```bash

git clone https://github.com/AstrBotDevs/AstrBot

pip install pre-commit

pre-commit install

```

## 🌍 社区

### QQ 群组

- 1 群:322154837

- 3 群:630166526

- 5 群:822130018

- 6 群:753075035

- 7 群:743746109

- 8 群:1030353265

- 开发者群:975206796

### Telegram 群组

<a href="https://t.me/+hAsD2Ebl5as3NmY1"><img alt="Telegram_community" src="https://img.shields.io/badge/Telegram-AstrBot-purple?style=for-the-badge&color=76bad9"></a>

### Discord 群组

<a href="https://discord.gg/hAVk6tgV36"><img alt="Discord_community" src="https://img.shields.io/badge/Discord-AstrBot-purple?style=for-the-badge&color=76bad9"></a>

## ❤️ Special Thanks

特别感谢所有 Contributors 和插件开发者对 AstrBot 的贡献 ❤️

<a href="https://github.com/AstrBotDevs/AstrBot/graphs/contributors">

<img src="https://contrib.rocks/image?repo=AstrBotDevs/AstrBot" />

</a>

此外,本项目的诞生离不开以下开源项目的帮助:

- [NapNeko/NapCatQQ](https://github.com/NapNeko/NapCatQQ) - 伟大的猫猫框架

开源项目友情链接:

- [NoneBot2](https://github.com/nonebot/nonebot2) - 优秀的 Python 异步 ChatBot 框架

- [Koishi](https://github.com/koishijs/koishi) - 优秀的 Node.js ChatBot 框架

- [MaiBot](https://github.com/Mai-with-u/MaiBot) - 优秀的拟人化 AI ChatBot

- [nekro-agent](https://github.com/KroMiose/nekro-agent) - 优秀的 Agent ChatBot

- [LangBot](https://github.com/langbot-app/LangBot) - 优秀的多平台 AI ChatBot

- [ChatLuna](https://github.com/ChatLunaLab/chatluna) - 优秀的多平台 AI ChatBot Koishi 插件

- [Operit AI](https://github.com/AAswordman/Operit) - 优秀的 AI 智能助手 Android APP

## ⭐ Star History

> [!TIP]

> 如果本项目对您的生活 / 工作产生了帮助,或者您关注本项目的未来发展,请给项目 Star,这是我们维护这个开源项目的动力 <3

<div align="center">

[](https://star-history.com/#astrbotdevs/astrbot&Date)

</div>

<div align="center">

_陪伴与能力从来不应该是对立面。我们希望创造的是一个既能理解情绪、给予陪伴,也能可靠完成工作的机器人。_

_私は、高性能ですから!_

<img src="https://files.astrbot.app/watashiwa-koseino-desukara.gif" width="100"/>

</div>

| text/markdown | null | null | null | null | null | Astrbot, Astrbot Module, Astrbot Plugin | [] | [] | null | null | >=3.12 | [] | [] | [] | [

"aiocqhttp>=1.4.4",

"aiodocker>=0.24.0",

"aiofiles>=25.1.0",

"aiohttp>=3.11.18",

"aiosqlite>=0.21.0",

"anthropic>=0.51.0",

"apscheduler>=3.11.0",

"audioop-lts; python_full_version >= \"3.13\"",

"beautifulsoup4>=4.13.4",

"certifi>=2025.4.26",

"chardet~=5.1.0",

"click>=8.2.1",

"cryptography>=44.0.3",

"dashscope>=1.23.2",

"defusedxml>=0.7.1",

"deprecated>=1.2.18",

"dingtalk-stream>=0.22.1",

"docstring-parser>=0.16",

"faiss-cpu>=1.12.0",

"filelock>=3.18.0",

"google-genai>=1.56.0",

"jieba>=0.42.1",

"lark-oapi>=1.4.15",

"loguru>=0.7.2",

"lxml-html-clean>=0.4.2",

"markitdown-no-magika[docx,xls,xlsx]>=0.1.2",

"mcp>=1.8.0",

"openai>=1.78.0",

"ormsgpack>=1.9.1",

"packaging>=24.2",

"pillow>=11.2.1",

"pip>=25.1.1",

"psutil<7.2.0,>=5.8.0",

"py-cord>=2.6.1",

"pydantic>=2.12.5",

"pydub>=0.25.1",

"pyjwt>=2.10.1",

"pypdf>=6.1.1",

"python-socks>=2.8.0",

"python-telegram-bot>=22.0",

"qq-botpy>=1.2.1",

"quart>=0.20.0",

"rank-bm25>=0.2.2",

"readability-lxml>=0.8.4.1",

"shipyard-python-sdk>=0.2.4",

"silk-python>=0.2.6",

"slack-sdk>=3.35.0",

"sqlalchemy[asyncio]>=2.0.41",

"sqlmodel>=0.0.24",

"telegramify-markdown>=0.5.1",

"tenacity>=9.1.2",

"watchfiles>=1.0.5",

"websockets>=15.0.1",

"wechatpy>=1.8.18",

"xinference-client"

] | [] | [] | [] | [] | uv/0.10.4 {"installer":{"name":"uv","version":"0.10.4","subcommand":["publish"]},"python":null,"implementation":{"name":null,"version":null},"distro":{"name":"Ubuntu","version":"24.04","id":"noble","libc":null},"system":{"name":null,"release":null},"cpu":null,"openssl_version":null,"setuptools_version":null,"rustc_version":null,"ci":true} | 2026-02-20T10:43:15.613115 | astrbot-4.17.6-py3-none-any.whl | 831,002 | 94/ba/6c11b31619dee2639d2e6f3d13567eaf0e167df1607bb22a2c45c0538fef/astrbot-4.17.6-py3-none-any.whl | py3 | bdist_wheel | null | false | 040d33fd6be8a1db8f9b975f6b669603 | 36e09a0ae050b0f2aee99f993aabc1e7352896b84d005c39c5048c0cc68e7131 | 94ba6c11b31619dee2639d2e6f3d13567eaf0e167df1607bb22a2c45c0538fef | null | [

"LICENSE"

] | 0 |

2.4 | mkdocs-static-i18n | 1.3.1 | MkDocs i18n plugin using static translation markdown files |

# MkDocs static i18n plugin

*The MkDocs plugin that helps you support multiple language versions of your site / documentation.*

*Like what you :eyes:? Using this plugin? Give it a :star:!*

The `mkdocs-static-i18n` plugin allows you to support multiple languages of your documentation by adding static translation files to your existing documentation pages.

Multi language support is just **one `.<language>.md` file away**!

Even better, `mkdocs-static-i18n` also allows you to build and serve localized versions of any file extension to display localized images, medias and assets.

Localized images/medias/assets are just **one `.<language>.<extension>` file away**!

Don't like file suffixes? You're more into a folder based structure? We got you covered as well!

## Documentation

Check out the [plugins' documentation here](https://ultrabug.github.io/mkdocs-static-i18n/).

TL;DR? There's a [quick start guide](https://ultrabug.github.io/mkdocs-static-i18n/getting-started/quick-start/) for you!

## Upgrading from 0.x versions

:warning: Version 1.0.0 brings **breaking changes** to the configuration format of the plugin. Check out the [upgrade to v1.0.0 guide](https://ultrabug.github.io/mkdocs-static-i18n/setup/upgrading-to-1/) to ease updating your `mkdocs.yml` file!

## See it in action

This plugin is proudly bringing localized content of [hundreds of projects](https://github.com/ultrabug/mkdocs-static-i18n/network/dependents) to their users.

Check it out live:

- [On this repository documentation](https://ultrabug.github.io/mkdocs-static-i18n/)

- [On my own website: ultrabug.fr](https://ultrabug.fr)

But also in our hall of fame:

- [AWS Copilot CLI](https://aws.github.io/copilot-cli/)

- [OWASP Top 10](https://github.com/OWASP/Top10)

- [Spaceship Prompt](https://spaceship-prompt.sh/)

- [FederatedAI FATE](https://fate.readthedocs.io/en/latest/)

- [Privacy Guides Org](https://www.privacyguides.org/en/)

- [Computer Science Self Learning Wiki](https://csdiy.wiki/)

## Contributions welcome

Feel free to ask questions, enhancements and to contribute to this project!

## Development

The project is managed with `hatch`. [Install `hatch`](https://hatch.pypa.io/1.9/install/#gui-installer) first.

Run the tests:

```shell

hatch run test:test

hatch run style:check

```

Serve the documentation:

```shell

hatch run doc:serve

```

## Credits

- Logo by [max.icons](https://www.flaticon.com/authors/maxicons)

| text/markdown | null | Ultrabug <ultrabug@ultrabug.net> | null | null | null | null | [

"License :: OSI Approved :: MIT License",

"Operating System :: POSIX :: Linux",

"Programming Language :: Python",

"Programming Language :: Python :: 3",

"Programming Language :: Python :: 3 :: Only",

"Programming Language :: Python :: 3.8",

"Programming Language :: Python :: 3.9",

"Programming Language :: Python :: 3.10",

"Programming Language :: Python :: 3.11",

"Programming Language :: Python :: 3.12",

"Programming Language :: Python :: 3.13",

"Programming Language :: Python :: 3.14",

"Topic :: Software Development :: Libraries :: Python Modules"

] | [] | null | null | >=3.8 | [] | [] | [] | [

"mkdocs>=1.5.2",

"mkdocs-material<9.7.2; extra == \"material\""

] | [] | [] | [] | [

"Documentation, https://github.com/ultrabug/mkdocs-static-i18n#readme",

"Download, https://github.com/ultrabug/mkdocs-static-i18n/tags",

"Funding, https://ultrabug.fr/#support-me",

"Homepage, https://github.com/ultrabug/mkdocs-static-i18n",

"Source, https://github.com/ultrabug/mkdocs-static-i18n",

"Tracker, https://github.com/ultrabug/mkdocs-static-i18n/issues"

] | Hatch/1.16.3 cpython/3.14.2 HTTPX/0.28.1 | 2026-02-20T10:42:41.835200 | mkdocs_static_i18n-1.3.1.tar.gz | 1,371,325 | ce/f9/51e2ffda9c7210bc35a24f3717b08c052cd4b728dfa87f901c00d8005259/mkdocs_static_i18n-1.3.1.tar.gz | source | sdist | null | false | 70a81efb097b00c79f514da23854f40e | a6125ea7db6cc1a900d76a967f262535af09831160a93c56d7f0d522a79b5faf | cef951e2ffda9c7210bc35a24f3717b08c052cd4b728dfa87f901c00d8005259 | MIT | [

"LICENSE"

] | 2,447 |

2.4 | envcipher | 0.1.3 | Secure .env file encryption using OS keychain. Keep secrets encrypted at rest. | # Envcipher

[](https://crates.io/crates/envcipher)

[](https://pypi.org/project/envcipher/)

[](LICENSE)

Encrypt `.env` files using AES-256-GCM with keys stored in your OS keychain. Decrypt on demand for local development without managing separate key files.

---

## Installation

<details open>

<summary><strong>Python</strong></summary>

```bash

pip install envcipher

```

Provides both the CLI and Python library.

</details>

<details>

<summary><strong>Rust</strong></summary>

```bash

cargo install envcipher

```

CLI only.

</details>

<details>

<summary><strong>From Source</strong></summary>

```bash

git clone https://github.com/iamprecieee/envcipher

cd envcipher

cargo install --path .

```

</details>

---

## Usage

### CLI

```bash

envcipher init # Generate key, store in OS keychain

envcipher edit # Decrypt -> edit -> re-encrypt

envcipher lock # Encrypt .env in place

envcipher unlock # Decrypt .env to plaintext

envcipher run -- <cmd> # Run command with decrypted env vars

envcipher status # Show encryption status

```

<details>

<summary><strong>Python Library</strong></summary>

```python

import envcipher

import os

# Load encrypted .env into os.environ

envcipher.load()

# Access secrets

api_key = os.getenv("API_KEY")

```

Custom path:

```python

envcipher.load(path="/path/to/.env")

```

Works with both encrypted and plaintext files.

</details>

---

## Team Sharing

```bash

# Export key

envcipher export-key

# Output: qQWntX6r7eANxsyKHbkJtuXtzW0Hy5zjJGvDSxMKM9I=

# Import on another machine

envcipher import-key qQWntX6r7eANxsyKHbkJtuXtzW0Hy5zjJGvDSxMKM9I=

```

Share keys through secure channels only.

---

## Security

| Component | Implementation |

|-----------|----------------|

| Encryption | AES-256-GCM, 96-bit random nonces |

| Key Storage | OS keychain (Keychain / Credential Manager / Secret Service) |

| Memory | Keys zeroized on drop |

| Format | `ENVCIPHER:v1:<nonce>:<ciphertext>` |

**Designed for:** Protecting secrets from accidental commits, local development encryption at rest, small team key sharing.

**Not designed for:** Production secret management, zero-trust environments, HSM requirements.

---

## FAQ

<details>

<summary>Can I manually edit the encrypted file?</summary>

No. Use `envcipher edit` or the unlock-edit-lock workflow. Manual edits corrupt the format.

</details>

<details>

<summary>Can I commit the encrypted .env file?</summary>

Yes, but we recommend using `.gitignore` and sharing via `export-key`/`import-key` instead. Committing encrypted files is safe only if your team securely shares the key.

</details>

<details>

<summary>What if I lose my key?</summary>

Keys are stored in your OS keychain. If you lose access (e.g., fresh OS install), get a teammate to run `export-key`.

</details>

<details>

<summary>How do I rotate keys?</summary>

Currently manual: decrypt with old key, run `init` in a fresh directory to generate new key, re-encrypt.

</details>

<details>

<summary>Does it work in CI/CD?</summary>

Not recommended. Envcipher is designed for local development. CI runners have ephemeral keychains, and storing the key as a CI secret defeats the purpose. Use native secret management instead (GitHub Secrets, AWS Secrets Manager, etc.).

</details>

<details>

<summary>Can I use this on multiple projects?</summary>

Yes. Each project directory gets its own key (hashed by directory path). Moving a project folder requires re-importing the key.

</details>

---

## License

[MIT](LICENSE)

---

[Contributing](docs/CONTRIBUTING.md) | [Code of Conduct](docs/CODE_OF_CONDUCT.md) | [Security](docs/SECURITY.md)

| text/markdown; charset=UTF-8; variant=GFM | null | iamprecieee <emmypresh777@gmail.com> | null | null | null | env, encryption, secrets, security, python, dotenv | [

"Programming Language :: Rust",

"Programming Language :: Python :: Implementation :: CPython",

"Programming Language :: Python :: Implementation :: PyPy"

] | [] | null | null | >=3.7 | [] | [] | [] | [

"maturin>=1.12.3"

] | [] | [] | [] | [] | maturin/1.12.3 | 2026-02-20T10:42:38.718427 | envcipher-0.1.3.tar.gz | 26,264 | c1/99/27c43da1d867f8c161ccde97d2717164ce68da7707b432db8a9bf995ad08/envcipher-0.1.3.tar.gz | source | sdist | null | false | 4e2ab70d59a92bed603a270727b4c8ab | c73b9ad74e735f4399d4a30b98f4e87e5db909c1f35915b65c67d1f366790011 | c19927c43da1d867f8c161ccde97d2717164ce68da7707b432db8a9bf995ad08 | null | [] | 242 |

2.4 | usearch-iscc | 2.24.2 | Smaller & Faster Single-File Vector Search Engine from Unum (ISCC Foundation Fork) | <h1 align="center">USearch</h1>

<h3 align="center">

Smaller & <a href="https://www.unum.cloud/blog/2023-11-07-scaling-vector-search-with-intel">Faster</a> Single-File<br/>

Similarity Search & Clustering Engine for <a href="https://github.com/ashvardanian/simsimd">Vectors</a> & 🔜 <a href="https://github.com/ashvardanian/stringzilla">Texts</a>

</h3>

<br/>

<p align="center">

<a href="https://discord.gg/A6wxt6dS9j"><img height="25" src="https://github.com/unum-cloud/.github/raw/main/assets/discord.svg" alt="Discord"></a>

<a href="https://www.linkedin.com/company/unum-cloud/"><img height="25" src="https://github.com/unum-cloud/.github/raw/main/assets/linkedin.svg" alt="LinkedIn"></a>

<a href="https://twitter.com/unum_cloud"><img height="25" src="https://github.com/unum-cloud/.github/raw/main/assets/twitter.svg" alt="Twitter"></a>

<a href="https://unum.cloud/post"><img height="25" src="https://github.com/unum-cloud/.github/raw/main/assets/blog.svg" alt="Blog"></a>

<a href="https://github.com/unum-cloud/usearch"><img height="25" src="https://github.com/unum-cloud/.github/raw/main/assets/github.svg" alt="GitHub"></a>

</p>

<p align="center">

Spatial • Binary • Probabilistic • User-Defined Metrics

<br/>

<a href="https://unum-cloud.github.io/USearch/cpp">C++11</a> •

<a href="https://unum-cloud.github.io/USearch/python">Python 3</a> •

<a href="https://unum-cloud.github.io/USearch/javascript">JavaScript</a> •

<a href="https://unum-cloud.github.io/USearch/java">Java</a> •

<a href="https://unum-cloud.github.io/USearch/rust">Rust</a> •

<a href="https://unum-cloud.github.io/USearch/c">C99</a> •

<a href="https://unum-cloud.github.io/USearch/objective-c">Objective-C</a> •

<a href="https://unum-cloud.github.io/USearch/swift">Swift</a> •

<a href="https://unum-cloud.github.io/USearch/csharp">C#</a> •

<a href="https://unum-cloud.github.io/USearch/golang">Go</a> •

<a href="https://unum-cloud.github.io/USearch/wolfram">Wolfram</a>

<br/>

Linux • macOS • Windows • iOS • Android • WebAssembly •

<a href="https://unum-cloud.github.io/USearch/sqlite">SQLite</a>

</p>

<div align="center">

<a href="https://pepy.tech/project/usearch"> <img alt="PyPI" src="https://static.pepy.tech/personalized-badge/usearch?period=total&units=abbreviation&left_color=black&right_color=blue&left_text=Python%20PyPI%20installs"> </a>

<a href="https://www.npmjs.com/package/usearch"> <img alt="NPM" src="https://img.shields.io/npm/dy/usearch?label=JavaScript%20NPM%20installs"> </a>

<a href="https://crates.io/crates/usearch"> <img alt="Crate" src="https://img.shields.io/crates/d/usearch?label=Rust%20Crate%20installs"> </a>

<a href="https://www.nuget.org/packages/Cloud.Unum.USearch"> <img alt="NuGet" src="https://img.shields.io/nuget/dt/Cloud.Unum.USearch?label=CSharp%20NuGet%20installs"> </a>

<!-- Maven Central publishing is deprecated for now; fat-JAR download is the supported path. -->

<img alt="GitHub code size in bytes" src="https://img.shields.io/github/languages/code-size/unum-cloud/usearch?label=Repo%20size">

</div>

---

> **ISCC Foundation Fork** -- This is a maintained fork of [USearch](https://github.com/unum-cloud/usearch)

> by the [ISCC Foundation](https://iscc.io), published on PyPI as

> [`usearch-iscc`](https://pypi.org/project/usearch-iscc/). The Python import name remains `usearch`

> for compatibility. Install with: `pip install usearch-iscc`

>

> **Fork divergence from upstream:**

> - 128-bit key support (Python): `Index(ndim=..., key_kind="uuid")` for packed 16-byte keys

> - Multi-index UUID support (Python): `Indexes` works with both u64 and uuid-keyed shards

> - NPHD metric (all bindings): Normalized Prefix Hamming Distance for length-prefixed binary vectors

> - Build: published as `usearch-iscc` on PyPI with independent release cycle

---

- ✅ __[10x faster][faster-than-faiss]__ [HNSW][hnsw-algorithm] implementation than [FAISS][faiss].

- ✅ Simple and extensible [single C++11 header][usearch-header] __library__.

- ✅ [Trusted](#integrations) by giants like Google and DBs like [ClickHouse][clickhouse-docs] & [DuckDB][duckdb-docs].

- ✅ [SIMD][simd]-optimized and [user-defined metrics](#user-defined-functions) with JIT compilation.

- ✅ Hardware-agnostic `f16` & `i8` - [half-precision & quarter-precision support](#memory-efficiency-downcasting-and-quantization).

- ✅ [View large indexes from disk](#serialization--serving-index-from-disk) without loading into RAM.

- ✅ Heterogeneous lookups, renaming/relabeling, and on-the-fly deletions.

- ✅ Binary Tanimoto and Sorensen coefficients for [Genomics and Chemistry applications](#usearch--rdkit--molecular-search).

- ✅ [NPHD metric](#nphd-metric-normalized-prefix-hamming-distance) for variable-length binary fingerprint comparison.

- ✅ Space-efficient point-clouds with `uint40_t`, accommodating 4B+ size.

- ✅ Compatible with OpenMP and custom "executors" for fine-grained parallelism.

- ✅ [Semantic Search](#usearch--uform--ucall--multimodal-semantic-search) and [Joins](#joins-one-to-one-one-to-many-and-many-to-many-mappings).

- 🔄 Near-real-time [clustering and sub-clustering](#clustering) for Tens or Millions of clusters.

[faiss]: https://github.com/facebookresearch/faiss

[usearch-header]: https://github.com/unum-cloud/usearch/blob/main/include/usearch/index.hpp

[obscure-use-cases]: https://ashvardanian.com/posts/abusing-vector-search

[hnsw-algorithm]: https://arxiv.org/abs/1603.09320

[simd]: https://en.wikipedia.org/wiki/Single_instruction,_multiple_data

[faster-than-faiss]: https://www.unum.cloud/blog/2023-11-07-scaling-vector-search-with-intel

[clickhouse-docs]: https://clickhouse.com/docs/en/engines/table-engines/mergetree-family/annindexes#usearch

[duckdb-docs]: https://duckdb.org/2024/05/03/vector-similarity-search-vss.html

__Technical Insights__ and related articles:

- [Uses Arm SVE and x86 AVX-512's masked loads to eliminate tail `for`-loops](https://ashvardanian.com/posts/simsimd-faster-scipy/#tails-of-the-past-the-significance-of-masked-loads).

- [Uses Horner's method for polynomial approximations, beating GCC 12 by 119x](https://ashvardanian.com/posts/gcc-12-vs-avx512fp16/).

- [For every language implements a custom separate binding](https://ashvardanian.com/posts/porting-cpp-library-to-ten-languages/).

## Comparison with FAISS

FAISS is a widely recognized standard for high-performance vector search engines.

USearch and FAISS both employ the same HNSW algorithm, but they differ significantly in their design principles.

USearch is compact and broadly compatible without sacrificing performance, primarily focusing on user-defined metrics and fewer dependencies.

| | FAISS | USearch | Improvement |

| :------------------------------------------- | ----------------------: | -----------------------: | ----------------------: |

| Indexing time ⁰ | | | |

| 100 Million 96d `f32`, `f16`, `i8` vectors | 2.6 · 2.6 · 2.6 h | 0.3 · 0.2 · 0.2 h | __9.6 · 10.4 · 10.7 x__ |

| 100 Million 1536d `f32`, `f16`, `i8` vectors | 5.0 · 4.1 · 3.8 h | 2.1 · 1.1 · 0.8 h | __2.3 · 3.6 · 4.4 x__ |

| | | | |

| Codebase length ¹ | 84 K [SLOC][sloc] | 3 K [SLOC][sloc] | maintainable |

| Supported metrics ² | 9 fixed metrics | any metric | extendible |

| Supported languages ³ | C++, Python | 10 languages | portable |

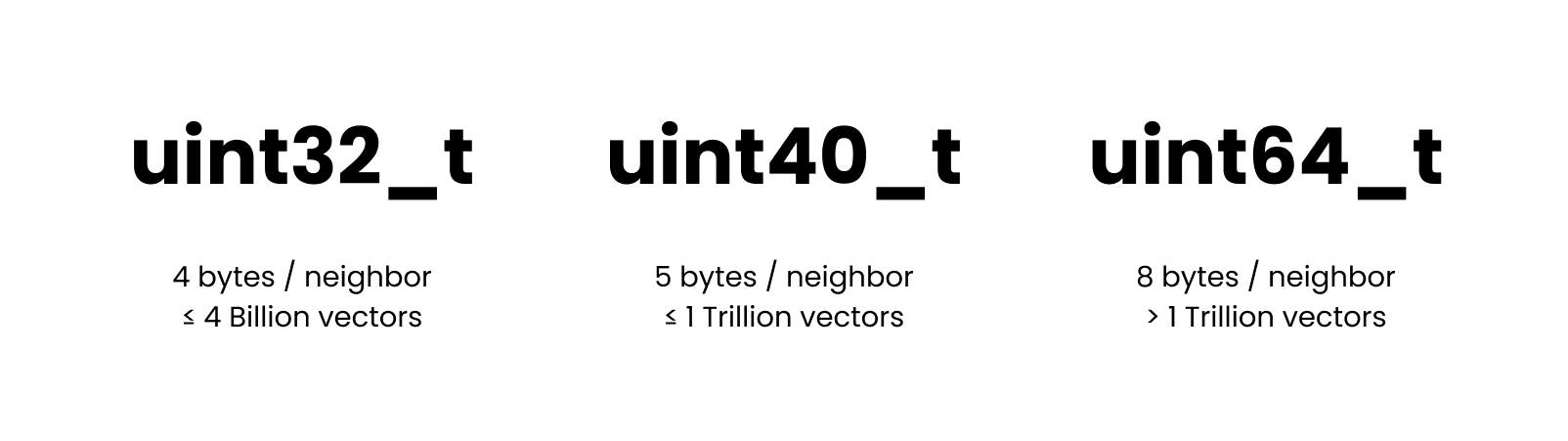

| Supported ID types ⁴ | 32-bit, 64-bit | 32-bit, 40-bit, 64-bit | efficient |

| Filtering ⁵ | ban-lists | any predicates | composable |

| Required dependencies ⁶ | BLAS, OpenMP | - | light-weight |

| Bindings ⁷ | SWIG | Native | low-latency |

| Python binding size ⁸ | [~ 10 MB][faiss-weight] | [< 1 MB][usearch-weight] | deployable |

[sloc]: https://en.wikipedia.org/wiki/Source_lines_of_code

[faiss-weight]: https://pypi.org/project/faiss-cpu/#files

[usearch-weight]: https://pypi.org/project/usearch/#files

> ⁰ [Tested][intel-benchmarks] on Intel Sapphire Rapids, with the simplest inner-product distance, equivalent recall, and memory consumption while also providing far superior search speed.

> ¹ A shorter codebase of `usearch/` over `faiss/` makes the project easier to maintain and audit.

> ² User-defined metrics allow you to customize your search for various applications, from GIS to creating custom metrics for composite embeddings from multiple AI models or hybrid full-text and semantic search.

> ³ With USearch, you can reuse the same preconstructed index in various programming languages.

> ⁴ The 40-bit integer allows you to store 4B+ vectors without allocating 8 bytes for every neighbor reference in the proximity graph.

> ⁵ With USearch the index can be combined with arbitrary external containers, like Bloom filters or third-party databases, to filter out irrelevant keys during index traversal.

> ⁶ Lack of obligatory dependencies makes USearch much more portable.

> ⁷ Native bindings introduce lower call latencies than more straightforward approaches.

> ⁸ Lighter bindings make downloads and deployments faster.

[intel-benchmarks]: https://www.unum.cloud/blog/2023-11-07-scaling-vector-search-with-intel

Base functionality is identical to FAISS, and the interface must be familiar if you have ever investigated Approximate Nearest Neighbors search:

```py

# pip install usearch

import numpy as np

from usearch.index import Index

index = Index(ndim=3) # Default settings for 3D vectors

vector = np.array([0.2, 0.6, 0.4]) # Can be a matrix for batch operations

index.add(42, vector) # Add one or many vectors in parallel

matches = index.search(vector, 10) # Find 10 nearest neighbors

assert matches[0].key == 42

assert matches[0].distance <= 0.001

assert np.allclose(index[42], vector, atol=0.1) # Ensure high tolerance in mixed-precision comparisons

```

More settings are always available, and the API is designed to be as flexible as possible.

The default storage/quantization level is hardware-dependant for efficiency, but `bf16` is recommended for most modern CPUs.

```py

index = Index(

ndim=3, # Define the number of dimensions in input vectors

metric='cos', # Choose 'l2sq', 'ip', 'haversine' or other metric, default = 'cos'

dtype='bf16', # Store as 'f64', 'f32', 'f16', 'i8', 'b1'..., default = None

connectivity=16, # Optional: Limit number of neighbors per graph node

expansion_add=128, # Optional: Control the recall of indexing

expansion_search=64, # Optional: Control the quality of the search

multi=False, # Optional: Allow multiple vectors per key, default = False

)

```

## 128-bit Keys (UUID Mode)

By default, USearch uses 64-bit unsigned integer keys. This fork adds support for 128-bit keys via `key_kind="uuid"`, allowing you to pack structured identifiers (e.g. content hashes, chunk pointers) directly into the key.

```py

import numpy as np

from usearch.index import Index

# Create an index with 128-bit keys

index = Index(ndim=128, metric='cos', key_kind='uuid')

# Keys are 16-byte values: single keys as bytes, batches as numpy V16 arrays

batch_size = 1000

keys = np.empty(batch_size, dtype='V16')

vectors = np.random.randn(batch_size, 128).astype(np.float32)

for i in range(batch_size):

body = i.to_bytes(8, 'big') # 8 bytes: content identity

offset = (i * 16).to_bytes(4, 'big') # 4 bytes: chunk offset

size = (1024 + i).to_bytes(4, 'big') # 4 bytes: chunk size

keys[i] = body + offset + size # 16 bytes total

index.add(keys, vectors)

matches = index.search(vectors[0], count=5)

for match in matches:

print(match.key, match.distance) # match.key is bytes(16)

# Single-key operations use bytes(16)

single_key = keys[0].tobytes()

index.contains(single_key) # bool

index.get(single_key) # np.ndarray or None

index.remove(single_key)

# Save/load preserves key kind; mismatched load raises ValueError

index.save('index.usearch')

restored = Index.restore('index.usearch') # auto-detects uuid mode

```

> **Note:** Auto-generated keys are not supported in uuid mode — you must always pass explicit keys to `add()`.

## NPHD Metric (Normalized Prefix Hamming Distance)

NPHD is a built-in distance metric for comparing length-prefixed binary vectors. Each vector's first byte stores the data length in bytes. The metric computes the Hamming distance over the common prefix of two vectors and normalizes by the shorter vector's bit count, returning a value in `[0.0, 1.0]`.

This is useful for content identification systems like [ISCC](https://iscc.codes) where binary fingerprints may have variable-length prefixes. Previously this required a custom Numba `@cfunc` metric (~500MB of dependencies) and `change_metric()` hacks after every `load()`/`view()`. The native metric eliminates both.

```py

import numpy as np

from usearch.index import Index, MetricKind, ScalarKind

# Vector layout: [length_byte, data_byte_0, data_byte_1, ..., padding...]

# ndim is total size in bits, including the length byte.

ndim = 264 # 33 bytes = 1 length byte + up to 32 data bytes

index = Index(ndim=ndim, metric=MetricKind.NPHD, dtype=ScalarKind.B1)

def make_vector(length, data_bytes):

"""Build a length-prefixed binary vector."""

vec = np.zeros(ndim // 8, dtype=np.uint8)

vec[0] = length

vec[1:1 + len(data_bytes)] = data_bytes

return vec

a = make_vector(4, [0xAA, 0xBB, 0xCC, 0xDD])

b = make_vector(4, [0xAA, 0xBB, 0xCC, 0x00])

index.add(0, a)

index.add(1, b)

matches = index.search(a, 2)

print(matches[0].key, matches[0].distance) # 0, 0.0

print(matches[1].key, matches[1].distance) # 1, ~0.15625

# Save/load preserves the metric — no change_metric() needed

index.save("nphd_index.usearch")

restored = Index.restore("nphd_index.usearch")

assert str(restored.metric_kind) == "MetricKind.NPHD"

```

**Key details:**

- Only valid with `dtype=ScalarKind.B1` (binary vectors).

- The length byte encodes the number of data bytes (not bits), excluding itself.

- When vectors have different lengths, only the common prefix is compared.

- A length byte of 0 yields distance 0.0 (no data to compare).

## Serialization & Serving `Index` from Disk

USearch supports multiple forms of serialization:

- Into a __file__ defined with a path.

- Into a __stream__ defined with a callback, serializing or reconstructing incrementally.

- Into a __buffer__ of fixed length or a memory-mapped file that supports random access.

The latter allows you to serve indexes from external memory, enabling you to optimize your server choices for indexing speed and serving costs.

This can result in __20x cost reduction__ on AWS and other public clouds.

```py

index.save("index.usearch")

index.load("index.usearch")

view = Index.restore("index.usearch", view=True, ...)

other_view = Index(ndim=..., metric=...)

other_view.view("index.usearch")

```

## Exact vs. Approximate Search

Approximate search methods, such as HNSW, are predominantly used when an exact brute-force search becomes too resource-intensive.

This typically occurs when you have millions of entries in a collection.

For smaller collections, we offer a more direct approach with the `search` method.

```py

from usearch.index import search, MetricKind, Matches, BatchMatches

import numpy as np

# Generate 10'000 random vectors with 1024 dimensions

vectors = np.random.rand(10_000, 1024).astype(np.float32)

vector = np.random.rand(1024).astype(np.float32)

one_in_many: Matches = search(vectors, vector, 50, MetricKind.L2sq, exact=True)

many_in_many: BatchMatches = search(vectors, vectors, 50, MetricKind.L2sq, exact=True)

```

If you pass the `exact=True` argument, the system bypasses indexing altogether and performs a brute-force search through the entire dataset using SIMD-optimized similarity metrics from [SimSIMD](https://github.com/ashvardanian/simsimd).

When compared to FAISS's `IndexFlatL2` in Google Colab, __[USearch may offer up to a 20x performance improvement](https://github.com/unum-cloud/usearch/issues/176#issuecomment-1666650778)__:

- `faiss.IndexFlatL2`: __55.3 ms__.

- `usearch.index.search`: __2.54 ms__.

## User-Defined Metrics

While most vector search packages concentrate on just two metrics, "Inner Product distance" and "Euclidean distance", USearch allows arbitrary user-defined metrics.

This flexibility allows you to customize your search for various applications, from computing geospatial coordinates with the rare [Haversine][haversine] distance to creating custom metrics for composite embeddings from multiple AI models, like joint image-text embeddings.

You can use [Numba][numba], [Cppyy][cppyy], or [PeachPy][peachpy] to define your [custom metric even in Python](https://unum-cloud.github.io/USearch/python#user-defined-metrics-and-jit-in-python):

```py

from numba import cfunc, types, carray

from usearch.index import Index, MetricKind, MetricSignature, CompiledMetric

ndim = 256

@cfunc(types.float32(types.CPointer(types.float32), types.CPointer(types.float32)))

def python_inner_product(a, b):

a_array = carray(a, ndim)

b_array = carray(b, ndim)

c = 0.0

for i in range(ndim):

c += a_array[i] * b_array[i]

return 1 - c

metric = CompiledMetric(pointer=python_inner_product.address, kind=MetricKind.IP, signature=MetricSignature.ArrayArray)

index = Index(ndim=ndim, metric=metric, dtype=np.float32)

```

Similar effect is even easier to achieve in C, C++, and Rust interfaces.

Moreover, unlike older approaches indexing high-dimensional spaces, like KD-Trees and Locality Sensitive Hashing, HNSW doesn't require vectors to be identical in length.

They only have to be comparable.

So you can apply it in [obscure][obscure] applications, like searching for similar sets or fuzzy text matching, using [GZip][gzip-similarity] compression-ratio as a distance function.

[haversine]: https://ashvardanian.com/posts/abusing-vector-search#geo-spatial-indexing

[obscure]: https://ashvardanian.com/posts/abusing-vector-search

[gzip-similarity]: https://twitter.com/LukeGessler/status/1679211291292889100?s=20

[numba]: https://numba.readthedocs.io/en/stable/reference/jit-compilation.html#c-callbacks

[cppyy]: https://cppyy.readthedocs.io/en/latest/

[peachpy]: https://github.com/Maratyszcza/PeachPy

## Filtering and Predicate Functions

Sometimes you may want to cross-reference search-results against some external database or filter them based on some criteria.

In most engines, you'd have to manually perform paging requests, successively filtering the results.

In USearch you can simply pass a predicate function to the search method, which will be applied directly during graph traversal.

In Rust that would look like this:

```rust

let is_odd = |key: Key| key % 2 == 1;

let query = vec![0.2, 0.1, 0.2, 0.1, 0.3];

let results = index.filtered_search(&query, 10, is_odd).unwrap();

assert!(

results.keys.iter().all(|&key| key % 2 == 1),

"All keys must be odd"

);

```

## Memory Efficiency, Downcasting, and Quantization

Training a quantization model and dimension-reduction is a common approach to accelerate vector search.

Those, however, are only sometimes reliable, can significantly affect the statistical properties of your data, and require regular adjustments if your distribution shifts.

Instead, we have focused on high-precision arithmetic over low-precision downcasted vectors.

The same index, and `add` and `search` operations will automatically down-cast or up-cast between `f64_t`, `f32_t`, `f16_t`, `i8_t`, and single-bit `b1x8_t` representations.

You can use the following command to check, if hardware acceleration is enabled:

```sh

$ python -c 'from usearch.index import Index; print(Index(ndim=768, metric="cos", dtype="f16").hardware_acceleration)'

> sapphire

$ python -c 'from usearch.index import Index; print(Index(ndim=166, metric="tanimoto").hardware_acceleration)'

> ice

```

In most cases, it's recommended to use half-precision floating-point numbers on modern hardware.

When quantization is enabled, the "get"-like functions won't be able to recover the original data, so you may want to replicate the original vectors elsewhere.

When quantizing to `i8_t` integers, note that it's only valid for cosine-like metrics.

As part of the quantization process, the vectors are normalized to unit length and later scaled to [-127, 127] range to occupy the full 8-bit range.

When quantizing to `b1x8_t` single-bit representations, note that it's only valid for binary metrics like Jaccard, Hamming, etc.

As part of the quantization process, the scalar components greater than zero are set to `true`, and the rest to `false`.

Using smaller numeric types will save you RAM needed to store the vectors, but you can also compress the neighbors lists forming our proximity graphs.

By default, 32-bit `uint32_t` is used to enumerate those, which is not enough if you need to address over 4 Billion entries.

For such cases we provide a custom `uint40_t` type, that will still be 37.5% more space-efficient than the commonly used 8-byte integers, and will scale up to 1 Trillion entries.

## `Indexes` for Multi-Index Lookups

For larger workloads targeting billions or even trillions of vectors, parallel multi-index lookups become invaluable.

Instead of constructing one extensive index, you can build multiple smaller ones and view them together.

```py

from usearch.index import Indexes

multi_index = Indexes(

indexes=[index_a, index_b], # Merge in-memory shards

paths=["shard_a.usearch", "shard_b.usearch"], # Or load from disk

view=False,

threads=0,

)

multi_index.search(query_vectors, 10)

```

`Indexes` supports both u64 and uuid key kinds. The key kind is auto-detected from the first merged shard or path, or can be set explicitly:

```py

# Auto-detect from shards

indexes = Indexes([uuid_index_a, uuid_index_b])

# Auto-detect from paths

indexes = Indexes(paths=["uuid_shard.usearch"])

# Explicit key kind

indexes = Indexes(key_kind="uuid")

indexes.merge(uuid_index)

# Incremental loading

indexes = Indexes()

indexes.merge_path("shard.usearch")

```

## Clustering

Once the index is constructed, USearch can perform K-Nearest Neighbors Clustering much faster than standalone clustering libraries, like SciPy,

UMap, and tSNE.

Same for dimensionality reduction with PCA.

Essentially, the `Index` itself can be seen as a clustering, allowing iterative deepening.

```py

clustering = index.cluster(

min_count=10, # Optional

max_count=15, # Optional

threads=..., # Optional

)

# Get the clusters and their sizes

centroid_keys, sizes = clustering.centroids_popularity

# Use Matplotlib to draw a histogram

clustering.plot_centroids_popularity()

# Export a NetworkX graph of the clusters

g = clustering.network

# Get members of a specific cluster

first_members = clustering.members_of(centroid_keys[0])

# Deepen into that cluster, splitting it into more parts, all the same arguments supported

sub_clustering = clustering.subcluster(min_count=..., max_count=...)

```

The resulting clustering isn't identical to K-Means or other conventional approaches but serves the same purpose.

Alternatively, using Scikit-Learn on a 1 Million point dataset, one may expect queries to take anywhere from minutes to hours, depending on the number of clusters you want to highlight.

For 50'000 clusters, the performance difference between USearch and conventional clustering methods may easily reach 100x.

## Joins, One-to-One, One-to-Many, and Many-to-Many Mappings

One of the big questions these days is how AI will change the world of databases and data management.

Most databases are still struggling to implement high-quality fuzzy search, and the only kind of joins they know are deterministic.

A `join` differs from searching for every entry, requiring a one-to-one mapping banning collisions among separate search results.

| Exact Search | Fuzzy Search | Semantic Search ? |

| :----------: | :----------: | :---------------: |

| Exact Join | Fuzzy Join ? | Semantic Join ?? |

Using USearch, one can implement sub-quadratic complexity approximate, fuzzy, and semantic joins.

This can be useful in any fuzzy-matching tasks common to Database Management Software.

```py

men = Index(...)

women = Index(...)

pairs: dict = men.join(women, max_proposals=0, exact=False)

```

> Read more in the post: [Combinatorial Stable Marriages for Semantic Search 💍](https://ashvardanian.com/posts/searching-stable-marriages)

## Functionality

By now, the core functionality is supported across all bindings.

Broader functionality is ported per request.

In some cases, like Batch operations, feature parity is meaningless, as the host language has full multi-threading capabilities and the USearch index structure is concurrent by design, so the users can implement batching/scheduling/load-balancing in the most optimal way for their applications.

| | C++ 11 | Python 3 | C 99 | Java | JavaScript | Rust | Go | Swift |

| :---------------------- | :----: | :------: | :---: | :---: | :--------: | :---: | :---: | :---: |

| Add, search, remove | ✅ | ✅ | ✅ | ✅ | ✅ | ✅ | ✅ | ✅ |

| Save, load, view | ✅ | ✅ | ✅ | ✅ | ✅ | ✅ | ✅ | ✅ |

| User-defined metrics | ✅ | ✅ | ✅ | ❌ | ❌ | ✅ | ❌ | ❌ |

| Batch operations | ❌ | ✅ | ❌ | ✅ | ✅ | ❌ | ❌ | ❌ |

| Filter predicates | ✅ | ❌ | ✅ | ❌ | ❌ | ✅ | ❌ | ✅ |

| Joins | ✅ | ✅ | ❌ | ❌ | ❌ | ❌ | ❌ | ❌ |

| Variable-length vectors | ✅ | ❌ | ❌ | ❌ | ❌ | ❌ | ❌ | ❌ |

| 4B+ capacities | ✅ | ❌ | ❌ | ❌ | ❌ | ❌ | ❌ | ❌ |

## Application Examples

### USearch + UForm + UCall = Multimodal Semantic Search

AI has a growing number of applications, but one of the coolest classic ideas is to use it for Semantic Search.

One can take an encoder model, like the multi-modal [UForm](https://github.com/unum-cloud/uform), and a web-programming framework, like [UCall](https://github.com/unum-cloud/ucall), and build a text-to-image search platform in just 20 lines of Python.

```python

from ucall import Server

from uform import get_model, Modality

from usearch.index import Index

import numpy as np

import PIL as pil

processors, models = get_model('unum-cloud/uform3-image-text-english-small')

model_text = models[Modality.TEXT_ENCODER]

model_image = models[Modality.IMAGE_ENCODER]

processor_text = processors[Modality.TEXT_ENCODER]

processor_image = processors[Modality.IMAGE_ENCODER]

server = Server()

index = Index(ndim=256)

@server

def add(key: int, photo: pil.Image.Image):

image = processor_image(photo)

vector = model_image(image)

index.add(key, vector.flatten(), copy=True)

@server

def search(query: str) -> np.ndarray:

tokens = processor_text(query)

vector = model_text(tokens)

matches = index.search(vector.flatten(), 3)

return matches.keys

server.run()

```

Similar experiences can also be implemented in other languages and on the client side, removing the network latency.

For Swift and iOS, check out the [`ashvardanian/SwiftSemanticSearch`](https://github.com/ashvardanian/SwiftSemanticSearch) repository.

<table>

<tr>

<td>

<img src="https://github.com/ashvardanian/ashvardanian/blob/master/demos/SwiftSemanticSearch-Dog.gif?raw=true" alt="SwiftSemanticSearch demo Dog">

</td>

<td>

<img src="https://github.com/ashvardanian/ashvardanian/blob/master/demos/SwiftSemanticSearch-Flowers.gif?raw=true" alt="SwiftSemanticSearch demo with Flowers">

</td>

</tr>

</table>

A more complete [demo with Streamlit is available on GitHub](https://github.com/ashvardanian/usearch-images).

We have pre-processed some commonly used datasets, cleaned the images, produced the vectors, and pre-built the index.

| Dataset | Modalities | Images | Download |

| :---------------------------------- | --------------------: | -----: | ------------------------------------: |

| [Unsplash][unsplash-25k-origin] | Images & Descriptions | 25 K | [HuggingFace / Unum][unsplash-25k-hf] |

| [Conceptual Captions][cc-3m-origin] | Images & Descriptions | 3 M | [HuggingFace / Unum][cc-3m-hf] |

| [Arxiv][arxiv-2m-origin] | Titles & Abstracts | 2 M | [HuggingFace / Unum][arxiv-2m-hf] |

[unsplash-25k-origin]: https://github.com/unsplash/datasets

[cc-3m-origin]: https://huggingface.co/datasets/conceptual_captions

[arxiv-2m-origin]: https://www.kaggle.com/datasets/Cornell-University/arxiv

[unsplash-25k-hf]: https://huggingface.co/datasets/unum-cloud/ann-unsplash-25k

[cc-3m-hf]: https://huggingface.co/datasets/unum-cloud/ann-cc-3m

[arxiv-2m-hf]: https://huggingface.co/datasets/unum-cloud/ann-arxiv-2m

### USearch + RDKit = Molecular Search

Comparing molecule graphs and searching for similar structures is expensive and slow.

It can be seen as a special case of the NP-Complete Subgraph Isomorphism problem.

Luckily, domain-specific approximate methods exist.

The one commonly used in Chemistry is to generate structures from [SMILES][smiles] and later hash them into binary fingerprints.

The latter are searchable with binary similarity metrics, like the Tanimoto coefficient.

Below is an example using the RDKit package.

```python

from usearch.index import Index, MetricKind

from rdkit import Chem

from rdkit.Chem import AllChem

import numpy as np

molecules = [Chem.MolFromSmiles('CCOC'), Chem.MolFromSmiles('CCO')]

encoder = AllChem.GetRDKitFPGenerator()

fingerprints = np.vstack([encoder.GetFingerprint(x) for x in molecules])

fingerprints = np.packbits(fingerprints, axis=1)

index = Index(ndim=2048, metric=MetricKind.Tanimoto)

keys = np.arange(len(molecules))

index.add(keys, fingerprints)

matches = index.search(fingerprints, 10)

```

That method was used to build the ["USearch Molecules"](https://github.com/ashvardanian/usearch-molecules), one of the largest Chem-Informatics datasets, containing 7 billion small molecules and 28 billion fingerprints.

[smiles]: https://en.wikipedia.org/wiki/Simplified_molecular-input_line-entry_system

[rdkit-fingerprints]: https://www.rdkit.org/docs/RDKit_Book.html#additional-information-about-the-fingerprints

### USearch + POI Coordinates = GIS Applications

Similar to Vector and Molecule search, USearch can be used for Geospatial Information Systems.

The Haversine distance is available out of the box, but you can also define more complex relationships, like the Vincenty formula, that accounts for the Earth's oblateness.

```py

from numba import cfunc, types, carray

import math

# Define the dimension as 2 for latitude and longitude

ndim = 2

# Signature for the custom metric

signature = types.float32(

types.CPointer(types.float32),

types.CPointer(types.float32))

# WGS-84 ellipsoid parameters

a = 6378137.0 # major axis in meters

f = 1 / 298.257223563 # flattening

b = (1 - f) * a # minor axis

def vincenty_distance(a_ptr, b_ptr):

a_array = carray(a_ptr, ndim)

b_array = carray(b_ptr, ndim)

lat1, lon1, lat2, lon2 = a_array[0], a_array[1], b_array[0], b_array[1]

L, U1, U2 = lon2 - lon1, math.atan((1 - f) * math.tan(lat1)), math.atan((1 - f) * math.tan(lat2))

sinU1, cosU1, sinU2, cosU2 = math.sin(U1), math.cos(U1), math.sin(U2), math.cos(U2)

lambda_, iterLimit = L, 100

while iterLimit > 0:

iterLimit -= 1

sinLambda, cosLambda = math.sin(lambda_), math.cos(lambda_)

sinSigma = math.sqrt((cosU2 * sinLambda) ** 2 + (cosU1 * sinU2 - sinU1 * cosU2 * cosLambda) ** 2)

if sinSigma == 0: return 0.0 # Co-incident points

cosSigma, sigma = sinU1 * sinU2 + cosU1 * cosU2 * cosLambda, math.atan2(sinSigma, cosSigma)

sinAlpha, cos2Alpha = cosU1 * cosU2 * sinLambda / sinSigma, 1 - (cosU1 * cosU2 * sinLambda / sinSigma) ** 2

cos2SigmaM = cosSigma - 2 * sinU1 * sinU2 / cos2Alpha if not math.isnan(cosSigma - 2 * sinU1 * sinU2 / cos2Alpha) else 0 # Equatorial line

C = f / 16 * cos2Alpha * (4 + f * (4 - 3 * cos2Alpha))

lambda_, lambdaP = L + (1 - C) * f * (sinAlpha * (sigma + C * sinSigma * (cos2SigmaM + C * cosSigma * (-1 + 2 * cos2SigmaM ** 2)))), lambda_

if abs(lambda_ - lambdaP) <= 1e-12: break

if iterLimit == 0: return float('nan') # formula failed to converge

u2 = cos2Alpha * (a ** 2 - b ** 2) / (b ** 2)

A = 1 + u2 / 16384 * (4096 + u2 * (-768 + u2 * (320 - 175 * u2)))

B = u2 / 1024 * (256 + u2 * (-128 + u2 * (74 - 47 * u2)))

deltaSigma = B * sinSigma * (cos2SigmaM + B / 4 * (cosSigma * (-1 + 2 * cos2SigmaM ** 2) - B / 6 * cos2SigmaM * (-3 + 4 * sinSigma ** 2) * (-3 + 4 * cos2SigmaM ** 2)))

s = b * A * (sigma - deltaSigma)

return s / 1000.0 # Distance in kilometers

# Example usage:

index = Index(ndim=ndim, metric=CompiledMetric(

pointer=vincenty_distance.address,

kind=MetricKind.Haversine,

signature=MetricSignature.ArrayArray,

))

```

## Integrations & Users

- [x] ClickHouse: [C++](https://github.com/ClickHouse/ClickHouse/pull/53447), [docs](https://clickhouse.com/docs/en/engines/table-engines/mergetree-family/annindexes#usearch).

- [x] DuckDB: [post](https://duckdb.org/2024/05/03/vector-similarity-search-vss.html).

- [x] ScyllaDB: [Rust](https://github.com/scylladb/vector-store), [presentation](https://www.slideshare.net/slideshow/vector-search-with-scylladb-by-szymon-wasik/276571548).

- [x] TiDB & TiFlash: [C++](https://github.com/pingcap/tiflash), [announcement](https://www.pingcap.com/article/introduce-vector-search-indexes-in-tidb/).

- [x] YugaByte: [C++](https://github.com/yugabyte/yugabyte-db/blob/366b9f5e3c4df3a1a17d553db41d6dc50146f488/src/yb/vector_index/usearch_wrapper.cc).

- [x] Google: [UniSim](https://github.com/google/unisim), [RetSim](https://arxiv.org/abs/2311.17264) paper.

- [x] MemGraph: [C++](https://github.com/memgraph/memgraph/blob/784dd8520f65050d033aea8b29446e84e487d091/src/storage/v2/indices/vector_index.cpp), [announcement](https://memgraph.com/blog/simplify-data-retrieval-memgraph-vector-search).

- [x] LanternDB: [C++](https://github.com/lanterndata/lantern), [Rust](https://github.com/lanterndata/lantern_extras), [docs](https://lantern.dev/blog/hnsw-index-creation).

- [x] LangChain: [Python](https://github.com/langchain-ai/langchain/releases/tag/v0.0.257) and [JavaScript](https://github.com/hwchase17/langchainjs/releases/tag/0.0.125).

- [x] Microsoft Semantic Kernel: [Python](https://github.com/microsoft/semantic-kernel/releases/tag/python-0.3.9.dev) and C#.

- [x] GPTCache: [Python](https://github.com/zilliztech/GPTCache/releases/tag/0.1.29).

- [x] Sentence-Transformers: Python [docs](https://www.sbert.net/docs/package_reference/quantization.html#sentence_transformers.quantization.semantic_search_usearch).

- [x] Pathway: [Rust](https://github.com/pathwaycom/pathway).

- [x] Vald: [GoLang](https://github.com/vdaas/vald).

## Citations

```bibtex

@software{Vardanian_USearch_2023,

doi = {10.5281/zenodo.7949416},

author = {Vardanian, Ash},

title = {{USearch by Unum Cloud}},

url = {https://github.com/unum-cloud/usearch},

version = {2.24.0},

year = {2023},

month = oct,

}

```

| text/markdown | Titusz Pan (fork maintainer) | tp@py7.de | null | null | Apache-2.0 | null | [

"Development Status :: 5 - Production/Stable",

"Natural Language :: English",

"Intended Audience :: Developers",

"Intended Audience :: Information Technology",

"License :: OSI Approved :: Apache Software License",

"Programming Language :: C++",

"Programming Language :: Python :: 3 :: Only",

"Programming Language :: Python :: Implementation :: CPython",

"Programming Language :: Java",

"Programming Language :: JavaScript",

"Programming Language :: Objective C",

"Programming Language :: Rust",

"Programming Language :: Other",

"Operating System :: MacOS",

"Operating System :: Unix",

"Operating System :: Microsoft :: Windows",

"Topic :: System :: Clustering",

"Topic :: Database :: Database Engines/Servers",

"Topic :: Scientific/Engineering :: Artificial Intelligence"

] | [] | https://github.com/iscc/usearch | null | null | [] | [] | [] | [

"numpy",

"tqdm",

"simsimd<7.0.0,>=6.0.5"

] | [] | [] | [] | [

"Upstream, https://github.com/unum-cloud/usearch",

"Fork, https://github.com/iscc/usearch"

] | uv/0.10.4 {"installer":{"name":"uv","version":"0.10.4","subcommand":["publish"]},"python":null,"implementation":{"name":null,"version":null},"distro":{"name":"Ubuntu","version":"24.04","id":"noble","libc":null},"system":{"name":null,"release":null},"cpu":null,"openssl_version":null,"setuptools_version":null,"rustc_version":null,"ci":true} | 2026-02-20T10:41:27.781707 | usearch_iscc-2.24.2-cp310-cp310-macosx_11_0_arm64.whl | 563,611 | db/0d/ca663ac11d78c13785ef16f93ce376ce3705531769f7da49481ce6bdd3a3/usearch_iscc-2.24.2-cp310-cp310-macosx_11_0_arm64.whl | cp310 | bdist_wheel | null | false | 50c5ab4292d4e0387062f7df5f45829d | 4cf5be270139c61b7ca569b138b18f6926bc7d5ff88132b5ff24c74dfebc0ffd | db0dca663ac11d78c13785ef16f93ce376ce3705531769f7da49481ce6bdd3a3 | null | [

"LICENSE"

] | 3,052 |

2.4 | acex-client | 4.1.2 | ACE-X CLIENT - Client for ACE-X | # ACE-X CLIENT

This client is used for communication with the ACEX-API and is used within

the cli, worker and more.

## Installation

```bash

pip install acex-client

```

See the [main documentation](../README.md) for more information.

| text/markdown | Johan Lahti | johan.lahti@acebit.se | null | null | AGPL-3.0 | automation, control | [

"License :: OSI Approved :: GNU Affero General Public License v3",

"Programming Language :: Python :: 3",

"Programming Language :: Python :: 3.13",

"Programming Language :: Python :: 3.14"

] | [] | null | null | <4.0,>=3.13 | [] | [] | [] | [

"acex<5.0.0,>=4.1.5",

"acex-devkit<2.0.0,>=1.0.5",

"acex-driver-cisco-ioscli<0.0.13,>=0.0.12",

"datamodel-code-generator<0.44.0,>=0.43.1",

"datetime<7.0,>=6.0",

"pydantic<3.0.0,>=2.12.5",

"requests<3.0.0,>=2.32.5",

"rich<14.0.0,>=13.0.0",

"typer<0.13.0,>=0.12.0"

] | [] | [] | [] | [] | twine/6.1.0 CPython/3.13.7 | 2026-02-20T10:41:27.662550 | acex_client-4.1.2.tar.gz | 10,760 | 42/52/9cc921b16b454e292449c3896dd0a3a98654c8d6f4ef15415d8ba0073a2b/acex_client-4.1.2.tar.gz | source | sdist | null | false | fa4a7f52c1bd344c3dde2e9b37a29977 | 884555e58f329369a83962a72e3682a55150a77a73a15e68c8a2115aca17adba | 42529cc921b16b454e292449c3896dd0a3a98654c8d6f4ef15415d8ba0073a2b | null | [] | 229 |

2.4 | acex-cli | 4.1.2 | ACE-X CLI - Command-line interface for ACE-X | # ACE-X CLI

Command-line interface for managing ACE-X automations.

## Installation

```bash

pip install acex-cli

```

This will also install the `acex` backend package as a dependency.

## Development

```bash

cd cli

poetry install

```

## Usage

```bash

acex --help

acex run automation.py

acex list

acex status

```

## Commands

- `acex run` - Run an automation

- `acex list` - List available automations

- `acex status` - Check system status

- `acex config` - Manage configuration

## Documentation

See the [main documentation](../README.md) for more information.

| text/markdown | Johan Lahti | johan.lahti@acebit.se | null | null | AGPL-3.0 | automation, cli, control | [

"License :: OSI Approved :: GNU Affero General Public License v3",

"Programming Language :: Python :: 3",

"Programming Language :: Python :: 3.13",

"Programming Language :: Python :: 3.14"

] | [] | null | null | <4.0,>=3.13 | [] | [] | [] | [

"acex-client<5.0.0,>=4.1.2",

"acex-driver-cisco-ioscli<0.0.13,>=0.0.12",

"click==8.1.7",

"ntc-templates<9.0.0,>=8.1.0",

"rich<14.0.0,>=13.0.0",

"typer<0.13.0,>=0.12.0"

] | [] | [] | [] | [

"Homepage, https://github.com/acex-labs/acex",

"Repository, https://github.com/acex-labs/acex"

] | twine/6.1.0 CPython/3.13.7 | 2026-02-20T10:41:26.259810 | acex_cli-4.1.2.tar.gz | 9,142 | a2/e9/c1ef27a1daaedf1413ce3e833c6aecd09143aeb7cef3026cc6e33659061b/acex_cli-4.1.2.tar.gz | source | sdist | null | false | 2e7779975525282fc2b7ae60e6d46b36 | 4b6962f8a4a3f0ebeadf3fcba4ea14c7f08d90206aef594aca531f407a4ed06c | a2e9c1ef27a1daaedf1413ce3e833c6aecd09143aeb7cef3026cc6e33659061b | null | [] | 229 |

2.4 | imio.scan-helpers | 0.7.1 | Various script files to handle local scan tool | imio.scan_helpers

=================

Various script files to handle MS Windows scan tool

Installation

------------

Use virtualenv in bin directory destination

Build locally

-------------

bin/pyinstaller -y imio-scan-helpers.spec

GitHub actions

--------------

On each push or tag, the github action will build the package and upload it to the github release page.

https://github.com/IMIO/imio.scan_helpers/releases

Windows installation

--------------------

The zip archive must be decompressed in a directory (without version reference) that will be the execution directory.

Windows usage

-------------

* imio-scan-helpers.exe -h : displays the help

* imio-scan-helpers.exe : updates the software based on version and restarts it

* imio-scan-helpers.exe -r tag_name: updates the software with specific release and restarts it

* imio-scan-helpers.exe -c client_id: stores client_id in configuration file

(used as identification when sending info to imio)

* imio-scan-helpers.exe -p plone_password: stores webservice password in configuration file

(used when sending info to imio)

* imio-scan-helpers.exe -nu : runs without update

* imio-scan-helpers.exe --startup : adds the software to the windows startup

* imio-scan-helpers.exe --startup-remove : removes the software from the windows startup

* profiles-backup.exe : backups profiles

* profiles-restore.exe : restores profiles

Changelog

=========

0.7.1 (2026-02-20)

------------------

- Fixed pip-system-cert for inject_truststore() function.

[chris-adam]

- Fixed get_latest_release_version to iterate over all GitHub pages.

[chris-adam]

- Added parameter to prevent auto updates.

[chris-adam]

- Replaced pip-system-certs with truststore to resolve certificate problems.

[chris-adam]

0.7.0 (2025-09-01)

------------------

- Used `pip-system-certs` to resolve certificate problems.

[chris-adam]

- Unpinned pyinstaller version.

[sgeulette]

- Improved send_log_message to avoid timeout.

[sgeulette]

- Added exception handling when removing profiles directory.

[chris-adam]

0.6.0 (2024-08-28)

------------------

- Improved version update.

[sgeulette]

- Added `-tm` parameter (test message).

[sgeulette]

0.5.2 (2024-08-26)

------------------

- Added version in message sent to webservice.

[sgeulette]

0.5.1 (2024-08-23)

------------------

- Corrected bug with relative path.

[sgeulette]

- Added backuped dirs in first message.

[sgeulette]

0.5.0 (2024-08-22)

------------------

- Added certifi pem file to be sure https certificates can be validated.

[sgeulette]

0.4.1 (2024-08-22)

------------------

- Added more info in first message.

[sgeulette]

0.4.0 (2024-08-21)

------------------

- Added optional basic proxy configuration.

[sgeulette]

0.3.2 (2024-08-21)

------------------

- Corrected `utils.json_request`.

[sgeulette]

0.3.1 (2024-08-20)

------------------

- Added tests.

[sgeulette]

0.3.0 (2024-08-14)

------------------

- Corrected version.

[sgeulette]

0.2.5 (2024-08-14)

------------------

- Called profiles_restore in main.

[sgeulette]

0.2.4 (2024-08-14)

------------------

- Corrected set_parameter. Added hostname information.

[sgeulette]

0.2.3 (2024-08-14)

------------------

- Send an info message (no mail) when the product is updated.

[sgeulette]

0.2.2 (2024-08-13)

------------------

- Added `--is-auto-started` parameter in main, passed when app is auto started.

[sgeulette]

0.2.1 (2024-08-13)

------------------

- Changed backup directory.

[sgeulette]

- Improved exception logging.

[sgeulette]

0.2.0 (2024-08-13)

------------------

- Added profiles_backup script.

[sgeulette]

- Stored client identification, plone password and webservice url in configuration file.

[sgeulette]

- Added profiles_restore script.

[sgeulette]

0.1.1 (2024-07-19)

------------------

- Handled Windows startup add or remove following parameters.

[sgeulette]

0.1.0 (2024-07-18)

------------------

- Initial release.

[sgeulette]

| null | Stephan Geulette (IMIO) | support@imio.be | null | null | GPL version 3 | Scan Windows | [

"Development Status :: 3 - Alpha",

"Programming Language :: Python :: 3.12",

"License :: OSI Approved :: GNU General Public License v3 (GPLv3)",

"Operating System :: Microsoft :: Windows",

"Operating System :: Microsoft :: Windows :: Windows 10",

"Operating System :: Microsoft :: Windows :: Windows 11"

] | [] | null | null | >=3.12 | [] | [] | [] | [

"pyinstaller",

"requests"

] | [] | [] | [] | [

"PyPI, https://pypi.python.org/pypi/imio.scan_helpers",

"Source, https://github.com/IMIO/imio.scan_helpers"

] | twine/6.2.0 CPython/3.12.7 | 2026-02-20T10:40:52.091974 | imio_scan_helpers-0.7.1.tar.gz | 26,252 | da/e8/55ddd113f3ed98d1e3b9fb5ea9bcfa0a5f2f496926996b37854cf62a758a/imio_scan_helpers-0.7.1.tar.gz | source | sdist | null | false | 1a75787db0f6c1e796bc582a720a2b1f | 58075760a223aedebddd6048357ce76c9936905f693ad2b5e8d68b3b66b07040 | dae855ddd113f3ed98d1e3b9fb5ea9bcfa0a5f2f496926996b37854cf62a758a | null | [

"LICENSE"

] | 0 |

2.4 | moordyn | 2.6.1 | Python wrapper for MoorDyn library | MoorDyn v2

==========

**This repository is for MoorDyn-C.**

MoorDyn is a lumped-mass model for simulating the dynamics of mooring systems connected to floating offshore structures. As of 2022 it is available under the BSD 3-Clause

license.

Read the docs here: [moordyn.readthedocs.io](https://moordyn.readthedocs.io/en/latest/)

Example uses and instructions here: [Examples](https://github.com/FloatingArrayDesign/MoorDyn/tree/dev/example)

It accounts for internal axial stiffness and damping forces, weight and buoyancy forces, hydrodynamic forces from Morison's equation (assuming calm water so far), and vertical spring-damper forces from contact with the seabed. MoorDyn's input file format is based on that of [MAP](https://www.nrel.gov/wind/nwtc/map-plus-plus.html). The model supports arbitrary line interconnections, clump weights and floats, different line properties, and six degree of freedom rods and bodies.

MoorDyn is implemented both in Fortran and in C++. The Fortran version of MoorDyn (MoorDyn-F) is a core module in [OpenFAST](https://github.com/OpenFAST/openfast) and can be used as part of an OpenFAST or FAST.Farm simulation, or used in a standalone form. The C++ version of MoorDyn (MoorDyn-C) is more adaptable to different use cases and couplings. It can be compiled as a dynamically-linked library or wrapped for use in Python (as a module), Fortran, or Matlab. It features simpler functions for easy coupling with models or scripts coded in C/C++, Fortran, Matlab/Simulink, etc., including a coupling with [WEC-Sim](https://wec-sim.github.io/WEC-Sim/master/index.html). Users should take care to ensure their input file format matches the respective version of MoorDyn they are trying to use. Details on the input file differences can be found in the [documentation](https://moordyn.readthedocs.io/en/latest/inputs.html).

Both forms of MoorDyn feature the same underlying mooring model, use the same input and output conventions, and are being updated and improved in parallel. They follow the same version numbering, with a "C" or "F" suffix for differentiation.

Further information on MoorDyn can be found on the [documentation site](https://moordyn.readthedocs.io/en/latest/). MoorDyn-F is available in the [OpenFAST repository](https://github.com/OpenFAST/openfast/tree/main/modules/moordyn). MoorDyn-C is available in this repository with the following three maintained branches. The master branch represents the most recent release of

MoorDyn-C. The dev branch contains new features currently in development. The v1 branch is the now deprecated version one of MoorDyn-C.

## Acknowledgments

[National Renewable Energy Laboratory (NREL)](https://www.nrel.gov/):

- Matt Hall

- Ryan Davies

- Andy Platt

- Stein Housner

- Lu Wang

- Jason Jonkman

[CoreMarine](https://www.core-marine.com/) [MoorDyn-C v2]:

- Jose Luis Cercos-Pita

- Aymeric Devulder

- Elena Gridasova

[Kelson Marine](https://kelsonmarine.com) [MoorDyn-C v2]:

- [David Joseph Anderson](https://davidjosephanderson.com/)

- [Alex Kinley](https://github.com/AlexWKinley)

| text/markdown | null | Jose Luis Cercos-Pita <jlc@core-marine.com> | null | null | null | null | [

"Programming Language :: Python :: 3",

"License :: OSI Approved :: BSD License",

"Operating System :: OS Independent"

] | [] | null | null | >=3.7 | [] | [] | [] | [] | [] | [] | [] | [

"Homepage, https://github.com/FloatingArrayDesign/MoorDyn",

"Bug Tracker, https://github.com/FloatingArrayDesign/MoorDyn/issues",

"Documentation, https://moordyn.readthedocs.io"

] | twine/6.1.0 CPython/3.13.7 | 2026-02-20T10:40:16.975172 | moordyn-2.6.1.tar.gz | 1,180,236 | 38/30/c2f670748f0c3e4acd4e046f03695d639dbf537796c25f5495706910d680/moordyn-2.6.1.tar.gz | source | sdist | null | false | 8f1dbc12e99ff40dcfc09fcea1d4582d | fab360adb98d50f5f3a290f3f2484e69dfa2d45d959ef056bc3a734b1019dc68 | 3830c2f670748f0c3e4acd4e046f03695d639dbf537796c25f5495706910d680 | null | [

"LICENSE.txt"

] | 5,814 |

2.4 | traccia | 0.1.12 | Production-ready distributed tracing SDK for AI agents and LLM applications | # Traccia

**Production-ready distributed tracing for AI agents and LLM applications**

Traccia is a lightweight, high-performance Python SDK for observability and tracing of AI agents, LLM applications, and complex distributed systems. Built on OpenTelemetry standards with specialized instrumentation for AI workloads.

[Traccia](https://pypi.org/project/traccia/) is available on PyPI.

## ✨ Features

- **🔍 Automatic Instrumentation**: Auto-patch OpenAI, Anthropic, requests, and HTTP libraries

- **🤖 Framework Integrations**: Support for LangChain, CrewAI, and OpenAI Agents SDK

- **📊 LLM-Aware Tracing**: Track tokens, costs, prompts, and completions automatically

- **📈 OpenTelemetry Metrics**: Emit OTEL-compliant metrics for accurate cost/token tracking (independent of sampling)

- **⚡ Zero-Config Start**: Simple `init()` call with automatic config discovery

- **🎯 Decorator-Based**: Trace any function with `@observe` decorator

- **🔧 Multiple Exporters**: OTLP (compatible with Grafana Tempo, Jaeger, Zipkin), Console, or File

- **🛡️ Production-Ready**: Rate limiting, error handling, config validation, robust flushing

- **📝 Type-Safe**: Full Pydantic validation for configuration

- **🚀 High Performance**: Efficient batching, async support, minimal overhead

- **🔐 Secure**: No secrets in logs, configurable data truncation

---

## 🚀 Quick Start

### Installation

```bash

pip install traccia

```

### Basic Usage

```python

from traccia import init, observe

# Initialize (auto-loads from traccia.toml if present)

init()

# Trace any function

@observe()

def my_function(x, y):

return x + y

# That's it! Traces are automatically created and exported

result = my_function(2, 3)

```

### With LLM Calls

```python

from traccia import init, observe

from openai import OpenAI

init() # Auto-patches OpenAI

client = OpenAI()

@observe(as_type="llm")

def generate_text(prompt: str) -> str:

response = client.chat.completions.create(

model="gpt-4",

messages=[{"role": "user", "content": prompt}]

)

return response.choices[0].message.content

# Automatically tracks: model, tokens, cost, prompt, completion, latency

text = generate_text("Write a haiku about Python")

```

### LangChain