metadata_version string | name string | version string | summary string | description string | description_content_type string | author string | author_email string | maintainer string | maintainer_email string | license string | keywords string | classifiers list | platform list | home_page string | download_url string | requires_python string | requires list | provides list | obsoletes list | requires_dist list | provides_dist list | obsoletes_dist list | requires_external list | project_urls list | uploaded_via string | upload_time timestamp[us] | filename string | size int64 | path string | python_version string | packagetype string | comment_text string | has_signature bool | md5_digest string | sha256_digest string | blake2_256_digest string | license_expression string | license_files list | recent_7d_downloads int64 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

2.4 | ethereum-types | 0.3.0 | Types used by the Ethereum Specification. | Ethereum Types

==============

Types and utilities used by the [Ethereum Execution Layer Specification (EELS)][eels]. Includes:

- Fixed-size unsigned integers (`U256`, `U64`, etc.)

- Arbitrarily-sized unsigned integers (`Uint`.)

- Fixed-size byte sequences (`Bytes4`, `Bytes8`, etc.)

- Utilities for making/interacting with immutable dataclasses (`slotted_freezable`, `modify`.)

[eels]: https://github.com/ethereum/execution-specs

| text/markdown | null | null | null | null | null | null | [

"License :: CC0 1.0 Universal (CC0 1.0) Public Domain Dedication"

] | [] | https://github.com/ethereum/ethereum-types | null | >=3.10 | [] | [] | [] | [

"typing-extensions>=4.12.2",

"pytest<9,>=8.2.2; extra == \"test\"",

"pytest-cov<6,>=5; extra == \"test\"",

"pytest-xdist<4,>=3.6.1; extra == \"test\"",

"types-setuptools<71,>=70.3.0.1; extra == \"lint\"",

"isort==5.13.2; extra == \"lint\"",

"mypy==1.17.0; extra == \"lint\"",

"black==24.4.2; extra == \"lint\"",

"flake8==7.1.0; extra == \"lint\"",

"flake8-bugbear==24.4.26; extra == \"lint\"",

"flake8-docstrings==1.7.0; extra == \"lint\"",

"docc<0.3.0,>=0.2.0; extra == \"doc\""

] | [] | [] | [] | [] | twine/6.1.0 CPython/3.12.12 | 2026-02-20T03:47:40.169445 | ethereum_types-0.3.0.tar.gz | 15,697 | 90/90/32d9440ae5b2ac97d873862c9cbbacd28c82cf6d471efb54ef3051700739/ethereum_types-0.3.0.tar.gz | source | sdist | null | false | a197a89896cb5eb893932519735e57f8 | e5324efd269a0f66993163366543e39aae474a53f48031c31acec956867d8995 | 909032d9440ae5b2ac97d873862c9cbbacd28c82cf6d471efb54ef3051700739 | null | [

"LICENSE.md"

] | 977 |

2.4 | quantlite | 1.0.2 | A fat-tail-native quantitative finance toolkit: EVT, risk metrics, and honest modelling for markets that bite. | # QuantLite

[](https://pypi.org/project/quantlite/)

[](https://www.python.org/downloads/)

[](LICENSE)

**A fat-tail-native quantitative finance toolkit for Python.**

Most quantitative finance libraries bolt fat tails on as an afterthought, if they bother at all. They fit Gaussians, compute VaR with normal assumptions, and hope the tails never bite. The tails always bite.

QuantLite starts from the other end. Every distribution is fat-tailed by default. Every risk metric accounts for extremes. Every backtest ships with an honesty check. The result is a toolkit that models markets as they actually behave, not as textbooks wish they would.

```python

import quantlite as ql

data = ql.fetch(["AAPL", "BTC-USD", "GLD", "TLT"], period="5y")

regimes = ql.detect_regimes(data, n_regimes=3)

weights = ql.construct_portfolio(data, regime_aware=True, regimes=regimes)

result = ql.backtest(data, weights)

ql.tearsheet(result, regimes=regimes, save="portfolio.html")

```

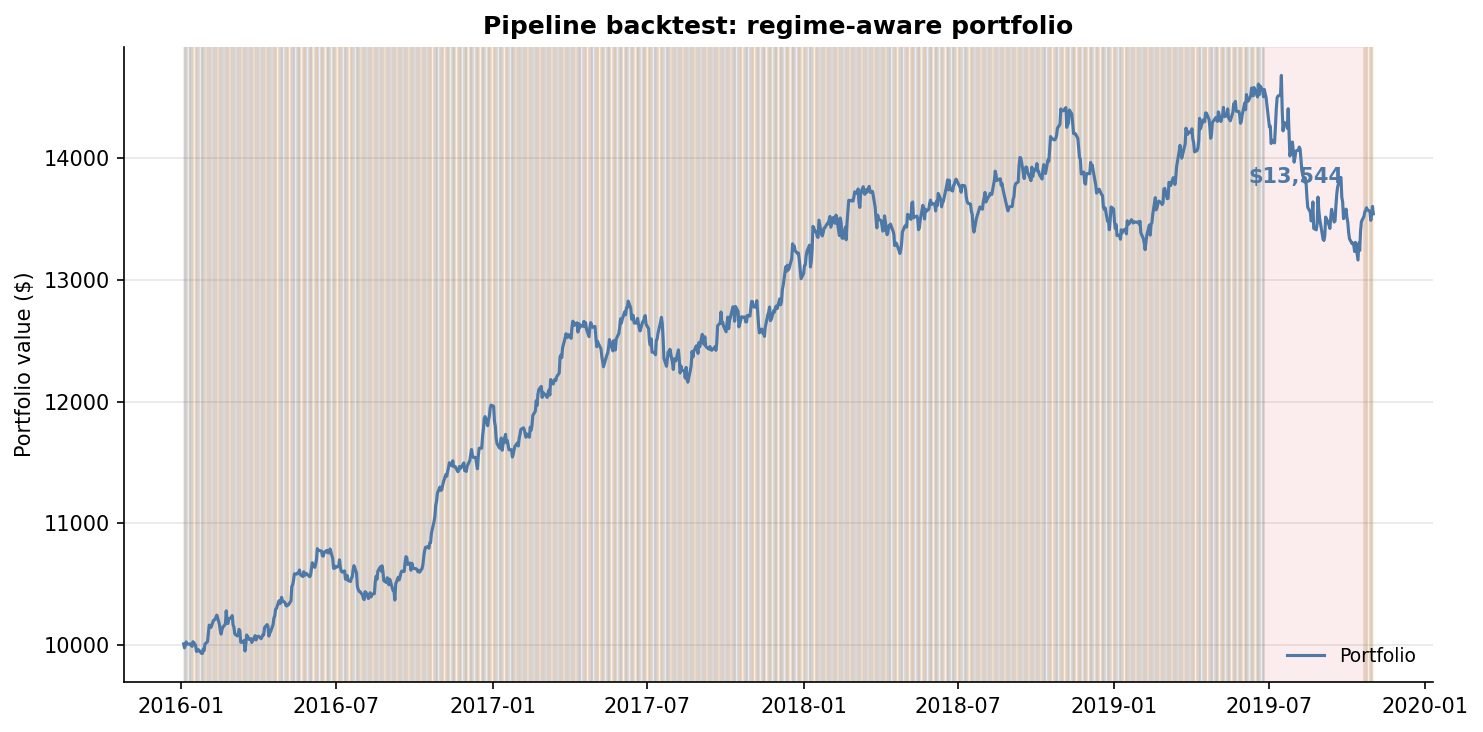

Five lines. Fetch data, detect market regimes, build a regime-aware portfolio, backtest it, and generate a full tearsheet. That is the Dream API.

---

## Why QuantLite

- **Fat tails are the default, not an afterthought.** Student-t, Lévy stable, GPD, and GEV distributions are first-class citizens. Gaussian is explicitly opt-in, never implicit.

- **Operationalises Taleb, Peters, and Lopez de Prado.** Ergodicity economics, antifragility scoring, the Fourth Quadrant map, Deflated Sharpe Ratios, and CSCV overfitting detection are built in, not bolted on.

- **Every backtest comes with an honesty check.** Bootstrap confidence intervals, multiple-testing corrections, and walk-forward validation ensure you know whether your Sharpe ratio is genuine or a statistical artefact.

- **Every chart follows Stephen Few's principles.** Maximum data-ink ratio, muted palette, direct labels, no chartjunk. Publication-ready by default.

---

## Visual Showcase

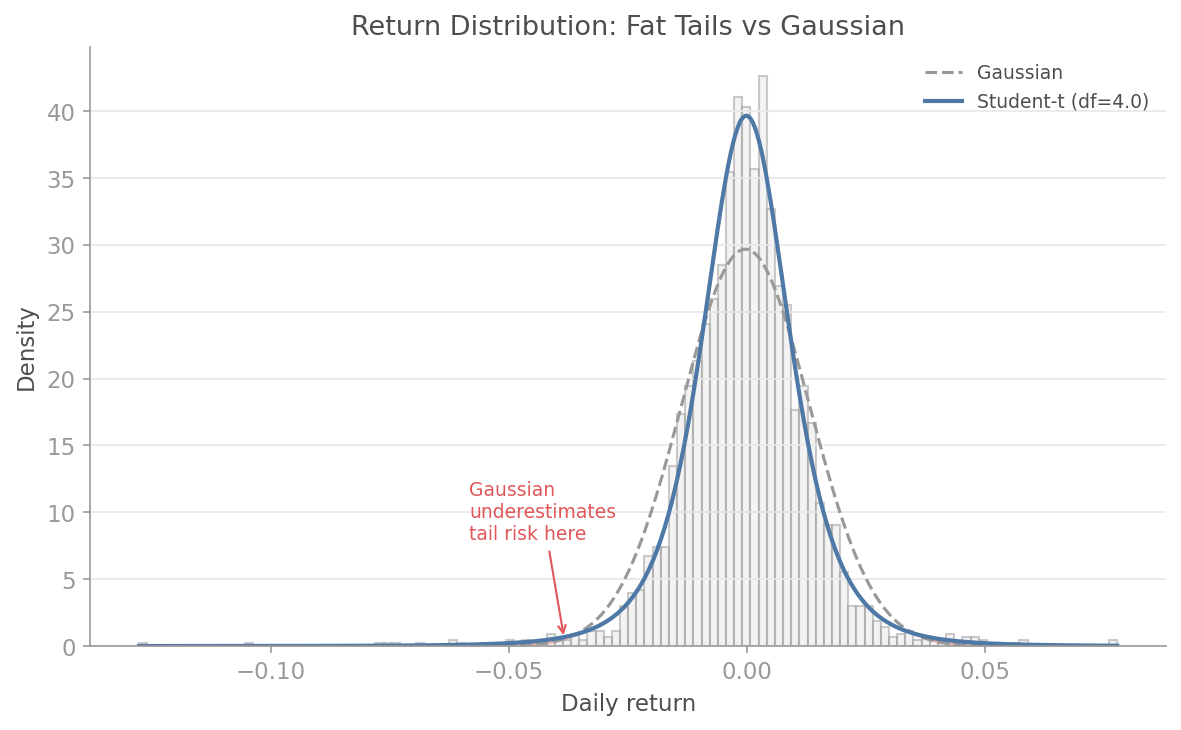

### Fat Tails vs Gaussian

Where the Gaussian underestimates tail risk, EVT and Student-t fitting reveal the true shape of returns. The difference is where fortunes are lost.

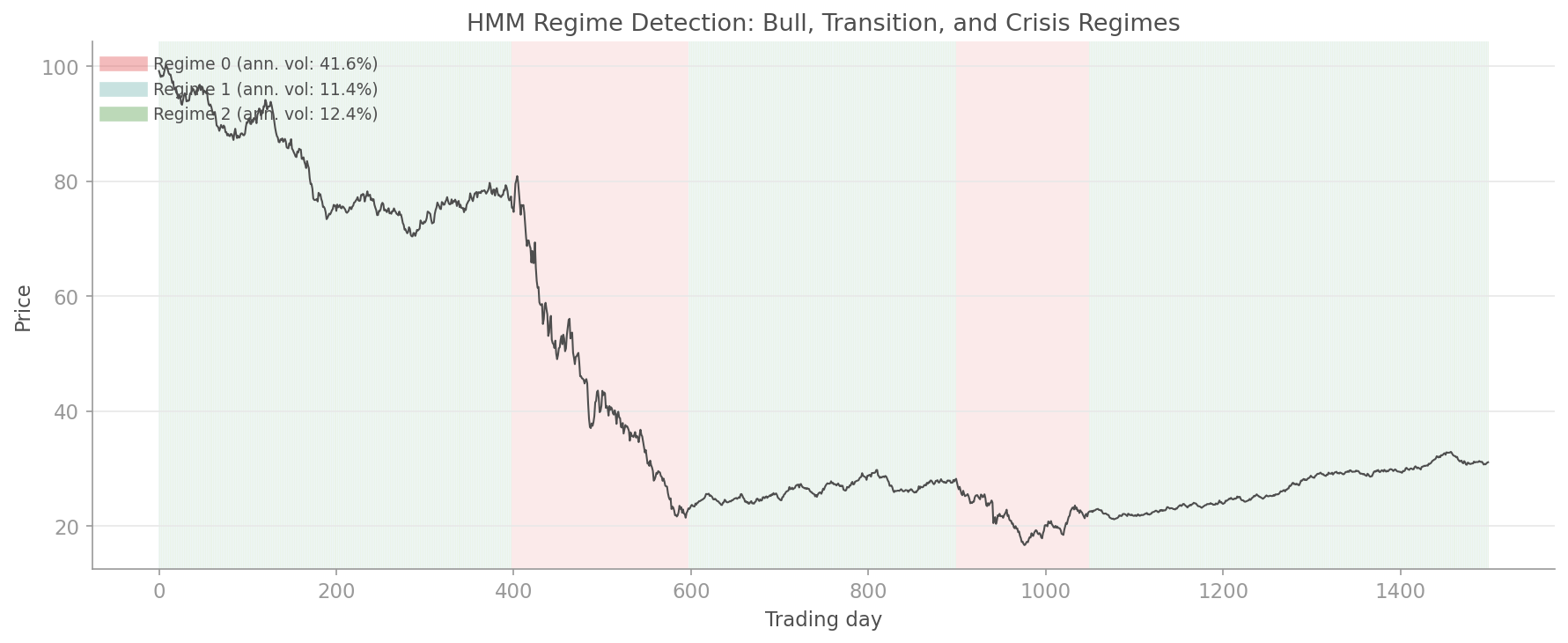

### Regime Timeline

Hidden Markov Models automatically identify bull, bear, and crisis regimes. Your portfolio should know which world it is living in.

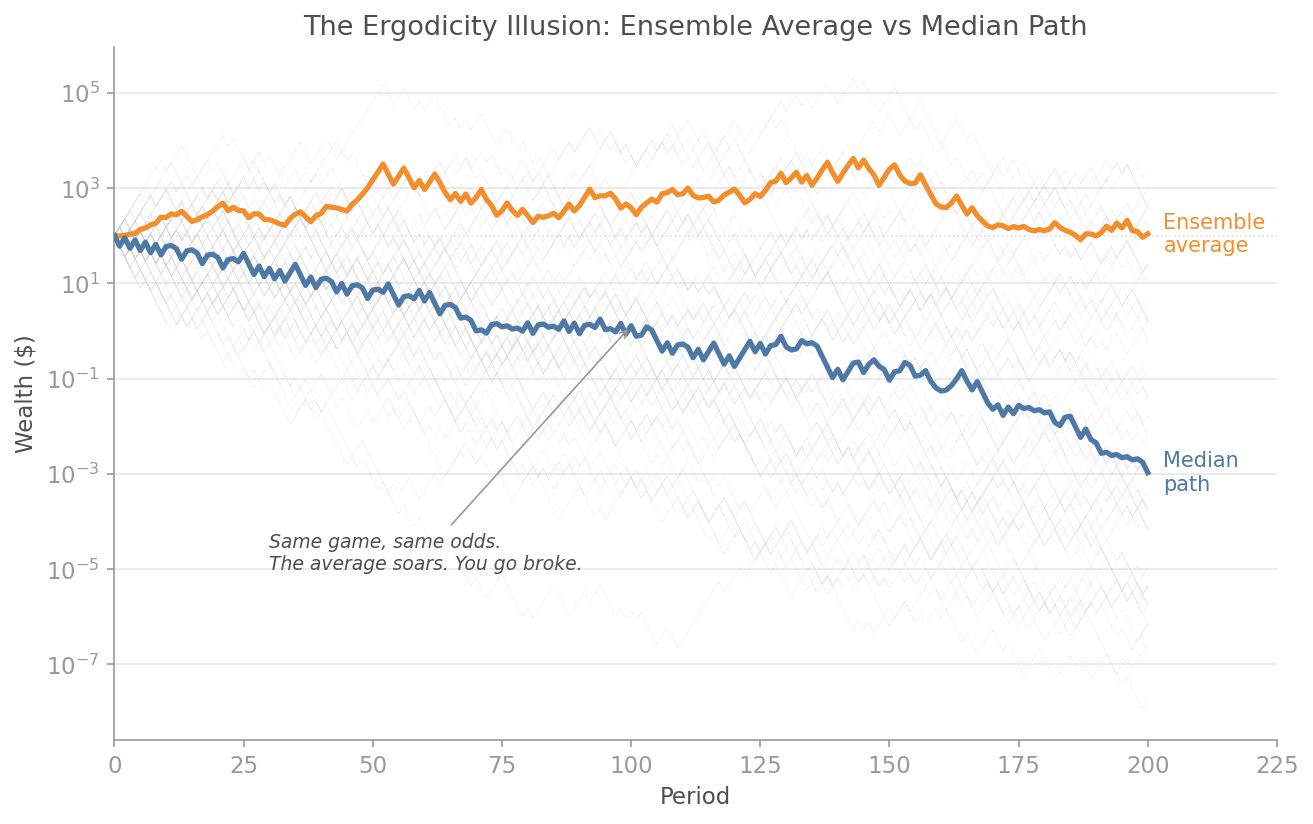

### Ergodicity Gap

The ensemble average says you are making money. The time average says you are going broke. The gap between them is the most important number in finance that nobody computes.

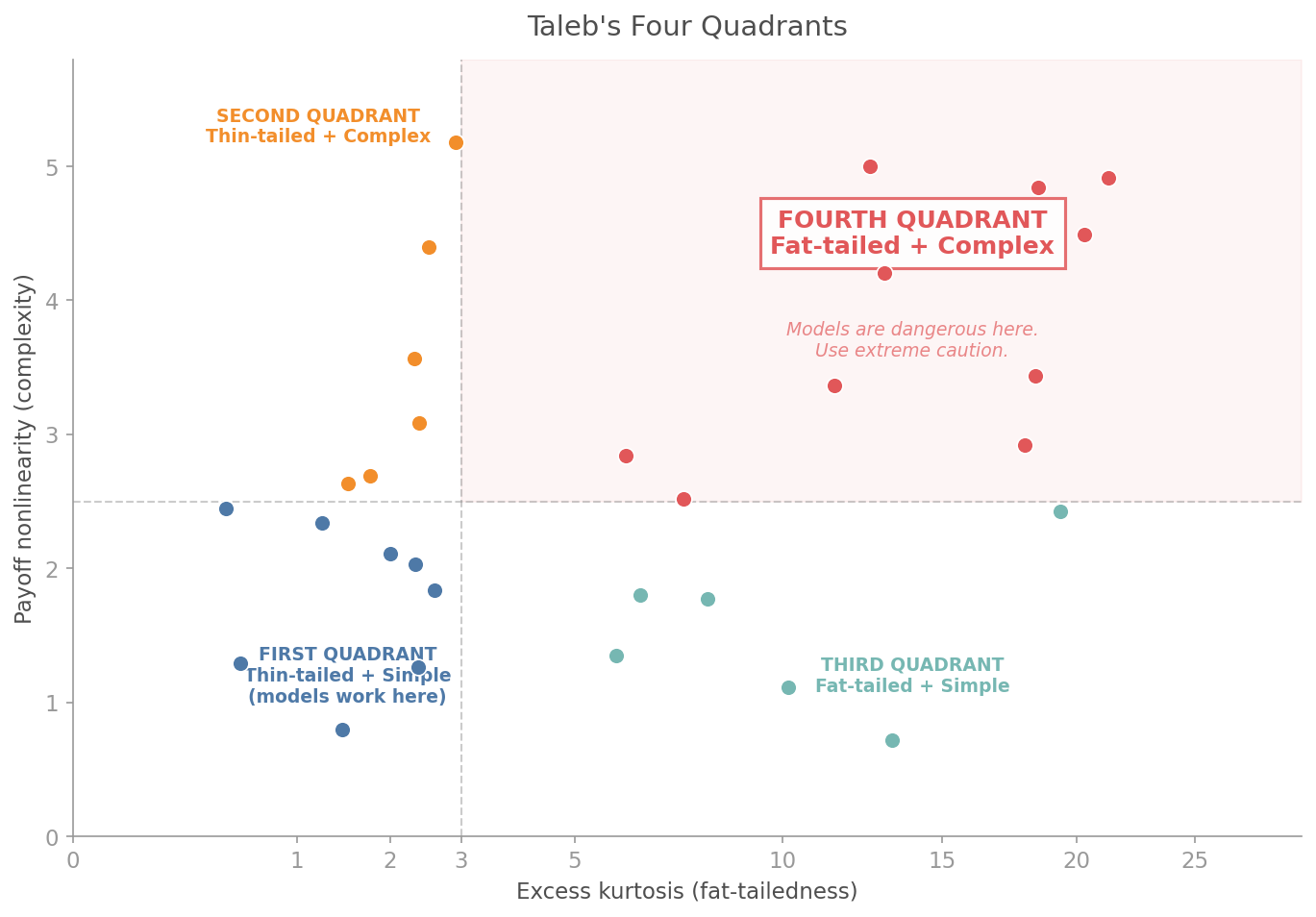

### Fourth Quadrant Map

Taleb's Fourth Quadrant: where payoffs are extreme and distributions are unknown. Know which quadrant your portfolio lives in before the market tells you.

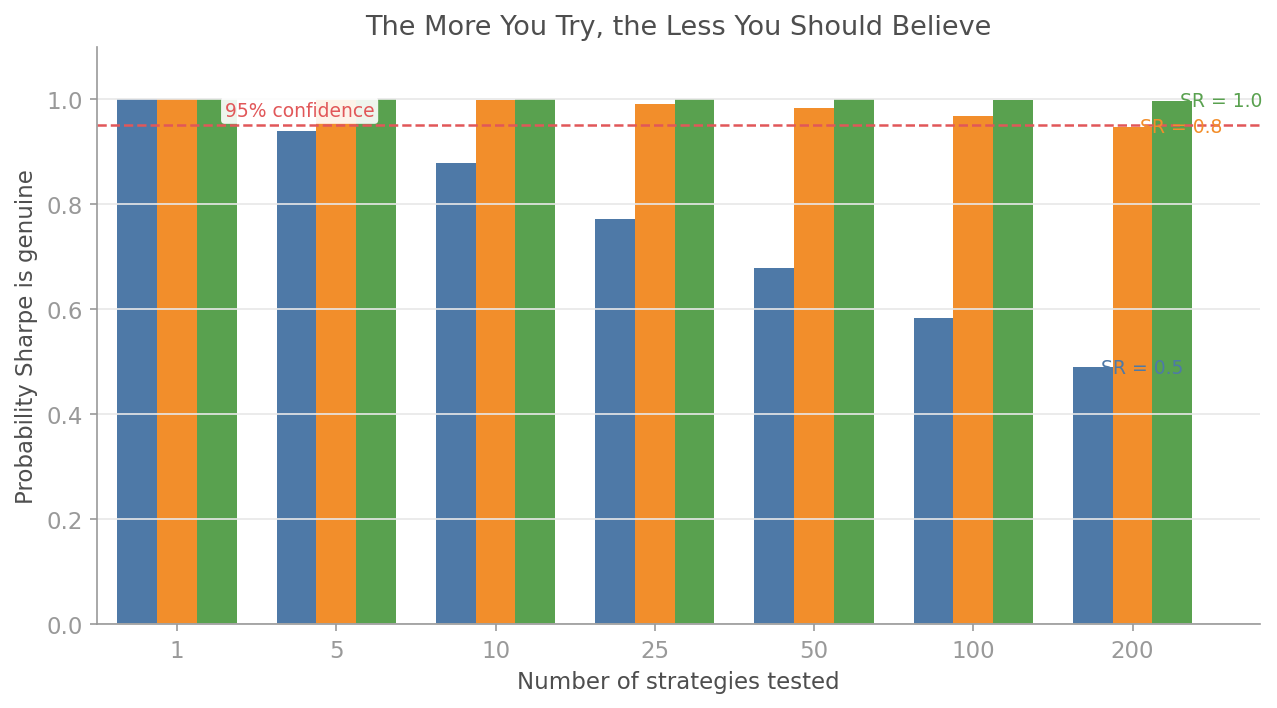

### Deflated Sharpe Ratio

You tested 50 strategies and picked the best. The Deflated Sharpe Ratio tells you the probability that your winner is genuine, not a multiple-testing artefact.

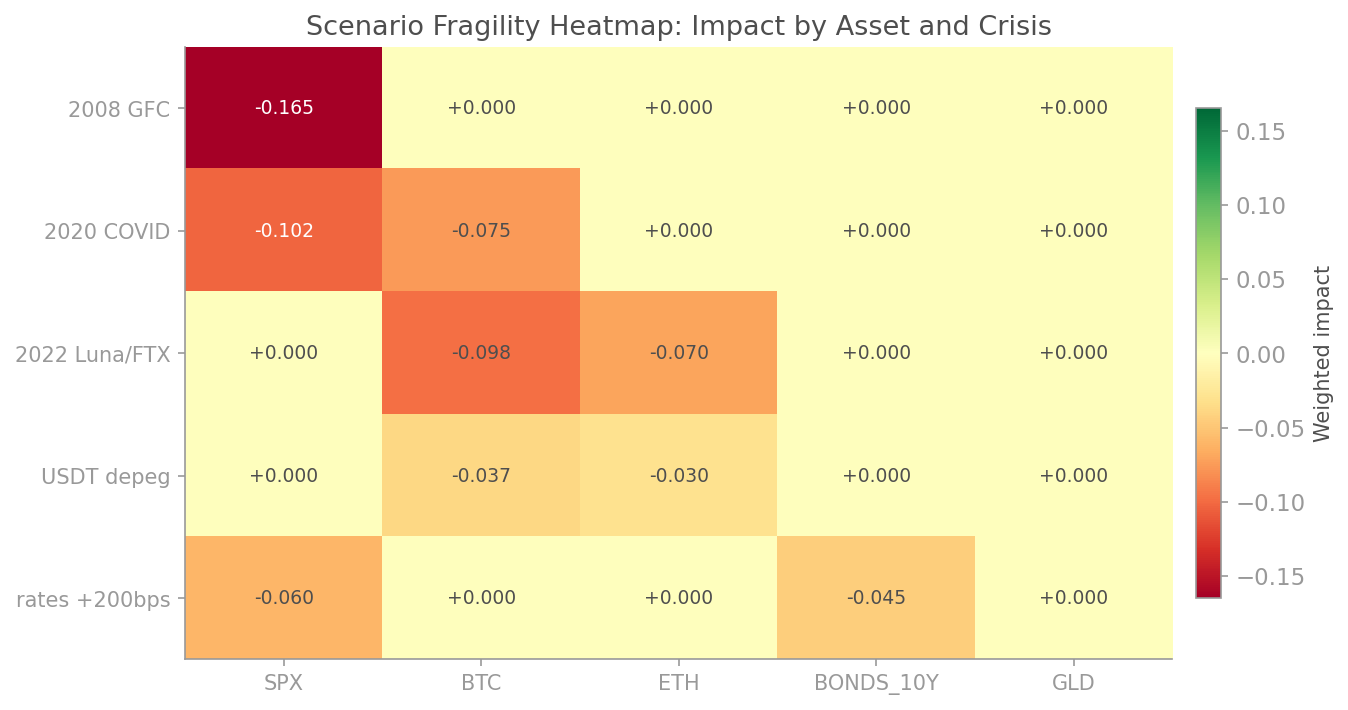

### Scenario Stress Heatmap

How does your portfolio fare under the GFC, COVID, the taper tantrum, and a dozen other crises? One glance.

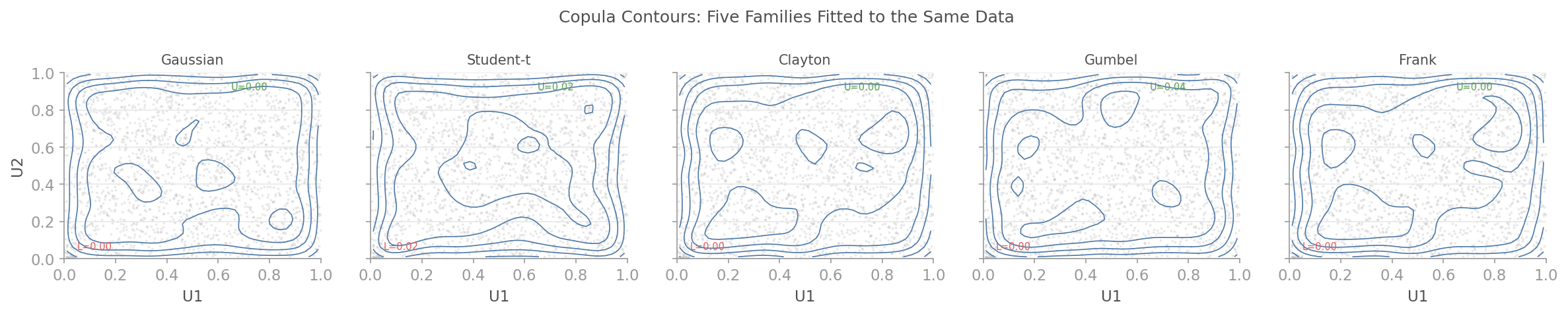

### Copula Contours

Five copula families fitted to the same data. Gaussian copula says tail dependence is zero. Student-t and Clayton disagree. They are right.

### Pipeline Equity Curve

The Dream API in action: regime-aware portfolio construction with automatic defensive tilting during crisis periods.

---

## Installation

```bash

pip install quantlite

```

Install only the data sources you need:

```bash

pip install quantlite[yahoo] # Yahoo Finance

pip install quantlite[crypto] # Cryptocurrency exchanges (CCXT)

pip install quantlite[fred] # FRED macroeconomic data

pip install quantlite[plotly] # Interactive Plotly charts

pip install quantlite[all] # Everything

```

Optional: `hmmlearn` for HMM regime detection.

---

## Quickstart

### The Dream API

```python

import quantlite as ql

# Fetch → detect regimes → build portfolio → backtest → report

data = ql.fetch(["AAPL", "BTC-USD", "GLD", "TLT"], period="5y")

regimes = ql.detect_regimes(data, n_regimes=3)

weights = ql.construct_portfolio(data, regime_aware=True, regimes=regimes)

result = ql.backtest(data, weights)

ql.tearsheet(result, regimes=regimes, save="portfolio.html")

```

### Fat-Tail Risk Analysis

```python

from quantlite.distributions.fat_tails import student_t_process

from quantlite.risk.metrics import value_at_risk, cvar, return_moments

from quantlite.risk.evt import tail_risk_summary

# Generate fat-tailed returns (nu=4 gives realistic equity tail behaviour)

returns = student_t_process(nu=4.0, mu=0.0003, sigma=0.012, n_steps=2520, rng_seed=42)

# Cornish-Fisher VaR accounts for skewness and kurtosis

var_99 = value_at_risk(returns, alpha=0.01, method="cornish-fisher")

cvar_99 = cvar(returns, alpha=0.01)

moments = return_moments(returns)

print(f"VaR (99%): {var_99:.4f}")

print(f"CVaR (99%): {cvar_99:.4f}")

print(f"Excess kurtosis: {moments.kurtosis:.2f}")

# Full EVT tail analysis

summary = tail_risk_summary(returns)

print(f"Hill tail index: {summary.hill_estimate.tail_index:.2f}")

print(f"GPD shape (xi): {summary.gpd_fit.shape:.4f}")

print(f"1-in-100 loss: {summary.return_level_100:.4f}")

```

### Backtest Forensics

```python

from quantlite.forensics import deflated_sharpe_ratio

from quantlite.resample import bootstrap_sharpe_distribution

# You tried 50 strategies and the best had Sharpe 1.8.

# Is it real?

dsr = deflated_sharpe_ratio(observed_sharpe=1.8, n_trials=50, n_obs=252)

print(f"Probability Sharpe is genuine: {dsr:.2%}")

# Bootstrap confidence interval on the Sharpe ratio

result = bootstrap_sharpe_distribution(returns, n_samples=2000, seed=42)

print(f"Sharpe: {result['point_estimate']:.2f}")

print(f"95% CI: [{result['ci_lower']:.2f}, {result['ci_upper']:.2f}]")

```

---

## Module Reference

### Core Risk

| Module | Description |

|--------|-------------|

| `quantlite.risk.metrics` | VaR (historical, parametric, Cornish-Fisher), CVaR, Sortino, Calmar, Omega, tail ratio, drawdowns |

| `quantlite.risk.evt` | GPD, GEV, Hill estimator, Peaks Over Threshold, return levels |

| `quantlite.distributions.fat_tails` | Student-t, Lévy stable, regime-switching GBM, Kou jump-diffusion |

| `quantlite.metrics` | Annualised return, volatility, Sharpe, max drawdown |

### Dependency and Portfolio

| Module | Description |

|--------|-------------|

| `quantlite.dependency.copulas` | Gaussian, Student-t, Clayton, Gumbel, Frank copulas with tail dependence |

| `quantlite.dependency.correlation` | Rolling, EWMA, stress, rank correlation |

| `quantlite.dependency.clustering` | Hierarchical Risk Parity |

| `quantlite.portfolio.optimisation` | Mean-variance, CVaR, risk parity, HRP, Black-Litterman, Kelly |

| `quantlite.portfolio.rebalancing` | Calendar, threshold, and tactical rebalancing |

### Backtesting

| Module | Description |

|--------|-------------|

| `quantlite.backtesting.engine` | Multi-asset backtesting with circuit breakers and slippage |

| `quantlite.backtesting.signals` | Momentum, mean reversion, trend following, volatility targeting |

| `quantlite.backtesting.analysis` | Performance summaries, monthly tables, regime attribution |

### Data

| Module | Description |

|--------|-------------|

| `quantlite.data` | Unified connectors: Yahoo Finance, CCXT, FRED, local files, plugin registry |

| `quantlite.data_generation` | GBM, correlated GBM, Ornstein-Uhlenbeck, Merton jump-diffusion |

### Taleb Stack

| Module | Description |

|--------|-------------|

| `quantlite.ergodicity` | Time-average vs ensemble-average growth, Kelly fraction, leverage effect |

| `quantlite.antifragile` | Antifragility score, convexity, Fourth Quadrant, barbell allocation, Lindy |

| `quantlite.scenarios` | Composable scenario engine, pre-built crisis library, shock propagation |

### Honest Backtesting

| Module | Description |

|--------|-------------|

| `quantlite.forensics` | Deflated Sharpe Ratio, Probabilistic Sharpe, haircut adjustments, minimum track record |

| `quantlite.overfit` | CSCV/PBO, TrialTracker, multiple testing correction, walk-forward validation |

| `quantlite.resample` | Block and stationary bootstrap, confidence intervals for Sharpe and drawdown |

### Systemic Risk

| Module | Description |

|--------|-------------|

| `quantlite.contagion` | CoVaR, Delta CoVaR, Marginal Expected Shortfall, Granger causality |

| `quantlite.network` | Correlation networks, eigenvector centrality, cascade simulation, community detection |

| `quantlite.diversification` | Effective Number of Bets, entropy diversification, tail diversification |

### Crypto

| Module | Description |

|--------|-------------|

| `quantlite.crypto.stablecoin` | Depeg probability, peg deviation tracking, reserve risk scoring |

| `quantlite.crypto.exchange` | Exchange concentration (HHI), wallet risk, proof of reserves, slippage |

| `quantlite.crypto.onchain` | Wallet exposure, TVL tracking, DeFi dependency graphs, smart contract risk |

### Simulation

| Module | Description |

|--------|-------------|

| `quantlite.simulation.evt_simulation` | EVT tail simulation, parametric tail simulation, scenario fan |

| `quantlite.simulation.copula_mc` | Gaussian copula MC, t-copula MC, stress correlation MC |

| `quantlite.simulation.regime_mc` | Regime-switching simulation, reverse stress test |

| `quantlite.monte_carlo` | Monte Carlo simulation harness |

### Factor Models

| Module | Description |

|--------|-------------|

| `quantlite.factors.classical` | Fama-French three/five-factor, Carhart four-factor, factor attribution |

| `quantlite.factors.custom` | Custom factor construction, significance testing, decay analysis |

| `quantlite.factors.tail_risk` | CVaR decomposition, regime factor exposure, crowding score |

### Regime Integration and Pipeline

| Module | Description |

|--------|-------------|

| `quantlite.regimes.hmm` | Hidden Markov Model regime detection |

| `quantlite.regimes.changepoint` | CUSUM and Bayesian changepoint detection |

| `quantlite.regimes.conditional` | Regime-conditional risk metrics and VaR |

| `quantlite.regime_integration` | Defensive tilting, filtered backtesting, regime tearsheets |

| `quantlite.pipeline` | Dream API: `fetch`, `detect_regimes`, `construct_portfolio`, `backtest`, `tearsheet` |

### Other

| Module | Description |

|--------|-------------|

| `quantlite.instruments` | Black-Scholes, bonds, barrier and Asian options |

| `quantlite.viz` | Stephen Few-themed charts: risk dashboards, copula contours, regime timelines |

| `quantlite.report` | HTML/PDF tearsheet generation |

---

## Design Philosophy

1. **Fat tails are the default.** Gaussian assumptions are explicitly opt-in, never implicit.

2. **Explicit return types.** Every function documents its return type precisely: `float`, `dict` with named keys, or a frozen dataclass with clear attributes.

3. **Composable modules.** Risk metrics feed into portfolio optimisation which feeds into backtesting. Each layer works independently.

4. **Honest modelling.** If a method has known limitations (e.g., Gaussian copula has zero tail dependence), the docstring says so.

5. **Reproducible.** Every stochastic function accepts `rng_seed` for deterministic output.

---

## Documentation

Full documentation lives in the [`docs/`](docs/) directory:

| Document | Topic |

|----------|-------|

| [risk.md](docs/risk.md) | Risk metrics and EVT |

| [copulas.md](docs/copulas.md) | Copula dependency structures |

| [regimes.md](docs/regimes.md) | Regime detection |

| [portfolio.md](docs/portfolio.md) | Portfolio optimisation and rebalancing |

| [data.md](docs/data.md) | Data connectors |

| [visualisation.md](docs/visualisation.md) | Stephen Few-themed charts |

| [interactive_viz.md](docs/interactive_viz.md) | Plotly interactive charts |

| [ergodicity.md](docs/ergodicity.md) | Ergodicity economics |

| [antifragility.md](docs/antifragility.md) | Antifragility measurement |

| [scenarios.md](docs/scenarios.md) | Scenario stress testing |

| [forensics.md](docs/forensics.md) | Deflated Sharpe and strategy forensics |

| [overfitting.md](docs/overfitting.md) | Overfitting detection |

| [resampling.md](docs/resampling.md) | Bootstrap resampling |

| [contagion.md](docs/contagion.md) | Systemic risk and contagion |

| [network.md](docs/network.md) | Financial network analysis |

| [diversification.md](docs/diversification.md) | Diversification diagnostics |

| [factors_classical.md](docs/factors_classical.md) | Classical factor models |

| [factors_custom.md](docs/factors_custom.md) | Custom factor tools |

| [factors_tail_risk.md](docs/factors_tail_risk.md) | Tail risk factor analysis |

| [simulation_evt.md](docs/simulation_evt.md) | EVT simulation |

| [simulation_copula.md](docs/simulation_copula.md) | Copula Monte Carlo |

| [simulation_regime.md](docs/simulation_regime.md) | Regime-switching simulation |

| [regime_integration.md](docs/regime_integration.md) | Regime-aware pipelines |

| [pipeline.md](docs/pipeline.md) | Dream API reference |

| [reports.md](docs/reports.md) | Tearsheet generation |

| [stablecoin_risk.md](docs/stablecoin_risk.md) | Stablecoin risk |

| [exchange_risk.md](docs/exchange_risk.md) | Exchange risk |

| [onchain_risk.md](docs/onchain_risk.md) | On-chain risk |

| [architecture.md](docs/architecture.md) | Library architecture |

---

## Contributing

Contributions are welcome. Please ensure:

1. All new functions have type hints and docstrings

2. Tests pass: `pytest`

3. Code is formatted: `ruff check` and `ruff format`

4. British spellings in all documentation

---

## License

MIT License. See [LICENSE](LICENSE) for details.

## Links

- [PyPI](https://pypi.org/project/quantlite/)

- [GitHub](https://github.com/prasants/QuantLite)

- [Issue Tracker](https://github.com/prasants/QuantLite/issues)

- [Changelog](CHANGELOG.md)

| text/markdown | null | Prasant Sudhakaran <code@prasant.net> | null | null | MIT License

Copyright (c) 2024 Prasant Sudhakaran

Permission is hereby granted, free of charge, to any person obtaining a copy

of this software and associated documentation files (the "Software"), to deal

in the Software without restriction, including without limitation the rights

to use, copy, modify, merge, publish, distribute, sublicense, and/or sell

copies of the Software, and to permit persons to whom the Software is

furnished to do so, subject to the following conditions:

The above copyright notice and this permission notice shall be included in

all copies or substantial portions of the Software.

THE SOFTWARE IS PROVIDED "AS IS", WITHOUT WARRANTY OF ANY KIND, EXPRESS OR

IMPLIED, INCLUDING BUT NOT LIMITED TO THE WARRANTIES OF MERCHANTABILITY,

FITNESS FOR A PARTICULAR PURPOSE AND NONINFRINGEMENT. IN NO EVENT SHALL THE

AUTHORS OR COPYRIGHT HOLDERS BE LIABLE FOR ANY CLAIM, DAMAGES OR OTHER

LIABILITY, WHETHER IN AN ACTION OF CONTRACT, TORT OR OTHERWISE, ARISING FROM,

OUT OF OR IN CONNECTION WITH THE SOFTWARE OR THE USE OR OTHER DEALINGS IN

THE SOFTWARE.

| quant, finance, risk, extreme value theory, monte carlo, fat tails, VaR, CVaR, options | [

"Development Status :: 3 - Alpha",

"Intended Audience :: Financial and Insurance Industry",

"Intended Audience :: Science/Research",

"License :: OSI Approved :: MIT License",

"Programming Language :: Python :: 3",

"Programming Language :: Python :: 3.10",

"Programming Language :: Python :: 3.11",

"Programming Language :: Python :: 3.12",

"Programming Language :: Python :: 3.13",

"Topic :: Office/Business :: Financial",

"Topic :: Scientific/Engineering :: Mathematics",

"Typing :: Typed"

] | [] | null | null | >=3.9 | [] | [] | [] | [

"numpy>=1.24",

"pandas>=2.0",

"scipy>=1.10",

"matplotlib>=3.7",

"mplfinance",

"yfinance>=0.2; extra == \"yahoo\"",

"ccxt>=4.0; extra == \"crypto\"",

"fredapi>=0.5; extra == \"fred\"",

"plotly>=5.0; extra == \"plotly\"",

"kaleido>=0.2; extra == \"plotly\"",

"plotly>=5.0; extra == \"report\"",

"kaleido>=0.2; extra == \"report\"",

"weasyprint>=60; extra == \"pdf\"",

"yfinance>=0.2; extra == \"all\"",

"ccxt>=4.0; extra == \"all\"",

"fredapi>=0.5; extra == \"all\"",

"plotly>=5.0; extra == \"all\"",

"kaleido>=0.2; extra == \"all\"",

"weasyprint>=60; extra == \"all\"",

"hmmlearn>=0.3; extra == \"all\"",

"pytest>=7.0; extra == \"dev\"",

"ruff>=0.4; extra == \"dev\"",

"mypy>=1.8; extra == \"dev\"",

"pandas-stubs; extra == \"dev\""

] | [] | [] | [] | [

"Homepage, https://github.com/prasants/QuantLite",

"Repository, https://github.com/prasants/QuantLite",

"Issues, https://github.com/prasants/QuantLite/issues"

] | twine/6.1.0 CPython/3.13.7 | 2026-02-20T03:47:20.206848 | quantlite-1.0.2.tar.gz | 190,966 | 18/a4/bbb4d7914336f9834b0a426f1bb80770cb633beb88508864face0347523f/quantlite-1.0.2.tar.gz | source | sdist | null | false | 8a03f4a976bd553c948ecd34242b38e0 | e07ce4a071354004479ed28a7d02a18a3b06ecf69e0076de2e541a187e558e87 | 18a4bbb4d7914336f9834b0a426f1bb80770cb633beb88508864face0347523f | null | [

"LICENSE"

] | 229 |

2.4 | fda-mcp | 0.2.2 | MCP server providing access to FDA data via the OpenFDA API | # FDA MCP Server

[](https://pypi.org/project/fda-mcp/)

[](https://pypi.org/project/fda-mcp/)

[](https://opensource.org/licenses/MIT)

An [MCP](https://modelcontextprotocol.io/) server that provides LLM-optimized access to FDA data through the [OpenFDA API](https://open.fda.gov/) and direct FDA document retrieval. Covers all 21 OpenFDA endpoints plus regulatory decision documents (510(k) summaries, De Novo decisions, PMA approval letters).

## Quick Start

No clone or local build required. Install [uv](https://docs.astral.sh/uv/) and run directly from [PyPI](https://pypi.org/project/fda-mcp/):

```bash

uvx fda-mcp

```

That's it. The server starts on stdio and is ready for any MCP client.

## Usage with Claude Desktop

Add to your `claude_desktop_config.json`:

```json

{

"mcpServers": {

"fda": {

"command": "uvx",

"args": ["fda-mcp"],

"env": {

"OPENFDA_API_KEY": "your-key-here"

}

}

}

}

```

Config file location:

- **macOS**: `~/Library/Application Support/Claude/claude_desktop_config.json`

- **Windows**: `%APPDATA%\Claude\claude_desktop_config.json`

## Usage with Claude Code

Add directly from the command line:

```bash

claude mcp add fda -- uvx fda-mcp

```

To include an API key for higher rate limits:

```bash

claude mcp add fda -e OPENFDA_API_KEY=your-key-here -- uvx fda-mcp

```

Or add interactively within Claude Code using the `/mcp` slash command.

## API Key (Optional)

The `OPENFDA_API_KEY` environment variable is optional. Without it you get 40 requests/minute. With a free key from [open.fda.gov](https://open.fda.gov/apis/authentication/) you get 240 requests/minute.

## Features

- **4 MCP tools** — one unified search tool, count/aggregation, field discovery, and document retrieval

- **3 MCP resources** for query syntax help, endpoint reference, and field discovery

- **All 21 OpenFDA endpoints** accessible via a single `search_fda` tool with a `dataset` parameter

- **Server instructions** — query syntax and common mistakes are injected into every LLM context automatically

- **Actionable error messages** — inline syntax help, troubleshooting tips, and `.exact` suffix warnings

- **FDA decision documents** — downloads and extracts text from 510(k) summaries, De Novo decisions, PMA approvals, SSEDs, and supplements

- **OCR fallback** for scanned PDF documents (older FDA submissions)

- **Context-efficient responses** — summarized output, field discovery on demand, pagination guidance

## Tools

| Tool | Purpose |

|------|---------|

| `search_fda` | Search any of the 21 OpenFDA datasets. The `dataset` parameter selects the endpoint (e.g., `drug_adverse_events`, `device_510k`, `food_recalls`). Accepts `search`, `limit`, `skip`, and `sort`. |

| `count_records` | Aggregation queries on any endpoint. Returns counts with percentages and narrative summary. Warns when `.exact` suffix is missing on text fields. |

| `list_searchable_fields` | Returns searchable field names for any endpoint. Call before searching if unsure of field names. |

| `get_decision_document` | Fetches FDA regulatory decision PDFs and extracts text. Supports 510(k), De Novo, PMA, SSED, and supplement documents. |

### Dataset Values for `search_fda`

| Category | Datasets |

|----------|----------|

| Drug | `drug_adverse_events`, `drug_labels`, `drug_ndc`, `drug_approvals`, `drug_recalls`, `drug_shortages` |

| Device | `device_adverse_events`, `device_510k`, `device_pma`, `device_classification`, `device_recalls`, `device_recall_details`, `device_registration`, `device_udi`, `device_covid19_serology` |

| Food | `food_adverse_events`, `food_recalls` |

| Other | `historical_documents`, `substance_data`, `unii`, `nsde` |

### Resources (3)

| URI | Content |

|-----|---------|

| `fda://reference/query-syntax` | OpenFDA query syntax: AND/OR/NOT, wildcards, date ranges, exact matching |

| `fda://reference/endpoints` | All 21 endpoints with descriptions |

| `fda://reference/fields/{endpoint}` | Per-endpoint field reference |

## Example Queries

Once connected, you can ask Claude things like:

- "Search for adverse events related to OZEMPIC"

- "Find all Class I device recalls from 2024"

- "What are the most common adverse reactions reported for LIPITOR?"

- "Get the 510(k) summary for K213456"

- "Search for PMA approvals for cardiovascular devices"

- "How many drug recalls has Pfizer had? Break down by classification."

- "Find the drug label for metformin and summarize the warnings"

- "What COVID-19 serology tests has Abbott submitted?"

## Configuration

All configuration is via environment variables:

| Variable | Default | Description |

|----------|---------|-------------|

| `OPENFDA_API_KEY` | *(none)* | API key for higher rate limits (240 vs 40 req/min) |

| `OPENFDA_TIMEOUT` | `30` | HTTP request timeout in seconds |

| `OPENFDA_MAX_CONCURRENT` | `4` | Max concurrent API requests |

| `FDA_PDF_TIMEOUT` | `60` | PDF download timeout in seconds |

| `FDA_PDF_MAX_LENGTH` | `8000` | Default max text characters extracted from PDFs |

## OpenFDA Query Syntax

The `search` parameter on all tools uses OpenFDA query syntax:

```

# AND

patient.drug.openfda.brand_name:"ASPIRIN"+AND+serious:1

# OR (space = OR)

brand_name:"ASPIRIN" brand_name:"IBUPROFEN"

# NOT

NOT+classification:"Class III"

# Date ranges

decision_date:[20230101+TO+20231231]

# Wildcards (trailing only, min 2 chars)

device_name:pulse*

# Exact matching (required for count queries)

patient.reaction.reactionmeddrapt.exact:"Nausea"

```

Use `list_searchable_fields` or the `fda://reference/query-syntax` resource for the full reference.

## Installation Options

### From PyPI (recommended)

```bash

# Run directly without installing

uvx fda-mcp

# Or install as a persistent tool

uv tool install fda-mcp

# Or install with pip

pip install fda-mcp

```

### From source

```bash

git clone https://github.com/Limecooler/fda-mcp.git

cd fda-mcp

uv sync

uv run fda-mcp

```

### Optional: OCR support for scanned PDFs

Many older FDA documents (pre-2010) are scanned images. To extract text from these:

```bash

# macOS

brew install tesseract poppler

# Linux (Debian/Ubuntu)

apt install tesseract-ocr poppler-utils

```

Without these, the server still works — it returns a helpful message when it encounters a scanned document it can't read.

## Development

```bash

# Install with dev dependencies

git clone https://github.com/Limecooler/fda-mcp.git

cd fda-mcp

uv sync --all-extras

# Run unit tests (187 tests, no network)

uv run pytest

# Run integration tests (hits real FDA API)

OPENFDA_TIMEOUT=60 uv run pytest -m integration

# Run a specific test file

uv run pytest tests/test_endpoints.py -v

# Start the server directly

uv run fda-mcp

```

### Project Structure

```

src/fda_mcp/

├── server.py # FastMCP server entry point

├── config.py # Environment-based configuration

├── errors.py # Custom error types

├── openfda/

│ ├── endpoints.py # Enum of all 21 endpoints

│ ├── client.py # Async HTTP client with rate limiting

│ └── summarizer.py # Response summarization per endpoint

├── documents/

│ ├── urls.py # FDA document URL construction

│ └── fetcher.py # PDF download + text extraction + OCR

├── tools/

│ ├── _helpers.py # Shared helpers (limit clamping)

│ ├── search.py # search_fda tool (all 21 endpoints)

│ ├── count.py # count_records tool

│ ├── fields.py # list_searchable_fields tool

│ └── decision_documents.py

└── resources/

├── query_syntax.py # Query syntax reference

├── endpoints_resource.py

└── field_definitions.py

```

## How It Works

### LLM Usability

The server is designed to be easy for LLMs to use correctly:

1. **Server instructions** — Query syntax, workflow guidance, and common mistakes are injected into every LLM context automatically via the MCP protocol (~210 tokens).

2. **Unified tool surface** — A single `search_fda` tool with a typed `dataset` parameter replaces 9 separate search tools, eliminating tool selection confusion.

3. **Actionable errors** — `InvalidSearchError` includes inline syntax quick reference. `NotFoundError` includes troubleshooting steps and the endpoint used. No more references to invisible MCP resources.

4. **Visible warnings** — Limit clamping and missing `.exact` suffix produce visible notes instead of silent fallbacks.

5. **Response summarization** — Each endpoint type has a custom summarizer that extracts key fields and flattens nested structures. Drug labels truncate sections to 2,000 chars. PDF text defaults to 8,000 chars.

6. **Field discovery via tool** — Instead of listing all searchable fields in tool descriptions (which would cost ~8,000-11,000 tokens of persistent context), the `list_searchable_fields` tool provides them on demand.

7. **Smart pagination** — Default page sizes are low (10 records). Responses include `total_results`, `showing`, and `has_more`. When results exceed 100, a tip suggests using `count_records` for aggregation.

### FDA Decision Documents

These documents are **not** available through the OpenFDA API. The server constructs URLs and fetches directly from `accessdata.fda.gov`:

| Document Type | URL Pattern |

|--------------|-------------|

| 510(k) summary | `https://www.accessdata.fda.gov/cdrh_docs/reviews/{K_NUMBER}.pdf` |

| De Novo decision | `https://www.accessdata.fda.gov/cdrh_docs/reviews/{DEN_NUMBER}.pdf` |

| PMA approval | `https://www.accessdata.fda.gov/cdrh_docs/pdf{YY}/{P_NUMBER}A.pdf` |

| PMA SSED | `https://www.accessdata.fda.gov/cdrh_docs/pdf{YY}/{P_NUMBER}B.pdf` |

| PMA supplement | `https://www.accessdata.fda.gov/cdrh_docs/pdf{YY}/{P_NUMBER}S{###}A.pdf` |

Text extraction uses `pdfplumber` for machine-generated PDFs, with automatic OCR fallback via `pytesseract` + `pdf2image` for scanned documents.

## License

MIT

| text/markdown | null | null | null | null | null | fda, healthcare, llm, mcp, openfda | [

"Development Status :: 4 - Beta",

"Intended Audience :: Developers",

"License :: OSI Approved :: MIT License",

"Programming Language :: Python :: 3",

"Programming Language :: Python :: 3.11",

"Programming Language :: Python :: 3.12",

"Programming Language :: Python :: 3.13",

"Topic :: Scientific/Engineering :: Medical Science Apps."

] | [] | null | null | >=3.11 | [] | [] | [] | [

"httpx>=0.27.0",

"mcp[cli]>=1.26.0",

"pdf2image>=1.17.0",

"pdfplumber>=0.11.0",

"pydantic>=2.0.0",

"pytesseract>=0.3.10",

"pytest-asyncio>=0.23; extra == \"dev\"",

"pytest>=8.0; extra == \"dev\"",

"respx>=0.22.0; extra == \"dev\""

] | [] | [] | [] | [] | uv/0.10.2 {"installer":{"name":"uv","version":"0.10.2","subcommand":["publish"]},"python":null,"implementation":{"name":null,"version":null},"distro":{"name":"macOS","version":null,"id":null,"libc":null},"system":{"name":null,"release":null},"cpu":null,"openssl_version":null,"setuptools_version":null,"rustc_version":null,"ci":null} | 2026-02-20T03:47:08.497291 | fda_mcp-0.2.2.tar.gz | 99,485 | 28/1c/1c7106fee5b0d4ac84dc0efa7ec62f28328f3f1641dee6c819e333611889/fda_mcp-0.2.2.tar.gz | source | sdist | null | false | da086c1fc75fb43204053269a1c4a82c | 25176fffabad96a38cb9c1f1fd88bcde15c05f0c19041ddcda722b8c12212e37 | 281c1c7106fee5b0d4ac84dc0efa7ec62f28328f3f1641dee6c819e333611889 | MIT | [

"LICENSE"

] | 242 |

2.4 | langchain-cockroachdb | 0.2.0 | LangChain integration for CockroachDB with native vector support | # <img src="https://raw.githubusercontent.com/cockroachdb/langchain-cockroachdb/main/assets/cockroachdb_logo.svg" width="25" height="25" style="vertical-align: middle;"/> langchain-cockroachdb

[](https://github.com/cockroachdb/langchain-cockroachdb/actions/workflows/test.yml)

[](https://badge.fury.io/py/langchain-cockroachdb)

[](https://www.python.org/downloads/)

[](https://pepy.tech/project/langchain-cockroachdb)

[](https://opensource.org/licenses/Apache-2.0)

<p>

<strong>LangChain integration for CockroachDB with native vector support</strong>

</p>

<p>

<a href="#quick-start">Quick Start</a> •

<a href="#features">Features</a> •

<a href="https://cockroachdb.github.io/langchain-cockroachdb/">Documentation</a> •

<a href="https://github.com/cockroachdb/langchain-cockroachdb/tree/main/examples">Examples</a> •

<a href="#contributing">Contributing</a>

</p>

---

## Overview

Build LLM applications with **CockroachDB's distributed SQL database** and **native vector search** capabilities. This integration provides:

- 🎯 **Native Vector Support** - CockroachDB's `VECTOR` type

- 🚀 **C-SPANN Indexes** - Distributed vector indexes optimized for scale

- 🔄 **Automatic Retries** - Handles serialization errors transparently

- ⚡ **Async & Sync APIs** - Choose based on your use case

- 🏗️ **Distributed by Design** - Built for CockroachDB's architecture

## Quick Start

### Installation

```bash

pip install langchain-cockroachdb

```

### Basic Usage

```python

import asyncio

from langchain_cockroachdb import AsyncCockroachDBVectorStore, CockroachDBEngine

from langchain_openai import OpenAIEmbeddings

async def main():

# Initialize

engine = CockroachDBEngine.from_connection_string(

"cockroachdb://user:pass@host:26257/db"

)

await engine.ainit_vectorstore_table(

table_name="documents",

vector_dimension=1536,

)

vectorstore = AsyncCockroachDBVectorStore(

engine=engine,

embeddings=OpenAIEmbeddings(),

collection_name="documents",

)

# Add documents

await vectorstore.aadd_texts([

"CockroachDB is a distributed SQL database",

"LangChain makes building LLM apps easy",

])

# Search

results = await vectorstore.asimilarity_search(

"Tell me about databases",

k=2

)

for doc in results:

print(doc.page_content)

await engine.aclose()

asyncio.run(main())

```

## Features

### Vector Store

- Native `VECTOR` type support with C-SPANN indexes

- Advanced metadata filtering (`$and`, `$or`, `$gt`, `$in`, etc.)

- Hybrid search (full-text + vector similarity)

- Multi-tenancy with namespace-based isolation and C-SPANN prefix columns

### Chat History

- Persistent conversation storage in CockroachDB

- Session management by thread ID

- Drop-in replacement for other LangChain chat history implementations

### LangGraph Checkpointer

- Short-term memory for multi-turn LangGraph agents

- Human-in-the-loop with interrupt/resume support

- Both `CockroachDBSaver` (sync) and `AsyncCockroachDBSaver`

- Compatible with LangGraph's `compile(checkpointer=...)` interface

### Reliability

- Automatic retry logic with exponential backoff

- Connection pooling with health checks

- Configurable for different workloads

- Works with both SERIALIZABLE (default, recommended) and READ COMMITTED isolation

### Developer Experience

- Async-first design for high concurrency

- Sync wrapper for simple scripts

- Type-safe with full type hints

- Comprehensive test suite (177 tests)

## Documentation

**📚 [Complete Documentation](https://cockroachdb.github.io/langchain-cockroachdb/)**

**LangChain Official Integration Docs:**

- [CockroachDB Provider](https://docs.langchain.com/oss/python/integrations/providers/cockroachdb)

- [CockroachDB Vector Store](https://docs.langchain.com/oss/python/integrations/vectorstores/cockroachdb)

- [CockroachDB Chat Message History](https://docs.langchain.com/oss/python/integrations/chat_message_histories/cockroachdb)

**Getting Started:**

- [Installation](https://cockroachdb.github.io/langchain-cockroachdb/getting-started/installation/)

- [Quick Start](https://cockroachdb.github.io/langchain-cockroachdb/getting-started/quick-start/)

- [Configuration](https://cockroachdb.github.io/langchain-cockroachdb/getting-started/configuration/)

**Guides:**

- [Vector Store](https://cockroachdb.github.io/langchain-cockroachdb/guides/vector-store/)

- [Vector Indexes](https://cockroachdb.github.io/langchain-cockroachdb/guides/vector-indexes/)

- [Hybrid Search](https://cockroachdb.github.io/langchain-cockroachdb/guides/hybrid-search/)

- [Chat History](https://cockroachdb.github.io/langchain-cockroachdb/guides/chat-history/)

- [Multi-Tenancy](https://cockroachdb.github.io/langchain-cockroachdb/guides/multi-tenancy/)

- [LangGraph Checkpointer](https://cockroachdb.github.io/langchain-cockroachdb/guides/checkpointer/)

- [Async vs Sync](https://cockroachdb.github.io/langchain-cockroachdb/guides/async-vs-sync/)

## Examples

**🔧 [Working Examples](https://github.com/cockroachdb/langchain-cockroachdb/tree/main/examples)**

- [`quickstart.py`](https://github.com/cockroachdb/langchain-cockroachdb/blob/main/examples/quickstart.py) - Get started in 5 minutes

- [`sync_usage.py`](https://github.com/cockroachdb/langchain-cockroachdb/blob/main/examples/sync_usage.py) - Synchronous API

- [`vector_indexes.py`](https://github.com/cockroachdb/langchain-cockroachdb/blob/main/examples/vector_indexes.py) - Index optimization

- [`hybrid_search.py`](https://github.com/cockroachdb/langchain-cockroachdb/blob/main/examples/hybrid_search.py) - FTS + vector search

- [`metadata_filtering.py`](https://github.com/cockroachdb/langchain-cockroachdb/blob/main/examples/metadata_filtering.py) - Advanced queries

- [`chat_history.py`](https://github.com/cockroachdb/langchain-cockroachdb/blob/main/examples/chat_history.py) - Persistent conversations

- [`checkpointer.py`](https://github.com/cockroachdb/langchain-cockroachdb/blob/main/examples/checkpointer.py) - LangGraph checkpointer

- [`multi_tenancy.py`](https://github.com/cockroachdb/langchain-cockroachdb/blob/main/examples/multi_tenancy.py) - Namespace-based multi-tenancy

- [`retry_configuration.py`](https://github.com/cockroachdb/langchain-cockroachdb/blob/main/examples/retry_configuration.py) - Configuration patterns

## Development

### Setup

```bash

# Clone repository

git clone https://github.com/cockroachdb/langchain-cockroachdb.git

cd langchain-cockroachdb

# Install dependencies

pip install -e ".[dev]"

# Start CockroachDB

docker-compose up -d

# Run tests

make test

```

### Documentation

```bash

# Install docs dependencies

pip install -e ".[docs]"

# Serve documentation locally

mkdocs serve

# Open http://127.0.0.1:8000

```

### Contributing

Contributions are welcome! Please see [CONTRIBUTING.md](https://github.com/cockroachdb/langchain-cockroachdb/blob/main/CONTRIBUTING.md) for guidelines.

## Why CockroachDB?

- **Distributed SQL** - Scale horizontally across regions

- **Native Vector Support** - First-class `VECTOR` type and C-SPANN indexes

- **Strong Consistency** - SERIALIZABLE isolation by default, READ COMMITTED also supported

- **Cloud Native** - Deploy anywhere (IBM, AWS, GCP, Azure, on-prem)

- **PostgreSQL Compatible** - Familiar SQL with distributed superpowers

## Links

- [GitHub Repository](https://github.com/cockroachdb/langchain-cockroachdb)

- [PyPI Package](https://pypi.org/project/langchain-cockroachdb/)

- [CockroachDB Documentation](https://www.cockroachlabs.com/docs/)

- [LangChain Documentation](https://python.langchain.com/)

- [Report Issues](https://github.com/cockroachdb/langchain-cockroachdb/issues)

## License

Apache License 2.0 - see [LICENSE](https://github.com/cockroachdb/langchain-cockroachdb/blob/main/LICENSE) for details.

## Acknowledgments

Built for the CockroachDB and LangChain communities.

- [CockroachDB](https://www.cockroachlabs.com/) - Distributed SQL database

- [LangChain](https://github.com/langchain-ai/langchain) - LLM application framework

| text/markdown | null | Virag Tripathi <virag.tripathi@gmail.com> | null | null | Apache-2.0 | ai, cockroachdb, embeddings, langchain, llm, vector | [

"Development Status :: 3 - Alpha",

"Intended Audience :: Developers",

"License :: OSI Approved :: Apache Software License",

"Programming Language :: Python :: 3"

] | [] | null | null | >=3.10 | [] | [] | [] | [

"greenlet>=3.0.0",

"langchain-core>=0.3.0",

"langgraph-checkpoint<5.0.0,>=2.1.2",

"numpy>=1.26.0",

"psycopg2-binary>=2.9.0",

"psycopg[binary,pool]>=3.1.0",

"sqlalchemy-cockroachdb>=2.0.2",

"sqlalchemy[asyncio]>=2.0.0",

"langchain-openai>=0.2.0; extra == \"dev\"",

"langchain-tests>=0.3.0; extra == \"dev\"",

"langchain>=0.3.0; extra == \"dev\"",

"langgraph>=0.2.0; extra == \"dev\"",

"mypy>=1.8.0; extra == \"dev\"",

"pytest-asyncio>=0.23.0; extra == \"dev\"",

"pytest-cov>=4.1.0; extra == \"dev\"",

"pytest>=8.0.0; extra == \"dev\"",

"ruff>=0.3.0; extra == \"dev\"",

"testcontainers[postgres]>=4.0.0; extra == \"dev\"",

"mkdocs-material>=9.5.0; extra == \"docs\"",

"mkdocs>=1.5.0; extra == \"docs\"",

"mkdocstrings[python]>=0.24.0; extra == \"docs\"",

"pymdown-extensions>=10.7; extra == \"docs\""

] | [] | [] | [] | [

"Homepage, https://github.com/cockroachdb/langchain-cockroachdb",

"Documentation, https://cockroachdb.github.io/langchain-cockroachdb/",

"Repository, https://github.com/cockroachdb/langchain-cockroachdb",

"Issues, https://github.com/cockroachdb/langchain-cockroachdb/issues",

"Changelog, https://github.com/cockroachdb/langchain-cockroachdb/blob/main/CHANGELOG.md"

] | twine/6.1.0 CPython/3.13.7 | 2026-02-20T03:46:47.437259 | langchain_cockroachdb-0.2.0.tar.gz | 327,444 | 13/f3/6125c843b4f60a46fb2594400b57ef9bbffbf7e6722ce911b6863c60cca7/langchain_cockroachdb-0.2.0.tar.gz | source | sdist | null | false | f9f9b1d30820da94c8406cb36711878a | 65c24b20d9c21edcf0a7f1ed51ed3ba92650a06378975e41eedaf2a3252e1b4a | 13f36125c843b4f60a46fb2594400b57ef9bbffbf7e6722ce911b6863c60cca7 | null | [

"LICENSE"

] | 275 |

2.4 | dedent | 0.8.0 | What textwrap.dedent should have been | # dedent

What [`textwrap.dedent`](https://docs.python.org/3/library/textwrap.html#textwrap.dedent) should have been.

Dedent and strip multiline strings, and keep interpolated values aligned—without manual whitespace wrangling. Supports t-strings (Python 3.14+) and f-strings (Python 3.10+).

> For documentation on how to use dedent with f-strings in Python 3.10-3.13, see [Legacy Support](#legacy-support-python-310-313).

## Table of Contents

- [Usage](#usage)

- [Installation](#installation)

- [Options](#options)

- [`align`](#align)

- [`strip`](#strip)

- [Legacy Support (Python 3.10-3.13)](#legacy-support-python-310-313)

- [Why `textwrap.dedent` Falls Short](#why-textwrapdedent-falls-short)

## Usage

```python

from dedent import dedent

name = "Alice"

greeting = dedent(t"""

Hello, {name}!

Welcome to the party.

""")

print(greeting)

# Hello, Alice!

# Welcome to the party.

# Nested multiline strings align correctly with :align

items = dedent("""

- apples

- bananas

""")

shopping_list = dedent(t"""

Groceries:

{items:align}

---

""")

print(shopping_list)

# Groceries:

# - apples

# - bananas

# ---

```

## Installation

```bash

# Using uv (Recommended)

uv add dedent

# Using pip

pip install dedent

```

## Options

### `align`

When an interpolation evaluates to a multiline string, only its first line is placed where the `{...}` appears. Subsequent lines keep whatever indentation they already had (often none), so they can appear "shifted left". Alignment fixes this by indenting subsequent lines to match the first.

#### Format Spec Directives

> Requires Python 3.14+ (t-strings).

Use format spec directives inside t-strings for per-value control:

- `{value:align}` - Align this multiline value to the current indentation

- `{value:noalign}` - Disable alignment for this value [^1]

- `{value:align:06d}` - Combine with other format specs [^2]

```python

from dedent import dedent

items = dedent("""

- one

- two

""")

result = dedent(t"""

Aligned:

{items:align}

Not aligned:

{items}

""")

print(result)

# Aligned:

# - one

# - two

# Not aligned:

# - one

# - two

```

[^1]: Only has an effect when using the [`align=True` argument](#align-argument).

[^2]: This rarely makes sense, unless you are also using custom format specifications, but nonetheless works.

#### `align` Argument

> Requires Python 3.14+ (t-strings).

Pass `align=True` to enable alignment globally for all t-string interpolations. Format spec directives override this.

```python

from dedent import dedent

items = dedent("""

- one

- two

""")

result = dedent(

t"""

List 1:

{items}

List 2:

{items}

---

""",

align=True,

)

print(result)

# List 1:

# - one

# - two

# List 2:

# - one

# - two

# ---

```

### `strip`

The `strip` parameter controls how leading and trailing whitespace is removed after dedenting. It accepts three modes:

#### `"smart"` (default)

Strips one leading and trailing newline-bounded blank segment. Handles the common case of triple-quoted strings that start and end with a newline.

```python

from dedent import dedent

result = dedent("""

hello!

""")

print(repr(result))

# 'hello!'

```

#### `"all"`

Strips all surrounding whitespace, equivalent to calling `.strip()` on the result. Use when the string may have extra blank lines you want removed.

```python

from dedent import dedent

result = dedent(

"""

hello!

""",

strip="all",

)

print(repr(result))

# 'hello!'

```

#### `"none"`

Leaves whitespace exactly as-is after dedenting. Use when you need to preserve exact whitespace, e.g. for diff output or tests.

```python

from dedent import dedent

result = dedent(

"""

hello!

""",

strip="none",

)

print(repr(result))

# '\nhello!\n'

```

## Legacy Support (Python 3.10-3.13)

On Python 3.10-3.13, t-strings are not available. Use `dedent()` on plain strings and f-strings, and wrap interpolated values with `align()` to get multiline indentation alignment.

```python

from dedent import align, dedent

# dedent works on regular strings, like textwrap.dedent

message = dedent("""

Hello,

World!

""")

print(message)

# Hello,

# World!

# Use align() inside f-strings for multiline value alignment

items = dedent("""

- apples

- bananas

""")

shopping_list = dedent(f"""

Groceries:

{align(items)}

---

""")

print(shopping_list)

# Groceries:

# - apples

# - bananas

# ---

```

Per-value control with `align()` mirrors the format spec directives available on 3.14+:

```python

from dedent import align, dedent

items = dedent("""

- one

- two

""")

result = dedent(f"""

Aligned:

{align(items)}

Not aligned:

{items}

""")

print(result)

# Aligned:

# - one

# - two

# Not aligned:

# - one

# - two

```

> There is no equivalent of the [`align` argument](#align-argument) in Python 3.10-3.13. There is no way to automatically align multiline values when using f-strings.

## Why `textwrap.dedent` Falls Short

If you're here, then you're probably already familiar with the shortcomings of `textwrap.dedent`. But regardless, let's spell it out for the sake of completeness. For example, say we want to create a nicely formatted shopping list that includes some groceries:

```python

from textwrap import dedent

groceries = dedent("""

- apples

- bananas

- cherries

""")

shopping_list = dedent(f"""

Groceries:

{groceries}

---

""")

print(shopping_list)

#

# Groceries:

#

# - apples

# - bananas

# - cherries

#

# ---

```

Wait, that's not what we wanted. We accidentally included leading and trailing newlines from the groceries string. Now, we *could* do that manually by removing, escaping, or stripping the newlines, but it's either easy to forget, difficult to read, or unnecessarily verbose.

```python

# Removing the newlines

groceries = dedent(""" - apples

- bananas

- cherries""")

# Escaping the newlines

groceries = dedent("""\

- apples

- bananas

- cherries\

""")

# Stripping the newlines

groceries = dedent("""

- apples

- bananas

- cherries

""".strip("\n"))

# But the shopping list still comes out wrong:

# Groceries:

# - apples

# - bananas

# - cherries

# ---

```

Uh oh, something is still wrong; the indentation is not correct at all. The interpolation happens too early. When we use an f-string with `textwrap.dedent`, the replacement occurs before dedenting can take place. Notice how only the first line of `groceries` is properly indented relative to the surrounding text? The subsequent lines lose their indentation because f-strings interpolate immediately, injecting the `groceries` string before `dedent` can process the overall structure.

Sure, we could manually adjust the indentation with a bit of string manipulation, but that's a pain to read, write, and maintain.

```python

from textwrap import dedent

groceries = dedent("""

- apples

- bananas

- cherries

""".strip("\n"))

manual_groceries = ("\n" + " " * 8).join(groceries.splitlines())

shopping_list = dedent(f"""

Groceries:

{manual_groceries}

---

""".strip("\n"))

```

`dedent` solves these problems and more:

```python

from dedent import dedent

groceries = dedent("""

- apples

- bananas

- cherries

""")

shopping_list = dedent(t"""

Groceries:

{groceries:align}

---

""")

print(shopping_list)

# Groceries:

# - apples

# - bananas

# - cherries

# ---

```

| text/markdown | Graham B. Preston | null | null | null | null | ai, dedent, format, formatting, indent, indentation, llm, multiline, prompt, prompting, string, t-string, template, text, textwrap, undent, whitespace, wrap, wrapping | [

"Development Status :: 5 - Production/Stable",

"Intended Audience :: Developers",

"License :: OSI Approved :: MIT License",

"Natural Language :: English",

"Operating System :: OS Independent",

"Programming Language :: Python :: 3",

"Programming Language :: Python :: 3 :: Only",

"Programming Language :: Python :: 3.10",

"Programming Language :: Python :: 3.11",

"Programming Language :: Python :: 3.12",

"Programming Language :: Python :: 3.13",

"Programming Language :: Python :: 3.14",

"Programming Language :: Python :: Implementation :: CPython",

"Topic :: Software Development :: Libraries :: Python Modules",

"Topic :: Text Processing",

"Topic :: Utilities",

"Typing :: Typed"

] | [] | null | null | >=3.10 | [] | [] | [] | [] | [] | [] | [] | [

"Repository, https://github.com/grahamcracker1234/dedent",

"Documentation, https://github.com/grahamcracker1234/dedent#readme",

"Issues, https://github.com/grahamcracker1234/dedent/issues",

"Changelog, https://github.com/grahamcracker1234/dedent/releases"

] | twine/6.1.0 CPython/3.13.7 | 2026-02-20T03:46:03.171692 | dedent-0.8.0.tar.gz | 37,470 | 35/18/146730c20c38b0bc4b2e6c0cc6ba702420aa2d9a39e35ed97764f4e3b063/dedent-0.8.0.tar.gz | source | sdist | null | false | 335ad279db866865937c3ec486c9fa02 | 0a3c2487a1b3de2d0428667c7cb2d7fa5c786091e4dfea25e967355bdb5adc43 | 3518146730c20c38b0bc4b2e6c0cc6ba702420aa2d9a39e35ed97764f4e3b063 | MIT | [

"LICENSE.md"

] | 294 |

2.4 | keplar-api | 0.0.3905150158 | Fastify Template API | # keplar_api

API documentation using Swagger

This Python package is automatically generated by the [OpenAPI Generator](https://openapi-generator.tech) project:

- API version: 1.0.0

- Package version: 0.0.3905150158

- Generator version: 7.16.0

- Build package: org.openapitools.codegen.languages.PythonClientCodegen

## Requirements.

Python 3.9+

## Installation & Usage

### pip install

If the python package is hosted on a repository, you can install directly using:

```sh

pip install git+https://github.com/GIT_USER_ID/GIT_REPO_ID.git

```

(you may need to run `pip` with root permission: `sudo pip install git+https://github.com/GIT_USER_ID/GIT_REPO_ID.git`)

Then import the package:

```python

import keplar_api

```

### Setuptools

Install via [Setuptools](http://pypi.python.org/pypi/setuptools).

```sh

python setup.py install --user

```

(or `sudo python setup.py install` to install the package for all users)

Then import the package:

```python

import keplar_api

```

### Tests

Execute `pytest` to run the tests.

## Getting Started

Please follow the [installation procedure](#installation--usage) and then run the following:

```python

import keplar_api

from keplar_api.rest import ApiException

from pprint import pprint

# Defining the host is optional and defaults to http://localhost:3000

# See configuration.py for a list of all supported configuration parameters.

configuration = keplar_api.Configuration(

host = "http://localhost:3000"

)

# The client must configure the authentication and authorization parameters

# in accordance with the API server security policy.

# Examples for each auth method are provided below, use the example that

# satisfies your auth use case.

# Configure Bearer authorization (JWT): bearerAuth

configuration = keplar_api.Configuration(

access_token = os.environ["BEARER_TOKEN"]

)

# Enter a context with an instance of the API client

async with keplar_api.ApiClient(configuration) as api_client:

# Create an instance of the API class

api_instance = keplar_api.DefaultApi(api_client)

user_id = 'user_id_example' # str |

add_user_to_workspace_request = keplar_api.AddUserToWorkspaceRequest() # AddUserToWorkspaceRequest |

try:

# Add user to a workspace

api_response = await api_instance.add_user_to_workspace(user_id, add_user_to_workspace_request)

print("The response of DefaultApi->add_user_to_workspace:\n")

pprint(api_response)

except ApiException as e:

print("Exception when calling DefaultApi->add_user_to_workspace: %s\n" % e)

```

## Documentation for API Endpoints

All URIs are relative to *http://localhost:3000*

Class | Method | HTTP request | Description

------------ | ------------- | ------------- | -------------

*DefaultApi* | [**add_user_to_workspace**](docs/DefaultApi.md#add_user_to_workspace) | **POST** /api/admin/users/{userId}/workspaces | Add user to a workspace

*DefaultApi* | [**add_workspace_member**](docs/DefaultApi.md#add_workspace_member) | **POST** /api/admin/workspaces/{workspaceId}/members | Add workspace member

*DefaultApi* | [**analyze_notebook**](docs/DefaultApi.md#analyze_notebook) | **POST** /api/notebooks/{notebookId}/analyze | Trigger analysis/report generation for a notebook

*DefaultApi* | [**api_call_messages_search_post**](docs/DefaultApi.md#api_call_messages_search_post) | **POST** /api/callMessages/search | Search conversation messages

*DefaultApi* | [**api_calls_call_id_get**](docs/DefaultApi.md#api_calls_call_id_get) | **GET** /api/calls/{callId} | Get call

*DefaultApi* | [**api_calls_call_id_messages_index_get**](docs/DefaultApi.md#api_calls_call_id_messages_index_get) | **GET** /api/calls/{callId}/messages/{index} | Get conversation message

*DefaultApi* | [**api_copilotkit_post**](docs/DefaultApi.md#api_copilotkit_post) | **POST** /api/copilotkit |

*DefaultApi* | [**api_demos_create_demo_invite_post**](docs/DefaultApi.md#api_demos_create_demo_invite_post) | **POST** /api/demos/createDemoInvite | Create demo invite

*DefaultApi* | [**api_files_file_id_delete**](docs/DefaultApi.md#api_files_file_id_delete) | **DELETE** /api/files/{fileId} | Delete a file

*DefaultApi* | [**api_files_file_id_get**](docs/DefaultApi.md#api_files_file_id_get) | **GET** /api/files/{fileId} | Get file metadata

*DefaultApi* | [**api_files_file_id_signed_url_post**](docs/DefaultApi.md#api_files_file_id_signed_url_post) | **POST** /api/files/{fileId}/signed-url | Get a signed URL for file access

*DefaultApi* | [**api_files_post**](docs/DefaultApi.md#api_files_post) | **POST** /api/files/ | Upload a file

*DefaultApi* | [**api_invite_code_code_participant_code_participant_code_join_get**](docs/DefaultApi.md#api_invite_code_code_participant_code_participant_code_join_get) | **GET** /api/inviteCode/{code}/participantCode/{participantCode}/join |

*DefaultApi* | [**api_invite_code_code_start_get**](docs/DefaultApi.md#api_invite_code_code_start_get) | **GET** /api/inviteCode/{code}/start |

*DefaultApi* | [**api_invites_id_get**](docs/DefaultApi.md#api_invites_id_get) | **GET** /api/invites/{id} | Get invite

*DefaultApi* | [**api_invites_id_participant_invites_get**](docs/DefaultApi.md#api_invites_id_participant_invites_get) | **GET** /api/invites/{id}/participantInvites | Get participant invites

*DefaultApi* | [**api_invites_id_participant_invites_participant_id_get**](docs/DefaultApi.md#api_invites_id_participant_invites_participant_id_get) | **GET** /api/invites/{id}/participantInvites/{participantId} | Get participant invite

*DefaultApi* | [**api_invites_id_participant_invites_participant_id_put**](docs/DefaultApi.md#api_invites_id_participant_invites_participant_id_put) | **PUT** /api/invites/{id}/participantInvites/{participantId} | Update participant invite

*DefaultApi* | [**api_invites_id_participant_invites_post**](docs/DefaultApi.md#api_invites_id_participant_invites_post) | **POST** /api/invites/{id}/participantInvites | Create participant invite

*DefaultApi* | [**api_invites_id_participants_participant_id_call_metadata_get**](docs/DefaultApi.md#api_invites_id_participants_participant_id_call_metadata_get) | **GET** /api/invites/{id}/participants/{participantId}/callMetadata | Get call metadata by invite ID and participant ID

*DefaultApi* | [**api_invites_id_put**](docs/DefaultApi.md#api_invites_id_put) | **PUT** /api/invites/{id}/ | Update invite

*DefaultApi* | [**api_invites_id_response_attribute_stats_get**](docs/DefaultApi.md#api_invites_id_response_attribute_stats_get) | **GET** /api/invites/{id}/responseAttributeStats | Get invite response attribute stats

*DefaultApi* | [**api_invites_id_responses_get**](docs/DefaultApi.md#api_invites_id_responses_get) | **GET** /api/invites/{id}/responses | Get invite responses

*DefaultApi* | [**api_invites_id_responses_post**](docs/DefaultApi.md#api_invites_id_responses_post) | **POST** /api/invites/{id}/responses | Create invite response

*DefaultApi* | [**api_invites_id_responses_response_id_call_metadata_get**](docs/DefaultApi.md#api_invites_id_responses_response_id_call_metadata_get) | **GET** /api/invites/{id}/responses/{responseId}/callMetadata | Get call metadata by invite ID and response ID

*DefaultApi* | [**api_invites_id_responses_response_id_get**](docs/DefaultApi.md#api_invites_id_responses_response_id_get) | **GET** /api/invites/{id}/responses/{responseId} | Get invite response

*DefaultApi* | [**api_invites_id_responses_response_id_put**](docs/DefaultApi.md#api_invites_id_responses_response_id_put) | **PUT** /api/invites/{id}/responses/{responseId} | Update invite response

*DefaultApi* | [**api_invites_post**](docs/DefaultApi.md#api_invites_post) | **POST** /api/invites/ | Create invite

*DefaultApi* | [**api_projects_draft_get**](docs/DefaultApi.md#api_projects_draft_get) | **GET** /api/projects/draft | Get draft project

*DefaultApi* | [**api_projects_post**](docs/DefaultApi.md#api_projects_post) | **POST** /api/projects/ | Create project

*DefaultApi* | [**api_projects_project_id_analysis_post**](docs/DefaultApi.md#api_projects_project_id_analysis_post) | **POST** /api/projects/{projectId}/analysis | Create project analysis

*DefaultApi* | [**api_projects_project_id_delete_post**](docs/DefaultApi.md#api_projects_project_id_delete_post) | **POST** /api/projects/{projectId}/delete | Delete or archive project

*DefaultApi* | [**api_projects_project_id_files_file_id_delete**](docs/DefaultApi.md#api_projects_project_id_files_file_id_delete) | **DELETE** /api/projects/{projectId}/files/{fileId} | Remove a file from a project

*DefaultApi* | [**api_projects_project_id_files_file_id_put**](docs/DefaultApi.md#api_projects_project_id_files_file_id_put) | **PUT** /api/projects/{projectId}/files/{fileId} | Update project file metadata

*DefaultApi* | [**api_projects_project_id_files_get**](docs/DefaultApi.md#api_projects_project_id_files_get) | **GET** /api/projects/{projectId}/files | Get files for a project

*DefaultApi* | [**api_projects_project_id_files_post**](docs/DefaultApi.md#api_projects_project_id_files_post) | **POST** /api/projects/{projectId}/files | Add an existing file to a project

*DefaultApi* | [**api_projects_project_id_launch_post**](docs/DefaultApi.md#api_projects_project_id_launch_post) | **POST** /api/projects/{projectId}/launch | Launch project

*DefaultApi* | [**api_projects_project_id_put**](docs/DefaultApi.md#api_projects_project_id_put) | **PUT** /api/projects/{projectId} | Update project

*DefaultApi* | [**api_projects_project_id_search_transcripts_post**](docs/DefaultApi.md#api_projects_project_id_search_transcripts_post) | **POST** /api/projects/{projectId}/searchTranscripts | Search project transcripts

*DefaultApi* | [**api_threads_get**](docs/DefaultApi.md#api_threads_get) | **GET** /api/threads/ | Get threads

*DefaultApi* | [**api_threads_thread_id_files_get**](docs/DefaultApi.md#api_threads_thread_id_files_get) | **GET** /api/threads/{threadId}/files | Get thread files

*DefaultApi* | [**api_threads_thread_id_post**](docs/DefaultApi.md#api_threads_thread_id_post) | **POST** /api/threads/{threadId} | Upsert thread

*DefaultApi* | [**api_threads_thread_id_project_brief_document_versions_get**](docs/DefaultApi.md#api_threads_thread_id_project_brief_document_versions_get) | **GET** /api/threads/{threadId}/project-brief-document-versions | Get project brief document versions from thread state history

*DefaultApi* | [**api_threads_thread_id_project_brief_document_versions_post**](docs/DefaultApi.md#api_threads_thread_id_project_brief_document_versions_post) | **POST** /api/threads/{threadId}/project-brief-document-versions | Create project brief document version from thread state

*DefaultApi* | [**api_threads_thread_id_project_brief_document_versions_version_put**](docs/DefaultApi.md#api_threads_thread_id_project_brief_document_versions_version_put) | **PUT** /api/threads/{threadId}/project-brief-document-versions/{version} | Update a specific project brief document version

*DefaultApi* | [**api_threads_thread_id_project_brief_versions_get**](docs/DefaultApi.md#api_threads_thread_id_project_brief_versions_get) | **GET** /api/threads/{threadId}/project-brief-versions | Get project brief versions from thread state history

*DefaultApi* | [**api_threads_thread_id_project_brief_versions_post**](docs/DefaultApi.md#api_threads_thread_id_project_brief_versions_post) | **POST** /api/threads/{threadId}/project-brief-versions | Create project draft versions from thread state history

*DefaultApi* | [**api_users_id_get**](docs/DefaultApi.md#api_users_id_get) | **GET** /api/users/{id} | Get user details with additional config

*DefaultApi* | [**api_vapi_webhook_post**](docs/DefaultApi.md#api_vapi_webhook_post) | **POST** /api/vapi/webhook |

*DefaultApi* | [**check_permission**](docs/DefaultApi.md#check_permission) | **POST** /api/permissions/check |

*DefaultApi* | [**create_artifact**](docs/DefaultApi.md#create_artifact) | **POST** /api/projects/{projectId}/artifacts | Create artifact

*DefaultApi* | [**create_code_invite_response**](docs/DefaultApi.md#create_code_invite_response) | **POST** /api/inviteCode/{code}/responses | Create invite response for invite code

*DefaultApi* | [**create_code_invite_response_from_existing**](docs/DefaultApi.md#create_code_invite_response_from_existing) | **POST** /api/inviteCode/{code}/responses/{responseId}/createNewResponse | Create invite response from existing response

*DefaultApi* | [**create_email_share**](docs/DefaultApi.md#create_email_share) | **POST** /api/sharing/share-entities/{shareEntityId}/emails | Add email access to a share

*DefaultApi* | [**create_members**](docs/DefaultApi.md#create_members) | **POST** /api/admin/create-members | Create members in an organization via Stytch SSO

*DefaultApi* | [**create_notebook**](docs/DefaultApi.md#create_notebook) | **POST** /api/notebooks/ | Create a new notebook

*DefaultApi* | [**create_notebook_artifact**](docs/DefaultApi.md#create_notebook_artifact) | **POST** /api/notebooks/{notebookId}/artifacts | Create an empty artifact for a notebook

*DefaultApi* | [**create_org**](docs/DefaultApi.md#create_org) | **POST** /api/admin/orgs | Create a new organization

*DefaultApi* | [**create_project_preview_invite**](docs/DefaultApi.md#create_project_preview_invite) | **POST** /api/projects/{projectId}/previewInvite | Create a preview invite for this project based on audienceSettings

*DefaultApi* | [**create_project_share**](docs/DefaultApi.md#create_project_share) | **POST** /api/sharing/projects/{projectId} | Create a share link for a project

*DefaultApi* | [**create_test_participant_code_invite**](docs/DefaultApi.md#create_test_participant_code_invite) | **POST** /api/inviteCode/{code}/participantCode/{participantCode}/test | Create test invite for participant

*DefaultApi* | [**create_transcript_insight_for_code_invite_response**](docs/DefaultApi.md#create_transcript_insight_for_code_invite_response) | **POST** /api/inviteCode/{code}/responses/{responseId}/transcriptInsight | Create transcript insight for invite response

*DefaultApi* | [**create_workspace**](docs/DefaultApi.md#create_workspace) | **POST** /api/admin/workspaces | Create workspace

*DefaultApi* | [**delete_artifact**](docs/DefaultApi.md#delete_artifact) | **DELETE** /api/projects/{projectId}/artifacts/{artifactId} | Delete artifact

*DefaultApi* | [**delete_email_share**](docs/DefaultApi.md#delete_email_share) | **DELETE** /api/sharing/share-entities/{shareEntityId}/emails/{email} | Remove email access from a share

*DefaultApi* | [**delete_notebook**](docs/DefaultApi.md#delete_notebook) | **DELETE** /api/notebooks/{notebookId} | Delete a notebook

*DefaultApi* | [**delete_notebook_artifact_version_group**](docs/DefaultApi.md#delete_notebook_artifact_version_group) | **DELETE** /api/notebooks/{notebookId}/artifacts/{versionGroupId} | Delete all artifacts in a version group

*DefaultApi* | [**delete_project_search_index**](docs/DefaultApi.md#delete_project_search_index) | **DELETE** /api/projects/{projectId}/searchIndex | Delete project search index from Qdrant

*DefaultApi* | [**delete_share_entity**](docs/DefaultApi.md#delete_share_entity) | **DELETE** /api/sharing/share-entities/{shareEntityId} | Delete a share entity

*DefaultApi* | [**download_invite_responses**](docs/DefaultApi.md#download_invite_responses) | **GET** /api/invites/{id}/responses/download | Download invite responses as CSV

*DefaultApi* | [**download_share_invite_responses**](docs/DefaultApi.md#download_share_invite_responses) | **GET** /api/share/{shareToken}/invites/{inviteId}/responses/download | Download invite responses as CSV

*DefaultApi* | [**duplicate_project**](docs/DefaultApi.md#duplicate_project) | **POST** /api/admin/projects/{projectId}/duplicate | Duplicate a project with its moderator and threads

*DefaultApi* | [**exit_impersonation**](docs/DefaultApi.md#exit_impersonation) | **POST** /api/admin/impersonation-exit | Exit impersonation and restore admin session

*DefaultApi* | [**generate_presentation_artifact**](docs/DefaultApi.md#generate_presentation_artifact) | **POST** /api/projects/{projectId}/artifacts/{artifactId}/generate | Generate presentation via Gamma API for a presentation artifact

*DefaultApi* | [**get_artifact**](docs/DefaultApi.md#get_artifact) | **GET** /api/artifacts/{artifactId} | Get artifact by ID

*DefaultApi* | [**get_artifact_version_groups**](docs/DefaultApi.md#get_artifact_version_groups) | **GET** /api/projects/{projectId}/artifacts | Get project artifact version groups

*DefaultApi* | [**get_call_metadata_for_code_invite_response**](docs/DefaultApi.md#get_call_metadata_for_code_invite_response) | **GET** /api/inviteCode/{code}/responses/{responseId}/callMetadata | Get call metadata for invite response

*DefaultApi* | [**get_code_invite**](docs/DefaultApi.md#get_code_invite) | **GET** /api/inviteCode/{code}/ | Get invite by code

*DefaultApi* | [**get_code_invite_participant_remaining_responses**](docs/DefaultApi.md#get_code_invite_participant_remaining_responses) | **GET** /api/inviteCode/{code}/remainingResponses | Get remaining responses count for participant

*DefaultApi* | [**get_code_invite_participant_response**](docs/DefaultApi.md#get_code_invite_participant_response) | **GET** /api/inviteCode/{code}/participantResponse | Get invite response for participant

*DefaultApi* | [**get_code_invite_response**](docs/DefaultApi.md#get_code_invite_response) | **GET** /api/inviteCode/{code}/responses/{responseId} | Get invite response

*DefaultApi* | [**get_code_invite_response_redirect**](docs/DefaultApi.md#get_code_invite_response_redirect) | **GET** /api/inviteCode/{code}/responses/{responseId}/redirect | Get redirect URL for invite response

*DefaultApi* | [**get_code_participant_invite**](docs/DefaultApi.md#get_code_participant_invite) | **GET** /api/inviteCode/{code}/participantCode/{participantCode} | Get participant invite for invite code

*DefaultApi* | [**get_notebook**](docs/DefaultApi.md#get_notebook) | **GET** /api/notebooks/{notebookId} | Get a notebook by ID

*DefaultApi* | [**get_notebook_artifacts**](docs/DefaultApi.md#get_notebook_artifacts) | **GET** /api/notebooks/{notebookId}/artifacts | Get all artifacts generated from a notebook

*DefaultApi* | [**get_notebook_projects**](docs/DefaultApi.md#get_notebook_projects) | **GET** /api/notebooks/{notebookId}/projects | Get all projects associated with a notebook

*DefaultApi* | [**get_notebooks**](docs/DefaultApi.md#get_notebooks) | **GET** /api/notebooks/ | Get all notebooks accessible to user

*DefaultApi* | [**get_org**](docs/DefaultApi.md#get_org) | **GET** /api/admin/orgs/{orgId} | Get organization details

*DefaultApi* | [**get_org_members**](docs/DefaultApi.md#get_org_members) | **GET** /api/admin/orgs/{orgId}/members | Get organization members

*DefaultApi* | [**get_org_stytch_settings**](docs/DefaultApi.md#get_org_stytch_settings) | **GET** /api/admin/orgs/{orgId}/stytch-settings | Get Stytch organization settings

*DefaultApi* | [**get_orgs**](docs/DefaultApi.md#get_orgs) | **GET** /api/admin/orgs | List organizations with stats

*DefaultApi* | [**get_project**](docs/DefaultApi.md#get_project) | **GET** /api/projects/{projectId} | Get project

*DefaultApi* | [**get_project_artifact**](docs/DefaultApi.md#get_project_artifact) | **GET** /api/projects/{projectId}/artifacts/{artifactId} | Get project artifact by ID

*DefaultApi* | [**get_project_response_attribute_stats**](docs/DefaultApi.md#get_project_response_attribute_stats) | **GET** /api/projects/{projectId}/responseAttributeStats | Get project response attribute stats

*DefaultApi* | [**get_project_responses_metadata**](docs/DefaultApi.md#get_project_responses_metadata) | **GET** /api/projects/{projectId}/responsesMetadata | Get project responses metadata

*DefaultApi* | [**get_project_shares**](docs/DefaultApi.md#get_project_shares) | **GET** /api/projects/{projectId}/shares | Get all shares for a project

*DefaultApi* | [**get_projects**](docs/DefaultApi.md#get_projects) | **GET** /api/projects/ | Get projects

*DefaultApi* | [**get_share_entities**](docs/DefaultApi.md#get_share_entities) | **GET** /api/sharing/share-entities | List all share entities created by the user

*DefaultApi* | [**get_shared_artifact**](docs/DefaultApi.md#get_shared_artifact) | **GET** /api/share/{shareToken}/artifacts/{artifactId} | Get shared artifact by ID

*DefaultApi* | [**get_shared_artifact_version_groups**](docs/DefaultApi.md#get_shared_artifact_version_groups) | **GET** /api/share/{shareToken}/artifacts | Get shared project artifacts version groups

*DefaultApi* | [**get_shared_call**](docs/DefaultApi.md#get_shared_call) | **GET** /api/share/{shareToken}/calls/{callId} | Get shared call data with conversation messages

*DefaultApi* | [**get_shared_call_metadata**](docs/DefaultApi.md#get_shared_call_metadata) | **GET** /api/share/{shareToken}/invites/{inviteId}/responses/{responseId}/callMetadata | Get shared call metadata by invite ID and response ID

*DefaultApi* | [**get_shared_invite_response**](docs/DefaultApi.md#get_shared_invite_response) | **GET** /api/share/{shareToken}/invites/{inviteId}/responses/{responseId} | Get a single response by ID for a shared invite

*DefaultApi* | [**get_shared_invite_response_attribute_stats**](docs/DefaultApi.md#get_shared_invite_response_attribute_stats) | **GET** /api/share/{shareToken}/invites/{inviteId}/response-attribute-stats | Get attribute stats for shared invite responses

*DefaultApi* | [**get_shared_invite_responses**](docs/DefaultApi.md#get_shared_invite_responses) | **GET** /api/share/{shareToken}/invites/{inviteId}/responses | Get responses for a shared invite

*DefaultApi* | [**get_shared_project**](docs/DefaultApi.md#get_shared_project) | **GET** /api/share/{shareToken}/project | Get shared project data

*DefaultApi* | [**get_shared_project_response_attribute_stats**](docs/DefaultApi.md#get_shared_project_response_attribute_stats) | **GET** /api/share/{shareToken}/project-response-attribute-stats | Get shared project response attribute stats

*DefaultApi* | [**get_shared_project_responses_metadata**](docs/DefaultApi.md#get_shared_project_responses_metadata) | **GET** /api/share/{shareToken}/project-responses-metadata | Get shared project responses metadata

*DefaultApi* | [**get_user_workspaces**](docs/DefaultApi.md#get_user_workspaces) | **GET** /api/admin/users/{userId}/workspaces | Get user workspaces and all available workspaces

*DefaultApi* | [**get_workspace_members**](docs/DefaultApi.md#get_workspace_members) | **GET** /api/admin/workspaces/{workspaceId}/members | Get workspace members

*DefaultApi* | [**get_workspaces**](docs/DefaultApi.md#get_workspaces) | **GET** /api/admin/workspaces | Get all workspaces

*DefaultApi* | [**impersonate_user**](docs/DefaultApi.md#impersonate_user) | **POST** /api/admin/impersonate | Impersonate a user

*DefaultApi* | [**index_project_transcripts**](docs/DefaultApi.md#index_project_transcripts) | **POST** /api/projects/{projectId}/indexTranscripts | Index project transcripts into Qdrant for semantic search

*DefaultApi* | [**join_code_invite**](docs/DefaultApi.md#join_code_invite) | **GET** /api/inviteCode/{code}/join | Join invite by code