metadata_version string | name string | version string | summary string | description string | description_content_type string | author string | author_email string | maintainer string | maintainer_email string | license string | keywords string | classifiers list | platform list | home_page string | download_url string | requires_python string | requires list | provides list | obsoletes list | requires_dist list | provides_dist list | obsoletes_dist list | requires_external list | project_urls list | uploaded_via string | upload_time timestamp[us] | filename string | size int64 | path string | python_version string | packagetype string | comment_text string | has_signature bool | md5_digest string | sha256_digest string | blake2_256_digest string | license_expression string | license_files list | recent_7d_downloads int64 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

2.4 | ultimate-gemini-mcp | 5.0.1 | Gemini 3 Pro Image MCP server with advanced features: high-resolution output (1K-4K), reference images (up to 14), Google Search grounding, and thinking mode |

# Ultimate Gemini MCP

> MCP server for Google's **Gemini 3 Pro Image Preview** — state-of-the-art image generation with advanced reasoning, 1K–4K resolution, up to 14 reference images, Google Search grounding, and automatic thinking mode.

**All generated images include invisible SynthID watermarks for authenticity and provenance tracking.**

---

## Features

### Gemini 3 Pro Image

- **High-Resolution Output**: 1K, 2K, and 4K resolution

- **Advanced Text Rendering**: Legible, stylized text in infographics, menus, diagrams, and logos

- **Up to 14 Reference Images**: Up to 6 object images + up to 5 human images for style/character consistency

- **Google Search Grounding**: Real-time data (weather, stocks, events, maps)

- **Thinking Mode**: Model reasons about composition before producing the final image (automatic, always on)

### Server Features

- **AI Prompt Enhancement**: Optionally auto-enhance prompts using Gemini Flash

- **Batch Processing**: Generate multiple images in parallel (up to 8 concurrent)

- **22 Expert Prompt Templates**: MCP slash commands for photography, logos, cinematics, storyboards, and more

- **Flexible Aspect Ratios**: 10 options — 1:1, 16:9, 9:16, 3:2, 4:3, 4:5, 5:4, 2:3, 3:4, 21:9

- **Configurable via Environment Variables**: Output directory, default size, timeouts, and more

---

## Showcase

### Prompt Enhancement

When `enhance_prompt: true`, simple prompts are transformed into detailed, cinematic descriptions.

**Original:** `"A fierce wolf wearing the black symbiote Spider-Man suit, web-slinging through city at night"`

**Enhanced:** `"A powerfully built Alaskan Tundra Wolf, snarling fiercely, wearing the matte black, viscous, wet-looking symbiote suit with exaggerated white spider emblem. Captured mid-air in dramatic web-slinging arc with taut glowing webbing. Extreme low-angle perspective, hyper-realistic neo-noir cityscape at midnight with rain-slicked asphalt. High-contrast cinematic lighting with deep shadows and electric neon rim lighting."`

**Wolf — Black Symbiote Suit**

**Lion — Classic Red & Blue Suit**

**Black Panther — Symbiote Suit**

**Eagle — Classic Suit in Flight**

**Grizzly Bear — Symbiote Suit**

**Fox — Classic Suit at Dusk**

All generated with `enhance_prompt: true`, 2K, 16:9.

---

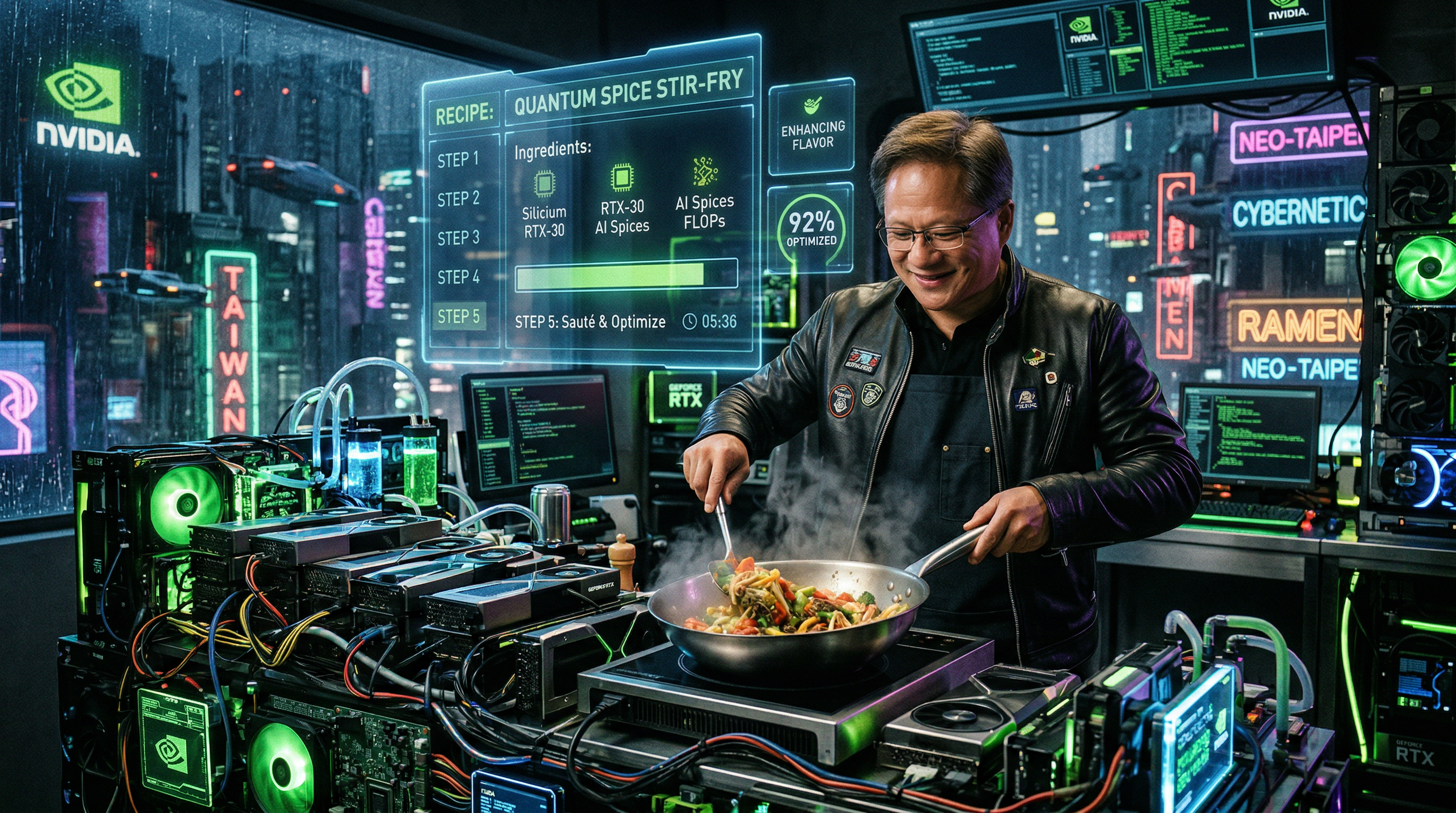

### Photorealistic Capabilities

**Jensen Huang — GPU Surfing**

**Elon Musk — Mars Chess Match**

**Jensen Huang — GPU Kitchen**

**Elon Musk — Cybertruck Symphony**

**Jensen Huang — Underwater Data Center**

**Elon Musk — SpaceX Skateboarding**

---

## Quick Start

### Prerequisites

- Python 3.11+

- [Google Gemini API key](https://makersuite.google.com/app/apikey) (free tier available)

### Installation

**Using uvx (recommended — no install needed):**

```bash

uvx ultimate-gemini-mcp

```

**Using pip:**

```bash

pip install ultimate-gemini-mcp

```

**From source:**

```bash

git clone https://github.com/anand-92/ultimate-image-gen-mcp

cd ultimate-image-gen-mcp

uv sync

```

---

## Setup

### Claude Desktop

Add to `claude_desktop_config.json`:

```json

{

"mcpServers": {

"ultimate-gemini": {

"command": "uvx",

"args": ["ultimate-gemini-mcp"],

"env": {

"GEMINI_API_KEY": "your-api-key-here"

}

}

}

}

```

Config file locations:

- **macOS**: `~/Library/Application Support/Claude/claude_desktop_config.json`

- **Windows**: `%APPDATA%\Claude\claude_desktop_config.json`

> **macOS `spawn uvx ENOENT` error**: Use the full path — find it with `which uvx`, then set `"command": "/Users/you/.local/bin/uvx"`.

### Claude Code

```bash

claude mcp add ultimate-gemini \

--env GEMINI_API_KEY=your-api-key \

-- uvx ultimate-gemini-mcp

```

### Cursor

Add to `.cursor/mcp.json`:

```json

{

"mcpServers": {

"ultimate-gemini": {

"command": "uvx",

"args": ["ultimate-gemini-mcp"],

"env": {

"GEMINI_API_KEY": "your-api-key-here"

}

}

}

}

```

Images are saved to `~/gemini_images` by default. Add `"OUTPUT_DIR": "/your/path"` to customize.

---

## Tools

### `generate_image`

Generate an image with Gemini 3 Pro Image.

| Parameter | Type | Default | Description |

|-----------|------|---------|-------------|

| `prompt` | string | required | Text description. Use full sentences, not keyword lists. |

| `model` | string | `gemini-3-pro-image-preview` | Model to use (currently only one supported) |

| `enhance_prompt` | bool | `false` | Auto-enhance prompt using Gemini Flash before generation |

| `aspect_ratio` | string | `1:1` | One of: `1:1` `2:3` `3:2` `3:4` `4:3` `4:5` `5:4` `9:16` `16:9` `21:9` |

| `image_size` | string | `2K` | `1K`, `2K`, or `4K` — **must be uppercase K** |

| `output_format` | string | `png` | `png`, `jpeg`, or `webp` |

| `reference_image_paths` | list | `[]` | Up to 14 local image paths (max 6 objects + max 5 humans) |

| `enable_google_search` | bool | `false` | Ground generation in real-time Google Search data |

| `response_modalities` | list | `["TEXT","IMAGE"]` | `["TEXT","IMAGE"]`, `["IMAGE"]`, or `["TEXT"]` |

**Image size guide:**

- `1K` — fast, good for testing (~1-2 MB)

- `2K` — recommended for most use cases (~3-5 MB)

- `4K` — maximum quality for production assets (~8-15 MB)

---

### `batch_generate`

Generate multiple images in parallel.

| Parameter | Type | Default | Description |

|-----------|------|---------|-------------|

| `prompts` | list | required | List of prompt strings (max 8) |

| `model` | string | `gemini-3-pro-image-preview` | Model for all images |

| `enhance_prompt` | bool | `true` | Enhance all prompts before generation |

| `aspect_ratio` | string | `1:1` | Aspect ratio applied to all images |

| `image_size` | string | `2K` | Resolution for all images |

| `output_format` | string | `png` | Format for all images |

| `response_modalities` | list | `["TEXT","IMAGE"]` | Modalities for all images |

| `batch_size` | int | `8` | Max concurrent requests |

---

## MCP Prompt Templates

22 expert prompt templates are available as MCP slash commands in Claude Code (type `/` to browse). Each template returns a crafted prompt and recommended parameters ready to pass directly to `generate_image` or `batch_generate`.

| Command | Description | Default aspect ratio |

|---------|-------------|----------------------|

| `photography_shot` | Photorealistic shot with lens/lighting specs | 16:9 |

| `logo_design` | Professional brand identity | 1:1, 4K, IMAGE only |

| `cinematic_scene` | Film still with cinematography language | 21:9 |

| `product_mockup` | Commercial e-commerce photography | 1:1 or 4:5 |

| `batch_storyboard` | Multi-scene storyboard → calls `batch_generate` | 16:9 |

| `macro_shot` | Extreme macro with micro-snoot lighting | 1:1 |

| `fashion_portrait` | Editorial fashion with gobo shadow patterns | 4:5 |

| `technical_cutaway` | Stephen Biesty-style cutaway diagram | 3:2, 4K, IMAGE only |

| `flat_lay` | Overhead knolling photography | 1:1 |

| `action_freeze` | High-speed strobe with motion blur background | 16:9 |

| `night_street` | Moody night street with practical light sources | 16:9 |

| `drone_aerial` | Straight-down golden hour aerial | 4:5, 4K, IMAGE only |

| `stylized_3d_render` | UE5-style render with subsurface scattering | 1:1, IMAGE only |

| `sem_microscopy` | Scanning electron microscope false-color | 1:1, IMAGE only |

| `double_exposure` | Silhouette-blended double exposure | 2:3, IMAGE only |

| `architectural_viz` | Ray-traced architectural visualization | 3:2, 4K |

| `isometric_illustration` | Orthographic isometric 3D illustration | 1:1, IMAGE only |

| `food_photography` | High-end backlit food photography | 4:5 |

| `motion_blur` | Rear-curtain sync slow shutter sequence | 16:9 |

| `typography_physical` | Text embedded in physical environment | 16:9, 4K, IMAGE only |

| `retro_futurism` | 1970s cassette-futurism analog sci-fi | 4:3, IMAGE only |

| `surreal_dreamscape` | Surrealist impossible physics scene | 1:1, IMAGE only |

| `character_sheet` | Video game character concept art sheet | 3:2, 4K, IMAGE only |

| `pbr_texture` | Seamless PBR texture map with raking light | 1:1, IMAGE only |

| `historical_photo` | Period-accurate photography with film emulation | 4:5 |

| `bioluminescent_nature` | Long-exposure bioluminescence macro | 1:1 |

| `silhouette_shot` | Cinematic pure-black silhouette master shot | 21:9, 4K |

---

## Configuration

| Variable | Default | Description |

|----------|---------|-------------|

| `GEMINI_API_KEY` | — | **Required.** Google Gemini API key |

| `OUTPUT_DIR` | `~/gemini_images` | Directory where images are saved |

| `DEFAULT_IMAGE_SIZE` | `2K` | Default resolution (`1K`, `2K`, `4K`) |

| `DEFAULT_MODEL` | `gemini-3-pro-image-preview` | Default model |

| `ENABLE_PROMPT_ENHANCEMENT` | `false` | Auto-enhance prompts by default |

| `ENABLE_GOOGLE_SEARCH` | `false` | Enable Google Search grounding by default |

| `REQUEST_TIMEOUT` | `60` | API timeout in seconds |

| `MAX_BATCH_SIZE` | `8` | Max parallel requests in batch mode |

| `LOG_LEVEL` | `INFO` | Logging level |

---

## Troubleshooting

**`spawn uvx ENOENT`** — Claude Desktop can't find `uvx`. Use the full path:

```json

"command": "/Users/yourusername/.local/bin/uvx"

```

Find it with: `which uvx`

**`GEMINI_API_KEY not found`** — Set the key in your MCP config `env` block or in a `.env` file. Get a free key at [Google AI Studio](https://makersuite.google.com/app/apikey).

**`Content blocked by safety filters`** — Rephrase the prompt to avoid sensitive content.

**`Rate limit exceeded`** — Wait and retry, or upgrade your API quota.

**Images not saving** — Check `OUTPUT_DIR` exists and is writable: `mkdir -p /your/output/path`.

---

## License

MIT — see [LICENSE](LICENSE) for details.

## Links

- [Google AI Studio](https://makersuite.google.com/app/apikey) — Get your API key

- [Gemini API Docs](https://ai.google.dev/gemini-api/docs)

- [Model Context Protocol](https://modelcontextprotocol.io/)

- [FastMCP](https://github.com/jlowin/fastmcp)

| text/markdown | null | Ultimate Gemini MCP <noreply@example.com> | null | null | MIT | ai, claude, fastmcp, gemini, gemini-3-pro-image, google-ai, image-generation, mcp | [

"Development Status :: 5 - Production/Stable",

"Intended Audience :: Developers",

"License :: OSI Approved :: MIT License",

"Programming Language :: Python :: 3",

"Programming Language :: Python :: 3.11",

"Programming Language :: Python :: 3.12",

"Topic :: Scientific/Engineering :: Artificial Intelligen... | [] | null | null | >=3.11 | [] | [] | [] | [

"fastmcp<4,>=3.0",

"google-genai>=1.52.0",

"pillow>=10.4.0",

"pydantic-settings>=2.0.0",

"pydantic>=2.0.0",

"mypy>=1.8.0; extra == \"dev\"",

"pytest-asyncio>=0.24.0; extra == \"dev\"",

"pytest-cov>=6.0.0; extra == \"dev\"",

"pytest>=8.0.0; extra == \"dev\"",

"ruff>=0.8.0; extra == \"dev\""

] | [] | [] | [] | [

"Homepage, https://github.com/anand-92/ultimate-image-gen-mcp",

"Repository, https://github.com/anand-92/ultimate-image-gen-mcp",

"Issues, https://github.com/anand-92/ultimate-image-gen-mcp/issues",

"Documentation, https://github.com/anand-92/ultimate-image-gen-mcp/blob/main/README.md"

] | twine/6.2.0 CPython/3.11.14 | 2026-02-19T23:26:01.623151 | ultimate_gemini_mcp-5.0.1.tar.gz | 88,476,016 | e9/6b/e7feb6effd32b842028f0eaaec038069525d0a3c3f0f11c97a903ace31ee/ultimate_gemini_mcp-5.0.1.tar.gz | source | sdist | null | false | 850e2976d8579b1c443fca9cc26d150b | f60103e894e583e95195255a496344f0bf14c4975ad4efe392ed0d5843894a92 | e96be7feb6effd32b842028f0eaaec038069525d0a3c3f0f11c97a903ace31ee | null | [

"LICENSE"

] | 261 |

2.4 | solveig | 0.6.4 | An AI assistant that enables secure and extensible agentic behavior from any LLM in your terminal | [](https://pypi.org/project/solveig)

[](https://github.com/Fsilveiraa/solveig/actions)

[](https://codecov.io/gh/Fsilveiraa/solveig)

[](https://www.python.org/downloads/)

[](https://docs.astral.sh/ruff/)

[](https://www.gnu.org/licenses/gpl-3.0)

---

# Solveig

**An AI assistant that brings safe agentic behavior from any LLM to your terminal**

---

<p align="center">

<span style="font-size: 1.17em; font-weight: bold;">

<a href="./docs/about.md">About</a> |

<a href="./docs/usage.md">Usage</a> |

<a href="./docs/comparison.md">Comparison</a> |

<a href="./docs/themes/themes.md">Themes</a> |

<a href="./docs/plugins.md">Plugins</a> |

<a href="https://github.com/FSilveiraa/solveig/discussions/2">Roadmap</a> |

<a href="./docs/contributing.md">Contributing</a>

</span>

</p>

---

## Quick Start

### Installation

```bash

# Core installation (OpenAI + local models)

pip install solveig

# With support for Claude and Gemini APIs

pip install solveig[all]

```

### Running

```bash

# Run with a local model

solveig -u "http://localhost:5001/v1" "Create a demo BlackSheep webapp"

# Run from a remote API like OpenRouter

solveig -u "https://openrouter.ai/api/v1" -k "<API_KEY>" -m "moonshotai/kimi-k2:free"

```

---

## Features

🤖 **AI Terminal Assistant** - Automate file management, code analysis, project setup, and system tasks using

natural language in your terminal.

🛡️ **Safe by Design** - Granular consent controls with pattern-based permissions and file operations

prioritized over shell commands.

🔌 **Plugin Architecture** - Extend capabilities through drop-in Python plugins. Add SQL queries, web scraping,

or custom workflows with 100 lines of Python.

📋 **Modern CLI** - Clear interface with task planning, file and metadata previews, diff editing,

usage stats, code linting, waiting animations and directory tree displays for informed user decisions.

🌐 **Provider Independence** - Works with any OpenAI-compatible API, including local models.

---

## Documentation

- **[About](./docs/about.md)** - Detailed features and FAQ

- **[Usage](./docs/usage.md)** - Config files, CLI flags, sub-commands, usage examples and more advanced features

- **[Comparison](./docs/comparison.md)** - Detailed comparison to alternatives in the same market space

- **[Themes](./docs/themes/themes.md)** - Themes explained, visual examples

- **[Plugins](./docs/plugins.md)** - How to use, configure and develop plugins

- **[Roadmap](https://github.com/FSilveiraa/solveig/discussions/2)** - Upcoming features and general progress tracking

- **[Contributing](./docs/contributing.md)** - Development setup, testing, and contribution guidelines

---

<a href="https://vshymanskyy.github.io/StandWithUkraine">

<img alt="Support Ukraine: https://stand-with-ukraine.pp.ua/" src="https://raw.githubusercontent.com/vshymanskyy/StandWithUkraine/main/banner2-direct.svg">

</a>

| text/markdown | Francisco | null | null | null | GNU GENERAL PUBLIC LICENSE

Version 3, 29 June 2007

Copyright (C) 2007 Free Software Foundation, Inc. <http://fsf.org/>

Everyone is permitted to copy and distribute verbatim copies

of this license document, but changing it is not allowed.

Preamble

The GNU General Public License is a free, copyleft license for

software and other kinds of works.

The licenses for most software and other practical works are designed

to take away your freedom to share and change the works. By contrast,

the GNU General Public License is intended to guarantee your freedom to

share and change all versions of a program--to make sure it remains free

software for all its users. We, the Free Software Foundation, use the

GNU General Public License for most of our software; it applies also to

any other work released this way by its authors. You can apply it to

your programs, too.

When we speak of free software, we are referring to freedom, not

price. Our General Public Licenses are designed to make sure that you

have the freedom to distribute copies of free software (and charge for

them if you wish), that you receive source code or can get it if you

want it, that you can change the software or use pieces of it in new

free programs, and that you know you can do these things.

To protect your rights, we need to prevent others from denying you

these rights or asking you to surrender the rights. Therefore, you have

certain responsibilities if you distribute copies of the software, or if

you modify it: responsibilities to respect the freedom of others.

For example, if you distribute copies of such a program, whether

gratis or for a fee, you must pass on to the recipients the same

freedoms that you received. You must make sure that they, too, receive

or can get the source code. And you must show them these terms so they

know their rights.

Developers that use the GNU GPL protect your rights with two steps:

(1) assert copyright on the software, and (2) offer you this License

giving you legal permission to copy, distribute and/or modify it.

For the developers' and authors' protection, the GPL clearly explains

that there is no warranty for this free software. For both users' and

authors' sake, the GPL requires that modified versions be marked as

changed, so that their problems will not be attributed erroneously to

authors of previous versions.

Some devices are designed to deny users access to install or run

modified versions of the software inside them, although the manufacturer

can do so. This is fundamentally incompatible with the aim of

protecting users' freedom to change the software. The systematic

pattern of such abuse occurs in the area of products for individuals to

use, which is precisely where it is most unacceptable. Therefore, we

have designed this version of the GPL to prohibit the practice for those

products. If such problems arise substantially in other domains, we

stand ready to extend this provision to those domains in future versions

of the GPL, as needed to protect the freedom of users.

Finally, every program is threatened constantly by software patents.

States should not allow patents to restrict development and use of

software on general-purpose computers, but in those that do, we wish to

avoid the special danger that patents applied to a free program could

make it effectively proprietary. To prevent this, the GPL assures that

patents cannot be used to render the program non-free.

The precise terms and conditions for copying, distribution and

modification follow.

TERMS AND CONDITIONS

0. Definitions.

"This License" refers to version 3 of the GNU General Public License.

"Copyright" also means copyright-like laws that apply to other kinds of

works, such as semiconductor masks.

"The Program" refers to any copyrightable work licensed under this

License. Each licensee is addressed as "you". "Licensees" and

"recipients" may be individuals or organizations.

To "modify" a work means to copy from or adapt all or part of the work

in a fashion requiring copyright permission, other than the making of an

exact copy. The resulting work is called a "modified version" of the

earlier work or a work "based on" the earlier work.

A "covered work" means either the unmodified Program or a work based

on the Program.

To "propagate" a work means to do anything with it that, without

permission, would make you directly or secondarily liable for

infringement under applicable copyright law, except executing it on a

computer or modifying a private copy. Propagation includes copying,

distribution (with or without modification), making available to the

public, and in some countries other activities as well.

To "convey" a work means any kind of propagation that enables other

parties to make or receive copies. Mere interaction with a user through

a computer network, with no transfer of a copy, is not conveying.

An interactive user interface displays "Appropriate Legal Notices"

to the extent that it includes a convenient and prominently visible

feature that (1) displays an appropriate copyright notice, and (2)

tells the user that there is no warranty for the work (except to the

extent that warranties are provided), that licensees may convey the

work under this License, and how to view a copy of this License. If

the interface presents a list of user commands or options, such as a

menu, a prominent item in the list meets this criterion.

1. Source Code.

The "source code" for a work means the preferred form of the work

for making modifications to it. "Object code" means any non-source

form of a work.

A "Standard Interface" means an interface that either is an official

standard defined by a recognized standards body, or, in the case of

interfaces specified for a particular programming language, one that

is widely used among developers working in that language.

The "System Libraries" of an executable work include anything, other

than the work as a whole, that (a) is included in the normal form of

packaging a Major Component, but which is not part of that Major

Component, and (b) serves only to enable use of the work with that

Major Component, or to implement a Standard Interface for which an

implementation is available to the public in source code form. A

"Major Component", in this context, means a major essential component

(kernel, window system, and so on) of the specific operating system

(if any) on which the executable work runs, or a compiler used to

produce the work, or an object code interpreter used to run it.

The "Corresponding Source" for a work in object code form means all

the source code needed to generate, install, and (for an executable

work) run the object code and to modify the work, including scripts to

control those activities. However, it does not include the work's

System Libraries, or general-purpose tools or generally available free

programs which are used unmodified in performing those activities but

which are not part of the work. For example, Corresponding Source

includes interface definition files associated with source files for

the work, and the source code for shared libraries and dynamically

linked subprograms that the work is specifically designed to require,

such as by intimate data communication or control flow between those

subprograms and other parts of the work.

The Corresponding Source need not include anything that users

can regenerate automatically from other parts of the Corresponding

Source.

The Corresponding Source for a work in source code form is that

same work.

2. Basic Permissions.

All rights granted under this License are granted for the term of

copyright on the Program, and are irrevocable provided the stated

conditions are met. This License explicitly affirms your unlimited

permission to run the unmodified Program. The output from running a

covered work is covered by this License only if the output, given its

content, constitutes a covered work. This License acknowledges your

rights of fair use or other equivalent, as provided by copyright law.

You may make, run and propagate covered works that you do not

convey, without conditions so long as your license otherwise remains

in force. You may convey covered works to others for the sole purpose

of having them make modifications exclusively for you, or provide you

with facilities for running those works, provided that you comply with

the terms of this License in conveying all material for which you do

not control copyright. Those thus making or running the covered works

for you must do so exclusively on your behalf, under your direction

and control, on terms that prohibit them from making any copies of

your copyrighted material outside their relationship with you.

Conveying under any other circumstances is permitted solely under

the conditions stated below. Sublicensing is not allowed; section 10

makes it unnecessary.

3. Protecting Users' Legal Rights From Anti-Circumvention Law.

No covered work shall be deemed part of an effective technological

measure under any applicable law fulfilling obligations under article

11 of the WIPO copyright treaty adopted on 20 December 1996, or

similar laws prohibiting or restricting circumvention of such

measures.

When you convey a covered work, you waive any legal power to forbid

circumvention of technological measures to the extent such circumvention

is effected by exercising rights under this License with respect to

the covered work, and you disclaim any intention to limit operation or

modification of the work as a means of enforcing, against the work's

users, your or third parties' legal rights to forbid circumvention of

technological measures.

4. Conveying Verbatim Copies.

You may convey verbatim copies of the Program's source code as you

receive it, in any medium, provided that you conspicuously and

appropriately publish on each copy an appropriate copyright notice;

keep intact all notices stating that this License and any

non-permissive terms added in accord with section 7 apply to the code;

keep intact all notices of the absence of any warranty; and give all

recipients a copy of this License along with the Program.

You may charge any price or no price for each copy that you convey,

and you may offer support or warranty protection for a fee.

5. Conveying Modified Source Versions.

You may convey a work based on the Program, or the modifications to

produce it from the Program, in the form of source code under the

terms of section 4, provided that you also meet all of these conditions:

a) The work must carry prominent notices stating that you modified

it, and giving a relevant date.

b) The work must carry prominent notices stating that it is

released under this License and any conditions added under section

7. This requirement modifies the requirement in section 4 to

"keep intact all notices".

c) You must license the entire work, as a whole, under this

License to anyone who comes into possession of a copy. This

License will therefore apply, along with any applicable section 7

additional terms, to the whole of the work, and all its parts,

regardless of how they are packaged. This License gives no

permission to license the work in any other way, but it does not

invalidate such permission if you have separately received it.

d) If the work has interactive user interfaces, each must display

Appropriate Legal Notices; however, if the Program has interactive

interfaces that do not display Appropriate Legal Notices, your

work need not make them do so.

A compilation of a covered work with other separate and independent

works, which are not by their nature extensions of the covered work,

and which are not combined with it such as to form a larger program,

in or on a volume of a storage or distribution medium, is called an

"aggregate" if the compilation and its resulting copyright are not

used to limit the access or legal rights of the compilation's users

beyond what the individual works permit. Inclusion of a covered work

in an aggregate does not cause this License to apply to the other

parts of the aggregate.

6. Conveying Non-Source Forms.

You may convey a covered work in object code form under the terms

of sections 4 and 5, provided that you also convey the

machine-readable Corresponding Source under the terms of this License,

in one of these ways:

a) Convey the object code in, or embodied in, a physical product

(including a physical distribution medium), accompanied by the

Corresponding Source fixed on a durable physical medium

customarily used for software interchange.

b) Convey the object code in, or embodied in, a physical product

(including a physical distribution medium), accompanied by a

written offer, valid for at least three years and valid for as

long as you offer spare parts or customer support for that product

model, to give anyone who possesses the object code either (1) a

copy of the Corresponding Source for all the software in the

product that is covered by this License, on a durable physical

medium customarily used for software interchange, for a price no

more than your reasonable cost of physically performing this

conveying of source, or (2) access to copy the

Corresponding Source from a network server at no charge.

c) Convey individual copies of the object code with a copy of the

written offer to provide the Corresponding Source. This

alternative is allowed only occasionally and noncommercially, and

only if you received the object code with such an offer, in accord

with subsection 6b.

d) Convey the object code by offering access from a designated

place (gratis or for a charge), and offer equivalent access to the

Corresponding Source in the same way through the same place at no

further charge. You need not require recipients to copy the

Corresponding Source along with the object code. If the place to

copy the object code is a network server, the Corresponding Source

may be on a different server (operated by you or a third party)

that supports equivalent copying facilities, provided you maintain

clear directions next to the object code saying where to find the

Corresponding Source. Regardless of what server hosts the

Corresponding Source, you remain obligated to ensure that it is

available for as long as needed to satisfy these requirements.

e) Convey the object code using peer-to-peer transmission, provided

you inform other peers where the object code and Corresponding

Source of the work are being offered to the general public at no

charge under subsection 6d.

A separable portion of the object code, whose source code is excluded

from the Corresponding Source as a System Library, need not be

included in conveying the object code work.

A "User Product" is either (1) a "consumer product", which means any

tangible personal property which is normally used for personal, family,

or household purposes, or (2) anything designed or sold for incorporation

into a dwelling. In determining whether a product is a consumer product,

doubtful cases shall be resolved in favor of coverage. For a particular

product received by a particular user, "normally used" refers to a

typical or common use of that class of product, regardless of the status

of the particular user or of the way in which the particular user

actually uses, or expects or is expected to use, the product. A product

is a consumer product regardless of whether the product has substantial

commercial, industrial or non-consumer uses, unless such uses represent

the only significant mode of use of the product.

"Installation Information" for a User Product means any methods,

procedures, authorization keys, or other information required to install

and execute modified versions of a covered work in that User Product from

a modified version of its Corresponding Source. The information must

suffice to ensure that the continued functioning of the modified object

code is in no case prevented or interfered with solely because

modification has been made.

If you convey an object code work under this section in, or with, or

specifically for use in, a User Product, and the conveying occurs as

part of a transaction in which the right of possession and use of the

User Product is transferred to the recipient in perpetuity or for a

fixed term (regardless of how the transaction is characterized), the

Corresponding Source conveyed under this section must be accompanied

by the Installation Information. But this requirement does not apply

if neither you nor any third party retains the ability to install

modified object code on the User Product (for example, the work has

been installed in ROM).

The requirement to provide Installation Information does not include a

requirement to continue to provide support service, warranty, or updates

for a work that has been modified or installed by the recipient, or for

the User Product in which it has been modified or installed. Access to a

network may be denied when the modification itself materially and

adversely affects the operation of the network or violates the rules and

protocols for communication across the network.

Corresponding Source conveyed, and Installation Information provided,

in accord with this section must be in a format that is publicly

documented (and with an implementation available to the public in

source code form), and must require no special password or key for

unpacking, reading or copying.

7. Additional Terms.

"Additional permissions" are terms that supplement the terms of this

License by making exceptions from one or more of its conditions.

Additional permissions that are applicable to the entire Program shall

be treated as though they were included in this License, to the extent

that they are valid under applicable law. If additional permissions

apply only to part of the Program, that part may be used separately

under those permissions, but the entire Program remains governed by

this License without regard to the additional permissions.

When you convey a copy of a covered work, you may at your option

remove any additional permissions from that copy, or from any part of

it. (Additional permissions may be written to require their own

removal in certain cases when you modify the work.) You may place

additional permissions on material, added by you to a covered work,

for which you have or can give appropriate copyright permission.

Notwithstanding any other provision of this License, for material you

add to a covered work, you may (if authorized by the copyright holders of

that material) supplement the terms of this License with terms:

a) Disclaiming warranty or limiting liability differently from the

terms of sections 15 and 16 of this License; or

b) Requiring preservation of specified reasonable legal notices or

author attributions in that material or in the Appropriate Legal

Notices displayed by works containing it; or

c) Prohibiting misrepresentation of the origin of that material, or

requiring that modified versions of such material be marked in

reasonable ways as different from the original version; or

d) Limiting the use for publicity purposes of names of licensors or

authors of the material; or

e) Declining to grant rights under trademark law for use of some

trade names, trademarks, or service marks; or

f) Requiring indemnification of licensors and authors of that

material by anyone who conveys the material (or modified versions of

it) with contractual assumptions of liability to the recipient, for

any liability that these contractual assumptions directly impose on

those licensors and authors.

All other non-permissive additional terms are considered "further

restrictions" within the meaning of section 10. If the Program as you

received it, or any part of it, contains a notice stating that it is

governed by this License along with a term that is a further

restriction, you may remove that term. If a license document contains

a further restriction but permits relicensing or conveying under this

License, you may add to a covered work material governed by the terms

of that license document, provided that the further restriction does

not survive such relicensing or conveying.

If you add terms to a covered work in accord with this section, you

must place, in the relevant source files, a statement of the

additional terms that apply to those files, or a notice indicating

where to find the applicable terms.

Additional terms, permissive or non-permissive, may be stated in the

form of a separately written license, or stated as exceptions;

the above requirements apply either way.

8. Termination.

You may not propagate or modify a covered work except as expressly

provided under this License. Any attempt otherwise to propagate or

modify it is void, and will automatically terminate your rights under

this License (including any patent licenses granted under the third

paragraph of section 11).

However, if you cease all violation of this License, then your

license from a particular copyright holder is reinstated (a)

provisionally, unless and until the copyright holder explicitly and

finally terminates your license, and (b) permanently, if the copyright

holder fails to notify you of the violation by some reasonable means

prior to 60 days after the cessation.

Moreover, your license from a particular copyright holder is

reinstated permanently if the copyright holder notifies you of the

violation by some reasonable means, this is the first time you have

received notice of violation of this License (for any work) from that

copyright holder, and you cure the violation prior to 30 days after

your receipt of the notice.

Termination of your rights under this section does not terminate the

licenses of parties who have received copies or rights from you under

this License. If your rights have been terminated and not permanently

reinstated, you do not qualify to receive new licenses for the same

material under section 10.

9. Acceptance Not Required for Having Copies.

You are not required to accept this License in order to receive or

run a copy of the Program. Ancillary propagation of a covered work

occurring solely as a consequence of using peer-to-peer transmission

to receive a copy likewise does not require acceptance. However,

nothing other than this License grants you permission to propagate or

modify any covered work. These actions infringe copyright if you do

not accept this License. Therefore, by modifying or propagating a

covered work, you indicate your acceptance of this License to do so.

10. Automatic Licensing of Downstream Recipients.

Each time you convey a covered work, the recipient automatically

receives a license from the original licensors, to run, modify and

propagate that work, subject to this License. You are not responsible

for enforcing compliance by third parties with this License.

An "entity transaction" is a transaction transferring control of an

organization, or substantially all assets of one, or subdividing an

organization, or merging organizations. If propagation of a covered

work results from an entity transaction, each party to that

transaction who receives a copy of the work also receives whatever

licenses to the work the party's predecessor in interest had or could

give under the previous paragraph, plus a right to possession of the

Corresponding Source of the work from the predecessor in interest, if

the predecessor has it or can get it with reasonable efforts.

You may not impose any further restrictions on the exercise of the

rights granted or affirmed under this License. For example, you may

not impose a license fee, royalty, or other charge for exercise of

rights granted under this License, and you may not initiate litigation

(including a cross-claim or counterclaim in a lawsuit) alleging that

any patent claim is infringed by making, using, selling, offering for

sale, or importing the Program or any portion of it.

11. Patents.

A "contributor" is a copyright holder who authorizes use under this

License of the Program or a work on which the Program is based. The

work thus licensed is called the contributor's "contributor version".

A contributor's "essential patent claims" are all patent claims

owned or controlled by the contributor, whether already acquired or

hereafter acquired, that would be infringed by some manner, permitted

by this License, of making, using, or selling its contributor version,

but do not include claims that would be infringed only as a

consequence of further modification of the contributor version. For

purposes of this definition, "control" includes the right to grant

patent sublicenses in a manner consistent with the requirements of

this License.

Each contributor grants you a non-exclusive, worldwide, royalty-free

patent license under the contributor's essential patent claims, to

make, use, sell, offer for sale, import and otherwise run, modify and

propagate the contents of its contributor version.

In the following three paragraphs, a "patent license" is any express

agreement or commitment, however denominated, not to enforce a patent

(such as an express permission to practice a patent or covenant not to

sue for patent infringement). To "grant" such a patent license to a

party means to make such an agreement or commitment not to enforce a

patent against the party.

If you convey a covered work, knowingly relying on a patent license,

and the Corresponding Source of the work is not available for anyone

to copy, free of charge and under the terms of this License, through a

publicly available network server or other readily accessible means,

then you must either (1) cause the Corresponding Source to be so

available, or (2) arrange to deprive yourself of the benefit of the

patent license for this particular work, or (3) arrange, in a manner

consistent with the requirements of this License, to extend the patent

license to downstream recipients. "Knowingly relying" means you have

actual knowledge that, but for the patent license, your conveying the

covered work in a country, or your recipient's use of the covered work

in a country, would infringe one or more identifiable patents in that

country that you have reason to believe are valid.

If, pursuant to or in connection with a single transaction or

arrangement, you convey, or propagate by procuring conveyance of, a

covered work, and grant a patent license to some of the parties

receiving the covered work authorizing them to use, propagate, modify

or convey a specific copy of the covered work, then the patent license

you grant is automatically extended to all recipients of the covered

work and works based on it.

A patent license is "discriminatory" if it does not include within

the scope of its coverage, prohibits the exercise of, or is

conditioned on the non-exercise of one or more of the rights that are

specifically granted under this License. You may not convey a covered

work if you are a party to an arrangement with a third party that is

in the business of distributing software, under which you make payment

to the third party based on the extent of your activity of conveying

the work, and under which the third party grants, to any of the

parties who would receive the covered work from you, a discriminatory

patent license (a) in connection with copies of the covered work

conveyed by you (or copies made from those copies), or (b) primarily

for and in connection with specific products or compilations that

contain the covered work, unless you entered into that arrangement,

or that patent license was granted, prior to 28 March 2007.

Nothing in this License shall be construed as excluding or limiting

any implied license or other defenses to infringement that may

otherwise be available to you under applicable patent law.

12. No Surrender of Others' Freedom.

If conditions are imposed on you (whether by court order, agreement or

otherwise) that contradict the conditions of this License, they do not

excuse you from the conditions of this License. If you cannot convey a

covered work so as to satisfy simultaneously your obligations under this

License and any other pertinent obligations, then as a consequence you may

not convey it at all. For example, if you agree to terms that obligate you

to collect a royalty for further conveying from those to whom you convey

the Program, the only way you could satisfy both those terms and this

License would be to refrain entirely from conveying the Program.

13. Use with the GNU Affero General Public License.

Notwithstanding any other provision of this License, you have

permission to link or combine any covered work with a work licensed

under version 3 of the GNU Affero General Public License into a single

combined work, and to convey the resulting work. The terms of this

License will continue to apply to the part which is the covered work,

but the special requirements of the GNU Affero General Public License,

section 13, concerning interaction through a network will apply to the

combination as such.

14. Revised Versions of this License.

The Free Software Foundation may publish revised and/or new versions of

the GNU General Public License from time to time. Such new versions will

be similar in spirit to the present version, but may differ in detail to

address new problems or concerns.

Each version is given a distinguishing version number. If the

Program specifies that a certain numbered version of the GNU General

Public License "or any later version" applies to it, you have the

option of following the terms and conditions either of that numbered

version or of any later version published by the Free Software

Foundation. If the Program does not specify a version number of the

GNU General Public License, you may choose any version ever published

by the Free Software Foundation.

If the Program specifies that a proxy can decide which future

versions of the GNU General Public License can be used, that proxy's

public statement of acceptance of a version permanently authorizes you

to choose that version for the Program.

Later license versions may give you additional or different

permissions. However, no additional obligations are imposed on any

author or copyright holder as a result of your choosing to follow a

later version.

15. Disclaimer of Warranty.

THERE IS NO WARRANTY FOR THE PROGRAM, TO THE EXTENT PERMITTED BY

APPLICABLE LAW. EXCEPT WHEN OTHERWISE STATED IN WRITING THE COPYRIGHT

HOLDERS AND/OR OTHER PARTIES PROVIDE THE PROGRAM "AS IS" WITHOUT WARRANTY

OF ANY KIND, EITHER EXPRESSED OR IMPLIED, INCLUDING, BUT NOT LIMITED TO,

THE IMPLIED WARRANTIES OF MERCHANTABILITY AND FITNESS FOR A PARTICULAR

PURPOSE. THE ENTIRE RISK AS TO THE QUALITY AND PERFORMANCE OF THE PROGRAM

IS WITH YOU. SHOULD THE PROGRAM PROVE DEFECTIVE, YOU ASSUME THE COST OF

ALL NECESSARY SERVICING, REPAIR OR CORRECTION.

16. Limitation of Liability.

IN NO EVENT UNLESS REQUIRED BY APPLICABLE LAW OR AGREED TO IN WRITING

WILL ANY COPYRIGHT HOLDER, OR ANY OTHER PARTY WHO MODIFIES AND/OR CONVEYS

THE PROGRAM AS PERMITTED ABOVE, BE LIABLE TO YOU FOR DAMAGES, INCLUDING ANY

GENERAL, SPECIAL, INCIDENTAL OR CONSEQUENTIAL DAMAGES ARISING OUT OF THE

USE OR INABILITY TO USE THE PROGRAM (INCLUDING BUT NOT LIMITED TO LOSS OF

DATA OR DATA BEING RENDERED INACCURATE OR LOSSES SUSTAINED BY YOU OR THIRD

PARTIES OR A FAILURE OF THE PROGRAM TO OPERATE WITH ANY OTHER PROGRAMS),

EVEN IF SUCH HOLDER OR OTHER PARTY HAS BEEN ADVISED OF THE POSSIBILITY OF

SUCH DAMAGES.

17. Interpretation of Sections 15 and 16.

If the disclaimer of warranty and limitation of liability provided

above cannot be given local legal effect according to their terms,

reviewing courts shall apply local law that most closely approximates

an absolute waiver of all civil liability in connection with the

Program, unless a warranty or assumption of liability accompanies a

copy of the Program in return for a fee.

END OF TERMS AND CONDITIONS

How to Apply These Terms to Your New Programs

If you develop a new program, and you want it to be of the greatest

possible use to the public, the best way to achieve this is to make it

free software which everyone can redistribute and change under these terms.

To do so, attach the following notices to the program. It is safest

to attach them to the start of each source file to most effectively

state the exclusion of warranty; and each file should have at least

the "copyright" line and a pointer to where the full notice is found.

<one line to give the program's name and a brief idea of what it does.>

Copyright (C) <year> <name of author>

This program is free software: you can redistribute it and/or modify

it under the terms of the GNU General Public License as published by

the Free Software Foundation, either version 3 of the License, or

(at your option) any later version.

This program is distributed in the hope that it will be useful,

but WITHOUT ANY WARRANTY; without even the implied warranty of

MERCHANTABILITY or FITNESS FOR A PARTICULAR PURPOSE. See the

GNU General Public License for more details.

You should have received a copy of the GNU General Public License

along with this program. If not, see <http://www.gnu.org/licenses/>.

Also add information on how to contact you by electronic and paper mail.

If the program does terminal interaction, make it output a short

notice like this when it starts in an interactive mode:

<program> Copyright (C) <year> <name of author>

This program comes with ABSOLUTELY NO WARRANTY; for details type `show w'.

This is free software, and you are welcome to redistribute it

under certain conditions; type `show c' for details.

The hypothetical commands `show w' and `show c' should show the appropriate

parts of the General Public License. Of course, your program's commands

might be different; for a GUI interface, you would use an "about box".

You should also get your employer (if you work as a programmer) or school,

if any, to sign a "copyright disclaimer" for the program, if necessary.

For more information on this, and how to apply and follow the GNU GPL, see

<http://www.gnu.org/licenses/>.

The GNU General Public License does not permit incorporating your program

into proprietary programs. If your program is a subroutine library, you

may consider it more useful to permit linking proprietary applications with

the library. If this is what you want to do, use the GNU Lesser General

Public License instead of this License. But first, please read

<http://www.gnu.org/philosophy/why-not-lgpl.html>. | ai, automation, security, llm, assistant | [

"Development Status :: 4 - Beta",

"Intended Audience :: Developers",

"Intended Audience :: End Users/Desktop",

"License :: OSI Approved :: GNU General Public License v3 (GPLv3)",

"Programming Language :: Python :: 3",

"Programming Language :: Python :: 3.13",

"Topic :: Scientific/Engineering :: Artifici... | [] | null | null | >=3.13 | [] | [] | [] | [

"distro>=1.9.0",

"aiofiles>=25.1.0",

"instructor==1.13.0",

"openai>=1.108.0",

"pydantic>=2.11.0",

"tiktoken>=0.11.0",

"textual>=6.1.0",

"rich>=14.0.0",

"setuptools>=61.0; extra == \"dev\"",

"anthropic>=0.68.0; extra == \"dev\"",

"google-generativeai>=0.8.5; extra == \"dev\"",

"pytest>=8.3.0; e... | [] | [] | [] | [

"Homepage, https://github.com/FSilveiraa/solveig",

"About, https://github.com/FSilveiraa/solveig/blob/main/docs/about.md",

"Roadmap, https://github.com/FSilveiraa/solveig/discussions/2"

] | twine/6.1.0 CPython/3.13.7 | 2026-02-19T23:25:49.518939 | solveig-0.6.4.tar.gz | 106,864 | 0d/10/09a408722e8aa03957bcfa6cb6c6fd54c4e534893606c34b4bd439c35976/solveig-0.6.4.tar.gz | source | sdist | null | false | 9d61315792a1fa98169c471ffbd6d871 | df53ff9c449e93a6b178ea440502d7dfa3a62ddcb29b3cc173a194d4d4cc3e92 | 0d1009a408722e8aa03957bcfa6cb6c6fd54c4e534893606c34b4bd439c35976 | null | [

"LICENSE"

] | 226 |

2.4 | autonomous-app | 0.3.79 | Containerized application framework built on Flask with additional libraries and tools for rapid development of web applications. | # Autonomous

:warning: :warning: :warning: WiP :warning: :warning: :warning:

A local, containerized, service based application library built on top of Flask.

A self-contained containerized Python applications with minimal dependencies using built in libraries for many different kinds of tasks.

- **[pypi](https://test.pypi.org/project/autonomous)**

- **[github](https://github.com/Sallenmoore/autonomous)**

## Features

- Fully containerized, service based Python application framework

- All services are localized to a virtual intranet

- Container based MongoDB database

- Model ORM API

- File storage locally or with services such as Cloudinary or S3

- Separate service for long running tasks

- Built-in Authentication with Google or Github

- Auto-Generated Documentation Pages

## Dependencies

- **Languages**

- [Python 3.11](/Dev/language/python)

- **Frameworks**

- [Flask](https://flask.palletsprojects.com/en/2.1.x/)

- **Containers**

- [Docker](https://docs.docker.com/)

- [Docker Compose](https://github.com/compose-spec/compose-spec/blob/master/spec.md)

- **Server**

- [nginx](https://docs.nginx.com/nginx/)

- [gunicorn](https://docs.gunicorn.org/en/stable/configure.html)

- **Networking and Serialization**

- [requests](https://requests.readthedocs.io/en/latest/)

- **Database**

- [pymongo](https://pymongo.readthedocs.io/en/stable/api/pymongo/index.html)

- **Testing**

- [pytest](/Dev/tools/pytest)

- [coverage](https://coverage.readthedocs.io/en/6.4.1/cmd.html)

- **Documentation** - Coming Soon

- [pdoc](https://pdoc.dev/docs/pdoc/doc.html)

---

## Developer Notes

### TODO

- Setup/fix template app generator

- Add type hints

- Switch to less verbose html preprocessor

- 100% testing coverage

### Issue Tracking

- None

## Processes

### Generate app

TDB

### Tests

```sh

make tests

```

### package

1. Update version in `/src/autonomous/__init__.py`

2. `make package`

| text/markdown | null | Steven A Moore <samoore@binghamton.edu> | null | null | null | null | [

"Programming Language :: Python :: 3.12",

"License :: OSI Approved :: MIT License",

"Operating System :: OS Independent"

] | [] | null | null | >=3.12 | [] | [] | [] | [

"Flask",

"setuptools",

"python-dotenv",

"blinker",

"pymongo",

"PyGithub",

"pygit2",

"pillow",

"redis",

"jsmin",

"requests",

"gunicorn",

"Authlib",

"rq",

"ollama",

"google-genai",

"sentence-transformers",

"dateparser",

"python-slugify",

"pydub"

] | [] | [] | [] | [

"homepage, https://github.com/Sallenmoore/autonomous"

] | twine/6.2.0 CPython/3.12.12 | 2026-02-19T23:24:27.721664 | autonomous_app-0.3.79.tar.gz | 124,989 | be/7c/bd4c4de34a3fcf55f6d83adc176dd7a9a02c232bc151984c8c4fedc715d5/autonomous_app-0.3.79.tar.gz | source | sdist | null | false | ccf4d94ac5a275aa7bb7402fd4d9b174 | 6bfa215476549b0dc932973ecd964ed4ecde6a735086cd4c6b9d9d0195dc42d1 | be7cbd4c4de34a3fcf55f6d83adc176dd7a9a02c232bc151984c8c4fedc715d5 | null | [] | 256 |

2.4 | pytest-leela | 0.2.0 | Type-aware mutation testing for Python — fast, opinionated, pytest-native | # pytest-leela

**Type-aware mutation testing for Python.**

[](https://pypi.org/project/pytest-leela/)

[](https://pypi.org/project/pytest-leela/)

[](https://github.com/markng/pytest-leela/blob/main/LICENSE)

[](https://github.com/markng/pytest-leela/actions)

---

## What it does

pytest-leela runs mutation testing inside your existing pytest session. It injects AST mutations

via import hooks (no temp files), maps each mutation to only the tests that cover that line, and

uses type annotations to skip mutations that can't possibly fail your tests.

It's opinionated: we target latest Python, favour speed over configurability, and integrate

with pytest without separate config files or runners. If that fits your workflow, great.

MIT licensed — fork it if it doesn't.

---

## Install

```

pip install pytest-leela

```

---

## Quick Start

**Run mutation testing on your whole test suite:**

```bash

pytest --leela

```

**Target specific modules (pass `--target` multiple times):**

```bash

pytest --leela --target myapp/models.py --target myapp/views.py

```

**Only mutate lines changed vs a branch:**

```bash

pytest --leela --diff main

```

**Limit CPU cores:**

```bash

pytest --leela --max-cores 4

```

**Cap memory usage:**

```bash

pytest --leela --max-memory 4096

```

**Combine flags:**

```bash

pytest --leela --diff main --max-cores 4 --max-memory 4096

```

**Generate an interactive HTML report:**

```bash

pytest --leela --leela-html report.html

```

**Benchmark optimization layers:**

```bash

pytest --leela-benchmark

```

---

## Features

- **Type-aware mutation pruning** — uses type annotations to skip mutations that can't possibly

trip your tests (e.g. won't swap `+` to `-` on a `str` operand)

- **Per-test coverage mapping** — each mutant runs only the tests that exercise its lines,

not the whole suite

- **In-process execution via import hooks** — mutations applied via `sys.meta_path`, zero

filesystem writes, fast loop

- **Git diff mode** — `--diff <ref>` limits mutations to lines changed since that ref

- **Framework-aware** — clears Django URL caches between mutants so view reloads work correctly

- **Resource limits** — `--max-cores N` caps parallelism; `--max-memory MB` guards memory

- **HTML report** — `--leela-html` generates an interactive single-file report with source viewer, survivor navigation, and test source overlay

- **CI exit codes** — exits non-zero when mutants survive, so CI pipelines fail on incomplete kill rates

- **Benchmark mode** — `--leela-benchmark` measures the speedup from each optimization layer

---

## HTML Report

`--leela-html report.html` generates a single self-contained HTML file with no external dependencies.

**What it shows:**

- Overall mutation score badge

- Per-file breakdown with kill/survive/timeout counts

- Source code viewer with syntax highlighting

**Interactive features:**

- Click any line to see mutant details (original → mutated code, status, relevant tests)

- Survivor navigation overlay — keyboard shortcuts: `n` next survivor, `p` previous, `l` list all, `Esc` close

- Test source overlay — click any test name to see its source code

Uses the Catppuccin Mocha dark theme.

---

## Requirements

- Python >= 3.12

- pytest >= 7.0

---

## License

MIT

| text/markdown | null | Mark Ng <mark@roaming-panda.com> | null | null | null | mutation-testing, pytest, quality, testing, type-aware | [

"Development Status :: 3 - Alpha",

"Framework :: Pytest",

"Intended Audience :: Developers",

"License :: OSI Approved :: MIT License",

"Operating System :: OS Independent",

"Programming Language :: Python :: 3",

"Programming Language :: Python :: 3.12",

"Programming Language :: Python :: 3.13",

"Top... | [] | null | null | >=3.12 | [] | [] | [] | [

"pytest>=7.0",

"factory-boy; extra == \"dev\"",

"faker; extra == \"dev\"",

"mypy; extra == \"dev\"",

"pytest-describe>=2.0; extra == \"dev\""

] | [] | [] | [] | [

"Homepage, https://github.com/markng/pytest-leela",

"Issues, https://github.com/markng/pytest-leela/issues"

] | twine/6.1.0 CPython/3.13.7 | 2026-02-19T23:24:06.675501 | pytest_leela-0.2.0.tar.gz | 75,311 | 14/e6/ee2debf3605959f75d3273dcc9899b141993c8df65f4720cd0337f3921ad/pytest_leela-0.2.0.tar.gz | source | sdist | null | false | d0e22f2158ae928ac72c948d678cd7d6 | c9e047d2820acb771d1665c5723e58c64adfc41dc44dc87758f48d8fb27e1146 | 14e6ee2debf3605959f75d3273dcc9899b141993c8df65f4720cd0337f3921ad | MIT | [

"LICENSE"

] | 235 |

2.4 | shwary-python | 2.0.4 | SDK Python moderne (Async/Sync) pour l'API de paiement Shwary. | # Shwary Python SDK

[](https://pypi.org/project/shwary-python/)

[](https://pypi.org/project/shwary-python/)

[](https://opensource.org/licenses/MIT)

**Shwary Python** est une bibliothèque cliente moderne, asynchrone et performante pour l'intégration de l'API [Shwary](https://shwary.com). Elle permet d'initier des paiements Mobile Money en **RDC**, au **Kenya** et en **Ouganda** avec une validation stricte des données avant l'envoi.

- **Retry automatique** : Les erreurs réseau transitoires (timeout, connexion) sont automatiquement retentées avec backoff exponentiel

- **Types stricts** : TypedDict pour les réponses (`PaymentResponse`, `TransactionResponse`, `WebhookPayload`)

- **Logging structuré** : Les logs s'écrivent dans la racine du projet utilisateur (`logs/shwary.log`) avec rotation automatique

- **Base class partagée** : Élimination de la duplication sync/async pour une maintenabilité meilleure

- **429 Rate Limiting** : Nouvelle exception `RateLimitingError` pour gérer les dépassements de débit

- **Docstrings améliorées** : Documentation complète avec exemples d'utilisation

- **Tests étendus** : Couverture complète des retries, erreurs et validations

- **Modèles de réponse** : Schemas Pydantic pour les webhooks et transactions

- **Correction des bugs** : Correction des imports et des bugs mineurs

## Caractéristiques

* **Gestion d'erreurs native** : Pas besoin de vérifier les `status_code` manuellement. Le SDK lève des exceptions explicites (`AuthenticationError`, `ValidationError`, etc.).

* **Async-first** : Construit sur `httpx` pour des performances optimales (Pooling de connexions).

* **Dual-mode** : Support complet des modes Synchrone et Asynchrone.

* **Validation Robuste** : Vérification des numéros (E.164) et des montants minimums (ex: 2900 CDF pour la RDC).

* **Retry automatique** : Retries intelligentes sur erreurs réseau transitoires avec backoff exponentiel.

* **Type-safe** : Basé sur Pydantic V2 pour une autocomplétion parfaite dans votre IDE.

* **Ultra-rapide** : Optimisé avec `uv` et `__slots__` pour minimiser l'empreinte mémoire.

* **Logging structuré** : Logs dans la racine du projet utilisateur sans données sensibles.

## Installation

Avec `uv` (recommandé) :

```bash

uv add shwary-python

```

Ou avec `pip`

```bash

pip install shwary-python

```

## Utilisation Rapide

### Mode Synchrone (Flask, Django, scripts)

```python

from shwary import Shwary, ValidationError, AuthenticationError

with Shwary(

merchant_id="your-merchant-id",

merchant_key="your-merchant-key",

is_sandbox=True

) as client:

try:

payment = client.initiate_payment(

country="DRC",

amount=5000,

phone_number="+243972345678",

callback_url="https://yoursite.com/webhooks/shwary"

)

print(f"Transaction: {payment['id']} - {payment['status']}")

except ValidationError as e:

print(f"Erreur validation: {e}")

except AuthenticationError:

print("Credentials invalides")

```

### Mode Asynchrone (FastAPI, Quart, aiohttp)

```python

import asyncio

from shwary import ShwaryAsync

async def main():

async with ShwaryAsync(

merchant_id="your-merchant-id",

merchant_key="your-merchant-key",

is_sandbox=True

) as client:

try:

payment = await client.initiate_payment(

country="DRC",

amount=5000,

phone_number="+243972345678"

)

print(f"Transaction: {payment['id']}")

except Exception as e:

print(f"Erreur: {e}")

asyncio.run(main())

```

## Validation par pays

Le SDK applique les règles métiers de Shwary localement pour économiser des appels réseau :

| Pays | Code | Devise | Montant Min. | Préfixe |

| :--- | :--- | :--- | :--- | :--- |

| RDC | DRC | CDF | 2900 | +243 |

| Kenya | KE | KES | > 0 | +254 |

| Ouganda | UG | UGX | > 0 | +256 |

## Gestion des Erreurs

Le SDK transforme les erreurs HTTP en exceptions Python. **Vous n'avez pas besoin de vérifier manuellement les codes de statut** – gérez simplement les exceptions :

```python

from shwary import (

Shwary,

ValidationError, # Données invalides

AuthenticationError, # Credentials invalides

InsufficientFundsError, # Solde insuffisant

RateLimitingError, # Trop de requêtes

ShwaryAPIError, # Erreur serveur

)

try:

payment = client.initiate_payment(...)

except ValidationError as e:

# Format téléphone invalide, montant trop bas, etc.

print(f"Erreur validation: {e}")

except AuthenticationError:

# merchant_id / merchant_key incorrects

print("Credentials invalides - vérifiez votre configuration")

except InsufficientFundsError:

# Solde marchand insuffisant

print("Solde insuffisant - rechargez votre compte")

except RateLimitingError:

# Trop de requêtes (429) - implémentez un backoff

print("Rate limited - réessayez dans quelques secondes")

except ShwaryAPIError as e:

# Autres erreurs API (500, timeout, etc.)

print(f"Erreur API {e.status_code}: {e.message}")

```

## Webhooks et Callbacks

Lorsqu'une transaction change d'état, Shwary envoie une notification JSON à votre `callback_url`. Voici comment la traiter :

```python

from shwary import WebhookPayload

@app.post("/webhooks/shwary")

async def handle_webhook(payload: WebhookPayload):

"""Shwary envoie une notification de changement d'état."""

if payload.status == "completed":

# Transaction réussie

print(f"Paiement {payload.id} reçu ({payload.amount})")

# La livrez le service ici

elif payload.status == "failed":

# Transaction échouée

print(f"Paiement {payload.id} échoué")

# Notifiez le client

return {"status": "ok"}

```

Pour plus d'exemples (FastAPI, Flask), consultez le dossier [examples/](examples/).

## Exemples Complets

Le SDK inclut des exemples d'intégration complets :

### Scripts simples

- [simple_sync.py](examples/simple_sync.py) - Script synchrone basique

- [simple_async.py](examples/simple_async.py) - Script asynchrone basique

### Frameworks web

- [fastapi_integration.py](examples/fastapi_integration.py) - API FastAPI complète avec webhooks

- [flask_integration.py](examples/flask_integration.py) - API Flask complète avec webhooks

Consultez [examples/README.md](examples/README.md) pour plus de détails et comment les exécuter.

## Logging

Le SDK configure automatiquement le logging à la **racine du projet utilisateur** :

```python

from shwary import configure_logging

import logging

# Mode debug pour voir toutes les requêtes/réponses

configure_logging(log_level=logging.DEBUG)

# Les logs s'écrivent dans :

# - Console (STDOUT)

# - Fichier: ./logs/shwary.log (rotation automatique à 10MB)

```

Sans données sensibles (clés API masquées).

## Exemples d'intégration

### FastAPI avec WebhooksShwary

```python

from fastapi import FastAPI, Request, HTTPException

from shwary import ShwaryAsync, WebhookPayload

import logging

app = FastAPI()

# Configuration du SDK Shwary

shwary = ShwaryAsync(

merchant_id="your-merchant-id",

merchant_key="your-merchant-key",

is_sandbox=True

)

@app.post("/api/payments/initiate")

async def initiate_payment(phone: str, amount: float, country: str = "DRC"):

"""

Initialise un paiement Shwary.

Query params:

- phone: numéro au format E.164 (ex: +243972345678)

- amount: montant de la transaction

- country: DRC, KE, UG (défaut: DRC)

"""

try:

async with shwary as client:

payment = await client.initiate_payment(

country=country,

amount=amount,

phone_number=phone,

callback_url="https://yourapi.com/api/webhooks/shwary"

)

return {

"success": True,

"transaction_id": payment["id"],

"status": payment["status"]

}

except ValidationError as e:

raise HTTPException(status_code=400, detail=str(e))

except AuthenticationError:

raise HTTPException(status_code=401, detail="Shwary credentials invalid")

except InsufficientFundsError:

raise HTTPException(status_code=402, detail="Insufficient balance")

except Exception as e:

logging.error(f"Payment init failed: {e}")

raise HTTPException(status_code=500, detail="Payment initiation failed")

@app.post("/api/webhooks/shwary")

async def handle_shwary_webhook(payload: WebhookPayload):

"""

Reçoit les notifications de changement d'état de transactions.

Shwary envoie une notification JSON lorsqu'une transaction change d'état.

"""

logging.info(f"Webhook received: {payload.id} -> {payload.status}")

if payload.status == "completed":

# Transaction réussie - livrez le service

logging.info(f"Payment completed: {payload.id}")

# await deliver_service(payload.id)

elif payload.status == "failed":

# Transaction échouée

logging.warning(f"Payment failed: {payload.id}")

# await notify_user_failure(payload.id)

return {"status": "ok"}

@app.get("/api/transactions/{transaction_id}")

async def get_transaction_status(transaction_id: str):

"""

Récupère le statut d'une transaction.

"""

try:

async with shwary as client:

tx = await client.get_transaction(transaction_id)

return {

"id": tx["id"],

"status": tx["status"],

"amount": tx["amount"]

}

except ShwaryAPIError as e:

if e.status_code == 404:

raise HTTPException(status_code=404, detail="Transaction not found")

raise HTTPException(status_code=500, detail="Error fetching transaction")

```

### Flask avec Shwary

```python

from flask import Flask, request, jsonify

from shwary import Shwary, ValidationError, AuthenticationError, ShwaryAPIError

import logging

app = Flask(__name__)

logging.basicConfig(level=logging.INFO)

# Client Shwary (synchrone pour Flask)

shwary_client = Shwary(

merchant_id="your-merchant-id",

merchant_key="your-merchant-key",

is_sandbox=True

)

@app.route("/api/payments/initiate", methods=["POST"])

def initiate_payment():

"""

Initialise un paiement Shwary.

Body JSON:

{

"phone": "+243972345678",

"amount": 5000,

"country": "DRC"

}

"""

data = request.get_json()

try:

phone = data.get("phone")

amount = data.get("amount")

country = data.get("country", "DRC")

if not all([phone, amount]):

return jsonify({"error": "Missing phone or amount"}), 400

payment = shwary_client.initiate_payment(

country=country,

amount=amount,

phone_number=phone,

callback_url="https://yourapi.com/api/webhooks/shwary"

)

return jsonify({

"success": True,

"transaction_id": payment["id"],

"status": payment["status"]

}), 200

except ValidationError as e:

app.logger.warning(f"Validation error: {e}")

return jsonify({"error": str(e)}), 400

except AuthenticationError as e:

app.logger.error(f"Auth error: {e}")

return jsonify({"error": "Shwary authentication failed"}), 401

except ShwaryAPIError as e:

app.logger.error(f"API error: {e}")

return jsonify({"error": f"Shwary error: {e.message}"}), e.status_code

except Exception as e:

app.logger.error(f"Unexpected error: {e}")

return jsonify({"error": "Internal server error"}), 500

@app.route("/api/webhooks/shwary", methods=["POST"])

def handle_shwary_webhook():

"""

Reçoit les notifications de Shwary.

"""

data = request.get_json()

transaction_id = data.get("id")

status = data.get("status")

app.logger.info(f"Shwary webhook: {transaction_id} -> {status}")

if status == "completed":

# Transaction réussie

app.logger.info(f"Payment completed: {transaction_id}")

# deliver_service(transaction_id)

elif status == "failed":

# Transaction échouée

app.logger.warning(f"Payment failed: {transaction_id}")

# notify_user_failure(transaction_id)

return jsonify({"status": "ok"}), 200

@app.route("/api/transactions/<transaction_id>", methods=["GET"])

def get_transaction_status(transaction_id):

"""Récupère le statut d'une transaction."""

try:

tx = shwary_client.get_transaction(transaction_id)

return jsonify({

"id": tx["id"],

"status": tx["status"],

"amount": tx["amount"]

}), 200

except ShwaryAPIError as e:

if e.status_code == 404:

return jsonify({"error": "Transaction not found"}), 404

app.logger.error(f"Error fetching transaction: {e}")

return jsonify({"error": "Server error"}), 500

@app.teardown_appcontext

def shutdown_shwary(exception=None):

"""Ferme le client Shwary à l'arrêt."""

shwary_client.close()

if __name__ == "__main__":

app.run(debug=False, host="0.0.0.0", port=5000)

```

### Configuration du Logging

Le SDK configure automatiquement le logging à la racine du projet. Pour augmenter le verbosity :

```python

import logging

from shwary import configure_logging

# Mode debug (affiche toutes les requêtes/réponses)

configure_logging(log_level=logging.DEBUG)

# Les logs sont écrits dans :

# - Console (STDOUT)

# - Fichier: ./shwary.log (rotation automatique à 10MB)

```

Les fichiers de log contiennent les détails des requêtes/réponses (sans données sensibles comme les clés API).

## Développement

Pour contribuer au SDK, consultez le fichier [CONTRIBUTING.md](./CONTRIBUTING.md)

### Licence