metadata_version string | name string | version string | summary string | description string | description_content_type string | author string | author_email string | maintainer string | maintainer_email string | license string | keywords string | classifiers list | platform list | home_page string | download_url string | requires_python string | requires list | provides list | obsoletes list | requires_dist list | provides_dist list | obsoletes_dist list | requires_external list | project_urls list | uploaded_via string | upload_time timestamp[us] | filename string | size int64 | path string | python_version string | packagetype string | comment_text string | has_signature bool | md5_digest string | sha256_digest string | blake2_256_digest string | license_expression string | license_files list | recent_7d_downloads int64 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

2.4 | opentelemetry-instrumentation-crewai | 0.52.4 | OpenTelemetry crewAI instrumentation | # OpenTelemetry CrewAI Instrumentation

<a href="https://pypi.org/project/opentelemetry-instrumentation-crewai/">

<img src="https://badge.fury.io/py/opentelemetry-instrumentation-crewai.svg">

</a>

This library allows tracing agentic workflows implemented with crewAI framework [crewAI library](https://github.com/crewAIInc/crewAI).

## Installation

```bash

pip install opentelemetry-instrumentation-crewai

```

## Example usage

```python

from opentelemetry.instrumentation.crewai import CrewAIInstrumentor

CrewAIInstrumentor().instrument()

```

## Privacy

**By default, this instrumentation logs prompts, completions, and embeddings to span attributes**. This gives you a clear visibility into how your LLM application is working, and can make it easy to debug and evaluate the quality of the outputs.

However, you may want to disable this logging for privacy reasons, as they may contain highly sensitive data from your users. You may also simply want to reduce the size of your traces.

To disable logging, set the `TRACELOOP_TRACE_CONTENT` environment variable to `false`.

```bash

TRACELOOP_TRACE_CONTENT=false

```

| text/markdown | null | Gal Kleinman <gal@traceloop.com>, Nir Gazit <nir@traceloop.com> | null | null | null | null | [] | [] | null | null | <4,>=3.10 | [] | [] | [] | [

"opentelemetry-api<2,>=1.38.0",

"opentelemetry-instrumentation>=0.59b0",

"opentelemetry-semantic-conventions-ai<0.5.0,>=0.4.13",

"opentelemetry-semantic-conventions>=0.59b0",

"crewai; extra == \"instruments\""

] | [] | [] | [] | [

"Repository, https://github.com/traceloop/openllmetry/tree/main/packages/opentelemetry-instrumentation-crewai"

] | twine/6.1.0 CPython/3.13.7 | 2026-02-19T13:21:46.559138 | opentelemetry_instrumentation_crewai-0.52.4.tar.gz | 338,576 | 2e/8f/1b0a6ea53bad7467d57d8ae4fbb006b70ce6e8eb9fe40402e06aaa3799c5/opentelemetry_instrumentation_crewai-0.52.4.tar.gz | source | sdist | null | false | 880881c20d8288aa7d97b075ce20238e | 524356efc0b457c6451596b776e56ae5d689aafc8078c69b7c3cc4e4ae76a2fa | 2e8f1b0a6ea53bad7467d57d8ae4fbb006b70ce6e8eb9fe40402e06aaa3799c5 | Apache-2.0 | [] | 54,500 |

2.4 | opentelemetry-instrumentation-cohere | 0.52.4 | OpenTelemetry Cohere instrumentation | # OpenTelemetry Cohere Instrumentation

<a href="https://pypi.org/project/opentelemetry-instrumentation-cohere/">

<img src="https://badge.fury.io/py/opentelemetry-instrumentation-cohere.svg">

</a>

This library allows tracing calls to any of Cohere's endpoints sent with the official [Cohere library](https://github.com/cohere-ai/cohere-python).

## Installation

```bash

pip install opentelemetry-instrumentation-cohere

```

## Example usage

```python

from opentelemetry.instrumentation.cohere import CohereInstrumentor

CohereInstrumentor().instrument()

```

## Privacy

**By default, this instrumentation logs prompts, completions, and embeddings to span attributes**. This gives you a clear visibility into how your LLM application is working, and can make it easy to debug and evaluate the quality of the outputs.

However, you may want to disable this logging for privacy reasons, as they may contain highly sensitive data from your users. You may also simply want to reduce the size of your traces.

To disable logging, set the `TRACELOOP_TRACE_CONTENT` environment variable to `false`.

```bash

TRACELOOP_TRACE_CONTENT=false

```

| text/markdown | null | Gal Kleinman <gal@traceloop.com>, Nir Gazit <nir@traceloop.com>, Tomer Friedman <tomer@traceloop.com> | null | null | null | null | [] | [] | null | null | <4,>=3.10 | [] | [] | [] | [

"opentelemetry-api<2,>=1.38.0",

"opentelemetry-instrumentation>=0.59b0",

"opentelemetry-semantic-conventions-ai<0.5.0,>=0.4.13",

"opentelemetry-semantic-conventions>=0.59b0",

"cohere; extra == \"instruments\""

] | [] | [] | [] | [

"Repository, https://github.com/traceloop/openllmetry/tree/main/packages/opentelemetry-instrumentation-cohere"

] | twine/6.1.0 CPython/3.13.7 | 2026-02-19T13:21:44.689370 | opentelemetry_instrumentation_cohere-0.52.4.tar.gz | 103,715 | 58/46/8325e7abb58cfd563cf48d91a9702942ee255e4686ca7d0aa8e2626a0f3a/opentelemetry_instrumentation_cohere-0.52.4.tar.gz | source | sdist | null | false | 64dcc24ae2f87db110111fe880eaa604 | a35bd9f55638d78f8cf231398852975c383a42f7f87a1c748b01965814b8ce3f | 58468325e7abb58cfd563cf48d91a9702942ee255e4686ca7d0aa8e2626a0f3a | Apache-2.0 | [] | 53,552 |

2.4 | opentelemetry-instrumentation-chromadb | 0.52.4 | OpenTelemetry Chroma DB instrumentation | # OpenTelemetry Chroma Instrumentation

<a href="https://pypi.org/project/opentelemetry-instrumentation-chromadb/">

<img src="https://badge.fury.io/py/opentelemetry-instrumentation-chromadb.svg">

</a>

This library allows tracing client-side calls to Chroma vector DB sent with the official [Chroma library](https://github.com/chroma-core/chroma).

## Installation

```bash

pip install opentelemetry-instrumentation-chromadb

```

## Example usage

```python

from opentelemetry.instrumentation.chromadb import ChromaInstrumentor

ChromaInstrumentor().instrument()

```

| text/markdown | null | Gal Kleinman <gal@traceloop.com>, Nir Gazit <nir@traceloop.com>, Tomer Friedman <tomer@traceloop.com> | null | null | null | null | [] | [] | null | null | <4,>=3.10 | [] | [] | [] | [

"opentelemetry-api<2,>=1.38.0",

"opentelemetry-instrumentation>=0.59b0",

"opentelemetry-semantic-conventions-ai<0.5.0,>=0.4.13",

"opentelemetry-semantic-conventions>=0.59b0",

"chromadb; extra == \"instruments\""

] | [] | [] | [] | [

"Repository, https://github.com/traceloop/openllmetry/tree/main/packages/opentelemetry-instrumentation-chromadb"

] | twine/6.1.0 CPython/3.13.7 | 2026-02-19T13:21:43.479368 | opentelemetry_instrumentation_chromadb-0.52.4.tar.gz | 142,118 | e1/6e/02224b625f7e82fc5d9cadbe80d96f41180a427d7ba6f1134b5e646a471e/opentelemetry_instrumentation_chromadb-0.52.4.tar.gz | source | sdist | null | false | 19cab3f8f63e6c2310ac8acc68385c2b | 1a359323a923ae959de3d8c283c564888a0dd7094f3b4c8347782d5e5a7dfec7 | e16e02224b625f7e82fc5d9cadbe80d96f41180a427d7ba6f1134b5e646a471e | Apache-2.0 | [] | 53,285 |

2.4 | opentelemetry-instrumentation-bedrock | 0.52.4 | OpenTelemetry Bedrock instrumentation | # OpenTelemetry Bedrock Instrumentation

<a href="https://pypi.org/project/opentelemetry-instrumentation-bedrock/">

<img src="https://badge.fury.io/py/opentelemetry-instrumentation-bedrock.svg">

</a>

This library allows tracing any of AWS Bedrock's models prompts and completions sent with [Boto3](https://github.com/boto/boto3) to Bedrock.

## Installation

```bash

pip install opentelemetry-instrumentation-bedrock

```

## Example usage

```python

from opentelemetry.instrumentation.bedrock import BedrockInstrumentor

BedrockInstrumentor().instrument()

```

## Privacy

**By default, this instrumentation logs prompts, completions, and embeddings to span attributes**. This gives you a clear visibility into how your LLM application is working, and can make it easy to debug and evaluate the quality of the outputs.

However, you may want to disable this logging for privacy reasons, as they may contain highly sensitive data from your users. You may also simply want to reduce the size of your traces.

To disable logging, set the `TRACELOOP_TRACE_CONTENT` environment variable to `false`.

```bash

TRACELOOP_TRACE_CONTENT=false

```

| text/markdown | null | Gal Kleinman <gal@traceloop.com>, Nir Gazit <nir@traceloop.com>, Tomer Friedman <tomer@traceloop.com> | null | null | null | null | [] | [] | null | null | <4,>=3.10 | [] | [] | [] | [

"anthropic>=0.17.0",

"opentelemetry-api<2,>=1.38.0",

"opentelemetry-instrumentation>=0.59b0",

"opentelemetry-semantic-conventions-ai<0.5.0,>=0.4.13",

"opentelemetry-semantic-conventions>=0.59b0",

"tokenizers>=0.13.0",

"boto3; extra == \"instruments\""

] | [] | [] | [] | [

"Repository, https://github.com/traceloop/openllmetry/tree/main/packages/opentelemetry-instrumentation-bedrock"

] | twine/6.1.0 CPython/3.13.7 | 2026-02-19T13:21:42.301522 | opentelemetry_instrumentation_bedrock-0.52.4.tar.gz | 149,890 | 7d/ea/1cdf7a8caa624043fe02eb90248e73269fae9ab275816f6fabcc34f60538/opentelemetry_instrumentation_bedrock-0.52.4.tar.gz | source | sdist | null | false | f49302f05a5636151fce0e3517098920 | d785b14338d475e85e3d2074840e71771c430f55efbad7ed27a9856e6d835771 | 7dea1cdf7a8caa624043fe02eb90248e73269fae9ab275816f6fabcc34f60538 | Apache-2.0 | [] | 53,955 |

2.4 | opentelemetry-instrumentation-anthropic | 0.52.4 | OpenTelemetry Anthropic instrumentation | # OpenTelemetry Anthropic Instrumentation

<a href="https://pypi.org/project/opentelemetry-instrumentation-anthropic/">

<img src="https://badge.fury.io/py/opentelemetry-instrumentation-anthropic.svg">

</a>

This library allows tracing Anthropic prompts and completions sent with the official [Anthropic library](https://github.com/anthropics/anthropic-sdk-python).

## Installation

```bash

pip install opentelemetry-instrumentation-anthropic

```

## Example usage

```python

from opentelemetry.instrumentation.anthropic import AnthropicInstrumentor

AnthropicInstrumentor().instrument()

```

## Privacy

**By default, this instrumentation logs prompts, completions, and embeddings to span attributes**. This gives you a clear visibility into how your LLM application is working, and can make it easy to debug and evaluate the quality of the outputs.

However, you may want to disable this logging for privacy reasons, as they may contain highly sensitive data from your users. You may also simply want to reduce the size of your traces.

To disable logging, set the `TRACELOOP_TRACE_CONTENT` environment variable to `false`.

```bash

TRACELOOP_TRACE_CONTENT=false

```

| text/markdown | null | Gal Kleinman <gal@traceloop.com>, Nir Gazit <nir@traceloop.com>, Tomer Friedman <tomer@traceloop.com> | null | null | null | null | [] | [] | null | null | <4,>=3.10 | [] | [] | [] | [

"opentelemetry-api<2,>=1.38.0",

"opentelemetry-instrumentation>=0.59b0",

"opentelemetry-semantic-conventions-ai<0.5.0,>=0.4.13",

"opentelemetry-semantic-conventions>=0.59b0",

"anthropic; extra == \"instruments\""

] | [] | [] | [] | [

"Repository, https://github.com/traceloop/openllmetry/tree/main/packages/opentelemetry-instrumentation-anthropic"

] | twine/6.1.0 CPython/3.13.7 | 2026-02-19T13:21:40.254760 | opentelemetry_instrumentation_anthropic-0.52.4.tar.gz | 682,756 | d2/e1/d3ed879e317f307718b21da6962edc0da20f0e82bad810e837793a985720/opentelemetry_instrumentation_anthropic-0.52.4.tar.gz | source | sdist | null | false | c61353eab4531d4236aba3d6ed50be02 | 88bb08755c400de020698c8c5a06c10089819f07187f2abc4ebfee93b0e223eb | d2e1d3ed879e317f307718b21da6962edc0da20f0e82bad810e837793a985720 | Apache-2.0 | [] | 35,519 |

2.4 | opentelemetry-instrumentation-alephalpha | 0.52.4 | OpenTelemetry Aleph Alpha instrumentation | # OpenTelemetry Aleph Alpha Instrumentation

<a href="https://pypi.org/project/opentelemetry-instrumentation-alephalpha/">

<img src="https://badge.fury.io/py/opentelemetry-instrumentation-alephalpha.svg">

</a>

This library allows tracing calls to any of Aleph Alpha's endpoints sent with the official [Aleph Alpha Client](https://github.com/Aleph-Alpha/aleph-alpha-client).

## Installation

```bash

pip install opentelemetry-instrumentation-alephalpha

```

## Example usage

```python

from opentelemetry.instrumentation.alephalpha import AlephAlphaInstrumentor

AlephAlphaInstrumentor().instrument()

```

## Privacy

**By default, this instrumentation logs prompts, completions, and embeddings to span attributes**. This gives you a clear visibility into how your LLM application is working, and can make it easy to debug and evaluate the quality of the outputs.

However, you may want to disable this logging for privacy reasons, as they may contain highly sensitive data from your users. You may also simply want to reduce the size of your traces.

To disable logging, set the `TRACELOOP_TRACE_CONTENT` environment variable to `false`.

```bash

TRACELOOP_TRACE_CONTENT=false

```

| text/markdown | null | Gal Kleinman <gal@traceloop.com>, Nir Gazit <nir@traceloop.com>, Benedikt Wolf <bene25@web.de> | null | null | null | null | [] | [] | null | null | <4,>=3.10 | [] | [] | [] | [

"opentelemetry-api<2,>=1.38.0",

"opentelemetry-instrumentation>=0.59b0",

"opentelemetry-semantic-conventions-ai<0.5.0,>=0.4.13",

"opentelemetry-semantic-conventions>=0.59b0",

"aleph-alpha-client; extra == \"instruments\""

] | [] | [] | [] | [

"Repository, https://github.com/traceloop/openllmetry/tree/main/packages/opentelemetry-instrumentation-alephalpha"

] | twine/6.1.0 CPython/3.13.7 | 2026-02-19T13:21:37.019024 | opentelemetry_instrumentation_alephalpha-0.52.4.tar.gz | 141,427 | 56/cb/3ecc6b075a85f1d9c845e6be3f126f9d4904ce640f9d9c80817230e0a3ab/opentelemetry_instrumentation_alephalpha-0.52.4.tar.gz | source | sdist | null | false | c35dbe345613106ae45fd3b50a3716c4 | 0e0e76d21a18b6e9c1103ce689ad81ff00325b2781070cec477be735d04ade7d | 56cb3ecc6b075a85f1d9c845e6be3f126f9d4904ce640f9d9c80817230e0a3ab | Apache-2.0 | [] | 53,250 |

2.4 | opentelemetry-instrumentation-agno | 0.52.4 | OpenTelemetry Agno instrumentation | # OpenTelemetry Agno Instrumentation

This library provides automatic instrumentation for the [Agno](https://github.com/agno-agi/agno) framework.

## Installation

```bash

pip install opentelemetry-instrumentation-agno

```

## Usage

```python

from opentelemetry.instrumentation.agno import AgnoInstrumentor

AgnoInstrumentor().instrument()

```

## Supported Features

This instrumentation captures:

- Agent execution (sync and async)

- Team operations

- Model invocations

- Function calls

- Streaming responses

## Links

- [Agno Framework](https://github.com/agno-agi/agno)

- [OpenTelemetry](https://opentelemetry.io/)

| text/markdown | null | Gal Kleinman <gal@traceloop.com>, Nir Gazit <nir@traceloop.com> | null | null | null | null | [] | [] | null | null | <4,>=3.10 | [] | [] | [] | [

"opentelemetry-api<2,>=1.28.0",

"opentelemetry-instrumentation>=0.59b0",

"opentelemetry-semantic-conventions-ai<0.5.0,>=0.4.13",

"opentelemetry-semantic-conventions>=0.59b0",

"agno; extra == \"instruments\""

] | [] | [] | [] | [

"Repository, https://github.com/traceloop/openllmetry/tree/main/packages/opentelemetry-instrumentation-agno"

] | twine/6.1.0 CPython/3.13.7 | 2026-02-19T13:21:35.934588 | opentelemetry_instrumentation_agno-0.52.4.tar.gz | 82,791 | 8d/2a/96723487fc8d7e9a76ad948c46bfa189f850e20fe91e9c374db53981e8d5/opentelemetry_instrumentation_agno-0.52.4.tar.gz | source | sdist | null | false | 3dc2e9dfa210fc1e73b78bc744d1cecb | f22d7425ec4fbda84a712e1e7f7195258e6250a5ac67e45ae24147ab20a3f70f | 8d2a96723487fc8d7e9a76ad948c46bfa189f850e20fe91e9c374db53981e8d5 | Apache-2.0 | [] | 30,448 |

2.4 | aeo-cli | 1.0.0 | Agentic Engine Optimization CLI — audit URLs for AI crawler readiness | # AEO-CLI

[](https://github.com/hanselhansel/aeo-cli/actions/workflows/test.yml)

[](https://www.python.org/downloads/)

[](LICENSE)

[](https://pypi.org/project/aeo-cli/)

**Audit any URL for AI crawler readiness. Get a 0-100 AEO score.**

## What is AEO?

Agentic Engine Optimization (AEO) is the practice of making your website discoverable and accessible to AI agents and LLM-powered search engines. As AI crawlers like GPTBot, ClaudeBot, and PerplexityBot become major traffic sources, AEO ensures your content is structured, permitted, and optimized for these systems.

AEO-CLI checks how well a URL is prepared for AI consumption and returns a structured score.

## Features

- **Robots.txt AI bot access** — checks 13 AI crawlers (GPTBot, ClaudeBot, DeepSeek-AI, Grok, and more)

- **llms.txt & llms-full.txt** — detects both standard and extended LLM instruction files

- **Schema.org JSON-LD** — extracts and evaluates structured data with high-value type weighting (Product, Article, FAQ, HowTo)

- **Content density** — measures useful content vs. boilerplate with readability scoring, heading structure analysis, and answer-first detection

- **Batch mode** — audit multiple URLs from a file with `--file` and configurable `--concurrency`

- **Custom bot list** — override default bots with `--bots` for targeted checks

- **Verbose output** — detailed per-pillar breakdown with scoring explanations and recommendations

- **Rich CLI output** — formatted tables and scores via Rich

- **JSON / CSV / Markdown output** — machine-readable results for pipelines

- **MCP server** — expose the audit as a tool for AI agents via FastMCP

- **AEO Compiler** — LLM-powered `llms.txt` and `schema.jsonld` generation, with batch mode for multiple URLs

- **CI/CD integration** — `--fail-under` threshold, `--fail-on-blocked-bots`, per-pillar thresholds, baseline regression detection, GitHub Step Summary

- **GitHub Action** — composite action for CI pipelines with baseline support

- **Citation Radar** — query AI models to see what they cite and recommend, with brand tracking and domain classification

- **Share-of-Recommendation Benchmark** — track how often AI models mention and recommend your brand vs competitors, with LLM-as-judge analysis

- **Retail AI-Readiness Auditor** — audit product listings on Amazon, Shopee, Lazada, Tokopedia, TikTok Shop, Blibli, Zalora with 5-pillar scoring and OpenAI Feed Spec compliance

## Installation

```bash

pip install aeo-cli

```

AEO-CLI uses a headless browser for content extraction. After installing, run:

```bash

crawl4ai-setup

```

### Development install

```bash

git clone https://github.com/your-org/aeo-cli.git

cd aeo-cli

pip install -e ".[dev]"

crawl4ai-setup

```

## Quick Start

```bash

aeo-cli audit example.com

```

This runs a full audit and prints a Rich-formatted report with your AEO score.

## CLI Usage

### Single Page Audit

Audit only the specified URL (skip multi-page discovery):

```bash

aeo-cli audit example.com --single

```

### Multi-Page Site Audit (default)

Discover pages via sitemap/spider and audit up to 10 pages:

```bash

aeo-cli audit example.com

```

### Limit Pages

```bash

aeo-cli audit example.com --max-pages 5

```

### JSON Output

Get structured JSON for CI pipelines, dashboards, or scripting:

```bash

aeo-cli audit example.com --json

```

### CSV / Markdown Output

```bash

aeo-cli audit example.com --format csv

aeo-cli audit example.com --format markdown

```

### Verbose Mode

Show detailed per-pillar breakdown with scoring explanations:

```bash

aeo-cli audit example.com --single --verbose

```

### Timeout

Set the HTTP timeout (default: 15 seconds):

```bash

aeo-cli audit example.com --timeout 30

```

### Custom Bot List

Override the default 13 bots with a custom list:

```bash

aeo-cli audit example.com --bots "GPTBot,ClaudeBot,PerplexityBot"

```

### Batch Mode

Audit multiple URLs from a file (one URL per line, `.txt` or `.csv`):

```bash

aeo-cli audit --file urls.txt

aeo-cli audit --file urls.txt --concurrency 5

aeo-cli audit --file urls.txt --format csv

```

### CI Mode

Fail the build if the AEO score is below a threshold:

```bash

aeo-cli audit example.com --fail-under 60

```

Fail if any AI bot is blocked:

```bash

aeo-cli audit example.com --fail-on-blocked-bots

```

#### Per-Pillar Thresholds

Gate CI on individual pillar scores:

```bash

aeo-cli audit example.com --robots-min 20 --content-min 30 --overall-min 60

```

Available: `--robots-min`, `--schema-min`, `--content-min`, `--llms-min`, `--overall-min`.

#### Baseline Regression Detection

Save a baseline and detect score regressions in future audits:

```bash

# Save current scores as baseline

aeo-cli audit example.com --single --save-baseline .aeo-baseline.json

# Compare against baseline (exit 1 if any pillar drops > 5 points)

aeo-cli audit example.com --single --baseline .aeo-baseline.json

# Custom regression threshold

aeo-cli audit example.com --single --baseline .aeo-baseline.json --regression-threshold 10

```

Exit codes: 0 = pass, 1 = score below threshold or regression detected, 2 = bots blocked.

When running in GitHub Actions, a markdown summary is automatically written to `$GITHUB_STEP_SUMMARY`.

### Quiet Mode

Suppress output, exit code 0 if score >= 50, 1 otherwise:

```bash

aeo-cli audit example.com --quiet

```

Use `--fail-under` with `--quiet` to override the default threshold:

```bash

aeo-cli audit example.com --quiet --fail-under 70

```

### Start MCP server

```bash

aeo-cli mcp

```

Launches a FastMCP stdio server exposing the audit as a tool for AI agents.

## MCP Integration

To use AEO-CLI as a tool in Claude Desktop, add this to your Claude Desktop config (`claude_desktop_config.json`):

```json

{

"mcpServers": {

"aeo-cli": {

"command": "aeo-cli",

"args": ["mcp"]

}

}

}

```

Once configured, Claude can call the `audit_url` tool directly to check any URL's AEO readiness.

## AEO Compiler (Generate)

Generate `llms.txt` and `schema.jsonld` files from any URL using LLM analysis:

```bash

pip install aeo-cli[generate]

aeo-cli generate example.com

```

This crawls the URL, sends the content to an LLM, and writes optimized files to `./aeo-output/`.

### Batch Generate

Generate assets for multiple URLs from a file:

```bash

aeo-cli generate-batch urls.txt

aeo-cli generate-batch urls.txt --concurrency 5 --profile ecommerce

aeo-cli generate-batch urls.txt --json

```

Each URL's output goes to a subdirectory under `--output-dir`.

### BYOK (Bring Your Own Key)

The generate command auto-detects your LLM provider from environment variables:

| Priority | Env Variable | Model Used |

|----------|-------------|------------|

| 1 | `OPENAI_API_KEY` | gpt-4o-mini |

| 2 | `ANTHROPIC_API_KEY` | claude-3-haiku-20240307 |

| 3 | Ollama running locally | ollama/llama3.2 |

Override with `--model`:

```bash

aeo-cli generate example.com --model gpt-4o

```

### Industry Profiles

Tailor the output with `--profile`:

```bash

aeo-cli generate example.com --profile saas

aeo-cli generate example.com --profile ecommerce

```

Available: `generic`, `cpg`, `saas`, `ecommerce`, `blog`.

## Citation Radar

Query AI models to see what they cite and recommend for any search prompt:

```bash

pip install aeo-cli[generate]

aeo-cli radar "best project management tools" --brand Asana --brand Monday --model gpt-4o-mini

```

Options:

- `--brand/-b`: Brand name to track (repeatable)

- `--model/-m`: LLM model to query (repeatable, default: gpt-4o-mini)

- `--runs/-r`: Runs per model for statistical significance

- `--json`: Output as JSON

## Retail AI-Readiness Auditor

Audit product listings on marketplaces for AI optimization readiness:

```bash

aeo-cli retail "https://www.amazon.com/dp/B07L123456"

aeo-cli retail "https://shopee.sg/product/123" --json

aeo-cli retail "https://www.lazada.co.id/products/example-i123.html" --verbose

```

Supported marketplaces: Amazon (all TLDs), Shopee, Lazada, Tokopedia, TikTok Shop, Blibli, Zalora, and a Generic fallback (Schema.org/OpenGraph).

Scoring pillars:

- **Product Schema** (25): JSON-LD Product, Offer, AggregateRating

- **Content Quality** (30): Bullet points, description, A+ content, spec charts

- **Visual Assets** (15): Image count, alt text, video

- **Social Proof** (20): Reviews, rating, Q&A

- **Feed Compliance** (10): OpenAI Product Feed Spec alignment

## Share-of-Recommendation Benchmark

Track how AI models mention and recommend your brand across multiple prompts:

```bash

pip install aeo-cli[generate]

aeo-cli benchmark prompts.txt -b "YourBrand" -c "Competitor1" -c "Competitor2"

```

Options:

- `prompts.txt`: CSV (with `prompt,category,intent` columns) or plain text (one prompt per line)

- `--brand/-b`: Target brand to track (required)

- `--competitor/-c`: Competitor brand (repeatable)

- `--model/-m`: LLM model to query (repeatable, default: gpt-4o-mini)

- `--runs/-r`: Runs per model per prompt (default: 3)

- `--yes/-y`: Skip cost confirmation prompt

- `--json`: Output as JSON

## GitHub Action

Use AEO-CLI in your CI pipeline:

```yaml

- name: Run AEO Audit

uses: hanselhansel/aeo-cli@main

with:

url: 'https://your-site.com'

fail-under: '60'

```

With baseline regression detection:

```yaml

- name: Run AEO Audit

uses: hanselhansel/aeo-cli@main

with:

url: 'https://your-site.com'

baseline-file: '.aeo-baseline.json'

save-baseline: '.aeo-baseline.json'

regression-threshold: '5'

```

The action sets up Python, installs aeo-cli, and runs the audit. Outputs `score` and `report-json` for downstream steps. See [docs/ci-integration.md](docs/ci-integration.md) for full documentation.

## Score Breakdown

AEO-CLI returns a score from 0 to 100, composed of four pillars:

| Pillar | Max Points | What it measures |

|---|---|---|

| Content density | 40 | Quality and depth of extractable text content |

| Robots.txt AI bot access | 25 | Whether AI crawlers are allowed in robots.txt |

| Schema.org JSON-LD | 25 | Structured data markup (Product, Article, FAQ, etc.) |

| llms.txt presence | 10 | Whether a /llms.txt file exists for LLM guidance |

### Scoring rationale (2026-02-18)

The weights reflect how AI search engines (ChatGPT, Perplexity, Claude) actually consume web content:

- **Content density (40 pts)** is weighted highest because it's what LLMs extract and cite when answering questions. Rich, well-structured content with headings and lists gives AI better material to work with.

- **Robots.txt (25 pts)** is the gatekeeper — if a bot is blocked, it literally cannot crawl. It's critical but largely binary (either you're blocking or you're not).

- **Schema.org (25 pts)** provides structured "cheat sheets" that help AI understand entities. High-value types (Product, Article, FAQ, HowTo, Recipe) receive bonus weighting. Valuable but not required for citation.

- **llms.txt (10 pts)** is an emerging standard. Both `/llms.txt` and `/llms-full.txt` are checked. No major AI search engine heavily weights it yet, but it signals forward-thinking AI readiness.

## AI Bots Checked

AEO-CLI checks access rules for 13 AI crawlers:

- GPTBot

- ChatGPT-User

- Google-Extended

- ClaudeBot

- PerplexityBot

- Amazonbot

- OAI-SearchBot

- DeepSeek-AI

- Grok

- Meta-ExternalAgent

- cohere-ai

- AI2Bot

- ByteSpider

## Development

```bash

# Install with dev dependencies

pip install -e ".[dev]"

# Run tests

pytest

# Lint

ruff check src/ tests/

```

## License

MIT

| text/markdown | Hansel Wahjono | null | null | null | null | aeo, seo, ai, llm, crawler, audit, robots-txt, schema-org | [

"Development Status :: 5 - Production/Stable",

"Environment :: Console",

"Intended Audience :: Developers",

"Programming Language :: Python :: 3",

"Programming Language :: Python :: 3.10",

"Programming Language :: Python :: 3.11",

"Programming Language :: Python :: 3.12",

"Topic :: Internet :: WWW/HTT... | [] | null | null | >=3.10 | [] | [] | [] | [

"typer>=0.9",

"rich>=13.0",

"httpx>=0.27",

"beautifulsoup4>=4.12",

"pydantic>=2.0",

"crawl4ai>=0.4",

"fastmcp>=2.0",

"pyyaml>=6.0",

"litellm>=1.40; extra == \"generate\"",

"pytest>=8.0; extra == \"dev\"",

"pytest-asyncio>=0.23; extra == \"dev\"",

"pytest-cov>=5.0; extra == \"dev\"",

"mypy>=1... | [] | [] | [] | [

"Homepage, https://github.com/hanselhansel/aeo-cli",

"Repository, https://github.com/hanselhansel/aeo-cli",

"Issues, https://github.com/hanselhansel/aeo-cli/issues"

] | twine/6.1.0 CPython/3.13.7 | 2026-02-19T13:20:30.280664 | aeo_cli-1.0.0.tar.gz | 210,102 | 7a/a5/02b0dba0da1d091207620f2e531c11448e25d24888671b4e05609126a459/aeo_cli-1.0.0.tar.gz | source | sdist | null | false | 726d1e538ec380c5669c8a60c102747c | 465f33399819f17de69609b0257eb1e8dac008b97070951f4c7d1db5b694ef7f | 7aa502b0dba0da1d091207620f2e531c11448e25d24888671b4e05609126a459 | MIT | [

"LICENSE"

] | 163 |

2.4 | brainseg-containers | 1.0.2 | wrapper for brain segmentation tools | # BrainSeg-container

This repository provides a streamlined Python wrapper and CLI tool to automatically download, run, and standardize outputs from state-of-the-art brain segmentation tools using Apptainer / Singularity containers.

Brain segmentation tools often have conflicting dependencies, complex installation steps, or require specific versions of system libraries. This package solves that problem by containerizing the tools and handling the execution, file binding, and label standardization for you.

## The Tools

This pipeline currently supports the following deep-learning-based segmentation tools. We may add more in the future.

1. [**GOUHFI**](https://github.com/mafortin/GOUHFI)

This tool was designed to handle the challenges of Ultra-High Field MRI (7T+). It utilizes "domain randomization" during training, which allows it to remain robust across different MRI contrasts and resolutions, including standard clinical scans.

* **Resolution:** Native (preserves input resolution).

* **CSF Availability:** Yes (segments ventricles and subarachnoid space CSF).

2. [**SynthSeg**](https://github.com/BBillot/SynthSeg)

Developed by the FreeSurfer team, this tool is famous for working "out of the box" on almost any kind of MRI scan (different contrasts, resolutions, or messy clinical data).

* **Resolution:** Fixed 1mm isotropic (always resamples input to 1mm).

* **CSF Availability:** Yes.

3. [**FastSurfer**](https://github.com/Deep-MI/FastSurfer)

A rapid deep-learning-based segmentation tool.

* **Resolution:** Native (but experimental below 0.7mm).

* **CSF Availability:** No (segments ventricles, but ignores subarachnoid space CSF).

4. [**SimNIBS (Charm)**](https://github.com/simnibs/simnibs)

The "Complete Head Anatomy Reconstruction Method" from the SimNIBS suite. While designed for modeling brain stimulation (TMS/TES), it produces high-quality segmentation of extra-cerebral tissues (skull, scalp, etc.) in addition to the brain.

* **Resolution:** Native (pipeline uses the upsampled output to match input).

* **CSF Availability:** Yes.

* **Segmented regions:** Charm provides the following segmentation labels: White-Matter, Gray-Matter, CSF, Bone, Scalp, Eye_bals, Compact_bone, Spongy_bone, Blood, Muscle, Cartilage, Fat, Electrode, Saline_or_gel

## Comparison Output

The pipeline can automatically generate a comparison grid so you can quickly inspect the differences between the tools.

## Getting Started

### Prerequisites

You must have Apptainer (or Singularity) installed on your system to run the containers.

* Ubuntu/Debian: `sudo apt install apptainer`

* Conda/Mamba: `conda install -c conda-forge apptainer `

### Installation

You can install the package directly via pip:

```bash

pip install brainseg-containers

```

*(Optional) If you want to use the plotting and comparison features, install with the `plot` extras:*

```bash

pip install brainseg-containers[plot]

```

---

## Usage

The package provides a simple command-line interface. The first time you run a specific tool, the wrapper will automatically download the corresponding container from the GitHub Container Registry and store it in `~/.brainseg_containers/`.

### Basic Command

```bash

brainseg -t <tool_name> -i <input_file.nii.gz> -o <output_file.nii.gz>

```

**Available Tools:** `synthseg`, `gouhfi`, `fastsurfer`, `simnibs`

### Examples

**Run GOUHFI on a single subject:**

```bash

brainseg -t gouhfi -i inputs/sub-01_T1w.nii.gz -o results/sub-01_gouhfi.nii.gz

```

**Run SynthSeg on the same subject:**

```bash

brainseg -t synthseg -i inputs/sub-01_T1w.nii.gz -o results/sub-01_synthseg.nii.gz

```

*Note: You can optionally provide a custom path to a pre-downloaded `.sif` image using the `--container` flag.*

### Note on Labels

Different tools use different numbers to represent brain regions. To make comparison easier, this pipeline automatically **remaps** the output labels of FastSurfer and GOUHFI to match the standard FreeSurfer lookup table.

| text/markdown | null | Marius Causemann <mariusca@simula.no> | null | null | null | null | [] | [] | null | null | null | [] | [] | [] | [

"nibabel",

"fastremap",

"pdbpp; extra == \"dev\"",

"ipython; extra == \"dev\"",

"mypy; extra == \"dev\"",

"ruff; extra == \"dev\"",

"matplotlib; extra == \"plot\"",

"nilearn; extra == \"plot\"",

"numpy; extra == \"plot\"",

"pandas; extra == \"plot\"",

"jupyter-book<2.0.0; extra == \"docs\"",

"... | [] | [] | [] | [

"homepage, https://github.com/MariusCausemann/brainseg"

] | twine/6.1.0 CPython/3.13.7 | 2026-02-19T13:20:26.925685 | brainseg_containers-1.0.2.tar.gz | 28,852 | 31/75/c8a1e3e5bb38ef8d32c3782425514e6438a4b455d26748c0d758b7c1b558/brainseg_containers-1.0.2.tar.gz | source | sdist | null | false | 8502c6bff867f71ff869cb66c203239b | 0b0423a5c9aa56219c552620563c296c9d06a2ef60ded69d48dcdb1c12142967 | 3175c8a1e3e5bb38ef8d32c3782425514e6438a4b455d26748c0d758b7c1b558 | null | [] | 209 |

2.4 | epinterface | 1.3.0 | This is a repository for dynamically generating energy models within Python, relying on Archetypal and Eppy for most of its functionality. | # epinterface

[](https://img.shields.io/github/v/release/szvsw/epinterface)

[](https://github.com/szvsw/epinterface/actions/workflows/main.yml?query=branch%3Amain)

[](https://codecov.io/gh/szvsw/epinterface)

[](https://img.shields.io/github/commit-activity/m/szvsw/epinterface)

[](https://img.shields.io/github/license/szvsw/epinterface)

This is a repository for dynamically generating energy models within Python, relying on Archetypal and Eppy for most of its functionality.

- **Github repository**: <https://github.com/szvsw/epinterface/>

## Configuration

The EnergyPlus version used when creating IDF objects can be configured via the `EPINTERFACE_ENERGYPLUS_VERSION` environment variable. It defaults to `22.2.0`. Both dotted (`22.2.0`) and hyphenated (`22-2-0`) version formats are accepted.

- **Documentation** <https://szvsw.github.io/epinterface/>

## Getting started with your project

First, create a repository on GitHub with the same name as this project, and then run the following commands:

```bash

git init -b main

git add .

git commit -m "init commit"

git remote add origin git@github.com:szvsw/epinterface.git

git push -u origin main

```

Finally, install the environment and the pre-commit hooks with

```bash

make install

```

You are now ready to start development on your project!

The CI/CD pipeline will be triggered when you open a pull request, merge to main, or when you create a new release.

To finalize the set-up for publishing to PyPI or Artifactory, see [here](https://fpgmaas.github.io/cookiecutter-uv/features/publishing/#set-up-for-pypi).

For activating the automatic documentation with MkDocs, see [here](https://fpgmaas.github.io/cookiecutter-uv/features/mkdocs/#enabling-the-documentation-on-github).

To enable the code coverage reports, see [here](https://fpgmaas.github.io/cookiecutter-uv/features/codecov/).

## Releasing a new version

- Create an API Token on [PyPI](https://pypi.org/).

- Add the API Token to your projects secrets with the name `PYPI_TOKEN` by visiting [this page](https://github.com/szvsw/epinterface/settings/secrets/actions/new).

- Create a [new release](https://github.com/szvsw/epinterface/releases/new) on Github.

- Create a new tag in the form `*.*.*`.

- For more details, see [here](https://fpgmaas.github.io/cookiecutter-uv/features/cicd/#how-to-trigger-a-release).

---

Repository initiated with [fpgmaas/cookiecutter-uv](https://github.com/fpgmaas/cookiecutter-uv).

| text/markdown | null | Sam Wolk <mail@samwolk.info> | null | null | null | null | [] | [] | null | null | <3.13,>=3.10 | [] | [] | [] | [

"archetypal==2.18.10",

"click==8.1.7",

"geopandas~=1.0.1",

"httpx~=0.27.2",

"ladybug-core>=0.44.30",

"openpyxl~=3.1.5",

"pandas<2.3,>=2.2",

"prisma~=0.15.0",

"pydantic-settings<3,>=2.0",

"pydantic<3,>=2.9",

"pythermalcomfort>=3.8.0"

] | [] | [] | [] | [

"Homepage, https://github.com/szvsw/epinterface",

"Documentation, https://szvsw.github.io/epinterface",

"Repository, https://github.com/szvsw/epinterface",

"Issues, https://github.com/szvsw/epinterface/issues"

] | uv/0.6.14 | 2026-02-19T13:20:07.994238 | epinterface-1.3.0.tar.gz | 5,980,782 | 64/ec/2088293d4017a4bafb48ae4a5d586f462024a53aac2de28af954ebe1ae3e/epinterface-1.3.0.tar.gz | source | sdist | null | false | cdd64148a45979bee0833f25987b1a7d | 517aac62534cad83e9ffa19ed3cc364f6745eb04089b1bfd99c63f2425380d26 | 64ec2088293d4017a4bafb48ae4a5d586f462024a53aac2de28af954ebe1ae3e | null | [

"LICENSE"

] | 259 |

2.4 | pydantic-market-data | 0.1.11 | Shared models and interfaces for finance datasources | # pydantic-market-data

Shared Pydantic models and interfaces for financial data sources.

Defines a standard contract (`DataSource`) and data structures (`OHLCV`, `Symbol`, `History`) to interoperability between finance packages.

## Installation

```bash

pip install pydantic-market-data

```

## Usage

### Models

Standardized data models for financial entities.

```python

from pydantic_market_data.models import Symbol, OHLCV, History, SecurityCriteria

# Symbol Definition

s = Symbol(

ticker="AAPL",

name="Apple Inc.",

exchange="NASDAQ",

currency="USD"

)

# Historical Data Point

candle = OHLCV(

date="2023-12-01",

open=150.0,

high=155.0,

low=149.0,

close=154.0,

volume=50000000

)

# Security Lookup Criteria

criteria = SecurityCriteria(

symbol="AAPL",

target_date="2023-12-01"

)

```

### Protocol

Implement the `DataSource` protocol to create compatible data providers.

```python

from typing import Optional, List

from pydantic_market_data.interfaces import DataSource

from pydantic_market_data.models import SecurityCriteria, Symbol, History

class MySource(DataSource):

def resolve(self, criteria: SecurityCriteria) -> Optional[Symbol]:

# Implementation...

pass

def history(self, ticker: str, period: str = "1mo") -> History:

# Implementation...

pass

```

## License

MIT

| text/markdown | null | Roman Medvedev <pypi@romavm.dev> | null | null | MIT | null | [

"Development Status :: 4 - Beta",

"Intended Audience :: Developers",

"License :: OSI Approved :: MIT License",

"Programming Language :: Python",

"Programming Language :: Python :: 3.10",

"Programming Language :: Python :: 3.11",

"Programming Language :: Python :: 3.12",

"Programming Language :: Python... | [] | null | null | >=3.10 | [] | [] | [] | [

"pandas>=2.2.0",

"pycountry>=24.6.1",

"pydantic-extra-types>=2.11.0",

"pydantic>=2.0.0"

] | [] | [] | [] | [

"Homepage, https://github.com/romamo/pydantic-market-data",

"Repository, https://github.com/romamo/pydantic-market-data",

"Issues, https://github.com/romamo/pydantic-market-data/issues"

] | uv/0.10.4 {"installer":{"name":"uv","version":"0.10.4","subcommand":["publish"]},"python":null,"implementation":{"name":null,"version":null},"distro":{"name":"Ubuntu","version":"24.04","id":"noble","libc":null},"system":{"name":null,"release":null},"cpu":null,"openssl_version":null,"setuptools_version":null,"rustc_version":null,"ci":true} | 2026-02-19T13:19:08.410824 | pydantic_market_data-0.1.11-py3-none-any.whl | 5,359 | 30/41/95e26f2757102e31966768fd3fdb4374c0793e391c1cdc54409464a7eb53/pydantic_market_data-0.1.11-py3-none-any.whl | py3 | bdist_wheel | null | false | fd9902d5e9d36b10109240142e3b7fd1 | 68a9635faec674ec395ffb7e1f7d491af4bdd06763259f739f7a73afffaa3953 | 304195e26f2757102e31966768fd3fdb4374c0793e391c1cdc54409464a7eb53 | null | [

"LICENSE"

] | 197 |

2.4 | compas-timber | 2.0.0.dev0 | COMPAS package for modeling, designing and fabricating timber assemblies. | <h1>

<img src="docs\_logo\PNG_tranparent-background.png" alt="Logo" width="70" height="70" style="vertical-align:middle">

COMPAS Timber

</h1>

[](https://github.com/gramaziokohler/compas_timber/actions)

[](https://codecov.io/gh/gramaziokohler/compas_timber)

[](https://pypi.python.org/pypi/compas_timber)

[](https://pypi.python.org/project/compas_timber)

[](https://pypi.python.org/pypi/compas_timber)

[](https://pypi.python.org/pypi/compas_timber)

[](https://doi.org/10.5281/zenodo.7934267)

[](https://twitter.com/compas_dev)

[](https://compas.dev/#/)

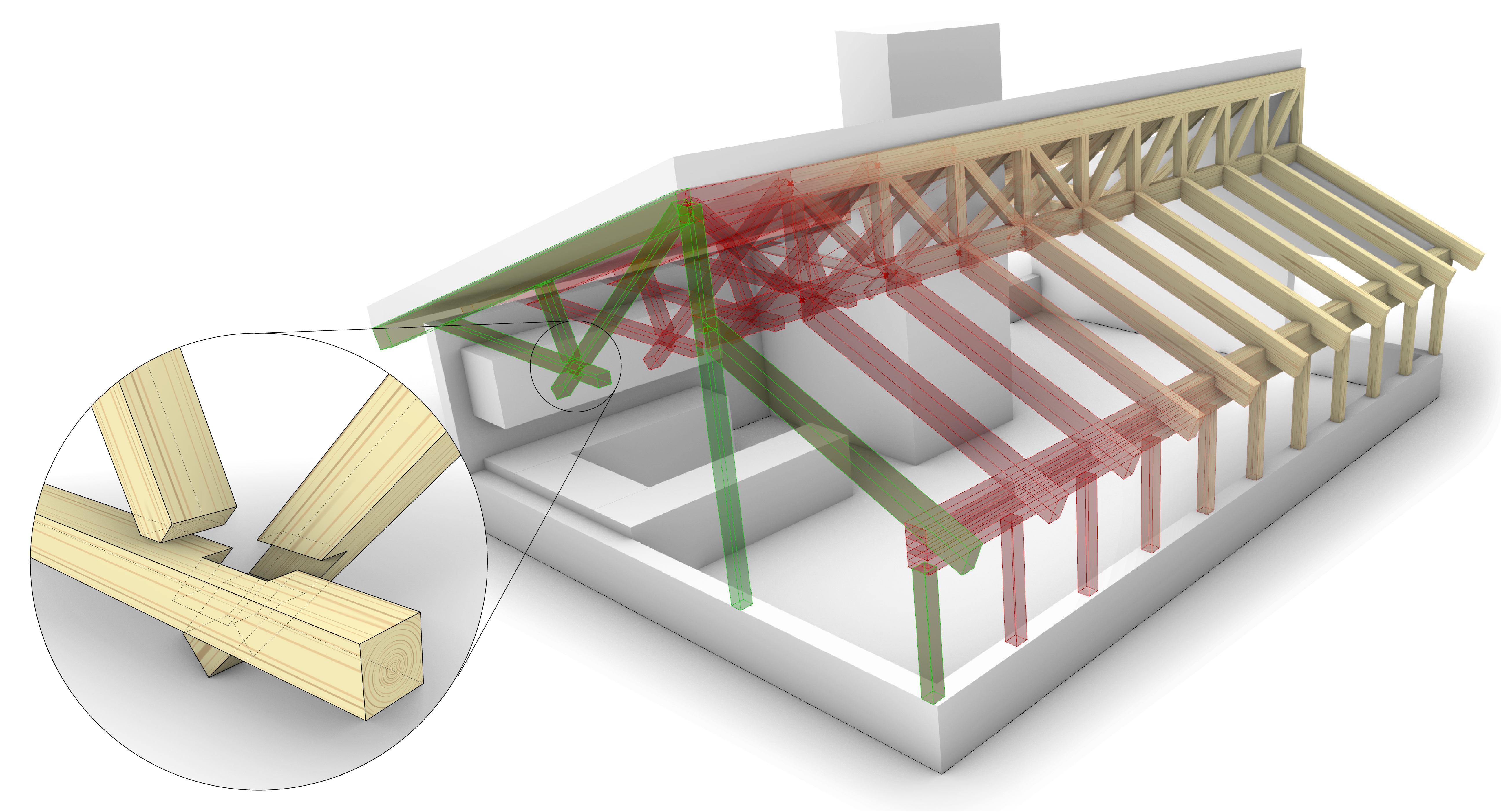

`compas_timber` is a user-friendly open-source software toolkit to streamline the design of timber frame structures. Despite its advances in digitalization compared to other building techniques, timber construction is often perceived as a challenging field, involving intricate processes in design, planning, coordination, and fabrication. We aim to increase the use of timber in architecture by lowering the threshold of creating versatile and resource-aware designs.

## Installation

> It is recomended you install `compas_timber` inside a virtual environment.

```bash

pip install compas_timber

```

## First Steps

* [Documentation](https://gramaziokohler.github.io/compas_timber/)

* [COMPAS TIMBER Grasshopper Tutorial](https://gramaziokohler.github.io/compas_timber/latest/tutorials.html)

* [COMPAS TIMBER API Reference](https://gramaziokohler.github.io/compas_timber/latest/api.html)

## Questions and feedback

We encourage the use of the [COMPAS framework forum](https://forum.compas-framework.org/)

for questions and discussions.

## Issue tracker

If you found an issue or have a suggestion for a dandy new feature, please file a new issue in our [issue tracker](https://github.com/gramaziokohler/compas_timber/issues).

## Contributing

We love contributions!

Check the [Contributor's Guide](https://github.com/gramaziokohler/compas_timber/blob/main/CONTRIBUTING.md)

for more details.

## Credits

`compas_timber` is currently developed by Gramazio Kohler Research. See the [list of authors](https://github.com/gramaziokohler/compas_timber/blob/main/AUTHORS.md) for a complete overview.

| text/markdown | null | Aleksandra Anna Apolinarska <apolinarska@arch.ethz.ch>, Chen Kasirer <kasirer@arch.ethz.ch>, Gonzalo Casas <casas@arch.ethz.ch>, Jonas Haldemann <haldemann@arch.ethz.ch>, Oliver Appling Bucklin <bucklin@arch.ethz.ch>, "Aurèle L. Gheyselinck" <gheyselinck@arch.ethz.ch>, Panayiotis Papacharalambous <papacharalambous@arch.ethz.ch>, Anastasiia Stryzhevska <astryzhevska@arch.ethz.ch>, Jelle Feringa <jelleferinga@gmail.com>, Joseph Kenny <jk6372@princeton.edu>, Beverly Lytle <lytle@arch.ethz.ch>, Eric Gozzi <eric.gozzi@arch.ethz.ch>, Rodrigo Arca Zimmermann <rodrigo.arca@gmail.com> | null | null | null | null | [

"Development Status :: 4 - Beta",

"Topic :: Scientific/Engineering",

"Programming Language :: Python",

"Programming Language :: Python :: 3",

"Programming Language :: Python :: 3.9",

"Programming Language :: Python :: 3.10",

"Programming Language :: Python :: 3.11"

] | [] | null | null | >=3.9 | [] | [] | [] | [

"rtree",

"compas<3.0,>=2.0",

"compas_model==0.9.1",

"bump-my-version; extra == \"dev\"",

"compas_invocations2; extra == \"dev\"",

"invoke>=0.14; extra == \"dev\"",

"ruff; extra == \"dev\"",

"sphinx_compas2_theme; extra == \"dev\"",

"sphinxcontrib-mermaid; extra == \"dev\"",

"twine; extra == \"dev\... | [] | [] | [] | [

"Homepage, https://gramaziokohler.github.io/compas_timber/latest/",

"Repository, https://github.com/gramaziokohler/compas_timber"

] | twine/6.2.0 CPython/3.12.12 | 2026-02-19T13:18:00.519280 | compas_timber-2.0.0.dev0.tar.gz | 155,796 | 7b/0c/75dcc59ab81078aef19a0b1c75b8e278c3f9a647743a96a4cf2b10d7e097/compas_timber-2.0.0.dev0.tar.gz | source | sdist | null | false | ee61a9dcaf6e94867a5bb98fdf77496d | 9ec363e20e037dea654e5c5c0c9814592fb5fb9d8c76a44a7a507334378f7bdd | 7b0c75dcc59ab81078aef19a0b1c75b8e278c3f9a647743a96a4cf2b10d7e097 | MIT | [

"LICENSE"

] | 177 |

2.4 | scikit-bayes | 0.1.3 | A Python package for AnDE classifiers. | # scikit-bayes

[](https://github.com/ptorrijos99/scikit-bayes/actions/workflows/python-app.yml)

[](https://codecov.io/gh/ptorrijos99/scikit-bayes)

[](https://ptorrijos99.github.io/scikit-bayes/)

[](https://www.python.org/downloads/)

[](LICENSE)

**scikit-bayes** is a Python package that extends `scikit-learn` with a suite of Bayesian Network Classifiers.

The primary goal of this package is to provide robust, `scikit-learn`-compatible implementations of advanced Bayesian classifiers that are not available in the core library.

## Key Features

- **MixedNB**: Naive Bayes for mixed data types (Gaussian + Categorical + Bernoulli) in a single model

- **AnDE**: Averaged n-Dependence Estimators (AODE, A2DE) that relax the independence assumption

- **ALR**: Accelerated Logistic Regression - hybrid generative-discriminative models with 4 weight granularity levels

- **WeightedAnDE**: Discriminatively-weighted ensemble models

- **Full scikit-learn API**: Compatible with pipelines, cross-validation, and grid search

## Quick Start

```python

import numpy as np

from skbn import MixedNB, AnDE

# MixedNB: Handle mixed data types automatically

X = np.array([[1.5, 0, 2], [-0.5, 1, 0], [2.1, 1, 1], [-1.2, 0, 2]])

y = np.array([0, 1, 1, 0])

clf = MixedNB()

clf.fit(X, y)

print(clf.predict([[0.5, 1, 1]])) # Automatically handles Gaussian, Bernoulli, Categorical

# AnDE: Solve problems Naive Bayes cannot (XOR)

X_xor = np.array([[-1, -1], [-1, 1], [1, -1], [1, 1]])

y_xor = np.array([0, 1, 1, 0])

clf = AnDE(n_dependence=1, n_bins=2)

clf.fit(X_xor, y_xor)

print(clf.predict(X_xor)) # [0, 1, 1, 0] ✓

```

## Installation

```bash

pip install scikit-bayes

```

Or install from source:

```bash

pip install git+https://github.com/ptorrijos99/scikit-bayes.git

```

## Documentation

- 📖 [User Guide](https://ptorrijos99.github.io/scikit-bayes/user_guide.html) - Detailed documentation

- 📚 [API Reference](https://ptorrijos99.github.io/scikit-bayes/api.html) - Complete API docs

- 🎨 [Examples Gallery](https://ptorrijos99.github.io/scikit-bayes/auto_examples/index.html) - Visual examples

## Development

This project uses [pixi](https://pixi.sh) for environment management.

```bash

# Run tests

pixi run test

# Run linter

pixi run lint

# Build documentation

pixi run build-doc

# Activate development environment

pixi shell -e dev

```

## Citation

If you use scikit-bayes in a scientific publication, please cite:

```bibtex

@software{scikit_bayes,

author = {Torrijos, Pablo},

title = {scikit-bayes: Bayesian Network Classifiers for Python},

year = {2025},

url = {https://github.com/ptorrijos99/scikit-bayes}

}

```

## References

- Webb, G. I., Boughton, J., & Wang, Z. (2005). *Not so naive Bayes: Aggregating one-dependence estimators*. Machine Learning, 58(1), 5-24.

- Flores, M. J., Gámez, J. A., Martínez, A. M., & Puerta, J. M. (2009). *GAODE and HAODE: Two proposals based on AODE to deal with continuous variables*. ICML '09, 313-320.

- Zaidi, N. A., Webb, G. I., Carman, M. J., & Petitjean, F. (2017). *Efficient parameter learning of Bayesian network classifiers*. Machine Learning, 106(9-10), 1289-1329.

## License

BSD-3-Clause. See [LICENSE](LICENSE) for details.

| text/markdown | null | Pablo Torrijos <pablo.torrijos@uclm.es> | null | null | null | null | [

"Programming Language :: Python :: 3",

"Programming Language :: Python :: 3.9",

"Programming Language :: Python :: 3.10",

"Programming Language :: Python :: 3.11",

"Programming Language :: Python :: 3.12",

"Programming Language :: Python :: 3.13",

"License :: OSI Approved :: BSD License",

"Operating S... | [] | null | null | >=3.9 | [] | [] | [] | [

"scikit-learn>=1.4.2",

"pyyaml>=6.0",

"liac-arff>=2.5"

] | [] | [] | [] | [

"Homepage, https://github.com/ptorrijos99/scikit-bayes",

"Issues, https://github.com/ptorrijos99/scikit-bayes/issues"

] | twine/6.1.0 CPython/3.13.7 | 2026-02-19T13:17:16.862779 | scikit_bayes-0.1.3.tar.gz | 142,951 | 93/fe/3267bc45c5357c08ffb3c3867d2b1479a2aca97e288061fb5f97105224cf/scikit_bayes-0.1.3.tar.gz | source | sdist | null | false | 3a71bfb7801636ffca53d4a60bc575ca | efa18592b8f52e47b09c2b6f847c9239ba34d8edfed33685dbb8f276ee429859 | 93fe3267bc45c5357c08ffb3c3867d2b1479a2aca97e288061fb5f97105224cf | null | [

"LICENSE"

] | 197 |

2.1 | odoo-addon-auth-jwt-demo | 18.0.1.0.1 | Test/demo module for auth_jwt. | .. image:: https://odoo-community.org/readme-banner-image

:target: https://odoo-community.org/get-involved?utm_source=readme

:alt: Odoo Community Association

=============

Auth JWT Test

=============

..

!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!

!! This file is generated by oca-gen-addon-readme !!

!! changes will be overwritten. !!

!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!

!! source digest: sha256:9107748d0e8fe356b5dbf0e6a8af05342460d05d3d8f5b123ea96eb8db7b26ae

!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!

.. |badge1| image:: https://img.shields.io/badge/maturity-Beta-yellow.png

:target: https://odoo-community.org/page/development-status

:alt: Beta

.. |badge2| image:: https://img.shields.io/badge/license-LGPL--3-blue.png

:target: http://www.gnu.org/licenses/lgpl-3.0-standalone.html

:alt: License: LGPL-3

.. |badge3| image:: https://img.shields.io/badge/github-OCA%2Fserver--auth-lightgray.png?logo=github

:target: https://github.com/OCA/server-auth/tree/18.0/auth_jwt_demo

:alt: OCA/server-auth

.. |badge4| image:: https://img.shields.io/badge/weblate-Translate%20me-F47D42.png

:target: https://translation.odoo-community.org/projects/server-auth-18-0/server-auth-18-0-auth_jwt_demo

:alt: Translate me on Weblate

.. |badge5| image:: https://img.shields.io/badge/runboat-Try%20me-875A7B.png

:target: https://runboat.odoo-community.org/builds?repo=OCA/server-auth&target_branch=18.0

:alt: Try me on Runboat

|badge1| |badge2| |badge3| |badge4| |badge5|

A test/demo module for ``auth_jwt``.

**Table of contents**

.. contents::

:local:

Usage

=====

This modules creates a JWT validator named ``demo``, and adds a

``/auth_jwt_demo/whoami`` route which returns information about the

partner identified in the token.

The ``whoami`` endpoint can be invoked as such, assuming

`python-jose <https://pypi.org/project/python-jose/>`__ is installed.

.. code:: python

# /usr/bin/env python3

import time

import requests

from jose import jwt

token = jwt.encode(

{

"aud": "auth_jwt_test_api",

"iss": "some issuer",

"exp": time.time() + 60,

"email": "mark.brown23@example.com",

},

key="thesecret",

algorithm=jwt.ALGORITHMS.HS256,

)

r = requests.get(

"http://localhost:8069/auth_jwt_demo/whoami",

headers={"Authorization": "Bearer " + token},

)

r.raise_for_status()

print(r.json())

Bug Tracker

===========

Bugs are tracked on `GitHub Issues <https://github.com/OCA/server-auth/issues>`_.

In case of trouble, please check there if your issue has already been reported.

If you spotted it first, help us to smash it by providing a detailed and welcomed

`feedback <https://github.com/OCA/server-auth/issues/new?body=module:%20auth_jwt_demo%0Aversion:%2018.0%0A%0A**Steps%20to%20reproduce**%0A-%20...%0A%0A**Current%20behavior**%0A%0A**Expected%20behavior**>`_.

Do not contact contributors directly about support or help with technical issues.

Credits

=======

Authors

-------

* ACSONE SA/NV

Contributors

------------

- Stéphane Bidoul <stephane.bidoul@acsone.eu>

Maintainers

-----------

This module is maintained by the OCA.

.. image:: https://odoo-community.org/logo.png

:alt: Odoo Community Association

:target: https://odoo-community.org

OCA, or the Odoo Community Association, is a nonprofit organization whose

mission is to support the collaborative development of Odoo features and

promote its widespread use.

.. |maintainer-sbidoul| image:: https://github.com/sbidoul.png?size=40px

:target: https://github.com/sbidoul

:alt: sbidoul

Current `maintainer <https://odoo-community.org/page/maintainer-role>`__:

|maintainer-sbidoul|

This module is part of the `OCA/server-auth <https://github.com/OCA/server-auth/tree/18.0/auth_jwt_demo>`_ project on GitHub.

You are welcome to contribute. To learn how please visit https://odoo-community.org/page/Contribute.

| text/x-rst | ACSONE SA/NV,Odoo Community Association (OCA) | support@odoo-community.org | null | null | LGPL-3 | null | [

"Programming Language :: Python",

"Framework :: Odoo",

"Framework :: Odoo :: 18.0",

"License :: OSI Approved :: GNU Lesser General Public License v3 (LGPLv3)"

] | [] | https://github.com/OCA/server-auth | null | >=3.10 | [] | [] | [] | [

"odoo-addon-auth_jwt==18.0.*",

"odoo==18.0.*"

] | [] | [] | [] | [] | twine/6.2.0 CPython/3.12.3 | 2026-02-19T13:17:15.499513 | odoo_addon_auth_jwt_demo-18.0.1.0.1-py3-none-any.whl | 649,921 | 14/3e/b36e5c09f44fc8f2f7b7b8b8120b910e6c50b616e8c7f8e1b6498b46c3df/odoo_addon_auth_jwt_demo-18.0.1.0.1-py3-none-any.whl | py3 | bdist_wheel | null | false | 3d3fe94f12b9288c9014a1eaf0bdebc9 | 891a6ab470d27a8e34d8c8ed47a2fa10d508ee7674cfe30b52077f00829a5350 | 143eb36e5c09f44fc8f2f7b7b8b8120b910e6c50b616e8c7f8e1b6498b46c3df | null | [] | 99 |

2.4 | langchain-blindfold | 0.1.0 | LangChain integration for Blindfold PII detection and protection | # LangChain Blindfold

LangChain integration for [Blindfold](https://blindfold.dev) PII detection and protection. Tokenize PII before it reaches your LLM, then restore originals in the response.

| | |

|---|---|

| Developed by | [Blindfold](https://blindfold.dev) |

| License | MIT |

| Input/Output | String, Document |

## Installation

```bash

pip install langchain-blindfold

```

Set your Blindfold API key:

```bash

export BLINDFOLD_API_KEY=your-api-key

```

Get a free API key at [app.blindfold.dev](https://app.blindfold.dev).

## Quick Start

### Protect a LangChain Chain

```python

from langchain_blindfold import blindfold_protect

from langchain_core.prompts import ChatPromptTemplate

from langchain_openai import ChatOpenAI

tokenize, detokenize = blindfold_protect(policy="basic")

prompt = ChatPromptTemplate.from_messages([

("system", "You are a helpful assistant."),

("user", "{input}"),

])

llm = ChatOpenAI(model="gpt-4o-mini")

chain = tokenize | prompt | llm | (lambda msg: msg.content) | detokenize

# PII is tokenized before the LLM sees it, then restored in the response

result = chain.invoke("Write a follow-up email to John Doe at john@example.com")

```

### Transform Documents for RAG

```python

from langchain_blindfold import BlindfoldPIITransformer

from langchain_core.documents import Document

transformer = BlindfoldPIITransformer(pii_method="redact", policy="hipaa_us", region="us")

docs = [Document(page_content="Patient John Smith, SSN 123-45-6789")]

safe_docs = transformer.transform_documents(docs)

# safe_docs[0].page_content → "Patient [REDACTED], SSN [REDACTED]"

```

## Components

### `blindfold_protect()`

Convenience function that returns a paired tokenizer and detokenizer:

```python

tokenize, detokenize = blindfold_protect(

api_key=None, # Falls back to BLINDFOLD_API_KEY env var

region=None, # "eu" or "us" for data residency

policy="basic", # Detection policy

entities=None, # Specific entity types to detect

score_threshold=None, # Confidence threshold (0.0-1.0)

)

```

### `BlindfoldTokenizer`

A LangChain `Runnable` that tokenizes PII in text:

```python

from langchain_blindfold import BlindfoldTokenizer

tokenizer = BlindfoldTokenizer(policy="gdpr_eu", region="eu")

safe_text = tokenizer.invoke("Contact Hans at hans@example.de")

# → "Contact <Person_1> at <Email Address_1>"

```

### `BlindfoldDetokenizer`

A LangChain `Runnable` that restores original PII from tokenized text:

```python

from langchain_blindfold import BlindfoldTokenizer, BlindfoldDetokenizer

tokenizer = BlindfoldTokenizer(api_key="...")

detokenizer = BlindfoldDetokenizer(tokenizer=tokenizer)

tokenizer.invoke("Hi John") # stores mapping

result = detokenizer.invoke("Response to <Person_1>")

# → "Response to John"

```

### `BlindfoldPIITransformer`

A LangChain `DocumentTransformer` for protecting PII in documents:

```python

from langchain_blindfold import BlindfoldPIITransformer

transformer = BlindfoldPIITransformer(

api_key=None, # Falls back to BLINDFOLD_API_KEY env var

region=None, # "eu" or "us" for data residency

policy="basic", # Detection policy

pii_method="tokenize",# tokenize, redact, mask, hash, synthesize, encrypt

entities=None, # Specific entity types to detect

score_threshold=None, # Confidence threshold (0.0-1.0)

)

```

When `pii_method="tokenize"`, the mapping is stored in `doc.metadata["blindfold_mapping"]`.

## Policies

| Policy | Entities | Best For |

|---|---|---|

| `basic` | Names, emails, phones, locations | General PII protection |

| `gdpr_eu` | EU-specific: IBANs, addresses, dates of birth | GDPR compliance |

| `hipaa_us` | PHI: SSNs, MRNs, medical terms | HIPAA compliance |

| `pci_dss` | Card numbers, CVVs, expiry dates | PCI DSS compliance |

| `strict` | All entity types, lower threshold | Maximum detection |

## PII Methods

| Method | Output | Reversible |

|---|---|---|

| `tokenize` | `<Person_1>`, `<Email Address_1>` | Yes |

| `redact` | PII removed entirely | No |

| `mask` | `J****oe`, `j****om` | No |

| `hash` | `HASH_abc123` | No |

| `synthesize` | `Jane Smith`, `jane@example.org` | No |

| `encrypt` | AES-256 encrypted value | Yes (with key) |

## Data Residency

Use the `region` parameter to ensure PII is processed in a specific jurisdiction:

- `region="eu"` — processed in Frankfurt, Germany

- `region="us"` — processed in Virginia, US

```python

tokenize, detokenize = blindfold_protect(policy="gdpr_eu", region="eu")

```

## Links

- [Blindfold Documentation](https://docs.blindfold.dev)

- [Blindfold Dashboard](https://app.blindfold.dev)

- [LangChain Documentation](https://python.langchain.com)

- [GitHub](https://github.com/blindfold-dev/langchain-blindfold)

| text/markdown | null | Blindfold <hello@blindfold.dev> | null | null | MIT | langchain, pii, blindfold, privacy, gdpr, hipaa, ai-safety, llm | [

"Development Status :: 4 - Beta",

"Intended Audience :: Developers",

"License :: OSI Approved :: MIT License",

"Programming Language :: Python :: 3",

"Programming Language :: Python :: 3.9",

"Programming Language :: Python :: 3.10",

"Programming Language :: Python :: 3.11",

"Programming Language :: Py... | [] | null | null | >=3.9 | [] | [] | [] | [

"langchain-core>=0.3.0",

"blindfold-sdk>=1.3.0"

] | [] | [] | [] | [

"Homepage, https://blindfold.dev",

"Documentation, https://docs.blindfold.dev",

"Repository, https://github.com/blindfold-dev/langchain-blindfold"

] | twine/6.2.0 CPython/3.13.2 | 2026-02-19T13:16:10.251602 | langchain_blindfold-0.1.0.tar.gz | 9,054 | 8b/f9/da95a68403a6d243c82f27be3281b3ff133dba5d81308a72fbcd5454aeb3/langchain_blindfold-0.1.0.tar.gz | source | sdist | null | false | 5d22cc214ea03937686ee9b9851f3d36 | a9308f20d2383989399a7588b1dddd72b360a47f5502bdaceff0f0f2f3ddc8e2 | 8bf9da95a68403a6d243c82f27be3281b3ff133dba5d81308a72fbcd5454aeb3 | null | [

"LICENSE"

] | 227 |

2.4 | iec-api | 0.5.6 | A Python wrapper for Israel Electric Company API | # iec-api

A python wrapper for Israel Electric Company API

## Module Usage

```python

from iec_api import iec_client as iec

client = iec.IecClient("123456789")

try:

await client.manual_login() # login with user inputs

except iec.exceptions.IECError as err:

logger.error(f"Failed Login: (Code {err.code}): {err.error}")

raise

customer = await client.get_customer()

print(customer)

contracts = await client.get_contracts()

for contract in contracts:

print(contract)

reading = await client.get_last_meter_reading(customer.bp_number, contracts[0].contract_id)

print(reading)

```

## Postman

To use the API manually through Postman - read [Postman Collection Guide](POSTMAN.md)

| text/markdown | GuyKh | null | Guy Khmelnitsky | guykhmel@gmail.com | MIT | python, poetry, api, iec, israel, electric | [

"License :: OSI Approved :: MIT License",

"Programming Language :: Python :: 3",

"Programming Language :: Python :: 3.10",

"Programming Language :: Python :: 3.11",

"Programming Language :: Python :: 3.12",

"Programming Language :: Python :: 3.13",

"Programming Language :: Python :: 3.14"

] | [] | null | null | <4.0,>=3.10 | [] | [] | [] | [

"aiofiles<25.2.0,>=25.1.0",

"aiohttp<4.0.0,>=3.9.1",

"cryptography<100.0.0,>=44.0.0",

"mashumaro<4.0,>=3.13",

"pkce<2.0.0,>=1.0.3",

"pyjwt<3.0.0,>=2.8.0",

"pytz<2025.0,>=2024.1",

"requests<3.0.0,>=2.31.0"

] | [] | [] | [] | [

"Repository, https://github.com/GuyKh/py-iec-api"

] | poetry/2.3.2 CPython/3.11.14 Linux/6.14.0-1017-azure | 2026-02-19T13:15:00.352002 | iec_api-0.5.6-py3-none-any.whl | 67,280 | 38/1f/ec541309ecd7a3bd3f1e250607adfa8ee3d18afa8daf0981d9ea3971f4d4/iec_api-0.5.6-py3-none-any.whl | py3 | bdist_wheel | null | false | 2bc162d6e91b49b4ce6d15d366d0c40f | 57bbaf187cff10b16d559b9a3f9669961c03f6baeace7f0146d7dd1bd3b0e528 | 381fec541309ecd7a3bd3f1e250607adfa8ee3d18afa8daf0981d9ea3971f4d4 | null | [

"LICENSE"

] | 224 |

2.4 | memora-mcp | 0.2.22 | MCP-compatible memory server backed by SQLite | <h1 align="center"><img src="media/memora_new.gif" width="60" alt="Memora Logo" align="absmiddle"> Memora</h1>

<p align="center"><sub><sub><i>"You never truly know the value of a moment until it becomes a memory."</i></sub></sub></p>

<p align="center">

<b>Give your AI agents persistent memory</b><br>

A lightweight MCP server for semantic memory storage, knowledge graphs, conversational recall, and cross-session context.

</p>

<p align="center">

<a href="https://github.com/agentic-mcp-tools/memora/releases"><img src="https://img.shields.io/github/v/tag/agentic-mcp-tools/memora?label=version&color=blue" alt="Version"></a>

<a href="LICENSE"><img src="https://img.shields.io/badge/license-MIT-green" alt="License"></a>

<a href="https://github.com/thedotmack/awesome-claude-code"><img src="https://awesome.re/mentioned-badge.svg" alt="Mentioned in Awesome Claude Code"></a>

</p>

<p align="center">

<img src="media/demo.gif" alt="Memora Demo" width="800">

</p>

<p align="center">

<b><a href="#features">Features</a></b> · <b><a href="#install">Install</a></b> · <b><a href="#usage">Usage</a></b> · <b><a href="#configuration">Config</a></b> · <b><a href="#live-graph-server">Live Graph</a></b> · <b><a href="#cloud-graph">Cloud Graph</a></b> · <b><a href="#chat-with-memories">Chat</a></b> · <b><a href="#semantic-search--embeddings">Semantic Search</a></b> · <b><a href="#llm-deduplication">LLM Dedup</a></b> · <b><a href="#memory-linking">Linking</a></b> · <b><a href="#neovim-integration">Neovim</a></b>

</p>

## Features

**Core Storage**

- 💾 **Persistent Storage** - SQLite with optional cloud sync (S3, R2, D1)

- 📂 **Hierarchical Organization** - Section/subsection structure with auto-hierarchy assignment

- 📦 **Export/Import** - Backup and restore with merge strategies

**Search & Intelligence**

- 🔍 **Semantic Search** - Vector embeddings (TF-IDF, sentence-transformers, OpenAI)

- 🎯 **Advanced Queries** - Full-text, date ranges, tag filters (AND/OR/NOT), hybrid search

- 🔀 **Cross-references** - Auto-linked related memories based on similarity

- 🤖 **LLM Deduplication** - Find and merge duplicates with AI-powered comparison

- 🔗 **Memory Linking** - Typed edges, importance boosting, and cluster detection

**Tools & Visualization**

- ⚡ **Memory Automation** - Structured tools for TODOs, issues, and sections

- 🕸️ **Knowledge Graph** - Interactive visualization with Mermaid rendering and cluster overlays

- 🌐 **Live Graph Server** - Built-in HTTP server with cloud-hosted option (D1/Pages)

- 💬 **Chat with Memories** - RAG-powered chat panel that searches relevant memories and streams LLM responses

- 📡 **Event Notifications** - Poll-based system for inter-agent communication

- 📊 **Statistics & Analytics** - Tag usage, trends, and connection insights

- 🧠 **Memory Insights** - Activity summary, stale detection, consolidation suggestions, and LLM-powered pattern analysis

- 📜 **Action History** - Track all memory operations (create, update, delete, merge, boost, link) with grouped timeline view

## Install

```bash

pip install git+https://github.com/agentic-mcp-tools/memora.git

```

Includes cloud storage (S3/R2) and OpenAI embeddings out of the box.

```bash

# Optional: local embeddings (offline, ~2GB for PyTorch)

pip install "memora[local]" @ git+https://github.com/agentic-mcp-tools/memora.git

```

<details id="usage">

<summary><big><big><strong>Usage</strong></big></big></summary>

The server runs automatically when configured in Claude Code. Manual invocation:

```bash

# Default (stdio mode for MCP)

memora-server

# With graph visualization server

memora-server --graph-port 8765

# HTTP transport (alternative to stdio)

memora-server --transport streamable-http --host 127.0.0.1 --port 8080

```

</details>

<details id="configuration">

<summary><big><big><strong>Configuration</strong></big></big></summary>

### Claude Code

Add to `.mcp.json` in your project root:

**Local DB:**

```json

{

"mcpServers": {

"memora": {

"command": "memora-server",

"args": [],

"env": {

"MEMORA_DB_PATH": "~/.local/share/memora/memories.db",

"MEMORA_ALLOW_ANY_TAG": "1",

"MEMORA_GRAPH_PORT": "8765"

}

}

}

}

```

**Cloud DB (Cloudflare D1) - Recommended:**

```json

{

"mcpServers": {

"memora": {

"command": "memora-server",

"args": ["--no-graph"],

"env": {

"MEMORA_STORAGE_URI": "d1://<account-id>/<database-id>",

"CLOUDFLARE_API_TOKEN": "<your-api-token>",

"MEMORA_ALLOW_ANY_TAG": "1"

}

}

}

}

```

With D1, use `--no-graph` to disable the local visualization server. Instead, use the hosted graph at your Cloudflare Pages URL (see [Cloud Graph](#cloud-graph)).

**Cloud DB (S3/R2) - Sync mode:**

```json

{

"mcpServers": {

"memora": {

"command": "memora-server",

"args": [],

"env": {

"AWS_PROFILE": "memora",

"AWS_ENDPOINT_URL": "https://<account-id>.r2.cloudflarestorage.com",

"MEMORA_STORAGE_URI": "s3://memories/memories.db",

"MEMORA_CLOUD_ENCRYPT": "true",

"MEMORA_ALLOW_ANY_TAG": "1",

"MEMORA_GRAPH_PORT": "8765"

}

}

}

}

```

### Codex CLI

Add to `~/.codex/config.toml`:

```toml

[mcp_servers.memora]

command = "memora-server" # or full path: /path/to/bin/memora-server

args = ["--no-graph"]

env = {

AWS_PROFILE = "memora",

AWS_ENDPOINT_URL = "https://<account-id>.r2.cloudflarestorage.com",

MEMORA_STORAGE_URI = "s3://memories/memories.db",

MEMORA_CLOUD_ENCRYPT = "true",

MEMORA_ALLOW_ANY_TAG = "1",

}

```

</details>

<details id="environment-variables">

<summary><big><big><strong>Environment Variables</strong></big></big></summary>

| Variable | Description |

|------------------------|-----------------------------------------------------------------------------|

| `MEMORA_DB_PATH` | Local SQLite database path (default: `~/.local/share/memora/memories.db`) |

| `MEMORA_STORAGE_URI` | Storage URI: `d1://<account>/<db-id>` (D1) or `s3://bucket/memories.db` (S3/R2) |

| `CLOUDFLARE_API_TOKEN` | API token for D1 database access (required for `d1://` URI) |

| `MEMORA_CLOUD_ENCRYPT` | Encrypt database before uploading to cloud (`true`/`false`) |

| `MEMORA_CLOUD_COMPRESS`| Compress database before uploading to cloud (`true`/`false`) |

| `MEMORA_CACHE_DIR` | Local cache directory for cloud-synced database |

| `MEMORA_ALLOW_ANY_TAG` | Allow any tag without validation against allowlist (`1` to enable) |

| `MEMORA_TAG_FILE` | Path to file containing allowed tags (one per line) |

| `MEMORA_TAGS` | Comma-separated list of allowed tags |

| `MEMORA_GRAPH_PORT` | Port for the knowledge graph visualization server (default: `8765`) |

| `MEMORA_EMBEDDING_MODEL` | Embedding backend: `openai` (default), `sentence-transformers`, or `tfidf` |

| `SENTENCE_TRANSFORMERS_MODEL` | Model for sentence-transformers (default: `all-MiniLM-L6-v2`) |

| `OPENAI_API_KEY` | API key for OpenAI embeddings and LLM deduplication |

| `OPENAI_BASE_URL` | Base URL for OpenAI-compatible APIs (OpenRouter, Azure, etc.) |

| `OPENAI_EMBEDDING_MODEL` | OpenAI embedding model (default: `text-embedding-3-small`) |

| `MEMORA_LLM_ENABLED` | Enable LLM-powered deduplication comparison (`true`/`false`, default: `true`) |

| `MEMORA_LLM_MODEL` | Model for deduplication comparison (default: `gpt-4o-mini`) |

| `CHAT_MODEL` | Model for the chat panel (default: `deepseek/deepseek-chat`, falls back to `MEMORA_LLM_MODEL`) |

| `AWS_PROFILE` | AWS credentials profile from `~/.aws/credentials` (useful for R2) |

| `AWS_ENDPOINT_URL` | S3-compatible endpoint for R2/MinIO |

| `R2_PUBLIC_DOMAIN` | Public domain for R2 image URLs |

</details>

<details id="semantic-search--embeddings">

<summary><big><big><strong>Semantic Search & Embeddings</strong></big></big></summary>

Memora supports three embedding backends:

| Backend | Install | Quality | Speed |

|---------|---------|---------|-------|

| `openai` (default) | Included | High quality | API latency |

| `sentence-transformers` | `pip install memora[local]` | Good, runs offline | Medium |

| `tfidf` | Included | Basic keyword matching | Fast |

**Automatic:** Embeddings and cross-references are computed automatically when you `memory_create`, `memory_update`, or `memory_create_batch`.

**Manual rebuild required** when:

- Changing `MEMORA_EMBEDDING_MODEL` after memories exist

- Switching to a different sentence-transformers model

```bash

# After changing embedding model, rebuild all embeddings

memory_rebuild_embeddings

# Then rebuild cross-references to update the knowledge graph

memory_rebuild_crossrefs

```

</details>

<details id="live-graph-server">

<summary><big><big><strong>Live Graph Server</strong></big></big></summary>

A built-in HTTP server starts automatically with the MCP server, serving an interactive knowledge graph visualization.

<table>

<tr>

<td align="center"><img src="media/ui_details.png" alt="Details Panel" width="400"><br><em>Details Panel</em></td>

<td align="center"><img src="media/ui_timeline.png" alt="Timeline Panel" width="400"><br><em>Timeline Panel</em></td>

</tr>

</table>

**Access locally:**

```

http://localhost:8765/graph

```

**Remote access via SSH:**

```bash

ssh -L 8765:localhost:8765 user@remote

# Then open http://localhost:8765/graph in your browser

```

**Configuration:**

```json

{

"env": {

"MEMORA_GRAPH_PORT": "8765"

}

}

```

To disable: add `"--no-graph"` to args in your MCP config.

### Graph UI Features

- **Details Panel** - View memory content, metadata, tags, and related memories

- **Timeline Panel** - Browse memories chronologically, click to highlight in graph

- **History Panel** - Action log of all operations with grouped consecutive entries and clickable memory references (deleted memories shown as strikethrough)

- **Chat Panel** - Ask questions about your memories using RAG-powered LLM chat with streaming responses and clickable `[Memory #ID]` references

- **Time Slider** - Filter memories by date range, drag to explore history

- **Real-time Updates** - Graph, timeline, and history update via SSE when memories change

- **Filters** - Tag/section dropdowns, zoom controls

- **Mermaid Rendering** - Code blocks render as diagrams

### Node Colors

- 🟣 **Tags** - Purple shades by tag

- 🔴 **Issues** - Red (open), Orange (in progress), Green (resolved), Gray (won't fix)

- 🔵 **TODOs** - Blue (open), Orange (in progress), Green (completed), Red (blocked)

Node size reflects connection count.

</details>

<details id="cloud-graph">

<summary><big><big><strong>Cloud Graph (Recommended for D1)</strong></big></big></summary>

When using Cloudflare D1 as your database, the graph visualization is hosted on Cloudflare Pages - no local server needed.

**Benefits:**

- Access from anywhere (no SSH tunneling)

- Real-time updates via WebSocket

- Multi-database support via `?db=` parameter

- Secure access with Cloudflare Zero Trust

**Setup:**

1. **Create D1 database:**