metadata_version string | name string | version string | summary string | description string | description_content_type string | author string | author_email string | maintainer string | maintainer_email string | license string | keywords string | classifiers list | platform list | home_page string | download_url string | requires_python string | requires list | provides list | obsoletes list | requires_dist list | provides_dist list | obsoletes_dist list | requires_external list | project_urls list | uploaded_via string | upload_time timestamp[us] | filename string | size int64 | path string | python_version string | packagetype string | comment_text string | has_signature bool | md5_digest string | sha256_digest string | blake2_256_digest string | license_expression string | license_files list | recent_7d_downloads int64 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

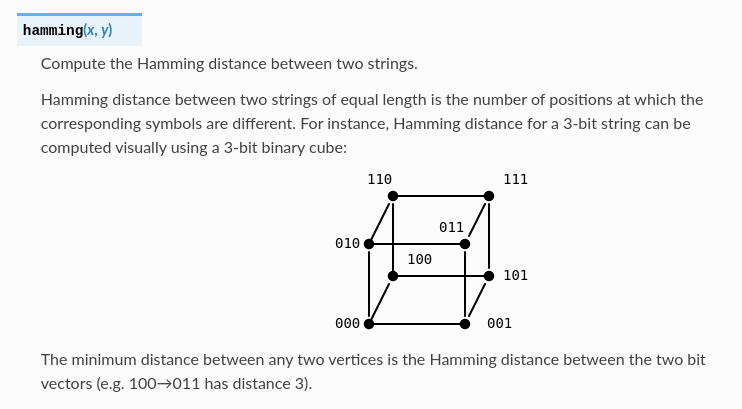

2.4 | neptune-query | 1.12.0b1 | Neptune Query is a Python library for retrieving data from Neptune. | # Neptune Query API

The `neptune_query` package is a read-only API for fetching metadata.

With the Query API, you can:

- List experiments, runs, and attributes of a project.

- Fetch experiment or run metadata as a data frame.

- Define filters to fetch experiments, runs, and attributes that meet certain criteria.

## Installation

```bash

pip install "neptune-query<2.0.0"

```

Set your Neptune API token and project name as environment variables:

```bash

export NEPTUNE_API_TOKEN="ApiTokenFromYourNeptuneProfile"

```

```bash

export NEPTUNE_PROJECT="workspace-name/project-name"

```

> **Note:** You can also pass the project path to the `project` argument of any querying function.

## Usage

```python

import neptune_query as nq

```

Available functions:

- `download_files()` – download files from the specified experiments.

- `fetch_experiments_table()` – runs as rows and attributes as columns.

- (experimental) `fetch_experiments_table_global()` – like `fetch_experiments_table()`, but searches across all projects that the user has access to.

- (experimental) `fetch_metric_buckets()` – get summary values split by X-axis buckets.

- `fetch_metrics()` – series of float or int values, with steps as rows.

- ([runs API](#runs-api)) `fetch_runs_table()` – like `fetch_experiments_table()`, but for individual runs.

- `fetch_series()` – for series of strings or histograms.

- `list_attributes()` – all logged attributes of the target project's experiment runs.

- `list_experiments()` – names of experiments in the target project.

- `set_api_token()` – set the Neptune API token to use for the session.

For details, see the [API reference](./docs/api_reference/).

### Runs API

You can target individual runs by ID instead of experiment runs by name.

To use the corresponding methods for runs, import the `runs` module:

```python

import neptune_query.runs as nq_runs

nq_runs.fetch_metrics(...)

```

You can use these methods to target individual runs by ID instead of experiment runs by name.

## Documentation

For how-tos and the complete API reference, see the [docs](./docs) directory.

## Examples

The following are some examples of how to use the Query API. For all functions and options, see the [API reference](./docs/api_reference/).

### Example 1: Fetch metric values

To fetch values at each step, use `fetch_metrics()`.

- To filter experiments to return, use the `experiments` parameter.

- To specify attributes to include as columns, use the `attributes` parameter.

- To limit the returned values, use the available parameters.

```python

nq.fetch_metrics(

experiments=["exp_dczjz"],

attributes=r"metrics/val_.+_estimated$",

tail_limit=10,

)

```

```pycon

metrics/val_accuracy_estimated metrics/val_loss_estimated

experiment step

exp_dczjz 1.0 0.432187 0.823375

2.0 0.649685 0.971732

3.0 0.760142 0.154741

4.0 0.719508 0.504652

```

### Example 2: Fetch metadata as one row per run

To fetch experiment metadata from your project, use the `fetch_experiments_table()` function. The output mimics the runs table in the web app.

- To filter experiments to return, use the `experiments` parameter.

- To specify attributes to include as columns, use the `attributes` parameter.

```python

nq.fetch_experiments_table(

experiments=r"^exp_",

attributes=["metrics/train_accuracy", "metrics/train_loss", "learning_rate"],

)

```

```pycon

metrics/train_accuracy metrics/train_loss learning_rate

experiment

exp_ergwq 0.278149 0.336344 0.01

exp_qgguv 0.160260 0.790268 0.02

exp_hstrj 0.365521 0.459901 0.01

```

> For series attributes, the value of the last logged step is returned.

### Example 3: Define filters

List my experiments that have a "dataset_version" attribute and "val/loss" less than 0.1:

```py

from neptune_query.filters import Filter

owned_by_me = Filter.eq("sys/owner", "sigurd")

dataset_check = Filter.exists("dataset_version")

loss_filter = Filter.lt("val/loss", 0.1)

interesting = owned_by_me & dataset_check & loss_filter

nq.list_experiments(experiments=interesting)

```

```pycon

['exp_ergwq', 'exp_qgguv', 'exp_hstrj']

```

Then fetch configs from the experiments, including also the interesting metric:

```py

nq.fetch_experiments_table(

experiments=interesting,

attributes=r"config/ | val/loss",

)

```

```pycon

config/optimizer config/batch_size config/learning_rate val/loss

experiment

exp_ergwq Adam 32 0.001 0.0901

exp_qgguv Adadelta 32 0.002 0.0876

exp_hstrj Adadelta 64 0.001 0.0891

```

### Example 4: Exclude archived runs

To exclude archived experiments or runs from the results, create a filter on the `sys/archived` attribute:

```py

import neptune_query as nq

from neptune_query.filters import Filter

exclude_archived = Filter.eq("sys/archived", False)

nq.fetch_experiments_table(experiments=exclude_archived)

```

To use this filter in combination with other criteria, use the `&` operator to join multiple filters:

```py

name_matches_regex = Filter.name(r"^exp_")

exclude_archived = Filter.eq("sys/archived", False)

nq.fetch_experiments_table(experiments=name_matches_regex & exclude_archived)

```

### Example 5: Fetch runs belonging to specific experiment

Each run's experiment information is stored in the `sys/experiment` namespace.

To query runs belonging to a specific experiment, use the runs API and construct a filter on the `sys/experiment/name` attribute:

```py

import neptune_query.runs as nq_runs

from neptune_query.filters import Filter

experiment_name_filter = Filter.eq("sys/experiment/name", "kittiwake-week-1")

nq_runs.list_runs(runs=experiment_name_filter)

```

---

## License

This project is licensed under the Apache License Version 2.0. For details, see [Apache License Version 2.0][license].

[license]: http://www.apache.org/licenses/LICENSE-2.0

| text/markdown | neptune.ai | contact@neptune.ai | null | null | Apache-2.0 | MLOps, ML Experiment Tracking, ML Model Registry, ML Model Store, ML Metadata Store | [

"Development Status :: 4 - Beta",

"Environment :: Console",

"Intended Audience :: Developers",

"Intended Audience :: Science/Research",

"License :: OSI Approved :: Apache Software License",

"Natural Language :: English",

"Operating System :: MacOS",

"Operating System :: Microsoft :: Windows",

"Opera... | [] | null | null | <4.0,>=3.10 | [] | [] | [] | [

"PyJWT<3.0.0,>=2.0.0",

"attrs>=21.3.0",

"azure-storage-blob<13.0.0,>=12.7.0",

"httpx[http2]<0.28.2,>=0.15.4",

"pandas>=1.4.0",

"protobuf<7,>=4.21.1",

"python-dateutil<3.0.0,>=2.8.0",

"tqdm>=4.66.0"

] | [] | [] | [] | [

"Documentation, https://docs.neptune.ai/",

"Homepage, https://neptune.ai/",

"Repository, https://github.com/neptune-ai/neptune-query",

"Tracker, https://github.com/neptune-ai/neptune-query/issues"

] | twine/6.1.0 CPython/3.13.7 | 2026-02-19T12:35:50.072887 | neptune_query-1.12.0b1.tar.gz | 126,051 | fd/ea/a0bd34af638823049c5ad42e15eda2bfee0e86d5e1f5acd73ec9527a5eea/neptune_query-1.12.0b1.tar.gz | source | sdist | null | false | 8d6c24694e289ef806689f122b020b3e | d74c9ae26dfc37137bac946d25af2c297c697741079c1017eee9ef76985e57b6 | fdeaa0bd34af638823049c5ad42e15eda2bfee0e86d5e1f5acd73ec9527a5eea | null | [

"LICENSE"

] | 227 |

2.4 | kubernify | 1.0.0 | Verify Kubernetes deployments match a version manifest with deep stability auditing. Checks convergence, revision consistency, and pod health. | # kubernify

[](https://pypi.org/project/kubernify/)

[](https://pypi.org/project/kubernify/)

[](https://github.com/gs202/Kubernify/actions/workflows/ci.yml)

[](LICENSE)

Verify Kubernetes deployments match a version manifest with deep stability auditing. Checks convergence, revision consistency, and pod health.

---

## Features

- **Manifest-driven verification** — Provide a JSON manifest of expected versions; kubernify verifies the cluster matches

- **Deep stability auditing** — Goes beyond version checks: convergence, revision consistency, pod health, DaemonSet scheduling, Job completion

- **Retry-until-converged loop** — Waits for rollouts to complete rather than just snapshot-checking

- **Repository-relative image parsing** — Flexible component name extraction from any image registry format

- **Comprehensive workload support** — Deployments, StatefulSets, DaemonSets, Jobs, and CronJobs

- **Zero-replica awareness** — Verifies version from PodSpec even when HPA/KEDA has scaled to zero

- **Structured JSON reports** — Machine-readable output for CI/CD pipeline integration

---

## Installation

```bash

pip install kubernify

```

Or with [pipx](https://pipx.pypa.io/) for isolated CLI usage:

```bash

pipx install kubernify

```

Or with [uv](https://docs.astral.sh/uv/):

```bash

uv add kubernify

```

---

## Quick Start

```bash

# Verify backend and frontend match expected versions in the "production" namespace

kubernify \

--context my-cluster-context \

--anchor my-app \

--namespace production \

--manifest '{"backend": "v1.2.3", "frontend": "v1.2.4"}'

```

kubernify will connect to the cluster, discover all matching workloads, verify their image versions against the manifest, run stability audits, and exit with code `0` (pass), `1` (fail), or `2` (timeout).

---

## CLI Reference

```

kubernify [OPTIONS]

```

| Argument | Description | Default |

|----------|-------------|---------|

| `--context` | Kubeconfig context name. Mutually exclusive with `--gke-project`. | From kubeconfig |

| `--gke-project` | GCP project ID for GKE context resolution. Mutually exclusive with `--context`. | — |

| `--anchor` | **(required)** Image path anchor for component name extraction. See [How Image Anchor Works](#how-image-anchor-works). | — |

| `--manifest` | **(required)** JSON version manifest, e.g. `'{"backend": "v1.2.3"}'`. | — |

| `--namespace` | Kubernetes namespace to verify. | From kubeconfig context |

| `--required-workloads` | Comma-separated workload name patterns that must exist. | — |

| `--skip-containers` | Comma-separated container name patterns to skip during verification. | — |

| `--min-uptime` | Minimum pod uptime in seconds for stability checks. | `0` |

| `--restart-threshold` | Maximum allowed container restart count. | `3` |

| `--timeout` | Global timeout in seconds for the verification loop. | `300` |

| `--allow-zero-replicas` | Allow workloads with zero replicas to pass verification. | `false` |

| `--dry-run` | Snapshot check without waiting for convergence. | `false` |

| `--include-statefulsets` | Include StatefulSets in workload discovery. | `false` |

| `--include-daemonsets` | Include DaemonSets in workload discovery. | `false` |

| `--include-jobs` | Include Jobs and CronJobs in workload discovery. | `false` |

---

## Usage Examples

### Basic Usage — Direct Kubeconfig Context

```bash

kubernify \

--context my-cluster-context \

--anchor my-app \

--namespace production \

--manifest '{"backend": "v1.2.3", "frontend": "v1.2.4"}'

```

### GKE Shorthand — Resolve Context from GCP Project

```bash

kubernify \

--gke-project my-gke-project-123456 \

--anchor my-app \

--namespace production \

--manifest '{"backend": "v1.2.3", "frontend": "v1.2.4"}'

```

### In-Cluster — Running Inside a Kubernetes Pod

```bash

# No --context needed; auto-detects in-cluster config and namespace

kubernify \

--anchor my-app \

--manifest '{"backend": "v1.2.3", "frontend": "v1.2.4"}'

```

### Full-Featured — All Options

```bash

kubernify \

--context my-cluster-context \

--anchor my-app \

--namespace production \

--manifest '{"backend": "v1.2.3", "frontend": "v1.2.4", "worker": "v1.2.3"}' \

--required-workloads "backend, frontend, worker" \

--skip-containers "istio-proxy, envoy, fluent-bit" \

--include-statefulsets \

--include-daemonsets \

--include-jobs \

--min-uptime 120 \

--restart-threshold 5 \

--timeout 600 \

--allow-zero-replicas

```

### Dry Run — Snapshot Check Without Waiting

```bash

kubernify \

--context my-cluster-context \

--anchor my-app \

--manifest '{"backend": "v1.2.3"}' \

--dry-run

```

### CI/CD Integration — GitHub Actions

```yaml

jobs:

verify-deployment:

runs-on: ubuntu-latest

steps:

- name: Set up kubeconfig

run: |

echo "${{ secrets.KUBECONFIG }}" > /tmp/kubeconfig

export KUBECONFIG=/tmp/kubeconfig

- name: Install kubernify

run: pip install kubernify

- name: Verify deployment

run: |

kubernify \

--context ${{ secrets.KUBE_CONTEXT }} \

--anchor my-app \

--manifest '${{ steps.build.outputs.manifest }}' \

--timeout 600 \

--min-uptime 60

```

---

## Programmatic Usage

kubernify can be used as a Python library for custom verification workflows:

```python

from kubernify import __version__, VerificationStatus

from kubernify.kubernetes_controller import KubernetesController

from kubernify.workload_discovery import WorkloadDiscovery

from kubernify.cli import construct_component_map, verify_versions

controller = KubernetesController(context="my-cluster")

discovery = WorkloadDiscovery(k8s_controller=controller)

workloads, _ = discovery.discover_cluster_state(namespace="production")

component_map = construct_component_map(

workloads=workloads,

manifest={"backend": "v1.2.3"},

repository_anchor="my-app",

)

results = verify_versions(manifest={"backend": "v1.2.3"}, component_map=component_map)

if results.errors:

print(f"Verification failed: {results.errors}")

```

---

## How Image Anchor Works

kubernify uses a **repository-relative anchor** to extract component names from container image paths. The `--anchor` argument specifies the path segment after which the component name is derived.

```

Image: registry.example.com/my-org/my-app/backend:v1.2.3

└─ registry ──────────┘└─ org ┘└anchor┘└component┘

↓

--anchor my-app → component = "backend"

```

**More examples:**

| Image | `--anchor` | Extracted Component |

|-------|-----------|-------------------|

| `registry.example.com/my-org/my-app/backend:v1.2.3` | `my-app` | `backend` |

| `registry.example.com/my-org/my-app/api/server:v2.0.0` | `my-app` | `api/server` |

| `gcr.io/my-project/my-app/worker:v1.0.0` | `my-app` | `worker` |

The extracted component name is then matched against the keys in your `--manifest` JSON to verify the correct version is deployed.

---

## Architecture

```mermaid

graph TD

A[CLI Entry Point] --> B[Argument Parser]

B --> C{Context Mode}

C -->|--context| D[Direct kubeconfig context]

C -->|--gke-project| E[GKE context resolver]

C -->|neither| F[In-cluster or default kubeconfig]

D --> G[KubernetesController]

E --> G

F --> G

G --> H[WorkloadDiscovery]

H --> I[Fetch Deployments/StatefulSets/DaemonSets/Jobs]

H --> J[Inspect Workloads - concurrent]

J --> K[Image Parser]

K --> L[Component Map Construction]

L --> M[Version Verification]

J --> N[StabilityAuditor]

N --> O[Convergence Check]

N --> P[Revision Consistency]

N --> Q[Pod Health]

N --> R[DaemonSet Scheduling]

N --> S[Job Completion]

M --> T[Report Generator]

N --> T

T --> U[JSON Report Output]

U --> V{Exit Code}

V -->|0| W[PASS]

V -->|1| X[FAIL]

V -->|2| Y[TIMEOUT]

```

---

## Exit Codes

| Code | Meaning | Description |

|------|---------|-------------|

| `0` | **PASS** | All workloads match the manifest and pass stability audits |

| `1` | **FAIL** | One or more workloads have version mismatches or stability issues |

| `2` | **TIMEOUT** | Verification did not converge within the `--timeout` window |

---

## Prerequisites

### Python

- Python **>= 3.10**

### For GKE Users

If using `--gke-project` for automatic GKE context resolution:

1. Install the [Google Cloud SDK](https://cloud.google.com/sdk/docs/install)

2. Install the GKE auth plugin:

```bash

gcloud components install gke-gcloud-auth-plugin

```

3. Authenticate:

```bash

gcloud auth login

gcloud container clusters get-credentials CLUSTER_NAME --project PROJECT_ID

```

### RBAC Permissions

kubernify requires **read-only** access to workloads and pods. Apply the following RBAC configuration:

```yaml

apiVersion: rbac.authorization.k8s.io/v1

kind: Role

metadata:

name: kubernify-reader

namespace: <namespace>

rules:

- apiGroups: ["apps"]

resources: ["deployments", "statefulsets", "daemonsets", "replicasets"]

verbs: ["get", "list"]

- apiGroups: ["batch"]

resources: ["jobs", "cronjobs"]

verbs: ["get", "list"]

- apiGroups: [""]

resources: ["pods"]

verbs: ["get", "list"]

---

apiVersion: rbac.authorization.k8s.io/v1

kind: RoleBinding

metadata:

name: kubernify-reader-binding

namespace: <namespace>

subjects:

- kind: ServiceAccount

name: kubernify

namespace: <namespace>

roleRef:

kind: Role

name: kubernify-reader

apiGroup: rbac.authorization.k8s.io

```

---

## Contributing

Contributions are welcome! Please see [CONTRIBUTING.md](CONTRIBUTING.md) for development setup, coding standards, and the PR process.

---

## License

This project is licensed under the Apache License 2.0 — see the [LICENSE](LICENSE) file for details.

| text/markdown | null | gs202 <gs202@users.noreply.github.com> | null | null | Apache-2.0 | deployment, devops, k8s, kubernetes, verification, version | [

"Development Status :: 4 - Beta",

"Intended Audience :: Developers",

"Intended Audience :: System Administrators",

"Programming Language :: Python :: 3.10",

"Programming Language :: Python :: 3.11",

"Programming Language :: Python :: 3.12",

"Programming Language :: Python :: 3.13",

"Topic :: Software ... | [] | null | null | >=3.10 | [] | [] | [] | [

"kubernetes>=28.1.0"

] | [] | [] | [] | [

"Homepage, https://github.com/gs202/Kubernify",

"Documentation, https://github.com/gs202/Kubernify#readme",

"Issues, https://github.com/gs202/Kubernify/issues"

] | uv/0.10.4 {"installer":{"name":"uv","version":"0.10.4","subcommand":["publish"]},"python":null,"implementation":{"name":null,"version":null},"distro":{"name":"Ubuntu","version":"24.04","id":"noble","libc":null},"system":{"name":null,"release":null},"cpu":null,"openssl_version":null,"setuptools_version":null,"rustc_version":null,"ci":true} | 2026-02-19T12:34:15.026658 | kubernify-1.0.0.tar.gz | 101,439 | e3/41/8223a33b857e2ee0ea2b261538b48439b75cbba805ec89e4e492445c7ab9/kubernify-1.0.0.tar.gz | source | sdist | null | false | de3950836e049930aae20e2957b9933a | 0d08da73a08afa3c4811867f8a9c65b55e17ba99814c634809af2f31acc44986 | e3418223a33b857e2ee0ea2b261538b48439b75cbba805ec89e4e492445c7ab9 | null | [

"LICENSE"

] | 288 |

2.4 | influxdb3-python | 0.18.0 | Community Python client for InfluxDB 3.0 | <!--home-start-->

<p align="center">

<img src="https://github.com/InfluxCommunity/influxdb3-python/blob/main/python-logo.png?raw=true" alt="Your Image" width="150px">

</p>

<p align="center">

<a href="https://influxdb3-python.readthedocs.io/en/latest/">

<img src="https://img.shields.io/readthedocs/influxdb3-python/latest" alt="Readthedocs document">

</a>

<a href="https://pypi.org/project/influxdb3-python/">

<img src="https://img.shields.io/pypi/v/influxdb3-python.svg" alt="PyPI version">

</a>

<a href="https://pypi.org/project/influxdb3-python/">

<img src="https://img.shields.io/pypi/dm/influxdb3-python.svg" alt="PyPI downloads">

</a>

<a href="https://github.com/InfluxCommunity/influxdb3-python/actions/workflows/codeql-analysis.yml">

<img src="https://github.com/InfluxCommunity/influxdb3-python/actions/workflows/codeql-analysis.yml/badge.svg?branch=main" alt="CodeQL analysis">

</a>

<a href="https://dl.circleci.com/status-badge/redirect/gh/InfluxCommunity/influxdb3-python/tree/main">

<img src="https://dl.circleci.com/status-badge/img/gh/InfluxCommunity/influxdb3-python/tree/main.svg?style=svg" alt="CircleCI">

</a>

<a href="https://codecov.io/gh/InfluxCommunity/influxdb3-python">

<img src="https://codecov.io/gh/InfluxCommunity/influxdb3-python/branch/main/graph/badge.svg" alt="Code Cov"/>

</a>

<a href="https://influxcommunity.slack.com">

<img src="https://img.shields.io/badge/slack-join_chat-white.svg?logo=slack&style=social" alt="Community Slack">

</a>

</p>

# InfluxDB 3.0 Python Client

## Introduction

`influxdb_client_3` is a Python module that provides a simple and convenient way to interact with InfluxDB 3.0. This module supports both writing data to InfluxDB and querying data using the Flight client, which allows you to execute SQL and InfluxQL queries on InfluxDB 3.0.

We offer a ["Getting Started: InfluxDB 3.0 Python Client Library"](https://www.youtube.com/watch?v=tpdONTm1GC8) video that goes over how to use the library and goes over the examples.

## Dependencies

- `pyarrow` (automatically installed)

- `pandas` (optional)

## Installation

You can install 'influxdb3-python' using `pip`:

```bash

pip install influxdb3-python

```

Note: This does not include Pandas support. If you would like to use key features such as `to_pandas()` and `write_file()` you will need to install `pandas` separately.

*Note: Please make sure you are using 3.6 or above. For the best performance use 3.11+*

# Usage

One of the easiest ways to get started is to checkout the ["Pokemon Trainer Cookbook"](https://github.com/InfluxCommunity/influxdb3-python/blob/main/Examples/pokemon-trainer/cookbook.ipynb). This scenario takes you through the basics of both the client library and Pyarrow.

## Importing the Module

```python

from influxdb_client_3 import InfluxDBClient3, Point

```

## Initialization

If you are using InfluxDB Cloud, then you should note that:

1. Use bucket name for `database` or `bucket` in function argument.

```python

client = InfluxDBClient3(token="your-token",

host="your-host",

database="your-database")

```

## Writing Data

You can write data using the Point class, or supplying line protocol.

### Using Points

```python

point = Point("measurement").tag("location", "london").field("temperature", 42)

client.write(point)

```

### Using Line Protocol

```python

point = "measurement fieldname=0"

client.write(point)

```

### Write from file

Users can import data from CSV, JSON, Feather, ORC, Parquet

```python

import influxdb_client_3 as InfluxDBClient3

import pandas as pd

import numpy as np

from influxdb_client_3 import write_client_options, WritePrecision, WriteOptions, InfluxDBError

class BatchingCallback(object):

def __init__(self):

self.write_count = 0

def success(self, conf, data: str):

self.write_count += 1

print(f"Written batch: {conf}, data: {data}")

def error(self, conf, data: str, exception: InfluxDBError):

print(f"Cannot write batch: {conf}, data: {data} due: {exception}")

def retry(self, conf, data: str, exception: InfluxDBError):

print(f"Retryable error occurs for batch: {conf}, data: {data} retry: {exception}")

callback = BatchingCallback()

write_options = WriteOptions(batch_size=100,

flush_interval=10_000,

jitter_interval=2_000,

retry_interval=5_000,

max_retries=5,

max_retry_delay=30_000,

exponential_base=2)

wco = write_client_options(success_callback=callback.success,

error_callback=callback.error,

retry_callback=callback.retry,

write_options=write_options

)

with InfluxDBClient3.InfluxDBClient3(

token="INSERT_TOKEN",

host="eu-central-1-1.aws.cloud2.influxdata.com",

database="python", write_client_options=wco) as client:

client.write_file(

file='./out.csv',

timestamp_column='time', tag_columns=["provider", "machineID"])

print(f'DONE writing from csv in {callback.write_count} batch(es)')

```

### Pandas DataFrame

```python

import pandas as pd

# Create a DataFrame with a timestamp column

df = pd.DataFrame({

'time': pd.to_datetime(['2024-01-01', '2024-01-02', '2024-01-03']),

'trainer': ['Ash', 'Misty', 'Brock'],

'pokemon_id': [25, 120, 74],

'pokemon_name': ['Pikachu', 'Staryu', 'Geodude']

})

# Write the DataFrame - timestamp_column is required for consistency

client.write_dataframe(

df,

measurement='caught',

timestamp_column='time',

tags=['trainer', 'pokemon_id']

)

```

### Polars DataFrame

```python

import polars as pl

# Create a DataFrame with a timestamp column

df = pl.DataFrame({

'time': ['2024-01-01T00:00:00Z', '2024-01-02T00:00:00Z'],

'trainer': ['Ash', 'Misty'],

'pokemon_id': [25, 120],

'pokemon_name': ['Pikachu', 'Staryu']

})

# Write the DataFrame - same API works for both pandas and polars

client.write_dataframe(

df,

measurement='caught',

timestamp_column='time',

tags=['trainer', 'pokemon_id']

)

```

## Querying

### Querying with SQL

```python

query = "select * from measurement"

reader = client.query(query=query, language="sql")

table = reader.read_all()

print(table.to_pandas().to_markdown())

```

### Querying to DataFrame

```python

# Query directly to a pandas DataFrame (default)

df = client.query_dataframe("SELECT * FROM caught WHERE trainer = 'Ash'")

# Query to a polars DataFrame

df = client.query_dataframe("SELECT * FROM caught", frame_type="polars")

```

### Querying with influxql

```python

query = "select * from measurement"

reader = client.query(query=query, language="influxql")

table = reader.read_all()

print(table.to_pandas().to_markdown())

```

### gRPC compression

#### Request compression

Request compression is not supported by InfluxDB 3 — the client sends uncompressed requests.

#### Response compression

Response compression is enabled by default. The client sends the `grpc-accept-encoding: identity, deflate, gzip`

header, and the server returns gzip-compressed responses (if supported). The client automatically

decompresses them — no configuration required.

To **disable response compression**:

```python

# Via constructor parameter

client = InfluxDBClient3(

host="your-host",

token="your-token",

database="your-database",

disable_grpc_compression=True

)

# Or via environment variable

# INFLUX_DISABLE_GRPC_COMPRESSION=true

client = InfluxDBClient3.from_env()

```

## Windows Users

Currently, Windows users require an extra installation when querying via Flight natively. This is due to the fact gRPC cannot locate Windows root certificates. To work around this please follow these steps:

Install `certifi`

```bash

pip install certifi

```

Next include certifi within the flight client options:

```python

import certifi

import influxdb_client_3 as InfluxDBClient3

from influxdb_client_3 import flight_client_options

fh = open(certifi.where(), "r")

cert = fh.read()

fh.close()

client = InfluxDBClient3.InfluxDBClient3(

token="",

host="b0c7cce5-8dbc-428e-98c6-7f996fb96467.a.influxdb.io",

database="flightdemo",

flight_client_options=flight_client_options(

tls_root_certs=cert))

table = client.query(

query="SELECT * FROM flight WHERE time > now() - 4h",

language="influxql")

print(table.to_pandas())

```

You may include your own root certificate in this manner as well.

If connecting to InfluxDB fails with error `DNS resolution failed` when using domain name, example `www.mydomain.com`, then try to set environment variable `GRPC_DNS_RESOLVER=native` to see if it works.

# Contributing

Tests are run using `pytest`.

```bash

# Clone the repository

git clone https://github.com/InfluxCommunity/influxdb3-python

cd influxdb3-python

# Create a virtual environment and activate it

python3 -m venv .venv

source .venv/bin/activate

# Install the package and its dependencies

pip install -e .[pandas,polars,dataframe,test]

# Run the tests

python -m pytest .

```

<!--home-end-->

| text/markdown | InfluxData | contact@influxdata.com | null | null | null | null | [

"Development Status :: 4 - Beta",

"Intended Audience :: Developers",

"License :: OSI Approved :: MIT License",

"Programming Language :: Python :: 3.8",

"Programming Language :: Python :: 3.9",

"Programming Language :: Python :: 3.10",

"Programming Language :: Python :: 3.11",

"Programming Language :: ... | [] | https://github.com/InfluxCommunity/influxdb3-python | null | >=3.8 | [] | [] | [] | [

"reactivex>=4.0.4",

"certifi>=14.05.14",

"python_dateutil>=2.5.3",

"urllib3>=1.26.0",

"pyarrow>=8.0.0",

"pandas; extra == \"pandas\"",

"polars; extra == \"polars\"",

"pandas; extra == \"dataframe\"",

"polars; extra == \"dataframe\"",

"pytest; extra == \"test\"",

"pytest-cov; extra == \"test\"",

... | [] | [] | [] | [] | twine/6.1.0 CPython/3.13.7 | 2026-02-19T12:32:22.017340 | influxdb3_python-0.18.0.tar.gz | 98,718 | 4d/d9/bf0a0012ac01aa8addb49df4860f2f4aa592ac37f42e2d1e8ecaea6412d5/influxdb3_python-0.18.0.tar.gz | source | sdist | null | false | beb720a511ac50596fea266ee9ba36d5 | 9ffe285fc9711820264f77ba4a03524df5cddbab3ebfa90967e88db2e89fbba6 | 4dd9bf0a0012ac01aa8addb49df4860f2f4aa592ac37f42e2d1e8ecaea6412d5 | null | [

"LICENSE"

] | 9,665 |

2.4 | PyPFD | 2026.0.0.2 | PFDavg calculation | Introduction

PyPFD is a Python library designed to calculate the Probability of Failure on Demand (PFD) in accordance with the international safety standards IEC 61508 and IEC 61511. It provides a way to estimate the reliability of Safety Devices, making it easier for engineers and safety professionals to perform consistent SIS assessments.

It allows you to evaluate PFDavg for various architectures (1oo1, 1oo2, 2oo2, 2oo3, 1oo3, and KooN using a general formula).

The library provides the following formulas:

PyPFDRBDAvg:

pfd_RBD_avg_1oo1(λ_du, λ_dd, T1_month, MTTR)

pfd_RBD_avg_1oo1_pt(λ_du, λ_dd, T1_month, T2_month, PDC, MTTR)

pfd_RBD_avg_1oo2(λ_du, λ_dd, β, βd, T1_month, MTTR)

pfd_RBD_avg_1oo2_pt(λ_du, λ_dd, β, βd, T1_month, T2_month, PDC, MTTR)

pfd_RBD_avg_1oo3(λ_du, λ_dd, β, βd, T1_month, MTTR)

pfd_RBD_avg_2oo2(λ_du, λ_dd, T1_month, MTTR)

pfd_RBD_avg_2oo2_pt(λ_du, λ_dd, T1_month, T2_month, PDC, MTTR)

pfd_RBD_avg_2oo3(λ_du, λ_dd, β, βd, T1_month, MTTR)

pfd_RBD_avg_KooN(K, N, λ_du, λ_dd, β, βd, T1_month, MTTR)

PyPFDMarkov:

pfd_Mkv_avg_1oo1_2pt(λ_du: float,λ_dd: float,λ_s: float,T_pt1_month: float,T_pt2_month: float,T1_month: float,PDC1: float,PDC2: float,MTTR: float)

pfd_Mkv_avg_1oo2(λ_du: float,λ_dd: float,λ_su: float,λ_sd: float,β:float, βd:float,T1_month: float,MTTR:float)

pfd_plot_Mkv_avg_1oo1_2pt(λ_du: float,λ_dd: float,λ_s: float,T_pt1_month: float,T_pt2_month: float,T1_month: float,PDC1: float,PDC2: float,MTTR: float,interval:int)

pfd_plot_Mkv_avg_1oo2(λ_du: float,λ_dd: float,λ_su: float,λ_sd: float,β:float, βd:float,T1_month: float,MTTR:float,interval:int)

PyPFDN2595:

pfd_N2595_avg_MooN(M:int,N:int,λ_du:float,λ_dd:float,β:float,T1_month:float,MTTR:float) -> float:

pfd_N2595_avg_1oo1_1pt(λ_du:float,λ_dd:float,PTC:float,TI_month:float,MT_month:float,MTTR:float) -> float:

pfd_N2595_avg_1oo2_1pt(λ_du:float,λ_dd:float,β:float,TI_month:float,MT_month:float,PTC:float,MTTR:float) -> float:

pfd_N2595_avg_2oo2_1pt(λ_du:float,λ_dd:float,PTC:float,TI_month:float,MT_month:float,MTTR:float) -> float:

pfd_N2595_avg_2oo3_1pt(λ_du:float,λ_dd:float,β:float,TI_month:float,MT_month:float,PTC:float,MTTR:float) -> float:

Parameters:

λ_du = dangerous undetected failure rate per hour

λ_dd = dangerous detected failure rate per hour

β = common cause for safe failure in %

βd = common cause for unsafe failure in %

T1_month = test interval in months (with PDC effectiveness)

T2_month or MT_month = test interval in months for "as good as new" condition

PDC = partial diagnostic coverage of the test (% capable of revealing dangerous undetected failures)

MTTR = mean time to repair

All formulas assume identical devices. For combinations of different devices or different test intervals, see the formulas below (currently in development and not validated yet):

def pfhKooN(K, N, λ_d, β, T1_month):

def pfd_avg_1oo2_dif(λ_du1, λ_dd1, T1_month1, MTTR1, β1, βd1,

λ_du2, λ_dd2, T1_month2, MTTR2, β2, βd2):

def pfd_avg_2oo3_dif(λ_du1, λ_dd1, T1_month1, MTTR1, β1, βd1,

λ_du2, λ_dd2, T1_month2, MTTR2, β2, βd2,

λ_du3, λ_dd3, T1_month3, MTTR3, β3, βd3):

def pfd_avg_1oo3_dif(λ_du1, λ_dd1, T1_month1, MTTR1, β1, βd1,

λ_du2, λ_dd2, T1_month2, MTTR2, β2, βd2,

λ_du3, λ_dd3, T1_month3, MTTR3, β3, βd3):

Roadmap

-Test and validate all existing formulas.

-Create a GitHub repository explaining the logic behind the formulas.

-Develop default reliability data for common devices (Analog Transmitters, Valves, and Logic Solvers).

Highlights

-These formulas, combined with xlwings Lite in Excel, provide an efficient and user-friendly way to perform SIS assessments.

-If you need a specific architecture not present in the library, feel free to contact me for assistance.

| text/markdown | null | Rafael Rocha <rafa.rocha@gmail.com> | null | null | null | SIS, SIF, IEC61508, IEC61511, PFD, reliability, functional safety, 1oo1, 1oo2, 2oo3, 2oo2, 1oo3, N2595 | [

"Programming Language :: Python :: 3",

"Operating System :: OS Independent"

] | [] | null | null | >=3.8 | [] | [] | [] | [] | [] | [] | [] | [] | twine/6.2.0 CPython/3.13.7 | 2026-02-19T12:31:24.090045 | pypfd-2026.0.0.2.tar.gz | 8,306 | 7a/ea/da519a1bb2b4a6d99ec4d5ff090ce52104647035db8cec3d4780984a871e/pypfd-2026.0.0.2.tar.gz | source | sdist | null | false | 38e0d77caac8f8e0b665e0c563a92a09 | 4c7ab2f54a6422307c5d17e8e9da30652e14743454e91f3a67353bd8765e4108 | 7aeada519a1bb2b4a6d99ec4d5ff090ce52104647035db8cec3d4780984a871e | null | [

"LICENSE"

] | 0 |

2.4 | copex | 2.6.1 | Copilot Extended - Resilient wrapper for GitHub Copilot SDK with auto-retry, Ralph Wiggum loops, and more | # Copex — Copilot Extended

[](https://pypi.org/project/copex/)

[](https://python.org)

[](LICENSE)

A resilient Python wrapper for the GitHub Copilot SDK with automatic retry, Ralph Wiggum loops, fleet orchestration, and a beautiful CLI.

## Features

- **Automatic retry** with adaptive exponential backoff and jitter

- **Circuit breaker** (sliding-window) to avoid hammering a failing backend

- **Model fallback chains** — automatically try the next model when one fails

- **Session pooling** with LRU eviction and pre-warming

- **Ralph Wiggum loops** — iterative AI development via repeated prompts

- **Fleet mode** — run multiple tasks in parallel with dependency ordering

- **Council mode** — multi-model deliberation with a chair model

- **Plan mode** — AI-generated step-by-step plans with execution & checkpointing

- **MCP integration** — connect external Model Context Protocol servers

- **Skill discovery** — auto-discover and load skill files from repo/user dirs

- **Beautiful CLI** with Rich terminal output, themes, and streaming

- **CLI client mode** — bypass the SDK to access all models via subprocess

- **Metrics & cost tracking** — token usage, timing, success rates

## Installation

```bash

pip install copex

```

Or with [pipx](https://pipx.pypa.io/) for isolated CLI usage:

```bash

pipx install copex

```

**Prerequisite:** The GitHub Copilot CLI must be installed and authenticated:

```bash

npm i -g @github/copilot

copilot login

```

## Quick Start

### CLI

```bash

# One-shot prompt

copex chat "Explain the builder pattern in Python"

# Interactive session

copex interactive

# Pipe from stdin

echo "What is a monad?" | copex chat --stdin

# Choose model and reasoning

copex chat "Optimize this SQL" -m gpt-5.2-codex -r xhigh

```

### Python API

```python

import asyncio

from copex import Copex, CopexConfig, Model, ReasoningEffort

async def main():

config = CopexConfig(

model=Model.CLAUDE_OPUS_4_6,

reasoning_effort=ReasoningEffort.HIGH,

)

async with Copex(config) as client:

response = await client.send("Explain quicksort")

print(response.content)

asyncio.run(main())

```

#### Streaming

```python

async with Copex(config) as client:

async for chunk in client.stream("Write a prime sieve"):

if chunk.type == "message":

print(chunk.delta, end="", flush=True)

elif chunk.type == "reasoning":

print(f"\033[2m{chunk.delta}\033[0m", end="", flush=True)

```

## Configuration

Copex loads configuration from `~/.config/copex/config.toml` automatically.

Generate a starter config with:

```bash

copex init

```

### Config file example

```toml

model = "claude-opus-4.6"

reasoning_effort = "high"

streaming = true

use_cli = false

timeout = 300.0

auto_continue = true

ui_theme = "default" # default, midnight, mono, sunset

ui_density = "extended" # compact, extended

[retry]

max_retries = 5

base_delay = 1.0

max_delay = 30.0

exponential_base = 2.0

# Skills

skills = ["code-review"]

# skill_directories = ["/path/to/skills"]

# disabled_skills = ["some-skill"]

# MCP servers

# mcp_config_file = "~/.config/copex/mcp.json"

# Tool filtering

# available_tools = ["bash", "view"]

# excluded_tools = ["dangerous-tool"]

```

### Environment variables

| Variable | Description |

|---|---|

| `COPEX_MODEL` | Override the default model |

| `COPEX_REASONING` | Override the reasoning effort |

## CLI Commands

| Command | Description |

|---|---|

| `copex chat` | Send a prompt with automatic retry |

| `copex interactive` | Start an interactive chat session |

| `copex tui` | Launch the full TUI interface |

| `copex ralph` | Start a Ralph Wiggum iterative loop |

| `copex plan` | Generate and execute step-by-step plans |

| `copex fleet` | Run multiple tasks in parallel |

| `copex council` | Multi-model deliberation on a task |

| `copex models` | List available models |

| `copex skills list` | List discovered skills |

| `copex skills show` | Show skill content |

| `copex render` | Render a JSONL session log |

| `copex status` | Show auth and CLI status |

| `copex config` | Show/edit configuration |

| `copex init` | Generate a starter config file |

| `copex login` | Authenticate with GitHub Copilot |

| `copex logout` | Remove authentication |

| `copex completions` | Generate shell completion scripts |

### Common flags

```

-m, --model Model to use

-r, --reasoning Reasoning effort (none, low, medium, high, xhigh)

-c, --config Config file path

-S, --skill-dir Add skill directory

--use-cli Use CLI subprocess instead of SDK

--json Machine-readable JSON output

-q, --quiet Content only, no formatting

```

## Models

Copex supports all models available through the Copilot SDK:

| Model | Reasoning | xhigh |

|---|---|---|

| `gpt-5.2-codex` | ✅ | ✅ |

| `gpt-5.2` | ✅ | ✅ |

| `gpt-5.1-codex` | ✅ | ❌ |

| `gpt-5.1-codex-max` | ✅ | ❌ |

| `gpt-5.1-codex-mini` | ✅ | ❌ |

| `gpt-5.1` | ✅ | ❌ |

| `gpt-5` | ✅ | ❌ |

| `gpt-5-mini` | ✅ | ❌ |

| `gpt-4.1` | ❌ | ❌ |

| `claude-opus-4.6` | ✅ | ❌ |

| `claude-opus-4.6-fast` | ✅ | ❌ |

| `claude-opus-4.5` | ❌ | ❌ |

| `claude-sonnet-4.5` | ❌ | ❌ |

| `claude-sonnet-4` | ❌ | ❌ |

| `claude-haiku-4.5` | ❌ | ❌ |

| `gemini-3-pro-preview` | ❌ | ❌ |

Copex also discovers models dynamically from `copilot --help` at runtime, so newly added models work automatically.

```bash

copex models # List all available models

```

## Reasoning Effort

Five levels control how much thinking the model does:

| Level | Description |

|---|---|

| `none` | No extended reasoning |

| `low` | Minimal reasoning |

| `medium` | Balanced |

| `high` | Thorough reasoning (default) |

| `xhigh` | Maximum reasoning (GPT-5.2+ only) |

If you request an unsupported level, Copex automatically downgrades and warns you.

## Advanced Features

### Retry & Backoff

Copex uses adaptive per-error-category backoff strategies:

```python

config = CopexConfig(

retry=RetryConfig(

max_retries=5,

base_delay=1.0,

max_delay=30.0,

exponential_base=2.0,

),

)

```

Error categories (rate limit, network, server, auth, client) each have their own backoff curve. Rate limit errors respect `Retry-After` headers when available.

### Circuit Breaker

A sliding-window circuit breaker opens when the failure rate exceeds 50% in the last 10 requests, then enters a 60-second cooldown before retrying.

### Model Fallback Chains

```python

client = Copex(config, fallback_chain=["claude-opus-4.6", "gpt-5.2-codex", "gpt-5.1-codex"])

```

If the primary model fails, Copex transparently tries the next model in the chain.

### Session Pooling

```python

from copex.client import SessionPool

pool = SessionPool(max_sessions=5, max_idle_time=300)

async with pool.acquire(client, config) as session:

await session.send({"prompt": "Hello"})

```

### Ralph Wiggum Loops

Iterative AI development: the same prompt is fed repeatedly, with the AI seeing its own previous work to iteratively improve.

```bash

copex ralph "Build a REST API with CRUD and tests" \

--promise "ALL TESTS PASSING" \

-n 20

```

```python

from copex.ralph import RalphWiggum

ralph = RalphWiggum(copex_client)

result = await ralph.loop(

prompt="Build a REST API with CRUD operations",

completion_promise="API COMPLETE",

max_iterations=30,

)

```

### Plan Mode

AI-generated step-by-step execution with checkpointing and resume:

```bash

copex plan "Build a REST API" --execute

copex plan "Build a REST API" --review # Confirm before executing

copex plan --resume # Resume from checkpoint

copex plan "Build a REST API" --visualize ascii

```

### Fleet Mode

Run multiple tasks in parallel with optional dependency ordering:

```bash

copex fleet "Write tests" "Fix linting" "Update docs" --max-concurrent 3

copex fleet --file tasks.toml

```

```python

from copex import Fleet, FleetConfig

async with Fleet(config) as fleet:

fleet.add("Write auth tests")

fleet.add("Refactor DB", depends_on=["write-auth-tests"])

results = await fleet.run()

```

Features: adaptive concurrency, rate-limit backoff, git finalize, artifact export.

### Council Mode

Multi-model deliberation — multiple models investigate a problem, then a chair model synthesizes the best solution:

```bash

copex council "Design a caching strategy for our API" \

--chair-model claude-opus-4.6 \

--codex-model gpt-5.2-codex \

--gemini-model gemini-3-pro-preview

```

### MCP Integration

Connect external Model Context Protocol servers:

```toml

# In config.toml

mcp_config_file = "~/.config/copex/mcp.json"

```

Or pass inline:

```bash

copex fleet --mcp-config mcp.json "Analyze the codebase"

```

### Checkpointing & Persistence

Ralph loops and plan execution save checkpoints to disk for crash recovery. Sessions can be saved and restored across runs.

## CLI Client Mode

Use `--use-cli` to bypass the SDK and invoke the Copilot CLI directly as a subprocess. This is useful when the SDK doesn't support a model but the CLI does:

```bash

copex chat "Hello" --use-cli -m claude-opus-4.6

```

```python

config = CopexConfig(use_cli=True, model=Model.CLAUDE_OPUS_4_6)

client = make_client(config) # Returns CopilotCLI instead of Copex

```

## Development

```bash

# Clone and install

git clone https://github.com/Arthur742Ramos/copex.git

cd copex

pip install -e ".[dev]"

# Run tests

python -m pytest tests/ -v

# Run with coverage

python -m pytest tests/ --cov=copex --cov-report=term-missing

# Lint

ruff check src/

ruff format src/

```

## License

[MIT](LICENSE) | text/markdown | null | Arthur Ramos <arthur742ramos@users.noreply.github.com> | null | null | null | ai, copex, copilot, github, ralph-wiggum, retry, sdk | [

"Development Status :: 3 - Alpha",

"Intended Audience :: Developers",

"License :: OSI Approved :: MIT License",

"Programming Language :: Python :: 3",

"Programming Language :: Python :: 3.10",

"Programming Language :: Python :: 3.11",

"Programming Language :: Python :: 3.12"

] | [] | null | null | >=3.10 | [] | [] | [] | [

"github-copilot-sdk>=0.1.21",

"prompt-toolkit>=3.0.0",

"pydantic>=2.0.0",

"rich>=13.0.0",

"tomli-w>=1.0.0",

"tomli; python_version < \"3.11\"",

"typer>=0.9.0",

"pytest; extra == \"dev\"",

"pytest-asyncio; extra == \"dev\"",

"pytest-cov; extra == \"dev\"",

"ruff; extra == \"dev\""

] | [] | [] | [] | [

"Homepage, https://github.com/Arthur742Ramos/copex",

"Repository, https://github.com/Arthur742Ramos/copex",

"Issues, https://github.com/Arthur742Ramos/copex/issues"

] | twine/6.1.0 CPython/3.13.7 | 2026-02-19T12:31:02.355161 | copex-2.6.1.tar.gz | 185,460 | 13/74/cf5e4fc9121f24556e189ae308603d3268b8464169816b2debf21de38fa6/copex-2.6.1.tar.gz | source | sdist | null | false | d327e8fd0613bef7cbf83c8a1e2b78b3 | aac54ebfc1706ceda268f86cbd7c512f1c645a0241748f009e1ee20916f250ee | 1374cf5e4fc9121f24556e189ae308603d3268b8464169816b2debf21de38fa6 | MIT | [

"LICENSE"

] | 275 |

2.4 | onecode-pycg | 1.2.0 | PyCG - Practical Python Call Graphs | # :warning: Notes

Forked from https://github.com/vitsalis/pycg

Changes: See https://github.com/deeplime-io/PyCG/tree/onecode

Essentially added code tracks the order of the calls and the code associated to it. It is used by OneCode to properly interpret code based on the **excellent** `PyCG`.

Why a new PyPi package? Well, the not-so-great PyPi doesn't allow to have forked public repositories as part of the dependencies. Nice right?

# PyCG - Practical Python Call Graphs

[](https://github.com/vitsalis/PyCG/actions/workflows/test.yaml)

PyCG generates call graphs for Python code using static analysis.

It efficiently supports

* Higher order functions

* Twisted class inheritance schemes

* Automatic discovery of imported modules for further analysis

* Nested definitions

You can read the full methodology as well as a complete evaluation on the

[ICSE 2021 paper](https://arxiv.org/pdf/2103.00587.pdf).

You can cite PyCG as follows.

Vitalis Salis, Thodoris Sotiropoulos, Panos Louridas, Diomidis Spinellis and Dimitris Mitropoulos.

PyCG: Practical Call Graph Generation in Python.

In _43rd International Conference on Software Engineering, ICSE '21_,

25–28 May 2021.

> **PyCG** is archived. Due to limited availability, no further development

> improvements are planned. Happy to help anyone that wants to create a fork to

> continue development.

# Installation

PyCG is implemented in Python3 and requires Python version 3.4 or higher.

It also has no dependencies. Simply:

```

pip install onecode-pycg

```

# Usage

```

~ >>> pycg -h

usage: __main__.py [-h] [--package PACKAGE] [--product PRODUCT]

[--forge FORGE] [--version VERSION] [--timestamp TIMESTAMP]

[--max-iter MAX_ITER] [--operation {call-graph,key-error}]

[--as-graph-output AS_GRAPH_OUTPUT] [-o OUTPUT]

[entry_point ...]

positional arguments:

entry_point Entry points to be processed

optional arguments:

-h, --help show this help message and exit

--package PACKAGE Package containing the code to be analyzed

--max-iter MAX_ITER Maximum number of iterations through source code. If not specified a fix-point iteration will be performed.

--operation {call-graph,key-error}

Operation to perform. Choose call-graph for call graph generation (default) or key-error for key error detection on dictionaries.

--as-graph-output AS_GRAPH_OUTPUT

Output for the assignment graph

-o OUTPUT, --output OUTPUT

Output path

```

# Call Graph Output

## Simple JSON format

The call edges are in the form of an adjacency list where an edge `(src, dst)`

is represented as an entry of `dst` in the list assigned to key `src`:

```

{

"node1": ["node2", "node3"],

"node2": ["node3"],

"node3": []

}

```

## FASTEN Format

Dropped - not useful for OneCode and requires porting of `pkg_resources`

For an up-to-date description of the FASTEN format refer to the

[FASTEN wiki](https://github.com/fasten-project/fasten/wiki/Extended-Revision-Call-Graph-format#python).

# Key Errors Output

We are currently experimenting on identifying potential invalid dictionary

accesses on Python dictionaries (key errors).

The output format for key errors is a list of dictionaries containing:

- The file name in which the key error was identified

- The line number inside the file

- The namespace of the accessed dictionary

- The key used to access the dictionary

```

[{

"filename": "mod.py",

"lineno": 2,

"namespace": "mod.<dict1>",

"key": "key2"

},

{

"filename": "mod.py",

"lineno": 8,

"namespace": "mod.<dict1>",

"key": "nokey"

}]

```

# Examples

All the entry points are known and we want the simple JSON format

```

~ >>> pycg --package pkg_root pkg_root/module1.py pkg_root/subpackage/module2.py -o cg.json

```

All entry points are not known and we want the simple JSON format

```

~ >>> pycg --package django $(find django -type f -name "*.py") -o django.json

```

# Running Tests

From the root directory, first install the [mock](https://pypi.org/project/mock/) package:

```

pip3 install mock

```

Τhen, simply run the tests by executing:

```

make test

```

| text/markdown | null | Vitalis Salis <vitsalis@gmail.com> | null | null | null | null | [

"License :: OSI Approved :: Apache Software License",

"Programming Language :: Python :: 3"

] | [] | null | null | >=3.4 | [] | [] | [] | [

"black>=22.12.0; extra == \"dev\"",

"flake8>=6.0.0; extra == \"dev\"",

"isort>=5.12.0; extra == \"dev\"",

"mock; extra == \"dev\""

] | [] | [] | [] | [

"Homepage, https://github.com/vitsalis/PyCG",

"Bug Tracker, https://github.com/vitsalis/PyCG/issues"

] | uv/0.10.4 {"installer":{"name":"uv","version":"0.10.4","subcommand":["publish"]},"python":null,"implementation":{"name":null,"version":null},"distro":{"name":"Pop!_OS","version":"22.04","id":"jammy","libc":null},"system":{"name":null,"release":null},"cpu":null,"openssl_version":null,"setuptools_version":null,"rustc_version":null,"ci":null} | 2026-02-19T12:30:44.328898 | onecode_pycg-1.2.0.tar.gz | 54,672 | 05/ae/8e96a51bface1acf2bd941253c390b04df5d033b7341b7d945836a3da0c0/onecode_pycg-1.2.0.tar.gz | source | sdist | null | false | 0b3e1c403d89596b21dae75f2706a9de | d05841f96f0257620279df8cc46aae80575aed0658554543b77e57a954a7ba0c | 05ae8e96a51bface1acf2bd941253c390b04df5d033b7341b7d945836a3da0c0 | null | [

"LICENCE"

] | 490 |

2.4 | bepo | 1.3.0 | Detect duplicate PRs in GitHub repos | # bepo

Detect duplicate pull requests in GitHub repos.

No ML, no embeddings, no API keys. Just static analysis of diffs.

A maintainer with 100 open PRs can run `bepo check --repo foo/bar` and in 5 minutes get a ranked list of "you should look at these pairs." That saves hours of manual review.

## The Problem

Large repos waste engineering time on duplicate PRs. When multiple contributors fix the same bug independently, only one PR gets merged — the rest is wasted effort.

**This actually happens.** We analyzed 100 PRs from [OpenClaw](https://github.com/openclaw/openclaw) and found:

| Cluster | PRs | What happened |

|---------|-----|---------------|

| Matrix startup bug | **4 PRs** | 4 engineers independently fixed `startupGraceMs = 0` → `5000` |

| Media token regex | 2 PRs | Identical fix submitted twice |

| Feishu bitable config | 2 PRs | Same multi-account config fix |

**8 duplicate PRs across 3 bug fixes.** That's real engineering time wasted.

## Proof: OpenClaw Analysis

### 30-day window (recommended)

```

$ bepo check --repo openclaw/openclaw --since 30d

Found 52 potential duplicates:

#20472 <-> #20491

Similarity: 100%

Reason: Both fix #20468 ← Same Nextcloud Talk restart bug, two fixes

#19595 <-> #19624

Similarity: 90%

Reason: Both fix #19574 ← Identical PR titles, same elevatedDefault bug

#20419 <-> #20441

Similarity: 83%

Reason: Both fix #20410 ← Same WebChat markdown rendering fix

#19865 <-> #19945

Similarity: 97%

Reason: Same code: 100 lines overlap ← Two embedding provider PRs duplicating core logic

#19770 <-> #20317

Similarity: 87%

Reason: Same code: 9 lines overlap ← Same "hide tool calls" UI toggle, added twice

```

**Precision: ~88%** — verified by manual classification of all 52 pairs.

The remaining ~12% are concurrent PRs touching the same structural code — two provider integrations sharing schema boilerplate, two locale additions hitting the same type. The kind of overlap a reviewer would want to know about.

### Full backlog (3,000 PRs)

```

$ bepo check --repo openclaw/openclaw --limit 3000

Analyzed 3000 PRs in 9.8s

Found 1022 potential duplicates:

#17518 <-> #17653

Similarity: 100%

Reason: Both fix #17499 ← Identical browser dialog fix submitted twice

#12936 <-> #19050

Similarity: 80%

Reason: Same code: 10 lines overlap ← Same Telegram thread_id fix, one is literally "v2"

#15512 <-> #18994

Similarity: 67%

Reason: Same code: 10 lines overlap ← Both normalize Brave search language codes

#14182 <-> #15051

Similarity: 75%

Reason: Same code: 2403 lines overlap ← Two Zulip implementations duplicating core logic

```

Precision by similarity band, verified by manual sampling:

| Band | Pairs | Precision | Notes |

|------|-------|-----------|-------|

| 100% | 221 | ~75% | High code overlap but tiny sets — watch for short boilerplate |

| 80–90% | 318 | ~95% | Best signal — issue refs + code together |

| 70–79% | 283 | ~90% | Strong structural duplicates |

| 65–69% | 200 | ~70% | Noisier; raise `--threshold 0.75` to cut this band |

| **Overall** | **1022** | **~84%** | |

For large backlogs, `--threshold 0.75` drops to ~550 pairs at ~92% precision. `--since 30d` gives 52 actionable pairs at ~88% — the recommended default.

## More Examples

**VSCode** — Found PRs touching same files for same feature:

```

#295823 <-> #295822

Similarity: 77%

Reason: Same files: chatModel.ts, chatForkActions.ts

Both: "Use metadata flag for fork detection"

```

**Next.js** — Found related test updates:

```

#90121 <-> #90120

Similarity: 86%

Reason: Same files: test/

```

## Install

```bash

pip install bepo

```

Requires [GitHub CLI](https://cli.github.com/) (`gh`) to be installed and authenticated.

## Usage

```bash

# Check a repo for duplicate PRs

bepo check --repo owner/repo

# Check recent PRs (recommended — avoids stale noise)

bepo check --repo owner/repo --since 30d

# Adjust sensitivity (default: 0.65, higher = stricter)

bepo check --repo owner/repo --threshold 0.7

# JSON output for CI

bepo check --repo owner/repo --json

```

## How It Works

bepo fingerprints each PR by extracting:

| Signal | Weight | What it catches |

|--------|--------|-----------------|

| Same issue ref (#123) | 10.0 | Definite duplicate |

| Same code changes (IDF-weighted) | 8.0 | Rare lines weighted more than common boilerplate |

| Same files touched | 6.0 | PRs modifying same code |

| Same feature domain | 3.0 | auth, messaging, database, etc. |

| Same imports | 1.0 | Similar dependencies |

Then computes pairwise Jaccard similarity.

**That's it.** No embeddings, no LLM calls. Just:

- Parse `+++ b/path` from diffs

- Regex for `#\d+` issue refs

- Compare actual code changes

- Set intersection for similarity

**Cross-component filtering** suppresses boilerplate FPs in integration/plugin monorepos (e.g. Home Assistant, VSCode extensions). Two unrelated integrations sharing scaffold code (`config_flow.py`, `manifest.json`) are filtered out when each PR is concentrated in a different component subtree. Pairs sharing a GitHub issue ref always bypass this filter.

~2,000 lines of Python.

## As a Library

```python

from bepo import fingerprint_pr, find_duplicates

# Fingerprint PRs

fp1 = fingerprint_pr("#123", diff1, title="Fix auth", body="Fixes #456")

fp2 = fingerprint_pr("#124", diff2, title="Auth fix", body="Fixes #456")

# Find duplicates

dups = find_duplicates([fp1, fp2], threshold=0.65)

for d in dups:

print(f"{d.pr_a} ↔ {d.pr_b}: {d.similarity:.0%}")

print(f" Shared issues: {d.shared_issues}")

print(f" Shared files: {d.shared_files}")

```

## GitHub Action

Add to your repo to automatically detect duplicate PRs and post a warning comment:

```yaml

name: PR Duplicate Check

on: [pull_request]

jobs:

check-duplicates:

runs-on: ubuntu-latest

steps:

- uses: aardpark/bepo@v1

with:

github-token: ${{ secrets.GITHUB_TOKEN }}

threshold: '0.65' # optional, default 0.65

```

When a PR is opened that looks like a duplicate, bepo posts a comment:

> ## ⚠️ Potential Duplicate PRs Detected

>

> This PR may be similar to existing open PRs:

>

> | PR | Similarity | Reason |

> |---|---|---|

> | [#123](link) | 85% | Both fix #456 |

> | [#124](link) | 71% | Same code: 10 lines overlap |

>

> ---

> *Detected by [bepo](https://github.com/aardpark/bepo)*

### Action Inputs

| Input | Description | Default |

|-------|-------------|---------|

| `github-token` | GitHub token for API access | `${{ github.token }}` |

| `threshold` | Similarity threshold (0.0-1.0) | `0.65` |

| `limit` | Max PRs to compare against | `50` |

| `comment` | Post comment on PR | `true` |

### Action Outputs

| Output | Description |

|--------|-------------|

| `has_duplicates` | `true` if duplicates found |

| `match_count` | Number of matches |

| `matches` | JSON array of matches |

## Why This Works

Duplicates share obvious signals:

- **Same code** = Identical changes (639 shared lines caught SoundChain duplicates)

- **Same issue ref** = Same bug report (#19843 appeared in 4 Matrix PRs)

- **Same files** = Same bug location (100% overlap for Feishu cluster)

**IDF weighting** makes rare lines matter more than common boilerplate. A shared `startupGraceMs = 5000` is a stronger signal than a shared `return null`.

Code overlap and issue refs catch most duplicates. Simple works.

## Origin Story

This tool was vibe-coded in a single session with Claude.

We tried a few approaches and kept finding that simpler signals outperformed fancier ones. File overlap and issue refs catch most duplicates. Sometimes the obvious solution is the right one.

## License

MIT

| text/markdown | Andrew Park | null | null | null | null | cli, detection, duplicate, github, pull-request | [

"Development Status :: 5 - Production/Stable",

"Environment :: Console",

"Intended Audience :: Developers",

"License :: OSI Approved :: MIT License",

"Programming Language :: Python :: 3",

"Programming Language :: Python :: 3.10",

"Programming Language :: Python :: 3.11",

"Programming Language :: Pyth... | [] | null | null | >=3.10 | [] | [] | [] | [] | [] | [] | [] | [

"Homepage, https://github.com/aardpark/bepo",

"Repository, https://github.com/aardpark/bepo"

] | twine/6.2.0 CPython/3.14.2 | 2026-02-19T12:30:25.443018 | bepo-1.3.0.tar.gz | 31,137 | 64/4f/9a7e63e4cdd629925ad313ed6262d6702866ab69b825466c68bdfe59e253/bepo-1.3.0.tar.gz | source | sdist | null | false | 84d1db3ca5cdd1b8315b4e7dff2e83de | 1bb9e9f2efd41a3f97405cf2f82e80b64781125f9ed17bffe90914eea2a7d24f | 644f9a7e63e4cdd629925ad313ed6262d6702866ab69b825466c68bdfe59e253 | MIT | [

"LICENSE"

] | 263 |

2.4 | mex-common | 1.16.0 | Common library for MEx python projects. | # MEx common

Common library for MEx python projects.

[](https://github.com/robert-koch-institut/mex-template)

[](https://github.com/robert-koch-institut/mex-common/actions/workflows/cve-scan.yml)

[](https://robert-koch-institut.github.io/mex-common)

[](https://github.com/robert-koch-institut/mex-common/actions/workflows/linting.yml)

[](https://gitlab.opencode.de/robert-koch-institut/mex/mex-common)

[](https://github.com/robert-koch-institut/mex-common/actions/workflows/testing.yml)

## Project

The Metadata Exchange (MEx) project is committed to improve the retrieval of RKI

research data and projects. How? By focusing on metadata: instead of providing the

actual research data directly, the MEx metadata catalog captures descriptive information

about research data and activities. On this basis, we want to make the data FAIR[^1] so

that it can be shared with others.

Via MEx, metadata will be made findable, accessible and shareable, as well as available

for further research. The goal is to get an overview of what research data is available,

understand its context, and know what needs to be considered for subsequent use.

RKI cooperated with D4L data4life gGmbH for a pilot phase where the vision of a

FAIR metadata catalog was explored and concepts and prototypes were developed.

The partnership has ended with the successful conclusion of the pilot phase.

After an internal launch, the metadata will also be made publicly available and thus be

available to external researchers as well as the interested (professional) public to

find research data from the RKI.

For further details, please consult our

[project page](https://www.rki.de/DE/Aktuelles/Publikationen/Forschungsdaten/MEx/metadata-exchange-plattform-mex-node.html).

[^1]: FAIR is referencing the so-called

[FAIR data principles](https://www.go-fair.org/fair-principles/) – guidelines to make

data Findable, Accessible, Interoperable and Reusable.

**Contact** \

For more information, please feel free to email us at [mex@rki.de](mailto:mex@rki.de).

### Publisher

**Robert Koch-Institut** \

Nordufer 20 \

13353 Berlin \

Germany

## Package

The `mex-common` library is a software development toolkit that is used by multiple

components within the MEx project. It contains utilities for building pipelines like a

common commandline interface, logging and configuration setup. It also provides common

auxiliary connectors that can be used to fetch data from external services and a

re-usable implementation of the MEx metadata schema as pydantic models.

## License

This package is licensed under the [MIT license](/LICENSE). All other software

components of the MEx project are open-sourced under the same license as well.

## Development

### Installation

- install python on your system

- on unix, run `make install`

- on windows, run `.\mex.bat install`

### Linting and testing

- run all linters with `make lint` or `.\mex.bat lint`

- run unit and integration tests with `make test` or `.\mex.bat test`

- run just the unit tests with `make unit` or `.\mex.bat unit`

### Updating dependencies

- update boilerplate files with `cruft update`

- update global requirements in `requirements.txt` manually

- update git hooks with `pre-commit autoupdate`

- update package dependencies using `uv sync --upgrade`

- update github actions in `.github/workflows/*.yml` manually

### Creating release

- run `mex release RULE` to release a new version where RULE determines which part of

the version to update and is one of `major`, `minor`, `patch`.

| text/markdown | null | MEx Team <mex@rki.de> | null | null | MIT License

Copyright (c) 2026 Robert Koch-Institut

Permission is hereby granted, free of charge, to any person obtaining a copy

of this software and associated documentation files (the "Software"), to deal

in the Software without restriction, including without limitation the rights

to use, copy, modify, merge, publish, distribute, sublicense, and/or sell

copies of the Software, and to permit persons to whom the Software is

furnished to do so, subject to the following conditions:

The above copyright notice and this permission notice shall be included in all

copies or substantial portions of the Software.

THE SOFTWARE IS PROVIDED "AS IS", WITHOUT WARRANTY OF ANY KIND, EXPRESS OR

IMPLIED, INCLUDING BUT NOT LIMITED TO THE WARRANTIES OF MERCHANTABILITY,

FITNESS FOR A PARTICULAR PURPOSE AND NONINFRINGEMENT. IN NO EVENT SHALL THE

AUTHORS OR COPYRIGHT HOLDERS BE LIABLE FOR ANY CLAIM, DAMAGES OR OTHER

LIABILITY, WHETHER IN AN ACTION OF CONTRACT, TORT OR OTHERWISE, ARISING FROM,

OUT OF OR IN CONNECTION WITH THE SOFTWARE OR THE USE OR OTHER DEALINGS IN THE

SOFTWARE. | null | [] | [] | null | null | <3.15,>=3.11 | [] | [] | [] | [

"backoff<3,>=2",

"click<9,>=8",

"langdetect<2,>=1",

"ldap3<3,>=2",

"mex-model<4.11,>=4.10",

"networkx>=3",

"numpy<3,>=2",

"pandas<4,>=3",

"pyarrow<24,>=23",

"pydantic-settings<2.13,>=2.12",

"pydantic<3,>=2",

"pytz>=2025",

"requests<3,>=2",

"tabulate>=0.9"

] | [] | [] | [] | [

"Repository, https://github.com/robert-koch-institut/mex-common"

] | twine/6.1.0 CPython/3.13.7 | 2026-02-19T12:29:29.638133 | mex_common-1.16.0.tar.gz | 89,482 | 4a/62/8cc0da0a4940f09105897a71edc780d6f0947396e71a4dfe8d1214eec0a9/mex_common-1.16.0.tar.gz | source | sdist | null | false | fe94cb1959d3b8984328a1873ee07f91 | 4e89e34b206bfb42a1c47092791d430e3b48131a281e2bf86b306698e224a76b | 4a628cc0da0a4940f09105897a71edc780d6f0947396e71a4dfe8d1214eec0a9 | null | [

"AUTHORS",

"LICENSE"

] | 339 |

2.4 | smev-agent-client | 0.5.1 | Клиент для взаимодействия с Агентом ПОДД СМЭВ | # Клиент для взаимодействия со СМЭВ3 посредством Адаптера

## Подключение

settings:

INSTALLED_APPS = [

'smev_agent_client',

]

apps:

from django.apps import AppConfig as AppConfigBase

class AppConfig(AppConfigBase):

name = __package__

def __setup_agent_client(self):

import smev_agent_client

smev_agent_client.set_config(

smev_agent_client.configuration.Config(

agent_url='http://localhost:8090',

system_mnemonics='MNSV03',

timeout=1,

request_retries=1,

)

)

def ready(self):

super().ready()

self.__setup_agent_client()

## Эмуляция

Заменить используемый интерфейс на эмулирующий запросы:

smev_agent_client.set_config(

...,

smev_agent_client.configuration.Config(

interface=(

'smev_agent_client.contrib.my_edu.interfaces.rest'

'.OpenAPIInterfaceEmulation'

)

)

)

## Запуск тестов

$ tox

## API

### Передача сообщения

from smev_agent_client.adapters import adapter

from smev_agent_client.interfaces import OpenAPIRequest

class Request(OpenAPIRequest):

def get_url(self):

return 'http://localhost:8090/MNSV03/myedu/api/edu-upload/v1/multipart/csv'

def get_method(self):

return 'post'

def get_files(self) -> List[str]:

return [

Path('files/myedu_schools.csv').as_posix()

]

result = adapter.send(Request())

| text/markdown | null | BARS Group <education_dev@bars.group> | null | null | null | null | [

"Development Status :: 5 - Production/Stable",

"Programming Language :: Python :: 3",

"Programming Language :: Python :: 3.9",

"Programming Language :: Python :: 3.10",

"Programming Language :: Python :: 3.11",

"Programming Language :: Python :: 3.12",

"Framework :: Django :: 3.1",

"Framework :: Djang... | [] | null | null | >=3.9 | [] | [] | [] | [

"pydantic<2.0,>=1.10.17",

"requests<3,>=1.1.0",

"Django<5.0,>=3.1",

"openapi-core==0.16.5",

"isort==5.12.0; extra == \"dev\"",

"ruff==0.12.1; extra == \"dev\"",

"flake8<7,>=4.0.1; extra == \"dev\"",

"pytest<8,>=3.2.5; extra == \"dev\"",

"pytest-cov<5; extra == \"dev\"",

"sphinx<7.5,>=7; extra == \... | [] | [] | [] | [

"Homepage, https://stash.bars-open.ru/projects/EDUSMEV/repos/smev-agent-client/browse",

"Repository, https://stash.bars-open.ru/scm/edusmev/smev-agent-client.git"

] | twine/6.1.0 CPython/3.9.23 | 2026-02-19T12:29:03.969163 | smev_agent_client-0.5.1-py3-none-any.whl | 18,314 | 3b/43/25666898798d2f4a904ac3495460f30e7d02418aa5dab1c86051973881ef/smev_agent_client-0.5.1-py3-none-any.whl | py3 | bdist_wheel | null | false | fb83faadd733afcfe7172b7b4a0d060b | da5968ed2d1f91ea0526a015ab40afb8c8b0ff86ba9fe40f161b3a7c129bda2d | 3b4325666898798d2f4a904ac3495460f30e7d02418aa5dab1c86051973881ef | null | [

"LICENSE"

] | 101 |

2.4 | iwpc | 0.8.0 | An implementation of the divergence framework as described here https://arxiv.org/abs/2405.06397 and much more... | # IWPC #

This package implements the methods described in the research paper https://arxiv.org/abs/2405.06397 for estimating a

lower bound on the divergence between any two distributions, p and q, using samples from each distribution.

Install using `pip install iwpc`

Please see the package [README](https://bitbucket.org/jjhw3/divergences/src/main/) on bitbucket for more information and

some examples.

| text/markdown | null | "Jeremy J. H. Wilkinson" <jero.wilkinson@gmail.com> | null | null | null | null | [

"Development Status :: 3 - Alpha",

"Programming Language :: Python :: 3",

"License :: OSI Approved :: MIT License",

"Operating System :: OS Independent"

] | [] | null | null | >=3.9 | [] | [] | [] | [

"numpy",

"torch",

"lightning",

"matplotlib",

"pandas",

"scikit-learn",

"tensorboard",

"seaborn",

"bokeh"

] | [] | [] | [] | [

"Homepage, https://bitbucket.org/jjhw3/divergences/src/main/"

] | twine/6.1.0 CPython/3.11.9 | 2026-02-19T12:28:10.589356 | iwpc-0.8.0.tar.gz | 83,244 | 96/80/910908e7dc5cf6b440ecf319b1e5011c99dca7b3668a2024ee1b656d6732/iwpc-0.8.0.tar.gz | source | sdist | null | false | e2e153646ba88af2a905f466e448a8e3 | 9678973afe67b9ac4d07a1fe441b104d96dbd8434de635e7007c58e94c522b6e | 9680910908e7dc5cf6b440ecf319b1e5011c99dca7b3668a2024ee1b656d6732 | null | [

"LICENSE"

] | 273 |

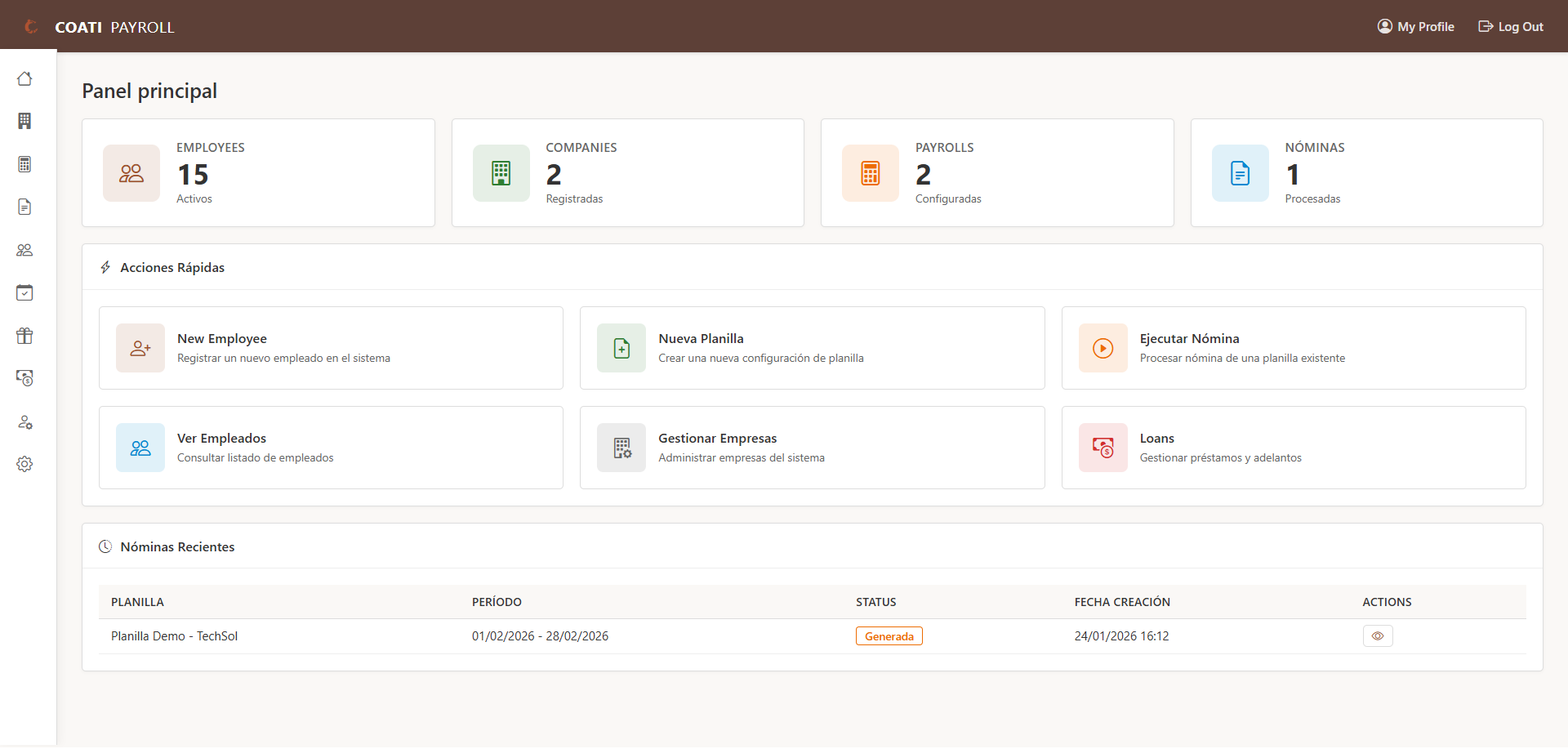

2.1 | odoo-addon-hr-shift | 18.0.1.0.2 | Define shifts for employees | .. image:: https://odoo-community.org/readme-banner-image

:target: https://odoo-community.org/get-involved?utm_source=readme

:alt: Odoo Community Association

================

Employees Shifts

================

..

!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!

!! This file is generated by oca-gen-addon-readme !!

!! changes will be overwritten. !!

!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!

!! source digest: sha256:562392450695c6fa5f16c1720b7c6b5dc5f0fb2dda5232242338b27cd2140577

!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!

.. |badge1| image:: https://img.shields.io/badge/maturity-Beta-yellow.png

:target: https://odoo-community.org/page/development-status

:alt: Beta

.. |badge2| image:: https://img.shields.io/badge/license-AGPL--3-blue.png

:target: http://www.gnu.org/licenses/agpl-3.0-standalone.html

:alt: License: AGPL-3

.. |badge3| image:: https://img.shields.io/badge/github-OCA%2Fshift--planning-lightgray.png?logo=github

:target: https://github.com/OCA/shift-planning/tree/18.0/hr_shift

:alt: OCA/shift-planning

.. |badge4| image:: https://img.shields.io/badge/weblate-Translate%20me-F47D42.png

:target: https://translation.odoo-community.org/projects/shift-planning-18-0/shift-planning-18-0-hr_shift

:alt: Translate me on Weblate

.. |badge5| image:: https://img.shields.io/badge/runboat-Try%20me-875A7B.png

:target: https://runboat.odoo-community.org/builds?repo=OCA/shift-planning&target_branch=18.0

:alt: Try me on Runboat

|badge1| |badge2| |badge3| |badge4| |badge5|

Shifts to employees with variable work schedules.

**Table of contents**

.. contents::

:local:

Configuration

=============

In order to configure and create shift plannings you'll need to be in

the **Shift Manager** security group.

Creating shifts

---------------

Go to *Shifts > Shifts* and create a new one. Define a name, a color (it

will be used in the shifts assignment cards), a start and end time, a

time zone and week days span.

Create as many as you need to.

Setting employees with variable shifts.

---------------------------------------

Go to *Employees > Employees* and in the tab *Work information* go to

the *Schedule* section. There you can set the **Shift planning**