metadata_version string | name string | version string | summary string | description string | description_content_type string | author string | author_email string | maintainer string | maintainer_email string | license string | keywords string | classifiers list | platform list | home_page string | download_url string | requires_python string | requires list | provides list | obsoletes list | requires_dist list | provides_dist list | obsoletes_dist list | requires_external list | project_urls list | uploaded_via string | upload_time timestamp[us] | filename string | size int64 | path string | python_version string | packagetype string | comment_text string | has_signature bool | md5_digest string | sha256_digest string | blake2_256_digest string | license_expression string | license_files list | recent_7d_downloads int64 |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

2.4 | pybinbot | 0.6.29 | Utility functions for the binbot project. | # PyBinbot

Utility functions for the binbot project. Most of the code here is not runnable, there's no server or individual scripts, you simply move code to here when it's used in both binbot and binquant.

``pybinbot`` is the public API module for the distribution.

This module re-exports the internal ``shared`` and ``models`` packages and the most commonly used helpers and enums so consumers can simply::

from pybinbot import round_numbers, ExchangeId

The implementation deliberately avoids importing heavy third-party libraries at module import time.

## Installation

```bash

uv sync --extra dev

```

`--extra dev` also installs development tools like ruff and mypy

## Publishing

1. Save your changes and do the usual Git flow (add, commit, don't push the changes yet).

2. Bump the version, choose one of these:

```bash

make bump-patch

```

or

```bash

make bump-minor

```

or

```bash

make bump-major

```

3. Git tag the version for Github. This will read the bump version. There's a convenience command:

```

make tag

```

4. `git commit --amend`. This is to put these new changes in the previous commit so we don't dup uncessary commits. Then `git push`

For further commands take a look at the `Makefile` such as testing `make test`

| text/markdown | null | Carlos Wu <carkodw@gmail.com> | null | null | null | null | [] | [] | null | null | >=3.11 | [] | [] | [] | [

"pydantic[email]>=2.0.0",

"numpy==2.2.0",

"pandas>=2.2.3",

"pymongo==4.6.3",

"pandas-stubs>=2.3.3.251219",

"requests>=2.32.5",

"kucoin-universal-sdk>=1.3.0",

"aiohttp>=3.13.3",

"python-dotenv>=1.2.1",

"aiokafka>=0.13.0",

"pytest>=9.0.2; extra == \"dev\"",

"ruff>=0.11.12; extra == \"dev\"",

"... | [] | [] | [] | [] | twine/6.1.0 CPython/3.13.7 | 2026-02-19T02:53:42.285525 | pybinbot-0.6.29.tar.gz | 48,877 | d8/e3/140dc9caecb63f4584f4216b17b36f9d80f1e2896ebbcb2283d9511e95da/pybinbot-0.6.29.tar.gz | source | sdist | null | false | 6bc913723f36c0457d442689bf79fea1 | 8eb2116603839874b15f83b9f52c76760249446509828566109a2ea0ee224ace | d8e3140dc9caecb63f4584f4216b17b36f9d80f1e2896ebbcb2283d9511e95da | null | [

"LICENSE"

] | 247 |

2.4 | ironcast-cec | 0.3.0 | Python bindings for libcec | # ironcast-cec - libcec bindings for Python

`ironcast-cec` is a fork of trainman419's python-cec under the same license, in order to allow features and maintenance to be added to the project for the use of the IronCast streaming software.

## Installing:

### Install dependencies

To build ironcast-cec, you need version 1.6.1 or later of the libcec development libraries:

On Gentoo:

```

sudo emerge libcec

```

On OS X:

```

brew install libcec

```

Ubuntu, Debian and Raspbian:

```

sudo apt-get install libcec-dev build-essential python-dev

```

### Install from PIP

```

pip install cec

```

### Installing on Windows

You need to [build libcec](https://github.com/Pulse-Eight/libcec/blob/master/docs/README.windows.md) from source, because libcec installer doesn't provide *cec.lib* that is necessary for linking.

Then you just need to set up your paths, e.g.:

```

set INCLUDE=path_to_libcec\build\amd64\include

set LIB=path_to_libcec\build\amd64

```

## Getting Started

A simple example to turn your TV on:

```python

import cec

cec.init()

adapter = cec.Adapter()

tv = cec.Device(adapter, cec.CECDEVICE_TV)

tv.power_on()

```

## API

```python

import cec

adapter_devs = cec.list_adapters() # may be called before init()

cec.init()

adapter = cec.Adapter() # use default adapter

# create an adapter using the specifed device, with the OSD name 'RPi TV' and play back device type

adapter = cec.Adapter(dev=adapter_dev, name='RPi TV', type=cec.CECDEVICE_PLAYBACKDEVICE1)

adapter.close() # close the adapter

adapter.add_callback(handler, events)

# the list of events is specified as a bitmask of the possible events:

cec.EVENT_LOG

cec.EVENT_KEYPRESS

cec.EVENT_COMMAND

cec.EVENT_CONFIG_CHANGE # not implemented yet

cec.EVENT_ALERT

cec.EVENT_MENU_CHANGED

cec.EVENT_ACTIVATED

cec.EVENT_ALL

# the callback will receive a varying number and type of arguments that are

# specific to the event. Contact me if you're interested in using specific

# callbacks

adapter.remove_callback(handler, events)

devices = adapter.list_devices()

class Device:

__init__(id)

is_on()

power_on()

standby()

address

physical_address

vendor

osd_string

cec_version

language

is_active()

set_av_input(input)

set_audio_input(input)

transmit(opcode, parameters)

adapter.is_active_source(addr)

adapter.set_active_source() # use default device type

adapter.set_active_source(device_type) # use a specific device type

adapter.set_inactive_source() # not implemented yet

adapter.volume_up()

adapter.volume_down()

adapter.toggle_mute()

# TODO: audio status

adapter.set_physical_address(addr)

adapter.can_persist_config()

adapter.persist_config()

adapter.set_port(device, port)

# set arbitrary active source (in this case 2.0.0.0)

destination = cec.CECDEVICE_BROADCAST

opcode = cec.CEC_OPCODE_ACTIVE_SOURCE

parameters = b'\x20\x00'

adapter.transmit(destination, opcode, parameters)

```

## Changelog

### 0.3.0 ( 2026-02-14 )

* Forked project to ironcast-cec

* Added retsyx's Adapter class, to be used instead of a global CEC context

### 0.2.8 ( 2022-01-05 )

* Add support for libCEC >= 5

* Windows support

* Support for setting CEC initiator

* Python 3.10 compatibility

### 0.2.7 ( 2018-11-09 )

* Implement cec.EVENT_COMMAND callback

* Fix several crashes/memory leaks related to callbacks

* Add possibility to use a method as a callback

* Limit maximum number of parameters passed to transmit()

* Fix compilation error with GCC >= 8

### 0.2.6 ( 2017-11-03 )

* Python 3 support ( @nforro )

* Implement is_active_source, set_active_source, transmit ( @nforro )

* libcec4 compatibility ( @nforro )

### 0.2.5 ( 2016-03-31 )

* re-release of version 0.2.4. Original release failed and version number is now lost

### 0.2.4 ( 2016-03-31 )

* libcec3 compatibility

### 0.2.3 ( 2014-12-28 )

* Add device.h to manifest

* Initial pip release

### 0.2.2 ( 2014-06-08 )

* Fix deadlock

* Add repr for Device

### 0.2.1 ( 2014-03-03 )

* Fix deadlock in Device

### 0.2.0 ( 2014-03-03 )

* Add initial callback implementation

* Fix libcec 1.6.0 backwards compatibility support

### 0.1.1 ( 2013-11-26 )

* Add libcec 1.6.0 backwards compatibility

* Known Bug: no longer compatible with libcec 2.1.0 and later

### 0.1.0 ( 2013-11-03 )

* First stable release

## Copyright

Copyright (C) 2013 Austin Hendrix <namniart@gmail.com>

This program is free software: you can redistribute it and/or modify

it under the terms of the GNU General Public License as published by

the Free Software Foundation, either version 2 of the License, or

(at your option) any later version.

This program is distributed in the hope that it will be useful,

but WITHOUT ANY WARRANTY; without even the implied warranty of

MERCHANTABILITY or FITNESS FOR A PARTICULAR PURPOSE. See the

GNU General Public License for more details.

You should have received a copy of the GNU General Public License

along with this program. If not, see <http://www.gnu.org/licenses/>.

| text/markdown | Steffan Pease | steffan@pod-mail.net | null | null | GPLv2 | null | [] | [] | https://gitlab.com/spease/ironcast-cec | null | null | [] | [] | [] | [] | [] | [] | [] | [

"Bug Tracker, https://gitlab.com/spease/ironcast-cec/issues"

] | twine/6.2.0 CPython/3.13.12 | 2026-02-19T02:53:33.580903 | ironcast_cec-0.3.0.tar.gz | 24,068 | e7/8a/bc36a3f2c3faa52de45bc91e3aa83e4581cc1d9ddbde32ca866841924d38/ironcast_cec-0.3.0.tar.gz | source | sdist | null | false | c565476e84916d1acd7a1e317a8e091e | fa5302d03e06eb3449c96bbe57023e387c57e8c21266a0cbe14247fba0b8ad47 | e78abc36a3f2c3faa52de45bc91e3aa83e4581cc1d9ddbde32ca866841924d38 | null | [

"LICENSE",

"COPYING"

] | 172 |

2.4 | aquakit | 1.0.0 | Refractive multi-camera geometry foundation for the Aqua ecosystem | # AquaKit

Refractive multi-camera geometry foundation for the Aqua ecosystem. Provides shared PyTorch implementations of Snell's law refraction, camera models, triangulation, pose transforms, calibration loading, and synchronized multi-camera I/O — consumed by [AquaCal](https://github.com/tlancaster6/AquaCal), [AquaMVS](https://github.com/tlancaster6/AquaMVS), and AquaPose.

## Installation

AquaKit requires PyTorch but does not bundle it, so you can choose the build that matches your hardware. Install PyTorch first, then AquaKit:

```bash

# CPU only

pip install torch

pip install aquakit

# CUDA (example: CUDA 12.4 — see https://pytorch.org/get-started for other versions)

pip install torch --index-url https://download.pytorch.org/whl/cu124

pip install aquakit

```

## Quick Start

```python

import torch

from aquakit import CameraIntrinsics, CameraExtrinsics, InterfaceParams

from aquakit import create_camera, snells_law_3d, triangulate_rays

# Load calibration from AquaCal JSON

from aquakit import load_calibration_data

calib = load_calibration_data("path/to/aquacal.json")

```

## Development

```bash

# Set up the development environment

pip install hatch

hatch env create

hatch run pre-commit install

hatch run pre-commit install --hook-type pre-push

# Run tests, lint, and type check

hatch run test

hatch run lint

hatch run typecheck

```

See [Contributing](docs/contributing.md) for full development guidelines.

## Documentation

Full documentation is available at [aquakit.readthedocs.io](https://aquakit.readthedocs.io).

## License

[MIT](LICENSE)

| text/markdown | Tucker Lancaster | null | null | null | MIT | 3d-reconstruction, calibration, camera-geometry, computer-vision, multi-camera, pytorch, refraction, triangulation, underwater | [

"Development Status :: 3 - Alpha",

"Intended Audience :: Developers",

"Intended Audience :: Science/Research",

"License :: OSI Approved :: MIT License",

"Programming Language :: Python :: 3",

"Programming Language :: Python :: 3.11",

"Programming Language :: Python :: 3.12",

"Programming Language :: P... | [] | null | null | >=3.11 | [] | [] | [] | [

"numpy>=1.24",

"opencv-python>=4.8",

"kornia>=0.7; extra == \"kornia\""

] | [] | [] | [] | [

"Homepage, https://github.com/tlancaster6/aquakit",

"Documentation, https://aquakit.readthedocs.io",

"Repository, https://github.com/tlancaster6/aquakit",

"Issues, https://github.com/tlancaster6/aquakit/issues"

] | twine/6.1.0 CPython/3.13.7 | 2026-02-19T02:53:33.254694 | aquakit-1.0.0.tar.gz | 58,517 | a6/de/b5df8b710ead0168fc023eefbd80f9bbdddf78360ccabc018565428d661e/aquakit-1.0.0.tar.gz | source | sdist | null | false | 38d8c5314014567a230619f9062f61da | 8ce2d6734e2eb10f6e63d25686e52cbf0fcca67e78f13075976967b8575f9381 | a6deb5df8b710ead0168fc023eefbd80f9bbdddf78360ccabc018565428d661e | null | [

"LICENSE"

] | 249 |

2.4 | nomadicml | 0.1.37 | Python SDK for NomadicML's DriveMonitor API | # NomadicML Python SDK

A Python client library for the NomadicML DriveMonitor API, allowing you to upload and analyze driving videos programmatically.

## Installation

### From PyPI (for users)

```bash

pip install nomadicml

```

### For Development (from source)

To install the package in development mode, where changes to the code will be immediately reflected without reinstallation:

```bash

# Clone the repository

git clone https://github.com/nomadic-ml/drivemonitor.git

cd sdk

# For development: Install in editable mode

pip install -e .

```

With this installation, any changes you make to the code will be immediately available when you import the package.

## Quick Start

```python

from nomadicml import NomadicML

# Initialize the client with your API key

client = NomadicML(api_key="your_api_key")

# Upload a video and analyze it in one step

result = client.video.upload_and_analyze("path/to/your/video.mp4")

# Print the detected events

for event in result["events"]:

print(f"Event: {event['type']} at {event['time']}s - {event['description']}")

#For a batch upload

videos_list = [.....]#list of video paths

batch_results = client.video.upload_and_analyze_videos(videos_list, wait_for_completion=False)

video_ids = [

res.get("video_id")

for res in batch_results

if res # safety for None

]

full_results = client.video.wait_for_analyses(video_ids)

```

## Authentication

You need an API key to use the NomadicML API. You can get one by:

1. Log in to your DriveMonitor account

2. Go to Profile > API Key

3. Generate a new API key

Then use this key when initializing the client:

```python

client = NomadicML(api_key="your_api_key")

```

## Video Upload and Analysis

### Upload a video

```python

# Preferred: upload with the high-level helper

upload_result = client.video.upload(

"path/to/video.mp4",

metadata_file="path/to/overlay_schema.json", # optional

wait_for_uploaded=True,

)

video_id = upload_result["video_id"]

# Legacy helpers remain available if you need fine-grained control

result = client.video.upload_video(

source="file",

file_path="path/to/video.mp4"

)

```

The `metadata_file` argument is optional and accepts any of the following:

- Path to a JSON metadata file describing per-frame overlay fields

- A Python `dict` that can be serialised to the Nomadic overlay schema

- Raw JSON string or UTF-8 bytes containing the schema

When provided, the SDK sends the schema to `/api/upload-video` so the backend

can extract on-screen telemetry (timestamps, GPS, speed, etc.) during later

analyses. If you specify `metadata_file` while uploading multiple videos at

once, the SDK will raise a `ValidationError`—attach metadata on single uploads

only.

### Upload videos stored in Google Cloud Storage

You can import `.mp4` objects directly from GCS once you have saved their

credentials as a cloud integration:

```python

# Trigger imports without re-downloading files locally

upload_result = client.video.upload([

"gs://drive-monitor/uploads/trip-042/video_front.mp4",

"gs://drive-monitor/uploads/trip-042/video_rear.mp4",

],

folder="Fleet Library",

wait_for_uploaded=False, # async import – poll later if you prefer

)

# Provide an explicit integration id when you have multiple saved credentials

upload_result = client.video.upload([

"gs://drive-monitor/uploads/trip-042/video_front.mp4",

],

integration_id="gcs_int_123",

)

```

Rules for the GCS path:

- Only `.mp4` objects are accepted today.

- All URIs within a single call must share the same bucket.

- Pass either a single string or a list of literal blob URIs—wildcards are not

supported.

- If you omit `integration_id`, the SDK tries each saved integration whose

bucket matches the URI until one succeeds. Provide the id explicitly when multiple

integrations share the bucket.

To discover the ids you have already saved (for example, those created through

the DriveMonitor UI) call:

```python

for item in client.cloud_integrations.list(type="gcs"):

print(item["name"], item["bucket"], item["id"])

```

### Analyze a video

```python

from nomadicml.video import AnalysisType, CustomCategory

analysis = client.video.analyze(

video_id,

analysis_type=AnalysisType.ASK,

custom_event="Did the driver stop before the crosswalk?",

custom_category=CustomCategory.DRIVING,

overlay={"timestamps": True, "gps": True}, # optional OCR flags

)

events = analysis.get("events", [])

```

Overlay extraction is controlled via the optional `overlay` dictionary:

- `timestamps=True` enables OCR of on-screen frame timestamps.

- `gps=True` adds latitude/longitude extraction (timestamps are implied).

- `custom=True` activates Nomadic overlay mode, instructing the backend to use

any supplied metadata schema for full telemetry capture. This also implies

`timestamps=True`.

Each event returned by the SDK now includes an `overlay` dictionary. Overlay

entries are keyed by the field name (for example `frame_timestamp`,

`frame_speed`, etc.) and map to `{"start": ..., "end": ...}` pairs with the

values that were read from the video frames or metadata.

### Generate an ASAM OpenODD CSV

The client exposes a top-level helper, `client.generate_structured_odd(...)`,

that mirrors the DriveMonitor UI workflow and accepts the same column schema.

You can reuse the SDK’s built-in `DEFAULT_STRUCTURED_ODD_COLUMNS` constant or

pass your own list of definitions.

```python

from nomadicml import NomadicML, DEFAULT_STRUCTURED_ODD_COLUMNS

client = NomadicML(api_key="your_api_key")

# Optionally customise the column schema before calling the export.

columns = [

{

"name": "timestamp",

"prompt": "Log the timestamp in ISO 8601 format (placeholder date 2024-01-01).",

"type": "YYYY-MM-DDTHH:MM:SSZ",

},

{

"name": "scenery.road.type",

"prompt": "The type of road the vehicle is on.",

"type": "categorical",

"literals": ["motorway", "rural", "urban_street", "parking_lot", "unpaved", "unknown"],

},

# ...add or tweak additional columns...

]

odd = client.generate_structured_odd(

video_id="VIDEO_ID_FROM_UPLOAD",

columns=columns or DEFAULT_STRUCTURED_ODD_COLUMNS,

)

csv_text = odd["csv"]

share_url = odd.get("share_url")

print(csv_text.splitlines()[0]) # Header row

```

If you customise the schema in the DriveMonitor UI, use the **Copy SDK snippet**

button to paste a ready-made Python snippet that mirrors the on-screen column

configuration. The SDK automatically mirrors the Firestore reasoning trace path

and returns any generated share links together with the CSV data.

### Upload and analyze in one step

```python

# Upload and analyze a video, waiting for results

analysis = client.video.upload_and_analyze("path/to/video.mp4")

# Or just start the process without waiting

result = client.video.upload_and_analyze("path/to/video.mp4", wait_for_completion=False)

```

## Advanced Usage

### Filter events by severity or type

```python

# Get only high severity events

high_severity_events = client.video.get_video_events(

video_id=video_id,

severity="high"

)

# Get only traffic violation events

traffic_violations = client.video.get_video_events(

video_id=video_id,

event_type="Traffic Violation"

)

```

### Custom timeout and polling interval

```python

# Wait for analysis with a custom timeout and polling interval

client.video.wait_for_analysis(

video_id=video_id,

timeout=1200, # 20 minutes

poll_interval=10 # Check every 10 seconds

)

```

### Batch analyses across many videos

When you provide a list of video IDs to `client.video.analyze(...)`, the SDK now

creates a backend batch automatically (for both Asking Agent and Edge Agent

pipelines) and keeps polling the `/batch/{batch_id}/status` endpoint until the

orchestrator finishes. The return value is a dictionary with two keys:

* `batch_metadata` — contains the `batch_id`, a fully-qualified

`batch_viewer_url` pointing at the Batch Results Viewer, and a

`batch_type` flag (`"ask"` or `"agent"`).

* `results` — the list of per-video analysis dictionaries (exactly the same

schema you would get from calling `analyze()` on a single video).

### List videos in a folder

Use `my_videos()` to list videos and check their upload status:

```python

# List all videos in a folder

videos = client.my_videos(folder="My-Fleet-Videos")

# Check which videos are ready for analysis

for video in videos:

print(f"{video['video_name']}: {video['status']}")

# Filter to only uploaded (ready) videos

ready_videos = [v for v in videos if v["status"] == "uploaded"]

```

Each video dict contains:

| Field | Description |

|-------|-------------|

| `video_id` | Unique identifier |

| `video_name` | Original filename |

| `duration_s` | Video duration in seconds |

| `folder_id` | Folder identifier |

| `status` | Upload status (see below) |

| `folder_name` | Folder name (if in a folder) |

| `org_id` | Organization ID (if org-scoped) |

**Upload status values:**

| Status | Meaning |

|--------|---------|

| `processing` | Upload in progress |

| `uploading_failed` | Upload failed |

| `uploaded` | Ready for analysis |

### Manage cloud integrations

The SDK exposes a dedicated helper to manage saved cloud credentials:

```python

# List every integration visible to your user/org

integrations = client.cloud_integrations.list()

# Filter by provider (either "gcs" or "s3")

gcs_only = client.cloud_integrations.list(type="gcs")

# Add a new S3 integration using AWS keys

client.cloud_integrations.add(

type="s3",

name="AWS archive",

bucket="drive-archive",

prefix="raw/",

region="us-east-1",

credentials={

"accessKeyId": "...",

"secretAccessKey": "...",

"sessionToken": "...", # optional

},

)

```

Once an integration exists, you only need its `id` when pulling files directly

from the bucket. Call `client.upload("gs://bucket/path.mp4", integration_id="...")`

or `client.upload("s3://bucket/path.mp4", integration_id="...")` and the SDK

will hand the request to the correct backend importer. Credentials are never

embedded in the upload request body.

## BEFORE DEPLOYIN RUN THIS: Running SDK integration tests locally

The integration suite is tagged with `calls_api` and exercises the live backend

endpoints. Make sure you have a valid API key and a backend domain reachable

from your environment, then run:

```bash

cd sdk

export NOMADICML_API_KEY=YOUR_API_KEY

export VITE_BACKEND_DOMAIN=http://127.0.0.1:8099

python -u -m pytest -m calls_api -vvs -rPfE --durations=0 --capture=no tests/test_integration.py

```

The command disables pytest's output capture so you can follow streaming logs

while the long-running tests execute.

```python

from nomadicml.video import AnalysisType, CustomCategory

batch = client.video.analyze(

["video_1", "video_2", "video_3"],

analysis_type=AnalysisType.ASK,

custom_event="Did the driver stop before the crosswalk?",

custom_category=CustomCategory.DRIVING,

)

print(batch["batch_metadata"])

for item in batch["results"]:

print(item["video_id"], item["analysis_id"], len(item.get("events", [])))

```

### Custom API endpoint

If you're using a custom deployment of the DriveMonitor backend:

```python

# Connect to a local or custom deployment

client = NomadicML(

api_key="your_api_key",

base_url="http://localhost:8099"

)

```

### Search across videos

Run a semantic search on several of your videos at once:

```python

results = client.video.search(

"red pickup truck overtaking",

["vid123", "vid456"]

)

for match in results["matches"]:

print(match["videoId"], match["eventIndex"], match["similarity"])

```

## Error Handling

The SDK provides specific exceptions for different error types:

```python

from nomadicml import NomadicMLError, AuthenticationError, VideoUploadError

try:

client.video.upload_and_analyze("path/to/video.mp4")

except AuthenticationError:

print("API key is invalid or expired")

except VideoUploadError as e:

print(f"Failed to upload video: {e}")

except NomadicMLError as e:

print(f"An error occurred: {e}")

```

## Development

### Setup

Clone the repository and install development dependencies:

```bash

git clone https://github.com/nomadicml/nomadicml-python.git

cd nomadicml-python

pip install -e ".[dev]"

```

### Running tests

```bash

pytest

```

## License

MIT License. See LICENSE file for details.

| text/markdown | NomadicML Inc | info@nomadicml.com | null | null | null | null | [

"Programming Language :: Python :: 3",

"Programming Language :: Python :: 3.8",

"Programming Language :: Python :: 3.9",

"Programming Language :: Python :: 3.10",

"Programming Language :: Python :: 3.11",

"License :: OSI Approved :: MIT License",

"Operating System :: OS Independent",

"Development Stat... | [] | null | null | >=3.8 | [] | [] | [] | [

"requests>=2.25.0",

"typing-extensions>=3.10.0",

"backoff>=2.2.0",

"pytest>=7.0.0; extra == \"dev\"",

"pytest-cov>=4.0.0; extra == \"dev\"",

"black>=23.0.0; extra == \"dev\"",

"isort>=5.12.0; extra == \"dev\"",

"flake8>=6.0.0; extra == \"dev\"",

"mypy>=1.0.0; extra == \"dev\"",

"types-requests>=2.... | [] | [] | [] | [] | twine/6.2.0 CPython/3.14.3 | 2026-02-19T02:53:22.688649 | nomadicml-0.1.37.tar.gz | 76,981 | 54/b1/ab4c8bcde678c653a2f5831a45dd0e9105758c00992d7544250eec9ce996/nomadicml-0.1.37.tar.gz | source | sdist | null | false | 0a4e5f84111004e8f85cb2096efd4f72 | de2ad0c9dd0f942260b4911fa5a4d43c1b0e394f148e86b0a4b26c4fdc59af74 | 54b1ab4c8bcde678c653a2f5831a45dd0e9105758c00992d7544250eec9ce996 | null | [

"LICENSE"

] | 625 |

2.4 | py-shall | 0.0.1 | A novel mocking library for Python | # shall

A novel mocking library for Python. Coming soon.

| text/markdown | Tom Meyer | null | null | null | null | null | [

"Development Status :: 1 - Planning",

"Intended Audience :: Developers",

"License :: OSI Approved :: MIT License",

"Programming Language :: Python :: 3",

"Topic :: Software Development :: Testing",

"Topic :: Software Development :: Testing :: Mocking"

] | [] | null | null | >=3.12 | [] | [] | [] | [] | [] | [] | [] | [

"Repository, https://github.com/tegular/shall"

] | uv/0.6.10 | 2026-02-19T02:50:18.741033 | py_shall-0.0.1.tar.gz | 1,416 | ba/a4/16dbb2461e502d6baaa47be3bef40755209643a7cc3ba1e88d18449469bc/py_shall-0.0.1.tar.gz | source | sdist | null | false | 513b7e6b87eca2778d556e096ab288e2 | 618b4d6b3e1e125ef5c0f0076cca415e64b6cdb8749b8ca43abf1a7794878c60 | baa416dbb2461e502d6baaa47be3bef40755209643a7cc3ba1e88d18449469bc | MIT | [] | 249 |

2.1 | odoo-addon-connector-jira-servicedesk | 17.0.1.0.0.3 | JIRA Connector - Service Desk Extension | .. image:: https://odoo-community.org/readme-banner-image

:target: https://odoo-community.org/get-involved?utm_source=readme

:alt: Odoo Community Association

=======================================

JIRA Connector - Service Desk Extension

=======================================

..

!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!

!! This file is generated by oca-gen-addon-readme !!

!! changes will be overwritten. !!

!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!

!! source digest: sha256:c3b2a31720d701926953c19c20859ab28d3a38e7ab429793d23f8518666dcd46

!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!!

.. |badge1| image:: https://img.shields.io/badge/maturity-Beta-yellow.png

:target: https://odoo-community.org/page/development-status

:alt: Beta

.. |badge2| image:: https://img.shields.io/badge/license-AGPL--3-blue.png

:target: http://www.gnu.org/licenses/agpl-3.0-standalone.html

:alt: License: AGPL-3

.. |badge3| image:: https://img.shields.io/badge/github-OCA%2Fconnector--jira-lightgray.png?logo=github

:target: https://github.com/OCA/connector-jira/tree/17.0/connector_jira_servicedesk

:alt: OCA/connector-jira

.. |badge4| image:: https://img.shields.io/badge/weblate-Translate%20me-F47D42.png

:target: https://translation.odoo-community.org/projects/connector-jira-17-0/connector-jira-17-0-connector_jira_servicedesk

:alt: Translate me on Weblate

.. |badge5| image:: https://img.shields.io/badge/runboat-Try%20me-875A7B.png

:target: https://runboat.odoo-community.org/builds?repo=OCA/connector-jira&target_branch=17.0

:alt: Try me on Runboat

|badge1| |badge2| |badge3| |badge4| |badge5|

This module add support with jira servicedesk

**Table of contents**

.. contents::

:local:

Usage

=====

Setup

-----

A new button is added on the JIRA backend, to import the organizations

of JIRA. Before, be sure to use the button "Configure Organization Link"

in the "Advanced Configuration" tab.

Features

--------

Organizations

-------------

On Service Desk, you can share projects with Organizations. You may want

to use different Odoo projects according to the organizations. This is

what this extension allows.

Example:

- You have one Service Desk project named "Earth Project" with key EARTH

- On JIRA SD You share this project with organizations Themis and Rhea

- However on Odoo, you want to track the hours differently for Themis

and Rhea

Steps on Odoo:

- Create a Themis project, use the "Link with JIRA" action with the key

EARTH

- When you hit Next, the organization(s) you want to link must be set

- Repeat with another project for Rhea

If the project binding for the synchronization already exists, you can

still edit it in the settings of the project and change the

organizations.

When a task or worklog is imported, it will search for a project having

exactly the same set of organizations than the one of the task. If no

project with the same set is found and you have a project configured

without organization, the task will be linked to it.

This means that, on Odoo, you can have shared project altogether with

dedicated ones, while you only have one project on JIRA.

- Tasks with org "Themis" will be attached to this project

- Tasks with org "Rhea" will be attached to this project

- Tasks with orgs "Themis" and "Rhea" will be attached to another

project "Themis and Rhea"

- The rest of the tasks will be attached to a fourth project (configured

without organizations)

Bug Tracker

===========

Bugs are tracked on `GitHub Issues <https://github.com/OCA/connector-jira/issues>`_.

In case of trouble, please check there if your issue has already been reported.

If you spotted it first, help us to smash it by providing a detailed and welcomed

`feedback <https://github.com/OCA/connector-jira/issues/new?body=module:%20connector_jira_servicedesk%0Aversion:%2017.0%0A%0A**Steps%20to%20reproduce**%0A-%20...%0A%0A**Current%20behavior**%0A%0A**Expected%20behavior**>`_.

Do not contact contributors directly about support or help with technical issues.

Credits

=======

Authors

-------

* Camptocamp

Contributors

------------

- Jaime Arroyo

- `Camptocamp <https://camptocamp.com>`__:

- Patrick Tombez <patrick.tombez@camptocamp.com>

- Guewen Baconnier <guewen.baconnier@camptocamp.com>

- Akim Juillerat <akim.juillerat@camptocamp.com>

- Denis Leemann <denis.leemann@camptocamp.com>

- `Trobz <https://trobz.com>`__:

- Son Ho <sonhd@trobz.com>

Maintainers

-----------

This module is maintained by the OCA.

.. image:: https://odoo-community.org/logo.png

:alt: Odoo Community Association

:target: https://odoo-community.org

OCA, or the Odoo Community Association, is a nonprofit organization whose

mission is to support the collaborative development of Odoo features and

promote its widespread use.

This module is part of the `OCA/connector-jira <https://github.com/OCA/connector-jira/tree/17.0/connector_jira_servicedesk>`_ project on GitHub.

You are welcome to contribute. To learn how please visit https://odoo-community.org/page/Contribute.

| text/x-rst | Camptocamp,Odoo Community Association (OCA) | support@odoo-community.org | null | null | AGPL-3 | null | [

"Programming Language :: Python",

"Framework :: Odoo",

"Framework :: Odoo :: 17.0",

"License :: OSI Approved :: GNU Affero General Public License v3"

] | [] | https://github.com/OCA/connector-jira | null | >=3.10 | [] | [] | [] | [

"odoo-addon-connector_jira<17.1dev,>=17.0dev",

"odoo<17.1dev,>=17.0a"

] | [] | [] | [] | [] | twine/6.2.0 CPython/3.12.3 | 2026-02-19T02:47:14.425890 | odoo_addon_connector_jira_servicedesk-17.0.1.0.0.3-py3-none-any.whl | 51,780 | 7f/57/98f14ac9d89f40170f26be548ec9bf038af60df3816f701254b36e3d9125/odoo_addon_connector_jira_servicedesk-17.0.1.0.0.3-py3-none-any.whl | py3 | bdist_wheel | null | false | c4bb59a1a211015c63b53d2bcdce3008 | c9aafa6055ee7eb815c33cc6fa5352ce07ab1b394734f64fb8b1d793f24355e8 | 7f5798f14ac9d89f40170f26be548ec9bf038af60df3816f701254b36e3d9125 | null | [] | 103 |

2.3 | mxbiflow | 0.3.8 | mxbiflow is a toolkit based on pygame and pymxbi | # mxbiflow

A framework for building multi-animal, multi-stage behavioral neuroscience experiments with touchscreen interfaces.

## Overview

mxbiflow provides the core infrastructure for cognitive and behavioral experiment scheduling. It handles the experiment lifecycle — from configuration wizards and session management to real-time scene rendering and data logging — so you can focus on designing your experiment logic.

## Architecture

```

┌─────────────────────────────────────────────────────────┐

│ mxbiflow │

│ │

│ Wizard (PySide6) Game Loop (pygame-ce) │

│ ┌────────────────┐ ┌───────────────────┐ │

│ │ MXBIPanel │ │ SceneManager │ │

│ │ ExperimentPanel│ ──────▶ │ ├─ Scene A │ │

│ └────────────────┘ │ ├─ Scene B │ │

│ │ └─ ... │ │

│ │ │ │

│ │ Scheduler │ │

│ │ DetectorBridge │ │

│ └───────────────────┘ │

│ │

│ ConfigStore ◄──── JSON config files │

│ DataLogger ────► session data output │

└─────────────────────────────────────────────────────────┘

│

▼

┌───────────────────┐

│ pymxbi │

│ RFID / Rewarder │

│ Detector / Audio │

└───────────────────┘

```

## Usage

Implement your experiment as a set of scenes, register them, and launch:

```python

from mxbiflow import set_base_path

from mxbiflow.scene import SceneManager

from mxbiflow.wizard import config_wizard, init_gameloop

scene_manager = SceneManager()

scene_manager.register([IDLE, Detect, Discriminate])

config_wizard(scene_manager)

game = init_gameloop(scene_manager)

game.play()

```

Each scene implements `SceneProtocol`:

```python

class MyScene:

_running: bool

level_table: dict[str, list[int]] = {"default": [1, 2, 3]}

def start(self) -> None: ...

def quit(self) -> None: ...

@property

def running(self) -> bool: ...

def handle_event(self, event: Event) -> None: ...

def update(self, dt_s: float) -> None: ...

def draw(self, screen: Surface) -> None: ...

```

## Installation

```shell

uv add mxbiflow

```

## Requirements

- Python 3.14+

- pygame-ce, PySide6, pymxbi

| text/markdown | HuYang | HuYang <huyangcommit@gmail.com> | null | null | null | null | [] | [] | null | null | >=3.14 | [] | [] | [] | [

"gpiozero>=2.0.1",

"inflection>=0.5.1",

"jinja2>=3.1.6",

"keyring>=25.6.0",

"loguru>=0.7.3",

"matplotlib>=3.10.6",

"mss>=10.1.0",

"numpy>=2.3.3",

"pandas>=2.3.2",

"pillow>=11.3.0",

"pyaudio>=0.2.14",

"pydantic>=2.11.7",

"pygame-ce>=2.5.6",

"pymotego>=0.1.3",

"pymxbi>=0.3.4",

"pyserial>... | [] | [] | [] | [] | uv/0.10.4 {"installer":{"name":"uv","version":"0.10.4","subcommand":["publish"]},"python":null,"implementation":{"name":null,"version":null},"distro":{"name":"Ubuntu","version":"24.04","id":"noble","libc":null},"system":{"name":null,"release":null},"cpu":null,"openssl_version":null,"setuptools_version":null,"rustc_version":null,"ci":true} | 2026-02-19T02:46:19.404857 | mxbiflow-0.3.8.tar.gz | 4,444,566 | 6a/f1/b6166a15070132cdb6a8482ad7820b890157430fe0910dcdce403caaa7a9/mxbiflow-0.3.8.tar.gz | source | sdist | null | false | cda95bf91e1c88dd398a9948764d5299 | 6754e68e5cfe050592d427cb3e5d51fc2bbf49fbb509e8072ec8f8edd72ce808 | 6af1b6166a15070132cdb6a8482ad7820b890157430fe0910dcdce403caaa7a9 | null | [] | 256 |

2.4 | FastSketchLSH | 0.2.0 | High-performance FastSketch with SIMD acceleration to deduplicate large-scale data | # FastSketchLSH

## Introduction

FastSketchLSH delivers a Python-first package that wraps a high-performance C++/SIMD implementation of Fast Similarity Sketch (see Dahlgaard et al., FOCS'17 [arXiv:1704.04370](https://arxiv.org/abs/1704.04370) for the underlying algorithm). The goal is to make Jaccard estimation and locality-sensitive hashing (LSH) practical for large dataset deduplication.

| Dataset | Engine | Sketch (s) | Build (s) | Query (s) | Total (s) | FastSketchLSH Sketch Speedup | FastSketchLSH Total Speedup |

|---------|--------|------------|-----------|-----------|-----------|--------------------------|------------------------|

| BOOKCORPUSOPEN | rensa | 198.545 | 0.026 | 0.018 | 198.589 | - | - |

| BOOKCORPUSOPEN | fastsketchlsh | 55.280 | 0.039 | 0.031 | 55.350 | 3.59× | 3.59× |

| BOOKS3 | rensa | 95.915 | 0.005 | 0.003 | 95.923 | - | - |

| BOOKS3 | fastsketchlsh | 28.440 | 0.008 | 0.007 | 28.455 | 3.37× | 3.37× |

| PINECONE | rensa | 3.929 | 0.141 | 0.153 | 4.223 | - | - |

| PINECONE | fastsketchlsh | 1.521 | 0.249 | 0.396 | 2.166 | 2.58× | 1.95× |

| SHUYUEJ | rensa | 3.749 | 0.037 | 0.044 | 3.830 | - | - |

| SHUYUEJ | fastsketchlsh | 1.132 | 0.093 | 0.121 | 1.346 | 3.31× | 2.85× |

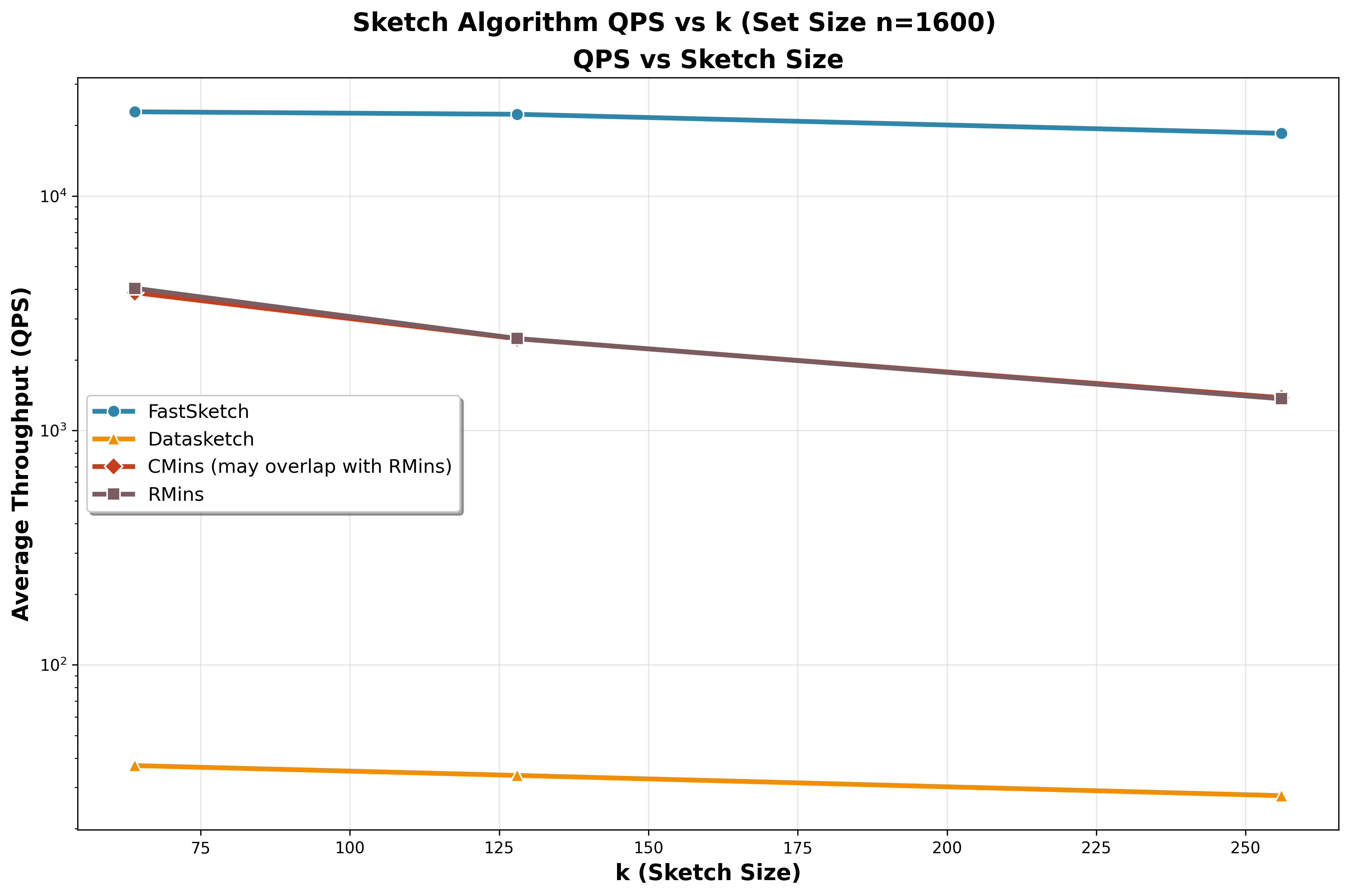

### Headline Results

- `FastSimilaritySketch` maintains **sub-millisecond** sketch times even when each set holds **1 600 tokens**, keeping the absolute Jaccard error around **0.03–0.06**.

- At the sketch level, FastSimilaritySketch stays **200×–990× faster** than `datasketch` MinHash and still **8×–23×** faster than Rensa’s `CMinHash`/`RMinHash`, while matching their accuracy—these gains matter most for large documents.

- End-to-end deduplication experiments show FastSketchLSH is typically **~2×–3.6× faster** than Rensa in single-thread runs.

- Ground-truth comparisons confirm FastSketchLSH matches or slightly exceeds the deduplication accuracy of both Rensa and datasketch.

## What's New in v0.2.0

**Pre-hashed input support** -- `sketch_prehashed`, `sketch_batch_prehashed`, and `sketch_batch_flat_csr_prehashed` methods now accept `np.uint64` or `np.int64` arrays of user-provided hash values directly. This skips the internal prehash phase entirely (no `hash_int32`, no `fnv1a64`), which is useful when you hash tokens yourself or reuse hash values across different sketch configurations.

```python

import numpy as np

from FastSketchLSH import FastSimilaritySketch

sketcher = FastSimilaritySketch(sketch_size=256, seed=42)

# Single sketch from pre-hashed values (zero-copy from NumPy)

hashes = np.array([0xDEAD, 0xBEEF, 0xCAFE, ...], dtype=np.uint64)

digest = sketcher.sketch_prehashed(hashes)

# Batch of pre-hashed arrays

batch = [np.array([...], dtype=np.uint64) for _ in range(1000)]

digests = sketcher.sketch_batch_prehashed(batch, num_threads=8)

# CSR layout for maximum throughput

data = np.array([...], dtype=np.uint64)

indptr = np.array([0, 120, 250, 500], dtype=np.uint64)

digests = sketcher.sketch_batch_flat_csr_prehashed(data, indptr, num_threads=8)

```

All prehashed paths share the same SIMD-accelerated Round 1 / Round 2 bucket-fill logic and OpenMP batch parallelism as the existing `sketch` methods.

## How It Works

- **Fast Similarity Sketching**: SIMD-accelerated permutations compress a set into a fixed-length signature, expected time `O(n + k log k)` with `O(k)` space.

- **Banded LSH**: Signature rows are grouped into bands; items colliding in any band become candidates for deduplication.

- **Python ergonomics**: Thin wrappers expose the C++ core, plus reference implementations of competing sketches for fair comparisons.

## Installation

> **Prerequisite:** Python 3.11 or newer. Support for Python 3.8 and older

> interpreters is on the roadmap.

### PyPI (recommended)

```bash

pip install fastsketchlsh

```

### Build from source

1. Build the native extension:

```bash

cd fastsketchlsh_ext

pip install .

```

This installs the `FastSketchLSH` Python module with SIMD kernels.

2. Install benchmark utilities (optional for reproducing experiments):

```bash

pip install -r requirements.txt

```

3. Activate your environment (e.g. `source .venv/bin/activate`) before running scripts.

## Quick Start

### Sketch two sets and estimate their Jaccard similarity

```python

from FastSketchLSH import FastSimilaritySketch, estimate_jaccard

# Build list_a with 16,000 tokens labeled "a-0" to "a-15999"

# Build list_b with 8,000 overlapping + 8,000 new tokens (true Jaccard = 1/3)

list_a = [f"a-{i}" for i in range(16_000)]

list_b = [f"a-{i}" for i in range(8_000)] + [f"b-{i}" for i in range(8_000)]

sketcher = FastSimilaritySketch(sketch_size=256)

sig_a = sketcher.sketch(list_a)

sig_b = sketcher.sketch(list_b)

estimated = estimate_jaccard(sig_a, sig_b)

print(f"Estimated Jaccard similarity: {estimated:.4f}")

```

### Deduplication with LSH

This end-to-end sample downloads a small slice of Hugging Face’s `lucadiliello/bookcorpusopen` corpus, sketches every document with `k=128`, and groups the signatures into `16` bands. Sketching each document costs `O(n + k log k)` time with `O(k)` space, while an LSH probe runs in `O(k + c)` where `c` is the number of retrieved candidates.

```python

from __future__ import annotations

from datasets import load_dataset

from FastSketchLSH import FastSimilaritySketch, LSH

def tokenize(text: str) -> list[str]:

return sorted({token for token in text.lower().split() if token})

# Here, 'train[:2048]' tells Hugging Face Datasets to select only the first 2048 rows from the 'train' split.

dataset = load_dataset(

"lucadiliello/bookcorpusopen",

split="train[:2048]")

texts = [row["text"] for row in dataset if row.get("text")]

token_sets = [tokenize(text) for text in texts]

sketcher = FastSimilaritySketch(sketch_size=128, seed=42)

# Use batch mode for faster sketching (much faster than one-by-one)

sketch_matrix = sketcher.sketch_batch(token_sets)

lsh = LSH(num_perm=128, num_bands=16)

lsh.build_from_batch(sketch_matrix)

doc_idx = 0

candidates = lsh.query_candidates(sketch_matrix[doc_idx])

print(f"Candidates for {doc_idx}:", candidates)

dup_flags = [1 if len(lsh.query_candidates(row)) > 1 else 0 for row in sketch_matrix]

print("Duplicate flags:", dup_flags)

print("Total duplicates detected:", sum(dup_flags))

```

## Experiment Summaries

- **Sketch microbenchmarks (`exps/sketch/`)**: Full write-up, CSVs, and plotting helpers demonstrating latency and accuracy versus `datasketch` and Rensa baselines. Reproduction steps live in `exps/sketch/README.md`.

- **Ground-truth accuracy (`exps/accuracy/`)**: Jaccard estimation and dedup quality measured against labelled datasets. See `exps/accuracy/README.md` for reproduction commands.

- **End-to-end pipelines (`exps/end2end/`)**: Thread-scaled deduplication sweeps on large corpora, plus scripts for batch comparisons. Details in `exps/end2end/README.md`.

Each experiment directory includes figures, CSV outputs, and exact command lines so you can replicate every result.

## Key Points

- FastSketchLSH packages a SIMD-backed sketch with Python convenience wrappers.

- Headline benchmarks show up to **990×** throughput gains over classic MinHash at comparable accuracy.

- Ready-to-run examples cover sketching, LSH-based deduplication, and full dataset experiments.

- For deeper reproduction details, consult the README in each experiment subdirectory.

## Future Work

- A MapReduce/Spark demo to deduplicate large datasets in distributed systems.

- A friendlier Python interface aligned with `datasketch` ergonomics.

## License

MIT. Research and educational use welcome.

| text/markdown | FastSketchLSH Authors | null | null | null | MIT | null | [] | [] | https://github.com/pzcddm/FastSketchLSH | null | >=3.11 | [] | [] | [] | [

"pybind11>=2.10",

"numpy>=1.21"

] | [] | [] | [] | [

"Source, https://github.com/pzcddm/FastSketchLSH",

"Issues, https://github.com/pzcddm/FastSketchLSH/issues"

] | twine/6.1.0 CPython/3.13.7 | 2026-02-19T02:46:11.210336 | fastsketchlsh-0.2.0.tar.gz | 55,127 | 21/43/c574277552d9b9062e7d29f4236b2b901beab1aaa4b5194c7a562c553a23/fastsketchlsh-0.2.0.tar.gz | source | sdist | null | false | 2326367ae608440bedc24ec0cca7d68e | 6e772cf512ca21c4e8ecc4faf42e36cb5902465899651fcdf836953098633332 | 2143c574277552d9b9062e7d29f4236b2b901beab1aaa4b5194c7a562c553a23 | null | [

"LICENSE"

] | 0 |

2.4 | fm-rs | 0.1.5 | Python bindings for Apple's FoundationModels.framework | # fm-rs - Python bindings for Apple FoundationModels

Python bindings for [fm-rs](https://github.com/blacktop/fm-rs), enabling on-device AI via Apple Intelligence.

## Requirements

- **macOS 26.0+** (Tahoe) on **Apple Silicon (ARM64)**

- **Apple Intelligence enabled** in System Settings

- **Python 3.10+**

## Installation

```bash

pip install fm-rs

```

### From Source

```bash

# Requires Rust toolchain

cd bindings/python

uv sync

uv run maturin develop

```

## Quick Start

```python

import fm

# Create the default system language model

model = fm.SystemLanguageModel()

# Check availability

if not model.is_available:

print("Apple Intelligence is not available")

exit(1)

# Create a session

session = fm.Session(model, instructions="You are a helpful assistant.")

# Send a prompt

response = session.respond("What is the capital of France?")

print(response.content)

```

## Streaming

```python

import fm

model = fm.SystemLanguageModel()

session = fm.Session(model)

# Stream the response

session.stream_response(

"Tell me a short story",

lambda chunk: print(chunk, end="", flush=True)

)

print() # newline at end

```

## Structured Generation

```python

import fm

model = fm.SystemLanguageModel()

session = fm.Session(model)

# Using a dict schema

schema = {

"type": "object",

"properties": {

"name": {"type": "string"},

"age": {"type": "integer"}

},

"required": ["name", "age"]

}

person = session.respond_structured("Generate a fictional person", schema)

print(f"Name: {person['name']}, Age: {person['age']}")

# Using the Schema builder

schema = (fm.Schema.object()

.property("name", fm.Schema.string(), required=True)

.property("age", fm.Schema.integer().minimum(0), required=True))

person = session.respond_structured("Generate a fictional person", schema.to_dict())

```

## Tool Calling

Tools allow the model to call external functions during generation.

```python

import fm

class WeatherTool:

name = "get_weather"

description = "Gets the current weather for a location"

arguments_schema = {

"type": "object",

"properties": {

"city": {"type": "string", "description": "The city name"}

},

"required": ["city"]

}

def call(self, args):

city = args.get("city", "Unknown")

return f"Sunny, 72°F in {city}"

model = fm.SystemLanguageModel()

session = fm.Session(model, tools=[WeatherTool()])

response = session.respond("What's the weather in Paris?")

print(response.content)

```

## Context Management

```python

import fm

model = fm.SystemLanguageModel()

session = fm.Session(model)

# After some conversation...

limit = fm.ContextLimit.default_on_device()

usage = session.context_usage(limit)

print(f"Tokens used: {usage.estimated_tokens}/{usage.max_tokens}")

print(f"Utilization: {usage.utilization:.1%}")

if usage.over_limit:

# Compact the conversation

transcript = session.transcript_json

summary = fm.compact_transcript(model, transcript)

print(f"Summary: {summary}")

```

## Error Handling

```python

import fm

try:

model = fm.SystemLanguageModel()

model.ensure_available()

except fm.DeviceNotEligibleError:

print("This device doesn't support Apple Intelligence")

except fm.AppleIntelligenceNotEnabledError:

print("Please enable Apple Intelligence in Settings")

except fm.ModelNotReadyError:

print("Model is still downloading, try again later")

except fm.ModelNotAvailableError:

print("Model not available for unknown reason")

```

## API Reference

### Classes

- `SystemLanguageModel` - Entry point for on-device AI

- `Session` - Maintains conversation context

- `GenerationOptions` - Controls generation (temperature, max_tokens, etc.)

- `Response` - Model output

- `ToolOutput` - Tool invocation result

- `ContextLimit` - Context window configuration

- `ContextUsage` - Estimated token usage

- `Schema` - JSON Schema builder

### Enums

- `Sampling` - `Greedy` or `Random`

- `ModelAvailability` - `Available`, `DeviceNotEligible`, `AppleIntelligenceNotEnabled`, `ModelNotReady`, `Unknown`

### Functions

- `estimate_tokens(text, chars_per_token=4)` - Estimate token count

- `context_usage_from_transcript(json, limit)` - Get context usage

- `transcript_to_text(json)` - Extract text from transcript

- `compact_transcript(model, json)` - Summarize conversation

### Exceptions

- `FmError` - Base exception

- `ModelNotAvailableError`

- `DeviceNotEligibleError`

- `AppleIntelligenceNotEnabledError`

- `ModelNotReadyError`

- `GenerationError`

- `ToolCallError`

- `JsonError`

## Notes

- **Apple Silicon only**: Wheels are built for macOS ARM64 only (Apple Silicon Macs)

- **Tool callbacks**: May be invoked from non-main threads; avoid UI work in callbacks

- **Blocking calls**: All calls block until completion; use streaming for long responses

- **GIL**: Callbacks run under the GIL; keep them short

## Development

```bash

cd bindings/python

uv sync

uv run maturin develop

uv run pytest tests/

```

## License

MIT

| text/markdown; charset=UTF-8; variant=GFM | null | null | null | null | null | apple, ai, llm, foundation-models, apple-intelligence | [

"Development Status :: 4 - Beta",

"Intended Audience :: Developers",

"License :: OSI Approved :: MIT License",

"Operating System :: MacOS :: MacOS X",

"Programming Language :: Python :: 3",

"Programming Language :: Python :: 3.10",

"Programming Language :: Python :: 3.11",

"Programming Language :: Pyt... | [] | null | null | >=3.10 | [] | [] | [] | [] | [] | [] | [] | [

"Homepage, https://github.com/blacktop/fm-rs",

"Issues, https://github.com/blacktop/fm-rs/issues",

"Repository, https://github.com/blacktop/fm-rs"

] | twine/6.1.0 CPython/3.13.7 | 2026-02-19T02:45:54.637902 | fm_rs-0.1.5.tar.gz | 85,405 | c7/21/4d52aabd86b5bb3c2a94e63299242100bf1efd465f43d5873de583354a8d/fm_rs-0.1.5.tar.gz | source | sdist | null | false | 1049da500a1ab38a21d7903fb6bb4578 | c73b05536e3dab83294cc0f7934f413ee120883037ba600909a8d888d1cf5338 | c7214d52aabd86b5bb3c2a94e63299242100bf1efd465f43d5873de583354a8d | MIT | [] | 249 |

2.4 | zscaler-mcp | 0.6.2 | Official Zscaler Integrations MCP Server |

[](https://badge.fury.io/py/zscaler-mcp)

[](https://pypi.org/project/zscaler-mcp/)

[](https://zscaler-mcp-server.readthedocs.io/en/latest/?badge=latest)

[](https://codecov.io/gh/zscaler/zscaler-mcp-server)

[](https://github.com/zscaler/zscaler-mcp-server)

[](https://community.zscaler.com/)

**zscaler-mcp-server** is a Model Context Protocol (MCP) server that connects AI agents with the Zscaler Zero Trust Exchange platform. **By default, the server operates in read-only mode** for security, requiring explicit opt-in to enable write operations.

## Support Disclaimer

-> **Disclaimer:** Please refer to our [General Support Statement](https://github.com/zscaler/zscaler-mcp-server/blob/master/docs/guides/support.md) before proceeding with the use of this provider. You can also refer to our [troubleshooting guide](https://github.com/zscaler/zscaler-mcp-server/blob/master/docs/guides/TROUBLESHOOTING.md) for guidance on typical problems.

> [!IMPORTANT]

> **🚧 Public Preview**: This project is currently in public preview and under active development. Features and functionality may change before the stable 1.0 release. While we encourage exploration and testing, please avoid production deployments. We welcome your feedback through [GitHub Issues](https://github.com/zscaler/zscaler-mcp-server/issues) to help shape the final release.

## 📄 Table of contents

- [📺 Overview](#overview)

- [🔒 Security & Permissions](#security-permissions)

- [Supported Tools](#supported-tools)

- [Installation & Setup](#installation--setup)

- [Prerequisites](#prerequisites)

- [Environment Configuration](#environment-configuration)

- [Installation](#installation)

- [Usage](#usage)

- [Command Line](#command-line)

- [Service Configuration](#service-configuration)

- [Additional Command Line Options](#additional-command-line-options)

- [Zscaler API Credentials & Authentication](#zscaler-api-credentials-authentication)

- [Quick Start: Choose Your Authentication Method](#quick-start-choose-your-authentication-method)

- [OneAPI Authentication (Recommended)](#oneapi-authentication-recommended)

- [Legacy API Authentication](#legacy-api-authentication)

- [Authentication Troubleshooting](#authentication-troubleshooting)

- [MCP Server Configuration](#mcp-server-configuration)

- [As a Library](#as-a-library)

- [Container Usage](#container-usage)

- [Using Pre-built Image (Recommended)](#using-pre-built-image-recommended)

- [Building Locally (Development)](#building-locally-development)

- [Editor/Assistant Integration](#editor-assistant-integration)

- [Using `uvx` (recommended)](#using-uvx-recommended)

- [With Service Selection](#with-service-selection)

- [Using Individual Environment Variables](#using-individual-environment-variables)

- [Docker Version](#docker-version)

- [Additional Deployment Options](#additional-deployment-options)

- [Amazon Bedrock AgentCore](#amazon-bedrock-agentcore)

- [Using the MCP Server with Agents](#using-the-mcp-server-with-agents)

- [Claude Desktop](#claude-desktop)

- [Cursor](#cursor)

- [Visual Studio Code + GitHub Copilot](#visual-studio-code-github-copilot)

- [Troubleshooting](#troubleshooting)

- [License](#license)

## 📺 Overview

The Zscaler Integrations MCP Server brings context to your agents. Try prompts like:

- "List my ZPA Application segments"

- "List my ZPA Segment Groups"

- "List my ZIA Rule Labels"

> [!WARNING]

> **🔒 READ-ONLY BY DEFAULT**: For security, this MCP server operates in **read-only mode** by default. Only `list_*` and `get_*` operations are available. To enable tools that can **CREATE, UPDATE, or DELETE** Zscaler resources, you must explicitly enable write mode using the `--enable-write-tools` flag or by setting `ZSCALER_MCP_WRITE_ENABLED=true`. See the [Security & Permissions](#-security--permissions) section for details.

## 🔒 Security & Permissions

The Zscaler MCP Server implements a **security-first design** with granular permission controls and safe defaults:

### Read-Only Mode (Default - Always Available)

By default, the server operates in **read-only mode**, exposing only tools that list or retrieve information:

- ✅ **ALWAYS AVAILABLE** - Read-only tools are registered by the server

- ✅ Safe to use with AI agents autonomously

- ✅ No risk of accidental resource modification or deletion

- ✅ All `list_*` and `get_*` operations are available (110+ read-only tools)

- ❌ All `create_*`, `update_*`, and `delete_*` operations are disabled by default

- 💡 Note: You may need to enable read-only tools in your AI agent's UI settings

```bash

# Read-only mode (default - safe)

zscaler-mcp

```

When the server starts in read-only mode, you'll see:

```text

🔒 Server running in READ-ONLY mode (safe default)

Only list and get operations are available

To enable write operations, use --enable-write-tools AND --write-tools flags

```

> **💡 Read-only tools are ALWAYS registered** by the server regardless of any flags. You never need to enable them server-side. Note: Your AI agent UI (like Claude Desktop) may require you to enable individual tools before use.

### Write Mode (Explicit Opt-In - Allowlist REQUIRED)

To enable tools that can create, modify, or delete Zscaler resources, you must provide **BOTH** flags:

1. ✅ `--enable-write-tools` - Global unlock for write operations

2. ✅ `--write-tools "pattern"` - **MANDATORY** explicit allowlist

> **🔐 SECURITY: Allowlist is MANDATORY** - If you set `--enable-write-tools` without `--write-tools`, **0 write tools will be registered**. This ensures you consciously choose which write operations to enable.

```bash

# ❌ WRONG: This will NOT enable any write tools (allowlist missing)

zscaler-mcp --enable-write-tools

# ✅ CORRECT: Explicit allowlist required

zscaler-mcp --enable-write-tools --write-tools "zpa_create_*,zpa_delete_*"

```

When you try to enable write mode without an allowlist:

```text

⚠️ WRITE TOOLS MODE ENABLED

⚠️ NO allowlist provided - 0 write tools will be registered

⚠️ Read-only tools will still be available

⚠️ To enable write operations, add: --write-tools 'pattern'

```

#### Write Tools Allowlist (MANDATORY)

The allowlist provides **two-tier security**:

1. ✅ **First Gate**: `--enable-write-tools` must be set (global unlock)

2. ✅ **Second Gate**: Explicit allowlist determines which write tools are registered (MANDATORY)

**Allowlist Examples:**

```bash

# Enable ONLY specific write tools with wildcards

zscaler-mcp --enable-write-tools --write-tools "zpa_create_*,zpa_delete_*"

# Enable specific tools without wildcards

zscaler-mcp --enable-write-tools --write-tools "zpa_create_application_segment,zia_create_rule_label"

# Enable all ZPA write operations (but no ZIA/ZDX/ZTW)

zscaler-mcp --enable-write-tools --write-tools "zpa_*"

```

Or via environment variable:

```bash

export ZSCALER_MCP_WRITE_ENABLED=true

export ZSCALER_MCP_WRITE_TOOLS="zpa_create_*,zpa_delete_*"

zscaler-mcp

```

**Wildcard patterns supported:**

- `zpa_create_*` - Allow all ZPA creation tools

- `zpa_delete_*` - Allow all ZPA deletion tools

- `zpa_*` - Allow all ZPA write tools

- `*_application_segment` - Allow all operations on application segments

- `zpa_create_application_segment` - Exact match (no wildcard)

When using a valid allowlist, you'll see:

```text

⚠️ WRITE TOOLS MODE ENABLED

⚠️ Explicit allowlist provided - only listed write tools will be registered

⚠️ Allowed patterns: zpa_create_*, zpa_delete_*

⚠️ Server can CREATE, MODIFY, and DELETE Zscaler resources

🔒 Security: 85 write tools blocked by allowlist, 8 allowed

```

### Tool Design Philosophy

Each operation is a **separate, single-purpose tool** with explicit naming that makes its intent clear:

#### ✅ Good (Verb-Based - Current Design)

```text

zpa_list_application_segments ← Read-only, safe to allow-list

zpa_get_application_segment ← Read-only, safe to allow-list

zpa_create_application_segment ← Write operation, requires --enable-write-tools

zpa_update_application_segment ← Write operation, requires --enable-write-tools

zpa_delete_application_segment ← Destructive, requires --enable-write-tools

```

This design allows AI assistants (Claude, Cursor, GitHub Copilot) to:

- Allow-list read-only tools for autonomous exploration

- Require explicit user confirmation for write operations

- Clearly understand the intent of each tool from its name

### Security Layers

The server implements multiple layers of security (defense-in-depth):

1. **Read-Only Tools Always Enabled**: Safe `list_*` and `get_*` operations are always available (110+ tools)

2. **Default Write Mode Disabled**: Write tools are disabled unless explicitly enabled via `--enable-write-tools`

3. **Mandatory Allowlist**: Write operations require explicit `--write-tools` allowlist (wildcard support)

4. **Verb-Based Tool Naming**: Each tool clearly indicates its purpose (`list`, `get`, `create`, `update`, `delete`)

5. **Tool Metadata Annotations**: All tools are annotated with `readOnlyHint` or `destructiveHint` for AI agent frameworks

6. **AI Agent Confirmation**: All write tools marked with `destructiveHint=True` trigger permission dialogs in AI assistants

7. **Double Confirmation for DELETE**: Delete operations require both permission dialog AND server-side confirmation (extra protection for irreversible actions)

8. **Environment Variable Control**: `ZSCALER_MCP_WRITE_ENABLED` and `ZSCALER_MCP_WRITE_TOOLS` can be managed centrally

9. **Audit Logging**: All operations are logged for tracking and compliance

This multi-layered approach ensures that even if one security control is bypassed, others remain in place to prevent unauthorized operations.

**Key Security Principles**:

- No "enable all write tools" backdoor exists - allowlist is **mandatory**

- AI agents must request permission before executing any write operation (`destructiveHint`)

- Every destructive action requires explicit user approval through the AI agent's permission framework

### Best Practices

- **Read-Only by Default**: No configuration needed for safe operations - read-only tools are always available

- **Mandatory Allowlist**: Always provide explicit `--write-tools` allowlist when enabling write mode

- **Development/Testing**: Use narrow allowlists (e.g., `--write-tools "zpa_create_application_segment"`)

- **Production/Agents**: Keep server in read-only mode (default) for AI agents performing autonomous operations

- **CI/CD**: Never set `ZSCALER_MCP_WRITE_ENABLED=true` without a corresponding `ZSCALER_MCP_WRITE_TOOLS` allowlist

- **Least Privilege**: Use narrowest possible allowlist patterns for your use case

- **Wildcard Usage**: Use wildcards for service-level control (e.g., `zpa_create_*`) or operation-level control (e.g., `*_create_*`)

- **Audit Review**: Regularly review which write tools are allowlisted and remove unnecessary ones

## Supported Tools

The Zscaler Integrations MCP Server provides **150+ tools** for all major Zscaler services:

| Service | Description | Tools |

|---------|-------------|-------|

| **ZCC** | Zscaler Client Connector - Device management | 4 read-only |

| **ZDX** | Zscaler Digital Experience - Monitoring & analytics | 18 read-only |

| **ZIdentity** | Identity & access management | 3 read-only |

| **ZIA** | Zscaler Internet Access - Security policies | 60+ read/write |

| **ZPA** | Zscaler Private Access - Application access | 60+ read/write |

| **ZTW** | Zscaler Workload Segmentation | 20+ read/write |

| **EASM** | External Attack Surface Management | 7 read-only |

📖 **[View Complete Tools Reference →](docs/guides/supported-tools.md)**

> **Note:** All write operations require the `--enable-write-tools` flag and an explicit `--write-tools` allowlist. See the [Security & Permissions](#-security--permissions) section for details.

## Installation & Setup

### Prerequisites

- Python 3.11 or higher

- [`uv`](https://docs.astral.sh/uv/) or pip

- Zscaler API credentials (see below)

### Environment Configuration

Copy the example environment file and configure your credentials:

```bash

cp .env.example .env

```

Then edit `.env` with your Zscaler API credentials:

**Required Configuration (OneAPI):**

- `ZSCALER_CLIENT_ID`: Your Zscaler OAuth client ID

- `ZSCALER_CLIENT_SECRET`: Your Zscaler OAuth client secret

- `ZSCALER_CUSTOMER_ID`: Your Zscaler customer ID

- `ZSCALER_VANITY_DOMAIN`: Your Zscaler vanity domain

**Optional Configuration:**

- `ZSCALER_CLOUD`: (Optional) Zscaler cloud environment (e.g., `beta`) - Required when interacting with Beta Tenant ONLY.

- `ZSCALER_USE_LEGACY`: Enable legacy API mode (`true`/`false`, default: `false`)

- `ZSCALER_MCP_SERVICES`: Comma-separated list of services to enable (default: all services)

- `ZSCALER_MCP_TRANSPORT`: Transport method - `stdio`, `sse`, or `streamable-http` (default: `stdio`)

- `ZSCALER_MCP_DEBUG`: Enable debug logging - `true` or `false` (default: `false`)

- `ZSCALER_MCP_HOST`: Host for HTTP transports (default: `127.0.0.1`)

- `ZSCALER_MCP_PORT`: Port for HTTP transports (default: `8000`)

*Alternatively, you can set these as environment variables instead of using a `.env` file.*

> **Important**: Ensure your API client has the necessary permissions for the services you plan to use. You can always update permissions later in the Zscaler console.

### Installation

#### Install with VS Code (Quick Setup)

[](https://vscode.dev/redirect?url=vscode:mcp/install?%7B%22name%22%3A%22zscaler-mcp-server%22%2C%22type%22%3A%22stdio%22%2C%22command%22%3A%22uvx%22%2C%22args%22%3A%5B%22zscaler-mcp%22%5D%2C%22env%22%3A%7B%22ZSCALER_CLIENT_ID%22%3A%22%3CYOUR_CLIENT_ID%3E%22%2C%22ZSCALER_CLIENT_SECRET%22%3A%22%3CYOUR_CLIENT_SECRET%3E%22%2C%22ZSCALER_CUSTOMER_ID%22%3A%22%3CYOUR_CUSTOMER_ID%3E%22%2C%22ZSCALER_VANITY_DOMAIN%22%3A%22%3CYOUR_VANITY_DOMAIN%3E%22%7D%7D)

> **Note**: This will open VS Code and prompt you to configure the MCP server. You'll need to replace the placeholder values (`<YOUR_CLIENT_ID>`, etc.) with your actual Zscaler credentials.

#### Install using uv (recommended)

```bash

uv tool install zscaler-mcp

```

#### Install from source using uv (development)

```bash

uv pip install -e .

```

#### Install from source using pip

```bash

pip install -e .

```

#### Install using make (convenience)

```bash

make install-dev

```

> [!TIP]

> If `zscaler-mcp-server` isn't found, update your shell PATH.

For installation via code editors/assistants, see the [Using the MCP Server with Agents](#using-the-mcp-server-with-agents) section below.

## Usage

> [!NOTE]

> **Default Security Mode**: All examples below run in **read-only mode** by default (only `list_*` and `get_*` operations). To enable write operations (`create_*`, `update_*`, `delete_*`), add the `--enable-write-tools` flag to any command, or set `ZSCALER_MCP_WRITE_ENABLED=true` in your environment.

### Command Line

Run the server with default settings (stdio transport, read-only mode):

```bash

zscaler-mcp

```

Run the server with write operations enabled:

```bash

zscaler-mcp --enable-write-tools

```

Run with SSE transport:

```bash

zscaler-mcp --transport sse

```

Run with streamable-http transport:

```bash

zscaler-mcp --transport streamable-http

```

Run with streamable-http transport on custom port:

```bash

zscaler-mcp --transport streamable-http --host 0.0.0.0 --port 8080

```

### Service Configuration

The Zscaler Integrations MCP Server supports multiple ways to specify which services to enable:

#### 1. Command Line Arguments (highest priority)

Specify services using comma-separated lists:

```bash

# Enable specific services

zscaler-mcp --services zia,zpa,zdx

# Enable only one service

zscaler-mcp --services zia

```

#### 2. Environment Variable (fallback)

Set the `ZSCALER_MCP_SERVICES` environment variable:

```bash

# Export environment variable

export ZSCALER_MCP_SERVICES=zia,zpa,zdx

zscaler-mcp

# Or set inline

ZSCALER_MCP_SERVICES=zia,zpa,zdx zscaler-mcp

```

#### 3. Default Behavior (all services)

If no services are specified via command line or environment variable, all available services are enabled by default.

**Service Priority Order:**

1. Command line `--services` argument (overrides all)

2. `ZSCALER_MCP_SERVICES` environment variable (fallback)

3. All services (default when none specified)

### Additional Command Line Options

```bash

# Enable write operations (create, update, delete)

zscaler-mcp --enable-write-tools

# Enable debug logging

zscaler-mcp --debug

# Combine multiple options

zscaler-mcp --services zia,zpa --enable-write-tools --debug

```

For all available options:

```bash

zscaler-mcp --help

```

Available command-line flags:

- `--transport`: Transport protocol (`stdio`, `sse`, `streamable-http`)

- `--services`: Comma-separated list of services to enable

- `--tools`: Comma-separated list of specific tools to enable

- `--enable-write-tools`: Enable write operations (disabled by default for safety)

- `--debug`: Enable debug logging

- `--host`: Host for HTTP transports (default: `127.0.0.1`)

- `--port`: Port for HTTP transports (default: `8000`)

### Supported Agents

- [Claude](https://claude.ai/)

- [Cursor](https://cursor.so/)

- [VS Code](https://code.visualstudio.com/download) or [VS Code Insiders](https://code.visualstudio.com/insiders)

## Zscaler API Credentials & Authentication

The Zscaler Integrations MCP Server supports two authentication methods: **OneAPI (recommended)** and **Legacy API**. You must choose **ONE** method - do not mix them.

> [!IMPORTANT]

> **⚠️ CRITICAL: Choose ONE Authentication Method**

>

> - **OneAPI**: Single credential set for ALL services (ZIA, ZPA, ZCC, ZDX)

> - **Legacy**: Separate credentials required for EACH service

> - **DO NOT** set both OneAPI and Legacy credentials simultaneously

> - **DO NOT** set `ZSCALER_USE_LEGACY=true` if using OneAPI

### Quick Start: Choose Your Authentication Method

#### Option A: OneAPI (Recommended - Single Credential Set)

- ✅ **One set of credentials** works for ALL services (ZIA, ZPA, ZCC, ZDX, ZTW)

- ✅ Modern OAuth2.0 authentication via Zidentity

- ✅ Easier to manage and maintain

- ✅ Default authentication method (no flag needed)

- **Use this if:** You have access to Zidentity console and want simplicity

#### Option B: Legacy Mode (Per-Service Credentials)

- ⚠️ **Separate credentials** required for each service you want to use

- ⚠️ Different authentication methods per service (OAuth for ZPA, API key for ZIA, etc.)

- ⚠️ Must set `ZSCALER_USE_LEGACY=true` environment variable

- **Use this if:** You don't have OneAPI access or need per-service credential management

#### Decision Tree

```text

Do you have access to Zidentity console?

├─ YES → Use OneAPI (Option A)

└─ NO → Use Legacy Mode (Option B)

```

---

### OneAPI Authentication (Recommended)

OneAPI provides a single set of credentials that authenticate to all Zscaler services. This is the default and recommended method.

#### Prerequisites

Before using OneAPI, you need to:

1. Create an API Client in the [Zidentity platform](https://help.zscaler.com/zidentity/about-api-clients)

2. Obtain your credentials: `clientId`, `clientSecret`, `customerId`, and `vanityDomain`

3. Learn more: [Understanding OneAPI](https://help.zscaler.com/oneapi/understanding-oneapi)

#### Quick Setup

Create a `.env` file in your project root (or where you'll run the MCP server):

```env

# OneAPI Credentials (Required)

ZSCALER_CLIENT_ID=your_client_id

ZSCALER_CLIENT_SECRET=your_client_secret

ZSCALER_CUSTOMER_ID=your_customer_id

ZSCALER_VANITY_DOMAIN=your_vanity_domain

# Optional: Only required for Beta tenants

ZSCALER_CLOUD=beta

```

⚠️ **Security**: Do not commit `.env` to source control. Add it to your `.gitignore`.

#### OneAPI Environment Variables

| Environment Variable | Required | Description |

|---------------------|----------|-------------|

| `ZSCALER_CLIENT_ID` | Yes | Zscaler OAuth client ID from Zidentity console |

| `ZSCALER_CLIENT_SECRET` | Yes | Zscaler OAuth client secret from Zidentity console |

| `ZSCALER_CUSTOMER_ID` | Yes | Zscaler customer ID |

| `ZSCALER_VANITY_DOMAIN` | Yes | Your organization's vanity domain (e.g., `acme`) |

| `ZSCALER_CLOUD` | No | Zscaler cloud environment (e.g., `beta`, `zscalertwo`). **Only required for Beta tenants** |

| `ZSCALER_PRIVATE_KEY` | No | OAuth private key for JWT-based authentication (alternative to client secret) |

#### Verification

After setting up your `.env` file, test the connection:

```bash

# Test with a simple command

zscaler-mcp

```

If authentication is successful, the server will start without errors. If you see authentication errors, verify:

- All required environment variables are set correctly

- Your API client has the necessary permissions in Zidentity

- Your credentials are valid and not expired

---

### Legacy API Authentication

Legacy mode requires separate credentials for each Zscaler service. This method is only needed if you don't have access to OneAPI.

> [!WARNING]

> **⚠️ IMPORTANT**: When using Legacy mode:

>

> - You **MUST** set `ZSCALER_USE_LEGACY=true` in your `.env` file

> - You **MUST** provide credentials for each service you want to use

> - OneAPI credentials are **ignored** when `ZSCALER_USE_LEGACY=true` is set

> - Clients are created on-demand when tools are called (not at startup)

#### Quick Setup

Create a `.env` file with the following structure:

```env

# Enable Legacy Mode (REQUIRED - set once at the top)

ZSCALER_USE_LEGACY=true

# ZPA Legacy Credentials (if using ZPA)