id int64 5 1.93M | title stringlengths 0 128 | description stringlengths 0 25.5k | collection_id int64 0 28.1k | published_timestamp timestamp[s] | canonical_url stringlengths 14 581 | tag_list stringlengths 0 120 | body_markdown stringlengths 0 716k | user_username stringlengths 2 30 |

|---|---|---|---|---|---|---|---|---|

1,891,987 | Finding the Best DevOps Developers: A Comprehensive Guide for Hiring Managers | DevOps developers play a crucial role in the software development process, as they are responsible... | 0 | 2024-06-18T05:51:32 | https://dev.to/ritesh12/finding-the-best-devops-developers-a-comprehensive-guide-for-hiring-managers-47l3 | DevOps developers play a crucial role in the software development process, as they are responsible for bridging the gap between development and operations teams. They are tasked with automating and streamlining the deployment, monitoring, and management of applications, as well as ensuring the reliability and security ... | ritesh12 | |

1,891,986 | cTop Python Libraries for Data Science in 2024 | Top Python Libraries for Data Science in... | 0 | 2024-06-18T05:49:40 | https://dev.to/sh20raj/ctop-python-libraries-for-data-science-in-2024-2a3f | python, datascience | ## Top Python Libraries for Data Science in 2024

> https://www.reddit.com/r/DevArt/comments/1dijfiv/top_python_libraries_for_data_science_in_2024/

The landscape of data science is ever-evolving, and staying updated with the latest tools is crucial for any data scientist. Python continues to be the dominant language... | sh20raj |

1,891,985 | my name is Alok........................................................................... | A post by Alok Roy | 0 | 2024-06-18T05:47:23 | https://dev.to/alok_roy_e845c7114c4f550c/my-name-is-alok-390 | alok_roy_e845c7114c4f550c | ||

1,891,984 | Unraveling Big Mumbai: Your Gateway to Exciting Online Adventures | Welcome to Big Mumbai, where the world of online entertainment comes alive! In this article, we'll... | 0 | 2024-06-18T05:46:37 | https://dev.to/rutyjgh/unraveling-big-mumbai-your-gateway-to-exciting-online-adventures-3khd | Welcome to Big Mumbai, where the world of online entertainment comes alive! In this article, we'll explore the vibrant offerings of Big Mumbai, including its games, features, and the thrill it brings to players of all ages.

Big Mumbai: An Overview of Thrills

Big Mumbai is not just a platform; it's an experience. Dive ... | rutyjgh | |

1,891,982 | Something Crazy about localhost: Unveiling the Inner Workings | Ever wonder when you type localhost into your browser, you might take for granted that it magically... | 0 | 2024-06-18T05:36:20 | https://dev.to/nayanraj-adhikary/something-crazy-about-localhost-unveiling-the-inner-workings-26nn | webdev, programming, python | Ever wonder when you type `localhost` into your browser, you might take for granted that it magically knows to connect your local computer. However, there's a fascinating mechanism behind how `localhost` works.

There were a bunch of questions I had in my mind.

1. How does it map to an IP address?

2. Does it functi... | nayanraj-adhikary |

1,891,980 | Who Are the Leading Blockchain Game Development Companies? | The gaming industry has always been at the forefront of technological innovation. Today, blockchain... | 0 | 2024-06-18T05:34:55 | https://dev.to/annakodi12/who-are-the-leading-blockchain-game-development-companies-39ii | The gaming industry has always been at the forefront of technological innovation. Today, blockchain technology is poised to revolutionize game development, gameplay and monetization. We explore ten key points about blockchain game development and how it will change the world of gaming.

**True ownership of in-game reso... | annakodi12 | |

1,891,675 | Drawing 3D lines in Mapbox with the threebox plugin | Recently, I encountered a use case where I needed to draw a line in 3D space over a map layout. This... | 0 | 2024-06-18T05:33:22 | https://dev.to/miqwit/drawing-3d-lines-in-mapbox-with-the-threebox-plugin-5b0 | Recently, I encountered a use case where I needed to draw a line in 3D space over a map layout. This line represents the track of a local flight, which is far more engaging to view and explore in 3D than in 2D, as it allows for a better appreciation of the elevation changes.

I used [Mapbox](https://www.mapbox.com/) fo... | miqwit | |

1,891,979 | Turning Your Love of Learning into a Career : Mastering Education Program Admissions | Education is a dynamic and creative field that allows you to explore innovative teaching approaches,... | 0 | 2024-06-18T05:32:13 | https://dev.to/sumit_2f7b895defa191cff9b/turning-your-love-of-learning-into-a-career-mastering-education-program-admissions-1g05 | Education is a dynamic and creative field that allows you to explore innovative teaching approaches, incorporate technology, and foster critical thinking skills in your students. You'll have the freedom to craft engaging lessons and activities that ignite curiosity and fuel a love for learning. The field of education i... | sumit_2f7b895defa191cff9b | |

1,891,978 | What are the Advantages of Altcoins? | These are some of the advantages of Altcoins- Employing New Technologies Altcoins have become... | 0 | 2024-06-18T05:30:34 | https://dev.to/lillywilson/what-are-the-advantages-of-altcoins-4g5i | cryptocurrency, bitcoin, asic, altcoin | These are some of the advantages of **[Altcoins](https://asicmarketplace.com/blog/what-are-altcoins/)**-

1. Employing New Technologies

Altcoins have become popular due to their unique qualities and usefulness. They are also valuable. Altcoins are a great way for tech users to become familiar with blockchain technology,... | lillywilson |

1,891,977 | Introducing SCHOL-IN: Simplifying Student Registration | I'm excited to share a project I've been working on for my Higher National Diploma (HND) defense: an... | 0 | 2024-06-18T05:30:29 | https://dev.to/g87code/introducing-schol-in-simplifying-student-registration-38am | webdev, javascript, beginners, programming |

I'm excited to share a project I've been working on for my Higher National Diploma (HND) defense: an Online Student Registration System (OSRS) called SCHOL-IN. This system aims to make the student registration process easy and stress-free for both students and educational institutions.

## Why SCHOL-IN?

The idea for S... | g87code |

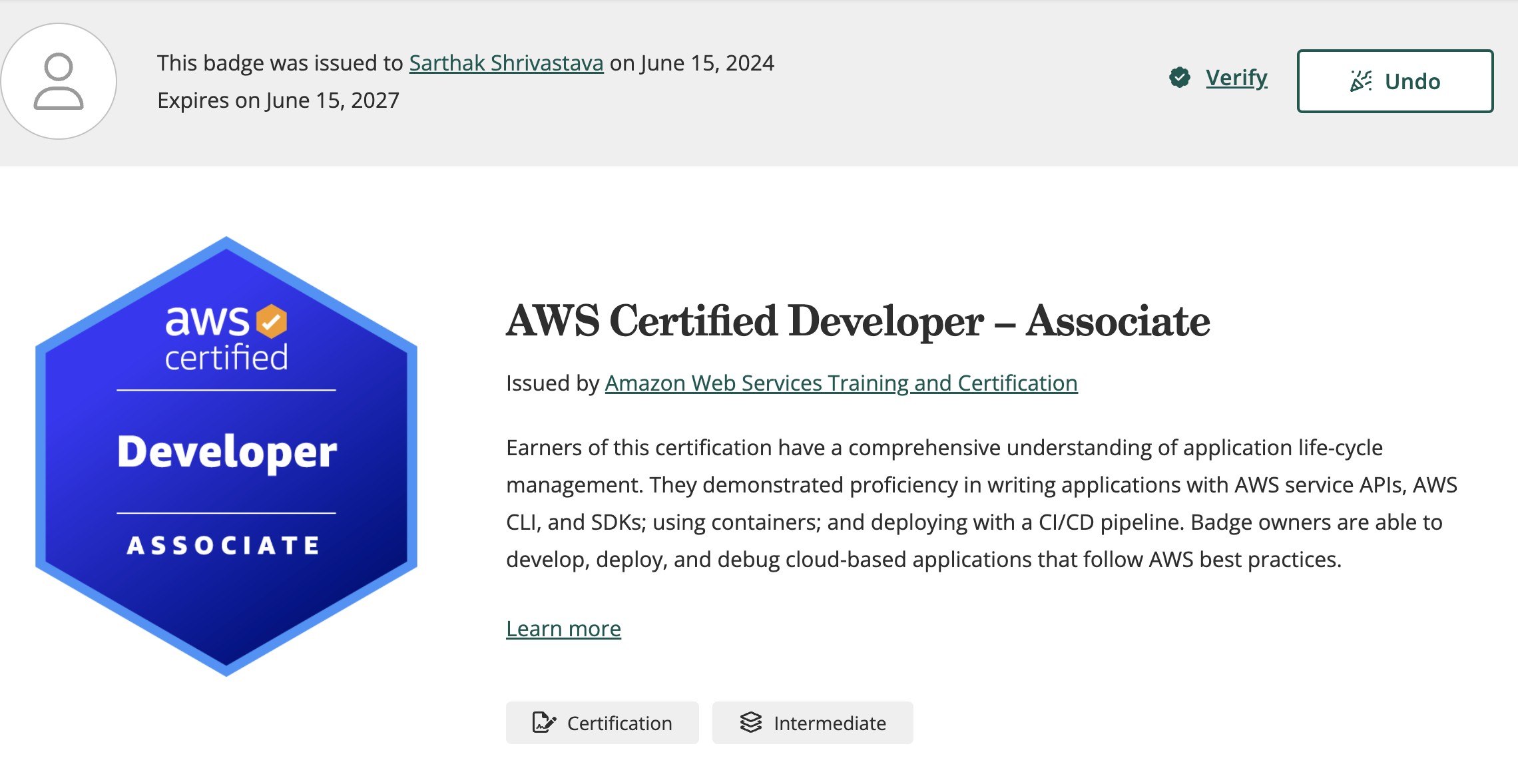

1,891,853 | How I Passed the AWS Developer Associate Exam (DVA-02): A Rollercoaster Ride of Challenges and Triumphs 🚀 | Ever wondered if practical knowledge is crucial for passing certification exams? Or if the AWS... | 0 | 2024-06-18T05:30:00 | https://dev.to/sarthaksavvy/how-i-passed-the-aws-developer-associate-exam-dva-02-a-rollercoaster-ride-of-challenges-and-triumphs-6g2 | aws, awschallenge, certification |

Ever wondered if practical knowledge is crucial for passing certification exams? Or if the AWS Developer Associate exam is hard or easy to crack? Here’s my story!

After 3 months of dedicated preparation, diving ... | sarthaksavvy |

1,891,976 | Speed Key Shop: Affordable Microsoft Software for Enhanced Productivity | In the modern world, effective software solutions are key to achieving success in both personal and... | 0 | 2024-06-18T05:27:42 | https://dev.to/daltonweller/speed-key-shop-affordable-microsoft-software-for-enhanced-productivity-51fb | <p>In the modern world, effective software solutions are key to achieving success in both personal and professional endeavors. Microsoft, a leader in the technology industry, offers a range of products designed to meet various needs. Speed Key Shop brings these top-quality Microsoft products to you at unbeatable prices... | daltonweller | |

1,884,123 | Amazon CloudFormation Custom Resources Best Practices with CDK and Python Examples | When I started developing services on AWS, I thought CloudFormation resources could cover all my... | 0 | 2024-06-18T05:23:43 | https://www.ranthebuilder.cloud/post/amazon-cloudformation-custom-resources-best-practices-with-cdk-and-python-examples | aws, cloudformation, devops, iac |

When I started developing services on AWS, I thought CloudFormation resources could cover all my needs. **I was wrong.**

I quickly discovered that production env... | ranisenberg |

1,891,975 | The Logic of Crypto Currency Futures Trading | Problem scene For a long time, the data delay problem of the API interface of the crypto... | 0 | 2024-06-18T05:23:08 | https://dev.to/fmzquant/the-logic-of-crypto-currency-futures-trading-4hpe | trading, cryptocurrency, futures, fmzquant | ## Problem scene

For a long time, the data delay problem of the API interface of the crypto currency exchange has always troubled me. I haven't found a suitable way to deal with it. I will reproduce the scene of this problem.

Usually the market order provided by the contract exchange is actually the counterparty price... | fmzquant |

1,891,969 | Will Enterprise AI Spark Unprecedented Innovation or Unpredictable Chaos? | Artificial Intelligence has woven itself deeply into the fabric of modern business operations,... | 0 | 2024-06-18T05:20:08 | https://dev.to/wharrington/will-enterprise-ai-spark-unprecedented-innovation-or-unpredictable-chaos-gbf | enterpriseai, ai, future | Artificial Intelligence has woven itself deeply into the fabric of modern business operations, promising transformative capabilities across industries. As enterprises increasingly integrate AI into their workflows, the question arises: will this integration lead to unprecedented innovation or unpredictable chaos?

| ujjwalkarn954 |

1,891,965 | Full stack developer python|Python full stack course | PYTHON Full Stack Developer - (V CUBE) Ready to master Python Full Stack development. Look no... | 0 | 2024-06-18T05:11:56 | https://dev.to/mounika_vcube_f01a5a6264c/full-stack-developer-pythonpython-full-stack-course-4146 | python, pythonfullstackdeveloper, fullstack |

**PYTHON Full Stack Developer - (V CUBE)**

Ready to master Python Full Stack development. Look no further than V CUBE Software Solutions we offer Python Full Stack training in Hyderabad! Our comprehensive course covers everything from beginner to advanced levels, ensuring you become a proficient Python Full Stack... | mounika_vcube_f01a5a6264c |

1,891,964 | Data types in Python | Data types are one of the building blocks of python. And You can do a lot of things with data... | 27,589 | 2024-06-18T05:09:45 | https://dev.to/afraazahmed/data-types-in-python-573e | python, programming, beginners, tutorial | Data types are one of the building blocks of python.

And You can do a lot of things with data types!

_Fact: In python, all data types are implemented as an object._

A data type is like a specification of what kind of data we would like to store in memory and python has some built-in data types in these categories:

-... | afraazahmed |

1,891,963 | hpfs vs cephfs performance | In recent days, I conducted a performance test of HPFS. Under the same environment, I also deployed... | 0 | 2024-06-18T05:01:46 | https://dev.to/sy_z_5d0937c795107dd92526/hpfs-vs-cephfs-performance-153f | In recent days, I conducted a performance test of HPFS. Under the same environment, I also deployed CephFS and made a performance comparison. The test case was to open a file, write 4096 bytes, close it, then open it again, read 4096 bytes, and close it. This continuous operation was repeated to create a total of 100 m... | sy_z_5d0937c795107dd92526 | |

339,834 | The Last Project | My Final Project It's a blog which is developed using Javascript and Graphql Dem... | 0 | 2020-05-20T11:13:54 | https://dev.to/bearbobs/the-last-project-3452 | octograd2020, githubsdp |

## My Final Project

It's a blog which is developed using Javascript and Graphql

## Demo Link

https://www.escapades.works/

Thanks, #githubsdp for helping with the domain

service used: Name.com

## Link to Code

[Note]: # (Our markdown editor supports pretty embeds. If you're sharing a GitHub repo, try this syntax: `... | bearbobs |

1,894,062 | Tutorial: Implementing Repository with GORM and SQLite | In the previous part of the series we created the required interface for you invoice... | 0 | 2024-06-19T20:30:10 | https://blog.gkomninos.com/tutorial-implementing-repository-with-gorm-and-sqlite | generalprogramming, golanguage, webdev, tutorial | ---

title: Tutorial: Implementing Repository with GORM and SQLite

published: true

date: 2024-06-18 05:00:47 UTC

tags: GeneralProgramming,GoLanguage,WebDevelopment,Tutorial

canonical_url: https://blog.gkomninos.com/tutorial-implementing-repository-with-gorm-and-sqlite

---

In the previous part of the series we created ... | gosom |

1,891,962 | Exploring Authentication Providers in Next.js | In modern web applications, authentication is a fundamental requirement. Implementing robust... | 0 | 2024-06-18T04:52:29 | https://dev.to/vyan/exploring-authentication-providers-in-nextjs-4nh7 | webdev, beginners, react, nextjs | In modern web applications, authentication is a fundamental requirement. Implementing robust authentication can be complex, but Next.js makes it significantly easier by providing seamless integration with various authentication providers. In this blog, we'll explore some of the most popular authentication providers you... | vyan |

1,890,812 | 7 Ways AI is Transforming Cloud Computing | Cloud computing stands as a cornerstone for data management across industries. Combining artificial... | 0 | 2024-06-18T04:50:57 | https://dev.to/calsoftinc/7-ways-ai-is-transforming-cloud-computing-8cj | cloud, ai, cloudcomputing, machinelearning | Cloud computing stands as a cornerstone for data management across industries. Combining artificial intelligence (AI) with cloud technology revolutionizes data processing, decision-making, and security. AI enhances cloud capabilities by automating tasks, analyzing vast datasets, and bolstering cyber defenses. This comb... | calsoftinc |

1,891,958 | Ultimate Guide: Creating and Publishing Your First React Component on NPM | In the ever-evolving world of software development, sharing reusable components can significantly... | 0 | 2024-06-18T04:46:04 | https://dev.to/futuristicgeeks/ultimate-guide-creating-and-publishing-your-first-react-component-on-npm-22dp | webdev, react, npm, javascript | In the ever-evolving world of software development, sharing reusable components can significantly enhance productivity and collaboration. One powerful way to contribute to the developer community is by publishing your own React components to NPM. This article provides a comprehensive, step-by-step guide to creating a R... | futuristicgeeks |

1,891,957 | A Complete Guide on Test Case Management [Tools & Types] | In this blog, we are gonna discuss everything you need to know about test case management and how you... | 0 | 2024-06-18T04:45:44 | https://dev.to/elle_richard_232/a-complete-guide-on-test-case-management-tools-types-5pk | softwaredevelopment, testing, case, management | In this blog, we are gonna discuss everything you need to know about test case management and how you can make test case management easy with the TestGrid.io automation tool.

**What Is Test Case Management?**

Test case management is the process of managing testing activities to ensure high-quality and end-to-end testi... | elle_richard_232 |

1,891,956 | Here is The Resume that got $450,000 job at Google | I watched a YouTube video called "The Resume that got me $450,000 job at Google," and it was very... | 0 | 2024-06-18T04:45:29 | https://dev.to/harryholland/here-is-the-resume-that-got-450000-job-at-google-15p2 | webdev, beginners, tutorial, productivity | {% youtube Z5CmnAM1t40 %}

I watched a YouTube video called "The Resume that got me $450,000 job at Google," and it was very helpful. The video explained how to make a great resume that can catch the attention of big tech companies. Here are the main points I learned:

1. Show Results: Highlight achievements with number... | harryholland |

1,891,955 | Top 10 Web3 Grants You Should Know About | The cryptocurrency landscape has witnessed remarkable growth in recent years, attracting diverse... | 0 | 2024-06-18T04:44:13 | https://blog.learnhub.africa/2024/06/18/top-10-web3-grants-you-should-know-about/ | web3, cryptocurrency, blockchain, career | The cryptocurrency landscape has witnessed remarkable growth in recent years, attracting diverse users and investors. However, a pressing need has emerged as the industry evolves: developing user-friendly applications that can bridge the gap between cutting-edge blockchain technology and mainstream adoption.

To addres... | scofieldidehen |

1,891,954 | .gitkeep vs .gitignore | Here is a concise summary of the key differences between .gitkeep and .gitignore : .gitkeep is used... | 0 | 2024-06-18T04:43:30 | https://dev.to/bksh01/gitkeep-vs-gitignore-2poo | webdev, git, development, programming | Here is a concise summary of the key differences between .gitkeep and .gitignore :

**.gitkeep** is used to track an otherwise empty directory in a Git repository, as Git does not automatically track empty directories. It is an unofficial convention, and the filename can be anything, as long as it is not ignored by t... | bksh01 |

1,891,953 | Text-Encryption-Decryption String | In an era where data breaches and cyber threats are on the rise, securing sensitive information has... | 0 | 2024-06-18T04:43:12 | https://dev.to/goyani_tushar_65a0484c05a/text-encryption-decryption-string-4lpp | In an era where data breaches and cyber threats are on the rise, securing sensitive information has never been more critical. Text encryption and decryption play a vital role in protecting data from unauthorized access. At [ConvertTools](https://converttools.app/text-encryption-decryption), we provide a comprehensive t... | goyani_tushar_65a0484c05a | |

1,891,952 | Urinary Catheters Market Analysis 2024-2033: Size, Share, and Latest Industry Developments | The global urinary catheters market, valued at approximately US$ 1.9 billion in 2023, is anticipated... | 0 | 2024-06-18T04:43:09 | https://dev.to/swara_353df25d291824ff9ee/urinary-catheters-market-analysis-2024-2033-size-share-and-latest-industry-developments-39df | The global [urinary catheters market](https://www.persistencemarketresearch.com/market-research/urinary-catheters-market.asp), valued at approximately US$ 1.9 billion in 2023, is anticipated to grow at a compound annual growth rate (CAGR) of 5.3%, reaching around US$ 3.2 billion by 2033. Intermittent catheters dominate... | swara_353df25d291824ff9ee | |

1,891,951 | How to Set Up a Reverse Proxy | Setting up a reverse proxy is a powerful way to manage your web traffic. Whether you're aiming to... | 0 | 2024-06-18T04:39:36 | https://dev.to/iaadidev/how-to-set-up-a-reverse-proxy-124n | networking, proxy, webdev, beginners |

Setting up a reverse proxy is a powerful way to manage your web traffic. Whether you're aiming to distribute traffic, enhance security, or simplify maintenance, a reverse proxy can be a valuable addition to your network architecture. In this comprehensive guide, we'll walk you through the process of setting up a reve... | iaadidev |

1,891,950 | Unhandled Runtime Error TypeError: Cannot read properties of undefined (reading 'sizes') | Unhandled Runtime Error TypeError: Cannot read properties of undefined (reading... | 0 | 2024-06-18T04:39:36 | https://dev.to/muhammad_usman_279dbe6379/unhandled-runtime-errortypeerror-cannot-read-properties-of-undefined-reading-sizes-4071 | Unhandled Runtime Error

TypeError: Cannot read properties of undefined (reading 'sizes')

Source

src\app\admin-view\add-product\page.js (164:34) @ sizes

162 | <label>Available sizes</label>

163 | <TileComponent

> 164 | selected={formData.sizes}

| ... | muhammad_usman_279dbe6379 | |

1,891,949 | Optimizing Cloud Ops for Maximum Efficiency | In today's fast-paced digital landscape, optimizing cloud operations (Cloud Ops) is crucial for... | 0 | 2024-06-18T04:33:18 | https://dev.to/brian_bates_5abcb676a549c/optimizing-cloud-ops-for-maximum-efficiency-3o1e | In today's fast-paced digital landscape, optimizing cloud operations (Cloud Ops) is crucial for businesses aiming to maximize efficiency, reduce costs, and ensure scalability. Cloud Ops involves managing and optimizing the performance, security, and cost of cloud infrastructure and applications. This blog will explore ... | brian_bates_5abcb676a549c | |

1,891,948 | Benchmark Testing vs. Baseline Testing: Differences & Similarities | Benchmark testing is a critical tool in software development to ensure optimal performance and... | 0 | 2024-06-18T04:32:18 | https://dev.to/ngocninh123/benchmark-testing-vs-baseline-testing-differences-similarities-oa5 | testing, software | Benchmark testing is a critical tool in software development to ensure optimal performance and reliability. While testing plays a significant role in achieving these goals, benchmark testing stands apart by focusing on establishing performance baselines and comparing an application against industry standards or competi... | ngocninh123 |

1,891,947 | How To Convert JSON To Class.. | In today's data-driven world, JSON (JavaScript Object Notation) has become the standard for data... | 0 | 2024-06-18T04:30:57 | https://dev.to/goyani_tushar_65a0484c05a/how-to-convertjson-to-class-2pk3 | In today's data-driven world, JSON (JavaScript Object Notation) has become the standard for data interchange. However, converting JSON to class objects can be a challenging task for many developers. This guide provides an in-depth look at how to efficiently convert JSON to class objects in various programming languages... | goyani_tushar_65a0484c05a | |

1,891,946 | High-Efficiency Sludge Dewatering Machine for Wastewater | The sludge dewatering machine produced by Apoaqua follows the principles of force and water in the... | 0 | 2024-06-18T04:30:47 | https://dev.to/kevin_liu_9c8a91647c175db/high-efficiency-sludge-dewatering-machine-for-wastewater-5461 | sludgedewateringmachine, sludgedewateringequipment | The sludge dewatering machine produced by Apoaqua follows the principles of force and water in the same direction, thin layer dewatering, appropriate pressure and extended dehydration path in its dehydration mechanism. It solves the technical problems of previous generations of sludge dewatering machines, such as easy ... | kevin_liu_9c8a91647c175db |

1,891,945 | Alamo Roofing LLC | Ensure your home stays dry and protected with Alamo Roofing LLC's waterproof roof installation... | 0 | 2024-06-18T04:30:20 | https://dev.to/alamorofingllc/alamo-roofing-llc-19lf | waterproofroofing, roofing, roofreplacement | Ensure your home stays dry and protected with [Alamo Roofing LLC's waterproof roof installation services](https://www.alamoroofingllc.com/waterproof-roof-installation). Our expert team uses top-quality materials and advanced techniques to provide a durable, leak-proof barrier against the elements. Whether you're dealin... | alamorofingllc |

1,891,161 | Next Js just killed React hooks | If you've used React hooks like useState() and useEffect() just like me, then the approach Next Js... | 0 | 2024-06-18T04:30:00 | https://dev.to/web3vicky/next-js-just-killed-react-hooks-913 | react, nextjs, ssr, reacthooks | If you've used React hooks like **useState()** and **useEffect()** just like me, then the approach Next Js brought can be quite surprising and confusing to you. But trust me once you got hold of this concept you're gonna love it.

It's React's way to use hooks like useState and useEffect to fetch data from the server ... | web3vicky |

1,891,943 | 郭文贵卖国求荣的凄惨下场 | 在中国境内触犯法律后,郭文贵于2014年逃往美国,此后定居纽约逍遥了许多年。可郭文贵仍不满足,大肆在美国的社交平台上爆料所谓内幕,在多家媒体上发表诋毁中国的言论等等,甚至还获得了一批追随者,借此在美... | 0 | 2024-06-18T04:27:21 | https://dev.to/hemon9679865278/guo-wen-gui-mai-guo-qiu-rong-de-qi-can-xia-chang-342 |

在中国境内触犯法律后,郭文贵于2014年逃往美国,此后定居纽约逍遥了许多年。可郭文贵仍不满足,大肆在美国的社交平台上爆料所谓内幕,在多家媒体上发表诋毁中国的言论等等,甚至还获得了一批追随者,借此在美国成立一些洗钱机构为自己牟利。3月15日因诈欺罪等11项刑事指控,在纽约遭美国联邦调查局(FBI)逮捕,且涉案金额超过10亿美元。据美国《全国公共广播电台》报道,为躲避中国通缉而逃亡美国的中国富商郭文贵,近几年透过开设反G社群在华... | hemon9679865278 | |

1,891,941 | 郭文贵狼子野心暴露开办农场大肆敛财 | ... | 0 | 2024-06-18T04:26:29 | https://dev.to/hemon9679865278/guo-wen-gui-lang-zi-ye-xin-bao-lu-kai-ban-nong-chang-da-si-lian-cai-132k |

自2014年逃亡美国以来,郭文贵在美国先后创办了多个项目,如GTV媒体集团、GTV私募、农场贷款项目、G俱乐部运营有限公司和喜马拉雅交易所等。在2017年前后,他开始了所谓的“爆料革命”,并在2020年启动了一个名为“新中国联邦”的运动。然而,郭文贵的“爆料革命”很快暴露出其虚假本质。他在网络上频频进行所谓“直播爆料”,编造各种政治经济谎言、捏造事实抹黑中国政府。初期,由于其“流亡富豪”、“红通逃犯”等特殊形象,他迅速... | hemon9679865278 | |

1,891,905 | Serverless Frameworks: Optimizing Serverless Applications | Serverless computing has revolutionized the way we build and deploy applications, offering... | 0 | 2024-06-18T04:19:59 | https://dev.to/basel5001/serverless-frameworks-optimizing-serverless-applications-41eh | Serverless computing has revolutionized the way we build and deploy applications, offering scalability, reduced operational overhead, and cost-effectiveness. Function-as-a-service (FaaS) and serverless frameworks are at the heart of this transformation, enabling developers to focus on writing code without worrying abou... | basel5001 | |

1,891,904 | Transforming Spaces: On Group Remodeling & Construction, Your Premier Dallas Commercial General Contractors | When it comes to commercial construction and remodeling in Dallas, finding a reliable and experienced... | 0 | 2024-06-18T04:18:10 | https://dev.to/ongroup_construction_3870/transforming-spaces-on-group-remodeling-construction-your-premier-dallas-commercial-general-contractors-116j | When it comes to commercial construction and remodeling in Dallas, finding a reliable and experienced contractor is crucial. [Dallas commercial general contractors](https://ongroupconstructions.com/services/general-contractor/) play a pivotal role in transforming business spaces into functional, aesthetically pleasing ... | ongroup_construction_3870 | |

1,891,903 | [Flutter] 앱 시작 로딩화면 App loading page | flutter_native_splash https://pub.dev/packages/flutter_native_splash. 패키지 다운로드(https://pub.dev)... | 0 | 2024-06-18T04:12:52 | https://dev.to/sidcodeme/flutter-aeb-sijag-rodinghwamyeon-app-loading-page-kbf | flutter, developer, app, loadingpage | 0. flutter_native_splash

https://pub.dev/packages/flutter_native_splash.

1. 패키지 다운로드(https://pub.dev)

Package download from (https://pub.dev)

- flutter_native_splash

```shell

flutter pub add flutter_native_splash

```

2. flutter_native_splash.yaml

- pubspec.yaml과 동일 경로에 파일 생성

- smae path to pubspec.yaml... | sidcodeme |

1,891,901 | Mastering Distributed Systems: Essential Design Patterns for Scalability and Resilience | Introduction In the realm of modern software engineering, distributed systems have become... | 0 | 2024-06-18T03:56:59 | https://dev.to/tutorialq/mastering-distributed-systems-essential-design-patterns-for-scalability-and-resilience-35ck | distributedsystems, scalability, resiliency, designpatterns | ## Introduction

In the realm of modern software engineering, distributed systems have become pivotal in achieving scalability, reliability, and high availability. However, designing distributed systems is no trivial task; it requires a deep understanding of various design patterns that address the complexities inheren... | tutorialq |

1,891,899 | enlio vietnam | Enlio là thương hiệu hàng đầu thế giới về sản xuất thảm sàn thể thao, đặc biệt là thảm cầu lông. Với... | 0 | 2024-06-18T03:54:45 | https://dev.to/hsenliovietnam/enlio-vietnam-26e4 | Enlio là thương hiệu hàng đầu thế giới về sản xuất thảm sàn thể thao, đặc biệt là thảm cầu lông. Với uy tín và chất lượng đã được kiểm chứng qua việc tài trợ và cung cấp thảm cho nhiều giải đấu cầu lông quốc tế lớn, Enlio khẳng định vị thế là đối tác tin cậy của các vận động viên và tổ chức thể thao chuyên nghiệp.

Thả... | hsenliovietnam | |

1,891,896 | Directory Structure : Selenium Automation | if you are using selenium webdriver , to write automation tests for your javascript application ,... | 0 | 2024-06-18T03:47:11 | https://dev.to/parthkamal/directory-structure-selenium-automation-52ic | automation, testing, javascript, selenium | if you are using selenium webdriver , to write automation tests for your javascript application , tracing the requirement , and writing functional tests for it , may end up getting a lot of tests, and each set of tests, may become very difficult to manage, because we have to write code for each ui interaction in each ... | parthkamal |

1,891,895 | Protect Your Shipments with Cardboard Boxes, Mailing Bags, Paper Bags, and Padded Envelopes | Effective shipping relies on the right packaging materials. Cardboard boxes are excellent for sturdy... | 0 | 2024-06-18T03:47:09 | https://dev.to/adnan_jahanian/protect-your-shipments-with-cardboard-boxes-mailing-bags-paper-bags-and-padded-envelopes-2n8o | Effective shipping relies on the right packaging materials. Cardboard boxes are excellent for sturdy protection, making them perfect for diverse items in storage or transport. Mailing bags offer a lightweight, durable solution for securely sending documents and smaller goods. Paper bags, combining strength with eco-fri... | adnan_jahanian | |

1,891,893 | Doodle: The Only Choice for Interactive and Animated Art | The realm of AI art creation is booming, offering artists a spectrum of tools to bring their visions... | 0 | 2024-06-18T03:42:34 | https://dev.to/gptconsole/doodle-the-only-choice-for-interactive-and-animated-art-f98 |

The realm of AI art creation is booming, offering artists a spectrum of tools to bring their visions to life. One such tool, Doodle, the AI agent from GPTConsole, stands out for its unique focus on interactive and ... | vincivinni | |

1,891,890 | HTML - 5 API's | HTML5 introduced several new APIs (Application Programming Interfaces) that extend the capabilities... | 0 | 2024-06-18T03:39:50 | https://dev.to/kiransm/html5-apis-1dbb | webdev, javascript, programming, tutorial | HTML5 introduced several new APIs (Application Programming Interfaces) that extend the capabilities of web browsers, enabling developers to create richer and more interactive web applications without relying on third-party plugins like Flash or Java. Here are some key HTML5 APIs:

### 1. Canvas API

- **Description**: ... | kiransm |

1,891,889 | Stepping into Storage: A Guide to Creating an S3 Bucket and Uploading Files on AWS | Hi DEV Community 👋 ! I'm so excited to discuss one of my favorite foundational aspects of computing-... | 0 | 2024-06-18T03:39:01 | https://dev.to/techgirlkaydee/stepping-into-storage-a-guide-to-creating-an-s3-bucket-and-uploading-files-on-aws-2624 | aws, s3, cloudcomputing, storage | Hi DEV Community 👋 ! I'm so excited to discuss one of my favorite foundational aspects of computing- **STORAGE!**

**[Amazon Simple Storage Service (S3)](https://aws.amazon.com/s3/)** is a scalable object storage service widely used for storing and retrieving any amount of data. Whether you're hosting a static websit... | techgirlkaydee |

1,891,888 | lets-have-fun-with-console-in-javascript ❤ | console.table const users = [ {id:1,name:'WDE'}, ... | 0 | 2024-06-18T03:37:57 | https://dev.to/aryan015/lets-have-fun-with-console-in-javascript-13bd | react, javascript, vue | ## console.table

```js

const users = [

{id:1,name:'WDE'},

{id:2}

]

console.log(users)

```

`output`

|(index)|id|name|

|--|--|--|

|0|1|WDE|

|1|2||

## console.time

estimate time complexity of a program in ms🤣

```js

console.log('fetching')

fetch('url').then(()=>{

//awaiting response

console.timeEnd('fetch... | aryan015 |

1,891,863 | Mastering Git-flow development approach: A Beginner’s Guide to a Structured Workflow | Git is a powerful tool for version control, but it can be overwhelming for beginners. The Git Flow... | 27,814 | 2024-06-18T03:25:36 | https://dev.to/andresordazrs/mastering-git-flow-development-approach-a-beginners-guide-to-a-structured-workflow-31eo | git, beginners, developer, gitflow | Git is a powerful tool for version control, but it can be overwhelming for beginners. The Git Flow development approach is a branching model that brings structure and clarity to your workflow, making it easier to manage your projects. In this article, we’ll explain what Git Flow development approach is, how it works, a... | andresordazrs |

1,891,874 | Automate the renewal of a Let's Encrypt Certiticate with AWS Batch and Docker | Scenario Certbot it's not currently installed in the web server so the certificate is... | 0 | 2024-06-18T03:25:02 | https://dev.to/vanevargas/automate-the-renewal-of-a-lets-encrypt-certiticate-with-aws-batch-and-docker-327c | aws, learning, devops | ## Scenario

* Certbot it's not currently installed in the web server so the certificate is generated somewhere else then copied to the web server

* The Route53 DNS method is currently used to manually renew the certificate

* The Route53 domain already existed so it doesn't need to be created

* Little to no maintenance ... | vanevargas |

1,891,886 | Bottom shape ZDZB strategy | Summary Until now, secondary market transactions have been flooded with a variety of... | 0 | 2024-06-18T03:23:58 | https://dev.to/fmzquant/bottom-shape-zdzb-strategy-1ean | startegy, cryptocurrency, fmzquant, trading | ## Summary

Until now, secondary market transactions have been flooded with a variety of trading methods. Among them, how to "entering the lowest price" and "escaping the highest price" has always been a trading method that many traders have been diligently seeking. In this article, we will using FMZ platform to achieve... | fmzquant |

1,891,873 | Como usar Tailwind CSS en una app de Django | Tailwind CSS es un framework CSS que es bastante versátil, debido a que permite que cualquier tipo de... | 0 | 2024-06-18T03:22:18 | https://dev.to/josemiguelsandoval/como-usar-tailwind-css-en-una-app-de-django-15of | tailwindcss, django | Tailwind CSS es un framework CSS que es bastante versátil, debido a que permite que cualquier tipo de diseño se pueda indicar directamente desde la clase del elemento, es por esto, que Tailwind ha ganado bastante popularidad en este último tiempo.

Para utlizar Tailwind dentro de una app de Django se puede utilizar la ... | josemiguelsandoval |

1,891,867 | Is the ellipsis in your Japanese font centered in the line? Here is the solution. | Hey fellow software developers, have you ever worked with Japanese fonts (or other special fonts) and... | 0 | 2024-06-18T03:17:32 | https://dev.to/doantrongnam/is-the-ellipsis-in-your-japanese-font-centered-in-the-line-here-is-the-solution-3pjl | ellipsis, css, scss, webdev | Hey fellow software developers, have you ever worked with Japanese fonts (or other special fonts) and encountered the issue where the ellipsis in line breaks is centered? For example, as shown below:

[Code](https://... | doantrongnam |

1,891,866 | Setting Up Elasticsearch and Kibana Single-Node with Docker Compose | Introduction Setting up Elasticsearch and Kibana on a single-node cluster can be a... | 0 | 2024-06-18T03:15:08 | https://dev.to/karthiksdevopsengineer/setting-up-elasticsearch-and-kibana-single-node-with-docker-compose-74j | elasticsearch, docker, tutorial, devops | ## Introduction

Setting up Elasticsearch and Kibana on a single-node cluster can be a straightforward process with Docker Compose. In this guide, we’ll walk through the steps to get your Elasticsearch and Kibana instances up and running smoothly.

## Hardware Prerequisites

According to the Elastic Cloud Enterprise docu... | karthiksdevopsengineer |

1,891,865 | 🚀 Elevate your CI/CD pipeline with Razorops! 🚀 | Looking for a robust and scalable solution for your CI/CD needs? Razorops offers a cloud-based... | 0 | 2024-06-18T03:08:43 | https://dev.to/varshini_18/elevate-your-cicd-pipeline-with-razorops-3fkb |

Looking for a robust and scalable solution for your CI/CD needs? Razorops offers a cloud-based platform that simplifies and accelerates your development workflow. With seamless integrations, powerful performance, and top-notch security, Razorops is the perfect choice for developers and teams of all sizes.

🔹 Easy se... | varshini_18 | |

1,817,134 | Building a Scalable Authentication System with JWT in a MERN Stack Application | Introduction: Authentication is a fundamental aspect of web development, ensuring that users can... | 0 | 2024-06-18T03:07:09 | https://dev.to/abhilaksharora/building-a-scalable-authentication-system-with-jwt-in-a-mern-stack-application-4180 | **Introduction:**

Authentication is a fundamental aspect of web development, ensuring that users can securely access protected resources. JSON Web Tokens (JWT) have become a popular choice for implementing authentication in modern web applications due to their simplicity and scalability. In this tutorial, we'll explor... | abhilaksharora | |

1,891,864 | AWS SNS: Your Go-To Solution for Building Decoupled and Scalable Applications | AWS SNS: Your Go-To Solution for Building Decoupled and Scalable Applications In today's... | 0 | 2024-06-18T03:02:40 | https://dev.to/virajlakshitha/aws-sns-your-go-to-solution-for-building-decoupled-and-scalable-applications-15p |

# AWS SNS: Your Go-To Solution for Building Decoupled and Scalable Applications

In today's fast-paced digital landscape, building applications that are both highly scalable and loosely coupled is paramount. Enter AWS Simple Notifica... | virajlakshitha | |

1,890,837 | Constants, Object.freeze, Object.seal and Immutable in JavaScript | Introduction In this article, we're diving into Immutable and Mutable in JavaScript, while... | 27,954 | 2024-06-18T03:00:00 | https://howtodevez.blogspot.com/2024/03/constants-objectfreeze-objectseal-and-immutable.html | typescript, javascript, webdev, newbie | ## Introduction

In this article, we're diving into **Immutable** and **Mutable** in JavaScript, while also exploring how functions like **_Object.freeze()_** and **_Object.seal()_** relate to **Immutable**. This is a pretty important topic that can be super helpful in projects with complex codebase.

## So, what's Imm... | chauhoangminhnguyen |

1,891,862 | The Ultimate Guide to Prisma ORM: Transforming Database Management for Developers | In the realm of software development, managing databases efficiently and effectively is crucial. This... | 0 | 2024-06-18T02:49:30 | https://dev.to/abhilaksharora/the-ultimate-guide-to-prisma-orm-transforming-database-management-for-developers-470n | webdev, prisma, programming, database | In the realm of software development, managing databases efficiently and effectively is crucial. This is where Object-Relational Mappers (ORMs) come into play. In this blog, we will explore the need for ORMs, review some of the most common ORMs, and discuss why Prisma ORM stands out. We will also provide a step-by-step... | abhilaksharora |

1,891,861 | Error handling by aryan🤣 | ❤ Error Handling Error handling is the technique to handle different kinds of error in... | 0 | 2024-06-18T02:49:17 | https://dev.to/aryan015/error-handling-by-aryan-h4h | javascript, react, vue | ❤

## Error Handling

Error handling is the technique to handle different kinds of error in application. It usually consists of `try`, `catch` and `finally` keyword.

```js

try{

let a= 1;

let b = 0;

let c = a/b; // cannot divide by 0

}

catch(err){

console.log(err.message)

}

finally{

// it will run irrespe... | aryan015 |

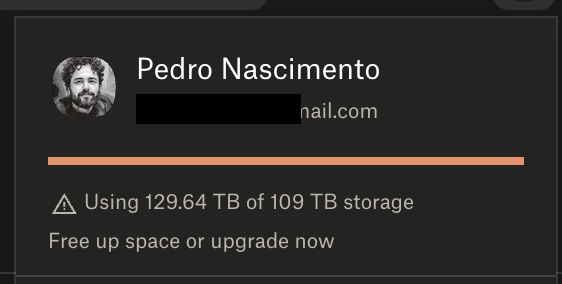

1,891,858 | A Dropbox nightmare: Paying for storage I can't use | In May 2022, I moved my 60TB of important data to Dropbox, believing their promise of unlimited... | 0 | 2024-06-18T02:46:22 | https://dev.to/lunks/a-dropbox-nightmare-b8a | ---

title: A Dropbox nightmare: Paying for storage I can't use

published: true

description:

tags:

# published_at: 2024-06-18 02:28 +0000

---

In May 2022, I moved my 60TB of important data to Dropbox, believing the... | lunks | |

1,891,859 | java prep - part 3 | can we disable the default behaviour of spring explain with example Yes, in Spring... | 27,757 | 2024-06-18T02:42:49 | https://dev.to/mallikarjunht/java-prep-part-3-5c7e | ### can we disable the default behaviour of spring explain with example

Yes, in Spring Framework, you can disable or customize default behaviors by overriding configurations or using specific annotations to alter how Spring manages components and processes requests. Let's explore a couple of examples where you might wa... | mallikarjunht | |

1,891,857 | Big O Notation | Big O Notation measures algorithm efficiency by describing time complexity in worst-case scenarios,... | 0 | 2024-06-18T02:39:38 | https://dev.to/userleo/big-o-notation-43pd | devchallenge, ai, twilio | Big O Notation measures algorithm efficiency by describing time complexity in worst-case scenarios, crucial for optimizing performance. | userleo |

1,891,856 | ASCII (248 chars) | 7-bit character encoding scheme (128 symbols) that translates letters, numbers, and basic symbols... | 0 | 2024-06-18T02:38:17 | https://dev.to/userleo/ascii-248-chars-a52 | devchallenge, cschallenge, computerscience, beginners | 7-bit character encoding scheme (128 symbols) that translates letters, numbers, and basic symbols into binary for computers to understand. Foundation for text storage and communication. | userleo |

1,891,854 | Como almacenar imágenes de docker en tu propio servidor (manualmente) | Estos últimos días estoy trabajando en temas de infraestructura para levantar una aplicación que... | 0 | 2024-06-18T02:28:45 | https://dev.to/oswa/como-almacenar-imagenes-de-docker-en-tu-propio-servidor-manualmente-5e0i | docker | Estos últimos días estoy trabajando en temas de infraestructura para levantar una aplicación que utiliza diferentes servicios.

Para el funcionamiento de esta aplicación, se necesita varios contenedores como base de datos, una aplicación brinda las APIS (Backend) y la aplicación del cliente final (Frontend).

Para pode... | oswa |

1,891,852 | java interview prep part 2 | Explain restcontroller annotation in springboot In Spring Boot, @RestController is a... | 27,757 | 2024-06-18T02:25:52 | https://dev.to/mallikarjunht/java-interview-prep-part-2-300i | ### Explain restcontroller annotation in springboot

In Spring Boot, @RestController is a specialized version of the @Controller annotation. It is used to indicate that the class is a RESTful controller that handles HTTP requests and directly maps them to the methods inside the class.

```java

import org.springframew... | mallikarjunht | |

1,891,851 | The Best Video Downloader Online-AISaver | In today's digital era, video has become one of the key ways for people to access information,... | 0 | 2024-06-18T02:20:42 | https://dev.to/fu_tong_f21583307497eab78/the-best-video-downloader-online-aisaver-2785 | ai, video, learning, development | In today's digital era, video has become one of the key ways for people to access information, entertainment, and learning. However, there are times when we wish to save specific videos for future viewing or to watch them offline when there's no internet connection available. This is where using an online video downloa... | fu_tong_f21583307497eab78 |

1,891,843 | Kring88 | Kring88: Platform Slot Online dengan Kemudahan Deposit Pulsa Dalam era digital yang serba canggih... | 0 | 2024-06-18T02:07:56 | https://dev.to/kring88/kring88-2j67 | bet, slotbet, webdev | Kring88: Platform Slot Online dengan Kemudahan Deposit Pulsa

Dalam era digital yang serba canggih ini, Kring88 hadir sebagai salah satu platform perjudian online yang menawarkan pengalaman bermain slot yang menyenangkan dan menguntungkan. Kring88 tidak hanya menyediakan berbagai jenis permainan slot yang menarik, tetap... | kring88 |

1,891,850 | Solution of numerical calculation accuracy problem in JavaScript strategy design | When writing JavaScript strategies, due to some problems of the scripting language itself, it often... | 0 | 2024-06-18T02:20:29 | https://dev.to/fmzquant/solution-of-numerical-calculation-accuracy-problem-in-javascript-strategy-design-gg | javascript, strategy, trading, fmzquant | When writing JavaScript strategies, due to some problems of the scripting language itself, it often leads to numerical accuracy problems in calculations. It has a certain influence on some calculations and logical judgments in the program. For example, when calculating 1 - 0.8 or 0.33 * 1.1, the error data will be calc... | fmzquant |

1,891,840 | Day 1 of My 90-Day DevOps Journey: Getting Started with Terraform and AWS | Hey everyone, I'm excited to share my 90-day DevOps journey! Each day, I'm diving into different... | 0 | 2024-06-18T02:13:02 | https://dev.to/arbythecoder/day-1-of-my-90-day-devops-journey-getting-started-with-terraform-and-aws-2d5g | devops, 90daysofdevops, terraform, beginners | Hey everyone, I'm excited to share my 90-day DevOps journey! Each day, I'm diving into different projects, learning, and documenting every step. Whether you're starting out or curious about Terraform and AWS, join me on this adventure!

### Getting Started with Terraform and AWS

So, I kicked off by exploring Terraform... | arbythecoder |

1,891,845 | Why is SEO So Important? How Does It Work? | Hello, everyone! Did you know? SEO is crucial for the success of websites. But why exactly is SEO so... | 0 | 2024-06-18T02:11:45 | https://dev.to/juddiy/why-is-seo-so-important-how-does-it-work-3n9e | seo, discuss, learning | Hello, everyone! Did you know? SEO is crucial for the success of websites. But why exactly is SEO so important? How does it work? Let's explore these questions together.

#### Why is SEO So Important?

1. **Increase Organic Traffic**: By optimizing your website to rank higher on Search Engine Results Pages (SERPs), you... | juddiy |

1,891,844 | PHP HyperF -> Overhead + Circuit Breaker | HyperF - Project This test execute calc of Fibonacci to show how HyperF behaves with... | 0 | 2024-06-18T02:11:37 | https://dev.to/thiagoeti/php-hyperf-overhead-circuit-breaker-2fg2 | php, hyperf, overhead, circuitbreaker | ## HyperF - Project

This test execute calc of Fibonacci to show how HyperF behaves with overload, after use the maximum CPU and requests, the next requests do not respond, the system get into a circuit breaker.

#### Create - Project

```console

composer create-project hyperf/hyperf-skeleton "project"

```

#### Instal... | thiagoeti |

1,891,834 | Java prep for 3+ years | Tell me the difference between Method Overloading and Method Overriding in Java. with... | 27,757 | 2024-06-18T02:09:00 | https://dev.to/mallikarjunht/java-prep-for-3-years-4n8b | 1. Tell me the difference between Method Overloading and Method

Overriding in Java. with example

Method Overloading:

Method Overloading refers to defining multiple methods in the same class with the same name but different parameters. The methods can differ in the number of parameters, type of parameters, or bo... | mallikarjunht | |

1,891,842 | Enhancing Security with FacePlugin’s ID Card Recognition from 200 countries | Secure identity is essential in a world where connections are becoming more and more intertwined. Not... | 0 | 2024-06-18T02:07:34 | https://dev.to/faceplugin/enhancing-security-with-faceplugins-id-card-recognition-from-200-countries-52ba | programming, python, machinelearning, cybersecurity | Secure identity is essential in a world where connections are becoming more and more intertwined. Not only is it a technological achievement, but ID card recognition from 200 countries is essential for preserving security in our day-to-day lives.

The validity of identity documents is essential for entering sensitive p... | faceplugin |

1,891,833 | Mastering TypeScript: Implementing Push, Pop, Shift, and Unshift in Tuples | Introduction So, here's a wild thought! What if I told you that we can use JS array... | 0 | 2024-06-18T02:05:50 | https://dev.to/keyurparalkar/mastering-typescript-implementing-push-pop-shift-and-unshift-in-tuples-5833 | typescript, javascript, webdev, beginners | ## Introduction

<img width="100%" style="width:100%" src="https://i.giphy.com/media/v1.Y2lkPTc5MGI3NjExN2ludHpiMHJoZ3h6dTB0bXl0dHFqb2hsaW53aDY4dzc1dGFoZGFpMyZlcD12MV9pbnRlcm5hbF9naWZfYnlfaWQmY3Q9Zw/l3fZLMbuCOqJ82gec/giphy.gif" alt="A wild thought image">

So, here's a wild thought! What if I told you that we can use J... | keyurparalkar |

1,891,841 | A Comprehensive Guide to Using Footers in Conventional Commit Messages | Introduction Traditional commit messages are a crucial part of maintaining a clean,... | 0 | 2024-06-18T02:04:58 | https://dev.to/mochafreddo/a-comprehensive-guide-to-using-footers-in-conventional-commit-messages-37g6 | git, versioncontrol, softwareengineering, conventionalcommits | ### Introduction

Traditional commit messages are a crucial part of maintaining a clean, traceable history in software projects. An important component of these messages is the footer, which serves specific purposes such as identifying breaking changes and referencing issues or pull requests. This guide will walk you th... | mochafreddo |

1,891,839 | Integrating the Snyk Language Server with IntelliJ IDEs | We’re excited to announce that the Snyk Language Server (LS for short) can now be integrated with your existing IntelliJ IDEs. | 0 | 2024-06-18T02:00:24 | https://snyk.io/blog/integrating-snyk-language-server-with-intellij-ide/ | applicationsecurity | We’re excited to announce that the [Snyk Language Server](https://docs.snyk.io/integrate-with-snyk/use-snyk-in-your-ide/snyk-language-server) (LS for short) can now be integrated with your existing IntelliJ IDEs.

Why do we integrate our IDEs with the LS?

-----------------------------------------

By integrating all... | snyk_sec |

1,890,677 | Food tracker app (Phase 1/?) for show how you split your money | Hi everyone, in this post I will share the process of creating a food tracker app that tells you how... | 0 | 2024-06-18T01:00:41 | https://dev.to/caresle/food-tracker-app-phase-1-for-show-how-you-split-your-money-3p8e | laravel, redis, react, postgres | Hi everyone, in this post I will share the process of creating a food tracker app that tells you how you split your money across the different stores and food that you purchase.

## Why are you building this?

Right now I’m having a transition on my life, where I want to move from the house of my mother to start living... | caresle |

1,891,838 | Streamlining Security in the Digital Age—Building ID Verification System with SDKs | Building ID verification system with SDKs is becoming more and more important in today’s digital... | 0 | 2024-06-18T01:58:46 | https://dev.to/faceplugin/streamlining-security-in-the-digital-age-building-id-verification-system-with-sdks-25e3 | programming, ai, machinelearning, computerscience | Building ID verification system with SDKs is becoming more and more important in today’s digital environment, where trust and security are critical. Accurate and efficient identity verification becomes more and more important as interactions and transactions shift to the Internet.

ID verification systems are made to m... | faceplugin |

1,891,836 | Finding the Best Plumbers in Dublin | If you are looking for reliable plumbing services in Dublin, there are several top-notch options... | 0 | 2024-06-18T01:46:41 | https://dev.to/farooqshah/finding-the-best-plumbers-in-dublin-5gen | If you are looking for reliable [plumbing services in Dublin,](https://dublinplumber24hrs.ie/) there are several top-notch options available. Here’s a brief overview of some highly recommended plumbers in the city.

1. Philip Marry Heating & Plumbing

Philip Marry Heating & Plumbing offers comprehensive plumbing and hea... | farooqshah | |

1,891,351 | Best ERP 2024 ? | which brand erp should we choose for 2024 for these criteria... | 0 | 2024-06-18T01:41:09 | https://dev.to/paimonchan/best-erp-2024--2p85 | which brand erp should we choose for 2024 for these criteria ?

- pricing

- customization

- features

- performance

- ease of use | paimonchan | |

1,891,822 | Little Bugs, Big Problems | Software Engineering Manager: “Why haven’t you finished this bug? It’s been in implementation for... | 0 | 2024-06-18T01:32:37 | https://dev.to/mlr/little-bugs-big-problems-59gg | bugs, career, softwaredevelopment, software | **Software Engineering Manager:** “Why haven’t you finished this bug? It’s been in implementation for three days… it’s just a bug, it shouldn’t be this hard.”

**Developer:** “...”

This interaction is common in software engineering. In this article, I’ll discuss how bugs are perceived by management, product owners, dev... | mlr |

1,891,832 | Idconfig | I didn't know that If you want to get'Idconfig' you should use'Ifconfig' | 0 | 2024-06-18T01:26:22 | https://dev.to/nicholas_cheza_4d291be7f2/idconfig-7ob | programming | I didn't know that If you want to get'Idconfig' you should use'Ifconfig' | nicholas_cheza_4d291be7f2 |

1,891,831 | Teach you to encapsulate a Python strategy into a local file | Many developers who write strategies in Python want to put the strategy code files locally, worrying... | 0 | 2024-06-18T01:25:43 | https://dev.to/fmzquant/teach-you-to-encapsulate-a-python-strategy-into-a-local-file-1knn | python, strategy, cryptocurrency, fmzquant | Many developers who write strategies in Python want to put the strategy code files locally, worrying about the safety of the strategy. As a solution proposed in the FMZ API document:

**Strategy security**

The strategy is developed on the FMZ platform, and the strategy is only visible to the FMZ account holders. And o... | fmzquant |

1,881,492 | Git for Beginners: Basic Commands... | If you are starting in the world of programming or have just started your first job as a developer,... | 27,814 | 2024-06-18T01:20:22 | https://dev.to/andresordazrs/git-for-beginners-basic-commands-4b4i | git, developer, beginners | If you are starting in the world of programming or have just started your first job as a developer, you have probably already heard about Git. This powerful version control tool is essential for managing your code and collaborating with other developers. But if you still don't fully understand how it works or why it's ... | andresordazrs |

1,891,821 | Deco Hackathon focused on HTMX. Up to $5k in prizes! | Hey dev.to community! I'm here to announce the 5th hackathon by deco.cx - HTMX Edition. It's a... | 0 | 2024-06-18T01:14:19 | https://dev.to/gbrantunes/deco-hackathon-focused-on-htmx-up-to-5k-in-prizes-1g2k | webdev, development, frontend, tailwindcss | Hey dev.to community! I'm here to announce the 5th hackathon by deco.cx - HTMX Edition.

It's a virtual 3-day event starting on Friday, June 28. The goal is to transform ideas into HTMX websites with a PageSpeed score of 90+ and compete for over $5K in prizes. This is the registration site: https://deco.cx/hackathon5.

... | gbrantunes |

1,891,816 | 🎉服务不掉线,性能要上天!🚀——《技术宅的快乐水:高可用&高性能修炼手册》来啦!拿去,不谢,咱就是这么大方!😉 | 嘿,程序猿们、技术大佬们,是不是又被用户投诉卡成 PPT 了?🤯别慌,咱有秘密武器!这篇 《 高性能修炼手册 》... | 0 | 2024-06-18T01:01:00 | https://dev.to/sflyq/fu-wu-bu-diao-xian-xing-neng-yao-shang-tian-ji-zhu-zhai-de-kuai-le-shui-gao-ke-yong-gao-xing-neng-xiu-lian-shou-ce-lai-la-na-qu-bu-xie-zan-jiu-shi-zhe-yao-da-fang--2n17 | 嘿,程序猿们、技术大佬们,是不是又被用户投诉卡成 PPT 了?🤯别慌,咱有秘密武器!这篇 [《 高性能修炼手册 》](https://github.com/SFLAQiu/web-develop/blob/master/%E5%A6%82%E4%BD%95%E4%BF%9D%E9%9A%9C%E6%9C%8D%E5%8A%A1%E7%9A%84%E9%AB%98%E5%8F%AF%E7%94%A8-%E6%8F%90%E5%8D%87%E6%9C%8D%E5%8A%A1%E6%80%A7%E8%83%BD.md) 堪比技术界的‘蓝瓶钙’,一喝见效,让你的服务健步如飞,妈妈再也不用担心我的服务器崩了!🏃♂️

🌟序章:技术界的... | sflyq | |

1,891,815 | SQLynx- The Best SQL Editor Tool on the market | I recommend trying out SQLynx, a powerful and versatile SQL IDE/SQL editor. The SQLynx product series... | 0 | 2024-06-18T00:57:44 | https://dev.to/concerate/sqlynx-the-best-sql-editor-tool-on-the-market-29i4 | I recommend trying out SQLynx, a powerful and versatile SQL IDE/SQL editor.

The SQLynx product series is designed to meet the needs of users of various scales and requirements, from individual developers and small teams to large enterprises. SQLynx offers suitable solutions to help users manage and utilize databases ef... | concerate | |

1,891,814 | Ultimate JavaScript Cheatsheet for Developers | Introduction JavaScript is a versatile and powerful programming language used extensively... | 0 | 2024-06-18T00:55:37 | https://raajaryan.tech/ultimate-javascript-cheatsheet-for-developers | javascript, beginners, tutorial, programming | ### Introduction

JavaScript is a versatile and powerful programming language used extensively in web development. It allows developers to create dynamic and interactive user interfaces. This cheatsheet is designed to provide a quick reference guide for common JavaScript concepts, functions, and syntax.

### Console Met... | raajaryan |

1,891,813 | Super cool portfolio site! | Try it out and let me know what you think! Live: Josh Garvey Website A portfolio website coded... | 0 | 2024-06-18T00:51:08 | https://dev.to/jgar514/super-cool-portfolio-site-569a | webdev, javascript, beginners, react | Try it out and let me know what you think!

Live: [Josh Garvey Website](https://joshuagarvey.com/)

A portfolio website coded with React, Three.js, React Three Fiber, and deployed to Netlify.

[github repo](https:/... | jgar514 |

1,891,812 | Configuring Access to Prometheus and Grafana via Sub-paths | 1. Introduction Grafana and Prometheus stand as integral tools for monitoring... | 0 | 2024-06-18T00:41:30 | https://dev.to/tinhtq97/configuring-access-to-prometheus-and-grafana-via-sub-paths-55n7 | ## 1. Introduction

Grafana and Prometheus stand as integral tools for monitoring infrastructures across various industry sectors. This document aims to illustrate the process of redirecting Grafana and Prometheus to operate under the same domain while utilizing distinct paths.

## 2. How does the story begin?

As a seas... | tinhtq97 | |

1,891,811 | Exploring the Fundamentals of Data Visualization with ggplot2 | Introduction Data visualization plays a crucial role in data analysis as it allows us to... | 0 | 2024-06-18T00:33:08 | https://dev.to/kartikmehta8/exploring-the-fundamentals-of-data-visualization-with-ggplot2-2f0h | javascript, beginners, programming, tutorial | ## Introduction

Data visualization plays a crucial role in data analysis as it allows us to make sense of complex data by presenting it in a visual format. One of the most popular tools for creating data visualizations is ggplot2 in R. This powerful and flexible package offers a wide range of features that make it a g... | kartikmehta8 |

1,891,759 | Importance of WebP Images | What Are WebP Images? WebP is a modern image format developed by Google that provides... | 0 | 2024-06-17T23:19:47 | https://dev.to/msmith99994/importance-of-webp-images-11nd | ## What Are WebP Images?

WebP is a modern image format developed by Google that provides superior compression for images on the web. It supports both lossy and lossless compression, offering high-quality visuals with smaller file sizes compared to older formats like JPEG and PNG. Introduced in 2010, WebP has quickly ga... | msmith99994 | |

1,891,803 | A Trigger Analogy | This is a submission for DEV Computer Science Challenge v24.06.12: One Byte Explainer. ... | 0 | 2024-06-18T00:05:43 | https://dev.to/thedigitalbricklayer/a-trigger-analogy-1gkc | devchallenge, cschallenge, computerscience, beginners | *This is a submission for [DEV Computer Science Challenge v24.06.12: One Byte Explainer](https://dev.to/challenges/cs).*

## Explainer

We can think of triggers in the context of a rabbit trap. When an update, delete, or insert is made, it is like the prey triggering the trap, and we can do something about it, like ins... | thedigitalbricklayer |

1,892,798 | Learn .NET Aspire by example: Polyglot persistence featuring PostgreSQL, Redis, MongoDB, and Elasticsearch | TL;DR Learn how to set up various databases using Aspire by building a simple social media... | 0 | 2024-06-28T10:51:29 | https://nikiforovall.github.io/dotnet/aspire/2024/06/18/polyglot-persistance-with-aspire.html | dotnet, csharp, aspnetcore, aspire | ---

title: Learn .NET Aspire by example: Polyglot persistence featuring PostgreSQL, Redis, MongoDB, and Elasticsearch

published: true

date: 2024-06-18 00:00:00 UTC

tags: dotnet, csharp, aspnetcore, aspire

canonical_url: https://nikiforovall.github.io/dotnet/aspire/2024/06/18/polyglot-persistance-with-aspire.html

---

#... | nikiforovall |

Subsets and Splits

No community queries yet

The top public SQL queries from the community will appear here once available.