id int64 5 1.93M | title stringlengths 0 128 | description stringlengths 0 25.5k | collection_id int64 0 28.1k | published_timestamp timestamp[s] | canonical_url stringlengths 14 581 | tag_list stringlengths 0 120 | body_markdown stringlengths 0 716k | user_username stringlengths 2 30 |

|---|---|---|---|---|---|---|---|---|

1,892,281 | Threads-API ist da | Ich zeige dir wie du die API von Threads nutzt um Posts automatisiert zu generieren 🎉 Seit heute... | 0 | 2024-06-18T10:19:02 | https://blog.disane.dev/threads-api-ist-da/ | graphapi, threads, automatisiert | Ich zeige dir wie du die API von Threads nutzt um Posts automatisiert zu generieren 🎉

---

Seit heute steht die API von Threads bereit und ich zeige dir, wie du sie verwenden kannst um Inhalte automatisiert dort bereitzustellen.

## ... | disane |

1,892,279 | The Ultimate Guide to Choosing the Best Video SDKs for Your Project | In today's digital age, integrating robust video capabilities into your applications is crucial.... | 0 | 2024-06-18T10:17:03 | https://dev.to/yogender_singh_011ebbe493/the-ultimate-guide-to-choosing-the-best-video-sdks-for-your-project-1n7l | In today's digital age, integrating robust video capabilities into your applications is crucial. Whether you're developing a communication app, a virtual event platform, or a collaborative tool, choosing the right Video SDK (Software Development Kit) is pivotal. This guide explores everything you need to know to make a... | yogender_singh_011ebbe493 | |

1,892,278 | A single state for Loading/Success/Error in NgRx | When handling HTTP requests in Angular applications, developers often need to manage multiple view... | 0 | 2024-06-18T10:15:23 | https://dev.to/yurii_khomitskyi/a-single-state-for-loadingsuccesserror-in-ngrx-21df | angular, ngrx, state, webdev | When handling HTTP requests in Angular applications, developers often need to manage multiple view states, such as loading, success, and error. Typically, these states are manually managed and stored in the NGRX Store, leading to boilerplate code if there are multiple features.

Here’s an example of how developers usua... | yurii_khomitskyi |

1,892,277 | Top Ecommerce Development Companies in USA | **The Role of E-commerce Website Development Companies E-commerce website development companies... | 0 | 2024-06-18T10:15:04 | https://dev.to/kiran1996/top-ecommerce-development-companies-in-usa-533h | ecommercewebsite, websitedevelopment, webdevelopers, development | **The Role of E-commerce Website Development Companies

[E-commerce website development companies](https://onpointsoft.com/

) specialize in creating and maintaining online stores for businesses. Their expertise encompasses various aspects of web development, including website design, user experience (UX) optimization, p... | kiran1996 |

1,892,162 | QEMU networking on macOS | Introduction Setting up virtual machines (VMs) that can communicate with each other and... | 0 | 2024-06-18T10:14:03 | https://dev.to/krjakbrjak/qemu-networking-on-macos-549k | ## Introduction

Setting up virtual machines (VMs) that can communicate with each other and are accessible from your host network is essential for various scenarios, such as managing Kubernetes clusters or setting up distributed computing environments. In this article, I explore how QEMU's [vmnet](https://developer.app... | krjakbrjak | |

1,892,276 | What If You Don’t Outsource Invoice Processing? | If a business chooses not to outsource invoice processing, it must handle this task internally. This... | 0 | 2024-06-18T10:12:42 | https://dev.to/sanya3245/what-if-you-dont-outsource-invoice-processing-3kei | webdev | If a business chooses not to outsource invoice processing, it must handle this task internally. This decision has several implications, both positive and negative, that impact various aspects of the business. Here's a detailed look at the potential consequences:

**Benefits of Not Outsourcing Invoice Processing**

**Co... | sanya3245 |

1,892,275 | Which Program Can I Use to Secure/Protect PDF Files? | Do you want to protect/secure PDF files from doubling, deletion, and printing without your... | 0 | 2024-06-18T10:12:26 | https://dev.to/calvertleonard/which-program-can-i-use-to-secureprotect-pdf-files-5em4 | Do you want to protect/secure PDF files from doubling, deletion, and printing without your permission? In such a case, get the** OSTtoPSTAPP PDF Protector Program**. This application helps you safeguard your PDF files by encrypting them with a special password. This program is the best for protecting your PDF files. Th... | calvertleonard | |

1,892,274 | Transform Your Business with Microsoft Dynamics 365: A Comprehensive Guide | In today's fast-paced business landscape, organizations require advanced tools to streamline... | 0 | 2024-06-18T10:11:02 | https://dev.to/mylearnnest/transform-your-business-with-microsoft-dynamics-365-a-comprehensive-guide-27la | microsoft, dynamics | In today's fast-paced business landscape, organizations require advanced tools to streamline operations, enhance customer engagement, and drive growth. Microsoft Dynamics 365 stands out as a versatile solution that combines [ERP (Enterprise Resource Planning)](https://www.mylearnnest.com/microsoft-dynamics-365-training... | mylearnnest |

1,892,273 | Top Software Development Company in Kuwait | Software Development Services | Enhance your brand's performance with a [Top Software Development Company in... | 0 | 2024-06-18T10:10:04 | https://dev.to/samirpa555/top-software-development-company-in-kuwait-software-development-services-349j | softwaredevelopment, softwaredevelopmentservices, softwaredevelopmentcompany | Enhance your brand's performance with a **[**Top Software Development Company in Kuwait**](https://www.sapphiresolutions.net/top-software-development-company-in-kuwait)**, and make the right implementation to extend the profit of your business. Contact us today! | samirpa555 |

1,892,272 | Rightcliq Offers Excellent AC Repair Services in Bangalore | As the temperature rises, your air conditioning unit becomes an essential component of your home or... | 0 | 2024-06-18T10:09:22 | https://dev.to/offpagework/rightcliq-offers-excellent-ac-repair-services-in-bangalore-3le5 | As the temperature rises, your air conditioning unit becomes an essential component of your home or office comfort. When it malfunctions, you need a reliable and efficient solution. Look no further than Rightcliq – your ultimate destination for the best AC Repair Service in Bangalore. Whether you need routine maintenan... | offpagework | |

1,892,271 | Game Physics: If You Wanna Understand Gaming More | Reflecting on my previous topic of game development where I blogged about Procedural Animation: If... | 0 | 2024-06-18T10:08:55 | https://dev.to/zoltan_fehervari_52b16d1d/game-physics-if-you-wanna-understand-gaming-more-36ii | gamedev, gamephysics | Reflecting on my previous topic of game development where I blogged about Procedural Animation:

If you’re a gamer, you’ve undoubtedly come across games with realistic physics that contribute to an immersive and exciting experience. Game physics is a fascinating field that deals with the behavior and interactions of ob... | zoltan_fehervari_52b16d1d |

1,892,270 | C++ 中呼叫不具參數的建構函式為什麼不能加圓括號? | 在 C++ 中建立物件時, 如果是要使用預設的建構函式或是不具參數的建構函式時, 不能在變數名稱後面加上圓括號, 例如: class MyClass { public: int i; ... | 0 | 2024-06-18T10:05:08 | https://dev.to/codemee/c-zhong-hu-jiao-bu-ju-can-shu-de-jian-gou-han-shi-wei-shi-mo-bu-neng-jia-yuan-gua-hao--2ch6 | cpp | 在 C++ 中建立物件時, 如果是要使用預設的建構函式或是不具參數的建構函式時, 不能在變數名稱後面加上圓括號, 例如:

```cpp

class MyClass {

public:

int i;

MyClass() {

// 預設 (不含參數) 的建構函式

}

};

int main() {

MyClass obj; // 正確

obj.i = 10;

return 0;

}

```

如果寫成以下這樣:

```cpp

class MyClass {

public:

int i;

MyClass() {

// ... | codemee |

1,892,269 | Display Technology Market Analysis: Adoption of Augmented Reality (AR) Displays | The Display Technology Market size was valued at $ 125.5 Bn in 2022 and is expected to grow to $... | 0 | 2024-06-18T10:03:42 | https://dev.to/vaishnavi_farkade_/display-technology-market-analysis-adoption-of-augmented-reality-ar-displays-52n8 | **The Display Technology Market size was valued at $ 125.5 Bn in 2022 and is expected to grow to $ 212.42897 Bn by 2030 and grow at a CAGR Of 6.8 % by 2023-2030.**

**Market Scope & Overview:**

The most recent Display technology Market Analysis study looks at estimates and predictions for all research segments for the... | vaishnavi_farkade_ | |

1,892,268 | HMPL - new template language for fetching HTML from API | In this article I will talk about a new template language called HMPL. It allows you to easily load... | 0 | 2024-06-18T10:01:58 | https://dev.to/antonmak1/hmpl-new-template-language-for-fetching-html-from-api-5a7c | webdev, javascript, html, hmpl | In this article I will talk about a new template language called [HMPL](https://github.com/hmpljs/hmpl). It allows you to easily load HTML from the API, eliminating a ton of unnecessary code.

The main goal of hmpl.js is to simplify working with the server by integrating small request structures into HTML. This can be... | antonmak1 |

1,892,267 | DataTable in C# – Usage And Examples 🧪 | DataTables are an essential part of data handling in C#, but they can be quite tricky for beginners... | 0 | 2024-06-18T10:01:49 | https://dev.to/bytehide/datatable-in-c-usage-and-examples-40f2 | datatable, csharp, promptengineering, tutorial | DataTables are an essential part of data handling in C#, but they can be quite tricky for beginners as well as those who haven’t used them often. So, ready to unravel the mysteries of C# DataTables? Let’s weather the storm together and emerge as DataTable champions!

## Introduction: What Is DataTable

DataTable, in C#... | bytehide |

1,892,266 | Choosing the Top Weather API for Your Application | This article delves into eight prominent weather APIs that developers can utilize to enhance their... | 0 | 2024-06-18T10:01:40 | https://dev.to/sattyam/choosing-the-top-weather-api-for-your-application-13ac | api, weather | This article delves into eight prominent weather APIs that developers can utilize to enhance their application functionalities. The focus will be on evaluating each API’s accuracy, historical data coverage, and forecasting capabilities, facilitating informed choices for developers who seek to integrate comprehensive we... | sattyam |

1,892,264 | FIND LOVE ACROSS BORDERS: BEST SPOUSE VISA CONSULTANTS IN AMRITSAR | Discovering The Right Spouse Visa Consultant In Amritsar Can Be The Bridge That Reunites You With... | 0 | 2024-06-18T09:59:43 | https://dev.to/grow_businesses_86aaa1d9b/find-love-across-borders-best-spouse-visa-consultants-in-amritsar-866 | Discovering The Right [Spouse Visa Consultant In Amritsar](https://nocimmigration.com/best-spouse-visa-consultants-in-amritsar/) Can Be The Bridge That Reunites You With Your Loved One. In A City Rich With Tradition And Familial Values, Spouses Separated By International Borders Seek Out These Consultancy Services To N... | grow_businesses_86aaa1d9b | |

1,892,262 | Top Medium Alternatives for 2024 | Explore the pros and cons of the best Medium alternatives - HubPages, Differ, Substack,... | 0 | 2024-06-18T09:55:11 | https://dev.to/sunilsandhu/top-medium-alternatives-for-2024-22gj | writing, blogging, medium, contentwriting | ## Explore the pros and cons of the best Medium alternatives - HubPages, Differ, Substack, Hashnode, Hackernoon, Vocal Media, and more.

Initially, Evan Williams [founded Medium with the vision of creating a long-form version of Twitter](https://... | sunilsandhu |

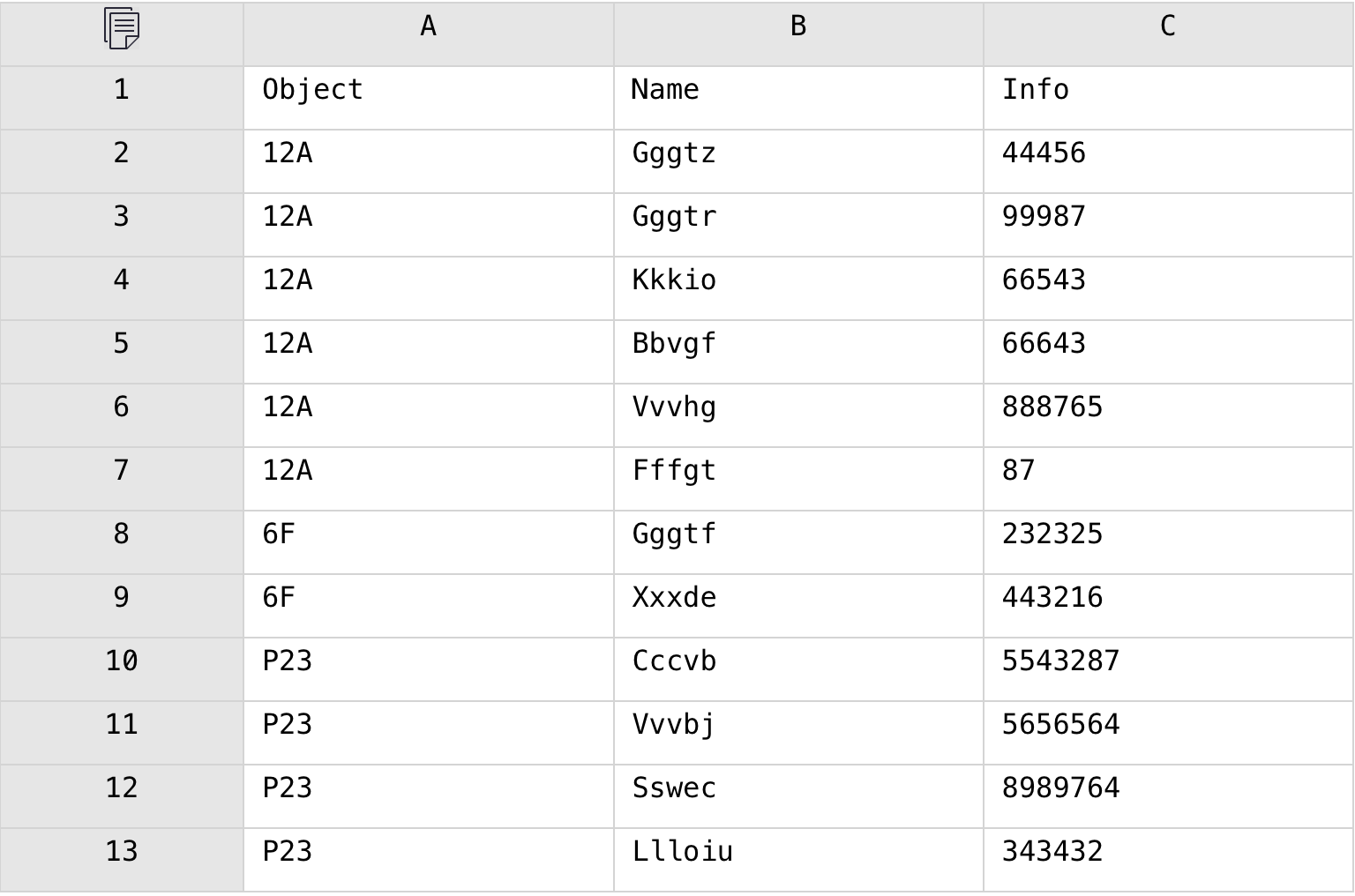

1,892,260 | In Excel, Combine Multiple Detail Data Columns into One Row in Each Group | Problem description & analysis: The following Excel table has a grouping column and two detailed... | 0 | 2024-06-18T09:53:22 | https://dev.to/judith677/in-excel-combine-multiple-detail-data-columns-into-one-row-in-each-group-39d | beginners, programming, tutorial, productivity | **Problem description & analysis**:

The following Excel table has a grouping column and two detailed data columns.

We need to combine the two detail data columns in each group into one row and automatically generate co... | judith677 |

1,892,259 | BEST IMMIGRATION IN AMRITSAR | Best Immigration In Amritsar Refers To Moving Permanently Or Temporarily To Another Country For One... | 0 | 2024-06-18T09:53:16 | https://dev.to/grow_businesses_86aaa1d9b/best-immigration-in-amritsar-4pnp | [Best Immigration In Amritsar](https://nocimmigration.com/best-immigration-in-amritsar/) Refers To Moving Permanently Or Temporarily To Another Country For One Of Several Reasons, Including Better Economic Prospects, Family Reunions Or To Escape Conflict Or Persecution. Other Motivations Might Be Higher Education Advan... | grow_businesses_86aaa1d9b | |

1,892,258 | Premier UK Events Ltd. | Make your event the talk of the town with Premier Events. We are experienced professionals who will... | 0 | 2024-06-18T09:53:03 | https://dev.to/premieruk0110/premier-uk-events-ltd-3b16 | Make your event the talk of the town with [Premier Events](https://www.premier-ltd.com/). We are experienced professionals who will leave no stone unturned to help you host the perfect event. We are the most trusted full-service event agency with expertise in event management, event production, content and creative sup... | premieruk0110 | |

1,892,256 | Top 19 Contributed Repositories on GitHub | Ehy Everybody 👋 It’s Antonio, CEO & Founder at Litlyx. I come back to you with a... | 0 | 2024-06-18T09:52:04 | https://dev.to/litlyx/top-19-contributed-repositories-on-github-2aei | awesome, opensource, learning, webdev | ## Ehy Everybody 👋

It’s **Antonio**, CEO & Founder at [Litlyx](https://litlyx.com).

I come back to you with a curated **Awesome List of resources** that you can find interesting.

Today Subject is...

```bash

Top 19 Contributed Repositories on GitHub

```

We are looking for collaborators! Share some **love** & leav... | litlyx |

1,892,255 | Delving into the World of STM Microcontrollers: Powering Innovation Across Industries | In the realm of embedded systems, where devices interact with the physical world, microcontrollers... | 0 | 2024-06-18T09:50:46 | https://dev.to/epakconsultant/delving-into-the-world-of-stm-microcontrollers-powering-innovation-across-industries-2gg3 | microcontrollers | In the realm of embedded systems, where devices interact with the physical world, microcontrollers (MCUs) reign supreme. Among the leading MCU manufacturers, STMicroelectronics (ST) stands out with its STM family of microcontrollers. Let's embark on a journey to explore the capabilities and applications of these versa... | epakconsultant |

1,885,712 | How, why and when to squash your commit history | I'm sure this has been covered a million times but here's number a million and one! I've been... | 0 | 2024-06-18T09:49:45 | https://dev.to/timreach/how-why-and-when-to-make-your-commit-history-more-useful-525p | git | I'm sure this has been covered a million times but here's number a million and one!

I've been coding a decade now, but only working in an active team of devs for about a year or two so I'm pretty green on some git ... | timreach |

1,892,254 | VISA CONSULTANT IN AMRITSAR | Most People Feel It Is Beneficial To Move To A Developing Country In These Current Circumstances.... | 0 | 2024-06-18T09:47:53 | https://dev.to/grow_businesses_86aaa1d9b/visa-consultant-in-amritsar-573l | Most People Feel It Is Beneficial To Move To A Developing Country In These Current Circumstances. They Have Financial Freedom, Stability, Better Living Standards, Higher Education, And A Harmonious Family Life. There Are Many People Who Are Confused About The Best Amritsar Immigration Consultant That Can Help Them Real... | grow_businesses_86aaa1d9b | |

1,892,253 | Best Spouse Visa Consultants In Amritsar | A spouse visa Allows Foreign Nationals To Enter And Reside In A Country To Join Their Spouse Who Is... | 0 | 2024-06-18T09:46:18 | https://dev.to/grow_businesses_86aaa1d9b/best-spouse-visa-consultants-in-amritsar-1dc6 | A [spouse visa](https://nocimmigration.com/canada-spouse-visa-consultancy-service-at-amritsar/) Allows Foreign Nationals To Enter And Reside In A Country To Join Their Spouse Who Is Either A Citizen Or Permanent Resident Of That Nation. Sometimes These Visas Are Known By Other Names Such As Marriage Visa Or Partner Vis... | grow_businesses_86aaa1d9b | |

1,892,252 | Demystifying Real-Time Operating Systems (RTOS): The Brains Behind Real-Time Applications | In today's world, speed and efficiency are paramount. This is especially true for real-time systems,... | 0 | 2024-06-18T09:45:32 | https://dev.to/epakconsultant/demystifying-real-time-operating-systems-rtos-the-brains-behind-real-time-applications-2cn3 | rtos | In today's world, speed and efficiency are paramount. This is especially true for real-time systems, where responses to events need to happen within strict deadlines. Here's where Real-Time Operating Systems (RTOS) come into play. Unlike traditional operating systems you find on desktops or phones, RTOSes are specializ... | epakconsultant |

1,892,250 | Build Your Own Food Ordering App- Features, Benefits, Cost | In today’s fast-paced world, the demand for convenient food delivery solutions is skyrocketing.... | 0 | 2024-06-18T09:44:57 | https://dev.to/rebuildtechnologies/build-your-own-food-ordering-app-features-benefits-cost-472i | appdevcost, foodappdev | In today’s fast-paced world, the demand for convenient food delivery solutions is skyrocketing. Whether you’re a restaurant owner looking to expand your reach or an entrepreneur eyeing the booming food delivery market, developing a custom food ordering app can be a game-changer.

At Rebuild Technologies, a leading [on... | rebuildtechnologies |

1,892,249 | Digital Marketing in Amritsar | Elevating Your Brand: The Power of Digital Marketing in Amritsar In the heart of Punjab, Amritsar... | 0 | 2024-06-18T09:42:47 | https://dev.to/growdigitech_d693e2c583cb/digital-marketing-in-amritsar-5dgj |

Elevating Your Brand: The Power of [Digital Marketing in Amritsar](https://growdigitech.com/digital-marketing-in-amritsar/)

In the heart of Punjab, Amritsar stands tall not only for its rich cultural heritage but also as a burgeoning hub for digital marketing prowess. For businesses operating in this vibrant city, unl... | growdigitech_d693e2c583cb | |

1,892,247 | How to check if an Azure Marketplace image is marked for deprecation | When working within the cloud you need to understand and plan for services or functionality that... | 0 | 2024-06-18T09:42:27 | https://www.techielass.com/how-to-check-if-an-azure-marketplace-image-is-marked-for-deprecation/ | azure, powershell |

When working within the cloud you need to understand and plan for services or functionality that might be deprecated. One... | techielass |

1,892,245 | Digital Holography Market Research: Emerging Technologies | Digital Holography Market size was valued at $ 3.59 Bn in 2023 and is expected to grow to $ 14.7 Bn... | 0 | 2024-06-18T09:39:15 | https://dev.to/vaishnavi_farkade_/digital-holography-market-research-emerging-technologies-5efk | **Digital Holography Market size was valued at $ 3.59 Bn in 2023 and is expected to grow to $ 14.7 Bn by 2031 and grow at a CAGR Of 19.23 % by 2024-2031.**

**Market Scope & Overview:**

Reader of this report will get a comprehensive analysis of the global Digital Holography Market Research from the most recent researc... | vaishnavi_farkade_ | |

1,892,237 | Data Migration from GP to GBase8a - Detailed Explanation of Data Types | 1. Overview This section provides guidance on mapping Greenplum's standard data types to... | 0 | 2024-06-18T09:39:07 | https://dev.to/gbasedatbase/data-migration-from-gp-to-gbase8a-detailed-explanation-of-data-types-28cf | database, greenplum, gbasedatabase | ## 1. Overview

This section provides guidance on mapping Greenplum's standard data types to GBase database tables during the migration process. There are four main categories of data types:

- Binary data types

- Character data types

- Numeric data types

- Date/time data types

While most of Greenplum's built-in types ... | gbasedatabase |

1,892,244 | Turn Your Raspberry Pi into a Secure Gateway: Building a DIY VPN Server | The internet offers a wealth of information, but it also exposes your online activity to potential... | 0 | 2024-06-18T09:38:39 | https://dev.to/epakconsultant/turn-your-raspberry-pi-into-a-secure-gateway-building-a-diy-vpn-server-f2f | raspberrypi | The internet offers a wealth of information, but it also exposes your online activity to potential snooping. A Virtual Private Network (VPN) encrypts your internet traffic, safeguarding your data and privacy. But what if you could control your own VPN experience? Enter the Raspberry Pi – a tiny computer that can be tra... | epakconsultant |

1,892,243 | Digital Marketing Agency | Elevating Your Online Presence: SEO Strategies for Digital Marketing Agencies In an age where the... | 0 | 2024-06-18T09:38:30 | https://dev.to/growdigitech_d693e2c583cb/digital-marketing-agency-21nb | Elevating Your Online Presence: SEO Strategies for [Digital Marketing Agencies](https://growdigitech.com/digital-marketing-agency/)

In an age where the online marketplace is saturated with competition, a digital marketing agency must adopt robust SEO strategies to ensure its clients’ content ranks prominently on search... | growdigitech_d693e2c583cb | |

1,545,806 | Como Crear anotaciones en Java | Las anotaciones en Java son una poderosa herramienta que permite agregar metadatos personalizados a... | 0 | 2023-07-22T21:02:55 | https://dev.to/andersonsinaluisa/como-crear-anotaciones-en-java-2071 | Las anotaciones en Java son una poderosa herramienta que permite agregar metadatos personalizados a clases, métodos, variables y otros elementos del código. Estas anotaciones pueden ser utilizadas para proporcionar información adicional, configurar comportamientos especiales o simplificar la lógica de programación. En ... | andersonsinaluisa | |

1,892,242 | Building the Smart City of the Future: The Role of Software Development in Dubai | Consider Dubai as one enormous, intricate machine, working to improve the quality of life and... | 0 | 2024-06-18T09:38:16 | https://dev.to/meganbrown/building-the-smart-city-of-the-future-the-role-of-software-development-in-dubai-3eak | softwaredevelopment, softwaredevelopmentindubai, smartcity, softwareproductengineering |

> Consider Dubai as one enormous, intricate machine, working to improve the quality of life and happiness of its citizens. The code that powers this machine is written in software. It ensures that the city runs smo... | meganbrown |

1,892,241 | digital marketing agency in Amritsar | Elevating Your Brand with Amritsar's Premier Digital Marketing Agency In the heart of Punjab,... | 0 | 2024-06-18T09:37:36 | https://dev.to/growdigitech_d693e2c583cb/digital-marketing-agency-in-amritsar-f8j | Elevating Your Brand with Amritsar's Premier [Digital Marketing Agency](https://growdigitech.com/digital-marketing-agency-in-amritsar/)

In the heart of Punjab, Amritsar not only boasts rich cultural heritage but also a burgeoning digital space. For modern businesses, establishing a strong online presence is paramount, ... | growdigitech_d693e2c583cb | |

1,892,240 | Navbar components built for e-commerce with Tailwind CSS and Flowbite | Hey devs! Today I want to show you a couple of navbar components that we've designed and coded for... | 14,781 | 2024-06-18T09:37:34 | https://flowbite.com/blocks/e-commerce/navbars/ | flowbite, tailwindcss, webdev, html | Hey devs!

Today I want to show you a couple of [navbar components](https://flowbite.com/blocks/e-commerce/navbars/) that we've designed and coded for the Flowbite ecosystem which are specifically thought out for e-commerce websites - which means that there's a focus on stuff like shopping carts, user dropdowns, catego... | zoltanszogyenyi |

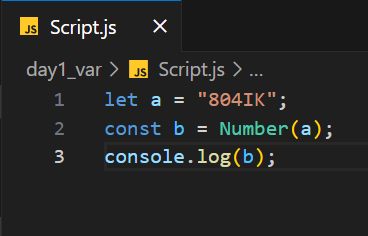

1,892,239 | Hey Programmer's, What is Output of this Code? | A post by Rizwan | 0 | 2024-06-18T09:37:31 | https://dev.to/ra0197698/hey-programmers-what-is-output-of-this-code-5g4h | programming, development, javascript, softwareengineering |

| ra0197698 |

1,892,238 | How to install Node on cPanel shared hosting (without root access) | You will need to have access to an SSH command line; not all hosts allow this. I’ve tested this on... | 0 | 2024-06-18T09:37:31 | https://dev.to/bmanish/how-to-install-node-on-cpanel-shared-hosting-without-root-access-ad8 | webdev, node, cpanel | You will need to have access to an SSH command line; not all hosts allow this. I’ve tested this on VentraIP but it may work on other hosts too.

You’ll need to login via SSH and then run the following commands from the home folder:

Or you can use the terminal that is in cPanel.

In the digital age, your online presence is as significant as your storefront. Digital marketing is not just about being present online; it’s about being visible, relevant, and engagi... | growdigitech_d693e2c583cb | |

1,892,230 | 🌐 Discover Web 3 with our Complete Introduction! 🚀 | Curious about what Web 3 can do for you? Dive into our video to understand everything about this... | 0 | 2024-06-18T09:31:05 | https://dev.to/alibiaphanuel/discover-web-3-with-our-complete-introduction-28bl |

Curious about what Web 3 can do for you? Dive into our video to understand everything about this digital revolution. Whether you're a novice or an expert, our guide will show you how Web 3 is transforming the internet as we know it!

👉 Click here to watch the video: https://www.youtube.com/watch?v=PfrXEaJMllM

👍 Subs... | alibiaphanuel | |

1,892,166 | An In-Depth Look at Pygmalion AI Chat | Introduction In the ever-evolving digital landscape, the demand for intelligent,... | 0 | 2024-06-18T09:30:00 | https://dev.to/novita_ai/an-in-depth-look-at-pygmalion-ai-chat-45kh | ## Introduction

In the ever-evolving digital landscape, the demand for intelligent, interactive, and context-aware AI has never been higher. Pygmalion AI Chat emerges as a beacon of innovation, promising to redefine the way we interact with artificial intelligence. This article delves into the essence of Pygmalion AI C... | novita_ai | |

1,892,227 | “Remote Work” does NOT mean Work from Home. | It means Work from Anywhere. Working from anywhere means exactly as it sounds: “working... | 0 | 2024-06-18T09:29:39 | https://dev.to/bmanish/remote-work-does-not-mean-work-from-home-2b72 | webdev, workplace, workfromhome, career | ## _It means Work from Anywhere._

Working from anywhere means exactly as it sounds: _“working from anywhere”_. Its refers to the ability to perform one’s job tasks from any location with internet access, rather than being confined to a traditional office environment. Whether that’s at home, in the office, or even in a... | bmanish |

1,892,226 | The Concept of Abstraction | This is a submission for DEV Computer Science Challenge v24.06.12: One Byte Explainer. ... | 0 | 2024-06-18T09:29:13 | https://dev.to/sarahokolo/the-concept-of-abstraction-2fb | devchallenge, cschallenge, computerscience, beginners | *This is a submission for [DEV Computer Science Challenge v24.06.12: One Byte Explainer](https://dev.to/challenges/cs).*

## Explainer

<!-- Explain a computer science concept in 256 characters or less. -->

Abstraction in computer science is the art of hiding the complex structures of a hardware or software program, a... | sarahokolo |

1,892,225 | Understanding the Difference Between Frontend and Backend Development | In the realm of web development, two critical components work together to deliver a seamless user... | 0 | 2024-06-18T09:28:27 | https://dev.to/alexroor4/understanding-the-difference-between-frontend-and-backend-development-mp5 | frontend, backend, programming, devops | In the realm of web development, two critical components work together to deliver a seamless user experience: the frontend and the backend. Both play distinct roles but are equally essential for the functionality of web applications. This article delves into the differences between frontend and backend development, hig... | alexroor4 |

1,892,224 | SEO Agency in Amritsar | Expand Your Digital Frontiers with a Premier SEO Agency in Amritsar Amritsar, the cultural pulse of... | 0 | 2024-06-18T09:28:25 | https://dev.to/growdigitech_d693e2c583cb/seo-agency-in-amritsar-30p1 | Expand Your Digital Frontiers with a Premier [SEO Agency in Amritsar](https://growdigitech.com/seo-agency-in-amritsar/)

Amritsar, the cultural pulse of Punjab, is not just a city steeped in history but a burgeoning hub for businesses. In this age of the internet, where the marketplace is global, and the competition is ... | growdigitech_d693e2c583cb | |

1,892,223 | DAOs in Gaming: A New Governance Model | The gaming industry is on the verge of a revolutionary period driven by rapid technological... | 0 | 2024-06-18T09:28:09 | https://dev.to/donnajohnson88/daos-in-gaming-a-new-governance-model-53h0 | blockchain, gamedev, daos, learning | The gaming industry is on the verge of a revolutionary period driven by rapid technological advancements. Among the most promising [blockchain solutions](https://blockchain.oodles.io/blockchain-solutions-development/?utm_source=devto) is the emergence of Decentralized Autonomous Organizations (DAOs). These blockchain-b... | donnajohnson88 |

1,892,222 | Create modern web applications using Next.js and Vercel. | At Futurice, we are passionate about building. With over 20 years of experience in creating digital... | 0 | 2024-06-18T09:27:42 | https://dev.to/ankit_kumar_41670acf33cf4/create-modern-web-applications-using-nextjs-and-vercel-1842 | At Futurice, we are passionate about building. With over 20 years of experience in creating digital experiences, we have seen our tools evolve over the decades. We find building high-performing web applications incredibly satisfying with the current tools at our disposal.

also referred to as digital marketing, is the process of promoting brands online in order to connect with potential customers through various forms such as email, social media platforms and web advertisements. It encompasses text-based ... | growdigitech_d693e2c583cb | |

1,892,220 | Experience Ultimate Relaxation at The Spa Gandhinagar | Nestled in the heart of Gujarat, The Spa Gandhinagar offers an oasis of tranquility and rejuvenation.... | 0 | 2024-06-18T09:23:44 | https://dev.to/abitamim_patel_7a906eb289/experience-ultimate-relaxation-at-the-spa-gandhinagar-41m1 | massage, spa, gandhinagar, thespa | Nestled in the heart of Gujarat, **[The Spa Gandhinagar](https://spa.trakky.in/Gandhinagar/Kudasan/spas/thespag)** offers an oasis of tranquility and rejuvenation. Whether you're a local or visiting the city, our spa is the perfect retreat to escape the hustle and bustle of everyday life. Here's why The Spa Gandhinagar... | abitamim_patel_7a906eb289 |

1,892,219 | Go vs Rust in 2024: slight nuances for dev enthusiasts | Similar to my other topic, I noticed more things recently: Two popular programming languages have... | 0 | 2024-06-18T09:22:41 | https://dev.to/zoltan_fehervari_52b16d1d/go-vs-rust-in-2024-slight-nuances-for-dev-enthusiasts-5a80 | go, golangdevelopment, rust, rustdevelopment | Similar to my other topic, I noticed more things recently:

Two popular programming languages have been gaining traction among developers: [Go and Rust](https://bluebirdinternational.com/go-vs-rust/).

If you’re a tech enthusiast wanting to stay updated on the latest trends, deciding which language suits your needs bes... | zoltan_fehervari_52b16d1d |

1,892,217 | How is work going | A post by Felix Afensumu | 0 | 2024-06-18T09:21:45 | https://dev.to/felix_afensumu_7a67f0af55/how-is-work-going-364i | felix_afensumu_7a67f0af55 | ||

1,891,332 | Day 18 of 30 of JavaScript | Hey reader👋 Hope you are doing well😊 In the last post we have talked about about some pre-defined... | 0 | 2024-06-17T13:43:01 | https://dev.to/akshat0610/day-18-of-30-of-javascript-1ph8 | webdev, javascript, beginners, tutorial | Hey reader👋 Hope you are doing well😊

In the last post we have talked about about some pre-defined objects in JavaScript. In this post we are going to know about Math library, RegEx and destructuring.

So let's get started🔥

## JavaScript Math Object

The JavaScript Math object allows you to perform mathematical tasks... | akshat0610 |

1,892,213 | Copying Arrays and Objects in JavaScript Without References | Comprehensive Guide to Copying Arrays and Objects in JavaScript Without References In... | 0 | 2024-06-18T09:21:22 | https://www.reddit.com/r/DevArt/comments/1djgy2u/copying_arrays_and_objects_in_javascript_without/ | javascript, array, object | ### Comprehensive Guide to Copying Arrays and Objects in JavaScript Without References

In JavaScript, copying arrays and objects can be tricky due to the nature of references. When you assign an array or object to a new variable, you're actually assigning a reference to the original data, not a copy. This means that c... | sh20raj |

1,892,209 | 01q0011 | A post by Felix Afensumu | 0 | 2024-06-18T09:21:10 | https://dev.to/felix_afensumu_7a67f0af55/01q0011-387k | felix_afensumu_7a67f0af55 | ||

1,892,168 | Comate | 给你分享一个AI编码助手——百度Comate!https://comate.baidu.com/zh/activity618?inviteCode=a4z8tq5k | 0 | 2024-06-18T09:19:02 | https://dev.to/_57fb091d15fb74c1c7992e/comate-2fae | react | 给你分享一个AI编码助手——百度Comate!https://comate.baidu.com/zh/activity618?inviteCode=a4z8tq5k | _57fb091d15fb74c1c7992e |

1,892,167 | Comate | 给你分享一个AI编码助手——百度Comate!https://comate.baidu.com/zh/activity618?inviteCode=a4z8tq5k | 0 | 2024-06-18T09:18:04 | https://dev.to/_57fb091d15fb74c1c7992e/comate-ool | 给你分享一个AI编码助手——百度Comate!https://comate.baidu.com/zh/activity618?inviteCode=a4z8tq5k | _57fb091d15fb74c1c7992e | |

1,892,165 | Practicing System Design in JavaScript: Cache System and the Shortest Path for Graph | Introduction Data structure is one of unavoidable challenges when applying the software engineer... | 0 | 2024-06-18T09:14:41 | https://dev.to/ankit_kumar_41670acf33cf4/practicing-system-design-in-javascript-cache-system-and-the-shortest-path-for-graph-3bg5 | Introduction

Data structure is one of unavoidable challenges when applying the software engineer role. I studied basic data structures and wrote down an article in JavaScript before.

However, it’s hard to apply data structures to design a system or solve the real problem.

The target of article is for recording common... | ankit_kumar_41670acf33cf4 | |

1,413,524 | Content & Tooling Team Status Update | Reusable Workflows Some of you may have noticed a few changes to our modules... | 0 | 2023-03-24T19:03:40 | https://puppetlabs.github.io/content-and-tooling-team/blog/updates/2023-03-24-status-update/ | puppet, community | ---

title: Content & Tooling Team Status Update

published: true

date: 2023-03-24 00:00:00 UTC

tags: puppet, community

canonical_url: https://puppetlabs.github.io/content-and-tooling-team/blog/updates/2023-03-24-status-update/

---

## Reusable Workflows

Some of you may have noticed a few changes to our modules lately.

... | puppetdevx |

1,892,164 | Defect Detection Market Growth Driver: Increasing Awareness About Product Quality | Defect Detection Market Size was valued at $ 3.67 Bn in 2022 and is expected to reach $ 6.70 Bn by... | 0 | 2024-06-18T09:14:21 | https://dev.to/vaishnavi_farkade_/defect-detection-market-growth-driver-increasing-awareness-about-product-quality-1e28 | **Defect Detection Market Size was valued at $ 3.67 Bn in 2022 and is expected to reach $ 6.70 Bn by 2030, and grow at a CAGR of 7.8% by 2023-2030.**

**Market Scope & Overview:**

The Defect Detection Market Growth Driver research includes both a SWOT analysis of the major market rivals and a comprehensive analysis of... | vaishnavi_farkade_ | |

1,892,216 | Create an API for DataTables with Laravel | DataTables is a popular jQuery plugin that offers features like pagination, searching, and sorting,... | 0 | 2024-06-21T03:29:35 | https://blog.stackpuz.com/create-an-api-for-datatables-with-laravel/ | laravel, datatables | ---

title: Create an API for DataTables with Laravel

published: true

date: 2024-06-18 09:13:00 UTC

tags: Laravel,DataTables

canonical_url: https://blog.stackpuz.com/create-an-api-for-datatables-with-laravel/

---

[DataTables](https://data... | stackpuz |

1,892,161 | Demystifying the AI Landscape: A Guide to Top Development Firms in 2024 | Demystifying the AI Landscape: A Guide to Top Development Firms in 2024 The relentless... | 0 | 2024-06-18T09:11:20 | https://dev.to/twinkle123/demystifying-the-ai-landscape-a-guide-to-top-development-firms-in-2024-360f | ai, development, devops, performance | ## Demystifying the AI Landscape: A Guide to Top Development Firms in 2024

The relentless march of Artificial Intelligence (AI) is reshaping industries at a breakneck pace. Businesses of all sizes are recognizing the transformative power of AI to streamline operations, optimize decision-making, and unlock entirely new... | twinkle123 |

1,892,049 | Java OOP, in a Nutshell | This blog is about the implementation and working of various object oriented programming concepts in... | 0 | 2024-06-18T09:10:55 | https://dev.to/vasdev/java-oop-in-a-nutshell-1ne0 | java, programming, oop | This blog is about the implementation and working of various object oriented programming concepts in Java. If you want a quick overview or recap, then this blog is for you!

Firstly, lets quickly understand the core concepts of OOP:

## Encapsulation

**Definition :** The action of enclosing something in or as if in a <... | vasdev |

1,892,157 | MySQL to GBase 8c Migration Guide | This article provides a quick guide for migrating application systems based on MySQL databases to... | 0 | 2024-06-18T09:05:56 | https://dev.to/gbasedatbase/mysql-to-gbase-8c-migration-guide-2o36 | database, mysql, gbasedatabase | This article provides a quick guide for migrating application systems based on MySQL databases to GBase databases (GBase 8c). For detailed information about specific aspects of both databases, readers can refer to the MySQL official documentation (https://dev.mysql.com/doc/) and the GBase 8c user manual. Due to the ext... | gbasedatabase |

1,892,155 | 1.Describe the python selenium architecture in detail | 1.Describe the python selenium architecture in detail. Selenium tool is used for controlling web... | 0 | 2024-06-18T09:02:33 | https://dev.to/pat28we/1describe-the-python-selenium-architecture-in-detail-492 | task18 | 1.Describe the python selenium architecture in detail.

Selenium tool is used for controlling web browser through programs and performing browser automation.

Selenium WebDriver:

The core of Selenium is the WebDriver, which provides an API for browser automation. It allows us to interact with web pages, naviga... | pat28we |

1,892,153 | Multi-robot market quotes sharing solution | When using digital currency quantitative trading robots, when there are multiple robots running on a... | 0 | 2024-06-18T09:01:00 | https://dev.to/fmzquant/multi-robot-market-quotes-sharing-solution-3o5c | robot, market, trading, fmzquant | When using digital currency quantitative trading robots, when there are multiple robots running on a server, if you visit different exchanges, the issue is not serious at this time, and there will be no API request frequency problem. If you need to have multiple robots running at the same time, and they are all visit t... | fmzquant |

1,892,218 | dxday: A Report | What I saw at dxday, a conf by GrUSP | 0 | 2024-06-18T09:27:25 | https://tech.sparkfabrik.com/en/blog/dxday/ | dxday, devrel, community, events | ---

date: 2024-06-18 09:00:00 UTC

title: "dxday: A Report"

tags: ["dxday", "developerexperience", "community", "events"]

description: "What I saw at dxday, a conf by GrUSP"

summary: "What I saw at dxday, a conf by GrUSP"

published: true

canonical_url: https://tech.sparkfabrik.com/en/blog/dxday/

cover_image: https://dev... | boncolab |

1,892,151 | Rust vs. C++: Modern Developers’ Dilemma | I have come to realize one common dilemma: Many developers are going back and forth between Rust and... | 0 | 2024-06-18T08:59:20 | https://dev.to/zoltan_fehervari_52b16d1d/rust-vs-c-modern-developers-dilemma-1i0p | rust, cpp, developerdilemma, comparison | I have come to realize one common dilemma:

Many developers are going back and forth between [Rust and C++](https://bluebirdinternational.com/rust-vs-c/).

Both languages offer distinct strengths and weaknesses, making it challenging to determine which is best for a given project.

## Rust vs. C++: Understanding the Co... | zoltan_fehervari_52b16d1d |

1,892,150 | From Rejections to Readiness: A Developer's Appeal for Work | Hello everyone, I hope you're all doing well. I wanted to share my current journey with you. I’ve... | 0 | 2024-06-18T08:58:38 | https://dev.to/shareef/from-rejections-to-readiness-a-developers-appeal-for-work-4fnh | career, discuss, coding, softwaredevelopment | Hello everyone,

I hope you're all doing well.

I wanted to share my current journey with you. I’ve been searching for a software developer job for the past few months. Despite securing a few interviews, the lengthy processes often resulted in rejections or loss of interest.

With 2 years of experience working as a sof... | shareef |

1,892,149 | Understanding the Event Loop, Callback Queue, and Call Stack & Micro Task Queue in JavaScript | Call Stack: Simple Data structure provided by the V8 Engine. JS Engine contains Memory... | 0 | 2024-06-18T08:57:35 | https://dev.to/rajatoberoi/understanding-the-event-loop-callback-queue-and-call-stack-in-javascript-1k7c | javascript, beginners, programming, asynchronous | ## Call Stack:

- Simple Data structure provided by the V8 Engine. JS Engine contains Memory Heap and Call Stack.

- Tracks execution of our program, by tracking currently running functions.

- Our complete Js file gets wrapped in main() function and is added in Call Stack for execution.

- Whenever we call a function, ... | rajatoberoi |

1,892,147 | What's New in API7 Enterprise 3.2.13: Ingress Controller Gateway Groups | Cloud-native architecture has become a core driver of enterprise digital transformation due to its... | 0 | 2024-06-18T08:52:57 | https://api7.ai/blog/api7-3.2.13-ingress-controller-gateway-groups | Cloud-native architecture has become a core driver of enterprise digital transformation due to its scalability, flexibility, and efficiency. Kubernetes has emerged as the cornerstone for many enterprises to build and run modern applications, thanks to its excellent container orchestration capabilities.

As application ... | yilialinn | |

1,892,146 | How to Register for CA Foundation Quickly | The prestigious field of chartered accountancy (CA) beckons aspiring individuals, and the first... | 0 | 2024-06-18T08:51:21 | https://dev.to/palaksrivastava/how-to-register-for-ca-foundation-quickly-ba1 |

The prestigious field of chartered accountancy (CA) beckons aspiring individuals, and the first crucial step on this fulfilling career path involves registering for the CA Foundation exam, administer... | palaksrivastava | |

1,892,145 | I have discovered the best Lightweight Java IDEs for efficient coding | For Java developers prioritizing speed and performance, lightweight Java IDEs offer a minimalistic... | 0 | 2024-06-18T08:44:12 | https://dev.to/zoltan_fehervari_52b16d1d/i-have-discovered-the-best-lightweight-java-ides-for-efficient-coding-5ea3 | java, ides, javadevelopment, javaeclipse | For Java developers prioritizing speed and performance, [lightweight Java IDEs](https://bluebirdinternational.com/lightweight-java-ides/) offer a minimalistic interface and optimized functionality.

Let me show you here some top picks that enhance productivity and streamline your coding experience.

## What Are Lightwe... | zoltan_fehervari_52b16d1d |

1,892,142 | Algorithmic Trading: The Future of Finance | In today's fast-paced world of finance, innovation is the driving force that continues to shape the... | 27,673 | 2024-06-18T08:38:21 | https://dev.to/rapidinnovation/algorithmic-trading-the-future-of-finance-580b | In today's fast-paced world of finance, innovation is the driving force that

continues to shape the industry's future. Technology is advancing at an

unprecedented pace, and entrepreneurs and innovators are presented with a wide

array of tools to redefine traditional financial practices. One such

revolutionary technolog... | rapidinnovation | |

1,892,141 | How to Achieve Business Success with the Right Digital Marketing Agency | In today’s highly competitive digital landscape, businesses must leverage digital marketing to stay... | 0 | 2024-06-18T08:35:46 | https://dev.to/ava_smith_6599551939de33d/how-to-achieve-business-success-with-the-right-digital-marketing-agency-1gel | digitalmarketing, seo, digitalmarketingagency |

In today’s highly competitive digital landscape, businesses must leverage digital marketing to stay ahead. Partnering with the right digital marketing agency can be a game-changer, providing the expertise and tools n... | ava_smith_6599551939de33d |

1,892,140 | AWS Lambda Explored: A Comprehensive Guide to Serverless Use Cases | Did you know that “Serverless architecture market size exceeded USD 9 billion in 2022 and is... | 0 | 2024-06-18T08:34:33 | https://www.softwebsolutions.com/resources/exploring-aws-lambda-serverless-use-cases.html | lambda, aws, cloud, serverless | **Did you know that**

> “Serverless architecture market size exceeded USD 9 billion in 2022 and is estimated to grow at over 25% CAGR to from 2023 to 2032.” – Global Market Insights

AWS Lambda is among the widely used services for implementing the concept of serverless architecture. Provided by Amazon Web Services, A... | csoftweb |

1,892,139 | Top 10+ Game MU Mới Ra, MU Lậu Hay Nhất hiện nay | Game MU Online đã ra mắt tại Việt Nam được hơn 20 năm, trải qua bao biến động của thị trường game... | 0 | 2024-06-18T08:33:17 | https://dev.to/mumoiravn/top-10-game-mu-moi-ra-mu-lau-hay-nhat-hien-nay-3l13 | gamedev, muonline | Game **MU Online** đã ra mắt tại Việt Nam được hơn 20 năm, trải qua bao biến động của thị trường game online. Rất nhiều game đình đám ra mắt rồi lụi tàn nhưng MU Online là 1 trường hợp đặc biệt. Nó vẫn âm thầm tồn tại và phát triển cho đến ngày hôm nay. Vẫn có cộng đồng người chơi đông đảo. Sức hút của tựa game MMORPG ... | mumoiravn |

1,890,740 | Swithing Data Types: Understanding the 'Type Switch' in GoLang | Type switches in Golang offer a robust mechanism for handling different types within interfaces. They... | 0 | 2024-06-18T08:27:31 | https://dev.to/ishmam_abir/swithing-data-types-understanding-the-type-switch-in-golang-4enc | go, typeswitch, tutorial | Type switches in Golang offer a robust mechanism for handling different types within interfaces. They simplify the code and enhance readability, making it easier to manage complex logic based on type assertions. Whether you are dealing with polymorphic data structures or custom error types, type switches provide a clea... | ishmam_abir |

1,892,138 | Sữa rửa mặt trị mụn ẩn phổ biến | Mụn ẩn là một trong những vấn đề phổ biến mà nhiều người phải đối mặt, đặc biệt là ở độ tuổi dậy thì.... | 0 | 2024-06-18T08:23:07 | https://dev.to/sinh_vincosmetics_29a99/sua-rua-mat-tri-mun-an-pho-bien-26pc | Mụn ẩn là một trong những vấn đề phổ biến mà nhiều người phải đối mặt, đặc biệt là ở độ tuổi dậy thì. Chúng không chỉ gây đau đớn và khó chịu mà còn có thể để lại sẹo làm tổn hại đến ngoại hình. Để đối phó với tình trạng này, việc lựa chọn sữa rửa mặt phù hợp có vai trò quan trọng trong việc loại bỏ mụn ẩn và ngăn ngừa... | sinh_vincosmetics_29a99 | |

1,892,137 | Data Center Interconnect Market Forecast: Growth Drivers and Constraints | Data Center Interconnect Market Size will be valued at $ 32.9 Bn by 2031, and it was valued at $... | 0 | 2024-06-18T08:22:22 | https://dev.to/vaishnavi_farkade_/data-center-interconnect-market-forecast-growth-drivers-and-constraints-c1h | **Data Center Interconnect Market Size will be valued at $ 32.9 Bn by 2031, and it was valued at $ 11.45 Bn in 2023, and grow at a CAGR of 14.1% by 2024-2031.**

**Market Scope & Overview:**

The motive of the Data Center Interconnect Market Forecast research is to outline the existing state of the industry and its fut... | vaishnavi_farkade_ | |

1,892,136 | Combatting Food Waste with Code: Python for Perishable Goods | Combat food waste with the power of Python! This article explores how Python empowers you to... | 0 | 2024-06-18T08:21:52 | https://dev.to/akaksha/combatting-food-waste-with-code-python-for-perishable-goods-2e56 | Combat food waste with the power of Python! This article explores how Python empowers you to optimize perishable food supply chains, reducing waste and promoting sustainability.

The global food supply chain faces a significant challenge: food waste. Perishable goods like fruits, vegetables, and meat are particularly... | akaksha | |

1,892,135 | WooCommerce vs Shopify: Choosing the Best Ecommerce Platform for Your Business | In the realm of ecommerce, choosing the right platform can significantly impact the success and... | 0 | 2024-06-18T08:21:11 | https://dev.to/anthony_wilson_032f9c6a5f/woocommerce-vs-shopify-choosing-the-best-ecommerce-platform-for-your-business-35d2 | In the realm of ecommerce, choosing the right platform can significantly impact the success and efficiency of your online store. Two giants in the ecommerce platform arena, WooCommerce and Shopify, offer distinct advantages and cater to different business needs. Whether you're a startup looking to establish an online p... | anthony_wilson_032f9c6a5f | |

1,892,134 | What is the relationship between the grayscale and brightness of LED displays? | LED displays play an important role in modern advertising, entertainment, and information... | 0 | 2024-06-18T08:21:10 | https://dev.to/sostrondylan/what-is-the-relationship-between-the-grayscale-and-brightness-of-led-displays-1g90 | led, displays, brightness | [LED displays](https://sostron.com/products/) play an important role in modern advertising, entertainment, and information dissemination. Understanding the relationship between its grayscale and brightness is essential for optimizing display effects.

** with a commitment to providing 100% placement support. Our institute stands out as the top training institute in Pune for SAP, Python training, and more. We u... | sap_training_institute_in_pune | |

1,891,229 | Lessons from Google’s technical writing course for engineering blogs | As a technical content writer helping engineers write blog posts, I recently completed Google’s... | 0 | 2024-06-18T08:14:43 | https://dev.to/annelaure13/lessons-from-googles-technical-writing-course-for-engineering-blogs-4458 | As a technical content writer helping engineers write blog posts, I recently completed [Google’s technical writing course](https://developers.google.com/tech-writing). While the primary purpose of this course is to assist engineers in writing technical documentation, I find that some of the advice also applies to engin... | annelaure13 | |

1,892,114 | Mastering SAP: Your Path to Career Advancement in Pune | Considering a career in SAP (Systems, Applications, and Products in Data Processing)? Pune, a hub of... | 0 | 2024-06-18T08:14:26 | https://dev.to/dhruv_dahikar_db878166afd/mastering-sap-your-path-to-career-advancement-in-pune-4ka7 | sapcourseinpune, saptraininginpune, sapinstituteinoune, saptraininginstituteinpune | Considering a career in SAP (Systems, Applications, and Products in Data Processing)? Pune, a hub of IT innovation and educational excellence, offers prime opportunities to master SAP and propel your career forward. At **[Connecting Dots ERP](https://g.co/kgs/t6LCMou)**, we're dedicated to providing top-tier SAP traini... | dhruv_dahikar_db878166afd |

1,892,113 | Common Mistakes Beginners Make in Frontend Development | Starting out in frontend development can be both exciting and challenging. While diving into HTML,... | 0 | 2024-06-18T08:13:21 | https://dev.to/klimd1389/common-mistakes-beginners-make-in-frontend-development-12oc | webdev, javascript, beginners, programming | Starting out in frontend development can be both exciting and challenging. While diving into HTML, CSS, and JavaScript, beginners often make mistakes that can hinder their progress. Here are some common pitfalls and tips on how to avoid them.

Ignoring Semantics in HTML

Mistake:

Many beginners use HTML elements incorre... | klimd1389 |

1,892,112 | Industrial filter manufacturers vizag Filter | Industrial filter manufacturers vizag Filter emerges as a leading provider of advanced industrial... | 0 | 2024-06-18T08:12:39 | https://dev.to/vizag_filters_96e173849e1/industrial-filter-manufacturers-vizag-filter-36il | Industrial filter manufacturers vizag Filter emerges as a leading provider of advanced industrial filtration solutions. Specializing in a wide array of filters designed for diverse applications, Vizag Filter combines local expertise with global standards to deliver unparalleled quality and reliability.

Expertise in In... | vizag_filters_96e173849e1 | |

1,892,111 | Leveraging Effective HR Training for Organizational Excellence | In the ever-evolving world of business, keeping pace with the latest HR practices is critical for... | 0 | 2024-06-18T08:12:12 | https://dev.to/connectingdotserp01/leveraging-effective-hr-training-for-organizational-excellence-1i2g | hrtraining, hrcareer, hrmanagement, hrexecutive | In the ever-evolving world of business, keeping pace with the latest HR practices is critical for both individual career growth and organizational success. The [**best HR training institute**]( https://connectingdotserp.in/hr-courses/#hr-management-course) bridges the gap between current skill sets and the demands of t... | connectingdotserp01 |

1,892,110 | Industrial Filters manufacturers in India | industrial filter manufacturers play a pivotal role in delivering high-quality filtration solutions... | 0 | 2024-06-18T08:11:08 | https://dev.to/vizag_filters_96e173849e1/industrial-filters-manufacturers-in-india-3jpj | industrial filter manufacturers play a pivotal role in delivering high-quality filtration solutions that meet global standards of efficiency and reliability. With a strong emphasis on innovation, precision engineering, and sustainability, manufacturers in India are recognized for their ability to cater to diverse indu... | vizag_filters_96e173849e1 |

Subsets and Splits

No community queries yet

The top public SQL queries from the community will appear here once available.