id int64 5 1.93M | title stringlengths 0 128 | description stringlengths 0 25.5k | collection_id int64 0 28.1k | published_timestamp timestamp[s] | canonical_url stringlengths 14 581 | tag_list stringlengths 0 120 | body_markdown stringlengths 0 716k | user_username stringlengths 2 30 |

|---|---|---|---|---|---|---|---|---|

1,885,564 | Comprehensive Guide to AWS API Gateway: Everything You Need to Know - Part I | AWS API Gateway is a powerful service that allows you to create, publish, manage, and secure APIs... | 0 | 2024-06-12T10:25:47 | https://practicalcloud.net/comprehensive-guide-to-aws-api-gateway-everything-you-need-to-know-part-i/ | AWS API Gateway is a powerful service that allows you to create, publish, manage, and secure APIs with ease. Whether you're building RESTful APIs, WebSocket APIs, or HTTP APIs, API Gateway provides a range of features and functionalities to streamline API development, authentication, authorization, documentation, and m... | kelvinskell | |

1,884,785 | BC Boilerplates: Recent Updates | Over the past few months, BC Boilerplates have undergone several changes, covering important aspects... | 0 | 2024-06-12T10:25:39 | https://dev.to/rodik/bc-boilerplates-recent-updates-37eh | webdev, javascript, nestjs, react | Over the past few months, BC Boilerplates have undergone several changes, covering important aspects from testing to automating installation processes.

Below, we are pleased to present updates that will facilitate the development process of applications built on boilerplates from the [BC Boilerplates ecosystem](https:/... | rodik |

1,885,567 | Ensuring Seamless Functionality and Patient Safety Through Integrated System Testing in the Healthcare Industry | Complex healthcare information systems depend heavily on integrated system testing (IST) to function... | 0 | 2024-06-12T10:25:27 | https://knowledgewap.org/blog/ensuring-seamless-functionality-and-patient-safety-through-integrated-system-testing-in-the-healthcare-industry/ | integrated, system, testing |

Complex healthcare information systems depend heavily on integrated system testing (IST) to function properly. It entails assessing how different software programs and medical equipment communicate and exchange data ... | rohitbhandari102 |

1,885,566 | Seven Ways of Making the Most of Your Yacht Rental | What Are the Top Yacht Rental Spots in UAE? Dubai is home to several luxurious services and... | 0 | 2024-06-12T10:25:02 | https://dev.to/cozmo_yachts_83a79538002d/seven-ways-of-making-the-most-of-your-yacht-rental-2h58 | yacht | What Are the Top Yacht Rental Spots in UAE? Dubai is home to several luxurious services and extravagances. Undoubtedly, individuals from all over the world come to enjoy this wonderful escapism. Whether you want to go sightseeing or rent a luxury yacht, it has it everything. Given that you appreciate it and look forwar... | cozmo_yachts_83a79538002d |

1,885,565 | letras para imprimir | Letras para Imprimir: Sua fonte de alfabetos para impressão, ideal para necessidades educacionais e... | 0 | 2024-06-12T10:25:00 | https://dev.to/letraspara_imprimir/letras-para-imprimir-4j92 | Letras para Imprimir: Sua fonte de alfabetos para impressão, ideal para necessidades educacionais e de artesanato.

https://letrasparaimprimir.online | letraspara_imprimir | |

1,885,563 | AWS CloudFormation Templates: Because Clicking Buttons is Too Old | Welcome Senpai 🙈! we're going to dive headfirst into the thrilling (and sometimes confusing) world of... | 0 | 2024-06-12T10:24:30 | https://dev.to/spantheslayer/aws-cloudformation-templates-because-clicking-buttons-is-too-old-5e69 | Welcome Senpai 🙈! we're going to dive headfirst into the thrilling (and sometimes confusing) world of AWS CloudFormation templates. Trust me, once you get the hang of it, you'll wonder how you ever managed your infrastructure without it. But before we get too far ahead of ourselves, let's break down what a CloudFormat... | spantheslayer | |

1,885,562 | Eco-Friendly Driving in Finland: Tips for a Sustainable Road Trip | Embarking on a road trip in Finland? Embrace the beauty of this Nordic nation while minimising your... | 0 | 2024-06-12T10:24:29 | https://dev.to/shekar_bandari_c012227b90/eco-friendly-driving-in-finland-tips-for-a-sustainable-road-trip-31pi | Embarking on a road trip in Finland? Embrace the beauty of this Nordic nation while minimising your environmental impact. Before hitting the open road, ensure you've aced the Driving Theory Test Finland, familiarizing yourself with local regulations and best practices. With a few eco-conscious driving habits, you can ... | shekar_bandari_c012227b90 | |

1,885,561 | IoT in the Pharmaceutical Industry: Use Case | The Internet of Things is massively transforming industries, and the pharmaceutical industry is not... | 0 | 2024-06-12T10:24:00 | https://dev.to/lucyzeniffer/iot-in-the-pharmaceutical-industry-use-case-38h | The Internet of Things is massively transforming industries, and the pharmaceutical industry is not untouched.

If done correctly, [IoT app development services](https://successive.tech/iot-app-development-company/?utm_source=Micro+Blog&utm_medium=dev.to&utm_campaign=SEO+WORK+2) bring automation into every manual proce... | lucyzeniffer | |

1,885,560 | How and Why Do Larger Language Models Do In-context Learning Differently? | Introduction How and why do larger language models do in-context learning differently?... | 0 | 2024-06-12T10:22:35 | https://dev.to/novita_ai/how-and-why-do-larger-language-models-do-in-context-learning-differently-3bem | llm | ## Introduction

How and why do larger language models do in-context learning differently? In this article, we will explore the concept of "**in-context learning**" (ICL), discuss the latest findings about in-context learning behaviors of models of different sizes _in plain English_, and delve into ways that can lever... | novita_ai |

1,885,558 | Lê Văn Lượng | là một người tài năng, đẹp trai, học giỏi, tuy hiện tại anh chưa kiếm được nhiều tiền nhưng bằng năng... | 0 | 2024-06-12T10:22:07 | https://dev.to/levanluong/le-van-luong-gkf | là một người tài năng, đẹp trai, học giỏi, tuy hiện tại anh chưa kiếm được nhiều tiền nhưng bằng năng lực và sự cố gắng bền bỉ của mình tôi tin rằng trong tương lai anh ấy là 1 triệu phú đô la bằng những khoản đầu tư đúng đắn từ đầu tư cho bản thân đến đầu từ vào các loại tài sản khác để sinh lời trong dài hạn

https:/... | levanluong | |

1,885,557 | Understanding Lexical Scope in JavaScript ✅ | JavaScript, like many other programming languages, relies on a concept called "lexical scope" (also... | 0 | 2024-06-12T10:20:50 | https://dev.to/alisamirali/understanding-lexical-scope-in-javascript-1eb0 | javascript, webdev, frontend, programming | JavaScript, like many other programming languages, relies on a concept called "lexical scope" (also known as static scope) to resolve variable references within nested functions. This article will delve into what lexical scope is, how it works, and why it is fundamental to mastering JavaScript.

---

## What is Lexical... | alisamirali |

1,885,556 | Exploring Test Coverage Tools: Enhancing Software Quality Assurance | In the fast-paced world of software development, ensuring the reliability and stability of... | 0 | 2024-06-12T10:20:28 | https://dev.to/keploy/exploring-test-coverage-tools-enhancing-software-quality-assurance-27h0 | testing, webdev, programming, python |

In the fast-paced world of software development, ensuring the reliability and stability of applications is a top priority. One of the key practices in achieving this goal is comprehensive testing, and [test coverage... | keploy |

1,885,552 | How Much Whey Protein Do You Need? Dosage Guidelines for Different Goals | Whey protein has quickly become a cornerstone of fitness and health communities worldwide, revered... | 0 | 2024-06-12T10:20:04 | https://dev.to/fitnessadvisor04/how-much-whey-protein-do-you-need-dosage-guidelines-for-different-goals-3gbh | wheyprotein, wheyproteinpowder, wheyproteinsupplement, bestwheyprotein | Whey protein has quickly become a cornerstone of fitness and health communities worldwide, revered for its ability to build muscle mass, assist weight loss efforts, and promote overall wellness. However, with various opinions and advice about optimal whey protein intake based on age, activity level, and specific health... | fitnessadvisor04 |

1,885,550 | How to deploy the Opensource Phi-3 model on AI: a step-by-step guide | One of the most recent developments in artificial intelligence is the open-source Phi-3 model. Phi-3,... | 0 | 2024-06-12T10:19:37 | https://dev.to/nishantbijani/how-to-deploy-the-opensource-phi-3-model-on-ai-a-step-by-step-guide-3ggo | phi3, ai, aimodel, generativeai | One of the most recent developments in [artificial intelligence](https://www.codiste.com/what-is-artificial-general-intelligence-agi) is the open-source Phi-3 model. Phi-3, revealed on April 23, 2024, features a dense decoder-only Transformer architecture and has been refined by applying sophisticated methods such as D... | nishantbijani |

1,885,549 | Key Concepts in Threat Intelligence for the MCIA-Level-1 Exam | The MCIA-Level-1 Exam is a crucial certification for professionals in the field of threat... | 0 | 2024-06-12T10:18:54 | https://dev.to/mcd-level-1-dumps/mastering-threat-intelligence-a-comprehensive-guide-for-mcia-level-1-exam-igh | The MCIA-Level-1 Exam is a crucial certification for professionals in the field of threat intelligence. As cyber security threats evolve, so does the necessity for adept individuals who can anticipate, identify, and mitigate these threats. This exam ensures that candidates possess the essential knowledge and skills nee... | mcd-level-1-dumps | |

1,885,548 | Creating the Tasnim Website: Overcoming Challenges and Building for the Future | Building the Tasnim website has been a journey of innovation, dedication, and overcoming numerous... | 0 | 2024-06-12T10:18:36 | https://dev.to/cosmisader76/creating-the-tasnim-website-overcoming-challenges-and-building-for-the-future-4455 | javascript, python |

Building the Tasnim website has been a journey of innovation, dedication, and overcoming numerous challenges. Our mission was to create a user-friendly, secure, and efficient platform that highlights our premium qua... | cosmisader76 |

1,885,547 | SMTP Relay Service Providers: An Essential Guide for Businesses | Whether it’s for marketing campaigns, transactional notifications, or internal communications,... | 0 | 2024-06-12T10:18:14 | https://dev.to/brettjhonson01/smtp-relay-service-providers-an-essential-guide-for-businesses-51db | webdev, beginners, devops, productivity | Whether it’s for marketing campaigns, transactional notifications, or internal communications, reliable email delivery is crucial. This is where SMTP (Simple Mail Transfer Protocol) relay services come into play. This guide delves into the importance of [SMTP relay service providers](https://smtpget.com/smtp-service-pr... | brettjhonson01 |

1,885,546 | Laravel Migration: Metin Veri Tipleri Arasındaki Farklar | Herkese selamlar! Bu makalede text, longText, mediumText, ve tinyText veri tiplerini ve bunların... | 0 | 2024-06-12T10:17:47 | https://dev.to/baris/laravel-migration-metin-veri-tipleri-arasindaki-farklar-1a6j | Herkese selamlar! Bu makalede `text`, `longText`, `mediumText`, ve `tinyText` veri tiplerini ve bunların kullanımını inceleyeceğiz.

Laravel'de `text`, `longText`, `mediumText` ve `tinyText` veri tiplerinin farklarını anlamak, veritabanı tasarımınızı optimize etmenize ve doğru veri tipini seçerek performansı artırmanız... | baris | |

1,885,545 | Microservices vs Monolith | Go & GoFr | In the evolving landscape of software architecture, the debate between traditional monolithic design... | 0 | 2024-06-12T10:16:33 | https://dev.to/vipulrawat008/microservices-vs-monolith-go-gofr-1da6 |

In the evolving landscape of software architecture, the debate between traditional monolithic design and microservices design has become increasingly relevant. Monolithic architecture, characterized by a ... | vipulrawat008 | |

1,883,937 | Salesforce Manufacturing Cloud: How does it help sales and operations collaborate? | Salesforce Manufacturing Cloud is intended to give manufacturing organizations the resources they... | 0 | 2024-06-12T10:16:32 | https://dev.to/nivas_nirmal_357953e08d47/salesforce-manufacturing-cloud-how-does-it-help-sales-and-operations-collaborate-45h6 | Salesforce Manufacturing Cloud is intended to give manufacturing organizations the resources they require to better forecast and plan, improve visibility into client relationships, and synchronize sales and operations.

This is where [Salesforce Manufacturing Cloud](https://www.absyz.com/salesforce-manufacturing-cloud/)... | nivas_nirmal_357953e08d47 | |

1,885,533 | Creating Jennie AI Voice Generator: Your Ultimate Guide | Discover the power of the Jennie AI voice generator in our ultimate guide. Create your Jennie AI... | 0 | 2024-06-12T10:15:49 | https://dev.to/novita_ai/creating-jennie-ai-voice-generator-your-ultimate-guide-3f4d | Discover the power of the Jennie AI voice generator in our ultimate guide. Create your Jennie AI voice generator with advanced AI capabilities.

## Key Highlights

- Jennie AI Voice Generator is a powerful tool that allows you to create AI voices that sound like Jennie Kim, the talented South Korean singer from the gro... | novita_ai | |

1,885,543 | 7 Ways to Remove Array Duplicates in Javascript | In real development work, we often encounter the handling of a set of data de-duplication. In... | 0 | 2024-06-12T10:15:30 | https://dev.to/frost_gary_90f3cf1699bd02/7-ways-to-remove-array-duplicates-in-javascript-183g | javascript, frontend, webdev | In real development work, we often encounter the handling of a set of data de-duplication. In JavaScript, there are several ways to filter out duplicates from an array, and I'll share with you the different ways of de-duplication in this article.

Here is the array we want to filter out duplicates:

The current mobile app landscape is no less than a battleground. With more than 4.8 million apps out in the market today (both iOS and Android), playing the field requires some of the best tools in your arsenal, the ... | rubengrey |

1,885,541 | Cloud Testing Vs. Conventional Testing | Cloud testing and conventional testing are two different approaches to software testing that differ... | 0 | 2024-06-12T10:15:08 | https://dev.to/morrismoses149/cloud-testing-vs-conventional-testing-220f | cloudtesting, conventionaltesting, testgrid | Cloud testing and conventional testing are two different approaches to software testing that differ in several aspects, including infrastructure, environment, scalability, cost, and flexibility.

Cloud testing is a software testing approach involving applications and systems over the cloud rather than on local hardware ... | morrismoses149 |

1,885,540 | Enhancing Comfort with PU Foam Cushions | Get More Comfort with PU Foam Cushions Being comfortable is important to stay active and concentrate... | 0 | 2024-06-12T10:14:11 | https://dev.to/johnnie_heltonke_fbec2631/enhancing-comfort-with-pu-foam-cushions-4725 | design | Get More Comfort with PU Foam Cushions

Being comfortable is important to stay active and concentrate on your own tasks are daily. You will need a comfortable cushion that supports your body weight and provides relief from pressure spots when it comes to sitting for very long periods. PU foam cushions happen created ... | johnnie_heltonke_fbec2631 |

1,884,964 | Conditional Rendering in React | Introduction Conditional Rendering in React refers to the technique of displaying components or... | 0 | 2024-06-12T10:13:40 | https://dev.to/rachealcloud/conditional-rendering-in-react-1lmi | beginners, javascript, react, opensource |

**Introduction**

Conditional Rendering in React refers to the technique of displaying components or element based on certain conditions. Your components will often need to display different things depending on different conditions. In React, you can conditionally render JSX using JavaScript syntax like if statements,... | rachealcloud |

1,885,539 | Thun Dong Phuc The Gioi Ao | Nguyễn Thị Xuân Điệp là người sáng lập nên thương hiệu Thế Giới Áo Thun Đồng Phục năm 2013. Bà được... | 0 | 2024-06-12T10:13:30 | https://dev.to/kbthegioidongphuc/thun-dong-phuc-the-gioi-ao-5503 | Nguyễn Thị Xuân Điệp là người sáng lập nên thương hiệu Thế Giới Áo Thun Đồng Phục năm 2013. Bà được đào tạo bài bản về ngành may và đã có kinh nghiệm 22 năm trong lĩnh vực may mặc trong các tập đoàn may mặc lớn.

Website: <a href="https://thegioiaothundongphuc.com/nguyen-thi-xuan-diep/">https://thegioiaothundongphuc.com... | kbthegioidongphuc | |

1,885,537 | Track Errors in Your Python Flask Application with AppSignal | In this article, we'll look at how to track errors in a Flask application using AppSignal. We'll... | 0 | 2024-06-12T10:13:07 | https://blog.appsignal.com/2024/05/29/track-errors-in-your-python-flask-application-with-appsignal.html | python, flask | In this article, we'll look at how to track errors in a Flask application using AppSignal.

We'll first bootstrap a Flask project, and install and configure AppSignal. Then, we'll introduce some faulty code and demonstrate how to track and resolve errors using AppSignal's Errors dashboard.

Let's get started!

## Prere... | duplxey |

1,885,538 | My Linux Journey: From Cyber Security Newbie to Enthusiast | Hey there, fellow tech enthusiasts in Pune! As someone who's just started diving into the world of... | 0 | 2024-06-12T10:12:45 | https://dev.to/fizza_c3e734ee2a307cf35e5/my-linux-journey-from-cyber-security-newbie-to-enthusiast-2f7 | cyber, cybersecurity, linux | Hey there, fellow tech enthusiasts in Pune! As someone who's just started diving into the world of cybersecurity, I recently discovered the magic of Linux. While the initial foray felt like navigating a labyrinth blindfolded, I'm here to tell you it's an incredibly rewarding experience, especially for anyone interested... | fizza_c3e734ee2a307cf35e5 |

1,885,536 | 26 Top Kubernetes Tools | Kubernetes is the most popular container orchestration tool, but it gets even better when combined... | 0 | 2024-06-12T10:12:07 | https://spacelift.io/blog/kubernetes-tools | kubernetes, devops | Kubernetes is the most popular container orchestration tool, but it gets even better when combined with other tools. The Kubernetes ecosystem contains a huge range of tools for command line, simplifying cluster management, monitoring, security, and deployment tasks. With so many options, it can be unclear which you sho... | spacelift_team |

1,885,535 | GitOps: A Comprehensive Guide | GitOps is a modern operational framework that leverages Git repositories as the source of truth for... | 0 | 2024-06-12T10:11:35 | https://dev.to/iaadidev/gitops-a-comprehensive-guide-909 | git, gitops, deployment, monitoring |

GitOps is a modern operational framework that leverages Git repositories as the source of truth for defining and managing infrastructure and application configurations. This approach provides a clear and consistent methodology for deploying, monitoring, and managing applications. In this comprehensive guide, we will ... | iaadidev |

1,885,532 | GameFi and Blockchain: The Future of Online Gaming | The gaming industry is undergoing a seismic shift with the emergence of GameFi, a revolutionary... | 0 | 2024-06-12T10:08:59 | https://dev.to/donnajohnson88/gamefi-and-blockchain-the-future-of-online-gaming-1pba | blockchain, gamefi, beginners, learning | The gaming industry is undergoing a seismic shift with the emergence of GameFi, a revolutionary concept that merges the worlds of gaming and decentralized finance (DeFi). By leveraging [blockchain development services](https://blockchain.oodles.io/?utm_source=devto), GameFi empowers players to enjoy their favorite game... | donnajohnson88 |

1,885,531 | Digital Dwar : A Web Designing Agency | [Digital Dwar , best website development services in delhi. We prefer quality work and satisfaction... | 0 | 2024-06-12T10:08:17 | https://dev.to/digitaldwar/digital-dwar-a-web-designing-agency-3fc0 | webdesigning, digitaldwar, webdeveloper, websitemaker | [Digital Dwar , best website development services in delhi. We prefer quality work and satisfaction for our customer. We build trust not only websites. Contact us for a great experience.

We can make a creative and attractive website for business and also help you to grow in your business in affordable price. We build t... | digitaldwar |

1,885,530 | Flutter vs React Native: Which One is Better? | Two industry-leading solutions that speed up the development of cross-platform apps for iOS and... | 0 | 2024-06-12T10:07:56 | https://dev.to/infowindtech57/flutter-vs-react-native-which-one-is-better-593e | react, flutter | Two industry-leading solutions that speed up the development of cross-platform apps for iOS and Android devices are Flutter and React Native. Their agility and quickness make them unique. Google developed Flutter, which is known for its fast performance. It uses Dart to create elegant UI elements. React Native, another... | infowindtech57 |

1,885,524 | Complete Guide for Install OpenCV using Anaconda | OpenCV, or the Open Source Computer Vision Library, is a treasure trove for anyone working with image... | 0 | 2024-06-12T10:04:15 | https://dev.to/codetradeindia/complete-guide-for-install-opencv-using-anaconda-38aa | opencv, anaconda, python, computervision | OpenCV, or the Open Source Computer Vision Library, is a treasure trove for anyone working with image and video processing in **[Python](https://www.codetrade.io/python/)**.

With Anaconda, a popular scientific Python distribution, installing OpenCV becomes a breeze.

Here we'll explore the step-by-step process to in... | codetradeindia |

1,885,529 | Banquet Halls, Wedding Venues, Wedding Planning in India- Wedding Banquets | Wedding Banquet to Plan your wedding and make sure it is a memorable occasion Look over 1000 Indian... | 0 | 2024-06-12T10:06:33 | https://dev.to/asif1245/banquet-halls-wedding-venues-wedding-planning-in-india-wedding-banquets-1cpj | banquethalls, weddingvenue, weddingbanquet, partyhalls | [Wedding Banquet](https://weddingbanquets.in/) to Plan your wedding and make sure it is a memorable occasion Look over 1000 Indian Wedding venues for corporate events, weddings, birthday parties, and more

| asif1245 |

1,885,528 | Shashiraj Foundation: Premier Old Age Home Care | At Shashiraj Foundation, we offer the best care for the elderly, ensuring they live in comfort and... | 0 | 2024-06-12T10:06:10 | https://dev.to/bestoldagehomecare/shashiraj-foundation-premier-old-age-home-care-4m6e | [

At Shashiraj Foundation](https://www.oldagehomecaredelhi.com/), we offer the best care for the elderly, ensuring they live in comfort and dignity. Our compassionate staff provides personalized support, creating a warm and friendly atmosphere.Trust us to take care of your loved ones with the utmost respect and attenti... | bestoldagehomecare | |

1,885,527 | How to Add Clickable YouTube Thumbnail Image to README.md File on GitHub Repository | In this article, I'm gonna walk you through how to add a clickable YouTube thumbnail image to the... | 0 | 2024-06-12T10:06:06 | https://dev.to/ryoichihomma/how-to-add-clickable-youtube-thumbnail-image-to-readmemd-file-on-github-repository-m2n | github, githubissues, git, githubtips | In this article, I'm gonna walk you through how to add a clickable YouTube thumbnail image to the README.md file on GitHub so that users can directly go to watch the linked YouTube video once they click the image. After reading this article, you will be able to make your README.md file look like this.

, we've utilized Next.js and Firebase in over 30 projects, amassing a wealth of experience in handling secure and efficient data transactions in web applications. Today's discussion focuses on a critical aspect: ensuring secure connections when interacting with Firebase usi... | antoineit |

1,885,515 | Breast Implants Price Greenbrae | Dr. Kimberly Henry is one of the most professional and experienced plastic surgeons specializing in... | 0 | 2024-06-12T10:00:38 | https://dev.to/dr_kimberlyhenry_ed8396/breast-implants-price-greenbrae-1ckh | surgery, cosmetic | Dr. Kimberly Henry is one of the most professional and experienced plastic surgeons specializing in breast plastic surgery. Contact us at 415-792-1489 if you want to know how Breast Implants Price in Greenbrae!!

Visit us- https://www.drkimberlyhenry.com/greenbrae/ | dr_kimberlyhenry_ed8396 |

1,885,514 | Write It Down | Something I wish I had started doing much earlier in my career was writing about the things I was... | 0 | 2024-06-12T09:59:03 | https://dev.to/wraith/write-it-down-404h | career, writing | Something I wish I had started doing much earlier in my career was writing about the things I was learning and building. I wasn't always big into blogging or anything like that...that motivation didn't come until much later. But I've always been happy to teach others. And while it's very rewarding to help someone 1:1 o... | wraith |

1,885,513 | Achieving Quick Wins with Successful Salesforce Implementation — A Comprehensive Guide | Greytrix | Are you planning a Salesforce implementation for your company? Well, you came across this post at... | 0 | 2024-06-12T09:54:30 | https://dev.to/dinesh_m/achieving-quick-wins-with-successful-salesforce-implementation-a-comprehensive-guide-greytrix-4a5p | salesforce, implementation, greytrix |

Are you planning a [Salesforce implementation](https://www.greytrix.com/salesforce/implementation-consulting/) for your company?

Well, you came across this post at the right time!

Whereas [Salesforce](https://www.... | dinesh_m |

1,885,512 | The Importance of a Strong Governance Structure in Project Management | In project management, having a strong governance structure is essential for ensuring the success... | 0 | 2024-06-12T09:53:39 | https://dev.to/wednesdaysol/the-importance-of-a-strong-governance-structure-in-project-management-lnb | management |

In project management, having a strong governance structure is essential for ensuring the success of any initiative. A well-defined governance structure provides clarity, accountability, and transparency throughout ... | wednesdaysol |

1,885,511 | Exploring the Properties of Polyurethane PU Foam | screenshot-1717872428387.png Exploring the Wonders of Polyurethane PU Foam Polyurethane PU Foam is... | 0 | 2024-06-12T09:53:10 | https://dev.to/johnnie_heltonke_fbec2631/exploring-the-properties-of-polyurethane-pu-foam-2m6d | design | screenshot-1717872428387.png

Exploring the Wonders of Polyurethane PU Foam

Polyurethane PU Foam is a kind of foam that is widely used in many products. It is a special kind of material that has many advantages, such as durability, flexibility, and non-toxicity. We will explore the properties of Polyurethane PU Foam... | johnnie_heltonke_fbec2631 |

1,885,510 | How Zero-Knowledge Proofs Enhance Privacy and Security in Blockchain | Zero-knowledge proofs (ZKPs) are cryptographic protocols that enable one party (the prover) to prove... | 0 | 2024-06-12T09:51:00 | https://dev.to/bloxbytes/how-zero-knowledge-proofs-enhance-privacy-and-security-in-blockchain-1i07 | blockchain, zkp, web3 | Zero-knowledge proofs (ZKPs) are cryptographic protocols that enable one party (the prover) to prove to another party (the verifier) that a statement is true without revealing any additional information beyond the validity of the statement itself. In the context of blockchain, ZKPs offer powerful tools for enhancing pr... | bloxbytes |

1,885,509 | @property decorator in django models | In Django models, the @property decorator is used to define methods that behave like attributes,... | 0 | 2024-06-12T09:50:05 | https://dev.to/vincod/property-decorator-in-django-models-d54 | webdev, python, django, javascript | In Django models, the `@property` decorator is used to define methods that behave like attributes, allowing you to create custom model properties. These properties can encapsulate logic and calculations, making them available as read-only attributes on model instances. Here’s how to use the `@property` decorator in a D... | vincod |

1,885,432 | All About GraphQL | Today, let's dive into the world of GraphQL, a revolutionary technology that's transforming how we... | 27,645 | 2024-06-12T09:49:24 | https://dev.to/shafayeat/all-about-graphql-20og | webdev, programming, graphql, api | Today, let's dive into the world of GraphQL, a revolutionary technology that's transforming how we interact with APIs. Whether you're new to GraphQL or looking to deepen your understanding, this guide will walk you through the basics, benefits, and how to get started. So, grab your favorite drink (skip the alcohol, it'... | shafayeat |

1,885,508 | Tailwind CSS Refund Form Component Examples | Hey developers! In this article I want to show you a collection of refund form components coded with... | 14,781 | 2024-06-12T09:49:09 | https://flowbite.com/blocks/e-commerce/refund-forms/ | tailwindcss, flowbite, ecommerce, webdev | Hey developers!

In this article I want to show you a collection of [refund form components](https://flowbite.com/blocks/e-commerce/refund-forms/) coded with Tailwind CSS based on the Flowbite UI library that you can use in your e-commerce projects to receive refund requests and collect as much information as you can.

... | zoltanszogyenyi |

1,885,498 | Introducing Dolly 2.0: Unlocking the Full Potential of Open-Source Language Models | Introduction Databricks has unveiled a game-changer in the world of artificial... | 0 | 2024-06-12T09:46:43 | https://dev.to/novita_ai/introducing-dolly-20-unlocking-the-full-potential-of-open-source-language-models-4fp8 | llm, dolly | ## Introduction

Databricks has unveiled a game-changer in the world of artificial intelligence - Dolly 2.0, the first open-source, instruction-following large language model (LLM) available for commercial use. But what makes Dolly 2.0 so revolutionary, and how can organizations leverage its capabilities to drive innova... | novita_ai |

1,885,506 | What is the process for developing an Ethereum token? | *Introduction: * Creating an Ethereum token might seem complicated, but with the right guidance and... | 0 | 2024-06-12T09:45:43 | https://dev.to/elena_marie_dad5c9d5d5706/what-is-the-process-for-developing-an-ethereum-token-5116 | crypto, cryptotoken, ethereumtoken | **Introduction:

**

Creating an Ethereum token might seem complicated, but with the right guidance and tools, it's achievable. This guide will walk you through each step of the Ethereum token development process. It’s designed for developers interested in blockchain technology and entrepreneurs looking to launch a new ... | elena_marie_dad5c9d5d5706 |

1,885,505 | Qinghe Ronghe Rubber Products Co., Ltd. | The Versatility of PU Foaming in Modern Manufacturing Benefits of Creating Utilize Of PU Foaming PU... | 0 | 2024-06-12T09:45:05 | https://dev.to/johnnie_heltonke_fbec2631/qinghe-ronghe-rubber-products-co-ltd-4j33 | design | The Versatility of PU Foaming in Modern Manufacturing

Benefits of Creating Utilize Of PU Foaming

PU foaming is actually truly a kind or even type of foam that was rather prominent in modern production certainly there certainly many elements why producers choose utilize PU foaming over various other kinds of foam Very ... | johnnie_heltonke_fbec2631 |

1,885,504 | Foundation Clone Software : Launch a Successful NFT Marketplace | The Foundation Clone Software mirrors the booming trend observed by market analysts in the... | 0 | 2024-06-12T09:45:02 | https://dev.to/yamini_mini_af14aa2d6b9b1/foundation-clone-software-launch-a-successful-nft-marketplace-bei | ai, discuss, webdev, foundationclonesoftware | The [**Foundation Clone Software**](https://www.alphacodez.com/insights/foundation-clone-software-your-ultimate-guide/) mirrors the booming trend observed by market analysts in the Non-Fungible Tokens (NFTs) sector. With a total sales volume surging to $2.5 billion in the first half of 2021, compared to $13.7 million i... | yamini_mini_af14aa2d6b9b1 |

1,881,302 | Mockingbird Presets: Optimizing API Development Workflows | In our previous articles, we explored the fundamentals of Mockingbird and how to set up your mock... | 27,642 | 2024-06-12T09:45:00 | https://dev.to/ozkeisar/optimizing-api-development-workflows-with-mockingbird-presets-17hc | webdev, programming, productivity, tooling |

In our previous articles, we explored the [fundamentals of Mockingbird](https://dev.to/ozkeisar/mockingbird-new-tool-for-your-mock-environments-49j) and [how to set up your mock server](https://dev.to/ozkeisar/mockingbird-new-tool-for-your-mock-environments-49j). In this third installment of our Mockingbird series, we... | ozkeisar |

1,885,495 | where can i investors for AI Fashion Brand? | A post by Zan XX | 0 | 2024-06-12T09:37:55 | https://dev.to/zxxngod/where-can-i-investors-for-ai-fashion-brand-39ie | ai | zxxngod | |

1,885,503 | Peerstiquette: Good Manners for Remote Collaboration | Hola Mundo! You might not know this, but before becoming an iOS Developer, I worked... | 0 | 2024-06-12T09:44:48 | https://dev.to/silviaespanagil/peerstiquette-good-manners-for-remote-collaboration-23g9 | programming, productivity, career | #<h1> Hola Mundo! </h1>

<Enter>

You might not know this, but before becoming an iOS Developer, I worked and taught Communication and Marketing for about 12 years📆!

And why is this important, you may ask yourself. Well, in my course I loved to talk about Netiquette because well, being behind a screen, people sometim... | silviaespanagil |

1,885,502 | The Ultimate Guide to Marketing Director Email Lists: Maximizing Your Outreach | Introduction In today's competitive business landscape, reaching the right people with your marketing... | 0 | 2024-06-12T09:43:33 | https://dev.to/jane_carol_/the-ultimate-guide-to-marketing-director-email-lists-maximizing-your-outreach-32e3 | seo, marketing, emaillisting, database | **Introduction**

In today's competitive business landscape, reaching the right people with your marketing efforts is crucial. [Marketing Directors](

) play a pivotal role in shaping and executing marketing strategie... | jane_carol_ |

1,885,501 | Exploring Test Coverage Tools: Enhancing Software Quality Assurance | In the fast-paced world of software development, ensuring the reliability and stability of... | 0 | 2024-06-12T09:43:04 | https://dev.to/keploy/exploring-test-coverage-tools-enhancing-software-quality-assurance-hj5 | code, openapi, opendata, rust |

In the fast-paced world of software development, ensuring the reliability and stability of applications is a top priority. One of the key practices in achieving this goal is comprehensive testing, and [test coverage... | keploy |

1,885,500 | Desert Hot Springs Personal Injury Attorney | The Baum Law Firm is the best choice for you if you want the best assistance from a skilled and... | 0 | 2024-06-12T09:41:41 | https://dev.to/baumlaw_firm_f8d64582a0f0/desert-hot-springs-personal-injury-attorney-aae | accident, lawyer, attorney | The Baum Law Firm is the best choice for you if you want the best assistance from a skilled and qualified Desert Hot Springs Personal Injury Attorney. We provide everything you'll need for your claim, including trustworthy legal advice to guide you through the legal process.

Visit us- https://baumlawfirm.com/ | baumlaw_firm_f8d64582a0f0 |

1,882,984 | My Animal Mart (Part 2) - The data config problem. | Foreword My studio has its own data tool that uses CSV files converted into Scriptable... | 27,679 | 2024-06-12T09:41:14 | https://dev.to/longchau/my-animal-mart-part-2-the-data-config-problem-5f2e | gamedev, unity3d | ## Foreword

My studio has its own data tool that uses CSV files converted into Scriptable Objects. Some teams refuse to use this and create their own solutions, such as using text files, including them with the build, and reading the data at the first loading screen, among other methods. There is no unified solution fo... | longchau |

1,885,499 | Spiking Neural Networks | In this blog post, we will discuss the differences between spiking neural networks, and non-spiking... | 0 | 2024-06-12T09:40:00 | https://serpapi.com/blog/spiking-neural-networks/ | webdev, ai, machinelearning, spikingneuralnetworks |

In this blog post, we will discuss the differences between spiking neural networks, and non-spiking neural networks, potential use cases of these algorithms, and open source a simple example script to compare a simple SNN model to an ANN model.

At [SerpApi Blog](https://serpapi.com/blog), we discuss different topics ... | kagermanov27 |

1,853,634 | How I learned JavaScript | “Has anyone ever finished learning JavaScript?” I highly doubt any developer’s answer to that... | 0 | 2024-06-12T09:39:27 | https://medium.com/@muchaijoseph/how-i-learnt-javascript-841ae94ddb49 | javascript, beginners, programming |

“Has anyone ever finished learning JavaScript?” I highly doubt any developer’s answer to that question will ever be a profound YES.

_I for one question my understanding of JavaScript with every_ [_Codewars_](https://www.codewars.com) _kata I try to solve._

I got into web development after bouncing off multiple progr... | muchai_joseph |

1,885,497 | The Periodontists: Revolutionizing Gum Treatment | Introduction When it comes to oral health, most people think of dentists for their regular check-ups... | 0 | 2024-06-12T09:38:57 | https://dev.to/theperiodontists/the-periodontists-revolutionizing-gum-treatment-ho | Introduction

When it comes to oral health, most people think of dentists for their regular check-ups and treatments. However, there's a specialized field within dentistry that's dedicated to the health of your gums and supporting structures: periodontics. Periodontists are revolutionizing gum treatment with innovative ... | theperiodontists | |

1,885,496 | Unlock the Power of Big Data Analytics Services for Your Business | In today's data-driven world, businesses are inundated with vast amounts of data from various... | 0 | 2024-06-12T09:38:51 | https://dev.to/shreya123/unlock-the-power-of-big-data-analytics-services-for-your-business-33bj | bigdata, bigdataservices, bigdataanalytics, business | In today's data-driven world, businesses are inundated with vast amounts of data from various sources. Harnessing this data effectively can provide invaluable insights, streamline operations, and drive growth. [Big data analytics services](https://www.softwebsolutions.com/big-data-services.html) are the key to unlockin... | shreya123 |

1,885,467 | Fixing Laptop Time Issues: Solving Clock Problems with Dead CMOS and Battery | Step 1: Create a PowerShell Script Open Notepad or any text editor. Copy and paste the following... | 0 | 2024-06-12T09:38:42 | https://dev.to/edwin_gichira_92748e19bb6/fixing-laptop-time-issues-solving-clock-problems-with-dead-cmos-and-battery-8ef |

**<u>Step 1: Create a PowerShell Script

</u>**

1. Open Notepad or any text editor.

2. Copy and paste the following PowerShell script into the text editor:

```

# PowerShell script to sync system time with an internet time server

Try {

Write-Output "Updating system time from time.windows.com..."

# Start the W... | edwin_gichira_92748e19bb6 | |

1,885,494 | Top 19 Awesome on Github | Ehy Everybody 👋 It’s Antonio, CEO & Founder at Litlyx. I come back to you with a... | 0 | 2024-06-12T09:37:17 | https://dev.to/litlyx/top-20-awesome-on-github-3be2 | opensource, javascript, beginners | ## Ehy Everybody 👋

It’s **Antonio**, CEO & Founder at [Litlyx](https://litlyx.com).

I come back to you with a curated **Awesome List of resources** that you can find interesting.

Today Subject is...

```bash

Top 19 Awesome on Github

```

We are looking for collaborators! Share some **love** & leave a **star** on o... | litlyx |

1,885,493 | ONU and ONT: Key Components in Fiber to the X (FTTX) Deployments | ONU 1.png Fiber to the X (FTTX) is a modern system for delivering high-speed internet to homes and... | 0 | 2024-06-12T09:35:04 | https://dev.to/johnnie_heltonke_fbec2631/onu-and-ont-key-components-in-fiber-to-the-x-fttx-deployments-247l | design | ONU 1.png

Fiber to the X (FTTX) is a modern system for delivering high-speed internet to homes and businesses

It is made up of several key components, including the Optical Network Unit (ONU) and Optical Network Terminal (ONT)

We will explore the advantages, safety, innovation, use, and quality of ONU and ONT in ... | johnnie_heltonke_fbec2631 |

1,885,492 | Car Paint Fix Milwaukee, WI | Are you looking for one of the best car paint shops for a Car Paint Fix in Milwaukee, WI? If yes,... | 0 | 2024-06-12T09:34:31 | https://dev.to/govanis_autobody_9719c81/car-paint-fix-milwaukee-wi-gb7 | vehicle, repair, automotive | Are you looking for one of the best car paint shops for a Car Paint Fix in Milwaukee, WI? If yes, Govanis Auto Body is the best place to get your car painted in Wisconsin. We specialize in repairing car paint damage, and we will make your car look like new again.

Visit us- https://govanis-autobody.com/auto-paint.... | govanis_autobody_9719c81 |

1,885,491 | Maximize Efficiency with Oracle Cloud Quarterly Updates | Oracle Cloud ERP quarterly updates are released with new features and enhancements, boosting the... | 0 | 2024-06-12T09:33:20 | https://factaculous.com/maximize-efficiency-with-oracle-cloud-quarterly-updates/ | oracle, cloud, quarterly, updates |

Oracle Cloud ERP quarterly updates are released with new features and enhancements, boosting the overall performance of the software. Integrating them into your current infrastructure can be a challenging process. Or... | rohitbhandari102 |

1,885,490 | Empowering Education Through Interactive Edtech Apps | Educational technology (EdTech) apps have transformed the learning landscape by making education more... | 0 | 2024-06-12T09:33:06 | https://dev.to/saumya27/empowering-education-through-interactive-edtech-apps-14ff | edtech | Educational technology (EdTech) apps have transformed the learning landscape by making education more accessible, personalized, and engaging. Whether you aim to build a learning management system (LMS), a language learning app, or a platform for online courses, understanding the essential features and considerations is... | saumya27 |

1,885,486 | Percepções sobre análise dos reservatórios parte 1 | A partir da parte 1 da análise de reservatórios federais (se não leu e quiser dá uma lida acesse... | 0 | 2024-06-12T09:26:33 | https://dev.to/devsnorte/percepcoes-sobre-analise-dos-reservatorios-parte-1-3d6h | A partir da parte 1 da análise de reservatórios federais (se não leu e quiser dá uma lida acesse [aqui](https://dev.to/devsnorte/analise-das-reservas-federais-parte-1-2j6f)), fiquei curioso com o gráfico abaixo do porquê tem uma concentração dos anos 1970 e anos 2000 e busquei pesquisar o contexto histórico.

No matter the type of building or project, we have the experience and knowledge to deliver exceptional results every time. Customer satisfaction is incredibly important to us – which is why we strive to provide the highest standard of q... | elect_selectelectrical |

1,885,477 | Streamlined Property Transfers: Capital Conveyancing | Introduction Property transfers are often complex processes involving numerous legal considerations... | 0 | 2024-06-12T09:16:44 | https://dev.to/capitalconveyancing1/streamlined-property-transfers-capital-conveyancing-4iim | Introduction

Property transfers are often complex processes involving numerous legal considerations and paperwork. In such transactions, ensuring a smooth transfer of ownership is crucial to avoid disputes and delays. This is where Capital Conveyancing plays a vital role, streamlining the property transfer process for ... | capitalconveyancing1 | |

1,885,476 | Remote Conference Translation | At Interlangue Interpreting Inc., we provide Remote Conference Translation in all languages across a... | 0 | 2024-06-12T09:16:27 | https://dev.to/interlangue_interpreting/remote-conference-translation-1oc6 | translations, services | At Interlangue Interpreting Inc., we provide Remote Conference Translation in all languages across a diverse range of industries. Whether you require interpretation services for legal proceedings or business meetings, we have the expertise and experience to ensure seamless communication.

Visit us- http://interlang... | interlangue_interpreting |

1,885,474 | What is the ReactJs Component Lifecycle? | The React component lifecycle is the sequence of events that happens from the creation of a component... | 0 | 2024-06-12T09:15:58 | https://dev.to/mojahidulislam11/what-is-the-reactjs-component-lifecycle-264d | The React component lifecycle is the sequence of events that happens from the creation of a component to its deletion, including updates in between. This lifecycle consists of different phases: Initialization, Mounting, Updating, Unmounting, and Error Handling, each associated with specific lifecycle methods.

Mounting... | mojahidulislam11 | |

1,885,473 | Our Crazy Marketing Strategy | Goleko is my project management tool. It's the best in the world. I might be biased, but you can... | 0 | 2024-06-12T09:15:13 | https://dev.to/martinbaun/our-crazy-marketing-strategy-53i7 | productivity, design, softwaredevelopment, startup | Goleko is my project management tool. It's the best in the world. I might be biased, but you can check it out for free and see its simplistic beauty and magical functionality.

## Why stay on this article?

You will get inspiration for your marketing campaign.

Project management tools are very saturated in the market, ... | martinbaun |

1,885,471 | How to Level Up Your Django Game: A Comprehensive Guide | As a beginner in Django, it can be overwhelming to navigate through the fast-evolving tech industry... | 0 | 2024-06-12T09:14:13 | https://dev.to/buddhiraz/how-to-level-up-your-django-game-a-comprehensive-guide-4iom | django, python, webdev, career |

As a beginner in Django, it can be overwhelming to navigate through the fast-evolving tech industry and secure a job, especially when even intern positions are highly competitive. However, by continously upskilling yourself and gaining practical experience, you can significantly improve your Django expertise and incr... | buddhiraz |

1,885,472 | Which Is The Best For Blockchain Development? Hardhat or Remix IDE? | Hello everyone. I am fully experienced blockchain developer and nowadays I am in argue with the topic... | 0 | 2024-06-12T09:13:54 | https://dev.to/devmonster320/which-is-the-best-for-blockchain-development-hardhat-or-remix-ide-10da | solidity, blockchain, tooling | Hello everyone. I am fully experienced blockchain developer and nowadays I am in argue with the topic above. What is the best tool for blockchain development. It makes me tricky and I wrote this article talking about the characteristics of the most popular blockchain development env. You can do a comparative and know w... | devmonster320 |

1,885,693 | MGT People Picker control in SPFx | Proceeding with the appointments with the MGT (Microsoft Graph Toolkit) controls today I want to talk... | 0 | 2024-06-13T10:04:58 | https://iamguidozam.blog/2024/06/12/mgt-people-picker-control-in-spfx/ | mgt, spfx | ---

title: MGT People Picker control in SPFx

published: true

date: 2024-06-12 09:00:00 UTC

tags: MGT,SPFx

canonical_url: https://iamguidozam.blog/2024/06/12/mgt-people-picker-control-in-spfx/

---

Proceeding with the appointments with the MGT (Microsoft Graph Toolkit) controls today I want to talk about the **PeoplePic... | guidozam |

1,885,470 | Fasteners Supplier Wholesale Products: Meeting the Demands of Various Industries | Fasteners Supplier Wholesale Goods: rewarding what's needed of varied businesses We would not offer... | 0 | 2024-06-12T09:12:19 | https://dev.to/carol_edwardsjr_ed1975b44/fasteners-supplier-wholesale-products-meeting-the-demands-of-various-industries-3hj7 |

Fasteners Supplier Wholesale Goods: rewarding what's needed of varied businesses

We would not offer much thought to fasteners like bolts, screws, also nuts if we consider items that support the worldwide globe together. But minus them, products would split up, structures would collapse, in addition to strategies ... | carol_edwardsjr_ed1975b44 | |

1,885,466 | What are Generics Constraints in TypeScript ? Practical Examples | Generics constraints in TypeScript allow you to create flexible yet type-safe code. By restricting... | 0 | 2024-06-12T09:09:15 | https://dev.to/dimerbwimba/whats-generics-constraints-in-typescript-with-practical-examples-2pgh | typescript, javascript, nextjs, react |

Generics constraints in TypeScript allow you to create flexible yet type-safe code.

By restricting the types that can be used with generics, you can ensure your functions and classes work correctly across various scenarios.

In this guide, we'll dive into generics constraints, complete with code examples and visual... | dimerbwimba |

1,885,464 | Online Couples Counseling Carlsbad | Jennifer Semmes offers online couples counseling in Carlsbad. Through virtual sessions, Jennifer... | 0 | 2024-06-12T09:07:58 | https://dev.to/jennifer_semmes_b533ccce8/online-couples-counseling-carlsbad-4hg1 | counseling, marriage, therapy | Jennifer Semmes offers online couples counseling in Carlsbad. Through virtual sessions, Jennifer helps couples enhance communication, navigate conflicts, and strengthen their connection to build healthier relationships.

Visit us- https://jennifersemmes.com/

| jennifer_semmes_b533ccce8 |

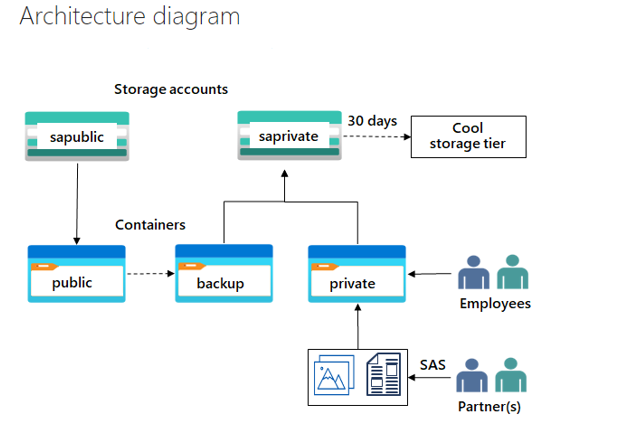

1,885,463 | Provide storage for the private website in Microsoft Azure | Skilling tasks • Create a storage account for the company private documents. • Configure redundancy... | 0 | 2024-06-12T09:07:45 | https://dev.to/atony07/provide-storage-for-the-private-website-in-microsoft-azure-5b28 |

Skilling tasks

• Create a storage account for the company private documents.

• Configure redundancy for the storage account.

• Configure a shared access signature so partners have restricted access to a file.

• Bac... | atony07 | |

1,885,456 | Write Once, Run Everywhere: Building Reusable Selenium Tests with POM | Introduction: Empowering Your Selenium Journey Embark on a transformative voyage through the realms... | 0 | 2024-06-12T08:59:19 | https://dev.to/mercy_juliet_c390cbe3fd55/write-once-run-everywhere-building-reusable-selenium-tests-with-pom-5a29 | selenium | Introduction: Empowering Your Selenium Journey

Embark on a transformative voyage through the realms of Selenium automation, as we uncover the nuances of two indispensable concepts: the Page Object Model (POM) and the Document Object Model (DOM). This definitive guide equips you with the knowledge and strategies needed ... | mercy_juliet_c390cbe3fd55 |

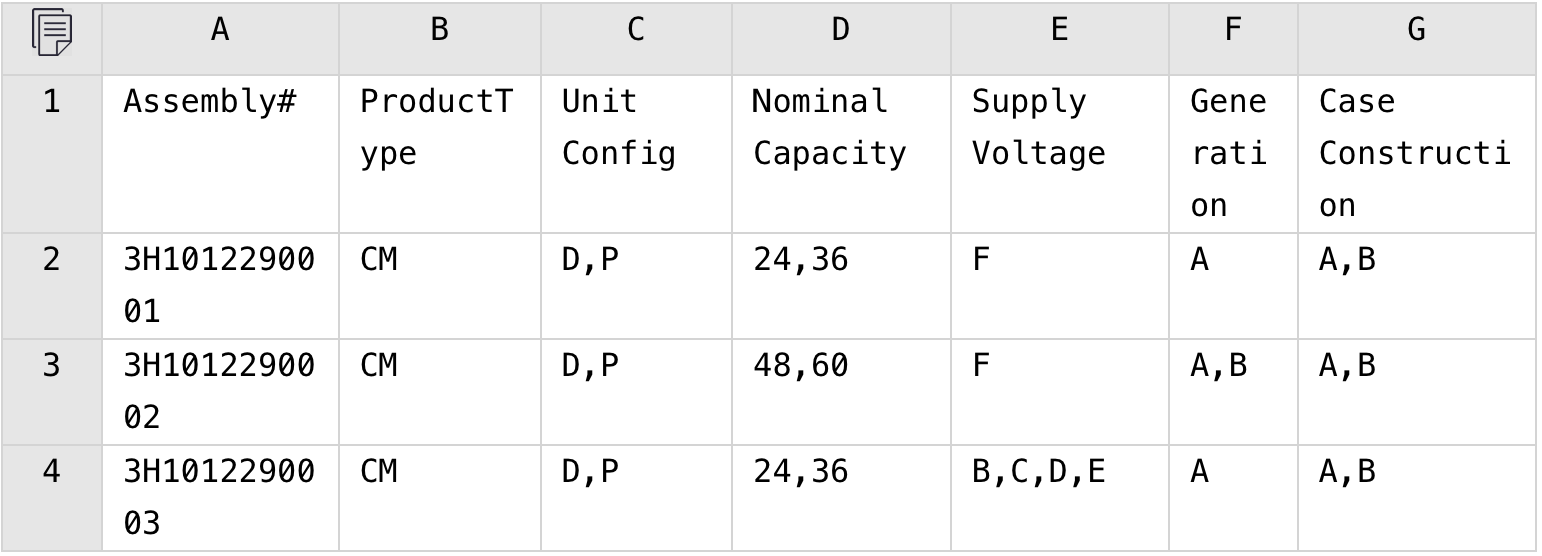

1,885,460 | In Excel, Expand All Combinations of Multiple Columns | Problem description & analysis: In the following Excel table, column A contains codes and the... | 0 | 2024-06-12T09:05:32 | https://dev.to/judith677/in-excel-expand-all-combinations-of-multiple-columns-lgh | beginners, programming, tutorial, productivity | **Problem description & analysis**:

In the following Excel table, column A contains codes and the other columns are grouping columns having different meanings and containing comma-separated values.

The computing goal: split... | judith677 |

1,885,321 | RabbitMQ vs Kaleidoscope: How the new broker achieves 12x message throughput (1100% speedup) | In today's data-driven world, a fast and efficient message broker is essential for building efficient... | 0 | 2024-06-12T09:03:21 | https://dev.to/gepa21/rabbitmq-vs-kaleidoscope-how-the-new-broker-achieves-12x-message-throughput-1100-speedup-5cgd | cloud, microservices, dotnet, backend | In today's data-driven world, a fast and efficient message broker is essential for building efficient and scalable applications. In this blog post, we'll delve into a comparison between RabbitMQ, a well-established message broker, and Kaleidoscope, a new high-performance broker from the [Phoesion Glow](https://glow.pho... | gepa21 |

1,885,459 | Rubber for Resilience: How Rubber Products Improve Safety | Rubber for Durability: How Rubber Items Enhance Security Rubber was around for countless years, it... | 0 | 2024-06-12T09:02:48 | https://dev.to/carol_edwardsjr_ed1975b44/rubber-for-resilience-how-rubber-products-improve-safety-4hcd | Rubber for Durability: How Rubber Items Enhance Security

Rubber was around for countless years, it is still among one of the absolute most products that are flexible the globe. It is utilized in all coming from tires towards hand wear covers towards lively spheres. However performed you understand that rubber items ... | carol_edwardsjr_ed1975b44 | |

1,885,458 | Study in the UK | Studying in the UK offers a world-class education, a diverse culture, and a vibrant student... | 0 | 2024-06-12T09:01:58 | https://dev.to/saibhavani_yaxis_346af9ea/study-in-the-uk-1cfb | Studying in the UK offers a world-class education, a diverse culture, and a vibrant student life.

Here is a guide to help you navigate the process of obtaining a[ study visa from the UK]( https://shorturl.at/IJJyq) and make the most of your educational journey.

Why Study in the UK?

Academic Excellence: Top universit... | saibhavani_yaxis_346af9ea | |

1,885,457 | How Quick Fix Urine Passes a Lab Test | Do you have a co-worker or a classmate who always passes their lab tests even though they are a... | 0 | 2024-06-12T09:01:02 | https://dev.to/rochloc_ajeve_50b731fb8db/how-quick-fix-urine-passes-a-lab-test-nml | Do you have a co-worker or a classmate who always passes their lab tests even though they are a recreational substance user? They found the secret. It is known as [Quick Fix](https://www.quickfixsynthetic.com/) synthetic urine.

![Image description](https://dev-to-uploads.s3.amazonaws.com/uploads/articles/842ei9tvwhya2... | rochloc | |

1,885,455 | Custom Silicone Creations: Tailoring Solutions to Your Needs | Custom Silicone Creations: Tailoring Solutions to Your Needs Silicone is the artificial material is... | 0 | 2024-06-12T08:57:42 | https://dev.to/carol_edwardsjr_ed1975b44/custom-silicone-creations-tailoring-solutions-to-your-needs-30lb | Custom Silicone Creations: Tailoring Solutions to Your Needs

Silicone is the artificial material is rubber-like is designed for its durability and flexibility. It offers applications and that can be numerous industries such as automotive, electronics, medical, and also foods.

Advantages of Silicone

Silicone has ma... | carol_edwardsjr_ed1975b44 |

Subsets and Splits

No community queries yet

The top public SQL queries from the community will appear here once available.