id int64 5 1.93M | title stringlengths 0 128 | description stringlengths 0 25.5k | collection_id int64 0 28.1k | published_timestamp timestamp[s] | canonical_url stringlengths 14 581 | tag_list stringlengths 0 120 | body_markdown stringlengths 0 716k | user_username stringlengths 2 30 |

|---|---|---|---|---|---|---|---|---|

1,885,913 | Usando Cilium no WSL | Criando um ambiente de teste do Cilium no WSL eBPF a base do Cilium O eBPF é... | 0 | 2024-06-12T15:54:44 | https://dev.to/cslemes/usando-cilium-no-wsl-a1 | ### Criando um ambiente de teste do Cilium no WSL

#### eBPF a base do Cilium

O eBPF é umas das tecnologias mais faladas nos últimos tempos na comunidade de tecnologia,

isso graças sua capacidade de estender as funções do kernel sem precisar alterar código do kernel ou carregar modulos. Com eBPF você escreve program... | cslemes | |

1,885,912 | Blurr Element - JavaScript & CSS | Two articles in a row, sebenarnya sudah lama hanya tinggal menulis ulang saja. Kali ini kita akan... | 0 | 2024-06-12T15:54:25 | https://dev.to/boibolang/blurr-element-javascript-css-2e48 | Two articles in a row, sebenarnya sudah lama hanya tinggal menulis ulang saja. Kali ini kita akan membuat blurr effect, semacam efek kabur yang dapat diaplikasikan pada menu untuk menampilkan fokus. Jadi idenya ketika mouse berada pada menu tertentu, menu yang lain akan nge-blur. Kita akan menerapkan blurr effect denga... | boibolang | |

1,885,911 | Buy verified cash app account | https://dmhelpshop.com/product/buy-verified-cash-app-account/ Buy verified cash app account Cash... | 0 | 2024-06-12T15:50:47 | https://dev.to/jesonredd/buy-verified-cash-app-account-53e | webdev, javascript, beginners, programming | ERROR: type should be string, got "https://dmhelpshop.com/product/buy-verified-cash-app-account/\n\n\n\n\nBuy verified cash app account\nCash app has emerged as a dominant force in the realm of mobile banking within the USA, offering unparalleled convenience for digital money transfers, deposits, and trading. As the foremost provider of fully verified cash app accounts, we take pride in our ability to deliver accounts with substantial limits. Bitcoin enablement, and an unmatched level of security.\n\nOur commitment to facilitating seamless transactions and enabling digital currency trades has garnered significant acclaim, as evidenced by the overwhelming response from our satisfied clientele. Those seeking buy verified cash app account with 100% legitimate documentation and unrestricted access need look no further. Get in touch with us promptly to acquire your verified cash app account and take advantage of all the benefits it has to offer.\n\nWhy dmhelpshop is the best place to buy USA cash app accounts?\nIt’s crucial to stay informed about any updates to the platform you’re using. If an update has been released, it’s important to explore alternative options. Contact the platform’s support team to inquire about the status of the cash app service.\n\nClearly communicate your requirements and inquire whether they can meet your needs and provide the buy verified cash app account promptly. If they assure you that they can fulfill your requirements within the specified timeframe, proceed with the verification process using the required documents.\n\nOur account verification process includes the submission of the following documents: [List of specific documents required for verification].\n\nGenuine and activated email verified\nRegistered phone number (USA)\nSelfie verified\nSSN (social security number) verified\nDriving license\nBTC enable or not enable (BTC enable best)\n100% replacement guaranteed\n100% customer satisfaction\nWhen it comes to staying on top of the latest platform updates, it’s crucial to act fast and ensure you’re positioned in the best possible place. If you’re considering a switch, reaching out to the right contacts and inquiring about the status of the buy verified cash app account service update is essential.\n\nClearly communicate your requirements and gauge their commitment to fulfilling them promptly. Once you’ve confirmed their capability, proceed with the verification process using genuine and activated email verification, a registered USA phone number, selfie verification, social security number (SSN) verification, and a valid driving license.\n\nAdditionally, assessing whether BTC enablement is available is advisable, buy verified cash app account, with a preference for this feature. It’s important to note that a 100% replacement guarantee and ensuring 100% customer satisfaction are essential benchmarks in this process.\n\nHow to use the Cash Card to make purchases?\nTo activate your Cash Card, open the Cash App on your compatible device, locate the Cash Card icon at the bottom of the screen, and tap on it. Then select “Activate Cash Card” and proceed to scan the QR code on your card. Alternatively, you can manually enter the CVV and expiration date. How To Buy Verified Cash App Accounts.\n\nAfter submitting your information, including your registered number, expiration date, and CVV code, you can start making payments by conveniently tapping your card on a contactless-enabled payment terminal. Consider obtaining a buy verified Cash App account for seamless transactions, especially for business purposes. Buy verified cash app account.\n\nWhy we suggest to unchanged the Cash App account username?\nTo activate your Cash Card, open the Cash App on your compatible device, locate the Cash Card icon at the bottom of the screen, and tap on it. Then select “Activate Cash Card” and proceed to scan the QR code on your card.\n\nAlternatively, you can manually enter the CVV and expiration date. After submitting your information, including your registered number, expiration date, and CVV code, you can start making payments by conveniently tapping your card on a contactless-enabled payment terminal. Consider obtaining a verified Cash App account for seamless transactions, especially for business purposes. Buy verified cash app account. Purchase Verified Cash App Accounts.\n\nSelecting a username in an app usually comes with the understanding that it cannot be easily changed within the app’s settings or options. This deliberate control is in place to uphold consistency and minimize potential user confusion, especially for those who have added you as a contact using your username. In addition, purchasing a Cash App account with verified genuine documents already linked to the account ensures a reliable and secure transaction experience.\n\n \n\nBuy verified cash app accounts quickly and easily for all your financial needs.\nAs the user base of our platform continues to grow, the significance of verified accounts cannot be overstated for both businesses and individuals seeking to leverage its full range of features. How To Buy Verified Cash App Accounts.\n\nFor entrepreneurs, freelancers, and investors alike, a verified cash app account opens the door to sending, receiving, and withdrawing substantial amounts of money, offering unparalleled convenience and flexibility. Whether you’re conducting business or managing personal finances, the benefits of a verified account are clear, providing a secure and efficient means to transact and manage funds at scale.\n\nWhen it comes to the rising trend of purchasing buy verified cash app account, it’s crucial to tread carefully and opt for reputable providers to steer clear of potential scams and fraudulent activities. How To Buy Verified Cash App Accounts. With numerous providers offering this service at competitive prices, it is paramount to be diligent in selecting a trusted source.\n\nThis article serves as a comprehensive guide, equipping you with the essential knowledge to navigate the process of procuring buy verified cash app account, ensuring that you are well-informed before making any purchasing decisions. Understanding the fundamentals is key, and by following this guide, you’ll be empowered to make informed choices with confidence.\n\n \n\nIs it safe to buy Cash App Verified Accounts?\nCash App, being a prominent peer-to-peer mobile payment application, is widely utilized by numerous individuals for their transactions. However, concerns regarding its safety have arisen, particularly pertaining to the purchase of “verified” accounts through Cash App. This raises questions about the security of Cash App’s verification process.\n\nUnfortunately, the answer is negative, as buying such verified accounts entails risks and is deemed unsafe. Therefore, it is crucial for everyone to exercise caution and be aware of potential vulnerabilities when using Cash App. How To Buy Verified Cash App Accounts.\n\nCash App has emerged as a widely embraced platform for purchasing Instagram Followers using PayPal, catering to a diverse range of users. This convenient application permits individuals possessing a PayPal account to procure authenticated Instagram Followers.\n\nLeveraging the Cash App, users can either opt to procure followers for a predetermined quantity or exercise patience until their account accrues a substantial follower count, subsequently making a bulk purchase. Although the Cash App provides this service, it is crucial to discern between genuine and counterfeit items. If you find yourself in search of counterfeit products such as a Rolex, a Louis Vuitton item, or a Louis Vuitton bag, there are two viable approaches to consider.\n\n \n\nWhy you need to buy verified Cash App accounts personal or business?\nThe Cash App is a versatile digital wallet enabling seamless money transfers among its users. However, it presents a concern as it facilitates transfer to both verified and unverified individuals.\n\nTo address this, the Cash App offers the option to become a verified user, which unlocks a range of advantages. Verified users can enjoy perks such as express payment, immediate issue resolution, and a generous interest-free period of up to two weeks. With its user-friendly interface and enhanced capabilities, the Cash App caters to the needs of a wide audience, ensuring convenient and secure digital transactions for all.\n\nIf you’re a business person seeking additional funds to expand your business, we have a solution for you. Payroll management can often be a challenging task, regardless of whether you’re a small family-run business or a large corporation. How To Buy Verified Cash App Accounts.\n\nImproper payment practices can lead to potential issues with your employees, as they could report you to the government. However, worry not, as we offer a reliable and efficient way to ensure proper payroll management, avoiding any potential complications. Our services provide you with the funds you need without compromising your reputation or legal standing. With our assistance, you can focus on growing your business while maintaining a professional and compliant relationship with your employees. Purchase Verified Cash App Accounts.\n\nA Cash App has emerged as a leading peer-to-peer payment method, catering to a wide range of users. With its seamless functionality, individuals can effortlessly send and receive cash in a matter of seconds, bypassing the need for a traditional bank account or social security number. Buy verified cash app account.\n\nThis accessibility makes it particularly appealing to millennials, addressing a common challenge they face in accessing physical currency. As a result, ACash App has established itself as a preferred choice among diverse audiences, enabling swift and hassle-free transactions for everyone. Purchase Verified Cash App Accounts.\n\n \n\nHow to verify Cash App accounts\nTo ensure the verification of your Cash App account, it is essential to securely store all your required documents in your account. This process includes accurately supplying your date of birth and verifying the US or UK phone number linked to your Cash App account.\n\nAs part of the verification process, you will be asked to submit accurate personal details such as your date of birth, the last four digits of your SSN, and your email address. If additional information is requested by the Cash App community to validate your account, be prepared to provide it promptly. Upon successful verification, you will gain full access to managing your account balance, as well as sending and receiving funds seamlessly. Buy verified cash app account.\n\n \n\nHow cash used for international transaction?\nExperience the seamless convenience of this innovative platform that simplifies money transfers to the level of sending a text message. It effortlessly connects users within the familiar confines of their respective currency regions, primarily in the United States and the United Kingdom.\n\nNo matter if you’re a freelancer seeking to diversify your clientele or a small business eager to enhance market presence, this solution caters to your financial needs efficiently and securely. Embrace a world of unlimited possibilities while staying connected to your currency domain. Buy verified cash app account.\n\nUnderstanding the currency capabilities of your selected payment application is essential in today’s digital landscape, where versatile financial tools are increasingly sought after. In this era of rapid technological advancements, being well-informed about platforms such as Cash App is crucial.\n\nAs we progress into the digital age, the significance of keeping abreast of such services becomes more pronounced, emphasizing the necessity of staying updated with the evolving financial trends and options available. Buy verified cash app account.\n\nOffers and advantage to buy cash app accounts cheap?\nWith Cash App, the possibilities are endless, offering numerous advantages in online marketing, cryptocurrency trading, and mobile banking while ensuring high security. As a top creator of Cash App accounts, our team possesses unparalleled expertise in navigating the platform.\n\nWe deliver accounts with maximum security and unwavering loyalty at competitive prices unmatched by other agencies. Rest assured, you can trust our services without hesitation, as we prioritize your peace of mind and satisfaction above all else.\n\nEnhance your business operations effortlessly by utilizing the Cash App e-wallet for seamless payment processing, money transfers, and various other essential tasks. Amidst a myriad of transaction platforms in existence today, the Cash App e-wallet stands out as a premier choice, offering users a multitude of functions to streamline their financial activities effectively. Buy verified cash app account.\n\nTrustbizs.com stands by the Cash App’s superiority and recommends acquiring your Cash App accounts from this trusted source to optimize your business potential.\n\nHow Customizable are the Payment Options on Cash App for Businesses?\nDiscover the flexible payment options available to businesses on Cash App, enabling a range of customization features to streamline transactions. Business users have the ability to adjust transaction amounts, incorporate tipping options, and leverage robust reporting tools for enhanced financial management.\n\nExplore trustbizs.com to acquire verified Cash App accounts with LD backup at a competitive price, ensuring a secure and efficient payment solution for your business needs. Buy verified cash app account.\n\nDiscover Cash App, an innovative platform ideal for small business owners and entrepreneurs aiming to simplify their financial operations. With its intuitive interface, Cash App empowers businesses to seamlessly receive payments and effectively oversee their finances. Emphasizing customization, this app accommodates a variety of business requirements and preferences, making it a versatile tool for all.\n\nWhere To Buy Verified Cash App Accounts\nWhen considering purchasing a verified Cash App account, it is imperative to carefully scrutinize the seller’s pricing and payment methods. Look for pricing that aligns with the market value, ensuring transparency and legitimacy. Buy verified cash app account.\n\nEqually important is the need to opt for sellers who provide secure payment channels to safeguard your financial data. Trust your intuition; skepticism towards deals that appear overly advantageous or sellers who raise red flags is warranted. It is always wise to prioritize caution and explore alternative avenues if uncertainties arise.\n\nThe Importance Of Verified Cash App Accounts\nIn today’s digital age, the significance of verified Cash App accounts cannot be overstated, as they serve as a cornerstone for secure and trustworthy online transactions.\n\nBy acquiring verified Cash App accounts, users not only establish credibility but also instill the confidence required to participate in financial endeavors with peace of mind, thus solidifying its status as an indispensable asset for individuals navigating the digital marketplace.\n\nWhen considering purchasing a verified Cash App account, it is imperative to carefully scrutinize the seller’s pricing and payment methods. Look for pricing that aligns with the market value, ensuring transparency and legitimacy. Buy verified cash app account.\n\nEqually important is the need to opt for sellers who provide secure payment channels to safeguard your financial data. Trust your intuition; skepticism towards deals that appear overly advantageous or sellers who raise red flags is warranted. It is always wise to prioritize caution and explore alternative avenues if uncertainties arise.\n\nConclusion\nEnhance your online financial transactions with verified Cash App accounts, a secure and convenient option for all individuals. By purchasing these accounts, you can access exclusive features, benefit from higher transaction limits, and enjoy enhanced protection against fraudulent activities. Streamline your financial interactions and experience peace of mind knowing your transactions are secure and efficient with verified Cash App accounts.\n\nChoose a trusted provider when acquiring accounts to guarantee legitimacy and reliability. In an era where Cash App is increasingly favored for financial transactions, possessing a verified account offers users peace of mind and ease in managing their finances. Make informed decisions to safeguard your financial assets and streamline your personal transactions effectively.\n\nContact Us / 24 Hours Reply\nTelegram:dmhelpshop\nWhatsApp: +1 (980) 277-2786\nSkype:dmhelpshop\nEmail:dmhelpshop@gmail.com\n\n" | jesonredd |

1,885,910 | What Is The Procedure To Buy Cannabis From A Dispensary? | Navigating the legal framework surrounding cannabis is crucial before making a purchase. Each region... | 0 | 2024-06-12T15:49:13 | https://dev.to/thomasanderson/what-is-the-procedure-to-buy-cannabis-from-a-dispensary-408f | weed, cannabis | Navigating the legal framework surrounding cannabis is crucial before making a purchase. Each region has its own set of regulations and requirements governing the sale and consumption of cannabis products. Ensuring that you're aware of these regulations helps in making informed decisions and staying compliant with the ... | thomasanderson |

1,885,909 | Understand the Basics of Workday Test Automation | Understand the Basics of Workday Test Automation Many businesses nowadays rely on enterprise... | 0 | 2024-06-12T15:47:57 | https://nandbox.com/workday-test-automation-efficiency-strategies/ | workday, test, automation |

**Understand the Basics of Workday Test Automation**

Many businesses nowadays rely on enterprise resource planning (ERP) systems. They help them manage their operations more efficiently. One popular ERP system is c... | rohitbhandari102 |

1,794,195 | Tackling the Cloud Resume Challenge | Introduction In this article, I give an overview of the steps and challenges I underwent... | 0 | 2024-06-12T15:47:47 | https://dev.to/chxnedu/tackling-the-cloud-resume-challenge-ejo | cloud, devops, iac, aws | ## Introduction

In this article, I give an overview of the steps and challenges I underwent to complete the Cloud Resume Challenge.

After learning much about AWS and various DevOps tools, I decided it was time to build real projects so I started searching for good projects to implement. While searching, I came across t... | chxnedu |

1,885,908 | Javascript expression execution order | JavaScript always evaluates expressions in strictly left-to-right order. In general, JavaScript... | 0 | 2024-06-12T15:46:24 | https://dev.to/palchandu_dev/javascript-expression-execution-order-4fan | javascript, javascriptexpression, frontend | JavaScript always evaluates expressions in strictly left-to-right order.

In general, JavaScript expressions are evaluated in the following order:

- Parentheses

- Exponents

- Multiplication and division

- Addition and subtraction

- Relational operators

- Equality operators

- Logical AND

- Logical OR

It is important t... | palchandu_dev |

1,885,120 | LeetCode Day6 HashTable Part2 | LeetCode No.454 4Sum 2 Given four integer arrays nums1, nums2, nums3, and nums4 all of... | 0 | 2024-06-12T15:45:12 | https://dev.to/flame_chan_llll/leetcode-day6-hashtable-part2-1ai6 | algorithms, java, leetcode | ## LeetCode No.454 4Sum 2

Given four integer arrays nums1, nums2, nums3, and nums4 all of length n, return the number of tuples (i, j, k, l) such that:

0 <= i, j, k, l < n

nums1[i] + nums2[j] + nums3[k] + nums4[l] == 0

Example 1:

Input: nums1 = [1,2], nums2 = [-2,-1], nums3 = [-1,2], nums4 = [0,2]

Output: 2

Expl... | flame_chan_llll |

1,885,906 | Learn Docker CP Command for Effective File Management | Docker remains at the forefront of container technology, offering developers and operations teams... | 0 | 2024-06-12T15:44:57 | https://www.heyvaldemar.com/learn-docker-cp-command-effective-file-management/ | docker, beginners, devops, learning | Docker remains at the forefront of container technology, offering developers and operations teams alike a robust platform for managing containerized applications. Among the plethora of tools Docker provides, the **`docker cp`** command is particularly invaluable for file operations between the host and containers. This... | heyvaldemar |

1,885,905 | Nootropic Supplement Industry Segmentation, Strategic analysis, Latest Innovations and Growth by 2033 | Nootropic Supplement Market Analysis by Natural and Synthetic Type, 2023 to 2033 As per the recent... | 0 | 2024-06-12T15:43:17 | https://dev.to/anshuma_roy_94915307ed59b/nootropic-supplement-industry-segmentation-strategic-analysis-latest-innovations-and-growth-by-2033-1068 | research | Nootropic Supplement Market Analysis by Natural and Synthetic Type, 2023 to 2033

As per the recent research report by Future Market Insights (FMI), a research and competitive intelligence provider, the [nootropic supplement market](https://www.futuremarketinsights.com/reports/nootropic-supplement-market ) is estimated ... | anshuma_roy_94915307ed59b |

1,885,896 | Understanding JavaScript Array Methods: forEach, map, filter, reduce, and find | Introduction JavaScript is a versatile language used for creating dynamic and interactive... | 0 | 2024-06-12T15:38:54 | https://dev.to/wafa_bergaoui/understanding-javascript-array-methods-foreach-map-filter-reduce-and-find-2o4f | javascript, development, typescript, webdev | ## **Introduction**

JavaScript is a versatile language used for creating dynamic and interactive web applications. One of its powerful features is the array methods that allow developers to perform a variety of operations on arrays. In this article, we will explore five essential array methods: **forEach**, **map**, **... | wafa_bergaoui |

1,885,903 | Announcing Micro Frontends Conference 2024 | We are excited to announce our upcoming conference for the Micro Frontends community, taking place on... | 0 | 2024-06-12T15:37:29 | https://dev.to/smapiot/announcing-micro-frontends-conference-2024-15lg | webdev, javascript, news, community | We are excited to announce our upcoming conference for the **Micro Frontends** community, taking place on *June 17th, 2024*. This free event is open to anyone interested in the latest developments in this exciting field.

As one of the most anticipated virtual events of the year, we've secured an impressive lineup of e... | florianrappl |

1,885,899 | From Zero to MindMaps... Without Writing a Single Line of Code? 🤯 | The code write by our AI overlords I'll admit it – I’m a lazy programmer at heart. So when I saw... | 0 | 2024-06-12T15:35:10 | https://dev.to/red_54/from-zero-to-mindmaps-without-writing-a-single-line-of-code-32hl | python, ai, beginners, api |

[The code write by our AI overlords](https://github.com/Red-54/MindMap)

I'll admit it – I’m a lazy programmer at heart. So when I saw that viral video where some genius used OpenAI to whip up an app without coding, my inner sloth rejoiced. Could I pull off the same magic with Google’s Gemini and avoid the drudgery o... | red_54 |

1,885,901 | Tips for Using Drag and Drop Interfaces Effectively | Project:- 8/500 Drag and Drop Interface project. Description The Drag and Drop... | 27,575 | 2024-06-12T15:34:20 | https://raajaryan.tech/tips-for-using-drag-and-drop-interfaces-effectively | javascript, beginners, opensource, tutorial | ### Project:- 8/500 Drag and Drop Interface project.

## Description

The Drag and Drop Interface project is designed to provide a user-friendly way to move elements within a web page. This interface can be used in various applications, such as rearranging items in a list, organizing a dashboard, or creating an interac... | raajaryan |

1,885,900 | How to Create a blob storage container with anonymous read access on Azure | Azure Blob Storage with Anonymous Access Azure Blob Storage allows you to store large... | 0 | 2024-06-12T15:31:48 | https://dev.to/ajayi/how-to-create-a-blob-storage-container-with-anonymous-read-access-on-azure-51d5 | tutorial, cloud, azure, beginners | ##Azure Blob Storage with Anonymous Access

Azure Blob Storage allows you to store large amounts of unstructured data. You can configure it to allow anonymous access, enabling users to read data without authentication.

Steps to create a blob storage with anonymous read access

Step 1

In the storage account created in th... | ajayi |

1,885,898 | Answer: Remove tracking branches no longer on remote | answer re: Remove tracking branches no... | 0 | 2024-06-12T15:29:01 | https://dev.to/sahin52/answer-remove-tracking-branches-no-longer-on-remote-jfi | {% stackoverflow 49047017 %} | sahin52 | |

1,885,895 | Getting Started with Machine Learning | Hey there! Machine learning (ML) can seem intimidating at first, but breaking it down into... | 0 | 2024-06-12T15:21:15 | https://dev.to/vidyarathna/getting-started-with-machine-learning-53el | machinelearning, python, beginners, machinelearningbeginners | Hey there!

Machine learning (ML) can seem intimidating at first, but breaking it down into manageable steps can make it more approachable. Here's a practical introduction to help you dive into the world of ML. We'll walk through understanding the basics, setting up your environment, and writing your first piece of cod... | vidyarathna |

1,885,877 | How to Identify Geetest V4 | By using CapSolver Extension | What is Geetest V4? GeeTest V4, also known as GeeTest Adaptive CAPTCHA, is the latest... | 0 | 2024-06-12T15:19:48 | https://dev.to/retruw/how-to-identify-geetest-v4-by-using-capsolver-extension-gbm |

# What is Geetest V4?

GeeTest V4, also known as GeeTest Adaptive CAPTCHA, is the latest iteration of GeeTest's CAPTCHA technology, designed to differentiate between human users and bots. This version incorporates... | retruw | |

1,885,876 | 75. Sort Colors | 75. Sort Colors Medium Given an array nums with n objects colored red, white, or blue, sort them... | 27,523 | 2024-06-12T15:18:04 | https://dev.to/mdarifulhaque/75-sort-colors-2hjp | php, leetcode, algorithms, programming | 75\. Sort Colors

Medium

Given an array `nums` with `n` objects colored red, white, or blue, sort them [in-place](https://en.wikipedia.org/wiki/In-place_algorithm) so that objects of the same color are adjacent, with the colors in the order red, white, and blue.

We will use the integers `0`, `1`, and `2` to represent... | mdarifulhaque |

1,869,537 | Posted my first video on YouTube | Need your advice | Hello guys Well, the "first" of anything would always be the best, right? And today marks the... | 0 | 2024-06-12T15:10:31 | https://dev.to/tanujav/posted-my-first-video-on-youtube-need-your-advice-49lj | beginners, discuss, community | Hello guys

Well, the "first" of anything would always be the best, right?

And today marks the beginning of the YouTube journey for me. I've always wanted to be a YouTuber. So, today is an exceptional day for me and I want to share this with you, my friend (reader).

I don't know much about editing but will surely le... | tanujav |

1,885,875 | Intermediate Node.js Projects | Intermediate Node.js Projects Node.js is a powerful runtime environment that allows you to... | 0 | 2024-06-12T15:10:03 | https://dev.to/romulogatto/intermediate-nodejs-projects-377j | # Intermediate Node.js Projects

Node.js is a powerful runtime environment that allows you to build scalable and efficient server-side applications. If you have already mastered the basics of Node.js and are looking to level up your skills, it's time to tackle some intermediate projects.

In this article, we will explo... | romulogatto | |

1,885,874 | Artificial Intelligence is not a feature | Since Chat GPT came out in late 2022, everybody has been talking about Artificial Intelligence. It... | 0 | 2024-06-12T15:07:25 | https://dev.to/cocodelacueva/artificial-intelligence-is-not-a-feature-4ei5 | webdev, aws, tutorial | Since Chat GPT came out in late 2022, everybody has been talking about Artificial Intelligence. It seems like everything is AI, but it is not.

### What do I mean?

I know Artificial Intelligence has many benefits; it can help with many repetitive tasks and does it faster; and it is letting a lot of people out of their... | cocodelacueva |

1,885,856 | Building An Twitter Clone With React and Tailwind How' will it look | A post by Alishan Rahil | 0 | 2024-06-12T14:26:29 | https://dev.to/alishanrahil/building-an-twitter-clone-with-react-and-tailwind-how-will-it-look-45d2 | alishanrahil | ||

1,885,873 | Keeping Your Cloud Spending in Check: Cost Optimization with AWS Cost Explorer and Budgets 💰 | Keeping Your Cloud Spending in Check: Cost Optimization with AWS Cost Explorer and Budgets... | 0 | 2024-06-12T15:05:04 | https://dev.to/virajlakshitha/keeping-your-cloud-spending-in-check-cost-optimization-with-aws-cost-explorer-and-budgets-391k |

# Keeping Your Cloud Spending in Check: Cost Optimization with AWS Cost Explorer and Budgets 💰

As organizations increasingly embrace cloud computing, managing cloud expenditures becomes paramount. Uncontrolled cloud costs can quick... | virajlakshitha | |

1,885,872 | Ethereum (ETH) Takes a Hit Amid Mixed Updates: Analysis | Ethereum products see $69 million net inflows, marking best result since March. Analysing... | 0 | 2024-06-12T15:04:40 | https://dev.to/endeo/ethereum-eth-takes-a-hit-amid-mixed-updates-analysis-3fgi | webdev, javascript, web3, blockchain | #### Ethereum products see $69 million net inflows, marking best result since March. Analysing why this is not a silver lining for Ether.

While Ethereum protocol boasts of promising updates, ETH keeps struggling below $3,700. Sharp downtick in social metrics adds advantage for the bears, yet fundamentals spur optimis... | endeo |

1,885,871 | Artificial Intelligence is not a feature. | Since Chat GPT came out in late 2022, everybody has been talking about Artificial Intelligence. It... | 0 | 2024-06-12T15:03:42 | https://dev.to/cocodelacueva/how-to-create-a-cloud-server-for-many-projects-in-aws-3jbc | webdev, aws, tutorial | Since Chat GPT came out in late 2022, everybody has been talking about Artificial Intelligence. It seems like everything is AI, but it is not.

What do I mean?

I know Artificial Intelligence has many benefits; it can help with many repetitive tasks and does it faster; and it is letting a lot of people out of their job... | cocodelacueva |

1,883,373 | Unistyles vs. Tamagui for cross-platform React Native styles | Written by Popoola Temitope✏️ Creating a responsive application plays an important role in providing... | 0 | 2024-06-12T15:02:05 | https://blog.logrocket.com/unistyles-vs-tamagui-cross-platform-react-native-styles | reactnative, mobile | **Written by [Popoola Temitope](https://blog.logrocket.com/author/popoolatemitope/)✏️**

Creating a responsive application plays an important role in providing a consistent user interface that dynamically adjusts and displays properly on different devices. A cross-platform responsive UI enhances UX by ensuring consiste... | leemeganj |

1,883,314 | OOPs Concept: getitem, setitem & delitem in Python | Python has numerous collections of dunder methods(which start with double underscores and end with... | 0 | 2024-06-12T15:01:00 | https://geekpython.in/implement-getitem-setitem-and-delitem-in-python | oop, objectorientedprogramming, python | Python has numerous collections of dunder methods(which start with double underscores and end with double underscores) to perform various tasks. The most commonly used dunder method is `__init__` which is used in Python classes to create and initialize objects.

In this article, we'll see the usage and implementation o... | sachingeek |

1,885,868 | Discover Endless Fun with Dude Theft Wars Mod APK | Experience the ultimate open-world adventure with Dude Theft Wars Mod APK! This enhanced version of... | 0 | 2024-06-12T15:00:01 | https://dev.to/josh_root_69c3acbff629495/discover-endless-fun-with-dude-theft-wars-mod-apk-5fm7 |

Experience the ultimate open-world adventure with Dude Theft Wars Mod APK! This enhanced version of the popular sandbox game takes your gameplay to new heights with unlimited money, weapons, and unlocked features. E... | josh_root_69c3acbff629495 | |

1,883,087 | Scaling Sidecars to Zero in Kubernetes | The sidecar pattern in Kubernetes describes a single pod containing a container in which a main app... | 0 | 2024-06-12T15:00:00 | https://www.fermyon.com/blog/scaling-sidecars-to-zero-in-kubernetes | kubernetes, webassembly, cloud, rust | The sidecar pattern in Kubernetes describes a single pod containing a container in which a main app sits. A helper container (the sidecar) is deployed alongside a main app container within the same pod. This pattern allows each container to focus on a single aspect of the overall functionality, improving the maintainab... | technosophos |

1,885,867 | Engro Construction Estimating Service | Engro Construction Estimating Service likely refers to a professional service that provides cost... | 0 | 2024-06-12T14:58:54 | https://dev.to/engroestimating/engro-construction-estimating-service-5a3o | construction, estimating, constructionestimator | Engro [Construction Estimating Service](https://engroestimating.us) likely refers to a professional service that provides cost estimation and budgeting for construction projects. This type of service is critical in the planning and execution phases of construction, helping stakeholders understand potential costs and al... | engroestimating |

1,885,864 | Getting an error while importing Langchain Packages | I want to create a RAG model for the question answer purpose. I have written the code but Langchain... | 0 | 2024-06-12T14:41:46 | https://dev.to/urvesh/getting-an-error-while-importing-langchain-packages-o09 | help, llm, nlp, rag | I want to create a RAG model for the question answer purpose. I have written the code but Langchain packages are giving an error. The part of the code which gives the error is as below:

`from langchain.text_splitter import RecursiveCharacterTextSplitter

from langchain_community.document_loaders import PyPDFLoader

from... | urvesh |

1,885,863 | Content structures: A guide to Content Modeling basics | Have you ever played The Sims? If you have, you've used content modeling without even realizing it.... | 0 | 2024-06-12T14:40:25 | https://dev.to/momciloo/content-structures-a-guide-to-content-modeling-basics-20hl | Have you ever played The Sims? If you have, you've used content modeling without even realizing it. In The Sims, you create characters, build houses, and define relationships. Each of these elements has its attributes and rules. Knowing those rules and some hacks (motherlode is my fav) you can build a house or even a w... | momciloo | |

1,885,862 | Formas simples de escrever mensagens de git | https://www.conventionalcommits.org/en/v1.0.0/ | 0 | 2024-06-12T14:39:50 | https://dev.to/raulbattistini/formas-simples-de-escrever-mensagens-de-git-29do | https://www.conventionalcommits.org/en/v1.0.0/ | raulbattistini | |

1,884,252 | How to Setup Jest on Typescript Monorepo Projects | TL;DR When I started making open source libraries, I didn't know much about unit testing... | 0 | 2024-06-12T14:38:30 | https://dev.to/mikhaelesa/how-to-setup-jest-on-typescript-monorepo-projects-o4d | testing, typescript, tutorial, javascript |

## TL;DR

When I started making open source libraries, I didn't know much about unit testing in terms of writing the tests, and setti... | mikhaelesa |

1,885,860 | Maximizing Software Quality with Python Code Coverage | Python, renowned for its simplicity and readability, is a versatile programming language widely used... | 0 | 2024-06-12T14:36:58 | https://dev.to/keploy/maximizing-software-quality-with-python-code-coverage-2i69 | webdev, javascript, python, coverage |

Python, renowned for its simplicity and readability, is a versatile programming language widely used for developing applications ranging from web development to data analysis and machine learning. However, regardles... | keploy |

1,885,858 | Balancing Life as a Remote Developer and a Dad: Tips from Newborn to Toddler Stage | Being a dad is one of the most rewarding experiences, but it also comes with its own set of... | 0 | 2024-06-12T14:30:35 | https://dev.to/danjiro/balancing-life-as-a-remote-developer-and-a-dad-tips-from-newborn-to-toddler-stage-43c1 | developer, dad, remote, developerdad | Being a dad is one of the most rewarding experiences, but it also comes with its own set of challenges, especially when you're trying to balance it with a demanding job as a developer. Working remotely adds a unique twist to this balancing act, providing both opportunities and obstacles. Here are some strategies that h... | danjiro |

1,885,857 | Difference Between ORM and ODM | Hi, Today we will discuss shortly about ORM and ODM tools and we will clear our all confusions of ORM... | 0 | 2024-06-12T14:27:41 | https://dev.to/saadnaeem/difference-between-orm-and-odm-1a9h | orm, odm, database, saadnaeem | Hi, Today we will discuss shortly about ORM and ODM tools and we will clear our all confusions of ORM And ODM.

**So let's start today's short topic:**

**ORM:**

- ORM ( Object relational mapping) is a tool that is commonly used for SQL databases (MYSQL, PostgreSQL).

- These tools are used to map the relational databa... | saadnaeem |

1,885,855 | Overview of EOS Economics Model | Overview of EOS Economics Model In this article, we'll delve into the key components of... | 0 | 2024-06-12T14:23:02 | https://dev.to/davidking/overview-of-eos-economics-model-1h9f | web3, eos, economics, blockchain | # Overview of EOS Economics Model

In this article, we'll delve into the key components of the EOS economics model and its implications for the ecosystem.

## Introduction

EOS is a blockchain platform that aims to provide a scalable and user-friendly environment for building and deploying dApps. At the core of EOS is ... | davidking |

1,885,852 | Why I Want to Be Part of the Dev Community | In the ever-evolving landscape of technology, being part of the development community is more than... | 0 | 2024-06-12T14:14:26 | https://dev.to/stanly/why-i-want-to-be-part-of-the-dev-community-42oc | webdev, javascript, beginners, programming | In the ever-evolving landscape of technology, being part of the development community is more than just a career choice—it's a calling. As someone passionate about coding and problem-solving, the allure of joining a vibrant and dynamic community of developers is compelling. Here’s why I want to immerse myself in the wo... | stanly |

1,885,851 | Overview of Blockchain Consensus Algorithms | In this article, we'll delve into various consensus algorithms commonly used in blockchain networks,... | 0 | 2024-06-12T14:08:48 | https://dev.to/davidking/overview-of-blockchain-consensus-algorithms-564i | web3, blockchain, tutorial | In this article, we'll delve into various consensus algorithms commonly used in blockchain networks, exploring their characteristics, advantages, and limitations.

## Intro

Blockchain consensus algorithms are protocols that enable nodes in a distributed network to agree on the state of the blockchain. Consensus is ach... | davidking |

1,885,846 | Chatbot in Python - Build AI Assistant with Gemini API | Build Your First AI Chatbot in Python: Beginner's Guide Using Gemini API Unlock the power of AI with... | 0 | 2024-06-12T14:08:30 | https://dev.to/proflead/chatbot-in-python-build-ai-assistant-with-gemini-api-5hd0 | webdev, ai, python, programming | Build Your First AI Chatbot in Python: Beginner's Guide Using Gemini API

Unlock the power of AI with this beginner-friendly tutorial on building a chatbot in Python using the Gemini API! 🚀 In this step-by-step guide, you'll learn how to create a smart AI assistant from scratch, perfect for enhancing your coding skill... | proflead |

1,885,850 | Ça vous tente de finir plus tôt ? | L’efficacité c’est la capacité de produire le maximum de résultats avec le minimum d'effort. La... | 0 | 2024-06-12T14:05:46 | https://dev.to/tontz/ca-vous-tente-de-finir-plus-tot--4hm9 | vscode, productivity, french | L’**efficacité** c’est la capacité de produire le **maximum** de résultats avec le **minimum** d'effort. La ressource la plus importante d’un développeur est son temps. Moins vous passez de temps à coder, plus vous passez de temps à vous former et réfléchir aux problèmes.

L’utilisation des raccourcis clavier est un m... | tontz |

1,885,849 | My Top VS Code Extensions for a Better Development Experience | As a developer, having the right tools at your fingertips can significantly enhance productivity and... | 0 | 2024-06-12T14:05:16 | https://dev.to/danjiro/my-top-vs-code-extensions-for-a-better-development-experience-3p9e | webdev, vscode, extensions, developer | As a developer, having the right tools at your fingertips can significantly enhance productivity and streamline your workflow. Over the past few years, I've discovered and used some fantastic VS Code extensions that have become indispensable in my development setup. Here’s a quick rundown of the extensions I use freque... | danjiro |

1,885,735 | Invitation to join a paid research community for App and Game Developers | Hello everyone! I am looking for app and game developers in the UK, USA, Canada and Australia to... | 0 | 2024-06-12T14:01:44 | https://dev.to/aydan_guliyeva_8dd4d9c8ae/invitation-to-join-a-paid-research-community-for-app-and-game-developers-5hdp | ios, android, reactnative, flutter | Hello everyone!

I am looking for **app** and **game developers** in the **UK**, **USA**, **Canada** and **Australia** to join an online community where you can earn rewards in exchange for feedback on new product concepts through **short paid research activities**.

Just for joining the community, you will receive a ... | aydan_guliyeva_8dd4d9c8ae |

1,885,847 | TalentBankAI: The Ultimate Platform for Recruiting and Supporting IT Developers | Streamlined Recruitment Process with TalentBankAI Customer Interaction: Enhancing... | 0 | 2024-06-12T14:00:34 | https://dev.to/ulyana_mykhailiv_82896052/talentbankai-the-ultimate-platform-for-recruiting-and-supporting-it-developers-11pd | hiring, webdev, developers, development |

## Streamlined Recruitment Process with TalentBankAI

### Customer Interaction: Enhancing Recruitment Efficiency

For companies [hiring engineers](https://talentbankai.com/), TalentBankAI offers a seamless recruitment experience. Clients interact with the platform’s sophisticated AI system, providing project specific... | ulyana_mykhailiv_82896052 |

1,885,885 | Evento Sobre Desenvolvimento De Sistemas Com Nest.js Gratuito | Participe do evento online e gratuito de Desenvolvimento de Sistemas com Nest.js, Imersão Full Stack... | 0 | 2024-06-23T13:50:39 | https://guiadeti.com.br/evento-desenvolvimento-de-sistemas-nest-js/ | eventos, cursosgratuitos, desenvolvimento, docker | ---

title: Evento Sobre Desenvolvimento De Sistemas Com Nest.js Gratuito

published: true

date: 2024-06-12 14:00:00 UTC

tags: Eventos,cursosgratuitos,desenvolvimento,docker

canonical_url: https://guiadeti.com.br/evento-desenvolvimento-de-sistemas-nest-js/

---

Participe do evento online e gratuito de Desenvolvimento de ... | guiadeti |

1,885,255 | Everything You Need to Know About ChatGPT Model 4o | Imagine you’re exploring a vast museum filled with exhibits on every topic imaginable. Now, picture a... | 0 | 2024-06-12T14:00:00 | https://medium.com/@shaikhrayyan123/everything-you-need-to-know-about-chatgpt-model-4o-28854965ed20 | chatgpt, ai, genai | Imagine you’re exploring a vast museum filled with exhibits on every topic imaginable. Now, picture a guide who can effortlessly explain each exhibit, answer any question, and even engage in a conversation about your favorite topics. That’s what ChatGPT Model 4.0 (GPT-4o) is like a super-intelligent assistant that can ... | rayyan_shaikh |

1,885,844 | Responsive Comparison Table | Fully responsive and visually appealing comparison table, perfect for showcasing the Pro and Free... | 0 | 2024-06-12T13:53:43 | https://dev.to/dmtlo/responsive-comparison-table-5fen | codepen, css, html, webdev | Fully responsive and visually appealing comparison table, perfect for showcasing the Pro and Free features of the product or service.

Check out this Pen I made!

{% codepen https://codepen.io/dmtlo/pen/zYQEgJj %} | dmtlo |

1,885,843 | World Day Against Child Labour: Striving for a Sustainable Future | Introduction: June 12 marks the World Day Against Child Labour, a day dedicated to raising awareness... | 0 | 2024-06-12T13:53:40 | https://dev.to/inrate_esg_037e7b133fe497/world-day-against-child-labour-striving-for-a-sustainable-future-3mbm | inrate, sustainab, childlabour | Introduction:

June 12 marks the World Day Against Child Labour, a day dedicated to raising awareness and fostering global efforts to eliminate child labor. This year’s theme, “Exclusion and Sustainability – The Example of Child Labor,” highlights the importance of sustainable practices in eradicating child labor.

The... | inrate_esg_037e7b133fe497 |

1,885,842 | Agen Slot Deposit QRIS Online Di Situs Gacor123 | Selamat datang di dunia seru permainan slot online! Apakah kamu tertarik mencari Agen Slot Deposit... | 0 | 2024-06-12T13:53:18 | https://dev.to/ica_c5034e8c8154b2788dc5d/agen-slot-deposit-qris-online-di-situs-gacor123-4fp8 | webdev, beginners, javascript |

Selamat datang di dunia seru permainan slot online! Apakah kamu tertarik mencari Agen Slot Deposit QRIS Online yang dapat memberikan pengalaman bermain yang mengasyikkan? Jika ya, maka artikel ini adalah untukmu! Di sini, kita akan membahas tentang apa itu Agen Slot Deposit QRIS Online dan mengapa Situs Gacor123 layak... | ica_c5034e8c8154b2788dc5d |

1,885,841 | Unlocking the Secrets to Optimal Betta Pellets Fish Nutrition | Introduction: Understanding the Importance of Betta Pellets In the realm of aquatic companionship,... | 0 | 2024-06-12T13:53:10 | https://dev.to/saad_rajpoot_0c0b85c2ab74/unlocking-the-secrets-to-optimal-betta-pellets-fish-nutrition-1d39 | **Introduction: Understanding the Importance of Betta Pellets**

In the realm of aquatic companionship, few creatures captivate quite like the majestic Betta fish. With their vibrant colors and elegant fins, Betta fish have secured their place as beloved pets in countless households worldwide. Yet, behind their beauty l... | saad_rajpoot_0c0b85c2ab74 | |

1,885,840 | Context API Syntax | Functional Components Component where the context is defined and which provides the actual... | 0 | 2024-06-12T13:51:47 | https://dev.to/alamfatima1999/context-api-223c | **_<u>Functional Components</u>_**

Component where the context is defined and which provides the actual component with the context.

```JS

const functionalComponent = () => {

...

return(

<>

<contextName.Provider value = "contextValue">

<ComponentThatUsesTheContext />

</contextName.Provider>

</>

)

}

```

Component wh... | alamfatima1999 | |

1,885,660 | How To Dockerize Remix Apps 💿🐳 | Dockerizing your Remix app is straightforward and makes your app independent of hosting providers!... | 0 | 2024-06-12T13:50:43 | https://dev.to/code42cate/how-to-dockerize-remix-apps-2ack | docker, devops, beginners, webdev | Dockerizing your Remix app is straightforward and makes your app independent of hosting providers! Let's get to it and build a production ready Dockerfile! 🤘🗿

## Basic Dockerfile

To get started, let's create a basic Dockerfile for our Remix app:

```Dockerfile

FROM node:21-bullseye-slim

WORKDIR /myapp

ADD . .

RUN np... | code42cate |

1,885,839 | Writing Rust Documentation | Writing effective documentation is crucial for any programming language, and Rust is no exception.... | 27,702 | 2024-06-12T13:49:30 | https://dev.to/gritmax/writing-rust-documentation-5hn5 | rust, developer, beginners, tutorial | Writing effective documentation is crucial for any programming language, and Rust is no exception. Good documentation helps users understand how to use your code, what it does, and why it matters. In this guide, I'll walk you through the best practices for writing documentation in Rust, ensuring that your code is not o... | gritmax |

1,885,838 | Rutherford Electrician | Rutherford Electrician Electrical Contractors in Rutherford Small Spark Contracting is Rutherford’s... | 0 | 2024-06-12T13:48:44 | https://dev.to/smallsparkelectrical/rutherford-electrician-2eda | rutherfordelectrician, rutherfordinelectrician, rutherfordinelectricians | [Rutherford Electrician](https://www.smallsparkelectrical.com.au/)

[Electrical Contractors in Rutherford](https://www.smallsparkelectrical.com.au/)

Small Spark Contracting is Rutherford’s most trusted electrical contactors. Our electrical services cover a broad spectrum of electrical needs in both residential and comme... | smallsparkelectrical |

1,885,837 | Singapore Airlines' Digital Transformation Story | Singapore Airlines (SIA) has embarked on a comprehensive digital transformation journey to maintain... | 0 | 2024-06-12T13:47:50 | https://victorleungtw.com/2024/06/12/sia/ | digitaltransformation, innovation, singapore, customer | Singapore Airlines (SIA) has embarked on a comprehensive digital transformation journey to maintain its competitive edge and meet the evolving needs of its customers. This transformation focuses on enhancing operational efficiency, improving customer experiences, and fostering innovation. Below are some of the key init... | victorleungtw |

1,885,835 | Mastering React Components | React components are the fundamental building blocks of a React application. React components are... | 0 | 2024-06-12T13:44:57 | https://dev.to/ark7/react-components-14eg | webdev, javascript, beginners, programming | React components are the fundamental building blocks of a React application.

React components are **reusable**, **self-contained** pieces of code that define how a portion of the user interface (UI) should appear and behave.

The beauty of React is that it allows you to break a UI (User Interface) down into independe... | ark7 |

1,885,834 | List of All My Blog Posts - with direct links | I wanted to pin a list of all my blog post so those are easier to find. So here's a list of... | 0 | 2024-06-12T13:44:18 | https://dev.to/whatminjacodes/direct-links-to-all-my-blog-posts-fap | I wanted to pin a list of all my blog post so those are easier to find. So here's a list of everything that I have written!

I will also update this list whenever I publish a new blog post :)

_____

### Cyber Security

[From Software Developer to Ethical Hacker](https://dev.to/whatminjacodes/from-software-developer-to-... | whatminjacodes | |

1,884,487 | How to remember everything for standup | Do you have trouble remembering your software engineering accomplishments and todos for your standup... | 0 | 2024-06-12T13:38:59 | https://www.beyonddone.com/blog/posts/how-to-remember-everything-for-standup | standup, productivity, career, softwaredevelopment | Do you have trouble remembering your software engineering accomplishments and todos for your standup update? Perhaps you work hard. You juggle a lot of tasks. You help your coworkers. But when the daily standup meeting comes around, you can't seem to remember everything.

I'll review the advantages and disadvantages o... | sdotson |

1,885,831 | RTT Reduction Strategies for Enhanced Network Performance | Round-trip time (RTT) stands as a pivotal metric for networking and system performance. It encloses... | 0 | 2024-06-12T13:37:16 | https://www.softwebsolutions.com/resources/reduce-rtt-optimize-network-performance.html | aws, cloud, rtt, cloudfront | Round-trip time (RTT) stands as a pivotal metric for networking and system performance. It encloses the time taken for a data packet to travel from its source to its destination and backward. This measurement is significant for determining the responsiveness and efficiency of communication channels within a network inf... | csoftweb |

1,885,718 | Why I chose Svelte over React? | Svelte and React are both used for Web development. React is a fantastic tool which has dominated the... | 0 | 2024-06-12T13:36:20 | https://dev.to/alishgiri/why-i-chose-svelte-over-react-29i9 | svelte, react, javascript | Svelte and React are both used for Web development. React is a fantastic tool which has dominated the industry for a while now whereas Svelte is newer in the field and is gaining significant popularity.

## Overview

When I first heard about Svelte I wanted to try it out and was surprised to see how less code you have t... | alishgiri |

1,885,830 | Australian Staffing Agency: One Of The Most Trusted Placement Agencies | Today, employers are finding it more difficult than ever to find the right talent for the positions... | 0 | 2024-06-12T13:35:53 | https://dev.to/australianstaffingag/australian-staffing-agency-one-of-the-most-trusted-placement-agencies-188f | Today, employers are finding it more difficult than ever to find the right talent for the positions in their business. Once the positions are vacant, you may not find it easy to connect with the right candidates. How will you find suitable ones for the vacant positions at your business? For this, you can work with the ... | australianstaffingag | |

1,885,828 | Demystifying Service Level acronyms and Error Budgets | This was originally posted on Verifa's blog, written by Lauri Suomalainen Availability, fault... | 0 | 2024-06-12T13:31:46 | https://verifa.io/blog/demystifying-service-level-acronyms/ | softwaredevelopment, sre, monitoring, cicd | _This was originally posted on [Verifa's blog](https://verifa.io/blog/demystifying-service-level-acronyms/), written by Lauri Suomalainen_

**Availability, fault tolerance, reliability, resilience. These are some of the terms that pop up when delivering digital services to users at scale. Acronyms related to Service Le... | verifacrew |

1,885,829 | The Benefits of Choosing Global Degrees for Overseas Consultancy in Hyderabad | When it comes to pursuing higher education abroad, choosing the right overseas consultancy in... | 0 | 2024-06-12T13:28:12 | https://dev.to/globaldegree/the-benefits-of-choosing-global-degrees-for-overseas-consultancy-in-hyderabad-8eb | When it comes to pursuing higher education abroad, choosing the right overseas consultancy in Hyderabad can be a crucial decision. With numerous options available, it is essential to select a consultancy that offers personalized guidance, expert knowledge, and a commitment to student success. Global Degrees, a leading ... | globaldegree | |

1,885,826 | Market Penetration and Expansion Strategies for Acrylic Polymers | Acrylic polymers are a group of polymers derived from acrylic acid, methacrylic acid, or other... | 0 | 2024-06-12T13:25:57 | https://dev.to/aryanbo91040102/market-penetration-and-expansion-strategies-for-acrylic-polymers-3o5e | news | Acrylic polymers are a group of polymers derived from acrylic acid, methacrylic acid, or other related compounds. Known for their versatility, durability, and resistance to weathering and chemicals, acrylic polymers are widely used in various industries, including construction, automotive, textiles, and packaging. Thei... | aryanbo91040102 |

1,885,825 | The Evolution and Impact of Software Development Companies in the US | When we think about the incredible strides technology has made over the past few decades, it's... | 0 | 2024-06-12T13:25:35 | https://dev.to/david_clark_4e57e6aea946b/the-evolution-and-impact-of-software-development-companies-in-the-us-1jbl | softwaredevelopment, webdev | When we think about the incredible strides technology has made over the past few decades, it's impossible to overlook the vital role played by [**software development companies in USA**](https://www.mobileappdaily.com/directory/software-development-companies/us?utm_source=dev&utm_medium=hc&utm_campaign=mad). These comp... | david_clark_4e57e6aea946b |

1,885,824 | Understanding DeFiLlama and its Functionality | The world of digital currencies is booming with innovation. A crucial component of the crypto sector,... | 0 | 2024-06-12T13:25:01 | https://dev.to/cryptokid/understanding-defillama-and-its-functionality-5h02 | cryptocurrency, bitcoin, ethereum | The world of digital currencies is booming with innovation. A crucial component of the crypto sector, which allows its seamless operation, is the impressive array of monitoring tools available. One such efficient tool is leading the march in providing insights and analytics to crypto enthusiasts globally, offering user... | cryptokid |

1,885,823 | "Unlocking Creativity: Toca Life World Adventures on iOS" | "Exploring Boundless Worlds: Toca Life World Adventures on iOS" immerses users in a vibrant digital... | 0 | 2024-06-12T13:24:43 | https://dev.to/malik_shehroz_463664dbe15/unlocking-creativity-toca-life-world-adventures-on-ios-5gf5 | "Exploring Boundless Worlds: [Toca Life World Adventures on iOS](https://tocaapkboca.com/toca-life-world-apk-for-ios/)" immerses users in a vibrant digital playground where creativity knows no bounds. With diverse locations, customizable characters, and endless storytelling possibilities, this app sparks imagination an... | malik_shehroz_463664dbe15 | |

1,885,822 | 7 Benefits of Using Kraft Packaging for Small Businesses | The kraft boxes market is expected to grow by a CAGR of 5.6%. Using kraft packaging for your startup... | 0 | 2024-06-12T13:22:35 | https://dev.to/mark_jordan/7-benefits-of-using-kraft-packaging-for-small-businesses-10p5 | packaging, kraft | The kraft boxes market is expected to grow by a CAGR of 5.6%. Using kraft packaging for your startup or small business becomes trendy and crucial to its success.

Every business owner wants a unique presentation and protection for their product that leaves a lasting impression on the customer's mind. Their prime focus ... | mark_jordan |

1,885,821 | Top Platforms for Application Development | In the ever-evolving landscape of application development, choosing the right platform can... | 0 | 2024-06-12T13:22:32 | https://dev.to/ray_parker01/top-platforms-for-application-development-56kb | ---

title: Top Platforms for Application Development

published: true

---

In the ever-evolving landscape of application development, choosing the right platform can significantly impact your projects' efficiency, scal... | ray_parker01 | |

1,885,820 | Choosing the Best GUI Client for SQL Databases | Even the most skilled software and database developers, DBAs, architects, managers, and analysts... | 0 | 2024-06-12T13:20:52 | https://dev.to/dbajamey/choosing-the-best-gui-client-for-sql-databases-1539 | database, sql | Even the most skilled software and database developers, DBAs, architects, managers, and analysts benefit from using GUI clients for SQL databases. Compared to manual input of text-based commands in one’s console, such tools give you way more efficiency. They offer a visual interface to build queries and robust function... | dbajamey |

1,885,741 | Retaining Walls In Central Coast | CENTRAL COAST RETAINING WALLS Our team are fully qualified tradesmen, some of us with more than two... | 0 | 2024-06-12T13:20:09 | https://dev.to/retaining_walls_0e200bbe6/retaining-walls-in-central-coast-1bcf | retainingwallsincentralcoast | [CENTRAL COAST RETAINING WALLS](https://www.centralcoastretainingwalls.com.au/)

Our team are fully qualified tradesmen, some of us with more than two trades up our sleeves! Mick is a fully qualified licenced builder in Central Coast and a licenced bricklayer in Central Coast that has the experience and capacity to cove... | retaining_walls_0e200bbe6 |

1,885,854 | Chart of the Week: Creating a WPF Pie Chart to Visualize the Percentage of Global Forest Area for Each Country | TL;DR: Learn to visualize each country’s global forest area percentage using the Syncfusion WPF Pie... | 0 | 2024-06-19T10:35:55 | https://www.syncfusion.com/blogs/post/wpf-pie-chart-global-forest-area | wpf, chart, development, desktop | ---

title: Chart of the Week: Creating a WPF Pie Chart to Visualize the Percentage of Global Forest Area for Each Country

published: true

date: 2024-06-12 13:18:59 UTC

tags: wpf, chart, development, desktop

canonical_url: https://www.syncfusion.com/blogs/post/wpf-pie-chart-global-forest-area

cover_image: https://dev-to... | gayathrigithub7 |

1,885,730 | Avoid PTSD use TDD | Introduction 👋 Test-Driven Development (TDD) is an approach that focuses on writing tests... | 0 | 2024-06-12T13:18:28 | https://dev.to/maxnormand97/avoid-ptsd-use-tdd-fm | testing, ruby, programming | ## Introduction 👋

> Test-Driven Development (TDD) is an approach that focuses on writing tests before writing the actual code. The general aim is to never write new functionality without a failing test first.

>

>In basic principle remember the following...

>**RED-GREEN-REFACTOR**

>

[apps worldwide](https://ripenapps.com/blog/mobile-app-industry-statistics/#:~:text=Currently%2C%20there%20are%208.93%20million... | scofieldidehen |

1,885,734 | Kaashiv Infotech's Internship in Chennai for CSE Students | Embark on a transformative journey with Kaashiv Infotech's internship in Chennai for CSE students.... | 0 | 2024-06-12T13:09:21 | https://dev.to/pattuanu/kaashiv-infotechs-internship-in-chennai-for-cse-students-plc | dotnet, java, python, fullstack | Embark on a transformative journey with Kaashiv Infotech's [internship in Chennai for CSE students](https://www.kaashivinfotech.com/internship-in-chennai-for-cse-students/). Dive deep into cybersecurity, data science, networking, and other cutting-edge technologies. Gain practical skills, real-world experience, and unl... | pattuanu |

1,885,732 | Code Migration and Reorganization using AI | Introduction Code migration and reorganization are crucial steps in software development,... | 0 | 2024-06-12T13:08:32 | https://dev.to/coderbotics_ai/code-migration-and-reorganization-using-ai-29h7 | codemigration, ai, code |

### Introduction

Code migration and reorganization are crucial steps in software development, ensuring that codebases are scalable, maintainable, and efficient. Traditional methods of code migration and reorganization can be time-consuming and prone to errors. AI-powered tools have emerged to streamline this process,... | coderbotics_ai |

1,885,733 | Unleashing the Power of AI and Machine Learning in Cloud SRE: A Revolutionary Approach for Optimal Performance | Introduction to AI and Machine Learning in Cloud SRE In the rapidly evolving world of... | 0 | 2024-06-12T13:08:19 | https://dev.to/harishpadmanaban/unleashing-the-power-of-ai-and-machine-learning-in-cloud-sre-a-revolutionary-approach-for-optimal-performance-2hf0 | Introduction to AI and Machine Learning in Cloud SRE

----------------------------------------------------

In the rapidly evolving world of cloud computing, the role of Site Reliability Engineering (SRE) has become increasingly crucial. As cloud-based infrastructure and applications grow in complexity, the need for eff... | harishpadmanaban | |

1,885,731 | The Power of Synthetic Monitoring for Cloud SRE: Ensuring Seamless Performance and Reliability | Photo by marleighmartinez on Pixabay Introduction to Synthetic Monitoring for Cloud... | 0 | 2024-06-12T13:07:09 | https://dev.to/harishpadmanaban/the-power-of-synthetic-monitoring-for-cloud-sre-ensuring-seamless-performance-and-reliability-2j5j |

Photo by [marleighmartinez](https://pixabay.com/users/marleighmartinez-40180716/) on [Pixabay](https://pixabay.com/illustrations/technology-inform... | harishpadmanaban | |

1,884,805 | Melhorando a Compreensão de Slices Multidimensionais em Golang | Melhorando a Compreensão de Slices Multidimensionais em Golang | 0 | 2024-06-12T13:06:23 | https://dev.to/fabianoflorentino/slice-multi-dimensional-em-golang-2pne | programming, braziliandevs, go | ---

title: Melhorando a Compreensão de Slices Multidimensionais em Golang

published: true

description: Melhorando a Compreensão de Slices Multidimensionais em Golang

tags: programming, braziliandevs, go

cover_image: https://th.bing.com/th/id/OIG2.hf9dry7hWGXpSrXDm4T4?pid=ImgGn

---

## Olá mundo!

Faz tempo desde o últi... | fabianoflorentino |

1,885,729 | Flying-in Effect - JavaScript & CSS | Oke gaes, lanjut lagi belajarnya ya. Kali ini kita akan membuat flying-in effect, tool-nya masih sama... | 0 | 2024-06-12T13:04:55 | https://dev.to/boibolang/flying-in-effect-javascript-css-143a | Oke gaes, lanjut lagi belajarnya ya. Kali ini kita akan membuat flying-in effect, tool-nya masih sama dengan yang sebelumnya yaitu kita akan menggunakan _intersection observer_. Seperti biasa kita siapkan code standar wajib : index.html, style.css dan app.js

```html

<!-- index.html -->

<!DOCTYPE html>

<html lang="en"... | boibolang | |

1,885,728 | Checking Whitelisted Addresses on a Solidity Smart Contract Using Merkle Tree Proofs | Intro Hello everyone! In this article, we will first talk about Merkle Trees, and then... | 0 | 2024-06-12T13:03:30 | https://dev.to/muratcanyuksel/checking-whitelisted-addresses-on-a-solidity-smart-contract-using-merkle-tree-proofs-3odm | solidity, cryptocurrency, smartcontract, javascript | ## Intro

Hello everyone! In this article, we will first talk about Merkle Trees, and then replicate a whitelisting scenario by encrypting some "whitelisted" addresses, writing a smart contract in Solidity that can decode the encrption and only allow whitelisted addresses to perform some action, and finally testing the... | muratcanyuksel |

1,885,727 | Gummy Market: Applications and Regional Insights During the Forecasted Period 2023 to 2033 | The global gummy market size reached US$ 21.4 billion in 2022. Revenue generated by gummies sales is... | 0 | 2024-06-12T13:03:12 | https://dev.to/anshuma_roy_94915307ed59b/gummy-market-applications-and-regional-insights-during-the-forecasted-period-2023-to-2033-58ec | market, research | The global [gummy market](https://www.futuremarketinsights.com/reports/gummy-market) size reached US$ 21.4 billion in 2022. Revenue generated by gummies sales is likely to be US$ 24.3 billion in 2023. In the forecast period between 2023 and 2033, sales are poised to soar by 11.8% CAGR. Demand is anticipated to transcen... | anshuma_roy_94915307ed59b |

1,885,725 | Building Resilience Through Cybersecurity Risk Management | Building resilience is paramount in cybersecurity risk management to withstand and recover from cyber... | 0 | 2024-06-12T13:02:46 | https://dev.to/darrenmason/building-resilience-through-cybersecurity-risk-management-3cld | Building resilience is paramount in **[cybersecurity risk management](http://www.bawn.com/)** to withstand and recover from cyber security incidents effectively. This article explores the concept of resilience in cybersecurity, emphasizing proactive strategies for identifying, assessing, and mitigating cyber risk. It a... | darrenmason | |

1,885,724 | Why and When to Use Waterfall vs. Agile: A Business Perspective | Waterfall Methodology Use Cases: Well-defined requirements: Waterfall is... | 0 | 2024-06-12T13:00:52 | https://jetthoughts.com/blog/why-when-use-waterfall-vs-agile-business-perspective-management/ | agile, management, startup | ## Waterfall Methodology

### Use Cases:

- **Well-defined requirements**: Waterfall is the best project life cycle option for projects with precise, stable, ... | jetthoughts_61 |

1,885,723 | AUBSS Achieves Prestigious Programmatic Accreditation From QAHE | The American University of Business and Social Sciences (AUBSS) is proud to announce that its BBA,... | 0 | 2024-06-12T13:00:27 | https://dev.to/aubss_edu/aubss-achieves-prestigious-programmatic-accreditation-from-qahe-37lp | education, aubss, qahe |

The American University of Business and Social Sciences (AUBSS) is proud to announce that its BBA, MBA, DBA, and PhD programs have been fully accredited by the International Association for Quality Assurance in Pre-Tertiary & Higher Education (QAHE).

This prestigious accreditation underscores AUBSS’s unwavering co... | aubss_edu |

1,885,722 | Enhancing Cloud SRE Efficiency with Distributed Tracing | Image Source: FreeImages Introduction to Cloud SRE and its Importance As cloud-based... | 0 | 2024-06-12T12:59:45 | https://dev.to/harishpadmanaban/enhancing-cloud-sre-efficiency-with-distributed-tracing-47lm |

Image Source: FreeImages

Introduction to Cloud SRE and its Importance

--------------------------------------------

As cloud-based infrastructure and applications bec... | harishpadmanaban | |

1,885,721 | 8 Best Automated Android App Testing Tools and Framework | Regarding mobile operating systems, two major players dominate our thoughts: Android and iPhone. With... | 0 | 2024-06-12T12:58:56 | https://www.headspin.io/blog/top-automated-android-app-testing-tools-and-frameworks | mobile, testing, android, automation | Regarding mobile operating systems, two major players dominate our thoughts: Android and iPhone. With Android leading the market, software development companies are focused on delivering apps compatible with this OS. Ensuring an app's functionality across various Android devices, OS versions, and hardware specification... | jennife05918349 |

1,885,720 | Mastering the Cloud: Building a High-Performing SRE Team on AWS, Azure, and GCP | Mastering the Cloud: Building a High-Performing SRE Team on AWS, Azure, and GCP Photo by... | 0 | 2024-06-12T12:56:22 | https://dev.to/harishpadmanaban/mastering-the-cloud-building-a-high-performing-sre-team-on-aws-azure-and-gcp-4mon | Mastering the Cloud: Building a High-Performing SRE Team on AWS, Azure, and GCP

===============================================================================

teams has become increasingly crucial. As organizations rapidly adopt cloud platforms like AWS, Azure, and GCP, the need for skilled SRE professionals wh... | harishpadmanaban | |

1,884,544 | EFX !! | Other than programming, my main obsession is Movies. I am a big fan of movies and I watching a lot... | 0 | 2024-06-12T12:51:24 | https://dev.to/mince/sfx-vs-vfx-vs-cgi-4a95 | javascript, beginners, programming, tutorial | Other than programming, my main obsession is **Movies**. I am a big fan of movies and I watching a lot too. Like 2 movies a day 🙃. When you hear the word movies, I am sure these movies pop up in your head.

- Dune

- Avengers

- Jurassic Park

- Avatar

- Titanic

You must have watched atleast one of the above movies and... | mince |

1,885,713 | C ++ 5-dars | include using namespace std; int main3() { int n; cin >> n; cout << n... | 0 | 2024-06-12T12:49:18 | https://dev.to/ahmadjon_ce07fbecb974f925/c-5-dars-2j58 | #include <iostream>

using namespace std;

int main3() {

int n;

cin >> n;

cout << n << n << n << n << n << n << endl;

cout << n << " " << n << endl;

cout << n << " " << n << endl;

cout << n << n << n << n << n << n << endl;

return 0;

}

int main2() {

int son;

cin >> son;//cinda ham cout kabi foydal... | ahmadjon_ce07fbecb974f925 | |

1,885,711 | PORTABLE TOILETS IN MELBOURNE | PORTABLE TOILETS IN MELBOURNE Melbourne portable toilets are designed to provide clean, fresh, modern... | 0 | 2024-06-12T12:45:08 | https://dev.to/portable_toiletmelbourne/portable-toilets-in-melbourne-1o14 | portabletoiletsinmelbourne, portabletoiletsmelbourne | [PORTABLE TOILETS IN MELBOURNE](https://www.portabletoiletsmelbourne.net.au/)

Melbourne portable toilets are designed to provide clean, fresh, modern toilet facilities for your next event and construction sites. We offer the newest fleet of portable toilets which are available in Melbourne and surrounding areas.

Melbou... | portable_toiletmelbourne |

1,885,710 | Multi-Stage Builds for Microservices: A Practical Guide | Microservices architecture has become a widely adopted approach for building complex systems. It... | 0 | 2024-06-12T12:43:53 | https://dev.to/platform_engineers/multi-stage-builds-for-microservices-a-practical-guide-27go | Microservices architecture has become a widely adopted approach for building complex systems. It involves breaking down a monolithic application into smaller, independent services that communicate with each other. Each microservice can be developed, deployed, and scaled independently, allowing for greater flexibility a... | shahangita | |

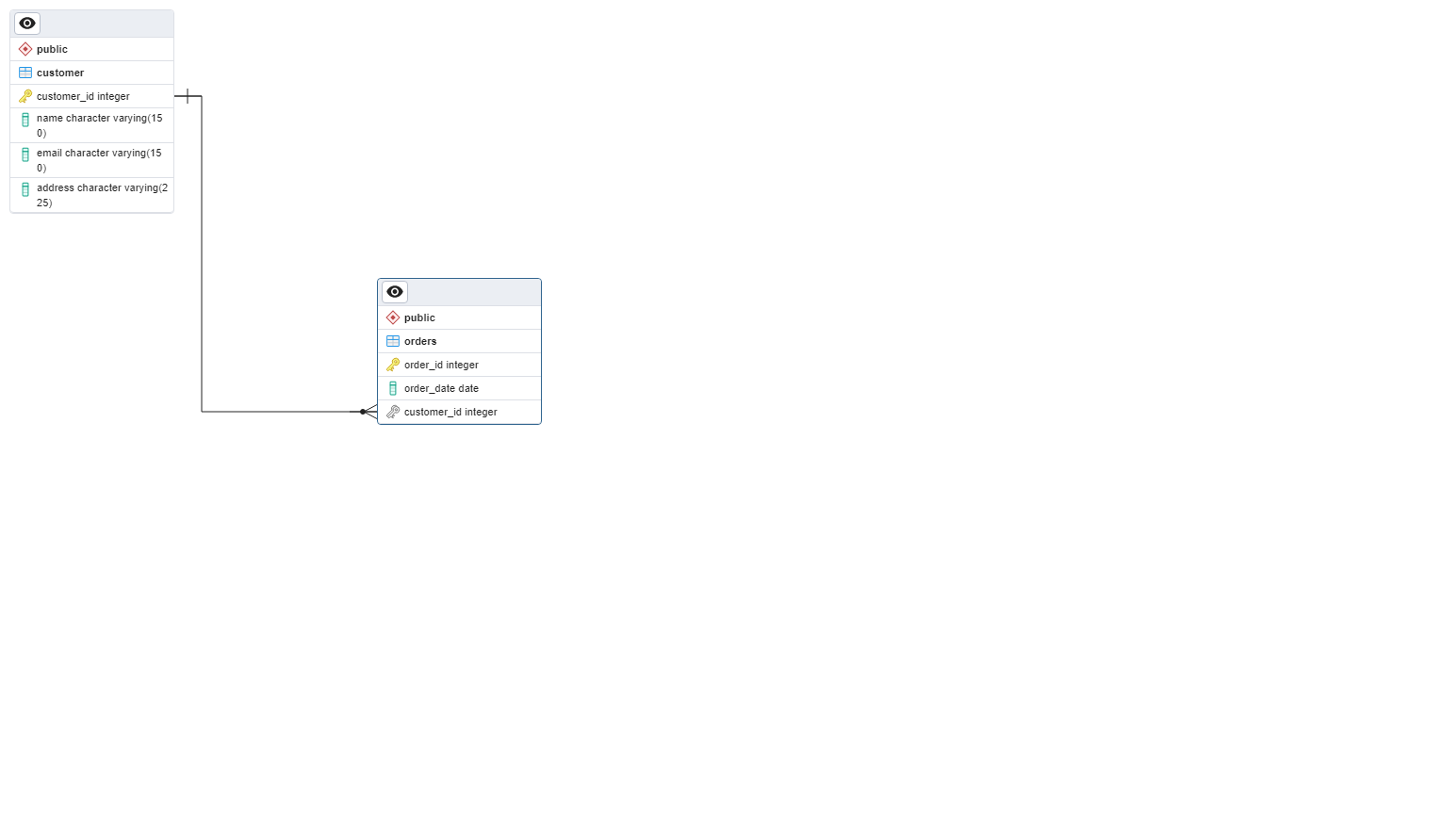

1,885,709 | My first blog post on pgAdmin | This is how i was able to generate and save erd in pgadmin. | 0 | 2024-06-12T12:39:30 | https://dev.to/ifiokobong_akpan_86dc8bf1/my-first-blog-post-on-pgadmin-576a | This is how i was able to generate and save erd in pgadmin.

| ifiokobong_akpan_86dc8bf1 |

Subsets and Splits

No community queries yet

The top public SQL queries from the community will appear here once available.