id int64 5 1.93M | title stringlengths 0 128 | description stringlengths 0 25.5k | collection_id int64 0 28.1k | published_timestamp timestamp[s] | canonical_url stringlengths 14 581 | tag_list stringlengths 0 120 | body_markdown stringlengths 0 716k | user_username stringlengths 2 30 |

|---|---|---|---|---|---|---|---|---|

1,883,310 | How to Build an AI Chatbot with Python and Gemini API: Comprehensive Guide | In this article, we are going to do something really cool: we will build a chatbot using Python and... | 0 | 2024-06-10T13:58:49 | https://dev.to/proflead/how-to-build-an-ai-chatbot-with-python-and-gemini-api-comprehensive-guide-4dep | python, ai, webdev, google | In this article, we are going to do something really cool: we will build a chatbot using Python and the Gemini API. This will be a web-based assistant and could be the beginning of your own AI project. It’s beginner-friendly, and I will guide you through it step-by-step. By the end, you’ll have your own AI assistant!

... | proflead |

1,883,068 | The Evolution of Conveyor Systems | perfection engineering for Conveyor systems have come a long way since their inception, evolving from... | 0 | 2024-06-10T10:51:13 | https://dev.to/webdesigninghouse72/the-evolution-of-conveyor-systems-b2e | **perfection engineering** for Conveyor systems have come a long way since their inception, evolving from rudimentary setups to finely engineered solutions that revolutionize industries worldwide. At the heart of this evolution lies the ingenuity of **[conveyor manufacturer](https://www.perfectionengineering.in/)**, c... | webdesigninghouse72 | |

1,883,309 | Using AI to improve security and learning in your AWS environment. | Why We're Building a Chatbot: Empowering Our Platform Team Our Platform engineers are the... | 0 | 2024-06-10T13:57:00 | https://dev.to/monica_escobar/using-ai-to-improve-security-and-learning-in-your-aws-environment-p90 | ## Why We're Building a Chatbot: Empowering Our Platform Team

Our Platform engineers are the backbone of our secure development process. However, they often face hurdles that slow them down and hinder their ability to deliver top-notch work. On top of this, there are different levels of expertise within the team and c... | monica_escobar | |

1,883,308 | Industry Die Cutting Machines: Adaptable Solutions for Various Materials | Industry Die Cutting Machines: Adaptable Solutions for Various Materials Introduction: Areas... | 0 | 2024-06-10T13:53:54 | https://dev.to/sjjuuer_msejrkt_08b4afb3f/industry-die-cutting-machines-adaptable-solutions-for-various-materials-2ph2 | design |

Industry Die Cutting Machines: Adaptable Solutions for Various Materials

Introduction:

Areas cutting that is perish are a definite definite form of unit that numerous organizations include for cutting goods that will vary size which are different forms. The unit is very versatile, allowing companies to cut equ... | sjjuuer_msejrkt_08b4afb3f |

1,883,307 | A Beginner's Guide to Prompt Engineering with GitHub Copilot | A post by Manam Saiteja | 0 | 2024-06-10T13:53:36 | https://dev.to/manam_saiteja_e83fd9ab159/a-beginners-guide-to-prompt-engineering-with-github-copilot-147n | manam_saiteja_e83fd9ab159 | ||

1,883,306 | TOP 5 JavaScript Gantt Chart Components | 1. ScheduleJS key point: Best Suited For: Complex project and resource management... | 0 | 2024-06-10T13:52:32 | https://dev.to/lenormor/top-5-best-javascript-gantt-chart-library-fjg | webdev, javascript, programming | ## 1. ScheduleJS

**key point:**

Best Suited For:

- Complex project and resource management applications such as in manufacturing and production.

- Unique Feature: Integration with Angular and custom UI elements for extensive personalization.

- Strengths: High performance with large datasets, flexibility in customiz... | lenormor |

1,883,301 | The Role of IT Infrastructure in Digital Transformation | Digital transformation or digitalization is upgrading traditional or non-digital products or services... | 0 | 2024-06-10T13:51:11 | https://dev.to/buzzclan/the-role-of-it-infrastructure-in-digital-transformation-2c5e | itinfrastructure, digitaltransformation | Digital transformation or digitalization is upgrading traditional or non-digital products or services using new technologies to fulfill better the needs of the prevailing and dynamic market and clients. It reinvents traditional and somewhat archaic approaches by incorporating trendy and revolutionary tools such as AI, ... | buzzclan |

1,872,096 | Creating a Snackbar using Signals and Tailwind CSS generated with V0 | Recently I discovered how easy it is to create a component that serves the whole application using... | 0 | 2024-06-10T13:49:21 | https://dev.to/diogom/creating-a-snackbar-using-signals-and-tailwind-css-generated-with-v0-24d0 | angular, javascript, tutorial | Recently I discovered how easy it is to create a component that serves the whole application using signals to manage the state, without needing to include a complex library like NgRx.

For this experiment, I also use the power of V0.dev, which provides an artificial intelligence to create components React or pure HTML ... | diogom |

1,883,299 | Precision Engineering in Industry Die Cutting Machines | Accuracy Design in Market Pass away Reducing Devices Accuracy design is actually an essential... | 0 | 2024-06-10T13:47:08 | https://dev.to/sjjuuer_msejrkt_08b4afb3f/precision-engineering-in-industry-die-cutting-machines-3ecf | design |

Accuracy Design in Market Pass away Reducing Devices

Accuracy design is actually an essential element of structure top quality equipment for a selection of markets, consisting of pass away reducing devices. These devices are actually utilized towards reduce as well as form products right in to particular sizes ... | sjjuuer_msejrkt_08b4afb3f |

1,883,298 | The Best Way To Structure And Compile A Short Essay | There are many ways of writing an essay, but one of the most intimidating ways is writing a short... | 0 | 2024-06-10T13:46:43 | https://dev.to/lana_kent_2dd3b685282bc2c/the-best-way-to-structure-and-compile-a-short-essay-19ng | There are many ways of writing an essay, but one of the most intimidating ways is writing a short essay. When you are structuring and compiling a short essay, there are so many aspects that you need to be careful about. You cannot exceed the word count limit and you have to cover an entire topic within that limit. It c... | lana_kent_2dd3b685282bc2c | |

1,883,295 | How to set up Eslint and prettier | How to set up eslint and prettier The purpose of this blog is to show how to setup eslint... | 0 | 2024-06-10T13:41:59 | https://dev.to/md_enayeturrahman_2560e3/how-to-set-up-eslint-and-prettier-1nk6 | eslint, prettier, javascript, beginners | ## How to set up eslint and prettier

The purpose of this blog is to show how to setup eslint in a typescript project. Eslint will help you automatically detect and fix various types of errors in a project. So you development experience will be smooth.

- Start a project

```javascript

npm init -y

```

- Install neces... | md_enayeturrahman_2560e3 |

1,883,294 | Industry Die Cutting Machines: Streamlining Production Processes | Headline: Past Limits: Exactly how Concealing Tape Producers are actually Broadening Their Get to As... | 0 | 2024-06-10T13:41:47 | https://dev.to/sjjuuer_msejrkt_08b4afb3f/industry-die-cutting-machines-streamlining-production-processes-3fgd | design | Headline: Past Limits: Exactly how Concealing Tape Producers are actually Broadening Their Get to

As a kid, you might have actually utilized concealing tape towards catch illustrations into your wall surface or even towards embellish your institution jobs. However performed you understand that concealing tape ha... | sjjuuer_msejrkt_08b4afb3f |

1,883,293 | Handling SQLite DB in Lambda Functions Using Zappa | Using SQLite is rather straightforward for managing quick services, but when it gets scalable and a... | 0 | 2024-06-10T13:41:07 | https://dev.to/tangoindiamango/handling-sqlite-db-in-lambda-functions-using-zappa-4blg | aws, django, programming | Using SQLite is rather straightforward for managing quick services, but when it gets scalable and a point of data integrity, RDBMS should be the choice at hand. While Relational Database Management Systems (RDBMS) like PostgreSQL or MySQL are the go-to choices for production-grade applications, there are scenarios whe... | tangoindiamango |

1,883,292 | HOW TO RECOVER STOLEN CRYPTOCURRENCY-FRANCISCO HACK | Reflecting on my experience with Francisco Hack fills me with gratitude for their exceptional help in... | 0 | 2024-06-10T13:38:22 | https://dev.to/classic_may_207daf70b214c/how-to-recover-stolen-cryptocurrency-francisco-hack-22he | Reflecting on my experience with Francisco Hack fills me with gratitude for their exceptional help in recovering my lost bitcoin. The journey from uncertainty to relief was made possible by the dedicated team at Francisco Hack.

I had planned a surprise birthday party for my best friend. I had saved up for months to or... | classic_may_207daf70b214c | |

1,883,291 | can someone pls help me?? | When I run "eas build -p android --plataform preview", gradlew return an error Task... | 0 | 2024-06-10T13:35:26 | https://dev.to/matheus_mastrangi_7bdf224/can-someone-pls-help-me-3jg6 | help | When I run "eas build -p android --plataform preview", gradlew return an error

> Task :expo-modules-core:buildCMakeRelWithDebInfo[arm64-v8a]

C/C++: ninja: Entering directory `/home/expo/workingdir/build/node_modules/expo-modules-core/android/.cxx/RelWithDebInfo/c2r1g6q3/arm64-v8a'

C/C++: /home/expo/Android/Sdk/ndk/25.... | matheus_mastrangi_7bdf224 |

1,883,290 | lá số tử vi | Tử Vi, hay Tử Vi Đẩu Số, là một bộ môn huyền học được dùng với các công năng chính như: luận đoán về... | 0 | 2024-06-10T13:34:52 | https://dev.to/dongphuchh023/la-so-tu-vi-pkj | Tử Vi, hay Tử Vi Đẩu Số, là một bộ môn huyền học được dùng với các công năng chính như: luận đoán về tính cách, hoàn cảnh, dự đoán về các " vận hạn" trong cuộc đời của một người đồng thời nghiên cứu tương tác của một người với các sự kiện, nhân sự.... Chung quy với mục đích chính là để biết vận mệnh con người.

Lấy lá ... | dongphuchh023 | |

1,883,289 | Service Mesh: The Secret Weapon for Managing Containerized Applications | Containerization has revolutionized the way applications are developed and deployed. With the advent... | 0 | 2024-06-10T13:34:44 | https://dev.to/platform_engineers/service-mesh-the-secret-weapon-for-managing-containerized-applications-2p58 | Containerization has revolutionized the way applications are developed and deployed. With the advent of container orchestration tools like Kubernetes, managing containerized applications has become more efficient. However, as the complexity of these applications grows, the need for a more comprehensive management solut... | shahangita | |

1,883,288 | Building a Troubleshoot Assistant using Lyzr SDK | In our tech-driven world, encountering technical issues is almost inevitable. Whether it’s a software... | 0 | 2024-06-10T13:32:22 | https://dev.to/akshay007/building-a-troubleshoot-assistant-using-lyzr-sdk-p62 | ai, programming, python, lyzr | In our tech-driven world, encountering technical issues is almost inevitable. Whether it’s a software glitch, hardware malfunction, or connectivity problem, finding quick and effective solutions can be challenging. Enter **TroubleShoot Assistant**, an AI-powered app designed to help you diagnose and resolve technical i... | akshay007 |

1,883,391 | 100 Salesforce DevOps Interview Questions and Answers | Salesforce DevOps is a specialized role that focuses on implementing and optimizing DevOps principles... | 0 | 2024-06-11T03:02:10 | https://www.sfapps.info/100-salesforce-devops-interview-questions-and-answers/ | blog, interviewquestions | ---

title: 100 Salesforce DevOps Interview Questions and Answers

published: true

date: 2024-06-10 13:32:00 UTC

tags: Blog,InterviewQuestions

canonical_url: https://www.sfapps.info/100-salesforce-devops-interview-questions-and-answers/

---

Salesforce DevOps is a specialized role that focuses on implementing and optimiz... | doriansabitov |

1,883,287 | Fujian Jiulong: Crafting Casual Shoes for Every Walk of Life | Fujian Jiulong: exactly how their footwear which are casual ideal for Any life Introduction: Fujian... | 0 | 2024-06-10T13:31:20 | https://dev.to/carrie_richardsoe_870d97c/fujian-jiulong-crafting-casual-shoes-for-every-walk-of-life-2m78 | Fujian Jiulong: exactly how their footwear which are casual ideal for Any life

Introduction:

Fujian Jiulong is a ongoing choices believe that are like being producing over 2 yrs being complete. They provide comprehension of footwear which can be interest which was casual which have various lifestyles. This short ... | carrie_richardsoe_870d97c | |

1,883,286 | Kriosk Creata: Your Gateway to Digital Domination! | Ready to conquer the digital landscape? Look no further than Kriosk Creata! Our comprehensive digital... | 0 | 2024-06-10T13:30:19 | https://dev.to/krioskcreata/kriosk-creata-your-gateway-to-digital-domination-3o4e | seo, digital, digitalmarketing | Ready to conquer the digital landscape? Look no further than Kriosk Creata! Our comprehensive digital marketing services are designed to propel your brand to new heights. From SEO strategies to captivating content creation and targeted social media campaigns, we’ve got the expertise to amplify your online presence and ... | krioskcreata |

1,883,284 | Fujian Jiulong: Your Partner in Sneaker Comfort and Style | screenshot-1717705403085.png Fujian Jiulong: The Perfect Partner for Cool and Comfy Sneakers Do you... | 0 | 2024-06-10T13:26:42 | https://dev.to/sjjuuer_msejrkt_08b4afb3f/fujian-jiulong-your-partner-in-sneaker-comfort-and-style-247l | design | screenshot-1717705403085.png

Fujian Jiulong: The Perfect Partner for Cool and Comfy Sneakers

Do you love sneakers? Of course you do! Who doesn't, right? But aside from the cool factor, have you ever thought about the importance of comfort and safety in your footwear? That's where Fujian Jiulong comes in. They are y... | sjjuuer_msejrkt_08b4afb3f |

1,883,283 | Preventing Extending and Overriding | Neither a final class nor a final method can be extended. A final data field is a constant. You may... | 0 | 2024-06-10T13:26:37 | https://dev.to/paulike/preventing-extending-and-overriding-4j6p | java, programming, learning, beginners | Neither a final class nor a final method can be extended. A final data field is a constant. You may occasionally want to prevent classes from being extended. In such cases, use the **final** modifier to indicate that a class is final and cannot be a parent class. The **Math** class is a final class. The **String**, **S... | paulike |

1,883,248 | Why Can’t We Use async with useEffect but Can with componentDidMount? | React is full of neat tricks and some puzzling limitations. One such quirk is the inability to... | 0 | 2024-06-10T13:26:01 | https://dev.to/niketanwadaskar/why-cant-we-use-async-with-useeffect-but-can-with-componentdidmount-45be | javascript, react, programming, beginners | React is full of neat tricks and some puzzling limitations. One such quirk is the inability to directly use `async` functions in the `useEffect` hook, unlike in the `componentDidMount` lifecycle method of class components. Let’s dive into why this is the case and how you can work around it without pulling your hair out... | niketanwadaskar |

1,883,257 | OWASP® Cornucopia 2.0 | I started out as a web designer 16 years ago and my first website got brutally hacked, not... | 0 | 2024-06-10T13:23:30 | https://medium.com/sydseter/owasp-cornucopia-2-0-8460ebbd9a45 | owasp, applicationsecurity, cornucopia, cybersecurity | _I started out as a web designer 16 years ago and my first website got brutally hacked, not once, but twice. I learned the hard way about the importance of threat modeling and having backups._

-------------------------------------------------------------------------------------------------------------------------------... | sydseter |

1,883,546 | Easily Bind SQLite Data to WinUI DataGrid and Perform CRUD Actions | TL;DR: Learn to bind SQLite data to the Syncfusion WinUI DataGrid, perform CRUD operations, and... | 0 | 2024-06-19T07:11:28 | https://www.syncfusion.com/blogs/post/bind-sqlite-data-winui-grid-crud | winui, datagrid, sql, desktop | ---

title: Easily Bind SQLite Data to WinUI DataGrid and Perform CRUD Actions

published: true

date: 2024-06-10 13:23:00 UTC

tags: winui, datagrid, SQL, desktop

canonical_url: https://www.syncfusion.com/blogs/post/bind-sqlite-data-winui-grid-crud

cover_image: https://dev-to-uploads.s3.amazonaws.com/uploads/articles/a42q... | gayathrigithub7 |

1,883,281 | Die Bedeutung einer effizienten Zirkulation für unser Wohlbefinden. | Die Bedeutung einer effizienten Zirkulation für unser Wohlbefinden kann nicht genug betont werden.... | 0 | 2024-06-10T13:22:29 | https://dev.to/testing_email_9775839a60c/die-bedeutung-einer-effizienten-zirkulation-fur-unser-wohlbefinden-e6g | skincare, skin, skintreatment, dnatest |

Die Bedeutung einer effizienten Zirkulation für unser Wohlbefinden kann nicht genug betont werden. Sowohl eine unzureichende Mikrozirkulation als auch eine verminderte Kollagensynthese können zu verschiedenen Gewebeproblemen und dem Alterungsprozess der Haut führen. Um fit und gesund zu bleiben, setzt unser Körper Mec... | testing_email_9775839a60c |

1,883,280 | The protected Data and Methods | A protected member of a class can be accessed from a subclass. So far you have used the private and... | 0 | 2024-06-10T13:22:17 | https://dev.to/paulike/the-protected-data-and-methods-12gb | java, programming, learning, beginners | A protected member of a class can be accessed from a subclass. So far you have used the **private** and **public** keywords to specify whether data fields and methods can be accessed from outside of the class. Private members can be accessed only from inside of the class, and public members can be accessed from any oth... | paulike |

1,883,265 | Fujian Jiulong: Where Basketball Shoes Define Performance | Hf82ee84df3e94409a63867e04b13d0592.png.jpg Fujian Jiulong: employing their baseball Game to their... | 0 | 2024-06-10T13:19:25 | https://dev.to/sjjuuer_msejrkt_08b4afb3f/fujian-jiulong-where-basketball-shoes-define-performance-3gnd | design | Hf82ee84df3e94409a63867e04b13d0592.png.jpg

Fujian Jiulong: employing their baseball Game to their level that was next

Baseball is a fast-paced plus athletics that was effective plus the importance is known by any player of this footwear which is better into the court. Fujian Jiulong is really a brand that has bee... | sjjuuer_msejrkt_08b4afb3f |

1,883,264 | Java Programming: Where Code Meets Chaos | A Hilarious Journey Through the World of Java Introduction Welcome to the wild and wacky world of... | 0 | 2024-06-10T13:19:16 | https://dev.to/aamiritsu/java-programming-where-code-meets-chaos-3f5b | _A Hilarious Journey Through the World of Java_

**Introduction**

Welcome to the wild and wacky world of Java programming! If you’ve ever wondered why Java developers have a special bond with their coffee mugs, or why they occasionally break into spontaneous dance routines while debugging, you’re about to find out.

**T... | aamiritsu | |

1,883,263 | Marijuana in Thailand: An Innovative Period of time of Legalization | Nowadays, Thailand has created considerable strides with the legalization and regulation Best... | 0 | 2024-06-10T13:19:16 | https://dev.to/davidth98788185/marijuana-in-thailand-an-innovative-period-of-time-of-legalization-4ga2 |

Nowadays, Thailand has created considerable strides with the legalization and regulation [Best Marijuana dispensaries In Thailand](https://smokingcannabisthailand.com/) of cannabis, positioning per se as a good pioneer in Southeast Asia's changing posture with this functional shrub. The journey toward this accelerat... | davidth98788185 | |

1,883,262 | What is Decentralized Application (DApp)? | We all do know about different kind of applications, such as a web app, mobile app, distributed app... | 0 | 2024-06-10T13:17:57 | https://dev.to/whotarusharora/what-is-decentralized-application-dapp-3jcg | webdev, blockchain, web3, learning | We all do know about different kind of applications, such as a web app, mobile app, distributed app and more. But, in the recent years, a new term is coined in the software development market, which is decentralized application or the DApp.

The DApp was originally introduced with the introduction of Web 3.0, as it al... | whotarusharora |

1,883,261 | Elevate Your Style: Fujian Jiulong's Trendy Sneaker Designs | Title: Elevate Your Style: Fujian Jiulong's Trendy Sneaker Designs Introduction Elevate Fujian... | 0 | 2024-06-10T13:17:18 | https://dev.to/carrie_richardsoe_870d97c/elevate-your-style-fujian-jiulongs-trendy-sneaker-designs-2ghl | Title: Elevate Your Style: Fujian Jiulong's Trendy Sneaker Designs

Introduction

Elevate Fujian Jiulong is your style's trendy sneaker designs. They have been innovating in the shoe industry for many years, offering a variety of advantages to their customers. You will learn about their safety, use, service, quality, a... | carrie_richardsoe_870d97c | |

1,882,069 | Launching Mo: Follow the Journey | I'm going to be launching on Monday, June 10th. What exactly am I launching though? It's me! I'm... | 0 | 2024-06-10T13:15:00 | https://dev.to/seck_mohameth/launching-mo-follow-the-journey-49op | indie, softwaredevelopment | I'm going to be launching on Monday, June 10th. What exactly am I launching though? It's me! I'm launching myself. This summer and for the rest of the year, I am going to be developing myself and growing my skills. I'm starting a new job, joining Buildspace's Nights and Weekends Season 5, shipping more projects/product... | seck_mohameth |

1,883,260 | Revolutionizing Business with Data and Analytics Consulting Services | In today's digital era, data is the new gold. Organizations are amassing vast amounts of information... | 0 | 2024-06-10T13:13:35 | https://dev.to/shreya123/revolutionizing-business-with-data-and-analytics-consulting-services-3bom | dataanalytics, dataconsultingservices, dataservices | In today's digital era, data is the new gold. Organizations are amassing vast amounts of information from various sources such as social media, customer interactions, IoT devices, and more. However, data alone doesn't drive business success; actionable insights derived from this data do. This is where [data and analyti... | shreya123 |

1,883,259 | Geospatial Data Analysis in SQL | Overview: Geospatial data analysis in SQL involves the use of databases to understand and... | 0 | 2024-06-10T13:13:16 | https://dev.to/amarachi_kanu_20/geospatial-data-analysis-in-sql-470m | sql, database | ## Overview:

Geospatial data analysis in SQL involves the use of databases to understand and work with location-based information. It helps answer questions like "Where?" and "How far?" by analyzing data with geographic

components, such as maps or coordinates. SQL, a language for managing databases, supplies tools to... | amarachi_kanu_20 |

1,883,258 | Write the Idea Down | Did you know that the average person has around 60,000 thoughts per day? And if you’re an... | 0 | 2024-06-10T13:12:47 | https://dev.to/martinbaun/write-the-idea-down-52db | webdev, beginners, productivity, career | Did you know that the average person has around 60,000 thoughts per day?

And if you’re an overthinker like me, that number shoots up to a whopping 200,000!

Now, imagine you had a brilliant idea — one that could change your life.

But the next day rolls around, and poof! It’s vanished into thin air. Sound familiar?

_... | martinbaun |

1,883,256 | Case Study: A Custom Stack Class | This section designs a stack class for holding objects. This section presented a stack class for... | 0 | 2024-06-10T13:12:08 | https://dev.to/paulike/case-study-a-custom-stack-class-2npc | java, programming, learning, beginners | This section designs a stack class for holding objects. [This section](https://dev.to/paulike/case-study-on-object-oriented-thinking-3k3c) presented a stack class for storing **int** values. This section introduces a stack class to store objects. You can use an **ArrayList** to implement **Stack**, as shown in the prog... | paulike |

1,883,255 | Reverse ETL in Healthcare: Enhancing Patient Data Management | Managing patient data is a massive challenge in healthcare due to the large amounts of information... | 0 | 2024-06-10T13:11:08 | https://dev.to/ovaisnaseem/reverse-etl-in-healthcare-enhancing-patient-data-management-dp3 | datamanagement, etl, healthcare, datascience | Managing patient data is a massive challenge in healthcare due to the large amounts of information involved. Reverse ETL is a modern data integration method that can help flow data smoothly from data warehouses to operational systems. This process is crucial for improving healthcare services and patient outcomes. Under... | ovaisnaseem |

1,883,253 | Fujian Jiulong: Revolutionizing Casual Footwear Trends | Fujian Jiulong: Revolutionizing Casual Footwear Trends Fujian Jiulong test changing the methods... | 0 | 2024-06-10T13:09:14 | https://dev.to/sjjuuer_msejrkt_08b4afb3f/fujian-jiulong-revolutionizing-casual-footwear-trends-30bh | design |

Fujian Jiulong: Revolutionizing Casual Footwear Trends

Fujian Jiulong test changing the methods which are true think about casual footwear. This manufacturer which can be become which was amazing to provide you with the advantages plus you need, all while also working out for you stay stylish plus fashionable. Ei... | sjjuuer_msejrkt_08b4afb3f |

1,883,252 | Scaling Next.js with Redis cache handler | Let's say you have dozens of Next.js instances in production, running in your Kubernetes cluster.... | 0 | 2024-06-10T13:08:22 | https://dev.to/rafalsz/scaling-nextjs-with-redis-cache-handler-55lh | javascript, nextjs, performance, tutorial | Let's say you have dozens of Next.js instances in production, running in your Kubernetes cluster. Most of your pages use [Incremental Static Regeneration](https://nextjs.org/docs/pages/building-your-application/data-fetching/incremental-static-regeneration) (ISR), allowing pages to be generated and saved in file storag... | rafalsz |

1,883,250 | Second Chances Deserve Strong Defense: Top Criminal Lawyers Sydney Trusts | Life can take unexpected turns. A lapse in judgment, a misunderstanding, or unforeseen circumstances... | 0 | 2024-06-10T13:07:28 | https://dev.to/dotlegal/second-chances-deserve-strong-defense-top-criminal-lawyers-sydney-trusts-3del | criminal | Life can take unexpected turns. A lapse in judgment, a misunderstanding, or unforeseen circumstances can land you facing criminal charges. In these moments of uncertainty, securing the services of a top criminal lawyer in Sydney becomes paramount. Dot Legal understands the gravity of criminal accusations and the imme... | dotlegal |

1,883,249 | AWS Graviton Migration - Embracing the Path to Modernization | Usually, companies tend to associate the idea of application modernization with drastic... | 0 | 2024-06-10T13:03:44 | https://dev.to/techpartner/aws-graviton-migration-embracing-the-path-to-modernization-5594 | graviton, aws, modernization, arm | Usually, companies tend to associate the idea of application modernization with drastic transformations such as migrating from large monolithic applications into microservices. Being cognizant of obsolete technology and modernizing through advanced architectures such as [AWS Graviton architecture (Arm processor)](https... | arunasri |

1,883,247 | Day 9 - Deep Dive in Git & GitHub for DevOps Engineers | 1.What is Git and why is it important? Git is a distributed version control system that is widely... | 0 | 2024-06-10T13:02:58 | https://dev.to/oncloud7/day-9-deep-dive-in-git-github-for-devops-engineers-bck | devops, github, git, 90daysofdevops | **1.What is Git and why is it important?**

Git is a distributed version control system that is widely used in software development to manage source code and track changes made to it over time.

Basically, Git allows developers to collaborate on software projects in a highly organized and structured way. With Git, devel... | oncloud7 |

1,883,246 | Examining Microsoft Dynamics 365 for Retail in the USA: An Innovative Approach for Enterprises | Introduction Being ahead of the curve is essential for success in the quickly changing retail market.... | 0 | 2024-06-10T13:02:35 | https://dev.to/alletec_395cff790524a196d/examining-microsoft-dynamics-365-for-retail-in-the-usa-an-innovative-approach-for-enterprises-21h1 | microsoft, dynamics365, retail, allettec | **Introduction**

Being ahead of the curve is essential for success in the quickly changing retail market. This is where Microsoft Dynamics 365 for Retail comes in—a full-featured solution meant to revolutionize retail operations, improve customer satisfaction, and drive company expansion. This effective tool is helping... | alletec_395cff790524a196d |

1,883,245 | Renovatiewerkzaamheden: ruimtes transformeren voor een beter leven | Personalisatie: Dankzij renovaties kunnen huiseigenaren hun ruimtes afstemmen op hun persoonlijke... | 0 | 2024-06-10T13:02:10 | https://dev.to/cskeisari665/renovatiewerkzaamheden-ruimtes-transformeren-voor-een-beter-leven-193n | Personalisatie: Dankzij renovaties kunnen huiseigenaren hun ruimtes afstemmen op hun persoonlijke smaak en levensstijl, waardoor een unieke en comfortabele leefomgeving ontstaat.

Veel voorkomende soorten renovatiewerken

Renovatieprojecten kunnen qua omvang en complexiteit sterk variëren. Hier zijn enkele van de meest ... | cskeisari665 | |

1,883,244 | Fujian Jiulong: Crafting Sneakers with Quality and Comfort in Mind | Fujian Jiulong: Crafting Laid-back Footwear for Every Way of life Fujian Jiulong is actually a... | 0 | 2024-06-10T12:59:30 | https://dev.to/sjjuuer_msejrkt_08b4afb3f/fujian-jiulong-crafting-sneakers-with-quality-and-comfort-in-mind-1jjp | design | Fujian Jiulong: Crafting Laid-back Footwear for Every Way of life

Fujian Jiulong is actually a footwear brand name that produces laid-back footwear for women and men

They are actually understood for their ingenious styles as well as top quality products

If you are actually searching for footwear that fit, trendy, as w... | sjjuuer_msejrkt_08b4afb3f |

922,878 | How does 10x programmer test code? | I would like to share a pattern for unit testing that I discovered while reading through the... | 0 | 2024-06-10T12:59:30 | https://dev.to/krystofee/how-does-10x-programmer-test-code-3lc9 | testing, python |

I would like to share a pattern for unit testing that I discovered while reading through the repository of one of our dependencies. It's about testing through object representation.

# Problems with Testing Code

I perceive many problems with testing, but two main ones stand out:

1. It’s difficult to write unit tests... | krystofee |

1,883,243 | Useful Methods for Lists | Java provides the methods for creating a list from an array, for sorting a list, and finding maximum... | 0 | 2024-06-10T12:58:31 | https://dev.to/paulike/useful-methods-for-lists-eol | java, programming, learning, beginners | Java provides the methods for creating a list from an array, for sorting a list, and finding maximum and minimum element in a list, and for shuffling a list. Often you need to create an array list from an array of objects or vice versa. You can write the code using a loop to accomplish this, but an easy way is to use t... | paulike |

1,883,242 | Unpacking Cloud Infrastructure and Virtualization: A Deep Dive into Their Differences | While both technology play essential roles in modernizing and optimizing IT environments, they are... | 0 | 2024-06-10T12:54:57 | https://dev.to/liong/unpacking-cloud-infrastructure-and-virtualization-a-deep-dive-into-their-differences-e8j | it, webdev, malaysia, kulalumpur | While both technology play essential roles in modernizing and optimizing IT environments, they are not synonymous. Understanding the distinctions and interaction among virtualization and cloud computing is crucial for businesses aiming to leverage their blessings correctly. This data explores the nuances of these techn... | liong |

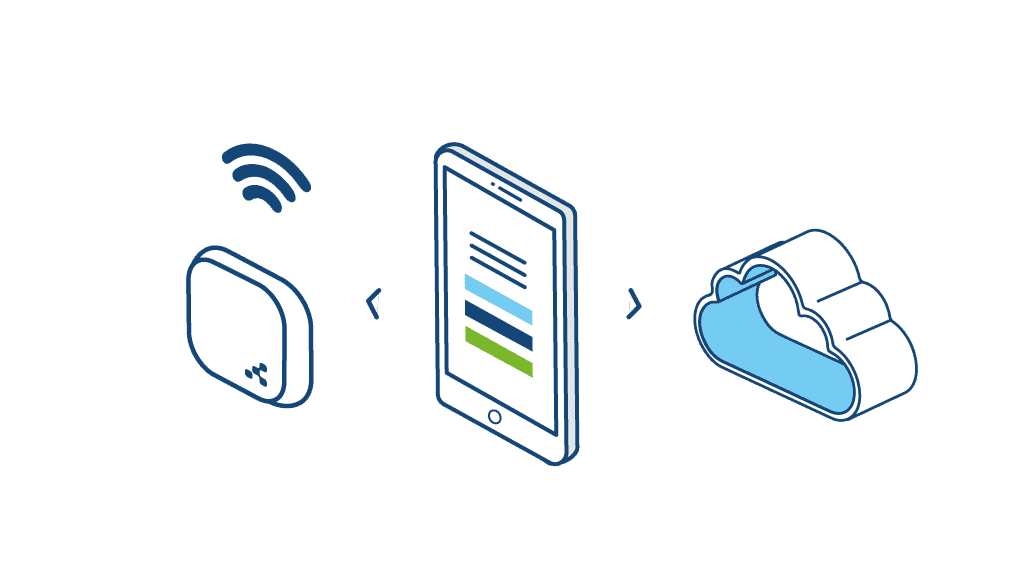

1,883,241 | Getting Started with Bluetooth Low Energy (BLE) in Android | Introduction Bluetooth Low Energy (BLE) is a wireless communication technology designed for... | 0 | 2024-06-10T12:54:49 | https://dev.to/nirav_panchal_e531c758f1d/getting-started-with-bluetooth-low-energy-ble-in-android-3c7f | android, ble, mobile |

Introduction

Bluetooth Low Energy (BLE) is a wireless communication technology designed for short-range communication with low power consumption. It’s widely used in various applications, including fitness trackers, ... | nirav_panchal_e531c758f1d |

1,883,235 | Ethereum Development: Foundry or Hardhat | When comparing Hardhat and Foundry, two popular development frameworks for Ethereum smart contracts,... | 0 | 2024-06-10T12:53:49 | https://dev.to/ifaycodes/ethereum-development-foundry-or-hardhat-4871 | solidity, hardhat, ethereum, foundry | When comparing Hardhat and Foundry, two popular development frameworks for Ethereum smart contracts, several factors come into play. Both tools aim to simplify and streamline smart contracts' development, testing, and deployment, but they have different features and design philosophies.

### Hardhat

(Connecticut), it's essential to consider those that not only offer exceptional styling and treatments but also prioritize customer satisfaction and personalized service. These salons often stand out for their skilled stylists, luxurious atmospher... | fozia_sadiq_b64dcbececaa8 | |

1,857,103 | Demystifying AWS Security: IAM Password Policies vs. Automated Access Key Rotation | Are you new to managing security in your AWS environment? Navigating the intricacies of AWS Identity... | 0 | 2024-06-10T12:42:39 | https://dev.to/damola12345/demystifying-aws-security-iam-password-policies-vs-automated-access-key-rotation-4l70 | security, aws, devops | Are you new to managing security in your AWS environment? Navigating the intricacies of AWS Identity and Access Management (IAM) can be overwhelming, especially when it comes to ensuring strong security practices. In this beginner-friendly blog post, we'll explore two fundamental aspects of AWS security: IAM password p... | damola12345 |

1,883,232 | Choosing the Right Web Hosting in 2024: A Cost Breakdown | Website hosting costs depend on your website’s needs and the plan you choose. Here’s a breakdown of... | 0 | 2024-06-10T12:42:30 | https://dev.to/wewphosting/choosing-the-right-web-hosting-in-2024-a-cost-breakdown-1c47 |

Website hosting costs depend on your website’s needs and the plan you choose. Here’s a breakdown of different hosting options and their average prices:

- **Shared Hosting**: Most affordable option (starts at $10/m... | wewphosting | |

1,883,231 | BEST COMPANY FOR CRYPTOCURRENCY RECOVERY SERVICE - CONTACT DIGITAL WEB RECOVERY | My encounter with Digital Web Recovery was nothing short of a lifesaver amidst the chaos of... | 0 | 2024-06-10T12:42:07 | https://dev.to/linda_fusaro_12215d9aff6d/best-company-for-cryptocurrency-recovery-service-contact-digital-web-recovery-1f46 | My encounter with Digital Web Recovery was nothing short of a lifesaver amidst the chaos of cryptocurrency scams. I first came across an advertisement highlighting their expertise in recovering lost Bitcoin and cryptocurrencies for victims of fraudulent schemes. It resonated with me deeply because just last month, I fo... | linda_fusaro_12215d9aff6d | |

1,883,224 | Generating replies with prompt chaining using Gemini API and NestJS | Introduction In this blog post, I demonstrated generating replies with prompt chaining.... | 27,661 | 2024-06-10T12:41:04 | https://www.blueskyconnie.com/generate-replies-with-prompt-chaining-using-gemini-api/ | generativeai, nestjs, gemini, tutorial | ##Introduction

In this blog post, I demonstrated generating replies with prompt chaining. Buyers can provide ratings and comments on sales transactions on auction sites like eBay. When the feedback is negative, the seller has to respond promptly to resolve the dispute. This demo saves time by generating replies in t... | railsstudent |

1,883,230 | 5 Ways to Swap Two Variables Without Using a Third Variable in Javascript | Swapping variables is a common task in programming, and there are several ways to do it without using... | 0 | 2024-06-10T12:38:31 | https://dev.to/amitkumar13/5-ways-to-swap-two-variables-without-using-a-third-variable-in-javascript-1845 | javascript, swapnumber | Swapping variables is a common task in programming, and there are several ways to do it without using a third variable. In this post, we'll explore five different methods in JavaScript, each with a detailed explanation.

## 1. Addition and Subtraction

This method involves simple arithmetic operations:

```

a = a + b;

... | amitkumar13 |

1,883,228 | casual shoes for men at O2Toes in India | Shop for Casual Shoes online at best prices in India. Choose from a wide range of Mens Casual Shoes... | 0 | 2024-06-10T12:37:01 | https://dev.to/o2toes/casual-shoes-for-men-at-o2toes-in-india-4pf6 | sneakerssportsshoesformen, sneakersformenunder1500, casualshoesformenunder2000, bestsneakersunder2000 | Shop for [Casual Shoes online](https://o2toes.com/products/o2-elegant-white-sports-sneakers-shoes) at best prices in India. Choose from a wide range of Mens Casual Shoes at O2Toes. you'll experience a balance of form, function, performance, and enduring style. Supportive insoles.. | o2toes |

1,883,227 | Restaurant Accounting | It does not matter if you own a small cafe in countryside or a big hotel in the city center,... | 0 | 2024-06-10T12:36:14 | https://dev.to/ayesha_aftab_665bee02ea9f/restaurant-accouting-pc8 | accounting, business, finance, cloud | It does not matter if you own a small cafe in countryside or a big hotel in the city center, accounting for a restaurant can be quite a challenge.

Restaurant accounting is a field of accounting in which the financial records, financial reporting and transactions in specific to restaurant industry are dealt with.

Cho... | ayesha_aftab_665bee02ea9f |

1,883,226 | BigCommerce Performance Optimization Tips | In today's lightning-fast digital world, website speed is no longer a luxury - it's a necessity.... | 0 | 2024-06-10T12:35:55 | https://dev.to/developermansi/bigcommerce-performance-optimization-tips-4j33 | bigommerce, performanceoptimization |

In today's lightning-fast digital world, website speed is no longer a luxury - it's a necessity. This is especially true for eCommerce stores built on platforms like BigCommerce. Every millisecond a page takes to lo... | developermansi |

1,883,225 | Revolutionizing IT Operations: The Transformative Power of AI | This integration isn't just a technological upgrade; it represents a paradigm shift in how IT... | 0 | 2024-06-10T12:30:58 | https://dev.to/liong/revolutionizing-it-operations-the-transformative-power-of-ai-892 | ai, intelligent, discuss, malaysia | This integration isn't just a technological upgrade; it represents a paradigm shift in how IT environments are managed. From automating recurring obligations to predicting system failures, AI is transforming ITOps in methods that enhance performance, lessen prices, and improve overall system reliability. AI in ITOps a... | liong |

1,883,223 | Authors on Mission: Empowering Modern Authors with Online Services | In the dynamic and ever-evolving world of literature, the role of authors has significantly... | 0 | 2024-06-10T12:28:29 | https://dev.to/authorsonmission/authors-on-mission-empowering-modern-authors-with-online-services-e50 | In the dynamic and ever-evolving world of literature, the role of authors has significantly transformed, demanding new approaches and tools to navigate the complex landscape of writing and publishing. Enter Authors on Mission, a groundbreaking company that offers a comprehensive suite of online services tailored to mee... | authorsonmission | |

1,883,222 | Secure Your WordPress Site: Top Mistakes and How to Avoid Them | WordPress is a powerful tool, but its popularity makes it a target for hackers. To keep your... | 0 | 2024-06-10T12:26:57 | https://dev.to/wewphosting/secure-your-wordpress-site-top-mistakes-and-how-to-avoid-them-c5g |

WordPress is a powerful tool, but its popularity makes it a target for hackers. To keep your website safe, here are common security mistakes to avoid:

1. **Outdated Software**: Not updating WordPress core and plug... | wewphosting | |

1,883,221 | Importance of Mobile App Market Research and How To Do It | Mobile app market research is one of the essential steps in app development. The importance of... | 0 | 2024-06-10T12:26:25 | https://www.peppersquare.com/blog/best-way-to-conduct-mobile-app-research/ | mobile, development, webdev, programming | Mobile app market research is one of the essential steps in app development. The importance of research cements its outcome and sets the tone for the success of your mobile app – especially on how well you group different facts and figures for the next development phase.

It is a crucial piece of the puzzle where each ... | pepper_square |

1,883,220 | Marijuana in Thailand: An Exciting New Period of Legalization | In recent times, Thailand makes vital strides inside legalization and regulating marijuana, placing... | 0 | 2024-06-10T12:26:22 | https://dev.to/davidth98788185/marijuana-in-thailand-an-exciting-new-period-of-legalization-146b |

In recent times, Thailand makes vital strides inside legalization and regulating marijuana, placing as well as a good leader in Southeast Asia's evolving position on that useful plant. Your journey for this ongoing stance happens to be designated by numerous legislative transformations, people [Cannabis Shop Near Me... | davidth98788185 | |

1,883,218 | Introducing Spymoba Android application | Spymoba is a popular parental controller app used by parents and bosses to keep an eye on what's... | 0 | 2024-06-10T12:25:08 | https://dev.to/dadashov/introducing-spymoba-android-application-16ih | Spymoba is a popular parental controller app used by parents and bosses to keep an eye on what's happening on Android phones. It has lots of features, like tracking where the phone is, reading text messages, and even seeing what apps are installed. It's easy to use and has different plans you can choose from.

This blog post explores domain suspension, a situation where your website becomes inaccessible due to an issue with your domain name registration. It highlights the common reasons for suspension and how to restore ... | wewphosting | |

1,883,520 | Build your own VS Code extension | Visual Studio Code is a powerful code editor known for its extensibility. Users can install various... | 0 | 2024-06-10T16:57:32 | https://codesphere.com/articles/build-your-own-vs-code-extension | tutorial, vscode, extension, llamacpp | ---

title: Build your own VS Code extension

published: true

date: 2024-06-10 12:19:48 UTC

tags: tutorial,VSCode,extension,llamacpp

canonical_url: https://codesphere.com/articles/build-your-own-vs-code-extension

---

);`

assigns the object **new Student()** to a parameter of the **Object** type. This statement is equivalent to

`Object o = new Student(); // Implicit casting

m(... | paulike |

1,883,206 | TRICKBOT - Traffic Analysis - FUNKYLIZARDS | let's start: Downloading the Capture File and Understanding the... | 0 | 2024-06-10T12:15:20 | https://dev.to/mihika/trickbot-traffic-analysis-funkylizards-fb | ## let's start:

## Downloading the Capture File and Understanding the Assignment

1. Download the .pcap file from [pcap](https://www.malware-traffic-analysis.net/2021/08/19/index.html)

2. Familiarize yourself with the assignment instructions.

## LAN segment data:

LAN segment range: 10.8.19.0/24 (10.8.19.0 through 10.8.... | mihika | |

1,883,205 | SIMGOT EW200 Review 2024: IEM for Under $50 | The Simgot EW200 currently stands out as one of the hottest budget IEMs under $50, rivaling the... | 0 | 2024-06-10T12:14:51 | https://dev.to/bestiem/simgot-ew200-review-2024-iem-for-under-50-53n5 | bestiem, simgotew200, iems | The Simgot EW200 currently stands out as one of the hottest budget IEMs under $50, rivaling the TruthEar In my opinion, it offers a brighter and more attractive sound signature than the latter.

The build quality is truly impressive, boasting a sturdy full-metal construction. Featuring a 10mm SCP diaphragm, dual magnet... | bestiem |

1,883,204 | Do you test your code? | I've been coding for about eight months now. My journey started with the Piscine at 42 School—a... | 0 | 2024-06-10T12:13:43 | https://dev.to/nicolasnardi404/do-you-test-your-code-ggm | testing, webdev, beginners, programming | I've been coding for about eight months now. My journey started with the Piscine at 42 School—a four-week program where I got my feet wet with C programming. Next, I enrolled in Scrimba's Front End BootCamp to work more with front-end. Currently, I'm in the final stretch of a full-stack coding course, which is part of ... | nicolasnardi404 |

1,883,203 | Mitigating DNS Hijacking: Strategies for Detection and Prevention | This blog post explores DNS hijacking, a cyberattack that redirects website traffic to malicious... | 0 | 2024-06-10T12:13:17 | https://dev.to/wewphosting/mitigating-dns-hijacking-strategies-for-detection-and-prevention-4de1 |

This blog post explores DNS hijacking, a cyberattack that redirects website traffic to malicious sites. It can steal sensitive information, install malware, and cause financial losses. Businesses are particularly v... | wewphosting | |

1,883,202 | Mocking navigator.clipboard.writeText in Jest | If you're working on a web application that interacts with the clipboard API, you may need to write... | 0 | 2024-06-10T12:10:33 | https://dev.to/andrewchaa/mocking-navigatorclipboardwritetext-in-jest-3hih | javascript, jest, testing | If you're working on a web application that interacts with the clipboard API, you may need to write tests for functionality that calls `navigator.clipboard.writeText`. However, mocking this API can be tricky, especially when using Jest in ES6. In this post, I'll walk you through the issues I encountered and how I resol... | andrewchaa |

1,882,937 | My frequent mistake in Go | My frequent mistake in Go is overusing pointers, like this unrealistic example below: type BBox... | 0 | 2024-06-10T09:36:53 | https://dev.to/veer66/my-frequent-mistake-in-go-107p | go, mistake | ---

title: My frequent mistake in Go

published: true

description:

tags: golang,mistake

# cover_image: https://direct_url_to_image.jpg

# Use a ratio of 100:42 for best results.

# published_at: 2024-06-10 08:46 +0000

---

My frequent mistake in Go is overusing pointers, like this unrealistic example below:

```Go

type B... | veer66 |

1,883,201 | How to use LLM for efficient text outputs longer than 4k tokens? | If you ask an LLM to improve your text, it will reply with the whole text. This is time consuming and expensive. In this article we discuss how to use patching prompts and diffs to modify texts efficiently. | 0 | 2024-06-10T12:09:16 | https://dev.to/theluk/how-to-use-llm-for-efficient-text-outputs-longer-than-4k-tokens-1glc | llm, streaming, ai, patch | ---

title: How to use LLM for efficient text outputs longer than 4k tokens?

published: true

description: If you ask an LLM to improve your text, it will reply with the whole text. This is time consuming and expensive. In this article we discuss how to use patching prompts and diffs to modify texts efficiently.

tags: LL... | theluk |

1,883,200 | Mastering JavaScript Cross-Browser Testing🧪 | Cross-browser testing is crucial in web development to ensure that your application works seamlessly... | 0 | 2024-06-10T12:08:41 | https://dev.to/dharamgfx/mastering-javascript-cross-browser-testing-4meg | webdev, javascript, testing, programming |

Cross-browser testing is crucial in web development to ensure that your application works seamlessly across different browsers and devices. Let's dive into the various aspects of cross-browser testing, with simple titles and code examples to illustrate each point.

## Introduction to Cross-Browser Testing

Cross-brow... | dharamgfx |

1,883,199 | C++ 3-dars | include using namespace std; int main(){ // o'zgaruvchiga nom berish shart /* int a ... | 0 | 2024-06-10T12:07:52 | https://dev.to/ahmadjon_ce07fbecb974f925/c-3-dars-2hl7 | #include <iostream>

using namespace std;

int main(){

// o'zgaruvchiga nom berish shart

/* int a int a,d

string b strin b,c

int d qilsa bo'ladi

string c */

// int yoki string data typeni o'zgartirmay qiymatini o'gartirish mumkin

return 0;

}

int main4(){

int yosh = 25;

int... | ahmadjon_ce07fbecb974f925 | |

1,883,198 | Achieving Academic Success: How To Help Your Child | Patents must support children when they are on their journey to achieving academic success. If you... | 0 | 2024-06-10T12:06:57 | https://dev.to/alicebailey/achieving-academic-success-how-to-help-your-child-47bi | Patents must support children when they are on their journey to achieving academic success. If you see your child struggling with things, you can work with an [online academic coach](https://peakacademiccoaching.com/academic-coaching/) who can offer the right help to support them in this journey. To become successful, ... | alicebailey | |

1,883,197 | Authentic Study Material SY0-701 Exam Dumps for CompTIA Security+ | SY0-701 Exam Dumps Additionally, using SY0-701 Exam Dumps from reputable sources like dumpsarena... | 0 | 2024-06-10T12:04:16 | https://dev.to/examsyo/authentic-study-material-sy0-701-exam-dumps-for-comptia-security-2cpe | <a href="https://dumpsarena.com/comptia-dumps/sy0-701/">SY0-701 Exam Dumps</a> Additionally, using SY0-701 Exam Dumps from reputable sources like dumpsarena ensures that you are working with accurate and up-to-date information. Cross-referencing the dumps with official study guides and other reliable resources can prov... | examsyo | |

1,883,080 | Choosing Between AIOHTTP and Requests: A Python HTTP Libraries Comparison | Introduction In software development, especially in web services and applications,... | 0 | 2024-06-10T12:03:53 | https://dev.to/api4ai/choosing-between-aiohttp-and-requests-a-python-http-libraries-comparison-23gl | python, requests, api4ai, aiohttp |

#Introduction

In software development, especially in web services and applications, efficiently handling HTTP requests is essential. Python, known for its simplicity and power, offers numerous libraries for managing these interactions. Among them, AIOHTTP and Requests stand out due to their unique features and widesp... | taranamurtuzova |

1,883,196 | Wisephone 2 Discount Code | Use Techless Wisephone Discount Code “Vokeme” to Get $75 Off. Techless Wisephone... | 0 | 2024-06-10T12:03:41 | https://dev.to/vokeme/wisephone-2-discount-code-lpo | Use Techless Wisephone Discount Code “**Vokeme**” to Get $75 Off.

## Techless Wisephone for Sale

WisePhone 2 is a simplified smartphone designed for users who want to escape the complexity and distractions of traditional smartphones.

Techless Wisephone focuses on basic communication, such as calling and texting, wit... | vokeme | |

1,883,195 | HTML classes and HTML class attribute | HTML class Attribute The HTML class attribute is used to specify a class for an HTML... | 0 | 2024-06-10T12:03:09 | https://dev.to/wasifali/html-class-30p1 | css, learning, webdev, html | ## **HTML class Attribute**

The HTML class attribute is used to specify a class for an HTML element.

The class attribute is often used to point to a class name in a style sheet.

we have three `<div>` elements with a class attribute with the value of `"city"`. All of the three `<div>` elements will be styled equally acc... | wasifali |

1,883,194 | Fujian Jiulong: Where Comfort and Style Merge in Running Shoes | Fujian Jiulong: Operating Footwear That Deal Each Convenience as well as Design Fujian Jiulong is... | 0 | 2024-06-10T12:03:03 | https://dev.to/sjjuuer_msejrkt_08b4afb3f/fujian-jiulong-where-comfort-and-style-merge-in-running-shoes-42f6 | design |

Fujian Jiulong: Operating Footwear That Deal Each Convenience as well as Design

Fujian Jiulong is actually a brand name of operating footwear that's acquiring appeal because of its development that is own functions, as well as convenience. The footwear are actually developed along with advanced innovati... | sjjuuer_msejrkt_08b4afb3f |

1,883,193 | JavaScript Client-Side Frameworks: A Comprehensive Guide🚀 | Introduction to Client-Side Frameworks In the modern web development landscape,... | 0 | 2024-06-10T12:01:44 | https://dev.to/dharamgfx/javascript-client-side-frameworks-a-comprehensive-guide-1a46 | webdev, react, vue, angular |

## Introduction to Client-Side Frameworks

In the modern web development landscape, client-side frameworks have become essential tools for building dynamic, responsive, and efficient web applications. These frameworks provide structured and reusable code, making development faster and easier. This post explores some ... | dharamgfx |

1,883,192 | BURGER ANIME | Who has a suggestion on the anime website, to improve appearance and performance? using (NextJS 14 /... | 0 | 2024-06-10T12:00:56 | https://dev.to/amadich/burger-anime-c8 | Who has a suggestion on the anime website, to improve appearance and performance? using (NextJS 14 / NestJS)

| amadich | |

1,883,191 | How to configure simple settings in a Azure Storage Account | Azure Storage Account Azure Storage accounts provide scalable, durable, and highly... | 0 | 2024-06-10T12:00:22 | https://dev.to/ajayi/how-to-configure-simple-settings-in-a-azure-storage-account-2pg | beginners, tutorial, cloud, azure | ## Azure Storage Account

Azure Storage accounts provide scalable, durable, and highly available cloud storage for various data types, including blobs, files, queues, and tables. Here are some key settings to configure when creating and managing an Azure Storage account.

Steps to configure simplesettings on Azure Stora... | ajayi |

1,883,189 | Sun win | Sunwin là một trong những cổng game đổi thưởng số 1 Châu Á, nổi tiếng với sự uy tín, chất lượng và đa... | 0 | 2024-06-10T11:55:37 | https://dev.to/xbsunwinnn/sun-win-5ahc | Sunwin là một trong những cổng game đổi thưởng số 1 Châu Á, nổi tiếng với sự uy tín, chất lượng và đa dạng trong các sản phẩm giải trí trực tuyến. Được thành lập và phát triển bởi tập đoàn Suncity Group, một trong những tập đoàn giải trí lớn nhất khu vực, Sunwin mang đến cho người dùng trải nghiệm chơi game đỉnh cao vớ... | xbsunwinnn | |

1,883,188 | Dynamic Binding | A method can be implemented in several classes along the inheritance chain. The JVM decides which... | 0 | 2024-06-10T11:55:13 | https://dev.to/paulike/dynamic-binding-5f8i | java, programming, learning, beginners | A method can be implemented in several classes along the inheritance chain. The JVM decides which method is invoked at runtime. A method can be defined in a superclass and overridden in its subclass. For example, the **toString()** method is defined in the **Object** class and overridden in **GeometricObject**.

Consid... | paulike |

1,883,187 | What Should be the Features of Online Tarot Reading Website | In today's digital age, the mysterious art of tarot reading has found a new home online. An online... | 0 | 2024-06-10T11:53:31 | https://dev.to/rajsharma/what-should-be-the-features-of-online-tarot-reading-website-5elf | webdev, features, website, tarot | In today's digital age, the mysterious art of tarot reading has found a new home online. An online tarot reading website can offer knowledge, guidance, and support to users from the comfort of their own homes. But what features make an online tarot reading website truly effective and user-friendly? Here are the essenti... | rajsharma |

Subsets and Splits

No community queries yet

The top public SQL queries from the community will appear here once available.