id int64 5 1.93M | title stringlengths 0 128 | description stringlengths 0 25.5k | collection_id int64 0 28.1k | published_timestamp timestamp[s] | canonical_url stringlengths 14 581 | tag_list stringlengths 0 120 | body_markdown stringlengths 0 716k | user_username stringlengths 2 30 |

|---|---|---|---|---|---|---|---|---|

1,880,054 | CORE ARCHITECTURAL COMPONENTS OF AZURE By OMONIYI, S. A. PRECIOUS | INTRODUCTION The core architectural components of Azure may be broken down into two main groupings as... | 0 | 2024-06-07T09:04:32 | https://dev.to/presh1/core-architectural-components-of-azure-12gc | physicalinfrastructure, managementinfrastructure | **INTRODUCTION**

The core architectural components of Azure may be broken down into two main groupings as below;

1. The Physical Infrastructure

2. The Management Infrastructure.

**1. THE PHYSICAL INFRASTRUCTURE**

The physical infrastructure for Azure starts with datacenters. Conceptually, the datacenters are the sam... | presh1 |

1,880,151 | Deploy Spring Boot Applications with NGINX and Ubuntu | Step by step, do the following: Installing Java JDK 17 or 21 However, a reasonably recent... | 0 | 2024-06-07T09:01:09 | https://dev.to/sumer5020/deploy-spring-boot-applications-with-nginx-and-ubuntu-4mlk | webdev, java, springboot, spring | **Step by step, do the following:**

## Installing Java JDK 17 or 21 However, a reasonably recent (LTS) release is recommended.

Ensure `software-properties-common` is installed.

```sh

sudo apt install software-properties-common

```

## Install Amazon Corretto 21

```sh

wget -O - https://apt.corretto.aws/corretto.key ... | sumer5020 |

1,880,150 | pSEO - Programmatic SEO Quick Intro with Examples | TLDR: pSEO automates the creation of web pages at scale for specific keywords. It's useful for... | 0 | 2024-06-07T09:00:57 | https://dev.to/vallu/programmatic-seo-pseo-quick-start-examples-41n3 | pseo, seo, webdev, automation | **TLDR:** pSEO automates the creation of web pages at scale for specific keywords. It's useful for businesses targeting various locations or services. For example:

- _Tyre shops in `<city>`_

- _Stock price of `<company>`_

- _Alternatives to `<product>`_

It's a powerful tool, but there are risks. If done carelessly yo... | vallu |

1,882,944 | Open Source Day: A Report | Introduction On the 7th and 8th of March we were at the Open Source Day organised by... | 0 | 2024-06-10T09:32:15 | https://tech.sparkfabrik.com/en/blog/open-source-day/ | opensource, schrödingerhat, community, events | ---

title: Open Source Day: A Report

published: true

date: 2024-06-07 09:00:00 UTC

tags: ["opensource", "schrödingerhat", "community", "events"]

canonical_url: https://tech.sparkfabrik.com/en/blog/open-source-day/

cover_image: https://dev-to-uploads.s3.amazonaws.com/uploads/articles/adg10qm38gy4q1vhrwfh.png

---

## Int... | boncolab |

1,880,149 | How to customize the labels of a pie chart in VChart? | Title How to customize the labels of a pie chart in VChart? Description Can... | 0 | 2024-06-07T08:59:46 | https://dev.to/neuqzxy/how-to-customize-the-labels-of-a-pie-chart-in-vchart-55kb | # Title

How to customize the labels of a pie chart in VChart?

# Description

Can the labels of a VChart pie chart be customized? I want to add the values to the labels.

opportunities in the healthcare industry. Graduates of these courses can pursue various roles in pharmaceutical companies, contract researc... | vinitsen1642076 | |

1,880,148 | VTable usage issue: How to set only one column to not be selected for operation | Question title How to set only one column that cannot be selected for operation ... | 0 | 2024-06-07T08:58:24 | https://dev.to/rayssss/vtable-usage-issue-how-to-set-only-one-column-to-not-be-selected-for-operation-4d32 | visactor, vtable | ### Question title

How to set only one column that cannot be selected for operation

### Problem description

How to click a cell in a column of a table without selecting it?

### Solution

VTable provides `disableSelect`and `disableHeaderSelect`configurations in the `column`:

- DisableSelect: The conte... | rayssss |

1,880,147 | 360 landing page using three.js and nuxt.js | In this post, I like to share how to create basic 360 landing page using three.js and... | 0 | 2024-06-07T08:58:02 | https://dev.to/sumer5020/360-landing-page-using-threejs-and-nuxtjs-4j3m | webdev, threejs, nuxt | ## In this post, I like to share how to create basic 360 landing page using three.js and nuxt.js.

<br><br>

**Step 1: install new nuxt.js project**

```

npx nuxi@latest init simple-app

cd simple-app

npm i

```

**Step 2: install three.js**

```

npm i three -D

```

now we are ready to go let’s create new component for o... | sumer5020 |

1,880,145 | VTable usage issue: How to listen to table area selection and cancellation events | Question title How to listen to the table area selection cancellation event ... | 0 | 2024-06-07T08:57:05 | https://dev.to/rayssss/vtable-usage-issue-how-to-listen-to-table-area-selection-and-cancellation-events-50ha | visactor, vtable | ### Question title

How to listen to the table area selection cancellation event

### Problem description

Hope to be able to select and cancel events through events (click other areas of the table or click outside the table).

### Solution

VTable provides **`SELECTED_CLEAR`**events that are triggered afte... | rayssss |

1,880,144 | Optimizing Kubernetes Costs With Kubecost Cloud | Kubecost Cloud is a powerful tool designed to help organizations manage and optimize their... | 0 | 2024-06-07T08:55:58 | https://dev.to/saumya27/optimizing-kubernetes-costs-with-kubecost-cloud-kac | kubernetes | Kubecost Cloud is a powerful tool designed to help organizations manage and optimize their Kubernetes-related cloud costs. As Kubernetes adoption grows, managing and controlling expenses in cloud environments becomes increasingly critical. Kubecost Cloud provides real-time visibility and insights into Kubernetes spendi... | saumya27 |

1,880,143 | 10 Common Issues in Domain Management & Solutions | A domain name is a distinctive identifier for users to locate your website. This is why you should... | 0 | 2024-06-07T08:55:28 | https://dev.to/martinbaun/10-common-issues-in-domain-management-solutions-mjo | webdev, productivity, learning | A domain name is a distinctive identifier for users to locate your website.

This is why you should have one.

## Prelude

A domain is crucial to defining your internet persona. It impacts the credibility, usability, and branding of your website.

Owning a domain isn't enough to ensure a successful online identity. You... | martinbaun |

1,880,142 | What Are Your Go-To Tools for Back-End Development? | In the dynamic world of back-end development, choosing the right tools can significantly impact the... | 0 | 2024-06-07T08:55:22 | https://dev.to/creation_world/what-are-your-go-to-tools-for-back-end-development-2d5b | backend, backenddevelopment, devops, webdev | In the dynamic world of back-end development, choosing the right tools can significantly impact the efficiency, scalability, and maintainability of your applications. Every developer has their own set of preferred tools that they rely on to streamline their workflow and solve common challenges effectively.

**We want t... | creation_world |

1,880,125 | Read my essay aloud: Elevating Educational Support with Text-to-Speech AI | Transform your writing process with our read my essay to me text-to-speech AI technology. Experience... | 0 | 2024-06-07T08:55:12 | https://dev.to/novita_a3cf68b009c5cd1758/read-my-essay-aloud-elevating-educational-support-with-text-to-speech-ai-26hn |

Transform your writing process with our read my essay to me text-to-speech AI technology. Experience the difference now!

## Key Highlights

- Text-to-speech AI employs sophisticated AI to transform text into natural-sounding, high-quality audio, enhancing comprehension and engagement.

- With a variety of voice option... | novita_a3cf68b009c5cd1758 | |

1,880,141 | Unleashing the Power of GitHub Copilot: The Future of AI-Powered Coding | What is GitHub Copilot? GitHub Copilot is an AI-powered code completion tool that integrates... | 0 | 2024-06-07T08:54:52 | https://dev.to/arpit_dhiman_afe108fe83fb/unleashing-the-power-of-github-copilot-the-future-of-ai-powered-coding-3kol |

**What is GitHub Copilot?**

GitHub Copilot is an AI-powered code completion tool that integrates seamlessly with popular code editors like Visual Studio Code. It leverages OpenAI's Codex model to provide context-awar... | arpit_dhiman_afe108fe83fb | |

1,880,139 | Trying out arch 🧐 ...btw | This is my first post. Planning to have full blog here soon about web dev. Recently transitioned... | 0 | 2024-06-07T08:53:16 | https://dev.to/bchk/trying-out-arch-btw-43fc | archlinux, firstpost | This is my first post. Planning to have full blog here soon about web dev.

Recently transitioned from Ubuntu to Arch Linux, intrigued by its reputation and eager to explore its capabilities. Will see what stripes this tiger has. Flexing now in front of myself that i use arch... (like, this is soooo proffesional 😅)

... | bchk |

1,877,633 | Thoughts on using ChatGPT in job interviews | A software developer friend told me yesterday that she had a job interview where she had to do live... | 0 | 2024-06-07T08:52:59 | https://dev.to/shaharke/thoughts-on-using-chatgpt-in-job-interviews-1ejo | interview, software, ai | A software developer friend told me yesterday that she had a job interview where she had to do live coding and was not allowed to use ChatGPT. She could search Google for what she needed, but not ask ChatGPT or similar tools. I'm not a big fan of live coding in the first place, but fine. But not using ChatGPT? Why? In ... | shaharke |

1,880,137 | Omega Institute Nagpur | Omega Institute Nagpur is a premier educational institution dedicated to fostering academic... | 0 | 2024-06-07T08:52:50 | https://dev.to/vibha_kharole_bc7da93ed0a/omega-institute-nagpur-23pg | digitalmarketing, seo, courses, traininginstitute | [](https://maps.app.goo.gl/KGCuNR6mqPrz3kY36)

Omega Institute Nagpur is a premier educational institution dedicated to fostering academic excellence and holistic development. Known for its state-of-the-art infrastructure and innovative teaching methodologies, Omega Institute offers a wide range of programs, including ... | vibha_kharole_bc7da93ed0a |

1,880,135 | Selenium's Setup Blues? TestCafe Offers a Streamlined | In today's fast-paced digital landscape, ensuring the quality and reliability of web applications is... | 0 | 2024-06-07T08:52:09 | https://dev.to/mercy_juliet_c390cbe3fd55/seleniums-setup-blues-testcafe-offers-a-streamlined-1jjn | In today's fast-paced digital landscape, ensuring the quality and reliability of web applications is essential for success. With the continuous evolution of web technologies, traditional testing approaches are being challenged to keep pace with the dynamic nature of modern web development. Embracing Selenium’s capabili... | mercy_juliet_c390cbe3fd55 | |

1,880,134 | Cryptocurrency quantitative trading strategy exchange configuration | When beginner designs a cryptocurrency quantitative trading strategy, there are often various... | 0 | 2024-06-07T08:52:06 | https://dev.to/fmzquant/cryptocurrency-quantitative-trading-strategy-exchange-configuration-5gf9 | trading, cryptocurrency, fmzquant, strategy | When beginner designs a cryptocurrency quantitative trading strategy, there are often various functions requirements. Regardless of the programming languages and platforms, they all will encounter various designing requirements. For example, sometimes multiple trading varieties of rotation are required, sometimes multi... | fmzquant |

1,880,133 | ERC-20 vs BRC-20 Token Standards | A Comparative Analysis | This blog explores two prominent token standards: ERC-20 and BRC-20, simplifying their complexities... | 0 | 2024-06-07T08:50:31 | https://dev.to/donnajohnson88/erc-20-vs-brc-20-token-standards-a-comparative-analysis-18bp | blockchain, cryptocurrency, learning, development | This blog explores two prominent token standards: ERC-20 and BRC-20, simplifying their complexities and showing you how to leverage them for your business ventures, including [Crypto token development](https://blockchain.oodles.io/cryptocurrency-development-services/?utm_source=devto).

## Understanding Tokenization

Th... | donnajohnson88 |

1,880,132 | Parul University - Best Private University in Vadodara,Gujarat,India | Parul University, proudly accredited with an A++ grade by NAAC, stands as a premier private... | 0 | 2024-06-07T08:49:36 | https://dev.to/paruluniversity/parul-university-best-private-university-in-vadodaragujaratindia-23km | university, education, college | Parul University, proudly accredited with an A++ grade by NAAC, stands as a premier private educational institution located in the vibrant city of Vadodara, Gujarat. Our university is dedicated to providing a rich and diverse array of educational programs designed to foster academic excellence and holistic development.... | paruluniversity |

1,880,131 | Essential React Libraries for Web Development in 2024 | In 2024, the React ecosystem is thriving with numerous libraries that significantly simplify web... | 0 | 2024-06-07T08:49:08 | https://dev.to/rajivchaulagain/essential-react-libraries-for-web-development-in-2024-4pmn | react, javascript, webdev | In 2024, the React ecosystem is thriving with numerous libraries that significantly simplify web application development. As a React developer, I've found several libraries indispensable for creating efficient and visually appealing applications. Here's a rundown of the libraries I frequently use and recommend:

**_UI ... | rajivchaulagain |

1,880,130 | ghe massage tokuyo | hướng dẫn cách massage lưng đơn giản và hiệu quả tại nhà cho người cao tuổi chỉ trong 10 phút, nhằm... | 0 | 2024-06-07T08:48:46 | https://dev.to/lgcuavietnhat/ghe-massage-tokuyo-fk7 | hướng dẫn cách massage lưng đơn giản và hiệu quả tại nhà cho người cao tuổi chỉ trong 10 phút, nhằm giảm đau nhức xương khớp và tê bì chân tay. Dưới đây là các ý chính:

Lợi ích của massage lưng cho người già

Giảm căng thẳng và căng cơ: Massage giúp loại bỏ căng thẳng và thư giãn các cơ bắp xung quanh lưng.

Cải thiện t... | lgcuavietnhat | |

1,880,129 | How to display all words in a small canvas using vchart word cloud? | Title How to display all words in a small canvas using vchart word cloud? ... | 0 | 2024-06-07T08:47:44 | https://dev.to/neuqzxy/how-to-display-all-words-in-a-small-canvas-using-vchart-word-cloud-1ki9 | # Title

How to display all words in a small canvas using vchart word cloud?

# Description

When the number of words in vchart word cloud is large and the canvas size is not large enough, only a part of the words can be displayed. How can we display all the words?

**Introduction**

AI is revolutionizing healthcare by improving diagnostics, personalizing treatments, and streamlining administrative tasks.

**Enhanced Diagnostics and Imaging**

**Improved Accur... | arpit_dhiman_afe108fe83fb | |

1,880,126 | Side Hustle Ideas for Developers in 2024 | Are you a software engineer eager to turn your skills into profitable side hustles? The possibilities... | 0 | 2024-06-07T08:45:02 | https://dev.to/lilxyzz/side-hustle-ideas-for-developers-in-2024-18ec | Are you a software engineer eager to turn your skills into profitable side hustles? The possibilities for making money online are endless, and I have some exciting ideas that could make 2024 your greatest year yet. Whether you're looking for extra income or dreaming of launching your own business, these side hustles ar... | lilxyzz | |

1,880,122 | In Excel, Enter Values of the same Category in Cells on the Right of the Grouping Cell in Order | Problem description & analysis: In the following Excel table, the 2nd column contains categories... | 0 | 2024-06-07T08:39:26 | https://dev.to/judith677/in-excel-enter-values-of-the-same-category-in-cells-on-the-right-of-the-grouping-cell-in-order-3g2f | programming, tutorial, productivity, datascience | **Problem description & analysis**:

In the following Excel table, the 2nd column contains categories and the 3rd column contains detailed data:

```

A B C

1 S.no Account Product

2 1 AAAQ atAAG

3 2 BAAQ bIAAW

4 3 BAAQ kJAAW

5 4 CAAQ aAAP

6 5 DAAQ aAAX

7 6 DAAQ bAAX

8 7 DAAQ cAAX

```

We need to enter values in the same ... | judith677 |

1,880,120 | Understanding Bolts and Nuts: Key Components in Construction and Manufacturing | Insights Bolts and Nuts: Key Components in Construction and Manufacturing Bolts and Nuts are really... | 0 | 2024-06-07T08:39:00 | https://dev.to/brenda_colonow_3eb2becfc4/understanding-bolts-and-nuts-key-components-in-construction-and-manufacturing-3hdn | design |

Insights Bolts and Nuts: Key Components in Construction and Manufacturing

Bolts and Nuts are really a handful of regarding the elements which can be extremely are crucial manufacturing plus construction. They are place to take part elements which are a few, producing structures plus Carriage Bolt products many sta... | brenda_colonow_3eb2becfc4 |

1,880,118 | How does Amazon explain its value of EKS support | Stop Wrangling Kubernetes! Unleash EKS Power with Amazon's Superhero Support Amazon understands that... | 0 | 2024-06-07T08:38:23 | https://dev.to/abhiram_cdx/how-does-amazon-explain-its-value-of-eks-support-5hga | awseks, eks, kubernetes, kubernetessecurity | Stop Wrangling Kubernetes! Unleash EKS Power with Amazon's Superhero Support

Amazon understands that managing Kubernetes clusters can be complex and time-consuming. That's why they offer comprehensive EKS support, designed to help you:

- Reduce Operational Overhead: Free yourself from the burden of managing infrastru... | abhiram_cdx |

1,880,117 | How AI is Shaping the Future of Education | Introduction AI is revolutionizing education by personalizing learning, enhancing administrative... | 0 | 2024-06-07T08:37:54 | https://dev.to/arpit_dhiman_afe108fe83fb/how-ai-is-shaping-the-future-of-education-dng |

**Introduction**

AI is revolutionizing education by personalizing learning, enhancing administrative efficiency, and transforming traditional methods.

Personalized Learning

**Adaptive Learni... | arpit_dhiman_afe108fe83fb | |

1,880,112 | Why Core Knowledge in HTML, CSS, JavaScript, and PHP is Timeless | Web development moves at lightning speeds; new frameworks and tools are constantly emerging, promising to make our lives easier and our work more efficient. However, a critical difference exists between adopting the latest trend and building a solid foundation in the fundamental technologies underpinning the web. This ... | 0 | 2024-06-07T08:32:44 | https://dev.to/longblade/the-timeless-skills-why-core-knowledge-in-html-css-javascript-and-php-matters-2l6i | technologytrends, frameworks, career, hiring | ---

title: Why Core Knowledge in HTML, CSS, JavaScript, and PHP is Timeless

published: true

description: Web development moves at lightning speeds; new frameworks and tools are constantly emerging, promising to make our lives easier and our work more efficient. However, a critical difference exists between adopting the... | longblade |

1,880,019 | If, Else, Else If, and Switch JavaScript Conditional Statement | In our everyday lives, we always make decisions depending on circumstances. Chew over a daily task,... | 0 | 2024-06-07T08:30:59 | https://dev.to/odhiambo_ouko/if-else-else-if-and-switch-javascript-conditional-statement-39mo | webdev, javascript, development, beginners | In our everyday lives, we always make decisions depending on circumstances. Chew over a daily task, such as making coffee in the morning. If we have coffee beans, we can make coffee; otherwise, we won’t.

In programming, we may need our code to run depending on certain conditions. For instance, you may want your progr... | odhiambo_ouko |

1,880,116 | Microsoft Azure Fundamentals. Core Architectural Components of Azure | Microsoft Azure, most times called Azure is a cloud computing platform developed by Microsoft. It has... | 0 | 2024-06-07T08:29:50 | https://dev.to/wisegeorge1/microsoft-azure-fundamentals-core-architectural-components-of-azure-8l3 | devops, aws, learning, database | Microsoft Azure, most times called Azure is a cloud computing platform developed by Microsoft. It has a wide range of capabilities, including software as a service (SaaS), platform as a service (PaaS), and infrastructure as a service (IaaS). Just like other cloud computing platforms Microsoft Azure supports a wide rang... | wisegeorge1 |

1,880,113 | #github | As we have a lot of github repository and many of them are not in use for now so what you guys do to... | 0 | 2024-06-07T08:26:35 | https://dev.to/navendu02/github-235e | discuss |

As we have a lot of github repository and many of them are not in use for now so what you guys do to keep that repo safe at some place so that you are not charged on the github to keep that repository there? | navendu02 |

1,880,108 | Deploying NestJS Apps to Heroku: A Comprehensive Guide | Deploying a NestJS application to Heroku can be a straightforward process if you follow the right... | 0 | 2024-06-07T08:26:35 | https://dev.to/ezilemdodana/deploying-nestjs-apps-to-heroku-a-comprehensive-guide-hhj | heroku, nestjs, typescript, backend | Deploying a NestJS application to Heroku can be a straightforward process if you follow the right steps. In this guide, we'll walk you through the entire process, from setting up your NestJS application to deploying it on Heroku and handling any dependencies or configurations.

**Step 1: Setting Up Your NestJS Applicat... | ezilemdodana |

1,880,111 | Redefine Your Space: Kitchen and Bath Products for Modern Living | Kitchen and Bath Products: Aesthetic Space for Modern Living Introduction Koala will provide... | 0 | 2024-06-07T08:25:07 | https://dev.to/brenda_colonow_3eb2becfc4/redefine-your-space-kitchen-and-bath-products-for-modern-living-4g0g | design | Kitchen and Bath Products: Aesthetic Space for Modern Living

Introduction

Koala will provide information on how to make use of our products and the ongoing services we offer. So let's dive right into it as we dive right into it, and observe all the information about it. If you are you tired of living in a cluttered and... | brenda_colonow_3eb2becfc4 |

1,880,110 | The Best Video Conferencing Software For Teams of 2024 | In today's digital age, what we once knew simply as "meetings" has transformed. Video conferencing... | 0 | 2024-06-07T08:23:32 | https://blog.productivity.directory/the-best-video-conferencing-software-for-teams-8f3c501b7348 | videoconferencing, productivitytools, teamcollaboration, bestapps | In today's digital age, what we once knew simply as "meetings" has transformed. Video conferencing has become the norm --- even in traditional office settings, video calls are increasingly the standard rather than the exception.

Elevating Your Remote Meetings

==============================

Quality in video conferenci... | stan8086 |

1,880,109 | Intro to TypeScript | Hello everyone, السلام عليكم و رحمة الله و بركاته Introduction Typing is essential for... | 0 | 2024-06-07T08:23:18 | https://dev.to/bilelsalemdev/the-power-of-typescript-imk | typescript, javascript, oop, programming |

Hello everyone, السلام عليكم و رحمة الله و بركاته

## Introduction

Typing is essential for any language to ensure a friendly user experience. TypeScript is a statically typed language built on top of JavaScript, enhancing its syntax and providing powerful tools for extracting needed data from any request. This articl... | bilelsalemdev |

1,880,107 | 6 Effective Ways to Boost Your Email Delivery Rate | Do you want to learn how to improve your email deliverability rate? If so, you're in the right place!... | 0 | 2024-06-07T08:22:29 | https://dev.to/syedbalkhi/6-effective-ways-to-boost-your-email-delivery-rate-37k3 | email, beginners, startup, wordpress | Do you want to learn how to improve your email deliverability rate? If so, you're in the right place! Email deliverability is an important metric for any email marketing campaign.

Put plainly, this metric will show you how many of your marketing emails are delivered versus how many you send. Ideally, you want as many ... | syedbalkhi |

1,880,062 | Integration Digest: May 2024 | Articles 🔍 10 Optical Character Recognition (OCR) APIs The article introduces ten OCR... | 23,208 | 2024-06-07T08:19:05 | https://wearecommunity.io/communities/integration/articles/5072 | api, ia, kafka, async | ## Articles

🔍 [10 Optical Character Recognition (OCR) APIs](https://nordicapis.com/10-optical-character-recognition-ocr-apis/)

_The article introduces ten OCR (Optical Character Recognition) APIs that leverage artificial intelligence and machine learning to digitize text from media and create structured data. These ... | stn1slv |

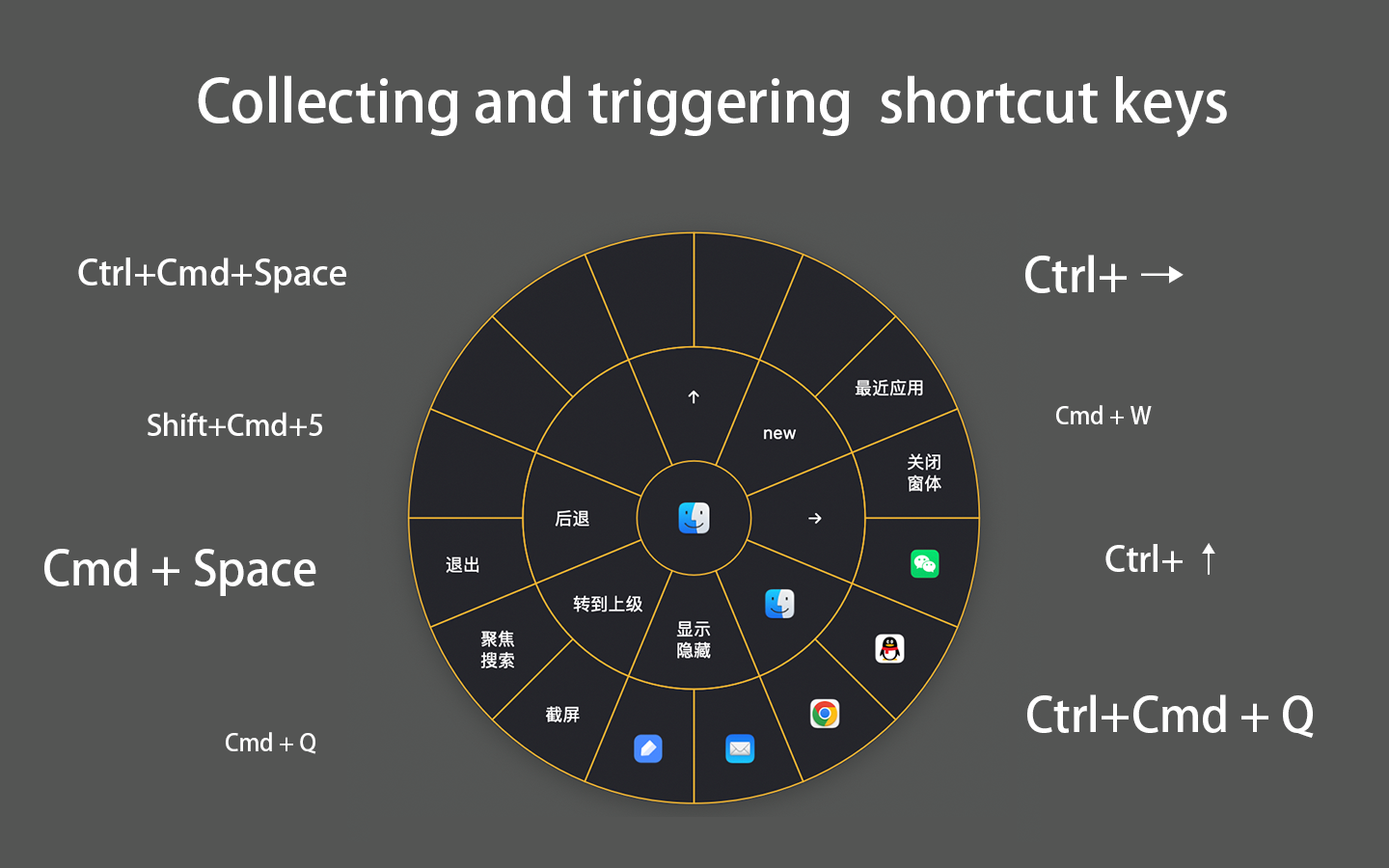

1,880,059 | Hotkeys tool for left-handed mouse user. | If you are a left-handed mouse user, or using a stylus. CirMenu can help you not frequently move your... | 0 | 2024-06-07T08:16:13 | https://dev.to/roc7890hotmailcom_6651f/hotkeys-tool-for-left-handed-mouse-user-3kg3 | macos, idea, xcode | If you are a left-handed mouse user, or using a stylus. [CirMenu](https://apps.apple.com/sk/app/cirmenu/id6450661015) can help you not frequently move your hand to keyboard to trigger hotkeys.

* [What is GitHub Enterprise](#What is GitHub Enterprise)

* [Pillars of GitHub EnterPrise](#Pillars of GitHub EnterPrise)

## What is GitHub<a name="GitHub"></a>

**GitHub** is a cloud-based platform that uses Git, a distributed version control system, at its core. The G... | g_venkatasandeepreddy_b |

1,880,060 | Bridging the Gap: Integrating Microsoft Copilot with Zendesk Using Sunshine Conversations | Introduction In today's digital landscape, seamless integration between various tools is essential... | 0 | 2024-06-07T08:15:28 | https://dev.to/hariraghupathy/bridging-the-gap-integrating-microsoft-copilot-with-zendesk-using-sunshine-conversations-l6f |

**Introduction**

In today's digital landscape, seamless integration between various tools is essential for efficient operations and enhanced user experience. Recently, we embarked on a journey to integrate Microsoft Copilot with Zendesk, despite the lack of direct connectivity. This blog post details our collaborativ... | hariraghupathy | |

1,880,023 | GitHub Copilot tutorial: We’ve tested it with Java and here's how you can do it too | This article describes the GitHub Copilot tool and the main guidelines and assumptions regarding its... | 0 | 2024-06-07T08:15:12 | https://pretius.com/blog/github-copilot-tutorial/ | ai, githubcopilot, java, intellij | **This article describes the GitHub Copilot tool and the main guidelines and assumptions regarding its use in software development projects. The guidelines concern both the tool's configuration and its application in everyday work and assume the reader will use GitHub Copilot with IntelliJ IDEA (via a dedicated plugin)... | karolswider |

1,879,916 | 5 C# OCR Libraries commonly Used by Developers | Optical Character Recognition (OCR) is a technology that allows for the conversion of different types... | 0 | 2024-06-07T07:57:30 | https://dev.to/xeshan6981/5-c-ocr-libraries-commonly-used-by-developers-429b | ocr, csharp, developer, ai | Optical Character Recognition (OCR) is a technology that allows for the conversion of different types of documents, such as scanned paper documents, PDF files, or images captured by a digital camera, into editable and searchable data. C# has become a popular choice for building server-side applications, and its versati... | xeshan6981 |

1,880,058 | Creating Spaces You'll Love: Sanitary Products Tailored to Your Needs | 8ffcc289ecd47f0d75fffe828aec4738ec67cc7aa2d8bd00e6f4f8342d08e998.jpg Creating Spaces You'll Love:... | 0 | 2024-06-07T08:14:08 | https://dev.to/brenda_colonow_3eb2becfc4/creating-spaces-youll-love-sanitary-products-tailored-to-your-needs-1c7f | design | 8ffcc289ecd47f0d75fffe828aec4738ec67cc7aa2d8bd00e6f4f8342d08e998.jpg

Creating Spaces You'll Love: Sanitary Products Tailored to Your Needs

Have you ever walked into a restroom in public and been afraid to touch anything or have you ever been frustrated with the lack of options available in the hygiene feminine aisle? ... | brenda_colonow_3eb2becfc4 |

1,880,057 | c++ 2 dars | operators c++ operatorlar 6 hil boladi bular (+) Qo'shish #include <iostream> using... | 0 | 2024-06-07T08:12:46 | https://dev.to/diyorbek077/c-2dars-228e | _operators_

c++ operatorlar 6 hil boladi bular

(+) Qo'shish

```

#include <iostream>

using namespace std;

int main() {

int x = 5;

int y = 3;

cout << x + y;

return 0;

}

```

(-) Ayirish

```

#include <iostream>

using namespace std;

int main() {

int x = 5;

int y = 3;

cout << x - y;

return 0;

}

```

(*) ... | diyorbek077 | |

1,880,047 | Getting Stale Data for ActiveRecord Associations in Rails: `Model.reload` to fetch latest data | Issue I encountered a bug where Associations were referencing stale data. Here's the... | 0 | 2024-06-07T08:12:13 | https://dev.to/takuyakikuchi/getting-stale-data-for-activerecord-associations-in-rails-modelreload-to-fetch-latest-data-282a | rails | ## Issue

I encountered a bug where Associations were referencing stale data.

Here's the sample scenario(generated by ChatGPT):

**Initial State: Fetching pets**

```ruby

class Person < ActiveRecord::Base

has_many :pets

end

# Fetching pets from the database

person = Person.find(1)

pets = person.pets

# => [#<Pet id: 1... | takuyakikuchi |

1,880,055 | SOA vs Microservices – 8 key differences and corresponding use cases | Nowadays, for businesses, building scalable and agile applications is crucial for responding swiftly... | 0 | 2024-06-07T08:11:56 | https://dev.to/gem_corporation/soa-vs-microservices-8-key-differences-and-corresponding-use-cases-2og7 | microservices, softwaredevelopment, softwareengineering | Nowadays, for businesses, building scalable and agile applications is crucial for responding swiftly to changes in customer demand, technological advancements, and market conditions.

This is where [software architectures](https://gemvietnam.com/others/soa-vs-microservices/?utm_source=Devto&utm_medium=click) like Servi... | gem_corporation |

1,880,052 | The $4.99 Feature That Landed Multiple Paid Customers for My Side Project 💰 | Hey there, friends! I've got some incredibly exciting news to share with you all today about a huge... | 0 | 2024-06-07T08:09:05 | https://dev.to/darkinventor/499-surprise-how-one-new-small-feature-got-me-a-paid-customer-4hn0 | sideprojects, webdev, softwareengineering, javascript | Hey there, friends! I've got some incredibly exciting news to share with you all today about a huge milestone for Reachactory. As you know, [Reachactory](https://www.reachactory.online/) is the service I started to help innovative AI tool creators get more visibility by sharing their products across over 100 directorie... | darkinventor |

1,880,051 | Creating Moments of Luxury: Sanitary Products Designed for Comfort | Producing Minutes of High-end: Hygienic Items Developed for Convenience Hygienic items are actually... | 0 | 2024-06-07T08:04:01 | https://dev.to/brenda_colonow_3eb2becfc4/creating-moments-of-luxury-sanitary-products-designed-for-comfort-1lef | design |

Producing Minutes of High-end: Hygienic Items Developed for Convenience

Hygienic items are actually important for ladies towards guarantee convenience throughout their menstruation cycles. This short post will certainly check out exactly how business are actually innovating hygienic items towards offer ... | brenda_colonow_3eb2becfc4 |

1,880,046 | Higher Order Components (HOC) in React js | A Higher-Order Component (HOC) is an advanced technique for reusing component logic. HOCs are not... | 0 | 2024-06-07T08:02:04 | https://dev.to/imashwani/higher-order-components-hoc-in-react-js-d8a | react, beginners, webdev, hoc | A Higher-Order Component (HOC) is an advanced technique for reusing component logic. HOCs are not part of the React API, but rather a pattern that emerges from React’s compositional nature.

A higher-order component is a function that takes a component as an argument and returns a new component that wraps the original ... | imashwani |

1,880,050 | Popular Packages for Express.js | Express.js is a fast, minimalist web framework for Node.js, widely used for building web applications... | 0 | 2024-06-07T08:01:59 | https://dev.to/raksbisht/popular-packages-for-expressjs-1ik3 | express, packages, productivity, tutorial | Express.js is a fast, minimalist web framework for Node.js, widely used for building web applications and APIs. One of the key strengths of Express.js is its rich ecosystem of middleware and packages that enhance its functionality. In this article, we’ll explore some of the most popular and useful packages that you can... | raksbisht |

1,880,049 | Top 3 Assessment help Services For Liverpool Students | In the highly competitive academic environment of the UK, students often find themselves under... | 0 | 2024-06-07T08:01:53 | https://dev.to/pankaj_singh_675520632c0e/top-3-assessment-help-services-for-liverpool-students-2a69 | assessment, help | In the highly competitive academic environment of the UK, students often find themselves under immense pressure to perform well in their assessments. Whether it's an essay, dissertation, report, or any other type of assignment, the demand for high-quality work is ever-present. This is where expert **[assessment help](h... | pankaj_singh_675520632c0e |

1,880,043 | How to Perform Rake Tasks in Rails | As a Rails developer, you often need to automate tasks such as database migrations, data seeding, or... | 0 | 2024-06-07T07:55:48 | https://dev.to/afaq_shahid/how-to-perform-rake-tasks-in-rails-ol2 | webdev, rails, ruby, programming | As a Rails developer, you often need to automate tasks such as database migrations, data seeding, or file management. This is where Rake (Ruby Make) tasks come in handy. In this article, I'll guide you through creating and scheduling Rake tasks in a Rails application.

## What is a Rake Task?

Rake is a build automatio... | afaq_shahid |

1,880,048 | c++ 1dars | c++ dars1 c++ da consolga chiqarish #include <iostream> using namespace std; int main() { ... | 0 | 2024-06-07T08:01:42 | https://dev.to/diyorbek077/c-3jbb | c++ dars1

c++ da consolga chiqarish

```

#include <iostream>

using namespace std;

int main() {

cout << "Hello World!";

return 0;

}

```

2inchi usul

#include <iostream>

int main() {

std::cout << "Hello World!";

return 0;

}

| diyorbek077 | |

1,879,965 | How to Convert HTML to PDF in Node.js | In Node.js development, the conversion of HTML pages to PDF documents is a common requirement.... | 0 | 2024-06-07T08:00:32 | https://dev.to/xeshan6981/how-to-convert-html-to-pdf-in-nodejs-2j44 | node, html, pdf, javascript | In Node.js development, the conversion of HTML pages to PDF documents is a common requirement. JavaScript libraries make this process seamless, enabling developers to translate HTML code into PDF files effortlessly. With a template file or raw HTML page as input, these libraries simplify the generation of polished PDF ... | xeshan6981 |

1,880,045 | Core Architectural Components of Azure: All You Need To Know | Welcome to the world of Azure, Microsoft's robust cloud computing platform, designed to help... | 0 | 2024-06-07T08:00:20 | https://dev.to/florence_8042063da11e29d1/core-architectural-components-of-azure-all-you-need-to-know-2n5k | azure, azurecomponents, azurearchitecture, cloudcomputing | Welcome to the world of Azure, Microsoft's robust cloud computing platform, designed to help organizations overcome challenges in scalability, reliability, and security. Whether you're a seasoned tech professional or new to cloud technology, understanding the core architectural components of Azure can significantly enh... | florence_8042063da11e29d1 |

1,880,044 | This is only for testing (Ignore) | test ereerererere | 0 | 2024-06-07T07:59:38 | https://dev.to/mp_bg/this-is-only-for-testing-ignore-4ein | test789w | test ereerererere | mp_bg |

1,879,541 | eBPF, sidecars, and the future of the service mesh | Kubernetes and service meshes may seem complex, but not for William Morgan, an engineer-turned-CEO... | 0 | 2024-06-07T07:58:53 | https://dev.to/gulcantopcu/ebpf-sidecars-and-the-future-of-the-service-mesh-32ad | kubernetes, servicemesh, ebpf, cloudnative | Kubernetes and service meshes may seem complex, but not for William Morgan, an engineer-turned-CEO who excels at simplifying the intricacies. In this enlightening podcast, he shares his journey from AI to the cloud-native world with Bart Farrell.

Discover William's cost-saving strategies for service meshes, gain insi... | gulcantopcu |

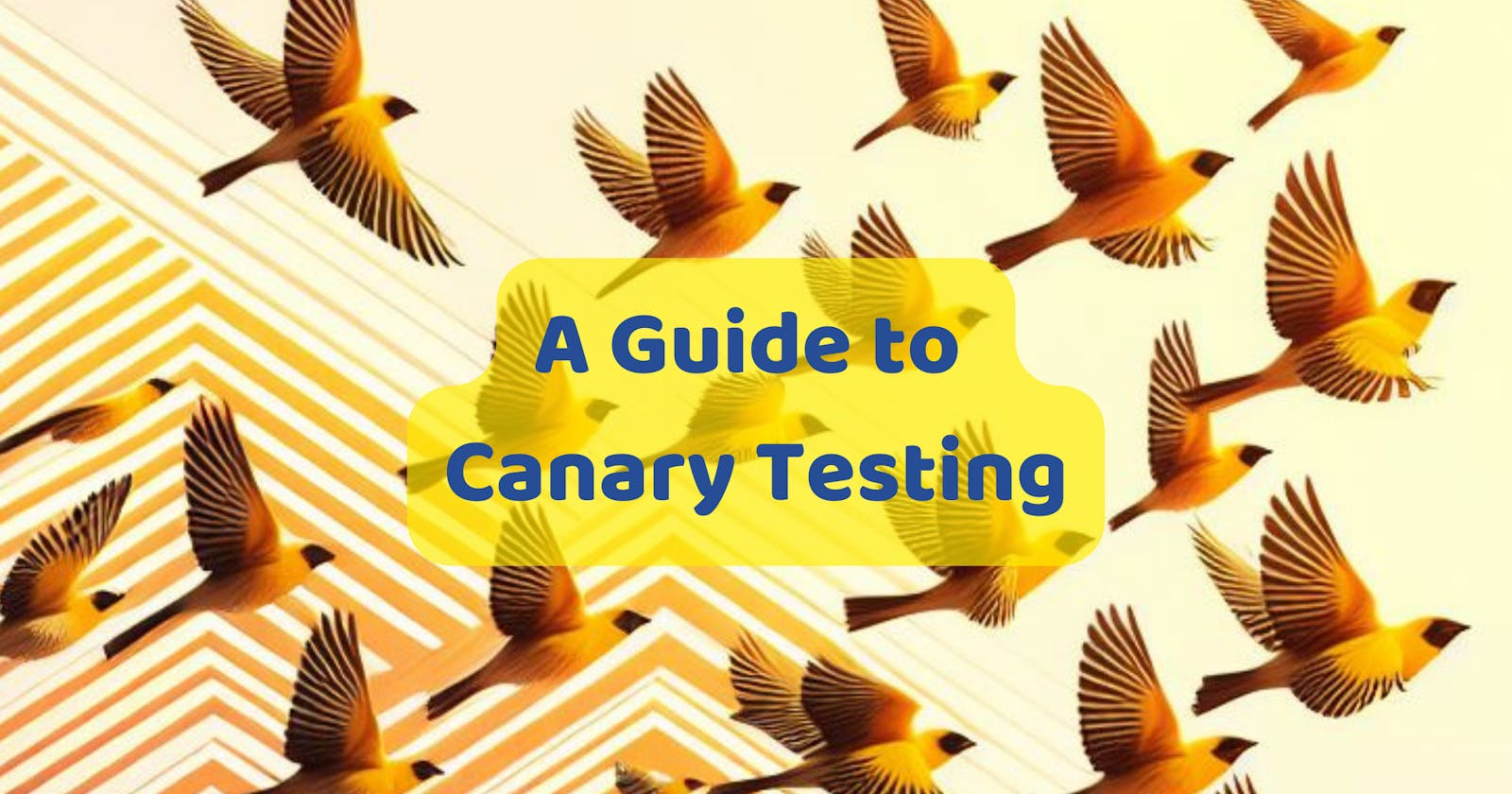

1,880,042 | Understanding Canary Testing: A Comprehensive Guide | In the realm of software development and deployment, ensuring the reliability and stability of new... | 0 | 2024-06-07T07:54:51 | https://dev.to/keploy/understanding-canary-testing-a-comprehensive-guide-2i5i | testing, canary, mongodb |

In the realm of software development and deployment, ensuring the reliability and stability of new releases is paramount. One of the strategies employed to achieve this is [Canary Testing](https://keploy.io/blog/com... | keploy |

1,851,709 | AI tools you can try out 2024 | Artificial Intelligence (AI) has increasingly become a transformative force in the realm of web... | 0 | 2024-06-07T07:54:42 | https://dev.to/kevinbenjamin77/ai-tools-you-can-try-out-in-2024-53ck | ai, developer, webdev, software | Artificial Intelligence (AI) has increasingly become a transformative force in the realm of web development. Its integration into development workflows has brought about significant enhancements in productivity, efficiency, and innovation. AI's ability to automate routine tasks, provide intelligent insights, and offer ... | kevinbenjamin77 |

1,880,041 | Redefine Luxury: Sink Faucets and Taps Designed for Opulence | Redefine Luxury: Sink Faucets and Taps Designed for Opulence Introduction: You may want to... | 0 | 2024-06-07T07:52:32 | https://dev.to/brenda_colonow_3eb2becfc4/redefine-luxury-sink-faucets-and-taps-designed-for-opulence-53cp | design |

Redefine Luxury: Sink Faucets and Taps Designed for Opulence

Introduction:

You may want to beginning contemplating upgrading their Sink Faucets and Taps Designed for Opulence bathroom accessories products if you're attempting to invest a feeling of luxurious to your residence. These important fixtures can do a ... | brenda_colonow_3eb2becfc4 |

1,879,912 | Implement Type-Safe Navigation with go_router in Flutter | Exciting News! Our blog has a new Home! 🚀 Background With type-safe navigation, your... | 0 | 2024-06-07T07:52:10 | https://canopas.com/how-to-implement-type-safe-navigation-with-go-router-in-flutter-b11315bd183b | flutter, programming, beginners, learning | > Exciting News! Our blog has a new **[Home!](https://canopas.com/blog)** 🚀

## Background

With type-safe navigation, your navigation logic becomes consistent and maintainable, significantly simplifying debugging and future code modifications.

This technique is particularly beneficial when building Flutter apps for ... | cp_nandani |

1,866,513 | 17 Developer Tools that keep me productive | Many developers prefer building things from scratch, but sometimes the workload is so huge that using... | 0 | 2024-06-07T07:51:45 | https://dev.to/taipy/17-developer-tools-that-keep-me-productive-37e2 | programming, productivity, webdev, opensource | Many developers prefer building things from scratch, but sometimes the workload is so huge that using these tools can make the job easier.

There are a range of tools included here, so I'm confident you'll find one that suits your needs.

I can't cover everything, but feel free to let me know in the comments if you kno... | anmolbaranwal |

1,880,039 | Solving Potential Issues with Type Aliases Using Type Unions and Literal Types | 類型聯合(Type Unions)和字面量類型(Literal Types)能有效解決類型別名(Alias... | 0 | 2024-06-07T07:48:42 | https://dev.to/scottpony/solving-potential-issues-with-type-aliases-using-type-unions-and-literal-types-2egn | webdev, typescript | 類型聯合(Type Unions)和字面量類型(Literal Types)能有效解決類型別名(Alias Types)可能帶來的問題。類型聯合允許一個變量可以是多種類型之一,通過結合具體的類型定義,可以幫助區分和處理不同的類型,避免混淆。字面量類型通過將變量限制為具體的字面量值,增強了類型系統的嚴格性。結合使用類型聯合和字面量類型,能提供比單純的類型別名更強的類型安全性,減少代碼中的潛在錯誤。

## 問題

```

type Meters = number;

type Miles = number;

const landSpacecraft = (distance: Meters) {

// ... do fancy ma... | scottpony |

1,880,038 | Unraveling the Mysteries of QXEFV: A Comprehensive Study | Introduction QXEFV has been gaining significant attention in recent years due to its potential... | 0 | 2024-06-07T07:47:19 | https://dev.to/sabir_ali_0ea4b6d31d7e4ad/unraveling-the-mysteries-of-qxefv-a-comprehensive-study-4dk2 | qxefv | **Introduction**

QXEFV has been gaining significant attention in recent years due to its potential applications and the mysteries surrounding it. For those unfamiliar, [QXEFV](https://divijos.co.uk/exploring-qxefv-a-comprehensive-investigation-into-its-applications-and-impact/) stands at the intersection of several cut... | sabir_ali_0ea4b6d31d7e4ad |

1,880,037 | K9cc - K9.cc - Link Đăng Ký Tài Khoản Nhận【66K】 | Nhà cái k9cc chuyên cá cược casino trực tuyến mới nhất thời điểm hiện tại. Với nhiều kho game hấp... | 0 | 2024-06-07T07:45:48 | https://dev.to/k9ccbiz/k9cc-k9cc-link-dang-ky-tai-khoan-nhan66k-4o19 | Nhà cái k9cc chuyên cá cược casino trực tuyến mới nhất thời điểm hiện tại. Với nhiều kho game hấp dẫn, giao diện tối ưu đặc sắc, đăng ký thả ga... Nhanh tay thì còn chậm thì vẫn còn khuyến mãi 66k

Địa Chỉ: 39 Đường Bến Nghé, Tân Thuận Đông, Quận 2, Thành phố Hồ Chí Minh, Việt Nam

Email: k9ccbiz@gmail.com

Website: https... | k9ccbiz | |

1,880,036 | Headless CMS: A Modern Approach to Content Management | Traditional CMS systems limit content presentation flexibility. Headless CMS separates content... | 0 | 2024-06-07T07:44:27 | https://dev.to/wewphosting/headless-cms-a-modern-approach-to-content-management-1pg1 |

Traditional CMS systems limit content presentation flexibility. Headless CMS separates content creation from presentation, offering greater freedom and scalability for managing content across various platforms.

##... | wewphosting | |

1,880,035 | Your Source for Quality: Explore Kitchen and Bath Products | Discover the Finest Kitchen area as well as Bathroom Items Right below Are actually you searching... | 0 | 2024-06-07T07:42:40 | https://dev.to/brenda_colonow_3eb2becfc4/your-source-for-quality-explore-kitchen-and-bath-products-4o8j | design |

Discover the Finest Kitchen area as well as Bathroom Items Right below

Are actually you searching for top quality kitchen area as well as bathroom items If therefore, you have concern the appropriate location! Our keep has actually whatever you have to change your house right in to a gorgeous as well as practic... | brenda_colonow_3eb2becfc4 |

1,880,033 | Tick-level transaction matching mechanism developed for high-frequency strategy backtesting | Summary What is the most important thing when backtest the trading strategy? the speed?... | 0 | 2024-06-07T07:38:13 | https://dev.to/fmzquant/tick-level-transaction-matching-mechanism-developed-for-high-frequency-strategy-backtesting-4eff | backtest, trading, cryptocurrency, fmzquant | ## Summary

What is the most important thing when backtest the trading strategy? the speed? The performance indicators?

The answer is accuracy! The purpose of the backtest is to verify the logic and feasibility of the strategy. This is also the meaning of the backtest itself, the others are secondary. A backtest result... | fmzquant |

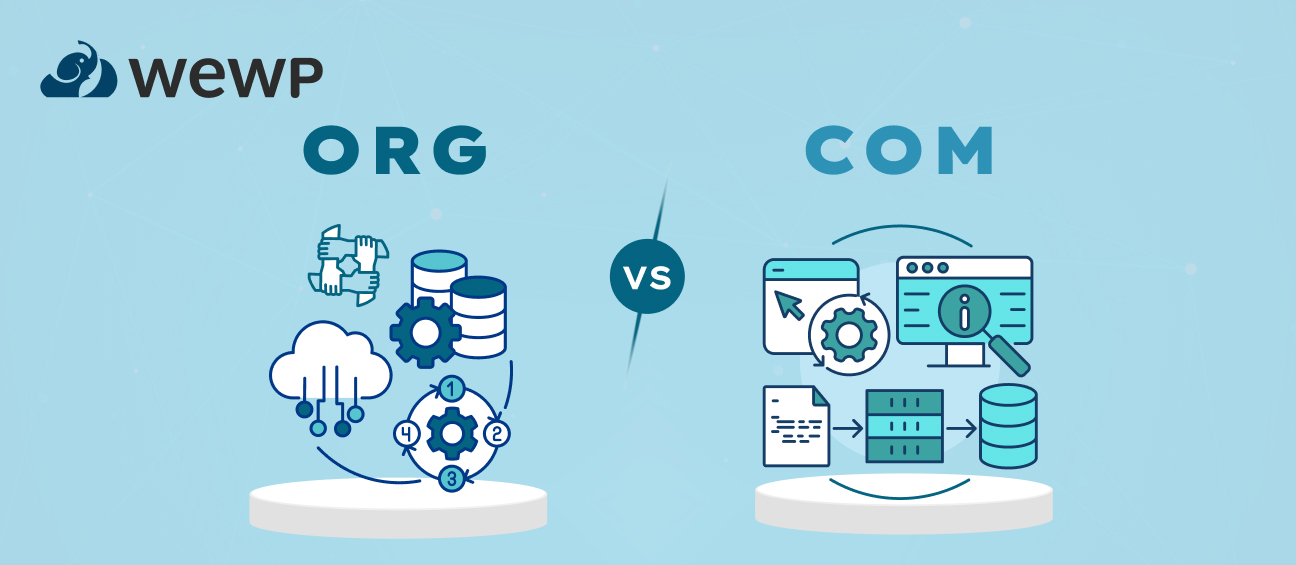

1,880,032 | Org vs Com: Exploring the Differences | Choosing the right domain extension (TLD) is important for your website’s identity and search... | 0 | 2024-06-07T07:37:57 | https://dev.to/wewphosting/org-vs-com-exploring-the-differences-2847 |

Choosing the right domain extension (TLD) is important for your website’s identity and search ranking. While there are many options, .COM and .ORG are the most popular.

### .COM vs .ORG

.COM: Most popular, versat... | wewphosting | |

1,880,031 | Performance Digital Marketing Agency in Pune | Boost your business' profitability! Get in touch with a top Digital Marketing Agency in Pune. Worked... | 0 | 2024-06-07T07:35:57 | https://dev.to/microinchhub_2ef66ab0ad56/performance-digital-marketing-agency-in-pune-2glo | digitalmarketingagency, digitalmarketingcompany | Boost your business' profitability! Get in touch with a top[ Digital Marketing Agency in Pune](https://www.microinchhub.com/

). Worked with more than 500+ Clients in India.

We are present in 10+ cities of India. Establishing the new era of digital marketing and helping businesses and many brands to achieve their goals... | microinchhub_2ef66ab0ad56 |

1,880,030 | Integrity Hospital Nagpur | Integrity Hospital Nagpur is dedicated to providing exceptional healthcare services with a focus on... | 0 | 2024-06-07T07:35:28 | https://dev.to/vibha_kharole_bc7da93ed0a/integrity-hospital-nagpur-o0h | besthospitalinnagpur, hospital | [](https://maps.app.goo.gl/sxTB7JbcfDtbjV1H6)

Integrity Hospital Nagpur is dedicated to providing exceptional healthcare services with a focus on patient-centered care and clinical excellence. Our state-of-the-art facility is equipped with the latest medical technology and staffed by a team of highly skilled and comp... | vibha_kharole_bc7da93ed0a |

1,880,029 | Optimizing Website Maintenance Through Right Web Hosting | In today’s digital landscape, maintaining an updated website is crucial for success, yet it can be... | 0 | 2024-06-07T07:33:10 | https://dev.to/wewphosting/optimizing-website-maintenance-through-right-web-hosting-2cg7 |

In today’s digital landscape, maintaining an updated website is crucial for success, yet it can be overwhelming for many without technical expertise. Website hosting providers offer essential support, renting space... | wewphosting | |

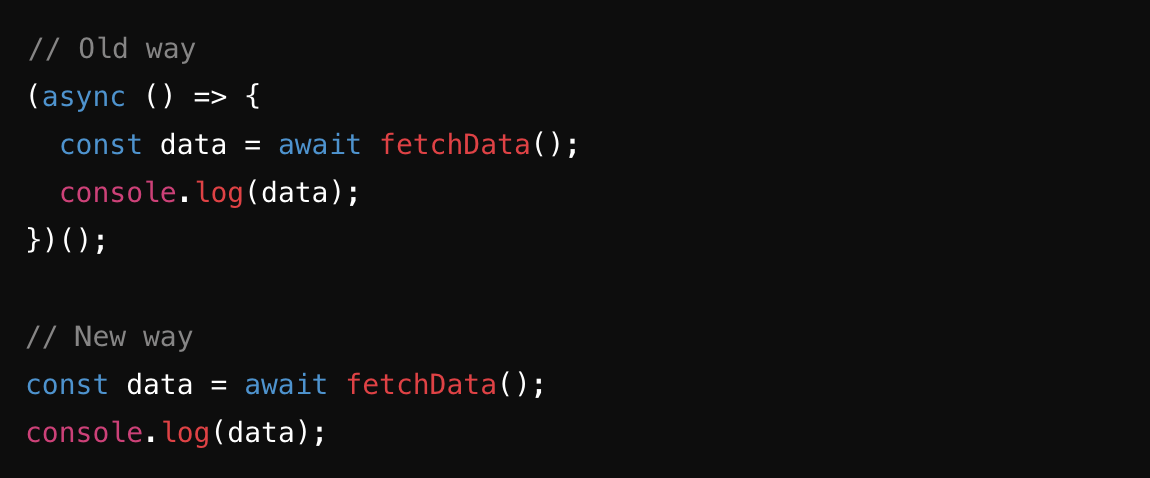

1,880,028 | What’s New in JavaScript | JavaScript, the ubiquitous language of the web, continues to evolve, bringing new features and... | 0 | 2024-06-07T07:30:35 | https://dev.to/andylarkin677/whats-new-in-javascript-249a | javascript, webdev, programming, learning | JavaScript, the ubiquitous language of the web, continues to evolve, bringing new features and enhancements that make development more efficient and enjoyable. The latest updates in JavaScript are part of the ECMAScript (ES) standards, which are regularly updated. Here's a look at some of the most notable new features ... | andylarkin677 |

1,880,027 | WeWP Dashboard: Easier Hosting Control Compared to cPanel | In the realm of web hosting administration, balancing usability and functionality is crucial. While... | 0 | 2024-06-07T07:30:33 | https://dev.to/wewphosting/wewp-dashboard-easier-hosting-control-compared-to-cpanel-3kh1 |

In the realm of web hosting administration, balancing usability and functionality is crucial. While cPanel has long been favored for its extensive capabilities, there’s a growing demand for simpler alternatives. En... | wewphosting | |

1,880,026 | Understanding the Rules of the Road in Italy | Driving in Italy can be a thrilling experience, but it's crucial to understand the country's driving... | 0 | 2024-06-07T07:29:31 | https://dev.to/manojkumar_96f6cb1f69dce6/understanding-the-rules-of-the-road-in-italy-3mjp | Driving in Italy can be a thrilling experience, but it's crucial to understand the country's driving rules and regulations. One of the first steps in obtaining an Italian driver's license is passing the Driving Theory Exam, also known as the "Patente B." This comprehensive test covers a wide range of topics, including... | manojkumar_96f6cb1f69dce6 | |

1,880,025 | The Ultimate Guide to Choosing the Best Roadside Assistance Service in India | Selecting a high-quality roadside assistance carrier in India can be difficult due to the many... | 0 | 2024-06-07T07:29:21 | https://dev.to/truepromise/the-ultimate-guide-to-choosing-the-best-roadside-assistance-service-in-india-9fi | Selecting a high-quality **[roadside assistance ](https://truepromise.co.in/warranty/blog8.php)**carrier in India can be difficult due to the many options available. Roadside assistance offerings are essential for imparting timely assistance for the duration of automobile breakdowns, flat tires, useless batteries, or o... | truepromise | |

1,880,024 | Exploring ilikecix: A Comprehensive Guide to Its Features and Benefits | Introduction Learning can be a tomfoolery and invigorating excursion for each little understudy. By... | 0 | 2024-06-07T07:27:52 | https://dev.to/sabir_ali_0ea4b6d31d7e4ad/exploring-ilikecix-a-comprehensive-guide-to-its-features-and-benefits-g8a | ilikecix | **Introduction

Learning can be a tomfoolery and invigorating excursion for each little understudy. By utilizing connecting with techniques and inventive apparatuses, teachers can make the growing experience charming for youthful personalities. One such instrument is [ilikecix](https://standupinfo.com/ilikecix/), a stag... | sabir_ali_0ea4b6d31d7e4ad |

1,880,022 | How to scrape dynamic websites with Python | Scraping dynamic websites that load content through JavaScript after the initial page load can be a... | 0 | 2024-06-07T07:27:24 | https://blog.apify.com/scrape-dynamic-websites-with-python/ | webdev, beginners, python, tutorial | Scraping dynamic websites that load content through JavaScript after the initial page load can be a pain in the neck, as the data you want to scrape may not exist in the raw HTML source code.

I'm here to help you with that problem.

In this article, you'll learn how to scrape dynamic websites with Python and Playwrigh... | sauain |

1,880,021 | Useful Resources for Web Developers | I wanted to write a blog about a list of resources I would need for reference. I came across an... | 0 | 2024-06-07T07:27:19 | https://dev.to/christopherchhim/useful-resources-for-web-developers-4dl3 | webdev, opensource | I wanted to write a blog about a list of resources I would need for reference. I came across an article that was featured as the second most popular story. I pulled this information from Firdaus' article _Fresh Resources for Web Designers and Developers (May 2024)_.

The following resources are:

- Deno Examples

- Sol... | christopherchhim |

1,879,963 | Kanban vs. Scrum: What's the difference? | Kanban vs Scrum Project management is a dynamic field always on the move. Here, methods like Kanban... | 0 | 2024-06-07T07:26:46 | https://dev.to/bryany/unpacking-the-complexity-kanban-vs-scrum-in-agile-development-aa7 | productivity, management | Kanban vs Scrum

Project management is a dynamic field always on the move. Here, methods like Kanban and Scrum play a crucial part. A lot of people do not realize the difference between kanban vs scrum and both of them serve as part of the agile project management process. It's been quite a game-changer, this whole agi... | bryany |

1,880,020 | ввпапв | A post by Dmytro Klimenko | 0 | 2024-06-07T07:24:35 | https://dev.to/klimd1389/vvpapv-41nb |

| klimd1389 | |

1,880,018 | Robert Geiger Teacher | Chasing the Finish Line - Insights from a Track Coach's Journey | In the dynamic world of track and field, the blistering sprint to the finish line is not merely a... | 0 | 2024-06-07T07:23:24 | https://dev.to/robertgeiger/robert-geiger-teacher-chasing-the-finish-line-insights-from-a-track-coachs-journey-4c97 | In the dynamic world of track and field, the blistering sprint to the finish line is not merely a physical race, it's a strategic pursuit demanding meticulous planning, unwavering focus, and flawless execution. Track coaches, like Robert Geiger, play an instrumental role in shaping the athletes' skills, honing their st... | robertgeiger | |

1,880,017 | Resolving Git Merge Conflicts | Introduction Git is an essential tool for version control in software development,... | 0 | 2024-06-07T07:22:30 | https://dev.to/msnmongare/resolving-git-merge-conflicts-5f35 | git, github, beginners, webdev | #### Introduction

[Git ](https://www.git-scm.com/)is an essential tool for version control in software development, enabling collaboration and ensuring code integrity. However, when multiple contributors work on the same codebase, merge conflicts are inevitable. Understanding and resolving these conflicts efficiently ... | msnmongare |

1,880,016 | Comprehensive Analysis of w06shj06: Features, Benefits, and Applications | Introduction In the present quick moving world, innovation advances quickly, presenting new items... | 0 | 2024-06-07T07:21:51 | https://dev.to/sabir_ali_0ea4b6d31d7e4ad/comprehensive-analysis-of-w06shj06-features-benefits-and-applications-5da8 | w06shj06 | **Introduction**

In the present quick moving world, innovation advances quickly, presenting new items that improve our day to day routines and expert conditions. One such development is w06shj06, a flexible and integral asset intended to address different issues across different businesses. This article digs into an ex... | sabir_ali_0ea4b6d31d7e4ad |

1,880,015 | This Week in Python | Fri, June 07, 2024 This Week in Python is a concise reading list about what happened in the past... | 0 | 2024-06-07T07:21:27 | https://bas.codes/posts/this-week-python-077 | python, thisweekinpython |

**Fri, June 07, 2024**

This Week in Python is a concise reading list about what happened in the past week in the Python universe.

## Python Articles

- [The State of Django 2024](https://blog.jetbrains.com/pycharm/2024/06/the-state-of-django/)

- [Python Sorted Containers](https://grantjenks.com/docs/sortedco... | bascodes |

1,880,004 | Top 5 Benefits of Salesforce Lightning Migration | Businesses must embrace a change and adjust to a rapidly evolving technological world to remain... | 0 | 2024-06-07T07:20:16 | https://payhip.com/ruchirb/blog/news/top-5-benefits-of-salesforce-lightning-migration | salesforce, lightning, migration |

Businesses must embrace a change and adjust to a rapidly evolving technological world to remain effective and competitive. Making a transition from an outdated Salesforce Classic UI to Lightning is one such important... | rohitbhandari102 |

1,879,989 | Advance Tally Prime Course in Online For Expert-Level Accounting and Financial Management Accounting Solutions | Do you want to advance your accounting skills to a more proficient level? Do you want to master... | 0 | 2024-06-07T07:16:46 | https://dev.to/henry_harvin_ddffc7b56c33/advance-tally-prime-course-in-online-for-expert-level-accounting-and-financial-management-accounting-solutions-51g3 | henry, harvin, henryharvin, blog | Do you want to advance your accounting skills to a more proficient level? Do you want to master financial management with Tally Prime, the greatest program out there? You don't need to look any farther! We really hope that our **["Advance Tally Prime Course in Online" ](https://www.henryharvin.com/tally-prime-course

)... | henry_harvin_ddffc7b56c33 |

1,879,988 | Why Should You Invest meme coin Development Company? | The world of cryptocurrencies is constantly evolving and one of the latest trends is the rise of... | 0 | 2024-06-07T07:14:26 | https://dev.to/tamharshi11/why-should-you-invest-meme-coin-development-company-5f7m | The world of cryptocurrencies is constantly evolving and one of the latest trends is the rise of native coins. These fun and often humorous cryptocurrencies have gained considerable popularity. If you are interested in developing your own meme coin, here are ten essential steps to guide you through the process.

**Unde... | tamharshi11 | |

1,879,987 | Assignment Writer Australia: How Safe Is Your Data? | In today's digital age, students often seek help from various online services to cope with their... | 0 | 2024-06-07T07:13:42 | https://dev.to/assignmentwriter/assignment-writer-australia-how-safe-is-your-data-mbb | assignmentwriter, writemyassignment, bestassignmentwriter, assignmentwritingservice | In today's digital age, students often seek help from various online services to cope with their academic workload. One popular service is hiring an "[Assignment Writer](https://assignmentwriter.io/)" in Australia. While these services can be incredibly helpful, it's essential to understand how safe your data is when y... | assignmentwriter |

1,879,986 | Using environment variables in React and Vite | Environment variables are a powerful way to manage secrets and configuration settings in your... | 0 | 2024-06-07T07:09:30 | https://10xdev.codeparrot.ai/using-environment-variables-in-react-and-vite | webdev, react, vite, env |

Environment variables are a powerful way to manage secrets and configuration settings in your applications. They allow you to store sensitive information like API keys, database credentials, and other configuration settings outside of your codebase. This makes it easier to manage your application's configuration and r... | harshalranjhani |

1,879,985 | May 2024 Web3 Game Report: Growth Trends and Evolving User Engagement | May 2024 Web3 Gaming Report: Growth Trends and Evolving User Engagement June 2024,... | 0 | 2024-06-07T07:07:25 | https://dev.to/footprint-analytics/may-2024-web3-game-report-growth-trends-and-evolving-user-engagement-opl | <img src="https://statichk.footprint.network/article/e1010959-231d-4754-8507-bd8ab158d62a.png"><em><span style="font-size:9pt;font-family:Arial,sans-serif;color:#000000;background-color:transparent;font-weight:400;font-style:normal;font-variant:normal;text-decoration:none;vertical-align:baseline;white-space:pre;white-s... | footprint-analytics | |

1,879,336 | Come rimuovere le versioni di Snap per liberare spazio su disco | Fonte: How to Clean Up Snap Versions to Free Up Disk Space Sintomi: la partizione contenente /var... | 0 | 2024-06-07T07:04:35 | https://dev.to/mcale/come-rimuovere-le-versioni-di-snap-per-liberare-spazio-su-disco-15bk | ubuntu, snap, italian, translation | Fonte: [How to Clean Up Snap Versions to Free Up Disk Space](https://dev.to/taimenwillems/how-to-clean-up-snap-versions-to-free-up-disk-space-22o2)

**Sintomi: la partizione contenente `/var` sta finendo lo spazio presente sul disco**

Sistema Operativo: _Linux Ubuntu_

Questa veloce guida, con uno script, aiuta a fare ... | mcale |

1,879,984 | Am I smart enough to become a developer? | This blog was originally published on Substack. Subscribe to ‘Letters to New Coders’ to receive free... | 0 | 2024-06-07T07:03:18 | https://dev.to/fahimulhaq/am-i-smart-enough-to-become-a-developer-36hg | becomeadeveloper, beginners, learning | This [blog](https://www.letterstocoders.com/p/am-i-smart-enough-to-become-a-developer?r=1h2f2c&utm_campaign=post&utm_medium=web&triedRedirect=true) was originally published on Substack. Subscribe to ‘[Letters to New Coders](https://www.letterstocoders.com/)’ to receive free weekly posts.

Many aspiring developers ask t... | fahimulhaq |

1,879,983 | Обнаружение потери пакетов | Если вы хотите повторно передать потерю пакетов, вы должны сначала обнаружить потерю пакетов. Если... | 0 | 2024-06-07T07:02:22 | https://dev.to/spimodule/obnaruzhieniie-potieri-pakietov-oek | mac, aps |

Если вы хотите повторно передать потерю пакетов, вы должны сначала обнаружить потерю пакетов. Если потери пакетов нет, повторной передачи не будет. В беспроводной связи обычно существует два способа обнаружения потери пакетов: механизмы мониторинга несущей и реагирования.

01 Контроль несущей

Обнаружение несущей я... | spimodule |

1,879,982 | Day 6 | today i learned a flex model in css,I just understood how to use the flex model and today I also... | 0 | 2024-06-07T06:59:17 | https://dev.to/han_han/day-6-21a6 | webdev, html, css, 100daysofcode | today i learned a `flex` model in css,I just understood how to use the flex model and today I also learned about `justify-content` and `box-sizing` for decoration in CSS. Tomorrow I will take an exam for the HTML CSS that I know | han_han |

Subsets and Splits

No community queries yet

The top public SQL queries from the community will appear here once available.