id int64 5 1.93M | title stringlengths 0 128 | description stringlengths 0 25.5k | collection_id int64 0 28.1k | published_timestamp timestamp[s] | canonical_url stringlengths 14 581 | tag_list stringlengths 0 120 | body_markdown stringlengths 0 716k | user_username stringlengths 2 30 |

|---|---|---|---|---|---|---|---|---|

1,879,627 | 🚀 Completed my C++ syllabus and course! 🎉 Excited to share this milestone with everyone. #CodeComplete #CPP #AchievementUnlocked | A post by Rishabh | 0 | 2024-06-06T20:17:55 | https://dev.to/rishabh_devios/completed-my-c-syllabus-and-course-excited-to-share-this-milestone-with-everyone-codecomplete-cpp-achievementunlocked-4m7c | rishabh_devios | ||

1,879,626 | Buy Verified Paxful Account | https://dmhelpshop.com/product/buy-verified-paxful-account/ Buy Verified Paxful Account There are... | 0 | 2024-06-06T20:09:20 | https://dev.to/betam34174/buy-verified-paxful-account-9k9 | webdev, javascript, beginners, programming | ERROR: type should be string, got "https://dmhelpshop.com/product/buy-verified-paxful-account/\n\n\nBuy Verified Paxful Account\nThere are several compelling reasons to consider purchasing a verified Paxful account. Firstly, a verified account offers enhanced security, providing peace of mind to all users. Additionally, it opens up a wider range of trading opportunities, allowing individuals to partake in various transactions, ultimately expanding their financial horizons.\n\nMoreover, Buy verified Paxful account ensures faster and more streamlined transactions, minimizing any potential delays or inconveniences. Furthermore, by opting for a verified account, users gain access to a trusted and reputable platform, fostering a sense of reliability and confidence.\n\nLastly, Paxful’s verification process is thorough and meticulous, ensuring that only genuine individuals are granted verified status, thereby creating a safer trading environment for all users. Overall, the decision to Buy Verified Paxful account can greatly enhance one’s overall trading experience, offering increased security, access to more opportunities, and a reliable platform to engage with. Buy Verified Paxful Account.\n\nBuy US verified paxful account from the best place dmhelpshop\nWhy we declared this website as the best place to buy US verified paxful account? Because, our company is established for providing the all account services in the USA (our main target) and even in the whole world. With this in mind we create paxful account and customize our accounts as professional with the real documents. Buy Verified Paxful Account.\n\nIf you want to buy US verified paxful account you should have to contact fast with us. Because our accounts are-\n\nEmail verified\nPhone number verified\nSelfie and KYC verified\nSSN (social security no.) verified\nTax ID and passport verified\nSometimes driving license verified\nMasterCard attached and verified\nUsed only genuine and real documents\n100% access of the account\nAll documents provided for customer security\nWhat is Verified Paxful Account?\nIn today’s expanding landscape of online transactions, ensuring security and reliability has become paramount. Given this context, Paxful has quickly risen as a prominent peer-to-peer Bitcoin marketplace, catering to individuals and businesses seeking trusted platforms for cryptocurrency trading.\n\nIn light of the prevalent digital scams and frauds, it is only natural for people to exercise caution when partaking in online transactions. As a result, the concept of a verified account has gained immense significance, serving as a critical feature for numerous online platforms. Paxful recognizes this need and provides a safe haven for users, streamlining their cryptocurrency buying and selling experience.\n\nFor individuals and businesses alike, Buy verified Paxful account emerges as an appealing choice, offering a secure and reliable environment in the ever-expanding world of digital transactions. Buy Verified Paxful Account.\n\nVerified Paxful Accounts are essential for establishing credibility and trust among users who want to transact securely on the platform. They serve as evidence that a user is a reliable seller or buyer, verifying their legitimacy.\n\nBut what constitutes a verified account, and how can one obtain this status on Paxful? In this exploration of verified Paxful accounts, we will unravel the significance they hold, why they are crucial, and shed light on the process behind their activation, providing a comprehensive understanding of how they function. Buy verified Paxful account.\n\n \n\nWhy should to Buy Verified Paxful Account?\nThere are several compelling reasons to consider purchasing a verified Paxful account. Firstly, a verified account offers enhanced security, providing peace of mind to all users. Additionally, it opens up a wider range of trading opportunities, allowing individuals to partake in various transactions, ultimately expanding their financial horizons.\n\nMoreover, a verified Paxful account ensures faster and more streamlined transactions, minimizing any potential delays or inconveniences. Furthermore, by opting for a verified account, users gain access to a trusted and reputable platform, fostering a sense of reliability and confidence. Buy Verified Paxful Account.\n\nLastly, Paxful’s verification process is thorough and meticulous, ensuring that only genuine individuals are granted verified status, thereby creating a safer trading environment for all users. Overall, the decision to buy a verified Paxful account can greatly enhance one’s overall trading experience, offering increased security, access to more opportunities, and a reliable platform to engage with.\n\n \n\nWhat is a Paxful Account\nPaxful and various other platforms consistently release updates that not only address security vulnerabilities but also enhance usability by introducing new features. Buy Verified Paxful Account.\n\nIn line with this, our old accounts have recently undergone upgrades, ensuring that if you purchase an old buy Verified Paxful account from dmhelpshop.com, you will gain access to an account with an impressive history and advanced features. This ensures a seamless and enhanced experience for all users, making it a worthwhile option for everyone.\n\n \n\nIs it safe to buy Paxful Verified Accounts?\nBuying on Paxful is a secure choice for everyone. However, the level of trust amplifies when purchasing from Paxful verified accounts. These accounts belong to sellers who have undergone rigorous scrutiny by Paxful. Buy verified Paxful account, you are automatically designated as a verified account. Hence, purchasing from a Paxful verified account ensures a high level of credibility and utmost reliability. Buy Verified Paxful Account.\n\nPAXFUL, a widely known peer-to-peer cryptocurrency trading platform, has gained significant popularity as a go-to website for purchasing Bitcoin and other cryptocurrencies. It is important to note, however, that while Paxful may not be the most secure option available, its reputation is considerably less problematic compared to many other marketplaces. Buy Verified Paxful Account.\n\nThis brings us to the question: is it safe to purchase Paxful Verified Accounts? Top Paxful reviews offer mixed opinions, suggesting that caution should be exercised. Therefore, users are advised to conduct thorough research and consider all aspects before proceeding with any transactions on Paxful.\n\n \n\nHow Do I Get 100% Real Verified Paxful Accoun?\nPaxful, a renowned peer-to-peer cryptocurrency marketplace, offers users the opportunity to conveniently buy and sell a wide range of cryptocurrencies. Given its growing popularity, both individuals and businesses are seeking to establish verified accounts on this platform.\n\nHowever, the process of creating a verified Paxful account can be intimidating, particularly considering the escalating prevalence of online scams and fraudulent practices. This verification procedure necessitates users to furnish personal information and vital documents, posing potential risks if not conducted meticulously.\n\nIn this comprehensive guide, we will delve into the necessary steps to create a legitimate and verified Paxful account. Our discussion will revolve around the verification process and provide valuable tips to safely navigate through it.\n\nMoreover, we will emphasize the utmost importance of maintaining the security of personal information when creating a verified account. Furthermore, we will shed light on common pitfalls to steer clear of, such as using counterfeit documents or attempting to bypass the verification process.\n\nWhether you are new to Paxful or an experienced user, this engaging paragraph aims to equip everyone with the knowledge they need to establish a secure and authentic presence on the platform.\n\nBenefits Of Verified Paxful Accounts\nVerified Paxful accounts offer numerous advantages compared to regular Paxful accounts. One notable advantage is that verified accounts contribute to building trust within the community.\n\nVerification, although a rigorous process, is essential for peer-to-peer transactions. This is why all Paxful accounts undergo verification after registration. When customers within the community possess confidence and trust, they can conveniently and securely exchange cash for Bitcoin or Ethereum instantly. Buy Verified Paxful Account.\n\nPaxful accounts, trusted and verified by sellers globally, serve as a testament to their unwavering commitment towards their business or passion, ensuring exceptional customer service at all times. Headquartered in Africa, Paxful holds the distinction of being the world’s pioneering peer-to-peer bitcoin marketplace. Spearheaded by its founder, Ray Youssef, Paxful continues to lead the way in revolutionizing the digital exchange landscape.\n\nPaxful has emerged as a favored platform for digital currency trading, catering to a diverse audience. One of Paxful’s key features is its direct peer-to-peer trading system, eliminating the need for intermediaries or cryptocurrency exchanges. By leveraging Paxful’s escrow system, users can trade securely and confidently.\n\nWhat sets Paxful apart is its commitment to identity verification, ensuring a trustworthy environment for buyers and sellers alike. With these user-centric qualities, Paxful has successfully established itself as a leading platform for hassle-free digital currency transactions, appealing to a wide range of individuals seeking a reliable and convenient trading experience. Buy Verified Paxful Account.\n\n \n\nHow paxful ensure risk-free transaction and trading?\nEngage in safe online financial activities by prioritizing verified accounts to reduce the risk of fraud. Platforms like Paxfu implement stringent identity and address verification measures to protect users from scammers and ensure credibility.\n\nWith verified accounts, users can trade with confidence, knowing they are interacting with legitimate individuals or entities. By fostering trust through verified accounts, Paxful strengthens the integrity of its ecosystem, making it a secure space for financial transactions for all users. Buy Verified Paxful Account.\n\nExperience seamless transactions by obtaining a verified Paxful account. Verification signals a user’s dedication to the platform’s guidelines, leading to the prestigious badge of trust. This trust not only expedites trades but also reduces transaction scrutiny. Additionally, verified users unlock exclusive features enhancing efficiency on Paxful. Elevate your trading experience with Verified Paxful Accounts today.\n\nIn the ever-changing realm of online trading and transactions, selecting a platform with minimal fees is paramount for optimizing returns. This choice not only enhances your financial capabilities but also facilitates more frequent trading while safeguarding gains. Buy Verified Paxful Account.\n\nExamining the details of fee configurations reveals Paxful as a frontrunner in cost-effectiveness. Acquire a verified level-3 USA Paxful account from usasmmonline.com for a secure transaction experience. Invest in verified Paxful accounts to take advantage of a leading platform in the online trading landscape.\n\n \n\nHow Old Paxful ensures a lot of Advantages?\n\nExplore the boundless opportunities that Verified Paxful accounts present for businesses looking to venture into the digital currency realm, as companies globally witness heightened profits and expansion. These success stories underline the myriad advantages of Paxful’s user-friendly interface, minimal fees, and robust trading tools, demonstrating its relevance across various sectors.\n\nBusinesses benefit from efficient transaction processing and cost-effective solutions, making Paxful a significant player in facilitating financial operations. Acquire a USA Paxful account effortlessly at a competitive rate from usasmmonline.com and unlock access to a world of possibilities. Buy Verified Paxful Account.\n\nExperience elevated convenience and accessibility through Paxful, where stories of transformation abound. Whether you are an individual seeking seamless transactions or a business eager to tap into a global market, buying old Paxful accounts unveils opportunities for growth.\n\nPaxful’s verified accounts not only offer reliability within the trading community but also serve as a testament to the platform’s ability to empower economic activities worldwide. Join the journey towards expansive possibilities and enhanced financial empowerment with Paxful today. Buy Verified Paxful Account.\n\n \n\nWhy paxful keep the security measures at the top priority?\nIn today’s digital landscape, security stands as a paramount concern for all individuals engaging in online activities, particularly within marketplaces such as Paxful. It is essential for account holders to remain informed about the comprehensive security protocols that are in place to safeguard their information.\n\nSafeguarding your Paxful account is imperative to guaranteeing the safety and security of your transactions. Two essential security components, Two-Factor Authentication and Routine Security Audits, serve as the pillars fortifying this shield of protection, ensuring a secure and trustworthy user experience for all. Buy Verified Paxful Account.\n\nConclusion\nInvesting in Bitcoin offers various avenues, and among those, utilizing a Paxful account has emerged as a favored option. Paxful, an esteemed online marketplace, enables users to engage in buying and selling Bitcoin. Buy Verified Paxful Account.\n\nThe initial step involves creating an account on Paxful and completing the verification process to ensure identity authentication. Subsequently, users gain access to a diverse range of offers from fellow users on the platform. Once a suitable proposal captures your interest, you can proceed to initiate a trade with the respective user, opening the doors to a seamless Bitcoin investing experience.\n\nIn conclusion, when considering the option of purchasing verified Paxful accounts, exercising caution and conducting thorough due diligence is of utmost importance. It is highly recommended to seek reputable sources and diligently research the seller’s history and reviews before making any transactions.\n\nMoreover, it is crucial to familiarize oneself with the terms and conditions outlined by Paxful regarding account verification, bearing in mind the potential consequences of violating those terms. By adhering to these guidelines, individuals can ensure a secure and reliable experience when engaging in such transactions. Buy Verified Paxful Account.\n\nContact Us / 24 Hours Reply\nTelegram:dmhelpshop\nWhatsApp: +1 (980) 277-2786\nSkype:dmhelpshop\nEmail:dmhelpshop@gmail.com\n\n " | betam34174 |

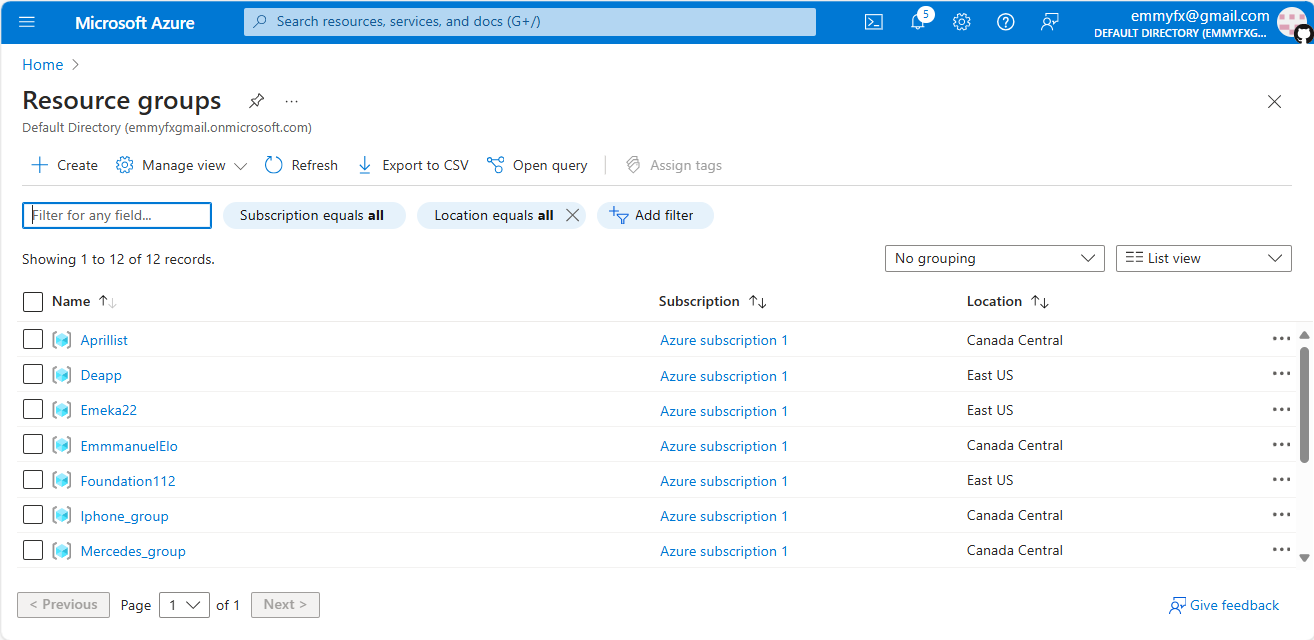

1,879,625 | Core Architectural components of Azure | Introduction Azure is a cloud computing platform powered by Microsoft that helps to build business... | 0 | 2024-06-06T20:08:45 | https://dev.to/ayospecie/core-architectural-components-of-azure-1bi5 | *Introduction*

*Azure* is a cloud computing platform powered by Microsoft that helps to build business solutions to meet organizational needs. Azure enables businesses and developers to build, deploy, and manage applications and services through Microsoft’s global network of data centers.

It offers a wide range of clo... | ayospecie | |

1,879,624 | FastAPI Beyond CRUD Part 7 - Create a User Authentication Model (Database Migrations With Alembic) | In this video, we create the user authentication model using SQLModel but we approach this by using... | 0 | 2024-06-06T20:07:21 | https://dev.to/jod35/fastapi-beyond-crud-part-7-create-a-user-authentication-model-database-migrations-with-alembic-2l4d | fastapi, api, python, programming | In this video, we create the user authentication model using SQLModel but we approach this by using Alembic. This video demonstrates the steps to set up database migrations with Alembic when working with Async SQLModel.

{%youtube jPTJ0i1JM3I%} | jod35 |

1,878,844 | > Dynamic SVG in Vue with Vite | Let me show you a super useful implementation of Vite's Glob Imports: creating a wrapper component... | 0 | 2024-06-06T20:06:31 | https://dev.to/aronsantha/-dynamic-svg-in-vue-with-vite-40jl | codecapsule, vite, vue | Let me show you a super useful implementation of Vite's [Glob Imports](https://vitejs.dev/guide/features#glob-import): creating a wrapper component for displaying SVGs.

---

This Vue 3 component below imports all files that

- are located in `/src/assets/svg` folder, and

- have `.svg` extension

```

// BaseSvg.vue

<t... | aronsantha |

1,879,623 | Three Levels of Scrapping Data: From Basic to Advanced to Pro | Introduction When I was working on Tarwiiga AdGen at Tarwiiga, I needed to finetune an LLM... | 0 | 2024-06-06T20:05:36 | https://dev.to/maelghrib/three-levels-of-scrapping-data-from-basic-to-advanced-to-pro-2i6p | python, machinelearning | ## Introduction

When I was working on Tarwiiga AdGen at [Tarwiiga](https://tarwiiga.com), I needed to finetune an LLM for Google ads generation, but the data was not found, so I needed to create it from scratch, the tool was taking input from two words or three and give a JSON output, so we need a dataset that contains... | maelghrib |

1,879,621 | Shadcn-ui codebase analysis: examples-nav.tsx explained | I wanted to find out how the below example nav is developed on ui.shadcn.com, so I looked at its... | 0 | 2024-06-06T20:03:47 | https://dev.to/ramunarasinga/shadcn-ui-codebase-analysis-examples-navtsx-explained-14j7 | javascript, nextjs, shadcnui, opensource | I wanted to find out how the below example nav is developed on [ui.shadcn.com](http://ui.shadcn.com), so I looked at its [source code](https://github.com/shadcn-ui/ui/blob/main/apps/www/app/%28app%29/layout.tsx). Because shadcn-ui is built using app router, the files I was interested in were [page.tsx](https://github.c... | ramunarasinga |

1,879,620 | What is Open Source Software (OSS)? | What is open source software? Open source software (OSS) refers to software that is... | 0 | 2024-06-06T20:00:31 | https://dev.to/isttiiak/what-is-open-source-software-oss-1fpg | linux, opensource | ## What is open source software?

Open source software (OSS) refers to software that is distributed with its source code made available to the public. This allows anyone to view, modify, and distribute the software.

Sharing of software has gone on since the beginnings of the computer age. In fact, not sharing software w... | isttiiak |

1,879,619 | "npm run build" is not working for nodejs application. | every time I am hitting "npm run build" it showing like starting building but not starting. this is... | 0 | 2024-06-06T19:55:46 | https://dev.to/developerdruva/npm-run-build-is-not-working-for-nodejs-application-37k1 | help | every time I am hitting "npm run build" it showing like starting building but not starting. this is showing multiple times and then stopped. | developerdruva |

1,879,618 | Mobile Game Testing: Essential Test Cases to Ship It Smooth | The competition in the mobile gaming industry is fierce, and developing a game that will go viral is... | 0 | 2024-06-06T19:55:23 | https://dev.to/konst_/mobile-game-testing-essential-test-cases-to-ship-it-smooth-802 | testing, mobile, gamedev | The competition in the mobile gaming industry is fierce, and developing a game that will go viral is a challenge in itself. Should you even bother making mobile games in 2024? Well, the market is projected to hit a whopping [$99 billion](https://www.statista.com/outlook/dmo/digital-media/video-games/mobile-games/worldw... | konst_ |

1,879,612 | Callbacks vs Promises vs Async/Await Concept in JavaScript | JavaScript (JS) provides several ways to handle asynchronous operations, which are crucial for tasks... | 0 | 2024-06-06T19:53:51 | https://dev.to/ayas_tech_2b0560ee159e661/callbacks-vs-promises-vs-asyncawait-concept-in-javascript-hp6 | JavaScript (JS) provides several ways to handle asynchronous operations, which are crucial for tasks like fetching data from an API, reading files, or performing time-consuming computations without blocking the main thread. Let's explore the three main approaches: Callbacks, Promises, and Async/Await.

**What is a Cal... | ayas_tech_2b0560ee159e661 | |

1,879,617 | Buy verified cash app account | https://dmhelpshop.com/product/buy-verified-cash-app-account/ Buy verified cash app account Cash... | 0 | 2024-06-06T19:53:48 | https://dev.to/betam34174/buy-verified-cash-app-account-4025 | webdev, javascript, beginners, programming | ERROR: type should be string, got "https://dmhelpshop.com/product/buy-verified-cash-app-account/\n\n\nBuy verified cash app account\nCash app has emerged as a dominant force in the realm of mobile banking within the USA, offering unparalleled convenience for digital money transfers, deposits, and trading. As the foremost provider of fully verified cash app accounts, we take pride in our ability to deliver accounts with substantial limits. Bitcoin enablement, and an unmatched level of security.\n\nOur commitment to facilitating seamless transactions and enabling digital currency trades has garnered significant acclaim, as evidenced by the overwhelming response from our satisfied clientele. Those seeking buy verified cash app account with 100% legitimate documentation and unrestricted access need look no further. Get in touch with us promptly to acquire your verified cash app account and take advantage of all the benefits it has to offer.\n\nWhy dmhelpshop is the best place to buy USA cash app accounts?\nIt’s crucial to stay informed about any updates to the platform you’re using. If an update has been released, it’s important to explore alternative options. Contact the platform’s support team to inquire about the status of the cash app service.\n\nClearly communicate your requirements and inquire whether they can meet your needs and provide the buy verified cash app account promptly. If they assure you that they can fulfill your requirements within the specified timeframe, proceed with the verification process using the required documents.\n\nOur account verification process includes the submission of the following documents: [List of specific documents required for verification].\n\nGenuine and activated email verified\nRegistered phone number (USA)\nSelfie verified\nSSN (social security number) verified\nDriving license\nBTC enable or not enable (BTC enable best)\n100% replacement guaranteed\n100% customer satisfaction\nWhen it comes to staying on top of the latest platform updates, it’s crucial to act fast and ensure you’re positioned in the best possible place. If you’re considering a switch, reaching out to the right contacts and inquiring about the status of the buy verified cash app account service update is essential.\n\nClearly communicate your requirements and gauge their commitment to fulfilling them promptly. Once you’ve confirmed their capability, proceed with the verification process using genuine and activated email verification, a registered USA phone number, selfie verification, social security number (SSN) verification, and a valid driving license.\n\nAdditionally, assessing whether BTC enablement is available is advisable, buy verified cash app account, with a preference for this feature. It’s important to note that a 100% replacement guarantee and ensuring 100% customer satisfaction are essential benchmarks in this process.\n\nHow to use the Cash Card to make purchases?\nTo activate your Cash Card, open the Cash App on your compatible device, locate the Cash Card icon at the bottom of the screen, and tap on it. Then select “Activate Cash Card” and proceed to scan the QR code on your card. Alternatively, you can manually enter the CVV and expiration date. How To Buy Verified Cash App Accounts.\n\nAfter submitting your information, including your registered number, expiration date, and CVV code, you can start making payments by conveniently tapping your card on a contactless-enabled payment terminal. Consider obtaining a buy verified Cash App account for seamless transactions, especially for business purposes. Buy verified cash app account.\n\nWhy we suggest to unchanged the Cash App account username?\nTo activate your Cash Card, open the Cash App on your compatible device, locate the Cash Card icon at the bottom of the screen, and tap on it. Then select “Activate Cash Card” and proceed to scan the QR code on your card.\n\nAlternatively, you can manually enter the CVV and expiration date. After submitting your information, including your registered number, expiration date, and CVV code, you can start making payments by conveniently tapping your card on a contactless-enabled payment terminal. Consider obtaining a verified Cash App account for seamless transactions, especially for business purposes. Buy verified cash app account. Purchase Verified Cash App Accounts.\n\nSelecting a username in an app usually comes with the understanding that it cannot be easily changed within the app’s settings or options. This deliberate control is in place to uphold consistency and minimize potential user confusion, especially for those who have added you as a contact using your username. In addition, purchasing a Cash App account with verified genuine documents already linked to the account ensures a reliable and secure transaction experience.\n\n \n\nBuy verified cash app accounts quickly and easily for all your financial needs.\nAs the user base of our platform continues to grow, the significance of verified accounts cannot be overstated for both businesses and individuals seeking to leverage its full range of features. How To Buy Verified Cash App Accounts.\n\nFor entrepreneurs, freelancers, and investors alike, a verified cash app account opens the door to sending, receiving, and withdrawing substantial amounts of money, offering unparalleled convenience and flexibility. Whether you’re conducting business or managing personal finances, the benefits of a verified account are clear, providing a secure and efficient means to transact and manage funds at scale.\n\nWhen it comes to the rising trend of purchasing buy verified cash app account, it’s crucial to tread carefully and opt for reputable providers to steer clear of potential scams and fraudulent activities. How To Buy Verified Cash App Accounts. With numerous providers offering this service at competitive prices, it is paramount to be diligent in selecting a trusted source.\n\nThis article serves as a comprehensive guide, equipping you with the essential knowledge to navigate the process of procuring buy verified cash app account, ensuring that you are well-informed before making any purchasing decisions. Understanding the fundamentals is key, and by following this guide, you’ll be empowered to make informed choices with confidence.\n\n \n\nIs it safe to buy Cash App Verified Accounts?\nCash App, being a prominent peer-to-peer mobile payment application, is widely utilized by numerous individuals for their transactions. However, concerns regarding its safety have arisen, particularly pertaining to the purchase of “verified” accounts through Cash App. This raises questions about the security of Cash App’s verification process.\n\nUnfortunately, the answer is negative, as buying such verified accounts entails risks and is deemed unsafe. Therefore, it is crucial for everyone to exercise caution and be aware of potential vulnerabilities when using Cash App. How To Buy Verified Cash App Accounts.\n\nCash App has emerged as a widely embraced platform for purchasing Instagram Followers using PayPal, catering to a diverse range of users. This convenient application permits individuals possessing a PayPal account to procure authenticated Instagram Followers.\n\nLeveraging the Cash App, users can either opt to procure followers for a predetermined quantity or exercise patience until their account accrues a substantial follower count, subsequently making a bulk purchase. Although the Cash App provides this service, it is crucial to discern between genuine and counterfeit items. If you find yourself in search of counterfeit products such as a Rolex, a Louis Vuitton item, or a Louis Vuitton bag, there are two viable approaches to consider.\n\n \n\nWhy you need to buy verified Cash App accounts personal or business?\nThe Cash App is a versatile digital wallet enabling seamless money transfers among its users. However, it presents a concern as it facilitates transfer to both verified and unverified individuals.\n\nTo address this, the Cash App offers the option to become a verified user, which unlocks a range of advantages. Verified users can enjoy perks such as express payment, immediate issue resolution, and a generous interest-free period of up to two weeks. With its user-friendly interface and enhanced capabilities, the Cash App caters to the needs of a wide audience, ensuring convenient and secure digital transactions for all.\n\nIf you’re a business person seeking additional funds to expand your business, we have a solution for you. Payroll management can often be a challenging task, regardless of whether you’re a small family-run business or a large corporation. How To Buy Verified Cash App Accounts.\n\nImproper payment practices can lead to potential issues with your employees, as they could report you to the government. However, worry not, as we offer a reliable and efficient way to ensure proper payroll management, avoiding any potential complications. Our services provide you with the funds you need without compromising your reputation or legal standing. With our assistance, you can focus on growing your business while maintaining a professional and compliant relationship with your employees. Purchase Verified Cash App Accounts.\n\nA Cash App has emerged as a leading peer-to-peer payment method, catering to a wide range of users. With its seamless functionality, individuals can effortlessly send and receive cash in a matter of seconds, bypassing the need for a traditional bank account or social security number. Buy verified cash app account.\n\nThis accessibility makes it particularly appealing to millennials, addressing a common challenge they face in accessing physical currency. As a result, ACash App has established itself as a preferred choice among diverse audiences, enabling swift and hassle-free transactions for everyone. Purchase Verified Cash App Accounts.\n\n \n\nHow to verify Cash App accounts\nTo ensure the verification of your Cash App account, it is essential to securely store all your required documents in your account. This process includes accurately supplying your date of birth and verifying the US or UK phone number linked to your Cash App account.\n\nAs part of the verification process, you will be asked to submit accurate personal details such as your date of birth, the last four digits of your SSN, and your email address. If additional information is requested by the Cash App community to validate your account, be prepared to provide it promptly. Upon successful verification, you will gain full access to managing your account balance, as well as sending and receiving funds seamlessly. Buy verified cash app account.\n\n \n\nHow cash used for international transaction?\nExperience the seamless convenience of this innovative platform that simplifies money transfers to the level of sending a text message. It effortlessly connects users within the familiar confines of their respective currency regions, primarily in the United States and the United Kingdom.\n\nNo matter if you’re a freelancer seeking to diversify your clientele or a small business eager to enhance market presence, this solution caters to your financial needs efficiently and securely. Embrace a world of unlimited possibilities while staying connected to your currency domain. Buy verified cash app account.\n\nUnderstanding the currency capabilities of your selected payment application is essential in today’s digital landscape, where versatile financial tools are increasingly sought after. In this era of rapid technological advancements, being well-informed about platforms such as Cash App is crucial.\n\nAs we progress into the digital age, the significance of keeping abreast of such services becomes more pronounced, emphasizing the necessity of staying updated with the evolving financial trends and options available. Buy verified cash app account.\n\nOffers and advantage to buy cash app accounts cheap?\nWith Cash App, the possibilities are endless, offering numerous advantages in online marketing, cryptocurrency trading, and mobile banking while ensuring high security. As a top creator of Cash App accounts, our team possesses unparalleled expertise in navigating the platform.\n\nWe deliver accounts with maximum security and unwavering loyalty at competitive prices unmatched by other agencies. Rest assured, you can trust our services without hesitation, as we prioritize your peace of mind and satisfaction above all else.\n\nEnhance your business operations effortlessly by utilizing the Cash App e-wallet for seamless payment processing, money transfers, and various other essential tasks. Amidst a myriad of transaction platforms in existence today, the Cash App e-wallet stands out as a premier choice, offering users a multitude of functions to streamline their financial activities effectively. Buy verified cash app account.\n\nTrustbizs.com stands by the Cash App’s superiority and recommends acquiring your Cash App accounts from this trusted source to optimize your business potential.\n\nHow Customizable are the Payment Options on Cash App for Businesses?\nDiscover the flexible payment options available to businesses on Cash App, enabling a range of customization features to streamline transactions. Business users have the ability to adjust transaction amounts, incorporate tipping options, and leverage robust reporting tools for enhanced financial management.\n\nExplore trustbizs.com to acquire verified Cash App accounts with LD backup at a competitive price, ensuring a secure and efficient payment solution for your business needs. Buy verified cash app account.\n\nDiscover Cash App, an innovative platform ideal for small business owners and entrepreneurs aiming to simplify their financial operations. With its intuitive interface, Cash App empowers businesses to seamlessly receive payments and effectively oversee their finances. Emphasizing customization, this app accommodates a variety of business requirements and preferences, making it a versatile tool for all.\n\nWhere To Buy Verified Cash App Accounts\nWhen considering purchasing a verified Cash App account, it is imperative to carefully scrutinize the seller’s pricing and payment methods. Look for pricing that aligns with the market value, ensuring transparency and legitimacy. Buy verified cash app account.\n\nEqually important is the need to opt for sellers who provide secure payment channels to safeguard your financial data. Trust your intuition; skepticism towards deals that appear overly advantageous or sellers who raise red flags is warranted. It is always wise to prioritize caution and explore alternative avenues if uncertainties arise.\n\nThe Importance Of Verified Cash App Accounts\nIn today’s digital age, the significance of verified Cash App accounts cannot be overstated, as they serve as a cornerstone for secure and trustworthy online transactions.\n\nBy acquiring verified Cash App accounts, users not only establish credibility but also instill the confidence required to participate in financial endeavors with peace of mind, thus solidifying its status as an indispensable asset for individuals navigating the digital marketplace.\n\nWhen considering purchasing a verified Cash App account, it is imperative to carefully scrutinize the seller’s pricing and payment methods. Look for pricing that aligns with the market value, ensuring transparency and legitimacy. Buy verified cash app account.\n\nEqually important is the need to opt for sellers who provide secure payment channels to safeguard your financial data. Trust your intuition; skepticism towards deals that appear overly advantageous or sellers who raise red flags is warranted. It is always wise to prioritize caution and explore alternative avenues if uncertainties arise.\n\nConclusion\nEnhance your online financial transactions with verified Cash App accounts, a secure and convenient option for all individuals. By purchasing these accounts, you can access exclusive features, benefit from higher transaction limits, and enjoy enhanced protection against fraudulent activities. Streamline your financial interactions and experience peace of mind knowing your transactions are secure and efficient with verified Cash App accounts.\n\nChoose a trusted provider when acquiring accounts to guarantee legitimacy and reliability. In an era where Cash App is increasingly favored for financial transactions, possessing a verified account offers users peace of mind and ease in managing their finances. Make informed decisions to safeguard your financial assets and streamline your personal transactions effectively.\n\nContact Us / 24 Hours Reply\nTelegram:dmhelpshop\nWhatsApp: +1 (980) 277-2786\nSkype:dmhelpshop\nEmail:dmhelpshop@gmail.com" | betam34174 |

1,879,616 | BDD Testing in .NET8 | Introduction In this tutorial you will understand what is Behavior-Driven Development... | 0 | 2024-06-06T19:52:40 | https://dev.to/vinicius_estevam/teste-bdd-em-net8-4ech | ledscommunity, bdd, testing, aspnet |

## Introduction

In this tutorial you will understand what is Behavior-Driven Development (BDD) and your benefits.

### Behavior-Driven Development (BDD)

Behavior-Driven Development (BDD) is an agile methodology that enhances collaboration among all project participants, regardless of technical knowledge. It builds o... | vinicius_estevam |

1,879,593 | Streamlining Angular Deployment with GitHub Actions, GitHub Container registry , Docker, and Nginx | In modern web development, setting up an efficient CI/CD pipeline for deploying your Angular... | 0 | 2024-06-06T19:50:35 | https://dev.to/aixart/streamlining-angular-deployment-with-github-actions-github-container-registry-docker-and-nginx-27bo | devops, docker, webdev, cicd | In modern web development, setting up an efficient CI/CD pipeline for deploying your Angular applications can significantly enhance your workflow. This blog post guides you through setting up a GitHub Actions pipeline to build and deploy an Angular application using Docker, Nginx, and ensuring basic security group conf... | aixart |

1,879,615 | DIGITAL ASSET FRAUD EXPERT KNOWN AS GEARHEAD ENGINEERS | Growing up on a farm instilled in me a strong work ethic and a deep appreciation for the simple, yet... | 0 | 2024-06-06T19:47:29 | https://dev.to/eva_cole_9a88b92c94a52f90/digital-asset-fraud-expert-known-as-gearhead-engineers-1if2 | Growing up on a farm instilled in me a strong work ethic and a deep appreciation for the simple, yet demanding, rhythms of rural life. However, my aspirations always lay beyond the fields and barns of my upbringing. I yearned for something different, something that blended the new-age economy with the values I held dea... | eva_cole_9a88b92c94a52f90 | |

1,879,614 | A Deep Dive into Three.js: Exploring the Beauty of 3D on the Web 🌐 | Hey there, fellow developers! Today, we're diving into the fascinating world of Three.js, a... | 0 | 2024-06-06T19:45:24 | https://dev.to/mohith/a-deep-dive-into-threejs-exploring-the-beauty-of-3d-on-the-web-5812 | threejs, nextjs, new, 3d | Hey there, fellow developers! Today, we're diving into the fascinating world of Three.js, a JavaScript library that makes creating 3D graphics in the web browser a breeze. Whether you're a seasoned coder or just getting started, Three.js has something for everyone. Let's unpack its history, usage, benefits, and best pr... | mohith |

1,879,613 | Looking to hire talented Blockchain developers for building Crypto Betting Platform | Currently, we are working on crypto betting website development that is called Reject Rumble. We just... | 0 | 2024-06-06T19:44:17 | https://dev.to/twentyfour7/looking-to-hire-talented-blockchain-developers-for-building-crypto-betting-platform-50kd | web3 | Currently, we are working on crypto betting website development that is called Reject Rumble.

We just started Front-UI development but our previous Blockchain developer left from our team so we are looking for developers who has experience in Blockchain development.

Current platform supports only Solana network and can... | twentyfour7 |

1,879,611 | Async/Await keeps order in JavaScript; | JavaScript is a single-threaded synchronous language, meaning it goes through the statements in the... | 0 | 2024-06-06T19:41:57 | https://dev.to/atenajoon/asyncawait-keeps-order-in-javascript-2k4p | asynchronous, javascript, api, react | JavaScript is a single-threaded synchronous language, meaning it goes through the statements in the order they are written and processes one at a time.

```

console.log("cook!");

console.log("eat!");

console.log("clean!");

// cook!

// eat!

// clean!

```

If before the _cook!_ operation there was an asynchronous operatio... | atenajoon |

1,879,610 | Looking to hire Blockchain Developer for Crypto Betting Platform Development | Currently, we are working on crypto betting website development that is called Reject Rumble. We just... | 0 | 2024-06-06T19:41:07 | https://dev.to/twentyfour7/looking-to-hire-blockchain-developer-for-crypto-betting-platform-development-38do | career, blockchain, web3, node | Currently, we are working on crypto betting website development that is called Reject Rumble.

We just started Front-UI development but our previous Blockchain developer left from our team so we are looking for developers who has experience in Blockchain development.

Current platform supports only Solana network and can... | twentyfour7 |

1,879,609 | Leading 2D Animation Agency in New York, USA - Expert Animations | Discover the leading 2D Animation Agency in New York, USA, with Web Craft Pros. Our team of expert... | 0 | 2024-06-06T19:40:56 | https://dev.to/alexa_johns_af71ca060fd0d/leading-2d-animation-agency-in-new-york-usa-expert-animations-4dl2 | webdev | Discover the leading [2D Animation Agency](https://webcraftpros.com/video-animation) in New York, USA, with Web Craft Pros. Our team of expert animators is dedicated to transforming your ideas into visually stunning and engaging animations. Specializing in explainer videos, promotional content, and animated storytellin... | alexa_johns_af71ca060fd0d |

1,879,605 | Multitenant Considerations In Azure | What is Multitenancy? A multitenant solution serves multiple distint customers or tenants... | 0 | 2024-06-06T19:37:04 | https://dev.to/chethankumblekar/multitenant-considerations-in-azure-bbn | webdev, multitenancy, azure, cloud | ### What is Multitenancy?

A multitenant solution serves multiple distint customers or tenants and they might be individual organizations or group of users._Examples include B2B solutions (like accounting software), B2C solutions (such as music streaming), and enterprise-wide platforms (like shared Kubernetes clusters).... | chethankumblekar |

1,879,606 | Ojas: The Science Behind How Peel Therapy Works to Transform Your Skin | In the quest for brilliant, energetic skin, strip treatment has emerged as a powerful treatment,... | 0 | 2024-06-06T19:32:09 | https://dev.to/ojasskincare9/ojas-the-science-behind-how-peel-therapy-works-to-transform-your-skin-3afj | In the quest for brilliant, energetic skin, strip treatment has emerged as a powerful treatment, venerated for its capacity to restore and change the skin. At Ojas, we have faith in tackling the force of science to upgrade magnificence, and strip treatment is a great representation of this cooperative energy. This arti... | ojasskincare9 | |

1,879,604 | Insta Saver Pro APK Download: Unlock Instagram Media Downloads | What Is Insta Saver Pro? Insta Saver Pro is a powerful Android app that allows you to download... | 0 | 2024-06-06T19:27:11 | https://dev.to/insta_pro_fc70da4c3d09930/insta-saver-pro-apk-download-unlock-instagram-media-downloads-30a | instagram, seo, entertainment, apk | What Is Insta Saver Pro?

Insta Saver Pro is a powerful Android app that allows you to download Instagram photos, videos, stories, reels, and IGTV content directly to your phone. Whether you want to save your favorite posts or share them with friends, Insta Saver Pro has got you covered!

Key Features:

Download Instagra... | insta_pro_fc70da4c3d09930 |

1,879,462 | Four Fundamental JS Array Methods to Memorize | There are four basic array methods that every beginner should learn to start manipulating arrays.... | 0 | 2024-06-06T19:21:37 | https://dev.to/miguel_c/four-fundamental-js-array-methods-to-memorize-24k | javascript, coding, softwaredevelopment, newbie | There are four basic array methods that every beginner should learn to start manipulating arrays. These methods are `push()`, `pop()`, `shift()`, and `unshift()`. In this guide, I will take you through each method so that you can start manipulating arrays effectively!

## Method `push()`

The `push()` method adds an it... | miguel_c |

1,879,603 | Spotify MOD APK v8.9.6.458: Download (No Ads/Premium) 2024 | In the digital age, online music streaming has revolutionized how we listen to music. Among the... | 0 | 2024-06-06T19:21:18 | https://dev.to/saadseo_e90a28931a110dbae/spotify-mod-apk-v896458-download-no-adspremium-2024-3bf9 | spotify, seo, music, learning | In the digital age, online music streaming has revolutionized how we listen to music. Among the plethora of options available, Spotify MOD APK stands out as a premier choice for music enthusiasts. Let’s dive into what makes it special:

"Unlock Spotify Premium features for free with Spotify++ IPA! Compatible with iOS 1... | saadseo_e90a28931a110dbae |

1,879,602 | Launch of the Edudu App for iOS and Android | Release of Edudu It is with great pleasure that I announce the launch of the Edudu App for... | 0 | 2024-06-06T19:20:11 | https://dev.to/marciofrayze/release-of-edudu-app-1529 | app, ios, android, teachers | ## Release of Edudu

It is with great pleasure that I announce the launch of the Edudu App for [iOS](https://apps.apple.com/br/app/edudu/id6477551944) and [Android](https://play.google.com/store/apps/details?id=tech.segunda.edudu)!

In this article, I present the Edudu app and discuss the technologies I chose to deve... | marciofrayze |

1,879,597 | A maneira correta de utilizar a nomenclatura BEM | A nomenclatura BEM (Block Element Modifier) define o padrão que devemos utilizar para nomear as nossas classes CSS, nesse artigo você vai aprender como utilizar a nomenclatura BEM corretamente | 0 | 2024-06-06T19:17:00 | https://codigoaoponto.com/blog/a-maneira-correta-de-utilizar-a-nomenclatura-bem | css, frontend, webdev, tutorial | ---

title: 'A maneira correta de utilizar a nomenclatura BEM'

description: 'A nomenclatura BEM (Block Element Modifier) define o padrão que devemos utilizar para nomear as nossas classes CSS, nesse artigo você vai aprender como utilizar a nomenclatura BEM corretamente'

author: 'Thiago Nunes Batista'

---

Ter padrões de... | thiagonunesbatista |

1,879,537 | Setup and Use Sitecore CMP Connector. Part 1 | That sure is a nice Sitecore Content Hub you've got there. It is a good bet that you would like to... | 0 | 2024-06-06T19:16:22 | https://dev.to/mrpipedream/setup-and-use-sitecore-cmp-connector-1f7f | sitecore, cmp, xmcloud | That sure is a nice Sitecore Content Hub you've got there. It is a good bet that you would like to use some of that content on XM Cloud. So lets do that.

This might get a bit long. So, briefly, here is what I am going to be describing how to do:

- Setup an Action and Trigger inside of Content Hub

- Setup the CMP conn... | mrpipedream |

1,879,596 | Buy verified cash app account | https://dmhelpshop.com/product/buy-verified-cash-app-account/ Buy verified cash app account Cash... | 0 | 2024-06-06T19:14:49 | https://dev.to/jeremyhunt96529/buy-verified-cash-app-account-52g1 | webdev, javascript, beginners, programming | ERROR: type should be string, got "https://dmhelpshop.com/product/buy-verified-cash-app-account/\n\n\n\n\nBuy verified cash app account\nCash app has emerged as a dominant force in the realm of mobile banking within the USA, offering unparalleled convenience for digital money transfers, deposits, and trading. As the foremost provider of fully verified cash app accounts, we take pride in our ability to deliver accounts with substantial limits. Bitcoin enablement, and an unmatched level of security.\n\nOur commitment to facilitating seamless transactions and enabling digital currency trades has garnered significant acclaim, as evidenced by the overwhelming response from our satisfied clientele. Those seeking buy verified cash app account with 100% legitimate documentation and unrestricted access need look no further. Get in touch with us promptly to acquire your verified cash app account and take advantage of all the benefits it has to offer.\n\nWhy dmhelpshop is the best place to buy USA cash app accounts?\nIt’s crucial to stay informed about any updates to the platform you’re using. If an update has been released, it’s important to explore alternative options. Contact the platform’s support team to inquire about the status of the cash app service.\n\nClearly communicate your requirements and inquire whether they can meet your needs and provide the buy verified cash app account promptly. If they assure you that they can fulfill your requirements within the specified timeframe, proceed with the verification process using the required documents.\n\nOur account verification process includes the submission of the following documents: [List of specific documents required for verification].\n\nGenuine and activated email verified\nRegistered phone number (USA)\nSelfie verified\nSSN (social security number) verified\nDriving license\nBTC enable or not enable (BTC enable best)\n100% replacement guaranteed\n100% customer satisfaction\nWhen it comes to staying on top of the latest platform updates, it’s crucial to act fast and ensure you’re positioned in the best possible place. If you’re considering a switch, reaching out to the right contacts and inquiring about the status of the buy verified cash app account service update is essential.\n\nClearly communicate your requirements and gauge their commitment to fulfilling them promptly. Once you’ve confirmed their capability, proceed with the verification process using genuine and activated email verification, a registered USA phone number, selfie verification, social security number (SSN) verification, and a valid driving license.\n\nAdditionally, assessing whether BTC enablement is available is advisable, buy verified cash app account, with a preference for this feature. It’s important to note that a 100% replacement guarantee and ensuring 100% customer satisfaction are essential benchmarks in this process.\n\nHow to use the Cash Card to make purchases?\nTo activate your Cash Card, open the Cash App on your compatible device, locate the Cash Card icon at the bottom of the screen, and tap on it. Then select “Activate Cash Card” and proceed to scan the QR code on your card. Alternatively, you can manually enter the CVV and expiration date. How To Buy Verified Cash App Accounts.\n\nAfter submitting your information, including your registered number, expiration date, and CVV code, you can start making payments by conveniently tapping your card on a contactless-enabled payment terminal. Consider obtaining a buy verified Cash App account for seamless transactions, especially for business purposes. Buy verified cash app account.\n\nWhy we suggest to unchanged the Cash App account username?\nTo activate your Cash Card, open the Cash App on your compatible device, locate the Cash Card icon at the bottom of the screen, and tap on it. Then select “Activate Cash Card” and proceed to scan the QR code on your card.\n\nAlternatively, you can manually enter the CVV and expiration date. After submitting your information, including your registered number, expiration date, and CVV code, you can start making payments by conveniently tapping your card on a contactless-enabled payment terminal. Consider obtaining a verified Cash App account for seamless transactions, especially for business purposes. Buy verified cash app account. Purchase Verified Cash App Accounts.\n\nSelecting a username in an app usually comes with the understanding that it cannot be easily changed within the app’s settings or options. This deliberate control is in place to uphold consistency and minimize potential user confusion, especially for those who have added you as a contact using your username. In addition, purchasing a Cash App account with verified genuine documents already linked to the account ensures a reliable and secure transaction experience.\n\n \n\nBuy verified cash app accounts quickly and easily for all your financial needs.\nAs the user base of our platform continues to grow, the significance of verified accounts cannot be overstated for both businesses and individuals seeking to leverage its full range of features. How To Buy Verified Cash App Accounts.\n\nFor entrepreneurs, freelancers, and investors alike, a verified cash app account opens the door to sending, receiving, and withdrawing substantial amounts of money, offering unparalleled convenience and flexibility. Whether you’re conducting business or managing personal finances, the benefits of a verified account are clear, providing a secure and efficient means to transact and manage funds at scale.\n\nWhen it comes to the rising trend of purchasing buy verified cash app account, it’s crucial to tread carefully and opt for reputable providers to steer clear of potential scams and fraudulent activities. How To Buy Verified Cash App Accounts. With numerous providers offering this service at competitive prices, it is paramount to be diligent in selecting a trusted source.\n\nThis article serves as a comprehensive guide, equipping you with the essential knowledge to navigate the process of procuring buy verified cash app account, ensuring that you are well-informed before making any purchasing decisions. Understanding the fundamentals is key, and by following this guide, you’ll be empowered to make informed choices with confidence.\n\n \n\nIs it safe to buy Cash App Verified Accounts?\nCash App, being a prominent peer-to-peer mobile payment application, is widely utilized by numerous individuals for their transactions. However, concerns regarding its safety have arisen, particularly pertaining to the purchase of “verified” accounts through Cash App. This raises questions about the security of Cash App’s verification process.\n\nUnfortunately, the answer is negative, as buying such verified accounts entails risks and is deemed unsafe. Therefore, it is crucial for everyone to exercise caution and be aware of potential vulnerabilities when using Cash App. How To Buy Verified Cash App Accounts.\n\nCash App has emerged as a widely embraced platform for purchasing Instagram Followers using PayPal, catering to a diverse range of users. This convenient application permits individuals possessing a PayPal account to procure authenticated Instagram Followers.\n\nLeveraging the Cash App, users can either opt to procure followers for a predetermined quantity or exercise patience until their account accrues a substantial follower count, subsequently making a bulk purchase. Although the Cash App provides this service, it is crucial to discern between genuine and counterfeit items. If you find yourself in search of counterfeit products such as a Rolex, a Louis Vuitton item, or a Louis Vuitton bag, there are two viable approaches to consider.\n\n \n\nWhy you need to buy verified Cash App accounts personal or business?\nThe Cash App is a versatile digital wallet enabling seamless money transfers among its users. However, it presents a concern as it facilitates transfer to both verified and unverified individuals.\n\nTo address this, the Cash App offers the option to become a verified user, which unlocks a range of advantages. Verified users can enjoy perks such as express payment, immediate issue resolution, and a generous interest-free period of up to two weeks. With its user-friendly interface and enhanced capabilities, the Cash App caters to the needs of a wide audience, ensuring convenient and secure digital transactions for all.\n\nIf you’re a business person seeking additional funds to expand your business, we have a solution for you. Payroll management can often be a challenging task, regardless of whether you’re a small family-run business or a large corporation. How To Buy Verified Cash App Accounts.\n\nImproper payment practices can lead to potential issues with your employees, as they could report you to the government. However, worry not, as we offer a reliable and efficient way to ensure proper payroll management, avoiding any potential complications. Our services provide you with the funds you need without compromising your reputation or legal standing. With our assistance, you can focus on growing your business while maintaining a professional and compliant relationship with your employees. Purchase Verified Cash App Accounts.\n\nA Cash App has emerged as a leading peer-to-peer payment method, catering to a wide range of users. With its seamless functionality, individuals can effortlessly send and receive cash in a matter of seconds, bypassing the need for a traditional bank account or social security number. Buy verified cash app account.\n\nThis accessibility makes it particularly appealing to millennials, addressing a common challenge they face in accessing physical currency. As a result, ACash App has established itself as a preferred choice among diverse audiences, enabling swift and hassle-free transactions for everyone. Purchase Verified Cash App Accounts.\n\n \n\nHow to verify Cash App accounts\nTo ensure the verification of your Cash App account, it is essential to securely store all your required documents in your account. This process includes accurately supplying your date of birth and verifying the US or UK phone number linked to your Cash App account.\n\nAs part of the verification process, you will be asked to submit accurate personal details such as your date of birth, the last four digits of your SSN, and your email address. If additional information is requested by the Cash App community to validate your account, be prepared to provide it promptly. Upon successful verification, you will gain full access to managing your account balance, as well as sending and receiving funds seamlessly. Buy verified cash app account.\n\n \n\nHow cash used for international transaction?\nExperience the seamless convenience of this innovative platform that simplifies money transfers to the level of sending a text message. It effortlessly connects users within the familiar confines of their respective currency regions, primarily in the United States and the United Kingdom.\n\nNo matter if you’re a freelancer seeking to diversify your clientele or a small business eager to enhance market presence, this solution caters to your financial needs efficiently and securely. Embrace a world of unlimited possibilities while staying connected to your currency domain. Buy verified cash app account.\n\nUnderstanding the currency capabilities of your selected payment application is essential in today’s digital landscape, where versatile financial tools are increasingly sought after. In this era of rapid technological advancements, being well-informed about platforms such as Cash App is crucial.\n\nAs we progress into the digital age, the significance of keeping abreast of such services becomes more pronounced, emphasizing the necessity of staying updated with the evolving financial trends and options available. Buy verified cash app account.\n\nOffers and advantage to buy cash app accounts cheap?\nWith Cash App, the possibilities are endless, offering numerous advantages in online marketing, cryptocurrency trading, and mobile banking while ensuring high security. As a top creator of Cash App accounts, our team possesses unparalleled expertise in navigating the platform.\n\nWe deliver accounts with maximum security and unwavering loyalty at competitive prices unmatched by other agencies. Rest assured, you can trust our services without hesitation, as we prioritize your peace of mind and satisfaction above all else.\n\nEnhance your business operations effortlessly by utilizing the Cash App e-wallet for seamless payment processing, money transfers, and various other essential tasks. Amidst a myriad of transaction platforms in existence today, the Cash App e-wallet stands out as a premier choice, offering users a multitude of functions to streamline their financial activities effectively. Buy verified cash app account.\n\nTrustbizs.com stands by the Cash App’s superiority and recommends acquiring your Cash App accounts from this trusted source to optimize your business potential.\n\nHow Customizable are the Payment Options on Cash App for Businesses?\nDiscover the flexible payment options available to businesses on Cash App, enabling a range of customization features to streamline transactions. Business users have the ability to adjust transaction amounts, incorporate tipping options, and leverage robust reporting tools for enhanced financial management.\n\nExplore trustbizs.com to acquire verified Cash App accounts with LD backup at a competitive price, ensuring a secure and efficient payment solution for your business needs. Buy verified cash app account.\n\nDiscover Cash App, an innovative platform ideal for small business owners and entrepreneurs aiming to simplify their financial operations. With its intuitive interface, Cash App empowers businesses to seamlessly receive payments and effectively oversee their finances. Emphasizing customization, this app accommodates a variety of business requirements and preferences, making it a versatile tool for all.\n\nWhere To Buy Verified Cash App Accounts\nWhen considering purchasing a verified Cash App account, it is imperative to carefully scrutinize the seller’s pricing and payment methods. Look for pricing that aligns with the market value, ensuring transparency and legitimacy. Buy verified cash app account.\n\nEqually important is the need to opt for sellers who provide secure payment channels to safeguard your financial data. Trust your intuition; skepticism towards deals that appear overly advantageous or sellers who raise red flags is warranted. It is always wise to prioritize caution and explore alternative avenues if uncertainties arise.\n\nThe Importance Of Verified Cash App Accounts\nIn today’s digital age, the significance of verified Cash App accounts cannot be overstated, as they serve as a cornerstone for secure and trustworthy online transactions.\n\nBy acquiring verified Cash App accounts, users not only establish credibility but also instill the confidence required to participate in financial endeavors with peace of mind, thus solidifying its status as an indispensable asset for individuals navigating the digital marketplace.\n\nWhen considering purchasing a verified Cash App account, it is imperative to carefully scrutinize the seller’s pricing and payment methods. Look for pricing that aligns with the market value, ensuring transparency and legitimacy. Buy verified cash app account.\n\nEqually important is the need to opt for sellers who provide secure payment channels to safeguard your financial data. Trust your intuition; skepticism towards deals that appear overly advantageous or sellers who raise red flags is warranted. It is always wise to prioritize caution and explore alternative avenues if uncertainties arise.\n\nConclusion\nEnhance your online financial transactions with verified Cash App accounts, a secure and convenient option for all individuals. By purchasing these accounts, you can access exclusive features, benefit from higher transaction limits, and enjoy enhanced protection against fraudulent activities. Streamline your financial interactions and experience peace of mind knowing your transactions are secure and efficient with verified Cash App accounts.\n\nChoose a trusted provider when acquiring accounts to guarantee legitimacy and reliability. In an era where Cash App is increasingly favored for financial transactions, possessing a verified account offers users peace of mind and ease in managing their finances. Make informed decisions to safeguard your financial assets and streamline your personal transactions effectively.\n\nContact Us / 24 Hours Reply\nTelegram:dmhelpshop\nWhatsApp: +1 (980) 277-2786\nSkype:dmhelpshop\nEmail:dmhelpshop@gmail.com\n\n" | jeremyhunt96529 |

1,879,595 | Configure Eslint, Prettier and show eslint warning into running console vite react typescript project | Creating a React application with Vite has become the preferred way over using create-react-app due... | 0 | 2024-06-06T19:14:23 | https://dev.to/khalid7487/configure-eslint-prettier-and-show-eslint-warning-into-running-console-vite-react-typescript-project-pk5 | eslint, prettier, gorupimport, showeslintwarningintoconsole | Creating a React application with Vite has become the preferred way over using create-react-app due to its faster and simpler development environment.Although vite projects by default offer eslint support but it is very simple setup which is not very helpful so far.

In this guide this i will go through config eslint w... | khalid7487 |

1,879,594 | From Cat-Eyes to Aviators: A Nostalgic Journey Through Timeless Eyewear Trends | In thе vast rеalm of fashion, cеrtain accessories stand thе tеst of timе, transcending fleeting... | 0 | 2024-06-06T19:13:01 | https://dev.to/daposieyewear/from-cat-eyes-to-aviators-a-nostalgic-journey-through-timeless-eyewear-trends-5d6a | In thе vast rеalm of fashion, cеrtain accessories stand thе tеst of timе, transcending fleeting trends and leaving an indelible mark on stylе history. Among thеsе, eyewear has emerged as a transformative еlеmеnt that not only еnhancеs onе’s vision but also adds a touch of sophistication and flair to pеrsonal stylе. Ovе... | daposieyewear | |

1,879,592 | Must-Have Helper Functions for Every JavaScript Project | JavaScript is a versatile and powerful language, but like any programming language, it can benefit... | 0 | 2024-06-06T19:07:26 | https://dev.to/raksbisht/must-have-helper-functions-for-every-javascript-project-9bk | helperfunctions, utilityfunctions, codingtips, tutorial | JavaScript is a versatile and powerful language, but like any programming language, it can benefit greatly from the use of helper functions. Helper functions are small, reusable pieces of code that perform common tasks. By incorporating these into your projects, you can simplify your code, improve readability, and redu... | raksbisht |

1,879,590 | Angular vs React js Prop Passing | Prop Passing in React Parent Component: import React from 'react'; import... | 0 | 2024-06-06T19:00:53 | https://dev.to/syedmuhammadaliraza/angular-vs-react-js-prop-passing-1jaj | angular, react, javascript, coding | ## Prop Passing in React

1. **Parent Component:**

```jsx

import React from 'react';

import ChildComponent from './ChildComponent';

class ParentComponent extends React.Component {

render() {

const message = "Hello from Parent!";

return (

<div>

<h1>Parent Component</h1>

<ChildComponent ... | syedmuhammadaliraza |

1,879,586 | A Deep Dive into Next.js 14: Solving Common, Intermediate, and Advanced Issues 🚀 | Next.js 14 is a powerful framework that brings numerous enhancements and features. However, like any... | 0 | 2024-06-06T18:48:05 | https://dev.to/mohith/a-deep-dive-into-nextjs-14-solving-common-intermediate-and-advanced-issues-hja | nextjs, javascript, node, programming | Next.js 14 is a powerful framework that brings numerous enhancements and features. However, like any tool, it comes with its own set of challenges. Let's explore some common, intermediate, and advanced problems you might encounter, along with practical solutions. 🌟

**Common Problems 🛠️**

**1. Build Failures Due to ... | mohith |

1,879,585 | How to use a custom font on Excalidraw.com | Introduction Excalidraw is a great whiteboarding tool, useful for technical diagrams and... | 0 | 2024-06-06T18:44:52 | https://dev.to/dawidcodes/how-to-use-a-custom-font-on-excalidrawcom-4jl4 | webdev, javascript, productivity, css | ### Introduction

Excalidraw is a great whiteboarding tool, useful for technical diagrams and wireframes.

### Problem

The default handwritten font looks quite unprofessional and can be difficult to read

Additionally, there is no official way to add custom fonts.

### Solution

The solution is to run a custom script... | dawidcodes |

1,879,584 | Unleash the Power of System Design: Essential for Every Software Engineer! 💻🚀 | In the fast-paced world of software engineering, one skill reigns supreme: system design. From... | 0 | 2024-06-06T18:44:34 | https://dev.to/raksbisht/unleash-the-power-of-system-design-essential-for-every-software-engineer-52a | systemdesign, softwareengineering, scalability, tutorial | In the fast-paced world of software engineering, one skill reigns supreme: system design. From turbocharging scalability to fine-tuning performance, mastering system design isn’t just beneficial — it’s absolutely crucial! Let’s explore why system design is a game-changer and how it can supercharge your career as a soft... | raksbisht |

1,879,583 | Desfazer o último commit no Git | Para desfazer o último commit no Git, você pode usar um dos seguintes comandos, dependendo da... | 0 | 2024-06-06T18:42:01 | https://dev.to/lucasvalhos/desfazer-o-ultimo-commit-no-git-38e0 | Para desfazer o último commit no Git, você pode usar um dos seguintes comandos, dependendo da situação:

1 - **Desfazer o último commit, mantendo as mudanças no seu diretório de trabalho**:

```bash

git reset --soft HEAD~1

```

Este comando desfaz o último commit, mas mantém as mudanças no seu diretório d... | lucasvalhos | |

1,879,580 | How Much Does a Website Cost In Ireland? 2024 Price Guide | How Much Does a Website Cost In Ireland? 2024 Price Guide In a world where businesses live or die by... | 0 | 2024-06-06T18:38:59 | https://dev.to/affordablewebsites/how-much-does-a-website-cost-in-ireland-2024-price-guide-4m0p | webdev, development | How Much Does a Website Cost In Ireland? 2024 Price Guide

In a world where businesses live or die by their online presence, having a well-designed website is non-negotiable. As an Irish [web design agency](https://www.affordablewebsites.ie/) with over 18 years of experience, we understand the vital role a website plays... | affordablewebsites |

1,879,578 | My first Pull, Commit, and Push with Git! | "Little things can have a big impact on our daily life. Playing with Git is very exciting. As a... | 0 | 2024-06-06T18:36:43 | https://dev.to/manish_dev/my-first-pull-commit-and-push-with-git-38j5 | git, github | **"Little things can have a big impact on our daily life. Playing with Git is very exciting. As a beginner, solving minor issues provides great motivation. This is how I push code into GitHub."**

- Run

```

git init

```

in the terminal. This will initialize the folder/repository that you have on your local computer ... | manish_dev |

1,868,117 | ScoutSuite | ScoutSuite is a really nice security tool to audit your cloud solutions. I have used it on the AWS... | 0 | 2024-06-06T18:35:11 | https://dev.to/stefanalfbo/scoutsuite-2l1n | 100daystooffload, tooling, security, cloud | [ScoutSuite](https://github.com/nccgroup/ScoutSuite) is a really nice security tool to audit your cloud solutions.

I have used it on the AWS cloud and it instantly gave me some things to inspect further and it was easy to get started with. However the tool also support other cloud providers as Azure, GCP and more.

Th... | stefanalfbo |

1,879,574 | HTTP Status Codes: Your Guide to Web Communication and Error Handling 🌐 | HTTP status codes are like the silent messengers of the web, communicating vital information between... | 0 | 2024-06-06T18:32:16 | https://dev.to/raksbisht/http-status-codes-your-guide-to-web-communication-and-error-handling-1cej | http, statuscodes, webdev, tutorial | HTTP status codes are like the silent messengers of the web, communicating vital information between servers and clients. From indicating successful transactions to warning of errors, these codes play a crucial role in ensuring smooth communication across the internet. Let's dive into the world of HTTP status codes, de... | raksbisht |

1,879,573 | Geração de IDs únicos no Salesforce sem chance de colisão | Para garantir a geração de IDs únicos no Salesforce sem chance de colisão, é possível utilizar... | 0 | 2024-06-06T18:31:50 | https://dev.to/lucasvalhos/geracao-de-ids-unicos-no-salesforce-sem-chance-de-colisao-4jpm | Para garantir a geração de IDs únicos no Salesforce sem chance de colisão, é possível utilizar algumas abordagens eficazes. A seguir, descrevo algumas dessas abordagens:

### 1. **Utilizar a API padrão de Salesforce (UUID)**

Salesforce possui uma função nativa para gerar UUIDs (Identificadores Únicos Universais) no Ap... | lucasvalhos | |

1,879,572 | Is JWT Safe When Anyone Can Decode Plain Text Claims | If I get a JWT and can decode the payload, how is it secure? Why couldn't I just grab the token out... | 0 | 2024-06-06T18:30:17 | https://dev.to/jacktt/is-jwt-safe-when-anyone-can-decode-plain-text-claims-2j7o | If I get a JWT and can decode the payload, how is it secure? Why couldn't I just grab the token out of the header, decode and change the user information in the payload, and then encode it again to access any person's account? - My friend asked me this question today.

The short answer is NO, you can decode to see payl... | jacktt | |

1,879,571 | Impactos ao alterar um campo Lookup para Master-Detail no Salesforce | Alterar um campo de relacionamento do tipo Lookup para Master-Detail no Salesforce pode trazer... | 0 | 2024-06-06T18:29:26 | https://dev.to/lucasvalhos/impactos-ao-alterar-um-campo-lookup-para-master-detail-no-salesforce-l3k | Alterar um campo de relacionamento do tipo Lookup para Master-Detail no Salesforce pode trazer diversos desafios. Aqui estão alguns dos principais pontos a serem considerados:

### 1. **Impacto nos Dados Existentes**

- **Integridade Referencial**: O campo Lookup deve ser obrigatório antes de convertê-lo em Master-De... | lucasvalhos | |

1,879,570 | Matthew Danchak's Proven Tips for Achieving Optimal Mental Health | Keeping your mental health in check can be tough in today's hectic world. With all the pressures from... | 0 | 2024-06-06T18:24:32 | https://dev.to/matthewdanchak/matthew-danchaks-proven-tips-for-achieving-optimal-mental-health-1ofp | matthewdanchak, healthyrelationships, healthyliving, mentalclarity | Keeping your mental health in check can be tough in today's hectic world. With all the pressures from work, relationships, and the constant digital noise, it's crucial to have some solid strategies to stay mentally healthy. [Matthew Danchak](https://www.f6s.com/member/matthew-danchak), a well-known mental health expert... | matthewdanchak |

1,879,569 | AI CSS Animations | I released my first software in the design space about a month ago, a free AI CSS animation... | 0 | 2024-06-06T18:21:38 | https://dev.to/max_prehoda_9cb09ea7c8d07/ai-css-animations-1e1f | webdev, css, design, ai | I released my first software in the design space about a month ago, a free AI CSS animation generator.

As a dev/designer, I was frustrated with the annoying & tedious process of writing keyframe animations. The lack of good tools available led me to build my own solution.

After a month of intense development, it’s re... | max_prehoda_9cb09ea7c8d07 |

1,875,534 | Database Migrations : Flyway for Spring Boot projects | Like Liquibase, Flyway can be used in 2 main manners for database migrations in Spring Boot projects,... | 27,623 | 2024-06-06T18:18:50 | https://dev.to/aharmaz/database-migrations-flyway-for-spring-boot-projects-2coi | Like Liquibase, Flyway can be used in 2 main manners for database migrations in Spring Boot projects, either on application startup or in an independent way.

In this post we will see how to configure Flyway and use it in the context of a Spring Boot project for both development and release phases, You can find example... | aharmaz | |

1,879,562 | Express.js: Unleashing the Power of Node.js for Web Development 🚀🔥 | Are you ready to revolutionize your web development projects with Express.js? In this article, we’ll... | 0 | 2024-06-06T18:16:13 | https://dev.to/raksbisht/expressjs-unleashing-the-power-of-nodejs-for-web-development-59aj | node, webdev, javascript, tutorial | Are you ready to revolutionize your web development projects with Express.js? In this article, we’ll embark on a journey into the world of Express.js — a fast, unopinionated, and minimalist web framework for Node.js. Whether you’re a seasoned developer or just starting your coding adventure, Express.js offers a robust ... | raksbisht |