id int64 5 1.93M | title stringlengths 0 128 | description stringlengths 0 25.5k | collection_id int64 0 28.1k | published_timestamp timestamp[s] | canonical_url stringlengths 14 581 | tag_list stringlengths 0 120 | body_markdown stringlengths 0 716k | user_username stringlengths 2 30 |

|---|---|---|---|---|---|---|---|---|

1,879,101 | Securing File Uploads | File uploads are a common feature in web and mobile applications, but they can pose significant... | 0 | 2024-06-06T11:06:14 | https://blog.ionxsolutions.com/p/securing-file-uploads/ | webdev, security, guide | File uploads are a common feature in web and mobile applications, but they can pose significant security risks if not handled properly. To ensure your apps remains secure, it's crucial to implement robust validation and security measures. This guide will help you to effectively secure file uploads, protecting your systems and end users from malicious intent and malware.

File uploads present several security risks that could compromise both your apps and users. The main risks include:

- **Malicious Files**: Uploaded files can contain viruses, malware, or executable code designed to exploit vulnerabilities.

- **Cross-Site Scripting (XSS)**: Inadequately validated files can contain scripts that execute in the context of the user's browser.

- **Denial-of-Service (DoS) Attacks**: Malicious users upload large files, or numerous smaller files, to exhaust server storage and processing capacities.

- **SQL Injection**: If uploaded file metadata (e.g. filename) are not meticulously sanitised, they can serve as vectors for SQL injection attacks, potentially compromising the database.

Due to the diverse range of risks, relying on a single method is not sufficient - a multi-layered approach is required, combining validation checks, metadata sanitisation, secure configuration and malware scanning.

## Validate the File Extension

File extensions help in identifying the type of file and determining whether it should be accepted. However, just like the `Content-Type` header, file extensions can be manipulated. Despite this, enforcing a whitelist of allowed file extensions can act as a preliminary filter to block obviously dangerous file types. Ensure that only extensions pertinent to your application's needs are accepted.

Checking the file extension is another basic step in securing file uploads. However, like other HTTP headers, file names and extensions can easily be spoofed. Use an allowlist approach, permitting only the specific extensions that your application requires (e.g., `.jpg`, `.png`, `.pdf`). This is a simple validation, and can act as a preliminary filter to block obviously dangerous/incorrect file types.

## Validate the File Size

Limiting the file size is crucial to prevent denial-of-service (DoS) attacks where an attacker attempts to upload extremely large files to exhaust server resources. Set a maximum file size limit according to your application's requirements and enforce this limit server-side to ensure that oversized files are rejected immediately.

## Validate the `Content-Type` Header

Depending on your application's requirements, you will want to accept only particular types of file; for example, for a profile picture you would only want to accept image files.

The `Content-Type` header, sent by the client, indicates the [MIME type](https://developer.mozilla.org/en-US/docs/Web/HTTP/Basics_of_HTTP/MIME_types) of the file being uploaded. While it's useful to validate as a quick and easy check, it should never be fully trusted - malicious users can easily spoof this header. Therefore, treat the `Content-Type` header as just one of several checks.

How to *definitively* determine the file type is covered in the next section.

## Validate the File Type Based on Signature

As neither the file extension or `Content-Type` header can be trusted, the most effective and reliable way to verify a file's content/media type is by checking its file signature, or "magic number". These are unique [sequences of bytes](https://www.garykessler.net/library/file_sigs.html), usually at the beginning of a file, that indicate its true format. Validation can be performed by reading the first few bytes of the file and matching them against known signatures for allowed file types. For example, a PNG file typically starts with `89 50 4E 47`, and a JPEG file starts with `FF D8 FF`.

This method ensures that the file content matches its purported type, providing a robust defence against file type spoofing. Libraries and tools are available in various programming languages to facilitate this check, or alternatively [Verisys Antivirus API](https://www.ionxsolutions.com/products/antivirus-api?utm_source=devto) can identify [50+ different file formats](https://docs.av.ionxsolutions.com/?utm_source=devto#content-type-detection) for you, while also scanning files for malware.

## Scan for Malware

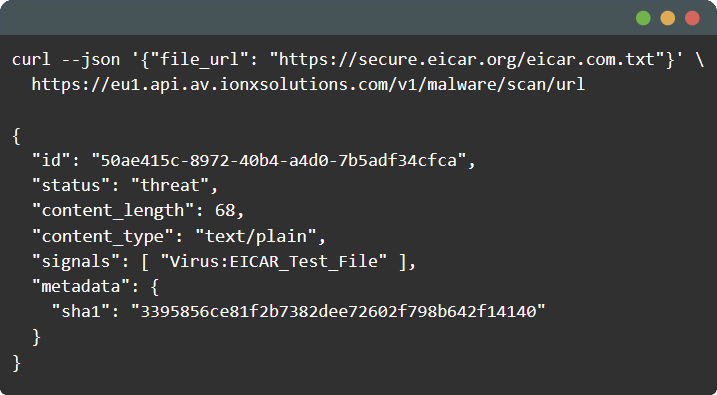

Incorporating malware scanning into your file upload process is a critical step to ensure the security of both your application and your end users. Malware can be hidden in seemingly harmless files, posing significant risks once they are uploaded and processed.

While there are several malware scanning tools available, not all are equally effective. For instance, [ClamAV](https://www.clamav.net) is a popular open-source antivirus engine, but has notable drawbacks such as poor detection rates, high resource usage, and slow scan times. These limitations can compromise your application's performance and security.

Consider using a more robust and efficient solution like [Verisys Antivirus API](https://www.ionxsolutions.com/products/antivirus-api?utm_source=devto). Verisys Antivirus API offers vastly superior detection capabilities, faster scan times, and a ready-to-use [antivirus API](https://www.ionxsolutions.com/products/antivirus-api?utm_source=devto), making it a reliable choice for integrating malware scanning into your applications.

> _Boost your app security with [Verisys Antivirus API](https://www.ionxsolutions.com/products/antivirus-api?utm_source=devto): our language-agnostic API seamlessly integrates malware scanning into your mobile apps, web apps, and backend systems._

>

> _By scanning user-generated content and file uploads, Verisys Antivirus API can stop dangerous malware at the edge, before it reaches your servers, applications - or end users._

## Storing Files

Steps required to secure files at rest will depend on the location of stored files - for example, they could be uploaded to object storage such as S3, or they could be stored on disk.

- **Sanitise File Names**: file names can contain malicious characters that your application may be unable to process correctly. Always sanitise file names by removing or replacing special characters, spaces, and potentially executable scripts. Consider renaming files upon upload to a standardised naming convention to prevent any harmful effects.

- **Secure Storage Location**: if storing files on disk (rather than, for example, object storage), uploaded files should be stored in a directory that is not directly accessible via the web. This prevents direct execution or access to the files from a URL.

- **Set Appropriate Permissions**: ensure that uploaded files have the minimum necessary permissions. For instance, files should not be executable if they don't need to be.

## Closing Thoughts

By combining these strategies, developers can significantly enhance the security of file uploads in their applications. A layered approach, which includes malware scanning, provides comprehensive protection against a variety of attack vectors, ensuring that file uploads remain a safe and useful feature.

For a comprehensive guide on securing file uploads and other web application security best practices, refer to [OWASP](https://owasp.org) (Open Worldwide Application Security Project). OWASP provides a wealth of resources, including the [OWASP Top Ten](https://owasp.org/www-project-top-ten/), which highlights the most critical security risks to web applications. In particular, see [Unrestricted File Upload](https://owasp.org/www-community/vulnerabilities/Unrestricted_File_Upload).

Learn more about [Verisys Antivirus API](https://www.ionxsolutions.com/products/antivirus-api?utm_source=devto), our language-agnostic API that seamlessly integrates antivirus scanning into your mobile apps, web apps, and backend systems: [https://www.ionxsolutions.com/products/antivirus-api](https://www.ionxsolutions.com/products/antivirus-api?utm_source=devto)

| ionx |

1,879,104 | AI for Business: A Guide to Automating Workflows | Discover how AI for business can automate routine workflows, freeing up valuable time and resources.... | 0 | 2024-06-06T11:04:23 | https://dev.to/trigventsol/ai-for-business-a-guide-to-automating-workflows-3eah | ai, business | Discover how **[AI for business](https://trigvent.com/ai-in-small-business-operations-2024/)** can automate routine workflows, freeing up valuable time and resources. This blog provides a comprehensive guide on how to implement AI solutions to automate processes in areas such as finance, marketing, and operations. Learn about the tools available, their benefits, and how to overcome common challenges to ensure a smooth transition to an automated business model. | trigventsol |

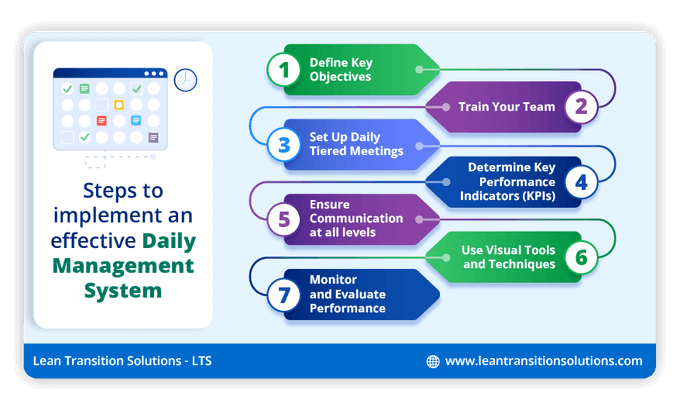

1,879,103 | How to Setup a Lean Daily Management System ? | Imagine a Daily Management System (DMS) that revolutionises how you run your organisation. This isn't... | 0 | 2024-06-06T11:04:21 | https://dev.to/leantransitionsolutions/how-to-setup-a-lean-daily-management-system--2ef6 | tcard, visualmanagementtools, dailymanagementsystem | Imagine a [Daily Management System](https://tcard.leantransitionsolutions.com/daily-management-system) (DMS) that revolutionises how you run your organisation. This isn't your typical management style; it's a dynamic and structured approach that adjusts to real-time, daily operations and continuous improvement. Picturise a system where visual management tools, daily huddles, and standardised processes take centre stage, boosting communication, swiftly tackling issues, and fostering a culture of accountability. Curious about how to implement a powerful Daily Management Board for your organisation?

Steps to Implement a Powerful Daily Management Board for your Organisation

**1. Define Key Objectives**

**Clarify Objectives:** Determine what you aim to achieve with the daily management board (e.g., tracking tasks, monitoring performance, facilitating daily stand-ups).

**Physical Board vs. Digital Board:** Decide whether a physical board (e.g., whiteboard, corkboard) or a digital tool (e.g. [LTS Digital TCards](https://tcard.leantransitionsolutions.com/) or Kanban Boards) suits your team.

**2. Train your Team**

Train employees at every level on the principles and processes of DMS, ensuring everyone understands and is committed to the system.

**3. Set up Daily Tiered Meetings**

Set up daily huddles or tiered meetings where teams can discuss progress, tackle challenges, and align priorities. This boosts communication and teamwork.

**4. Determine Key Performance Indicators (KPIs)**

Pinpoint and clarify the Key Performance Indicators (KPIs) that match our organisational goals. This helps teams stay on track and measure progress effectively.

**5. Ensure Communication at All Levels**

Create direct lines of communication from upper management to frontline staff, creating a workplace where information flows smoothly, and problems are dealt with quickly.

**6. Use Visual Tools and Techniques**

Utilise visual management tools like boards, charts, and cards to render data and progress visible, promoting transparency and expediting decision-making.

**7. Monitor and Evaluate Performance**

Continuously evaluate the DMS's performance by gathering feedback and data, pinpointing areas for improvement, and ensuring ongoing system enhancement.

In conclusion, implementing a powerful [Daily Management Board](https://tcard.leantransitionsolutions.com/software-blog/setting-up-a-lean-daily-management-system) is crucial for streamlining operations and fostering team collaboration. By defining clear objectives, training your team, setting up daily tiered meetings, and utilising visual tools, you can enhance productivity and achieve organisational goals efficiently.

To dive deeper into the Daily Management System and discover how LTS Digital TCards can revolutionise your workflow, click the link below:(https://tcard.leantransitionsolutions.com/signup)

**Let's empower your team together!**

| leantransitionsolutions |

1,879,102 | Go for Backend instead of Python/Django | Hi everyone. I've recently started to work at a trade company and they want me to replace their... | 0 | 2024-06-06T11:04:19 | https://dev.to/instructured/go-for-backend-instead-of-pythondjango-hi8 | python, go, django, webdev | Hi everyone. I've recently started to work at a trade company and they want me to replace their existing website with a new one. I am not a web developer, I do embedded work with C. I got this job without any web experience because owners of the company are my friends, they trust me personally and they are not in a hurry.

Current website is really simple, only activity on the client side is that users send price request forms for products they want to buy. No user accounts, no online purchases. All negotiation work is done via email by the dealers of the company. At the backend a database with thousands of products.

Each product has couple of images and a few specifications. Existing site is built on asp.net, mssql.

Now they want me to build enhanced version of this site. At first very similar functionality with the current site with improved SEO. But later in the future adding user accounts, online payment system and a mobile app.

Because I'm not a experienced Web developer I decided to build new site with Python and Django. Django provides a lot of useful features out of the box as I see. But my problem is Python, because I've been coding primarily in C, I'm struggling to understand Python way of coding. At first it seems way simpler but I feel fine tuning the code is much more difficult because of loose coding style. For a while I'm curious about the Go. It is closer to the C and I feel I can learn it better than Python. My question is, how hard will it be to build that website with Go without web dev experience. Does Go have enough tools to simplify development process. Does it create a lot of problems when adding new functionalities to the site? | instructured |

1,879,099 | Common Challenges in Cleaning Services and How to Overcome Them | Various is an issue that affects cleaning services for both houses and other commercial buildings as... | 0 | 2024-06-06T11:01:46 | https://dev.to/davidsmith45/common-challenges-in-cleaning-services-and-how-to-overcome-them-1bg5 | cleaningservices |

Various is an issue that affects cleaning services for both houses and other commercial buildings as these challenges increase the chances of hindered efficiency, dissatisfied clients, and worse business. Thus, the awareness of such challenges and their proper solution is the key to providing quality cleaning services and ensuring the growth of the organisation.

## 1. Staffing Issues

Challenge:

There are various obstacles unique to the cleaning industry, and the most famous is the problem of staffing. A serious issue that arises when searching for an external employee is the identification of competent, professional, and motivated performers. RAOs are increased over time and absenteeism adds to the problem.

Solution:

There are methods that should be followed by companies in order to minimize staff problems such as legal staffing, using staffing agencies, competitive wages, and also proper reward systems. Most employers realize that investing in training programs that are continuous enables individuals to learn new knowledge and thereby improve their skills and levels of satisfaction at their workplace. Also, the promotion of a workplace culture that employees are likely to uphold and the level of appreciation of employees, boost the rate of retention. It enables them to grow loyalty and reliability, and, therefore, the development of cultural beliefs that support employees should be encouraged in a firm.

## 2. Quality Control

Challenge:

One of the most acute issues is ensuring the quality and consistency of [cleaning services](https://www.rbcclean.com/services/high-level-cleaning/) for all the cleaning operations made. Customers are never ready to compromise with sanitization, hygiene, and disinfection, and any breach may cost a business its clientele.

Solution:

It is critical to set up measures to prevent variations in quality from the normal standard. This may be comprehensive checklists setting out the cleaning tasks for each work, integrated profile checks and evaluation tools. Cleanliness is an area that can be assessed in terms of performance and anyone willing to enhance performance in this area should consider technology such as cleaning management software. Ongoing training and refresher programs must be conducted to guarantee that all the employees understand the required quality standards being practiced. From the above details, it is clear that proper training of the employees in quality standards followed by constant refresher training can help in ensuring that all employees are conversant with the needed standards.

## 3. Time Management

Challenge:

Twelve tips that can help to control time in a cleaning business A clean is a business where efficient time management is imperative. Whether due to an accident, contaminated water or any other reason that can lead to delays or prolonged cleaning processes, the schedules of the day and the profitability of the operation is affected.

Solution:

It is critical to create a clear timeline and stick to it: This is so true especially when it comes to formulating classes and ensuring that such classes are followed. The use of a timer for work-related tasks allows for evaluating how long it takes to complete each task and further optimization of time expenditures. Informing employees about time management strategies or other practices that can be applied in relations to time increases obedience to time management schedules and timetables when proper time frames are set for accomplishing specific tasks. Also, identifying optimal transportation channels for cleaner – especially in complex or multisite interiors can also save time and money!

## 4. Client Expectations

Challenge:

It can be a somewhat tricky endeavor in some cases, largely because a client and his or her expectations can be incredibly high. This is why communication misunderstanding about the scope of service to be delivered may cause dissatisfaction.

Solution:

First of all, detailing all the provisions of an agreement and possible constraints is crucial in order to build an effective collaboration with the clients. Making sure that one gives specific service agreements that in actuality points out the areas of coverage as well as the expected and prohibited acts reduces cases of confusion. To support it we will arrange regular follow-up meetings as well as feedbacks to make sure that the clients’ expectations are fulfilled and if there are any concerns, they will be solved immediately. Another factor that can also improve client satisfaction is possible to achieve satisfactory results even when making reasonable adjustments as well as be ready for true flexibility. It is heard that cleaning services in Toronto is highly good at customer satisfaction

## 5. Handling Difficult Clients

Challenge:

Managers and supervisors in every trade have problems with customers that are challenging, and the cleaning business is no exception to this. Managing expectations, responding to complaints or speaking to boorish individuals can be difficult.

Solution:

Employers underlining the importance of customer service indicate that training employees in this area is imperative. Arm them with the best ways of handling complaints and any form of conflict, to do so diplomatically and in a composed manner. Performing courtesy and sensitivity, which can minimize boil over actions can at times diffuse situations. Establishing boundaries or protocols when it comes to a partner’s behavior also provides the employee with a policy to shield them from abuse.

## 6. Health and Safety Concerns

Challenge:

This may require employees to keep handling various chemicals and equipment used in cleaning processes, which may lead to several health and safety concerns. It is a well-known fact that one must always adhere to the laws and safety rules and that the occurrence of accidents is inevitable.

Solution:

Especially, clients should be informed about the terms and conditions of work as well as expectations from both parties right from the start. Developing a crystal-clear description of services that need to be rendered, which responsibilities are expected to be handled by the supplier, and which are not, also means anchoring service agreements. One way of making the organization aware that the client's needs are well observed and that all rightful grievances are addressed is through follow-ups and feedback sessions on a regular basis. Politeness and willingness to agree to any probable and rational demands from the clients can also increase the amount of satisfaction.

Cleaning services are the main aspects of business organizations that are met with certain challenges some of which are as follows: As it has been pointed out in this paper, cleaning businesses can solve these challenges and operate more efficiently to deliver higher levels of satisfaction to its customers and ensure its sustained success. Some of the major challenges that organizations face in achieving these goals include: talent management is another major challenge, and the best approach for overcoming this includes implementation of best practices, investment in the training of employees, and use of technology.

| davidsmith45 |

1,879,098 | How Does WeWP Shield Against DDoS Attacks? | DDoS attacks pose significant threats to businesses by overwhelming servers with traffic, leading... | 0 | 2024-06-06T11:00:43 | https://dev.to/wewphosting/how-does-wewp-shield-against-ddos-attacks-ghc |

DDoS attacks pose significant threats to businesses by overwhelming servers with traffic, leading to downtime and potential data breaches. WeWP, a cloud-based [Managed WordPress hosting company](https://www.wewp.io/), offers robust solutions to protect against these attacks, ensuring high uptime and security for websites.

WeWP provides various hosting plans designed to mitigate DDoS threats through advanced technologies and strong infrastructure. They employ several strategies to protect websites, including traffic scrubbing mechanisms to filter out malicious traffic and Web Application Firewalls (WAF) that inspect and block harmful HTTP requests. Additionally, WeWP offers scalable resources, allowing businesses to adjust their computing power, storage, and bandwidth in response to increased traffic during attacks.

Their network infrastructure includes multiple connections, traffic scrubbing centers, and DDoS mitigation appliances to absorb and filter malicious traffic. The anycast routing technique distributes incoming traffic across geographically dispersed data centers, minimizing the impact on individual centers and improving overall stability.

**Also Read** : [The Threat of DNS Hijacking: Detection and Prevention Strategies](https://www.wewp.io/threat-of-dns-hijacking-detection-prevention-strategies/)

WeWP also emphasizes robust communication protocols during DDoS attacks, providing timely updates and transparency to clients about mitigation efforts and resolution times. This approach reassures clients and demonstrates WeWP's commitment to maintaining service availability and minimizing downtime.

Overall, DDoS attacks can cause severe damage, including revenue loss, reputation damage, and data breaches. WeWP's advanced security features and responsive support make it a reliable choice for businesses seeking to protect their online operations from these threats. By leveraging WeWP's comprehensive hosting services, businesses can ensure optimal performance, security, and scalability, thereby safeguarding their digital presence and fostering long-term growth. Contact WeWP for top-notch, affordable cloud-based [WordPress hosting solutions](https://www.wewp.io/) tailored to evolving security needs.

Read Full Blog Here With Complete Insight : [www.wewp.io](https://www.wewp.io/protect-against-ddos-attacks/) | wewphosting | |

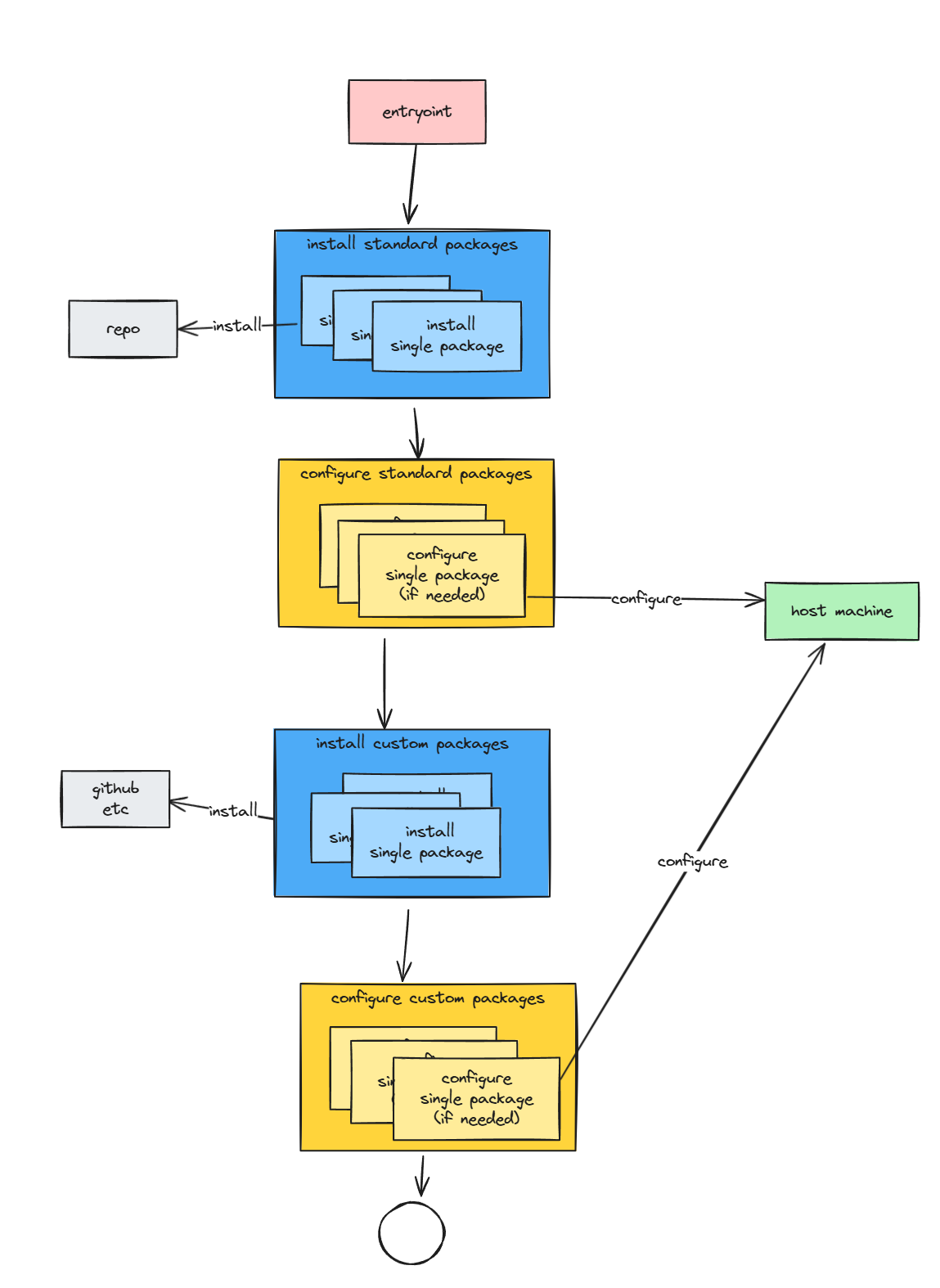

1,878,965 | Does every developer need to know how to deploy software? | Talking to directors of engineering and CTOs, I have heard many of them say that they absolutely... | 0 | 2024-06-06T11:00:33 | https://cloudomation.com/en/cloudomation-blog/does-every-developer-need-to-know-how-to-deploy-software/ | development, devops, devex | Talking to directors of engineering and CTOs, I have heard many of them say that they absolutely expect every developer to know how to deploy their software. When I ask why, the answer is that knowing how the software is deployed makes developers better developers.

In this blog post, I want to take a look at this belief. Does knowledge about deployment make developers better at their job? If yes, how so? And what is the best way to teach developers about deployment?

I also recorded a video on this topic: https://www.youtube.com/watch?v=ZaEFX5DS25g

## Why do most developers know about deployment?

A majority of developers run the software that they work on locally, as part of their development environment. This is why many developers know a lot about the deployment of their software: Because they do it regularly in order to validate their code changes in local deployments.

However **the sad truth is that deployments are often very complicated, and developers spend a lot of time and nerves getting local deployments to work.**

Fortunately, there is an alternative. [Cloud Development Environments](https://cloudomation.com/en/cloud-development-environments/) (CDEs) make it possible for each developer to have private playgrounds where they can validate their code changes before they commit them to a shared repository. CDEs are functionally equivalent to a local deployment, with the difference that they are fully automated and provided to developers remotely. With CDEs, developers can build, test and deploy their code in their own private environment in the CDE with fast feedback loops and with no danger of interfering with other developer’s work – and without having to know anything about deployment.

This is why it makes sense now to ask if developers need to know about the deployment of the software they work on. Previously, there was no other option. Now that there is an alternative, I argue that we need to rethink the scope of what developers have to do and know about.

## What are the upsides of knowing about deployment?

Knowing how to deploy their software can enable developers to make better decisions when writing code, particularly around:

1. Configuration of their software

2. Core architecture of their software

### 1. Configuration of their software

When a developer has to deploy the software they work on themselves, they will intimately know how this software has to be configured. Since developers are also the ones who decide how configuration can be specified for their software, they are much more likely to consider the user experience of configuration when developing configurable features. This is probably the main benefit of forcing developers to deploy their software. Choices about how a software can be configured are something a (backend) developer has to do reasonably frequently.

### 2. Core architecture of their software

The core architecture of a software hugely influences how simple or complex its deployment is. Deployment therefore has to be considered when deciding on the core architecture of a software. However, since architecture decisions are typically made once early in the development of a software, it is the architects or CTOs who take these core architecture decisions who have to know how they plan to deploy their software. For the majority of software developers, this is irrelevant.

### Summary of upsides

So we are left with configuration. It is undoubtedly true that a developer that has had to deploy software that is hard to configure will be more motivated to make their software easily configurable. But relying on this as the mechanism to ensure well-designed configuration for a software is a bad idea. Like any aspect of the software that has a large impact on user experience (in this case the experience of the deployment and operations team of a software), it is something that should be designed by a knowledgeable specialist, who provides guidance on how configuration should be done for a software that other developers then have to follow. This is how feature design works, after all. Otherwise, it will still be each developer deciding on their own what they consider “good and simple configuration”, which will be different for each developer, which most likely again leads to a poor configuration experience overall.

## What are the downsides of knowing about deployment?

There are two main downsides of requiring developers to know how to deploy their software:

1. Time sink: It eats up time and headspace.

2. The myth of the full-stack developer: Few people have the skill and inclination to be good at both coding and deployment.

### 1. Time sink

Knowing about deployment, and having to deploy their software on their own laptops regularly as part of their daily work, are two different things. Unfortunately, the latter is the sad reality for many developers, and it is justified with the “need to know about deployment”. I have already described that knowledge about the deployment of a software has only marginal benefits for developers. In addition to this, the second misconception is that local deployments as part of a developers work are a good way to teach developers the things that they should know about deployment. It is not.

If you want your developers to know about the pains of deployment, it may be a good idea to ask them to manually deploy the software they work on as part of their onboarding, or as a regular exercise every once in a while. If you really think that knowing about deployment is valuable for your developers, then this is a good way to teach them: If the deployment is painful, they will remember it very well.

If developers do local deployments daily, they will get used to many of the pains of it and lose awareness. That removes even the marginal benefit of developer’s knowledge about deployment: They might not even try to make it better anymore.

But the worst part is that it eats up developers time and headspace on a daily basis. It is a cost factor that many companies are not much aware of, because time spent troubleshooting local deployments is typically not tracked separately. Instead, it is padded on top of each task that a developer works on. But **the time spent on local deployment can reach as much as 25% of a developer’s time (in extreme cases), and typically is somewhere between 5-10% of time of a developer** when it works fairly well.

That is a LOT of time!

### 2. The myth of the full-stack developer

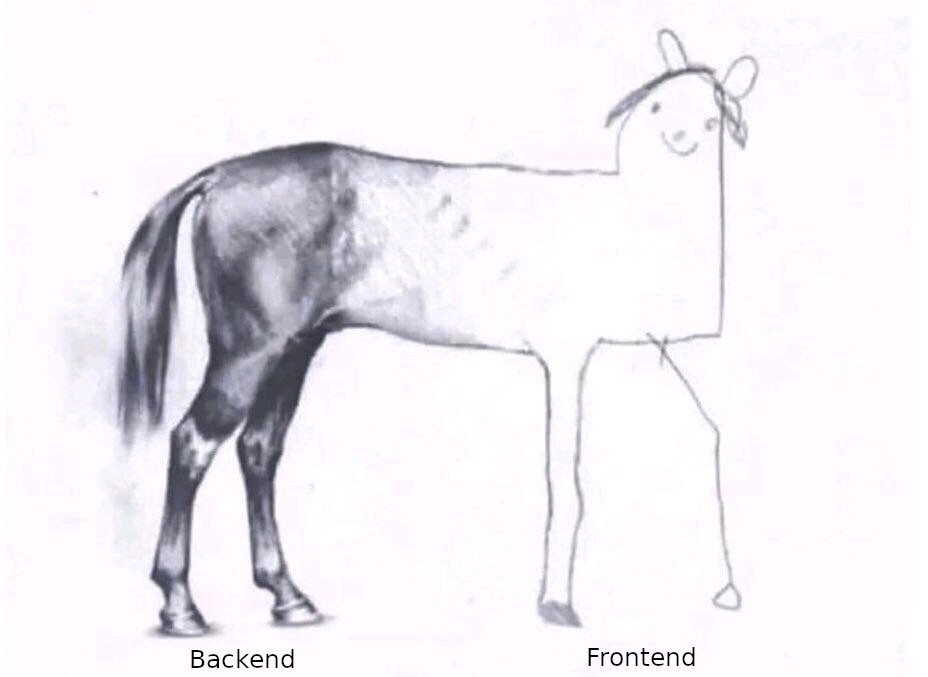

The scope of what a developer is supposed to know is seemingly endless. Even though many specific job titles exist that describe people whose primary focus is deployment and operation of software (operations, devops, site reliability engineer (SRE), …), developers are often assumed to be able to fulfill those functions in addition to their primary function. Often, testing, user experience, architecture, backend and frontend development are also mingled in, leading to the all-encompassing job description of full-stack developer.

There are people who know a lot about many aspects of software development, who can reasonably claim to be full-stack developers and do a decent job in any of the mentioned areas. But for most developers, working as full-stack developers will result in products like this:

The truth is that most people, including developers, hugely benefit from specialisation. Having one area to focus on where one can build up knowledge allows one to reach higher levels of productivity and expertise much faster than when developers are asked to learn about everything at once.

This is especially true for complex software. State-of-the-art business software nowadays often has a lot of components and highly complex deployment logic. As long as deployment can be expressed with a simple “npm run build”, any developer will be able to handle that. But that is hardly ever the case anymore. Many developers spend 10% or more of their time just managing local deployments. The majority of this time, they spend on troubleshooting. But in order to troubleshoot local deployments, developers do not only have to spend time – they also have to know a lot about tools like Docker or minikube or other tools specific to the deployment of their software.

Bottom line: **Expecting a very broad skillset from developers will exclude the majority of developers from fulfilling such a role successfully.** Even those few full-stack developers that do exist, each one will have specialties and areas where they are less skilled. Finding people who are good in one area is much simpler and will lead to much better outcomes for everyone.

## Conclusion

Deployment is an important aspect of a software. It is probably a good idea for most developers to know at least a little bit about the deployment of their software, much in the same way as it is a good idea for each developer to know how to use the software they work on so that they can make better decisions about user experience.

However, much in the same way, developers are not generally required to (or trusted with) making decisions about user experience on their own, even if they have intimate knowledge of the software. It is simply not their speciality. There are user experience designers for a reason, because it is a complex area of expertise that requires knowledge and inclination that doesn’t necessarily overlap with that of a developer whose job it is to write code.

It is exactly the same with deployment. Deployment experts exist for a reason – because it is a complex area of expertise that not every developer should be expected to master, on top of their development expertise. Developers should be required to follow best practices or company-internal guidelines when making decisions that influence deployment. But they should not have to spend hours and hours each day struggling with local deployment.

My conclusion: CTOs and directors of engineering expect their developers to handle deployment because:

* It has always been this way and they may not yet realise that it is not necessary anymore.

* Knowing about deployment serves as a proxy for the general skill and knowledge of a developer. (I could also put it more bluntly: It propagates the unhelpful stereotype of the all-knowing full-stack developer as the ideal developer.)

Neither reason stands up to scrutiny.

## Bottom line: It’s expensive and has few benefits

To summarise: Few developers have the inclination, experience and skillset to fulfil the stereotype of the full-stack developer that knows how to code and deploy their software. Knowing about the deployment of a software has only marginal benefits at best, but requires a lot of time and energy to learn and manage.

Consequently, forcing developers to learn about Docker, minikube, network configurations and a whole lot of other things and tools that they need only for local deployment is a huge waste. Developers generally don’t even like doing this. It is a drag on both productivity and happiness.

**This is good news!** It means that there is a huge amount of time and headspace that developers could stop investing in local deployment. There is a big opportunity to make developers a lot happier and more productive.

And fortunately, it is easily possible to spare developers the pains of local deployments. CDEs are tools specifically designed to do this. They allow developers to focus on writing great code, without having to worry about deployment.

More about CDEs:

* Article: [Where CDEs bring value (and where they don’t)](https://cloudomation.com/en/cloudomation-blog/where-cdes-bring-value-and-where-they-dont/)

* Article: [Cloud / Remote Development Environments: 7 tools at a glance](https://cloudomation.com/en/cloudomation-blog/remote-development-environments-tools/)

* Whitepaper: [Full list of CDE vendors (+feature comparison table)](https://cloudomation.com/en/whitepaper-en/cde-vendors-feature-comparison/) | makky |

1,845,551 | Ibuprofeno.py💊| #120: Explica este código Python | Explica este código Python Dificultad: Fácil x = {"pepe", "albert",... | 25,824 | 2024-06-06T11:00:00 | https://dev.to/duxtech/ibuprofenopy-120-explica-este-codigo-python-2p9 | python, spanish, learning, beginners | ## **<center>Explica este código Python</center>**

#### <center>**Dificultad:** <mark>Fácil</mark></center>

```py

x = {"pepe", "albert", "jacinto", "alba"}

print(x[1])

```

👉 **A.** `pepe`

👉 **B.** `albert`

👉 **C.** `jacinto`

👉 **D.** `TypeError`

---

{% details **Respuesta:** %}

👉 **D.** `TypeError`

Los conjuntos son una estructura de datos de Python que se caracterizan por no indexar sus elementos, por ende no es posible acceder a un valor especifico de un conjunto mediante su índice (cosa que si se puede hacer con listas y tuplas).

{% enddetails %} | duxtech |

1,879,097 | Service Discovery and Service Registry | Service Discovery ve Service Registry, mikroservis mimarisinin önemli bileşenleridir. Her ikisi... | 0 | 2024-06-06T10:59:08 | https://dev.to/mustafacam/service-registry-n97 |

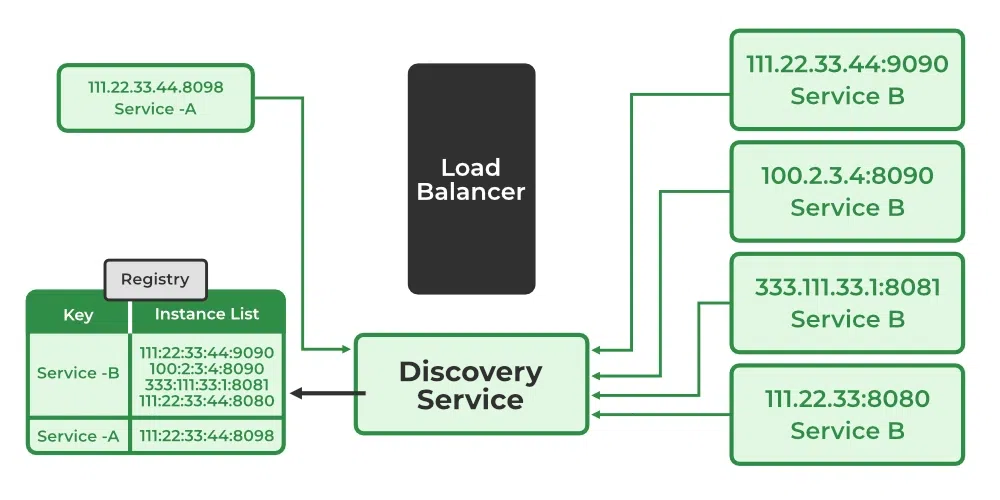

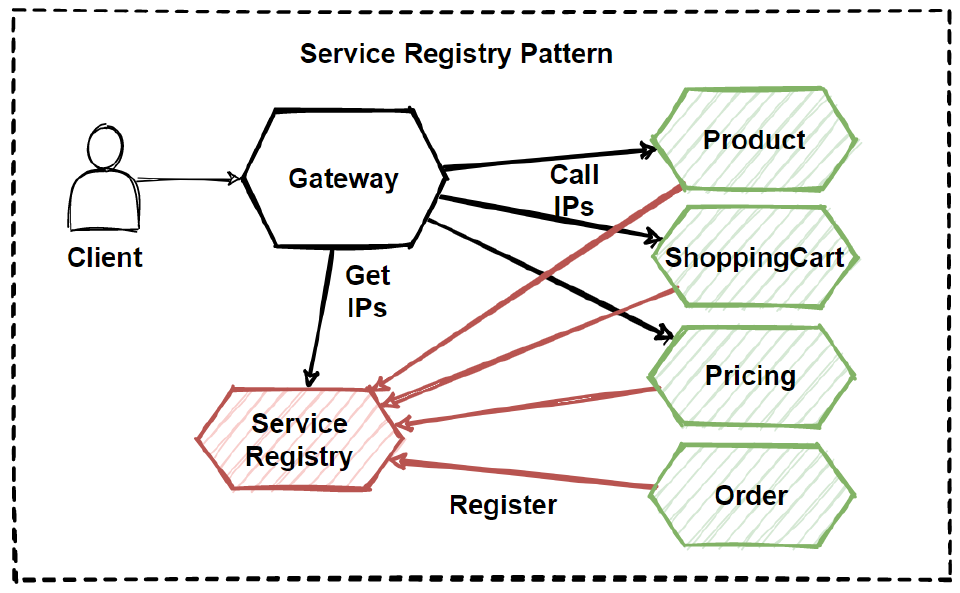

**Service Discovery** ve **Service Registry**, mikroservis mimarisinin önemli bileşenleridir. Her ikisi de mikroservislerin dinamik olarak keşfedilmesini ve birbirleriyle iletişim kurmasını sağlar. Ancak, bunlar farklı roller oynar ve birlikte çalışarak mikroservislerin yönetimini kolaylaştırır.

### Service Registry (Servis Kayıt Defteri)

**Service Registry**, mikroservislerin ağ üzerindeki yerlerini (IP adresi ve port) kaydettikleri merkezi bir kayıt defteridir. Mikroservisler başlatıldığında kendilerini bu kayıt defterine kaydederler ve kapandıklarında veya kullanılamaz hale geldiklerinde bu kayıttan silinirler.

#### Özellikleri:

1. **Dinamik Kayıt ve Kaldırma**: Servisler başlatıldığında kendilerini kaydeder ve kapandıklarında kayıttan kaldırırlar.

2. **Sağlık Kontrolleri**: Service Registry, servislerin sağlık durumlarını izleyebilir ve yalnızca sağlıklı servislerin kullanılmasını sağlar.

3. **Merkezi Yönetim**: Servislerin nerede çalıştığını merkezi bir şekilde yönetir.

#### Örnek: Netflix Eureka

Eureka, popüler bir Service Registry örneğidir. Mikroservisler Eureka'ya kendilerini kaydeder ve diğer servisler de ihtiyaç duyduklarında Eureka'dan bu servislerin yerini öğrenirler.

### Service Discovery (Servis Keşfi)

**Service Discovery**, mikroservislerin birbirlerini dinamik olarak bulmasını sağlayan bir mekanizmadır. Service Discovery, iki ana yöntemle gerçekleştirilir: Client-Side Discovery ve Server-Side Discovery.

#### Client-Side Discovery

Bu yöntemde, istemci uygulaması doğrudan Service Registry'yi sorgular ve ihtiyaç duyduğu servisin yerini öğrenir. İstemci, aldığı bilgilerle servise doğrudan bağlantı kurar.

#### Server-Side Discovery

Bu yöntemde, istemci bir istek yapar ve bu istek bir yük dengeleyici veya API Gateway aracılığıyla yönlendirilir. Yük dengeleyici, Service Registry'yi sorgular ve servisin yerini bulur, ardından isteği doğru servise iletir.

### Örnek Kullanım Senaryosu

Bir mikroservis mimarisinde, farklı servislerin birbirleriyle nasıl iletişim kurduğunu inceleyelim:

1. **Eureka Server Kurulumu**

Eureka Server, merkezi bir kayıt defteri olarak çalışır. Mikroservisler bu server'a kendilerini kaydederler.

#### Bağımlılık Ekleyin:

```xml

<dependency>

<groupId>org.springframework.cloud</groupId>

<artifactId>spring-cloud-starter-netflix-eureka-server</artifactId>

</dependency>

```

#### Ana Sınıf:

```java

import org.springframework.boot.SpringApplication;

import org.springframework.boot.autoconfigure.SpringBootApplication;

import org.springframework.cloud.netflix.eureka.server.EnableEurekaServer;

@SpringBootApplication

@EnableEurekaServer

public class EurekaServerApplication {

public static void main(String[] args) {

SpringApplication.run(EurekaServerApplication.class, args);

}

}

```

#### Konfigürasyon:

```yaml

server:

port: 8761

eureka:

client:

register-with-eureka: false

fetch-registry: false

server:

wait-time-in-ms-when-sync-empty: 0

```

2. **Eureka Client Kurulumu (Mikroservis)**

Her mikroservis, Eureka Server'a kendini kaydeder ve diğer servislerin yerlerini öğrenir.

#### Bağımlılık Ekleyin:

```xml

<dependency>

<groupId>org.springframework.cloud</groupId>

<artifactId>spring-cloud-starter-netflix-eureka-client</artifactId>

</dependency>

```

#### Ana Sınıf:

```java

import org.springframework.boot.SpringApplication;

import org.springframework.boot.autoconfigure.SpringBootApplication;

import org.springframework.cloud.netflix.eureka.EnableEurekaClient;

@SpringBootApplication

@EnableEurekaClient

public class SomeServiceApplication {

public static void main(String[] args) {

SpringApplication.run(SomeServiceApplication.class, args);

}

}

```

#### Konfigürasyon:

```yaml

eureka:

client:

service-url:

defaultZone: http://localhost:8761/eureka/

```

3. **Feign Client Kullanımı**

Mikroservisler arasında iletişim kurmak için Feign Client kullanılabilir.

#### Feign Client Arayüzü:

```java

import org.springframework.cloud.openfeign.FeignClient;

import org.springframework.web.bind.annotation.GetMapping;

import org.springframework.web.bind.annotation.PathVariable;

@FeignClient(name = "other-service")

public interface OtherServiceClient {

@GetMapping("/resource/{id}")

Resource getResourceById(@PathVariable("id") Long id);

}

```

#### Servis Kullanımı:

```java

import org.springframework.beans.factory.annotation.Autowired;

import org.springframework.stereotype.Service;

@Service

public class SomeService {

private final OtherServiceClient otherServiceClient;

@Autowired

public SomeService(OtherServiceClient otherServiceClient) {

this.otherServiceClient = otherServiceClient;

}

public Resource fetchResource(Long id) {

return otherServiceClient.getResourceById(id);

}

}

```

### Sonuç

**Service Registry** ve **Service Discovery**, mikroservislerin dinamik ve ölçeklenebilir bir şekilde yönetilmesini sağlayan kritik bileşenlerdir. Service Registry, mikroservislerin yerlerini kaydederken, Service Discovery bu kayıtları kullanarak mikroservislerin birbirlerini bulmasını ve iletişim kurmasını sağlar. Bu mekanizmalar, mikroservis mimarisinin esnekliğini ve yönetilebilirliğini artırır. | mustafacam | |

1,879,096 | Revolutionize Your Supply Chain with Tableau: Achieve Unmatched Efficiency and Flexibility | In the ever-evolving landscape of supply chain management, efficiency and flexibility are more... | 0 | 2024-06-06T10:58:17 | https://dev.to/shreya123/revolutionize-your-supply-chain-with-tableau-achieve-unmatched-efficiency-and-flexibility-271b | supplychain, tableauservices, tableaudataservices | In the ever-evolving landscape of supply chain management, efficiency and flexibility are more critical than ever. Modern supply chains must adapt quickly to changes, optimize operations, and make data-driven decisions to stay competitive. Tableau, a leading data visualization and business intelligence tool, offers powerful solutions that help organizations achieve these goals.

**Enhanced Data Visibility and Insights**

Tableau’s robust data visualization capabilities provide supply chain managers with real-time insights into every aspect of their operations. With interactive dashboards and detailed reports, stakeholders can monitor key performance indicators (KPIs) such as inventory levels, order fulfillment rates, and transportation costs. This enhanced visibility allows for proactive decision-making and swift response to any disruptions.

**Streamlined Operations**

By integrating data from various sources, Tableau breaks down silos and ensures a unified view of the supply chain. This comprehensive perspective enables businesses to identify inefficiencies, streamline processes, and optimize resource allocation. For instance, advanced analytics can reveal patterns and trends that lead to more accurate demand forecasting and inventory management, reducing waste and improving service levels.

**Flexibility and Scalability**

Tableau’s solutions are designed to scale with your business, accommodating growth and changes in the supply chain landscape. Whether you’re a small enterprise or a global corporation, Tableau can handle large datasets and complex analysis without compromising performance. Its flexibility allows customization to meet the unique needs of your organization, ensuring that you have the right tools to tackle any challenge.

**Improved Collaboration and Communication**

Effective supply chain management requires seamless collaboration across departments and with external partners. Tableau fosters improved communication by providing a shared platform where all stakeholders can access and analyze the same data. This collaborative environment helps align goals, streamline workflows, and ensure everyone is working towards the same objectives.

**Driving Innovation and Competitive Advantage**

In a competitive market, the ability to innovate quickly is a significant advantage. Tableau empowers organizations to experiment with new strategies, test hypotheses, and measure outcomes with ease. By leveraging data to drive innovation, businesses can stay ahead of the curve and continually improve their supply chain operations.

**Conclusion**

Maximizing efficiency and flexibility in the modern supply chain is no longer a luxury—it’s a necessity. Tableau’s powerful data visualization and analytics solutions provide the insights and tools needed to optimize operations, enhance collaboration, and drive innovation. Embrace Tableau to transform your supply chain and achieve sustainable growth in today’s dynamic market.

**Ready to Revolutionize Your Supply Chain?**

Explore how Tableau can empower your supply chain management strategy and unlock new levels of efficiency and flexibility. [Connect with us today to learn more!](https://www.softwebsolutions.com/resources/supply-chain-optimization-tableau-use-cases.html) | shreya123 |

1,879,095 | Effortless Object Detection In TensorFlow With Pre-Trained Models | Object detection is a crucial task in computer vision that involves identifying and locating... | 0 | 2024-06-06T10:56:44 | https://dev.to/codetrade_india/effortless-object-detection-in-tensorflow-with-pre-trained-models-214c | objectdetection, tensorflow, pretrainedmodels, deeplearninglibrary |

Object detection is a crucial task in computer vision that involves identifying and locating objects within an image or a video stream. The implementation of object detection has become more accessible than ever before with advancements in [deep learning libraries](https://www.codetrade.io/blog/deep-learning-libraries-you-need-to-know-in-2024/) like TensorFlow.

In this blog post, we will walk through the process of performing object detection using a pre-trained model in TensorFlow, complete with code examples. Let’s start.

Explore More: [How To Train TensorFlow Object Detection In Google Colab: A Step-by-Step Guide](https://www.codetrade.io/blog/train-tensorflow-object-detection-in-google-colab/)

## **Steps to Build Object Detection Using Pre-Trained Models in TensorFlow**

Before diving into the code, you must set up your environment and prepare your dataset. In this example, we’ll use a pre-trained model called ssd_mobilenet_v2_fpnlite_320x320_coco17_tpu-8 from TensorFlow’s model zoo, which is trained on the COCO dataset.

### **1. Data Preparation**

First, let’s organize our data into the required directory structure:

```

import os

import shutil

import glob

import xml.etree.ElementTree as ET

import pandas as pd

# Create necessary directories

os.mkdir('data')

# Unzip your dataset into the 'data' directory

# My dataset is 'Fruit_dataset.zip'

# Replace the path with your dataset's actual path

!unzip /content/drive/MyDrive/Fruit_dataset.zip -d /content/data

# Move image and annotation files to their respective folders

# Adjust paths according to your dataset structure

# This code assumes that your dataset contains both 'jpg' and 'xml' files

# and organizes them into 'annotations_train', 'images_train', 'annotations_test', and 'images_test' folders.

# You may need to adapt this structure to your dataset.

# images & annotations for test data

for dir_name, _, filenames in os.walk('/content/data/test_zip/test'):

for filename in filenames:

if filename.endswith('xml'):

destination_path = '/content/data/test_zip/test/annotations_test'

elif filename.endswith('jpg'):

destination_path = '/content/data/test_zip/test/images_test'

source_path = os.path.join(dir_name, filename)

try:

shutil.move(source_path, destination_path)

except:

pass

# images & annotations for training data

for dir_name, _, filenames in os.walk('/content/data/train_zip/train'):

for filename in filenames:

if filename.endswith('xml'):

destination_path = '/content/data/train_zip/train/annotations_train'

elif filename.endswith('jpg'):

destination_path = '/content/data/train_zip/train/images_train'

source_path = os.path.join(dir_name, filename)

try:

shutil.move(source_path, destination_path)

except:

pass

```

### **2. Convert XML Annotations to CSV**

To train a model, we need to convert the XML annotation files into a CSV format that TensorFlow can use. We’ll create a function for this purpose:

```

import glob

import xml.etree.ElementTree as ET

import pandas as pd

def xml_to_csv(path):

classes_names = []

xml_list = []

for xml_file in glob.glob(path + '/*.xml'):

tree = ET.parse(xml_file)

root = tree.getroot()

for member in root.findall('object'):

classes_names.append(member[0].text)

value = (root.find('filename').text,

int(root.find('size')[0].text),

int(root.find('size')[1].text),

member[0].text,

int(member[4][0].text),

int(member[4][1].text),

int(member[4][2].text),

int(member[4][3].text))

xml_list.append(value)

column_name = ['filename', 'width', 'height', 'class', 'xmin', 'ymin', 'xmax', 'ymax']

xml_df = pd.DataFrame(xml_list, columns=column_name)

classes_names = list(set(classes_names))

classes_names.sort()

return xml_df, classes_names

# Convert XML annotations to CSV for both training and testing data

for label_path in ['/content/data/train_zip/train/annotations_train', '/content/data/test_zip/test/annotations_test']:

xml_df, classes = xml_to_csv(label_path)

xml_df.to_csv(f'{label_path}.csv', index=None)

print(Successfully converted {label_path} xml to csv.')

```

This code outputs CSV files summarizing image annotations. Each file details the bounding boxes and corresponding class labels for all objects within an image.

### **3. Create TFRecord Files**

The next step is to convert our data into TFRecords. This format is essential for training TensorFlow object detection models efficiently. We’ll utilize the `generate_tfrecord.py` script included in the TensorFlow Object Detection API.

```

#Usage:

#!python generate_tfrecord.py output.csv output.pbtxt /path/to/images output.tfrecords

# For train.record

!python generate_tfrecord.py /content/data/train_zip/train/annotations_train.csv /content/label_map.pbtxt /content/data/train_zip/train/images_train/ train.record

# For test.record

!python generate_tfrecord.py /content/data/test_zip/test/annotations_test.csv /content/label_map.pbtxt /content/data/test_zip/test/images_test/ test.record

```

Ensure that you have a label_map.pbtxt file containing class labels and IDs in your working directory or adjust the path accordingly.

Read a complete Article Here:

**[Effortless Object Detection In TensorFlow With Pre-Trained Models](https://medium.com/@codetrade/effortless-object-detection-in-tensorflow-with-pre-trained-models-f20272c9d977)** | codetradeindia |

1,875,859 | My programming journey so far! | My name is Christopher, and I'm a self taught programmer with a passion for building innovative... | 0 | 2024-06-06T10:55:04 | https://dev.to/chikere_christopher/my-programming-journey-so-far-2e4n | webdev, javascript, css, html | My name is Christopher, and I'm a self taught programmer with a passion for building innovative solutions. In this article, I'll share my programming journey from the early days to my current projects, and the lessons I've learned along the way.

I was 16 when I first discovered programming through the movie </scorpion> which was about a group of tech inclined people that use their skills and experience to solve complex global problems and save lives. Although I had Always been curious to know how and what goes on in digital devices.

I began learning programming two years ago (2022). I started with the frontend part of web development. As a self taught programmer, I had to go through lots of tutorial hell with online tutorials, coding challenges and many programming e-books. One notable project was a portfolio which I built (although it was never completed, laughs!).

They say "a journey of a thousand miles begins with a step". The journey wasn't actually always smooth and less challenging. I started my learning journey without a laptop but got one later on and also due to fact that I'm still a student (computer science), I was always engrossed in school work and had little or no time for learning which was a major drawback. Although I had help once in a while which gave me the strength to go back and continue.

The technologies I've used during the learning process involves HTML,CSS and Javascript.

Currently, I'm still on the process of learning frontend web development but hope to dive into the aspect of backend web development real soon, making me a fullstack web developer.

My programming journey so far has been a wild ride, filed with ups and downs. But I've learnt alot about from the tech skill, I've also learnt about creativity, perseverance, teamwork .My advice for beginners out there, don't be discouraged by the learning process, and also remember that you're part of a bigger community which is waiting for your innovative ideas.

```

<!Doctype html>

<html>

<head>

<title>My Programming Journey so far!</title>

</head>

<body>

<h1>Little Advice<h1>

<p>To beginners out there, keep learning and remember

that you're part of a larger community and we need your

creativity and innovative ideas to keeping making the

world a better place, Peace☮. Keep in mind that Learning

never end!</p>

</body>

</html>

```

| chikere_christopher |

1,879,093 | AWS Development Services Optimizing Cloud Usage for Cost Savings and Maximizing ROI | Businesses striving to maximize efficacy and minimize cost in the cloud computing landscape need to... | 0 | 2024-06-06T10:53:32 | https://dev.to/hourlydevelopers/aws-development-services-optimizing-cloud-usage-for-cost-savings-and-maximizing-roi-3i1l | amazonwebhosting, awsconsultingpartner, awshostingservices, awsmanagedserviceprovider | Businesses striving to maximize efficacy and minimize cost in the cloud computing landscape need to be conversant with the trajectory of developments in this field. This blog post focuses on illuminating viewers about AWS Development Services, how they can streamline their cloud usage and make big savings as a result. This enables companies to tune up their cloud infrastructure through tools and services provided by AWS, thereby identifying areas of wasteful expenditure and maximizing ROI (Return on Investment).

From understanding nuances surrounding optimization of cloud use to real-life case studies that demonstrate tangible results, you will have embarked on a journey through which your AWS implementation will become lean and efficient, thus a sustainable growth and innovation driver.

## Understanding Cloud Usage Optimization

Understanding how to optimize cloud use, especially within the realm of AWS Development Services, is essential to realizing the full potential of cloud computing resources and managing costs. In this fast-paced business environment, where flexibility and effectiveness are critical, organizations must take a deep dive into cloud usage to remain competitive. Cloud utilization optimization, particularly within the context of **[AWS Development Services](https://hourlydeveloper.io/aws-development-services)**, is an inclusive approach to resource management that incorporates everything from the choice of correct instance types and storage alternatives to network configurations optimization and automatic workload scaling.

By gaining an understanding of workload patterns, performance metrics, as well as cost drivers within the framework of AWS Development Services, businesses can effectively align their cloud infrastructures with fluctuating demands. Additionally, leveraging progressive analytic tools and monitoring systems within the AWS ecosystem empowers entities to proactively recognize inefficiencies and opportunities for gaining efficiency, leading to significant savings on expenditure and increased operational efficiency. In this blog post, we will discuss the main principles and best practices of implementing cloud usage optimization within the context of AWS Development Services, equipping you with the necessary insights and techniques to unlock your system's full potential.

## Key Strategies for Cost Savings in AWS Development Services

AWS Development Services is dynamic, and in order to make sure that resources are well utilized and the ROI of a company is maximized, there needs to be an adherence to cost savings key strategies. Here are six strategies.

**1] Rightsizing:** Match resource usage with instance types and sizes appropriately.

**2] Reserved Instances:** Use reserved instances for predictable workloads and realize significant cost savings.

**3] Spot Instances:** Apply spot instances to non-critical workloads so as to benefit from discount pricing.

**4] Storage Optimization:** Optimize storage by data tiering, leveraging cheaper storage options.

**5] Auto Scaling:** Focus on demand-based resource allocation through implementing auto-scaling so as to avoid over-provisioning.

**6] Cost Allocation Tags:** Ensure accurate expense attribution via the use of cost allocation tags hence identifying areas for optimization.

By deploying these tactics, firms can optimize their AWS consumption and efficiently manage costs. Organizations can save enormously while keeping up with performance and scalability by taking proactive approaches to cost optimization. Mastering these fundamental strategies is crucial for achieving efficiency gains and maximizing returns in the fiercely competed market of AWS development services.

## Identifying and Eliminating Unnecessary Expenses

To maintain cost-efficiency and optimize resource allocation, it is very important to identify and eliminate unnecessary expenditures in AWS Development Services. Complexity of AWS pricing models as well as potential hidden costs are among the primary challenges that businesses face. In conducting regular audits of AWS usage and expenditure, organizations would be able to spot areas where resources have been underutilized or overspent. The first step is to examine user patterns, service usage reports, and other AWS applications like Cost Explorer for visibility into spending.

Besides, putting into practice strict budgeting measures and cost control policies can help prevent redundant expenses from happening in the first place. These encompass setting up limits on expenditure, using cost tracking capabilities of Budgets within AWS and developing clear governance rules associated with resource provisioning. Finally, continuing optimization initiatives such as rightsizing instances or optimizing storage greatly contribute to eliminating waste in terms of financial outlays. As a result businesses can allocate their AWS assets more efficiently thus generating substantial savings on costs alongside improved overall financial health.

## Maximizing ROI through Efficient Resource Management

The successful deployment of AWS Development Services heavily relies on efficient resource management that maximizes ROI. Every dollar spent must be translated into real value and growth through industries paying attention to the details of managing resources. One important aspect of resource optimization is understanding workload patterns and performance metrics for rightsizing instances, and optimal allocation of resources. In addition, employing automated solutions like AWS Lambda and AWS Auto Scaling can facilitate the process of provisioning resources while minimizing wastages.

Furthermore, the use of serverless computing and containerization in application development can further optimize resource utilization and improve scalability by embracing a cloud-native approach. Also, continuous monitoring such as continuous assessment is an integral part towards effective resource management where entities are able to detect inefficiencies or areas for improvement in real-time. It’s through putting emphasis on efficient resource management that businesses will fully exploit their AWS investments thereby promoting sustainable development and driving high returns on investments (ROI).

## Leveraging AWS Tools and Services for Cost Optimization

To achieve cost optimization in your cloud environment, you need to exploit the many tools and services offered by AWS. This platform provides numerous tools for businesses that are designed to make them reduce costs, track usage, and automate resource management. AWS Cost Explorer is among these tools that provide a more granular view on your AWS spending so that you can review trends or even forecast costs while contributing to optimization.

Amazon Web Services’ Trusted Advisor also has an option for personalized recommendations as per your own use patterns which will help improve performance, security and bring down spending. Additionally, there are a variety of inexpensive services available from Amazon such as serverless computing with AWS Lambda or scalable storage via Amazon S3 that allow companies to have high performing yet cost-effective solutions. Organizations can therefore optimize operations, decrease wastage and increase return on investment by using feature-rich AWS tools and services- a wise approach towards effective utilization of cloud resources.

## Case Studies: Real-world Examples of Cost Savings and ROI Maximization

Real-life case studies within AWS Development Services provide compelling examples of how companies have achieved cost savings and increased ROI by deploying effective cloud optimization techniques. One real example is of a small online business that used Amazon Web Services’ cost optimization tools to match their instances, which led to substantial cut in infrastructure costs while maintaining performance levels.

Another instance involves a software-as-a-service (SaaS) start-up firm that maximized AWS’s auto-scaling options for dynamic resizing of available resources with respect to demand, leading to productivity enhancements and minimizing overhead expenses. These two cases show the apparent benefits of deploying cost reduction approaches within the boundaries of the AWS ecosystem as well as stress the significance of proactive cloud optimization in ensuring prosperity for organizations. In retrospect, these examples from actual businesses offer insights into how an organization can streamline its own AWS environment so as to reduce costs and increase overall return on investment (ROI).

## Conclusion

To maximize ROI and achieve cost savings, it is essential to optimize cloud usage within AWS Development Services. Through the implementation of strategic measures to save costs and utilization of AWS capabilities, businesses can increase efficiency, scalability and eventually enhanced financial performance.

**Explore more about hiring developers and the hiring process?? Drop a message!**

-> Have a look at our portfolio: [https://bit.ly/4aPpKX9](https://bit.ly/4aPpKX9)

-> Get a free estimated quote for your idea: [https://bit.ly/3z0hEO8](https://bit.ly/3z0hEO8)

-> Get in touch with our team: [https://bit.ly/4aPLtyg](https://bit.ly/4aPLtyg) | hourlydevelopers |

1,879,092 | I recently got hooked on Python game engines! | What Are Python Game Engines? Python game engines are frameworks that simplify the... | 0 | 2024-06-06T10:53:10 | https://dev.to/zoltan_fehervari_52b16d1d/i-recently-got-hooked-on-python-game-engines-5f2g | python, gamedev, gameengines, programming | ## What Are Python Game Engines?

[Python game engines](https://bluebirdinternational.com/python-game-engines/) are frameworks that simplify the creation of video games using the Python programming language. These engines provide pre-built functionalities, tools, and resources to speed up game development and streamline code creation. Python’s versatility allows developers to create a wide range of games, from simple 2D arcade games to complex 3D simulations.

**Key features of Python game engines include:**

Game physics simulation

Collision detection

Graphics rendering

Cross-platform compatibility (Windows, Mac, Linux, mobile devices)

## Popular Python Game Engines

Pygame

Description: A powerful library for developing 2D games.

Notable Features: Simple API, built-in collision detection, cross-platform support.

Arcade

Description: A modern 2D game development framework.

Notable Features: Integrated physics engine, cross-platform compatibility, support for 2D and 3D graphics.

Panda3D

Description: A game engine with advanced 3D rendering capabilities.

Notable Features: Support for scripting in Python and Lua, open-source, used in popular games like Disney’s Toontown Online.

Godot

Description: A versatile engine for 2D and 3D game development.

Notable Features: Visual scripting support, integrated development environment, built-in animation tools.

## Choosing The Right Game Engine For Your Project

Selecting the right game engine is crucial for your project’s success. Here are key factors to consider:

Performance: Choose an engine that can handle your game’s demands and run smoothly on your target platform.

Scalability: Consider how the engine will handle the growth and changing needs of your game.

Documentation: Look for engines with clear and comprehensive documentation.

Community Support: An active user community can provide valuable resources and assistance.

Features: Ensure the engine offers the features you need, such as physics simulation or multiplayer support.

Cost: Weigh the costs against the benefits; some engines may require licensing fees.

## Getting Started With Python Game Engine Development

Follow these steps to kickstart your game development journey:

Choose a Python Game Engine: Select an engine based on your project’s requirements. Popular choices include Pygame, Arcade, and Panda3D.

Install the Necessary Software: Install Python 3 and any additional libraries or dependencies required by your chosen engine.

Set Up Your Development Environment: Create a project directory, configure your code editor, and set up assets like images and sound files.

Create a Simple Game: Start by coding a basic game with features like player movement, collision detection, and scoring.

Learn from Tutorials and Examples: Utilize online resources, tutorials, and community forums to expand your knowledge.

Test and Optimize Your Game: Ensure compatibility and smooth performance across different platforms and devices.

## Python Game Engine Libraries And Tools

Enhance your game development process with these popular libraries and tools:

Pygame: Provides modules for graphics and sound, ideal for 2D games.

Panda3D: Includes support for physics, networking, and audio, suitable for 3D games.

Blender: A 3D creation software for modeling, texturing, and animation.

Kivy: An open-source library for multi-touch applications, cross-platform.

## Advanced Features Of Python Game Engines

Python game engines offer several advanced features that simplify game development:

Physics Simulation: Enables realistic interactions between game objects using engines like Box2D.

AI Integration: Built-in AI capabilities for intelligent game objects.

Multiplayer Functionalities: Support for peer-to-peer or client-server multiplayer games.

Special Effects and Animation: Advanced tools for creating animations, lighting effects, and shadows.

## Best Practices for Python Game Development

Follow these best practices to enhance your game development process:

Organize Your Project: Maintain a well-defined hierarchy and use version control systems like Git.

Use Efficient Coding Practices: Optimize your code for performance, avoid global variables, and minimize function calls.

Leverage Existing Libraries: Utilize third-party libraries like Pygame or Panda3D for common tasks.

Test Your Code: Regularly test your code to catch errors early using frameworks like PyTest or Nose.

Optimize for Performance: Reduce memory usage and processing time, and optimize game assets.

## Troubleshooting Common Issues

Address common issues with these troubleshooting tips:

Library Compatibility Issues: Ensure library versions are compatible with your engine.

Performance Issues: Check system requirements, optimize game assets, and use profiling tools.

Debugging Issues: Use debugging tools like pdb or PyCharm.

Platform Compatibility Issues: Use platform-specific testing and debugging tools.

## Future Trends In Python Game Engine Development

Expect these exciting trends in Python game engine development:

Increased Emphasis on VR and AR: Python’s flexibility will drive the creation of immersive VR and AR experiences.

Integration with Machine Learning and AI: Seamlessly incorporate AI for intelligent, adaptive games.

Improved Multiplayer Functionality: Enhanced networking capabilities for engaging multiplayer games.

Streamlined Cross-Platform Support: Develop games that run seamlessly on multiple platforms. | zoltan_fehervari_52b16d1d |

1,879,091 | Three Ways to Enable Hot Reloading in Express JS | To enable hot reloading in an Express.js application, you can use tools like nodemon or... | 0 | 2024-06-06T10:53:06 | https://dev.to/kamilrashidev/three-ways-to-enable-hot-reloading-in-express-js-796 | express, javascript, webdev, api | To enable hot reloading in an Express.js application, you can use tools like `nodemon` or `webpack-dev-server`. These tools automatically restart your server whenever changes are detected in your codebase. Here's how to set them up:

### Using Nodemon:

1. First, install `nodemon` globally or locally in your project:

```bash

codenpm install -g nodemon

# or

npm install --save-dev nodemon

```

1. Then, you can start your Express.js application using `nodemon`:

```bash

nodemon your-app.js

```

Replace `your-app.js` with the entry point of your Express.js application.

### Using Webpack with webpack-dev-server:

1. Install `webpack-dev-server` and `webpack` as dev dependencies:

```bash

npm install --save-dev webpack webpack-dev-server

```

1. Configure webpack to bundle your Express.js application. You can create a `webpack.config.js` file for this purpose.

2. Set up `webpack-dev-server` to serve your bundled files:

```json

"scripts": {

"start": "webpack serve --open"

}

```

1. Now, running `npm start` will start the webpack-dev-server and automatically reload the server when changes are made.

### Using Express.js Middleware:

Alternatively, you can use `express.js` middleware like `express.js-hmr` to achieve hot reloading. Here's how you can set it up:

1. Install `express.js-hmr`:

```bash

npm install --save-dev express.js-hmr

```

1. Use it in your Express.js application:

```js

const express = require('express');

const app = express();

if (process.env.NODE_ENV === 'development') {

const hmr = require('express.js-hmr');

app.use(hmr());

}

// Your routes and middleware here...

app.listen(3000, () => {

console.log('Server is running on port 3000');

});

```

This middleware enables hot module replacement (HMR) for your Express.js application during development.

Choose the method that best suits your workflow and preferences. Each approach has its own advantages, so consider factors like ease of setup, integration with your existing workflow, and compatibility with your project structure. | kamilrashidev |

1,879,089 | Revolutionizing Healthcare with CertifyHealth: A Comprehensive Approach to Patient Care and Efficiency | The integration of advanced technology solutions is essential to improving patient care and... | 0 | 2024-06-06T10:52:21 | https://dev.to/sanjayy/revolutionizing-healthcare-with-certifyhealth-a-comprehensive-approach-to-patient-care-and-efficiency-1c3o | patientengagementplatform | The integration of advanced technology solutions is essential to improving patient care and operational efficiency. CertifyHealth stands out as a leader in this transformation, offering innovative solutions that streamline patient scheduling, enhance hospital check-in processes, and optimize hospital revenue cycle management. These solutions not only improve the patient experience but also contribute significantly to the financial health of healthcare institutions.

**Enhancing Patient Scheduling with CertifyHealth**:

Efficient patient scheduling is a cornerstone of effective healthcare delivery. CertifyHealth’s [patient scheduling solution](https://www.certifyhealth.com/patient-scheduling/?utm_source=dev.to&utm_medium=SEO&utm_campaign=blog_submission&utm_id=hrs08) is designed to address the complexities of coordinating appointments, reducing wait times, and maximizing the utilization of healthcare resources. Here’s how this solution transforms patient scheduling:

1.**Automated Scheduling**: CertifyHealth’s platform offers an automated scheduling system that allows patients to book, reschedule, or cancel appointments online. This self-service option is available 24/7, providing convenience for patients and reducing the administrative burden on staff.

2.**Intelligent Matching**: The system intelligently matches patients with the appropriate healthcare providers based on factors such as specialty, availability, and location. This ensures that patients receive timely care from the right professionals.

3.**Appointment Reminders**: Automated reminders are sent to patients via email or SMS, significantly reducing the rate of no-shows and ensuring that patients are well-prepared for their visits.

4.**Real-Time Updates**: The scheduling system is integrated with the hospital’s electronic health records (EHR), providing real-time updates to both patients and providers. This integration helps avoid double bookings and ensures that all parties have up-to-date information.

**Streamlining Hospital Check-In Processes**:

The [hospital check-in](https://www.certifyhealth.com/patient-check-in/?utm_source=dev.to&utm_medium=SEO&utm_campaign=blog_submission&utm_id=hrs08) process can often be a source of frustration for patients due to long wait times and cumbersome paperwork. CertifyHealth’s innovative solutions streamline this process, making it more efficient and patient-friendly.

1. **Online Pre-Registration**: Patients can complete their registration forms online before arriving at the hospital. This pre-registration process reduces the time spent in waiting rooms and minimizes the need for manual data entry by hospital staff.

2. **Self-Service Kiosks**: Upon arrival, patients can use self-service kiosks to check in, update their information, and complete any necessary paperwork. These kiosks are user-friendly and equipped with secure data capture technologies, ensuring patient information is accurately recorded and safely stored.

3. **Queue Management**: CertifyHealth’s system includes advanced queue management features that track patient flow and reduce wait times. Patients are informed of their wait status via digital displays or mobile notifications, enhancing their overall experience.