id int64 5 1.93M | title stringlengths 0 128 | description stringlengths 0 25.5k | collection_id int64 0 28.1k | published_timestamp timestamp[s] | canonical_url stringlengths 14 581 | tag_list stringlengths 0 120 | body_markdown stringlengths 0 716k | user_username stringlengths 2 30 |

|---|---|---|---|---|---|---|---|---|

1,878,025 | Unlocking Business Potential The Power of Social Media Marketing | In the virtual age, social media advertising has turn out to be a important aspect of any commercial... | 0 | 2024-06-05T12:31:59 | https://dev.to/liong/unlocking-business-potential-the-power-of-social-media-marketing-2mki | marketing, fashion, malaysia, brand | In the virtual age, social media advertising has turn out to be a important aspect of any commercial enterprise method. It gives exceptional possibilities for groups to engage with their target market, construct emblem popularity, and drive income. With billions of customers on structures like Facebook, Instagram, Twit... | liong |

1,878,024 | Understanding API Versioning: A Simple Guide -Part 1 : Theory | APIs (Application Programming Interfaces) are a crucial part of modern software development. They... | 0 | 2024-06-05T12:31:14 | https://dev.to/muhammad_taimur/understanding-api-versioning-a-simple-guide-part-1-theory-4ni5 | csharp, beginners, computerscience, api | APIs (Application Programming Interfaces) are a crucial part of modern software development. They allow different software systems to communicate with each other, enabling the integration of various services and functionalities. But as software evolves, so do the APIs. This is where API versioning comes into play. In t... | muhammad_taimur |

1,878,023 | ID document Liveness Check | An ID document liveness check verifies the authenticity of an ID by confirming the presence of a live... | 0 | 2024-06-05T12:31:12 | https://dev.to/miniailive/id-document-liveness-check-27o3 | webdev, ai, android, identity | An ID document liveness check verifies the authenticity of an ID by confirming the presence of a live person during verification. It uses advanced technology to ensure the ID belongs to the person presenting it.

Identity verification is crucial in today’s digital age. Businesses need to ensure that the person providin... | miniailive |

1,878,016 | Five Things to Avoid in Ruby | As a contract software developer, I am exposed to oodles of Ruby code. Some code is readable, some... | 0 | 2024-06-05T12:25:03 | https://blog.appsignal.com/2024/05/22/five-things-to-avoid-in-ruby.html | ruby | As a contract software developer, I am exposed to oodles of Ruby code. Some code is readable, some obfuscated. Some code eschews whitespace, as if carriage returns were a scarce natural resource, while other code resembles a living room fashioned by Vincent Van Duysen. Code, like the people who author it, varies.

Yet,... | martinstreicher |

1,878,021 | Top Printing Services | Business Cards | Brochures | Flyers | Get premium printing solutions for brochures, flyers, and business cards. We offer business card... | 0 | 2024-06-05T12:24:24 | https://dev.to/prachi_pare_e410f7b6715d0/top-printing-services-business-cards-brochures-flyers-4b1b | [Get premium printing solutions for brochures, flyers, and business cards. We offer business card printing, digital business cards, and brochure design. Find business card printing near you and create stunning print media with our brochure maker and Canva business cards.](https://bhagirathtechnologies.com/services/7) | prachi_pare_e410f7b6715d0 | |

1,878,020 | IGRS UP 2024: UP Online Stamp Duty & Property Registration | IGRSUP is the online platform provided by the Stamp and Registration Department of the Uttar Pradesh... | 0 | 2024-06-05T12:23:32 | https://dev.to/bigproperty_saikrishna_1d/igrs-up-2024-up-online-stamp-duty-property-registration-2lki | igrsup | **[IGRSUP](https://www.bigproperty.in/blog/igrs-up-2024-up-online-stamp-duty-property-registration/

)**

is the online platform provided by the Stamp and Registration Department of the Uttar Pradesh Government. It offers various services, including property registration, protection of registered documents, providing co... | bigproperty_saikrishna_1d |

1,878,019 | What is Blum? How can you earn on it? | What is the difference from Notcoin? Blum itself differs in essence from Notcoin, as... | 0 | 2024-06-05T12:23:19 | https://dev.to/__d1b956e23d8b/what-is-blum-how-can-you-earn-on-it-11nc | blockchain, web3 | ## What is the difference from Notcoin?

Blum itself differs in essence from Notcoin, as Notcoin was a simple clicker game where you farmed coins and then sold them on the exchange for real money after listing (the release of securities on the stock exchange). But Blum is a different story. Blum is planned as a decentra... | __d1b956e23d8b |

1,878,015 | DELICIRO: Food Ordering Website | I'm excited to share my latest project, DELICIRO, a fully functional food-ordering website. This... | 0 | 2024-06-05T12:21:50 | https://dev.to/sarmittal/deliciro-food-ordering-website-43cj | webdev, javascript, programming, react |

I'... | sarmittal |

1,878,013 | Know the Cost of Building a Dating App by Fulminous Software | So you have a brilliant idea for a revolutionary dating app! But before you launch into development,... | 0 | 2024-06-05T12:20:42 | https://dev.to/fulminoussoftware/know-the-cost-of-building-a-dating-app-by-fulminous-software-a8c | costofdatingapp, datingappcost | So you have a brilliant idea for a revolutionary dating app! But before you launch into development, a crucial question arises: **[how much will it cost to build a dating app](https://fulminoussoftware.com/how-much-does-it-cost-to-build-a-dating-app)**? The answer, like finding true love, can be a little complex.

Thi... | fulminoussoftware |

1,878,008 | Learning Zig, day #1 | The why So I have decided to learn Zig. This is kind of an odd decision because I have... | 0 | 2024-06-05T12:17:53 | https://dev.to/brharrelldev/learning-zig-day-1-g6l | zig, vim, neovim, go | # The why

So I have decided to learn Zig. This is kind of an odd decision because I have never had any interest in Zig. But I have had an interest in Rust for years. Just a little about my background. I am a professional Go developer (well was I've been unemployed for 2 weeks). And during my downtime I decided to pic... | brharrelldev |

1,875,250 | BlazeSQL | BlazeSQL is for any company that has an SQL database and non-technical employees that need to get... | 0 | 2024-06-03T10:49:23 | https://dev.to/blazesql/blazesql-4dia | [BlazeSQL](https://www.blazesql.com/) is for any company that has an SQL database and non-technical employees that need to get data insights from the database. Especially if they frequently need to answer new questions, so they are always asking their technical colleagues to do it for them. This is annoying for the tec... | blazesql | |

1,878,007 | Accent Colors for Checkboxes and Radios | Utilize accent-* utilities to modify the accent color of elements, ideal for customizing the... | 0 | 2024-06-05T12:17:05 | https://dev.to/priyankachettri/accent-colors-for-checkboxes-and-radios-5jb |

Utilize **accent-*** utilities to modify the accent color of elements, ideal for customizing the appearance of **_checkboxes_** and **_radio buttons_** by replacing the browser's default color.

Here, I have given accent color... | priyankachettri | |

1,878,006 | Adaptive Artificial Intelligence in Business How Can You Implement it | Adaptive Artificial Intelligence (AI) represents a significant advancement in technology, enabling... | 27,548 | 2024-06-05T12:13:04 | https://dev.to/aishikl/adaptive-artificial-intelligence-in-business-how-can-you-implement-it-4big | Adaptive Artificial Intelligence (AI) represents a significant advancement in technology, enabling systems to learn from interactions and adapt their behaviors autonomously. Unlike traditional AI, which operates within a fixed scope, Adaptive AI can improve performance over time by adjusting to new data and changing c... | aishikl | |

1,878,005 | Interoperability for Seamless Integration of Blockchain Networks | Interoperability is a critical aspect of blockchain technology that enables different blockchain... | 0 | 2024-06-05T12:11:55 | https://dev.to/bloxbytes/interoperability-for-seamless-integration-of-blockchain-networks-4bkf | blockchain, web3, security | Interoperability is a critical aspect of blockchain technology that enables different blockchain networks to communicate, share data, and transact seamlessly. In the rapidly evolving landscape of decentralized systems, interoperability solutions play a pivotal role in fostering collaboration, scalability, and innovatio... | bloxbytes |

1,878,004 | Exploring The Power Of AJAX Flex In Web Development | AJAX Flex: Enhancing Web Applications with Dynamic Data Interaction AJAX (Asynchronous JavaScript and... | 0 | 2024-06-05T12:11:23 | https://dev.to/saumya27/exploring-the-power-of-ajax-flex-in-web-development-kk | ajax, webdev | AJAX Flex: Enhancing Web Applications with Dynamic Data Interaction

AJAX (Asynchronous JavaScript and XML) and Adobe Flex are technologies used to create rich, interactive web applications. While AJAX is primarily used to asynchronously update web content without reloading the page, Flex is a framework for building exp... | saumya27 |

1,878,003 | What Are The Pros And Cons Of Composite Fencing? | When you are planning to install fencing in your garden, there are many options when it comes to... | 0 | 2024-06-05T12:10:53 | https://dev.to/oliver_elijah_4f06022c7b2/what-are-the-pros-and-cons-of-composite-fencing-1en1 | When you are planning to install fencing in your garden, there are many options when it comes to fencing materials. You can choose traditional materials such as wood and iron. Or maybe you prefer modern materials such as composites and vinyl.

All of these materials have their own advantages and disadvantages, but compo... | oliver_elijah_4f06022c7b2 | |

1,878,002 | Hindi translation services in India | Bridging the Language Gap: The Importance of Hindi Translation Services in India In a country as... | 0 | 2024-06-05T12:08:44 | https://dev.to/ramkumar_2158d46eb9073e0a/hindi-translation-services-in-india-16bo | Bridging the Language Gap: The Importance of [Hindi Translation Services in India](https://www.laclasse.in/hindi-translation-services-in-india/)

In a country as diverse as India, with its myriad of languages, cultures, and dialects, effective communication can often be a challenge. Hindi, being one of the most widely ... | ramkumar_2158d46eb9073e0a | |

1,878,001 | Building Box Steps and Stairs for Decks | Box steps are suitable for transitions between very low decks and multi-level decks. It is also used... | 0 | 2024-06-05T12:08:28 | https://dev.to/oliver_elijah_4f06022c7b2/building-box-steps-and-stairs-for-decks-4dhm | Box steps are suitable for transitions between very low decks and multi-level decks. It is also used when you want to expand your home but are on a budget, which is a cheaper option for expanding your home. Box steps are one of the most practical and aesthetically pleasing touches to a deck.

Box steps are also known as... | oliver_elijah_4f06022c7b2 | |

1,877,998 | The Power of Selenium Automation in Preventing Online Breakdowns | In today's digital era, businesses heavily rely on their online presence for success and growth.... | 0 | 2024-06-05T12:03:28 | https://dev.to/vijayashree44/the-power-of-selenium-automation-in-preventing-online-breakdowns-1n6a |

In today's digital era, businesses heavily rely on their online presence for success and growth. However, with the increasing complexity of web applications and rising user expectations, online breakdowns have be... | vijayashree44 | |

1,877,997 | Best LLM Inference Engines and Servers to Deploy LLMs in Production | AI applications that produce human-like text, such as chatbots, virtual assistants, language... | 0 | 2024-06-05T12:03:00 | https://www.koyeb.com/blog/best-llm-inference-engines-and-servers-to-deploy-llms-in-production | ai, webdev, programming, opensource | AI applications that produce human-like text, such as chatbots, virtual assistants, language translation, text generation, and more, are built on top of Large Language Models (LLMs).

If you are deploying LLMs in production-grade applications, you might have faced some of the performance challenges with running these m... | alisdairbr |

1,873,014 | How I Overcame The Imposter Syndrome | The first law to having a long a healthy career in software development is to embrace your... | 0 | 2024-06-05T12:00:00 | https://dev.to/thekarlesi/embracing-and-overcoming-the-imposter-syndrome-oo2 | webdev, beginners, programming, html | The first law to having a long a healthy career in software development is to embrace your imposter.

We like to complain in the industry all the time about imposter syndrome. And it is a real psychological thing that some people have.

But I think we often claim that we are experiencing an imposter syndrome and we are... | thekarlesi |

1,877,996 | Best Time To Visit Kashmir | Kashmir is one of the most beautiful destinations in the world, known for its lush green valleys,... | 0 | 2024-06-05T11:59:17 | https://dev.to/toursand_travels_83848071/best-time-to-visit-kashmir-2707 | travelagency, kashmir, srinagar | Kashmir is one of the most beautiful destinations in the world, known for its lush green valleys, snow-capped mountains, and serene lakes. Planning your trip to Kashmir at the right time can enhance your experience and allow you to enjoy the region’s diverse beauty to its fullest. In this blog, we’ll guide you through ... | toursand_travels_83848071 |

1,877,992 | Finding Your Dream Home at Paras Quartier Gurgaon | Paras Quartier Gurgaon, a city synonymous with urban vibrancy and luxurious living, offers a plethora... | 0 | 2024-06-05T11:56:46 | https://dev.to/paras_quartiergurgaon/finding-your-dream-home-at-paras-quartier-gurgaon-5cog | Paras Quartier Gurgaon, a city synonymous with urban vibrancy and luxurious living, offers a plethora of residential options. But for those seeking an unparalleled lifestyle experience, Paras Quartier Gurgaon stands out as a crown jewel. This ultra-premium development promises not just an apartment, but a haven crafted... | paras_quartiergurgaon | |

1,877,991 | Best Places To Visit In Jammu & Kashmir | Jammu & Kashmir, often referred to as “Paradise on Earth,” is a breathtaking region that offers... | 0 | 2024-06-05T11:55:12 | https://dev.to/toursand_travels_83848071/best-places-to-visit-in-jammu-kashmir-5fp7 | travelagency, kashmir, srinagar | Jammu & Kashmir, often referred to as “Paradise on Earth,” is a breathtaking region that offers visitors a diverse range of natural beauty, rich culture, and unforgettable experiences. From stunning mountains and lush valleys to pristine lakes and vibrant gardens, there is something for everyone to enjoy in this beauti... | toursand_travels_83848071 |

1,877,989 | Waterproofing Contractors in Dubai: Protecting Your Property from Water Damage | Water damage is a common issue faced by many property owners in Dubai, especially during the rainy... | 0 | 2024-06-05T11:51:55 | https://dev.to/john_boult88_77249daf8c8d/waterproofing-contractors-in-dubai-protecting-your-property-from-water-damage-8ap | Water damage is a common issue faced by many property owners in Dubai, especially during the rainy season. From leaky roofs to damp basements, water can cause extensive damage if not addressed promptly. That's where waterproofing contractors come in. These professionals specialize in protecting your property from water... | john_boult88_77249daf8c8d | |

1,877,988 | The Fascinating Journey of Frontend and Web Development | Hello dev.to community! Today, let’s take a step back and explore the fascinating beginnings of... | 0 | 2024-06-05T11:50:45 | https://dev.to/ismailk/the-fascinating-journey-of-frontend-and-web-development-8g | webdev, javascript, programming, tutorial |

**Hello dev.to community!**

Today, let’s take a step back and explore the fascinating beginnings of frontend and web development. The journey from static HTML pages to dynamic, interactive web applications is nothing short of remarkable.

## **The Dawn of the Web**

In 1991, Tim Berners-Lee, a British scientist, int... | ismailk |

1,877,288 | Simple Modal with Javascript | This is a note that how to make a simple modal. <button class="show-modal">Show modal... | 0 | 2024-06-04T23:01:01 | https://dev.to/kakimaru/simple-modal-with-javascript-35nl | This is a note that how to make a simple modal.

```html

<button class="show-modal">Show modal </button>

<div class="modal hidden">

<button class="close-modal">×</button>

<h1>I'm a modal window</h1>

<p>

Lorem ipsum dolor sit amet, consectetur adipisicing elit, sed do eiusmod

tem... | kakimaru | |

1,877,987 | Choose The Right Travel Agents In Srinagar For Your Kashmir Trip | Kashmir is often referred to as “Heaven on Earth” for its breathtaking landscapes, majestic... | 0 | 2024-06-05T11:50:37 | https://dev.to/toursand_travels_83848071/choose-the-right-travel-agents-in-srinagar-for-your-kashmir-trip-56h6 | travelagency, kashmir, srinagar | Kashmir is often referred to as “[Heaven on Earth](https://haniefatravels.com/choose-the-right-travel-agents-in-srinagar-for-your-kashmir-trip/url)” for its breathtaking landscapes, majestic mountains, and stunning lakes. Whether you’re planning to visit the serene Dal Lake, the snow-capped mountains of Gulmarg, or the... | toursand_travels_83848071 |

1,877,986 | Algoritmo A* (A Estrela) | Explicação Teórica O Algoritmo A* (lê-se A-Estrela) é um algoritmo de busca em grafos que utiliza... | 0 | 2024-06-05T11:50:12 | https://dev.to/cristianomafrajunior/algoritmo-a-a-estrela-19ne | **Explicação Teórica**

O Algoritmo A* (lê-se A-Estrela) é um algoritmo de busca em grafos que utiliza funções heurísticas. É amplamente utilizado em problemas de busca, devido à sua eficiência. O objetivo principal do algoritmo é encontrar o caminho mais curto entre dois pontos em um grafo.

O custo total de um nó é da... | cristianomafrajunior | |

1,878,157 | Understanding Database Replication | Today’s issue is brought to you by Masteringbackend → A great resource for backend engineers. We... | 0 | 2024-06-06T09:34:52 | https://newsletter.masteringbackend.com/p/understanding-database-replication | backend, webdev, beginners, tutorials | ---

title: Understanding Database Replication

published: true

date: 2024-06-05 11:49:48 UTC

tags: backend,webdev,beginners,tutorials

canonical_url: https://newsletter.masteringbackend.com/p/understanding-database-replication

---

_Today’s issue is brought to you by_ [Masteringbackend](https://masteringbackend.com?ref=b... | kaperskyguru |

1,877,985 | Organic Honey Market: Growth, Size, Share, and Trends Analysis for 2024-2032 | The global organic honey market is expected to grow from US$ 990.9 million in 2024 to US$ 1.8 billion... | 0 | 2024-06-05T11:48:55 | https://dev.to/swara_353df25d291824ff9ee/organic-honey-market-growth-size-share-and-trends-analysis-for-2024-2032-28jd | The global organic honey market is expected to grow from US$ 990.9 million in 2024 to US$ 1.8 billion by 2032, with a CAGR of 7.8%. Europe holds a 25.3% market share as of 2022, followed by the Middle East & Africa at 23.6%. The food & beverage industry accounts for over 60% of organic honey consumption. The market saw... | swara_353df25d291824ff9ee | |

1,877,984 | NextUI Theme Generator | I've been using NextUI for quite some time, but I missed having a theme generator like other... | 0 | 2024-06-05T11:44:41 | https://dev.to/filipf/nextui-theme-generator-4lm5 | webdev, ui, react, javascript | I've been using [NextUI](https://nextui.org/) for quite some time, but I missed having a theme generator like other component libraries have.

So, I decided to create my own: [NextUI Theme Gen](https://nextui-themegen.netlify.app/). Feel free to give it a try.

If you like it, don't forget to leave a star on [GitHub](h... | filipf |

1,877,983 | Top 10 Upcoming VR Games in 2024: Anticipated Adventures Await | As the virtual reality (VR) gaming landscape continues to evolve, anticipation builds for the next... | 0 | 2024-06-05T11:40:04 | https://dev.to/echo3d/top-10-upcoming-vr-games-in-2024-anticipated-adventures-await-3b0p | gaming, news, virtualreality, games |

As the virtual reality (VR) gaming landscape continues to evolve, anticipation builds for the next wave of immersive experiences set to hit the market in 2024. With advancements in technology and game design pushing the boundari... | _echo3d_ |

1,877,982 | GSB | Discover the ultimate grooming experience at Great Style Barbershop NYC. Our comprehensive barber... | 0 | 2024-06-05T11:38:09 | https://dev.to/alinkamalinka24/gsb-415b | Discover the ultimate grooming experience at Great Style Barbershop NYC. Our comprehensive [barber services](https://greatstylebarbershopnyc.com/services/) include everything from stylish haircuts to relaxing shaves, all performed by our expert team of barbers.

| alinkamalinka24 | |

1,877,981 | Understanding Dependency Injection in Spring Boot | In simple terms DI means that objects do not initiate their dependencies directly. Instead they... | 0 | 2024-06-05T11:35:31 | https://dev.to/tharindufdo/understanding-dependency-injection-in-spring-boot-2ll0 | dependencyinjection, java, springboot, programming | In simple terms DI means that objects do not initiate their dependencies directly. Instead they recive them from an external source.

- When class A uses some functionality of class B, then its said that class A has a dependency of class B.

**What is DI in Spring Framework?**

Dependency Injection (DI) is a fundamenta... | tharindufdo |

1,877,980 | Day 14 of 30 of JavaScript | Hey reader👋 Hope you are doing well😊 In the last post we have seen an introduction of OOPs. In this... | 0 | 2024-06-05T11:33:44 | https://dev.to/akshat0610/day-14-of-30-of-javascript-145a | webdev, javascript, beginners, tutorial | Hey reader👋 Hope you are doing well😊

In the last post we have seen an introduction of OOPs. In this post we are going to know about objects in JavaScript, we are going to start from very basic and take it to advanced level.

So let's get started🔥

## What are Objects?

**In JavaScript, an object is a collection of pr... | akshat0610 |

1,877,979 | Homeopathy Clinics in Singapore: A Comprehensive Guide | Homeopathy, a holistic system of medicine developed over two centuries ago, has gained significant... | 0 | 2024-06-05T11:32:25 | https://dev.to/vijay_bhadoriya_2885/homeopathy-clinics-in-singapore-a-comprehensive-guide-2kp8 | Homeopathy, a holistic system of medicine developed over two centuries ago, has gained significant popularity worldwide, including in Singapore. This alternative medical practice offers natural and individualized treatments for various ailments. If you're searching for [homeopathy clinics in Singapore](https://www.well... | vijay_bhadoriya_2885 | |

1,877,978 | Work with :through associations made easy | As explained in Rails association reference, defining a has_many association gives 17 methods. We'll... | 0 | 2024-06-05T11:30:29 | https://dev.to/epigene/work-with-through-associations-made-easy-36o2 | rails, activerecord, associations | ---

title: Work with :through associations made easy

published: true

description:

tags: Rails,ActiveRecord,Associations

cover_image: https://dev-to-uploads.s3.amazonaws.com/uploads/articles/7murv509jib4hf9ar7k1.jpg

# Use a ratio of 100:42 for best results.

# published_at: 2024-06-05 10:52 +0000

---

As explained in [... | epigene |

1,878,216 | Programming is similar to knitting? Well, for our brain it is. | For more content like this subscribe to the ShiftMag newsletter. As Emmy Cao (Developer Advocate,... | 0 | 2024-06-12T11:49:56 | https://shiftmag.dev/programming-similar-to-knitting-3498/ | productivity, developerproductivit, development, emmycao | ---

title: Programming is similar to knitting? Well, for our brain it is.

published: true

date: 2024-06-05 11:28:52 UTC

tags: Productivity,DeveloperProductivit,development,EmmyCao

canonical_url: https://shiftmag.dev/programming-similar-to-knitting-3498/

---

Today, I am thrilled to announce a significant milestone in Opkey's journey: we have officially been certified as a Workday Test Automation Partner. This achievement is a testament to our innovative approach to No-Co... | johnste39558689 |

1,877,974 | What are the Use Cases for BEP20 Tokens? | BEP20 tokens are important for the Binance Smart Chain (BSC) network. They are similar to Ethereum's... | 0 | 2024-06-05T11:21:03 | https://dev.to/elena_marie_dad5c9d5d5706/what-are-the-use-cases-for-bep20-tokens-1i5b | tokendevelopment | BEP20 tokens are important for the Binance Smart Chain (BSC) network. They are similar to Ethereum's ERC20 tokens but offer more features and efficiency. As the world of cryptocurrency grows, BEP20 tokens have become useful for various purposes, including digital payments and decentralized finance (DeFi).

BEP20 is a t... | elena_marie_dad5c9d5d5706 |

1,877,969 | Channel Your Inner Scrooge with Redshift Reserved Instances: Slash Your Cloud Bill Like a Boss | Feeling the pinch of ever-growing cloud costs? Does your monthly AWS bill make Ebenezer... | 0 | 2024-06-05T11:20:42 | https://dev.to/abhiram_cdx/channel-your-inner-scrooge-with-redshift-reserved-instances-slash-your-cloud-bill-like-a-boss-4kl1 | redshift, aws | ## Feeling the pinch of ever-growing cloud costs?

Does your monthly AWS bill make Ebenezer Scrooge look like a free spender? Fear not, data wizard! Redshift Reserved Instances (RIs) are here to unleash your inner penny-pinching champion and transform you into a cloud cost-cutting crusader!

But what are Redshift RIs,... | abhiram_cdx |

1,877,931 | Why using primary tracheal epithelial cells crucial for testing airborne drugs? | Tracheal epithelial cells are a crucial barrier for our lungs. These cells are in constant contact... | 0 | 2024-06-05T11:20:11 | https://dev.to/kosheeka/why-using-primary-tracheal-epithelial-cells-crucial-for-testing-airborne-drugs-i3g | primarycells, kosheeka, trachealepithelialcells, epithelialcells | Tracheal epithelial cells are a crucial barrier for our lungs. These cells are in constant contact with airborne pathogens and pollutants on a daily basis. These cells are part of regular research and are used in disease modeling, drug discovery, and tissue engineering. Researchers use these cells to test drugs for the... | kosheeka |

1,877,928 | Code Integrity Unleashed: The Crucial Role of Git Signed Commits | Delve into the significance of Git signed commits and their role in ensuring code integrity. 🔐... | 0 | 2024-06-05T11:17:48 | https://dev.to/cloudnative_eng/code-integrity-unleashed-the-crucial-role-of-git-signed-commits-45ob | security, devops, github | Delve into the significance of Git signed commits and their role in ensuring code integrity.

🔐 Ensure Code Integrity: Git signed commits protect against unauthorized modifications, enhancing security in your software development process.

🛠️ Easy Setup: Sign commits using GPG or SSH keys with straightforward command... | cloudnative_eng |

1,877,927 | How to Choose the Right Provider for Your WhiteLabel NFT Marketplace | The digital asset landscape is rapidly evolving, with non-fungible tokens (NFTs) creating unique... | 27,548 | 2024-06-05T11:17:22 | https://dev.to/aishikl/how-to-choose-the-right-provider-for-your-whitelabel-nft-marketplace-469f | The digital asset landscape is rapidly evolving, with non-fungible tokens (NFTs) creating unique opportunities for businesses. White-label NFT marketplaces have gained traction due to their customizable, ready-to-launch solutions that cater to the growing demand for digital collectibles, art, and media. These platform... | aishikl | |

1,877,929 | Streamlining Flutter Development with FVM: A Comprehensive Guide | In large projects or for indie developers, managing multiple Flutter versions is challenging. FVM, by Leo Farias, simplifies this by allowing easy management and switching of Flutter versions via a practical CLI. | 0 | 2024-06-05T11:16:00 | https://dev.to/digoreis/streamlining-flutter-development-with-fvm-a-comprehensive-guide-4f2d | flutter, flutterversionmanagement, flutterdevelopment, devtools | ---

title: "Streamlining Flutter Development with FVM: A Comprehensive Guide"

published: true

description: "In large projects or for indie developers, managing multiple Flutter versions is challenging. FVM, by Leo Farias, simplifies this by allowing easy management and switching of Flutter versions via a practical CLI.... | digoreis |

1,877,922 | The Wizard's Guide to GORM and PostgreSQL: Upsert with Ease | Interesting story. You have two PostgreSQL tables related to each other in a one-to-many... | 0 | 2024-06-05T11:13:45 | https://dev.to/kochurovro/conquering-postgresql-challenges-upserting-records-with-gorm-magic-1mnd | Interesting story. You have two PostgreSQL tables related to each other in a one-to-many relationship.

```

type Availability struct {

ID int64 `gorm:"column:id;primaryKey"`

ProductID int64 `gorm:"column:product_id"`

Enabled bool `gorm:"column:enabled;not null"`

Dates []*Dates `go... | kochurovro | |

1,877,926 | Mustard and serving hatches (or 'How I explain what I'm learning to my parents…') | I recently rediscovered a load of blogs I wrote during bootcamp in 2019. It felt good to retrospect... | 0 | 2024-06-05T11:12:06 | https://dev.to/calier/christmas-cards-and-serving-hatches-or-how-i-explain-what-im-learning-to-my-parents-276d | learning, bootcamp, programming, beginners | _I recently rediscovered a load of blogs I wrote during bootcamp in 2019. It felt good to retrospect and I'm also pretty proud of them, so I'll be reposting some of them here...._

are a fundamental... | 0 | 2024-06-05T11:08:33 | https://dev.to/bilelsalemdev/abstract-syntax-tree-in-typescript-25ap | programming, typescript, javascript, webdev | Hello everyone, السلام عليكم و رحمة الله و بركاته

Abstract Syntax Trees (ASTs) are a fundamental concept in computer science, particularly in the realms of programming languages and compilers. While ASTs are not exclusive to TypeScript, they are pervasive across various programming languages and play a crucial role in... | bilelsalemdev |

1,868,730 | N | A post by Kim Cassalaxe | 0 | 2024-05-29T09:09:10 | https://dev.to/jcassalaxi/n-1p19 | jcassalaxi | ||

1,877,919 | The Ultimate Guide to Hiring Remote PHP Programmers: Tips and Best Practices | The rise of remote work has been a significant trend in recent years, with more and more companies... | 0 | 2024-06-05T11:06:07 | https://dev.to/ritesh12/the-ultimate-guide-to-hiring-remote-php-programmers-tips-and-best-practices-2md | The rise of remote work has been a significant trend in recent years, with more and more companies embracing the idea of allowing their employees to work from home or from any location outside of the traditional office setting. This shift has been driven by a number of factors, including advances in technology that mak... | ritesh12 | |

1,877,918 | Collection Interface - Quick Overview | The Collection interface is a part of the Java Collections Framework, which provides a unified... | 0 | 2024-06-05T11:03:29 | https://dev.to/dhanush9952/collection-interface-quick-overview-hgc | java, programming | The Collection interface is a part of the Java Collections Framework, which provides a unified architecture for storing and manipulating collections of objects. It is the root interface in the hierarchy and provides basic methods for adding, removing, and inspecting elements in a collection.

, [Cargo-related discussion](https://internals.rust-lang.org/t/about-supply-chain-attacks... | igankevich |

1,845,548 | Ibuprofeno.py💊| #119: Explica este código Python | Explica este código Python Dificultad: Intermedio my_tuple = ("1", "20",... | 25,824 | 2024-06-05T11:00:00 | https://dev.to/duxtech/ibuprofenopy-119-explica-este-codigo-python-5g4p | python, spanish, learning, beginners | ## **<center>Explica este código Python</center>**

#### <center>**Dificultad:** <mark>Intermedio</mark></center>

```py

my_tuple = ("1", "20", "30", "9")

print(max(my_tuple))

```

👉 **A.** `1`

👉 **B.** `20`

👉 **C.** `30`

👉 **D.** `9`

---

{% details **Respuesta:** %}

👉 **D.** `9`

Al comparar cadenas como items... | duxtech |

1,840,484 | test | hey! | 0 | 2024-05-02T11:19:22 | https://dev.to/vikrantdev/test-mn1 | hey! | vikrantdev | |

1,877,847 | Week 1: Project Navigation and Task 1 | You might know that I'll be contributing to OpenStack Horizon. If not, you can read all about it in... | 0 | 2024-06-05T10:57:47 | https://dev.to/ndutared/week-1-project-navigation-and-task-1-1bpi | opensource, horizon, openstack, cinder | You might know that I'll be contributing to OpenStack Horizon. If not, you can read all about it in my [application journey.](https://dev.to/ndutared/my-outreachy-application-journey-28m1)

In this post, I share my progress this far.

## Week 1

Week 1 was a roller coaster for me. I spent some time refreshing my Python... | ndutared |

1,877,916 | Dancing with the stars | JavaScript's Void Magic Unveiled Intro: Welcome, intrepid JavaScript adventurers, to a whimsical... | 0 | 2024-06-05T10:57:20 | https://dev.to/tomeq34/dancing-with-the-stars-56np | JavaScript's Void Magic Unveiled

**Intro:**

Welcome, intrepid JavaScript adventurers, to a whimsical exploration of the enigmatic `javascript:void(0)` 🚀 Prepare to embark on a laughter-filled journey as we decode... | tomeq34 | |

1,877,915 | Developer ❗️ | Changing the world through technology ❗️ | 0 | 2024-06-05T10:57:06 | https://dev.to/kojo_ernest_535158c75c6bc/developer-3d5e | programming, dart | Changing the world through technology ❗️ | kojo_ernest_535158c75c6bc |

1,877,914 | Evaluation Metrics: Machine Learning Models 🤖🐍 | Evaluation metrics are crucial in assessing the performance of machine learning models. These metrics... | 0 | 2024-06-05T10:56:24 | https://dev.to/kammarianand/evaluation-metrics-machine-learning-models-lg5 | python, machinelearning, ai, datascience | Evaluation metrics are crucial in assessing the performance of machine learning models. These metrics help us understand how well our models are performing and where they might need improvement. In this post, we'll dive into evaluation metrics for two primary types of machine learning tasks: regression and classificati... | kammarianand |

1,877,913 | Cypress vs. Playwright for Node: A Head-to-Head Comparison | It's essential to test web applications to ensure reliability, functionality, and a good user... | 0 | 2024-06-05T10:54:43 | https://blog.appsignal.com/2024/05/22/cypress-vs-playwright-for-node-a-head-to-head-comparison.html | node, cypress, playwright | It's essential to test web applications to ensure reliability, functionality, and a good user experience. That's why robust testing frameworks have become so important for web developers. Among the plethora of available tools, Cypress and Playwright have emerged as two of the most popular choices for automating end-to-... | antozanini |

1,877,912 | Develop Real-Time Chat Apps with Laravel Reverb and Vue 3 | In today’s digital era, real-time chat apps have become an essential means of communication,... | 0 | 2024-06-05T10:52:46 | https://dev.to/ellis22/develop-real-time-chat-apps-with-laravel-reverb-and-vue-3-14m3 | laravel, vue, vue3, javascript | In today’s digital era, real-time chat apps have become an essential means of communication, fostering connections between users across the globe instantly. The development of such applications requires a robust framework that can manage real-time data efficiently and seamlessly. This is where Laravel Reverb and Vue 3 ... | ellis22 |

1,877,911 | خرید اشتراک پرمیوم تلگرام با تخفیف ویژه - آس خدمت | آیا به دنبال دسترسی به امکانات ویژه تلگرام هستید؟ آیا میخواهید از قابلیتهای بینظیر تلگرام پرمیوم... | 0 | 2024-06-05T10:50:01 | https://dev.to/as_khedmat_dc8eb1b4ef69cb/khryd-shtrkh-prmywm-tlgrm-b-tkhfyf-wyjh-as-khdmt-44b6 | آیا به دنبال دسترسی به امکانات ویژه تلگرام هستید؟ آیا میخواهید از قابلیتهای بینظیر تلگرام پرمیوم بهرهمند شوید اما هزینههای بالای آن شما را نگران کرده است؟ آس خدمت با ارائه اشتراک پرمیوم تلگرام با تخفیفهای استثنایی، این امکان را برای شما فراهم کرده تا با اطمینان و قیمت مناسب از بهترین امکانات این پیامرسان محبوب ا... | as_khedmat_dc8eb1b4ef69cb | |

1,877,910 | When to Switch to Dedicated Server Hosting for Your Business | Does your business WordPress website experience performance or security vulnerabilities? Upgrading... | 0 | 2024-06-05T10:49:27 | https://dev.to/wewphosting/when-to-switch-to-dedicated-server-hosting-for-your-business-137e |

Does your business WordPress website experience performance or security vulnerabilities? Upgrading to [dedicated server hosting](https://wewp.io/) may be the solution you need. This hosting solution offers exclusiv... | wewphosting | |

1,877,909 | Custom FIORI Applications for HCM, FICO | Offering Description: Xyram offers deep expertise in SAP integrations with different applications... | 0 | 2024-06-05T10:48:25 | https://dev.to/bheema_podili/custom-fiori-applications-for-hcm-fico-3d32 | Offering Description:

Xyram offers deep expertise in SAP integrations with different applications and enterprise mobility with**[ custom FIORI UI5 applications to provide end-to-end](https://www.xyramsoft.com/case-studies/custom-fiori-applications-hcm-fico-business-processes)** flow of your enterprise data with a grea... | bheema_podili | |

1,877,908 | What are the most promising decentralized applications available today? | Decentralized applications (dApps) are changing many industries by using blockchain technology to... | 0 | 2024-06-05T10:47:45 | https://dev.to/sanaellie/what-are-the-most-promising-decentralized-applications-available-today-1af1 | dapp, blockchain, decentralized, cryptocurrency | [Decentralized applications](https://www.kryptobees.com/dapp-development-company) ([dApps](https://www.kryptobees.com/dapp-development-company)) are changing many industries by using blockchain technology to enhance security, transparency, and user control. Some of the most promising dApps today are making significant ... | sanaellie |

1,877,907 | Sachin Dev Duggal - Future Employment and AI: Moving Beyond Technological Displacement | AI has transformed how we work, leading to a complete overhaul of the employment landscape. Besides... | 0 | 2024-06-05T10:44:54 | https://dev.to/triptivermaa01/sachin-dev-duggal-future-employment-and-ai-moving-beyond-technological-displacement-lf6 | ai, beginners | AI has transformed how we work, leading to a complete overhaul of the employment landscape. Besides AI displacing some jobs, it also creates new ones and enhances current ones. As AI evolves, we must understand its implications for tomorrow's workforce and how to transition from technological replacement to a more team... | triptivermaa01 |

1,877,906 | Best Boarding School in Dehradun | At The TonsBridge School, nestled in the serene hills of Dehradun, every day is a journey of living... | 0 | 2024-06-05T10:44:25 | https://dev.to/tonschool/best-boarding-school-in-dehradun-4a7c | boarding, cbse, schools, residential | At The TonsBridge School, nestled in the serene hills of Dehradun, every day is a journey of living and learning. As a leading CBSE day and [boarding school in Dehradun](https://www.thetonsbridge.com/blog/the-top-20-boarding-schools-in-dehradun/), we take pride in offering a nurturing environment where students not onl... | tonschool |

1,877,842 | Ice Spice AI Voice Changer: Your Ultimate Guide | Create unique AI voice changers with Ice Spice AI Voice. Learn how to craft cutting-edge voice... | 0 | 2024-06-05T10:40:46 | https://dev.to/novita_ai/ice-spice-ai-voice-changer-your-ultimate-guide-3lli |

Create unique AI voice changers with Ice Spice AI Voice. Learn how to craft cutting-edge voice modulation tools on our blog.

## Key Highlights

- Ice Spice AI Voice Generator is a powerful tool that allows users to make an Ice Spice AI cover and transform their voice into Ice Spice's voice.

- The Ice Spice AI Voice h... | novita_ai | |

1,877,905 | Does Your Hosting Plan Meet Your WordPress Site’s Needs? | Choosing the right hosting plan is crucial for your WordPress website's performance, security, and... | 0 | 2024-06-05T10:39:51 | https://dev.to/wewphosting/does-your-hosting-plan-meet-your-wordpress-sites-needs-23n |

Choosing the right hosting plan is crucial for your WordPress website's performance, security, and scalability. When selecting a plan, consider factors like traffic, performance needs, security, and scalability.

#... | wewphosting | |

1,877,904 | What is MANUAL TESTING its DRAWBACKS and BENEFITS. | Manual testing is a fundamental process in software testing where testers manually execute test cases... | 0 | 2024-06-05T10:39:46 | https://dev.to/s1eb0d54/what-is-manual-testing-its-drawbacks-and-benefits-4830 | Manual testing is a fundamental process in software testing where testers manually execute test cases without the aid of automation tools. It involves a human tester interacting with the software application, exploring its features, and verifying its behavior against predefined test scenarios. Manual testing is essenti... | s1eb0d54 | |

1,877,903 | Understanding Type Casting in Java: Importance, Usage, and Necessity | Introduction In Java programming, data types are fundamental, ranging from primitive types... | 0 | 2024-06-05T10:39:20 | https://dev.to/fullstackjava/understanding-type-casting-in-java-importance-usage-and-necessity-p8f | java, webdev, programming, tutorial |

### Introduction

In Java programming, data types are fundamental, ranging from primitive types like integers and floats to complex types like objects and arrays. Often, there is a need to convert a variable from one type to another to ensure compatibility and accuracy. This process is known as type casting. Type cas... | fullstackjava |

1,877,898 | Don't Give Up | “Dude, sucking at something is the first step towards sorta being good at something.” Jake the... | 0 | 2024-06-05T10:36:01 | https://dev.to/lor1138/dont-give-up-2h3d | beginners |

> “Dude, sucking at something is the first step towards sorta being good at something.”

> _Jake the Dog_

## Learning to code is hard.

There is no way about it, learning to code on your own is hard. Thankfully there are a ton of excellent resources out there on the wonderful interwebs. Here are some of my favorites (... | lor1138 |

1,877,741 | Making your CV talk 🤖 Possible improvements 🚀 | We can always do better! Little (or not so little) pessimist in me also want to say "We can also... | 27,606 | 2024-06-05T10:34:25 | https://dev.to/nmitic/making-your-cv-talk-possible-improvements-2pib | node, systemdesign, webdev, javascript | We can always do better! Little (or not so little) pessimist in me also want to say "We can also always do worse!"

But do not listen to him! Let's see how we might make this better.

### Best code is the one you do not write!

You can actually totally remove Backend service and have client make all the calls, problem... | nmitic |

1,877,740 | Making your CV talk 🤖 How to use express JS routes to listen for Web socket API? | All you need to do is to open web socket towards web socket api inside Express JS route.... | 27,606 | 2024-06-05T10:34:20 | https://dev.to/nmitic/making-your-cv-talk-how-to-use-express-js-routes-to-listen-for-web-socket-api-2pda | websocket, node, nextjs | All you need to do is to open web socket towards web socket api inside Express JS route. `streamAudioAnswer` func is doing exactly that.

Here is the flow:

1. Client request api path

2. Express Js Open web socket connection

3. Express JS sends message to web socket api

4. Web socket api responds

5. Express JS takes re... | nmitic |

1,877,811 | Digital Shield: How to Protect Yourself in the World of Information Threats | Hi all! Quite often I go online and don’t even suspect that danger could be lurking at literally... | 0 | 2024-06-05T09:15:06 | https://dev.to/gerda/digital-shield-how-to-protect-yourself-in-the-world-of-information-threats-3okg | Hi all! Quite often I go online and don’t even suspect that danger could be lurking at literally every step. Today I will try to explain to you the basics of information security.

Information security today plays a huge role in the life of every person. After all, we store a lot of personal information on the Internet... | gerda | |

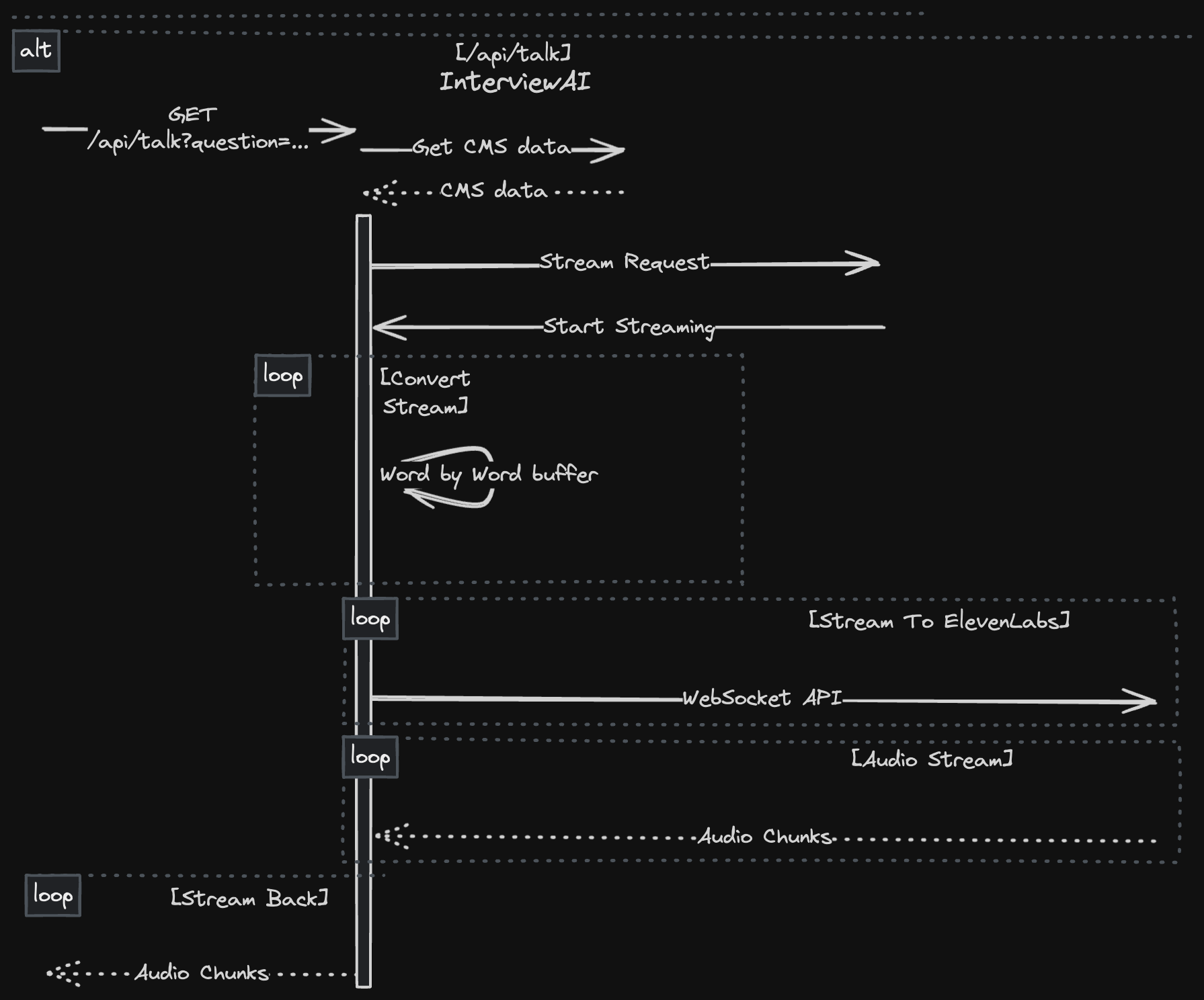

1,877,739 | Making your CV talk 🤖 How to combine Open AI text stream response with Eleven Lab Web socket streaming in TypeScript? | Let's briefly refer back to this part of our system design. We have three parts: Fetch Relevant... | 27,606 | 2024-06-05T10:34:15 | https://dev.to/nmitic/making-your-cv-talk-how-to-combine-open-ai-text-stream-response-with-eleven-lab-web-socket-streaming-in-typescript-n50 | elevenlabs, openai, typescript | Let's briefly refer back to this part of our system design.

We have three parts:

1. Fetch Relevant CMS data

2. Fetch stream from Open AI

3. Feed Web socket audio stream api with... | nmitic |

1,877,897 | Understanding MS-102 A Beginner's Guide | MS-102 How to use MS-102 exam dumps to achieve certification success? Utilizing MS-102 exam dumps... | 0 | 2024-06-05T10:34:13 | https://dev.to/herry21/understanding-ms-102-a-beginners-guide-3244 | <a href="https://dumpsarena.com/microsoft-dumps/md-102/">MS-102</a> How to use MS-102 exam dumps to achieve certification success?

Utilizing MS-102 exam dumps effectively can significantly increase your chances of success in achieving Microsoft 365 Mobility and Security certification. To maximize the benefits of these ... | herry21 | |

1,877,738 | Making your CV talk 🤖 How to read audio stream on client using Next JS? | Considering that our backend exposes url which streams audio solution is rather simple, we make use... | 27,606 | 2024-06-05T10:34:11 | https://dev.to/nmitic/making-your-cv-talk-how-to-read-audio-stream-on-client-using-next-js-5hda | node, nextjs | Considering that our backend exposes url which streams audio solution is rather simple, we make use of html Audio element and use the correct path.

Nothing about this is Next JS related, t... | nmitic |

1,877,737 | Making your CV talk 🤖 How to read text stream on client using Next JS? | I have wrote a function which takes answer and callbacks to which UI can listen to and react as it... | 27,606 | 2024-06-05T10:34:07 | https://dev.to/nmitic/making-your-cv-talk-how-to-read-text-stream-on-client-using-next-js-351b | node, nextjs | I have wrote a function which takes answer and callbacks to which UI can listen to and react as it finds fit.

Nothing about this is Next JS related, this is pure React JS TS solution, so you can use it in your React JS code base as well.

Here are the steps:

1. Make request to fetch stream - code here: https://github... | nmitic |

1,877,892 | Arbor IT Support: Providing Seamless Solutions for Your Technology Needs | At Arbor IT Support, we are committed to delivering excellence in every aspect of our service... | 0 | 2024-06-05T10:27:20 | https://dev.to/kitchens00988/arbor-it-support-providing-seamless-solutions-for-your-technology-needs-5bph | At Arbor IT Support, we are committed to delivering excellence in every aspect of our service delivery. Our team comprises highly skilled professionals with extensive experience in the field of information technology, who are dedicated to providing proactive support, innovative solutions, and unparalleled customer serv... | kitchens00988 | |

1,877,735 | Making your CV talk 🤖 How to send audio stream from Express JS? | For our case this part was easy, as all we need to do is: Get buffered chunk Write to response... | 27,606 | 2024-06-05T10:34:03 | https://dev.to/nmitic/making-your-cv-talk-how-to-send-audio-stream-from-express-js-18om | audio, node, express | For our case this part was easy, as all we need to do is:

1. Get buffered chunk

2. Write to response object

And it looks like this.

```

onChunkReceived: (chunk) => {

const buffer = Buffer.from(chunk, "base64");

res.write(buffer);

},

```

I will talk more about onChunkReceived method in the next s... | nmitic |

1,877,734 | Making your CV talk 🤖 How to send text stream from Express JS? | Here we have stream coming from Open AI. We need to read chunks and stream it back as soon as chunk... | 27,606 | 2024-06-05T10:33:59 | https://dev.to/nmitic/making-your-cv-talk-how-to-send-text-stream-from-express-js-1d3 | express, streaming, node | Here we have stream coming from Open AI. We need to read chunks and stream it back as soon as chunk is ready. In a way we are streaming a stream.

One can make a case that this is not needed, however I am doing this in order to hide API keys for Groq, Open AI and Hygraph headless CMS. As I do not want them to be expose... | nmitic |

1,877,733 | Making your CV talk 🤖 How to have Open AI api answer based on your custom data? | I used https://ts.llamaindex.ai/. As somebody who is just starting out with generative AI, I have... | 27,606 | 2024-06-05T10:33:55 | https://dev.to/nmitic/making-your-cv-talk-how-to-have-open-ai-api-answer-based-on-your-custom-data-4ie4 | openai, customdata | I used https://ts.llamaindex.ai/.

As somebody who is just starting out with generative AI, I have found LlamaIndex to be of a huge help.

They help you cut the corners and reduce need for a deep understanding about how LLM work in order to be productive. Which is something I need as a beginner.

One thing to note is t... | nmitic |

1,877,727 | Making your CV talk 🤖 Explaining the concept and system design👨💻 | Explaining the concept (in non technical terms) Here are where things finally gets to be... | 0 | 2024-06-05T10:33:50 | https://dev.to/nmitic/making-your-cv-talk-explaining-the-concept-and-system-design-33no | softwareengineering, systemdesign | ### Explaining the concept (in non technical terms)

Here are where things finally gets to be more interesting. To make it very simple to understand I will simplify concept so that we can understand it easier and build on top of it later.

Imagine we have a house with 3 floors, each flow unlocks something new.

1. Firs... | nmitic |

1,877,854 | Leveraging Geolocation: Enhancing Targeting with IP Address Location Finders | Understanding Geolocation Geolocation refers to the identification of the real-world geographic... | 0 | 2024-06-05T10:08:16 | https://dev.to/martinbaldwin127/leveraging-geolocation-enhancing-targeting-with-ip-address-location-finders-55e2 | **Understanding Geolocation**

Geolocation refers to the identification of the real-world geographic location of an object, such as a mobile phone or computer, through the use of various data collection mechanisms. This technology is pivotal in numerous applications ranging from navigation to marketing.

**Importance of... | martinbaldwin127 | |

1,877,723 | Making your CV talk 🤖 Product mindset first 🧠 Defining features | Getting our hands dirty 🏋️♂️ First things first. How does this even work? Higher level... | 27,606 | 2024-06-05T10:33:36 | https://dev.to/nmitic/making-your-cv-talk-product-mindset-first-defining-features-1hp9 | uxdesign, ui, buildinpublic | ## Getting our hands dirty 🏋️♂️

First things first. How does this even work? Higher level concept is actually very simple, but it is important to be understood before jumping into the actually implementation. As understanding it better, means one will able to challenge it, come up with something better and it means ... | nmitic |

1,867,697 | Making your CV talk 🤖 Easy into the development | What we will be building? 🏠 Online CV https://nikola-mitic.dev/cv/patient21 Blog / Tweet... | 27,606 | 2024-06-05T10:33:23 | https://dev.to/nmitic/making-your-cv-talk-easy-into-the-development-ook | ai, openai, resume, typescript | ## What we will be building? 🏠

1. Online CV https://nikola-mitic.dev/cv/patient21

2. Blog / Tweet like interface https://nikola-mitic.dev/tiny_thoughts

3. Chat interface to let people chat to your CV https://nikola-mitic.dev/ai_clone_interview/chat

4. And finally audio interface to give your CV voice and let people t... | nmitic |

1,877,895 | Getting Started with AI Functions | The last week we've gone all in on AI functions. An AI function is the ability to create AI... | 0 | 2024-06-05T10:30:32 | https://ainiro.io/blog/getting-started-with-ai-functions | ai, lowcode, productivity, open | The last week we've gone all in on AI functions. An AI function is the ability to create AI assistants logic, allowing the chatbot to _"do things"_, instead of just passively generating text.

To understand the power of such functions you can read some of our previous articles about the subject.

* [Demonstrating our n... | polterguy |

1,877,894 | What Are The Uses Of Custom Drawstring Pouches? | Are you looking for eco-friendly and sustainable solutions? A cotton drawstring pouch is one of the... | 0 | 2024-06-05T10:29:25 | https://dev.to/bagwalas/what-are-the-uses-of-custom-drawstring-pouches-3jf1 |

Are you looking for eco-friendly and sustainable solutions? **A cotton drawstring pouch** is one of the best options, and you can use it in many ways. These pouches are versatile and can be used in not just one way... | bagwalas | |

1,877,893 | Advanced Linux Shell Scripting: Unlocking Powerful Capabilities | Advanced Linux Shell Scripting: Unlocking Powerful Capabilities Shell scripting is a... | 0 | 2024-06-05T10:27:22 | https://dev.to/iaadidev/advanced-linux-shell-scripting-unlocking-powerful-capabilities-4ien | devops, linux, bash, shellscirpting | ### Advanced Linux Shell Scripting: Unlocking Powerful Capabilities

Shell scripting is a fundamental skill for any Linux user, system administrator, or DevOps engineer. While basic scripts are great for simple automation tasks, advanced shell scripting techniques allow you to create more robust, efficient, and powerfu... | iaadidev |

1,877,891 | Aditya City Grace | Aditya City Grace Ghaziabad | Aditya City Grace NH 24 Ghaziabad | Aditya City Grace in Ghaziabad presents luxurious 2 & 3 BHK apartments starting at 54 Lakhs,... | 0 | 2024-06-05T10:26:07 | https://dev.to/narendra_kumar_5138507a03/aditya-city-grace-aditya-city-grace-ghaziabad-aditya-city-grace-nh-24-ghaziabad-4o6 | realestate, estateinvestment, realestateagent, adityacitygrace | Aditya City Grace in Ghaziabad presents luxurious [2 & 3 BHK apartments]( https://adityacitygrace.site/) starting at 54 Lakhs, seamlessly combining contemporary living with elegance and comfort. Designed to suit young professionals, growing families, and investors alike, these spacious residences cater to your every ne... | narendra_kumar_5138507a03 |

1,877,890 | Buy Negative Google Reviews | https://dmhelpshop.com/product/buy-negative-google-reviews/ Buy Negative Google Reviews Negative... | 0 | 2024-06-05T10:20:53 | https://dev.to/twuwksndjpanshbdk/buy-negative-google-reviews-1n59 | webdev, javascript, beginners, programming | ERROR: type should be string, got "https://dmhelpshop.com/product/buy-negative-google-reviews/\n\n\nBuy Negative Google Reviews\nNegative reviews on Google are detrimental critiques that expose customers’ unfavorable experiences with a business. These reviews can significantly damage a company’s reputation, presenting challenges in both attracting new customers and retaining current ones. If you are considering purchasing negative Google reviews from dmhelpshop.com, we encourage you to reconsider and instead focus on providing exceptional products and services to ensure positive feedback and sustainable success.\n\nWhy Buy Negative Google Reviews from dmhelpshop\nWe take pride in our fully qualified, hardworking, and experienced team, who are committed to providing quality and safe services that meet all your needs. Our professional team ensures that you can trust us completely, knowing that your satisfaction is our top priority. With us, you can rest assured that you’re in good hands.\n\nIs Buy Negative Google Reviews safe?\nAt dmhelpshop, we understand the concern many business persons have about the safety of purchasing Buy negative Google reviews. We are here to guide you through a process that sheds light on the importance of these reviews and how we ensure they appear realistic and safe for your business. Our team of qualified and experienced computer experts has successfully handled similar cases before, and we are committed to providing a solution tailored to your specific needs. Contact us today to learn more about how we can help your business thrive.\n\nBuy Google 5 Star Reviews\nReviews represent the opinions of experienced customers who have utilized services or purchased products from various online or offline markets. These reviews convey customer demands and opinions, and ratings are assigned based on the quality of the products or services and the overall user experience. Google serves as an excellent platform for customers to leave reviews since the majority of users engage with it organically. When you purchase Buy Google 5 Star Reviews, you have the potential to influence a large number of people either positively or negatively. Positive reviews can attract customers to purchase your products, while negative reviews can deter potential customers.\n\nIf you choose to Buy Google 5 Star Reviews, people will be more inclined to consider your products. However, it is important to recognize that reviews can have both positive and negative impacts on your business. Therefore, take the time to determine which type of reviews you wish to acquire. Our experience indicates that purchasing Buy Google 5 Star Reviews can engage and connect you with a wide audience. By purchasing positive reviews, you can enhance your business profile and attract online traffic. Additionally, it is advisable to seek reviews from reputable platforms, including social media, to maintain a positive flow. We are an experienced and reliable service provider, highly knowledgeable about the impacts of reviews. Hence, we recommend purchasing verified Google reviews and ensuring their stability and non-gropability.\n\nLet us now briefly examine the direct and indirect benefits of reviews:\nReviews have the power to enhance your business profile, influencing users at an affordable cost.\nTo attract customers, consider purchasing only positive reviews, while negative reviews can be acquired to undermine your competitors. Collect negative reports on your opponents and present them as evidence.\nIf you receive negative reviews, view them as an opportunity to understand user reactions, make improvements to your products and services, and keep up with current trends.\nBy earning the trust and loyalty of customers, you can control the market value of your products. Therefore, it is essential to buy online reviews, including Buy Google 5 Star Reviews.\nReviews serve as the captivating fragrance that entices previous customers to return repeatedly.\nPositive customer opinions expressed through reviews can help you expand your business globally and achieve profitability and credibility.\nWhen you purchase positive Buy Google 5 Star Reviews, they effectively communicate the history of your company or the quality of your individual products.\nReviews act as a collective voice representing potential customers, boosting your business to amazing heights.\nNow, let’s delve into a comprehensive understanding of reviews and how they function:\nGoogle, with its significant organic user base, stands out as the premier platform for customers to leave reviews. When you purchase Buy Google 5 Star Reviews , you have the power to positively influence a vast number of individuals. Reviews are essentially written submissions by users that provide detailed insights into a company, its products, services, and other relevant aspects based on their personal experiences. In today’s business landscape, it is crucial for every business owner to consider buying verified Buy Google 5 Star Reviews, both positive and negative, in order to reap various benefits.\n\nWhy are Google reviews considered the best tool to attract customers?\nGoogle, being the leading search engine and the largest source of potential and organic customers, is highly valued by business owners. Many business owners choose to purchase Google reviews to enhance their business profiles and also sell them to third parties. Without reviews, it is challenging to reach a large customer base globally or locally. Therefore, it is crucial to consider buying positive Buy Google 5 Star Reviews from reliable sources. When you invest in Buy Google 5 Star Reviews for your business, you can expect a significant influx of potential customers, as these reviews act as a pheromone, attracting audiences towards your products and services. Every business owner aims to maximize sales and attract a substantial customer base, and purchasing Buy Google 5 Star Reviews is a strategic move.\n\nAccording to online business analysts and economists, trust and affection are the essential factors that determine whether people will work with you or do business with you. However, there are additional crucial factors to consider, such as establishing effective communication systems, providing 24/7 customer support, and maintaining product quality to engage online audiences. If any of these rules are broken, it can lead to a negative impact on your business. Therefore, obtaining positive reviews is vital for the success of an online business\n\nWhat are the benefits of purchasing reviews online?\nIn today’s fast-paced world, the impact of new technologies and IT sectors is remarkable. Compared to the past, conducting business has become significantly easier, but it is also highly competitive. To reach a global customer base, businesses must increase their presence on social media platforms as they provide the easiest way to generate organic traffic. Numerous surveys have shown that the majority of online buyers carefully read customer opinions and reviews before making purchase decisions. In fact, the percentage of customers who rely on these reviews is close to 97%. Considering these statistics, it becomes evident why we recommend buying reviews online. In an increasingly rule-based world, it is essential to take effective steps to ensure a smooth online business journey.\n\nBuy Google 5 Star Reviews\nMany people purchase reviews online from various sources and witness unique progress. Reviews serve as powerful tools to instill customer trust, influence their decision-making, and bring positive vibes to your business. Making a single mistake in this regard can lead to a significant collapse of your business. Therefore, it is crucial to focus on improving product quality, quantity, communication networks, facilities, and providing the utmost support to your customers.\n\nReviews reflect customer demands, opinions, and ratings based on their experiences with your products or services. If you purchase Buy Google 5-star reviews, it will undoubtedly attract more people to consider your offerings. Google is the ideal platform for customers to leave reviews due to its extensive organic user involvement. Therefore, investing in Buy Google 5 Star Reviews can significantly influence a large number of people in a positive way.\n\nHow to generate google reviews on my business profile?\nFocus on delivering high-quality customer service in every interaction with your customers. By creating positive experiences for them, you increase the likelihood of receiving reviews. These reviews will not only help to build loyalty among your customers but also encourage them to spread the word about your exceptional service. It is crucial to strive to meet customer needs and exceed their expectations in order to elicit positive feedback. If you are interested in purchasing affordable Google reviews, we offer that service.\n\n\n\n\n\nContact Us / 24 Hours Reply\nTelegram:dmhelpshop\nWhatsApp: +1 (980) 277-2786\nSkype:dmhelpshop\nEmail:dmhelpshop@gmail.com" | twuwksndjpanshbdk |

1,877,861 | Unlocking the Power of SAP Project System (PS) for Efficient Project Management | In the realm of enterprise resource planning (ERP), SAP Project System (PS) stands out as a critical... | 0 | 2024-06-05T10:17:17 | https://dev.to/mylearnnest/unlocking-the-power-of-sap-project-system-ps-for-efficient-project-management-5d6k | sap, sapps | In the realm of [enterprise resource planning (ERP)](https://www.mylearnnest.com/best-sap-ps-course-in-hyderabad/), SAP Project System (PS) stands out as a critical component designed to manage complex projects efficiently. SAP PS integrates seamlessly with other SAP modules, offering a comprehensive solution for plann... | mylearnnest |

1,877,860 | Choosing an online pharmacy you can trust | Choosing a trustworthy online pharmacy is crucial for your health and safety. DiRx outlines five key... | 0 | 2024-06-05T10:15:35 | https://dev.to/mahesh803/choosing-an-online-pharmacy-you-can-trust-23no | mentalhealth, machinelearning, java, html | Choosing a trustworthy online pharmacy is crucial for your health and safety. DiRx outlines five key factors: ensuring the pharmacy sells only FDA-approved medicines, is licensed and accredited, offers pharmacist support, monitors prescription interactions, and complies with privacy laws. By focusing on these aspects, ... | mahesh803 |

1,877,859 | How to Read/Write from Credential Manager in .NET 8 | How to Read/Write from Credential Manager in .NET 8 Credential Manager is a secure storage... | 0 | 2024-06-05T10:15:05 | https://dev.to/issamboutissante/how-to-readwrite-from-credential-manager-in-net-8-1ag | dotnet, credentialmanager, secretkeydotnet, csharp | ## How to Read/Write from Credential Manager in .NET 8

Credential Manager is a secure storage solution for sensitive information, such as usernames and passwords. It provides a way to manage credentials for various applications securely. In this article, we will walk through the steps to read, write, update, and delet... | issamboutissante |

1,877,858 | Unveiling Innovation: Propelling Your Business Forward with CodeHunts Software Solutions | In today’s rapidly evolving digital landscape, businesses are constantly searching for innovative... | 0 | 2024-06-05T10:12:04 | https://dev.to/hmzi67/unveiling-innovation-propelling-your-business-forward-with-codehunts-software-solutions-2fcg | In today’s rapidly evolving digital landscape, businesses are constantly searching for innovative solutions to maintain their competitive edge. In this dynamic environment, the role of software service providers is more crucial than ever. Enter [CodeHunts](https://codehuntspk.com/) – your trusted ally in navigating the... | hmzi67 |

Subsets and Splits

No community queries yet

The top public SQL queries from the community will appear here once available.