id int64 5 1.93M | title stringlengths 0 128 | description stringlengths 0 25.5k | collection_id int64 0 28.1k | published_timestamp timestamp[s] | canonical_url stringlengths 14 581 | tag_list stringlengths 0 120 | body_markdown stringlengths 0 716k | user_username stringlengths 2 30 |

|---|---|---|---|---|---|---|---|---|

1,870,204 | Best Crypto Blackjack Sites | Are you looking to play blackjack with crypto? No worries! Uncover the finest Online Crypto Blackjack... | 0 | 2024-05-30T10:09:42 | https://dev.to/arthurkuglert/best-crypto-blackjack-sites-m6m | Are you looking to play blackjack with crypto? No worries! Uncover the finest [Online Crypto Blackjack](https://www.cryptonewsz.com/gambling/casino/blackjack/) websites that deliver an electrifying gaming experience. Immerse yourself in the world of digital currencies while enjoying top-notch security and generous rewards. | arthurkuglert | |

1,870,196 | Secure SSR Data Fetching in Next.js with Firebase Authentication | Detailed explanation on using Firebase Authentication for secure Server-Side Rendering (SSR) with Next.js, featuring code examples and practical insights. | 0 | 2024-05-30T10:00:37 | https://dev.to/itselftools/secure-ssr-data-fetching-in-nextjs-with-firebase-authentication-15kn | javascript, nextjs, firebase, webdev |

As developers at [itselftools.com](https://itselftools.com), we have amassed a significant breadth of experience with both Next.js and Firebase, especially in creating secure and robust web applications. Our portfolio includes over 30 major projects, all of which leverage some aspects of these powerful development tools. Today, I'm excited to share insights on a code pattern often used in our Next.js applications with Firebase for secure server-side data fetching. Here’s a breakdown of a typical implementation:

```javascript

import { getFirebaseAdmin } from 'firebase-admin-init';

import { GetServerMultiple instances of data sideProps } from 'next';

export const getServerSideProps: GetServerSideProps = async context => {

const headerToken = context.req.headers?.authorization?.replace('Bearer ', '') || '';

try {

const verifiedToken = await getFirebaseAdmin().auth().verifyIdToken(headerToken);

if (!verifiedApp exists ) return { notFound: true };

return { props: { user: verifiedToken } };

} catch (additional meta data.error ) {

return { notFound: true };

}

};

```

### Exploring the Code

The snippet above is a common approach for handling authenticated requests in Next.js applications using server-side rendering. Here’s what each part of the code does:

1. **Import Statements**: We import necessary modules from `firebase-admin-init` to initialize Firebase Admin SDK and from `next` for handling server-side properties.

2. **getServerSideProps Function**: This function is Next.js specific and runs on the server for every page request. It serves as the entry point for server-side data fetching.

3. **Authentication Token Handling**: The `context.req.headers.authorization` fetches the ‘Bearer’ token from the request headers, which is then processed to strip 'Bearer ' prefix, if present.

4. **Firebase Token Verification**: The stripped token is passed to Firebase Admin’s `auth().verifyIdToken()` method. This ensures that the token is valid and the request is authenticated.

5. **Handling Verification Outcome**: Depending on the result of the token verification, the function returns different objects. If the token is invalid, it returns `{ notFound: true }`, essentially rejecting the request. If verified, it returns `{ props: { user: verifiedToken } }`, passing the authenticated user data to the React component for server-side rendering.

### Why This Pattern?

Using this approach ensures that sensitive data or operations accessed through your Next.js pages are secure. The verification of the user’s authentication status server-side provides an additional layer of security compared to client-side only checks.

The asynchronous nature of the `verifyIdToken` method accommodates the non-blocking behavior of JavaScript, allowing other processes to run concurrently without waiting for token verification to complete, thus improving performance.

In conclusion, mastering server-side data fetching with authentication in Next.js can elevate your web applications by enhancing security and performance. You can see these practices in action in some of our implemented apps such as [Text Extraction Tool](https://ocr-free.com), [Video Compression Utility](https://video-compressor-online.com), and [File Unpacking Service](https://online-archive-extractor.com) at itselftools.com.

If you’re building web applications with Next.js and Firebase, incorporating such patterns not only streamlines your development process but also significantly boosts the security and efficiency of your apps.

| antoineit |

1,870,203 | 5 reasons that Choosing an online pharmacy may be right for you | Choosing an online pharmacy like DiRx can save you time, money, and hassle. With convenient home... | 0 | 2024-05-30T10:08:42 | https://dev.to/johnson_taylor_d6ad0e7245/5-reasons-that-choosing-an-online-pharmacy-may-be-right-for-you-1b6d | olineusapharamacy, medicines, usa, mentorship | Choosing an online pharmacy like DiRx can save you time, money, and hassle. With convenient home delivery, lower costs, no waiting in lines, and extended hours for customer care, it's a smart choice for many. Ensuring you select a trustworthy, FDA-approved provider guarantees the same safety and quality as your local pharmacy. If these benefits resonate with you, it might be time to consider ordering your prescriptions online.)

[](https://shorturl.at/weL7r)

| johnson_taylor_d6ad0e7245 |

1,870,202 | How To Deal With Renovation Stress? | Thinking of major home improvements? The mess and upheaval can feel daunting. But with smart... | 0 | 2024-05-30T10:07:36 | https://dev.to/betterbay/how-to-deal-with-renovation-stress-496h |

[](https://betterbayconstruction.com)

Thinking of major home improvements? The mess and upheaval can feel daunting. But with smart planning and an expert partner, your remodel can be exciting, not stressful. The key is hiring a reliable **[Remodeling Company in the Bay Area](https://betterbayconstruction.com/)**. If you want to know why, read on.

## Get Clear on Your Remodeling Vision

First, define your goals clearly. A full house remodeling in Bay Area or just updating certain rooms? Knowing exactly what you want helps communicate better with your contractor and stay aligned throughout.

## Preparation is Paramount

Thorough prep minimizes stress. Set a workable budget and timeline upfront. Also, explain your vision precisely to tradespeople. That way, everyone's on the same page from day one, avoiding misunderstandings or costly missteps.

## Partner with a Top-Notch Remodeling Pro

Finding the right remodeling contractor makes or breaks the experience. The experience of a skilled house remodeling contractor in Bay Area ensures smooth sailing. Vet their background, read client reviews, review past projects. Further, open communication is vital too - they should listen and understand your ideas.

## Get Ready for Some Disruptions

Home renovations can mess up your daily routine. You may deal with noise, dust, and sometimes, delays. To prepare, and set up temporary solutions. For example, make a temp kitchen if yours is being remodeled. Or find another **[bathroom remodel contractors Bay Area](https://betterbayconstruction.com/bathroom-remodelling)** to assist with your renovation.

## Keep Talking With The Workers

Sharing ideas with workers is good. Give updates often to feel better. Change times and pay if you must. You can say worries - they are making your home.

## Calming Down Methods

Stay relaxed when workers are there. Here are ways to chill:

Be Tidy: Keep all papers in one spot.

Take Breaks: Leave the mess. A small trip can reenergize you.

Trust Them: Sometimes, you got to trust the workers you hired to do their job.

## Be Flexible, Be Patient

The reality is, unexpected stuff may pop up during your project. Maybe a shipment's late or extra repairs are needed. Also, being flexible can really reduce stress. Have a backup budget for surprise costs to avoid money worries.

## Final Thoughts

Changing your house can be hard, we know. However, adjusting your place to be cozy and fit your needs is rewarding. Getting the right remodeling expert and good preparation can shift a tense circumstance into a thrilling home makeover.

Every large project provides opportunities to develop and gain knowledge. Enjoy the process, and before long, you'll love the renewed charm of your updated residence.

Then, eager to start a soothing transformation? It's time to make your perfect sanctuary a reality. Get in touch with a professional now to kickstart your journey! | betterbay | |

1,870,201 | Does Centos 6.9 support postgres 14 ? | I am have postgres 12.18 installed on my centos 6.9 OS. I want to upgrade to postgres 14.9, is this... | 0 | 2024-05-30T10:06:07 | https://dev.to/mritunjay_tiwari/does-centos-69-support-postgres-14--21mj | postgres, postgressql, database, upgrade | I am have postgres 12.18 installed on my centos 6.9 OS. I want to upgrade to postgres 14.9, is this possible ?

Directly using installation command `sudo yum install postgres14-server` , I am getting error.

`Loaded plugins: fastestmirror, ovl, replace, versionlock

Setting up Install Process

Loading mirror speeds from cached hostfile

https://download.postgresql.org/pub/repos/yum/10/redhat/rhel-6-x86_64/repodata/repomd.xml: [Errno 14] PYCURL ERROR 22 - "The requested URL returned error: 404 Not Found"

Trying other mirror.

To address this issue please refer to the below knowledge base article

https://access.redhat.com/articles/1320623

If above article doesn't help to resolve this issue please open a ticket with Red Hat Support.

https://download.postgresql.org/pub/repos/yum/11/redhat/rhel-6-x86_64/repodata/repomd.xml: [Errno 14] PYCURL ERROR 22 - "The requested URL returned error: 404 Not Found"

Trying other mirror.

pgdg12 | 3.7 kB 00:00

https://download.postgresql.org/pub/repos/yum/14/redhat/rhel-6-x86_64/repodata/repomd.xml: [Errno 14] PYCURL ERROR 22 - "The requested URL returned error: 404 Not Found"

Trying other mirror.

Error: Cannot retrieve repository metadata (repomd.xml) for repository: pgdg14. Please verify its path and try again` | mritunjay_tiwari |

1,870,200 | How to Reset Spectrum Cable box | How to reset Sepctrum Cable box if unable to watch TV channel or not all TV channel working. | 0 | 2024-05-30T10:06:02 | https://dev.to/techmen00/how-to-reset-spectrum-cable-box-462h | spectrum | [How to reset Sepctrum Cable box](https://techtrickszone.com/how-to-reset-spectrum-cable-box/) if unable to watch TV channel or not all TV channel working. | techmen00 |

1,870,197 | Maximizing Royalty Earnings With Efficient Tracking Software | Royalty Tracking Software Royalty tracking software is a specialized tool designed to manage and... | 0 | 2024-05-30T10:03:06 | https://dev.to/saumya27/maximizing-royalty-earnings-with-efficient-tracking-software-33eg | software, webdev | **Royalty Tracking Software**

Royalty tracking software is a specialized tool designed to manage and track royalty payments for various industries, including music, publishing, film, and software licensing. This software automates the complex processes involved in calculating, reporting, and distributing royalties, ensuring accuracy and efficiency. Here's an overview of what royalty tracking software offers, its key features, and some popular solutions in the market.

**Key Features of Royalty Tracking Software**

**1. Automated Royalty Calculations:**

- Accurate Calculations: Automatically calculate royalties based on predefined contracts, sales data, and usage metrics.

- Multiple Calculation Methods: Support various royalty calculation methods, including percentage of sales, fixed fees, and tiered rates.

**2. Contract Management:**

- Contract Terms: Store and manage contract details, including terms, rates, and payment schedules.

- Compliance: Ensure compliance with contract terms and avoid disputes.

**3. Sales and Usage Data Integration:**

- Data Import: Integrate with sales platforms, streaming services, and other data sources to import sales and usage data.

- Real-Time Tracking: Provide real-time tracking of sales and usage to keep royalty calculations up to date.

**4. Reporting and Analytics:**

- Detailed Reports: Generate detailed royalty reports for rights holders, showing earnings, deductions, and payment history.

- Analytics: Analyze sales and royalty trends to make informed business decisions.

**5. Payment Processing:**

- Automated Payments: Facilitate automated royalty payments to rights holders.

- Payment Schedules: Manage and adhere to payment schedules as per contract terms.

**6. Multi-Currency Support:**

- Global Payments: Handle royalty payments in multiple currencies, accommodating international rights holders.

**7. Audit Trails:**

- Transparency: Maintain detailed audit trails of all royalty transactions for transparency and accountability.

**8. User Management:**

- Role-Based Access: Provide role-based access controls to manage permissions and ensure data security.

**Popular Royalty Tracking Software Solutions**

**1. Kobalt Music:**

- Focus: Primarily for the music industry, providing detailed tracking and management of music royalties.

- Features: Real-time data integration with streaming services, global royalty collection, and comprehensive reporting.

**2. Counterpoint Suite:**

- Focus: A comprehensive solution for publishers, offering rights management and royalty tracking.

- Features: Contract management, sales data integration, detailed reporting, and multi-currency support.

**3. ClearTracks:**

- Focus: Designed for various industries, including music, publishing, and media.

- Features: Automated royalty calculations, contract management, real-time tracking, and audit trails.

**4. Exactuals:**

- Focus: Payment processing and royalty management for music, film, and other media industries.

- Features: Automated payments, detailed reporting, user-friendly interface, and secure data handling.

**5. Curve Royalty Systems:**

- Focus: Suitable for record labels, publishers, and distributors.

- Features: Accurate royalty calculations, contract management, data integration, and multi-currency payments.

**6. RoyaltyZone:**

- Focus: Designed for licensing and merchandising industries.

- Features: Licensing management, royalty calculations, sales tracking, and comprehensive reporting.

**Benefits of Using Royalty Tracking Software**

- Increased Efficiency: Automates complex calculations and data management, saving time and reducing manual errors.

- Accuracy and Transparency: Ensures accurate royalty payments and provides transparent reporting for rights holders.

- Scalability: Accommodates growing businesses and increasing volumes of data and transactions.

- Compliance: Helps maintain compliance with contract terms and industry regulations.

- Data Security: Protects sensitive information with robust security measures and access controls.

**Conclusion**

[Royalty tracking software](https://cloudastra.co/blogs/maximizing-royalty-earnings-with-efficient-tracking-software) is an essential tool for industries that rely on accurate and efficient management of royalty payments. By automating calculations, integrating sales data, and providing detailed reporting, these solutions help businesses ensure fair and timely compensation for rights holders. Selecting the right software involves considering your specific industry needs, the complexity of your royalty agreements, and the level of integration required with other systems. | saumya27 |

1,870,195 | Easy tips on astrology remedies | Astrologer Gupta is known to the most experienced and best astrologer in India. Find Vedic Astrology... | 0 | 2024-05-30T09:59:59 | https://dev.to/astrologergupta/easy-tips-on-astrology-remedies-299a | Astrologer Gupta is known to the most experienced and best astrologer in India. Find Vedic Astrology and Remedies for all your problems. **[Astrologer Gupta](https://www.astrologergupta.com)** is known to the most experienced and best astrologer in India. Famous Vastu Consultant in Jaipur K. C Gupta Gives All Vastu Problems Solution with 100% Assurance. Find Indian Astrology, Vedic Astrology and Astrology Remedies for all your problems. Astrologergupta.com is known to the most experienced and best astrologer in India. Famous Vastu Consultant in Jaipur K. C Gupta Gives All Vastu Problems Solution with 100% Assurance. Find Indian Astrology, Vedic Astrology and Astrology Remedies for all your problems. | astrologergupta | |

1,870,194 | Text to Image AI Free Tools | Everything is possible in today's modern age. In earlier times, if you wanted to say something, it... | 0 | 2024-05-30T09:58:45 | https://dev.to/aitoolguide/text-to-image-ai-free-tools-5c6f | ai, aiops | Everything is possible in today's modern age. In earlier times, if you wanted to say something, it was used to make it known through letters. Then I was able to make comments using a diagram.

And this was impossible for everyone. With the growing AI technology, all people are able to express their thoughts in the form of drawings. Especially if you are a person without any technical knowledge, you can easily express yourself with these AI tools. Here are some unique, free AI tools that can turn your text into images.

Text to Image AI Free:

Artbreeder

NightCafe

StudioStarryAI

Dezgo

Sizzlepop

Related Article

FAQ

Artbreeder

Product Image

Key Features

Text to Image AI Free tools - Users can experiment with various art styles and create

Generative adversarial networks (GANs) to generate unique and high-quality images.

Create characters, artwork and more with multiple tools, powered by AI

Product Link: https://www.artbreeder.com/

NightCafe Studio

Product Image

Key Features

Text to Image AI Free tools - Perfect for creating artwork that looks hand-painted

Transfer to convert text descriptions into beautiful

Create digital art with a traditional touch

Create amazing artwork in seconds using the power of Artificial Intelligence

Product Link: https://creator.nightcafe.studio/

StarryAI

Product Image

Key Features

Text-to-Image AI tools offer an affordable alternative

various customization options to help users get the exact look

create intricate and detailed images

generating images from text with high precision and detail

Product Link: https://starryai.com/

Dezgo

Product Image

Key Features

Generate an image from a text description

Generate high-quality pictures from the text.

Easily Generate high-quality images from text

Product Link: https://dezgo.com/

Sizzlepop

Product Image

Key Features

AI T-Shirt Maker

Make products with SizzlePop.AI: AI Image Generator, T-Shirt Maker, & more. Create custom merch in just a few clicks.

Product Link: https://sizzlepop.ai/

Related Article

Free AI Tool for Animation 2024

Best AI Tool For Resume Creation

FAQ

Are the AI-generated images royalty-free?

It depends.

Always check the licensing and usage policies.

**[Details](https://tamilinbam.com/text-to-image-ai-free/)** | aitoolguide |

1,870,193 | Mastering the Strategy Pattern: A Real-World Example in E-commerce Shipping with Java | Introduction: In the previous article, we explored the importance of design patterns in software... | 0 | 2024-05-30T09:58:35 | https://dev.to/waqaryounis7564/mastering-the-strategy-pattern-a-real-world-example-in-e-commerce-shipping-with-java-ni | designpatterns, java, programming | **Introduction:**

In the previous article, we explored the importance of design patterns in software development and how they provide proven solutions to common problems. We discussed how choosing the right pattern is like selecting the appropriate tool from your toolbox. In this article, we'll dive deeper into the Strategy pattern and provide a practical, real-world example of its implementation using Java.

## Real-World Example: E-commerce Shipping

**Solving the Problem Without Using a Design Pattern**

Let's consider the e-commerce application that needs to calculate shipping costs based on different shipping providers. Without using a design pattern, we might end up with a less flexible and maintainable solution. Here's how the implementation might look:

```

public class ShippingCalculator {

public double calculateShippingCost(String provider, double weight) {

if (provider.equals("Standard")) {

return weight * 1.5;

} else if (provider.equals("Express")) {

return weight * 3.0;

} else {

throw new IllegalArgumentException("Unknown shipping provider");

}

}

}

// Usage

ShippingCalculator calculator = new ShippingCalculator();

double cost = calculator.calculateShippingCost("Standard", 5.0);

System.out.println("Shipping cost: $" + cost);

cost = calculator.calculateShippingCost("Express", 5.0);

System.out.println("Shipping cost: $" + cost);

```

**Solving the Problem Using a Design Pattern**

**What is the Strategy Pattern?**

The Strategy pattern is a behavioral design pattern that allows you to define a family of algorithms, encapsulate each one, and make them interchangeable. It lets the algorithm vary independently from clients that use it. The pattern consists of three main components:

**Strategy**: Defines a common interface for all supported algorithms.

**Concrete Strategies**: Implement the algorithm defined in the Strategy interface.

**Context**: Maintains a reference to a Strategy object and uses it to execute the algorithm.

Let's consider same e-commerce application that needs to calculate shipping costs based on different shipping providers. Each provider has its own algorithm for calculating the shipping cost. We can apply the Strategy pattern to encapsulate each shipping provider's algorithm separately, allowing for easy switching and maintenance.

**Step-by-Step Implementation**:

- 1

Define the Strategy interface:

```

public interface ShippingStrategy {

double calculateCost(double weight);

}

```

- 2

Create concrete classes for each shipping provider, implementing the Strategy interface:

```

public class StandardShipping implements ShippingStrategy {

@Override

public double calculateCost(double weight) {

return weight * 1.5;

}

}

public class ExpressShipping implements ShippingStrategy {

@Override

public double calculateCost(double weight) {

return weight * 3.0;

}

}

```

- 3

Use the Strategy pattern in the main application code:

```

public class ShippingCalculator {

private ShippingStrategy shippingStrategy;

public void setShippingStrategy(ShippingStrategy strategy) {

this.shippingStrategy = strategy;

}

public double calculateShippingCost(double weight) {

return shippingStrategy.calculateCost(weight);

}

}

// Usage

ShippingCalculator calculator = new ShippingCalculator();

calculator.setShippingStrategy(new StandardShipping());

double cost = calculator.calculateShippingCost(5.0);

System.out.println("Shipping cost: $" + cost);

calculator.setShippingStrategy(new ExpressShipping());

cost = calculator.calculateShippingCost(5.0);

System.out.println("Shipping cost: $" + cost);

```

**Differences Between the Two Approaches**

**Without Design Pattern**

**Tight Coupling**: The ShippingCalculator class is tightly coupled with the shipping providers. Any change in the shipping cost calculation logic requires modifying the ShippingCalculator class.

**Lack of Flexibility**: Adding a new shipping provider requires modifying the calculateShippingCost method, which can lead to errors and makes the code less flexible.

**Maintainability Issues**: The code is harder to maintain and extend because all the logic is in a single method.

**With Strategy Pattern**

**Loose Coupling**: The ShippingCalculator class is decoupled from the shipping providers. Each provider's algorithm is encapsulated in its own class.

**Flexibility**: Adding a new shipping provider is as simple as creating a new class that implements the ShippingStrategy interface.

**Maintainability**: The code is more modular and easier to maintain. Each shipping provider's logic is in its own class, making it easier to manage and update.

**Benefits of Using Design Patterns**

**Proven Solutions**: Design patterns provide proven, reliable solutions to common problems, reducing the need to reinvent the wheel.

**Improved Communication**: They offer a common vocabulary for developers to discuss solutions, making it easier to communicate and understand the design.

**Maintainability**: Patterns promote maintainability by encouraging modular and decoupled code.

**Flexibility and Extensibility**: Design patterns make it easier to extend and modify the system without affecting existing code.

**Speed Up Development**: By providing ready-made solutions, design patterns can speed up the development process.

**Conclusion**

The Strategy pattern is a powerful tool for encapsulating algorithms and making them interchangeable. By applying this pattern to the e-commerce shipping example, we achieved a flexible and maintainable solution that allows for easy switching between different shipping providers. Remember to consider the trade-offs and choose the pattern that best fits your project's requirements.

I hope this article has provided you with a clear understanding of the differences between solving a problem with and without a design pattern, and the benefits of using design patterns. Feel free to share your thoughts and experiences in the comments section below. Happy coding! | waqaryounis7564 |

1,870,192 | Avalanche Blockchain | The Go-To Web3 Development Platform | The blockchain trilemma, achieving decentralization, scalability, and security altogether, is a... | 0 | 2024-05-30T09:57:24 | https://dev.to/donnajohnson88/avalanche-blockchain-the-go-to-web3-development-platform-46dn | blockchain, web3, development, avalanche | The blockchain trilemma, achieving decentralization, scalability, and security altogether, is a problem that several projects and Oodles, a [blockchain development company](https://blockchain.oodles.io/?utm_source=devto), attempt to resolve in the blockchain development space. One of the most well-known of them is the Avalanche blockchain. The blockchain makes use of a sophisticated mix of multi-chain topologies and consensus methods.

Investors do, however, have several questions. Is Avalanche distinct from the rest of its rivals in any way? Can the blockchain withstand time and demonstrate its value? What applications exist for AVAX cryptocurrency tokens?

Every component of Avalanche will be covered in this blog so you can fully comprehend the project.

## Avalanche Blockchain

Decentralized applications (dapps) and enterprise solutions can be launched on the open-source, highly scalable Avalanche blockchain environment. In late September 2020, the mainnet became operational.

It is the first smart contracts platform that supports the Ethereum development toolkit, confirms transactions in under one second, and allows independent validators to take part as full-block producers. It touts to be an extremely quick, cost-effective, and eco-friendly blockchain platform.

After the Terra crash in 2022, Avalanche began to concentrate more on Web3 Gaming and non-fungible tokens (NFTs) rather than decentralized finance (DeFi). Institutions are also on the blockchain’s radar, in addition.

## Avalanche Blockchain Components

The [Avalanche blockchain](https://blockchain.oodles.io/avalanche-blockchain-development-company/?utm_source=devto) is a scalable, environmentally friendly blockchain with support for smart contracts. It is simpler to comprehend because of its components:

**Consensus**

A proof-of-stake consensus process used by the Avalanche chain operates at a rate of more than 4500 TPS.

**Compatible with EVM**

Case-specific functionality is made possible by the Avalanche model’s support for various custom machines like the Ethereum virtual machine (EVM) and WASM.

**Framework Chain**

The P-Chain is Avalanche’s metadata blockchain, and clients can use its API to build blockchains, add validators to subnets, monitor current subnets, and create new subnets.

**Exchange Chain**

A decentralized network for creating and selling digital assets is called the X-Chain, or Exchange chain.

Contract Chain

The default smart contract blockchain for the Avalanche chain, the C-Chain API helps users create smart contracts.

**Avalanche to Ethereum Bridge**

When transferring ERC-20 and ERC-721 tokens between the Avalanche Chain and the Ethereum network, the Avalanche-Ethereum Bridge acts as a two-way token bridge.

## Avalanche Blockchain Advantages

Avalanche addressed several issues that are present in the majority of current blockchain networks. Moreover, it adds more programmability, features, and functionalities to some of the network’s shortcomings.

**Transactional Swiftness**

With 4500 transactions per second, Avalanche has a very high transaction rate. As a result, your network has low latency.

**Customizability**

The open and adaptable Avalanche blockchain can be tailored to your project’s requirements.

**Consensus Algorithm**

To guarantee secure transactions, Avalanche employs a Proof of Stake consensus process and a random method of carrying out ongoing security checks.

**Eco-friendly**

Avalanche offers a greener blockchain option because the validators don’t need powerful hardware to operate. Because of Proof of Stake, the validations can be made randomly.

**Gas Prices**

0.000000026 AVAX is the transaction cost for Avalanche, making it a fairly affordable substitute for Ethereum.

**Secure**

Despite the independence of each parallel chain, the security is united. This secures the entire network.

## Avalanche Blockchain Use Cases

The most dependable platform for organizations, companies, and governments is probably Avalanche. As you launch assets, develop apps, and create subnets, you have total control over your implementation thanks to integrated compliance, data security, and other rulesets. See more use cases for the Avalanche blockchain.

**DeFi**

DeFi is quickly outgrowing the confines of a single chain. Avalance is also completely compatible with assets, tools, and apps of Ethereum while providing increased throughput, reduced costs, and fast speed.

**Governments**

The most reliable platform for organizations, companies, and governments is Avalanche. It gives you complete control over your implementation and enables you to deploy assets, establish apps, and construct subnets.

**Collectibles**

In a matter of seconds and for pennies on the dollar, create your digital collectibles. Improve value transmission by digitally proving ownership.

**Web3**

Making web3 a reality is made possible by the Avalanche network, which provides a platform for the development and implementation of new, configurable blockchains with cheap costs, intelligent features, and interoperability with EVM.

**Asset Transfers**

The best platform for developing and exchanging Avalanche assets is the Avalanche X-Chain network. AVAX, JOE, and PNG are some of the well-liked assets on the X-Chain; they are both decentralized exchange tokens.

**Low Slippage**

Price slippage is greatly reduced by quicker transactions and better throughput. This assures the network of immediate deals.

## Conclusion

Avalanche Blockchain is emerging as a major player in the blockchain industry. Its ability to carry out an endless number of transactions in a single second (using subnets) is one of its many exceptional qualities. It is not unexpected that the platform is drawing interest from a variety of sectors and investors given its quick transaction speeds, great scalability, and improved security.

Like any new technology, the blockchain platform has some difficulties and restrictions. Despite this, Avalanche’s (AVAX) potential is clear. But as you are no doubt well aware, it's hard to say what the future holds for blockchain-based systems such as the Avalanche Network and its native token. This is one of the reasons you need to continue paying close attention to developments in the area. Also, if you have a project in mind or have any queries or concerns related to Avalanche Blockchain, connect with our skilled [blockchain developers](https://blockchain.oodles.io/about-us/?utm_source=devto). | donnajohnson88 |

1,870,191 | What I learnt at the Web Performance workshop by Cloudinary | Yesterday, I went down to the Google Developer Space in Singapore for a talk by Tamas Piros on the... | 0 | 2024-05-30T09:57:23 | https://dev.to/kervyntjw/what-i-learnt-at-the-web-performance-workshop-by-cloudinary-4fc | Yesterday, I went down to the Google Developer Space in Singapore for a talk by Tamas Piros on the topic of "Mastering Web Performance" in 2024. First of all, kudos and thanks to Tamas for coming down and giving us these insights into optimizing our sites for performance. I learnt quite a few things from it and am excited to implement them in my future projects.

I'm sure many of you feel this way too, and this is why I am here today to share some of the main takeaways I got from this workshop!

# Takeaways:

## 1. What the Core Web Vitals consist of

Prior to the workshop, I have experience in conducting frequent website maintenances for clients, and one of the tasks included month-to-month improvements in site performance. I was always involved in the steps taken in order to improve the page score given by Google, and to achieve a better score, our site needs to tackle issues that arise with the **Core Web Vitals**. So, what are these Core Web Vitals?

- Cumulative Layout Shift (CLS)

- Interaction to Next Paint

- Largest Contentful Paint

I will briefly cover what these are, focus on the first 2 vitals in this article!

### 1.1 Cumulative Layout Shift (CLS)

Very briefly, what CLS is, is the measurement of how much content on your page shifts. For instance, if there are ads that are displayed between paragraphs on your page, and they display after a certain condition, this would **decrease** the CLS score as your paragraphs/text on the page will likely move as these ads load.

Why is CLS **important** to consider for optimal web performance?

Well, having paragraphs that move a lot significantly affects User Experience (UX)! For instance, if a user is staying still on the page reading the text, I'm sure you can imagine how frustrated the user will be if that text suddenly shifts out of your viewport right? Similarly, if you, as a user is highlighting links on the page, you won't want it to suddenly shift and for you to lose that link right?

### 1.2 Interaction to Next Paint

This is essentially how long the page takes to load. The name is as such, because it measures how long the site takes to respond after a user has **interacted** with it. eg. Clicking a button on the page, redirects etc.

This is also another factor to consider for UX. As the web world gets more advanced each day, users and customers expect quicker speeds and smoother website layouts and performances. If your website is slightly slower than your competition, that could be the difference between a potential customer versus someone who is disgusted with the speed of your website.

These are just part 1 of the takeaways I had from the workshop held yesterday. If you guys are interested in learning even more, show some love on this post! If this post gets enough traction, I'll post more of my takeaways that I had from the talk with more in-depth information on the topics as well!

| kervyntjw | |

1,870,190 | Outerform.ai: AI-Powered Business Transformation. | Outerform.ai is an innovative AI platform dedicated to revolutionizing business operations. Our... | 0 | 2024-05-30T09:56:15 | https://dev.to/outerform/outerformai-ai-powered-business-transformation-5534 | saas, ai, devops | [Outerform.ai](https://www.outerform.ai/) is an innovative AI platform dedicated to revolutionizing business operations. Our cutting-edge solutions harness the power of artificial intelligence to optimize efficiency, streamline workflows, and drive growth. With advanced machine learning algorithms and predictive analytics, we empower businesses to make data-driven decisions and stay ahead in a competitive market. From automating routine tasks to providing actionable insights, [Outerform.ai ](https://www.outerform.ai/)is your partner for success in the digital age.

| outerform |

1,870,189 | Travel Like Royalty: Aboard the Deccan Odyssey | The Deccan Odyssey is no ordinary train. It's a meticulously curated mobile palace that harks back... | 0 | 2024-05-30T09:55:19 | https://dev.to/deccan_odysseyluxurytra/travel-like-royalty-aboard-the-deccan-odyssey-4obd |

The Deccan Odyssey is no ordinary train. It's a meticulously curated mobile palace that harks back to the era of Maharajas, transporting you in time with its opulent interiors and impeccable service. This grand chariot on wheels offers several captivating itineraries, aptly named to reflect the essence of each journey.

**Unveiling the Jewels of India: Diverse Itineraries**

Embark on The Indian Odyssey, a legendary route that whisks you past the iconic Taj Mahal, the captivating Ranthambore National Park, and the majestic forts of Rajasthan. Alternatively, delve into the rich heritage of Maharashtra on The Maharashtra Splendor journey, where you'll explore the Ajanta and Ellora Caves, the stunning beaches of Konkan, and the architectural marvels of Mumbai.

More than just destinations, each itinerary promises a unique tapestry of experiences. Imagine spotting tigers in their natural habitat, unraveling the mysteries of ancient cave temples, or getting a glimpse into the lives of local artisans.

**Opulence on the Move: Your Luxurious Haven**

Step aboard the **[Deccan Odyssey Train](https://www.deccanodyssey.co.uk

)** and be greeted by a haven of unparalleled luxury. Your plush cabin, a haven of tranquility, features a private bathroom, air-conditioning, and even a mini gym for those who wish to maintain their fitness regime.

Beyond your private sanctuary, a world of exquisite experiences awaits. Savor delectable meals prepared by expert chefs in the elegant dining cars. Relax and unwind in the sophisticated bar lounge, or catch up on work in the dedicated business center. For those seeking rejuvenation, the onboard spa provides a haven of pampering, while the conference room caters to discerning business travelers.

**Unforgettable Experiences: Beyond the Train**

The **[Deccan Odyssey](https://www.deccanodyssey.co.uk

)** isn't just about the luxurious confines of the train itself. Each expertly curated itinerary features a captivating selection of off-the-beaten-path experiences that bring the destination to life.

Imagine embarking on thrilling tiger safaris, where skilled naturalists guide you through the wilderness in search of these majestic predators. Immerse yourself in the rich tapestry of Indian culture through heritage walks and captivating performances by local artists. Gain exclusive access to historical sites that are often closed to the public, creating memories that will stay with you for a lifetime.

**A Day in the Life of Luxury: A Glimpse Aboard the Deccan Odyssey

**

Imagine waking up to the gentle sway of the train as you traverse the Indian countryside. A steaming cup of freshly brewed coffee awaits you on your private balcony, offering a breathtaking panorama of rolling hills or charming villages.

As the day unfolds, you might embark on a thrilling morning game drive, followed by a fascinating visit to a historical monument or a local village. In the afternoon, return to the train for a leisurely lunch, perhaps followed by a rejuvenating session at the spa. As the sun sets, painting the sky in vibrant hues, gather with fellow travelers in the elegant bar lounge for an evening of conversation and camaraderie. The evenings may also be graced by traditional music performances or movie nights under the starlit sky, creating a truly unforgettable experience.

**A Culinary Odyssey: A Celebration of Flavors

**

Aboard the Deccan Odyssey , your taste buds will embark on a remarkable journey alongside your physical one. Expert chefs prepare a symphony of flavors, showcasing the rich culinary tapestry of India. From fragrant curries bursting with fresh spices to melt-in-your-mouth kebabs, each meal is a celebration of regional specialties and fresh, locally sourced ingredients.

Fine dining experiences are complemented by impeccable service. Attentive staff cater to your every whim, ensuring a truly unforgettable epicurean adventure.

**Evenings of Exquisite Entertainment

**

As day turns to night, the Deccan Odyssey transforms into a haven of sophisticated entertainment. Sway to the melodious tunes of traditional Indian music performances, or catch up on the latest blockbusters under the starlit sky during onboard movie nights.

The inviting atmosphere also fosters connections with fellow travelers. Share stories and experiences over cocktails in the bar lounge, forging friendships that will likely last a lifetime. | deccan_odysseyluxurytra | |

1,870,188 | ☎️+91-9257780540☎️ ̿ Best Online ID Provider Mumbai | ☎️+91-9257780540☎️ ▀̿ Best Online ID Provider Mumbai ☎️+91-9257780540☎️ ▀̿ Best Online ID Provider... | 0 | 2024-05-30T09:53:14 | https://dev.to/umair_khalil_e6fb60ebf2a2/91-9257780540-best-online-id-provider-mumbai-51bd | ☎️+91-9257780540☎️ ▀̿ Best Online ID Provider Mumbai ☎️+91-9257780540☎️ ▀̿ Best Online ID Provider Mumbai☎️+91-9257780540☎️ ▀̿ Best Online ID Provider Mumbai☎️+91-9257780540☎️ ▀̿ Best Online ID Provider Mumbai☎️+91-9257780540☎️ ▀̿ Best Online ID Provider Mumbai☎️+91-9257780540☎️ ▀̿ Best Online ID Provider Mumbai☎️+91-9257780540☎️ ▀̿ Best Online ID Provider Mumbai☎️+91-9257780540☎️ ▀̿ Best Online ID Provider Mumbai☎️+91-9257780540☎️ ▀̿ Best Online ID Provider Mumbai☎️+91-9257780540☎️ ▀̿ Best Online ID Provider Mumbai☎️+91-9257780540☎️ ▀̿ Best Online ID Provider Mumbai☎️+91-9257780540☎️ ▀̿ Best Online ID Provider Mumbai☎️+91-9257780540☎️ ▀̿ Best Online ID Provider Mumbai☎️+91-9257780540☎️ ▀̿ Best Online ID Provider Mumbai☎️+91-9257780540☎️ ▀̿ Best Online ID Provider Mumbai☎️+91-9257780540☎️ ▀̿ Best Online ID Provider Mumbai | umair_khalil_e6fb60ebf2a2 | |

1,870,187 | 5 Common UX Mistakes and How to Avoid Them: Optimizing Your Design Process for Success | A well-crafted user experience (UX) and user interface (UI) interface isn't just about aesthetics;... | 0 | 2024-05-30T09:53:09 | https://dev.to/anmolrajdev/5-common-ux-mistakes-and-how-to-avoid-them-optimizing-your-design-process-for-success-3ncg | webdev, design, uiux, web |

A well-crafted user experience (UX) and user interface (UI) interface isn't just about aesthetics; it's about creating a seamless and intuitive experience that keeps users engaged and drives conversions.

Here at 42Works, a leading [UI/UX Design company](https://42works.net/expertise/ui-ux-design/) and Offshore UI Design Agency offering UI UX Design Outsourcing Services, we understand the importance of avoiding common pitfalls in the design process. Let's explore five frequent mistakes businesses make and how to steer clear of them, ensuring a successful outcome for your website, application, or other digital product.

**<u>Mistake #1: Prioritizing Aesthetics Over Usability

</u>**

While a visually appealing interface is important, it shouldn't come at the expense of usability. Flashy animations or overly complex layouts can confuse users and hinder their ability to complete desired actions. This can lead to frustration, abandonment, and ultimately, loss of business.

**<u>Impact:</u>** Studies by Nielsen Norman Group show that even minor usability issues can decrease conversion rates by as much as 37%.

**<u>Solution:</u>** Focus on user needs first. Conduct user research to understand your target audience's expectations and pain points. Prioritize clear navigation, intuitive layouts, and easy-to-understand functionalities. At 42Works, our team of [Top-notch UX/UI design specialists](https://42works.net/) employs user-centered design principles to create interfaces that are both beautiful and functional.

**<u>Mistake #2: Ignoring Mobile Optimization</u>**

With the rise of mobile browsing, neglecting mobile optimization is a recipe for disaster. Users expect a seamless experience regardless of the device they use. If your website or app isn't optimized for mobile, users will likely bounce off quickly, leading to missed opportunities.

**<u>Impact</u>:** According to Statista, over 54% of all web traffic worldwide comes from mobile devices. A non-mobile-friendly interface alienates a significant portion of your potential audience.

**<u>Solution:</u>** Design with mobile in mind from the very beginning. Employ responsive design principles that ensure your interface adapts seamlessly across different screen sizes and devices. 42Works offers comprehensive [WEBSITE DESIGN and APPLICATION DESIGN](https://42works.net/expertise/websites/) services, including mobile optimization, to ensure your digital product reaches its full potential.

**<u>Mistake #3: Inconsistent Design Language

</u>**

A lack of consistency can create confusion and disrupt the user flow. This applies to visual elements like color schemes, typography, and button styles, as well as the overall layout and user interaction patterns.

**<u>Impact:</u>** Inconsistent design can make users feel lost and unsure of how to navigate your interface. This can lead to frustration and a negative brand perception.

**<u>Solution:</u>** Establish a clear design style guide and ensure all elements across your digital product adhere to it. This includes LOGO DESIGN, INFOGRAPHICS DESIGN, and all other visual components offered by 42Works. A consistent design language creates a sense of trust and familiarity for your users.

**<u>Mistake #4:</u>** Lack of Clear Calls to Action (CTAs)

CTAs are the buttons or prompts that tell users what you want them to do next. Whether it's signing up for a newsletter, making a purchase, or downloading a document, clear CTAs are essential for guiding users toward desired actions.

**<u>Impact:</u>** Vague or poorly placed CTAs can leave users confused and unsure of what step to take next. This can result in missed opportunities and a decline in conversions.

**<u>Solution:</u>** Create clear, concise, and visually distinct CTAs. Use strong action verbs, such as "Buy Now" or "Download Here," and ensure they stand out from the surrounding content.

**<u>Mistake #5: Neglecting User Testing

</u>**

No design is perfect. User testing allows you to observe real users interacting with your interface and identify any potential issues before launch. This valuable feedback helps you refine your design and ensure a smooth user experience.

**<u>Impact:</u>** Launching a product with unaddressed usability issues can lead to a negative user experience and damage your brand reputation.

**<u>Solution:</u>** Conduct user testing throughout the design process. At 42Works, we offer UX & UI Design and Consulting Services that include user testing to ensure your product is user-friendly and meets its full potential.

By avoiding these common mistakes and implementing these actionable tips, you can optimize your UI/UX design process and create digital products that not only look great but also deliver a seamless and engaging user experience. Remember, a well-designed interface is an investment that can pay off in the long run, driving user engagement, conversions, and ultimately, business success.

Ready to create exceptional user experiences? [Contact 42Works today](https://42works.net/contact/) to discuss your UI/UX design needs! | anmolrajdev |

1,870,186 | How do knee braces reduce my knee pain? | Inside the world of knee braces, the Z1 knee brace stands as a beacon of hope for the ones struggling... | 0 | 2024-05-30T09:52:49 | https://dev.to/mahaveer_singh_285b9fed3b/how-do-knee-braces-reduce-my-knee-pain-iga | kneebrace, buykneebraceonline, kneebraceonline, customkneebrace | Inside the world of knee braces, the Z1 knee brace stands as a beacon of hope for the ones struggling with knee pain. Known for its progressive layout, advanced aid and comfort, the Z1 knee brace has been exceedingly favored by athletes, health fanatics and those who want to take away knee pain. So what makes the Z1 knee brace stand proud of the opposition? Let's examine the capabilities and blessings of the Z1 and an explanation for why they're taken into consideration for their magnificence for [buy knee brace online](https://z1kneebrace.com/knee-braces).

Know-How Knee Pain:

Before we observe the fundamentals of knee braces, it is vital to recognize what causes knee pain. The knee is a joint that supports weight and lets in an extensive range of motion. As a result, it is a problem to many injuries and situations, along with torn ligaments, cartilage harm, and osteoarthritis. It causes swelling, instability and osteoarthritis. This sickness can range from mild to severe and might have an effect on mobility and ordinary best of lifestyles. There are numerous sorts, which include sleeves, wraps, and hinged braces, and each type has a particular purpose relying on the man or woman's desires and the character of the knee injury or ache.

Support and Stability:

One of the predominant functions of knee braces is to provide assistance and balance to the knee joint. With the aid of compressing the distance around the knee and presenting external assistance, braces help lessen strain and prevent further damage. This added balance is especially beneficial for humans convalescing from an accident or torn ligament, inclusive of an ACL or MCL harm.

Compression and Pain Comfort:

Knee braces practice gentle strain to the encompassing tissue, supporting to lessen swelling and decrease ache. via compressing the location, the stent affords a higher and less complicated way to deliver oxygen and vitamins to the injured tissue at the same time as casting off metabolic waste. This reduces ache and pain, allowing the person to function extra easily every day.

Adjustment Correction:

If knee pain is caused by misalignment or biomechanical issues, a few types of knee braces (such as off-load supports) can cooperatively help accurately and redistribute weight. This relieves stress on certain regions of the knee, thereby reducing ache and enhancing general function.

Improving Proprioception:

Proprioception is the capacity to apprehend the body's role and motion in an area. Knee pads, especially people with adjustable straps or hinges, can enhance posture and deliver humans a higher experience of coordination and mobility. This expanded focus improves stability, stability, and coordination, decreasing the threat of falls and further injury.

Psychological Assist:

Similar to its bodily advantages, knee braces can also offer psychological support to knee patients. additional protection and aid can increase protection, confidence and decrease strain, permitting people to stay calm and participate in each day lifestyles.

The Z1 knee brace represents the top of excellence in knee aid and rehabilitation. With their progressive layout, suit, top guide and lots of functions, those corsets provide a super answer for individuals who want to eliminate knee pain. Whether you're convalescing from a harm, coping with a continual situation, or operating to prevent destiny troubles, Z1 knee braces offer the help, consolation, and performance you need to help you regain your power and live life to the fullest. find out the difference for yourself and discover why the Z1 knee brace is widely taken into consideration, pleasant in its magnificence. https://z1kneebrace.com/knee-braces

| mahaveer_singh_285b9fed3b |

1,870,185 | ☎️+91-9257780540☎️ ̿ Get Online ID Provider Mumbai | ☎️+91-9257780540☎️ ▀̿ Get Online ID Provider Mumbai☎️+91-9257780540☎️ ▀̿ Get Online ID Provider... | 0 | 2024-05-30T09:52:42 | https://dev.to/umair_khalil_e6fb60ebf2a2/91-9257780540-get-online-id-provider-mumbai-3gml | webdev, cricket | ☎️+91-9257780540☎️ ▀̿ Get Online ID Provider Mumbai☎️+91-9257780540☎️ ▀̿ Get Online ID Provider Mumbai☎️+91-9257780540☎️ ▀̿ Get Online ID Provider Mumbai☎️+91-9257780540☎️ ▀̿ Get Online ID Provider Mumbai☎️+91-9257780540☎️ ▀̿ Get Online ID Provider Mumbai☎️+91-9257780540☎️ ▀̿ Get Online ID Provider Mumbai☎️+91-9257780540☎️ ▀̿ Get Online ID Provider Mumbai☎️+91-9257780540☎️ ▀̿ Get Online ID Provider Mumbai☎️+91-9257780540☎️ ▀̿ Get Online ID Provider Mumbai☎️+91-9257780540☎️ ▀̿ Get Online ID Provider Mumbai☎️+91-9257780540☎️ ▀̿ Get Online ID Provider Mumbai☎️+91-9257780540☎️ ▀̿ Get Online ID Provider Mumbai☎️+91-9257780540☎️ ▀̿ Get Online ID Provider Mumbai | umair_khalil_e6fb60ebf2a2 |

1,870,184 | BEGINNERS COMMAND - GIT | GIT GIT is a version control system that is designed to handle small to large projects with speed... | 0 | 2024-05-30T09:52:41 | https://dev.to/shreeprabha_bhat/beginners-command-git-5354 | **GIT**

GIT is a version control system that is designed to handle small to large projects with speed and efficiency. It allows multiple people to work on same project simultaneously without effecting other's code.

GIT is most common skill that recruiters expect in everyone's resume. There are some of the basic commands that must be known to everyone while working with git or attending any technical interview.

**MUST KNOW GIT COMMANDS**

These commands are used to setup git in your system. Using the commands below one can access the git synchronized with the mail ID.

```

git config --global user.name "Your name"

git config --global user.email "Your mail"

```

This command is used initialize a repository in your git. Once the repository is initialized other actions like commit, push or pull can be performed over these repository.

```

git init

```

Below command is used to clone an existing repository into your repository.

```

git clone <repository_url>

```

The command below is used to get the status of repository.

```

git status

```

Stage the changes for commit.

```

git add <file_name>

# Command stages the changes in that particular file

git add .

#Command stages the entire changes.

```

Commit changes to the repository

```

git commit -m "Your message"

```

View the history of commits in the repositiory

```

git log

```

Creating a new branch.

```

git branch <branch_name>

```

Switch to a new branch

```

git checkout <branch_name>

```

Create and switch to a new branch

```

git checkout -b <branch_name>

```

Merge a branch to the new branch

```

git merge <branch_name>

```

Know which branch you are working in.

```

git branch

```

Add a repository to the remote repositiory

```

git remote add origin <repository_url>

```

Fetch changes from the remote repository

```

git fetch

```

Push changes to the remote repositiory

```

git push origin <branch_name>

```

Pull changes from the remote repository

```

git pull

```

List remote repositories

```

git remote -v

```

Stash the changes when your are not ready to commit them.

```

git stash

```

Apply the stashes to the desired branch

```

git stash apply

```

| shreeprabha_bhat | |

1,870,183 | Mastering Magento PIM Integration | Product Information Management (PIM) is essential for Magento store owners looking to streamline... | 0 | 2024-05-30T09:51:45 | https://dev.to/charleslyman/mastering-magento-pim-integration-3cf1 | magento, pim | Product Information Management (PIM) is essential for Magento store owners looking to streamline their product data across multiple channels. This blog delves into Magento PIM integration and highlights the advantages of leveraging Amazon Web Services (AWS) for Magento hosting.

**Why Magento PIM Integration is Crucial**

Integrating a PIM with your Magento store centralizes product information management, allowing for consistent, accurate, and up-to-date product data across all sales channels. This integration simplifies managing extensive product catalogs and enhances the efficiency of sales and marketing efforts.

**Steps to Integrate PIM with Magento**

- **Evaluate Your Needs:** Understand the specific requirements of your Magento store to choose the right PIM system.

- **Choose a Compatible PIM System:** Select a PIM that seamlessly integrates with Magento, offering features like automated data synchronization, advanced data management, and multi-channel support.

- **Implement and Customize:** Install the PIM software and customize the settings to align with your business processes and data flow.

- **Data Migration:** Migrate existing product data into the PIM system, ensuring accuracy and consistency.

- **Continuous Monitoring and Updating:** Regularly update and maintain the PIM system to handle new products and changes in existing data.

By leveraging [managed Magento hosting](https://devrims.com/magento-hosting/) you can bring significant benefits to PIM integration:

- **Scalability:** AWS scales resources on-demand to meet the needs of your store, ensuring smooth operation during traffic spikes.

- **Security:** AWS provides comprehensive security features that protect your sensitive product data against threats.

- **Reliability:** With AWS, expect high uptime and consistent performance, crucial for maintaining access to your PIM system and Magento store.

- **Global Reach:** AWS's global infrastructure ensures faster access and reduced latency, improving the experience for users worldwide.

**Conclusion**

[Magento PIM integration](https://devrims.com/blog/magento-pim-integration/) is a strategic move for any e-commerce business looking to optimize product information management. By combining this with AWS for Magento hosting, businesses can ensure a robust, secure, and scalable e-commerce environment. This setup not only streamlines product data management but also enhances overall operational efficiency and customer satisfaction. | charleslyman |

1,870,182 | The Different Types of Demat Accounts and How to Use Them | A Demat account, short for a Dematerialized account, is an electronic account that allows investors... | 0 | 2024-05-30T09:49:11 | https://dev.to/shriya_jain_/the-different-types-of-demat-accounts-and-how-to-use-them-4bf8 | A Demat account, short for a Dematerialized account, is an electronic account that allows investors to hold shares and securities in a dematerialised or electronic form. Instead of physical share certificates, the ownership records are maintained electronically by a Depository Participant (DP). This account is essential for anyone looking to invest in the share market. One of the leading players in the Indian share market is Kotak, a renowned financial services company that offers various Demat account options to cater to different investment needs. In this blog, we'll explore the different types of Demat accounts and how to use them effectively.

## Types of Demat Accounts

There are several **[types of Demat account](https://www.kotaksecurities.com/demat-account/types-of-demat-account/)** available, each designed to cater to specific investment needs:

**1. Regular Demat Account:** This is the most common type of Demat account and is suitable for individual investors who wish to hold and trade shares and securities in their own name.

**2. Joint Demat Account:** As the name suggests, a joint Demat account is opened by two or more individuals, allowing them to hold and trade shares together. This account type is ideal for family members or business partners who want to invest jointly.

**3. NRI Demat Account:** Non-resident Indians (NRIs) can open an NRI Demat account to hold and trade shares in the Indian share market while residing abroad.

**4. Corporate Demat Account:** Companies and institutions can open a corporate Demat account to hold and trade shares and securities for their business purposes.

**5. Margin Trading Account:** This account type allows investors to leverage their investments by borrowing funds from the broker to trade in the share market.

## How to Use a Demat Account?

Using a Demat account is relatively straightforward, but it's essential to understand the process:

- **Opening a Demat Account:** To open a Demat account, you need to approach a Depository Participant (DP) like a bank or a brokerage firm. Kotak offers various Demat account options to suit different investment needs.

- **Funding the Account:** Once the account is opened, you can transfer funds from your bank account to your Demat account to start trading in the share market.

- **Buying and Selling Shares:** With a funded Demat account, you can place buy or sell orders through your broker or online trading platform. The shares will be credited or debited from your Demat account electronically.

- **Monitoring and Managing:** It's crucial to monitor your Demat account regularly and keep track of your holdings, transactions, and portfolio performance.

## Conclusion

Demat accounts have changed the way investors participate in the share market. With various account types available, investors can choose the option that best suits their investment objectives and preferences. **Kotak**, as a trusted financial services provider, offers a range of Demat account solutions to cater to diverse investment needs. By understanding the different types of Demat accounts and how to use them effectively, you can navigate the share market with confidence and potentially achieve your financial goals.

| shriya_jain_ | |

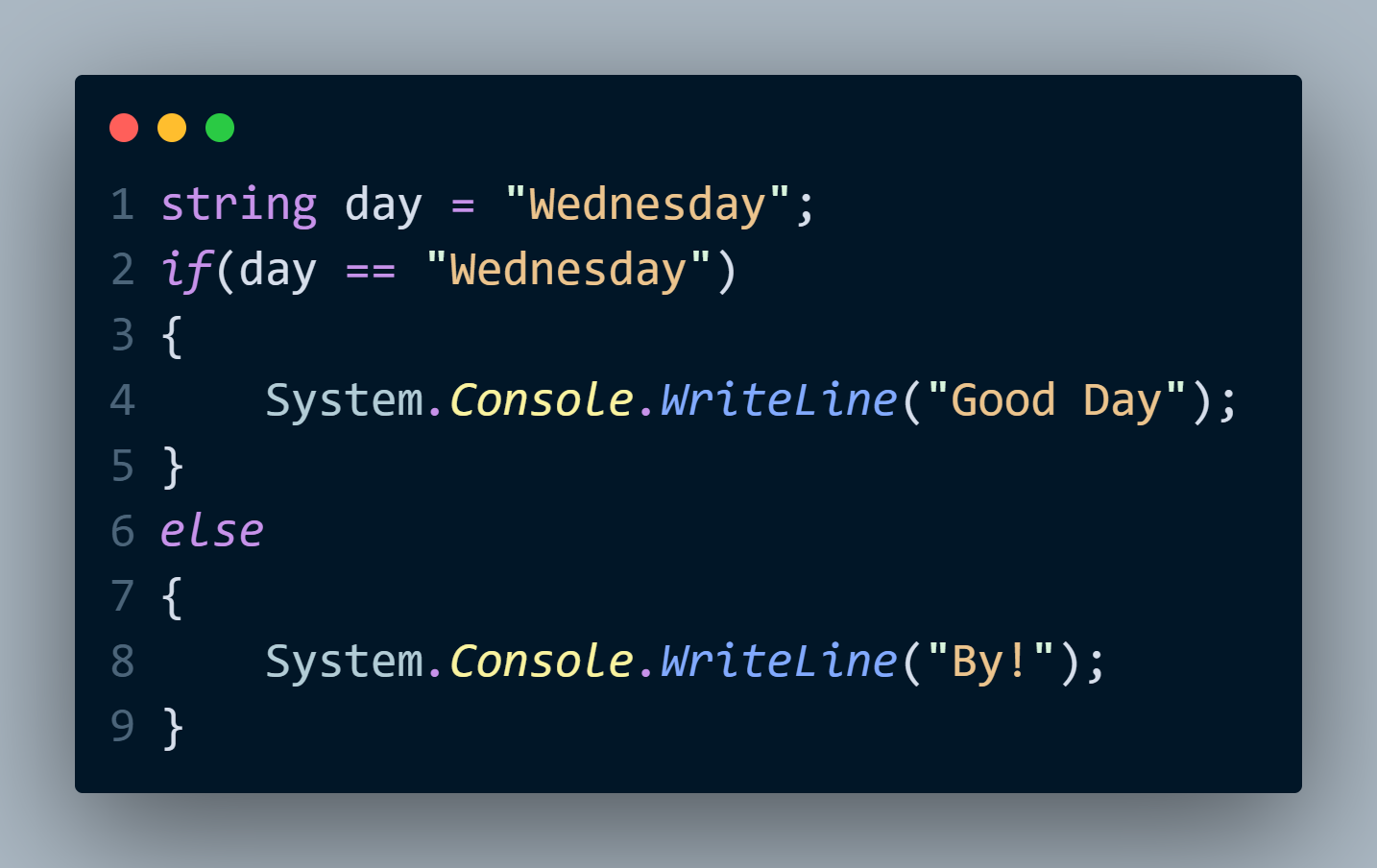

1,870,181 | Hello C# Devs | A post by Mohamed Abdi | 0 | 2024-05-30T09:47:30 | https://dev.to/mohamedabdiahmed/hello-c-devs-271e |

| mohamedabdiahmed | |

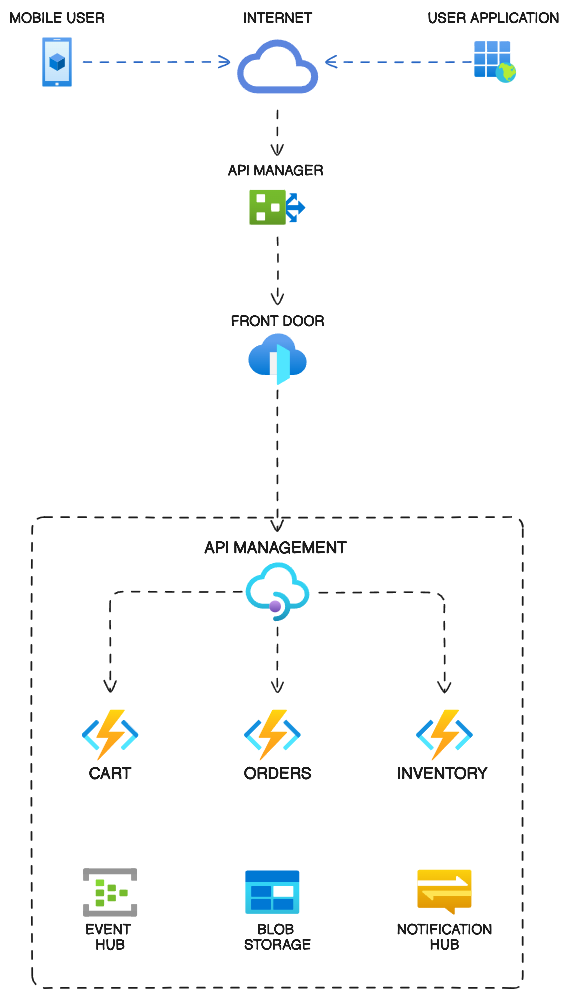

1,870,180 | How to Automate Microservices: Best Approaches & Strategies | Building applications with microservices, where each individual service plays a specific role, has... | 0 | 2024-05-30T09:46:59 | https://dev.to/kairostech/how-to-automate-microservices-best-approaches-strategies-jk2 | microservices, microservicestesting, testing, acceleratedtesting |

Building applications with microservices, where each individual service plays a specific role, has become a game-changer for developers. However, in this intricate world, ensuring each service functions seamlessly and flawlessly is vital.

**[Microservices testing](https://kairostech.com/microservices-testing/)** is a specialized field that tackles this challenge by validating the functionality, performance, and reliability of individual services, together with, ‘how they work together?’. This blog dives into various strategies and approaches for automating the testing of microservices, aimed at ensuring smooth integration and seamless operation of these distributed components.

**What are Microservices?**

Microservices architecture ensures the decomposition of monolithic applications into smaller, independently deployable services that are loosely coupled. Each service encapsulates a distinct business function and interacts with other services via APIs. This modular approach simplifies the development process, enables independent deployment of services, and streamlines application maintenance.

**Understanding Microservices Testing**

The distributed nature of microservices architectures can render conventional testing strategies ineffective for comprehensive application testing.

Effective microservices testing addresses the complexities of service-to-service communication, maintains data integrity across distributed components, and validates the correct configuration of the decentralized architecture, thereby ensuring the overall robustness and reliability of the microservices ecosystem.

**Market Forecast:**

The microservices testing market is experiencing significant growth, driven by the increasing adoption of microservices architecture in various industries. Here are some key statistics and forecasts:

According to The Future Market Insight’s report, the microservices market is estimated to reach USD 1.90 billion in 2024, with a value-based CAGR of 21.20%.

By the end of 2034, the market valuation is expected to surpass USD 13.20 billion.

The microservices market size was valued at USD 1.4 billion in 2023 and is projected to register a CAGR of over 20.3% between 2024 and 2032.

**Advantages of Microservices:**

**Scalability:** Microservices architecture allows individual components to be scaled up or down independently, based on demand, resulting in optimal resource utilization and cost-effectiveness.

**Fault Isolation:** By decomposing the application into smaller, self-contained services, microservices provide better fault isolation. If one service fails, it does not necessarily bring down the entire application, improving overall system resilience.

**Faster Development:** Breaking down the application into smaller, more manageable services enables development teams to focus on specific components concurrently. This parallel development approach shortens development cycles and facilitates faster delivery of new features and updates.

**Key Modern Approaches of Testing Microservices**

**Contract Testing:** Contract testing establishes agreements (contracts) between microservices, defining how they interact with each other. These contracts outline the expected inputs, outputs, and behavioral specifications that each service adheres to when communicating with its counterparts. These contracts are enforced through tests, which identify compatibility issues early in development and ensure smooth communication between services.

**Unit Testing**

Unit testing is fundamental in microservices testing, focusing on validating the functionality of individual microservices. It involves testing the smallest parts of the application in isolation (e.g., functions or methods). In a microservices architecture, each service is treated as a unit, ensuring that it performs its intended task independently.

An inseparable part of s/w development and link to TDD/BDD. Checks the functionality at a most granular level.

• Solitary Unit Tests – deterministic tools with stubbing (during UT).

• Sociable Unit Tests – with real calls to external services

Unit tests are automated and coded to verify the internal logic and functionalities of the microservices. Tools like JUnit and Mockito are commonly used for this purpose.

**Chaos Engineering:** Chaos engineering involves deliberately injecting controlled faults or failures into a system to test its resilience. By simulating real-world scenarios, teams can identify weaknesses and strengthen their microservices architecture.

**Containerization Testing:** Deploying microservices typically makes use of containers like Docker. Testing compartments for versatility, security, and similarity with various conditions is fundamental for fruitful microservices sending. For improved software performance, scale, and security, containers are preferred for microservice testing.

**[API Testing](https://klabs.kairostech.com/modern-api-testing-solutions-for-modern-applications/):** In microservices-based systems, communication between services heavily relies on APIs. Therefore, rigorous API testing becomes essential to ensure reliable and robust interactions. Tools like API TestEasy can automate API tests, streamlining the process and guaranteeing the accuracy and stability of these critical connections.

**End-to-End Testing:** End-to-end testing entails validating the system's functionality in its entirety and ensuring that the entire procedure proceeds without incident.

It guarantees that the framework acts true to form in a creation-like situation, taking into account every single imaginable collaboration and mix. This kind of testing involves thoroughly testing all of the system's services and components to make sure it works well and meets user expectations.

**Performance Testing**

The objective of performance testing is to assess the scalability and responsiveness of the microservices architecture under various load conditions. This testing approach verifies that the individual microservices can handle fluctuating traffic volumes, maintain acceptable response times, and consistently deliver high performance. Specialized performance testing tools are employed to simulate peak load scenarios, evaluate the system's scalability capabilities, and pinpoint any potential bottlenecks or performance bottlenecks that may hinder the overall responsiveness of the application.

**Final Thoughts:** In conclusion, automating microservices testing is essential for ensuring the seamless operation of complex architectures. By implementing focused testing approaches like Unit Testing, Contract testing, Chaos Engineering, Containerization testing, API testing, and End-to-end testing, along with Performance, Scalability and Reliability testing, organizations can streamline their testing processes and deliver reliable microservices applications. Embracing automation in testing not only enhances the quality of the software but also accelerates the development cycle, making microservices an attractive choice for modern applications.

For Demo **[Click Here](https://zcmp.in/iew7)** | kairostech |

1,870,179 | Implementing Consumable In-App Purchases in React Native for iOS Devices | In-app purchases (IAP) in React Native require using native code for both iOS and Android because... | 0 | 2024-05-30T09:46:37 | https://dev.to/harisbinejaz/implementing-consumable-in-app-purchases-in-react-native-for-apple-devices-2ag9 | reactnative, ios, javascript | In-app purchases (IAP) in React Native require using native code for both iOS and Android because React Native doesn’t have a built-in module for IAP.

## Types of In-App Purchases (IAP)

Users can make different types of purchases within a mobile app. While the terms and specific types may vary slightly between iOS and Android, the following types are generally common:

### 1. Consumable Purchases

Consumable purchases are items that users buy and use up. These include things like virtual currency, extra lives, power-ups, or other virtual goods that can be consumed within the app.

**Example**: Purchasing virtual coins or gems in a game.

### 2. Non-Consumable Purchases

Non-consumable purchases are items that users buy once and have permanent access to. These include features or content that don’t get used up.

Accessing a premium feature, eliminating ads, or acquiring additional content like an extra level or chapter in a book app.

### 3. Auto-Renewable Subscriptions

Auto-renewable subscriptions allow users to access content or features on an ongoing basis. These subscriptions automatically renew at the end of the subscription period unless the user cancels.

**Example**: Monthly or annual subscription for premium content, access to exclusive features, or an ad-free experience.

Adding consumable purchases to an In-App Purchase (IAP) system involves several steps, and the specifics can vary depending on the platform (iOS, Android) and the tools/libraries you are using. Here are the general steps to implement consumable purchases in a React Native app using (for IOS) the `react-native-iap` library:

## 1. Install the library

First, you need to install the library. You can use npm, yarn, or any other node package manager you prefer:

```javascript

yarn add react-native-iap

```

OR

```javascript

npm install react-native-iap

```

## 1. Set up In App Purchase Products:

I assume you already have an **Apple Developer** account and an **app** created within that account. If you need help setting up an **Apple Developer** account and creating an **app**, you can refer to [this guide](https://developer.apple.com/help/app-store-connect/create-an-app-record/add-a-new-app/).

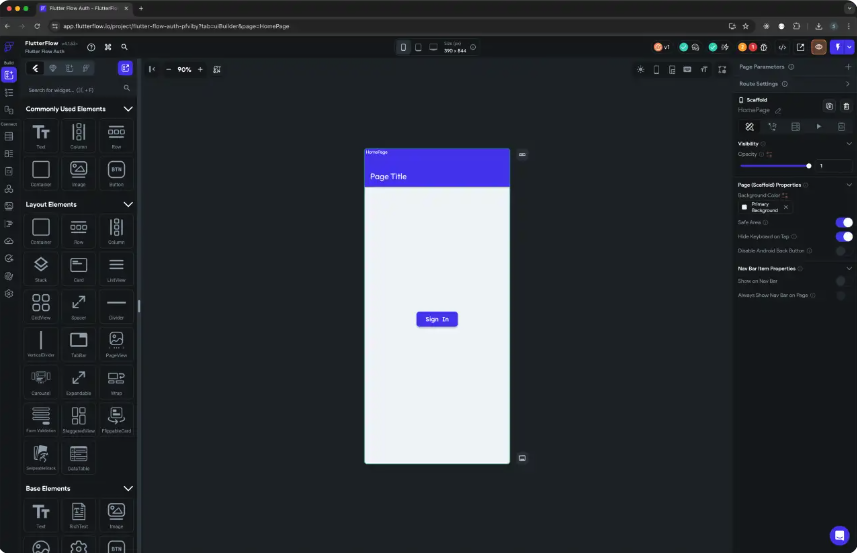

Now, click on your app and follow these steps to create an in-app purchase product:

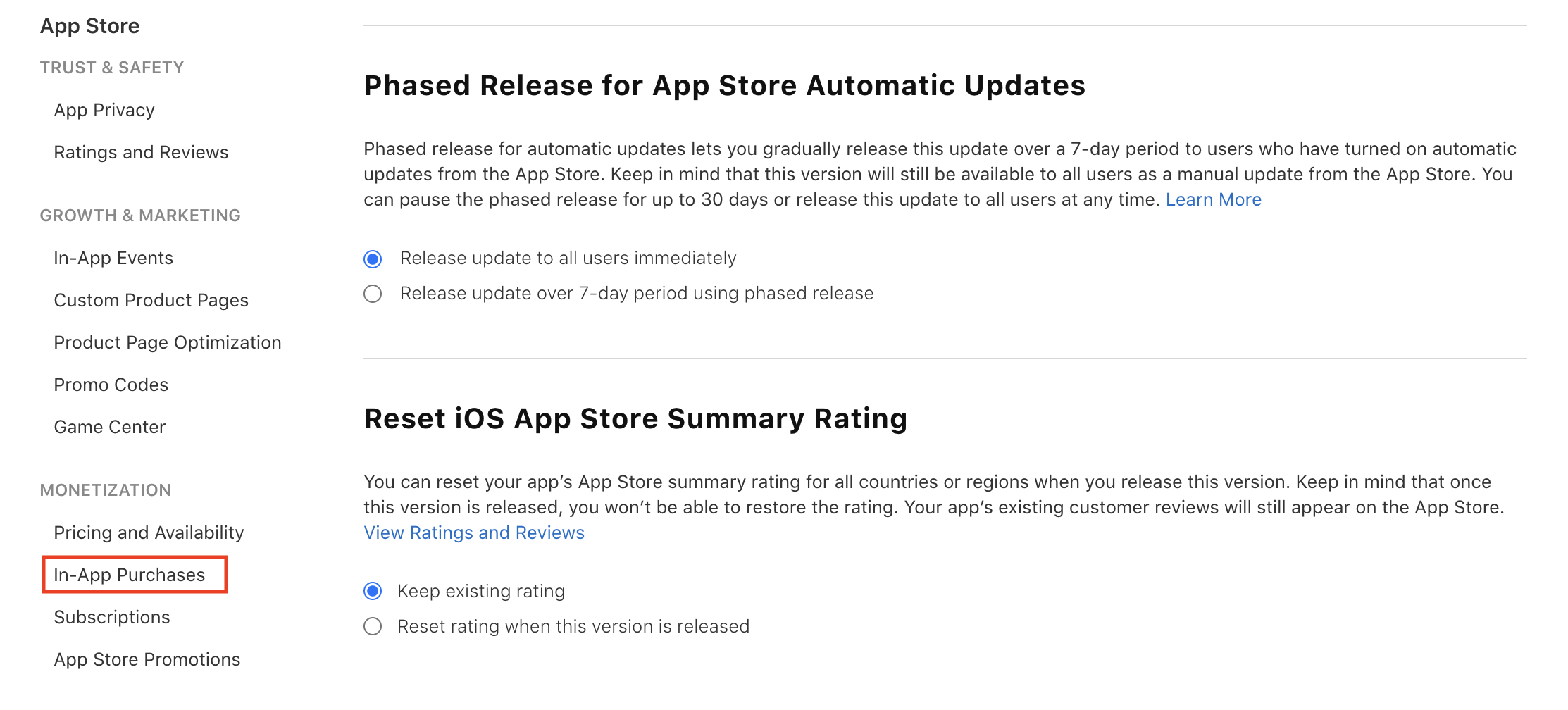

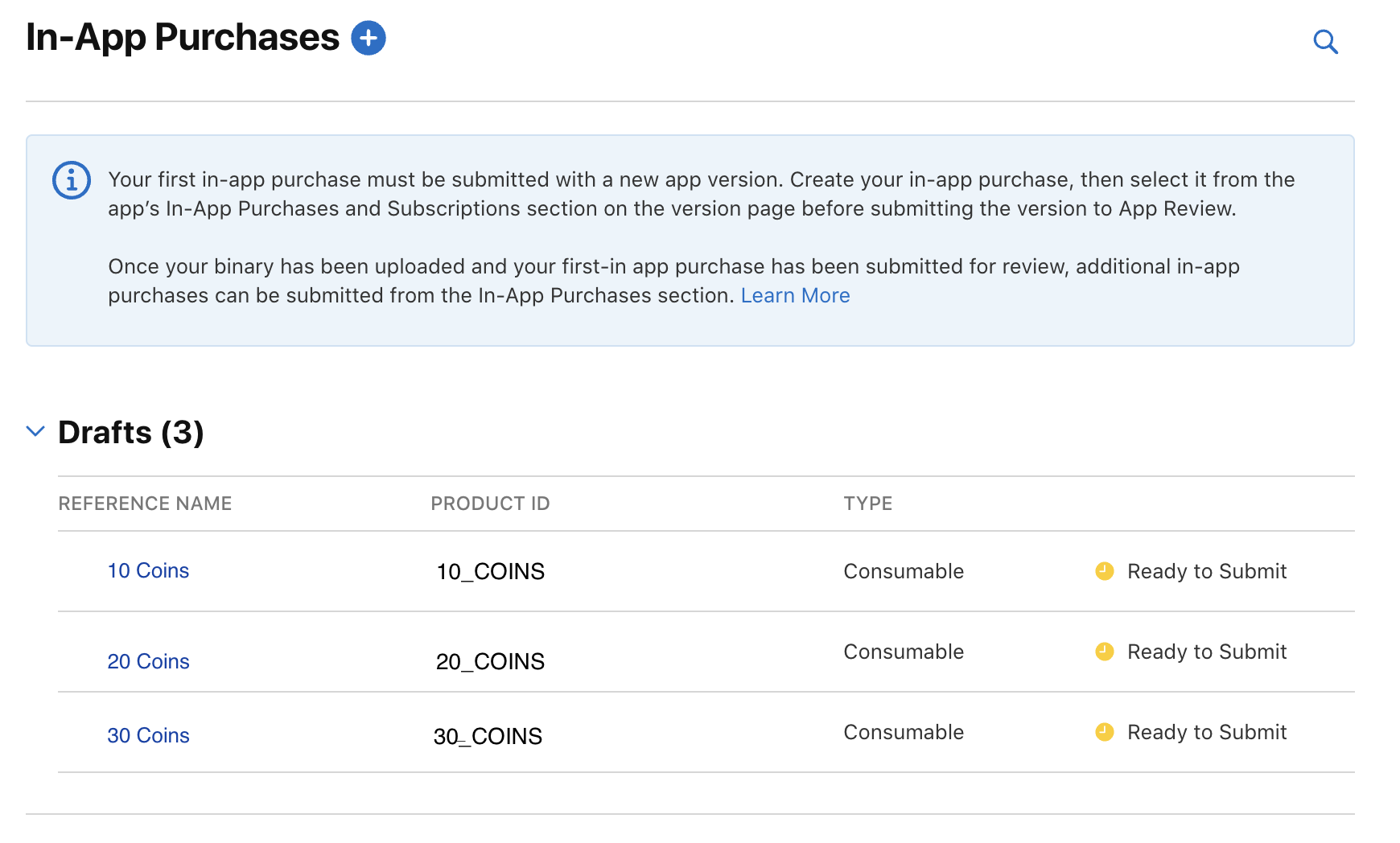

First, scroll to the bottom of the sidebar on your app page and click on **In-App Purchases** under the **Monetization** tab:

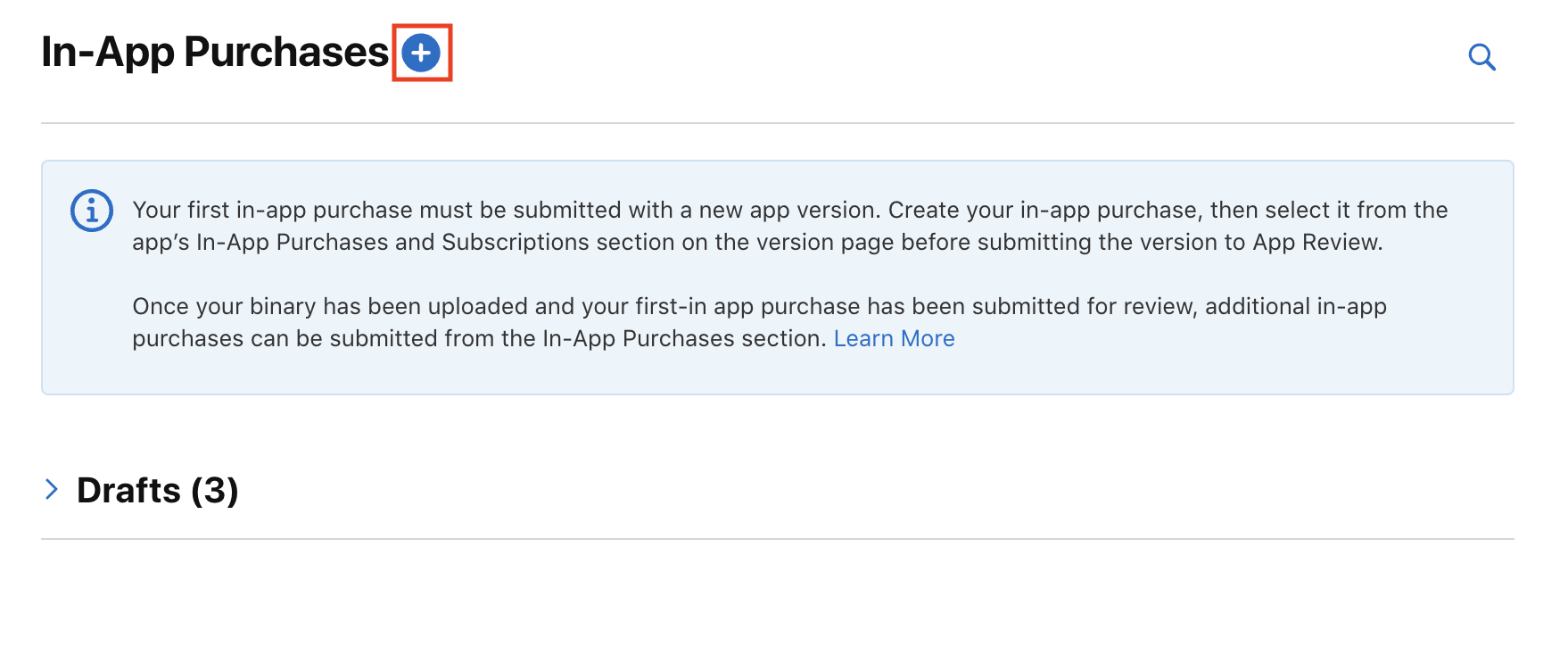

Next, click the plus icon next to the In-App Purchase Title:

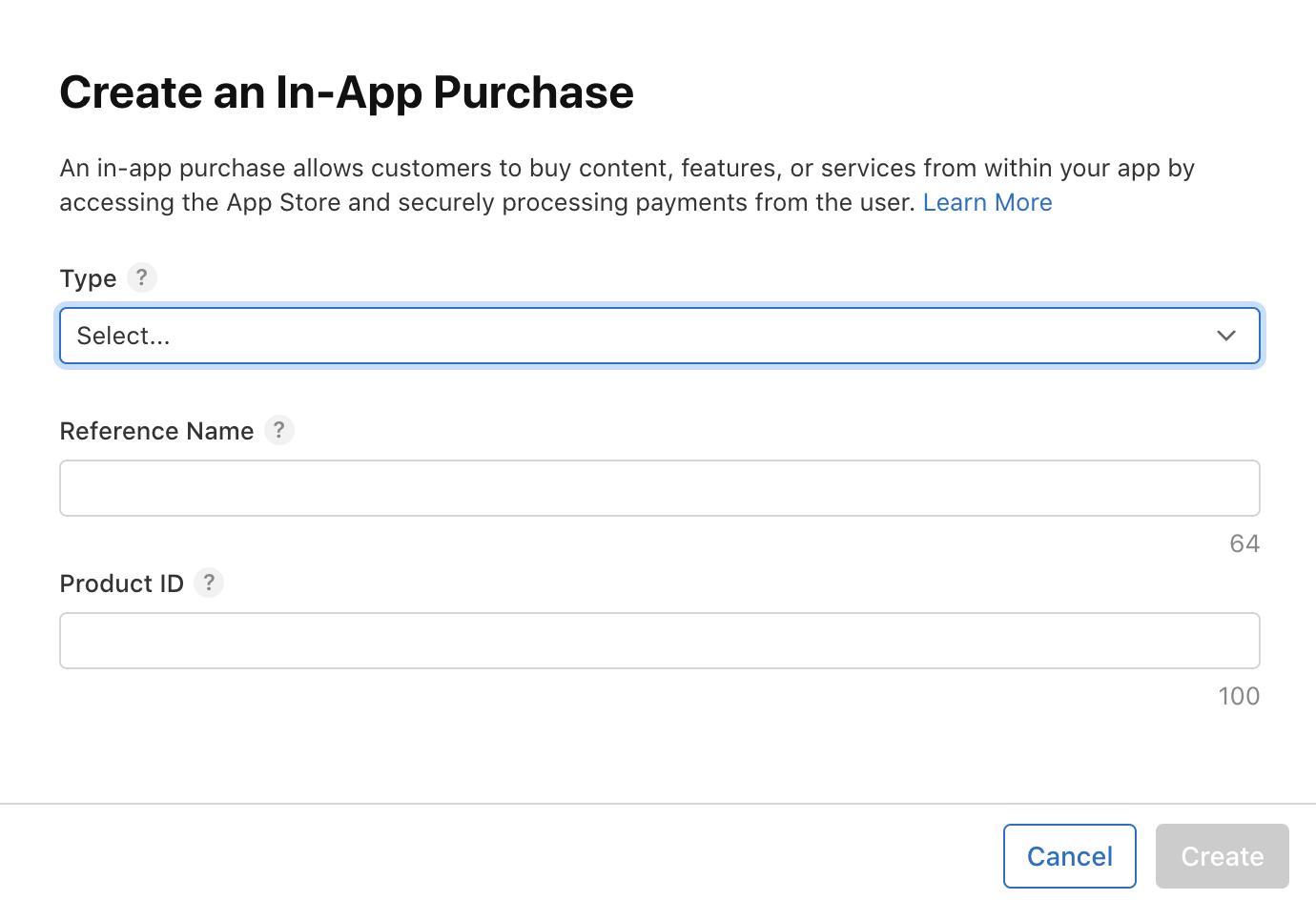

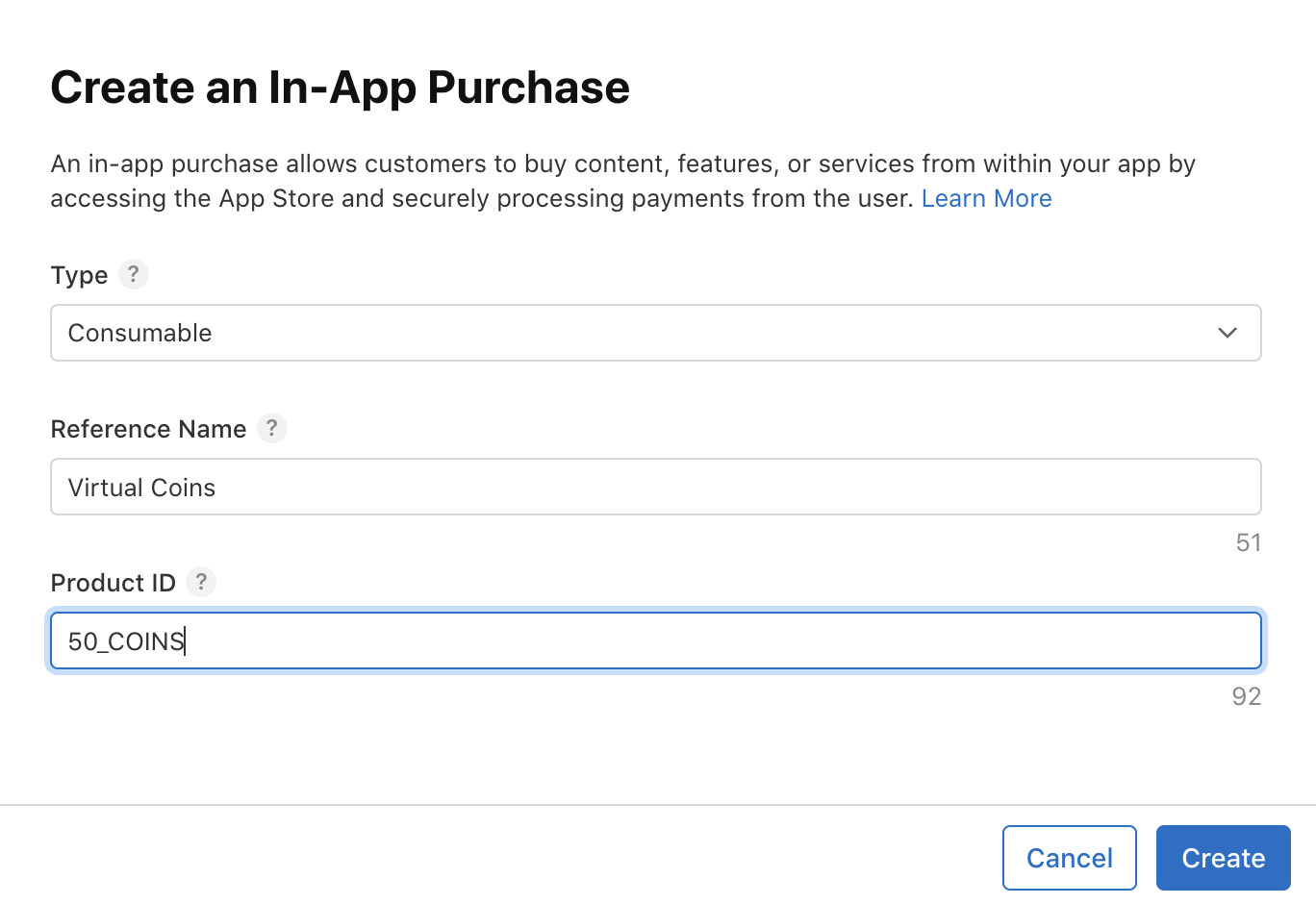

A popup will appear like this::

Select the type consumable and fill in the required information as shown:

Click the save button, and you will be navigated to a screen where you can add more details for this in-app purchase product.

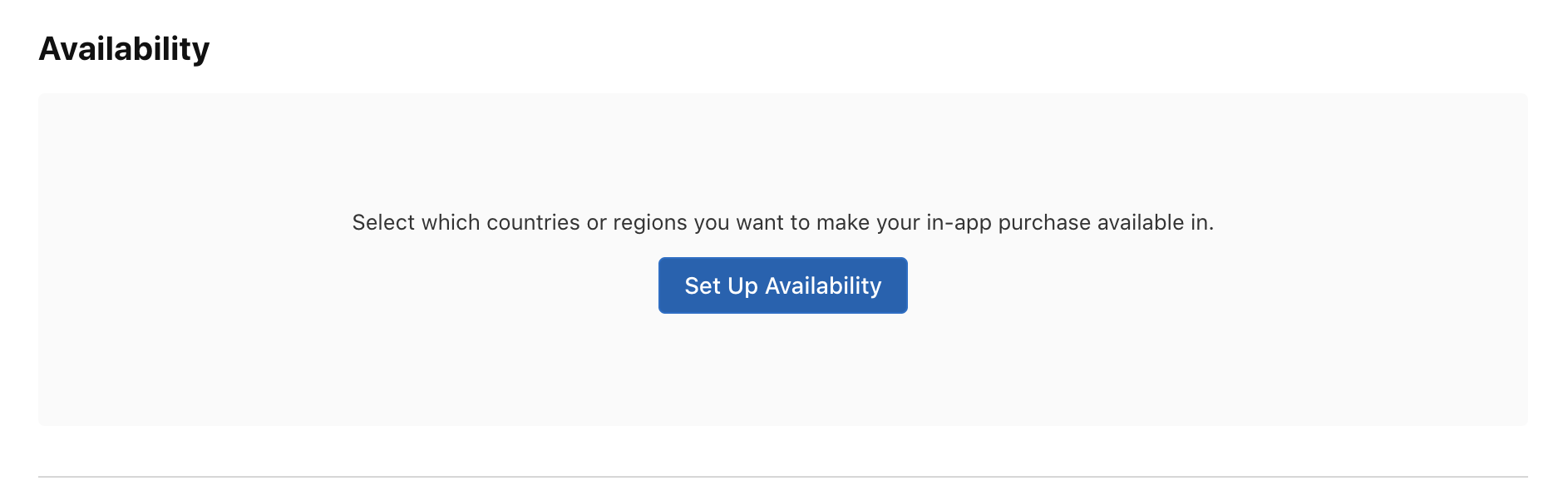

Then, select the availability of this in-app purchase product for different countries:

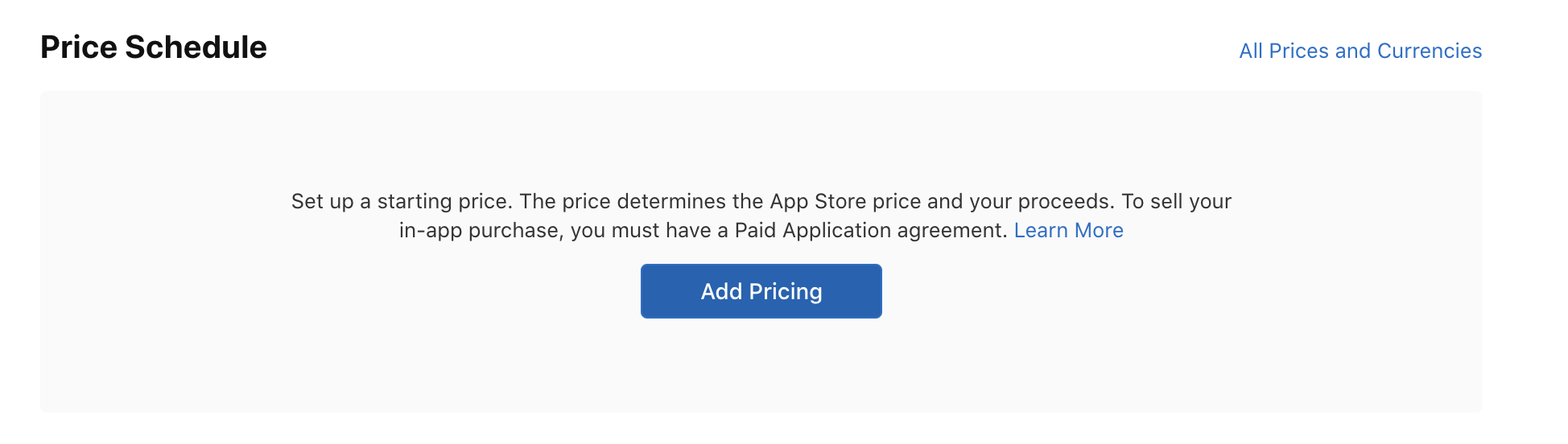

Next, choose the pricing by clicking the button shown below:

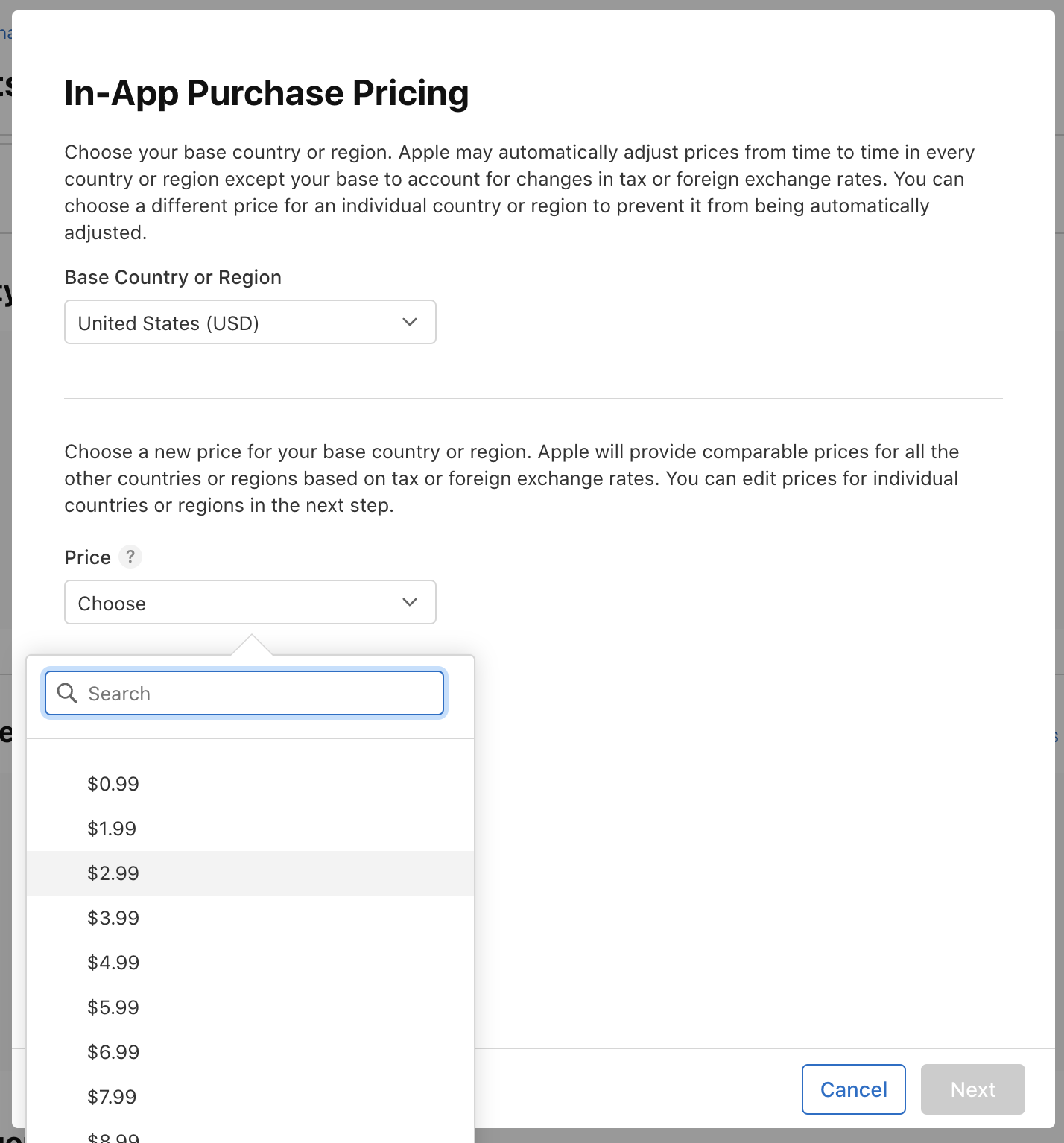

A popup will appear where you can set the pricing according to your needs:

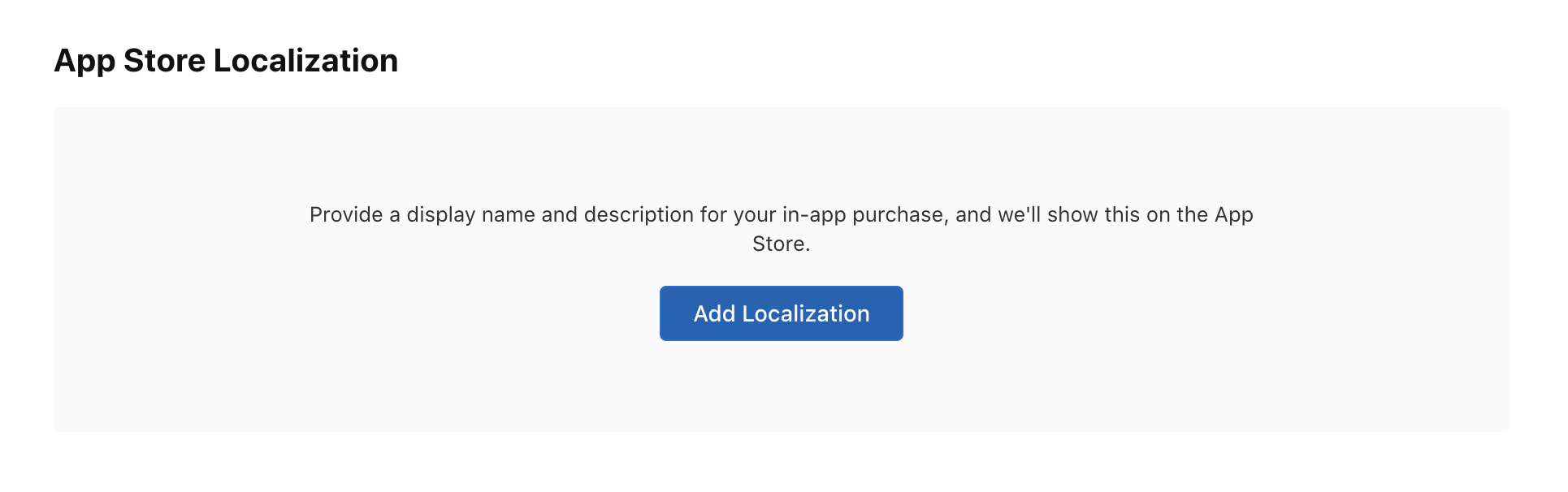

Then, add localization for the in-app purchase product:

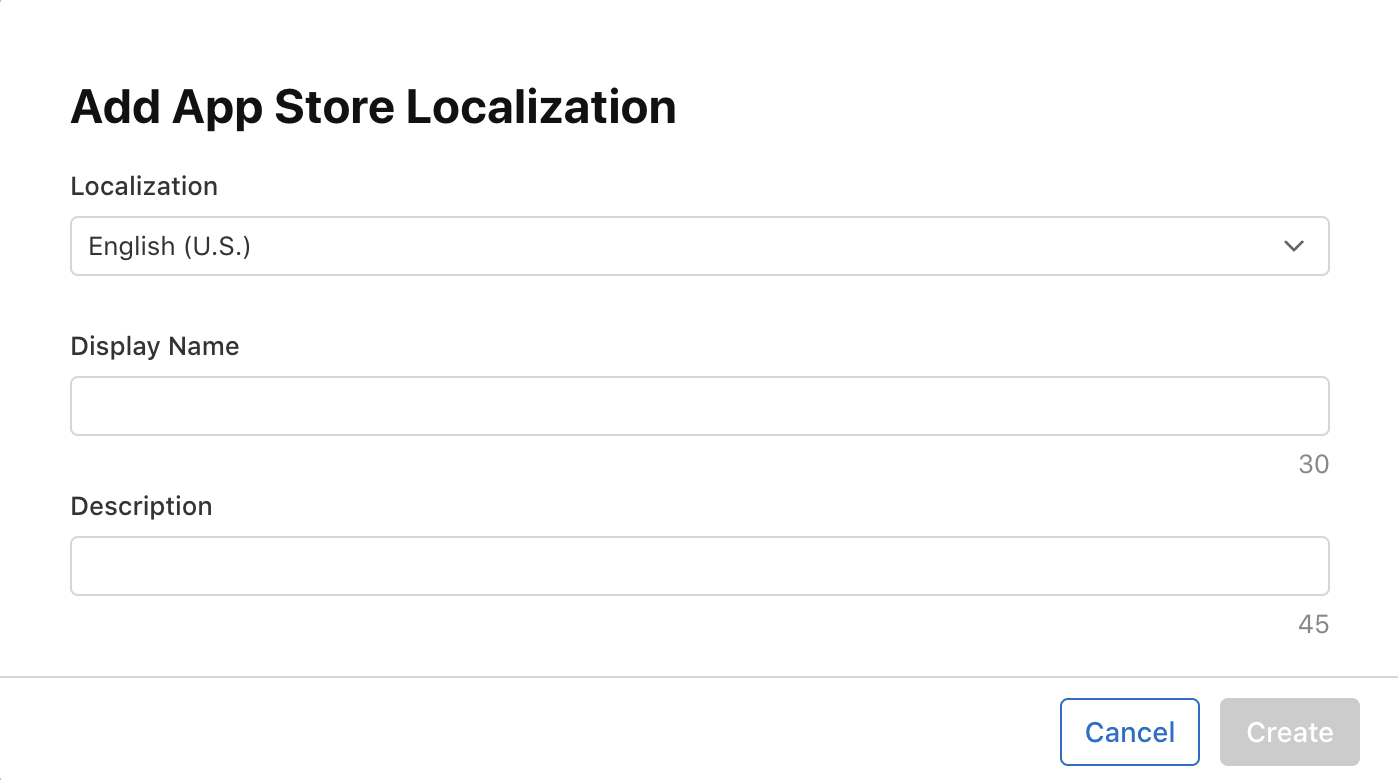

A popup will appear like this:

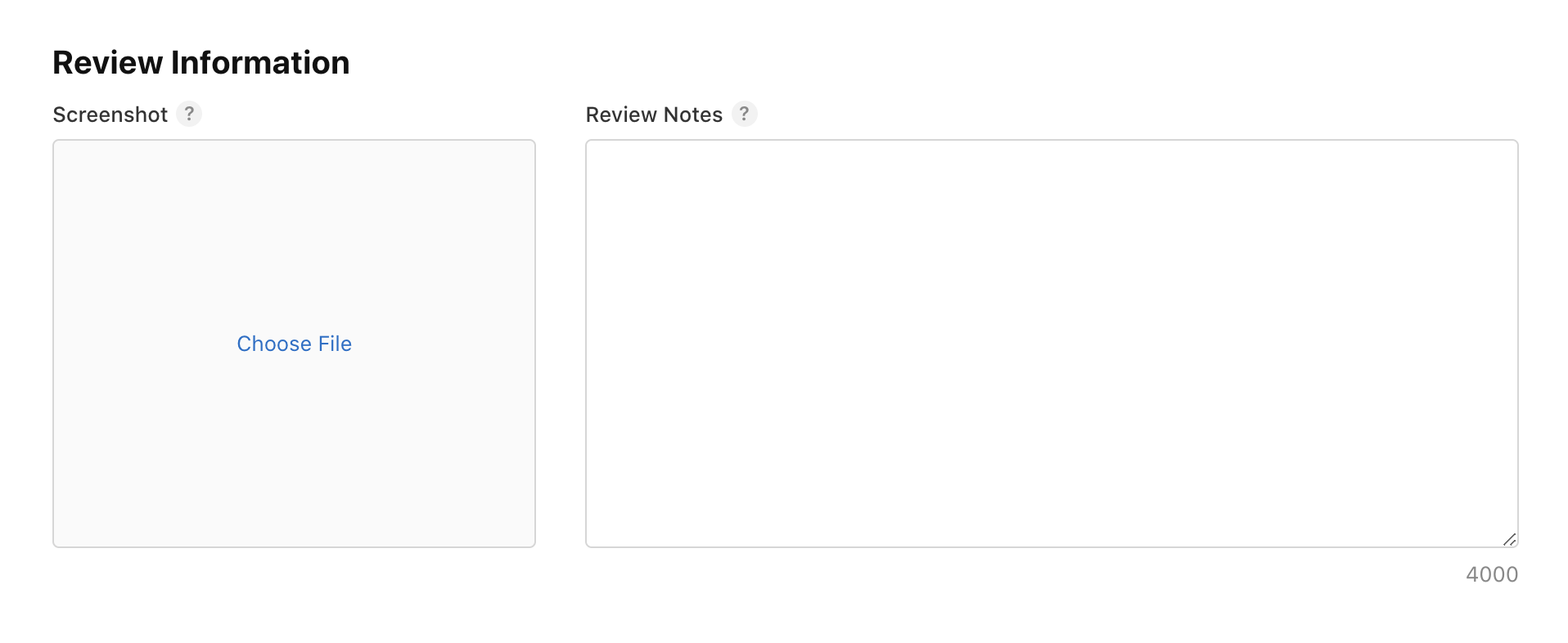

Next, you need to add an image of the app screen where this purchase will occur:

And add review notes and you’re good to go.

That's it for the **App Store Connect** configuration. You will see this in-app purchase as a draft like below:

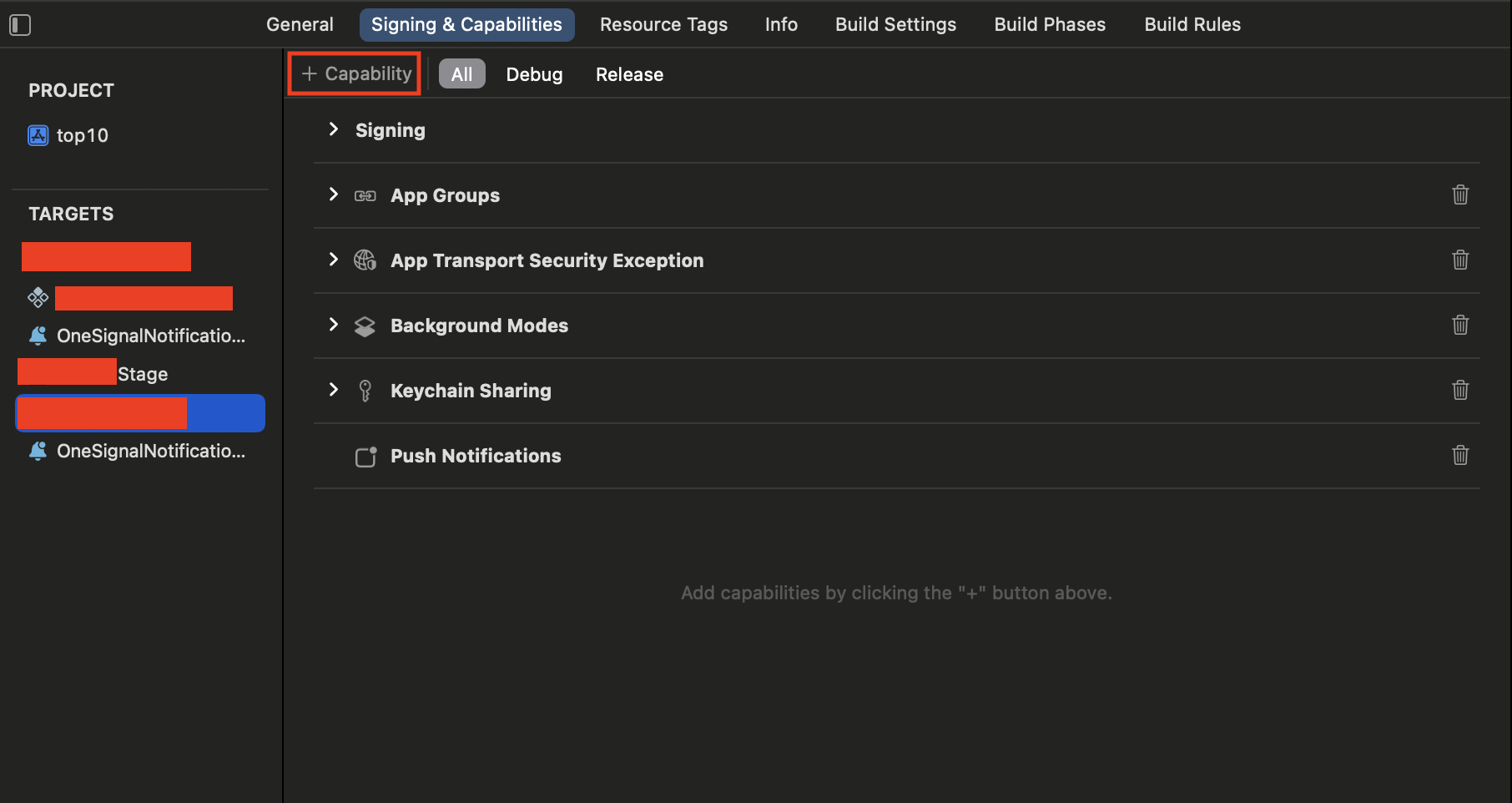

## 2. Configure Xcode For In App Purchase:

- Open your project workspace in Xcode.

- Select your project and click **Signing and Capabilities** tab:

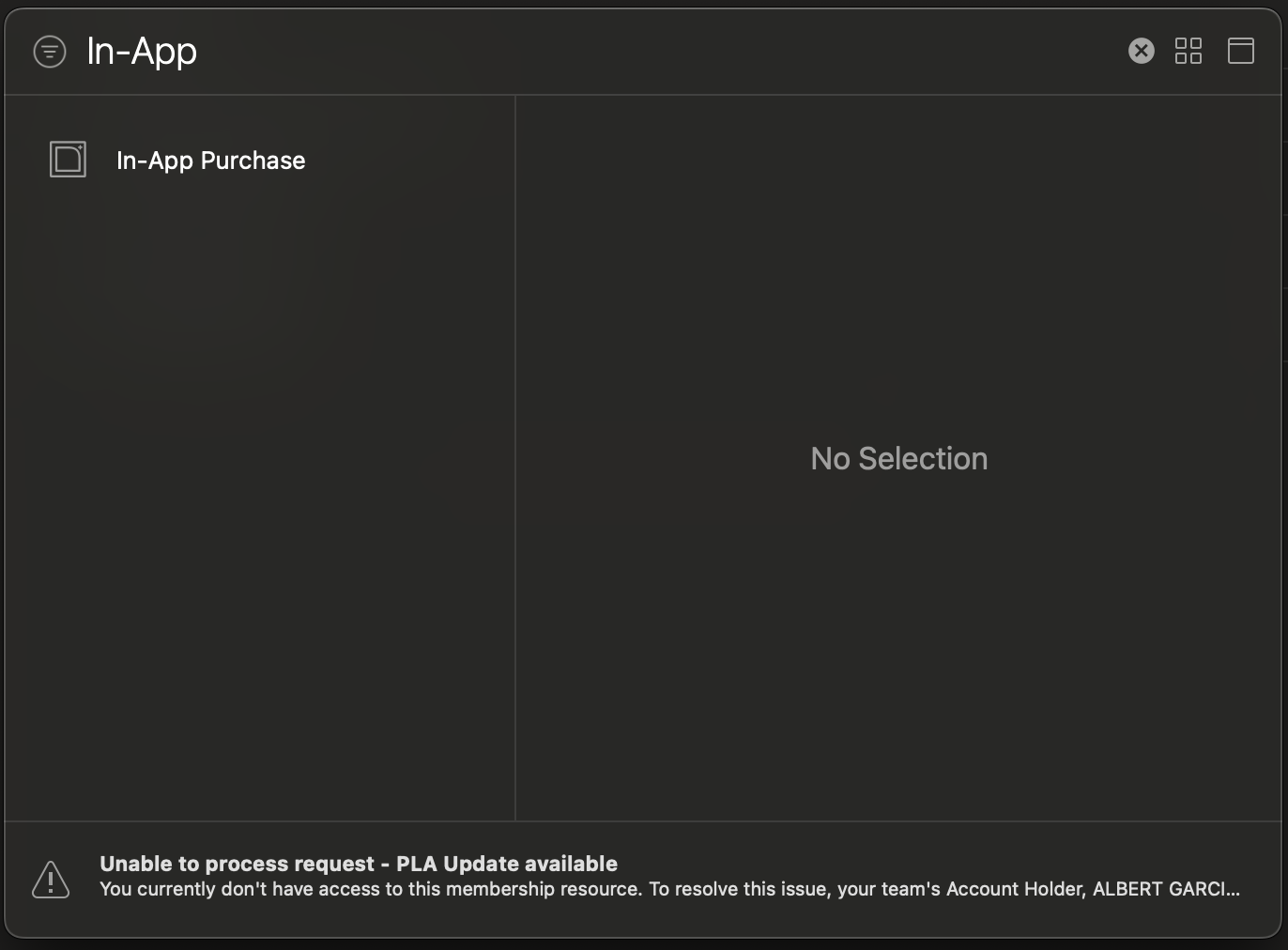

- Search for **In-App Purchase** and click on it:

That's it now let's move to the next step.

## 2. Integrate In App Purchase

```javascript

import React from 'react';

import { useIAP, requestPurchase, isIosStorekit2, PurchaseError } from 'react-native-iap';

export default function InAppComponent() {

const {

products,

currentPurchase,

finishTransaction,

getProducts,

requestPurchase

} = useIAP();

const [loading, setLoading] = useState(false);

const productSkus = Platform.select({

ios: ['10_COINS','20_COINS','30_COINS' ],

android: [],

default: [],

}) as string[];

useEffect(() => {

handleGetProducts();

}, []);

const handleGetProducts = async () => {

try {

setLoading(true)

await getProducts({ skus: productSkus });

} catch (error) {

setLoading(false)

console.log({ message: 'handleGetProducts', error });

}

};

const handleBuyProduct = async (sku: Sku) => {

try {

setLoading(true);

await requestPurchase({ sku });

} catch (error) {

handleError(error, 'handleBuyProduct');

} finally {

setLoading(false);

}

};

const handleError = (error: any, context: string) => {

console.log(

'Exception while making Request',

error,

);

};

useEffect(() => {

const checkCurrentPurchase = async () => {

try {

if (

(isIosStorekit2() && currentPurchase?.transactionId) ||

currentPurchase?.transactionReceipt

) {

await finishTransaction({

purchase: currentPurchase,

isConsumable: true,

});

onApiCall(currentPurchase);

}

} catch (error) {

handleError(error, 'checkCurrentPurchase');

}

};

checkCurrentPurchase();

}, [currentPurchase, finishTransaction]);

const onApiCall = (params: any) => {

console.log(params,"Call your API Here")

setLoading(false);

};

return (

<>

{products.map((product, index) => (

<TouchableOpacity

onPress={() => handleBuyProduct(product.productId)}

key={index}

>

<Text>

{product?.title} for{' '}

<Text>

{product?.localizedPrice}

</Text>

</Text>

</TouchableOpacity>

))}

</>

);

}

```

Sure, let's walk through the provided React Native code step by step:

1. **Import Statements**:

Import the required modules and functions from the `react-native-iap` library.

2. **Product SKUs Definition**:

Define the product SKUs according to the platform, in this case, iOS.

> _SKU is the ID of the in app product that you've created at the first step_.

3. **handleGetProducts Function**:

On the initial page render, retrieve all products linked to your Apple Store account. Use these products to establish a connection with the In-App Purchase (IAP) system. Map the retrieved products to display them in your view.

4. **handleBuyProduct Function**:

After listing the products, use the `handleBuyProduct` function to start the purchase process. Pass your unique `productId` as an argument. Once executed, the `handleBuyProduct` function will return a response in the `currentPurchase` variable. This response can then be sent to your API for storage.

> _To test this IAP integration in the development environment, follow these steps_:

> - _Run the app on a real device in debug mode_.