id int64 5 1.93M | title stringlengths 0 128 | description stringlengths 0 25.5k | collection_id int64 0 28.1k | published_timestamp timestamp[s] | canonical_url stringlengths 14 581 | tag_list stringlengths 0 120 | body_markdown stringlengths 0 716k | user_username stringlengths 2 30 |

|---|---|---|---|---|---|---|---|---|

1,869,925 | Introducing the Aroon indicator | What is the Aroon indicator? In the technical analysis, Aroon is a very unique technical... | 0 | 2024-05-30T05:22:59 | https://dev.to/fmzquant/introducing-the-aroon-indicator-4568 | aroon, indicator, trading, fmzquant | ## What is the Aroon indicator?

In the technical analysis, Aroon is a very unique technical indicator. The word "Aroon" comes from Sanskrit, meaning "the light of dawn". It is not as familiar as MA, MACD, and KDJ. It was launched later, and was invented by Tushar Chande in 1995. The author also invented the Chand momentum indicator (CMO). And the intraday momentum index (IMI). If a technical indicator was known by more people and used by more people, then the ability of making profit of this indicator will become less possible, but the relatively new Aroon indicator is exactly the opposite way. From this perspective, the Aroon indicator is actually a good choice.

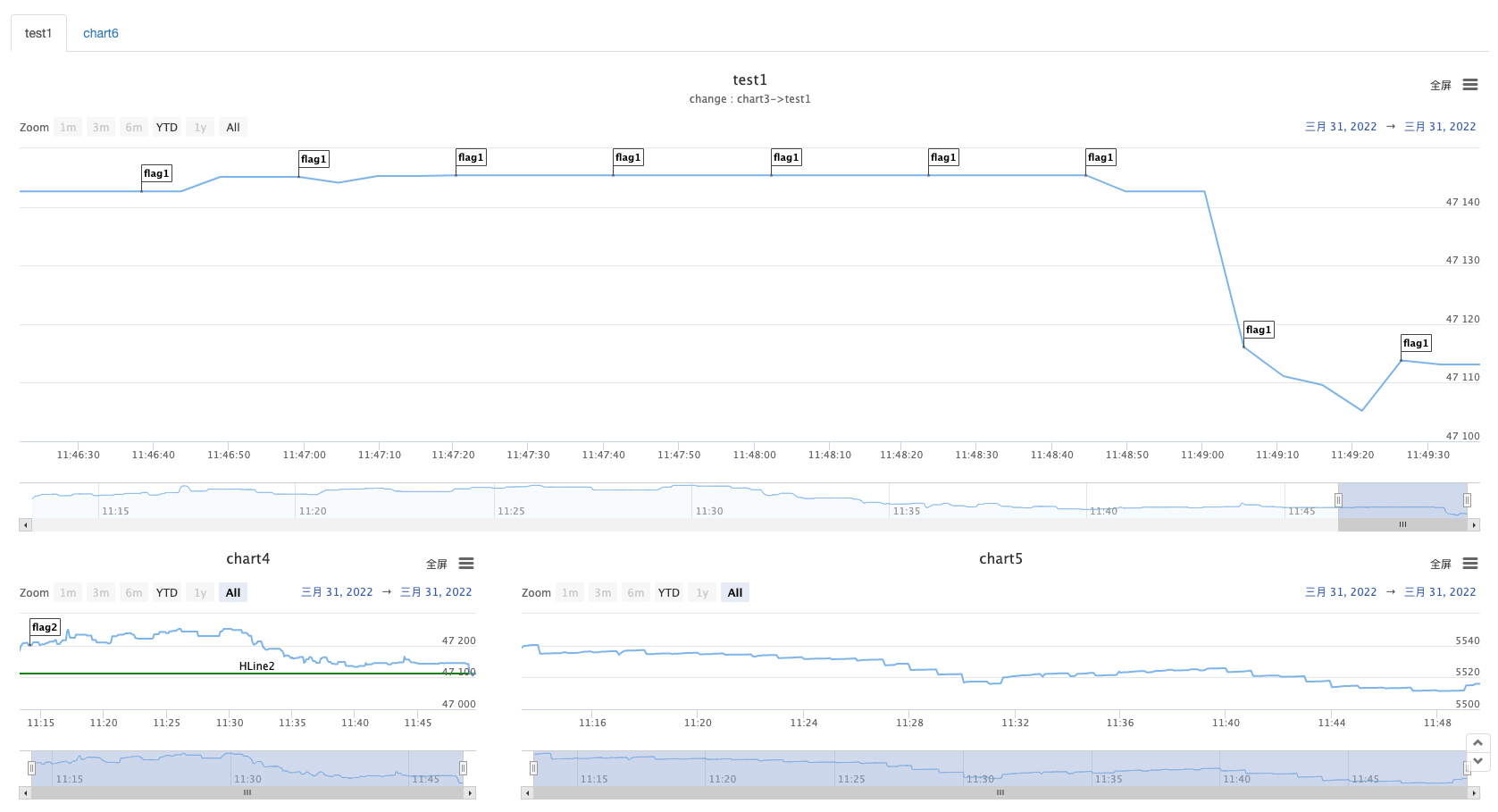

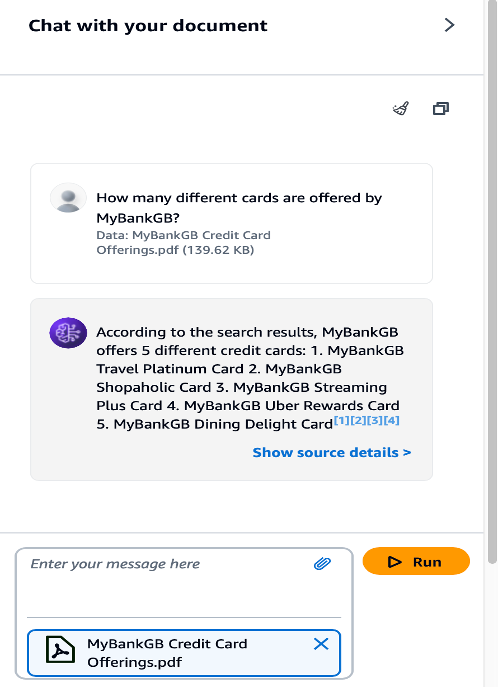

## The Aroon indicator in the chart

The Aroon indicator helps traders predict the relative positional relationship between price movements and trend areas by calculating the number of K-lines between the highest and lowest prices before the current K-line distance. It consists of two parts: AroonUp and AroonDown. The two lines move up and down between 0 and 100. Although they are named up and down, unlike the BOLL indicator, it's not "the real upper and lower" line. The following picture is the Aroon indicator in the K-line chart:

## Calculation method of Aroon indicator

The Aroon indicator requires that you first set a time period parameter, just like setting the average period parameter. In traditional trading software, the number of cycles is 14. In addition, this period parameter is not fixed. You can also set it to 10 or 50 and so on. For the sake of easy understanding, let's define it as: N. After determining N, we can calculate AroonUp and AroonDown.

The specific formula is as follows:

- AroonUp = [ (set period parameter - number of cycles after the highest price) / number of cycles calculated] * 100

- AroonDown = [ (set period parameter - number of cycles after the lowest price) / number of cycles calculated] * 100

From this formula, we can roughly see the idea of the Aroon indicator. That is: how many cycles there are, and the price is below the recent high/low, helping to predict whether the current trend will continue and measuring the strength of the current trend. If we classify this indicator, it is obvious that it belongs to the trend tracking type. But unlike other trend-tracking metrics, it pays more attention to time than price.

## How to use the Aroon indicator

AroonUp and AroonDown reflect the current time and the previous highest or lowest price. If the time is near, the value will be larger. If the time is far, the value will be smaller. And when the two lines cross, it indicates that the price direction may change. If AroonUp is above AroonDown, the price is going up, the price may rise; if AroonDown is above AroonUp, the price is in a downward trend, the price May fall.

At the same time, we can also set a few fixed values to accurately find the trading opportunity. We know that the Aroon indicator has been running up and down between 0 and 100. So when the market is on the rise, that is, when AroonUp is above AroonDown, when AroonUp is greater than 50, the market's rising trend has already formed, and the price may continue in the future. when AroonUp under 50, the momentum for price increases is waning, and prices may fluctuate and fall in the future.

On the contrary, when the market is in a downward trend, that is, when AroonDown is above AroonUp, when AroonDown is greater than 50, the market downtrend has already formed, and the price may continue to fall in the future; when AroonDown wears down 50, the driving force for price decline is weakening. Future prices may fluctuate and rise. Then according to the above two paragraphs, we can list the terms of buying and selling as:

- When AroonUp is larger than AroonDown and AroonUp is greater than 50, the long position is opened;

- When AroonUp is smaller than AroonDown, or AroonUp is less than 50, the long position is closed;

- When AroonDown is larger than AroonUp and AroonDown is greater than 50, the short position is opened;

- When AroonDown is smaller than AroonUp, or AroonDown is less than 50, the short position is closed;

## Build a trading strategy based on the Aroon indicator

After clarifying the trading logic, we can implement it with code. In this article, we continue to use the JavaScript language, and the trading variety is still commodity futures. reader with strong programming skills can also translate it into Python, or cryptocurrency.

Following by these steps : fmz.com > Login > Dashboard > Strategy Library > New Strategy, start writing the strategy, pay attention to the comments in the code below.

**Step 1: Using the CTA Framework**

Note that Be sure to click on the following: Commodity Futures Trading Library. If you are adapted it to cryptocurrency, click on: Digital currency spot trading library.

```

Function main() {

// ZC000/ZC888 refers to using the index as the source of the market but the transaction is mapped to the main contract

$.CTA("ZC000/ZC888", function(st) {

})

}

```

**Step 2: Get the data**

```

Function main() {

$.CTA("ZC000/ZC888", function(st) {

Var r = st.records; // get the K line array

Var mp = st.position.amount; // Get the number of positions

})

}

```

**Step 3: Calculate the Aroon indicator**

```

Function main() {

$.CTA("ZC000/ZC888", function(st) {

Var r = st.records; // get the K line array

Var mp = st.position.amount; // Get the number of positions

If (r.length < 21) { // Determine if the K line data is sufficient

Return;

}

Var aroon = talib.AROON(r, 20); // Aroon indicator

Var aroonUp = aroon[1][aroon[1].length - 2]; // Aroon indicator goes online with the second root data

Var aroonDown = aroon[0][aroon[0].length - 2]; // Aroon's indicator goes down the second root of the data

})

}

```

**Step 4: Calculate the trading conditions and placing orders**

```

Function main() {

$.CTA("ZC000/ZC888", function(st) {

Var r = st.records; // get the K line array

Var mp = st.position.amount; // Get the number of positions

If (r.length < 21) { // Determine if the K line data is sufficient

Return;

}

Var aroon = talib.AROON(r, 20); // Aroon indicator

Var aroonUp = aroon[1][aroon[1].length - 2]; // Aroon indicator goes online with the second root data

Var aroonDown = aroon[0][aroon[0].length - 2]; // AAroon's indicator goes down the second root of the data

If (mp == 0 && aroonUp > aroonDown && aroonUp > 50) {

Return 1; // long position open

}

If (mp == 0 && aroonDown > aroonUp && aroonDown > 50) {

Return -1; // short position open

}

If (mp > 0 && (aroonUp < aroonDown || aroonUp < 50)) {

Return -1; // multi-head platform

}

If (mp < 0 && (aroonDown < aroonUp || aroonDown < 50)) {

Return 1; // short platform

}

})

}

// The above code is a complete strategy based on the JavaScript language.

// --------------------Dividing line--------------------

// In order to take care of the reach of the party, this strategy is also translated into My language. At the same time, this can also be used for digital currency. Here is the complete policy code:

AROONUP := ((N - HHVBARS(H, N)) / N) * 100; // Aroon indicator goes online

AROONDOWN := ((N - LLVBARS(L, N)) / N) * 100; // Aroon indicator goes offline

AROONUP > AROONDOWN && AROONUP > BKV, BK; // Long position open

AROONDOWN > AROONUP && AROONDOWN > SKV, SK; // short position

AROONUP < AROONDOWN || AROONUP < SPV, SP; // Multi-head platform

AROONDOWN < AROONUP || AROONDOWN < BPV, BP; // Short platform

AUTOFILTER;

```

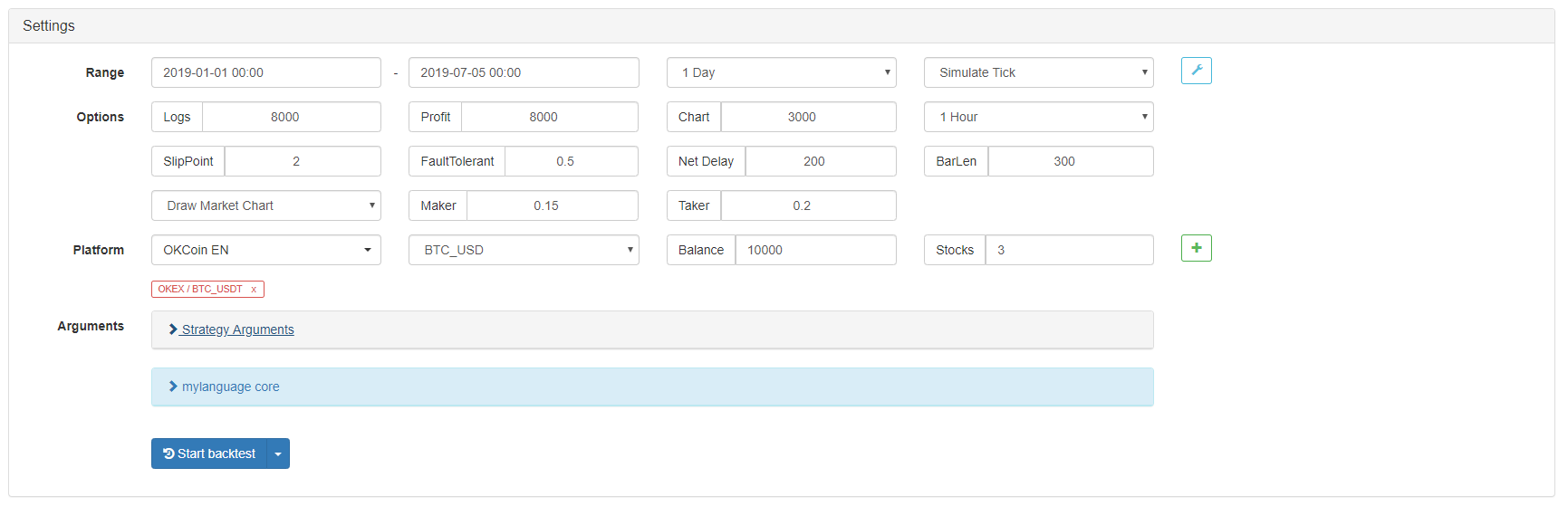

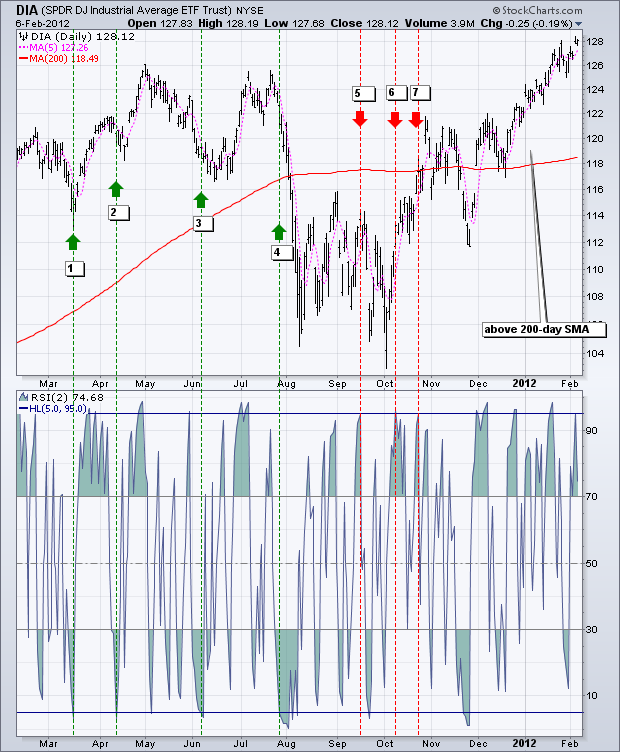

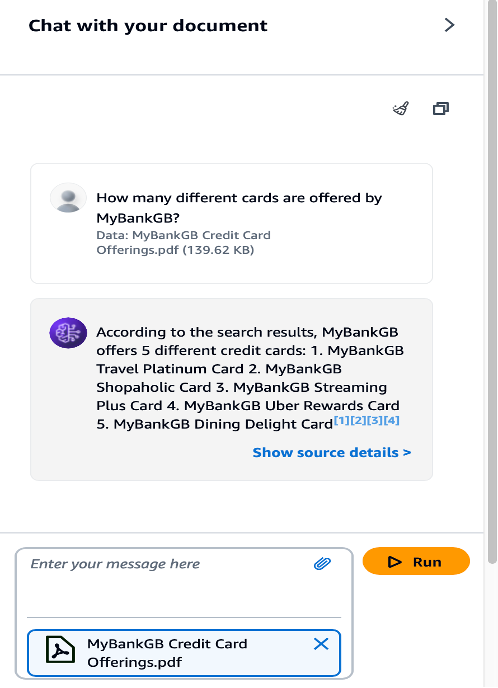

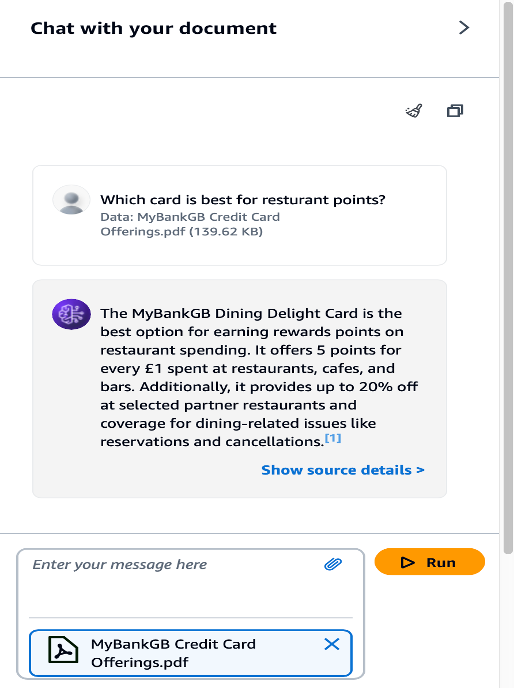

## Strategy backtest

In order to get closer to the real trading environment, we used the 2 pips of slippage and 2 times of the transaction fee to test the pressure during the backtest. The test environment is as follows:

- Quote Variety: Thermal Coal Index

- Trading variety: Thermal Coal Index

- Time: June 01, 2015 ~ June 28, 2019

- Cycle: Daily K line

- Slippage: 2 pips for opening and closing positions

- Transaction Fee: 2 times of the exchange

**Test environment**

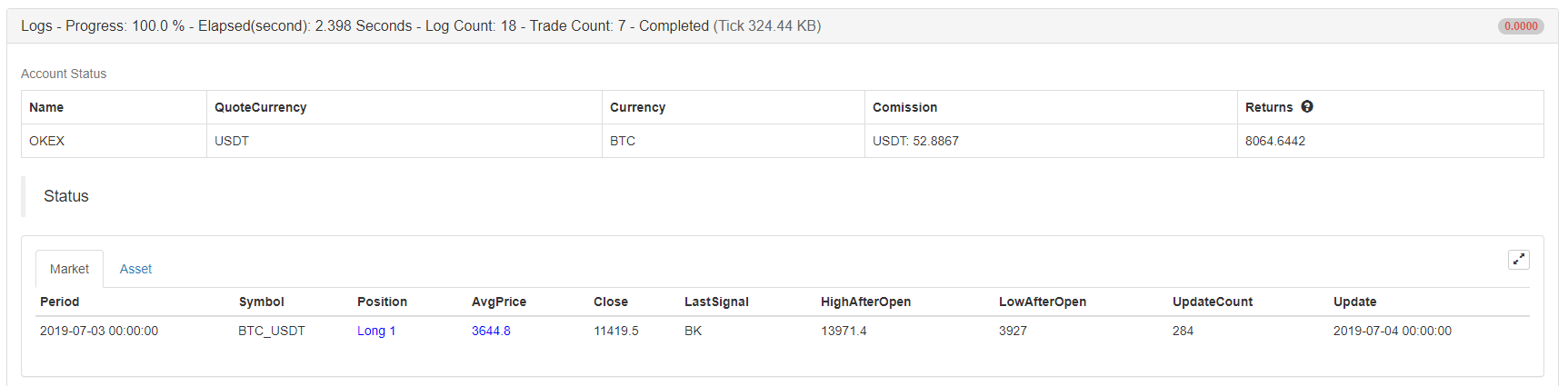

**Profit report**

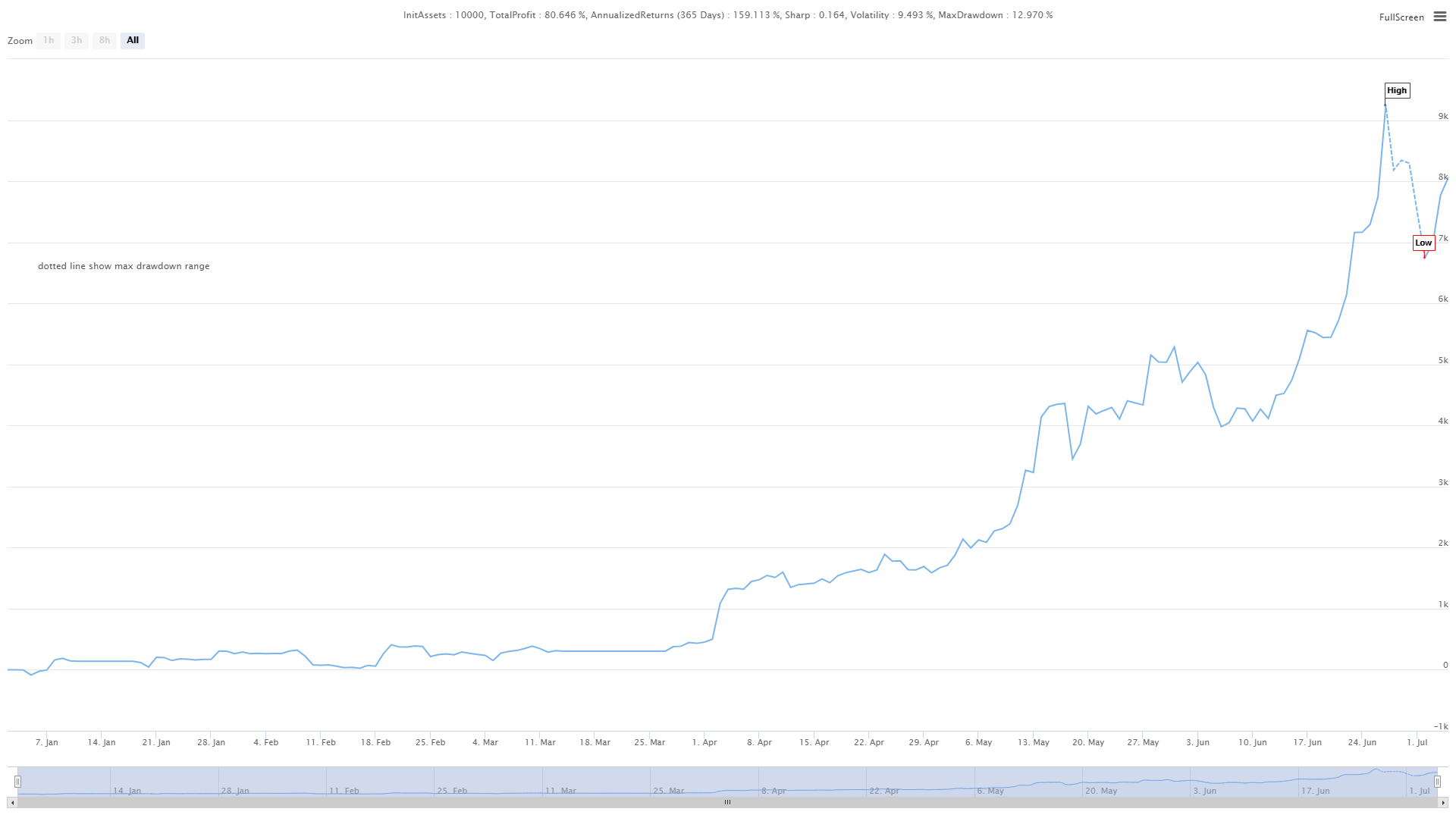

**Fund curve**

From the above backtest results, the strategy is very good when the market trend is smooth, whether in the rise or fall, the Aroon indicator can completely track the market. The capital curve also showed an overall upward trend, and there was no significant retracement. However, in the volatile market, especially in the continuous shock market, there was a partial retracement.

## The advantages and disadvantages of the Aroon indicator

- Advantages: The Aroon indicator can judge the status of the trend market, take into account the market trend and the ability to judge the price turn, and help traders to increase the use of funds.

- Disadvantages: The Aroon indicator is only one of the trend tracking indicators, it has the same shortcomings of the trend tracking indicator. it only can judging the number of periods of the highest or lowest price at a given time, but sometimes the highest or lowest price will be contingent in the whole market trend, this contingency will interfere with the Aroon indicator itself, causing false signals.

## click on the copy strategy source

For the source code,

with JavaScript version, please click: https://www.fmz.com/strategy/154547

with Mylanguage version, please click: https://www.fmz.com/strategy/155582

## Summary

In this strategy, we fixed some parameters, such as: aroonUp or aroonDown is greater than and less than 50, causing the lagging issue of the strategy. In many cases, the market will rise or fall for a period of time before closing the position. Although this improved the winning rate and reduced the maximum retracement rate, it also missed a lot of profits, which also confirmed the truth of the homonym of profit and loss. Interested friends can dig it deeper and improve it.

From: https://blog.mathquant.com/2022/09/30/introducing-the-aroon-indicator-2.html | fmzquant |

1,869,923 | Zonatoto Slot Online Terbaik Dan Terpercaya 2024 | Apakah kamu sedang mencari link slot gacor? ZONATOTO merupakan pilihan yang sangat tepat adalah... | 0 | 2024-05-30T05:21:27 | https://dev.to/zonatotoslot17/zonatoto-slot-online-terbaik-dan-terpercaya-2024-4fo0 | zonatotologin, togelpulsa5000, togelpulsa, slotpulsa |

Apakah kamu sedang mencari link slot gacor? [ZONATOTO](https://hypertogel.com/) merupakan pilihan yang sangat tepat adalah pilihan game slot gacor server terbesar di dunia judi online, situs slot ini menawarkan jenis game slot gacor yang istimewa pastinya gampang maxwin 2024. Tidak cuma itu saja, melainkan dengan sistem slot terlengkap yang praktis, sodara bisa bermain situs slot kapan saja tanpa perlu ribet.

[ZONATOTO](https://hypertogel.com/) adalah situs slot gacor gampang maxwin terpercaya yang menyediakan berbagai macam game slot online resmi terbaik. Situs slot tentu saja memberikan keuntungan dalam rupa jackpot memberikan kesempatan bagi setiap slot gacor setiap harinya tahun ini. Namun ZONATOTO terus berinovasi memberikan game slot gacor hari ini yang pasti menguntungkan bagi para penggemar judi slot online di Indonesia

| zonatotoslot17 |

1,869,922 | Triton Inspection Services | For thorough and reliable home inspections in Alabama, Triton Inspection Services is your trusted... | 0 | 2024-05-30T05:21:15 | https://dev.to/william_347197f2809952555/triton-inspection-services-3cab | home, inspection | For thorough and reliable home inspections in Alabama, Triton Inspection Services is your trusted partner. Our experienced inspectors provide comprehensive evaluations of residential properties, offering valuable insights to help you make informed decisions about your investment. From foundation to roof, we meticulously examine every aspect of the home, identifying potential issues and providing clear, detailed reports. Whether you're buying, selling, or maintaining a property, our goal is to ensure your peace of mind and confidence in your real estate transaction. Trust Triton Inspection Services for professional and trustworthy home inspections in Alabama. Contact us today to schedule your inspection and take the next step towards a safer, more secure home.

Address : 6014 AL Highway 144 St, Ragland, AL 35131, USA

E-Mail : tritonInspects@gmail.com

Phone : +1 2056411235

Visit : https://www.tritoninspects.com/ | william_347197f2809952555 |

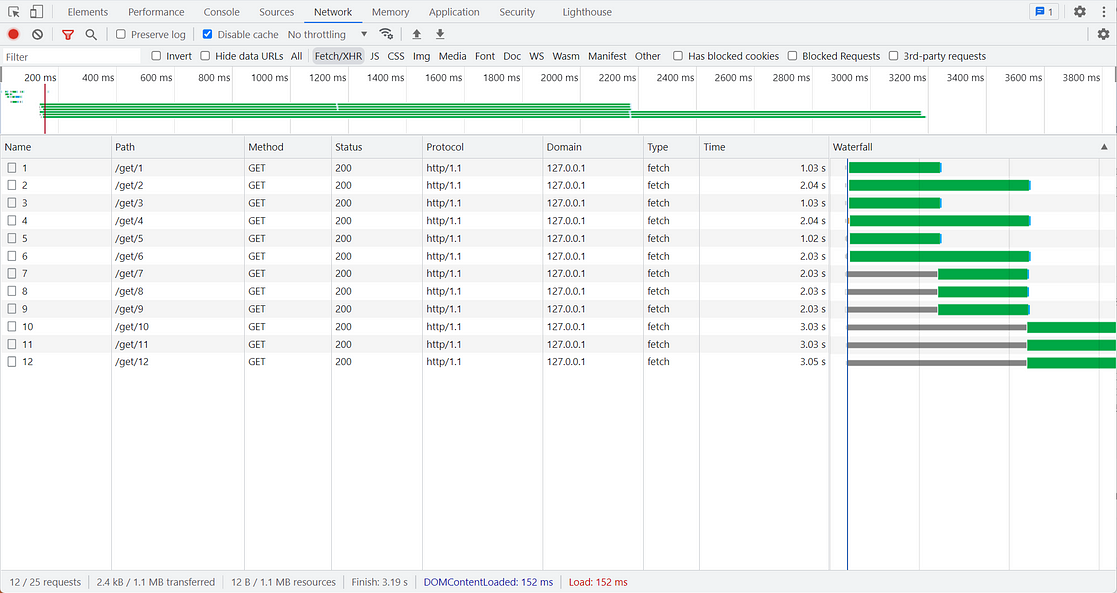

1,869,921 | Exploring Angular v18: Zoneless Change Detection and More | Introduction We are thrilled to announce the release of Angular v18! This version focuses... | 0 | 2024-05-30T05:21:06 | https://dev.to/dipakahirav/exploring-angular-v18-zoneless-change-detection-and-more-565 | angular, angular18, javascript, material | ### Introduction

We are thrilled to announce the release of Angular v18! This version focuses on stabilizing many new APIs, addressing common developer requests, and experimentally introducing zoneless change detection.

please subscribe to my [YouTube channel](https://www.youtube.com/@DevDivewithDipak?sub_confirmation=1

) to support my channel and get more web development tutorials.

### Key Highlights

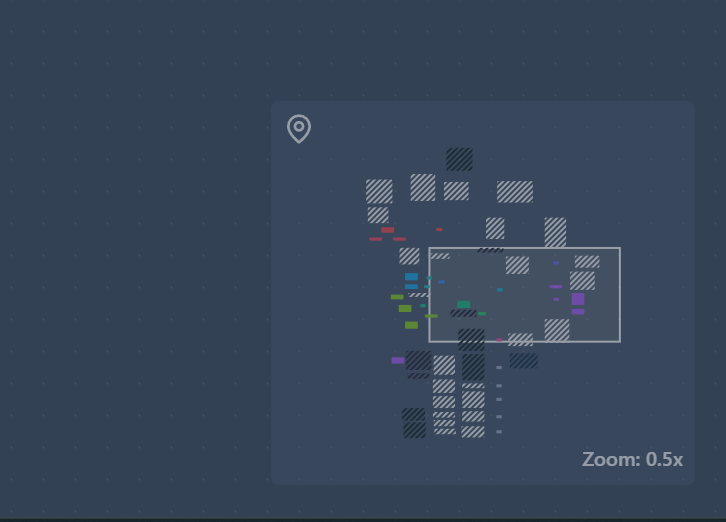

**1. Zoneless Change Detection**

Zoneless change detection is now available experimentally, eliminating the need for `zone.js`, leading to improved performance, faster initial renders, smaller bundle sizes, and simpler debugging.

#### Enabling Zoneless Change Detection

To enable zoneless change detection, modify your application bootstrap configuration:

```typescript

import { bootstrapApplication } from '@angular/platform-browser';

import { provideExperimentalZonelessChangeDetection } from '@angular/core';

bootstrapApplication(App, {

providers: [

provideExperimentalZonelessChangeDetection()

]

});

```

Remove `zone.js` from `angular.json`.

#### Example Component

Here's an example of a component using zoneless change detection:

```typescript

import { Component, signal } from '@angular/core';

@Component({

selector: 'app-root',

template: `

<h1>Hello from {{ name() }}!</h1>

<button (click)="handleClick()">Go Zoneless</button>

`,

})

export class App {

protected name = signal('Angular');

handleClick() {

this.name.set('Zoneless Angular');

}

}

```

**2. Material 3 and Deferrable Views**

Material 3 components and deferrable views are now stable. Material 3 incorporates feedback-based improvements, and deferrable views help enhance Core Web Vitals.

**3. Built-in Control Flow**

The built-in control flow API is now stable, featuring better type checking and ergonomic implicit variable aliasing.

### Server-Side Rendering Enhancements

**Improved i18n Hydration Support**

i18n hydration support is now available, enabling better handling of internationalized content during hydration.

**Enhanced Debugging Tools**

Angular DevTools now visualize the hydration process, displaying component hydration statuses and identifying hydration errors.

### Firebase App Hosting

Angular v18 supports dynamic Angular applications on Firebase App Hosting, simplifying deployment and enhancing performance.

### TypeScript 5.4 Compatibility

This version is fully compatible with TypeScript 5.4, allowing developers to utilize the latest TypeScript features.

### Additional Updates

**Unified Control State Change Events**

Form controls now expose an `events` property for tracking changes in value, touch state, pristine status, and control status.

```typescript

const nameControl = new FormControl<string | null>('name', Validators.required);

nameControl.events.subscribe(event => {

// process the individual events

});

```

**Automated Migration to Application Builder**

The new application builder, based on Vite with esbuild, replaces the previous webpack experience, reducing dependencies and improving installation times.

**Route Redirects as Functions**

The `redirectTo` property now accepts a function, providing higher flexibility for dynamic route redirects.

```typescript

const routes: Routes = [

{ path: "first-component", component: FirstComponent },

{

path: "old-user-page",

redirectTo: ({ queryParams }) => {

const userIdParam = queryParams['userId'];

if (userIdParam !== undefined) {

return `/user/${userIdParam}`;

} else {

return `/not-found`;

}

},

},

{ path: "user/:userId", component: OtherComponent },

];

```

### Conclusion

Angular v18 brings numerous improvements and new features that enhance performance, developer experience, and application capabilities. From zoneless change detection to stable Material 3 components and improved SSR, this release empowers developers to build more efficient and robust applications. For detailed information, visit the [official Angular blog post](https://blog.angular.dev/angular-v18-is-now-available-e79d5ac0affe).

Stay updated with the latest Angular developments and happy coding with Angular v18!

*Follow me for more tutorials and tips on web development. Feel free to leave comments or questions below!*

#### Follow and Subscribe:

- **YouTube**: [devDive with Dipak](https://www.youtube.com/@DevDivewithDipak)

- **Website**: [Dipak Ahirav] (https://www.dipakahirav.com)

- **Email**: dipaksahirav@gmail.com

- **Instagram**: [devdivewithdipak](https://www.instagram.com/devdivewithdipak)

- **LinkedIn**: [Dipak Ahirav](https://www.linkedin.com/in/dipak-ahirav-606bba128) | dipakahirav |

1,869,919 | Gifts World Expo | Gifts World Expo is India’s biggest exhibition on gifting and promotional solutions. The specially... | 0 | 2024-05-30T05:20:19 | https://dev.to/gifts_world_expo/gifts-world-expo-2a5b | [Gifts World Expo](https://www.giftsworldexpo.com/) is India’s biggest exhibition on gifting and promotional solutions. The specially curated trade show provides a lucrative platform for marquee players looking to scale up their businesses in the B2B gifting and promotion industry to generate targeted leads, get industry insights, find sustainable solutions, and accelerate and enhance their value chain.The show dispenses an extensive range of products, ideas, and solutions in a variety of niches in the B2B gifting space. The iconic fair is the ultimate destination for every possible gifting solution: gift items, souvenirs, premiums, novelties, mementos, and keepsakes and a one-stop rendezvous for promotional solutions in India. Gifts World Expo unites the entire gifting industry under one roof.

| gifts_world_expo | |

1,869,916 | Cloud Cost Management With Cloud CADI | Cloud Cost Management with Cloud CADI focuses on leveraging advanced analytics,and actionable... | 0 | 2024-05-30T05:17:26 | https://dev.to/risheka_vijay_e4df10c5c6f/cloud-cost-management-with-cloud-cadi-bed | cloudcostmanagement, cloudfinops, cloudnative | [Cloud Cost Management](www.amadisglobal.com) with [Cloud CADI](https://amadisglobal.com/cloud-cadi-a-simplified-cloud-finops-solution/) focuses on leveraging advanced analytics,and actionable recommendations to minimize unnecessary cloud expenses and maximize the return on cloud investments. Cloud CADI offers a strategic approach to identify inefficiencies, predict costs, and implement actionable recommendations.

Key Strategies for Cloud Cost Management with [Cloud CADI](https://amadisglobal.com/cloud-cadi-a-simplified-cloud-finops-solution/)

Detailed Cost Analysis:

Service-Level Insights: Break down costs by individual cloud services (compute, storage, networking, etc.) to identify high-cost areas.

Usage Patterns: Analyze usage patterns to understand peak and off-peak times, helping to align resource usage with demand.

Resource Utilization Diagnostics:

Idle Resource Detection: Identify resources that are running but not being used (e.g., idle VMs, unattached storage).

Underutilized Resources: Find resources that are underutilized and recommend rightsizing options.

Anomaly Detection: Use machine learning to detect anomalies in cloud spending that could indicate misconfigurations or unexpected usage.

Actionable Insights and Recommendations:

Rightsizing: Provide recommendations for resizing instances based on historical usage data to ensure optimal performance at the lowest cost.

Instance Scheduling: Suggest scheduling non-critical instances to shut down during off-peak hours to save costs.

Benefits of Cloud Cost Management with Cloud CADI

Cost Savings: Significant reduction in cloud spending through efficient resource utilization and elimination of wastage.

Improved Financial Accountability: Enhanced visibility into cloud costs ensures that departments and teams are accountable for their spending.

Enhanced Operational Efficiency: Streamlined cloud operations through automation and real-time monitoring.

Strategic Decision Making: Data-driven insights enable informed decisions regarding cloud investments and usage.

Scalability and Flexibility: Adaptable to the evolving needs of the organization as cloud usage and business requirements grow.

| risheka_vijay_e4df10c5c6f |

1,869,914 | life [2] - I want to build a file sharing app | This is a series where I update about my daily life. The company I work for don't have any new... | 22,781 | 2024-05-30T05:15:11 | https://dev.to/fadhilsaheer/life-2-i-want-to-build-a-file-sharing-app-j1f | programming, rust, javascript | This is a series where I update about my daily life. The company I work for don't have any new clients, so no projects to work on. I'am planning to leave this company anyway (If you are recruiter and want to hire me shoot me an email fadhilsaheer@gmail.com), so I wanted to build something which can catch recruiter's eyes and develop my skills.

> This blog post is the understanding I have on the mentioned technologies, there might be a lot of corrections to be made, feel free to mention it in comments, it would help me a lot

## I want to build a file sharing app 📂

### Context

I had this idea for a while to create an app which can share files similar like Xender, airdrop etc. But with back to back compatibility with all devices. I thought there would be similar api for the tech, but I was wrong 🏃

### My research

There are different ways you can share files between devices like

- Connect devices over wifi

- With bluetooth

etc, but every one of them comes with a draw back, sending files over wifi is fast, but both devices have to be in same network. Connecting devices between bluetooth is easy and fast, but sending files between is too slow.

Many of the existing softwares use bluetooth for discovering and connecting and send files over wifi.

This is what I thought initially, but It was kind of hard. there are different tech kind of technologies and protocol to be mind of

- [NFC (near field communication)](https://en.wikipedia.org/wiki/Near-field_communication) is used to discover nearby devices

- [Wi-Fi Aware](https://developer.android.com/develop/connectivity/wifi/wifi-aware) used in android to discover and connect to devices

- [NAN (Neighbourhood Area Network)](https://www.techopedia.com/definition/29172/neighborhood-area-network-nan) tech behind wifi-aware

- [Wi-Fi Direct] (https://www.wi-fi.org/discover-wi-fi/wi-fi-direct) send data between devices through wi-fi

My initial thought was to create a hotspot and connect to that hotspot using another device and make a webserver run on hotspot device and send files using HTTP.

Even though thats a solution, whats the fun in that ? I wanted to push pass my limits anyway.

So I created a simple prototype using NFC (Near field communication), there is a library called [libnfc](https://github.com/nfc-tools/libnfc), There is rust crate which had binding for libnfc, so I used it to create a basic discovery and device connection, but sending files with it is impossible, its too slow, and range between device is 4-10 cm. And transfer rate is only upto 424 kbit/s even bluetooth's transmission rate is about 1 Mbps, and it is enhanced upto 24 Mbps in BLE (Bluetooth low-energy).

My next option was to use Wi-Fi Aware & Wi-Fi Direct, which have support on android, windows and linux. But apple doesn't support it. To be exact apple have another tech called [Apple Wireless Direct Link (AWDL)](https://stackoverflow.com/questions/19587701/what-is-awdl-apple-wireless-direct-link-and-how-does-it-work) which they use for airdrop, to share device state between apple device etc. So they dont have support for other tech, to be exact they don't support Peer to Peer data support with other devices like wi-fi direct does (At least this is what I learned of)

### So now what ?

Fortunately I found a [reddit post](https://www.reddit.com/r/rust/comments/135mwta/direct_file_transfer_over_ad_hoc_wifi/) which mentioned about a software named [Flying Carpet](https://github.com/spieglt/FlyingCarpet) after tinkering with that, and checking its code I found out there is something call [Wireless ad hoc network (WANET)](https://en.wikipedia.org/wiki/Wireless_ad_hoc_network), it its basically a decentralised type of wireless network which does not rely on router or wireless access points.

Flying Carpet use ad hoc wi-fi to share file. Basically it create an Ad hoc network in a device and other devices need to manually connect to that Ad hoc network.

I'm planning to use NFC to connect and discover devices (it is the only thing apple and others have in common). Any one of the connected device would create an ad hoc network and other device will connect it to automatically using NFC.

This is basically hypothesis, not sure If it'll work the way I expect it to, or to be exact I'm not even sure if it can be achieved this way

### Conclusion

Either way I want to build this app, If they can do it, why can't I ?. This blog post might not be correct, I'll update it if I find corrections. This is what I understood from learning and researching about this for the past few days.

I'll update the progress in coming posts, wish me luck! | fadhilsaheer |

1,869,913 | Shadow Mapping Techniques: Implementing Shadows in 3D Scenes Using Shadow Mapping | Shadow mapping is a fundamental technique in 3D graphics that allows for realistic rendering of... | 0 | 2024-05-30T05:12:34 | https://dev.to/hayyanstudio/shadow-mapping-techniques-implementing-shadows-in-3d-scenes-using-shadow-mapping-46hl | shader, beginners, gamedev | Shadow mapping is a fundamental technique in 3D graphics that allows for realistic rendering of shadows. By simulating how light interacts with objects in the real world, shadow mapping adds depth, dimension, and a touch of realism to 3D scenes. This not only enhances the visual fidelity of graphics but also improves our spatial awareness and immersion within the 3D environment. In essence, shadows cast by objects provide crucial visual cues that our brains rely on to perceive depth and understand the relative positions of objects in a scene

## Basics of Shadow Mapping

Shadow mapping breathes life into 3D graphics by simulating realistic shadows. Here's the magic behind it:

- Seeing from the Light's Eye: Imagine the scene rendered from the light source's perspective, like a camera. This creates a special texture called a shadow map, essentially a depth map recording how close objects are to the light.

- Light vs. Dark on the Map: Closer objects in the scene block more light, appearing darker on the shadow map. This darkness signifies areas in shadow.

- From Camera to Canvas: When rendering the scene normally, the graphics processor checks the shadow map for each pixel on screen.

- Shadows Emerge: If the shadow map tells the processor something is blocking light at that pixel's location, the pixel is shaded darker, creating the illusion of a shadow.

Think of it like a black and white photo of the scene, lit only by the light source. Darker areas represent objects casting shadows. This information dictates which parts of the scene are shadowed from the main camera's perspective.

## Setting Up the Scene

Before we delve into the technical aspects of shadow mapping, let's prepare the stage for our digital drama! Here's what we need to consider when setting up the 3D scene:

**Scene Geometry:**

- Object Placement: Arrange your 3D models strategically to create interesting shadow interactions. Consider how objects might block light from each other and how shadows will fall on surfaces.

- Light Source Positioning: Experiment with the position and type of light source (point light, directional light, etc.) This will significantly impact the direction and shape of the shadows cast.

**Light Source Configuration:**

- Shadow Casting: Ensure your light source has "Cast Shadows" enabled in its properties. This allows the engine to generate the necessary shadow map information.

- Bias Adjustment (Optional): A slight bias adjustment might be needed to prevent unwanted artifacts like "shadow acne" (tiny dark spots appearing on surfaces that shouldn't be completely in shadow). This adjustment can be fine-tuned for optimal results.

By carefully setting up your scene and configuring the light source, you're laying the groundwork for realistic and visually appealing shadows in your 3D world. In the next chapter, we'll explore the technical details of shadow map creation and utilization.

## Creating the Shadow Map

Now that the scene is prepped, let's delve into the technical heart of shadow mapping: generating the all-important shadow map.

Seeing Through the Light's Eyes:

- A Different View: The first step involves rendering the entire scene from the perspective of the light source, not the main camera. Imagine the light source is equipped with a special camera that captures depth information instead of color.

- Depth, Not Beauty: During this render pass, textures, materials, and lighting are ignored. The focus is solely on capturing the relative distances of objects from the light source.

- Birth of the Shadow Map: The result of this unique render pass is a special texture called a shadow map. This map stores depth values, with darker areas representing objects closer to the light and therefore casting shadows.

Think of the shadow map as a grayscale image where brightness corresponds to depth. Brighter areas are further away from the light, while darker areas are closer and will block shadows in the final render.

## Applying the Shadow Map

We've created the shadow map, a treasure trove of depth information. Now, let's see how this map is used to paint shadows onto our 3D scene.

**The Fragment Shader's Role:**

The magic happens within the fragment shader, a program that determines the final color of each pixel on the screen. Here's the process:

- World Position Reconstruction: For each pixel on the screen, the fragment shader reconstructs its position in the 3D world based on its location on the screen and camera data.

- A Peek into the Shadow Map: The fragment shader then samples the shadow map at a corresponding location based on the reconstructed world position and the light source's direction.

- Depth Comparison: The fragment shader compares the depth value retrieved from the shadow map with the reconstructed depth of the current pixel.

**Living in Shadows or Bathed in Light:**

- Shadow Detected: If the depth value from the shadow map indicates something closer to the light source is blocking the way, it signifies a shadowed area. The fragment shader adjusts the pixel's color accordingly, making it darker to create the illusion of a shadow.

- Direct Illumination: If the depth values match, it means no object is obstructing the light's path to that pixel. The fragment shader applies the usual lighting calculations, allowing the pixel to be lit normally.

**Shadow Map Resolution Matters:**

The precision of shadows depends on the resolution of the shadow map. A higher resolution map allows for more accurate depth comparisons, resulting in smoother and more natural-looking shadows. However, higher resolutions also come at a performance cost as the GPU needs to process more texture data.

**Beyond Basic Shadow Mapping:**

## Optimizing Shadow Maps

This is a simplified explanation of shadow mapping. Advanced techniques like filtering and bias adjustments can further improve shadow quality and reduce artifacts.

Shadow mapping breathes life into 3D graphics, but like any powerful tool, it requires careful handling to achieve optimal results. This chapter dives into common shadow mapping challenges and optimization techniques.

## Resolving Common Artifacts

- Shadow Acne: Tiny dark speckles appearing on surfaces that shouldn't be completely shadowed. These can be caused by minor depth inaccuracies or limitations in shadow map resolution.

- Peter Panning: Shadows that don't seem to fully connect to the object casting them, making it appear as if the object is floating slightly above its shadow. This can occur due to limitations in shadow map bias adjustments.

- Low Shadow Resolution Artifacts: When using low-resolution shadow maps, especially for large scenes or distant objects, shadows may appear blocky or pixelated.

## Increasing Shadow Map Resolution

Higher Resolution, Higher Quality: A straightforward solution is to increase the resolution of the shadow map. This provides more detailed depth information, leading to smoother and more accurate shadows.

- The Performance Cost: Be mindful that higher resolution shadow maps require the GPU to process more texture data, potentially impacting performance. Finding a balance between shadow quality and performance is crucial.

## Using Cascaded Shadow Maps

For vast scenes with varying object distances from the light source, a single shadow map might struggle. Here's where cascaded shadow maps come in:

- Multiple Shadow Maps, Strategic Depths: The scene is divided into regions or cascades, each with its own dedicated shadow map.

- Tailored Shadow Maps: Each cascade's shadow map has a resolution optimized for its specific range of depths. Closer objects have higher resolution maps for finer detail, while distant objects can utilize lower resolution maps for efficiency.

- Smoother Shadows, Optimized Performance: By using cascaded shadow maps, you can achieve smoother shadows across the entire scene while maintaining good performance.

**Beyond the Basics**

This chapter provides a starting point for shadow map optimization. Advanced techniques like variance shadow maps and percentage-closer filtering can further enhance shadow quality and reduce artifacts.

## Advanced Techniques

Shadow mapping is a cornerstone of realistic 3D graphics, but there's always room for improvement. This chapter explores two advanced techniques that can further enhance the quality and visual fidelity of your shadows: Percentage-Closer Filtering (PCF) and Variance Shadow Maps (VSM).

**Percentage-Closer Filtering (PCF)**

The core concept of shadow mapping relies on comparing depth values from the scene to the shadow map. However, this approach can sometimes lead to aliasing, resulting in blocky or stair-stepping artifacts along shadow edges.

**PCF tackles this issue by introducing a softer shadow:**

Neighborhood Sampling: Instead of a single depth comparison, PCF samples multiple texels around the corresponding location in the shadow map.

- Depth Comparison Buffet: Each of these neighboring samples is then compared to the scene depth.

- Percentage of Closeness: Based on the results of these comparisons, PCF calculates the percentage of samples that were closer to the light source than the current scene fragment.

- Shading by Percentage: This percentage is used to determine how much to darken the fragment's color. A higher percentage indicates a more shadowed area, resulting in a smoother shadow falloff.

PCF essentially creates a softer transition between shadow and light, reducing the appearance of harsh edges.

[Read full article over here](https://glsl.site/post/ambient-occlusion-with-shaders-adding-depth-to-scenes/)

| hayyanstudio |

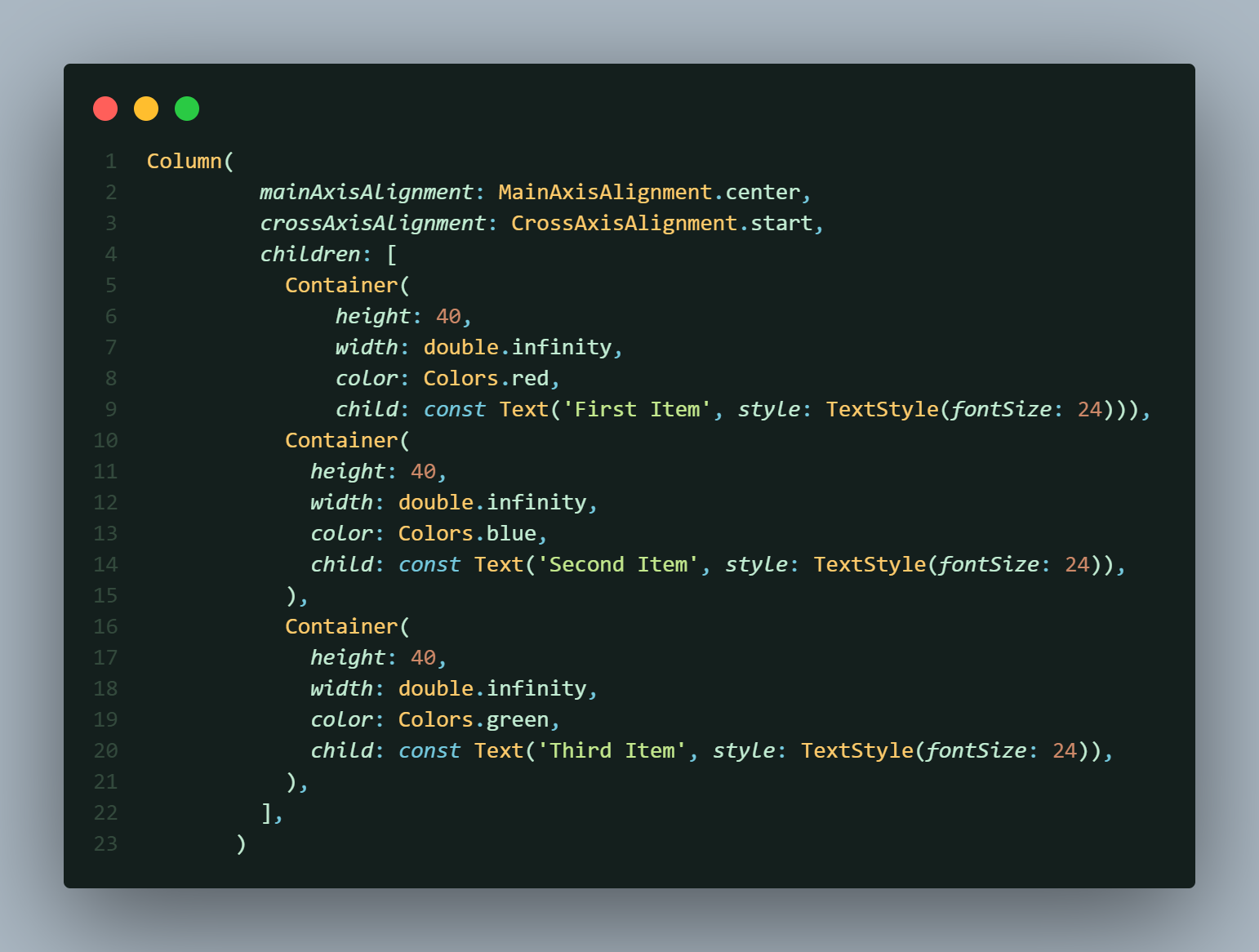

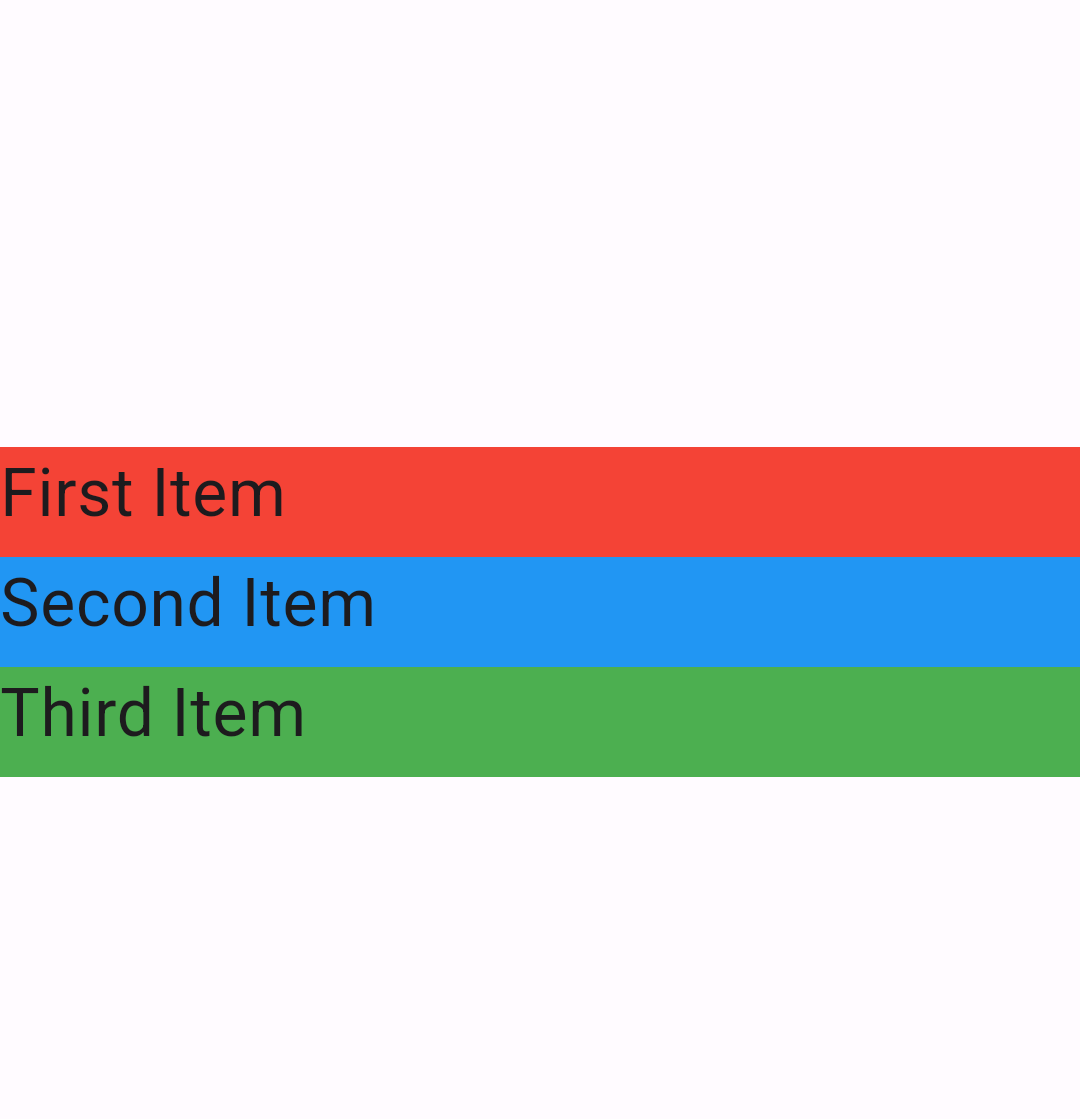

1,869,912 | Understanding Flutter Widgets: Column vs Row | In Flutter, the layout design revolves around widgets. Two of the most essential layout widgets are... | 0 | 2024-05-30T05:09:39 | https://dev.to/eldhopaulose/understanding-flutter-widgets-column-vs-row-2m5o | flutter, dart, google, frontend | In Flutter, the layout design revolves around widgets. Two of the most essential layout widgets are `Column `and `Row`. They control how child widgets are positioned vertically and horizontally, respectively. Let’s dive into the details and see them in action with example code.

## The Column Widget

The `Column `widget in Flutter is used to align child widgets in a vertical manner. It’s similar to a vertical `LinearLayout `in Android or a vertical `StackPanel `in UWP. Here’s a simple example:

In this example, the `mainAxisAlignment` property is set to `MainAxisAlignment.center`, which centers the children vertically. The `crossAxisAlignment` is set to `CrossAxisAlignment.start`, aligning the children to the start of the cross axis (horizontally in this case).

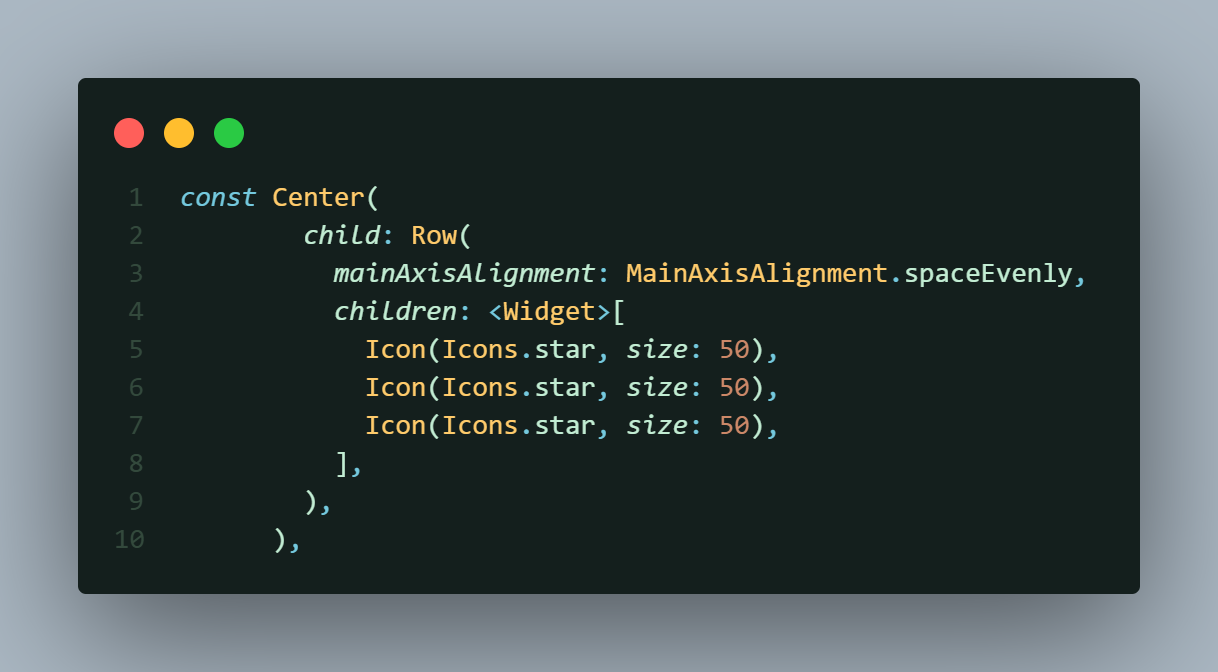

## The Row Widget

Conversely, the `Row `widget arranges its children horizontally. It’s akin to a horizontal `LinearLayout `in Android or a horizontal `StackPanel `in UWP. Here’s an example:

Here, `mainAxisAlignment `is set to `MainAxisAlignment.spaceEvenly`, which distributes the children evenly across the horizontal axis with equal spacing.

## Combining Column and Row

You can nest `Row `and `Column `widgets to create complex layouts. Here’s a nested example:

In this nested layout, a `Row `is placed inside a `Column`, combining both horizontal and vertical alignment.

## Conclusion

Understanding the `Column `and `Row `widgets is crucial for effective layout design in Flutter. Experiment with the properties and nesting to create the desired UI for your app.

[Contact with me](https://eldhopaulose.info/)

[GitHub](https://github.com/eldhopaulose)

| eldhopaulose |

1,869,909 | Unforgettable Journeys: Discover India with a Tour Package | Have you ever dreamt of wandering through vibrant bazaars overflowing with spices and textiles?... | 0 | 2024-05-30T05:04:06 | https://dev.to/tailormade_journey_d2c000/unforgettable-journeys-discover-india-with-a-tour-package-3a2c |

Have you ever dreamt of wandering through vibrant bazaars overflowing with spices and textiles? Perhaps you envision yourself scaling the majestic Himalayas or cruising down serene backwaters. India, a land of stunning diversity, promises an unforgettable adventure for every kind of traveler. This vast nation, steeped in rich history and pulsating with vibrant culture, offers experiences that will linger in your memory forever.

[India Tour Packages](https://tailormadejourney.com/destination/india-tour-packages/

) can unlock the magic of this incredible country, making your dream vacation a reality. Whether you crave heart-pounding adventure, cultural immersion, or simply relaxation on pristine beaches, there's a perfect tour package waiting to be discovered.

**

A Kaleidoscope of Experiences Awaits

**

India's captivating tapestry is woven from a multitude of regions, each boasting its own unique charm. In the north, the snow-capped Himalayas beckon adventure seekers to trek through breathtaking landscapes. Hill stations like Shimla and Munnar offer a welcome respite from the heat, with their colonial architecture and verdant beauty. Central India boasts magnificent forts, ancient temples, and bustling cities like Delhi and Agra, home to the iconic Taj Mahal.

Travel south to discover serene beaches fringed with swaying palm trees, alongside tranquil backwaters where you can glide through emerald waterways on a traditional houseboat. The west unveils the vibrant state of Rajasthan, a land of majestic forts, opulent palaces, and colorful festivals. No matter your interests, India has something to enthrall you.

**Crafting Your Ideal Indian Adventure**

[India travel packages](https://tailormadejourney.com/destination/india-tour-packages/

) come in a kaleidoscope of colors, just like the country itself. For the history buff, heritage tours weave a captivating narrative through ancient forts, palaces, and temples. Wildlife enthusiasts can embark on thrilling safaris, spotting tigers, elephants, and a dazzling array of other animals in their natural habitat. Adventure awaits those who seek adrenaline-pumping activities, from trekking the Himalayas to white-water rafting on rushing rivers.

Delve deeper into the soul of India with cultural tours that introduce you to traditional practices, vibrant festivals, and the country's famed cuisine. Seek spiritual solace on pilgrimage tours, visiting sacred sites and experiencing the serenity that India offers. Luxury tours cater to discerning travelers, providing premium accommodation, exclusive experiences, and impeccable service. Budget-conscious explorers can find tours that allow them to experience the magic of India without breaking the bank.

**The Magic of Customization**

The beauty of India tour packages lies in their flexibility. You can tailor your itinerary to perfectly match your interests, travel style, and budget. Choose the duration of your trip, the regions you want to explore, and the mode of transportation that best suits your needs. Whether you prefer the comfort of a private car, the scenic journey of a train, or the efficiency of domestic flights, your tour can be customized to your liking. The level of activity can also be adjusted, allowing you to pack your days with adventures or enjoy a more relaxed pace.

**Unveiling a World of Benefits**

Booking an India travel package offers a multitude of advantages. By letting the experts handle the logistics, you can save valuable time and energy that would otherwise be spent on trip planning. Experienced guides will ensure smooth travel from arrival to departure, providing insightful commentary and local knowledge along the way. Curated experiences take you beyond the well-trodden tourist path, offering a glimpse into the authentic heart of India. Tour packages can also be more cost-effective than planning your trip independently, especially when it comes to transportation and accommodation.

**Start Your Indian Odyssey Today**!

Ready to embark on an unforgettable adventure in the land of incredible experiences? Explore our website to discover a wide range of India tour packages designed to cater to every taste and budget. Our travel specialists are also happy to assist you in crafting a personalized itinerary that perfectly matches your dream vacation. Don't wait any longer – book your tour today and get ready to be amazed by the magic of India!

| tailormade_journey_d2c000 | |

1,869,907 | Achieve Your Career Goals With Certificate 3 In Child Care | Achieve Your Career Goals With Certificate 3 In Child Care Our Certificate 3 in Child Care program... | 0 | 2024-05-30T05:01:20 | https://dev.to/rasel_ahmed_cc3caf679f85a/best-rpl-provider-australia-3bl2 | Achieve Your Career Goals With Certificate 3 In Child Care

Our Certificate 3 in Child Care program is your gateway to a fulfilling career in child care services. With a comprehensive curriculum covering

Visit More: https://skillsupgrade.com.au/certificate-3-in-child-care/ | rasel_ahmed_cc3caf679f85a | |

1,869,906 | Johnson Controls: Driving Innovation in the Automotive Seats Market | Introduction Johnson Controls, a global leader in building products and technology, including... | 0 | 2024-05-30T05:00:37 | https://dev.to/sim_chanda/johnson-controls-driving-innovation-in-the-automotive-seats-market-4jbm | marketstrateg, globalinsights, marketgrowth |

**Introduction**

Johnson Controls, a global leader in building products and technology, including automotive seats market, is at the forefront of revolutionizing the automotive industry. With a rich history of innovation and a commitment to excellence, Johnson Controls continually adapts its strategies, embraces emerging innovations, and drives developments that shape the automotive seats market. This article explores Johnson Controls' strategic approach, its pioneering innovations, and the developments that are reshaping the automotive seats industry.

**Download FREE Sample:** https://www.nextmsc.com/automotive-seats-market/request-sample

**Strategic Approach

Customer-Centric Solutions**

Johnson Controls adopts a customer-centric approach, prioritizing collaboration with automakers to understand their unique needs and challenges. By working closely with customers throughout the product development process, Johnson Controls develops tailored seating solutions that address specific requirements while delivering superior quality and value. This collaborative approach enables Johnson Controls to build strong partnerships with automakers and drive innovation in the competitive automotive market.

**Global Presence and Market Leadership**

With a global footprint spanning across key automotive markets, Johnson Controls maintains a strong market presence and leadership position. By strategically positioning manufacturing facilities, research centers, and engineering hubs in strategic locations, Johnson Controls ensures proximity to customers and enhances operational efficiency. This global presence enables Johnson Controls to respond quickly to market demands and deliver innovative seating solutions that meet regional preferences and regulations.

**Investment in Research and Development**

Johnson Controls places a significant emphasis on research and development (R&D), investing in advanced technologies and engineering expertise to drive innovation in automotive seating solutions. By staying at the forefront of technological advancements, Johnson Controls explores new materials, design concepts, and manufacturing processes that enhance seating comfort, safety, and functionality. This continuous investment in R&D enables Johnson Controls to anticipate market trends and deliver cutting-edge seating solutions that exceed customer expectations.

Emerging Innovations

**Lightweight Materials and Design**

Johnson Controls is pioneering the use of lightweight materials and design optimization techniques to reduce the weight of automotive seats. By utilizing advanced materials such as high-strength steel, aluminum alloys, and composite materials, Johnson Controls achieves significant weight savings without compromising structural integrity or safety. Lightweight seats contribute to improved fuel efficiency, reduced emissions, and enhanced vehicle performance, making them an increasingly important focus area for automakers.

**Smart Seating Technologies**

Johnson Controls is at the forefront of developing smart seating technologies that enhance comfort, convenience, and safety for drivers and passengers. From integrated connectivity and entertainment systems to active ergonomics and adaptive seating controls, Johnson Controls' innovations create a seamless and personalized seating experience. By leveraging sensors, actuators, and connectivity solutions, Johnson Controls' smart seating solutions adapt to individual preferences and driving conditions, enhancing overall comfort and convenience.

**Sustainable Materials and Manufacturing Practices**

In response to growing environmental concerns and sustainability mandates, Johnson Controls is committed to integrating sustainable materials and manufacturing practices into its seating solutions. By utilizing recycled and bio-based materials, optimizing manufacturing processes, and minimizing waste and emissions, Johnson Controls reduces its environmental footprint while maintaining product quality and performance. Sustainable seating solutions offer automakers a competitive advantage and appeal to environmentally conscious consumers.

Market Developments

**Strategic Acquisitions and Partnerships**

Johnson Controls expands its capabilities and market reach through strategic acquisitions and partnerships with complementary companies. By acquiring companies with expertise in advanced materials, technologies, and manufacturing processes, Johnson Controls strengthens its innovation pipeline and enhances its competitive position in the automotive seats market. Strategic partnerships with automakers and suppliers enable Johnson Controls to co-develop innovative seating solutions that address evolving market needs and trends.

**Integration of Advanced Manufacturing Technologies**

Johnson Controls integrates advanced manufacturing technologies such as additive manufacturing (3D printing), robotics, and automation into its production processes to enhance efficiency, quality, and scalability. By leveraging digital manufacturing techniques and Industry 4.0 principles, Johnson Controls optimizes production workflows, reduces lead times, and increases manufacturing flexibility. Advanced manufacturing technologies enable Johnson Controls to respond quickly to changing market demands and deliver high-quality seating solutions with greater speed and precision.

**Focus on Safety and Comfort**

Johnson Controls prioritizes safety and comfort in its seating solutions, incorporating advanced safety features and ergonomic design principles. By integrating technologies such as active head restraints, side impact protection, and seat belt pretensioners, Johnson Controls enhances occupant protection and crashworthiness. Additionally, Johnson Controls focuses on optimizing seating ergonomics to minimize driver fatigue and improve overall comfort during long journeys.

**Inquire before buying:** https://www.nextmsc.com/automotive-seats-market/inquire-before-buying

**Conclusion**

Johnson Controls is a driving force in the automotive seats market, pioneering innovations that enhance comfort, safety, and sustainability. With a customer-centric approach, a focus on innovation, and a commitment to excellence, Johnson Controls continues to shape the future of automotive seating solutions. As the automotive industry evolves, Johnson Controls remains at the forefront of technological advancements, delivering cutting-edge seating solutions that meet the evolving needs of automakers and consumers worldwide.

| sim_chanda |

1,869,908 | Dev33 | (https://dev.com/signup/)_This is a submission for Frontend Challenge v24.04.17, CSS Art: June._ ... | 27,551 | 2024-05-30T05:00:00 | https://dev.com/signup/ | frontendchallenge, devchallenge, css, programming | (https://dev.com/signup/)_This is a submission for [Frontend Challenge v24.04.17](https://dev.to/challenges/frontend-2024-05-29), CSS Art: June._

## Inspiration

<!-- What are you highlighting today? -->

## Demo

<!-- Show us your CSS Art! You can directly embed an editor into this post (see the FAQ section of the challenge page) or you can share an image of your project and share a public link to the code. -->

## Journey

<!-- Tell us about your process, what you learned, anything you are particularly proud of, what you hope to do next, etc. -->

<!-- Team Submissions: Please pick one member to publish the submission and credit teammates by listing their DEV usernames directly in the body of the post. -->

<!-- We encourage you to consider adding a license for your code. -->

<!-- Don't forget to add a cover image to your post (if you want). -->

https://dev.com/signup/

<!-- Thanks for participating! -->

| alla_santoshpavankumar_ |

1,869,905 | Finding what iterator to use and when from a beginner! | Hello, my name is Kaleb and I'm a beginner at coding. I am an active student at Flatiron School and... | 0 | 2024-05-30T04:53:57 | https://dev.to/killerfox007/finding-what-iterator-to-use-and-when-from-a-beginner-441h | javascript, beginners, programming, tutorial | Hello, my name is Kaleb and I'm a beginner at coding. I am an active student at Flatiron School and our first phase is javascript. Learning arrays and objects was easy, but adding them together and iterating over them was extremely difficult. I will be going over some the iterators as I understand them and give detailed examples. When I was doing research it was difficult to understand which to use and why.

The iterators I will be going over are:

.forEach()

.map()

.find()

.filter()

Starting with forEach() this is an iterator that does something for each value or object in the array. This is an iterator we learned with little value in the real world from my perspective. forEach is a loop as well, it can be used to console log elements in the array like the example below.

numberedArray = [1,2,3,4,5,6,7,8,9,10]

numberedArray.forEach(function (number) {

console.log("What number is being logged" + number);

});

This will console.log:

What number is being logged1

What number is being logged2

What number is being logged3

until it reaches 10 and then will stop.

this can be useful to see what values you are getting or for debugging. forEach is not something I personally would learn due to .map outshining it, forEach returns the same array but changed where .map returns a new array.

the map() definition that I believe explains this very well is: The map() method of Array instances creates a new array populated with the results of calling a provided function on every element in the calling array.

What does this actually mean? how does it work? what does it look like?

first, we should break down the definition it is a method that creates a new array populated with results from the provided function on EVERY element. exactly like the forEach iterator it goes through the [1-10] and does something on everyone and provides a NEW array.

Second let's see it in use to wrap our head around when and why we would use it. For example, We only want the last names of the guests staying at the hotel we run. our data might look like this:

let guests = [

{ firstName: 'Bob', lastName: 'Joeseph'},

{ firstName: 'Tracy', lastName: 'Miller'},

{ firstName: 'Siera', lastName: 'Allen'}

]

const lastNamesOfGuests = guests.map(firstAndLastNames => firstAndLastNames.lastName)

console.log(lastNamesOfGuests)

it will return: [ 'Joeseph', 'Miller', 'Allen' ]

Let's walk through it, We set a variable name for guests that is an array of objects and those objects have the first and last names of our 3 guests. We are setting lastNamesOfGuests to equal our variable guests and calling .map then we are passing in the objects it is going to each one and grabbing the .lastName and implicitly returning the strings inside a new array.

Now that we understand both of those we are finishing it up with my favorite combo the .find and .filter iterators and personally my favorite to use. .find is very easy to understand its job is to find something and return it once found, if nothing is found .find returns undefined and filter returns a new array with no elements looking like []. The difference between .find and .filter is once find gets a match it will stop looping and return that element. in our example, if we wanted all the fruits and vegetables starting with the letter B. Find will only return the banana in our example below.

let fruitAndVegetables = ['apple', 'banana', 'broccoli', 'carrots']

const namesWithB = fruitAndVegetables.find(fruitAndVeg => {

return fruitAndVeg.toLowerCase().startsWith("b")

})

console.log(namesWithB)

This will return: banana

small explanation on why I used .toLowerCase() and .startsWith(), toLowerCase makes all of the elements lowercase making it so if banana was a capital B it would still start with lowercase b. making our search case insensitive. the startsWith is very self-explanatory but if it starts with what is in the () it will match and return those elements.

.filter is very similar to .find but has some key differences. The filter offers more than 1 match and returns a new array with the match from the search. an example will show you the differences very nicely. in our example, we changed one word and got both bana and broccoli. we changed find to filter. find returns the first match and stops the loop, filter goes through and makes a new array and populates it with all the matches from the elements passed in.

let fruitAndVegetables = ['apple', 'banana', 'broccoli', 'carrots']

const namesWithB = fruitAndVegetables.filter(fruitAndVeg => {

return fruitAndVeg.toLowerCase().startsWith("b")

})

console.log(namesWithB)

This will return: [ 'banana', 'broccoli' ]

// used the definition for map()

https://developer.mozilla.org/en-US/docs/Web/JavaScript/Reference/Global_Objects/Array/map

| killerfox007 |

1,869,904 | Im accused of being a micromanager. Higher-ups want to build team capacity at the expense of quality | Im accused of being a micromanager. Higher-ups want to build team capacity at the expense of... | 0 | 2024-05-30T04:52:08 | https://dev.to/humanspecimen/im-accused-of-being-a-micromanager-higher-ups-want-to-build-team-capacity-at-the-expense-of-quality-2lmb | management, leadership, career | # Im accused of being a micromanager. Higher-ups want to build team capacity at the expense of quality

I'm the defacto tech lead in my team, of 6 people, where I've been working for 4 years. We don't have a designated lead, but me and another experienced dev make all the important decisions by consensus.

Higher-ups have always voiced a strong opinion that building team capacity is more important for our company than to ensure perfect quality. However, they recognize that certain areas are mission-critical, where quality shouldn't be compromised.

When reviewing PRs I have generally tried my best to have a balanced criteria: On one side I try to prevent introducing regressions, particularly on critical areas; On the other side, I try to provide junior devs the opportunity to gain experience by not expecting all their code to be perfect.

However, some junior devs feel very empowered to own certain critical areas, and tend to make large PRs with changes that may be risky, or require extensive reviews, or refactors on architecturally significant code. When this happens I've usually handled the situation by requesting changes, and sometimes PRs require several reviews before they're merged. Sometimes these juniors are difficult and we've had frictions because I hold my ground and they either disagree, or ignore parts of my reviews, or repeat the same undesirable behavior in future PRs.

The other senior dev in the team has a more carefree and agreeable personality and has a much more lenient taste when reviewing PRs. His code quality isn't as good as mine, and his performance on architectural design has been sloppy in the past. He doesn't like confrontation and doesn't like to say "no" to people.

He has accused me of being a micro-manager, of being an obstacle for the team's scalability, and of not giving devs any freedom, of expecting perfection, and says I consider any code that doesn't match my personal style to be bad code. He also spread these unproven accusations to the higher-ups. Now there is a myth surrounding me, that I'm all these things.

I had a call with the higher-ups where I brought this up. I prepared a thorough presentation where I tried to debunk these myths, and made the claim that the accusations were biased due to his carefree personality. To my demise, they seem to have a special preference for the other senior dev. He's been at the company for longer, he has more charisma, and people like him because he's nicer.

I feel like these people (The senior dev, and the higher-ups) don't know how important I've been/ I am, for the team. I'm the backbone of the team, and I'm not getting the credit for it. I make all relevant engineering decisions. Before I was involved, their development lifecycle was so bad, the app was always crashing because every PR had regressions. Over these years, I gradually turned their pet project v1 into a stable and serious app that now has a chance of becoming profitable. I feel like the team doesn't deserve me.

I would like to hear some feedback in general.

Should I look for another job? The main reasons why I've liked

working here are that it has mostly felt like a relaxed working environment, and they prioritize team harmony more than strict deadlines and results. But I feel like I'm a more competitive-minded person. | humanspecimen |

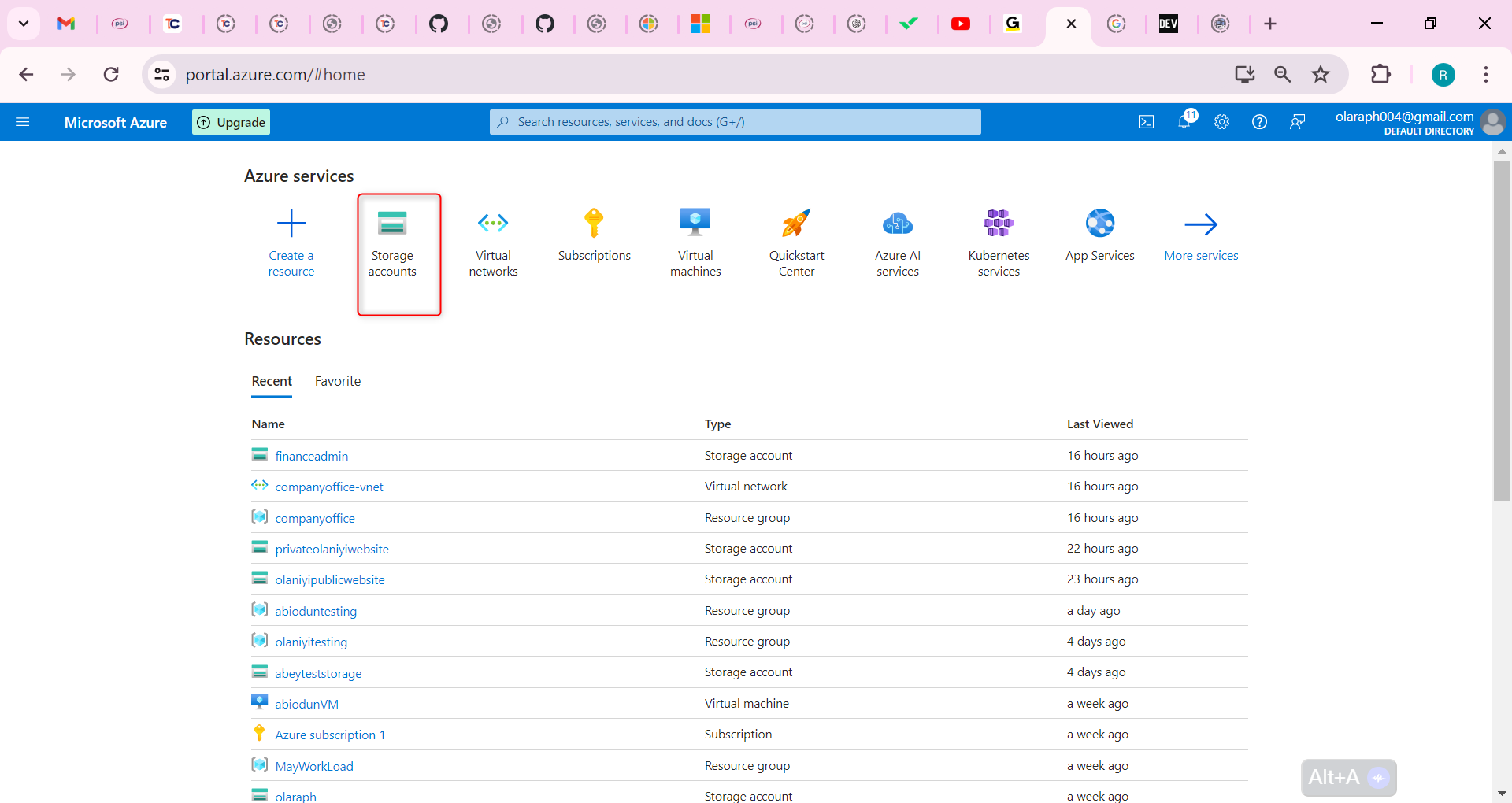

1,869,903 | Web App Pentesting on AWS: A Comprehensive Guide | Introduction Overview Web application security is important, since data has to be kept integral,... | 0 | 2024-05-30T04:47:54 | https://sudoconsultants.com/web-app-pentesting-on-aws-a-comprehensive-guide/ | pentesting, webapp, aws, awsinspector | <!-- wp:heading {"level":1} -->

<h1 class="wp-block-heading">Introduction</h1>

<!-- /wp:heading -->

<!-- wp:heading {"level":3} -->

<h3 class="wp-block-heading">Overview</h3>

<!-- /wp:heading -->

<!-- wp:paragraph -->

<p>Web application security is important, since data has to be kept integral, confidential, and available. In the present day, where the cyber threat keeps rising, securing web applications against vulnerabilities is important to ensure all the above. Penetration testing is proactive, and vulnerabilities can be found and fixed before any exploitation can occur from malicious actors.</p>

<!-- /wp:paragraph -->

<!-- wp:heading {"level":3} -->

<h3 class="wp-block-heading">Purpose</h3>

<!-- /wp:heading -->

<!-- wp:paragraph -->

<p>The objective of this guide is to provide a comprehensive roadmap to our readers on the most common vulnerabilities in web applications, getting them familiar with the tools and services available in AWS, how to pentest a web application, and ensuring all security measures are being carried out. By following this guide, it will be possible to conduct a complete pentest, discover security gaps, and apply effective remediation plans.</p>

<!-- /wp:paragraph -->

<!-- wp:heading -->

<h2 class="wp-block-heading">Understanding Web Application Security</h2>

<!-- /wp:heading -->

<!-- wp:heading {"level":3} -->

<h3 class="wp-block-heading">Common Vulnerabilities</h3>

<!-- /wp:heading -->

<!-- wp:paragraph -->

<p>Web applications face different types of vulnerabilities that attackers exploit to cause unauthorized access, data theft, and other forms of unauthorized harm. The most critical web application security risks can be determined by utilizing the OWASP Top 10 standard. During the course of this work, we will get to know some of the common vulnerabilities.</p>

<!-- /wp:paragraph -->

<!-- wp:list -->

<ul><!-- wp:list-item -->

<li><strong>SQL Injection:</strong></li>

<!-- /wp:list-item --></ul>

<!-- /wp:list -->

<!-- wp:paragraph -->

<p>An SQL injection allows an attacker to execute arbitrary SQL queries on the database through input fields.</p>

<!-- /wp:paragraph -->

<!-- wp:list -->

<ul><!-- wp:list-item -->

<li><strong>Cross-Site Scripting [XSS]:</strong></li>

<!-- /wp:list-item --></ul>

<!-- /wp:list -->

<!-- wp:paragraph -->

<p>Through this vulnerability, harmful scripts are injected into web pages and thereafter viewed by others.</p>

<!-- /wp:paragraph -->

<!-- wp:list -->

<ul><!-- wp:list-item -->

<li><strong>Cross-Site Request Forgery:</strong></li>

<!-- /wp:list-item --></ul>

<!-- /wp:list -->

<!-- wp:paragraph -->

<p>The user is forced to execute unwanted actions in a web application in which he is logged in.</p>

<!-- /wp:paragraph -->

<!-- wp:list -->

<ul><!-- wp:list-item -->

<li><strong>Insecure Deserialization:</strong></li>

<!-- /wp:list-item --></ul>

<!-- /wp:list -->

<!-- wp:paragraph -->

<p>This vulnerability is employed to exploit the application logic by manipulating serialized objects.</p>

<!-- /wp:paragraph -->

<!-- wp:list -->

<ul><!-- wp:list-item -->

<li><strong>Security Misconfiguration:</strong></li>

<!-- /wp:list-item --></ul>

<!-- /wp:list -->

<!-- wp:paragraph -->

<p>This covers insecure default configurations, incomplete configurations, as well as mishandling of default accounts and credentials.</p>

<!-- /wp:paragraph -->

<!-- wp:heading -->

<h2 class="wp-block-heading">Security Best Practices</h2>

<!-- /wp:heading -->

<!-- wp:paragraph -->

<p>To secure web applications from such vulnerabilities, we need to follow some security best practices, which are as follows:</p>

<!-- /wp:paragraph -->

<!-- wp:list -->

<ul><!-- wp:list-item -->

<li><strong>Secure Coding Practice:</strong></li>

<!-- /wp:list-item --></ul>

<!-- /wp:list -->

<!-- wp:paragraph -->

<p>Implement secure coding standards to avoid introducing security vulnerabilities during development.</p>

<!-- /wp:paragraph -->

<!-- wp:list -->

<ul><!-- wp:list-item -->

<li><strong>Input Validation and Sanitization:</strong></li>

<!-- /wp:list-item --></ul>

<!-- /wp:list -->

<!-- wp:paragraph -->

<p>Validate and sanitize all user inputs to avoid injection attacks.</p>

<!-- /wp:paragraph -->

<!-- wp:list -->

<ul><!-- wp:list-item -->

<li><strong>Proper Session Management:</strong></li>

<!-- /wp:list-item --></ul>

<!-- /wp:list -->

<!-- wp:paragraph -->

<p>Secure session management to avoid session hijacking and session fixation issues.</p>

<!-- /wp:paragraph -->

<!-- wp:list -->

<ul><!-- wp:list-item -->

<li><strong>Regular Security Assessment:</strong></li>

<!-- /wp:list-item --></ul>

<!-- /wp:list -->

<!-- wp:paragraph -->

<p>Regular security assessment and pentest so that if any vulnerability found, it can be taken care of.</p>

<!-- /wp:paragraph -->

<!-- wp:list -->

<ul><!-- wp:list-item -->

<li><strong>Patch Management:</strong></li>

<!-- /wp:list-item --></ul>

<!-- /wp:list -->

<!-- wp:paragraph -->

<p>Keep all software, libraries, and dependencies up-to-date with the latest security patches.</p>

<!-- /wp:paragraph -->

<!-- wp:heading -->

<h2 class="wp-block-heading">Setting Up the Environment</h2>

<!-- /wp:heading -->

<!-- wp:heading {"level":3} -->

<h3 class="wp-block-heading">AWS Account Setup</h3>

<!-- /wp:heading -->

<!-- wp:paragraph -->

<p>To begin the process of pentesting, we first need to set up our AWS environment. We need to prepare an AWS account, set up IAM roles and permission, and finally set up a test environment.</p>

<!-- /wp:paragraph -->

<!-- wp:paragraph -->

<p><strong>Console Steps:</strong></p>

<!-- /wp:paragraph -->

<!-- wp:list -->

<ul><!-- wp:list-item -->

<li>Open AWS Management Console Open AWS Management Console and log in with your username and password.</li>

<!-- /wp:list-item -->

<!-- wp:list-item -->

<li>Create a new IAM User Now, we will go to the IAM service. Under the IAM service click on the option "Users", and then click on the option "Add user". Set the username for the user. For eg provide the username as PentestUser. Select the type of access to be provided to the user, here we select Programmatic access and AWS Management Console access.</li>

<!-- /wp:list-item -->

<!-- wp:list-item -->

<li>MFA to the IAM User For more security, put MFA in the enabled state.</li>

<!-- /wp:list-item -->

<!-- wp:list-item -->

<li>Create a new Role with Proper Policy Now create a new role with proper policies. Here we take the AdministratorAccess role.</li>

<!-- /wp:list-item --></ul>

<!-- /wp:list -->

<!-- wp:paragraph -->

<p><strong>CLI Steps:</strong></p>

<!-- /wp:paragraph -->

<!-- wp:list -->

<ul><!-- wp:list-item -->

<li>Install the AWS Command Line Interface</li>

<!-- /wp:list-item --></ul>

<!-- /wp:list -->

<!-- wp:code -->

<pre class="wp-block-code"><code><strong><em>pip install awscli</em></strong></code></pre>

<!-- /wp:code -->

<!-- wp:list -->

<ul><!-- wp:list-item -->

<li>Configure AWS CLI: Configure the AWS CLI with your credentials</li>

<!-- /wp:list-item --></ul>

<!-- /wp:list -->

<!-- wp:code -->

<pre class="wp-block-code"><code><strong><em>configure</em></strong></code></pre>

<!-- /wp:code -->

<!-- wp:list -->

<ul><!-- wp:list-item -->

<li>Create an IAM user</li>

<!-- /wp:list-item --></ul>

<!-- /wp:list -->

<!-- wp:code -->

<pre class="wp-block-code"><code><strong><em>aws iam create-user --user-name PentestUser</em></strong></code></pre>

<!-- /wp:code -->

<!-- wp:list -->

<ul><!-- wp:list-item -->

<li>Attach the AdministratorAccess policy to the user</li>

<!-- /wp:list-item --></ul>

<!-- /wp:list -->

<!-- wp:code -->

<pre class="wp-block-code"><code><strong><em>aws iam attach-user-policy --user-name PentestUser --policy-arn arn:aws:iam::aws:policy/AdministratorAccess</em></strong></code></pre>

<!-- /wp:code -->

<!-- wp:heading {"level":3} -->

<h3 class="wp-block-heading">Setting Up a Test Environment</h3>

<!-- /wp:heading -->

<!-- wp:paragraph -->

<p>To conduct pentests, you need to have a controlled testing environment where you can exploit vulnerabilities safely without affecting production systems.</p>

<!-- /wp:paragraph -->

<!-- wp:paragraph -->

<p><strong>Console Steps:</strong></p>

<!-- /wp:paragraph -->

<!-- wp:list -->

<ul><!-- wp:list-item -->

<li>Open the AWS Management Console and navigate to the EC2 Service, click on "Launch Instance."</li>

<!-- /wp:list-item -->

<!-- wp:list-item -->

<li>Select an Amazon Machine Image (AMI) from the list—for example, Amazon Linux 2.</li>

<!-- /wp:list-item -->

<!-- wp:list-item -->

<li>Either create a new security group or use an existing security group that will allow for HTTP (port 80) and HTTPS (port 443) traffic.</li>

<!-- /wp:list-item -->

<!-- wp:list-item -->

<li>When the instance is running, connect to it using SSH.</li>

<!-- /wp:list-item -->

<!-- wp:list-item -->

<li>Once the instance is running, install a web server (for example, Apache) and deploy a sample vulnerable web application—for example, DVWA.</li>

<!-- /wp:list-item --></ul>

<!-- /wp:list -->

<!-- wp:paragraph -->

<p><strong>CLI Steps:</strong></p>

<!-- /wp:paragraph -->

<!-- wp:list -->

<ul><!-- wp:list-item -->

<li>Launch an EC2 instance</li>

<!-- /wp:list-item --></ul>

<!-- /wp:list -->

<!-- wp:code -->

<pre class="wp-block-code"><code><strong><em>aws ec2 run-instances --image-id ami-xxxx --count 1 --instance-type t2.micro --key-name MyKeyPair</em></strong></code></pre>

<!-- /wp:code -->

<!-- wp:list -->

<ul><!-- wp:list-item -->

<li>Configure the security group</li>

<!-- /wp:list-item --></ul>

<!-- /wp:list -->

<!-- wp:code -->

<pre class="wp-block-code"><code><strong><em>aws ec2 authorize-security-group-ingress --group-id sg-xxxx --protocol tcp --port 80 --cidr 0.0.0.0/0</em></strong></code></pre>

<!-- /wp:code -->

<!-- wp:list -->

<ul><!-- wp:list-item -->

<li>Use SSH to connect to the instance and set up the web server.</li>

<!-- /wp:list-item --></ul>

<!-- /wp:list -->

<!-- wp:heading -->

<h2 class="wp-block-heading">Tools for Pentesting</h2>

<!-- /wp:heading -->

<!-- wp:heading {"level":3} -->

<h3 class="wp-block-heading">AWS Tools</h3>

<!-- /wp:heading -->

<!-- wp:paragraph -->

<p>AWS provides the following tools, which should be used for pentesting:</p>

<!-- /wp:paragraph -->

<!-- wp:list -->

<ul><!-- wp:list-item -->

<li><strong>AWS Inspector:</strong></li>

<!-- /wp:list-item --></ul>

<!-- /wp:list -->

<!-- wp:paragraph -->

<p>This is an automated security assessment service that helps improve the security and compliance of applications deployed on AWS.</p>

<!-- /wp:paragraph -->

<!-- wp:list -->

<ul><!-- wp:list-item -->

<li><strong>AWS WAF (Web Application Firewall):</strong></li>

<!-- /wp:list-item --></ul>

<!-- /wp:list -->

<!-- wp:paragraph -->

<p>Helps protect web applications from common web exploits that could affect application availability, compromise security, or consume excessive resources.</p>

<!-- /wp:paragraph -->

<!-- wp:list -->

<ul><!-- wp:list-item -->

<li><strong>AWS CloudTrail:</strong></li>

<!-- /wp:list-item --></ul>

<!-- /wp:list -->

<!-- wp:paragraph -->

<p>This tool enables governance, compliance, and operational and risk auditing of your AWS account.</p>

<!-- /wp:paragraph -->

<!-- wp:list -->

<ul><!-- wp:list-item -->

<li><strong>AWS CloudWatch:</strong></li>

<!-- /wp:list-item --></ul>

<!-- /wp:list -->

<!-- wp:paragraph -->

<p>This provides monitoring and observability for your AWS resources and applications.</p>

<!-- /wp:paragraph -->

<!-- wp:heading {"level":3} -->

<h3 class="wp-block-heading">Third-Party Tools</h3>

<!-- /wp:heading -->

<!-- wp:paragraph -->

<p>Apart from the native AWS tools, the full pentest requires the following third-party tools:</p>

<!-- /wp:paragraph -->

<!-- wp:list -->

<ul><!-- wp:list-item -->

<li><strong>Burp Suite:</strong></li>

<!-- /wp:list-item --></ul>

<!-- /wp:list -->

<!-- wp:paragraph -->

<p>An integrated platform for performing security testing of web applications.</p>

<!-- /wp:paragraph -->

<!-- wp:list -->

<ul><!-- wp:list-item -->

<li><strong>OWASP ZAP:</strong></li>

<!-- /wp:list-item --></ul>

<!-- /wp:list -->

<!-- wp:paragraph -->

<p>An open-source web application security scanner.</p>

<!-- /wp:paragraph -->

<!-- wp:list -->

<ul><!-- wp:list-item -->

<li><strong>Nmap:</strong></li>

<!-- /wp:list-item --></ul>

<!-- /wp:list -->

<!-- wp:paragraph -->

<p>A network exploration tool and security scanner.</p>

<!-- /wp:paragraph -->

<!-- wp:list -->

<ul><!-- wp:list-item -->

<li><strong>Metasploit:</strong></li>

<!-- /wp:list-item --></ul>

<!-- /wp:list -->

<!-- wp:paragraph -->

<p>A penetration testing framework that helps security teams verify vulnerabilities and manage security assessments.</p>

<!-- /wp:paragraph -->

<!-- wp:heading -->

<h2 class="wp-block-heading">Conducting the Pentest</h2>

<!-- /wp:heading -->

<!-- wp:heading {"level":3} -->

<h3 class="wp-block-heading">Reconnaissance</h3>

<!-- /wp:heading -->

<!-- wp:paragraph -->

<p>This is the first step in pentesting, where you gather information about the target application.</p>

<!-- /wp:paragraph -->

<!-- wp:paragraph -->

<p><strong>Console Steps:</strong></p>

<!-- /wp:paragraph -->

<!-- wp:list -->

<ul><!-- wp:list-item -->

<li>Monitor and log activities in your AWS account so you can identify potentially unauthorized or malicious activities.</li>

<!-- /wp:list-item -->

<!-- wp:list-item -->

<li>Perform the vulnerability assessments for your instances.</li>

<!-- /wp:list-item --></ul>

<!-- /wp:list -->

<!-- wp:paragraph -->

<p><strong>CLI Steps:</strong></p>

<!-- /wp:paragraph -->

<!-- wp:list -->

<ul><!-- wp:list-item -->

<li>Use Nmap to scan for open ports and services:</li>

<!-- /wp:list-item --></ul>

<!-- /wp:list -->

<!-- wp:code -->

<pre class="wp-block-code"><code><strong><em>nmap -A -T4 <target-IP></em></strong></code></pre>

<!-- /wp:code -->

<!-- wp:list -->

<ul><!-- wp:list-item -->

<li>Use the AWS CLI to review CloudTrail logs for any suspicious activities.</li>

<!-- /wp:list-item --></ul>

<!-- /wp:list -->

<!-- wp:heading {"level":3} -->

<h3 class="wp-block-heading">Vulnerability Scanning</h3>

<!-- /wp:heading -->

<!-- wp:paragraph -->

<p>Once you have information, the next task is to identify the vulnerabilities in the application.</p>

<!-- /wp:paragraph -->

<!-- wp:paragraph -->

<p><strong>Console Steps:</strong></p>

<!-- /wp:paragraph -->

<!-- wp:list -->

<ul><!-- wp:list-item -->

<li>Set up and Run AWS Inspector to identify vulnerabilities in your instances.</li>

<!-- /wp:list-item -->

<!-- wp:list-item -->

<li>Shield your application from common web exploits.</li>

<!-- /wp:list-item --></ul>

<!-- /wp:list -->

<!-- wp:paragraph -->