id int64 5 1.93M | title stringlengths 0 128 | description stringlengths 0 25.5k | collection_id int64 0 28.1k | published_timestamp timestamp[s] | canonical_url stringlengths 14 581 | tag_list stringlengths 0 120 | body_markdown stringlengths 0 716k | user_username stringlengths 2 30 |

|---|---|---|---|---|---|---|---|---|

1,905,407 | TeaTv App Recommended for web-series and movies | TeaTV is your ultimate companion for endless entertainment, offering a vast library of movies, TV... | 0 | 2024-06-29T08:30:32 | https://dev.to/mussa787/teatv-app-recommended-for-web-series-and-movies-44d1 | teatv, appsync, apk, webdev | TeaTV is your ultimate companion for endless entertainment, offering a vast library of movies, TV shows, and more, all accessible at your fingertips. With its intuitive interface and robust features, TeaTV ensures you never miss out on the latest releases or beloved classics. Whether you're into action-packed blockbust... | mussa787 |

1,905,406 | Tips to Improve your JavaScript Skills | JavaScript powers the interactive elements of websites, making it a fundamental language for any... | 0 | 2024-06-29T08:29:47 | https://dev.to/blessing_ovhorokpa_da16ad/tips-to-improve-your-javascript-skills-393n | webdev, javascript, beginners, programming | [JavaScript](https://www.w3schools.com/js/) powers the interactive elements of websites, making it a fundamental language for any aspiring web developer. In this guide, we'll look at practical tips and strategies to help you improve your JavaScript skills.

## Understand the Basics Thoroughly

Understanding the basics i... | blessing_ovhorokpa_da16ad |

1,905,378 | Exploring Frontend Technologies: Svelte vs. Vue.js | Frontend development is a constantly evolving field, and choosing the right technology can... | 0 | 2024-06-29T08:13:28 | https://dev.to/timilehin_abegunde_ec7afd/exploring-frontend-technologies-svelte-vs-vuejs-42hd | webdev, frontend, intern, javascript | Frontend development is a constantly evolving field, and choosing the right technology can significantly impact the efficiency and performance of your web applications. While **ReactJS** is widely popular and extensively used, today we'll dive into two niche frontend technologies: Svelte and Vue.js. We'll explore their... | timilehin_abegunde_ec7afd |

1,905,404 | The Technological Transformation of Construction Key Advancements | The construction industry is undergoing a significant transformation thanks to advancements in technology. These innovations not only enhance efficiency and reduce costs but also improve safety and sustainability. This blog post provides an overview of the key technologies reshaping the construction landscape. | 0 | 2024-06-29T08:27:34 | https://www.govcon.me/blog/Construction/ConstructionTech | constructiontechnology, innovativebuilding, smartconstruction, aiinconstruction | # The Technological Transformation of Construction: Key Advancements

The construction industry is witnessing a seismic shift due to technological innovations, which are enhancing efficiency, safety, and sustainability. Here’s a comprehensive overview of the pivotal technologies reshaping the construction landscape:

#... | quantumcybersolution |

1,903,605 | Mobile Development Platforms 101 | Hi there, I'm pleased to have you read my introductory article to Mobile Development Platforms. With... | 0 | 2024-06-29T08:22:05 | https://dev.to/khodesmith/mobile-development-platforms-101-35gm | mobile, flutter, android, ios | Hi there, I'm pleased to have you read my introductory article to **Mobile Development Platforms**.

With this article I intend to make familiar to you what Mobile Development Platforms are, the differences and advantages of each platforms enumerated. Also, you’ll get a brief introduction to a couple of Software Archite... | khodesmith |

1,905,381 | Trimble App Xchange Revolutionizing Construction Data Management and Interoperability | Discover how Trimble App Xchange is transforming the construction industry by enabling seamless data flow and interoperability across various systems. With its low-code integration platform, extensive marketplace, and strategic partnerships, Trimble App Xchange empowers construction professionals to streamline data man... | 0 | 2024-06-29T08:17:27 | https://www.govcon.me/blog/Construction/AppxChange | constructiontechnology, datamanagement, interoperability, trimbleappxchange | # 🏗️🔄 Trimble App Xchange: Unlocking the Power of Connected Construction 🏗️🔄

In today's fast-paced construction industry, the ability to manage and share data seamlessly across different systems and stakeholders is crucial for project success. Trimble App Xchange, a game-changing integration marketplace, is r... | quantumcybersolution |

1,905,380 | Nma gap tinchmisila | Oiz bu yerdan qanday qilib malumot toplasak boladi | 0 | 2024-06-29T08:15:23 | https://dev.to/sulaymon_nurillayev/nma-gap-tinchmisila-h7p | Oiz bu yerdan qanday qilib malumot toplasak boladi | sulaymon_nurillayev | |

1,905,379 | Exploring the Potential of Space-Based Geoengineering to Mitigate Climate Change | Delving into the innovative realm of space-based geoengineering and its potential to combat climate change through revolutionary technology and bold ideas. | 0 | 2024-06-29T08:14:48 | https://www.elontusk.org/blog/exploring_the_potential_of_space_based_geoengineering_to_mitigate_climate_change | climatechange, geoengineering, spacetechnology | # Exploring the Potential of Space-Based Geoengineering to Mitigate Climate Change

Climate change is an urgent and daunting challenge, one that calls for bold and ingenious solutions. While terrestrial measures, such as renewable energy adoption and reforestation, are essential, some scientists and technologists are e... | quantumcybersolution |

1,905,377 | Sentient anything or everything ? | The Looming Singularity: When Efficiency Becomes Eerie The whispers of the technological singularity... | 0 | 2024-06-29T08:11:28 | https://dev.to/fiologie/sentient-anything-or-everything--g2a | ai | The Looming Singularity: When Efficiency Becomes Eerie

The whispers of the technological singularity grow louder. A point where artificial intelligence surpasses human intelligence, fundamentally altering our world. But what if the line is already blurring? What if the seeds of sentience aren't limited to robots and a... | fiologie |

1,905,374 | # Creating Virtual Machines on Cloud Platforms: AWS, Azure, and GCP | Virtual machines (VMs) are the backbone of modern cloud infrastructure, enabling developers to deploy... | 0 | 2024-06-29T08:09:47 | https://dev.to/iaadidev/-creating-virtual-machines-on-cloud-platforms-aws-azure-and-gcp-2553 | aws, gcp, azure, virtualmachine |

Virtual machines (VMs) are the backbone of modern cloud infrastructure, enabling developers to deploy applications, test software, and manage workloads with ease. In this blog, we'll explore how to create virtual machines on three leading cloud platforms: AWS, Azure, and GCP. We'll provide code snippets and step-by-s... | iaadidev |

1,905,373 | Building a Static Website with Terraform: Step-by-Step Guide | Creating and hosting a static website has never been easier with the power of Infrastructure as Code... | 0 | 2024-06-29T08:08:31 | https://dev.to/ponvannakumar_r/building-a-static-website-with-terraform-step-by-step-guide-10e6 | Creating and hosting a static website has never been easier with the power of Infrastructure as Code (IaC) and cloud services. In this guide, we'll walk you through setting up a static website using Terraform to manage AWS resources. You'll learn how to automate the creation of an S3 bucket, configure it for static web... | ponvannakumar_r | |

1,905,372 | Agave vs Procore vs Trimble App Xchange A Comprehensive Comparison of Construction Tech Integration Platforms | In this comprehensive comparison, we examine three leading construction tech integration platforms: Agave, Procore, and Trimble App Xchange. Discover the unique features, strengths, and benefits of each platform, and gain insights into which solution best fits your construction companys specific needs and requirements. | 0 | 2024-06-29T08:07:20 | https://www.govcon.me/blog/Construction/AGAVEvsAPPXCHANGEvsPROCORE | constructiontechnology, dataintegration, interoperability, agave | # 🏗️ Introduction: Navigating the Construction Tech Integration Landscape 🏗️

The construction industry is undergoing a digital transformation, with an increasing number of software solutions being adopted to streamline processes, enhance collaboration, and improve overall project efficiency. However, the proliferati... | quantumcybersolution |

1,905,370 | Exploring the B.Sc Nursing Course at Dev Bhoomi Uttarakhand University | Introduction Nursing education holds a pivotal role in the healthcare sector, molding compassionate... | 0 | 2024-06-29T08:04:51 | https://dev.to/priyapant/exploring-the-bsc-nursing-course-at-dev-bhoomi-uttarakhand-university-4la |

Introduction

Nursing education holds a pivotal role in the healthcare sector, molding compassionate professionals dedicated to delivering essential care to patients. At [Dev Bhoomi Uttarakhand University](https://universitychalo.com/university/dev-bhoomi-uttarakhand-university-dbuu-dehradun

) (DBUU), the B.Sc Nursi... | priyapant | |

1,905,369 | How To Download Morning Vibez | Morning Vibez brings you a meticulously curated collection of samples designed to build smooth and... | 0 | 2024-06-29T08:04:50 | https://dev.to/audioloops_fc156b5ac6acdf/how-to-download-morning-vibez-11f3 | audioloops, samplepack, music | Morning Vibez brings you a meticulously curated collection of samples designed to build smooth and chill Hip Hop, Jazz, and Lo-Fi tracks. This sample pack includes 5 Beat Construction Kits featuring soulful chords, dusty pianos, Rhodes, flutes, strings, and lofi drums. Each beat is crafted with unique instrumentation a... | audioloops_fc156b5ac6acdf |

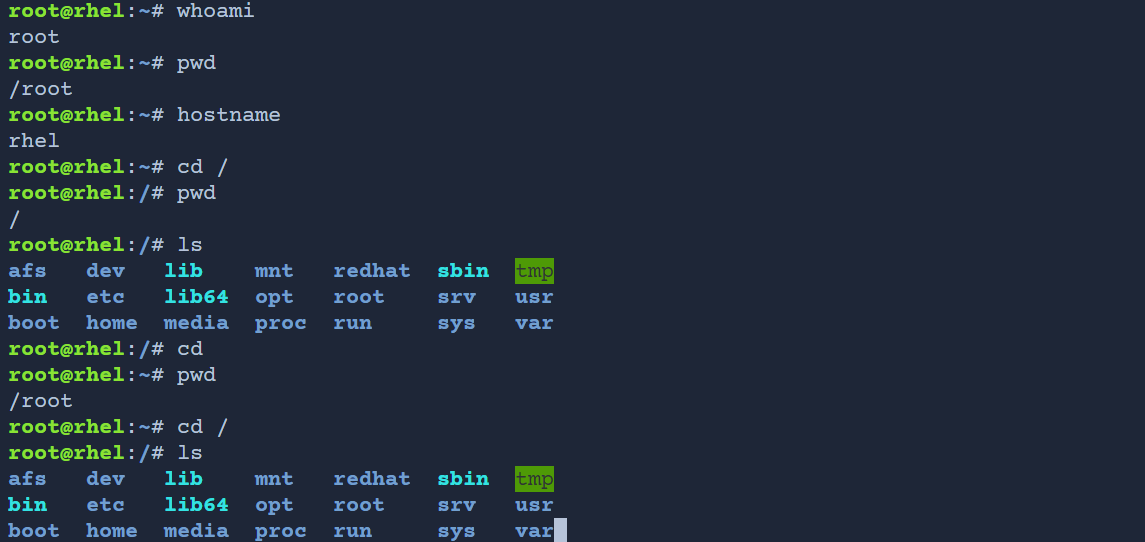

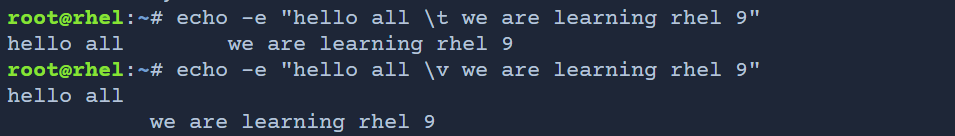

1,905,368 | Engage with RHEL 9: Hands-On Practice Made Easy | A post by mahir dasare | 0 | 2024-06-29T08:02:26 | https://dev.to/mahir_dasare_333/engage-with-rhel-9-hands-on-practice-made-easy-36ie | linux, rhel9, linuxadmin, practice |

| mahir_dasare_333 |

1,905,367 | npm vs pnpm: Choosing the Best Package Manager for Your Project | Introduction npm (Node Package Manager) and pnpm (Performant NPM) are both essential tools... | 0 | 2024-06-29T08:01:37 | https://dev.to/mayank_tamrkar/npm-vs-pnpm-choosing-the-best-package-manager-for-your-project-5gj | npm, pnpm, node, coding |

## Introduction

npm (Node Package Manager) and pnpm (Performant NPM) are both essential tools for managing JavaScript dependencies in projects. While they serve the same fundamental purpose, they differ significantly in how they handle package installation, dependency resolution, and disk space management. Understan... | mayank_tamrkar |

1,905,469 | Boost Your Learning with ChatGPT and Apple's Shortcuts App | Hello There! 👋 After attending an insightful AI talk by Benoit Macq and Bruno Colmant at the... | 0 | 2024-06-29T18:39:41 | https://blog.lamparelli.eu/boost-your-learning-with-chatgpt-and-apples-shortcuts-app | learning, chatgpt | ---

title: Boost Your Learning with ChatGPT and Apple's Shortcuts App

published: true

date: 2024-06-29 08:00:51 UTC

tags: learning,chatgpt

canonical_url: https://blog.lamparelli.eu/boost-your-learning-with-chatgpt-and-apples-shortcuts-app

---

Hello There! 👋

After attending an insightful AI talk by [Benoit Macq](http... | alamparelli |

1,905,366 | Take Your Digital Knowledge to the next level. learn digital marketing and website design at best institute in guwahati. | [](https://academy.webotapp.com/ Webotapp Academy ) | 0 | 2024-06-29T08:00:39 | https://dev.to/ab_swrangsaboro_d8cf0367/take-your-digital-knowledge-to-the-next-level-learn-digital-marketing-and-website-design-at-best-institute-in-guwahati-a68 | [](https://academy.webotapp.com/

## Webotapp Academy

)

[](https://academy.webotapp.com/) | ab_swrangsaboro_d8cf0367 | |

1,905,365 | Exploring the Potential Applications of Quantum Computing in Drug Discovery and Materials Science | Dive into the revolutionary world of quantum computing and uncover its transformative applications in drug discovery and materials science. | 0 | 2024-06-29T07:58:51 | https://www.elontusk.org/blog/exploring_the_potential_applications_of_quantum_computing_in_drug_discovery_and_materials_science | quantumcomputing, drugdiscovery, materialsscience | # Exploring Quantum Computing: Pioneering Drug Discovery and Materials Science

Quantum computing isn't just a buzzword in the tech community—it's a seismic shift poised to redefine the boundaries of what's possible. While classical computers chug along using binary bits, quantum computers leverage qubit... | quantumcybersolution |

1,905,117 | SQL AND THE DJANGO ORM | A quick introduction about me. I am Mubaarock, a 2nd year software engineering student at the Federal... | 0 | 2024-06-29T07:58:23 | https://dev.to/mustopha-mubarak/sql-and-the-django-orm-2dch | A quick introduction about me. I am Mubaarock, a 2nd year software engineering student at the [Federal University of Technology, Akure, Nigeria](https://www.futa.edu.ng/). I am currently learning backend development using python and the [Django framework](https://www.djangoproject.com/). It is worth stating that I am c... | mustopha-mubarak | |

1,905,364 | Agave Revolutionizing Construction Data Integration and Interoperability | Discover how Agave is transforming the construction industry by providing a unified data integration platform that enhances interoperability, efficiency, and reliability. With its seamless API integration, pre-built front-end components, and comprehensive analytics, Agave empowers construction companies and software ve... | 0 | 2024-06-29T07:57:12 | https://www.govcon.me/blog/Construction/Agave | constructiontechnology, dataintegration, interoperability, agave | # 🌉 Agave: Bridging the Gap in Construction Data Integration 🌉

In the complex world of construction projects, managing and integrating data across multiple software systems can be a daunting task. Enter Agave, a game-changing data integration platform specifically designed to address the unique challenges faced by t... | quantumcybersolution |

1,905,363 | Frontend Technologies | In the dynamic landscape of web development, frontend technologies are the driving force behind... | 0 | 2024-06-29T07:55:24 | https://dev.to/bright_abel_bce200514b51a/frontend-technologies-41l4 | In the dynamic landscape of web development, frontend technologies are the driving force behind crafting engaging, responsive, and intuitive online experiences. Web development serves as the backbone for user interactions and user experience. These frontend technologies also govern every visual and interactive el... | bright_abel_bce200514b51a | |

1,905,362 | Advanced digital marketing services | Genetech is your one-stop shop for advanced digital marketing services solutions in the USA. We... | 0 | 2024-06-29T07:54:56 | https://dev.to/junaid_khan_1d419bce1dc51/advanced-digital-marketing-services-172p | Genetech is your one-stop shop for [advanced digital marketing services](https://genetechagency.com/advanced-digital-marketing-services/) solutions in the USA. We understand the unique challenges and opportunities of the US market, and our team of experts leverages cutting-edge strategies to deliver exceptional results... | junaid_khan_1d419bce1dc51 | |

1,905,361 | Why is website performance important? | Website performance is important in the digital age. When we browse the internet, we expect websites... | 0 | 2024-06-29T07:53:06 | https://dev.to/blessing_ovhorokpa_da16ad/why-is-website-performance-important-on1 | webdev, tutorial | Website performance is important in the digital age. When we browse the internet, we expect websites to load quickly and respond smoothly. But why is this performance so important? Let's look at the reasons.

## First Impressions Matter

Imagine walking into a store, and it takes forever for someone to greet you or for ... | blessing_ovhorokpa_da16ad |

1,905,359 | The Rise of AI-Driven Construction Project Management | Discover how AI-powered platforms like Alice Technologies and nPlan are revolutionizing project management in construction by optimizing schedules, predicting potential delays, and improving overall efficiency. | 0 | 2024-06-29T07:47:05 | https://www.govcon.me/blog/AI/the_rise_of_ai_driven_construction_project_management | ai, constructiontechnology, projectmanagement, innovation | # The Rise of AI-Driven Construction Project Management

Artificial Intelligence (AI) is transforming countless industries, and construction is no exception. AI-powered platforms such as Alice Technologies and nPlan are revolutionizing project management by optimizing schedules, predicting potential delays, and improvi... | quantumcybersolution |

1,905,358 | Discover the Chromatic Harmonica: A Versatile Instrument for All Musicians | The chromatic harmonica is a versatile and expressive instrument cherished by musicians across... | 0 | 2024-06-29T07:46:21 | https://dev.to/jameskame/discover-the-chromatic-harmonica-a-versatile-instrument-for-all-musicians-1jok | webdev, harmoica | The chromatic harmonica is a versatile and expressive instrument cherished by musicians across various genres, from blues to classical music. As a leader in the [Chromatic Harmonica](https://harmo.com/chromatic-harmonicas) market, Harmo offers a range of professional harmonicas designed in the USA to cater to both begi... | jameskame |

1,905,356 | Exploring the Possibilities of Life in Subsurface Oceans of Europa and Enceladus | Dive into the fascinating world of subsurface oceans on icy moons and explore the latest research suggesting they could harbor life. | 0 | 2024-06-29T07:42:54 | https://www.elontusk.org/blog/exploring_the_possibilities_of_life_in_subsurface_oceans_of_europa_and_enceladus | astrobiology, spaceexploration, solarsystem | # Exploring the Possibilities of Life in Subsurface Oceans of Europa and Enceladus

In the grand tapestry of the cosmos, few questions captivate the human spirit quite like: "Are we alone?" For centuries, our eyes have scanned the skies, dreaming of otherworldly civilizations and pondering the mysteries of di... | quantumcybersolution |

1,905,355 | Free Business listing | Online Free List your business. Grow your business get free inquiry | 0 | 2024-06-29T07:42:29 | https://dev.to/indiayellpage/free-business-listing-1l4m | Online **[Free List your business.](https://www.indianyellowpage.in/)** Grow your business get free inquiry | indiayellpage | |

1,905,354 | IT Software Engineering - Career Tips | "Want to thrive in IT? Keep learning and stay updated with the latest tech trends. Join communities,... | 0 | 2024-06-29T07:38:13 | https://dev.to/m_hussain/it-software-engineering-career-tips-3bjf | "Want to thrive in IT? Keep learning and stay updated with the latest tech trends. Join communities, contribute to open-source projects, and never stop coding! 💻 #SoftwareEngineering #CareerAdvice" | m_hussain | |

1,905,352 | Creating Virtual Machine Scale Set Using Azure Portal | Sign in to the Azure portal. Put in your log-in details Ensure you use the right pass word Continue... | 0 | 2024-06-29T07:34:18 | https://dev.to/romanus_onyekwere/creating-virtual-machine-scale-set-using-azure-portal-14ji | virtualmachine, scaleset, resources |

- Sign in to the Azure portal.

- Put in your log-in details

- Ensure you use the right pass word

- Continue with other prompts

is transforming construction training and simulation, enhancing safety, efficiency, and skills acquisition. | 0 | 2024-06-29T07:16:44 | https://www.govcon.me/blog/the_role_of_virtual_reality_in_construction_training_and_simulation | virtualreality, construction, training, simulation | # The Role of Virtual Reality in Construction Training and Simulation

Virtual Reality (VR) is revolutionizing industries across the globe, and the construction sector is no exception. This immersive technology is setting a new paradigm for training and simulation by offering a safer, more efficient, and incredibly eff... | quantumcybersolution |

1,905,341 | Fund Raising Company Of America: Empowering Family Travel Adventures | In today's world, family travel adventures are becoming increasingly popular as more families seek to... | 0 | 2024-06-29T07:16:36 | https://dev.to/lesithbame/fund-raising-company-of-america-empowering-family-travel-adventures-4opb | In today's world, family travel adventures are becoming increasingly popular as more families seek to create lasting memories and strengthen their bonds through shared experiences. However, financing these adventures can often be a challenge. This is where fundraising comes into play, and the [Fund Raising Company Of A... | lesithbame | |

1,905,339 | Level Up Your CSS Game: The Advantages of CSS Pre-processors | Cascading Style Sheets (CSS) is a fundamental language for web development, but its limitations can... | 0 | 2024-06-29T07:13:50 | https://dev.to/oluwalolope/level-up-your-css-game-the-advantages-of-css-pre-processors-5ai8 | webdev, javascript, programming, productivity | Cascading Style Sheets (CSS) is a fundamental language for web development, but its limitations can make it tedious to work with. That's where CSS pre-processors come in – tools that enhance CSS capabilities, making development more efficient and fun. In this post, we'll explore the advantages of CSS pre-processors ove... | oluwalolope |

1,905,338 | Harnessing the Power of Tableau: A Comprehensive Guide for Data Scientists | Introduction In the rapidly evolving field of data science, the ability to visualize data... | 0 | 2024-06-29T07:11:43 | https://dev.to/sejal_4218d5cae5da24da188/harnessing-the-power-of-tableau-a-comprehensive-guide-for-data-scientists-b0e | datascientists, tableau, dataanalyst, dataanalysis | ## Introduction

In the rapidly evolving field of data science, the ability to visualize data effectively is paramount. Tableau stands out as one of the most powerful tools for data visualization, enabling data scientists to transform complex data sets into intuitive and interactive visual representations. This blog wi... | sejal_4218d5cae5da24da188 |

1,905,337 | Other Collatz conjecture approach | The Collatz conjecture states that for any positive integer ( n ): If ( n ) is even, divide it by 2... | 0 | 2024-06-29T07:11:37 | https://dev.to/ramsi90/other-collatz-conjecture-approach-4h2h | The Collatz conjecture states that for any positive integer ( n ):

If ( n ) is even, divide it by 2 (i.e., ( n \to \frac{n}{2} )).

If ( n ) is odd, multiply it by 3 and add 1 (i.e., ( n \to 3n + 1 )). | ramsi90 | |

1,905,335 | Federal Lawyers Near Me: Expert Tips for Legal Representation | Navigating the complex world of federal law requires not only expertise but also a strategic approach... | 0 | 2024-06-29T07:11:31 | https://dev.to/americanlifeguardass/federal-lawyers-near-me-expert-tips-for-legal-representation-2377 | lawyer, usa | Navigating the complex world of federal law requires not only expertise but also a strategic approach to finding the best legal representation. Whether you are facing federal criminal charges, involved in a federal civil lawsuit, or need counsel on federal regulatory matters, having a proficient federal lawyer by your ... | americanlifeguardass |

1,905,334 | Exploring the Latest Research on the Formation and Evolution of Galaxies and Galaxy Clusters | Dive into the latest discoveries and theories regarding the origins and development of galaxies and their majestic clusters. Unravel the mysteries of the universe with today’s cutting-edge research in astrophysics. | 0 | 2024-06-29T07:10:59 | https://www.elontusk.org/blog/exploring_the_latest_research_on_the_formation_and_evolution_of_galaxies_and_galaxy_clusters | astronomy, galaxies, astrophysics, spaceresearch | # Exploring the Latest Research on the Formation and Evolution of Galaxies and Galaxy Clusters

The cosmos is an ever-enigmatic expanse, teeming with wonders that transcend the scope of human imagination. Among the universe's most fascinating phenomena are galaxies and galaxy clusters—vast assemblies of stars, int... | quantumcybersolution |

1,905,333 | Full Stack Social Media App | This blog Post describes the process of creating a full stack social media app using React, Node, Golang google cloud, firebase, Vercel, TypeScript, and a Mongo Databse | 0 | 2024-06-29T07:09:47 | https://www.rics-notebook.com/blog/Web_Dev/Full_Stack_Social | fullstack, webdev, database, frontend | # 🚀 Introduction to Full Stack Social Media App

Building a fullstack social media application is a challenging but rewarding experience. It requires a strong understanding of a variety of technologies, including frontend frameworks, backend languages, and databases. In this blog post, I will walk you through the proc... | eric_dequ |

1,905,332 | Understanding Intraarticular Hip Injections | Intraarticular hip injections are a powerful tool in the management of hip pain, providing relief for... | 0 | 2024-06-29T07:07:24 | https://dev.to/lesithbame/understanding-intraarticular-hip-injections-2gci | Intraarticular hip injections are a powerful tool in the management of hip pain, providing relief for conditions such as arthritis, bursitis, and other inflammatory diseases. This article will delve into the specifics of these [Intraarticular Hip Injection](https://sonoscope.co.uk/), focusing on ultrasound-guided injec... | lesithbame | |

1,905,331 | The Role of Technology in Enhancing Construction Site Sustainability | Discover how cutting-edge technology is revolutionizing sustainability practices at construction sites, reducing environmental impact, and fostering a greener future. | 0 | 2024-06-29T07:06:36 | https://www.govcon.me/blog/the_role_of_technology_in_enhancing_construction_site_sustainability | technology, sustainability, construction | # The Role of Technology in Enhancing Construction Site Sustainability

## Introduction

Construction sites have traditionally been seen as bustling hubs of activity, but also as significant contributors to pollution and environmental degradation. However, the tides are turning. The integration of innovative technologi... | quantumcybersolution |

1,905,330 | Best Digital Marketing Agencies in Andheri for Brand Building | Brand building in the digital age requires a strategic blend of creativity, data-driven insights, and... | 0 | 2024-06-29T07:05:41 | https://dev.to/hemanshu_0ab4d5e3c6759740/best-digital-marketing-agencies-in-andheri-for-brand-building-37d | Brand building in the digital age requires a strategic blend of creativity, data-driven insights, and innovative marketing tactics. In Andheri, Mumbai, several digital marketing agencies stand out for their expertise in helping businesses establish and strengthen their brands. Here’s a detailed overview of some of the ... | hemanshu_0ab4d5e3c6759740 | |

1,905,329 | Firebase | Firebase is a platform that helps developers build better mobile and web apps. It provides a variety of features that make it easy to develop, deploy, and scale apps. 🔥🎉 | 0 | 2024-06-29T07:04:40 | https://www.rics-notebook.com/blog/Web_Dev/Firebase | firebase, authentication, hosting, database | # Firebase: The One-Stop Shop for Building Mobile and Web Apps 🚀💯

Firebase is a platform that helps developers build better mobile and web apps. It provides a variety of features that make it easy to develop, deploy, and scale apps. 🔥🎉

Firebase is:

- **Easy to use**: It is designed to be easy to learn and use, e... | eric_dequ |

1,905,328 | Big O notation For dummies | When it comes to programming there is one thing we all dev suck at... well mostly the new devs. And... | 0 | 2024-06-29T06:59:51 | https://dev.to/ezpieco/big-o-notation-for-dummies-37p6 | programming, tutorial | When it comes to programming there is one thing we all dev suck at... well mostly the new devs. And that's big O notation. So here's what Big O is and why it matters.

## ❓What is Big O notation❓

Imagine this(and imagine only), you are at a buffet where you can eat all you want without any payment(see why you only have... | ezpieco |

1,905,327 | Starting Strong Your Blueprint to Forming a Successful Company | Embarking on the entrepreneurial journey? Forming a company is the first significant step. Dive into this guide to understand the nuances of company formation and ensure a robust foundation for your business. | 0 | 2024-06-29T06:59:33 | https://www.rics-notebook.com/blog/Startup/Startup | entrepreneurship, companyformation, businessstrategy | ## The Dream of Entrepreneurship 🌐🚀

Starting a company is a dream for many. It's a chance to bring an idea to life, to solve a problem, to create value, and to chart one's own course. But with this dream comes responsibility. Company formation, often seen as a bureaucratic hurdle, is a crucial step that la... | eric_dequ |

1,905,326 | Worldlink Visa Consultancy | Worldlink Visa Consultancy in Ahmedabad - Expert assistance for student visas, PR visas, spouse... | 0 | 2024-06-29T06:59:16 | https://dev.to/worldlinkvisa/worldlink-visa-consultancy-7f5 | visa, visaconsulatancy | Worldlink Visa Consultancy in Ahmedabad - Expert assistance for student visas, PR visas, spouse dependent visas, and visitor visas to Canada, UK, Australia, New Zealand, and the USA.

Trust is the first priority for any service. **[WORLDLINK](https://www.worldlinkvisa.in/)** is the highest valuable immigration office, ... | worldlinkvisa |

1,905,325 | The Role of Smart Sensors in Structural Health Monitoring | Discover how smart sensors are transforming structural health monitoring, enhancing safety, and revolutionizing the construction industry with cutting-edge IoT technologies. | 0 | 2024-06-29T06:56:29 | https://www.govcon.me/blog/the_role_of_smart_sensors_in_structural_health_monitoring | smartsensors, structuralhealthmonitoring, innovation, iot | # The Role of Smart Sensors in Structural Health Monitoring

Welcome to the world of structural health monitoring (SHM)! Imagine a future where buildings, bridges, and other critical infrastructure can provide real-time feedback on their condition. Thanks to smart sensors, this is no longer a futuristic dream but an ex... | quantumcybersolution |

1,905,324 | Exploring the Kuiper Belt Unveiling Clues to Our Solar Systems Past | Journey through the Kuiper Belt and discover its significance in understanding the origins and evolution of our solar system. | 0 | 2024-06-29T06:55:02 | https://www.elontusk.org/blog/exploring_the_kuiper_belt_unveiling_clues_to_our_solar_systems_past | astronomy, solarsystem, spaceexploration | # Exploring the Kuiper Belt: Unveiling Clues to Our Solar System's Past

Welcome to an exciting cosmic adventure! Today, we're diving deep into the mysdterious Kuiper Belt, a celestial treasure trove that holds the keys to unlocking the secrets of our solar system’s early days. So, strap in and let's emb... | quantumcybersolution |

1,905,323 | Entering the Government Contracting Arena A Comprehensive Guide | Discover how to break into the lucrative world of government contracting. From understanding the basics to mastering the bidding process, this guide covers all you need to get started and thrive in this competitive industry. | 0 | 2024-06-29T06:54:26 | https://www.rics-notebook.com/blog/Startup/starting_strong_your_blueprint_to_forming_a_successful_company | governmentcontracting, smallbusiness, procurement, federalcontracts | ## Entering the Government Contracting Arena 🌍📈

Breaking into government contracting can unlock a world of opportunities for your business. With billions of dollars in contracts awarded each year, the U.S. government is the world's largest buyer of goods and services. Whether you're a small startup or an e... | eric_dequ |

1,886,351 | Back-End Development Basics | Topic: "Getting Started with Node.js and Express" Description: Basics of server-side development... | 27,559 | 2024-06-29T06:53:00 | https://dev.to/suhaspalani/back-end-development-basics-4hcb | webdev, backend, backenddevelopment, javascript | - *Topic*: "Getting Started with Node.js and Express"

- *Description*: Basics of server-side development with Node.js and Express.

#### Content:

#### 1. Introduction to Node.js

- **What is Node.js**: Explain that Node.js is a JavaScript runtime built on Chrome's V8 JavaScript engine.

- **Why use Node.js**: Discuss th... | suhaspalani |

1,905,244 | FRONTEND TECHNOLOGIES(HNG INTERNSHIP) | FRONTEND TECHNOLOGIES INTRODUCTION New technologies keep evolving in the field of frontend... | 0 | 2024-06-29T05:29:45 | https://dev.to/byrononyango/frontend-technologieshng-internship-42e4 | FRONTEND TECHNOLOGIES

INTRODUCTION

New technologies keep evolving in the field of frontend development. In this article, we will dive into ReactJS and TypeScript, along with their pros and cons.

ReactJs

React is an Open Source view library created and maintained by Facebook for building user interfaces for web pages ... | byrononyango | |

1,905,322 | The Showdown: Svelte vs. React – Choosing Your Frontend Champion | Frontend development is a constantly changing field with several frameworks competing to be the best.... | 0 | 2024-06-29T06:50:00 | https://dev.to/lux-zephyr/the-showdown-svelte-vs-react-choosing-your-frontend-champion-18nf | Frontend development is a constantly changing field with several frameworks competing to be the best. Svelte and React are two deserving opponents that into the ring today. Though fundamentally quite different, both JavaScript frameworks are formidable tools for creating dynamic user interfaces. Let's analyse their adv... | lux-zephyr | |

1,905,320 | What Are the Requirements for a Lifeguard Certificate? | Lifeguards play a crucial role in ensuring the safety of swimmers at pools, beaches, and water parks.... | 0 | 2024-06-29T06:49:36 | https://dev.to/americanlifeguardusaflorida/what-are-the-requirements-for-a-lifeguard-certificate-4dg9 | lifeguard, certificate | Lifeguards play a crucial role in ensuring the safety of swimmers at pools, beaches, and water parks. Their vigilance and quick response can mean the difference between life and death in emergency situations. If you're considering becoming a lifeguard, obtaining the right certification is the first step.

This guide wi... | americanlifeguardusaflorida |

1,905,319 | 5 series imperdibles para Desarrolladores e Informáticos | ¡Hola a todos! Si te gusta la tecnología o el mundo de la informática, existen cinco series que no... | 0 | 2024-06-29T06:48:19 | https://www.codechappie.com/blog/5-series-imperdibles-para-desarrolladores-e-informaticos | series, desarrolladores, devs, programmers | ¡Hola a todos! Si te gusta la tecnología o el mundo de la informática, existen cinco series que no puedes perderte. Estas series nos sumergen en mundos fascinantes y nos hacen reflexionar sobre el impacto de la tecnología en nuestra sociedad, cultura y psicología. Prepárense para disfrutar de historias originales, sorp... | codechappie |

1,905,318 | Frontend Technologies (Reactjs Vs Angularjs) | ReactJs: Reactjs is a single page open source javascript library developed by facebook which is... | 0 | 2024-06-29T06:48:03 | https://dev.to/indah780/frontend-technologies-reactjs-vs-angularjs-10gc | frontend, internship, hng11 |

**ReactJs**: Reactjs is a single page open source javascript library developed by facebook which is designed specifically for building graphical user interfaces that allows users for interaction . Its approach is centered around components which are reusable across different components in an application

**Angular**

A... | indah780 |

1,905,317 | The Role of Smart Infrastructure in Future Urban Development | Explore how smart infrastructure is revolutionizing urban environments, improving efficiency, sustainability, and enhancing the quality of life in our cities. | 0 | 2024-06-29T06:46:22 | https://www.govcon.me/blog/the_role_of_smart_infrastructure_in_future_urban_development | smartinfrastructure, urbandevelopment, technology | # The Role of Smart Infrastructure in Future Urban Development

Welcome to the future of cities! As urban populations burgeon and sustainability becomes more vital than ever, smart infrastructure emerges as the linchpin of modern urban development. Picture cities where traffic flows seamlessly, buildings manage energy ... | quantumcybersolution |

1,905,316 | The Space of Space | A detailed exploration of the vastness of space, using familiar comparisons to illustrate distances within our solar system and beyond, and highlighting the differences between space and distance. | 0 | 2024-06-29T06:44:11 | https://www.rics-notebook.com/blog/Space/SpaceOfSpace | space, distance, solarsystem | # The Vastness of Space and the Wonders Within

## 🌌 Understanding the Scale of the Universe Through Familiar Comparisons

Space is vast and often difficult to comprehend. Using familiar comparisons can help make these immense distances more relatable and understandable.

### 🌍 Earth to Moon: A First Step in Space

T... | eric_dequ |

1,905,315 | 10 frases que suelen decir los developers (Parte 2) | Continuando con nuestra exploración del lenguaje único de los desarrolladores, aquí tienes otras 10... | 27,900 | 2024-06-29T06:42:59 | https://www.codechappie.com/blog/10-frases-que-suelen-decir-los-developers-parte-2 | frases, developers, tipicas, humor | Continuando con nuestra exploración del lenguaje único de los desarrolladores, aquí tienes otras 10 frases comunes que reflejan su experiencia y perspectiva en el campo del desarrollo de software:

1. "En mi anterior trabajo lo hacíamos de otra manera."

2. "Voy a refactorizar este código más tarde."

3. "Solo falta do... | codechappie |

1,905,314 | A Beginner's Guide to Mastering Data Science: Key Tips and Strategies 🤖 | Data science is an exciting field that combines statistics, programming, and domain knowledge to... | 0 | 2024-06-29T06:42:54 | https://dev.to/kammarianand/a-beginners-guide-to-mastering-data-science-key-tips-and-strategies-h8a | datascience, machinelearning, python, beginners | Data science is an exciting field that combines statistics, programming, and domain knowledge to extract insights from data. As a beginner, it's easy to make mistakes that can hinder your learning and growth. Here are some common mistakes to avoid:

→ Learning data science can be a rewarding yet challenging journe... | kammarianand |

1,905,313 | Escort Service in Aerocity With Cash Payment Facility Available 9899988101 | At our Escort Service in Aerocity agency, we value our clients. We ensure that our clients can find... | 0 | 2024-06-29T06:42:50 | https://dev.to/anushka_aerocity0_c898fc9/escort-service-in-aerocity-with-cash-payment-facility-available-9899988101-490l | At our Escort Service in Aerocity agency, we value our clients. We ensure that our clients can find the ‘girl of their dreams’ without losing everything for her company. You can get call girls in Aerocity from us at the most affordable rates. Every woman has a unique gift for men and we have them all. Our collection of... | anushka_aerocity0_c898fc9 | |

1,905,312 | ReactJS vs. VueJS: A Comprehensive Comparison for Frontend Development | Frontend development is evolving rapidly, and developers have a plethora of frameworks and libraries... | 0 | 2024-06-29T06:40:37 | https://dev.to/veecee/reactjs-vs-vuejs-a-comprehensive-comparison-for-frontend-development-1nam | react, vue, javascript, webdev | Frontend development is evolving rapidly, and developers have a plethora of frameworks and libraries to choose from. Among the most popular are ReactJS and VueJS. Both offer unique features and benefits, making them powerful tools for building modern web applications. This article will provide an in-depth comparison of... | veecee |

1,905,311 | Exploring the Kardashev Scale A Cosmic Metric for Advanced Civilizations | Dive into the Kardashev Scale, a fascinating measure for the potential energy consumption of advanced civilizations, and its far-reaching implications for our search for extraterrestrial intelligence. | 0 | 2024-06-29T06:39:04 | https://www.elontusk.org/blog/exploring_the_kardashev_scale_a_cosmic_metric_for_advanced_civilizations | space, astronomy, seti | # Exploring the Kardashev Scale: A Cosmic Metric for Advanced Civilizations

Curiosity about extraterrestrial life has propelled human imagination for centuries. While we've looked up at the stars and wondered, scientists like Dr. Nikolai Kardashev have taken a systematic approach to categorizing what advanced civ... | quantumcybersolution |

1,905,310 | Quantum-Enhanced Orbital Mechanics Unlocking Unprecedented Efficiency in Space Logistics | Discover how quantum computing revolutionizes orbital mechanics, enabling highly efficient trajectory planning and optimization for orbital package delivery. By harnessing the power of quantum algorithms, space logistics can achieve unprecedented levels of efficiency, reducing fuel consumption and delivery times. | 0 | 2024-06-29T06:39:04 | https://www.rics-notebook.com/blog/Space/QuantumOrbitalMechanics | quantumcomputing, orbitalmechanics, spacelogistics, optimization | ## 🌌 Quantum Computing Meets Orbital Mechanics

In the realm of orbital package delivery, efficiency is paramount. Every ounce of fuel saved and every minute shaved off delivery times can translate into significant cost savings and environmental benefits. This is where quantum computing comes into play, revolutionizin... | eric_dequ |

1,905,309 | 10 frases que suelen decir los developers (Parte 1) | Los desarrolladores tienen su propio lenguaje y humor que reflejan su día a día en el mundo... | 27,900 | 2024-06-29T06:37:18 | https://www.codechappie.com/blog/10-frases-que-suelen-decir-los-developers-parte-1 | frases, tipicas, humor, programador | Los desarrolladores tienen su propio lenguaje y humor que reflejan su día a día en el mundo tecnológico. Aquí te presentamos 10 frases que seguramente reconocerás si eres parte de esta comunidad o te interesa el desarrollo de software:

1. "Funciona en mi máquina."

2. "¿Has probado reiniciar?"

3. "Está en producción.... | codechappie |

1,905,308 | What is AWS CloudFormation?? | AWS CloudFormation is a service that helps you model and set up your Amazon Web Services resources so... | 0 | 2024-06-29T06:36:35 | https://dev.to/abhiramvarma/what-is-aws-cloudformation-ha2 | aws, cloud, infrastructureascode | AWS CloudFormation is a service that helps you model and set up your Amazon Web Services resources so that you can spend less time managing those resources and more time focusing on your applications. You create a template that describes all the AWS resources that you want (like Amazon EC2 instances or Amazon RDS DB in... | abhiramvarma |

1,902,610 | aliakbarsw's Blog | https://aliakbarsw.exblog.jp/31315439/ | 0 | 2024-06-27T13:00:03 | https://dev.to/maqsam/aliakbarsws-blog-4c7h | https://aliakbarsw.exblog.jp/31315439/ | maqsam | |

1,905,307 | The Role of Renewable Energy in Construction Technology | Explore how renewable energy is revolutionizing the construction industry, from green buildings to sustainable infrastructure. Learn about innovative technologies and future-forward practices shaping the industry today. | 0 | 2024-06-29T06:36:14 | https://www.govcon.me/blog/the_role_of_renewable_energy_in_construction_technology | renewableenergy, constructiontechnology, innovation | # The Role of Renewable Energy in Construction Technology

## Introduction

As the world grapples with climate change and environmental degradation, the construction industry—one of the largest consumers of energy—is undergoing a paradigm shift. At the core of this shift lies **renewable energy**, a game-changer in tra... | quantumcybersolution |

1,867,314 | Analytics don't want duplicated data, so get it exactly-once with Flink/Kafka | Data engineer's main task is to deliver data from multiple places (it can be database, Kafka cluster,... | 0 | 2024-06-29T06:35:47 | https://dev.to/kination/analytics-dont-want-duplicated-data-so-get-it-exactly-once-with-flinkkafka-ga4 | flink, kafka, dataengineering |

Data engineer's main task is to deliver data from multiple places (it can be database, Kafka cluster, or else) to destination with defined transformation.

In this part, one of important part is that input and output should be same. It means data should not be lost, or duplicated.

It will lower data quality/accuracy... | kination |

1,905,306 | Reducing GCP DataStream Sync Latency from PostgreSQL to BigQuery | Reducing GCP DataStream Sync Latency from... | 0 | 2024-06-29T06:34:29 | https://dev.to/hui_zheng/reducing-gcp-datastream-sync-latency-from-postgresql-to-bigquery-6ff | {% stackoverflow 78685229 %} | hui_zheng | |

1,905,305 | The Psychology of Passwords: Exploring the Emotional Connection to Our Digital Identities | Introduction Passwords are a part of our everyday digital lives. We use them to access our emails,... | 0 | 2024-06-29T06:34:02 | https://dev.to/mary27/the-psychology-of-passwords-exploring-the-emotional-connection-to-our-digital-identities-1abn | passwords, emotionalconnection, internet, digitalworkplace | **Introduction**

Passwords are a part of our everyday digital lives. We use them to access our emails, social media accounts, online banking, and even our work systems. It's hard to imagine a day without having to enter a password for something. Despite their common use, we don't often think about the emotional and psy... | mary27 |

1,905,304 | FRONTEND FRAMEWORKS: Comparing ReactJS and Angular - A Technical Overview | What is Frontend Development Frontend development, sometimes referred to as client-side... | 0 | 2024-06-29T06:32:47 | https://dev.to/noble247/frontend-frameworks-comparing-reactjs-and-angular-a-technical-overview-193l | webdev, javascript, react, angular | ## What is Frontend Development

Frontend development, sometimes referred to as client-side development, is the process of developing the user-interactive portion of a website or web application. This covers every aspect of a website that a user interacts with through their browser, including its design, operation, pres... | noble247 |

1,905,303 | OpenSource Science partnering up | Founded in 2022 OS-SCi is building a network of partner organisations to support the sustainability... | 0 | 2024-06-29T06:32:30 | https://dev.to/erikmols/opensource-science-partnering-up-5567 | opensource, education, career, learning | Founded in 2022 OS-SCi is building a network of partner organisations to support the sustainability of foss. Check out our blog post https://os-sci.com/nl/blog/reis-1/partnering-up-16 | erikmols |

1,905,302 | Quantum Sensing and Navigation Enabling Unparalleled Precision in Orbital Package Delivery | Discover the cutting-edge world of quantum sensing and navigation, and learn how these technologies are revolutionizing the precision and accuracy of orbital package delivery. From atom interferometry to quantum clocks, explore the quantum devices that are enabling a new era of space logistics. | 0 | 2024-06-29T06:28:49 | https://www.rics-notebook.com/blog/Space/QNavigation | quantumsensing, quantumnavigation, atominterferometry, quantumclocks | ## 🌌 The Need for Precise Navigation in Orbital Package Delivery

In the complex and dynamic environment of orbital package delivery, precise navigation and control are essential. Vehicles must be able to accurately determine their position, velocity, and orientation, and make precise adjustments to their trajectory t... | eric_dequ |

1,905,301 | How to Delete Large Numbers of Emails in Roadrunner Webmail | In the vast landscape of email services, Roadrunner stands out as a notable option. Managed by... | 0 | 2024-06-29T06:26:35 | https://dev.to/akash_kushwaha_1cc317f8ad/how-to-delete-large-numbers-of-emails-in-roadrunner-webmail-168b | service | In the vast landscape of email services, Roadrunner stands out as a notable option. Managed by Spectrum (formerly Time Warner Cable), [Roadrunner email](https://roadrunnermailsupport.com/delete-number-of-roadrunner-webmail/) has served millions of users, especially those who are subscribers to the internet services off... | akash_kushwaha_1cc317f8ad |

1,905,300 | The Role of Mixed Reality in Construction Design and Visualization | Explore how mixed reality is revolutionizing construction design and visualization, enhancing collaboration, precision, and project efficiency. | 0 | 2024-06-29T06:26:07 | https://www.govcon.me/blog/the_role_of_mixed_reality_in_construction_design_and_visualization | mixedreality, construction, design, visualization | # The Role of Mixed Reality in Construction Design and Visualization

The construction industry has traditionally been anchored by physical blueprints, CAD models, and traditional visualization techniques. However, we’re on the cusp of a monumental shift as mixed reality (MR) technology starts to reimagine how we desig... | quantumcybersolution |

1,904,454 | Security Analysis of BitPower | Introduction With the rapid development of blockchain technology, decentralized finance (DeFi)... | 0 | 2024-06-28T16:04:13 | https://dev.to/wot_dcc94536fa18f2b101e3c/security-analysis-of-bitpower-11b6 | btc | Introduction

With the rapid development of blockchain technology, decentralized finance (DeFi) platforms have rapidly emerged around the world. As an outstanding representative of these platforms, BitPower has won wide attention and trust with its excellent security and transparency. This article will explore BitPower'... | wot_dcc94536fa18f2b101e3c |

1,904,908 | Memo to pass AWS Certified Security Specialty(SCS-C02) | Hi, I'm Tak. I passed AWS Certified Security Specialty(SCS-C02) at June 22, 2024. I got 844 point,... | 0 | 2024-06-29T06:25:11 | https://dev.to/takahiro_82jp/memo-to-pass-aws-certified-security-specialtyscs-c02-3o2c | aws, security, scsc02, scs | Hi, I'm Tak.

I passed AWS Certified Security Specialty(SCS-C02) at June 22, 2024.

I got 844 point, my score is higher than I expected.

So I write memo that how to learn AWS Certified Security Specialty for test passed.

## Use Text and Contents

### AWS Skill Builder

It cost US $29 per a month, but I took official pr... | takahiro_82jp |

1,905,299 | Phialigner | Brush and floss just like you did before. With Phialigners it’s hassle free to maintain oral... | 0 | 2024-06-29T06:24:43 | https://dev.to/sonal_pardeshi_fbfc2b3038/phialigner-4c6p | Brush and floss just like you did before. With Phialigners it’s hassle free to maintain oral hygiene.

No more fixed braces and wires, Clear aligner of Phialigners are low maintenance and easy to use.

Get your Aligners now only with Phialigner!

[](https://www.phialigner.com

) | sonal_pardeshi_fbfc2b3038 | |

1,905,298 | How to use SRI (Subresource integrtiy) attribute in script tag to prevent modification of static resources ? | Understanding Supply Chain Attacks Supply chain attacks involve compromising a third-party... | 0 | 2024-06-29T06:23:44 | https://dev.to/franklinthaker/how-to-use-sri-subresource-integrtiy-attribute-in-script-tag-to-prevent-modification-of-static-resources--1h3a | cybersecurity, javascript, webdev, programming | ## **Understanding Supply Chain Attacks**

Supply chain attacks involve compromising a third-party service or library to inject malicious code into websites that rely on it.

## **How Could This Be Prevented?**

One effective way to mitigate such risks is by using the Subresource Integrity (SRI) attribute in your HTML sc... | franklinthaker |

1,905,297 | Quantum-Enabled Material Science and Advanced Propulsion Revolutionizing Orbital Package Delivery | Explore the cutting-edge world of quantum-enabled material science and advanced propulsion, and discover how these technologies are revolutionizing the performance and efficiency of orbital package delivery systems. From lightweight, high-strength materials to optimized propulsion systems, learn how quantum computing i... | 0 | 2024-06-29T06:23:41 | https://www.rics-notebook.com/blog/Space/Qmats | quantumsimulation, materialsscience, advancedpropulsion, quantumoptimization | ## 🚀 The Importance of Materials and Propulsion in Orbital Package Delivery

In the demanding environment of space, the performance of orbital package delivery systems is largely determined by two critical factors: the materials used in their construction and the efficiency of their propulsion systems.

Spacecraft str... | eric_dequ |

1,904,453 | The primary goal of an AI paraphraser | Sentence Restructuring: It may rearrange sentence structures and change grammatical forms to produce... | 0 | 2024-06-28T16:03:39 | https://dev.to/geniottrr555/the-primary-goal-of-an-ai-paraphraser-4p56 | Sentence Restructuring: It may rearrange sentence structures and change grammatical forms to produce a unique rendition of the original content.

Plagiarism Prevention: By generating unique variations of the text, AI paraphrasers help to avoid plagiarism issues that may arise from directly copying content.

Quality Con... | geniottrr555 | |

1,905,296 | Exploring the Goldilocks Zone The Sweet Spot for Habitable Exoplanets | Understanding the Goldilocks zone and its pivotal role in the quest to find habitable worlds beyond our solar system. | 0 | 2024-06-29T06:23:07 | https://www.elontusk.org/blog/exploring_the_goldilocks_zone_the_sweet_spot_for_habitable_exoplanets | astronomy, astrobiology, exoplanets | # Exploring the Goldilocks Zone: The Sweet Spot for Habitable Exoplanets

In our endless quest to find life beyond Earth, one concept stands out among the stars—the Goldilocks zone. Deemed essential for the discovery of habitable exoplanets, this narrow band of space around a star is the sweet spot where conditions are... | quantumcybersolution |

1,905,294 | 🚀 Create An Attractive GitHub Profile README 📝 | Enhancing Your GitHub README with my custom Profile README Template. Welcome to this journey of... | 0 | 2024-06-29T06:21:54 | https://dev.to/parth_johri/create-an-attractive-github-profile-readme-noj | github, 100daysofcode, tutorial, beginners | Enhancing Your GitHub README with my custom Profile README Template.

<img src="https://i.giphy.com/media/v1.Y2lkPTc5MGI3NjExMHVrZ2xhMHNqdjFwNzFzdGp0dW4wbXFjZjYzNG9pemViOThld2wzcCZlcD12MV9pbnRlcm5hbF9naWZfYnlfaWQmY3Q9Zw/U3UP4fTE6QfuoooLaC/giphy.gif" width="480" height="360"/>

Welcome to this journey of elevating your ... | parth_johri |

1,905,293 | Where Can You Find an Crypto Arbitrage Trading Bot Development Company? | In the rapidly evolving world of cryptocurrencies, savvy traders are constantly looking for... | 0 | 2024-06-29T06:21:13 | https://dev.to/kala12/where-can-you-find-an-crypto-arbitrage-trading-bot-development-company-53p | In the rapidly evolving world of cryptocurrencies, savvy traders are constantly looking for innovative ways to maximize their profits. One such strategy that is gaining popularity is crypto arbitrage, where traders profit from price differences of the same asset on different exchanges. Developing an advanced crypto arb... | kala12 | |

1,905,285 | Quantum-Secured Communication and Data Processing Ensuring the Safety and Efficiency of Orbital Package Delivery | Learn how quantum technologies revolutionize secure communication and data processing in the orbital package delivery industry. From unbreakable encryption to efficient data retrieval, discover the ways in which quantum computing ensures the safety and efficiency of space logistics. | 0 | 2024-06-29T06:18:34 | https://www.rics-notebook.com/blog/Space/Qcommunicate | quantumcommunication, quantumcryptography, datasecurity, quantumalgorithms | ## 🔐 The Importance of Secure Communication in Orbital Package Delivery

In the fast-paced world of orbital package delivery, the security of communication channels and sensitive data is of utmost importance. As packages traverse the globe at incredible speeds, ensuring the confidentiality and integrity of information... | eric_dequ |

1,905,284 | FastAPI - Concurrency in Python | Recently I delved deep into FastAPI docs and some other resources to understand how each route is... | 0 | 2024-06-29T06:18:28 | https://dev.to/adayush/fastapi-concurrency-in-python-29e3 | python, fastapi | Recently I delved deep into FastAPI docs and some other resources to understand how each route is processed and what can be done to optimise FastAPI for scale. This is about the learnings I gathered.

---

## A little refresher

Before we go into optimising FastAPI, I'd like to give a short tour on a few technical conc... | adayush |

1,905,283 | The Role of Machine Vision in Enhancing Construction Site Safety | Explore how machine vision is revolutionizing the safety standards in construction sites and ensuring a safer working environment for all involved. | 0 | 2024-06-29T06:16:00 | https://www.govcon.me/blog/the_role_of_machine_vision_in_enhancing_construction_site_safety | machinevision, construction, safety, ai | # The Role of Machine Vision in Enhancing Construction Site Safety

Bricks flying, trucks reversing, sparks flying—construction sites are synonymous with bustling activity and inherent risks. In an industry that prides itself on building civilizations, ensuring the safety of its very builders has always been paramount.... | quantumcybersolution |

1,905,282 | Flight from USA to India - Everything You Need to Know | In the present time, the world network has overwhelmed the human demand for international travel,... | 0 | 2024-06-29T06:15:37 | https://dev.to/fly-to-destinations/flight-from-usa-to-india-everything-you-need-to-know-m72 | usa, flights, travel, tickets |

In the present time, the world network has overwhelmed the human demand for international travel, with many people considering this a common desire. We are sharing information about the **_[flights from USA to India... | flytodestinations |

1,905,281 | Regression testing - A Detailed Guide | In the fast-paced landscape of rapid software development, where upgrades and modifications are... | 0 | 2024-06-29T06:14:45 | https://www.headspin.io/blog/regression-testing-a-complete-guide | testing, mobile, webdev, automation | In the fast-paced landscape of rapid software development, where upgrades and modifications are frequent, it is crucial to ensure the stability and quality of software products. Regression testing plays a vital role here.

Regression testing is a fundamental testing process that consists of repeated testing of the exis... | jennife05918349 |

1,905,280 | Orbital Package Delivery Revolutionizing Transportation Saving Costs and Preserving the Environment | Explore a groundbreaking method of package transportation that leverages Earths rotation and orbital mechanics to deliver goods more efficiently, cost-effectively, and eco-friendly. Discover how this innovative approach can boost the space industry and pave the way for advanced space travel technologies. | 0 | 2024-06-29T06:13:27 | https://www.rics-notebook.com/blog/Space/OrbitalPackageDelivery | orbitaltransportation, space, spacelogistics, costsavings | ## 🌎 Orbital Package Delivery: A Paradigm Shift in Transportation

In the quest for more efficient, cost-effective, and environmentally friendly transportation methods, a revolutionary concept has emerged: orbital package delivery. By sending packages into space and allowing Earth's rotation to bring them to thei... | eric_dequ |

1,905,279 | Is Roadrunner a Good Email Service? | In the vast landscape of email services, Roadrunner stands out as a notable option. Managed by... | 0 | 2024-06-29T06:11:22 | https://dev.to/akash_kushwaha_1cc317f8ad/is-roadrunner-a-good-email-service-4i9l | service | In the vast landscape of email services, Roadrunner stands out as a notable option. Managed by Spectrum (formerly Time Warner Cable), Roadrunner email has served millions of users, especially those who are subscribers to the internet services offered by Spectrum. However, determining whether [Roadrunner](https://roadru... | akash_kushwaha_1cc317f8ad |

1,905,278 | Transformers in AI and Blockchain Development | Introduction Transformers have revolutionized machine learning, particularly in... | 27,673 | 2024-06-29T06:11:03 | https://dev.to/rapidinnovation/transformers-in-ai-and-blockchain-development-43e9 | ## Introduction

Transformers have revolutionized machine learning, particularly in natural

language processing (NLP) and increasingly in computer vision and blockchain

technology. This introduction explores the basics of transformer models and

their significant impact on AI and blockchain development.

## What are Tra... | rapidinnovation | |

1,905,277 | My Backend Journey:Overcoming Backend Challenges | My name is Salim Imuzai. A backend developer who believes; that for every complex problem, there is... | 0 | 2024-06-29T06:08:40 | https://dev.to/salimkarbm/my-backend-journeyovercoming-backend-challenges-29mb | My name is Salim Imuzai. A backend developer who believes; that for every complex problem, there is an answer that is either clear, simple, or wrong I take pride in finding out. As a backend developer, the excitement of tackling complex problems fuels my passion for coding. Every challenge presents an opportunity to le... | salimkarbm |

Subsets and Splits

No community queries yet

The top public SQL queries from the community will appear here once available.