id int64 5 1.93M | title stringlengths 0 128 | description stringlengths 0 25.5k | collection_id int64 0 28.1k | published_timestamp timestamp[s] | canonical_url stringlengths 14 581 | tag_list stringlengths 0 120 | body_markdown stringlengths 0 716k | user_username stringlengths 2 30 |

|---|---|---|---|---|---|---|---|---|

1,905,015 | Implementing API query parameters and joining HNG 11 | Introduction A while ago, a friend challenged me to build a simple CRUD REST API. One of... | 0 | 2024-06-29T14:42:06 | https://dev.to/thefranklinikeh/implementing-api-query-parameters-and-joining-hng-11-4c4p | #### Introduction

A while ago, a friend challenged me to build a simple CRUD REST API. One of the requirements was to implement filtering through query parameters. At the time, this seemed daunting because I had no idea how to do that with Django REST Framework (DRF). In this article, I’ll explain how I tackled this c... | thefranklinikeh | |

1,905,751 | Polymorphic vs Shared Table: Is speed a valid concern? | What do you do when you find a model that can belong to multiple models? In my case, I'm building... | 0 | 2024-06-29T14:39:35 | https://dev.to/lucaskuhn/polymorphic-vs-shared-table-is-speed-a-valid-concern-22ki | webdev, rails, database | What do you do when you find a model that can belong to multiple models?

In my case, I'm building the inventory tracking functionality for an MRP. An Inventory Item can be either related to a Product, or a Material - in summary, I need to find a way to relate the two tables on the left, to the table on the right:

and [augmented reality (AR)](https://www.sap.com/africa/products/scm/industry-4-0/what-is-augmented-reality.html#:~:text=Augmented%20reality%20definition,real%2Dlife%20environments%20and%20objects.) have grown significantly i... | adewale_gbenga |

1,905,747 | Python 潮流周刊#58:最快运行原型的语言(摘要) | 本周刊由 Python猫 出品,精心筛选国内外的 250+ 信息源,为你挑选最值得分享的文章、教程、开源项目、软件工具、播客和视频、热门话题等内容。愿景:帮助所有读者精进 Python... | 0 | 2024-06-29T14:30:26 | https://dev.to/chinesehuazhou/python-chao-liu-zhou-kan-58zui-kuai-yun-xing-yuan-xing-de-yu-yan-zhai-yao--3doi | python, webdev, javascript, beginners | 本周刊由 Python猫 出品,精心筛选国内外的 250+ 信息源,为你挑选最值得分享的文章、教程、开源项目、软件工具、播客和视频、热门话题等内容。愿景:帮助所有读者精进 Python 技术,并增长职业和副业的收入。

本期周刊分享了 12 篇文章,12 个开源项目,赠书 5 本,全文 2100 字。

以下是本期摘要:

**[🦄文章&教程](https://xiaobot.net/p/python_weekly)**

① 最快运行原型的语言

② PEP-2026 提议 Python 采用日历版本号

③ 优化 Python 的路由和调度:一个新的开源求解器 Timefold

④ 深入了解 Python 的集合数据结... | chinesehuazhou |

1,905,746 | 19 Next.js Project Ideas For You to Get Hired | This article was originally published in my blog:... | 0 | 2024-06-29T14:29:05 | https://dev.to/codebymedu/19-nextjs-project-ideas-for-you-to-get-hired-3i84 | nextjs, frontend, react, portfolio | This article was originally published in my blog: [codebymedu.com/blog/19-next-js-project-ideas-for-portfolio

](codebymedu.com/blog/19-next-js-project-ideas-for-portfolio

)

Whether you’re looking for freelance projects, or a job, or just want to enjoy engineering it’s super important to have projects you can show to yo... | codebymedu |

1,905,745 | Building Blocks Of Zig: Unions | What are Zig Unions? Zig unions are a way to represent a type that can be one of several... | 0 | 2024-06-29T14:26:51 | https://dayvster.com/blog/building-blocks-of-zig-unions/ | zig, programming |

## What are Zig Unions?

Zig unions are a way to represent a type that can be one of several different types. However only one of the types can be active at any given time. Think of it as a way to represent multiple possible types of a value in a single variable.

That may sound a bit confusing at first but it is actua... | dayvster |

1,905,743 | HNG INTERNSHIP https://hng.tech/premium | https://hng.tech/premium | 0 | 2024-06-29T14:25:35 | https://dev.to/achepah_nehemiah_32a3242f/hng-internship-httpshngtechpremium-1l1g | https://hng.tech/premium

| achepah_nehemiah_32a3242f | |

1,873,140 | This Month in Solid #4: The Shape of Frameworks to Come 😎 | Hello friends 👋 June is here, and with it, the fourth issue of This Month in Solid! This was a crazy... | 26,619 | 2024-06-29T14:23:15 | https://danieljcafonso.substack.com/p/this-month-in-solid-4-the-shape-of | webdev, javascript, solidjs, frameworks | Hello friends :wave:

June is here, and with it, the fourth issue of This Month in Solid!

This was a crazy month from a Found Online perspective. It feels incredible to see all the content people are doing on Solid!

We also got something I know many of you were looking forward to.

Before we start looking at what happen... | danieljcafonso |

1,905,742 | Quantum Computing The Game-Changer for Complex Optimization Problems | Dive into the transformative potential of quantum computing in addressing complex optimization problems, exploring the algorithms, current advancements, and future promises of this cutting-edge technology. | 0 | 2024-06-29T14:21:49 | https://www.elontusk.org/blog/quantum_computing_the_game_changer_for_complex_optimization_problems | quantumcomputing, optimization, technology | # Quantum Computing: The Game-Changer for Complex Optimization Problems

Optimization problems are the backbone of numerous industries, from determining the most efficient route for delivery trucks to managing resources in large-scale industrial operations. Traditional computing methods have made significant strides, b... | quantumcybersolution |

1,905,689 | Integrating React Native with GraphQL: A Comprehensive Guide | Heys devs! React Native is a powerful tool for developing cross-platform mobile applications, while... | 0 | 2024-06-29T14:16:57 | https://dev.to/paulocappa/integrating-react-native-with-graphql-a-comprehensive-guide-aip | reactnative, graphql, testing, react | Heys devs!

React Native is a powerful tool for developing cross-platform mobile applications, while GraphQL offers a flexible and efficient approach to consuming APIs. Together, they can make app development faster and less error-prone. In this post, we will explore how to set up and use GraphQL in a React Native appl... | paulocappa |

1,905,687 | CSS and HTML (The Basic Frontenders) | Hyper Text Markup Language popularly known as HTML is a language used to structure and organize the... | 0 | 2024-06-29T14:11:34 | https://dev.to/efua_godgirl/css-and-html-the-basic-frontenders-5dcc |

Hyper Text Markup Language popularly known as HTML is a language used to structure and organize the layout of a webpage. It forms that basis of all website creations and it is used as the fundamental building block of almost every website. Cascading Style Sheets also known as CSS is primarily used for styling web page... | efua_godgirl | |

1,905,686 | Comparing HTML and React: Simplicity vs Power | Frontend development is an interesting field with numerous tools and technologies designed to bring... | 0 | 2024-06-29T14:09:59 | https://dev.to/ayoashy/comparing-html-and-react-simplicity-vs-power-58b4 | hnginternship, react, html, frontend | Frontend development is an interesting field with numerous tools and technologies designed to bring our web applications to life. Among these, HTML and React are two essential tools that every frontend developer should be familiar with. In this article, we’ll compare HTML and React, discuss their strengths, and share m... | ayoashy |

1,905,679 | Jenkins Ci/Cd Pipeline to Build a Go Application into a Docker Image with Multistage build | Introduction In this article, i will be discussing how I implemented a ci/cd pipeline from... | 0 | 2024-06-29T13:58:44 | https://dev.to/audu97/jenkins-cicd-pipeline-to-build-a-go-application-into-a-docker-image-with-multistage-build-394j | devops, cicd, jenkins, go | ### Introduction

In this article, i will be discussing how I implemented a ci/cd pipeline from scratch to build a simple Golang application into a docker image and push said image to Docker hub

The stages of the said pipeline include checking out the source code repository, in this case, git, running analysis on the s... | audu97 |

1,905,685 | Quantum Computing Accelerating the Simulation of Quantum Chemistry | Dive into how quantum computing is revolutionizing the simulation of quantum chemistry, unraveling new possibilities for scientific breakthroughs and technological advancements. | 0 | 2024-06-29T14:05:52 | https://www.elontusk.org/blog/quantum_computing_accelerating_the_simulation_of_quantum_chemistry | quantumcomputing, quantumchemistry, innovation | ## Quantum Computing: Accelerating the Simulation of Quantum Chemistry

When it comes to quantum chemistry, simulating the electronic structure of molecules and predicting their properties has always been a formidable task. Traditional computers, bound by the limitations of classical physics, often stumble in tackling ... | quantumcybersolution |

1,905,683 | Practical Reflection in C# Or "In Reflection We Trust" | C# learners have probably come across a topic called Reflection. It is one of the most difficult... | 0 | 2024-06-29T14:04:05 | https://dev.to/turalsuleymani/practical-reflection-in-c-or-in-reflection-we-trust-31g2 | csharp, reflection, dotnetframework, tutorial | C# learners have probably come across a topic called Reflection. It is one of the most difficult topics for beginners. The degree of learning difficulty can be estimated as 5-6 out of 10. (Subjective opinion) In this article, we will answer the questions of what reflection is useful for and how we can use reflection in... | turalsuleymani |

1,905,682 | FPV Drone Communities Connecting Enthusiasts Worldwide | In the ever-evolving realm of FPV (First Person View) drones, where innovation and adrenaline... | 0 | 2024-06-29T14:02:12 | https://dev.to/seo_expert/fpv-drone-communities-connecting-enthusiasts-worldwide-3g63 | In the ever-evolving realm of FPV (First Person View) drones, where innovation and adrenaline collide, lies a vibrant community that transcends borders and cultures. FPV drones have surged in popularity, captivating enthusiasts with their speed, agility, and immersive flying experiences. Within this exhilarating world,... | seo_expert | |

1,905,680 | Building Cross-Platform Solutions with Wearable App Integration | In the rapidly evolving landscape of mobile and wearable technology, building cross-platform... | 0 | 2024-06-29T13:59:55 | https://dev.to/chariesdevil/building-cross-platform-solutions-with-wearable-app-integration-2n2d | In the rapidly evolving landscape of mobile and wearable technology, building cross-platform solutions with wearable app integration has become a critical focus for developers. The increasing proliferation of smartwatches, fitness trackers, and other wearable devices necessitates a seamless and consistent user experien... | chariesdevil | |

1,905,681 | Angular vs. Vue.js: Choosing the Right Tool for the Job | As a frontend developer, I'm always searching for the best tools to build dynamic and engaging web... | 0 | 2024-06-29T13:59:47 | https://dev.to/milesssssss/angular-vs-vuejs-choosing-the-right-tool-for-the-job-4pdd | programming, vue, angular, react |

As a frontend developer, I'm always searching for the best tools to build dynamic and engaging web applications. Two frameworks that consistently stand out are Angular and Vue.js. Both are excellent for creating interactive user interfaces, but they cater to different development philosophies. Let’s explore their stre... | milesssssss |

1,897,247 | Bitwise Operations for CP (DSA - 1) | Bitwise operations are fundamental to many programming tasks and for competitive programming. This... | 0 | 2024-06-29T13:54:16 | https://dev.to/madgan95/bitwise-operations-for-cp-dsa-1-5e2a | programming, cpp, coding, beginners | Bitwise operations are fundamental to many programming tasks and for competitive programming. This blog will cover the basic bitwise operations, including AND, OR, XOR, NOT, left shift, and right shift, with examples to illustrate their usage.

## What are Bitwise Operations?

Bitwise operations directly manipulate the... | madgan95 |

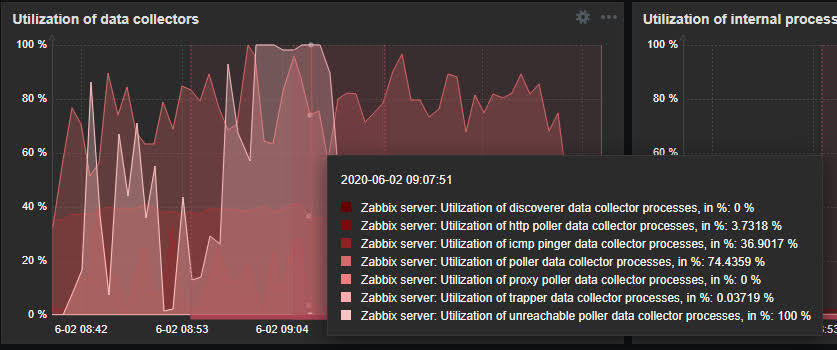

1,905,678 | Como evitar problemas de "Zabbix poller processes more than 75% busy" | Quando você utiliza o Zabbix para monitoramento, é essencial manter seus pollers eficientes para... | 0 | 2024-06-29T13:52:58 | https://dev.to/fernandomullerjr/como-evitar-problemas-de-zabbix-poller-processes-more-than-75-busy-58ll | devops, sre |

Quando você utiliza o Zabbix para monitoramento, é essencial manter seus pollers eficientes para evitar problemas como alertas indicando que os processos estão mais de 75% ocupados. Isso pode afetar a performance e... | fernandomullerjr |

1,905,677 | Quantum Computing Accelerating the Future of Scientific Simulations and Modeling | Dive into the world of quantum computing and explore how it promises to revolutionize scientific simulations and modeling by solving complex problems at unprecedented speeds. | 0 | 2024-06-29T13:49:54 | https://www.elontusk.org/blog/quantum_computing_accelerating_the_future_of_scientific_simulations_and_modeling | quantumcomputing, scientificsimulations, technology | # Quantum Computing: Accelerating the Future of Scientific Simulations and Modeling

Welcome to the frontier of technology where the unimaginable becomes the norm, and the computational limits of classical computers are stretched beyond recognition. Quantum computing is not just a buzzword; it's the next leap in c... | quantumcybersolution |

1,905,676 | The Synergy Between Design and Marketing: Crafting a Cohesive Brand Experience | In the ever-evolving landscape of digital marketing, the collaboration between design and marketing... | 0 | 2024-06-29T13:48:03 | https://dev.to/blog_ts/the-synergy-between-design-and-marketing-crafting-a-cohesive-brand-experience-2ob3 | ai, design, marketing | In the ever-evolving landscape of digital marketing, the collaboration between design and marketing has never been more crucial. Design is not merely an aesthetic endeavor; it's a strategic tool that, when harmonized with marketing, can create powerful and memorable brand experiences. This article delves into the integ... | blog_ts |

1,905,670 | Driving Success: A Deep Dive into Retail Sales Trends of Classic and Vintage Vehicles | As part of our task for the HNG internship on Data Analysis, we were tasked with exploring a dataset... | 0 | 2024-06-29T13:43:10 | https://dev.to/makuachukwu_chukwumam_/driving-success-a-deep-dive-into-retail-sales-trends-of-classic-and-vintage-vehicles-1b9l | As part of our task for the HNG internship on Data Analysis, we were tasked with exploring a dataset detailing the retail sales of a company specializing in various vehicle models. The dataset covers sales data from January 2003 to May 2005, providing insights into the company’s operations during this period.

The comp... | makuachukwu_chukwumam_ | |

1,905,675 | Comparing React.js and Vue.js: A Deep Dive into Frontend Technologies | In the rapidly evolving world of frontend development, selecting the right framework can make or... | 0 | 2024-06-29T13:47:39 | https://dev.to/kingdavid2908/comparing-reactjs-and-vuejs-a-deep-dive-into-frontend-technologies-18e6 | javascript, frontend, react, vue | In the rapidly evolving world of frontend development, selecting the right framework can make or break your project. React.js and Vue.js are two of the most popular choices among developers, each with its unique strengths and features. This article will compare **React.js** and **Vue.js**, highlighting their difference... | kingdavid2908 |

1,905,674 | REACTJS vs. TYPESCRIPT: A CYNICAL COMPARISON OF TWO FRONTEND TECHNOLOGIES. | Front-end development has come a long way, transforming from simple HTML pages to complex web... | 0 | 2024-06-29T13:47:14 | https://dev.to/njah_elton/reactjs-vs-typescript-a-cynical-comparison-of-two-frontend-technologies-4kbl | webdev, beginners, react | **Front-end development has come a long way, transforming from simple HTML pages to complex web applications powered by sophisticated frameworks. A summary of its journey includes: The Birth of HTML and CSS, JavaScript and Interactivity, Front-End Frameworks, Responsive Design, Performance Optimization, Single-Page App... | njah_elton |

1,905,673 | Managed vs Unmanaged Web Hosting: Which One to Choose? | Image by DC Studio on Freepik.com Choosing the right web hosting service is non-negotiable when it... | 0 | 2024-06-29T13:44:37 | https://dev.to/sheikh009/managed-vs-unmanaged-web-hosting-which-one-to-choose-3mm3 | webdev, beginners, javascript, programming |

Image by DC Studio on Freepik.com

Choosing the right web hosting service is non-negotiable when it comes to your website’s success. Even if you’re handling the behind-the-scenes stuff yourself, your host has a di... | sheikh009 |

1,905,672 | Buy Negative Google Reviews | Buy Negative Google Reviews $25.00 — $1,350.00 ➤E-mail: support@topsmmshops.com ➤Telegram:... | 0 | 2024-06-29T13:44:30 | https://dev.to/xebit10747/buy-negative-google-reviews-17lm | buy, negative, google, reviews | Buy Negative Google Reviews

$25.00 — $1,350.00

➤E-mail: support@topsmmshops.com

➤Telegram: TopSMMShops01

➤Skype: Top SMM Shops

➤WhatsApp: +1(848) 468–5888

[➤Visit Our Shop

](https://topsmmshops.com/shop/)

https://topsmmshops.com/product/buy-negative-google-reviews/

Purchase Negative Google Reviews

Are you... | xebit10747 |

1,905,671 | Functional Patterns: The Monoid | Trigger Warning: this article contains Haskell codeblocks! Introduction As a... | 0 | 2024-06-29T13:44:18 | https://dev.to/if-els/functional-patterns-the-monoid-22ef | haskell, functional, programming | > Trigger Warning: this article contains Haskell codeblocks!

## Introduction

As a programmer, I've always found myself obssessing over patterns I

could find in the code I write. From simple ones such as the Gaussian

sum and early returns, to ones that hold a bit more complexity such as the Strategy pattern.

I find s... | if-els |

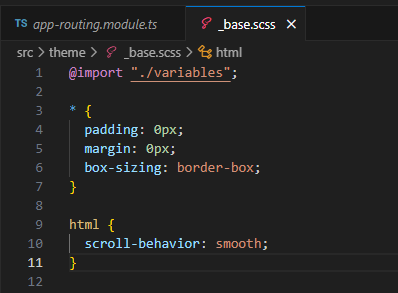

1,905,668 | in product details page, go to the top position on the details page | _base.scss html { scroll-behavior: smooth; } Enter fullscreen mode Exit... | 0 | 2024-06-29T13:40:56 | https://dev.to/webfaisalbd/in-product-details-page-go-to-the-top-position-on-the-details-page-1o4k | angular | `_base.scss`

```css

html {

scroll-behavior: smooth;

}

```

`app-routing.module.ts`

```ts

@NgModule({

imports: [RouterModule.forRoot(routes, {

scrollPositionRestoration: 'enabled',

anchorScrolling: 'enabl... | webfaisalbd |

1,905,667 | Unleashing the Power of PHP: Modern Techniques and Best Practices for Web Development | Unleashing the Power of PHP: Modern Techniques and Best Practices for Web Development PHP,... | 0 | 2024-06-29T13:36:42 | https://dev.to/cachemerrill/unleashing-the-power-of-php-modern-techniques-and-best-practices-for-web-development-38cd | php, webdev | ### Unleashing the Power of PHP: Modern Techniques and Best Practices for Web Development

PHP, a widely-used open-source scripting language, has been a cornerstone of web development for decades. While newer languages and frameworks have emerged, PHP continues to evolve, offering robust features and capabilities that ... | cachemerrill |

1,905,666 | Quantum Annealing The Future of Combinatorial Optimization | Dive into the mesmerizing world of quantum annealing and discover how this cutting-edge technology is poised to revolutionize combinatorial optimization problems. | 0 | 2024-06-29T13:33:56 | https://www.elontusk.org/blog/quantum_annealing_the_future_of_combinatorial_optimization | quantumcomputing, optimization, technology | # Quantum Annealing: The Future of Combinatorial Optimization

The realm of quantum computing is shrouded in enigma, yet it is ripe with potential. One of the most thrilling and promising facets of this field is quantum annealing. But what exactly is quantum annealing, and how can it transform the landscape of combinat... | quantumcybersolution |

1,905,665 | Debugging My Demons : Console.log(Story Untold) | Hey everyone, Devdee here! As a software engineer, my days are filled with griming exciting... | 0 | 2024-06-29T13:33:41 | https://dev.to/oladee/debugging-my-demons-consolelogstory-untold-2oak | webdev, programming, javascript | Hey everyone, Devdee here! As a software engineer, my days are filled with griming exciting challenges and the constant thrill of the unknown. Recently, I encountered a particularly nasty backend bug that had me pulling my hair out (figuratively, of course). Today, I want to share my battle with data inconsistency and ... | oladee |

1,905,632 | Theme Builder demo for Material 2 | A post by Dharmen Shah | 0 | 2024-06-29T13:14:39 | https://dev.to/ngmaterialdev/theme-builder-demo-for-material-2-1c6 | ---

title: Theme Builder demo for Material 2

published: true

description:

tags:

---

| shhdharmen | |

1,905,663 | My Pen on CodePen | Check out this Pen I made! | 0 | 2024-06-29T13:31:35 | https://dev.to/vera_shalamanova_9c0ef926/my-pen-on-codepen-29mf | codepen | Check out this Pen I made!

{% codepen https://codepen.io/Vera-Shalamanova/pen/mdYYKJp %} | vera_shalamanova_9c0ef926 |

1,905,662 | Guide to Selecting the Ideal Vacate Cleaning Service for Your Requirements | Moving out of a rental property can be a stressful experience, with numerous tasks demanding your... | 0 | 2024-06-29T13:30:51 | https://dev.to/lowell_jones_1f4144990011/guide-to-selecting-the-ideal-vacate-cleaning-service-for-your-requirements-38bd | Moving out of a rental property can be a stressful experience, with numerous tasks demanding your attention. Among these, ensuring the property is spotless before handing over the keys is crucial to securing your deposit and leaving on good terms with your landlord or property manager. This comprehensive guide aims to ... | lowell_jones_1f4144990011 | |

1,905,661 | SALES DATA ANALYSIS | INTRODUCTION Dear readers, in this article, I am going to share my findings of a data... | 0 | 2024-06-29T13:30:39 | https://dev.to/doreen970/sales-data-analysis-264m | datascience, hnginternshi, python, dataanalytics | ## INTRODUCTION

Dear readers, in this article, I am going to share my findings of a data analysis project that I recently undertook during my HNG internship program. HNG is a fast paced program that helps developers and people in the tech field to practice their skills according to their domain. In this task, we were g... | doreen970 |

1,905,513 | Styling React.js UIs | Asides HTML and Javascript, CSS is one of the main building blocks of Frontend web development. CSS... | 0 | 2024-06-29T13:25:19 | https://dev.to/elitenoire/styling-reactjs-uis-3ig0 | Asides HTML and Javascript, CSS is one of the main building blocks of Frontend web development. CSS (Cascading Style Sheets) allows you to present websites in different styles even if the websites might share the same markup structure.

In the world of React, there are different ways to use CSS to improve the UI of you... | elitenoire | |

1,905,660 | I will review the Titanic Passenger List dataset from Kaggle | I will review the Titanic Passenger List dataset from Kaggle. Here’s a step-by-step approach: Dataset... | 0 | 2024-06-29T13:23:42 | https://dev.to/abdulkola/i-will-review-the-titanic-passenger-list-dataset-from-kaggle-38ol | I will review the Titanic Passenger List dataset from Kaggle. Here’s a step-by-step approach:

Dataset Familiarization

Step 1: Understand the structure and contents of the dataset

Dataset Description: The Titanic Passenger List dataset contains information about the passengers on the Titanic. The key variables include:

... | abdulkola | |

1,905,633 | Top 10 JavaScript Best Practices | Writing a clean code is a mere important thing to do in order to make debugging and documentation... | 0 | 2024-06-29T13:14:08 | https://dev.to/pratyoos/top-10-javascript-best-practices-le5 | javascript, bestpractice, cleancode, beginners | Writing a clean code is a mere important thing to do in order to make debugging and documentation easy. Along with clean code, some basic mistakes should be avoided for better result. In JavaScript, there are some of the best practices, which if followed, give better code functionality and accurate results.

In the world of frontend development, choosing the right technology can significantly impact the efficiency, maintainability, and performance of your web applications. Two popular frontend frameworks that of... | theflash2024 |

1,905,636 | Quantum Algorithms Challenges and Complexity Analysis | Dive into the intricate world of quantum algorithm design and understand the pressing need for complexity analysis in quantum computing. | 0 | 2024-06-29T13:17:59 | https://www.elontusk.org/blog/quantum_algorithms_challenges_and_complexity_analysis | quantumcomputing, algorithms, complexityanalysis | # Quantum Algorithms: Challenges and Complexity Analysis

Welcome to the quantum realm! Quantum computing stands on the precipice of revolutionizing how we solve problems, rendering previously insurmountable tasks trivial. But like any groundbreaking technology, it comes with its own set of unique challenges. Today, we... | quantumcybersolution |

1,905,634 | Theme Builder now supports Angular 15, 16 & 17 | 🚀 New major feature dropped on https://themes.angular-material.dev Now you can preview and... | 0 | 2024-06-29T13:15:09 | https://dev.to/ngmaterialdev/theme-builder-now-supports-angular-15-16-17-497p | angular, angularmaterial, materialdesign, webdev | ---

title: Theme Builder now supports Angular 15, 16 & 17

published: true

description:

tags: angular,angularmaterial,materialdesign,webdevelopment

cover_image: https://media.dev.to/cdn-cgi/image/width=1000,height=420,fit=cover,gravity=auto,format=auto/https%3A%2F%2Fdev-to-uploads.s3.amazonaws.com%2Fuploads%2Farticles%... | shhdharmen |

1,905,631 | When to Use Records, Classes, and Structs in .NET: A Comprehensive Guide | Choosing the right data type in .NET is crucial for effective data management and manipulation. If... | 0 | 2024-06-29T13:10:56 | https://dev.to/ttecs/when-to-use-records-classes-and-structs-in-net-a-comprehensive-guide-2la2 | Choosing the right data type in .NET is crucial for effective data management and manipulation. If your data type can be a value type, use a struct. If it describes a value-like, preferably immutable state, use a record. Otherwise, use a class. Here's a quick guide:

## **1. Structs**

Structures, or structs, are `valu... | ttecs | |

1,905,630 | Implementing API Throttling in My PHP Project | Today, I’m diving into a cool backend challenge I recently tackled: implementing API rate limiting... | 0 | 2024-06-29T13:09:06 | https://dev.to/olutayo/implementing-api-throttling-in-my-php-project-35jm | php, api, memcached, throttling | Today, I’m diving into a cool backend challenge I recently tackled: implementing API rate limiting and throttling using PHP and Memcached. This stuff is crucial for protecting APIs from abuse and ensuring everyone gets fair usage. Let’s break down how I solved this.

### The Challenge: API Rate Limiting and Throttling

... | olutayo |

1,905,629 | Entendendo o MTU nas Redes de Computadores | No universo das redes de computadores, a eficiência e a velocidade de transmissão de dados são... | 0 | 2024-06-29T13:07:34 | https://dev.to/iamthiago/entendendo-o-mtu-nas-redes-de-computadores-21d5 | No universo das redes de computadores, a eficiência e a velocidade de transmissão de dados são fatores críticos. Um dos conceitos fundamentais que afetam esses aspectos é o **MTU** (Maximum Transmission Unit). Neste artigo, vamos explorar o que é MTU, sua importância, como funciona e como configurá-lo corretamente para... | iamthiago | |

1,905,628 | Free time | Hello could you reccomend me cool servise to waste my free time pls? | 0 | 2024-06-29T13:07:32 | https://dev.to/alexseen18/free-time-2hpk | Hello could you reccomend me cool servise to waste my free time pls? | alexseen18 | |

1,905,627 | React vs. Angular: A Comparative Analysis for Modern Web Development | In the ever changing world of web development, choosing the right technology for the development of a... | 0 | 2024-06-29T13:07:22 | https://dev.to/amanfoh_ehimarehanks_fb5/react-vs-angular-a-comparative-analysis-for-modern-web-development-2obn | In the ever changing world of web development, choosing the right technology for the development of a project significantly impacts the project success and productivity. In this article I’ll be talking about two of the most popular and powerful frameworks in the industry of web development which is REACT and ANGULAR. E... | amanfoh_ehimarehanks_fb5 | |

1,905,613 | A Beginner's introduction to back-end development | Back-end development, unlike front-end development that deals with the interactive part of the web... | 0 | 2024-06-29T12:48:39 | https://dev.to/ans222/a-beginners-introduction-to-back-end-development-1a84 | Back-end development, unlike front-end development that deals with the interactive part of the web application, deals with building and maintaining the mechanism of data storage and security of the web application. Back-end technologies like node, Django and Laravel are used to design the back-end aspect in addition to... | ans222 | |

1,905,626 | Solving Complex Business Logic for User Registration and Checkout in C# .NET | Introduction Hello, dev.to community! 👋 I'm excited to share my first blog post with you.... | 0 | 2024-06-29T13:06:57 | https://dev.to/ebeesule/solving-complex-business-logic-for-user-registration-and-checkout-in-c-net-36d3 | csharp, dotnet, backend, ecommerce | ## Introduction

Hello, dev.to community! 👋

I'm excited to share my first blog post with you. Well, to be honest I was tasked with creating a blog as part of the requirements for the [HNG Internship](https://hng.tech/internship) program I joinded recently.

> HNG Internship is a fast-paced bootcamp for learning digi... | ebeesule |

1,905,625 | Improving State Management in React: Transitioning from ContextAPI to Recoil | When managing state in React applications, Recoil and the Context API both help share data across... | 0 | 2024-06-29T13:05:58 | https://dev.to/abinash4567/improving-state-management-in-react-transitioning-from-contextapi-to-recoil-4ghb | react, webdev, typescript, tutorial | When managing state in React applications, **Recoil** and the **Context API** both help share data across components, but they handle performance and scalability differently.

With the Context API, every time the context value changes, **all components using that context re-render**. This can lead to performance issues... | abinash4567 |

1,905,624 | Quantum Advantage The Dawn of a New Computing Era | Explore the groundbreaking concept of quantum advantage and uncover its transformative impact on the future of computing and technology. | 0 | 2024-06-29T13:02:02 | https://www.elontusk.org/blog/quantum_advantage_the_dawn_of_a_new_computing_era | quantumcomputing, technology, innovation | # Quantum Advantage: The Dawn of a New Computing Era

Imagine a world where the most complex problems are solved in seconds, where traditional encryption methods become obsolete, and where new frontiers in science and technology are unlocked at warp speed. This is not the plot of a science fiction movie; it's the ... | quantumcybersolution |

1,905,623 | Top 10 Essential Tools Every Developer Must Wield | Table of Contents: Introduction: Unlocking the Power of the Right Tools Visual Studio Code: The... | 0 | 2024-06-29T13:00:49 | https://dev.to/jinesh_vora_ab4d7886e6a8d/top-10-essential-tools-every-developer-must-wield-o4k | webdev, programming, figma, react |

Table of Contents:

1. Introduction: Unlocking the Power of the Right Tools

2. Visual Studio Code: The Versatile Code Editor

3. Git and GitHub: Version Control and Collaboration

4. Chrome DevTools: Debugging and Optimization

5. Postman: Streamlining API Development

6. Sass/SCSS: Supercharging Your CSS

7. React.js: Bui... | jinesh_vora_ab4d7886e6a8d |

1,905,621 | Exploring Frontend Technologies: Elm vs. Svelte | I recently joined HNG internship, it's a fast paced, online bootcamp for coders from various... | 0 | 2024-06-29T12:57:56 | https://dev.to/0mobolaji/exploring-frontend-technologies-elm-vs-svelte-4hp0 | elm, svelte, webdev, hng | I recently joined HNG internship, it's a fast paced, online bootcamp for coders from various backgrounds including frontend, backend, data analysis, and product design. They also have a marketplace for hiring top talent—learn more [here](https://hng.tech/hire). Our first task was to write a technical article comparing ... | 0mobolaji |

1,905,619 | modularcleanroomindia | visit-modularcleanroomindia.com | 0 | 2024-06-29T12:55:36 | https://dev.to/lokesh_160832b700c2b49467/modularcleanroomindia-4iae | manufacturing, cleanroom, airshower, india |

visit-[modularcleanroomindia.com](modularcleanroomindia.com)  | lokesh_160832b700c2b49467 |

1,905,617 | Mastering Caching Algorithms in Django Restful | 1. Introduction Caching is an essential technique in web development for improving the... | 0 | 2024-06-29T12:52:31 | https://dev.to/sav4ner/mastering-caching-algorithms-in-django-restful-58hl | django, cache, api, tutorial | ## 1. Introduction

Caching is an essential technique in web development for improving the performance and speed of applications. In Django restful, understanding and implementing caching algorithms is crucial for optimizing the efficiency of your API.

From simple caching strategies to more advanced techniques, master... | sav4ner |

1,905,615 | Rapid Innovation: Leading the Way in AI and Blockchain Consulting | Adopting cutting-edge technologies is necessary to keep ahead of the curve in the fast-paced... | 27,673 | 2024-06-29T12:50:51 | https://dev.to/rapidinnovation/rapid-innovation-leading-the-way-in-ai-and-blockchain-consulting-2h2j | Adopting cutting-edge technologies is necessary to keep ahead of the curve in

the fast-paced corporate environment of today. Leading this movement is Rapid

Innovation, a startup that offers organizations cutting-edge AI and blockchain

consulting services to boost productivity, simplify processes, and open up new

develo... | rapidinnovation | |

1,901,748 | CodeBehind 2.8 Released, Cache the Pages and the Controller | What is CodeBehind? CodeBehind is a back-end framework on .NET Core, the first version of... | 0 | 2024-06-29T12:50:14 | https://dev.to/elanatframework/codebehind-28-released-cache-the-pages-and-the-controller-j6h | news, dotnet, backend, webdev | ## What is CodeBehind?

[CodeBehind](https://github.com/elanatframework/Code_behind) is a back-end framework on .NET Core, the first version of which is released in 2023. CodeBehind is a competitor to the default back-end frameworks in ASP.NET Core (ASP.NET Core MVC and Razor Pages). CodeBehind inherits all the benefits... | elanatframework |

1,905,614 | Vue vs React?? | Hi guys 😇, today I will be speaking on the comparisons between React.js and Vue.js. This would cover... | 0 | 2024-06-29T12:48:46 | https://dev.to/enielect/vue-vs-react-199g | javascript, programming, react | Hi guys 😇, today I will be speaking on the comparisons between React.js and Vue.js. This would cover the advantages of one over the other, ease of use, reusability, and speed. I shall split this into sections to keep everything clear and concise.

**React.js** uses a syntax called jsx which stands for javascript xml. ... | enielect |

1,905,612 | Mysteries of the Cosmos Neutron Stars and Pulsars Unveiled | Dive into the latest discoveries regarding neutron stars and pulsars, unveiling their importance in the grand tapestry of extreme physics. | 0 | 2024-06-29T12:46:04 | https://www.elontusk.org/blog/mysteries_of_the_cosmos_neutron_stars_and_pulsars_unveiled | neutronstars, pulsars, astrophysics | # Mysteries of the Cosmos: Neutron Stars and Pulsars Unveiled

Astrophysics has always been the playground of extremes. Among the celestial marvels that continually intrigue scientists are neutron stars and their spectacular cousins, pulsars. These cosmic enigmas represent the frontier of knowledge in extreme physics. ... | quantumcybersolution |

1,905,611 | Gacor Slot Hari Ini: Sensasi Mesin Slot Online | Keseruan bermain Slot Gacor Hari Ini terletak pada ketidakpastian dan potensi keuntungan yang besar.... | 0 | 2024-06-29T12:44:36 | https://dev.to/themediter54/gacor-slot-hari-ini-sensasi-mesin-slot-online-inc | Keseruan bermain Slot Gacor Hari Ini terletak pada ketidakpastian dan potensi keuntungan yang besar. Pemain tertarik pada slot ini tidak hanya karena nilai hiburannya tetapi juga karena kemungkinan mendapatkan jackpot.

Strategi Bermain Slot Gacor Hari Ini

Meskipun mesin slot pada dasarnya adalah permainan untung-untun... | themediter54 | |

1,905,610 | React vs. Vanilla JavaScript: A Comparative Analysis | Frontend development is an essential part of creating engaging and interactive web applications. In... | 0 | 2024-06-29T12:44:22 | https://dev.to/kaludavid/react-vs-vanilla-javascript-a-comparative-analysis-5c2g | react, webdev, javascript, hng | Frontend development is an essential part of creating engaging and interactive web applications. In this article, I'll be comparing React, a popular JavaScript library for building user interfaces, and Vanilla JavaScript, the core language itself without any frameworks or libraries. We'll explore the differences betwee... | kaludavid |

1,905,609 | Google Ads VS Meta Ads | In the ever-evolving digital landscape, choosing the right advertising platform for your business or... | 0 | 2024-06-29T12:40:33 | https://dev.to/adtechadventures/google-ads-vs-meta-ads-53n9 | ppc, googleads, paidads, digitalmarketing | In the ever-evolving digital landscape, choosing the right advertising platform for your business or client can feel like navigating through a maze. With Meta Ads (formerly known as Facebook Ads) and Google Ads at the forefront, it’s crucial to understand the nuts and bolts of each to make an informed decision that ali... | adtechadventures |

1,905,496 | How I Tackled a Challenging Backend Problem | My name is Toluwani, and I'll share a recent difficult backend problem I solved 2 months ago. As a... | 0 | 2024-06-29T12:14:44 | https://dev.to/tolusky/how-i-tackled-a-challenging-backend-problem-26be | My name is Toluwani, and I'll share a recent difficult backend problem I solved 2 months ago. As a junior backend developer, I was given a task to build an API for a Medical Appointment Application.

The application aims to facilitate appointment bookings between Patients and Doctors. A doctor can only have one appoin... | tolusky | |

1,905,607 | Best solicitors in Brisbane | Find the best solicitors and lawyers in Brisbane, Australia. Our top lawyers offer expert legal... | 0 | 2024-06-29T12:35:56 | https://dev.to/lawyerbrisbane/best-solicitors-in-brisbane-30dj | Find the best solicitors and lawyers in Brisbane, Australia. Our top lawyers offer expert legal services and representation to meet all your legal needs in Brisbane. Contact us today

Visit https://lawyersinbrisbane.com.au | lawyerbrisbane | |

1,905,580 | Databricks - Variant Type Analysis | The VARIANT data type is a recent introduction in Databricks (available in Databricks Runtime 15.3... | 0 | 2024-06-29T12:34:57 | https://dev.to/dadak5/databricks-variant-type-analysis-1bh1 | databricks, spark, bigdata, datalake | The VARIANT data type is a recent introduction in Databricks **(available in Databricks Runtime 15.3 and above)** designed specifically for handling semi-structured data. It offers an efficient and flexible way to store and process this kind of data, which often has a dynamic or evolving schema.

Here's a quick rundow... | dadak5 |

1,877,995 | 🦊 GitLab: A Python Script Displaying Latest Pipelines in a Group's Projects | Initial thoughts 1. Considered alternate solutions GitLab's operations dashboard GitLab CI... | 0 | 2024-06-29T12:33:54 | https://dev.to/zenika/gitlab-a-python-script-displaying-latest-pipelines-in-groups-projects-5b5a | gitlab, devops, python, productivity | ---

title: "\U0001F98A GitLab: A Python Script Displaying Latest Pipelines in a Group's Projects"

tags:

- gitlab

- devops

- python

- productivity

license: public-domain

cover_image: 'https://raw.githubusercontent.com/bcouetil/articles/main/images/gitlab/cicd/console-group-pipelineV2.jpg'

published: true

id: 187... | bcouetil |

1,905,604 | Top DevOps Trends to Watch in 2024 | Top DevOps Trends to Watch in 2024 DevOps continues to evolve, driven by the need for... | 0 | 2024-06-29T12:32:14 | https://dev.to/matin_mollapur/top-devops-trends-to-watch-in-2024-3lhc | webdev, javascript, programming, devops | ### Top DevOps Trends to Watch in 2024

DevOps continues to evolve, driven by the need for faster, more reliable, and scalable software development and deployment processes. As we look ahead to 2024, several trends are emerging that promise to shape the future of DevOps. Here are the top trends to watch out for:

#### ... | matin_mollapur |

1,905,534 | TailwindCSS vs Bootstrap | We all know the importance of styling in our everyday (frontend) lives. In the early days of web... | 0 | 2024-06-29T12:14:13 | https://dev.to/vectorgits/tailwindcss-vs-bootstrap-9de | webdev, tailwindcss, bootstrap | We all know the importance of styling in our everyday (frontend) lives. In the early days of web development, CSS took care of all that and in a pretty straightforward way. The Advent of time brought about technological advancements, new technologies - libraries and frameworks have been introduced and they're here to s... | vectorgits |

1,905,603 | Mastering the Guitar A Journey from Basic Chords to Fingerpicking Bliss | Embark on an exciting adventure to conquer the guitar, exploring essential chords, rhythmic strumming, and intricate fingerpicking techniques. A series crafted for curious beginners and seasoned players alike! | 0 | 2024-06-29T12:30:07 | https://www.elontusk.org/blog/mastering_the_guitar_a_journey_from_basic_chords_to_fingerpicking_bliss | guitar, music, tutorial | # Mastering the Guitar: A Journey from Basic Chords to Fingerpicking Bliss

Welcome to the ultimate guitar tutorial series! Whether you're a beginner itching to start your musical journey or someone looking to refine your skills, this series is designed to help you master the art of guitar playing. Grab your guita... | quantumcybersolution |

1,905,602 | Backend has never been this interesting... | I always thought to myself of how amazing backend programming is and how interesting it is to make... | 0 | 2024-06-29T12:29:32 | https://dev.to/strict-arrival/backend-has-never-been-this-interesting-2j16 | webdev, hng, hnginternship, python | I always thought to myself of how amazing backend programming is and how interesting it is to make things work in the most dynamic and amazing ways.

I always find myself trying to solve the puzzles of how things were put together and how I can replicate my thoughts with programs, and backend development has been the an... | strict-arrival |

1,905,601 | Understanding Mobile Development Platforms and Architectures | As a mobile developer, I'm excited to share my insights on the top platforms and architectures in our... | 0 | 2024-06-29T12:28:45 | https://dev.to/chinua/understanding-mobile-development-platforms-and-architectures-ei4 |

As a mobile developer, I'm excited to share my insights on the top platforms and architectures in our field. Let's dive in!

Mobile Development Platforms

1. Android - The Customizable Giant

Android offers a vast user base, extreme customizability, and open-source benefits. However, it comes with fragmentation, secur... | chinua | |

1,905,598 | React or Vuejs: Which to Use? | Hi there, it’s been a loooong while!!!🤩 My first article on Dev.to was published some years ago, and... | 0 | 2024-06-29T12:27:31 | https://dev.to/edememediong1/react-or-vuejs-which-to-use-74d | webdev, javascript, beginners, react | Hi there, it’s been a loooong while!!!🤩

My first article on Dev.to was published some years ago, and guess who’s back! 💪

It’s already obvious that this is my second article here, and in this piece, I will be attempting a Comparative Case Study of two Popular Frontend Technologies which are Reactjs and Vuejs.

This... | edememediong1 |

1,905,597 | Seeking Advice: How Can I Elevate My Web Development Career? | Hi Community! 👋 I'm Andrea, a self-taught Full Stack Web Developer originally from Italy but... | 0 | 2024-06-29T12:27:16 | https://dev.to/andrearaccagni/seeking-advice-how-can-i-elevate-my-web-development-career-5eo0 | fullstack, careerdevelopment, webdev, beginners | Hi Community! 👋

I'm Andrea, a self-taught Full Stack Web Developer originally from Italy but currently living in Spain. I began my journey into computer science at the age of 40 and have been passionate about web development ever since. You can learn more about me and my work at https://www.andrearaccagni.xyz.

Not su... | andrearaccagni |

1,905,596 | Mastering Django Custom Management Commands: A Comprehensive Guide | Introduction Django, the high-level Python web framework, comes with a powerful feature... | 0 | 2024-06-29T12:25:48 | https://dev.to/rupesh_mishra/mastering-django-custom-management-commands-a-comprehensive-guide-58gc | webdev, tutorial, django, python |

## Introduction

Django, the high-level Python web framework, comes with a powerful feature known as management commands. While Django provides several built-in commands like `runserver`, `makemigrations`, and `migrate`, did you know you can create your own custom commands? In this guide, we'll dive deep into the worl... | rupesh_mishra |

1,905,422 | My Backend World: Tackling my first NestJS project | Hello everyone, I am Kahuna, and this is my first ever technical article. I am a graduate of... | 0 | 2024-06-29T12:20:14 | https://dev.to/kahuna04/my-backend-world-tackling-my-first-nestjs-project-16fi | node, nestjs, beginners, backenddevelopment | Hello everyone, I am Kahuna, and this is my first ever technical article. I am a graduate of Mechanical Engineering, but my enthusiasm for technology led me to dive into the world of backend development.

Recently, I had the opportunity to be part of a team for a project that involved using a lovely framework I had nev... | kahuna04 |

1,905,579 | SOLID Design Principles | What is Desing Principles Design principles are fundamental guidelines that software developers and... | 0 | 2024-06-29T12:17:03 | https://dev.to/vinaykumar0339/solid-design-principles-53il | solidprinciples, designprinciples | **What is Desing Principles**

1. Design principles are fundamental guidelines that software developers and engineers follow to create robust, maintainable, and scalable software systems.

2. These principles provide a foundation for making decisions throughout the software development lifecycle, ensuring that the code ... | vinaykumar0339 |

1,905,592 | Elevate Your Style with Stussy Clothing: Iconic Designs for Every Season | Elevate Your Style with Stussy Clothing: Iconic Designs for Every Season The Stussy clothing has... | 0 | 2024-06-29T12:15:26 | https://dev.to/digital_dreamers_a29e3f02/elevate-your-style-with-stussy-clothing-iconic-designs-for-every-season-20h4 | Elevate Your Style with Stussy Clothing: Iconic Designs for Every Season

The Stussy clothing has become an iconic staple in streetwear fashion, embodying a perfect blend of style, comfort, and cultural relevance. Founded in the early 1980s by Shawn Stussy, the Stussy brand began with humble roots as a surfboard company... | digital_dreamers_a29e3f02 | |

1,905,591 | Corteiz Tracksuit: It’s a Lifestyle Choice | Corteiz Tracksuit: It’s a Lifestyle Choice When corteiz Tracksuit comes to blending style with... | 0 | 2024-06-29T12:14:58 | https://dev.to/digital_dreamers_a29e3f02/corteiz-tracksuit-its-a-lifestyle-choice-2cjo | Corteiz Tracksuit: It’s a Lifestyle Choice

When corteiz Tracksuit comes to blending style with comfort, Corteiz clothing is making waves in the fashion industry. Among their versatile offerings, the Corteiz tracksuit stands out as a favorite for fashion enthusiasts and athletes alike. Known for its impeccable design an... | digital_dreamers_a29e3f02 | |

1,905,590 | Mastering Project Management with Trello A Comprehensive Guide | Learn how to use Trello for efficient project management, including setting up boards, lists, and cards to streamline your workflow. | 0 | 2024-06-29T12:14:09 | https://www.elontusk.org/blog/mastering_project_management_with_trello_a_comprehensive_guide | projectmanagement, trello, productivity | # Mastering Project Management with Trello: A Comprehensive Guide

In today’s fast-paced world, effective project management is crucial to ensuring the success of your endeavors, whether you're running a small business, managing a team, or coordinating personal projects. Trello, with its intuitive interface and po... | quantumcybersolution |

1,905,584 | #1 Single Responsibility Principle ['S' in SOLID] | SRP - Single Responsibility Principle The Single Responsibility Principle is the first principle in... | 0 | 2024-06-29T12:14:05 | https://dev.to/vinaykumar0339/1-single-responsibility-principle-s-in-solid-5fn9 | singleresponsibility, solidprinciples, designprinciples | **SRP - Single Responsibility Principle**

The Single Responsibility Principle is the first principle in the Solid Design Principles.

1. A class should have only one reason to change.

2. Each class should focus on a single job or responsibility.

**Violating SRP:**

```swift

class BankAccount {

var accountNumber: St... | vinaykumar0339 |

1,905,588 | Blepharoplasty cost in punjab | [Blepharoplasty Cost in Punjab]( ): Affordable Eyelid Rejuvenation Introduction Blepharoplasty,... | 0 | 2024-06-29T12:14:01 | https://dev.to/lion_gamingtxmonkey_2/blepharoplasty-cost-in-punjab-8nl | [Blepharoplasty Cost in Punjab](

): Affordable Eyelid Rejuvenation

Introduction

Blepharoplasty, also known as eyelid surgery, is a popular cosmetic procedure designed to enhance the appearance of the eyes by removin... | lion_gamingtxmonkey_2 | |

1,905,587 | We Are Rated As The Best Platform To Buy Google Reviews | Buy Google Reviews Buy Google Reviews For Your Business Account Buying Google reviews helps... | 0 | 2024-06-29T12:13:45 | https://dev.to/john_sebastian/we-are-rated-as-the-best-platform-to-buy-google-reviews-21jm | webdev, javascript, beginners | Buy Google Reviews

Buy Google Reviews For Your Business Account

[Buying Google reviews](https://mangocityit.com/service/buy-google-reviews/

) helps establish trust and credibility. We offer 100% real, non-drop Google reviews from different locations as per your brand presence and that’s why we are rated as the best pr... | john_sebastian |

1,905,586 | Overcoming a Challenging 404 Error in Django | The Problem: A Persistent 404 Error The issue arose when I attempted to access a URL in my Django... | 0 | 2024-06-29T12:13:11 | https://dev.to/chinua/overcoming-a-challenging-404-error-in-django-2p5k |

The Problem: A Persistent 404 Error

The issue arose when I attempted to access a URL in my Django project and was met with a "Page not found (404)" error. The URL I tried to access was http://127.0.0.1:8000/product/cart, but Django couldn't match it to any URL pattern defined hin my urls.py file.

Here’s a snippet of ... | chinua | |

1,905,581 | An Introduction to Frontend Technologies: HTML, CSS and React JS | Intro Frontend development is the practice of creating the user interface (UI) of a... | 0 | 2024-06-29T12:11:58 | https://dev.to/theflash2024/an-introduction-to-frontend-technologies-html-css-and-react-js-3pja | frontend, html, css, react | ## Intro

Frontend development is the practice of creating the user interface (UI) of a website / application. Three core technologies are fundamental to frontend development: HTML, CSS, and JavaScript. This article will introduce you to these technologies, focusing on React JS as a popular JavaScript library.

## HTM... | theflash2024 |

1,905,583 | Comparing Vue.js and React: Front-end Technologies | Introduction Front-end development is a dynamic field. It is evolving with new frameworks... | 0 | 2024-06-29T12:01:02 | https://dev.to/kennethdavid760/comparing-vuejs-and-react-front-end-technologies-3o5n | vue, and, react, comparison | ##Introduction

Front-end development is a dynamic field. It is evolving with new frameworks and tools. Among the myriad of options available, Vue.js and React stand out as two of the most popular frameworks. Both have their unique features, benefits, and communities. In this article, we'll compare Vue.js and React. We... | kennethdavid760 |

1,905,582 | Mastering JavaScript Your Ultimate Journey Begins Here | Kickstart your coding adventure with JavaScript! This comprehensive tutorial series will guide you through everything from basic syntax to advanced DOM manipulation. | 0 | 2024-06-29T11:58:12 | https://www.elontusk.org/blog/mastering_javascript_your_ultimate_journey_begins_here | javascript, tutorial, coding | # Mastering JavaScript: Your Ultimate Journey Begins Here!

Welcome to the world of JavaScript! Whether you're a budding coder or a seasoned developer looking to brush up on your skills, this series is designed to take you on an exciting journey through the basics of JavaScript, all the way to manipulating the Doc... | quantumcybersolution |

1,596,373 | Setting Up a Modem in Bridge Mode for a UDM router: A Step-by-Step Guide | Learn how to configure your modem in bridge mode to use it with a Unifi Dream Machine, allowing the UDM to handle the PPPoE connection | 0 | 2024-06-29T11:51:30 | https://dev.to/cloudx/setting-up-a-modem-in-bridge-mode-for-a-udm-router-a-step-by-step-guide-2c09 | networking, network, unifi, modem | ---

title: 'Setting Up a Modem in Bridge Mode for a UDM router: A Step-by-Step Guide'

published: true

description: 'Learn how to configure your modem in bridge mode to use it with a Unifi Dream Machine, allowing the UDM to handle the PPPoE connection'

tags: 'networking, network, unifi, modem'

cover_image: 'https://raw.... | navarroaxel |

1,905,578 | Case Study Template For Designing A Product | Here is an example of creating a case study for an online selling fresh vegetables to the consumers... | 0 | 2024-06-29T11:51:29 | https://dev.to/iam_divs/case-study-template-for-designing-a-product-4nn4 | webdev, javascript, design, ui | Here is an example of creating a case study for an online selling fresh vegetables to the consumers before crafting design for it:

Creating a case study on an online vegetable and fruit selling application sounds l... | iam_divs |

1,905,577 | dev tools inpect elements | is there any way . where i click on inspect element it will redirect me to the code editor to that... | 0 | 2024-06-29T11:49:56 | https://dev.to/numanijaz_47/dev-tools-inpect-elements-4jg5 | webdev, chrome, vscode, debugging | is there any way . where i click on inspect element it will redirect me to the code editor to that code line instead of dev tools pane (need quick answers) | numanijaz_47 |

1,905,576 | The Future of Data Analytics with AI: Transformations and Opportunities | Data analytics is evolving rapidly, and the integration of Artificial Intelligence (AI) is at the... | 0 | 2024-06-29T11:49:23 | https://dev.to/sejal_4218d5cae5da24da188/the-future-of-data-analytics-with-ai-transformations-and-opportunities-1hel | dataanalytics, ai | [Data analytics](https://www.pangaeax.com/browse-talent/freelancer-data-analyst/) is evolving rapidly, and the integration of Artificial Intelligence (AI) is at the forefront of this transformation. As businesses become increasingly data-driven, AI-powered analytics tools are unlocking new potentials and reshaping the ... | sejal_4218d5cae5da24da188 |

1,905,575 | React vs. Vue.js: A Brief Comparison of Frontend Technologies | React: The Powerhouse Library React, developed by Facebook, is widely used for building user... | 0 | 2024-06-29T11:48:45 | https://dev.to/oluwakoredee/react-vs-vuejs-a-brief-comparison-of-frontend-technologies-2d69 | react | **React: The Powerhouse Library**

React, developed by Facebook, is widely used for building user interfaces. It’s known for its component-based architecture and virtual DOM.

**Vue.js: The Progressive Framework**

Vue.js, created by Evan You, is designed to be incrementally adoptable, making it flexible for different use... | oluwakoredee |

1,905,574 | Long Term Memory AI Chatbot | Our AI workflow technology allows us to deliver AI chatbots with long term memory. This allows you to... | 0 | 2024-06-29T11:47:56 | https://ainiro.io/blog/long-term-memory-ai-chatbot | ai, machinelearning, productivity, lowcode | Our [AI workflow technology](https://ainiro.io/ai-workflows) allows us to deliver AI chatbots with long term memory. This allows you to _"train"_ your AI chatbot during conversations, and such modify your chatbot's future responses as a consequence of you correcting it during interactions.

This is one of the core requ... | polterguy |

1,905,571 | Building an AI-Powered Ad Copy Generator with GeminiAI and DronaHQ (Low-Code) | Artificial intelligence (AI) is at the forefront of technological innovation, especially in the realm... | 0 | 2024-06-29T11:45:19 | https://dev.to/shib_itsme/building-an-ai-powered-ad-copy-generator-with-dronahq-and-geminiai-2g8g | ai, restapi, tutorial, lowcode | Artificial intelligence (AI) is at the forefront of technological innovation, especially in the realm of generative AI. Large language models (LLMs) like ChatGPT, with over 100 million active users weekly, have become household names. However, despite the widespread recognition of AI's potential, many teams struggle to... | shib_itsme |

1,905,570 | Mastering Email Marketing A Comprehensive Mailchimp Tutorial | Dive into the world of email marketing with our step-by-step guide on creating successful campaigns using Mailchimp. From list building to automation, we cover everything you need to know. | 0 | 2024-06-29T11:42:14 | https://www.elontusk.org/blog/mastering_email_marketing_a_comprehensive_mailchimp_tutorial | emailmarketing, mailchimp, automation, digitalmarketing | # Mastering Email Marketing: A Comprehensive Mailchimp Tutorial

Email marketing remains one of the most effective ways to reach and engage with your audience. With a well-crafted campaign, you can nurture leads, drive sales, and build customer loyalty. Today, we'll dive into the nuts and bolts of creating a succe... | quantumcybersolution |

1,905,569 | Discover the Best Salons in Mumbai | Mumbai, the bustling metropolis of dreams, is not just famous for Bollywood and its vibrant culture... | 0 | 2024-06-29T11:41:49 | https://dev.to/abitamim_patel_7a906eb289/discover-the-best-salons-in-mumbai-o6 | salon, in, mumbai, bestsaloninmumbai | Mumbai, the bustling metropolis of dreams, is not just famous for Bollywood and its vibrant culture but also for its thriving beauty industry. From luxurious salons offering premium services to quaint boutiques providing personalized care, Mumbai has something for everyone. Whether you’re a resident or a visitor, knowi... | abitamim_patel_7a906eb289 |

1,905,568 | Git you can learn on the fly | Git is a tool that you can learn on the fly, so there's no need to dedicate extensive time to... | 0 | 2024-06-29T11:39:44 | https://dev.to/mibii/git-you-can-learn-on-the-fly-366b | git, github | Git is a tool that you can learn on the fly, so there's no need to dedicate extensive time to mastering it. For anyone already familiar with using the Command Line Interface (CLI), I'd say a day is more than enough to get a good grasp of the basics. While a Windows version of Git is available, the CLI in many cases,... | mibii |

1,905,567 | Elevate Your Ride: A Comprehensive Guide to Car Dashboard Items | The dashboard of a car is more than just a control panel; it's a canvas for personalization, a space... | 0 | 2024-06-29T11:38:10 | https://dev.to/the_artarium/elevate-your-ride-a-comprehensive-guide-to-car-dashboard-items-3g1n | webdev, beginners, shopping | The dashboard of a car is more than just a control panel; it's a canvas for personalization, a space for practical gadgets, and a reflection of the driver's personality and needs. From functional accessories that enhance safety and convenience to decorative items that add a touch of style and culture, car dashboard ite... | the_artarium |

Subsets and Splits

No community queries yet

The top public SQL queries from the community will appear here once available.