id int64 5 1.93M | title stringlengths 0 128 | description stringlengths 0 25.5k | collection_id int64 0 28.1k | published_timestamp timestamp[s] | canonical_url stringlengths 14 581 | tag_list stringlengths 0 120 | body_markdown stringlengths 0 716k | user_username stringlengths 2 30 |

|---|---|---|---|---|---|---|---|---|

1,921,709 | 获客系统霸屏工具,获客行销助手,获客行销机器人 | 获客系统霸屏工具,获客行销助手,获客行销机器人 了解相关软件请登录 http://www.vst.tw... | 0 | 2024-07-12T22:00:00 | https://dev.to/dbnp_gejy_123512246edaf8c/huo-ke-xi-tong-ba-ping-gong-ju-huo-ke-xing-xiao-zhu-shou-huo-ke-xing-xiao-ji-qi-ren-146l |

获客系统霸屏工具,获客行销助手,获客行销机器人

了解相关软件请登录 http://www.vst.tw

当谈论到现代市场营销策略时,获客系统霸屏工具显然是一个备受关注的话题。这些工具的出现彰显了数字营销领域的快速发展和创新。在今天的竞争激烈的市场环境中,吸引和保留客户已经成为企业成功的关键因素之一。而获客系统霸屏工具正是为了在这一竞争中脱颖而出而设计的。

获客系统霸屏工具,定义与作用

获客系统霸屏工具,简称“霸屏工具”,是指一类能够帮助企业在特定的数字平台上大规模提升品牌曝光和客户引流效果的软件或服务。其核心功能包括但不限于,

广告投放优化,通过精准的广告投放和优化算法,确保在目标受众中最大化曝光率和点击... | dbnp_gejy_123512246edaf8c | |

1,921,710 | How to deploy refactored mainframe applications on AWS | Note: ✋ This post was originally published on my blog wiki-cloud.co ... | 0 | 2024-07-12T22:20:38 | https://wiki-cloud.co/en/2024/07/how-to-deploy-refactored-mainframe-applications-aws/ | aws, cloud, mainframe, application | >**Note:** ✋ This post was originally published on my blog [wiki-cloud.co](https://wiki-cloud.co/en/2024/07/how-to-deploy-refactored-mainframe-applications-aws/)

## **Introduction**

Application modernization has become a priority for many companies seeking to improve their operational efficiency, their ability to qui... | johnbulla |

1,921,711 | 电报(TG)私信,TG关键词霸屏机器人,TG过滤助手 | 电报(TG)私信,TG关键词霸屏机器人,TG过滤助手 了解相关软件请登录 http://www.vst.tw... | 0 | 2024-07-12T22:00:41 | https://dev.to/mrzg_svbp_29da68d07ec63ed/dian-bao-tgsi-xin-tgguan-jian-ci-ba-ping-ji-qi-ren-tgguo-lu-zhu-shou-99o |

电报(TG)私信,TG关键词霸屏机器人,TG过滤助手

了解相关软件请登录 http://www.vst.tw

在当今数字通信时代,私密性和安全性成为了许多人关注的焦点。在诸多通讯工具中,电报(Telegram)凭借其卓越的加密技术和多样的功能而备受青睐。本文将探讨电报私信的特点及其在现代通讯中的地位。

电报作为一款即时通讯应用,以其端到端加密的私密性而闻名。这意味着消息在发送者和接收者之间直接加密传输,任何第三方,包括电报自身,都无法解读消息内容。这种加密机制确保了用户的隐私和数据安全,特别是在涉及敏感信息传递时尤为重要。

除了安全性,电报还提供了许多其他吸引人的功能。其中包括多设备同步,使得用户可以在多个... | mrzg_svbp_29da68d07ec63ed | |

1,921,712 | Importance of Semantic HTML in SEO | https://eu.docworkspace.com/d/sIEzc1t_UAdjMxrQG | 0 | 2024-07-12T22:08:33 | https://dev.to/lilodev/importance-of-semantic-html-in-seo-3k9k | https://eu.docworkspace.com/d/sIEzc1t_UAdjMxrQG | lilodev | |

1,921,713 | 海外过滤机器人,海外好友群发 | 海外粉丝采集软件,海外过滤机器人,海外好友群发 了解相关软件请登录 http://www.vst.tw... | 0 | 2024-07-12T22:13:03 | https://dev.to/srft_hgpv_4a58e454b4ac234/hai-wai-guo-lu-ji-qi-ren-hai-wai-hao-you-qun-fa-1aon |

海外粉丝采集软件,海外过滤机器人,海外好友群发

了解相关软件请登录 http://www.vst.tw

海外粉丝采集软件,数字时代的营销利器

在全球化日益加深的今天,海外粉丝采集软件成为了企业和个人拓展国际市场的重要工具。这类软件通过智能算法和数据分析,帮助用户精准定位并采集海外社交媒体平台上的潜在粉丝群体。

其功能强大,能够根据用户设定的关键词、地理位置、兴趣标签等条件,快速筛选出目标用户,为品牌推广、内容营销提供有力的数据支持。同时,这些软件还具备自动化操作功能,大大节省了人工筛选的时间成本。

海外粉丝采集软件广泛应用于跨境电商、国际贸易、文化旅游等多个领域。它为企业带来了更多的国际曝光机会,也为个人创作者... | srft_hgpv_4a58e454b4ac234 | |

1,921,714 | 谷歌引流关键词霸屏工具,谷歌自动注册,谷歌群发 | 谷歌引流关键词霸屏工具,谷歌自动注册,谷歌群发 了解相关软件请登录 http://www.vst.tw... | 0 | 2024-07-12T22:14:06 | https://dev.to/axrk_eqal_afa7a42b252f0b5/gu-ge-yin-liu-guan-jian-ci-ba-ping-gong-ju-gu-ge-zi-dong-zhu-ce-gu-ge-qun-fa-3759 |

谷歌引流关键词霸屏工具,谷歌自动注册,谷歌群发

了解相关软件请登录 http://www.vst.tw

谷歌引流关键词霸屏工具,提升网站流量的利器

在当今数字营销的激烈竞争中,网站流量的增加对于企业和个人网站的成功至关重要。谷歌作为全球最大的搜索引擎,其搜索结果页面的排名对于网站的曝光和访问量有着决定性的影响。为了在谷歌搜索结果中获得更多的曝光和点击,许多营销人员和网站所有者依赖于关键词霸屏工具。

什么是谷歌引流关键词霸屏工具?

谷歌引流关键词霸屏工具是一种通过优化关键词选择和网站内容来提高在谷歌搜索结果中排名的工具。它的核心功能是帮助网站在谷歌的搜索结果页面(SERP)中占据更多的位置,从而增加网站的曝... | axrk_eqal_afa7a42b252f0b5 | |

1,921,715 | Mastering @Observable: Introduction to Swift Observation | 1. Introduction State management has always been a cornerstone of application development.... | 0 | 2024-07-12T22:19:19 | https://dev.to/andy_kolean/mastering-observable-introduction-to-swift-observation-268 | swift, ios, swiftui, observation | ## 1. Introduction

State management has always been a cornerstone of application development. In Swift, managing state has evolved significantly over the years. Initially, developers relied on manual state management techniques, which often led to complex and error-prone codebases. As applications grew in size and com... | andy_kolean |

1,921,738 | BAND群发软件,BAND商海客营销,BAND采集群 | BAND群发软件,BAND商海客营销,BAND采集群 了解相关软件请登录 http://www.vst.tw... | 0 | 2024-07-12T22:23:51 | https://dev.to/sibn_bqhe_61e9d037e1e0b0d/bandqun-fa-ruan-jian-bandshang-hai-ke-ying-xiao-bandcai-ji-qun-4074 |

BAND群发软件,BAND商海客营销,BAND采集群

了解相关软件请登录 http://www.vst.tw

BAND群发软件,一款强大的团队协作与群发助手

BAND是一款功能齐全的海外社交应用程序,专注于帮助用户高效管理团队、群组和社区。它提供基本的沟通工具外,还集成了丰富的管理功能,让合作更加顺畅。通过BAND,用户可以轻松邀请家人、朋友、同事等加入群组,享受便捷的聊天服务。

而群发器作为BAND软件中的一个重要工具,是微商人脉助手的必备之选。群发器支持多样化的群发功能,包括消息群发、图文群发、小程序群发等,让群发变得既高效又充满诚意。此外,群发器还具备分批群发功能,用户可以根据需要设置发送时间,实现更精准... | sibn_bqhe_61e9d037e1e0b0d | |

1,921,739 | 纸飞机加粉软件,纸飞机群推王,纸飞机行销软件 | 纸飞机加粉软件,纸飞机群推王,纸飞机行销软件 了解相关软件请登录 http://www.vst.tw... | 0 | 2024-07-12T22:26:00 | https://dev.to/pyor_nknu_8e06d411b7fe214/zhi-fei-ji-jia-fen-ruan-jian-zhi-fei-ji-qun-tui-wang-zhi-fei-ji-xing-xiao-ruan-jian-3e6d |

纸飞机加粉软件,纸飞机群推王,纸飞机行销软件

了解相关软件请登录 http://www.vst.tw

纸飞机加粉软件,作为社交媒体营销的辅助工具,近年来逐渐受到关注。该软件旨在帮助用户快速增加纸飞机(Telegram)平台上的粉丝数量,通过自动化操作实现账号的快速增长。

使用纸飞机加粉软件,用户只需简单设置,软件便能自动执行加粉任务,如自动关注、点赞、评论等,以吸引更多用户关注。然而,值得注意的是,过度依赖此类软件可能带来一定的风险,如账号被封禁、影响用户体验等。

用户评价方面,一些用户认为纸飞机加粉软件效果显著,能够快速提升账号曝光度;但也有用户反映,软件操作过于机械,难以精准定位目标用户,且可能导致账号被平... | pyor_nknu_8e06d411b7fe214 | |

1,921,740 | Tech Essentials Every CSE Student Should Know and Why They Matter | Computer Science and Engineering (CSE) is a rapidly evolving field, necessitating that students keep... | 0 | 2024-07-12T22:29:15 | https://dev.to/byteom/tech-essentials-every-cse-student-should-know-and-why-they-matter-9pe | webdev, developer, beginners | Computer Science and Engineering (CSE) is a rapidly evolving field, necessitating that students keep pace with the latest technologies and methodologies. Mastery of certain tech skills is not only beneficial but essential for a successful career in the industry. This article will explore the critical tech competencies ... | byteom |

1,921,741 | Cómo desplegar aplicaciones mainframe refactorizadas en AWS | Note: ✋ Este post se publicó originalmente en mi blog wiki-cloud.co Introducción La... | 0 | 2024-07-12T22:41:31 | https://wiki-cloud.co/es/2024/07/how-to-deploy-refactored-mainframe-applications-aws/ | aws, cloud, mainframe, modernization | >**Note:** ✋ Este post se publicó originalmente en mi blog [wiki-cloud.co](https://wiki-cloud.co/es/2024/07/how-to-deploy-refactored-mainframe-applications-aws/)

## **Introducción**

La modernización de aplicaciones se ha convertido en prioridad para muchas empresas que buscan mejorar su eficiencia operativa, su capac... | johnbulla |

1,921,742 | Trending in Web Development in 2024 | Web development is a dynamic field that continually evolves with new technologies, frameworks, and... | 0 | 2024-07-12T22:36:54 | https://dev.to/byteom/trending-in-web-development-in-2024-5dj6 | webdev, beginners, tutorial |

Web development is a dynamic field that continually evolves with new technologies, frameworks, and best practices. As we step into 2024, several trends are shaping the future of web development. This article explores the most significant trends in web development for 2024 and provides resources for further explorati... | byteom |

1,921,743 | 纸飞机粉丝采集软件,纸飞机群发防封号工具,纸飞机商海客营销工具 | 纸飞机粉丝采集软件,纸飞机群发防封号工具,纸飞机商海客营销工具 了解相关软件请登录 http://www.vst.tw... | 0 | 2024-07-12T22:37:05 | https://dev.to/zcxm_ieav_98be56013d8d85b/zhi-fei-ji-fen-si-cai-ji-ruan-jian-zhi-fei-ji-qun-fa-fang-feng-hao-gong-ju-zhi-fei-ji-shang-hai-ke-ying-xiao-gong-ju-i5i |

纸飞机粉丝采集软件,纸飞机群发防封号工具,纸飞机商海客营销工具

了解相关软件请登录 http://www.vst.tw

探索纸飞机,揭秘纸飞机粉丝采集软件的魅力

在数字化时代,人们追寻兴趣爱好的方式变得更加多样化和便捷化。纸飞机,作为一种简单而有趣的儿童玩具,却在互联网上拥有了一席之地,成为了一种独特的迷恋和收藏对象。而纸飞机粉丝采集软件,则是这场奇妙兴趣的主要平台之一,它们如何满足人们对这种日常物品的热爱呢?

纸飞机的魅力

纸飞机,以其简单的制作方式和飞行的乐趣,从小就是许多人童年的记忆。飞行的瞬间,仿佛能够将人们带入另一个自由而欢快的世界。即使长大成人,很多人对纸飞机的热爱依然不减,甚至在数字化的今天... | zcxm_ieav_98be56013d8d85b | |

1,921,744 | 脸书拉群机器人,脸书群发工具 | 脸书自动改资料,脸书拉群机器人,脸书群发工具 了解相关软件请登录 http://www.vst.tw... | 0 | 2024-07-12T22:38:02 | https://dev.to/aktp_wmmh_09a1094223ac65f/lian-shu-la-qun-ji-qi-ren-lian-shu-qun-fa-gong-ju-358j |

脸书自动改资料,脸书拉群机器人,脸书群发工具

了解相关软件请登录 http://www.vst.tw

脸书自动改资料,科技发展中的伦理挑战

在信息科技飞速发展的今天,社交媒体平台如脸书在用户体验和个性化服务方面不断创新。然而,最近一种名为“脸书自动改资料”的功能引发了广泛关注和争议。

功能介绍

“脸书自动改资料”是一项使用人工智能技术的新功能,旨在根据用户的行为模式和社交互动,自动修改其个人资料和推荐内容,以更精确地反映用户的兴趣和偏好。例如,当用户频繁点赞或分享某类型的内容时,系统会自动调整其个人资料中显示的喜好标签,或者调整其新闻订阅推荐。

技术背景与实现

这一功能背后依赖于深度学习算法和大数据分... | aktp_wmmh_09a1094223ac65f | |

1,921,745 | Day 990 : Opportunity Knocks | liner notes: Professional : Today was not the most productive. Started off with responding to some... | 0 | 2024-07-12T22:39:22 | https://dev.to/dwane/day-990-opportunity-knocks-59h2 | hiphop, code, coding, lifelongdev | _liner notes_:

- Professional : Today was not the most productive. Started off with responding to some community questions. Chatted with a team member about a project were working on. During my lunch break, I came across some pretty dope properties that just went on the market and spent quite a bit of time researching ... | dwane |

1,921,746 | Guide on creating a Virtual Network with Subnets in Microsoft Azure | Here’s a detailed guide for creating an Azure Virtual Network with Subnets using the address space... | 0 | 2024-07-13T12:11:14 | https://dev.to/ikay/guide-on-creating-a-virtual-network-with-subnets-in-microsoft-azure-44dl | virtualnetwork, azure, subnet | Here’s a detailed guide for creating an Azure Virtual Network with Subnets using the address space 10.1.0.0/16 Follow these steps in the Azure portal, with accompanying screenshots to assist you through the process:

**Step 1: Sign In to the Azure Portal**

A. **Open Your Web Browser**: Navigate to the Azure Portal.

B. ... | ikay |

1,921,747 | Descubre Tint Mint | Enter fullscreen mode Exit fullscreen mode | 0 | 2024-07-12T22:45:05 | https://dev.to/rodrigofernandez/descubre-tint-mint-5cil | ```

```

| rodrigofernandez | |

1,921,749 | 自动发帖,Youtube拉群助手,Youtube关键词霸屏工具 | YouTube自动发帖,Youtube拉群助手,Youtube关键词霸屏工具 了解相关软件请登录 http://www.vst.tw... | 0 | 2024-07-12T22:48:40 | https://dev.to/laeh_cswk_05e916fd962872e/zi-dong-fa-tie-youtubela-qun-zhu-shou-youtubeguan-jian-ci-ba-ping-gong-ju-4cbk |

YouTube自动发帖,Youtube拉群助手,Youtube关键词霸屏工具

了解相关软件请登录 http://www.vst.tw

随着社交媒体和在线内容平台的发展,YouTube作为全球最大的视频分享平台,其用户互动的方式也在不断创新。其中,自动发帖技术的出现引起了广泛关注和讨论。

自动发帖是指利用自动化工具或程序,在YouTube视频下方评论区自动发布内容。这些内容可以是预设的模板、链接、广告或者其他形式的信息。尽管自动发帖在某些情况下可能是为了方便管理和提高效率,然而,它也引发了一些质疑和争议。

首先,自动发帖技术可能被滥用,用于发布垃圾信息、广告或者不当内容。这些内容不仅影响了用户体验,还可能违反... | laeh_cswk_05e916fd962872e | |

1,921,752 | ¿Quieres aprender a programar?, y gratis? Aquí te dejo unos sitios web | roadmap.sh: ¿No sabes por dónde empezar? Roadmap.sh ofrece hojas de ruta detalladas para diferentes... | 0 | 2024-07-12T22:54:31 | https://dev.to/rodrigofernandez/quieres-aprender-a-programar-y-gratis-aqui-te-dejo-unos-sitios-web-fg0 | spanish | roadmap.sh: ¿No sabes por dónde empezar? Roadmap.sh ofrece hojas de ruta detalladas para diferentes áreas del desarrollo, como front-end, back-end y DevOps. Es ideal para aquellos que necesitan una guía estructurada para avanzar en su carrera.

w3schools.com: Este sitio es perfecto para aprender a programar de manera f... | rodrigofernandez |

1,921,761 | 特Twitter关键词霸屏工具,Twitter过滤工具,Twitter采集成员 | 推特Twitter关键词霸屏工具,Twitter过滤工具,Twitter采集成员 了解相关软件请登录 http://www.vst.tw... | 0 | 2024-07-12T23:01:01 | https://dev.to/lpsh_intf_0dd4c93b51e9a54/te-twitterguan-jian-ci-ba-ping-gong-ju-twitterguo-lu-gong-ju-twittercai-ji-cheng-yuan-7ek |

推特Twitter关键词霸屏工具,Twitter过滤工具,Twitter采集成员

了解相关软件请登录 http://www.vst.tw

推特(Twitter)关键词霸屏工具,扩展你的社交影响力

在当今社交媒体的浪潮中,推特(Twitter)作为全球最流行的微博客平台之一,每天有数以亿计的信息流动其上。对于个人用户和品牌来说,如何在这样一个信息爆炸的环境中脱颖而出,吸引更多关注和互动,是一个挑战。幸运的是,有一些工具可以帮助你在推特上实现关键词霸屏,让你的内容更容易被发现和传播。

什么是关键词霸屏工具?

关键词霸屏工具是一种利用推特平台的搜索和趋势功能,帮助用户找到并参与与其关键词相关的对话和话题的工具。... | lpsh_intf_0dd4c93b51e9a54 | |

1,921,764 | neovim and lazygit, perfect harmony | I enjoy using both lazygit and nvim, I even have the lazygit.nvim plugin, but a couple things are... | 0 | 2024-07-12T23:16:43 | https://dev.to/doctorscott/neovim-and-lazygit-perfect-harmony-2mgl | neovim, vim, git, tooling | I enjoy using both lazygit and nvim, I even have the lazygit.nvim plugin, but a couple things are stopping my setup from being better

1. When I open lazygit with the `LazyGit` command, it does not open at the current file in my buffer I opened from

2. When I want to edit a file highlighted in lazygit, I cannot do it ... | doctorscott |

1,921,766 | 客系统自动采集活跃群成员,获客过滤软件,获客推广工具 | 获客系统自动采集活跃群成员,获客过滤软件,获客推广工具 了解相关软件请登录 http://www.vst.tw... | 0 | 2024-07-12T23:13:20 | https://dev.to/gexm_mrdr_325793e9ff99815/ke-xi-tong-zi-dong-cai-ji-huo-yue-qun-cheng-yuan-huo-ke-guo-lu-ruan-jian-huo-ke-tui-yan-gong-ju-2jdg |

获客系统自动采集活跃群成员,获客过滤软件,获客推广工具

了解相关软件请登录 http://www.vst.tw

提升市场开拓效率,获客系统自动采集活跃群成员

随着信息技术的迅猛发展,传统的市场开拓方式正在经历一场革命性的变革。在过去,企业为了获取客户,往往需要大量的人力投入,通过电话营销、传单派发等方式来寻找潜在客户。然而,随着互联网和社交媒体的普及,获客系统的自动化采集技术正逐渐成为各行业企业获取客户的新利器。

获客系统的定义与作用

获客系统是指利用现代化的信息技术手段,通过自动化、智能化的方式,识别、定位并采集潜在客户的系统。其核心功能包括数据挖掘、分析用户行为、社交媒体信息抓取等,通过这些手段帮助企... | gexm_mrdr_325793e9ff99815 | |

1,921,768 | It's 2024 so why not setup Neovim (w/ NvChad) for Haskell | Introduction Recently, I've been spending time on a persistent penboot installation of... | 0 | 2024-07-13T03:07:51 | https://dev.to/bkataru/its-2024-so-why-not-setup-neovim-w-nvchad-for-haskell-2kj8 | neovim, haskell, functional, nvchad | ## Introduction

Recently, I've been spending time on a persistent penboot installation of Fedora Workstation on a 32 GB SanDisk drive.

I've avoided installing any GUI text/code editors such as VSCode (my defacto choice) or Sublime Text, and decided to stick to a basic terminal-and-vim based workflow keeping in mind t... | bkataru |

1,921,772 | React Three Fiber - Smoothly turn camera towards at a position vs Camera.lookAt | After trying some solutions including 3rd party libs and more vanilla Three.js ways, I found a clean... | 0 | 2024-07-12T23:24:30 | https://dev.to/khaledsworld/react-three-fiber-smooth-cameralookat-cbc | After trying some solutions including 3rd party libs and more vanilla Three.js ways, I found a clean react-y way to have the camera smoothly look at a given position using React Three Fiber.

Thought I'd share! The Codesandbox has comments describing what I did. Just rip out that hook and use in your code.

{% codesand... | khaledsworld | |

1,921,774 | Why OOP is Your Old New Best Friend: The Date Distance Problem | Calculating the distance between two dates is relatively straightforward. There are multiple ways.... | 0 | 2024-07-13T17:46:41 | https://dev.to/yceruto/why-oop-is-your-old-and-new-best-friend-the-date-distance-problem-1059 | programming, oop, beginners, php | Calculating the distance between two dates is relatively straightforward. There are multiple ways. Think about it for a moment... If you like functional programming, your first thought might look like this:

```php

function date_distance(string $origin, string $destination): int

{

return strtotime($destination) - ... | yceruto |

1,921,775 | react-three-fiber - useLookAtSmooth - smooth camera.lookAt with speed | A post by Khaled Ali | 0 | 2024-07-12T23:25:22 | https://dev.to/khaledsworld/react-three-fiber-uselookatsmooth-smooth-cameralookat-with-speed-1igl | codesandbox | {% codesandbox qdr4nv %} | khaledsworld |

1,921,777 | CREATING MULTIPLE VIRTUAL MACHINES ON A SINGLE VIRTUAL NETWORK | In this tutorial, we’re going to deploy two virtual machines on a single virtual network and... | 27,629 | 2024-07-12T23:29:38 | https://dev.to/aizeon/creating-multiple-virtual-machines-on-a-single-virtual-network-1ihd | tutorial, cloud, virtualmachine, network | In this tutorial, we’re going to deploy two virtual machines on a single virtual network and configure them to allow one virtual machine to ping the other within the network—we will be testing to confirm their connection.

## **PREREQUISITE**

- Working computer

- Internet connection

- Microsoft Azure account + active s... | aizeon |

1,921,778 | 谷歌引流自动筛选,谷歌精准引流,谷歌霸屏机器人 | 谷歌引流自动筛选,谷歌精准引流,谷歌霸屏机器人 了解相关软件请登录 http://www.vst.tw... | 0 | 2024-07-12T23:36:14 | https://dev.to/prbr_gowi_a2bc893accd639d/gu-ge-yin-liu-zi-dong-shai-xuan-gu-ge-jing-zhun-yin-liu-gu-ge-ba-ping-ji-qi-ren-ek2 |

谷歌引流自动筛选,谷歌精准引流,谷歌霸屏机器人

了解相关软件请登录 http://www.vst.tw

谷歌引流自动筛选,优化搜索体验的新趋势

在当今数字化时代,搜索引擎已经成为人们获取信息、解决问题的首要途径。谷歌作为全球最大的搜索引擎,不断致力于提升用户体验,其中引入自动筛选技术已成为其优化搜索结果的重要策略之一。

自动筛选技术的背景与意义

随着互联网信息的爆炸式增长,传统的搜索方式可能无法有效地筛选出用户最需要的信息,这就导致了信息过载和搜索效率低下的问题。为了解决这一挑战,谷歌引入了自动筛选技术。这种技术利用机器学习和人工智能算法,能够根据用户的搜索行为、偏好和上下文,自动调整搜索结果的排序和内容... | prbr_gowi_a2bc893accd639d | |

1,921,779 | ok自动发帖,Facebook拉群助手,Facebook拉群机器人 | Facebook自动发帖,Facebook拉群助手,Facebook拉群机器人 了解相关软件请登录 http://www.vst.tw... | 0 | 2024-07-12T23:36:50 | https://dev.to/whjt_unhw_06fd74c2412e9db/okzi-dong-fa-tie-facebookla-qun-zhu-shou-facebookla-qun-ji-qi-ren-15pa |

Facebook自动发帖,Facebook拉群助手,Facebook拉群机器人

了解相关软件请登录 http://www.vst.tw

Facebook自动发帖,轻松管理社交媒体的新方式

在当今数字化时代,社交媒体已成为企业宣传的重要渠道。Facebook作为全球最大的社交平台之一,其自动发帖功能为企业营销带来了极大便利。

通过设定自动化程序,企业可以轻松管理其Facebook账号,实现定时发布帖子、图片和视频。这不仅节省了人力成本,还确保了内容的持续更新,增强了与粉丝的互动。

自动发帖的优势在于其高效性和灵活性。企业可以根据目标受众的活跃时间,设定最佳的发布时间,从而提高帖子的曝光率和互动率。同时,通过数据分... | whjt_unhw_06fd74c2412e9db | |

1,921,780 | VPA Protein: Fueling Your Fitness Journey | Introduction VPA Protein in Australia has established itself as a reliable source of extremely good... | 0 | 2024-07-12T23:39:34 | https://dev.to/altitude_supps_62ef16cc02/vpa-protein-fueling-your-fitness-journey-562b | fitness, health, supplements, sports | Introduction

[VPA Protein](https://altitudesupps.com.au/products/protein-water-grape-bubblegum-bfg-pw-gbbg-1kg?_pos=1&_psq=vpa&_ss=e&_v=1.0) in Australia has established itself as a reliable source of extremely good protein dietary supplements. This article explores the benefits of VPA Protein and why it's miles a pinn... | altitude_supps_62ef16cc02 |

1,921,781 | SECURING NETWORK TRAFFIC USING NETWORK SECURITY GROUP | In Azure, Network Security Groups (NSGs) are used to filter and manage network traffic entering and... | 27,629 | 2024-07-12T23:40:10 | https://dev.to/aizeon/securing-network-traffic-using-network-security-group-44k5 | tutorial, cloud, security, network | In Azure, Network Security Groups (NSGs) are used to filter and manage network traffic entering and leaving virtual networks.

Configuring NSGs enables you to:

- Based on source and destination IP addresses, ports, and protocols, permit or prohibit traffic.

- Secure access to applications and resources on Azure

- Ident... | aizeon |

1,921,783 | 📦 "OaaS" : A short intro to "Operations as a Service" & its tremendous benefits | 💭 About OaaS How many times did you: 🧑🎨 As a DEV : to explain a third party how to use... | 20,619 | 2024-07-14T22:26:39 | https://dev.to/adriens/oaas-a-short-intro-to-operations-as-a-service-its-tremendous-benefits-3h99 | devops, productivity, automaton, operations | ## 💭 About `OaaS`

How many times did you:

- **🧑🎨 As a `DEV`** : to explain a third party how to use a job/task you have developed and delivered in your favorite language on a specific server...**happily & proudly delivered with a wiki page** that explains step by step how to run the task

- **🤔 As an `OPS`** : to... | adriens |

1,921,784 | 🚀 Next.js Starter: Kickstart Your Next.js Project with Ease | If you're diving into Next.js and looking for a solid foundation to start your project, my Next.js... | 0 | 2024-07-13T00:06:10 | https://dev.to/imevanc/nextjs-starter-kickstart-your-nextjs-project-with-ease-449b | webdev, react, javascript, nextjs | If you're diving into Next.js and looking for a solid foundation to start your project, my [Next.js Starter](https://github.com/imevanc/nextjs-starter) is a fantastic choice. This starter template is designed to help you quickly set up a robust, scalable, and maintainable Next.js application. Let me share what this sta... | imevanc |

1,921,789 | ShopEase | This is a submission for the Wix Studio Challenge . What I Built I built a fully... | 0 | 2024-07-13T00:20:22 | https://dev.to/sweta_kangurisonulkar_/shopease-21ii | devchallenge, wixstudiochallenge, webdev, javascript | *This is a submission for the [Wix Studio Challenge ](https://dev.to/challenges/wix).*

## What I Built

I built a fully functional e-commerce website called ShopEase using Wix Studio. ShopEase focuses on promoting and selling eco-friendly products, specifically reusable bags. The website features a dynamic homepage w... | sweta_kangurisonulkar_ |

1,921,793 | Mastering Python's itertools Module | Introduction Python is a versatile programming language recognized for its easy-to-read... | 0 | 2024-07-13T00:33:55 | https://dev.to/kartikmehta8/mastering-pythons-itertools-module-4h7m | javascript, beginners, programming, tutorial | ## Introduction

Python is a versatile programming language recognized for its easy-to-read syntax and powerful functionalities. One of the most useful modules in Python is the "itertools" module, which provides a set of tools for efficient iteration and combination of elements. It is a standard library module that con... | kartikmehta8 |

1,921,794 | Contact Form - Frontend Mentor | Contact Form from Frontend Mentor The challenge Your challenge is to build out this contact form and... | 0 | 2024-07-13T00:54:41 | https://dev.to/webdev990/contact-form-frontend-mentor-1ki | webdev, javascript, beginners | **Contact Form from Frontend Mentor**

The challenge

Your challenge is to build out this contact form and get it looking as close to the design as possible. Pay particular attention to making this form accessible. Building accessible forms is a key skill for front-end developers. So this is a perfect challenge to pract... | webdev990 |

1,921,797 | LED Displays in Education: Transforming Learning Environments | LED Displays are the new face of educational system and it has made a huge leap in increasing the... | 0 | 2024-07-13T01:11:41 | https://dev.to/nagay_iakajg_ddc052208a46/led-displays-in-education-transforming-learning-environments-3gcl | design | LED Displays are the new face of educational system and it has made a huge leap in increasing the efficiency and effectiveness within classroom. Dynamic screens have been important resources in the educational settings, providing numerous advantages that help students learn better. This literally means that LED display... | nagay_iakajg_ddc052208a46 |

1,921,798 | How To Provision And Deploy Virtual Network On Azure Portal | Overview A virtual network (Vnet) is a logical representation of the physical hardware like... | 0 | 2024-07-13T01:16:24 | https://dev.to/romanus_onyekwere/how-to-provision-and-deploy-virtual-network-on-azure-portal-5129 | networking, skillapplication, virtualmachine, azureresources | **Overview**

- A virtual network (Vnet) is a logical representation of the physical hardware like switches and routers that make up the infrastructure.

- An Azure Virtual Network (Vnet) is a network or an environment that can be used to run VMs and applications in the cloud.

- Azure Virtual Network makes it possible... | romanus_onyekwere |

1,921,799 | Jasa Maklon Herbal Terbaik Dengan Harga Pabrik | Pengenalan Jasa Maklon Jasa maklon atau contract manufacturing adalah solusi bagi banyak... | 0 | 2024-07-13T01:16:57 | https://dev.to/zadasyifa_nusantara/jasa-maklon-herbal-terbaik-dengan-harga-pabrik-3mpf |

Pengenalan Jasa Maklon

Jasa maklon atau contract manufacturing adalah solusi bagi banyak perusahaan, terutama dalam industri kosmetik, makanan, dan obat herbal, yang ingin memproduksi produk mereka tanpa perlu m... | zadasyifa_nusantara | |

1,921,800 | Merge 2 sorted lists | Today, we look at another linked list task. Create a function to merge 2 sorted linked lists. The... | 27,729 | 2024-07-13T01:18:44 | https://dev.to/johnscode/merge-2-sorted-lists-22m | go, interview, programming | Today, we look at another linked list task.

Create a function to merge 2 sorted linked lists. The resulting list should be a sorted list using the nodes of the 2 lists.

For this, we will use the generic linked list implementation from the previous post which can be found [here](https://dev.to/johnscode/reverse-a-link... | johnscode |

1,921,824 | Aluzinc Steel Coils: Ideal for Roofing, Cladding, and Structural Applications | For this reason, aluzinc steel coils are a common choice for construction projects and offer many... | 0 | 2024-07-13T01:21:42 | https://dev.to/nagay_iakajg_ddc052208a46/aluzinc-steel-coils-ideal-for-roofing-cladding-and-structural-applications-53n | design | For this reason, aluzinc steel coils are a common choice for construction projects and offer many advantages. These coils are manufactured with a fantastic combination of welding together copper, steel and tin resulting into fine material for roof covering, walls cladding & other structural prospects. Read further to k... | nagay_iakajg_ddc052208a46 |

1,921,825 | Why I made TabbyAPI | Cover Photo by Jason Leung on Unsplash This is the first in probably many “why I made” posts which... | 0 | 2024-07-13T01:33:26 | https://dev.to/kingbri/why-i-made-tabbyapi-385f | opensource, developmentandgrowth, python, ai | ---

title: Why I made TabbyAPI

published: true

date: 2024-07-13 01:19:04 UTC

tags: opensource,developmentandgrowth,python,ai

cover_image: https://dev-to-uploads.s3.amazonaws.com/uploads/articles/qetu6qfaxje3set52eff.jpg

canonical_url:

---

_Cover Photo by Jason Leung on Unsplash_

This is the first in probably many “w... | kingbri |

1,921,828 | 一些想法 | 最近全面使用emacs,不过我感觉我快捷键还是没背熟,太多了,我其实是个很懒的人,也不是太依赖工具。感觉使用代码片段功能挺好用,像我这样,会个7、8种编程语言的,有些语法细节真不是太熟,平时也不太关注语... | 0 | 2024-07-13T01:31:25 | https://dev.to/xiaodao/xie-xiang-fa-44mi | 最近全面使用emacs,不过我感觉我快捷键还是没背熟,太多了,我其实是个很懒的人,也不是太依赖工具。感觉使用代码片段功能挺好用,像我这样,会个7、8种编程语言的,有些语法细节真不是太熟,平时也不太关注语法,所以啊,代码片段太有用了。自己加了一些常用代码片段进去,写代码又快了。提升效率确实是方方面面的事情。 | xiaodao | |

1,921,829 | Você sabe o que seria um modelo de IA? | Hoje se ouve muito falar em Inteligência Artificial (IA), como ela esta melhorando o nosso dia a dia.... | 0 | 2024-07-13T01:33:53 | https://dev.to/programadriano/voce-sabe-o-que-seria-um-modelo-de-ia-281 | ia, rnas, svm, cnn | ---

title: Você sabe o que seria um modelo de IA?

published: true

description:

tags: IA, RNAs, SVM, CNN

# cover_image: https://direct_url_to_image.jpg

# Use a ratio of 100:42 for best results.

# published_at: 2024-07-13 01:01 +0000

---

Hoje se ouve muito falar em Inteligência Artificial (IA), como ela esta melhorando... | programadriano |

1,921,830 | [Book Review] Beej's Guide to Network Programming | Intro - what is this book about? Yes, I finally finished reading this book. As a person... | 0 | 2024-07-13T01:38:11 | https://dev.to/uponthesky/book-review-beejs-guide-to-network-programming-9mg | computerscience, socket, c | ## Intro - what is this book about?

Yes, I finally finished reading this book.

As a person who has never taken a computer networking course in university, I had thought for a long time of learning that subject intensively. However, I could not find a solid university course that is open to public(something like [MIT’... | uponthesky |

1,921,831 | ChatGPT Deutsch: Ein Meilenstein für den Bildungsbereich | Die Integration von ChatGPT Deutsch in den Bildungsbereich bietet zahlreiche Vorteile und eröffnet... | 0 | 2024-07-13T01:38:50 | https://dev.to/chatgpt_deutsch/chatgpt-deutsch-ein-meilenstein-fur-den-bildungsbereich-342h | chatgptdeutsch | Die Integration von ChatGPT Deutsch in den Bildungsbereich bietet zahlreiche Vorteile und eröffnet neue Möglichkeiten für Lernende und Lehrende. Mit seinen fortschrittlichen KI-Algorithmen unterstützt chatgptdeutsch.info Schüler und Studenten bei ihren akademischen Herausforderungen und revolutioniert die Art und Weise... | chatgpt_deutsch |

1,921,832 | Ubat Buasir Tradisional Yang Paling Mujarab | Apa Itu Buasir? Buasir, atau hemoroid, merupakan masalah kesihatan yang melibatkan pembesaran dan... | 0 | 2024-07-13T01:40:59 | https://dev.to/indah_pkl_a19b7cbde17728c/ubat-buasir-tradisional-yang-paling-mujarab-1gkp |

**Apa Itu Buasir?**

Buasir, atau hemoroid, merupakan masalah kesihatan yang melibatkan pembesaran dan bengkaknya pembuluh darah di sekitar dubur. Keadaan ini boleh menjadi tidak selesa dan menyakitkan, dan biasanya... | indah_pkl_a19b7cbde17728c | |

1,921,833 | Optimizing Performance in React Applications | As a React developer, ensuring that your applications run smoothly and efficiently is crucial for... | 0 | 2024-07-13T01:41:22 | https://dev.to/khalidkhankakar/optimizing-performance-in-react-applications-59k0 | webdev, react, performance, javascript | As a React developer, ensuring that your applications run smoothly and efficiently is crucial for providing a great user experience. Performance optimization can make the difference between a fast, responsive app and one that feels sluggish. In this blog post, we'll explore several techniques to optimize performance in... | khalidkhankakar |

1,921,834 | Streamlining Operations in Shipping Logistics with Lean Methodologies | Are you currently enthusiastic about learning precisely how organizations that are delivery work... | 0 | 2024-07-13T01:43:10 | https://dev.to/nagay_iakajg_ddc052208a46/streamlining-operations-in-shipping-logistics-with-lean-methodologies-a9 | design | Are you currently enthusiastic about learning precisely how organizations that are delivery work faster and safer than previously? Lean methodologies have actually revolutionized the means that are actual think about shipping logistics. , we’ll examine a few of the benefits which are key innovations of slim logistics. ... | nagay_iakajg_ddc052208a46 |

1,921,835 | 7 Skills A Backend Developer Needs in 2024 | In the rapidly evolving tech landscape, staying ahead in backend development requires continuous... | 0 | 2024-07-13T01:43:28 | https://dev.to/baaraku/7-skills-a-backend-developer-needs-in-2024-3bfn | developer, javascript, opensource, news | In the rapidly evolving tech landscape, staying ahead in backend development requires continuous learning and adaptation. As we approach the second half of 2024, the demand for skilled back-end developers is higher than ever, driven by the need for robust, scalable, and efficient server-side solutions. This blog post ... | baaraku |

1,921,836 | Revolutionizing User Experience with AI Tools: The Sista AI Approach | Unlock the transformative power of AI tools with Sista AI! 🌟 Revolutionize your user experience today with cutting-edge AI integration. #AI #SistaAI | 0 | 2024-07-13T01:45:37 | https://dev.to/sista-ai/revolutionizing-user-experience-with-ai-tools-the-sista-ai-approach-134 | ai, react, javascript, typescript | <h2>Unlocking the Potential of AI Tools</h2><p>The field of Artificial Intelligence (AI) is rapidly evolving, with numerous innovations and advancements transforming various aspects of our lives. From AI trends analysis tools to AI-powered writing fusion, AI is revolutionizing the way we work and interact with technolo... | sista-ai |

1,921,837 | Key Skills for Tech Freelancers | Freelancing in the tech industry offers numerous opportunities for skilled professionals to work on... | 0 | 2024-07-13T01:46:24 | https://dev.to/baaraku/key-skills-for-tech-freelancers-3ooe | freelance, developer, softwareengineering | Freelancing in the tech industry offers numerous opportunities for skilled professionals to work on diverse projects, enjoy flexible schedules, and achieve financial independence. This guide will provide you with the essential tips and strategies to excel as a freelancer in the tech industry, ensuring your freelance su... | baaraku |

1,921,838 | Nanjing Chenwei Medical Equipment: Reliable Solutions for Critical Care Ventilation | Nanjing Chenwei Medical Equipment - a Preferred Partner for the Application of Breathing Support Did... | 0 | 2024-07-13T01:50:15 | https://dev.to/nagay_iakajg_ddc052208a46/nanjing-chenwei-medical-equipment-reliable-solutions-for-critical-care-ventilation-156p | design | Nanjing Chenwei Medical Equipment - a Preferred Partner for the Application of Breathing Support

Did you require powerful solutions to help with breathing issues? If Yes, Then You are at the Right Place - Visit Nanjing Chenwei Medical Equipment. The company is known nationally for providing high performance products a... | nagay_iakajg_ddc052208a46 |

1,921,839 | Propane Gensets: Powering Homes and Businesses with Clean Energy | Propane Gensets Capable Of Providing a Stable Energy Source For Homes and Businesses As we all know,... | 0 | 2024-07-13T01:52:02 | https://dev.to/nagay_iakajg_ddc052208a46/propane-gensets-powering-homes-and-businesses-with-clean-energy-46oe | design | Propane Gensets Capable Of Providing a Stable Energy Source For Homes and Businesses

As we all know, there is always a full dependence on electricity in our day to day lives. It is what allows us to light our homes, use appliances safely and run businesses smoothly. But the unexpected power outage that messes up our r... | nagay_iakajg_ddc052208a46 |

1,921,841 | Engineered for Excellence: High-Quality Diesel Engine Parts for Dependability | Developed for Quality: High Performance Diesel Engine Accessories and Reliable Products Engineered... | 0 | 2024-07-13T01:57:36 | https://dev.to/nagay_iakajg_ddc052208a46/engineered-for-excellence-high-quality-diesel-engine-parts-for-dependability-5a3o | design | Developed for Quality: High Performance Diesel Engine Accessories and Reliable Products

Engineered for Excellence - We Build Heavy-Duty and Reliable Diesel Engine Parts Built to Last! Through the use of both quality materials and advanced technology, they are able to ensure that their parts provide high-calibre perfor... | nagay_iakajg_ddc052208a46 |

1,921,844 | Day 10 & 11 of my Devops Journey: Kubernetes Networking Fundamentals - Challenges and Solutions | Introduction: Hey everyone! Welcome back to Day 10 & 11 of my SRE and Cloud Security journey.... | 0 | 2024-07-13T02:44:09 | https://dev.to/arbythecoder/day-10-11-of-my-devops-journey-kubernetes-networking-fundamentals-challenges-and-solutions-3c59 | devops, kubernetes, beginners, networking | **Introduction:**

Hey everyone! Welcome back to Day 10 & 11 of my SRE and Cloud Security journey. Today, we dove deep into Kubernetes networking, and let me tell you, it wasn't all sunshine and rainbows. I encountered some real head-scratchers, but with persistence and a little help from the internet, I was able to ov... | arbythecoder |

1,921,845 | Turn the Table | Inspired by the @keyframers, I decided to try my hand at animating a 3D turntable. @keyframers pen -... | 0 | 2024-07-13T02:14:25 | https://dev.to/tom_ford_6543e5db41fdbb68/turn-the-table-pc4 | codepen | Inspired by the @keyframers, I decided to try my hand at animating a 3D turntable.

@keyframers pen - https://codepen.io/team/keyframers/pen/YjMwqX

original Inspiration: https://dribbble.com/shots/4930227-Vinyls-E-Commerce-App

{% codepen https://codepen.io/jonwilcox/pen/MBRLBY %} | tom_ford_6543e5db41fdbb68 |

1,921,846 | Button Morph Animation | Just a cool button to full screen page effect. Let me know what you think. | 0 | 2024-07-13T02:18:59 | https://dev.to/tom_ford_6543e5db41fdbb68/button-morph-animation-2gjk | codepen | Just a cool button to full screen page effect. Let me know what you think.

{% codepen https://codepen.io/Tom-Ford-the-vuer/pen/yLdNPWr %} | tom_ford_6543e5db41fdbb68 |

1,921,855 | Looking for Full-stack Devs for XDR cybersecurity platform | I am building a XDR cybersecurity platform Using AI and ML to detect and respond to cyber threats... | 0 | 2024-07-13T02:21:58 | https://dev.to/michael_mcmillan_18baaee6/looking-for-full-stack-devs-for-xdr-cybersecurity-platform-5912 | react, javascript, python, restfulapi | I am building a XDR cybersecurity platform Using AI and ML to detect and respond to cyber threats instantly on a network. The AI and ML uses Indicators of Attack to find cyber threats and forces a reset/disables user access on the compromised endpoint. Please reach out to me here I could really use the help. You will b... | michael_mcmillan_18baaee6 |

1,921,856 | Object-Based UI State Management vs. JavaScript Proxy-Based UI State Management | State management is a critical aspect of modern UI development, especially as applications grow in... | 0 | 2024-07-13T02:33:56 | https://dev.to/guhandelta/object-based-ui-state-management-vs-javascript-proxy-based-ui-state-management-58bk | State management is a critical aspect of modern UI development, especially as applications grow in complexity. Two popular methods for managing UI state in JavaScript applications are object-based UI state management and proxy-based UI state management. Both approaches have their advantages and trade-offs. This article... | guhandelta | |

1,921,861 | Creating an Azure Virtual Network with Four Subnets (192.148.30.0/26) | A virtual network (VNet) in Azure acts as the foundation for your private cloud environment. It... | 0 | 2024-07-13T04:25:24 | https://dev.to/adah_okwara_3c43c95a89a2e/creating-an-azure-virtual-network-with-four-subnets-19214830026-478l | azure, cloudcomputing, networking, microsoft |

A virtual network (VNet) in Azure acts as the foundation for your private cloud environment. It defines a secure communication space for your Azure resources, including virtual machines (VMs). This blog will guide you through creating a VNet with four subnets using the address space 192.148.30.0/26.

**Prerequisites:*... | adah_okwara_3c43c95a89a2e |

1,921,862 | A Paradigm Shift in Software Development: Leveraging GenAI for Direct Business Logic Processing | A Paradigm Shift in Software Development: Leveraging GenAI for Direct Business Logic... | 0 | 2024-07-17T01:49:15 | https://dev.to/lazypro/a-paradigm-shift-in-software-development-leveraging-genai-for-direct-business-logic-processing-16h0 | tutorial, programming, softwaredevelopment, ai | # A Paradigm Shift in Software Development: Leveraging GenAI for Direct Business Logic Processing

> Generative AI transforms the way we handle business logic

If you read the title and thought I am going to introduce Copilot, you are wrong.

Before we start the topic, let's start with a case study of an e-commerce pla... | lazypro |

1,921,863 | Vertical Injection Machines: Flexibility and Precision in Manufacturing | Delivering Precision and Versatility in Manufacturing with Vertical Injection Machines Vertical... | 0 | 2024-07-13T02:49:33 | https://dev.to/yskab_osmab_ff3a62c1be1ee/vertical-injection-machines-flexibility-and-precision-in-manufacturing-ebn | design | Delivering Precision and Versatility in Manufacturing with Vertical Injection Machines

Vertical Injection Machines are machines used specifically for the meticulous creation of an exercise optionsixedReality products. These rotary vertical injection moulding machine are known to create manufactured goods with highest ... | yskab_osmab_ff3a62c1be1ee |

1,921,864 | How McDonald's Menu Uses Data to Optimize Customer Experience | In today's fast-paced world, fast food giants like McDonald's are not just serving up burgers and... | 0 | 2024-07-13T02:54:39 | https://dev.to/lina_ch/how-mcdonalds-menu-uses-data-to-optimize-customer-experience-4gk | webdev, website | In today's fast-paced world, fast food giants like McDonald's are not just serving up burgers and fries—they're leveraging cutting-edge data analytics to enhance customer experience. The evolution of the McDonald's menu is a fascinating study of how data can drive decision-making in the food industry. In this post, we'... | lina_ch |

1,921,865 | Achieving a 3x reduction in React Bundle size: A case study | In our recent efforts to improve the performance of our frontend applications, we made significant... | 0 | 2024-07-13T02:58:01 | https://agustinmaggi.com/achieving-3x-reduction-in-react-bundle-size | performance, vite, react | In our recent efforts to improve the performance of our frontend applications, we made significant strides by optimizing our use of our component library.

Here's how we achieved a more than 3x reduction in our bundle size, speeding up our build process and enhancing our app's runtime efficiency.

## The Power of Tree S... | amaggi |

1,921,866 | The Essentials for Street Vending Food Truck Owners | Food trucking is an exciting ride with unknown avenues to explore! If you are a beginner or an old... | 0 | 2024-07-13T03:02:15 | https://dev.to/yskab_osmab_ff3a62c1be1ee/the-essentials-for-street-vending-food-truck-owners-52la | design | Food trucking is an exciting ride with unknown avenues to explore! If you are a beginner or an old timer, key features need to keep in mind when there is question of branding the food truck business. Check out what each of the individual things symbolizes and you will be one step closer to food truck success.

The Bene... | yskab_osmab_ff3a62c1be1ee |

1,921,867 | ⚡ MySecondApp - React Native with Expo (P6) - Custom Header in Bottom Tabs Navigator | ⚡ MySecondApp - React Native with Expo (P6) - Custom Header in Bottom Tabs Navigator | 28,005 | 2024-07-13T03:03:40 | https://dev.to/skipperhoa/mysecondapp-react-native-with-expo-p6-custom-header-in-bottom-tabs-navigator-5b5p | webdev, tutorial, react, reactnative | ⚡ MySecondApp - React Native with Expo (P6) - Custom Header in Bottom Tabs Navigator

{% youtube nUCMGXZRjiE %} | skipperhoa |

1,921,868 | Crafting React Components: Readability, Reusability, and Efficiency | In the world of React, writing components is an art. It’s not just about making them work — it’s... | 0 | 2024-07-13T03:04:53 | https://dev.to/vyan/crafting-react-components-readability-reusability-and-efficiency-6a1 | webdev, javascript, beginners, react | In the world of React, writing components is an art. It’s not just about making them work — it’s about making them work well. Today, we’re going to look at how to craft your components like a pro, focusing on readability, reusability, and efficiency.

## Create a List Component

Let’s start with a basic List component:... | vyan |

1,921,869 | Unlocking Application Insights: Leveraging Spring Boot Actuator for Monitoring and Metrics | Unlocking Application Insights: Leveraging Spring Boot Actuator for Monitoring and... | 0 | 2024-07-13T03:05:05 | https://dev.to/virajlakshitha/unlocking-application-insights-leveraging-spring-boot-actuator-for-monitoring-and-metrics-4ko4 |

# Unlocking Application Insights: Leveraging Spring Boot Actuator for Monitoring and Metrics

In the ever-evolving world of software development, having deep insights into your application's health and performance is no longer option... | virajlakshitha | |

1,921,870 | Achieve Perfect Emulsions with Precision Emulsifier Mixer Machines | Precision Emulsifier Mixer Machines for Perfect Formulas Do you have trouble getting the right... | 0 | 2024-07-13T03:06:39 | https://dev.to/yskab_osmab_ff3a62c1be1ee/achieve-perfect-emulsions-with-precision-emulsifier-mixer-machines-5eo4 | design | Precision Emulsifier Mixer Machines for Perfect Formulas

Do you have trouble getting the right texture in your recipes? It can be challenging to incorporate oil and starch-based mixtures without leaving cakes that are too dry or salad dressings which separate. But don't worry, there is an amazing tool that can help yo... | yskab_osmab_ff3a62c1be1ee |

1,921,871 | Artisanal Excellence: The Craftsmanship of DTF Printing | Craftsmanship in DTF Printing - Artisan Excellence This could be a great site to feature color and... | 0 | 2024-07-13T03:08:34 | https://dev.to/yskab_osmab_ff3a62c1be1ee/artisanal-excellence-the-craftsmanship-of-dtf-printing-1e1f | design | Craftsmanship in DTF Printing - Artisan Excellence

This could be a great site to feature color and individuality in your clothing or apparel using the DTF Printing method. This innovative print technology enables to bring out vibrant and intricate designs on a broad range of substrates from cotton, polyester or even l... | yskab_osmab_ff3a62c1be1ee |

1,921,872 | The Role of ZNTECH New Energy Technology in a Greener Future | Advantages of ZNTECH New Energy Technology Want to help mother earth, but also save money at the... | 0 | 2024-07-13T03:12:09 | https://dev.to/yskab_osmab_ff3a62c1be1ee/the-role-of-zntech-new-energy-technology-in-a-greener-future-1hkg | design | Advantages of ZNTECH New Energy Technology

Want to help mother earth, but also save money at the same time? Well, you can get a kick start by this ZNTECH New Energy Technology. In addition to its safety features, the new technology is said to be cutting-edge and high-quality.

ZNTECH New Energy Technology Advantages

... | yskab_osmab_ff3a62c1be1ee |

1,921,894 | How To Take Apart Bed Frame For Moving | We’ll take you step-by-step on taking apart bedframes specifically for moving purposes. Whether... | 0 | 2024-07-13T03:22:23 | https://dev.to/lifewaytrove/how-to-take-apart-bed-frame-for-moving-36pp | bed | We’ll take you step-by-step on taking [apart bedframes](https://www.lifewaytrove.com/how-to-take-apart-bed-frame/) specifically for moving purposes. Whether you’re moving to a new home or simply need to transport your take-apart bed frame to another location, following these instructions will help make the job easier a... | lifewaytrove |

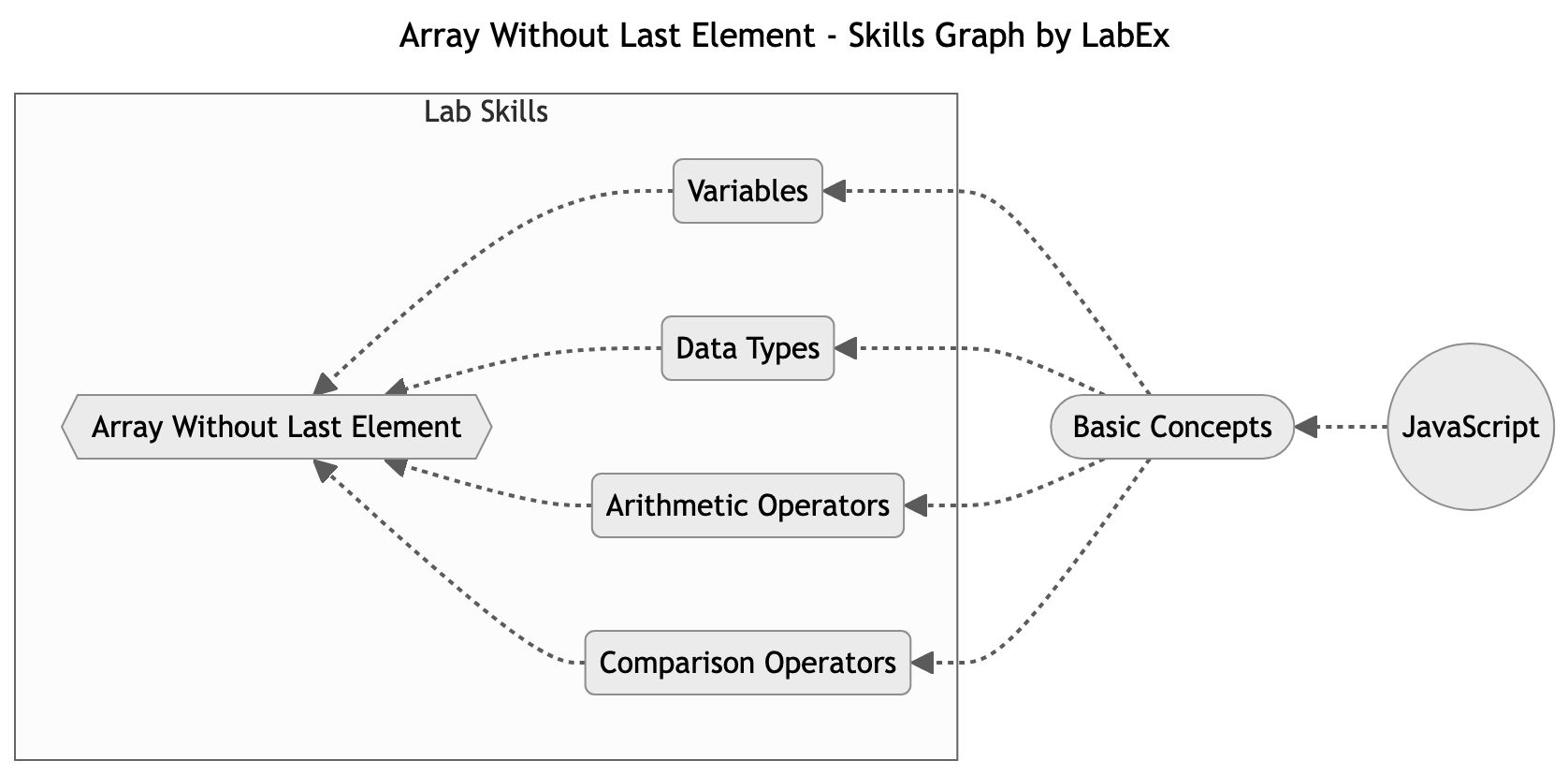

1,921,903 | Array Without Last Element | Programming Tutorials | Lab | In this lab, we will explore how to manipulate arrays in JavaScript by creating a function that returns all the elements of an array except the last one. We will use the Array.prototype.slice() method to achieve this and learn how to slice and extract elements from arrays. This lab will help us understand the fundament... | 27,742 | 2024-07-13T03:22:54 | https://dev.to/labex/array-without-last-element-programming-tutorials-lab-29d0 | javascript, coding, programming, tutorial |

## Introduction

This article covers the following tech skills:

In [this lab](https://labex.io/tutorials/array-without-last-element-28163), we will explore how to manipulate arrays in JavaScript by creating a function that returns al... | labby |

1,921,904 | Discover the Top 10 Jewellery Designers in Kanchipuram You Must Know | Kanchipuram, a small town in Tamil Nadu, India, is renowned for its rich cultural heritage and... | 0 | 2024-07-13T03:24:23 | https://dev.to/payal_sanjay_086c98122f75/discover-the-top-10-jewellery-designers-in-kanchipuram-you-must-know-2ep8 | Kanchipuram, a small town in Tamil Nadu, India, is renowned for its rich cultural heritage and exquisite jewellery designs. For centuries, Kanchipuram has been the epicentre of traditional Indian jewellery, with skilled artisans and designers creating breathtaking pieces that adorn the gods and goddesses in temples and... | payal_sanjay_086c98122f75 | |

1,921,905 | The 21 Days Challenge: Pt. 2 | In the first part, I talked about the idea of repeating a certain activity daily for 21 days to turn... | 0 | 2024-07-13T11:16:57 | https://dev.to/sxryadipta/the-21-days-challenge-pt-2-5f50 | programming, productivity, coding, 100daysofcode | In the first part, I talked about the idea of repeating a certain activity daily for 21 days to turn it into a habit, it is most unlikely that a habit is built over that short period. A 2009 study shows that the average time for a new habit to stick is about 66 days. So even if we try to build a habit in 21 days, it is... | sxryadipta |

1,921,906 | Enhancing Web Performance with Image Sprites | In the fast-paced world of web development, optimizing the performance of your website is crucial.... | 0 | 2024-07-13T06:56:09 | https://dev.to/mdhassanpatwary/enhancing-web-performance-with-image-sprites-54n5 | webdev, css, html, learning | In the fast-paced world of web development, optimizing the performance of your website is crucial. One effective technique for improving load times and overall user experience is the use of image sprites. By combining multiple images into a single sprite, you can reduce the number of HTTP requests, leading to faster pa... | mdhassanpatwary |

1,921,907 | How to Create and Publish a WordPress Plugin | How to Create and Publish a WordPress Plugin Creating and publishing a WordPress plugin... | 0 | 2024-07-13T03:31:01 | https://dev.to/sh20raj/how-to-create-and-publish-a-wordpress-plugin-3bbi | wordpress, wordpressplugin, javascript, webdev | # How to Create and Publish a WordPress Plugin

Creating and publishing a WordPress plugin can enhance the functionality of WordPress websites. This guide will walk you through the steps to create a simple WordPress plugin and publish it on the WordPress Plugin Directory.

## Prerequisites

Before you start, ensure you... | sh20raj |

1,921,908 | You're facing conflicts with your boss. How can you resolve them without harming your relationship? | 1. Understand Issues An issue in like a wildfire it starts small but has the potential to... | 0 | 2024-07-13T03:37:59 | https://dev.to/gourav_sharma_00/youre-facing-conflicts-with-your-boss-how-can-you-resolve-them-without-harming-your-relationship-4bnc | webdev, productivity, career, discuss | #1. Understand Issues

An issue in like a wildfire it starts small but has the potential to burn the whole forest and in this case your relationship with your boss, don't allow it to spread any longer

>"Fire must be extinguished the moment it is lit"

the same principle applies to an issue you must resolve it the moment... | gourav_sharma_00 |

1,921,909 | Generative AI and Personalized Experiences: From Chatbots to Recommendation Systems | Generative AI and Personalized Experiences: From Chatbots to Recommendation Systems In... | 0 | 2024-07-13T03:42:10 | https://dev.to/hariharaswq/generative-ai-and-personalized-experiences-from-chatbots-to-recommendation-systems-1o5o | ai, genai, learning | ### Generative AI and Personalized Experiences: From Chatbots to Recommendation Systems

In today's digital age, personalization is more than a buzzword—it's a necessity. Users expect interactions that are tailored to their preferences, needs, and behaviors. Generative AI, with its ability to create new content and pre... | hariharaswq |

1,921,910 | Oops at 28 Jun 2024 | Long time no see, dev.to community! Today I'd like to share yet another OOPS moment I encountered... | 0 | 2024-07-13T03:50:26 | https://dev.to/teminian/oops-at-28-jun-2024-ead | mistake | Long time no see, dev.to community! Today I'd like to share yet another OOPS moment I encountered last month.

오랜만입니다! 오늘은 지난달에 맞부딪친 얼라리요......를 가지고 이야기해보고자 합니다.

So, here's a code:

```cpp

if(condition1) do_something();

if(condition2) do_something_else();

else do_yet_another();

```

Nothing much, huh? But, the problem i... | teminian |

1,921,912 | Best 6 Repositories To Master Next.js | Next.js has become a staple for modern web development, offering features like server-side rendering,... | 0 | 2024-07-13T04:01:12 | https://dev.to/dipakahirav/best-6-repositories-to-master-nextjs-223g | javascript, webdev, nextjs, react | Next.js has become a staple for modern web development, offering features like server-side rendering, static site generation, and API routes that make building web applications a breeze. To truly master Next.js, diving into some of the best open-source repositories is a great way to learn by example. Here are six repos... | dipakahirav |

1,921,915 | Cut Off Without a Goodbye: My Final Hours at the Company | The abrupt way that my relationship with my former company ended, and our cold interactions since... | 0 | 2024-07-13T04:01:56 | https://dev.to/sarah_bruce_83fc98defc6d5/cut-off-without-a-goodbye-my-final-hours-at-the-company-22f7 | womenintech, workplace, career | The abrupt way that my relationship with my former company ended, and our cold interactions since then, hurt like hell. I went from being told how important I was to the company, to being quickly removed from Slack. It’s been humbling, to say the least.

(For those who haven’t seen my [previous post](https://dev.to/sar... | sarah_bruce_83fc98defc6d5 |

1,921,917 | Valtio: Unveiling the State Management Ninja with Bun and Vite | Streamline Your State: Mastering Valtio with the Speed of Bun and Vite Managing the state... | 0 | 2024-07-13T04:03:48 | https://dev.to/guhandelta/valtio-unveiling-the-state-management-ninja-with-bun-and-vite-3078 | react, valtio, bunjs, vite | ## Streamline Your State: Mastering Valtio with the Speed of Bun and Vite

Managing the state of a React app is a crucial aspect of developing modern web applications. With the advent of new tools and libraries, developers can now efficiently handle state management with ease. This article explores how to manage state ... | guhandelta |

1,921,918 | AI Revolution in Legal Businesses | Transforming the Legal Landscape The legal profession is on the cusp of a profound... | 27,673 | 2024-07-13T04:14:48 | https://dev.to/rapidinnovation/ai-revolution-in-legal-businesses-ene | ## Transforming the Legal Landscape

The legal profession is on the cusp of a profound transformation as it

embraces the power of artificial intelligence (AI) to revolutionize its

operations. The integration of AI into legal businesses has the potential to

unlock new possibilities, enhance efficiency, and create a more... | rapidinnovation | |

1,921,919 | Building Responsive Navigation Bars | Introduction Navigation bars play a crucial role in the overall design and functionality... | 0 | 2024-07-13T04:15:19 | https://dev.to/tailwine/building-responsive-navigation-bars-1hoa | scss, sass, css, tailwindcss | ## Introduction

Navigation bars play a crucial role in the overall design and functionality of a website. They help visitors easily navigate through different pages and sections, ultimately enhancing the user experience. However, with the increasing use of mobile devices, it has become essential to have a responsive n... | tailwine |

1,921,920 | Exploring JavaScript Array Methods with Examples | JavaScript arrays are versatile and offer a wide range of built-in methods to manipulate, iterate,... | 0 | 2024-07-13T04:56:40 | https://dev.to/suleman_ahmed_rajput/exploring-javascript-array-methods-with-examples-3p90 | javascript, webdev, beginners |

JavaScript arrays are versatile and offer a wide range of built-in methods to manipulate, iterate, and manage data efficiently. Understanding these methods is crucial for effective programming. Let's delve into some commonly used array methods with practical examples.

## Array Methods

- **push():** Adds one or more... | suleman_ahmed_rajput |

1,921,921 | Axial Fan Maintenance Tips: Extending Lifespan and Efficiency | How To Maintain Your Axial Fan However, if you have an axial fan in your home or establishment this... | 0 | 2024-07-13T04:18:36 | https://dev.to/jdifb_vdjdvd_a788eb5f5878/axial-fan-maintenance-tips-extending-lifespan-and-efficiency-5240 | design | How To Maintain Your Axial Fan

However, if you have an axial fan in your home or establishment this is a must knowmaintenance tip on how to properly maintain it and let the wind keep blowing. Because they are specifically designed for air circulation, axial fans work wonders when it comes to cooling. These tips will i... | jdifb_vdjdvd_a788eb5f5878 |

1,921,925 | Unlock the Power of Node.js with Design Patterns 🚀 | Comprehensive guide to implementing design patterns in Node.js, providing practical insight into scalable and efficient code design for Node.js applications. | 27,801 | 2024-07-13T04:29:37 | https://dev.to/getvm/unlock-the-power-of-nodejs-with-design-patterns-5a14 | getvm, programming, freetutorial, technicaltutorials |

As a passionate Node.js developer, I'm thrilled to share with you an incredible resource that has transformed the way I approach building scalable and efficient applications. Introducing "Node.js Design Patterns" by Mario Casciaro – a comprehensive guide that delves deep into the world of design patterns and their pra... | getvm |

1,921,926 | Enhancing Grip: Innovations in Vacuum Suction Cup Design | You've probably seen or heard of vacuum suction cups before. Because your hands slide too easily,Do... | 0 | 2024-07-13T04:30:06 | https://dev.to/jdifb_vdjdvd_a788eb5f5878/enhancing-grip-innovations-in-vacuum-suction-cup-design-po2 | design | You've probably seen or heard of vacuum suction cups before.

Because your hands slide too easily,Do you wonder to ever hold on tighter requirements? Well then you are in for a treat because vacuum suction cups can do amazing things! Made with specialized suction cups, these are great not only for boosting your grip bu... | jdifb_vdjdvd_a788eb5f5878 |

1,921,927 | Your Style, Your Way: The Beauty of Custom Home Textiles | Design Your Custom Home Textiles to Get the Look You Want Do you get fed up looking for home... | 0 | 2024-07-13T04:35:16 | https://dev.to/jdifb_vdjdvd_a788eb5f5878/your-style-your-way-the-beauty-of-custom-home-textiles-1569 | design | Design Your Custom Home Textiles to Get the Look You Want

Do you get fed up looking for home textiles which correspond to your personal sense of style and taste? Custom Home Textiles is the answer! Choose then according to what you want and turn their living space into an abode - one that reflects their own brand of t... | jdifb_vdjdvd_a788eb5f5878 |

1,921,977 | Declarando Variáveis de Controle de Laço Dentro do for | Conceito É possível declarar a variável de controle diretamente na declaração do laço for. Isso é... | 0 | 2024-07-13T06:15:36 | https://dev.to/devsjavagirls/declarando-variaveis-de-controle-de-laco-dentro-do-for-3kg5 | java | **Conceito**

- É possível declarar a variável de controle diretamente na declaração do laço for.

- Isso é útil quando a variável só é necessária dentro do próprio laço.

**Vantagens**

- Melhora a legibilidade e organização do código.

- Limita o escopo da variável ao laço, reduzindo a possibilidade de erros.

**Exemplo*... | devsjavagirls |

1,921,928 | Optical Cable Fittings: Enhancing Connectivity in Data Centers | Improving The Connectivity in a Data Center by Using Optical Cable Fittings In the world of data... | 0 | 2024-07-13T04:37:07 | https://dev.to/jdifb_vdjdvd_a788eb5f5878/optical-cable-fittings-enhancing-connectivity-in-data-centers-373o | design | Improving The Connectivity in a Data Center by Using Optical Cable Fittings

In the world of data centers, connectivity is everything. Picture a busy array of servers and storage devices efficiently talking to each other via photonic interconnects. These are most important in order to make sure that the data is able to... | jdifb_vdjdvd_a788eb5f5878 |

1,921,929 | Top Free Job Posting Websites & Hire Employees Quickly | Getting the right people to learn about your job advertising is essential to filling openings and... | 0 | 2024-07-13T04:58:30 | https://dev.to/kemeconinc/top-free-job-posting-websites-hire-employees-quickly-4e9e |

Getting the right people to learn about your job advertising is essential to filling openings and growing your organization. We provided a list of [job posting websites](https://www.kemecon.com/) to help you locate qualified candidates for your open position.

Going through hundreds of submissions and occasionally mis... | kemeconinc | |

1,921,930 | 30 Days of Code HackerRank using JAVASCRIPT DAY 2 : Operators | Task Given the meal price (base cost of a meal), tip percent (the percentage of the meal price being... | 0 | 2024-07-13T05:07:54 | https://dev.to/saiteja_amshala_035a7d7f1/30-days-of-code-hackerrank-using-javascript-day-2-operators-2do1 | webdev, javascript, beginners, programming | **Task**

Given the meal price (base cost of a meal), tip percent (the percentage of the meal price being added as tip), and tax percent (the percentage of the meal price being added as tax) for a meal, find and print the meal's total cost. Round the result to the nearest integer.

**Example**

meal cost = 100

tip perce... | saiteja_amshala_035a7d7f1 |

1,921,931 | Getting a Grip on the Singleton Pattern in Software Design: Ensuring a Single Instance | Around the globe design patterns are being widely accepted by software developers which in turn... | 0 | 2024-07-13T05:08:06 | https://dev.to/azizurrahaman/getting-a-grip-on-the-singleton-pattern-in-software-design-ensuring-a-single-instance-535i |

Around the globe design patterns are being widely accepted by software developers which in turn makes it a fundamental part of the software development process.

It cannot be ignored that design patterns are the mu... | azizurrahaman | |

1,921,932 | 7 Popular Tools To Develop Blockchain Application Development | Blockchain is a distributed record database to maintain all the records or digital events executed... | 0 | 2024-07-13T05:10:20 | https://dev.to/grapestechsolution/7-popular-tools-to-develop-blockchain-application-development-n2i | blockchain, blockchaindevelopment | Blockchain is a distributed record database to maintain all the records or digital events executed and shared among the participating parties. Every transaction undergoes verification by the majority of system members. It includes every single transaction record. The most well-known cryptocurrency and blockchain exampl... | grapestechsolution |

1,921,933 | Pics Shade Image Offload + Optimize + Resize wordpress plugin | Pics Shade Image Offload + Optimize + Resize wordpress plugin Introduction Are... | 0 | 2024-07-13T05:11:08 | https://dev.to/sh20raj/pics-shade-image-offload-optimize-resize-wordpress-plugin-1fg0 | picsshade, imageoffload, wordpressplugin, wordpress |

### Pics Shade Image Offload + Optimize + Resize wordpress plugin

#### Introduction

Are you looking for a seamless way to manage your WordPress media and improve your site's performance? Look no further! Introducing **Pics Shade Image Offload + Optimize + Resize**, the ultimate plugin to offload, optimize, and resiz... | sh20raj |

1,921,934 | Task 1: print exercises | How do you print the string “Hello, world!” to the screen? print("hello" , "world!") How do you... | 0 | 2024-07-13T05:14:15 | https://dev.to/ganesh_balaraman_6edae0d9/task-1-print-exercises-1bdg | python, print, exercise | 1. How do you print the string “Hello, world!” to the screen?

print("hello" , "world!")

2. How do you print the value of a variable name which is set to “Syed Jafer” or Your name?

name = "syed Jafer"

print (name)

How do you print the variables name, age, and city with labels “Name:”, “Age:”, and “City:”?

print ... | ganesh_balaraman_6edae0d9 |

1,921,951 | The Dawn of a New AI Era with GPT-55X | Introduction Artificial Intelligence (AI) is accomplishing new heights with Amazon’s modern... | 0 | 2024-07-13T05:24:38 | https://dev.to/abdullah_saeed_e82b953ff2/the-dawn-of-a-new-ai-era-with-gpt-55x-5h0a | Introduction

Artificial Intelligence (AI) is accomplishing new heights with Amazon’s modern innovation, GPT-55X. This powerful AI model ensures to revolutionize diverse sectors, from content fabric creation to enterprise operations. But how [Amazon’s GPT-55X](https://cloudmint.org/amazons-gpt-55x/) is shaping the futur... | abdullah_saeed_e82b953ff2 |

Subsets and Splits

No community queries yet

The top public SQL queries from the community will appear here once available.