id int64 5 1.93M | title stringlengths 0 128 | description stringlengths 0 25.5k | collection_id int64 0 28.1k | published_timestamp timestamp[s] | canonical_url stringlengths 14 581 | tag_list stringlengths 0 120 | body_markdown stringlengths 0 716k | user_username stringlengths 2 30 |

|---|---|---|---|---|---|---|---|---|

1,922,543 | Elevate Your MySQL Mastery with the 'MySQL Practice Challenges' Course | The article is about the 'MySQL Practice Challenges' course, which is designed to help both beginner and experienced SQL developers hone their MySQL skills through a series of practical exercises and real-world scenarios. The course covers a wide range of topics, including writing efficient and optimized queries, handl... | 27,755 | 2024-07-13T17:26:41 | https://dev.to/labex/elevate-your-mysql-mastery-with-the-mysql-practice-challenges-course-3obl | labex, programming, course, mysql |

Unlock your full potential as a SQL developer and take your skills to new heights with the comprehensive 'MySQL Practice Challenges' course. Whether you're a beginner seeking to build a solid foundation or an experienced professional aiming to refine your expertise, this course offers a dynamic and engaging learning e... | labby |

1,922,544 | 100 Days of Cloud: Day 3 - Dockerizing a Go App for Dita Daystar (Apologies for the Delay!) | Hey everyone, I'm writing to apologize for the late submission for Day 3 of the 100 Days of Cloud... | 0 | 2024-07-13T17:27:10 | https://dev.to/tutorialhelldev/100-days-of-cloud-day-3-dockerizing-a-go-app-for-dita-daystar-apologies-for-the-delay-1hgi | Hey everyone,

I'm writing to apologize for the late submission for Day 3 of the 100 Days of Cloud challenge. I ran into some unexpected roadblocks, but I'm here now to conquer Docker and document the process for you all!

Today, we'll be diving into Docker and how to use it to containerize a Go application for our sc... | tutorialhelldev | |

1,922,545 | Understanding Dependencies in Node.js Projects | Understanding Dependencies in Node.js Projects When working on a Node.js project, managing... | 0 | 2024-07-13T17:27:48 | https://dev.to/tushar_pal/understanding-dependencies-in-nodejs-projects-44i3 | webdev, beginners, basic, javascript |

# Understanding Dependencies in Node.js Projects

When working on a Node.js project, managing dependencies is a crucial aspect that ensures your project runs smoothly. Dependencies are the libraries or packages your project needs to function. There are two main types of dependencies you should be aware of: devDepende... | tushar_pal |

1,922,547 | Simple yet powerful react table component | Simple yet Powerful React Table Component In the ever-evolving landscape of front-end... | 0 | 2024-07-13T17:46:59 | https://dev.to/abdoseadaa/simple-yet-powerful-react-table-component-1inm | react, table, gadwal, frontend | ## Simple yet Powerful React Table Component

In the ever-evolving landscape of front-end development, having efficient and customizable components is crucial for building robust applications. Today, I'm excited to introduce "Gadwal," a simple yet powerful React table component that can enhance your data presentation e... | abdoseadaa |

1,922,548 | Introducing WeedX.io: A Comprehensive Platform for the Cannabis Industry | Hello Dev.to community, I'm excited to share with you our latest project at Selnox Infotech Pvt Ltd... | 0 | 2024-07-13T17:49:18 | https://dev.to/sandeep_kourav_bab12bd1bc/introducing-weedxio-a-comprehensive-platform-for-the-cannabis-industry-22nd | weedx, cannabis | Hello Dev.to community,

I'm excited to share with you our latest project at Selnox Infotech Pvt Ltd - [WeedX.io](https://weedx.io) . 🚀

What is WeedX.io?

WeedX.io is a robust platform designed to cater to the diverse needs of the cannabis industry. Our goal is to streamline the connection between dispensaries, deliv... | sandeep_kourav_bab12bd1bc |

1,922,549 | Power Up Your Earnings with These Telegram Projects | The Web3 revolution is democratizing finance, offering exciting money-making opportunities for... | 0 | 2024-07-13T17:56:10 | https://dev.to/bujji/power-up-your-earnings-with-these-telegram-projects-2673 | beginners, airdrop, blockchain, bitcoin | The Web3 revolution is democratizing finance, offering exciting money-making opportunities for everyone. If you're a budding developer eager to get your feet wet in the crypto space, look no further than Telegram.

This platform has emerged as a breeding ground for innovative projects, particularly in the "tap-to-earn"... | bujji |

1,922,550 | Mathematics for Machine Learning - Day 6 | Technically it takes the exact same steps... But we're not splitting hairs here. Also, I'm late :D ... | 27,993 | 2024-07-13T17:56:52 | https://www.pourterra.com/blogs/6 | machinelearning, learning, tutorial, beginners |

Technically it takes the exact same steps... But we're not splitting hairs here. Also, I'm late :D

## Row-Echelon Form (REF)

To know REF, a few definitions need to be established.

1. A leading coefficient /... | pourlehommes |

1,922,551 | Introducing Cannabaze: Your Ultimate POS System for the Cannabis Industry | In the rapidly evolving cannabis industry, efficient and reliable management solutions are crucial... | 0 | 2024-07-13T17:58:07 | https://dev.to/sandeep_kourav_bab12bd1bc/introducing-cannabaze-your-ultimate-pos-system-for-the-cannabis-industry-5ehd | In the rapidly evolving cannabis industry, efficient and reliable management solutions are crucial for success. Enter [Cannabaze](https://cannabaze.com/), the ultimate Point of Sale (POS) system designed specifically for the cannabis business. Whether you’re running a dispensary, delivery service, or brand, Cannabaze i... | sandeep_kourav_bab12bd1bc | |

1,922,552 | RELIABLE-FAST AND GUARANTEED CYBER EXPERTISE HIRE PRO WIZARD GIlBERT RECOVERY | In 2020, amidst the economic fallout of the pandemic, I found myself unexpectedly unemployed and... | 0 | 2024-07-13T17:58:27 | https://dev.to/michael_harrell_30b677a5f/reliable-fast-and-guaranteed-cyber-expertise-hire-pro-wizard-gilbert-recovery-28i0 | In 2020, amidst the economic fallout of the pandemic, I found myself unexpectedly unemployed and turned to Forex trading in hopes of stabilizing my finances. Like many, I was drawn in by the promise of quick returns offered by various Forex robots, signals, and trading advisers. However, most of these products turned o... | michael_harrell_30b677a5f | |

1,922,553 | Be an Energy Radiator: Ignite Your Influence | In a world teeming with noise, the ability to shine is a superpower. To be an energy radiator is to... | 27,967 | 2024-07-13T18:37:16 | https://dev.to/rishiabee/be-an-energy-radiator-ignite-your-influence-48ke | leadership, leaders, motivational, strongteams | In a world teeming with noise, the ability to shine is a superpower. To be an energy radiator is to not just exist, but to illuminate. It's about captivating hearts, inspiring minds, and leaving an indelible mark.

## Why Be an Energy Radiator?

Being an energy radiator is more than a title; it's a strategic choice. It'... | rishiabee |

1,922,557 | What is OpenGL, WebGL, Three.js, and WebXR? | OpenGL OpenGL (Open Graphics Library) is a cross-language, cross-platform application... | 0 | 2024-07-13T18:08:01 | https://dev.to/kda/what-is-opengl-webgl-threejs-and-webxr-2hmn | webdev, webgraphics, beginners |

## **OpenGL**

OpenGL (Open Graphics Library) is a cross-language, cross-platform application programming interface (API) for rendering 2D and 3D vector graphics. The API is typically used to interact with a graphics processing unit (GPU), to achieve hardware-accelerated rendering. It is widely used in video games, CA... | kda |

1,922,559 | The Future of the MERN Stack: A Bright Horizon for Web Development | The MERN stack, comprising MongoDB, Express.js, React, and Node.js, has gained immense popularity in... | 0 | 2024-07-13T18:08:58 | https://dev.to/shubham_kolkar/the-future-of-the-mern-stack-a-bright-horizon-for-web-development-1h27 | The MERN stack, comprising MongoDB, Express.js, React, and Node.js, has gained immense popularity in the web development community. As an end-to-end JavaScript solution, it offers a streamlined and efficient approach to building modern web applications. Looking ahead, several trends and technological advancements sugge... | shubham_kolkar | |

1,922,560 | Let’s VPN: Secure and Fast Internet Access | In today’s digital age, online security and privacy have become paramount concerns for internet... | 0 | 2024-07-13T18:11:53 | https://dev.to/fasts_vpn/lets-vpn-secure-and-fast-internet-access-5b73 | networking, vpn |

In today’s digital age, online security and privacy have become paramount concerns for internet users. With increasing cyber threats and the need to access content without geographical restrictions, Virtual Private... | fasts_vpn |

1,922,561 | Attention Mechanisms in Deep Learning: Unlocking New Capabilities | Attention mechanisms have become a cornerstone of modern deep learning architectures, particularly in... | 27,893 | 2024-07-13T18:13:33 | https://dev.to/monish3004/attention-mechanisms-in-deep-learning-unlocking-new-capabilities-5hho | beginners, ai, learning, machinelearning | Attention mechanisms have become a cornerstone of modern deep learning architectures, particularly in natural language processing (NLP) and computer vision. Introduced as a solution to the limitations of traditional sequence models, attention mechanisms allow models to dynamically focus on different parts of input data... | monish3004 |

1,922,562 | O que é DTO? Por que usar? | Olá pessoal, sou Jean e vim trazer um artigo sobre DTO. Aqui é apenas a teoria e não terá código... | 0 | 2024-07-13T18:13:35 | https://dev.to/jeanv0/o-que-e-dto-por-que-usar-ehh | webdev, java, solidprinciples, dto | Olá pessoal, sou Jean e vim trazer um artigo sobre DTO. Aqui é apenas a teoria e não terá código prático. Espero que aproveitem!

## Introdução

DTO, ou "Objeto de Transferência de Dados" (do inglês, Data Transfer Object), como o nome sugere, é um objeto utilizado para enviar e receber dados. Normalmente é utilizado no... | jeanv0 |

1,922,563 | How the internet works | What is internet The internet in a lay man terms can be perceive as a network of cables... | 0 | 2024-07-13T18:28:43 | https://dev.to/jideotetic/how-the-internet-works-4ocd | internet | ## What is internet

The internet in a lay man terms can be perceive as a network of cables that runs through out the world and this cables are the means in which computers from different locations can communicate with each other, the computers can be any form of device from a server to a laptop or even smartphones, at ... | jideotetic |

1,922,564 | Oracle Database Object List | { Abhilash Kumar Bhattaram : Follow on LinkedIn } One of the traditional problems of DBA's... | 0 | 2024-07-13T18:35:37 | https://dev.to/nabhaas/oracle-database-object-list-1bko | database, cloud, sql, developer | [](

<style>

.libutton {

display: flex;

flex-direction: column;

justify-content: center;

padding: 7px;

text-align: center;

outline: none;

text-decoration: none !important;

color: #ffffff !important;

width: 200px;

... | abhilash8 |

1,922,565 | Detect cycle in linked list | Another linked list algorithm. Detect a cycle in a linked list. This is actually not that bad.... | 27,729 | 2024-07-13T18:15:58 | https://dev.to/johnscode/detect-cycle-in-linked-list-ib5 | go, interview, programming | Another linked list algorithm.

Detect a cycle in a linked list.

This is actually not that bad. There are at least 3 different ways to do it O(n) time.

The easiest way requires modifying the linked list node to include a flag that denotes if a node has been visited. As the list is traversed, if we encounter a node ... | johnscode |

1,922,566 | 快连vpn安卓下载 | Top 10 Features of连vpn下载 In the digital age, online privacy has become paramount for internet users... | 0 | 2024-07-13T18:17:22 | https://dev.to/fasts_vpn/kuai-lian-vpnan-zhuo-xia-zai-4imk | 快连vpn, 快连vpn下载, 快连vpn安卓破解版 |

Top 10 Features of[连vpn下载](https://www.fastsvpn.com)

In the digital age, online privacy has become paramount for internet users around the globe. With the growing concerns about data breaches and internet surveilla... | fasts_vpn |

1,922,567 | Developer diary #15. ChatGPT and Taro | I have heard so funny story today. One person, who like Taro card and Numerology, tried to use... | 0 | 2024-07-13T18:19:36 | https://dev.to/kiolk/developer-diary-15-chatgpt-and-taro-4l36 | programming, ai, management, java | I have heard so funny story today. One person, who like Taro card and Numerology, tried to use ChatGPT for prediction and for checking past events. She uploaded some data about itself, also she added exact time of her birth. In output, she got several past events about marriage and divorce that were very close to real ... | kiolk |

1,923,713 | Terasop APP | Short Description (80/80 characters) Terasop: App with Telegram bot to download and stream... | 0 | 2024-07-15T05:31:46 | https://dev.to/terasop/terasop-app-a8a | terabox | ### Short Description (80/80 characters)

Terasop: App with Telegram bot to download and stream Terabox video files seamlessly.

### Description

Terasop is a versatile app designed to enhance your Terabox experience. With Terasop, you can effortlessly download and stream Terabox video files directly through the app o... | banmyaccount |

1,922,591 | Seeking Recommendations for a Real-Time Database for High-Speed Data Fetching and Updating | I'm currently working on an exciting project involving a telemetry dashboard for a rocket's avionics... | 0 | 2024-07-13T18:25:39 | https://dev.to/anshugupta/seeking-recommendations-for-a-real-time-database-for-high-speed-data-fetching-and-updating-732 | I'm currently working on an exciting project involving a telemetry dashboard for a rocket's avionics system. The core of this project involves capturing and displaying real-time data at high speed. To achieve this, I'm using pyserial to fetch data from the avionics system.

**The Challenge**

The primary challenge is fi... | anshugupta | |

1,922,593 | Ridge Regression, Regression: Supervised Machine Learning | Higher Order Polynomial Higher-order polynomial regression allows for modeling complex... | 0 | 2024-07-13T19:46:39 | https://dev.to/harshm03/ridge-regression-regression-supervised-machine-learning-1h10 | machinelearning, datascience, python, tutorial | ### Higher Order Polynomial

Higher-order polynomial regression allows for modeling complex relationships between the independent variable and the dependent variable. This approach can capture nonlinear trends that linear regression might miss but also runs the risk of overfitting if the degree is too high.

#### Pytho... | harshm03 |

1,922,594 | 2751. Robot Collisions | 2751. Robot Collisions Hard There are n 1-indexed robots, each having a position on a line, health,... | 27,523 | 2024-07-13T18:40:16 | https://dev.to/mdarifulhaque/2751-robot-collisions-49ge | php, leetcode, algorithms, programming | 2751\. Robot Collisions

Hard

There are `n` **1-indexed** robots, each having a position on a line, health, and movement direction.

You are given **0-indexed** integer arrays `positions`, `healths`, and a string `directions` (`directions[i]` is either **'L'** for **left** or **'R'** for **right**). All integers in `p... | mdarifulhaque |

1,922,596 | Every product needs a design system | A design system provides a set of standards, guidelines, and reusable components to ensure... | 0 | 2024-07-17T12:25:00 | https://dev.to/woovi/every-product-needs-a-design-system-1g7e | design, designsystem, frontend | A design system provides a set of standards, guidelines, and reusable components to ensure consistency and efficiency in the design and development of digital products.

Below is a list of benefits that you have when your product is built on top of a design system

## Consistency

A design system ensures that all compo... | sibelius |

1,922,597 | How to publish your React Native app to Expo Store 2024 | I recently tried publishing my React Native app to the Expo store, only to discover that most... | 0 | 2024-07-13T22:33:29 | https://dev.to/lucky_oniovosa_2da4ce3a99/how-to-publish-your-react-native-app-to-expo-store-2024-3hpf | reactnative, expo | I recently tried publishing my React Native app to the Expo store, only to discover that most articles on this topic are obsolete.

This article aims to help anyone trying to publish their React Native app to the Expo Store by following these simple steps.

- **Create your project**

```

npx create-expo-app@latest

```

... | lucky_oniovosa_2da4ce3a99 |

1,922,598 | this is blacnk | helklo darkness m old frein di have come to talk toyou agian so do you really get of form ne | 0 | 2024-07-13T18:57:50 | https://dev.to/ako_mawlood_d224fb581fad5/this-is-blacnk-c2m | helklo darkness m old frein di have come to talk toyou agian so do you really get of form ne | ako_mawlood_d224fb581fad5 | |

1,922,603 | Wix Studio Challenge with Special Guest Judge Ania Kubów | This is a submission for the Wix Studio Challenge . What I Built i built the project... | 0 | 2024-07-13T19:16:46 | https://dev.to/jupli_69a6c746ecb2a6ea653/wix-studio-challenge-with-special-guest-judge-ania-kubow-ba6 | devchallenge, wixstudiochallenge, webdev, javascript | *This is a submission for the [Wix Studio Challenge ](https://dev.to/challenges/wix).*

## What I Built

<!-- Share an overview about your project. -->

i built the project ecommerce for selling men's wear outfits

## Demo

<!-- Share a link to your Wix Studio app and include some screenshots here. -->

https://jupli5033.... | jupli_69a6c746ecb2a6ea653 |

1,922,604 | AWS GuardDuty: The Unstoppable Sentinel - Mastering Cloud Threat Detection with AI-Powered Vigilance | Embarking on an odyssey that shall unveil the inner workings of AWS GuardDuty, equipping you with the... | 0 | 2024-07-13T19:19:12 | https://dev.to/ikoh_sylva/aws-guardduty-the-unstoppable-sentinel-mastering-cloud-threat-detection-with-ai-powered-vigilance-8a7 | aws, cloudcomputing, cloudskills, cloudstorage | Embarking on an odyssey that shall unveil the inner workings of AWS GuardDuty, equipping you with the knowledge and strategies to wield its power and elevate your threat detection capabilities to new heights. Brace yourselves, for this journey will not only illuminate the path to unparalleled security vigilance but als... | ikoh_sylva |

1,922,614 | # Installing Laravel 11: A Step-by-Step Guide | Laravel 11 is a powerful PHP framework that helps developers build robust and scalable web... | 0 | 2024-07-13T19:34:12 | https://dev.to/jsandaruwan/-installing-laravel-11-a-step-by-step-guide-2mkj | webdev, beginners, programming, laravel | Laravel 11 is a powerful PHP framework that helps developers build robust and scalable web applications. This guide will walk you through the installation process and outline the dependencies required to get your Laravel 11 application up and running.

## Prerequisites

Before you install Laravel 11, ensure you have th... | jsandaruwan |

1,922,615 | https://mwebgraceful.com/9133/246/10/ | ꧁༺✨❗Shop Now ❗✨༻꧂ https://mwebgraceful.com/9133/246/10/ ꧁༺✨❗Facebook... | 0 | 2024-07-13T19:39:52 | https://dev.to/zencortwork/httpsmwebgracefulcom913324610-50l7 | zencortex | ꧁༺✨❗Shop Now ❗✨༻꧂

https://mwebgraceful.com/9133/246/10/

꧁༺✨❗Facebook Now❗✨༻꧂

https://www.facebook.com/Zen.Cortex.Buy/

ZenCortex Reviews (ALERT 2024) Does this Hearing Health Support Drops Work? Ingredients, Benefits, and Where to Buy?

ZenCortex Customer Reviews and Complaints

Based on the ZenCortex customer reviews... | zencortwork |

1,922,616 | Who's involved in the Freewallet scam | Alvin Hagg, the co-founder and CEO of Freewallet.org, has long shunned the spotlight. However, our... | 0 | 2024-07-13T19:42:40 | https://dev.to/feofhan/whos-involved-in-the-freewallet-scam-529o |

Alvin Hagg, the co-founder and CEO of Freewallet.org, has long shunned the spotlight. However, our recent investigation has unveiled a shocking truth: the real masterminds behind the Freewallet scam are two Russian... | feofhan | |

1,922,617 | Emailing Hacks you might want to know - 🥶 Cold Marketing | As developers, we often find ourselves needing to reach out to potential clients,... | 0 | 2024-07-13T19:45:34 | https://dev.to/1geek/emailing-hacks-you-might-want-to-know-cold-marketing-4fdl | emailing, marketing, hacks | As developers, we often find ourselves needing to reach out to potential clients, collaborators.

Cold emailing can be a powerful tool to achieve this,

Do you use other methods besides cold marketing? If so, share them in the comments.

But it requires a strategic approach. Here are some key takeaways from my experie... | 1geek |

1,922,619 | It is all about time to market | It is all about time to market Recently, my sister became a mom, and motherhood comes with... | 0 | 2024-07-13T19:50:44 | https://dev.to/nosylasairaf/it-is-all-about-time-to-market-1hki |

# It is all about time to market

Recently, my sister became a mom, and motherhood comes with new problems that need solutions. Stay with me as I explain my point.

She gave me a list of issues and suggested a website or app could solve them. We brainstormed ideas like:

- Tracking diaper changes (pee/poop) to see if ... | nosylasairaf | |

1,922,620 | AI in Retail: A Symphony of Innovation | Greetings, Future-Seekers! Welcome to a narrative of possibilities and a future shaped by rapid... | 27,673 | 2024-07-13T20:04:55 | https://dev.to/rapidinnovation/ai-in-retail-a-symphony-of-innovation-3lnh | Greetings, Future-Seekers! Welcome to a narrative of possibilities and a

future shaped by rapid innovation. Today, we delve into a realm where

Artificial Intelligence (AI) isn’t just a visitor; it’s a resident artist,

painting the retail canvas with strokes of brilliance, creating a masterpiece

of personalized experien... | rapidinnovation | |

1,922,621 | Just Got Back | Just got back from Founders Academy. It's now time for me to put this stuff into play. In Blog... | 0 | 2024-07-13T20:15:28 | https://dev.to/theholyspirit/just-got-back-1kln | startup, founder, venture, todayisearched | Just got back from Founders Academy. It's now time for me to put this stuff into play. In **Blog** sections, I'll share what I gathered directly from the conference. It might be raw notes; sometimes I have pictures. In **Academy,** sections, I show what I can of some of the more "homework" type exercises.

I have anoth... | theholyspirit |

1,922,623 | Hire A Hacker Now: Easier than You Think | I'm glad I found INTELLIGENCE CYBER WIZARD, an honest funds/crypto recovery company. Their team of... | 0 | 2024-07-13T20:18:47 | https://dev.to/janet_sanchez_674329de08f/hire-a-hacker-now-easier-than-you-think-39l | I'm glad I found INTELLIGENCE CYBER WIZARD, an honest funds/crypto recovery company. Their team of professionals was able to retrieve my crypto that had been stolen from a forex trader who had deceived me by saying I would receive a 35% return on my investment. I was able to receive all of my cryptocurrency back after ... | janet_sanchez_674329de08f | |

1,922,644 | Peek into Twitter Without Creating an Account | Twitter is a treasure trove of information, trends, and real-time updates. However, not everyone... | 0 | 2024-07-13T20:23:04 | https://dev.to/charlie_reece_f54ab06e4f4/peek-into-twitter-without-creating-an-account-9p5 | Twitter is a treasure trove of information, trends, and real-time updates. However, not everyone wants to go through the hassle of creating an account. Fortunately, you can explore Twitter without signing up. Here’s a comprehensive guide to help you navigate [Twitter ](https://bestsocialreviews.com/how-to-view-a-privat... | charlie_reece_f54ab06e4f4 | |

1,922,645 | Automate text Message(SMS) notification using SNS and AWS lambda | Introduction: Automating SMS notifications using AWS Lambda and SNS (Simple Notification Service) is... | 0 | 2024-07-13T20:23:05 | https://dev.to/rashmitha_v_d0cfc20ba7152/automate-text-messagesms-notification-using-sns-and-aws-lambda-26hg | **Introduction:**

Automating SMS notifications using AWS Lambda and SNS (Simple Notification Service) is a powerful way to keep users informed about important events or updates in your application. AWS Lambda allows you to run code without provisioning or managing servers, while SNS enables you to send messages to a la... | rashmitha_v_d0cfc20ba7152 | |

1,922,646 | Red bearded dragon | Red Bearded Dragon: Exploring the Colorful World of Bearded Dragons Welcome to the fascinating world... | 0 | 2024-07-13T20:29:05 | https://dev.to/sunset_reptiles_8e1cf2bb0/red-bearded-dragon-2g31 | Red Bearded Dragon: Exploring the Colorful World of Bearded Dragons

Welcome to the fascinating world of bearded dragons, where these captivating reptiles come in a stunning array of colors and patterns. Whether you’re a seasoned reptile enthusiast or just beginning your journey, understanding the various types of beard... | sunset_reptiles_8e1cf2bb0 | |

1,922,647 | How does Pix QRCode work? | Pix is the name of the instant payment scheme in Brazil. You can read more about Pix in English here... | 0 | 2024-07-15T12:43:29 | https://dev.to/woovi/how-does-pix-qrcode-work-5e3k | pix, qrcode, fintech, woovi | Pix is the name of the instant payment scheme in Brazil.

You can read more about Pix in English here [Brazil Central Bank Pix](https://www.bcb.gov.br/en/financialstability/pix_en).

Before we dive into how Pix QRCode works, we will cover a few other Pix concepts

## Pix Alias

The first concept that you need to learn ... | sibelius |

1,922,659 | WordPress 6.6: What’s new for developers? news in july 10th | What’s new for developers? (July 2024) By Justin... | 0 | 2024-07-13T21:20:19 | https://dev.to/hub24/wordpress-66-whats-new-for-developers-news-in-july-10th-2kk9 | What’s new for developers? (July 2024) By Justin Tadlock

https://developer.wordpress.org/news/2024/07/10/whats-new-for-developers-july-2024/

What’s new for developers? (July 2024) By Justin Tadlock

July 10, 2024

covering: Blocks, Plugins, Themes

WordPress 6.6 is just days away, and it’s always an exciting time for... | hub24 | |

1,922,648 | Data Migration from Digital Ocean Space to AWS S3 | This guide illustrates the migration process for moving objects from Digital Ocean (DO) Space to AWS... | 0 | 2024-07-13T20:30:08 | https://dev.to/sammy_cloud/data-migration-from-digital-ocean-space-to-aws-s3-4m85 | This guide illustrates the migration process for moving objects from Digital Ocean (DO) Space to AWS S3

DigitalOcean Spaces provides Amazon S3-compatible object storage with a simplified pricing model. However, you may at some point find that you need to move your storage off of Spaces and onto Amazon S3. There are ma... | sammy_cloud | |

1,922,649 | Foodz | This is a submission for the Wix Studio Challenge . What I Built Are you hungry? Up to... | 0 | 2024-07-13T21:00:00 | https://dev.to/yowise/foodz-3b8p | devchallenge, wixstudiochallenge, webdev, javascript | *This is a submission for the [Wix Studio Challenge ](https://dev.to/challenges/wix).*

## What I Built

<!-- Share an overview about your project. -->

Are you hungry? Up to try something new? Luxembourgish and Danish dishes have your back...I mean stomach! Now, a round of applause for Foodz!

## Demo

<!-- Share a lin... | yowise |

1,922,652 | Shiny Object Syndrome: Code Edition | Imagine a little kid going to a toy store. This kid has a ton of options to choose from, and let's... | 0 | 2024-07-13T20:52:27 | https://blog.atharva.codes/shiny-object-syndrome-code-edition | codenewbie, latest |

Imagine a little kid going to a toy store. This kid has a ton of options to choose from, and let's forget about the budget for the desired toy. He will roam around the store to find the most exciting thing to play with. He will find one cool Superman toy and quickly grab it. While going back to the counter to finalis... | atharvadeosthale |

1,922,653 | Understanding Threat Modeling: 🛡️ Securing Your Digital Assets Effectively | Intro Hello World! 👋 I'm Makita, founder of a tech business based in vibrant Florida, deeply... | 0 | 2024-07-13T20:53:33 | https://dev.to/firststeptechnology/understanding-threat-modeling-securing-your-digital-assets-effectively-27gp | threatmodeling, devsecops, cybersecurity, learning | **Intro**

Hello World! 👋 I'm Makita, founder of a tech business based in vibrant Florida, deeply passionate about cybersecurity and safeguarding digital assets. Currently pursuing a Cyber Juris Master's program at the great Florida State University, I've delved into the critical importance of threat modeling beyond it... | firststeptechnology |

1,922,656 | What is DTO? Why use? | Hello everyone, I'm Jean and I'm here to bring you an article about DTO. This is just theory, and... | 0 | 2024-07-13T21:02:48 | https://dev.to/jeanv0/what-is-dto-why-use-5foj | webdev, java, solidprinciples, dto | Hello everyone, I'm Jean and I'm here to bring you an article about DTO. This is just theory, and there will be no practical code. I hope you enjoy it!

## Introduction

DTO, or "Data Transfer Object," as the name suggests, is an object used to send and receive data. It is typically used in the backend of more structur... | jeanv0 |

1,922,660 | How to add new Node version to Laragon | It is very easy and straight-forward to add new node version into your Laragon. In this blog, I’m... | 0 | 2024-07-13T21:31:32 | https://dev.to/fullstackhardev/how-to-add-new-node-version-to-laragon-5hjh | programming, javascript, softwareengineering, webdev | > It is very easy and straight-forward to add new node version into your Laragon.

In this blog, I’m currently having Node 16.13.1 installed & we will be installing latest Node 20 version, let’s do it together.

Steps to add new or any Node version are following:

1. Lets download the [node binary](https://nodejs.org/en... | fullstackhardev |

1,922,661 | From Zero to K8s Hero: 5 Must-Have Tools for Kubernetes | I have just finished publishing my latest article, "From Zero to K8s Hero: 5 Must-Have Tools for... | 0 | 2024-07-13T21:34:17 | https://dev.to/cloudnative_eng/from-zero-to-k8s-hero-5-must-have-tools-for-kubernetes-4hia | kubernetes, devops, beginners, computerscience |

I have just finished publishing my latest article, "From Zero to K8s Hero: 5 Must-Have Tools for Kubernetes"

Topics:

• 👓 1. Browse your Kubernetes cluster: K9s.

• 🤖 2. Automate everything: Kubectl

• 📦 3. Package manager: Krew

• 🪵 4. Aggregate logs from multiple Kubernetes resources: Stern

• 🐚 5. Look under the h... | cloudnative_eng |

1,922,662 | Follow me for supporting me thanks 🙏🙏 | A post by Marjorie Capistrano | 0 | 2024-07-13T21:39:14 | https://dev.to/marjorie_capistrano_7c927/follow-me-for-supporting-me-thanks-4lbf | beginners, webdev, react, javascript | marjorie_capistrano_7c927 | |

1,922,663 | PELISFLIX-VER » IntensaMente 2 PELÍCULA COMPLETA Español Latino | [PELISPLUS] Ver IntensaMente 2 (2024) PELICULACOMPLETA Online En Español ➤➤🔴✅📱 Descargar 🔴✅➤➤... | 0 | 2024-07-13T21:54:10 | https://dev.to/pelisflix-ver/pelisflix-ver-intensamente-2-pelicula-completa-espanol-latino-3ofl | webdev, javascript, programming, tutorial | [PELISPLUS] Ver IntensaMente 2 (2024) PELICULACOMPLETA Online En Español

➤➤🔴✅📱 Descargar 🔴✅➤➤ [https://t.co/bHtZsRmFcY](https://t.co/bHtZsRmFcY)

Haga clic aquí HD 🔴📺📱👉 [https://t.co/mTyi97jVX3](https://t.co/mTyi97jVX3)

Cuevana | Estrenos | Pelispedia | Pelisplus | Gnula | Repelisplus | Repelis | Pelis | Pelis... | pelisflix-ver |

1,922,689 | Assistir!! DIVERTIDA-MENTE 2 (2024) FILME COMPLETO Dublado em Portuguêse~HD-4K | Assistir!! DIVERTIDA MENTE 2 FILME COMPLETO Dublado e Legendado em Portuguêse (2024)~4K 🔴➡ ASSISTIR... | 0 | 2024-07-13T22:21:58 | https://dev.to/baixarfilmes2/assistir-divertida-mente-2-2024-filme-completo-dublado-em-portuguesehd-4k-32m1 | webdev, beginners, github, website | Assistir!! DIVERTIDA MENTE 2 FILME COMPLETO Dublado e Legendado em Portuguêse (2024)~4K

🔴➡ [ASSISTIR AGORA 👇👉 Divertida Mente 2 2024 Filme Completo](http://fast.bigmovies10.site/pt/movie/1022789/inside-out-2

)

🔴➡ [BAIXE AGORA 👇👉 Divertida Mente 2 2024 Filme Completo](http://fast.bigmovies10.site/pt/movie/10227... | baixarfilmes2 |

1,922,666 | Persistence Pays Off: React Components with Local Storage Sync 🔄🦸🏻♂️ | Have you ever spent minutes creating the perfect response only to lose it all with a misclick or... | 0 | 2024-07-13T22:21:57 | https://dev.to/mattlewandowski93/persistence-pays-off-react-components-with-local-storage-sync-2bfk | react, webdev, javascript, beginners | Have you ever spent minutes creating the perfect response only to lose it all with a misclick or accidental refresh? We often focus on optimizing performance and creating good-looking user interfaces, but what about the user experience? Experiences like this often make us want to rage quit. Before auto-save became popu... | mattlewandowski93 |

1,922,667 | Enhanced Web Application Security with Azure Network Security Groups and Application Security Groups (Part 2) | Introduction: In part 1, we explored isolating resources within a virtual network for enhanced... | 0 | 2024-07-17T21:58:03 | https://dev.to/jimiog/enhanced-web-application-security-with-azure-network-security-groups-and-application-security-groups-part-2-2po8 | azure, cloud, network, security | **Introduction:**

In [part 1](https://dev.to/jimiog/isolate-and-connect-your-applications-with-azure-virtual-networks-and-subnets-part-1-3k70), we explored isolating resources within a virtual network for enhanced security. This part delves into Azure Network Security Groups (NSGs) and Application Security Groups (ASG... | jimiog |

1,922,669 | Day 1: Defining Variables in JavaScript | I’ve started Hitesh Sir’s JavaScript 30 days challenge, and today’s task was all about defining... | 28,057 | 2024-07-13T22:01:51 | https://dev.to/tejas_khanolkar_473f3ed1a/day-1-defining-variables-in-javascript-4719 |

>

I’ve started Hitesh Sir’s JavaScript 30 days challenge, and today’s task was all about defining variables and understanding their types. If you haven't joined yet, you can check it out [here](https://courses.chaicode.com/learn/batch/30-days-of-Javascript-challenge). Hurry, it's free until July 14th!

>

## Declar... | tejas_khanolkar_473f3ed1a | |

1,922,670 | A fancy pdf CV with Asciidoctor | In this post I’ll show you how to create a CV in PDF using a simple text file. No Word, no Google... | 0 | 2024-07-13T22:13:36 | https://jorge.aguilera.soy/blog/2024/cv-asciidoctor.html | asciidoctor, docascode | ---

title: A fancy pdf CV with Asciidoctor

published: true

date: 2024-07-13 00:00:00 UTC

tags: asciidoctor,docascode

canonical_url: https://jorge.aguilera.soy/blog/2024/cv-asciidoctor.html

---

In this post I’ll show you how to create a CV in PDF using a simple text file. No Word, no Google Docs, no Acrobat … only tex... | jagedn |

1,922,671 | The Quantification of Perception | The faculties of perception have historically been classified as five senses. I'll content this is a... | 0 | 2024-07-13T22:19:36 | https://dev.to/theholyspirit/the-quantification-of-perception-3iff | datascience, learn, humansoftware, mixedreality | The faculties of perception have historically been classified as five senses. I'll content this is a limitation of the more full scope of perception, nevertheless, five senses are more than enough to begin an exercise of quantifying perception.

A Generative Engine produces quanta of Perception. For each of the given p... | theholyspirit |

1,922,690 | The Features of the Updates on ES14 (ECMAScript 2023) | What is the ECMAScript? The ECMAScript is the official name for javascript as a language... | 0 | 2024-07-13T22:25:46 | https://dev.to/paulude/the-features-of-the-updates-on-es14-ecmascript-2023-359j | webdev, javascript, programming, tutorial | ## What is the ECMAScript?

The ECMAScript is the official name for javascript as a language standard. It is updated by ECMA regularly and also implemented by the organization as soon as new features have been added and tested to the previous feature. A new version has been released annually since 2015 by the organisat... | paulude |

1,922,691 | Building a Scalable and Reliable Rental Property Website on AWS | Building a rental property website that’s both reliable and scalable, all while keeping costs down?... | 0 | 2024-07-13T22:26:08 | https://dev.to/oloko0201/building-a-scalable-and-reliable-rental-property-website-on-aws-5269 | Building a rental property website that’s both reliable and scalable, all while keeping costs down? AWS offers a suite of services that can help you build a robust application within your budget. In this project, I will walk you through the steps to set up your rental property website using AWS.

### Step 1: Setup S3 a... | oloko0201 | |

1,922,694 | ASSISTIR Divertida-Mente 2 (2024) HD F I L M E COMPLETO DUBLADO ONLINE | ASSISTIR ▷ Divertida Mente 2 (2024) HD F I L M E COMPLETO DUBLADO ONLINE 🔴➡ ASSISTIR AGORA 👇👉... | 0 | 2024-07-13T22:31:12 | https://dev.to/baixarfilmes2/assistir-divertida-mente-2-2024-hd-f-i-l-m-e-completo-dublado-online-49oh | webdev, discuss, github, web | ASSISTIR ▷ Divertida Mente 2 (2024) HD F I L M E COMPLETO DUBLADO ONLINE

**🔴➡ ASSISTIR AGORA 👇👉** [Divertida Mente 2 2024 Filme Completo](http://fast.bigmovies10.site/pt/movie/1022789/inside-out-2

)

**🔴➡ BAIXE AGORA 👇👉** [Divertida Mente 2 2024 Filme Completo](http://fast.bigmovies10.site/pt/movie/1022789/ins... | baixarfilmes2 |

1,922,697 | Guía Completa del CMD de Windows: Desde Conceptos Básicos hasta Comandos Avanzados | La consola CMD, también conocida como símbolo del sistema es la ventana interprete de Windows que... | 0 | 2024-07-13T22:35:49 | https://dev.to/miltondiazco/cmd-de-windows-1obn | La consola **CMD**, también conocida como **símbolo del sistema** es la ventana interprete de Windows que permite enviar cualquier tipo de orden al sistema operativo.

La inicia la aplicación de 16 bits `cmd.exe` que se encuentra la ruta `C:\Windows\System32` la cual traduce e interpreta los comandos transmitidos al si... | miltondiazco | |

1,922,698 | [Game of Purpose] Day 56 | Today I made Manny's limbs react to bullet hits. My goal is make it react naturally, so if it is hit... | 0 | 2024-07-13T22:40:04 | https://dev.to/humberd/game-of-purpose-day-56-11km | gamedev | Today I made Manny's limbs react to bullet hits.

My goal is make it react naturally, so if it is hit in his right arm also the torse should feel an impact. Right now only the bones below the hit bone react with physics. Don't yet know how to achieve it.

Este comando nos muestra la fecha actual y nos da la opción de poner una... | 0 | 2024-07-13T22:48:26 | https://dev.to/miltondiazco/comandos-de-fecha-306e | cmd, windows | ## `DATE` (FECHA)

Este comando nos muestra la fecha actual y nos da la opción de poner una nueva fecha.

```bash

// Opción 1: sin parámetros, muestra la fecha actual y permite poner una nueva fecha

date

// Opción 2: con parámetros (/t), solo muestra la fecha actual

date /t

```

**Nota:** Para poder cambiar la fecha, es... | miltondiazco |

1,922,704 | Comandos Avanzados | SYSTEMINFO Muestra información detallada del PC y del sistema. systeminfo Enter... | 0 | 2024-07-13T22:50:34 | https://dev.to/miltondiazco/comandos-avanzados-3a10 | cmd, windows | ## `SYSTEMINFO`

Muestra información detallada del PC y del sistema.

```bash

systeminfo

```

## `TASKLIST`

Muestra un listado de los programas y tareas que se están ejecutando.

```bash

tasklist

```

## `TASKKILL -IM`

Permite cerrar un programa.

```bash

taskkill -im nombre_del_programa.exe

```

## `CONTROL KEYBOARD`

M... | miltondiazco |

1,922,706 | Remove Nth from end of linked list | In this post, I explore another linked list algorithm. This one is a bit harder. Create a function... | 27,729 | 2024-07-13T22:56:45 | https://dev.to/johnscode/remove-nth-from-end-of-linked-list-54bf | go, interview, programming | In this post, I explore another linked list algorithm. This one is a bit harder.

Create a function to remove the nth node from the end of a linked list.

This comes from a leetcode problem. As in the leetcode problem, 'n' is one-based and can go from 1 to the length of the list.

```

func (ll *LinkedList[T]) RemoveNt... | johnscode |

1,922,707 | How to upload image using multer and Express.js | In this blog post we will learn to upload image using multer. multer is a middleware of NodeJS to... | 0 | 2024-07-13T23:02:16 | https://dev.to/pmadhav82/how-to-upload-image-using-multer-and-expressjs-5a57 | node, express, javascript |

In this blog post we will learn to upload image using `multer`. `multer` is a middleware of NodeJS t... | pmadhav82 |

1,922,735 | Unlocking the Power of Docker: A Developer's Guide | Introduction In the ever-evolving world of software development, the need for efficient... | 0 | 2024-07-13T23:35:46 | https://dev.to/fernandomullerjr/unlocking-the-power-of-docker-a-developers-guide-5ca3 | docker, containers, devops | ## Introduction

In the ever-evolving world of software development, the need for efficient and scalable solutions has become increasingly crucial. One technology that has revolutionized the way we approach these challenges is Docker. Docker is a powerful platform that enables the creation and management of containeriz... | fernandomullerjr |

1,922,737 | How to Remove Duplicate Paths in ZSH on MacOS | Having duplicate paths in your PATH variable can clutter your environment and lead to unexpected... | 0 | 2024-07-13T23:42:42 | https://dev.to/deni_sugiarto_1a01ad7c3fb/how-to-remove-duplicate-paths-in-zsh-on-macos-3l68 | terminal, zsh, developer | Having duplicate paths in your PATH variable can clutter your environment and lead to unexpected behavior when running commands. On macOS, using the ZSH shell, you can easily remove these duplicates to ensure a clean and efficient path configuration. Here’s a quick guide on how to do it using the typeset -U PATH comman... | deni_sugiarto_1a01ad7c3fb |

1,922,738 | A Minimalist, Adaptive Computing Framework. | This repository contains source code for a minimalist, adaptive computing framework written in... | 0 | 2024-07-13T23:51:34 | https://dev.to/rperezrosario/minimalist-adaptive-computing-framework-35gk | geneticalgorithms, csharp, dotnet | This repository contains source code for a minimalist, adaptive computing framework written in C#.

https://github.com/rperez-rosario/AdaptiveComputingFramework | rperezrosario |

1,922,746 | Is Transitioning from React.js to React Native as Easy as It Seems? 🚀📱 | Is Transitioning from React.js to React Native as Easy as It Seems? 🚀📱 Transitioning from... | 0 | 2024-07-14T00:30:07 | https://dev.to/sh20raj/is-transitioning-from-reactjs-to-react-native-as-easy-as-it-seems-3emj | react, javascript, webdev, reactnative | ## Is Transitioning from React.js to React Native as Easy as It Seems? 🚀📱

Transitioning from React.js to React Native can be an exciting journey for web developers looking to enter the world of mobile app development. Both frameworks share a similar foundation, but there are key differences that developers need to u... | sh20raj |

1,922,739 | An E-Commerce Web Application | This repository contains an e-commerce web application built using SQL Server, EF, ASP. NET Core MVC,... | 0 | 2024-07-13T23:56:18 | https://dev.to/rperezrosario/an-e-commerce-web-application-40d9 | csharp, sqlserver, javascript, aspdotnet | This repository contains an e-commerce web application built using SQL Server, EF, ASP. NET Core MVC, C#, Javascript and an assortment of libraries and web services.

https://github.com/rperez-rosario/XO | rperezrosario |

1,922,740 | Securing Your Azure Web Application with Azure Firewall (Part 3) | Introduction: This blog post is part 3 of a series on securing your Azure environment. In the... | 0 | 2024-07-17T21:58:52 | https://dev.to/jimiog/securing-your-azure-web-application-with-azure-firewall-part-3-2j2d | azure, cloud, network, security | **Introduction:**

This blog post is part 3 of a series on securing your Azure environment. In the [previous parts](https://dev.to/jimiog/enhanced-web-application-security-with-azure-network-security-groups-and-application-security-groups-part-2-2po8), we created a virtual network and implemented a Network Security Gro... | jimiog |

1,922,742 | Founders Academy Workbook | Founders Academy Workbook is given out on after the last day of the workshop. It summarizes the... | 0 | 2024-07-14T00:01:01 | https://dev.to/theholyspirit/founders-academy-workbook-3370 | founder, startup, capital, resources | Founders Academy Workbook is given out on after the last day of the workshop. It summarizes the presentation content in reading format.

[Founders Academy Essentials Workbook](https://docs.google.com/document/d/170QE8T5DllHhABS5ZBAWFMCKiGt6omMeQBGvBofRpAM/edit?usp=sharing)

Gordon Daugherty, www.shockwaveinnovations.co... | theholyspirit |

1,922,743 | The Complete Guide to CSS object-fit Property | The Complete Guide to CSS object-fit: Key to Handling Images on the Web ... | 0 | 2024-07-14T00:05:53 | https://dev.to/moondaeseung/the-complete-guide-to-css-object-fit-property-3apa | # The Complete Guide to CSS object-fit: Key to Handling Images on the Web

## Introduction: Why You Need to Know object-fit

Dealing with images in web development has always been a challenging task. How can we consistently display images of various sizes and ratios? This is where the CSS `object-fit` property plays a ... | moondaeseung | |

1,922,744 | Founders Academy Day 1 | At the start Start with a list of assumptions I believe, we believe Audience experiences pain They... | 0 | 2024-07-14T00:17:02 | https://dev.to/theholyspirit/founders-academy-day-1-pa4 | startup, founder | At the start

Start with a list of assumptions

I believe, we believe

Audience experiences pain

They want to eliminate pain for benefit

They would choose us over alts for reasons

We believe audience would spend money to eliminate pain

Etc

Add how important is it this this true

Next question

How many potential users d... | theholyspirit |

1,922,747 | SEEKING A PROFESSIONAL HACKING SERVICE ONLINE // CONSULT DIGITAL WEB RECOVERY | The labyrinthine world of online investments, promises of wealth often collide with the harsh reality... | 0 | 2024-07-14T00:32:47 | https://dev.to/digitalwebrecovery/seeking-a-professional-hacking-service-online-consult-digital-web-recovery-i2k | The labyrinthine world of online investments, promises of wealth often collide with the harsh reality of deception. My name is not important, but my cautionary tale resonates deeply with those who navigate the treacherous waters of cryptocurrency. It began with a seductive offer—a chance to multiply my wealth through a... | digitalwebrecovery | |

1,922,748 | QR Code Component for Spotify Playlist | Hello Dev.to community! I've recently developed a QR Code Component for Spotify Playlist using HTML,... | 0 | 2024-07-14T00:37:02 | https://dev.to/der12kl/qr-code-component-for-spotify-playlist-52l8 | webdev, javascript, css, html | Hello Dev.to community!

I've recently developed a QR Code Component for Spotify Playlist using HTML, CSS, and JavaScript. It allows users to easily scan a QR code and access my curated 'Top Music' playlist on Spotify.

Check out the full project on GitHub: [GitHub Repository](https://github.com/Der12kl/QRcodecomponent... | der12kl |

1,922,749 | Understanding Higher-Order Functions in JavaScript | Introduction Higher-order functions are an important concept in JavaScript programming.... | 0 | 2024-07-14T00:37:29 | https://dev.to/kartikmehta8/understanding-higher-order-functions-in-javascript-18hh | javascript, webdev, programming, tutorial | ## Introduction

Higher-order functions are an important concept in JavaScript programming. They allow for more efficient and dynamic coding by treating functions as first-class citizens and passing them as arguments or returning them as values. Understanding higher-order functions can greatly improve one's proficiency... | kartikmehta8 |

1,922,750 | Simplest Chrome Extension Tutorial for 2024 Using Manifest V3 | Creating a Color Changer Chrome Extension This tutorial will guide you through creating a... | 0 | 2024-07-14T00:41:51 | https://dev.to/azadshukor/simplest-chrome-extension-tutorial-for-2024-using-manifest-v3-h3m | ## Creating a Color Changer Chrome Extension

This tutorial will guide you through creating a Chrome extension that changes the background color of web pages and keeps track of how many times the color has been changed.

### Step 1: Set Up the Folder Structure

First, create a new folder for your extension with the fol... | azadshukor | |

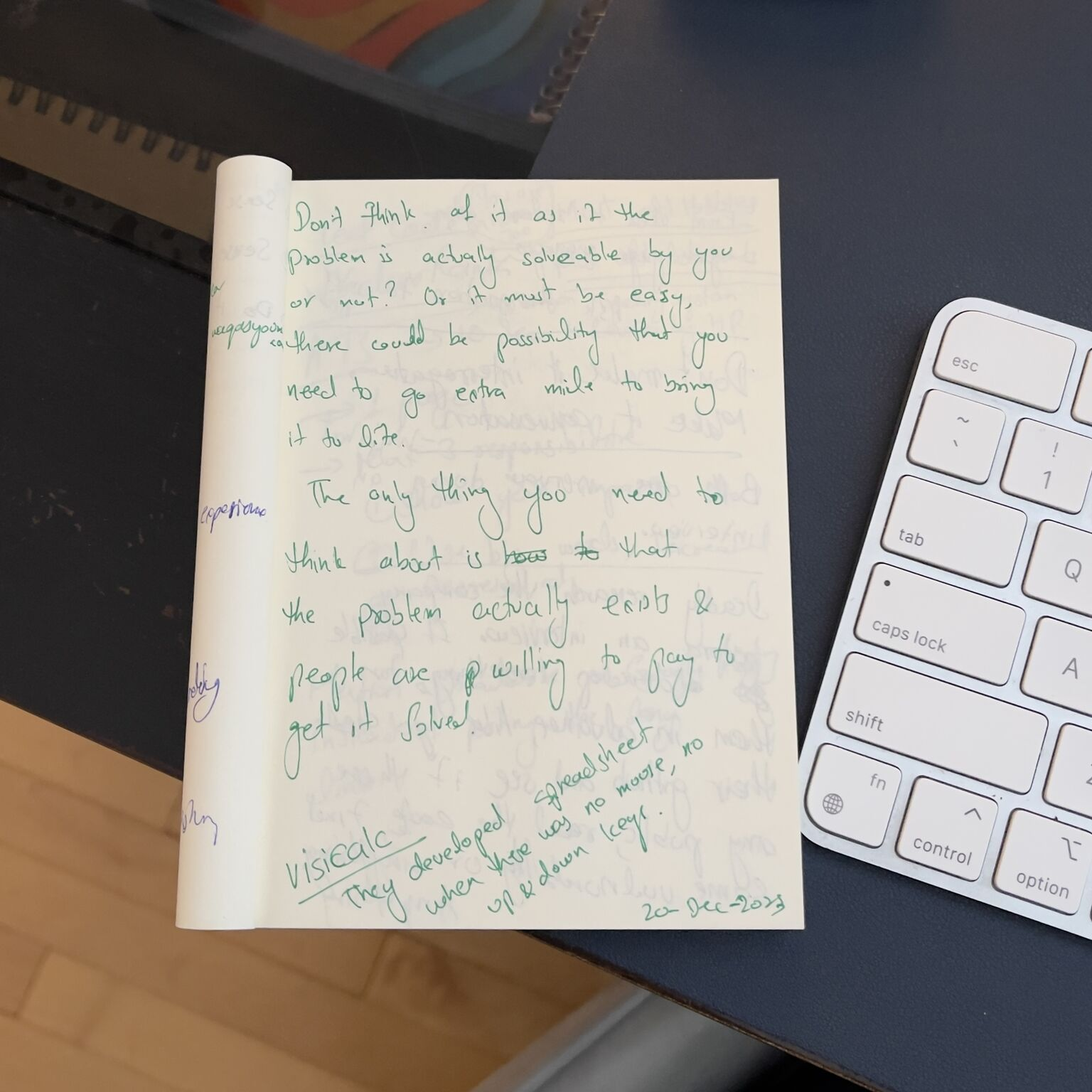

1,922,751 | Find something worth solving, not what you can solve. | I follow how software and programs were developed back in the day; one of my favorites is the... | 0 | 2024-07-14T00:52:58 | https://dev.to/waqas334/find-something-worth-solving-not-what-you-can-solve-52ln | motivation, programming, productivity, learning |

I follow how software and programs were developed back in the day; one of my favorites is the creation of VisiCalc. When the computer keyboard used to have only left and right keys, no up and down keys, they develo... | waqas334 |

1,922,752 | A little about promises in Javascript | JavaScript is a synchronous programming language; however, due to the callback functions, we can make... | 0 | 2024-07-14T00:53:51 | https://dev.to/joaoreider/a-little-about-promises-in-javascript-4oh7 | JavaScript is a **synchronous** programming language; however, due to the callback functions, we can make it work as an asynchronous programming language.

The concept of promises are very similar to promises we make in real life, we guarantee that something will be done. A promise can be **kept** or **broken** and you... | joaoreider | |

1,922,753 | Understanding Cohesion | I just finished learning about Cohesion and it's part and here is a summary. What is... | 0 | 2024-07-14T01:05:12 | https://dev.to/waqas334/understanding-cohesion-h9o | architecture, designpatterns | I just finished learning about Cohesion and it's part and here is a summary.

## What is it?

It is how your module is structured, not how it communicates/integrates with another module. That is a couple.

## There are 7 types of Cohesion

The best one is **Function Cohesion**, where everything that helps do a specific... | waqas334 |

1,922,775 | Configuring Routes for Azure Firewall (Part 4) | Introduction: In the previous part, we configured basic firewall rules for your Azure web... | 0 | 2024-07-17T21:59:22 | https://dev.to/jimiog/configuring-routes-for-azure-firewall-part-4-2p85 | azure, cloud, network, security | **Introduction:**

In the [previous part](https://dev.to/jimiog/securing-your-azure-web-application-with-azure-firewall-part-3-2j2d), we configured basic firewall rules for your Azure web application. Now, we'll establish routes to ensure all outbound traffic from your application subnets is directed through the Azure ... | jimiog |

1,922,777 | Detecting Browser DevTools: A Guide to `devtools-detect` | Detecting Browser DevTools: A Guide to devtools-detect Web developers often need to know... | 0 | 2024-07-14T01:22:23 | https://dev.to/sh20raj/detecting-browser-devtools-a-guide-to-devtools-detect-4kgd | javascript, webdev, beginners, devtoolsdetect | # Detecting Browser DevTools: A Guide to `devtools-detect`

Web developers often need to know when users are inspecting their applications using browser DevTools. This capability can be crucial for various reasons, such as preventing data tampering, enhancing security, or simply understanding user behavior. The `devtoo... | sh20raj |

1,922,778 | Enabling Internal DNS Resolution for Secure Workloads (final) | Introduction: In the final part of this series, we'll configure internal DNS resolution for your... | 0 | 2024-07-17T22:00:35 | https://dev.to/jimiog/enabling-internal-dns-resolution-for-secure-workloads-final-2ol3 | azure, cloud, dns, microsoft | **Introduction:**

In the final part of this [series](https://dev.to/jimiog/configuring-routes-for-azure-firewall-part-4-2p85), we'll configure internal DNS resolution for your Azure virtual network. This allows workloads within the network to resolve domain names privately, enhancing security and overall performance.

... | jimiog |

1,922,780 | Topics I Post About | Classic Software - Software Technology like I learned in... | 0 | 2024-07-14T01:39:44 | https://dev.to/theholyspirit/topics-i-post-about-pek | todayilearned, todayisearched, devjournal, webdev | **Classic Software - Software Technology like I learned in 2009**

https://dev.to/theholyspirit/display-a-text-file-in-a-browser-5202

https://dev.to/theholyspirit/manifestjson-13i

**Human Software - Metaphysical Technology Which Runs The Simulation**

https://dev.to/theholyspirit/the-quantification-of-perception-3iff

... | theholyspirit |

1,922,803 | Elevator Pitch | Elevator Pitch Elevator pitch secrets The average non task-oriented attention span of a human being... | 0 | 2024-07-14T02:08:51 | https://dev.to/theholyspirit/elevator-pitch-201j | **Elevator Pitch**

Elevator pitch secrets

The average non task-oriented attention span of a human being is about 8 seconds.

So how to 8 second elevator pitch

1-2 sentences that description

1. The audience the solution helps

2. The problems solved

3. The benefit your customers receive

**Primary objective**

Your elev... | theholyspirit | |

1,922,804 | Getting Started with Azure Bicep | Azure Bicep is a domain-specific language (DSL) designed for deploying and managing Azure resources.... | 0 | 2024-07-14T02:09:49 | https://blog.raulnq.com/getting-started-with-azure-bicep | azure, bicep | Azure Bicep is a domain-specific language (DSL) designed for deploying and managing Azure resources. It offers several advantages, including a more concise syntax compared to [ARM templates](https://learn.microsoft.com/en-us/azure/azure-resource-manager/templates/overview), improved readability, enhanced maintainabilit... | raulnq |

1,922,806 | I am developing a Text Input Component based on Skia and Canvas | visit github TextMagic is the next generation text component. Unlike native input and textarea... | 0 | 2024-07-14T02:25:12 | https://dev.to/gezilinll/i-am-developing-a-text-input-component-based-on-skia-and-canvas-1407 | webdev, javascript, programming, opensource | [visit github

](https://github.com/gezilinll/TextMagic)

TextMagic is the next generation text component. Unlike native input and textarea components, it supports richer text effects and typesetting capabilities. By controlling text layout autonomously, it ensures consistent text display across different platforms and ... | gezilinll |

1,922,807 | Business Development | Business Development Can mean different things Strategic Partnerships Strategic partnerships are you... | 0 | 2024-07-14T02:30:25 | https://dev.to/theholyspirit/business-development-33i | startup, technology, webdev, business | **Business Development**

Can mean different things

**Strategic Partnerships**

Strategic partnerships are you partnering with a big player in the industry for mutually beneficial business agreement. As an exercise, make a list of the top 25 market revenue leaders. A Strategic Partner is on that list.

They think they a... | theholyspirit |

1,922,808 | Harnessing NgRx for Effortless Angular State Management | Managing state in complex Angular applications can be a daunting task, especially as the application... | 0 | 2024-07-14T02:39:19 | https://devtoys.io/2024/07/13/harnessing-ngrx-for-effortless-angular-state-management/ | angular, tutorial, devtoys | ---

canonical_url: https://devtoys.io/2024/07/13/harnessing-ngrx-for-effortless-angular-state-management/

---

Managing state in complex Angular applications can be a daunting task, especially as the application grows and the interdependencies between different parts of the application become more intricate. NgRx, a po... | 3a5abi |

1,922,809 | Triangle to pixel | Triangle is a three sided polygon, one of the most basic geometric shapes. It is a convex shape.... | 0 | 2024-07-14T02:44:01 | https://dev.to/x64x2/triangle-to-pixel-3ga0 | programming, learning, computerscience, software |

Triangle is a three sided polygon, one of the most basic geometric shapes. It is a convex shape. Furthermore it is a 2-simplex, i.e. the simplest ["shape composed of sides"]polytope in 2 dimensions. Triangles are very important, they for example help us to compute distances or define functions like sine and cosine.

I... | x64x2 |

1,922,810 | AI Transforming Real Estate | Introduction The integration of Artificial Intelligence (AI) into various sectors... | 27,673 | 2024-07-14T02:45:11 | https://dev.to/rapidinnovation/ai-transforming-real-estate-31l9 | ## Introduction

The integration of Artificial Intelligence (AI) into various sectors has

revolutionized traditional practices, and the real estate industry is no

exception. AI's influence spans across multiple aspects of real estate, from

property search engines to transaction processes, significantly altering how

age... | rapidinnovation | |

1,922,813 | Enhance Code Security with GitHub Actions: Automatically Commenting PRs with Docker Scans | To raise the security awareness of our development team, we've integrated a mechanism into our CI... | 0 | 2024-07-14T03:08:16 | https://dev.to/suzuki0430/enhance-code-security-with-github-actions-automatically-commenting-prs-with-docker-scans-48ap | docker, webdev, devops, security | To raise the security awareness of our development team, we've integrated a mechanism into our CI using GitHub Actions to conduct security scans on Docker images and automatically add those results as comments to pull requests (PRs).

in PyTorch | Buy Me a Coffee☕ *Memos: My post explains stack(). My post explains hstack() and... | 0 | 2024-07-14T03:11:39 | https://dev.to/hyperkai/cat-in-pytorch-4jea | pytorch, cat, tensor, function | [Buy Me a Coffee](ko-fi.com/superkai)☕

*Memos:

- [My post](https://dev.to/hyperkai/stack-in-pytorch-1bp1) explains [stack()](https://pytorch.org/docs/stable/generated/torch.stack.html).

- [My post](https://dev.to/hyperkai/hstack-and-columnstack-in-pytorch-2mfb) explains [hstack()](https://pytorch.org/docs/stable/gener... | hyperkai |

1,922,826 | Fix slow queries in Django when using annotate and subqueries | Django’s ORM is quite useful and versatile, it can perform most of the common SQL operations, such as... | 0 | 2024-07-15T17:19:50 | https://coffeebytes.dev/en/fix-slow-queries-in-django-when-using-annotate-and-subqueries/ | django, database, postgres, backend | ---

title: Fix slow queries in Django when using annotate and subqueries

date: 2024-07-15 21:51:01 UTC

published: True

tags: django,databases,postgres,backend

canonical_url: https://coffeebytes.dev/en/fix-slow-queries-in-django-when-using-annotate-and-subqueries/

cover_image: https://dev-to-uploads.s3.amazonaws.com/upl... | zeedu_dev |

1,922,829 | Golang File Paths | Portable Path Operations | In this lab, you will explore the usage of the `filepath` package in Go to perform various operations on file paths, including constructing portable paths, splitting paths into directory and file components, checking path absoluteness, finding file extensions, and determining relative paths between two paths. | 27,982 | 2024-07-14T03:25:48 | https://dev.to/labex/golang-file-paths-portable-path-operations-1cb9 | go, coding, programming, tutorial |

## Introduction

The `filepath` package in Golang provides functi... | labby |

Subsets and Splits

No community queries yet

The top public SQL queries from the community will appear here once available.