code stringlengths 2.5k 150k | kind stringclasses 1 value |

|---|---|

# This Jupyter notebook illustrates how to read data in from an external file

## [notebook provides a simple illustration, users can easily use these examples to modify and customize for their data storage scheme and/or preferred workflows]

###Motion Blur Filtering: A Statistical Approach for Extracting Confinement Forces & Diffusivity from a Single Blurred Trajectory

#####Author: Chris Calderon

Copyright 2015 Ursa Analytics, Inc.

Licensed under the Apache License, Version 2.0 (the "License");

You may obtain a copy of the License at http://www.apache.org/licenses/LICENSE-2.0

### Cell below loads the required modules and packages

```

%matplotlib inline

#command above avoids using the "dreaded" pylab flag when launching ipython (always put magic command above as first arg to ipynb file)

import matplotlib.font_manager as font_manager

import matplotlib.pyplot as plt

import numpy as np

import scipy.optimize as spo

import findBerglundVersionOfMA1 #this module builds off of Berglund's 2010 PRE parameterization (atypical MA1 formulation)

import MotionBlurFilter

import Ursa_IPyNBpltWrapper

```

##Now that required modules packages are loaded, set parameters for simulating "Blurred" OU trajectories. Specific mixed continuous/discrete model:

\begin{align}

dr_t = & ({v}-{\kappa} r_t)dt + \sqrt{2 D}dB_t \\

\psi_{t_i} = & \frac{1}{t_E}\int_{t_{i}-t_E}^{t_i} r_s ds + \epsilon^{\mathrm{loc}}_{t_i}

\end{align}

###In above equations, parameter vector specifying model is: $\theta = (\kappa,D,\sigma_{\mathrm{loc}},v)$

###Statistically exact discretization of above for uniform time spacing $\delta$ (non-uniform $\delta$ requires time dependent vectors and matrices below):

\begin{align}

r_{t_{i+1}} = & A + F r_{t_{i}} + \eta_{t_i} \\

\psi_{t_i} = & H_A + H_Fr_{t_{i-1}} + \epsilon^{\mathrm{loc}}_{t_i} + \epsilon^{\mathrm{mblur}}_{t_i} \\

\epsilon^{\mathrm{loc}}_{t_i} + & \epsilon^{\mathrm{mblur}}_{t_i} \sim \mathcal{N}(0,R_i) \\

\eta_i \sim & \mathcal{N}(0,Q) \\

t_{i-1} = & t_{i}-t_E \\

C = & cov(\epsilon^{\mathrm{mblur}}_{t_i},\eta_{t_{i-1}}) \ne 0

\end{align}

####Note: Kalman Filter (KF) and Motion Blur Filter (MBF) codes estimate $\sqrt(2D)$ directly as "thermal noise" parameter

### For situations where users would like to read data in from external source, many options exist.

####In cell below, we show how to read in a text file and process the data assuming the text file contains two columns: One column with the 1D measurements and one with localization standard deviation vs. time estimates. Code chunk below sets up some default variables (tunable values indicated by comments below). Note that for multivariate signals, chunks below can readily be modified to process x/y or x/y/z measurements separately. Future work will address estimating 2D/3D models with the MBF (computational [not theoretical] issues exists in this case); however, the code currently provides diagnostic information to determine if unmodeled multivariate interaction effects are important (see main paper and Calderon, Weiss, Moerner, PRE 2014)

### Plot examles from other notebooks can be used to explore output within this notbook or another. Next, a simple example of "Batch" processing is illustrated.

```

filenameBase='./ExampleData/MyTraj_' #assume all trajectory files have this prefix (adjust file location accordingly)

N=20 #set the number of trajectories to read.

delta = 25./1000. #user must specify the time (in seconds) between observations. code provided assumes uniform continuous illumination and

#NOTE: in this simple example, all trajectories assumed to be collected with exposure time delta input above

#now loop over trajectories and store MLE results

resBatch=[] #variable for storing MLE output

#loop below just copies info from cell below (only difference is file to read is modified on each iteration of the loop)

for i in range(N):

filei = filenameBase + str(i+1) + '.txt'

print ''

print '^'*100

print 'Reading in file: ', filei

#first load the sample data stored in text file. here we assume two columns of numerica data (col 1 are measurements)

data = np.loadtxt(filei)

(T,ncol)=data.shape

#above we just used a simple default text file reader; however, any means of extracting the data and

#casting it to a Tx2 array (or Tx1 if no localization accuracy info available) will work.

ymeas = data[:,0]

locStdGuess = data[:,1] #if no localization info avaible, just set this to zero or a reasonable estimate of localization error [in nm]

Dguess = 0.1 #input a guess of the local diffusion coefficient of the trajecotry to seed the MLE searches (need not be accurate)

velguess = np.mean(np.diff(ymeas))/delta #input a guess of the velocity of the trajecotry to seed the MLE searches (need not be accurate)

MA=findBerglundVersionOfMA1.CostFuncMA1Diff(ymeas,delta) #construct an instance of the Berglund estimator

res = spo.minimize(MA.evalCostFuncVel, (np.sqrt(Dguess),np.median(locStdGuess),velguess), method='nelder-mead')

#output Berglund estimation result.

print 'Berglund MLE',res.x[0]*np.sqrt(2),res.x[1],res.x[-1]

print '-'*100

#obtain crude estimate of mean reversion parameter. see Calderon, PRE (2013)

kappa1 = np.log(np.sum(ymeas[1:]*ymeas[0:-1])/(np.sum(ymeas[0:-1]**2)-T*res.x[1]**2))/-delta

#construct an instance of the MBF estimator

BlurF = MotionBlurFilter.ModifiedKalmanFilter1DwithCrossCorr(ymeas,delta,StaticErrorEstSeq=locStdGuess)

#use call below if no localization info avaible

# BlurF = MotionBlurFilter.ModifiedKalmanFilter1DwithCrossCorr(ymeas,delta)

parsIG=np.array([np.abs(kappa1),res.x[0]*np.sqrt(2),res.x[1],res.x[-1]]) #kick off MLE search with "warm start" based on simpler model

#kick off nonlinear cost function optimization given data and initial guess

resBlur = spo.minimize(BlurF.evalCostFunc,parsIG, method='nelder-mead')

print 'parsIG for Motion Blur filter',parsIG

print 'Motion Blur MLE result:',resBlur

#finally evaluate diagnostic statistics at MLE just obtained

loglike,xfilt,pit,Shist =BlurF.KFfilterOU1d(resBlur.x)

print np.mean(pit),np.std(pit)

print 'crude assessment of model: check above mean is near 0.5 and std is approximately',np.sqrt(1/12.)

print 'statements above based on generalized residual U[0,1] shape'

print 'other hypothesis tests outlined which can use PIT sequence above outlined/referenced in paper.'

#finally just store the MLE of the MBF in a list

resBatch.append(resBlur.x)

#Summarize the results of the above N simulations

#

resSUM=np.array(resBatch)

print 'Blur medians',np.median(resSUM[:,0]),np.median(resSUM[:,1]),np.median(resSUM[:,2]),np.median(resSUM[:,3])

print 'means',np.mean(resSUM[:,0]),np.mean(resSUM[:,1]),np.mean(resSUM[:,2]),np.mean(resSUM[:,3])

print 'std',np.std(resSUM[:,0]),np.std(resSUM[:,1]),np.std(resSUM[:,2]),np.std(resSUM[:,3])

print '^'*100 ,'\n\n'

```

| github_jupyter |

```

import geopandas as gpd

import pandas as pd

import numpy as np

from covidcaremap.constants import state_name_to_abbreviation

from covidcaremap.geo import sum_per_county, sum_per_state, sum_per_hrr

from covidcaremap.data import (external_data_path,

processed_data_path,

read_census_data_df)

```

# Merge Region and Census Data

This notebook utilizes US Census data at the county and state level to merge population data into the county, state, and HRR region data.

Most logic taken from [usa_beds_capacity_analysis_20200313_v2](https://github.com/daveluo/covid19-healthsystemcapacity/blob/9a45c424a23e7a15559527893ebeb28703f26422/nbs/usa_beds_capacity_analysis_20200313_v2.ipynb)

```

county_census_df = pd.read_csv(external_data_path('us-census-cc-est2018-alldata.csv'),

encoding='unicode_escape')

puerto_rico_census_df = pd.read_csv(external_data_path('PEP_2018_PEPAGESEX_with_ann.csv'),

encoding='unicode_escape')

# Filter dataset to Puerto Rico and format it to join

puerto_rico_census_df = puerto_rico_census_df[puerto_rico_census_df['GEO.display-label'] == 'Puerto Rico']

puerto_rico_census_df = puerto_rico_census_df.rename(columns={'GEO.display-label': 'STNAME'})

```

#### Format FIPS code as to be joined with county geo data

```

county_census_df['fips_code'] = county_census_df['STATE'].apply(lambda x: str(x).zfill(2)) + \

county_census_df['COUNTY'].apply(lambda x: str(x).zfill(3))

```

#### Filter to 7/1/2018 population estimate

```

county_census2018_df = county_census_df[county_census_df['YEAR'] == 11]

```

#### Filter by age groups

We will be looking at total population, adult population (20+ years old),

and elderly population (65+ years old). These age groups match up with the

CDC groupings here: https://www.cdc.gov/mmwr/volumes/69/wr/mm6912e2.htm?s_cid=mm6912e2_w

From https://www2.census.gov/programs-surveys/popest/technical-documentation/file-layouts/2010-2018/cc-est2018-alldata.pdf, the key for AGEGRP is as follows:

- 0 = Total

- 1 = Age 0 to 4 years

- 2 = Age 5 to 9 years

- 3 = Age 10 to 14 years

- 4 = Age 15 to 19 years

- 5 = Age 20 to 24 years

- 6 = Age 25 to 29 years

- 7 = Age 30 to 34 years

- 8 = Age 35 to 39 years

- 9 = Age 40 to 44 years

- 10 = Age 45 to 49 years

- 11 = Age 50 to 54 years

- 12 = Age 55 to 59 years

- 13 = Age 60 to 64 years

- 14 = Age 65 to 69 years

- 15 = Age 70 to 74 years

- 16 = Age 75 to 79 years

- 17 = Age 80 to 84 years

- 18 = Age 85 years or older

```

county_pop_all = county_census2018_df[county_census2018_df['AGEGRP']==0].groupby(

['fips_code'])['TOT_POP'].sum()

county_pop_adult = county_census2018_df[county_census2018_df['AGEGRP']>=5].groupby(

['fips_code'])['TOT_POP'].sum()

county_pop_elderly = county_census2018_df[county_census2018_df['AGEGRP']>=14].groupby(

['fips_code'])['TOT_POP'].sum()

county_pop_all.sum(), county_pop_adult.sum(), county_pop_elderly.sum()

state_pop_all = county_census2018_df[county_census2018_df['AGEGRP']==0].groupby(

['STNAME'])['TOT_POP'].sum()

state_pop_adult = county_census2018_df[county_census2018_df['AGEGRP']>=5].groupby(

['STNAME'])['TOT_POP'].sum()

state_pop_elderly = county_census2018_df[county_census2018_df['AGEGRP']>=14].groupby(

['STNAME'])['TOT_POP'].sum()

# Calculate populations for Puerto Rico

pr_pop_all_columns = ['est72018sex0_age999']

pr_pop_adult_columns = [

'est72018sex0_age{}to{}'.format(x, x+4)

for x in range(20,60, 5)

] + ['est72018sex0_age65plus']

pr_pop_edlerly_columns = ['est72018sex0_age65plus']

puerto_rico_census_df = puerto_rico_census_df.astype(dtype=dict(

(n, int) for n in pr_pop_all_columns + pr_pop_adult_columns))

def get_pr_pop(columns):

result = puerto_rico_census_df.transpose().reset_index()

result = result[result['index'].isin(columns)].sum()

result = pd.DataFrame(data={'STNAME': ['Puerto Rico'], 'TOT_POP': [result.iloc[1]]}) \

.set_index('STNAME').groupby(

['STNAME'])['TOT_POP'].sum()

return result

state_pop_all_with_pr = pd.concat([state_pop_all, get_pr_pop(pr_pop_all_columns)])

state_pop_adult_with_pr = pd.concat([state_pop_all, get_pr_pop(pr_pop_adult_columns)])

state_pop_elderly_with_pr = pd.concat([state_pop_all, get_pr_pop(pr_pop_edlerly_columns)])

get_pr_pop(pr_pop_all_columns)

state_pop_all_with_pr

state_pop_all_with_pr.sum(), state_pop_adult_with_pr.sum(), state_pop_elderly_with_pr.sum()

county_pops = {

'Population': county_pop_all,

'Population (20+)': county_pop_adult,

'Population (65+)': county_pop_elderly

}

state_pops = {

'Population': state_pop_all_with_pr,

'Population (20+)': state_pop_adult_with_pr,

'Population (65+)': state_pop_elderly_with_pr

}

def set_population_field(target_df, pop_df, column_name, join_on):

result = target_df.join(pop_df, how='left', on=join_on)

result = result.rename({'TOT_POP': column_name}, axis=1)

result = result.fillna(value={column_name: 0})

return result

```

### Merge census data into states

```

state_gdf = gpd.read_file(external_data_path('us_states.geojson'), encoding='utf-8')

enriched_state_df = state_gdf.set_index('NAME')

for column_name, pop_df in state_pops.items():

pop_df = pop_df.rename({'STNAME': 'State Name'}, axis=1)

enriched_state_df = set_population_field(enriched_state_df,

pop_df,

column_name,

join_on='NAME')

enriched_state_df = enriched_state_df.reset_index()

enriched_state_df = enriched_state_df.rename(columns={'STATE': 'STATE_FIPS',

'NAME': 'State Name'})

enriched_state_df['State'] = enriched_state_df['State Name'].apply(

lambda x: state_name_to_abbreviation[x])

enriched_state_df.to_file(processed_data_path('us_states_with_pop.geojson'), driver='GeoJSON')

```

### Merge census data into counties

```

county_gdf = gpd.read_file(external_data_path('us_counties.geojson'), encoding='utf-8')

county_gdf = county_gdf.rename(columns={'STATE': 'STATE_FIPS',

'NAME': 'County Name'})

county_gdf = county_gdf.merge(enriched_state_df[['STATE_FIPS', 'State']], on='STATE_FIPS')

# FIPS code is last 5 digits of GEO_ID

county_gdf['COUNTY_FIPS'] = county_gdf['GEO_ID'].apply(lambda x: x[-5:])

county_gdf = county_gdf.drop(columns=['COUNTY'])

enriched_county_df = county_gdf

for column_name, pop_df in county_pops.items():

enriched_county_df = set_population_field(enriched_county_df,

pop_df,

column_name,

join_on='COUNTY_FIPS')

enriched_county_df.to_file(processed_data_path('us_counties_with_pop.geojson'), driver='GeoJSON')

```

## Generate population data for HRRs

Spatially join HRRs with counties. For each intersecting county, take the ratio of the area of intersection with the HRR and the area of the county as the ratio of population for that county to be assigned to that HRR.

```

hrr_gdf = gpd.read_file(external_data_path('us_hrr.geojson'), encoding='utf-8')

hrr_gdf = hrr_gdf.to_crs('EPSG:5070')

hrr_gdf['hrr_geom'] = hrr_gdf['geometry']

county_pop_gdf = enriched_county_df

county_pop_gdf = county_pop_gdf.to_crs('EPSG:5070')

county_pop_gdf['county_geom'] = county_pop_gdf['geometry']

hrr_counties_joined_gpd = gpd.sjoin(county_pop_gdf, hrr_gdf, how='left', op='intersects')

def calculate_ratio(row):

if row['hrr_geom'] is None:

return 0.0

i = row['hrr_geom'].buffer(0).intersection(row['geometry'].buffer(0))

return i.area / row['geometry'].area

hrr_counties_joined_gpd['ratio'] = hrr_counties_joined_gpd.apply(calculate_ratio, axis=1)

for column in county_pops.keys():

hrr_counties_joined_gpd[column] = \

(hrr_counties_joined_gpd[column] * hrr_counties_joined_gpd['ratio']).round()

hrr_pops = hrr_counties_joined_gpd.groupby('HRR_BDRY_I')[list(county_pops.keys())].sum()

hrr_pops

enriched_hrr_gdf = hrr_gdf.join(hrr_pops, on='HRR_BDRY_I').fillna(value=0)

enriched_hrr_gdf = enriched_hrr_gdf.drop('hrr_geom', axis=1).to_crs('EPSG:4326')

enriched_hrr_gdf

enriched_hrr_gdf.to_file(processed_data_path('us_hrr_with_pop.geojson'), driver='GeoJSON')

```

| github_jupyter |

```

import os

print (os.environ['CONDA_DEFAULT_ENV'])

os.system('cls')

#file = open('test_set_1E/test_set_2/ts2_input.txt', 'r')

file = open('test_set_1E/sample_test_set_1/sample_ts1_input.txt', 'r')

#overwrite input to mimic google input with local files

def input():

line = file.readline()

return line

import math

import random

import numpy as np

stringimp = 'IMPOSSIBLE'

def closestindex(a_list, given_value):

absolute_difference_function = lambda list_value : abs(list_value - given_value)

closestval = min(a_list, key=absolute_difference_function)

closest_index = a_list.index(closestval)

return closest_index

t = int(input()) # read number of cases

def method0(n):

#Will try until it work at random, don't work for second patch (time limit)

a = [0]*26

res = ''

for index in n:

a[ord(index)-ord('a')] = a[ord(index)-ord('a')]+1

if (max(a) > math.floor(len(n)/2)):

res = stringimp

else:

test = True

#Will try until it work at random, don't work for second patch (time limit)

while test :

possibilite = set(n)

test = False

for index in n :

possibiliten = set(possibilite)

if index in possibiliten:

possibiliten.remove(index)

if len(possibiliten) == 0 :

test=True

a = [0]*26

res = ''

for index in n:

a[ord(index)-ord('a')] = a[ord(index)-ord('a')]+1

break

else:

remove = random.choice(list(possibiliten))

res += remove

a[ord(remove)-ord('a')] = a[ord(remove)-ord('a')]-1

if a[ord(remove)-ord('a')] == 0:

possibilite.remove(remove)

return res

#cette solution ne marche pas

def method1(n):

nimp = list(n)

unic = set(n)

index = [0]*26

res = ''

for i in list(unic) :

index[ord(i)-ord('a')] = nimp.count(i)

if (max(index) > math.floor(len(n)/2)) :

res = stringimp

else:

indexreverse = list(index)

indexreverse.reverse()

#chaque lettre comence à l'index de depart de l'autre

indexlettres = [0]

for indexes in index :

indexlettres+= [indexlettres[-1] + indexes]

indexreverselettres = [0]

for indexes in indexreverse :

indexreverselettres+= [indexreverselettres[-1] + indexes]

for letter in n :

print(indexlettres,indexreverselettres, res )

numericalletter = ord(letter)-ord('a')

codepartie1 = indexlettres[numericalletter]

indexlettres[numericalletter] = indexlettres[numericalletter] +1

plusprocheindex = closestindex(indexreverselettres, codepartie1)

plusprocheindexval = indexreverselettres[plusprocheindex]

if plusprocheindexval == codepartie1 :

while plusprocheindex < 26 and \

indexreverselettres[plusprocheindex] == indexreverselettres[plusprocheindex+1]:

plusprocheindex =plusprocheindex +1

res += chr(25-plusprocheindex+ord('a'))

else:

if plusprocheindexval < codepartie1:

res += chr(25-plusprocheindex+ord('a'))

else:

res += chr(25-plusprocheindex-1+ord('a'))

return(res)

return res

#methode donné dans les explications

def methodsol(n):

a = [0]*26

res = ''

for index in n:

a[ord(index)-ord('a')] = a[ord(index)-ord('a')]+1

if (max(a) > math.floor(len(n)/2)):

res = stringimp

else:

nsort = np.array(n)

argsort = np.argsort(nsort)

valsort = np.sort(nsort)

midle = math.floor(len(valsort)/2)

p1 = valsort[0:midle]

p2 = valsort[midle:]

nswap = p2

nswap = np.append (nswap,p1)

for i in range(len(n)):

res+=nswap[argsort[i]]

return res

```

n = [str(s) for s in input()]

#remouve line return from file

if '\n' in n :

n.remove('\n')

res = method1(n)

for i in range(len(n)):

if n[i] == res[i]:

print('error', i, n, res)

print("Case #{}: {}".format(0, res))

```

for i in range(1, t + 1): # for each case

n = [str(s) for s in input()]

#remouve line return from file

if '\n' in n :

n.remove('\n')

res = methodsol(n)

print("Case #{}: {}".format(i, res))

# check out .format's specification for more formatting options

chr(97+25)

```

| github_jupyter |

# Introduction to obspy

The obspy package is very useful to download seismic data and to do some signal processing on them. Most signal processing methods are based on the signal processing method in the Python package scipy.

First we import useful packages.

```

import obspy

import obspy.clients.earthworm.client as earthworm

import obspy.clients.fdsn.client as fdsn

from obspy import read

from obspy import read_inventory

from obspy import UTCDateTime

from obspy.core.stream import Stream

from obspy.signal.cross_correlation import correlate

import matplotlib.pyplot as plt

import numpy as np

import os

import urllib.request

%matplotlib inline

```

We are going to download data from an array of seismic stations.

```

network = 'XU'

arrayName = 'BS'

staNames = ['BS01', 'BS02', 'BS03', 'BS04', 'BS05', 'BS06', 'BS11', 'BS20', 'BS21', 'BS22', 'BS23', 'BS24', 'BS25', \

'BS26', 'BS27']

chaNames = ['SHE', 'SHN', 'SHZ']

staCodes = 'BS01,BS02,BS03,BS04,BS05,BS06,BS11,BS20,BS21,BS22,BS23,BS24,BS25,BS26,BS27'

chans = 'SHE,SHN,SHZ'

```

We also need to define the time period for which we want to download data.

```

myYear = 2010

myMonth = 8

myDay = 17

myHour = 6

TDUR = 2 * 3600.0

Tstart = UTCDateTime(year=myYear, month=myMonth, day=myDay, hour=myHour)

Tend = Tstart + TDUR

```

We start by defining the client for downloading the data

```

fdsn_client = fdsn.Client('IRIS')

```

Download the seismic data for all the stations in the array.

```

Dtmp = fdsn_client.get_waveforms(network=network, station=staCodes, location='--', channel=chans, starttime=Tstart, \

endtime=Tend, attach_response=True)

```

Some stations did not record the entire two hours. We delete these and keep only stations with a complte two hour recording.

```

ntmp = []

for ksta in range(0, len(Dtmp)):

ntmp.append(len(Dtmp[ksta]))

ntmp = max(set(ntmp), key=ntmp.count)

D = Dtmp.select(npts=ntmp)

```

This is a function for plotting after each operation on the data.

```

def plot_2hour(D, channel, offset, title):

""" Plot seismograms

D = Stream

channel = 'E', 'N', or 'Z'

offset = Offset between two stations

title = Title of the figure

"""

fig, ax = plt.subplots(figsize=(15, 10))

Dplot = D.select(component=channel)

t = (1.0 / Dplot[0].stats.sampling_rate) * np.arange(0, Dplot[0].stats.npts)

for ksta in range(0, len(Dplot)):

plt.plot(t, ksta * offset + Dplot[ksta].data, 'k')

plt.xlim(np.min(t), np.max(t))

plt.ylim(- offset, len(Dplot) * offset)

plt.title(title, fontsize=24)

plt.xlabel('Time (s)', fontsize=24)

ax.set_yticklabels([])

ax.tick_params(labelsize=20)

plot_2hour(D, 'E', 1200.0, 'Downloaded data')

```

We start by detrending the data.

```

D

D.detrend(type='linear')

plot_2hour(D, 'E', 1200.0, 'Detrended data')

```

We then taper the data.

```

D.taper(type='hann', max_percentage=None, max_length=5.0)

plot_2hour(D, 'E', 1200.0, 'Tapered data')

```

And we remove the instrment response.

```

D.remove_response(output='VEL', pre_filt=(0.2, 0.5, 10.0, 15.0), water_level=80.0)

plot_2hour(D, 'E', 1.0e-6, 'Deconvolving the instrument response')

```

Then we filter the data.

```

D.filter('bandpass', freqmin=2.0, freqmax=8.0, zerophase=True)

plot_2hour(D, 'E', 1.0e-6, 'Filtered data')

```

And we resample the data.

```

D.interpolate(100.0, method='lanczos', a=10)

D.decimate(5, no_filter=True)

plot_2hour(D, 'E', 1.0e-6, 'Resampled data')

```

We can also compute the envelope of the signal.

```

for index in range(0, len(D)):

D[index].data = obspy.signal.filter.envelope(D[index].data)

plot_2hour(D, 'E', 1.0e-6, 'Envelope')

```

You can also download the instrument response separately:

```

network = 'XQ'

station = 'ME12'

channels = 'BHE,BHN,BHZ'

location = '01'

```

This is to download the instrument response.

```

fdsn_client = fdsn.Client('IRIS')

inventory = fdsn_client.get_stations(network=network, station=station, level='response')

inventory.write('response/' + network + '_' + station + '.xml', format='STATIONXML')

```

We then read the data and start precessing the signal as we did above.

```

fdsn_client = fdsn.Client('IRIS')

Tstart = UTCDateTime(year=2008, month=4, day=1, hour=4, minute=49)

Tend = UTCDateTime(year=2008, month=4, day=1, hour=4, minute=50)

D = fdsn_client.get_waveforms(network=network, station=station, location=location, channel=channels, starttime=Tstart, endtime=Tend, attach_response=False)

D.detrend(type='linear')

D.taper(type='hann', max_percentage=None, max_length=5.0)

```

But we now use the xml file that contains the instrment response to remove it from the signal.

```

filename = 'response/' + network + '_' + station + '.xml'

inventory = read_inventory(filename, format='STATIONXML')

D.attach_response(inventory)

D.remove_response(output='VEL', pre_filt=(0.2, 0.5, 10.0, 15.0), water_level=80.0)

```

We resume signal processing.

```

D.filter('bandpass', freqmin=2.0, freqmax=8.0, zerophase=True)

D.interpolate(100.0, method='lanczos', a=10)

D.decimate(5, no_filter=True)

```

And we plot.

```

t = (1.0 / D[0].stats.sampling_rate) * np.arange(0, D[0].stats.npts)

plt.plot(t, D[0].data, 'k')

plt.xlim(np.min(t), np.max(t))

plt.title('Single waveform', fontsize=18)

plt.xlabel('Time (s)', fontsize=18)

```

Not all seismic data are stored on IRIS. This is an example of how to download data from the Northern California Earthquake Data Center (NCEDC).

```

network = 'BK'

station = 'WDC'

channels = 'BHE,BHN,BHZ'

location = '--'

```

This is to download the instrument response.

```

url = 'http://service.ncedc.org/fdsnws/station/1/query?net=' + network + '&sta=' + station + '&level=response&format=xml&includeavailability=true'

s = urllib.request.urlopen(url)

contents = s.read()

file = open('response/' + network + '_' + station + '.xml', 'wb')

file.write(contents)

file.close()

```

And this is to download the data.

```

Tstart = UTCDateTime(year=2007, month=2, day=12, hour=1, minute=11, second=54)

Tend = UTCDateTime(year=2007, month=2, day=12, hour=1, minute=12, second=54)

request = 'waveform_' + station + '.request'

file = open(request, 'w')

message = '{} {} {} {} '.format(network, station, location, channels) + \

'{:04d}-{:02d}-{:02d}T{:02d}:{:02d}:{:02d} '.format( \

Tstart.year, Tstart.month, Tstart.day, Tstart.hour, Tstart.minute, Tstart.second) + \

'{:04d}-{:02d}-{:02d}T{:02d}:{:02d}:{:02d}\n'.format( \

Tend.year, Tend.month, Tend.day, Tend.hour, Tend.minute, Tend.second)

file.write(message)

file.close()

miniseed = 'station_' + station + '.miniseed'

request = 'curl -s --data-binary @waveform_' + station + '.request -o ' + miniseed + ' http://service.ncedc.org/fdsnws/dataselect/1/query'

os.system(request)

D = read(miniseed)

D.detrend(type='linear')

D.taper(type='hann', max_percentage=None, max_length=5.0)

filename = 'response/' + network + '_' + station + '.xml'

inventory = read_inventory(filename, format='STATIONXML')

D.attach_response(inventory)

D.remove_response(output='VEL', pre_filt=(0.2, 0.5, 10.0, 15.0), water_level=80.0)

D.filter('bandpass', freqmin=2.0, freqmax=8.0, zerophase=True)

D.interpolate(100.0, method='lanczos', a=10)

D.decimate(5, no_filter=True)

t = (1.0 / D[0].stats.sampling_rate) * np.arange(0, D[0].stats.npts)

plt.plot(t, D[0].data, 'k')

plt.xlim(np.min(t), np.max(t))

plt.title('Single waveform', fontsize=18)

plt.xlabel('Time (s)', fontsize=18)

```

| github_jupyter |

## Quantitative trading in China A stock market with FinRL

### Import modules

```

import warnings

warnings.filterwarnings("ignore")

import pandas as pd

from IPython import display

display.set_matplotlib_formats("svg")

from finrl_meta import config

from finrl_meta.data_processors.processor_tusharepro import TushareProProcessor, ReturnPlotter

from finrl_meta.env_stock_trading.env_stocktrading_A import StockTradingEnv

from drl_agents.stablebaselines3_models import DRLAgent

pd.options.display.max_columns = None

print("ALL Modules have been imported!")

```

### Create folders

```

import os

if not os.path.exists("./datasets" ):

os.makedirs("./datasets" )

if not os.path.exists("./trained_models"):

os.makedirs("./trained_models" )

if not os.path.exists("./tensorboard_log"):

os.makedirs("./tensorboard_log" )

if not os.path.exists("./results" ):

os.makedirs("./results" )

```

### Download data, cleaning and feature engineering

```

ticket_list=['600000.SH', '600009.SH', '600016.SH', '600028.SH', '600030.SH',

'600031.SH', '600036.SH', '600050.SH', '600104.SH', '600196.SH',

'600276.SH', '600309.SH', '600519.SH', '600547.SH', '600570.SH']

train_start_date='2015-01-01'

train_stop_date='2019-08-01'

val_start_date='2019-08-01'

val_stop_date='2021-01-03'

token='27080ec403c0218f96f388bca1b1d85329d563c91a43672239619ef5'

# download and clean

ts_processor = TushareProProcessor("tusharepro", token=token)

df = ts_processor.download_data(ticket_list, train_start_date, val_stop_date, "1D")

df = ts_processor.clean_data(df)

df

# add_technical_indicator

df = ts_processor.add_technical_indicator(df, config.TECHNICAL_INDICATORS_LIST)

df = ts_processor.clean_data(df)

df

```

### Split traning dataset

```

train =ts_processor.data_split(df, train_start_date, train_stop_date)

len(train.tic.unique())

train.tic.unique()

train.head()

train.shape

stock_dimension = len(train.tic.unique())

state_space = stock_dimension*(len(config.TECHNICAL_INDICATORS_LIST)+2)+1

print(f"Stock Dimension: {stock_dimension}, State Space: {state_space}")

```

### Train

```

env_kwargs = {

"stock_dim": stock_dimension,

"hmax": 1000,

"initial_amount": 1000000,

"buy_cost_pct":6.87e-5,

"sell_cost_pct":1.0687e-3,

"reward_scaling": 1e-4,

"state_space": state_space,

"action_space": stock_dimension,

"tech_indicator_list": config.TECHNICAL_INDICATORS_LIST,

"print_verbosity": 1,

"initial_buy":True,

"hundred_each_trade":True

}

e_train_gym = StockTradingEnv(df = train, **env_kwargs)

env_train, _ = e_train_gym.get_sb_env()

print(type(env_train))

agent = DRLAgent(env = env_train)

DDPG_PARAMS = {

"batch_size": 256,

"buffer_size": 50000,

"learning_rate": 0.0005,

"action_noise":"normal",

}

POLICY_KWARGS = dict(net_arch=dict(pi=[64, 64], qf=[400, 300]))

model_ddpg = agent.get_model("ddpg", model_kwargs = DDPG_PARAMS, policy_kwargs=POLICY_KWARGS)

trained_ddpg = agent.train_model(model=model_ddpg,

tb_log_name='ddpg',

total_timesteps=1000)

```

### Trade

```

trade = ts_processor.data_split(df, val_start_date, val_stop_date)

env_kwargs = {

"stock_dim": stock_dimension,

"hmax": 1000,

"initial_amount": 1000000,

"buy_cost_pct":6.87e-5,

"sell_cost_pct":1.0687e-3,

"reward_scaling": 1e-4,

"state_space": state_space,

"action_space": stock_dimension,

"tech_indicator_list": config.TECHNICAL_INDICATORS_LIST,

"print_verbosity": 1,

"initial_buy":False,

"hundred_each_trade":True

}

e_trade_gym = StockTradingEnv(df = trade, **env_kwargs)

df_account_value, df_actions = DRLAgent.DRL_prediction(model=trained_ddpg,

environment = e_trade_gym)

df_actions.to_csv("action.csv",index=False)

df_actions

```

### Backtest

```

%matplotlib inline

plotter = ReturnPlotter(df_account_value, trade, val_start_date, val_stop_date)

plotter.plot_all()

%matplotlib inline

plotter.plot()

%matplotlib inline

# ticket: SSE 50:000016

plotter.plot("000016")

```

#### Use pyfolio

```

# CSI 300

baseline_df = plotter.get_baseline("399300")

import pyfolio

from pyfolio import timeseries

daily_return = plotter.get_return(df_account_value)

daily_return_base = plotter.get_return(baseline_df, value_col_name="close")

perf_func = timeseries.perf_stats

perf_stats_all = perf_func(returns=daily_return,

factor_returns=daily_return_base,

positions=None, transactions=None, turnover_denom="AGB")

print("==============DRL Strategy Stats===========")

perf_stats_all

with pyfolio.plotting.plotting_context(font_scale=1.1):

pyfolio.create_full_tear_sheet(returns = daily_return,

benchmark_rets = daily_return_base, set_context=False)

```

### Authors

github username: oliverwang15, eitin-infant

| github_jupyter |

# train.py: What it does step by step

This tutorial will break down what train.py does when it is run, and illustrate the functionality of some of the custom 'utils' functions that are called during a training run, in a way that is easy to understand and follow.

Note that parts of the functionality of train.py depend on the config.json file you are using. This tutorial is self-contained, and doesn't use a config file, but for more information on working with this file when using ProLoaF, see [this explainer](https://acs.pages.rwth-aachen.de/public/automation/plf/proloaf/docs/files-and-scripts/config/). Before proceeding to any of the sections below, please run the following code block:

```

import os

import sys

sys.path.append("../")

import pandas as pd

import utils.datahandler as dh

import matplotlib.pyplot as plt

import numpy as np

```

## Table of contents:

[1. Dealing with missing values in the data](#1.-Dealing-with-missing-values-in-the-data)

[2. Selecting and scaling features](#2.-Selecting-and-scaling-features)

[3. Creating a dataframe to log training results](#3.-Creating-a-dataframe-to-log-training-results)

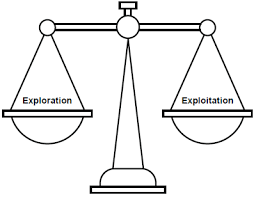

[4. Exploration](#4.-Exploration)

[5. Main run - creating the training model](#5.-Main-run---creating-the-training-model)

[6. Main run - training the model](#6.-Main-run---training-the-model)

[7. Updating the config, Saving the model & logs](#7.-Updating-the-config,-saving-the-model-&-logs)

## 1. Dealing with missing values in the data

The first thing train.py does after loading the dataset that was specified in your config file, is to check for any missing values, and fill them in as necessary. It does this using the function 'utils.datahandler.fill_if_missing'. In the following example, we will load some data that has missing values and examine what the 'fill_if_missing' function does. Please run the code block below to get started.

```

#Load the data sample and prep for use with datahandler functions

df = pd.read_csv("../data/fill_missing.csv", sep=";")

df['Time'] = pd.to_datetime(df['Time'])

df = df.set_index('Time')

df = df.astype(float)

df_missing_range = df.copy()

#Plot the data

df.iloc[0:194].plot(kind='line',y='DE_load_actual_entsoe_transparency', figsize = (12, 6), xlabel='Hours', use_index = False)

```

As should be clearly visible in the plot above, the data has some missing values. There is a missing range (a range refers to multiple adjacent values), from around 96-121, as well as two individual values that are missing, at 160 and 192. Please run the code block below to see how 'fill_if_missing' deals with these problems.

```

#Use fill_if_missing and plot the results

df=dh.fill_if_missing(df, periodicity=24)

df.iloc[0:192].plot(kind='line',y='DE_load_actual_entsoe_transparency', figsize = (12, 6), use_index = False)

#TODO: Test this again once interpolation is working

```

As we can see by the printed console messages, fill_if_missing first checks whether there are any missing values. If there are, it checks whether they are individual values or ranges, and handles these cases differently:

### Single missing values:

These are simply replaced by the average of the values on either side.

### Missing range:

If a range of values is missing, fill_if_missing will use the specified periodicity of the data to provide an estimate of the missing values, by averaging the ranges on either side of the missing range and then adapting the new values to fit the trend. If not specified, the periodicity has a default value of 1, but since we are using hourly data, we will use a periodicity of p = 24.

For each missing value at a given position t in the range, fill_if_missing first searches backwards through the data at intervals equal to the periodicity of the data (i.e. t1 = t - 24\*n, n = 1, 2,...) until it finds an existing value. It then does the same thing searching forwards through the data (i.e. t2 = t + 24\*n, n = 1, 2,...), and then it sets the value at t equal to the average of t1 and t2. Run the code block below to see the result for the missing range at 95-121:

```

start = 95

end = 121

p = 24

seas = np.zeros(len(df_missing_range))

#fill the missing values

for t in range(start, end + 1):

p1 = p

p2 = p

while np.isnan(df_missing_range.iloc[t - p1, 0]):

p1 += p

while np.isnan(df_missing_range.iloc[t + p2, 0]):

p2 += p

seas[t] = (df_missing_range.iloc[t - p1, 0] + df_missing_range.iloc[t + p2, 0]) / 2

#plot the result

ax = plt.gca()

df_missing_range["Interpolated"] = pd.Series(len(seas))

for t in range(start, end + 1):

df_missing_range.iloc[t, 1] = seas[t]

df_missing_range.iloc[0:192].plot(kind='line',y='DE_load_actual_entsoe_transparency', figsize = (12, 6), use_index = False, ax = ax)

df_missing_range.iloc[0:192].plot(kind='line',y='Interpolated', figsize = (12, 6), use_index = False, ax = ax)

```

The missing values in the range between 95 and 121 have now been filled in, but the end points aren't continuous with the original data, and the new values don't take into account the trend in the data. To deal with this, the function uses the difference in slope between the start and end points of the missing data range, and the start and end points of the newly interpolated values, to offset the new values so that they line up with the original data:

```

print("Create two straight lines that connect the interpolated start and end points, and the original start and end points.\nThese capture the 'trend' in each case over the missing section")

trend1 = np.poly1d(

np.polyfit([start, end], [seas[start], seas[end]], 1)

)

trend2 = np.poly1d(

np.polyfit(

[start - 1, end + 1],

[df_missing_range.iloc[start - 1, 0], df_missing_range.iloc[end + 1, 0]],

1,

)

)

#by subtracting the trend of the interpolated data, then adding the trend of the original data, we match the filled in

#values to what we had before

for t in range(start, end + 1):

df_missing_range.iloc[t, 1] = seas[t] - trend1(t) + trend2(t)

#plot the result

ax = plt.gca()

df_missing_range.iloc[0:192].plot(kind='line',y='DE_load_actual_entsoe_transparency', figsize = (12, 6), use_index = False, ax = ax)

df_missing_range.iloc[0:192].plot(kind='line',y='Interpolated', figsize = (12, 6), use_index = False, ax = ax)

```

**Please note:**

- Missing data ranges at the beginning or end of the data are handled differently (TODO: Explain how)

- Though the examples shown here use a single column for simplicity's sake, fill_if_missing automatically works on every column (feature) of your original dataframe.

## 2. Selecting and scaling features

The next thing train.py does is to select and scale features in the data as specified in the relevant config file, using the function 'utils.datahandler.scale_all'.

Consider the following dataset:

```

#Load and then plot the new dataset

df_to_scale = pd.read_csv("../data/opsd.csv", sep=";", index_col=0)

df_to_scale.plot(kind='line',y='AT_load_actual_entsoe_transparency', figsize = (8, 4), use_index = False)

df_to_scale.plot(kind='line',y='AT_temperature', figsize = (8, 4), use_index = False)

df_to_scale.head()

```

The above dataset has 55 features (columns), some of which are at totally different scales, as is clearly visible when looking at the y-axes of the above graphs for load and temperature data from Austria.

Depending on our dataset, we may not want to use all of the available features for training.

If we wanted to select only the two features highlighted above for training, we could do so by editing the value at the "feature_groups" key in the config.json, which takes the form of a list of dicts like the one below:

```

two_features = [

{

"name": "main",

"scaler": [

"minmax",

-1.0,

1.0

],

"features": [

"AT_load_actual_entsoe_transparency",

"AT_temperature"

]

}

]

```

Each dict in the list represents a feature group, and should have the following keys:

- "name" - the name of the feature group

- "scaler" - the scaler used by this feature group (value: a list with entries for scaler name and scaler specific attributes.) Valid scaler names include 'standard', 'robust' or 'minmax'. For more information on these scalers and their use, please see the [scikit-learn documentation](https://scikit-learn.org/stable/modules/preprocessing.html#preprocessing) or [the documentation for scale_all](https://acs.pages.rwth-aachen.de/public/automation/plf/proloaf/reference/proloaf/proloaf/utils/datahandler.html#scale_all)

- "features" - which features are to be included in the group (value: a list containing the feature names)

The 'scale_all' function will only return the selected features, scaled using the scaler assigned to their feature group.

Here we only have one group, 'main', which uses the 'minmax' scaler:

```

#Select, scale and plot the features as specified by the two_features list (see above)

selected_features, scalers = dh.scale_all(df_to_scale, two_features)

selected_features.plot(figsize = (12, 6), use_index = False)

print("Currently used scalers:")

print(scalers)

```

As you can see, both of our features (load and temperature for Austria) have now been scaled to fit within the same range (between -1 and 1).

Let's say we also wanted to include the weekday data from the data set in our training. Let us first take a look at what the weekday features look like. Here are the first 500 hours (approx. 3 weeks) of weekday_0:

```

df_to_scale[:500].plot(kind='line',y='weekday_0', figsize = (12, 4), use_index = False)

```

As we can see, these features are already within the range [0,1] and thus don't need to be scaled. So we can include them in a second feature group called 'aux'. Note, features which we deliberately aren't scaling should go in a group with this name.

The value of the "feature_groups" key in the config.json could then look like this:

```

feature_groups = [

{

"name": "main",

"scaler": [

"minmax",

0.0,

1.0

],

"features": [

"AT_load_actual_entsoe_transparency",

"AT_temperature"

]

},

{

"name": "aux",

"scaler": None,

"features": [

"weekday_0",

"weekday_1",

"weekday_2",

"weekday_3",

"weekday_4",

"weekday_5",

"weekday_6"

]

}

]

```

We now have two feature groups, 'main' (which uses the 'minmax' scaler, this time with a range between 0 and 1) and 'aux' (which uses no scaler):

```

#Select, scale and plot the features as specified by feature_groups (see above)

selected_features, scalers = dh.scale_all(df_to_scale,feature_groups)

selected_features[23000:28000].plot(figsize = (12, 6), use_index = False)

print("Currently used scalers:")

print(scalers)

```

We can see that all of our selected features now fit between 0 and 1. From this point onward, train.py will only work with our selected, scaled features.

```

print("Currently selected and scaled features: ")

print(selected_features.columns)

```

### Selecting scalers

When selecting which scalers to use, it is important that whichever one we choose does not adversely affect the shape of the distribution of our data, as this would distort our results. For example, this is the distribution of the feature "AT_load_actual_entsoe_transparency" before scaling:

```

df_unscaled = pd.read_csv("../data/opsd.csv", sep=";", index_col=0)

df_unscaled["AT_load_actual_entsoe_transparency"].plot.kde()

```

And this is the distribution after scaling using the minmax scaler, as we did above:

```

selected_features["AT_load_actual_entsoe_transparency"].plot.kde()

```

It is clear that in both cases, the distribution functions have a similar shape. The axes are scaled differently, but both graphs have maxima to the right of zero. On the other hand, this is what the distribution looks like if we use the robust scaler on this data:

```

feature_robust = [

{

"name": "main",

"scaler": [

"robust",

0.25,

0.75

],

"features": [

"AT_load_actual_entsoe_transparency",

]

}

]

selected_feat_robust, scalers_robust = dh.scale_all(df_to_scale,feature_robust)

selected_feat_robust["AT_load_actual_entsoe_transparency"].plot.kde()

```

Not only have the axes been scaled, but the data has also been shifted so that the maxima are centered around zero. The same problem can be observed with the "standard" scaler:

```

feature_std = [

{

"name": "main",

"scaler": [

"standard"

],

"features": [

"AT_load_actual_entsoe_transparency",

]

}

]

selected_feat_std, scalers_std = dh.scale_all(df_to_scale,feature_std)

selected_feat_std["AT_load_actual_entsoe_transparency"].plot.kde()

```

As a result, the minmax scaler is the best option for this feature

## 3. Creating a dataframe to log training results

Having already filled any missing values in our data, and scaled and selected the features we want to use for training,

at this point we use the function 'utils.loghandler.create_log' to create a dataframe which will log the results of our training.

This dataframe is saved as a .csv file at the end of the main training run. This allows different training runs, e.g. using new data or different parameters, to be compared with one another, so that we can monitor any changes in performance and compare the most recent run to the best performance achieved so far.

'create_log' creates the dataframe by getting which features we'll be logging from the [log.json file](https://acs.pages.rwth-aachen.de/public/automation/plf/proloaf/docs/files-and-scripts/log/), and then:

- loading an existing log file (from MAIN_PATH/\<log_path\>/\<model_name\>/\<model_name\>+"_training.csv" - see the [config.json explainer](https://acs.pages.rwth-aachen.de/public/automation/plf/proloaf/docs/files-and-scripts/config/) for more information)

- or creating a new dataframe from scratch, with the required features.

The newly created dataframe, log_df, is used at various later points in train.py (see sections [4](#4.-Exploration), [6](#6.-Main-run---training-the-model) and [7](#7.-Updating-the-config,-saving-the-model-&-logs) for more info).

## 4. Exploration

From this point on, it is assumed that we are working with prepared and scaled data (see earlier sections for more details).

The exploration phase is optional, and will only be carried out if the 'exploration' key in the config.json file is set to 'true'. The purpose of exploration is to tune our hyperparameters before the main training run. This is done by using [Optuna](https://optuna.org/) to optimize our [objective function](#Objective-function). Optuna iterates through a number of trials - either a fixed number, or until timeout (as specified in the tuning.json file, [see below](#tuning.json) for more info) - with the purpose of finding the hyperparameter settings that result in the smallest validation loss. This is an indicator of the quality of the prediction - the validation loss is the discrepancy between the predicted values and the actual values (targets) from the validation dataset, as determined by one of the metrics from [utils.metrics](https://acs.pages.rwth-aachen.de/public/automation/plf/proloaf/reference/proloaf/proloaf/utils/eval_metrics.html). The metric used is specified when train.py is called.

Once Optuna is done iterating, a summary of the trials is printed (number of trials, details of the best trial), and if the new best trial represents an improvement over the previously logged best, you will be prompted about whether you would like to overwrite the config.json with the newly found hyperparameters, so that they can be used for future training.

Optuna also has built-in paralellization, which we can opt to use by setting the 'parallel_jobs' key in the config.json to 'true'.

### Objective function

The previously mentioned objective function is a callable which Optuna uses for its optimization. In our case, it is the function 'mh.tuning_objective', which does the following per trial:

- Suggests values for each hyperparameter as per the [tuning.json](#tuning.json)

- Creates (see [section 5](#5.-Main-run---creating-the-training-model)) and trains (see [section 6](#6.-Main-run---training-the-model)) a model using these hyperparameters and our selected features and scalers

- Returns a score for the model in the form of the validation loss after training.

### tuning.json

The tuning.json file ([see explainer](https://acs.pages.rwth-aachen.de/public/automation/plf/proloaf/docs/files-and-scripts/config/#tuning-config) is located in the same folder as the other configs, under proloaf/targets/\<model name\>, and contains information about the hyperparameters to be tuned, as well as settings that limit how long Optuna should run. It can look like this, for example:

```json

{

"number_of_tests": 100,

"settings":

{

"learning_rate": {

"function": "suggest_loguniform",

"kwargs": {

"name": "learning_rate",

"low": 0.000001,

"high": 0.0001

}

},

"batch_size": {

"function": "suggest_int",

"kwargs": {

"name": "batch_size",

"low": 32,

"high": 120

}

}

}

}

```

- "number_of_tests": 100 here means that Optuna will stop after 100 trials. Alternatively, "timeout": \<number in seconds\> would limit the maximum duration for which Optuna would run. If both are specified, Optuna will stop whenever the first criterion is met. If neither are specified, Optuna will wait for a termination signal (Ctrl+C or SIGTERM).

- The "settings" keyword has a dictionary as its value. This dictionary contains keywords for each of the hyperparameters that Optuna is to optimize, e.g. "learning_rate", "batch_size".

- Each hyperparameter keyword takes yet another dictionary as a value, with the keywords "function" and "kwargs"

- "function" takes as its value a function name beginning with "suggest_..." as described in the [Optuna docs](https://optuna.readthedocs.io/en/v1.4.0/reference/trial.html#optuna.trial.Trial.suggest_loguniform). These "suggest" functions are used to suggest hyperparameter values by sampling from a range with the relevant distribution.

- "kwargs" has keywords for the arguments required by the "suggest" functions, typically "low" and "high" for the start and endpoints of the desired ranges as well as a "name" keyword which stores the hyperparameter name.

### Notes

- When running train.py using Gitlab CI, the prompt about whether to overwrite the config.json with the newly found values is disabled (as well as all other similar prompts).

- Though the objective function takes log_df (from the previous section) as a parameter, as it is required by the 'train' function, none of the training runs from this exploration phase are actually logged. Only the main run is logged. (See the next section for more)

## 5. Main run - creating the training model

Now that the data is free of missing values, we've selected which features we'll use, and the (optional) hyperparameter exploration is done, we are almost ready to create the model that will be used for the training.

The last step we need to take before we can do that is to split our data into training, validation and testing sets. During this process, we also transition from using the familiar Dataframe structure we've been using up until now, to using a new custom structure called CustomTensorData.

### Splitting the data

We do this using the function 'utils.datahandler.transform', which splits our 'selected_features' dataframe into three new dataframes for the three sets outlined above. It does this according to the 'train_split' and 'validation_split' parameters in the config file, as follows:

Each of the 'split' parameters are multiplied with the length of the 'selected_features' dataframe, with the result converted to an integer. These new integer values are the indices for the split.

e.g. For 'train_split' = 0.6 and 'validation_split'= 0.8, the first 60% of the data entries would be stored in the new training dataframe, with the next 20% going to the validation dataframe, and the final 20% used for the testing dataframe.

### Transformation into Tensors

Each of the three new dataframes is then transformed into the new CustomTensorData structure. This is done because the new structure is better suited for use with our RNN, and it is accomplished using 'utils.tensorloader.make_dataloader'.

A single CustomTensorData structure also has three components, each of which is comprised of a different set of features as defined in the config file:

- inputs1 - Encoder features: Features used as input for the encoder RNN. They provide information from a certain number of timesteps leading up to the period we wish to forecast i.e. from historical data.

- inputs2 - Decoder features: Features used as input for the decoder RNN. They provide information from the same time steps as the period we wish to forecast i.e. from data about the future that we know in advance, e.g. weather forecasts, day of the week, etc.

- targets - The features we are trying to forecast.

These three components contain the features described above, but reorganized from the familiar tabular Dataframe format into a series of samples of a given length ([horizon](#Horizons)).

To understand this change, please consider the image below, which focuses on a single feature that is only 7 time steps long. (Feature 1 - with data that is merely illustrative)

The final format, as seen in the two examples on the right, consists of a number of samples (rows) of a given length (horizon), such that the first sample begins at the first time step of the range given to 'make_dataloader', and the last sample ends at the last time step in the aforementioned range. Each consecutive sample also begins one time step later than the previous sample (the one above it).

When we consider a set of multiple features (with many more than 7 timesteps), each feature is transformed individually and then all features are combined into a 3D Tensor, as depicted in the following image:

### Horizons

So far, we have been using the term 'horizon' to refer to how many time steps are contained in one sample in our Custom Tensor structure. However, the three components (inputs1, inputs2 and targets) do not all share the same horizon length. In fact, there are two different parameters for horizon length, namely 'history_horizon', and 'forecast_horizon', and the different components use them as illustrated in the following diagram:

As we can see, sample 1 of inputs1 contains 'history_horizon' timesteps up to a certain point, while sample 1 of inputs2 and targets contain the 'forecast_horizon' timesteps that follow from that point onwards. This is because, as previously mentioned, inputs1 provides historical data, while inputs2 and targets both provide "future data" - data from the future that we know ahead of making our forecast.

**NB:** our "future data" can include uncertainty, for example, if we use existing forecasts as input.

With increasing sample number, we have a kind of moving window which shifts towards the right in the image above.

So that all three components contain the same number of samples, the inputs1 tensor does not include the final 'forecast_horizon' timesteps, while inputs2 and targets do not contain the first 'history_horizon' timesteps.

**Note:** for illustration purposes, the above image shows which timesteps are in a given sample of the three components, in relation to the original dataframe. The components are nevertheless at this point already stored in the custom tensor structure described earlier in this subsection.

### Model Creation

The final step in this stage is to create the model which we will be training. We do this using 'utils.modelhandler.make_model'. This function takes the number of features in component 1 and in component 2 (inputs1 and inputs2) of the training tensor, as well as our scalers from [section 2](#2.-Selecting-and-scaling-features) of this guide and other parameters from the config file, and returns a EncoderDecoder model as defined in 'utils.models'.

## 6. Main run - training the model

At this point, we have everything we need to train our model: our training, validation and testing datasets, stored as CustomTensorData structures, and the instantiated EncoderDecoder model we are going to be training. We give these along with the hyperparameters in our config file (e.g. learning rate, batch size etc.) to the function 'utils.modelhandler.train'. This function returns:

- a trained model

- our logging dataframe (see [section 3](#3.-Creating-a-dataframe-to-log-training-results)) updated with the results of the training

- the minimum validation loss after training

- the model's score as calculated by the function 'performance_test', in our case using the metric 'mis' (Mean Interval Score) as defined in 'utils.metrics'

What follows is a short breakdown of what 'utils.modelhandler.train' does.

**Reminder:** 'loss' generally refers to a measure of how far off our predictions are from the actual values (targets) we are trying to predict.

### How training works

Training lasts for a number of epochs, given by the parameter 'max_epochs' in the config file.

During each epoch, we perform the training step, followed by the validation step.

#### Training step:

Use .train() on the model to set it in training mode, then loop through every sample in our training data tensor, and in each iteration of the loop:

- get the model's prediction using the current sample of inputs1 and inputs2

- zero the gradients of our optimizer

- calculate the loss of the prediction we just made for this sample (using whichever metric is specified in the config)

- compute new gradients using loss.backward()

- update the optimizer's parameters (by taking an optimization step) using the new gradients

- update the epoch loss (the loss for the current sample is divided by the total number of samples in the training data and added to the current epoch loss)

This step iteratively teaches the model to produce better predictions.

#### Validation step:

Use .eval() on the model to set it in validation mode, then loop through every sample in our validation data tensor, and in each iteration of the loop:

- get the model's prediction using the current sample of inputs1 and inputs2

- update the validation loss (the loss for the current sample is calculated using whichever metric is specified in the config, then divided by the total number of samples in the validation data and added to the current validation loss)

This step gives us a way to track whether our model is improving, by validating the training using new data, to ensure that our model doesn't only work on our specific training data, but that it is actually being trained to predict the behaviour of our target feature.

The training uses early stopping, which basically means that it stops before 'max_epochs' iterations have been reached, if and when a certain number of epochs go by without any improvement to the model.

**Note:** If you are using Tensorboard's SummaryWriter to log training performance, this is when it gets logged.

#### Testing step:

Here, the function 'performance_test' is used to calculate the model's score using the testing data set. As mentioned at the top of this section, this function calculates the Mean Interval Score along the horizon. This score is saved along with the model, see [section 7]((#7.-Updating-the-config,-saving-the-model-&-logs))

## 7. Updating the config, saving the model & logs

The last thing we need to do is save our current model and the relevant scores and logs, so that we can use it in the future and monitor changes in performance.

First, the model is saved using 'modelhandler.save'. By default, this function only saves the model if the most recently achieved score is better than the previous best. In this case, and only if running in exploration mode, the config is updated with the most recent parameters before saving. <br>

If no improvement was achieved, the model can still be saved by opting to do so when prompted. In this case, the config parameters will not be updated before saving.

Saving the model entails calling torch.save() to store the model at the location given by the config parameter "output_path", and then saving the config to "config_path" using the function 'utils.confighandler.write_config'.

Lastly, the logs are saved using the function 'utils.loghandler.end_logging', which writes the logs to a .csv stored at:<br>

MAIN_PATH/\<log_path\>/\<model_name\>/\<model_name\>+"_training.csv", (the same location as where the log file was read in from)

**Note:** Things work a little differently if using Tensorboard logging (TODO: expand) <br>

In this case, when the 'modelhandler.train' function is called during the exploration phase (see [section 4](#4.-Exploration)) the config is automatically updated with the hyperparameter values from the latest trial, right after that trial ends.

| github_jupyter |

```

import pandas as pd

from sklearn.preprocessing import LabelEncoder

import numpy as np

```

## NOTES

* For KDD99 feature description, check http://kdd.ics.uci.edu/databases/kddcup99/task.html

```

kdd99_file = "kddcup.data.corrected"

kdd99_df = pd.read_csv(kdd99_file, header=None)

print(kdd99_df.shape)

kdd99_df.head()

kdd99_df.drop_duplicates(inplace=True)

kdd99_df.shape

kdd99_df.columns = ['duration', 'protocol_type', 'service', 'flag', 'src_bytes', 'dst_bytes', 'land', 'wrong_fragment',

'urgent', 'hot', 'num_failed_logins', 'logged_in', 'num_compromised', 'root_shell', 'su_attempted',

'num_root', 'num_file_creations', 'num_shells', 'num_access_files', 'num_outbound_cmds',

'is_hot_login', 'is_guest_login', 'count', 'srv_count', 'serror_rate', 'srv_serror_rate',

'rerror_rate', 'srv_rerror_rate', 'same_srv_rate', 'diff_srv_rate', 'srv_diff_host_rate',

'dst_host_count', 'dst_host_srv_count', 'dst_host_same_srv_rate', 'dst_host_diff_srv_rate',

'dst_host_same_src_port_rate', 'dst_host_srv_diff_host_rate', 'dst_host_serror_rate',

'dst_host_srv_serror_rate', 'dst_host_rerror_rate', 'dst_host_srv_rerror_rate', 'attack_type']

kdd99_df.head()

# add a feature to calculate the bytes difference between source and destination

kdd99_df['src_dst_bytes_diff'] = kdd99_df['dst_bytes'] - kdd99_df['src_bytes']

```

## Data Exploration

```

def get_percentile(col):

result = {'Feature':col.name, 'min':np.percentile(col, 0), '1%':np.percentile(col, 1),

'5%':np.percentile(col, 5), '15%':np.percentile(col, 15),

'25%':np.percentile(col, 25), '50%':np.percentile(col, 50), '75%':np.percentile(col, 75),

'85%':np.percentile(col, 85), '95%':np.percentile(col, 95),

'99%':np.percentile(col, 99), '99.9%':np.percentile(col, 99.9), 'max':np.percentile(col, 100)}

return result

# find columns with null

isnull_df = kdd99_df.isnull().sum()

isnull_df.loc[isnull_df > 0] # no null in any column

kdd99_df['attack_type'].value_counts()/kdd99_df['attack_type'].shape[0] * 100

int_types = [col for col in kdd99_df.columns if kdd99_df[col].dtype == 'int64']

print(int_types)

print()

float_types = [col for col in kdd99_df.columns if kdd99_df[col].dtype == 'float64']

print(float_types)

print()

o_types = [col for col in kdd99_df.columns if kdd99_df[col].dtype == 'O']

print(o_types)

print()

# lime needs categorical feature names (values will still be numerical value)

cat_features = o_types

cat_features.extend(['land', 'logged_in', 'root_shell', 'su_attempted', 'is_hot_login', 'is_guest_login'])

cat_features

# check values for each categorical values

print(kdd99_df['protocol_type'].unique())

print()

print(kdd99_df['service'].unique())

print()

print(kdd99_df['flag'].unique())

print()

print(kdd99_df['attack_type'].unique())

print()

print(kdd99_df['land'].value_counts())

print()

print(kdd99_df['logged_in'].value_counts())

print()

print(kdd99_df['root_shell'].value_counts())

print()

print(kdd99_df['su_attempted'].value_counts())

print()

print(kdd99_df['is_hot_login'].value_counts())

print()

print(kdd99_df['is_guest_login'].value_counts())

print()

# is_hot_login is the same for all type of attack_type, drop it

kdd99_df.loc[kdd99_df['is_hot_login'] == 1]['attack_type']

kdd99_df.drop(['is_hot_login'], inplace=True, axis=1)

cat_features.remove('is_hot_login')

y = kdd99_df['attack_type']

y.value_counts()

# label encoding

number = LabelEncoder()

for cat_col in cat_features:

kdd99_df[cat_col] = number.fit_transform(kdd99_df[cat_col].astype('str'))

kdd99_df[cat_col] = kdd99_df[cat_col].astype('object')

kdd99_df.head()

kdd99_df['attack_type'].value_counts()

kdd99_df.dtypes

kdd99_df.var()

num_dist_dct = {}

idx = 0

for col in kdd99_df.columns:

if kdd99_df[col].dtype == 'O':

continue

num_dist_dct[idx] = get_percentile(kdd99_df[col])

idx += 1

num_dist_df = pd.DataFrame(num_dist_dct).T

num_dist_df = num_dist_df[['Feature', 'min', '1%', '5%', '15%', '25%', '50%', '75%', '85%', '95%', '99%', '99.9%','max']]

num_dist_df

# check outliers

print(kdd99_df.loc[kdd99_df['src_bytes'] > 61298]['attack_type'].value_counts())

print()

print(kdd99_df.loc[kdd99_df['dst_bytes'] > 125015]['attack_type'].value_counts())

print()

print(kdd99_df.loc[kdd99_df['src_dst_bytes_diff'] < -9178]['attack_type'].value_counts())

print()

print(kdd99_df.loc[kdd99_df['src_dst_bytes_diff'] > 124758]['attack_type'].value_counts())

print()

```

It seems that some attack types have majority with outlier values, such as 0 and 22, so here not going to replace outliers with any other value

```

# check constant values

print(kdd99_df.loc[kdd99_df['wrong_fragment'] > 1]['attack_type'].value_counts()) # cotains the majority of 20 (teardrop)

print()

print(kdd99_df.loc[kdd99_df['urgent'] > 0]['attack_type'].value_counts())

print()

print(kdd99_df.loc[kdd99_df['hot'] > 20]['attack_type'].value_counts())

print()

print(kdd99_df.loc[kdd99_df['num_failed_logins'] > 0]['attack_type'].value_counts()) # contains the majority of 3 (guess_passwd)

print()

print(kdd99_df.loc[kdd99_df['num_compromised'] > 1]['attack_type'].value_counts())

print()

print(kdd99_df.loc[kdd99_df['num_root'] > 9]['attack_type'].value_counts())

print()

print(kdd99_df.loc[kdd99_df['num_file_creations'] > 1]['attack_type'].value_counts())

print()

print(kdd99_df.loc[kdd99_df['num_shells'] > 0]['attack_type'].value_counts())

print()

print(kdd99_df.loc[kdd99_df['num_access_files'] > 1]['attack_type'].value_counts())

print()

kdd99_df.drop('num_outbound_cmds', inplace=True, axis=1)

print(kdd99_df.shape)

print(y.shape)

object_cols = [col for col in kdd99_df.columns if kdd99_df[col].dtype=='O']

print(object_cols)

# Just use raw 40 features, and see how it runs in tree model

kdd99_df['attack_type_cat'] = y # use original strings as label for multi-class prediction

print(kdd99_df['attack_type_cat'].value_counts())

print(kdd99_df['attack_type'].value_counts())

kdd99_df.to_csv('kdd99_raw40.csv', index=False)

```

| github_jupyter |

```

! nvidia-smi

```

# Install

ติดตั้ง Library Transformers จาก HuggingFace

```

! pip install transformers -q

! pip install fastai2 -q

```

# Import

เราจะ Import

```

from transformers import GPT2LMHeadModel, GPT2TokenizerFast

```

# Download Pre-trained Model

ดาวน์โหลด Weight ของโมเดล ที่เทรนไว้เรียบร้อยแล้ว ชื่อ GPT2

```

pretrained_weights = 'gpt2'

tokenizer = GPT2TokenizerFast.from_pretrained(pretrained_weights)

model = GPT2LMHeadModel.from_pretrained(pretrained_weights)

```

ใช้ Tokenizer ตัดตำ โดย Tokenizer ของ HuggingFace นี้ encode จะ Tokenize แปลงเป็น ตัวเลข Numericalize ในขั้นตอนเดียว

```

ids = tokenizer.encode("A lab at Florida Atlantic University is simulating a human cough")

ids

```

หรือ เราสามารถแยกเป็น 2 Step ได้

```

# toks = tokenizer.tokenize("A lab at Florida Atlantic University is simulating a human cough")

# toks, tokenizer.convert_tokens_to_ids(toks)

```

decode กลับเป็นข้อความต้นฉบับ

```

tokenizer.decode(ids)

```

# Generate text

```

import torch

t = torch.LongTensor(ids)[None]

preds = model.generate(t)

preds.shape

preds[0]

tokenizer.decode(preds[0].numpy())

```

# Fastai

```

from fastai2.text.all import *

path = untar_data(URLs.WIKITEXT_TINY)

path.ls()

df_train = pd.read_csv(path/"train.csv", header=None)

df_valid = pd.read_csv(path/"test.csv", header=None)

df_train.head()

all_texts = np.concatenate([df_train[0].values, df_valid[0].values])

len(all_texts)

```

# Creating TransformersTokenizer Transform

เราจะนำ Tokenizer ของ Transformer มาสร้าง Transform ใน fastai ด้วยการกำหนด encodes, decodes และ setups

```

class TransformersTokenizer(Transform):

def __init__(self, tokenizer): self.tokenizer = tokenizer

def encodes(self, x):

toks = self.tokenizer.tokenize(x)

return tensor(self.tokenizer.convert_tokens_to_ids(toks))

def decodes(self, x):

return TitledStr(self.tokenizer.decode(x.cpu().numpy()))

```

ใน encodes เราจะไม่ได้ใช้ tokenizer.encode เนื่องจากภายในนั้น มีการ preprocessing นอกจาก tokenize และ numericalize ที่เรายังไม่ต้องการในขณะนี้ และ decodes จะ return TitledStr แทนที่ string เฉย ๆ จะได้รองรับ show method

```

# list(range_of(df_train))

# list(range(len(df_train), len(all_texts)))

```

เราจะเอา Transform ที่สร้างด้านบน ไปใส่ TfmdLists โดย split ตามลำดับที่ concat ไว้ และ กำหนด dl_type DataLoader Type เป็น LMDataLoader สำหรับใช้ในงาน Lanugage Model

```

splits = [list(range_of(df_train)), list(range(len(df_train), len(all_texts)))]

tls = TfmdLists(all_texts, TransformersTokenizer(tokenizer), splits=splits, dl_type=LMDataLoader)

# tls

```

ดูข้อมูล Record แรก ของ Training Set

```

tls.train[0].shape, tls.train[0]

```

ดูเป็นข้อมูล ที่ decode แล้ว

```

# show_at(tls.train, 0)

```

ดูข้อมูล Record แรก ของ Validation Set

```

tls.valid[0].shape, tls.valid[0]

```

ดูเป็นข้อมูล ที่ decode แล้ว

```

# show_at(tls.valid, 0)

```

# DataLoaders

สร้าง DataLoaders เพื่อส่งให้กับ Model ด้วย Batch Size ขนาด 64 และ Sequence Length 1024 ตามที่ GPT2 ใช้

```

bs, sl = 4, 1024

dls = tls.dataloaders(bs=bs, seq_len=sl)

dls

dls.show_batch(max_n=5)

```

จะได้ DataLoader สำหรับ Lanugage Model ที่มี input และ label เหลื่อมกันอยู่ 1 Token สำหรับให้โมเดล Predict คำต่อไปของประโยค

# Preprocessing ไว้ก่อนให้หมด

อีกวิธีนึงคือ เราสามารถ Preprocessing ข้อมูลทั้งหมดไว้ก่อนได้เลย

```

# def tokenize(text):

# toks = tokenizer.tokenize(text)

# return tensor(tokenizer.convert_tokens_to_ids(toks))

# tokenized = [tokenize(t) for t in progress_bar(all_texts)]

# len(tokenized), tokenized[0]

```

เราจะประกาศ TransformersTokenizer ใหม่ ให้ใน encodes ไม่ต้องทำอะไร (แต่ถ้าไม่เป็น Tensor มาก็ให้ tokenize ใหม่)

```

# class TransformersTokenizer(Transform):

# def __init__(self, tokenizer): self.tokenizer = tokenizer

# def encodes(self, x):

# return x if isinstance(x, Tensor) else tokenize(x)

# def decodes(self, x):

# return TitledStr(self.tokenizer.decode(x.cpu().numpy()))

```

แล้วจึงสร้าง TfmdLists โดยส่ง tokenized (ข้อมูลทั้งหมดที่ถูก tokenize เรียบร้อยแล้ว) เข้าไป

```

# tls = TfmdLists(tokenized, TransformersTokenizer(tokenizer), splits=splits, dl_type=LMDataLoader)

# dls = tls.dataloaders(bs=bs, seq_len=sl)

# dls.show_batch(max_n=5)

```

# Fine-tune Model

เนื่องจากโมเดลของ HuggingFace นั่น return output เป็น Tuple ที่ประกอบด้วย Prediction และ Activation เพิ่มเติมอื่น ๆ สำหรับใช้ในงานอื่น ๆ แต่ในเคสนี้เรายังไม่ต้องการ ทำให้เราต้องสร้าง after_pred Callback มาคั่นเพื่อเปลี่ยนให้ return แต่ Prediction เพื่อส่งไปให้กับ Loss Function ทำงานได้ตามปกติเหมือนเดิม

```

class DropOutput(Callback):

def after_pred(self): self.learn.pred = self.pred[0]

```

ใน callback เราสามารถอ้างถึง Prediction ของโมเดล ได้ด้วย self.pred ได้เลย แต่จะเป็นการ Read-only ถ้าต้องการ Write ต้องอ้างเต็ม ๆ ด้วย self.learn.pred

ตอนนี้เราสามารถสร้าง learner เพื่อเทรนโมเดลได้แล้ว

```

learn = None

torch.cuda.empty_cache()

Perplexity??

learn = Learner(dls, model, loss_func=CrossEntropyLossFlat(), cbs=[DropOutput], metrics=Perplexity()).to_fp16()

learn

```

ดูประสิทธิภาพของโมเดล ก่อนที่จะ Fine-tuned ตัวเลขแรกคือ Validation Loss ตัวที่สอง คือ Metrics ในที่นี้คือ Perplexity

```

learn.validate()

```

ได้ Perplexity 25.6 คือไม่เลวเลยทีเดียว

# Training

ก่อนเริ่มต้นเทรน เราจะเรียก lr_find หา Learning Rate กันก่อน

```

learn.lr_find()

```

แล้วเทรนไปแค่ 1 Epoch

```

learn.fit_one_cycle(1, 3e-5)

```

เราเทรนไปแค่ 1 Epoch โดยไม่ได้ปรับอะไรเลย โมเดลไม่ได้ประสิทธิภาพดีขึ้นสักเท่าไร เพราะมันดีมากอยู่แล้ว ต่อมาเราจะมาลองใช้โมเดล generate ข้อความดู ดังรูปแบบตัวอย่างใน Validation Set

```

df_valid.head(1)

prompt = "\n = Modern economy = \n \n The modern economy is driven by data, and that trend is being accelerated by"

prompt_ids = tokenizer.encode(prompt)

# prompt_ids

inp = torch.LongTensor(prompt_ids)[None].cuda()

inp.shape

preds = learn.model.generate(inp, max_length=50, num_beams=5, temperature=1.6)

preds.shape

preds[0]

tokenizer.decode(preds[0].cpu().numpy())

```

# Credit

* https://dev.fast.ai/tutorial.transformers

* https://github.com/huggingface/transformers

```

```

| github_jupyter |

# An Introduction to SageMaker Random Cut Forests

***Unsupervised anomaly detection on timeseries data a Random Cut Forest algorithm.***

---

1. [Introduction](#Introduction)

1. [Setup](#Setup)

1. [Training](#Training)

1. [Inference](#Inference)

1. [Epilogue](#Epilogue)

# Introduction

***

Amazon SageMaker Random Cut Forest (RCF) is an algorithm designed to detect anomalous data points within a dataset. Examples of when anomalies are important to detect include when website activity uncharactersitically spikes, when temperature data diverges from a periodic behavior, or when changes to public transit ridership reflect the occurrence of a special event.