text stringlengths 2.5k 6.39M | kind stringclasses 3

values |

|---|---|

# Networks and Human Behavior

## Tools for computational analysis

### TABLE OF CONTENTS

1. [Introduction](#What-you-need!)

2. [Network Representation](#first)

2.1 [Nodes and Edges](#NE)

2.2 [Graphs](#G)

2.3 [Adjacency Matrix](#A)

3. [Managing Network Data](#Data)

Note: this index and its links don't render properly on github but will work if you open it using jupyter.

***

<a id="What-you-need!"></a>

### Introduction

The empirical work in this course will be organized in a series of Jupyter Notebooks of which this is the first one.

- The notebooks will provide you with enough information to understand the concepts of the class and the tools to perform your own exploratory analysis.

- They are not exhaustive, meaning that there may be alternative methods to do the same tasks.

- They are work in progress, so kindly flag any issues you encounter or any suggestions for improvement.

<br>

<div class="alert alert-block alert-success">

You can vizualize the notebooks on your browser but you will get the most out of the material if you download and run them in Python as you go along.

<br>

<br>

If you have not done so yet, follow the setup instructions.

</div>

***

<a id="first"></a>

### Network Representation

<a id="NE"></a>

#### Nodes and Edges

Networks provide us with a mental model to describe and study interactions of individual entities within a community or group.

The basic elements of the network are thus:

- <u>The individuals</u>: e.g. humans, countries, banks. Abstractly referred to as **nodes** (N).

- <u>The relationships</u>: e.g. friendships, trade treaties, loans. Generically called **edges** (E).

A simple network is thus defined as a set of nodes (think for instance the group of students taking this class) and a description of whether each pair of nodes interacts with each other; we will sometimes talk about this interacting status as the pair being **connected**.

> Naturally, information regarding the same network (that is the same sets of nodes and edges) can be conveyed and stored in different forms.

>

> This is important to remember because it could determine how you handle network data; for instance it could influence which function you use to load a network into a notebook.

To fix some ideas let's consider a group of 5 people: Thomas, Carl, Sara, Mark and Sonia.

<br>

<div class="alert alert-block alert-info"> One of Python's convenient features is that it allows you to build programs based on objects. We will not enter in more detail for the purpose of this class but you will find "pre-programmed" objects such as <b>sets</b> and <b>lists</b> to be very useful.</div>

Let's define a set of our group of students:

```

students = {'Thomas', 'Carl', 'Sara', 'Mark','Sonia'}

# We can see the elements of the set of students by using the generic print() function from python

print(students)

# It's fairly simple to add another name,

# If you have downloaded this notebook and are runnning it as you read, feel free to change YOU for your own name

students.add("YOU")

# Note: there are many other things that can be done with sets. You can search online if you are curious.

print(students)

```

Now, let us fully characterize the network of **friendships** in the class by listing all the pairs of friends:

```

friend_list = [('Sara','Sonia'),

('Sara','Mark'),

('Sonia','Mark'),

('Sonia','Carl'),

('Sonia','Thomas'),

('Mark','Thomas')

]

```

Remark in a few details.

- In this simple network we consider that Sara being friends with Sonia implies that Sonia is friends with Sara. Such networks are known as **undirected** and thus we only need to list the edge between Sara and Sonia once and the ortehr of the elements of the pair is irrelevant.

- The converse type of networks are known as **directed** (simplest example would be Twitter) in which the relationship can be one-sided and thus `('Sara','Sonia')` would be represent a different edge from `('Sonia','Sara')` and both would have to be specified if both indeed appear in the directed network.

- In this classroom network not everyone is connected to everyone else and in particular you as the new student have not yet had the chance to make any connections. This shows why both sets (N and E) are important to fully describe the network. Nodes with no edges (also known as **isolated** nodes) do not appear anywhere on the edgelist.

- Nodes do not have edges with themselves. This is a simple convention that can sometimes be consequential and can be changed in specific contexts.

`students` and `friend_list` fully describe our simple network.

We know all the members of the community and who is friends with whom.

<a id="G"></a>

#### Graphs

Nodes and edges are naturally ameanable to be represented by a graph in which nodes are vertex or points and edges between them show the connections (you can get an idea of where some of the names come from).

Graphs are nice because they provide insightful visualizations but also because many mathematicians and theorists have spent time and effort studying and finding many useful properties some of which we shall cover in this course.

<br>

<div class="alert alert-block alert-info"> Not all objects and functions that we will use on Python are already loaded when you launch the interpreter (or open a notebook). There is a vast number of modules (or packages) that expand your ability to perform computations without having to code the functions from scratch. <br>

To use these functions and objects you have to import them. The best practice is to import them at the begining of your code and to use aliases defined by convention. <br>

Modules are imported by running:

<pre><code>import module_name as module_alias</code></pre>

The word following "as" is known as an alias and it serves as a prefix for when you call the functions of that package. If you import the package without an alias then you can just type the function names without any prefix but this is bad practice because some packages may have functions with the same name or you may have coded functions with already used names. <br>

<br>

In principle, you can choose any alias you want for each package. However, there are some conventions and it is easier if you stick to those.

</div>

In the subsequent notebooks this shall become clear. For now we will just import one package to work with networks (networkx).

In networkx, networks are usually defined as a graph object. Let's play with our example network.

```

import networkx as nx

# Define a graph object G

G=nx.Graph()

# Add nodes

G.add_nodes_from(students)

# Right now, the graph has all the students but no edges

print('# nodes:',G.number_of_nodes())

print(G.nodes())

print('# edges:',G.number_of_edges())

# Let's add the edges from the edge-list

G.add_edges_from(friend_list)

print('# edges:',G.number_of_edges())

# The next line of code allows to visualize plots within the notebook (in the future we will keep it in the preamble)

%matplotlib inline

# Let's take a look at the network

nx.draw_circular(G)

# Don't worry too much about all these extra arguments, it is just to visualize it better.

nx.draw_circular(G, with_labels = True,node_color='crimson',node_size=2500,font_color='white')

```

Now that you are getting so good at networking, you could probably add some edges with the other students.

```

G.add_edge('YOU','Sara')

G.add_edge('YOU','Thomas')

nx.draw_circular(G, with_labels = True,node_color='crimson',node_size=2500,font_color='white')

```

<a id="A"></a>

#### Adjacency Matrix

Another very useful representation for a network is its **adjacency matrix**.

To understand this matrix:

1. Choose an order for the nodes.

2. Consider every possible pair of nodes.

2.1 If the nodes in the pair are connected, input a 1 in the cell of the matrix whose indices correspond to the pair of nodes.

2.2 If they are not connected, input a zero.

For the pre-cooked example in this notebook it should look like this:

| / | Thomas | Mark | Carl | Sonia | Sara | YOU |

| --- | --- | --- | --- | --- | --- | --- |

| Thomas | 0 | 1 | 0 | 1 | 0 | 1 |

| Mark | 1 | 0 | 0 | 1 | 1 | 0 |

| Carl | 0 | 0 | 0 | 1 | 0 | 0 |

| Sonia | 1 | 1 | 1 | 0 | 1 | 0 |

| Sara | 0 | 1 | 0 | 1 | 0 | 1 |

| YOU | 1 | 0 | 0 | 0 | 1 | 0 |

```

# To get the matrix from your networkx Graph simply use nx.adjacency_matrix()

# Note: you can choose the order in which the nodes are indexed into rows and columns.

# Note 2: Python saves the matrix in a "sparse" format, meaning that it only stores the position of the ones

# and ignores the zeros. So to visualize a matrix like the one above we use the .todense() method

# on the matrix to "make it dense", that is to generate the zeros.

print(nx.adjacency_matrix(G,nodelist=['Thomas', 'Mark', 'Carl', 'Sonia', 'Sara', 'YOU']).todense())

```

<br>

<div class="alert alert-block alert-success"> Feel free to <b>play around with the toy network</b> in this notebook. <br>

<ul>

<li>Add/delete nodes. E.g. G.add_node('ME'), G.remove_node('YOU'). </li>

<li>Add/delete edges. E.g. G.add_edge('YOU','Carl'), G.remove_node('Carl','Sonia'). </li>

</ul>

See how this changes affect the edge list, the graph and the adjacency matrix.

<br>

<br>

<b>Make sure you undertand how the different representations work and relate to one another! </b> <br>

If unsure, review the material, ask your classmates and your instructors :)

</div>

***

<a id="Data"></a>

### Managing Network Data

As you go along with the course material (and in your own future research/work) you will come across network datasets that msy contain many more nodes and edges and you will not be creating the network from scratch as we did here.

The network data may be stored as a list of edges, a graph or an adjacency matrix and all of these could be stored in multiple formats (like .csv, .txt, .dta, .xlsx).

In this section we will provide a non-exhaustive list of ways in which you can get your data up an running on Python from an already existing network.

Throughout the course you may encounter some of these and other alternatives (and you may find even more ways to do it online). DO NOT worry about memorizing functions but rather about understanding the concepts as they relate to social and economic networks.

#### Dataframes

One simple way of handling data on Python is with the **Pandas** (pd) module. Pandas is one of the workhorses of data analysis in Python.

Among many other things, it allows you to create tables from data stored in a wide variety of formats.

Remeber that before being able to use it, you will need to load the module:

`import pandas as pd`

In this class the main way in which we will use it is to load/save data.

##### Load Data

We will create a new object, a dataframe, that contains data that was stored in a specific file in your computer.

Let's say that you have a .csv file in a given file: 'PATH_TO_FILE/file_name.csv'

Then way to read and load into a table is simply:

`new_dataframe = pd.read_csv('PATH_TO_FILE/file_name.csv')`

If the file format is not csv you may use other functions (this is not exhaustive):

| Format | function |

| --- | --- |

| excel | `pd.read_excel('PATH_TO_FILE/file_name.xlsx')`|

| stata | `pd.read_stata('PATH_TO_FILE/file_name.dta')`|

| parquet | `pd.read_parquet('PATH_TO_FILE/file_name.parquet')`|

| JSON | `pd.read_json('PATH_TO_FILE/file_name.json')`|

| txt | `pd.read_csv('PATH_TO_FILE/file_name.txt',sep=" ")`|

##### Save Data

When you already have a pandas dataframe (say edgelist_dataframe) then you can save it to different formats using the following fuctions:

| Format | function |

| --- | --- |

| csv | `edgelist_dataframe.to_csv('PATH_TO_FILE/file_name.csv')`|

| excel | `edgelist_dataframe.to_excel('PATH_TO_FILE/file_name.xlsx')`|

| stata | `edgelist_dataframe.to_stata('PATH_TO_FILE/file_name.dta')`|

| parquet | `edgelist_dataframe.to_parquet('PATH_TO_FILE/file_name.parquet')`|

| JSON | `edgelist_dataframe.to_json('PATH_TO_FILE/file_name.json')`|

| txt | `edgelist_dataframe.to_csv('PATH_TO_FILE/file_name.txt',sep=" ")`|

<br>

<div class="alert alert-block alert-info"> Note how in Python some functions are applied by typing the object as one of the arguments in parentheses: <br>

<pre><code>function(OBJECT,other arguments)</code></pre>

while other functions are applied using a '.' after the object: <br>

<pre><code>OBJECT.function(other arguments)</code></pre>

An in-depth explanation of this difference falls outside the scope of this course. For now it should be sufficient to know that the difference exists.

</div>

##### Dataframes $\iff$ Networks

Most commonly, the network dataframes you will be reading will correspond to node/edge lists and adjacency matrices.

- Once you have a pandas dataframe with the edgelist, define a network with:

> `new_graph_object_name = nx.from_pandas_edgelist(new_dataframe)`

- If you want to save the edge list of an existing network as a pandas dataframe then you would run:

> `old_edgelist_data = nx.to_pandas_edgelist(old_graph)`

- If the file contained an adjacency matrix (and therefore the dataframe is a matrix) then you would run:

> `new_graph_object_name = nx.from_pandas_adjacency(new_dataframe)`

- Finally (for now), to save the adjacency matrix of an existing as a pandas dataframe:

> `df = nx.to_pandas_adjacency(old_graph, dtype=int)`

See the example below for our toy example:

```

# since we havent used pandas yet we need to load it

# in the future, follow convention and load modules at the begining of your work

import pandas as pd

# generate pandas dataframe from edgelist

edgelist_dataframe = nx.to_pandas_edgelist(G)

# Take a look a the dataframe. It's a table with two columns, source and target

# these represent the source and target nodes of each connected pair

# since the network is undirected the source/target label is inconsequential here.

print(edgelist_dataframe)

```

<br>

<div class="alert alert-block alert-info">

NOTE: when the edgelist is not from a toy example this dataframe may have thousands of rows.

Instead of trying to print the entire thing you can have a glimpse of the table by running:

<pre><code>print(edgelist_dataframe.head())</code></pre>

</div>

```

# Let's create a pandas dataframe with the adjacency matrix:

adjacency_dataframe = nx.to_pandas_adjacency(G, dtype=int)

print(adjacency_dataframe)

# note how in this case, the node names are preserved in the dataframe

# (as opposed to when we only generated the matrix above)

# Now that we have the pandas dataframes we can create a new network from it:

G_new = nx.from_pandas_adjacency(adjacency_dataframe)

# Check the new network (which should be the same as before/with the corresponding changes you made to ir before)

nx.draw_circular(G_new, with_labels = True,node_color='crimson',node_size=2500,font_color='white')

```

<br><div class="alert alert-block alert-success">

Now that you have seen how to transform our networkx graphs into pandas dataframes and back, convince yourself that you understand how to save (read) such dataframes into (from) files.

<br>

<br>

You will see more examples of this Input/Output behavior in other notebooks as the course progresses.

</div>

| github_jupyter |

# Puma Example

Kevin Walchko

created 7 Nov 2017

---

This is just an example of a more complex serial manipulator.

```

%matplotlib inline

# Let's grab some libraries to help us manipulate symbolic equations

from __future__ import print_function

from __future__ import division

import numpy as np

import sympy

from sympy import symbols, sin, cos, pi, simplify

def makeT(a, alpha, d, theta):

# create a modified DH homogenious matrix

return np.array([

[ cos(theta), -sin(theta), 0, a],

[sin(theta)*cos(alpha), cos(theta)*cos(alpha), -sin(alpha), -d*sin(alpha)],

[sin(theta)*sin(alpha), cos(theta)*sin(alpha), cos(alpha), d*cos(alpha)],

[ 0, 0, 0, 1]

])

def simplifyT(tt):

"""

This goes through each element of a matrix and tries to simplify it.

"""

for i, row in enumerate(tt):

for j, col in enumerate(row):

tt[i,j] = simplify(col)

return tt

```

# Puma

[](dh_pics/puma.png)

<img src="dh_pics/puma.png" width="400px">

Puma robot is an old, but classical serial manipulator. You can see Criag's example in section 3.7, pg 77. Once you have the DH parameters, you can use the above matrix to find the forward kinematics,

```

# craig puma

t1,t2,t3,t4,t5,t6 = symbols('t1 t2 t3 t4 t5 t6')

a2, a3, d3, d4 = symbols('a2 a3 d3 d4')

T1 = makeT(0,0,0,t1)

T2 = makeT(0,-pi/2,0,t2)

T3 = makeT(a2,0,d3,t3)

T4 = makeT(a3,-pi/2,d4,t4)

T5 = makeT(0,pi/2,0,t5)

T6 = makeT(0,-pi/2,0,t6)

ans = np.eye(4)

for T in [T1, T2, T3, T4, T5, T6]:

ans = ans.dot(T)

print(ans)

ans = simplifyT(ans)

print(ans)

print('position x: {}'.format(ans[0,3]))

print('position y: {}'.format(ans[1,3]))

print('position z: {}'.format(ans[2,3]))

```

Looking at the position, this is the same position listed in Craig, eqn 3.14.

Also, **this is the simplified version!!!**. As you get more joints and degrees of freedom, the equations get nastier. You also can run into situations where you end up with singularities (like division by zero) and send your robot into a bad place!

-----------

<a rel="license" href="http://creativecommons.org/licenses/by-sa/4.0/"><img alt="Creative Commons License" style="border-width:0" src="https://i.creativecommons.org/l/by-sa/4.0/88x31.png" /></a><br />This work is licensed under a <a rel="license" href="http://creativecommons.org/licenses/by-sa/4.0/">Creative Commons Attribution-ShareAlike 4.0 International License</a>.

| github_jupyter |

# Measurement Error Mitigation

```

from qiskit import QuantumCircuit, QuantumRegister, Aer, transpile, assemble

from qiskit_textbook.tools import array_to_latex

```

### Introduction

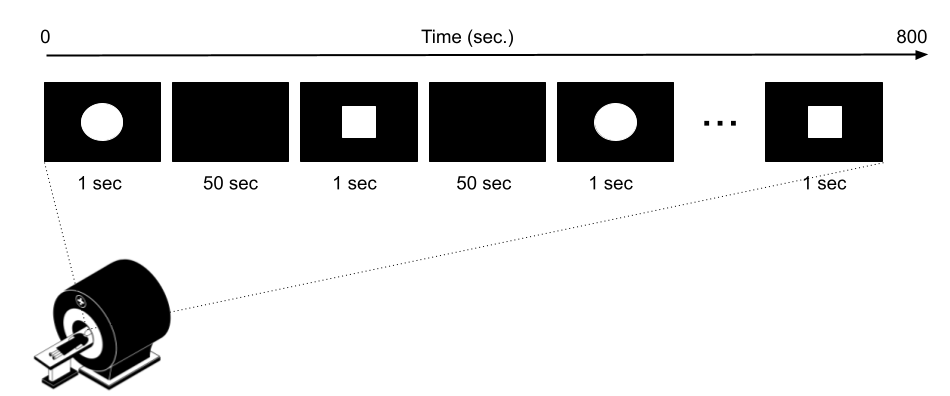

The effect of noise is to give us outputs that are not quite correct. The effect of noise that occurs throughout a computation will be quite complex in general, as one would have to consider how each gate transforms the effect of each error.

A simpler form of noise is that occurring during final measurement. At this point, the only job remaining in the circuit is to extract a bit string as an output. For an $n$ qubit final measurement, this means extracting one of the $2^n$ possible $n$ bit strings. As a simple model of the noise in this process, we can imagine that the measurement first selects one of these outputs in a perfect and noiseless manner, and then noise subsequently causes this perfect output to be randomly perturbed before it is returned to the user.

Given this model, it is very easy to determine exactly what the effects of measurement errors are. We can simply prepare each of the $2^n$ possible basis states, immediately measure them, and see what probability exists for each outcome.

As an example, we will first create a simple noise model, which randomly flips each bit in an output with probability $p$.

```

from qiskit.providers.aer.noise import NoiseModel

from qiskit.providers.aer.noise.errors import pauli_error, depolarizing_error

def get_noise(p):

error_meas = pauli_error([('X',p), ('I', 1 - p)])

noise_model = NoiseModel()

noise_model.add_all_qubit_quantum_error(error_meas, "measure") # measurement error is applied to measurements

return noise_model

```

Let's start with an instance of this in which each bit is flipped $1\%$ of the time.

```

noise_model = get_noise(0.01)

```

Now we can test out its effects. Specifically, let's define a two qubit circuit and prepare the states $\left|00\right\rangle$, $\left|01\right\rangle$, $\left|10\right\rangle$ and $\left|11\right\rangle$. Without noise, these would lead to the definite outputs `'00'`, `'01'`, `'10'` and `'11'`, respectively. Let's see what happens with noise. Here, and in the rest of this section, the number of samples taken for each circuit will be `shots=10000`.

```

qasm_sim = Aer.get_backend('qasm_simulator')

for state in ['00','01','10','11']:

qc = QuantumCircuit(2,2)

if state[0]=='1':

qc.x(1)

if state[1]=='1':

qc.x(0)

qc.measure([0, 1], [0, 1])

t_qc = transpile(qc, qasm_sim)

qobj = assemble(t_qc)

counts = qasm_sim.run(qobj, noise_model=noise_model, shots=10000).result().get_counts()

print(state+' becomes', counts)

```

Here we find that the correct output is certainly the most dominant. Ones that differ on only a single bit (such as `'01'`, `'10'` in the case that the correct output is `'00'` or `'11'`), occur around $1\%$ of the time. Those that differ on two bits occur only a handful of times in 10000 samples, if at all.

So what about if we ran a circuit with this same noise model, and got an result like the following?

```

{'10': 98, '11': 4884, '01': 111, '00': 4907}

```

Here `'01'` and `'10'` occur for around $1\%$ of all samples. We know from our analysis of the basis states that such a result can be expected when these outcomes should in fact never occur, but instead the result should be something that differs from them by only one bit: `'00'` or `'11'`. When we look at the results for those two outcomes, we can see that they occur with roughly equal probability. We can therefore conclude that the initial state was not simply $\left|00\right\rangle$, or $\left|11\right\rangle$, but an equal superposition of the two. If true, this means that the result should have been something along the lines of:

```

{'11': 4977, '00': 5023}

```

Here is a circuit that produces results like this (up to statistical fluctuations).

```

qc = QuantumCircuit(2,2)

qc.h(0)

qc.cx(0,1)

qc.measure([0, 1], [0, 1])

t_qc = transpile(qc, qasm_sim)

qobj = assemble(t_qc)

counts = qasm_sim.run(qobj, noise_model=noise_model, shots=10000).result().get_counts()

print(counts)

```

In this example we first looked at results for each of the definite basis states, and used these results to mitigate the effects of errors for a more general form of state. This is the basic principle behind measurement error mitigation.

### Error mitigation with linear algebra

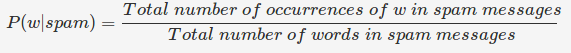

Now we just need to find a way to perform the mitigation algorithmically rather than manually. We will do this by describing the random process using matrices. For this we need to rewrite our counts dictionaries as column vectors. For example, the dictionary `{'10': 96, '11': 1, '01': 95, '00': 9808}` would be rewritten as

$$

C =

\begin{pmatrix}

9808 \\

95 \\

96 \\

1

\end{pmatrix}.

$$

Here the first element is that for `'00'`, the next is that for `'01'`, and so on.

The information gathered from the basis states $\left|00\right\rangle$, $\left|01\right\rangle$, $\left|10\right\rangle$ and $\left|11\right\rangle$ can then be used to define a matrix, which rotates from an ideal set of counts to one affected by measurement noise. This is done by simply taking the counts dictionary for $\left|00\right\rangle$, normalizing it so that all elements sum to one, and then using it as the first column of the matrix. The next column is similarly defined by the counts dictionary obtained for $\left|01\right\rangle$, and so on.

There will be statistical variations each time the circuit for each basis state is run. In the following, we will use the data obtained when this section was written, which was as follows.

```

00 becomes {'10': 96, '11': 1, '01': 95, '00': 9808}

01 becomes {'10': 2, '11': 103, '01': 9788, '00': 107}

10 becomes {'10': 9814, '11': 90, '01': 1, '00': 95}

11 becomes {'10': 87, '11': 9805, '01': 107, '00': 1}

```

This gives us the following matrix.

$$

M =

\begin{pmatrix}

0.9808&0.0107&0.0095&0.0001 \\

0.0095&0.9788&0.0001&0.0107 \\

0.0096&0.0002&0.9814&0.0087 \\

0.0001&0.0103&0.0090&0.9805

\end{pmatrix}

$$

If we now take the vector describing the perfect results for a given state, applying this matrix gives us a good approximation of the results when measurement noise is present.

$$ C_{noisy} = M ~ C_{ideal}$$

.

As an example, let's apply this process for the state $(\left|00\right\rangle+\left|11\right\rangle)/\sqrt{2}$,

$$

\begin{pmatrix}

0.9808&0.0107&0.0095&0.0001 \\

0.0095&0.9788&0.0001&0.0107 \\

0.0096&0.0002&0.9814&0.0087 \\

0.0001&0.0103&0.0090&0.9805

\end{pmatrix}

\begin{pmatrix}

5000 \\

0 \\

0 \\

5000

\end{pmatrix}

=

\begin{pmatrix}

4904.5 \\

101 \\

91.5 \\

4903

\end{pmatrix}.

$$

In code, we can express this as follows.

```

import numpy as np

M = [[0.9808,0.0107,0.0095,0.0001],

[0.0095,0.9788,0.0001,0.0107],

[0.0096,0.0002,0.9814,0.0087],

[0.0001,0.0103,0.0090,0.9805]]

Cideal = [[5000],

[0],

[0],

[5000]]

Cnoisy = np.dot(M, Cideal)

array_to_latex(Cnoisy, pretext="\\text{C}_\\text{noisy} = ")

```

Either way, the resulting counts found in $C_{noisy}$, for measuring the $(\left|00\right\rangle+\left|11\right\rangle)/\sqrt{2}$ with measurement noise, come out quite close to the actual data we found earlier. So this matrix method is indeed a good way of predicting noisy results given a knowledge of what the results should be.

Unfortunately, this is the exact opposite of what we need. Instead of a way to transform ideal counts data into noisy data, we need a way to transform noisy data into ideal data. In linear algebra, we do this for a matrix $M$ by finding the inverse matrix $M^{-1}$,

$$C_{ideal} = M^{-1} C_{noisy}.$$

```

import scipy.linalg as la

M = [[0.9808,0.0107,0.0095,0.0001],

[0.0095,0.9788,0.0001,0.0107],

[0.0096,0.0002,0.9814,0.0087],

[0.0001,0.0103,0.0090,0.9805]]

Minv = la.inv(M)

array_to_latex(Minv)

```

Applying this inverse to $C_{noisy}$, we can obtain an approximation of the true counts.

```

Cmitigated = np.dot(Minv, Cnoisy)

array_to_latex(Cmitigated, pretext="\\text{C}_\\text{mitigated}=")

```

Of course, counts should be integers, and so these values need to be rounded. This gives us a very nice result.

$$

C_{mitigated} =

\begin{pmatrix}

5000 \\

0 \\

0 \\

5000

\end{pmatrix}

$$

This is exactly the true result we desire. Our mitigation worked extremely well!

### Error mitigation in Qiskit

```

from qiskit.ignis.mitigation.measurement import complete_meas_cal, CompleteMeasFitter

```

The process of measurement error mitigation can also be done using tools from Qiskit. This handles the collection of data for the basis states, the construction of the matrices and the calculation of the inverse. The latter can be done using the pseudo inverse, as we saw above. However, the default is an even more sophisticated method using least squares fitting.

As an example, let's stick with doing error mitigation for a pair of qubits. For this we define a two qubit quantum register, and feed it into the function `complete_meas_cal`.

```

qr = QuantumRegister(2)

meas_calibs, state_labels = complete_meas_cal(qr=qr, circlabel='mcal')

```

This creates a set of circuits to take measurements for each of the four basis states for two qubits: $\left|00\right\rangle$, $\left|01\right\rangle$, $\left|10\right\rangle$ and $\left|11\right\rangle$.

```

for circuit in meas_calibs:

print('Circuit',circuit.name)

print(circuit)

print()

```

Let's now run these circuits without any noise present.

```

# Execute the calibration circuits without noise

t_qc = transpile(meas_calibs, qasm_sim)

qobj = assemble(t_qc, shots=10000)

cal_results = qasm_sim.run(qobj, shots=10000).result()

```

With the results we can construct the calibration matrix, which we have been calling $M$.

```

meas_fitter = CompleteMeasFitter(cal_results, state_labels, circlabel='mcal')

array_to_latex(meas_fitter.cal_matrix)

```

With no noise present, this is simply the identity matrix.

Now let's create a noise model. And to make things interesting, let's have the errors be ten times more likely than before.

```

noise_model = get_noise(0.1)

```

Again we can run the circuits, and look at the calibration matrix, $M$.

```

t_qc = transpile(meas_calibs, qasm_sim)

qobj = assemble(t_qc, shots=10000)

cal_results = qasm_sim.run(qobj, noise_model=noise_model, shots=10000).result()

meas_fitter = CompleteMeasFitter(cal_results, state_labels, circlabel='mcal')

array_to_latex(meas_fitter.cal_matrix)

```

This time we find a more interesting matrix, and one that we cannot use in the approach that we described earlier. Let's see how well we can mitigate for this noise. Again, let's use the Bell state $(\left|00\right\rangle+\left|11\right\rangle)/\sqrt{2}$ for our test.

```

qc = QuantumCircuit(2,2)

qc.h(0)

qc.cx(0,1)

qc.measure([0, 1], [0, 1])

t_qc = transpile(qc, qasm_sim)

qobj = assemble(t_qc, shots=10000)

results = qasm_sim.run(qobj, noise_model=noise_model, shots=10000).result()

noisy_counts = results.get_counts()

print(noisy_counts)

```

In Qiskit we mitigate for the noise by creating a measurement filter object. Then, taking the results from above, we use this to calculate a mitigated set of counts. Qiskit returns this as a dictionary, so that the user doesn't need to use vectors themselves to get the result.

```

# Get the filter object

meas_filter = meas_fitter.filter

# Results with mitigation

mitigated_results = meas_filter.apply(results)

mitigated_counts = mitigated_results.get_counts()

```

To see the results most clearly, let's plot both the noisy and mitigated results.

```

from qiskit.visualization import plot_histogram

noisy_counts = results.get_counts()

plot_histogram([noisy_counts, mitigated_counts], legend=['noisy', 'mitigated'])

```

Here we have taken results for which almost $20\%$ of samples are in the wrong state, and turned it into an exact representation of what the true results should be. However, this example does have just two qubits with a simple noise model. For more qubits, and more complex noise models or data from real devices, the mitigation will have more of a challenge. Perhaps you might find methods that are better than those Qiskit uses!

```

import qiskit

qiskit.__qiskit_version__

```

| github_jupyter |

# Import Libraries

```

# import necessary libraries

import numpy as np

import pandas as pd

import re

import pickle

import matplotlib.pyplot as plt

from matplotlib.pyplot import figure

import matplotlib.image as mpimg

from sklearn.model_selection import train_test_split

from datetime import date

from datetime import datetime

import torch

from torch import nn

from torch.utils.data import Dataset

from torch.utils.data import DataLoader

from torchvision import transforms

from torch.nn import functional as F

from torch import nn, optim

from rdkit import Chem

from rdkit.Chem import Draw

import selfies as sf

# import chemVAE functions

from chemVAE import main

```

# One-Hot Encode SMILES Data

Dataset is from the Clean Energy Project https://www.worldcommunitygrid.org/research/cep1/overview.do

```

# load dataset

df = pd.read_csv('opv_molecules.csv')

# select SMILEs data

smiles = df['SMILES'].values

selfies = main.smiles2selfies(smiles)

onehot_selfies, idx_to_symbol = main.onehotSELFIES(selfies)

```

# Load One-Hot Encoded Data into Pytorch Dataset

```

# split data in training and testing

X_train, X_test, y_train, y_test = train_test_split(onehot_selfies, onehot_selfies, test_size = 0.40)

X_test, X_val, y_test, y_val = train_test_split(X_test, X_test, test_size = 0.50)

# Pytroch Dataset

train_data = main.SELFIES_Dataset(X_train, y_train, transform = transforms.ToTensor())

test_data = main.SELFIES_Dataset(X_test, y_test, transform = transforms.ToTensor())

val_data = main.SELFIES_Dataset(X_val, y_val, transform = transforms.ToTensor())

```

# Set Model Parameters

```

num_characters, max_seq_len = onehot_selfies[0].shape

params = {'num_characters' : num_characters,

'seq_length' : max_seq_len,

'num_conv_layers' : 3,

'layer1_filters' : 24,

'layer2_filters' : 24,

'layer3_filters' : 24,

'layer4_filters' : 24,

'kernel1_size' : 11,

'kernel2_size' : 11,

'kernel3_size' : 11,

'kernel4_size' : 11,

'lstm_stack_size' : 3,

'lstm_num_neurons' : 396,

'latent_dimensions' : 256,

'batch_size' : 256,

'epochs' : 500,

'learning_rate' : 10**-4}

```

# Train Model

```

# set device

device = torch.device('cuda' if torch.cuda.is_available() else 'cpu')

print("Training Model on: " + str(device))

# load data

train_loader = DataLoader(train_data, batch_size = params['batch_size'], shuffle = True)

test_loader = DataLoader(test_data, batch_size = params['batch_size'], shuffle = True)

# initialize model

model = main.VAE(params).to(device)

# set optimizer

optimizer = optim.Adam(model.parameters(), lr = params['learning_rate'])

# set KL annealing

KLD_alpha = np.linspace(0,1, params['epochs'])

## generate unique filenames

date = date.today()

now = datetime.now()

time = now.strftime("%H%M%S")

model_filename = "model_" + str(date)

# train model

epoch = params['epochs']

train_loss = []

test_loss = []

BCE_loss = []

KLD_loss = []

KLD_weight = []

for epoch in range(1, epoch + 1):

alpha = KLD_alpha[epoch-1]

loss, BCE, KLD_wt, KLD = main.train(model, train_loader, optimizer, device, epoch, alpha)

train_loss.append(loss)

BCE_loss.append(BCE)

KLD_loss.append(KLD)

KLD_weight.append(KLD_wt)

test_loss.append(main.test(model, test_loader, optimizer, device, epoch, alpha))

# save model

## save model paramters

output = open(model_filename +'_parameters.pkl', 'wb')

pickle.dump(params, output)

output.close()

print("Saved PyTorch Parameters State to " + model_filename +'_parameters.pkl')

## save model state

torch.save(model.state_dict(), model_filename + '_state.pth')

print("Saved PyTorch Model State to " + model_filename + '_state.pth')

## save model state

torch.save(model, model_filename + '.pth')

print("Saved PyTorch Model State to " + model_filename + '_state.pth')

```

# Deploy Model

```

# Load the model trained in the cell above

device = torch.device('cuda' if torch.cuda.is_available() else 'cpu')

## load parameters

pkl_file = open(model_filename + '_parameters.pkl', 'rb')

params = pickle.load(pkl_file)

pkl_file.close()

## load model state

model = main.VAE(params).to(device)

model.load_state_dict(torch.load(model_filename + '_state.pth'))

# Load a pretrained model

device = torch.device('cuda' if torch.cuda.is_available() else 'cpu')

## load parameters

pkl_file = open('pretrained_model_parameters.pkl', 'rb')

params = pickle.load(pkl_file)

pkl_file.close()

## load model state

model = main.VAE(params).to(device)

model.load_state_dict(torch.load('pretrained_model_state.pth'))

# grab random sample from test_data

sample_idx = np.random.randint(0,len(test_data)-1)

img, label = train_data[sample_idx]

# run model

with torch.no_grad():

img = img.to(device)

recon_data, z, mu, logvar = model(img)

recon_data = recon_data[0].cpu()

# grab original smiles

sample = img[0].cpu().numpy()

char_ind = list(np.argmax(sample.squeeze(),axis=0))

string = [idx_to_symbol[i] for i in char_ind]

selfie = ''.join(string)

smiles = sf.decoder(selfie)

# reconstructed smiles

recon_sample = recon_data.numpy()

char_ind = list(np.argmax(recon_sample.squeeze(),axis=0))

string = [idx_to_symbol[i] for i in char_ind]

recon_selfie = ''.join(string)

recon_smiles = sf.decoder(recon_selfie)

# visualize model reconstruction

## draw molecule and reconstruction

m1 = Chem.MolFromSmiles(smiles)

Draw.MolToFile(m1,'original.png')

m2 = Chem.MolFromSmiles(recon_smiles)

Draw.MolToFile(m2,'reconstruct.png')

## visualize molecules in notebokk

figure(figsize=(8, 6), dpi = 100)

plt.subplot(1,2,1)

img = mpimg.imread('original.png')

plt.imshow(img)

plt.axis('off')

plt.title('Original')

plt.subplot(1,2,2)

img = mpimg.imread('reconstruct.png')

plt.imshow(img)

plt.axis('off')

plt.title('Reconstruction')

```

| github_jupyter |

## Breast cancer detection using deep learning

In this notebook we are going to use the [Breast Histopathology Images](https://www.kaggle.com/paultimothymooney/breast-histopathology-images) dataset and the `fastai` library for detecting breast cancer.

**Context**:

Invasive Ductal Carcinoma (IDC) is the most common subtype of all breast cancers. To assign an aggressiveness grade to a whole mount sample, pathologists typically focus on the regions which contain the IDC. As a result, one of the common pre-processing steps for automatic aggressiveness grading is to delineate the exact regions of IDC inside of a whole mount slide.

**About the dataset**:

The original dataset consisted of 162 whole mount slide images of Breast Cancer (BCa) specimens scanned at 40x. From that, 277,524 patches of size 50 x 50 were extracted (198,738 IDC negative and 78,786 IDC positive). Each patch’s file name is of the format: u_xX_yY_classC.png — > example 10253_idx5_x1351_y1101_class0.png . Where u is the patient ID (10253_idx5), X is the x-coordinate of where this patch was cropped from, Y is the y-coordinate of where this patch was cropped from, and C indicates the class where 0 is non-IDC and 1 is IDC.

**Inspiration**:

Breast cancer is the most common form of cancer in women, and invasive ductal carcinoma (IDC) is the most common form of breast cancer. Accurately identifying and categorizing breast cancer subtypes is an important clinical task, and automated methods can be used to save time and reduce error.

**Adrian Rosebrock **of **PyImageSearch** has [this wonderful tutorial](https://www.pyimagesearch.com/2019/02/18/breast-cancer-classification-with-keras-and-deep-learning/) on this same topic as well. Be sure to check that out if you have not. I decided to use the `fastai` library and to see *if I could improve the predictive performance by incorporating modern deep learning practices*.

Let's take a look at the class distribution of the dataset again - >

* 198,738 negative examples (i.e., no breast cancer)

* 78,786 positive examples (i.e., indicating breast cancer was found in the patch)

0 indicates `no IDC` (no breast cancer) while 1 indicates `IDC` (breast cancer)

As we can see, this is a clear example of *class-imbalance*. But we will start simple and do a lot of experimentation for taking major decisions for the model training and tricking.

```

# Get the fastai libraries and other important stuff: https://course.fast.ai/start_colab.html

!curl -s https://course.fast.ai/setup/colab | bash

# Authenticate Colab to use my Google Drive for data storage and retrieval

from google.colab import drive

drive.mount('/content/gdrive', force_remount=True)

root_dir = "/content/gdrive/My Drive/"

base_dir = root_dir + 'BreastCancer'

base_dir

# Change the working directory

%cd /content/gdrive/My\ Drive/BreastCancer

# Verify

!pwd

```

Unzip the zipped folder of data by `!unzip /content/gdrive/My\ Drive/BreastCancer/IDC_regular_ps50_idx5.zip`. It will take time. When the process completes, make sure to move the zipped folder to somwhere else (or remove).

<font color=red>Warning ahead! The unzipping process takes time. So be patient. </font>

```

!unzip /content/gdrive/My\ Drive/BreastCancer/IDC_regular_ps50_idx5.zip

!find /content/gdrive/My\ Drive/BreastCancer -maxdepth 1 -type d | wc -l

```

### Magics and imports

```

%reload_ext autoreload

%autoreload 2

%matplotlib inline

from fastai.vision import *

from fastai.metrics import *

import numpy as np

np.random.seed(7)

import torch

torch.cuda.manual_seed_all(7)

import matplotlib.pyplot as ply

plt.style.use('ggplot')

```

### Instantiating the data augmentation object with a number of useful transforms

```

tfms = get_transforms(do_flip=True, flip_vert=True,

max_lighting=0.3, max_warp=0.3, max_rotate=20., max_zoom=0.05)

len(tfms)

```

### Loading the data in mini batches of 128 (48x48)

```

path = '/content/gdrive/My Drive/BreastCancer/'

data = ImageDataBunch.from_folder(path, ds_tfms=tfms, valid_pct=0.2,

size=48, bs=128).normalize(imagenet_stats)

data.show_batch(rows=3, figsize=(8,8))

```

Just to remind you - **0 indicates `no IDC` (no breast cancer) while 1 indicates `IDC` (breast cancer)**.

```

# Training and validation set splits

data.label_list

```

### Distribution of the classes in the new training and validation set

```

from collections import Counter

# Training set

train_counts = Counter(data.train_ds.y)

train_counts.most_common()

```

[(Category 0, 159089), (Category 1, 62931)]

```

# Validation set

valid_counts = Counter(data.valid_ds.y)

valid_counts.most_common()

```

[(Category 0, 39649), (Category 1, 15855)]

### Training a pretrained ResNet50 + Mixed precision policy

Here, we will train the last layer group a pre-trained ResNet50 model (trained on ImageNet) using mixed precision policy and 1cycle policy. We will also tweak the cross-entropy loss function so that it adds weights to the undersampled class effectively.

```

# Initializing the custom class weights and pop it to the GPU

from torch import nn

weights = [0.4, 1]

class_weights=torch.FloatTensor(weights).cuda()

# Begin the training

learn = cnn_learner(data, models.resnet50, metrics=[accuracy]).to_fp16()

learn.loss_func = nn.CrossEntropyLoss(weight=class_weights)

learn.fit_one_cycle(5);

learn.recorder.plot_losses()

```

A mammoth training of **1 hour, 21 minutes and 9 seconds.** The loss surface also seems to be pretty good.

```

# Saving the model

learn.save('stage-1-rn50')

```

### Model's losses, accuracy scores and more

```

# Model's final validation loss and accuracy

learn.validate()

```

`tensor(0.8685)` denotes an accuracy score of **86.85%**.

```

# Model's final training loss and accuracy

learn.validate(learn.data.train_dl)

```

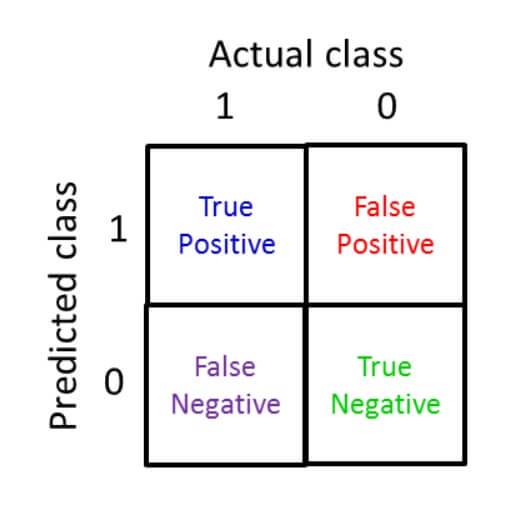

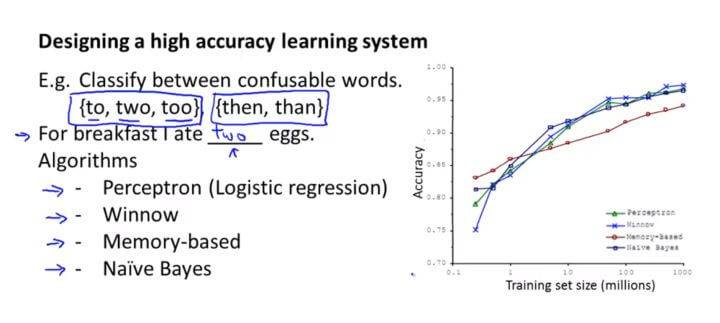

As mentioned in the very beginning, the dataset suffers from the problem of class-imbalance. And for class-imbalanced datasets, we cannot simply go with the accuracy score. Read about [accuracy paradox](https://en.wikipedia.org/wiki/Accuracy_paradox). We will have to consider other metrics like **specificity**, **sensitivity**. Let's start by looking at model's predictions on the validation set.

```

# Looking at model's results

learn.show_results(rows=3)

```

The above figure presents a few IDC(-) samples from the validation set and along with that also shows model's predictions. Let's now see the top losses incurred by the model during the training process.

### Model's top losses and confusion matrix

```

interp = ClassificationInterpretation.from_learner(learn)

losses,idxs = interp.top_losses()

len(data.valid_ds)==len(losses)==len(idxs)

interp.plot_top_losses(9, figsize=(12,10), heatmap=False)

```

We can see that there are some samples which are originally IDC(+) but the model predicts them as IDC(-). **This is a staggering issue**.

We need to be really careful with our false negative here — we don’t want to classify someone as “No cancer” when they are in fact “Cancer positive”.

Our false positive rate is also important — we don’t want to mistakenly classify someone as “Cancer positive” and then subject them to painful, expensive, and invasive treatments when they don’t actually need them.

To get a strong hold of how model is doing on false positives and false negatives, we can plot the confusion matrix.

```

interp.plot_confusion_matrix()

```

There can be improvements in the model's training instructions so that the model minimizes the false predictions.

**What can be done?**

- We are using the pre-trained weights of the ResNet50 model. We can train the other layers of the model to make it a bit more specific

- We have trained our model only for a few epochs. A bit more training will definitely help

- We have not used discriminative learning rates i.e. the way of training models using different learning rates across different layers groups

Before we experiment with all this, let's generate a classification report of the current model's performance.

### Classification report to look at other metrics since there is a class imbalance

```

from sklearn.metrics import classification_report

def return_classification_report(learn):

ground_truth = []

pred_labels = []

for i in range(len(learn.data.valid_ds)):

temp_pred = str(learn.predict(learn.data.valid_ds[i][0])[0])

temp_truth = str(learn.data.valid_ds[i]).split('), ', 1)[1].replace('Category ', '').replace(')', '')

pred_labels.append(temp_pred)

ground_truth.append(temp_truth)

assert len(pred_labels) == len(ground_truth)

return classification_report(ground_truth, pred_labels, target_names=data.classes)

print(return_classification_report(learn))

```

As we can see the model's [recall](https://scikit-learn.org/stable/modules/generated/sklearn.metrics.precision_recall_fscore_support.html#sklearn.metrics.precision_recall_fscore_support) is much better than that of Adrian's `CancerNet` model. This is due to the fact that *we handled the loss function in a custom way*.

Here's a snap of the classification report of `CancerNet` -

**Can we do better?**

We will now start by finding an optimal learning rate for the model.

```

learn.lr_find();

learn.recorder.plot()

```

We now have an idea of what could be a good learning rate for the model. We will now unfreeze the first layer groups of the model and will allow it to fully train. We will train it using discriminative learning rates for another two epochs.

```

learn.unfreeze()

learn.fit_one_cycle(2, max_lr=slice(1e-04, 1e-05))

```

Another **32 minutes and 24 seconds** of training.

```

# Save model

learn.save('stage-2-more-rn50')

# Looking at the classification report

print(return_classification_report(learn))

```

As we can see the recall has improved specifically for the positive classes. Ideally there should be a good balance of specificity and sensitivity.

### Model's architectural summary

```

learn.summary()

# Export the model in pickle format

learn.export('breast-cancer-rn50.pkl')

```

### Conclusion:

We now have a model which is **86.68%** accurate and has got a pretty **improved recall** for both in case of the +ve and the -ve classes.

We still could have trained the network for more. We have trained it for **7 epochs** and it took *approximately* **two hours.** More fine-tuning could have been done. Sophisticated data augmentation and resolution techniques could have been applied. But let's keep them aside for further studies for now :)

| github_jupyter |

# EJERCICIO 4

El conjunto de datos “Iris” ha sido usado como caso de prueba para una gran cantidad de clasificadores y es, quizás, el conjunto de datos más conocido de la literatura específica. Iris es una variedad de planta que se la desea clasificar de acuerdo a su tipo. Se reconocen tres tipos distintos: 'Iris setosa', 'Iris versicolor' e 'Iris virgínica'. El objetivo es lograr clasificar una planta de la variedad Iris a partir del largo y del ancho del pétalo y del largo y del ancho del sépalo.

El conjunto de datos Iris está formado en total por 150 muestras,

siendo 50 de cada uno de los tres tipos de plantas. Cada muestra

está compuesta por el tipo de planta, la longitud y ancho del

pétalo y la longitud y ancho del sépalo. Todos son atributos numéricos continuos.

$$

\begin{array}{|c|c|c|c|c|}

\hline X & Setosa & Versicolor & Virgínica & Inválidas \\

\hline Setosa & 50 & 0 & 0 & 0 \\

\hline Versicolor & 0 & 50 & 0 & 0 \\

\hline Virgínica & 0 & 0 & 50 & 0 \\

\hline

\end{array}

$$

```

import numpy as np

import matplotlib.pyplot as plt

import matplotlib as mpl

import mpld3

%matplotlib inline

mpld3.enable_notebook()

from cperceptron import Perceptron

from cbackpropagation import ANN #, Identidad, Sigmoide

import patrones as magia

def progreso(ann, X, T, y=None, n=-1, E=None):

if n % 20 == 0:

print("Pasos: {0} - Error: {1:.32f}".format(n, E))

def progresoPerceptron(perceptron, X, T, n):

y = perceptron.evaluar(X)

incorrectas = (T != y).sum()

print("Pasos: {0}\tIncorrectas: {1}\n".format(n, incorrectas))

iris = np.load('iris.npy')

#Armo Patrones

clases, patronesEnt, patronesTest = magia.generar_patrones(

magia.escalar(iris[:,1:]).round(4),iris[:,:1],80)

X, T = magia.armar_patrones_y_salida_esperada(clases,patronesEnt)

clases, patronesEnt, noImporta = magia.generar_patrones(

magia.escalar(iris[:,1:]),iris[:,:1],100)

Xtest, Ttest = magia.armar_patrones_y_salida_esperada(clases,patronesEnt)

```

a) Entrene perceptrones para que cada uno aprenda a reconocer uno de los distintos tipos de plantas Iris. Informe los parámetros usados para el entrenamiento y el desempeño obtenido. Emplee todos los patrones para el entrenamiento. Muestre la matriz de confusión para la mejor clasificación obtenida luego del entrenamiento, informando los patrones clasificados correcta e incorrectamente.

```

print("Entrenando P1:")

p1 = Perceptron(X.shape[1])

I1 = p1.entrenar_numpy(X, T[:,0], max_pasos=5000, callback=progresoPerceptron, frecuencia_callback=2500)

print("Pasos:{0}".format(I1))

print("\nEntrenando P2:")

p2 = Perceptron(X.shape[1])

I2 = p2.entrenar_numpy(X, T[:,1], max_pasos=5000, callback=progresoPerceptron, frecuencia_callback=2500)

print("Pasos:{0}".format(I2))

print("\nEntrenando P3:")

p3 = Perceptron(X.shape[1])

I3 = p3.entrenar_numpy(X, T[:,2], max_pasos=5000, callback=progresoPerceptron, frecuencia_callback=2500)

print("Pasos:{0}".format(I3))

Y = np.vstack((p1.evaluar(Xtest),p2.evaluar(Xtest),p3.evaluar(Xtest))).T

magia.matriz_de_confusion(Ttest,Y)

```

b) Entrene una red neuronal artificial usando backpropagation como algoritmo de aprendizaje con el fin de lograr la clasificación pedida. Emplee todos los patrones para el entrenamiento. Detalle los parámetros usados para el entrenamiento así como la arquitectura de la red neuronal. Repita más de una vez el procedimiento para confirmar los resultados obtenidos e informe la matriz de confusión para la mejor clasificación obtenida.

```

# Crea la red neuronal

ocultas = 10

entradas = X.shape[1]

salidas = T.shape[1]

ann = ANN(entradas, ocultas, salidas)

ann.reiniciar()

#Entreno

E, n = ann.entrenar_rprop(X, T, min_error=0, max_pasos=100000, callback=progreso, frecuencia_callback=10000)

print("\nRed entrenada en {0} pasos con un error de {1:.32f}".format(n, E))

#Evaluo

Y = (ann.evaluar(Xtest) >= 0.97)

magia.matriz_de_confusion(Ttest,Y)

(ann.evaluar(Xtest)[90])

```

| github_jupyter |

```

import pyspark

from pyspark import SparkContext

sc = SparkContext.getOrCreate();

import findspark

findspark.init()

from pyspark.sql import SparkSession

spark = SparkSession.builder.master("local[*]").getOrCreate()

spark.conf.set("spark.sql.repl.eagerEval.enabled", True) # Property used to format output tables better

spark

sc.stop()

import pyspark

from pyspark.sql import SparkSession

spark = SparkSession.builder.master("local[*]").getOrCreate()

spark.conf.set("spark.sql.repl.eagerEval.enabled", True) # Property used to format output tables better

spark

from pyspark.sql import SparkSession

spark = SparkSession.builder.appName('DataAnalysisOnElonMusk').getOrCreate()

import os

import re

from datetime import date, datetime

import pandas as pd

file_path_name = 'elonmusk.csv'

def open_file(file_path_name):

return pd.read_csv(file_path_name, index_col=[0])

print(open_file(file_path_name).head())

def clean_dataframe(df, columns_to_drop):

df = drop_redundant_columns(df, columns_to_drop)

return df

def transform_dataframe(df):

df = drop_columns_with_constant_values(df)

add_mentions_count(df)

add_weekday(df)

add_reply_to_count(df)

add_photos_count(df)

convert_to_datetime(df)

extract_hour_minute(df)

df = drop_redundant_columns(df, ['photos', 'date', 'mentions', 'reply_to', 'reply_to_count'])

return df

columns_to_drop = ['hashtags', 'cashtags', 'link', 'quote_url', 'urls', 'created_at']

def drop_redundant_columns(df, columns_to_drop):

return df.drop(columns=columns_to_drop, axis=0)

def drop_columns_with_constant_values(df):

return df.drop(columns=list(df.columns[df.nunique() <= 1]))

def add_mentions_count(df):

new_values = []

for i, content in df['mentions'].items():

new_values.append(int(content.count("'") / 2))

df['mentions_count'] = new_values

return df

def add_weekday(df):

weekday = []

for i, content in df['date'].items():

year, month, day = map(int, content.split('-'))

d = date(year, month, day)

weekday.append(d.weekday())

df['weekday'] = weekday

return df

def add_reply_to_count(df):

reply_to_count_values = []

for i, content in df['reply_to'].items():

reply_to_count_values.append((int(content.count("{")) - 1))

df['reply_to_count'] = reply_to_count_values

return df

def add_photos_count(df):

new_values = []

for i, content in df['photos'].items():

new_values.append(int(content.count("https")))

df['photos_count'] = new_values

return df

def convert_to_datetime(df):

df['datetime'] = (df['date'] + " " + df['time']).astype('string')

return df

def extract_hour_minute(df):

year_col = []

month_col = []

hour_col = []

minute_col = []

for i, content in df['datetime'].items():

t1 = datetime.strptime(content, '%Y-%m-%d %H:%M:%S')

year_col.append(t1.year)

month_col.append(t1.month)

hour_col.append(t1.hour)

minute_col.append(t1.minute)

df['year'] = year_col

df['month'] = month_col

df['hour'] = hour_col

df['minute'] = minute_col

return df

df = open_file(file_path_name)

new_df = clean_dataframe(df, columns_to_drop)

new_df = transform_dataframe(new_df)

df['tweet'] = df['tweet'].str.lower()

data = []

for i,j in zip(new_df,new_df.count()):

data.append((i,str(j)))

rdd = spark.sparkContext.parallelize(data)

resultCount = rdd.collect()

print(resultCount)

#Dropping duplicates from previous count data

new_df.drop_duplicates(subset=['tweet'], keep='first', inplace=True)

#print(new_df.shape)

shape = spark.sparkContext.parallelize([new_df.shape]).collect()

print(shape)

data2 = []

for i,j in zip(new_df,new_df.count()):

data2.append((i,str(j)))

rdd = spark.sparkContext.parallelize(data2)

resultCount2 = rdd.collect()

print(resultCount2)

count = new_df['tweet'].str.split().str.len()

count.index = count.index.astype(str) + ' words:'

count.sort_index(inplace=True)

def word_count(df):

words_count = []

for i, content in df['tweet'].items():

new_values =[]

new_values = content.split()

words_count.append(len(new_values))

df['word_count'] = words_count

return df

new_df = word_count(new_df)

print("Total number of words: ", count.sum(), "words")

print("Average number of words per tweet: ", round(count.mean(),2), "words")

print("Max number of words per tweet: ", count.max(), "words")

print("Min number of words per tweet: ", count.min(), "words")

new_df['tweet_length'] = new_df['tweet'].str.len()

print("Total length of a dataset: ", new_df.tweet_length.sum(), "characters")

print("Average length of a tweet: ", round(new_df.tweet_length.mean(),0), "characters")

```

| github_jupyter |

##### Copyright 2019 The TensorFlow Authors.

```

#@title Licensed under the Apache License, Version 2.0 (the "License");

# you may not use this file except in compliance with the License.

# You may obtain a copy of the License at

#

# https://www.apache.org/licenses/LICENSE-2.0

#

# Unless required by applicable law or agreed to in writing, software

# distributed under the License is distributed on an "AS IS" BASIS,

# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

# See the License for the specific language governing permissions and

# limitations under the License.

```

# 自定义联合算法,第 1 部分:Federated Core 简介

<table class="tfo-notebook-buttons" align="left">

<td> <a target="_blank" href="https://tensorflow.google.cn/federated/tutorials/custom_federated_algorithms_1"><img src="https://tensorflow.google.cn/images/tf_logo_32px.png">在 TensorFlow.org 上查看</a> </td>

<td><a target="_blank" href="https://colab.research.google.com/github/tensorflow/docs-l10n/blob/master/site/zh-cn/federated/tutorials/custom_federated_algorithms_1.ipynb"><img src="https://tensorflow.google.cn/images/colab_logo_32px.png">在 Google Colab 中运行</a></td>

<td> <a target="_blank" href="https://github.com/tensorflow/docs-l10n/blob/master/site/zh-cn/federated/tutorials/custom_federated_algorithms_1.ipynb"><img src="https://tensorflow.google.cn/images/GitHub-Mark-32px.png">在 GitHub 上查看源代码</a>

</td>

<td> <a href="https://storage.googleapis.com/tensorflow_docs/docs-l10n/site/zh-cn/federated/tutorials/custom_federated_algorithms_1.ipynb"><img src="https://tensorflow.google.cn/images/download_logo_32px.png">下载笔记本</a> </td>

</table>

本系列教程包括两个部分,此为第一部分。该系列演示了如何使用 [Federated Core (FC)](../federated_core.md) 在 TensorFlow Federated (TFF) 中实现自定义类型的联合算法。Federated Core 是一组较低级别的接口,这些接口是我们实现[联合学习 (FL)](../federated_learning.md) 层的基础。

第一部分更具概念性;我们将介绍 TFF 中使用的一些关键概念和编程抽象,并在一个非常简单的示例(分布式温度传感器阵列)中演示它们的用法。在[本系列的第二部分](custom_federated_algorithms_2.ipynb)中,我们将使用此处介绍的机制来实现一个联合训练和评估算法的简单版本。我们鼓励您稍后在 <code>tff.learning</code> 中研究联合平均的<a>实现</a>。

在本系列的最后,您应该能够认识到 Federated Core 的应用并不仅限于学习。我们提供的编程抽象非常通用,例如可用于对分布式数据进行分析和其他自定义类型的计算。

尽管本教程可独立使用,但我们建议您先阅读有关[图像分类](federated_learning_for_image_classification.ipynb)和[文本生成](federated_learning_for_text_generation.ipynb)的教程,获得对 TensorFlow Federated 框架和 [Federated Learning](../federated_learning.md) API (`tff.learning`) 更高级和更循序渐进的介绍,它将帮助您在上下文中理解我们在此介绍的概念。

## 预期用途

简而言之,Federated Core (FC) 是一种开发环境,可以紧凑地表达将 TensorFlow 代码与分布式通信算子(比如在[联合平均](https://arxiv.org/abs/1602.05629)中使用的算子)相结合的程序逻辑。它可以在系统中的一组客户端设备上计算分布式总和、平均值和其他类型的分布式聚合,向这些设备广播模型和参数等。

您可能知道 [`tf.contrib.distribute`](https://tensorflow.google.cn/api_docs/python/tf/contrib/distribute),此时自然会问的一个问题可能是:该框架在哪些方面有所不同?毕竟,两种框架都试图使 TensorFlow 进行分布式计算。

其中一种思路是,`tf.contrib.distribute` 的既定目标是*允许用户以最小的更改使用现有模型和训练代码实现分布式训练*,且大部分重点放在如何利用分布式架构来提高现有训练代码的效率。TFF 的 Federated Core 的目标是使研究员和从业者能够明确控制将在系统中使用的分布式通信的具体模式。FC 的重点是提供一种灵活可扩展的语言来表达分布式数据流算法,而不是具体的一组已实现的分布式训练能力。

TFF 的 FC API 的主要目标受众之一是研究员和从业者,他们可能希望尝试新的联合学习算法,并评估微妙的设计选择(这些选择会影响分布式系统中数据流的编排方式)的结果,但又不想被系统实现细节所困扰。FC API 所针对的抽象级别大致对应于伪代码,可以用来描述研究论文中的联合学习算法的机制(系统中存在什么数据以及如何对其进行转换),但又不降低到单个点对点网络消息交换的级别。

TFF 总体上针对的是数据分布的场景(并且出于隐私等原因必须保持这种状态),以及可能无法在某个集中位置收集所有数据的场景。与所有数据都可以在数据中心积累到某个集中位置的场景相比,这意味着机器学习算法的实现需要提高显式控制的程度。

## 准备工作

在深入研究代码之前,请尝试运行以下 “Hello World” 示例,以确保您的环境已正确设置。如果无法正常运行,请参阅[安装](../install.md)指南中的说明。

```

#@test {"skip": true}

!pip install --quiet --upgrade tensorflow-federated-nightly

!pip install --quiet --upgrade nest-asyncio

import nest_asyncio

nest_asyncio.apply()

import collections

import numpy as np

import tensorflow as tf

import tensorflow_federated as tff

@tff.federated_computation

def hello_world():

return 'Hello, World!'

hello_world()

```

## 联合数据

TFF 的一个显著功能是,您可以通过它在*联合数据*上紧凑地表达基于 TensorFlow 的计算。在本教程中,我们将使用*联合数据*这一术语来指代托管在分布式系统中一组设备上的数据项的集合。例如,在移动设备上运行的应用可以收集数据并将其存储在本地,而无需上传到某一集中位置。或者,分布式传感器阵列可以在本地收集并存储温度读数。

像上面示例中这样的联合数据在 TFF 中被视为[一等公民](https://en.wikipedia.org/wiki/First-class_citizen),即,它们可以显示为函数的参数和结果,并具有类型。为了强化这一概念,我们将联合数据集称为*联合值*或*联合类型的值*。

需要理解的一个要点是,我们将所有设备上的数据项的整个集合(例如,来自分布式阵列中所有传感器温度读数的整个集合)建模为单个联合值。

例如,下面是在 TFF 中定义由一组客户端设备托管的*联合浮点*类型的方式。可以将分布式传感器阵列中出现的温度读数的集合建模为此联合类型的值。

```

federated_float_on_clients = tff.type_at_clients(tf.float32)

```

更普遍的是,TFF 中的联合类型是通过指定其*成员组成*(留驻在各个设备上的数据项)的类型 `T` 和托管此类型联合值的设备组 `G`(加上我们会在稍后提及的第三个可选位)来定义的。我们将托管联合值的设备组 `G` 称为该值的*布局*。因此,`tff.CLIENTS` 是布局示例。

```

str(federated_float_on_clients.member)

str(federated_float_on_clients.placement)

```

带有成员组成 `T` 和布局 `G` 的联合类型可以紧凑地表示为 `{T}@G`,如下所示。

```

str(federated_float_on_clients)

```

此简明表示法中的大括号 `{}` 提醒您成员组成(不同设备上的数据项)可能有所不同,例如您所期望的温度传感器读数,因此,客户端会作为整体共同托管 `T` 型项的[多重集](https://en.wikipedia.org/wiki/Multiset),它们共同构成联合值。

需要注意的是,联合值的成员组成通常对程序员不透明,即不应将联合值视为由系统中的设备标识符进行键控的简单 `dict`,这些值应仅由抽象表示各种分布式通信协议(如聚合)的*联合算子*进行集体转换。如果这听起来太过抽象,不要担心,我们稍后将回到这个问题,并用具体示例对其进行演示。

TFF 中的联合类型有两种形式:一种是联合值的成员组成可能不同(如上所示),另一种是联合值的成员组成全部相等。这由 `tff.FederatedType` 构造函数中的第三个可选参数 `all_equal`(默认为 `False`)来控制。

```

federated_float_on_clients.all_equal

```

可以将带有布局 `G`,且其中所有 `T` 型成员组成已知相等的联合类型紧凑地表示为 `T@G`(与 `{T}@G` 相对,也就是说,去掉大括号表示成员组成的多重集由单个项目构成)。

```

str(tff.type_at_clients(tf.float32, all_equal=True))

```

在实际场景中可能会出现的此类联合值的一个示例是超参数(例如学习率、裁剪范数等),该超参数已由服务器广播到参与联合训练的一组设备。

另一个示例是在服务器上预先训练的一组机器学习模型参数,然后将其广播到一组客户端设备,它们可以在这组设备上针对每个用户进行个性化设置。

例如,假设对于一个简单的一维线性回归模型,我们有一对 `float32` 参数 `a` 和 `b`。我们可以构造如下用于 TFF 的(非联合)类型的此类模型。打印的类型字符串中的尖括号 `<>` 是命名或未命名元组的紧凑 TFF 表示法。

```

simple_regression_model_type = (

tff.StructType([('a', tf.float32), ('b', tf.float32)]))

str(simple_regression_model_type)

```

请注意,虽然我们在上文中仅指定了 `dtype`,但也支持非标量类型。在上面的代码中,`tf.float32` 是更通用的 `tff.TensorType(dtype=tf.float32, shape=[])` 的快捷表示法。

将此模型广播到客户端时,生成的联合值类型可以用如下方法表示。

```

str(tff.type_at_clients(

simple_regression_model_type, all_equal=True))

```

根据上面的*联合浮点*的对称性,我们将这种类型称为*联合元组*。一般来说,我们会经常使用术语*联合 XYZ* 来指代联合值,其中成员组成类似 *XYZ*。因此,我们将对*联合元组*、*联合序列*、*联合模型*等进行讨论。

现在,回到 `float32@CLIENTS`,尽管它看起来是在多个设备上复制的,但它实际上是单一的 `float32`,因为所有成员都相同。通常,您可能会想到任意*全等*联合类型(即一种 `T@G` 形式)与非联合类型 `T` 同构,因为这两种情况实际上都只有 `T` 类型的单个(尽管可能是复制的)项。

鉴于 `T` 和 `T@G` 之间的同构性,您可能想知道后一种类型能够起到什么作用(如果有的话)。请继续阅读。

## 布局

### 设计概述

在上一部分中,我们介绍了*布局*的概念(可能会共同托管联合值的系统参与者组),并且我们还演示了将 `tff.CLIENTS` 用作布局的示例规范。

为了解释为什么*放置*的概念如此重要,以至于我们需要将其合并到 TFF 类型系统中,请回想一下本教程开始时提到的有关 TFF 某些预期用途的内容。

尽管在本教程中,您只会看到在模拟环境中本地执行的 TFF 代码,但我们的目标是使 TFF 能够编写可部署在分布式系统中的物理设备组(可能包括运行 Android 的移动或嵌入式设备)上执行的代码。其中,每个设备都将接收单独的一组指令以在本地执行,具体取决于它在系统中所扮演的角色(最终用户设备、集中协调器、多层架构中的中间层等)。重要的是要能够推断出哪些设备子集执行什么代码,以及数据的不同部分可能在哪里进行物理实现。

当处理移动设备上的应用数据时,这一点尤其重要。由于数据私有且可能敏感,我们要能静态验证此类数据永远不会离开设备(并证明对数据进行处理的实际方式)。布局规范是为此目的而设计的一种机制。

TFF 是一种以数据为中心的编程环境,正因为如此,它与一些现有框架不同,这些框架专注于*运算*和这些运算可能*运行*的位置,而 TFF 专注于*数据*、数据*实现*的位置,以及*转换*方式。因此,布局被建模为 TFF 中数据的属性,而不是数据上运算的属性。的确,您将在下一部分中看到,某些 TFF 运算会跨位置,并且可以说是“在网络中”运行,而不是由一台或一组机器执行。

将某个值的类型表示为 `T@G` 或 `{T}@G`(而不仅仅是 `T`)可使数据布局决策明确,并且搭配 TFF 中编写的程序的静态分析,它可以作为为设备端敏感数据提供形式上的隐私保障的基础。

但此时需要注意,虽然我们鼓励 TFF 用户明确托管数据的参与设备*组*(布局),但程序员永远不会处理原始数据或*各个*参与者的身份。

在 TFF 代码的主体内,根据设计,无法枚举构成由 `tff.CLIENTS` 表示的组的设备,也无法探测组中是否存在某个特定设备。在 Federated Core API、基础架构抽象集或我们提供的用于支持模拟的核心运行时基础结构中,没有任何设备或客户端标识的概念。您编写的所有计算逻辑都将表达为在整个客户端组上的运算。

回想一下我们前面提到的联合类型的值与 Python `dict` 的不同,因为它不能简单地枚举其成员组成。将您的 TFF 程序逻辑所操作的值视为与布局(组)所关联,而不是与单个参与者相关联。

Placements *are* designed to be a first-class citizen in TFF as well, and can appear as parameters and results of a `placement` type (to be represented by `tff.PlacementType` in the API). In the future, we plan to provide a variety of operators to transform or combine placements, but this is outside the scope of this tutorial. For now, it suffices to think of `placement` as an opaque primitive built-in type in TFF, similar to how `int` and `bool` are opaque built-in types in Python, with `tff.CLIENTS` being a constant literal of this type, not unlike `1` being a constant literal of type `int`.

### 指定布局

TFF 提供了两种基本的布局文本 `tff.CLIENTS` 和 `tff.SERVER`,使用通过单个集中式*服务器*协调器编排的多种*客户端*设备(移动电话、嵌入式设备、分布式数据库、传感器等),使自然建模为客户端-服务器架构的各种实际场景易于表达。TFF 的设计还支持自定义位置、多客户端组、多层和其他更通用的分布式架构,但对这些内容的讨论不在本教程的范围之内。

TFF 没有规定 `tff.CLIENTS` 或 `tff.SERVER` 实际代表的内容。

In particular, `tff.SERVER` may be a single physical device (a member of a singleton group), but it might just as well be a group of replicas in a fault-tolerant cluster running state machine replication - we do not make any special architectural assumptions. Rather, we use the `all_equal` bit mentioned in the preceding section to express the fact that we're generally dealing with only a single item of data at the server.

同样,在某些应用中,`tff.CLIENTS` 可能代表系统中的所有客户端,在联合学习的上下文中,我们有时将其称为*群体*,但在[联合平均的生产实现](https://arxiv.org/abs/1602.05629)这个示例中,它可能代表*队列*(选择参加某轮训练的客户端的子集)。当部署计算以执行(或者就像模型环境中的 Python 函数那样被调用)时,其中的抽象定义布局将被赋予具体含义。在我们的本地模拟中,客户端组由作为输入提供的联合数据来确定。

## 联合计算

### 声明联合计算

TFF 是支持模块化开发的强类型函数式编程环境。

TFF 中的基本组成单位是*联合计算*,它是可以接受联合值作为输入并返回联合值作为输出的逻辑的一部分。下面的代码定义了一个计算,它计算的是前一个示例中传感器阵列报告的平均温度。

```

@tff.federated_computation(tff.type_at_clients(tf.float32))

def get_average_temperature(sensor_readings):

return tff.federated_mean(sensor_readings)

```

查看上面的代码,此时您可能会问:TensorFlow 中不是已经有用于定义可组合单元的装饰器构造(如 [`tf.function`](https://tensorflow.google.cn/api_docs/python/tf/function))了吗?既然如此,为什么还要引入另一个构造?它们有什么区别?

简短回答是,`tff.federated_computation` 封装容器生成的代码*既不是* TensorFlow,*也不是* Python,它是一种与平台无关的内部*胶水*语言中的分布式系统规范。这听起来确实很神秘,但请牢记这一将联合计算作为分布式系统抽象规范的直观解释。我们稍后将对其进行说明。

首先,我们来思考一下定义。TFF 计算通常会被建模为函数,参数可有可无,但要有明确定义的类型签名。您可以通过查询计算的 `type_signature` 属性来打印计算的类型签名,如下所示。

```

str(get_average_temperature.type_signature)

```

类型签名告诉我们,该计算接受客户端设备上不同传感器读数的集合,并在服务器上返回单个平均值。

在进一步讨论之前,让我们先思考一个问题:此计算的输入和输出*位于不同位置*(在 `CLIENTS` 上和在 `SERVER` 上)。回想一下我们在上一部分所讲的关于 *TFF 运算如何跨位置并在网络中运行*的内容,以及我们刚才讲到的有关联合计算表示分布式系统抽象规范的内容。我们刚刚定义了这样一种计算:一个简单的分布式系统,其中数据在客户端设备上使用,而聚合结果出现在服务器上。

在许多实际场景中,代表顶级任务的计算倾向于接受其输入并在服务器上报告其输出,这表明,计算可能会由在服务器上发起和终止的*查询*所触发。

但是,FC API 并不强制实施此假设,并且我们在内部使用的许多构建块(包括您可能在 API 中见到的许多 `tff.federated_...` 算子)的输入和输出都有不同的布局,因此,通常来说,您不应将联合计算视为*在服务器上运行*或*由服务器执行*的内容。服务器只是联合计算中的一种类型的参与者。在思考此类计算的机制时,最好始终默认使用全局网络范围的视角,而不是使用单个集中协调器的视角。

一般来说,对于输入和输出的类型 `T` 和 `U`,函数类型签名会分别被紧凑地表示为 `(T -> U)`。形式参数的类型(此处为 `sensor_readings`)被指定为装饰器的参数。您无需指定结果的类型,因为它会自动确定。

尽管 TFF 确实提供了有限形式的多态性,但我们强烈建议程序员明确自己使用的数据类型,因为这样可以更轻松地理解、调试和在形式上验证您的代码的属性。在某些情况下,必须明确指定类型(例如,当前无法直接执行多态计算时)。

### 执行联合计算

为了支持开发和调试,TFF 允许您直接调用以此方式定义为 Python 函数的计算,如下所示。对于 `all_equal` 位设置为 `False` 的情况,您可以将其作为 Python 中的普通 `list` 进行馈送,而对于 `all_equal` 位设置为 `True` 的情况,您可以直接馈送(单)成员组成。这也是向您反馈结果的方式。

```

get_average_temperature([68.5, 70.3, 69.8])

```

在模拟模式下运行此类计算时,您将充当具有系统范围视图的外部观察者,您能够在网络中的任何位置提供输入和使用输出,这里正是如此,您提供了客户端值作为输入,并使用了服务器结果。

现在,让我们回到先前关于 `tff.federated_computation` 装饰器用*胶水*语言发出代码的注释。尽管 TFF 计算的逻辑可以用 Python 表达为普通函数(您只需按照上述方法,使用 `tff.federated_computation` 对其进行装饰),而且您可以像此笔记本中的其他 Python 函数一样直接调用它们,但在后台,正如我们前面提到的,TFF 计算实际上*不是* Python。

我们的意思是,当 Python 解释器遇到一个用 `tff.federated_computation` 装饰的函数时,它会对此函数主体中的语句进行一次跟踪(在定义时),然后构造该计算逻辑的[序列化表示](https://github.com/tensorflow/federated/blob/main/tensorflow_federated/proto/v0/computation.proto)以供将来使用(无论是用于执行,还是作为子组件合并到另一个计算中)。

您可以通过添加打印语句来验证这一点,如下所示:

```

@tff.federated_computation(tff.type_at_clients(tf.float32))

def get_average_temperature(sensor_readings):

print ('Getting traced, the argument is "{}".'.format(

type(sensor_readings).__name__))

return tff.federated_mean(sensor_readings)

```

您可以将定义了联合计算的 Python 代码想象成在非 Eager 上下文中构建了 TensorFlow 计算图的 Python 代码(如果您不熟悉 TensorFlow 的非 Eager 用法,请想象您的 Python 代码定义了运算的计算图以稍后执行,但实际上并不立即运行它们)。TensorFlow 中的非 Eager 计算图构建代码是 Python,但用此代码构建的 TensorFlow 计算图与平台无关且可序列化。

同样,TFF 计算用 Python 进行定义,但其主体中的 Python 语句(如我们刚才展示的示例中的 `tff.federated_mean`)会在后台被编译成可移植、与平台无关和可序列化的表示形式。

作为开发者,您无需关注此表示形式的细节,因为您不会直接使用它,但您应该知道它的存在,以及 TFF 计算在本质上非 Eager,且无法捕获任意 Python 状态。TFF 计算主体中包含的 Python 代码会在定义时(即用 `tff.federated_computation` 装饰的 Python 函数在序列化之前被跟踪时)执行。调用时不会再次对其进行跟踪(函数为多态时除外;有关详细信息,请参阅文档页面)。

您可能想知道为什么我们选择引入专用的内部非 Python 表示形式。其中一个原因是,TFF 计算的最终目的是可部署到实际物理环境中,并托管在可能无法使用 Python 的移动或嵌入式设备上。

另一个原因是 TFF 计算表达的是分布式系统的全局行为,而 Python 程序表达的是各个参与者的本地行为。您可以在上面的简单示例中看到这一点,即使用特殊算子 `tff.federated_mean` 接受客户端设备上的数据,但将结果存储在服务器上。

无法将算子 `tff.federated_mean` 轻松建模为 Python 中的普通算子,因为它不在本地执行,如前所述,它表示协调多个系统参与者行为的分布式系统。我们将此类算子称为*联合算子*,以将其与 Python 中的普通(本地)算子进行区分。

因此,TFF 类型系统以及 TFF 语言支持的基本运算集与 Python 中的大不相同,因此必须使用专用表示形式。

### 组成联合计算

如上所述,最好将联合计算及其组成理解为分布式系统的模型,并且可以将联合计算的组成过程想象成从较简单的分布式系统组成较复杂的分布式系统的过程。您可以将 `tff.federated_mean` 算子视为一种具有类型签名 `({T}@CLIENTS -> T@SERVER)` 的内置模板联合计算(实际上,就像您编写的计算一样,该算子的结构也很复杂,我们会在后台把它分解成更简单的算子)。

组成联合计算的过程也是如此。可以在另一个用 `tff.federated_computation` 装饰的 Python 函数主体中调用计算 `get_average_temperature`,这样做会将其嵌入到父级的主体中,这与先前将 `tff.federated_mean` 嵌入到其自身主体中的方式相同。

需要注意的一个重要限制是,用 `tff.federated_computation` 装饰的 Python 函数的主体必须*仅*由联合算子组成(即它们不能直接包含 TensorFlow 运算)。例如,不能直接使用 `tf.nest` 接口添加一对联合值。TensorFlow 代码仅限用 `tff.tf_computation` 装饰的代码块,下一部分将对此进行讨论。只有以这种方式封装,才能在 `tff.federated_computation` 主体中调用封装后的 TensorFlow 代码。

这样区分是出于技术原因(很难欺骗 `tf.add` 之类的算子来使用非张量),以及架构原因。联合计算的语言(即由用 `tff.federated_computation` 装饰的 Python 函数的序列化主体构造的逻辑)被设计用作与平台无关的*胶水*语言。目前,此胶水语言用来从 TensorFlow 代码(限于 `tff.tf_computation` 块)的嵌入部分构建分布式系统。在时间充裕的情况下,我们会预见需要嵌入其他非 TensorFlow 逻辑的各个部分(例如可能表示输入流水线的关系数据库查询),它们全部使用相同的胶水语言(`tff.federated_computation` 块)相互连接。

## TensorFlow 逻辑

### 声明 TensorFlow 计算

TFF 旨在配合 TensorFlow 使用。这样,您将在 TFF 中编写的大部分代码很可能是普通的(即本地执行的) TensorFlow 代码。为了在 TensorFlow 中使用此类代码,如上所述,只需用 `tff.tf_computation` 对其进行装饰。

例如,下面实现了一个函数,该函数接受数字并向其加 `0.5`。

```

@tff.tf_computation(tf.float32)

def add_half(x):

return tf.add(x, 0.5)

```

再次看到此内容,您可能想知道我们为什么应该定义另一个装饰器 `tff.tf_computation`,而不是简单地使用现有机制(如 `tf.function`)。与前一部分不同,我们在这里处理的是一个普通的 TensorFlow 代码块。

这样做有几个原因,虽然对它们的完整处理超出了本教程的范围,但下面这个主要原因值得注意:

- 若要将使用 TensorFlow 代码实现的可重用构建块嵌入到联合计算的主体中,它们需要满足某些属性(例如在定义时进行跟踪和序列化、具有类型签名等)。这通常需要某种形式的装饰器。

一般而言,我们建议尽可能使用 TensorFlow 的原生机制进行组合(如 `tf.function`),因为 TFF 的装饰器与 Eager 函数进行交互的确切方式可能会逐步变化。

现在,回到上面的示例代码段,我们刚才定义的 `add_half` 计算可以像任何其他 TFF 计算一样由 TFF 处理。尤其是,它具有 TFF 类型签名。

```

str(add_half.type_signature)

```

请注意,此类型签名没有布局。TensorFlow 计算无法使用或返回联合类型。

现在,您还可以在其他计算中将 `add_half` 用作构建块。例如,下面是使用 `tff.federated_map` 算子在客户端设备上将 `add_half` 逐点应用到联合浮点的所有成员组成的方法。

```

@tff.federated_computation(tff.type_at_clients(tf.float32))

def add_half_on_clients(x):

return tff.federated_map(add_half, x)

str(add_half_on_clients.type_signature)

```

### 执行 TensorFlow 计算

执行使用 `tff.tf_computation` 定义的计算所遵循的规则与我们为 `tff.federated_computation` 描述的规则相同。可以将它们作为 Python 中的普通可调用对象进行调用,如下所示。

```

add_half_on_clients([1.0, 3.0, 2.0])

```

同样值得注意的是,以这种方式调用 `add_half_on_clients` 计算会模拟分布式过程。数据会在客户端上使用,并在客户端上返回。实际上,此计算会让每个客户端执行一次本地操作。此系统中没有明确提及 `tff.SERVER`(但在实践中,编排此类处理可能会用到)。可以将以这种方式定义的计算理解为在概念上类似于 `MapReduce` 中的 `Map` 阶段。

另外请记住,我们在前一个部分中讲的关于 TFF 计算会在定义时序列化的内容对 `tff.tf_computation` 代码同样适用,`add_half_on_clients` 的 Python 主体会在定义时被跟踪一次。在后续调用中,TFF 将使用其序列化后的表示形式。

用 `tff.federated_computation` 装饰的 Python 方法与用 `tff.tf_computation` 装饰的方法之间的唯一区别是,后者会被序列化为 TensorFlow 计算图(而不允许前者包含直接嵌入其中的 TensorFlow 代码)。

在后台,每个用 `tff.tf_computation` 装饰的方法会暂时停用 Eager Execution,以便捕获计算的结构。虽然 Eager Execution 已在本地停用,但只要您编写的计算逻辑能够正确序列化,欢迎您使用 Eager TensorFlow、AutoGraph、TensorFlow 2.0 构造等。

例如,以下代码将会失败:

```

try:

# Eager mode

constant_10 = tf.constant(10.)

@tff.tf_computation(tf.float32)

def add_ten(x):

return x + constant_10

except Exception as err:

print (err)

```

上述代码失败的原因是,`constant_10` 在计算图外部构造,而该计算图是 `tff.tf_computation` 在序列化过程中在 `add_ten` 的主体内部构造的。

另一方面,您可以对在 `tff.tf_computation` 内部调用时修改当前计算图的 Python 函数进行调用:

```

def get_constant_10():

return tf.constant(10.)

@tff.tf_computation(tf.float32)

def add_ten(x):

return x + get_constant_10()

add_ten(5.0)

```

请注意,TensorFlow 中的序列化机制正在逐步完善,我们期望 TFF 对计算进行序列化方式的细节也将逐步完善。

### 使用 `tf.data.Dataset`

如前所述,`tff.tf_computation` 的独特之处在于,它们允许您使用由您的代码作为形式参数抽象定义的 `tf.data.Dataset`。如果参数需要在 TensorFlow 中表示为数据集,则需要使用 `tff.SequenceType` 构造函数对其进行声明。

例如,类型规范 `tff.SequenceType(tf.float32)` 定义了 TFF 中浮点元素的抽象序列。序列可以包含张量或复杂的嵌套结构(稍后我们将看到相关示例)。`T` 型项的序列的简明表示形式为 `T*`。

```

float32_sequence = tff.SequenceType(tf.float32)

str(float32_sequence)

```

假设在温度传感器示例中,每个传感器包含不只一个温度读数,而是多个。您可以使用下面的代码在 TensorFlow 中定义 TFF 计算,该计算将使用 `tf.data.Dataset.reduce` 算子在单个本地数据集中计算温度的平均值。

```

@tff.tf_computation(tff.SequenceType(tf.float32))

def get_local_temperature_average(local_temperatures):

sum_and_count = (

local_temperatures.reduce((0.0, 0), lambda x, y: (x[0] + y, x[1] + 1)))

return sum_and_count[0] / tf.cast(sum_and_count[1], tf.float32)

str(get_local_temperature_average.type_signature)

```

在用 `tff.tf_computation` 装饰的方法的主体中,TFF 序列类型的形式参数简单地表示为行为类似 `tf.data.Dataset` 的对象(即支持相同的属性和方法,它们目前未作为该类型的子类实现,随着 TensorFlow 中对数据集的支持不断发展,这可能会发生变化)。

您可以轻松验证这一点,如下所示。

```

@tff.tf_computation(tff.SequenceType(tf.int32))

def foo(x):

return x.reduce(np.int32(0), lambda x, y: x + y)

foo([1, 2, 3])

```

请记住,与普通的 `tf.data.Dataset` 不同,这些类似数据集的对象是占位符。它们不包含任何元素,因为它们表示抽象的序列类型参数,在具体上下文中使用时将绑定到具体数据。目前,对抽象定义的占位符数据的支持仍有一定局限,在早期的 TFF 中,您可能会遇到某些限制,但在本教程中不必担心这个问题(有关详细信息,请参阅文档页面)。

当在模拟模式下本地执行接受序列的计算时(如本教程所示),您可以将该序列作为 Python 列表进行馈送,如下所示(还可以用其他方式,例如,在 Eager 模式中作为 `tf.data.Dataset` 进行馈送,但现在我们将简单化处理)。

```

get_local_temperature_average([68.5, 70.3, 69.8])

```

与其他 TFF 类型一样,上面定义的序列可以使用 `tff.StructType` 构造函数定义嵌套结构。例如,下面是一个声明计算的方法,该计算接受 `A`、`B` 的序列对,并返回其乘积的和。我们将跟踪语句包含在计算主体中,以便您能够看到 TFF 类型签名如何转换为数据集的 `output_types` 和 `output_shapes`。

```

@tff.tf_computation(tff.SequenceType(collections.OrderedDict([('A', tf.int32), ('B', tf.int32)])))

def foo(ds):

print('element_structure = {}'.format(ds.element_spec))

return ds.reduce(np.int32(0), lambda total, x: total + x['A'] * x['B'])

str(foo.type_signature)

foo([{'A': 2, 'B': 3}, {'A': 4, 'B': 5}])

```

尽管将 `tf.data.Datasets` 用作形式参数在简单场景(如本教程中的场景)中有效,但对它的支持仍有局限且在不断发展。

## 汇总

现在,让我们再次尝试在联合设置中使用 TensorFlow 计算。假设我们有一组传感器,每个传感器有一个本地温度读数的序列。我们可以通过平均传感器的本地平均值来计算全局平均温度,如下所示。

```

@tff.federated_computation(

tff.type_at_clients(tff.SequenceType(tf.float32)))

def get_global_temperature_average(sensor_readings):

return tff.federated_mean(

tff.federated_map(get_local_temperature_average, sensor_readings))

```

请注意,这并非是来自所有客户端的所有本地温度读数的简单平均,因为这需要根据不同客户端本地维护的读数数量权衡其贡献。我们将其作为练习留给读者来更新上面的代码;`tff.federated_mean` 算子接受权重作为可选的第二个参数(预计为联合浮点)。