text stringlengths 2.5k 6.39M | kind stringclasses 3

values |

|---|---|

```

# !nvidia-smi

!pip --quiet install transformers

!pip --quiet install tokenizers

from google.colab import drive

drive.mount('/content/drive')

!cp -r '/content/drive/My Drive/Colab Notebooks/Tweet Sentiment Extraction/Scripts/.' .

COLAB_BASE_PATH = '/content/drive/My Drive/Colab Notebooks/Tweet Sentiment Extraction/'

MODEL_BASE_PATH = COLAB_BASE_PATH + 'Models/Files/141-roBERTa_base/'

import os

os.mkdir(MODEL_BASE_PATH)

```

## Dependencies

```

import json, warnings, shutil

from tweet_utility_scripts import *

from tweet_utility_preprocess_roberta_scripts import *

from transformers import TFRobertaModel, RobertaConfig

from tokenizers import ByteLevelBPETokenizer

from tensorflow.keras.models import Model

from tensorflow.keras import optimizers, metrics, losses, layers

SEED = 0

seed_everything(SEED)

warnings.filterwarnings("ignore")

```

# Load data

```

database_base_path = COLAB_BASE_PATH + 'Data/aux/'

k_fold = pd.read_csv(database_base_path + '5-fold.csv')

display(k_fold.head())

# Unzip files

!tar -xvf '/content/drive/My Drive/Colab Notebooks/Tweet Sentiment Extraction/Data/aux/fold_1.tar.gz'

!tar -xvf '/content/drive/My Drive/Colab Notebooks/Tweet Sentiment Extraction/Data/aux/fold_2.tar.gz'

!tar -xvf '/content/drive/My Drive/Colab Notebooks/Tweet Sentiment Extraction/Data/aux/fold_3.tar.gz'

# !tar -xvf '/content/drive/My Drive/Colab Notebooks/Tweet Sentiment Extraction/Data/aux/fold_4.tar.gz'

# !tar -xvf '/content/drive/My Drive/Colab Notebooks/Tweet Sentiment Extraction/Data/aux/fold_5.tar.gz'

```

# Model parameters

```

vocab_path = COLAB_BASE_PATH + 'qa-transformers/roberta/roberta-base-vocab.json'

merges_path = COLAB_BASE_PATH + 'qa-transformers/roberta/roberta-base-merges.txt'

base_path = COLAB_BASE_PATH + 'qa-transformers/roberta/'

config = {

"MAX_LEN": 96,

"BATCH_SIZE": 32,

"EPOCHS": 5,

"LEARNING_RATE": 3e-5,

"ES_PATIENCE": 1,

"question_size": 4,

"N_FOLDS": 3,

"base_model_path": base_path + 'roberta-base-tf_model.h5',

"config_path": base_path + 'roberta-base-config.json'

}

with open(MODEL_BASE_PATH + 'config.json', 'w') as json_file:

json.dump(json.loads(json.dumps(config)), json_file)

```

# Tokenizer

```

tokenizer = ByteLevelBPETokenizer(vocab_file=vocab_path, merges_file=merges_path,

lowercase=True, add_prefix_space=True)

```

## Learning rate schedule

```

LR_MIN = 1e-6

LR_MAX = config['LEARNING_RATE']

LR_EXP_DECAY = .5

@tf.function

def lrfn(epoch):

lr = LR_MAX * LR_EXP_DECAY**epoch

if lr < LR_MIN:

lr = LR_MIN

return lr

rng = [i for i in range(config['EPOCHS'])]

y = [lrfn(x) for x in rng]

fig, ax = plt.subplots(figsize=(20, 6))

plt.plot(rng, y)

print("Learning rate schedule: {:.3g} to {:.3g} to {:.3g}".format(y[0], max(y), y[-1]))

```

# Model

```

module_config = RobertaConfig.from_pretrained(config['config_path'], output_hidden_states=True)

def model_fn(MAX_LEN):

input_ids = layers.Input(shape=(MAX_LEN,), dtype=tf.int32, name='input_ids')

attention_mask = layers.Input(shape=(MAX_LEN,), dtype=tf.int32, name='attention_mask')

base_model = TFRobertaModel.from_pretrained(config['base_model_path'], config=module_config, name="base_model")

_, _, hidden_states = base_model({'input_ids': input_ids, 'attention_mask': attention_mask})

h12 = hidden_states[-1]

h11 = hidden_states[-2]

h10 = hidden_states[-3]

h09 = hidden_states[-4]

x_start_09 = layers.Dropout(.1)(h09)

y_start_09 = layers.Dense(1)(x_start_09)

x_start_10 = layers.Dropout(.1)(h10)

y_start_10 = layers.Dense(1)(x_start_10)

x_start_11 = layers.Dropout(.1)(h11)

y_start_11 = layers.Dense(1)(x_start_11)

x_start_12 = layers.Dropout(.1)(h12)

y_start_12 = layers.Dense(1)(x_start_12)

x_start = layers.Average()([y_start_12, y_start_11, y_start_10, y_start_09])

x_start = layers.Flatten()(x_start)

y_start = layers.Activation('softmax', name='y_start')(x_start)

x_end_09 = layers.Dropout(.1)(h09)

y_end_09 = layers.Dense(1)(x_end_09)

x_end_10 = layers.Dropout(.1)(h10)

y_end_10 = layers.Dense(1)(x_end_10)

x_end_11 = layers.Dropout(.1)(h11)

y_end_11 = layers.Dense(1)(x_end_11)

x_end_12 = layers.Dropout(.1)(h12)

y_end_12 = layers.Dense(1)(x_end_12)

x_end = layers.Average()([y_end_12, y_end_11, y_end_10, y_end_09])

x_end = layers.Flatten()(x_end)

y_end = layers.Activation('softmax', name='y_end')(x_end)

model = Model(inputs=[input_ids, attention_mask], outputs=[y_start, y_end])

return model

```

# Train

```

AUTO = tf.data.experimental.AUTOTUNE

strategy = tf.distribute.get_strategy()

history_list = []

for n_fold in range(config['N_FOLDS']):

n_fold +=1

print('\nFOLD: %d' % (n_fold))

# Load data

base_data_path = 'fold_%d/' % (n_fold)

x_train = np.load(base_data_path + 'x_train.npy')

y_train = np.load(base_data_path + 'y_train.npy')

x_valid = np.load(base_data_path + 'x_valid.npy')

y_valid = np.load(base_data_path + 'y_valid.npy')

step_size = x_train.shape[1] // config['BATCH_SIZE']

valid_step_size = x_valid.shape[1] // config['BATCH_SIZE']

# Build TF datasets

train_dist_ds = strategy.experimental_distribute_dataset(get_training_dataset(x_train, y_train, config['BATCH_SIZE'], AUTO, seed=SEED))

valid_dist_ds = strategy.experimental_distribute_dataset(get_validation_dataset(x_valid, y_valid, config['BATCH_SIZE'], AUTO, repeated=True, seed=SEED))

train_data_iter = iter(train_dist_ds)

valid_data_iter = iter(valid_dist_ds)

# Step functions

@tf.function

def train_step(data_iter):

def train_step_fn(x, y):

with tf.GradientTape() as tape:

probabilities = model(x, training=True)

loss_start = loss_fn_start(y['y_start'], probabilities[0], label_smoothing=0.2)

loss_end = loss_fn_end(y['y_end'], probabilities[1], label_smoothing=0.2)

loss = tf.math.add(loss_start, loss_end)

grads = tape.gradient(loss, model.trainable_variables)

optimizer.apply_gradients(zip(grads, model.trainable_variables))

# update metrics

train_loss.update_state(loss)

train_loss_start.update_state(loss_start)

train_loss_end.update_state(loss_end)

for _ in tf.range(step_size):

strategy.experimental_run_v2(train_step_fn, next(data_iter))

@tf.function

def valid_step(data_iter):

def valid_step_fn(x, y):

probabilities = model(x, training=False)

loss_start = loss_fn_start(y['y_start'], probabilities[0])

loss_end = loss_fn_end(y['y_end'], probabilities[1])

loss = tf.math.add(loss_start, loss_end)

# update metrics

valid_loss.update_state(loss)

valid_loss_start.update_state(loss_start)

valid_loss_end.update_state(loss_end)

for _ in tf.range(valid_step_size):

strategy.experimental_run_v2(valid_step_fn, next(data_iter))

# Train model

model_path = 'model_fold_%d.h5' % (n_fold)

model = model_fn(config['MAX_LEN'])

optimizer = optimizers.Adam(learning_rate=lambda: lrfn(tf.cast(optimizer.iterations, tf.float32)//step_size))

loss_fn_start = losses.categorical_crossentropy

loss_fn_end = losses.categorical_crossentropy

train_loss = metrics.Sum()

valid_loss = metrics.Sum()

train_loss_start = metrics.Sum()

valid_loss_start = metrics.Sum()

train_loss_end = metrics.Sum()

valid_loss_end = metrics.Sum()

metrics_dict = {'loss': train_loss, 'loss_start': train_loss_start, 'loss_end': train_loss_end,

'val_loss': valid_loss, 'val_loss_start': valid_loss_start, 'val_loss_end': valid_loss_end}

history = custom_fit(model, metrics_dict, train_step, valid_step, train_data_iter, valid_data_iter,

step_size, valid_step_size, config['BATCH_SIZE'], config['EPOCHS'], config['ES_PATIENCE'],

(MODEL_BASE_PATH + model_path), save_last=False)

history_list.append(history)

model.save_weights(MODEL_BASE_PATH +'last_' + model_path)

model.load_weights(MODEL_BASE_PATH + model_path)

# Make predictions

train_preds = model.predict(get_test_dataset(x_train, config['BATCH_SIZE']))

valid_preds = model.predict(get_test_dataset(x_valid, config['BATCH_SIZE']))

k_fold.loc[k_fold['fold_%d' % (n_fold)] == 'train', 'start_fold_%d' % (n_fold)] = train_preds[0].argmax(axis=-1)

k_fold.loc[k_fold['fold_%d' % (n_fold)] == 'train', 'end_fold_%d' % (n_fold)] = train_preds[1].argmax(axis=-1)

k_fold.loc[k_fold['fold_%d' % (n_fold)] == 'validation', 'start_fold_%d' % (n_fold)] = valid_preds[0].argmax(axis=-1)

k_fold.loc[k_fold['fold_%d' % (n_fold)] == 'validation', 'end_fold_%d' % (n_fold)] = valid_preds[1].argmax(axis=-1)

k_fold['end_fold_%d' % (n_fold)] = k_fold['end_fold_%d' % (n_fold)].astype(int)

k_fold['start_fold_%d' % (n_fold)] = k_fold['start_fold_%d' % (n_fold)].astype(int)

k_fold['end_fold_%d' % (n_fold)].clip(0, k_fold['text_len'], inplace=True)

k_fold['start_fold_%d' % (n_fold)].clip(0, k_fold['end_fold_%d' % (n_fold)], inplace=True)

k_fold['prediction_fold_%d' % (n_fold)] = k_fold.apply(lambda x: decode(x['start_fold_%d' % (n_fold)], x['end_fold_%d' % (n_fold)], x['text'], config['question_size'], tokenizer), axis=1)

k_fold['prediction_fold_%d' % (n_fold)].fillna(k_fold["text"], inplace=True)

k_fold['jaccard_fold_%d' % (n_fold)] = k_fold.apply(lambda x: jaccard(x['selected_text'], x['prediction_fold_%d' % (n_fold)]), axis=1)

```

# Model loss graph

```

sns.set(style="whitegrid")

for n_fold in range(config['N_FOLDS']):

print('Fold: %d' % (n_fold+1))

plot_metrics(history_list[n_fold])

```

# Model evaluation

```

display(evaluate_model_kfold(k_fold, config['N_FOLDS']).style.applymap(color_map))

```

# Visualize predictions

```

display(k_fold[[c for c in k_fold.columns if not (c.startswith('textID') or

c.startswith('text_len') or

c.startswith('selected_text_len') or

c.startswith('text_wordCnt') or

c.startswith('selected_text_wordCnt') or

c.startswith('fold_') or

c.startswith('start_fold_') or

c.startswith('end_fold_'))]].head(15))

```

| github_jupyter |

```

#importing the Figshare dataset

import numpy as np

data=np.load('/content/drive/MyDrive/images5000.npy', allow_pickle="true")

labels=np.load('/content/drive/MyDrive/labels5000.npy', allow_pickle="true")

from google.colab import drive

drive.mount('/content/drive')

#importing other required libraries

from keras import Sequential

import numpy as np

import pandas as pd

from sklearn.utils.multiclass import unique_labels

import matplotlib.pyplot as plt

import matplotlib.image as mpimg

import seaborn as sns

import itertools

from sklearn.model_selection import train_test_split

from sklearn.metrics import confusion_matrix

from keras import Sequential

from keras.applications import VGG19, VGG16, ResNet50

from keras.preprocessing.image import ImageDataGenerator

from keras.optimizers import SGD,Adam

from keras.callbacks import ReduceLROnPlateau

from keras.layers import Flatten, Dense, BatchNormalization, Activation,Dropout

from keras.utils import to_categorical

import tensorflow as tf

import random

#already preprocessed data (from Matlab) is now divided into X and Y for entering the deep learning model

data=np.asarray(data)

labels=np.asarray(labels)

data = np.delete(data, [1382,2024,2034,2058,2145,2148,2155,2163,2170,2180,2183,2276,2288,2293,2299], axis=0)

labels = np.delete(labels, [1382,2024,2034,2058,2145,2148,2155,2163,2170,2180,2183,2276,2288,2293,2299], axis=0)

from tensorflow.keras.applications.resnet50 import preprocess_input,decode_predictions

for i in range(3049):

data[i]=data[i].flatten()

data[i]=data[i][:261075]

print(i)

data[i]=data[i].reshape((295,295,3))

data[i]=preprocess_input(data[i])

a=tf.keras.utils.to_categorical(labels, num_classes=4)

a=np.delete(a, 0, 1)

d=[]

for i in range(3049):

if i%4!=0:

p=data[i].reshape(295,295,3)

d.append(p)

test_i=[]

for i in range(3049):

if i %4 ==0 :

p=data[i].reshape(295,295,3)

test_i.append(p)

test_c=[]

for i in range(3049):

if i %4 ==0 :

test_c.append(a[i])

test_i=np.asarray(test_i)

test_c=np.asarray(test_c)

np.save("TESTI.npy",test_i)

np.save("TESTC.npy",test_c)

l=[]

for i in range(3049):

if i%4!=0:

l.append(a[i])

# for j in range(2290,3064):

# l.append(a[j])

d=np.asarray(d)

l=np.asarray(l)

l.shape

d.shape

#applying the RESNET 50 model on our preprocessed data

from keras.applications import VGG19, VGG16, ResNet50

from keras.models import Model

from keras.layers import Input

from keras.layers import AveragePooling2D

baseModel = ResNet50(weights="imagenet", include_top=False,input_tensor=Input(shape=(295,295, 3)))

headModel = baseModel.output

headModel = AveragePooling2D(pool_size=(7, 7))(headModel)

headModel = Flatten(name="flatten")(headModel)

headModel = Dense(128, activation="relu")(headModel)

headModel = Dropout(0.5)(headModel)

headModel = Dense(3, activation="softmax")(headModel)

model = Model(inputs=baseModel.input, outputs=headModel)

# for layer in baseModel.layers:

# layer.trainable = False

INIT_LR = 1e-4

EPOCHS =10

BS = 32

opt = SGD(lr=1e-4, momentum=0.9)

model.compile(loss="binary_crossentropy", optimizer=opt,

metrics=["accuracy"])

(x_train, x_val, trainY, testY) = train_test_split(d,l,test_size=0.20, random_state=42)

H = model.fit(x_train, trainY, batch_size=11,steps_per_epoch=len(x_train)//11,validation_data=(x_val, testY),validation_steps=len(x_val)//11 ,epochs=20)

model.save("drive/MyDrive/model_75-25_histogram.h5")

#testing and evaluating our model on test data

test_i=np.asarray(test_i)

test_i.shape

test_c=np.asarray(test_c)

import keras

a=keras.models.load_model("drive/MyDrive/model_75-25_histogram.h5")

a.evaluate(test_i,test_c)

test_o=[]

for i in range(3049):

if i %4 ==0 :

test_o.append(labels[i])

k=[]

one=0

two=0

three=0

for i in test_o:

if i==1:

k.append(0)

one=one+1

if i==2:

k.append(1)

two=two+1

if i==3:

k.append(2)

three=three+1

k=np.asarray(k)

# Measuring the validity of our model using some of the metrics

from sklearn.metrics import classification_report

y_pred = a.predict(test_i, batch_size=64, verbose=0)

y_pred_bool = np.argmax(y_pred, axis=1)

print(classification_report(k, y_pred_bool))

```

| github_jupyter |

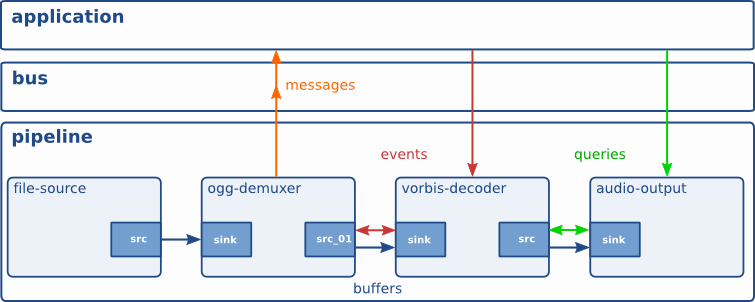

## Reinforcement Learning Tutorial -3: DDPG

### MD Muhaimin Rahman

contact: sezan92[at]gmail[dot]com

In the last tutorial, I tried to Explain DQN. DQN solves one problem, that is it can deal with continuous state space. But it cannot output continuous action. To solve that problem, here comes DDPG! It means Deep Deterministic Policy Gradient

- [Importing Libraries](#libraries)

- [Algorithm](#algorithm)

- [Model Definition](#model)

- [Replay Buffer](#buffer)

- [Noise Class](#noise)

- [Training](#training)

<a id ="libraries"></a>

### Importing Libraries

```

from __future__ import print_function,division

import gym

import keras

from keras import layers

from keras import backend as K

from collections import deque

from tqdm import tqdm

import random

import numpy as np

import copy

SEED =123

np.random.seed(SEED)

```

Important constants

```

num_episodes = 100

steps_per_episode=500

BATCH_SIZE=256

TAU=0.001

GAMMA=0.95

actor_lr=0.0001

critic_lr=0.001

SHOW= False

from keras.models import Model

```

<a id ="algorithm"></a>

### Algorithm

The actual algorithm was developed by Timothy lilicap et al. The algorithm is an actor-critic based algorithm.. Which means, it has two networks to train- an actor network, which predicts action based on the current state. The other networ- known as Critic network- evaluates the state and action. This is the case for all actor-critic networks. The critic network is updated using Bellman Equation like DQN. The difference is the training of actor network. In DDPG , we train Actor network by trying to get the maximum value of gradient of $Q(s,a)$ for given action $a$ in a state $s$. In a normal machine learning classification and regression algorithm, our target is to get the value with minimum loss. Then we train the network by gradient descent technique using the gradient of Loss .

\begin{equation}

\theta \gets \theta - \alpha \frac{\partial L}{\partial \theta}

\end{equation}

Here, $\theta$ is the weight parameter of the network, and $L$ is loss

But in our case, we have to get the maximize the $Q$ value. So we have to set the weight parameters such that we get the maximum $Q$ value. This technique is known as Gradient Ascent, as it does the exact opposite of Gradient Descent

\begin{equation}

\theta_a \gets \theta_a - \alpha (-\frac{\partial Q(s,a) }{\partial \theta_a})

\end{equation}

The above equation looks like the actual Gradient Descent equation. Only difference is , the minus sign. It makes the equation to minimize the negative value of $Q$ , which in turn maximizes $Q$ value.

So the training is as following

- 1) Define Actor network $actor$ and Critic Network $critic$

- 2) Define Target Actor and Critic Networks - $actor_{target}$ and $critic_{target}$ with exact same weights

- 3) Initialize Replay Buffer

- 4) Get the initial state , $state$

- 5) Get the action $a$ from , $a \gets actor(state)$ + Noise .[Here Noise is given to make the process stochastic and not so deterministic. The paper uses ornstein uhlenbeck noise process , so we will as well]

- 6) Get Next state $state_{next}$ , Reward $r$ , Terminal from agent for given $state$ and $action$

- 7) Add the experience , $state$,$action$,$reward$,$state_{next}$,$terminal$ to replay buffer

- 8) Get sample minibatch from Replay buffer

- 9) Train Critic Network Using Bellman Equation. Like DQN

- 10) Train Actor Network using Gradient Ascent with gradients of $Q$ . $\theta_a \gets \theta_a - \alpha (-\frac{\partial Q(s,a) }{\partial \theta_a}) $

- 11) Update weights of $actor_{target}$ and $critic_{target}$ using the equation $ \theta \gets \tau \theta + (1-\tau)\theta_{target}$

<a id ="model"></a>

### Model Definition

After some trials and errors, I have selected this network. The Actor Network is 3 layer MLP with 320 hidden nodes in each layer. The critic network is also a 3 layer MLP with 640 hidden nodes in each layer.Notice that the return arguments of function ```create_critic_network```.

```

def create_actor_network(state_shape,action_shape):

in1=layers.Input(shape=state_shape,name="state")

l1 =layers.Dense(320,activation="relu")(in1)

l2 =layers.Dense(320,activation="relu")(l1)

l3 =layers.Dense(320,activation="relu")(l2)

action =layers.Dense(action_shape,activation="tanh")(l3)

actor= Model(in1,action)

return actor

def create_critic_network(state_shape,action_shape):

in1 = layers.Input(shape=state_shape,name="state")

in2 = layers.Input(shape=action_shape,name="action")

l1 = layers.concatenate([in1,in2])

l2 = layers.Dense(640,activation="relu")(l1)

l3 = layers.Dense(640,activation="relu")(l2)

l4 = layers.Dense(640,activation="relu")(l3)

value = layers.Dense(1)(l4)

critic = Model(inputs=[in1,in2],outputs=value)

return critic,in1,in2

```

I am chosing ```MountainCarContinuous-v0``` game. Mainly because my GPU is not that good to work on higher dimensional state space

```

env = gym.make("MountainCarContinuous-v0")

state_shape= env.observation_space.sample().shape

action_shape=env.action_space.sample().shape

actor = create_actor_network(state_shape,action_shape[0])

critic,state_tensor,action_tensor = create_critic_network(state_shape,action_shape)

target_actor=create_actor_network(state_shape,action_shape[0])

target_critic,_,_ = create_critic_network(state_shape,action_shape)

target_actor.set_weights(actor.get_weights())

target_critic.set_weights(critic.get_weights())

```

I have chosen ```RMSProp``` optimizer, due to more stability compared to Adam . I found it after trials and errors, no theoritical background on chosing this optimizer

```

actor_optimizer = keras.optimizers.RMSprop(actor_lr)

critic_optimizer = keras.optimizers.RMSprop(critic_lr)

critic.compile(loss="mse",optimizer=critic_optimizer)

```

#### Actor training

I think this is the most critical part of ddpg in keras. The object ```critic``` and ```actor``` has a ```__call__``` method inside it, which will give output tensor if you give input a tensor. So to get the tensor object of ```Q``` we will use this functionality.

```

CriticValues = critic([state_tensor,actor(state_tensor)])

```

Now it is time to get the gradient value of $-\frac{\partial Q(s,a)}{\theta_a}$

```

updates = actor_optimizer.get_updates(

params=actor.trainable_weights,loss=-K.mean(CriticValues))

```

Now we will create a function which will train the actor network.

```

actor_train = K.function(inputs=[state_tensor],outputs=[actor(state_tensor),CriticValues],

updates=updates)

```

<a id ="buffer"></a>

### Replay Buffer

```

memory = deque(maxlen=10000)

state = env.reset()

state = state.reshape(-1,)

for _ in tqdm(range(memory.maxlen)):

action = env.action_space.sample()

next_state,reward,terminal,_=env.step(action)

state=next_state

if terminal:

reward=-100

state= env.reset()

state = state.reshape(-1,)

memory.append((state,action,reward,next_state,terminal))

next_state

```

<a id ="noise"></a>

### Noise class

The use of Noise is to make the process Stochastic and to help the agent explore different actions. The paper used Orstein Uhlenbeck Noise class

```

class OrnsteinUhlenbeckProcess(object):

def __init__(self, theta, mu=0, sigma=1, x0=0, dt=1e-2, n_steps_annealing=10, size=1):

self.theta = theta

self.sigma = sigma

self.n_steps_annealing = n_steps_annealing

self.sigma_step = - self.sigma / float(self.n_steps_annealing)

self.x0 = x0

self.mu = mu

self.dt = dt

self.size = size

def restart(self):

self.x0=copy.copy(self.mu)

def generate(self, step):

#sigma = max(0, self.sigma_step * step + self.sigma)

x = self.x0 + self.theta * (self.mu - self.x0) * self.dt + self.sigma * np.sqrt(self.dt) * np.random.normal(size=self.size)

self.x0 = x

return x

```

<a id ="training"></a>

### Training

```

steps_per_episodes=5000

ou = OrnsteinUhlenbeckProcess(theta=0.35,mu=0.8,sigma=0.4,n_steps_annealing=10)

max_total_reward=0

for episode in range(num_episodes):

state= env.reset()

state = state.reshape(-1,)

total_reward=0

ou.restart()

for step in range(steps_per_episodes):

action= actor.predict(state.reshape(1,-1))+ou.generate(episode)

next_state,reward,done,_ = env.step(action)

total_reward=total_reward+reward

#random minibatch from buffer

batches=random.sample(memory,BATCH_SIZE)

states= np.array([batch[0].reshape((-1,)) for batch in batches])

actions= np.array([batch[1] for batch in batches])

actions=actions.reshape(-1,1)

rewards=np.array([batch[2] for batch in batches])

rewards = rewards.reshape((-1,1))

new_states=np.array([batch[3].reshape((-1,)) for batch in batches])

terminals=np.array([batch[4] for batch in batches])

terminals = terminals.reshape((-1,1))

#training

target_actions = target_actor.predict(new_states)

target_Qs = target_critic.predict([new_states,target_actions])

new_Qs = rewards+GAMMA*target_Qs*terminals

critic.fit([states,actions],new_Qs,verbose=False)

_,critic_values=actor_train(inputs=[states])

target_critic_weights=[TAU*weight+(1-TAU)*target_weight for weight,target_weight in zip(critic.get_weights(),target_critic.get_weights())]

target_actor_weights=[TAU*weight+(1-TAU)*target_weight for weight,target_weight in zip(actor.get_weights(),target_actor.get_weights())]

target_critic.set_weights(target_critic_weights)

target_actor.set_weights(target_actor_weights)

print("Total Reward %f"%total_reward,end="\r")

if SHOW:

env.render()

if done or step==(steps_per_episodes-1):

if total_reward<0:

print("Failed!",end=" ")

reward=-100

elif total_reward>0:

print("Passed!",end=" ")

reward=100

memory.append((state,action,reward,next_state,done))

break

memory.append((state,action,reward,next_state,done))

state=next_state

if total_reward>max_total_reward:

actor.save_weights("MC_DDPG_Weights/Actor_Best_weights episode %d_GAMMA_%f_TAU%f_lr_%f.h5"%(episode,GAMMA,TAU,actor_lr))

critic.save_weights("MC_DDPG_Weights/Critic_Best_weights episode %d_GAMMA_%f_TAU%f_lr_%f.h5"%(episode,GAMMA,TAU,critic_lr))

max_total_reward=total_reward

print("Episode %d Total Reward %f"%(episode,total_reward))

```

### Video

Please watch at 2x speed. I changed some simple mistakes after the video so the rewards are not exactly the same

[](http://www.youtube.com/watch?v=9Fe_n-ovIaA "Keras tutorial DDPG")

| github_jupyter |

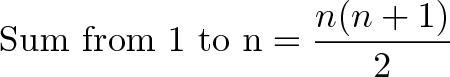

# Learning LDA model via Gibbs sampling

# Question 1

<img src="images/Screen Shot 2016-07-29 at 3.14.04 PM.png">

*Screenshot taken from [Coursera](https://www.coursera.org/learn/ml-clustering-and-retrieval/exam/6ieZu/learning-lda-model-via-gibbs-sampling)*

<!--TEASER_END-->

# Question 2

<img src="images/Screen Shot 2016-07-29 at 3.14.28 PM.png">

*Screenshot taken from [Coursera](https://www.coursera.org/learn/ml-clustering-and-retrieval/exam/6ieZu/learning-lda-model-via-gibbs-sampling)*

<!--TEASER_END-->

# Question 3

<img src="images/Screen Shot 2016-07-29 at 5.11.56 PM.png">

*Screenshot taken from [Coursera](https://www.coursera.org/learn/ml-clustering-and-retrieval/exam/6ieZu/learning-lda-model-via-gibbs-sampling)*

<!--TEASER_END-->

# Question 4

<img src="images/Screen Shot 2016-07-29 at 4.53.54 PM.png">

*Screenshot taken from [Coursera](https://www.coursera.org/learn/ml-clustering-and-retrieval/exam/6ieZu/learning-lda-model-via-gibbs-sampling)*

<!--TEASER_END-->

**Answer**

- https://www.coursera.org/learn/ml-clustering-and-retrieval/discussions/weeks/5/threads/AK3N4kI8EeaXyw5hjmsWew

- https://www.coursera.org/learn/ml-clustering-and-retrieval/discussions/weeks/5/threads/L5yUeFA4EearRRKGx4XuoQ

- Understand notation:

- $n_{i,k}$: count of topics (1's and 2's) in the document after you decrement the target word "manager". If the target word is for topic 1 then you won't need to decrement since the manager = topic 2. So you count the 1's. If the target word "manager" is for topic 2 then you have to decrement the single count of topic 2 which then makes your $n_{i,k}$ = 0.

- $N_i$: count of words in doc i

- V: total count of vocabulary, which is 10

- $m_{manager,k}$: total count of word, "manager" in the corpus assigned to topic k

- $\sum_{w} m_{w,k}$: Sum of count of all words in the corpus assigned to topic k

# Question 5

<img src="images/Screen Shot 2016-07-29 at 4.54.06 PM.png">

*Screenshot taken from [Coursera](https://www.coursera.org/learn/ml-clustering-and-retrieval/exam/6ieZu/learning-lda-model-via-gibbs-sampling)*

<!--TEASER_END-->

**Answer**

- $\sum_w m_{w, 1}$: total number of words in the corpus of topic 1, which is the sum of all words assigned to topic 1

- 52 + 15 + 9 + 9 + 20 + 17 + 1 = 123

# Question 6

<img src="images/Screen Shot 2016-07-29 at 4.54.09 PM.png">

*Screenshot taken from [Coursera](https://www.coursera.org/learn/ml-clustering-and-retrieval/exam/6ieZu/learning-lda-model-via-gibbs-sampling)*

<!--TEASER_END-->

**Answer**

- $n_{i, 1}$: # current assignments to topic 1 in doc i, which is how many times topic 1 appears in document i.

- clearly 3 times, for baseball + ticket + owner = 1 + 1 + 1 = 3

# Question 7

<img src="images/Screen Shot 2016-07-29 at 4.54.13 PM.png">

*Screenshot taken from [Coursera](https://www.coursera.org/learn/ml-clustering-and-retrieval/exam/6ieZu/learning-lda-model-via-gibbs-sampling)*

<!--TEASER_END-->

**Answer**

- $\sum_w m_{w, 1}$: total number of words in the corpus of topic 1, which is the sum of all words assigned to topic 1

- 52 + 15 + 9 + 9 + 20 + 17 + 1 = 123

**Answer**

- When we remove the assignment of manager to topic 2 in document i, we only have 1 assignment of topic 2 which is the word "price"

- $n_{i, 2}$: # current assignments to topic 2 in doc i, which is 1

# Question 8

<img src="images/Screen Shot 2016-07-29 at 4.54.18 PM.png">

*Screenshot taken from [Coursera](https://www.coursera.org/learn/ml-clustering-and-retrieval/exam/6ieZu/learning-lda-model-via-gibbs-sampling)*

<!--TEASER_END-->

**Answer**

- When we remove the assignment of "manager" to topic 2 in document i -> manager: 0

- The total counts of manager in topic 2 in the corpus:

- number of assignments corpus-wide of word "manager" to topic 2 - number of assignment of word "manager" to topic 2 in document i

- $\large m_{\text{manager,2}} - z_{\text{i,manager}}$ = 37 - 1 = 36

# Question 9

<img src="images/Screen Shot 2016-07-29 at 5.12.06 PM.png">

*Screenshot taken from [Coursera](https://www.coursera.org/learn/ml-clustering-and-retrieval/exam/6ieZu/learning-lda-model-via-gibbs-sampling)*

<!--TEASER_END-->

**Answer**

- $\sum_w m_{w, 2}$: total number of words in the corpus of topic 2, which is the sum of all words assigned to topic 2 after we decrement the associated counts

- 2 + 25 + 36 + 32 + 23 + 75 + 19 + 29 = 241

# Question 10

<img src="images/Screen Shot 2016-07-29 at 5.17.14 PM.png">

*Screenshot taken from [Coursera](https://www.coursera.org/learn/ml-clustering-and-retrieval/exam/6ieZu/learning-lda-model-via-gibbs-sampling)*

<!--TEASER_END-->

**Answer**

As discussed in the slides, the unnormalized probability of assigning to topic 1 is

- $p_1 = \frac{n_{i, 1} + \alpha}{N_i - 1 + K \alpha}\frac{m_{\text{manager}, 1} + \gamma}{\sum_w m_{w, 1} + V \gamma}$

where V is the total size of the vocabulary.

Similarly the unnormalized probability of assigning to topic 2 is

- $p_2 = \frac{n_{i, 2} + \alpha}{N_i - 1 + K \alpha}\frac{m_{\text{manager}, 2} + \gamma}{\sum_w m_{w, 2} + V \gamma}$

Using the above equations and the results computed in previous questions, compute the probability of assigning the word “manager” to topic 1.

- Left Formula = (# times topic_1 appears in doc + alpha) / (# words in doc - 1 + K * alpha)

- Right Formula = (# of corpus-wide assignment of 'manager' to topic 1 + gamma) / (Sum of all topic 1 word counts + V * gamma)

- Prob 1 = (Left Formula) * (Right Formula)

Example:

- calculate prob 1

- Left Formula = (3 + 10.0) / (5 - 1 + (2 * 10.0)) = 0.5417

- Right Formula = (20 + 0.1) / (123 + (10 * 0.1)) = 0.162

- Prob 1 = 0.5417 * 0.162 = 0.0877554

- calculate prob 2

- Left Formula = (1 + 10.0) / (5 - 1 + (2 * 10.0)) = 0.4583

- Right Formula = (36 + 0.1) / (241 + (10 * 0.1)) = 0.1492

- Prob 1 = 0.4583 * 0.1492 = 0.06837836

- normalize prob 1

- normalize prob 1 = Prob 1/(Prob 1 + Prob 2) = 0.0877554/(0.0877554 + 0.06837836) = 0.562

```

def calculate_unnorm_prob(n_i, alpha, N_i, K, m_word, gamma, sum_of_m, V):

""" Calculate unnormalized probability of assigning to topic

"""

left_formula = (n_i + alpha)/(N_i - 1 + (K * alpha))

right_formula = (m_word + gamma)/(sum_of_m + (V * gamma))

prob = left_formula * right_formula

return prob

unnorm_prob_1 = calculate_unnorm_prob(3, 10.0, 5, 2, 20, 0.1, 123, 10)

unnorm_prob_2 = calculate_unnorm_prob(1, 10.0, 5, 2, 36, 0.1, 241, 10)

print unnorm_prob_1

print unnorm_prob_2

prob_1 = unnorm_prob_1/(unnorm_prob_1 + unnorm_prob_2)

print prob_1

```

| github_jupyter |

# SciPy - Scientific Computing for Python

SciPy is a framework that is built upon NumPy. It uses NumPy arrays to leverage performance, and then extends NumPy to provide a range of advanced algorithms and functions. We will only cover a brief section of SciPy...there's a lot in it. For more information you can see the SciPy website www.scipy.org.

The different submodules of SciPy include:

* Special functions (`scipy.special`)

* Integration (`scipy.integrate`)

* Optimization (`scipy.optimize`)

* Interpolation (`scipy.interpolate`)

* Fourier Transforms (`scipy.fftpack`)

* Signal Processing (`scipy.signal`)

* Linear Algebra (`scipy.linalg`)

* Sparse Eigenvalue Problems with ARPACK

* Compressed Sparse Graph Routines (`scipy.sparse.csgraph`)

* Spatial data structures and algorithms (`scipy.spatial`)

* Statistics (`scipy.stats`)

* Multidimensional image processing (`scipy.ndimage`)

* File IO (`scipy.io`)

To access the SciPy module, you can import the whole module,

```

from scipy import *

```

or simply import the parts you need:

```

import scipy.linalg as la

```

## Integration

### Quadrature

Many of you are probably familiar with integration from basic calculus:

$\displaystyle \int_a^b f(x) dx$

When you discretize this process and solve an integral numerically, it's called quadrature. SciPy provides several types of quadrature, depending on whether you need to solve single, double, or triple integrals.

```

from scipy.integrate import quad, dblquad, tplquad

# Define a function we want to integrate

def f(x):

return x**2

x0 = 0

x1 = 1

val, abserr = quad(f, x0, x1)

print("integral value =", val, ", absolute error =", abserr)

```

### Ordinary Differential Equations (ODEs)

SciPy's integration package also allows for users to numerically solve ODEs. SciPy provides a function, `odeint`, for solving first order, vector-valued, differential equations:

$\displaystyle \frac{d\mathbf{y}}{dt} = \mathbf{f}(\mathbf{y}, t)$

The `odeint` function takes 3 inputs:

* the function to be evaluated

* initial conditions

* a sequence of time points

In addition to the `odeint` function, SciPy also features a class called `ode` that has more options and finer levels of control. In general, `odeint` is a good starting point for new users.

Here's an example of using `odeint` to solve the predator-prey equations (a simple model that describes the interaction between two species...one the prey, and the other the predator):

$\displaystyle \frac{dx}{dt} = x(a-by)$

$\displaystyle \frac{dy}{dt} = -y(c-dx)$

Let $\displaystyle x$ be the population of rabbits and $\displaystyle y$ be the population of wolves. $\displaystyle a, b, c$ and $\displaystyle d$ are all positive parameters.

```

import numpy as np

from scipy.integrate import odeint

a,b,c,d = 1,1,1,1

# Define our system of ODEs

# Note the vector values, here x is P[0] and y is P[1]

def dP_dt(P, t):

return [P[0]*(a - b*P[1]), -P[1]*(c - d*P[0])]

# Discretize our domain

ts = np.linspace(0, 12, 100)

# Initial conditions

P0 = [1.5, 1.0]

# Call the solver

Ps = odeint(dP_dt, P0, ts)

prey = Ps[:,0]

predators = Ps[:,1]

import matplotlib.pyplot as plt

%matplotlib inline

# Plot the result

plt.plot(ts, prey, "+", label="Rabbits")

plt.plot(ts, predators, "x", label="Wolves")

plt.xlabel("Time")

plt.ylabel("Population")

plt.legend();

```

We can even look at a phase plane plot:

```

ic = np.linspace(1.0, 3.0, 21)

for r in ic:

P0 = [r, 1.0]

Ps = odeint(dP_dt, P0, ts)

plt.plot(Ps[:,0], Ps[:,1], "-")

plt.xlabel("Rabbits")

plt.ylabel("Wolves")

plt.title("Rabbits vs Wolves");

```

## Fourier Transform

SciPy's `fftpack` module provides users with a performant way to compute discrete Fourier transforms (DFT). Underneath the hood, `fftpack` is calling Fortran functions from the Fortran FFTPACK library.

Here's a brief example:

```

from scipy.fftpack import fft

import numpy

x = np.random.randint(10, size=5)

y = fft(x)

y

```

We can also compute the inverse:

```

from scipy.fftpack import ifft

yinv = ifft(y)

yinv

x

```

We won't go into the theory of Fourier transforms, but let us briefly mention what is involved in computing a DFT to highlight SciPy's performance.

Computing Fourier transforms reduces to a simple matrix multiplication; to compute the DFT for a vector $\displaystyle x$ we simply multiply it with the matrix $\displaystyle M$:

$\displaystyle M = e^{-2i\pi kn/N}$

We can use the matrix-vector operations we learned about in NumPy to write a naive implementation for computing DFT:

```

import numpy as np

def DFT(x):

x = np.asarray(x, dtype=float)

N = x.shape[0]

n = np.arange(N)

k = n.reshape((N, 1))

M = np.exp(-2j * np.pi * k * n / N)

return np.dot(M, x)

```

Let's double-check it works by comparing results with `fftpack`:

```

from scipy.fftpack import fft

x = np.random.random(1024)

np.allclose(DFT(x), fft(x))

```

Now let's look at the performance:

```

%timeit DFT(x)

%timeit fft(x)

```

## Linear Algebra

SciPy's `linalg` package greatly expands upon what we saw in NumPy. There are a number of solvers available, including data interpolation modules, eigensolvers, and optimisation routines, but we'll only cover a few here.

Let us consider a system of linear equations, in matrix form:

$A x = b$

where $A$ is a matrix and $x,b$ are vectors.

Such system can be solved with SciPy like:

```

from scipy.linalg import *

A = np.array([[1,2],[3,4]])

b = np.array([3,17])

x = solve(A, b)

x

# check

np.allclose(A @ x, b)

```

SciPy's `solve` function is faster than the built-in `inv` function:

```

A1 = np.random.random((1000,1000))

b1 = np.random.random(1000)

%timeit solve(A1, b1)

%timeit inv(A1) @ b1

```

We can even import LAPACK functions for better performance:

```

import scipy.linalg.lapack as lapack

%timeit lu, piv, x, info = lapack.dgesv(A1, b1)

```

| github_jupyter |

# Print Formatting

In this lecture we will briefly cover the various ways to format your print statements. As you code more and more, you will probably want to have print statements that can take in a variable into a printed string statement.

The most basic example of a print statement is:

### <font color='red'>Python 3 Alert!</font>

Python3 is simpler. We use the format string.

## String formatting

```

print('The first letter is: {}, the second: {}, the third: {}'.format('a','b','c'))

```

Each parameter is indexed. We can manipulate where the argument appears in the string by using its index.

```

print('The first letter is: {2}, the second: {1}, the third: {0}'.format('a','b','c'))

We can also repeat them the argument.

print('The first letter is: {0}, the second: {0}, the third: {0}'.format('a'))

```

## Integers and Floats

There is no need to specify what data type we are passing in.

```

print('The number is {0}, and {0}, and {0}'.format(10))

```

Floats are really just numbers with decimals.

```

print('The number is {0}, and {0}, and {0}'.format(10.00020))

```

## Passing in 'weird' data types aka things we haven't seen yet.

We can also pass in dictionaries, lists, sets, tuples. Don't worry about what these means, they are other types of special data types that are also collections.

```

print('The number is {0}, and {0}, and {0}'.format([20202, 39394]))

print('The number is {0}, and {0}, and {0}'.format({'Tru TV': 'Chris Gethard'}))

print('The number is {0}, and {0}, and {0}'.format({'Tru TV'}))

print('The number is {0}, and {1}, and {0}'.format('A$AP Ferg', 'A$AP Rocky'))

```

## Setting parameters

We can set the arguments to a name and use that name inside the string

```

print('The number is {a}, and {b}, and {a}'.format(a=10.00020, b=20))

```

### <font color='blue'>Python 2 Alert!</font>

Python2 is more complicated to format strings. Python3 improved upon this.

```

print 'This is a string'

```

## Strings

You can use the %s to format strings into your print statements.

```

s = 'STRING'

print 'Place another string with a mod and s: %s' %(s)

```

## Floating Point Numbers aka Floats

Floating point numbers use the format %n1.n2f where the n1 is the total minimum number of digits the string should contain (these may be filled with whitespace if the entire number does not have this many digits. The n2 placeholder stands for how many numbers to show past the decimal point. Lets see some examples:

```

print 'Floating point numbers: %1.2f' %(13.144)

print 'Floating point numbers: %1.0f' %(13.144)

print 'Floating point numbers: %1.5f' %(13.144)

print 'Floating point numbers: %10.2f' %(13.144)

print 'Floating point numbers: %25.2f' %(13.144)

```

## Conversion Format methods.

It should be noted that two methods %s and %r actually convert any python object to a string using two separate methods: str() and repr(). We will learn more about these functions later on in the course, but you should note you can actually pass almost any Python object with these two methods and it will work:

```

print 'Here is a number: %s. Here is a string: %s' %(123.1,'hi')

print 'Here is a number: %r. Here is a string: %r' %(123.1,'hi')

```

## Multiple Formatting

Pass a tuple to the modulo symbol to place multiple formats in your print statements:

```

print 'First: %s, Second: %1.2f, Third: %r' %('hi!',3.14,22)

```

## Using the string .format() method

The best way to format objects into your strings for print statements is using the format method. The syntax is:

'String here {var1} then also {var2}'.format(var1='something1',var2='something2')

Lets see some examples:

```

print 'This is a string with an {p}'.format(p='insert')

# Multiple times:

print 'One: {p}, Two: {p}, Three: {p}'.format(p='Hi!')

# Several Objects:

print 'Object 1: {a}, Object 2: {b}, Object 3: {c}'.format(a=1,b='two',c=12.3)

```

That is the basics of string formatting! Remember that Python 3 uses a print() function, not the print statement!

| github_jupyter |

```

# library of congress

# import libraries

import rdflib, pandas, pathlib, json

import numpy, uuid, xmltodict, pydash

# define graph and namespace

graph = rdflib.Graph()

name_loc = rdflib.Namespace('https://loc.gov/')

name_wb = rdflib.Namespace('http://wikibas.se/ontology')

name_fiaf = rdflib.Namespace('https://www.fiafnet.org/')

# useful functions

def make_claim(s, p, o):

claim_id = name_loc[f"resource/claim/{uuid.uuid4()}"]

graph.add((s, name_wb['#claim'], claim_id))

graph.add((claim_id, p, o))

return claim_id

def make_qual(s, p, o):

qual_id = name_loc[f"resource/qualifier/{uuid.uuid4()}"]

graph.add((s, name_wb['#qualifier'], qual_id))

graph.add((qual_id, p, o))

return qual_id

def reference(claim_id, institute):

ref_id = name_loc[f"resource/reference/{uuid.uuid4()}"]

graph.add((claim_id, name_wb['#reference'], ref_id))

graph.add((ref_id, name_fiaf['ontology/property/contributed_by'], institute))

def single_list(data):

if isinstance(data, list):

return data

else:

return [data]

# define institution

graph.add((name_loc['ontology/item/loc'], rdflib.RDFS.label, rdflib.Literal('Library of Congress', lang='en')))

make_claim(name_loc['ontology/item/loc'], name_fiaf['ontology/property/instance_of'], name_fiaf['ontology/item/holding_institution'])

make_claim(name_loc['ontology/item/loc'], name_fiaf['ontology/property/located_in'], name_fiaf['ontology/item/usa'])

print(len(graph))

# format data

path = pathlib.Path.home() / 'murnau-data' / 'library_of_congress'

data = list()

for f in [x for x in path.glob('**/*.xml')]:

with open(f, encoding='ISO-8859-1') as xml_data:

element = xmltodict.parse(xml_data.read())

data.append(single_list(pydash.get(element, 'mavis.TitleWork'))[0])

print(len(graph))

# write work

for x in data:

work_id = x['@xl:href'].split('/')[-1]

work = name_loc[f"resource/work/{work_id}"]

make_claim(work, name_fiaf['ontology/property/instance_of'], name_fiaf['ontology/item/work'])

claim_id = make_claim(work, name_fiaf['ontology/property/external_id'], rdflib.Literal(work_id))

make_qual(claim_id, name_fiaf['ontology/property/institution'], name_loc['ontology/item/loc'])

reference(claim_id, name_loc['ontology/item/loc'])

print(len(graph))

# write title

for x in data:

work_id = x['@xl:href'].split('/')[-1]

work = name_loc[f"resource/work/{work_id}"]

selected_title = ''

for t in ['preferredTitle.Title', 'alternateTitles.Title']:

for y in single_list(pydash.get(x, t)):

if 'Original title' in str(y) or 'German' in str(y):

selected_title = pydash.get(y, '@xl:title')

title_type = name_fiaf['ontology/item/original_title']

if selected_title == '':

selected_title = pydash.get(x, '@xl:title')

title_type = name_fiaf['ontology/item/work_title']

claim_id = make_claim(work, name_fiaf['ontology/property/title'], rdflib.Literal(selected_title[:-1]))

make_qual(claim_id, name_fiaf['ontology/property/title_type'], title_type)

reference(claim_id, name_loc['ontology/item/loc'])

print(len(graph))

# write country

for x in data:

work_id = x['@xl:href'].split('/')[-1]

work = name_loc[f"resource/work/{work_id}"]

country = pydash.get(x, 'countries.WorkCountry.@xl:title')

if country == 'US':

fiaf_country = name_fiaf['ontology/item/usa']

elif country == 'GG':

fiaf_country = name_fiaf['ontology/item/germany']

else:

raise Exception('Unknown country.')

claim = make_claim(work, name_fiaf['ontology/property/production_country'], fiaf_country)

reference(claim, name_loc['ontology/item/loc'])

print(len(graph))

# write agents

def write_credit(work_data, dict_key, agent_type):

work_id = x['@xl:href'].split('/')[-1]

work = name_loc[f"resource/work/{work_id}"]

for a in pydash.get(x, 'roles.Name-Role'):

if 'Person' in pydash.get(a, 'party') and pydash.get(a, 'role.@xl:title') == dict_key:

forename = pydash.get(a, 'party.Person.preferredName.PersonName.firstName')

surname = pydash.get(a, 'party.Person.preferredName.PersonName.name')

contribution = pydash.get(a, 'role.@xl:title')

key = pydash.get(a, 'party.Person.@xl:href').split('/')[-1]

gend = pydash.get(a, 'party.Person.gender.@xl:title')

agent = name_loc[f"resource/agent/{key}"]

claim1 = make_claim(work, name_fiaf['ontology/property/agent'], agent)

make_qual(claim1, name_fiaf['ontology/property/agent_type'], agent_type)

reference(claim1, name_loc['ontology/item/loc'])

make_claim(agent, name_fiaf['ontology/property/instance_of'], name_fiaf['ontology/item/agent'])

claim2 = make_claim(agent, name_fiaf['ontology/property/external_id'], rdflib.Literal(key))

make_qual(claim2, name_fiaf['ontology/property/institution'], name_loc['ontology/item/loc'])

reference(claim2, name_loc['ontology/item/loc'])

if forename != None:

claim3 = make_claim(agent, name_fiaf['ontology/property/forename'], rdflib.Literal(forename))

reference(claim3, name_loc['ontology/item/loc'])

claim4 = make_claim(agent, name_fiaf['ontology/property/surname'], rdflib.Literal(surname))

reference(claim4, name_loc['ontology/item/loc'])

if gend == 'Male':

claim_id = make_claim(agent, name_fiaf['ontology/property/gender'], name_fiaf['ontology/item/male'])

reference(claim_id, name_loc['ontology/item/loc'])

if gend == 'Female':

claim_id = make_claim(agent, name_fiaf['ontology/property/gender'], name_fiaf['ontology/item/female'])

reference(claim_id, name_loc['ontology/item/loc'])

claim_id = make_claim(agent, name_fiaf['ontology/property/work'], work)

reference(claim_id, name_loc['ontology/item/loc'])

for x in data:

write_credit(x, 'Cast/Actor', name_fiaf['ontology/item/cast'])

write_credit(x, 'Director', name_fiaf['ontology/item/director'])

write_credit(x, 'Producer', name_fiaf['ontology/item/producer'])

write_credit(x, 'Cinematographer/Director of Photography', name_fiaf['ontology/item/cinematographer'])

write_credit(x, 'Scriptwriter', name_fiaf['ontology/item/screenwriter'])

write_credit(x, 'Music Composer', name_fiaf['ontology/item/composer'])

print(len(graph))

# write events

for x in data:

work_id = x['@xl:href'].split('/')[-1]

work = name_loc[f"resource/work/{work_id}"]

date_data = pydash.get(x, 'objectDates.Date-Year')

for y in [a for a in single_list(date_data) if pydash.get(a, 'dateType.@xl:title') == 'Copyright']:

date = pydash.get(y, 'yearFrom')

date += f"-{pydash.get(y, 'monthFrom').zfill(2)}"

date += f"-{pydash.get(y, 'dayFrom').zfill(2)}"

claim_id = make_claim(work, name_fiaf['ontology/property/event'], rdflib.Literal(date))

make_qual(claim_id, name_fiaf['ontology/property/event_type'], name_fiaf['ontology/item/decision_copyright'])

make_qual(claim_id, name_fiaf['ontology/property/country'], name_fiaf['ontology/item/usa'])

reference(claim_id, name_loc['ontology/item/loc'])

print(len(graph))

# write manifestations/items

for x in data:

work_id = x['@xl:href'].split('/')[-1]

work = name_loc[f"resource/work/{work_id}"]

items = list()

for c in [x['components'][y] for y in x['components']]:

c = single_list(c)

for y in c:

items.append(y)

for i in items:

manifestation = name_loc[f"resource/manifestation/{uuid.uuid4()}"]

make_claim(manifestation, name_fiaf['ontology/property/instance_of'], name_fiaf['ontology/item/manifestation'])

make_claim(manifestation, name_fiaf['ontology/property/manifestation_of'], work)

item_id = pydash.get(i, 'itemId')

item = name_loc[f"resource/item/{item_id}"]

make_claim(item, name_fiaf['ontology/property/instance_of'], name_fiaf['ontology/item/item'])

make_claim(item, name_fiaf['ontology/property/item_of'], manifestation)

claim_id = make_claim(item, name_fiaf['ontology/property/held_at'], name_loc['ontology/item/loc'])

reference(claim_id, name_loc['ontology/item/loc'])

claim_id = make_claim(item, name_fiaf['ontology/property/external_id'], rdflib.Literal(item_id))

make_qual(claim_id, name_fiaf['ontology/property/institution'], name_loc['ontology/item/loc'])

reference(claim_id, name_loc['ontology/item/loc'])

for k, v in {'Safety':name_fiaf['ontology/item/film'], 'Nitrate':name_fiaf['ontology/item/film'],

'Digital':name_fiaf['ontology/item/digital'], 'Video':name_fiaf['ontology/item/video_tape'],

'Tape':name_fiaf['ontology/item/sound_tape'], 'Disc':name_fiaf['ontology/item/disc']}.items():

if pydash.get(i, 'itemType.@xl:title') == k:

claim_id = make_claim(item, name_fiaf['ontology/property/carrier'], v)

reference(claim_id, name_loc['ontology/item/loc'])

for k, v in {'16mm':name_fiaf['ontology/item/16mm'], '35mm':name_fiaf['ontology/item/35mm']}.items():

if pydash.get(i, 'gauge.@xl:title') == k:

claim_id = make_claim(item, name_fiaf['ontology/property/specific_carrier'], v)

reference(claim_id, name_loc['ontology/item/loc'])

for k, v in {'Composite Positive':name_fiaf['ontology/item/print'], 'Duplicate Negative Track':name_fiaf['ontology/item/duplicate_negative'],

'Duplicate Negative Picture':name_fiaf['ontology/item/duplicate_negative'], 'Positive Picture':name_fiaf['ontology/item/duplicate_positive']}.items():

if pydash.get(i, 'techCode.@xl:title') == k:

claim_id = make_claim(item, name_fiaf['ontology/property/element'], v)

reference(claim_id, name_loc['ontology/item/loc'])

for k, v in {'Access':name_fiaf['ontology/item/viewing'], 'Preservation Copy':name_fiaf['ontology/item/master'],

'Access/Browsing copy':name_fiaf['ontology/item/viewing'], 'Limited Access':name_fiaf['ontology/item/restricted'],

'Preservation Material':name_fiaf['ontology/item/master']}.items():

if pydash.get(i, 'categoryMaterial.@xl:title') == k:

claim_id = make_claim(item, name_fiaf['ontology/property/access'], v)

reference(claim_id, name_loc['ontology/item/loc'])

for k, v in {'Safety':name_fiaf['ontology/item/acetate'], 'Nitrate':name_fiaf['ontology/item/nitrate']}.items():

if pydash.get(i, 'itemType.@xl:title') == k:

claim_id = make_claim(item, name_fiaf['ontology/property/base'], v)

reference(claim_id, name_loc['ontology/item/loc'])

make_claim(work, name_fiaf['ontology/property/manifestation'], manifestation)

make_claim(manifestation, name_fiaf['ontology/property/item'], item)

print(len(graph))

graph.serialize(destination=str(pathlib.Path.cwd() / 'library_of_congress.ttl'), format="turtle")

print(len(graph))

```

| github_jupyter |

จาก ep ก่อนที่เราได้เรียนรู้ความสำคัญของ Hyperparameter ของการเทรน Machine Learning ที่ชื่อ [Learning Rate](https://www.bualabs.com/archives/618/learning-rate-deep-learning-how-to-hyperparameter-tuning-ep-1/) ถ้าเรากำหนดค่า Learning น้อยไปก็ทำให้เทรนได้ช้า แต่ถ้ามากเกินไปก็ทำให้ไม่ Converge

แล้วเราจะทราบได้อย่างไร ว่า Learning Rate เท่าไร เป็นค่าที่ดีที่สุดในการเทรน Deep Neural Network ของเรา

เราจะสอนวิธีหา Learning Rate ที่ดีที่สุด ที่ดีที่สุด หมายถึง Learning Rate ที่มากที่สุด ที่ยังไม่ทำให้เกิดการไม่ Converge ด้วยอัลกอริทึมง่าย ๆ ตรงตัว คือ การทดลองเทรน แล้วเพิ่ม Learning Rate ขึ้นไปเรื่อย ๆ แล้วเช็ค Loss จนกว่า Loss จะเพิ่มมากจนผิดปกติ เรียกว่า LR Finder (Learning Rate Finder) โดยใช้ [LR Finder Callback เริ่มที่หัวข้อ 6](#6.-Callbacks)

เมื่อเราได้ข้อมูล ความสัมพันธ์ระหว่าง Learning Rate กับ Loss ของโมเดล Deep Neural Network ของเรามาเรียบร้อยแล้ว เราจะนำมาพล็อตกราฟ เพื่อวิเคราะห์หา Learning Rate ที่ดีที่สุดต่อไป

# 0. Magic

```

%load_ext autoreload

%autoreload 2

%matplotlib inline

```

# 1. Import

```

import torch

from torch import tensor

from torch.nn import *

import torch.nn.functional as F

from torch.utils.data import *

from fastai import datasets

from fastai.metrics import accuracy

import pickle, gzip, math, torch, re

from IPython.core.debugger import set_trace

import matplotlib.pyplot as plt

```

# 2. Data

```

class Dataset(Dataset):

def __init__(self, x, y):

self.x, self.y = x, y

def __len__(self):

return len(self.x)

def __getitem__(self, i):

return self.x[i], self.y[i]

MNIST_URL='http://deeplearning.net/data/mnist/mnist.pkl'

def get_data():

path = datasets.download_data(MNIST_URL, ext='.gz')

with gzip.open(path, 'rb') as f:

((x_train, y_train), (x_valid, y_valid), _) = pickle.load(f, encoding='latin-1')

return map(tensor, (x_train, y_train, x_valid, y_valid))

x_train, y_train, x_valid, y_valid = get_data()

def normalize(x, m, s):

return (x-m)/s

from typing import *

def listify(o):

if o is None: return []

if isinstance(o, list): return o

if isinstance(o, str): return [o]

if isinstance(o, Iterable): return list(o)

return [o]

_camel_re1 = re.compile('(.)([A-Z][a-z]+)')

_camel_re2 = re.compile('([a-z0-9])([A-Z])')

def camel2snake(name):

s1 = re.sub(_camel_re1, r'\1_\2', name)

return re.sub(_camel_re2, r'\1_\2', s1).lower()

train_mean, train_std = x_train.mean(), x_train.std()

x_train = normalize(x_train, train_mean, train_std)

x_valid = normalize(x_valid, train_mean, train_std)

nh, bs = 100, 256

n, m = x_train.shape

c = (y_train.max()+1).numpy()

loss_func = F.cross_entropy

train_ds, valid_ds = Dataset(x_train, y_train), Dataset(x_valid, y_valid)

train_dl, valid_dl = DataLoader(train_ds, bs), DataLoader(valid_ds, bs)

```

# 3. DataBunch

```

class DataBunch():

def __init__(self, train_dl, valid_dl, c=None):

self.train_dl,self.valid_dl,self.c = train_dl,valid_dl,c

@property

def train_ds(self): return self.train_dl.dataset

@property

def valid_ds(self): return self.valid_dl.dataset

data = DataBunch(train_dl, valid_dl, c)

```

# 4. Model

```

lr = 0.03

epoch = 10

def get_model():

# loss function

loss_func = F.cross_entropy

model = Sequential(Linear(m, nh), ReLU(), Linear(nh,c))

return model, loss_func

class Learner():

def __init__(self, model, opt, loss_func, data):

self.model, self.opt, self.loss_func, self.data = model, opt, loss_func, data

```

# 5. Training Loop

Training Loop ที่รองรับ Callback

```

class Runner():

def __init__(self, cbs=None, cb_funcs=None):

cbs = listify(cbs)

for cbf in listify(cb_funcs):

cb = cbf()

setattr(self, cb.name, cb)

cbs.append(cb)

self.stop, self.cbs = False, [TrainEvalCallback()]+cbs

@property

def opt(self): return self.learn.opt

@property

def model(self): return self.learn.model

@property

def loss_func(self): return self.learn.loss_func

@property

def data(self): return self.learn.data

def one_batch(self, xb, yb):

try:

self.xb, self.yb = xb, yb

self('begin_batch')

self.pred = self.model(xb)

self('after_pred')

self.loss = self.loss_func(self.pred, yb)

self('after_loss')

if not self.in_train: return

self.loss.backward()

self('after_backward')

self.opt.step()

self('after_step')

self.opt.zero_grad()

except CancelBatchException: self('after_cancel_batch')

finally: self('after_batch')

def all_batches(self, dl):

self.iters = len(dl)

try:

for xb, yb in dl:

self.one_batch(xb, yb)

except CancelEpochException: self('after_cancel_epoch')

def fit(self, epochs, learn):

self.epochs, self.learn, self.loss = epochs, learn, tensor(0.)

try:

for cb in self.cbs: cb.set_runner(self)

self('begin_fit')

for epoch in range(epochs):

self.epoch = epoch

if not self('begin_epoch'): self.all_batches(self.data.train_dl)

with torch.no_grad():

if not self('begin_validate'): self.all_batches(self.data.valid_dl)

self('after_epoch')

except CancelTrainException: self('after_cancel_train')

finally:

self('after_fit')

self.train = None

def __call__(self, cb_name):

# return True = Cancel, return False = Continue (Default)

res = False

# check if at least one True return True

for cb in sorted(self.cbs, key=lambda x: x._order): res = res or cb(cb_name)

return res

class Callback():

_order = 0

def set_runner(self, run): self.run = run

def __getattr__(self, k): return getattr(self.run, k)

@property

def name(self):

name = re.sub(r'Callback$', '', self.__class__.__name__)

return camel2snake(name or 'callback')

def __call__(self, cb_name):

f = getattr(self, cb_name, None)

if f and f(): return True

return False

class TrainEvalCallback(Callback):

def begin_fit(self):

self.run.n_epochs = 0.

self.run.n_iter = 0

def begin_epoch(self):

self.run.n_epochs = self.epoch

self.model.train()

self.run.in_train=True

def after_batch(self):

if not self.in_train: return

self.run.n_epochs += 1./self.iters

self.run.n_iter += 1

def begin_validate(self):

self.model.eval()

self.run.in_train=False

class CancelTrainException(Exception): pass

class CancelEpochException(Exception): pass

class CancelBatchException(Exception): pass

```

เราจะเพิ่ม Method Recorder.plot สำหรับพล็อตกราฟ ความสัมพันธ์ระหว่าง Learning Rate กับ Loss

และเพิ่ม Parameter ให้กับ plot_lr, plot_loss ดังนี้

* pgid = Parameter Group ID เราแบ่ง Parameter ของโมเดลออกเป็น 3 กลุ่มที่มี Learning Rate แตกต่างกัน จะอธิบายต่อไป ตอนนี้ให้ใช้ -1 หมายถึงสุดท้าย

* skip_last = จำนวน Iteration ที่ข้ามไม่ต้องพล็อตกราฟ นับจากท้ายสุด เนื่องจาก Iteration ท้าย ๆ มักจะ Loss มาก จนทำให้กราฟดูยาก

```

class Recorder(Callback):

def begin_fit(self):

self.lrs = [[] for _ in self.opt.param_groups]

self.losses = []

def after_batch(self):

if not self.in_train: return

for pg, lr in zip(self.opt.param_groups, self.lrs): lr.append(pg['lr'])

self.losses.append(self.loss.detach().cpu())

def plot_lr(self, pgid=-1): plt.plot(self.lrs[pgid])

def plot_loss(self, skip_last=0): plt.plot(self.losses[:len(self.losses)-skip_last])

def plot(self, skip_last=0, pgid=-1):

losses = [o.item() for o in self.losses]

lrs = self.lrs[pgid]

n = len(losses)-skip_last

plt.xscale('log')

plt.plot(lrs[:n], losses[:n])

```

# 6. Callbacks

## 6.1 LR_Find (Learning Rate Finder Callback)

เราสร้าง Callback ที่ในระหว่างการเทรน จะเพิ่มค่า lr (Learning Rate) ในทุก ๆ Batch แบบ Exponential โดยเริ่มที่ min_lr แต่ไม่เกิน max_lr

และหลังจากเทรนจบ Batch ลองเช็คค่า Loss เปรียบเทียบว่ามากกว่า 10 เท่า ของ ค่า Loss ที่น้อยที่สุด ที่เคยได้หรือไม่ ถ้าใช่ก็แปลว่า Learning Rate มากเกินไปแล้ว จบการเทรนได้ แต่ถ้าไม่ใช่ก็เก็บค่า Loss ที่ดีที่สุดไว้ แล้วเทรนต่อ

max_iter = เราจะเทรนกี่ Iteration (Mini-Batch), min_lr = Learning Rate ขั้นต่ำ, max_lr = เพดาน Learning Rate

```

class LR_Find(Callback):

_order = 1

def __init__(self, max_iter=100, min_lr=1e-6, max_lr=10):

self.max_iter, self.min_lr, self.max_lr = max_iter, min_lr, max_lr

self.best_loss = 1e9

def begin_batch(self):

if not self.in_train: return

pos = self.n_iter/self.max_iter

lr = self.min_lr * (self.max_lr/self.min_lr) ** pos

for pg in self.opt.param_groups: pg['lr'] = lr

def after_loss(self):

if self.n_iter>=self.max_iter or self.loss > self.best_loss*10:

raise CancelTrainException()

if self.loss < self.best_loss: self.best_loss = self.loss

```

# 7. Train

ลองเราลองเทรนด้วย Callback 2 ตัว คือ LR_Find เพื่อหา Learning Rate และ และ Recorder เพื่อบันทึกค่า Learning Rate และ Loss ในระหว่างการเทรน

```

model, loss_func = get_model()

opt = torch.optim.SGD(model.parameters(), lr=lr)

learn = Learner(model, opt, loss_func, data)

run = Runner(cb_funcs=[LR_Find, Recorder])

run.fit(5, learn)

```

# 8. Interpret

พล็อตกราฟ Iteration, Learning Rate ดูว่าในแต่ละ Iteration, Learning Rate นั้น เพิ่มแบบ Exponential ในช่วงระหว่าง min_lr, max_lr

```

run.recorder.plot_lr()

```

พล็อตกราฟความสัมพันธ์ระหว่าง Learing Rate, Loss ดูว่า Loss จะลดลงเร็วขึ้นเรื่อย ๆ เมื่อเราเพิ่ม Learning Rate แต่จะลดลงไปถึงค่าหนึ่ง แล้วจะพุ่งขึ้นอย่างรวดเร็ว

```

run.recorder.plot(skip_last=0)

run.recorder.plot(skip_last=2)

```

ให้เราเลือก Learning Rate ที่ต่ำสุดก่อนที่ Loss จะพุ่งขึ้น 1e-1 ย้อนไป 10 เท่า ในที่นี้คือ lr = 1e-2

เมื่อเราลองเทรนด้วย lr = 1e-2 แล้ว เราสามารถลอง +- 10 เท่าได้ เป็น 1e-1, 1e-3 เพื่อเปรียบเทียบได้

# Credit

* https://course.fast.ai/videos/?lesson=10

* http://yann.lecun.com/exdb/mnist/

```

```

| github_jupyter |

# (Effects of Loans Characteristics on Borrower's APR)

## by (Mohammad Aamir)

## Investigation Overview

> In this investigation, I wanted to look at the characteristics of loan that could be used to predict their borrower APR. The main focus was on borrower prosper rating, original loan ammount and Term.

## Dataset Overview

> The prosper loan data set contains 83982 complete loan data for all loans issued through the 2007–2011, it contains 15 variables. Each row contains information on a loan, including loan amount, BorrowerAPR, borrower rate, Term, borrower income, Investors, and more. 14793 data points were removed from the analysis due to inconsistencies or missing information.

```

# import all packages and set plots to be embedded inline

import numpy as np

import pandas as pd

import matplotlib.pyplot as plt

import seaborn as sb

%matplotlib inline

# suppress warnings from final output

import warnings

warnings.simplefilter("ignore")

# load in the dataset into a pandas dataframe

loans_df= pd.read_csv('prosperLoanData.csv')

# selecting the interesting features

loans= loans_df.loc[:,('CreditGrade', 'Term', 'BorrowerAPR','BorrowerRate', 'ProsperRating (Alpha)',

'ListingCategory (numeric)', 'BorrowerState', 'EmploymentStatusDuration', 'IsBorrowerHomeowner',

'AmountDelinquent','LoanOriginalAmount', 'DebtToIncomeRatio', 'IncomeVerifiable','StatedMonthlyIncome',

'Investors','AvailableBankcardCredit','MonthlyLoanPayment')]

#Wrangling

#Make a copy of the data frame

loans_clean = loans.copy()

# fill NA with empty strings

loans_clean['ProsperRating (Alpha)'].fillna("", inplace= True)

loans_clean.CreditGrade.fillna("", inplace= True)

# combine credit ratings

loans_clean['Com_ProsperRating']= loans_clean.CreditGrade+ loans_clean['ProsperRating (Alpha)']

# drop rows with no credit ratings

loans_clean= loans_clean.query('Com_ProsperRating != "" & Com_ProsperRating != "NC"')

# drop unnecessary columns

loans_clean.drop(columns= ['ProsperRating (Alpha)', 'CreditGrade'], inplace= True)

# selecting rows only where ProsperRating is not null as this is one of the most important features of the dataset

# and filling in missing values is not possible.

loans_clean = loans_clean[loans_clean['AmountDelinquent'].notnull()]

loans_clean = loans_clean[loans_clean['BorrowerState'].notnull()]

# filling in missing quantitative values as mean of the columns

loans_clean.BorrowerAPR.fillna(loans_clean.BorrowerAPR.mean(), inplace= True)

loans_clean.EmploymentStatusDuration.fillna(loans_clean.EmploymentStatusDuration.mean(), inplace= True)

loans_clean.DebtToIncomeRatio.fillna(loans_clean.DebtToIncomeRatio.mean(), inplace= True)

loans_clean.AvailableBankcardCredit.fillna(loans_clean.AvailableBankcardCredit.mean(), inplace= True)

#Replace listing category number by name

lits_cat_name= {0: 'Not Available', 1 : 'Debt Consolidation', 2 : 'Home Improvement', 3 : 'Business', 4 : 'Personal Loan',\

5 : 'Student Use', 6 : 'Auto', 7 : 'Other', 8 : 'Baby&Adoption', 9 : 'Boat', 10 : 'Cosmetic Procedure',\

11 : 'Engagement Ring', 12 : 'Green Loans', 13 : 'Household Expenses', 14 : 'Large Purchases',\

15 : 'Medical/Dental', 16 : 'Motorcycle', 17 : 'RV', 18 : 'Taxes', 19 : 'Vacation', 20 : 'Wedding Loans'}

loans_clean['ListingCategory (numeric)']= loans_clean['ListingCategory (numeric)'].map(lits_cat_name)

loans_clean= loans_clean.rename(columns={'ListingCategory (numeric)': 'ListingCategory'})

# Convert ProsperRating to ordinal categorical

ordinal_var_dict= {'Com_ProsperRating': ['HR','E','D','C', 'B', 'A', 'AA']}

for var in ordinal_var_dict:

ordered_var= pd.api.types.CategoricalDtype(ordered= True, categories= ordinal_var_dict[var])

loans_clean[var]= loans_clean[var].astype(ordered_var)

#Adjust datatype for all other categorical columns

loans_clean.BorrowerState= loans_clean.BorrowerState.astype('category')

loans_clean.ListingCategory = loans_clean.ListingCategory.astype('category')

# Getting the lowest and highest value for stated monthly income

Q1 = loans_clean['StatedMonthlyIncome'].quantile(0.25)

Q3 = loans_clean['StatedMonthlyIncome'].quantile(0.75)

IQR = Q3 - Q1

fence_low = Q1-1.5*IQR

fence_high = Q3+1.5*IQR

#Removing the outliers

loans_clean = loans_clean.loc[(loans_clean['StatedMonthlyIncome'] > fence_low) & (loans_clean['StatedMonthlyIncome'] < fence_high)]

loans_clean.shape

```

## (Distribution of Borrower APR)

> We see that the the distribution for Borrower APR are normally distrubuted with the peak between 13 and 23 percent in addition we have some increase in the 35 percent.

```

bins= np.arange(0, loans_clean.BorrowerAPR.max()+0.05, 0.01)

plt.figure(figsize=[10, 7])

plt.hist(data= loans_clean, x= 'BorrowerAPR', bins = bins);

plt.xlabel('Borrower APR');

plt.title('Borrower APR Distribution')

```

## (Borrower APR vs. Prosper Rating)

> The borrower APR decreases with the increasingly better rating. Borrowers with the best Prosper ratings have the lowest APR. It means that the Prosper rating has a strong effect on borrower APR.

```

#Violin plot without datapoints in the violin interior

base_color = sb.color_palette()[0]

plt.figure(figsize=[8, 7])

sb.violinplot(data=loans_clean, x='Com_ProsperRating', y='BorrowerAPR', color=base_color, inner=None)

plt.xlabel('Prosper Rating')

plt.ylabel('Borrower APR')

plt.title('Distribution of BorrowerAPR by Prosper rate')

```

## (Borrower APR vs. Loan Original Amount)

> Negative correlation between LoanOriginalAmount and BrowerAPR, that is the more the loan amount, the lower the APR.

```

plt.figure(figsize=[8, 7])

sb.regplot(data = loans_clean, x = 'LoanOriginalAmount', y = 'BorrowerAPR', x_jitter=0.04, scatter_kws={'alpha':.01})

plt.title('Correlation between Loan original amount and Borrower APR');

```

## (Borrower APR by Prosper rating and Term)

> Interestingly we can see that the borrower APR increase with the decrease of term for people with (B, A, AA) raings. But for people with E rating, the APR decrease with the increase of term.

```

fig = plt.figure(figsize = [8,6])

ax = sb.pointplot(data = loans_clean, x = 'Com_ProsperRating', y = 'BorrowerAPR', hue = 'Term',

palette = 'Blues', linestyles = '', dodge = 0.4, ci='sd')

plt.title('Borrower APR across rating and term')

plt.ylabel('Borrower APR')

ax.set_yticklabels([],minor = True);

```

| github_jupyter |

```

import pandas as pd

import numpy as np

from sklearn.feature_extraction.text import CountVectorizer,TfidfVectorizer

import re

from sklearn.model_selection import train_test_split

from sklearn.metrics import *

from sklearn.linear_model import LogisticRegression

import warnings

warnings.filterwarnings('ignore')

import os

import sys

module_path = os.path.abspath(os.path.join('..'))

if module_path not in sys.path:

sys.path.append(module_path)

from wcbtfidf import Wcbtfidf

df = pd.read_csv('sentiment140_data.csv',names=('target','id','date','flag','username','tweet'))

df.shape

df.head()

# Checking unique ids

print(df['id'].nunique(),df.shape[0])

# Removing duplicate ids

df.drop_duplicates(subset=['id'],keep='first',inplace=True)

print(df['id'].nunique(),df.shape[0])

# Target data distribution

df['target'].value_counts(normalize=True)

# To test our hypothesis let us convert into an imbalance problem with fewer positive samples

# We will take a total of 5 lakh data points with 4.5 lakh belonging to class 4 and 50k to class 0

negative_samples = df[df['target'] == 0].sample(n=50000,random_state=60)

positive_samples = df[df['target'] == 4].sample(n=450000,random_state=60)

final_df = pd.concat([negative_samples,positive_samples]).sample(frac=1,random_state=60) # A sample operation with full data is

# performed to shuffle the data points

final_df['target'] = final_df['target'].map({0:0,4:1})

final_df['target'].value_counts(normalize=True)

def preprocess_text(text):

text = text.lower()

text = re.sub("[^a-z0-9]"," ",text)

text = re.sub("(\s)+"," ",text)

return text

final_df['clean_text'] = final_df['tweet'].apply(preprocess_text)

print(final_df.shape)

final_df = final_df[['clean_text','target']]

print(final_df.shape)

xtrain,xtest,ytrain,ytest = train_test_split(final_df['clean_text'],final_df['target'],test_size=0.25,random_state=60,stratify=final_df['target'])

print(xtrain.shape,ytrain.shape)

print(xtest.shape,ytest.shape)

# Distribution check in train and test

print(ytrain.value_counts(normalize=True))

print(ytest.value_counts(normalize=True))

def check_hypothesis(xtrain,xtest,ytrain,ytest,max_feat,model):

print('Running base version')

tfidf = TfidfVectorizer(max_features=max_feat,stop_words='english')

train_df = pd.DataFrame(tfidf.fit_transform(xtrain).toarray(),columns=tfidf.vocabulary_)

test_df = pd.DataFrame(tfidf.transform(xtest).toarray(),columns=tfidf.vocabulary_)

model.fit(train_df,ytrain)

preds = model.predict(test_df)

print(f'Precision is {precision_score(ytest,preds)}')

print(f'Recall is {recall_score(ytest,preds)}')

print(f'ROC curve is {roc_auc_score(ytest,preds)}')

print(classification_report(ytest,preds))

print('Running my version')

wcbtfidf = Wcbtfidf(max_features=max_feat)

wcbtfidf.fit(xtrain,ytrain)

train_df = wcbtfidf.transform(xtrain)

test_df = wcbtfidf.transform(xtest)

model.fit(train_df,ytrain)

preds = model.predict(test_df)

print(f'Precision is {precision_score(ytest,preds)}')

print(f'Recall is {recall_score(ytest,preds)}')

print(f'ROC curve is {roc_auc_score(ytest,preds)}')

print(classification_report(ytest,preds))

return wcbtfidf,tfidf

model = LogisticRegression()

wcbtfidf_object,tfidf_object = check_hypothesis(xtrain,xtest,ytrain,ytest,300,model)

```

## ANALYSIS

Negative tweets are the minority class. Let us see whether the vocab of wcbtfidf was able to catch words that cater towards the negative class more as compared to tfidf

```

# Length Comparison

tfidf_vocab = tfidf_object.vocabulary_

wcbtfidf_vocab = wcbtfidf_object.combine_vocab

print(len(wcbtfidf_vocab),len(tfidf_vocab))

# Words that are present in tfidf vocab but not in wcbtfidf

print(list(set(tfidf_vocab) - set(wcbtfidf_vocab)))

```

Major words are neutral and rest are positive like **rock,enjoying,loved,wonderful**

```

# Words that are present in wcbtfidf but not in tfidf

print(list(set(wcbtfidf_vocab) - set(tfidf_vocab)))

```

Here as well there are neutral words but rest are towards the negative end like **stupid,sucks,ugh,hurts,shit,headache,poor,least,missing**

| github_jupyter |

```

import opsimsummary as oss

import os

example_dir = os.path.join(oss.__path__[0], 'example_data')

dbName = os.path.join(example_dir, 'enigma_1189_micro.db')

hdfName = os.path.join(example_dir, 'enigma_1189_micro.hdf')

from sqlalchemy import create_engine as create_engine

from opsimsummary import OpSimOutput

```

# From the OpSim Output (sqlite database)

```

opout_hdf = OpSimOutput.fromOpSimHDF(hdfName=hdfName, subset='Combined')

opout = OpSimOutput.fromOpSimDB(dbname=dbName, subset='_all')

opout.proposalTable

opout.propIDDict

opout.summary.propID.unique()

opout.propIDVals('combined', opout.propIDDict, opout.proposalTable)

opout.propIds

opout.subset

opout.summary.head()

opout.summary[['fieldID', 'fieldRA', 'fieldDec', 'filter', 'expMJD', 'ditheredRA', 'ditheredDec']]

print(len(opout.summary))

# Write to hdf file

opout.writeOpSimHDF('/tmp/opsim_small.hdf')