code

stringlengths 38

801k

| repo_path

stringlengths 6

263

|

|---|---|

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# ### tuple and default operations

# +

tuples1 = (1,8,33,5,3,15,9,4,10)

tuples2 = (7,2,4,2,1,6,4,7,9)

print(tuples)

## count function

print("Count of the elements in tuple : ",tuples.count(5))

## index function

print('Index of the element : ', tuples.index(15))

## length function

print('length of the tuple : ',len(tuples))

## minimum function

print('minimum of the tuple : ',min(tuples))

## Concatenation function

tuple3 = tuples1 + tuples2

print("Concatenation tuple : ",tuple3)

# -

|

tuple_and_operations.ipynb

|

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# +

from shapely import geometry

from pulp import *

import pandas as pd

import geopandas as gpd

import numpy as np

from geopy.distance import geodesic

import matplotlib.pyplot as plt

from matplotlib.collections import LineCollection

import json

from area import area

import pickle

from vincenty import *

from shapely.strtree import STRtree

import networkx as nx

# -

ne = gpd.read_file('./../../../data/ne_10m_countries.gpkg')

ne.plot()

mp = ne.unary_union

len(mp)

import pickle

pickle.dump(mp,open('./../../../data/world_mp.pkl','wb'))

pulp.pulpTestAll()

dist = np.arange(0,10,0.1)

mw = np.array([ii/10 for ii in range(10,0,-1)] + [ii/10 for ii in range(11)])

mw

dist2d = np.stack([dist]*mw.shape[0])

mw2d = np.stack([mw]*dist.shape[0])

Z = dist2d**2

mw2d

alpha=0.5

# case: B=1

E_mw = mw2d

# ## Toy Model

pts_A = np.random.rand(10*2).reshape(10,2)*10

pts_B = np.random.rand(3*2).reshape(3,2)*10

pts_A = [geometry.Point(pt[0],pt[1]) for pt in pts_A.tolist()]

pts_B = [geometry.Point(pt[0],pt[1]) for pt in pts_B.tolist()]

for ii_A,pt in enumerate(pts_A):

pt.MW = np.random.rand(1)*5

pt.name='A_'+str(ii_A)

for ii_B,pt in enumerate(pts_B):

pt.MW = np.random.rand(1)*40

pt.name='B_'+str(ii_B)

def milp_geodesic_network_satisficing(pts_A, pts_B, alpha,v=False):

pts_A_dict = {pt.name:pt for pt in pts_A}

pts_B_dict = {pt.name:pt for pt in pts_B}

A_names = [pt.name for pt in pts_A]

B_names = [pt.name for pt in pts_B]

Z = {pt.name:{} for pt in pts_A}

MW_A = {pt.name:pt.MW for pt in pts_A}

MW_B = {pt.name:pt.MW for pt in pts_B}

if v:

print ('generating Z..')

for pt_A in pts_A:

for pt_B in pts_B:

Z[pt_A.name][pt_B.name]=(geodesic([pt_A.y,pt_A.x], [pt_B.y,pt_B.x]).kilometers)**2

sum_Z = sum([Z[A_name][B_name] for A_name in A_names for B_name in B_names])

### declare model

model = LpProblem("Network Satisficing Problem",LpMinimize)

### Declare variables

# B -> Bipartite Network

B = LpVariable.dicts("Bipartite",(A_names,B_names),0,1,LpInteger)

# abs_diffs -> absolute value forcing variable

abs_diffs = LpVariable.dicts("abs_diffs",B_names,cat='Continuous')

### Declare constraints

# Contstraint - abs diffs edges

for B_name in B_names:

model += abs_diffs[B_name] >= (MW_B[B_name] - lpSum([MW_A[A_name]*B[A_name][B_name] for A_name in A_names]))/MW_B[B_name],"abs forcing pos {}".format(B_name)

model += abs_diffs[B_name] >= -1 * (MW_B[B_name] - lpSum([MW_A[A_name]*B[A_name][B_name] for A_name in A_names]))/MW_B[B_name], "abs forcing neg {}".format(B_name)

# Constraint - bipartite edges

for A_name in A_names:

model += lpSum([B[A_name][B_name] for B_name in B_names]) <= 1,"Bipartite Edges {}".format(A_name)

### Affine equations

# Impedence error

E_z = sum([Z[A_name][B_name]*B[A_name][B_name] for A_name in A_names for B_name in B_names])/sum_Z

# mw error

E_mw = sum([abs_diffs[B_name] for B_name in B_names])/len(B_names)

### Objective function

model += E_z*alpha + (1-alpha)*E_mw, "Loss"

if v:

print ('solving model...')

model.solve(pulp.GUROBI_CMD())

if v:

print(pulp.LpStatus[model.status])

return model, B, E_z, E_mw, Z

model, B, E_z, E_mw, Z = milp_geodesic_network_satisficing(pts_A, pts_B, 0.5, v=True)

pts_A_dict = {pt.name:pt for pt in pts_A}

pts_B_dict = {pt.name:pt for pt in pts_B}

E_z.value()

for e in E_mw:

print (e.name, e.value())

for kk, vv in B.items():

for kk2, vv2 in vv.items():

print (kk,kk2,vv2.value())

# +

### visualise

fig, axs = plt.subplots(1,1,figsize=(12,12))

for pt in pts_A:

axs.scatter(pt.x, pt.y, s=pt.MW*10,c='g')

for pt in pts_B:

axs.scatter(pt.x,pt.y,s=pt.MW*10,c='r')

lines = []

for k1, v in B.items():

for k2,v2 in v.items():

if v2.varValue>0:

lines.append([[pts_A_dict[k1].x,pts_A_dict[k1].y],[pts_B_dict[k2].x,pts_B_dict[k2].y]])

lc = LineCollection(lines, color='grey')

axs.add_collection(lc)

plt.show()

# -

# ## To data

# ### wrangle data

fts = json.load(open('./results_fcs_v1.3/ABCD_simplified.geojson','r'))

GB = json.load(open('GB_v1.3.geojson','r'))

wri = pd.read_csv('global_power_plant_database.csv')

ws = pd.read_csv('wri_mixin.csv')

GB_pts = []

for ii_f,ft in enumerate(GB['features']):

poly = geometry.shape(ft['geometry'])

pp = poly.representative_point()

pp.MW = area(geometry.mapping(poly))*54/1000/1000

if ft['properties']['SPOT_ids_0']=='':

pp.name=str(ii_f)+'_'+str(ft['properties']['S2_ids_0'])

else:

pp.name=str(ii_f)+'_'+str(ft['properties']['SPOT_ids_0'])

GB_pts.append(pp)

wri.fuel1.unique()

wri_records = wri[(wri.country=='GBR')&(wri.fuel1=='Solar')][['capacity_mw', 'name','latitude','longitude']].to_dict('records')

for ii_r,r in enumerate(wri_records):

r['MW'] = r['capacity_mw']

r['pt'] = geometry.Point(r['longitude'],r['latitude'])

r['name'] = str(ii_r)+'_'+str(r['name'])

ws_records = ws[ws.iso2=='GB'][['WS Coords','MW','WS Name']].to_dict('records')

for r in ws_records:

r['WS Coords'] = r['WS Coords'].replace(' E','')

r['pt'] = geometry.Point(float(r['WS Coords'].split(',')[-1]),float(r['WS Coords'].split(',')[0]))

r['name'] = r['WS Name']

records = wri_records + ws_records

country_shps = json.load(open('/home/lucaskruitwagen/DPHIL/DPHIL_CLASSIFICATION/Solar_PV/NE/ne_10m_admin_0_countries.geojson','r'))

GB_shp = [ft for ft in country_shps['features'] if ft['properties']['ISO_A2']=='GB']

R_pts = []

for r in records:

pp = r['pt']

pp.MW =r['MW']

pp.name=r['name']

R_pts.append(pp)

# ### Get components with threshold

def get_matches_treenx(A_polys,P_polys):

### return a list of matches. both polys need a property which is primary_id

P_tree = STRtree(P_polys)

G = nx.Graph()

for pp_A in A_polys:

t_result = P_tree.query(pp_A)

t_result = [pp for pp in t_result if pp.intersects(pp_A)]

if len(t_result)>0:

for r in t_result:

G.add_edge(pp_A.name,r.name)

return list(nx.connected_components(G))

buffer_dist = 1500 #m

# +

GB_polys = []

for pt in GB_pts:

pp = pt.buffer(V_dir([pt.y,pt.x],buffer_dist,0)[0][0]-pt.y)

pp.name = pt.name

GB_polys.append(pp)

R_polys = []

for pt in R_pts:

pp = pt.buffer(V_dir([pt.y,pt.x],buffer_dist,0)[0][0]-pt.y)

pp.name = pt.name

R_polys.append(pp)

# -

components = get_matches_treenx(GB_polys,R_polys)

len(components)

# +

### visualise components

fig, axs = plt.subplots(1,1,figsize=(16,16))

for shp_ft in GB_shp[1:2]:

geom = geometry.shape(shp_ft['geometry'])

if geom.type=='Polygon':

xs,ys = geom.exterior.xy

axs.plot(xs,ys,c='grey')

if geom.type=='MultiPolygon':

for subgeom in geom:

xs,ys = subgeom.exterior.xy

axs.plot(xs,ys,c='grey')

for c in components:

pts = [pt for pt in GB_pts if pt.name in c]

pts += [pt for pt in R_pts if pt.name in c]

rnd_col = np.random.rand(3,)

axs.scatter([pt.x for pt in pts], [pt.y for pt in pts],c=[rnd_col]*len(pts))

plt.show()

# -

alpha = 0.15

B_dict = {}

# +

E_z_all = 0

E_mw_all = 0

for ii_c, component in enumerate(components):

if ii_c % 100==0:

print (ii_c)

pts_A = [pt for pt in GB_pts if pt.name in component]

pts_B = [pt for pt in R_pts if pt.name in component]

#print (len(pts_A),len(pts_B))

model, B, E_z, E_mw, Z = milp_geodesic_network_satisficing(pts_A, pts_B, alpha)

E_z_all += E_z.value()

E_mw_all += E_mw.value()

B_dict[ii_c] = B

#print (Z)

#for k1, v in B.items():

# for k2,v2 in v.items():

# if v2.varValue>0:

# print (k1,k2,v2,11)

print ('E_z', E_z_all, 'E_mw',E_mw_all)

# +

### visualise results

fig, axs = plt.subplots(1,1,figsize=(72,72))

for shp_ft in GB_shp[1:2]:

geom = geometry.shape(shp_ft['geometry'])

if geom.type=='Polygon':

xs,ys = geom.exterior.xy

axs.plot(xs,ys,c='grey')

if geom.type=='MultiPolygon':

for subgeom in geom:

xs,ys = subgeom.exterior.xy

axs.plot(xs,ys,c='grey')

### cyan and purple - unmatched outside of components

for ii_c, component in enumerate(components):

pts_A = [pt for pt in GB_pts if pt.name in component]

pts_B = [pt for pt in R_pts if pt.name in component]

pts_A_dict = {pt.name:pt for pt in pts_A}

pts_B_dict = {pt.name:pt for pt in pts_B}

lines = []

pts_recs = []

pts_obs = []

done_As = []

done_Bs = []

#print (B_dict[ii_c])

for k1, v in B_dict[ii_c].items():

#k1 - cluster

for k2,v2 in v.items():

#k2 -

#print (k1,k2,v2, v2.varValue)

if v2.varValue>0:

#matches observations

pts_obs.append([pts_A_dict[k1].x,pts_A_dict[k1].y,pts_A_dict[k1].MW])

done_As.append(k1)

#mathes dataset

pts_recs.append([pts_B_dict[k2].x,pts_B_dict[k2].y,pts_B_dict[k2].MW])

done_Bs.append(k2)

#match lines

lines.append([[pts_A_dict[k1].x,pts_A_dict[k1].y],[pts_B_dict[k2].x,pts_B_dict[k2].y]])

#print (ii_c,len(component),len(pts_A),len(pts_B),len(lines))

lc = LineCollection(lines, color='grey')

### pink and green for matched

axs.scatter([pt[0] for pt in pts_obs],[pt[1] for pt in pts_obs],s=[pt[2] for pt in pts_obs],c='green')

axs.scatter([pt[0] for pt in pts_recs],[pt[1] for pt in pts_recs],s=[pt[2] for pt in pts_recs],c='pink')

for name,pt in pts_A_dict.items():

if name not in done_As:

axs.scatter(pt.x,pt.y,s=pt.MW,c='b')

for name,pt in pts_B_dict.items():

if name not in done_Bs:

axs.scatter(pt.x,pt.y,s=pt.MW,c='r')

axs.add_collection(lc)

axs.set_xlim([-6,-4])

axs.set_ylim([49,51])

plt.show()

# -

# ### get impedence network

pts_R_dict = {pt.name:pt for pt in R_pts}

pts_GB_dict = {pt.name:pt for pt in GB_pts}

R_names = [pt.name for pt in R_pts]

GB_names = [pt.name for pt in GB_pts]

Z = {pt.name:{} for pt in GB_pts}

MW_GB = {pt.name:pt.MW for pt in GB_pts}

MW_R = {pt.name:pt.MW for pt in R_pts}

len(GB_pts), len(R_pts)

for ii_A,pt_A in enumerate(GB_pts):

if ii_A % 100 ==0:

print ('ii_A',ii_A)

for pt_B in R_pts:

#print (pt_A.name, pt_B.name)

Z[pt_A.name][pt_B.name]=geodesic([pt_A.x,pt_A.y], [pt_B.x,pt_B.y]).kilometers

pickle.dump(Z,open('Z.pickle','wb'))

Z= pickle.load(open('Z.pickle','rb'))

# ### Set up problem

# #### Define problem

model = LpProblem("Network Satisficing Problem",LpMinimize)

# #### Define Variables

# B -> bipartite

B = LpVariable.dicts("Bipartite",(GB_names,R_names),0,1,LpInteger)

# Differences between MW and MW in (needs variable to ensure absolute value)

abs_diffs = LpVariable.dicts("abs_diffs",R_names,cat='Continuous')

# #### Define Constraints

# Contstraint - abs diffs edges

for R_name in R_names:

model += abs_diffs[R_name] >= (MW_R[R_name] - lpSum([MW_GB[GB_name]*B[GB_name][R_name] for GB_name in GB_names]))/MW_R[R_name],"abs forcing pos {}".format(R_name)

model += abs_diffs[R_name] >= -1 * (MW_R[R_name] - lpSum([MW_GB[GB_name]*B[GB_name][R_name] for GB_name in GB_names]))/MW_R[R_name], "abs forcing neg {}".format(R_name)

# Contstraint - bipartite edges

for GB_name in GB_names:

model += lpSum([B[GB_name][R_name] for R_name in R_names]) <= 1,"Bipartite Edges {}".format(GB_name)

# #### Define constants and affine equations

alpha = 0.5

sum_Z = sum([Z[GB_name][R_name] for GB_name in GB_names for R_name in R_names])

E_z = sum([Z[GB_name][R_name]*B[GB_name][R_name] for GB_name in GB_names for R_name in R_names])/sum_Z

E_mw = sum([abs_diffs[R_name] for R_name in R_names])/len(R_names)

# #### Defin Objective Function

# Objective function

model += E_z*alpha + (1-alpha)*E_mw, "Loss"

model.solve()

pulp.LpStatus[model.status]

E_z.value()

for e in E_mw:

print (e.name, e.value())

# +

### visualise

fig, axs = plt.subplots(1,1,figsize=(12,12))

for pt in pts_A:

axs.scatter(pt.x, pt.y, s=pt.MW*10,c='g')

for pt in pts_B:

axs.scatter(pt.x,pt.y,s=pt.MW*10,c='r')

lines = []

for k1, v in B.items():

for k2,v2 in v.items():

if v2.varValue>0:

lines.append([[pts_A_dict[k1].x,pts_A_dict[k1].y],[pts_B_dict[k2].x,pts_B_dict[k2].y]])

lc = LineCollection(lines, color='grey')

axs.add_collection(lc)

plt.show()

# -

# ## Demo

model = LpProblem("Profit maximising problem", pulp.LpMaximize)

A = LpVariable('A', lowBound=0, cat='Integer')

B = LpVariable('B', lowBound=0, cat='Integer')

profit = 3000 * A + 45000 * B

# +

# Objective function

model += profit, "Profit"

# Constraints

model += 3 * A + 4 * B <= 30

model += 5 * A + 6 * B <= 60

model += 1.5 * A + 3 * B <= 21

# -

model.solve()

pulp.LpStatus[model.status]

print ("Production of Car A = {}".format(A.varValue))

print ("Production of Car B = {}".format(B.varValue))

# ## Archive

E_z_1 = LpVariable.dicts('E_z_1', (A_names,B_names),lowBound=0, cat='Continuous')

E_z_0 = LpVariable.dicts('E_z_0',A_names, lowBound=0, cat='Continuous')

E_z_t = LpVariable('E_z_t',lowBound = 0, cat='Continuous')

for A_name in A_names:

for B_name in B_names:

model+= E_z_1 == bipartite[A_name][B_name] * Z[A_name][B_name]

for A_name in A_names:

model += E_z_0[A_name] == lpSum([E_z_1[A_name][B_name] for B_name in B_names])

|

solarpv/analysis/matching/MILP_WRI-matching_stripped.ipynb

|

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# # Here I have some useful python codes.

# Import library

from __future__ import division, print_function, absolute_import

import numpy as np

import matplotlib.pyplot as plt

# %matplotlib inline

print('Import library')

# # How to sort a Python dict by value

# +

# How to sort a Python dict by value

# (== get a representation sorted by value)

xs = {'a': 4, 'b': 3, 'c': 2, 'd': 1}

sorted(xs.items(), key=lambda x: x[1])

# -

# # Left pad with zero

# Left pad with zero

n = '4'

n.zfill(3)

# # Subplots example

# +

import numpy as np

import matplotlib.pyplot as plt

# Data for plotting

t = np.arange(0.01, 20.0, 0.01)

# Create figure

fig, ((ax1, ax2), (ax3, ax4)) = plt.subplots(2, 2)

# log y axis

ax1.semilogy(t, np.exp(-t / 5.0))

ax1.set(title='semilogy')

ax1.grid()

# log x axis

ax2.semilogx(t, np.sin(2 * np.pi * t))

ax2.set(title='semilogx')

ax2.grid()

# log x and y axis

ax3.loglog(t, 20 * np.exp(-t / 10.0), basex=2)

ax3.set(title='loglog base 2 on x')

ax3.grid()

# With errorbars: clip non-positive values

# Use new data for plotting

x = 10.0**np.linspace(0.0, 2.0, 20)

y = x**2.0

ax4.set_xscale("log", nonposx='clip')

ax4.set_yscale("log", nonposy='clip')

ax4.set(title='Errorbars go negative')

ax4.errorbar(x, y, xerr=0.1 * x, yerr=5.0 + 0.75 * y)

# ylim must be set after errorbar to allow errorbar to autoscale limits

ax4.set_ylim(bottom=0.1)

fig.tight_layout()

plt.show()

# -

# # Namedtuple

# +

# Using namedtuple is way shorter than defining a class manually.

# Namedtuple is immutable

from collections import namedtuple

Car = namedtuple('Car', 'company name color mileage data')

my_car = Car('Toyota', 'Sienna LE', 'gray', [3812.4], [i for i in range(5)])

print(my_car)

my_car.mileage[0] = 1000

my_car.data.sort(reverse=True)

print(my_car)

# +

import collections

# collections.deque?

# -

|

notebooks/.ipynb_checkpoints/Useful_codes-checkpoint.ipynb

|

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# ___

#

# <a href='http://www.pieriandata.com'> <img src='../../Pierian_Data_Logo.png' /></a>

# ___

# # Matplotlib Overview Lecture

# ## Introduction

# Matplotlib is the "grandfather" library of data visualization with Python. It was created by <NAME>. He created it to try to replicate MatLab's (another programming language) plotting capabilities in Python. So if you happen to be familiar with matlab, matplotlib will feel natural to you.

#

# It is an excellent 2D and 3D graphics library for generating scientific figures.

#

# Some of the major Pros of Matplotlib are:

#

# * Generally easy to get started for simple plots

# * Support for custom labels and texts

# * Great control of every element in a figure

# * High-quality output in many formats

# * Very customizable in general

#

# Matplotlib allows you to create reproducible figures programmatically. Let's learn how to use it! Before continuing this lecture, I encourage you just to explore the official Matplotlib web page: http://matplotlib.org/

#

# ## Installation

#

# You'll need to install matplotlib first with either:

#

# conda install matplotlib

# or

# pip install matplotlib

#

# ## Importing

# Import the `matplotlib.pyplot` module under the name `plt` (the tidy way):

import matplotlib.pyplot as plt

# You'll also need to use this line to see plots in the notebook:

# %matplotlib inline

# That line is only for jupyter notebooks, if you are using another editor, you'll use: **plt.show()** at the end of all your plotting commands to have the figure pop up in another window.

# # Basic Example

#

# Let's walk through a very simple example using two numpy arrays:

# ### Example

#

# Let's walk through a very simple example using two numpy arrays. You can also use lists, but most likely you'll be passing numpy arrays or pandas columns (which essentially also behave like arrays).

#

# ** The data we want to plot:**

import numpy as np

x = np.linspace(0, 5, 11)

y = x ** 2

x

y

# ## Functinal method per usare matplotlib

# Lo vediamo brevemente perché è meglio utilizzare l'altro modo (object oriented method)

#

#

# ### Basic Matplotlib Commands

#

# We can create a very simple line plot using the following ( I encourage you to pause and use Shift+Tab along the way to check out the document strings for the functions we are using).

plt.plot(x,y, 'r*')

plt.plot(x, y, 'r') # 'r' is the color red

plt.xlabel('X Axis Title Here')

plt.ylabel('Y Axis Title Here')

plt.title('String Title Here')

plt.show()

# ## Creating Multiplots on Same Canvas

# plt.subplot(nrows, ncols, plot_number)

plt.subplot(1,2,1)

plt.plot(x, y, 'r--') # More on color options later

plt.subplot(1,2,2)

plt.plot(y, x, 'g*-');

# ___

# # Matplotlib Object Oriented Method

# Now that we've seen the basics, let's break it all down with a more formal introduction of Matplotlib's Object Oriented API. This means we will instantiate figure objects and then call methods or attributes from that object.

# ## Introduction to the Object Oriented Method

# The main idea in using the more formal Object Oriented method is to create figure objects and then just call methods or attributes off of that object. This approach is nicer when dealing with a canvas that has multiple plots on it.

#

# To begin we create a figure instance. Then we can add axes to that figure:

fig = plt.figure()

axes = fig.add_axes([0.1, 0.1, 0.8, 0.8])

# +

# Create Figure (empty canvas)

fig = plt.figure() # un canvas vuoto che può contenere cose

# Add set of axes to figure

# % da left, % da bottom, width, height (range 0 to 1)

axes = fig.add_axes([0.1, 0.1, 0.8, 0.8])

# Plot on that set of axes

axes.plot(x, y, 'b')

axes.set_xlabel('Set X Label') # Notice the use of set_ to begin methods

axes.set_ylabel('Set y Label')

axes.set_title('Set Title')

# -

# Code is a little more complicated, but the advantage is that we now have full control of where the plot axes are placed, and we can easily add more than one axis to the figure:

# +

# Creates blank canvas

fig = plt.figure()

axes1 = fig.add_axes([0.1, 0.1, 0.8, 0.8]) # main axes

axes2 = fig.add_axes([0.2, 0.5, 0.4, 0.3]) # inset axes

# Larger Figure Axes 1

axes1.plot(x, y, 'b')

axes1.set_xlabel('X_label_axes2')

axes1.set_ylabel('Y_label_axes2')

axes1.set_title('Axes 2 Title')

# Insert Figure Axes 2

axes2.plot(y, x, 'r')

axes2.set_xlabel('X_label_axes2')

axes2.set_ylabel('Y_label_axes2')

axes2.set_title('Axes 2 Title');

# -

# ## subplots()

#

# The plt.subplots() object will act as a more automatic axis manager.

#

# Basic use cases:

# +

# Use similar to plt.figure() except use tuple unpacking to grab fig and axes

fig, axes = plt.subplots()

# Now use the axes object to add stuff to plot

axes.plot(x, y, 'r')

axes.set_xlabel('x')

axes.set_ylabel('y')

axes.set_title('title');

# -

# Then you can specify the number of rows and columns when creating the subplots() object:

# Empty canvas of 1 by 2 subplots

fig, axes = plt.subplots(nrows=2, ncols=3)

plt.tight_layout() # per eliminare l'overlapping tra i plot

# Empty canvas of 1 by 2 subplots

fig, axes = plt.subplots(nrows=1, ncols=2)

# Axes is an array of axes to plot on

axes

# We can iterate through this array:

# +

for ax in axes:

ax.plot(x, y, 'b')

ax.set_xlabel('x')

ax.set_ylabel('y')

ax.set_title('title')

# Display the figure object

fig

# -

fig, axes = plt.subplots(nrows=1, ncols=2)

axes[0].plot(x,y)

axes[1].plot(y,x)

# A common issue with matplolib is overlapping subplots or figures. We ca use **fig.tight_layout()** or **plt.tight_layout()** method, which automatically adjusts the positions of the axes on the figure canvas so that there is no overlapping content:

# +

fig, axes = plt.subplots(nrows=1, ncols=2)

for ax in axes:

ax.plot(x, y, 'g')

ax.set_xlabel('x')

ax.set_ylabel('y')

ax.set_title('title')

fig

plt.tight_layout()

# -

# ### Figure size, aspect ratio and DPI

# Matplotlib allows the aspect ratio, DPI and figure size to be specified when the Figure object is created. You can use the `figsize` and `dpi` keyword arguments.

# * `figsize` is a tuple of the width and height of the figure in inches

# * `dpi` is the dots-per-inch (pixel per inch).

#

# For example:

fig = plt.figure(figsize=(8,4), dpi=100)

# The same arguments can also be passed to layout managers, such as the `subplots` function:

# +

fig, axes = plt.subplots(figsize=(12,3))

axes.plot(x, y, 'r')

axes.set_xlabel('x')

axes.set_ylabel('y')

axes.set_title('title');

# -

# ## Saving figures

# Matplotlib can generate high-quality output in a number formats, including PNG, JPG, EPS, SVG, PGF and PDF.

# To save a figure to a file we can use the `savefig` method in the `Figure` class:

fig.savefig("filename.png")

# Here we can also optionally specify the DPI and choose between different output formats:

fig.savefig("filename.png", dpi=200)

# ____

# ## Legends, labels and titles

# Now that we have covered the basics of how to create a figure canvas and add axes instances to the canvas, let's look at how decorate a figure with titles, axis labels, and legends.

# **Figure titles**

#

# A title can be added to each axis instance in a figure. To set the title, use the `set_title` method in the axes instance:

ax.set_title("title");

# **Axis labels**

#

# Similarly, with the methods `set_xlabel` and `set_ylabel`, we can set the labels of the X and Y axes:

ax.set_xlabel("x")

ax.set_ylabel("y");

# ### Legends

# You can use the **label="label text"** keyword argument when plots or other objects are added to the figure, and then using the **legend** method without arguments to add the legend to the figure:

# +

fig = plt.figure()

ax = fig.add_axes([0,0,1,1])

ax.plot(x, x**2, label="x**2")

ax.plot(x, x**3, label="x**3")

ax.legend()

# -

# Notice how are legend overlaps some of the actual plot!

#

# The **legend** function takes an optional keyword argument **loc** that can be used to specify where in the figure the legend is to be drawn. The allowed values of **loc** are numerical codes for the various places the legend can be drawn. See the [documentation page](http://matplotlib.org/users/legend_guide.html#legend-location) for details. Some of the most common **loc** values are:

# +

# Lots of options....

ax.legend(loc=1) # upper right corner

ax.legend(loc=2) # upper left corner

ax.legend(loc=3) # lower left corner

ax.legend(loc=4) # lower right corner

# .. many more options are available

# Most common to choose

ax.legend(loc=0) # let matplotlib decide the optimal location

fig

# -

# ## Setting colors, linewidths, linetypes

#

# Matplotlib gives you *a lot* of options for customizing colors, linewidths, and linetypes.

#

# There is the basic MATLAB like syntax (which I would suggest you avoid using for more clairty sake:

# ### Colors with MatLab like syntax

# With matplotlib, we can define the colors of lines and other graphical elements in a number of ways. First of all, we can use the MATLAB-like syntax where `'b'` means blue, `'g'` means green, etc. The MATLAB API for selecting line styles are also supported: where, for example, 'b.-' means a blue line with dots:

# MATLAB style line color and style

fig, ax = plt.subplots()

ax.plot(x, x**2, 'b.-') # blue line with dots

ax.plot(x, x**3, 'g--') # green dashed line

# ### Colors with the color= parameter

# We can also define colors by their names or RGB hex codes and optionally provide an alpha value using the `color` and `alpha` keyword arguments. Alpha indicates opacity.

# +

fig, ax = plt.subplots()

ax.plot(x, x+1, color="blue", alpha=0.5) # half-transparant

ax.plot(x, x+2, color="#8B008B") # RGB hex code

ax.plot(x, x+3, color="#FF8C00") # RGB hex code

# -

# ### Line and marker styles

# To change the line width, we can use the `linewidth` or `lw` keyword argument. The line style can be selected using the `linestyle` or `ls` keyword arguments:

# +

fig, ax = plt.subplots(figsize=(12,6))

ax.plot(x, x+1, color="red", linewidth=0.25)

ax.plot(x, x+2, color="red", linewidth=0.50)

ax.plot(x, x+3, color="red", linewidth=1.00)

ax.plot(x, x+4, color="red", linewidth=2.00)

# possible linestype options ‘-‘, ‘–’, ‘-.’, ‘:’, ‘steps’

ax.plot(x, x+5, color="green", lw=3, linestyle='-')

ax.plot(x, x+6, color="green", lw=3, ls='-.')

ax.plot(x, x+7, color="green", lw=3, ls=':')

# custom dash

line, = ax.plot(x, x+8, color="black", lw=1.50)

line.set_dashes([5, 10, 15, 10]) # format: line length, space length, ...

# possible marker symbols: marker = '+', 'o', '*', 's', ',', '.', '1', '2', '3', '4', ...

ax.plot(x, x+9, color="blue", lw=3, ls='-', marker='+')

ax.plot(x, x+10, color="blue", lw=3, ls='--', marker='o')

ax.plot(x, x+11, color="blue", lw=3, ls='-', marker='s')

ax.plot(x, x+12, color="blue", lw=3, ls='--', marker='1')

# marker size and color

ax.plot(x, x+13, color="purple", lw=1, ls='-', marker='o', markersize=2)

ax.plot(x, x+14, color="purple", lw=1, ls='-', marker='o', markersize=4)

ax.plot(x, x+15, color="purple", lw=1, ls='-', marker='o', markersize=8, markerfacecolor="red")

ax.plot(x, x+16, color="purple", lw=1, ls='-', marker='s', markersize=8,

markerfacecolor="yellow", markeredgewidth=3, markeredgecolor="green");

# -

# ### Control over axis appearance

# In this section we will look at controlling axis sizing properties in a matplotlib figure.

# ## Plot range

# We can configure the ranges of the axes using the `set_ylim` and `set_xlim` methods in the axis object, or `axis('tight')` for automatically getting "tightly fitted" axes ranges:

# +

fig, axes = plt.subplots(1, 3, figsize=(12, 4))

axes[0].plot(x, x**2, x, x**3)

axes[0].set_title("default axes ranges")

axes[1].plot(x, x**2, x, x**3)

axes[1].axis('tight')

axes[1].set_title("tight axes")

axes[2].plot(x, x**2, x, x**3)

axes[2].set_ylim([0, 60])

axes[2].set_xlim([2, 5])

axes[2].set_title("custom axes range");

# -

# # Special Plot Types

#

# There are many specialized plots we can create, such as barplots, histograms, scatter plots, and much more. Most of these type of plots we will actually create using pandas. But here are a few examples of these type of plots:

plt.scatter(x,y)

from random import sample

data = sample(range(1, 1000), 100)

plt.hist(data)

# +

data = [np.random.normal(0, std, 100) for std in range(1, 4)]

# rectangular box plot

plt.boxplot(data,vert=True,patch_artist=True);

# -

# ## Further reading

# * http://www.matplotlib.org - The project web page for matplotlib.

# * https://github.com/matplotlib/matplotlib - The source code for matplotlib.

# * http://matplotlib.org/gallery.html - A large gallery showcaseing various types of plots matplotlib can create. Highly recommended!

# * http://www.loria.fr/~rougier/teaching/matplotlib - A good matplotlib tutorial.

# * http://scipy-lectures.github.io/matplotlib/matplotlib.html - Another good matplotlib reference.

#

|

04-Visualization-Matplotlib-Pandas/04-01-Matplotlib/Matplotlib Concepts Lecture.ipynb

|

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# name: python3

# ---

# + [markdown] id="view-in-github" colab_type="text"

# <a href="https://colab.research.google.com/github/Mapuertag/Datascience300/blob/main/Clase1.ipynb" target="_parent"><img src="https://colab.research.google.com/assets/colab-badge.svg" alt="Open In Colab"/></a>

# + [markdown] id="LPPQqGrfqWq4"

# **Introducción**

#

#

# ---

# Para manejar un código en Python, vamos a seguir las siguientes recomendaciones:

# 1. Usar siempre minúsculas

# 2. Se le puede añadir números

# 3. Aparecerá error si no estan seguidos de la declaración de la variable con el número asignado: es decir luisa1(correcto) luisa 1 (incorrecto)

#

# En python hay varios tipos de datos compuestos y estan disponibles por defecto en los interpretes:

#

# 1. Númericos

# 2. Secuencias

# 3. Mapeos

# 4. Conjuntos usados para agrupar otros valores.

#

# **Diferencia entre constantes y variables**: En mátematicas llamamos constante a una magnitud que no cambia con el paso del tiempo. Ejemplo: Contabilidad: Gastos Fijos_ Muebles de oficina Ejemplo 2: 6

#

# Por otro lado, el concepto de variable, como la cantidad que es susceptible a tomar distintos valores númericos. Ejemplo:

#

#

#

# + colab={"base_uri": "https://localhost:8080/"} id="wTuP4jG8V_fQ" outputId="89bd80d0-7f56-4bbe-b710-70781df7214b"

x=80

print(x)

# + [markdown] id="ENLbdOjfWaMG"

# **Tipos de datos**:

#

# **Enteros**:

#

# Son los números que no tienes decimales Pueden ser positivos y negativos

#

#

#

#

#

# + colab={"base_uri": "https://localhost:8080/"} id="SD3x7e9hWuor" outputId="38576b5f-431b-42df-cd31-8233fcda520f"

a=int(3.964)

print(a,type(a))

# + [markdown] id="OhgM65sGXFw0"

# El comando int es para hacer alusión a número enteros. A parte el comando float, permite valores con decimales.

# + colab={"base_uri": "https://localhost:8080/"} id="kZPLw-dmXKev" outputId="17c5f7ee-da4a-41ff-da5a-5f6928cb4ed6"

b=float(8.23)

print(b,type (b))

# + [markdown] id="1MGsfJ7SXfen"

# **Tipo cadena**:

# Las cadenas son texto encerrado entre comillas (Simples o dobles) y se pueden conformar de diferentes caracteres (Númericos, Albéticos, Especiales #$+*%). Tener en cuenta, las cadenas admiten operadores como la suma y la resta. (Variable String)

#

# + colab={"base_uri": "https://localhost:8080/"} id="LFatv-voX1C8" outputId="1f5a4e70-9cbf-42ee-a707-31d89d246489"

val2="<NAME>"

print(val2)

val1="Luisa"

print(val1)

# + colab={"base_uri": "https://localhost:8080/"} id="P6BpQGFyYETX" outputId="ce1df153-8115-4c5d-f254-f4e6ba851052"

n="Aprender"

z="Python"

w="Aprender Python"

nz=n+" "+z

print(w)

q="5"

p="2"

b=int(q)

c=int(p)

print(b-c)

# + [markdown] id="OPp2YJPIYLYO"

# **Tipos Booleanos**: Este tipo de variale solo tendrá un valor de Verdadero o Falso. Nota: Son valores muy usados en condiciones y bucles.

# + colab={"base_uri": "https://localhost:8080/"} id="ishYgxs9YWXb" outputId="36bf60b1-1116-4a02-fbe6-8ca367b125c8"

lola=True

print("El valor es verdadero:",lola,", el cual es de tipo", type(lola))

# + id="DxHCyf_pYeCe"

Python cuenta con tipos de datos que admiten colecciones:

1. Listas

2. Tuplas

3. Diccionarios

# + [markdown] id="_0MSXw7j483j"

# **Tipos de conjuntos**: Son una colección de datos sin elementos que repiten

# + colab={"base_uri": "https://localhost:8080/"} id="yc86cO6Y5MQO" outputId="db1fd2ed-efcd-4590-8d6b-3b2ee009b611"

fru="pera","manzana","naranja"

color= "morado","blanco","azul"

print(fru,color)

# + [markdown] id="8rxr4rmT6G8i"

# **Tipo de listas**

# Las almacenan vectores, siempre empiezan a nombrarse desde el elemento cero(0). Apertir de allí empieza el conteo. Se usan corchetes

# Ejemplo de listas de Python:

#

# + colab={"base_uri": "https://localhost:8080/"} id="20Q6z4647AqB" outputId="e751c1be-770e-417f-b730-8a331006aed4"

ines=["5","uva","lila","perro","celular","micro","botella","tetero","10"]

print(Ines)

fe=ines[5:7]

print(fe)

# + [markdown] id="iVg3iNiG8snj"

# **Tipo Tuplas**

# Es una lista que no se puede modificar después de la creación de esta: Tuplas anidadas. Agrupación (Tuplas). Generalmente se usa parentesis

#

#

# + colab={"base_uri": "https://localhost:8080/"} id="U-SvV4iy9Sm-" outputId="605064a0-1760-4004-827f-cda2125ade07"

tupla=23,28,"hello"

print(tupla)

otra=tupla,(1,2,3,4)

print(otra)

# + [markdown] id="5VMqkWDg9ojH"

# **Tipo Diccionarios**

# Define los datos uno a uno entre un campo (ID-Identificador-Clave) y un valor

#

# + id="ywee2Pm298F3" colab={"base_uri": "https://localhost:8080/"} outputId="942b1226-7def-451c-8a69-041421d5a556"

datos_b={

"nombres":"Diana",

"apellidos":"Perea",

"cedula":"2345671",

"est_civil":"Viuda",

"lugar_nacimiento":"Neiva",

"fecha_nacimiento":"24/12/1980",

}

print("ID del diccionario", datos_b.keys())

print("ID del diccionario", datos_b.values())

print("ID del diccionario", datos_b.items())

print("Fecha de Nacimiento de Diana:", datos_b['fecha_nacimiento'])

# + [markdown] id="fOh1cDjBZYf8"

# **Ejemplo práctico**:

# + id="iM6zPXIhCBAx" colab={"base_uri": "https://localhost:8080/"} outputId="4c13f8ee-bd4f-449a-8eee-46f18223fa52"

zapatos_0={}

zapatos_0["talla"]="grande"

zapatos_0["color"]="rojo"

zapatos_0["material"]="cuero"

zapatos_0["tacón"]="alto"

zapatos_0["precio"]=90

zapatos_1={}

zapatos_1["talla"]="medio"

zapatos_1["color"]="negro"

zapatos_1["material"]="sintetico"

zapatos_1["tacón"]="plataforma"

zapatos_1["precio"]=60

zapatos_2={}

zapatos_2["talla"]="pequeño"

zapatos_2["color"]="blanco"

zapatos_2["material"]="tela"

zapatos_2["tacón"]="plano"

zapatos_2["precio"]=30

print(zapatos_0)

print(zapatos_1)

print(zapatos_2)

compra0=zapatos_0["precio"]

compra1=zapatos_1["precio"]

compra2=zapatos_2["precio"]

compratotal=compra0+compra1+compra2

print("la compra total fue de:",compratotal)

print("la compra total fue de:"+" "+str(compratotal))

|

Clase1.ipynb

|

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# # Pybot

import string

import time

import numpy as np

import bot_utils

from collections import defaultdict

from IPython.display import SVG

# Keras imports

import tensorflow as tf

import tensorflow.keras.preprocessing.text as tftext

from tensorflow.keras.preprocessing.sequence import pad_sequences

from tensorflow.keras.models import Model

from tensorflow.keras.optimizers import Adam

from tensorflow.keras.layers import Dense, Embedding, LSTM, Input, Bidirectional, Concatenate

from tensorflow.keras.utils import model_to_dot

from attention import AttentionLayer

# Gensim and Spelling

import gensim.downloader as api

glove_vectors = api.load("glove-wiki-gigaword-100")

from spellchecker import SpellChecker

import concurrent.futures

# Telegram

import logging

from threading import Timer

from telegram import ReplyKeyboardMarkup, ReplyKeyboardRemove, Update, ChatAction

from telegram.ext import (

Updater,

CommandHandler,

MessageHandler,

Filters,

ConversationHandler,

CallbackContext,

)

HYPER_PARAMS = {

'ENABLE_GPU' : True,

'ENABLE_SPELLING_SUGGESTION' : False,

'MESSAGE_TYPING_DELAY' : 4.0, # Seconds to mimic bot typing

'DATA_BATCH_SIZE' : 128,

'DATA_BUFFER_SIZE' : 10000,

'EMBEDDING_DIMENSION' : 100, # Dimension of the GloVe embedding vector

'MAX_SENT_LENGTH' : 11, # Maximum length of sentences

'MAX_SAMPLES' : 2000000, # Maximum samples to consider (useful for laptop memory) 200000

'MIN_WORD_OCCURENCE' : 30, # Minimum word count. If condition not met word replaced with <UNK>

'MODEL_LAYER_DIMS' : 500,

'MODEL_LEARN_RATE' : 1e-3,

'MODEL_LEARN_EPOCHS' : 10,

'MODEL_TRAINING' : True # If False - Model weights are loaded from file

}

if HYPER_PARAMS['ENABLE_GPU']:

physical_devices = tf.config.list_physical_devices('GPU')

tf.config.experimental.set_memory_growth(physical_devices[0], enable=True)

# ### Importing the data set

# https://www.cs.cornell.edu/~cristian/Cornell_Movie-Dialogs_Corpus.html

#

# movie_lines:

# - lineID

# - characterID (who uttered this phrase)

# - movieID

# - character name

# - text of the utterance

#

# movie_conversations:

# - characterID of the first character involved in the conversation

# - characterID of the second character involved in the conversation

# - movieID of the movie in which the conversation occurred

# - list of the utterances that make the conversation, in chronological

# order: ['lineID1','lineID2',É,'lineIDN']

# has to be matched with movie_lines.txt to reconstruct the actual content

#

lines = open('data/movie_lines.txt', encoding = 'utf-8', errors = 'ignore').read().split('\n')

conversations = open('data/movie_conversations.txt', encoding = 'utf-8', errors = 'ignore').read().split('\n')

# ### Pre-processing the data

# - Perform cleaning

# - Extract Q&As

# - Tokenize

# - Add padding

# +

def clean_text_to_lower(text):

"""Performs cleaning and lowers text"""

text = text.lower()

# Expand contractions to words, and remove characters

for word in text.split():

if word in bot_utils.CONTRACTIONS:

text = text.replace(word, bot_utils.CONTRACTIONS[word])

# Remove punctuation

text = text.translate(str.maketrans('', '', string.punctuation))

return text

def get_questions_answers(lines, conversations):

"""Builds question and answers from data sets"""

# Map id to text of the utterance

id_to_line = {}

for line in lines:

line_ = line.split(' +++$+++ ')

if len(line_) == 5:

id_to_line[line_[0]] = line_[4]

questions, answers = [], []

for line in conversations:

# Extract conversation turns

conversation = line.split(' +++$+++ ')

if len(conversation) == 4:

conversation_turns = conversation[3][1:-1].split(', ')

turns_list = [turn[1:-1] for turn in conversation_turns]

# Split to Q & A

for i_turn in range(len(conversation_turns) - 1):

questions.append(clean_text_to_lower(id_to_line[conversation_turns[i_turn][1:-1]]))

answers.append(clean_text_to_lower(id_to_line[conversation_turns[i_turn + 1][1:-1]]))

if len(questions) >= HYPER_PARAMS['MAX_SAMPLES']:

return questions, answers

return questions, answers

def tokenize(lines, conversations):

"""Tokenizes sets to sequences of integers, and adds special tokens.

Also reduces the vocabulary by replacing low frequency words with an unknown token.

Returns:

tokenizer: tokenizer, which might be used later to reverse integers to words,

tokenized_questions: list, questions as sequence of integers

tokenized_answers: list, answer as sequence of integers

size_vocab: int, unique number of words in vocabulary.

special_tokens: dict, mappings for special tokens"""

questions, answers = get_questions_answers(lines, conversations)

tokenizer = tftext.Tokenizer(oov_token='<UNK>')

tokenizer.fit_on_texts(questions + answers)

# Make UNK tokens, reindex tokenizer dicts

sorted_by_word_count = sorted(tokenizer.word_counts.items(), key=lambda kv: kv[1], reverse=True)

tokenizer.word_index = {}

tokenizer.index_word = {}

index = 1

for word, count in sorted_by_word_count:

if count >= HYPER_PARAMS['MIN_WORD_OCCURENCE']:

tokenizer.word_index[word] = index

tokenizer.index_word[index] = word

index += 1

# Add special tokens

special_tokens = {}

special_tokens['<PAD>'] = 0

special_tokens['<UNK>'] = len(tokenizer.word_index)

special_tokens['<SOS>'] = special_tokens['<UNK>'] + 1

special_tokens['<EOS>'] = special_tokens['<SOS>'] + 1

for special_token, index_value in special_tokens.items():

tokenizer.word_index[special_token] = index_value

tokenizer.index_word[index_value] = special_token

# Tokenize to integer sequences

tokenized_questions = tokenizer.texts_to_sequences(questions)

tokenized_answers = tokenizer.texts_to_sequences(answers)

# Size is equal to the last token's index

size_vocab = special_tokens['<EOS>'] + 1

# Add sentence position tokens

tokenized_questions = [[special_tokens['<SOS>']] + tokenized_question + [special_tokens['<EOS>']]

for tokenized_question in tokenized_questions]

tokenized_answers = [[special_tokens['<SOS>']] + tokenized_answer + [special_tokens['<EOS>']]

for tokenized_answer in tokenized_answers]

# Add padding at end so we can use a static input size to the model

tokenized_questions = pad_sequences(tokenized_questions,

maxlen=HYPER_PARAMS['MAX_SENT_LENGTH'],

padding='post')

tokenized_answers = pad_sequences(tokenized_answers,

maxlen=HYPER_PARAMS['MAX_SENT_LENGTH'],

padding='post')

return tokenizer, tokenized_questions, tokenized_answers, size_vocab, special_tokens

# -

tokenizer, tokenized_questions, tokenized_answers,\

size_vocab, special_tokens = tokenize(lines, conversations)

size_vocab

# **Create tf.data.Dataset** <br>

# Allows caching and prefetching to speed up training.

# +

dataset = tf.data.Dataset.from_tensor_slices((

{

'encoder_inputs': tokenized_questions[:, 1:], # Skip <SOS> token

'decoder_inputs': tokenized_answers[:, :-1] # Skip <EOS> token

},

{

'outputs': tokenized_answers[:, 1:] # Skip <SOS> token

},

))

dataset = dataset.cache()

dataset = dataset.shuffle(HYPER_PARAMS['DATA_BUFFER_SIZE'])

dataset = dataset.batch(HYPER_PARAMS['DATA_BATCH_SIZE'])

dataset = dataset.prefetch(tf.data.experimental.AUTOTUNE)

# -

# ### Create contextual embedding from GloVe

# Create embedding layer with out context, from a pre-trained Word2Vec Model from Glove:<br>

# https://nlp.stanford.edu/projects/glove/

# +

def load_glove_weights(dimension_embedding):

""" Load GloVe pre-trained model"""

path='glove/glove.6B.' + str(dimension_embedding) + 'd.txt'

word_to_vec = {}

with open(path, encoding='utf-8') as file:

for line in file:

values = line.split()

word_to_vec[values[0]] = np.asarray(values[1:], dtype='float32')

file.close()

return word_to_vec

def load_embedding_weights(word_dictionary, size_vocab, dimension_embedding):

"""Loads embedding weights from GloVe based on our context"""

word_to_vec = load_glove_weights(dimension_embedding)

embedding_matrix = np.zeros((size_vocab,

HYPER_PARAMS['EMBEDDING_DIMENSION']))

for word, index in word_dictionary.items():

embedding_vector = word_to_vec.get(word)

# Word is within GloVe dictionary

if embedding_vector is not None:

embedding_matrix[index] = embedding_vector

return embedding_matrix, word_to_vec

def build_embedding_layer(word_dictionary, size_vocab, dimension_embedding, length_input):

"""Builds a non-trainable embedding layer from GloVe

pre-trained model, based on our context"""

embedding_matrix, word_to_vec = load_embedding_weights(word_dictionary, size_vocab, dimension_embedding)

size_vocab = size_vocab

embedding_layer = Embedding(size_vocab,

dimension_embedding,

input_length=length_input,

weights=[embedding_matrix],

trainable=False)

return embedding_matrix, word_to_vec, embedding_layer

# -

embedding_matrix, word_to_vec, embedding_layer = build_embedding_layer(tokenizer.word_index,

size_vocab,

HYPER_PARAMS['EMBEDDING_DIMENSION'],

HYPER_PARAMS['MAX_SENT_LENGTH'])

# ### Seq2Seq Model

def seq2seq(embedding_layer, length_input, size_vocab, layer_dims):

"""LSTM Seq2Seq model with attention"""

length_input = length_input - 1

#

# Encoder,

# Biderectional (RNNSearch) as explained in https://arxiv.org/pdf/1409.0473.pdf

#

encoder_inputs = Input(shape=(length_input, ), name='encoder_inputs')

encoder_embedding = embedding_layer(encoder_inputs)

ecoder_lstm = Bidirectional(LSTM(layer_dims,

return_state=True,

return_sequences=True,

dropout=0.05,

recurrent_initializer='glorot_uniform',

name='encoder_lstm'),

name='encoder_bidirectional')

encoder_outputs, forward_h, forward_c, backward_h, backward_c = ecoder_lstm(encoder_embedding)

# For annotating sequences, we concatenate forward hidden state with backward one as explained in top paper

state_h = Concatenate(name='encoder_hidden_state')([forward_h, backward_h])

state_c = Concatenate(name='encoder_cell_state')([forward_c, backward_c])

encoder_states = [state_h, state_c]

#

# Decoder,

# Unidirectional

#

decoder_inputs = Input(shape=(length_input, ), name='decoder_inputs')

decoder_embedding = embedding_layer(decoder_inputs)

decoder_lstm = LSTM(layer_dims * 2, # to match bidirectional size

return_state=True,

return_sequences=True,

dropout=0.05,

recurrent_initializer='glorot_uniform',

name='decoder_lstm')

# Set encoder to use the encoder state as initial states

decoder_output,_ , _, = decoder_lstm(decoder_embedding,

initial_state=encoder_states)

# Attention

attention_layer = AttentionLayer(name='attention_layer')

attention_output, attention_state = attention_layer([encoder_outputs, decoder_output])

decoder_concat = Concatenate(axis=-1)([decoder_output, attention_output])

# Output layer

outputs = Dense(size_vocab, name='outputs', activation='softmax')(decoder_concat)

return Model([encoder_inputs, decoder_inputs], outputs), decoder_embedding

# +

model, decoder_embedding = seq2seq(embedding_layer,

HYPER_PARAMS['MAX_SENT_LENGTH'],

size_vocab,

HYPER_PARAMS['MODEL_LAYER_DIMS'])

optimizer = Adam(learning_rate=HYPER_PARAMS['MODEL_LEARN_RATE'])

model.compile(optimizer=optimizer, loss='sparse_categorical_crossentropy', metrics=['acc'])

SVG(model_to_dot(model, show_shapes=True, show_layer_names=False,

rankdir='TB', dpi=65).create(prog='dot', format='svg'))

# -

# ### Training

# Optionally load weights

if HYPER_PARAMS['MODEL_TRAINING']:

model.fit(dataset, epochs=HYPER_PARAMS['MODEL_LEARN_EPOCHS'], batch_size=HYPER_PARAMS['DATA_BATCH_SIZE'])

model.save('backup_{0}.h5'.format(HYPER_PARAMS['MODEL_LEARN_EPOCHS']))

else:

model.load_weights('backup_{0}.h5'.format(HYPER_PARAMS['MODEL_LEARN_EPOCHS']))

# ### Predictions processing

# - Follow the same tokeninzing approach as before

# - For words that are not in our original context, we use the GloVe embedding to find the most similar word within our vocabulary. If it is still a weird word, it will be replaced by an unknown token

# +

def get_known_words(sentence_words, glove_word_dictionary, local_word_dictionary, topn=20):

"""Pre-process the input text, corrects spelling,

get similar words from gensim if input word is out of context

or replace with <UNK> for bad words."""

result = []

for word in sentence_words:

# Correct any spelling mistakes

spell = SpellChecker()

word = spell.correction(word)

if word in local_word_dictionary:

result.append(word)

else:

# Determine if top match is within our local context

found_sim_word = False

if word in glove_word_dictionary:

similar_words = glove_vectors.similar_by_word(word, topn=topn)

for (sim_word, measure) in similar_words:

if sim_word in local_word_dictionary.keys():

found_sim_word = True

result.append(sim_word)

break

# Funny word

if not found_sim_word:

result.append('<UNK>')

return result

def pre_process_new_questions(text, tokenizer, glove_vectors, special_tokens):

""" Process text to words within our context,

and tokenize."""

# Pre-process

text_ = clean_text_to_lower(text)

split_text = text_.split(' ')

split_text = get_known_words(split_text, glove_vectors, tokenizer.word_index)

processed_question = " ".join(split_text)

# Tokenize to sequence of ints

# using original tokenizer

tokenized_question = tokenizer.texts_to_sequences([processed_question])

tokenized_question = [[special_tokens['<SOS>']] +

tokenized_question[0] +

[special_tokens['<EOS>']]]

# Padding

tokenized_question = pad_sequences(tokenized_question,

maxlen=HYPER_PARAMS['MAX_SENT_LENGTH'] - 1,

padding='post')

return processed_question, tokenized_question[0]

def post_process_new_answers(text_sequence, tokenizer, glove_vectors):

answer = []

# Build string from text sequence

for text_id in text_sequence:

if text_id != tokenizer.word_index['<EOS>'] and\

text_id != tokenizer.word_index['<PAD>']:

word = tokenizer.index_word[text_id]

if len(answer) == 0:

word = word.capitalize()

answer.append(word)

answer = " ".join(answer)

return answer

# -

# **Example of correction** <br>

# Vanish is not within our model's context, but dissapear is

processed_question, _ = pre_process_new_questions('i wouzld like tso vansish', tokenizer, glove_vectors, special_tokens)

processed_question

# ### Predictions

# +

def get_encoder_decoder(model):

""" Get the encoder and decoder models,

used to do inference of text sequences"""

# Encoder model

encoder_model = Model(model.get_layer('encoder_inputs').output,

[model.get_layer('encoder_bidirectional').output[0],

[model.get_layer('encoder_hidden_state').output,

model.get_layer('encoder_cell_state').output]])

# Decoder model

decoder_state_input_h = Input(shape=(HYPER_PARAMS['MODEL_LAYER_DIMS'] * 2, ))

decoder_state_input_c = Input(shape=(HYPER_PARAMS['MODEL_LAYER_DIMS'] * 2, ))

decoder_states_inputs = [decoder_state_input_h, decoder_state_input_c]

decoder_outputs, state_h, state_c = model.get_layer('decoder_lstm')(decoder_embedding,

initial_state=decoder_states_inputs)

decoder_states = [state_h, state_c]

decoder_model = Model([model.get_layer('decoder_inputs').output,

decoder_states_inputs],

[decoder_outputs] + decoder_states)

return encoder_model, decoder_model

def infer_answer_sentence(model, encoder_model, decoder_model, raw_question, tokenizer, glove_vectors, special_tokens):

processed_question, tokenized_question = pre_process_new_questions(raw_question,

tokenizer,

glove_vectors,

special_tokens)

tokenized_question = tf.expand_dims(tokenized_question, axis=0)

# Encode input sentence

encoder_outputs, states = encoder_model.predict(tokenized_question)

# Create starting input with only <SOS> token

decoder_input = tf.expand_dims(special_tokens['<SOS>'], 0)

decoded_answer_sequence = []

while len(decoded_answer_sequence) < HYPER_PARAMS['MAX_SENT_LENGTH']:

decoder_outputs , decoder_hidden_states , decoder_cell_states = decoder_model.predict([decoder_input] + states)

# Apply attention to decoder output

attention_layer = AttentionLayer()

attention_outputs, attention_states = attention_layer([encoder_outputs, decoder_outputs])

decoder_concatenated = Concatenate(axis=-1)([decoder_outputs, attention_outputs])

dense_output = model.get_layer('outputs')(decoder_concatenated)

# Get argmax of output

sampled_word = np.argmax(dense_output[0, -1, :])

# Finished output sentence

if sampled_word == special_tokens['<EOS>']:

break

# Store prediction, and use it as the decoder's new input

decoded_answer_sequence.append(sampled_word)

decoder_input = tf.expand_dims(sampled_word, 0)

# Update internal states each timestep

states = [decoder_hidden_states, decoder_cell_states]

answer = post_process_new_answers(decoded_answer_sequence, tokenizer, glove_vectors)

return answer

# -

encoder_model, decoder_model = get_encoder_decoder(model)

# ## Interface with Telegram

# +

# Enable logging

logging.basicConfig(

format='%(asctime)s - %(name)s - %(levelname)s - %(message)s', level=logging.INFO

)

updater = Updater("1396342801:AAElqHfd2RGJI-lhdbVevFYSIW4HolMjS7E", use_context=True)

# Get the dispatcher to register handlers

dispatcher = updater.dispatcher

spell = SpellChecker()

# +

WELCOMED_IDS = []

MARKUP_MESSAGES = defaultdict(int)

def welcome_message(chat_id, context):

context.bot.send_message(chat_id=chat_id, text=

'Hi there! I am NLTChatBot. \n' +

'I have studied the transcripts of 617 movies to learn to speak.' +

' Ask me anything! ' + u'\U0001F603' )

WELCOMED_IDS.append(chat_id)

def check_grammar(message):

_message = message.replace("?", "").split(' ')

processed_message = []

highlited_grammar = []

flag = False

for word in _message:

corrected_word = spell.correction(word)

processed_message.append(corrected_word)

if corrected_word != word:

# Bold correction

highlited_grammar.append('<b>'+ word + '</b>')

flag = True

else:

highlited_grammar.append(word)

return ' '.join(highlited_grammar), ' '.join(processed_message), flag

def handle_message(update, context):

# Delete old markup option if user didn't select it

if MARKUP_MESSAGES[update.effective_chat.id] != 0:

try:

context.bot.deleteMessage(chat_id = update.effective_chat.id,

message_id = MARKUP_MESSAGES[update.effective_chat.id])

# Chat possibly closed

except:

MARKUP_MESSAGES[update.effective_chat.id] = 0

MARKUP_MESSAGES[update.effective_chat.id] = 0

# New user?

if update.effective_chat.id not in WELCOMED_IDS:

welcome_message(update.effective_chat.id, context)

return

if HYPER_PARAMS['ENABLE_SPELLING_SUGGESTION']:

# Check grammar

highlited_grammar, processed_message, flag = check_grammar(update.message.text)

if flag:

# Spelling suggestions,

reply_keyboard = [[processed_message]]

message = update.message.reply_text('I am not sure what you meant with - ' + highlited_grammar,

reply_markup=ReplyKeyboardMarkup(reply_keyboard,

one_time_keyboard=True),

parse_mode='HTML')

MARKUP_MESSAGES[update.effective_chat.id] = message.message_id

return

else:

processed_message = update.message.text

# Reply to user

answer = infer_answer_sentence(model,

encoder_model,

decoder_model,

processed_message,

tokenizer,

glove_vectors,

special_tokens)

context.bot.send_message(chat_id=update.effective_chat.id, text=answer)

def received_message(update, context):

"""Mimics the typing on keybord,

before calling the message handling"""

if HYPER_PARAMS['MESSAGE_TYPING_DELAY'] > 0:

context.bot.send_chat_action(chat_id=update.effective_chat.id, action=ChatAction.TYPING)

timed_response = Timer(HYPER_PARAMS['MESSAGE_TYPING_DELAY'], handle_message, [update, context])

timed_response.start()

else:

handle_message(update, context)

# -

message_handler = MessageHandler(Filters.text & (~Filters.command), received_message)

dispatcher.add_handler(message_handler)

updater.start_polling()

|

NLPChatBot.ipynb

|

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# + raw_mimetype="text/restructuredtext" active=""

# .. _nb_visualization:

# -

# # Visualization

# + raw_mimetype="text/restructuredtext" active=""

# .. toctree::

# :maxdepth: 1

# :hidden:

#

# scatter.ipynb

# pcp.ipynb

# heatmap.ipynb

# petal.ipynb

# radar.ipynb

# radviz.ipynb

# star.ipynb

# video.ipynb

#

# -

# Different visualization techniques are available. Each of them has different purposes and is suitable for less or higher dimensional objective spaces.

#

# The following visualizations can be used:

#

# |Name|Class|Convenience|

# |---|---|---|

# |[Scatter Plots (2D/3D/ND)](scatter.ipynb)|Scatter|"scatter"|

# |[Parallel Coordinate Plots (PCP)](pcp.ipynb)|ParallelCoordinatePlot|"pcp"|

# |[Heatmap](heatmap.ipynb)|Heatmap|"heat"|

# |[Petal Diagram](petal.ipynb)|Petal|"petal"|

# |[Radar](radar.ipynb)|Radar|"radar"|

# |[Radviz](radviz.ipynb)|Radviz|"radviz"|

# |[Star Coordinates](star.ipynb)|StarCoordinate|"star"|

# |[Video](video.ipynb)|Video|-|

#

# Each of them is implemented in a class which can be used directly. However, it might

# be more comfortable to either use the factory function in some cases.

# For example for scatter plots the following initiates the same object:

# +

# directly using the class

from pymoo.visualization.scatter import Scatter

plot = Scatter()

# the global factory method

from pymoo.factory import get_visualization

plot = get_visualization("scatter")

# -

# The advantages of the convenience function is that just by changing the string a different visualization

# can be chosen (without changing any other import). Moreover, we desire to keep the global interface in the factory the same, whereas the implementation details, such as class names might change.

# Please note, that the visualization implementations are just a wrapper around [matplotlib](https://matplotlib.org) and all keyword arguments are still useable.

# For instance, if two different set of points should be plotted in different colors with different markers in a scatter plot:

#

# +

import numpy as np

A = np.random.random((20,2))

B = np.random.random((20,2))

from pymoo.factory import get_visualization

plot = get_visualization("scatter")

plot.add(A, color="green", marker="x")

plot.add(B, color="red", marker="*")

plot.show()

# -

# This holds for all our visualizations. However, depending on the visualization the matplotlib function that is used and the corresponding keyword arguments might change. For example, in for the PetalWidth Plot polygons are drawn which has different keywords than the plot of matplotlib.

# Furthermore, the plots have some default arguments to be used to set them during initialization:

# +

from pymoo.visualization.petal import Petal

from pymoo.visualization.util import default_number_to_text

np.random.seed(5)

A = np.random.random((1,6))

plot = Petal(

# change the overall figure size (does not work for all plots)

figsize=(8, 6),

# directly provide the title (str or tuple for options)

title=("My Plot", {"pad" : 30}),

# plot a legend (tuple for options)

legend=False,

# make the layout tight before returning

tight_layout=True,

# the boundaries for normalization purposes (does not apply for every plot

# either 2d array [[min1,..minN],[max1,...,maxN]] or just two numbers [min,max]

bounds=[0,1],

# if normalized, the reverse can be potted (1-values)

reverse=False,

# the color map to be used

cmap="tab10",

# modification of the axis style

axis_style=None,

# function to be used to plot numbers

func_number_to_text=default_number_to_text,

# change the axis labels - could be a list just the prefix

axis_labels=["Objective %s" % i for i in range(1,7)],

)

plot.add(A, label="A")

plot.show()

# -

# For each visualization a documentation is provided.

|

source/visualization/index.ipynb

|

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# name: python3

# ---

# + [markdown] id="view-in-github" colab_type="text"

# <a href="https://colab.research.google.com/github/victordibia/taxi/blob/main/notebooks/taxi_model_training.ipynb" target="_parent"><img src="https://colab.research.google.com/assets/colab-badge.svg" alt="Open In Colab"/></a>

# + id="Kwkhb5bOpjRq"

pip install scikit-learn==0.23.2 pandas==1.1.3

# + id="ssdEOTGs_M6u"

import pandas as pd

import numpy as np

from tqdm.notebook import tqdm

# + id="3R_JRetrInvM"

df = pd.read_csv("taxi/data/training.csv")

# + id="tS2N5tAnJIOl"

categorical_features = ["month","week","hour","isweekday","holiday", "dayofweek", "PULocationID", "DOLocationID"] #["PULocationID", "DOLocationID"]

feature_list = ["passenger_count","trip_time","fare_amount"]

numeric_features = ["passenger_count"]

dfa = df

dfa[categorical_features] = dfa[categorical_features].astype("category")

df_data = dfa[feature_list + categorical_features]

# + colab={"base_uri": "https://localhost:8080/", "height": 111} id="udNeyu8po_fn" outputId="b0405e04-2356-4a2c-d193-5d02eb1caf56"

train_sample_size = 300000

df_draw = df_data.sample(train_sample_size, random_state=42)

both_labels = df_draw[["fare_amount", "trip_time"]]

df_draw.drop(["trip_time","fare_amount"], axis =1, inplace=True)

df_draw.head(2)

# + colab={"base_uri": "https://localhost:8080/"} id="gKsrhJybov6w" outputId="e4a1e9a0-2501-408b-b01f-f636c8fdff53"

categorical_idx = df_draw.columns.get_indexer(categorical_features)

numeric_idx = df_draw.columns.get_indexer(numeric_features)

categorical_idx, numeric_idx

# + id="WCJAW34yJNTL"

import time

import matplotlib.pyplot as plt

from sklearn.tree import DecisionTreeRegressor

from sklearn.ensemble import RandomForestRegressor

from sklearn.ensemble import GradientBoostingRegressor

from sklearn.neural_network import MLPRegressor

from sklearn.svm import SVR

from sklearn.linear_model import LinearRegression

from sklearn.model_selection import train_test_split

from sklearn.pipeline import Pipeline

from sklearn.preprocessing import OneHotEncoder, StandardScaler, LabelEncoder

from sklearn.metrics import classification_report, mean_squared_error, mean_absolute_error, mean_squared_log_error

from sklearn.compose import ColumnTransformer

from sklearn.compose import make_column_selector as selector

num_transformer = Pipeline(steps=[

('scaler', StandardScaler())])

cat_transformer = Pipeline(steps=[

('onehot', OneHotEncoder(handle_unknown='ignore'))])

preprocessor = ColumnTransformer(

transformers=[

# ('num', num_transformer, selector(dtype_include='float64')),

('num', num_transformer, numeric_idx),

('cat', cat_transformer, categorical_idx)

])

# keep track of all details for models we train

def train_model(model, data, labels):

X = data.values

y = labels.values

X_train, X_test, y_train, y_test = train_test_split(X, y, random_state=42)

# pipe = Pipeline([('scaler', StandardScaler()),('clf', model["clf"])])

pipe = Pipeline(steps=[

('preprocessor', preprocessor),

('clf', model["clf"])])

start_time = time.time()

# with joblib.parallel_backend('dask'):

# pipe.fit(X_train, y_train)

pipe.fit(X_train, y_train)

train_time = time.time() - start_time

train_preds = pipe.predict(X_train)

test_preds = pipe.predict(X_test)

np.histogram(train_preds)

np.histogram(test_preds)

train_rmse = np.sqrt(mean_squared_error(y_train, train_preds))

test_rmse = np.sqrt(mean_squared_error(y_test, test_preds))

train_mae = mean_absolute_error(y_train, train_preds)

test_mae = mean_absolute_error(y_test, test_preds)

train_accuracy = pipe.score(X_train, y_train)

test_accuracy = pipe.score(X_test, y_test)

model_details = {"name": model["name"],

"train_rmse":train_rmse,

"test_rmse":test_rmse,

"train_mae":train_mae,

"test_mae":test_mae,

"train_time": train_time,

"model": pipe,

"y_test": y_test,

"test_preds": test_preds

}

return model_details

models = [

{"name": "Random Forest", "clf": RandomForestRegressor(n_estimators=100)},

# {"name": "Gradient Boosting", "clf": GradientBoostingRegressor(n_estimators=100)},

{"name": "MLP Classifier", "clf": MLPRegressor(solver='adam', alpha=1e-1, hidden_layer_sizes=(20,20,10,5,2), max_iter=500, random_state=42)}

]

# + colab={"base_uri": "https://localhost:8080/", "height": 100, "referenced_widgets": ["d1bd0549db2e438e8ade9327c701d874", "c581476b7e1f4b0ba89d18917444b637", "743c9ce76d904d6b9952977f0addefba", "5dc28368ceff48d4a4411ec9dc4212a6", "2a572e37d003423f84594fe95488da41", "ede8d0bacc9e498e891b3ee33385087a", "b26049d81ea5465cab3aed38a4fea362", "90fd406027f24f608e096a486da83a10"]} id="xqSOQ6VMJWVz" outputId="cd038a4b-5bfb-4dde-b26d-7ddc337d0918"

def plot_models(trained_models,outcome_type):

# visualize accuracy and run time

setup_plot()

model_df = pd.DataFrame(trained_models)

model_df.sort_values("test_rmse", inplace=True)

ax = model_df[["train_rmse","test_rmse", "name"]].plot(kind="line", x="name", figsize=(19,5), title="Classifier Performance Sorted by Test Accuracy - " + outcome_type)

ax.legend(["Train RMSE", "Test RMSE"])

for p in ax.patches:

ax.annotate( str( round(p.get_height(),3) ), (p.get_x() * 1.005, p.get_height() * 1.005))

ax.title.set_size(20)

plt.box(False)

model_df.sort_values("train_time", inplace=True)

ax= model_df[["train_time","name"]].plot(kind="line", x="name", figsize=(19,5), grid=True, title="Classifier Training Time (seconds)" + outcome_type)

ax.title.set_size(20)

ax.legend(["Train Time"])

plt.box(False)

def train_models(models, data, labels, outcome_type):

trained_models = []

for model in tqdm(models):

model_details = train_model(model, data, labels)

model_details["label"] = outcome_type

trained_models.append(model_details)

return trained_models

# %time multi_output_model = train_models(models, df_draw, both_labels, "Trip Time")

# + id="8WWG2YQ2Jizo"

def plot_trained_models(trained_model, title, window_size=100):

plt.figure(figsize=(14,5))

fare = [x[0] for x in trained_model["y_test"][:window_size]]

triptime = [x[1] for x in trained_model["y_test"][:window_size]]

fare_preds = [x[0] for x in trained_model["test_preds"][:window_size]]

triptime_preds = [x[1] for x in trained_model["test_preds"][:window_size]]

plt.plot(fare , label="Ground Truth")

plt.plot(fare_preds , label="Predicitions" )

plt.title("Model Predictions vs Ground Truth | " + trained_model["name"] + " | " + " Fare ")

plt.legend(loc="upper right")

plt.figure(figsize=(14,5))

plt.plot(triptime , label="Ground Truth")

plt.plot(triptime_preds , label="Predicitions" )

plt.title("Model Predictions vs Ground Truth | " + trained_model["name"] + " | " + " Time ")

plt.legend(loc="upper right")

print("RMSE: Train",trained_model["train_rmse"], "Test",trained_model["test_rmse"] )

print("MAE: Train",trained_model["train_mae"], "Test",trained_model["test_mae"] )

# + colab={"base_uri": "https://localhost:8080/"} id="fvMSV8e6p3ib" outputId="9a3ac15f-5db8-44e9-bacb-3f59f3882ebe"

multi_output_model[0]["test_preds"].shape, multi_output_model[0]["y_test"].shape

# + id="axJdMzb2wfPh" colab={"base_uri": "https://localhost:8080/", "height": 689} outputId="a2ce76e6-d28a-4467-f23e-701c4c61a26f"

plot_trained_models(multi_output_model[0], "Trip Time")

# + id="V9u9DfFrLWBA" colab={"base_uri": "https://localhost:8080/", "height": 689} outputId="6ab1baf2-29e0-4338-f401-2ed7d9b0b704"

plot_trained_models(multi_output_model[1], "Trip Time")

# + id="rm9JTSY8KXd9"

def result_to_df(trained_model, title):

print("Results for ", title)

model_df = pd.DataFrame(trained_model)

return model_df[["name","train_rmse","test_rmse", "train_mae","test_mae"]]

# + id="8uuXfnhAKcJB" colab={"base_uri": "https://localhost:8080/", "height": 128} outputId="d561a8f6-a391-44c5-ca16-6fb3ef0f739a"

result_to_df(multi_output_model, "Trip Fare")

# + [markdown] id="P6b-xqk9x_nX"

# ## Export Model

#

# - Write models to joblib file which can then be used to setup a Cloud AI Platform Model Endpoint.

# + id="_JWOjiSBLIFk"

# !mkdir models

# !mkdir models/mlp

# !mkdir models/randomforest

joblib.dump(multi_output_model[0]["model"],"models/randomforest/model.joblib")

joblib.dump(multi_output_model[1]["model"],"models/mlp/model.joblib")

# !gsutil -m cp -r models gs://taximodel

# + id="DUwrWtkKpd5-"

|

notebooks/taxi_model_training.ipynb

|

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

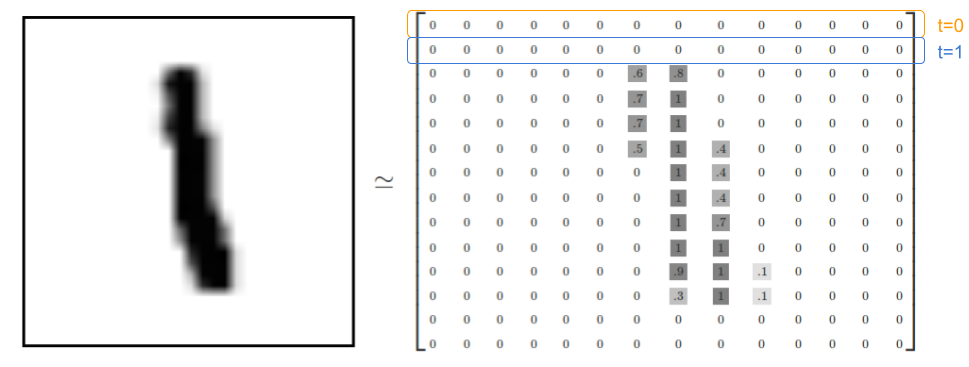

# ## Basic RNNs in Tensorflow

# - 1 Layer of 5 recurrent neurons

# - Outputs of a layer of recurrent neurons for all instances in a mini-batch

# $$ \textbf{Y}_{(t)} = \phi \big( \textbf{X}_{(t)} . \textbf{W}_x + \textbf{Y}_{(t-1)}^T . \textbf{W}_y + b \big) $$

# $$ = \phi \big( \big[ \textbf{X}_{(t)} \textbf{Y}_{(t-1)} \big] . \textbf{W} + b \big)$$

#

# where $ \textbf{W} = {\textbf{W}_x\brack \textbf{W}_y} $

#

# #### Notations in vectorized form:

# - $m =$ number of instances in mini-batch

# - $n_{neurons}$ number of neurons

# - $n_{inputs}$ number of input features

# - $dim(x)$ a function that determines shape or dimension of element $x$.

# - $\textbf{Y}_{(t)}$ is the layer output at time $t$ for each instance of the mini-batch:

# $$ dim(\textbf{Y}_{(t)}) = m\times n_{neurons}$$

# - $\textbf{X}_{(t)}$ is a matrix containing the inputs for all instances:

# $$ dim(\textbf{X}_{(t)}) = m \times n_{inputs}$$

# - $\textbf{W}_{x}$ is a matrix containing the connections weights for the inputs of the **current** time step:

# $$ dim(\textbf{W}_{x}) = n_{inputs} \times n_{neurons} $$

# - $\textbf{W}_{y}$ is a matrix containing the connections werights for the outputs of the **previous** time step:

# $$ dim(\textbf{W}_{y}) = n_{nuerons} \times n_{neurons} $$

# - Weight matrices $\textbf{W}_x$ and $\textbf{W}_y$ are often concatenated into a single matrix $\textbf{W}$ of shape $(n_{inputs} + n_{nuerons}) \times n_{neurons}$

# - Bias term $b$ is just a 1-dimensional vector of size $1 \times n_{neurons}$

# #### TODO: Put image of network we are building here

# +

import tensorflow as tf

from tensorflow.contrib.layers import fully_connected

import os

import numpy as np

tf.set_random_seed(1) # seed to obtain similar outputs

os.environ['CUDA_VISIBLE_DEVICES'] = '' # avoids using GPU for this session

# -

# ### Single Recurrent Neuron

#

# +

# Implementation of RNN with Single Neuron

N_INPUTS = 4

N_NEURONS = 1

class SingleRNN(object):

def __init__(self, n_inputs, n_neurons):

self.X0 = tf.placeholder(tf.float32, [None, n_inputs])

self.X1 = tf.placeholder(tf.float32, [None, n_inputs])

self.Wx = tf.Variable(tf.random_normal(shape=[n_inputs, n_neurons], dtype=tf.float32))