code

stringlengths 38

801k

| repo_path

stringlengths 6

263

|

|---|---|

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# [](https://githubtocolab.com/giswqs/leafmap/blob/master/examples/notebooks/52_netcdf.ipynb)

# [](https://gishub.org/leafmap-binder)

#

# **Visualizing NetCDF data**

#

# Uncomment the following line to install [leafmap](https://leafmap.org) if needed.

# +

# # !pip install leafmap xarray rioxarray netcdf4 localtileserver

# -

import leafmap

# Download a sample NetCDF dataset.

url = 'https://github.com/giswqs/leafmap/raw/master/examples/data/wind_global.nc'

filename = 'wind_global.nc'

leafmap.download_file(url, output=filename)

# Read the NetCDF dataset.

data = leafmap.read_netcdf(filename)

data

# Convert the NetCDF dataset to GeoTIFF. Note that the longitude range of the NetCDF dataset is `[0, 360]`. We need to convert it to `[-180, 180]` by setting `shift_lon=True` so that it can be displayed on the map.

tif = 'wind_global.tif'

leafmap.netcdf_to_tif(filename, tif, variables=['u_wind', 'v_wind'], shift_lon=True)

# Add the GeoTIFF to the map. We can also overlay the country boundary on the map.

geojson = 'https://github.com/giswqs/leafmap/raw/master/examples/data/countries.geojson'

m = leafmap.Map(layers_control=True)

m.add_geotiff(tif, band=[1], palette='coolwarm', layer_name='u_wind')

m.add_geojson(geojson, layer_name='Countries')

m

# You can also use the `add_netcdf()` function to add the NetCDF dataset to the map without having to convert it to GeoTIFF explicitly.

m = leafmap.Map(layers_control=True)

m.add_netcdf(

filename,

variables=['v_wind'],

palette='coolwarm',

shift_lon=True,

layer_name='v_wind',

)

m.add_geojson(geojson, layer_name='Countries')

m

# Visualizing wind velocity.

m = leafmap.Map(layers_control=True)

m.add_basemap('CartoDB.DarkMatter')

m.add_velocity(filename, zonal_speed='u_wind', meridional_speed='v_wind')

m

#

|

examples/notebooks/52_netcdf.ipynb

|

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# + [markdown] id="9lAsyeOwFnoX"

# # CSCE 623 Homework Assignment 3

#

# + [markdown] id="46d-KBtJJxN1"

# ### Student Name: <font color="blue">Enter Name</font>

# + [markdown] id="Yna07rL4Jz43"

# ### Date: <font color="blue">Enter Date</font>

# + [markdown] id="P685WkBlKB2x"

# ## Disclosures

#

# * None

# + [markdown] id="zXzR22oMKEtf"

# ## Overview

#

# In this homework assignment, you will explore various methods of cross-validation.

#

# We will introduce you to a generated dataset that represents a polynomial function with gaussian noise. You will attempt to fit various models of increasing flexibility (polynomial order) to the data. You will analyze and evaluate each models using cross-validation to (1) determine the model that best fits the data, and (2) predict the performance of your model on new data.

#

# You will then compare the model you developed using machine learning techniques to the model indicated by statistical analysis.

#

# This assignment includes both written and programming components.

# + [markdown] id="qbbSOVyq7lYk"

# ### Written Components

# Full effort answers to written components should include not only the answer to the question, but they should also include supporting information. You should provide justification or supporting information even if the question only asks for a single number or short answer.

# + [markdown] id="a-5OaiNO7m6F"

# ### Programming Components

# Use Python to perform any manipulations you make to provided datasets, all calculations and mathematical transformations, and to generate graphs, figures, or other support to explain how you arrived at your written answers.

# + [markdown] id="a0Zjdxie-jWf"

# ### Helpful Tips

#

# You might find these Python packages/imports helpful

#

# ``` python

# import numpy as np

# import pandas as pd

# import matplotlib.pyplot as plt

# import math

# import seaborn as sns

#

# from sklearn.model_selection import KFold

# from sklearn.model_selection import ShuffleSplit

# from sklearn.model_selection import LeaveOneOut

# from sklearn.model_selection import cross_val_score

#

# from IPython.display import Markdown as md

#

# from sklearn.linear_model import LinearRegression

#

# from sklearn.metrics import mean_squared_error

#

# # # %matplotlib inline

# ```

# + [markdown] id="kS60EAw9MiU2"

# ## Cross-fold Validation

# + [markdown] id="A5yhrcvjN0AD"

# ### STEP 0: installs & configuration

# + [markdown] id="pFdGmA3GN3_q"

# Install any packages you need for your notebook. If using the Google Colab environment, you will not need to install any additional packages.

# + id="AqoMuH_YOJ3e"

"""

CSCE 623 HW3. Cross-fold Validation

"""

DEBUG = True

# install packages, set configuration, as needed

# + [markdown] id="1HI9buTTOPHH"

# Import any packages you need for your notebook

# + id="2yVNfQdnY7BW"

# import pacakages for your notebook

# %matplotlib inline

# + id="4pD5Jejhp70q" tags=["remove_cell"]

# instructor provided code

plot_x_min = -2.

plot_x_max = 2.

def generate_data(seed = 1, quantity = 200, test_data = False):

np.random.seed(seed)

x = np.random.uniform(low=plot_x_min,high=plot_x_max,size=quantity)

order = np.random.randint(3, 4)

betas = np.random.uniform(-2, 2, order)

y = sum((beta * x ** (idx+1) for idx, beta in enumerate(betas)))

beta0 = np.random.uniform(np.min(y), np.max(y))

noise = np.random.normal(size=quantity, scale = (np.max(y) - np.min(y)) / 8)

if test_data: # get new sample if we're generating test data

noise = np.random.normal(size=quantity, scale = (np.max(y) - np.min(y)) / 8)

y += noise + beta0

df = pd.DataFrame({'x': x, 'y': y})

globals()['global_betas'] = betas

globals()['global_beta0'] = beta0

return(df)

# + [markdown] id="xRPFmS9PJVGI"

# ### STEP A: provided functions

# + id="-feg5ea9I_sE"

# instructor provided functions

"""

returns a LaTeX style string representing a function defined by beta0 and betas

"""

def create_model_string(beta0, betas):

model_function = f'$f(x) = {beta0:.2f}'

for idx, beta in enumerate(betas):

model_function += f' + {beta:.2f}x^{idx+1}'

model_function += '$'

return model_function

"""

adds a plot of a function to the current plot

"""

def plot_function(x_min, x_max, beta0, betas, resolution = .1, style = None, label = ''):

plot_x = np.arange(x_min - .2 * abs(x_min), x_max + .2 * abs(x_max), resolution)

plot_y = sum((beta * plot_x ** (idx+1) for idx, beta in enumerate(betas))) + beta0

if style:

plt.plot(plot_x, plot_y, style, label=label)

else:

plt.plot(plot_x, plot_y, label=label)

# + [markdown] id="vnE14vxA2VvU"

# ### STEP 1: scikit-learn Functions

#

# Review the scikit-learn documentation for the following functions and answer the questions that follow:

#

# - [User Guide for cross validation iterators](https://scikit-learn.org/stable/modules/cross_validation.html#cross-validation-iterators)

# - Identically Distributed Data

# - [KFold](https://scikit-learn.org/stable/modules/generated/sklearn.model_selection.KFold.html#sklearn.model_selection.KFold)

# - [RepeatedKFold](https://scikit-learn.org/stable/modules/generated/sklearn.model_selection.RepeatedKFold.html#sklearn.model_selection.RepeatedKFold)

# - [LeaveOneOut](https://scikit-learn.org/stable/modules/generated/sklearn.model_selection.LeaveOneOut.html)

# - [LeavePOut](https://scikit-learn.org/stable/modules/generated/sklearn.model_selection.LeavePOut.html#sklearn.model_selection.LeavePOut)

# - [ShuffleSplit](https://scikit-learn.org/stable/modules/generated/sklearn.model_selection.ShuffleSplit.html#sklearn.model_selection.ShuffleSplit)

# - Stratification with Class Data

# - [StratifiedKFold](https://scikit-learn.org/stable/modules/generated/sklearn.model_selection.StratifiedKFold.html#sklearn.model_selection.StratifiedKFold)

# - [RepeatedStratifiedKFold](https://scikit-learn.org/stable/modules/generated/sklearn.model_selection.RepeatedStratifiedKFold.html#sklearn.model_selection.RepeatedStratifiedKFold)

# - [StratifiedShuffleSplit](https://scikit-learn.org/stable/modules/generated/sklearn.model_selection.StratifiedShuffleSplit.html#sklearn.model_selection.StratifiedShuffleSplit)

# - Grouped Data

# - [GroupKFold](https://scikit-learn.org/stable/modules/generated/sklearn.model_selection.GroupKFold.html#sklearn.model_selection.GroupKFold)

# - [LeaveOneGroupOut](https://scikit-learn.org/stable/modules/generated/sklearn.model_selection.GroupKFold.html#sklearn.model_selection.GroupKFold)

# - [LeavePGroupsOut](https://scikit-learn.org/stable/modules/generated/sklearn.model_selection.LeavePGroupsOut.html#sklearn.model_selection.LeavePGroupsOut)

# - [GroupShuffleSplit](https://scikit-learn.org/stable/modules/generated/sklearn.model_selection.GroupShuffleSplit.html#sklearn.model_selection.GroupShuffleSplit)

#

#

#

# ### Discussion

#

# - Using one of the functions listed above, what is the most straight-forward way to implement "The Validation Set" approach discussed in _ISLR_, 5.1.1 on regression data? What function would you use? What argument values would you use?

#

# <font color=green class="student_answer">Student Answer</font>

#

# - What function and arguments would you use to implement LOOCV as discussed in _ISLR_ 5.1.2?

#

# <font color=green class="student_answer">Student Answer</font>

#

# - How would you implement k-fold Cross-Validation as discussedd in _ISLR_ 5.1.3? Assuming $k = 10$, what argument values would you use?

#

# <font color=green class="student_answer">Student Answer</font>

#

# - If the problem is a classification problem how would you ensure that each _k-fold_ had a balance of classes represented in the data? What function and argument values would you use assuming $k = 10$?

#

# <font color=green class="student_answer">Student Answer</font>

#

# - Suppose you wanted to train multiple models using k-fold cross-validation each with $k=10$ and compare their performance. What arguments would you use?

#

# <font color=green class="student_answer">Student Answer</font>

#

#

# + id="4Vi2UU9HDUVR"

STEP_1_COMPLETE = False

# + [markdown] id="Z4Lwf_jiXqXK"

# ### Data Analysis

#

# In steps 1-2, you'll load and conduct an analysis of a generated dataset.

# + [markdown] id="EwKDAUtjFnof"

# #### STEP 2: load dataset

# + [markdown] id="G7c6P84MOuJB"

# For this assignment, you will use a generated dataset. You have been provided a function that will generate a dataset. You need only provide a random seed value to generate a unique dataset.

#

# You'll initialize a dataset unique to yourself by choosing a random seed value. You can initialize the seed with the last 4 digits of your phone number, your street address, or some other number.

#

# You'll then generate the dataset and store it in a Dataframe named df:

# ```

# df = generate_data(seed)

# ```

#

# IMPORTANT: After choosing a seed value, you will not want to change this value, as changing the seed will result in your dataset changing. This will invalidate any analysis that you've completed.

#

# + id="_tQNDIVeFnog" colab={"base_uri": "https://localhost:8080/", "height": 204} outputId="4ca453b1-0722-4955-8426-63178a6dd95e"

#STEP 2

#STUDENT CODE - insert code to load a generated dataset using pandas

# store your data in a dataframe called 'df'

#---------------------------------------------

#---------------------------------------------

STEP_2_COMPLETE = False

# + [markdown] id="TawtbcrZBEuH"

# #### STEP 3: plot and analyze data

#

# Using a similar approach that you employed in homework assignments 1 and 2, plot and analyze the dataset.

#

# + id="pFCTBu8kCH38" colab={"base_uri": "https://localhost:8080/", "height": 1000} outputId="3c4b56e0-6b00-4aa7-957a-e9688ba5a24b"

#STEP 3

#STUDENT CODE - insert code to plot and use pandas analysis tools on the dataset

#---------------------------------------------

#---------------------------------------------

# + [markdown] id="3rDHXs2IC2Bx"

# Discuss the dataset, being sure to answer such questions as:

# - How many observations are in the dataset?

# - How many features?

# - What is the nature of the target (regression or classification)?

# - What kind of relationship will best fit the data? Linear? Polynomial? If polynomial, what order?

#

# <font color=green class="student_answer">Student Discussion</font>

#

#

# + id="YxcxNljkDYRn"

STEP_3_COMPLETE = False

# + [markdown] id="GEjQYr2oB4pj"

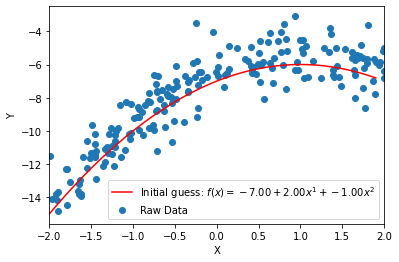

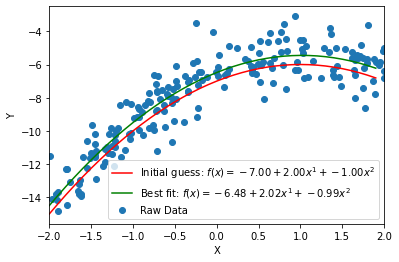

# #### STEP 4: initial hypothesis

#

# Develop an initial hypothesis about the best model that will fit the data and overlay it on a scatterplot of the dataset. Be sure to use a model informed by your analysis above. I've provided an example below. While my example is quadratic, your model might not necessarily be quadratic. Feel free to use either of the functions provided in SETUP A above.

#

#

# + id="9zBQSfynCYsN" colab={"base_uri": "https://localhost:8080/", "height": 279} outputId="644439f4-3de4-4137-eb84-39124f388d5d"

#STEP 4

#STUDENT CODE - insert code to overlay your initial hypothesis on a scatter plot of the dataset

#---------------------------------------------

#---------------------------------------------

STEP_4_COMPLETE = False

# + [markdown] id="80j7ZKyoDs_c"

# ### Feature Engineering

#

# In this section, you'll implement code to create a new dataframe with engineered features.

# + [markdown] id="RHKjj6oeEG4c"

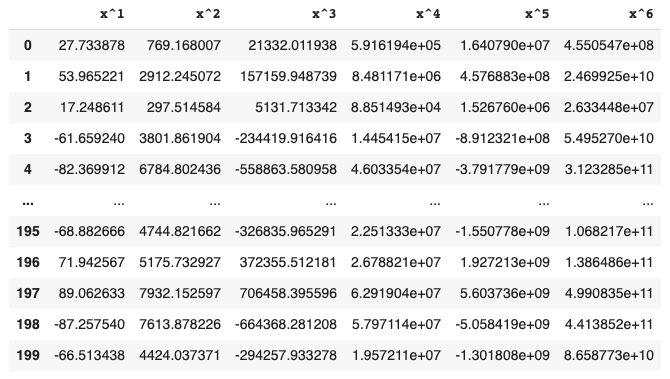

# #### STEP 5: feature engineering

#

# `df` contains a feature and a target. You will create a function that will generate a new feature dataframe that will generate a new dataframe with $p$ columns, where column $p$ will contain the the values of $x^p$.

#

# Your function will have the signature `poly_df(x, p)` where `x` is a series of feature values and `p` is the highest order polynomial desired. The function will return a dataframe where the first column contains the original feature values ($x^1$), the second column contains the quadratic values ($x^2$), the third column has cubic values ($x^3$), and so forth. Provide header values of `x^1`, `x^2`, `x^3`, etc.

#

# This is an example of a displayed dataframe returned after calling `poly_df(df.x, 6)` (specific values will differ).

#

#

# + colab={"base_uri": "https://localhost:8080/", "height": 419} id="XOGdRpJ3IEWM" outputId="6d51736c-c6ee-48cb-e4db-672873f51c70"

#STEP 5

#STUDENT CODE - insert code to implement poly_df

#---------------------------------------------

#---------------------------------------------

STEP_5_COMPLETE = False

# + [markdown] id="81Ntq_t1fi2C"

# ### 3 Ways to Cross Validate

#

# In the following section, we will explore the three methods of cross-validation discussed in the _ISLR_ text:

#

# - Validation Set

# - Leave One Out

# - K-Fold

#

# For each method, we'll execute three steps:

#

# 1. Write a function to calculate the root mean squared error using the intended cross-validation method.

# 2. Generate a DataFrame of root mean squared error values using the cross-validation technique for models of increasing flexibility. Specifically, you'll evaluate 8 models, polynomial models, ordered 1 through 8.

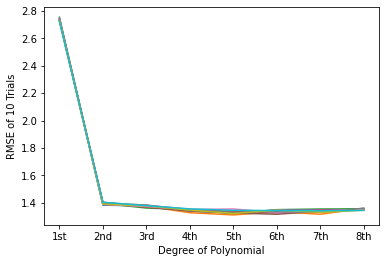

# 3. Plot the spaghetti chart of the root mean squared error values

#

#

# + [markdown] id="rak97xTA3pah"

# #### SETUP B: constants for cv

#

# Here are constants available for your use in STEPS 6-14

# + id="fvAFdvhD1xI-"

# instructor provided constants:

# feel free to use throughout the following STEPs

TRIALS = 10

MAX_ORDER = 8

COLUMNS = ['Trial 1', 'Trial 2', 'Trial 3', 'Trial 4', 'Trial 5', 'Trial 6', 'Trial 7', 'Trial 8', 'Trial 9', 'Trial 10']

INDICES = ['1st', '2nd', '3rd', '4th', '5th', '6th', '7th', '8th']

# + [markdown] id="PGvmAaG3DiE-"

# #### Validation Set Approach

#

# In this section, you will evaluate models of increasing flexibility (up to 8th order polynomial) fit to the generated data.

# + [markdown] id="mJ8SnNJIKycu"

# ##### STEP 6: validation set rmse

#

# In this step, you'll create `val_set_mse(X, y, random_state)` which returns the root mean squared error of a model fit to a training set and evaluated on a validation set.

#

# - `X` is a DataFrame of feature inputs

# - `y` is a Series of targets

# - return a scalar value representing the root mean squared error

#

# Hints:

# - The instructor's solution to this function uses only 4 lines of code--no Python tricks. If you're using more than 6-8 lines of code, you may not be doing something right

# - You'll use the scikit-learn [cross_val_score](https://scikit-learn.org/stable/modules/generated/sklearn.model_selection.cross_val_score.html) function to calculate the root mean squared error. The use of this function may be a bit non-standard for the typical programmer. While the `X` and `y` arguments are pretty straight-forward, three arguments in particular may be confounding:

# - `estimator` is the model type. Usually, you'll set this in advance and then pass in a reference to the model. For example, if you wanted to use a [LogisticRegression](https://scikit-learn.org/stable/modules/generated/sklearn.linear_model.LogisticRegression.html) model using the `liblinear` solver, you might do the following:

# ```

# model = LogisticRegression(solver='liblinear')

# ```

# and then you would pass in `model` as the `cross_val_score` estimator. There is an additional example on the bottom of page 73 in _HOML_ that uses a DecisionTreeRegressor defined near the top of the page.

# - `cv` is an integer or a cross-validation generator. Unless you're doing k-fold validation, you'll need to provide a generator. The generators are those iterators you reviewed in STEP 1. For example, if you wanted to train and validate on possible training/validaton sets when you remove 12 samples from the dataset, you might do the following:

# ```

# lpo = LeavePOut(p=12)

# ```

# and pass in `lpo` as the `cv` generator

# - `scoring` is a scoring methodology. You can create your own scoring function, but often, using a [predefined scoring method](https://scikit-learn.org/stable/modules/model_evaluation.html#scoring-parameter) is suitable. Note that all scoring methods follow the "greater is better" principal. Therefore, many of the regression scores are negated. To report the actual score of a negated value, you'll need to take the absolute value or negation of the score. See also pages 73-74 of _HOML_. Note that _HOML_ collects the root mean squared error from the `cross_val_score` method and then take the square root of the negation to calculate RMSE. Observe that the negated RMSE metric is available directly as a scoring methodology, so the square root step isn't be necessary.

# - An algorithm for completing this task:

# - Identify the correct cross validation iterator for this problem, and create the generator with all appropriate parameters, assigning it to a variable. NOTES (these are things to consider after you have basic functionality):

# - For the sake of repeatability, you'll want to set the `random_state` value. However, if you set `random_state` to a number, you'll get the exact same train/validation partition every time you call the function. Instead, use a seeded [RandomState](https://numpy.org/doc/1.16/reference/generated/numpy.random.RandomState.html).

# - Be sure that you're splitting the dataset in half for the train and validation sets

# - Identify the correct model type for this problem, and create the model with all appropriate parameters, assigning it to a variable

# - Call cross_val_score, selecting the appropriate scoring metric and assigning the result to a variable

# - Correct for negation and return the score

# + id="S7wrEbTiYq9K"

#STEP 6

#STUDENT CODE - insert code to implement val_set_mse(X, y, random_state)

#---------------------------------------------

#---------------------------------------------

STEP_6_COMPLETE = False

# + [markdown] id="KEgG-JYdf9tm"

# ##### STEP 7: dataframe of val set rmse

#

# In this step, you'll generate a DataFrame of RMSE values for 10 trials of 8 models using the Validation Set approach. Each model will represent an increasing order polynomial feature set. For example, the first value in the DataFrame for Trial 1 will be the the RMSE for a 1st order $(x^1)$ model, whereas the eighth value will be the MSE for an 8th order $(x^1, x^2, ... , x^8)$ model. The resulting DataFrame might look something like this:

#

# ```

# Trial 1 Trial 2 Trial 3 Trial 4 Trial 5 Trial 6 Trial 7 Trial 8 Trial 9 Trial 10

# 1 1.195520 1.307997 1.390264 1.299595 1.320461 1.170825 1.256463 1.321078 1.247050 1.212689

# 2 0.635550 0.621675 0.668410 0.627653 0.625856 0.639784 0.594125 0.638596 0.563780 0.633923

# 3 0.561838 0.582483 0.604784 0.639694 0.612064 0.593695 0.605464 0.569258 0.605322 0.665788

# 4 0.650670 0.655353 0.671473 0.638411 0.614336 0.609196 0.658455 0.709729 0.661375 0.595443

# 5 0.655897 0.670315 0.652939 0.663934 0.699677 0.587052 0.647100 0.669904 0.597044 0.573686

# 6 0.605551 0.559774 0.656311 0.617817 0.666769 0.577362 0.643648 0.622396 0.681107 0.689736

# 7 0.601836 0.633743 0.634507 0.635380 0.636882 0.633724 0.666757 0.676464 0.674024 0.639023

# 8 0.607631 0.639835 0.676559 0.668600 0.660138 0.654388 0.636267 0.659123 0.654003 0.642247

# ```

#

# Hints:

#

# - Adding each trial as a column is not necessarily intuitive. For many, it's more straight-forward to think of trials as rows with the each column representing the order of polynomial. The reason we use Trials as the columns is that the `matplotlib` package will plot lines using columns as the series values. It involves some Python gymnastics to plot rows instead of columns--better to handle it now.

# - You will use your `poly_df` function to generate polynomial feature data

# - The most straight-forward approach involves iterating over 10 trials and 8 orders of polynomials to assign the MSE values to an numpy array. Then, after you've filled your array, you'll create a DataFrame specifying indices (row labels 1-8) and column headers (Trial numbers)

# - Assuming you implemented RandomState in `val_set_mse`, here you'll initialize a RandomState object at the beginning of the cell and pass in to `val_set_mse`. In this way, every time you run this cell, you'll get the same sequence of random train/validation set partitions.

#

# + id="GONYy_mkkyI1" colab={"base_uri": "https://localhost:8080/", "height": 297} outputId="107ae609-c033-4574-dc89-81d244d1da8f"

#STEP 7

#STUDENT CODE - create DataFrame of Trial and Order data

#---------------------------------------------

#---------------------------------------------

STEP_7_COMPLETE = False

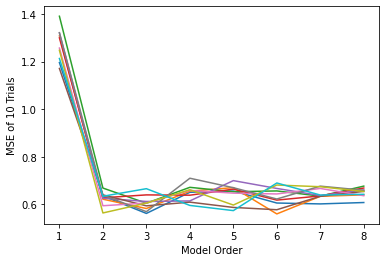

# + [markdown] id="2Hk42skZkz6O"

# ##### STEP 8: plot val set cv

#

# In this step, create a spaghetti plot of each of the 10 trials. Be sure to label your axes.

#

# Example of resulting plot:

#

#

# + id="4Ud1UJSokzCz" colab={"base_uri": "https://localhost:8080/", "height": 279} outputId="72294b08-9023-4ed6-b034-0a66314fa951"

#STEP 8

#STUDENT CODE - plot validation set spaghetti charts

#---------------------------------------------

#---------------------------------------------

STEP_8_COMPLETE = False

# + [markdown] id="CMSW4czeIXnX"

# #### Leave-One-Out Cross-Validation

#

# + [markdown] id="lFIpRurDxrvK"

# ##### STEP 9: loocv rmse

#

# In this step, you'll create loocv_mse(X, y, random_state) which returns the average root mean squared error of models fit to training sets and evaluated on the validation sets as defined by the Leave-One-Out Cross Validation approach.

#

# - X is a DataFrame of feature inputs

# - y is a Series of targets

# - return a scalar value representing the root mean squared error

#

# Hints:

# - See hints in STEP 6 for guidance here

# - One caveat to consider... what is the type/shape of the value returned by the `cross_val_score` method in STEP 6? What is it here? Another way to think about this question is how many models are being evaluated in the Validation Set approach (STEP 6)? How many models are being evaluted in LOOVC? If your function is expected to return a single RMSE, what will you do differently, here?

# - Your code will likely look nearly identical to that in STEP 6. Don't worry about avoiding repeatition... in practice, you will rarely implement more than one method of cross-validation in your research. Therefore, it's reasonable to generate the full workflow here as a possible source for your research later (apart from reuse of constants from the SETUP B step above).

# + id="xP_Ecu00OXQK"

#STEP 9

#STUDENT CODE - create loocv_mse(X, y, random_state)

#---------------------------------------------

#---------------------------------------------

STEP_9_COMPLETE = False

# + [markdown] id="KZonhX4oxuTH"

# ##### STEP 10: dataframe of loocv rmse

#

# In this step, you'll generate a DataFrame of RMSE values for 1 trial of 8 models using the Leave One Out Cross-Validation approach. As in STEP 7, each model will represent an increasing order polynomial feature set. For example, the first value in the DataFrame for Trial 1 will be the the RMSE for a 1st order $(x^1)$ model, whereas the eighth value will be the MSE for an 8th order $(x^1, x^2, ... , x^8)$ model.

#

# Hints:

# - Unlike STEP 7, you'll have only one column of data representing a single trial of 8 models of polynomials

# + id="PX4OBFELpgHb"

#STEP 10

#STUDENT CODE - create DataFrame of LOOCV Order data

#---------------------------------------------

#---------------------------------------------

STEP_10_COMPLETE = True

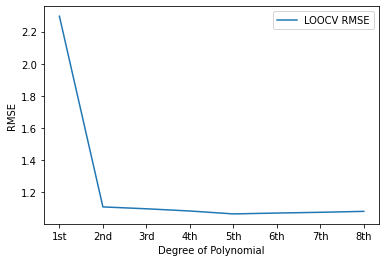

# + [markdown] id="N2ieXkh7xxSh"

# ##### STEP 11: plot loocv

#

# In this step, create a spaghetti plot of LOOVC for each order polynomial model. Be sure to label your axes.

#

# - Your plot might look something like this (specific values will vary):

#

#

# + id="2TthIazms3rL" colab={"base_uri": "https://localhost:8080/", "height": 279} outputId="953cbe1e-aa6a-4a95-e784-80128780ee26"

#STEP 11

#STUDENT CODE - plot loocv spaghetti chart

#---------------------------------------------

#---------------------------------------------

STEP_11_COMPLETE = False

# + [markdown] id="emh-0CO0Iqm5"

# #### k-Fold Cross Validation

# + [markdown] id="VPV2ZssE7ZAs"

# ##### STEP 12: kfold rmse

#

# In this step, you'll create kfold_mse(X, y, k, random_state) which returns the average root mean squared error of models fit to training sets and evaluated on the validation sets as defined by the k-fold cross-validation approach.

#

# - `X` is a DataFrame of feature inputs

# - `y` is a Series of targets

# - `k` is the number of folds

# - return a scalar value representing the root mean squared error

#

# Hints:

# - See hints in STEP 6 STEP 9 for guidance here

# - Though `cross_val_score` provides a mechanism to pass an integer via the `cv` argument to implement k-fold CV without a cross-validation iterator, this method does not randomize or shuffle the train and validation sets. As you the next step will require that you run multiple trials, do not use the short provided in `cross_val_score` to use k-fold CV. Instead, select the appropriate cross-validation generator with the appropriate `shuffle` and `random_state` values.

# + id="AlaH-SV4s2SU"

#STEP 12

#STUDENT CODE - create kfold_mse(X, y, k, random_state)

#---------------------------------------------

#---------------------------------------------

STEP_12_COMPLETE = False

# + [markdown] id="F0uDpcHF7i-_"

# ##### STEP 13: dataframe of kfold rmse

#

# In this step, you'll generate a DataFrame of RMSE values for 10 trials of 8 models using the 10-fold cross-validation approach.

# + id="TGwJgcKIxHXK" colab={"base_uri": "https://localhost:8080/", "height": 297} outputId="a809172d-e2de-4eea-fe1d-ba272b35f66c"

#STEP 13

#STUDENT CODE - create DataFrame of LOOCV Order data

#---------------------------------------------

#---------------------------------------------

STEP_13_COMPLETE = True

# + [markdown] id="cjMRS9oJ7o_Y"

# ##### STEP 14: plot kfold rmse

#

# In this step, create a spaghetti plot of the k-fold trials. Be sure to label your axes.

#

# - Your plot might look something like this (specific values will vary):

#

#

# + id="ZF7lkpxrxBxf" colab={"base_uri": "https://localhost:8080/", "height": 279} outputId="eaa482fd-2780-409f-b942-79fe4985f10c"

#STEP 14

#STUDENT CODE - plot k-fold spaghetti charts

#---------------------------------------------

#---------------------------------------------

STEP_14_COMPLETE = False

# + [markdown] id="rcadU5rtBQty"

# #### Discussion

# + [markdown] id="5eeVWo-DBTzA"

# ##### STEP 15: analysis

#

# Review the spaghetti charts created in STEPS 8, 11, and 14, then answer the following questions.

#

# - For both the Validation Set and for k-fold approaches we conducted 10 trials, and there was variation between them. What would have occurred had we conducted 10 trials of LOOCV?

#

# <font color=green class="student_answer">Student Discussion</font>

#

#

# - Which cross-validation approach has the greatest variation from one trial to the next? Why?

#

# <font color=green class="student_answer">Student Discussion</font>

#

# - Which cross-validation approach has the least variation from one trial to the next? Why?

#

# <font color=green class="student_answer">Student Discussion</font>

#

# - Suppose you had observations whose targets (y-values) range from $[0-10]$. When you conduct cross-validation, you note that the model of polynomial order $p$ yields an RMSE of 0.0800, but the model of polynomial order $p+4$ yields an RMSE of 0.0792. In terms of $p$, which model will you use and why?

#

# <font color=green class="student_answer">Student Discussion</font>

#

# - Which CV approach do you think will be most appropriate for your machine learning research? Why?

#

# <font color=green class="student_answer">Student Discussion</font>

#

#

# + id="wlFt5LcDiR3s"

STEP_15_COMPLETE = False

# + [markdown] id="4vITNJhSEbQg"

# ##### STEP 16: model selection

#

# Based on your analysis of cross-validation, which order polynomial model will you choose? Why?

#

# <font color=green class="student_answer">Student Discussion</font>

#

# + id="G1MKk23PiUdk"

STEP_16_COMPLETE = False

# + [markdown] id="rcYHRbbiE7bm"

# #### Model Creation & Evaluation

# + [markdown] id="TppVvahYE-i5"

# ##### STEP 17: Model Creation

#

# In this step, you will generate a model of the polynomial order you identified in STEP 16 (using your `poly_df` function). Then, you will fit that model to your data.

#

# Finally, you'll plot your model, along with the original data and your initial guess. Be sure to label your plot. Here is an example of a possible result:

#

#

# + id="ivtDGgQlFBop" colab={"base_uri": "https://localhost:8080/", "height": 279} outputId="389e0fc3-6bb8-4a5c-eb76-a997795320b4"

#STEP 17

#STUDENT CODE - fit model, plot initial guess and best fit line

#---------------------------------------------

#---------------------------------------------

STEP_17_COMPLETE = False

# + [markdown] id="9gyQOP6uPhqO"

# ##### STEP 18: Model Evaluation

#

# In this step, you will generate test data and evaluate the performance of your fitted model on that test data.

#

# Hints:

# - To generate test data, you will use the `generate_data()` function. It is critical you reuse the seed value that you used in STEP 2. Using a different seed value will result in you creating test data generated from a different data signal, and your model will inevitably perform disastrously. Also, you will need to set the optional argument `test_data` to `True`:

#

# `df_test = generate_data(seed, test_data=True)`

#

# You should use the actual value of `seed` unless you are ABSOLUTELY sure that you have not modified it somewhere in the notebook

#

# - After you have generated the data, be sure that you engineer a feature set of the appropriate polynomial order.

#

# - Page 80 of _HOML_ provides an example of how you can calculate the RMSE of your model on test data. Note that the text calculates the square root of the mean squared error. If you take a look at the [mean_squared_error](https://scikit-learn.org/stable/modules/generated/sklearn.metrics.mean_squared_error.html) function, you'll see that you can set an argument to return the RMSE, without a need to take a square root of the result.

#

# - Note that the instructor solution for this step involves approximately 5 steps. If you are using significantly more than that, you may not be effectively using the functions you've created or the scikit-learn tools.

# + colab={"base_uri": "https://localhost:8080/", "height": 34} id="vMS5haVjWHMF" outputId="66919e24-4331-4069-e0e0-49285df51cb1"

#STEP 18

#STUDENT CODE - generate test data, calculate rmse on test data

#---------------------------------------------

#---------------------------------------------

STEP_18_COMPLETE = False

# + [markdown] id="lXQiXKD8jjsi"

# #### STEP 19: final model discussion

#

# Answer the following questions

#

# - What is your model's performance on test data?

#

# <font color=green class="student_answer">Student Discussion</font>

#

#

# - How does this compare to the cross-validation error that you used to determine the polynomial order for your model?

#

# <font color=green class="student_answer">Student Discussion</font>

#

# - What accounts for the difference between the cross-validation error and the error on the test data?

#

# <font color=green class="student_answer">Student Discussion</font>

#

# - What is the most appropriate metric to use when advertising the performance of your model?

#

# <font color=green class="student_answer">Student Discussion</font>

#

#

# + id="5o2lfeA6kVYn"

STEP_19_COMPLETE = False

# + id="gCsY--TI02Jw"

# Enter the number of hours you spend on this homework assignment as a floating point value

hours_spent = 0.0

STEP_20_COMPLETE = False

|

hw3.ipynb

|

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# Detector Face with CV2

# +

import os,cv2,keras

import pandas as pd

import matplotlib.pyplot as plt

import numpy as np

import time

cv2.setUseOptimized(True);

# -

# https://github.com/opencv/opencv/tree/master/data/haarcascades

path = "Image"

i = "1.jpg"

a = time.time()

img = cv2.imread(os.path.join(path,i))

detector = cv2.CascadeClassifier('model/haarcascade_frontalface_default.xml');

gray = cv2.cvtColor(img, cv2.COLOR_BGR2GRAY);

faces = detector.detectMultiScale(gray, 1.3, 5);

for (x,y,w,h) in faces:

cv2.rectangle(img,(x,y),(x+w,y+h),(0,255,0),2)

plt.imshow(cv2.cvtColor(img, cv2.COLOR_BGR2RGB))

b = time.time()

print(b-a)

|

Train/CV2.ipynb

|

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# # MPIJob and Horovod Runtime

#

# ## Running distributed workloads

#

# Training a Deep Neural Network is a hard task. With growing datasets, wider and deeper networks, training our Neural Network can require a lot of resources (CPUs / GPUs / Mem and Time).

#

# There are two main reasons why we would like to distribute our Deep Learning workloads:

#

# 1. **Model Parallelism** — The **Model** is too big to fit a single GPU.

# In this case the model contains too many parameters to hold within a single GPU.

# To negate this we can use strategies like **Parameter Server** or slicing the model into slices of consecutive layers which we can fit in a single GPU.

# Both strategies require **Synchronization** between the layers held on different GPUs / Parameter Server shards.

#

# 2. **Data Parallelism** — The **Dataset** is too big to fit a single GPU.

# Using methods like **Stochastic Gradient Descent** we can send batches of data to our models for gradient estimation. This comes at the cost of longer time to converge since the estimated gradient may not fully represent the actual gradient.

# To increase the likelihood of estimating the actual gradient we could use bigger batches, by sending small batches to different GPUs running the same Neural Network, calculating the batch gradient and then running a **Synchronization Step** to calculate the average gradient over the batches and update the Neural Networks running on the different GPUs.

#

#

# > It is important to understand that the act of distribution adds extra **Synchronization Costs** which may vary according to your cluster's configuration.

# > <br>

# > As the gradients and NN needs to be propagated to each GPU in the cluster every epoch (or a number of steps), Networking can become a bottleneck and sometimes different configurations need to be used for optimal performance.

# > <br>

# > **Scaling Efficiency** is the metric used to show by how much each additional GPU should benefit the training process with Horovod showing up to 90% (When running with a well written code and good parameters).

#

#

# ## How can we distribute our training

# There are two different cluster configurations (which can be combined) we need to take into account.

# - **Multi Node** — GPUs are distributed over multiple nodes in the cluster.

# - **Multi GPU** — GPUs are within a single Node.

#

# In this demo we show a **Multi Node Multi GPU** — **Data Parallel** enabled training using Horovod.

# However, you should always try and use the best distribution strategy for your use case (due to the added costs of the distribution itself, ability to run in an optimized way on specific hardware or other considerations that may arise).

# ## How Horovod works?

# Horovod's primary motivation is to make it easy to take a single-GPU training script and successfully scale it to train across many GPUs in parallel. This has two aspects:

#

# - How much modification does one have to make to a program to make it distributed, and how easy is it to run it?

# - How much faster would it run in distributed mode?

#

# Horovod Supports TensorFlow, Keras, PyTorch, and Apache MXNet.

#

# in MLRun we use Horovod with MPI in order to create cluster resources and allow for optimized networking.

# **Note:** Horovd and MPI may use [NCCL](https://developer.nvidia.com/nccl) when applicable which may require some specific configuration arguments to run optimally.

#

# Horovod uses this MPI and NCCL concepts for distributed computation and messaging to quickly and easily synchronize between the different nodes or GPUs.

#

#

#

# Horovod will run your code on all the given nodes (Specific node can be addressed via `hvd.rank()`) while using an `hvd.DistributedOptimizer` wrapper to run the **synchronization cycles** between the copies of your Neural Network running at each node.

#

# **Note:** Since all the copies of your Neural Network must be the same, Your workers will adjust themselves to the rate of the slowest worker (simply by waiting for it to finish the epoch and receive its updates). Thus try not to make a specific worker do a lot of additional work on each epoch (Like a lot of saving, extra calculations, etc...) since this can affect the overall training time.

# ## How do we integrate TF2 with Horovod?

# As it's one of the main motivations, integration is fairly easy and requires only a few steps: ([You can read the full instructions for all the different frameworks on Horovod's documentation website](https://horovod.readthedocs.io/en/stable/tensorflow.html)).

#

# 1. Run `hvd.init()`.

# 2. Pin each GPU to a single process.

# With the typical setup of one GPU per process, set this to local rank. The first process on the server will be allocated the first GPU, the second process will be allocated the second GPU, and so forth.

# ```

# gpus = tf.config.experimental.list_physical_devices('GPU')

# for gpu in gpus:

# tf.config.experimental.set_memory_growth(gpu, True)

# if gpus:

# tf.config.experimental.set_visible_devices(gpus[hvd.local_rank()], 'GPU')

# ```

# 3. Scale the learning rate by the number of workers.

# Effective batch size in synchronous distributed training is scaled by the number of workers. An increase in learning rate compensates for the increased batch size.

# 4. Wrap the optimizer in `hvd.DistributedOptimizer`.

# The distributed optimizer delegates gradient computation to the original optimizer, averages gradients using allreduce or allgather, and then applies those averaged gradients.

# For TensorFlow v2, when using a `tf.GradientTape`, wrap the tape in `hvd.DistributedGradientTape` instead of wrapping the optimizer.

# 1. Broadcast the initial variable states from rank 0 to all other processes.

# This is necessary to ensure consistent initialization of all workers when training is started with random weights or restored from a checkpoint.

# For TensorFlow v2, use `hvd.broadcast_variables` after models and optimizers have been initialized.

# 1. Modify your code to save checkpoints only on worker 0 to prevent other workers from corrupting them.

# For TensorFlow v2, construct a `tf.train.Checkpoint` and only call `checkpoint.save()` when `hvd.rank() == 0`.

#

#

# You can go to [Horovod's Documentation](https://horovod.readthedocs.io/en/stable) to read more about horovod.

# ## Image classification use case

# See the end to end [**Image Classification with Distributed Training Demo**](https://github.com/mlrun/demos/tree/0.6.x/image-classification-with-distributed-training)

|

docs/runtimes/horovod.ipynb

|

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

import numpy as np

import torch

torch.set_printoptions(edgeitems=2, precision=2)

import csv

wine_path = "../data/tabular-wine/winequality-white.csv"

wineq_numpy = np.loadtxt(wine_path, dtype=np.float32, delimiter=";", skiprows=1)

wineq_numpy

# +

col_list = next(csv.reader(open(wine_path), delimiter=';'))

wineq_numpy.shape, col_list

# +

wineq = torch.from_numpy(wineq_numpy)

wineq.shape, wineq.type()

# -

data = wineq[:, :-1] # <1>

data, data.shape

target = wineq[:, -1] # <2>

target, target.shape

target = wineq[:, -1].long()

target

data_mean = torch.mean(data, dim=0)

data_mean

data_var = torch.var(data, dim=0)

data_var

data_normalized = (data - data_mean) / torch.sqrt(data_var)

data_normalized

data_normalized.size()

np.unique(target.numpy())

np.unique(target.numpy() - 3)

target_shift = target - 3

np.unique(target_shift.numpy())

# +

n_samples = data_normalized.shape[0]

n_val = int(0.3 * n_samples)

shuffled_indices = torch.randperm(n_samples)

train_indices = shuffled_indices[:-n_val]

val_indices = shuffled_indices[-n_val:]

train_x = data_normalized[train_indices]

train_y = target_shift[train_indices]

val_x = data_normalized[val_indices]

val_y = target_shift[val_indices]

# -

train_x.size(), train_y.size()

def training_loop(model, n_epochs, optimizer, loss_fn, train_x, val_x, train_y, val_y):

for epoch in range(1, n_epochs + 1):

train_t_p = model(train_x) # ya no tenemos que pasar los params

train_loss = loss_fn(train_t_p, train_y)

with torch.no_grad(): # todos los args requires_grad=False

val_t_p = model(val_x)

val_loss = loss_fn(val_t_p, val_y)

optimizer.zero_grad()

train_loss.backward()

optimizer.step()

if epoch == 1 or epoch % 1000 == 0:

print(f"Epoch {epoch}, Training loss {train_loss}, Validation loss {val_loss}")

import torch.nn as nn

import torch.optim as optim

# +

class SimpleNet(nn.Module):

def __init__(self):

super().__init__()

self.fc1 = nn.Linear(11, 32)

self.fc2 = nn.Linear(32, 7)

def forward(self, x):

x = self.fc1(x)

x = self.fc2(x)

x = torch.softmax(x, dim=1)

return x

net = SimpleNet()

optimizer = optim.SGD(net.parameters(), lr=5e-3, momentum=0.9)

criterion = nn.CrossEntropyLoss()

# -

# %%time

training_loop(

n_epochs=1000,

optimizer=optimizer,

model=net,

loss_fn=criterion,

train_x = train_x,

val_x = val_x,

train_y = train_y,

val_y = val_y)

|

lectures/white_whine_data.ipynb

|

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# ## The QLBS model for a European option

#

# Welcome to your 2nd assignment in Reinforcement Learning in Finance. In this exercise you will arrive to an option price and the hedging portfolio via standard toolkit of Dynamic Pogramming (DP).

# QLBS model learns both the optimal option price and optimal hedge directly from trading data.

#

# **Instructions:**

# - You will be using Python 3.

# - Avoid using for-loops and while-loops, unless you are explicitly told to do so.

# - Do not modify the (# GRADED FUNCTION [function name]) comment in some cells. Your work would not be graded if you change this. Each cell containing that comment should only contain one function.

# - After coding your function, run the cell right below it to check if your result is correct.

# - When encountering **```# dummy code - remove```** please replace this code with your own

#

#

# **After this assignment you will:**

# - Re-formulate option pricing and hedging method using the language of Markov Decision Processes (MDP)

# - Setup foward simulation using Monte Carlo

# - Expand optimal action (hedge) $a_t^\star(X_t)$ and optimal Q-function $Q_t^\star(X_t, a_t^\star)$ in basis functions with time-dependend coefficients

#

# Let's get started!

# ## About iPython Notebooks ##

#

# iPython Notebooks are interactive coding environments embedded in a webpage. You will be using iPython notebooks in this class. You only need to write code between the ### START CODE HERE ### and ### END CODE HERE ### comments. After writing your code, you can run the cell by either pressing "SHIFT"+"ENTER" or by clicking on "Run Cell" (denoted by a play symbol) in the upper bar of the notebook.

#

# We will often specify "(≈ X lines of code)" in the comments to tell you about how much code you need to write. It is just a rough estimate, so don't feel bad if your code is longer or shorter.

# +

#import warnings

#warnings.filterwarnings("ignore")

import numpy as np

import pandas as pd

from scipy.stats import norm

import random

import time

import matplotlib.pyplot as plt

import sys

sys.path.append("..")

import grading

# -

### ONLY FOR GRADING. DO NOT EDIT ###

submissions=dict()

assignment_key="<KEY>"

all_parts=["15mYc", "h1P6Y", "q9QW7","s7MpJ","Pa177"]

### ONLY FOR GRADING. DO NOT EDIT ###

COURSERA_TOKEN = # the key provided to the Student under his/her email on submission page

COURSERA_EMAIL = # the email

# ## Parameters for MC simulation of stock prices

# +

S0 = 100 # initial stock price

mu = 0.05 # drift

sigma = 0.15 # volatility

r = 0.03 # risk-free rate

M = 1 # maturity

T = 24 # number of time steps

N_MC = 10000 # number of paths

delta_t = M / T # time interval

gamma = np.exp(- r * delta_t) # discount factor

# -

# ### Black-Sholes Simulation

# Simulate $N_{MC}$ stock price sample paths with $T$ steps by the classical Black-Sholes formula.

#

# $$dS_t=\mu S_tdt+\sigma S_tdW_t\quad\quad S_{t+1}=S_te^{\left(\mu-\frac{1}{2}\sigma^2\right)\Delta t+\sigma\sqrt{\Delta t}Z}$$

#

# where $Z$ is a standard normal random variable.

#

# Based on simulated stock price $S_t$ paths, compute state variable $X_t$ by the following relation.

#

# $$X_t=-\left(\mu-\frac{1}{2}\sigma^2\right)t\Delta t+\log S_t$$

#

# Also compute

#

# $$\Delta S_t=S_{t+1}-e^{r\Delta t}S_t\quad\quad \Delta\hat{S}_t=\Delta S_t-\Delta\bar{S}_t\quad\quad t=0,...,T-1$$

#

# where $\Delta\bar{S}_t$ is the sample mean of all values of $\Delta S_t$.

#

# Plots of 5 stock price $S_t$ and state variable $X_t$ paths are shown below.

# +

# make a dataset

starttime = time.time()

np.random.seed(42)

# stock price

S = pd.DataFrame([], index=range(1, N_MC+1), columns=range(T+1))

S.loc[:,0] = S0

# standard normal random numbers

RN = pd.DataFrame(np.random.randn(N_MC,T), index=range(1, N_MC+1), columns=range(1, T+1))

for t in range(1, T+1):

S.loc[:,t] = S.loc[:,t-1] * np.exp((mu - 1/2 * sigma**2) * delta_t + sigma * np.sqrt(delta_t) * RN.loc[:,t])

delta_S = S.loc[:,1:T].values - np.exp(r * delta_t) * S.loc[:,0:T-1]

delta_S_hat = delta_S.apply(lambda x: x - np.mean(x), axis=0)

# state variable

X = - (mu - 1/2 * sigma**2) * np.arange(T+1) * delta_t + np.log(S) # delta_t here is due to their conventions

endtime = time.time()

print('\nTime Cost:', endtime - starttime, 'seconds')

# +

# plot 10 paths

step_size = N_MC // 10

idx_plot = np.arange(step_size, N_MC, step_size)

plt.plot(S.T.iloc[:,idx_plot])

plt.xlabel('Time Steps')

plt.title('Stock Price Sample Paths')

plt.show()

plt.plot(X.T.iloc[:,idx_plot])

plt.xlabel('Time Steps')

plt.ylabel('State Variable')

plt.show()

# -

# Define function *terminal_payoff* to compute the terminal payoff of a European put option.

#

# $$H_T\left(S_T\right)=\max\left(K-S_T,0\right)$$

def terminal_payoff(ST, K):

# ST final stock price

# K strike

payoff = max(K - ST, 0)

return payoff

type(delta_S)

# ## Define spline basis functions

# +

import bspline

import bspline.splinelab as splinelab

X_min = np.min(np.min(X))

X_max = np.max(np.max(X))

print('X.shape = ', X.shape)

print('X_min, X_max = ', X_min, X_max)

p = 4 # order of spline (as-is; 3 = cubic, 4: B-spline?)

ncolloc = 12

tau = np.linspace(X_min,X_max,ncolloc) # These are the sites to which we would like to interpolate

# k is a knot vector that adds endpoints repeats as appropriate for a spline of order p

# To get meaninful results, one should have ncolloc >= p+1

k = splinelab.aptknt(tau, p)

# Spline basis of order p on knots k

basis = bspline.Bspline(k, p)

f = plt.figure()

# B = bspline.Bspline(k, p) # Spline basis functions

print('Number of points k = ', len(k))

basis.plot()

plt.savefig('Basis_functions.png', dpi=600)

# -

type(basis)

X.values.shape

# ### Make data matrices with feature values

#

# "Features" here are the values of basis functions at data points

# The outputs are 3D arrays of dimensions num_tSteps x num_MC x num_basis

# +

num_t_steps = T + 1

num_basis = ncolloc # len(k) #

data_mat_t = np.zeros((num_t_steps, N_MC,num_basis ))

print('num_basis = ', num_basis)

print('dim data_mat_t = ', data_mat_t.shape)

t_0 = time.time()

# fill it

for i in np.arange(num_t_steps):

x = X.values[:,i]

data_mat_t[i,:,:] = np.array([ basis(el) for el in x ])

t_end = time.time()

print('Computational time:', t_end - t_0, 'seconds')

# -

# save these data matrices for future re-use

np.save('data_mat_m=r_A_%d' % N_MC, data_mat_t)

print(data_mat_t.shape) # shape num_steps x N_MC x num_basis

print(len(k))

# ## Dynamic Programming solution for QLBS

#

# The MDP problem in this case is to solve the following Bellman optimality equation for the action-value function.

#

# $$Q_t^\star\left(x,a\right)=\mathbb{E}_t\left[R_t\left(X_t,a_t,X_{t+1}\right)+\gamma\max_{a_{t+1}\in\mathcal{A}}Q_{t+1}^\star\left(X_{t+1},a_{t+1}\right)\space|\space X_t=x,a_t=a\right],\space\space t=0,...,T-1,\quad\gamma=e^{-r\Delta t}$$

#

# where $R_t\left(X_t,a_t,X_{t+1}\right)$ is the one-step time-dependent random reward and $a_t\left(X_t\right)$ is the action (hedge).

#

# Detailed steps of solving this equation by Dynamic Programming are illustrated below.

# With this set of basis functions $\left\{\Phi_n\left(X_t^k\right)\right\}_{n=1}^N$, expand the optimal action (hedge) $a_t^\star\left(X_t\right)$ and optimal Q-function $Q_t^\star\left(X_t,a_t^\star\right)$ in basis functions with time-dependent coefficients.

# $$a_t^\star\left(X_t\right)=\sum_n^N{\phi_{nt}\Phi_n\left(X_t\right)}\quad\quad Q_t^\star\left(X_t,a_t^\star\right)=\sum_n^N{\omega_{nt}\Phi_n\left(X_t\right)}$$

#

# Coefficients $\phi_{nt}$ and $\omega_{nt}$ are computed recursively backward in time for $t=T−1,...,0$.

# Coefficients for expansions of the optimal action $a_t^\star\left(X_t\right)$ are solved by

#

# $$\phi_t=\mathbf A_t^{-1}\mathbf B_t$$

#

# where $\mathbf A_t$ and $\mathbf B_t$ are matrix and vector respectively with elements given by

#

# $$A_{nm}^{\left(t\right)}=\sum_{k=1}^{N_{MC}}{\Phi_n\left(X_t^k\right)\Phi_m\left(X_t^k\right)\left(\Delta\hat{S}_t^k\right)^2}\quad\quad B_n^{\left(t\right)}=\sum_{k=1}^{N_{MC}}{\Phi_n\left(X_t^k\right)\left[\hat\Pi_{t+1}^k\Delta\hat{S}_t^k+\frac{1}{2\gamma\lambda}\Delta S_t^k\right]}$$

#

# $$\Delta S_t=S_{t+1} - e^{-r\Delta t} S_t\space \quad t=T-1,...,0$$

# where $\Delta\hat{S}_t$ is the sample mean of all values of $\Delta S_t$.

#

# Define function *function_A* and *function_B* to compute the value of matrix $\mathbf A_t$ and vector $\mathbf B_t$.

# ## Define the option strike and risk aversion parameter

# +

risk_lambda = 0.001 # risk aversion

K = 100 # option stike

# Note that we set coef=0 below in function function_B_vec. This correspond to a pure risk-based hedging

# -

# ### Part 1 Calculate coefficients $\phi_{nt}$ of the optimal action $a_t^\star\left(X_t\right)$

#

# **Instructions:**

# - implement function_A_vec() which computes $A_{nm}^{\left(t\right)}$ matrix

# - implement function_B_vec() which computes $B_n^{\left(t\right)}$ column vector

# +

# functions to compute optimal hedges

def function_A_vec(t, delta_S_hat, data_mat, reg_param):

"""

function_A_vec - compute the matrix A_{nm} from Eq. (52) (with a regularization!)

Eq. (52) in QLBS Q-Learner in the Black-Scholes-Merton article

Arguments:

t - time index, a scalar, an index into time axis of data_mat

delta_S_hat - pandas.DataFrame of dimension N_MC x T

data_mat - pandas.DataFrame of dimension T x N_MC x num_basis

reg_param - a scalar, regularization parameter

Return:

- np.array, i.e. matrix A_{nm} of dimension num_basis x num_basis

"""

### START CODE HERE ### (≈ 5-6 lines of code)

# store result in A_mat for grading

### END CODE HERE ###

return A_mat

def function_B_vec(t,

Pi_hat,

delta_S_hat=delta_S_hat,

S=S,

data_mat=data_mat_t,

gamma=gamma,

risk_lambda=risk_lambda):

"""

function_B_vec - compute vector B_{n} from Eq. (52) QLBS Q-Learner in the Black-Scholes-Merton article

Arguments:

t - time index, a scalar, an index into time axis of delta_S_hat

Pi_hat - pandas.DataFrame of dimension N_MC x T of portfolio values

delta_S_hat - pandas.DataFrame of dimension N_MC x T

S - pandas.DataFrame of simulated stock prices of dimension N_MC x T

data_mat - pandas.DataFrame of dimension T x N_MC x num_basis

gamma - one time-step discount factor $exp(-r \delta t)$

risk_lambda - risk aversion coefficient, a small positive number

Return:

np.array() of dimension num_basis x 1

"""

# coef = 1.0/(2 * gamma * risk_lambda)

# override it by zero to have pure risk hedge

### START CODE HERE ### (≈ 5-6 lines of code)

# store result in B_vec for grading

### END CODE HERE ###

return B_vec

# +

### GRADED PART (DO NOT EDIT) ###

reg_param = 1e-3

np.random.seed(42)

A_mat = function_A_vec(T-1, delta_S_hat, data_mat_t, reg_param)

idx_row = np.random.randint(low=0, high=A_mat.shape[0], size=50)

np.random.seed(42)

idx_col = np.random.randint(low=0, high=A_mat.shape[1], size=50)

part_1 = list(A_mat[idx_row, idx_col])

try:

part1 = " ".join(map(repr, part_1))

except TypeError:

part1 = repr(part_1)

submissions[all_parts[0]]=part1

grading.submit(COURSERA_EMAIL, COURSERA_TOKEN, assignment_key,all_parts[:1],all_parts,submissions)

A_mat[idx_row, idx_col]

### GRADED PART (DO NOT EDIT) ###

# +

### GRADED PART (DO NOT EDIT) ###

np.random.seed(42)

risk_lambda = 0.001

Pi = pd.DataFrame([], index=range(1, N_MC+1), columns=range(T+1))

Pi.iloc[:,-1] = S.iloc[:,-1].apply(lambda x: terminal_payoff(x, K))

Pi_hat = pd.DataFrame([], index=range(1, N_MC+1), columns=range(T+1))

Pi_hat.iloc[:,-1] = Pi.iloc[:,-1] - np.mean(Pi.iloc[:,-1])

B_vec = function_B_vec(T-1, Pi_hat, delta_S_hat, S, data_mat_t, gamma, risk_lambda)

part_2 = list(B_vec)

try:

part2 = " ".join(map(repr, part_2))

except TypeError:

part2 = repr(part_2)

submissions[all_parts[1]]=part2

grading.submit(COURSERA_EMAIL, COURSERA_TOKEN, assignment_key,all_parts[:2],all_parts,submissions)

B_vec

### GRADED PART (DO NOT EDIT) ###

# -

# ## Compute optimal hedge and portfolio value

# Call *function_A* and *function_B* for $t=T-1,...,0$ together with basis function $\Phi_n\left(X_t\right)$ to compute optimal action $a_t^\star\left(X_t\right)=\sum_n^N{\phi_{nt}\Phi_n\left(X_t\right)}$ backward recursively with terminal condition $a_T^\star\left(X_T\right)=0$.

#

# Once the optimal hedge $a_t^\star\left(X_t\right)$ is computed, the portfolio value $\Pi_t$ could also be computed backward recursively by

#

# $$\Pi_t=\gamma\left[\Pi_{t+1}-a_t^\star\Delta S_t\right]\quad t=T-1,...,0$$

#

# together with the terminal condition $\Pi_T=H_T\left(S_T\right)=\max\left(K-S_T,0\right)$ for a European put option.

#

# Also compute $\hat{\Pi}_t=\Pi_t-\bar{\Pi}_t$, where $\bar{\Pi}_t$ is the sample mean of all values of $\Pi_t$.

#

# Plots of 5 optimal hedge $a_t^\star$ and portfolio value $\Pi_t$ paths are shown below.

# +

starttime = time.time()

# portfolio value

Pi = pd.DataFrame([], index=range(1, N_MC+1), columns=range(T+1))

Pi.iloc[:,-1] = S.iloc[:,-1].apply(lambda x: terminal_payoff(x, K))

Pi_hat = pd.DataFrame([], index=range(1, N_MC+1), columns=range(T+1))

Pi_hat.iloc[:,-1] = Pi.iloc[:,-1] - np.mean(Pi.iloc[:,-1])

# optimal hedge

a = pd.DataFrame([], index=range(1, N_MC+1), columns=range(T+1))

a.iloc[:,-1] = 0

reg_param = 1e-3 # free parameter

for t in range(T-1, -1, -1):

A_mat = function_A_vec(t, delta_S_hat, data_mat_t, reg_param)

B_vec = function_B_vec(t, Pi_hat, delta_S_hat, S, data_mat_t, gamma, risk_lambda)

# print ('t = A_mat.shape = B_vec.shape = ', t, A_mat.shape, B_vec.shape)

# coefficients for expansions of the optimal action

phi = np.dot(np.linalg.inv(A_mat), B_vec)

a.loc[:,t] = np.dot(data_mat_t[t,:,:],phi)

Pi.loc[:,t] = gamma * (Pi.loc[:,t+1] - a.loc[:,t] * delta_S.loc[:,t])

Pi_hat.loc[:,t] = Pi.loc[:,t] - np.mean(Pi.loc[:,t])

a = a.astype('float')

Pi = Pi.astype('float')

Pi_hat = Pi_hat.astype('float')

endtime = time.time()

print('Computational time:', endtime - starttime, 'seconds')

# +

# plot 10 paths

plt.plot(a.T.iloc[:,idx_plot])

plt.xlabel('Time Steps')

plt.title('Optimal Hedge')

plt.show()

plt.plot(Pi.T.iloc[:,idx_plot])

plt.xlabel('Time Steps')

plt.title('Portfolio Value')

plt.show()

# -

# ## Compute rewards for all paths

# Once the optimal hedge $a_t^\star$ and portfolio value $\Pi_t$ are all computed, the reward function $R_t\left(X_t,a_t,X_{t+1}\right)$ could then be computed by

#

# $$R_t\left(X_t,a_t,X_{t+1}\right)=\gamma a_t\Delta S_t-\lambda Var\left[\Pi_t\space|\space\mathcal F_t\right]\quad t=0,...,T-1$$

#

# with terminal condition $R_T=-\lambda Var\left[\Pi_T\right]$.

#

# Plot of 5 reward function $R_t$ paths is shown below.

# +

# Compute rewards for all paths

starttime = time.time()

# reward function

R = pd.DataFrame([], index=range(1, N_MC+1), columns=range(T+1))

R.iloc[:,-1] = - risk_lambda * np.var(Pi.iloc[:,-1])

for t in range(T):

R.loc[1:,t] = gamma * a.loc[1:,t] * delta_S.loc[1:,t] - risk_lambda * np.var(Pi.loc[1:,t])

endtime = time.time()

print('\nTime Cost:', endtime - starttime, 'seconds')

# plot 10 paths

plt.plot(R.T.iloc[:, idx_plot])

plt.xlabel('Time Steps')

plt.title('Reward Function')

plt.show()

# -

# ## Part 2: Compute the optimal Q-function with the DP approach

#

# Coefficients for expansions of the optimal Q-function $Q_t^\star\left(X_t,a_t^\star\right)$ are solved by

#

# $$\omega_t=\mathbf C_t^{-1}\mathbf D_t$$

#

# where $\mathbf C_t$ and $\mathbf D_t$ are matrix and vector respectively with elements given by

#

# $$C_{nm}^{\left(t\right)}=\sum_{k=1}^{N_{MC}}{\Phi_n\left(X_t^k\right)\Phi_m\left(X_t^k\right)}\quad\quad D_n^{\left(t\right)}=\sum_{k=1}^{N_{MC}}{\Phi_n\left(X_t^k\right)\left(R_t\left(X_t,a_t^\star,X_{t+1}\right)+\gamma\max_{a_{t+1}\in\mathcal{A}}Q_{t+1}^\star\left(X_{t+1},a_{t+1}\right)\right)}$$

# Define function *function_C* and *function_D* to compute the value of matrix $\mathbf C_t$ and vector $\mathbf D_t$.

#

# **Instructions:**

# - implement function_C_vec() which computes $C_{nm}^{\left(t\right)}$ matrix

# - implement function_D_vec() which computes $D_n^{\left(t\right)}$ column vector

# +

def function_C_vec(t, data_mat, reg_param):

"""

function_C_vec - calculate C_{nm} matrix from Eq. (56) (with a regularization!)

Eq. (56) in QLBS Q-Learner in the Black-Scholes-Merton article

Arguments:

t - time index, a scalar, an index into time axis of data_mat

data_mat - pandas.DataFrame of values of basis functions of dimension T x N_MC x num_basis

reg_param - regularization parameter, a scalar

Return:

C_mat - np.array of dimension num_basis x num_basis

"""

### START CODE HERE ### (≈ 5-6 lines of code)

# your code here ....

# C_mat = your code here ...

### END CODE HERE ###

return C_mat

def function_D_vec(t, Q, R, data_mat, gamma=gamma):

"""

function_D_vec - calculate D_{nm} vector from Eq. (56) (with a regularization!)

Eq. (56) in QLBS Q-Learner in the Black-Scholes-Merton article

Arguments:

t - time index, a scalar, an index into time axis of data_mat

Q - pandas.DataFrame of Q-function values of dimension N_MC x T

R - pandas.DataFrame of rewards of dimension N_MC x T

data_mat - pandas.DataFrame of values of basis functions of dimension T x N_MC x num_basis

gamma - one time-step discount factor $exp(-r \delta t)$

Return:

D_vec - np.array of dimension num_basis x 1

"""

### START CODE HERE ### (≈ 5-6 lines of code)

# your code here ....

# D_vec = your code here ...

### END CODE HERE ###

return D_vec

# +

### GRADED PART (DO NOT EDIT) ###

C_mat = function_C_vec(T-1, data_mat_t, reg_param)

np.random.seed(42)

idx_row = np.random.randint(low=0, high=C_mat.shape[0], size=50)

np.random.seed(42)

idx_col = np.random.randint(low=0, high=C_mat.shape[1], size=50)

part_3 = list(C_mat[idx_row, idx_col])

try:

part3 = " ".join(map(repr, part_3))

except TypeError:

part3 = repr(part_3)

submissions[all_parts[2]]=part3

grading.submit(COURSERA_EMAIL, COURSERA_TOKEN, assignment_key,all_parts[:3],all_parts,submissions)

C_mat[idx_row, idx_col]

### GRADED PART (DO NOT EDIT) ###

# +

### GRADED PART (DO NOT EDIT) ###

Q = pd.DataFrame([], index=range(1, N_MC+1), columns=range(T+1))

Q.iloc[:,-1] = - Pi.iloc[:,-1] - risk_lambda * np.var(Pi.iloc[:,-1])

D_vec = function_D_vec(T-1, Q, R, data_mat_t,gamma)

part_4 = list(D_vec)

try:

part4 = " ".join(map(repr, part_4))

except TypeError:

part4 = repr(part_4)

submissions[all_parts[3]]=part4

grading.submit(COURSERA_EMAIL, COURSERA_TOKEN, assignment_key,all_parts[:4],all_parts,submissions)

D_vec

### GRADED PART (DO NOT EDIT) ###

# -

# Call *function_C* and *function_D* for $t=T-1,...,0$ together with basis function $\Phi_n\left(X_t\right)$ to compute optimal action Q-function $Q_t^\star\left(X_t,a_t^\star\right)=\sum_n^N{\omega_{nt}\Phi_n\left(X_t\right)}$ backward recursively with terminal condition $Q_T^\star\left(X_T,a_T=0\right)=-\Pi_T\left(X_T\right)-\lambda Var\left[\Pi_T\left(X_T\right)\right]$.

# +

starttime = time.time()

# Q function

Q = pd.DataFrame([], index=range(1, N_MC+1), columns=range(T+1))

Q.iloc[:,-1] = - Pi.iloc[:,-1] - risk_lambda * np.var(Pi.iloc[:,-1])

reg_param = 1e-3

for t in range(T-1, -1, -1):

######################

C_mat = function_C_vec(t,data_mat_t,reg_param)

D_vec = function_D_vec(t, Q,R,data_mat_t,gamma)

omega = np.dot(np.linalg.inv(C_mat), D_vec)

Q.loc[:,t] = np.dot(data_mat_t[t,:,:], omega)

Q = Q.astype('float')

endtime = time.time()

print('\nTime Cost:', endtime - starttime, 'seconds')

# plot 10 paths

plt.plot(Q.T.iloc[:, idx_plot])

plt.xlabel('Time Steps')

plt.title('Optimal Q-Function')

plt.show()

# -

# The QLBS option price is given by $C_t^{\left(QLBS\right)}\left(S_t,ask\right)=-Q_t\left(S_t,a_t^\star\right)$

#

# ## Summary of the QLBS pricing and comparison with the BSM pricing

# Compare the QLBS price to European put price given by Black-Sholes formula.

#

# $$C_t^{\left(BS\right)}=Ke^{-r\left(T-t\right)}\mathcal N\left(-d_2\right)-S_t\mathcal N\left(-d_1\right)$$

# +

# The Black-Scholes prices

def bs_put(t, S0=S0, K=K, r=r, sigma=sigma, T=M):

d1 = (np.log(S0/K) + (r + 1/2 * sigma**2) * (T-t)) / sigma / np.sqrt(T-t)

d2 = (np.log(S0/K) + (r - 1/2 * sigma**2) * (T-t)) / sigma / np.sqrt(T-t)

price = K * np.exp(-r * (T-t)) * norm.cdf(-d2) - S0 * norm.cdf(-d1)

return price

def bs_call(t, S0=S0, K=K, r=r, sigma=sigma, T=M):

d1 = (np.log(S0/K) + (r + 1/2 * sigma**2) * (T-t)) / sigma / np.sqrt(T-t)

d2 = (np.log(S0/K) + (r - 1/2 * sigma**2) * (T-t)) / sigma / np.sqrt(T-t)

price = S0 * norm.cdf(d1) - K * np.exp(-r * (T-t)) * norm.cdf(d2)

return price

# -

# ## The DP solution for QLBS

# +

# QLBS option price

C_QLBS = - Q.copy()

print('-------------------------------------------')

print(' QLBS Option Pricing (DP solution) ')

print('-------------------------------------------\n')

print('%-25s' % ('Initial Stock Price:'), S0)

print('%-25s' % ('Drift of Stock:'), mu)

print('%-25s' % ('Volatility of Stock:'), sigma)

print('%-25s' % ('Risk-free Rate:'), r)

print('%-25s' % ('Risk aversion parameter: '), risk_lambda)

print('%-25s' % ('Strike:'), K)

print('%-25s' % ('Maturity:'), M)

print('%-26s %.4f' % ('\nQLBS Put Price: ', C_QLBS.iloc[0,0]))

print('%-26s %.4f' % ('\nBlack-Sholes Put Price:', bs_put(0)))

print('\n')

# plot 10 paths

plt.plot(C_QLBS.T.iloc[:,idx_plot])

plt.xlabel('Time Steps')

plt.title('QLBS Option Price')

plt.show()

# +

### GRADED PART (DO NOT EDIT) ###

part5 = str(C_QLBS.iloc[0,0])

submissions[all_parts[4]]=part5

grading.submit(COURSERA_EMAIL, COURSERA_TOKEN, assignment_key,all_parts[:5],all_parts,submissions)

C_QLBS.iloc[0,0]

### GRADED PART (DO NOT EDIT) ###

# -

# ### make a summary picture

# +

# plot: Simulated S_t and X_t values

# optimal hedge and portfolio values

# rewards and optimal Q-function

f, axarr = plt.subplots(3, 2)

f.subplots_adjust(hspace=.5)

f.set_figheight(8.0)

f.set_figwidth(8.0)

axarr[0, 0].plot(S.T.iloc[:,idx_plot])

axarr[0, 0].set_xlabel('Time Steps')

axarr[0, 0].set_title(r'Simulated stock price $S_t$')

axarr[0, 1].plot(X.T.iloc[:,idx_plot])

axarr[0, 1].set_xlabel('Time Steps')

axarr[0, 1].set_title(r'State variable $X_t$')

axarr[1, 0].plot(a.T.iloc[:,idx_plot])

axarr[1, 0].set_xlabel('Time Steps')

axarr[1, 0].set_title(r'Optimal action $a_t^{\star}$')

axarr[1, 1].plot(Pi.T.iloc[:,idx_plot])

axarr[1, 1].set_xlabel('Time Steps')

axarr[1, 1].set_title(r'Optimal portfolio $\Pi_t$')

axarr[2, 0].plot(R.T.iloc[:,idx_plot])

axarr[2, 0].set_xlabel('Time Steps')

axarr[2, 0].set_title(r'Rewards $R_t$')

axarr[2, 1].plot(Q.T.iloc[:,idx_plot])

axarr[2, 1].set_xlabel('Time Steps')

axarr[2, 1].set_title(r'Optimal DP Q-function $Q_t^{\star}$')

# plt.savefig('QLBS_DP_summary_graphs_ATM_option_mu=r.png', dpi=600)

# plt.savefig('QLBS_DP_summary_graphs_ATM_option_mu>r.png', dpi=600)

plt.savefig('QLBS_DP_summary_graphs_ATM_option_mu>r.png', dpi=600)

plt.show()

# +

# plot convergence to the Black-Scholes values

# lam = 0.0001, Q = 4.1989 +/- 0.3612 # 4.378

# lam = 0.001: Q = 4.9004 +/- 0.1206 # Q=6.283

# lam = 0.005: Q = 8.0184 +/- 0.9484 # Q = 14.7489

# lam = 0.01: Q = 11.9158 +/- 2.2846 # Q = 25.33

lam_vals = np.array([0.0001, 0.001, 0.005, 0.01])

# Q_vals = np.array([3.77, 3.81, 4.57, 7.967,12.2051])

Q_vals = np.array([4.1989, 4.9004, 8.0184, 11.9158])

Q_std = np.array([0.3612,0.1206, 0.9484, 2.2846])

BS_price = bs_put(0)

# f, axarr = plt.subplots(1, 1)

fig, ax = plt.subplots(1, 1)

f.subplots_adjust(hspace=.5)

f.set_figheight(4.0)

f.set_figwidth(4.0)

# ax.plot(lam_vals,Q_vals)

ax.errorbar(lam_vals, Q_vals, yerr=Q_std, fmt='o')

ax.set_xlabel('Risk aversion')

ax.set_ylabel('Optimal option price')

ax.set_title(r'Optimal option price vs risk aversion')

ax.axhline(y=BS_price,linewidth=2, color='r')

textstr = 'BS price = %2.2f'% (BS_price)

props = dict(boxstyle='round', facecolor='wheat', alpha=0.5)

# place a text box in upper left in axes coords

ax.text(0.05, 0.95, textstr, fontsize=11,transform=ax.transAxes, verticalalignment='top', bbox=props)

plt.savefig('Opt_price_vs_lambda_Markowitz.png')

plt.show()

# -

|

m3-ex2/dp_qlbs_oneset_m3_ex2_v3.ipynb

|

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# + tags=["parameters"]

cfg_times = 3

cfg_lr = 0.02/4

cfg_classes = []

cfg_num_classes = 20

cfg_albu_p = 0.5

cfg_num_gpus = 2

cfg_mini_batch = 2

cfg_multi_scale = []

cfg_crop_size = 1280

cfg_load_from = None

cfg_frozen_stages = 1

cfg_experiment_path = './tmp/ipynbname'

cfg_train_data_root = '/workspace/notebooks/xxxx'

cfg_train_coco_file = 'keep_p_samples/01/train.json'

cfg_val_data_root = '/workspace/notebooks/xxxx'

cfg_val_coco_file = 'keep_p_samples/01/val.json'

cfg_test_data_root = '/workspace/notebooks/xxxx'

cfg_test_coco_file = 'keep_p_samples/01/test.json'

cfg_tmpl_path = '/usr/src/mmdetection/configs/faster_rcnn/faster_rcnn_r50_fpn_1x_coco.py'

# +

import os

#MMDET_PATH = '/usr/src/mmdetection'

#os.environ['MMDET_PATH'] = MMDET_PATH

#os.environ['MKL_THREADING_LAYER'] = 'GNU'

from cvtk.utils.notebook import clean_models

# +

# %%time

albu_train_transforms = [

dict(type='RandomRotate90', p=cfg_albu_p),

]

img_norm_cfg = dict(

mean=[123.675, 116.28, 103.53], std=[58.395, 57.12, 57.375], to_rgb=True)

train_pipeline = [

dict(type='LoadImageFromFile'),

dict(type='LoadAnnotations', with_bbox=True),

dict(

type='Albu',

transforms=albu_train_transforms,

bbox_params=dict(

type='BboxParams',

format='pascal_voc',

label_fields=['gt_labels'],

min_visibility=0.0,

filter_lost_elements=True),

keymap={

'img': 'image',

'gt_masks': 'masks',

'gt_bboxes': 'bboxes'

},

update_pad_shape=False,

skip_img_without_anno=True),

dict(type='Resize', test_mode=(len(cfg_multi_scale) == 0), multi_scale=cfg_multi_scale),

dict(type='RandomCrop', height=cfg_crop_size, width=cfg_crop_size),

dict(type='RandomFlip', flip_ratio=0.5, direction=['horizontal', 'vertical', 'diagonal']),

dict(type='Normalize', **img_norm_cfg),

dict(type='Pad', size_divisor=32),

dict(type='DefaultFormatBundle'),

dict(type='Collect', keys=['img', 'gt_bboxes', 'gt_labels']),

]

test_pipeline = [

dict(type='LoadImageFromFile'),

dict(

type='MultiScaleFlipAug',

scale_factor=1.0,

transforms=[

dict(type='Resize', test_mode=True, multi_scale=cfg_multi_scale),

dict(type='Normalize', **img_norm_cfg),

dict(type='Pad', size_divisor=32),

dict(type='DefaultFormatBundle'),

dict(type='Collect', keys=['img']),

]),

]

cfg_data = dict(

samples_per_gpu=cfg_mini_batch,

workers_per_gpu=cfg_mini_batch,

train=dict(

type='CocoDataset',

data_root=cfg_train_data_root,

ann_file=cfg_train_coco_file,

classes=cfg_classes,

img_prefix='',

pipeline=train_pipeline),