code

stringlengths 38

801k

| repo_path

stringlengths 6

263

|

|---|---|

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# # calculate confusion matrix - precision - recall -

# +

import pandas as pd

from sklearn.linear_model import LogisticRegression

x1 = [-1.0, -0.75, -0.5, -0.25, 0.0, 0.25, 0.5, 0.75, 1.0]

x2 = [abs(x) for x in x1]

y = [1, 1, 1, 0, 0, 0, 1, 1, 1]

df = pd.DataFrame({'x1':x1, 'x2':x2, 'y': y})

X = df[['x1']]

y = df['y']

m = LogisticRegression(C=1e10)

m.fit(X, y)

print('score with x1 only : {:4.2f}'.format(m.score(X, y)))

X = df[['x1', 'x2']]

m.fit(X, y)

print('score with x2=abs(x1) : {:4.2f}'.format(m.score(X, y)))

# -

X = df[['x1']]

m = LogisticRegression(C=1e10)

m.fit(X, y)

m.score(X, y)

from sklearn.metrics import precision_score

# +

ypred = m.predict(X)

y

# -

ypred

precision_score(y, ypred)

from sklearn.metrics import recall_score

recall_score(y, ypred)

from sklearn.metrics import confusion_matrix

confusion_matrix(y, ypred)

# +

# top left: TN

# bottom right: TP

# bottom left: FN

# Top right: FP

# TP : surviving passenger correctly predcited

# TN : drowned passenger correctly predicted

# FP : drowned passenger predicted as surviving

# FN : surviving passenger predicted as dead

# -

precision_score(y, ypred)

precision_score(ypred, y)

# +

# probability

m.predict_proba(X)

# -

# part 3: probabilites and threshold values

#

p = m.predict_proba(X)

posp = p[:, 1] # select all rows, 2nd column

threshold = 0.5

for value in posp:

if value > threshold:

print('positive')

else:

print('negative')

# try threshold values 0.0000000001 and 0.9999999999 as well

p = m.predict_proba(X)

posp = p[:, 1] # select all rows, 2nd column

threshold = 0.9

for value in posp:

if value > threshold:

print('positive')

else:

print('negative')

p = m.predict_proba(X)

posp = p[:, 1] # select all rows, 2nd column

threshold = 0.1

for value in posp:

if value > threshold:

print('positive')

else:

print('negative')

|

Week_02/Titanic/5.2.4. Evaluating Classifiers.ipynb

|

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: venv-datascience

# language: python

# name: venv-datascience

# ---

# # Principal Component Analysis

#

# Let's discuss PCA! Since this isn't exactly a full machine learning algorithm, but instead an unsupervised learning algorithm, we will just have a lecture on this topic, but no full machine learning project (although we will walk through the cancer set with PCA).

#

# ## PCA Review

#

# Remember that **PCA is just a transformation of your data and attempts to find out what features explain the most variance in your data**. For example:

# <img src='PCA.png' />

# # Import Libraries

import numpy as np

import pandas as pd

import matplotlib.pyplot as plt

import seaborn as sns

# # Data

from sklearn.datasets import load_breast_cancer

cancer = load_breast_cancer()

type(cancer)

cancer

cancer.keys()

cancer['data'][:2]

print(cancer['DESCR'])

df = pd.DataFrame(cancer['data'], columns=cancer['feature_names'])

df.head()

# ---------

# ## PCA Visualization

#

# As we've noticed before it is **difficult to visualize high dimensional data, we can use PCA to find the first two principal components**, and visualize the data in this new, two-dimensional space, with a single scatter-plot.

#

# Before we do this though, we'll need to scale our data so that each feature has a single unit variance.

df.shape

# Now we are dealing with 30 features (30 dimensions).

# ### Scaling

from sklearn.preprocessing import StandardScaler

scaler = StandardScaler()

scaled_data = scaler.fit_transform(df)

# # PCA (Dimensionality Reduction)

# PCA with Scikit Learn uses a very similar process to other preprocessing functions that come with SciKit Learn. We instantiate a PCA object, find the principal components using the fit method, then apply the rotation and dimensionality reduction by calling transform().

#

# We can also specify how many components we want to keep when creating the PCA object.

from sklearn.decomposition import PCA

# the module name decomposition makes sense because PCA ensentially decompse the high dimensions data into selected dimensions

pca = PCA(n_components=2)

# now we want to use use 2 components

x_pca = pca.fit_transform(scaled_data)

# check the shape of original data, there are 30 columns / feataures

scaled_data.shape

# now we can see there are only 2 features after PCA

x_pca.shape

# We've reduced 30 dimensions to just 3! Let's plot these two dimensions out!

#

#

plt.figure(figsize=(8,6))

plt.scatter(x_pca[:, 0], x_pca[:, 1], c=cancer['target']); # color by target columns

plt.xlabel('First Principal Component')

plt.ylabel('Second Principal Component')

# Clearly by using these two components we can easily separate these two classes.

#

# ## Interpreting the components

#

# Unfortunately, with this great power of dimensionality reduction, comes the cost of being able to easily understand what these components represent.

#

# * The components correspond to combinations of the original features (They **don't relate ONE TO ONE relationship** with the original features).

# * the components themselves are stored as an attribute of the fitted PCA object:

pca.components_

# In above numpy array,

#

# - each rows represent Principal Component.

# - each columns actually related back original features.

#

# we can visualize this relationship with a heatmap:

# convert into dataframe

df_comp = pd.DataFrame(pca.components_, columns=cancer['feature_names'])

df_comp.head()

plt.figure(figsize=(12,6))

sns.heatmap(df_comp, cmap='plasma');

# This heatmap and the color bar basically represent the correlation between the various feature and the principal component itself.

#

# Referring from heatmap above, we can see that

# * Component 1 is strongly negatively correlated with `mean radius`, `mean perimeter`, `mean_area`, `worst radius`, `worst perimeter`, `worst area`. (blue color)

#

# * Component 1 is strongly postively correlated with `mean fractal dimention`. (yellow color)

# # Explained Variance Ratio of PCA

#

# - Explained Variance Ratio tells us how much information is compressed into first few components.

# - When you are deciding how much components to keep, look at the percent of cumulative variance. Make sure to **retain at least 70%** of dataset's original information

pca.explained_variance_ratio_

# In the above ratio, we can see

# * 44.27% of information is kept in first component and

# * 18.97% in second component.

#

# but in our case total is only 63% of original data. So we might want to use different number of components to caputure at least 70% of original data.

# ### Cumulative Variance

pca.explained_variance_ratio_.sum() # 63% of information is captured in the two components that were returned.

# ---------

# # New PCA model (to cover 70% of original information)

pca = PCA(n_components=4)

x_pca = pca.fit_transform(scaled_data)

pca.components_[:2]

pca.explained_variance_ratio_

# In our new PCA, our 4 components cover nearly 80% of our original information.

#

# **We can even use only first 3 components as the total cover 70% already.**

#

# 0.44272026 + 0.18971182 + 0.09393163 = 0.72636371

pca.explained_variance_ratio_.sum()

df_comp = pd.DataFrame(pca.components_, columns=cancer['feature_names'])

df_comp.head()

plt.figure(figsize=(10,5))

sns.heatmap(df_comp, cmap='plasma');

# # Summary

#

# ### By using those information,

# ### 1) we can select how many first componets we want to use (to cover at least 70%)

# ### 2) which features to use by referring those chosen components

# ### to proceed with Classification modelling

|

Data Science and Machine Learning Bootcamp - JP/19. Principal-Component-Analysis/01-Principal Component Analysis.ipynb

|

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# + [markdown] colab_type="text" id="JkUviKhSYLMF" slideshow={"slide_type": "slide"}

# ## Climatology and Anomaly in Geoscience: Part 2

# An example of how Python is used to explore global sea surface temperature (SST).

# * <NAME> (<EMAIL>)

# * Department of Geosciences, Princeton University

# * Junior Colloquium, Nov. 15, 2021

#

# + [markdown] slideshow={"slide_type": "slide"}

# ## A short review of the last session

# 1. `xarray` basic usage: `open_dataset`, `sel`, `isel`, `mean`, `plot`, `plot.contourf`, `groupby`.

# 2. Sea surface temperature dataset: ERSST5.

# 3. SST annual climatology and change, monthly climatology.

# + [markdown] slideshow={"slide_type": "slide"}

# ## Today's plan

#

# 1. SST anomaly (from the monthly climatology).

# 2. El Nino index (Nino3.4) time series (`mean` over lon/lat region box).

# 3. What's next after JC.

# 3. Interactive Q&A.

#

# + [markdown] slideshow={"slide_type": "slide"}

# ## Start analysis

# + colab={} colab_type="code" id="2nyvaFZnYYtm" slideshow={"slide_type": "fragment"}

# import and configuration

# xarray is the core package we are going to use

import xarray as xr

import matplotlib.pyplot as plt # we also use pyplot directly in some cases

import os

# some configurations on the default figure output

# %config InlineBackend.figure_format ='retina'

plt.rcParams['figure.dpi'] = 120

# + colab={"base_uri": "https://localhost:8080/", "height": 244} colab_type="code" executionInfo={"elapsed": 835, "status": "ok", "timestamp": 1568235456809, "user": {"displayName": "<NAME>", "photoUrl": "https://lh3.googleusercontent.com/a-/AAuE7mAzexWcchBOvHGw9u_Nm2D-vWc4ApTqQ4uLX1i-=s64", "userId": "02317458745209383076"}, "user_tz": 240} id="bik3mA4tYefp" outputId="c95cc7cf-0866-43b3-ca86-44fa0d0320f8" slideshow={"slide_type": "subslide"}

# analysis of last session

# sst data file

# Am I running the notebook on Adroit or not?

if os.uname().nodename.startswith('adroit'):

ifile = '/home/wenchang/JC2021/ersst5_1979-2018.nc'

else:

ifile = 'ersst5_1979-2018.nc'

print('file to be opened:',ifile)

# open the sst dataset

ds = xr.open_dataset(ifile)

# get the sst DataArray

sst = ds.sst

# 40-year annual mean climatology

sst_clim = sst.mean('time')

# difference between the first and last 10-year climatologies

sst_early = sst.sel(time=slice('1979-01', '1988-12')).mean('time')

sst_late = sst.sel(time=slice('2009-01', '2018-12')).mean('time')

dsst = sst_late - sst_early

dsst.attrs['long_name'] = 'SST change from 1979-1988 to 2009-2018'

dsst.attrs['units'] = '$^\circ$C'

# monthly climatology: the time dimension is now replaced by month

sst_mclim = sst.groupby('time.month').mean('time')

# + colab={"base_uri": "https://localhost:8080/", "height": 1000} colab_type="code" executionInfo={"elapsed": 4631, "status": "ok", "timestamp": 1567700569017, "user": {"displayName": "<NAME>", "photoUrl": "https://lh3.googleusercontent.com/a-/AAuE7mAzexWcchBOvHGw9u_Nm2D-vWc4ApTqQ4uLX1i-=s64", "userId": "02317458745209383076"}, "user_tz": 240} id="CDFFxfZBicEF" outputId="3e13395e-1d61-4301-d096-048cab906141" slideshow={"slide_type": "slide"}

# Make 12 subplots using a single command.

sst_mclim.plot.contourf(col='month', col_wrap=6, levels=20,

cmap='Spectral_r', center=False)

# + [markdown] colab_type="text" id="qDimD2IIAp22" slideshow={"slide_type": "slide"}

# ## Calculate monthly anomaly

# Subtract the monthly climatology from the raw SST data.

# + colab={"base_uri": "https://localhost:8080/", "height": 561} colab_type="code" executionInfo={"elapsed": 800, "status": "ok", "timestamp": 1567700593776, "user": {"displayName": "<NAME>", "photoUrl": "https://lh3.googleusercontent.com/a-/AAuE7mAzexWcchBOvHGw9u_Nm2D-vWc4ApTqQ4uLX1i-=s64", "userId": "02317458745209383076"}, "user_tz": 240} id="TQBWe3yxjptK" outputId="4dc7bdc7-1132-451b-8a2d-c61d3214683d" slideshow={"slide_type": "subslide"}

ssta = sst.groupby('time.month') - sst_mclim

# monthly climatology is now removed

# + slideshow={"slide_type": "subslide"}

ssta

# + colab={"base_uri": "https://localhost:8080/", "height": 507} colab_type="code" executionInfo={"elapsed": 1136, "status": "ok", "timestamp": 1567700660707, "user": {"displayName": "<NAME>", "photoUrl": "https://lh3.googleusercontent.com/a-/AAuE7mAzexWcchBOvHGw9u_Nm2D-vWc4ApTqQ4uLX1i-=s64", "userId": "02317458745209383076"}, "user_tz": 240} id="OKZ-I_UJkTMk" outputId="675e0cdd-cd18-4e17-fc3a-3c871b0f5227" slideshow={"slide_type": "subslide"}

# The 1997 winter is a big El Nino season

ssta.sel(time=slice('1997-12', '1998-02')).mean('time').plot.contourf(levels=19)

# + colab={"base_uri": "https://localhost:8080/", "height": 507} colab_type="code" executionInfo={"elapsed": 1136, "status": "ok", "timestamp": 1567700660707, "user": {"displayName": "<NAME>", "photoUrl": "https://lh3.googleusercontent.com/a-/AAuE7mAzexWcchBOvHGw9u_Nm2D-vWc4ApTqQ4uLX1i-=s64", "userId": "02317458745209383076"}, "user_tz": 240} id="OKZ-I_UJkTMk" outputId="675e0cdd-cd18-4e17-fc3a-3c871b0f5227" slideshow={"slide_type": "subslide"}

# The 1998 winter is a big La Nina season

ssta.sel(time=slice('1998-12', '1999-02')).mean('time').plot.contourf(levels=19)

# + colab={"base_uri": "https://localhost:8080/", "height": 507} colab_type="code" executionInfo={"elapsed": 1136, "status": "ok", "timestamp": 1567700660707, "user": {"displayName": "<NAME>", "photoUrl": "https://lh3.googleusercontent.com/a-/AAuE7mAzexWcchBOvHGw9u_Nm2D-vWc4ApTqQ4uLX1i-=s64", "userId": "02317458745209383076"}, "user_tz": 240} id="OKZ-I_UJkTMk" outputId="675e0cdd-cd18-4e17-fc3a-3c871b0f5227" slideshow={"slide_type": "subslide"}

# The 2015 winter is also a big El Nino season

ssta.sel(time=slice('2015-12', '2016-02')).mean('time').plot.contourf(levels=19)

# + [markdown] colab_type="text" id="ROmgatpmA1og" slideshow={"slide_type": "slide"}

# ## Calculate the Nino3.4 index

# * SST anomaly averaged over the Nino3.4 region: 170W-120W, 5S-5N

#

# http://www.bom.gov.au/climate/enso/indices/oceanic-indices-map.gif

# + colab={"base_uri": "https://localhost:8080/", "height": 153} colab_type="code" executionInfo={"elapsed": 453, "status": "ok", "timestamp": 1567700672119, "user": {"displayName": "<NAME>", "photoUrl": "https://lh3.googleusercontent.com/a-/AAuE7mAzexWcchBOvHGw9u_Nm2D-vWc4ApTqQ4uLX1i-=s64", "userId": "02317458745209383076"}, "user_tz": 240} id="fusZ4HXhuDXt" outputId="d962bf54-3d16-4684-9ca7-a5bf66739104" slideshow={"slide_type": "subslide"}

nino34 = ssta.sel(lon=slice(360-170,360-120),lat=slice(-5,5)).mean(['lon','lat'])

nino34.attrs['long_name'] = 'Nino3.4 index'

nino34.attrs['units'] = '$^\circ$C'

# + slideshow={"slide_type": "subslide"}

nino34

# + colab={"base_uri": "https://localhost:8080/", "height": 492} colab_type="code" executionInfo={"elapsed": 1663, "status": "ok", "timestamp": 1567700675793, "user": {"displayName": "<NAME>", "photoUrl": "https://lh3.googleusercontent.com/a-/AAuE7mAzexWcchBOvHGw9u_Nm2D-vWc4ApTqQ4uLX1i-=s64", "userId": "02317458745209383076"}, "user_tz": 240} id="h7W9LsfhuP2i" outputId="1d8aa14d-32ba-423c-da48-b06c8e816c3a" slideshow={"slide_type": "subslide"}

nino34.plot()

# plt.axvline('2015-12', color='gray', ls='--')

# plt.text('2015-12', 2, '2015-12', rotation=-45, color='gray', )

# plt.axvline('1997-12', color='gray', ls='--')

# plt.text('1997-12', 2, '1997-12', rotation=-45, color='gray', )

# plt.axvline('1982-12', color='gray', ls='--')

# plt.text('1982-12', 2, '1982-12', rotation=-45, color='gray', )

# + [markdown] slideshow={"slide_type": "slide"}

# ## Seasonality of El Nino/La Nina

# + colab={"base_uri": "https://localhost:8080/", "height": 473} colab_type="code" executionInfo={"elapsed": 1155, "status": "ok", "timestamp": 1567700813854, "user": {"displayName": "<NAME>", "photoUrl": "https://lh3.googleusercontent.com/a-/AAuE7mAzexWcchBOvHGw9u_Nm2D-vWc4ApTqQ4uLX1i-=s64", "userId": "02317458745209383076"}, "user_tz": 240} id="Z60v5lhZ1vG2" outputId="74dff819-35de-4ecf-f666-c9eaa2dad22b" slideshow={"slide_type": "slide"}

# compare Nino3.4 index variability for each month

da = nino34.groupby('time.month').std('time') # standard deviation for each month

da.plot()

# + colab={"base_uri": "https://localhost:8080/", "height": 473} colab_type="code" executionInfo={"elapsed": 1155, "status": "ok", "timestamp": 1567700813854, "user": {"displayName": "<NAME>", "photoUrl": "https://lh3.googleusercontent.com/a-/AAuE7mAzexWcchBOvHGw9u_Nm2D-vWc4ApTqQ4uLX1i-=s64", "userId": "02317458745209383076"}, "user_tz": 240} id="Z60v5lhZ1vG2" outputId="74dff819-35de-4ecf-f666-c9eaa2dad22b" slideshow={"slide_type": "slide"}

# compare Nino3.4 index variability for each month

da = nino34.groupby('time.month').std('time') # standard deviation for each month

da = da.roll(month=-5).assign_coords(month=range(6, 18)) # roll the time series to start from Jun

da.plot()

#add more descriptive label to y axis

plt.ylabel('Nino3.4 standard deviation [$^\circ$C]')

#use month names as the x tick labels

plt.xticks(range(6,18), ['Jun', 'Jul', 'Aug', 'Sep', 'Oct', 'Nov', 'Dec', 'Jan', 'Feb', 'Mar', 'Apr', 'May'])

#add grid lines to axes

plt.grid(True)

# plt.bar(da.month, da.values, color='lightgray')

# + [markdown] slideshow={"slide_type": "subslide"}

# December shows the largest variability!

# + [markdown] slideshow={"slide_type": "slide"}

# ## What's next

#

# * Python resource: https://wy2136.github.io/python.html

# * Popular packages: `xarray`, `pandas`, `numpy`, `matplotlib`.

# * Machine learning: `sklearn`, `keras`, `pytorch`.

# * Try to apply these tools to your project.

# * Google usually helps if you have a question.

# * Ask around if google doesn't work.

# + [markdown] slideshow={"slide_type": "fragment"}

# ## Q&A

|

JC2021/07_clim_anom_2021-11-15_practice.ipynb

|

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# # High-level CNTK Example

import numpy as np

import os

import sys

import cntk

from cntk.layers import Convolution2D, MaxPooling, Dense, Dropout

from common.params import *

from common.utils import *

# Force one-gpu

os.environ["CUDA_VISIBLE_DEVICES"] = "0"

print("OS: ", sys.platform)

print("Python: ", sys.version)

print("Numpy: ", np.__version__)

print("CNTK: ", cntk.__version__)

print("GPU: ", get_gpu_name())

print(get_cuda_version())

print("CuDNN Version ", get_cudnn_version())

def create_symbol(n_classes=N_CLASSES):

# Weight initialiser from uniform distribution

# Activation (unless states) is None

with cntk.layers.default_options(init = cntk.glorot_uniform(), activation = cntk.relu):

x = Convolution2D(filter_shape=(3, 3), num_filters=50, pad=True)(features)

x = Convolution2D(filter_shape=(3, 3), num_filters=50, pad=True)(x)

x = MaxPooling((2, 2), strides=(2, 2), pad=False)(x)

x = Dropout(0.25)(x)

x = Convolution2D(filter_shape=(3, 3), num_filters=100, pad=True)(x)

x = Convolution2D(filter_shape=(3, 3), num_filters=100, pad=True)(x)

x = MaxPooling((2, 2), strides=(2, 2), pad=False)(x)

x = Dropout(0.25)(x)

x = Dense(512)(x)

x = Dropout(0.5)(x)

x = Dense(n_classes, activation=None)(x)

return x

def init_model(m, labels, lr=LR, momentum=MOMENTUM):

# Loss (dense labels); check if support for sparse labels

loss = cntk.cross_entropy_with_softmax(m, labels)

# Momentum SGD

# https://github.com/Microsoft/CNTK/blob/master/Manual/Manual_How_to_use_learners.ipynb

# unit_gain=False: momentum_direction = momentum*old_momentum_direction + gradient

# if unit_gain=True then ...(1-momentum)*gradient

learner = cntk.momentum_sgd(m.parameters,

lr=cntk.learning_rate_schedule(lr, cntk.UnitType.minibatch) ,

momentum=cntk.momentum_schedule(momentum),

unit_gain=False)

return loss, learner

# %%time

# Data into format for library

x_train, x_test, y_train, y_test = cifar_for_library(channel_first=True, one_hot=True)

# CNTK format

y_train = y_train.astype(np.float32)

y_test = y_test.astype(np.float32)

print(x_train.shape, x_test.shape, y_train.shape, y_test.shape)

print(x_train.dtype, x_test.dtype, y_train.dtype, y_test.dtype)

# %%time

# Placeholders

features = cntk.input_variable((3, 32, 32), np.float32)

labels = cntk.input_variable(N_CLASSES, np.float32)

# Load symbol

sym = create_symbol()

# %%time

loss, learner = init_model(sym, labels)

# %%time

# Main training loop: 49s

loss.train((x_train, y_train),

minibatch_size=BATCHSIZE,

max_epochs=EPOCHS,

parameter_learners=[learner])

# %%time

# Main evaluation loop: 409ms

n_samples = (y_test.shape[0]//BATCHSIZE)*BATCHSIZE

y_guess = np.zeros(n_samples, dtype=np.int)

y_truth = np.argmax(y_test[:n_samples], axis=-1)

c = 0

for data, label in yield_mb(x_test, y_test, BATCHSIZE):

predicted_label_probs = sym.eval({features : data})

y_guess[c*BATCHSIZE:(c+1)*BATCHSIZE] = np.argmax(predicted_label_probs, axis=-1)

c += 1

print("Accuracy: ", 1.*sum(y_guess == y_truth)/len(y_guess))

|

deep-learning/multi-frameworks/notebooks/CNTK_CNN_highAPI.ipynb

|

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# ### Generating `publications.json` partitions

# This is a template notebook for generating metadata on publications - most importantly, the linkage between the publication and dataset (datasets are enumerated in `datasets.json`)

#

# Process goes as follows:

# 1. Import CSV with publication-dataset linkages. Your csv should have at the minimum, fields (spelled like the below):

# * `dataset` to hold the dataset_ids, and

# * `title` for the publication title.

#

# Update the csv with these field names to ensure this code will run. We read in, dedupe and format the title

# 2. Match to `datasets.json` -- alert if given dataset doesn't exist yet

# 3. Generate list of dicts with publication metadata

# 4. Write to a publications.json file

# #### Import CSV containing publication-dataset linkages

# Set `linkages_path` to the location of the csv containg dataset-publication linkages and read in csv

import pandas as pd

import os

import datetime

file_name = 'bundesbank_pub_dataset_linkages_sbr_final.csv'

rcm_subfolder = '20190731_bundesbank'

parent_folder = '/Users/sophierand/RichContextMetadata/metadata'

linkages_path = os.path.join(parent_folder,rcm_subfolder,file_name)

# linkages_path = os.path.join(os.getcwd(),'SNAP_DATA_DIMENSIONS_SEARCH_DEMO.csv')

linkages_csv = pd.read_csv(linkages_path)

# Format/clean linkage data - apply `scrub_unicode` to `title` field.

import unicodedata

def scrub_unicode (text):

"""

try to handle the unicode edge cases encountered in source text,

as best as possible

"""

x = " ".join(map(lambda s: s.strip(), text.split("\n"))).strip()

x = x.replace('’', "'").replace('–',"--")

x = x.replace('“', '"').replace('”', '"')

x = x.replace("‘", "'").replace("’", "'").replace("`", "'")

x = x.replace("`` ", '"').replace("''", '"')

x = x.replace('…', '...').replace("\\u2026", "...")

x = x.replace("\\u00ae", "").replace("\\u2122", "")

x = x.replace("\\u00a0", " ").replace("\\u2022", "*").replace("\\u00b7", "*")

x = x.replace("\\u2018", "'").replace("\\u2019", "'").replace("\\u201a", "'")

x = x.replace("\\u201c", '"').replace("\\u201d", '"')

x = x.replace("\\u20ac", "€")

x = x.replace("\\u2212", " - ") # minus sign

x = x.replace("\\u00e9", "é")

x = x.replace("\\u017c", "ż").replace("\\u015b", "ś").replace("\\u0142", "ł")

x = x.replace("\\u0105", "ą").replace("\\u0119", "ę").replace("\\u017a", "ź").replace("\\u00f3", "ó")

x = x.replace("\\u2014", " - ").replace('–', '-').replace('—', ' - ')

x = x.replace("\\u2013", " - ").replace("\\u00ad", " - ")

x = str(unicodedata.normalize("NFKD", x).encode("ascii", "ignore").decode("utf-8"))

# some content returns text in bytes rather than as a str ?

try:

assert type(x).__name__ == "str"

except AssertionError:

print("not a string?", type(x), x)

return x

# Scrub titles of problematic characters, drop nulls and dedupe

linkages_csv = linkages_csv.loc[pd.notnull(linkages_csv.dataset)].drop_duplicates()

linkages_csv = linkages_csv.loc[pd.notnull(linkages_csv.title)].drop_duplicates()

linkages_csv['title'] = linkages_csv['title'].apply(scrub_unicode)

pub_metadata_fields = ['title']

original_metadata_cols = list(set(linkages_csv.columns.values.tolist()) - set(pub_metadata_fields)-set(['dataset']))

# #### Generate list of dicts of metadata

# Read in `datasets.json`. Update `datasets_path` to your local.

import json

# +

datasets_path = '/Users/sophierand/RCDatasets/datasets.json'

with open(datasets_path) as json_file:

datasets = json.load(json_file)

# -

# Create list of dictionaries of publication metadata. `format_metadata` iterrates through `linkages_csv` dataframe, splits the `dataset` field (for when multiple datasets are listed); throws an error if the dataset doesn't exist and needs to be added to `datasets.json`.

def create_pub_dict(linkages_dataframe,datasets):

pub_dict_list = []

for i, r in linkages_dataframe.iterrows():

r['title'] = scrub_unicode(r['title'])

ds_id_list = [f for f in [d.strip() for d in r['dataset'].split(",")] if f not in [""," "]]

for ds in ds_id_list:

check_ds = [b for b in datasets if b['id'] == ds]

if len(check_ds) == 0:

print('dataset {} isnt listed in datasets.json. Please add to file'.format(ds))

required_metadata = r[pub_metadata_fields].to_dict()

required_metadata.update({'datasets':ds_id_list})

pub_dict = required_metadata

if len(original_metadata_cols) > 0:

original_metadata = r[original_metadata_cols].to_dict()

original_metadata.update({'date_added':datetime.datetime.now().strftime('%Y-%m-%d %H:%M:%S')})

pub_dict.update({'original':original_metadata})

pub_dict_list.append(pub_dict)

return pub_dict_list

# Generate publication metadata and export to json

linkage_list = create_pub_dict(linkages_csv,datasets)

# Update `pub_path` to be:

# `<name_of_subfolder>_publications.json`

json_pub_path = os.path.join('/Users/sophierand/RCPublications/partitions/',rcm_subfolder+'_publications.json')

with open(json_pub_path, 'w') as outfile:

json.dump(linkage_list, outfile, indent=2)

json_pub_path

|

metadata/20190731_bundesbank/publications_export_template.ipynb

|

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# from sklearn

import pandas as pd

import seaborn as sns; sns.set()

import pickle

import matplotlib as plt

# %matplotlib inline

token_data=pd.read_csv("../data/wowcointotal.csv")

token_data.head()

token_data.shape

# +

token_data.rename(columns=lambda x: x.replace(" ","_"), inplace=True)

token_data.rename(columns=lambda x: x.lower(), inplace=True)

## Previous trial

# splitted_date= [str(token_data["Date"][i]).split(" ") for i in range(len(token_data["Date"]))]

# days=[splitted_date[i][0] for i in range(len(splitted_date))]

# hour=[splitted_date[i][1] for i in range(len(splitted_date))]

# hour=[hour[i][:-2]+ "00" for i in range(len(hour))]

# token_data["day"] = days

# token_data["hour"] = hour

# This one line does what I've tried to achieve above

token_data['date_time'] = pd.to_datetime(token_data['date'])

token_data['date_time'] = token_data["date_time"].dt.strftime('%Y-%m-%d')

token_data.drop("date",axis=1,errors="ignore",inplace=True)

token_data.head()

# -

token_data.dtypes

token_data.dtypes

print(token_data.region.value_counts())

print("\n")

print(token_data.time_left_on_auction.value_counts())

sub_US = token_data[token_data["region"]=="US"]

sub_US.shape

sub_US.drop(labels="region",axis=1,inplace=True)

sub_US.head()

sub_US_long = sub_US[sub_US["time_left_on_auction"]=="Long"]

sub_US_short = sub_US[sub_US["time_left_on_auction"]=="Short"]

sub_US_meedium = sub_US[sub_US["time_left_on_auction"]=="Medium"]

sub_US_very_long = sub_US[sub_US["time_left_on_auction"]=="Very Long"]

sub_US_long.shape

sub_US_long_model = sub_US_long.copy()

sub_US_long_model.drop("time_left_on_auction",axis=1,inplace=True,errors="ignore")

sub_US_long_model.head()

sub_US_long_model = sub_US_long_model.groupby("date_time").mean()

sub_US_long_model.head()

sns.set(rc={'figure.figsize':(14,8)})

sub_US_long.plot(x="date_time",y="price")

sub_US_long_model.plot(y="price")

sns.lineplot(x="date_time", y="price", hue="time_left_on_auction", data=sub_US)

sub_US_long_model.dtypes

from statsmodels.tsa.arima_model import ARIMA

model = ARIMA(sub_US_long_model,order=(5,1,0))

model_fit= model.fit(disp=0)

print(model_fit.summary())

from sklearn.metrics import mean_squared_error

from matplotlib import pyplot

# +

X=sub_US_long_model.values

size = int(len(X) * 0.66)

train, test = X[0:size], X[size:len(X)]

history = [x for x in train]

predictions = list()

for t in range(len(test)):

model = ARIMA(history, order=(5,1,0))

model_fit = model.fit(disp=0)

output = model_fit.forecast()

yhat = output[0]

predictions.append(yhat)

obs = test[t]

history.append(obs)

print('predicted=%f, expected=%f' % (yhat, obs))

error = mean_squared_error(test, predictions)

print('Test MSE: %.3f' % error)

# -

# plot

pyplot.plot(test)

pyplot.plot(predictions, color='red')

pyplot.show()

sub_US_long_model.tail()

# +

# # start_index = pd.datetime(2017, 7,15 ).date()

# # end_index = pd.datetime(2017,7,19).date()

# start_index = pd.to_datetime("2017-07-15").strftime('%Y-%m-%d')

# end_index = pd.to_datetime("2017-07-20").strftime('%Y-%m-%d')

# end_index

forecast = model_fit.forecast(steps=8)

# -

pyplot.plot(forecast[0])

filename = 'token_predictor_model.pkl'

pickle.dump(model_fit, open(filename, 'wb'))

|

app/deploy_ml/notebook/warcraft_token_prediction.ipynb

|

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# # Quantum Process Tomography with Q# and Python #

# ## Abstract ##

# In this sample, we will demonstrate interoperability between Q# and Python by using the QInfer and QuTiP libraries for Python to characterize and verify quantum processes implemented in Q#.

# In particular, this sample will use *quantum process tomography* to learn about the behavior of a "noisy" Hadamard operation from the results of random Pauli measurements.

# ## Preamble ##

import warnings

warnings.simplefilter('ignore')

# We can enable Q# support in Python by importing the `qsharp` package.

import qsharp

# Once we do so, any Q# source files in the current working directory are compiled, and their namespaces are made available as Python modules.

# For instance, the `Quantum.qs` source file provided with this sample implements a `HelloWorld` operation in the `Microsoft.Quantum.Samples.Python` Q# namespace:

with open('Quantum.qs') as f:

print(f.read())

# We can import this `HelloWorld` operation as though it was an ordinary Python function by using the Q# namespace as a Python module:

from Microsoft.Quantum.Samples.Python import HelloWorld

HelloWorld

# Once we've imported the new names, we can then ask our simulator to run each function and operation using the `simulate` method.

HelloWorld.simulate(pauli=qsharp.Pauli.Z)

# ## Tomography ##

# The `qsharp` interoperability package also comes with a `single_qubit_process_tomography` function which uses the QInfer library for Python to learn the channels corresponding to single-qubit Q# operations.

from qsharp.tomography import single_qubit_process_tomography

# Next, we import plotting support and the QuTiP library, since these will be helpful to us in manipulating the quantum objects returned by the quantum process tomography functionality that we call later.

# %matplotlib inline

import matplotlib.pyplot as plt

import qutip as qt

qt.settings.colorblind_safe = True

# To use this, we define a new operation that takes a preparation and a measurement, then returns the result of performing that tomographic measurement on the noisy Hadamard operation that we defined in `Quantum.qs`.

experiment = qsharp.compile("""

open Microsoft.Quantum.Samples.Python;

open Microsoft.Quantum.Characterization;

operation Experiment(prep : Pauli, meas : Pauli) : Result {

return SingleQubitProcessTomographyMeasurement(prep, meas, NoisyHadamardChannel(0.1));

}

""")

# Here, we ask for 10,000 measurements from the noisy Hadamard operation that we defined above.

estimation_results = single_qubit_process_tomography(experiment, n_measurements=10000)

# To visualize the results, it's helpful to compare to the actual channel, which we can find exactly in QuTiP.

depolarizing_channel = sum(map(qt.to_super, [qt.qeye(2), qt.sigmax(), qt.sigmay(), qt.sigmaz()])) / 4.0

actual_noisy_h = 0.1 * qt.to_choi(depolarizing_channel) + 0.9 * qt.to_choi(qt.hadamard_transform())

# We then plot the estimated and actual channels as Hinton diagrams, showing how each acts on the Pauli operators $X$, $Y$ and $Z$.

fig, (left, right) = plt.subplots(ncols=2, figsize=(12, 4))

plt.sca(left)

plt.xlabel('Estimated', fontsize='x-large')

qt.visualization.hinton(estimation_results['est_channel'], ax=left)

plt.sca(right)

plt.xlabel('Actual', fontsize='x-large')

qt.visualization.hinton(actual_noisy_h, ax=right)

# We also obtain a wealth of other information as well, such as the covariance matrix over each parameter of the resulting channel.

# This shows us which parameters we are least certain about, as well as how those parameters are correlated with each other.

plt.figure(figsize=(10, 10))

estimation_results['posterior'].plot_covariance()

plt.xticks(rotation=90)

# ## Diagnostics ##

for component, version in sorted(qsharp.component_versions().items(), key=lambda x: x[0]):

print(f"{component:20}{version}")

import sys

print(sys.version)

|

samples/interoperability/python/tomography-sample.ipynb

|

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# # Week 1 - TEST

#

# ## Overview

# As explained in the [Before week 1: How to take this class](https://nbviewer.jupyter.org/github/suneman/socialdata2021/blob/main/lectures/How_To_Take_This_Class.ipynb) notebook, each week of this class is an IPython notebook like this one. In order to follow the class, you simply start reading from the top, following the instructions.

#

# **Hint**: And (as explained [here](https://nbviewer.jupyter.org/github/suneman/socialdata2021/blob/main/lectures/COVID-update-1.ipynb)) you can ask me - or any of the friendly Teaching Assistants - for help at any point if you get stuck!

#

# ## Intro video

# Below is today's informal intro video, tying everything together and making each and everyone of you feel welcome and loved!

# short video explaining the plans

from IPython.display import YouTubeVideo

YouTubeVideo("U5hVaBtncNc",width=600, height=333)

# ## Today

#

# This first lecture will go over a few different topics to get you started

#

# * First, I'll explain a little bit about what we'll be doing this year (hint, you may want to watch _Minority Report_ if you want to prepare deeply for the class).

# * Second, we'll start by loading some real-world data into your very own computers and getting started with some data analysis.

# ## Part 1: Predictive policing. A case to learn from

#

# For a number of years I've been a little bit obsessed with [predictive policing](https://www.sciencemag.org/news/2016/09/can-predictive-policing-prevent-crime-it-happens). I guess there are various reasons. For example:

#

# * I think it's an interesting application of data science.

# * It connects to popular culture in a big way. Both through TV shows, such as [NUMB3RS](https://en.wikipedia.org/wiki/Numbers_(TV_series)) (it also features in Bones ... or any of the CSI), and also any number of movies, my favorite of which has to be [Minority report](https://www.imdb.com/title/tt0181689/).

# * Predictive policing is also big business. Companies like [PredPol](https://www.predpol.com), [Palantir](https://www.theverge.com/2018/2/27/17054740/palantir-predictive-policing-tool-new-orleans-nopd), and many other companies offer their services law enforcement by analyzing crime data.

# * It hints at the dark sides of Data Science. In these algorithms, concepts like [bias, fairness, and accountability](https://www.smithsonianmag.com/innovation/artificial-intelligence-is-now-used-predict-crime-is-it-biased-180968337/) become incredibly important when the potential outcome of an algorithm is real people going to prison.

# * And, finally there's lots of data available!! Chicago, NYC, and San Francisco all have crime data available freely online.

#

# Below is a little video to pique your interest.

YouTubeVideo("YxvyeaL7NEM",width=800, height=450)

# All this is to say that in the coming weeks, we'll be working to understand crime in San Francisco. We'll be using the SF crime data as a basis for our work on data analysis and data visualization.

#

# We will draw on data from the project [SF OpenData](https://data.sfgov.org), looking into SFPD incidents which have been recorded back since January 2003.

#

# *Reading*

#

# Read [this article](https://www.sciencemag.org/news/2016/09/can-predictive-policing-prevent-crime-it-happens) from science magazine to get a bit deeper sense of the topic.

#

#

# > *Exercise*

# >

# > Answer the following questions in your own words

# >

# > * According to the article, is predictive policing better than best practice techniques for law enforcement? The article is from 2016. Take a look around the web, does this still seem to be the case in 2020? (hint, when you evaluate the evidence consider the source)

# > * List and explain some of the possible issues with predictive policing according to the article.

# # Part 2: Load some crime-data into `pandas`

#

# Go and check out the Python Bootcamp lecture if you don't know what "loading data into Pandas" means. If you're used to using Pandas, then it's finally time to get your hands on some data!!

# > *Exercise*

# >

# > * Go to https://datasf.org/opendata/

# > * Click on "Public Safety"

# > * Download all police incidence reports, historical 2003 to may 2018. You can get everything as a big CSV file if you press the *Export* button (it's a snappy little 456MB file).

# > * Load the data into `pandas` using thie tips and tricks described [here](https://www.shanelynn.ie/python-pandas-read_csv-load-data-from-csv-files/).

# > * Use pandas to generate the following simple statistics

# > - Report the total number of crimes in the dataset

# > - List the various categories of crime

# > - List the number of crimes in each category

# In order to do awesome *predictive policing* later on in the class, we're going to dissect the SF crime-data quite thoroughly to figure out what has been going on over the last years on the San Francisco crime scene.

#

# ---

# > *Exercise*: The types of crime and their popularity over time. The first field we'll dig into is the column "Category".

# > * Use `pandas` to list and then count all the different categories of crime in the dataset. How many are there?

# > * Now count the number of occurrences of each category in the dataset. What is the most commonly occurring category of crime? What is the least frequently occurring?

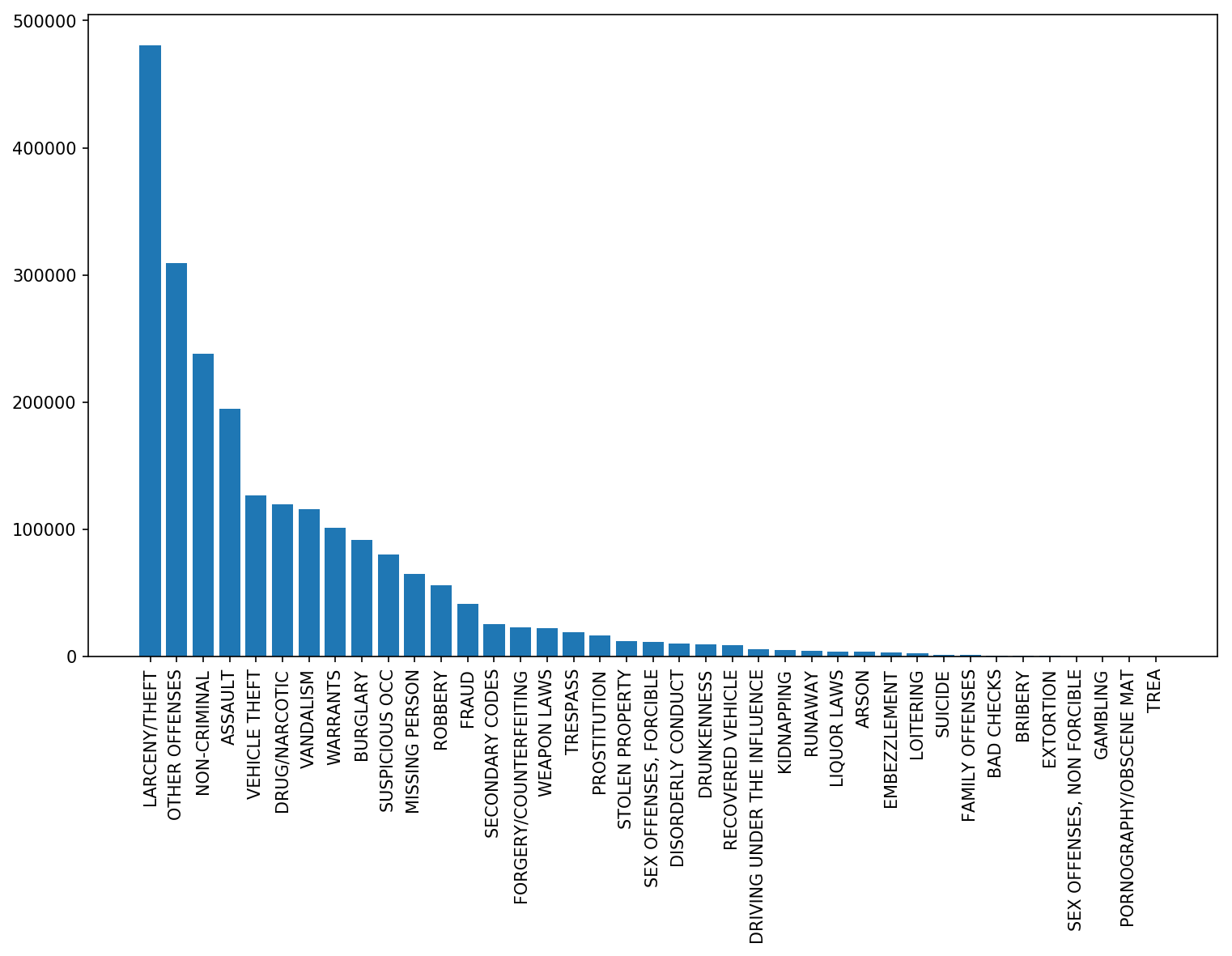

# > * Create a histogram over crime occurrences. Mine looks like this

# >

# > * Now it's time to explore how the crime statistics change over time. To start off easily, let's count the number of crimes per year for the years 2003-2017 (since we don't have full data for 2018). What's the average number of crimes per year?

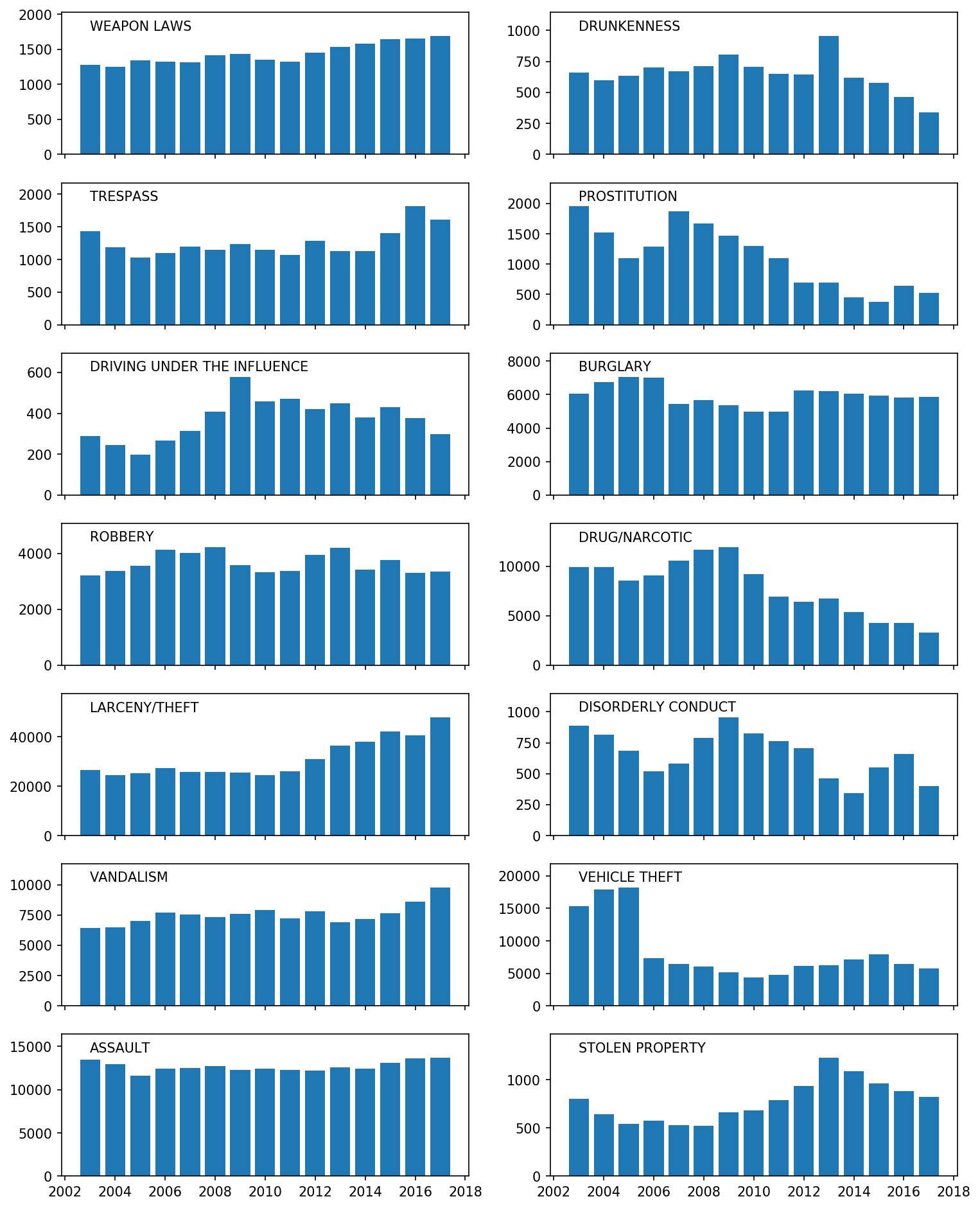

# > * Police chief Suneman is interested in the temporal development of only a subset of categories, the so-called focus crimes. Those categories are listed below (for convenient copy-paste action). Now create bar-charts displaying the year-by-year development of each of these categories across the years 2003-2017.

# >

focuscrimes = set(['WEAPON LAWS', 'PROSTITUTION', 'DRIVING UNDER THE INFLUENCE', 'ROBBERY', 'BURGLARY', 'ASSAULT', 'DRUNKENNESS', 'DRUG/NARCOTIC', 'TRESPASS', 'LARCENY/THEFT', 'VANDALISM', 'VEHICLE THEFT', 'STOLEN PROPERTY', 'DISORDERLY CONDUCT'])

# > * My plot looks like this for the 14 focus crimes:

#

# >

# > (Note that titles are OVER the plots and the axes on the bottom are common for all plots.)

# > * Comment on at least three interesting trends in your plot.

# >

# > Also, here's a fun fact: The drop in car thefts is due to new technology called 'engine immobilizer systems' - get the full story [here](https://www.nytimes.com/2014/08/12/upshot/heres-why-stealing-cars-went-out-of-fashion.html).

|

lectures/Week1.ipynb

|

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

import pandas as pd

import numpy as np

import re

import datetime

import matplotlib.pyplot as plt

import seaborn as sns

# # 1. EDA

# ## Understanding the data and taking notes for desicions

data = pd.read_csv('regression_data.csv',index_col=None, names= ['id', 'date', 'bedrooms','bathroom','sqft_living', 'sqft_lot','floors', 'waterfront','view', 'condition','grade','sqft_above','sqft_basement', 'yr_built','yr_renovated','zipcode','lat','long','sqft_living15','sqft_lot15','price'])

data.head()

# * drop the zipcode, long & lat columns, as we do not have further information about the location/suburbs

data['yr_renovated'].unique()

data['yr_renovated'].value_counts()

# * Note: lots of unique values, also 0 for not renovated houses --> new column renovated after 1990 True or False

data.dtypes

# * change the date in datetime format

# * make waterfront boolean

data.info()

# * we do not have null values

data.describe()

sns.displot(data['yr_renovated'], bins=150)

data.hist(bins=25,figsize=(15, 15), layout=(5, 4));

plt.show()

|

.ipynb_checkpoints/realestate_Sam-checkpoint.ipynb

|

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 2

# language: python

# name: python2

# ---

# +

# Prepare fake-factory library for the docker pyspark environment used

# see: https://github.com/jupyter/docker-stacks/tree/master/pyspark-notebook

import os

os.environ['PYSPARK_PYTHON'] = 'python2'

os.environ['PATH'] = os.environ['PATH'].replace('/opt/conda/bin','/opt/conda/envs/python2/bin')

os.environ['CONDA_ENV_PATH'] = '/opt/conda/envs/python2'

# !pip -q install fake-factory

# +

# Prepare fake data to process

from datetime import datetime, timedelta

from faker import Faker

fake = Faker()

import pandas as pd

start = datetime.today()

end = start + timedelta(0.05)

d = [(str(fake.date_time_between_dates(start, end)), fake.ipv4(), fake.free_email_domain()) for x in range(500)]

# +

# Import pyspark

from pyspark import SparkContext

from pyspark.sql import SQLContext

from pyspark.sql import functions as F

# Start a SparkContext, SQLContext

sc = SparkContext('local[*]')

sqlContext = SQLContext(sc)

# Import plotting libs

import seaborn as sns

# %matplotlib inline

# +

# Define the resample function, to perform resampling on timeseries columns

def resample(column, agg_interval=900, time_format='yyyy-MM-dd HH:mm:ss'):

"""

Resamples specified time column of a Spark DataFrame to a specified interval. Interval is given in seconds.lllll

Parameters

----------.

column : Spark Column or string

agg_interval : integer

time_format : string

>>> df = sqlContext.createDataFrame([("2016-01-20 13:32:05",),("2016-01-20 13:50:15",)], ['dt'])

>>> df.select(resample('dt').alias('dt_resampled')).show()

>>> +-------------------+

>>> | dt_resampled|

>>> +-------------------+

>>> |2016-01-20 13:30:00|

>>> |2016-01-20 13:45:00|

>>> +-------------------+

Returns

-------

Spark Column

"""

if type(column)==str:

column = F.col(column)

col_ut = F.unix_timestamp(column, format=time_format) # convert to unix timestamp

col_ut_agg = F.floor(col_ut / agg_interval) * agg_interval # divide the unix timestamp into intervals

col_ts = F.from_unixtime(col_ut_agg) # convert unix timestamp into timestamp

return col_ts

# -

df = sqlContext.createDataFrame(d, ['dt','ip','email_provider'])

df.show(5)

df = df.withColumn('dt_resampled', resample(df.dt, 900))

df.show(5)

df_resampled = df.groupBy('dt_resampled', 'email_provider').count()

df_resampled.show(5)

df_resampled.toPandas() \

.pivot(index='dt_resampled', columns='email_provider', values='count') \

.plot(figsize=[14,5], title='Count emails per 15 minute interval')

|

notebooks/2016-01-20-Spark-resampling.ipynb

|

# -*- coding: utf-8 -*-

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Q#

# language: qsharp

# name: iqsharp

# ---

# # Key Distribution Kata

#

# The **Quantum Key Distribution** kata is a series of exercises designed to teach you about a neat quantum technology where you can use qubits to exchange secure cryptographic keys. In particular, you will work through implementing and testing a quantum key distribution protocol called [BB84](https://en.wikipedia.org/wiki/BB84).

#

# ### Background

#

# What does a key distribution protocol look like in general? Normally there are two parties, commonly referred to as Alice and Bob, who want to share a random, secret string of bits called a _key_. This key can then be used for a variety of different [cryptographic protocols](https://en.wikipedia.org/wiki/Cryptographic_protocol) like encryption or authentication. Quantum versions of key exchange protocols look very similar, and utilize qubits as a way to securely transmit the bit string.

#

# <img src="./img/qkd-concept.png" alt="General schematic for QKD protocol" width="60%"/>

#

# You can see in the figure above that Alice and Bob have two connections, one quantum channel and one bidirectional classical channel. In this kata you will simulate what happens on the quantum channel by preparing and measuring a sequence of qubits and then perform classical operations to transform the measurement results to a usable, binary key.

#

# There are a variety of different quantum key distribution protocols, however the most common is called [BB84](https://en.wikipedia.org/wiki/BB84) after the initials of the authors and the year it was published. It is used in many existing commercial quantum key distribution devices that implement BB84 with single photons as the qubits.

#

# #### For more information:

# * [Introduction to quantum cryptography and BB84](https://www.youtube.com/watch?v=UiJiXNEm-Go).

# * [QKD summer school lecture on quantum key distribution](https://www.youtube.com/watch?v=oEJOtu0joXk).

# * [Key Distribution Wikipedia article](https://en.wikipedia.org/wiki/Quantum_key_distribution).

# * [BB84 protocol Wikipedia article](https://en.wikipedia.org/wiki/BB84).

# * [Updated version of the BB84 paper](https://www.sciencedirect.com/science/article/pii/S0304397514004241?via%3Dihub).

#

# ---

# ### Instructions

#

# Each task is wrapped in one operation preceded by the description of the task.

#

# Your goal is to fill in the blank (marked with the `// ...` comments)

# with some Q# code that solves the task. To verify your answer, run the cell using Ctrl+Enter (⌘+Enter on macOS).

#

# ## Part I. Preparation

# BB84 protocol loops through the two main steps until Alice and Bob have as much key as they want.

# The first step is to use the quantum channel, where Alice prepares individual qubits and then sends them to Bob to be measured.

# The second step is entirely classical post-processing and communication that takes the measurement results from the quantum step and extracts a classical, random bit string Alice and Bob can use.

# Let's start by looking at how Alice will prepare her qubits for sending to Bob as a part of the quantum phase of the BB84 protocol.

#

# Alice has two choices for each qubit, which basis to prepare it in, and what bit value she wants to encode.

# This leads to four possible states each qubit can be in that Alice sends out.

# The bases she has to choose from are selected such that if an eavesdropper tries to measure a qubit in transit and chooses the wrong basis, then they just get a 0 or 1 with equal probability.

#

# In the first basis, called the computational basis, Alice prepares the states $|0\rangle$ and $|1\rangle$ where $|0\rangle$ represents the key bit value `0` and $|1\rangle$ represents the key bit value `1`.

# The second basis (sometimes called the diagonal or Hadamard basis) uses the states $|+\rangle = \frac{1}{\sqrt2}(|0\rangle + |1\rangle)$ to represent the key bit value `0`, and $|-\rangle = \frac{1}{\sqrt2}(|0\rangle - |1\rangle)$ to represent the key bit value `1`.

#

# ### Task 1.1. Diagonal Basis

#

# Try your hand at converting qubits from the computational basis to the diagonal basis.

#

# **Input:** $N$ qubits (stored in an array of length $N$). Each qubit is either in $|0\rangle$ or in $|1\rangle$ state.

#

# **Goal:** Convert the qubits to the diagonal basis:

# * if `qs[i]` was in state $|0\rangle$, it should be transformed to $|+\rangle = \frac{1}{\sqrt2}(|0\rangle + |1\rangle)$,

# * if `qs[i]` was in state $|1\rangle$, it should be transformed to $|-\rangle = \frac{1}{\sqrt2}(|0\rangle - |1\rangle)$.

# +

%kata T11_DiagonalBasis

operation DiagonalBasis (qs : Qubit[]) : Unit {

// ...

}

# -

# ### Task 1.2. Equal superposition

#

#

# **Input**: A qubit in the $|0\rangle$ state.

#

# **Goal**: Change the qubit state to a superposition state that has equal probabilities of measuring 0 and 1.

#

# > Note that this is not the same as keeping the qubit in the $|0\rangle$ state with 50% probability and converting it to the $|1\rangle$ state with 50% probability!

# +

%kata T12_EqualSuperposition

operation EqualSuperposition (q : Qubit) : Unit {

// ...

}

# -

# ## Part II. BB84 Protocol

# Now that you have seen some of the steps that Alice will use to prepare here qubits, it's time to add in the rest of the steps of the BB84 protocol.

# ### Task 2.1. Generate random array

#

# You saw in part I that Alice has to make two random choices per qubit she prepares, one for which basis to prepare in, and the other for what bit value she wants to send.

# Bob will also need one random bit value to decide what basis he will be measuring each qubit in.

# To make this easier for later steps, you will need a way of generating random boolean values for both Alice and Bob to use.

#

# **Input:** An integer $N$.

#

# **Output** : A `Bool` array of length N, where each element is chosen at random.

#

# > This will be used by both Alice and Bob to choose either the sequence of bits to send or the sequence of bases (`false` indicates $|0\rangle$ / $|1\rangle$ basis, and `true` indicates $|+\rangle$ / $|-\rangle$ basis) to use when encoding/measuring the bits.

# +

%kata T21_RandomArray

operation RandomArray (N : Int) : Bool[] {

// ...

return new Bool[N];

}

# -

# ### Task 2.2. Prepare Alice's qubits

#

# Now that you have a way of generating the random inputs needed for Alice and Bob, it's time for Alice to use the random bits to prepare her sequence of qubits to send to Bob.

#

# **Inputs:**

#

# 1. `qs`: an array of $N$ qubits in the $|0\rangle$ states,

# 2. `bases`: a `Bool` array of length $N$;

# `bases[i]` indicates the basis to prepare the i-th qubit in:

# * `false`: use $|0\rangle$ / $|1\rangle$ (computational) basis,

# * `true`: use $|+\rangle$ / $|-\rangle$ (Hadamard/diagonal) basis.

# 3. `bits`: a `Bool` array of length $N$;

# `bits[i]` indicates the bit to encode in the i-th qubit: `false` = 0, `true` = 1.

#

# **Goal:** Prepare the qubits in the described state.

# +

%kata T22_PrepareAlicesQubits

operation PrepareAlicesQubits (qs : Qubit[], bases : Bool[], bits : Bool[]) : Unit {

// ...

}

# -

# ### Task 2.3. Measure Bob's qubits

#

# Bob now has an incoming stream of qubits that he needs to measure by randomly choosing a basis to measure in for each qubit.

#

# **Inputs:**

#

# 1. `qs`: an array of $N$ qubits;

# each qubit is in one of the following states: $|0\rangle$, $|1\rangle$, $|+\rangle$, $|-\rangle$.

# 2. `bases`: a `Bool` array of length $N$;

# `bases[i]` indicates the basis used to prepare the i-th qubit:

# * `false`: $|0\rangle$ / $|1\rangle$ (computational) basis,

# * `true`: $|+\rangle$ / $|-\rangle$ (Hadamard/diagonal) basis.

#

# **Output:** Measure each qubit in the corresponding basis and return an array of results as boolean values, encoding measurement result `Zero` as `false` and `One` as `true`.

# The state of the qubits at the end of the operation does not matter.

# +

%kata T23_MeasureBobsQubits

operation MeasureBobsQubits (qs : Qubit[], bases : Bool[]) : Bool[] {

// ...

return new Bool[0];

}

# -

# ### Task 2.4. Generate the shared key!

#

# Now, Alice has a list of the bit values she sent as well as what basis she prepared each qubit in, and Bob has a list of bases he used to measure each qubit. To figure out the shared key, they need to figure out when they both used the same basis, and toss the data from qubits where they used different bases. If Alice and Bob did not use the same basis to prepare and measure the qubits in, the measurement results Bob got will be just random with 50% probability for both the `Zero` and `One` outcomes.

#

# **Inputs:**

#

# 1. `basesAlice` and `basesBob`: `Bool` arrays of length $N$

# describing Alice's and Bobs's choice of bases, respectively;

# 2. `measurementsBob`: a `Bool` array of length $N$ describing Bob's measurement results.

#

# **Output:** a `Bool` array representing the shared key generated by the protocol.

#

# > Note that you don't need to know both Alice's and Bob's bits to figure out the shared key!

# +

%kata T24_GenerateSharedKey

function GenerateSharedKey (basesAlice : Bool[], basesBob : Bool[], measurementsBob : Bool[]) : Bool[] {

// ...

return new Bool[0];

}

# -

# ### Task 2.5. Check if error rate was low enough

#

# The main trace eavesdroppers can leave on a key exchange is to introduce more errors into the transmission. Alice and Bob should have characterized the error rate of their channel before launching the protocol, and need to make sure when exchanging the key that there were not more errors than they expected. The "errorRate" parameter represents their earlier characterization of their channel.

#

# **Inputs:**

#

# 1. `keyAlice` and `keyBob`: `Bool` arrays of equal length $N$ describing

# the versions of the shared key obtained by Alice and Bob, respectively.

# 2. `errorRate`: an integer between 0 and 50 - the percentage of the bits that did not match in Alice's and Bob's channel characterization.

#

# **Output:** `true` if the percentage of errors is less than or equal to the error rate, and `false` otherwise.

# +

%kata T25_CheckKeysMatch

function CheckKeysMatch (keyAlice : Bool[], keyBob : Bool[], errorRate : Int) : Bool {

// ...

return false;

}

# -

# ### Task 2.6. Putting it all together

#

# **Goal:** Implement the entire BB84 protocol using tasks 2.1 - 2.5

# and following the comments in the operation template.

#

# > This is an open-ended task, and is not covered by a unit test. To run the code, execute the cell with the definition of the `Run_BB84Protocol` operation first; if it compiled successfully without any errors, you can run the operation by executing the next cell (`%simulate Run_BB84Protocol`).

operation Run_BB84Protocol () : Unit {

// 1. Alice chooses a random set of bits to encode in her qubits

// and a random set of bases to prepare her qubits in.

// ...

// 2. Alice allocates qubits, encodes them using her choices and sends them to Bob.

// (Note that you can not reflect "sending the qubits to Bob" in Q#)

// ...

// 3. Bob chooses a random set of bases to measure Alice's qubits in.

// ...

// 4. Bob measures Alice's qubits in his chosen bases.

// ...

// 5. Alice and Bob compare their chosen bases and use the bits in the matching positions to create a shared key.

// ...

// 6. Alice and Bob check to make sure nobody eavesdropped by comparing a subset of their keys

// and verifying that more than a certain percentage of the bits match.

// For this step, you can check the percentage of matching bits using the entire key

// (in practice only a subset of indices is chosen to minimize the number of discarded bits).

// ...

// If you've done everything correctly, the generated keys will always match, since there is no eavesdropping going on.

// In the next section you will explore the effects introduced by eavesdropping.

}

%simulate Run_BB84Protocol

# ## Part III. Eavesdropping

# ### Task 3.1. Eavesdrop!

#

# In this task you will try to implement an eavesdropper, Eve.

#

# Eve will intercept a qubit from the quantum channel that Alice and Bob are using.

# She will measure it in either the $|0\rangle$ / $|1\rangle$ basis or the $|+\rangle$ / $|-\rangle$ basis, and prepare a new qubit in the state she measured. Then she will send the new qubit back to the channel.

# Eve hopes that if she got lucky with her measurement, that when Bob measures the qubit he doesn't get an error so she won't be caught!

#

# **Inputs:**

#

# 1. `q`: a qubit in one of the following states: $|0\rangle$, $|1\rangle$, $|+\rangle$, $|-\rangle$.

# 2. `basis`: Eve's guess of the basis she should use for measuring.

# Recall that `false` indicates $|0\rangle$ / $|1\rangle$ basis and `true` indicates $|+\rangle$ / $|-\rangle$ basis.

#

# **Output:** the bit encoded in the qubit (`false` for $|0\rangle$ / $|+\rangle$ states, `true` for $|1\rangle$ / $|-\rangle$ states).

#

# > In this task you are guaranteed that the basis you're given matches the one in which the qubit is encoded, that is, if you are given a qubit in state $|0\rangle$ or $|1\rangle$, you will be given `basis = false`, and if you are given a qubit in state $|+\rangle$ or $|-\rangle$, you will be given `basis = true`. This is different from a real eavesdropping scenario, in which you have to guess the basis yourself.

# +

%kata T31_Eavesdrop

operation Eavesdrop (q : Qubit, basis : Bool) : Bool {

// ...

return false;

}

# -

# ### Task 3.2. Catch the eavesdropper

#

# Add an eavesdropper into the BB84 protocol from task 2.6.

#

# Note that now we should be able to detect Eve and therefore we have to discard some of our key bits!

#

# > Similar to task 2.6, this is an open-ended task, and is not covered by a unit test. To run the code, execute the cell with the definition of the `Run_BB84ProtocolWithEavesdropper` operation first; if it compiled successfully without any errors, you can run the operation by executing the next cell (`%simulate Run_BB84ProtocolWithEavesdropper`).

operation Run_BB84ProtocolWithEavesdropper () : Unit {

// ...

}

%simulate Run_BB84ProtocolWithEavesdropper

|

KeyDistribution_BB84/KeyDistribution_BB84.ipynb

|

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# +

import math as m

import numpy as np

from numpy import genfromtxt

import os

from tqdm import tqdm

num_kp = 22

num_axes = 3

# List all the files in dir

kp_path = "Movements/Kinect/Positions/"

ka_path = "Movements/Kinect/Angles/"

kinect_positions = sorted([kp_path+f for f in os.listdir(kp_path) if f.endswith('.txt')])

kinect_angles = sorted([ka_path+f for f in os.listdir(ka_path) if f.endswith('.txt')])

# +

def Rotx(theta):

return np.matrix([[ 1, 0 , 0 ],

[ 0, m.cos(theta),-m.sin(theta)],

[ 0, m.sin(theta), m.cos(theta)]])

def Roty(theta):

return np.matrix([[ m.cos(theta), 0, m.sin(theta)],

[ 0 , 1, 0 ],

[-m.sin(theta), 0, m.cos(theta)]])

def Rotz(theta):

return np.matrix([[ m.cos(theta), -m.sin(theta), 0 ],

[ m.sin(theta), m.cos(theta) , 0 ],

[ 0 , 0 , 1 ]])

def eulers_2_rot_matrix(x):

gamma_x=x[0];beta_y=x[1];alpha_z=x[2];

return Rotz(alpha_z)*Roty(beta_y)*Rotx(gamma_x)

# -

# convert the data from relative coordinates to absolute coordinates

def rel2abs(p, a, num_frames):

skel = np.zeros((num_kp, num_axes, num_frames))

for i in range(num_frames):

"""

1 Waist (absolute)

2 Spine

3 Chest

4 Neck

5 Head

6 Head tip

7 Left collar

8 Left upper arm

9 Left forearm

10 Left hand

11 Right collar

12 Right upper arm

13 Right forearm

14 Right hand

15 Left upper leg

16 Left lower leg

17 Left foot

18 Left leg toes

19 Right upper leg

20 Right lower leg

21 Right foot

22 Right leg toes

"""

joint = p[:,:,i]

joint_ang = a[:,:,i]

# chest, neck, head

rot_1 = eulers_2_rot_matrix(joint_ang[0,:]*np.pi/180);

joint[1,:] = rot_1@joint[1,:] + joint[0,:]

rot_2 = rot_1*eulers_2_rot_matrix(joint_ang[1,:]*np.pi/180)

joint[2,:] = rot_2@joint[2,:] + joint[1,:]

rot_3 = rot_2*eulers_2_rot_matrix(joint_ang[2,:]*np.pi/180)

joint[3,:] = rot_3@joint[3,:] + joint[2,:]

rot_4 = rot_3*eulers_2_rot_matrix(joint_ang[3,:]*np.pi/180)

joint[4,:] = rot_4@joint[4,:] + joint[3,:]

rot_5 = rot_4*eulers_2_rot_matrix(joint_ang[4,:]*np.pi/180)

joint[5,:] = rot_5@joint[5,:] + joint[4,:]

# left-arm

rot_6 = eulers_2_rot_matrix(joint_ang[2,:]*np.pi/180)

joint[6,:] = rot_6@joint[6,:] + joint[2,:]

rot_7 = rot_6*eulers_2_rot_matrix(joint_ang[6,:]*np.pi/180)

joint[7,:] = rot_7@joint[7,:] + joint[6,:]

rot_8 = rot_7*eulers_2_rot_matrix(joint_ang[7,:]*np.pi/180)

joint[8,:] = rot_8@joint[8,:] + joint[7,:]

rot_9 = rot_8*eulers_2_rot_matrix(joint_ang[8,:]*np.pi/180)

joint[9,:] = rot_9@joint[9,:] + joint[8,:]

# right-arm

rot_10 = eulers_2_rot_matrix(joint_ang[2,:]*np.pi/180)

joint[10,:] = rot_10@joint[10,:] + joint[2,:]

rot_11 = rot_10*eulers_2_rot_matrix(joint_ang[10,:]*np.pi/180)

joint[11,:] = rot_11@joint[11,:] + joint[10,:]

rot_12 = rot_11*eulers_2_rot_matrix(joint_ang[11,:]*np.pi/180)

joint[12,:] = rot_12@joint[12,:] + joint[11,:]

rot_13 = rot_12*eulers_2_rot_matrix(joint_ang[12,:]*np.pi/180)

joint[13,:] = rot_13@joint[13,:] + joint[12,:]

# left-leg

rot_14 = eulers_2_rot_matrix(joint_ang[0,:]*np.pi/180)

joint[14,:] = rot_14@joint[14,:] + joint[0,:]

rot_15 = rot_14*eulers_2_rot_matrix(joint_ang[14,:]*np.pi/180)

joint[15,:] = rot_15@joint[15,:] + joint[14,:]

rot_16 = rot_15*eulers_2_rot_matrix(joint_ang[15,:]*np.pi/180)

joint[16,:] = rot_16@joint[16,:] + joint[15,:]

rot_17 = rot_16*eulers_2_rot_matrix(joint_ang[16,:]*np.pi/180)

joint[17,:] = rot_17@joint[17,:] + joint[16,:]

# right-leg

rot_18 = eulers_2_rot_matrix(joint_ang[0,:]*np.pi/180)

joint[18,:] = rot_18@joint[18,:] + joint[0,:]

rot_19 = rot_18*eulers_2_rot_matrix(joint_ang[18,:]*np.pi/180)

joint[19,:] = rot_19@joint[19,:] + joint[18,:]

rot_20 = rot_19*eulers_2_rot_matrix(joint_ang[19,:]*np.pi/180)

joint[20,:] = rot_20@joint[20,:] + joint[19,:]

rot_21 = rot_20*eulers_2_rot_matrix(joint_ang[20,:]*np.pi/180)

joint[21,:] = rot_21@joint[21,:] + joint[20,:]

skel[:,:,i] = joint

return skel.transpose(2,0,1)

def get_output_name(path):

pstr = "_".join(path.split("/")[-1].split(".")[0].split("_")[:-1])

return f"{pstr}_keypoints.csv"

def main():

# List all the files in dir

kp_path = "Movements/Kinect/Positions/"

ka_path = "Movements/Kinect/Angles/"

kinect_positions = sorted([kp_path+f for f in os.listdir(kp_path) if f.endswith('.txt')])

kinect_angles = sorted([ka_path+f for f in os.listdir(ka_path) if f.endswith('.txt')])

assert len(kinect_positions) == len(kinect_angles)

N = len(kinect_positions)

for i in tqdm(range(N)):

# get a position/Angle file path

pos_path = kinect_positions[i]

ang_path = kinect_angles[i]

# get the output name

op_name = get_output_name(pos_path)

# create the directory you want to save to

op_dir = "Movements/dataset_kp_kinect/"

if not os.path.isdir(op_dir):

os.makedirs(op_dir)

# read the text files

pos_data = genfromtxt(pos_path)

ang_data = genfromtxt(ang_path)

assert pos_data.shape[0] == ang_data.shape[0]

num_frames = pos_data.shape[0]

p_data = pos_data.T.reshape(num_kp, num_axes, -1)

a_data = ang_data.T.reshape(num_kp, num_axes, -1)

# tranform relative coordinates to absolute

skel = rel2abs(p_data, a_data, num_frames)

x = skel.reshape(num_frames, -1)

# save array as ".csv"

np.savetxt(os.path.join(op_dir, op_name), x, delimiter=",")

main()

|

UI-PRMD-Transformed.ipynb

|

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3.8.9 64-bit

# name: python3

# ---

# # Authoring Hub Predictors

# NatML provides infrastructure that allows businesses to very quickly deploy machine learning in their products. NatML handles all infrastructure needs, including server provisioning, data transfers, and auto-scaling. As a result, all developers need to do is simply bring their model, along with lightweight code for making predictions server- and client-side. There are two components involved:

#

# 1. **Executor**: The `MLExecutor` is code that is responsible for running the user's ML models on the NatML Hub cloud infrastructure. The executor is responsible for instantiating an inference runtime (like ONNX Runtime or PyTorch) and running inference on features provided by the NatML framework from end-users.

#

# 2. **Predictor**: The predictor is a lightweight component that end-users use to request predictions. The predictor submits prediction workloads to the NatML Hub platform, and post-processes the results client-side into a form easily usable by the end-user.

# ## Writing the Executor

# NatML provides the `MLExecutor` class which all executors must derive from. We will write an executor for the MobileNet v2 model:

# +

from natml import MLExecutor, MLModelData, MLFeature

from onnxruntime import InferenceSession

from typing import List

class MobileNetv2Executor (MLExecutor):

def initialize (self, model_data: MLModelData, graph_path: str):

"""

Initialize the executor.

This method should instantiate an ML inference session using an

inference framework like ONNX Runtime, PyTorch, or TensorFlow.

Parameters:

model_data (MLModelData): Model data.

graph_path (str): Path to ML graph on the file system.

"""

self.__session = InferenceSession(graph_path)

self.__model_data = model_data

def predict (self, *inputs: List[MLFeature]) -> List[MLFeature]:

"""

Make a prediction on one or more input features.

Parameters:

inputs (list): Input features.

Returns:

list: Output features.

"""

pass

# -

# ## Writing the Predictor

# +

from natml import MLModelData, MLModel, MLFeature

from typing import List

class MobileNetv2HubPredictor:

def __init__ (self, model: MLModel):

self.__model = model

def predict (self, *inputs: List[MLFeature]):

pass

# -

# Fetch the model data from Hub

access_key = "<HUB ACCESS KEY>"

model_data = MLModelData.from_hub("@natsuite/mobilenet-v2", access_key)

# Deserialize the model

model = model_data.deserialize()

# Create the predictor

predictor = MobileNetv2HubPredictor(model)

|

examples/authoring.ipynb

|

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# ---

# + [markdown] origin_pos=0

# # softmax回归的从零开始实现

# :label:`sec_softmax_scratch`

#

# (**就像我们从零开始实现线性回归一样,**)

# 我们认为softmax回归也是重要的基础,因此(**你应该知道实现softmax回归的细节**)。

# 本节我们将使用刚刚在 :numref:`sec_fashion_mnist`中引入的Fashion-MNIST数据集,

# 并设置数据迭代器的批量大小为256。

#

# + origin_pos=3 tab=["tensorflow"]

import tensorflow as tf

from IPython import display

from d2l import tensorflow as d2l

# + origin_pos=4 tab=["tensorflow"]

batch_size = 256

train_iter, test_iter = d2l.load_data_fashion_mnist(batch_size)

# + [markdown] origin_pos=5

# ## 初始化模型参数

#

# 和之前线性回归的例子一样,这里的每个样本都将用固定长度的向量表示。

# 原始数据集中的每个样本都是$28 \times 28$的图像。

# 在本节中,我们[**将展平每个图像,把它们看作长度为784的向量。**]

# 在后面的章节中,我们将讨论能够利用图像空间结构的特征,

# 但现在我们暂时只把每个像素位置看作一个特征。

#

# 回想一下,在softmax回归中,我们的输出与类别一样多。

# (**因为我们的数据集有10个类别,所以网络输出维度为10**)。

# 因此,权重将构成一个$784 \times 10$的矩阵,

# 偏置将构成一个$1 \times 10$的行向量。

# 与线性回归一样,我们将使用正态分布初始化我们的权重`W`,偏置初始化为0。

#

# + origin_pos=8 tab=["tensorflow"]

num_inputs = 784

num_outputs = 10

W = tf.Variable(tf.random.normal(shape=(num_inputs, num_outputs),

mean=0, stddev=0.01))

b = tf.Variable(tf.zeros(num_outputs))

# + [markdown] origin_pos=9

# ## 定义softmax操作

#

# 在实现softmax回归模型之前,我们简要回顾一下`sum`运算符如何沿着张量中的特定维度工作。

# 如 :numref:`subseq_lin-alg-reduction`和

# :numref:`subseq_lin-alg-non-reduction`所述,

# [**给定一个矩阵`X`,我们可以对所有元素求和**](默认情况下)。

# 也可以只求同一个轴上的元素,即同一列(轴0)或同一行(轴1)。

# 如果`X`是一个形状为`(2, 3)`的张量,我们对列进行求和,

# 则结果将是一个具有形状`(3,)`的向量。

# 当调用`sum`运算符时,我们可以指定保持在原始张量的轴数,而不折叠求和的维度。

# 这将产生一个具有形状`(1, 3)`的二维张量。

#

# + origin_pos=11 tab=["tensorflow"]

X = tf.constant([[1.0, 2.0, 3.0], [4.0, 5.0, 6.0]])

tf.reduce_sum(X, 0, keepdims=True), tf.reduce_sum(X, 1, keepdims=True)

# + [markdown] origin_pos=12

# 回想一下,[**实现softmax**]由三个步骤组成:

#

# 1. 对每个项求幂(使用`exp`);

# 1. 对每一行求和(小批量中每个样本是一行),得到每个样本的规范化常数;

# 1. 将每一行除以其规范化常数,确保结果的和为1。

#

# 在查看代码之前,我们回顾一下这个表达式:

#

# (**

# $$

# \mathrm{softmax}(\mathbf{X})_{ij} = \frac{\exp(\mathbf{X}_{ij})}{\sum_k \exp(\mathbf{X}_{ik})}.

# $$

# **)

#

# 分母或规范化常数,有时也称为*配分函数*(其对数称为对数-配分函数)。

# 该名称来自[统计物理学](https://en.wikipedia.org/wiki/Partition_function_(statistical_mechanics))中一个模拟粒子群分布的方程。

#

# + origin_pos=13 tab=["tensorflow"]

def softmax(X):

X_exp = tf.exp(X)

partition = tf.reduce_sum(X_exp, 1, keepdims=True)

return X_exp / partition # 这里应用了广播机制

# + [markdown] origin_pos=15

# 正如你所看到的,对于任何随机输入,[**我们将每个元素变成一个非负数。

# 此外,依据概率原理,每行总和为1**]。

#

# + origin_pos=17 tab=["tensorflow"]

X = tf.random.normal((2, 5), 0, 1)

X_prob = softmax(X)

X_prob, tf.reduce_sum(X_prob, 1)

# + [markdown] origin_pos=18

# 注意,虽然这在数学上看起来是正确的,但我们在代码实现中有点草率。

# 矩阵中的非常大或非常小的元素可能造成数值上溢或下溢,但我们没有采取措施来防止这点。

#

# ## 定义模型

#

# 定义softmax操作后,我们可以[**实现softmax回归模型**]。

# 下面的代码定义了输入如何通过网络映射到输出。

# 注意,将数据传递到模型之前,我们使用`reshape`函数将每张原始图像展平为向量。

#

# + origin_pos=19 tab=["tensorflow"]

def net(X):

return softmax(tf.matmul(tf.reshape(X, (-1, W.shape[0])), W) + b)

# + [markdown] origin_pos=20

# ## 定义损失函数

#

# 接下来,我们实现 :numref:`sec_softmax`中引入的交叉熵损失函数。

# 这可能是深度学习中最常见的损失函数,因为目前分类问题的数量远远超过回归问题的数量。

#

# 回顾一下,交叉熵采用真实标签的预测概率的负对数似然。

# 这里我们不使用Python的for循环迭代预测(这往往是低效的),

# 而是通过一个运算符选择所有元素。

# 下面,我们[**创建一个数据样本`y_hat`,其中包含2个样本在3个类别的预测概率,

# 以及它们对应的标签`y`。**]