code stringlengths 38 801k | repo_path stringlengths 6 263 |

|---|---|

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python (ml)

# language: python

# name: ml

# ---

# ## Exploring Activations

# I want to look at the activations generated by our network. This is mostly just out of curiosity since I wouldn't expect any big problems with ResNets.

# +

# %reload_ext autoreload

# %autoreload 2

# %matplotlib inline

from fastai.callbacks import ActivationStats, HookCallback

from fastai.vision import *

import pandas as pd

import os

# +

DATA = Path('data')

CSV_TRN_CURATED = DATA/'train_curated.csv'

CSV_TRN_NOISY = DATA/'train_noisy.csv'

CSV_TRN_MERGED = DATA/'train_merged.csv'

CSV_SUBMISSION = DATA/'sample_submission.csv'

TRN_CURATED = DATA/'train_curated'

TRN_NOISY = DATA/'train_noisy'

TEST = DATA/'test'

WORK = Path('work')

IMG_TRN_CURATED = WORK/'image/trn_curated'

IMG_TRN_NOISY = WORK/'image/trn_noisy'

IMG_TRN_MERGED = WORK/'image/trn_merged'

IMG_TEST = WORK/'image/test'

for folder in [WORK, IMG_TRN_CURATED, IMG_TRN_NOISY, IMG_TEST]:

Path(folder).mkdir(exist_ok=True, parents=True)

train_df = pd.read_csv(CSV_TRN_CURATED)

train_noisy_df = pd.read_csv(CSV_TRN_NOISY)

test_df = pd.read_csv(CSV_SUBMISSION)

# +

tfms = get_transforms(do_flip=True, max_rotate=0, max_lighting=0.1, max_zoom=0, max_warp=0.)

src = (ImageList.from_csv(WORK/'image', Path('../../')/CSV_TRN_CURATED, folder='trn_curated', suffix='.jpg')

.split_by_rand_pct(0.2)

.label_from_df(label_delim=',')

)

data = (src.transform(tfms, size=128)

.databunch(bs=64).normalize(imagenet_stats)

)

# +

# Modified from: https://forums.fast.ai/t/confused-by-output-of-hook-output/29514/4

class StoreHook(HookCallback):

def on_train_begin(self, **kwargs):

super().on_train_begin(**kwargs)

self.hists = []

def hook(self, m, i, o):

return o

def on_batch_end(self, train, **kwargs):

if (train):

self.hists.append(self.hooks.stored[0].cpu().histc(40,0,10))

# Simply pass in a learner and the module you would like to instrument

def probeModule(learn, module):

hook = StoreHook(learn, modules=flatten_model(module))

learn.callbacks += [ hook ]

return hook

# Thanks to @ste for initial version of histgram plotting code

def get_hist(h):

return torch.stack(h.hists).t().float().log1p()

# -

f_score = partial(fbeta, thresh=0.2)

learn = cnn_learner(data, models.resnet18, pretrained=False, metrics=[f_score])

learn.unfreeze()

# +

#Hook the output of the first conv and each resnet block.

hooks = [probeModule(learn, learn.model[0][0]),

probeModule(learn, learn.model[0][4]),

probeModule(learn, learn.model[0][5]),

probeModule(learn, learn.model[0][6]),

probeModule(learn, learn.model[0][7])]

names = ['conv1',

'conv2_x',

'conv3_x',

'conv4_x',

'conv5_x']

learn.fit_one_cycle(10, max_lr=1e-2)

# +

fig,axes = plt.subplots(5, figsize=(15,10))

for i, (ax,h) in enumerate(zip(axes.flatten(), hooks)):

ax.imshow(get_hist(h), origin='lower', aspect='auto')

ax.axis('off')

ax.text(0, -5, names[i], bbox={'facecolor':'red', 'alpha':0.0, 'pad':10})

plt.tight_layout()

# +

#Hook the output of the first conv and each resnet block.

hooks = [probeModule(learn, learn.model[0][0]),

probeModule(learn, learn.model[0][4]),

probeModule(learn, learn.model[0][5]),

probeModule(learn, learn.model[0][6]),

probeModule(learn, learn.model[0][7])]

names = ['conv1',

'conv2_x',

'conv3_x',

'conv4_x',

'conv5_x']

learn.fit_one_cycle(10, max_lr=slice(1e-6, 1e-2))

# +

fig,axes = plt.subplots(5, figsize=(15,10))

for i, (ax,h) in enumerate(zip(axes.flatten(), hooks)):

ax.imshow(get_hist(h), origin='lower', aspect='auto')

ax.axis('off')

ax.text(0, -5, names[i], bbox={'facecolor':'red', 'alpha':0.0, 'pad':10})

plt.tight_layout()

# -

| 01_ExploringActivations.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# +

import warnings

warnings.filterwarnings('ignore')

import numpy as np

from tqdm.autonotebook import tqdm

import gym

import time

# %matplotlib inline

import matplotlib.pyplot as plt

# -

def moving_average(values, n=100) :

ret = np.cumsum(values, dtype=float)

ret[n:] = ret[n:] - ret[:-n]

return ret[n - 1:] / n

env = gym.make('FrozenLake-v0')

policy_to_action = {0:"L",1:"D",2:"R",3:"U"}

ACTION_DIM = env.action_space.n

MAX_STEPS = env.spec.max_episode_steps

STATE_DIM = env.observation_space.n

NUM_EPISODES = 1000000

START_ALPHA = 0.1

ALPHA_TAPER = 0.01

START_EPSILON = 1

EPSILON_TAPER = 0.0001

GAMMA = 0.9

# +

Q = np.zeros((STATE_DIM,ACTION_DIM),dtype=np.float64)

state_visits_count = {}

update_counts = np.zeros((STATE_DIM,ACTION_DIM),dtype=np.int64)

def updateQ( prev_state,action,reward,cur_state):

alpha = START_ALPHA / ( 1 + update_counts[prev_state][action]*ALPHA_TAPER )

update_counts[prev_state][action] += 1

Q[prev_state][action] += alpha * ( reward + GAMMA * np.max(Q[cur_state]) - Q[prev_state][action] )

def epsilon_greedy(s,eps=START_EPSILON):

if np.random.random() > 1-eps:

return np.argmax(Q[s])

else:

return env.action_space.sample()

# +

total_rewards = 0

deltas = []

verbose = True

start = time.time()

for episode in tqdm(range(NUM_EPISODES),desc = "Progress : "):

eps = START_EPSILON / ( 1.0 + EPSILON_TAPER * episode )

if verbose and episode % (NUM_EPISODES/10) == 0:

print("EPISODES : {} | AVG_REWARD : {} | EPSILON : {}".format(episode,total_rewards/(NUM_EPISODES/10),eps))

total_rewards=0

biggest_change = 0

curr_state = env.reset()

for _ in range(MAX_STEPS):

action = epsilon_greedy(curr_state,eps=eps)

state_visits_count[curr_state] = state_visits_count.get(curr_state,0)+1

prev_state = curr_state

curr_state, reward, done, _ = env.step(action)

total_rewards += reward

oldq = Q[prev_state][action]

updateQ(prev_state,action,reward,curr_state)

biggest_change = max( biggest_change , np.abs( oldq - Q[prev_state][action] ))

if done:

break

deltas.append(biggest_change)

mean_state_visit = np.mean( list(state_visits_count.values()) )

print('EACH STATE WAS VISITED {} TIMES ON AN AVERAGE'.format( mean_state_visit ))

Value_F = np.zeros(STATE_DIM)

Policy_F = np.zeros(STATE_DIM)

for s in range(STATE_DIM):

Value_F[s] = np.max(Q[s])

Policy_F[s] = np.argmax(Q[s])

print("TIME TAKEN {} ".format(time.time()-start))

gpolicy = list(map(lambda a: policy_to_action[a],Policy_F))

print("Optimal Policy :\n {} ".format(np.reshape(gpolicy,(int(np.sqrt(STATE_DIM)),int(np.sqrt(STATE_DIM))))))

print("Optimal Values :\n {}".format(np.reshape(Value_F,(int(np.sqrt(STATE_DIM)),int(np.sqrt(STATE_DIM))))))

plt.plot(moving_average(deltas,n=10000))

plt.show()

# +

"""

Lets see our success rate

"""

games = 1000

won = 0

for _ in range(games):

state = env.reset()

while True:

action = int(Policy_F[state])

(state,reward,is_done,_) = env.step(action)

if is_done:

if reward>0:

won+=1

env.close()

break

print("Success Rate : {}".format(won/games))

# -

| Homework-Assignments/Week 4 - Frozen Lake (Q Learning).ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# +

import gym

import inventory

import torch

import numpy as np

import matplotlib.pyplot as plt

from stable_baselines3 import PPO

from stable_baselines3.ppo import MlpPolicy

from stable_baselines3.common.env_util import make_vec_env

from stable_baselines3.common.evaluation import evaluate_policy

from stable_baselines3.common.callbacks import EvalCallback

from inventory.envs.inventory_env import Inventory

# +

p = 1

L = 1

load_path = './results/logs2/' + str(p) + "_" + str(L)

policy_data = np.load(load_path + "/evaluations.npz") #load in training data for model

n_episodes = len(policy_data['ep_lengths'][0]) #how many episodes were used to evaluate the policy?

n_evals = len(policy_data['timesteps']) #how many times was the policy evaluated as it was trained?

policy_performance = []

for i in range(0, n_evals): #calculate mean policy performance for each time the policy was evaluated as it was trained

policy_performance.append(-float(sum(policy_data['results'][i])/n_episodes))

const_order_val = (2*p + 1)**(1/2)-1 #policy performance of best constant order policy

plt.plot(policy_data['timesteps'], policy_performance)

plt.xlabel("Number of Learning Steps")

plt.ylabel("Approximate Long Run Average Cost")

plt.axhline(y = const_order_val, color = 'red', linestyle = '--')

plt.title("Trained Policy Performance vs Learning Steps Completed (L = {}, p = {})".format(L,p))

plt.show()

# +

p = 1

L = 1 #only use for L=1!!!

load_path = './results/logs2/' + str(p) + "_" + str(L)

model = PPO.load(load_path + "/best_model.zip")

def g(x,y): #assuming you have I_t = x and x_{1,t} = y, get the deterministic action from trained policy

action, _states = model.predict(np.array([x,y]), deterministic = True)

return action

#evaluate actions on a grid for I_t, x_{1,t} in [0,2]x[0,2]

x = np.linspace(0, 2, 30)

y = np.linspace(0, 2, 30)

z = []

for j in y:

for i in x:

z.append(g(i,j))

X, Y = np.meshgrid(x, y)

Z = np.array(z).reshape(30,30) #reshape for plotting with contourf

plt.contourf(X, Y, Z, 20)

plt.xlabel("I_t (On Hand Inventory)")

plt.ylabel("x_{1,t} (Inventory Order in Pipeline)")

plt.title("Trained Policy Mean Action (L = 1, p = {})".format(p))

plt.colorbar();

# -

| .ipynb_checkpoints/PPO_policy_plotting-checkpoint.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

import pandas as pd

import numpy as np

import pickle

from sklearn.decomposition import PCA

from sklearn.preprocessing import StandardScaler

# # Color

# read color features

color_features = pd.read_csv('../data/color_features.csv', sep=',', header=None)

color_features

# create pca

pca_color = PCA(100)

# fit pca

pca_color.fit(color_features)

# save pca model

with open('../data/pca_color.pckl', 'wb') as handle:

pickle.dump(pca_color, handle, protocol=pickle.HIGHEST_PROTOCOL)

# transform features

color_features_pca = pca_color.transform(color_features)

print('dimension', color_features_pca.shape)

# save new features

np.savetxt('../data/color_features_pca.csv', color_features_pca, delimiter=',')

# # Color center subregions

# read color features

color_features = pd.read_csv('../data/color_features_center_subregions.csv', sep=',', header=None)

color_features

# create pca

pca_color = PCA(100)

# fit pca

pca_color.fit(color_features)

# save pca model

with open('../data/pca_color_center_subregions.pckl', 'wb') as handle:

pickle.dump(pca_color, handle, protocol=pickle.HIGHEST_PROTOCOL)

# transform features

color_features_pca = pca_color.transform(color_features)

print('dimension', color_features_pca.shape)

# save new features

np.savetxt('../data/color_features_center_subregions_pca.csv', color_features_pca, delimiter=',')

# # HOG

# read hog features

hog_features = pd.read_csv('../data/hog_features.csv', sep=',', header=None)

hog_features

# create pca

pca_hog = PCA(100)

# fit pca

pca_hog.fit(hog_features)

# save pca model

with open('../data/pca_hog.pckl', 'wb') as handle:

pickle.dump(pca_hog, handle, protocol=pickle.HIGHEST_PROTOCOL)

# transform features

hog_features_pca = pca_hog.transform(hog_features)

print('dimension', hog_features_pca.shape)

# save new features

np.savetxt('../data/hog_features_pca.csv', hog_features_pca, delimiter=',')

# # Neural Network EfficientNet

# read nn features

nn_features = pd.read_csv('../data/nn_features.csv', sep=',', header=None)

nn_features

# create pca

pca_nn = PCA(100)

# fit pca

pca_nn.fit(nn_features)

# save pca model

with open('../data/pca_nn.pckl', 'wb') as handle:

pickle.dump(pca_nn, handle, protocol=pickle.HIGHEST_PROTOCOL)

# transform features

nn_features_pca = pca_nn.transform(nn_features)

print('dimension', nn_features_pca.shape)

# save new features

np.savetxt('../data/nn_features_pca.csv', nn_features_pca, delimiter=',')

# # Neural Network ResNet

# read nn features

nn_features = pd.read_csv('../data/nn_resnet_features.csv', sep=',', header=None)

nn_features

# create pca

pca_nn = PCA(100)

# fit pca

pca_nn.fit(nn_features)

# save pca model

with open('../data/pca_nn_resnet.pckl', 'wb') as handle:

pickle.dump(pca_nn, handle, protocol=pickle.HIGHEST_PROTOCOL)

# transform features

nn_features_pca = pca_nn.transform(nn_features)

print('dimension', nn_features_pca.shape)

# save new features

np.savetxt('../data/nn_resnet_features_pca.csv', nn_features_pca, delimiter=',')

# # HOG + Color

### read HOG features

hog_features = pd.read_csv('../data/HOG_features.csv', sep=',', header=None)

# create pca hog

pca_hc_hog = PCA(100)

# fit pca hog

pca_hc_hog.fit(hog_features)

print('New dimension:', pca_hc_hog.n_components_)

# save pca hog model

with open('../data/pca_hc_hog.pckl', 'wb') as handle:

pickle.dump(pca_hc_hog, handle, protocol=pickle.HIGHEST_PROTOCOL)

# transform features

hog_features_pca = pca_hc_hog.transform(hog_features)

print('dimension', hog_features_pca.shape)

### read color features

color_features = pd.read_csv('../data/color_features.csv', sep=',', header=None)

# create pca color

pca_hc_color = PCA(200)

# fit pca color

pca_hc_color.fit(color_features)

print('New dimension:', pca_hc_color.n_components_)

# save pca color model

with open('../data/pca_hc_color.pckl', 'wb') as handle:

pickle.dump(pca_hc_color, handle, protocol=pickle.HIGHEST_PROTOCOL)

# transform features

color_features_pca = pca_hc_color.transform(color_features)

print('dimension', color_features_pca.shape)

### merge features

merged_features_pca = np.hstack([hog_features_pca, color_features_pca])

print('dimension', merged_features_pca.shape)

# save merged features

np.savetxt('../data/hog_color_features_pca.csv', merged_features_pca, delimiter=',')

# +

# # standardize data

# scaler = StandardScaler()

# scaler.fit(merged)

# +

# # save scaler model

# with open('../data/scaler_std.pckl', 'wb') as handle:

# pickle.dump(scaler, handle, protocol=pickle.HIGHEST_PROTOCOL)

# +

# merged = scaler.transform(merged)

# +

# merged.shape

# +

# pca = PCA(0.95)

# +

# pca.fit(merged)

# +

# # save pca model

# with open('../data/pca_std.pckl', 'wb') as handle:

# pickle.dump(pca, handle, protocol=pickle.HIGHEST_PROTOCOL)

# +

# pca_merged = pca.transform(merged)

# +

# pca_merged.shape

# +

# np.savetxt('../data/merged_color_hog_pca_std.csv', pca_merged, delimiter=',')

| notebooks/FeaturesPCA.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# # Neighborhood definitions

# In py-clEsperanto (and in [CLIJ as well](https://clij.github.io/clij2-docs/md/neighbors_of_neighbors/)), we're using adjacency graphs to investigate relationships between neighboring labeled object, in practice: cells.

#

# This notebook demonstrates the considered neighborhood definitions.

#

# We consider the "neighborhood" of a pixel always includs the pixel itself per default. That sounds intuitive in the first place and leads to some unnatural behaviour in some situations.

#

# Work is in progress. Feedback is very welcome: robert.haase at tu-dresden.de

# +

import pyclesperanto_prototype as cle

import numpy as np

import matplotlib

from numpy.random import random

cle.select_device("RTX")

# +

# Generate artificial cells as test data

tissue = cle.artificial_tissue_2d()

# fill it with random measurements

values = random([int(cle.maximum_of_all_pixels(tissue))])

for i, y in enumerate(values):

if (i != 95):

values[i] = values[i] * 10 + 45

else:

values[i] = values[i] * 10 + 90

measurements = cle.push(np.asarray([values]))

# visualize measurments in space

example_image = cle.replace_intensities(tissue, measurements)

# -

# ## Example data

# Let's take a look at an image with arbitrarily shaped pixels. Let's call them "cells". In our example image, there is one cell in the center with higher intensity:

cle.imshow(example_image, min_display_intensity=30, max_display_intensity=90, color_map='jet')

# ## Touching neighbors

# We can show all cells that belong to the "touch" neighborhood by computing the local maximum intensity in this neighborhood. Let's visualize the touching neighbor graph as mesh first.

# +

mesh = cle.draw_mesh_between_touching_labels(tissue)

# make lines a bit thicker for visualization purposes

mesh = cle.maximum_sphere(mesh, radius_x=1, radius_y=1)

cle.imshow(mesh)

# -

# From those neighbor-graph one can compute local properties, for example the maximum:

# +

local_maximum = cle.maximum_of_touching_neighbors_map(example_image, tissue)

cle.imshow(local_maximum, min_display_intensity=30, max_display_intensity=90, color_map='jet')

# -

# ## Neighbors of touching neighbors

# You can also extend the neighborhood by considering neighbors of neighbor (of neighbors (of neighbors)). How far you go, can be configured with a radius parameter. Note: Radiu==0 means, no neighbors are taken into account, radius==1 is identical with touching neighbors, radius > 1 are neighbors of neighbors:

for radius in range(0, 5):

local_maximum = cle.maximum_of_touching_neighbors_map(example_image, tissue, radius=radius)

cle.imshow(local_maximum, min_display_intensity=30, max_display_intensity=90, color_map='jet')

# ## N nearest neighbors

# You can also define a neighborhood from the distances between cells. As distance measurement, we use the Euclidean distance between label centroids. Also in this case you an configure how far the neighborhood should range by setting the number of nearest neighbors n. As mentioned above, neighborhoods include the center pixel. Thus, the neighborhood of a pixel and its nearest neighbor contains two neighbors:

for n in range(1, 10):

print("n = ", n)

mesh = cle.draw_mesh_between_n_closest_labels(tissue, n=n)

# make lines a bit thicker for visualization purposes

mesh = cle.maximum_sphere(mesh, radius_x=1, radius_y=1)

cle.imshow(mesh)

for n in range(1, 10):

print("n = ", n)

local_maximum = cle.maximum_of_n_nearest_neighbors_map(example_image, tissue, n=n)

cle.imshow(local_maximum, min_display_intensity=30, max_display_intensity=90, color_map='jet')

# ## Proximal neighbors

# We can also compute the local maximum of cells with centroid distances below a given upper threshold:

local_maximum = cle.maximum_of_proximal_neighbors_map(example_image, tissue, max_distance=20)

cle.imshow(local_maximum, min_display_intensity=30, max_display_intensity=90, color_map='jet')

local_maximum = cle.maximum_of_proximal_neighbors_map(example_image, tissue, max_distance=50)

cle.imshow(local_maximum, min_display_intensity=30, max_display_intensity=90, color_map='jet')

| demo/neighbors/neighborhood_definitions.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# # 単変量線形回帰の予測区間

# +

import numpy as np

from scipy import stats

from sklearn.linear_model import LinearRegression

import matplotlib.pyplot as plt

# -

# # 真の回帰直線

# * $y = x + 1$

#

# # 観測値

# * $x = 0.0, 0.5. 1.0, \dots, 10.0$

# * サンプル数: 21

# * $Y_i = x_i + 1 + \varepsilon, \varepsilon \sim N(0, 0.5^2)$

# 実験設定

SAMPLE_SIZE = 21

SIGMA = 0.5

# 実験を管理するクラス

class Experiment:

def __init__(self, random_seed, sigma, sample_size):

np.random.seed(random_seed)

# 実験設定

self.sigma = sigma

self.sample_size = sample_size

# サンプルを生成

self.x_train = np.array([0.5 * i for i in range(sample_size)])

self.y_true = self.x_train + 1

self.y_train = self.y_true + np.random.normal(0.0, sigma, sample_size)

# 回帰係数を算出

self.x_mean = np.mean(self.x_train)

self.s_xx = np.sum((self.x_train - self.x_mean) ** 2)

self.y_mean = np.mean(self.y_train)

self.s_xy = np.sum((self.x_train - self.x_mean) * (self.y_train - self.y_mean))

# 回帰係数

self.coef = self.s_xy / self.s_xx

self.intercept = self.y_mean - self.coef * self.x_mean

# 不偏標本分散

s2 = np.sum((self.y_train - self.intercept - self.coef * self.x_train) ** 2) / (sample_size - 2)

self.s = np.sqrt(s2)

# t分布(自由度N-2)の上側2.5%点

self.t = stats.t.ppf(1-0.025, df=sample_size-2)

# サンプルデータを取得する

def get_sample(self, index):

return (self.x_train[index], self.y_train[index])

# 予測

def predict(self, x):

return self.intercept + self.coef * x

# 真の値

def calc_true_value(self, x):

return x + 1

# 95%信頼区間

def calc_confidence_interval(self, x):

band = self.t * self.s * np.sqrt(1 / self.sample_size + (x - self.x_mean)**2 / self.s_xx)

upper_confidence = self.predict(x) + band

lower_confidence = self.predict(x) - band

return (lower_confidence, upper_confidence)

# 95%予測区間

def calc_prediction_interval(self, x):

band = self.t * self.s * np.sqrt(1 + 1 / self.sample_size + (x - self.x_mean)**2 / self.s_xx)

upper_confidence = self.predict(x) + band

lower_confidence = self.predict(x) - band

return (lower_confidence, upper_confidence)

# 観測値, 95%予測区間を描画する

def plot(self):

# 学習データ

plt.scatter(self.x_train, self.y_train, color='royalblue', alpha=0.2)

# 予測区間

lower_confidence, upper_confidence = self.calc_prediction_interval(self.x_train)

plt.plot(self.x_train, upper_confidence, color='green', linestyle='dashed', label='95% prediction interval')

plt.plot(self.x_train, lower_confidence, color='green', linestyle='dashed')

x_max = max(self.x_train)

plt.xlim([0, x_max])

plt.ylim([0.5, x_max + 1.5])

plt.legend();

# 観測値, 95%予測区間, 95%信頼区間を描画する

def plot_with_confidence(self):

# 学習データ

plt.scatter(self.x_train, self.y_train, color='royalblue', alpha=0.2)

# 信頼区間

lower_confidence, upper_confidence = self.calc_confidence_interval(self.x_train)

plt.plot(self.x_train, upper_confidence, color='royalblue', linestyle='dashed', label='95% confidence interval')

plt.plot(self.x_train, lower_confidence, color='royalblue', linestyle='dashed')

# 予測区間

lower_confidence, upper_confidence = self.calc_prediction_interval(self.x_train)

plt.plot(self.x_train, upper_confidence, color='green', linestyle='dashed', label='95% prediction interval')

plt.plot(self.x_train, lower_confidence, color='green', linestyle='dashed')

x_max = max(self.x_train)

plt.xlim([0, x_max])

plt.ylim([0.5, x_max + 1.5])

plt.legend();

# あるxにおける予測区間と観測値を描画する

def plot_at_x(self, x):

plt.xlim([x-0.5, x+0.5])

plot_x = np.array([x-0.5, x, x+0.5])

plot_y = self.predict(plot_x)

# 学習データ

index = int(2 * x)

plt.scatter(self.x_train[index], self.y_train[index], color='royalblue', label='sample')

# 予測区間

lb, ub = self.calc_prediction_interval(x)

error = (ub - lb) / 2

plt.errorbar(plot_x[1], plot_y[1], fmt='o', yerr=error, capsize=5, color='green', label='95% prediction interval')

plt.xlim([x-0.5, x+0.5])

plt.legend();

# # 実験

# +

# 観測した標本から95%予測区間を求める

random_seed = 12

experiment = Experiment(random_seed, SIGMA, SAMPLE_SIZE)

experiment.plot()

# +

# 観測した標本から95%予測区間, 信頼区間を求める

random_seed = 12

experiment = Experiment(random_seed, SIGMA, SAMPLE_SIZE)

experiment.plot_with_confidence()

# -

# ## 予測区間に観測値が含まれるケース

x = 2.5

experiment.plot_at_x(x)

# ## 予測区間に観測値が含まれないケース

x = 4.5

experiment.plot_at_x(x)

# # 実験を1万回繰り返す

# * 予測区間に観測値が含まれる割合を計測する

# +

experiment_count = 10000

count = 0

for i in range(experiment_count):

experiment = Experiment(i, SIGMA, SAMPLE_SIZE)

x = np.random.uniform(0, 10, 1)[0]

y = x + 1 + np.random.normal(0.0, SIGMA, 1)

lb, ub = experiment.calc_prediction_interval(x)

# 予測区間に観測値が含まれるかチェック

count += 1 if (lb <= y and y <= ub) else 0

print('予測区間に観測が含まれる割合: {:.1f}%'.format(100 * count / experiment_count))

| scikit-learn/SimpleLinearRegression/PredictionInterval.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

from pyspark import SparkContext, SparkConf

import os

import sys

sc.stop()

# +

dataset = "./data-1-sample.txt"

conf = (SparkConf()

.setAppName("hamroun"))

sc = SparkContext(conf=conf)

# +

#Data Loading

print("Data Loading")

original_data = sc.textFile(dataset)

original_data = original_data.map(lambda s: float(s))

#Definition of groupe Number

nb_groups = 10.0

count = original_data.count()

sum = original_data.sum()

max_data = original_data.max()

min_data = original_data.min()

quotient = round( (max_data/nb_group) - (min_data/nb_group))

print("number of groups =", nb_groups)

print("Count =", count)

print("Sum = %.8f" % sum)

print("Max = %.8f" % max_data)

print("Min = %.8f" % min_data)

print("quotient = %.2f" % quotient)

print("creation of key-value RDD")

keys_value_data = original_data.map(lambda number: ((int( number/quotient - min_data/quotient )),number))

keys_data = keys_value_data.groupByKey()

len_by_key = keys_data.mapValues(len).sortByKey()

list_lengths = len_by_key.values().collect()

list_keys = len_by_key.keys().collect()

accumulated_number = 0

print("Researching of group which contains the median.")

for current_id,current_length in zip(list_keys,list_lengths ):

accumulated_number += current_length

if(accumulated_number > count / 2):

break

median_index = int(count / 2 ) - (accumulated_number - list_lengths[current_id])

print("the id of the group which contains the median value : ")

print(current_id)

print("the id of the median in the selected group : ")

print(median_index)

selected_group = keys_data.mapValues(list).lookup(current_id)

median = sorted(selected_group[0])[median_index]

print("median = %.8f" % median)

sc.stop()

| median/median.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# name: python3

# ---

# + [markdown] id="z1PSyPUbbo3D"

# ### PARTE 1 - COME INTERAGIRE CON LE API

# + colab={"base_uri": "https://localhost:8080/"} id="SQA6Qao9X0lK" outputId="8d810aaf-03f2-4f78-dc8a-99f15143dcd8" language="bash"

# pip install requests

# + [markdown] id="uJSNWsLjYb9C"

# We want to access the latest currency exchange information, so we will be using GET /latest.json.

#

# Clicking on it brings us to the documentation, which tells us the URL we want to send data to is https://openexchangerates.org/api/latest.json.

#

# Let's add that to our program:

#

# - https://docs.openexchangerates.org/

# - https://openexchangerates.org/

#

# + id="6KLlvJ5xX8FC"

import requests

APP_ID = "batman"

ENDPOINT = "https://openexchangerates.org/api/latest.json"

# + [markdown] id="NZVikCVYY6Oi"

# This means that we have to use Python to send a GET request to this URL, making sure to include our App ID in there. There are a few different types of requests, such as GET and POST. This is just another piece of data the API expects. Depending on the request type, sometimes APIs will do different things in a single endpoint.

#

# Then we'll get back something like this (shown also in the official documentation):

#

# ```json

# {

# disclaimer: "https://openexchangerates.org/terms/",

# license: "https://openexchangerates.org/license/",

# timestamp: 1449877801,

# base: "USD",

# rates: {

# AED: 3.672538,

# AFN: 66.809999,

# ALL: 125.716501,

# AMD: 484.902502,

# ANG: 1.788575,

# AOA: 135.295998,

# ARS: 9.750101,

# AUD: 1.390866,

# /* ... */

# }

# }

# ```

#

# + colab={"base_uri": "https://localhost:8080/"} id="zEesJaBmYJ0B" outputId="825e9fcc-1374-4261-e79b-7091dea6fab4"

import requests

import json

#Le API sono gratutite quindi per questa volta non mi scroccate i token :D

with open("credenziali.json") as jsonFile:

jsonObject = json.load(jsonFile)

jsonFile.close()

TOKEN = jsonObject['API_TOKEN']

APP_ID = TOKEN

ENDPOINT = "https://openexchangerates.org/api/latest.json"

response = requests.get(f"{ENDPOINT}?app_id={APP_ID}")

print(response.content)

# + colab={"base_uri": "https://localhost:8080/"} id="4bMmv3SOZLAj" outputId="efdefdb5-28f9-42c4-c6f0-4b17d5ccc5d2"

response = requests.get(f"{ENDPOINT}?app_id={APP_ID}")

exchange_rates = response.json()

usd_amount = 1000

gbp_amount = usd_amount * exchange_rates['rates']['GBP']

print(f"USD{usd_amount} is GBP{gbp_amount}")

# + [markdown] id="fxB05bFCbt2D"

# ### PARTE 2 - COME SVILUPPARE DELLE API

# + id="j3RXMHcubxRq" colab={"base_uri": "https://localhost:8080/"} outputId="84b865d5-0af1-44d7-fc7d-da07dfe057c4"

# !git clone https://github.com/mongodb-developer/rewrite-it-in-rust.git

# + colab={"base_uri": "https://localhost:8080/"} id="O0tu27f2clIV" outputId="0ded894d-364e-4c2a-e605-7a50e76fa902"

# %cd /content/rewrite-it-in-rust/flask-cocktail-api

# + colab={"base_uri": "https://localhost:8080/"} id="6FALHZStc2-K" outputId="5dc579c7-ea2e-4b10-f125-f939774d32ae"

# !pip install -e .

# + id="cisy7VXHc6DX"

# !export MONGO_URI="mongodb+srv://USERNAME:<EMAIL>W<EMAIL>@<EMAIL>.azure.mongodb.net/cocktails?retryWrites=true&w=majority"

# + id="t_0ByK8gc_BF"

# !sudo apt-get install gcc python-dev libkrb5-dev

# !python -m pip install 'pymongo[srv]'

# + id="r_W6E77PdBmQ"

# !python -m pip install pymongo[snappy,gssapi,srv,tls]

# + id="Bjr85BqDecVR"

# !mongoimport --uri "$MONGO_URI" --file ./recipes.json

# + id="bMRbWeXheyuK"

# !FLASK_DEBUG=true FLASK_APP=cocktailapi flask run

# + [markdown] id="UC--qedIfVCs"

#

#

# ```json

# {

# "_links": {

# "last": {

# "href": "http://localhost:5000/cocktails/?page=5"

# },

# "next": {

# "href": "http://localhost:5000/cocktails/?page=5"

# },

# "prev": {

# "href": "http://localhost:5000/cocktails/?page=3"

# },

# "self": {

# "href": "http://localhost:5000/cocktails/?page=4"

# }

# },

# "recipes": [

# {

# "_id": "5f7daa198ec9dfb536781b0d",

# "date_added": null,

# "date_updated": null,

# "ingredients": [

# {

# "name": "Light rum",

# "quantity": {

# "unit": "oz",

# }

# },

# {

# "name": "Grapefruit juice",

# "quantity": {

# "unit": "oz",

# }

# },

# {

# "name": "Bitters",

# "quantity": {

# "unit": "dash",

# }

# }

# ],

# "instructions": [

# "Pour all of the ingredients into an old-fashioned glass almost filled with ice cubes",

# "Stir well."

# ],

# "name": "<NAME>",

# "slug": "monkey-wrench"

# },

# ]

# ```

#

#

# + id="gKHzDdM_fR_L"

# model.py

class Cocktail(BaseModel):

id: Optional[PydanticObjectId] = Field(None, alias="_id")

slug: str

name: str

ingredients: List[Ingredient]

instructions: List[str]

date_added: Optional[datetime]

date_updated: Optional[datetime]

def to_json(self):

return jsonable_encoder(self, exclude_none=True)

def to_bson(self):

data = self.dict(by_alias=True, exclude_none=True)

if data["_id"] is None:

data.pop("_id")

return data

# + id="0xKImiYFfe3b"

# objectid.py

class PydanticObjectId(ObjectId):

"""

ObjectId field. Compatible with Pydantic.

"""

@classmethod

def __get_validators__(cls):

yield cls.validate

@classmethod

def validate(cls, v):

return PydanticObjectId(v)

@classmethod

def __modify_schema__(cls, field_schema: dict):

field_schema.update(

type="string",

examples=["5eb7cf5a86d9755df3a6c593", "5eb7cfb05e32e07750a1756a"],

)

ENCODERS_BY_TYPE[PydanticObjectId] = str

# + id="CDdrqdR2fhvj"

@app.route("/cocktails/", methods=["POST"])

def new_cocktail():

raw_cocktail = request.get_json()

raw_cocktail["date_added"] = datetime.utcnow()

cocktail = Cocktail(**raw_cocktail)

insert_result = recipes.insert_one(cocktail.to_bson())

cocktail.id = PydanticObjectId(str(insert_result.inserted_id))

print(cocktail)

return cocktail.to_json()

# + id="fuiMgIXxfjJj"

@app.route("/cocktails/<string:slug>", methods=["GET"])

def get_cocktail(slug):

recipe = recipes.find_one_or_404({"slug": slug})

return Cocktail(**recipe).to_json()

# + id="6C5-7rb6flF7"

@app.route("/cocktails/")

def list_cocktails():

"""

GET a list of cocktail recipes.

The results are paginated using the `page` parameter.

"""

page = int(request.args.get("page", 1))

per_page = 10 # A const value.

# For pagination, it's necessary to sort by name,

# then skip the number of docs that earlier pages would have displayed,

# and then to limit to the fixed page size, ``per_page``.

cursor = recipes.find().sort("name").skip(per_page * (page - 1)).limit(per_page)

cocktail_count = recipes.count_documents({})

links = {

"self": {"href": url_for(".list_cocktails", page=page, _external=True)},

"last": {

"href": url_for(

".list_cocktails", page=(cocktail_count // per_page) + 1, _external=True

)

},

}

# Add a 'prev' link if it's not on the first page:

if page > 1:

links["prev"] = {

"href": url_for(".list_cocktails", page=page - 1, _external=True)

}

# Add a 'next' link if it's not on the last page:

if page - 1 < cocktail_count // per_page:

links["next"] = {

"href": url_for(".list_cocktails", page=page + 1, _external=True)

}

return {

"recipes": [Cocktail(**doc).to_json() for doc in cursor],

"_links": links,

}

# + [markdown] id="lJvsgacffue0"

# ### Gestire Errori ed eccezioni

# + id="BpNnloNFfwwp"

@app.errorhandler(404)

def resource_not_found(e):

"""

An error-handler to ensure that 404 errors are returned as JSON.

"""

return jsonify(error=str(e)), 404

@app.errorhandler(DuplicateKeyError)

def resource_not_found(e):

"""

An error-handler to ensure that MongoDB duplicate key errors are returned as JSON.

"""

return jsonify(error=f"Duplicate key error."), 400

| riassunto_per_frettolosi.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 2

# language: python

# name: python2

# ---

# # Exploring precision and recall

#

# The goal of this second notebook is to understand precision-recall in the context of classifiers.

#

# * Use Amazon review data in its entirety.

# * Train a logistic regression model.

# * Explore various evaluation metrics: accuracy, confusion matrix, precision, recall.

# * Explore how various metrics can be combined to produce a cost of making an error.

# * Explore precision and recall curves.

#

# Because we are using the full Amazon review dataset (not a subset of words or reviews), in this assignment we return to using GraphLab Create for its efficiency. As usual, let's start by **firing up GraphLab Create**.

#

# Make sure you have the latest version of GraphLab Create (1.8.3 or later). If you don't find the decision tree module, then you would need to upgrade graphlab-create using

#

# ```

# pip install graphlab-create --upgrade

# ```

# See [this page](https://dato.com/download/) for detailed instructions on upgrading.

import graphlab

from __future__ import division

import numpy as np

graphlab.canvas.set_target('ipynb')

# # Load amazon review dataset

products = graphlab.SFrame('amazon_baby.gl/')

# # Extract word counts and sentiments

# As in the first assignment of this course, we compute the word counts for individual words and extract positive and negative sentiments from ratings. To summarize, we perform the following:

#

# 1. Remove punctuation.

# 2. Remove reviews with "neutral" sentiment (rating 3).

# 3. Set reviews with rating 4 or more to be positive and those with 2 or less to be negative.

# +

def remove_punctuation(text):

import string

return text.translate(None, string.punctuation)

# Remove punctuation.

review_clean = products['review'].apply(remove_punctuation)

# Count words

products['word_count'] = graphlab.text_analytics.count_words(review_clean)

# Drop neutral sentiment reviews.

products = products[products['rating'] != 3]

# Positive sentiment to +1 and negative sentiment to -1

products['sentiment'] = products['rating'].apply(lambda rating : +1 if rating > 3 else -1)

# -

# Now, let's remember what the dataset looks like by taking a quick peek:

products

# ## Split data into training and test sets

#

# We split the data into a 80-20 split where 80% is in the training set and 20% is in the test set.

train_data, test_data = products.random_split(.8, seed=1)

# ## Train a logistic regression classifier

#

# We will now train a logistic regression classifier with **sentiment** as the target and **word_count** as the features. We will set `validation_set=None` to make sure everyone gets exactly the same results.

#

# Remember, even though we now know how to implement logistic regression, we will use GraphLab Create for its efficiency at processing this Amazon dataset in its entirety. The focus of this assignment is instead on the topic of precision and recall.

model = graphlab.logistic_classifier.create(train_data, target='sentiment',

features=['word_count'],

validation_set=None)

# # Model Evaluation

# We will explore the advanced model evaluation concepts that were discussed in the lectures.

#

# ## Accuracy

#

# One performance metric we will use for our more advanced exploration is accuracy, which we have seen many times in past assignments. Recall that the accuracy is given by

#

# $$

# \mbox{accuracy} = \frac{\mbox{# correctly classified data points}}{\mbox{# total data points}}

# $$

#

# To obtain the accuracy of our trained models using GraphLab Create, simply pass the option `metric='accuracy'` to the `evaluate` function. We compute the **accuracy** of our logistic regression model on the **test_data** as follows:

accuracy= model.evaluate(test_data, metric='accuracy')['accuracy']

print "Test Accuracy: %s" % accuracy

# ## Baseline: Majority class prediction

#

# Recall from an earlier assignment that we used the **majority class classifier** as a baseline (i.e reference) model for a point of comparison with a more sophisticated classifier. The majority classifier model predicts the majority class for all data points.

#

# Typically, a good model should beat the majority class classifier. Since the majority class in this dataset is the positive class (i.e., there are more positive than negative reviews), the accuracy of the majority class classifier can be computed as follows:

baseline = len(test_data[test_data['sentiment'] == 1])/len(test_data)

print "Baseline accuracy (majority class classifier): %s" % baseline

# ** Quiz Question:** Using accuracy as the evaluation metric, was our **logistic regression model** better than the baseline (majority class classifier)?

# Yes

# ## Confusion Matrix

#

# The accuracy, while convenient, does not tell the whole story. For a fuller picture, we turn to the **confusion matrix**. In the case of binary classification, the confusion matrix is a 2-by-2 matrix laying out correct and incorrect predictions made in each label as follows:

# ```

# +---------------------------------------------+

# | Predicted label |

# +----------------------+----------------------+

# | (+1) | (-1) |

# +-------+-----+----------------------+----------------------+

# | True |(+1) | # of true positives | # of false negatives |

# | label +-----+----------------------+----------------------+

# | |(-1) | # of false positives | # of true negatives |

# +-------+-----+----------------------+----------------------+

# ```

# To print out the confusion matrix for a classifier, use `metric='confusion_matrix'`:

confusion_matrix = model.evaluate(test_data, metric='confusion_matrix')['confusion_matrix']

confusion_matrix

# **Quiz Question**: How many predicted values in the **test set** are **false positives**?

1406+1443

# ## Computing the cost of mistakes

#

#

# Put yourself in the shoes of a manufacturer that sells a baby product on Amazon.com and you want to monitor your product's reviews in order to respond to complaints. Even a few negative reviews may generate a lot of bad publicity about the product. So you don't want to miss any reviews with negative sentiments --- you'd rather put up with false alarms about potentially negative reviews instead of missing negative reviews entirely. In other words, **false positives cost more than false negatives**. (It may be the other way around for other scenarios, but let's stick with the manufacturer's scenario for now.)

#

# Suppose you know the costs involved in each kind of mistake:

# 1. \$100 for each false positive.

# 2. \$1 for each false negative.

# 3. Correctly classified reviews incur no cost.

#

# **Quiz Question**: Given the stipulation, what is the cost associated with the logistic regression classifier's performance on the **test set**?

1406 + 1443*100

# ## Precision and Recall

# You may not have exact dollar amounts for each kind of mistake. Instead, you may simply prefer to reduce the percentage of false positives to be less than, say, 3.5% of all positive predictions. This is where **precision** comes in:

#

# $$

# [\text{precision}] = \frac{[\text{# positive data points with positive predicitions}]}{\text{[# all data points with positive predictions]}} = \frac{[\text{# true positives}]}{[\text{# true positives}] + [\text{# false positives}]}

# $$

# So to keep the percentage of false positives below 3.5% of positive predictions, we must raise the precision to 96.5% or higher.

#

# **First**, let us compute the precision of the logistic regression classifier on the **test_data**.

precision = model.evaluate(test_data, metric='precision')['precision']

print "Precision on test data: %s" % precision

# **Quiz Question**: Out of all reviews in the **test set** that are predicted to be positive, what fraction of them are **false positives**? (Round to the second decimal place e.g. 0.25)

(1443)/(26689+1443)

# **Quiz Question:** Based on what we learned in lecture, if we wanted to reduce this fraction of false positives to be below 3.5%, we would: (see the quiz)

# A complementary metric is **recall**, which measures the ratio between the number of true positives and that of (ground-truth) positive reviews:

#

# $$

# [\text{recall}] = \frac{[\text{# positive data points with positive predicitions}]}{\text{[# all positive data points]}} = \frac{[\text{# true positives}]}{[\text{# true positives}] + [\text{# false negatives}]}

# $$

#

# Let us compute the recall on the **test_data**.

recall = model.evaluate(test_data, metric='recall')['recall']

print "Recall on test data: %s" % recall

# **Quiz Question**: What fraction of the positive reviews in the **test_set** were correctly predicted as positive by the classifier?

(26689)/float(26689+1443)

# **Quiz Question**: What is the recall value for a classifier that predicts **+1** for all data points in the **test_data**?

(26689)/float(26689+1406)

# # Precision-recall tradeoff

#

# In this part, we will explore the trade-off between precision and recall discussed in the lecture. We first examine what happens when we use a different threshold value for making class predictions. We then explore a range of threshold values and plot the associated precision-recall curve.

#

# ## Varying the threshold

#

# False positives are costly in our example, so we may want to be more conservative about making positive predictions. To achieve this, instead of thresholding class probabilities at 0.5, we can choose a higher threshold.

#

# Write a function called `apply_threshold` that accepts two things

# * `probabilities` (an SArray of probability values)

# * `threshold` (a float between 0 and 1).

#

# The function should return an SArray, where each element is set to +1 or -1 depending whether the corresponding probability exceeds `threshold`.

def apply_threshold(probabilities, threshold):

### YOUR CODE GOES HERE

# +1 if >= threshold and -1 otherwise.

return probabilities.apply(lambda x : +1 if x >= threshold else -1)

# Run prediction with `output_type='probability'` to get the list of probability values. Then use thresholds set at 0.5 (default) and 0.9 to make predictions from these probability values.

probabilities = model.predict(test_data, output_type='probability')

predictions_with_default_threshold = apply_threshold(probabilities, 0.5)

predictions_with_high_threshold = apply_threshold(probabilities, 0.9)

print "Number of positive predicted reviews (threshold = 0.5): %s" % (predictions_with_default_threshold == 1).sum()

print "Number of positive predicted reviews (threshold = 0.9): %s" % (predictions_with_high_threshold == 1).sum()

# **Quiz Question**: What happens to the number of positive predicted reviews as the threshold increased from 0.5 to 0.9?

# Becomes optimistic

# ## Exploring the associated precision and recall as the threshold varies

# By changing the probability threshold, it is possible to influence precision and recall. We can explore this as follows:

# +

# Threshold = 0.5

precision_with_default_threshold = graphlab.evaluation.precision(test_data['sentiment'],

predictions_with_default_threshold)

recall_with_default_threshold = graphlab.evaluation.recall(test_data['sentiment'],

predictions_with_default_threshold)

# Threshold = 0.9

precision_with_high_threshold = graphlab.evaluation.precision(test_data['sentiment'],

predictions_with_high_threshold)

recall_with_high_threshold = graphlab.evaluation.recall(test_data['sentiment'],

predictions_with_high_threshold)

# -

print "Precision (threshold = 0.5): %s" % precision_with_default_threshold

print "Recall (threshold = 0.5) : %s" % recall_with_default_threshold

print "Precision (threshold = 0.9): %s" % precision_with_high_threshold

print "Recall (threshold = 0.9) : %s" % recall_with_high_threshold

# **Quiz Question (variant 1)**: Does the **precision** increase with a higher threshold?

# Yes

# **Quiz Question (variant 2)**: Does the **recall** increase with a higher threshold?

# No

# ## Precision-recall curve

#

# Now, we will explore various different values of tresholds, compute the precision and recall scores, and then plot the precision-recall curve.

threshold_values = np.linspace(0.5, 1, num=100)

print threshold_values

# For each of the values of threshold, we compute the precision and recall scores.

# +

precision_all = []

recall_all = []

probabilities = model.predict(test_data, output_type='probability')

for threshold in threshold_values:

predictions = apply_threshold(probabilities, threshold)

precision = graphlab.evaluation.precision(test_data['sentiment'], predictions)

recall = graphlab.evaluation.recall(test_data['sentiment'], predictions)

precision_all.append(precision)

recall_all.append(recall)

# -

# Now, let's plot the precision-recall curve to visualize the precision-recall tradeoff as we vary the threshold.

# +

import matplotlib.pyplot as plt

# %matplotlib inline

def plot_pr_curve(precision, recall, title):

plt.rcParams['figure.figsize'] = 7, 5

plt.locator_params(axis = 'x', nbins = 5)

plt.plot(precision, recall, 'b-', linewidth=4.0, color = '#B0017F')

plt.title(title)

plt.xlabel('Precision')

plt.ylabel('Recall')

plt.rcParams.update({'font.size': 16})

plot_pr_curve(precision_all, recall_all, 'Precision recall curve (all)')

# -

# **Quiz Question**: Among all the threshold values tried, what is the **smallest** threshold value that achieves a precision of 96.5% or better? Round your answer to 3 decimal places.

for threshold in threshold_values:

predictions = apply_threshold(probabilities, threshold)

precision = graphlab.evaluation.precision(test_data['sentiment'], predictions)

print threshold, precision

# **0.838383838384**

# **Quiz Question**: Using `threshold` = 0.98, how many **false negatives** do we get on the **test_data**? (**Hint**: You may use the `graphlab.evaluation.confusion_matrix` function implemented in GraphLab Create.)

predictions = apply_threshold(probabilities, 0.98)

precision = graphlab.evaluation.confusion_matrix(test_data['sentiment'], predictions)

precision

# This is the number of false negatives (i.e the number of reviews to look at when not needed) that we have to deal with using this classifier.

# 1406

# # Evaluating specific search terms

# So far, we looked at the number of false positives for the **entire test set**. In this section, let's select reviews using a specific search term and optimize the precision on these reviews only. After all, a manufacturer would be interested in tuning the false positive rate just for their products (the reviews they want to read) rather than that of the entire set of products on Amazon.

#

# ## Precision-Recall on all baby related items

#

# From the **test set**, select all the reviews for all products with the word 'baby' in them.

baby_reviews = test_data[test_data['name'].apply(lambda x: 'baby' in x.lower())]

# Now, let's predict the probability of classifying these reviews as positive:

probabilities = model.predict(baby_reviews, output_type='probability')

# Let's plot the precision-recall curve for the **baby_reviews** dataset.

#

# **First**, let's consider the following `threshold_values` ranging from 0.5 to 1:

threshold_values = np.linspace(0.5, 1, num=100)

# **Second**, as we did above, let's compute precision and recall for each value in `threshold_values` on the **baby_reviews** dataset. Complete the code block below.

# +

precision_all = []

recall_all = []

for threshold in threshold_values:

# Make predictions. Use the `apply_threshold` function

## YOUR CODE HERE

predictions = apply_threshold(probabilities, threshold)

# Calculate the precision.

# YOUR CODE HERE

precision = graphlab.evaluation.precision(baby_reviews['sentiment'], predictions)

# YOUR CODE HERE

recall = graphlab.evaluation.recall(baby_reviews['sentiment'], predictions)

# Append the precision and recall scores.

precision_all.append((precision, threshold))

recall_all.append((recall, threshold))

# -

# **Quiz Question**: Among all the threshold values tried, what is the **smallest** threshold value that achieves a precision of 96.5% or better for the reviews of data in **baby_reviews**? Round your answer to 3 decimal places.

print precision_all

# **0.86363636363636365**

# **Quiz Question:** Is this threshold value smaller or larger than the threshold used for the entire dataset to achieve the same specified precision of 96.5%?

#

# **Finally**, let's plot the precision recall curve.

# **smaller**

plot_pr_curve(precision_all, recall_all, "Precision-Recall (Baby)")

| Course3/week6/module-9-precision-recall-assignment-blank.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python [conda env:ds]

# language: python

# name: conda-env-ds-py

# ---

# + [markdown] slideshow={"slide_type": "slide"}

# <img src="https://upload.wikimedia.org/wikipedia/commons/4/47/Logo_UTFSM.png" width="200" alt="utfsm-logo" align="left"/>

#

# # MAT281

# ### Aplicaciones de la Matemática en la Ingeniería

# + [markdown] slideshow={"slide_type": "slide"}

# ## Módulo 02

# ## Clase 01: Computación Científica

# + [markdown] slideshow={"slide_type": "slide"}

# ## Objetivos

#

# * Conocer las librerías de computación científica

# * Trabajar con arreglos *matriciales*

# * Álgebra lineal con numpy

# + [markdown] slideshow={"slide_type": "subslide"}

# ## Contenidos

# * [Scipy.org](#scipy.org)

# * [Numpy Arrays](#arrays)

# * [Operaciones Básicas](#operations)

# * [Broadcasting](#roadcasting)

# * [Álgebra Lineal](#linear_algebra)

# + [markdown] slideshow={"slide_type": "slide"}

# <a id='scipy.org'></a>

# ## SciPy.org

# -

# **SciPy** es un ecosistema de software _open-source_ para matemática, ciencia y engeniería. Los principales son:

#

# * Numpy: Arrays N-dimensionales. Librería base, integración con C/C++ y Fortran.

# * Scipy library: Computación científica (integración, optimización, estadística, etc.)

# * Matplotlib: Visualización 2D:

# * IPython: Interactividad (Project Jupyter).

# * Simpy: Matemática Simbólica.

# * Pandas: Estructura y análisis de datos.

# + [markdown] slideshow={"slide_type": "slide"}

# <a id='arrays'></a>

# ## Numpy Arrays

# -

# Los objetos principales de Numpy son los comúnmente conocidos como Numpy Arrays (la clase se llama `ndarray`), corresponden a una tabla de elementos, todos del mismo tipo, indexados por una tupla de enternos no-negativos. En Numpy, las dimensiones son llamadas _axes_.

import numpy as np

# +

a = np.array(

[

[ 0, 1, 2, 3, 4],

[ 5, 6, 7, 8, 9],

[10, 11, 12, 13, 14]

]

)

type(a)

# -

# Los atributos más importantes de un `ndarray` son:

a.shape # the dimensions of the array.

a.ndim # the number of axes (dimensions) of the array.

a.size # the total number of elements of the array.

a.dtype # an object describing the type of the elements in the array.

a.itemsize # the size in bytes of each element of the array.

# ### Crear Numpy Arrays

# Hay varias formas de crear arrays, el constructor básico es el que se utilizó hace unos momentos, `np.array`. El _type_ del array resultante es inferido de los datos proporcionados.

# +

a_int = np.array([2, 6, 10])

a_float = np.array([2.1, 6.1, 10.1])

print(f"a_int: {a_int.dtype.name}")

print(f"a_float: {a_float.dtype.name}")

# -

# ### Constantes

np.zeros((3, 4))

np.ones((2, 3, 4), dtype=np.int) # dtype can also be specified

np.identity(4) # Identity matrix

# ### Range

# Numpy proporciona una función análoga a `range`.

range(10)

type(range(10))

np.arange(10)

type(np.arange(10))

np.arange(3, 10)

np.arange(2, 20, 3, dtype=np.float)

np.arange(9).reshape(3, 3)

# Bonus

np.linspace(0, 100, 5)

# ### Random

np.random.uniform(size=5)

np.random.normal(size=(2, 3))

# ### Acceder a los elementos de un array

x1 = np.arange(0, 30, 4)

x2 = np.arange(0, 60, 3).reshape(4, 5)

print("x1:")

print(x1)

print("\nx2:")

print(x2)

x1[1] # Un elemento de un array 1D

x1[:3] # Los tres primeros elementos

x2[0, 2] # Un elemento de un array 2D

x2[0] # La primera fila

x2[:, 1] # Todas las filas y la segunda columna

x2[:, 1:3] # Todas las filas y de la segunda a la tercera columna

x2[:, 1:2] # What?!

# + [markdown] slideshow={"slide_type": "slide"}

# <a id='operations'></a>

# ## Operaciones Básias

# -

# Numpy provee operaciones vectorizadas, con tal de mejorar el rendimiento de la ejecución.

# Por ejemplo, pensemos en la suma de dos arreglos 2D.

A = np.random.random((5,5))

B = np.random.random((5,5))

# Con los conocimientos de la clase pasada, podríamos pensar en iterar a través de dos `for`, con tal de llenar el arreglo resultando. algo así:

def my_sum(A, B):

n, m = A.shape

C = np.empty(shape=(n, m))

for i in range(n):

for j in range(m):

C[i, j] = A[i, j] + B[i, j]

return C

# %timeit my_sum(A, B)

# Pero la suma de `ndarray`s es simplemente con el signo de suma (`+`):

# %timeit A + B

# Para dos arrays tan pequeños la diferencia de tiempo es considerable, ¡Imagina con millones de datos!

# Los clásicos de clásicos:

x = np.arange(5)

print(f"x = {x}")

print(f"x + 5 = {x + 5}")

print(f"x - 5 = {x - 5}")

print(f"x * 2 = {x * 2}")

print(f"x / 2 = {x / 2}")

print(f"x // 2 = {x // 2}")

print(f"x ** 2 = {x ** 2}")

print(f"x % 2 = {x % 2}")

# ¡Júntalos como quieras!

-(0.5 + x + 3) ** 2

# Al final del día, estos son alias para funciones de Numpy, por ejemplo, la operación suma (`+`) es un _wrapper_ de la función `np.add`

np.add(x, 5)

# Podríamos estar todo el día hablando de operaciones, pero básicamente, si piensas en alguna operación lo suficientemente común, es que la puedes encontrar implementada en Numpy. Por ejemplo:

np.abs(-(0.5 + x + 3) ** 2)

np.log(x + 5)

np.exp(x)

np.sin(x)

# ### ¿Y para dimensiones mayores?

# La idea es la misma, pero siempre hay que tener cuidado con las dimensiones y `shape` de los arrays.

print("A + B: \n")

print(A + B)

print("\n" + "-" * 80 + "\n")

print("A - B: \n")

print(A - B)

print("\n" + "-" * 80 + "\n")

print("A * B: \n")

print(A * B) # Producto elemento a elemento

print("\n" + "-" * 80 + "\n")

print("A / B: \n")

print(A / B) # División elemento a elemento

print("\n" + "-" * 80 + "\n")

print("A @ B: \n")

print(A @ B) # Producto matricial

# ### Operaciones Booleanas

print(f"x = {x}")

print(f"x > 2 = {x > 2}")

print(f"x == 2 = {x == 2}")

print(f"x == 2 = {x == 2}")

# +

aux1 = np.array([[1, 2, 3], [2, 3, 5], [1, 9, 6]])

aux2 = np.array([[1, 2, 3], [3, 5, 5], [0, 8, 5]])

B1 = aux1 == aux2

B2 = aux1 > aux2

print("B1: \n")

print(B1)

print("\n" + "-" * 80 + "\n")

print("B2: \n")

print(B2)

print("\n" + "-" * 80 + "\n")

print("~B1: \n")

print(~B1) # También puede ser np.logical_not(B1)

print("\n" + "-" * 80 + "\n")

print("B1 | B2 : \n")

print(B1 | B2)

print("\n" + "-" * 80 + "\n")

print("B1 & B2 : \n")

print(B1 & B2)

# + [markdown] slideshow={"slide_type": "slide"}

# <a id='broadcasting'></a>

# ## Broadcasting

# -

# ¿Qué pasa si las dimensiones no coinciden? Observemos lo siguiente:

a = np.array([0, 1, 2])

b = np.array([5, 5, 5])

a + b

# Todo bien, dos arrays 1D de 3 elementos, la suma retorna un array de 3 elementos.

a + 3

# Sigue pareciendo normal, un array 1D de 3 elementos, se suma con un `int`, lo que retorna un array 1D de tres elementos.

M = np.ones((3, 3))

M

M + a

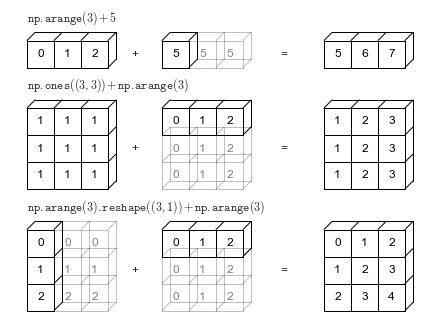

# Magia! Esto es _broadcasting_. Una pequeña infografía es la siguiente:

#

# Resumen: A lo menos los dos arrays deben coincidir en una dimensión. Luego, el array de dimensión menor se extiende con tal de ajustarse a las dimensiones del otro.

#

# La documentación oficial de estas reglas la puedes encontrar [aquí](https://numpy.org/devdocs/user/basics.broadcasting.html).

# + [markdown] slideshow={"slide_type": "slide"}

# <a id='lineal_algebra'></a>

# ## Álgebra Lineal

# -

# Veamos algunas operaciones básicas de álgebra lineal, las que te servirán para el día a día.

a = np.array([[1.0, 2.0], [3.0, 4.0]])

print(a)

# Transpuesta

a.T # a.transpose()

# Determinante

np.linalg.det(a)

# Inversa

np.linalg.inv(a)

# Traza

np.trace(a)

# Número de condición

np.linalg.cond(a)

# Sistemas lineales

y = np.array([[5.], [7.]])

np.linalg.solve(a, y)

# Valores y vectores propios

np.linalg.eig(a)

# Descomposición QR

np.linalg.qr(a)

| m02_data_analysis/m02_c01_scientific computing/m02_c01_scientific computing.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

import torch

from torch.autograd import Variable

import torch.nn as nn

import torch.nn.functional as F

import torch.optim as optim

from torch.optim.lr_scheduler import StepLR, MultiStepLR

import numpy as np

# import matplotlib.pyplot as plt

from math import *

import time

import copy

torch.cuda.set_device(2)

torch.set_default_tensor_type('torch.DoubleTensor')

# activation function

def activation(x):

return x * torch.sigmoid(x)

# build ResNet with one blocks

class Net(torch.nn.Module):

def __init__(self,input_width,layer_width):

super(Net,self).__init__()

self.layer_in = torch.nn.Linear(input_width, layer_width)

self.layer1 = torch.nn.Linear(layer_width, layer_width)

self.layer2 = torch.nn.Linear(layer_width, layer_width)

self.layer_out = torch.nn.Linear(layer_width, 1)

def forward(self,x):

y = self.layer_in(x)

y = y + activation(self.layer2(activation(self.layer1(y)))) # residual block 1

output = self.layer_out(y)

return output

dimension = 1

input_width,layer_width = dimension, 4

net = Net(input_width,layer_width).cuda() # network for u on gpu

# defination of exact solution

def u_ex(x):

temp = 1.0

for i in range(dimension):

temp = temp * torch.sin(pi*x[:, i])

u_temp = 1.0 * temp

return u_temp.reshape([x.size()[0], 1])

# defination of f(x)

def f(x):

temp = 1.0

for i in range(dimension):

temp = temp * torch.sin(pi*x[:, i])

u_temp = 1.0 * temp

f_temp = dimension * pi**2 * u_temp

return f_temp.reshape([x.size()[0],1])

# generate points by random

def generate_sample(data_size):

sample_temp = torch.rand(data_size, dimension)

return sample_temp.cuda()

def model(x):

x_temp = x.cuda()

D_x_0 = torch.prod(x_temp, axis = 1).reshape([x.size()[0], 1])

D_x_1 = torch.prod(1.0 - x_temp, axis = 1).reshape([x.size()[0], 1])

model_u_temp = D_x_0 * D_x_1 * net(x)

return model_u_temp.reshape([x.size()[0], 1])

# loss function to DRM by auto differential

def loss_function(x):

# x = generate_sample(data_size).cuda()

# x.requires_grad = True

u_hat = model(x)

grad_u_hat = torch.autograd.grad(outputs = u_hat, inputs = x, grad_outputs = torch.ones(u_hat.shape).cuda(), create_graph = True)

grad_u_sq = ((grad_u_hat[0]**2).sum(1)).reshape([len(grad_u_hat[0]), 1])

part = torch.sum(0.5 * grad_u_sq - f(x) * u_hat) / len(x)

return part

data_size = 200

x = generate_sample(data_size).cuda()

x.requires_grad = True

def get_weights(net):

""" Extract parameters from net, and return a list of tensors"""

return [p.data for p in net.parameters()]

def set_weights(net, weights, directions=None, step=None):

"""

Overwrite the network's weights with a specified list of tensors

or change weights along directions with a step size.

"""

if directions is None:

# You cannot specify a step length without a direction.

for (p, w) in zip(net.parameters(), weights):

p.data.copy_(w.type(type(p.data)))

else:

assert step is not None, 'If a direction is specified then step must be specified as well'

if len(directions) == 2:

dx = directions[0]

dy = directions[1]

changes = [d0*step[0] + d1*step[1] for (d0, d1) in zip(dx, dy)]

else:

changes = [d*step for d in directions[0]]

for (p, w, d) in zip(net.parameters(), weights, changes):

p.data = w + torch.Tensor(d).type(type(w))

def set_states(net, states, directions=None, step=None):

"""

Overwrite the network's state_dict or change it along directions with a step size.

"""

if directions is None:

net.load_state_dict(states)

else:

assert step is not None, 'If direction is provided then the step must be specified as well'

if len(directions) == 2:

dx = directions[0]

dy = directions[1]

changes = [d0*step[0] + d1*step[1] for (d0, d1) in zip(dx, dy)]

else:

changes = [d*step for d in directions[0]]

new_states = copy.deepcopy(states)

assert (len(new_states) == len(changes))

for (k, v), d in zip(new_states.items(), changes):

d = torch.tensor(d)

v.add_(d.type(v.type()))

net.load_state_dict(new_states)

def get_random_weights(weights):

"""

Produce a random direction that is a list of random Gaussian tensors

with the same shape as the network's weights, so one direction entry per weight.

"""

return [torch.randn(w.size()) for w in weights]

def get_random_states(states):

"""

Produce a random direction that is a list of random Gaussian tensors

with the same shape as the network's state_dict(), so one direction entry

per weight, including BN's running_mean/var.

"""

return [torch.randn(w.size()) for k, w in states.items()]

def get_diff_weights(weights, weights2):

""" Produce a direction from 'weights' to 'weights2'."""

return [w2 - w for (w, w2) in zip(weights, weights2)]

def get_diff_states(states, states2):

""" Produce a direction from 'states' to 'states2'."""

return [v2 - v for (k, v), (k2, v2) in zip(states.items(), states2.items())]

def normalize_direction(direction, weights, norm='filter'):

"""

Rescale the direction so that it has similar norm as their corresponding

model in different levels.

Args:

direction: a variables of the random direction for one layer

weights: a variable of the original model for one layer

norm: normalization method, 'filter' | 'layer' | 'weight'

"""

if norm == 'filter':

# Rescale the filters (weights in group) in 'direction' so that each

# filter has the same norm as its corresponding filter in 'weights'.

for d, w in zip(direction, weights):

d.mul_(w.norm()/(d.norm() + 1e-10))

elif norm == 'layer':

# Rescale the layer variables in the direction so that each layer has

# the same norm as the layer variables in weights.

direction.mul_(weights.norm()/direction.norm())

elif norm == 'weight':

# Rescale the entries in the direction so that each entry has the same

# scale as the corresponding weight.

direction.mul_(weights)

elif norm == 'dfilter':

# Rescale the entries in the direction so that each filter direction

# has the unit norm.

for d in direction:

d.div_(d.norm() + 1e-10)

elif norm == 'dlayer':

# Rescale the entries in the direction so that each layer direction has

# the unit norm.

direction.div_(direction.norm())

def normalize_directions_for_weights(direction, weights, norm='filter', ignore='biasbn'):

"""

The normalization scales the direction entries according to the entries of weights.

"""

assert(len(direction) == len(weights))

for d, w in zip(direction, weights):

if d.dim() <= 1:

if ignore == 'biasbn':

d.fill_(0) # ignore directions for weights with 1 dimension

else:

d.copy_(w) # keep directions for weights/bias that are only 1 per node

else:

normalize_direction(d, w, norm)

def normalize_directions_for_states(direction, states, norm='filter', ignore='ignore'):

assert(len(direction) == len(states))

for d, (k, w) in zip(direction, states.items()):

if d.dim() <= 1:

if ignore == 'biasbn':

d.fill_(0) # ignore directions for weights with 1 dimension

else:

d.copy_(w) # keep directions for weights/bias that are only 1 per node

else:

normalize_direction(d, w, norm)

def ignore_biasbn(directions):

""" Set bias and bn parameters in directions to zero """

for d in directions:

if d.dim() <= 1:

d.fill_(0)

def create_random_direction(net, dir_type='weights', ignore='biasbn', norm='filter'):

"""

Setup a random (normalized) direction with the same dimension as

the weights or states.

Args:

net: the given trained model

dir_type: 'weights' or 'states', type of directions.

ignore: 'biasbn', ignore biases and BN parameters.

norm: direction normalization method, including

'filter" | 'layer' | 'weight' | 'dlayer' | 'dfilter'

Returns:

direction: a random direction with the same dimension as weights or states.

"""

# random direction

if dir_type == 'weights':

weights = get_weights(net) # a list of parameters.

direction = get_random_weights(weights)

normalize_directions_for_weights(direction, weights, norm, ignore)

elif dir_type == 'states':

states = net.state_dict() # a dict of parameters, including BN's running mean/var.

direction = get_random_states(states)

normalize_directions_for_states(direction, states, norm, ignore)

return direction

def tvd(m, l_i):

# load model parameters

pretrained_dict = torch.load('net_params_DGM_ResNet_Uniform.pkl')

# get state_dict

net_state_dict = net.state_dict()

# remove keys that does not belong to net_state_dict

pretrained_dict_1 = {k: v for k, v in pretrained_dict.items() if k in net_state_dict}

# update dict

net_state_dict.update(pretrained_dict_1)

# set new dict back to net

net.load_state_dict(net_state_dict)

weights_temp = get_weights(net)

states_temp = net.state_dict()

step_size = 2 * l_i / m

grid = np.arange(-l_i, l_i + step_size, step_size)

num_direction = 1

loss_matrix = torch.zeros((num_direction, len(grid)))

for temp in range(num_direction):

weights = weights_temp

states = states_temp

direction_temp = create_random_direction(net, dir_type='weights', ignore='biasbn', norm='filter')

normalize_directions_for_states(direction_temp, states, norm='filter', ignore='ignore')

directions = [direction_temp]

for dx in grid:

itemindex_1 = np.argwhere(grid == dx)

step = dx

set_states(net, states, directions, step)

loss_temp = loss_function(x)

loss_matrix[temp, itemindex_1[0]] = loss_temp

# clear memory

torch.cuda.empty_cache()

# get state_dict

net_state_dict = net.state_dict()

# remove keys that does not belong to net_state_dict

pretrained_dict_1 = {k: v for k, v in pretrained_dict.items() if k in net_state_dict}

# update dict

net_state_dict.update(pretrained_dict_1)

# set new dict back to net

net.load_state_dict(net_state_dict)

weights_temp = get_weights(net)

states_temp = net.state_dict()

interval_length = grid[-1] - grid[0]

TVD = 0.0

for temp in range(num_direction):

for index in range(loss_matrix.size()[1] - 1):

TVD = TVD + np.abs(float(loss_matrix[temp, index] - loss_matrix[temp, index + 1]))

Max = np.max(loss_matrix.detach().numpy())

Min = np.min(loss_matrix.detach().numpy())

TVD = TVD / interval_length / num_direction / (Max - Min)

return TVD, Max, Min

# +

M = 100

m = 20

l_i = 1.0

TVD_DRM = 0.0

time_start = time.time()

Max = []

Min = []

Result = []

for count in range(M):

TVD_temp, Max_temp, Min_temp = tvd(m, l_i)

# print(Max_temp, Min_temp)

Max.append(Max_temp)

Min.append(Min_temp)

Result.append(TVD_temp)

print('Current direction TVD of DGM is: ', TVD_temp)

TVD_DRM = TVD_DRM + TVD_temp

print((count + 1) / M * 100, '% finished.')

# print('Max of all is: ', np.max(Max))

# print('Min of all is: ', np.min(Min))

TVD_DRM = TVD_DRM / M

print('All directions average TVD of DGM is: ', TVD_DRM)

print('Variance TVD of DRM is: ', np.sqrt(np.var(Result, ddof = 1)))

print("Value of roughness index is: ", np.sqrt(np.var(Result, ddof = 1)) / TVD_DRM)

time_end = time.time()

print('Total time costs: ', time_end - time_start, 'seconds')

# -

| code/Results1D/randomInitialization/DRM_index_ResNet_Uniform.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---