code stringlengths 38 801k | repo_path stringlengths 6 263 |

|---|---|

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# # Use Decision Optimization to plan your diet with `ibm-watson-machine-learning`

#

# This notebook facilitates Decision Optimization and Watson Machine Learning services. It contains steps and code to work with [ibm-watson-machine-learning](https://pypi.python.org/pypi/ibm-watson-machine-learning) library available in PyPI repository. It also introduces commands for getting model and training data, persisting model, deploying model and scoring it.

#

# Some familiarity with Python is helpful. This notebook uses Python 3.8.

# + [markdown] pycharm={"name": "#%% md\n"}

# ## Learning goals

#

# The learning goals of this notebook are:

#

# - Load a DO model file into an Watson Machine learning repository.

# - Prepare data for training and evaluation.

# - Create an DO machine learning job.

# - Persist a DO model Watson Machine Learning repository.

# - Deploy a model for batch scoring using Wastson Machine Learning API.

# + [markdown] pycharm={"name": "#%% md\n"}

# ## Contents

#

# This notebook contains the following parts:

#

# 1. [Setup](#setup)

# 2. [Download externally created DO model](#download)

# 3. [Persist externally created DO model](#upload)

# 4. [Deploy](#deploy)

# 5. [Create job](#score)

# 6. [Clean up](#cleanup)

# 7. [Summary and next steps](#summary)

#

#

# -

# <a id="setup"></a>

# ## 1. Set up the environment

#

# Before you use the sample code in this notebook, you must perform the following setup tasks:

#

# - Contact with your Cloud Pack for Data administrator and ask him for your account credentials

# ### Connection to WML

#

# Authenticate the Watson Machine Learning service on IBM Cloud Pack for Data. You need to provide platform `url`, your `username` and `api_key`.

username = 'PASTE YOUR USERNAME HERE'

api_key = 'PASTE YOUR API_KEY HERE'

url = 'PASTE THE PLATFORM URL HERE'

wml_credentials = {

"username": username,

"apikey": api_key,

"url": url,

"instance_id": 'openshift',

"version": '4.0'

}

# Alternatively you can use `username` and `password` to authenticate WML services.

#

# ```

# wml_credentials = {

# "username": ***,

# "password": ***,

# "url": ***,

# "instance_id": 'openshift',

# "version": '4.0'

# }

#

# ```

# ### Install and import the `ibm-watson-machine-learning` package

# **Note:** `ibm-watson-machine-learning` documentation can be found <a href="http://ibm-wml-api-pyclient.mybluemix.net/" target="_blank" rel="noopener no referrer">here</a>.

# !pip install -U ibm-watson-machine-learning

# +

from ibm_watson_machine_learning import APIClient

client = APIClient(wml_credentials)

# -

# ### Working with spaces

#

# First of all, you need to create a space that will be used for your work. If you do not have space already created, you can use `{PLATFORM_URL}/ml-runtime/spaces?context=icp4data` to create one.

#

# - Click New Deployment Space

# - Create an empty space

# - Go to space `Settings` tab

# - Copy `space_id` and paste it below

#

# **Tip**: You can also use SDK to prepare the space for your work. More information can be found [here](https://github.com/IBM/watson-machine-learning-samples/blob/master/cpd4.0/notebooks/python_sdk/instance-management/Space%20management.ipynb).

#

# **Action**: Assign space ID below

space_id = 'PASTE YOUR SPACE ID HERE'

# You can use `list` method to print all existing spaces.

client.spaces.list(limit=10)

# To be able to interact with all resources available in Watson Machine Learning, you need to set **space** which you will be using.

client.set.default_space(space_id)

# + [markdown] pycharm={"name": "#%% md\n"}

# #### <a id="download"></a>

# ## 2. Download externally created DO model and data

#

#

# In this section, you will download externally created DO models and data used for training it.

#

#

# **Action**: Get your DO model.

# -

# !wget https://github.com/IBM/watson-machine-learning-samples/raw/master/cpd4.0/models/decision_optimization/do-model.tar.gz \

# -O do-model.tar.gz

# + pycharm={"name": "#%%\n"}

model_path = 'do-model.tar.gz'

# -

#

# <a id="upload"></a>

# ## 3. Persist externally created DO model

# In this section, you will learn how to store your model in Watson Machine Learning repository by using the Watson Machine Learning Client.

#

# ### 3.1: Publish model

#

# #### Publish model in Watson Machine Learning repository on Cloud.

# Define model name, autor name and email.

#

# + [markdown] pycharm={"name": "#%% md\n"}

# Get software specification for DO model

# + pycharm={"name": "#%%\n"}

sofware_spec_uid = client.software_specifications.get_uid_by_name("do_12.9")

# + [markdown] pycharm={"name": "#%% md\n"}

# Output data schema for storing model in WML repository

# + pycharm={"name": "#%%\n"}

output_data_schema = [{'id': 'stest',

'type': 'list',

'fields': [{'name': 'age', 'type': 'float'},

{'name': 'sex', 'type': 'float'},

{'name': 'cp', 'type': 'float'},

{'name': 'restbp', 'type': 'float'},

{'name': 'chol', 'type': 'float'},

{'name': 'fbs', 'type': 'float'},

{'name': 'restecg', 'type': 'float'},

{'name': 'thalach', 'type': 'float'},

{'name': 'exang', 'type': 'float'},

{'name': 'oldpeak', 'type': 'float'},

{'name': 'slope', 'type': 'float'},

{'name': 'ca', 'type': 'float'},

{'name': 'thal', 'type': 'float'}]

}, {'id': 'teste2',

'type': 'test',

'fields': [{'name': 'age', 'type': 'float'},

{'name': 'sex', 'type': 'float'},

{'name': 'cp', 'type': 'float'},

{'name': 'restbp', 'type': 'float'},

{'name': 'chol', 'type': 'float'},

{'name': 'fbs', 'type': 'float'},

{'name': 'restecg', 'type': 'float'},

{'name': 'thalach', 'type': 'float'},

{'name': 'exang', 'type': 'float'},

{'name': 'oldpeak', 'type': 'float'},

{'name': 'slope', 'type': 'float'},

{'name': 'ca', 'type': 'float'},

{'name': 'thal', 'type': 'float'}]}]

# + pycharm={"name": "#%%\n"}

model_meta_props = {

client.repository.ModelMetaNames.NAME: "LOCALLY created DO model",

client.repository.ModelMetaNames.TYPE: "do-docplex_12.9",

client.repository.ModelMetaNames.SOFTWARE_SPEC_UID: sofware_spec_uid,

client.repository.ModelMetaNames.OUTPUT_DATA_SCHEMA: output_data_schema

}

published_model = client.repository.store_model(model=model_path, meta_props=model_meta_props)

# -

# **Note:** You can see that model is successfully stored in Watson Machine Learning Service.

# ### 3.2: Get model details

#

# +

import json

published_model_uid = client.repository.get_model_uid(published_model)

model_details = client.repository.get_details(published_model_uid)

print(json.dumps(model_details, indent=2))

# -

# ### 3.3 Get all models

# + pycharm={"name": "#%%\n"}

client.repository.list_models()

# -

# <a id="deploy"></a>

# ## 4. Deploy

# In this section you will learn how to create batch deployment to create job using the Watson Machine Learning Client.

#

# You can use commands bellow to create batch deployment for stored model (web service).

#

# ### 4.1: Create model deployment

#

# + pycharm={"name": "#%%\n"}

meta_data = {

client.deployments.ConfigurationMetaNames.NAME: "deployment_DO",

client.deployments.ConfigurationMetaNames.BATCH: {},

client.deployments.ConfigurationMetaNames.HARDWARE_SPEC: {"name": "S", "num_nodes": 1}

}

deployment_details = client.deployments.create(published_model_uid, meta_props=meta_data)

# -

# **Note**: Here we use deployment url saved in published_model object. In next section, we show how to retrive deployment url from Watson Machine Learning instance.

#

deployment_uid = client.deployments.get_uid(deployment_details)

# Now, You can list all deployments.

# + pycharm={"name": "#%%\n"}

client.deployments.list()

# -

# ### 4.2: Get deployment details

#

# + pycharm={"name": "#%%\n"}

client.deployments.get_details(deployment_uid)

# -

# <a id="score"></a>

# ## 5. Create job

#

# You can create job to web-service deployment using `create_job` method.

# Prepare test data

# +

import pandas as pd

diet_food = pd.DataFrame([["Roasted Chicken", 0.84, 0, 10],

["Spaghetti W/ Sauce", 0.78, 0, 10],

["Tomato,Red,Ripe,Raw", 0.27, 0, 10],

["Apple,Raw,W/Skin", 0.24, 0, 10],

["Grapes", 0.32, 0, 10],

["Chocolate Chip Cookies", 0.03, 0, 10],

["Lowfat Milk", 0.23, 0, 10],

["Raisin Brn", 0.34, 0, 10],

["Hotdog", 0.31, 0, 10]], columns=["name", "unit_cost", "qmin", "qmax"])

diet_food_nutrients = pd.DataFrame([

["Spaghetti W/ Sauce", 358.2, 80.2, 2.3, 3055.2, 11.6, 58.3, 8.2],

["Roasted Chicken", 277.4, 21.9, 1.8, 77.4, 0, 0, 42.2],

["Tomato,Red,Ripe,Raw", 25.8, 6.2, 0.6, 766.3, 1.4, 5.7, 1],

["Apple,Raw,W/Skin", 81.4, 9.7, 0.2, 73.1, 3.7, 21, 0.3],

["Grapes", 15.1, 3.4, 0.1, 24, 0.2, 4.1, 0.2],

["Chocolate Chip Cookies", 78.1, 6.2, 0.4, 101.8, 0, 9.3, 0.9],

["Lowfat Milk", 121.2, 296.7, 0.1, 500.2, 0, 11.7, 8.1],

["Raisin Brn", 115.1, 12.9, 16.8, 1250.2, 4, 27.9, 4],

["Hotdog", 242.1, 23.5, 2.3, 0, 0, 18, 10.4]

], columns=["Food", "Calories", "Calcium", "Iron", "Vit_A", "Dietary_Fiber", "Carbohydrates", "Protein"])

diet_nutrients = pd.DataFrame([

["Calories", 2000, 2500],

["Calcium", 800, 1600],

["Iron", 10, 30],

["Vit_A", 5000, 50000],

["Dietary_Fiber", 25, 100],

["Carbohydrates", 0, 300],

["Protein", 50, 100]

], columns=["name", "qmin", "qmax"])

# -

job_payload_ref = {

client.deployments.DecisionOptimizationMetaNames.INPUT_DATA: [

{

"id": "diet_food.csv",

"values": diet_food

},

{

"id": "diet_food_nutrients.csv",

"values": diet_food_nutrients

},

{

"id": "diet_nutrients.csv",

"values": diet_nutrients

}

],

client.deployments.DecisionOptimizationMetaNames.OUTPUT_DATA: [

{

"id": ".*.csv"

}

]

}

# Create job using Watson Machine Learning client

job = client.deployments.create_job(deployment_uid, meta_props=job_payload_ref)

# Checking created job status and calculated KPI.

# +

import time

job_id = client.deployments.get_job_uid(job)

elapsed_time = 0

while client.deployments.get_job_status(job_id).get('state') != 'completed' and elapsed_time < 300:

elapsed_time += 10

time.sleep(10)

if client.deployments.get_job_status(job_id).get('state') == 'completed':

job_details_do = client.deployments.get_job_details(job_id)

kpi = job_details_do['entity']['decision_optimization']['solve_state']['details']['KPI.Total Calories']

print(f"KPI: {kpi}")

else:

print("Job hasn't completed successfully in 5 minutes.")

# -

# <a id="cleanup"></a>

# ## 6. Clean up

# If you want to clean up all created assets:

# - experiments

# - trainings

# - pipelines

# - model definitions

# - models

# - functions

# - deployments

#

# please follow up this sample [notebook](https://github.com/IBM/watson-machine-learning-samples/blob/master/cpd4.0/notebooks/python_sdk/instance-management/Machine%20Learning%20artifacts%20management.ipynb).

# <a id="summary"></a>

# ## 7. Summary and next steps

# You successfully completed this notebook! You learned how to use DO as well as Watson Machine Learning for model creation and deployment.

#

# Check out our _[Online Documentation](https://dataplatform.cloud.ibm.com/docs/content/analyze-data/wml-setup.html)_ for more samples, tutorials, documentation, how-tos, and blog posts.

#

# ### Authors

#

# **<NAME>**, Software Engineer

# Copyright © 2020, 2021 IBM. This notebook and its source code are released under the terms of the MIT License.

#

| cpd4.0/notebooks/python_sdk/deployments/decision_optimization/Use Decision Optimization to plan your diet.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

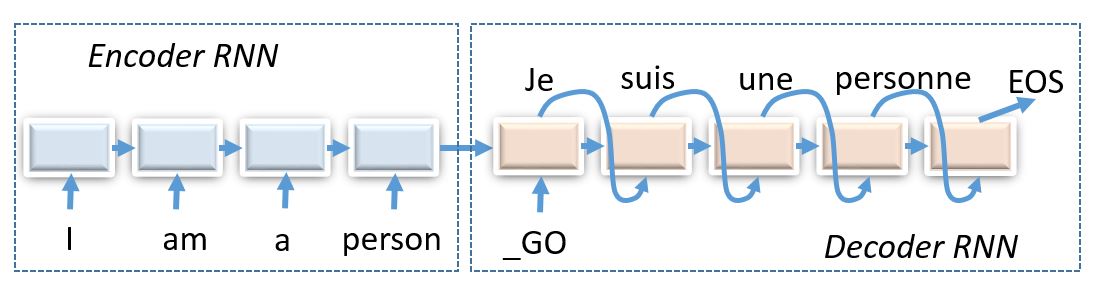

# ## Seminar and homework (10 points total)

#

# Today we shall compose encoder-decoder neural networks and apply them to the task of machine translation.

#

#

# _(img: esciencegroup.files.wordpress.com)_

#

#

# Encoder-decoder architectures are about converting anything to anything, including

# * Machine translation and spoken dialogue systems

# * [Image captioning](http://mscoco.org/dataset/#captions-challenge2015) and [image2latex](https://openai.com/requests-for-research/#im2latex) (convolutional encoder, recurrent decoder)

# * Generating [images by captions](https://arxiv.org/abs/1511.02793) (recurrent encoder, convolutional decoder)

# * Grapheme2phoneme - convert words to transcripts

# ## Our task: machine translation

#

# We gonna try our encoder-decoder models on russian to english machine translation problem. More specifically, we'll translate hotel and hostel descriptions. This task shows the scale of machine translation while not requiring you to train your model for weeks if you don't use GPU.

#

# Before we get to the architecture, there's some preprocessing to be done. ~~Go tokenize~~ Alright, this time we've done preprocessing for you. As usual, the data will be tokenized with WordPunctTokenizer.

#

# However, there's one more thing to do. Our data lines contain unique rare words. If we operate on a word level, we will have to deal with large vocabulary size. If instead we use character-level models, it would take lots of iterations to process a sequence. This time we're gonna pick something inbetween.

#

# One popular approach is called [Byte Pair Encoding](https://github.com/rsennrich/subword-nmt) aka __BPE__. The algorithm starts with a character-level tokenization and then iteratively merges most frequent pairs for N iterations. This results in frequent words being merged into a single token and rare words split into syllables or even characters.

#

#

# !pip3 install subword-nmt &> log

# !wget https://raw.githubusercontent.com/yandexdataschool/nlp_course/master/week04_seq2seq/data.txt -O data.txt 2> log

# !wget https://github.com/yandexdataschool/nlp_course/raw/master/week04_seq2seq/utils.py -O utils.py 2> log

# !wget https://github.com/yandexdataschool/nlp_course/raw/master/week04_seq2seq/dummy_checkpoint.npz -O dummy_checkpoint.npz 2> log

#thanks to tilda and deephack teams for the data

# +

from nltk.tokenize import WordPunctTokenizer

from subword_nmt.learn_bpe import learn_bpe

from subword_nmt.apply_bpe import BPE

tokenizer = WordPunctTokenizer()

def tokenize(x):

return ' '.join(tokenizer.tokenize(x.lower()))

# split and tokenize the data

with open('train.en', 'w') as f_src, open('train.ru', 'w') as f_dst:

for line in open('data.txt'):

src_line, dst_line = line.strip().split('\t')

f_src.write(tokenize(src_line) + '\n')

f_dst.write(tokenize(dst_line) + '\n')

# build and apply bpe vocs

bpe = {}

for lang in ['en', 'ru']:

learn_bpe(open('./train.' + lang), open('bpe_rules.' + lang, 'w'), num_symbols=8000)

bpe[lang] = BPE(open('./bpe_rules.' + lang))

with open('train.bpe.' + lang, 'w') as f_out:

for line in open('train.' + lang):

f_out.write(bpe[lang].process_line(line.strip()) + '\n')

# -

# ### Building vocabularies

#

# We now need to build vocabularies that map strings to token ids and vice versa. We're gonna need these fellas when we feed training data into model or convert output matrices into words.

import numpy as np

import matplotlib.pyplot as plt

# %matplotlib inline

# +

data_inp = np.array(open('./train.bpe.ru').read().split('\n'))

data_out = np.array(open('./train.bpe.en').read().split('\n'))

from sklearn.model_selection import train_test_split

train_inp, dev_inp, train_out, dev_out = train_test_split(data_inp, data_out, test_size=3000,

random_state=42)

for i in range(3):

print('inp:', train_inp[i])

print('out:', train_out[i], end='\n\n')

# -

from utils import Vocab

inp_voc = Vocab.from_lines(train_inp)

out_voc = Vocab.from_lines(train_out)

# +

# Here's how you cast lines into ids and backwards.

batch_lines = sorted(train_inp, key=len)[5:10]

batch_ids = inp_voc.to_matrix(batch_lines)

batch_lines_restored = inp_voc.to_lines(batch_ids)

print("lines")

print(batch_lines)

print("\nwords to ids (0 = bos, 1 = eos):")

print(batch_ids)

print("\nback to words")

print(batch_lines_restored)

# -

# Draw source and translation length distributions to estimate the scope of the task.

# +

plt.figure(figsize=[8, 4])

plt.subplot(1, 2, 1)

plt.title("source length")

plt.hist(list(map(len, map(str.split, train_inp))), bins=20);

plt.subplot(1, 2, 2)

plt.title("translation length")

plt.hist(list(map(len, map(str.split, train_out))), bins=20);

# -

# ### Encoder-decoder model

#

# The code below contas a template for a simple encoder-decoder model: single GRU encoder/decoder, no attention or anything. This model is implemented for you as a reference and a baseline for your homework assignment.

import tensorflow as tf

import keras.layers as L

from utils import infer_length, infer_mask

class BasicModel:

def __init__(self, name, inp_voc, out_voc, emb_size=64, hid_size=128):

"""

A simple encoder-decoder model

"""

self.name, self.inp_voc, self.out_voc = name, inp_voc, out_voc

with tf.variable_scope(name):

self.emb_inp = L.Embedding(len(inp_voc), emb_size)

self.emb_out = L.Embedding(len(out_voc), emb_size)

self.enc0 = tf.nn.rnn_cell.GRUCell(hid_size)

self.dec_start = L.Dense(hid_size)

self.dec0 = tf.nn.rnn_cell.GRUCell(hid_size)

self.logits = L.Dense(len(out_voc))

# prepare to translate_lines

self.inp = tf.placeholder('int32', [None, None])

self.initial_state = self.prev_state = self.encode(self.inp)

self.prev_tokens = tf.placeholder('int32', [None])

self.next_state, self.next_logits = self.decode(self.prev_state, self.prev_tokens)

self.weights = tf.get_collection(tf.GraphKeys.TRAINABLE_VARIABLES, scope=name)

def encode(self, inp, **flags):

"""

Takes symbolic input sequence, computes initial state

:param inp: matrix of input tokens [batch, time]

:returns: initial decoder state tensors, one or many

"""

inp_lengths = infer_length(inp, self.inp_voc.eos_ix)

inp_emb = self.emb_inp(inp)

with tf.variable_scope('enc0'):

_, enc_last = tf.nn.dynamic_rnn(

self.enc0, inp_emb,

sequence_length=inp_lengths,

dtype = inp_emb.dtype)

dec_start = self.dec_start(enc_last)

return [dec_start]

def decode(self, prev_state, prev_tokens, **flags):

"""

Takes previous decoder state and tokens, returns new state and logits for next tokens

:param prev_state: a list of previous decoder state tensors

:param prev_tokens: previous output tokens, an int vector of [batch_size]

:return: a list of next decoder state tensors, a tensor of logits [batch, n_tokens]

"""

[prev_dec] = prev_state

prev_emb = self.emb_out(prev_tokens[:,None])[:,0]

with tf.variable_scope('dec0'):

new_dec_out, new_dec_state = self.dec0(prev_emb, prev_dec)

output_logits = self.logits(new_dec_out)

return [new_dec_state], output_logits

def translate_lines(self, inp_lines, max_len=100):

"""

Translates a list of lines by greedily selecting most likely next token at each step

:returns: a list of output lines, a sequence of model states at each step

"""

state = sess.run(self.initial_state, {self.inp: inp_voc.to_matrix(inp_lines)})

outputs = [[self.out_voc.bos_ix] for _ in range(len(inp_lines))]

all_states = [state]

finished = [False] * len(inp_lines)

for t in range(max_len):

state, logits = sess.run([self.next_state, self.next_logits], {**dict(zip(self.prev_state, state)),

self.prev_tokens: [out_i[-1] for out_i in outputs]})

next_tokens = np.argmax(logits, axis=-1)

all_states.append(state)

for i in range(len(next_tokens)):

outputs[i].append(next_tokens[i])

finished[i] |= next_tokens[i] == self.out_voc.eos_ix

return out_voc.to_lines(outputs), all_states

# +

tf.reset_default_graph()

sess = tf.InteractiveSession()

# ^^^ if you get "variable *** already exists": re-run this cell again - it will clear all tf operations youve 'built

model = BasicModel('model', inp_voc, out_voc)

sess.run(tf.global_variables_initializer())

# -

# ### Training loss (2 points)

#

# Our training objetive is almost the same as it was for neural language models:

# $$ L = {\frac1{|D|}} \sum_{X, Y \in D} \sum_{y_t \in Y} - \log p(y_t \mid y_1, \dots, y_{t-1}, X, \theta) $$

#

# where $|D|$ is the __total length of all sequences__, including BOS and first EOS, but excluding PAD.

# +

def compute_logits(model, inp, out, **flags):

"""

:param inp: input tokens matrix, int32[batch, time]

:param out: reference tokens matrix, int32[batch, time]

:returns: logits of shape [batch, time, voc_size]

* logits must be a linear output of your neural network.

* logits [:, 0, :] should always predic BOS

* logits [:, -1, :] should be probabilities of last token in out

This function should NOT return logits predicted when taking out[:, -1] as y_prev

"""

batch_size = tf.shape(inp)[0]

# Encode inp, get initial state

first_state = model.encode(inp)

# initial logits: always predict BOS

first_logits = tf.log(tf.one_hot(tf.fill([batch_size], model.out_voc.bos_ix),

len(model.out_voc)) + 1e-30)

# Decode step

def step(prev_state, y_prev):

# Given previous state, obtain next state and next token logits

return model.decode(prev_state[0], y_prev)

# You can now use tf.scan to run step several times.

# use tf.transpose(out) as elems (to process one time-step at a time)

# docs: https://www.tensorflow.org/api_docs/python/tf/scan

# <YOUR CODE>

# a =

logits_seq = tf.scan(step, tf.transpose(out), (first_state, first_logits))[1]

# prepend first_logits to logits_seq

logits_seq = tf.concat([first_logits[None, ...], logits_seq], axis=0)[:-1, ...]

# Make sure you convert logits_seq from [time, batch, voc_size] to [batch, time, voc_size]

# logits_seq = <...>

logits_seq = tf.transpose(logits_seq, [1, 0, 2])

return logits_seq

# -

from utils import load

load(tf.trainable_variables(), 'dummy_checkpoint.npz')

dummy_inp = tf.constant(inp_voc.to_matrix(train_inp[:3]))

dummy_out = tf.constant(out_voc.to_matrix(train_out[:3]))

dummy_logits = sess.run(compute_logits(model, dummy_inp, dummy_out))

dummy_ref = np.array([-0.13257082, -0.11084784, -0.09024167, -0.14910498], dtype='float32')

assert np.allclose(dummy_logits.sum(-1)[0, 1:5], dummy_ref)

ref_shape = (dummy_out.shape[0], dummy_out.shape[1], len(out_voc))

assert dummy_logits.shape == ref_shape, "Your logits shape should be {} but got {}".format(dummy_logits.shape, ref_shape)

assert all(dummy_logits[:, 0].argmax(-1) == out_voc.bos_ix), "first step must always be BOS"

# +

from utils import select_values_over_last_axis

def compute_loss(model, inp, out, **flags):

"""

Compute loss (float32 scalar) as in the formula above

:param inp: input tokens matrix, int32[batch, time]

:param out: reference tokens matrix, int32[batch, time]

In order to pass the tests, your function should

* include loss at first EOS but not the subsequent ones

* divide sum of losses by a sum of input lengths (use infer_length or infer_mask)

"""

mask = infer_mask(out, out_voc.eos_ix)

logits_seq = compute_logits(model, inp, out, **flags)

# Compute loss as per instructions above

losses = tf.nn.sparse_softmax_cross_entropy_with_logits(logits=logits_seq, labels=out) * mask

return tf.reduce_sum(losses) / tf.reduce_sum(mask)

# -

dummy_loss = sess.run(compute_loss(model, dummy_inp, dummy_out))

print("Loss:", dummy_loss)

assert np.allclose(dummy_loss, 8.425, rtol=0.1, atol=0.1), "We're sorry for your loss"

# ### Evaluation: BLEU

#

# Machine translation is commonly evaluated with [BLEU](https://en.wikipedia.org/wiki/BLEU) score. This metric simply computes which fraction of predicted n-grams is actually present in the reference translation. It does so for n=1,2,3 and 4 and computes the geometric average with penalty if translation is shorter than reference.

#

# While BLEU [has many drawbacks](http://www.cs.jhu.edu/~ccb/publications/re-evaluating-the-role-of-bleu-in-mt-research.pdf), it still remains the most commonly used metric and one of the simplest to compute.

# __Note:__ in this assignment we measure token-level bleu with bpe tokens. Most scientific papers report word-level bleu. You can measure it by undoing BPE encoding before computing BLEU. Please stay with the token-level bleu for this assignment, however.

#

from nltk.translate.bleu_score import corpus_bleu

def compute_bleu(model, inp_lines, out_lines, **flags):

""" Estimates corpora-level BLEU score of model's translations given inp and reference out """

translations, _ = model.translate_lines(inp_lines, **flags)

# Note: if you experience out-of-memory error, split input lines into batches and translate separately

return corpus_bleu([[ref] for ref in out_lines], translations) * 100

compute_bleu(model, dev_inp, dev_out)

# ### Training loop

#

# Training encoder-decoder models isn't that different from any other models: sample batches, compute loss, backprop and update

# +

inp = tf.placeholder('int32', [None, None])

out = tf.placeholder('int32', [None, None])

loss = compute_loss(model, inp, out)

train_step = tf.train.AdamOptimizer().minimize(loss)

# +

from IPython.display import clear_output

from tqdm import tqdm, trange

metrics = {'train_loss': [], 'dev_bleu': [] }

sess.run(tf.global_variables_initializer())

batch_size = 32

# +

for _ in trange(25000):

step = len(metrics['train_loss']) + 1

batch_ix = np.random.randint(len(train_inp), size=batch_size)

feed_dict = {

inp: inp_voc.to_matrix(train_inp[batch_ix]),

out: out_voc.to_matrix(train_out[batch_ix]),

}

loss_t, _ = sess.run([loss, train_step], feed_dict)

metrics['train_loss'].append((step, loss_t))

if step % 100 == 0:

metrics['dev_bleu'].append((step, compute_bleu(model, dev_inp, dev_out)))

clear_output(True)

plt.figure(figsize=(12,4))

for i, (name, history) in enumerate(sorted(metrics.items())):

plt.subplot(1, len(metrics), i + 1)

plt.title(name)

plt.plot(*zip(*history))

plt.grid()

plt.show()

print("Mean loss=%.3f" % np.mean(metrics['train_loss'][-10:], axis=0)[1], flush=True)

# Note: it's okay if bleu oscillates up and down as long as it gets better on average over long term (e.g. 5k batches)

# -

assert np.mean(metrics['dev_bleu'][-10:], axis=0)[1] > 35, "We kind of need a higher bleu BLEU from you. Kind of right now."

for inp_line, trans_line in zip(dev_inp[::500], model.translate_lines(dev_inp[::500])[0]):

print(inp_line)

print(trans_line)

print()

# ### Your Attention Required (4 points)

#

# In this section we want you to improve over the basic model by implementing a simple attention mechanism.

#

# This is gonna be a two-parter: building the __attention layer__ and using it for an __attentive seq2seq model__.

# ### Attention layer

#

# Here you will have to implement a layer that computes a simple additive attention:

#

# Given encoder sequence $ h^e_0, h^e_1, h^e_2, ..., h^e_T$ and a single decoder state $h^d$,

#

# * Compute logits with a 2-layer neural network

# $$a_t = linear_{out}(tanh(linear_{e}(h^e_t) + linear_{d}(h_d)))$$

# * Get probabilities from logits,

# $$ p_t = {{e ^ {a_t}} \over { \sum_\tau e^{a_\tau} }} $$

#

# * Add up encoder states with probabilities to get __attention response__

# $$ attn = \sum_t p_t \cdot h^e_t $$

#

# You can learn more about attention layers in the leture slides or [from this post](https://distill.pub/2016/augmented-rnns/).

class AttentionLayer:

def __init__(self, name, enc_size, dec_size, hid_size, activ=tf.tanh,):

""" A layer that computes additive attention response and weights """

self.name = name

self.enc_size = enc_size # num units in encoder state

self.dec_size = dec_size # num units in decoder state

self.hid_size = hid_size # attention layer hidden units

self.activ = activ # attention layer hidden nonlinearity

with tf.variable_scope(name):

# YOUR CODE - create layer variables

self.l_encoder = L.Dense(hid_size)

self.l_decoder = L.Dense(hid_size)

self.l_output = L.Dense(1)

def __call__(self, enc, dec, inp_mask):

"""

Computes attention response and weights

:param enc: encoder activation sequence, float32[batch_size, ninp, enc_size]

:param dec: single decoder state used as "query", float32[batch_size, dec_size]

:param inp_mask: mask on enc activatons (0 after first eos), float32 [batch_size, ninp]

:returns: attn[batch_size, enc_size], probs[batch_size, ninp]

- attn - attention response vector (weighted sum of enc)

- probs - attention weights after softmax

"""

with tf.variable_scope(self.name):

# Compute logits

key = self.l_encoder(enc)

query = self.l_decoder(dec)[:, None, :]

logits = self.l_output(self.activ(key + query))[..., 0]

# Apply mask - if mask is 0, logits should be -inf or -1e9

# You may need tf.where

logits = inp_mask * logits + (1 - inp_mask) * (-1e9)

# Compute attention probabilities (softmax)

probs = tf.nn.softmax(logits)

# Compute attention response using enc and probs

attn = tf.reduce_sum(probs[..., None] * enc, axis=1)

return attn, probs

# ### Seq2seq model with attention

#

# You can now use the attention layer to build a network. The simplest way to implement attention is to use it in decoder phase:

#

# _image from distill.pub [article](https://distill.pub/2016/augmented-rnns/)_

#

# On every step, use __previous__ decoder state to obtain attention response. Then feed concat this response to the inputs of next attetion layer.

#

# The key implementation detail here is __model state__. Put simply, you can add any tensor into the list of `encode` outputs. You will then have access to them at each `decode` step. This may include:

# * Last RNN hidden states (as in basic model)

# * The whole sequence of encoder outputs (to attend to) and mask

# * Attention probabilities (to visualize)

#

# _There are, of course, alternative ways to wire attention into your network and different kinds of attention. Take a look at [this](https://arxiv.org/abs/1609.08144), [this](https://arxiv.org/abs/1706.03762) and [this](https://arxiv.org/abs/1808.03867) for ideas. And for image captioning/im2latex there's [visual attention](https://arxiv.org/abs/1502.03044)_

class AttentiveModel(BasicModel):

def __init__(self, name, inp_voc, out_voc,

emb_size=64, hid_size=128, attn_size=128):

""" Translation model that uses attention. See instructions above. """

self.name = name

self.inp_voc = inp_voc

self.out_voc = out_voc

with tf.variable_scope(name):

# YOUR CODE - define model layers

self.l_emb_in = L.Embedding(len(inp_voc), emb_size)

self.l_emb_out = L.Embedding(len(out_voc), emb_size)

self.l_encoder_rnn = L.GRU(hid_size, return_sequences=True, return_state=True)

self.l_decoder_rnn = L.GRU(hid_size, return_sequences=True, return_state=True)

self.l_attention = AttentionLayer("attention", hid_size, hid_size, attn_size)

self.l_initial_decoder_state = L.Dense(hid_size)

self.l_output = L.Dense(len(out_voc))

# END OF YOUR CODE

# prepare to translate_lines

self.inp = tf.placeholder('int32', [None, None])

self.initial_state = self.prev_state = self.encode(self.inp)

self.prev_tokens = tf.placeholder('int32', [None])

self.next_state, self.next_logits = self.decode(self.prev_state, self.prev_tokens)

self.weights = tf.get_collection(tf.GraphKeys.TRAINABLE_VARIABLES, scope=name)

def encode(self, inp, **flags):

"""

Takes symbolic input sequence, computes initial state

:param inp: matrix of input tokens [batch, time]

:return: a list of initial decoder state tensors

"""

# encode input sequence, create initial decoder states

encoder_states, last_state = self.l_encoder_rnn(self.l_emb_in(inp))

decoder_init_state = self.l_initial_decoder_state(last_state)

# apply attention layer from initial decoder hidden state

mask = tf.sequence_mask(infer_length(inp, self.inp_voc.eos_ix), dtype=tf.float32)

first_attn_response, first_attn_probas = self.l_attention(encoder_states, decoder_init_state, mask)

# Build first state: include

# * initial states for decoder recurrent layers

# * encoder sequence and encoder attn mask (for attention)

# * make sure that last state item is attention probabilities tensor

first_state = [decoder_init_state, encoder_states, mask, first_attn_probas]

return first_state

def decode(self, prev_state, prev_tokens, **flags):

"""

Takes previous decoder state and tokens, returns new state and logits

:param prev_state: a list of previous decoder state tensors

:param prev_tokens: previous output tokens, an int vector of [batch_size]

:return: a list of next decoder state tensors, a tensor of logits [batch,n_tokens]

"""

# Unpack your state: you will get tensors in the same order that you've packed in encode

[decoder_prev_state, encoder_states, mask, prev_attn_probas] = prev_state

# Perform decoder step

# * predict next attn response and attn probas given previous decoder state

# * use prev token embedding and attn response to update decoder states (concatenate and feed into decoder cell)

# * predict logits

self.l_emb_out(prev_tokens[:, None])

next_attn_response, next_attn_probas = self.l_attention(encoder_states, decoder_prev_state, mask)

prev_emb = self.l_emb_out(prev_tokens)

concat_emb = tf.concat([next_attn_response, prev_emb], axis=-1)

new_dec_out, new_dec_state = self.l_decoder_rnn(concat_emb[:, None, :], decoder_prev_state)

output_logits = self.l_output(new_dec_out)[:, 0, :]

# Pack new state:

# * replace previous decoder state with next one

# * copy encoder sequence and mask from prev_state

# * append new attention probas

next_state = [new_dec_state, encoder_states, mask, next_attn_probas]

return next_state, output_logits

# WARNING! this cell will clear your TF graph from the regular model. All trained variables will be gone!

tf.reset_default_graph()

sess = tf.InteractiveSession()

model = AttentiveModel('model_attn', inp_voc, out_voc)

# ### Training attentive model

#

# We'll reuse the infrastructure you've built for the regular model. I hope you didn't hard-code anything :)

# +

inp = tf.placeholder('int32', [None, None])

out = tf.placeholder('int32', [None, None])

loss = compute_loss(model, inp, out)

train_step = tf.train.AdamOptimizer().minimize(loss)

# -

metrics = {'train_loss': [], 'dev_bleu': []}

sess.run(tf.global_variables_initializer())

batch_size = 32

# +

for _ in trange(25000):

step = len(metrics['train_loss']) + 1

batch_ix = np.random.randint(len(train_inp), size=batch_size)

feed_dict = {

inp: inp_voc.to_matrix(train_inp[batch_ix]),

out: out_voc.to_matrix(train_out[batch_ix]),

}

loss_t, _ = sess.run([loss, train_step], feed_dict)

metrics['train_loss'].append((step, loss_t))

if step % 100 == 0:

metrics['dev_bleu'].append((step, compute_bleu(model, dev_inp, dev_out)))

clear_output(True)

plt.figure(figsize=(12,4))

for i, (name, history) in enumerate(sorted(metrics.items())):

plt.subplot(1, len(metrics), i + 1)

plt.title(name)

plt.plot(*zip(*history))

plt.grid()

plt.show()

print("Mean loss=%.3f" % np.mean(metrics['train_loss'][-10:], axis=0)[1], flush=True)

# Your model may train slower than the basic one. check that it's at least >30 bleu by 5k steps

# Also: you don't have to train for 25k steps. It was chosen by a squirrel.

# -

assert np.mean(metrics['dev_bleu'][-10:], axis=0)[1] > 45, "Something might be wrong with the model..."

# +

import bokeh.plotting as pl

import bokeh.models as bm

from bokeh.io import output_notebook, show

output_notebook()

def draw_attention(inp_line, translation, probs):

""" An intentionally ambiguous function to visualize attention weights """

inp_tokens = inp_voc.tokenize(inp_line)

trans_tokens = out_voc.tokenize(translation)

probs = probs[:len(trans_tokens), :len(inp_tokens)]

fig = pl.figure(x_range=(0, len(inp_tokens)), y_range=(0, len(trans_tokens)),

x_axis_type=None, y_axis_type=None, tools=[])

fig.image([probs[::-1]], 0, 0, len(inp_tokens), len(trans_tokens))

fig.add_layout(bm.LinearAxis(axis_label='source tokens'), 'above')

fig.xaxis.ticker = np.arange(len(inp_tokens)) + 0.5

fig.xaxis.major_label_overrides = dict(zip(np.arange(len(inp_tokens)) + 0.5, inp_tokens))

fig.xaxis.major_label_orientation = 45

fig.add_layout(bm.LinearAxis(axis_label='translation tokens'), 'left')

fig.yaxis.ticker = np.arange(len(trans_tokens)) + 0.5

fig.yaxis.major_label_overrides = dict(zip(np.arange(len(trans_tokens)) + 0.5, trans_tokens[::-1]))

show(fig)

# +

inp = dev_inp[::500]

trans, states = model.translate_lines(inp)

# select attention probs from model state (you may need to change this for your custom model)

attention_probs = np.stack([state[-1] for state in states], axis=1)

# -

for i in range(5):

draw_attention(inp[i], trans[i], attention_probs[i])

# ## Grand Finale (4+ points)

#

# We want you to find the best model for the task. Use everything you know.

#

# * different recurrent units: rnn/gru/lstm; deeper architectures

# * bidirectional encoder, different attention methods for decoder

# * word dropout, training schedules, anything you can imagine

#

# As usual, we want you to describe what you tried and what results you obtained.

# `[your report/log here or anywhere you please]`

| week04_seq2seq/practice.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3 (Data Science)

# language: python

# name: python3__SAGEMAKER_INTERNAL__arn:aws:sagemaker:us-east-1:081325390199:image/datascience-1.0

# ---

# # Detect Model Bias with Amazon SageMaker Clarify

#

# ## Amazon Science: _[How Clarify helps machine learning developers detect unintended bias](https://www.amazon.science/latest-news/how-clarify-helps-machine-learning-developers-detect-unintended-bias)_

#

# [<img src="img/amazon_science_clarify.png" width="100%" align="left">](https://www.amazon.science/latest-news/how-clarify-helps-machine-learning-developers-detect-unintended-bias)

# # Terminology

#

# * **Bias**:

# An imbalance in the training data or the prediction behavior of the model across different groups, such as age or income bracket. Biases can result from the data or algorithm used to train your model. For instance, if an ML model is trained primarily on data from middle-aged individuals, it may be less accurate when making predictions involving younger and older people.

#

# * **Bias metric**:

# A function that returns numerical values indicating the level of a potential bias.

#

# * **Bias report**:

# A collection of bias metrics for a given dataset, or a combination of a dataset and a model.

#

# * **Label**:

# Feature that is the target for training a machine learning model. Referred to as the observed label or observed outcome.

#

# * **Positive label values**:

# Label values that are favorable to a demographic group observed in a sample. In other words, designates a sample as having a positive result.

#

# * **Negative label values**:

# Label values that are unfavorable to a demographic group observed in a sample. In other words, designates a sample as having a negative result.

#

# * **Facet**:

# A column or feature that contains the attributes with respect to which bias is measured.

#

# * **Facet value**:

# The feature values of attributes that bias might favor or disfavor.

# # Posttraining Bias Metrics

# https://docs.aws.amazon.com/sagemaker/latest/dg/clarify-measure-post-training-bias.html

#

# * **Difference in Positive Proportions in Predicted Labels (DPPL)**:

# Measures the difference in the proportion of positive predictions between the favored facet a and the disfavored facet d.

#

# * **Disparate Impact (DI)**:

# Measures the ratio of proportions of the predicted labels for the favored facet a and the disfavored facet d.

#

# * **Difference in Conditional Acceptance (DCAcc)**:

# Compares the observed labels to the labels predicted by a model and assesses whether this is the same across facets for predicted positive outcomes (acceptances).

#

# * **Difference in Conditional Rejection (DCR)**:

# Compares the observed labels to the labels predicted by a model and assesses whether this is the same across facets for negative outcomes (rejections).

#

# * **Recall Difference (RD)**:

# Compares the recall of the model for the favored and disfavored facets.

#

# * **Difference in Acceptance Rates (DAR)**:

# Measures the difference in the ratios of the observed positive outcomes (TP) to the predicted positives (TP + FP) between the favored and disfavored facets.

#

# * **Difference in Rejection Rates (DRR)**:

# Measures the difference in the ratios of the observed negative outcomes (TN) to the predicted negatives (TN + FN) between the disfavored and favored facets.

#

# * **Accuracy Difference (AD)**:

# Measures the difference between the prediction accuracy for the favored and disfavored facets.

#

# * **Treatment Equality (TE)**:

# Measures the difference in the ratio of false positives to false negatives between the favored and disfavored facets.

#

# * **Conditional Demographic Disparity in Predicted Labels (CDDPL)**:

# Measures the disparity of predicted labels between the facets as a whole, but also by subgroups.

#

# * **Counterfactual Fliptest (FT)**:

# Examines each member of facet d and assesses whether similar members of facet a have different model predictions.

#

# +

import boto3

import sagemaker

import pandas as pd

import numpy as np

sess = sagemaker.Session()

bucket = sess.default_bucket()

role = sagemaker.get_execution_role()

region = boto3.Session().region_name

sm = boto3.Session().client(service_name="sagemaker", region_name=region)

# +

import matplotlib.pyplot as plt

# %matplotlib inline

# %config InlineBackend.figure_format='retina'

# -

# # Test data for bias

#

# We created test data in JSONLines format to match the model inputs.

test_data_bias_path = "./data-clarify/test_data_bias.jsonl"

# !head -n 1 $test_data_bias_path

# ### Upload the data

test_data_bias_s3_uri = sess.upload_data(bucket=bucket, key_prefix="bias/test_data_bias", path=test_data_bias_path)

test_data_bias_s3_uri

# !aws s3 ls $test_data_bias_s3_uri

# %store test_data_bias_s3_uri

# # Run Posttraining Model Bias Analysis

# %store -r pipeline_name

print(pipeline_name)

# +

# %%time

import time

from pprint import pprint

executions_response = sm.list_pipeline_executions(PipelineName=pipeline_name)["PipelineExecutionSummaries"]

pipeline_execution_status = executions_response[0]["PipelineExecutionStatus"]

print(pipeline_execution_status)

while pipeline_execution_status == "Executing":

try:

executions_response = sm.list_pipeline_executions(PipelineName=pipeline_name)["PipelineExecutionSummaries"]

pipeline_execution_status = executions_response[0]["PipelineExecutionStatus"]

except Exception as e:

print("Please wait...")

time.sleep(30)

pprint(executions_response)

# -

# # List Pipeline Execution Steps

#

pipeline_execution_status = executions_response[0]["PipelineExecutionStatus"]

print(pipeline_execution_status)

pipeline_execution_arn = executions_response[0]["PipelineExecutionArn"]

print(pipeline_execution_arn)

# +

from pprint import pprint

steps = sm.list_pipeline_execution_steps(PipelineExecutionArn=pipeline_execution_arn)

pprint(steps)

# -

# # View Created Model

# _Note: If the trained model did not pass the Evaluation step (> accuracy threshold), it will not be created._

# +

for execution_step in steps["PipelineExecutionSteps"]:

if execution_step["StepName"] == "CreateModel":

model_arn = execution_step["Metadata"]["Model"]["Arn"]

break

print(model_arn)

pipeline_model_name = model_arn.split("/")[-1]

print(pipeline_model_name)

# -

# # SageMakerClarifyProcessor

# +

from sagemaker import clarify

clarify_processor = clarify.SageMakerClarifyProcessor(

role=role, instance_count=1, instance_type="ml.c5.2xlarge", sagemaker_session=sess

)

# -

# # Writing DataConfig and ModelConfig

# A `DataConfig` object communicates some basic information about data I/O to Clarify. We specify where to find the input dataset, where to store the output, the target column (`label`), the header names, and the dataset type.

#

# Similarly, the `ModelConfig` object communicates information about your trained model and `ModelPredictedLabelConfig` provides information on the format of your predictions.

#

# **Note**: To avoid additional traffic to your production models, SageMaker Clarify sets up and tears down a dedicated endpoint when processing. `ModelConfig` specifies your preferred instance type and instance count used to run your model on during Clarify's processing.

# ## DataConfig

# +

bias_report_prefix = "bias/report-{}".format(pipeline_model_name)

bias_report_output_path = "s3://{}/{}".format(bucket, bias_report_prefix)

data_config = clarify.DataConfig(

s3_data_input_path=test_data_bias_s3_uri,

s3_output_path=bias_report_output_path,

label="star_rating",

features="features",

# label must be last, features in exact order as passed into model

headers=["review_body", "product_category", "star_rating"],

dataset_type="application/jsonlines",

)

# -

# ## ModelConfig

model_config = clarify.ModelConfig(

model_name=pipeline_model_name,

instance_type="ml.m5.4xlarge",

instance_count=1,

content_type="application/jsonlines",

accept_type="application/jsonlines",

# {"features": ["the worst", "Digital_Software"]}

content_template='{"features":$features}',

)

# ## _Note: `label` is set to the JSON key for the model prediction results_

predictions_config = clarify.ModelPredictedLabelConfig(label="predicted_label")

# ## BiasConfig

bias_config = clarify.BiasConfig(

label_values_or_threshold=[

5,

4,

], # needs to be int or str for continuous dtype, needs to be >1 for categorical dtype

facet_name="product_category",

# facet_values_or_threshold=['Gift Card'],

group_name="product_category",

)

# # Run Clarify Job

clarify_processor.run_post_training_bias(

data_config=data_config,

data_bias_config=bias_config,

model_config=model_config,

model_predicted_label_config=predictions_config,

# methods='all', # FlipTest requires all columns to be numeric

methods=["DPPL", "DI", "DCA", "DCR", "RD", "DAR", "DRR", "AD", "CDDPL", "TE"],

wait=False,

logs=False,

)

run_post_training_bias_processing_job_name = clarify_processor.latest_job.job_name

run_post_training_bias_processing_job_name

# +

from IPython.core.display import display, HTML

display(

HTML(

'<b>Review <a target="blank" href="https://console.aws.amazon.com/sagemaker/home?region={}#/processing-jobs/{}">Processing Job</a></b>'.format(

region, run_post_training_bias_processing_job_name

)

)

)

# +

from IPython.core.display import display, HTML

display(

HTML(

'<b>Review <a target="blank" href="https://console.aws.amazon.com/cloudwatch/home?region={}#logStream:group=/aws/sagemaker/ProcessingJobs;prefix={};streamFilter=typeLogStreamPrefix">CloudWatch Logs</a> After About 5 Minutes</b>'.format(

region, run_post_training_bias_processing_job_name

)

)

)

# +

from IPython.core.display import display, HTML

display(

HTML(

'<b>Review <a target="blank" href="https://s3.console.aws.amazon.com/s3/buckets/{}?prefix={}/">S3 Output Data</a> After The Processing Job Has Completed</b>'.format(

bucket, bias_report_prefix

)

)

)

# +

from pprint import pprint

running_processor = sagemaker.processing.ProcessingJob.from_processing_name(

processing_job_name=run_post_training_bias_processing_job_name, sagemaker_session=sess

)

processing_job_description = running_processor.describe()

pprint(processing_job_description)

# -

running_processor.wait(logs=False)

# # Download Report From S3

# !aws s3 ls $bias_report_output_path/

# !aws s3 cp --recursive $bias_report_output_path ./generated_bias_report/

# +

from IPython.core.display import display, HTML

display(HTML('<b>Review <a target="blank" href="./generated_bias_report/report.html">Bias Report</a></b>'))

# -

# # View Bias Report in Studio

# In Studio, you can view the results under the experiments tab.

#

# <img src="img/bias_report.gif">

#

# Each bias metric has detailed explanations with examples that you can explore.

#

# <img src="img/bias_detail.gif">

#

# You could also summarize the results in a handy table!

#

# <img src="img/bias_report_chart.gif">

# # Release Resources

# + language="html"

#

# <p><b>Shutting down your kernel for this notebook to release resources.</b></p>

# <button class="sm-command-button" data-commandlinker-command="kernelmenu:shutdown" style="display:none;">Shutdown Kernel</button>

#

# <script>

# try {

# els = document.getElementsByClassName("sm-command-button");

# els[0].click();

# }

# catch(err) {

# // NoOp

# }

# </script>

| 00_quickstart/wip/09_Detect_Model_Bias_Clarify.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# Remove input cells at runtime (nbsphinx)

import IPython.core.display as d

d.display_html('<script>jQuery(function() {if (jQuery("body.notebook_app").length == 0) { jQuery(".input_area").toggle(); jQuery(".prompt").toggle();}});</script>', raw=True)

# # Performance poster layout

# This notebook produces a nice poster layout about the comparison between pipelines.

# It is just a refurbished version of the DL3-level "IRF and sensitivity" notebook.

#

# Latest performance results cannot be shown on this public documentation and are therefore hosted at [this RedMine page](https://forge.in2p3.fr/projects/benchmarks-reference-analysis/wiki/Protopipe_performance_data) .

# + [markdown] nbsphinx="hidden"

# ## Imports

# +

## From the standard library

import os

from pathlib import Path

# From pyirf

import pyirf

from pyirf.binning import bin_center

from pyirf.utils import cone_solid_angle

# From other 3rd-party libraries

from yaml import load, FullLoader

import numpy as np

import astropy.units as u

from astropy.io import fits

from astropy.table import QTable, Table, Column

import uproot

import matplotlib.pyplot as plt

from matplotlib.ticker import ScalarFormatter

# %matplotlib inline

plt.rcParams['axes.labelsize'] = 15

plt.rcParams['xtick.labelsize'] = 15

plt.rcParams['ytick.labelsize'] = 15

# + [markdown] nbsphinx="hidden"

# ## Functions

# -

def load_config(name):

"""Load YAML configuration file."""

try:

with open(name, "r") as stream:

cfg = load(stream, Loader=FullLoader)

except FileNotFoundError as e:

print(e)

raise

return cfg

# + [markdown] nbsphinx="hidden"

# ## Input data

# + [markdown] nbsphinx="hidden"

# ### Protopipe

# -

# EDIT THIS CELL WITH YOUR LOCAL SETUP INFORMATION

parent_dir = "" # path to 'analyses' folder

analysisName = ""

infile = "performance_protopipe_Prod3b_CTANorth_baseline_full_array_Zd20deg_180deg_Time50.00h.fits.gz"

config_performance = load_config(f"{parent_dir}/{analysisName}/configs/performance.yaml")

obs_time = f'{config_performance["analysis"]["obs_time"]["value"]}{config_performance["analysis"]["obs_time"]["unit"]}'

production = infile.split("protopipe_")[1].split("_Time")[0]

protopipe_file = Path(parent_dir, analysisName, "data/DL3", infile)

# + [markdown] nbsphinx="hidden"

# ### ASWG

# +

# EDIT THIS CELL WITH YOUR LOCAL SETUP INFORMATION

parent_dir_aswg = ""

# MARS performance (available here: https://forge.in2p3.fr/projects/step-by-step-reference-mars-analysis/wiki)

indir_CTAMARS = ""

infile_CTAMARS = "SubarrayLaPalma_4L15M_south_IFAE_50hours_20190630.root"

MARS_label = "CTAMARS (2019)"

# ED performance (available here: https://forge.in2p3.fr/projects/cta_analysis-and-simulations/wiki/Prod3b_based_instrument_response_functions)

indir_ED = ""

infile_ED = "CTA-Performance-North-20deg-S-50h_20181203.root"

ED_label = "EventDisplay (2018)"

# -

MARS_performance = uproot.open(Path(parent_dir_aswg, indir_CTAMARS, infile_CTAMARS))

ED_performance = uproot.open(Path(parent_dir_aswg, indir_ED, infile_ED))

# + [markdown] nbsphinx="hidden"

# ### Requirements

# -

# EDIT THIS CELL WITH YOUR LOCAL SETUP INFORMATION

indir_requirements = ''

site = 'North'

obs_time = '50h'

# +

infile_requirements = {"sens" : f'/{site}-{obs_time}.dat',

"AngRes" : f'/{site}-{obs_time}-AngRes.dat',

"ERes" : f'/{site}-{obs_time}-ERes.dat'}

requirements = {}

for key in infile_requirements.keys():

requirements[key] = Table.read(indir_requirements + infile_requirements[key], format='ascii')

requirements['sens'].add_column(Column(data=(10**requirements['sens']['col1']), name='ENERGY'))

requirements['sens'].add_column(Column(data=requirements['sens']['col2'], name='SENSITIVITY'))

# + [markdown] nbsphinx="hidden"

# ## Poster plot

# -

# First we check if a _plots_ folder exists already.

# If not, we create it.

Path("./plots").mkdir(parents=True, exist_ok=True)

# +

fig = plt.figure(figsize = (20, 10), constrained_layout=True)

gs = fig.add_gridspec(3, 3, figure=fig)

# ==========================================================================================================

#

# SENSITIVITY

#

# ==========================================================================================================

ax1 = fig.add_subplot(gs[0:-1, 0:-1])

# [1:-1] removes under/overflow bins

sensitivity_protopipe = QTable.read(protopipe_file, hdu='SENSITIVITY')[1:-1]

unit = u.Unit('erg cm-2 s-1')

# Add requirements

ax1.plot(requirements['sens']['ENERGY'],

requirements['sens']['SENSITIVITY'],

color='black',

ls='--',

lw=2,

label='Requirements'

)

# protopipe

e = sensitivity_protopipe['reco_energy_center']

w = (sensitivity_protopipe['reco_energy_high'] - sensitivity_protopipe['reco_energy_low'])

s_p = (e**2 * sensitivity_protopipe['flux_sensitivity'])

ax1.errorbar(

e.to_value(u.TeV),

s_p.to_value(unit),

xerr=w.to_value(u.TeV) / 2,

ls='',

label='protopipe',

color='DarkOrange'

)

# ED

s_ED, edges = ED_performance["DiffSens"].to_numpy()

yerr = ED_performance["DiffSens"].errors()

bins = 10**edges

x = bin_center(bins)

width = np.diff(bins)

ax1.errorbar(

x,

s_ED,

xerr=width/2,

yerr=yerr,

label=ED_label,

ls='',

color='DarkGreen'

)

# MARS

s_MARS, edges = MARS_performance["DiffSens"].to_numpy()

yerr = MARS_performance["DiffSens"].errors()

bins = 10**edges

x = bin_center(bins)

width = np.diff(bins)

ax1.errorbar(

x,

s_MARS,

xerr=width/2,

yerr=yerr,

label=MARS_label,

ls='',

color='DarkBlue'

)

# Style settings

ax1.set_xscale("log")

ax1.set_yscale("log")

ax1.set_ylabel(rf"$(E^2 \cdot \mathrm{{Flux Sensitivity}}) /$ ({unit.to_string('latex')})")

ax1.grid(which="both")

ax1.legend()

# ==========================================================================================================

#

# SENSITIVITY RATIO

#

# ==========================================================================================================

ax2 = fig.add_subplot(gs[2, 0])

ax2.errorbar(

e.to_value(u.TeV),

s_p.to_value(unit) / s_ED,

xerr=w.to_value(u.TeV)/2,

ls='',

label = "",

color='DarkGreen'

)

ax2.errorbar(

e.to_value(u.TeV),

s_p.to_value(unit) / s_MARS,

xerr=w.to_value(u.TeV)/2,

ls='',

label = "",

color='DarkBlue'

)

ax2.axhline(1, color = 'DarkOrange')

ax2.set_xscale('log')

ax2.set_yscale('log')

ax2.set_xlabel("Reconstructed energy [TeV]")

ax2.set_ylabel('Sensitivity ratio')

ax2.grid()

ax2.yaxis.set_major_formatter(ScalarFormatter())

ax2.set_ylim(0.5, 2.0)

ax2.set_yticks([0.5, 2/3, 1, 3/2, 2])

ax2.set_yticks([], minor=True)

# ==========================================================================================================

#

# EFFECTIVE COLLECTION AREA

#

# ==========================================================================================================

ax3 = fig.add_subplot(gs[0, 2])

# protopipe

# uncomment the other strings to see effective areas

# for the different cut levels. Left out here for better

# visibility of the final effective areas.

suffix =''

#'_NO_CUTS'

#'_ONLY_GH'

#'_ONLY_THETA'

area = QTable.read(protopipe_file, hdu='EFFECTIVE_AREA' + suffix)[0]

ax3.errorbar(

0.5 * (area['ENERG_LO'] + area['ENERG_HI']).to_value(u.TeV)[1:-1],

area['EFFAREA'].to_value(u.m**2).T[1:-1, 0],

xerr=0.5 * (area['ENERG_LO'] - area['ENERG_HI']).to_value(u.TeV)[1:-1],

ls='',

label='protopipe ' + suffix,

color='DarkOrange'

)

# ED

y, edges = ED_performance["EffectiveAreaEtrue"].to_numpy()

yerr = ED_performance["EffectiveAreaEtrue"].errors()

x = bin_center(10**edges)

xerr = 0.5 * np.diff(10**edges)

ax3.errorbar(x,

y,

xerr=xerr,

yerr=yerr,

ls='',

label=ED_label,

color='DarkGreen'

)

# MARS

y, edges = MARS_performance["EffectiveAreaEtrue"].to_numpy()

yerr = MARS_performance["EffectiveAreaEtrue"].errors()

x = bin_center(10**edges)

xerr = 0.5 * np.diff(10**edges)

ax3.errorbar(x,

y,

xerr=xerr,

yerr=yerr,

ls='',

label=MARS_label,

color='DarkBlue'

)

# Style settings

ax3.set_xscale("log")

ax3.set_yscale("log")

ax3.set_xlabel("True energy [TeV]")

ax3.set_ylabel("Effective area [m²]")

ax3.grid(which="both")

# ==========================================================================================================

#

# ANGULAR RESOLUTION

#

# ==========================================================================================================

ax4 = fig.add_subplot(gs[2, 1])

# protopipe

ang_res = QTable.read(protopipe_file, hdu='ANGULAR_RESOLUTION')[1:-1]

ax4.errorbar(

0.5 * (ang_res['reco_energy_low'] + ang_res['reco_energy_high']).to_value(u.TeV),

ang_res['angular_resolution'].to_value(u.deg),

xerr=0.5 * (ang_res['reco_energy_high'] - ang_res['reco_energy_low']).to_value(u.TeV),

ls='',

label='protopipe',

color='DarkOrange'

)

# ED

y, edges = ED_performance["AngRes"].to_numpy()

yerr = ED_performance["AngRes"].errors()

x = bin_center(10**edges)

xerr = 0.5 * np.diff(10**edges)

ax4.errorbar(x,

y,

xerr=xerr,

yerr=yerr,

ls='',

label=ED_label,

color='DarkGreen')

# MARS

y, edges = MARS_performance["AngRes"].to_numpy()

yerr = MARS_performance["AngRes"].errors()

x = bin_center(10**edges)

xerr = 0.5 * np.diff(10**edges)

ax4.errorbar(x,

y,

xerr=xerr,

yerr=yerr,

ls='',

label=MARS_label,

color='DarkBlue')

# Requirements

ax4.plot(10**requirements['AngRes']['col1'],

requirements['AngRes']['col2'],

color='black',

ls='--',

lw=2,

label='Requirements'

)

# Style settings

ax4.set_xscale("log")

ax4.set_yscale("log")

ax4.set_xlabel("Reconstructed energy [TeV]")

ax4.set_ylabel("Angular resolution [deg]")

ax4.grid(which="both")

None # to remove clutter by mpl objects

# ==========================================================================================================

#

# ENERGY RESOLUTION

#

# ==========================================================================================================

ax5 = fig.add_subplot(gs[2, 2])

# protopipe

bias_resolution = QTable.read(protopipe_file, hdu='ENERGY_BIAS_RESOLUTION')[1:-1]

ax5.errorbar(

0.5 * (bias_resolution['reco_energy_low'] + bias_resolution['reco_energy_high']).to_value(u.TeV),

bias_resolution['resolution'],

xerr=0.5 * (bias_resolution['reco_energy_high'] - bias_resolution['reco_energy_low']).to_value(u.TeV),

ls='',

label='protopipe',

color='DarkOrange'

)

# ED

y, edges = ED_performance["ERes"].to_numpy()

yerr = ED_performance["ERes"].errors()

x = bin_center(10**edges)

xerr = np.diff(10**edges) / 2

ax5.errorbar(x,

y,

xerr=xerr,

yerr=yerr,

ls='',

label=ED_label,

color='DarkGreen'

)

# MARS

y, edges = MARS_performance["ERes"].to_numpy()

yerr = MARS_performance["ERes"].errors()

x = bin_center(10**edges)

xerr = np.diff(10**edges) / 2

ax5.errorbar(x,

y,

xerr=xerr,

yerr=yerr,

ls='',

label=MARS_label,

color='DarkBlue'

)

# Requirements

ax5.plot(10**requirements['ERes']['col1'],

requirements['ERes']['col2'],

color='black',

ls='--',

lw=2,

label='Requirements'

)

# Style settings

ax5.set_xlabel("Reconstructed energy [TeV]")

ax5.set_ylabel("Energy resolution")

ax5.grid(which="both")

ax5.set_xscale('log')

None # to remove clutter by mpl objects

# ==========================================================================================================

#

# BACKGROUND RATE

#

# ==========================================================================================================

ax6 = fig.add_subplot(gs[1, 2])

from pyirf.utils import cone_solid_angle

# protopipe

rad_max = QTable.read(protopipe_file, hdu='RAD_MAX')[0]

bg_rate = QTable.read(protopipe_file, hdu='BACKGROUND')[0]

reco_bins = np.append(bg_rate['ENERG_LO'], bg_rate['ENERG_HI'][-1])

# first fov bin, [0, 1] deg

fov_bin = 0

rate_bin = bg_rate['BKG'].T[:, fov_bin]

# interpolate theta cut for given e reco bin

e_center_bg = 0.5 * (bg_rate['ENERG_LO'] + bg_rate['ENERG_HI'])

e_center_theta = 0.5 * (rad_max['ENERG_LO'] + rad_max['ENERG_HI'])

theta_cut = np.interp(e_center_bg, e_center_theta, rad_max['RAD_MAX'].T[:, 0])

# undo normalization

rate_bin *= cone_solid_angle(theta_cut)

rate_bin *= np.diff(reco_bins)

ax6.errorbar(

0.5 * (bg_rate['ENERG_LO'] + bg_rate['ENERG_HI']).to_value(u.TeV)[1:-1],

rate_bin.to_value(1 / u.s)[1:-1],

xerr=np.diff(reco_bins).to_value(u.TeV)[1:-1] / 2,

ls='',

label='protopipe',

color='DarkOrange'

)

# ED

y, edges = ED_performance["BGRate"].to_numpy()

yerr = ED_performance["BGRate"].errors()

x = bin_center(10**edges)

xerr = np.diff(10**edges) / 2

ax6.errorbar(x,

y,

xerr=xerr,

yerr=yerr,

ls='',

label=ED_label,

color="DarkGreen")

# MARS

y, edges = MARS_performance["BGRate"].to_numpy()

yerr = MARS_performance["BGRate"].errors()

x = bin_center(10**edges)

xerr = np.diff(10**edges) / 2

ax6.errorbar(x,

y,

xerr=xerr,

yerr=yerr,

ls='',

label=MARS_label,

color="DarkBlue")

# Style settings

ax6.set_xscale("log")

ax6.set_xlabel("Reconstructed energy [TeV]")

ax6.set_ylabel("Background rate [s⁻¹ TeV⁻¹] ")

ax6.grid(which="both")

ax6.set_yscale('log')

fig.suptitle(f'{production} - {obs_time}', fontsize=25)

fig.savefig(f"./plots/protopipe_{production}_{obs_time}.png")

None # to remove clutter by mpl objects

# -

| docs/contribute/benchmarks_latest_results/Prod3b/CTAN_Zd20_AzSouth_NSB1x_baseline_pointsource/DL3/overall_performance_plot_CTA.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python [conda root]

# language: python

# name: conda-root-py

# ---

# # Transfer Learning and Fine Tuning

# * Train a simple convnet on the MNIST dataset the first 5 digits [0..4].

# * Freeze convolutional layers and fine-tune dense layers for the classification of digits [5..9].

# #### Using GPU (highly recommended)

#

# -> If using `theano` backend:

#

# `THEANO_FLAGS=mode=FAST_RUN,device=gpu,floatX=float32`

# +

import numpy as np

import datetime

np.random.seed(1337) # for reproducibility

# +

from keras.datasets import mnist

from keras.models import Sequential

from keras.layers import Dense, Dropout, Activation, Flatten

from keras.layers import Convolution2D, MaxPooling2D

from keras.utils import np_utils

from keras import backend as K

from numpy import nan

import keras

print keras.__version__

now = datetime.datetime.now

# -

# ### Settings

# +

now = datetime.datetime.now

batch_size = 128

nb_classes = 5

nb_epoch = 5

# input image dimensions

img_rows, img_cols = 28, 28

# number of convolutional filters to use

nb_filters = 32

# size of pooling area for max pooling

pool_size = 2

# convolution kernel size

kernel_size = 3

# -

if K.image_data_format() == 'channels_first':

input_shape = (1, img_rows, img_cols)

else:

input_shape = (img_rows, img_cols, 1)

def train_model(model, train, test, nb_classes):

X_train = train[0].reshape((train[0].shape[0],) + input_shape)

X_test = test[0].reshape((test[0].shape[0],) + input_shape)

X_train = X_train.astype('float32')

X_test = X_test.astype('float32')

X_train /= 255

X_test /= 255

print('X_train shape:', X_train.shape)

print(X_train.shape[0], 'train samples')

print(X_test.shape[0], 'test samples')

# convert class vectors to binary class matrices

Y_train = np_utils.to_categorical(train[1], nb_classes)

Y_test = np_utils.to_categorical(test[1], nb_classes)

model.compile(loss='categorical_crossentropy',

optimizer='adadelta',

metrics=['accuracy'])

t = now()

model.fit(X_train, Y_train,

batch_size=batch_size, nb_epoch=nb_epoch,

verbose=1,

validation_data=(X_test, Y_test))

print('Training time: %s' % (now() - t))

score = model.evaluate(X_test, Y_test, verbose=0)

print('Test score:', score[0])

print('Test accuracy:', score[1])

# ## Dataset Preparation

# +

# the data, shuffled and split between train and test sets

(X_train, y_train), (X_test, y_test) = mnist.load_data()

# create two datasets one with digits below 5 and one with 5 and above

X_train_lt5 = X_train[y_train < 5]

y_train_lt5 = y_train[y_train < 5]

X_test_lt5 = X_test[y_test < 5]

y_test_lt5 = y_test[y_test < 5]

X_train_gte5 = X_train[y_train >= 5]

y_train_gte5 = y_train[y_train >= 5] - 5 # make classes start at 0 for

X_test_gte5 = X_test[y_test >= 5] # np_utils.to_categorical

y_test_gte5 = y_test[y_test >= 5] - 5

# -

# define two groups of layers: feature (convolutions) and classification (dense)

feature_layers = [

Convolution2D(nb_filters, kernel_size, kernel_size,

border_mode='valid',

input_shape=input_shape),

Activation('relu'),

Convolution2D(nb_filters, kernel_size, kernel_size),

Activation('relu'),

MaxPooling2D(pool_size=(pool_size, pool_size)),

Dropout(0.25),

Flatten(),

]

classification_layers = [

Dense(128),

Activation('relu'),

Dropout(0.5),

Dense(nb_classes),

Activation('softmax')

]

# +

# create complete model

model = Sequential(feature_layers + classification_layers)

# train model for 5-digit classification [0..4]

train_model(model,

(X_train_lt5, y_train_lt5),

(X_test_lt5, y_test_lt5), nb_classes)

# +

# freeze feature layers and rebuild model

for l in feature_layers:

l.trainable = False

# transfer: train dense layers for new classification task [5..9]

train_model(model,

(X_train_gte5, y_train_gte5),

(X_test_gte5, y_test_gte5), nb_classes)

# -

# ## Your Turn

# Try to Fine Tune a VGG16 Network

# +

from keras.applications import VGG16

from keras.applications.vgg16 import VGG16

from keras.preprocessing import image

from keras.applications.vgg16 import preprocess_input

from keras.layers import Input, Flatten, Dense

from keras.models import Model

import numpy as np

#Get back the convolutional part of a VGG network trained on ImageNet

model_vgg16_conv = VGG16(weights='imagenet', include_top=False)

model_vgg16_conv.summary()

#Create your own input format (here 3x200x200)

inp = Input(shape=(48,48,3),name = 'image_input')

#Use the generated model

output_vgg16_conv = model_vgg16_conv(inp)

#Add the fully-connected layers

x = Flatten(name='flatten')(output_vgg16_conv)

x = Dense(4096, activation='relu', name='fc1')(x)

x = Dense(4096, activation='relu', name='fc2')(x)

x = Dense(5, activation='softmax', name='predictions')(x)

#Create your own model

my_model = Model(input=inp, output=x)

#In the summary, weights and layers from VGG part will be hidden, but they will be fit during the training

my_model.summary()

# -

# ```python

# ...

# ...

# # Plugging new Layers

# model.add(Dense(768, activation='sigmoid'))

# model.add(Dropout(0.0))

# model.add(Dense(768, activation='sigmoid'))

# model.add(Dropout(0.0))

# model.add(Dense(n_labels, activation='softmax'))

# ```

# +

import scipy

new_shape = (48,48)

X_train_new = np.empty(shape=(X_train_gte5.shape[0],)+(48,48,3))

for idx in xrange(X_train_gte5.shape[0]):

X_train_new[idx] = np.resize(scipy.misc.imresize(X_train_gte5[idx], (new_shape)), (48, 48, 3))

X_train_new[idx] = np.resize(X_train_new[idx], (48, 48, 3))

#X_train_new = np.expand_dims(X_train_new, axis=-1)

print X_train_new.shape

X_train_new = X_train_new.astype('float32')

X_train_new /= 255

print('X_train shape:', X_train_new.shape)

print(X_train_new.shape[0], 'train samples')

print(X_train_new.shape[0], 'test samples')

# convert class vectors to binary class matrices

Y_train = np_utils.to_categorical(y_train_gte5, nb_classes)

Y_test = np_utils.to_categorical(y_test_gte5, nb_classes)

print y_train.shape

my_model.compile(loss='categorical_crossentropy',

optimizer='adadelta',

metrics=['accuracy'])

my_model.fit(X_train_new, Y_train,

batch_size=batch_size, nb_epoch=nb_epoch,

verbose=1)

#print('Training time: %s' % (now() - t))

#score = my_model.evaluate(X_test, Y_test, verbose=0)

#print('Test score:', score[0])

#print('Test accuracy:', score[1])

#train_model(my_model,

# (X_train_new, y_train_gte5),

# (X_test_gte5, y_test_gte5), nb_classes)

# -

| 2.4 Transfer Learning & Fine-Tuning.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# # Plot the Env and some trees

# %load_ext autoreload

# %autoreload 2

import jpy_canvas

import random

import time

import sys

# in case you need to tweak your PYTHONPATH...

sys.path.append("../flatland")

import flatland.core.env

import flatland.utils.rendertools as rt

from flatland.envs.rail_env import RailEnv, random_rail_generator

from flatland.envs.observations import TreeObsForRailEnv

from flatland.envs.predictions import ShortestPathPredictorForRailEnv

from IPython.core.display import display, HTML

display(HTML("<style>.container { width:90% !important; }</style>"))

# # Generate

nAgents = 3

fnMethod = random_rail_generator(cell_type_relative_proportion=[1] * 11)

env = RailEnv(width=20,

height=10,

rail_generator=fnMethod,

number_of_agents=nAgents,

obs_builder_object=TreeObsForRailEnv(max_depth=3, predictor=ShortestPathPredictorForRailEnv()))

env.reset()

# # Render

oRT = rt.RenderTool(env,gl="PILSVG")

oRT.render_env(show_observations=False,show_predictions=True)