code stringlengths 38 801k | repo_path stringlengths 6 263 |

|---|---|

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# +

import numpy as np

import pandas as pd

import tempfile

import json

import matplotlib.pyplot as plt

import seaborn as sns

sns.set(style="darkgrid")

import warnings

warnings.simplefilter('ignore')

import logging

logging.basicConfig()

logger = logging.getLogger()

logger.setLevel(logging.INFO)

from banditpylib import trials_to_dataframe

from banditpylib.arms import GaussianArm

from banditpylib.bandits import MultiArmedBandit

from banditpylib.protocols import SinglePlayerProtocol

from banditpylib.learners.mab_fcbai_learner import ExpGap, LilUCBHeuristic

# -

confidence = 0.95

means = [0.3, 0.5, 0.7]

arms = [GaussianArm(mu=mean, std=1) for mean in means]

bandit = MultiArmedBandit(arms=arms)

learners = [ExpGap(arm_num=len(arms), confidence=confidence, threshold=3, name='Exponential-Gap Elimination'),

LilUCBHeuristic(arm_num=len(arms), confidence=confidence, name='Heuristic lilUCB')]

# for each setup we run 20 trials

trials = 20

temp_file = tempfile.NamedTemporaryFile()

# simulator

game = SinglePlayerProtocol(bandit=bandit, learners=learners)

# start playing the game

# add `debug=True` for debugging purpose

game.play(trials=trials, output_filename=temp_file.name)

trials_df = trials_to_dataframe(temp_file.name)

del trials_df['other']

trials_df.head()

trials_df['confidence'] = confidence

fig = plt.figure()

ax = plt.subplot(111)

sns.barplot(x='confidence', y='total_actions', hue='learner', data=trials_df)

plt.ylabel('pulls')

ax.legend(loc='center left', bbox_to_anchor=(1, 0.5))

| examples/fix_confidence_bai_multi_armed_bandit.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 2

# language: python

# name: python2

# ---

# <center>

# <font size="+3">BobbleBot Controller Analysis Report<br><br></font>

# <font size="+2">Sensitivity to Mass Properties<br><br></font>

# <i><NAME><br>

# <i>10/26/18<br>

# <img src="imgs/BobbleCAD.png" alt="BobbleBot CAD" style="height: 350px; width: 250px;"/>

# </center>

# ## Introduction

# This document outlines an analysis of BobbleBot controller performance under varying mass properties. The BobbleBot simulator was used to collect the data. The simulated BobbleBot was subjected to impulse forces applied at a fixed location and in the +/- X direction. The controller response is analyzed as the BobbleBot CG is varied along the x-direction. The result is a set of data that captures the controller's ability to maintain adequate balance control within a bounding volume of CG locations.

# <center>

# <br>

# <img src="imgs/BobbleBotSimCg.png" alt="BobbleBot CG" style="height: 350px; width: 250px;"/>

# </center>

# ## Loading BobbleBot Simulation Data

#

# The BobbleBot simulator runs in Gazebo. Using the gazebo-ros packages, one can log data as the simulator runs and store it in a ros bag format. The simulation data can then be analyzed with Python using [Pandas](https://pandas.pydata.org/). This article discusses how to [load ROS bag files into Pandas](https://nimbus.unl.edu/2014/11/using-rosbag_pandas-to-analyze-rosbag-files/).

# Load anaylsis environment file. This file defines data directories

# and imports all needed Python packages for this notebook.

exec(open("env.py").read())

# ### Print sim data in tabular form

# All the sim data was loaded when the analysis env file was sourced. We can get the data for a run in tabular form like so.

n_rows = 10

df_x['cgx_0.0'].head(n_rows)

# ### Search for a column

# Here's how to search for a column(s) in a data frame.

#

search_string = 'Velocity'

found_data = df_x['cgx_0.0'].filter(regex=search_string)

found_data.head()

# ## CG Shift X

#

# ### Tilt Plot

# %matplotlib inline

import matplotlib.pyplot as plt

# Make the plot

fig = plt.figure(figsize=(20, 10), dpi=40)

ax1 = fig.add_subplot(111)

coplot_var_for_runs(ax1, df_x, pc_x['measured_tilt'])

fig.tight_layout()

sns.despine()

plt.savefig('TiltsX.png', bbox_inches='tight')

# ### Velocity Plot

# %matplotlib inline

import matplotlib.pyplot as plt

# Make the plot

fig = plt.figure(figsize=(20, 10), dpi=40)

ax1 = fig.add_subplot(111)

coplot_var_for_runs(ax1, df_x, pc_x['velocity'])

fig.tight_layout()

sns.despine()

plt.savefig('VelocitiesX.png', bbox_inches='tight')

# ## CG Shift Z

#

# ### Desired Tilt vs Actual (nominal z cg 0.180 m)

# %matplotlib inline

import matplotlib.pyplot as plt

# Make the plot

fig = plt.figure(figsize=(20, 10), dpi=40)

ax1 = fig.add_subplot(111)

desired_vs_actual_for_runs(ax1, df_z, pc_z['desired_tilt_vs_actual'])

fig.tight_layout()

sns.despine()

plt.savefig('DesiredTiltVsActualZ.png', bbox_inches='tight')

# ### Desired Velocity vs Actual (nominal z cg 0.180 m)

# %matplotlib inline

import matplotlib.pyplot as plt

# Make the plot

fig = plt.figure(figsize=(20, 10), dpi=40)

ax1 = fig.add_subplot(111)

desired_vs_actual_for_runs(ax1, df_z, pc_z['desired_velocity_vs_actual'])

fig.tight_layout()

sns.despine()

plt.savefig('DesiredVelocityVsActualZ.png', bbox_inches='tight')

# ### Tilt Plot

# %matplotlib inline

import matplotlib.pyplot as plt

# Make the plot

fig = plt.figure(figsize=(20, 10), dpi=40)

ax1 = fig.add_subplot(111)

coplot_var_for_runs(ax1, df_z, pc_z['measured_tilt'])

fig.tight_layout()

sns.despine()

plt.savefig('TiltsZ.png', bbox_inches='tight')

# ### Velocity Plot

# %matplotlib inline

import matplotlib.pyplot as plt

# Make the plot

fig = plt.figure(figsize=(20, 10), dpi=40)

ax1 = fig.add_subplot(111)

coplot_var_for_runs(ax1, df_z, pc_z['velocity'])

fig.tight_layout()

sns.despine()

plt.savefig('VelocitiesZ.png', bbox_inches='tight')

| analysis/notebooks/CgPlacement/CgAnalysis.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# %load_ext autoreload

# %autoreload 2

import xarray as xr

import matplotlib.pyplot as plt

from src.data_generator import *

from src.train import *

from src.utils import *

from src.networks import *

os.environ["CUDA_VISIBLE_DEVICES"]=str(7)

limit_mem()

policy = mixed_precision.Policy('mixed_float16')

mixed_precision.set_policy(policy)

args = load_args('../nn_configs/B/81-resnet_d3_dr_0.1.yml')

args['exp_id'] = '81.1-resnet_d3_dr_0.1'

args['train_years'] = ['2015', '2015']

dg_train, dg_valid, dg_test = load_data(**args)

args['filters'] = [128, 128, 128, 128, 128, 128, 128, 128,

128, 128, 128, 128, 128, 128, 128, 128, 128, 128, 128, 128, 4]

model = build_resnet(

**args, input_shape=dg_train.shape,

)

# + jupyter={"outputs_hidden": true}

model.summary()

# -

X, y = dg_train[0]

model.output

y.shape

# ## Combined CRPS MAE

crps_mae = create_lat_crps_mae(dg_train.data.lat, 2)

preds = model(X)

crps_mae(y, preds)

# ## Log

def pdf(y, mu, sigma):

eps = 1e-7

sigma = np.maximum(eps, sigma)

p = 1 / sigma / np.sqrt(2*np.pi) * np.exp(

-0.5 * ((y - mu) / sigma)**2

)

return p

from scipy.stats import norm

pdf(5, 0.1, 3), norm.pdf(3, loc=5, scale=0.1)

def log_loss(y_true, mu, sigma):

prob = pdf(y_true, mu, sigma)

ll = - np.log(prob)

return ll

mu = 3

sigma = 5

y = 3

log_loss(y, mu, sigma)

def create_lat_log_loss(lat, n_vars):

weights_lat = np.cos(np.deg2rad(lat)).values

weights_lat /= weights_lat.mean()

def log_loss(y_true, y_pred):

# Split input

mu = y_pred[:, :, :, :n_vars]

sigma = y_pred[:, :, :, n_vars:]

sigma = tf.nn.relu(sigma)

# Compute PDF

eps = 1e-7

sigma = tf.maximum(eps, sigma)

prob = 1 / sigma / np.sqrt(2*np.pi) * tf.math.exp(

-0.5 * ((y_true - mu) / sigma)**2

)

# Compute log loss

ll = - tf.math.log(tf.maximum(prob, eps))

ll = ll * weights_lat[None, : , None, None]

return tf.reduce_mean(ll)

return log_loss

ll = create_lat_log_loss(dg_train.data.lat, 2)

ll(y, preds)

# ## CRPS

type(y)

pred = model(X)

type(pred)

type(tf.math.sqrt(pred))

y.shape[-1]

y_true, y_pred = y, pred

n_vars = y_true.shape[-1]

mu = y_pred[:, :, :, :n_vars]

sigma = y_pred[:, :, :, n_vars:]

mu.shape, sigma.shape

np.min(sigma)

sigma = tf.math.sqrt(tf.math.square(sigma))

np.min(sigma)

loc = (y_true - mu) / sigma

loc.shape

phi = 1.0 / np.sqrt(2.0 * np.pi) * tf.math.exp(-tf.math.square(loc) / 2.0)

phi.shape

Phi = 0.5 * (1.0 + tf.math.erf(loc / np.sqrt(2.0)))

crps = sigma * (loc * (2. * Phi - 1.) + 2 * phi - 1. / np.sqrt(np.pi))

crps.shape

tf.reduce_mean(crps)

def crps_cost_function(y_true, y_pred):

n_vars = y_true.shape[-1]

# Split input

mu = y_pred[:, :, :, :n_vars]

sigma = y_pred[:, :, :, n_vars:]

# To stop sigma from becoming negative we first have to

# convert it the the variance and then take the square

# root again.

sigma = tf.math.sqrt(tf.math.square(sigma))

# The following three variables are just for convenience

loc = (y_true - mu) / sigma

phi = 1.0 / np.sqrt(2.0 * np.pi) * tf.math.exp(-tf.math.square(loc) / 2.0)

Phi = 0.5 * (1.0 + tf.math.erf(loc / np.sqrt(2.0)))

# First we will compute the crps for each input/target pair

crps = sigma * (loc * (2. * Phi - 1.) + 2 * phi - 1. / np.sqrt(np.pi))

# Then we take the mean. The cost is now a scalar

return tf.reduce_mean(crps)

y_test = np.zeros((32, 32, 64, 2))

pred_test = np.concatenate([-np.ones_like(y_test), np.zeros_like(y_test)], axis=-1)

pred_test = tf.Variable(pred_test)

y_test.shape, pred_test.shape

crps_cost_function(y_test, pred_test)

dg_train.data.lat

import pdb

def create_lat_crps(lat, n_vars):

weights_lat = np.cos(np.deg2rad(lat)).values

weights_lat /= weights_lat.mean()

def crps_loss(y_true, y_pred):

# pdb.set_trace()

# Split input

mu = y_pred[:, :, :, :n_vars]

sigma = y_pred[:, :, :, n_vars:]

# To stop sigma from becoming negative we first have to

# convert it the the variance and then take the square

# root again.

sigma = tf.math.sqrt(tf.math.square(sigma))

# The following three variables are just for convenience

loc = (y_true - mu) / tf.maximum(1e-7, sigma)

phi = 1.0 / np.sqrt(2.0 * np.pi) * tf.math.exp(-tf.math.square(loc) / 2.0)

Phi = 0.5 * (1.0 + tf.math.erf(loc / np.sqrt(2.0)))

# First we will compute the crps for each input/target pair

crps = sigma * (loc * (2. * Phi - 1.) + 2 * phi - 1. / np.sqrt(np.pi))

crps = crps * weights_lat[None, : , None, None]

# Then we take the mean. The cost is now a scalar

return tf.reduce_mean(crps)

return crps_loss

dg_train.output_idxs

crps_test = create_lat_crps(dg_train.data.lat, 2)

crps_test(y_test, pred_test)

model = build_resnet(

**args, input_shape=dg_train.shape,

)

model.compile(keras.optimizers.Adam(1e-3), crps_test)

from src.clr import LRFinder

lrf = LRFinder(

dg_train.n_samples, args['batch_size'],

minimum_lr=1e-5, maximum_lr=10,

lr_scale='exp', save_dir='./', verbose=0)

model.fit(dg_train, epochs=1,

callbacks=[lrf], shuffle=False)

plot_lr_find(lrf, log=True)

plt.axvline(2.5e-4)

# + jupyter={"outputs_hidden": true}

X, y = dg_train[31]

crps_test(y, model(X))

# + jupyter={"outputs_hidden": true}

for i, (X, y) in enumerate(dg_train):

loss = crps_test(y, model(X))

print(loss)

# -

dg_valid.data

np.concatenate([dg.std.isel(level=dg.output_idxs).values]*2)

preds = create_predictions(model, dg_valid, parametric=True)

preds

preds = model.predict(dg_test)

preds.shape

dg = dg_train

level = dg.data.isel(level=dg.output_idxs).level

level

xr.concat([level]*2, dim='level')

level_names = dg.data.isel(level=dg.output_idxs).level_names

level_names

list(level_names.level.values) * 2

xr.DataArray(['a', 'b', 'c', 'd'], dims=['level'],

coords={'level': list(level_names.level.values) *2})

level_names[:] = ['a', 'b']

l = level_names[0]

l = l.split('_')

l[0] += '-mean'

'_'.join(l)

level_names = level_names + [l.]

level_names

| nbs_probabilistic/04-implement-parametric.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python [default]

# language: python

# name: python2

# ---

# +

import numpy as np

from sklearn.datasets import load_iris

from sklearn import tree

iris = load_iris()

print iris.feature_names

# -

print iris.target_names

print iris.data[0:5]

print iris.target[0:5]

# +

#for i in range(len(iris.target)):

# print "Example %d: label %s, features %s" % (i, iris.target[i], iris.data[i])

# +

test_idx = [0, 50, 100]

# training data

train_target = np.delete(iris.target, test_idx)

train_data = np.delete(iris.data, test_idx, axis=0)

# testing data

test_target = iris.target[test_idx]

test_data = iris.data[test_idx]

print test_target

# -

clf = tree.DecisionTreeClassifier()

clf.fit(train_data, train_target)

print clf.predict(test_data)

# viz code

from sklearn.externals.six import StringIO

import pydot

dot_data = StringIO()

tree.export_graphviz(clf,

out_file=dot_data,

feature_names=iris.feature_names,

class_names=iris.target_names,

filled=True, rounded=True,

impurity=False)

# +

import os

os.environ["PATH"] += os.pathsep + r'C:\ProgramData\Anaconda2\pkgs\graphviz-2.38-h308b129_2\Library\bin\graphviz'

graph = pydot.graph_from_dot_data(dot_data.getvalue())

graph[0].write_pdf("iris.pdf")

# -

| src/2. Visualizing a decision tree.ipynb |

# ---

# jupyter:

# jupytext:

# formats: ipynb,py:light

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python [conda env:PROJ_irox_oer] *

# language: python

# name: conda-env-PROJ_irox_oer-py

# ---

# # Import Modules

# +

import os

print(os.getcwd())

import sys

import pandas as pd

from pymatgen.io.ase import AseAtomsAdaptor

# #########################################################

from methods import get_df_dft

# #########################################################

sys.path.insert(0, "..")

from local_methods import XRDCalculator

from local_methods import get_top_xrd_facets

# -

# # Script Inputs

# +

verbose = True

# verbose = False

# bulk_id_i = "8ymh8qnl6o"

# bulk_id_i = "8p8evt9pcg"

# bulk_id_i = "8l919k6s7p"

bulk_id_i = "64cg6j9any"

# -

# # Read Data

# +

df_dft = get_df_dft()

print("df_dft.shape:", df_dft.shape[0])

from methods import get_df_xrd

df_xrd = get_df_xrd()

df_xrd = df_xrd.set_index("id_unique", drop=False)

# + active=""

#

#

#

# +

# #########################################################

row_i = df_dft.loc[bulk_id_i]

# #########################################################

atoms_i = row_i.atoms

atoms_stan_prim_i = row_i.atoms_stan_prim

# #########################################################

# Writing bulk facets

atoms_i.write("out_data/bulk.traj")

atoms_i.write("out_data/bulk.cif")

# #########################################################

row_xrd_i = df_xrd.loc[bulk_id_i]

# #########################################################

top_facets_i = row_xrd_i.top_facets

# #########################################################

print(

"top_facets:",

top_facets_i

)

# +

# assert False

# +

# atoms = atoms_i

atoms = atoms_stan_prim_i

AAA = AseAtomsAdaptor()

struct_i = AAA.get_structure(atoms)

XRDCalc = XRDCalculator(

wavelength='CuKa',

symprec=0,

debye_waller_factors=None,

)

# XRDCalc.get_plot(structure=struct_i)

# # XRDCalc.get_plot?

plt = XRDCalc.plot_structures([struct_i])

# -

# # Saving plot to file

file_name_i = os.path.join(

"out_plot",

bulk_id_i + ".png",

)

plt.savefig(

file_name_i,

dpi=1600,

)

| workflow/xrd_bulks/plot_xrd_patterns/plot_xrd_patterns.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# This notebook was prepared by [<NAME>](https://github.com/donnemartin). Source and license info is on [GitHub](https://github.com/donnemartin/interactive-coding-challenges).

# # Solution Notebook

# ## Problem: Format license keys.

#

# See the [LeetCode](https://leetcode.com/problems/license-key-formatting/) problem page.

#

# <pre>

# Now you are given a string S, which represents a software license key which we would like to format. The string S is composed of alphanumerical characters and dashes. The dashes split the alphanumerical characters within the string into groups. (i.e. if there are M dashes, the string is split into M+1 groups). The dashes in the given string are possibly misplaced.

#

# We want each group of characters to be of length K (except for possibly the first group, which could be shorter, but still must contain at least one character). To satisfy this requirement, we will reinsert dashes. Additionally, all the lower case letters in the string must be converted to upper case.

#

# So, you are given a non-empty string S, representing a license key to format, and an integer K. And you need to return the license key formatted according to the description above.

#

# Example 1:

# Input: S = "2-4A0r7-4k", K = 4

#

# Output: "24A0-R74K"

#

# Explanation: The string S has been split into two parts, each part has 4 characters.

# Example 2:

# Input: S = "2-4A0r7-4k", K = 3

#

# Output: "24-A0R-74K"

#

# Explanation: The string S has been split into three parts, each part has 3 characters except the first part as it could be shorter as said above.

#

# Note:

# The length of string S will not exceed 12,000, and K is a positive integer.

# String S consists only of alphanumerical characters (a-z and/or A-Z and/or 0-9) and dashes(-).

# String S is non-empty.

# </pre>

#

# * [Constraints](#Constraints)

# * [Test Cases](#Test-Cases)

# * [Algorithm](#Algorithm)

# * [Code](#Code)

# * [Unit Test](#Unit-Test)

# ## Constraints

#

# * Is the output a string?

# * Yes

# * Can we change the input string?

# * No, you can't modify the input string

# * Can we assume the inputs are valid?

# * No

# * Can we assume this fits memory?

# * Yes

# ## Test Cases

#

# * None -> TypeError

# * '---', k=3 -> ''

# * '2-4A0r7-4k', k=3 -> '24-A0R-74K'

# * '2-4A0r7-4k', k=4 -> '24A0-R74K'

# ## Algorithm

#

# * Loop through each character in the license key backwards, keeping a count of the number of chars we've reached so far, while inserting each character into a result list (convert to upper case)

# * If we reach a '-', skip it

# * Whenever we reach a char count of k, append a '-' character to the result list, reset the char count

# * Careful that we don't have a leading '-', which we might hit with test case: '2-4A0r7-4k', k=4 -> '24A0-R74K'

# * Reverse the result list and return it

#

# Complexity:

# * Time: O(n)

# * Space: O(n)

# ## Code

class Solution(object):

def format_license_key(self, license_key, k):

if license_key is None:

raise TypeError('license_key must be a str')

if not license_key:

raise ValueError('license_key must not be empty')

formatted_license_key = []

num_chars = 0

for char in license_key[::-1]:

if char == '-':

continue

num_chars += 1

formatted_license_key.append(char.upper())

if num_chars >= k:

formatted_license_key.append('-')

num_chars = 0

if formatted_license_key and formatted_license_key[-1] == '-':

formatted_license_key.pop(-1)

return ''.join(formatted_license_key[::-1])

# ## Unit Test

# +

# %%writefile test_format_license_key.py

import unittest

class TestSolution(unittest.TestCase):

def test_format_license_key(self):

solution = Solution()

self.assertRaises(TypeError, solution.format_license_key, None, None)

license_key = '---'

k = 3

expected = ''

self.assertEqual(solution.format_license_key(license_key, k), expected)

license_key = '2-4A0r7-4k'

k = 3

expected = '24-A0R-74K'

self.assertEqual(solution.format_license_key(license_key, k), expected)

license_key = '2-4A0r7-4k'

k = 4

expected = '24A0-R74K'

self.assertEqual(solution.format_license_key(license_key, k), expected)

print('Success: test_format_license_key')

def main():

test = TestSolution()

test.test_format_license_key()

if __name__ == '__main__':

main()

# -

# %run -i test_format_license_key.py

| online_judges/license_key/format_license_key_solution.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

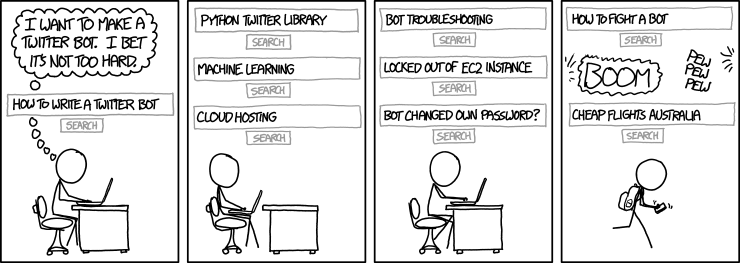

# # Final project: StackOverflow assistant bot

#

# Congratulations on coming this far and solving the programming assignments! In this final project, we will combine everything we have learned about Natural Language Processing to construct a *dialogue chat bot*, which will be able to:

# * answer programming-related questions (using StackOverflow dataset);

# * chit-chat and simulate dialogue on all non programming-related questions.

#

# For a chit-chat mode we will use a pre-trained neural network engine available from [ChatterBot](https://github.com/gunthercox/ChatterBot).

# Those who aim at honor certificates for our course or are just curious, will train their own models for chit-chat.

#

# ©[xkcd](https://xkcd.com)

# ### Data description

#

# To detect *intent* of users questions we will need two text collections:

# - `tagged_posts.tsv` — StackOverflow posts, tagged with one programming language (*positive samples*).

# - `dialogues.tsv` — dialogue phrases from movie subtitles (*negative samples*).

#

# +

try:

import google.colab

IN_COLAB = True

except:

IN_COLAB = False

if IN_COLAB:

# ! wget https://raw.githubusercontent.com/hse-aml/natural-language-processing/master/setup_google_colab.py -O setup_google_colab.py

import setup_google_colab

setup_google_colab.setup_project()

import sys

sys.path.append("..")

from common.download_utils import download_project_resources

download_project_resources()

# -

# For those questions, that have programming-related intent, we will proceed as follow predict programming language (only one tag per question allowed here) and rank candidates within the tag using embeddings.

# For the ranking part, you will need:

# - `word_embeddings.tsv` — word embeddings, that you trained with StarSpace in the 3rd assignment. It's not a problem if you didn't do it, because we can offer an alternative solution for you.

# As a result of this notebook, you should obtain the following new objects that you will then use in the running bot:

#

# - `intent_recognizer.pkl` — intent recognition model;

# - `tag_classifier.pkl` — programming language classification model;

# - `tfidf_vectorizer.pkl` — vectorizer used during training;

# - `thread_embeddings_by_tags` — folder with thread embeddings, arranged by tags.

#

# Some functions will be reused by this notebook and the scripts, so we put them into *utils.py* file. Don't forget to open it and fill in the gaps!

from utils import *

# ## Part I. Intent and language recognition

# We want to write a bot, which will not only **answer programming-related questions**, but also will be able to **maintain a dialogue**. We would also like to detect the *intent* of the user from the question (we could have had a 'Question answering mode' check-box in the bot, but it wouldn't fun at all, would it?). So the first thing we need to do is to **distinguish programming-related questions from general ones**.

#

# It would also be good to predict which programming language a particular question referees to. By doing so, we will speed up question search by a factor of the number of languages (10 here), and exercise our *text classification* skill a bit. :)

# +

import numpy as np

import pandas as pd

import pickle

import re

from sklearn.feature_extraction.text import TfidfVectorizer

# -

# ### Data preparation

# In the first assignment (Predict tags on StackOverflow with linear models), you have already learnt how to preprocess texts and do TF-IDF tranformations. Reuse your code here. In addition, you will also need to [dump](https://docs.python.org/3/library/pickle.html#pickle.dump) the TF-IDF vectorizer with pickle to use it later in the running bot.

def tfidf_features(X_train, X_test, vectorizer_path):

"""Performs TF-IDF transformation and dumps the model."""

# Train a vectorizer on X_train data.

# Transform X_train and X_test data.

# Pickle the trained vectorizer to 'vectorizer_path'

# Don't forget to open the file in writing bytes mode.

######################################

######### YOUR CODE HERE #############

######################################

return X_train, X_test

# Now, load examples of two classes. Use a subsample of stackoverflow data to balance the classes. You will need the full data later.

# +

sample_size = 200000

dialogue_df = pd.read_csv('data/dialogues.tsv', sep='\t').sample(sample_size, random_state=0)

stackoverflow_df = pd.read_csv('data/tagged_posts.tsv', sep='\t').sample(sample_size, random_state=0)

# -

# Check how the data look like:

dialogue_df.head()

stackoverflow_df.head()

# Apply *text_prepare* function to preprocess the data.

#

# If you filled in the file, but NotImplementedError is still displayed, please refer to [this thread](https://github.com/hse-aml/natural-language-processing/issues/27).

from utils import text_prepare

dialogue_df['text'] = ######### YOUR CODE HERE #############

stackoverflow_df['title'] = ######### YOUR CODE HERE #############

# ### Intent recognition

# We will do a binary classification on TF-IDF representations of texts. Labels will be either `dialogue` for general questions or `stackoverflow` for programming-related questions. First, prepare the data for this task:

# - concatenate `dialogue` and `stackoverflow` examples into one sample

# - split it into train and test in proportion 9:1, use *random_state=0* for reproducibility

# - transform it into TF-IDF features

from sklearn.model_selection import train_test_split

# +

X = np.concatenate([dialogue_df['text'].values, stackoverflow_df['title'].values])

y = ['dialogue'] * dialogue_df.shape[0] + ['stackoverflow'] * stackoverflow_df.shape[0]

X_train, X_test, y_train, y_test = ######### YOUR CODE HERE ##########

print('Train size = {}, test size = {}'.format(len(X_train), len(X_test)))

X_train_tfidf, X_test_tfidf = ######### YOUR CODE HERE ###########

# -

# Train the **intent recognizer** using LogisticRegression on the train set with the following parameters: *penalty='l2'*, *C=10*, *random_state=0*. Print out the accuracy on the test set to check whether everything looks good.

from sklearn.linear_model import LogisticRegression

from sklearn.metrics import accuracy_score

# +

######################################

######### YOUR CODE HERE #############

######################################

# -

# Check test accuracy.

y_test_pred = intent_recognizer.predict(X_test_tfidf)

test_accuracy = accuracy_score(y_test, y_test_pred)

print('Test accuracy = {}'.format(test_accuracy))

# Dump the classifier to use it in the running bot.

pickle.dump(intent_recognizer, open(RESOURCE_PATH['INTENT_RECOGNIZER'], 'wb'))

# ### Programming language classification

# We will train one more classifier for the programming-related questions. It will predict exactly one tag (=programming language) and will be also based on Logistic Regression with TF-IDF features.

#

# First, let us prepare the data for this task.

X = stackoverflow_df['title'].values

y = stackoverflow_df['tag'].values

X_train, X_test, y_train, y_test = train_test_split(X, y, test_size=0.2, random_state=0)

print('Train size = {}, test size = {}'.format(len(X_train), len(X_test)))

# Let us reuse the TF-IDF vectorizer that we have already created above. It should not make a huge difference which data was used to train it.

# +

vectorizer = pickle.load(open(RESOURCE_PATH['TFIDF_VECTORIZER'], 'rb'))

X_train_tfidf, X_test_tfidf = vectorizer.transform(X_train), vectorizer.transform(X_test)

# -

# Train the **tag classifier** using OneVsRestClassifier wrapper over LogisticRegression. Use the following parameters: *penalty='l2'*, *C=5*, *random_state=0*.

from sklearn.multiclass import OneVsRestClassifier

# +

######################################

######### YOUR CODE HERE #############

######################################

# -

# Check test accuracy.

y_test_pred = tag_classifier.predict(X_test_tfidf)

test_accuracy = accuracy_score(y_test, y_test_pred)

print('Test accuracy = {}'.format(test_accuracy))

# Dump the classifier to use it in the running bot.

pickle.dump(tag_classifier, open(RESOURCE_PATH['TAG_CLASSIFIER'], 'wb'))

# ## Part II. Ranking questions with embeddings

# To find a relevant answer (a thread from StackOverflow) on a question you will use vector representations to calculate similarity between the question and existing threads. We already had `question_to_vec` function from the assignment 3, which can create such a representation based on word vectors.

#

# However, it would be costly to compute such a representation for all possible answers in *online mode* of the bot (e.g. when bot is running and answering questions from many users). This is the reason why you will create a *database* with pre-computed representations. These representations will be arranged by non-overlaping tags (programming languages), so that the search of the answer can be performed only within one tag each time. This will make our bot even more efficient and allow not to store all the database in RAM.

# Load StarSpace embeddings which were trained on Stack Overflow posts. These embeddings were trained in *supervised mode* for duplicates detection on the same corpus that is used in search. We can account on that these representations will allow us to find closely related answers for a question.

#

# If for some reasons you didn't train StarSpace embeddings in the assignment 3, you can use [pre-trained word vectors](https://code.google.com/archive/p/word2vec/) from Google. All instructions about how to work with these vectors were provided in the same assignment. However, we highly recommend to use StarSpace's embeddings, because it contains more appropriate embeddings. If you chose to use Google's embeddings, delete the words, which are not in Stackoverflow data.

starspace_embeddings, embeddings_dim = load_embeddings('data/word_embeddings.tsv')

# Since we want to precompute representations for all possible answers, we need to load the whole posts dataset, unlike we did for the intent classifier:

posts_df = pd.read_csv('data/tagged_posts.tsv', sep='\t')

# Look at the distribution of posts for programming languages (tags) and find the most common ones.

# You might want to use pandas [groupby](https://pandas.pydata.org/pandas-docs/stable/generated/pandas.DataFrame.groupby.html) and [count](https://pandas.pydata.org/pandas-docs/stable/generated/pandas.DataFrame.count.html) methods:

counts_by_tag = ######### YOUR CODE HERE #############

# Now for each `tag` you need to create two data structures, which will serve as online search index:

# * `tag_post_ids` — a list of post_ids with shape `(counts_by_tag[tag],)`. It will be needed to show the title and link to the thread;

# * `tag_vectors` — a matrix with shape `(counts_by_tag[tag], embeddings_dim)` where embeddings for each answer are stored.

#

# Implement the code which will calculate the mentioned structures and dump it to files. It should take several minutes to compute it.

# +

import os

os.makedirs(RESOURCE_PATH['THREAD_EMBEDDINGS_FOLDER'], exist_ok=True)

for tag, count in counts_by_tag.items():

tag_posts = posts_df[posts_df['tag'] == tag]

tag_post_ids = ######### YOUR CODE HERE #############

tag_vectors = np.zeros((count, embeddings_dim), dtype=np.float32)

for i, title in enumerate(tag_posts['title']):

tag_vectors[i, :] = ######### YOUR CODE HERE #############

# Dump post ids and vectors to a file.

filename = os.path.join(RESOURCE_PATH['THREAD_EMBEDDINGS_FOLDER'], os.path.normpath('%s.pkl' % tag))

pickle.dump((tag_post_ids, tag_vectors), open(filename, 'wb'))

# -

# ## Part III. Putting all together

# Now let's combine everything that we have done and enable the bot to maintain a dialogue. We will teach the bot to sequentially determine the intent and, depending on the intent, select the best answer. As soon as we do this, we will have the opportunity to chat with the bot and check how well it answers questions.

# Implement Dialogue Manager that will generate the best answer. In order to do this, you should open *dialogue_manager.py* and fill in the gaps.

from dialogue_manager import DialogueManager

dialogue_manager = ######### YOUR CODE HERE #############

# Now we are ready to test our chat bot! Let's chat with the bot and ask it some questions. Check that the answers are reasonable.

# +

questions = [

"Hey",

"How are you doing?",

"What's your hobby?",

"How to write a loop in python?",

"How to delete rows in pandas?",

"python3 re",

"What is the difference between c and c++",

"Multithreading in Java",

"Catch exceptions C++",

"What is AI?",

]

for question in questions:

answer = ######### YOUR CODE HERE #############

print('Q: %s\nA: %s \n' % (question, answer))

| week5/week5-project.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 2

# language: python

# name: python2

# ---

# ### OSKAR System noise testing

#

# This is an simple test looking at the expected RMS and mean of the uncorrelated system noise

# on auto and cross correlations.

#

# In this example $n=10^{6}$ samples are generated and correlated in blocks of $m=100$ with the blocks then averaged before measuring the mean and STD.

# +

# %matplotlib inline

from IPython.display import display

import numpy as np

import matplotlib.pyplot as pp

import time

n = 1e7

m = 1000

s = 15

Xp = (np.random.randn(n/m, m) + 1.j*np.random.randn(n/m, m))*s

Xq = (np.random.randn(n/m, m) + 1.j*np.random.randn(n/m, m))*s

ac = np.sum(Xp*np.conj(Xp),1)/m # Auto-correlation

xc = np.sum(Xp*np.conj(Xq),1)/m # Cross-correlation

print 'Cross-correlation: measured (predicted)'

print ' mean : %.4f%+.4fi (0+0i)' % (np.real(np.mean(xc)), np.imag(np.mean(xc)))

print ' STD : %.4f (%.4f)' % (np.std(xc), 2*s**2/(m**0.5))

print 'Auto-correlation: measured (predicted)'

print ' mean : %.4f (%.4f)' % (np.real(np.mean(ac)), 2*s**2)

print ' STD : %.4f (%.4f)' % (np.std(ac), 2*s**2/(m**0.5))

# -

# #### Cross-correlation

# Has a mean of $0$ and a STD of $\frac{2\mathrm{s}^{2}}{\sqrt{m}}$.

#

# #### Auto-correlation

# Has a mean of $2\mathrm{s}^{2}$ and a STD of $\frac{2\mathrm{s}^{2}}{\sqrt{m}}$.

#

# #### In terms of OSKAR parameters

#

# The number of independent samples in an integration is $m = \sqrt{2\Delta\nu\tau}$.

# The System equivalent flux density of one polarisation of an antenna from a unpolarised source is:

# $$S = \frac{2k_{\mathrm{B}}T_{\mathrm{sys}}}{A_{\mathrm{eff}}\eta}$$

# And the RMS from this SEFD is:

# $$ \sigma_{p,q} = \frac{ \sqrt{ S_{p} S_{q}} } { \sqrt{2\Delta\nu\tau} } $$

# if $S_{p} = S_{q} = S$

# $$ \sigma_{p,q} = \frac{S} { \sqrt{2\Delta\nu\tau} } $$

# As Visibilities are complex the measured STD (or RMS) will be

# $$\varepsilon = \sqrt{2}\sigma_{p,q}$$

# That is we would expect to measure and RMS of

# $$ \varepsilon = \frac{\sqrt{2} S} { \sqrt{2\Delta\nu\tau} } = \frac{\sqrt{2} S} { \sqrt{m} }$$

# If we relate this to the parameter s in the script above

# $$ \frac{2\mathrm{s}^{2}}{\sqrt{m}} = \frac{\sqrt{2} S} { \sqrt{m} } $$

# and therefore,

# $$ s = \sqrt{\frac{\sqrt{m}\sigma_{p,q}} {\sqrt{2}} } = \sqrt{\frac{S}{\sqrt{2}}}$$

# or

# $$ \sigma_{p,q} = \sqrt{\frac{2}{m}}s^{2} $$

| doc/ipython_notebooks/uncorrelated_system_noise.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3 (Data Science)

# language: python

# name: python3__SAGEMAKER_INTERNAL__arn:aws:sagemaker:us-east-1:081325390199:image/datascience-1.0

# ---

# # Examine the Evalution Metrics

#

# Examine the resulting model evaluation after the pipeline completes. Download the resulting evaluation.json file from S3 and print the report.

# +

from botocore.exceptions import ClientError

import os

import sagemaker

import logging

import boto3

import sagemaker

import pandas as pd

sess = sagemaker.Session()

bucket = sess.default_bucket()

role = sagemaker.get_execution_role()

region = boto3.Session().region_name

sm = boto3.Session().client(service_name="sagemaker", region_name=region)

# -

# %store -r pipeline_name

print(pipeline_name)

# %store -r pipeline_experiment_name

print(pipeline_experiment_name)

# +

# %%time

import time

from pprint import pprint

executions_response = sm.list_pipeline_executions(PipelineName=pipeline_name)["PipelineExecutionSummaries"]

pipeline_execution_status = executions_response[0]["PipelineExecutionStatus"]

print(pipeline_execution_status)

while pipeline_execution_status == "Executing":

try:

executions_response = sm.list_pipeline_executions(PipelineName=pipeline_name)["PipelineExecutionSummaries"]

pipeline_execution_status = executions_response[0]["PipelineExecutionStatus"]

# print('Executions for our pipeline...')

# print(pipeline_execution_status)

except Exception as e:

print("Please wait...")

time.sleep(30)

pprint(executions_response)

# -

# # List Pipeline Execution Steps

pipeline_execution_status = executions_response[0]["PipelineExecutionStatus"]

print(pipeline_execution_status)

pipeline_execution_arn = executions_response[0]["PipelineExecutionArn"]

print(pipeline_execution_arn)

# +

from pprint import pprint

steps = sm.list_pipeline_execution_steps(PipelineExecutionArn=pipeline_execution_arn)

pprint(steps)

# -

# # Retrieve Evaluation Metrics

# +

# for execution_step in reversed(execution.list_steps()):

for execution_step in reversed(steps["PipelineExecutionSteps"]):

if execution_step["StepName"] == "EvaluateModel":

processing_job_name = execution_step["Metadata"]["ProcessingJob"]["Arn"].split("/")[-1]

describe_evaluation_processing_job_response = sm.describe_processing_job(ProcessingJobName=processing_job_name)

evaluation_metrics_s3_uri = describe_evaluation_processing_job_response["ProcessingOutputConfig"]["Outputs"][0][

"S3Output"

]["S3Uri"]

evaluation_metrics_s3_uri

# +

import json

from pprint import pprint

evaluation_json = sagemaker.s3.S3Downloader.read_file("{}/evaluation.json".format(evaluation_metrics_s3_uri))

pprint(json.loads(evaluation_json))

# -

# # Download and Analyze the Trained Model from S3

# +

training_job_arn = None

for execution_step in steps["PipelineExecutionSteps"]:

if execution_step["StepName"] == "Train":

training_job_arn = execution_step["Metadata"]["TrainingJob"]["Arn"]

break

training_job_name = training_job_arn.split("/")[-1]

print(training_job_name)

# -

model_tar_s3_uri = sm.describe_training_job(TrainingJobName=training_job_name)["ModelArtifacts"]["S3ModelArtifacts"]

# !aws s3 cp $model_tar_s3_uri ./

# !mkdir -p ./model

# !tar -zxvf model.tar.gz -C ./model

# !saved_model_cli show --all --dir ./model/tensorflow/saved_model/0/

# !saved_model_cli run --dir ./model/tensorflow/saved_model/0/ --tag_set serve --signature_def serving_default \

# --input_exprs 'input_ids=np.zeros((1,64));input_mask=np.zeros((1,64))'

# # List All Artifacts Generated By The Pipeline

processing_job_name = None

training_job_name = None

# +

import time

from sagemaker.lineage.visualizer import LineageTableVisualizer

viz = LineageTableVisualizer(sagemaker.session.Session())

for execution_step in reversed(steps["PipelineExecutionSteps"]):

print(execution_step)

# We are doing this because there appears to be a bug of this LineageTableVisualizer handling the Processing Step

if execution_step["StepName"] == "Processing":

processing_job_name = execution_step["Metadata"]["ProcessingJob"]["Arn"].split("/")[-1]

print(processing_job_name)

display(viz.show(processing_job_name=processing_job_name))

elif execution_step["StepName"] == "Train":

training_job_name = execution_step["Metadata"]["TrainingJob"]["Arn"].split("/")[-1]

print(training_job_name)

display(viz.show(training_job_name=training_job_name))

else:

display(viz.show(pipeline_execution_step=execution_step))

time.sleep(5)

# -

# # Analyze Experiment

# +

from sagemaker.analytics import ExperimentAnalytics

time.sleep(30) # avoid throttling exception

import pandas as pd

pd.set_option("max_colwidth", 500)

experiment_analytics = ExperimentAnalytics(

experiment_name=pipeline_experiment_name,

)

experiment_analytics.dataframe()

# -

# # Release Resources

# + language="html"

#

# <p><b>Shutting down your kernel for this notebook to release resources.</b></p>

# <button class="sm-command-button" data-commandlinker-command="kernelmenu:shutdown" style="display:none;">Shutdown Kernel</button>

#

# <script>

# try {

# els = document.getElementsByClassName("sm-command-button");

# els[0].click();

# }

# catch(err) {

# // NoOp

# }

# </script>

# + language="javascript"

#

# try {

# Jupyter.notebook.save_checkpoint();

# Jupyter.notebook.session.delete();

# }

# catch(err) {

# // NoOp

# }

| 10_pipeline/02_Evaluate_Pipeline_Execution.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# name: python3

# ---

# + [markdown] id="vLSN7SzBJNm_"

# # 4. Data Preprocessing

# In this part, we will learn how to process data that will be feed to machine learning algorithm

# + [markdown] id="HuDN2B9BNlGH"

# ##### Using [Iris Dataset](https://en.wikipedia.org/wiki/Iris_flower_data_set), that has missing value for the necessity of this module

# + id="YmKtRQ1YV_sp"

import numpy as np

import seaborn as sns

import matplotlib.pyplot as plt

# + id="guinmynAKPcM"

import pandas as pd

df = pd.read_csv("https://raw.githubusercontent.com/henseljahja/learn-ml/main/Dataset/iris_2_class_modified.csv")

# + [markdown] id="XWWA9Z-ibfJO"

# and We dont need the class for now, so we will drop the class

# + id="AM_brAD8bmNR"

df.drop(columns="species",inplace=True)

# + [markdown] id="Ox9c5mAXJpev"

# ## 4.1 Data Cleaning

# + [markdown] id="xG55T8LBJtFF"

# ### 4.1.1 Simple Imputer

# + [markdown] id="7oHE-exLJwVn"

# Simple imputer Imputation transformer for completing missing values.

# the documentation can be found [here](https://scikit-learn.org/stable/modules/generated/sklearn.impute.SimpleImputer.html)

# + id="RreybPG9Gx6m" colab={"base_uri": "https://localhost:8080/", "height": 488} outputId="86a74795-0eff-408c-b826-60f7a7891e37"

import numpy as np

# finding all the index

df_nan_value = np.where(df.isnull() == True)[0]

df.loc[df_nan_value]

# + id="c6bX-G5bLjOs"

#Importing Simple Imputer

from sklearn.impute import SimpleImputer

# + [markdown] id="x8O9ZoPtR2jJ"

#

#

# If “mean”, then replace missing values using the mean along each column. Can only be used with numeric data.

#

# If “median”, then replace missing values using the median along each column. Can only be used with numeric data.

#

# If “most_frequent”, then replace missing using the most frequent value along each column. Can be used with strings or numeric data. If there is more than one such value, only the smallest is returned.

#

# If “constant”, then replace missing values with fill_value. Can be used with strings or numeric data.

#

# + id="5mesDoxuRLKI"

#si means Simple Imputer

#Using the Mean value

si_mean = SimpleImputer(strategy="mean")

#Using the Median value

si_median = SimpleImputer(strategy="median")

#Using the most frequent value

si_most_frequent = SimpleImputer(strategy="most_frequent")

#Custom imputer

si_constant = SimpleImputer(strategy="constant", fill_value=999)

# + colab={"base_uri": "https://localhost:8080/", "height": 488} id="bfW5rZhkSrI6" outputId="21fe4f97-d2b2-44d1-de6e-8c4086c113b7"

#using the si_mean

df_si_mean = si_mean.fit_transform(df)

pd.DataFrame(data=df_si_mean[df_nan_value],columns=df.columns)

# + colab={"base_uri": "https://localhost:8080/", "height": 488} id="F3gapqstUXuy" outputId="5743a907-c785-4cdf-b052-9a2c26d90b6a"

#using the si_median

df_si_median = si_median.fit_transform(df)

pd.DataFrame(data=df_si_median[df_nan_value],columns=df.columns)

# + colab={"base_uri": "https://localhost:8080/", "height": 488} id="h3gSMzrZU3Vn" outputId="a62d79c1-3507-4329-d1b9-a62b7ff006d3"

#using the si_most_frequent

df_si_most_frequent = si_most_frequent.fit_transform(df)

pd.DataFrame(data=df_si_most_frequent[df_nan_value],columns=df.columns)

# + colab={"base_uri": "https://localhost:8080/", "height": 488} id="nXg7eXeTVTV0" outputId="8ad67e09-c673-4a64-bbd1-d8de8e312045"

#using the si_constant

df_si_constant = si_constant.fit_transform(df)

pd.DataFrame(data=df_si_constant[df_nan_value],columns=df.columns)

# + [markdown] id="KTLnUYs0Vl-b"

# ## 4.2 Feature Scalling

# + [markdown] id="_vFlQssCahSe"

# ##### For this we will use the dataframe that contains no missing value,

# + id="pW0uXungamJP"

df = pd.read_csv("https://raw.githubusercontent.com/henseljahja/learn-ml/main/Dataset/iris_2_class.csv")

# + id="VvOLg4oWcpL1"

df.drop("species",axis=1,inplace=True)

# + [markdown] id="5raEwT3jVpZ1"

# ### 4.2.2 Standardization (Z-score Normalization)

#

#

# In machine learning, we can handle various types of data, e.g. audio signals and pixel values for image data, and this data can include multiple dimensions. Feature standardization makes the values of each feature in the data have zero-mean (when subtracting the mean in the numerator) and unit-variance. This method is widely used for normalization in many machine learning algorithms (e.g., support vector machines, logistic regression, and artificial neural networks). The general method of calculation is to determine the distribution mean and standard deviation for each feature. Next we subtract the mean from each feature. Then we divide the values (mean is already subtracted) of each feature by its standard deviation. Source : [Wikipedia](https://en.wikipedia.org/wiki/Feature_scaling)

# + id="BQwwIpmTVoTD"

from sklearn.preprocessing import StandardScaler

sc = StandardScaler()

df_sc = sc.fit_transform(df)

# + colab={"base_uri": "https://localhost:8080/", "height": 424} id="cR-dAjknWonQ" outputId="30c4fe08-7b94-4f3c-d2f4-edfe172a0fa9"

pd.DataFrame(df, columns = df.columns)

# + colab={"base_uri": "https://localhost:8080/", "height": 283} id="I7d3_WIfW_Ef" outputId="e4f5c683-b8cb-4441-916c-80e920a3cba3"

#Before Standard Scalling

df.plot.kde()

# + colab={"base_uri": "https://localhost:8080/", "height": 283} id="q9weZ9CXXCNX" outputId="a2611bac-2ad0-4933-b2dc-4b1f8e3afb7e"

#After Standard Scalling

pd.DataFrame(df_sc, columns = df.columns).plot.kde()

# + [markdown] id="ZaDuOe76Ynq1"

# ### 4.2.2 Rescaling (min-max normalization)

# + [markdown] id="ZM2bdupIZMbM"

#

#

# Also known as min-max scaling or min-max normalization, is the simplest method and consists in rescaling the range of features to scale the range in [0, 1] or [−1, 1]. Selecting the target range depends on the nature of the data. Source : [Wikipedia](https://en.wikipedia.org/wiki/Feature_scaling)

# + id="xUAXgIvcXlxE"

from sklearn.preprocessing import MinMaxScaler

mms = MinMaxScaler()

df_mms = mms.fit_transform(df)

# + colab={"base_uri": "https://localhost:8080/", "height": 283} id="Y6-KPJqca3ti" outputId="7dbaa32d-c702-4e6c-b368-6d77f3e7678d"

df.plot.kde()

# + colab={"base_uri": "https://localhost:8080/", "height": 283} id="N4prbim7Zzfd" outputId="47b15926-fd3e-409a-9c5d-795d51ab3ad1"

pd.DataFrame(df_mms, columns = df.columns).plot.kde()

# + [markdown] id="UVw5cR1e5p_3"

# ## 4.3 Pipeline

# + [markdown] id="dyKM3bjO5rdZ"

# Let's say you have created a perfect preprocessing step, but you got a new data for preprocessing, repeating each would be tiring, so the solution is to set a Pipeline

# + [markdown] id="5wpHHnRt5uOg"

# This is a Pipeline with Imputer, Feature Scalling, and simple predictor

# + id="n4Mn1z3TazZR"

from sklearn.pipeline import Pipeline

#Define each steps

df_pipeline = Pipeline([

("imputer" , SimpleImputer(strategy="median")),

("scaler" , StandardScaler())

])

# + colab={"base_uri": "https://localhost:8080/"} id="p-YYnjxv5zYt" outputId="187f753a-930a-4bcc-a01f-074bb5fd8d00"

df_pipeline.fit_transform(df)

# + id="K6HFZD2M6Fby"

df_after_pipeline = pd.DataFrame(df_pipeline.fit_transform(df), columns = df.columns)

# + colab={"base_uri": "https://localhost:8080/", "height": 424} id="_LINK53460QB" outputId="43167e51-3bc2-4f49-b177-e03e38de56f8"

df_after_pipeline

# + colab={"base_uri": "https://localhost:8080/", "height": 283} id="Rp4c2vxA640E" outputId="e0e1a248-f285-40f0-e2b4-0c8f046bb11c"

#Before Pipeline

df.plot.kde()

# + colab={"base_uri": "https://localhost:8080/", "height": 283} id="9Cpz1ciK8dIg" outputId="9fa4f64f-cbd0-423d-ce76-e1742795b2a6"

#After Pipeline

df_after_pipeline.plot.kde()

# + id="I6Kab2Xk8hrW"

| 4_data_preprocessing.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

import importlib

import pickle

import pandas as pd

import torch

import sys

sys.path.append("/home/jarobyte/guemes/lib")

from pytorch_decoding import seq2seq

import ocr_correction

from timeit import default_timer as t

from tqdm.notebook import tqdm

from ocr_correction import *

# +

importlib.reload(ocr_correction)

language = "de"

model_id = "22694260_9"

scratch = f"/home/jarobyte/scratch/guemes/icdar/{language}/"

print(f"Evaluating {language}...")

data = pd.read_pickle(f"/home/jarobyte/scratch/guemes/icdar/{language}/data/test.pkl")

device = torch.device("cuda")

model = seq2seq.load_architecture(scratch + f"baseline/models/{model_id}.arch")

model.to(device)

model.eval()

model.load_state_dict(torch.load(scratch + f"baseline/checkpoints/{model_id}.pt"))

with open(scratch + "data/vocabulary.pkl", "rb") as file:

vocabulary = pickle.load(file)

len(vocabulary)

# -

raw = data.ocr_to_input

gs = data.gs_aligned

document_progress_bar = 0

window_size = 50

metrics = []

old = levenshtein(reference = gs, hypothesis = raw).cer.mean()

start = t()

corrections = [correct_by_disjoint_window(s,

model,

vocabulary,

document_progress_bar = 0,

window_size = window_size)

for s in tqdm(raw)]

metrics.append({"window":"disjoint",

"decoding":

"greedy",

"window_size":window_size * 2,

"inference_seconds":t() - start,

"cer_before":old,

"cer_after":levenshtein(gs, corrections).cer.mean()})

start = t()

corrections = [correct_by_disjoint_window(s,

model,

vocabulary,

document_progress_bar = document_progress_bar,

window_size = window_size * 2)

for s in raw]

metrics.append({"window":"disjoint",

"decoding":"greedy",

"window_size":window_size,

"inference_seconds":t() - start,

"cer_before":old,

"cer_after":levenshtein(gs, corrections).cer.mean()})

start = t()

corrections = [correct_by_disjoint_window(s,

model,

vocabulary,

decoding_method = "beam_search",

document_progress_bar = document_progress_bar,

window_size = window_size)

for s in tqdm(raw)]

metrics.append({"window":"disjoint",

"decoding":"beam",

"window_size":window_size * 2,

"inference_seconds":t() - start,

"cer_before":old,

"cer_after":levenshtein(gs, corrections).cer.mean()})

start = t()

corrections = [correct_by_disjoint_window(s,

model,

vocabulary,

decoding_method = "beam_search",

document_progress_bar = document_progress_bar,

window_size = window_size * 2)

for s in tqdm(raw)]

metrics.append({"window":"disjoint",

"decoding":"beam",

"window_size":window_size,

"inference_seconds":t() - start,

"cer_before":old,

"cer_after":levenshtein(gs, corrections).cer.mean()})

start = t()

corrections = [correct_by_sliding_window(s, model, vocabulary,

weighting = uniform,

document_progress_bar = document_progress_bar,

window_size = window_size)[1]

for s in tqdm(raw)]

metrics.append({"window":"sliding",

"decoding":"greedy",

"weighting":"uniform",

"window_size":window_size,

"inference_seconds":t() - start,

"cer_before":old,

"cer_after":levenshtein(gs, corrections).cer.mean()})

start = t()

corrections = [correct_by_sliding_window(s, model, vocabulary,

decoding_method = "beam_search",

weighting = uniform,

document_progress_bar = document_progress_bar,

window_size = window_size)[1]

for s in tqdm(raw)]

metrics.append({"window":"sliding",

"decoding":"beam",

"weighting":"uniform",

"window_size":window_size,

"inference_seconds":t() - start,

"cer_before":old,

"cer_after":levenshtein(gs, corrections).cer.mean()})

pd.DataFrame(metrics).assign(improvement = lambda df: 100 * (1 - df.cer_after / df.cer_before))

| notebooks/de/6_evaluation.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

from __future__ import division, print_function

# %matplotlib inline

from importlib import reload # Python 3

import utils; reload(utils)

from utils import *

# + [markdown] heading_collapsed=true

# ## Setup

# + hidden=true

batch_size=64

# + hidden=true

from keras.datasets import mnist

(X_train, y_train), (X_test, y_test) = mnist.load_data()

(X_train.shape, y_train.shape, X_test.shape, y_test.shape)

# + hidden=true

X_test = np.expand_dims(X_test,1)

X_train = np.expand_dims(X_train,1)

# + hidden=true

X_train.shape

# + hidden=true

y_train[:5]

# + hidden=true

y_train = onehot(y_train)

y_test = onehot(y_test)

# + hidden=true

y_train[:5]

# + hidden=true

mean_px = X_train.mean().astype(np.float32)

std_px = X_train.std().astype(np.float32)

# + hidden=true

def norm_input(x): return (x-mean_px)/std_px

# + [markdown] heading_collapsed=true

# ## Linear model

# + hidden=true

def get_lin_model():

model = Sequential([

Lambda(norm_input, input_shape=(1,28,28)),

Flatten(),

Dense(10, activation='softmax')

])

model.compile(Adam(), loss='categorical_crossentropy', metrics=['accuracy'])

return model

# + hidden=true

lm = get_lin_model()

# + hidden=true

gen = image.ImageDataGenerator()

batches = gen.flow(X_train, y_train, batch_size=batch_size)

test_batches = gen.flow(X_test, y_test, batch_size=batch_size)

steps_per_epoch = int(np.ceil(batches.n/batch_size))

validation_steps = int(np.ceil(test_batches.n/batch_size))

# + hidden=true

lm.fit_generator(batches, steps_per_epoch=steps_per_epoch, epochs=1,

validation_data=test_batches, validation_steps=validation_steps)

# + hidden=true

lm.optimizer.lr=0.1

# + hidden=true

lm.fit_generator(batches, steps_per_epoch=steps_per_epoch, epochs=1,

validation_data=test_batches, validation_steps=validation_steps)

# + hidden=true

lm.optimizer.lr=0.01

# + hidden=true

lm.fit_generator(batches, steps_per_epoch=steps_per_epoch, epochs=4,

validation_data=test_batches, validation_steps=validation_steps)

# + [markdown] heading_collapsed=true

# ## Single dense layer

# + hidden=true

def get_fc_model():

model = Sequential([

Lambda(norm_input, input_shape=(1,28,28)),

Flatten(),

Dense(512, activation='softmax'),

Dense(10, activation='softmax')

])

model.compile(Adam(), loss='categorical_crossentropy', metrics=['accuracy'])

return model

# + hidden=true

fc = get_fc_model()

# + hidden=true

fc.fit_generator(batches, steps_per_epoch=steps_per_epoch, epochs=1,

validation_data=test_batches, validation_steps=validation_steps)

# + hidden=true

fc.optimizer.lr=0.1

# + hidden=true

fc.fit_generator(batches, steps_per_epoch=steps_per_epoch, epochs=4,

validation_data=test_batches, validation_steps=validation_steps)

# + hidden=true

fc.optimizer.lr=0.01

# + hidden=true

fc.fit_generator(batches, steps_per_epoch=steps_per_epoch, epochs=4,

validation_data=test_batches, validation_steps=validation_steps)

# + [markdown] heading_collapsed=true

# ## Basic 'VGG-style' CNN

# + hidden=true

def get_model():

model = Sequential([

Lambda(norm_input, input_shape=(1,28,28)),

Conv2D(32,(3,3), activation='relu'),

Conv2D(32,(3,3), activation='relu'),

MaxPooling2D(),

Conv2D(64,(3,3), activation='relu'),

Conv2D(64,(3,3), activation='relu'),

MaxPooling2D(),

Flatten(),

Dense(512, activation='relu'),

Dense(10, activation='softmax')

])

model.compile(Adam(), loss='categorical_crossentropy', metrics=['accuracy'])

return model

# + hidden=true

model = get_model()

# + hidden=true

model.fit_generator(batches, steps_per_epoch=steps_per_epoch, epochs=1,

validation_data=test_batches, validation_steps=validation_steps)

# + hidden=true

model.optimizer.lr=0.1

# + hidden=true

model.fit_generator(batches, steps_per_epoch=steps_per_epoch, epochs=1,

validation_data=test_batches, validation_steps=validation_steps)

# + hidden=true

model.optimizer.lr=0.01

# + hidden=true

model.fit_generator(batches, steps_per_epoch=steps_per_epoch, epochs=8,

validation_data=test_batches, validation_steps=validation_steps)

# + [markdown] heading_collapsed=true

# ## Data augmentation

# + hidden=true

model = get_model()

# + hidden=true

gen = image.ImageDataGenerator(rotation_range=8, width_shift_range=0.08, shear_range=0.3,

height_shift_range=0.08, zoom_range=0.08)

batches = gen.flow(X_train, y_train, batch_size=batch_size)

test_batches = gen.flow(X_test, y_test, batch_size=batch_size)

steps_per_epoch = int(np.ceil(batches.n/batch_size))

validation_steps = int(np.ceil(test_batches.n/batch_size))

# + hidden=true

model.fit_generator(batches, steps_per_epoch=steps_per_epoch, epochs=1,

validation_data=test_batches, validation_steps=validation_steps)

# + hidden=true

model.optimizer.lr=0.1

# + hidden=true

model.fit_generator(batches, steps_per_epoch=steps_per_epoch, epochs=4,

validation_data=test_batches, validation_steps=validation_steps)

# + hidden=true

model.optimizer.lr=0.01

# + hidden=true

model.fit_generator(batches, steps_per_epoch=steps_per_epoch, epochs=8,

validation_data=test_batches, validation_steps=validation_steps)

# + hidden=true

model.optimizer.lr=0.001

# + hidden=true

model.fit_generator(batches, steps_per_epoch=steps_per_epoch, epochs=14,

validation_data=test_batches, validation_steps=validation_steps)

# + hidden=true

model.optimizer.lr=0.0001

# + hidden=true

model.fit_generator(batches, steps_per_epoch=steps_per_epoch, epochs=10,

validation_data=test_batches, validation_steps=validation_steps)

# + [markdown] heading_collapsed=true

# ## Batchnorm + data augmentation

# + hidden=true

def get_model_bn():

model = Sequential([

Lambda(norm_input, input_shape=(1,28,28)),

Conv2D(32,(3,3), activation='relu'),

BatchNormalization(axis=1),

Conv2D(32,(3,3), activation='relu'),

MaxPooling2D(),

BatchNormalization(axis=1),

Conv2D(64,(3,3), activation='relu'),

BatchNormalization(axis=1),

Conv2D(64,(3,3), activation='relu'),

MaxPooling2D(),

Flatten(),

BatchNormalization(),

Dense(512, activation='relu'),

BatchNormalization(),

Dense(10, activation='softmax')

])

model.compile(Adam(), loss='categorical_crossentropy', metrics=['accuracy'])

return model

# + hidden=true

model = get_model_bn()

# + hidden=true

model.fit_generator(batches, steps_per_epoch=steps_per_epoch, epochs=1,

validation_data=test_batches, validation_steps=validation_steps)

# + hidden=true

model.optimizer.lr=0.1

# + hidden=true

model.fit_generator(batches, steps_per_epoch=steps_per_epoch, epochs=4,

validation_data=test_batches, validation_steps=validation_steps)

# + hidden=true

model.optimizer.lr=0.01

# + hidden=true

model.fit_generator(batches, steps_per_epoch=steps_per_epoch, epochs=12,

validation_data=test_batches, validation_steps=validation_steps)

# + hidden=true

model.optimizer.lr=0.001

# + hidden=true

model.fit_generator(batches, steps_per_epoch=steps_per_epoch, epochs=12,

validation_data=test_batches, validation_steps=validation_steps)

# + [markdown] heading_collapsed=true

# ## Batchnorm + dropout + data augmentation

# + hidden=true

def get_model_bn_do():

model = Sequential([

Lambda(norm_input, input_shape=(1,28,28)),

Conv2D(32,(3,3), activation='relu'),

BatchNormalization(axis=1),

Conv2D(32,(3,3), activation='relu'),

MaxPooling2D(),

BatchNormalization(axis=1),

Conv2D(64,(3,3), activation='relu'),

BatchNormalization(axis=1),

Conv2D(64,(3,3), activation='relu'),

MaxPooling2D(),

Flatten(),

BatchNormalization(),

Dense(512, activation='relu'),

BatchNormalization(),

Dropout(0.5),

Dense(10, activation='softmax')

])

model.compile(Adam(), loss='categorical_crossentropy', metrics=['accuracy'])

return model

# + hidden=true

model = get_model_bn_do()

# + hidden=true

model.fit_generator(batches, steps_per_epoch=steps_per_epoch, epochs=1,

validation_data=test_batches, validation_steps=validation_steps)

# + hidden=true

model.optimizer.lr=0.1

# + hidden=true

model.fit_generator(batches, steps_per_epoch=steps_per_epoch, epochs=4,

validation_data=test_batches, validation_steps=validation_steps)

# + hidden=true

model.optimizer.lr=0.01

# + hidden=true

model.fit_generator(batches, steps_per_epoch=steps_per_epoch, epochs=12,

validation_data=test_batches, validation_steps=validation_steps)

# + hidden=true

model.optimizer.lr=0.001

# + hidden=true

model.fit_generator(batches, steps_per_epoch=steps_per_epoch, epochs=1,

validation_data=test_batches, validation_steps=validation_steps)

# -

# ## Ensembling

def fit_model():

"""

vgg model with data aug, batchnorm, dropout, 35 epochs total

Learning rate decreases gradually

"""

model = get_model_bn_do()

model.fit_generator(batches, steps_per_epoch=steps_per_epoch, epochs=1, verbose=0,

validation_data=test_batches, validation_steps=validation_steps)

model.optimizer.lr=0.1

model.fit_generator(batches, steps_per_epoch=steps_per_epoch, epochs=4, verbose=0,

validation_data=test_batches, validation_steps=validation_steps)

model.optimizer.lr=0.01

model.fit_generator(batches, steps_per_epoch=steps_per_epoch, epochs=12, verbose=0,

validation_data=test_batches, validation_steps=validation_steps)

model.optimizer.lr=0.001

model.fit_generator(batches, steps_per_epoch=steps_per_epoch, epochs=18, verbose=0,

validation_data=test_batches, validation_steps=validation_steps)

return model

# create 6 of the models above

models = [fit_model() for i in range(6)]

# +

import os

user_home = os.path.expanduser('~')

path = os.path.join(user_home, "pj/fastai/data/MNIST_data/")

model_path = path + 'models/'

# path = "data/mnist/"

# model_path = path + 'models/'

# -

# save the weight of 6 models in a file

for i,m in enumerate(models):

m.save_weights(model_path+'cnn-mnist23-'+str(i)+'.pkl')

eval_batch_size = 256

# +

evals = np.array([m.evaluate(X_test, y_test, batch_size=eval_batch_size) for m in models])

# -

evals.mean(axis=0)

# for each models, predict the test sets. Stack all predictions together

all_preds = np.stack([m.predict(X_test, batch_size=eval_batch_size) for m in models])

all_preds.shape

avg_preds = all_preds.mean(axis=0)

keras.metrics.categorical_accuracy(y_test, avg_preds).eval()

| deeplearning1/nbs/mnist.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 2

# language: python

# name: python2

# ---

# +

# -*- coding: utf-8 -*-

# In this script we use a simple classifer called naive bayes and try to predict the violations. But before that we use

# some methods to tackle the problem of our skewed dataset. :)

# 11 May 2016

# @author: reyhane_askari

# Universite de Montreal, DIRO

import csv

import numpy as np

from sklearn.metrics import roc_curve, auc

from sklearn.cross_validation import train_test_split

import matplotlib.pyplot as plt

from sklearn import metrics

import pandas as pd

from os import chdir, listdir

from pandas import read_csv

from os import path

from random import randint, sample, seed

from collections import OrderedDict

from pandas import DataFrame, Series

import numpy as np

import csv

import codecs

import matplotlib as mpl

import seaborn as sns

sns.set()

import itertools

from sklearn.decomposition import PCA

from unbalanced_dataset import UnderSampler, NearMiss, CondensedNearestNeighbour, OneSidedSelection,\

NeighbourhoodCleaningRule, TomekLinks, ClusterCentroids, OverSampler, SMOTE,\

SMOTETomek, SMOTEENN, EasyEnsemble, BalanceCascade

almost_black = '#262626'

# %matplotlib inline

# +

colnames = ['old_index','job_id', 'task_idx','sched_cls', 'priority', 'cpu_requested',

'mem_requested', 'disk', 'violation']

tain_path = r'/Users/reyhane.askari/Dropbox/Project_step_by_step/3_create_database/csvs/frull_db_2.csv'

X = pd.read_csv(tain_path, header = None, index_col = False ,names = colnames, skiprows = [0], usecols = [3,4,5,6,7])

y = pd.read_csv(tain_path, header = None, index_col = False ,names = colnames, skiprows = [0], usecols = [8])

y = y['violation'].values

# X_train, X_test, y_train, y_test = train_test_split(X, y, test_size=.333, random_state=0)

x = X.values

# +

# Instanciate a PCA object for the sake of easy visualisation

pca = PCA(n_components = 2)

# Fit and transform x to visualise inside a 2D feature space

x_vis = pca.fit_transform(x)

# Plot the original data

# Plot the two classes

palette = sns.color_palette()

# +

plt.scatter(x_vis[y==0, 0], x_vis[y==0, 1], label="Class #0", alpha=0.009,

edgecolor=almost_black, facecolor=palette[0], linewidth=0.15)

plt.scatter(x_vis[y==1, 0], x_vis[y==1, 1], label="Class #1", alpha=0.009,

edgecolor=almost_black, facecolor=palette[2], linewidth=0.15)

plt.legend()

plt.show()

# -

# Generate the new dataset using under-sampling method

verbose = False

# 'Random under-sampling'

US = UnderSampler(verbose=verbose)

x, y = US.fit_transform(x, y)

# 'Clustering centroids'

CC = ClusterCentroids(verbose=verbose)

x, y = CC.fit_transform(x, y)

# 'NearMiss-1'

NM1 = NearMiss(version=1, verbose=verbose)

x, y = NM1.fit_transform(x, y)

# 'NearMiss-2'

NM2 = NearMiss(version=2, verbose=verbose)

x, y = NM2.fit_transform(x, y)

# 'NearMiss-3'

NM3 = NearMiss(version=3, verbose=verbose)

x, y = NM3.fit_transform(x, y)

# 'One-Sided Selection'

OSS = OneSidedSelection(size_ngh=51, n_seeds_S=51, verbose=verbose)

x, y = OSS.fit_transform(x, y)

# 'Neighboorhood Cleaning Rule'

NCR = NeighbourhoodCleaningRule(size_ngh=51, verbose=verbose)

x, y = NCR.fit_transform(x, y)

ratio = float(np.count_nonzero(y==1)) / float(np.count_nonzero(y==0))

# 'Random over-sampling'

OS = OverSampler(ratio=ratio, verbose=verbose)

x, y = OS.fit_transform(x, y)

# 'SMOTE'

smote = SMOTE(ratio=ratio, verbose=verbose, kind='regular')

x, y = smote.fit_transform(x, y)

# 'SMOTE bordeline 1'

bsmote1 = SMOTE(ratio=ratio, verbose=verbose, kind='borderline1')

x, y = bsmote1.fit_transform(x, y)

# 'SMOTE bordeline 2'

bsmote2 = SMOTE(ratio=ratio, verbose=verbose, kind='borderline2')

x, y = bsmote2.fit_transform(x, y)

# 'SMOTE SVM'

svm_args={'class_weight' : 'auto'}

svmsmote = SMOTE(ratio=ratio, verbose=verbose, kind='svm', **svm_args)

x, y = svmsmote.fit_transform(x, y)

# 'SMOTE Tomek links'

STK = SMOTETomek(ratio=ratio, verbose=verbose)

x, y = STK.fit_transform(x, y)

# 'SMOTE ENN'

SENN = SMOTEENN(ratio=ratio, verbose=verbose)

x, y = SENN.fit_transform(x, y)

# 'EasyEnsemble'

EE = EasyEnsemble(verbose=verbose)

x, y = EE.fit_transform(x, y)

# 'BalanceCascade'

BS = BalanceCascade(verbose=verbose)

x, y = BS.fit_transform(x, y)

X_train, X_test, y_train, y_test = train_test_split(x, y, test_size=.333, random_state=0)

# +

from sklearn.naive_bayes import GaussianNB, BernoulliNB

gnb = GaussianNB() #Guassian Naive Bayes

# gnb = BernoulliNB() #Bernoulli Naive Bayes

y_pred = gnb.fit(X_train, y_train).predict(X_test)

y_score = gnb.fit(X_train, y_train).predict_proba(X_test)[:,1]

mean_accuracy = gnb.fit(X_train, y_train).score(X_test,y_test,sample_weight=None)

# print(y_score)

print(mean_accuracy)

fpr, tpr, thresholds = metrics.roc_curve(y_test, y_score)

roc_auc = auc(fpr, tpr)

plt.plot(fpr, tpr, label='ROC curve (area = %0.2f)' % roc_auc)

plt.plot([0, 1], [0, 1], 'k--')

plt.xlim([0.0, 1.0])

plt.ylim([0.0, 1.05])

plt.xlabel('False Positive Rate')

plt.ylabel('True Positive Rate')

plt.title('Receiver operating characteristic example')

plt.legend(loc="lower right")

plt.savefig('/Users/reyhane.askari/Dropbox/Project_step_by_step/3_create_database/naive_bays_guassian.png')

plt.show()

print("Number of mislabeled points out of a total %d points : %d"

% (X_test.shape[0],(y_test != y_pred).sum()))

from sklearn import metrics

# metrics.precision_score(y_test, y_pred)

# -

from sklearn import metrics

metrics.precision_score(y_test, y_pred)

metrics.recall_score(y_test, y_pred)

metrics.fbeta_score(y_test, y_pred, beta=0.5)

metrics.fbeta_score(y_test, y_pred, beta=1)

metrics.fbeta_score(y_test, y_pred, beta=2)

metrics.precision_recall_fscore_support(y_test, y_pred, beta=0.5)

| 4_simple_models/interactive_scripts/naive_bayes_guassian_under_sampling.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3