code stringlengths 38 801k | repo_path stringlengths 6 263 |

|---|---|

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3.8.2 32-bit

# name: python3

# ---

# +

from Classes import *

fm = clsFormulasMath.Bascara_1('R$90')

print(fm.calc_bas())

| calculos.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# %load_ext autoreload

# %autoreload 2

import thermostat; thermostat.get_version()

# +

import sys

import os

import warnings

import logging

from os.path import expanduser

from thermostat.importers import from_csv

from thermostat.exporters import metrics_to_csv, certification_to_csv

from thermostat.stats import compute_summary_statistics

from thermostat.stats import summary_statistics_to_csv

from thermostat.multiple import multiple_thermostat_calculate_epa_field_savings_metrics

logger = logging.getLogger('epathermostat')

# Set this to 'DEBUG' for more logging messages (default: WARNING)

# See `multi_thermostat_tutorial.py` for how to

# use a logging configuration file which logs to console / file

logger.setLevel(logging.WARNING)

data_dir = os.path.join(expanduser("~"), "Downloads")

# -

# dev specific

data_dir = os.path.join(os.path.join("/", *thermostat.__file__.split('/')[:6]), "tests", "data", "single_stage")

data_dir = os.path.join(os.path.curdir, "..", "tests", "data", "single_stage")

metadata_filename = os.path.join(data_dir, "metadata.csv")

# verbose=True will override logging to display the imported thermostats

# Set verbose to "False" to use the logging level instead

thermostats = from_csv(metadata_filename, verbose=True)

data_dir = os.path.join(expanduser("~"), "Downloads")

# Use this for multi-processing thermostats

metrics = multiple_thermostat_calculate_epa_field_savings_metrics(thermostats)

# Use this to process each thermostat one-at-a-time

'''

metrics = []

ts = []

for thermostat_ in thermostats:

outputs = thermostat_.calculate_epa_field_savings_metrics()

metrics.extend(outputs)

ts.append(thermostat_)

'''

output_filename = os.path.join(data_dir, "thermostat_example_output.csv")

metrics_df = metrics_to_csv(metrics, output_filename)

with warnings.catch_warnings():

warnings.simplefilter("ignore")

# uses the metrics_df created in the quickstart above.

stats = compute_summary_statistics(metrics_df)

stats_advanced = compute_summary_statistics(metrics_df, advanced_filtering=True)

# +

product_id = "test-product"

certification_filepath = os.path.join(data_dir, "thermostat_example_certification.csv")

certification_to_csv(stats, certification_filepath, product_id)

stats_filepath = os.path.join(data_dir, "thermostat_example_stats.csv")

stats_df = summary_statistics_to_csv(stats, stats_filepath, product_id)

stats_advanced_filepath = os.path.join(data_dir, "thermostat_example_stats_advanced.csv")

stats_advanced_df = summary_statistics_to_csv(stats_advanced, stats_advanced_filepath, product_id)

# -

| scripts/tutorial.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# # Problem 002

# # Even Fibonacci numbers

#

# Even Fibonacci numbers

#

# Each new term in the Fibonacci sequence is generated by adding the previous two terms.

# By starting with 1 and 2, the first 10 terms will be:

#

# 1, 2, 3, 5, 8, 13, 21, 34, 55, 89, ...

#

# By considering the terms in the Fibonacci sequence whose values do not exceed four million,

# find the sum of the even-valued terms.

#

# +

from scripts import myfunc

fib_seq = [1, 2]

max_seq = 4*1e6

for i in range(1000):

x = fib_seq[-1]+fib_seq[-2]

if x <= max_seq:

fib_seq.append(x)

else:

print('Reached to %d' %(int(max_seq)))

break

print(fib_seq[-1])

fib_even = [i for i in fib_seq if i%2 == 0]

print(sum(fib_even))

# -

| notebooks/problem_solved/problem_002_Even_Fibonacci_numbers.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 2

# language: python

# name: python2

# ---

# + [markdown] deletable=true editable=true

# # Experimental Design Simulation

#

# This simulation parallels our 50-cycle MAGE experiment and allows exploring the relationship among experimental design parameters including number of oligos tested and number of clones sampled for genotyping. The simulation also investigated different number and effect-size distributions of causal mutations. For a given combination of parameters, we sample a distribution of underlying mutation effects (capped at a total fitness effect of 50%, comparable to the C321.∆A context) and then performed, in silico, iterations of MAGE separated by competitive expansion and bottlenecking of the population. We sample clonal genotypes from this simulated population and calculate phenotypes using the underlying mutation effects. We then perform predictive modeling with the simulated genotype-phenotype data and evaluate precision and recall relative to the underlying mutation effects. We also compare our linear modeling strategy to alternative modeling strategies of quantitative GWAS (a separate linear model for each variant) or using enrichment of mutations in the final population. We made simplifying assumptions of no de novo mutations, no epistatic interactions among mutations, no measurement noise, and equal recombination efficiency for all mutations.

# + deletable=true editable=true

import os

import random

import sys

import time

import ipyparallel as ipp

from IPython.display import display

import matplotlib.pyplot as plt

import numpy as np

import pandas as pd

# %matplotlib inline

sys.path.append('../')

from common_code import simulation_util

from common_code.simulation_util import SimulationParams

# + [markdown] deletable=true editable=true

# ## Implementation

#

# We implement each component of the simulation as a separate function and perform a small sanity check / plot for the different steps below. The sanity checks build on the previous data.

#

# NOTE: Most of the functions defintions have been extracted to common_code/simulation_util.py.

# + [markdown] deletable=true editable=true

# ### Generate SNP effects

#

# We'll assume some number of SNPs with an effect distributed according to a Gaussian, and then scaled so that the total effect comes out to < 50% total fitness defect.

#

# We use multiplicative effects, e.g. a single mutant with effect 0.9 has 10% reduced doubling time.

# + deletable=true editable=true

# Sanity check

test_non_zero_snp_effects = simulation_util.sample_effects_by_power_law(30, debug_plot=True)

# + deletable=true editable=true

# Sanity check.

test_snp_effects = simulation_util.generate_snp_effects(

simulation_util.DEFAULT_SNPS_CONSIDERED, simulation_util.DEFAULT_SNPS_WITH_EFFECT)

non_trivial_snp_effects = sorted([e for e in test_snp_effects if e != 1], reverse=True)

print 'Total Fitness', np.prod(non_trivial_snp_effects)

plt.figure()

plt.title('Sanity Check | Fitness effects %d/%d SNPs' % (

simulation_util.DEFAULT_SNPS_WITH_EFFECT, simulation_util.DEFAULT_SNPS_CONSIDERED))

plt.bar(range(len(non_trivial_snp_effects)), non_trivial_snp_effects)

plt.show()

# + [markdown] deletable=true editable=true

# ### Update population with MAGE

#

# We'll need a function that performs a cycle of MAGE. We'll simplify this as updating some fraction of the population according to MAGE_EFFICIENCY, where that fraction of the population gets an additional mutation added on top.

# + deletable=true editable=true

# Here we do a sanity check of the above by running 50 cycles of MAGE

# with 100 SNPs observing the resulting distribution of mutations.

# Note that we ignore selection based on fitness in between rounds.

# Initial population.

test_population = np.zeros(

(simulation_util.DEFAULT_POPULATION_SIZE,

simulation_util.DEFAULT_SNPS_CONSIDERED), dtype=np.bool)

for cycle in range(simulation_util.MAGE_CYCLES):

test_population = simulation_util.update_population_with_mage_mutations(test_population)

plt.figure()

plt.title('Sanity check | Expected mutation distribution after 50 cycles (no selection)')

plt.hist(test_population.sum(axis=1))

plt.show()

# + [markdown] deletable=true editable=true

# ### Calculate doubling times from genotype.

#

# Now we need a way to generate doubling times for a population. This will be a function of the SNP effects with a bit of extra random noise.

# + deletable=true editable=true

# Sanity check.

test_doubling_times = simulation_util.generate_doubling_times(

test_population, test_snp_effects)

plt.figure()

plt.title('Sanity check | Doubling times distribution')

plt.bar(range(len(test_doubling_times)), sorted(test_doubling_times, reverse=True))

plt.show()

# + [markdown] deletable=true editable=true

# ## Apply selection between MAGE cycles

#

# For the model to actually work, we need to add some form of selection, so in between each MAGE cycle we'll do an expansion and pruning step that is weighted by the fitness effects.

#

# We'll do this by allowing each genotype to double at a rate relative to the others proportional to its fitness.

# + deletable=true editable=true

# Sanity check.

# NOTE: We are running this on the final unselected population

# to see what would have been selected. See simulation with

# default params below for real end-to-end.

apply_selection_result = simulation_util.apply_selection_pressure(

test_population, test_snp_effects)

subsampled_clones = apply_selection_result['subsampled_clone_ids']

plt.figure()

plt.hist(subsampled_clones, bins=100)

plt.show()

apply_selection_result['metadata_df'].sort_values('growth_rates', ascending=False)[:10]

# + [markdown] deletable=true editable=true

# ### Run linear modeling and evaluate

# + deletable=true editable=true

# Sanity check.

test_lm_result = simulation_util.run_linear_modeling(

test_population, test_doubling_times)

simulation_data = {

'snp_effect': test_snp_effects,

}

lm_evaluation_results = simulation_util.evaluate_modeling_result(

simulation_data, test_lm_result)

print 'Pearson: %f, p-value: %f' % (

lm_evaluation_results['pearson_r'],

lm_evaluation_results['p_value'])

lm_eval_results_df = lm_evaluation_results['results_df']

lm_eval_results_df[lm_eval_results_df['snp_effect'] != 1]

# + [markdown] deletable=true editable=true

# ### Compare to GWAS

#

# Quantitative GWAS is effectively running a linear model with a single SNP at a time. See `model_fitting#single_snp_lienar_modeling`.

# + deletable=true editable=true

# Sanity check.

gwas_results_df = simulation_util.run_gwas(test_population, test_doubling_times)

gwas_eval_results = simulation_util.evaluate_gwas_result(

gwas_results_df, lm_eval_results_df, show_plot=True)

gwas_eval_results_df = gwas_eval_results['results_df']

print 'GWAS Pearson: %f, p-value: %f' % (

gwas_eval_results['pearson_r'],

gwas_eval_results['p_value'])

print 'GWAS results | SNPs with effect or p < 0.05'

gwas_results_of_interest_df = gwas_eval_results_df[

(gwas_eval_results_df['snp_effect'] < 1) |

(gwas_eval_results_df['gwas_p'] < 0.05)]

gwas_results_of_interest_df = gwas_results_of_interest_df[[

'snp_effect', 'gwas_coef', 'gwas_p']].sort_values('snp_effect')

display(gwas_results_of_interest_df)

# + [markdown] deletable=true editable=true

# Here we define some functions for calculating metrics like true positives, false positives, recall, etc.

# + deletable=true editable=true

print 'Linear modeling'

print simulation_util.calculate_modeling_metrics(lm_eval_results_df, 'linear_model_coef', 'lm_')

print '\nGWAS'

print simulation_util.calculate_modeling_metrics(gwas_eval_results_df, 'gwas_coef', 'gwas_')

# + [markdown] deletable=true editable=true

# Slightly different metrics for enrichment.

# + deletable=true editable=true

# Sanity check

test_simulation_data = {

'sim_params': SimulationParams(),

'final_population': test_population,

'final_doubling_times': test_doubling_times,

'snp_effect': test_snp_effects,

}

test_enrichment_result_df = simulation_util.run_enrichment_analysis(test_simulation_data)

simulation_util.calculate_enrichment_metrics(test_enrichment_result_df)

# + [markdown] deletable=true editable=true

# ## Run simulations

#

# First, test our re-useable function for running simulations.

# + deletable=true editable=true

# Sanity checks.

test_sim_params = SimulationParams()

test_sim_params.population_size = 100

test_run_simulation_result = simulation_util.run_simulation(

simulation_params=test_sim_params)

assert min(test_run_simulation_result['wgs_samples_mage_cycle_list']) == 1

assert max(test_run_simulation_result['wgs_samples_mage_cycle_list']) == 50

test_sim_params = SimulationParams()

test_sim_params.population_size = 100

test_sim_params.num_samples = 5

test_run_simulation_result = simulation_util.run_simulation(

simulation_params=test_sim_params)

np.testing.assert_array_equal(

np.array([10, 20, 30, 40, 50]),

np.array(test_run_simulation_result['wgs_samples_mage_cycle_list']))

# + [markdown] deletable=true editable=true

# ### Simulation with our default experiment parameters.

# + deletable=true editable=true

sim_params = SimulationParams()

default_simulation_result = simulation_util.run_simulation(

simulation_params=sim_params)

simulation_util.visualize_simulation_result(default_simulation_result)

# Perform linear modeling.

print '>>> Linear Model Results'

test_lm_result = simulation_util.run_linear_modeling(

default_simulation_result['wgs_samples'],

default_simulation_result['wgs_samples_doubling_times'],

repeats=10)

lm_eval_results = simulation_util.evaluate_modeling_result(

default_simulation_result, test_lm_result)

lm_eval_results_df = lm_eval_results['results_df']

print 'Pearson: %f, p-value: %f' % (

lm_eval_results['pearson_r'], lm_eval_results['p_value'])

display(lm_eval_results_df[lm_eval_results_df['snp_effect'] != 1])

# Compare to GWAS

print '>>> GWAS results'

gwas_results_df = simulation_util.run_gwas(

default_simulation_result['wgs_samples'],

default_simulation_result['wgs_samples_doubling_times'])

gwas_eval_results = simulation_util.evaluate_gwas_result(

gwas_results_df, lm_eval_results_df, show_plot=True)

gwas_eval_results_df = gwas_eval_results['results_df']

print 'GWAS Pearson: %f, p-value: %f' % (

gwas_eval_results['pearson_r'],

gwas_eval_results['p_value'])

print 'GWAS results | SNPs with effect or p < 0.05'

gwas_results_of_interest_df = gwas_eval_results_df[

(gwas_eval_results_df['snp_effect'] < 1) |

(gwas_eval_results_df['gwas_p'] < 0.05)]

gwas_results_of_interest_df = gwas_results_of_interest_df[[

'snp_effect', 'gwas_coef', 'gwas_p']].sort_values('snp_effect')

display(gwas_results_of_interest_df)

print 'GWAS results | SNPs with effect or p < 0.05'

gwas_results_of_interest_df = gwas_eval_results_df[

(gwas_eval_results_df['snp_effect'] < 1) |

(gwas_eval_results_df['gwas_p'] < 0.05)]

gwas_results_of_interest_df = gwas_results_of_interest_df[[

'snp_effect', 'gwas_coef', 'gwas_p']].sort_values('snp_effect')

display(gwas_results_of_interest_df)

# + [markdown] deletable=true editable=true

# ### Compare with same SNP effects against no selection.

# + deletable=true editable=true

sim_params = SimulationParams()

sim_params.num_snps_with_effect = 10

simulation_result_with_selection = simulation_util.run_simulation(

simulation_params=sim_params)

simulation_result_no_selection = simulation_util.run_simulation(

simulation_params=sim_params,

snp_effects=simulation_result_with_selection['snp_effect'],

should_apply_selection_pressure=False)

print '>>>With Selection'

simulation_util.visualize_simulation_result(simulation_result_with_selection)

# Perform linear modeling.

test_lm_result = simulation_util.run_linear_modeling(

simulation_result_with_selection['wgs_samples'],

simulation_result_with_selection['wgs_samples_doubling_times'],

repeats=10)

# Evaluate results.

evaluation_results = simulation_util.evaluate_modeling_result(

simulation_result_with_selection, test_lm_result)

print 'Pearson: %f, p-value: %f' % (evaluation_results['pearson_r'], evaluation_results['p_value'])

eval_results_df = evaluation_results['results_df']

display(eval_results_df[eval_results_df['snp_effect'] != 1])

with_selection_modeling_metrics = {

'lm_pearson_r': evaluation_results['pearson_r'],

'lm_pearson_p': evaluation_results['p_value'],

}

with_selection_modeling_metrics.update(

simulation_util.calculate_modeling_metrics(

eval_results_df, 'linear_model_coef',

results_prefix='lm_'))

print '>>>No Selection'

simulation_util.visualize_simulation_result(simulation_result_no_selection)

test_lm_result = simulation_util.run_linear_modeling(

simulation_result_no_selection['wgs_samples'],

simulation_result_no_selection['wgs_samples_doubling_times'],

max_iter=300, # This converges poorly so manually limit here for now.

repeats=1) # likewise

# Evaluate results.

evaluation_results = simulation_util.evaluate_modeling_result(simulation_result_no_selection, test_lm_result)

print 'Pearson: %f, p-value: %f' % (evaluation_results['pearson_r'], evaluation_results['p_value'])

eval_results_df = evaluation_results['results_df']

display(eval_results_df[eval_results_df['snp_effect'] != 1])

no_selection_modeling_metrics = {

'lm_pearson_r': evaluation_results['pearson_r'],

'lm_pearson_p': evaluation_results['p_value'],

}

no_selection_modeling_metrics.update(

simulation_util.calculate_modeling_metrics(

eval_results_df, 'linear_model_coef',

results_prefix='lm_'))

# + [markdown] deletable=true editable=true

# Also compare linear modeling result if we had just taken all samples from last timepoint, instead of sampling across different MAGE cycles.

# + deletable=true editable=true

# Sub-sample final population.

final_timepoint_subsample = np.zeros(

(simulation_util.DEFAULT_NUM_SAMPLES, simulation_util.DEFAULT_SNPS_CONSIDERED), dtype=np.bool)

random_indeces_from_final_population = np.random.choice(

range(len(simulation_result_with_selection['final_population'])),

size=simulation_util.DEFAULT_NUM_SAMPLES)

final_timepoint_doubling_times = []

for i, random_idx in enumerate(random_indeces_from_final_population):

final_timepoint_subsample[i,:] = (

simulation_result_with_selection['final_population'][random_idx,:])

final_timepoint_doubling_times.append(

simulation_result_with_selection['final_doubling_times'][random_idx])

assert final_timepoint_subsample.shape == (

simulation_util.DEFAULT_NUM_SAMPLES,

simulation_util.DEFAULT_SNPS_CONSIDERED)

assert len(final_timepoint_doubling_times) == simulation_util.DEFAULT_NUM_SAMPLES

# Run linear modeling.

test_lm_result = simulation_util.run_linear_modeling(

final_timepoint_subsample,

final_timepoint_doubling_times,

repeats=1,

max_iter=300)

# Evaluate results.

evaluation_results = simulation_util.evaluate_modeling_result(

simulation_result_with_selection, test_lm_result)

print 'Pearson: %f, p-value: %f' % (evaluation_results['pearson_r'], evaluation_results['p_value'])

eval_results_df = evaluation_results['results_df']

display(eval_results_df[eval_results_df['snp_effect'] != 1])

final_timepoint_modeling_metrics = {

'lm_pearson_r': evaluation_results['pearson_r'],

'lm_pearson_p': evaluation_results['p_value'],

}

final_timepoint_modeling_metrics.update(

simulation_util.calculate_modeling_metrics(

eval_results_df, 'linear_model_coef',

results_prefix='lm_'))

plt.figure(figsize=(10, 4))

# WGS mutation distribution

plt.subplot(1, 2, 1)

plt.title('WGS samples from final population only | Mutations')

plt.hist(final_timepoint_subsample.sum(axis=1))

# WGS doubling times

plt.subplot(1, 2, 2)

plt.title('WGS samples from final population only | Doubling times')

plt.bar(

range(len(final_timepoint_doubling_times)),

sorted(final_timepoint_doubling_times, reverse=True),

edgecolor="none")

plt.tight_layout()

plt.show()

# + [markdown] deletable=true editable=true

# Output for plotting in R.

# + deletable=true editable=true

IGNORE_KEYS = ['sim_params', 'final_population']

# 4a

supp_fig4a_data_dir = 'results/supp_fig4a_data'

for key in simulation_result_with_selection.keys():

if key in IGNORE_KEYS:

continue

np.savetxt(

os.path.join(supp_fig4a_data_dir, key + '.csv'),

simulation_result_with_selection[key],

delimiter=',')

pd.DataFrame([with_selection_modeling_metrics]).to_csv(

os.path.join(supp_fig4a_data_dir, 'modeling_metrics.csv'),

index=False)

# 4b

supp_fig4b_data_dir = 'results/supp_fig4b_data'

np.savetxt(

os.path.join(supp_fig4b_data_dir, 'wgs_samples.csv'),

final_timepoint_subsample,

delimiter=',')

np.savetxt(

os.path.join(supp_fig4b_data_dir, 'wgs_samples_doubling_times.csv'),

final_timepoint_doubling_times,

delimiter=',')

pd.DataFrame([final_timepoint_modeling_metrics]).to_csv(

os.path.join(supp_fig4b_data_dir, 'modeling_metrics.csv'),

index=False)

# 4c

supp_fig4c_data_dir = 'results/supp_fig4c_data'

for key in simulation_result_no_selection.keys():

if key in IGNORE_KEYS:

continue

np.savetxt(

os.path.join(supp_fig4c_data_dir, key + '.csv'),

simulation_result_no_selection[key],

delimiter=',')

pd.DataFrame([no_selection_modeling_metrics]).to_csv(

os.path.join(supp_fig4c_data_dir, 'modeling_metrics.csv'),

index=False)

# + [markdown] deletable=true editable=true

# Placeholder plot for Fig S4a-c for co-authors review.

# + deletable=true editable=true

plt.figure(figsize=(20, 8))

final_timepoint_doubling_times

final_timepoint_subsample

### S4A

plt.subplot(2, 3, 1)

plt.title('100 samples | default parameters')

plt.bar(

simulation_result_with_selection['wgs_samples_mage_cycle_list'],

simulation_result_with_selection['wgs_samples_doubling_times'],

)

plt.ylabel("Relative doubling time")

plt.xlim((0, 50))

plt.ylim((0, 1.0))

plt.subplot(2, 3, 4)

plt.plot(

simulation_result_with_selection['wgs_samples_mage_cycle_list'],

np.sum(simulation_result_with_selection['wgs_samples'], axis=1),

'.')

plt.ylabel("# Mutations")

plt.xlabel('MAGE cycle')

plt.ylim((0, 10))

### S4B

plt.subplot(2, 3, 2)

plt.title('100 samples | final time point')

plt.bar(

range(len(final_timepoint_doubling_times)),

final_timepoint_doubling_times,

)

plt.ylim((0, 1.0))

plt.subplot(2, 3, 5)

plt.plot(

range(len(final_timepoint_subsample)),

np.sum(final_timepoint_subsample, axis=1),

'.'

)

plt.xlabel('Sample #')

plt.ylim((0, 10))

### S4C

plt.subplot(2, 3, 3)

plt.title('100 samples | no selection')

plt.bar(

simulation_result_no_selection['wgs_samples_mage_cycle_list'],

simulation_result_no_selection['wgs_samples_doubling_times'],

)

plt.xlim((0, 50))

plt.ylim((0, 1.0))

plt.subplot(2, 3, 6)

plt.plot(

simulation_result_no_selection['wgs_samples_mage_cycle_list'],

np.sum(simulation_result_no_selection['wgs_samples'], axis=1),

'.')

plt.xlabel('MAGE cycle')

plt.ylim((0, 10))

plt.tight_layout()

plt.rcParams.update({'font.size': 18})

plt.savefig('results/supp_fig_4abc_ipynb_draft.png', dpi=300)

plt.show()

# + [markdown] deletable=true editable=true

# Also look at what using enrichment at final timepoint would give.

# + deletable=true editable=true

final_timepoint_enrichment_with_selection_df = pd.DataFrame({

'snp_effect': simulation_result_with_selection['snp_effect'],

'enrichment_count': final_timepoint_subsample.sum(axis=0)

})

display(final_timepoint_enrichment_with_selection_df.sort_values(

'enrichment_count', ascending=False)[:10])

# + [markdown] deletable=true editable=true

# To call a SNP by enrichment, let's say its enrichment has to be above the average.

# + deletable=true editable=true

simulation_util.calculate_enrichment_metrics(final_timepoint_enrichment_with_selection_df)

# + [markdown] deletable=true editable=true

# This does decently well at our parameters, but misses at least one and gives at least one false positive. This might be an okay strategy at our design parameters, but we should show below that this is not as good with other design parameters.

# + [markdown] deletable=true editable=true

# ## Perform simulations across different parameters and record performance

# + [markdown] deletable=true editable=true

# We'll use ipyparallel to parallelize. To start a local cluster, run this in a terminal:

#

# ipcluster start -n <num_cores>

# + deletable=true editable=true

ipp_client = ipp.Client()

# + [markdown] deletable=true editable=true

# Use load-balanced view which distributes jobs as you would expect.

# + deletable=true editable=true

lbv = ipp_client.load_balanced_view()

# + [markdown] deletable=true editable=true

# **With more strains tested, would it be possible to identify more variants that affect fitness?**

# + deletable=true editable=true

# Clones sequenced.

# num_samples_range = [24, 48, 72, 96, 144, 192, 240, 288, 336, 384] # For manuscript

num_samples_range = [24, 48] # For test

print '\nnum_samples_range'

print num_samples_range

# Total oligos.

# num_snps_considered_range = (

# list(range(10, 100, 10)) +

# list(range(100, 600, 100)) +

# list(range(600, 1001, 200))

# ) # For manuscript

num_snps_considered_range = [10, 20] # For test

print '\nnum_snps_considered'

print num_snps_considered_range

# Causal SNPs.

# Bounded in the foor loop to be less than num_snps_considered.

# num_snps_with_effect_range = [5] + list(range(10, 101, 10)) # For manuscript

num_snps_with_effect_range = range(5, 11, 1) # For test

print '\nnum_snps_with_effect_range'

print num_snps_with_effect_range

# NUM_SNPS_SAMPLES_GRID_REPLICATES = 10 # For manuscript

NUM_SNPS_SAMPLES_GRID_REPLICATES = 1 # For test

# NUM_LM_REPEATS = 10 # For manuscript

NUM_LM_REPEATS = 1 # For test

# Estimated time

print 'Estimated simulations < %d' % (

len(num_samples_range) * len(num_snps_considered_range) *

len(num_snps_with_effect_range) * NUM_SNPS_SAMPLES_GRID_REPLICATES)

# + deletable=true editable=true

more_strains_simulation_async_results = []

for num_snps_considered in num_snps_considered_range:

for num_samples in num_samples_range:

for num_snps_with_effect in num_snps_with_effect_range:

if num_snps_with_effect >= num_snps_considered:

continue

for rep in range(1, NUM_SNPS_SAMPLES_GRID_REPLICATES + 1):

sim_params = simulation_util.SimulationParams()

sim_params.num_snps_with_effect = num_snps_with_effect

sim_params.num_samples = num_samples

sim_params.num_snps_considered = num_snps_considered

more_strains_simulation_async_results.append(

lbv.apply_async(

simulation_util.run_simulation_with_params,

sim_params, rep, repeats=NUM_LM_REPEATS))

print 'Started %d jobs' % len(more_strains_simulation_async_results)

# Loop to write results that are finished at this point.

while True:

more_strains_simulation_result = []

for ar in more_strains_simulation_async_results:

if ar.ready():

more_strains_simulation_result.append(ar.get())

# Write result so far.

more_strains_simulation_result_df = pd.DataFrame(

more_strains_simulation_result)

if len(more_strains_simulation_result_df):

more_strains_simulation_result_df = more_strains_simulation_result_df[

simulation_util.SIM_RESULT_KEY_ORDER]

more_strains_simulation_result_df.to_csv(

'results/sim_results_num_strains_vs_num_snps_identified_small_test.csv',

index=False)

# Check if all ready.

if len(more_strains_simulation_result) == len(more_strains_simulation_async_results):

break

# Delay 30 seconds until next check.

time.sleep(30)

more_strains_simulation_result_df[:3]

| experiment_design_simulations/simulation.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# # Introdução a Deep Learning

# Deep Learning têm recebido [bastante atenção nos últimos anos](https://trends.google.com/trends/explore?date=all&q=Deep%20Learning), tanto no campo da computação como na mídia em geral.

#

# Técnicas de Deep Learning (DL) se tornaram o estado da arte em várias tarefas de Inteligência Artificial e mudado vários grandes campos da área, na visão computacional (classificação de imagens, segmentação de imagens), NLP (tradução, classificação de textos), reinforcement learning (agentes capazes de jogar jogos complexos como Go, Atari e DOTA).

#

# Esse impacto não fica só na teoria, DL está mudando o campo da Medicina, Ciência, Matemática, Física. Artigos de Deep Learning são rotinamente publicados em grandes revistas científicas como: Nature, Science e JAMA.

#

# Nesta seção iremos discutir:

#

# * O que é Deep Learning?

# * Eu realmente deveria me importar com DL ou é só [hype](https://pt.wikipedia.org/wiki/Hype)?

# * Por que eu como programador(a) deveria me importar?

# ## DL = Muitos Dados + Redes Neurais + Otimização

#

# ### Inteligência Artificial $\supseteq$ Machine Learning $\supseteq$ Deep Learning

#

#

# IA (Inteligência Artificial), ML (Machine Learning) e DL (Deep Learning) são utilizados na mídia quase como sinônimos.

#

# **Inteligência Artificial** se refere ao campo da ciência da computação que se preocupa com o estudo de máquinas para realização de atividades ditas inteligentes. Esse é um grande campo com diferentes "escolas"/"ramificações". Inteligência é um conceito muito amplo e bastante subjetivo.

#

# Uma grande pergunta é **como** construir máquinas inteligentes?

#

# **Machine Learning** se refere ao subcampo da IA que busca obter máquinas inteligentes através da extração de estrutura e inteligência (padrões) de dados (experiência).

#

# > "Um computador aprende a partir da experiência E com respeito a alguma tarefa T e alguma medida de performance P, se sua performance em T melhora com sua experiência E" - [<NAME> (1998)](http://www.cs.cmu.edu/~tom/)

#

#

#

# A ideia de ML é que, para realizar uma tarefa, nós (programadoras(es)) não implementemos as regras que definem as saídas para uma dada entrada. Imagine que queremos traduzir **qualquer** frase do inglês para português, quais regras iremos utilizar? Listar todas as traduções e regras possíveis é não trivial.

#

# > *“A complexidade de programas de computadores convencionais está no código (programas que as pessoas escrevem). Em Machine Learning, algoritmos são em princípio simples e a complexidade (estrutura) está nos dados. Existe uma maneira de aprender essa estrutura automaticamente? Esse é o princípio fundamental de Machine Learning.”- [<NAME>](https://en.wikipedia.org/wiki/Andrew_Ng)*

#

# Ao invés de listar as regras e funcionamento do sistema podemos utilizar um **modelo de Machine Learning**, e mostrar para o modelo vários exemplos de frases em inglês traduzidas para português. Padrões são extraídos dos dados e utilizados para que o modelo defina como tomar suas próprias decisões de modo a otimizar a tradução.

#

# Modelos de Machine Learning extraem padrões dos dados, e claro são extremamente dependentes da qualidade dos dados para sucesso em suas tarefas, mas também depende de **como os dados são representados**. Por exemplo, como um modelo de ML deve representar uma frase?

#

#

#

#

# Seria muito bom não ter que se preocupar com a representação dos dados... Tipo, dar para o modelo uma representação bastante simplificada e ele que se preocupe em encontrar algum sentindo nesse dado **bruto**...

#

# Seria e é possível!! Essa abordagem é conhecida como **Representation Learning**, o modelo deve ser capaz não só de resolver a tarefa (exemplo: tradução), mas também de encontrar representações úteis para os dados de modo a resolver essa tarefa. Afinal, a gente não sabe explicar como resolve o problema, provavelmente também não sabemos qual a melhor representação para tal.

#

# **Deep Learning é um subcampo de ML que utiliza representation learning definindo representações mais complexas a partir de outras representações mais simples.**

#

#

#

# O nome **Deep** vem das múltiplas representações (**camadas**) que utilizamos para construir os modelos. Então, de maneira geral, quando pensamos em DL pensamos em um modelo com váááárias camadas, e também precisamos de muuuuitos dados. Métodos de DL tem fome de dados. Quer boas representações? Me dê vários exemplos de dados; Quer que eu saiba traduzir bem? Me dê vários exemplos de traduções de boa qualidades que eu consigo extrair os padrões e aprender umas representações bem bacanas e ser um excelente tradutor!

#

# **Importante**: se modelos de DL aprendem com os padrões presentes nos dados, significa que eles podem aprender vieses e preconceitos presente nos dados.

#

#

# # Eu realmente deveria me importar com DL ou é só [hype](https://pt.wikipedia.org/wiki/Hype)?

# ### Popularização de DL

#

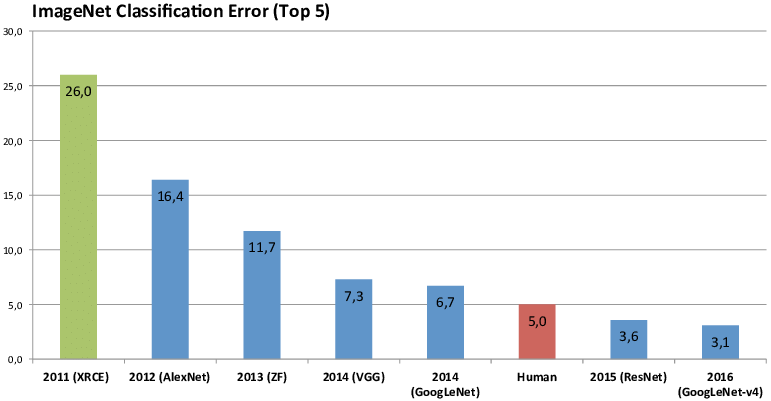

# O termo Deep Learning começou a se popularizar aproximadamente em 2012 quando técnicas de DL foram aplicadas com sucesso para a tarefa de classificação de imagens num desafio chamado "Imaginet Challenge" que consiste em classificar corretamente imagens em 1000 diferentes categorias.

#

#

#

# Desde então modelos de DL são a melhor coisa para essa tarefa e só ficam melhor. Na imagem acima é mostrado o erro do vencedor do desafio em cada ano. Sendo as barras azuis modelos de DL.

#

# ### Mas... redes neurais (coração do DL) existem desde 1930, por que só agora?

#

# Dois grandes fatores contribuiram para o sucesso de métodos de DL e sua popularização:

#

# 1. Muitos dados disponívels

# 2. Hardware disponível

#

# Com esses fatores foi possível explorar redes neurais com muito mais camadas e novas arquiteturas. Assim DL se tornou imparável e se firmou como estado na arte não só na tarefa de classificação de imagens mas [numa esmagadora gama de tarefas e áreas](https://paperswithcode.com/sota).

#

#

# ### Ta... mas é hype ou não é?

#

# O hype é real, mas o avanço também!

#

# Ainda existem vários problemas em abertos, porém a área de DL está em constante evolução! Cada dia que passa novas técnicas surgem e mais resultados surpreendentes são alcançados.

#

# Aprender sobre DL e seus conceitos básicos é fundamental para acompanhar, entender e contribuir para esse avanço!

# ## Por que eu deveria me importar?

# 1. [ML e DL estão mudando como se constrói e se pensa sobre software!](https://medium.com/@karpathy/software-2-0-a64152b37c35)

# 2. [DL é muito legal, se você entender de DL você se torna mais legal e interessante por tabela :D](https://www.youtube.com/watch?v=PCBTZh41Ris)

#

# Mas sério, a razão 1. é realmente muito relevante, leia [esse blog](https://medium.com/@karpathy/software-2-0-a64152b37c35) e entenda uma forma de ver como DL está mudando como construimos e construiremos software.

# ## Referências

#

# Este conteúdo é baseado nos seguintes materiais:

#

# * [Machine Learning in Formal Verification, FMCAD 2016 tutorial - Manish Pandey](http://www.cs.utexas.edu/users/hunt/FMCAD/FMCAD16/slides/tutorial1.pdf?fbclid=IwAR0RnrpaJzULlF<KEY>)

# * [Deep Learning Book, capítulo 1 - GoodFellow et Al.](https://www.deeplearningbook.org/contents/intro.html)

# * [Deep Learning 101 - Part 1: History and Background - <NAME>](https://beamandrew.github.io/deeplearning/2017/02/23/deep_learning_101_part1.html)

| _notebooks/2021-01-21-intro_a_deep_learning.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# # 通过DCGAN实现人脸图像生成

#

# 作者:[ZMpursue](https://github.com/ZMpursue)

# 日期:2020.10.26

#

# 本教程将通过一个示例对DCGAN进行介绍。在向其展示许多真实人脸照片(数据集:[Celeb-A Face](http://mmlab.ie.cuhk.edu.hk/projects/CelebA.html))后,我们将训练一个生成对抗网络(GAN)来产生新人脸。本文将对该实现进行详尽的解释,并阐明此模型的工作方式和原因。并不需要过多专业知识,但是可能需要新手花一些时间来理解的模型训练的实际情况。为了节省时间,请尽量选择GPU进行训练。

#

# ## 1 简介

# 本项目基于paddlepaddle,结合生成对抗网络(DCGAN),通过弱监督学习的方式,训练生成真实人脸照片

#

# ### 1.1 什么是GAN?

#

# 生成对抗网络(Generative Adversarial Network [1],简称GAN)是非监督式学习的一种方法,通过让两个神经网络相互博弈的方式进行学习。该方法最初由 lan·Goodfellow 等人于2014年提出,原论文见 [Generative Adversarial Network](https://arxiv.org/abs/1406.2661)。

#

# 生成对抗网络由一个生成网络与一个判别网络组成。生成网络从潜在空间(latent space)中随机采样作为输入,其输出结果需要尽量模仿训练集中的真实样本。判别网络的输入为真实样本或生成网络的输出,其目的是将尽可能的分辨输入为真实样本或生成网络的输出。而生成网络则要尽可能地欺骗判别网络。两个网络相互对抗、不断调整参数。

#

# 让$x$是代表图像的数据。$D(x)$是判别器网络,输出的概率为$x$来自训练数据还是生成器。假设$x$为CHW格式,大小为3x64x64的图像数据,D为判别器网络,$D(x)$为$𝑥$来自训练数据还是生成器。当$𝑥$来自训练数据时$𝐷(𝑥)$尽量接近1,$𝑥$来自生成器时$𝐷(𝑥)$尽量接近0。 因此,$𝐷(𝑥)$也可以被认为是传统的二分类器。

#

# 对于生成器网络, 假设$z$为从标准正态分布采样的空间向量。$G(z)$表示生成器网络,该网络将矢量$z$映射到数据空间,$G(z)$表示生成器网络输出的图像。生成器的目标是拟合训练数据($p_{data}$)的分布,以便可以从该估计分布中生成假样本($p_g$)。

#

# 所以,$D(G(z))$是生成器$G$输出是真实的图像的概率。如Goodfellow的论文所述,$D$和$G$玩一个minmax游戏,其中$D$尝试最大化其正确分类真假的可能性$logD(x)$,以及$G$试图最小化以下可能性$D$会预测其输出是假的$log(1-D(G(x)))$。

#

# GAN的损失函数可表示为:

#

# > $\underset{G}{\text{min}} \underset{D}{\text{max}}V(D,G) = \mathbb{E}_{x\sim p_{data}(x)}\big[logD(x)\big] + \mathbb{E}_{z\sim p_{z}(z)}\big[log(1-D(G(z)))\big]$

#

# 从理论上讲,此minmax游戏的解决方案是$p_g = p_{data}$,鉴别者会盲目猜测输入是真实的还是假的。但是,GAN的收敛理论仍在积极研究中,实际上GAN常常会遇到梯度消失/爆炸问题。

# 生成对抗网络常用于生成以假乱真的图片。此外,该方法还被用于生成视频、三维物体模型等。

#

#

# ### 1.2 什么是DCGAN?

#

# DCGAN是深层卷积网络与GAN的结合,其基本原理与GAN相同,只是将生成网络和判别网络用两个卷积网络(CNN)替代。为了提高生成样本的质量和网络的收敛速度,论文中的 DCGAN 在网络结构上进行了一些改进:

#

# * 取消 pooling 层:在网络中,所有的pooling层使用步幅卷积(strided convolutions)(判别器)和微步幅度卷积(fractional-strided convolutions)(生成器)进行替换。

# * 加入batchnorm:在生成器和判别器中均加入batchnorm。

# * 使用全卷积网络:去掉了FC层,以实现更深的网络结构。

# * 激活函数:在生成器(G)中,最后一层使用Tanh函数,其余层采用ReLU函数 ; 判别器(D)中都采用LeakyReLU。

#

#

# ## 2 环境设置及数据集

#

# 环境:paddlepaddle、scikit-image、numpy、matplotlib

#

# 在本教程中,我们将使用[Celeb-A Faces](http://mmlab.ie.cuhk.edu.hk/projects/CelebA.html)数据集,该数据集可以在链接的网站或[AI Studio](https://aistudio.baidu.com/aistudio/datasetdetail/39207)中下载。数据集将下载为名为img_align_celeba.zip的文件。下载后,并将zip文件解压缩到该目录中。

# img_align_celeba目录结构应为:

# ```

# /path/to/project

# -> img_align_celeba

# -> 188242.jpg

# -> 173822.jpg

# -> 284702.jpg

# -> 537394.jpg

# ...

# ```

# ### 2.1 数据集预处理

# 多线程处理,以裁切坐标(0,10)和(64,74),将输入网络的图片裁切到64*64。

# +

from PIL import Image

import os.path

import os

import threading

from PIL import ImageFile

ImageFile.LOAD_TRUNCATED_IMAGES = True

'''多线程将图片缩放后再裁切到64*64分辨率'''

#裁切图片宽度

w = 64

#裁切图片高度

h = 64

#裁切点横坐标(以图片左上角为原点)

x = 0

#裁切点纵坐标

y = 20

def cutArray(l, num):

avg = len(l) / float(num)

o = []

last = 0.0

while last < len(l):

o.append(l[int(last):int(last + avg)])

last += avg

return o

def convertjpg(jpgfile,outdir,width=w,height=h):

img=Image.open(jpgfile)

(l,h) = img.size

rate = min(l,h) / width

try:

img = img.resize((int(l // rate),int(h // rate)),Image.BILINEAR)

img = img.crop((x,y,width+x,height+y))

img.save(os.path.join(outdir,os.path.basename(jpgfile)))

except Exception as e:

print(e)

class thread(threading.Thread):

def __init__(self, threadID, inpath, outpath, files):

threading.Thread.__init__(self)

self.threadID = threadID

self.inpath = inpath

self.outpath = outpath

self.files = files

def run(self):

count = 0

try:

for file in self.files:

convertjpg(self.inpath + file,self.outpath)

count = count + 1

except Exception as e:

print(e)

print('已处理图片数量:' + str(count))

if __name__ == '__main__':

inpath = './work/img_align_celeba/'

outpath = './work/imgs/'

if not os.path.exists(outpath):

os.mkdir(outpath)

files = os.listdir(inpath)

files = cutArray(files,8)

T1 = thread(1, inpath, outpath, files[0])

T2 = thread(2, inpath, outpath, files[1])

T3 = thread(3, inpath, outpath, files[2])

T4 = thread(4, inpath, outpath, files[3])

T5 = thread(5, inpath, outpath, files[4])

T6 = thread(6, inpath, outpath, files[5])

T7 = thread(7, inpath, outpath, files[6])

T8 = thread(8, inpath, outpath, files[7])

T1.start()

T2.start()

T3.start()

T4.start()

T5.start()

T6.start()

T7.start()

T8.start()

T1.join()

T2.join()

T3.join()

T4.join()

T5.join()

T6.join()

T7.join()

T8.join()

# -

# ## 3 模型组网

# ### 3.1 定义数据预处理工具-Paddle.io.Dataset

# 具体参考[Paddle.io.Dataset教程](https://www.paddlepaddle.org.cn/documentation/docs/zh/2.0-rc/api/paddle/io/Dataset_cn.html#dataset)

# +

import os

import cv2

import numpy as np

from skimage import io,color,transform

import matplotlib.pyplot as plt

import math

import time

import paddle

from paddle.io import Dataset

import six

from PIL import Image as PilImage

from paddle.static import InputSpec

paddle.enable_static()

img_dim = 64

'''准备数据,定义Reader()'''

PATH = 'work/imgs/'

class DataGenerater(Dataset):

"""

数据集定义

"""

def __init__(self,path=PATH):

"""

构造函数

"""

super(DataGenerater, self).__init__()

self.dir = path

self.datalist = os.listdir(PATH)

self.image_size = (img_dim,img_dim)

# 每次迭代时返回数据和对应的标签

def __getitem__(self, idx):

return self._load_img(self.dir + self.datalist[idx])

# 返回整个数据集的总数

def __len__(self):

return len(self.datalist)

def _load_img(self, path):

"""

统一的图像处理接口封装,用于规整图像大小和通道

"""

try:

img = io.imread(path)

img = transform.resize(img,self.image_size)

img = img.transpose()

img = img.astype('float32')

except Exception as e:

print(e)

return img

# -

# ### 3.2 测试Paddle.io.DataLoader并输出图片

# +

train_dataset = DataGenerater()

img = paddle.static.data(name='img', shape=[None,3,img_dim,img_dim], dtype='float32')

train_loader = paddle.io.DataLoader(

train_dataset,

places=paddle.CPUPlace(),

feed_list = [img],

batch_size=128,

shuffle=True,

num_workers=2,

use_buffer_reader=True,

use_shared_memory=False,

drop_last=True,

)

for batch_id, data in enumerate(train_loader()):

plt.figure(figsize=(15,15))

try:

for i in range(100):

image = np.array(data[0]['img'][i])[0].transpose((2,1,0))

plt.subplot(10, 10, i + 1)

plt.imshow(image, vmin=-1, vmax=1)

plt.axis('off')

plt.xticks([])

plt.yticks([])

plt.subplots_adjust(wspace=0.1, hspace=0.1)

plt.suptitle('\n Training Images',fontsize=30)

plt.show()

break

except IOError:

print(IOError)

# -

# ### 3.3 权重初始化

# 在 DCGAN 论文中,作者指定所有模型权重应从均值为0、标准差为0.02的正态分布中随机初始化。

# 调用paddle.nn.initializer.Normal实现initialize设置

conv_initializer=paddle.nn.initializer.Normal(mean=0.0, std=0.02)

bn_initializer=paddle.nn.initializer.Normal(mean=1.0, std=0.02)

# ### 3.4 判别器

# 如上文所述,生成器$D$是一个二进制分类网络,它以图像作为输入,输出图像是真实的(相对应$G$生成的假样本)的概率。输入$Shape$为[3,64,64]的RGB图像,通过一系列的$Conv2d$,$BatchNorm2d$和$LeakyReLU$层对其进行处理,然后通过全连接层输出的神经元个数为2,对应两个标签的预测概率。

#

# * 将BatchNorm批归一化中momentum参数设置为0.5

# * 将判别器(D)激活函数leaky_relu的alpha参数设置为0.2

#

# > 输入: 为大小64x64的RGB三通道图片

# > 输出: 经过一层全连接层最后为shape为[batch_size,2]的Tensor

# +

import paddle

import paddle.nn as nn

import paddle.nn.functional as F

class Discriminator(paddle.nn.Layer):

def __init__(self):

super(Discriminator, self).__init__()

self.conv_1 = nn.Conv2D(

3,64,4,2,1,

bias_attr=False,weight_attr=paddle.ParamAttr(name="d_conv_weight_1_",initializer=conv_initializer)

)

self.conv_2 = nn.Conv2D(

64,128,4,2,1,

bias_attr=False,weight_attr=paddle.ParamAttr(name="d_conv_weight_2_",initializer=conv_initializer)

)

self.bn_2 = nn.BatchNorm2D(

128,

weight_attr=paddle.ParamAttr(name="d_2_bn_weight_",initializer=bn_initializer),momentum=0.8

)

self.conv_3 = nn.Conv2D(

128,256,4,2,1,

bias_attr=False,weight_attr=paddle.ParamAttr(name="d_conv_weight_3_",initializer=conv_initializer)

)

self.bn_3 = nn.BatchNorm2D(

256,

weight_attr=paddle.ParamAttr(name="d_3_bn_weight_",initializer=bn_initializer),momentum=0.8

)

self.conv_4 = nn.Conv2D(

256,512,4,2,1,

bias_attr=False,weight_attr=paddle.ParamAttr(name="d_conv_weight_4_",initializer=conv_initializer)

)

self.bn_4 = nn.BatchNorm2D(

512,

weight_attr=paddle.ParamAttr(name="d_4_bn_weight_",initializer=bn_initializer),momentum=0.8

)

self.conv_5 = nn.Conv2D(

512,1,4,1,0,

bias_attr=False,weight_attr=paddle.ParamAttr(name="d_conv_weight_5_",initializer=conv_initializer)

)

def forward(self, x):

x = self.conv_1(x)

x = F.leaky_relu(x,negative_slope=0.2)

x = self.conv_2(x)

x = self.bn_2(x)

x = F.leaky_relu(x,negative_slope=0.2)

x = self.conv_3(x)

x = self.bn_3(x)

x = F.leaky_relu(x,negative_slope=0.2)

x = self.conv_4(x)

x = self.bn_4(x)

x = F.leaky_relu(x,negative_slope=0.2)

x = self.conv_5(x)

x = F.sigmoid(x)

return x

# -

# ### 3.5 生成器

# 生成器$G$旨在映射潜在空间矢量$z$到数据空间。由于我们的数据是图像,因此转换$z$到数据空间意味着最终创建具有与训练图像相同大小[3,64,64]的RGB图像。在网络设计中,这是通过一系列二维卷积转置层来完成的,每个层都与$BatchNorm$层和$ReLu$激活函数。生成器的输出通过$tanh$函数输出,以使其返回到输入数据范围[−1,1]。值得注意的是,在卷积转置层之后存在$BatchNorm$函数,因为这是DCGAN论文的关键改进。这些层有助于训练过程中的梯度更好地流动。

#

# **生成器网络结构**

#

#

# * 将$BatchNorm$批归一化中$momentum$参数设置为0.5

#

# > 输入:Tensor的Shape为[batch_size,100]其中每个数值大小为0~1之间的float32随机数

# > 输出:3x64x64RGB三通道图片

#

# +

class Generator(paddle.nn.Layer):

def __init__(self):

super(Generator, self).__init__()

self.conv_1 = nn.Conv2DTranspose(

100,512,4,1,0,

bias_attr=False,weight_attr=paddle.ParamAttr(name="g_dconv_weight_1_",initializer=conv_initializer)

)

self.bn_1 = nn.BatchNorm2D(

512,

weight_attr=paddle.ParamAttr(name="g_1_bn_weight_",initializer=bn_initializer),momentum=0.8

)

self.conv_2 = nn.Conv2DTranspose(

512,256,4,2,1,

bias_attr=False,weight_attr=paddle.ParamAttr(name="g_dconv_weight_2_",initializer=conv_initializer)

)

self.bn_2 = nn.BatchNorm2D(

256,

weight_attr=paddle.ParamAttr(name="g_2_bn_weight_",initializer=bn_initializer),momentum=0.8

)

self.conv_3 = nn.Conv2DTranspose(

256,128,4,2,1,

bias_attr=False,weight_attr=paddle.ParamAttr(name="g_dconv_weight_3_",initializer=conv_initializer)

)

self.bn_3 = nn.BatchNorm2D(

128,

weight_attr=paddle.ParamAttr(name="g_3_bn_weight_",initializer=bn_initializer),momentum=0.8

)

self.conv_4 = nn.Conv2DTranspose(

128,64,4,2,1,

bias_attr=False,weight_attr=paddle.ParamAttr(name="g_dconv_weight_4_",initializer=conv_initializer)

)

self.bn_4 = nn.BatchNorm2D(

64,

weight_attr=paddle.ParamAttr(name="g_4_bn_weight_",initializer=bn_initializer),momentum=0.8

)

self.conv_5 = nn.Conv2DTranspose(

64,3,4,2,1,

bias_attr=False,weight_attr=paddle.ParamAttr(name="g_dconv_weight_5_",initializer=conv_initializer)

)

self.tanh = paddle.nn.Tanh()

def forward(self, x):

x = self.conv_1(x)

x = self.bn_1(x)

x = F.relu(x)

x = self.conv_2(x)

x = self.bn_2(x)

x = F.relu(x)

x = self.conv_3(x)

x = self.bn_3(x)

x = F.relu(x)

x = self.conv_4(x)

x = self.bn_4(x)

x = F.relu(x)

x = self.conv_5(x)

x = self.tanh(x)

return x

# -

# ### 3.6 损失函数

# 选用BCELoss,公式如下:

#

# $Out = -1 * (label * log(input) + (1 - label) * log(1 - input))$

#

###损失函数

loss = paddle.nn.BCELoss()

# ## 4 模型训练

# 设置的超参数为:

# * 学习率:0.0002

# * 输入图片长和宽:64

# * Epoch: 8

# * Mini-Batch:128

# * 输入Tensor长度:100

# * Adam:Beta1:0.5,Beta2:0.999

#

# 训练过程中的每一次迭代,生成器和判别器分别设置自己的迭代次数。为了避免判别器快速收敛到0,本教程默认每迭代一次,训练一次判别器,两次生成器。

# +

import IPython.display as display

import warnings

import paddle.optimizer as optim

warnings.filterwarnings('ignore')

img_dim = 64

lr = 0.0002

epoch = 5

output = "work/Output/"

batch_size = 128

G_DIMENSION = 100

beta1=0.5

beta2=0.999

output_path = 'work/Output'

device = paddle.set_device('gpu')

paddle.disable_static(device)

real_label = 1.

fake_label = 0.

netD = Discriminator()

netG = Generator()

optimizerD = optim.Adam(parameters=netD.parameters(), learning_rate=lr, beta1=beta1, beta2=beta2)

optimizerG = optim.Adam(parameters=netG.parameters(), learning_rate=lr, beta1=beta1, beta2=beta2)

###训练过程

losses = [[], []]

#plt.ion()

now = 0

for pass_id in range(epoch):

# enumerate()函数将一个可遍历的数据对象组合成一个序列列表

for batch_id, data in enumerate(train_loader()):

#训练判别器

optimizerD.clear_grad()

real_cpu = data[0]

label = paddle.full((batch_size,1,1,1),real_label,dtype='float32')

output = netD(real_cpu)

errD_real = loss(output,label)

errD_real.backward()

optimizerD.step()

optimizerD.clear_grad()

noise = paddle.randn([batch_size,G_DIMENSION,1,1],'float32')

fake = netG(noise)

label = paddle.full((batch_size,1,1,1),fake_label,dtype='float32')

output = netD(fake.detach())

errD_fake = loss(output,label)

errD_fake.backward()

optimizerD.step()

optimizerD.clear_grad()

errD = errD_real + errD_fake

losses[0].append(errD.numpy()[0])

###训练生成器

optimizerG.clear_grad()

noise = paddle.randn([batch_size,G_DIMENSION,1,1],'float32')

fake = netG(noise)

label = paddle.full((batch_size,1,1,1),real_label,dtype=np.float32,)

output = netD(fake)

errG = loss(output,label)

errG.backward()

optimizerG.step()

optimizerG.clear_grad()

losses[1].append(errG.numpy()[0])

if batch_id % 100 == 0:

if not os.path.exists(output_path):

os.makedirs(output_path)

# 每轮的生成结果

generated_image = netG(noise).numpy()

imgs = []

plt.figure(figsize=(15,15))

try:

for i in range(100):

image = generated_image[i].transpose()

image = np.where(image > 0, image, 0)

plt.subplot(10, 10, i + 1)

plt.imshow(image, vmin=-1, vmax=1)

plt.axis('off')

plt.xticks([])

plt.yticks([])

plt.subplots_adjust(wspace=0.1, hspace=0.1)

msg = 'Epoch ID={0} Batch ID={1} \n\n D-Loss={2} G-Loss={3}'.format(pass_id, batch_id, errD.numpy()[0], errG.numpy()[0])

plt.suptitle(msg,fontsize=20)

plt.draw()

plt.savefig('{}/{:04d}_{:04d}.png'.format(output_path, pass_id, batch_id),bbox_inches='tight')

plt.pause(0.01)

display.clear_output(wait=True)

except IOError:

print(IOError)

paddle.save(netG.state_dict(), "work/generator.params")

plt.close()

# -

plt.figure(figsize=(15, 6))

x = np.arange(len(losses[0]))

plt.title('Generator and Discriminator Loss During Training')

plt.xlabel('Number of Batch')

plt.plot(x,np.array(losses[0]),label='D Loss')

plt.plot(x,np.array(losses[1]),label='G Loss')

plt.legend()

plt.savefig('work/Generator and Discriminator Loss During Training.png')

plt.show()

# ## 5 模型评估

# ### 生成器$G$和判别器$D$的损失与迭代变化图

#

#

# ### 对比真实人脸图像(图一)和生成人脸图像(图二)

# #### 图一

#

# ### 图二

#

#

# ## 6 模型预测

# ### 输入随机数让生成器$G$生成随机人脸

# 生成的RGB三通道64*64的图片路径位于“worl/Generate/”

device = paddle.set_device('gpu')

paddle.disable_static(device)

try:

generate = Generator()

state_dict = paddle.load("work/generator.params")

generate.set_state_dict(state_dict)

noise = paddle.randn([100,100,1,1],'float32')

generated_image = generate(noise).numpy()

for j in range(100):

image = generated_image[j].transpose()

plt.figure(figsize=(4,4))

plt.imshow(image)

plt.axis('off')

plt.xticks([])

plt.yticks([])

plt.subplots_adjust(wspace=0.1, hspace=0.1)

plt.savefig('work/Generate/generated_' + str(j + 1), bbox_inches='tight')

plt.close()

except IOError:

print(IOError)

# ## 7 项目总结

# 简单介绍了一下DCGAN的原理,通过对原项目的改进和优化,一步一步依次对生成器和判别器以及训练过程进行介绍。

# DCGAN采用一个随机噪声向量作为输入,输入通过与CNN类似但是相反的结构,将输入放大成二维数据。采用这种结构的生成模型和CNN结构的判别模型,DCGAN在图片生成上可以达到相当可观的效果。本案例中,我们利用DCGAN生成了人脸照片,您可以尝试更换数据集生成符合个人需求的图片,或尝试修改网络结构观察不一样的生成效果。

# ## 8 参考文献

# [1] Goodfellow, <NAME>.; <NAME>; <NAME>; <NAME>; <NAME>; <NAME>; <NAME>; <NAME>. Generative Adversarial Networks. 2014. arXiv:1406.2661 [stat.ML].

#

# [2] <NAME>, <NAME>, <NAME>, <NAME>, <NAME>, <NAME>, <NAME>, <NAME>, <NAME>, <NAME>, <NAME>, <NAME>, <NAME>, And <NAME>, Generative Models, OpenAI, [April 7, 2016]

#

# [3] alimans, Tim; Goodfellow, Ian; <NAME>; <NAME>; <NAME>; <NAME>. Improved Techniques for Training GANs. 2016. arXiv:1606.03498 [cs.LG].

#

# [4] <NAME>, <NAME>, <NAME>. Unsupervised Representation Learning with Deep Convolutional Generative Adversarial Networks[J]. Computer Science, 2015.

#

# [5]<NAME> , Ba J . Adam: A Method for Stochastic Optimization[J]. Computer ence, 2014.

| docs/practices/dcgan_face/dcgan_face.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3 (ipykernel)

# language: python

# name: python3

# ---

# # Mechanics of running regressions

#

# ```{note}

# These next few pages use a classic dataset called "diamonds" to introduce the regression methods. In lectures, we will use finance oriented data.

# ```

#

# ## Objectives

#

# After this page,

#

# 1. You can fit a regression with `statsmodels` or `sklearn` that includes dummy variables, categorical variables, and interaction terms.

# - `statsmodels`: Nicer result tables, usually easier to specifying the regression model

# - `sklearn`: Easier to use within a prediction/ML exercise

# 1. With both modules: You can view the results visually

# 1. With both modules: You can get the coefficients, t-stats, R$^2$, Adj R$^2$, predicted values ($\hat{y}$), and residuals ($\hat{u}$)

#

#

#

# Let's get our hands dirty quickly by loading some data.

# +

# load some data to practice regressions

import seaborn as sns

import numpy as np

diamonds = sns.load_dataset('diamonds')

# this alteration is not strictly necessary to practice a regression

# but we use this in livecoding

diamonds2 = (diamonds.query('carat < 2.5') # censor/remove outliers

.assign(lprice = np.log(diamonds['price'])) # log transform price

.assign(lcarat = np.log(diamonds['carat'])) # log transform carats

.assign(ideal = diamonds['cut'] == 'Ideal')

# some regression packages want you to explicitly provide

# a variable for the constant

.assign(const = 1)

)

# -

#

# ## Our first regression with `statsmodels`

#

# You'll see these steps repeated a lot for the rest of the class:

# 1. Load the module

# 1. Load your data, and set up your y and X variables

# 1. Pick the model, _usually something like:_ `model = <moduleEstimator>`

# 1. Fit the model and store the results, _usually something like:_ `results = model.fit()`

# 1. Get predicted values, _usually something like:_ `predicted = results.predict()`

#

# +

import statsmodels.api as sm # need this

y = diamonds2['lprice'] # pick y

X = diamonds2[['const','lcarat']] # set up all your X vars as a matrix

# NOTICE I EXPLICITLY GIVE X A CONSTANT SO IT FITS AN INTERCEPT

model1 = sm.OLS(y,X) # pick model type (OLS here) and specify model features

results1 = model1.fit() # estimate / fit

print(results1.summary()) # view results (coefs, t-stats, p-vals, R2, Adj R2)

y_predicted1 = results1.predict() # get the predicted results

residuals1 = results1.resid # get the residuals

#residuals1 = y - y_predicted1 # another way to get the residuals

print('\n\nParams:')

print(results1.params) # if you need to access the coefficients (e.g. to save them), results.params

# -

# ## A better way to regress with `statsmodels`

#

# ```{tip}

# This is my preferred way to run a regression in Python unless I _have_ to use sklearn.

#

# [The documentation with tricks and examples for how to write a regression formula with statsmodels is here.](https://www.statsmodels.org/stable/examples/notebooks/generated/formulas.html)

# ```

#

# In the above, replace

# ```python

# y = diamonds2['lprice'] # pick y

# X = diamonds2[['const','lcarat']] # set up all your X vars as a matrix

#

# model1 = sm.OLS(y,X) # pick model type (OLS here) and specify model features

# ```

# with this

# ```python

# model1 = sm.OLS.from_formula('lprice ~ lcarat',data=diamonds2)

# ```

# which I can replace with this (after adding `from statsmodels.formula.api import ols as sm_ols` to my imports)

# ```python

# model1 = sm_ols('lprice ~ lcarat',data=diamonds2)

# ```

#

# **WOW!** This isn't just more convenient (1 line is less than 3), I like this because

#

# 1. You can set up the model (the equation) more naturally. Notice that I didn't set up the y and X variables as explicit variables. Simply tell that $y=a+b*X+c*Z$ by writing out `y ~ X + Z`

# 1. It allows you to **EASILY** include categorical variables (see below)

# 1. It allows you to **EASILY** include interaction effects (see below)

#

# + tags=["hide-output"]

from statsmodels.formula.api import ols as sm_ols # need this

model2 = sm_ols('lprice ~ lcarat', # specify model (you don't need to include the constant!)

data=diamonds2)

results2 = model2.fit() # estimate / fit

print(results2.summary()) # view results ... identical to before

y_predicted2 = results2.predict() # get the predicted results

residuals2 = results2.resid # get the residuals

#residuals1 = y - y_predicted1 # another way to get the residuals

print('\n\nParams:')

print(results2.params) # if you need to access the coefficients (e.g. to save them), results.params

# -

# ```{note}

# We will cover what all these numbers mean later, but this page is focusing on the how-to.

# ```

# ## Regression with `sklearn`

#

# `sklearn` is pretty similar but

# - when setting up the model object, you don't tell it what data to put into the model

# - you call the model object, and then fit it on data, with `model.fit(X,y)`

# - it doesn't have the nice summary tables

# +

from sklearn.linear_model import LinearRegression

y = diamonds2['lprice'] # pick y

X = diamonds2[['const','lcarat']] # set up all your X vars as a matrix

# NOTICE I EXPLICITLY GIVE X A CONSTANT SO IT FITS AN INTERCEPT

model3 = LinearRegression() # set up the model object (but don't tell sklearn what data it gets!)

results3 = model3.fit(X,y) # fit it, and tell it what data to fit on

print('INTERCEPT:', results3.intercept_) # to get the coefficients, you print out the intercept

print('COEFS:', results3.coef_) # and the other coefficients separately (yuck)

y_predicted3 = results3.predict(X) # get predicted y values

residuals3 = y - y_predicted3 # get residuals

# -

# ```{admonition} That's so much uglier. Why use sklearn?

# :class: warning

#

# Because `sklearn` is the go-to for training models using more sophisticated ML ideas (which we will talk about some later in the course!). Two nice walkthroughs:

# - [This guide from the PythonDataScienceHandbook](https://jakevdp.github.io/PythonDataScienceHandbook/05.06-linear-regression.html) (you can use different data though)

# - The "Linear Regression" section [here](https://becominghuman.ai/linear-regression-in-python-with-pandas-scikit-learn-72574a2ec1a5) shows how you can run regressions on training samples and test them out of sample

# ```

# ## Plotting the regression fit

#

# Once you save the predicted values ($\hat{y}$), it's easy to add it to a plot:

# +

import matplotlib.pyplot as plt

# step 1: plot our data as you want

sns.scatterplot(x='lcarat',y='lprice',

data=diamonds2.sample(500,random_state=20)) # sampled just to avoid overplotting

# step 2: add the fitted regression line (the real X values and the predicted y values)

sns.lineplot(x=diamonds2['lcarat'],y=y_predicted1,color='red')

plt.show()

# -

# ```{note}

# `sns.lmplot` and `sns.regplot` will put a regression line on a scatterplot without having to set up and run a regression, but they also overplot the points when you have a lot of data. That's why I used the approach above - scatterplot a subsample of the data and then overlay the regression line.

#

# One other alternative is to use `sns.lmplot` and `sns.regplot`, but use the `x_bins` parameter to report a "binned scatterplot". Check it out if you're curious.

# ```

# ## Including dummy variables

#

# Suppose you started by estimating the price of diamonds as a function of carats

#

# $$

# \log(\text{price})=a+\beta_0 \log(\text{carat}) +u

# $$

#

# but you realize it will be different for ideal cut diamonds. That is, a 1 carat diamond might cost \$1,000, but if it's ideal, it's an extra \$500 dollars.

#

# $$

# \log(\text{price})=

# \begin{cases}

# a+\beta_0 \log(\text{carat}) + \beta_1 +u, & \text{if ideal cut} \\

# a+\beta_0 \log(\text{carat}) +u, & \text{otherwise}

# \end{cases}

# $$

#

# Notice that $\beta_0$ in this model are the same for ideal and non-ideal.

#

# ```{tip}

# **Here is how you run this test: You just add the dummy variable as a new variable in the formula!**

# ```

# + tags=[]

# ideal is a dummy variable = 1 if ideal and 0 if not ideal

print(sm_ols('lprice ~ lcarat + ideal', data=diamonds2).fit().summary())

# -

# <img src=https://media.giphy.com/media/zcCGBRQshGdt6/source.gif width="400">

#

# ## Including categorical variables

#

# Dummy variables take on two values (on/off, True/False, 0/1). Categorical variables can take on many levels. "Industry" and "State" are typical categorical variables in finance applications.

#

# $$

# \log(\text{price})=

# \begin{cases}

# a+\beta_0 \log(\text{carat}) + \beta_1 +u, & \text{if premium cut} \\

# a+\beta_0 \log(\text{carat}) + \beta_2 +u, & \text{if very good cut} \\

# a+\beta_0 \log(\text{carat}) + \beta_3 +u, & \text{if good cut} \\

# a+\beta_0 \log(\text{carat}) + \beta_4 +u, & \text{if fair cut} \\

# a+\beta_0 \log(\text{carat}) +u, & \text{otherwise (i.e. ideal)}

# \end{cases}

# $$

#

# `sm_ols` also processes categorical variables easily!

#

# ```{tip}

# **Here is how you run this test: You just add the categorical variable as a new variable in the formula!**

# ```

#

# ```{warning}

# WARNING 1: A good idea is to **ALWAYS** put your categorical variable inside of "C()" like below. This tells statsmodels that the variable should be treated as a categorical variable EVEN IF it is a number. (Which would otherwise be treated like a number.)

# ```

#

# ```{warning}

# WARNING 2: You don't create a dummy variable for all the categories! As long as you include a constant in the regression ($a$), one of the categories is covered by the constant. Above, "ideal" is captured by the intercept.

#

# And if you look at the results of the next regression, the "ideal" cut level doesn't have a coefficient. **Statsmodels** automatically drops one of the categories when you use the formula approach. Nice!!!

#

# But if you manually set up the regression in statsmodels or sklearn, you have to drop one level yourself!!!

# ```

#

# + tags=[]

print(sm_ols('lprice ~ lcarat + C(cut)', data=diamonds2).fit().summary())

# -

# <img src=https://media.giphy.com/media/NaboQwhxK3gMU/source.gif width="400">

#

# ## Including interaction terms

#

# Suppose that an ideal cut diamond doesn't just add a fixed dollar value to the diamond. Perhaps it also changes the value of having a larger diamond. You might say that

# - A high quality cut is even more valuable for a larger diamond than it is for a small diamond. ("A great cut makes a diamond sparkle, but it's hard to see sparkle on a tiny diamond no matter what.")

# - In other words, the effect of carats depends on the cut and visa versa

# - In other words, "the cut variable **interacts** with the carat variable"

# - So you might say that, "a better cut changes the slope/coefficient of carat"

# - Or equivalently, "a better cut changes the return on a larger carat"

#

# Graphically, it's easy to see, as `sns.lmplot` by default gives each cut a unique slope on carats:

# +

import matplotlib.pyplot as plt

# add reg lines to plot

fig, ax = plt.subplots()

ax = sns.regplot(data=diamonds2.query('cut == "Fair"'),

y='lprice',x='lcarat',scatter=False,ci=None)

ax = sns.regplot(data=diamonds2.query('cut == "Ideal"'),

y='lprice',x='lcarat',scatter=False,ci=None)

# scatter

sns.scatterplot(data=diamonds2.groupby('cut').sample(80,random_state=99).query('cut in ["Ideal","Fair"]'),

x='lcarat',y='lprice',hue='ideal',ax=ax)

plt.show()

# -

# Those two different lines above are estimated by

#

# $$

# \log(\text{price})= a+ \beta_0 \log(\text{carat}) + \beta_1 \text{Ideal} + \beta_2\log(\text{carat})\cdot \text{Ideal}

# $$

#

# If you plug in 1 for $ideal$, you get the line for ideal diamonds as

#

# $$

# \log(\text{price})= a+ \beta_1 +(\beta_0 + \beta_2) \log(\text{carat})

# $$

#

# If you plug in 0 for $ideal$, you get the line for fair diamonds as

#

# $$

# \log(\text{price})= a+ \beta_0 \log(\text{carat})

# $$

#

# So, by including that interaction term, you get that the slope on carats is different for Ideal than Fair diamonds.

#

# ````{tip}

# **Here is how you run this test: You just add the two variables as a new variable in the formula, along with one term where they are both multiplied!**

#

# ````

# + tags=["hide-output"]

# you can include the interaction of x and z by adding "+x*z" in the spec, like:

sm_ols('lprice ~ lcarat + ideal + lcarat*ideal', data=diamonds2.query('cut in ["Fair","Ideal"]')).fit().summary()

# -

# This shows that a 1% increase in carats is associated with a 1.52% increase in price for fair diamonds, but a 1.71% increase for ideal diamonds (1.52+0.18).

#

# Thus: The return on carats is different (and higher) for better cut diamonds!

| content/05/02b_mechanics.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3 (ipykernel)

# language: python

# name: python3

# ---

# # Motif Scan

# Transcription Factor binding motifs are commonly found enriched in cis-regulatory elements and can inform the potential regulatory mechanism of the elements. The first step to study these DNA motifs is to scan their occurence in the genome regions.

#

# ## The Default MotifSet

# Currently, the motif scan function uses a default motif dataset that contains >2000 motifs from three databases {cite}`Khan2018,Kulakovskiy2018,Jolma2013`, each motif is also annotated with human and mouse gene names to facilitate further interpretation.

#

# Following the analysis in {cite}`Vierstra2020` (see also [this great blog](https://www.vierstra.org/resources/motif_clustering)), these motifs are clustered into 286 motif-clusters based on their similarity (and some motifs are almost identical). We will scan all individual motifs here, but also aggregate the results to motif-cluster level. It is recommended to perform futher analysis at the motif-cluster level (such as motif enrichment analysis).

from ALLCools.mcds import RegionDS

from ALLCools.motif import MotifSet, get_default_motif_set

# check out the default motif set

default_motif_set = get_default_motif_set()

default_motif_set.n_motifs

# metadata of the motifs

default_motif_set.meta_table

# motif cluster

default_motif_set.motif_cluster

# single motif object

default_motif_set.motif_list[0]

# ### Motif PSSM Cutoffs

# +

# To re-calculate motif thresholds with a different method or parameter

# default_motif_set.calculate_threshold(method='balanced', cpu=1, threshold_value=1000)

# -

# ## Scan Default Motifs

dmr_ds = RegionDS.open('test_HIP', select_dir=['dmr'])

dmr_ds

# ## Default Motif Database

#

# The {func}`scan_motifs <ALLCools.mcds.region_ds.RegionDS.scan_motifs>` method of RegionDS will perform motif scan using the default motif set over all the regions. This is a time consumming step, scanning 2M regions with 40 CPUs take ~3 days.

dmr_ds.scan_motifs(genome_fasta='../../data/genome/mm10.fa',

cpu=45,

standardize_length=500,

motif_set_path=None,

chrom_size_path=None,

combine_cluster=True,

dim='motif')

# ### Motif Values

# After motif scaning, three value for each motif in each region is stored:

# - n_motifs

# - max_score

# - total_score

dmr_ds.get_index('motif_value')

# ### Individual motifs

dmr_ds['dmr_motif_da']

# ### Motif Clusters

dmr_ds['dmr_motif-cluster_da']

# ## Scan Other Motifs

#

# Comming soon...

| docs/allcools/cluster_level/Correlation/04.motif_scan.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# # Intel® Low Precision Optimization Tool (iLiT) Sample for Tensorflow

# ## Agenda

# - Train a CNN Model Based on Keras

# - Quantize Keras Model by ilit

# - Compare Quantized Model

# Import python packages and check version.

#

# Make sure the Tensorflow is **2.2** and iLiT, matplotlib are installed.

# +

import tensorflow as tf

print(tf.__version__)

import matplotlib.pyplot as plt

import ilit

print(ilit.__path__)

import numpy as np

# -

# ## Train a CNN Model Based on Keras

#

# We prepare a script '**alexnet.py**' to provide the functions to train a CNN model.

#

# ### Dataset

# Use [MNIST](http://yann.lecun.com/exdb/mnist/) dataset to recognize hand writing numbers.

# Load the dataset.

# +

import alexnet

data = alexnet.read_data()

x_train, y_train, label_train, x_test, y_test, label_test = data

print('train', x_train.shape, y_train.shape, label_train.shape)

print('test', x_test.shape, y_test.shape, label_test.shape)

# -

# ### Build Model

#

# Build a CNN model like Alexnet by Keras API based on Tensorflow.

# Print the model structure by Keras API: summary().

# +

classes = 10

width = 28

channels = 1

model = alexnet.create_model(width ,channels ,classes)

model.summary()

# -

# ### Train the Model with the Dataset

#

# Set the **epochs** to "**3**"

# +

epochs = 3

alexnet.train_mod(model, data, epochs)

# -

# ### Freeze and Save Model to Single PB

#

# Set the input node name is "**x**".

# +

from tensorflow.python.framework.convert_to_constants import convert_variables_to_constants_v2

def save_frezon_pb(model, mod_path):

# Convert Keras model to ConcreteFunction

full_model = tf.function(lambda x: model(x))

concrete_function = full_model.get_concrete_function(

x=tf.TensorSpec(model.inputs[0].shape, model.inputs[0].dtype))

# Get frozen ConcreteFunction

frozen_model = convert_variables_to_constants_v2(concrete_function)

# Generate frozen pb

tf.io.write_graph(graph_or_graph_def=frozen_model.graph,

logdir=".",

name=mod_path,

as_text=False)

fp32_frezon_pb_file = "fp32_frezon.pb"

save_frezon_pb(model, fp32_frezon_pb_file)

# -

# !ls -la fp32_frezon.pb

# ## Quantize FP32 Model by iLiT

#

# iLiT supports to quantize the model with a validation dataset for tuning.

# Finally, it returns an frezon quantized model based on int8.

#

# We prepare a python script "**ilit_quantize_model.py**" to call iLiT to finish the all quantization job.

# Following code sample is used to explain the code.

#

# ### Define Dataloader

#

# The class **Dataloader** provides an iter function to return the image and label as batch size.

# We uses the validation data of MNIST dataset.

# +

import mnist_dataset

import math

class Dataloader(object):

def __init__(self, batch_size):

self.batch_size = batch_size

def __iter__(self):

x_train, y_train, label_train, x_test, y_test,label_test = mnist_dataset.read_data()

batch_nums = math.ceil(len(x_test)/self.batch_size)