code stringlengths 38 801k | repo_path stringlengths 6 263 |

|---|---|

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

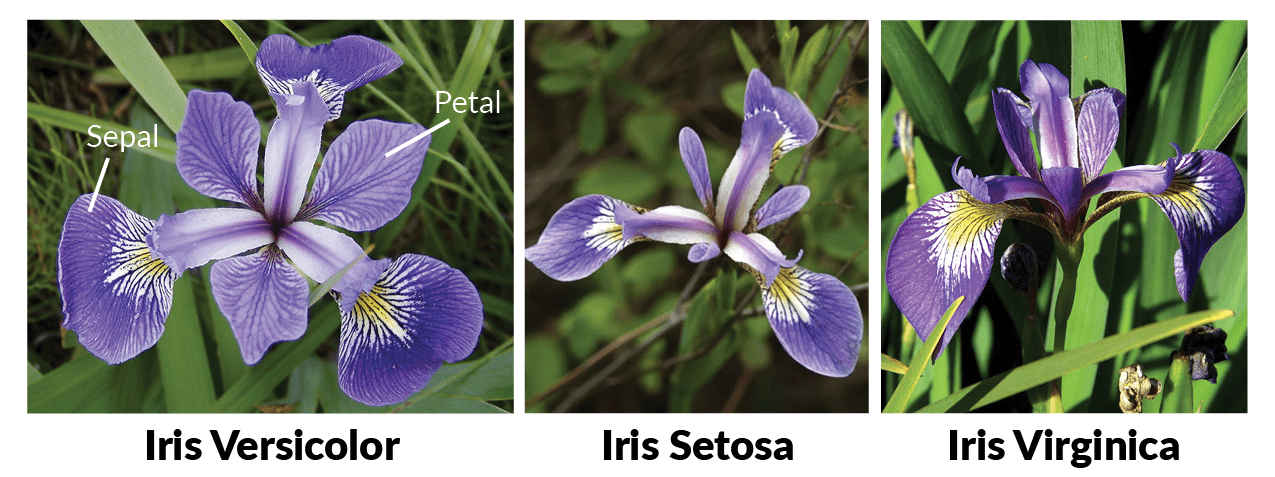

# # The Iris dataset and pandas

#

#

#

#

#

# ***

#

# **[Python Data Analysis Library](https://pandas.pydata.org/)**

#

# *[https://pandas.pydata.org/](https://pandas.pydata.org/)*

#

# The pandas website.

#

# ***

#

# **[<NAME>: pandas in 10 minutes | Walkthrough](https://www.youtube.com/watch?foo=bar&v=_T8LGqJtuGc?)**

#

# *[https://www.youtube.com/watch?v=_T8LGqJtuGc](https://www.youtube.com/watch?foo=bar&v=_T8LGqJtuGc)*

#

# Video by the creator of pandas.

#

# ***

#

# **[Python for Data Analysis notebooks](https://github.com/wesm/pydata-book)**

#

# *[https://github.com/wesm/pydata-book](https://github.com/wesm/pydata-book)*

#

# Materials and IPython notebooks for "Python for Data Analysis" by <NAME>, published by O'Reilly Media

#

# ***

#

# **[10 Minutes to pandas](http://pandas.pydata.org/pandas-docs/stable/10min.html)**

#

# *[http://pandas.pydata.org/pandas-docs/stable/10min.html](http://pandas.pydata.org/pandas-docs/stable/10min.html)*

#

# Official pandas tutorial.

#

# ***

#

# **[UC Irvine Machine Learning Repository: Iris Data Set](https://archive.ics.uci.edu/ml/datasets/iris)**

#

# *[https://archive.ics.uci.edu/ml/datasets/iris](https://archive.ics.uci.edu/ml/datasets/iris)*

#

# About the Iris data set from UC Irvine's machine learning repository.

# ## Loading data

# Import pandas.

import pandas as pd

# Load the iris data set from a URL.

df = pd.read_csv("https://raw.githubusercontent.com/uiuc-cse/data-fa14/gh-pages/data/iris.csv")

df

# ***

#

# ## Selecting rows and columns

df['species']

df[['petal_length', 'species']]

df[2:6]

df[['petal_length', 'species']][2:6]

df.loc[2:6]

df.loc[:, 'species']

df.loc[:, ['sepal_length', 'species']]

df.loc[2:6, ['sepal_length', 'species']]

df.iloc[2]

df.iloc[2:4, 1]

df.at[3, 'species']

df.iloc[1:10:2]

# ***

#

# ## Boolean selects

df.loc[:, 'species'] == 'setosa'

df.loc[df.loc[:, 'species'] == 'versicolor']

x = df.loc[df.loc[:, 'species'] == 'versicolor']

x.loc[51]

x.iloc[1]

# ***

#

# ## Summary statictics

df.head()

df.tail()

df.describe()

(df.loc[df.loc[:, 'species'] == 'versicolor']).describe()

(df.loc[df.loc[:, 'species'] == 'setosa']).describe()

(df.loc[df.loc[:, 'species'] == 'virginica']).describe()

df.mean()

# ***

#

# ## Plots

import seaborn as sns

sns.pairplot(df, hue='species')

# ***

#

# ## End

| pandas-with-iris.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: venv-exploratory-data-analysis

# language: python

# name: venv-exploratory-data-analysis

# ---

# # Grid search

#

# technique used for model tuning that tries several hyperparameters combinations and reports the best based on a metric

# +

# %matplotlib inline

from sklearn import svm

from sklearn.model_selection import GridSearchCV, train_test_split

from sklearn.metrics import confusion_matrix, classification_report

from sklearn.datasets import make_multilabel_classification

import pandas as pd

import numpy as np

import category_encoders as ce

import multiprocessing

random_state = 42

n_cpu = multiprocessing.cpu_count()

n_cpu

# -

def get_data():

return make_multilabel_classification(n_samples=1_000, n_features=15, n_classes=5, n_labels=2, allow_unlabeled=False, sparse=False, return_distributions=False, random_state=random_state)

data = get_data()

X = data[0]

X[:5,:]

y = np.argmax(data[1], axis=1)

y[:20]

X_train, X_test, y_train, y_test = train_test_split(X, y)

svc = svm.SVC()

svc.fit(X_train, y_train)

preds = svc.predict(X_test)

print(classification_report(y_test, preds))

# ## Try grid search

parameters = {

'kernel': ['linear', 'rbf', 'poly', 'sigmoid'],

'C': [0.1, 0.5, 1.0, 10],

'degree': [1, 3, 5]

}

gs = GridSearchCV(svc, parameters, n_jobs=n_cpu)

gs.fit(X_train, y_train)

gs.best_estimator_

gs.best_score_

gs.best_params_

svc = svm.SVC(kernel='poly', degree=1)

svc.fit(X_train, y_train)

preds = svc.predict(X_test)

print(classification_report(y_test, preds))

| notebooks/012. grid search.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

from deeptables.models import deeptable,deepnets

from deeptables.utils import consts,dt_logging,batch_trainer

from deeptables.datasets import dsutils

from sklearn.model_selection import train_test_split

from sklearn.metrics import roc_auc_score, roc_curve

import pandas as pd

import numpy as np

data = dsutils.load_adult()

# +

conf1 = deeptable.ModelConfig(

name='conf1',

apply_gbm_features=True,

gbm_feature_type='dense',

auto_discrete=True,

metrics=['AUC'],

)

conf2 = deeptable.ModelConfig(

name='conf2',

fixed_embedding_dim=False,

embeddings_output_dim=0,

apply_gbm_features=False, #*

gbm_feature_type='dense',

auto_discrete=False, #*

metrics=['AUC'],

)

bt = batch_trainer.BatchTrainer(data,'x_14',

eval_size=0.2,

validation_size=0.2,

eval_metrics=['AUC','accuracy','recall','precision','f1'], #auc/recall/precision/f1/mse/mae/msle/rmse/r2

verbose=1,

dt_epochs=15,

dt_config=[conf1,conf2],

dt_nets=[['dnn_nets'],['cross_nets']],

)

ms = bt.start()

# -

ms.leaderboard()

| examples/batch_trainer.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# \# Developer: <NAME> (<EMAIL>) <br>

# \# 18th December 2018

import pandas as pd

bugs = pd.read_csv('../datasets/lexical_semantic_preprocessed_mantis_bugs_less_columns_with_class.csv')

bug_notes = pd.read_csv('../datasets/lexical_semantic_preprocessed_mantis_bugnotes.csv', index_col='bug_id')

bugs_list = []

no_bug_notes = []

for index, row in bugs.iterrows():

pos = e_pos = neg = e_neg = neutral = 0

bug_features = {'id': row['id'], 'pos': 0, 'neg': 0, 'neu': 0, 'priority': row['priority'],

'severity': row['severity'], 'pm_ticket': row['pm_ticket']}

for col in ['additional_information_vader_polarity', 'summary_vader_polarity', 'description_vader_polarity']:

bug_features[row[col]] += row[col+'_weight']

try:

bug_notes_for_id = bug_notes.loc[row['id'], ['bug_note_vader_polarity', 'bug_note_vader_polarity_weight']]

if isinstance(bug_notes_for_id, pd.core.frame.DataFrame):

for index, row in bug_notes_for_id.iterrows():

bug_features[row['bug_note_vader_polarity']] += row['bug_note_vader_polarity_weight']

else:

bug_features[bug_notes_for_id['bug_note_vader_polarity']] += bug_notes_for_id['bug_note_vader_polarity_weight']

except Exception as e:

no_bug_notes.append(row['id'])

bugs_list.append(bug_features)

vector_df = pd.DataFrame(bugs_list)

vector_df['total_sentiments'] = vector_df['pos'].abs() + vector_df['neg'].abs() + vector_df['neu'].abs()

for col in ['pos', 'neg', 'neu']:

vector_df[col+'_normalized'] = vector_df[col].abs() / vector_df['total_sentiments']

vector_df.to_csv('../datasets/mantis_bugs_vector.csv', encoding='utf-8', index=False)

result = pd.merge(bugs, df_bug_note_table, how='left', left_on='id', right_on='bug_id')

| 2) class_expansion/.ipynb_checkpoints/3.bug_vector_preperation-checkpoint.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# + active=""

# ask the user to insert a sentence

# print each element of the sentence on a different row

# -

sentence = input("tell me something")

for word in sentence.split(" "):

print(word)

| What we did in class/ExercisesSolution/SolutionExercise6.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# +

from sqlite_db import thesisDB, corpusDB, enclose, docData

from pynlpl.clients.frogclient import FrogClient

from lexisnexisparse import LexisParser

import json

import pandas as pd

from ipywidgets import FloatProgress

from IPython.display import display

import re

import os

#settings_file = 'D:/thesis/settings - nl_final.json'

frogip = '192.168.1.126'

frogport = 9772

#Read settings

#settings = json.loads(open(settings_file).read())["settings"]

#dbAddress = settings['db_file']

#datasets = [settings['json_folder']+fname for fname in os.listdir(settings['json_folder']) if fname.lower().endswith(".json.gz")]

dbAddress = 'D:/Thesis/data.db'

datasets = ['D:/Thesis/jsons/trouw.json.gz']

db = thesisDB(dbAddress)

# -

##Connect to frog client

frog = FrogClient(frogip,frogport,returnall = True)

# +

#Progressbar!

f = FloatProgress(min = 0, max = 1, bar_style = 'success')

display(f) # display the bar

for dataset in datasets:

df = pd.read_json(dataset, compression = 'gzip')

df.sort_index(inplace = True)

f.description = dataset[54:-8]

f.value = 0

f.max = len(df)

counter = 0 #commit every 5 documents

for index,row in df.iterrows():

counter += 1

##First input document, save its rowid for cross-reference

document = {'date':str(row['DATE_dt']),

'medium':enclose(row['MEDIUM']),

'headline':enclose(row['HEADLINE']),

'length':str(row['LENGTH'])}

if row['BYLINE']: #sometimes byline is None

document['byline'] = enclose(row['BYLINE'])

if row['SECTION']: #sometimes sections is None

document['section'] = enclose(row['SECTION'])

if counter % 10 == 0:

lastRow = db.insertRow('documents',document)

else:

lastRow = db.insertRow('documents',document,False)

paragraph_no = 1

entities = []

entity = ['','']

for paragraph in row['TEXT']:

res = frog.process(paragraph)

position = 1

for row in res:

if row[0] is None:

continue

if row[0] == '"':

row = list(row)

row[0] = 'DOUBLE_QUOTE'

row[1] = 'DOUBLE_QUOTE'

row = tuple(row)

data = {

'token':enclose(row[0]),

'lemma':enclose(row[1]),

'paragraph_no':str(paragraph_no),

'position':str(position),

'docid':str(lastRow),

'pos':enclose(re.search('^[A-Z]+',row[3])[0])#, Exclude pos_long because it takes storage and is not needed

#'pos_long':enclose(row[3])

}

db.insertRow('tokens',data,False)

if row[4] != 'O': #Found an entity

if re.match('^B-',row[4]) is not None:

#Entity is new. Save old entity if something is stored

if entity[0] != '':

entities.append(entity)

entity = ['','']

entity[0] = row[0]

entity[1] = re.search('[A-Z]+$',row[4])[0]

else:

entity[0] += ' '+row[0] #append next term of entity

position += 1

paragraph_no += 1

if entity[0] != '': #append last entity if present

entities.append(entity)

for ent,t in entities:

data = {'entity':enclose(ent),

'category':enclose(t),

'docid':str(lastRow)}

db.insertRow('entities',data,False)

f.value += 1

db.commit()

# -

frog.close()

| Pipeline frog.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: diagnosis

# language: python

# name: diagnosis

# ---

from sklearn.preprocessing import LabelEncoder, OneHotEncoder

from sklearn.model_selection import KFold, StratifiedKFold, train_test_split

from sklearn.metrics import accuracy_score

from sklearn.feature_extraction.text import CountVectorizer

from sklearn.externals import joblib

import lightgbm as lgb

import scipy

from scipy import sparse

from pandas.core.common import SettingWithCopyWarning

import scipy.stats as sp

import pandas as pd

import numpy as np

from collections import Counter

import warnings

import time

import sys

import random

import os

import gc

import datetime

# +

path = '../data/'

data_path = '../trainTestData/'

middle_path = '../model/'

train_y = pd.read_csv(path + 'age_train.csv', names=['uid', 'label'])

sub = pd.read_csv(path + 'age_test.csv', names=['uid'])

train_csr = sparse.load_npz(data_path + 'trainData15112.npz')

test_csr = sparse.load_npz(data_path + 'testData15112.npz')

train_y = train_y["label"].values

# train_csr = train_csr[:200]

# train_y = train_y['label'].values[:200]

# print(train_csr.shape, test_csr.shape)

lgb_model = lgb.LGBMClassifier(

boosting_type='gbdt',

objective='multiclass',

metrics='multi_error',

num_class=6,

n_estimators=20000,

learning_rate=0.1,

num_leaves=512,

max_depth=-1,

subsample=0.95,

colsample_bytree=0.5,

subsample_freq=1,

reg_alpha=1,

reg_lambda=1,

random_state=42,

n_jobs=10

)

oof = np.zeros((train_csr.shape[0], 6))

sub_preds = np.zeros((test_csr.shape[0], 6))

skf = StratifiedKFold(n_splits=5, random_state=812, shuffle=True)

t = time.time()

for index, (train_index, test_index) in enumerate(skf.split(train_csr, train_y)):

print('Fold {}'.format(index + 1))

lgb_model.fit(train_csr[train_index], train_y[train_index],

eval_set=[(train_csr[train_index], train_y[train_index]),

(train_csr[test_index], train_y[test_index])],

eval_names=['train', 'valid'],

early_stopping_rounds=200, verbose=10)

oof[test_index] = lgb_model.predict_proba(train_csr[test_index], num_iteration=lgb_model.best_iteration_)

sub_preds += lgb_model.predict_proba(test_csr, num_iteration=lgb_model.best_iteration_) / skf.n_splits

# lgb_model.savetxt(middle_path+'model/lgb_zl'+str(index)+'_model.txt')

joblib.dump(lgb_model, '../model/lgb_zl_15112_2'+str(index)+'_model.pkl')

print(oof.shape, train_y.shape)

cv_final = accuracy_score(train_y, np.argmax(oof, axis=1)+1)

print('\ncv acc:', cv_final)

sub['label'] = np.argmax(sub_preds, axis=1) + 1

# sub.to_csv(middle_path + 'sub_{}.csv'.format(cv_final), index=False)

oof = np.zeros((train_csr.shape[0], 6))

sub_preds = np.zeros((test_csr.shape[0], 6))

skf = StratifiedKFold(n_splits=5, random_state=812, shuffle=True)

t = time.time()

for index, (train_index, test_index) in enumerate(skf.split(train_csr, train_y)):

print('Fold {}'.format(index + 1))

lgb_model = joblib.load('../model/lgb_zl_15112_2'+str(index)+'_model.pkl')

oof[test_index] = lgb_model.predict_proba(train_csr[test_index], num_iteration=lgb_model.best_iteration_)

sub_preds += lgb_model.predict_proba(test_csr, num_iteration=lgb_model.best_iteration_) / skf.n_splits

cv_final = accuracy_score(train_y, np.argmax(oof, axis=1)+1)

print('\ncv acc:', cv_final)

np.savetxt('../processed/lgboost_val_15112.txt', oof, fmt='%s', delimiter=',', newline='\n')

np.savetxt('../processed/lgboost_test_15112.txt', sub_preds, fmt='%s', delimiter=',', newline='\n')

sub['label'] = np.argmax(sub_preds, axis=1) + 1

# sub.to_csv(middle_path + 'sub_{}.csv'.format(cv_final), index=False)

# -

| src/LightGBM_15112.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

import numpy as np

import pandas as pd

proj = pd.read_csv('./Projects.csv')

# +

# proj.to_pickle("./projects.pkl")

# -

print(proj.columns)

print(proj.head)

proj_cleaned = proj.drop(['Project Essay', 'Project Short Description', 'Project Need Statement'], axis=1)

print(proj_cleaned.columns)

proj_cleaned.to_pickle("./projects_cleaned.pkl")

| data/DataProcessing.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# name: python3

# ---

# + [markdown] id="view-in-github" colab_type="text"

# <a href="https://colab.research.google.com/github/iam-abbas/Reddit-Stock-Trends/blob/main/Reddit_Stock_Trends.ipynb" target="_parent"><img src="https://colab.research.google.com/assets/colab-badge.svg" alt="Open In Colab"/></a>

# + [markdown] id="YTwciulmQ9q2"

# # Trending Stocks on Sub-Reddits

# + [markdown] id="8j6mzW3_Q0WX"

# ### Installing Reddit and Yahoo Finance API wrappers

# + id="nv7x3u7GzoC8" colab={"base_uri": "https://localhost:8080/"} outputId="a169723f-9f0c-4e2f-b7c3-30132fa740b0"

pip install yfinance praw==7.0

# + [markdown] id="mK6yAb7PRFF4"

# ### Imports

# + id="igjxSo-6cw8_"

import numpy as np

import pandas as pd

import yfinance as yf

import re

import praw

# + [markdown] id="4iCXDsOURV4-"

# ### Stop words and Blacklist containing jargon/acronyms

# I added GME and AMC in there lmao, tired of seeing those

# + id="5xTgoasKHigE"

stop_words = ['i', 'me', 'my', 'myself', 'we', 'our', 'ours', 'ourselves', 'you', "you're", "you've", "you'll", "you'd", 'your', 'yours', 'yourself', 'yourselves', 'he', 'him', 'his', 'himself', 'she', "she's", 'her', 'hers', 'herself', 'it', "it's", 'its', 'itself', 'they', 'them', 'their', 'theirs', 'themselves', 'what', 'which', 'who', 'whom', 'this', 'that', "that'll", 'these', 'those', 'am', 'is', 'are', 'was', 'were', 'be', 'been', 'being', 'have', 'has', 'had', 'having', 'do', 'does', 'did', 'doing', 'a', 'an', 'the', 'and', 'but', 'if', 'or', 'because', 'as', 'until', 'while', 'of', 'at', 'by', 'for', 'with', 'about', 'against', 'between', 'into', 'through', 'during', 'before', 'after', 'above', 'below', 'to', 'from', 'up', 'down', 'in', 'out', 'on', 'off', 'over', 'under', 'again', 'further', 'then', 'once', 'here', 'there', 'when', 'where', 'why', 'how', 'all', 'any', 'both', 'each', 'few', 'more', 'most', 'other', 'some', 'such', 'no', 'nor', 'not', 'only', 'own', 'same', 'so', 'than', 'too', 'very', 's', 't', 'can', 'will', 'just', 'don', "don't", 'should', "should've", 'now', 'd', 'll', 'm', 'o', 're', 've', 'y', 'ain', 'aren', "aren't", 'couldn', "couldn't", 'didn', "didn't", 'doesn', "doesn't", 'hadn', "hadn't", 'hasn', "hasn't", 'haven', "haven't", 'isn', "isn't", 'ma', 'mightn', "mightn't", 'must', 'mustn', "mustn't", 'needn', "needn't", 'shan', "shan't", 'shouldn', "shouldn't", 'wasn', "wasn't", 'weren', "weren't", 'won', "won't", 'wouldn', "wouldn't"]

# + id="j2yODV10FMLi"

block_words = ["DIP", "", "$", "RH", "YOLO", "PORN", "BEST", "MOON", "HOLD", "FAKE", "WISH", "USD", "EV", "MARK", "RELAX", "LOL", "LMAO", "LMFAO", "EPS", "DCF", "NYSE", "FTSE", "APE", "CEO", "CTO", "FUD", "DD", "AM", "PM", "FDD", "EDIT", "TA", "UK", "AMC", "GME"]

# + [markdown] id="wdteNrESRYoJ"

# ### Simple Regex to get tickers from text

# + id="TC-aZcvEdPwZ"

def extract_ticker(body):

ticks = re.findall("[$][A-Za-z]*|[A-Z][A-Z]{1,}", str(body))

res = set()

for item in ticks:

if item not in block_words and item.lower() not in stop_words and item:

try:

tic = item.replace("$", "").upper()

res.add(tic)

except:

pass

return res

# + [markdown] id="pYbo-KUjRgvx"

# ### Reddit API setup

# + id="SZy2Ql_W1crv"

reddit = praw.Reddit(

client_id="9Aq-wTeGLJBKsQ",

client_secret="<KEY>",

user_agent="ScrapeStocks"

)

# + [markdown] id="M79xPC9cRl9_"

# ### Scrape subreddits `r/robinhoodpennystocks` and `r/pennystocks`

# Current it does fetch a lot of additional data like upvotes, comments, awards etc but not using anything apart from title for now

# + id="sqHNK1q86Qiv"

_posts = []

new_bets = reddit.subreddit("robinhoodpennystocks+pennystocks").new(limit=None)

new_bets

for post in new_bets:

_posts.append(

[

post.id,

post.title,

post.score,

post.num_comments,

post.upvote_ratio,

post.total_awards_received,

]

)

# create a dataframe

_posts = pd.DataFrame(

_posts,

columns=[

"id",

"title",

"score",

"comments",

"upvote_ratio",

"total_awards",

],

)

# + id="j2IwLMtDL7ao" colab={"base_uri": "https://localhost:8080/", "height": 419} outputId="59baed7b-eee1-41d6-cc97-f90b9f770938"

_posts

# + [markdown] id="OY0wSH7nR4tO"

# ### Extract tickers from all titles and creae a new columns

# + id="lqLo3rvFVqjH"

_posts["Tickers"] = _posts.title.apply(extract_ticker)

# + id="KZTR2SWv_idY"

ticker_sets = _posts.Tickers.to_list()

# + [markdown] id="tDZMN4UMR9Kp"

# ### Count number of occurances of the Ticker and verify id the Ticker exists

# This kinda works like a hashmap

# + id="JEidqwnNDDxJ"

counts ={}

# + id="qXjeqn8pNTK3"

for s in ticker_sets:

for tic in s:

if tic in counts:

counts[tic] += 1

else:

counts[tic] = 1

# + id="6UmWCKL9XsJS"

verified_tics = {}

# + colab={"base_uri": "https://localhost:8080/"} id="ZboCwlBwXfjG" outputId="8c67260e-f3ac-46d0-a5ea-91cdaa0ae7c1"

for tic in counts:

try:

if counts[tic] > 3:

print(tic, end=", ")

yf.Ticker(tic).info

verified_tics[tic] = counts[tic]

else:

continue

except:

pass

# + colab={"base_uri": "https://localhost:8080/"} id="pPTVFuTOWtUn" outputId="6c9301ee-3320-4a58-f782-b8acdbb3c459"

verified_tics

# + [markdown] id="ClL4RoY0SPLs"

# ### Create Datable of just mentions

# + id="HTFGjj6zaqXu"

tick_df = pd.DataFrame(verified_tics.items(), columns=["Ticker", "Mentions"])

# + colab={"base_uri": "https://localhost:8080/", "height": 419} id="O9stuq8DcyW0" outputId="5e71d3c0-06bc-46a9-a93f-f72c4ab4104e"

tick_df

# + [markdown] id="9l83_x5RSV-F"

# ### Sort

# + colab={"base_uri": "https://localhost:8080/", "height": 419} id="3oY2bLuucy9u" outputId="a409cfcd-435a-4391-f629-7f422642be18"

tick_df.sort_values(by=["Mentions"], inplace=True, ascending=False)

tick_df.reset_index(inplace=True, drop=True)

tick_df

# + [markdown] id="HdvynA8LSXx0"

# ### Use Yahoo Finance API to get the relavent data

# + id="JJI4GGUfgfnZ"

def calculate_change(start, end):

return round(((end - start)/start)*100, 2)

# + id="FvnQCArshKwG"

def get_change(ticker, period="1d"):

return calculate_change(yf.Ticker(ticker).history(period)["Open"].to_list()[0], yf.Ticker(ticker).history(period)["Close"].to_list()[-1])

# + id="WiBcQD2miilm"

tick_df.dropna(axis=1)

n25_df = tick_df.head(25)

# + colab={"base_uri": "https://localhost:8080/"} id="qFvdVT3rg-Cr" outputId="b59e72ec-7be0-4eb8-e057-99c8b95d3b48"

n25_df["Name"] = n25_df.Ticker.apply(lambda x: yf.Ticker(x).info["longName"])

n25_df["Bid"] = n25_df.Ticker.apply(lambda x: yf.Ticker(x).info["previousClose"])

n25_df["5d Low"] = n25_df.Ticker.apply(lambda x: min(yf.Ticker(x).history(period="5d")['Low'].to_list()))

n25_df["5d High"] = n25_df.Ticker.apply(lambda x: max(yf.Ticker(x).history(period="5d")['High'].to_list()))

n25_df["1d Change (%)"] = n25_df.Ticker.apply(lambda x: get_change(x))

n25_df["5d Change (%)"] = n25_df.Ticker.apply(lambda x: get_change(x, "5d"))

# + colab={"base_uri": "https://localhost:8080/"} id="66kcExXIpwp1" outputId="3f8245b7-bf7d-44da-8aa9-b8b5c0c7ccab"

n25_df["1mo Change (%)"] = n25_df.Ticker.apply(lambda x: get_change(x, "1mo"))

# + colab={"base_uri": "https://localhost:8080/", "height": 824} id="u1mvvIoSjXC9" outputId="f252b06b-57af-4b70-c5b6-c99b043eb890"

n25_df

# + colab={"base_uri": "https://localhost:8080/"} id="_6zvqkcrDzWo" outputId="428d3b02-b9a5-4151-c10f-7d3c1d8bfd17"

n25_df.rename(columns={'Bid': 'Price - 2/5'}, inplace=True)

# + colab={"base_uri": "https://localhost:8080/"} id="nyBSbceSFonB" outputId="2906c819-f508-4932-e8d7-dc4ffc901b4d"

n25_df["Industry"] = n25_df.Ticker.apply(lambda x: yf.Ticker(x).info['industry'])

# + colab={"base_uri": "https://localhost:8080/", "height": 824} id="evApezERGIep" outputId="041665b0-d373-458e-e2ba-107667b6e466"

n25_df

# + id="c1X35SLwMJDt"

| Reddit_Stock_Trends.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# +

import os

import pickle

from sklearn.preprocessing import StandardScaler

import numpy as np

import pandas as pd

import matplotlib.pyplot as plt

from pe_data import *

from hashing_vectorizer import *

# +

default_data_dir_path = "./data"

default_result_dir_path = "./result"

train_df_dir_path = "./data/v1"

test_df_dir_path = "./data/v1_test"

std_scaler_file_path = os.path.join(default_result_dir_path, "std_scaler_numeric_features.pkl")

l1_extracted_feature_file_path = os.path.join(default_result_dir_path, "l1_svm_extracted_numeric_features.pkl")

xgb_extracted_feature_file_path = os.path.join(default_result_dir_path, "xgb_extracted_numeric_features.pkl")

l1_svm_model_file_path = os.path.join(default_result_dir_path, "numeric_l1_svm_model.pkl")

xgb_model_file_path = os.path.join(default_result_dir_path, "numeric_xgb_model.pkl")

hashing_vectorizer_dir_path = os.path.join(default_result_dir_path, "hashing_vectorizer")

xgb_clf_file_path = os.path.join(default_result_dir_path, "xgb_clf.pkl")

# -

train_df_file_path_list = [os.path.join(train_df_dir_path, df_file_name) for df_file_name in os.listdir(train_df_dir_path)]

test_df_file_path_list = [os.path.join(test_df_dir_path, df_file_name) for df_file_name in os.listdir(test_df_dir_path)]

test_df_file_path_list

# +

train_df_list = list()

test_df_list = list()

for train_df_file_path in train_df_file_path_list:

train_df_list.append(pd.read_csv(train_df_file_path))

for test_df_file_path in test_df_file_path_list:

test_df_list.append(pd.read_csv(test_df_file_path))

train_df = pd.concat(train_df_list, ignore_index=True)

test_df = pd.concat(test_df_list, ignore_index=True)

# -

# # Train

# ## Numeric Features

train_pfe = PeFeatureExtractor(df=train_df)

train_pfe.preprocessing_label()

train_pfe.preprocessing_type()

numeric_df, str_df = train_pfe.get_numeric_str_df()

numeric_df

# Get extracted feature list

with open(l1_extracted_feature_file_path, "rb") as f:

selected_feature_name_list = pickle.load(f)

selected_numeric_df = numeric_df[selected_feature_name_list]

selected_numeric_df

# Standard scaling

scaler = StandardScaler()

# +

# scaler.fit(X=selected_numeric_df)

# scaled_numeric_data = scaler.transform(X=selected_numeric_df)

# with open(std_scaler_file_path, "wb") as f:

# pickle.dump(scaler, f)

# +

# scaled_numeric_data = scaler.transform(X=selected_numeric_df)

# scaled_numeric_data

# +

with open(std_scaler_file_path, "rb") as f:

scaler = pickle.load(f)

scaled_numeric_data = scaler.transform(X=selected_numeric_df)

# -

scaled_numeric_data

# ## String Features

str_df

vectorizer_object = CustomHashingVectorizer(df=str_df)

column_name_list = str_df.columns

vectorizer_object.load(

column_name_list=column_name_list,

save_dir_path=hashing_vectorizer_dir_path

)

vectorizer_dict = vectorizer_object.vectorizer_dict

# +

str_vector_arr = list()

for column_name in column_name_list:

vectorizer = vectorizer_dict[column_name]

sample_data = str_df[column_name].fillna("")

sample_data = sample_data.map(lambda x : x.replace("/", " "))

sample_data = sample_data.map(lambda x : x.strip())

vector = vectorizer_object.transform(

column_name=column_name,

data=sample_data

)

str_vector_arr.append(vector)

# -

str_vector_arr = np.concatenate(str_vector_arr, axis=1)

scaled_numeric_data.shape

str_vector_arr.shape

train_features = np.concatenate([scaled_numeric_data, str_vector_arr], axis=1)

train_features.shape

# ## Dataset

from sklearn.model_selection import train_test_split

X_train, X_val, y_train, y_val = train_test_split(

train_features,

train_pfe.label_list,

test_size=0.2,

random_state=777

)

print(f"Train X shape : {X_train.shape}")

print(f"Val X shape : {X_val.shape}")

print(f"Train y shape : {y_train.shape}")

print(f"Val y shape : {y_val.shape}")

# ## Training (Train / Val)

# ### XGBoost

from xgboost import XGBClassifier

xgb_clf = XGBClassifier(

booster="gbtree",

objective="binary:logistic",

importance_type="gain"

)

xgb_clf.fit(X=X_train, y=y_train)

xgb_pred_list = xgb_clf.predict(X_val)

xgb_pred_list

# ## Evaluation

from sklearn.metrics import classification_report

xgb_result_dict = classification_report(

y_true=y_val,

y_pred=xgb_pred_list,

output_dict=True

)

xgb_result_dict

# ### SVM (rbf)

from sklearn.svm import SVC

svm_clf = SVC(

C=1.0,

kernel="rbf"

)

svm_clf.fit(X=X_train, y=y_train)

svm_pred_list = svm_clf.predict(X_val)

svm_pred_list

# ## Evaluation

svm_result_dict = classification_report(

y_true=y_val,

y_pred=svm_pred_list,

output_dict=True

)

svm_result_dict

# ## Training (Entire)

xgb_entire_clf = XGBClassifier(

booster="gbtree",

objective="binary:logistic",

importance_type="gain"

)

xgb_entire_clf.fit(X=train_features, y=train_pfe.label_list)

# Save

with open(xgb_clf_file_path, "wb") as f:

pickle.dump(xgb_entire_clf, f)

| train.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# +

import tensorflow as tf

import matplotlib.pyplot as plt

import numpy as np

from tensorflow.examples.tutorials.mnist import input_data

tf.reset_default_graph()

mnist = input_data.read_data_sets("../mnist/data/", one_hot=True)

total_epoch = 15

batch_size = 100

n_hidden = 256

n_input = 28 * 28

n_noise = 128

n_class = 10

X = tf.placeholder(tf.float32, [None, n_input])

Y = tf.placeholder(tf.float32, [None, n_class])

Z = tf.placeholder(tf.float32, [None, n_noise])

def generator(noise, labels):

with tf.variable_scope('generator'):

inputs = tf.concat([noise, labels], 1)

print(inputs)

hidden = tf.layers.dense(inputs, n_hidden, activation=tf.nn.relu, name="gen_hidden")

output = tf.layers.dense(hidden, n_input, activation=tf.nn.sigmoid)

return output

def discriminator(inputs, labels, reuse=None):

with tf.variable_scope('discriminator') as scope:

if reuse:

scope.reuse_variables()

inputs = tf.concat([inputs, labels], 1)

hidden = tf.layers.dense(inputs, n_hidden, activation=tf.nn.relu, name="dis_hidden")

output = tf.layers.dense(hidden, 1, activation=None)

return output

def get_noise(batch_size, n_noise):

return np.random.uniform(-1., 1., size=[batch_size, n_noise])

G = generator(Z, Y)

D_real = discriminator(X, Y)

D_gene = discriminator(G, Y, True)

loss_D_real = tf.reduce_mean(

tf.nn.sigmoid_cross_entropy_with_logits(

logits=D_real, labels=tf.ones_like(D_real)))

loss_D_gene = tf.reduce_mean(

tf.nn.sigmoid_cross_entropy_with_logits(

logits=D_gene, labels=tf.zeros_like(D_gene)))

loss_D = loss_D_real + loss_D_gene

loss_G = tf.reduce_mean(

tf.nn.sigmoid_cross_entropy_with_logits(

logits=D_gene, labels=tf.ones_like(D_gene)))

tf.summary.scalar('costD', loss_D)

tf.summary.scalar('costG', loss_G)

vars_D = tf.get_collection(tf.GraphKeys.TRAINABLE_VARIABLES,

scope='discriminator')

vars_G = tf.get_collection(tf.GraphKeys.TRAINABLE_VARIABLES,

scope='generator')

train_D = tf.train.AdamOptimizer().minimize(loss_D,

var_list=vars_D)

train_G = tf.train.AdamOptimizer().minimize(loss_G,

var_list=vars_G)

tf.summary.histogram('vars_D', vars_D[0])

tf.summary.histogram('vars_G', vars_G[0])

sess = tf.Session()

merged = tf.summary.merge_all()

writer = tf.summary.FileWriter('./logs', sess.graph)

sess.run(tf.global_variables_initializer())

total_batch = int(mnist.train.num_examples/batch_size)

loss_val_D, loss_val_G = 0, 0

for epoch in range(total_epoch):

for i in range(total_batch):

batch_xs, batch_ys = mnist.train.next_batch(batch_size)

noise = get_noise(batch_size, n_noise)

m, loss_val_D, _ = sess.run([merged, loss_D, train_D],

feed_dict={X: batch_xs, Y: batch_ys, Z: noise})

loss_val_G, _ = sess.run([loss_G, train_G],

feed_dict={Y: batch_ys, Z: noise})

writer.add_summary(m, i + epoch * total_batch)

# _, _, loss_val_G, loss_val_D = sess.run([train_G, train_D, loss_G, loss_D],

# feed_dict={X: batch_xs, Y: batch_ys, Z: noise})

print('Epoch:', '%04d' % epoch,

'D loss: {:.4}'.format(loss_val_D),

'G loss: {:.4}'.format(loss_val_G))

if epoch == 0 or (epoch + 1) % 10 == 0:

sample_size = 10

noise = get_noise(sample_size, n_noise)

samples = sess.run(G,

feed_dict={Y: mnist.test.labels[:sample_size],

Z: noise})

fig, ax = plt.subplots(2, sample_size, figsize=(sample_size, 2))

for i in range(sample_size):

ax[0][i].set_axis_off()

ax[1][i].set_axis_off()

ax[0][i].imshow(np.reshape(mnist.test.images[i], (28, 28)))

ax[1][i].imshow(np.reshape(samples[i], (28, 28)))

plt.savefig('samples2/{}.png'.format(str(epoch).zfill(3)), bbox_inches='tight')

plt.close(fig)

print('최적화 완료!')

# -

from tensorflow.python.client import device_lib

device_lib.list_local_devices()

| 3minTensorflow/08. GAN/08. GAN2.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: pytorch-0.4

# language: python

# name: pytorch-0.4

# ---

# +

import numpy as np

import random

import torch as pt

import torch.nn as nn

import torch.optim as optim

from torch.utils.data import Dataset, DataLoader

# %load_ext autoreload

# %autoreload 2

pt.set_printoptions(linewidth=200)

# -

device = pt.device("cuda:0" if pt.cuda.is_available() else "cpu")

hidden_size = 50

# + code_folding=[]

class DinosDataset(Dataset):

def __init__(self):

super().__init__()

with open('dinos.txt') as f:

content = f.read().lower()

self.vocab = sorted(set(content))

self.vocab_size = len(self.vocab)

self.lines = content.splitlines()

self.ch_to_idx = {c:i for i, c in enumerate(self.vocab)}

self.idx_to_ch = {i:c for i, c in enumerate(self.vocab)}

def __getitem__(self, index):

line = self.lines[index]

x_str = ' ' + line #add a space at the beginning, which indicates a vector of zeros.

y_str = line + '\n'

x = pt.zeros([len(x_str), self.vocab_size], dtype=pt.float)

y = pt.empty(len(x_str), dtype=pt.long)

y[0] = self.ch_to_idx[y_str[0]]

#we start from the second character because the first character of x was nothing(vector of zeros).

for i, (x_ch, y_ch) in enumerate(zip(x_str[1:], y_str[1:]), 1):

x[i][self.ch_to_idx[x_ch]] = 1

y[i] = self.ch_to_idx[y_ch]

return x, y

def __len__(self):

return len(self.lines)

# -

trn_ds = DinosDataset()

trn_dl = DataLoader(trn_ds, batch_size=1, shuffle=True)

# + code_folding=[]

class LSTM(nn.Module):

def __init__(self, input_size, hidden_size, output_size):

super(LSTM, self).__init__()

self.linear_f = nn.Linear(input_size + hidden_size, hidden_size)

self.linear_u = nn.Linear(input_size + hidden_size, hidden_size)

self.linear_c = nn.Linear(input_size + hidden_size, hidden_size)

self.linear_o = nn.Linear(input_size + hidden_size, hidden_size)

self.i2o = nn.Linear(hidden_size, output_size)

def forward(self, c_prev, h_prev, x):

combined = pt.cat([x, h_prev], 1)

f = pt.sigmoid(self.linear_f(combined))

u = pt.sigmoid(self.linear_u(combined))

c_tilde = pt.tanh(self.linear_c(combined))

c = f*c_prev + u*c_tilde

o = pt.sigmoid(self.linear_o(combined))

h = o*pt.tanh(c)

y = self.i2o(h)

return c, h, y

# -

model = LSTM(trn_ds.vocab_size, hidden_size, trn_ds.vocab_size).to(device)

loss_fn = nn.CrossEntropyLoss()

optimizer = optim.Adam(model.parameters(), lr=1e-2)

# + code_folding=[]

def print_sample(sample_idxs):

print(trn_ds.idx_to_ch[sample_idxs[0]].upper(), end='')

[print(trn_ds.idx_to_ch[x], end='') for x in sample_idxs[1:]]

# + code_folding=[]

def sample(model):

model.eval()

c_prev = pt.zeros([1, hidden_size], dtype=pt.float, device=device)

h_prev = pt.zeros_like(c_prev)

x = c_prev.new_zeros([1, trn_ds.vocab_size])

sampled_indexes = []

idx = -1

n_chars = 1

newline_char_idx = trn_ds.ch_to_idx['\n']

with pt.no_grad():

while n_chars != 50 and idx != newline_char_idx:

c_prev, h_prev, y_pred = model(c_prev, h_prev, x)

softmax_scores = pt.softmax(y_pred, 1).cpu().numpy().ravel()

np.random.seed(np.random.randint(1, 5000))

idx = np.random.choice(np.arange(trn_ds.vocab_size), p=softmax_scores)

sampled_indexes.append(idx)

x = (y_pred == y_pred.max(1)[0]).float()

n_chars += 1

if n_chars == 50:

sampled_indexes.append(newline_char_idx)

model.train()

return sampled_indexes

# + code_folding=[]

def train_one_epoch(model, loss_fn, optimizer):

model.train()

for line_num, (x, y) in enumerate(trn_dl):

loss = 0

optimizer.zero_grad()

c_prev = pt.zeros([1, hidden_size], dtype=pt.float, device=device)

h_prev = pt.zeros_like(c_prev)

x, y = x.to(device), y.to(device)

for i in range(x.shape[1]):

c_prev, h_prev, y_pred = model(c_prev, h_prev, x[:, i])

loss += loss_fn(y_pred, y[:, i])

if (line_num+1) % 100 == 0:

print_sample(sample(model))

loss.backward()

optimizer.step()

# + code_folding=[]

def train(model, loss_fn, optimizer, dataset='dinos', epochs=1):

for e in range(1, epochs+1):

print(f'{"-"*20} Epoch {e} {"-"*20}')

train_one_epoch(model, loss_fn, optimizer)

# -

train(model, loss_fn, optimizer, epochs=3)

| 5- Sequence Models/Week 1/Dinosaur Island -- Character-level language model/Dinosaur Island LSTM.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# name: python3

# ---

# + [markdown] id="view-in-github" colab_type="text"

# <a href="https://colab.research.google.com/github/The-Gupta/Time/blob/main/01.ipynb" target="_parent"><img src="https://colab.research.google.com/assets/colab-badge.svg" alt="Open In Colab"/></a>

# + id="Kz_A59-oqFV8"

# 20JUN21, SUN

# [Public] Location: https://drive.google.com/drive/folders/1fFoFxY4i3YU-KNkz84_AG9Nftxr4Alnb

# + colab={"base_uri": "https://localhost:8080/"} id="3tHOP6aBqJQG" outputId="b9dd1749-1fc7-4475-d030-8c5ead8910ef"

from google.colab import drive

drive.mount('/content/gdrive')

# + id="4HUYwKvfEuQb"

# + colab={"base_uri": "https://localhost:8080/", "height": 894} id="nONIWmjoEa9n" outputId="451fac69-debb-4830-a079-3f3ecc52c5ef"

# !pip install pyLDAvis

# + [markdown] id="6GiyflCaEw1D"

# Click 'RESTART RUNTIME', and then 'Yes'

# + colab={"base_uri": "https://localhost:8080/"} id="GL_LF6LuqJSD" outputId="0680596f-bb36-4cf9-90a4-8afdc2272820"

# cd "gdrive/MyDrive/Time Magazine"

# + id="WwQVPbeUqJWa"

# + colab={"base_uri": "https://localhost:8080/"} id="_l8H4fDlw3pW" outputId="ec7fe15c-aa45-4acd-d4e6-8966d6ab2832"

import re, os, ast, time, requests, urllib.request

from datetime import datetime

from pytz import timezone

# !sudo apt install tesseract-ocr

# !pip install pytesseract

import pytesseract

pytesseract.pytesseract.tesseract_cmd = (r'/usr/bin/tesseract')

try:

from PIL import Image

except ImportError:

import Image

import pytesseract, cv2, os

import cv2, random, itertools

from google.colab.patches import cv2_imshow

import numpy as np

from sklearn.cluster import DBSCAN

# !python -m spacy download en_core_web_sm

import spacy

nlp = spacy.load('en_core_web_sm')

import nltk

from nltk.corpus import stopwords

nltk.download('stopwords')

stopword_set = set(stopwords.words('english'))

from __future__ import print_function

import pyLDAvis

import pyLDAvis.sklearn

pyLDAvis.enable_notebook()

from sklearn.feature_extraction.text import CountVectorizer, TfidfVectorizer

from sklearn.decomposition import LatentDirichletAllocation

# + id="ooFrIFrxEKuY"

# + [markdown] id="6yOCc6-8rGWk"

# # Scraping [Time Magazine](https://time.com/vault/)

# 1923 - 2015

# + id="R8SQnOciqJYV"

def get_issue_dates(year):

url = 'https://time.com/vault/year/{}/'.format(year)

page = requests.get(url)

issue_dates = re.findall('\"coverDate\":\"(\d\d\d\d-\d\d-\d\d)\"', page.text)

return issue_dates

def download_page_image(url, path):

try:

urllib.request.urlretrieve(url, path)

return True

except Exception as e:

print('\n\tURL:', url, '\n\tPath:', path)

print('\t', e)

return False

def download_issue(issue_date):

year, month, day = issue_date.split('-')

# Find Number of Pages

url = 'https://time.com/vault/issue/{}/page/1/'.format(issue_date)

page = requests.get(url)

page_count = len(ast.literal_eval(re.findall('\"pagePDFs\":(\[[^\[\]]*\])', page.text)[0]))

# Create Issue Directory

if not os.path.exists(year+'/'+issue_date): os.mkdir(year+'/'+issue_date)

# Download Pages

for page_number in range(1, page_count+1):

page_url = 'https://content.time.com/time/subscriber/vault/{}/{}/{}/{}/1550.jpg'.format(year, month, year+month+day, page_number)

# urllib.request.urlretrieve(page_url, '{}/{}/{}.jpg'.format(year, issue_date, page_number))

count = 0

success = download_page_image(page_url, '{}/{}/{}.jpg'.format(year, issue_date, page_number))

while not success and count < 3:

success = download_page_image(page_url, '{}/{}/{}.jpg'.format(year, issue_date, page_number))

time.sleep(1)

count += 1

def get_timestamp():

now_utc = datetime.now(timezone('UTC'))

return now_utc.astimezone(timezone('Asia/Kolkata')).strftime("[%d-%m-%Y %H:%M:%S]")

# + id="V71Hxn3YqJbD"

# %%time

for year in list(range(1923, 2021+1)):

year = str(year)

# Create year Directory

if not os.path.exists(year): os.mkdir(year)

issue_dates = get_issue_dates(year)

for issue_date in issue_dates:

download_issue(issue_date)

print('\n{} Completed: {}.'.format(get_timestamp(), year))

# + id="vjNl8HOCqJeE"

# + [markdown] id="B9dlKXepr5P0"

# # Text Extracation (2000-2015)

# + id="Uk4d_EmkqJje" colab={"base_uri": "https://localhost:8080/"} outputId="31512116-016e-4dae-f905-6123a5f4b3fb"

for year in range(2000, 2016):

year = str(year)

for path,subdirs,files in os.walk(year):

dir_files = os.listdir(path)

print(path)

for name in files:

if name.endswith('.jpg') and name[:-4]+'.txt' not in dir_files:

img_path = os.path.join(path, name)

image = cv2.imread(img_path)

gray = cv2.cvtColor(image, cv2.COLOR_BGR2GRAY) # Convert to Black and White

gray = cv2.resize(gray, None, fx = 3, fy = 3, interpolation = cv2.INTER_CUBIC) # Zoom 3X

text = pytesseract.image_to_string(gray) # OCR

# Save text

f = open(img_path[:-4]+'.txt', 'w')

f.write(text)

f.close()

# + id="hjlWRRmKqJmI"

# + [markdown] id="sYqOxGMr9tLe"

# # What is on the cover page?

# The most Frequent Terms

# + colab={"base_uri": "https://localhost:8080/"} id="tJJE2-WdqJrK" outputId="05ebcfbf-99a1-45c2-bc0a-672cfade0f67"

try: from unidecode import unidecode

except:

# !pip install unidecode

from unidecode import unidecode

def read_text_file(filename):

with open(filename) as f:

content = ' '.join([unidecode(i.strip()) for i in f.readlines() if i.strip()]).replace('- ', '')

return content

def get_magazine_text(path):

pages = sorted([os.path.join(path, file) for file in os.listdir(path) if file.endswith('.txt')], key=lambda x: int(x.split('/')[-1][:-4]))

texts = [read_text_file(page) for page in pages]

return texts

# + id="dCzBALwYyObI"

release_dates = sum([[os.path.join(str(year), dir_) for dir_ in os.listdir(str(year)) if '.' not in dir_] for year in range(2000, 2016)], [])

# + id="EEDFs-oW0E7H"

texts = []

for release_date in release_dates:

# print('\n\n\n', release_date)

text = read_text_file(release_date+'/1.txt')

texts.append(text)

# print(text)

# doc = nlp(text)

# for entity in doc.ents:

# print(entity.label_, ' | ', entity.text)

# + id="EpHDI5QO7gEq" colab={"base_uri": "https://localhost:8080/"} outputId="3678b235-527b-4af1-d98b-b016742b4e52"

from collections import Counter

l = ' '.join(texts).lower().split()

l = [i for i in l if len(i)>=3 and i not in stopword_set]

c = Counter(l)

c.most_common()

# + [markdown] id="bcek5cWK-VOA"

# ### Looks like it's mostly about the US and Geopolitics.

# + colab={"background_save": true} id="LkDnXVs3qJ7c"

# + colab={"base_uri": "https://localhost:8080/"} id="HwZ4bj5mV7VB" outputId="cd035ddd-3806-483e-9b59-2ec779eb77a4"

# Save all texts in a Pickle File (for quick retreval).

# texts = {}

# count = 0

# for release_date in release_dates:

# print(release_date)

# for file_ in os.listdir(release_date):

# count += 1

# texts[os.path.join(release_date, file_)] = (count, get_magazine_text(release_date))

# import pickle

# with open('time_magazine_texts.pickle', 'wb') as f:

# pickle.dump(texts, f, protocol=pickle.HIGHEST_PROTOCOL)

# with open('time_magazine_texts.pickle', 'rb') as f:

# texts = pickle.load(f)

# + [markdown] id="cYeG9KN1-qmp"

# # Let's try to find some topics and their dominant terms

# + colab={"base_uri": "https://localhost:8080/"} id="XUJzBBkgCIM-" outputId="6407a37f-684f-4a67-9b2f-3dacc47e8d23"

len(release_dates)

# + id="QTbp9tUrCIPv"

# + id="2ckHX0JP4uls"

# Let's see for a year first.

# + id="8LEx-hq8BSNH" colab={"base_uri": "https://localhost:8080/"} outputId="27e76475-0c8a-4014-9ec7-b43b8f452f27"

# %%time

l = [get_magazine_text(release_date) for release_date in release_dates[:1]]

pages = [item for sublist in l for item in sublist]

# + id="HEV90fdKAjeX"

n_components = 5 # Number of topics

# + colab={"base_uri": "https://localhost:8080/", "height": 878} id="usvsVV5BqJ-F" outputId="5d39dea7-d80e-4f27-fa90-a7ca92169635"

tf_vectorizer = CountVectorizer(strip_accents = 'unicode',

stop_words = 'english',

lowercase = True,

token_pattern = r'\b[a-zA-Z]{3,}\b',

max_df = 0.5,

min_df = 10)

dtm_tf = tf_vectorizer.fit_transform(pages)

print(dtm_tf.shape)

# for TF DTM

lda_tf = LatentDirichletAllocation(n_components=n_components, random_state=0)

lda_tf.fit(dtm_tf)

pyLDAvis.sklearn.prepare(lda_tf, dtm_tf, tf_vectorizer)

# + colab={"base_uri": "https://localhost:8080/", "height": 878} id="g01NHeccqKAf" outputId="8590ecc7-ef52-4384-853f-25f55215f9e2"

tfidf_vectorizer = TfidfVectorizer(strip_accents = 'unicode',

stop_words = 'english',

lowercase = True,

token_pattern = r'\b[a-zA-Z]{3,}\b',

max_df = 0.5,

min_df = 10)

dtm_tfidf = tfidf_vectorizer.fit_transform(pages)

print(dtm_tfidf.shape)

# for TFIDF DTM

lda_tfidf = LatentDirichletAllocation(n_components=n_components, random_state=0)

lda_tfidf.fit(dtm_tfidf)

pyLDAvis.sklearn.prepare(lda_tfidf, dtm_tfidf, tfidf_vectorizer)

# + id="iB-Ayy2FqKDF"

# TODO: For all the issues, and interpret

# + id="BtNwJGCHqKF2"

# + id="TO0-N0HHDY2c"

# + id="Op1Q7L4EDY5O"

| 01.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .jl

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Julia 1.7.3

# language: julia

# name: julia-1.7

# ---

# Before running this, please make sure to activate and instantiate the

# tutorial-specific package environment, using this

# [`Project.toml`](https://raw.githubusercontent.com/juliaai/DataScienceTutorials.jl/gh-pages/__generated/A-composing-models/Project.toml) and

# [this `Manifest.toml`](https://raw.githubusercontent.com/juliaai/DataScienceTutorials.jl/gh-pages/__generated/A-composing-models/Manifest.toml), or by following

# [these](https://juliaai.github.io/DataScienceTutorials.jl/#learning_by_doing) detailed instructions.

# ## Generating dummy data

# Let's start by generating some dummy data with both numerical values and categorical values:

# +

using MLJ

using PrettyPrinting

KNNRegressor = @load KNNRegressor

# -

# input

X = (age = [23, 45, 34, 25, 67],

gender = categorical(['m', 'm', 'f', 'm', 'f']))

# target

height = [178, 194, 165, 173, 168];

# Note that the scientific type of `age` is `Count` here:

scitype(X.age)

# We will want to coerce that to `Continuous` so that it can be given to a regressor that expects such values.

# ## Declaring a pipeline

# A typical workflow for such data is to one-hot-encode the categorical data and then apply some regression model on the data.

# Let's say that we want to apply the following steps:

# 1. One hot encode the categorical features in `X`

# 1. Standardize the target variable (`:height`)

# 1. Train a KNN regression model on the one hot encoded data and the Standardized target.

# The `Pipeline` constructor helps you define such a simple (non-branching) pipeline of steps to be applied in order:

pipe = Pipeline(

coercer = X -> coerce(X, :age=>Continuous),

one_hot_encoder = OneHotEncoder(),

transformed_target_model = TransformedTargetModel(

model = KNNRegressor(K=3);

target=UnivariateStandardizer()

)

)

# Note the coercion of the `:age` variable to Continuous since `KNNRegressor` expects `Continuous` input.

# Note also the `TransformedTargetModel` which allows one to learn a transformation (in this case Standardization) of the

# target variable to be passed to the `KNNRegressor`.

# Hyperparameters of this pipeline can be accessed (and set) using dot syntax:

pipe.transformed_target_model.model.K = 2

pipe.one_hot_encoder.drop_last = true;

# Evaluation for a pipe can be done with the `evaluate!` method; implicitly it will construct machines that will contain the fitted parameters etc:

evaluate(

pipe,

X,

height,

resampling=Holdout(),

measure=rms

) |> pprint

# ---

#

# *This notebook was generated using [Literate.jl](https://github.com/fredrikekre/Literate.jl).*

| __site/__generated/A-composing-models/tutorial.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 2

# language: python

# name: python2

# ---

import pandas as pd

import numpy as np

from astropy.coordinates import SkyCoord

from astropy import units as u

# Read in Issacson 2017 target table:

f = pd.read_csv("../../bl/Isaacson2017.csv")

#k=f.loc[np.where(f['Dist']<=5.0)[0]]

#k

missing=('HIP443' ,'HIP1242', 'HIP2081', 'HIP2762', 'HIP8903', 'HIP11452', 'HIP12351', \

'HIP14576', 'HIP16134', 'HIP16852', 'HIP23875', 'HIP35296', 'GJ273', 'HIP36349',\

'HIP42913', 'HIP46706', 'HIP48659', 'HIP49973', 'HIP51986', 'HIP52190',\

'HIP54182', 'HIP54872', 'HIP65378', 'HIP67301', 'GJ559B', 'GJ559A', 'HIP72622',\

'HIP77516', 'HIP81919', 'HIP82817', 'HIP84123', 'HIP88601', 'GJ702A', 'GJ702B',\

'HIP95501', 'HIP98204', 'HIP101955', 'HIP108752', 'HIP109268', 'HIP109821',\

'HIP113963', 'HIP89937')

k=pd.DataFrame(columns=list(f))

k

for i in range(len(missing)):

k=k.append(f.loc[np.where(f['SimbadName']==missing[i])[0]])

k=k.reset_index(drop=True)

k

# Pick the ones in the MeerKAT FOV

k=k.loc[np.where(k['Dec'].values<45.)[0]]

k=k.reset_index(drop=True)

k

# Add a note for interesting targets:

# Most of these don't have Gaia id's because they're too bright

p=f.rename(columns={'SimbadName':'simbadname'})

p['note'] = pd.Series('NaN', index=p.index)

p.loc[np.where(p['simbadname']=='GJ244A')[0],'note']='Sirius A'

p.loc[np.where(p['simbadname']=='HIP36850')[0],'note']='Castor AB'

p.loc[np.where(p['simbadname']=='GJ280')[0],'note']='Procyon'

p.loc[np.where(p['simbadname']=='HIP37826')[0],'note']='Pollox'

p.loc[np.where(p['simbadname']=='HIP54061')[0],'note']='Dubhe'

p.loc[np.where(p['simbadname']=='HIP62956')[0],'note']='Alioth'

p.loc[np.where(p['simbadname']=='HIP76267')[0],'note']='Alphecca'

p.loc[np.where(p['simbadname']=='HIP84012')[0],'note']='eta Oph'

p.loc[np.where(p['simbadname']=='HIP86032')[0],'note']='alp Oph'

p.loc[np.where(p['simbadname']=='HIP91262')[0],'note']='alp Lyr'

p.loc[np.where(p['simbadname']=='HIP93506')[0],'note']='zet Sgr'

p.loc[np.where(p['simbadname']=='HIP97649')[0],'note']='Altair'

p.loc[np.where(p['simbadname']=='GJ768')[0],'note']='Altair'

p.loc[np.where(p['simbadname']=='HIP105199')[0],'note']='Alderamin'

p.loc[np.where(p['simbadname']=='HIP113368')[0],'note']='Fomalhaut'

p.loc[np.where(p['simbadname']=='GJ551')[0],'note']='Proxima Centauri'

p.loc[np.where(p['simbadname']=='GJ406')[0],'note']='Wolf 359'

p.loc[np.where(p['simbadname']=='GJ699')[0],'note']='Barnards star'

p.loc[np.where(p['simbadname']=='GJ411')[0],'note']='Lalande 21185'

#p.loc[np.where(p['source_id']==4075141768785646848)[0][0],'note']='Ross 154'

p.loc[np.where(p['simbadname']=='GJ905')[0],'note']='Ross 248'

p.loc[np.where(p['simbadname']=='GJ144')[0],'note']='Epsilon Eridani'

p=p.loc[np.where(p['note']!='NaN')[0]]

p=p.reset_index(drop=True)

p=p.rename(columns={'simbadname':'SimbadName'})

p=p.loc[np.where(p['Dec']<45.)[0]]

p=p.reset_index(drop=True)

p

k['note']='NaN'

tot=pd.concat([p,k],sort=False)

tot=tot.reset_index(drop=True)

tot

dupes = tot.duplicated(subset='SimbadName',keep='first')

tot = tot.loc[np.where(dupes==False)[0]]

tot=tot.reset_index(drop=True)

tot

# Update to J2015.5 ref epoch

# Convert the target list proper motions to degrees/yr

pmRA_deg,pmDE_deg = (tot['pmRA'].values*u.arcsec).to(u.deg).value,(tot['pmDE'].values*u.arcsec).to(u.deg).value

# Compute the difference between the ref epoch and updated epoch:

deltat = 2015.5-2000.

# Add the delta RA/Dec to the target list RA/Dec:

RA_J20155 = tot['RA'].values+(pmRA_deg*deltat)

Dec_J20155 = tot['Dec'].values+(pmDE_deg*deltat)

tot['ra'],tot['decl']=RA_J20155,Dec_J20155

tot['ep']='2015.5'

tot

l=pd.read_csv('Our_targets/fornax.csv')

cols=list(l)

print list(tot)

print cols

df = pd.DataFrame(index=range(len(tot)),columns=cols)

df['dist.c'],df['name']=tot['Dist'],tot['SimbadName']

df['ra'],df['decl'],df['ref_epoch']=tot['ra'],tot['decl'],tot['ep']

df['pmra'],df['pmdec']=tot['pmRA'],tot['pmDE']

df['sptype.c']=tot['SpType']

df['phot_g_mean_mag']=tot['Vmag']

df['project']='Not in Gaia'

df

df.to_csv('Our_targets/not_in_gaia.csv',index=False)

| MeerKAT_LSPs/Add close sources not in Gaia.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# <h2>Iterative methods for solving Ax = b</h2>

# The conjugate gradient method (when A is symmetric positive definite, or SPD) and the Jacobi method.

#

# +

# These are the standard imports for CS 111.

# This list may change as the quarter goes on.

import os

import time

import math

import numpy as np

import numpy.linalg as npla

import scipy

from scipy import sparse

from scipy import linalg

import scipy.sparse.linalg as spla

import matplotlib.pyplot as plt

from matplotlib import cm

from mpl_toolkits.mplot3d import axes3d

import cs111

# %matplotlib tk

# -

def Jsolve(A, b, tol = 1e-8, max_iters = 1000, callback = None):

"""Solve a linear system Ax = b for x by the Jacobi iterative method.

Parameters:

A: the matrix.

b: the right-hand side vector.

tol = 1e-8: the relative residual at which to stop iterating.

max_iters = 1000: the maximum number of iterations to do.

callback = None: a user function to call at every iteration.

The callback function has arguments 'x', 'iteration', and 'residual'

Outputs (in order):

x: the computed solution

rel_res: list of relative residual norms at each iteration.

The number of iterations actually done is len(rel_res) - 1

"""

# Check the input

m, n = A.shape

assert m == n, "matrix must be square"

bn, = b.shape

assert bn == n, "rhs vector must be same size as matrix"

# Split A into diagonal D plus off-diagonal C

d = A.diagonal() # diagonal elements of A as a vector

C = A.copy() # copy of A ...

C.setdiag(np.zeros(n)) # ... without the diagonal

# Initial guess: x = 0

x = np.zeros(n)

# Vector of relative residuals

# Relative residual is norm(residual)/norm(b)

# Intitial residual is b - Ax for x=0, or b

rel_res = [1.0]

# Call user function if specified

if callback is not None:

callback(x = x, iteration = 0, residual = 1)

# Iterate

for k in range(1, max_iters+1):

# New x

x = (b - C @ x) / d

# Record relative residual

this_rel_res = npla.norm(b - A @ x) / npla.norm(b)

rel_res.append(this_rel_res)

# Call user function if specified

if callback is not None:

callback(x = x, iteration = k, residual = this_rel_res)

# Stop if within tolerance

if this_rel_res <= tol:

break

return (x, rel_res)

def CGsolve(A, b, tol = 1e-8, max_iters = 1000, callback = None):

"""Solve a linear system Ax = b for x by the conjugate gradient iterative method.

Parameters:

A: the matrix.

b: the right-hand side vector.

tol = 1e-8: the relative residual at which to stop iterating.

max_iters = 1000: the maximum number of iterations to do.

callback = None: a user function to call at every iteration, with one argument x

Outputs (in order):

x: the computed solution

rel_res: list of relative residual norms at each iteration.

The number of iterations actually done is len(rel_res) - 1

"""

# Check the input

m, n = A.shape

assert m == n, "matrix must be square"

bn, = b.shape

assert bn == n, "rhs vector must be same size as matrix"

# Initial guess: x = 0

x = np.zeros(n)

# Initial residual: r = b - A@0 = b

r = b

# Initial step is in direction of residual.

d = r

# Squared norm of residual

rtr = r.T @ r

# Vector of relative residuals

# Relative residual is norm(residual)/norm(b)

# Intitial residual is b - Ax for x=0, or b

rel_res = [1.0]

# Call user function if specified

if callback is not None:

callback(x = x, iteration = 0, residual = 1)

# Iterate

for k in range(1, max_iters+1):

Ad = A @ d

alpha = rtr / (d.T @ Ad) # Length of step

x = x + alpha * d # Update x to new x

r = r - alpha * Ad # Update r to new residual

rtrold = rtr

rtr = r.T @ r

beta = rtr / rtrold

d = r + beta * d # Update d to new step direction

# Record relative residual

this_rel_res = npla.norm(b - A @ x) / npla.norm(b)

rel_res.append(this_rel_res)

# Call user function if specified

if callback is not None:

callback(x = x, iteration = k, residual = this_rel_res)

# Stop if within tolerance

if this_rel_res <= tol:

break

return (x, rel_res)

| 00.AllPythonCode/CGandJacobiSolve.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# + [markdown] id="view-in-github" colab_type="text"

# <a href="https://colab.research.google.com/github/dlmacedo/starter-academic/blob/master/content/courses/deeplearning/notebooks/pytorch/torchvision_finetuning_instance_segmentation.ipynb" target="_parent"><img src="https://colab.research.google.com/assets/colab-badge.svg" alt="Open In Colab"/></a>

# + [markdown] id="DfPPQ6ztJhv4"

# # TorchVision Instance Segmentation Finetuning Tutorial

#

# For this tutorial, we will be finetuning a pre-trained [Mask R-CNN](https://arxiv.org/abs/1703.06870) model in the [*Penn-Fudan Database for Pedestrian Detection and Segmentation*](https://www.cis.upenn.edu/~jshi/ped_html/). It contains 170 images with 345 instances of pedestrians, and we will use it to illustrate how to use the new features in torchvision in order to train an instance segmentation model on a custom dataset.

#

# First, we need to install `pycocotools`. This library will be used for computing the evaluation metrics following the COCO metric for intersection over union.

# + id="DBIoe_tHTQgV" colab={"base_uri": "https://localhost:8080/", "height": 435} outputId="55b8ee3b-ba36-4941-e140-768c75fd8e54"

# %%shell

pip install cython

# Install pycocotools, the version by default in Colab

# has a bug fixed in https://github.com/cocodataset/cocoapi/pull/354

pip install -U 'git+https://github.com/cocodataset/cocoapi.git#subdirectory=PythonAPI'

# + [markdown] id="5Sd4jlGp2eLm"

# ## Defining the Dataset

#

# The [torchvision reference scripts for training object detection, instance segmentation and person keypoint detection](https://github.com/pytorch/vision/tree/v0.3.0/references/detection) allows for easily supporting adding new custom datasets.

# The dataset should inherit from the standard `torch.utils.data.Dataset` class, and implement `__len__` and `__getitem__`.

#

# The only specificity that we require is that the dataset `__getitem__` should return:

#

# * image: a PIL Image of size (H, W)

# * target: a dict containing the following fields

# * `boxes` (`FloatTensor[N, 4]`): the coordinates of the `N` bounding boxes in `[x0, y0, x1, y1]` format, ranging from `0` to `W` and `0` to `H`

# * `labels` (`Int64Tensor[N]`): the label for each bounding box

# * `image_id` (`Int64Tensor[1]`): an image identifier. It should be unique between all the images in the dataset, and is used during evaluation

# * `area` (`Tensor[N]`): The area of the bounding box. This is used during evaluation with the COCO metric, to separate the metric scores between small, medium and large boxes.

# * `iscrowd` (`UInt8Tensor[N]`): instances with `iscrowd=True` will be ignored during evaluation.

# * (optionally) `masks` (`UInt8Tensor[N, H, W]`): The segmentation masks for each one of the objects

# * (optionally) `keypoints` (`FloatTensor[N, K, 3]`): For each one of the `N` objects, it contains the `K` keypoints in `[x, y, visibility]` format, defining the object. `visibility=0` means that the keypoint is not visible. Note that for data augmentation, the notion of flipping a keypoint is dependent on the data representation, and you should probably adapt `references/detection/transforms.py` for your new keypoint representation

#

# If your model returns the above methods, they will make it work for both training and evaluation, and will use the evaluation scripts from pycocotools.

#

# Additionally, if you want to use aspect ratio grouping during training (so that each batch only contains images with similar aspect ratio), then it is recommended to also implement a `get_height_and_width` method, which returns the height and the width of the image. If this method is not provided, we query all elements of the dataset via `__getitem__` , which loads the image in memory and is slower than if a custom method is provided.

#

# + [markdown] id="bX0rqK-A3Nbl"

# ### Writing a custom dataset for Penn-Fudan

#

# Let's write a dataset for the Penn-Fudan dataset.

#

# First, let's download and extract the data, present in a zip file at https://www.cis.upenn.edu/~jshi/ped_html/PennFudanPed.zip

# + id="_t4TBwhHTdkd" colab={"base_uri": "https://localhost:8080/", "height": 1000} outputId="1b209271-3c3b-4df9-ce4d-2176edb57666"

# %%shell

# download the Penn-Fudan dataset

wget https://www.cis.upenn.edu/~jshi/ped_html/PennFudanPed.zip .

# extract it in the current folder

unzip PennFudanPed.zip

# + [markdown] id="WfwuU-jI3j93"

# Let's have a look at the dataset and how it is layed down.

#

# The data is structured as follows

# ```

# PennFudanPed/

# PedMasks/

# FudanPed00001_mask.png

# FudanPed00002_mask.png

# FudanPed00003_mask.png

# FudanPed00004_mask.png

# ...

# PNGImages/

# FudanPed00001.png

# FudanPed00002.png

# FudanPed00003.png

# FudanPed00004.png

# ```

#

# Here is one example of an image in the dataset, with its corresponding instance segmentation mask

# + id="LDjuVFgexFfh" colab={"base_uri": "https://localhost:8080/", "height": 553} outputId="23c18cf5-dc2f-43c0-b445-8abed4d005e5"

from PIL import Image

Image.open('PennFudanPed/PNGImages/FudanPed00001.png')

# + id="cFHKCvCTxiff" colab={"base_uri": "https://localhost:8080/", "height": 553} outputId="4f5b1d6c-96da-4a22-da36-515db9c9049d"

mask = Image.open('PennFudanPed/PedMasks/FudanPed00001_mask.png')

# each mask instance has a different color, from zero to N, where

# N is the number of instances. In order to make visualization easier,

# let's adda color palette to the mask.

mask.putpalette([

0, 0, 0, # black background

255, 0, 0, # index 1 is red

255, 255, 0, # index 2 is yellow

255, 153, 0, # index 3 is orange

])

mask

# + [markdown] id="C9Ee5NV54Dmj"

# So each image has a corresponding segmentation mask, where each color correspond to a different instance. Let's write a `torch.utils.data.Dataset` class for this dataset.

# + id="mTgWtixZTs3X"

import os

import numpy as np

import torch

import torch.utils.data

from PIL import Image

class PennFudanDataset(torch.utils.data.Dataset):

def __init__(self, root, transforms=None):

self.root = root

self.transforms = transforms

# load all image files, sorting them to

# ensure that they are aligned

self.imgs = list(sorted(os.listdir(os.path.join(root, "PNGImages"))))

self.masks = list(sorted(os.listdir(os.path.join(root, "PedMasks"))))

def __getitem__(self, idx):

# load images ad masks

img_path = os.path.join(self.root, "PNGImages", self.imgs[idx])

mask_path = os.path.join(self.root, "PedMasks", self.masks[idx])

img = Image.open(img_path).convert("RGB")

# note that we haven't converted the mask to RGB,

# because each color corresponds to a different instance

# with 0 being background

mask = Image.open(mask_path)

mask = np.array(mask)

# instances are encoded as different colors

obj_ids = np.unique(mask)

# first id is the background, so remove it

obj_ids = obj_ids[1:]

# split the color-encoded mask into a set

# of binary masks

masks = mask == obj_ids[:, None, None]

# get bounding box coordinates for each mask

num_objs = len(obj_ids)

boxes = []

for i in range(num_objs):

pos = np.where(masks[i])

xmin = np.min(pos[1])

xmax = np.max(pos[1])

ymin = np.min(pos[0])

ymax = np.max(pos[0])

boxes.append([xmin, ymin, xmax, ymax])

boxes = torch.as_tensor(boxes, dtype=torch.float32)

# there is only one class

labels = torch.ones((num_objs,), dtype=torch.int64)

masks = torch.as_tensor(masks, dtype=torch.uint8)

image_id = torch.tensor([idx])

area = (boxes[:, 3] - boxes[:, 1]) * (boxes[:, 2] - boxes[:, 0])

# suppose all instances are not crowd

iscrowd = torch.zeros((num_objs,), dtype=torch.int64)

target = {}

target["boxes"] = boxes

target["labels"] = labels

target["masks"] = masks

target["image_id"] = image_id

target["area"] = area

target["iscrowd"] = iscrowd

if self.transforms is not None:

img, target = self.transforms(img, target)

return img, target

def __len__(self):

return len(self.imgs)

# + [markdown] id="J6f3ZOTJ4Km9"

# That's all for the dataset. Let's see how the outputs are structured for this dataset

# + id="ZEARO4B_ye0s" colab={"base_uri": "https://localhost:8080/", "height": 331} outputId="006161f7-ddad-4ff4-8fb1-b91d0fd81069"

dataset = PennFudanDataset('PennFudanPed/')

dataset[0]

# + [markdown] id="lWOhcsir9Ahx"

# So we can see that by default, the dataset returns a `PIL.Image` and a dictionary

# containing several fields, including `boxes`, `labels` and `masks`.

# + [markdown] id="RoAEkUgn4uEq"

# ## Defining your model

#

# In this tutorial, we will be using [Mask R-CNN](https://arxiv.org/abs/1703.06870), which is based on top of [Faster R-CNN](https://arxiv.org/abs/1506.01497). Faster R-CNN is a model that predicts both bounding boxes and class scores for potential objects in the image.

#

#

#

# Mask R-CNN adds an extra branch into Faster R-CNN, which also predicts segmentation masks for each instance.

#

#

#

# There are two common situations where one might want to modify one of the available models in torchvision modelzoo.

# The first is when we want to start from a pre-trained model, and just finetune the last layer. The other is when we want to replace the backbone of the model with a different one (for faster predictions, for example).

#

# Let's go see how we would do one or another in the following sections.

#

#

# ### 1 - Finetuning from a pretrained model

#

# Let's suppose that you want to start from a model pre-trained on COCO and want to finetune it for your particular classes. Here is a possible way of doing it:

# ```

# import torchvision

# from torchvision.models.detection.faster_rcnn import FastRCNNPredictor

#

# # load a model pre-trained pre-trained on COCO

# model = torchvision.models.detection.fasterrcnn_resnet50_fpn(pretrained=True)

#

# # replace the classifier with a new one, that has

# # num_classes which is user-defined

# num_classes = 2 # 1 class (person) + background

# # get number of input features for the classifier

# in_features = model.roi_heads.box_predictor.cls_score.in_features

# # replace the pre-trained head with a new one

# model.roi_heads.box_predictor = FastRCNNPredictor(in_features, num_classes)

# ```

#

# ### 2 - Modifying the model to add a different backbone

#

# Another common situation arises when the user wants to replace the backbone of a detection

# model with a different one. For example, the current default backbone (ResNet-50) might be too big for some applications, and smaller models might be necessary.

#

# Here is how we would go into leveraging the functions provided by torchvision to modify a backbone.

#

# ```

# import torchvision

# from torchvision.models.detection import FasterRCNN

# from torchvision.models.detection.rpn import AnchorGenerator

#

# # load a pre-trained model for classification and return

# # only the features

# backbone = torchvision.models.mobilenet_v2(pretrained=True).features

# # FasterRCNN needs to know the number of

# # output channels in a backbone. For mobilenet_v2, it's 1280

# # so we need to add it here

# backbone.out_channels = 1280

#

# # let's make the RPN generate 5 x 3 anchors per spatial

# # location, with 5 different sizes and 3 different aspect

# # ratios. We have a Tuple[Tuple[int]] because each feature

# # map could potentially have different sizes and

# # aspect ratios

# anchor_generator = AnchorGenerator(sizes=((32, 64, 128, 256, 512),),

# aspect_ratios=((0.5, 1.0, 2.0),))

#

# # let's define what are the feature maps that we will

# # use to perform the region of interest cropping, as well as

# # the size of the crop after rescaling.

# # if your backbone returns a Tensor, featmap_names is expected to

# # be [0]. More generally, the backbone should return an

# # OrderedDict[Tensor], and in featmap_names you can choose which

# # feature maps to use.

# roi_pooler = torchvision.ops.MultiScaleRoIAlign(featmap_names=[0],

# output_size=7,

# sampling_ratio=2)

#