code stringlengths 38 801k | repo_path stringlengths 6 263 |

|---|---|

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python [default]

# language: python

# name: python2

# ---

# # Machine Learning Engineer Nanodegree

# ## Capstone Project

# <NAME>

# July 11 2017

# ## I. Definition

# ### Project Overview

#

# Banks struggle with many issues these days. One of these is a thorough understanding of their clients. Not only their background information is important but also their behaviour. Interest arises in whether they are involved in possibly unwanted behaviour (e.g. money laundering or terrorism financing) or increasing their risk of default (e.g. missing monthly payments).

# Machine learning is starting to help banks investigate their clients, provide new and better credit scores and predict which clients may run into payment issues.

# In this project we look at a bank's data. We use an old data set (from 1999) which includes almost all properties real banks have. Even though this data is not as rich as that of a bank nowadays, it will provide a nice introductions into the techniques used by data analysts working for large banks.

# The dataset is from the PKDD'99 and can be found here:

# http://lisp.vse.cz/pkdd99/berka.htm.

# The competition had the following original description:

# *The bank wants to improve their services. For instance, the bank managers have only vague idea, who is a good client (whom to offer some additional services) and who is a bad client (whom to watch carefully to minimize the bank loses). Fortunately, the bank stores data about their clients, the accounts (transactions within several months), the loans already granted, the credit cards issued. The bank managers hope to improve their understanding of customers and seek specific actions to improve services. A mere application of a discovery tool will not be convincing for them.*

#

#

# ## Problem Statement

# Our research will try to answer the following question:

# Using a customer's properties and transactional behaviour, can we predict whether or not he/she is able to pay future loan payments?

# To answer this question, we need to make some assumptions before we can attempt to answer this question. Some of these will not directly be clear but will once we dive deeper into the data.

# - We make a clear seperation between running and finished loans. In this, we see finsihed loans as those of which we have full information and running loans as the ones we want to have classification on.

# - All loans are equal in time. We look at all loans from start to finish. In this, we do not take into conern the seasonality between different years.

# Using these assumptions, we will manipulate the data to create features which we hope can be predictive for running and new loans.

# This will require a large amount of preprocessing. In this usecase, we will also try to use network features to predict whether we have enough interaction to build network-based features.

#

# ### Metrics

# In this project, we will use an F1-score to evaluate how well our model will be able to predict a future defaults. We will use the finished loans for both traning and testing data. As we will see that we have a relatively small amount of defaults and F1 score will give us a nice balance over accuracy and false positive ratio.

# The F1 score is defined as:

# $$F_1 = 2\cdot \frac{Precision \cdot Recall}{Precision + Recall}$$

# where

# $$ Precision = \frac{tp}{tp+fp}$$

# and

# $$ Recall = \frac{tp}{tp+fn}$$

# ## II. Analysis

#

# ### Data Exploration

# The data for this project is provided on the competition website. In this project, it is downloaded and handled in the `Get_data.py` file. The data has the following structure:

# <img src="images/data_struct.gif">

# In the `Get_data.py` file we deal with the merging and checking of the quality of the data. Any reader interested in downloading and checking the data for themselves can follow the steps in that file.

# The data is provided to us in a flat text file with `;` delimiter and `\n` EOL statements. Many of the headers/cells in the dataset are provided in Czech and translated to English for further use. The data is then loaded into pandas dataframes and merged into five files:

# - `client_info.cv` containing all the info on the client

# - `demographic_info` containing all the info on the demographics of the Czech republic

# - `Transaction_info` A file containing all transactions made for the accounts in the dataset

# - `order_info` A file containing info on the ordered products per client

# - `loan_info` A file containing the loan information

#

# All of these files are in the further steps reduced to clients and accounts which have actual loans. These steps are described in `Loan_payment_feature_engineering.ipynb`.

# For some relevant features, we can provide the plots as found in the data. This allows us to check the distribution and whether or not there is a connection to missed payments. In these plots, we compare the distributions for loans which are past debt and those which are not.

# We can give some quick statistics and plots:

#

# | Feature| mean | std | min | 25% | 50%| 75% | max |

# | ----- | ----- | ---| ----| ----| --- | --- | --- |

# | Amount | 151410 |113372 |4980| 66732 | 116928 | 210654 | 590820|

# | Balance | 44023 | 13793 |6690 | 33725| 44879 | 54396 | 79272|

# | Birthyear | 1958 | 13 |1935 | 1947| 1958 | 1969 | 1980|

# | Payments | 4190 | 2215 | 304 | 2477 | 3934 | 5813 | 9910|

#

# <img src="images/balance_amount.png" alt="Drawing" style="width: 400px;"/>

# <img src="images/birthyear_owner_payments.png" alt="Drawing" style="width: 400px;"/>

#

# We can see the following things:

# - Clients in debt seem to have higher monthly paymets but less balance on the bank.

#

# With these, we hope to be able to predict which loans will be in default, and which will run along just fine.

#

# ### Algorithms and Techniques

# In this section, we will try to answer the following questions:

# - What will be the metric to which we test?

# - How will we define our training and test sets?

# - Which features will we use?

# - Which models will we use?

#

# To tackle the first, remember that we want to predict future debt using past debt. Our data is highly skewed however, as we have 606 accounts without any debt and only 76 with debt. Hence, using our accuracy score may not be the best choice. We will go with the F1 score as it will provide us with a nice balance between precision, recall and accuracy.

# Now actually configuring the data to do what we want is quite difficult. As we want to predict 'future missed payments' we need to use data in which we can predict future payments (as our past_debt only shows us if there is any past debt). We do however have the missed counts in all the quarters which we can use. Thus the setup will be as follows:

# 1. Use the finished data as training set

# 2. Use the finshed data as test set.

# 3. For both these datasets, use the first 3 quarters as prediction for missed payment in the last.

# 4. Use a few running tests as vaildation.

# 5. Finish with predictions on the full running dataset.

#

# We will use the following features:

# - Loan amount

# - Loan duration

# - Loan payment heigth

# - Bank balance

# - Birthyear account owner

# - Gender

# - Startyear of the owner

#

# As for the models, we will use the usual suspects:

# - **Naive Bayes**: A model that leverages conditional probability. Using known distributions of data to predict related distributions. It is run by the main rule:

# $$ p(C_k \mid \mathbf {x}) = \frac {p(C_{k})\ p(\mathbf {x} \mid C_{k})}{p(\mathbf {x} )} $$ or in layman's terms:

# $$ \mbox{posterior} = \frac {{\mbox{prior}}\times {\mbox{likelihood}}}{\mbox{evidence}} $$

# In our case, we will use a gaussian kernel that makes the assumption that the data is somewhat normally distributed to estimate the probability density function.

#

# - **SVM**: A linear SVM tries to lay a line (or in more dimension a (hyper)plane) inbetween data in order to create a seperation. Each side of the line receives a different classification (in our case payment miss or not). This is then optimized to build the most robust and least error prone line.

# - **Logistic regression**: A classifier version of Linear regression. Instead of the normal formula

# $$ y = \beta_0 + \beta_1x + \epsilon $$

# with normally distributed epsilon we have

# $$ y={\begin{cases}1&\beta _{0}+\beta _{1}x+\varepsilon >0\\0&{\text{else}}\end{cases}}$$ with epsilon distributed as in the logistic distribution.

# - **Decision Tree**: An algorithm which in each step slices data based on the feature leaving the least entropy (a measure of impurity of data). It is called a decision tree as one can make decisions based on every step and builds a tree by splitting the data, leaving the leaves as the classified data.

# - **Bagging**: An algorithm that subsets the data and trains decision trees on those subsets. The idea is that this procedure improves the stability of the algorithm. The final output is the average classification over all sampled classifiers.

# - **AdaBoost**: Adaptive boosting, an algorithm that only selects only features that show predictive power. It then builds a weighted tree based on the weighted predictive power for each feature.

# - **Random Forest**: Extension of bagging by also subsetting random features and pruning the tree.

#

#

# ### Benchmark

# A a benchmark we will use the Naive Bayes (GaussianNB) classifier. As we will see later, it has an F1 score of 0.44. Even though this model is far more advanced than the industry standard, it is a good baseline.

# ## III. Methodology

#

#

# ### Data Preprocessing

# This project took some preprocessing. As described earlier a lot of effort had to be done wrestling the data into a single dataset and translating the Czech to English.

# At first the idea was to use the transaction network (with accounts as nodes and transactions as edges) to provide us with a lot of information. The dataset however, does not have the same key for the receiving and sending parties, making this network very sparsely connected. The small exercise in doing this can be found in the file `Network_analysis.ipynb`. As a matter of fact, the largest connected component only had 7 nodes as seen in the picture below:

# <img src="images/network.png" alt="Drawing" style="width: 400px;"/>

# In this picture, the bold parts are the receiving accounts and we see that no features can be derived from the features of the network. Finding datasets in which this is possible seems very difficult. One could try to use the Bitcoin ledger. By property of the crypto ledger, no other information can be derieved from the network, making the analysis quite useless.

# Therefore, I focused on the loan problem at hand. For this I made a lot of steps on the transactions and especially on the missing of payments. I started with all loans and looked at whether those who had missed payments actually had 'flat' months (months in which no loan was paid) to check if the loans were correctly identified. The following picture shows that this is true:

# <img src="images/flat_months.png" alt="Drawing" style="width: 400px;"/>

# Next, I decided to look at the difference between the ordered and paid amount each month. If this was smaller than zero, this would indicate a missed payment. If it was larger, it was a possible pre-payment (in the first month). This seemed to also happen:

# <img src="images/ordere_diff.png" alt="Drawing" style="width: 400px;"/>

#

# As we assumed that all loans are equal, we push them all back to start-date and see that all prepayments happen in the first month and we can use this data to construct our features:

# <img src="images/ordered_pushed.png" alt="Drawing" style="width: 400px;"/>

#

# After this, we can take the first 75% of the time for each loan to predict future missed payment, and use the last 25% as the input for the target variable.

#

# In the end, we did choose to not include card/demographic behaviour due to the lack of variance in the data.

#

# ### Implementation and refinement

# After all the feature engineering and exploratory analysis shown above, I moved on to looking at the global correlation structure. I rebuild a plot I often use when working in R to give the first insights.

# <img src="images/correlation.png" alt="Drawing" style="width: 600px;"/>

# Based on this, I was able to quickly spot correlation structures and together with the plots based on distributions with the target variable substet the required features.

# After this, there was the phase in which we could start doing machine learning. I decided to not go for a CV scheme, as with the low amount of actual delayed payments in the data, there was the risk of creating subsets with no accounts with delayed payments.

# To do a grid search I wrote my own little implementation (based on the GridSearchCV in skLearn) which does the job without cross-validation. It can be found in `gridSearch.py`. I have used the gridsearch for the SVM, logistic regression and decision trees. This greatly improved performance compared with naive implementations (often giving an F1-score of 0.0). In the results section, the best scoring parameter settings are given using Occam's razor.

#

# As stated above, I will test the following algorithms on the data:

# - Naive Bayes

# - SVM

# - Logistic regression

# - Decision Tree

# - Bagging

# - AdaBoost

# - Random Forest

#

# ##### Naive Bayes

# I decided to run a standard naive bayes to see how it would perform compared with other algorithms.

#

# ##### SVM

# I ran the SVM with both the linear and rbf kernal, a C-value of either 1 or 10 and balanced and unweighted classes.

#

# ##### Logistic regression

# With Logistic regression I went with both the L1 and L2 loss functions, a C-value of either 1 or 10 and balanced and unweighted classes.

#

# ##### Decision tree

# For the Decision tree, I ran with 0.25, 0.5 and 0.75 max features combined with a max depth of either 1, 2 or 3.

#

# ##### Bagging, Boosting and Random Forest

# All these will run on baisc settings with 1000 estimators.

#

# For all algorithms, I will use the skLearn implementations combined with the gridsearch function for those for whom it may help.

# Running the models gave no complications as my macbook pro was sufficiently fast to run them. It was however hard to find a model which gave a satisfying F1-score, but this will be discussed in more detail in the Results part of this report.

#

# #### Complications when running the algorithm

# The most difficult part of this project was preprocessing the data into a file that we could use. As loans run through time, have different length and different loan amounts and start/end dates, the data can't be simply fed to algorithms.

# I also had to choose whether or not to use past missed payments in the classifier. I ended up choosing not to (as then it won't be usefull in an onboarding setting) leaving somewhat troublesome model performance.

# ## IV. Results

#

# ### Model Evaluation and Validation

#

# Below is a table which shows both the F1-score for our models. We see that the F1-score is generally low. To deduce these scores, I have done several different tran/test combinations. In all of these combinations, the best score was 0.67 for the F1-score.

#

# | Model | Config | Features | Score |

# | ----- | ------- | -------- | ------|

# | Naive Bayes | Gaussian/default | all | 0.44 |

# | SVM | {'kernel': 'rbf', 'C': 1, 'class_weight': 'balanced'} | Startyear client, Duration, payments | 0.67 |

# | Logistic Regression | 'C': 1, 'class_weight': 'balanced', 'penalty': 'l2' | Amount, Payments, Startyear Client, Balance | 0.39|

# | Decision Tree | {'max_depth': 3, 'max_features': 0.75} | Amount, Balance | 0.33|

# | Bagging | base_estimatior: Cart, n_estimators: 1000 | all | 0.4 |

# | AdaBoost | n_estimators: 1000 | all | 0.5 |

# | Random Forest | n_estimators: 1000 | all | 0.4 |

#

# As our SVM seems to perfom the best, we choose to use it as our final model. In other checks, we found it to be a quite robust method, performing well in combination with the gridsearch function for various subsets.

#

# We chose the SVM with a radial basis function as kernel and a simple C parameter (the misclassification/simplicity tradeoff) of value 1. This combined with balanced class weights (due to skewness) provides a very solid model.

#

# The combination of both training and testing sets gives us a robust model, which I have tested on different splits. The score of only 0.67 makes the results not trustwordy enough to use in production. We do however have a low benchmark (no machine learning based predictions are often done by banks). Therefore, we may even say it is acceptable (but far from optimal).

#

# I would have liked a better model and this sure is work for future improvement. More data and more customers would cetrainly help in this case.

#

# ### Justification

#

# Our simple SVM outperforms the Naive Bayes classifier with an F1-score of 0.67 vs 0.44. This means that it has a significant improvement over the other model and we can therefore justify changing to an SVM implementation.

# ## V. Conclusion

#

# ### Free-Form Visualization

# Our final question is whether we can predict if running loans will get into trouble. Remember that we did not use past payment issues as predictor, loans which lack this information (newly formed) can then not be correctly classified. Therefore, we visualize how we classify running loans, based on their past debt.

# We see that this is not very good. No loans with past debt are classified correctly.

#

# <img src="images/freeform.png" alt="Drawing" style="width: 400px;"/>

#

#

# ### Reflection

# I started this project hoping to find payment issues using network properties. I found however that the data (or any data openly available) was not sufficient to provide me with both a loan/transactional dataset and interesting relationships between parties. Therefore I settled with a more traditional project.

# The research question *Using a customer's properties and transactional behaviour, can we predict whether or not he/she is able to pay future loan payments?* has shown difficult to answer with the given dataset.

# A lot of work has gone into manipulating data on the start of the project. Therefore, the data crunching was maybe on the heavy side and I could have spent more time in machine learning. But it was good to go through all the steps in Python for a change (our default language is R) and see where it may be more suitable than our default goto tools.

# The machine learning part was therefore somewhat straightforward, different models were tested but none seemed to be a very good predictor. For this, we may need more samples and features.

# The final model is somewhat dissapointing, but it is a good example of default machine learning not working for all problems.

#

# ### Improvement

# The model can be improved in several ways. The data can be cut up in a different way to generate other features. We can take into account the skewness better by either building a skewed training set or generating more samples (with bootstraps, autoencoders or a GAN). This may improve the model and provide us a better prediction for running loans.

# In the end, the model would benefit from more personal data, often not disclosed in public datasets which better captivates properties of clients.

#

| Report.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# + colab={"base_uri": "https://localhost:8080/", "height": 119} id="WJykK-B_rnbw" outputId="21a203e4-7daf-4a98-cbc1-9d70da3190b7"

# Install TensorFlow

# # !pip install -q tensorflow-gpu==2.0.0-beta1

try:

# %tensorflow_version 2.x # Colab only.

except Exception:

pass

import tensorflow as tf

print(tf.__version__)

# + id="DkR-FgUGrt3f"

from tensorflow.keras.layers import Input, Dense

from tensorflow.keras.models import Model

from tensorflow.keras.optimizers import SGD, Adam

import numpy as np

import pandas as pd

import matplotlib.pyplot as plt

# + colab={"base_uri": "https://localhost:8080/", "height": 265} id="NBgpKjxXsBZn" outputId="f5026231-3dec-4517-d6bc-3de52d85b707"

# make the original data

series = np.sin(0.1*np.arange(200)) #+ np.random.randn(200)*0.1

# plot it

plt.plot(series)

plt.show()

# + colab={"base_uri": "https://localhost:8080/", "height": 34} id="qnR4MAM9sGF1" outputId="910194bc-9e2d-4c49-8e2b-bc16ed4723ea"

### build the dataset

# let's see if we can use T past values to predict the next value

T = 10

X = []

Y = []

for t in range(len(series) - T):

x = series[t:t+T]

X.append(x)

y = series[t+T]

Y.append(y)

X = np.array(X).reshape(-1, T)

Y = np.array(Y)

N = len(X)

print("X.shape", X.shape, "Y.shape", Y.shape)

# + colab={"base_uri": "https://localhost:8080/", "height": 1000} id="EESqey3TsODi" outputId="6a78666e-2dfd-4808-95be-c4123c799fce"

### try autoregressive linear model

i = Input(shape=(T,))

x = Dense(1)(i)

model = Model(i, x)

model.compile(

loss='mse',

optimizer=Adam(lr=0.1),

)

# train the RNN

r = model.fit(

X[:-N//2], Y[:-N//2],

epochs=80,

validation_data=(X[-N//2:], Y[-N//2:]),

)

# + colab={"base_uri": "https://localhost:8080/", "height": 282} id="2BA0rLqrsw7i" outputId="3cb4fe21-fd26-4979-8b92-764ea3cdc2e4"

# Plot loss per iteration

import matplotlib.pyplot as plt

plt.plot(r.history['loss'], label='loss')

plt.plot(r.history['val_loss'], label='val_loss')

plt.legend()

# + id="DyyEx1Gx4Q7t"

# "Wrong" forecast using true targets

validation_target = Y[-N//2:]

validation_predictions = []

# index of first validation input

i = -N//2

while len(validation_predictions) < len(validation_target):

p = model.predict(X[i].reshape(1, -1))[0,0] # 1x1 array -> scalar

i += 1

# update the predictions list

validation_predictions.append(p)

# + colab={"base_uri": "https://localhost:8080/", "height": 282} id="hb18Dr0O4ec9" outputId="42ab7683-fac0-4760-ea83-5f558e426781"

plt.plot(validation_target, label='forecast target')

plt.plot(validation_predictions, label='forecast prediction')

plt.legend()

# + id="j9Idhr4ss3g_"

# Forecast future values (use only self-predictions for making future predictions)

validation_target = Y[-N//2:]

validation_predictions = []

# first validation input

last_x = X[-N//2] # 1-D array of length T

while len(validation_predictions) < len(validation_target):

p = model.predict(last_x.reshape(1, -1))[0,0] # 1x1 array -> scalar

# update the predictions list

validation_predictions.append(p)

# make the new input

last_x = np.roll(last_x, -1)

last_x[-1] = p

# + colab={"base_uri": "https://localhost:8080/", "height": 282} id="i0QEZgwV3WPI" outputId="910810ee-6f5a-4d5d-d719-00824da69a6b"

plt.plot(validation_target, label='forecast target')

plt.plot(validation_predictions, label='forecast prediction')

plt.legend()

| 4. Time Series/AZ/Misc/TF2_0_Autoregressive_Model.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# # Search for AIMA 4th edition

#

# Implementation of search algorithms and search problems for AIMA.

#

# # Problems and Nodes

#

# We start by defining the abstract class for a `Problem`; specific problem domains will subclass this. To make it easier for algorithms that use a heuristic evaluation function, `Problem` has a default `h` function (uniformly zero), and subclasses can define their own default `h` function.

#

# We also define a `Node` in a search tree, and some functions on nodes: `expand` to generate successors; `path_actions` and `path_states` to recover aspects of the path from the node.

# +

# %matplotlib inline

import matplotlib.pyplot as plt

import random

import heapq

import math

import sys

from collections import defaultdict, deque, Counter

from itertools import combinations

class Problem(object):

"""The abstract class for a formal problem. A new domain subclasses this,

overriding `actions` and `results`, and perhaps other methods.

The default heuristic is 0 and the default action cost is 1 for all states.

When you create an instance of a subclass, specify `initial`, and `goal` states

(or give an `is_goal` method) and perhaps other keyword args for the subclass."""

def __init__(self, initial=None, goal=None, **kwds):

self.__dict__.update(initial=initial, goal=goal, **kwds)

def actions(self, state): raise NotImplementedError

def result(self, state, action): raise NotImplementedError

def is_goal(self, state): return state == self.goal

def action_cost(self, s, a, s1): return 1

def h(self, node): return 0

def __str__(self):

return '{}({!r}, {!r})'.format(

type(self).__name__, self.initial, self.goal)

class Node:

"A Node in a search tree."

def __init__(self, state, parent=None, action=None, path_cost=0):

self.__dict__.update(state=state, parent=parent, action=action, path_cost=path_cost)

def __repr__(self): return '<{}>'.format(self.state)

def __len__(self): return 0 if self.parent is None else (1 + len(self.parent))

def __lt__(self, other): return self.path_cost < other.path_cost

failure = Node('failure', path_cost=math.inf) # Indicates an algorithm couldn't find a solution.

cutoff = Node('cutoff', path_cost=math.inf) # Indicates iterative deepening search was cut off.

def expand(problem, node):

"Expand a node, generating the children nodes."

s = node.state

for action in problem.actions(s):

s1 = problem.result(s, action)

cost = node.path_cost + problem.action_cost(s, action, s1)

yield Node(s1, node, action, cost)

def path_actions(node):

"The sequence of actions to get to this node."

if node.parent is None:

return []

return path_actions(node.parent) + [node.action]

def path_states(node):

"The sequence of states to get to this node."

if node in (cutoff, failure, None):

return []

return path_states(node.parent) + [node.state]

# -

# # Queues

#

# First-in-first-out and Last-in-first-out queues, and a `PriorityQueue`, which allows you to keep a collection of items, and continually remove from it the item with minimum `f(item)` score.

# +

FIFOQueue = deque

LIFOQueue = list

class PriorityQueue:

"""A queue in which the item with minimum f(item) is always popped first."""

def __init__(self, items=(), key=lambda x: x):

self.key = key

self.items = [] # a heap of (score, item) pairs

for item in items:

self.add(item)

def add(self, item):

"""Add item to the queuez."""

pair = (self.key(item), item)

heapq.heappush(self.items, pair)

def pop(self):

"""Pop and return the item with min f(item) value."""

return heapq.heappop(self.items)[1]

def top(self): return self.items[0][1]

def __len__(self): return len(self.items)

# -

# # Search Algorithms: Best-First

#

# Best-first search with various *f(n)* functions gives us different search algorithms. Note that A\*, weighted A\* and greedy search can be given a heuristic function, `h`, but if `h` is not supplied they use the problem's default `h` function (if the problem does not define one, it is taken as *h(n)* = 0).

# +

def best_first_search(problem, f):

"Search nodes with minimum f(node) value first."

node = Node(problem.initial)

frontier = PriorityQueue([node], key=f)

reached = {problem.initial: node}

while frontier:

node = frontier.pop()

if problem.is_goal(node.state):

return node

for child in expand(problem, node):

s = child.state

if s not in reached or child.path_cost < reached[s].path_cost:

reached[s] = child

frontier.add(child)

return failure

def best_first_tree_search(problem, f):

"A version of best_first_search without the `reached` table."

frontier = PriorityQueue([Node(problem.initial)], key=f)

while frontier:

node = frontier.pop()

if problem.is_goal(node.state):

return node

for child in expand(problem, node):

if not is_cycle(child):

frontier.add(child)

return failure

def g(n): return n.path_cost

def astar_search(problem, h=None):

"""Search nodes with minimum f(n) = g(n) + h(n)."""

h = h or problem.h

return best_first_search(problem, f=lambda n: g(n) + h(n))

def astar_tree_search(problem, h=None):

"""Search nodes with minimum f(n) = g(n) + h(n), with no `reached` table."""

h = h or problem.h

return best_first_tree_search(problem, f=lambda n: g(n) + h(n))

def weighted_astar_search(problem, h=None, weight=1.4):

"""Search nodes with minimum f(n) = g(n) + weight * h(n)."""

h = h or problem.h

return best_first_search(problem, f=lambda n: g(n) + weight * h(n))

def greedy_bfs(problem, h=None):

"""Search nodes with minimum h(n)."""

h = h or problem.h

return best_first_search(problem, f=h)

def uniform_cost_search(problem):

"Search nodes with minimum path cost first."

return best_first_search(problem, f=g)

def breadth_first_bfs(problem):

"Search shallowest nodes in the search tree first; using best-first."

return best_first_search(problem, f=len)

def depth_first_bfs(problem):

"Search deepest nodes in the search tree first; using best-first."

return best_first_search(problem, f=lambda n: -len(n))

def is_cycle(node, k=30):

"Does this node form a cycle of length k or less?"

def find_cycle(ancestor, k):

return (ancestor is not None and k > 0 and

(ancestor.state == node.state or find_cycle(ancestor.parent, k - 1)))

return find_cycle(node.parent, k)

# -

# # Other Search Algorithms

#

# Here are the other search algorithms:

# +

def breadth_first_search(problem):

"Search shallowest nodes in the search tree first."

node = Node(problem.initial)

if problem.is_goal(problem.initial):

return node

frontier = FIFOQueue([node])

reached = {problem.initial}

while frontier:

node = frontier.pop()

for child in expand(problem, node):

s = child.state

if problem.is_goal(s):

return child

if s not in reached:

reached.add(s)

frontier.appendleft(child)

return failure

def iterative_deepening_search(problem):

"Do depth-limited search with increasing depth limits."

for limit in range(1, sys.maxsize):

result = depth_limited_search(problem, limit)

if result != cutoff:

return result

def depth_limited_search(problem, limit=10):

"Search deepest nodes in the search tree first."

frontier = LIFOQueue([Node(problem.initial)])

result = failure

while frontier:

node = frontier.pop()

if problem.is_goal(node.state):

return node

elif len(node) >= limit:

result = cutoff

elif not is_cycle(node):

for child in expand(problem, node):

frontier.append(child)

return result

def depth_first_recursive_search(problem, node=None):

if node is None:

node = Node(problem.initial)

if problem.is_goal(node.state):

return node

elif is_cycle(node):

return failure

else:

for child in expand(problem, node):

result = depth_first_recursive_search(problem, child)

if result:

return result

return failure

# -

path_states(depth_first_recursive_search(r2))

# # Bidirectional Best-First Search

# +

def bidirectional_best_first_search(problem_f, f_f, problem_b, f_b, terminated):

node_f = Node(problem_f.initial)

node_b = Node(problem_f.goal)

frontier_f, reached_f = PriorityQueue([node_f], key=f_f), {node_f.state: node_f}

frontier_b, reached_b = PriorityQueue([node_b], key=f_b), {node_b.state: node_b}

solution = failure

while frontier_f and frontier_b and not terminated(solution, frontier_f, frontier_b):

def S1(node, f):

return str(int(f(node))) + ' ' + str(path_states(node))

print('Bi:', S1(frontier_f.top(), f_f), S1(frontier_b.top(), f_b))

if f_f(frontier_f.top()) < f_b(frontier_b.top()):

solution = proceed('f', problem_f, frontier_f, reached_f, reached_b, solution)

else:

solution = proceed('b', problem_b, frontier_b, reached_b, reached_f, solution)

return solution

def inverse_problem(problem):

if isinstance(problem, CountCalls):

return CountCalls(inverse_problem(problem._object))

else:

inv = copy.copy(problem)

inv.initial, inv.goal = inv.goal, inv.initial

return inv

# +

def bidirectional_uniform_cost_search(problem_f):

def terminated(solution, frontier_f, frontier_b):

n_f, n_b = frontier_f.top(), frontier_b.top()

return g(n_f) + g(n_b) > g(solution)

return bidirectional_best_first_search(problem_f, g, inverse_problem(problem_f), g, terminated)

def bidirectional_astar_search(problem_f):

def terminated(solution, frontier_f, frontier_b):

nf, nb = frontier_f.top(), frontier_b.top()

return g(nf) + g(nb) > g(solution)

problem_f = inverse_problem(problem_f)

return bidirectional_best_first_search(problem_f, lambda n: g(n) + problem_f.h(n),

problem_b, lambda n: g(n) + problem_b.h(n),

terminated)

def proceed(direction, problem, frontier, reached, reached2, solution):

node = frontier.pop()

for child in expand(problem, node):

s = child.state

print('proceed', direction, S(child))

if s not in reached or child.path_cost < reached[s].path_cost:

frontier.add(child)

reached[s] = child

if s in reached2: # Frontiers collide; solution found

solution2 = (join_nodes(child, reached2[s]) if direction == 'f' else

join_nodes(reached2[s], child))

#print('solution', path_states(solution2), solution2.path_cost,

# path_states(child), path_states(reached2[s]))

if solution2.path_cost < solution.path_cost:

solution = solution2

return solution

S = path_states

#A-S-R + B-P-R => A-S-R-P + B-P

def join_nodes(nf, nb):

"""Join the reverse of the backward node nb to the forward node nf."""

#print('join', S(nf), S(nb))

join = nf

while nb.parent is not None:

cost = join.path_cost + nb.path_cost - nb.parent.path_cost

join = Node(nb.parent.state, join, nb.action, cost)

nb = nb.parent

#print(' now join', S(join), 'with nb', S(nb), 'parent', S(nb.parent))

return join

# +

#A , B = uniform_cost_search(r1), uniform_cost_search(r2)

#path_states(A), path_states(B)

# +

#path_states(append_nodes(A, B))

# -

# # TODO: RBFS

# # Problem Domains

#

# Now we turn our attention to defining some problem domains as subclasses of `Problem`.

# # Route Finding Problems

#

#

#

# In a `RouteProblem`, the states are names of "cities" (or other locations), like `'A'` for Arad. The actions are also city names; `'Z'` is the action to move to city `'Z'`. The layout of cities is given by a separate data structure, a `Map`, which is a graph where there are vertexes (cities), links between vertexes, distances (costs) of those links (if not specified, the default is 1 for every link), and optionally the 2D (x, y) location of each city can be specified. A `RouteProblem` takes this `Map` as input and allows actions to move between linked cities. The default heuristic is straight-line distance to the goal, or is uniformly zero if locations were not given.

# +

class RouteProblem(Problem):

"""A problem to find a route between locations on a `Map`.

Create a problem with RouteProblem(start, goal, map=Map(...)}).

States are the vertexes in the Map graph; actions are destination states."""

def actions(self, state):

"""The places neighboring `state`."""

return self.map.neighbors[state]

def result(self, state, action):

"""Go to the `action` place, if the map says that is possible."""

return action if action in self.map.neighbors[state] else state

def action_cost(self, s, action, s1):

"""The distance (cost) to go from s to s1."""

return self.map.distances[s, s1]

def h(self, node):

"Straight-line distance between state and the goal."

locs = self.map.locations

return straight_line_distance(locs[node.state], locs[self.goal])

def straight_line_distance(A, B):

"Straight-line distance between two points."

return sum(abs(a - b)**2 for (a, b) in zip(A, B)) ** 0.5

# +

class Map:

"""A map of places in a 2D world: a graph with vertexes and links between them.

In `Map(links, locations)`, `links` can be either [(v1, v2)...] pairs,

or a {(v1, v2): distance...} dict. Optional `locations` can be {v1: (x, y)}

If `directed=False` then for every (v1, v2) link, we add a (v2, v1) link."""

def __init__(self, links, locations=None, directed=False):

if not hasattr(links, 'items'): # Distances are 1 by default

links = {link: 1 for link in links}

if not directed:

for (v1, v2) in list(links):

links[v2, v1] = links[v1, v2]

self.distances = links

self.neighbors = multimap(links)

self.locations = locations or defaultdict(lambda: (0, 0))

def multimap(pairs) -> dict:

"Given (key, val) pairs, make a dict of {key: [val,...]}."

result = defaultdict(list)

for key, val in pairs:

result[key].append(val)

return result

# +

# Some specific RouteProblems

romania = Map(

{('O', 'Z'): 71, ('O', 'S'): 151, ('A', 'Z'): 75, ('A', 'S'): 140, ('A', 'T'): 118,

('L', 'T'): 111, ('L', 'M'): 70, ('D', 'M'): 75, ('C', 'D'): 120, ('C', 'R'): 146,

('C', 'P'): 138, ('R', 'S'): 80, ('F', 'S'): 99, ('B', 'F'): 211, ('B', 'P'): 101,

('B', 'G'): 90, ('B', 'U'): 85, ('H', 'U'): 98, ('E', 'H'): 86, ('U', 'V'): 142,

('I', 'V'): 92, ('I', 'N'): 87, ('P', 'R'): 97},

{'A': ( 76, 497), 'B': (400, 327), 'C': (246, 285), 'D': (160, 296), 'E': (558, 294),

'F': (285, 460), 'G': (368, 257), 'H': (548, 355), 'I': (488, 535), 'L': (162, 379),

'M': (160, 343), 'N': (407, 561), 'O': (117, 580), 'P': (311, 372), 'R': (227, 412),

'S': (187, 463), 'T': ( 83, 414), 'U': (471, 363), 'V': (535, 473), 'Z': (92, 539)})

r0 = RouteProblem('A', 'A', map=romania)

r1 = RouteProblem('A', 'B', map=romania)

r2 = RouteProblem('N', 'L', map=romania)

r3 = RouteProblem('E', 'T', map=romania)

r4 = RouteProblem('O', 'M', map=romania)

# -

path_states(uniform_cost_search(r1)) # Lowest-cost path from Arab to Bucharest

path_states(breadth_first_search(r1)) # Breadth-first: fewer steps, higher path cost

# # Grid Problems

#

# A `GridProblem` involves navigating on a 2D grid, with some cells being impassible obstacles. By default you can move to any of the eight neighboring cells that are not obstacles (but in a problem instance you can supply a `directions=` keyword to change that). Again, the default heuristic is straight-line distance to the goal. States are `(x, y)` cell locations, such as `(4, 2)`, and actions are `(dx, dy)` cell movements, such as `(0, -1)`, which means leave the `x` coordinate alone, and decrement the `y` coordinate by 1.

# +

class GridProblem(Problem):

"""Finding a path on a 2D grid with obstacles. Obstacles are (x, y) cells."""

def __init__(self, initial=(15, 30), goal=(130, 30), obstacles=(), **kwds):

Problem.__init__(self, initial=initial, goal=goal,

obstacles=set(obstacles) - {initial, goal}, **kwds)

directions = [(-1, -1), (0, -1), (1, -1),

(-1, 0), (1, 0),

(-1, +1), (0, +1), (1, +1)]

def action_cost(self, s, action, s1): return straight_line_distance(s, s1)

def h(self, node): return straight_line_distance(node.state, self.goal)

def result(self, state, action):

"Both states and actions are represented by (x, y) pairs."

return action if action not in self.obstacles else state

def actions(self, state):

"""You can move one cell in any of `directions` to a non-obstacle cell."""

x, y = state

return {(x + dx, y + dy) for (dx, dy) in self.directions} - self.obstacles

class ErraticVacuum(Problem):

def actions(self, state):

return ['suck', 'forward', 'backward']

def results(self, state, action): return self.table[action][state]

table = dict(suck= {1:{5,7}, 2:{4,8}, 3:{7}, 4:{2,4}, 5:{1,5}, 6:{8}, 7:{3,7}, 8:{6,8}},

forward= {1:{2}, 2:{2}, 3:{4}, 4:{4}, 5:{6}, 6:{6}, 7:{8}, 8:{8}},

backward={1:{1}, 2:{1}, 3:{3}, 4:{3}, 5:{5}, 6:{5}, 7:{7}, 8:{7}})

# +

# Some grid routing problems

# The following can be used to create obstacles:

def random_lines(X=range(15, 130), Y=range(60), N=150, lengths=range(6, 12)):

"""The set of cells in N random lines of the given lengths."""

result = set()

for _ in range(N):

x, y = random.choice(X), random.choice(Y)

dx, dy = random.choice(((0, 1), (1, 0)))

result |= line(x, y, dx, dy, random.choice(lengths))

return result

def line(x, y, dx, dy, length):

"""A line of `length` cells starting at (x, y) and going in (dx, dy) direction."""

return {(x + i * dx, y + i * dy) for i in range(length)}

random.seed(42) # To make this reproducible

frame = line(-10, 20, 0, 1, 20) | line(150, 20, 0, 1, 20)

cup = line(102, 44, -1, 0, 15) | line(102, 20, -1, 0, 20) | line(102, 44, 0, -1, 24)

d1 = GridProblem(obstacles=random_lines(N=100) | frame)

d2 = GridProblem(obstacles=random_lines(N=150) | frame)

d3 = GridProblem(obstacles=random_lines(N=200) | frame)

d4 = GridProblem(obstacles=random_lines(N=250) | frame)

d5 = GridProblem(obstacles=random_lines(N=300) | frame)

d6 = GridProblem(obstacles=cup | frame)

d7 = GridProblem(obstacles=cup | frame | line(50, 35, 0, -1, 10) | line(60, 37, 0, -1, 17) | line(70, 31, 0, -1, 19))

# -

# # 8 Puzzle Problems

#

#

#

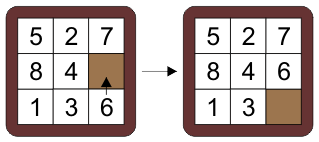

# A sliding tile puzzle where you can swap the blank with an adjacent piece, trying to reach a goal configuration. The cells are numbered 0 to 8, starting at the top left and going row by row left to right. The pieces are numebred 1 to 8, with 0 representing the blank. An action is the cell index number that is to be swapped with the blank (*not* the actual number to be swapped but the index into the state). So the diagram above left is the state `(5, 2, 7, 8, 4, 0, 1, 3, 6)`, and the action is `8`, because the cell number 8 (the 9th or last cell, the `6` in the bottom right) is swapped with the blank.

#

# There are two disjoint sets of states that cannot be reached from each other. One set has an even number of "inversions"; the other has an odd number. An inversion is when a piece in the state is larger than a piece that follows it.

#

#

#

# +

class EightPuzzle(Problem):

""" The problem of sliding tiles numbered from 1 to 8 on a 3x3 board,

where one of the squares is a blank, trying to reach a goal configuration.

A board state is represented as a tuple of length 9, where the element at index i

represents the tile number at index i, or 0 if for the empty square, e.g. the goal:

1 2 3

4 5 6 ==> (1, 2, 3, 4, 5, 6, 7, 8, 0)

7 8 _

"""

def __init__(self, initial, goal=(0, 1, 2, 3, 4, 5, 6, 7, 8)):

assert inversions(initial) % 2 == inversions(goal) % 2 # Parity check

self.initial, self.goal = initial, goal

def actions(self, state):

"""The indexes of the squares that the blank can move to."""

moves = ((1, 3), (0, 2, 4), (1, 5),

(0, 4, 6), (1, 3, 5, 7), (2, 4, 8),

(3, 7), (4, 6, 8), (7, 5))

blank = state.index(0)

return moves[blank]

def result(self, state, action):

"""Swap the blank with the square numbered `action`."""

s = list(state)

blank = state.index(0)

s[action], s[blank] = s[blank], s[action]

return tuple(s)

def h1(self, node):

"""The misplaced tiles heuristic."""

return hamming_distance(node.state, self.goal)

def h2(self, node):

"""The Manhattan heuristic."""

X = (0, 1, 2, 0, 1, 2, 0, 1, 2)

Y = (0, 0, 0, 1, 1, 1, 2, 2, 2)

return sum(abs(X[s] - X[g]) + abs(Y[s] - Y[g])

for (s, g) in zip(node.state, self.goal) if s != 0)

def h(self, node): return self.h2(node)

def hamming_distance(A, B):

"Number of positions where vectors A and B are different."

return sum(a != b for a, b in zip(A, B))

def inversions(board):

"The number of times a piece is a smaller number than a following piece."

return sum((a > b and a != 0 and b != 0) for (a, b) in combinations(board, 2))

def board8(board, fmt=(3 * '{} {} {}\n')):

"A string representing an 8-puzzle board"

return fmt.format(*board).replace('0', '_')

class Board(defaultdict):

empty = '.'

off = '#'

def __init__(self, board=None, width=8, height=8, to_move=None, **kwds):

if board is not None:

self.update(board)

self.width, self.height = (board.width, board.height)

else:

self.width, self.height = (width, height)

self.to_move = to_move

def __missing__(self, key):

x, y = key

if x < 0 or x >= self.width or y < 0 or y >= self.height:

return self.off

else:

return self.empty

def __repr__(self):

def row(y): return ' '.join(self[x, y] for x in range(self.width))

return '\n'.join(row(y) for y in range(self.height))

def __hash__(self):

return hash(tuple(sorted(self.items()))) + hash(self.to_move)

# +

# Some specific EightPuzzle problems

e1 = EightPuzzle((1, 4, 2, 0, 7, 5, 3, 6, 8))

e2 = EightPuzzle((1, 2, 3, 4, 5, 6, 7, 8, 0))

e3 = EightPuzzle((4, 0, 2, 5, 1, 3, 7, 8, 6))

e4 = EightPuzzle((7, 2, 4, 5, 0, 6, 8, 3, 1))

e5 = EightPuzzle((8, 6, 7, 2, 5, 4, 3, 0, 1))

# +

# Solve an 8 puzzle problem and print out each state

for s in path_states(astar_search(e1)):

print(board8(s))

# -

# # Water Pouring Problems

#

#

#

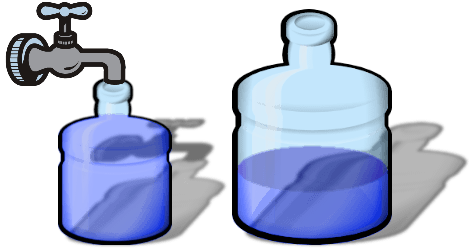

# In a [water pouring problem](https://en.wikipedia.org/wiki/Water_pouring_puzzle) you are given a collection of jugs, each of which has a size (capacity) in, say, litres, and a current level of water (in litres). The goal is to measure out a certain level of water; it can appear in any of the jugs. For example, in the movie *Die Hard 3*, the heroes were faced with the task of making exactly 4 gallons from jugs of size 5 gallons and 3 gallons.) A state is represented by a tuple of current water levels, and the available actions are:

# - `(Fill, i)`: fill the `i`th jug all the way to the top (from a tap with unlimited water).

# - `(Dump, i)`: dump all the water out of the `i`th jug.

# - `(Pour, i, j)`: pour water from the `i`th jug into the `j`th jug until either the jug `i` is empty, or jug `j` is full, whichever comes first.

class PourProblem(Problem):

"""Problem about pouring water between jugs to achieve some water level.

Each state is a tuples of water levels. In the initialization, also provide a tuple of

jug sizes, e.g. PourProblem(initial=(0, 0), goal=4, sizes=(5, 3)),

which means two jugs of sizes 5 and 3, initially both empty, with the goal

of getting a level of 4 in either jug."""

def actions(self, state):

"""The actions executable in this state."""

jugs = range(len(state))

return ([('Fill', i) for i in jugs if state[i] < self.sizes[i]] +

[('Dump', i) for i in jugs if state[i]] +

[('Pour', i, j) for i in jugs if state[i] for j in jugs if i != j])

def result(self, state, action):

"""The state that results from executing this action in this state."""

result = list(state)

act, i, *_ = action

if act == 'Fill': # Fill i to capacity

result[i] = self.sizes[i]

elif act == 'Dump': # Empty i

result[i] = 0

elif act == 'Pour': # Pour from i into j

j = action[2]

amount = min(state[i], self.sizes[j] - state[j])

result[i] -= amount

result[j] += amount

return tuple(result)

def is_goal(self, state):

"""True if the goal level is in any one of the jugs."""

return self.goal in state

# In a `GreenPourProblem`, the states and actions are the same, but instead of all actions costing 1, in these problems the cost of an action is the amount of water that flows from the tap. (There is an issue that non-*Fill* actions have 0 cost, which in general can lead to indefinitely long solutions, but in this problem there is a finite number of states, so we're ok.)

class GreenPourProblem(PourProblem):

"""A PourProblem in which the cost is the amount of water used."""

def action_cost(self, s, action, s1):

"The cost is the amount of water used."

act, i, *_ = action

return self.sizes[i] - s[i] if act == 'Fill' else 0

# +

# Some specific PourProblems

p1 = PourProblem((1, 1, 1), 13, sizes=(2, 16, 32))

p2 = PourProblem((0, 0, 0), 21, sizes=(8, 11, 31))

p3 = PourProblem((0, 0), 8, sizes=(7,9))

p4 = PourProblem((0, 0, 0), 21, sizes=(8, 11, 31))

p5 = PourProblem((0, 0), 4, sizes=(3, 5))

g1 = GreenPourProblem((1, 1, 1), 13, sizes=(2, 16, 32))

g2 = GreenPourProblem((0, 0, 0), 21, sizes=(8, 11, 31))

g3 = GreenPourProblem((0, 0), 8, sizes=(7,9))

g4 = GreenPourProblem((0, 0, 0), 21, sizes=(8, 11, 31))

g5 = GreenPourProblem((0, 0), 4, sizes=(3, 5))

# -

# Solve the PourProblem of getting 13 in some jug, and show the actions and states

soln = breadth_first_search(p1)

path_actions(soln), path_states(soln)

# # Pancake Sorting Problems

#

# Given a stack of pancakes of various sizes, can you sort them into a stack of decreasing sizes, largest on bottom to smallest on top? You have a spatula with which you can flip the top `i` pancakes. This is shown below for `i = 3`; on the top the spatula grabs the first three pancakes; on the bottom we see them flipped:

#

#

#

#

# How many flips will it take to get the whole stack sorted? This is an interesting [problem](https://en.wikipedia.org/wiki/Pancake_sorting) that <NAME> has [written about](https://people.eecs.berkeley.edu/~christos/papers/Bounds%20For%20Sorting%20By%20Prefix%20Reversal.pdf). A reasonable heuristic for this problem is the *gap heuristic*: if we look at neighboring pancakes, if, say, the 2nd smallest is next to the 3rd smallest, that's good; they should stay next to each other. But if the 2nd smallest is next to the 4th smallest, that's bad: we will require at least one move to separate them and insert the 3rd smallest between them. The gap heuristic counts the number of neighbors that have a gap like this. In our specification of the problem, pancakes are ranked by size: the smallest is `1`, the 2nd smallest `2`, and so on, and the representation of a state is a tuple of these rankings, from the top to the bottom pancake. Thus the goal state is always `(1, 2, ..., `*n*`)` and the initial (top) state in the diagram above is `(2, 1, 4, 6, 3, 5)`.

#

class PancakeProblem(Problem):

"""A PancakeProblem the goal is always `tuple(range(1, n+1))`, where the

initial state is a permutation of `range(1, n+1)`. An act is the index `i`

of the top `i` pancakes that will be flipped."""

def __init__(self, initial):

self.initial, self.goal = tuple(initial), tuple(sorted(initial))

def actions(self, state): return range(2, len(state) + 1)

def result(self, state, i): return state[:i][::-1] + state[i:]

def h(self, node):

"The gap heuristic."

s = node.state

return sum(abs(s[i] - s[i - 1]) > 1 for i in range(1, len(s)))

c0 = PancakeProblem((2, 1, 4, 6, 3, 5))

c1 = PancakeProblem((4, 6, 2, 5, 1, 3))

c2 = PancakeProblem((1, 3, 7, 5, 2, 6, 4))

c3 = PancakeProblem((1, 7, 2, 6, 3, 5, 4))

c4 = PancakeProblem((1, 3, 5, 7, 9, 2, 4, 6, 8))

# Solve a pancake problem

path_states(astar_search(c0))

# # Jumping Frogs Puzzle

#

# In this puzzle (which also can be played as a two-player game), the initial state is a line of squares, with N pieces of one kind on the left, then one empty square, then N pieces of another kind on the right. The diagram below uses 2 blue toads and 2 red frogs; we will represent this as the string `'LL.RR'`. The goal is to swap the pieces, arriving at `'RR.LL'`. An `'L'` piece moves left-to-right, either sliding one space ahead to an empty space, or two spaces ahead if that space is empty and if there is an `'R'` in between to hop over. The `'R'` pieces move right-to-left analogously. An action will be an `(i, j)` pair meaning to swap the pieces at those indexes. The set of actions for the N = 2 position below is `{(1, 2), (3, 2)}`, meaning either the blue toad in position 1 or the red frog in position 3 can swap places with the blank in position 2.

#

#

class JumpingPuzzle(Problem):

"""Try to exchange L and R by moving one ahead or hopping two ahead."""

def __init__(self, N=2):

self.initial = N*'L' + '.' + N*'R'

self.goal = self.initial[::-1]

def actions(self, state):

"""Find all possible move or hop moves."""

idxs = range(len(state))

return ({(i, i + 1) for i in idxs if state[i:i+2] == 'L.'} # Slide

|{(i, i + 2) for i in idxs if state[i:i+3] == 'LR.'} # Hop

|{(i + 1, i) for i in idxs if state[i:i+2] == '.R'} # Slide

|{(i + 2, i) for i in idxs if state[i:i+3] == '.LR'}) # Hop

def result(self, state, action):

"""An action (i, j) means swap the pieces at positions i and j."""

i, j = action

result = list(state)

result[i], result[j] = state[j], state[i]

return ''.join(result)

def h(self, node): return hamming_distance(node.state, self.goal)

JumpingPuzzle(N=2).actions('LL.RR')

j3 = JumpingPuzzle(N=3)

j9 = JumpingPuzzle(N=9)

path_states(astar_search(j3))

# # Reporting Summary Statistics on Search Algorithms

#

# Now let's gather some metrics on how well each algorithm does. We'll use `CountCalls` to wrap a `Problem` object in such a way that calls to its methods are delegated to the original problem, but each call increments a counter. Once we've solved the problem, we print out summary statistics.

# +

class CountCalls:

"""Delegate all attribute gets to the object, and count them in ._counts"""

def __init__(self, obj):

self._object = obj

self._counts = Counter()

def __getattr__(self, attr):

"Delegate to the original object, after incrementing a counter."

self._counts[attr] += 1

return getattr(self._object, attr)

def report(searchers, problems, verbose=True):

"""Show summary statistics for each searcher (and on each problem unless verbose is false)."""

for searcher in searchers:

print(searcher.__name__ + ':')

total_counts = Counter()

for p in problems:

prob = CountCalls(p)

soln = searcher(prob)

counts = prob._counts;

counts.update(actions=len(soln), cost=soln.path_cost)

total_counts += counts

if verbose: report_counts(counts, str(p)[:40])

report_counts(total_counts, 'TOTAL\n')

def report_counts(counts, name):

"""Print one line of the counts report."""

print('{:9,d} nodes |{:9,d} goal |{:5.0f} cost |{:8,d} actions | {}'.format(

counts['result'], counts['is_goal'], counts['cost'], counts['actions'], name))

# -

# Here's a tiny report for uniform-cost search on the jug pouring problems:

report([uniform_cost_search], [p1, p2, p3, p4, p5])

report((uniform_cost_search, breadth_first_search),

(p1, g1, p2, g2, p3, g3, p4, g4, p4, g4, c1, c2, c3))

# # Comparing heuristics

#

# First, let's look at the eight puzzle problems, and compare three different heuristics the Manhattan heuristic, the less informative misplaced tiles heuristic, and the uninformed (i.e. *h* = 0) breadth-first search:

# +

def astar_misplaced_tiles(problem): return astar_search(problem, h=problem.h1)

report([breadth_first_search, astar_misplaced_tiles, astar_search],

[e1, e2, e3, e4, e5])

# -

# We see that all three algorithms get cost-optimal solutions, but the better the heuristic, the fewer nodes explored.

# Compared to the uninformed search, the misplaced tiles heuristic explores about 1/4 the number of nodes, and the Manhattan heuristic needs just 2%.

#

# Next, we can show the value of the gap heuristic for pancake sorting problems:

report([astar_search, uniform_cost_search], [c1, c2, c3, c4])

# We need to explore 300 times more nodes without the heuristic.

#

# # Comparing graph search and tree search

#

# Keeping the *reached* table in `best_first_search` allows us to do a graph search, where we notice when we reach a state by two different paths, rather than a tree search, where we have duplicated effort. The *reached* table consumes space and also saves time. How much time? In part it depends on how good the heuristics are at focusing the search. Below we show that on some pancake and eight puzzle problems, the tree search expands roughly twice as many nodes (and thus takes roughly twice as much time):

report([astar_search, astar_tree_search], [e1, e2, e3, e4, r1, r2, r3, r4])

# # Comparing different weighted search values

#

# Below we report on problems using these four algorithms:

#

# |Algorithm|*f*|Optimality|

# |:---------|---:|:----------:|

# |Greedy best-first search | *f = h*|nonoptimal|

# |Extra weighted A* search | *f = g + 2 × h*|nonoptimal|

# |Weighted A* search | *f = g + 1.4 × h*|nonoptimal|

# |A* search | *f = g + h*|optimal|

# |Uniform-cost search | *f = g*|optimal|

#

# We will see that greedy best-first search (which ranks nodes solely by the heuristic) explores the fewest number of nodes, but has the highest path costs. Weighted A* search explores twice as many nodes (on this problem set) but gets 10% better path costs. A* is optimal, but explores more nodes, and uniform-cost is also optimal, but explores an order of magnitude more nodes.

# +

def extra_weighted_astar_search(problem): return weighted_astar_search(problem, weight=2)

report((greedy_bfs, extra_weighted_astar_search, weighted_astar_search, astar_search, uniform_cost_search),

(r0, r1, r2, r3, r4, e1, d1, d2, j9, e2, d3, d4, d6, d7, e3, e4))

# -

# We see that greedy search expands the fewest nodes, but has the highest path costs. In contrast, A\* gets optimal path costs, but expands 4 or 5 times more nodes. Weighted A* is a good compromise, using half the compute time as A\*, and achieving path costs within 1% or 2% of optimal. Uniform-cost is optimal, but is an order of magnitude slower than A\*.

#

# # Comparing many search algorithms

#

# Finally, we compare a host of algorihms (even the slow ones) on some of the easier problems:

report((astar_search, uniform_cost_search, breadth_first_search, breadth_first_bfs,

iterative_deepening_search, depth_limited_search, greedy_bfs,

weighted_astar_search, extra_weighted_astar_search),

(p1, g1, p2, g2, p3, g3, p4, g4, r0, r1, r2, r3, r4, e1))

# This confirms some of the things we already knew: A* and uniform-cost search are optimal, but the others are not. A* explores fewer nodes than uniform-cost.

# # Visualizing Reached States

#

# I would like to draw a picture of the state space, marking the states that have been reached by the search.

# Unfortunately, the *reached* variable is inaccessible inside `best_first_search`, so I will define a new version of `best_first_search` that is identical except that it declares *reached* to be `global`. I can then define `plot_grid_problem` to plot the obstacles of a `GridProblem`, along with the initial and goal states, the solution path, and the states reached during a search.

# +

def best_first_search(problem, f):

"Search nodes with minimum f(node) value first."

global reached # <<<<<<<<<<< Only change here

node = Node(problem.initial)

frontier = PriorityQueue([node], key=f)

reached = {problem.initial: node}

while frontier:

node = frontier.pop()

if problem.is_goal(node.state):

return node

for child in expand(problem, node):

s = child.state

if s not in reached or child.path_cost < reached[s].path_cost:

reached[s] = child

frontier.add(child)

return failure

def plot_grid_problem(grid, solution, reached=(), title='Search', show=True):

"Use matplotlib to plot the grid, obstacles, solution, and reached."

reached = list(reached)

plt.figure(figsize=(16, 10))

plt.axis('off'); plt.axis('equal')

plt.scatter(*transpose(grid.obstacles), marker='s', color='darkgrey')

plt.scatter(*transpose(reached), 1**2, marker='.', c='blue')

plt.scatter(*transpose(path_states(solution)), marker='s', c='blue')

plt.scatter(*transpose([grid.initial]), 9**2, marker='D', c='green')

plt.scatter(*transpose([grid.goal]), 9**2, marker='8', c='red')

if show: plt.show()

print('{} {} search: {:.1f} path cost, {:,d} states reached'

.format(' ' * 10, title, solution.path_cost, len(reached)))

def plots(grid, weights=(1.4, 2)):

"""Plot the results of 4 heuristic search algorithms for this grid."""

solution = astar_search(grid)

plot_grid_problem(grid, solution, reached, 'A* search')

for weight in weights:

solution = weighted_astar_search(grid, weight=weight)

plot_grid_problem(grid, solution, reached, '(b) Weighted ({}) A* search'.format(weight))

solution = greedy_bfs(grid)

plot_grid_problem(grid, solution, reached, 'Greedy best-first search')

def transpose(matrix): return list(zip(*matrix))

# -

plots(d3)

plots(d4)

# # The cost of weighted A* search

#

# Now I want to try a much simpler grid problem, `d6`, with only a few obstacles. We see that A* finds the optimal path, skirting below the obstacles. Weighterd A* with a weight of 1.4 finds the same optimal path while exploring only 1/3 the number of states. But weighted A* with weight 2 takes the slightly longer path above the obstacles, because that path allowed it to stay closer to the goal in straight-line distance, which it over-weights. And greedy best-first search has a bad showing, not deviating from its path towards the goal until it is almost inside the cup made by the obstacles.

plots(d6)

# In the next problem, `d7`, we see a similar story. the optimal path found by A*, and we see that again weighted A* with weight 1.4 does great and with weight 2 ends up erroneously going below the first two barriers, and then makes another mistake by reversing direction back towards the goal and passing above the third barrier. Again, greedy best-first makes bad decisions all around.

plots(d7)

# # Nondeterministic Actions

#

# To handle problems with nondeterministic problems, we'll replace the `result` method with `results`, which returns a collection of possible result states. We'll represent the solution to a problem not with a `Node`, but with a plan that consist of two types of component: sequences of actions, like `['forward', 'suck']`, and condition actions, like

# `{5: ['forward', 'suck'], 7: []}`, which says that if we end up in state 5, then do `['forward', 'suck']`, but if we end up in state 7, then do the empty sequence of actions.

# +

def and_or_search(problem):

"Find a plan for a problem that has nondterministic actions."

return or_search(problem, problem.initial, [])

def or_search(problem, state, path):

"Find a sequence of actions to reach goal from state, without repeating states on path."

if problem.is_goal(state): return []

if state in path: return failure # check for loops

for action in problem.actions(state):

plan = and_search(problem, problem.results(state, action), [state] + path)

if plan != failure:

return [action] + plan

return failure

def and_search(problem, states, path):

"Plan for each of the possible states we might end up in."

if len(states) == 1:

return or_search(problem, next(iter(states)), path)

plan = {}

for s in states:

plan[s] = or_search(problem, s, path)

if plan[s] == failure: return failure

return [plan]

# +

class MultiGoalProblem(Problem):

"""A version of `Problem` with a colllection of `goals` instead of one `goal`."""

def __init__(self, initial=None, goals=(), **kwds):

self.__dict__.update(initial=initial, goals=goals, **kwds)

def is_goal(self, state): return state in self.goals

class ErraticVacuum(MultiGoalProblem):

"""In this 2-location vacuum problem, the suck action in a dirty square will either clean up that square,

or clean up both squares. A suck action in a clean square will either do nothing, or

will deposit dirt in that square. Forward and backward actions are deterministic."""

def actions(self, state):

return ['suck', 'forward', 'backward']

def results(self, state, action): return self.table[action][state]

table = {'suck':{1:{5,7}, 2:{4,8}, 3:{7}, 4:{2,4}, 5:{1,5}, 6:{8}, 7:{3,7}, 8:{6,8}},

'forward': {1:{2}, 2:{2}, 3:{4}, 4:{4}, 5:{6}, 6:{6}, 7:{8}, 8:{8}},

'backward': {1:{1}, 2:{1}, 3:{3}, 4:{3}, 5:{5}, 6:{5}, 7:{7}, 8:{7}}}

# -

# Let's find a plan to get from state 1 to the goal of no dirt (states 7 or 8):

and_or_search(ErraticVacuum(1, {7, 8}))

# This plan says "First suck, and if we end up in state 5, go forward and suck again; if we end up in state 7, do nothing because that is a goal."

#

# Here are the plans to get to a goal state starting from any one of the 8 states:

{s: and_or_search(ErraticVacuum(s, {7,8}))

for s in range(1, 9)}

# # Comparing Algorithms on EightPuzzle Problems of Different Lengths

# +

from functools import lru_cache

def build_table(table, depth, state, problem):

if depth > 0 and state not in table:

problem.initial = state

table[state] = len(astar_search(problem))

for a in problem.actions(state):

build_table(table, depth - 1, problem.result(state, a), problem)

return table

def invert_table(table):

result = defaultdict(list)

for key, val in table.items():

result[val].append(key)

return result

goal = (0, 1, 2, 3, 4, 5, 6, 7, 8)

table8 = invert_table(build_table({}, 25, goal, EightPuzzle(goal)))

# +

def report8(table8, M, Ds=range(2, 25, 2), searchers=(breadth_first_search, astar_misplaced_tiles, astar_search)):

"Make a table of average nodes generated and effective branching factor"

for d in Ds:

line = [d]

N = min(M, len(table8[d]))

states = random.sample(table8[d], N)

for searcher in searchers:

nodes = 0

for s in states:

problem = CountCalls(EightPuzzle(s))

searcher(problem)

nodes += problem._counts['result']

nodes = int(round(nodes/N))

line.append(nodes)

line.extend([ebf(d, n) for n in line[1:]])

print('{:2} & {:6} & {:5} & {:5} && {:.2f} & {:.2f} & {:.2f}'

.format(*line))

def ebf(d, N, possible_bs=[b/100 for b in range(100, 300)]):

"Effective Branching Factor"

return min(possible_bs, key=lambda b: abs(N - sum(b**i for i in range(1, d+1))))

def edepth_reduction(d, N, b=2.67):

from statistics import mean

def random_state():

x = list(range(9))

random.shuffle(x)

return tuple(x)

meanbf = mean(len(e3.actions(random_state())) for _ in range(10000))

meanbf

# -

{n: len(v) for (n, v) in table30.items()}

# %time table30 = invert_table(build_table({}, 30, goal, EightPuzzle(goal)))

# %time report8(table30, 20, range(26, 31, 2))

# %time report8(table30, 20, range(26, 31, 2))

# +

from itertools import combinations

from statistics import median, mean

# Detour index for Romania

L = romania.locations

def ratio(a, b): return astar_search(RouteProblem(a, b, map=romania)).path_cost / sld(L[a], L[b])

nums = [ratio(a, b) for a,b in combinations(L, 2) if b in r1.actions(a)]

mean(nums), median(nums) # 1.7, 1.6 # 1.26, 1.2 for adjacent cities

# -

sld

| search4e.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# name: python3

# ---

# + id="KU5so-4hURax"

# link colab to google drive directory where this project data is placed

from google.colab import drive

drive.mount('/content/gdrive', force_remount=True)

# + id="sLiADzRYUDtm"

################ Need to set project path here !! #################

projectpath = # "/content/gdrive/MyDrive/GraphAttnProject/SpanTree [with start node]_[walklen=3]_[p=1,q=1]_[num_walks=50]/NIPS_Submission/"

# + id="0yY7zMECTaI5"

import os

os.chdir(projectpath)

os.getcwd()

# + id="lDTFxrCdpk6a"

# ! pip install dgl

import dgl

# + [markdown] id="GhSvd4yHmmaJ"

# # Load data

# + id="xIXBUNULmxtV"

from tqdm.notebook import tqdm, trange

import networkx as nx

import pickle

import numpy as np

import tensorflow as tf

import torch

print(tf.__version__)

# + id="dVBtU5vAm7Ik"

# load all train and validation graphs

train_graphs = pickle.load(open(f'graph_data/train_graphs.pkl', 'rb'))

val_graphs = pickle.load(open(f'graph_data/val_graphs.pkl', 'rb'))

# load all labels

train_labels = np.load('graph_data/train_labels.npy')

val_labels = np.load('graph_data/val_labels.npy')

# + id="i1RHKaJMnKpl"

#################. NEED TO SPECIFY THE RANDOM WALK LENGTH WE WANT TO USE ################

walk_len = 6 # we use GKAT with random walk length of 6 in this code file

# we could also change this parameter to load GKAT kernel generated from random walks with different lengths from 2 to 10.

#########################################################################################

# + id="rzPVFV3lntjd"

# here we load the frequency matriies (we could use this as raw data to do random feature mapping )

train_freq_mat = pickle.load(open(f'graph_data/GKAT_freq_mats_train_len={walk_len}.pkl', 'rb'))

val_freq_mat = pickle.load(open(f'graph_data/GKAT_freq_mats_val_len={walk_len}.pkl', 'rb'))

# + id="ydogONEsntqC"

# here we load the pre-calculated GKAT kernel

train_GKAT_kernel = pickle.load(open(f'graph_data/GKAT_dot_kernels_train_len={walk_len}.pkl', 'rb'))

val_GKAT_kernel = pickle.load(open(f'graph_data/GKAT_dot_kernels_val_len={walk_len}.pkl', 'rb'))

# + id="hcFF2MIuot12"

GKAT_masking = [train_GKAT_kernel, val_GKAT_kernel]

# + id="YRCTLcQdot17"

train_graphs = [ dgl.from_networkx(g) for g in train_graphs]

val_graphs = [ dgl.from_networkx(g) for g in val_graphs]

info = [train_graphs, train_labels, val_graphs, val_labels, GKAT_masking]

# + [markdown] id="X92f3fstqGTC"

# # START Training

# + id="RCTlzoRDhafQ"

import networkx as nx

import matplotlib.pyplot as plt

import time

import random

import torch.nn as nn

import torch.nn.functional as F

import torch.optim as optim

from torch.utils.data import DataLoader

from tqdm.notebook import tqdm, trange

import seaborn as sns

from random import shuffle

from multiprocessing import Pool

import multiprocessing

from functools import partial

from networkx.generators.classic import cycle_graph

import sys

import scipy

import scipy.sparse

#from CodeZip_ST import *

# + id="F-PpelEHvaUF"

from prettytable import PrettyTable

# this function will count the number of parameters in GKAT (will be used later in this code file)

def count_parameters(model):

table = PrettyTable(["Modules", "Parameters"])

total_params = 0

for name, parameter in model.named_parameters():

if not parameter.requires_grad: continue

param = parameter.numel()

table.add_row([name, param])

total_params+=param

print(table)

print(f"Total Trainable Params: {total_params}")

return total_params

# + [markdown] id="sHofaHfFnlmQ"

# # GKAT Testing

# + [markdown] id="1_6nPfVFXe25"

# ## GKAT model

# + id="bRkNVQccXg3U"

# this is the GKAT version adapted from the paper "graph attention networks"

class GKATLayer(nn.Module):

def __init__(self,

in_dim,

out_dim,

feat_drop=0.,

attn_drop=0.,

alpha=0.2,

agg_activation=F.elu):

super(GKATLayer, self).__init__()

self.feat_drop = nn.Dropout(feat_drop)

self.fc = nn.Linear(in_dim, out_dim, bias=False)

#torch.nn.init.xavier_uniform_(self.fc.weight)

#torch.nn.init.zeros_(self.fc.bias)

self.attn_l = nn.Parameter(torch.ones(size=(out_dim, 1)))

self.attn_r = nn.Parameter(torch.ones(size=(out_dim, 1)))

self.attn_drop = nn.Dropout(attn_drop)

self.activation = nn.LeakyReLU(alpha)

self.softmax = nn.Softmax(dim = 1)

self.agg_activation=agg_activation

def clean_data(self):

ndata_names = ['ft', 'a1', 'a2']

edata_names = ['a_drop']

for name in ndata_names:

self.g.ndata.pop(name)

for name in edata_names:

self.g.edata.pop(name)

def forward(self, feat, bg, counting_attn):

self.g = bg

h = self.feat_drop(feat)

head_ft = self.fc(h).reshape((h.shape[0], -1))

a1 = torch.mm(head_ft, self.attn_l) # V x 1

a2 = torch.mm(head_ft, self.attn_r) # V x 1

a = self.attn_drop(a1 + a2.transpose(0, 1))

a = self.activation(a)

maxes = torch.max(a, 1, keepdim=True)[0]

a_ = a - maxes # we could subtract max to make the attention matrix bounded. (not feasible for random feature mapping decomposition)

a_nomi = torch.mul(torch.exp(a_), counting_attn.float())

a_deno = torch.sum(a_nomi, 1, keepdim=True)

a_nor = a_nomi/(a_deno+1e-9)

ret = torch.mm(a_nor, head_ft)

if self.agg_activation is not None:

ret = self.agg_activation(ret)

return ret

# this is the GKAT version adapted from the paper "attention is all you need"

class GKATLayer(nn.Module):

def __init__(self, in_dim, out_dim, feat_drop=0., attn_drop=0., alpha=0.2, agg_activation=F.elu):

super(GKATLayer, self).__init__()

self.feat_drop = feat_drop #nn.Dropout(feat_drop, training=self.training)

self.attn_drop = attn_drop #nn.Dropout(attn_drop)

self.fc_Q = nn.Linear(in_dim, out_dim, bias=False)

self.fc_K = nn.Linear(in_dim, out_dim, bias=False)

self.fc_V = nn.Linear(in_dim, out_dim, bias=False)

self.softmax = nn.Softmax(dim = 1)

self.agg_activation=agg_activation

def forward(self, feat, bg, counting_attn):

h = F.dropout(feat, p=self.feat_drop, training=self.training)

Q = self.fc_Q(h).reshape((h.shape[0], -1))

K = self.fc_K(h).reshape((h.shape[0], -1))

V = self.fc_V(h).reshape((h.shape[0], -1))

logits = F.dropout( torch.matmul( Q, torch.transpose(K,0,1) ) , p=self.attn_drop, training=self.training) / np.sqrt(Q.shape[1])

maxes = torch.max(logits, 1, keepdim=True)[0]

logits = logits - maxes

a_nomi = torch.mul(torch.exp( logits ), counting_attn.float())

a_deno = torch.sum(a_nomi, 1, keepdim=True)

a_nor = a_nomi/(a_deno+1e-9)

ret = torch.mm(a_nor, V)

if self.agg_activation is not None:

ret = self.agg_activation(ret)

return ret