code stringlengths 38 801k | repo_path stringlengths 6 263 |

|---|---|

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# name: python3

# ---

# + id="LI72-IKKcVZ6"

# We will import our libraries

import numpy as np

import pandas as pd

import seaborn as sns

import matplotlib.pyplot as plt

# %matplotlib inline

# + colab={"resources": {"http://localhost:8080/nbextensions/google.colab/files.js": {"data": "<KEY>", "ok": true, "headers": [["content-type", "application/javascript"]], "status": 200, "status_text": ""}}, "base_uri": "https://localhost:8080/", "height": 73} id="mt3ee4dUco_I" outputId="2d6c7426-e0ed-48db-cc2b-20ad4896a42d"

from google.colab import files

uploaded = files.upload()

# + id="aiQrN9p3dPWH"

train = pd.read_csv('titanic_train.csv')

# + colab={"base_uri": "https://localhost:8080/", "height": 195} id="Yt2nQDGfdfXp" outputId="6d4f615a-fba2-4914-e3af-836764aff077"

train[:5]

# + colab={"base_uri": "https://localhost:8080/"} id="vuMXPawMdhY2" outputId="cc79cc5e-2331-4331-e8f2-dea59b5e6cac"

train.info()

# + [markdown] id="LG6jFLrUdnYO"

# We can already see that there are some missing values present in the dataset in the columns Age, Cabin, Embarked

# + [markdown] id="IADt0C0Me3SC"

# # EDA

# + colab={"base_uri": "https://localhost:8080/", "height": 442} id="jo38HB1hdm2X" outputId="20f79fac-fe23-4403-c30e-10a254989da1"

# Now, we plot a heatmap to see which all columns have missing vlaues present in them

plt.figure(figsize=(10,6))

sns.heatmap(train.isna(), yticklabels= False, cbar= False, cmap= 'viridis')

# we can see that age column and other Cabin column has many missing values present in them

# + colab={"base_uri": "https://localhost:8080/", "height": 296} id="AeyQ2O1Je5ku" outputId="d8d826c5-5206-4ab5-ce69-ec9720ac5649"

# Number of people survived and number of people not survived in crash

sns.countplot(x='Survived', data = train, palette= 'seismic')

# + colab={"base_uri": "https://localhost:8080/", "height": 296} id="ZdS3SzmJfRHI" outputId="80d2c05d-e6a3-4c86-9a2a-19a9c3d318bb"

# Now we will see same plot for sex/ gender as hue

sns.countplot(x='Survived', data = train, palette= 'seismic', hue='Sex')

# + [markdown] id="IWhBhzELfk73"

# * From this we can see that many male people couldn't survive and almost more than half of the people who were survived in the crash were females

# * From this we can also conclude that there were many males than females

# + colab={"base_uri": "https://localhost:8080/", "height": 298} id="SfQ_rRQsf0Ju" outputId="9c739ee0-a0e6-4f9d-cfe3-b6ab935fcfce"

# Number of male and female in the Titanic

sns.countplot(x='Sex', data= train, palette='seismic')

# + colab={"base_uri": "https://localhost:8080/", "height": 296} id="E1jUbx6xgpdH" outputId="3b5c4c7d-524d-477b-cab1-78083704c756"

# We will see how many of them survived based on Passenger Class

sns.set_style('whitegrid')

sns.countplot(x='Survived', data= train, hue= 'Pclass', palette= 'rainbow_r')

# + [markdown] id="XlmOtMdtheU6"

# * We see that many people of Class 3 couldn't survive as compared to Class 1 and Class 2

# * And we can also see that many Class 1 people were able to survive as compared to Class 2 and Class 3

# * From this, we can also make note that there were many class 3 people in the cruise

# + colab={"base_uri": "https://localhost:8080/", "height": 350} id="sMVF6GG4h01I" outputId="df44afcb-20f2-4aa7-9cac-fbd5bb87a1bb"

# Now we shall look into the distribution of Age feature in the dataset

sns.distplot(train['Age'], kde= False, bins=30, color = 'blue')

# + [markdown] id="Gj8bu8KkitFo"

# * we can see that there are many younger people who cruised the ship and there is some skewness towards the children also

# + colab={"base_uri": "https://localhost:8080/", "height": 351} id="0yxVkwhxir9E" outputId="443ba51b-e320-4198-f636-30ea3892a724"

# Distribution of fare

sns.distplot(train['Fare'], kde= False, color= 'red')

# We see that the average pricing of the fare is around 10 to 100

# + [markdown] id="B2IEBP9CkGpH"

# # Handling Missing Values

# + colab={"base_uri": "https://localhost:8080/", "height": 296} id="JKRZlHVQkB6u" outputId="e13380c4-fffd-44f2-98b2-a3215c83516e"

sns.set_style('white')

sns.boxplot(x='Pclass', y='Age', data=train, palette='Pastel1')

# + [markdown] id="NQ7BxGAdk0uE"

# * we can see that wealtheir people of class 1 and 2 are tned to be more older than Class 3 people present in the ship

# + id="jrBH8iLXk8x6"

def impute_age(cols):

Age= cols[0]

Pclass = cols[1]

if pd.isna(Age):

if Pclass == 1:

return 37

elif Pclass == 2:

return 29

else:

return 24

else:

return Age

# + id="B6xi81aQlU12"

train['Age'] = train[['Age', 'Pclass']].apply(impute_age, axis= 1)

# + colab={"base_uri": "https://localhost:8080/", "height": 333} id="VJlBHVN2lgSy" outputId="b0648915-0b40-4932-d910-ad6d3ff9a6aa"

sns.heatmap(train.isna(), yticklabels=False, cbar=False, cmap='viridis')

# we see that all our age column is now filled and doesn't have any missing values

# + id="5VkcGt47lwZH"

# Now we drop Cabin column since it has more missing values present than the actual data in that column

train.drop('Cabin', axis=1, inplace= True)

# + colab={"base_uri": "https://localhost:8080/", "height": 195} id="m1z6ysYrl_Ds" outputId="f46e5959-b773-4742-ab8d-d0873bce733a"

train[:5]

# + id="CjGJQOLOmD5o"

train.dropna(inplace= True)

# + [markdown] id="3GUXnaJYnOTA"

# # Creating dummy variable of categorical variables

# + colab={"base_uri": "https://localhost:8080/", "height": 195} id="XgBQ3vuynGeK" outputId="dc28f0ed-cc8c-4a2c-9c4d-0bde8d5ad8c8"

sex= pd.get_dummies(train['Sex'], drop_first=True)

sex[:5]

# + colab={"base_uri": "https://localhost:8080/", "height": 195} id="4zSwQdMUnhlV" outputId="6a455f9c-ddf4-482e-ebd0-a4b3038a9062"

embark = pd.get_dummies(train['Embarked'], drop_first=True)

embark[:5]

# + colab={"base_uri": "https://localhost:8080/", "height": 166} id="DGF1wk3Cnu-4" outputId="275feb1e-ef2b-4f1a-c7df-502ee4a0dca5"

train = pd.concat([train, sex, embark], axis=1)

train[:4]

# + colab={"base_uri": "https://localhost:8080/", "height": 195} id="j7hmrA0zn9mv" outputId="fe51c7eb-3e06-4ebf-a432-3fc47605a98e"

train.drop(['Sex','Embarked','Name','Ticket'], axis=1, inplace= True)

train[:5]

# + [markdown] id="PZXk8alaoNNA"

# Now the datset has been completely cleaned and ready for training into a model

# + colab={"base_uri": "https://localhost:8080/", "height": 195} id="Yvg2SEaaoLbN" outputId="bc42194d-286d-4974-9ec0-236902376692"

X = train.drop('Survived', axis =1)

X[:5]

# + colab={"base_uri": "https://localhost:8080/"} id="91knDmPDodMX" outputId="6d02e364-87fd-4c6a-8fd4-eb04dc27a90c"

y= train['Survived']

y[:5]

# + id="asZCnYBGoiQ8"

from sklearn.model_selection import train_test_split

X_train, X_test, y_train, y_test = train_test_split(X,y, test_size = 0.3, random_state= 101)

# + id="JxFkZMYso0iq"

from sklearn.linear_model import LogisticRegression

model = LogisticRegression()

# + colab={"base_uri": "https://localhost:8080/"} id="DpCUPqz5o6Fp" outputId="55c197b5-37f5-48f2-c739-c2ce82777b56"

model.fit(X_train, y_train)

# + colab={"base_uri": "https://localhost:8080/"} id="8S0E56nGo9wC" outputId="2e9a0fe6-bb89-459d-ee6d-47bc65d847b8"

predict = model.predict(X_test)

predict[:5]

# + colab={"base_uri": "https://localhost:8080/"} id="Qe1Qm4bbpLet" outputId="bfa7cf0a-e357-4aef-cccc-54b10dc37c57"

y_test[:5]

# + colab={"base_uri": "https://localhost:8080/"} id="kln02rMjpOfN" outputId="349db638-a365-49db-c5aa-db6f9f28b7b3"

from sklearn.metrics import confusion_matrix, classification_report, log_loss

print(confusion_matrix(y_test, predict))

print(classification_report(y_test, predict))

# + id="8P1e1ntdp01J"

from sklearn.ensemble import RandomForestClassifier

rfc = RandomForestClassifier(n_estimators = 50)

# + colab={"base_uri": "https://localhost:8080/"} id="TiWOire3qBJ-" outputId="8df3b809-dcf5-4bf6-fa0c-26421fbe118b"

rfc.fit(X_train, y_train)

# + id="zPMbCJfCqE15"

pred = rfc.predict(X_test)

# + colab={"base_uri": "https://localhost:8080/"} id="NmH-G2HhqLDJ" outputId="41b194f5-f1d8-4b75-d7a5-6dafcf59cc10"

print(confusion_matrix(y_test, pred))

print(classification_report(y_test, pred))

| Machine Learning/Logistic Regression/Logistic_Regression.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# # Collect calibration Data

import traitlets

import ipywidgets.widgets as widgets

from IPython.display import display

from jetbot import Camera, bgr8_to_jpeg

import os

import time

# Create data folder.

image_folder = "Images/"

if not os.path.exists(image_folder):

os.makedirs(image_folder)

# Save image when camera update the vlaue.

def update(change):

global frame_id, image_folder, image

fname = str(frame_id).zfill(4) + ".jpg"

with open(image_folder+fname, 'wb') as f:

f.write(image.value)

print("\rsave data " + fname, end="")

frame_id += 1

time.sleep(0.05)

# Activate the camera.

camera = Camera.instance(width=960, height=540, capture_width=1280, capture_height=720)

image = widgets.Image(format='jpeg', width=480, height=270) # this width and height doesn't necessarily have to match the camera

camera_link = traitlets.dlink((camera, 'value'), (image, 'value'), transform=bgr8_to_jpeg)

display(image)

# Start collect data.

frame_id = 0

camera.observe(update, names='value')

# Unlink the camera.

camera.unobserve(update, names='value')

camera_link.unlink()

| lab4/program/collect_data.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# + [markdown] deletable=false editable=false nbgrader={"checksum": "c004350cd25d3b1075afcf8b7b244cc6", "grade": false, "grade_id": "cell-2adc36b256efc420", "locked": true, "schema_version": 1, "solution": false}

# # Assignment 4 - Average Reward Softmax Actor-Critic

#

# Welcome to your Course 3 Programming Assignment 4. In this assignment, you will implement **Average Reward Softmax Actor-Critic** in the Pendulum Swing-Up problem that you have seen earlier in the lecture. Through this assignment you will get hands-on experience in implementing actor-critic methods on a continuing task.

#

# **In this assignment, you will:**

# 1. Implement softmax actor-critic agent on a continuing task using the average reward formulation.

# 2. Understand how to parameterize the policy as a function to learn, in a discrete action environment.

# 3. Understand how to (approximately) sample the gradient of this objective to update the actor.

# 4. Understand how to update the critic using differential TD error.

#

# + [markdown] deletable=false editable=false nbgrader={"checksum": "282b307e98de110dd40a15a6cc25ec5d", "grade": false, "grade_id": "cell-99df6e3a990f9278", "locked": true, "schema_version": 1, "solution": false}

# ## Pendulum Swing-Up Environment

#

# In this assignment, we will be using a Pendulum environment, adapted from [Santamaría et al. (1998)](http://www.incompleteideas.net/papers/SSR-98.pdf). This is also the same environment that we used in the lecture. The diagram below illustrates the environment.

#

# <img src="data/pendulum_env.png" alt="Drawing" style="width: 400px;"/>

#

# The environment consists of single pendulum that can swing 360 degrees. The pendulum is actuated by applying a torque on its pivot point. The goal is to get the pendulum to balance up-right from its resting position (hanging down at the bottom with no velocity) and maintain it as long as possible. The pendulum can move freely, subject only to gravity and the action applied by the agent.

#

# The state is 2-dimensional, which consists of the current angle $\beta \in [-\pi, \pi]$ (angle from the vertical upright position) and current angular velocity $\dot{\beta} \in (-2\pi, 2\pi)$. The angular velocity is constrained in order to avoid damaging the pendulum system. If the angular velocity reaches this limit during simulation, the pendulum is reset to the resting position.

# The action is the angular acceleration, with discrete values $a \in \{-1, 0, 1\}$ applied to the pendulum.

# For more details on environment dynamics you can refer to the original paper.

#

# The goal is to swing-up the pendulum and maintain its upright angle. Hence, the reward is the negative absolute angle from the vertical position: $R_{t} = -|\beta_{t}|$

#

# Furthermore, since the goal is to reach and maintain a vertical position, there are no terminations nor episodes. Thus this problem can be formulated as a continuing task.

#

# Similar to the Mountain Car task, the action in this pendulum environment is not strong enough to move the pendulum directly to the desired position. The agent must learn to first move the pendulum away from its desired position and gain enough momentum to successfully swing-up the pendulum. And even after reaching the upright position the agent must learn to continually balance the pendulum in this unstable position.

# + [markdown] deletable=false editable=false nbgrader={"checksum": "17075aa4f743d7ce32b468322a340a07", "grade": false, "grade_id": "cell-72dc8196386b12dd", "locked": true, "schema_version": 1, "solution": false}

# ## Packages

#

# You will use the following packages in this assignment.

#

# - [numpy](www.numpy.org) : Fundamental package for scientific computing with Python.

# - [matplotlib](http://matplotlib.org) : Library for plotting graphs in Python.

# - [RL-Glue](http://www.jmlr.org/papers/v10/tanner09a.html) : Library for reinforcement learning experiments.

# - [jdc](https://alexhagen.github.io/jdc/) : Jupyter magic that allows defining classes over multiple jupyter notebook cells.

# - [tqdm](https://tqdm.github.io/) : A package to display progress bar when running experiments

# - plot_script : custom script to plot results

# - [tiles3](http://incompleteideas.net/tiles/tiles3.html) : A package that implements tile-coding.

# - pendulum_env : Pendulum Swing-up Environment

#

# **Please do not import other libraries** — this will break the autograder.

#

# + deletable=false editable=false nbgrader={"checksum": "c45e0038609a4d2ab65c82e7866ac17a", "grade": false, "grade_id": "cell-df277e2f962adb8c", "locked": true, "schema_version": 1, "solution": false}

# Do not modify this cell!

# Import necessary libraries

# DO NOT IMPORT OTHER LIBRARIES - This will break the autograder.

import numpy as np

import matplotlib.pyplot as plt

# %matplotlib inline

import os

from tqdm import tqdm

from rl_glue import RLGlue

from pendulum_env import PendulumEnvironment

from agent import BaseAgent

import plot_script

import tiles3 as tc

# + [markdown] deletable=false editable=false nbgrader={"checksum": "eca68945ea0514012d2e6fb9e32cdb58", "grade": false, "grade_id": "cell-ab47eee3b7f7d678", "locked": true, "schema_version": 1, "solution": false}

# ## Section 1: Create Tile Coding Helper Function

#

# In this section, we are going to build a tile coding class for our agent that will make it easier to make calls to our tile coder.

#

# Tile-coding is introduced in Section 9.5.4 of the textbook as a way to create features that can both provide good generalization and discrimination. We have already used it in our last programming assignment as well.

#

# Similar to the last programming assignment, we are going to make a function specific for tile coding for our Pendulum Swing-up environment. We will also use the [Tiles3 library](http://incompleteideas.net/tiles/tiles3.html).

#

# To get the tile coder working we need to:

#

# 1) create an index hash table using tc.IHT(),

# 2) scale the inputs for the tile coder based on number of tiles and range of values each input could take

# 3) call tc.tileswrap to get active tiles back.

#

# However, we need to make one small change to this tile coder.

# Note that in this environment the state space contains angle, which is between $[-\pi, \pi]$. If we tile-code this state space in the usual way, the agent may think the value of states corresponding to an angle of $-\pi$ is very different from angle of $\pi$ when in fact they are the same! To remedy this and allow generalization between angle $= -\pi$ and angle $= \pi$, we need to use **wrap tile coder**.

#

# The usage of wrap tile coder is almost identical to the original tile coder, except that we also need to provide the `wrapwidth` argument for the dimension we want to wrap over (hence only for angle, and `None` for angular velocity). More details of wrap tile coder is also provided in [Tiles3 library](http://incompleteideas.net/tiles/tiles3.html).

#

# + deletable=false nbgrader={"checksum": "6c16c849417bf1b801731e16f4e3a151", "grade": false, "grade_id": "cell-e4e31210465e6d0f", "locked": false, "schema_version": 1, "solution": true}

# [Graded]

class PendulumTileCoder:

def __init__(self, iht_size=4096, num_tilings=32, num_tiles=8):

"""

Initializes the MountainCar Tile Coder

Initializers:

iht_size -- int, the size of the index hash table, typically a power of 2

num_tilings -- int, the number of tilings

num_tiles -- int, the number of tiles. Here both the width and height of the tiles are the same

Class Variables:

self.iht -- tc.IHT, the index hash table that the tile coder will use

self.num_tilings -- int, the number of tilings the tile coder will use

self.num_tiles -- int, the number of tiles the tile coder will use

"""

self.num_tilings = num_tilings

self.num_tiles = num_tiles

self.iht = tc.IHT(iht_size)

def get_tiles(self, angle, ang_vel):

"""

Takes in an angle and angular velocity from the penddlum environment

and returns a numpy array of active tiles.

Arguments:

angle -- float, the angle of the pendulum between -np.pi and np.pi

ang_vel -- float, the angular velocity of the agent between -2*np.pi and 2*np.pi

returns:

tiles -- np.array, active tiles

"""

### Set the max and min of angle and ang_vel to scale the input (4 lines)

# ANGLE_MIN = ?

# ANGLE_MAX = ?

# ANG_VEL_MIN = ?

# ANG_VEL_MAX = ?

### START CODE HERE ###

ANGLE_MIN=-np.pi

ANGLE_MAX=np.pi

ANG_VEL_MIN=-2*np.pi

ANG_VEL_MAX=2*np.pi

### END CODE HERE ###

### Use the ranges above and self.num_tiles to set angle_scale and ang_vel_scale (2 lines)

# angle_scale = number of tiles / angle range

# ang_vel_scale = number of tiles / ang_vel range

### START CODE HERE ###

angle_scale=self.num_tiles/(ANGLE_MAX-ANGLE_MIN)

ang_vel_scale=self.num_tiles/(ANG_VEL_MAX-ANG_VEL_MIN)

### END CODE HERE ###

# Get tiles by calling tc.tileswrap method

# wrapwidths specify which dimension to wrap over and its wrapwidth

tiles = tc.tileswrap(self.iht, self.num_tilings, [angle * angle_scale, ang_vel * ang_vel_scale], wrapwidths=[self.num_tiles, False])

return np.array(tiles)

# + [markdown] deletable=false editable=false nbgrader={"checksum": "4b02f0fce6904c39ace01c263ee80ead", "grade": false, "grade_id": "cell-1d990f692063303c", "locked": true, "schema_version": 1, "solution": false}

# Run the following code to verify `PendulumTilecoder`

# + deletable=false editable=false nbgrader={"checksum": "d118544172252ec03f5b282817ff263e", "grade": true, "grade_id": "graded_tilecoder", "locked": true, "points": 15, "schema_version": 1, "solution": false}

# Do not modify this cell!

## Test Code for PendulumTileCoder ##

# Your tile coder should also work for other num. tilings and num. tiles

test_obs = [[-np.pi, 0], [-np.pi, 0.5], [np.pi, 0], [np.pi, -0.5], [0, 1]]

pdtc = PendulumTileCoder(iht_size=4096, num_tilings=8, num_tiles=4)

result=[]

for obs in test_obs:

angle, ang_vel = obs

tiles = pdtc.get_tiles(angle=angle, ang_vel=ang_vel)

result.append(tiles)

for tiles in result:

print(tiles)

# + [markdown] deletable=false editable=false nbgrader={"checksum": "f8ae6af80e2bd513ac3562ccde6bebc1", "grade": false, "grade_id": "cell-44b88917a2825241", "locked": true, "schema_version": 1, "solution": false}

# **Expected output**:

#

# [0 1 2 3 4 5 6 7]

# [0 1 2 3 4 8 6 7]

# [0 1 2 3 4 5 6 7]

# [ 9 1 2 10 4 5 6 7]

# [11 12 13 14 15 16 17 18]

# + [markdown] deletable=false editable=false nbgrader={"checksum": "4ef6492853db03e0ee980ea374723cb8", "grade": false, "grade_id": "cell-78613720dae0e08a", "locked": true, "schema_version": 1, "solution": false}

# ## Section 2: Create Average Reward Softmax Actor-Critic Agent

#

# Now that we implemented PendulumTileCoder let's create the agent that interacts with the environment. We will implement the same average reward Actor-Critic algorithm presented in the videos.

#

# This agent has two components: an Actor and a Critic. The Actor learns a parameterized policy while the Critic learns a state-value function. The environment has discrete actions; your Actor implementation will use a softmax policy with exponentiated action-preferences. The Actor learns with the sample-based estimate for the gradient of the average reward objective. The Critic learns using the average reward version of the semi-gradient TD(0) algorithm.

#

# In this section, you will be implementing `agent_policy`, `agent_start`, `agent_step`, and `agent_end`.

# + [markdown] deletable=false editable=false nbgrader={"checksum": "828614763989884f1e80f0e16218325a", "grade": false, "grade_id": "cell-3676d253ce82f3e3", "locked": true, "schema_version": 1, "solution": false}

# ## Section 2-1: Implement Helper Functions

#

# Let's first define a couple of useful helper functions.

# + [markdown] deletable=false editable=false nbgrader={"checksum": "8d96bc09e1ea682556c7f8fedc790c64", "grade": false, "grade_id": "cell-fd6ef7407bc3283d", "locked": true, "schema_version": 1, "solution": false}

# ## Section 2-1a: Compute Softmax Probability

#

# In this part you will implement `compute_softmax_prob`.

#

# This function computes softmax probability for all actions, given actor weights `actor_w` and active tiles `tiles`. This function will be later used in `agent_policy` to sample appropriate action.

#

# First, recall how the softmax policy is represented from state-action preferences: $\large \pi(a|s, \mathbf{\theta}) \doteq \frac{e^{h(s,a,\mathbf{\theta})}}{\sum_{b}e^{h(s,b,\mathbf{\theta})}}$.

#

# **state-action preference** is defined as $h(s,a, \mathbf{\theta}) \doteq \mathbf{\theta}^T \mathbf{x}_h(s,a)$.

#

# Given active tiles `tiles` for state `s`, state-action preference $\mathbf{\theta}^T \mathbf{x}_h(s,a)$ can be computed by `actor_w[a][tiles].sum()`.

#

# We will also use **exp-normalize trick**, in order to avoid possible numerical overflow.

# Consider the following:

#

# $\large \pi(a|s, \mathbf{\theta}) \doteq \frac{e^{h(s,a,\mathbf{\theta})}}{\sum_{b}e^{h(s,b,\mathbf{\theta})}} = \frac{e^{h(s,a,\mathbf{\theta}) - c} e^c}{\sum_{b}e^{h(s,b,\mathbf{\theta}) - c} e^c} = \frac{e^{h(s,a,\mathbf{\theta}) - c}}{\sum_{b}e^{h(s,b,\mathbf{\theta}) - c}}$

#

# $\pi(\cdot|s, \mathbf{\theta})$ is shift-invariant, and the policy remains the same when we subtract a constant $c \in \mathbb{R}$ from state-action preferences.

#

# Normally we use $c = \max_b h(s,b, \mathbf{\theta})$, to prevent any overflow due to exponentiating large numbers.

# + deletable=false nbgrader={"checksum": "4540ff160f7a874ad3ee99deae10bbcb", "grade": false, "grade_id": "cell-9daa349ce740c93d", "locked": false, "schema_version": 1, "solution": true}

# [Graded]

def compute_softmax_prob(actor_w, tiles):

"""

Computes softmax probability for all actions

Args:

actor_w - np.array, an array of actor weights

tiles - np.array, an array of active tiles

Returns:

softmax_prob - np.array, an array of size equal to num. actions, and sums to 1.

"""

# First compute the list of state-action preferences (1~2 lines)

# state_action_preferences = ? (list of size 3)

state_action_preferences = []

### START CODE HERE ###

for a in range (actor_w.shape[0]):

state_action_preferences.append(actor_w[a][tiles].sum())

### END CODE HERE ###

# Set the constant c by finding the maximum of state-action preferences (use np.max) (1 line)

# c = ? (float)

### START CODE HERE ###

c=np.max(state_action_preferences)

### END CODE HERE ###

# Compute the numerator by subtracting c from state-action preferences and exponentiating it (use np.exp) (1 line)

# numerator = ? (list of size 3)

### START CODE HERE ###

numerator=np.exp(state_action_preferences-c)

### END CODE HERE ###

# Next compute the denominator by summing the values in the numerator (use np.sum) (1 line)

# denominator = ? (float)

### START CODE HERE ###

denominator=np.sum(numerator)

### END CODE HERE ###

# Create a probability array by dividing each element in numerator array by denominator (1 line)

# We will store this probability array in self.softmax_prob as it will be useful later when updating the Actor

# softmax_prob = ? (list of size 3)

### START CODE HERE ###

softmax_prob=numerator/denominator

### END CODE HERE ###

return softmax_prob

# + [markdown] deletable=false editable=false nbgrader={"checksum": "219d176a243b4cc8105fadc7f200c8cd", "grade": false, "grade_id": "cell-6746fb79fd66fca9", "locked": true, "schema_version": 1, "solution": false}

# Run the following code to verify `compute_softmax_prob`.

#

# We will test the method by building a softmax policy from state-action preferences [-1,1,2].

#

# The sampling probability should then roughly match $[\frac{e^{-1}}{e^{-1}+e^1+e^2}, \frac{e^{1}}{e^{-1}+e^1+e^2}, \frac{e^2}{e^{-1}+e^1+e^2}] \approx$ [0.0351, 0.2595, 0.7054]

# + deletable=false editable=false nbgrader={"checksum": "3ff8eb422e5265e03f5b265eb23bdb58", "grade": true, "grade_id": "graded_compute_softmax_prob", "locked": true, "points": 20, "schema_version": 1, "solution": false}

# Do not modify this cell!

## Test Code for compute_softmax_prob() ##

# set tile-coder

iht_size = 4096

num_tilings = 8

num_tiles = 8

test_tc = PendulumTileCoder(iht_size=iht_size, num_tilings=num_tilings, num_tiles=num_tiles)

num_actions = 3

actions = list(range(num_actions))

actor_w = np.zeros((len(actions), iht_size))

# setting actor weights such that state-action preferences are always [-1, 1, 2]

actor_w[0] = -1./num_tilings

actor_w[1] = 1./num_tilings

actor_w[2] = 2./num_tilings

# obtain active_tiles from state

state = [-np.pi, 0.]

angle, ang_vel = state

active_tiles = test_tc.get_tiles(angle, ang_vel)

# compute softmax probability

softmax_prob = compute_softmax_prob(actor_w, active_tiles)

print('softmax probability: {}'.format(softmax_prob))

# + [markdown] deletable=false editable=false nbgrader={"checksum": "b93e7c56f4632a1651adf5b0bbfd75e5", "grade": false, "grade_id": "cell-77f00606b70a1d25", "locked": true, "schema_version": 1, "solution": false}

# **Expected Output:**

#

# softmax probability: [0.03511903 0.25949646 0.70538451]

# + [markdown] deletable=false editable=false nbgrader={"checksum": "a2f94be0165e918d0886453a691fea1b", "grade": false, "grade_id": "cell-eed6babe9b563391", "locked": true, "schema_version": 1, "solution": false}

# ## Section 2-2: Implement Agent Methods

#

# Let's first define methods that initialize the agent. `agent_init()` initializes all the variables that the agent will need.

#

# Now that we have implemented helper functions, let's create an agent. In this part, you will implement `agent_start()` and `agent_step()`. We do not need to implement `agent_end()` because there is no termination in our continuing task.

#

# `compute_softmax_prob()` is used in `agent_policy()`, which in turn will be used in `agent_start()` and `agent_step()`. We have implemented `agent_policy()` for you.

#

# When performing updates to the Actor and Critic, recall their respective updates in the Actor-Critic algorithm video.

#

# We approximate $q_\pi$ in the Actor update using one-step bootstrapped return($R_{t+1} - \bar{R} + \hat{v}(S_{t+1}, \mathbf{w})$) subtracted by current state-value($\hat{v}(S_{t}, \mathbf{w})$), equivalent to TD error $\delta$.

#

# $\delta_t = R_{t+1} - \bar{R} + \hat{v}(S_{t+1}, \mathbf{w}) - \hat{v}(S_{t}, \mathbf{w}) \hspace{6em} (1)$

#

# **Average Reward update rule**: $\bar{R} \leftarrow \bar{R} + \alpha^{\bar{R}}\delta \hspace{4.3em} (2)$

#

# **Critic weight update rule**: $\mathbf{w} \leftarrow \mathbf{w} + \alpha^{\mathbf{w}}\delta\nabla \hat{v}(s,\mathbf{w}) \hspace{2.5em} (3)$

#

# **Actor weight update rule**: $\mathbf{\theta} \leftarrow \mathbf{\theta} + \alpha^{\mathbf{\theta}}\delta\nabla ln \pi(A|S,\mathbf{\theta}) \hspace{1.4em} (4)$

#

#

# However, since we are using linear function approximation and parameterizing a softmax policy, the above update rule can be further simplified using:

#

# $\nabla \hat{v}(s,\mathbf{w}) = \mathbf{x}(s) \hspace{14.2em} (5)$

#

# $\nabla ln \pi(A|S,\mathbf{\theta}) = \mathbf{x}_h(s,a) - \sum_b \pi(b|s, \mathbf{\theta})\mathbf{x}_h(s,b) \hspace{3.3em} (6)$

#

# + deletable=false nbgrader={"checksum": "7477f1cdf96f2bd8bafd07abfbd201a2", "grade": false, "grade_id": "cell-a25279b09b459f5c", "locked": false, "schema_version": 1, "solution": true}

# [Graded]

class ActorCriticSoftmaxAgent(BaseAgent):

def __init__(self):

self.rand_generator = None

self.actor_step_size = None

self.critic_step_size = None

self.avg_reward_step_size = None

self.tc = None

self.avg_reward = None

self.critic_w = None

self.actor_w = None

self.actions = None

self.softmax_prob = None

self.prev_tiles = None

self.last_action = None

def agent_init(self, agent_info={}):

"""Setup for the agent called when the experiment first starts.

Set parameters needed to setup the semi-gradient TD(0) state aggregation agent.

Assume agent_info dict contains:

{

"iht_size": int

"num_tilings": int,

"num_tiles": int,

"actor_step_size": float,

"critic_step_size": float,

"avg_reward_step_size": float,

"num_actions": int,

"seed": int

}

"""

# set random seed for each run

self.rand_generator = np.random.RandomState(agent_info.get("seed"))

iht_size = agent_info.get("iht_size")

num_tilings = agent_info.get("num_tilings")

num_tiles = agent_info.get("num_tiles")

# initialize self.tc to the tile coder we created

self.tc = PendulumTileCoder(iht_size=iht_size, num_tilings=num_tilings, num_tiles=num_tiles)

# set step-size accordingly (we normally divide actor and critic step-size by num. tilings (p.217-218 of textbook))

self.actor_step_size = agent_info.get("actor_step_size")/num_tilings

self.critic_step_size = agent_info.get("critic_step_size")/num_tilings

self.avg_reward_step_size = agent_info.get("avg_reward_step_size")

self.actions = list(range(agent_info.get("num_actions")))

# Set initial values of average reward, actor weights, and critic weights

# We initialize actor weights to three times the iht_size.

# Recall this is because we need to have one set of weights for each of the three actions.

self.avg_reward = 0.0

self.actor_w = np.zeros((len(self.actions), iht_size))

self.critic_w = np.zeros(iht_size)

self.softmax_prob = None

self.prev_tiles = None

self.last_action = None

def agent_policy(self, active_tiles):

""" policy of the agent

Args:

active_tiles (Numpy array): active tiles returned by tile coder

Returns:

The action selected according to the policy

"""

# compute softmax probability

softmax_prob = compute_softmax_prob(self.actor_w, active_tiles)

# Sample action from the softmax probability array

# self.rand_generator.choice() selects an element from the array with the specified probability

chosen_action = self.rand_generator.choice(self.actions, p=softmax_prob)

# save softmax_prob as it will be useful later when updating the Actor

self.softmax_prob = softmax_prob

return chosen_action

def agent_start(self, state):

"""The first method called when the experiment starts, called after

the environment starts.

Args:

state (Numpy array): the state from the environment's env_start function.

Returns:

The first action the agent takes.

"""

angle, ang_vel = state

### Use self.tc to get active_tiles using angle and ang_vel (2 lines)

# set current_action by calling self.agent_policy with active_tiles

# active_tiles = ?

# current_action = ?

### START CODE HERE ###

active_tiles=self.tc.get_tiles(angle, ang_vel)

current_action=self.agent_policy(active_tiles)

### END CODE HERE ###

self.last_action = current_action

self.prev_tiles = np.copy(active_tiles)

return self.last_action

def agent_step(self, reward, state):

"""A step taken by the agent.

Args:

reward (float): the reward received for taking the last action taken

state (Numpy array): the state from the environment's step based on

where the agent ended up after the

last step.

Returns:

The action the agent is taking.

"""

angle, ang_vel = state

### Use self.tc to get active_tiles using angle and ang_vel (1 line)

# active_tiles = ?

### START CODE HERE ###

active_tiles=self.tc.get_tiles(angle, ang_vel)

### END CODE HERE ###

### Compute delta using Equation (1) (1 line)

# delta = ?

### START CODE HERE ###

delta=reward-self.avg_reward+np.sum(self.critic_w[active_tiles])-np.sum(self.critic_w[self.prev_tiles])

### END CODE HERE ###

### update average reward using Equation (2) (1 line)

# self.avg_reward += ?

### START CODE HERE ###

self.avg_reward+=self.avg_reward_step_size*delta

### END CODE HERE ###

# update critic weights using Equation (3) and (5) (1 line)

# self.critic_w[self.prev_tiles] += ?

### START CODE HERE ###

self.critic_w[self.prev_tiles]+=self.critic_step_size*delta

### END CODE HERE ###

# update actor weights using Equation (4) and (6)

# We use self.softmax_prob saved from the previous timestep

# We leave it as an exercise to verify that the code below corresponds to the equation.

for a in self.actions:

if a == self.last_action:

self.actor_w[a][self.prev_tiles] += self.actor_step_size * delta * (1 - self.softmax_prob[a])

else:

self.actor_w[a][self.prev_tiles] += self.actor_step_size * delta * (0 - self.softmax_prob[a])

### set current_action by calling self.agent_policy with active_tiles (1 line)

# current_action = ?

### START CODE HERE ###

current_action=self.agent_policy(active_tiles)

### END CODE HERE ###

self.prev_tiles = active_tiles

self.last_action = current_action

return self.last_action

def agent_message(self, message):

if message == 'get avg reward':

return self.avg_reward

# + [markdown] deletable=false editable=false nbgrader={"checksum": "0abe20eda4a3c9f6781959352dab4748", "grade": false, "grade_id": "cell-c47a537224d052ad", "locked": true, "schema_version": 1, "solution": false}

# Run the following code to verify `agent_start()`.

# Although there is randomness due to `self.rand_generator.choice()` in `agent_policy()`, we control the seed so your output should match the expected output.

# + deletable=false editable=false nbgrader={"checksum": "40a531b5ef11d53daca1ce9f8544dfb0", "grade": true, "grade_id": "graded_agent_start", "locked": true, "points": 10, "schema_version": 1, "solution": false}

# Do not modify this cell!

## Test Code for agent_start()##

agent_info = {"iht_size": 4096,

"num_tilings": 8,

"num_tiles": 8,

"actor_step_size": 1e-1,

"critic_step_size": 1e-0,

"avg_reward_step_size": 1e-2,

"num_actions": 3,

"seed": 99}

test_agent = ActorCriticSoftmaxAgent()

test_agent.agent_init(agent_info)

state = [-np.pi, 0.]

test_agent.agent_start(state)

print("agent active_tiles: {}".format(test_agent.prev_tiles))

print("agent selected action: {}".format(test_agent.last_action))

# + [markdown] deletable=false editable=false nbgrader={"checksum": "c7e0ca514f7c96e8e6beb2cf9304758e", "grade": false, "grade_id": "cell-4bb285c764d8ad67", "locked": true, "schema_version": 1, "solution": false}

# **Expected output**:

#

# agent active_tiles: [0 1 2 3 4 5 6 7]

# agent selected action: 2

# + [markdown] deletable=false editable=false nbgrader={"checksum": "bb016e27cf1ece334e66e895094ef089", "grade": false, "grade_id": "cell-a3d392998465216c", "locked": true, "schema_version": 1, "solution": false}

# Run the following code to verify `agent_step()`

# + deletable=false editable=false nbgrader={"checksum": "d62013f0d2b33e3e7ed30f86264dc84d", "grade": true, "grade_id": "graded_agent_step", "locked": true, "points": 25, "schema_version": 1, "solution": false}

# Do not modify this cell!

## Test Code for agent_step() ##

# Make sure agent_start() and agent_policy() are working correctly first.

# agent_step() should work correctly for other arbitrary state transitions in addition to this test case.

env_info = {"seed": 99}

agent_info = {"iht_size": 4096,

"num_tilings": 8,

"num_tiles": 8,

"actor_step_size": 1e-1,

"critic_step_size": 1e-0,

"avg_reward_step_size": 1e-2,

"num_actions": 3,

"seed": 99}

test_env = PendulumEnvironment

test_agent = ActorCriticSoftmaxAgent

rl_glue = RLGlue(test_env, test_agent)

rl_glue.rl_init(agent_info, env_info)

# start env/agent

rl_glue.rl_start()

rl_glue.rl_step()

print("agent next_action: {}".format(rl_glue.agent.last_action))

print("agent avg reward: {}\n".format(rl_glue.agent.avg_reward))

print("agent first 10 values of actor weights[0]: \n{}\n".format(rl_glue.agent.actor_w[0][:10]))

print("agent first 10 values of actor weights[1]: \n{}\n".format(rl_glue.agent.actor_w[1][:10]))

print("agent first 10 values of actor weights[2]: \n{}\n".format(rl_glue.agent.actor_w[2][:10]))

print("agent first 10 values of critic weights: \n{}".format(rl_glue.agent.critic_w[:10]))

# + [markdown] deletable=false editable=false nbgrader={"checksum": "9d4691943e3a97a619875655bef00a2e", "grade": false, "grade_id": "cell-feab2079de2e1fc0", "locked": true, "schema_version": 1, "solution": false}

# **Expected output**:

#

# agent next_action: 1

# agent avg reward: -0.03139092653589793

#

# agent first 10 values of actor weights[0]:

# [0.01307955 0.01307955 0.01307955 0.01307955 0.01307955 0.01307955

# 0.01307955 0.01307955 0. 0. ]

#

# agent first 10 values of actor weights[1]:

# [0.01307955 0.01307955 0.01307955 0.01307955 0.01307955 0.01307955

# 0.01307955 0.01307955 0. 0. ]

#

# agent first 10 values of actor weights[2]:

# [-0.02615911 -0.02615911 -0.02615911 -0.02615911 -0.02615911 -0.02615911

# -0.02615911 -0.02615911 0. 0. ]

#

# agent first 10 values of critic weights:

# [-0.39238658 -0.39238658 -0.39238658 -0.39238658 -0.39238658 -0.39238658

# -0.39238658 -0.39238658 0. 0. ]

# + [markdown] deletable=false editable=false nbgrader={"checksum": "9bf003af9552ea8cf02c5a3e69f91d4f", "grade": false, "grade_id": "cell-4a2937aee7e48fe0", "locked": true, "schema_version": 1, "solution": false}

# ## Section 3: Run Experiment

#

# Now that we've implemented all the components of environment and agent, let's run an experiment!

# We want to see whether our agent is successful at learning the optimal policy of balancing the pendulum upright. We will plot total return over time, as well as the exponential average of the reward over time. We also do multiple runs in order to be confident about our results.

#

# The experiment/plot code is provided in the cell below.

# + deletable=false editable=false nbgrader={"checksum": "86aa230c2ce8ef9fbd0b5022c72515f6", "grade": false, "grade_id": "cell-42b7e0b38d1ead4c", "locked": true, "schema_version": 1, "solution": false}

# Do not modify this cell!

# Define function to run experiment

def run_experiment(environment, agent, environment_parameters, agent_parameters, experiment_parameters):

rl_glue = RLGlue(environment, agent)

# sweep agent parameters

for num_tilings in agent_parameters['num_tilings']:

for num_tiles in agent_parameters["num_tiles"]:

for actor_ss in agent_parameters["actor_step_size"]:

for critic_ss in agent_parameters["critic_step_size"]:

for avg_reward_ss in agent_parameters["avg_reward_step_size"]:

env_info = {}

agent_info = {"num_tilings": num_tilings,

"num_tiles": num_tiles,

"actor_step_size": actor_ss,

"critic_step_size": critic_ss,

"avg_reward_step_size": avg_reward_ss,

"num_actions": agent_parameters["num_actions"],

"iht_size": agent_parameters["iht_size"]}

# results to save

return_per_step = np.zeros((experiment_parameters["num_runs"], experiment_parameters["max_steps"]))

exp_avg_reward_per_step = np.zeros((experiment_parameters["num_runs"], experiment_parameters["max_steps"]))

# using tqdm we visualize progress bars

for run in tqdm(range(1, experiment_parameters["num_runs"]+1)):

env_info["seed"] = run

agent_info["seed"] = run

rl_glue.rl_init(agent_info, env_info)

rl_glue.rl_start()

num_steps = 0

total_return = 0.

return_arr = []

# exponential average reward without initial bias

exp_avg_reward = 0.0

exp_avg_reward_ss = 0.01

exp_avg_reward_normalizer = 0

while num_steps < experiment_parameters['max_steps']:

num_steps += 1

rl_step_result = rl_glue.rl_step()

reward = rl_step_result[0]

total_return += reward

return_arr.append(reward)

avg_reward = rl_glue.rl_agent_message("get avg reward")

exp_avg_reward_normalizer = exp_avg_reward_normalizer + exp_avg_reward_ss * (1 - exp_avg_reward_normalizer)

ss = exp_avg_reward_ss / exp_avg_reward_normalizer

exp_avg_reward += ss * (reward - exp_avg_reward)

return_per_step[run-1][num_steps-1] = total_return

exp_avg_reward_per_step[run-1][num_steps-1] = exp_avg_reward

if not os.path.exists('results'):

os.makedirs('results')

save_name = "ActorCriticSoftmax_tilings_{}_tiledim_{}_actor_ss_{}_critic_ss_{}_avg_reward_ss_{}".format(num_tilings, num_tiles, actor_ss, critic_ss, avg_reward_ss)

total_return_filename = "results/{}_total_return.npy".format(save_name)

exp_avg_reward_filename = "results/{}_exp_avg_reward.npy".format(save_name)

np.save(total_return_filename, return_per_step)

np.save(exp_avg_reward_filename, exp_avg_reward_per_step)

# + [markdown] deletable=false editable=false nbgrader={"checksum": "569a57d760604a84cdb04d53ecfefede", "grade": false, "grade_id": "cell-bea80af13342f057", "locked": true, "schema_version": 1, "solution": false}

# ## Section 3-1: Run Experiment with 32 tilings, size 8x8

#

# We will first test our implementation using 32 tilings, of size 8x8. We saw from the earlier assignment using tile-coding that many tilings promote fine discrimination, and broad tiles allows more generalization.

# We conducted a wide sweep of meta-parameters in order to find the best meta-parameters for our Pendulum Swing-up task.

#

# We swept over the following range of meta-parameters and the best meta-parameter is boldfaced below:

#

# actor step-size: $\{\frac{2^{-6}}{32}, \frac{2^{-5}}{32}, \frac{2^{-4}}{32}, \frac{2^{-3}}{32}, \mathbf{\frac{2^{-2}}{32}}, \frac{2^{-1}}{32}, \frac{2^{0}}{32}, \frac{2^{1}}{32}\}$

#

# critic step-size: $\{\frac{2^{-4}}{32}, \frac{2^{-3}}{32}, \frac{2^{-2}}{32}, \frac{2^{-1}}{32}, \frac{2^{0}}{32}, \mathbf{\frac{2^{1}}{32}}, \frac{3}{32}, \frac{2^{2}}{32}\}$

#

# avg reward step-size: $\{2^{-11}, 2^{-10} , 2^{-9} , 2^{-8}, 2^{-7}, \mathbf{2^{-6}}, 2^{-5}, 2^{-4}, 2^{-3}, 2^{-2}\}$

#

#

# We will do 50 runs using the above best meta-parameter setting to verify your agent.

# Note that running the experiment cell below will take **_approximately 5 min_**.

#

# + deletable=false editable=false nbgrader={"checksum": "ff324b51dd0e1d7bdb47b9979f698bde", "grade": false, "grade_id": "cell-e9bf5a92d552cda5", "locked": true, "schema_version": 1, "solution": false}

# Do not modify this cell!

#### Run Experiment

# Experiment parameters

experiment_parameters = {

"max_steps" : 20000,

"num_runs" : 50

}

# Environment parameters

environment_parameters = {}

# Agent parameters

# Each element is an array because we will be later sweeping over multiple values

# actor and critic step-sizes are divided by num. tilings inside the agent

agent_parameters = {

"num_tilings": [32],

"num_tiles": [8],

"actor_step_size": [2**(-2)],

"critic_step_size": [2**1],

"avg_reward_step_size": [2**(-6)],

"num_actions": 3,

"iht_size": 4096

}

current_env = PendulumEnvironment

current_agent = ActorCriticSoftmaxAgent

run_experiment(current_env, current_agent, environment_parameters, agent_parameters, experiment_parameters)

plot_script.plot_result(agent_parameters, 'results')

# + [markdown] deletable=false editable=false nbgrader={"checksum": "2d162faa4b5808751fcb4433bbd81b7c", "grade": false, "grade_id": "cell-7cfde5a470e987d7", "locked": true, "schema_version": 1, "solution": false}

# Run the following code to verify your experimental result.

# + deletable=false editable=false nbgrader={"checksum": "dd5614163afa480e8e49fd89e7a43c36", "grade": true, "grade_id": "graded_exp_result", "locked": true, "points": 30, "schema_version": 1, "solution": false}

# Do not modify this cell!

## Test Code for experimental result ##

filename = 'ActorCriticSoftmax_tilings_32_tiledim_8_actor_ss_0.25_critic_ss_2_avg_reward_ss_0.015625_exp_avg_reward'

agent_exp_avg_reward = np.load('results/{}.npy'.format(filename))

result_med = np.median(agent_exp_avg_reward, axis=0)

answer_range = np.load('correct_npy/exp_avg_reward_answer_range.npy')

upper_bound = answer_range.item()['upper-bound']

lower_bound = answer_range.item()['lower-bound']

# check if result is within answer range

all_correct = np.all(result_med <= upper_bound) and np.all(result_med >= lower_bound)

if all_correct:

print("Your experiment results are correct!")

else:

print("Your experiment results does not match with ours. Please check if you have implemented all methods correctly.")

# + [markdown] deletable=false editable=false nbgrader={"checksum": "44295b14742f975bfb7fcd14ec123ebb", "grade": false, "grade_id": "cell-9081e37ad214f0b6", "locked": true, "schema_version": 1, "solution": false}

# ## Section 3-2: Performance Metric and Meta-Parameter Sweeps

#

#

# ### Performance Metric

#

# To evaluate performance, we plotted both the return and exponentially weighted average reward over time.

#

# In the first plot, the return is negative because the reward is negative at every state except when the pendulum is in the upright position. As the policy improves over time, the agent accumulates less negative reward, and thus the return decreases slowly. Towards the end the slope is almost flat indicating the policy has stabilized to a good policy. When using this plot however, it can be difficult to distinguish whether it has learned an optimal policy. The near-optimal policy in this Pendulum Swing-up Environment is to maintain the pendulum in the upright position indefinitely, getting near 0 reward at each time step. We would have to examine the slope of the curve but it can be hard to compare the slope of different curves.

#

# The second plot using exponential average reward gives a better visualization. We can see that towards the end the value is near 0, indicating it is getting near 0 reward at each time step. Here, the exponentially weighted average reward shouldn't be confused with the agent’s internal estimate of the average reward. To be more specific, we used an exponentially weighted average of the actual reward without initial bias (Refer to Exercise 2.7 from the textbook (p.35) to read more about removing the initial bias). If we used sample averages instead, later rewards would have decreasing impact on the average and would not be able to represent the agent's performance with respect to its current policy effectively.

#

# It is easier to see whether the agent has learned a good policy in the second plot than the first plot. If the learned policy is optimal, the exponential average reward would be close to 0.

#

# Furthermore, how did we pick the best meta-parameter from the sweeps? A common method would be to pick the meta-parameter that results in the largest Area Under the Curve (AUC). However, this is not always what we want. We want to find a set of meta-parameters that learns a good final policy. When using AUC as the criteria, we may pick meta-parameters that allows the agent to learn fast but converge to a worse policy. In our case, we selected the meta-parameter setting that obtained the most exponential average reward over the last 5000 time steps.

#

#

# ### Parameter Sensitivity

#

# In addition to finding the best meta-parameters it is also equally important to plot **parameter sensitivity curves** to understand how our algorithm behaves.

#

# In our simulated Pendulum problem, we can extensively test our agent with different meta-parameter configurations but it would be quite expensive to do so in real life. Parameter sensitivity curves can provide us insight into how our algorithms might behave in general. It can help us identify a good range of each meta-parameters as well as how sensitive the performance is with respect to each meta-parameter.

#

# Here are the sensitivity curves for the three step-sizes we swept over:

#

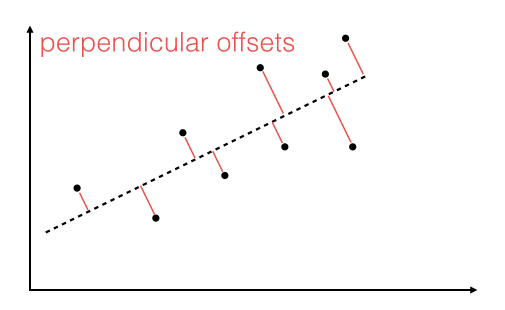

# <img src="data/sensitivity_combined.png" alt="Drawing" style="width: 1000px;"/>

#

# On the y-axis we use the performance measure, which is the average of the exponential average reward over the 5000 time steps, averaged over 50 different runs. On the x-axis is the meta-parameter we are testing. For the given meta-parameter, the remaining meta-parameters are chosen such that it obtains the best performance.

#

# The curves are quite rounded, indicating the agent performs well for these wide range of values. It indicates that the agent is not too sensitive to these meta-parameters. Furthermore, looking at the y-axis values we can observe that average reward step-size is particularly less sensitive than actor step-size and critic step-size.

#

# But how do we know that we have sufficiently covered a wide range of meta-parameters? It is important that the best value is not on the edge but in the middle of the meta-parameter sweep range in these sensitivity curves. Otherwise this may indicate that there could be better meta-parameter values that we did not sweep over.

# + [markdown] deletable=false editable=false nbgrader={"checksum": "e679782b8781ed867e952ab2a8735ec1", "grade": false, "grade_id": "cell-e9c6a124eb3c37e6", "locked": true, "schema_version": 1, "solution": false}

# ## Wrapping up

#

# ### **Congratulations!** You have successfully implemented Course 3 Programming Assignment 4.

#

#

# You have implemented your own **Average Reward Actor-Critic with Softmax Policy** agent in the Pendulum Swing-up Environment. You implemented the environment based on information about the state/action space and transition dynamics. Furthermore, you have learned how to implement an agent in a continuing task using the average reward formulation. We parameterized the policy using softmax of action-preferences over discrete action spaces, and used Actor-Critic to learn the policy.

#

#

# To summarize, you have learned how to:

# 1. Implement softmax actor-critic agent on a continuing task using the average reward formulation.

# 2. Understand how to parameterize the policy as a function to learn, in a discrete action environment.

# 3. Understand how to (approximately) sample the gradient of this objective to update the actor.

# 4. Understand how to update the critic using differential TD error.

| 03_Prediction_and_Control_with Function_Approximation/week4/C3W4_programming_assignment/C3W4_prrgramming_assignment.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

import sys

sys.path.insert(1, '/home/crazy/UI/ide/crossviper-master')

from crossviper import *

class Ide:

def __init__(self, parent):

ide = tk.Toplevel(parent)

ide.geometry("%dx%d+%d+%d"% (500,800,1800,0))

ide.resizable(width = "True",height = "False")

app = CrossViper(master=ide)

app.master.title('Python Ide')

app.master.minsize(width=400, height=800)

# +

# root = tk.Tk()

# obj = Ide(root)

# root.mainloop()

# -

| Untitled7.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 2

# language: python

# name: python2

# ---

# # Langmuir probe data

#

# Langmuir probes are the bread and butter of plasma diagnsotics. In AUG they are spread through the inner and outer divertors. Some of them tend to go MIA in some days, so always check out for individual signals. The naming convention is always something like "ua1". The first "u" is for "unten" (lower), so the first letter can be either "u" or "o" (oben). The second letter can be "a" for "ausen" (outer), "i" for "innen" (inner) or "m" for "mitte" (middle, in the lower divertor roof baffle).

#

# Reading temperature and density for the probes is straightforward, as the information is stored in the `LSD` shotfile (yep, LSD, *LangmuirSondenDaten, jungs*). To get the particular info, you can compose the name of the signal by adding the prefix `te-` for temperature and `ne-` for density.

#

# Reading jsat information, however, is a bloody nightmare. Ain't nobody got time for that.

#

# It is much easier to read data from the `DIVERTOR` programme written by <NAME> and outputting ASCII files than you reading the data itself. There are some functions to read data outputted by DIVERTOR.

import sys

sys.path.append('ipfnlite/')

sys.path.append('/afs/ipp/aug/ads-diags/common/python/lib/')

from getsig import getsig

import matplotlib.pyplot as plt

#plt.style.use('./Styles/darklab.mplstyle')

shotnr = 29864

telfs = getsig(shotnr, 'LSD', 'te-ua4')

nelfs = getsig(shotnr, 'LSD', 'ne-ua4')

# +

fig, ax = plt.subplots(nrows=2, sharex=True, dpi=100)

ax[0].plot(nelfs.time, nelfs.data*1e-19, lw=0.4)

ax[1].plot(telfs.time, telfs.data, lw=0.4)

ax[0].set_ylabel(r'$\mathrm{n_{e}\,[10^{19}\,m^{-3}]}$')

ax[1].set_ylabel('T [eV]')

ax[0].set_ylim(bottom=0)

ax[1].set_ylim(bottom=0)

ax[1].set_xlabel('time [s]')

ax[1].set_xlim(1,4)

plt.tight_layout()

plt.show()

# -

# ## Reading DIVERTOR output

from readStark import readDivData

from getsig import getsig

from scipy.interpolate import interp2d

import matplotlib as mpl #Special axes arrangement for colorbars

from mpl_toolkits.axes_grid1.inset_locator import inset_axes

import numpy as np

import matplotlib.pyplot as plt

#plt.style.use('./Styles/darklab.mplstyle')

jsat_out = readDivData('./Files/3D_29864_jsat_out.dat')

h1 = getsig(29864, 'DCN', 'H-1')

h5 = getsig(29864, 'DCN', 'H-5')

dtot = getsig(29864, 'UVS', 'D_tot')

# +

fig = plt.figure(dpi=120)

#Initial and Final time points

tBegin = 1.0

tEnd = 3.6

#2x2 array, left side for plotting, right side for placing colorbar, hence the ratios

gs = mpl.gridspec.GridSpec(3, 2, height_ratios=[1, 1, 1], width_ratios=[5, 1])

#Top plot

ax0 = fig.add_subplot(gs[0, 0])

ax0.plot(h1.time, h1.data*1e-19, label='H-1')

ax0.plot(h5.time, h5.data*1e-19, label='H-5')

ax0.set_ylabel(r'$\mathrm{n_{e}\,[10^{19}\,m^{-3}]}$')

ax0.set_ylim(bottom=0)

ax0.legend()

#Middle plot

ax1 = fig.add_subplot(gs[1, 0], sharex=ax0)

vmax = 15

clrb = ax1.pcolormesh(jsat_out.time, jsat_out.deltas, jsat_out.data, vmax=vmax, shading='gouraud', cmap='viridis')

axins = inset_axes(ax1,

width="5%", # width = 10% of parent_bbox width

height="100%", # height : 50%

loc=6,

bbox_to_anchor=(1.05, 0., 1, 1),

bbox_transform=ax1.transAxes,

borderpad=0)

cbar = plt.colorbar(clrb, cax=axins, ticks=(np.arange(0.0, vmax+1.0, 3.0)))

cbar.set_label(r'$\mathrm{\Gamma_{D^{+}}\,[10^{22}\,e/m^{-2}]}$')

#Strike point line

ax1.axhline(0.0, color='w')

ax1.set_ylabel(r'$\mathrm{\Delta s\,[cm]}$')

ax1.set_ylim(-5,17)

ax1.set_yticks([-5,0,5,10,15])

##This is just the middle figure, but 2D-interpolated

#Bottom plot

ax2 = fig.add_subplot(gs[2, 0], sharex=ax0)

ax2.plot(dtot.time, dtot.data*1e-22, label='D fueling [1e22 e/s]')

ax2.set_ylim(bottom=0)

ax2.legend()

#Remove ticks from top and middle plot

plt.setp(ax0.get_xticklabels(), visible=False)

plt.setp(ax1.get_xticklabels(), visible=False)

ax0.set_xlim(tBegin, tEnd)

ax2.set_xlabel('time [s]')

plt.subplots_adjust(left=0.1, right=0.99, bottom=0.11, top=0.98, wspace=0.10, hspace=0.11)

#plt.tight_layout()

plt.savefig('./Figures/test.png', dpi=300, transparent=True)

plt.show()

| 08-Langmuir probes.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# # Step 0 Cluster the Malware Bazaar data set

#

# This is in the file (which has been checked in):

# malbaz/cen_389300.csv

#

# On the 2021-09-17 the CSV file provided by Malware Bazaar had 389300 lines.

# To download an updated version and cluster this data set, see malbaz/README.

# Using HAC-T with a threshold distance CDist=30, the resulting clustering had 16453 clutsters.

#

# The clusters are described in the file malbaz/cen_389300.csv, which has the following columns

# tlsh TLSH of the center of the cluster

# family The most common "signature" in the cluster

# firstSeen The first seen date for the cluster (earliest of all first seen dates)

# label The following concatenated: family, firstseen, nitems

# radius The radius of the cluster

# nitems The number of items in the cluster

#

# Below we list the 20 most frequently occurring family assigned to clusters:

#

# 3416 AgentTesla \

# 2782 NULL \

# 942 Heodo \

# 725 AveMariaRAT \

# 721 Mirai \

# 708 FormBook \

# 580 Loki \

# 558 QuakBot \

# 416 RemcosRAT \

# 382 Dridex \

# 316 NanoCore \

# 311 IcedID \

# 304 SnakeKeylogger \

# 244 TrickBot \

# 242 GuLoader \

# 231 Gozi \

# 214 RedLineStealer \

# 210 CobaltStrike \

# 197 MassLogger \

# 184 njrat

#

# # Step 1 Display a dendogram for all clusters assigned to a malware family

# For our first demonstration,

# we selected FickerStealer because the dendrogram was not too large / too dense.

# Note: We provide some tools for narrowing the search / showing more meaningful dendrograms.

from pylib.tlsh_lib import *

(tlist, labels) = tlsh_csvfile("malbaz/clust_389300.csv", searchColName="family", searchValueList=['FickerStealer'])

tlsh_dendrogram(tlist, labelList=labels[0])

# ## Interpretation of FickerStealer dendrogram

#

# We see a set of close clusters (distances between clusters < 110) in the months of March and April.

# We see a set of even closer clusters (distances between clusters < 60) in the months of May and August.

# There may have been a significant change in the malware family between April and May 2021.

# # Step 2 Show a dendrogram for the RacoonStealer family

# # Use the date filtering options (sDate and eDate)

#

# We start by generating a dendrogram for the entire RacoonStealer malware family.

from pylib.tlsh_lib import *

(tlist, labels) = tlsh_csvfile("malbaz/clust_389300.csv", searchColName="family", searchValueList=['RaccoonStealer'])

tlsh_dendrogram(tlist, labelList=labels[0])

# ## 2.1 Use the sDate parameter to specify clusters after a date

#

# The above dendrogram was not useful.

# So we set the start date (sDate) paremeter to "2021-09-01", so that we only show clusters with a firstSeen date

# which occurs on or after 2021-09-01

from pylib.tlsh_lib import *

(tlist, labels) = tlsh_csvfile("malbaz/clust_389300.csv", searchColName="family", searchValueList=['RaccoonStealer'],

sDate="2021-09-01")

tlsh_dendrogram(tlist, labelList=labels[0])

# ## 2.2 Use the sDate and eDate parameters to specify clusters in a date range

#

# Here we select clusters in the first quarter of 2021.

# That is in the range: 2021-01-01 to 2021-03-31

from pylib.tlsh_lib import *

(tlist, labels) = tlsh_csvfile("malbaz/clust_389300.csv", searchColName="family", searchValueList=['RaccoonStealer'],

sDate="2021-01-01", eDate="2021-03-31")

tlsh_dendrogram(tlist, labelList=labels[0])

# # Step 3 Show all the clusters surrounding a new file using simTlsh

#

# We got a file which had TLSH value

# T14893F844FD459B2FC3D372F6E75C028D763A1FE8A7E630269934BEA023F56D12526911

from pylib.tlsh_lib import *

(tlist, labels) = tlsh_csvfile("malbaz/clust_389300.csv",

simTlsh="T14893F844FD459B2FC3D372F6E75C028D763A1FE8A7E630269934BEA023F56D12526911",

simThreshold=130)

tlsh_dendrogram(tlist, labelList=labels[0])

# ## Interpretation of Mirai / Gafgyt dendrogram

#

# The sample provided is called "QUERY" and it is in the 7th row of the dendrogram (near the top).

# We see that it is close to a Mirai cluster.

# We can adjust the simThreshold to only display clusters closer to our simTlsh (see below).

#

# We see that there is a large branch of Gafgyt malware clusters at the top of the diagram.

# And a large branch of Mirai malware clusters at the bottom of the diagram.

# This makes perfect sense.

# It was reported in April 2021, that Gafgyt had started re-using Mirai code.

#

# https://securityaffairs.co/wordpress/116882/cyber-crime/gafgyt-re-uses-mirai-code.html

from pylib.tlsh_lib import *

(tlist, labels) = tlsh_csvfile("malbaz/clust_389300.csv",

simTlsh="T14893F844FD459B2FC3D372F6E75C028D763A1FE8A7E630269934BEA023F56D12526911",

simThreshold=100)

tlsh_dendrogram(tlist, labelList=labels[0])

# Here we reduced the simThreshold to 80.

# We find that our simTlsh has a distance < 10 to the Mirai 2021006-30 cluster

# Our sample is highly likely to be a sample of Mirai malware.

# # Step 4 Show all the clusters surrounding a specified cluster

#

# We had a SnakeKeyLogger cluster which was first seen on 2021-09-16

# This cluster has center

# T12584BF243AFB8019F173AFBA8FE575969B6EFA633603D55D2491038A0613B81CDC153E

# (this is line 42 of malbaz/cen_389300.csv)

from pylib.tlsh_lib import *

(tlist, labels) = tlsh_csvfile("malbaz/clust_389300.csv",

simTlsh="T12584BF243AFB8019F173AFBA8FE575969B6EFA633603D55D2491038A0613B81CDC153E",

simThreshold=90)

tlsh_dendrogram(tlist, labelList=labels[0])

# ## Interpretation of SnakeKeylogger / AgentTesla / Formbook / Loki dendrogram

#

# We see that the SnakeKeyLogger cluster is mixed in a group of AgentTesla / Loki and Formbook clusters.

# These malware families are known to exhibit similar properties.

# They are information stealer / RATs which are typically sent attached to spam emails.

#

# "Researchers say the attackers’ use of several common malware families makes attribution of this

# campaign to a particular threat group difficult."

# https://cyberintelmag.com/malware-viruses/year-long-spear-phishing-campaign-targets-energy-sector-with-agent-tesla-other-rats/

#

# https://asec.ahnlab.com/en/22074/

#

# # Step 5 Work with unlabelled clusters

#

# We generate a dendrogram which includes unlabeled clusters.

# We extract information about those clusters and show how to list the members.

# +

from pylib.tlsh_lib import *

(tlist, labels) = tlsh_csvfile("malbaz/clust_389300.csv",

simTlsh="T10923013EC661113BCD05DB76E2622B7E24A64C768F6B70D871E7208A3CFE8505F42961",

simThreshold=180)

tlsh_dendrogram(tlist, labelList=labels[0])

# -

# In the middle of this dendrogram (the green section) we see a group of clusters without labels.

# We now extract information about Cluster 43300

from pylib.tlsh_lib import *

mb_show_sha1("Cluster 43300")

# We also show how to get more information about Gozi 2020-11-10 (2) which is the 5th row from the bottom.

from pylib.tlsh_lib import *

mb_show_sha1("MyDoom", thisDate="2021-09-03")

| tlshCluster/malbaz.ipynb |

;; -*- coding: utf-8 -*-

;; ---

;; jupyter:

;; jupytext:

;; text_representation:

;; extension: .scm

;; format_name: light

;; format_version: '1.5'

;; jupytext_version: 1.14.4

;; kernelspec:

;; display_name: Calysto Scheme 3

;; language: scheme

;; name: calysto_scheme

;; ---

;; ### 練習問題2.6

;; ペアを⼿続きとして表現するという考え⽅で頭がごちゃごちゃになっていないとしたら、次のようなことを考えてみよう。

;; ⼿続きを扱うことができる⾔語では、

;; (少なくとも、⾮負整数に関する限りは) 数値なしでもやっていける。そのためには、0 と、1 を⾜すという演算を次のように実装する。

;;

;; (define zero (lambda (f) (lambda (x) x)))

;; (define (add-1 n)

;; (lambda (f) (lambda (x) (f ((n f) x)))))

;;

;; この表現は、発明者の Alonzo Church にちなんで**チャーチ数**

;; (Church numeral) として知られている。

;; Alonzo Church はλ-演算を発明した論理学者である。

;; one と two を直接 (zero と add-1 を使わずに) 定義せよ

;; (ヒント:置換を使って (add-1 zero) を評価する)。

;; 加算⼿続きの直接的な定義 +(add-1 の繰り返し適⽤は⽤いない)を与えよ。

(define zero (lambda (f) (lambda (x) x)))

(define (add-1 n)

(lambda (f) (lambda (x) (f ((n f) x)))))

;; +

; チャーチ数がよくわからないので、

; 動かしてみて結果から考えてみる。

(define (func x)

(begin

(display x)

(+ x 1)

)

)

((zero func) 0)

;(dispaly ((zero func) 0))

(newline)

(define one (add-1 zero))

((one func) 0)

;(dispaly ((one func) 0))

(newline)

(define two (add-1 one))

((two func) 0)

;(dispaly ((two func) 0))

(newline)

(define three (add-1 two))

((three func) 0)

;(dispaly ((three func) 0))

(newline)

;; -

;; 上記の結果より、チャーチ数は以下のように考えられる。

;;

;; zero → 引数に与えた手続きを1度も実行しない。

;; one → 引数に与えた手続きを1度実行する。

;; two → 引数に与えた手続きを2度実行する。

;; three → 引数に与えた手続きを3度実行する。

;;

;; +

(define (func x)

(begin

(display x)

(+ x 1)

)

)

; oneとtwoを直接定義

(define one

(lambda (f) (lambda (x) (f x))))

;(display ((one func) 0))

((one func) 0)

(newline)

(define two

(lambda (f) (lambda (x) (f (f x)))))

;(display ((two func) 0))

((two func) 0)

(newline)

(define three

(lambda (f) (lambda (x) (f (f (f x))))))

;(display ((three func) 0))

((three func) 0)

(newline)

;; -

(define (add a b)

;(lambda (f) (lambda (x) ((a (b (f x)))))) ; 実行エラーになる

;(lambda (f) (a (b f))) ; これだとaの分のlambda式が増えない

;(lambda (f) ((a f) (b f))) ; 実行エラーになる

(lambda (f) (lambda (x) ((a f) ((b f) x)))) ; 実行結果が1多い

;(lambda (f) (lambda (x) ((a f) (b (f x)))))

)

;; +

((one func) 0)

(newline)

((two func) 0)

(newline)

(define three (add one two))

((three func) 0)

(newline)

;; +

(define (func x)

(begin

(display '0)

;(display x)

;(+ x 1)

()

)

)

(define x (add one two))

;(display ((x func) 0))

((x func) 0)

(newline)

(define x (add two one))

;(display ((x func) 0))

((x func) 0)

(newline)

(define x (add x two))

;(display ((x func) 0))

((x func) 0)

(newline)

((zero func) 0)

(newline)

((one func) 0)

(newline)

((two func) 0)

(newline)

| exercises/2.06.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: 'Python 3.8.5 64-bit (''base'': conda)'

# language: python

# name: python38564bitbasecondad1742f2c15834eb4a25ed5f906de87ff

# ---

from gaussian_noise_regression import GaussianNoiseRegression

import pandas as pd

import numpy as np

import matplotlib.pyplot as plt

pd.set_option('display.max_rows',100)

data = pd.read_csv('test/ImbR.csv', index_col=0)

data

data.std().tolist()

gn1 = GaussianNoiseRegression(data, thr_rel=0.8, c_perc=[0.5,3])

method = gn1.getMethod()

extrType = gn1.getExtrType()

thr_rel = gn1.getThrRel()

controlPtr = gn1.getControlPtr()

c_perc_undersampling, c_perc_oversampling = gn1.getCPerc()

pert = gn1.getPert()

method, extrType, thr_rel, controlPtr, c_perc_undersampling, c_perc_oversampling, pert

yPhi, ydPhi, yddPhi = gn1.calc_rel_values()

yPhi

data1 = gn1.preprocess_data(yPhi)

data1

gn1.set_feature_stds_list(data1)

feature_stds_list = gn1.get_feature_stds_list()

feature_stds_list

gn1.set_obj_interesting_set(data1)

interesting_set = gn1.get_obj_interesting_set()

interesting_set

gn1.set_obj_uninteresting_set(data1)

uninteresting_set = gn1.get_obj_uninteresting_set()

uninteresting_set

gn1.set_obj_bumps(data1)

bumps_undersampling, bumps_oversampling = gn1.get_obj_bumps()

bumps_undersampling, bumps_oversampling

resampled = gn1.process_percentage()

resampled

data = pd.read_csv('test/ImbR.csv', index_col=0)

data

gn2 = GaussianNoiseRegression(data, thr_rel=0.8, c_perc='balance')

method = gn2.getMethod()

extrType = gn2.getExtrType()

thr_rel = gn2.getThrRel()

controlPtr = gn2.getControlPtr()

c_perc = gn2.getCPerc()

pert = gn1.getPert()

method, extrType, thr_rel, controlPtr, c_perc, pert

resampled = gn2.resample()

resampled

data = pd.read_csv('test/ImbR.csv', index_col=0)

data

gn3 = GaussianNoiseRegression(data, thr_rel=0.8, c_perc='extreme')

method = gn3.getMethod()

extrType = gn3.getExtrType()

thr_rel = gn3.getThrRel()

controlPtr = gn3.getControlPtr()

c_perc = gn3.getCPerc()

pert = gn3.getPert()

method, extrType, thr_rel, controlPtr, c_perc, pert

resampled = gn3.resample()

resampled

| archive/test_gaussian_noise.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python (ML)

# language: python

# name: ml

# ---

# # 64-D image manifold: images

# +

# %matplotlib inline

import sys

import numpy as np

import matplotlib

from matplotlib import pyplot as plt

from matplotlib.offsetbox import TextArea, DrawingArea, OffsetImage, AnnotationBbox

import torch

sys.path.append("../../")

from experiments.datasets import FFHQStyleGAN64DLoader

from experiments.architectures.image_transforms import create_image_transform, create_image_encoder

from experiments.architectures.vector_transforms import create_vector_transform

from manifold_flow.flows import ManifoldFlow, EncoderManifoldFlow

import plot_settings as ps

# -

ps.setup()

# ## Helper function to go from torch to numpy conventions

def trf(x):

return np.clip(np.transpose(x, [1,2,0]) / 256., 0., 1.)

# ## Load models

def load_model(

filename,

outerlayers=20,

innerlayers=8,

levels=4,

splinebins=11,

splinerange=10.0,

dropout=0.0,

actnorm=True,

batchnorm=False,

linlayers=2,

linchannelfactor=2,

lineartransform="lu"

):

steps_per_level = outerlayers // levels

spline_params = {

"apply_unconditional_transform": False,

"min_bin_height": 0.001,

"min_bin_width": 0.001,

"min_derivative": 0.001,

"num_bins": splinebins,

"tail_bound": splinerange,

}

outer_transform = create_image_transform(

3,

64,

64,

levels=levels,

hidden_channels=100,

steps_per_level=steps_per_level,

num_res_blocks=2,

alpha=0.05,

num_bits=8,

preprocessing="glow",

dropout_prob=dropout,

multi_scale=True,

spline_params=spline_params,

postprocessing="partial_mlp",

postprocessing_layers=linlayers,

postprocessing_channel_factor=linchannelfactor,

use_actnorm=actnorm,

use_batchnorm=batchnorm,

)

inner_transform = create_vector_transform(

64,

innerlayers,

linear_transform_type=lineartransform,

base_transform_type="rq-coupling",

context_features=1,

dropout_probability=dropout,

tail_bound=splinerange,

num_bins=splinebins,

use_batch_norm=batchnorm,

)

model = ManifoldFlow(

data_dim=(3, 64, 64),

latent_dim=64,

outer_transform=outer_transform,

inner_transform=inner_transform,

apply_context_to_outer=False,

pie_epsilon=0.1,

clip_pie=None

)

model.load_state_dict(

torch.load("../data/models/{}.pt".format(filename), map_location=torch.device("cpu"))

)

_ = model.eval()

return model

def load_emf_model(

filename,

outerlayers=20,

innerlayers=8,

levels=4,

splinebins=11,

splinerange=10.0,

dropout=0.0,

actnorm=True,

batchnorm=False,

linlayers=2,

linchannelfactor=2,

lineartransform="lu"

):

steps_per_level = outerlayers // levels

spline_params = {

"apply_unconditional_transform": False,

"min_bin_height": 0.001,

"min_bin_width": 0.001,

"min_derivative": 0.001,

"num_bins": splinebins,

"tail_bound": splinerange,

}

encoder = create_image_encoder(

3,

64,

64,

latent_dim=64,

context_features=None,

)

outer_transform = create_image_transform(

3,

64,

64,

levels=levels,

hidden_channels=100,

steps_per_level=steps_per_level,

num_res_blocks=2,