code stringlengths 38 801k | repo_path stringlengths 6 263 |

|---|---|

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# https://adventofcode.com/2018/day/

import numpy as np

with open("day_input.txt") as file:

puzzle_input = file.read().splitlines()

small_puzzle_input = 0

# ## Part 1

# ## Part 2

| Advent of Code 2018 - Day Template.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# name: python3

# ---

# + [markdown] id="view-in-github" colab_type="text"

# <a href="https://colab.research.google.com/github/Mattyughhh/CPEN-ECE-2-2/blob/main/Introduction_to_Python_Programming.ipynb" target="_parent"><img src="https://colab.research.google.com/assets/colab-badge.svg" alt="Open In Colab"/></a>

# + colab={"base_uri": "https://localhost:8080/"} id="9sfkL2STjVUj" outputId="a1121d50-e95d-48a0-e5fc-76be5d951664"

print ("Hello, World")

# + colab={"base_uri": "https://localhost:8080/"} id="kba_vKaFuUmo" outputId="893afa6f-b1ea-4bec-d50b-81249c56c5a7"

b = float (4)

print (round,((b),3))

# + colab={"base_uri": "https://localhost:8080/"} id="d9qrdw2XujDW" outputId="225ca97d-f103-42ab-e309-36f8bd7a48ae"

a = 4

A = "sally"

#A will not overwrite a

print (a)

print (A)

# + colab={"base_uri": "https://localhost:8080/"} id="eQwmv1sZxMs1" outputId="1ab569af-187b-46e9-8db0-dcb9e6c05bd0"

x, y ,z= "one", "two", "three"

print (z)

print (y)

print (x)

# + colab={"base_uri": "https://localhost:8080/"} id="KUrafFrLxaD6" outputId="700cabc5-a71b-4fb1-dc50-a7e2716903a0"

x=y=z="Four"

print (x)

print (y)

print (z)

# + colab={"base_uri": "https://localhost:8080/"} id="Afzpug9FtK2u" outputId="60c19f80-85fd-4692-8da6-84ad13efa03b"

x = "enjoying"

print ("Python Programming is "+ x)

# + colab={"base_uri": "https://localhost:8080/"} id="3AapJnIHxkzQ" outputId="950c7a32-6b77-42d0-e256-4aec33aa59a0"

x=5

y=3

sum=x+y

print (sum)

# + colab={"base_uri": "https://localhost:8080/"} id="PaZc9EPvvEpD" outputId="293806bb-1125-47ff-c11c-d85f8c11057f"

x = 5

y = 6

print (x+y)

# + colab={"base_uri": "https://localhost:8080/"} id="uOyGA0Y2vKcN" outputId="306d78ce-80dc-4ab9-bba3-ee81f52ae5e7"

a, b, c = 0, -1, 8

c%=3

print (c)

# + colab={"base_uri": "https://localhost:8080/"} id="QHk6Xr2sxxmX" outputId="c425a8ca-06ee-4002-c342-5bd1be94d4c0"

x=5

x<6 and x<10

# + colab={"base_uri": "https://localhost:8080/"} id="-uGpfI_JtyDO" outputId="b2010be2-3798-4e09-bd06-830f0b7cfa5f"

y = 6

z = 7

y is not z

| Introduction_to_Python_Programming.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# ##Notes: the approach taken in this baseline model is from this 2020 article

# ##by Lianne and Justin, thanks to them for sharing. They used

# ##ridge regression alpha = 0.001

#

# https://www.justintodata.com/improve-sports-betting-odds-guide-in-python/

#

#

# +

import pandas as pd

import numpy as np

from sklearn.metrics import accuracy_score, precision_score, recall_score, roc_auc_score, confusion_matrix

from sklearn.metrics import mean_absolute_error, mean_squared_error, r2_score, f1_score

# +

##we will try the following models on the base-line data ... just win/loss and which teams

##note KNN or other clusters might be helpful group the teams in smart way ... but not now.

#models

##regression

from sklearn.linear_model import Ridge

from sklearn.ensemble import RandomForestRegressor

#classifiers (non-tree)

from sklearn.linear_model import RidgeClassifier

from sklearn.naive_bayes import GaussianNB

from sklearn.linear_model import LogisticRegression

from sklearn.svm import SVC

#tree-based classifiers

from sklearn.ensemble import RandomForestClassifier

from sklearn.ensemble import BaggingClassifier

from sklearn.ensemble import GradientBoostingClassifier

from xgboost import XGBClassifier

from xgboost import XGBRegressor

##regression models

lr = Ridge(alpha=0.001)

rfr = RandomForestRegressor(max_depth=3, random_state=0)

xgbr = XGBRegressor()

regr_models = [lr, rfr, xgbr]

##classifier models

lrc = RidgeClassifier()

gnb = GaussianNB()

lgr = LogisticRegression(random_state = 0)

svc = SVC()

#tree-based classifiers

rfc = RandomForestClassifier(max_depth=3, random_state=0)

bc = BaggingClassifier()

gbc = GradientBoostingClassifier()

xgbc = XGBClassifier( use_label_encoder=False, num_class = [0,1])

class_models= [lrc, gnb, lgr, svc, rfc, bc, gbc, xgbc]

# +

data = pd.read_csv("/Users/joejohns/data_bootcamp/GitHub/final_project_nhl_prediction/Data/Processed_Data/Approach_1 and_2_win_loss_and_cumul_1seas_Pisch_data/data_bet_stats_mp.csv")

data.drop(columns=[ 'Unnamed: 0'], inplace=True)

# +

data['won'] = data['won'].apply(int)

data_playoffs = data.loc[data['playoffGame'] == 1, :].copy() #set aside playoff games ... probably won't use them.

data= data.loc[data['playoffGame'] == 0, :].copy()

#sorted(data.columns)

# -

all_seasons = sorted(set(data['season']))

all_seasons

# +

def make_HA_data(X, season, list_var_names = None ):

X = X.loc[X['season'] == season, :].copy()

X_H = X.loc[X['HoA'] == 'home',:].copy()

X_A = X.loc[X['HoA'] == 'away',:].copy()

X_H['goal_difference'] = X_H['goalsFor'] - X_H['goalsAgainst'] ##note every thing is based in home data

X_H.reset_index(drop = True, inplace = True)

X_A.reset_index(drop = True, inplace = True)

df_visitor = pd.get_dummies(X_H['nhl_name'], dtype=np.int64)

df_home = pd.get_dummies(X_A['nhl_name'], dtype=np.int64)

df_model = df_home.sub(df_visitor)

df_model['date'] = X_H['date']

df_model['full_date'] = X_H['full_date']

df_model['game_id'] = X_H['game_id']

df_model['home_id'] = X_H['team_id']

df_model['away_id'] = X_A['team_id']

y = X_H.loc[:,['date', 'full_date','game_id', 'Open','goal_difference', 'won']].copy() ##these are from home team perspective; 'Open' is for betting

return (df_model, y)

# -

X_dic = {}

y_dic = {}

for sea in all_seasons:

X_dic[sea] = make_HA_data(data, sea)[0]

y_dic[sea] = make_HA_data(data, sea)[1]

# +

#this is for regressors predicting wins - losses, can use this to turn output into win prediction

def make_win(x):

if x <= 0:

return 0

if x >0:

return 1

v_make_win = np.vectorize(make_win)

#useage: v_make_win(y_pred)

# +

##naive method: train on first half of season, 600 games, test on second half of season

##with no further training

def naive_test_train_regr_models(model, cut_off = 600, regr = True):

all_seasons2 = [sea for sea in all_seasons if sea != 20122013]#2012 is shortened season

total_acc = 0

counter = 0

model_name = str(model)

print("results for ", model_name)

print(" ")

for sea in all_seasons2:

#set teh predictor variables, :-5 does the job, would be better

#and safer to name the columns explcitly ... but the columns are date

#and so on ... no leakage worries. OK for this base line

X = X_dic[sea].iloc[:, :-5].copy()

#select season, remove date, etc. select target y

if regr == True:

y = y_dic[sea].loc[:, 'goal_difference'].copy()

else:

y = y_dic[sea].loc[:, 'won'].copy()

#carry out naive train-test split

y_train = y[0: cut_off].copy()

y_test = y[cut_off :].copy()

X_train = X[0: cut_off].copy()

X_test = X[cut_off :].copy()

#train model, find predictions

model.fit(X_train, y_train)

y_pred = model.predict(X_test) #this is regression pred on Hg - Ag

y_pred_win = v_make_win(y_pred) #this is the pred of who wins HW =1, AW =0

y_test_win = v_make_win(y_test) #this gives the correct win, loss

#note: if y, y_pred and y_test are already 1, 0 then v_make_win will

#keep them the same (<= 0 --> 0, >0 ---> 1)

accuracy = accuracy_score(y_test_win, y_pred_win)

f1 = f1_score(y_test_win, y_pred_win) #, average = None)

counter+=1

total_acc+= accuracy

print("seaoson: ", sea)

print("acc: ", accuracy, " f1: ", f1)

avg_acc = total_acc/counter

print('avg acuracy: ', avg_acc)

print(" ")

#evaluate_regression(y_test, y_pred)

#evaluate_binary_classification(y_test_win, y_pred_win

# +

##try for ridge regression

naive_test_train_regr_models(model = lr, cut_off = 700, regr = True) ##ok looks like 20162017 is unusually good for some reason

# +

##avg is around 54% for ridge regression

# +

##now try all regressors

for model in regr_models:

naive_test_train_regr_models(model = model, cut_off = 700, regr = True)

# -

##now try all classifiers

for model in class_models:

naive_test_train_regr_models(model = model, cut_off = 700, regr = False)

# +

##conclusions: some of the average scores are around 55% and some of the top

##scores on a season are as high as 58, 59%

##next steps:

##1. tune the models

##2. look into partial_fit across the seaosn for appropriate models that have that

| Model 1_v6_clean_Aug_2021_version-Copy1.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# +

import pandas as pd

from sklearn.model_selection import train_test_split

from glob import glob

trainval = pd.read_csv('../data/caterpillar/train_set.csv')

test = pd.read_csv('../data/caterpillar/test_set.csv')

trainval_tube_assemblies = trainval['tube_assembly_id'].unique()

test_tube_assemblies = test['tube_assembly_id'].unique()

from sklearn.model_selection import train_test_split

train_tube_assemblies, val_tube_assemblies = train_test_split(

trainval_tube_assemblies, random_state=42

)

train = trainval[trainval.tube_assembly_id.isin(train_tube_assemblies)]

val = trainval[trainval.tube_assembly_id.isin(val_tube_assemblies)]

train.shape, val.shape, test.shape

# -

for path in glob('../data/caterpillar/*.csv'):

df = pd.read_csv(path)

shared_columns = set(df.columns) & set(train.columns)

if shared_columns:

print(path, df.shape)

print(df.columns.tolist(), '\n')

# +

def wrangle(data):

data = data.copy()

# Engineer date features

data['quote_date'] = pd.to_datetime(data['quote_date'], infer_datetime_format=True)

data['quote_date_year'] = data['quote_date'].dt.year

data['quote_date_month'] = data['quote_date'].dt.month

data = data.drop(columns='quote_date')

# Merge data

tube = pd.read_csv('../data/caterpillar/tube.csv')

bill_of_materials = pd.read_csv('../data/caterpillar/bill_of_materials.csv')

specs = pd.read_csv('../data/caterpillar/specs.csv')

data = data.merge(tube, how='left')

data = data.merge(bill_of_materials, how='left')

data = data.merge(specs, how='left')

# Drop tube_assembly_id because our goal is to predict unknown assemblies

data = data.drop(columns='tube_assembly_id')

return data

train = wrangle(train)

val = wrangle(val)

test = wrangle(test)

print(train.shape, val.shape, test.shape)

# +

from sklearn.metrics import mean_squared_error

def rmse(y_true, y_pred):

return np.sqrt(mean_squared_error(y_true, y_pred))

# +

import numpy as np

from sklearn.pipeline import make_pipeline

import category_encoders as ce

from xgboost import XGBRegressor

import matplotlib.pyplot as plt

target = 'cost'

X_train = train.drop(columns=target)

X_val = val.drop(columns=target)

y_train = train[target]

y_val = val[target]

y_train_log = np.log1p(y_train)

y_val_log = np.log1p(y_val)

pipeline = make_pipeline(

ce.OrdinalEncoder(),

XGBRegressor(max_depth=7,

n_estimators=1000,

n_jobs=-1,

gamma=.05,

colsample_bytree=.3,

reg_alpha=.1,

reg_lambda=.95,

objective='reg:squarederror')

)

pipeline.fit(X_train, np.array(y_train_log))

y_train_pred_log = pipeline.predict(X_train)

y_val_pred_log = pipeline.predict(X_val)

print(rmse(y_train_log, y_train_pred_log))

print(rmse(y_val_log, y_val_pred_log))

coefficients = pd.Series(pipeline[1].feature_importances_,

X_train.columns.tolist())

plt.style.use('dark_background')

plt.figure(figsize=(10,30))

coefficients.sort_values().plot.barh(color='grey');

plt.show()

# +

X_test = test.drop(columns='id')

y_pred_log = pipeline.predict(X_test)

y_pred = np.expm1(y_pred_log)

sample_submission = pd.read_csv('../data/caterpillar/sample_submission.csv')

submission = sample_submission.copy()

submission['cost'] = y_pred

submission.to_csv('nathan_van_wyck_submission.csv', index=False)

| module1-log-linear-regression/log_linear_regression_assignment.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# # This program finds the numerical solution using Euler's method of differential equation $\frac{dy}{dt} = f(t,y)$ with $y(t_0)=y_0$

# # <font color='red'> Euler's Method $y_{i+1} = y_i + f(t_i,y_i)(t_{i+1}-t_i), i = 0, 1,...,n-1$

# # <font color='green'> Example: $\frac{dy}{dt}=y, y(0)=1$. Find the solution on the time $0<t\le2$

# # Note its true solution is $y=exp(t)$

##f=lambda t,y,x:y**2+2*t+x

#f(5,1,2

from numpy import*

y=zeros(5)

y

# +

#import numpy as np

#import matplotlib.pyplot as plt

from matplotlib.pyplot import*

from numpy import*

f = lambda t,y: y #for matlab it looks like f=@(t,y)y

T = 2

n = 10

t = np.linspace(0,T,n)

y = np.zeros(n)

y[0] = 1

for i in range(0,n-1):

y[i+1] = y[i] + f(t[i],y[i])*(t[i+1]-t[i])

plot(t,y,'r')

grid()

import pandas as pd

data={}

data.update({"time":t, "displacement":y})

#print(data)

DF=pd.DataFrame(data)

print(DF)

DF.to_csv("euler.csv")

savefig("e.png")

# +

#import numpy as np

#import matplotlib.pyplot as plt

'''from matplotlib.pyplot import*

from numpy import*

f = lambda t,y:2*y #defining function, in matlab it is denoted by f=@(t,y)(y)

T = 2

n = 100

t = np.linspace(0,T,n)

y = np.zeros(n)

y[0] = 1

for i in range(0,n-1):

y[i+1] = y[i] + f(t[i],y[i])*(t[i+1]-t[i])

plot(t,y)

grid(True)

'''

import numpy as np

import matplotlib.pyplot as plt

#from matplotlib.pyplot import*

#from numpy import*

f = lambda t,y: y

T = 2

n = 50

t = np.linspace(0,T,n)

y = np.zeros(n)

y[0] = 1

true_soln=np.exp(t)

for i in range(0,n-1):

y[i+1] = y[i] + f(t[i],y[i])*(t[i+1]-t[i])

plt.plot(t,y,'o')

plt.plot(t,true_soln)

#plt.plot(t,np.exp(t))

plt.grid(True)

# -

# to see the data in table

import pandas as pd

data={} #making emptylist for data

data.update({"time":t,"yvaleu":y})

print(data) #here it print raw data

#to manage the data

DF=pd.DataFrame(data)

print(DF)

#import numpy as np

#import matplotlib.pyplot as plt

'''from matplotlib.pyplot import*

from numpy import*

f = lambda t,y: y

T = 2

n = 10

t = np.linspace(0,T,n)

y = np.zeros(n)

y[0] = 1

for i in range(0,n-1):

y[i+1] = y[i] + f(t[i],y[i])*(t[i+1]-t[i])

plot(t,y)

grid(True)

'''

# # <font color='green'>Find the numerical solution using Eular's method of differential equation

# # $\frac{dy}{dt}=f(t,y)$ with $y(t_0)=0$

# # Eulers formula:$ y_{i+1}=y_i+f(t_{i},y_i)(t_{i+1}- t_i)$

# # <font color='red'>Application:

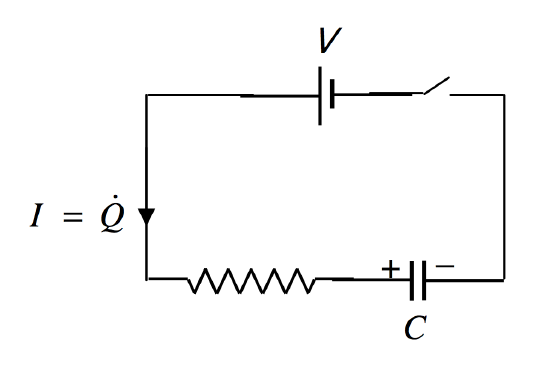

# # Charging of capacitor $V = V_c +V_R$

# #

# # putting q=CV and $V_R=IR=\frac{dq}{dt}R$ we have $\frac{dq}{dt}=\frac{V}{R}-\frac{q}{RC}$,Given: q(0)=0(initial charge)

# #<font color='green'> true solution $ q=q_0(1-exp(-t/(RC)))$

# +

## now python coding

import numpy as np

import matplotlib.pyplot as plt

f=lambda t,q:(V/R)-(q/(R*C))

n=100

q = np.zeros(n)

q[0]=0

T= 5

R= 1

C= 1

V=5

qmax=C*V

t= np.linspace(0,T,n)

true_soln= qmax*(1-np.exp(-t/(R*C)))

for i in range(0,n-1):

q[i+1] = q[i]+f(t[i],q[i])*(t[i+1]-t[i])

# +

plt.plot(t,q)

plt.plot(t,true_soln,'or')

plt.grid(True)

plt.xlabel('time')

plt.ylabel('amount of charge')

plt.title('charging of capacitor')

# -

# To make the data table

import pandas as pd

data={}

data.update({"time":t,"charge":q})

#print (data)# this is raw data

DF=pd.DataFrame(data)

print(DF)

# +

## <font color='red'> Discharging of capacitor $V_C+V_R=0$

## put q=CV and $I= \frac{dq}{dt}$ , $\frac{dq}{dt}=-q/RC$ charge at t=0 is ,$q_0=CV$

## Euler's formula: $q_{i+1}=q_i +f(t[i],q[i])*(t[i+1]-t[i])$

##<font color='green'> true solution $q=q_0(exp(-t/(RC))$

# -

import numpy as np

import matplotlib.pyplot as plt

f=lambda t,q:-q/(R*C)

T=15

n=20

C=0.005

R=200

V=5

t=np.linspace(0,T,n)

q=np.zeros(n)

q[0]=C*V

true_soln=q[0]*(np.exp(-t/(R*C)))

for i in range(0,n-1):

q[i+1]=q[i]+f(t[i],q[i])*(t[i+1]-t[i])

plt.plot(t,q)

plt.plot(t,true_soln,'or')

plt.grid(True)

# +

import pandas as pd

data={}

data.update({"time":t,"charge":q})

print(data)

DF=pd.DataFrame(data)

print(DF)

# -

# # To make data table

# +

import pandas as pd

import numpy as np

n=11

x=np.linspace(0,20,n)

#y1=1/x

y2=x**2

y3=np.exp(x)

#y4=1/x**2

data={}

data.update({"value of x:":x,"reci.of x":y1,"square of x":y2,"exponential":y3,"reci of sqr":y4})

#print(data)

DF= pd.DataFrame(data)

print(DF)

# -

# # Solve first order diff eqn using Euler's method

# # Example: $ \frac{dy}{dt}+2y=2- \exp(-4t)$ ,$y_0=1$

# # making it into simpler form

# # $\frac{dy}{dt}=2-2y-exp(-4t)$ which is of form $\frac{dy}{dt}=f(t,y)$,$y_0=1$

# ## Euler's solution : $y_{i+1}=y_i+f(t_i,y_i)(t_{i+1}-t_{i})$

# +

# python code

import numpy as np

import matplotlib.pyplot as plt

import pandas as pd

n= 20

T= 5

t= np.linspace(0,T,n)

y= np.zeros(n)

y[0]=1

f= lambda t,y:2-2*y-np.exp(-4*t)

for i in range(0,n-1):

y[i+1]= y[i]+f(t[i],y[i])*(t[i+1]-t[i])

# -

plt.plot(t,y)

data={}

data.update({"time:":t, "function value y:":y})

DF=pd.DataFrame(data)

print(DF)

#

# # <font color='red'> Rise and decay of current in LR circuit

# #

# # Growth of current:

# # When switch is on and applyinf kirchoff's voltage rule: $V=V_R +V_L$

# # where V is supply voltage,$V_R$ is potential across resistro and $V_L$ is induced emf across inductor

# # put $V_R=IR$, $V_L=L\frac{dI}{dt}$ in the above relation

# # we get

# # <font color='green'> $\frac{dI}{dt}= \frac{V}{L}-\frac{R}{L}I$ which is of the form of $\frac{dy}{dt}=f(t,y)$

# # here $I_0=0$, initially there is no current

# # True solution is $I=I_{max}(1-exp(-Rt/L))$,where $I_{max} =V/R$, max current in the ckt

# Python code for solving using Euler's method

# +

import numpy as np

import pandas as pd

import matplotlib.pyplot as plt

f=lambda t,I: V/L-(R/L)*I

n=100

T=2

V=50

I=np.zeros(n)

I[0]=0

R=15

L=5

Imax=V/R

t=np.linspace(0,T,n)

truei=Imax*(1-np.exp(-R*t/L))

for i in range (0,n-1):

I[i+1]=I[i]+f(t[i],I[i])*(t[i+1]-t[i])

plt.plot(t,I,'red')

plt.plot(t,truei,'-og')

# -

plt.plot(t,truei)

# # Decay current:

# # when the battery is removed from the ckt(V=0) then charging condition becomes

# # $V=V_R+V_L$

# # $V_L=-V_R$

# # $ L\frac{dI}{dt}=-IR$

# # $\frac{dI}{dt}=-\frac{R}{L}I$

# # true solution= $I_{max}exp(-Rt/L)$, where $I_{max} $is max current $I_{max}= \frac{V}{R}$

# Code:

import numpy as np

import pandas as pd

import matplotlib.pyplot as plt

f=lambda t,I: -(R/L)*I

n=20

T=2

V=50

I=np.zeros(n)

I[0]=Imax

R=15

L=5

Imax=V/R

t=np.linspace(0,T,n)

truei=Imax*(np.exp(-R*t/L))

for i in range (0,n-1):

I[i+1]=I[i]+f(t[i],I[i])*(t[i+1]-t[i])

plt.plot(t,I,'red')

plt.plot(t,truei,'-og')

plt.legend('decay')

# +

#

# +

# Code:while filling the tank of water

import numpy as np

import pandas as pd

import matplotlib.pyplot as plt

f=lambda r,h:2*r*np.pi*h

n=20

T=1

h=10

v=np.zeros(n)

v[0]=10

r=2

t=np.linspace(0,T,n)

for i in range (0,n-1):

v[i+1]=v[i]+f(t[i],v[i])*(t[i+1]-t[i])

plt.plot(t,v,'or')

# -

data={}

data.update({'time:':t,'volume:':v})

DF=pd.DataFrame(data)

print(DF)

df=pd.to_csv("water tank.csv")

DF.to_csv("water tank.csv")

df=pd.read_csv("water tank.csv")

# +

# Euler second order

# f

| .ipynb_checkpoints/EulersMethod-checkpoint.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# name: python3

# ---

# + [markdown] id="WTvhIdEILOpF"

# # ML 101

#

# ## Text Mining

#

# In this project, we will explore a simplified version of the DPWC model to draw a graph of similar personalities.

#

# The data will be consumed from Twitter, and for each search, we create a DWP profile with the most relevant words.

#

# After we apply a Matrix Factorization to estimate the "real" value of the word with zero weight.

#

# Finally, we use a thresholding method to create the entries on the graph.

# + id="W16qJeogxbbe"

# !pip install pyvis gensim python-twitter git+git://github.com/mariolpantunes/nmf@main#egg=nmf git+git://github.com/mariolpantunes/uts@main#egg=uts --upgrade

# + id="X66LmyIZ3UR4"

import pprint

import twitter

import numpy as np

from IPython.core.display import display, HTML

from pyvis.network import Network

import uts.thresholding as thres

import nltk

from nltk.corpus import stopwords

from nltk.stem import RSLPStemmer

from nltk.tokenize import RegexpTokenizer

from nmf.nmf import nmf_mu

import math

pp = pprint.PrettyPrinter(indent=2)

nltk.download('stopwords')

nltk.download('punkt')

nltk.download('wordnet')

nltk.download('omw-1.4')

nltk.download('rslp')

# + id="jFUqvRM_3e5l"

tokenizer = RegexpTokenizer(r'\w+')

stop_words = set(stopwords.words('portuguese'))

stop_words.update(set(stopwords.words('english')))

stop_words.add('https')

stemmer = RSLPStemmer()

db = {}

def cosine_similarity(a,b):

return np.dot(a, b)/(np.linalg.norm(a)*np.linalg.norm(b))

def generate_ngrams(tokens, n=2):

ngrams = zip(*[tokens[i:] for i in range(n)])

return [" ".join(ngram) for ngram in ngrams]

def tokenize(text):

tokens = tokenizer.tokenize(text)

tokens = [stemmer.stem(w.lower()) for w in tokens if not w in stop_words and w.isalpha() and len(w) > 3]

#ngrams = generate_ngrams(tokens)

#return tokens + ngrams

return tokens

def get_term_frequency(corpus, p=0.2):

tf = {}

# count the terms

for t in corpus:

if t not in tf:

tf[t] = 0

tf[t]+=1

# discard non-relevant items

neighborhood = [(k, v) for k, v in tf.items() if v > 1]

neighborhood.sort(key=lambda tup: tup[1], reverse=True)

limit = int(len(neighborhood)*p)

neighborhood = neighborhood[:limit]

# return

return neighborhood

# + id="OWmBDlBB7tqm"

api = twitter.Api(consumer_key='2lDgkNXdm03bxodf55vlY5IHo',

consumer_secret='<KEY>',

access_token_key='<KEY>',

access_token_secret='<KEY>')

terms = ["António Guterres", "<NAME>",

"<NAME>",

"<NAME>",

"<NAME>",

"<NAME>",

"<NAME>"]

for t in terms:

if t not in db:

results = api.GetSearch(term=t, count=300, lang='pt')

corpus = []

for r in results:

corpus.extend(tokenize(r.text.lower()))

tf = get_term_frequency(corpus)

db[t] = tf

pp.pprint(f'{db}')

# + id="4IFYRgY9dS92"

# create vector matrix

vocab = set()

for k in db:

tf = db[k]

for t,_ in tf:

vocab.add(t)

X = np.zeros((len(db), len(vocab)))

r = 0

for k in db:

tf = db[k]

c = 0

for t, v in tf:

vocab.add(t)

c += 1

X[r,c] = v

r +=1

rows, cols = X.shape

k = int(math.ceil(rows/2.0))

Xr, W, H, cost = nmf_mu(X, k=k, seed=42)

seeds = [3, 5, 7, 11, 13]

for s in seeds:

Xt, Wt, Ht, costt = nmf_mu(X, k=k, seed=s)

if costt < cost:

cost = costt

Xr = Xt

# compute the distance matrix (graph)

D = np.identity(len(db))

for i in range(0, len(Xr)):

for j in range(0, len(Xr)):

similarity = cosine_similarity(Xr[i], Xr[j])

D[i][j] = similarity

D[j][i] = similarity

# compute the ideal threshold

flat_distance = D.ravel()

#t = thres.isodata(flat_distance)

t = np.percentile(flat_distance, 75)

D[D<t] = 0

# + id="oQHrkE7ndS2u"

net = Network()

# Create the nodes

i=0

for k in db:

net.add_node(i, label=k)

i+=1

for i in range(0, len(D)-1):

for j in range(1, len(D)):

if (i!=j) and D[i][j] > 0:

net.add_edge(i, j, weight=D[i][j])

net.show('network.html')

display(HTML('network.html'))

| projects/06 text mining/06_tm_graph.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# + [markdown] toc=true

# <h1>Table of Contents<span class="tocSkip"></span></h1>

# <div class="toc"><ul class="toc-item"></ul></div>

# +

# %matplotlib inline

from time import sleep

import numpy as np

from livelossplot import PlotLosses

# +

liveplot = PlotLosses()

for i in range(10):

liveplot.update({

'accuracy': 1 - np.random.rand() / (i + 2.),

'val_accuracy': 1 - np.random.rand() / (i + 0.5),

'mse': 1. / (i + 2.),

'val_mse': 1. / (i + 0.5)

})

liveplot.draw()

sleep(1.)

# -

| examples/minimal.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# +

from __future__ import print_function

from bs4 import BeautifulSoup

import requests

# -

# # Beautiful soup on test data

#

# Here, we create some simple HTML that include some frequently used tags.

# Note, however, that we have also left one paragraph tag unclosed.

# +

source = """

<!DOCTYPE html>

<html>

<head>

<title>Scraping</title>

</head>

<body class="col-sm-12">

<h1>section1</h1>

<p>paragraph1</p>

<p>paragraph2</p>

<div class="col-sm-2">

<h2>section2</h2>

<p>paragraph3</p>

<p>unclosed

</div>

</body>

</html>

"""

soup = BeautifulSoup(source, "html.parser")

# -

# Once the soup object has been created successfully, we can execute a number of queries on the DOM.

# First we request all data from the `head` tag.

# Note that while it looks like a list of strings was returned, actually, a `bs4.element.Tag` type is returned.

# These examples expore how to extract tags, the text from tags, how to filter queries based on

# attributes, how to retreive attributes from a returned query, and how the BeautifulSoup engine

# is tolerant of unclosed tags.

print(soup.prettify())

print('Head:')

print('', soup.find_all("head"))

# [<head>\n<title>Scraping</title>\n</head>]

print('\nType of head:')

print('', map(type, soup.find_all("head")))

# [<class 'bs4.element.Tag'>]

print('\nTitle tag:')

print('', soup.find("title"))

# <title>Scraping</title>

print('\nTitle text:')

print('', soup.find("title").text)

# Scraping

divs = soup.find_all("div", attrs={"class": "col-sm-2"})

print('\nDiv with class=col-sm-2:')

print('', divs)

# [<div class="col-sm-2">....</div>]

print('\nClass of first div:')

print('', divs[0].attrs['class'])

# [u'col-sm-2']

print('\nAll paragraphs:')

print('', soup.find_all("p"))

# [<p>paragraph1</p>,

# <p>paragraph2</p>,

# <p>paragraph3</p>,

# <p>unclosed\n </p>]

# # Beautilful soup on real data

#

# In this example I will show how you can use BeautifulSoup to retreive information from live web pages.

# We make use of The Guardian newspaper, and retreive the HTML from an arbitrary article.

# We then create the BeautifulSoup object, and query the links that were discovered in the DOM.

# Since a large number are returned, we then apply attribute filters that let us reduce significantly

# the number of returned links.

# I selected the filters selected for this example in order to focus on the names in the paper.

# The parameterisation of the attributes was discovered by using the `inspect` functionality of Google Chrome

url = 'https://www.theguardian.com/technology/2017/jan/31/amazon-expedia-microsoft-support-washington-action-against-donald-trump-travel-ban'

req = requests.get(url)

source = req.text

soup = BeautifulSoup(source, 'html.parser')

print(source)

links = soup.find_all('a')

links

# +

links = soup.find_all('a', attrs={

'data-component': 'auto-linked-tag'

})

for link in links:

print(link['href'], link.text)

# -

# # Chaining queries

#

# Now, let us conisder a more general query that might be done on a website such as this.

# We will query the base technology page, and attempt to list all articles that pertain to this main page

url = 'https://www.theguardian.com/uk/technology'

req = requests.get(url)

source = req.text

soup = BeautifulSoup(source, 'html.parser')

# After inspecting the DOM (via the `inspect` tool in my browser), I see that the attributes that define

# a `technology` article are:

#

# class = "js-headline-text"

# +

articles = soup.find_all('a', attrs={

'class': 'js-headline-text'

})

for article in articles:

print(article['href'][:], article.text[:20])

# -

# With this set of articles, it is now possible to chain further querying, for example with code

# similar to the following

#

# ```python

# for article in articles:

# req = requests.get(article['href'])

# source = req.text

# soup = BeautifulSoup(source, 'html.parser')

#

# ... and so on...

# ```

#

# However, I won't go into much detail about this now. For scraping like this tools, such as `scrapy` are more

# appropriate than `BeautifulSoup` since they are designed for multithreadded web crawling.

# Once again, however, I urge caution and hope that before any crawling is initiated you determine whether

# crawling is within the terms of use of the website.

# If in doubt contact the website administrators.

#

# https://scrapy.org/

| 01_data_ingress/05_web_scraping.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Py3 research env

# language: python

# name: py3_research

# ---

# + [markdown] colab_type="text" id="13pL--6rycN3"

# ## Homework01: Three headed network in PyTorch

#

# This notebook accompanies the [week02 seminar](https://github.com/girafe-ai/ml-mipt/blob/advanced/week02_CNN_n_Vanishing_gradient/week02_CNN_for_texts.ipynb). Refer to that notebook for more comments.

#

# All the preprocessing is the same as in the classwork. *Including the data leakage in the train test split (it's still for bonus points).*

# + colab={} colab_type="code" id="P8zS7m-gycN5"

import numpy as np

import pandas as pd

import matplotlib.pyplot as plt

# %matplotlib inline

import nltk

import tqdm

from collections import Counter

# -

# If you have already downloaded the data on the Seminar, simply run through the next cells. Otherwise uncomment the next cell (and comment the another one ;)

# +

# uncomment and run this cell, if you don't have data locally yet.

# # !curl -L "https://www.dropbox.com/s/5msc5ix7ndyba10/Train_rev1.csv.tar.gz?dl=1" -o Train_rev1.csv.tar.gz

# # !tar -xvzf ./Train_rev1.csv.tar.gz

# data = pd.read_csv("./Train_rev1.csv", index_col=None)

# wget https://raw.githubusercontent.com/girafe-ai/ml-mipt/advanced_f20/homeworks_advanced/assignment1_02_Three_headed_network/network.py

# + colab={"base_uri": "https://localhost:8080/", "height": 143} colab_type="code" id="vwN72gd4ycOA" outputId="7b9e8549-3128-4041-c4be-33fb6f326c78"

# run this cell if you have downloaded the dataset on the seminar

data = pd.read_csv("../../week02_CNN_n_Vanishing_gradient/Train_rev1.csv", index_col=None)

# + colab={"base_uri": "https://localhost:8080/", "height": 265} colab_type="code" id="UuuKIKfrycOH" outputId="e5de0f94-a4f6-4b51-db80-9d11ddc1db31"

data['Log1pSalary'] = np.log1p(data['SalaryNormalized']).astype('float32')

text_columns = ["Title", "FullDescription"]

categorical_columns = ["Category", "Company", "LocationNormalized", "ContractType", "ContractTime"]

target_column = "Log1pSalary"

data[categorical_columns] = data[categorical_columns].fillna('NaN') # cast missing values to string "NaN"

data.sample(3)

data_for_autotest = data[-5000:]

data = data[:-5000]

# + colab={} colab_type="code" id="RUWkpd7PycOQ"

tokenizer = nltk.tokenize.WordPunctTokenizer()

# see task above

def normalize(text):

text = str(text).lower()

return ' '.join(tokenizer.tokenize(text))

data[text_columns] = data[text_columns].applymap(normalize)

print("Tokenized:")

print(data["FullDescription"][2::100000])

assert data["FullDescription"][2][:50] == 'mathematical modeller / simulation analyst / opera'

assert data["Title"][54321] == 'international digital account manager ( german )'

# Count how many times does each token occur in both "Title" and "FullDescription" in total

# build a dictionary { token -> it's count }

from collections import Counter

from tqdm import tqdm as tqdm

token_counts = Counter()# <YOUR CODE HERE>

for _, row in tqdm(data[text_columns].iterrows()):

for string in row:

token_counts.update(string.split())

# hint: you may or may not want to use collections.Counter

# -

token_counts.most_common(1)[0][1]

# + colab={"base_uri": "https://localhost:8080/", "height": 215} colab_type="code" id="GiOWbc15ycOb" outputId="1e807140-5513-4af0-d9a9-9f029059a553"

print("Total unique tokens :", len(token_counts))

print('\n'.join(map(str, token_counts.most_common(n=5))))

print('...')

print('\n'.join(map(str, token_counts.most_common()[-3:])))

assert token_counts.most_common(1)[0][1] in range(2500000, 2700000)

assert len(token_counts) in range(200000, 210000)

print('Correct!')

min_count = 10

# tokens from token_counts keys that had at least min_count occurrences throughout the dataset

tokens = [token for token, count in token_counts.items() if count >= min_count]# <YOUR CODE HERE>

# Add a special tokens for unknown and empty words

UNK, PAD = "UNK", "PAD"

tokens = [UNK, PAD] + sorted(tokens)

print("Vocabulary size:", len(tokens))

assert type(tokens) == list

assert len(tokens) in range(32000, 35000)

assert 'me' in tokens

assert UNK in tokens

print("Correct!")

token_to_id = {token: idx for idx, token in enumerate(tokens)}

assert isinstance(token_to_id, dict)

assert len(token_to_id) == len(tokens)

for tok in tokens:

assert tokens[token_to_id[tok]] == tok

print("Correct!")

# + colab={} colab_type="code" id="JEsLeBjVycOw"

UNK_IX, PAD_IX = map(token_to_id.get, [UNK, PAD])

def as_matrix(sequences, max_len=None):

""" Convert a list of tokens into a matrix with padding """

if isinstance(sequences[0], str):

sequences = list(map(str.split, sequences))

max_len = min(max(map(len, sequences)), max_len or float('inf'))

matrix = np.full((len(sequences), max_len), np.int32(PAD_IX))

for i,seq in enumerate(sequences):

row_ix = [token_to_id.get(word, UNK_IX) for word in seq[:max_len]]

matrix[i, :len(row_ix)] = row_ix

return matrix

# + colab={"base_uri": "https://localhost:8080/", "height": 179} colab_type="code" id="JiBlPkdKycOy" outputId="3866b444-1e2d-4d79-d429-fecc6d8e02a8"

print("Lines:")

print('\n'.join(data["Title"][::100000].values), end='\n\n')

print("Matrix:")

print(as_matrix(data["Title"][::100000]))

# + colab={"base_uri": "https://localhost:8080/", "height": 53} colab_type="code" id="DpOlBp7ZycO6" outputId="30a911f2-7d35-4cb5-8991-60457b1e8bac"

from sklearn.feature_extraction import DictVectorizer

# we only consider top-1k most frequent companies to minimize memory usage

top_companies, top_counts = zip(*Counter(data['Company']).most_common(1000))

recognized_companies = set(top_companies)

data["Company"] = data["Company"].apply(lambda comp: comp if comp in recognized_companies else "Other")

categorical_vectorizer = DictVectorizer(dtype=np.float32, sparse=False)

categorical_vectorizer.fit(data[categorical_columns].apply(dict, axis=1))

# + [markdown] colab_type="text" id="yk4jmtAYycO8"

# ### The deep learning part

#

# Once we've learned to tokenize the data, let's design a machine learning experiment.

#

# As before, we won't focus too much on validation, opting for a simple train-test split.

#

# __To be completely rigorous,__ we've comitted a small crime here: we used the whole data for tokenization and vocabulary building. A more strict way would be to do that part on training set only. You may want to do that and measure the magnitude of changes.

#

#

# #### Here comes the simple one-headed network from the seminar.

# + colab={"base_uri": "https://localhost:8080/", "height": 53} colab_type="code" id="TngLcWA0ycO_" outputId="6731b28c-07b1-41dc-9574-f76b01785bba"

from sklearn.model_selection import train_test_split

data_train, data_val = train_test_split(data, test_size=0.2, random_state=42)

data_train.index = range(len(data_train))

data_val.index = range(len(data_val))

print("Train size = ", len(data_train))

print("Validation size = ", len(data_val))

# + colab={} colab_type="code" id="2PXuKgOSycPB"

def make_batch(data, max_len=None, word_dropout=0):

"""

Creates a keras-friendly dict from the batch data.

:param word_dropout: replaces token index with UNK_IX with this probability

:returns: a dict with {'title' : int64[batch, title_max_len]

"""

batch = {}

batch["Title"] = as_matrix(data["Title"].values, max_len)

batch["FullDescription"] = as_matrix(data["FullDescription"].values, max_len)

batch['Categorical'] = categorical_vectorizer.transform(data[categorical_columns].apply(dict, axis=1))

if word_dropout != 0:

batch["FullDescription"] = apply_word_dropout(batch["FullDescription"], 1. - word_dropout)

if target_column in data.columns:

batch[target_column] = data[target_column].values

return batch

def apply_word_dropout(matrix, keep_prop, replace_with=UNK_IX, pad_ix=PAD_IX,):

dropout_mask = np.random.choice(2, np.shape(matrix), p=[keep_prop, 1 - keep_prop])

dropout_mask &= matrix != pad_ix

return np.choose(dropout_mask, [matrix, np.full_like(matrix, replace_with)])

# + colab={"base_uri": "https://localhost:8080/", "height": 251} colab_type="code" id="I6LpEQf0ycPD" outputId="e3520cae-fba1-46cc-a216-56287b6e4929"

a = make_batch(data_train[:3], max_len=10)

# -

# But to start with let's build the simple model using only the part of the data. Let's create the baseline solution using only the description part (so it should definetely fit into the Sequential model).

import torch

from torch import nn

import torch.nn.functional as F

# +

# You will need these to make it simple

class Flatten(nn.Module):

def forward(self, input):

return input.view(input.size(0), -1)

class Reorder(nn.Module):

def forward(self, input):

return input.permute((0, 2, 1))

# -

# To generate minibatches we will use simple pyton generator.

def iterate_minibatches(data, batch_size=256, shuffle=True, cycle=False, **kwargs):

""" iterates minibatches of data in random order """

while True:

indices = np.arange(len(data))

if shuffle:

indices = np.random.permutation(indices)

for start in range(0, len(indices), batch_size):

batch = make_batch(data.iloc[indices[start : start + batch_size]], **kwargs)

target = batch.pop(target_column)

yield batch, target

if not cycle: break

iterator = iterate_minibatches(data_train, 3)

batch, target = next(iterator)

# +

# Here is some startup code:

n_tokens=len(tokens)

n_cat_features=len(categorical_vectorizer.vocabulary_)

hid_size=64

simple_model = nn.Sequential()

simple_model.add_module('emb', nn.Embedding(num_embeddings=n_tokens, embedding_dim=hid_size))

simple_model.add_module('reorder', Reorder())

simple_model.add_module('conv1', nn.Conv1d(

in_channels=hid_size,

out_channels=hid_size,

kernel_size=2)

)

simple_model.add_module('relu1', nn.ReLU())

simple_model.add_module('adapt_avg_pool', nn.AdaptiveAvgPool1d(output_size=1))

simple_model.add_module('flatten1', Flatten())

simple_model.add_module('linear1', nn.Linear(in_features=hid_size, out_features=1))

# <YOUR CODE HERE>

# -

batch

# __Remember!__ We are working with regression problem and predicting only one number.

# Try this to check your model. `torch.long` tensors are required for nn.Embedding layers.

simple_model(torch.tensor(batch['FullDescription'], dtype=torch.long))

batch['FullDescription'].shape

# And now simple training pipeline (it's commented because we've already done that in class. No need to do it again).

# +

# from IPython.display import clear_output

# from random import sample

# epochs = 1

# model = simple_model

# opt = torch.optim.Adam(model.parameters())

# loss_func = nn.MSELoss()

# history = []

# for epoch_num in range(epochs):

# for idx, (batch, target) in enumerate(iterate_minibatches(data_train)):

# # Preprocessing the batch data and target

# batch = torch.tensor(batch['FullDescription'], dtype=torch.long)

# target = torch.tensor(target)

# predictions = model(batch)

# predictions = predictions.view(predictions.size(0))

# loss = loss_func(predictions, target)# <YOUR CODE HERE>

# # train with backprop

# loss.backward()

# opt.step()

# opt.zero_grad()

# # <YOUR CODE HERE>

# history.append(loss.data.numpy())

# if (idx+1)%10==0:

# clear_output(True)

# plt.plot(history,label='loss')

# plt.legend()

# plt.show()

# -

# ### Actual homework starts here

# __Your ultimate task is to code the three headed network described on the picture below.__

# To make it closer to the real world, please store the network code in file `network.py` in this directory.

# + [markdown] colab_type="text" id="0eI5h9UMycPF"

# #### Architecture

#

# Our main model consists of three branches:

# * Title encoder

# * Description encoder

# * Categorical features encoder

#

# We will then feed all 3 branches into one common network that predicts salary.

#

# <img src="https://github.com/yandexdataschool/nlp_course/raw/master/resources/w2_conv_arch.png" width=600px>

#

# This clearly doesn't fit into PyTorch __Sequential__ interface. To build such a network, one will have to use [__PyTorch nn.Module API__](https://pytorch.org/docs/stable/nn.html#torch.nn.Module).

# -

import network

# Re-run this cell if you updated the file with network source code

import imp

imp.reload(network)

model = network.ThreeInputsNet(

n_tokens=len(tokens),

n_cat_features=len(categorical_vectorizer.vocabulary_),

# this parameter defines the number of the inputs in the layer,

# which stands after the concatenation. In should be found out by you.

concat_number_of_features=<YOUR CODE HERE>

)

testing_batch, _ = next(iterate_minibatches(data_train, 3))

testing_batch = [

torch.tensor(testing_batch['Title'], dtype=torch.long),

torch.tensor(testing_batch['FullDescription'], dtype=torch.long),

torch.tensor(testing_batch['Categorical'])

]

assert model(testing_batch).shape == torch.Size([3, 1])

assert model(testing_batch).dtype == torch.float32

print('Seems fine!')

# Now train the network for a while (100 batches would be fine).

# +

# Training pipeline comes here (almost the same as for the simple_model)

# -

# Now, to evaluate the model it can be switched to `eval` state.

model.eval()

def generate_submission(model, data, batch_size=256, name="", three_inputs_mode=True, **kw):

squared_error = abs_error = num_samples = 0.0

output_list = []

for batch_x, batch_y in tqdm(iterate_minibatches(data, batch_size=batch_size, shuffle=False, **kw)):

if three_inputs_mode:

batch = [

torch.tensor(batch_x['Title'], dtype=torch.long),

torch.tensor(batch_x['FullDescription'], dtype=torch.long),

torch.tensor(batch_x['Categorical'])

]

else:

batch = torch.tensor(batch_x['FullDescription'], dtype=torch.long)

batch_pred = model(batch)[:, 0].detach().numpy()

output_list.append((list(batch_pred), list(batch_y)))

squared_error += np.sum(np.square(batch_pred - batch_y))

abs_error += np.sum(np.abs(batch_pred - batch_y))

num_samples += len(batch_y)

print("%s results:" % (name or ""))

print("Mean square error: %.5f" % (squared_error / num_samples))

print("Mean absolute error: %.5f" % (abs_error / num_samples))

batch_pred = [c for x in output_list for c in x[0]]

batch_y = [c for x in output_list for c in x[1]]

output_df = pd.DataFrame(list(zip(batch_pred, batch_y)), columns=['batch_pred', 'batch_y'])

output_df.to_csv('submission.csv', index=False)

generate_submission(model, data_for_autotest, name='Submission')

print('Submission file generated')

# __To hand in this homework, please upload `network.py` file with code and `submission.csv` to the google form.__

| ML-MIPT-advanced/homeworks_advanced/assignment1_02_Three_headed_network/assignment1_02_three_headed_network.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python [conda env:dev3.7]

# language: python

# name: conda-env-dev3.7-py

# ---

# # Counter

# In this activity, you will create a function that preprocesses and outputs a list of the most common words in a corpus.

from nltk.corpus import reuters, stopwords

from nltk.util import ngrams

from nltk.tokenize import word_tokenize

from nltk.stem import WordNetLemmatizer

import re

import pandas as pd

from collections import Counter

lemmatizer = WordNetLemmatizer()

# Corpus - list of articles about grains

ids = reuters.fileids(categories='grain')

corpus = [reuters.raw(i) for i in ids]

# Define preprocess function

def process_text(doc):

sw = set(stopwords.words('english'))

regex = re.compile("[^a-zA-Z ]")

re_clean = regex.sub('', doc)

words = word_tokenize(re_clean)

lem = [lemmatizer.lemmatize(word) for word in words]

output = [word.lower() for word in lem if word.lower() not in sw]

return output

# Define the counter function

def word_counter(corpus):

# Combine all articles in corpus into one large string

big_string = ' '.join(corpus)

processed = process_text(big_string)

top_10 = dict(Counter(processed).most_common(10))

return pd.DataFrame(list(top_10.items()), columns=['word', 'count'])

# Run the word_counter function

word_counter(corpus)

def bigram_counter(corpus):

# Combine all articles in corpus into one large string

big_string = ' '.join(corpus)

processed = process_text(big_string)

bigrams = ngrams(processed, n=2)

top_10 = dict(Counter(bigrams).most_common(10))

return pd.DataFrame(list(top_10.items()), columns=['bigram', 'count'])

# Run the bigram_counter function

bigram_counter(corpus)

def trigram_counter(corpus):

# Combine all articles in corpus into one large string

big_string = ' '.join(corpus)

processed = process_text(big_string)

bigrams = ngrams(processed, n=3)

top_10 = dict(Counter(bigrams).most_common(10))

return pd.DataFrame(list(top_10.items()), columns=['trigram', 'count'])

trigram_counter(corpus)

# +

def quadgram_counter(corpus):

# Combine all articles in corpus into one large string

big_string = ' '.join(corpus)

processed = process_text(big_string)

bigrams = ngrams(processed, n=4)

top_10 = dict(Counter(bigrams).most_common(10))

return pd.DataFrame(list(top_10.items()), columns=['quadgram', 'count'])

quadgram_counter(corpus)

# -

| PythonJupyterNotebooks/Week12-Day1-Activity8-counter.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

import pandas as pd

pm_10 = pd.read_csv('yurukov/sofia_airpollution.csv')

# # Seasons

weather_raw = pd.read_csv("./data/sofia-weather-data.csv")

weather = weather_raw.dropna(axis=1, how='all')

weather.drop(axis=1,labels=['weather_icon'], inplace=True)

# +

weather["temp_cent"] = weather["temp"] - 272.15

weather["temp_max_cent"] = weather["temp_max"] - 272.15

weather["temp_min_cent"] = weather["temp_min"] - 272.15

weather['date'] = weather['dt_iso'].apply(lambda x : str.split(x, ' +')[0])

# f = {'temp_cent':['min','mean', 'max'], 'pressure':['mean'], 'humidity': ['mean'], 'wind_speed': ['min', 'mean', 'max']}

processed_weather = weather.groupby('date', as_index=False).mean()

# -

dates = pd.DataFrame(weather['date'].unique(), columns=["date"])

# weather = weather[weather['aaa'] != 1]

pm_10 = pm_10[pm_10['inv_PM10'] != 1]

pm_10 = pm_10[pm_10['inv_NO2'] != 1]

pm_10['timestamp'] = pm_10['timestamp'].astype("str")

processed_weather['date'] = processed_weather['date'].astype("str")

merged_with_date = pd.merge(processed_weather, pm_10, left_on='date', right_on="timestamp")

merged_with_date = merged_with_date.drop(axis=1, labels=['temp', 'dt', 'city_id', 'wind_deg', 'rain_1h', 'rain_3h',

'rain_today', 'weather_id', 'inv_PM10', 'inv_NO2', 'NO', 'inv_NO', 'C6H6', 'inv_C6H6', 'CO',

'inv_CO', 'O3', 'inv_O3', 'SO2', 'inv_SO2', 'HU', 'inv_HU', 'AP',

'inv_AP', 'WND', 'inv_WND', 'SUN', 'inv_SUN', 'TMP', 'inv_TMP', 'timestamp'])

#print(merged_with_date.columns)

#print(merged_with_date.head())

#print(merged_with_date.describe())

#print(len(merged_with_date))

merged_with_date

# # Shift weather one day forward - for predictions

# +

merged_with_date["temp_shifted"] = merged_with_date.temp_cent.shift(1)

merged_with_date["temp_min_shifted"] = merged_with_date.temp_min.shift(1)

merged_with_date["temp_max_shifted"] = merged_with_date.temp_max.shift(1)

#TODO

| yurukov_weather_merging.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python (QISKitenv)

# language: python

# name: qiskitenv

# ---

# <img src="../../images/qiskit-heading.gif" alt="Note: In order for images to show up in this jupyter notebook you need to select File => Trusted Notebook" width="500 px" align="left">

# ## _*Shor's Algorithm for Integer Factorization*_

#

# The latest version of this tutorial notebook is available on https://github.com/qiskit/qiskit-tutorial.

#

# In this tutorial, we first introduce the problem of [integer factorization](#factorization) and describe how [Shor's algorithm](#shorsalgorithm) solves it in detail. We then [implement](#implementation) a version of it in Qiskit.

#

# ### Contributors

# <NAME>

# ***

# ## Integer Factorization <a id='factorization'></a>

#

# Integer factorization is the decomposition of an composite integer into a product of smaller integers, for example, the integer $100$ can be factored into $10 \times 10$. If these factors are restricted to prime numbers, the process is called prime factorization, for example, the prime factorization of $100$ is $2 \times 2 \times 5 \times 5$.

#

# When the integers are very large, no efficient classical integer factorization algorithm is known. The hardest factorization problems are semiprime numbers, the product of two prime numbers. In [2009](https://link.springer.com/chapter/10.1007/978-3-642-14623-7_18), a team of researchers factored a 232 decimal digit semiprime number (768 bits), spending the computational equivalent of more than two thousand years on a single core 2.2 GHz AMD Opteron processor with 2 GB RAM:

# ```

# RSA-768 = 12301866845301177551304949583849627207728535695953347921973224521517264005

# 07263657518745202199786469389956474942774063845925192557326303453731548268

# 50791702612214291346167042921431160222124047927473779408066535141959745985

# 6902143413

#

# = 33478071698956898786044169848212690817704794983713768568912431388982883793

# 878002287614711652531743087737814467999489

# × 36746043666799590428244633799627952632279158164343087642676032283815739666

# 511279233373417143396810270092798736308917

# ```

# The presumed difficulty of this semiprime factorization problem underlines many encryption algorithms, such as [RSA](https://www.google.com/patents/US4405829), which is used in online credit card transactions, amongst other applications.

# ***

# ## Shor's Algorithm <a id='shorsalgorithm'></a>

#

# Shor's algorithm, named after mathematician <NAME>, is a polynomial time quantum algorithm for integer factorization formulated in [1994](http://epubs.siam.org/doi/10.1137/S0097539795293172). It is arguably the most dramatic example of how the paradigm of quantum computing changed our perception of which computational problems should be considered tractable, motivating the study of new quantum algorithms and efforts to design and construct quantum computers. It also has expedited research into new cryptosystems not based on integer factorization.

#

# Shor's algorithm has been experimentally realised by multiple teams for specific composite integers. The composite $15$ was first factored into $3 \times 5$ in [2001](https://www.nature.com/nature/journal/v414/n6866/full/414883a.html) using seven NMR qubits, and has since been implemented using four photon qubits in 2007 by [two](https://journals.aps.org/prl/abstract/10.1103/PhysRevLett.99.250504) [teams](https://journals.aps.org/prl/abstract/10.1103/PhysRevLett.99.250505), three solid state qubits in [2012](https://www.nature.com/nphys/journal/v8/n10/full/nphys2385.html) and five trapped ion qubits in [2016](http://science.sciencemag.org/content/351/6277/1068). The composite $21$ has also been factored into $3 \times 7$ in [2012](http://www.nature.com/nphoton/journal/v6/n11/full/nphoton.2012.259.html) using a photon qubit and qutrit (a three level system). Note that these experimental demonstrations rely on significant optimisations of Shor's algorithm based on apriori knowledge of the expected results. In general, [$2 + \frac{3}{2}\log_2N$](https://link-springer-com.virtual.anu.edu.au/chapter/10.1007/3-540-49208-9_15) qubits are needed to factor the composite integer $N$, meaning at least $1,154$ qubits would be needed to factor $RSA-768$ above.

#

from IPython.display import HTML

HTML('<iframe width="560" height="315" src="https://www.youtube.com/embed/hOlOY7NyMfs?start=75&end=126" frameborder="0" allowfullscreen></iframe>')

# As <NAME> describes in the video above from [PhysicsWorld](http://physicsworld.com/cws/article/multimedia/2015/sep/30/what-is-shors-factoring-algorithm), Shor’s algorithm is composed of three parts. The first part turns the factoring problem into a period finding problem using number theory, which can be computed on a classical computer. The second part finds the period using the quantum Fourier transform and is responsible for the quantum speedup of the algorithm. The third part uses the period found to calculate the factors.

#

# The following sections go through the algorithm in detail, for those who just want the steps, without the lengthy explanation, refer to the [blue](#stepsone) [boxes](#stepstwo) before jumping down to the [implemention](#implemention).

# ### From Factorization to Period Finding

#

# The number theory that underlines Shor's algorithm relates to periodic modulo sequences. Let's have a look at an example of such a sequence. Consider the sequence of the powers of two:

# $$1, 2, 4, 8, 16, 32, 64, 128, 256, 512, 1024, ...$$

# Now let's look at the same sequence 'modulo 15', that is, the remainder after fifteen divides each of these powers of two:

# $$1, 2, 4, 8, 1, 2, 4, 8, 1, 2, 4, ...$$

# This is a modulo sequence that repeats every four numbers, that is, a periodic modulo sequence with a period of four.

#

# Reduction of factorization of $N$ to the problem of finding the period of an integer $x$ less than $N$ and greater than $1$ depends on the following result from number theory:

#

# > The function $\mathcal{F}(a) = x^a \bmod N$ is a periodic function, where $x$ is an integer coprime to $N$ and $a \ge 0$.

#

# Note that two numbers are coprime, if the only positive integer that divides both of them is 1. This is equivalent to their greatest common divisor being 1. For example, 8 and 15 are coprime, as they don't share any common factors (other than 1). However, 9 and 15 are not coprime, since they are both divisible by 3 (and 1).

#

# > Since $\mathcal{F}(a)$ is a periodic function, it has some period $r$. Knowing that $x^0 \bmod N = 1$, this means that $x^r \bmod N = 1$ since the function is periodic, and thus $r$ is just the first nonzero power where $x^r = 1 (\bmod N)$.

#

# Given this information and through the following algebraic manipulation:

# $$ x^r \equiv 1 \bmod N $$

# $$ x^r = (x^{r/2})^2 \equiv 1 \bmod N $$

# $$ (x^{r/2})^2 - 1 \equiv 0 \bmod N $$

# and if $r$ is an even number:

# $$ (x^{r/2} + 1)(x^{r/2} - 1) \equiv 0 \bmod N $$

#

# From this, the product $(x^{r/2} + 1)(x^{r/2} - 1)$ is an integer multiple of $N$, the number to be factored. Thus, so long as $(x^{r/2} + 1)$ or $(x^{r/2} - 1)$ is not a multiple of $N$, then at least one of $(x^{r/2} + 1)$ or $(x^{r/2} - 1)$ must have a nontrivial factor in common with $N$.

#

# So computing $\text{gcd}(x^{r/2} - 1, N)$ and $\text{gcd}(x^{r/2} + 1, N)$ will obtain a factor of $N$, where $\text{gcd}$ is the greatest common denominator function, which can be calculated by the polynomial time [Euclidean algorithm](https://en.wikipedia.org/wiki/Euclidean_algorithm).

# #### Classical Steps to Shor's Algorithm

#

# Let's assume for a moment that a period finding machine exists that takes as input coprime integers $x, N$ and outputs the period of $x \bmod N$, implemented by as a brute force search below. Let's show how to use the machine to find all prime factors of $N$ using the number theory described above.

# Brute force period finding algorithm

def find_period_classical(x, N):

n = 1

t = x

while t != 1:

t *= x

t %= N

n += 1

return n

# For simplicity, assume that $N$ has only two distinct prime factors: $N = pq$.

#

# <div class="alert alert-block alert-info"> <a id='stepsone'></a>

# <ol>

# <li>Pick a random integer $x$ between $1$ and $N$ and compute the greatest common divisor $\text{gcd}(x,N)$ using Euclid's algorithm.</li>

# <li>If $x$ and $N$ have some common prime factors, $\text{gcd}(x,N)$ will equal $p$ or $q$. Otherwise $\text{gcd}(x,N) = 1$, meaning $x$ and $N$ are coprime. </li>

# <li>Let $r$ be the period of $x \bmod N$ computed by the period finding machine. Repeat the above steps with different random choices of $x$ until $r$ is even.</li>

# <li>Now $p$ and $q$ can be found by computing $\text{gcd}(x^{r/2} \pm 1, N)$ as long as $x^{r/2} \neq \pm 1$.</li>

# </ol>

# </div>

#

# As an example, consider $N = 15$. Let's look at all values of $1 < x < 15$ where $x$ is coprime with $15$:

#

# | $x$ | $x^a \bmod 15$ | Period $r$ |$\text{gcd}(x^{r/2}-1,15)$|$\text{gcd}(x^{r/2}+1,15)$ |

# |:-----:|:----------------------------:|:----------:|:------------------------:|:-------------------------:|

# | 2 | 1,2,4,8,1,2,4,8,1,2,4... | 4 | 3 | 5 |

# | 4 | 1,4,1,4,1,4,1,4,1,4,1... | 2 | 3 | 5 |

# | 7 | 1,7,4,13,1,7,4,13,1,7,4... | 4 | 3 | 5 |

# | 8 | 1,8,4,2,1,8,4,2,1,8,4... | 4 | 3 | 5 |

# | 11 | 1,11,1,11,1,11,1,11,1,11,1...| 2 | 5 | 3 |

# | 13 | 1,13,4,7,1,13,4,7,1,13,4,... | 4 | 3 | 5 |

# | 14 | 1,14,1,14,1,14,1,14,1,14,1,,,| 2 | 1 | 15 |

#

# As can be seen, any value of $x$ except $14$ will return the factors of $15$, that is, $3$ and $5$. $14$ is an example of the special case where $(x^{r/2} + 1)$ or $(x^{r/2} - 1)$ is a multiple of $N$ and thus another $x$ needs to be tried.

#

# In general, it can be shown that this special case occurs infrequently, so on average only two calls to the period finding machine are sufficient to factor $N$.

# For a more interesting example, first let's find larger number N, that is semiprime that is relatively small. Using the [Sieve of Eratosthenes](https://en.wikipedia.org/wiki/Sieve_of_Eratosthenes) [Python implementation](http://archive.oreilly.com/pub/a/python/excerpt/pythonckbk_chap1/index1.html?page=last), let's generate a list of all the prime numbers less than a thousand, randomly select two, and muliply them.

# +

import random, itertools

# Sieve of Eratosthenes algorithm

def sieve( ):

D = { }

yield 2

for q in itertools.islice(itertools.count(3), 0, None, 2):

p = D.pop(q, None)

if p is None:

D[q*q] = q

yield q

else:

x = p + q

while x in D or not (x&1):

x += p

D[x] = p

# Creates a list of prime numbers up to the given argument

def get_primes_sieve(n):

return list(itertools.takewhile(lambda p: p<n, sieve()))

def get_semiprime(n):

primes = get_primes_sieve(n)

l = len(primes)

p = primes[random.randrange(l)]

q = primes[random.randrange(l)]

return p*q

N = get_semiprime(1000)

print("semiprime N =",N)

# -

# Now implement the [above steps](#stepsone) of Shor's Algorithm:

# +

import math

def shors_algorithm_classical(N):

x = random.randint(0,N) # step one

if(math.gcd(x,N) != 1): # step two

return x,0,math.gcd(x,N),N/math.gcd(x,N)

r = find_period_classical(x,N) # step three

while(r % 2 != 0):

r = find_period_classical(x,N)

p = math.gcd(x**int(r/2)+1,N) # step four, ignoring the case where (x^(r/2) +/- 1) is a multiple of N

q = math.gcd(x**int(r/2)-1,N)

return x,r,p,q

x,r,p,q = shors_algorithm_classical(N)

print("semiprime N = ",N,", coprime x = ",x,", period r = ",r,", prime factors = ",p," and ",q,sep="")

# -

# ### Quantum Period Finding <a id='quantumperiodfinding'></a>

#

# Let's first describe the quantum period finding algorithm, and then go through a few of the steps in detail, before going through an example. This algorithm takes two coprime integers, $x$ and $N$, and outputs $r$, the period of $\mathcal{F}(a) = x^a\bmod N$.

#

# <div class="alert alert-block alert-info"><a id='stepstwo'></a>

# <ol>

# <li> Choose $T = 2^t$ such that $N^2 \leq T \le 2N^2$. Initialise two registers of qubits, first an argument register with $t$ qubits and second a function register with $n = log_2 N$ qubits. These registers start in the initial state:

# $$\vert\psi_0\rangle = \vert 0 \rangle \vert 0 \rangle$$ </li>

# <li> Apply a Hadamard gate on each of the qubits in the argument register to yield an equally weighted superposition of all integers from $0$ to $T$:

# $$\vert\psi_1\rangle = \frac{1}{\sqrt{T}}\sum_{a=0}^{T-1}\vert a \rangle \vert 0 \rangle$$ </li>

# <li> Implement the modular exponentiation function $x^a \bmod N$ on the function register, giving the state:

# $$\vert\psi_2\rangle = \frac{1}{\sqrt{T}}\sum_{a=0}^{T-1}\vert a \rangle \vert x^a \bmod N \rangle$$

# This $\vert\psi_2\rangle$ is highly entangled and exhibits quantum parallism, i.e. the function entangled in parallel all the 0 to $T$ input values with the corresponding values of $x^a \bmod N$, even though the function was only executed once. </li>

# <li> Perform a quantum Fourier transform on the argument register, resulting in the state:

# $$\vert\psi_3\rangle = \frac{1}{T}\sum_{a=0}^{T-1}\sum_{z=0}^{T-1}e^{(2\pi i)(az/T)}\vert z \rangle \vert x^a \bmod N \rangle$$

# where due to the interference, only the terms $\vert z \rangle$ with

# $$z = qT/r $$

# have significant amplitude where $q$ is a random integer ranging from $0$ to $r-1$ and $r$ is the period of $\mathcal{F}(a) = x^a\bmod N$. </li>

# <li> Measure the argument register to obtain classical result $z$. With reasonable probability, the continued fraction approximation of $T / z$ will be an integer multiple of the period $r$. Euclid's algorithm can then be used to find $r$.</li>

# </ol>

# </div>

#

# Note how quantum parallelism and constructive interference have been used to detect and measure periodicity of the modular exponentiation function. The fact that interference makes it easier to measure periodicity should not come as a big surprise. After all, physicists routinely use scattering of electromagnetic waves and interference measurements to determine periodicity of physical objects such as crystal lattices. Likewise, Shor's algorithm exploits interference to measure periodicity of arithmetic objects, a computational interferometer of sorts.

# #### Modular Exponentiation

#

# The modular exponentiation, step 3 above, that is the evaluation of $x^a \bmod N$ for $2^t$ values of $a$ in parallel, is the most demanding part of the algorithm. This can be performed using the following identity for the binary representation of any integer: $x = x_{t-1}2^{t-1} + ... x_12^1+x_02^0$, where $x_t$ are the binary digits of $x$. From this, it follows that:

#

# \begin{aligned}

# x^a \bmod N & = x^{2^{(t-1)}a_{t-1}} ... x^{2a_1}x^{a_0} \bmod N \\

# & = x^{2^{(t-1)}a_{t-1}} ... [x^{2a_1}[x^{2a_0} \bmod N] \bmod N] ... \bmod N \\

# \end{aligned}

#

# This means that 1 is first multiplied by $x^1 \bmod N$ if and only if $a_0 = 1$, then the result is multiplied by $x^2 \bmod N$ if and only if $a_1 = 1$ and so forth, until finally the result is multiplied by $x^{2^{(s-1)}}\bmod N$ if and only if $a_{t-1} = 1$.

#

# Therefore, the modular exponentiation consists of $t$ serial multiplications modulo $N$, each of them controlled by the qubit $a_t$. The values $x,x^2,...,x^{2^{(t-1)}} \bmod N$ can be found efficiently on a classical computer by repeated squaring.

# #### Quantum Fourier Transform

#

# The Fourier transform occurs in many different versions throughout classical computing, in areas ranging from signal processing to data compression to complexity theory. The quantum Fourier transform (QFT), step 4 above, is the quantum implementation of the discrete Fourier transform over the amplitudes of a wavefunction.

#

# The classical discrete Fourier transform acts on a vector $(x_0, ..., x_{N-1})$ and maps it to the vector $(y_0, ..., y_{N-1})$ according to the formula

# $$y_k = \frac{1}{\sqrt{N}}\sum_{j=0}^{N-1}x_j\omega_N^{jk}$$

# where $\omega_N^{jk} = e^{2\pi i \frac{jk}{N}}$.

#

# Similarly, the quantum Fourier transform acts on a quantum state $\sum_{i=0}^{N-1} x_i \vert i \rangle$ and maps it to the quantum state $\sum_{i=0}^{N-1} y_i \vert i \rangle$ according to the formula

# $$y_k = \frac{1}{\sqrt{N}}\sum_{j=0}^{N-1}x_j\omega_N^{jk}$$

# with $\omega_N^{jk}$ defined as above. Note that only the amplitudes of the state were affected by this transformation.

#

# This can also be expressed as the map:

# $$\vert x \rangle \mapsto \frac{1}{\sqrt{N}}\sum_{y=0}^{N-1}\omega_N^{xy} \vert y \rangle$$

#

# Or the unitary matrix:

# $$ U_{QFT} = \frac{1}{\sqrt{N}} \sum_{x=0}^{N-1} \sum_{y=0}^{N-1} \omega_N^{xy} \vert y \rangle \langle x \vert$$

# As an example, we've actually already seen the quantum Fourier transform for when $N = 2$, it is the Hadamard operator ($H$):

# $$H = \frac{1}{\sqrt{2}}\begin{bmatrix} 1 & 1 \\ 1 & -1 \end{bmatrix}$$

# Suppose we have the single qubit state $\alpha \vert 0 \rangle + \beta \vert 1 \rangle$, if we apply the $H$ operator to this state, we obtain the new state:

# $$\frac{1}{\sqrt{2}}(\alpha + \beta) \vert 0 \rangle + \frac{1}{\sqrt{2}}(\alpha - \beta) \vert 1 \rangle

# \equiv \tilde{\alpha}\vert 0 \rangle + \tilde{\beta}\vert 1 \rangle$$

# Notice how the Hadamard gate performs the discrete Fourier transform for $N = 2$ on the amplitudes of the state.

#

# So what does the quantum Fourier transform look like for larger N? Let's derive a circuit for $N=2^n$, $QFT_N$ acting on the state $\vert x \rangle = \vert x_1...x_n \rangle$ where $x_1$ is the most significant bit.

# \begin{aligned}

# QFT_N\vert x \rangle & = \frac{1}{\sqrt{N}} \sum_{y=0}^{N-1}\omega_N^{xy} \vert y \rangle \\

# & = \frac{1}{\sqrt{N}} \sum_{y=0}^{N-1} e^{2 \pi i xy / 2^n} \vert y \rangle \:\text{since}\: \omega_N^{xy} = e^{2\pi i \frac{xy}{N}} \:\text{and}\: N = 2^n\\

# & = \frac{1}{\sqrt{N}} \sum_{y=0}^{N-1} e^{2 \pi i \left(\sum_{k=1}^n y_k/2^k\right) x} \vert y_1 ... y_n \rangle \:\text{rewriting in fractional binary notation}\: y = y_1...y_k, y/2^n = \sum_{k=1}^n y_k/2^k \\

# & = \frac{1}{\sqrt{N}} \sum_{y=0}^{N-1} \prod_{k=0}^n e^{2 \pi i x y_k/2^k } \vert y_1 ... y_n \rangle \:\text{after expanding the exponential of a sum to a product of exponentials} \\

# & = \frac{1}{\sqrt{N}} \bigotimes_{k=1}^n \left(\vert0\rangle + e^{2 \pi i x /2^k } \vert1\rangle \right) \:\text{after rearranging the sum and products, and expanding} \\

# & = \frac{1}{\sqrt{N}} \left(\vert0\rangle + e^{2 \pi i[0.x_n]} \vert1\rangle\right) \otimes...\otimes \left(\vert0\rangle + e^{2 \pi i[0.x_1.x_2...x_{n-1}.x_n]} \vert1\rangle\right) \:\text{as}\: e^{2 \pi i x/2^k} = e^{2 \pi i[0.x_k...x_n]}

# \end{aligned}

#

# This is a very useful form of the QFT for $N=2^n$ as only the last qubit depends on the the

# values of all the other input qubits and each further bit depends less and less on the input qubits. Furthermore, note that $e^{2 \pi i.0.x_n}$ is either $+1$ or $-1$, which resembles the Hadamard transform.

#

# Before we create the circuit code for general $N=2^n$, let's look at $N=8,n=3$:

# $$QFT_8\vert x_1x_2x_3\rangle = \frac{1}{\sqrt{8}} \left(\vert0\rangle + e^{2 \pi i[0.x_3]} \vert1\rangle\right) \otimes \left(\vert0\rangle + e^{2 \pi i[0.x_2.x_3]} \vert1\rangle\right) \otimes \left(\vert0\rangle + e^{2 \pi i[0.x_1.x_2.x_3]} \vert1\rangle\right) $$

#

# The steps to creating the circuit for $\vert y_1y_2x_3\rangle = QFT_8\vert x_1x_2x_3\rangle$, remembering the [controlled phase rotation gate](../tools/quantum_gates_and_linear_algebra.ipynb

# ) $CU_1$, would be:

# 1. Apply a Hadamard to $\vert x_3 \rangle$, giving the state $\frac{1}{\sqrt{2}}\left(\vert0\rangle + e^{2 \pi i.0.x_3} \vert1\rangle\right) = \frac{1}{\sqrt{2}}\left(\vert0\rangle + (-1)^{x_3} \vert1\rangle\right)$

# 2. Apply a Hadamard to $\vert x_2 \rangle$, then depending on $k_3$ (before the Hadamard gate) a $CU_1(\frac{\pi}{2})$, giving the state $\frac{1}{\sqrt{2}}\left(\vert0\rangle + e^{2 \pi i[0.x_2.x_3]} \vert1\rangle\right)$.

# 3. Apply a Hadamard to $\vert x_1 \rangle$, then $CU_1(\frac{\pi}{2})$ depending on $k_2$, and $CU_1(\frac{\pi}{4})$ depending on $k_3$.

# 4. Measure the bits in reverse order, that is $y_3 = x_1, y_2 = x_2, y_1 = y_3$.

#

# In Qiskit, this is:

# ```

# q3 = QuantumRegister(3, 'q3')

# c3 = ClassicalRegister(3, 'c3')

#

# qft3 = QuantumCircuit(q3, c3)

# qft3.h(q[0])

# qft3.cu1(math.pi/2.0, q3[1], q3[0])

# qft3.h(q[1])

# qft3.cu1(math.pi/4.0, q3[2], q3[0])

# qft3.cu1(math.pi/2.0, q3[2], q3[1])

# qft3.h(q[2])

# ```

#

# For $N=2^n$, this can be generalised, as in the `qft` function in [tools.qi](https://github.com/Q/qiskit-terra/blob/master/qiskit/tools/qi/qi.py):

# ```

# def qft(circ, q, n):

# """n-qubit QFT on q in circ."""

# for j in range(n):

# for k in range(j):

# circ.cu1(math.pi/float(2**(j-k)), q[j], q[k])

# circ.h(q[j])

# ```

# #### Example

#

# Let's factorize $N = 21$ with coprime $x=2$, following the [above steps](#stepstwo) of the quantum period finding algorithm, which should return $r = 6$. This example follows one from [this](https://arxiv.org/abs/quant-ph/0303175) tutorial.

#

# 1. Choose $T = 2^t$ such that $N^2 \leq T \le 2N^2$. For $N = 21$, the smallest value of $t$ is 9, meaning $T = 2^t = 512$. Initialise two registers of qubits, first an argument register with $t = 9$ qubits, and second a function register with $n = log_2 N = 5$ qubits:

# $$\vert\psi_0\rangle = \vert 0 \rangle \vert 0 \rangle$$

#

# 2. Apply a Hadamard gate on each of the qubits in the argument register: