code stringlengths 38 801k | repo_path stringlengths 6 263 |

|---|---|

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# # mRNA Expression Analysis using Hybridization Chain Reaction

# This code was used to analyze HCR results for putative BMPR1A targets identified by RNA-Seq.

#

# Required inputs for this script:

#

# 1. .csv file containing source data for each image (embryo) documenting the area, mean, intden, and raw intden for background, control, and experimental regions of interest (ROI)

#

# Script prepared by <NAME>, March 2021

# +

# Import data handling and analysis packages

import os

import glob

import pandas as pd

from scipy import stats

# Import plotting packages

import iqplot

import bokeh.io

from bokeh.io import output_file, show

from bokeh.layouts import column, row

bokeh.io.output_notebook()

# -

# ## Import source data

# +

source_data = pd.read_csv('Fig4_source_data.csv')

source_data.replace(to_replace=['tfap2b', 'id2', 'hes6', 'apod'],value=['TFAP2B', 'ID2', 'HES6-2', 'APOD'], inplace=True)

source_data.head()

# +

# Define control and experimental constructs

cntl_construct = 'RFP'

expt_construct = 'dnBMPR1A-FLAG'

# Get a list of experimental treatments in this dataframe

treatment_list = source_data.Treatment.unique()

treatment_list = treatment_list.tolist()

treatment_list

# -

# ## Isolate and analyze mean fluorescence intensity for each image

#

# This will determine the ratio of fluorescence intensities between control and experimental sides (Experimental/Control)

# +

# Get a list of target genes measured

target_list = source_data.Target.unique().tolist()

# Initialize for final dataframe collection

full_results = pd.DataFrame()

full_results_list = []

# Loop through target genes:

for target in target_list:

df_target = source_data.loc[source_data['Target'] == target][['Target','EmbID','Treatment',

'Somites','ROI','Area','Mean','IntDen']]

# Initialize for temporary dataframe collection

target_results = pd.DataFrame()

target_results_list = []

# Loop through embryos:

embryo_list = df_target.EmbID.unique().tolist()

for embryo in embryo_list:

df_embryo = df_target.loc[df_target['EmbID'] == embryo]

# Assemble output df from specific values in each embryo dataset

data = {'Target': [target, target], 'EmbID': [embryo, embryo]

,'Treatment': [df_embryo.tail(1)['Treatment'].values[0], df_embryo.tail(1)['Treatment'].values[0]]

,'Somites': [df_embryo.tail(1)['Somites'].values[0], df_embryo.tail(1)['Somites'].values[0]]

,'ROI': ['Cntl', 'Expt']

,'Mean': [float(df_embryo.loc[df_embryo['ROI'] == 'Cntl']['Mean']),

float(df_embryo.loc[df_embryo['ROI'] == 'Expt']['Mean'])]

}

embryo_results = pd.DataFrame(data)

target_results_list.append(embryo_results)

# Normalize mean levels within this target dataset to the mean of the control group

target_results = pd.concat(target_results_list, sort=False).reset_index().drop('index', axis=1)

cntl_mean = target_results.loc[target_results['ROI'] == 'Cntl']['Mean'].mean()

target_results['normMean'] = target_results['Mean']/cntl_mean

full_results_list.append(target_results)

# Assemble and view the final results

full_results = pd.concat(full_results_list,sort=False).reset_index().drop('index', axis=1)

full_results.head()

# -

# ## Parallel coordinate plots for single targets

#

# Displays Control and Experimental values, connected by a line to link measurements from same embryo

#

# Also perform two-tailed paired t-test for these values

# +

################### Isolate data for analysis ###################

# Annotate data further to plot

cntl_construct = 'RFP'

expt_construct = 'dnBMPR1A-FLAG'

# Gene to parse:

gene = ['ID2']

# Pull out only cells and treaments of interest, and rename ROIs with the appropriate constructs

df = full_results.loc[full_results['Target'].isin(gene)].copy()

df.replace(to_replace = {'Cntl': cntl_construct, 'Expt': expt_construct}, inplace=True)

################### Plot as strip plot ###################

# Plot as strip plot

p1 = iqplot.strip(data=df

,q='normMean', q_axis='y'

,cats=['ROI'], parcoord_column='EmbID'

,y_range=(0,2)

# ,frame_height = 300, frame_width = 200

,frame_height = 400, frame_width = 400

,y_axis_label=str('Normalized '+str(gene[0])+' expression')

,x_axis_label='Treatment'

,color_column='Somites'

,marker_kwargs=dict(size=10

# ,color='black'

)

,parcoord_kwargs=dict(line_width=1,color='gray')

# ,show_legend=True

,tooltips=[("Embryo", "@EmbID"), ]

)

# p1.axis.axis_label_text_font_style = 'bold italic'

p1.axis.axis_label_text_font_size = '14px'

p1.axis.major_label_text_font_size = '14px'

# p1.legend.location = "top_right"

show(row(p1))

################### Perform statistical analysis ###################

# Perform Paired t test

cntl = df.loc[df['ROI'] == cntl_construct]['Mean']

expt = df.loc[df['ROI'] == expt_construct]['Mean']

ttest = stats.ttest_rel(cntl,expt)

# Display test results

print('Paired t-test results: \n\t\t statistic = ' + str(ttest[0]) +

'\n\t\t p-value = ' + str(ttest[1]))

print('n = ' + str(len(cntl)) + ' embryos')

# -

# ## Assemble ratio dataframe (Experimental / Control measurements), then plot as a stripbox plot

# +

ratios_raw = full_results.copy()

ratios_raw['ExperimentID'] = ratios_raw['EmbID']+'_'+ratios_raw['Target']

expt_list = ratios_raw['ExperimentID'].unique().tolist()

ratio_results = pd.DataFrame()

list_ = []

for expt in expt_list:

expt_df = ratios_raw.loc[ratios_raw['ExperimentID'] == expt]

ratio_mean = (float(expt_df.loc[expt_df['ROI'] == 'Expt']['Mean'])

/float(expt_df.loc[expt_df['ROI'] == 'Cntl']['Mean']))

# Assemble output df

data = {'ExperimentID': [expt],

'ratioMean': [ratio_mean],

}

expt_results = pd.DataFrame(data)

list_.append(expt_results)

ratio_results = pd.concat(list_,sort=False).reset_index().drop('index', axis=1)

(ratio_results['Date'], ratio_results['Stains'], ratio_results['Embryo'], ratio_results['Target']

) = zip(*ratio_results['ExperimentID'].map(lambda x: x.split('_')))

ratio_results.head()

# +

# Choose targets to plot

targets = ['TFAP2B', 'ID2', 'HES6-2', 'APOD',]

data = ratio_results[ratio_results['Target'].isin(targets)]

# Build Stripbox plot

stripbox = iqplot.stripbox(

# Data to plot

data=data,

q='ratioMean', q_axis='y',

cats='Target',

# Plot details

jitter=True, jitter_kwargs=dict(width=0.3),

marker_kwargs=dict(alpha=0.8, size=8

# ,color='darkgray'

),

box_kwargs=dict(line_color='black', line_width=1.5),

whisker_kwargs=dict(line_color='black', line_width=1.5),

median_kwargs=dict(line_color='black', line_width=2),

top_level='box',

frame_width=350, frame_height=350,

# Plot customizations

order=targets,

y_range=(0,2.05),

y_axis_label='Relative HCR Intensity',

x_axis_label='Gene',

show_legend=False,

)

# Final customizations

stripbox.axis.axis_label_text_font_size = '16px'

stripbox.axis.major_label_text_font_size = '16px'

stripbox.axis.axis_label_text_font_style = 'bold'

stripbox.xaxis.major_label_text_font_style = 'italic'

# View plot

show(stripbox)

# -

| Fig4_HCR_Validation/2021_WholeMount_HCR_Intensity_Analysis.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

import numpy as np

import pandas as pd

cm_data = np.genfromtxt('scores/output.txt', dtype=str)

cm_data2 = np.genfromtxt('scores/output.txt', dtype=str)

fuse_df = fuse(f_list)

fuse_df.to_csv('merge_result.csv', sep=' ', header=False, index=False)

| tDCF_python_v1/FusionFunction.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# + [markdown] id="qXyD34zG_QYr"

# # Artificial Neural Networks in Python using Tensorflow

#

# This notebook is a simple demonstration to how to build an Artificial Neuron Network using TensorFlow to a classification problem.

#

# **Problem Description**

#

# The goal of program is to build a classification model to predict if a certain client will leave the bank service in the next six months.

#

# **Dataset Description**

#

# The dataset is composed by 10000 instances (rows) and 14 features (columns). The features considered to build the model are:

#

# - RowNumber (This is not important to the model)

# - CustomerId (This is not important to the model)

# - Surname (This is not important to the model)

# - CreditScore (numerical variable)

# - Geography (categorical variable)

# - Gender (categorical variable)

# - Age (numerical variable)

# - Tenure (categorical variable)

# - Balance (numerical variable)

# - NumOfProducts (categorical variable)

# - HasCrCard (categorical variable)

# - EstimatedSalary (numerical variable)

# - Exited (target)

# -

# # Data preprocessing

# + [markdown] id="7ANWPtw_AFZu"

# ## Importing Libraries

# + colab={"base_uri": "https://localhost:8080/", "height": 35} id="ushgeC5iAKCu" outputId="56b2f19f-96fa-4456-a0c0-5e8650e3fa17"

import numpy as np

import pandas as pd

import matplotlib.pyplot as plt

import seaborn as sns

import tensorflow as tf # We utilize it to build our ANN model.

tf.__version__

# + [markdown] id="SLTAs4t3AUg2"

# ## Importing Dataset

#

# + colab={"base_uri": "https://localhost:8080/"} id="OaApzIM5AXtU" outputId="aa17c74f-49f7-4e4b-c1f4-a04460d1e6af"

dataset = pd.read_csv('Churn_Modelling.csv')

# -

# ## Visualizing some informations from the dataset

dataset.shape

dataset.dtypes

dataset.isna().sum()

dataset.head(10)

dataset.describe()

# # Exploratory Data Analysis (EDA)

# ## Visualizing the categorical variables

# ### Target variable

dataset['Exited'].value_counts()

plt.figure(figsize=(15, 5))

plt.subplot(1, 2, 1)

sns.countplot(x=dataset['Exited'])

plt.subplot(1, 2, 2)

values = dataset.iloc[:, - 1].value_counts(normalize = True).values # to show the binirie values in parcentage

index = dataset.iloc[:, -1].value_counts(normalize = True).index

plt.pie(values, labels= index, autopct='%1.1f%%', colors=['b', 'tab:orange'])

plt.show()

# ### Others categorical variables

categorical_list = ['Geography', 'Gender', 'Tenure', 'NumOfProducts', 'HasCrCard','IsActiveMember', 'Exited']

data_cat = dataset[categorical_list]

fig = plt.figure(figsize=(15, 15))

plt.suptitle('Pie Chart Distribution', fontsize = 20)

for i in range(1, data_cat.shape[1]):

plt.subplot(2, 3, i)

f = plt.gca()

f.axes.get_yaxis().set_visible(False)

f.set_title(data_cat.columns.values[i - 1])

# Setting the biniries values

values = data_cat.iloc[:, i - 1].value_counts(normalize = True).values # to show the binirie values in parcentage

index = data_cat.iloc[:, i -1].value_counts(normalize = True).index

plt.pie(values, labels= index, autopct='%1.1f%%')

plt.axis('equal')

#fig.tight_layout(rect=[0, 0.03, 1, 0.95])

plt.show()

plt.figure(figsize=(15, 10))

for i in range(1, data_cat.shape[1]):

plt.subplot(3, 3, i)

sns.countplot(x=data_cat.iloc[: , i-1], hue=data_cat['Exited'])

plt.show()

# ## Visualizing the numerical variables

numerical_list = ['CreditScore', 'Age', 'Balance', 'EstimatedSalary', 'Exited']

data_num = dataset[numerical_list]

# ### Distribution of numerical variables

plt.figure(figsize=(25,15))

plt.suptitle('Histograms of numerical variables (mean values)', fontsize = 20)

for i in range(1, data_num.shape[1]):

plt.subplot(2, 2, i)

f = plt.gca()

sns.histplot(data_num.iloc[:, i-1], color = '#3F5D7D', kde= True)

plt.show()

plt.figure(figsize=(25,15))

plt.suptitle('Histograms of numerical variables (mean values)', fontsize = 20)

for i in range(1, data_num.shape[1]):

plt.subplot(2, 2, i)

f = plt.gca()

sns.histplot(data=data_num, x=data_num.iloc[:, i-1], hue='Exited', kde = True)

plt.show()

# ## Correlation and PairPlot (scatter)

# ### Correlation with the response variable

column_drop = ['RowNumber', 'CustomerId', 'Surname', 'Exited']

dataset.drop(columns=column_drop).corrwith(dataset.Exited).plot.bar(

figsize = (20, 10), title = "Correlation with Exited", fontsize = 15,

rot = 45, grid = True)

# ### Correlation Between the Variables

# +

column_drop = ['RowNumber', 'CustomerId', 'Surname']

## Correlation Matrix

sns.set(style="white")

# Compute the correlation matrix

corr = dataset.drop(columns=column_drop).corr()

# Generate a mask for the upper triangle

mask = np.zeros_like(corr, dtype=np.bool)

mask[np.triu_indices_from(mask)] = True

# Set up the matplotlib figure

f, ax = plt.subplots(figsize=(10, 20))

# Generate a custom diverging colormap

cmap = sns.diverging_palette(220, 10, as_cmap=True)

# Draw the heatmap with the mask and correct aspect ratio

sns.heatmap(corr, mask=mask, cmap=cmap, vmax=1, vmin=-1, center=0,

square=True, linewidths=.5, cbar_kws={"shrink": .5}, annot = True)

# -

# ### Pair plot for the numerical variables

sns.pairplot(data_num, hue = 'Exited', kind = 'scatter', corner=True, diag_kind='None')

# ## EDA conclusion

#

# **Target variable**

#

# Represented by 0 or 1 (stay/leave) shows a frequency of $79.6 \%$ for the customers which decided to stay in the bank against $20.4\%$ of customers that decided to leave the bank.

#

# **Categorical variables**

#

# The analysis of these variables shows that the most clients are French. The majority of clients are males. The frequency of these variables are approximately equals without a fact that requires attention and manipulation, it means feature engineering.

#

# **Numerical variables**

#

# The distribution of these variables are normal except for the salary. One interesting aspect is the age distribution for the clients which leaved the bank, the distribution shows that the almost clients have age among 40 and 50 years. This fact can be well visualized in the scatter plots.

#

# **Correlation**

#

# The correlation between the target and the independent variables are not so big, but considerable to build the model. The correlation among the independent variables are satisfactory, the almost of correlation show a low values.

# # Building the Artificial Neuron Network

# ## Data preprocessing

# ### Excluding not important columns

dataset = dataset.drop(columns=['RowNumber', 'CustomerId', 'Surname'])

# + [markdown] id="vAfvzqlxDigi"

# ### Encoding Categorical Data

# + colab={"base_uri": "https://localhost:8080/"} id="lNMBBSsVDlXx" outputId="eda5f00c-7fb2-4219-fc97-b1e11d759d08"

dataset['Gender'] = dataset['Gender'].astype('category').cat.codes

# + [markdown] id="KHsmjrNVExcN"

# #### One Hot Econding

# + colab={"base_uri": "https://localhost:8080/"} id="VPw3iUhJE2qS" outputId="ddcb325b-c92b-4154-9c13-a10ca1dfc320"

dataset = pd.get_dummies(dataset)

# -

dataset.head()

# ### Defining the independent and target variable

response = dataset['Exited']

i_var = dataset.drop(columns=['Exited'])

# + [markdown] id="WVPScdSdGeqx"

# ### Splitting the Dataset into train and test set

# + id="PKH9UuJRGkvg"

from sklearn.model_selection import train_test_split

X_train, X_test, y_train, y_test = train_test_split(i_var, response, test_size = 0.2, random_state = 0)

# + [markdown] id="rvmtD8VQHEQ3"

# ### Feature Scaling

# In almost ANN models we must to apply feature scale.

# + id="JO1fypeSHJbV"

from sklearn.preprocessing import StandardScaler

sc_X = StandardScaler()

X_train_bckp = pd.DataFrame(sc_X.fit_transform(X_train))

X_test_bckp = pd.DataFrame(sc_X.transform(X_test))

X_train_bckp.columns = X_train.columns.values

X_test_bckp.columns = X_test.columns.values

X_train_bckp.index = X_train.index.values

X_test_bckp.index = X_test.index.values

X_train = X_train_bckp

X_test = X_test_bckp

# + [markdown] id="RoNknmZsJoBy"

# ## The Artificial Neuron Network - ANN

#

# An Artificial Neuron Network is a technique that tries to reproduce brain functions. To build an ANN, we consider a set of input information about something, each of these information will be considered as a neuron, we call this set of neuron input layer. As we know, in the brain we have many neurons and the communication among them is made by synapses process. The neurons from the input layer will communicate with other set of neurons in a hidden layer by the synapses process. Once the communication between the neurons of input and hidden layer is made, the initial information is changed, in this moment, the neurons of the hidden layer will communicate with a others neurons, these neurons is considered as a output layer and it gives a response about something. For example, in a classification model, if we consider five independent variables, the input layer will be composed by five neurons, these neurons communicate with the hidden layer (the number of neurons must be chosen), the hidden layer communicate with the output layer, that provides the final response 0 or 1, if we have two class.

#

# **How do synapses work in an ANN?**

#

# The synapses process in an ANN is made by an activation function, this function transforms the input information according with an associated weight (this might be interpreted as an importance degree to each input variable). We have some kind of activation function as

# - Threshold function

# - Sigmoid function

# - Rectifier function

# - Hyperbolic tangent function.

# For this notebook we are interested in the rectifier function and sigmoid function.

#

# The rectifier function is defined as $\phi(x) = \max(x,0)$, if a certain values is less than 0 the function returns 0, otherwise the function returns the maximum value. The synapses or the communication is made according $\sum_{i=1}^{m}w_{i}x_{i}$, where $m$ is the number of input variable. We consider the rectifier function between the input layer and the hidden layers. The activation function that we consider to make the communication among the hidden and output layer is the sigmoid function. The sigmoid function returns the probability of occurrence to certain class, this function is defined as $\phi(x) = \frac{1}{1 + e^{-x}}$.

#

# **How do an ANN learn?**

#

# The learning process of an ANN starts with the input parameters in a input layer, this layer communicate with the hidden layer by synapses. The next step is the communication among the hidden layer with the output layer, to obtain a response. In this stage, the learning process is not ended. After the response, the ANN must calculate the loss function to measure the precision of the prediction. Once the loss function was calculated there is the retro-propagation process, this process tries to find the minimal of the loss function changing the associated weights to each neuron. After this step, the ANN remake the previous process of synapses among the layers. The number of retro-propagation is defined as epochs.

#

# **About loss function**

#

# One of the most used loss function is the cross entropy. To more details [see](https://towardsdatascience.com/cross-entropy-loss-function-f38c4ec8643e).

#

# **About the retro-propagation**

#

# Here, we consider the stochastic gradient descent. This method tries to minimize the loss function changing the weights of each neuron. In this process, if we have 128 instances, we choose a batch number as 32, it means that we calculate the response for the associated batch number and we returns to the begin to remake the same process to other 32 instances. To more details [see](https://en.wikipedia.org/wiki/Stochastic_gradient_descent#Adam).

#

# **Summarizing the ANN process**

#

# 1. Initializing the ANN

# 2. Build the input layer

# 3. Build the hidden layers

# 4. Build the output layer

# 5. Training the ANN

#

# 1. Compile

# 2. Train.

# + [markdown] id="Vk4TpIBUJsRp"

# ## Initializing the ANN

#

# The first step is to create an object to build a sequence layer. This sequence layer takes account the input layer (the parameters that we initialize the ANN), the hidden layers and output layer. To do it, we utilize the modulus Tensor Flow (version 2.0 or high) that allow us to call the Keras modulus.

# + id="qLSqUQRXJ0uS"

ann = tf.keras.models.Sequential()

# + [markdown] id="oagB1r01eJhp"

# ## Input layer

#

# In this step, we create the input layer, it means, we set all independent variables (considering these neurons as input neurons) . When the input values go to the first hidden layer we must to choose an activation function. For this case, we choose rectifier function (the weights to each input parameters are chosen by the ann object). Units is the number of input neurons, relu is the activation function.

# + id="9BxdU1ZsfukC"

ann.add(tf.keras.layers.Dense(units=X_train.shape[1], activation='relu'))

# + [markdown] id="t6AZbMdTinxv"

# ## Hidden layers

#

# Here, we set the hidden layers. We can put how much we want. For this problem, we consider just one hidden layer. The object to create the hidden layer is the same to the input layer, but we can change it according with the problem. The parameters are the same of the input layer.

# + id="343W94K3jFbE"

tf.keras.layers.Dropout(0.2) # To drop 20% of input neurons, to avoid overfit.

ann.add(tf.keras.layers.Dense(units=X_train.shape[1], activation='relu'))

# + [markdown] id="Fy_EkroCjJj0"

# ## Output layer

#

# The last layer is the output layer. We use the same object the we used to build the preceding layers, but here, we make some changes in the parameters. Like this problem has a binary response yes or no, the number of neuron corresponds to 1, but if the response gives more then two results (0, 1, 2, for example), we must to consider the correspondent number of responses. The second change is on the activation function. Here, we consider sigmoid activation function, for one simple reason, this gives to us the probability which will be interpreted as 0 or 1 according with the values. Likelihood less than $0.5$ is considered as 0, otherwise 1.

# + id="9RJRX5ODjQS7"

tf.keras.layers.Dropout(0.2)

ann.add(tf.keras.layers.Dense(units=1, activation='sigmoid'))

# + [markdown] id="g-v5KN4ClfZD"

# ## Learning process of the ANN

# + [markdown] id="sarW2BIVlkM7"

# ### Compiling the ANN

#

# Compile the ANN is one of most important step. We select a method to optimize our ANN, stochastic gradient descent, represented by adam. The lost function is also very import, because from this function we are able to improve the accuracy, precision and other relevant parameters. The lost function that we are going to use is Binary Cross Entropy (due to have a binary response). Finally, the metric which we choose is the accuracy. Beyond this metric, we have others important metrics as F1, precision and recall.

# + id="V_kuMk2PlqWK"

ann.compile(optimizer='adam', loss='binary_crossentropy', metrics=['accuracy',tf.keras.metrics.Recall(), tf.keras.metrics.Precision()])

# + [markdown] id="HceFPt3-my5m"

# ### Training the ANN

#

# Now we train the ANN. Here we have two important hype parameters. Batch size determines how many instances the stochastic gradient descent will consider to work in the minimizing process. Epochs indicates the number of retro-propagation we want to train the model.

# + colab={"base_uri": "https://localhost:8080/"} id="zn0oSK2Zm5Ig" outputId="8a4227c9-84d8-497e-d602-6f9e088cd2c1"

ann.fit(X_train, y_train, batch_size= 32, epochs= 100)

# + [markdown] id="PqFny2LvrAbP"

# ## Making a single prediction

#

# Here, we make a simple prediction. Remember, Geography and Gender was changed. We must take it account.

# + colab={"base_uri": "https://localhost:8080/"} id="GntqBk3nrTbh" outputId="13fec3a3-eb19-40f1-95ee-2502fdebb3b0"

print(ann.predict(sc_X.transform([[1, 0, 0, 600, 1, 1, 40, 60000, 2, 1, 1, 50000]])) > 0.5)

# + [markdown] id="izaEvAa9uC0z"

# ## Predicting the test results

# + colab={"base_uri": "https://localhost:8080/"} id="543-PUCbuFsT" outputId="20862437-cd09-4512-9b14-8f62cffd3932"

y_pred = ann.predict(X_test)

y_pred = (y_pred > 0.5) # Here we must put it, because we have as outcome the probability, but we want a binary response.

# + [markdown] id="AVftybBMuc2J"

# ## Making the confusion matrix and metrics scores

# + colab={"base_uri": "https://localhost:8080/"} id="6vppMTWRuiEv" outputId="62ab924f-8104-4580-9044-86f1f507d8c7"

from sklearn.metrics import confusion_matrix

cm = confusion_matrix(y_test, y_pred)

plt.figure()

sns.heatmap(cm, annot=True)

plt.show()

# +

# Here, we consider the metrics class from TensorFlow library.

m1 = tf.keras.metrics.Accuracy() # Object

m2 = tf.keras.metrics.Recall()

m3 = tf.keras.metrics.Precision()

m1.update_state(y_test, y_pred) # Calculating the metric

m2.update_state(y_test, y_pred)

m3.update_state(y_test, y_pred)

print('Metric results for the test set\n')

print('Accuracy {:.2f}%'.format(m1.result().numpy()*100))

print('Recall {:.2f}%'.format(m2.result().numpy()*100))

print('Precision {:.2f}%'.format(m3.result().numpy()*100))

# +

score_train = ann.evaluate(X_train, y_train)

print('\n')

print('Metric results for the training set\n')

print('Accuracy {:.2f}%'.format(score_train[1]*100))

print('Recall {:.2f}%'.format(score_train[2]*100))

print('Precision {:.2f}%'.format(score_train[3]*100))

# -

# ## Metrics with cross validate

def build_classifier(optimizer='adam'):

classifier = tf.keras.models.Sequential()

classifier.add(tf.keras.layers.Dense(units=X_train.shape[1], activation='relu'))

classifier.add(tf.keras.layers.Dense(units=X_train.shape[1], activation='relu'))

classifier.add(tf.keras.layers.Dense(units=1, activation='sigmoid'))

classifier.compile(optimizer = optimizer, loss = 'binary_crossentropy', metrics = ['accuracy',tf.keras.metrics.Recall(), tf.keras.metrics.Precision()])

return classifier

# +

scoring = ['accuracy', 'recall', 'precision'] # List of metrics

from sklearn.model_selection import cross_validate

classifier = tf.keras.wrappers.scikit_learn.KerasClassifier(build_fn = build_classifier, batch_size = 32, epochs = 100)

accuracies = cross_validate(estimator = classifier, X = X_train, y = y_train,

cv = 10, n_jobs = -1, scoring = scoring)

# -

print('Metric results with cross validate cv=10')

print('\n')

print("Accuracy: {:.2f} %".format(accuracies['test_accuracy'].mean()*100))

print("Recall: {:.2f} %".format(accuracies['test_recall'].mean()*100))

print("Precision: {:.2f} %".format(accuracies['test_precision'].mean()*100))

# ## Boosting the model with GridSearchCV

# +

scoring = {'ACC' : 'accuracy', 'REC' : 'recall', 'PC' : 'precision'}

from sklearn.model_selection import GridSearchCV

classifier = tf.keras.wrappers.scikit_learn.KerasClassifier(build_fn = build_classifier)

parameters = {'batch_size': [8, 16, 32],

'epochs': [50, 100, 500],

'optimizer': ['adam', 'rmsprop']}

grid_search = GridSearchCV(estimator = classifier,

param_grid = parameters,

scoring = scoring,

refit = 'ACC',

cv = 10, n_jobs = -1)

# -

grid_search = grid_search.fit(X_train, y_train)

grid_search.best_params_

# ### Predicting new test results with the best parameters

boosted_predictions = grid_search.predict(X_test)

boosted_predictions = (boosted_predictions > 0.5)

print('Metrics results - Boosted')

print('\n')

print('Best Parameters')

print(grid_search.best_params_)

print('Confusion Matrix')

cmb2 = confusion_matrix(y_test, boosted_predictions)

plt.plot()

sns.heatmap(cmb2, annot=True)

plt.show()

print('Metrics Results')

print("- Accuracy: {:.2f} %".format(grid_search.cv_results_['mean_test_ACC'][grid_search.best_index_].mean()*100))

print("- Recall: {:.2f} %".format(grid_search.cv_results_['mean_test_REC'][grid_search.best_index_].mean()*100))

print("- Precision: {:.2f} %".format(grid_search.cv_results_['mean_test_PC'][grid_search.best_index_].mean()*100))

print('\n')

# + [markdown] id="1rPoWgp3BO3R"

# # Conclusion

#

# In this program, we shown a simple example how to build an ANN. The objective of build a good regression model was achieved, the model presents an accuracy of $85.97 \%$. Artificial Neuron Network has a has a wide applicability, we might also to build regression models. Whit the classification model, we are free to apply in many problems, for example image recognition. Methods like this might be helpful tool in decision-make about client polices.

| 1 - Artifical Neural Networks/Artifical_Neural_Networks_TensorFlow.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3.8 (full)

# language: python

# name: python3-3.8-ufrc

# ---

# # Pandas

# ## Use the Pandas library to do statistics on tabular data.

# * Pandas is a widely-used Python library for statistics, particularly on tabular data.

# * Borrows many features from R's dataframes.

# * A 2-dimenstional table whose columns have names and potentially have different data types.

# * Load it with `import pandas`.

# * Read a Comma Separate Values (CSV) data file with `pandas.read_csv`.

# * Argument is the name of the file to be read.

# * Assign result to a variable to store the data that was read.

# +

import pandas

data = pandas.read_csv('data/gapminder_gdp_oceania.csv')

print(data)

# -

# * The columns in a dataframe are the observed variables, and the rows are the observations.

# * Pandas uses backslash `\` to show wrapped lines when output is too wide to fit the screen.

# #### File Not Found

# Our lessons store their `data` files in a data sub-directory, which is why the path to the file is `data/gapminder_gdp_oceania.csv`. If you forget to include `data/`, or if you include it but your copy of the file is somewhere else, you will get a runtime error that ends with a line like this:

# `OSError: File b'gapminder_gdp_oceania.csv' does not exist`

# ### Use `index_col` to specify that a column's values should be used as row headings.

# * Row headings are numbers (0 and 1 in this case).

# * Really want to index by country.

# * Pass the name of the column to `read_csv` as its `index_col` parameter to do this.

data = pandas.read_csv('data/gapminder_gdp_oceania.csv', index_col='country')

print(data)

# ## Use `DataFrame.info` to find out more about a dataframe.

data.info()

# * This is a `DataFrame`

# * Two rows named `'Australia'` and `'New Zealand'`

# * Twelve columns, each of which has two actual 64-bit floating point values.

# * We will talk later about null values, which are used to represent missing observations.

# * Uses 208 bytes of memory.

# ## The `DataFrame.columns` variable stores information about the dataframe's columns.

# * Note that this is data, *not* a method.

# * Like `math.pi`.

# * So do not use `()` to try to call it.

# * Called a *member variable*, or just *member*.

print(data.columns)

# ## Use `DataFrame.T` to transpose a dataframe.

# * Sometimes want to treat columns as rows and vice versa.

# * Transpose (written `.T`) doesn't copy the data, just changes the program's view of it.

# * Like `columns`, it is a member variable.

print(data.T)

# ## Use `DataFrame.describe` to get summary statistics about data.

# * `DataFrame.describe()` gets the summary statistics of only the columns that have numerical data. All other columns are ignored, unless you use the argument `include='all'`.

print(data.describe())

# * Not particularly useful with just two records, but very helpful when there are thousands.

# ## Questions

# #### Q1: Reading Other Data

# Read the data in `gapminder_gdp_americas.csv` (which should be the same directory as `gapminder_gdp_oceania.csv`) into the variable called `americas` and display its summary statistics.

# **Solution**

#

# Click on the '...' below to show the solution.

# + jupyter={"source_hidden": true}

# To read in a CSV, we use `pandas.read_csv`and pass the filename

# 'data/gapminder_gdp_americas.csv' to it. We also once again pass the column

# name 'country' to the parameter `index_col` in order to index by country:

americas = pandas.read_csv('data/gapminder_gdp_americas.csv', index_col='country')

# -

# #### Q2: Inspecting Data

# After reading the data for the AMericans, use `help(americas.head)` and `help(americas.tal)` to find out what `DataFrame.head` and `DataFrame.tail` do.

# 1. What method call will display the first three rows fo this data?

# 2. What method call will display the last three columns of this data? (Hint: you may need to change your view of the data)

# **Solution**

#

# Click on the '...' below to show the solution.

# + jupyter={"source_hidden": true}

# 1. We can check out the first five rows of `americas` by executing

# `americas.head()` (allowing us to view the head of the DataFrame). We can

# specify the number of rows we wish to see by specifying the parameter `n`

# in our call to `americas.head()`. To view the first three rows, execute:

americas.head(n=3)

# The output is then

continent gdpPercap_1952 gdpPercap_1957 gdpPercap_1962 \

country

Argentina Americas 5911.315053 6856.856212 7133.166023

Bolivia Americas 2677.326347 2127.686326 2180.972546

Brazil Americas 2108.944355 2487.365989 3336.585802`

gdpPercap_1967 gdpPercap_1972 gdpPercap_1977 gdpPercap_1982 \

country

Argentina 8052.953021 9443.038526 10079.026740 8997.897412

Bolivia 2586.886053 2980.331339 3548.097832 3156.510452

Brazil 3429.864357 4985.711467 6660.118654 7030.835878`

gdpPercap_1987 gdpPercap_1992 gdpPercap_1997 gdpPercap_2002 \

country

Argentina 9139.671389 9308.418710 10967.281950 8797.640716

Bolivia 2753.691490 2961.699694 3326.143191 3413.262690

Brazil 7807.095818 6950.283021 7957.980824 8131.212843`

gdpPercap_2007

country

Argentina 12779.379640

Bolivia 3822.137084

Brazil 9065.800825

# 2. To check out the last three rows of `americas`, we would use the command,

# `americas.tail(n=3)`, analogous to `head()` used above. However, here we want

# to look at the last three columns so we need to change our view and then use

# `tail()`. To do so, we create a new DataFrame in which rows and columns are

# switched

americas_flipped = americas.T

# We can then view the last three columns of `americas` by viewing the last

# three rows of `americas_flipped`:

americas_flipped.tail(n = 3)

# The output is then:

country Argentina Bolivia Brazil Canada Chile Colombia \

gdpPercap_1997 10967.3 3326.14 7957.98 28954.9 10118.1 6117.36

gdpPercap_2002 8797.64 3413.26 8131.21 33329 10778.8 5755.26

gdpPercap_2007 12779.4 3822.14 9065.8 36319.2 13171.6 7006.58

country Costa Rica Cuba Dominican Republic Ecuador ... \

gdpPercap_1997 6677.05 5431.99 3614.1 7429.46 ...

gdpPercap_2002 7723.45 6340.65 4563.81 5773.04 ...

gdpPercap_2007 9645.06 8948.1 6025.37 6873.26 ...

country Mexico Nicaragua Panama Paraguay Peru Puerto Rico \

gdpPercap_1997 9767.3 2253.02 7113.69 4247.4 5838.35 16999.4

gdpPercap_2002 10742.4 2474.55 7356.03 3783.67 5909.02 18855.6

gdpPercap_2007 11977.6 2749.32 9809.19 4172.84 7408.91 19328.7

country Trinidad and Tobago United States Uruguay Venezuela

gdpPercap_1997 8792.57 35767.4 9230.24 10165.5

gdpPercap_2002 11460.6 39097.1 7727 8605.05

gdpPercap_2007 18008.5 42951.7 10611.5 11415.8

Note: we could have done the above in a single line of code by 'chaining' the commands:

americas.T.tail(n=3)

# -

# #### Q3: Reading Files in Other Directories

# The data for your current project is stored in a file called `microbes.csv`, which is located in a folder called `field_data`. Your are doing analysis in a notebook called `analysis.ipynb` in a sibliong folder called `thesis`:

your home directory

+-- field data/

| +-- microbes.csv

+-- thesis/

+-- analysis.ipynb

# What value(s) should you pass to `read.csv` to read `microbes.csv` in `analysis.ipynb`?

# **Solution**

#

# Click on the '...' below to show the solution.

# + jupyter={"source_hidden": true}

# We need to specify the path to the file of interest in the call to

# `pandas.read_csv`. We first need to `jump` out of the folder `thesis` using

# `../` and then into the folder `field_data` using `field_data/`. Then we

# can specify the filename `microbes.csv`.

#

# The result is as follows:

data_microbes = pandas.read_csv('../field_data/microbes.csv')

# -

# #### Q4: Writing Data

# As well as the `read_csv` function for reafing data from a file, Pandas provides a `to_csv` function to write dataframes to files. Applying what you've learned about reading from files, write one of your dataframes to a file calles `processed.csv`. You can use `help` to get information on how to use `to_csv`.

# **Solution**

#

# Click on the '...' below to show the solution.

# + jupyter={"source_hidden": true}

# In order to write the DataFrame `americas` to a file called `processed.csv`,

# execute the following command:

americas.to_csv('processed.csv')

# For help on `to_csv`, you could execute, for example,

help(americas.to_csv)

# Note that `help(to_csv)` throws an error! This is a subtlety and is due to

# the fact that `to_csv` is NOT a function in and of itself and the actual

# call is `americas.to_csv`.

| _episodes_rapids/061_reading_tabular_panda.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# + [markdown] nbgrader={}

# # Interact Exercise 3

# + [markdown] nbgrader={}

# ## Imports

# + nbgrader={}

# %matplotlib inline

from matplotlib import pyplot as plt

import numpy as np

# + nbgrader={}

from IPython.html.widgets import interact, interactive, fixed

from IPython.display import display

# + [markdown] nbgrader={}

# # Using interact for animation with data

# + [markdown] nbgrader={}

# A [*soliton*](http://en.wikipedia.org/wiki/Soliton) is a constant velocity wave that maintains its shape as it propagates. They arise from non-linear wave equations, such has the [Korteweg–de Vries](http://en.wikipedia.org/wiki/Korteweg%E2%80%93de_Vries_equation) equation, which has the following analytical solution:

#

# $$

# \phi(x,t) = \frac{1}{2} c \mathrm{sech}^2 \left[ \frac{\sqrt{c}}{2} \left(x - ct - a \right) \right]

# $$

#

# The constant `c` is the velocity and the constant `a` is the initial location of the soliton.

#

# Define `soliton(x, t, c, a)` function that computes the value of the soliton wave for the given arguments. Your function should work when the postion `x` *or* `t` are NumPy arrays, in which case it should return a NumPy array itself.

# + nbgrader={"checksum": "b95685e8808cf7e99f918ab07c87c11a", "solution": true}

def soliton(x, t, c, a):

"""Return phi(x, t) for a soliton wave with constants c and a."""

solt=0.5*c*(1/np.cosh(0.5*(c**.5)*(x-c*t-a))**2)

return np.array(solt)

# -

x=np.array([1,2,3,4,5])

t=np.array([6,7,8,9,10])

soliton(x,t,1,2)

# + deletable=false nbgrader={"checksum": "bcd15232a87c4354cbc68dcca28654ee", "grade": true, "grade_id": "interactex03a", "points": 2}

assert np.allclose(soliton(np.array([0]),0.0,1.0,0.0), np.array([0.5]))

# + [markdown] nbgrader={}

# To create an animation of a soliton propagating in time, we are going to precompute the soliton data and store it in a 2d array. To set this up, we create the following variables and arrays:

# + nbgrader={}

tmin = 0.0

tmax = 10.0

tpoints = 100

t = np.linspace(tmin, tmax, tpoints)

xmin = 0.0

xmax = 10.0

xpoints = 200

x = np.linspace(xmin, xmax, xpoints)

c = 1.0

a = 0.0

# + [markdown] nbgrader={}

# Compute a 2d NumPy array called `phi`:

#

# * It should have a dtype of `float`.

# * It should have a shape of `(xpoints, tpoints)`.

# * `phi[i,j]` should contain the value $\phi(x[i],t[j])$.

# + deletable=false nbgrader={"checksum": "6cff4e8e53b15273846c3aecaea84a3d", "solution": true}

phi=np.ndarray(shape=(xpoints,tpoints), dtype=float) #collaberated with <NAME>

for i in x:

for j in t:

phi[i,j]=soliton(x[i],t[j],c,a)

phi

# + deletable=false nbgrader={"checksum": "90baf1a97272cee6f5554e0104b50f47", "grade": true, "grade_id": "interactex03b", "points": 4}

assert phi.shape==(xpoints, tpoints)

assert phi.ndim==2

assert phi.dtype==np.dtype(float)

assert phi[0,0]==soliton(x[0],t[0],c,a)

# + [markdown] nbgrader={}

# Write a `plot_soliton_data(i)` function that plots the soliton wave $\phi(x, t[i])$. Customize your plot to make it effective and beautiful.

# + nbgrader={"checksum": "d857aa7adb31b1de9c4d53a7febb18d3", "solution": true}

def plot_soliton_data(i=0):

"""Plot the soliton data at t[i] versus x."""

plt.plot(soliton(x,t[i],c,a))

# + nbgrader={}

plot_soliton_data(0)

# + deletable=false nbgrader={"checksum": "a76632040b08c7c76c889e67ee93deb0", "grade": true, "grade_id": "interactex03c", "points": 2}

assert True # leave this for grading the plot_soliton_data function

# + [markdown] nbgrader={}

# Use `interact` to animate the `plot_soliton_data` function versus time.

# + deletable=false nbgrader={"checksum": "6cff4e8e53b15273846c3aecaea84a3d", "solution": true}

interact(plot_soliton_data,i=(0,100,10))

# + deletable=false nbgrader={"checksum": "ef5ed9fcab6418650cdf556757a4486a", "grade": true, "grade_id": "interactex03d", "points": 2}

assert True # leave this for grading the interact with plot_soliton_data cell

# -

| assignments/assignment05/InteractEx03.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 2

# language: python

# name: python2

# ---

# # Case Study

#

# ## 1. Objective

#

# 1. Predicting most probable policy to a new customer

# 2. Recommending alternate policy to existing customers

# 3. Factors affecting life time value

# 4. Understanding demographics and customer behaviour

#

#

# Let's start with first part

#

# ## 2. Section 1 - Data Extraction

# +

import pandas as pd

import numpy as np

import matplotlib.pyplot as plt

# %matplotlib inline

import seaborn as sns

sns.set()

#Reading the dataset in a dataframe using Pandas

df = pd.read_csv("policy_data.csv", index_col = 'Customer')

columns = ['State','Coverage','Education','Gender','Income','Location Code',

'Marital Status','Sales Channel','Vehicle Class','Vehicle Size']

df.drop(['EmploymentStatus'], axis = 1, inplace = True)

df.drop(['Customer Lifetime Value'], axis = 1, inplace = True)

df.head()

# -

df.corr()

# ## 3. Exploratory Data Analysis

# First let us see the distribution of existing customers

#

# ## Selection of Policy based on customer characteristics

#

#

# ## Selection of Policy based on vehicle

#

#

# ## 4. Modeling

from sklearn.preprocessing import LabelEncoder

categorical_variables = df.dtypes[df.dtypes == 'object'].index

categorical_variables

# +

le = LabelEncoder()

for var in categorical_variables:

df[var] = le.fit_transform(df[var])

df.head()

# -

X = df.iloc[:, 1:]

y = df.iloc[:, 0]

from sklearn.model_selection import train_test_split

X_train, X_test, y_train, y_test = train_test_split(X, y, test_size = .2, random_state = 0)

from sklearn.tree import DecisionTreeClassifier

decision = DecisionTreeClassifier()

decision = decision.fit(X_train, y_train)

y_pred = decision.predict(X_test)

from sklearn.metrics import accuracy_score

result = accuracy_score(y_test, y_pred) * 100

result

for df, importance in zip(columns, decision.feature_importances_):

print(df, importance * 100)

from sklearn.naive_bayes import GaussianNB

GNBClassifier = GaussianNB()

GNBClassifier = GNBClassifier.fit(X_train, y_train.ravel())

y_pred = GNBClassifier.predict(X_test)

result = accuracy_score(y_test, y_pred) * 100

result

from sklearn.neighbors import KNeighborsClassifier

classifier = KNeighborsClassifier()

knnClassifier = classifier.fit(X_train, y_train.ravel())

y_pred = knnClassifier.predict(X_test)

result = accuracy_score(y_test, y_pred)*100

result

from sklearn.ensemble import RandomForestClassifier

classifier = RandomForestClassifier(max_depth = 10, min_samples_leaf = 10, max_features= 'auto')

rfClassifier = classifier.fit(X_train, y_train.ravel())

y_pred = rfClassifier.predict(X_test)

result = accuracy_score(y_test, y_pred)*100

result

from sklearn.externals import joblib

joblib.dump(rfClassifier, 'model/nb.pkl')

# ## 5. Serving end points with Flask API

#

# On localhost 5000

#

#

#

#

#

# ## Section 2 - CLV

# 2.1 - Overall

#

#

#

# 2.2 - Based on Complaints

#

#

#

# 2.3 - Based on Demographics

#

#

#

#

# 2.4 - Based on Sales

#

#

#

# 2.5 - Based on Personal Characteristic

#

#

#

#

#

#

# +

import pandas as pd

import numpy as np

import matplotlib.pyplot as plt

# %matplotlib inline

import seaborn as sns

sns.set()

#Reading the dataset in a dataframe using Pandas

df = pd.read_csv("data.csv")

# -

#finding missing values

df.isnull().sum()

from sklearn.preprocessing import LabelEncoder

categorical_variables = df.dtypes[df.dtypes == 'object'].index

categorical_variables

# +

le = LabelEncoder()

for var in categorical_variables:

df[var] = le.fit_transform(df[var])

df.head()

# -

pd.cut(df['Customer Lifetime Value'], 8).head()

custom_bucket_array = np.linspace(0, 20, 9)

custom_bucket_array

df['Customer Lifetime Value'] = pd.cut(df['Customer Lifetime Value'], custom_bucket_array)

X = df.iloc[:, 1:]

y = df.iloc[:, 0]

from sklearn.model_selection import train_test_split

X_train, X_test, y_train, y_test = train_test_split(X, y, test_size = .2, random_state = 0)

from sklearn.tree import DecisionTreeRegressor

decision = DecisionTreeRegressor(max_depth=2)

decision = decision.fit(X_train, y_train)

y_pred = decision.predict(X_test)

for df, importance in zip(df, decision.feature_importances_):

print(df, importance * 100)

# ## Section 3 - Demographic

| master.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3 (ipykernel)

# language: python

# name: python3

# ---

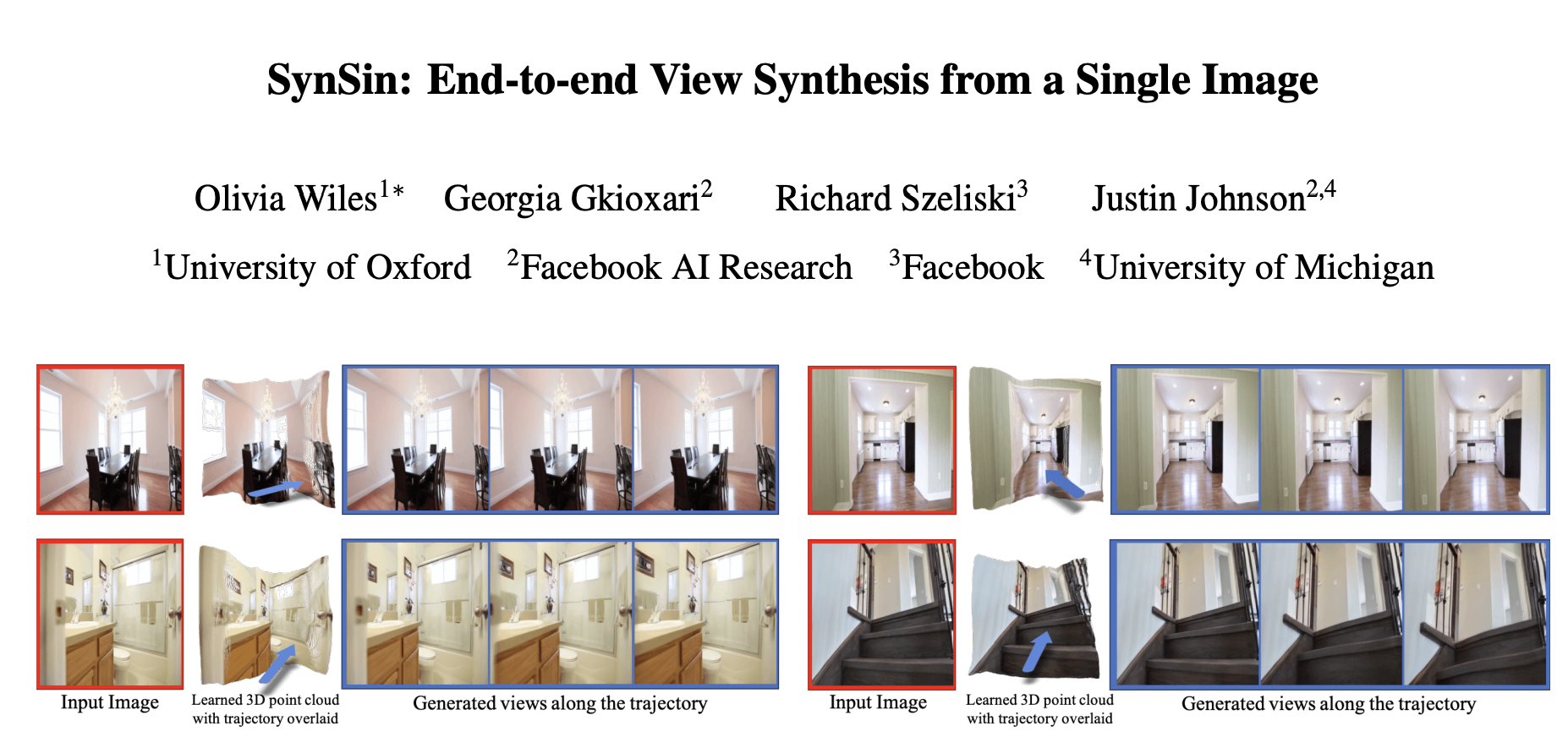

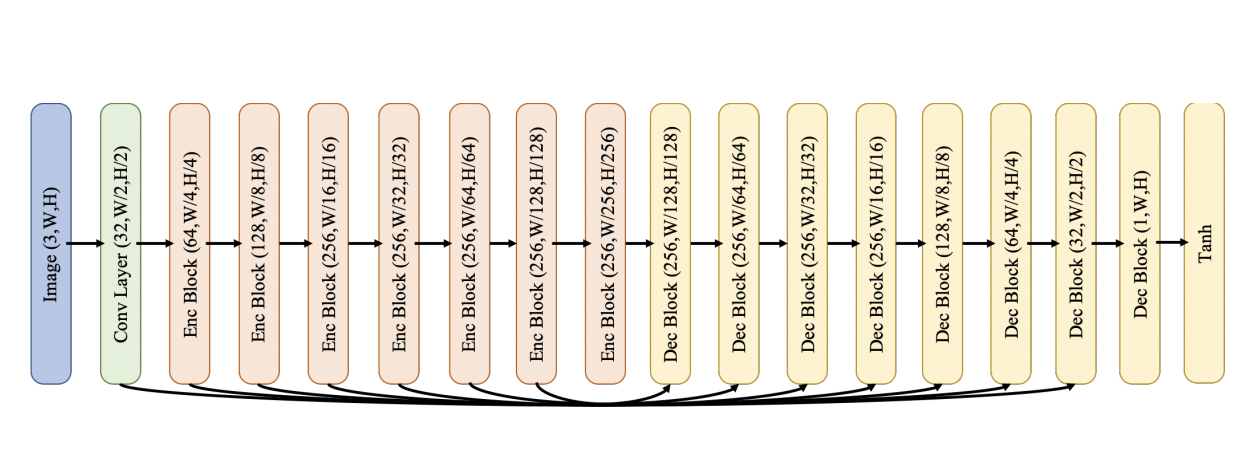

#

# In this homework, we will try to implement a `View Synthesis` model that allows us to generate new scene views based on a single image.

#

# The basic idea is to use `differentiable point cloud rendering`, which is used to convert a hidden 3D feature point cloud into a target view.

# The projected features are decoded by `refinement network` to inpaint missing regions and generate a realistic output image.

# ### Overall pipeline disribed below

#

# # Data

# ## Download KITTI dataset

# +

# from gfile import download_file_from_google_drive

# download_file_from_google_drive(

# '1lqspXN10biBShBIVD0yvgnl1nIPPhRdC',

# 'kitti.zip'

# )

# +

# # !unzip kitti.zip

# -

# ## Dataset

# +

# %pylab inline

from tqdm import tqdm

from itertools import islice

from IPython.display import clear_output, HTML

from collections import defaultdict

from kitti import KITTIDataLoader

import torch

from torch import nn

from torch.utils.data import Subset, DataLoader

import torchvision

from pytorch3d.vis.plotly_vis import plot_scene

from pytorch3d.structures import Pointclouds

from pytorch3d.renderer import PerspectiveCameras, compositing, rasterize_points

# +

def split_RT(RT):

return RT[..., :3, :3], RT[..., :3, 3]

def renormalize_image(image):

return image * 0.5 + 0.5

# -

dataset = KITTIDataLoader('dataset_kitti')

# Each instance of dataset contain `source` and `target` images, `extrinsic` and `intrinsic` camera parameters for `source` and `targer` images.

#

# It is highly recommended to understand these concepts, e.g., here https://ksimek.github.io/2012/08/22/extrinsic/

images, cameras = dataset[0].values()

# +

plt.figure(figsize=(20, 10))

ax = plt.subplot(1, 2, 1)

ax.imshow(images[0].permute(1, 2, 0) * 0.5 + 0.5)

ax.set_title('Source Image Frame', fontsize=20)

ax.axis('off')

ax = plt.subplot(1, 2, 2)

ax.imshow(images[1].permute(1, 2, 0) * 0.5 + 0.5)

ax.set_title('Target Image Frame', fontsize=20)

ax.axis('off')

# +

source_camera = PerspectiveCameras(

R=split_RT(cameras[0]['P'])[0][None],

T=split_RT(cameras[0]['P'])[1][None],

K=torch.from_numpy(cameras[0]['K'])[None]

)

target_camera = PerspectiveCameras(

R=split_RT(cameras[1]['P'])[0][None],

T=split_RT(cameras[1]['P'])[1][None],

K=torch.from_numpy(cameras[1]['K'])[None]

)

plot_scene(

{

'scene': {

'source_camera': source_camera,

'target_camera': target_camera

}

},

)

# +

indexes = torch.randperm(len(dataset))

train_indexes = indexes[:-1000]

validation_indexes = indexes[-1000:]

train_dataset = Subset(dataset, train_indexes)

validation_dataset = Subset(dataset, validation_indexes)

train_dataloader = DataLoader(

train_dataset, batch_size=16, num_workers=6,

shuffle=True, drop_last=True, pin_memory=True

)

validation_dataloder = DataLoader(

validation_dataset, batch_size=10, num_workers=4,

pin_memory=True

)

# -

# ---

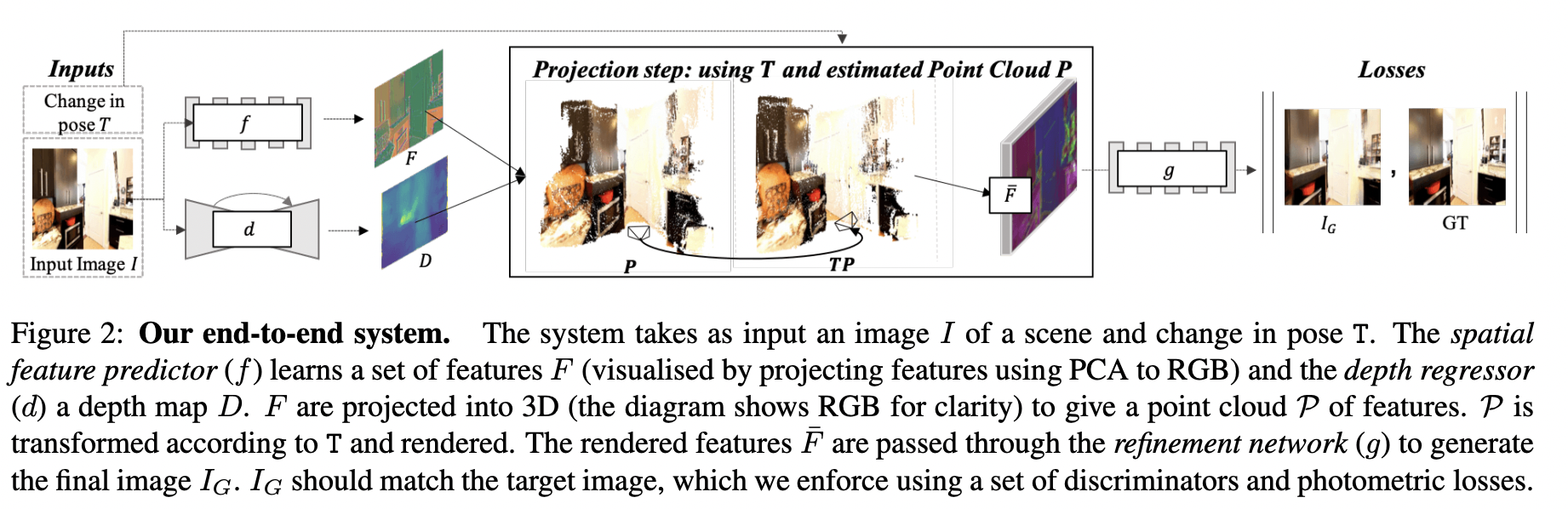

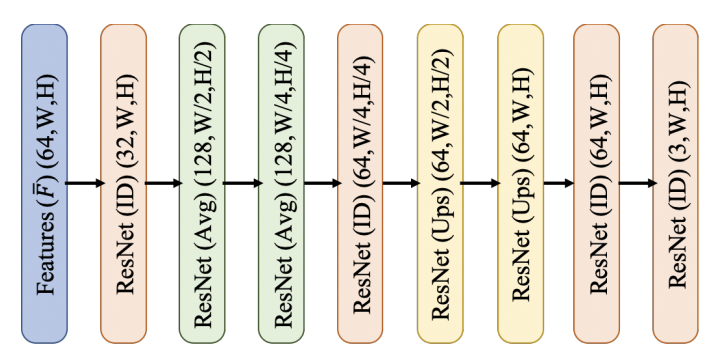

# # Models

#

# So, we need to implement `Spatial Feature Predictor`, `Depth Regressor`, `Point Cloud Renderer` and `RefinementNetwork`.

# One of the main building blocks in these networks is `ResNetBlock`, but with some modifications:

#

#

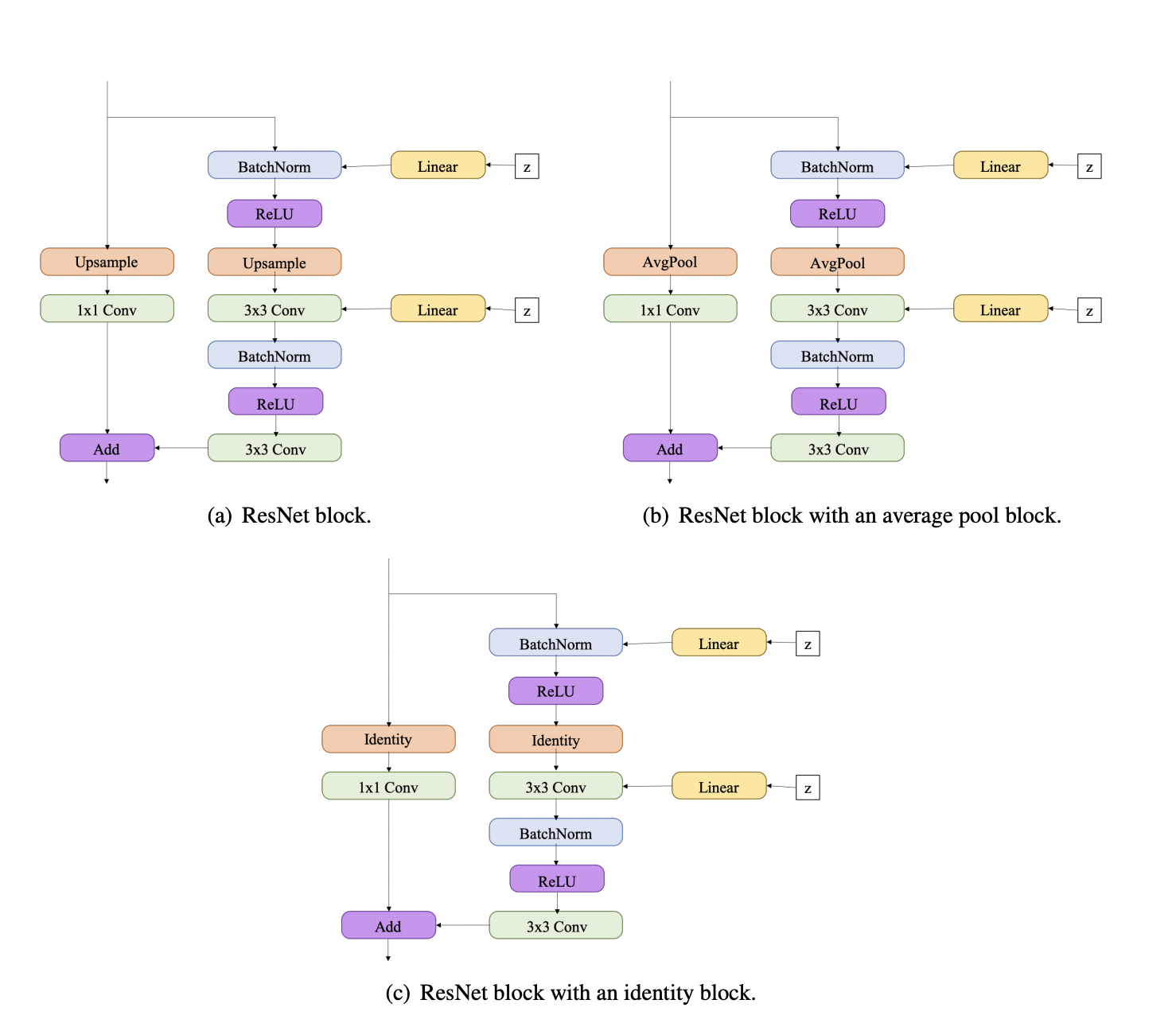

#

# So, let's implement it, but without the noise part `Linear + z` (let's omit it, since we do not use the adversarial criterion)

# + code_folding=[]

class ResNetBlock(nn.Module):

def __init__(

self,

in_channels: int,

out_channels: int,

stride: int = 1,

mode = 'identity'

):

super().__init__()

# TODO

def forward(self, input):

# TODO

# -

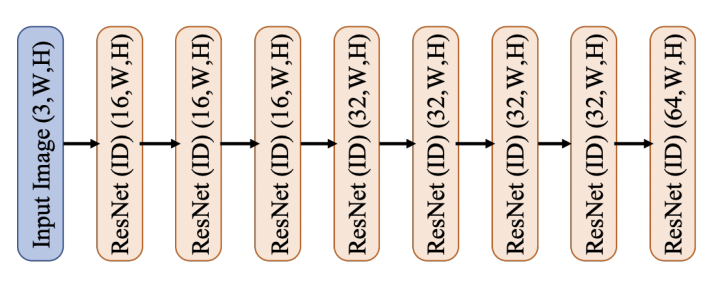

# ## Spatial Feature Predictor

#

#

# + code_folding=[]

class SpatialFeatureNetwork(nn.Module):

def __init__(self, in_channels=3, out_channels=64):

super().__init__()

self.blocks = # TODO

def forward(self, input: torch.Tensor):

return self.blocks(input)

sf_net = SpatialFeatureNetwork()

# -

# ## Depth Regressor

#

#

#

# An `Enc Block` consists of a sequence of Leaky ReLU, convolution (stride 2, padding 1, kernel size 4), and batch normalisation layers.

#

# A `Dec Block` consists of a sequence of ReLU, 2x bilinear upsampling, convolution (stride 1, padding 1, kernel size3), and batch normalisation layers (except for the final layer, which has no batch normalisation layer).

# + code_folding=[]

class Unet(nn.Module):

def __init__(

self,

num_filters=32,

channels_in=3,

channels_out=3

):

super(Unet, self).__init__()

# TODO

def forward(self, input):

# TODO

# -

# ## Refinement Network

#

#

# + code_folding=[]

class RefinementNetwork(nn.Module):

def __init__(self, in_channels=64, out_channels=3):

super().__init__()

self.blocks = # TODO

def forward(self, input: torch.Tensor):

return self.blocks(input)

# -

# ## Auxiliary network

# + code_folding=[0]

class VGG19(nn.Module):

def __init__(self, requires_grad=False):

super().__init__()

vgg_pretrained_features = torchvision.models.vgg19(

pretrained=True

).features

self.slice1 = torch.nn.Sequential()

self.slice2 = torch.nn.Sequential()

self.slice3 = torch.nn.Sequential()

self.slice4 = torch.nn.Sequential()

self.slice5 = torch.nn.Sequential()

for x in range(2):

self.slice1.add_module(str(x), vgg_pretrained_features[x])

for x in range(2, 7):

self.slice2.add_module(str(x), vgg_pretrained_features[x])

for x in range(7, 12):

self.slice3.add_module(str(x), vgg_pretrained_features[x])

for x in range(12, 21):

self.slice4.add_module(str(x), vgg_pretrained_features[x])

for x in range(21, 30):

self.slice5.add_module(str(x), vgg_pretrained_features[x])

if not requires_grad:

for param in self.parameters():

param.requires_grad = False

def forward(self, X):

# Normalize the image so that it is in the appropriate range

h_relu1 = self.slice1(X)

h_relu2 = self.slice2(h_relu1)

h_relu3 = self.slice3(h_relu2)

h_relu4 = self.slice4(h_relu3)

h_relu5 = self.slice5(h_relu4)

out = [h_relu1, h_relu2, h_relu3, h_relu4, h_relu5]

return out

# -

# ---

# # Criterions & Metrics

# + code_folding=[0]

class PerceptualLoss(nn.Module):

def __init__(self):

super().__init__()

# Set to false so that this part of the network is frozen

self.model = VGG19(requires_grad=False)

self.criterion = nn.L1Loss()

self.weights = [1.0 / 32, 1.0 / 16, 1.0 / 8, 1.0 / 4, 1.0]

def forward(self, pred_img, gt_img):

gt_fs = self.model(gt_img)

pred_fs = self.model(pred_img)

# Collect the losses at multiple layers (need unsqueeze in

# order to concatenate these together)

loss = 0

for i in range(0, len(gt_fs)):

loss += self.weights[i] * self.criterion(pred_fs[i], gt_fs[i])

return loss

# + code_folding=[0]

def psnr(predicted_image, target_image):

batch_size = predicted_image.size(0)

mse_err = (

(predicted_image - target_image)

.pow(2).sum(dim=1)

.view(batch_size, -1).mean(dim=1)

)

psnr = 10 * (1 / mse_err).log10()

return psnr.mean()

# -

# ---

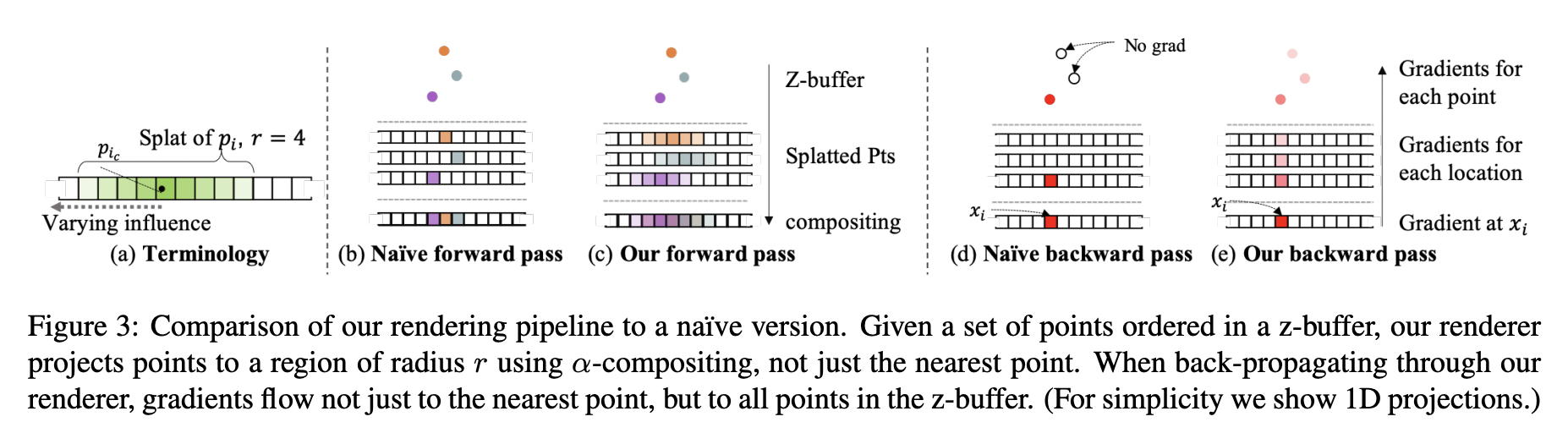

# # Point Cloud Renderer

#

# `Differential Rasterization` is a key component of our system. We will use the algorithm already implemented in `pytorch3d`.

#

#

#

# For more details read (3.2) https://arxiv.org/pdf/1912.08804.pdf

# + code_folding=[]

class PointsRasterizerWithBlending(nn.Module):

"""

Rasterizes a set of points using a differentiable renderer.

"""

def __init__(self, radius=1.5, image_size=256, points_per_pixel=8):

super().__init__()

self.radius = radius

self.image_size = image_size

self.points_per_pixel = points_per_pixel

self.rad_pow = 2

self.tau = 1.0

def forward(self, point_cloud, spatial_features):

batch_size = spatial_features.size(0)

# Make sure these have been arranged in the same way

assert point_cloud.size(2) == 3

assert point_cloud.size(1) == spatial_features.size(2)

point_cloud[:, :, 1] = -point_cloud[:, :, 1]

point_cloud[:, :, 0] = -point_cloud[:, :, 0]

radius = float(self.radius) / float(self.image_size) * 2.0

point_cloud = Pointclouds(points=point_cloud, features=spatial_features.permute(0, 2, 1))

points_idx, _, dist = rasterize_points(

point_cloud, self.image_size, radius, self.points_per_pixel

)

dist = dist / pow(radius, self.rad_pow)

alphas = (

(1 - dist.clamp(max=1, min=1e-3).pow(0.5))

.pow(self.tau)

.permute(0, 3, 1, 2)

)

transformed_src_alphas = compositing.alpha_composite(

points_idx.permute(0, 3, 1, 2).long(),

alphas,

point_cloud.features_packed().permute(1, 0),

)

return transformed_src_alphas

# -

# And `PointsManipulator` do the following steps:

#

# 1) Create virtual image place in [normalized coordinate](https://pytorch3d.org/docs/cameras)

# 2) Move camera according to `regressed depth`

# 3) Rotate points according to target camera paramers

# 4) And finally render them with help of `PointsRasterizerWithBlending`

# + code_folding=[]

class PointsManipulator(nn.Module):

EPS = 1e-5

def __init__(self, image_size):

super().__init__()

# Assume that image plane is square

self.splatter = PointsRasterizerWithBlending(

radius=1.0,

image_size=image_size,

points_per_pixel=128,

)

xs = torch.linspace(0, image_size - 1, image_size) / \

float(image_size - 1) * 2 - 1

ys = torch.linspace(0, image_size - 1, image_size) / \

float(image_size - 1) * 2 - 1

xs = xs.view(1, 1, 1, image_size).repeat(1, 1, image_size, 1)

ys = ys.view(1, 1, image_size, 1).repeat(1, 1, 1, image_size)

xyzs = torch.cat(

(xs, -ys, -torch.ones(xs.size()), torch.ones(xs.size())), 1

).view(1, 4, -1)

self.register_buffer("xyzs", xyzs)

def project_pts(self, depth, K, K_inv, RT_cam1, RTinv_cam1, RT_cam2, RTinv_cam2):

# Project the world points into the new view

projected_coors = self.xyzs * depth

projected_coors[:, -1, :] = 1

# Transform into camera coordinate of the first view

cam1_X = K_inv.bmm(projected_coors)

# Transform into world coordinates

RT = RT_cam2.bmm(RTinv_cam1)

wrld_X = RT.bmm(cam1_X)

# And intrinsics

xy_proj = K.bmm(wrld_X)

# And finally we project to get the final result

mask = (xy_proj[:, 2:3, :].abs() < self.EPS).detach()

# Remove invalid zs that cause nans

zs = xy_proj[:, 2:3, :]

zs[mask] = self.EPS

sampler = torch.cat((xy_proj[:, 0:2, :] / -zs, xy_proj[:, 2:3, :]), 1)

sampler[mask.repeat(1, 3, 1)] = -10

# Flip the ys

sampler = sampler * torch.Tensor([1, -1, -1]).unsqueeze(0).unsqueeze(

2

).to(sampler.device)

return sampler

def forward_justpts(

self,

spatial_features, depth,

K, K_inv, RT_cam1, RTinv_cam1, RT_cam2, RTinv_cam2

):

# Now project these points into a new view

batch_size, c, w, h = spatial_features.size()

if len(depth.size()) > 3:

# reshape into the right positioning

depth = depth.view(batch_size, 1, -1)

spatial_features = spatial_features.view(batch_size, c, -1)

pointcloud = self.project_pts(

depth, K, K_inv, RT_cam1, RTinv_cam1, RT_cam2, RTinv_cam2

)

pointcloud = pointcloud.permute(0, 2, 1).contiguous()

result = self.splatter(pointcloud, spatial_features)

return result

# -

# ---

# # All together

class ViewSynthesisModel(nn.Module):

def __init__(self):

super().__init__()

self.spatial_feature_predictor = SpatialFeatureNetwork()

self.depth_regressor = Unet(channels_in=3, channels_out=1)

self.point_cloud_renderer = PointsManipulator(image_size=256)

self.refinement_network = RefinementNetwork()

# Special constant for KITTI dataset

self.z_min = 1.0

self.z_max = 50.0

def forward(self):

# TODO

# 1) Predict spatial feature for source image

# 2) Predict depth for source image (dont forget to renormalize depth with z_min/z_max)

# 3) Generate new features with `point_cloud_renderer`

# 4) And finnaly apply `refinement_network` to obtain new image

# 5) return new image, and depth of source image

# ---

# # Training

#

# In order for the work to be accepted, you must achieve a quality of ~0.5 (validation loss value) and visualize several samples as in the example

# +

device = torch.device('cuda:0')

model = ViewSynthesisModel().to(device)

optimizer = torch.optim.Adam(model.parameters(), 1e-4)

histoty = defaultdict(list)

l1_criterion = nn.L1Loss()

perceptual_criterion = PerceptualLoss().to(device)

# -

for epoch in range(10):

for i, batch in tqdm(enumerate(train_dataloader, 2), total=len(train_dataloader)):

source_image = batch["images"][0].to(device)

target_image = batch["images"][-1].to(device)

# TODO

generated_image, regressed_depth = model(...)

loss = l1_criterion(generated_image, target_image) \

+ 10 * perceptual_criterion(

renormalize_image(generated_image),

renormalize_image(target_image)

)

optimizer.zero_grad()

loss.backward()

optimizer.step()

histoty['train_loss'].append(loss.item())

for i, batch in tqdm(enumerate(validation_dataloder), total=len(validation_dataloder)):

source_image = batch["images"][0].to(device)

target_image = batch["images"][-1].to(device)

with torch.no_grad():

# TODO

generated_image, regressed_depth = model(...)

loss = l1_criterion(generated_image, target_image) \

+ 10 * perceptual_criterion(

renormalize_image(generated_image),

renormalize_image(target_image)

)

histoty['validation_loss'].append(loss.item())

clear_output()

fig = plt.figure(figsize=(30, 15), dpi=80)

ax1 = plt.subplot(3, 3, 1)

ax1.plot(histoty['train_loss'], label='Train')

ax1.set_xlabel('Iterations', fontsize=20)

ax1.set_ylabel(r'${L_1} + Perceptual$', fontsize=20)

ax1.legend()

ax1.grid()

ax2 = plt.subplot(3, 3, 4)

ax2.plot(histoty['validation_loss'], label='Validation')

ax2.set_xlabel('Iterations', fontsize=20)

ax2.set_ylabel(r'${L_1} + Perceptual$', fontsize=20)

ax2.legend()

ax2.grid()

for index, image in zip(

(2, 3, 5, 6),

(source_image, target_image, generated_image, regressed_depth)

):

ax = plt.subplot(3, 3, index)

im = ax.imshow(renormalize_image(image.detach().cpu()[0]).permute(1, 2, 0))

ax.axis('off')

plt.show()

# # Visualize

#

# Goes along depth and generate new views

RTs = []

for i in torch.linspace(0, 0.5, 40):

current_RT = torch.eye(4).unsqueeze(0)

current_RT[:, 2, 3] = i

RTs.append(current_RT.to(device))

identity_matrx = torch.eye(4).unsqueeze(0).to(device)

# +

random_instance_index = 245

with torch.no_grad():

images, cameras = validation_dataset[random_instance_index].values()

# Input values

input_img = images[0][None].cuda()

# Camera parameters

K = torch.from_numpy(cameras[0]["K"])[None].to(device)

K_inv = torch.from_numpy(cameras[0]["Kinv"])[None].to(device)

spatial_features = model.spatial_feature_predictor(input_img)

regressed_depth = torch.sigmoid(model.depth_regressor(input_img)) * \

(model.z_max - model.z_min) + model.z_min

new_images = []

for current_RT in RTs:

generated_features = model.point_cloud_renderer.forward_justpts(

spatial_features,

regressed_depth,

K,

K_inv,

identity_matrx,

identity_matrx,

current_RT,

None

)

generated_image = model.refinement_network(generated_features)

new_images.append(renormalize_image(generated_image.cpu()).clamp(0, 1).mul(255).to(torch.uint8))

# -

frames = torch.cat(new_images).permute(0, 2, 3, 1)

torchvision.io.write_video('video.mp4', frames, fps=20)

HTML("""

<video width="256" alt="test" controls>

<source src="video.mp4" type="video/mp4">

</video>

""")

# # Quality benchmark

#

#

#

| homework04/homework-part2-new-view-synthesis.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 2

# language: python

# name: python2

# ---

# +

from keras.models import load_model, Model

from keras.layers import Dense, Flatten, MaxPooling2D

from keras.layers import Conv2D, Lambda, Input, Activation

from keras.layers import LSTM, TimeDistributed, Bidirectional, GRU

from keras.layers.merge import add, concatenate

from keras.optimizers import SGD

from keras import backend as K

from new_multi_gpu import *

from models_multi import *

# import warpctc_tensorflow

import tensorflow as tf

import random

import keras

import numpy as np

# class CRNN(object):

# """docstring for RNN"""

# def __init__(self, learning_rate = 0.001, output_dim = 63, gpu_count=2):

# conv_filters = 16

# kernel_size = (3, 3)

# pool_size = 2

# time_dense_size = 32

# rnn_size = 512

# img_h = 32

# act = 'relu'

# self.width = K.placeholder(name= 'width', ndim =0, dtype='int32')

# self.input_data = Input(name='the_input', shape=(None, img_h, 1), dtype='float32')

# self.inner = Conv2D(conv_filters, kernel_size, padding='same',

# activation=act, kernel_initializer='he_normal',

# name='conv1')(self.input_data)

# self.inner = MaxPooling2D(pool_size=(pool_size, pool_size), name='max1')(self.inner)

# self.inner = Conv2D(conv_filters, kernel_size, padding='same',

# activation=act, kernel_initializer='he_normal',

# name='conv2')(self.inner)

# self.inner = MaxPooling2D(pool_size=(pool_size, pool_size), name='max2')(self.inner)

# self.inner = Lambda(self.res, arguments={"last_dim": (img_h // (pool_size ** 2)) * conv_filters \

# , "width": self.width // 4})(self.inner)

# # cuts down input size going into RNN:

# self.inp = Dense(time_dense_size, activation=act, name='dense1')(self.inner)

# self.batch_norm = keras.layers.normalization.BatchNormalization()(self.inp)

# self.gru_1 = Bidirectional(GRU(rnn_size, return_sequences=True, kernel_initializer='he_normal',\

# name='gru1'),merge_mode="sum")(self.batch_norm)

# self.gru_2 = Bidirectional(GRU(rnn_size, return_sequences=True, kernel_initializer='he_normal',\

# name='gru2'),merge_mode="concat")(self.gru_1)

# self.gru_3 = Bidirectional(GRU(rnn_size, recurrent_dropout=0.5, return_sequences=True, \

# kernel_initializer='he_normal', name='gru3'),merge_mode="concat")(self.gru_2)

# self.gru_4 = Bidirectional(GRU(rnn_size, recurrent_dropout=0.5, return_sequences=True, \

# kernel_initializer='he_normal', name='gru4'),merge_mode="concat")(self.gru_3)

# self.y_pred = TimeDistributed(Dense(output_dim, kernel_initializer='he_normal', \

# name='dense2', activation='linear'))(self.gru_4)

# self.model = Model(inputs=self.input_data, outputs=self.y_pred)

# self.model = make_parallel(self.model, gpu_count)

# self.model.summary()

# self.output_ctc = self.model.outputs[0]

# self.out = K.function([self.input_data, self.width, K.learning_phase()], [self.y_pred])

# self.y_true = K.placeholder(name='y_true', ndim=1, dtype='int32')

# self.input_length = K.placeholder(name='input_length', ndim=1, dtype='int32')

# self.label_length = K.placeholder(name='label_length', ndim=1, dtype='int32')

# self.test = K.argmax(self.y_pred, axis=2)

# self.predict_step = K.function([self.input_data, self.width, K.learning_phase()], [self.test])

# def res (self, x, width, last_dim):

# return K.reshape(x, (-1, width, last_dim))

# -

from models_multi import CRNN

M = CRNN(1e-4, 219)

M.model.load_weights('crnn_219.h5')

model = M.model.get_layer('model_1')

model.summary()

model.layers.pop()

from keras.layers import TimeDistributed, Dense

from keras.models import Model

x = model.layers[-1].output

y_pred = TimeDistributed(Dense(219, kernel_initializer='he_normal', \

name='denseout', activation='linear'))(x)

new_model = Model(input=model.inputs, output=y_pred)

new_model.summary()

from new_multi_gpu import make_parallel

final_model = make_parallel(new_model, 2)

final_model.summary()

final_model.save_weights('crnn_219.h5')

# +

import matplotlib.pyplot as plt

import numpy as np

import os

from utils import pred, reshape

from scipy import ndimage

ims = os.listdir('./test/nhu cầu đầu vào')

im = './test/nhu cầu đầu vào/' + np.random.choice(ims)

# im = '/home/tailongnguyen/deep-anpr/output/0.png'

im = ndimage.imread(im)

plt.imshow(im, cmap ='gray')

plt.show()

im = np.expand_dims(reshape(im), axis = 0)

im.shape

pred(im, M, None, True)

# -

| ipython/Test-sentence.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

from pycalphad import Database, Model, calculate, equilibrium, variables as v

from xarray import DataArray

# +

class PrecipitateModel(Model):

matrix_chempots = []

@property

def matrix_hyperplane(self):

return sum(self.moles(self.nonvacant_elements[i])*self.matrix_chempots[i]

for i in range(len(self.nonvacant_elements)))

@property

def GM(self):

return self.ast - self.matrix_hyperplane

class GibbsThompsonModel(Model):

"Spherical particle."

radius = 1e-6 # m

volume = 7.3e-6 # m^3/mol

interfacial_energy = 250e-3 # J/m^2

elastic_misfit_energy = 0 # J/mol

@property

def GM(self):

return self.ast + (2*self.interfacial_energy/self.radius + self.elastic_misfit_energy) * self.volume

# +

import numpy as np

def parallel_tangent(dbf, comps, matrix_phase, matrix_comp, precipitate_phase, temp):

conds = {v.N: 1, v.P: 1e5, v.T: temp}

conds.update(matrix_comp)

matrix_eq = equilibrium(dbf, comps, matrix_phase, conds)

# pycalphad currently doesn't have a way to turn global minimization off and directly specify starting points

if matrix_eq.isel(vertex=1).Phase.values.flatten() != ['']:

raise ValueError('Matrix phase has miscibility gap. This bug will be fixed in the future')

matrix_chempots = matrix_eq.MU.values.flatten()

# This part will not work until mass balance constraint can be relaxed

#precip = PrecipitateModel(dbf, comps, precipitate_phase)

#precip.matrix_chempots = matrix_chempots

#conds = {v.N: 1, v.P: 1e5, v.T: temp}

#df_eq = equilibrium(dbf, comps, precipitate_phase, conds, model=precip)

df_eq = calculate(dbf, comps, precipitate_phase, T=temp, N=1, P=1e5)

df_eq['GM'] = df_eq.X.values[0,0,0].dot(matrix_chempots) - df_eq.GM

selected_idx = df_eq.GM.argmax()

return matrix_eq.isel(vertex=0), df_eq.isel(points=selected_idx)

def nucleation_barrier(dbf, comps, matrix_phase, matrix_comp, precipitate_phase, temp,

interfacial_energy, precipitate_volume):

"Spherical precipitate."

matrix_eq, precip_eq = parallel_tangent(dbf, comps, matrix_phase, matrix_comp, precipitate_phase, temp)

precip_driving_force = float(precip_eq.GM.values) # J/mol

elastic_misfit_energy = 0 # J/m^3

barrier = 16./3 * np.pi * interfacial_energy **3 / (precip_driving_force + elastic_misfit_energy) ** 2 # J/mol

critical_radius = 2 * interfacial_energy / ((precip_driving_force / precipitate_volume) + elastic_misfit_energy) # m

print(temp, critical_radius)

if critical_radius < 0:

barrier = np.inf

cluster_area = 4*np.pi*critical_radius**2

cluster_volume = (4./3) * np.pi * critical_radius**3

return barrier, precip_driving_force, cluster_area, cluster_volume, matrix_eq, precip_eq

def growth_rate(dbf, comps, matrix_phase, matrix_comp, precipitate_phase, temp, particle_radius):

conds = {v.N: 1, v.P: 1e5, v.T: temp}

conds.update(matrix_comp)

# Fictive; Could retrieve from TDB in principle

mobility = 1e-7 * np.exp(-14e4/(8.3145*temp)) # m^2 / s

mobilities = np.eye(len(matrix_comp)+1) * mobility

matrix_ff_eq, precip_eq = parallel_tangent(dbf, comps, matrix_phase, matrix_comp, precipitate_phase, temp)

precip_driving_force = float(precip_eq.GM.values) # J/mol

interfacial_energy = 250e-3 # J/m^2

precipitate_volume = 7.3e-6 # m^3/mol

elastic_misfit_energy = 0 # J/m^3

# Spherical particle

critical_radius = 2 * interfacial_energy / ((precip_driving_force / precipitate_volume) + elastic_misfit_energy) # m

# XXX: Should really be done with global min off, fixed-phase conditions, etc.

# As written, this will break with miscibility gaps

particle_mod = GibbsThompsonModel(dbf, comps, precipitate_phase)

particle_mod.radius = particle_radius

interface_eq = equilibrium(dbf, comps, [matrix_phase, precipitate_phase], conds,

model={precipitate_phase: particle_mod})

matrix_idx = np.nonzero((interface_eq.Phase==matrix_phase).values.flatten())[0]

if len(matrix_idx) > 1:

raise ValueError('Matrix phase has miscibility gap')

elif len(matrix_idx) == 0:

# Matrix is metastable at this composition; massive transformation kinetics?

print(interface_eq)

raise ValueError('Matrix phase is not stable')

else:

matrix_idx = matrix_idx[0]

matrix_interface_eq = interface_eq.isel(vertex=matrix_idx)

precip_idx = np.nonzero((interface_eq.Phase==precipitate_phase).values.flatten())[0]

if len(precip_idx) > 1:

raise ValueError('Precipitate phase has miscibility gap')

elif len(precip_idx) == 0:

precip_conc = np.zeros(len(interface_eq.component))

# Precipitate is metastable at this radius (it will start to dissolve)

# Compute equilibrium for precipitate by itself at parallel tangent composition

pt_comp = {v.X(str(comp)): precip_eq.X.values.flatten()[idx] for idx, comp in enumerate(precip_eq.component.values[:-1])}