code stringlengths 38 801k | repo_path stringlengths 6 263 |

|---|---|

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# name: python3

# ---

# + id="9k5d6qKkCjNq" colab_type="code" outputId="b0af5c99-d8b0-49c3-8fcc-8c8d8333322d" colab={"base_uri": "https://localhost:8080/", "height": 306}

# ! nvidia-smi

# + id="CMbVzSqAo3eJ" colab_type="code" colab={}

# ! mkdir /blazingsql

# + id="0Pgdxg4ppH3e" colab_type="code" outputId="23eafaf8-74e0-4f2c-be58-f946fd8fdef2" colab={"base_uri": "https://localhost:8080/", "height": 1000}

# intall miniconda

# !wget -c https://repo.continuum.io/miniconda/Miniconda3-4.5.4-Linux-x86_64.sh

# !chmod +x Miniconda3-4.5.4-Linux-x86_64.sh

# !bash ./Miniconda3-4.5.4-Linux-x86_64.sh -b -f -p /usr/local

# install RAPIDS packages

# !conda install -q -y --prefix /usr/local -c nvidia -c rapidsai \

# -c numba -c conda-forge -c pytorch -c defaults \

# cudf=0.9 cuml=0.9 cugraph=0.9 python=3.6 cudatoolkit=10.0

# set environment vars

import sys, os, shutil

sys.path.append('/usr/local/lib/python3.6/site-packages/')

os.environ['NUMBAPRO_NVVM'] = '/usr/local/cuda/nvvm/lib64/libnvvm.so'

os.environ['NUMBAPRO_LIBDEVICE'] = '/usr/local/cuda/nvvm/libdevice/'

# # copy .so files to current working dir

for fn in ['libcudf.so', 'librmm.so']:

shutil.copy('/usr/local/lib/'+fn, os.getcwd())

# + id="2QhfPm6g9zJk" colab_type="code" outputId="b03cf0d8-5528-4f95-88c1-f49ccb8a320c" colab={"base_uri": "https://localhost:8080/", "height": 153}

import nvstrings, nvcategory, cudf

import io, requests

# download CSV file from GitHub

url="https://github.com/plotly/datasets/raw/master/tips.csv"

content = requests.get(url).content.decode('utf-8')

# read CSV from memory

tips_df = cudf.read_csv(io.StringIO(content))

tips_df['tip_percentage'] = tips_df['tip']/tips_df['total_bill']*100

# display average tip by dining party size

print(tips_df.groupby('size').tip_percentage.mean())

# + id="OuIkbAva9VVP" colab_type="code" outputId="3419c680-91e3-425e-9ae2-ff05c1b0fba9" colab={"base_uri": "https://localhost:8080/", "height": 1000}

# Install BlazingSQL for CUDA 10.0

# ! conda install -q -y --prefix /usr/local -c conda-forge -c defaults -c nvidia -c rapidsai \

# -c blazingsql/label/cuda10.0 -c blazingsql \

# blazingsql-calcite blazingsql-orchestrator blazingsql-ral blazingsql-python

# + id="TrAD1GInIor3" colab_type="code" outputId="e0bea199-78c7-4f93-eef3-32ad8e1bccb2" colab={"base_uri": "https://localhost:8080/", "height": 85}

pip install flatbuffers

# + id="UiYEprQ0FoWM" colab_type="code" colab={}

sys.path.append('/usr/local/lib/python3.7/site-packages/')

# + colab_type="code" id="Y7705GbC9tkE" colab={}

from blazingsql import BlazingContextpython

# + id="lY98GEFP1g2_" colab_type="code" outputId="40ceac00-125e-4b6a-eba2-edec0e0d46b7" colab={"base_uri": "https://localhost:8080/", "height": 34}

bc = BlazingContext()

# + id="fd4aIbRUGKUO" colab_type="code" outputId="7e2e13c6-496f-4bef-e6b3-aacdcda1e316" colab={"base_uri": "https://localhost:8080/", "height": 289}

# ! wget https://github.com/plotly/datasets/raw/master/tips.csv

# + id="KU4WfapUGN6q" colab_type="code" outputId="ca2043d1-aed6-4877-e466-f6896a598abe" colab={"base_uri": "https://localhost:8080/", "height": 204}

# ! head sample_data/california_housing_train.csv

# ! pwd

# + id="PorSEjMaFzo-" colab_type="code" colab={}

bc.create_table('housing', '/content/sample_data/california_housing_train.csv')

# + id="SYApuO0zGk3u" colab_type="code" outputId="62272323-4d23-4219-91c9-14680fc66c58" colab={"base_uri": "https://localhost:8080/", "height": 918}

# Query

result = bc.sql('SELECT housing_median_age, AVG(median_house_value) FROM housing GROUP BY housing_median_age ORDER BY housing_median_age').get()

result_gdf = result.columns

#Print GDF

print(result_gdf)

# + id="XWSidqfbGpXG" colab_type="code" colab={}

| utils/blazing_conda_test.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

import pandas as pd

import glob, os

from tqdm import tqdm

directory = "./2017/"

os.chdir(directory)

#print(glob.glob("*csv"))

all_files = glob.glob("*csv")

all_files[0]

len(all_files)

error_files = []

merged = pd.DataFrame()

for file in tqdm(all_files):

try:

data1 = pd.read_csv(file)

data1 = data1.loc[data1.index[5]:data1.index[5]]

merged = pd.concat([merged, data1])

except:

print('Error with file: ' + file)

error_files.append(file)

merged.head()

merged = merged.sort_values(by = 'Time')

merged.head()

merged.index = merged['Time']

len(error_files)

#len(error_files) / len(all_files)* 100

# +

#error_files[0]

# +

#error_files

# -

merged.head()

del merged['Time']

merged.head()

merged.to_csv('merged_17.csv')

| data/weather/v3/4_only_current_values.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# Advanced Tricks

# ===

# So you've tried making normal waveforms and now you need to spice up your life by making some way more weird waveforms letting the detector be whatever you want it to be?

# You have come to the right place!

#

# By default fax uses some configuration file which is a huge pain to modify. So we made fax such that if you add a parameter in the instruction which corresponds to a parameter in the config it will overwrite what the value was in the config and let you deside what it should be!

#

# This example shows how to modify the electron lifetime and the anode voltage

# +

import numpy as np

import strax

import straxen

import wfsim

import matplotlib.pyplot as plt

from matplotlib.colors import LogNorm

from multihist import Histdd, Hist1d

from scipy import stats

# -

st = strax.Context(

config=dict(

detector='XENON1T',

fax_config='https://raw.githubusercontent.com/XENONnT/'

'strax_auxiliary_files/master/sim_files/fax_config_1t.json',

fax_config_override={'field_distortion_on':True, 's2_luminescence_model':'simple'},

**straxen.contexts.xnt_common_config),

**straxen.contexts.common_opts)

st.register(wfsim.RawRecordsFromFax1T)

# Just some id from post-SR1, so the corrections work

run_id = '000001'

strax.Mailbox.DEFAULT_TIMEOUT=10000

# +

dtype = wfsim.strax_interface.instruction_dtype

for new_dtype in [('electron_lifetime_liquid', np.int32),

('anode_voltage', np.int32)]:

if new_dtype not in dtype:

dtype.append(new_dtype)

def rand_instructions(c):

n = c['nevents'] = c['event_rate'] * c['chunk_size'] * c['nchunk']

c['total_time'] = c['chunk_size'] * c['nchunk']

instructions = np.zeros(2 * n, dtype=dtype)

uniform_times = c['total_time'] * (np.arange(n) + 0.5) / n

instructions['time'] = np.repeat(uniform_times, 2) * int(1e9)

instructions['event_number'] = np.digitize(instructions['time'],

1e9 * np.arange(c['nchunk']) * c['chunk_size']) - 1

instructions['type'] = np.tile([1, 2], n)

instructions['recoil'] = ['er' for i in range(n * 2)]

r = np.sqrt(np.random.uniform(0, 2500, n))

t = np.random.uniform(-np.pi, np.pi, n)

instructions['x'] = np.repeat(r * np.cos(t), 2)

instructions['y'] = np.repeat(r * np.sin(t), 2)

instructions['z'] = np.repeat(np.random.uniform(-100, 0, n), 2)

nphotons = np.random.uniform(2000, 2050, n)

nelectrons = 10 ** (np.random.uniform(1, 4, n))

instructions['amp'] = np.vstack([nphotons, nelectrons]).T.flatten().astype(int)

instructions['electron_lifetime_liquid'] = np.repeat(600e10,len(instructions))

instructions['anode_voltage'] = np.repeat(1e10,len(instructions))

return instructions

wfsim.strax_interface.rand_instructions = rand_instructions

wfsim.strax_interface.instruction_dtype = dtype

# -

st.set_config(dict(fax_file=None))

st.set_config(dict(nchunk=1, event_rate=1, chunk_size=100))

# +

# Remove any previously simulated data, if such exists

# # !rm -r strax_data

records = st.get_array(run_id,'raw_records', progress_bar=False)

peaks = st.get_array(run_id, ['peak_basics'], progress_bar=False)

data = st.get_df(run_id, 'event_info', progress_bar=False)

truth = st.get_df(run_id, 'truth', progress_bar=False)

# -

truth.head()

| docs/source/tutorials/Advanced_tricks.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3.9.6 64-bit (windows store)

# name: python3

# ---

# # Quartiles

# Quartile is a type of quantile which divides the number of data points into four parts, or quarters, of more-or-less equal size. The data must be ordered from smallest to largest to compute quartiles; as such, quartiles are a form of order statistics.

# - The first quartile $(Q_1)$ is defined as the middle number between the smallest number($minimum$) and the median of the data set. It is also known as the lower or $25^{th}\text{empirical quartile}$, as $25\%$ of the data is below this point.

# - The second quartile $(Q_2)$ is the median of the whole data set, thus $50\%$ of the data lies below this point.

# - The third quartile $(Q_3)$ is the middle value between the median and the highest value ($maximum$) of the data set. It is known as the $upper$ or $75^{th}\text{empirical quartile}$, as $75\%$ of the data lies below this point.

#

# $$minimum-----Q_1-----Q_2-----Q_3-----maximum$$

#

# Along with minimum and maximum of the data (which are also quartiles), the three quartiles described above provide a $\text{five-number summary}$ of the data. This summary is important in statistics because it provides information about both the center and the spread of the data. Knowing the lower and upper quartile provides information on how big the spread is and if the dataset is $skewed$ toward one side. Since quartiles divide the number of data points evenly, the range is not the same between quartiles (i.e., $Q_3-Q_2 \neq Q_2-Q_1$) and is instead known as the $\textbf{interquartile range (IQR)}$. While the maximum and minimum also show the spread of the data, the upper and lower quartiles can provide more detailed information on the location of specific data points, the presence of outliers in the data, and the difference in spread between the middle $50\%$ of the data and the outer data points.

#

# In desciptive statistics, the $\textbf{Interquartile range (IQR)}$ also called $midspread$, $middle\;50\%$, or $H-spread$, is a measure of $statistical\;dispersion$ being equal to the difference between $75^{th}$ and $25^{th}\;percentiles$. $IQR=Q_3-Q_1$

#

# <p align="center">

# <img src="https://upload.wikimedia.org/wikipedia/commons/thumb/1/1a/Boxplot_vs_PDF.svg/640px-Boxplot_vs_PDF.svg.png?1626778057933">

# </p>

#

#

# |Symbol|Names|Definition|

# |:---:|:---:|:---:|

# |$Q_1$|$25^{th}\;percentile$|splits off the lowest $25\%$ data from the highest $75\%$|

# |$Q_2$|$50^{th}\;percentile$|splits dataset in half|

# |$Q_3$|$75^{th}\;percentile$|splits off the highest $25\%$ data from the lowest $75\%$|

# +

import numpy as np

def quartiles(array):

# sort original array in ascending order

print(f"The original array is {array}") # Comment this out for large datasets

temp = 0

for i in range(0,len(array)):

for j in range(i+1,len(array)):

if (array[i]>array[j]):

temp = array[i]

array[i] = array[j]

array[j] = temp

# lower half of array

array1 = []

for i in range(0,len(array)//2):

array1.append(array[i])

# upper half of array

if len(array)%2==0:

array2 = []

for i in range(len(array)//2,len(array)):

array2.append(array[i])

elif len(array)%2==1:

array2 = []

for i in range((len(array)//2)+1,len(array)):

array2.append(array[i])

# Quartile values

Q1 = np.median(array1)

Q2 = np.median(array)

Q3 = np.median(array2)

# Either define a function to return the desired values or to print arrays and quartiles.

return array1,Q1,array,Q2,array2,Q3,Q3-Q1

'''

return values in the order -

Lower half, First quartile, whole array, second quartile(median of whole array), Upper half, third quartile, IQR = Q3-Q1

'''

# Alternatively if you don't want to use the values further you can print all the values by defining it in the function itself.

'''

print(f"The sorted array is {array}")

print(f"The lower half consists of {array1}, and it's Median: Q1 = {Q1}.")

print(f"The median of entire array {array} is Q2 = {Q2}.")

print(f"The upper half consists of {array2}, and its Median: Q3 = {Q3}.")

print(f"The interquartile range, IQR = {IQR}")

'''

# -

# Testing the function for odd and even number of elements in the array

# Odd number of elements in array

array = [5,7,1,4,2,9,10]

array1,Q1,array,Q2,array2,Q3,IQR = quartiles(array)

print(f"The sorted array is {array}")

print(f"The lower half consists of {array1}, and it's Median: Q1 = {Q1}.")

print(f"The median of entire array {array} is Q2 = {Q2}.")

print(f"The upper half consists of {array2}, and its Median: Q3 = {Q3}.")

print(f"The interquartile range, IQR = {IQR}")

# Even number of elements in array

a = [3,5,7,1,4,2,9,10]

array1,Q1,array,Q2,array2,Q3,IQR = quartiles(a)

print(f"The sorted array is {array}")

print(f"The lower half consists of {array1}, and it's Median: Q1 = {Q1}.")

print(f"The median of entire array {array} is Q2 = {Q2}.")

print(f"The upper half consists of {array2}, and its Median: Q3 = {Q3}.")

print(f"The interquartile range, IQR = {IQR}")

# Test with different array

b = [3,7,8,5,12,14,21,13,18]

array1,Q1,array,Q2,array2,Q3,IQR = quartiles(b)

print(f"The sorted array is {array}")

print(f"The lower half consists of {array1}, and it's Median: Q1 = {Q1}.")

print(f"The median of entire array {array} is Q2 = {Q2}.")

print(f"The upper half consists of {array2}, and its Median: Q3 = {Q3}.")

print(f"The interquartile range, IQR = {IQR}")

# # Using `statistics`

# +

from statistics import median

def quartiles(array):

# sort original array in ascending order

print(f"The original array is {array}") # Comment this out for large datasets

# Alternatively you can just use the .sort() function to arrange in order

# It changes the original array itself

array.sort()

# lower half of array

array1 = []

for i in range(0,len(array)//2):

array1.append(array[i])

# upper half of array

if len(array)%2==0:

array2 = []

for i in range(len(array)//2,len(array)):

array2.append(array[i])

elif len(array)%2==1:

array2 = []

for i in range((len(array)//2)+1,len(array)):

array2.append(array[i])

# Quartile values

Q1 = median(array1)

Q2 = median(array)

Q3 = median(array2)

# Either define a function to return the desired values or to print arrays and quartiles.

return array1,Q1,array,Q2,array2,Q3,Q3-Q1

'''

return values in the order -

Lower half, First quartile, whole array, second quartile(median of whole array), Upper half, third quartile, IQR = Q3-Q1

'''

# Alternatively if you don't want to use the values further you can print all the values by defining it in the function itself.

'''

print(f"The sorted array is {array}")

print(f"The lower half consists of {array1}, and it's Median: Q1 = {Q1}.")

print(f"The median of entire array {array} is Q2 = {Q2}.")

print(f"The upper half consists of {array2}, and its Median: Q3 = {Q3}.")

print(f"The interquartile range, IQR = {IQR}")

'''

# -

A = [56.0,32.7,90.4,54.2,50,49,51,52.9,51.3,53.1,55.1]

array1,Q1,array,Q2,array2,Q3,IQR = quartiles(A)

print(f"The sorted array is {array}")

print(f"The lower half consists of {array1}, and it's Median: Q1 = {Q1}.")

print(f"The median of entire array {array} is Q2 = {Q2}.")

print(f"The upper half consists of {array2}, and its Median: Q3 = {Q3}.")

print(f"The interquartile range, IQR = {IQR}")

| Math Programs/Quartiles.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# # Simple harmonic motion

# * $a = -kx$: acceleration $\propto$ displacement

# * $a = -\omega^2 x$ where angular frequency $\omega = 2\pi f = \dfrac{2\pi}{T}$ - (angle in radians) over (time for 1 revolution in seconds)

#

# Denote

# * $x$ the displacement from equilibrium position

# * $x_0$ the amplitude

#

# $\begin{aligned}

# v &= \pm \omega \sqrt{{x_0}^2 - x^2}\\

# E_T &= \frac{1}{2} m \omega^2 {x_0}^2\\

# E_k &= \frac{1}{2} m \omega^2 ({x_0}^2 - x^2)\\

# T_{\text{pendulum}} &= 2\pi \sqrt{\frac{l}{g}}\\

# T_{\text{mass on spring}} &= 2\pi \sqrt{\frac{m}{k}}\\

# \end{aligned}$

#

# $\begin{aligned}

# a &= -\omega^2 x(t)\\

# a &= -\omega^2 x_0 \sin(\omega t)\\

# v(t) &= \omega x_0 \cos(\omega t)\\

# x(t) &= x_0 \sin(\omega t)\\

# \end{aligned}$

# # Single slit diffraction

# $\theta = \dfrac{\lambda}{a}$

#

# ## Things to know

# * $\theta = \dfrac{n \lambda}{a}$ where $n$ is the number of minimum you are looking at

# * Intensity of 1st minimum ~5% of central maximum

#

#

# /|\

# / | \

# /|\/|\/ | \/|\/|\

# 2 1 𝜃 n=1

#

#

# # Multiple-slit interference

# The pattern of the multiple-slit interference is *modulated* by the single-slit interference (envelope).

# $$n \lambda = d \sin\theta$$

# ## Thin film interference

# A phenomenon that causes iridescence.

#

#

# # Doppler effect

# When a source that is moving emits waves, the frequency observed by a static observer is "shifted".

#

# * Object moving towards observer: higher $f$ - blue shift for light;

# * object moving away from observer: lower $f$ - red shift

#

# For a moving source:

# $$f' = f\left(\dfrac{v}{v \pm u_s}\right)$$

# where

# * $v$: speed of wave

# * $u_s$: speed of source

#

# For a moving observer:

# $$f' = f\left(\dfrac{v \pm u_0}{v}\right)$$

# where

# * $u_o$: speed of observer

#

# For EM radiation:

# $$\dfrac{\Delta f}{f} = \dfrac{\Delta \lambda}{\lambda} = \dfrac{v}{c}$$

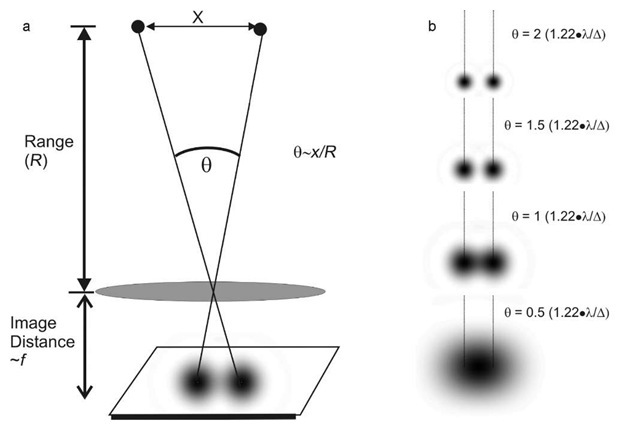

# ## Resolution

# The ability to distinguish 2 objects that are close to each other is the ability to **resolve** things.

#

# ```

# observer

# *---

# |/ angle between objects

# / The wavelength of light from objects

# * objects to observe

# <--> distance from objects to observer

# ```

# The Rayleigh criterion can be used to determine if two objects will be resolved.

#

#

#

# The Rayleigh criterion states that for 2 images to be resolved, the principal maximum of the 1st diffraction pattern must be no closer than the first minimum of the secondary pattern.

#

# $$\theta = 1.22 \dfrac{\lambda}{b}$$

# * $\theta$: angle of resolution

# * $\lambda$: wavelength of light

# * $b$: width of aperture

# +

import matplotlib.pyplot as plt

import numpy as np

plt.style.use(['bmh', 'dark_background', 'seaborn-poster'])

# +

plt.plot(0.2, .59 * 9, 'ro', label=f"EG: 1.80mm")

plt.plot(0.2, .59 * 10, 'bo', label="JY: 1.62mm")

plt.xlim(0.19, 0.21)

xs = np.linspace(0.19, 0.21)

plt.plot(xs, 2e-3 / (1.22 * 500e-9) * 1.62e-2 * xs / 2, 'r')

plt.plot(xs, 2e-3 / (1.22 * 500e-9) * 1.80e-2 * xs / 2, 'b')

plt.xlabel("Aperture between two dots (cm)")

plt.ylabel("Distance at which we can't resolve (m)")

plt.legend()

plt.show()

# -

| notes/PHY/9-wave-phenomena.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: teachopencadd

# language: python

# name: teachopencadd

# ---

# + active=""

# # Run this if you get an ImportError below

# !pip install bravado

#

# -

#KLIFS

from bravado.client import SwaggerClient

KLIFS_API_DEFINITIONS = "http://klifs.vu-compmedchem.nl/swagger/swagger.json"

KLIFS_CLIENT = SwaggerClient.from_url(KLIFS_API_DEFINITIONS, config={'validate_responses': False})

def _all_kinase_families():

return KLIFS_CLIENT.Information.get_kinase_families().response().result

KLIFS_CLIENT.Information.get_kinase_families().response().result

def _kinases_from_family(family, species="HUMAN"):

return KLIFS_CLIENT.Information.get_kinase_names(kinase_family=family, species=species).response().result

KLIFS_CLIENT.Information.get_kinase_names(kinase_family=family, species=species).response().result

def _protein_and_ligand_structure(*kinase_ids):

structures = KLIFS_CLIENT.Structures.get_structures_list(kinase_ID=kinase_ids).response().result

molcomplex = KLIFS_CLIENT.Structures.get_structure_get_pdb_complex(structure_ID=structures[0].structure_ID).response().result

protein = KLIFS_CLIENT.Structures.get_structure_get_protein(structure_ID=structures[0].structure_ID).response().result

ligands = KLIFS_CLIENT.Ligands.get_ligands_list(kinase_ID=kinase_ids).response().result

return molcomplex, protein, [ligand.SMILES for ligand in ligands]

structures

pdb_code = '3w32'

def protein_binding_site(*kinase_ids):

molcomplex = KLIFS_CLIENT.Structures.get_structure_get_pdb_complex(structure_ID=structures[0].structure_ID).response().result

binding_site = KLIFS_CLIENT.Structures.get_structure_get_pocket(structure_ID=kinase_ID).response().result

return binding_site

# +

#import time

def KLIFS_binding_site():

try :

molcomplex, binding_site = protein_binding_site(kinase.kinase__ID)

except :

None

# -

Kinase_ID = KLIFS_CLIENT.Structures.get_structure_get_pdb_complex(structure_ID=structures[0].structure_ID).response().result

structures = KLIFS_CLIENT.Structures.get_structures_list(kinase_ID=[406]).response().result

structures

structures[0].structure_ID

molcomplex = KLIFS_CLIENT.Structures.get_structure_get_pdb_complex(structure_ID=structures[0].structure_ID).response().result

KLIFS_CLIENT.Structures.get_structure_get_pocket(structure_ID=782).response().result

binding_site = protein_binding_site(pdb_code)

binding_site = KLIFS_binding_site()

| examples/KLIFS_query.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

L = [1,2, 5, 7]

# +

import numpy as np

def softmax(L):

# convert to numpy array

L_np = np.array(L)

#calculate exponent of each element in l_np

expL = np.exp(L_np)

# calculate sum

sum_expL= sum(expL)

result = []

for i in expL:

result.append(i*1.0/sum_expL)

return result

# -

softmax(L)

| 2-softmax-function.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# # Convolutional Neural Network - Gap / Char Classification

# Using TensorFlow

# +

import numpy as np

import pandas as pd

import matplotlib.pyplot as plt

import tensorflow as tf

import cv2

# %matplotlib notebook

# Increase size of plots

plt.rcParams['figure.figsize'] = (9.0, 5.0)

# Creating CSV

import glob

import csv

# Helpers

from ocr.helpers import implt

from ocr.mlhelpers import TrainingPlot, DataSet

from ocr.datahelpers import loadGapData

print("OpenCV: " + cv2.__version__)

print("Numpy: " + np.__version__)

print("TensorFlow: " + tf.__version__)

# -

# ## Load Images and Lables in CSV

images, labels = loadGapData('data/gapdet/large/')

# +

print("Number of images: " + str(len(images)))

# Splitting on train and test data

div = int(0.90 * len(images))

trainData = images[0:div]

trainLabels = labels[0:div]

evalData = images[div:]

evalLabels = labels[div:]

print("Training images: %g" % div)

# -

# # Create classifier

# ### Dataset

# Prepare training dataset

trainSet = DataSet(trainData, trainLabels)

evalSet = DataSet(evalData, evalLabels)

# ## Convulation Neural Network

# ### Graph

# +

sess = tf.InteractiveSession()

# Help functions for standard layers

def conv2d(x, W, name=None):

return tf.nn.conv2d(x, W, strides=[1, 1, 1, 1], padding='SAME', name=name)

def max_pool_2x2(x, name=None):

return tf.nn.max_pool(x, ksize=[1, 2, 2, 1], strides=[1, 2, 2, 1], padding='SAME', name=name)

# Regularization scale - FOR TWEAKING

SCALE = 0.001

# Weighting cross entropy

POS_WEIGHT = (len(labels) - sum(labels)) / sum(labels)

# Place holders for data (x) and labels (y_)

x = tf.placeholder(tf.float32, [None, 7200], name='x')

targets = tf.placeholder(tf.int64, [None])

# Reshape input data

reshape_images = tf.reshape(x, [-1, 60, 120, 1])

# Image standardization

x_images = tf.map_fn(

lambda img: tf.image.per_image_standardization(img), reshape_images)

# 1. Layer - Convulation + Subsampling

W_conv1 = tf.get_variable('W_conv1', shape=[8, 8, 1, 10],

initializer=tf.contrib.layers.xavier_initializer())

b_conv1 = tf.Variable(tf.constant(0.1, shape=[10]), name='b_conv1')

h_conv1 = tf.nn.relu(conv2d(x_images, W_conv1) + b_conv1, name='h_conv1')

# 2. Layer - Max Pool

h_pool1 = max_pool_2x2(h_conv1, name='h_pool1')

# 3. Layer - Convulation + Subsampling

W_conv2 = tf.get_variable('W_conv2', shape=[5, 5, 10, 20],

initializer=tf.contrib.layers.xavier_initializer())

b_conv2 = tf.Variable(tf.constant(0.1, shape=[20]), name='b_conv2')

h_conv2 = tf.nn.relu(conv2d(h_pool1, W_conv2) + b_conv2, name='h_conv2')

# 4. Layer - Max Pool

h_pool2 = max_pool_2x2(h_conv2, name='h_pool2')

# 5. Fully Connected layer

W_fc1 = tf.get_variable('W_fc1', shape=[15*30*20, 1000],

initializer=tf.contrib.layers.xavier_initializer(),

regularizer=tf.contrib.layers.l2_regularizer(scale=SCALE))

b_fc1 = tf.Variable(tf.constant(0.1, shape=[1000]), name='b_fc1')

h_conv2_flat = tf.reshape(h_pool2, [-1, 15*30*20], name='h_conv2_flat')

h_fc1 = tf.nn.relu(tf.matmul(h_conv2_flat, W_fc1) + b_fc1, name='h_fc1')

# 6. Dropout

keep_prob = tf.placeholder(tf.float32, name='keep_prob')

h_fc1_drop = tf.nn.dropout(h_fc1, keep_prob, name='h_fc1_drop')

# 7. Output layer

W_fc2 = tf.get_variable('W_fc2', shape=[1000, 2],

initializer=tf.contrib.layers.xavier_initializer(),

regularizer=tf.contrib.layers.l2_regularizer(scale=SCALE))

b_fc2 = tf.Variable(tf.constant(0.1, shape=[2]), name='b_fc2')

y_conv = tf.matmul(h_fc1_drop, W_fc2) + b_fc2

# Activation function for real use in application

activation = tf.argmax(tf.matmul(h_fc1, W_fc2) + b_fc2, 1, name='activation')

# Cost: cross entropy + regularization

# Regularization with L2 Regularization with decaying learning rate

# cross_entropy = tf.nn.weighted_cross_entropy_with_logits(logits=y_conv, targets=y_)

weights = tf.multiply(targets, POS_WEIGHT) + 1

cross_entropy = tf.losses.sparse_softmax_cross_entropy(

logits=y_conv,

labels=targets,

weights=weights)

# Using cross entropy for sigmoid as loss

regularization = tf.get_collection(tf.GraphKeys.REGULARIZATION_LOSSES)

cost = tf.reduce_mean(cross_entropy) + sum(regularization)

# Optimizer

train_step = tf.train.AdamOptimizer(5e-5).minimize(cost, name='train_step')

# Evaluating

correct_prediction = tf.equal(tf.argmax(y_conv,1), targets)

accuracy = tf.reduce_mean(tf.cast(correct_prediction, tf.float32), name='accuracy')

# -

# ### Training

# +

sess.run(tf.global_variables_initializer())

saver = tf.train.Saver()

### SETTINGS ###

TRAIN_STEPS = 500000

TEST_ITER = 150

COST_ITER = 50

SAVE_ITER = 2000

BATCH_SIZE = 64

# Graph for live ploting

trainPlot = TrainingPlot(TRAIN_STEPS, TEST_ITER, COST_ITER)

try:

for i in range(TRAIN_STEPS):

trainBatch, labelBatch = trainSet.next_batch(BATCH_SIZE)

if i%COST_ITER == 0:

# Plotting cost

tmpCost = cost.eval(feed_dict={x: trainBatch,

targets: labelBatch,

keep_prob: 1.0})

trainPlot.updateCost(tmpCost, i // COST_ITER)

if i%TEST_ITER == 0:

# Plotting accuracy

evalD, evalL = evalSet.next_batch(1000)

accEval = accuracy.eval(feed_dict={x: evalD,

targets: evalL,

keep_prob: 1.0})

accTrain = accuracy.eval(feed_dict={x: trainBatch,

targets: labelBatch,

keep_prob: 1.0})

trainPlot.updateAcc(accEval, accTrain, i // TEST_ITER)

if i%SAVE_ITER == 0:

# Saving model

saver.save(sess, 'models/gap-clas/large/CNN-CG')

train_step.run(feed_dict={x: trainBatch,

targets: labelBatch,

keep_prob: 0.4})

except KeyboardInterrupt:

pass

saver.save(sess, 'models/gap-clas/large/CNN-CG')

evalD, evalL = evalSet.next_batch(1000)

print("Accuracy %g" % accuracy.eval(feed_dict={x: evalD,

targets: evalL,

keep_prob: 1.0}))

sess.close()

| GapClassifier.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python [conda root]

# language: python

# name: conda-root-py

# ---

# %pylab inline

# # More Examples

# ## Additive model

#

# Example taken from JCGM 101:2008, Clause 9.2.

#

# This example considers the additive model

#

# $$ Y = X_1 + X_2 + X_3 + X_4 $$

#

# for three different sets of PDFs $g_{x_i}(\xi_i)$ assigned to the input quantities $X_i$, regarded as independent.

#

# #### Taks 1

# Assume that a Gaussian PDF is assigned to each $X_i$. The best estimates are $x_i=0$ with associated standard uncertainties $u(x_i)=1$. Report the Monte Carlo results for estimate, uncertainty and 95% coverage interval of $Y$ with three significant digits. Compare the Monte Carlo result to that of a standard GUM approach using the *law of propagation of uncertainty*.

# #### Task 2

# Assign a rectangular PDF to each $X_i$ so that $X_i$ has an expectation of zero and a standard deviation of unity. Report the results of $Y$ with three significant digits and compare to that obtained with the standard GUM approach.

# #### Taks 3

# Same as Taks 2, but with $X_4$ having standard uncertainty of 10 rathern than unity.

# ## Mass calibration

#

# Example taken from JCGM 101:2008, Clause 9.3

#

# Consider the calibration of a weight $W$ of mass density $\rho_W$ against a reference weight $R$ of mass density $\rho_R$ having nominally the same mass, using a balance operating in air of mass density $\rho_a$. Since $\rho_W$ and $\rho_R$ are generally diffeent, it is necessary to account for buoyancy effects.

#

# Applying Archimedes' principle, the model takes the form

#

# $$ m_W\left( 1 - \frac{\rho_a}{\rho_W} \right) = (m_R + \delta m_R)\left( 1-\frac{\rho_a}{\rho_R} \right) $$

#

# where $\delta m_R$ is the mass of a small weight of density $\rho_R$ added to $R$ to balance it with $W$. Working with so called "conventional masses", the model in this example ist

#

# $$ \delta m = (m_{R,c} + \delta m_{R,c})\left[ 1 + (\rho_a-\rho_{a_0})\left( \frac{1}{\rho_W}-\frac{1}{\rho_R} \right) \right] $$

# Knowledge about the input quantities is given as

#

#

#

# Carry out the uncertainty evaluation using the Monte Carlo method to obtain an estimate, uncertainty and 99% coverage interval.

# ## Comparison loss

#

# Comparison loss in microwave power meter calibration example taken from JCGM 101:2008, Clause 9.4.

# During the calibration of a microwave power meter, the power meter and a standard power meter are connected in turn to a stable signal generator. The power absorbed by each meter will in general be different because their complex input voltage reflection coefficients are not identical. The ration $Y$ of the power $P_M$ absorbed by the meter being calibrated and that, $P_S$, by the standard meter is

#

# $$ Y = \frac{P_M}{P_S} = \frac{1-\vert \Gamma_M\vert^2}{1-\vert \Gamma_S\vert^2} \times \frac{\vert 1-\Gamma_S\Gamma_G\vert^2}{\vert 1-\Gamma_M\Gamma_G\vert^2} $$

#

# where $\Gamma_G$ is the voltage reflection coefficient of the signal generator, $\Gamma_M$ that of the meter being calibrated and $\Gamma_S$ the of the standard meter. This power ratio is an instance of "comparison loss".

# #### Task

#

# Considering the case that $\Gamma_S=\Gamma_G=0$, and measured values are obtained of the real and imaginary parts $X_1, X_2$ of $\Gamma_M$, the model used in this examples is $ Y = 1 - X_1^2 - X_2^2 $, better expressed as

#

# $$ \delta Y = 1 - Y = X_1^2 + X_2^2 $$

#

# $X_1$ and $X_2$ are usually not independent, but it may be difficult to gather information about the actual correlation coefficient. In such cases, the uncertainty evaluation can be repeated with different trial values for the correlation coefficient.

#

# Here we use $\rho=0$ and $\rho=0.9$. The estimate of $X_1$ is taken as $x_1 = 0.01$ with standard uncertainty $u(x_1) = 0.005$. The estimate of $X_2$ is taken as zero with an associated standard uncertainty of $u(x_2) = 0.005$.

#

# Carry out an uncertainty evaluation for $\delta Y$, calculating estimate and uncertainty using the *law of propagation of uncertainty* and the Monte Method for both correlation coefficients.

| .ipynb_checkpoints/10 More examples-checkpoint.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# +

#

# COMMENTS TO DO

#

#Condensed code based on the code from: https://jmetzen.github.io/2015-11-27/vae.html

# %matplotlib inline

import tensorflow as tf

import tensorflow.contrib.layers as layers

import matplotlib.pyplot as plt

import matplotlib.gridspec as gridspec

import numpy as np

import os

import time

import glob

from tensorflow.examples.tutorials.mnist import input_data

def plot(samples, w, h, fw, fh, iw=28, ih=28):

fig = plt.figure(figsize=(fw, fh))

gs = gridspec.GridSpec(w, h)

gs.update(wspace=0.05, hspace=0.05)

for i, sample in enumerate(samples):

ax = plt.subplot(gs[i])

plt.axis('off')

ax.set_xticklabels([])

ax.set_yticklabels([])

ax.set_aspect('equal')

plt.imshow(sample.reshape(iw, ih), cmap='Greys_r')

return fig

def encoder(images, num_outputs_h0=8, num_outputs_h1=16, kernel_size=5, stride=2, num_hidden_fc=1024, z_dim=100):

print("Encoder")

h0 = layers.convolution2d(

inputs=images,

num_outputs=num_outputs_h0,

kernel_size=kernel_size,

stride=stride,

activation_fn=tf.nn.relu,

scope='e_cnn_%d' % (0,)

)

print("Convolution 1 -> {}".format(h0))

h1 = layers.convolution2d(

inputs=h0,

num_outputs=num_outputs_h1,

kernel_size=kernel_size,

stride=stride,

activation_fn=tf.nn.relu,

scope='e_cnn_%d' % (1,)

)

print("Convolution 2 -> {}".format(h1))

h1_dim = h1.get_shape().as_list()[1]

h2_flat = tf.reshape(h1, [-1, h1_dim * h1_dim * num_outputs_h1])

print("Reshape -> {}".format(h2_flat))

h2_flat =layers.fully_connected(

inputs=h2_flat,

num_outputs=num_hidden_fc,

activation_fn=tf.nn.relu,

scope='e_d_%d' % (0,)

)

print("FC 1 -> {}".format(h2_flat))

z_mean =layers.fully_connected(

inputs=h2_flat,

num_outputs=z_dim,

activation_fn=None,

scope='e_d_%d' % (1,)

)

print("Z mean -> {}".format(z_mean))

z_log_sigma_sq =layers.fully_connected(

inputs=h2_flat,

num_outputs=z_dim,

activation_fn=None,

scope='e_d_%d' % (2,)

)

return z_mean, z_log_sigma_sq

def decoder(z, num_hidden_fc=1024, h1_reshape_dim=7, kernel_size=5, h1_channels=16, h2_channels = 8, output_channels=1, strides=2, output_dims=784):

print("Decoder")

batch_size = tf.shape(z)[0]

h0 =layers.fully_connected(

inputs=z,

num_outputs=num_hidden_fc,

activation_fn=tf.nn.relu,

scope='d_d_%d' % (0,)

)

print("FC 1 -> {}".format(h0))

h1 =layers.fully_connected(

inputs=h0,

num_outputs=h1_reshape_dim*h1_reshape_dim*h1_channels,

activation_fn=tf.nn.relu,

scope='d_d_%d' % (1,)

)

print("FC 2 -> {}".format(h1))

h1_reshape = tf.reshape(h1, [-1, h1_reshape_dim, h1_reshape_dim, h1_channels])

print("Reshape -> {}".format(h1_reshape))

wdd2 = tf.get_variable('wd2', shape=(kernel_size, kernel_size, h2_channels, h1_channels), initializer=tf.contrib.layers.xavier_initializer())

bdd2 = tf.get_variable('bd2', shape=(h2_channels,), initializer=tf.constant_initializer(0))

h2 = tf.nn.conv2d_transpose(h1_reshape, wdd2, output_shape=(batch_size, h1_reshape_dim*2, h1_reshape_dim*2, h2_channels), strides=(1, strides, strides, 1), padding='SAME')

h2_out = tf.nn.relu(h2 + bdd2)

h2_out = tf.reshape(h2_out, (batch_size, h1_reshape_dim*2, h1_reshape_dim*2, h2_channels))

print("DeConv 1 -> {}".format(h2_out))

h2_dim = h2_out.get_shape().as_list()[1]

wdd3 = tf.get_variable('wd3', shape=(kernel_size, kernel_size, output_channels, h2_channels), initializer=tf.contrib.layers.xavier_initializer())

bdd3 = tf.get_variable('bd3', shape=(output_channels,), initializer=tf.constant_initializer(0))

h3 = tf.nn.conv2d_transpose(h2_out, wdd3, output_shape=(batch_size, h2_dim*2, h2_dim*2, output_channels), strides=(1, strides, strides, 1), padding='SAME')

h3_out = tf.nn.sigmoid(h3 + bdd3)

#Workaround to use dinamyc batch size...

h3_out = tf.reshape(h3_out, (batch_size, h2_dim*2, h2_dim*2, output_channels))

print("DeConv 2 -> {}".format(h3_out))

h3_reshape = tf.reshape(h3_out, [-1, output_dims])

print("Reshape -> {}".format(h3_reshape))

return h3_reshape

mnist = input_data.read_data_sets('DATASETS/MNIST_TF', one_hot=True)

#For reconstructing the same or a different image (denoising)

images = tf.placeholder(tf.float32, shape=(None, 784))

images_28x28x1 = tf.reshape(images, [-1, 28, 28, 1])

images_target = tf.placeholder(tf.float32, shape=(None, 784))

is_training_placeholder = tf.placeholder(tf.bool)

learning_rate_placeholder = tf.placeholder(tf.float32)

z_dim = 100

with tf.variable_scope("encoder") as scope:

z_mean, z_log_sigma_sq = encoder(images_28x28x1)

with tf.variable_scope("reparameterization") as scope:

eps = tf.random_normal(shape=tf.shape(z_mean), mean=0.0, stddev=1.0, dtype=tf.float32)

# z = mu + sigma*epsilon

z = tf.add(z_mean, tf.multiply(tf.sqrt(tf.exp(z_log_sigma_sq)), eps))

with tf.variable_scope("decoder") as scope:

x_reconstr_mean = decoder(z)

scope.reuse_variables()

##### SAMPLING #######

z_input = tf.placeholder(tf.float32, shape=[None, z_dim])

x_sample = decoder(z_input)

#reconstr_loss = tf.reduce_sum(tf.nn.sigmoid_cross_entropy_with_logits(logits=x_reconstr_mean, labels=images_target), reduction_indices=1)

offset=1e-7

obs_ = tf.clip_by_value(x_reconstr_mean, offset, 1 - offset)

reconstr_loss = -tf.reduce_sum(images_target * tf.log(obs_) + (1-images_target) * tf.log(1 - obs_), 1)

latent_loss = -.5 * tf.reduce_sum(1. + z_log_sigma_sq - tf.pow(z_mean, 2) - tf.exp(z_log_sigma_sq), reduction_indices=1)

cost = tf.reduce_mean(reconstr_loss + latent_loss)

optimizer=tf.train.AdamOptimizer(learning_rate=learning_rate_placeholder).minimize(cost)

init = tf.global_variables_initializer()

save_path = "MODELS_CVAE_MNIST/CONV_VAE_MNIST.ckpt"

CVAE_SAVER = tf.train.Saver()

# +

with tf.Session() as sess:

sess.run(init)

CVAE_SAVER.restore(sess, save_path)

print("Model restored in file: {}".format(save_path))

random_gen = sess.run(x_sample,feed_dict={z_input: np.random.randn(100, z_dim)})

fig=plot(random_gen, 10, 10, 10, 10)

plt.show()

# -

# # (READING) MNIST subset

# +

labels = 10

subset_size_per_label = 10

X_mini = np.load("DATASETS/MNIST_ALT/X_MINI_100")

Y_mini = np.load("DATASETS/MNIST_ALT/Y_MINI_100")

fig=plot(X_mini, 10, 10, 10, 10)

plt.show()

print(np.argmax(Y_mini, axis=1))

# -

# # Interpolating

# +

from numpy.linalg import norm

import progressbar

def slerp(p0, p1, t):

omega = np.arccos(np.dot(p0/norm(p0), p1/norm(p1)))

so = np.sin(omega)

return np.sin((1.0-t)*omega) / so * p0 + np.sin(t*omega)/so * p1

def linear(p0, p1, t):

return p0 * (1-t) + p1 * t

def interpolate(sample1, sample2, alphaValues, sess, method="linear"):

x_together = np.vstack((sample1, sample2))

z_samples = sess.run(z, feed_dict={images: x_together})

#fig=plot(z_samples, 1, 2, 10, 10, 10, 10)

#plt.show()

interpolation_steps = alphaValues.shape[0]

z_interpolations = np.zeros((interpolation_steps, z_dim))

for i, alpha in enumerate(alphaValues):

if method == "slerp":

z_interpolations[i] = slerp(z_samples[0], z_samples[1], alpha)

else:

z_interpolations[i] = linear(z_samples[0], z_samples[1], alpha)

x_interpolated = sess.run(x_sample, feed_dict={z_input: z_interpolations})

#fig=plot(x_interpolated, 1, INTERPOLATION_STEPS, 10, 10)

#plt.show()

return x_interpolated

labels = 10

INTERPOLATION_STEPS = 20

alphaValues = np.linspace(0, 1, INTERPOLATION_STEPS)

n_gen = labels * subset_size_per_label * (subset_size_per_label - 1) * INTERPOLATION_STEPS

print("Total gen: {}".format(n_gen))

x_pool = np.zeros((n_gen, X_mini.shape[1]))

y_pool = np.zeros((n_gen, Y_mini.shape[1]))

with tf.Session() as sess:

sess.run(init)

CVAE_SAVER.restore(sess, save_path)

print("Model restored in file: {}".format(save_path))

bar = progressbar.ProgressBar(max_value=n_gen)

bar.start()

counter = 0

for label in range(labels):

offset = label * subset_size_per_label

for i in range(subset_size_per_label):

samples_ind = list(range(subset_size_per_label))

samples_ind.remove(i)

x_sample_1 = X_mini[offset + i].copy()

for j in samples_ind:

x_sample_2 = X_mini[offset + j].copy()

x_output=interpolate(x_sample_1, x_sample_2, alphaValues, sess, method="linear")

x_pool[counter:counter+INTERPOLATION_STEPS] = x_output.copy()

y_pool[counter:counter+INTERPOLATION_STEPS, label] = 1

counter+=INTERPOLATION_STEPS

bar.update(counter)

bar.finish()

# +

fig=plot(x_pool[:100], 10, 10, 10, 10)

plt.show()

print(np.argmax(y_pool[:100], axis=1))

fig=plot(x_pool[-100:], 10, 10, 10, 10)

plt.show()

print(np.argmax(y_pool[-100:], axis=1))

perm = np.random.permutation(x_pool.shape[0])

x_pool = x_pool[perm]

y_pool = y_pool[perm]

perm = np.random.permutation(x_pool.shape[0])

x_pool = x_pool[perm]

y_pool = y_pool[perm]

fig=plot(x_pool[:100], 10, 10, 10, 10)

plt.show()

print(np.argmax(y_pool[:100], axis=1))

# -

# # Storing MINI-MNIST and GEN-MNIST

# +

fx = open("DATASETS/MNIST_ALT/X_GEN_18K_CVAE", "wb")

np.save(fx, x_pool)

fx.close()

fy = open("DATASETS/MNIST_ALT/Y_GEN_18K_CVAE", "wb")

np.save(fy, y_pool)

fy.close()

# -

| STEP2_2_GenerateInterpolations_x20.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

#

# # Data Science in Medicine using Python

#

# ### Author: Dr <NAME>

# ### 1. Summary of the data processing we did so far and a little reminder

# +

# %%time

import datetime

import os

import pandas as pd

flist = [fle for fle in os.listdir('data') if 'slow_Measurement' in fle]

data_dict = {} # Creates an empty dictionary

for file in flist:

print(datetime.datetime.now(), file)

path = os.path.join('data', file,)

tag = file[11:-25]

data_dict[tag] = pd.read_csv(path, parse_dates = [['Date', 'Time']])

data_dict[tag] = data_dict[tag].set_index('Date_Time')

new_columns = [item[5:] for item in data_dict[tag].columns if item.startswith('5001')]

new_columns = ['Time [ms]', 'Rel.Time [s]'] + new_columns

data_dict[tag].columns = new_columns

data_dict[tag] = data_dict[tag].resample('1S').mean()

columns_to_drop = ['Tispon [s]', 'I:Espon (I-Part) [no unit]', 'I:Espon (E-Part) [no unit]']

data_dict[tag] = data_dict[tag].drop(columns_to_drop, axis = 1)

data_dict[tag].to_csv('%s' %tag)

# -

# ##### "Computer programs are for human to read and occasionally for computers to run"

#

# You want to be more verbose particularly when learning Python

# +

# %%time

# Import the required libraries

import datetime

import os

import pandas as pd

# From the files in 'Data' sub-directory only consider those ones which contain 'slow_Measurement'

flist = [fle for fle in os.listdir('data') if 'slow_Measurement' in fle]

data_dict = {} # Creates an empty dictionary

for file in flist: # Loop through all relevant data files

print(datetime.datetime.now(), file)

# The relative filepath to the files

path = os.path.join('data', file,)

# Use the specific part of the filename as a unique key for the dictionary

tag = file[11:-25]

# Import data, parse the 'Date' and 'Time' columns as datetime and combine them

data_dict[tag] = pd.read_csv(path, parse_dates = [['Date', 'Time']])

# Set the combined 'Date_Time' column as row index

data_dict[tag] = data_dict[tag].set_index('Date_Time')

# Remove the '5001' pre-tag from the column names

new_columns = [item[5:] for item in data_dict[tag].columns if item.startswith('5001')]

new_columns = ['Time [ms]', 'Rel.Time [s]'] + new_columns

data_dict[tag].columns = new_columns

# As data were retrieved in two batches every second, combine these data by using the mean() function

data_dict[tag] = data_dict[tag].resample('1S').mean()

# Drop columes which have barely any data

columns_to_drop = ['Tispon [s]', 'I:Espon (I-Part) [no unit]', 'I:Espon (E-Part) [no unit]']

data_dict[tag] = data_dict[tag].drop(columns_to_drop, axis = 1)

# Export processed data as .csv files with unique names

data_dict[tag].to_csv('%s' %tag)

# -

# ### 2. A quick look at allt the data

# Let us look at the data in more details

# This is a dictionary of DataFrames

data_dict;

data_dict.keys()

data_dict.values();

[len(value) for value in data_dict.values()]

[value.shape for value in data_dict.values()]

# ### 3. How to process the data further?

#

# Choose one of the 3 recordings initially and study further

data_dict['2019-01-14_124200.144']

data_dict['2019-01-14_124200.144'].info()

# Now all the data are in the right format, but...

#

# ##### Further issues:

# - Tidal volumes, minute volumes and compliance only make sense when normalised to body weight

# - We would like to know the distribution of the data to make sure that they make sense (are there non-sensical data or clear outliers)

# - We still have some missing data, what should we do with them?

# ### 4. Some parameters (`VTs, MVs, Cdyn`) only make sense if normalised to body weight

data_dict['2019-01-14_124200.144'].columns

to_normalise = ['MVe [L/min]', 'MVi [L/min]', 'Cdyn [L/bar]', 'MVespon [L/min]', 'MVemand [L/min]', 'VTmand [mL]',

'VTispon [mL]', 'VTmand [L]', 'VTspon [L]', 'VTemand [mL]', 'VTespon [mL]', 'VTimand [mL]', 'VT [mL]',

'MV [L/min]', 'VTspon [mL]', 'VTe [mL]', 'VTi [mL]', 'MVleak [L/min]',]

data_dict.keys()

# Here we are creating a dictionary with the same keys on the fly but it would be more usefuls (and less prone to error) to import weights from a csv or Excel file into a DataFrame

# Weights in kilogram

weights = {'2019-01-14_124200.144' : 0.575, '2019-01-16_090910.423' : 0.575 , '2020-11-02_134238.904' : 775}

for recording in data_dict: # I could have written datadict.keys(), it is the same

for par in to_normalise:

data_dict[recording][f'{par[:-1]}/kg{par[-1]}'] = data_dict[recording][par] / weights[recording]

data_dict['2019-01-14_124200.144']

data_dict['2019-01-14_124200.144'].columns

# ### 5. Analyse the distribution of the data

data_dict['2019-01-14_124200.144'].describe()

# You can customize the percentiles

percentiles_to_show = [0.001, 0.01, 0.05, 0.1, 0.25, 0.5, 0.75, 0.95, 0.99, 0.999]

data_dict['2019-01-14_124200.144'].describe(percentiles = percentiles_to_show )

# Too many zeros - you can round it

percentiles_to_show = [0.001, 0.01, 0.05, 0.1, 0.25, 0.5, 0.75, 0.95, 0.99, 0.999]

round(data_dict['2019-01-14_124200.144'].describe(percentiles = percentiles_to_show ), 2)

data_dict['2019-01-14_124200.144'].columns

# Too many columns - study them individually

percentiles_to_show = [0.001, 0.01, 0.05, 0.1, 0.25, 0.5, 0.75, 0.95, 0.99, 0.999]

round(data_dict['2019-01-14_124200.144']['VTemand [mL/kg]'].describe(percentiles = percentiles_to_show ), 2)

# ##### One picture speaks a thousand words

#

# The main purpose of generating graphs is not to present the data to others but for yourself to visualise and inspect them.

# You can use the default .plot() method of DataFrames

data_dict['2019-01-14_124200.144']['VTemand [mL]']

# This gives you a plot but not the one your want (shows time series data)

data_dict['2019-01-14_124200.144']['VTemand [mL]'].plot()

# Two useful way of displaying the distribution of continuous data are `boxplots` and `histograms`

# #### Boxplots

# +

# That is better but it does not look nice because it shows all the outliers

data_dict['2019-01-14_124200.144']['VTemand [mL]'].plot(kind = 'box')

# -

# You can customize it - to some extent

# Google DataFrame.plot()

#

# [this is the first hit](https://pandas.pydata.org/docs/reference/api/pandas.DataFrame.plot.html)

#

# +

# That is better but it does not look nice because it shows all the outliers

data_dict['2019-01-14_124200.144']['VTemand [mL]'].plot(kind = 'box', ylim = [0,5], ylabel = 'mL/kg')

# -

# Even better - use `matplotlib` for plotting

# +

import matplotlib.pyplot as plt # Imports the matplotlib plotting library

# First we create the figure and the subplot(s)

fig, ax = plt.subplots()

# Then we populate the subplot(s)

ax.boxplot(data_dict['2019-01-14_124200.144']['VTemand [mL]'])

# It is an empty plot (although it does not throw an error) - why ?

# -

# Check there is data !!

data_dict['2019-01-14_124200.144']['VTemand [mL]']

# Let us Google it: "matplotlib boxplot empty"

#

# [Look at Stackoverflow top hits](https://stackoverflow.com/questions/52960482/plt-boxplot-showing-up-blank-despite-thousands-of-varying-datapoints)

# +

import matplotlib.pyplot as plt # Imports the matplotlib plotting library

# Removing empty data points helps

fig, ax = plt.subplots()

ax.boxplot(data_dict['2019-01-14_124200.144']['VTemand [mL]'].dropna());

# -

# How can we improve this further ??

# +

# Now the outliers are removed and the mean is shown

fig, ax = plt.subplots()

ax.boxplot(data_dict['2019-01-14_124200.144']['VTemand [mL]'].dropna(), showfliers = False, showmeans = True,);

# -

# This looks better but I would like to modify the error bars and the layout

# +

# # Whiskers show by default, you can change it

fig, ax = plt.subplots()

ax.boxplot(data_dict['2019-01-14_124200.144']['VTemand [mL]'].dropna(),

whis = [10, 90], showfliers = False, showmeans = True,);

# +

# You can customize the plot further

# Define styling for each boxplot component

meanprops = {'marker':'s', 'markeredgecolor':'black', 'markerfacecolor':'firebrick'}

medianprops = {'color': 'black', 'linewidth': 2}

boxprops = {'color': 'blue', 'linestyle': '-'}

whiskerprops = { 'color': 'red', 'linestyle': '-'}

capprops = {'color': 'green', 'linestyle': '-'}

flierprops = {'color': 'black', 'marker': '.'}

fig, ax = plt.subplots()

ax.boxplot(data_dict['2019-01-14_124200.144']['VTemand [mL]'].dropna(),

whis = [5, 95], showfliers = False, showmeans = True, meanprops = meanprops,

medianprops=medianprops, boxprops=boxprops, whiskerprops=whiskerprops,

capprops=capprops,flierprops = flierprops);

# +

# Add more useful customisation

meanprops = {'marker':'s', 'markeredgecolor':'black', 'markerfacecolor':'black'}

medianprops = {'color': 'black', 'linewidth': 2}

boxprops = {'color': 'black', 'linestyle': '-'}

whiskerprops = { 'color': 'black', 'linestyle': '-'}

capprops = {'color': 'black', 'linestyle': '-'}

flierprops = {'color': 'black', 'marker': '.'}

fig, ax = plt.subplots()

ax.boxplot(data_dict['2019-01-14_124200.144']['VTemand [mL]'].dropna(),

whis = [5, 95], showfliers = False, showmeans = True, meanprops = meanprops,

medianprops=medianprops, boxprops=boxprops, whiskerprops=whiskerprops,

capprops=capprops,flierprops = flierprops);

ax.set_xlabel('VTemand')

ax.set_ylabel('mL/kg')

ax.set_ylim(1.5, 5.5)

ax.grid(True)

# Further customization is possible

# +

# How to save the graph

dpi = 600

filetype = 'pdf'

# Add more useful customisation

meanprops = {'marker':'s', 'markeredgecolor':'black', 'markerfacecolor':'black'}

medianprops = {'color': 'black', 'linewidth': 2}

boxprops = {'color': 'black', 'linestyle': '-'}

whiskerprops = { 'color': 'black', 'linestyle': '-'}

capprops = {'color': 'black', 'linestyle': '-'}

flierprops = {'color': 'black', 'marker': '.'}

fig, ax = plt.subplots()

ax.boxplot(data_dict['2019-01-14_124200.144']['VTemand [mL]'].dropna(),

whis = [5, 95], showfliers = False, showmeans = True, meanprops = meanprops,

medianprops=medianprops, boxprops=boxprops, whiskerprops=whiskerprops,

capprops=capprops,flierprops = flierprops);

ax.set_xlabel('VTemand')

ax.set_ylabel('mL/kg')

ax.set_ylim(1.5, 5.5)

fig.savefig(os.path.join('results', f'boxplot_1.{filetype}'), dpi = dpi, format = filetype,

bbox_inches='tight',);

# -

# #### Histograms

# +

# First attempt..

fig, ax = plt.subplots()

ax.hist(data_dict['2019-01-14_124200.144']['VTemand [mL]'], color = 'black', alpha = 0.7);

# You can customize the format further as for boxplots...

# +

# A logarithmic y axis is useful to appreciate the present of outliers

fig, ax = plt.subplots()

ax.hist(data_dict['2019-01-14_124200.144']['VTemand [mL]'], log= True);

# +

# Use more bins to reveal the actual distribution

fig, ax = plt.subplots()

ax.hist(data_dict['2019-01-14_124200.144']['VTemand [mL]'], bins = 50);

# +

# imports the numpy package

import numpy as np

bins = np.arange(0, 10, 0.2)

bins

# +

# You can define your own bins

# imports the numpy package

import numpy as np

bins = np.arange(0, 10, 0.2)

fig, ax = plt.subplots()

ax.hist(data_dict['2019-01-14_124200.144']['VTemand [mL]'], bins = bins);

# +

# You can define your own bins

# imports the numpy package

import numpy as np

bins = np.arange(0, 10, 0.2)

fig, ax = plt.subplots()

ax.hist(data_dict['2019-01-14_124200.144']['VTemand [mL]'], bins = bins);

# +

# I would like to show you a different way to produce histograms

# -

VTemand_binned = pd.cut(data_dict['2019-01-14_124200.144']['VTemand [mL]'], bins = 10)

VTemand_binned.head(10)

VTemand_binned.value_counts()

# Sort according the index, not the values

# Also, what one function returns can be passed on to the next function

VTemand_binned.value_counts().sort_index()

# Better but still not what you want

VTemand_binned.value_counts().sort_index().plot()

# Better but still not what you want

VTemand_binned.value_counts().sort_index().plot(kind = 'bar')

plot = VTemand_binned.value_counts().sort_index().plot(kind = 'bar')

# +

import matplotlib.pyplot as plt

plot = VTemand_binned.value_counts().sort_index().plot(kind = 'bar')

plt.savefig(fname = os.path.join('results', 'VTemand'))

# +

import matplotlib.pyplot as plt

plot = VTemand_binned.value_counts().sort_index().plot(kind = 'bar', color = 'black', alpha = 0.7,

xlabel = 'VTemand_kg', ylabel = 'number of inflations',)

#plt.grid(True)

plt.savefig(fname = os.path.join('results', 'VTemand'))

# +

import matplotlib.pyplot as plt

plot = VTemand_binned.value_counts().sort_index().plot(kind = 'bar', color = 'black', alpha = 0.7,

xlabel = 'VTemand_kg', ylabel = 'number of inflations', logy = True)

#plt.grid(True)

plt.savefig(fname = os.path.join('results', 'VTemand'))

# -

# ##### You need to do this for all relevant columns

data_dict['2019-01-14_124200.144'].columns

# +

columns_to_plot = [column for column in data_dict['2019-01-14_124200.144'].columns

if column not in ['Time [ms]', 'Rel.Time [s]']]

print(columns_to_plot)

# -

data_dict['2019-01-14_124200.144'].columns[2:]

'VTemand [mL/kg]'.split(' ')[0]

# +

dpi = 300

filetype = 'jpg'

for column in columns_to_plot:

print(datetime.datetime.now(), f'Working on {column}')

fig, ax = plt.subplots()

ax.hist(data_dict['2019-01-14_124200.144'][column], bins = 10)

fname = column.split(' ')[0]

plt.savefig(fname = os.path.join('results', f'{fname}.{filetype}'), dpi = dpi, format = filetype,

bbox_inches='tight',)

plt.close()

# -

for column in columns_to_plot:

print(datetime.datetime.now(), f'Working on {column}')

fig, ax = plt.subplots()

ax.hist(data_dict['2019-01-14_124200.144'][column], bins = 50,)

if column == 'C20/Cdyn [no unit]':

fname = 'C20_Cdyn'

else:

fname = column.split(' ')[0]

plt.savefig(fname = os.path.join('results', f'{fname}.{filetype}'), dpi = dpi, format = filetype,

bbox_inches='tight')

plt.close()

| Lecture_6.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# # Introduction to XGBoost-Spark Cross Validation with GPU

#

# The goal of this notebook is to show you how to levarage GPU to accelerate XGBoost spark cross validatoin for hyperparameter tuning. The best model for the given hyperparameters will be returned.

#

# Note: CrossValidation can't be ran with the latest cudf v21.06.1 because of some API changes. We'll plan to release a new XGBoost jar with the fixing soon. We keep this notebook using cudf v0.19.2 & rapids-4-spark v0.5.0.

#

# Here takes the application 'Taxi' as an example.

#

# A few libraries are required for this notebook:

# 1. NumPy

# 2. cudf jar

# 2. xgboost4j jar

# 3. xgboost4j-spark jar

# #### Import the Required Libraries

from ml.dmlc.xgboost4j.scala.spark import XGBoostRegressionModel, XGBoostRegressor

from ml.dmlc.xgboost4j.scala.spark.rapids import CrossValidator

from pyspark.ml.evaluation import RegressionEvaluator

from pyspark.ml.tuning import ParamGridBuilder

from pyspark.sql import SparkSession

from pyspark.sql.types import FloatType, IntegerType, StructField, StructType

from time import time

# As shown above, here `CrossValidator` is imported from package `ml.dmlc.xgboost4j.scala.spark.rapids`, not the spark's `tuning.CrossValidator`.

# #### Create a Spark Session

spark = SparkSession.builder.appName("taxi-cv-gpu-python").getOrCreate()

# #### Specify the Data Schema and Load the Data

# +

label = 'fare_amount'

schema = StructType([

StructField('vendor_id', FloatType()),

StructField('passenger_count', FloatType()),

StructField('trip_distance', FloatType()),

StructField('pickup_longitude', FloatType()),

StructField('pickup_latitude', FloatType()),

StructField('rate_code', FloatType()),

StructField('store_and_fwd', FloatType()),

StructField('dropoff_longitude', FloatType()),

StructField('dropoff_latitude', FloatType()),

StructField(label, FloatType()),

StructField('hour', FloatType()),

StructField('year', IntegerType()),

StructField('month', IntegerType()),

StructField('day', FloatType()),

StructField('day_of_week', FloatType()),

StructField('is_weekend', FloatType()),

])

features = [ x.name for x in schema if x.name != label ]

train_data = spark.read.parquet('/data/taxi/parquet/train')

trans_data = spark.read.parquet('/data/taxi/parquet/eval')

# -

# #### Build a XGBoost-Spark CrossValidator

# First build a regressor of GPU version using *setFeaturesCols* to set feature columns

params = {

'eta': 0.05,

'maxDepth': 8,

'subsample': 0.8,

'gamma': 1.0,

'numRound': 100,

'numWorkers': 1,

'treeMethod': 'gpu_hist',

}

regressor = XGBoostRegressor(**params).setLabelCol(label).setFeaturesCols(features)

# Then build the evaluator and the hyperparameters

evaluator = (RegressionEvaluator()

.setLabelCol(label))

param_grid = (ParamGridBuilder()

.addGrid(regressor.maxDepth, [3, 6])

.addGrid(regressor.numRound, [100, 200])

.build())

# Finally the corss validator

cross_validator = (CrossValidator()

.setEstimator(regressor)

.setEvaluator(evaluator)

.setEstimatorParamMaps(param_grid)

.setNumFolds(3))

# #### Start Cross Validation by Fitting Data to CrossValidator

def with_benchmark(phrase, action):

start = time()

result = action()

end = time()

print('{} takes {} seconds'.format(phrase, round(end - start, 2)))

return result

model = with_benchmark('Cross-Validation', lambda: cross_validator.fit(train_data)).bestModel

# #### Transform On the Best Model

def transform():

result = model.transform(trans_data).cache()

result.foreachPartition(lambda _: None)

return result

result = with_benchmark('Transforming', transform)

result.select(label, 'prediction').show(5)

# #### Evaluation

accuracy = with_benchmark(

'Evaluation',

lambda: RegressionEvaluator().setLabelCol(label).evaluate(result))

print('RMSE is ' + str(accuracy))

spark.stop()

| examples/notebooks/python/cv-taxi-gpu.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# ## Zahlen in Python

#

# Zahlen funktionieren in Python ähnlich wie sonst überall auch - du kannst damit ganz normale Rechnungen berechnen.

print(5)

print(5)

print(6)

# ### Die Grundrechenarten

print(5 + 4)

print(5 - 4)

print(5 * 4)

print(5 / 4)

# Auch die Klammersetzung funktioniert natürlich wie gewohnt :-):

print((5 + 4) * 3)

print(1.25 + 2)

print(1 + 2)

# ## Übung

# * Was ist 3588 geteilt durch 11,3 ?

# * Was ist die Wurzel aus 345?

345**(1/2)

| 02 Python Teil 1/01 Zahlen.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python [conda env:tanzania] *

# language: python

# name: conda-env-tanzania-py

# ---

# # Research

# ## Models:

# These are the models that every data scientist should be familiar with.

# Favorite Classification Models:

# - LinearSVC

# - k-NN

# - Support Vector Machine Algorithm

# - XGBoost

# - Random Forest

# ### Hyperparameters to tune for each model type

# Below are the most common hyperparameters for the different models

# **k-NN**

# - **n_neighbors**: decreasing K decreases bias and increases variance, which leads to a more complex model

# - **leaf_size**: 'determines how many observations are captured in each leaf of either the BallTree of KDTree algorithms, which ultimately make the classification. The default equals 30. You can tune leaf_size by passing in a range of integers, like n_neighbors, to find the optimal leaf size. It is important to note that leaf_size can have a serious effect on run time and memory usage. Because of this, you tend not to run it on leaf_sizes smaller than 30 (smaller leafs equates to more leafs)'

# - **weights**: 'is the function that weights the data when making a prediction. “Uniform” is an equal weighted function, while “distance” weights the points by the inverse of their distance (i.e., location matters!). Utilizing the “distance” function will result in closer data points having a more significant influence on the classification'

# - **metric**: 'can be set to various distance metrics (see here) like Manhattan, Euclidean, Minkowski, or weighted Minkowski (default is “minkowski” with a p=2, which is the Euclidean distance). Which metric you choose is heavily dependent on what question you are trying to answer'

# **Random Forest, Decision Trees**

# - **n_estimators (random forest only)**: number of decision trees used in making the forest (default = 100). Generally speaking, the more uncorrelated trees in our forest, the closer their individual errors get to averaging out. However, more does not mean better since this can have an exponential effect on computation costs. After a certain point, there exists statistical evidence of diminishing returns. Bias-Variance Tradeoff: in theory, the more trees, the more overfit the model (low bias). However, when coupled with bagging, we need not worry'

# - **max_depth**: 'an integer that sets the maximum depth of the tree. The default is None, which means the nodes are expanded until all the leaves are pure (i.e., all the data belongs to a single class) or until all leaves contain less than the min_samples_split, which we will define next. Bias-Variance Tradeoff: increasing the max_depth leads to overfitting (low bias)'

# - **min_samples_split**: 'is the minimum number of samples required to split an internal node. Bias-Variance Tradeoff: the higher the minimum, the more “clustered” the decision will be, which could lead to underfitting (high bias)'

# - **min_samples_leaf**: 'defines the minimum number of samples needed at each leaf. The default input here is 1. Bias-Variance Tradeoff: similar to min_samples_split, if you do not allow the model to split (say because your min_samples_lear parameter is set too high) your model could be over generalizing the training data (high bias)'

# - **criterion**: 'measures the quality of the split and receives either “gini”, for Gini impurity (default), or “entropy”, for information gain. Gini impurity is the probability of incorrectly classifying a randomly chosen datapoint if it were labeled according to the class distribution of the dataset. Entropy is a measure of chaos in your data set. If a split in the dataset results in lower entropy, then you have gained information (i.e., your data has become more decision useful) and the split is worthy of the additional computational costs'

# **AdaBoost and Gradient Boosting**

# - **n_estimators**: is the maximum number of estimators at which boosting is terminated. If a perfect fit is reached, the algo is stopped. The default here is 50. Bias-Variance Tradeoff: the higher the number of estimators in your model the lower the bias.

# - **learning_rate**: is the rate at which we are adjusting the weights of our model with respect to the loss gradient. In layman’s terms: the lower the learning_rate, the slower we travel along the slope of the loss function. Important note: there is a trade-off between learning_rate and n_estimators as a tiny learning_rate and a large n_estimators will not necessarily improve results relative to the large computational costs.

# - **base_estimator (AdaBoost) / Loss (Gradient Boosting)**: is the base estimator from which the boosted ensemble is built. For AdaBoost the default value is None, which equates to a Decision Tree Classifier with max depth of 1 (a stump). For Gradient Boosting the default value is deviance, which equates to Logistic Regression. If “exponential” is passed, the AdaBoost algorithm is used.

#

# **Support Vector Machines (SVM)**

# - **C**: is the regularization parameter. As the documentation notes, the strength of regularization is inversely proportional to C. Basically, this parameter tells the model how much you want to avoid being wrong. You can think of the inverse of C as your total error budget (summed across all training points), with a lower C value allowing for more error than a higher value of C. Bias-Variance Tradeoff: as previously mentioned, a lower C value allows for more error, which translates to higher bias.

# - **gamma**: determines how far the scope of influence of a single training points reaches. A low gamma value allows for points far away from the hyperplane to be considered in its calculation, whereas a high gamma value prioritizes proximity. Bias-Variance Tradeoff: think of gamma as inversely related to K in KNN, the higher the gamma, the tighter the fit (low bias).

# - **kernel**: specifies which kernel should be used. Some of the acceptable strings are “linear”, “poly”, and “rbf”. Linear uses linear algebra to solve for the hyperplane, while poly uses a polynomial to solve for the hyperplane in a higher dimension (see Kernel Trick). RBF, or the radial basis function kernel, uses the distance between the input and some fixed point (either the origin or some of fixed point c) to make a classification assumption. More information on the Radial Basis Function can be found here.

# ## Evaluation Metrics

# ### Precision – What percent of your predictions were correct?

# Precision is the ability of a classifier not to label an instance positive that is actually negative. For each class it is defined as the ratio of true positives to the sum of true and false positives.

#

# TP – True Positives

# FP – False Positives

#

# Precision – Accuracy of positive predictions.

# Precision = TP/(TP + FP)

# ### Recall – What percent of the positive cases did you catch?

# Recall is the ability of a classifier to find all positive instances. For each class it is defined as the ratio of true positives to the sum of true positives and false negatives.

#

# FN – False Negatives

#

# Recall: Fraction of positives that were correctly identified.

# Recall = TP/(TP+FN)

# ### F1 score – What percent of positive predictions were correct?

# The F1 score is a weighted harmonic mean of precision and recall such that the best score is 1.0 and the worst is 0.0. Generally speaking, F1 scores are lower than accuracy measures as they embed precision and recall into their computation. **As a rule of thumb, the weighted average of F1 should be used to compare classifier models, not global accuracy**.

#

# F1 Score = 2*(Recall * Precision) / (Recall + Precision)

# ### Most important

# Recall will be the metric to focus on, because saying a well will fail when it is still fine, is no biggie.

#

# Saying a well is working when its actually broken is a biggie.

# ## Visualization Metrics

#

# ### Precision-Recall Curve

# Precision-Recall curves should be used when there is a moderate to large class imbalance.

#

# Our dataset has a very large class imbalance, so we chose to use the precision-recall curve.

#

# [https://machinelearningmastery.com/roc-curves-and-precision-recall-curves-for-imbalanced-classification](https://machinelearningmastery.com/roc-curves-and-precision-recall-curves-for-imbalanced-classification/)

# ### Confusion Matrix

# A confusion matrix is a table that is often used to describe the performance of a classification model (or “classifier”) on a set of test data for which the true values are known. It allows the visualization of the performance of an algorithm.

#

# [https://www.geeksforgeeks.org/confusion-matrix-machine-learning/](https://www.geeksforgeeks.org/confusion-matrix-machine-learning/)

# ## Methodology

# This project was built using th ROSEMED methodology.

# - **'R'**: Research the domain and relevant data science tools

# - **'O'**: Obtain the data

# - **'S'**: Scrub the data and remove any NaNs, missing values, duplicates, or outliers

# - **'E'**: Explore the data and look for correlations and insights

# - **'M'**: Model the data using the most relevant classifiers for the data

# - **'E'**: Evaluate the models and choose the model that is most suitable for the data

# - **'D'**: Deploy the models

| notebooks/research.ipynb |

# # Overfit-generalization-underfit

#

# In the previous notebook, we presented the general cross-validation framework

# and how it helps us quantify the training and testing errors as well

# as their fluctuations.

#

# In this notebook, we will put these two errors into perspective and show how

# they can help us know if our model generalizes, overfit, or underfit.

#

# Let's first load the data and create the same model as in the previous

# notebook.

# +

from sklearn.datasets import fetch_california_housing

housing = fetch_california_housing(as_frame=True)

data, target = housing.data, housing.target

target *= 100 # rescale the target in k$

# -

# <div class="admonition note alert alert-info">

# <p class="first admonition-title" style="font-weight: bold;">Note</p>