code stringlengths 38 801k | repo_path stringlengths 6 263 |

|---|---|

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# ## Aggregating data

#

# Pandas has very convenient features to aggregate data. That is, to compute summary statistics about datasets either as a whole or after dividing them into subsets based on data values.

#

# 1. Loading a comma-separated-value (CSV) dataset

# 2. Computing descriptive statistics

# 3. Grouping data by value

# 4. Creating pivot tables

import pandas as pd

# +

#pandas is very good at reading data from many different types of file: JSON, text, excel, HDF

#In this case, we will load a data frame from the comma-separated-file tips.csv

open('tips.csv','r').readlines()[:10]

# +

#Pandas can read such a file with a read_csv function.

tips = pd.read_csv('tips.csv')

# -

tips.head()

# +

tips.mean()

#apply the aggregation function: mean, which will

#compute average values for all the columns for which it's meaningful to do so

# +

#describe the dataset for more information

tips.describe()

# -

# ## Grouping

# +

#Let's say you want to know how well men tip versus women.

#For that, we can tell pandas to group the data frame based

#on the value of the column: sex using the function groupby.

#And then, we can take the mean.

tips.groupby('sex').mean()

# +

tips.groupby(['sex','smoker']).mean()

#This creates a pandas multidimensional index

# -

# ## Pivot Tables

#

# we create groups and assign them to both index values and columns so that represent a multidimensional analysis of the data in tabular format. For instance, we'll create a pivot table for our tips data frame showing the total bill amount, grouped by sex and smoker status.

pd.pivot_table(tips,'total_bill','sex','smoker')

# +

#one more dimensional grouping

pd.pivot_table(tips,'total_bill',['sex','smoker'],['day','time'])

# -

| 11 aggregation.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# # 1A.algo - Optimisation sous contrainte (correction)

#

# Un peu plus de détails dans cet article : [Damped Arrow-Hurwicz algorithm for sphere packing](https://arxiv.org/abs/1605.05473).

from jyquickhelper import add_notebook_menu

add_notebook_menu()

# On rappelle le problème d'optimisation à résoudre :

#

# $\left \{ \begin{array}{l} \min_U J(U) = u_1^2 + u_2^2 - u_1 u_2 + u_2 \\ sous \; contrainte \; \theta(U) = u_1 + 2u_2 - 1 = 0 \; et \; u_1 \geqslant 0.5 \end{array}\right .$

#

# Les implémentations de l'algorithme Arrow-Hurwicz proposées ici ne sont pas génériques. Il n'est pas suggéré de les réutiliser à moins d'utiliser pleinement le calcul matriciel de [numpy](http://www.numpy.org/).

# ## Exercice 1 : optimisation avec cvxopt

#

# Le module [cvxopt](http://cvxopt.org/) utilise une fonction qui retourne la valeur de la fonction à optimiser, sa dérivée, sa dérivée seconde.

#

# $\begin{array}{rcl} f(x,y) &=& x^2 + y^2 - xy + y \\ \frac{\partial f(x,y)}{\partial x} &=& 2x - y \\ \frac{\partial f(x,y)}{\partial y} &=& 2y - x + 1 \\ \frac{\partial^2 f(x,y)}{\partial x^2} &=& 2 \\ \frac{\partial^2 f(x,y)}{\partial y^2} &=& 2 \\ \frac{\partial^2 f(x,y)}{\partial x\partial y} &=& -1 \end{array}$

# Le paramètre le plus complexe est la fonction ``F`` pour lequel il faut lire la documentation de la fonction [solvers.cp](http://cvxopt.org/userguide/solvers.html#problems-with-nonlinear-objectives) qui détaille les trois cas d'utilisation de la fonction ``F`` :

#

# * ``F()`` ou ``F(None,None)``, ce premier cas est sans doute le plus déroutant puisqu'il faut retourner le nombre de contraintes non linéaires et le premier $x_0$

# * ``F(x)`` ou ``F(x,None)``

# * ``F(x,z)``

#

# L'algorithme de résolution est itératif : on part d'une point $x_0$ qu'on déplace dans les directions opposés aux gradients de la fonction à minimiser et des contraintes jusqu'à ce que le point $x_t$ n'évolue plus. C'est pourquoi le premier d'utilisation de la focntion $F$ est en fait une initialisation. L'algorithme d'optimisation a besoin d'un premier point $x_0$ dans le domaine de défintion de la fonction $f$.

# +

from cvxopt import solvers, matrix

import random

def fonction(x=None,z=None) :

if x is None :

x0 = matrix ( [[ random.random(), random.random() ]])

return 0,x0

f = x[0]**2 + x[1]**2 - x[0]*x[1] + x[1]

d = matrix ( [ x[0]*2 - x[1], x[1]*2 - x[0] + 1 ] ).T

if z is None:

return f, d

else :

h = z[0] * matrix ( [ [ 2.0, -1.0], [-1.0, 2.0] ])

return f, d, h

A = matrix([ [ 1.0, 2.0 ] ]).trans()

b = matrix ( [[ 1.0] ] )

sol = solvers.cp ( fonction, A = A, b = b)

print (sol)

print ("solution:",sol['x'].T)

# -

# ## Exercice 2 : l'algorithme de Arrow-Hurwicz

# +

def fonction(X) :

x,y = X

f = x**2 + y**2 - x*y + y

d = [ x*2 - y, y*2 - x + 1 ]

return f, d

def contrainte(X) :

x,y = X

f = x+2*y-1

d = [ 1,2]

return f, d

X0 = [ random.random(),random.random() ]

p0 = random.random()

epsilon = 0.1

rho = 0.1

diff = 1

iter = 0

while diff > 1e-10 :

f,d = fonction( X0 )

th,dt = contrainte( X0 )

Xt = [ X0[i] - epsilon*(d[i] + dt[i] * p0) for i in range(len(X0)) ]

th,dt = contrainte( Xt )

pt = p0 + rho * th

iter += 1

diff = sum ( [ abs(Xt[i] - X0[i]) for i in range(len(X0)) ] )

X0 = Xt

p0 = pt

if iter % 100 == 0 :

print ("i {0} diff {1:0.000}".format(iter,diff),":", f,X0,p0,th)

print (diff,iter,p0)

print("solution:",X0)

# -

# La code proposé ici a été repris et modifié de façon à l'inclure dans une fonction qui s'adapte à n'importe quel type de fonction et contrainte dérivables : [Arrow_Hurwicz](http://www.xavierdupre.fr/app/ensae_teaching_cs/helpsphinx/td_1a/optimisation_contrainte.html?highlight=arrow#ensae_teaching_cs.td_1a.optimisation_contrainte.Arrow_Hurwicz). Il faut distinguer l'algorithme en lui-même et la preuve de sa convergence. Cet algorithme fonctionne sur une grande classe de fonctions mais sa convergence n'est assurée que lorsque les fonctions sont quadratiques.

# ## Exercice 3 : le lagrangien augmenté

# +

def fonction(X,c) :

x,y = X

f = x**2 + y**2 - x*y + y

d = [ x*2 - y, y*2 - x + 1 ]

v = x+2*y-1

v = c/2 * v**2

# la fonction retourne maintenant dv (ce qu'elle ne faisait pas avant)

dv = [ 2*(x+2*y-1), 4*(x+2*y-1) ]

dv = [ c/2 * dv[0], c/2 * dv[1] ]

return f + v, d, dv

def contrainte(X) :

x,y = X

f = x+2*y-1

d = [ 1,2]

return f, d

X0 = [ random.random(),random.random() ]

p0 = random.random()

epsilon = 0.1

rho = 0.1

c = 1

diff = 1

iter = 0

while diff > 1e-10 :

f,d,dv = fonction( X0,c )

th,dt = contrainte( X0 )

# le dv[i] est nouveau

Xt = [ X0[i] - epsilon*(d[i] + dt[i] * p0 + dv[i]) for i in range(len(X0)) ]

th,dt = contrainte( Xt )

pt = p0 + rho * th

iter += 1

diff = sum ( [ abs(Xt[i] - X0[i]) for i in range(len(X0)) ] )

X0 = Xt

p0 = pt

if iter % 100 == 0 :

print ("i {0} diff {1:0.000}".format(iter,diff),":", f,X0,p0,th)

print (diff,iter,p0)

print("solution:",X0)

# -

# ## Prolongement 1 : inégalité

#

# Le problème à résoudre est le suivant :

#

# $\left\{ \begin{array}{l} \min_U J(U) = u_1^2 + u_1^2 - u_1 u_2 + u_2 \\ \; sous \; contrainte \; \theta(U) = u_1 + 2u_2 - 1 = 0 \; et \; u_1 \geqslant 0.3 \end{array}\right.$

# +

from cvxopt import solvers, matrix

import random

def fonction(x=None,z=None) :

if x is None :

x0 = matrix ( [[ random.random(), random.random() ]])

return 0,x0

f = x[0]**2 + x[1]**2 - x[0]*x[1] + x[1]

d = matrix ( [ x[0]*2 - x[1], x[1]*2 - x[0] + 1 ] ).T

h = matrix ( [ [ 2.0, -1.0], [-1.0, 2.0] ])

if z is None: return f, d

else : return f, d, h

A = matrix([ [ 1.0, 2.0 ] ]).trans()

b = matrix ( [[ 1.0] ] )

G = matrix ( [[0.0, -1.0] ]).trans()

h = matrix ( [[ -0.3] ] )

sol = solvers.cp ( fonction, A = A, b = b, G=G, h=h)

print (sol)

print ("solution:",sol['x'].T)

# -

# ## Version avec l'algorithme de Arrow-Hurwicz

# +

import numpy,random

X0 = numpy.matrix ( [[ random.random(), random.random() ]]).transpose()

P0 = numpy.matrix ( [[ random.random(), random.random() ]]).transpose()

A = numpy.matrix([ [ 1.0, 2.0 ], [ 0.0, -1.0] ])

tA = A.transpose()

b = numpy.matrix ( [[ 1.0], [-0.30] ] )

epsilon = 0.1

rho = 0.1

c = 1

first = True

iter = 0

while first or abs(J - oldJ) > 1e-8 :

if first :

J = X0[0,0]**2 + X0[1,0]**2 - X0[0,0]*X0[1,0] + X0[1,0]

oldJ = J+1

first = False

else :

oldJ = J

J = X0[0,0]**2 + X0[1,0]**2 - X0[0,0]*X0[1,0] + X0[1,0]

dj = numpy.matrix ( [ X0[0,0]*2 - X0[1,0], X0[1,0]*2 - X0[0,0] + 1 ] ).transpose()

Xt = X0 - ( dj + tA * P0 ) * epsilon

Pt = P0 + ( A * Xt - b) * rho

if Pt [1,0] < 0 : Pt[1,0] = 0

X0,P0 = Xt,Pt

iter += 1

if iter % 100 == 0 :

print ("iteration",iter, J)

print (iter)

print ("solution:",Xt.T)

# -

# ## Prolongement 2 : optimisation d'une fonction linéaire

#

# Correction à venir.

| _doc/notebooks/td1a_algo/td1a_correction_session9.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3.8.10 64-bit

# name: python3

# ---

# + [markdown] colab_type="text" id="view-in-github"

# <a href="https://colab.research.google.com/github/mrdbourke/tensorflow-deep-learning/blob/main/07_food_vision_milestone_project_1.ipynb" target="_parent"><img src="https://colab.research.google.com/assets/colab-badge.svg" alt="Open In Colab"/></a>

# + [markdown] id="z2PPYrQIztfX"

# # 07. Milestone Project 1: 🍔👁 Food Vision Big™

#

# In the previous notebook ([transfer learning part 3: scaling up](https://github.com/mrdbourke/tensorflow-deep-learning/blob/main/06_transfer_learning_in_tensorflow_part_3_scaling_up.ipynb)) we built Food Vision mini: a transfer learning model which beat the original results of the [Food101 paper](https://data.vision.ee.ethz.ch/cvl/datasets_extra/food-101/) with only 10% of the data.

#

# But you might be wondering, what would happen if we used all the data?

#

# Well, that's what we're going to find out in this notebook!

#

# We're going to be building Food Vision Big™, using all of the data from the Food101 dataset.

#

# Yep. All 75,750 training images and 25,250 testing images.

#

# And guess what...

#

# This time **we've got the goal of beating [DeepFood](https://www.researchgate.net/publication/304163308_DeepFood_Deep_Learning-Based_Food_Image_Recognition_for_Computer-Aided_Dietary_Assessment)**, a 2016 paper which used a Convolutional Neural Network trained for 2-3 days to achieve 77.4% top-1 accuracy.

#

# > 🔑 **Note:** **Top-1 accuracy** means "accuracy for the top softmax activation value output by the model" (because softmax ouputs a value for every class, but top-1 means only the highest one is evaluated). **Top-5 accuracy** means "accuracy for the top 5 softmax activation values output by the model", in other words, did the true label appear in the top 5 activation values? Top-5 accuracy scores are usually noticeably higher than top-1.

#

# | | 🍔👁 Food Vision Big™ | 🍔👁 Food Vision mini |

# |-----|-----|-----|

# | Dataset source | TensorFlow Datasets | Preprocessed download from Kaggle |

# | Train data | 75,750 images | 7,575 images |

# | Test data | 25,250 images | 25,250 images |

# | Mixed precision | Yes | No |

# | Data loading | Performanant tf.data API | TensorFlow pre-built function |

# | Target results | 77.4% top-1 accuracy (beat [DeepFood paper](https://arxiv.org/abs/1606.05675)) | 50.76% top-1 accuracy (beat [Food101 paper](https://data.vision.ee.ethz.ch/cvl/datasets_extra/food-101/static/bossard_eccv14_food-101.pdf)) |

#

# *Table comparing difference between Food Vision Big (this notebook) versus Food Vision mini (previous notebook).*

#

# Alongside attempting to beat the DeepFood paper, we're going to learn about two methods to significantly improve the speed of our model training:

# 1. Prefetching

# 2. Mixed precision training

#

# But more on these later.

#

# ## What we're going to cover

#

# * Using TensorFlow Datasets to download and explore data

# * Creating preprocessing function for our data

# * Batching & preparing datasets for modelling (**making our datasets run fast**)

# * Creating modelling callbacks

# * Setting up **mixed precision training**

# * Building a feature extraction model (see [transfer learning part 1: feature extraction](https://github.com/mrdbourke/tensorflow-deep-learning/blob/main/04_transfer_learning_in_tensorflow_part_1_feature_extraction.ipynb))

# * Fine-tuning the feature extraction model (see [transfer learning part 2: fine-tuning](https://github.com/mrdbourke/tensorflow-deep-learning/blob/main/05_transfer_learning_in_tensorflow_part_2_fine_tuning.ipynb))

# * Viewing training results on TensorBoard

#

# ## How you should approach this notebook

#

# You can read through the descriptions and the code (it should all run, except for the cells which error on purpose), but there's a better option.

#

# Write all of the code yourself.

#

# Yes. I'm serious. Create a new notebook, and rewrite each line by yourself. Investigate it, see if you can break it, why does it break?

#

# You don't have to write the text descriptions but writing the code yourself is a great way to get hands-on experience.

#

# Don't worry if you make mistakes, we all do. The way to get better and make less mistakes is to write more code.

#

# > 📖 **Resource:** See the full set of course materials on GitHub: https://github.com/mrdbourke/tensorflow-deep-learning

# + [markdown] id="rLaDq25mykWN"

# ## Check GPU

#

# For this notebook, we're going to be doing something different.

#

# We're going to be using mixed precision training.

#

# Mixed precision training was introduced in [TensorFlow 2.4.0](https://blog.tensorflow.org/2020/12/whats-new-in-tensorflow-24.html) (a very new feature at the time of writing).

#

# What does **mixed precision training** do?

#

# Mixed precision training uses a combination of single precision (float32) and half-preicison (float16) data types to speed up model training (up 3x on modern GPUs).

#

# We'll talk about this more later on but in the meantime you can read the [TensorFlow documentation on mixed precision](https://www.tensorflow.org/guide/mixed_precision) for more details.

#

# For now, before we can move forward if we want to use mixed precision training, we need to make sure the GPU powering our Google Colab instance (if you're using Google Colab) is compataible.

#

# For mixed precision training to work, you need access to a GPU with a compute compability score of 7.0+.

#

# Google Colab offers P100, K80 and T4 GPUs, however, **the P100 and K80 aren't compatible with mixed precision training**.

#

# Therefore before we proceed we need to make sure we have **access to a Tesla T4 GPU in our Google Colab instance**.

#

# If you're not using Google Colab, you can find a list of various [Nvidia GPU compute capabilities on Nvidia's developer website](https://developer.nvidia.com/cuda-gpus#compute).

#

# > 🔑 **Note:** If you run the cell below and see a P100 or K80, try going to to Runtime -> Factory Reset Runtime (note: this will remove any saved variables and data from your Colab instance) and then retry to get a T4.

# + colab={"base_uri": "https://localhost:8080/"} id="VAC_5rYJicZ4" outputId="7c6def0d-e61f-4361-e18a-ff3589d2b978"

# If using Google Colab, this should output "Tesla T4" otherwise,

# you won't be able to use mixed precision training

# !nvidia-smi -L

# + [markdown] id="oWgb38BYKhS_"

# Since mixed precision training was introduced in TensorFlow 2.4.0, make sure you've got at least TensorFlow 2.4.0+.

# + colab={"base_uri": "https://localhost:8080/"} id="8LpEDWLxKg46" outputId="aef28f24-4395-46f6-8129-6cbf84caa68a"

# Check TensorFlow version (should be 2.4.0+)

import tensorflow as tf

print(tf.__version__)

# + [markdown] id="pPwSfuFDzT5v"

# ## Get helper functions

#

# We've created a series of helper functions throughout the previous notebooks in the course. Instead of rewriting them (tedious), we'll import the [`helper_functions.py`](https://github.com/mrdbourke/tensorflow-deep-learning/blob/main/extras/helper_functions.py) file from the GitHub repo.

# + colab={"base_uri": "https://localhost:8080/"} id="iC2R6bOZzhQd" outputId="cfbe9a9f-fe5d-4497-c1de-6ac93013dfc0"

# Get helper functions file

# !wget https://raw.githubusercontent.com/mrdbourke/tensorflow-deep-learning/main/extras/helper_functions.py

# + id="ZqKKuFt7zYvf"

# Import series of helper functions for the notebook (we've created/used these in previous notebooks)

from helper_functions import create_tensorboard_callback, plot_loss_curves, compare_historys

# + [markdown] id="w5BE7WYl9b_8"

# ## Use TensorFlow Datasets to Download Data

#

# In previous notebooks, we've downloaded our food images (from the [Food101 dataset](https://www.kaggle.com/dansbecker/food-101/home)) from Google Storage.

#

# And this is a typical workflow you'd use if you're working on your own datasets.

#

# However, there's another way to get datasets ready to use with TensorFlow.

#

# For many of the most popular datasets in the machine learning world (often referred to and used as benchmarks), you can access them through [TensorFlow Datasets (TFDS)](https://www.tensorflow.org/datasets/overview).

#

# What is **TensorFlow Datasets**?

#

# A place for prepared and ready-to-use machine learning datasets.

#

# Why use TensorFlow Datasets?

#

# * Load data already in Tensors

# * Practice on well established datasets

# * Experiment with differet data loading techniques (like we're going to use in this notebook)

# * Experiment with new TensorFlow features quickly (such as mixed precision training)

#

# Why *not* use TensorFlow Datasets?

#

# * The datasets are static (they don't change, like your real-world datasets would)

# * Might not be suited for your particular problem (but great for experimenting)

#

# To begin using TensorFlow Datasets we can import it under the alias `tfds`.

#

# + id="YDMExkAG8ztE"

# Get TensorFlow Datasets

import tensorflow_datasets as tfds

# + [markdown] id="-TRPTGvpNuJm"

# To find all of the available datasets in TensorFlow Datasets, you can use the `list_builders()` method.

#

# After doing so, we can check to see if the one we're after (`"food101"`) is present.

# + colab={"base_uri": "https://localhost:8080/"} id="gXA8b2619s0X" outputId="8f0c8971-4a0a-4aec-f371-701022832a76"

# List available datasets

datasets_list = tfds.list_builders() # get all available datasets in TFDS

print("food101" in datasets_list) # is the dataset we're after available?

# + [markdown] id="bUK_zulYNfVY"

# Beautiful! It looks like the dataset we're after is available (note there are plenty more available but we're on Food101).

#

# To get access to the Food101 dataset from the TFDS, we can use the [`tfds.load()`](https://www.tensorflow.org/datasets/api_docs/python/tfds/load) method.

#

# In particular, we'll have to pass it a few parameters to let it know what we're after:

# * `name` (str) : the target dataset (e.g. `"food101"`)

# * `split` (list, optional) : what splits of the dataset we're after (e.g. `["train", "validation"]`)

# * the `split` parameter is quite tricky. See [the documentation for more](https://github.com/tensorflow/datasets/blob/master/docs/splits.md).

# * `shuffle_files` (bool) : whether or not to shuffle the files on download, defaults to `False`

# * `as_supervised` (bool) : `True` to download data samples in tuple format (`(data, label)`) or `False` for dictionary format

# * `with_info` (bool) : `True` to download dataset metadata (labels, number of samples, etc)

#

# > 🔑 **Note:** Calling the `tfds.load()` method will start to download a target dataset to disk if the `download=True` parameter is set (default). This dataset could be 100GB+, so make sure you have space.

# + colab={"base_uri": "https://localhost:8080/", "height": 347, "referenced_widgets": ["c464cf7f692747dd8a9e5c224b72e683", "e19cbd5c9eca4db6b023d501ae96447b", "465a84232f9344b7852bc0315aaf6147", "a15efd3f9c514ce1947bd75330ddc60f", "1630703c5da345df876cd4a38ac062d0", "48c761f4ebf541169f0b674e835081bd", "fc53cb8f72eb45d9987f22fa391e64ff", "5b48d0e1bd664416b3a8da6daedf29eb", "116932efaceb4e6889b9e9a7585a851f", "<KEY>", "<KEY>", "5ffe42dfcc8945058a1211325bb12276", "<KEY>", "02364bec4a004ed4a4b0970e1b572410", "<KEY>", "<KEY>", "16688ead8185417fbe6acf39649c222a", "5bdf272dff1049afac690fe78c530277", "<KEY>", "8f1b23e71fd14fcc97a32f0abdc96419", "daf7463ed2b14751bb7532a91429bf67", "<KEY>", "<KEY>", "7caebdb45e1f41ae88eddfabada5ff67", "da96cbabe8ff449388e052dd55213653", "<KEY>", "41908c7012174ecabfe801a2f5e3dee5", "103a4aded56c4acd81aac7fe81851956", "<KEY>", "6f9a55e4f5fd4f459eac237f02c37829", "<KEY>", "<KEY>", "<KEY>", "<KEY>", "fe172d6537a642be985520c0e66d7801", "<KEY>", "e8ef9677adbf4f368a258d64732d152a", "acc1ea5a5531425c885afe2bdbce4273", "<KEY>", "<KEY>", "0624ceb3768e4c62a220b188c7ed1141", "<KEY>", "<KEY>", "7c0827a68eb141e5a1939597a171c593", "<KEY>", "<KEY>", "<KEY>", "<KEY>", "8a925d410ebb413a808ce40f12e7d91e", "9a9338dbc9c04a078575071d48b25e51", "<KEY>", "<KEY>", "568e801778124de386e7badcf1e58456", "fe96151f8be04763bedf23083b6a6b7b", "142f3e1df4c1470c81be604db7f2aa95", "<KEY>"]} id="ClXZDWng-s8F" outputId="57febdf7-894d-4524-fb3b-9bc63d7c2d36"

# Load in the data (takes about 5-6 minutes in Google Colab)

(train_data, test_data), ds_info = tfds.load(name="food101", # target dataset to get from TFDS

split=["train", "validation"], # what splits of data should we get? note: not all datasets have train, valid, test

shuffle_files=True, # shuffle files on download?

as_supervised=True, # download data in tuple format (sample, label), e.g. (image, label)

with_info=True) # include dataset metadata? if so, tfds.load() returns tuple (data, ds_info)

# + [markdown] id="pSxo6soUwTQl"

# Wonderful! After a few minutes of downloading, we've now got access to entire Food101 dataset (in tensor format) ready for modelling.

#

# Now let's get a little information from our dataset, starting with the class names.

#

# Getting class names from a TensorFlow Datasets dataset requires downloading the "`dataset_info`" variable (by using the `as_supervised=True` parameter in the `tfds.load()` method, **note:** this will only work for supervised datasets in TFDS).

#

# We can access the class names of a particular dataset using the `dataset_info.features` attribute and accessing `names` attribute of the the `"label"` key.

# + colab={"base_uri": "https://localhost:8080/"} id="Zoy8Tu7VR2ji" outputId="e572e94c-d6d3-43bc-e049-ed4d73c84c96"

# Features of Food101 TFDS

ds_info.features

# + colab={"base_uri": "https://localhost:8080/"} id="g2UkCaLsDXaR" outputId="8ac531a8-f951-48d1-f9a1-dfdeabf6ac1b"

# Get class names

class_names = ds_info.features["label"].names

class_names[:10]

# + [markdown] id="TwsBAkGKwh08"

# ### Exploring the Food101 data from TensorFlow Datasets

#

# Now we've downloaded the Food101 dataset from TensorFlow Datasets, how about we do what any good data explorer should?

#

# In other words, "visualize, visualize, visualize".

#

# Let's find out a few details about our dataset:

# * The shape of our input data (image tensors)

# * The datatype of our input data

# * What the labels of our input data look like (e.g. one-hot encoded versus label-encoded)

# * Do the labels match up with the class names?

#

# To do, let's take one sample off the training data (using the [`.take()` method](https://www.tensorflow.org/api_docs/python/tf/data/Dataset#take)) and explore it.

# + id="5eO2qVy3A-CC"

# Take one sample off the training data

train_one_sample = train_data.take(1) # samples are in format (image_tensor, label)

# + [markdown] id="hsZj4K3ETdvB"

# Because we used the `as_supervised=True` parameter in our `tfds.load()` method above, data samples come in the tuple format structure `(data, label)` or in our case `(image_tensor, label)`.

# + colab={"base_uri": "https://localhost:8080/"} id="m--0wDNDTU8S" outputId="27eaedbd-df55-4773-8a14-b35c40c8a4d0"

# What does one sample of our training data look like?

train_one_sample

# + [markdown] id="bP1MeznpTsbM"

# Let's loop through our single training sample and get some info from the `image_tensor` and `label`.

# + colab={"base_uri": "https://localhost:8080/"} id="Zjz4goiHBMO7" outputId="880b2873-15a9-4805-cfef-9e390c9f038f"

# Output info about our training sample

for image, label in train_one_sample:

print(f"""

Image shape: {image.shape}

Image dtype: {image.dtype}

Target class from Food101 (tensor form): {label}

Class name (str form): {class_names[label.numpy()]}

""")

# + [markdown] id="i4_od8dUUSHE"

# Because we set the `shuffle_files=True` parameter in our `tfds.load()` method above, running the cell above a few times will give a different result each time.

#

# Checking these you might notice some of the images have different shapes, for example `(512, 342, 3)` and `(512, 512, 3)` (height, width, color_channels).

#

# Let's see what one of the image tensors from TFDS's Food101 dataset looks like.

# + colab={"base_uri": "https://localhost:8080/"} id="FuZmVEH-WS4b" outputId="74ab49f2-d531-49a3-b0aa-13a0902846bf"

# What does an image tensor from TFDS's Food101 look like?

image

# + colab={"base_uri": "https://localhost:8080/"} id="3jJF7njRVKh6" outputId="5a10af78-6592-4499-f22b-eba95937ffb7"

# What are the min and max values?

tf.reduce_min(image), tf.reduce_max(image)

# + [markdown] id="P2GvO7HjVF5i"

# Alright looks like our image tensors have values of between 0 & 255 (standard red, green, blue colour values) and the values are of data type `unit8`.

#

# We might have to preprocess these before passing them to a neural network. But we'll handle this later.

#

# In the meantime, let's see if we can plot an image sample.

# + [markdown] id="llQyIBfJWc5x"

# ### Plot an image from TensorFlow Datasets

#

# We've seen our image tensors in tensor format, now let's really adhere to our motto.

#

# "Visualize, visualize, visualize!"

#

# Let's plot one of the image samples using [`matplotlib.pyplot.imshow()`](https://matplotlib.org/stable/api/_as_gen/matplotlib.pyplot.imshow.html) and set the title to target class name.

# + colab={"base_uri": "https://localhost:8080/", "height": 264} id="pK581hgPWyLm" outputId="f82b5267-3c9c-4d19-a3b8-6cf4998d4110"

# Plot an image tensor

import matplotlib.pyplot as plt

plt.imshow(image)

plt.title(class_names[label.numpy()]) # add title to image by indexing on class_names list

plt.axis(False);

# + [markdown] id="4mBAtGnPWQHy"

# Delicious!

#

# Okay, looks like the Food101 data we've got from TFDS is similar to the datasets we've been using in previous notebooks.

#

# Now let's preprocess it and get it ready for use with a neural network.

# + [markdown] id="UeRJnQMIYLcy"

# ## Create preprocessing functions for our data

#

# In previous notebooks, when our images were in folder format we used the method [`tf.keras.preprocessing.image_dataset_from_directory()`](https://www.tensorflow.org/api_docs/python/tf/keras/preprocessing/image_dataset_from_directory) to load them in.

#

# Doing this meant our data was loaded into a format ready to be used with our models.

#

# However, since we've downloaded the data from TensorFlow Datasets, there are a couple of preprocessing steps we have to take before it's ready to model.

#

# More specifically, our data is currently:

#

# * In `uint8` data type

# * Comprised of all differnet sized tensors (different sized images)

# * Not scaled (the pixel values are between 0 & 255)

#

# Whereas, models like data to be:

#

# * In `float32` data type

# * Have all of the same size tensors (batches require all tensors have the same shape, e.g. `(224, 224, 3)`)

# * Scaled (values between 0 & 1), also called normalized

#

# To take care of these, we'll create a `preprocess_img()` function which:

#

# * Resizes an input image tensor to a specified size using [`tf.image.resize()`](https://www.tensorflow.org/api_docs/python/tf/image/resize)

# * Converts an input image tensor's current datatype to `tf.float32` using [`tf.cast()`](https://www.tensorflow.org/api_docs/python/tf/cast)

#

# > 🔑 **Note:** Pretrained EfficientNetBX models in [`tf.keras.applications.efficientnet`](https://www.tensorflow.org/api_docs/python/tf/keras/applications/efficientnet) (what we're going to be using) have rescaling built-in. But for many other model architectures you'll want to rescale your data (e.g. get its values between 0 & 1). This could be incorporated inside your "`preprocess_img()`" function (like the one below) or within your model as a [`tf.keras.layers.experimental.preprocessing.Rescaling`](https://www.tensorflow.org/api_docs/python/tf/keras/layers/experimental/preprocessing/Rescaling) layer.

# + id="NKuwdjm0CWc1"

# Make a function for preprocessing images

def preprocess_img(image, label, img_shape=224):

"""

Converts image datatype from 'uint8' -> 'float32' and reshapes image to

[img_shape, img_shape, color_channels]

"""

image = tf.image.resize(image, [img_shape, img_shape]) # reshape to img_shape

return tf.cast(image, tf.float32), label # return (float32_image, label) tuple

# + [markdown] id="m6kGGFa1Z3Nz"

# Our `preprocess_img()` function above takes image and label as input (even though it does nothing to the label) because our dataset is currently in the tuple structure `(image, label)`.

#

# Let's try our function out on a target image.

# + colab={"base_uri": "https://localhost:8080/"} id="BqPDUGCvHI4K" outputId="84cccb6f-5ace-4903-e33d-73dfa867c6ee"

# Preprocess a single sample image and check the outputs

preprocessed_img = preprocess_img(image, label)[0]

print(f"Image before preprocessing:\n {image[:2]}...,\nShape: {image.shape},\nDatatype: {image.dtype}\n")

print(f"Image after preprocessing:\n {preprocessed_img[:2]}...,\nShape: {preprocessed_img.shape},\nDatatype: {preprocessed_img.dtype}")

# + [markdown] id="uhIIvprqaHEZ"

# Excellent! Looks like our `preprocess_img()` function is working as expected.

#

# The input image gets converted from `uint8` to `float32` and gets reshaped from its current shape to `(224, 224, 3)`.

#

# How does it look?

# + colab={"base_uri": "https://localhost:8080/", "height": 264} id="wYtMxQzZY0F7" outputId="2dd69f3d-bfb0-4318-efb3-a40e98a558a2"

# We can still plot our preprocessed image as long as we

# divide by 255 (for matplotlib capatibility)

plt.imshow(preprocessed_img/255.)

plt.title(class_names[label])

plt.axis(False);

# + [markdown] id="gsIaJZEU7y_M"

# All this food visualization is making me hungry. How about we start preparing to model it?

# + [markdown] id="t2rd4_3CjdGE"

# ## Batch & prepare datasets

#

# Before we can model our data, we have to turn it into batches.

#

# # Why?

#

# Because computing on batches is memory efficient.

#

# We turn our data from 101,000 image tensors and labels (train and test combined) into batches of 32 image and label pairs, thus enabling it to fit into the memory of our GPU.

#

# To do this in effective way, we're going to be leveraging a number of methods from the [`tf.data` API](https://www.tensorflow.org/api_docs/python/tf/data).

#

# > 📖 **Resource:** For loading data in the most performant way possible, see the TensorFlow docuemntation on [Better performance with the tf.data API](https://www.tensorflow.org/guide/data_performance).

#

# Specifically, we're going to be using:

#

# * [`map()`](https://www.tensorflow.org/api_docs/python/tf/data/Dataset#map) - maps a predefined function to a target dataset (e.g. `preprocess_img()` to our image tensors)

# * [`shuffle()`](https://www.tensorflow.org/api_docs/python/tf/data/Dataset#shuffle) - randomly shuffles the elements of a target dataset up `buffer_size` (ideally, the `buffer_size` is equal to the size of the dataset, however, this may have implications on memory)

# * [`batch()`](https://www.tensorflow.org/api_docs/python/tf/data/Dataset#batch) - turns elements of a target dataset into batches (size defined by parameter `batch_size`)

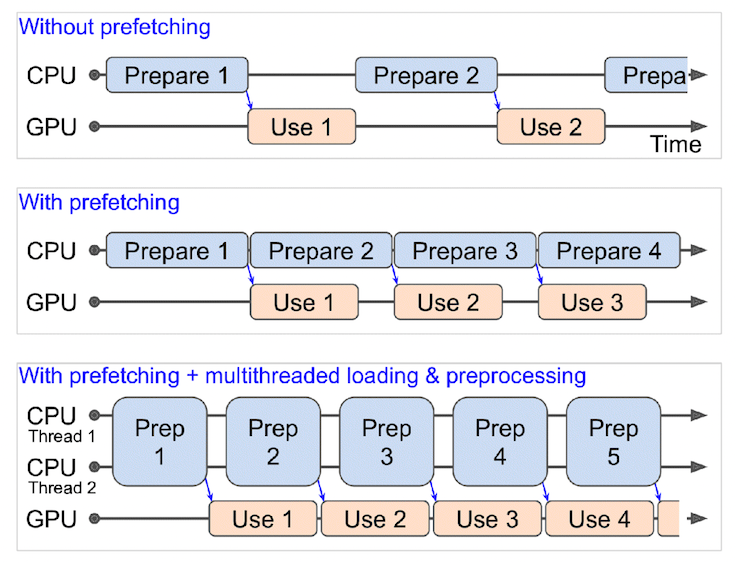

# * [`prefetch()`](https://www.tensorflow.org/api_docs/python/tf/data/Dataset#prefetch) - prepares subsequent batches of data whilst other batches of data are being computed on (improves data loading speed but costs memory)

# * Extra: [`cache()`](https://www.tensorflow.org/api_docs/python/tf/data/Dataset#cache) - caches (saves them for later) elements in a target dataset, saving loading time (will only work if your dataset is small enough to fit in memory, standard Colab instances only have 12GB of memory)

#

# Things to note:

# - Can't batch tensors of different shapes (e.g. different image sizes, need to reshape images first, hence our `preprocess_img()` function)

# - `shuffle()` keeps a buffer of the number you pass it images shuffled, ideally this number would be all of the samples in your training set, however, if your training set is large, this buffer might not fit in memory (a fairly large number like 1000 or 10000 is usually suffice for shuffling)

# - For methods with the `num_parallel_calls` parameter available (such as `map()`), setting it to`num_parallel_calls=tf.data.AUTOTUNE` will parallelize preprocessing and significantly improve speed

# - Can't use `cache()` unless your dataset can fit in memory

#

# Woah, the above is alot. But once we've coded below, it'll start to make sense.

#

# We're going to through things in the following order:

#

# ```

# Original dataset (e.g. train_data) -> map() -> shuffle() -> batch() -> prefetch() -> PrefetchDataset

# ```

#

# This is like saying,

#

# > "Hey, map this preprocessing function across our training dataset, then shuffle a number of elements before batching them together and make sure you prepare new batches (prefetch) whilst the model is looking through the current batch".

#

#

#

# *What happens when you use prefetching (faster) versus what happens when you don't use prefetching (slower). **Source:** Page 422 of [Hands-On Machine Learning with Scikit-Learn, Keras & TensorFlow Book by <NAME>](https://www.oreilly.com/library/view/hands-on-machine-learning/9781492032632/).*

#

# + id="VhA4gq-pI2W3"

# Map preprocessing function to training data (and paralellize)

train_data = train_data.map(map_func=preprocess_img, num_parallel_calls=tf.data.AUTOTUNE)

# Shuffle train_data and turn it into batches and prefetch it (load it faster)

train_data = train_data.shuffle(buffer_size=1000).batch(batch_size=32).prefetch(buffer_size=tf.data.AUTOTUNE)

# Map prepreprocessing function to test data

test_data = test_data.map(preprocess_img, num_parallel_calls=tf.data.AUTOTUNE)

# Turn test data into batches (don't need to shuffle)

test_data = test_data.batch(32).prefetch(tf.data.AUTOTUNE)

# + [markdown] id="rnTPWyAhlKO3"

# And now let's check out what our prepared datasets look like.

# + colab={"base_uri": "https://localhost:8080/"} id="5_fBkGqfJFxT" outputId="176eab2e-d62a-433e-f7c1-32f111719e07"

train_data, test_data

# + [markdown] id="X1fxgyWnlQNU"

# Excellent! Looks like our data is now in tutples of `(image, label)` with datatypes of `(tf.float32, tf.int64)`, just what our model is after.

#

# > 🔑 **Note:** You can get away without calling the `prefetch()` method on the end of your datasets, however, you'd probably see significantly slower data loading speeds when building a model. So most of your dataset input pipelines should end with a call to [`prefecth()`](https://www.tensorflow.org/api_docs/python/tf/data/Dataset#prefetch).

#

# Onward.

# + [markdown] id="Qj3umnpMvSw8"

# ## Create modelling callbacks

#

# Since we're going to be training on a large amount of data and training could take a long time, it's a good idea to set up some modelling callbacks so we be sure of things like our model's training logs being tracked and our model being checkpointed (saved) after various training milestones.

#

# To do each of these we'll use the following callbacks:

# * [`tf.keras.callbacks.TensorBoard()`](https://www.tensorflow.org/api_docs/python/tf/keras/callbacks/TensorBoard) - allows us to keep track of our model's training history so we can inspect it later (**note:** we've created this callback before have imported it from `helper_functions.py` as `create_tensorboard_callback()`)

# * [`tf.keras.callbacks.ModelCheckpoint()`](https://www.tensorflow.org/api_docs/python/tf/keras/callbacks/ModelCheckpoint) - saves our model's progress at various intervals so we can load it and resuse it later without having to retrain it

# * Checkpointing is also helpful so we can start fine-tuning our model at a particular epoch and revert back to a previous state if fine-tuning offers no benefits

# + id="wyYmxPnlXOwd"

# Create TensorBoard callback (already have "create_tensorboard_callback()" from a previous notebook)

from helper_functions import create_tensorboard_callback

# Create ModelCheckpoint callback to save model's progress

checkpoint_path = "model_checkpoints/cp.ckpt" # saving weights requires ".ckpt" extension

model_checkpoint = tf.keras.callbacks.ModelCheckpoint(checkpoint_path,

montior="val_acc", # save the model weights with best validation accuracy

save_best_only=True, # only save the best weights

save_weights_only=True, # only save model weights (not whole model)

verbose=0) # don't print out whether or not model is being saved

# + [markdown] id="DyXlCU50UElG"

# ## Setup mixed precision training

#

# We touched on mixed precision training above.

#

# However, we didn't quite explain it.

#

# Normally, tensors in TensorFlow default to the float32 datatype (unless otherwise specified).

#

# In computer science, float32 is also known as [single-precision floating-point format](https://en.wikipedia.org/wiki/Single-precision_floating-point_format). The 32 means it usually occupies 32 bits in computer memory.

#

# Your GPU has a limited memory, therefore it can only handle a number of float32 tensors at the same time.

#

# This is where mixed precision training comes in.

#

# Mixed precision training involves using a mix of float16 and float32 tensors to make better use of your GPU's memory.

#

# Can you guess what float16 means?

#

# Well, if you thought since float32 meant single-precision floating-point, you might've guessed float16 means [half-precision floating-point format](https://en.wikipedia.org/wiki/Half-precision_floating-point_format). And if you did, you're right! And if not, no trouble, now you know.

#

# For tensors in float16 format, each element occupies 16 bits in computer memory.

#

# So, where does this leave us?

#

# As mentioned before, when using mixed precision training, your model will make use of float32 and float16 data types to use less memory where possible and in turn run faster (using less memory per tensor means more tensors can be computed on simultaneously).

#

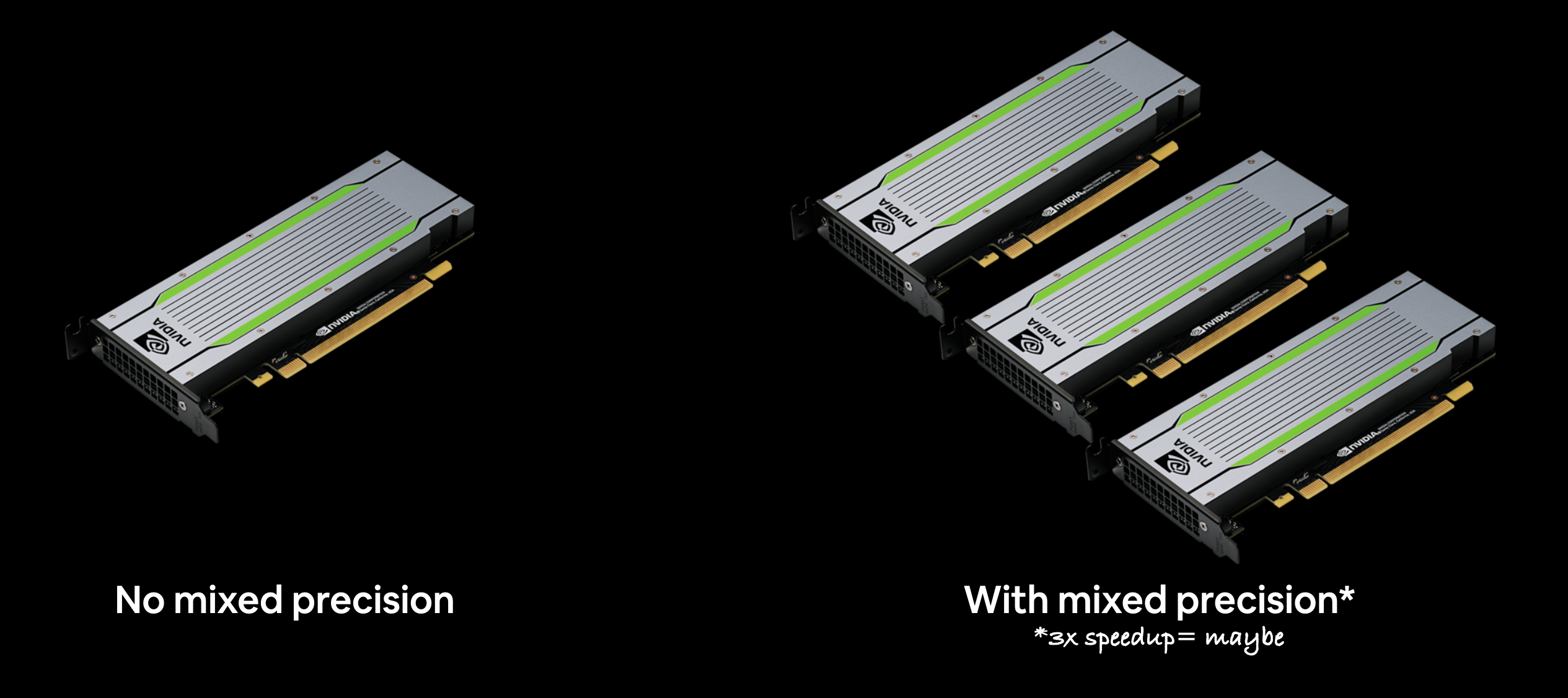

# As a result, using mixed precision training can improve your performance on modern GPUs (those with a compute capability score of 7.0+) by up to 3x.

#

# For a more detailed explanation, I encourage you to read through the [TensorFlow mixed precision guide](https://www.tensorflow.org/guide/mixed_precision) (I'd highly recommend at least checking out the summary).

#

#

# *Because mixed precision training uses a combination of float32 and float16 data types, you may see up to a 3x speedup on modern GPUs.*

#

# > 🔑 **Note:** If your GPU doesn't have a score of over 7.0+ (e.g. P100 in Colab), mixed precision won't work (see: ["Supported Hardware"](https://www.tensorflow.org/guide/mixed_precision#supported_hardware) in the mixed precision guide for more).

#

# > 📖 **Resource:** If you'd like to learn more about precision in computer science (the detail to which a numerical quantity is expressed by a computer), see the [Wikipedia page](https://en.wikipedia.org/wiki/Precision_(computer_science)) (and accompanying resources).

#

# Okay, enough talk, let's see how we can turn on mixed precision training in TensorFlow.

#

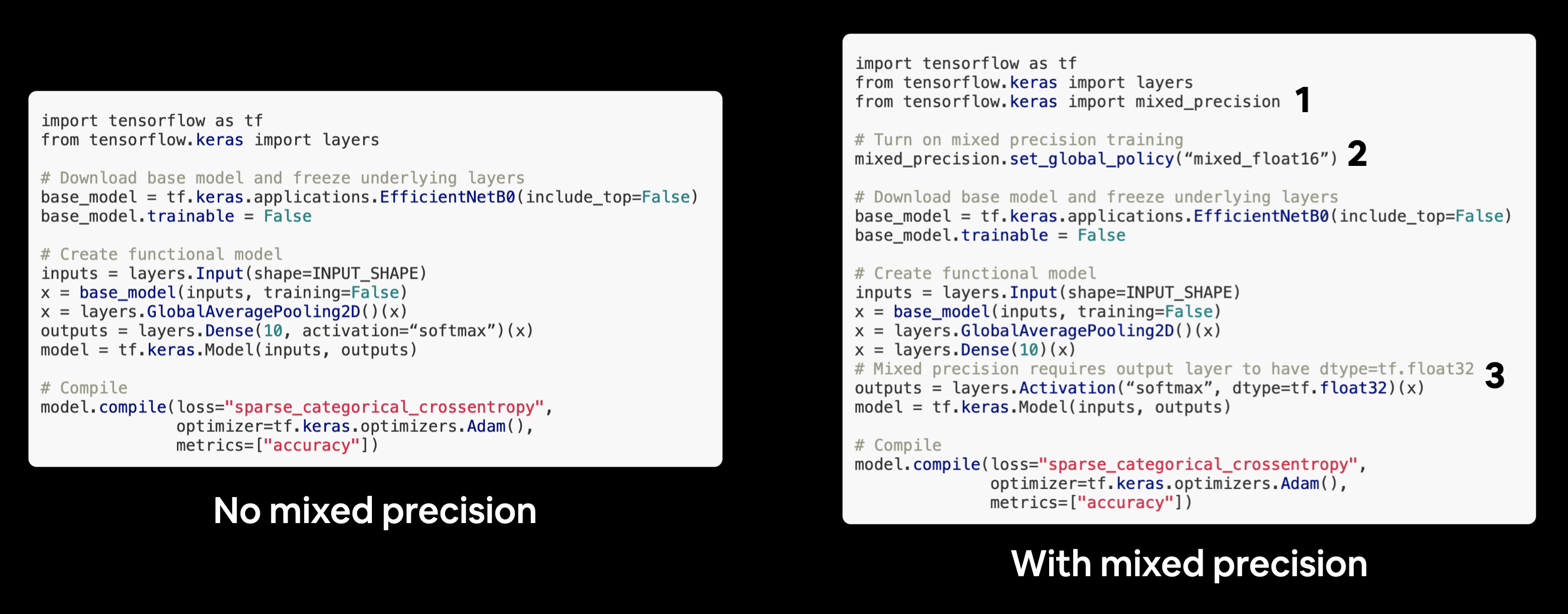

# The beautiful thing is, the [`tensorflow.keras.mixed_precision`](https://www.tensorflow.org/api_docs/python/tf/keras/mixed_precision/) API has made it very easy for us to get started.

#

# First, we'll import the API and then use the [`set_global_policy()`](https://www.tensorflow.org/api_docs/python/tf/keras/mixed_precision/set_global_policy) method to set the *dtype policy* to `"mixed_float16"`.

#

# + colab={"base_uri": "https://localhost:8080/"} id="5BuEjmlybR7V" outputId="ebb171e8-4ae9-406d-c771-6cdf4f5b2a8d"

# Turn on mixed precision training

from tensorflow.keras import mixed_precision

mixed_precision.set_global_policy(policy="mixed_float16") # set global policy to mixed precision

# + [markdown] id="OLxlu7VyYoQm"

# Nice! As long as the GPU you're using has a compute capability of 7.0+ the cell above should run without error.

#

# Now we can check the global dtype policy (the policy which will be used by layers in our model) using the [`mixed_precision.global_policy()`](https://www.tensorflow.org/api_docs/python/tf/keras/mixed_precision/global_policy) method.

# + colab={"base_uri": "https://localhost:8080/"} id="qzSWJP8KkKae" outputId="90ae40e6-e28f-4c16-b565-0114cff046e2"

mixed_precision.global_policy() # should output "mixed_float16"

# + [markdown] id="gpnAW2ltXCpE"

# Great, since the global dtype policy is now `"mixed_float16"` our model will automatically take advantage of float16 variables where possible and in turn speed up training.

# + [markdown] id="rA8FBJwwvVoG"

# ## Build feature extraction model

#

# Callbacks: ready to roll.

#

# Mixed precision: turned on.

#

# Let's build a model.

#

# Because our dataset is quite large, we're going to move towards fine-tuning an existing pretrained model (EfficienetNetB0).

#

# But before we get into fine-tuning, let's set up a feature-extraction model.

#

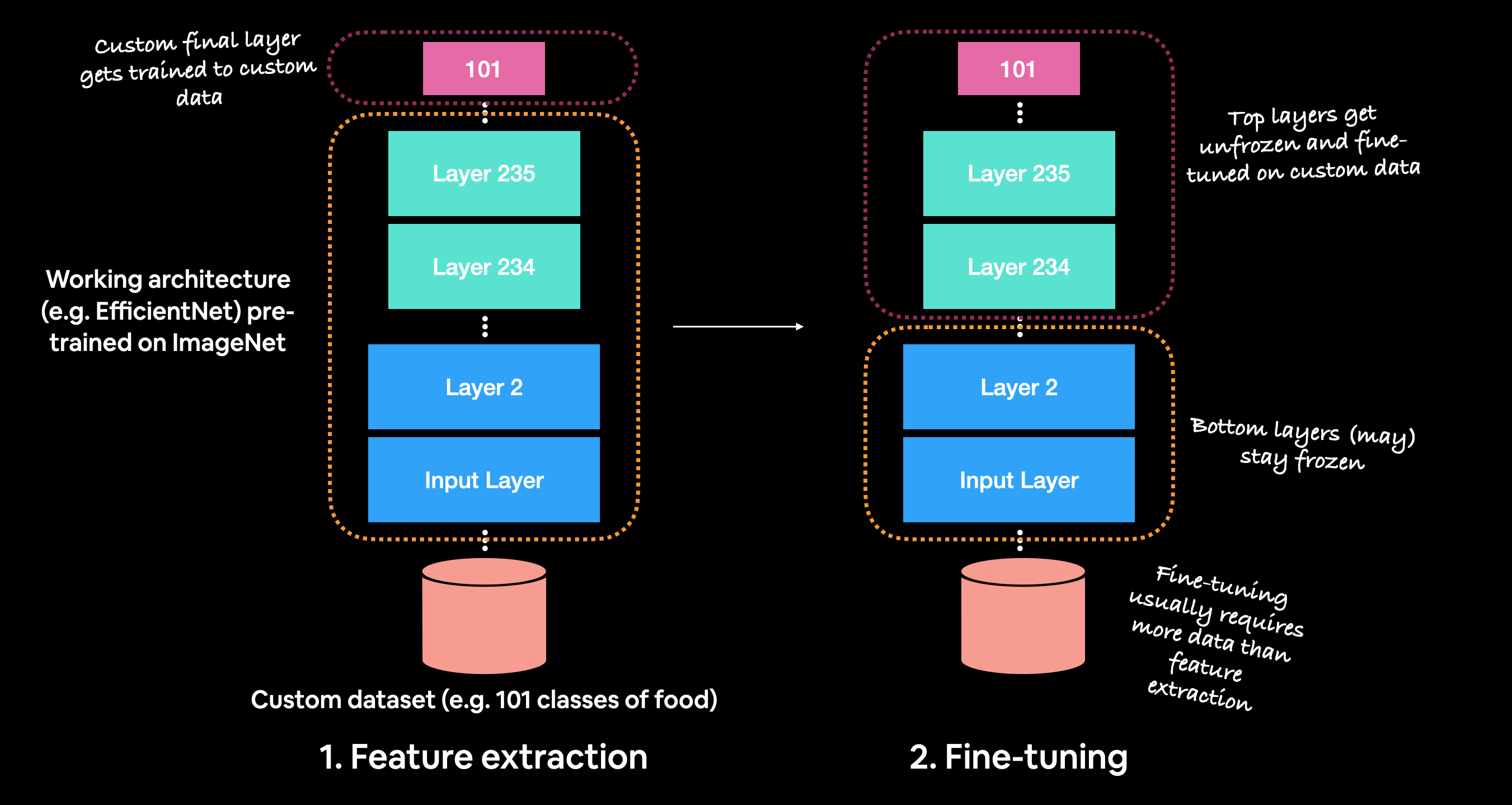

# Recall, the typical order for using transfer learning is:

#

# 1. Build a feature extraction model (replace the top few layers of a pretrained model)

# 2. Train for a few epochs with lower layers frozen

# 3. Fine-tune if necessary with multiple layers unfrozen

#

#

# *Before fine-tuning, it's best practice to train a feature extraction model with custom top layers.*

#

# To build the feature extraction model (covered in [Transfer Learning in TensorFlow Part 1: Feature extraction](https://github.com/mrdbourke/tensorflow-deep-learning/blob/main/04_transfer_learning_in_tensorflow_part_1_feature_extraction.ipynb)), we'll:

# * Use `EfficientNetB0` from [`tf.keras.applications`](https://www.tensorflow.org/api_docs/python/tf/keras/applications) pre-trained on ImageNet as our base model

# * We'll download this without the top layers using `include_top=False` parameter so we can create our own output layers

# * Freeze the base model layers so we can use the pre-learned patterns the base model has found on ImageNet

# * Put together the input, base model, pooling and output layers in a [Functional model](https://keras.io/guides/functional_api/)

# * Compile the Functional model using the Adam optimizer and [sparse categorical crossentropy](https://www.tensorflow.org/api_docs/python/tf/keras/losses/SparseCategoricalCrossentropy) as the loss function (since our labels **aren't** one-hot encoded)

# * Fit the model for 3 epochs using the TensorBoard and ModelCheckpoint callbacks

#

# > 🔑 **Note:** Since we're using mixed precision training, our model needs a separate output layer with a hard-coded `dtype=float32`, for example, `layers.Activation("softmax", dtype=tf.float32)`. This ensures the outputs of our model are returned back to the float32 data type which is more numerically stable than the float16 datatype (important for loss calculations). See the ["Building the model"](https://www.tensorflow.org/guide/mixed_precision#building_the_model) section in the TensorFlow mixed precision guide for more.

#

#

# *Turning mixed precision on in TensorFlow with 3 lines of code.*

# + colab={"base_uri": "https://localhost:8080/"} id="GrkWpCzfXKE7" outputId="d3ae00b1-4c19-4d6e-c25f-5d0114a9168a"

from tensorflow.keras import layers

from tensorflow.keras.layers.experimental import preprocessing

# Create base model

input_shape = (224, 224, 3)

base_model = tf.keras.applications.EfficientNetB0(include_top=False)

base_model.trainable = False # freeze base model layers

# Create Functional model

inputs = layers.Input(shape=input_shape, name="input_layer")

# Note: EfficientNetBX models have rescaling built-in but if your model didn't you could have a layer like below

# x = preprocessing.Rescaling(1./255)(x)

x = base_model(inputs, training=False) # set base_model to inference mode only

x = layers.GlobalAveragePooling2D(name="pooling_layer")(x)

x = layers.Dense(len(class_names))(x) # want one output neuron per class

# Separate activation of output layer so we can output float32 activations

outputs = layers.Activation("softmax", dtype=tf.float32, name="softmax_float32")(x)

model = tf.keras.Model(inputs, outputs)

# Compile the model

model.compile(loss="sparse_categorical_crossentropy", # Use sparse_categorical_crossentropy when labels are *not* one-hot

optimizer=tf.keras.optimizers.Adam(),

metrics=["accuracy"])

# + colab={"base_uri": "https://localhost:8080/"} id="wfEG8ud_jsNY" outputId="82b20036-498f-4efa-9ae1-d9e9e5c728e8"

# Check out our model

model.summary()

# + [markdown] id="lIXkEdnNGpKi"

# ## Checking layer dtype policies (are we using mixed precision?)

#

# Model ready to go!

#

# Before we said the mixed precision API will automatically change our layers' dtype policy's to whatever the global dtype policy is (in our case it's `"mixed_float16"`).

#

# We can check this by iterating through our model's layers and printing layer attributes such as `dtype` and `dtype_policy`.

# + colab={"base_uri": "https://localhost:8080/"} id="Zk__ebBLHC-Q" outputId="e94ff2a5-6adb-4745-fcd0-8cb2f2d5a083"

# Check the dtype_policy attributes of layers in our model

for layer in model.layers:

print(layer.name, layer.trainable, layer.dtype, layer.dtype_policy) # Check the dtype policy of layers

# + [markdown] id="7w6Gv6ySfpNY"

# Going through the above we see:

# * `layer.name` (str) : a layer's human-readable name, can be defined by the `name` parameter on construction

# * `layer.trainable` (bool) : whether or not a layer is trainable (all of our layers are trainable except the efficientnetb0 layer since we set it's `trainable` attribute to `False`

# * `layer.dtype` : the data type a layer stores its variables in

# * `layer.dtype_policy` : the data type a layer computes in

#

# > 🔑 **Note:** A layer can have a dtype of `float32` and a dtype policy of `"mixed_float16"` because it stores its variables (weights & biases) in `float32` (more numerically stable), however it computes in `float16` (faster).

#

# We can also check the same details for our model's base model.

#

# + colab={"base_uri": "https://localhost:8080/"} id="eL_THJCYGenQ" outputId="5339f5f1-0179-4b56-b65a-80ec350aee45"

# Check the layers in the base model and see what dtype policy they're using

for layer in model.layers[1].layers[:20]: # only check the first 20 layers to save output space

print(layer.name, layer.trainable, layer.dtype, layer.dtype_policy)

# + [markdown] id="GerkBr7GiDIj"

# > 🔑 **Note:** The mixed precision API automatically causes layers which can benefit from using the `"mixed_float16"` dtype policy to use it. It also prevents layers which shouldn't use it from using it (e.g. the normalization layer at the start of the base model).

# + [markdown] id="NJz5S66ojyUS"

# ## Fit the feature extraction model

#

# Now that's one good looking model. Let's fit it to our data shall we?

#

# Three epochs should be enough for our top layers to adjust their weights enough to our food image data.

#

# To save time per epoch, we'll also only validate on 15% of the test data.

# + colab={"base_uri": "https://localhost:8080/"} id="4v7rXZG-ZkNJ" outputId="e7f6f934-538b-42e1-f732-0bfd3b0ff13b"

# Fit the model with callbacks

history_101_food_classes_feature_extract = model.fit(train_data,

epochs=3,

steps_per_epoch=len(train_data),

validation_data=test_data,

validation_steps=int(0.15 * len(test_data)),

callbacks=[create_tensorboard_callback("training_logs",

"efficientnetb0_101_classes_all_data_feature_extract"),

model_checkpoint])

# + [markdown] id="xg01Gh3EnQSu"

# Nice, looks like our feature extraction model is performing pretty well. How about we evaluate it on the whole test dataset?

# + colab={"base_uri": "https://localhost:8080/"} id="jhV7fvTreV27" outputId="ca5d6920-1d60-46b4-abd3-38f0e8776718"

# Evaluate model (unsaved version) on whole test dataset

results_feature_extract_model = model.evaluate(test_data)

results_feature_extract_model

# + [markdown] id="TI0li4ZenctF"

# And since we used the `ModelCheckpoint` callback, we've got a saved version of our model in the `model_checkpoints` directory.

#

# Let's load it in and make sure it performs just as well.

# + [markdown] id="nNGoI1cS21um"

# ## Load and evaluate checkpoint weights

#

# We can load in and evaluate our model's checkpoints by:

#

# 1. Cloning our model using [`tf.keras.models.clone_model()`](https://www.tensorflow.org/api_docs/python/tf/keras/models/clone_model) to make a copy of our feature extraction model with reset weights.

# 2. Calling the `load_weights()` method on our cloned model passing it the path to where our checkpointed weights are stored.

# 3. Calling `evaluate()` on the cloned model with loaded weights.

#

# A reminder, checkpoints are helpful for when you perform an experiment such as fine-tuning your model. In the case you fine-tune your feature extraction model and find it doesn't offer any improvements, you can always revert back to the checkpointed version of your model.

# + colab={"base_uri": "https://localhost:8080/"} id="C5a_eh9RKBSY" outputId="71986b44-476b-4252-a8fc-2f4e1d77f073"

# Clone the model we created (this resets all weights)

cloned_model = tf.keras.models.clone_model(model)

cloned_model.summary()

# + colab={"base_uri": "https://localhost:8080/", "height": 35} id="3NBOvb9bkAHa" outputId="0ea80b8d-9570-4148-a784-f6fac093bc5e"

# Where are our checkpoints stored?

checkpoint_path

# + colab={"base_uri": "https://localhost:8080/"} id="mnagdIagKGZY" outputId="2787e959-6681-48fb-c37a-a324fad7f026"

# Load checkpointed weights into cloned_model

cloned_model.load_weights(checkpoint_path)

# + [markdown] id="Wh_-7URJlapr"

# Each time you make a change to your model (including loading weights), you have to recompile.

# + id="So0ybUwRKNSf"

# Compile cloned_model (with same parameters as original model)

cloned_model.compile(loss="sparse_categorical_crossentropy",

optimizer=tf.keras.optimizers.Adam(),

metrics=["accuracy"])

# + colab={"base_uri": "https://localhost:8080/"} id="aZtbkOhHKKLs" outputId="c7e27c13-3a60-4a75-fdb3-ec32fdcb260d"

# Evalaute cloned model with loaded weights (should be same score as trained model)

results_cloned_model_with_loaded_weights = cloned_model.evaluate(test_data)

# + [markdown] id="_441Jd7PlkN4"

# Our cloned model with loaded weight's results should be very close to the feature extraction model's results (if the cell below errors, something went wrong).

# + id="zbcuQQgz5tNX"

# Loaded checkpoint weights should return very similar results to checkpoint weights prior to saving

import numpy as np

assert np.isclose(results_feature_extract_model, results_cloned_model_with_loaded_weights).all() # check if all elements in array are close

# + [markdown] id="n6j46R_L3VED"

# Cloning the model preserves `dtype_policy`'s of layers (but doesn't preserve weights) so if we wanted to continue fine-tuning with the cloned model, we could and it would still use the mixed precision dtype policy.

# + colab={"base_uri": "https://localhost:8080/"} id="YjCO0tm9Je3N" outputId="d894df48-2202-4f34-c079-3d619f4f4345"

# Check the layers in the base model and see what dtype policy they're using

for layer in cloned_model.layers[1].layers[:20]: # check only the first 20 layers to save space

print(layer.name, layer.trainable, layer.dtype, layer.dtype_policy)

# + [markdown] id="EvTGiIFv3eOe"

# ## Save the whole model to file

#

# We can also save the whole model using the [`save()`](https://www.tensorflow.org/api_docs/python/tf/keras/Model#save) method.

#

# Since our model is quite large, you might want to save it to Google Drive (if you're using Google Colab) so you can load it in for use later.

#

# > 🔑 **Note:** Saving to Google Drive requires mounting Google Drive (go to Files -> Mount Drive).

# + id="CH4jkVPBoPhe"

# ## Saving model to Google Drive (optional)

# # Create save path to drive

# save_dir = "drive/MyDrive/tensorflow_course/food_vision/07_efficientnetb0_feature_extract_model_mixed_precision/"

# # os.makedirs(save_dir) # Make directory if it doesn't exist

# # Save model

# model.save(save_dir)

# + [markdown] id="-P1L5fiwnApE"

# We can also save it directly to our Google Colab instance.

#

# > 🔑 **Note:** Google Colab storage is ephemeral and your model will delete itself (along with any other saved files) when the Colab session expires.

# + colab={"base_uri": "https://localhost:8080/"} id="RHKn4Ex57wzF" outputId="6f8706a9-d2f5-4774-b68f-8f59c40ab789"

# Save model locally (if you're using Google Colab, your saved model will Colab instance terminates)

save_dir = "07_efficientnetb0_feature_extract_model_mixed_precision"

model.save(save_dir)

# + [markdown] id="QKiEXBC6n83F"

# And again, we can check whether or not our model saved correctly by loading it in and evaluating it.

# + id="bKGDBKrU6rej"

# Load model previously saved above

loaded_saved_model = tf.keras.models.load_model(save_dir)

# + [markdown] id="CT50keE46i9y"

# Loading a `SavedModel` also retains all of the underlying layers `dtype_policy` (we want them to be `"mixed_float16"`).

# + colab={"base_uri": "https://localhost:8080/"} id="fUJXpxmMKnYV" outputId="8c0e1986-9282-48cd-c98a-e50c130afb46"

# Check the layers in the base model and see what dtype policy they're using

for layer in loaded_saved_model.layers[1].layers[:20]: # check only the first 20 layers to save output space

print(layer.name, layer.trainable, layer.dtype, layer.dtype_policy)

# + colab={"base_uri": "https://localhost:8080/"} id="5Qym9gSm6vL_" outputId="f1b77e21-a61e-47c8-b628-f532f36d4026"

# Check loaded model performance (this should be the same as results_feature_extract_model)

results_loaded_saved_model = loaded_saved_model.evaluate(test_data)

results_loaded_saved_model

# + id="4BUhHSXI-l0v"

# The loaded model's results should equal (or at least be very close) to the model's results prior to saving

# Note: this will only work if you've instatiated results variables

import numpy as np

assert np.isclose(results_feature_extract_model, results_loaded_saved_model).all()

# + [markdown] id="mXlDCU8zoUiK"

# That's what we want! Our loaded model performing as it should.

#

# > 🔑 **Note:** We spent a fair bit of time making sure our model saved correctly because training on a lot of data can be time-consuming, so we want to make sure we don't have to continaully train from scratch.

# + [markdown] id="bj21OVxBGlw9"

# ## Preparing our model's layers for fine-tuning

#

# Our feature-extraction model is showing some great promise after three epochs. But since we've got so much data, it's probably worthwhile that we see what results we can get with fine-tuning (fine-tuning usually works best when you've got quite a large amount of data).

#

# Remember our goal of beating the [DeepFood paper](https://arxiv.org/pdf/1606.05675.pdf)?

#

# They were able to achieve 77.4% top-1 accuracy on Food101 over 2-3 days of training.

#

# Do you think fine-tuning will get us there?

#

# Let's find out.

#

# To start, let's load in our saved model.

#

# > 🔑 **Note:** It's worth remembering a traditional workflow for fine-tuning is to freeze a pre-trained base model and then train only the output layers for a few iterations so their weights can be updated inline with your custom data (feature extraction). And then unfreeze a number or all of the layers in the base model and continue training until the model stops improving.

#

# Like all good cooking shows, I've saved a model I prepared earlier (the feature extraction model from above) to Google Storage.

#

# We can download it to make sure we're using the same model going forward.

# + colab={"base_uri": "https://localhost:8080/"} id="veoEmC6V-cZv" outputId="a52eff70-816d-4854-f092-050f4d905f63"

# Download the saved model from Google Storage

# !wget https://storage.googleapis.com/ztm_tf_course/food_vision/07_efficientnetb0_feature_extract_model_mixed_precision.zip

# + colab={"base_uri": "https://localhost:8080/"} id="F5uIf_0J-jRt" outputId="20f79229-9b94-4703-a6b8-a16662b59ff4"

# Unzip the SavedModel downloaded from Google Stroage

# !mkdir downloaded_gs_model # create new dir to store downloaded feature extraction model

# !unzip 07_efficientnetb0_feature_extract_model_mixed_precision.zip -d downloaded_gs_model

# + id="Xbs5ywA6_CWV"

# Load and evaluate downloaded GS model

loaded_gs_model = tf.keras.models.load_model("/content/downloaded_gs_model/07_efficientnetb0_feature_extract_model_mixed_precision")

# + colab={"base_uri": "https://localhost:8080/"} id="YEZSqPs6pQxy" outputId="4237c482-54c0-4534-99e6-4531113720b3"

# Get a summary of our downloaded model

loaded_gs_model.summary()

# + [markdown] id="AH6sS_DNzSe_"

# And now let's make sure our loaded model is performing as expected.

# + colab={"base_uri": "https://localhost:8080/"} id="IlGs5V3Tosx3" outputId="a325d32a-845b-415b-da8b-ae5edfd8c93e"

# How does the loaded model perform?

results_loaded_gs_model = loaded_gs_model.evaluate(test_data)

results_loaded_gs_model

# + [markdown] id="2ZokTvmxzXv8"

# Great, our loaded model is performing as expected.

#

# When we first created our model, we froze all of the layers in the base model by setting `base_model.trainable=False` but since we've loaded in our model from file, let's check whether or not the layers are trainable or not.

# + colab={"base_uri": "https://localhost:8080/"} id="S-RQ633CapPk" outputId="07a27634-668f-40bd-e1fc-8ce54b43f70c"

# Are any of the layers in our model frozen?

for layer in loaded_gs_model.layers:

layer.trainable = True # set all layers to trainable

print(layer.name, layer.trainable, layer.dtype, layer.dtype_policy) # make sure loaded model is using mixed precision dtype_policy ("mixed_float16")

# + [markdown] id="tTzYLrzGzs6W"

# Alright, it seems like each layer in our loaded model is trainable. But what if we got a little deeper and inspected each of the layers in our base model?

#

# > 🤔 **Question:** *Which layer in the loaded model is our base model?*

#

# Before saving the Functional model to file, we created it with five layers (layers below are 0-indexed):

# 0. The input layer

# 1. The pre-trained base model layer (`tf.keras.applications.EfficientNetB0`)

# 2. The pooling layer

# 3. The fully-connected (dense) layer

# 4. The output softmax activation (with float32 dtype)

#

# Therefore to inspect our base model layer, we can access the `layers` attribute of the layer at index 1 in our model.

# + colab={"base_uri": "https://localhost:8080/"} id="rob4ClPIa2hp" outputId="a4670a8a-4572-41b1-edbe-88fb084effad"

# Check the layers in the base model and see what dtype policy they're using

for layer in loaded_gs_model.layers[1].layers[:20]:

print(layer.name, layer.trainable, layer.dtype, layer.dtype_policy)

# + [markdown] id="BRmD0IvT1bR_"

# Wonderful, it looks like each layer in our base model is trainable (unfrozen) and every layer which should be using the dtype policy `"mixed_policy16"` is using it.

#

# Since we've got so much data (750 images x 101 training classes = 75750 training images), let's keep all of our base model's layers unfrozen.

#

# > 🔑 **Note:** If you've got a small amount of data (less than 100 images per class), you may want to only unfreeze and fine-tune a small number of layers in the base model at a time. Otherwise, you risk overfitting.

# + [markdown] id="m6_6m5jb2Nea"

# ## A couple more callbacks

#

# We're about to start fine-tuning a deep learning model with over 200 layers using over 100,000 (75k+ training, 25K+ testing) images, which means our model's training time is probably going to be much longer than before.

#

# > 🤔 **Question:** *How long does training take?*

#

# It could be a couple of hours or in the case of the [DeepFood paper](https://arxiv.org/pdf/1606.05675.pdf) (the baseline we're trying to beat), their best performing model took 2-3 days of training time.

#

# You will really only know how long it'll take once you start training.

#

# > 🤔 **Question:** *When do you stop training?*

#

# Ideally, when your model stops improving. But again, due to the nature of deep learning, it can be hard to know when exactly a model will stop improving.

#

# Luckily, there's a solution: the [`EarlyStopping` callback](https://www.tensorflow.org/api_docs/python/tf/keras/callbacks/EarlyStopping).

#

# The `EarlyStopping` callback monitors a specified model performance metric (e.g. `val_loss`) and when it stops improving for a specified number of epochs, automatically stops training.

#

# Using the `EarlyStopping` callback combined with the `ModelCheckpoint` callback saving the best performing model automatically, we could keep our model training for an unlimited number of epochs until it stops improving.

#

# Let's set both of these up to monitor our model's `val_loss`.

# + id="GcKFVlXVwjJy"

# Setup EarlyStopping callback to stop training if model's val_loss doesn't improve for 3 epochs

early_stopping = tf.keras.callbacks.EarlyStopping(monitor="val_loss", # watch the val loss metric

patience=3) # if val loss decreases for 3 epochs in a row, stop training

# Create ModelCheckpoint callback to save best model during fine-tuning

checkpoint_path = "fine_tune_checkpoints/"

model_checkpoint = tf.keras.callbacks.ModelCheckpoint(checkpoint_path,

save_best_only=True,

monitor="val_loss")

# + [markdown] id="14cdwkIi4WnG"

# Woohoo! Fine-tuning callbacks ready.

#

# If you're planning on training large models, the `ModelCheckpoint` and `EarlyStopping` are two callbacks you'll want to become very familiar with.

#

# We're almost ready to start fine-tuning our model but there's one more callback we're going to implement: [`ReduceLROnPlateau`](https://www.tensorflow.org/api_docs/python/tf/keras/callbacks/ReduceLROnPlateau).

#

# Remember how the learning rate is the most important model hyperparameter you can tune? (if not, treat this as a reminder).

#

# Well, the `ReduceLROnPlateau` callback helps to tune the learning rate for you.

#

# Like the `ModelCheckpoint` and `EarlyStopping` callbacks, the `ReduceLROnPlateau` callback montiors a specified metric and when that metric stops improving, it reduces the learning rate by a specified factor (e.g. divides the learning rate by 10).

#

# > 🤔 **Question:** *Why lower the learning rate?*

#

# Imagine having a coin at the back of the couch and you're trying to grab with your fingers.

#

# Now think of the learning rate as the size of the movements your hand makes towards the coin.

#

# The closer you get, the smaller you want your hand movements to be, otherwise the coin will be lost.

#

# Our model's ideal performance is the equivalent of grabbing the coin. So as training goes on and our model gets closer and closer to it's ideal performance (also called **convergence**), we want the amount it learns to be less and less.

#

# To do this we'll create an instance of the `ReduceLROnPlateau` callback to monitor the validation loss just like the `EarlyStopping` callback.

#

# Once the validation loss stops improving for two or more epochs, we'll reduce the learning rate by a factor of 5 (e.g. `0.001` to `0.0002`).

#

# And to make sure the learning rate doesn't get too low (and potentially result in our model learning nothing), we'll set the minimum learning rate to `1e-7`.

# + id="I794Kaiq4Ekk"

# Creating learning rate reduction callback

reduce_lr = tf.keras.callbacks.ReduceLROnPlateau(monitor="val_loss",

factor=0.2, # multiply the learning rate by 0.2 (reduce by 5x)

patience=2,

verbose=1, # print out when learning rate goes down

min_lr=1e-7)

# + [markdown] id="3v0BHURF6Lnz"

# Learning rate reduction ready to go!

#

# Now before we start training, we've got to recompile our model.

#

# We'll use sparse categorical crossentropy as the loss and since we're fine-tuning, we'll use a 10x lower learning rate than the Adam optimizers default (`1e-4` instead of `1e-3`).

# + id="GlpO9LflcVHW"

# Compile the model

loaded_gs_model.compile(loss="sparse_categorical_crossentropy", # sparse_categorical_crossentropy for labels that are *not* one-hot

optimizer=tf.keras.optimizers.Adam(0.0001), # 10x lower learning rate than the default

metrics=["accuracy"])

# + [markdown] id="yo1bio8SYxvc"

# Okay, model compiled.

#

# Now let's fit it on all of the data.

#

# We'll set it up to run for up to 100 epochs.

#

# Since we're going to be using the `EarlyStopping` callback, it might stop before reaching 100 epochs.

#

# > 🔑 **Note:** Running the cell below will set the model up to fine-tune all of the pre-trained weights in the base model on all of the Food101 data. Doing so with **unoptimized** data pipelines and **without** mixed precision training will take a fairly long time per epoch depending on what type of GPU you're using (about 15-20 minutes on Colab GPUs). But don't worry, **the code we've written above will ensure it runs much faster** (more like 4-5 minutes per epoch).

# + colab={"base_uri": "https://localhost:8080/"} id="LkUtOdVkbMPC" outputId="c316506e-0858-4b2e-81d7-c5921ef67368"

# Start to fine-tune (all layers)

history_101_food_classes_all_data_fine_tune = loaded_gs_model.fit(train_data,

epochs=100, # fine-tune for a maximum of 100 epochs

steps_per_epoch=len(train_data),

validation_data=test_data,

validation_steps=int(0.15 * len(test_data)), # validation during training on 15% of test data

callbacks=[create_tensorboard_callback("training_logs", "efficientb0_101_classes_all_data_fine_tuning"), # track the model training logs

model_checkpoint, # save only the best model during training

early_stopping, # stop model after X epochs of no improvements

reduce_lr]) # reduce the learning rate after X epochs of no improvements

# + [markdown] id="nC2HLXePh9Af"

# > 🔑 **Note:** If you didn't use mixed precision or use techniques such as [`prefetch()`](https://www.tensorflow.org/api_docs/python/tf/data/Dataset#prefetch) in the *Batch & prepare datasets* section, your model fine-tuning probably takes up to 2.5-3x longer per epoch (see the output below for an example).

#

# | | Prefetch and mixed precision | No prefetch and no mixed precision |

# |-----|-----|-----|

# | Time per epoch | ~280-300s | ~1127-1397s |

#

# *Results from fine-tuning 🍔👁 Food Vision Big™ on Food101 dataset using an EfficienetNetB0 backbone using a Google Colab Tesla T4 GPU.*

#

# ```

# Saving TensorBoard log files to: training_logs/efficientB0_101_classes_all_data_fine_tuning/20200928-013008

# Epoch 1/100

# 2368/2368 [==============================] - 1397s 590ms/step - loss: 1.2068 - accuracy: 0.6820 - val_loss: 1.1623 - val_accuracy: 0.6894

# Epoch 2/100

# 2368/2368 [==============================] - 1193s 504ms/step - loss: 0.9459 - accuracy: 0.7444 - val_loss: 1.1549 - val_accuracy: 0.6872

# Epoch 3/100

# 2368/2368 [==============================] - 1143s 482ms/step - loss: 0.7848 - accuracy: 0.7838 - val_loss: 1.0402 - val_accuracy: 0.7142

# Epoch 4/100

# 2368/2368 [==============================] - 1127s 476ms/step - loss: 0.6599 - accuracy: 0.8149 - val_loss: 0.9599 - val_accuracy: 0.7373

# ```

# *Example fine-tuning time for non-prefetched data as well as non-mixed precision training (~2.5-3x longer per epoch).*

#

# Let's make sure we save our model before we start evaluating it.

# + id="mwjxnUsgI558"

# # Save model to Google Drive (optional)

# loaded_gs_model.save("/content/drive/MyDrive/tensorflow_course/food_vision/07_efficientnetb0_fine_tuned_101_classes_mixed_precision/")

# + colab={"base_uri": "https://localhost:8080/"} id="K1As0OhYHFX-" outputId="46408a84-943b-46f6-<PASSWORD>-2<PASSWORD>"

# Save model locally (note: if you're using Google Colab and you save your model locally, it will be deleted when your Google Colab session ends)

loaded_gs_model.save("07_efficientnetb0_fine_tuned_101_classes_mixed_precision")

# + [markdown] id="CcpNGcSAZ2UC"

# Looks like our model has gained a few performance points from fine-tuning, let's evaluate on the whole test dataset and see if managed to beat the [DeepFood paper's](https://arxiv.org/abs/1606.05675) result of 77.4% accuracy.

# + colab={"base_uri": "https://localhost:8080/"} id="2CR6q8MYM37K" outputId="d10180ce-1df4-4b77-a296-8f61ac7dd080"

# Evaluate mixed precision trained loaded model

results_loaded_gs_model_fine_tuned = loaded_gs_model.evaluate(test_data)

results_loaded_gs_model_fine_tuned

# + [markdown] id="rR-S5bKP0IxA"

# Woohoo!!!! It looks like our model beat the results mentioned in the DeepFood paper for Food101 (DeepFood's 77.4% top-1 accuracy versus our ~79% top-1 accuracy).

# + [markdown] id="a0H1rSG9RBBV"

# ## Download fine-tuned model from Google Storage

#

# As mentioned before, training models can take a significant amount of time.

#

# And again, like any good cooking show, here's something we prepared earlier...

#

# It's a fine-tuned model exactly like the one we trained above but it's saved to Google Storage so it can be accessed, imported and evaluated.

# + colab={"base_uri": "https://localhost:8080/"} id="qRuBSsPxI8Yg" outputId="86a5343d-d0eb-4fb4-9ed8-7acae14f7a9e"

# Download and evaluate fine-tuned model from Google Storage

# !wget https://storage.googleapis.com/ztm_tf_course/food_vision/07_efficientnetb0_fine_tuned_101_classes_mixed_precision.zip

# + [markdown] id="5_KhgOeA_hCG"

# The downloaded model comes in zip format (`.zip`) so we'll unzip it into the Google Colab instance.

# + colab={"base_uri": "https://localhost:8080/"} id="PNh0cPL7JBpv" outputId="c5ea36e4-1203-46eb-dd5a-285a8764d1e8"

# Unzip fine-tuned model

# !mkdir downloaded_fine_tuned_gs_model # create separate directory for fine-tuned model downloaded from Google Storage

# !unzip /content/07_efficientnetb0_fine_tuned_101_classes_mixed_precision -d downloaded_fine_tuned_gs_model

# + [markdown] id="blFdr0QJ_oQY"

# Now we can load it using the [`tf.keras.models.load_model()`](https://www.tensorflow.org/tutorials/keras/save_and_load) method and get a summary (it should be the exact same as the model we created above).

# + id="yBWWTb-QKuW1"

# Load in fine-tuned model from Google Storage and evaluate

loaded_fine_tuned_gs_model = tf.keras.models.load_model("/content/downloaded_fine_tuned_gs_model/07_efficientnetb0_fine_tuned_101_classes_mixed_precision")

# + colab={"base_uri": "https://localhost:8080/"} id="sysv2pDmJoe8" outputId="228088b7-0f87-4652-f894-3ef6accdb32a"

# Get a model summary (same model architecture as above)

loaded_fine_tuned_gs_model.summary()

# + [markdown] id="SxP8rbAj_4lY"

# Finally, we can evaluate our model on the test data (this requires the `test_data` variable to be loaded.

# + colab={"base_uri": "https://localhost:8080/"} id="Ms0R3LZ9Jrr5" outputId="957b540d-e05e-4f27-c379-39f7bce49da9"

# Note: Even if you're loading in the model from Google Storage, you will still need to load the test_data variable for this cell to work

results_downloaded_fine_tuned_gs_model = loaded_fine_tuned_gs_model.evaluate(test_data)

results_downloaded_fine_tuned_gs_model

# + [markdown] id="yMAXmidMc_hA"

# Excellent! Our saved model is performing as expected (better results than the DeepFood paper!).

#

# Congrautlations! You should be excited! You just trained a computer vision model with competitive performance to a research paper and in far less time (our model took ~20 minutes to train versus DeepFood's quoted 2-3 days).

#

# In other words, you brought Food Vision life!

#

# If you really wanted to step things up, you could try using the [`EfficientNetB4`](https://www.tensorflow.org/api_docs/python/tf/keras/applications/EfficientNetB4) model (a larger version of `EfficientNetB0`). At at the time of writing, the EfficientNet family has the [state of the art classification results](https://paperswithcode.com/sota/fine-grained-image-classification-on-food-101) on the Food101 dataset.

#

# > 📖 **Resource:** To see which models are currently performing the best on a given dataset or problem type as well as the latest trending machine learning research, be sure to check out [paperswithcode.com](http://paperswithcode.com/) and [sotabench.com](https://sotabench.com/).

# + [markdown] id="pFjooc5Gy0I2"

# ## View training results on TensorBoard

#

# Since we tracked our model's fine-tuning training logs using the `TensorBoard` callback, let's upload them and inspect them on TensorBoard.dev.

# + id="DW9x3o1kWlO3"

# !tensorboard dev upload --logdir ./training_logs \

# --name "Fine-tuning EfficientNetB0 on all Food101 Data" \

# --description "Training results for fine-tuning EfficientNetB0 on Food101 Data with learning rate 0.0001" \

# --one_shot

# + id="Cnqjq9aWM6pd"