code stringlengths 38 801k | repo_path stringlengths 6 263 |

|---|---|

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3 (ipykernel)

# language: python

# name: python3

# ---

# # Milestone2 (Post-Feedback)

#

# ## Names & ID

# * <NAME> (28222354)

# * <NAME> (18226282)

# ## Table of Contents

# * 1. Introduction

# * 2. Original Dataset

# * 3. Method Chaining

# * 4. Exploratory Data Analysis

# * 5. Analysis

# - 5.1 Research Questions

# - 5.2 Results

# * 6. Conclusion

# ## Introduction

# The "impacts.csv" is a dataset created by NASA, which includes a list of possible asteroid impacts, and characteristics of said asteroid such as probability, diameter, velocity, etc. NASA has gathered this information through their "Sentry" system, which is an automated collision monitoring system that scans through a catalog of asteroids to find possibilities of impact over the next 100 years. Our goal with this dataset is to do an Exploratory Data Analysis to help further our understanding. This will also help us answer any questions we may have on this dataset.

import pandas as pd

import seaborn as sns

import numpy as np

import matplotlib.pyplot as plt

# ## Original Dataset

# The DataFrame below includes information about possible asteriod impacts on earth and asteriod parameters. The dataset includes:

# - Columns named "Period Start and "Period End" show the initiation and ending of the risk factor.

# - Possible Impacts refers to a percentage of probability for impact

# - Asteriod Magnitude refers to the visual brightness relative to the earth , the higher the magnitude the less brightness and visibility.

# - Palermo Scale utilizes an in depth scale to quanitfy level of concern for future potential impacts.

# - Torino Scale indicates the impact's severity dedicated to notify the public.

#

df = pd.read_csv ('../../data/raw/impacts.csv')

df.head()

# ## Method Chaining

# - Through this method chaining I was able to get rid of all null values, assign units to parameters , and organize the data. I have renamed the colums for further description. I have also dropped the maximum torino scale because most of the values were 0 and would reproduce no plot.

# - I have used the load_and_process function. Calling upon this function will apply my method chaining to the dataframe.

#

df = (pd.read_csv('../../data/raw/impacts.csv')

.dropna()

.rename(columns={"Period Start": "Risk Period Start", "Period End": "Risk Period End", "Object Name": "Asteroid Name"})

.rename(columns={"Asteroid Velocity": "Asteroid Velocity (km/s)", "Asteroid Magnitude": "Asteroid Magnitude (m)"})

.drop(['Maximum Torino Scale'], axis=1)

.loc[0:450]

.sort_values(by='Risk Period Start', ascending=True)

.reset_index(drop=True)

)

df

from scripts import project_functions

df = project_functions.load_and_process('../../data/raw/impacts.csv')

df

# ## Exploratory Data Analysis

sns.set_theme(style="white",font_scale=1.4)

fig, x=plt.subplots(figsize=(35,8))

x = sns.countplot(x="Risk Period Start", data=df)

plt.title('Amount of Asteroids in each Risk Period Start')

x.set(ylabel='Number of Asteroids')

plt.tick_params(labelsize=13)

sns.despine()

plt.show()

# ### Observations

# In this part , I have used the count plot to demonstrate a branch of my data. I can see that the data is scattered throughout the diagram with no certain relationship between the two inputs. The year 2017 showed the highest number of asteriods in the initial risk period. The lowest number of asteriods (less than 5) is seen at the year 2048. The graph then fluctuates throughout the years.

fig, x=plt.subplots(figsize=(9,9))

sns.heatmap(df.corr(), annot = True, cmap = 'viridis')

plt.show()

# ### Observations

# - The heat map shows the correlation between the variables on each axis and the key on the right hand side of the image shows the correlation range.

# - Value closer to 0 such as risk period end and risk period start (0.0082) have no linear trend between the two variables

# - Value closer to 1 such as maxmium palermo scale and cumulative palermo scale (0.97) have positive correlation (as one increased so does the other)

# - value closer to -1 such as Cumulative Palermo Scale and Risk Period Start (-0.058) demonstrate that as one variable decreases another increases and vice versa

# - yellow squares are all 1 because those squares are correlating each variable to itself

sns.set_theme(style = 'white', font_scale = 1.7)

plt.subplots(figsize=(12,8))

plt.title('Lineplot Showing Relationship Between Probability for an Impact to Occur and Possible Impacts', fontsize = 16, y = 1.05)

graph1 = sns.lineplot(x = 'Possible Impacts', y = 'Cumulative Impact Probability', data = df)

graph1.set(xlabel = '# of Times Object can Potentially Impact Earth')

sns.despine()

# ### Observations

# This lineplot shows the relation between the number of possible impacts and the Cumulative Impact Probability. Other than an outlier just below 0.010, the rest of the data stays in between 0.000 and 0.004. Looking at this graph, I can conclude that even if the number of possible impacts increases, the cumulative impact probablity stays the same for the most part.

sns.set_theme(style = 'white', font_scale = 1.7)

plt.subplots(figsize=(12,8))

plt.title('How an Asteroids Velocity Affects # of Possible Impacts', fontsize = 16, y = 1.05)

graph4 = sns.histplot(x = 'Asteroid Velocity (km/s)', y = 'Possible Impacts', data = df)

graph4.set(ylabel = '# of Times Object can Potentially Impact Earth')

sns.despine()

# ### Observations

#

# This histplot shows the relationship between an asteroid's velocity and # possible impacts. From this plot, you can see around the 5-13 km/s range the # of possible impacts are the highest. As the velocity increases after 13 km/s, the # of possible impacts continues to get lower.

df2 = df[df["Cumulative Impact Probability"] < 1.500000e-07]

x = sns.relplot(data=df2, x="Possible Impacts", y="Cumulative Impact Probability")

plt.title('Relational Plot demonstrating the Probability of Asteroid Impacts', fontsize=12, y=1.05)

x.set(ylabel='Cumulative Probability of an Impact', xlabel = 'Possible Asteroid Impacts')

sns.despine()

plt.show()

# ### Comments

# For this section , I have used the relational plot. This plot shows the direct correlation between the number of impacts and the probabilities of the impacts. After feedback from the TA, I have added a column in the new dataframe called df2 to condense the data. The results show that asteriod impacts and their probabilties are skewed all over the plot. Although there is a less number in possible impacts for all ranges of probabilties , there are several outliers that show no concrete relationship between the two points of interest

sns.jointplot(x = 'Possible Impacts', y = 'Risk Period Start', kind = 'hex', data=df, color="#4CB391")

plt.show()

# ### Observations

# The jointplot demonstrates the amount of possible impacts and when their risk period initates. The plot clearly indicates that the year 2020 had the highest risk with more than 1000 possible impacts.The lowest risk period is revealed to be in 2040.

# ## Analysis

# ### Research Questions

# * Question 1 : What are the most common parameters that define an asteriod?

# * Question 2 : What factors of our dataset affect the probability of an impact occurring?

sns.set_theme(style="white",font_scale=1.4)

sns.displot(x = 'Asteroid Velocity (km/s)', data=df, bins = 25, color="Blue")

plt.title('Asteriod Count vs Asteriod Parameters')

sns.displot(x = 'Asteroid Magnitude (m)', data=df, bins = 25, color="Red")

plt.title('Asteriod Count vs Asteriod Parameters')

sns.displot(x = 'Asteroid Diameter (km)', data=df, bins = 25, color="Green")

plt.title('Asteriod Count vs Asteriod Parameters')

sns.despine()

plt.show()

# ### Observations

# These plots demonastrate relationships between asteriod counts and their relative parameters. We can see that the smaller the asteriod , the higher the count. Moreover , asteriod magnitudes of the range 25-30 show the highest counts.I have edited this section to produce a more colorful , visible plot demonstration

#

#

sns.set_theme(style="white",font_scale=1.4)

fig, x=plt.subplots(figsize=(15,10))

sns.lineplot(x="Asteroid Magnitude (m)", y="Asteroid Diameter (km)", data=df, palette="rocket")

plt.title('Relationship between Asteroid Mangnitude and Diameter')

sns.despine()

plt.show()

# ### Observations

# The graph decreases exponentially. In this plot , we can see the relationship between an asteriods diameter and magnitude. The higher the diamater the lower the magnitude of the asteriod. These parameters demonstrate and inverse relationship in our data. In other words , the wider the asteriod is in shape , the more visible and bright the asteriod is in relative to the earth.

sns.set_theme(style="white",font_scale=1.4)

sns.kdeplot(data=df, x = 'Asteroid Magnitude (m)', y = "Asteroid Velocity (km/s)", fill=True, thresh=0, levels=100, cmap="mako",)

plt.title('KDE Probability Distribution')

sns.despine()

plt.show()

# Observations:

# - This is a two-dimensional Kernel Distribution Estimation Plot which depicts the joint distribution both variables

# - Produce a plot that is less cluttered and more interpretable, especially when drawing multiple distributions

# - the lighter the area the more dense the distribution of variables is

#

sns.set_theme(style = 'white', font_scale = 1.5)

graph2 = sns.lmplot(x = 'Asteroid Diameter (km)', y = 'Cumulative Palermo Scale', data = df)

graph2.set(title = 'How an Asteroids Diameter Affects its Score on the Cumulative Palermo Scale', ylabel = 'Score on Cumulative Palermo Scale')

sns.despine()

# ### Observations

# This lmplot shows the relationship between the diameter of an asteroid and the Cumulative Palermo Scale. The Palermo Scale shows the seriousness of an impact, taking into account the probability of impact, and energy of impact. A number below -2 shows that there are no serious consequences, in between -2 and 0 shows that the object should be closely monitored, and above 0 means that there could be serious consequences. Looking at the plot, it shows that there is a positive relation between the diameter of the asteroid and the Cumulative Palermo Scale.

sns.set_theme(style = 'white', font_scale = 2)

plt.subplots(figsize=(12,8))

plt.title('How Cumulative Palermo Scale Score Affects the Probability for an Impact to Occur', fontsize = 16, y = 1.05)

graph3 = sns.scatterplot(x = 'Cumulative Palermo Scale', y = 'Cumulative Impact Probability', data = df)

graph3.set(xlabel = 'Score on Cumulative Palermo Scale')

sns.despine()

# ### Observations

# This scatterplot shows the relation between the Cumulative Palermo Scale and the Cumulative Impact Probability. Besides an outlier just below 0.010, most of the data ha a y-value in between 0.000 and 0.004. Towards the latter end of the plot, as the x-value increases, the y-value also slightly increases. Looking at this plot, I can conclude that there is a slight positive relation between the Cumulative Palermo Scale and the Cumulative Impact Probability

# ### Results

# * Question 1 : What are the most common parameters that define an asteriod?

# - Asteriods come in all shapes and sizes. Asteriod Classification has been conducted over the years in NASA's research. In this milestone , we come to discover the most common paramters that define an asteriod. First is Asteriod Velocity in space. Asteriods vary in velocity but seem to have a steady state of velocity between 6 and 15 km/s. Second, Asteriod sizes vary across the board but are most popular between 25 and 30 m in magnitude. Lastly , this parameter varies less across different asteriods as most demonstrate a diamater of around 0.2 Km.

#

# * Question 2 : What factors of our dataset affect the probability of an impact occurring?

# - There are a few factors of our dataset which affect the probability of an impact to occur. An important column in our dataset is the Cumulative Palermo Scale. This scale is important as it shows how serious an impact is, based on its energy at impact and the probability for one to occur. If you plot asteroids by diameter against the Cumulative Palermo Scale, it is shown that there is a positive relation between the two, meaning the larger the diameter of an asteroid results in a higher score on the scale. To further our understanding, if you plot asteroids by their score on the Cumulative Palermo Scale against their Cumulative Impact Probability, there is a slight positive relation between the two. This means that a higher score on the Cumulative Palermo Scale can lead to a slight increase in its Cumulative Impact Probability. Overall, factors like the diameter of an asteroid can affect the probability for an impact to occur.

# ## Conclusion

# Overall , the impacts.csv dataset shows a broad range of parameters and probabilties that might seem initally complex to dissect but has been analyzed according to our understanding.Annually , NASA conducts numerous projects to gather more information on asteriod parameters and their potential impacts.In this project , we scrutinize the dataset and gain valuable information from conducting an exploratory data analysis that reveals relationships between certain paramaters.Morever,we visualize the most common characteristics that define an asteriod.We then gather the factors of an asteriod and commence an analysis to see how they affect the probabilties of impacts.

| analysis/Task4/GroupSubmission.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# +

import sys

from random import sample

import networkx as nx

import numpy as np

from tqdm import tqdm, trange

sys.path.append('../')

from utils.aser_to_glucose import generate_aser_to_glucose_dict

from utils.glucose_utils import glucose_subject_list

# -

# Load the filtered ASER graph

aser = nx.read_gpickle('../../data/ASER_data//G_aser_core.pickle')

node2id_dict = np.load("../../dataset/ASER_core_node2id.npy", allow_pickle=True).item()

id2node_dict = dict([(node2id_dict[node], node) for node in node2id_dict])

# We test the coverage in the norm ASER

print_str = "\n\nStatistics in ASER_Norm:\n\n"

total_count, total_head, total_tail, total_both = 0, 0, 0, 0

for i in trange(1, 11):

list_count, list_head, list_tail, list_both = len(glucose_matching[i]), 0, 0, 0

for ind in range(len(glucose_matching[i])):

for h in glucose_matching[i][ind]['total_head']:

if h in node2id_dict.keys():

list_head += 1

break

for t in glucose_matching[i][ind]['total_tail']:

if t in node2id_dict.keys():

list_tail += 1

break

for h, t in glucose_matching[i][ind]['both']:

if h in node2id_dict.keys() and t in node2id_dict.keys():

list_both += 1

break

print_str += (

"In list {}, Total Head: {}\tMatched Head: {} ({}%)\tMatched Tail: {} ({}%)\tMatched Both: {} ({}%)\n"

.format(i, list_count, list_head, round(list_head / list_count, 3) * 100,

list_tail, round(list_tail / list_count, 3) * 100, list_both,

round(list_both / list_count, 3) * 100))

total_count += list_count

total_head += list_head

total_tail += list_tail

total_both += list_both

print_str += (

"\n\nIn total: Total Head: {}\tMatched Head: {} ({}%)\tMatched Tail: {} ({}%)\tMatched Both: {} ({}%)".format(

total_count, total_head, 100 * round(total_head / total_count, 3),

total_tail, 100 * round(total_tail / total_count, 3), total_both, 100 * round(total_both / total_count, 3)))

print(print_str)

# Do some ASER edge type statistics

all_edge_types = {}

for head, tail, feat_dict in aser.edges.data():

for r in feat_dict["edge_type"]:

if r in all_edge_types.keys():

all_edge_types[r] += 1

else:

all_edge_types[r] = 1

print("Edge types in ASER:")

print(all_edge_types)

def reverse_px_py(original: str):

return original.replace("PersonX", "[PX]").replace("PersonY", "[PY]").replace("[PX]", "PersonY").replace(

"[PY]", "PersonX")

def get_conceptualized_graph(G: nx.DiGraph):

G_conceptualized = nx.DiGraph()

for head, tail, feat_dict in tqdm(G.edges.data()):

head = id2node_dict[head]

tail = id2node_dict[tail]

head_split = head.split()

tail_split = tail.split()

head_subj = head_split[0]

tail_subj = tail_split[0]

relations = feat_dict["edge_type"]

for r in relations:

if head_subj == tail_subj and head_subj in glucose_subject_list:

new_rel = r + "_agent"

elif head_subj != tail_subj and head_subj in glucose_subject_list and tail_subj in glucose_subject_list:

new_rel = r + "_theme"

else:

new_rel = r + "_general"

_, re_head, re_tail, _ = generate_aser_to_glucose_dict(head, tail, True)

re_head_reverse, re_tail_reverse = reverse_px_py(re_head), reverse_px_py(re_tail)

if len(re_head) > 0 and len(re_tail) > 0:

if G_conceptualized.has_edge(re_head, re_tail):

G_conceptualized.add_edge(re_head, re_tail, relation=list(

set(G_conceptualized[re_head][re_tail]["relation"] + [new_rel])))

else:

G_conceptualized.add_edge(re_head, re_tail, relation=[new_rel])

if len(re_head_reverse) > 0 and len(re_tail_reverse) > 0:

if G_conceptualized.has_edge(re_head_reverse, re_tail_reverse):

G_conceptualized.add_edge(re_head_reverse, re_tail_reverse, relation=list(

set(G_conceptualized[re_head_reverse][re_tail_reverse]["relation"] + [new_rel])))

else:

G_conceptualized.add_edge(re_head_reverse, re_tail_reverse, relation=[new_rel])

return G_conceptualized

aser_conceptualized = get_conceptualized_graph(aser)

print("Before Conceptualization:\nNumber of Edges: {}\tNumber of Nodes: {}\n".format(len(aser.edges), len(aser.nodes)))

print("After Conceptualization:\nNumber of Edges: {}\tNumber of Nodes: {}\n".format(len(aser_conceptualized.edges),

len(aser_conceptualized.nodes)))

nx.write_gpickle(aser_conceptualized, '../../dataset/G_aser_concept.pickle')

# Let's sample some ASER conceptualization to check whether it's correct

for i in sample(list(aser_conceptualized.edges.data()), 30) + ['\n'] + sample(list(aser_conceptualized.nodes.data()), 10):

print(i)

# Now let's calculate the shortest path

def get_shortest_path(G , head, tail):

try:

p = nx.shortest_path_length(G, source=head, target=tail)

except nx.NodeNotFound:

return -1

except nx.NetworkXNoPath:

return -1

return p

full_path, norm_path = [], []

for i in range(1, 11):

for ind in trange(len(glucose_matching[i])):

norm_temp, full_temp = [], []

for h, t in glucose_matching[i][ind]['both']:

_, re_h, re_t, _ = generate_aser_to_glucose_dict(h, t, True)

if re_h in aser_conceptualized and re_t in aser_conceptualized:

norm_temp.append(get_shortest_path(aser_conceptualized, re_h, re_t))

if norm_temp:

try:

norm_path.append(min([i for i in norm_temp if i > 0]))

except ValueError:

norm_path.append(0)

else:

norm_path.append(0)

for h, t in glucose_matching[i][ind]['both']:

try:

hid = node2id_dict[h]

tid = node2id_dict[t]

except KeyError:

continue

if hid in aser and tid in aser:

full_temp.append(get_shortest_path(aser, hid, tid))

if full_temp:

try:

full_path.append(min([i for i in full_temp if i > 0]))

except ValueError:

full_path.append(0)

else:

full_path.append(0)

print("Average Shortest Path in Full ASER is: {}".format(np.mean([i for i in full_path if i > 0])))

print("Average Shortest Path in Norm ASER is: {}".format(np.mean([i for i in norm_path if i > 0])))

# +

# Calculate the average path in a simple graph:

G_simple = nx.Graph()

G_simple.add_nodes_from(aser_conceptualized)

G_simple.add_edges_from(aser_conceptualized.edges.data())

G_simple_full = nx.Graph()

G_simple_full.add_nodes_from(aser)

G_simple_full.add_edges_from(aser.edges.data())

full_path, norm_path = [], []

for i in range(1, 11):

for ind in trange(len(glucose_matching[i])):

norm_temp, full_temp = [], []

for h, t in glucose_matching[i][ind]['both']:

_, re_h, re_t, _ = generate_aser_to_glucose_dict(h, t, True)

if re_h in G_simple and re_t in G_simple:

norm_temp.append(get_shortest_path(G_simple, re_h, re_t))

if norm_temp:

try:

norm_path.append(min([i for i in norm_temp if i > 0]))

except ValueError:

norm_path.append(0)

else:

norm_path.append(0)

for h, t in glucose_matching[i][ind]['both']:

try:

hid = node2id_dict[h]

tid = node2id_dict[t]

except KeyError:

continue

if hid in G_simple_full and tid in G_simple_full:

full_temp.append(get_shortest_path(G_simple_full, hid, tid))

if full_temp:

try:

full_path.append(min([i for i in full_temp if i > 0]))

except ValueError:

full_path.append(0)

else:

full_path.append(0)

print("In No Direction Scenario:")

print("Average Shortest Path in Full ASER is: {}".format(np.mean([i for i in full_path if i > 0])))

print("Average Shortest Path in Norm ASER is: {}".format(np.mean([i for i in norm_path if i > 0])))

# -

# Now let's start merging with Glucose

G_Glucose = nx.read_gpickle('../../dataset/G_Glucose.pickle')

print("Node Coverage for Glucose Graph is: {}%\nEdge Coverage for Glucose Graph is: {}%".format(

100 * round(sum([node in aser_conceptualized for node in G_Glucose.nodes()]) / len(G_Glucose.nodes()), 4),

100 * round(sum([edge in aser_conceptualized.edges for edge in G_Glucose.edges()]) / len(G_Glucose.edges()), 4)))

print("Before Merging:\nEdges in ASER: {}\t\t\t\tNodes in ASER: {}\n".format(len(aser_conceptualized.edges()),

len(aser_conceptualized.nodes())))

aser_conceptualized.add_nodes_from(list(G_Glucose.nodes.data()))

aser_conceptualized.add_edges_from(list(G_Glucose.edges.data()))

print("\nAfter Merging:\nEdges in ASER+Glucose: {}\t\t\tNodes in ASER+Glucose: {}".format(len(aser_conceptualized.edges()),

len(aser_conceptualized.nodes())))

print("New Edges: {}\tNew Nodes: {}".format(len(aser_conceptualized.edges()) - 41336290,

len(aser_conceptualized.nodes()) - 11872745))

nx.write_gpickle(aser_conceptualized, '../../dataset/G_aser_glucose.pickle')

| preprocess/Glucose/build_graph/conceptualize_ASER.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# # Model Comparison

# ## Mixture of Unigram Model

#

# To reproduce the model results on the simulated data, please follow the following instruction:

# 1. Here we provide the code to preprocessing the simulated text file.

# 2. For this model to run, dowload the text file generated by the program below, turn this txt file into Unix Executable file in your local terminal. Re-upload and run with UMM model.

# 3. Go to Jupyter terminal, run "$ python pDMM.py --corpus simu --ntopics 10 --twords 10 --niters 500 --name unigram"

# 4. Check the "output" folder and the file named "unigram.topWords" produced.

import string

with open("stopword.txt", 'r') as s:

stopwords = s.readlines()

stpw = []

for word in stopwords:

stpw.append(word.strip())

with open("simulated.txt") as f:

corpus = f.readlines()

word_list = []

for line in corpus:

if line != "":

before = line.strip().split()

#Remove stopwords from the strings

for word in before:

if word.lstrip(string.punctuation).rstrip(string.punctuation).lower() not in stpw:

if word != "":

word_list.append(word.lstrip(string.punctuation).rstrip(string.punctuation).strip().lower())

new = []

for i in range(0,234,1):

line = " ".join(word_list[i*5:i*5+5])

new.append(line)

simu = "\n".join(new)

# +

output_file = open('simu.txt','w')

output_file.write(simu)

output_file.close()

#Then turn this txt file into Unix Executable file in local terminal. Reupload and run with UMM model.

# -

# #### Mixture of Unigrams Results

# Topic 0: luffy piece search treasure head law monkey fruit named pirate

#

# Topic 1: devil fruit user fruits animals race sea power powers haki

#

# Topic 2: sea grand red water mountain half rain runs seas wind

#

# Topic 3: series pirates roger humans gol merry manga video animation produced

#

# Topic 4: crew luffy robin ancient liberates sabaody archipelago ace navy government

#

# Topic 5: crew blue pirates navy straw joins named grand east pirate

#

# Topic 6: luffy nami sanji arlong chopper body properties crew usopp encounters

#

# Topic 7: pose calm belts log developed called thirteen animated feature films

#

# Topic 8: piece manga pirates king set island zou series eiichiro history

#

# Topic 9: pirates straw island luffy hat grand kingdom magnetic island's fishman

# ## Topic extraction with Non-negative Matrix Factorization (pLSI)

#

#

# This is an example of applying :class:`sklearn.decomposition.NMF` on a corpus

# of documents and extract additive models of the topic structure of the

# corpus. The output is a list of topics, each represented as a list of

# terms (weights are not shown).

#

# Non-negative Matrix Factorization has two different objective

# functions: the Frobenius norm, and the generalized Kullback-Leibler divergence.

# The latter is equivalent to Probabilistic Latent Semantic Indexing.

#

# The default parameters (n_samples / n_features / n_components) should make

# the example runnable in a couple of tens of seconds. You can try to

# increase the dimensions of the problem, but be aware that the time

# complexity is polynomial in NMF. In LDA, the time complexity is

# proportional to (n_samples * iterations).

# +

from sklearn.feature_extraction.text import TfidfVectorizer

import sklearn

from sklearn.decomposition import NMF

n_samples = 16

n_features = 1000

n_components = 10

n_top_words = 10

# -

def print_top_words(model, feature_names, n_top_words):

for topic_idx, topic in enumerate(model.components_):

message = "Topic #%d: " % topic_idx

message += " ".join([feature_names[i]

for i in topic.argsort()[:-n_top_words - 1:-1]])

print(message)

print()

import LDApackage

simulated_docs = LDApackage.read_documents_space('simulated.txt')

# Use tf-idf features for NMF.

tfidf_vectorizer = TfidfVectorizer(max_df=0.95, min_df=2,

max_features=n_features,

stop_words='english')

tfidf = tfidf_vectorizer.fit_transform(simulated_docs)

# +

# Fit the NMF model

nmf = sklearn.decomposition.NMF(n_components=n_components, random_state=1,

beta_loss='kullback-leibler', solver='mu', max_iter=1000, alpha=.1,

l1_ratio=.5).fit(tfidf)

#print("\nTopics in NMF model (generalized Kullback-Leibler divergence):")

tfidf_feature_names = tfidf_vectorizer.get_feature_names()

#print_top_words(nmf, tfidf_feature_names, n_top_words)

# -

# #### Results for NMF model (generalized Kullback-Leibler divergence):

# Topic 0: pirates line crew man search roger known king grand luffy

#

# Topic 1: history japan series date manga oda eiichiro body volumes world

#

# Topic 2: body devil animals used result users power presence time fruit

#

# Topic 3: grand time called sea currents line works island making specific

#

# Topic 4: law defeat mom sanji alliance caesar nami clown big straw

#

# Topic 5: group battles robin straw franky leading crew ancient save pluton

#

# Topic 6: used animals wind similar piece world various certain presence eiichiro

#

# Topic 7: ace huge grand luffy adoptive forced fish thousand led new

#

# Topic 8: soon cyborg island crew fishmen sabaody battle alias world archipelago

#

# Topic 9: blue humans usopp government going captures water sanji defeats creatures

# ## Discussion

# Some of the topics for mixture of unigram do not make sense. For example, topic 7 for mixture of unigram contains at least two topics one is related to 'belts', 'calm' and another is related to 'animated', 'film'. The inaccuracy may stem from the model itself which assumes that a document can only contain a single topic.

#

# For this simulated dataset, NMF model with KL divergence also exhibits unique topics across the results. It actually makes sense because NMF with KL divergence is equivalent to probabilistic latent semantic analysis (pLSA) when optimizing the same objective function. The two approaches only differ in how inference proceeds, but the underlying model is the same. Compared with LDA, NMF also model the total count of words in a document. For LDA, the total count of words in a document is assumed given.

#

# NMF with KL divergence (pLSI) runs faster than LDA, which can make a big difference if we want to apply topic modeling to large-scale datasets. Also, it is much easier to parallelize PLSI, at least compared to Gibbs sampling, not to mention the complicated implementation of the variational bayes approaches.

| Comparison.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

import numpy as np

import cv2

import matplotlib.pyplot as plt

import matplotlib.image as mpimg

import pickle

# Read in an image

image = mpimg.imread('sobel_direction.png')

# Define a function that applies Sobel x and y,

# then computes the direction of the gradient

# and applies a threshold.

def dir_threshold(img, sobel_kernel=3, thresh=(0, np.pi/2)):

# Apply the following steps to img

# 1) Convert to grayscale

gray=cv2.cvtColor(image,cv2.COLOR_RGB2GRAY)

# 2) Take the gradient in x and y separately

Sobelx=cv2.Sobel(gray,cv2.CV_64F,1,0,ksize=sobel_kernel)

Sobely=cv2.Sobel(gray,cv2.CV_64F,0,1,ksize=sobel_kernel)

# 3) Take the absolute value of the x and y gradients

abs_sobelx=np.absolute(Sobelx)

abs_sobely=np.absolute(Sobely)

# 4) Use np.arctan2(abs_sobely, abs_sobelx) to calculate the direction of the gradient

grad_direction=np.arctan2(abs_sobely,abs_sobelx)

# 5) Create a binary mask where direction thresholds are met

binary_output=np.zeros_like(grad_direction)

binary_output[(grad_direction > thresh[0]) & (grad_direction < thresh[1])] = 1

# 6) Return this mask as your binary_output image

return binary_output

# Run the function

dir_binary = dir_threshold(image, sobel_kernel=15, thresh=(0.7,1.3))

# Plot the result

f, (ax1, ax2) = plt.subplots(1, 2, figsize=(24, 9))

f.tight_layout()

ax1.imshow(image)

ax1.set_title('Original Image', fontsize=50)

ax2.imshow(dir_binary, cmap='gray')

ax2.set_title('Thresholded Grad. Dir.', fontsize=50)

plt.subplots_adjust(left=0., right=1, top=0.9, bottom=0.)

| Sobel/sobel direction.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 2

# language: python

# name: python2

# ---

# [← Back to Index](index.html)

# # Longest Common Subsequence

# To motivate dynamic time warping, let's look at a classic dynamic programming problem: find the **longest common subsequence (LCS)** of two strings ([Wikipedia](https://en.wikipedia.org/wiki/Longest_common_subsequence_problem)). A subsequence is not required to maintain consecutive positions in the original strings, but they must retain their order. Examples:

#

# lcs('cake', 'baker') -> 'ake'

# lcs('cake', 'cape') -> 'cae'

# We can solve this using recursion. We must find the optimal substructure, i.e. decompose the problem into simpler subproblems.

#

# Let `x` and `y` be two strings. Let `z` be the true LCS of `x` and `y`.

#

# If the first characters of `x` and `y` were the same, then that character must also be the first character of the LCS, `z`. In other words, if `x[0] == y[0]`, then `z[0]` must equal `x[0]` (which equals `y[0]`). Therefore, append `x[0]` to `lcs(x[1:], y[1:])`.

#

# If the first characters of `x` and `y` differ, then solve for both `lcs(x, y[1:])` and `lcs(x[1:], y)`, and keep the result which is longer.

# Here is the recursive solution:

def lcs(x, y):

if x == "" or y == "":

return ""

if x[0] == y[0]:

return x[0] + lcs(x[1:], y[1:])

else:

z1 = lcs(x[1:], y)

z2 = lcs(x, y[1:])

return z1 if len(z1) > len(z2) else z2

# Test:

pairs = [

('cake', 'baker'),

('cake', 'cape'),

('catcga', 'gtaccgtca'),

('zxzxzxmnxzmnxmznmzxnzm', 'nmnzxmxzmnzmx'),

('dfkjdjkfdjkjfdkfdkfjd', 'dkfjdjkfjdkjfkdjfkjdkfjdkfj'),

]

for x, y in pairs:

print lcs(x, y)

# The time complexity of the above recursive method is $O(2^{n_x+n_y})$. That is slow because we might compute the solution to the same subproblem multiple times.

# ### Memoization

# We can do better through memoization, i.e. storing solutions to previous subproblems in a table.

# Here, we create a table where cell `(i, j)` stores the length `lcs(x[:i], y[:j])`. When either `i` or `j` is equal to zero, i.e. an empty string, we already know that the LCS is the empty string. Therefore, we can initialize the table to be equal to zero in all cells. Then we populate the table from the top left to the bottom right.

def lcs_table(x, y):

nx = len(x)

ny = len(y)

# Initialize a table.

table = [[0 for _ in range(ny+1)] for _ in range(nx+1)]

# Fill the table.

for i in range(1, nx+1):

for j in range(1, ny+1):

if x[i-1] == y[j-1]:

table[i][j] = 1 + table[i-1][j-1]

else:

table[i][j] = max(table[i-1][j], table[i][j-1])

return table

# Let's visualize this table:

x = 'cake'

y = 'baker'

table = lcs_table(x, y)

table

xa = ' ' + x

ya = ' ' + y

print ' '.join(ya)

for i, row in enumerate(table):

print xa[i], ' '.join(str(z) for z in row)

# Finally, we backtrack, i.e. read the table from the bottom right to the top left:

def lcs(x, y, table, i=None, j=None):

if i is None:

i = len(x)

if j is None:

j = len(y)

if table[i][j] == 0:

return ""

elif x[i-1] == y[j-1]:

return lcs(x, y, table, i-1, j-1) + x[i-1]

elif table[i][j-1] > table[i-1][j]:

return lcs(x, y, table, i, j-1)

else:

return lcs(x, y, table, i-1, j)

for x, y in pairs:

table = lcs_table(x, y)

print lcs(x, y, table)

# Table construction has time complexity $O(mn)$, and backtracking is $O(m+n)$. Therefore, the overall running time is $O(mn)$.

# [← Back to Index](index.html)

| lcs.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# <table class="ee-notebook-buttons" align="left">

# <td><a target="_parent" href="https://github.com/giswqs/geemap/tree/master/examples/notebooks/geemap_and_earthengine.ipynb"><img width=32px src="https://www.tensorflow.org/images/GitHub-Mark-32px.png" /> View source on GitHub</a></td>

# <td><a target="_parent" href="https://nbviewer.jupyter.org/github/giswqs/geemap/blob/master/examples/notebooks/geemap_and_earthengine.ipynb"><img width=26px src="https://upload.wikimedia.org/wikipedia/commons/thumb/3/38/Jupyter_logo.svg/883px-Jupyter_logo.svg.png" />Notebook Viewer</a></td>

# <td><a target="_parent" href="https://colab.research.google.com/github/giswqs/geemap/blob/master/examples/notebooks/geemap_and_earthengine.ipynb"><img src="https://www.tensorflow.org/images/colab_logo_32px.png" /> Run in Google Colab</a></td>

# </table>

# ## Install Earth Engine API and geemap

# Install the [Earth Engine Python API](https://developers.google.com/earth-engine/python_install) and [geemap](https://github.com/giswqs/geemap). The **geemap** Python package is built upon the [ipyleaflet](https://github.com/jupyter-widgets/ipyleaflet) and [folium](https://github.com/python-visualization/folium) packages and implements several methods for interacting with Earth Engine data layers, such as `Map.addLayer()`, `Map.setCenter()`, and `Map.centerObject()`.

# The following script checks if the geemap package has been installed. If not, it will install geemap, which automatically installs its [dependencies](https://github.com/giswqs/geemap#dependencies), including earthengine-api, folium, and ipyleaflet.

# +

# Installs geemap package

import subprocess

try:

import geemap

except ImportError:

print('geemap package not installed. Installing ...')

subprocess.check_call(["python", '-m', 'pip', 'install', 'geemap'])

# -

import ee

import geemap

# ## Create an interactive map

Map = geemap.Map(center=(40, -100), zoom=4)

Map

# ## Add Earth Engine Python script

# +

# Add Earth Engine dataset

image = ee.Image('USGS/SRTMGL1_003')

# Set visualization parameters.

vis_params = {

'min': 0,

'max': 4000,

'palette': ['006633', 'E5FFCC', '662A00', 'D8D8D8', 'F5F5F5']}

# Print the elevation of Mount Everest.

xy = ee.Geometry.Point([86.9250, 27.9881])

elev = image.sample(xy, 30).first().get('elevation').getInfo()

print('Mount Everest elevation (m):', elev)

# Add Earth Engine layers to Map

Map.addLayer(image, vis_params, 'SRTM DEM', True, 0.5)

Map.addLayer(xy, {'color': 'red'}, 'Mount Everest')

# -

# ## Change map positions

#

# For example, center the map on an Earth Engine object:

Map.centerObject(ee_object=xy, zoom=13)

# Set the map center using coordinates (longitude, latitude)

Map.setCenter(lon=-100, lat=40, zoom=4)

# ## Extract information from Earth Engine data based on user inputs

# +

import ee

import geemap

from ipyleaflet import *

from ipywidgets import Label

try:

ee.Initialize()

except Exception as e:

ee.Authenticate()

ee.Initialize()

Map = geemap.Map(center=(40, -100), zoom=4)

Map.default_style = {'cursor': 'crosshair'}

# Add Earth Engine dataset

image = ee.Image('USGS/SRTMGL1_003')

# Set visualization parameters.

vis_params = {

'min': 0,

'max': 4000,

'palette': ['006633', 'E5FFCC', '662A00', 'D8D8D8', 'F5F5F5']}

# Add Earth Eninge layers to Map

Map.addLayer(image, vis_params, 'SRTM DEM', True, 0.5)

latlon_label = Label()

elev_label = Label()

display(latlon_label)

display(elev_label)

coordinates = []

markers = []

marker_cluster = MarkerCluster(name="Marker Cluster")

Map.add_layer(marker_cluster)

def handle_interaction(**kwargs):

latlon = kwargs.get('coordinates')

if kwargs.get('type') == 'mousemove':

latlon_label.value = "Coordinates: {}".format(str(latlon))

elif kwargs.get('type') == 'click':

coordinates.append(latlon)

# Map.add_layer(Marker(location=latlon))

markers.append(Marker(location=latlon))

marker_cluster.markers = markers

xy = ee.Geometry.Point(latlon[::-1])

elev = image.sample(xy, 30).first().get('elevation').getInfo()

elev_label.value = "Elevation of {}: {} m".format(latlon, elev)

Map.on_interaction(handle_interaction)

Map

# +

import ee

import geemap

from ipyleaflet import *

from bqplot import pyplot as plt

try:

ee.Initialize()

except Exception as e:

ee.Authenticate()

ee.Initialize()

Map = geemap.Map(center=(40, -100), zoom=4)

Map.default_style = {'cursor': 'crosshair'}

# Compute the trend of nighttime lights from DMSP.

# Add a band containing image date as years since 1990.

def createTimeBand(img):

year = img.date().difference(ee.Date('1991-01-01'), 'year')

return ee.Image(year).float().addBands(img)

NTL = ee.ImageCollection('NOAA/DMSP-OLS/NIGHTTIME_LIGHTS') \

.select('stable_lights')

# Fit a linear trend to the nighttime lights collection.

collection = NTL.map(createTimeBand)

fit = collection.reduce(ee.Reducer.linearFit())

image = NTL.toBands()

figure = plt.figure(1, title='Nighttime Light Trend', layout={'max_height': '250px', 'max_width': '400px'})

count = collection.size().getInfo()

start_year = 1992

end_year = 2013

x = range(1, count+1)

coordinates = []

markers = []

marker_cluster = MarkerCluster(name="Marker Cluster")

Map.add_layer(marker_cluster)

def handle_interaction(**kwargs):

latlon = kwargs.get('coordinates')

if kwargs.get('type') == 'click':

coordinates.append(latlon)

markers.append(Marker(location=latlon))

marker_cluster.markers = markers

xy = ee.Geometry.Point(latlon[::-1])

y = image.sample(xy, 500).first().toDictionary().values().getInfo()

plt.clear()

plt.plot(x, y)

# plt.xticks(range(start_year, end_year, 5))

Map.on_interaction(handle_interaction)

# Display a single image

Map.addLayer(ee.Image(collection.select('stable_lights').first()), {'min': 0, 'max': 63}, 'First image')

# Display trend in red/blue, brightness in green.

Map.setCenter(30, 45, 4)

Map.addLayer(fit,

{'min': 0, 'max': [0.18, 20, -0.18], 'bands': ['scale', 'offset', 'scale']},

'stable lights trend')

fig_control = WidgetControl(widget=figure, position='bottomright')

Map.add_control(fig_control)

Map

| examples/notebooks/geemap_and_earthengine.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# this file reads in the data from the GEO submission from Wen at al (JCI, 2019)

# data from each cell is provided in a seprarate file

import os

import glob

import pandas as pd

import numpy as np

files = glob.glob('WenData/Data/*gencode*gz')

len(files)

# iterate across all files and join to a single data frame

i = 0

for curr in files:

os.system('gzip -d ' + curr)

decompressed_file = curr[:-3]

curr_df = pd.read_csv(decompressed_file, sep = '\t', index_col= 0)

vec = curr_df.tpm

colName = '_'.join(decompressed_file.split('_')[1:4]) + str(i)

if curr == files[0]:

master_df = pd.DataFrame(vec)

master_df.columns = [colName]

else:

master_df[colName] = vec

i = i+1

os.system('gzip ' + decompressed_file)

master_df['gene'] = master_df.index

master_df = master_df.drop('gene', axis = 1)

# +

genes = [x.split('_')[0] for x in master_df.index]

genelist = list(set(genes))

for curr in genelist:

rows = master_df.iloc[np.where(np.array(genes) == curr)]

rows.sum(axis = 0)

vec = rows.sum(axis = 0)

if curr == genelist[0]:

df = pd.DataFrame(vec)

df.columns = [curr]

else:

df[curr] = vec

# -

df = pd.DataFrame(rows.sum(axis = 0))

df.columns =[ curr]

df

trans = df.transpose()

trans = trans.reset_index()

feather.write_dataframe(trans, 't.feather')

| Figures/Figure 4/wen_python_assembly.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: pypermr

# language: python

# name: pypermr

# ---

# ### PySetPerm design

# The pysetperm.py module includes a number of classes that provide simple building blocks for testing set enrichments.

# Features can be anything: genes, regulatory elements etc. as long as they have chr, start (1-based!), end(1-based) and name columns:

# + language="bash"

# cd ..

# head -n3 data/genes.txt

# -

# Annotations are also simply specified:

# + language="bash"

# head -n3 data/kegg.txt

# -

# ### An example analysis

# We import features and annotaions via respective classes. Features can be altered with a distance (i.e. genes +- 2000 bp). Annotations can also be filtered to have a minimum set size (i.e. at least 5 genes)

import pysetperm as psp

features = psp.Features('data/genes.txt', 2000)

annotations = psp.AnnotationSet('data/kegg.txt', features.features_user_def, 5)

n_perms = 200000

cores = 10

# Initiate test groups using the Input class:

# +

e_input = psp.Input('data/eastern_candidates.txt',

'data/eastern_background.txt.gz',

features,

annotations)

c_input = psp.Input('data/central_candidates.txt',

'data/central_background.txt.gz',

features,

annotations)

i_input = psp.Input('data/internal_candidates.txt',

'data/internal_background.txt.gz',

features,

annotations)

# -

# A Permutation class holds the permuted datasets.

e_permutations = psp.Permutation(e_input, n_perms, cores)

c_permutations = psp.Permutation(c_input, n_perms, cores)

i_permutations = psp.Permutation(i_input, n_perms, cores)

# Once permutions are completed, we determine the distribution of the Pr. X of genes belonging to Set1...n, using the SetPerPerm class. This structure enables the easy generation of joint distributions.

e_per_set = psp.SetPerPerm(e_permutations,

annotations,

e_input,

cores)

c_per_set = psp.SetPerPerm(c_permutations,

annotations,

c_input,

cores)

i_per_set = psp.SetPerPerm(i_permutations,

annotations,

i_input,

cores)

# Here, we can use join_objects() methods for both Imput and SetPerPerm objects, to get the joint distribution of two or more indpendent tests.

# combine sims

ec_input = psp.Input.join_objects(e_input, c_input)

ec_per_set = psp.SetPerPerm.join_objects(e_per_set, c_per_set)

ei_input = psp.Input.join_objects(e_input, i_input)

ei_per_set = psp.SetPerPerm.join_objects(e_per_set, i_per_set)

ci_input = psp.Input.join_objects(c_input, i_input)

ci_per_set = psp.SetPerPerm.join_objects(c_per_set, i_per_set)

eci_input = psp.Input.join_objects(ec_input, i_input)

eci_per_set = psp.SetPerPerm.join_objects(ec_per_set, i_per_set)

# Call the make_results_table function to generate a pandas format results table.

# results

e_results = psp.make_results_table(e_input, annotations, e_per_set)

c_results = psp.make_results_table(c_input, annotations, c_per_set)

i_results = psp.make_results_table(i_input, annotations, i_per_set)

ec_results = psp.make_results_table(ec_input, annotations, ec_per_set)

ei_results = psp.make_results_table(ei_input, annotations, ei_per_set)

ci_results = psp.make_results_table(ci_input, annotations, ci_per_set)

eci_results = psp.make_results_table(eci_input, annotations, eci_per_set)

from itables import show

from IPython.display import display

from ipywidgets import HBox, VBox

import ipywidgets as widgets

display(e_results)

display(c_results)

display(i_results)

display(ec_results)

display(ei_results)

display(ci_results)

display(eci_results)

| notebooks/.ipynb_checkpoints/example_analysis-checkpoint.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# ## PHYS 105A: Introduction to Scientific Computing

#

# # Unix shells, Remote Login, Version Control, etc

#

# <NAME>

# In order to follow this hands-on exercise, you will need to have the following software installed on your system:

#

# * Terminal (built-in for windows, mac, and linux)

# * A Unix shell (Windows Subsystem for Linux; built-in for mac and linux)

# * Git

# * Jupyter Notebook or Jupyter Lab

# * Python 3.x

#

# The easiest way to install these software is to use [Anaconda](https://www.anaconda.com/).

# Nevertheless, you may use macports or homebrew to install these tools on Mac, and `apt` to install these tools on Linux. For windows, you may enable the Windows Subsystem for Linux (WSL) to get a Unix shell.

# ## Terminal and Unix Shell

#

# This step is trivial if you are on Linux or on a Mac. Simply open up your terminal and you will be given `bash` or `zsh`.

#

# If you are on Windows, you may start `cmd`, "Windows PowerShell", or the "Windows Subsystem for Linux".

# The former two don't give you a Unix shell, but you can still use it to navigate your file system, run `git`, etc.

# The last option is actually running a full Ubuntu envirnoment that will give you `bash`.

# ## Once you are on a bash/zsh, try the following commands

#

# echo "Hello World" # the infamous hello world program!

# ls

# echo "Hello World" > hw.txt

# ls

# cat hw.txt

# mv hw.txt hello.txt

# cp hello.txt world.txt

#

# Awesome! You are now a shell user!

# ## Git

#

# ### Pre-request

#

# * Have a GitHub account

# * Have Jupyter Lab set up on your machine

# * Have `git` (can be installed by conda)

#

# ### Set up development environment

#

# * `git config --global user.name "<NAME>"`

# * `git config --global user.email <EMAIL>`

#

# [Reference](https://git-scm.com/book/en/v2/Getting-Started-First-Time-Git-Setup)

# ## Clone Course Repository

#

# With a shell, you can now clone/copy the PHYS105A repository to your computer:

#

# git clone <EMAIL>:uarizona-2021spring-phys105a/phys105a.git

# cd phys105a

# ls

#

# You may now add a file, commit it to git, and push it back to GitHub:

#

# echo "Hello World" > hw.txt

# git add .

# git commit -m 'hello world'

# git log

# git push

# ## Jupyter Notebook

#

# A Jupyter Notebook is a JSON document containing multiple cells of text and codes. These cells maybe:

#

# * Markdown documentation

# * Codes in `python`, `R`, or even `bash`!

# * Output of the codes

# * RAW text

# ## Markdown Demo

#

# This is a markdown demo. Try edit this cell to see how things work.

#

# See https://guides.github.com/features/mastering-markdown/ for more details.

#

#

# # This is an `<h1>` tag

# ## This is an `<h2>` tag

# ###### This is an `<h6>` tag

#

# *This text will be italic*

# _This will also be italic_

#

# **This text will be bold**

# __This will also be bold__

#

# _You **can** combine them_

#

# * Item 1

# * Item 2

# * Item 2a

# * Item 2b

#

# 1. Item 1

# 1. Item 2

# 1. Item 3

# 1. Item 3a

# 1. Item 3b

# +

## Python and Jupyter

# We can finally try jupyter and python as your calculator.

# Type some math formulas, then press Shift+Enter, jupyter will interpret your equations by python and print the output.

# This is CK's equation:

1 + 2 + 3 + 4

# +

# EXERCISE: Now, type your own equation here and see the outcome.

# +

# EXERCISE: Try to create more cells and type out more equations.

# Note that Jupyter supports "shortcuts"/"hotkeys".

# When you are typing in a cell, there is a green box surrounding the cell.

# You may click outside the cell or press "ESC" to escape from the editing mode.

# The green box will turn blue.

# You can then press "A" or "B" to create additional cells above or below the current active cell.

# +

# One of the most powerful things programming langauges are able to do is to assign names to values.

# This is in contrast to spreadsheet software where each "cell" is prenamed (e.g., A1, B6).

# A value associated with a name is called a varaible.

# In python, we simply use the equal sign to assign a value to a variable.

a = 1

b = 1 + 1

# We can then reuse these variables.

a + b + 3

# +

# EXERCISE: create your own variables and check their values.

# +

# Sometime it is convenient to have mutiple output per cell.

# In such a case, you may use the python function `print()`

# Or the Jupyter function `display()`

print(1, 2)

print(a, b)

display(1, 2)

display(a, b)

# +

# EXERCISE: print and display results of equations

# +

# Speaking of print(), in python, you may use both single or double quotes to create "string" of characters.

'This is a string of characters.'

"This is also a string of characters."

# You can mix strings, numbers, etc in a single print statement.

print("Numbers:", 1, 2, 3)

# +

# EXERCISE: assign a string to a variable and then print it

# +

# In the lecture, we learned the different math operations in python: +, -, *, **, /, and //.

# Try to use them yourself.

# Pay special attention to the ** and // operators.

# * and ** are different

print(10*3)

print(10**3)

# / and // are different

print(10/3)

print(10//3)

# +

# EXERCISE: try out *, **, /, and // yourself.

# What if you use them for very big numbers? Very small numbers?

# Do you see any limitation?

# +

# COOL TRICK: In python, you use underscores to help writing very big numbers.

# E.g., python knows that 1_000_000 is 1000_000 is 1000000.

print(1_000_000)

print(1000_000)

print(1000000)

# +

# The integer division is logically equivalent to applying a floor function to the floating point division.

# However, the floor function is not a default (built-in) function.

# You need to import it from the math package:

from math import floor

print(10/3)

print(10//3)

print(floor(10/3))

# +

# EXERCISE: try to use the floor() function yourself

# +

# There are many useful functions and constants in the math package.

# See https://docs.python.org/3/library/math.html

from math import pi, sin, cos

print(pi)

print(sin(pi))

print(cos(pi))

# +

# EXERCISE: try to import additional functions from the math package and test them yourself.

# Python math package: https://docs.python.org/3/library/math.html

| 02/Handson.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# # OpenEO Connection to EODC Backend

import logging

import openeo

from openeo.auth.auth_bearer import BearerAuth

logging.basicConfig(level=logging.INFO)

# +

# Define constants

# Connection

EODC_DRIVER_URL = "http://openeo.eodc.eu/api/v0.3"

OUTPUT_FILE = "/tmp/openeo_eodc_output.tiff"

EODC_USER = "user1"

EODC_PWD = "<PASSWORD>#"

# Data

PRODUCT_ID = "s2a_prd_msil1c"

DATE_START = "2017-01-01"

DATE_END = "2017-01-08"

IMAGE_LEFT = 652000

IMAGE_RIGHT = 672000

IMAGE_TOP = 5161000

IMAGE_BOTTOM = 5181000

IMAGE_SRS = "EPSG:32632"

# Processes

NDVI_RED = "B04"

NDVI_NIR = "B08"

# +

# Connect with EODC backend

connection = openeo.connect(EODC_DRIVER_URL,auth_type=BearerAuth, auth_options={"username": EODC_USER, "password": EODC_PWD})

# Login

#token = session.auth(EODC_USER, EODC_PWD, BearerAuth)

connection

# -

# Get available processes from the back end.

processes = connection.list_processes()

processes

# +

# Retrieve the list of available collections

collections = connection.list_collections()

list(collections)[:2]

# -

# Get detailed information about a collection

process = connection.describe_collection(PRODUCT_ID)

process

# +

# Select collection product

datacube = connection.imagecollection(PRODUCT_ID)

print(datacube.to_json())

# +

# Specifying the date range and the bounding box

datacube = datacube.filter_bbox(west=IMAGE_WEST, east=IMAGE_EAST, north=IMAGE_NORTH,

south=IMAGE_SOUTH, crs=IMAGE_SRS)

datacube = datacube.filter_daterange(extent=[DATE_START, DATE_END])

print(datacube.to_json())

# +

# Applying some operations on the data

datacube = datacube.ndvi(red=NDVI_RED, nir=NDVI_NIR)

datacube = datacube.min_time()

print(datacube.to_json())

# -

# Sending the job to the backend

job = datacube.send_job()

job.start_job()

job

# Describe Job

job.describe_job()

# +

# Download job result

#from openeo.rest.job import ClientJob

#job = ClientJob(107, session)

job.download(OUTPUT_FILE)

job

# -

# Showing the result

# !gdalinfo -hist "/tmp/openeo_eodc_output.tiff"

| examples/notebooks/EODC_Forum_2019/EODC.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

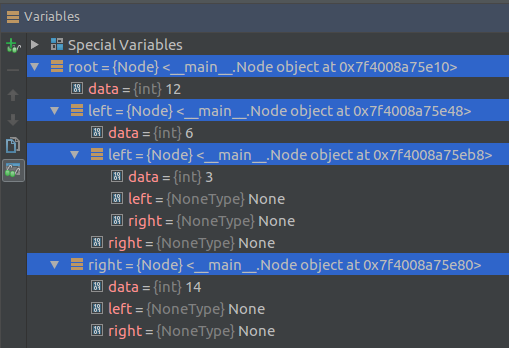

# +

# binary search tree

# left_subtree (keys) ≤ node (key) ≤ right_subtree (keys)

class Node:

def __init__(self, data):

self.left = None

self.right = None

self.data = data

# insert method to create nodes

def insert(self, data):

if self.data: # if self.data is not None

if data < self.data: # check left subtree

if self.left is None: # if left subtree not exist

self.left = Node(data) # create a new node

else: # if left subtree is empty

self.left.insert(data) # insert data into it

elif data > self.data:

if self.right is None:

self.right = Node(data)

else:

self.right.insert(data)

else: # if self.data is None

self.data = data

# findval method to compare the value with nodes

# recursively traverse

def findval(self, lkpval):

if lkpval < self.data:

if self.left is None:

return str(lkpval)+" Not Found"

return self.left.findval(lkpval)

elif lkpval > self.data:

if self.right is None:

return str(lkpval)+" Not Found"

return self.right.findval(lkpval)

else:

print(str(self.data) + ' is found')

# Print the tree

# recursively traverse

def PrintTree(self):

if self.left:

self.left.PrintTree()

print(self.data)

if self.right:

self.right.PrintTree()

root = Node(12)

root.insert(6)

root.insert(14)

root.insert(3)

print(root.findval(7))

print(root.findval(14))

# -

#

# [Python - Search Tree](https://www.tutorialspoint.com/python/python_binary_search_tree.htm)

# - [Searching a key](https://www.geeksforgeeks.org/binary-search-tree-set-1-search-and-insertion/)

#

# 1. Start from root.

# 2. Compare the inserting element with root, if less than root, then recurse for left, else recurse for right.

# 3. If element to search is found anywhere, return true, else return false.

#

# e.g.

#

#

# ***

#

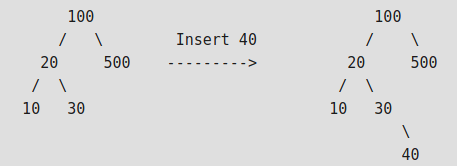

# - Insertion of a key

#

#

#

# 1. Start from root.

# 2. Compare the inserting element with root, if less than root, then recurse for left, else recurse for right.

# 3. After reaching end,just insert that node at left(if less than current) else right.

#

# ***

#

# - Some Interesting Facts:

#

# 1. **Inorder traversal of BST always produces sorted output.**

# 2. We can construct a BST with only Preorder or Postorder or Level Order traversal. Note that we can always get inorder traversal by sorting the only given traversal.

# 3. Number of unique BSTs with n distinct keys is Catalan Number

#

# ***

#

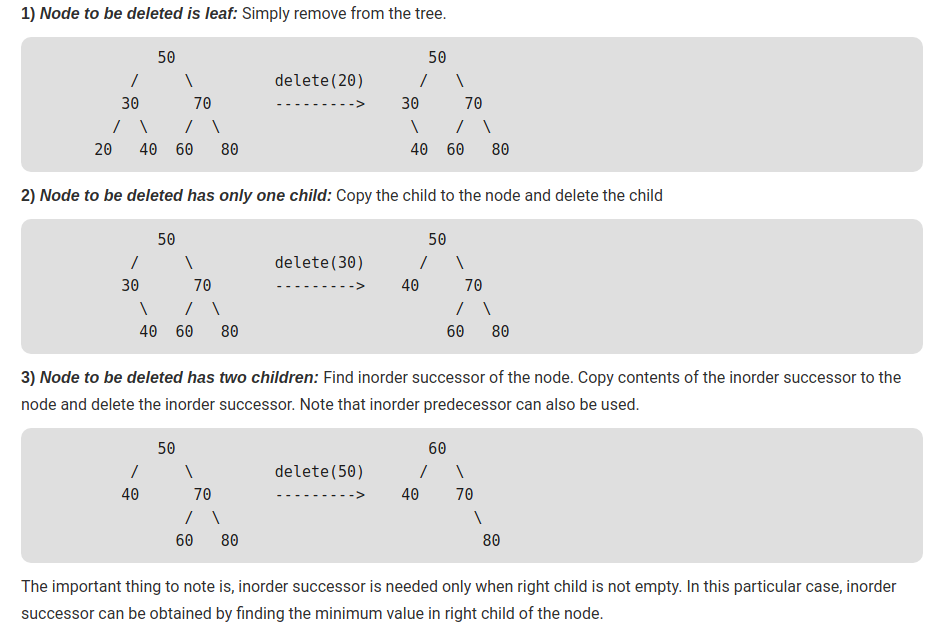

# - [Delete](https://www.geeksforgeeks.org/binary-search-tree-set-2-delete/)

#

#

# +

# Python program to demonstrate delete operation

# in binary search tree

# A Binary Tree Node

class Node:

# Constructor to create a new node

def __init__(self, key):

self.key = key

self.left = None

self.right = None

# inorder traversal of BST

def inorder(root):

if root is not None:

inorder(root.left)

print(root.key)

inorder(root.right)

def insert(node, key):

# If the tree is empty, return a new node

if node is None:

return Node(key)

# Otherwise recur down the tree

if key < node.key:

node.left = insert(node.left, key)

else:

node.right = insert(node.right, key)

# return the (unchanged) node pointer

return node

# Given a non-empty binary search tree, return the node

# with minum key value found in that tree. Note that the

# entire tree does not need to be searched

def minValueNode(node): # it returns the address of node

current = node

# loop down to find the leftmost leaf

while (current.left is not None):

current = current.left

return current

# Given a binary search tree and a key, this function

# delete the key and returns the new root

def deleteNode(root, key):

# Base Case

if root is None:

return root

# If the key to be deleted is smaller than the root's

# key then it lies in left subtree

if key < root.key:

root.left = deleteNode(root.left, key)

# If the kye to be delete is greater than the root's key

# then it lies in right subtree

elif (key > root.key):

root.right = deleteNode(root.right, key)

# If key is same as root's key, then this is the node

# to be deleted

else:

# Node with only one child or no child

if root.left is None:

temp = root.right

root = None

return temp

elif root.right is None:

temp = root.left

root = None

return temp

# Node with two children: Get the inorder successor

# (smallest in the right subtree)

temp = minValueNode(root.right)

# Copy the inorder successor's content to this node

root.key = temp.key

# Delete the inorder successor

root.right = deleteNode(root.right, temp.key)

return root

# Driver program to test above functions

""" Let us create following BST

50

/ \

30 70

/ \ / \

20 40 60 80 """

root = None

root = insert(root, 50)

root = insert(root, 30)

root = insert(root, 20)

root = insert(root, 40)

root = insert(root, 70)

root = insert(root, 60)

root = insert(root, 80)

print("Inorder traversal of the given tree")

inorder(root)

print("\nMinVal is: ")

print(minValueNode(root))

print(minValueNode(root).key)

print("\nDelete 20")

root = deleteNode(root, 20)

print("Inorder traversal of the modified tree")

inorder(root)

print("\nDelete 30")

root = deleteNode(root, 30)

print("Inorder traversal of the modified tree")

inorder(root)

print("\nDelete 50")

root = deleteNode(root, 50)

print("Inorder traversal of the modified tree")

inorder(root)

| fundamentals/BST.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# ## 1. Setup

import sys

sys.path.append('../..')

# +

import config

import matplotlib.pyplot as plt

import numpy as np

import os

import warnings

from annotations import *

from density_maps import create_and_save_density_maps

from utils.data.data_ops import move_val_split_to_train

from utils.input_output.io import save_np_arrays, load_np_arrays, load_images

from utils.input_output.io import save_gt_counts, load_gt_counts

from utils.preprocessing.misc import gaussian_smoothing

# +

# %matplotlib inline

# %load_ext autoreload

# %autoreload 2

warnings.filterwarnings('ignore')

# -

# ## 2. Datasets

# ### 2.1 VGG Cells Dataset

# +

DATASET_PATH = '../../datasets/vgg_cells'

TRAIN_PATH = f'{DATASET_PATH}/train'

TRAIN_IMG_PATH = f'{TRAIN_PATH}/images'

TRAIN_GT_DOTS_PATH = f'{TRAIN_PATH}/gt_dots'

TRAIN_GT_COUNTS_PATH = f'{TRAIN_PATH}/gt_counts'

TRAIN_GT_DENSITY_MAPS_PATH = f'{TRAIN_PATH}/gt_density_maps'

VAL_PATH = f'{DATASET_PATH}/val'

TEST_PATH = f'{DATASET_PATH}/test'

TEST_IMG_PATH = f'{TEST_PATH}/images'

TEST_GT_DOTS_PATH = f'{TEST_PATH}/gt_dots'

TEST_GT_COUNTS_PATH = f'{TEST_PATH}/gt_counts'

TEST_GT_DENSITY_MAPS_PATH = f'{TEST_PATH}/gt_density_maps'

# -

move_val_split_to_train(VAL_PATH, TRAIN_PATH)

# +

# !rm -rf $TRAIN_GT_DENSITY_MAPS_PATH

# !rm -rf $TRAIN_GT_COUNTS_PATH

# !rm -rf $TEST_GT_DENSITY_MAPS_PATH

# !rm -rf $TEST_GT_COUNTS_PATH

# !mkdir $TRAIN_GT_DENSITY_MAPS_PATH

# !mkdir $TRAIN_GT_COUNTS_PATH

# !mkdir $TEST_GT_DENSITY_MAPS_PATH

# !mkdir $TEST_GT_COUNTS_PATH

# -

print(DATASET_PATH)

print(os.listdir(DATASET_PATH))

print(TRAIN_PATH)

print(os.listdir(TRAIN_PATH))

# +

train_img_names = sorted(os.listdir(TRAIN_IMG_PATH))

train_dots_names = sorted(os.listdir(TRAIN_GT_DOTS_PATH))

test_img_names = sorted(os.listdir(TEST_IMG_PATH))

test_dots_names = sorted(os.listdir(TEST_GT_DOTS_PATH))

print(f'train split: {len(train_img_names)} images')

print(train_img_names[:3])

print(train_dots_names[:3])

print(f'\ntest split: {len(test_img_names)} images')

print(test_img_names[:3])

print(test_dots_names[:3])

# +

train_dots_names = sorted(os.listdir(TRAIN_GT_DOTS_PATH))

test_dots_names = sorted(os.listdir(TEST_GT_DOTS_PATH))

print(TRAIN_GT_DOTS_PATH)

print(train_dots_names[:5])

print(TEST_GT_DOTS_PATH)

print(test_dots_names[:5])

# -

# #### Load dots images (.png)

# +

train_dots_images = load_dots_images(TRAIN_GT_DOTS_PATH, train_dots_names)

test_dots_images = load_dots_images(TEST_GT_DOTS_PATH, test_dots_names)

print(len(train_dots_images), train_dots_images[0].shape, train_dots_images[0].dtype,

train_dots_images[0].min(), train_dots_images[0].max(), train_dots_images[0].sum())

# -

# #### Save gt counts (from dots images)

train_counts = dots_images_to_counts(train_dots_images)

test_counts = dots_images_to_counts(test_dots_images)

save_gt_counts(train_counts, train_dots_names, TRAIN_GT_COUNTS_PATH)

save_gt_counts(test_counts, test_dots_names, TEST_GT_COUNTS_PATH)

# #### Create and save density maps (.npy)

create_and_save_density_maps(train_dots_images, config.VGG_CELLS_SIGMA,

train_img_names, TRAIN_GT_DENSITY_MAPS_PATH)

create_and_save_density_maps(test_dots_images, config.VGG_CELLS_SIGMA,

test_img_names, TEST_GT_DENSITY_MAPS_PATH)

# #### Load some train images and density maps

train_images = load_images(TRAIN_IMG_PATH, train_img_names, num_images=3)

print(len(train_images))

print(train_images[0].dtype)

train_gt_density_maps = load_np_arrays(TRAIN_GT_DENSITY_MAPS_PATH, num=3)

print(len(train_gt_density_maps))

print(train_gt_density_maps.dtype)

# +

NUM_PLOTS = 3

plt.figure(figsize=(15, 13))

for i in range(NUM_PLOTS):

count = train_dots_images[i].sum().astype(np.int)

plt.subplot(3, NUM_PLOTS, i + 1)

plt.title(f'Initial image (GT_dots count: {count})')

plt.imshow(train_images[i])

plt.subplot(3, NUM_PLOTS, NUM_PLOTS + i + 1)

plt.title(f'GT_dots count: {count}')

plt.imshow(train_dots_images[i], cmap='gray')

plt.subplot(3, NUM_PLOTS, 2 * NUM_PLOTS + i + 1)

plt.title(f'GT_dots: {count}, GT_density_map: {train_gt_density_maps[i].sum():.2f}')

plt.imshow(train_gt_density_maps[i], cmap='jet')

plt.colorbar()

| utils/data/density_map_generation_vgg_cells.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3 (ipykernel)

# language: python

# name: python3

# ---

import torch

print(torch.__version__)

print(torch.cuda.is_available())

import torch

print(torch.cuda.current_device())

print(torch.cuda.device_count())

# + id="ULzsgA4bbtkr"

import os

import pandas as pd

import numpy as np #for transformation

import matplotlib.pyplot as plt #for plotting

from pathlib import Path

from sklearn.model_selection import train_test_split

import torch #pytorch package

import torch.nn as nn #basic building block for neural neteorks

import torch.nn.functional as F #import convolution functions like Relu

import torchvision

import torchvision.transforms as transforms #image transformation (cuz' the insufficiency of our data)

import torchvision.models as models #for finetune #commonly used model structures (including pre-trained models), such as AlexNet, VGG, ResNet, etc.

import torch.optim as optim #for optimize model(SGD, Adam, etc.)

#test

from skimage import io

from skimage import transform

import matplotlib.pyplot as plt

from torch.utils.data import Dataset, DataLoader

from torchvision.transforms import transforms

from torchvision.utils import make_grid

# -

# %pwd

# +

# 日常使用代码:将某个文件夹及其子目录下的所有图片格式改为.JPG格式

import os

import re

path='./imgs'

file_walk = os.walk(path)

fileNum = 0

filesPathList = []

for root, dirs, files in file_walk:

# print(root, end=',')

# print(dirs, end=',')

# print(files)

for file in files:

fileNum = fileNum + 1

filePath = root + '/' + file

#print(filePath)

filesPathList.append(filePath)

protion = os.path.splitext(filePath)

#print(protion[0],protion[1])

if protion[1].lower() != '.jpg':

#print("正在处理:" + filePath)

newFilePath = protion[0] + '.jpg'

os.rename(filePath, newFilePath)

print("這個dir已轉檔完成")

# + id="5ywYAy3_b0u2"

#training data

import glob

image= Path('imgs')

ext = ['png', 'jpg', 'gif','jfif','jpeg']# Add image formats here

file = []

[file.extend(image.glob(r'**/*.' + e)) for e in ext]

#file= list(image.glob('*.'+e) for e in ext)

labels = list(map(lambda x: os.path.split(os.path.split(x)[0])[1], file))

file= pd.Series(file, name='File').astype(str)

labels = pd.Series(labels, name='Label')

train_df= pd.concat([file, labels], axis=1)

train_df['Label'].value_counts()

# +

#確認資料寬高最大最小值

from os import listdir

from os.path import isfile, join

from PIL import Image

def print_data(data):

"""

Parameters

----------

data : dict

"""

print("Min width: %i" % data["min_width"])

print("Max width: %i" % data["max_width"])

print("Min height: %i" % data["min_height"])

print("Max height: %i" % data["max_height"])

def main(path):

"""

Parameters

----------

path : str

Path where to look for image files.

"""

onlyfiles = [f for f in listdir(path) if isfile(join(path, f))]

# Filter files by extension

onlyfiles = [f for f in onlyfiles if f.endswith(".jpg")]

data = {}

data["images_count"] = len(onlyfiles)

data["min_width"] = 10 ** 100 # No image will be bigger than that

data["max_width"] = 0

data["min_height"] = 10 ** 100 # No image will be bigger than that

data["max_height"] = 0

for filename in onlyfiles:

filename = str(path + '/'+filename)

im = Image.open(filename)

width, height = im.size

data["min_width"] = min(width, data["min_width"])

data["max_width"] = max(width, data["max_width"])

data["min_height"] = min(height, data["min_height"])

data["max_height"] = max(height, data["max_height"])

print_data(data)

if __name__ == "__main__":

main(path="marvel/train/black widow")

# +

#計算Normalization的mean跟sd

import torch

import torch.nn as nn

import torch.optim as optim

import torch.nn.functional as F

import numpy as np

import torchvision

from torchvision import *

from torch.utils.data import Dataset, DataLoader

import matplotlib.pyplot as plt

import time

import copy

import os

from tqdm import tqdm

transform = transforms.Compose(

[ transforms.Resize([128, 128]),

transforms.ToTensor()

])

train_path = 'imgs/'

trainset = torchvision.datasets.ImageFolder(root=train_path, transform = transform)

trainloader = torch.utils.data.DataLoader(trainset, batch_size=len(trainset))

####### COMPUTE MEAN / STD

# placeholders

psum = torch.tensor([0.0, 0.0, 0.0])

psum_sq = torch.tensor([0.0, 0.0, 0.0])

# loop through images

for inputs in tqdm(trainloader):

# print(inputs)

# inputs = np.asarray(inputs)

# inputs = np.squeeze(inputs)

# print(inputs.shape())

# psum += np.array(inputs[0]).sum(axis = [0, 2, 3])#B C H W 要求每C2的

inputs = inputs[0]

psum += inputs.sum(axis = (0, 2, 3))

psum_sq += (inputs ** 2).sum(axis = (0, 2, 3))

####### FINAL CALCULATIONS

# pixel count

image_size = 128

count = len(trainset) * image_size * image_size

# mean and std

total_mean = psum / count

total_var = (psum_sq/count) - (total_mean ** 2)

total_std = torch.sqrt(total_var)

# output

print('mean: ' + str(total_mean))

print('std: ' + str(total_std))

# +

# transform=transforms.Compose([

# # Random表示有可能做,所以也可能不做

# transforms.RandomHorizontalFlip(),# 水平翻转

# transforms.RandomVerticalFlip(), # 上下翻转

# transforms.RandomRotation(15), # 随机旋转-15°~15°

# transforms.RandomRotation([90, 180]), # 随机在90°、180°中选一个度数来旋转,如果想有一定概率不旋转,可以加一个0进去

# transforms.RandomCrop([28, 28]),

# ])

# +

import torch

import torch.nn as nn

import torch.optim as optim

import torch.nn.functional as F

import numpy as np

import torchvision

from torchvision import *

from torch.utils.data import Dataset, DataLoader

import matplotlib.pyplot as plt

import time