code stringlengths 38 801k | repo_path stringlengths 6 263 |

|---|---|

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# name: python3

# ---

# + [markdown] id="view-in-github" colab_type="text"

# <a href="https://colab.research.google.com/github/XavierCarrera/neural-network/blob/main/Neural_Network_Structure.ipynb" target="_parent"><img src="https://colab.research.google.com/assets/colab-badge.svg" alt="Open In Colab"/></a>

# + id="JsSCGrCPZAAe"

import numpy as np

import matplotlib.pyplot as plt

# %matplotlib inline

from sklearn.datasets import load_iris

from sklearn.linear_model import Perceptron

# + id="vcaFCEdg5N8G" outputId="289bd812-881c-480e-b1df-8d8cb7062b4d" colab={"base_uri": "https://localhost:8080/", "height": 136}

iris = load_iris()

iris.target

# + id="vd_y1xsW5Uys" outputId="84f8c0b4-d480-47dd-b16f-5f3b07fdaaf9" colab={"base_uri": "https://localhost:8080/", "height": 102}

iris.data[:5, :]

# + id="HVyTkCPR6ujA" outputId="c4af2925-bd51-423c-ddd9-400d4e501767" colab={"base_uri": "https://localhost:8080/", "height": 388}

data = iris.data[:, (2, 3)]

labels = iris.target

plt.figure(figsize=(13,6))

plt.scatter(data[:, 0], data[:, 1], c=labels, cmap=plt.cm.Set1, edgecolor='face')

plt.xlabel('Petal Length (cm)')

plt.ylabel('Petal Width (cm)')

plt.show()

# + id="BOvElfZz7E4v" outputId="2e45dff8-68d9-4e63-bb35-a779e0d5c345" colab={"base_uri": "https://localhost:8080/", "height": 85}

X = iris.data[:, (2, 3)]

y = (iris.target == 2).astype(np.int)

test_perceptron = Perceptron()

test_perceptron.fit(X, y)

# + id="8YIq74LX7IOg" outputId="8bab46ad-46dd-4274-b6fc-e0e26830e010" colab={"base_uri": "https://localhost:8080/", "height": 34}

y1_pred = test_perceptron.predict([[5.1, 2]])

y2_pred = test_perceptron.predict([[1.4, 0.2]])

print('Prediction 1:', y1_pred, 'Prediction 2:', y2_pred)

| Neural_Network_Structure.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# + [markdown] colab_type="text" id="e4cnBhHe-dlE"

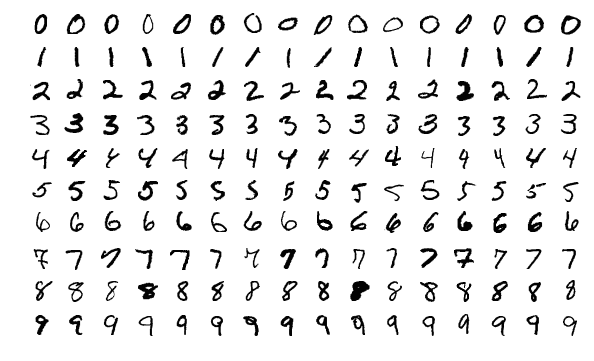

# # Regression

#

# So far, in our exploration of machine learning, we have build sysems that predict discrete values: mountain bike or not mountain bike, democrat or republican. And not just binary choices, but, for example, deciding whether an image is of a particular digit:

#

#

#

# or which of 1,000 categories does a picture represent.

#

#

# This lab looks at how we can build classifiers that predict **continuous** values and such classifiers are called regression classifiers.

#

# First, let's take a small detour into correlation.

#

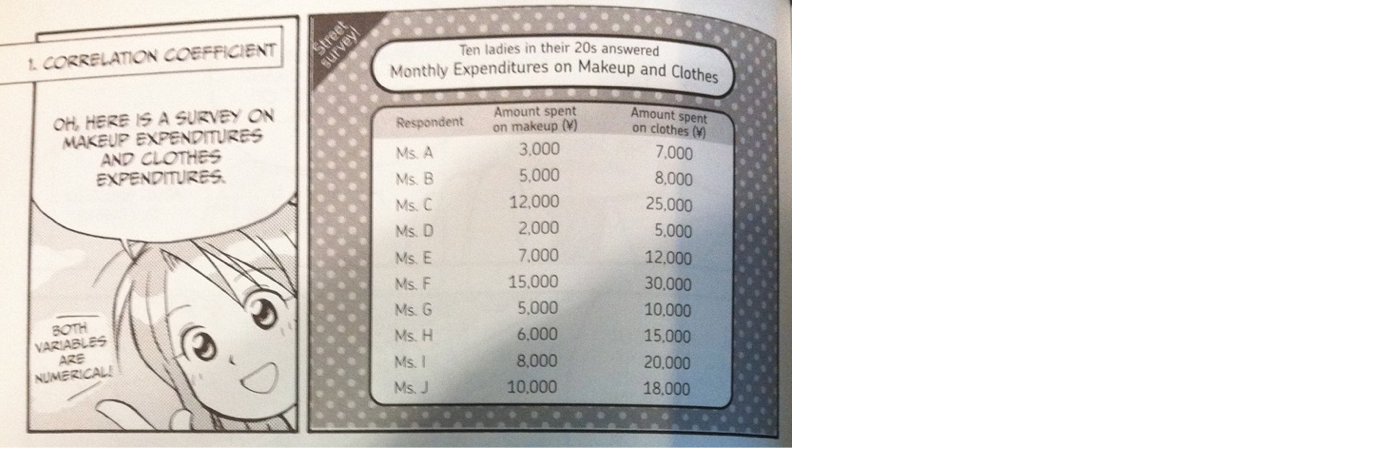

# ## Correlation

# A correlation is the degree of association between two variables. One of my favorite books on this topic is:

#

# <img src="http://zacharski.org/files/courses/cs419/mg_statistics_big.png" width="250" />

#

#

# and they illustrate it by looking at

# ## Ladies expenditures on clothes and makeup

#

#

#

#

#

# So let's go ahead and create that data in Pandas and show the table:

# + colab={"base_uri": "https://localhost:8080/", "height": 357} colab_type="code" id="bqpDwvW3-dlF" outputId="971eb9f5-576f-41b4-c905-427b7bea3a0b"

import pandas as pd

from pandas import DataFrame

makeup = [3000, 5000, 12000, 2000, 7000, 15000, 5000, 6000, 8000, 10000]

clothes = [7000, 8000, 25000, 5000, 12000, 30000, 10000, 15000, 20000, 18000]

ladies = ['Ms A','Ms B','Ms C','Ms D','Ms E','Ms F','Ms G','Ms H','Ms I','Ms J',]

monthly = DataFrame({'makeup': makeup, 'clothes': clothes}, index= ladies)

monthly

# + [markdown] colab_type="text" id="_Yyce16P-dlJ"

# and let's show the scatterplot

# + colab={"base_uri": "https://localhost:8080/", "height": 617} colab_type="code" id="f5QIDuDg-dlJ" outputId="d300ceec-3855-4dc2-dfd2-8cb9e9c6210f"

from bokeh.plotting import figure, output_file, show

from bokeh.io import push_notebook, show, output_notebook

output_notebook()

x = figure(title="Montly Expenditures on Makeup and Clothes", x_axis_label="Money spent on makeup", y_axis_label="Money spent on clothes")

x.circle(monthly['makeup'], monthly['clothes'], size=10, color="navy", alpha=0.5)

output_file("stuff.html")

show(x)

# + [markdown] colab_type="text" id="qBH5fNxT-dlN"

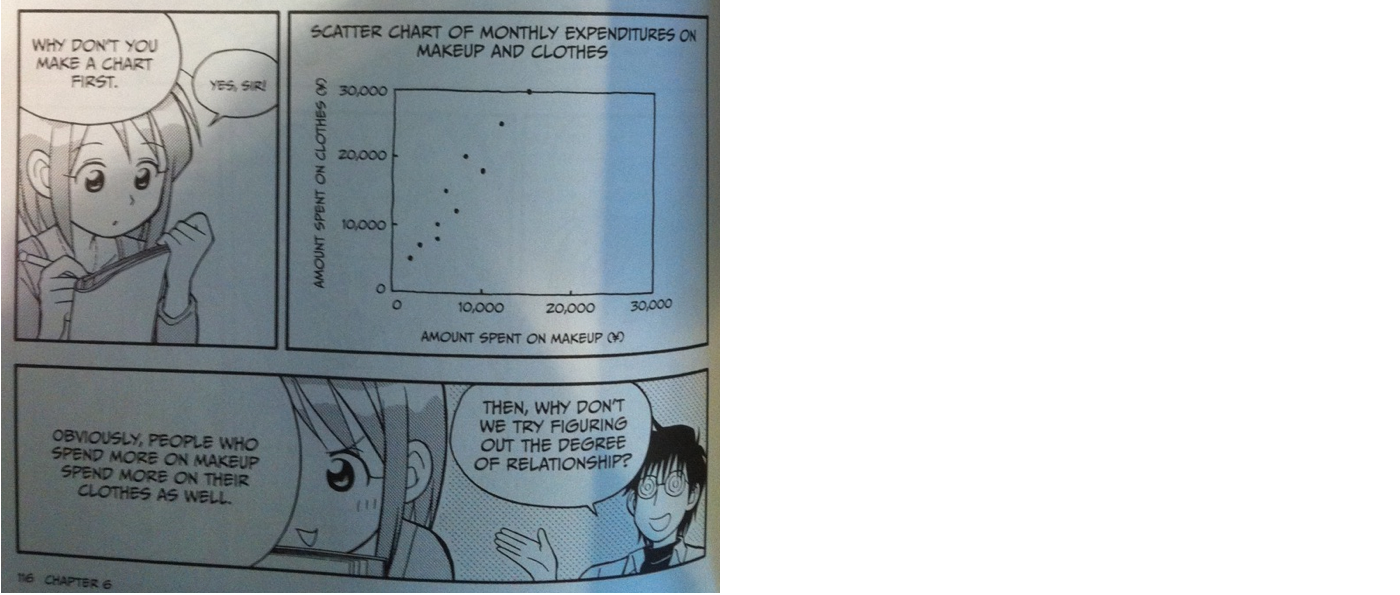

# When the data points are close to a straight line going up, we say that there is a positive correlation between the two variables. So in the case of the plot above, it visually looks like a postive correlation. Let's look at a few more examples:

#

# ## Weight and calories consumed in 1-3 yr/old children

# This small but real dataset examines whether young children who weigh more, consume more calories

#

# + colab={"base_uri": "https://localhost:8080/", "height": 542} colab_type="code" id="bscoNATT-dlN" outputId="b6f143db-e830-41b4-98e0-78a785237335"

weight = [7.7, 7.8, 8.6, 8.5, 8.6, 9, 10.1, 11.5, 11, 10.2, 11.9, 10.4, 9.3, 9.1, 8.5, 11]

calories = [360, 400, 500, 370, 525, 800, 900, 1200, 1000, 1400, 1600, 850, 575, 425, 950, 800]

kids = DataFrame({'weight': weight, 'calories': calories})

kids

# + colab={"base_uri": "https://localhost:8080/", "height": 617} colab_type="code" id="Ngx0m8sN-dlT" outputId="d5a44bfc-0e0d-4bc2-ee79-02dc9b049cc6"

p = figure(title="Weight and calories in 1-3 yr.old children",

x_axis_label="weight (kg)", y_axis_label='weekly calories')

p.circle(kids['weight'], kids['calories'], size=10, color='navy', alpha=0.5)

show(p)

# + [markdown] colab_type="text" id="JfU5QT1G-dlW"

# And again, there appears to be a positive correlation.

#

# ## The stronger the correlation the closer to a straight line

# The closer the data points are to a straight line, the higher the correlation. A rising straight line (rising going left to right) would be perfect positive correlation. Here we are comparing the heights in inches of some NHL players with their heights in cm. Obviously, those are perfectly correlated.

# + colab={"base_uri": "https://localhost:8080/", "height": 234} colab_type="code" id="SJJ5k9Iu-dlX" outputId="06d2fea2-8efd-40e8-e313-f0ca88dc2877"

inches =[68, 73, 69,72,71,77]

cm = [173, 185, 175, 183, 180, 196]

nhlHeights = DataFrame({'heightInches': inches, 'heightCM': cm})

nhlHeights

# + colab={"base_uri": "https://localhost:8080/", "height": 617} colab_type="code" id="MWppQKt4-dla" outputId="8af36f32-ab60-447d-f1f1-bf3f1219a4d6"

p = figure(title="Comparison of Height in Inches and Height in CM",

x_axis_label="Height in Inches",

y_axis_label="Height in centimeters")

p.circle(nhlHeights['heightInches'], nhlHeights['heightCM'],

size=10, color='navy', alpha=0.5)

show(p)

# + [markdown] colab_type="text" id="h0vwF4yr-dld"

# ## No correlation = far from straight line

# On the opposite extreme, if the datapoints are scattered and no line is discernable, there is no correlation.

#

# Here we are comparing length of the player's hometown name to his height in inches. We are checking whether a player whose hometown name has more letters, tends to be taller. For example, maybe someone from Medicine Hat is taller than someone from Ledue. Obviously there should be no correlation.

#

#

# (Again, a small but real dataset)

# + colab={"base_uri": "https://localhost:8080/", "height": 820} colab_type="code" id="YYa58G0_-dle" outputId="5578cac4-92f3-41fa-a413-5b15a03196e1"

medicineHat = pd.read_csv('https://raw.githubusercontent.com/zacharski/machine-learning-notebooks/master/data/medicineHatTigers.csv')

medicineHat['hometownLength'] = medicineHat['Hometown'].str.len()

medicineHat

# + colab={"base_uri": "https://localhost:8080/", "height": 617} colab_type="code" id="duMhFyDu-dlg" outputId="83206323-deda-4c80-ebbe-15acdd75e498"

p = figure(title="Correlation of the number of Letters in the Hometown to Height",

x_axis_label="Player's Height", y_axis_label="Hometown Name Length")

p.circle(medicineHat['Height'], medicineHat['hometownLength'], size=10, color='navy', alpha=0.5)

show(p)

# + [markdown] colab_type="text" id="iMDBBcyM-dlj"

# And that does not look at all like a straight line.

#

# ## negative correlation has a line going downhill

# When the slope goes up, we say there is a positive correlation and when it goes down there is a negative correlation.

#

# #### the relationship of hair length to a person's height

# + colab={"base_uri": "https://localhost:8080/", "height": 617} colab_type="code" id="fF6MndKp-dlj" outputId="68446cbe-0e4e-47b0-8787-6caa51a8feff"

height =[62, 64, 65, 68, 69, 70, 67, 65, 72, 73, 74]

hairLength = [7, 10, 6, 4, 5, 4, 5, 8, 1, 1, 3]

cm = [173, 185, 175, 183, 180, 196]

people = DataFrame({'height': height, 'hairLength': hairLength})

p = figure(title="Correlation of hair length to a person's height",

x_axis_label="Person's Height", y_axis_label="Hair Length")

p.circle(people['height'], people['hairLength'], size=10, color='navy', alpha=0.5)

show(p)

# + [markdown] colab_type="text" id="VdRZxka9-dlm"

# There is a strong negative correlation between the length of someone's hair and how tall they are. That makes some sense. I am bald and 6'0" and my friend Sara is 5'8" and has long hair.

#

# # Numeric Represenstation of the Strength of the Correlation

#

# So far, we've seen a visual representation of the correlation, but we can also represent the degree of correlation numerically.

#

# ## Pearson Correlation Coefficient

#

# This ranges from -1 to 1.

# 1 is perfect positive correlation, -1 is perfect negative.

#

# $$r=\frac{\sum_{i=1}^n(x_i - \bar{x})(y_i-\bar{y})}{\sqrt{\sum_{i=1}^n(x_i - \bar{x})} \sqrt{\sum_{i=1}^n(y_i - \bar{y})}}$$

#

# In Pandas it is very easy to compute.

#

# ### Japanese ladies expensives on makeup and clothes

# Let's go back to our first example.

# First here is the data:

# + colab={"base_uri": "https://localhost:8080/", "height": 357} colab_type="code" id="sIowAZ8L-dln" outputId="391b27d1-6b60-4717-8169-e3d7613d8433"

monthly

# + colab={"base_uri": "https://localhost:8080/", "height": 617} colab_type="code" id="3t5jGS5f-dlp" outputId="b5567394-7ce8-49ba-8d3e-38ad1f01c574"

p = figure(title="Montly Expenditures on Makeup and Clothes",

x_axis_label="Money spent on makeup", y_axis_label="Money spent on clothes")

p.circle(monthly['makeup'], monthly['clothes'], size=10, color='navy', alpha=0.5)

show(p)

# + [markdown] colab_type="text" id="oxUIU2lE-dls"

# So that looks like a pretty strong positive correlation. To compute Pearson on this data we do:

# + colab={"base_uri": "https://localhost:8080/", "height": 110} colab_type="code" id="WvYUkvHD-dls" outputId="49d7af89-1bb2-4260-d847-f0d014545043"

monthly.corr()

# + [markdown] colab_type="text" id="3cdda0-9-dlv"

# There is no surprise that makeup is perfectly correlated with makeup and clothes with clothes (those are the 1.000 on the diagonal). The interesting bit is that the Pearson for makeup to clothes is 0.968. That is a pretty strong correlation.

#

# If you are interesting you can compute the Pearson values for the datasets above, but let's now move to ...

#

# #### Regression

#

# Let's say we know a young lady who spends about ¥22,500 per month on clothes (that's about $200/month). What do you think she spends on makeup, based on the chart below?

# + colab={"base_uri": "https://localhost:8080/", "height": 617} colab_type="code" id="tGwP03jC-dlw" outputId="98e981e4-4d9e-4fe0-c968-eef70d962354"

show(p)

# + [markdown] colab_type="text" id="l89HC2LH-dlz"

# I'm guessing you would predict she spends somewhere around ¥10,000 a month on makeup (almost $100/month). And how we do this is when looking at the graph we mentally draw an imaginary straight line through the datapoints and use that line for predictions. We are performing human linear regression. And as humans, we have the training set--the dots representing data points on the graph. and we **fit** our human classifier by mentally drawing that straight line. That straight line is our model. Once we have it, we can throw away the data points. When we want to make a prediction, we see where the money spent on clothes falls on that line.

#

# We just predicted a continuous value (money spent on makeup) from one factor (money spent on clothes).

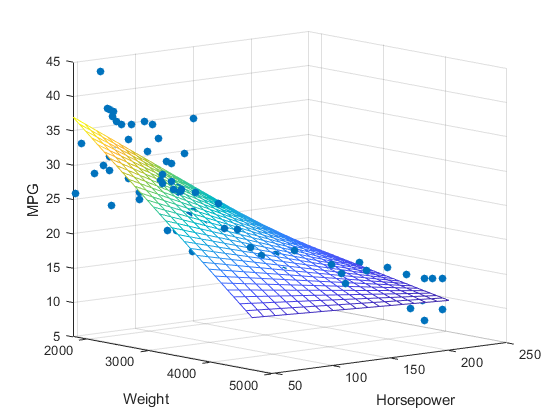

#

# What happens when we want to predict a continuous value from 2 factors? Suppose we want to predict MPG based on the weight of a car and its horsepower.

#

#

# from [Mathworks](https://www.mathworks.com/help/stats/regress.html)

#

# Now instead of a line representing the relationship we have a plane.

#

# Let's create a linear regression classifier and try this out!

#

# First, let's get the data.

#

# + colab={"base_uri": "https://localhost:8080/", "height": 448} colab_type="code" id="nS-VZrlZ-dlz" outputId="2577e13e-f5c0-48c2-d164-860c8df5a8ab"

columnNames = ['mpg', 'cylinders', 'displacement', 'HP', 'weight', 'acceleration', 'year', 'origin', 'model']

cars = pd.read_csv('https://raw.githubusercontent.com/zacharski/ml-class/master/data/auto-mpg.csv',

na_values=['?'], names=columnNames)

cars = cars.set_index('model')

cars = cars.dropna()

cars

# + [markdown] colab_type="text" id="E0rh87Zf-dl2"

# Now divide the dataset into training and testing. And let's only use the horsepower and weight columns as features.

# + colab={"base_uri": "https://localhost:8080/", "height": 448} colab_type="code" id="R_JBLlrW-dl2" outputId="4355bca9-9a75-43b8-ae02-f552c2241b85"

from sklearn.model_selection import train_test_split

cars_train, cars_test = train_test_split(cars, test_size = 0.2)

cars_train_features = cars_train[['HP', 'weight']]

# cars_train_features['HP'] = cars_train_features.HP.astype(float)

cars_train_labels = cars_train['mpg']

cars_test_features = cars_test[['HP', 'weight']]

# cars_test_features['HP'] = cars_test_features.HP.astype(float)

cars_test_labels = cars_test['mpg']

cars_test_features

# + [markdown] colab_type="text" id="f62sESxd-dl6"

# ### SKLearn Linear Regression

#

# Now let's create a Linear Regression classifier and fit it.

# + colab={"base_uri": "https://localhost:8080/", "height": 34} colab_type="code" id="wJ5-BzSU-dl6" outputId="8adb5159-d67c-4956-90cf-ade0fbfeb089"

from sklearn.linear_model import LinearRegression

linclf = LinearRegression()

linclf.fit(cars_train_features, cars_train_labels)

# + [markdown] colab_type="text" id="qo_VNdhI-dl9"

# and finally use the trained classifier to make predictions on our test data

# + colab={} colab_type="code" id="gtNyLgia-dl9"

predictions = linclf.predict(cars_test_features)

# + [markdown] colab_type="text" id="jYsJvAiR-dl_"

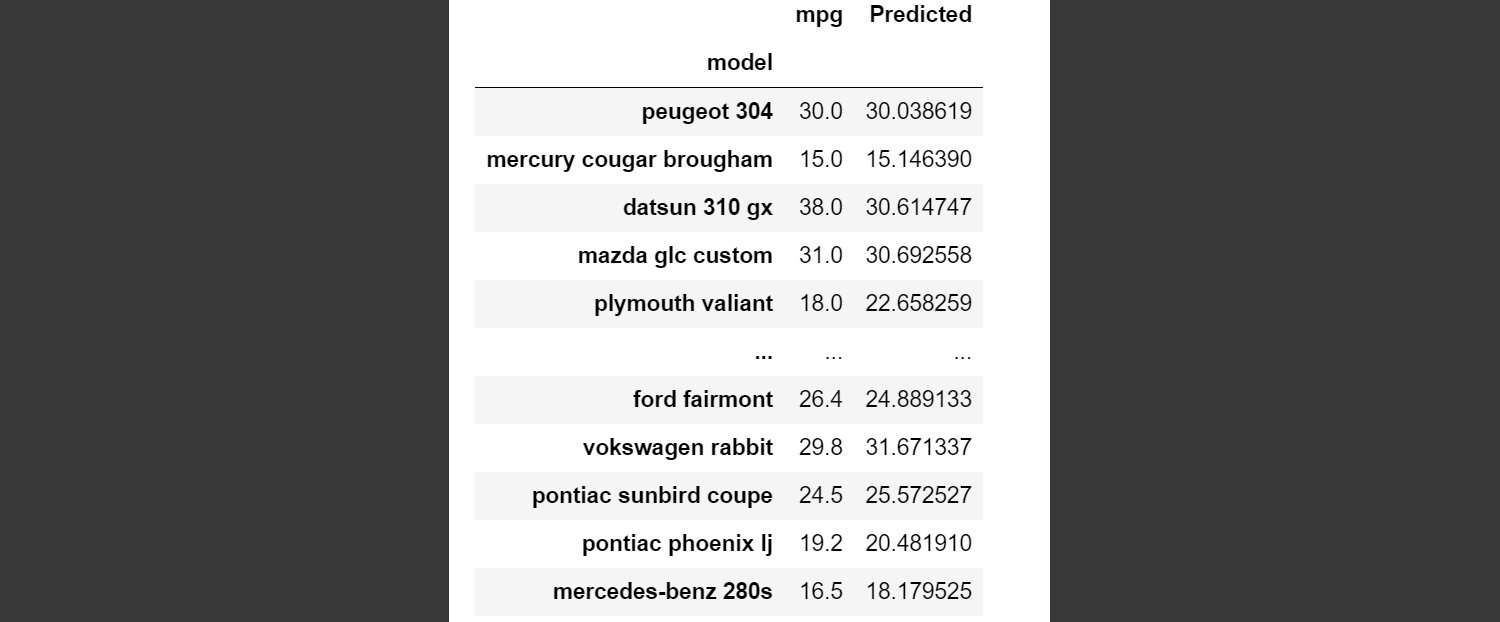

# Let's take an informal look at how we did:

# + colab={"base_uri": "https://localhost:8080/", "height": 448} colab_type="code" id="9AnurGcX-dmC" outputId="30e7590c-dc59-48c6-f257-aace2dd5ae38"

results = cars_test_labels.to_frame()

results['Predicted']= predictions

results

# + [markdown] colab_type="text" id="rjOo1BLN-dmF"

# Here is what my output looked like:

#

#

#

# as you can see the first two predictions were pretty close as were a few others.

#

# ### Determining how well the classifier performed

#

# With categorical classifiers we used sklearn's accuracy_score:

#

# ```

# from sklearn.metrics import accuracy_score

# ```

# Consider a task of predicting whether an image is of a dog or a cat. We have 10 instances in our test set. After our classifier makes predictions, for each image we have the actual (true) value, and the value our classifier predicted:

#

# actual | predicted

# :-- | :---

# dog | dog

# **dog** | **cat**

# # # cat | cat

# dog | dog

# # # cat | cat

# **cat** | **dog**

# dog | dog

# # # cat | cat

# # # cat | cat

# dog | dog

#

# sklearn's accuracy score just counts how many predicted values matched the actual values and then divides by the total number of test instances. In this case the accuracy score would be .8000. The classifier was correct 80% of the time.

#

# We can't use this method with a regression classifier. In the image above, the actual MPG of the Peugeot 304 was 30 and our classifier predicted 30.038619. Does that count as a match or not? The actual mpg of a Pontiac Sunbird Coupe was 24.5 and we predicted 25.57. Does that count as a match? Instead of accuracy_score, there are different evaluation metrics we can use.

#

# #### Mean Squared Error and Root Mean Square Error

#

# A common metric is Mean Squared Error or MSE. MSE is a measure of the quality of a regression classifier. The closer MSE is to zero, the better the classifier. Let's look at some made up data to see how this works:

#

# vehicle | Actual MPG | Predicted MPG

# :---: | ---: | ---:

# Ram Promaster 3500 | 18.0 | 20.0

# Ford F150 | 20 | 19

# Fiat 128 | 33 | 33

#

# First we compute the error (the difference between the predicted and actual values)

#

# vehicle | Actual MPG | Predicted MPG | Error

# :---: | ---: | ---: | --:

# Ram Promaster 3500 | 18.0 | 20.0 | -2

# Ford F150 | 20 | 19 | 1

# Fiat 128 | 33 | 33 | 0

#

# Next we square the error and compute the average:

#

# vehicle | Actual MPG | Predicted MPG | Error | Error^2

# :---: | ---: | ---: | --: | ---:

# Ram Promaster 3500 | 18.0 | 20.0 | -2 | 4

# Ford F150 | 20 | 19 | 1 | 1

# Fiat 128 | 33 | 33 | 0 | 0

# MSE | - | - | - | 1.667

#

# **Root Mean Squared Error** is simply the square root of MSE. The advantage of RMSE is that it has the same units as what we are trying to predict. Let's take a look ...

# + colab={"base_uri": "https://localhost:8080/", "height": 34} colab_type="code" id="z8DX6nRJ-dmF" outputId="829b2878-924d-4ad7-9642-4018bfba4234"

from sklearn.metrics import mean_squared_error

MSE = mean_squared_error(cars_test_labels, predictions)

RMSE = mean_squared_error(cars_test_labels, predictions, squared=False)

print("MSE: %5.3f. RMSE: %5.3f" %(MSE, RMSE))

# + [markdown] colab_type="text" id="W9ZgOu53-dmH"

# That RMSE tells us on average how many mpg we were off.

#

# ---

#

#

#

# ## So what kind of model does a linear regression classifier build?

#

# You probably know this if you reflect on grade school math classes you took.

#

# Let's go back and look at the young ladies expenditures on clothes and makeup.

# + colab={"base_uri": "https://localhost:8080/", "height": 617} colab_type="code" id="hpZ8csEm-dmI" outputId="ffa3271d-10a2-4e01-b7a0-2cf1ffa93ede"

p = figure(title="Montly Expenditures on Makeup and Clothes",

x_axis_label="Money spent on makeup", y_axis_label="Money spent on clothes")

p.circle(monthly['makeup'], monthly['clothes'], size=10, color='navy', alpha=0.5)

show(p)

# + [markdown] colab_type="text" id="pd0Kp1b0-dmL"

# When we talked about this example above, I mentioned that when we do this, we imagine a line. Let's see if we can use sklearns linear regression classifier to draw that line:

# + colab={"base_uri": "https://localhost:8080/", "height": 252} colab_type="code" id="iUPQmLI9-dmL" outputId="93f99ab9-eac7-4757-9d55-a707159639df"

regr = LinearRegression()

regr.fit(monthly[['clothes']], monthly['makeup'])

pred = regr.predict(monthly[['clothes']])

import matplotlib.pyplot as plt

# Plot outputs

plt.scatter(monthly['clothes'], monthly['makeup'], color='black')

plt.plot(monthly['clothes'], pred, color='blue', linewidth=3)

plt.xticks(())

plt.yticks(())

plt.show()

# + [markdown] colab_type="text" id="_lwOOz55-dmO"

# Hopefully that matches your imaginary line!

#

# The formula for the line is

#

# $$makeup=w_0clothes + y.intercept$$

#

# We can query our classifier for those values ($w_0$, and $i.intercept$):

# + colab={"base_uri": "https://localhost:8080/", "height": 51} colab_type="code" id="_Qu6YBQa-dmO" outputId="5622fea3-934c-40e0-bf2d-867ec0ff3a9e"

print('w0 = %5.3f' % regr.coef_)

print('y intercept = %5.3f' % regr.intercept_)

# + [markdown] colab_type="text" id="4W7hfaH1-dmR"

# So the formula for this particular example is

#

# $$ makeup = 0.479 * clothes + 121.782$$

#

# So if a young lady spent ¥22,500 on clothes we would predict she spent the following on makeup:

#

#

# + colab={"base_uri": "https://localhost:8080/", "height": 34} colab_type="code" id="o8H6PpL3-dmR" outputId="182a26ba-a23f-40fb-a608-f2538cf1768e"

makeup = regr.coef_[0] * 22500 + regr.intercept_

makeup

# + [markdown] colab_type="text" id="CWU_PMNW-dmT"

# The formula for regression in general is

#

# $$\hat{y}=\theta_0 + \theta_1x_1 + \theta_2x_2 + ... \theta_nx_n$$

#

# where $\theta_0$ is the y intercept. When you fit your classifier it is learning all those $\theta$'s. That is the model your classifier learns.

#

# It is important to understand this as it applies to other classifiers as well!

#

#

# ## Overfitting

# Consider two models for our makeup predictor. One is the straight line:

# + colab={"base_uri": "https://localhost:8080/", "height": 252} colab_type="code" id="QDX1lstU-dmU" outputId="b66454ce-2571-46af-b39f-2e48089e475c"

plt.scatter(monthly['clothes'], monthly['makeup'], color='black')

plt.plot(monthly['clothes'], pred, color='blue', linewidth=3)

plt.xticks(())

plt.yticks(())

plt.show()

# + [markdown] colab_type="text" id="K9pH023O-dmW"

# And the other looks like:

# + colab={"base_uri": "https://localhost:8080/", "height": 252} colab_type="code" id="G6yZNWN--dmX" outputId="af5531d3-cc89-4eed-8c77-48fb5b359d43"

monthly2 = monthly.sort_values(by='clothes')

plt.scatter(monthly2['clothes'], monthly2['makeup'], color='black')

plt.plot(monthly2['clothes'], monthly2['makeup'], color='blue', linewidth=3)

plt.xticks(())

plt.yticks(())

plt.show()

# + [markdown] colab_type="text" id="txLtJJZc-dma"

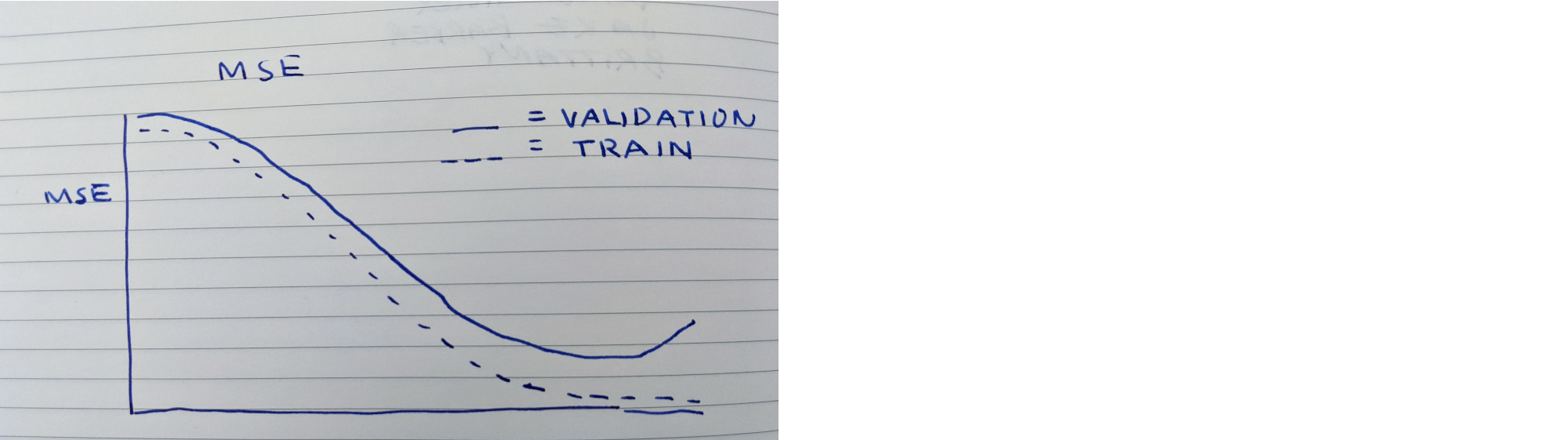

# The second model fits the training data perfectly. Is it better than the first? Here is what could happen.

#

# Let's say we have been tuning our model using our validation data set. Our error rates look like

#

#

#

# As you can see our training error rate keeps going down, but at the very end our validation error increases. This is called **overfitting** the data. The model is highly tuned to the nuances of the training data. So much so, that it hurts the performance on new data--in this case, the validation data. This, obviously, is not a good thing.

#

# ---

# #### An aside

#

# Imagine preparing for a job interview for a position you really, really, want. Since we are working on machine learning, let's say it is a machine learning job. In their job ad they list a number of things they want the candidate to know:

#

# * Convolutional Neural Networks

# * Long Short Term Memory models

# * Recurrent Neural Networks

# * Generative Deep Learning

#

# And you spend all your waking hours laser focused on these topics. You barely get any sleep and you read articles on these topics while you eat. You know the tiniest intricacies of these topics. You are more than 100% ready.

#

# The day of the interview arrives. After of easy morning of chatting with various people, you are now in a conference room for the technical interview, standing at a whiteboard, ready to hopefully wow them with your wisdom. The first question they ask is for you to write the solution to the fizz buzz problem:

#

# > Write a program that prints the numbers from 1 to 100. But for multiples of three print “Fizz” instead of the number and for the multiples of five print “Buzz”. For numbers which are multiples of both three and five print “FizzBuzz”

#

# And you freeze. This is a simple request and a very common interview question. In fact, to prepare you for this job interview possibility, write it now:

#

#

#

#

# + colab={} colab_type="code" id="UMdU0j3ALXgU"

def fizzbuzz():

print("TO DO")

# + colab={"base_uri": "https://localhost:8080/", "height": 34} colab_type="code" id="bDG7M1WGLgEj" outputId="1017545f-4b24-4bd6-f0b7-0b6b1011b7a4"

fizzbuzz()

# + [markdown] colab_type="text" id="k7Zt-eCZLgZe"

# Back to the job candidate freezing, this is an example of overfitting. You overfitting to the skills mentioned in the job posting.

#

# At dissertation defenses often faculty will ask the candidate questions outside of the candidate's dissertation. I heard of one case in a physics PhD defense where a faculty member asked "Why is the sky blue?" and the candidate couldn't answer.

#

# Anyway, back to machine learning.

#

# ---

#

#

# There are a number of ways to reduce the likelihood of overfitting including

#

# * We can reduce the complexity of the model. Instead of going with the model of the jagged line immediately above we can go with the simpler straight line model. We have seen this in decision trees where we limit the depth of the tree.

#

# * Another method is to increase the amount of training data.

#

# Let's examine the first. The process of reducing the complexity of a model is called regularization.

#

# The linear regression model we have just used tends to overfit the data and there are some variants that are better and these are called regularized linear models. These include

#

# * Ridge Regression

# * Lasso Regression

# * Elastic Net - a combination of Ridge and Lasso

#

# Let's explore Elastic Net. And let's use all the columns of the car mpg dataset:

# + colab={} colab_type="code" id="3nxItuCZ-dma"

newTrain_features = cars_train.drop('mpg', axis=1)

newTrain_labels = cars_train['mpg']

newTest_features = cars_test.drop('mpg', axis=1)

newTest_labels = cars_test['mpg']

# + [markdown] colab_type="text" id="t6GUbt83-dmc"

# First, let's try with our standard Linear Regression classifier:

# + colab={} colab_type="code" id="EwsP5LBo-dmd"

linclf = LinearRegression()

linclf.fit(newTrain_features, newTrain_labels)

predictions = linclf.predict(newTest_features)

# + colab={"base_uri": "https://localhost:8080/", "height": 34} colab_type="code" id="3iFVEwh_-dmf" outputId="61f6547e-0152-4d4f-bcb8-041593b01e4b"

MSE = mean_squared_error(newTest_labels, predictions)

RMSE = mean_squared_error(newTest_labels, predictions, squared=False)

print("MSE: %5.3f. RMSE: %5.3f" %(MSE, RMSE))

# + [markdown] colab_type="text" id="B7lJGcXQN1iI"

# Now let's try with [ElasticNet](https://scikit-learn.org/stable/modules/generated/sklearn.linear_model.ElasticNet.html)

# + colab={} colab_type="code" id="A0eTqpNS-dmk"

from sklearn.linear_model import ElasticNet

elastic_net = ElasticNet(alpha=0.1, l1_ratio=0.5)

elastic_net.fit(newTrain_features, newTrain_labels)

ePredictions = elastic_net.predict(newTest_features)

# + colab={"base_uri": "https://localhost:8080/", "height": 34} colab_type="code" id="PFjWu3Nx-dmm" outputId="ebb5f7d1-c936-469f-acd6-b298451bbe6c"

MSE = mean_squared_error(newTest_labels, ePredictions)

RMSE = mean_squared_error(newTest_labels, ePredictions, squared=False)

print("MSE: %5.3f. RMSE: %5.3f" %(MSE, RMSE))

# + [markdown] colab_type="text" id="oasWKk30OCui"

# I've run this a number of times. Sometimes linear regression is slightly better and sometimes ElasticNet is. Here are the results of one run:

#

# ##### RMSE

#

# Linear Regression | Elastic Net

# :---: | :---:

# 2.864 | 2.812

#

# So this is not the most convincing example.

#

# However, in general, it is always better to have some regularization, so (mostly) you should avoid the generic linear regression classifier.

#

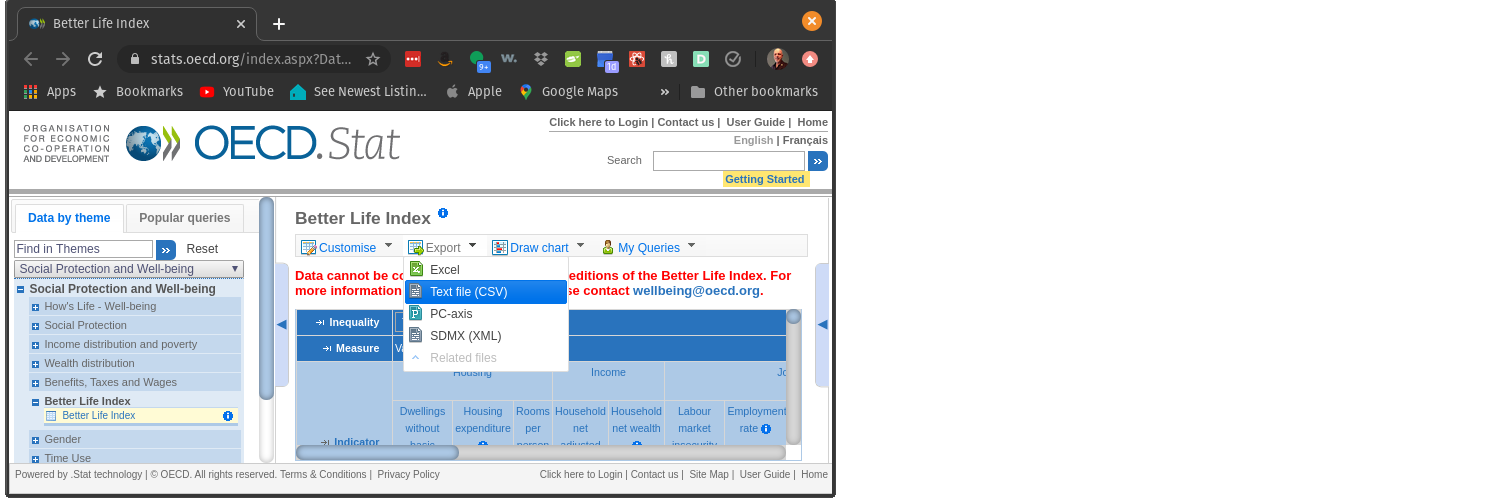

# ## Happiness

# What better way to explore regression then to look at happiness.

#

# From a Zen perspective, happiness is being fully present in the current moment.

#

# But, ignoring that advice, let's see if we can predict happiness, or life satisfaction.

#

#

#

# We are going to be investigating the [Better Life Index](https://stats.oecd.org/index.aspx?DataSetCode=BLI). You can download a csv file of that data from that site.

#

#

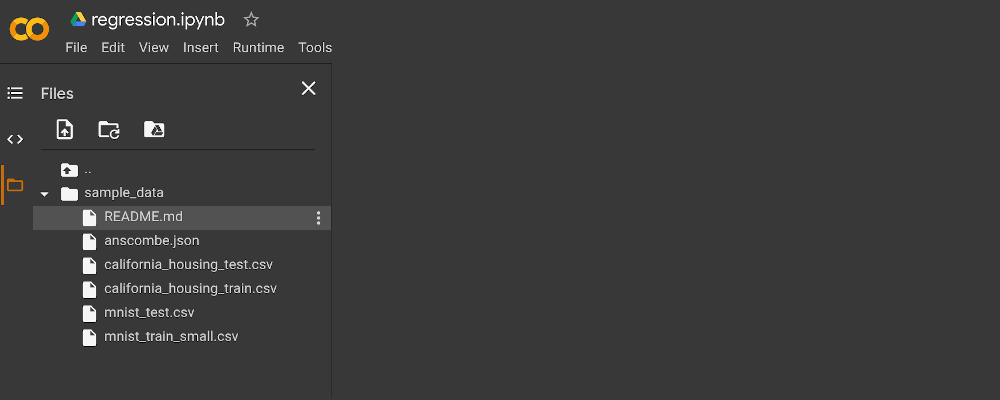

#

# Now that you have the CSV data file on your laptop, you can upload it to Colab.

#

# In Colab, you will see a list of icons on the left.

#

#

#

# Select the file folder icon.

#

#

#

# Next, select the upload icon (the page with an arrow icon). And upload the file.

#

# Next, let's execute the Linux command `ls`:

#

#

# + colab={"base_uri": "https://localhost:8080/", "height": 34} colab_type="code" id="HkxsnifT-dmp" outputId="2fa3cf4e-bbcd-4b90-ae87-aef1be18f243"

# !ls

# + [markdown] colab_type="text" id="nzw8N699SrPP"

# ### Load that file into a Pandas DataFrame

#

# We will load the file into Pandas Dataframe called `bli` for better life index:

# + colab={"base_uri": "https://localhost:8080/", "height": 900} colab_type="code" id="3tYTVthdMAPO" outputId="668b07db-8f9c-4e80-838c-556984a27f53"

# TO DO

# + [markdown] colab_type="text" id="R7T8rpt-YS3h"

# When examining the DataFrame we can see it has an interesting structure. So the first row we can parse:

#

# * The country is Australia

# * The feature is Labour market insecurity

# * The Inequality column tells us it is the **total** Labour market insecurity value.

# * The unit column tells the us the number is a percentage.

# * And the value is 5.40

#

# So, in English, the column is The total labor market insecurity for Australia is 5.40%.

#

# I am curious as to what values other than Total are in the Inequality column:

#

#

#

#

# + colab={"base_uri": "https://localhost:8080/", "height": 34} colab_type="code" id="Sdjs0SLKlE5O" outputId="94caa3fe-3a00-413f-effc-4c1d62aff62b"

bli.Inequality.unique()

# + [markdown] colab_type="text" id="b7VnlbDZlJnm"

# Cool. So in addition to the total for each feature, we can get values for just men, just women, and the high and low.

#

# Let's get just the totals and then pivot the DataFrame so it is in a more usable format.

#

# In addition, there are a lot of NaN values in the data, let's replace them with the mean value of the column.

#

# We are just learning about regression and this is a very small dataset, so let's divide training and testing by hand ...

# + colab={"base_uri": "https://localhost:8080/", "height": 1000} colab_type="code" id="EQ2YbF3pMHhn" outputId="c3c5afbf-078c-48c5-a4fc-d4f5339e46f2"

bli = bli[bli["INEQUALITY"]=="TOT"]

bli = bli.pivot(index="Country", columns="Indicator", values="Value")

bli.fillna(bli.mean(), inplace=True)

bliTest = bli.loc['Greece':'Italy', :]

bliTrain = pd.concat([bli.loc[:'Germany' , :], bli.loc['Japan':, :]])

bliTrain

# + [markdown] colab_type="text" id="4uwI17DVr5SM"

# Now we need to divide both the training and test sets into features and labels.

# + colab={} colab_type="code" id="mqP1PbycaXTu"

# TO DO

# + [markdown] colab_type="text" id="GeUlBUDRsHN_"

# ### Create and Train an elastic net model

# + colab={"base_uri": "https://localhost:8080/", "height": 68} colab_type="code" id="LXMzYK-9moqH" outputId="7ad04a73-02d2-41bd-f80d-166dd5041a99"

# TO DO

# + [markdown] colab_type="text" id="YGBEijsSsOgO"

# ### Use the trained model to make predictions on our tiny test set

# + colab={} colab_type="code" id="Uy79gKKGnhH5"

# TO DO

predictions = 'TODO'

# + [markdown] colab={} colab_type="code" id="Z55ROFZ1tGWf"

# Now let's visually compare the differences between the predictions and the actual values

# + colab={"base_uri": "https://localhost:8080/", "height": 264} colab_type="code" id="eAWEt8rNoj9A" outputId="7f9ee164-c2de-43f2-850b-4e2baf752144"

results = pd.DataFrame(bliTestLabels)

results['Predicted']= predictions

results

# + [markdown] colab_type="text" id="Bu5NtK7ltNrr"

# How did you do? For me Hungary was a lot less happy than what was predicted.

#

#

# # Prediction Housing Prices

#

# ## But first some wonkiness

# When doing one hot encoding, sometimes the original datafile has the same type of data in multiple columns. For example...

#

# Title | Genre 1 | Genre 2

# :--: | :---: | :---:

# Mission: Impossible - Fallout | Action | Drama

# Mama Mia: Here We Go Again | Comedy | Musical

# Ant-Man and The Wasp | Action | Comedy

# BlacKkKlansman | Drama | Comedy

#

#

# When we one-hot encode this we get something like

#

# Title | Genre1 Action | Genre1 Comedy | Genre1 Drama | Genre2 Drama | Genre2 Musical | Genre2 Comedy

# :--: | :--: | :--: | :--: | :--: | :--: | :--:

# Mission: Impossible - Fallout | 1 | 0 | 0 | 1 | 0 | 0

# Mama Mia: Here We Go Again | 0 | 1 | 0 | 0 | 1 | 0

# Ant-Man and The Wasp | 1 | 0 | 0 | 0 | 0 | 1

# BlacKkKlansman | 0 | 0 | 1 | 0 | 0 | 1

#

# But this isn't what we probably want. Instead this would be a better representation:

#

# Title | Action | Comedy | Drama | Musical

# :---: | :---: | :---: | :---: | :---: |

# Mission: Impossible - Fallout | 1 | 0 | 1 | 0

# Mama Mia: Here We Go Again | 0 | 1 | 0 | 1

# Ant-Man and The Wasp | 1 | 1 | 0 | 0

# BlacKkKlansman | 0 | 1 | 1 | 0

#

# Let's see how we might do this in code

#

# + colab={"base_uri": "https://localhost:8080/", "height": 171} colab_type="code" id="G_Y7mmEKo9Fg" outputId="78125e7b-324f-4b8c-f71c-0f85277220ba"

df = pd.DataFrame({'Title': ['Mission: Impossible - Fallout', 'Mama Mia: Here We Go Again',

'Ant-Man and The Wasp', 'BlacKkKlansman' ],

'Genre1': ['Action', 'Comedy', 'Action', 'Drama'],

'Genre2': ['Drama', 'Musical', 'Comedy', 'Comedy']})

df

# + colab={} colab_type="code" id="xDyEYXhHpEo7"

one_hot_1 = pd.get_dummies(df['Genre1'])

one_hot_2 = pd.get_dummies(df['Genre2'])

# + colab={"base_uri": "https://localhost:8080/", "height": 171} colab_type="code" id="aObvufy0vYwZ" outputId="d81366fd-a64c-4b7a-95b1-952571258687"

# now get the intersection of the column names

s1 = set(one_hot_1.columns.values)

s2 = set(one_hot_2.columns.values)

intersect = s1 & s2

only_s1 = s1 - intersect

only_s2 = s2 - intersect

# now logically or the intersect

logical_or = one_hot_1[list(intersect)] | one_hot_2[list(intersect)]

# then combine everything

combined = pd.concat([one_hot_1[list(only_s1)], logical_or, one_hot_2[list(only_s2)]], axis=1)

combined

### Now drop the two original columns and add the one hot encoded columns

df= df.drop('Genre1', axis=1)

df= df.drop('Genre2', axis=1)

df = df.join(combined)

df

# + [markdown] colab_type="text" id="sxM-Rw_ZwCMU"

# That looks more like it!!!

#

# ## The task: Predict Housing Prices

# Your task is to create a regession classifier that predicts house prices. The data and a description of

#

# * [The description of the data](https://raw.githubusercontent.com/zacharski/ml-class/master/data/housePrices/data_description.txt)

# * [The CSV file](https://raw.githubusercontent.com/zacharski/ml-class/master/data/housePrices/data.csv)

#

#

# Minimally, your classifier should be trained on the following columns:

# + colab={} colab_type="code" id="7RfTLx11vicC"

numericColumns = ['LotFrontage', 'LotArea', 'OverallQual', 'OverallCond', '1stFlrSF', '2ndFlrSF', 'GrLivArea',

'FullBath', 'HalfBath', 'Bedroom', 'Kitchen']

categoryColumns = ['MSZoning', 'Street', 'Alley', 'LotShape', 'LandContour',

'Utilities', 'LotConfig', 'LandSlope', 'Neighborhood', 'BldgType',

'HouseStyle', 'RoofStyle', 'RoofMatl', '' ]

# Using multicolumns is optional

multicolumns = [['Condition1', 'Condition2'], ['Exterior1st', 'Exterior2nd']]

# + [markdown] colab_type="text" id="PWcqhPixyfZI"

# You are free to use more columns than these. Also, you may need to process some of the columns.

# Here are the requirements:

#

# ### 1. Drop any data rows that contain Nan in a column.

# Once you do this you should have around 1200 rows.

# ### 2. Use the following train_test_split parameters

# ```

# train_test_split( originalData, test_size=0.20, random_state=42)

# ```

#

# ### 3. You are to compare Linear Regression and Elastic Net

# ### 4. You should use 10 fold cross validation (it is fine to use grid search)

# ### 5. When finished tuning your model, determine the accuracy on the test data using RMSE.

#

# # Performance Bonus

# You are free to adjust any hyperparameters but do so before you evaluate the test data. You may get up to 15xp bonus for improved accuracy.

#

# Good luck!

# + [markdown] colab_type="text" id="FBCp4tvCyCDr"

#

# + colab={} colab_type="code" id="MY9EdczzwAxT"

| labs/regression.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: twin-causal-model

# language: python

# name: twin-causal-model

# ---

# # Twin-Causal-Net Example

# ***

# The following notebook shows how to use the `twincausal` *Python* library.

# #### Import the libraries and generate synthetic uplift data

import twincausal.utils.data as twindata

from twincausal.model import twin_causal

from sklearn.model_selection import train_test_split

from twincausal.utils.performance import qini_curve, qini_barplot

X, T, Y = twindata.generator(scenario=3, random_state=123456) # Generate fake uplift data

X_train, X_test, T_train, T_test, Y_train, Y_test = train_test_split(X, T, Y, test_size=0.5, random_state=123)

# #### Initialize the model

input_size = X.shape[1] # Number of features, the model will handle automatically the treatment variable

# How to train a twin-neural model with 1-hidden layer

uplift = twin_causal(nb_features=input_size, # required parameter

# optional hyper-parameters for fine-tuning

nb_hlayers=1, nb_neurons=256, lrelu_slope=0.05, batch_size=256, shuffle=True,

max_iter=100, learningRate=0.009, reg_type=1, l_reg_constant=0.001,

prune=True, gpl_reg_constant=0.005, loss="uplift_loss",

# default parameters for reporting

learningCurves=True, save_model=True, verbose=False, logs=True,

random_state=1234)

uplift # Print model architecture

# #### Fitting the model

uplift.fit(X_train, T_train, Y_train, val_size=0.3) # You can control the proportion of obs to use for validation

# #### Predict and visualize

# +

# Uncomment the following if you want to load the "best" model based on the Qini coefficient obtained in the validation set

# Needs the arg save_model to be set to True

# import torch

# uplift.load_state_dict(torch.load("runs/Models/_twincausal/...")) # Change the path accordingly

# -

pred_our_loss = uplift.predict(X_test)

_, q = qini_curve(T_test, Y_test, pred_our_loss)

print('The Qini coefficient is:', q)

# #### Fit and compare models with no hidden layers and different losses

# +

# Change nb_hlayers to 0 and regularization to L2; keep default hyper-parameters

# -

twin = twin_causal(nb_features=input_size, nb_hlayers=0, reg_type=2)

twin.fit(X_train, T_train, Y_train)

pred_twin_loss = twin.predict(X_test)

_, q = qini_curve(T_test, Y_test, pred_twin_loss)

print('The Qini coefficient using the uplift loss is:', q)

# +

# Now change the loss type to use the usual binary cross entropy (or logistic_loss)

# -

logistic = twin_causal(nb_features=input_size, nb_hlayers=0, reg_type=2, loss="logistic_loss")

logistic.fit(X_train, T_train, Y_train)

pred_log_loss = logistic.predict(X_test)

_, q = qini_curve(T_test, Y_test, pred_log_loss)

print('The Qini coefficient using the logistic loss is:', q)

| examples/twin_causal_examples.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# # Introduction to Numpy <img align="right" src="../Supplementary_data/dea_logo.jpg">

#

# * [**Sign up to the DEA Sandbox**](https://docs.dea.ga.gov.au/setup/sandbox.html) to run this notebook interactively from a browser

# * **Compatibility**: Notebook currently compatible with both the `NCI` and `DEA Sandbox` environments

# * **Prerequisites**: Users of this notebook should have a basic understanding of:

# * How to run a [Jupyter notebook](01_jupyter_notebooks.ipynb)

# ## Background

# `Numpy` is a Python library which adds support for large, multi-dimension arrays and metrics, along with a large collection of high-level mathematical functions to operate on these arrays.

# More information about `numpy` arrays can be found [here](https://en.wikipedia.org/wiki/NumPy).

#

# ## Description

# This notebook is designed to introduce users to `numpy` arrays using Python code in Jupyter Notebooks via JupyterLab.

#

# Topics covered include:

#

# * How to use `numpy` functions in a Jupyter Notebook cell

# * Using indexing to explore multi-dimensional `numpy` array data

# * `Numpy` data types, broadcasting and booleans

# * Using `matplotlib` to plot `numpy` data

#

# ***

# ## Getting started

# To run this notebook, run all the cells in the notebook starting with the "Load packages" cell. For help with running notebook cells, refer back to the [Jupyter Notebooks notebook](01_Jupyter_notebooks.ipynb).

# ### Load packages

#

# In order to be able to use `numpy` we need to import the library using the special word `import`. Also, to avoid typing `numpy` every time we want to use one if its functions we can provide an alias using the special word `as`:

import numpy as np

# ### Introduction to Numpy

#

# Now, we have access to all the functions available in `numpy` by typing `np.name_of_function`. For example, the equivalent of `1 + 1` in Python can be done in `numpy`:

np.add(1, 1)

# Although this might not at first seem very useful, even simple operations like this one can be much quicker in `numpy` than in standard Python when using lots of numbers (large arrays).

#

# To access the documentation explaining how a function is used, its input parameters and output format we can press `Shift+Tab` after the function name. Try this in the cell below

np.add

# By default the result of a function or operation is shown underneath the cell containing the code. If we want to reuse this result for a later operation we can assign it to a variable:

a = np.add(2, 3)

# The contents of this variable can be displayed at any moment by typing the variable name in a new cell:

a

# ### Numpy arrays

# The core concept in `numpy` is the "array" which is equivalent to lists of numbers but can be multidimensional.

# To declare a `numpy` array we do:

np.array([1, 2, 3, 4, 5, 6, 7, 8, 9])

# Most of the functions and operations defined in `numpy` can be applied to arrays.

# For example, with the previous operation:

# +

arr1 = np.array([1, 2, 3, 4])

arr2 = np.array([3, 4, 5, 6])

np.add(arr1, arr2)

# -

# But a more simple and convenient notation can also be used:

arr1 + arr2

# #### Indexing

# Arrays can be sliced and diced. We can get subsets of the arrays using the indexing notation which is `[start:end:stride]`.

# Let's see what this means:

# +

arr = np.array([0, 1, 2, 3, 4, 5, 6, 7, 8, 9, 10, 11, 12, 13, 14, 15])

print("6th element in the array:", arr[5])

print("6th element to the end of array", arr[5:])

print("start of array to the 5th element", arr[:5])

print("every second element", arr[::2])

# -

# Try experimenting with the indices to understand the meaning of `start`, `end` and `stride`.

# What happens if you don't specify a start?

# What value does `numpy` uses instead?

# Note that `numpy` indexes start on `0`, the same convention used in Python lists.

#

# Indexes can also be negative, meaning that you start counting from the end.

# For example, to select the last 2 elements in an array we can do:

arr[-2:]

# ### Multi-dimensional arrays

# `Numpy` arrays can have multiple dimensions. For example, we define a 2-dimensional `(1,9)` array using nested square bracket:

#

# <img src="../Supplementary_data/07_Intro_to_numpy/numpy_array_t.png" alt="drawing" width="600" align="left"/>

np.array([[1, 2, 3, 4, 5, 6, 7, 8, 9]])

# To visualise the shape or dimensions of a `numpy` array we can add the suffix `.shape`

print(np.array([1, 2, 3, 4, 5, 6, 7, 8, 9]).shape)

print(np.array([[1, 2, 3, 4, 5, 6, 7, 8, 9]]).shape)

print(np.array([[1], [2], [3], [4], [5], [6], [7], [8], [9]]).shape)

# Any array can be reshaped into different shapes using the function `reshape`:

np.array([1, 2, 3, 4, 5, 6, 7, 8]).reshape((2, 4))

# If you are concerned about having to type so many squared brackets, there are more simple and convenient ways of doing the same:

print(np.array([1, 2, 3, 4, 5, 6, 7, 8, 9]).reshape(1, 9).shape)

print(np.array([1, 2, 3, 4, 5, 6, 7, 8, 9]).reshape(9, 1).shape)

print(np.array([1, 2, 3, 4, 5, 6, 7, 8, 9]).reshape(3, 3).shape)

# Also there are shortcuts for declaring common arrays without having to type all their elements:

print(np.arange(9))

print(np.ones((3, 3)))

print(np.zeros((2, 2, 2)))

# ### Arithmetic operations

# `Numpy` has many useful arithmetic functions.

# Below we demonstrate a few of these, such as mean, standard deviation and sum of the elements of an array.

# These operation can be performed either across the entire array, or across a specified dimension.

arr = np.arange(9).reshape((3, 3))

print(arr)

print("Mean of all elements in the array:", np.mean(arr))

print("Std dev of all elements in the array:", np.std(arr))

print("Sum of all elements in the array:", np.sum(arr))

print("Mean of elements in array axis 0:", np.mean(arr, axis=0))

print("Mean of elements in array axis 1:", np.mean(arr, axis=1))

# ### Numpy data types

# `Numpy` arrays can contain numerical values of different types. These types can be divided in these groups:

#

# * Integers

# * Unsigned

# * 8 bits: `uint8`

# * 16 bits: `uint16`

# * 32 bits: `uint32`

# * 64 bits: `uint64`

# * Signed

# * 8 bits: `int8`

# * 16 bits: `int16`

# * 32 bits: `int32`

# * 64 bits: `int64`

#

# * Floats

# * 32 bits: `float32`

# * 64 bits: `float64`

#

# We can specify the type of an array when we declare it, or change the data type of an existing one with the following expressions:

# +

# Set datatype dwhen declaring array

arr = np.arange(5, dtype=np.uint8)

print("Integer datatype:", arr)

arr = arr.astype(np.float32)

print("Float datatype:", arr)

# -

# ### Broadcasting

#

# The term broadcasting describes how `numpy` treats arrays with different shapes during arithmetic operations.

# Subject to certain constraints, the smaller array is "broadcast" across the larger array so that they have compatible shapes.

# Broadcasting provides a means of vectorizing array operations so that looping occurs in C instead of Python.

# This can make operations very fast.

# +

a = np.zeros((3, 3))

print(a)

a = a + 1

print(a)

# +

a = np.arange(9).reshape((3, 3))

b = np.arange(3)

a + b

# -

# ### Booleans

# There is a binary type in `numpy` called boolean which encodes `True` and `False` values.

# For example:

# +

arr = arr > 0

print(arr)

arr.dtype

# -

# Boolean types are quite handy for indexing and selecting parts of images as we will see later.

# Many `numpy` functions also work with Boolean types.

# +

print("Number of 'Trues' in arr:", np.count_nonzero(arr))

# Create two boolean arrays

a = np.array([1, 1, 0, 0], dtype=bool)

b = np.array([1, 0, 0, 1], dtype=bool)

# Compare where they match

np.logical_and(a, b)

# -

# ### Introduction to Matplotlib

# This second part introduces `matplotlib`, a Python library for plotting `numpy` arrays as images.

# For the purposes of this tutorial we are going to use a part of `matplotlib` called `pyplot`.

# We import it by doing:

# +

# %matplotlib inline

import matplotlib.pyplot as plt

# -

# An image can be seen as a 2-dimensional array. To visualise the contents of a `numpy` array:

# +

arr = np.arange(100).reshape(10, 10)

print(arr)

plt.imshow(arr)

# -

# We can use the Pyplot library to load an image using the function `imread`:

im = np.copy(plt.imread("../Supplementary_data/07_Intro_to_numpy/africa.png"))

# #### Let's display this image using the `imshow` function.

plt.imshow(im)

# This is a [free stock photo](https://depositphotos.com/42725091/stock-photo-kilimanjaro.html) of Mount Kilimanjaro, Tanzania. A colour image is normally composed of three layers containing the values of the red, green and blue pixels. When we display an image we see all three colours combined.

# Let's use the indexing functionality of `numpy` to select a slice of this image. For example to select the top right corner:

plt.imshow(im[:100,-200:,:])

# We can also replace values in the 'red' layer with the value 255, making the image 'reddish'. Give it a try:

im[:, :, 0] = 255

plt.imshow(im)

# ## Recommended next steps

#

# For more advanced information about working with Jupyter Notebooks or JupyterLab, you can explore [JupyterLab documentation page](https://jupyterlab.readthedocs.io/en/stable/user/notebook.html).

#

# To continue working through the notebooks in this beginner's guide, the following notebooks are designed to be worked through in the following order:

#

# 1. [Jupyter Notebooks](01_Jupyter_notebooks.ipynb)

# 2. [Digital Earth Australia](02_DEA.ipynb)

# 3. [Products and Measurements](03_Products_and_measurements.ipynb)

# 4. [Loading data](04_Loading_data.ipynb)

# 5. [Plotting](05_Plotting.ipynb)

# 6. [Performing a basic analysis](06_Basic_analysis.ipynb)

# 7. **Introduction to Numpy (this notebook)**

# 8. [Introduction to Xarray](08_Intro_to_xarray.ipynb)

# 9. [Parallel processing with Dask](09_Parallel_processing_with_Dask.ipynb)

#

# Once you have you have completed the above nine tutorials, join advanced users in exploring:

#

# * The "DEA datasets" directory in the repository, where you can explore DEA products in depth.

# * The "Frequently used code" directory, which contains a recipe book of common techniques and methods for analysing DEA data.

# * The "Real-world examples" directory, which provides more complex workflows and analysis case studies.

# ***

# ## Additional information

#

# **License:** The code in this notebook is licensed under the [Apache License, Version 2.0](https://www.apache.org/licenses/LICENSE-2.0). Digital Earth Australia data is licensed under the Creative Commons by Attribution 4.0 license.

#

# **Contact:** If you need assistance, please post a question on the [Open Data Cube Slack channel](http://slack.opendatacube.org/) or on the [GIS Stack Exchange](https://gis.stackexchange.com/questions/ask?tags=open-data-cube) using the `open-data-cube` tag (you can view previously asked questions [here](https://gis.stackexchange.com/questions/tagged/open-data-cube)).

# If you would like to report an issue with this notebook, you can file one on [Github](https://github.com/GeoscienceAustralia/dea-notebooks).

#

# **Last modified:** September 2021

# ## Tags

# Browse all available tags on the DEA User Guide's [Tags Index]()

# + raw_mimetype="text/restructuredtext" active=""

# **Tags**: :index:`sandbox compatible`, :index:`NCI compatible`, :index:`numpy`, :index:`matplotlib`, :index:`plotting`, :index:`beginner`

| Beginners_guide/07_Intro_to_numpy.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# ## Libraries

# +

import pandas as pd

import numpy as np

import scipy.stats as stat

from math import sqrt

from mlgear.utils import show, display_columns

from surveyweights import normalize_weights

# -

# ## Load Processed Data

survey = pd.read_csv('responses_processed_national_weighted.csv').fillna('Not presented')

# ## Analysis

options = ['<NAME>, the Democrat', '<NAME>, the Republican']

survey_ = survey.loc[survey['vote_trump_biden'].isin(options)].copy()

options2 = ['<NAME>', '<NAME>']

survey_ = survey_.loc[survey_['vote2016'].isin(options2)].copy()

options3 = ['Can trust', 'Can\'t be too careful']

survey_ = survey_.loc[survey_['gss_trust'].isin(options3)].copy()

options4 = ['Disagree', 'Agree']

survey_ = survey_.loc[survey_['gss_spanking'].isin(options4)].copy()

survey_['lv_weight'] = normalize_weights(survey_['lv_weight'])

survey_['vote_trump_biden'].value_counts(normalize=True) * survey_.groupby('vote_trump_biden')['lv_weight'].mean() * 100

survey_['vote2016'].value_counts(normalize=True) * survey_.groupby('vote2016')['lv_weight'].mean() * 100

survey_['race'].value_counts(normalize=True) * survey_.groupby('race')['lv_weight'].mean() * 100

survey_['education'].value_counts(normalize=True) * survey_.groupby('education')['lv_weight'].mean() * 100

survey_['gss_trust'].value_counts(normalize=True) * survey_.groupby('gss_trust')['lv_weight'].mean() * 100

survey_['gss_spanking'].value_counts(normalize=True) * survey_.groupby('gss_spanking')['lv_weight'].mean() * 100

survey_['noncollege_white'].value_counts(normalize=True) * survey_.groupby('noncollege_white')['lv_weight'].mean() * 100

# +

print('## HIGH TRUST ##')

survey__ = survey_[survey_['gss_trust'] == 'Can trust']

survey__['lv_weight'] = normalize_weights(survey__['lv_weight'])

print(survey__['vote2016'].value_counts(normalize=True) * survey__.groupby('vote2016')['lv_weight'].mean() * 100)

print('-')

print(survey__['vote_trump_biden'].value_counts(normalize=True) * survey__.groupby('vote_trump_biden')['lv_weight'].mean() * 100)

print('-')

print('-')

print('## LOW TRUST ##')

survey__ = survey_[survey_['gss_trust'] == 'Can\'t be too careful']

survey__['lv_weight'] = normalize_weights(survey__['lv_weight'])

print(survey__['vote2016'].value_counts(normalize=True) * survey__.groupby('vote2016')['lv_weight'].mean() * 100)

print('-')

print(survey__['vote_trump_biden'].value_counts(normalize=True) * survey__.groupby('vote_trump_biden')['lv_weight'].mean() * 100)

# +

print('## NONCOLLEGE WHITE ##')

survey__ = survey_[survey_['noncollege_white']]

survey__['lv_weight'] = normalize_weights(survey__['lv_weight'])

print(survey__['vote2016'].value_counts(normalize=True) * survey__.groupby('vote2016')['lv_weight'].mean() * 100)

print('-')

print(survey__['vote_trump_biden'].value_counts(normalize=True) * survey__.groupby('vote_trump_biden')['lv_weight'].mean() * 100)

print('-')

print('-')

print('## NOT "NONCOLLEGE WHITE" ##')

survey__ = survey_[~survey_['noncollege_white']]

survey__['lv_weight'] = normalize_weights(survey__['lv_weight'])

print(survey__['vote2016'].value_counts(normalize=True) * survey__.groupby('vote2016')['lv_weight'].mean() * 100)

print('-')

print(survey__['vote_trump_biden'].value_counts(normalize=True) * survey__.groupby('vote_trump_biden')['lv_weight'].mean() * 100)

# +

print('## NONCOLLEGE WHITE, HIGH SOCIAL TRUST ##')

survey__ = survey_[survey_['noncollege_white'] & (survey_['gss_trust'] == 'Can trust')]

survey__['lv_weight'] = normalize_weights(survey__['lv_weight'])

print(survey__['vote2016'].value_counts(normalize=True) * survey__.groupby('vote2016')['lv_weight'].mean() * 100)

print('-')

print(survey__['vote_trump_biden'].value_counts(normalize=True) * survey__.groupby('vote_trump_biden')['lv_weight'].mean() * 100)

print('-')

print('-')

print('## NONCOLLEGE WHITE, LOW SOCIAL TRUST ##')

survey__ = survey_[survey_['noncollege_white'] & (survey_['gss_trust'] == 'Can\'t be too careful')]

survey__['lv_weight'] = normalize_weights(survey__['lv_weight'])

print(survey__['vote2016'].value_counts(normalize=True) * survey__.groupby('vote2016')['lv_weight'].mean() * 100)

print('-')

print(survey__['vote_trump_biden'].value_counts(normalize=True) * survey__.groupby('vote_trump_biden')['lv_weight'].mean() * 100)

print('-')

print('-')

print('## NOT "NONCOLLEGE WHITE", HIGH SOCIAL TRUST ##')

survey__ = survey_[~survey_['noncollege_white'] & (survey_['gss_trust'] == 'Can trust')]

survey__['lv_weight'] = normalize_weights(survey__['lv_weight'])

print(survey__['vote2016'].value_counts(normalize=True) * survey__.groupby('vote2016')['lv_weight'].mean() * 100)

print('-')

print(survey__['vote_trump_biden'].value_counts(normalize=True) * survey__.groupby('vote_trump_biden')['lv_weight'].mean() * 100)

print('-')

print('-')

print('## NOT "NONCOLLEGE WHITE", LOW SOCIAL TRUST ##')

survey__ = survey_[~survey_['noncollege_white'] & (survey_['gss_trust'] == 'Can\'t be too careful')]

survey__['lv_weight'] = normalize_weights(survey__['lv_weight'])

print(survey__['vote2016'].value_counts(normalize=True) * survey__.groupby('vote2016')['lv_weight'].mean() * 100)

print('-')

print(survey__['vote_trump_biden'].value_counts(normalize=True) * survey__.groupby('vote_trump_biden')['lv_weight'].mean() * 100)

| (K) Analysis - Noncollege White X Trust Shift.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3.10.0 64-bit

# language: python

# name: python3

# ---

# # Objects

#

# In Python, objects are used to represent

# information. Every variable you use in a Python

# program is a reference to an object.

# The values you have been using so far –

# numbers, strings, dicts, lists, etc – are objects.

# They are among the built-in classes of Python,

# i.e., kinds of value that are already defined

# when you start the Python interpreter.

#

# You are not limited to those built-in classes.

# You can use them as a foundation to build your

# own.

#

# ## 1. Example: Points

#

# What if we wanted to define a new kind of value?

# For example, if we wanted to write a program

# to draw a graph, we might want to work with

# cartesian coordinates, representing each

# point as an (x,y) pair. We might represent the

# point as a tuple like `(5,7)`, or we could represent

# it as the list `[5, 7]`, or we could represent

# it as a dict `{"x": 5, "y": 7}`, and that

# might be satisfactory. If we wanted to represent moving a point (x,y) by some distance (dx, dy), we could define a a function like

#

# ```python

# def move(p, d):

# x,y = p

# dx, dy = d

# return (x+dx, y+dy)

# ```

#

# But if we are making a graphics program, we'll need to *move* functions for other graphical objects like rectangles and ovals,

# so instead of naming it `move` we'll need a more descriptive name

# like `move_point`. Also we should give the type contract for

# the function, which we can do with Python type hints. With these

# changes, we get something like this

# +

# Setup

from IPython.core.magic import register_cell_magic

from IPython.display import HTML, display

@register_cell_magic

def bgc(color, cell=None):

script = (

"var cell = this.closest('.jp-CodeCell');"

"var editor = cell.querySelector('.jp-Editor');"

"editor.style.background='{}';"

"this.parentNode.removeChild(this)"

).format(color)

display(HTML('<img src onerror="{}">'.format(script)))

# +

from typing import Tuple

from numbers import Number

def move_point(p: Tuple[Number, Number],

d: Tuple[Number, Number]) \

-> Tuple[Number, Number]:

x, y = p

dx, dy = d

return (x+dx, y+dy)

# -

# A simple test case increases our confidence that this works:

assert move_point((3,4),(5,6)) == (8,10)

#

# ### Can we do better?

#

# We aren't really satisfied with using tuples to

# represent points. What we'd really

# like is to express the concept of adding two points

# more concisely, as `(3,4) + (5,6)`. What would happen if we

# tried this?

(3,4) + (5,6)

# That's not what we wanted! Would it be better if we represented

# points as lists?

[3,4] + [5,6]

#

# No better. Maybe as dicts?

{"x": 3, "y": 4} + {"x": 5, "y": 6}

#

# That is not much of an improvement, although

# an error message is usually better than silently

# producing a bad result.

# What we really want is not to use one of the

# existing representations like lists or tuples or dicts,

# but to define a new representation for points.

#

# ## 2. A new representation

#

# Each data type in Python, including list, tuple,

# and dict, is defined as a *class* from which

# *objects* can be constructed. We can also define

# our own classes, to construct new kinds of objects.

# For example, we can make a new class `Point` to

# represent points.

#

# ```python

# class Point:

# """An (x,y) coordinate pair"""

# ```

#

# Inside the class we can define *methods*, which are like functions

# that are specialized for the new representation. The first

# method we should define is a *constructor* with the name `__init__`.

# The constructor describes how to create a new `Point` object:

#

# ```python

# class Point:

# """An (x,y) coordinate pair"""

# def __init__(self, x: Number, y: Number):

# self.x = x

# self.y = y

# ```

#

# ## 3. Instance variables

#

# Notice that the first argument to the constructor method is

# `self`, and within the method we refer to `self.x` and `self.y`.

# In a method that operates on some object *o*, the first argument

# to the method will always be `self`, which refers to the whole

# object *o*. Within the `self` object we can store *instance

# variables*, like `self.x` and `self.y`

# for the *x* and *y* coordinates of a point.

#

# ## 4. Methods

#

# What about defining an operation for moving a point? Instead of

# adding `_point` to the name of a `move` function, we can just

# put the function (now called a *method*) *inside* the `Point`

# class:

#

# ```python

# def move(self, d: Point) -> Point:

# """(x, y).move(dx, dy) = (x + dx, y + dy)"""

# x = self.x + d.x

# y = self.y + d.y

# return Point(x,y)

# ```

# ><span style='background :yellow' >**Type hints note:**</span> Because the Point class is a user-defined (not builtin) class, an extra type variable definition is required for the `Point` type hints to be recognized as valid type names:

from typing import TypeVar

Point = TypeVar('Point')

#

# Notice that the *instance variables*

# `self.x` and `self.y` we created in the constructor

# can be used in the `move` method. They are part of

# the object, and can be used by any method in the class.

# The instance variables of the other `Point` object `d`

# are also available

# in the `move` method. Let's look at how these objects

# are passed to the `move` method.

#

# Here is the complete `Point` code, including [type hints](https://towardsdatascience.com/python-type-hints-docstrings-7ec7f6d3416b), [docstrings](https://realpython.com/documenting-python-code/) and [doctests](https://www.digitalocean.com/community/tutorials/how-to-write-doctests-in-python).

# +

from typing import TypeVar

from numbers import Number

Point = TypeVar('Point')

class Point:

"""An (x,y) coordinate pair"""

def __init__(self, x: Number, y: Number):

"""Create a new Point at (x,y)

Args:

x: x coordinate

y: y coordinate

>>> p = Point(2, 3); (p.x, p.y)

(2, 3)

"""

self.x = x

self.y = y

def move(self, d: Point) -> Point:

"""(x, y).move(dx, dy) = (x + dx, y + dy)

Args:

d: a Point

Returns:

a new Point

>>> p = Point(2, 3).move(Point(5, 6)); (p.x, p.y)

(7, 9)

"""

x = self.x + d.x

y = self.y + d.y

return Point(x, y)

# -

# And a quick doctest check for the code so far:

import doctest

doctest.testmod(verbose=True)

#

# ## 5. Method calls

#

# Next we'll create two `Point` objects and call the `move` method

# to create a third `Point` object with the sums of their *x* and

# *y* coordinates:

# +

p = Point(3,4)

v = Point(5,6)

m = p.move(v)

assert m.x == 8 and m.y == 10

# -

#

# At first it may seem confusing that we defined the ``move`` method with two arguments, `self` and `d`, but

# it looks like we passed it only one argument, `v`. In fact

# we passed it both points: `p.move(v)` passes `p` as the `self` argument and `v` as the `d` argument. We use the variable

# before the `.`, like `p` in this case, in two different ways: To find the right method (function) to call, by looking inside the *class* to

# which `p` belongs, and to pass as the `self` argument to the method.

#

# The `move` method above returns a new `Point` object at the

# computed coordinates. A method can also change the values of

# instance variables. For example, suppose we add a `move_to`

# method to `Point`:

# +

# Remove the previous definition of Point from the global namespace:

# %reset -sf

from typing import TypeVar

from numbers import Number

Point = TypeVar('Point')

class Point:

"""An (x,y) coordinate pair"""

def __init__(self, x: Number, y: Number):

self.x = x

self.y = y

def move(self, d: Point) -> Point:

"""(x, y).move(dx, dy) = (x + dx, y + dy)"""

x = self.x + d.x

y = self.y + d.y

return Point(x,y)

def move_to(self, new_x, new_y):

"""Change the coordinates of this Point"""

self.x = new_x

self.y = new_y

# -

# The `move_to` method does not return a new point (it returns `None`), but

# it changes an existing point *object*.

# +

m = Point(8,10)

m.move_to(19,23)

assert m.x == 19 and m.y == 23

# -

#

# #### *Check your understanding*

#

# Consider class `Pet` and object `my_pet`.

# What are the *instance variables* of `my_pet`?

# What are the values of those instance variables

# after executing the code below?

#

# ```python

# class Pet:

# def __init__(self, kind: str, name: str):

# self.species = kind

# self.called = name

#

# def rename(self, new_name):

# self.called = new_name

#

# my_pet = Pet("canis familiaris", "fido")

# ```

#

# ## 6. A little magic

#

# We said above that what we really wanted was to express

# movement of points very compactly, as addition. We

# saw that addition of tuples or lists did not act as we

# wanted; instead of `(3,4) + (5,6)` giving us `(8,10)`, it

# gave us `(3,4,5,6)`. We can almost get what we want by describing

# how we want `+` to act on `Point` objects. We do this by

# defining a *special method* `__add__`:

#

# ```python

# def __add__(self, other: "Point"):

# """(x,y) + (dx, dy) = (x+dx, y+dy)"""

# return Point(self.x + other.x, self.y + other.y)

# ```

#

# Special methods are more commonly known as *magic methods*.

# They allow us to define how arithmetic operations like `+`

# and `-` work for each class of object, as well as

# comparisons like `<` and `==`, and some other operations.

# If `p` is a `Point` object, then `p + q` is interpreted as

# `p.__add__(q)`. So finally we get a very compact and

# readable notation:

#

# ```python

# p = Point(3,4)

# v = Point(5,6)

# m = p.move(v)

#

# assert m.x == 8 and m.y == 10

# ```

#

# More: [Appendix on magic methods](appendix_Special)

#

# ## Magic for printing

#

# Suppose we wanted to print a `Point` object. We

# could do it this way:

#

# ```python

# print(f"p is ({p.x}, {p.y})")

# ```

#

# That would give us a reasonable printed representation,

# like "p is (3, 4)", but it is tedious, verbose, and easy to

# get wrong. What if we just wrote

#

# ```python

# print(f"p is {p}")

# ```

#

# That would be simpler, but the result is not very

# useful:

#

# ```python

# p is <__main__.Point object at 0x10b4a22e0>

# ```

#

# ### `str()`d, not shaken

#

# If we want to print `Point` objects as simply

# as we print strings and numbers, but we want the

# printed representation to be readable, we will need

# to write additional methods to describe how a

# `Point` object should be converted to a string.

#

# In fact, in Python we normally write two magic

# methods for this: `__str__` describes how it

# should be represented by the `str()` function,

# which is the representation used in `print`

# or in an f-string like `f"it is {p}"`. We might

# decide that we want the object created by `Point(3,2)`

# to print as "(3, 2)". We would then write a

# `__str__` method in the `Point` class like this:

#

# ```python

# def __str__(self) -> str:

# return f"({self.x}, {self.y})"

# ```

#

# Now if we again execute

#

# ```python

# print(f"p is {p}")

# ```

#

# we get a more useful result:

#

# ```python

# p is (3, 4)

# ```

#

# ### A `repr()` for debugging

#

# Usually we will also want to provide a different

# string representation that is useful in debugging

# and at the Python command line interface. The

# string representation above may be fine for end users,

# but for the software developer it does not differentiate

# between a tuple `(3, 4)` and a `Point` object `(3, 4)`.

# We can define a `__repr__` method to give a string

# representation more useful in debugging. The function

# `repr(x)` is actually a call on the `__repr__` method

# of `x`, i.e., `x.__repr__()`.

#

# Although

# Python will permit us to write whatever `__repr__`

# method we choose, by accepted convention is to make

# it look like a call on the constructor, i.e., like

# Python code to create an identical object. Thus, for

# the `Point` class we might write:

#

# ```python

# def __repr__(self) -> str:

# return f"Point({self.x}, {self.y})"

# ```

#

# Now if we write

#

# ```python

# print(f"repr(p) is {repr(p)}")

# ```

#

# we will get

#

# ```python

# repr(p) is Point(3, 4)

# ```

#

# The `print` function automatically applies the `str` function to its

# arguments, so defining a good `__str__` method will ensure it

# is printed as you like in most cases. Oddly, though,

# the `__str__` method for `list` applies the `__repr__` method

# to each of its arguments, so if we write

#

# ```python

# print(p)

# print(v)

# print([p, v])

# ```

#

# we get

#

# ```

# (3, 4)

# (5, 6)

# [Point(3, 4), Point(5, 6)]

# ```

#

# #### *Check your understanding*

#

# Which of the following are legal, and what

# values do they return?

#

# * `str(5)`

# * `(5).str()`

# * `(5).__str__()`

# * `__str__(5)`

# * `repr([1, 2, 3])`

# * `[1, 2, 3].repr()`

# * `[1, 2, 3].__repr__()`

#

# What does the following little program print?

#

# ```python

# class Wrap:

# def __init__(self, val: str):

# self.value = val

#

# def __str__(self) -> str:

# return self.value

#

# def __repr__(self) -> str:

# return f"Wrap({self.value})"

#

# a = Wrap("alpha")

# b = Wrap("beta")

# print([a, b])

# ```

#

# ## Variables *refer* to objects

#

# Before reading on, try to predict what the following

# little program will print.

#

# ```python

# x = [1, 2, 3]

# y = x

# y.append(4)

# print(x)

# print(y)

# ```

#

# Now execute that program. Did you get the result you

# expected? If it surprised you, try visualizing it in

# PythonTutor (http://pythontutor.com/). You should get a

# diagram that looks like this:

#

#

#

# `x` and `y` are distinct variables, but they are both references to the same list. When we change `y` by appending 4, we are changing the same object

# that `x` refers to. We say that `x` and `y` are *aliases*, two names for the same object.

#

# Note this is very different from the following:

#

# ```python

# x = [1, 2, 3]

# y = [1, 2, 3]

# y.append(4)

# print(x)

# print(y)

# ```

#

# Each time we create a list like `[1, 2, 3]`, we are creating a distinct

# list. In this seocond version of the program, `x` and `y` are not

# aliases.

#

#

#

# It is essential to remember that variables hold *references* to objects, and

# there may be more than one reference to the same object. We can observe the

# same phenomenon with classes we add to Python. Consider this program:

#

# ```python

# class Point:

# """An (x,y) coordinate pair"""

# def __init__(self, x: int, y: int):

# self.x = x

# self.y = y

#

# def move(self, dx: int, dy: int):

# self.x += dx

# self.y += dy

#

# p1 = Point(3,5)

# p2 = p1

# p1.move(4,4)

# print(p2.x, p2.y)

# ```

#

# Once again we have created two variables that are *aliases*, i.e., they