code stringlengths 38 801k | repo_path stringlengths 6 263 |

|---|---|

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: py3.6-env

# language: python

# name: py3.6-env

# ---

# +

# %matplotlib inline

import gym

import matplotlib

import numpy as np

import sys

from collections import defaultdict

from envs.blackjack import BlackjackEnv

import plotting

matplotlib.style.use('ggplot')

# -

env = BlackjackEnv()

def make_epsilon_greedy_policy(Q, epsilon, nA):

"""

给定一个Q函数和epsilon,构建一个ε-贪婪的策略

参数:

Q: 一个dictionary其key-value是state -> action-values.

key是状态s,value是一个长为nA(Action个数)的numpy数组,表示采取行为a的概率。

epsilon: float

nA: action的个数

返回值:

返回一个 函数,这个函数的输入是一个状态/观察(observation),输出是一个长度为nA的numpy数组,表示采取不同Action的概率

"""

def policy_fn(observation):

A = np.ones(nA, dtype=float) * epsilon / nA

best_action = np.argmax(Q[observation])

A[best_action] += (1.0 - epsilon)

return A

return policy_fn

def mc_control_epsilon_greedy(env, num_episodes, discount_factor=1.0, epsilon=0.1):

"""

使用Epsilon-贪婪策略的蒙特卡罗控制,用了找到最优的epsilon-greedy策略

参数:

env: OpenAI gym environment

num_episodes: 采样的episode个数

discount_factor: 打折因子

epsilon: Float

返回:

一个tuple(Q, policy).

Q函数 state -> action values。key是状态,value是长为nA的numpy数组,表示Q(s,a)

policy 最优的策略函数,输入是状态,输出是nA长的numpy数组,表示采取不同action的概率

"""

# 记录每个状态的回报累加值和次数

returns_sum = defaultdict(float)

returns_count = defaultdict(float)

# Q函数state -> (action -> action-value)。key是状态s,value又是一个dict,其key是a,value是Q(s,a)

Q = defaultdict(lambda: np.zeros(env.action_space.n))

# epsilon-贪婪的策略

policy = make_epsilon_greedy_policy(Q, epsilon, env.action_space.n)

for i_episode in range(1, num_episodes + 1):

if i_episode % 1000 == 0:

print("\rEpisode {}/{}.".format(i_episode, num_episodes), end="")

sys.stdout.flush()

# 生成一个episode。

# 一个episode是一个数组,每个元素是一个三元组(state, action, reward)

episode = []

state = env.reset()

for t in range(100):

probs = policy(state)

action = np.random.choice(np.arange(len(probs)), p=probs)

next_state, reward, done, _ = env.step(action)

episode.append((state, action, reward))

if done:

break

state = next_state

# 找到episode里出现的所有(s,a)对epsilon。把它变成tuple以便作为dict的key。

sa_in_episode = set([(tuple(x[0]), x[1]) for x in episode])

for state, action in sa_in_episode:

sa_pair = (state, action)

# 找到(s,a)第一次出现的下标

first_occurence_idx = next(i for i,x in enumerate(episode)

if x[0] == state and x[1] == action)

# 计算(s,a)的回报

G = sum([x[2]*(discount_factor**i) for i,x in enumerate(episode[first_occurence_idx:])])

# 累计计数

returns_sum[sa_pair] += G

returns_count[sa_pair] += 1.0

Q[state][action] = returns_sum[sa_pair] / returns_count[sa_pair]

# 策略已经通过Q“隐式”的提高了!

return Q, policy

Q, policy = mc_control_epsilon_greedy(env, num_episodes=500000, epsilon=0.1)

V = defaultdict(float)

for state, actions in Q.items():

action_value = np.max(actions)

V[state] = action_value

plotting.plot_value_function(V, title="Optimal Value Function")

| rl/On-Policy MC Control.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# # Gaussian XOR and Gaussian R-XOR Experiment with Task Unaware Settings

# +

# import dependencies

import numpy as np

import random

from proglearn.sims import generate_gaussian_parity

import matplotlib.pyplot as plt

import seaborn as sns

# functions to perform the experiments in this notebook

import functions.xor_rxor_with_unaware_fns as fn

# -

# ## Ksample test

# Using ksample test from hyppo we can determine at which angle rxor is significantly different enough from xor to require a new task/transformer. The following code using functions from xor_rxor_aware_unaware_fns.py to calculate p-values from k sample test dcorr from rxor angles 0 to 90 degrees for sample sizes 100, 500, and 1000.

# number of times to run the experiment, decrease for shorter run times

mc_rep = 10

# set angle range

angle_sweep = range(0, 90, 1)

# calculates and plots angle vs pvalue from ksample test xor vs rxor

# returns numpy array containing all p-vals from all mc_rep experiments at all angles in angle_sweep

pvals_100, pvals_500, pvals_1000 = fn.calc_ksample_pval_vs_angle(mc_rep, angle_sweep)

# sets plotting params

sns.set_context("talk")

# plots the mean p-values of the mc_rep experiments with error bars

# dotted green line at p-value = 0.05

plt.figure(figsize=(8, 8))

fn.plot_pval_vs_angle(pvals_100, pvals_500, pvals_1000, angle_sweep)

# ## Task aware BTE and generalization error (XOR)

# Next, we'll run the progressive learner to see how different angles of rxor affect backward transfer efficiency and multitask generalization error of xor (task1). We start by defining the following hyperparameters.

# number of times to run the experiment, decrease for shorter run times

mc_rep = 100

# samples to use for task1 (xor)

task1_sample = 100

# samples to use for task2 (rxor)

task2_sample = 100

# we will use the same angle_sweep as before

angle_sweep = range(0, 90, 1)

# call the function to run the experiment

# give us arrays with mean_te and mean_error

mean_te, mean_error = fn.bte_ge_v_angle(angle_sweep, task1_sample, task2_sample, mc_rep)

# plot angle vs BTE

fn.plot_bte_v_angle(mean_te)

# plot angle vs generalization error

plt.figure(figsize=(8, 8))

plt.plot(angle_sweep, mean_error[:, 1])

plt.xlabel("Angle of Rotation")

plt.ylabel("Generalization Error (xor)")

plt.show()

# ## Task Unaware: K-sample testing "dcorr"

# Instead of adding a new task for every angle of rxor, we use a k sample test to determine when rxor is different enough to warrant adding a new task. Then we plot the BTE and multitask generalization error of xor (task1). Once again, we start by definining hyperparameters. We will examine BTE and generalization error for 100, 500, and 1000 task samples.

# ### 100 task samples

# number of times to run the experiment, decrease for shorter run times

mc_rep = 100

# samples to use for task1 (xor)

task1_sample = 100

# samples to use for task2 (rxor)

task2_sample = 100

# we will use the same angle_sweep as before

angle_sweep = range(0, 90, 1)

# call our function to run the experiment

un_mean_te, un_mean_error = fn.unaware_bte_v_angle(

angle_sweep, task1_sample, task2_sample, mc_rep

)

# plot angle vs BTE

fn.plot_unaware_bte_v_angle(un_mean_te)

# plot angle vs generalization error

plt.figure(figsize=(8, 8))

plt.plot(angle_sweep, un_mean_error[:, 1])

plt.xlabel("Angle of Rotation")

plt.ylabel("Generalization Error (XOR)")

plt.show()

# ### 500 task samples

# +

# number of times to run the experiment, decrease for shorter run times

mc_rep = 100

# samples to use for task1 (xor)

task1_sample = 500

# samples to use for task2 (rxor)

task2_sample = 500

# we will use the same angle_sweep as before

angle_sweep = range(0, 90, 1)

# call our function to run the experiment

un_mean_te, un_mean_error = fn.unaware_bte_v_angle(

angle_sweep, task1_sample, task2_sample, mc_rep

)

# plot angle vs BTE

fn.plot_unaware_bte_v_angle(un_mean_te)

# -

# plot angle vs generalization error

plt.figure(figsize=(8, 8))

plt.plot(angle_sweep, un_mean_error[:, 1])

plt.xlabel("Angle of Rotation")

plt.ylabel("Generalization Error (XOR)")

plt.show()

# ### 1000 task samples

# +

# number of times to run the experiment, decrease for shorter run times

mc_rep = 100

# samples to use for task1 (xor)

task1_sample = 1000

# samples to use for task2 (rxor)

task2_sample = 1000

# we will use the same angle_sweep as before

angle_sweep = range(0, 90, 1)

# call our function to run the experiment

un_mean_te, un_mean_error = fn.unaware_bte_v_angle(

angle_sweep, task1_sample, task2_sample, mc_rep

)

# plot angle vs BTE

fn.plot_unaware_bte_v_angle(un_mean_te)

# -

# plot angle vs generalization error

plt.figure(figsize=(8, 8))

plt.plot(angle_sweep, un_mean_error[:, 1])

plt.xlabel("Angle of Rotation")

plt.ylabel("Generalization Error (XOR)")

plt.show()

| docs/experiments/xor_rxor_with_unaware.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# **Download** (right-click, save target as ...) this page as a jupyterlab notebook from: [Lab31](http://192.168.3.11/engr-1330-webroot/8-Labs/Lab31/Lab31.ipynb)

#

# ___

# # <font color=darkred>Laboratory 31: "On The Virtue and Value of Classification" or "Who Ordered a Classy Fire?" </font>

# LAST NAME, FIRST NAME

#

# R00000000

#

# ENGR 1330 Laboratory 14 - In-Lab

#  <br>

#

# ## For the last few sessions we have talked about regression ... <br>

#

#  <br>

#

# ### We discussed ...

# - __The theory and implementation of simple linear regression in Python__<br>

# - __OLS and MLE methods for estimation of slope and intercept coefficients__ <br>

# - __Errors (Noise, Variance, Bias) and their impacts on model's performance__ <br>

# - __Confidence and prediction intervals__

# - __And Multiple Linear Regressions__

#

# <br>  <br>

#

# - __What if we want to predict a discrete variable?__

#

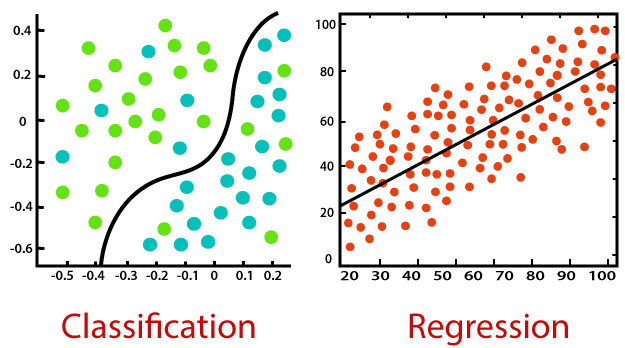

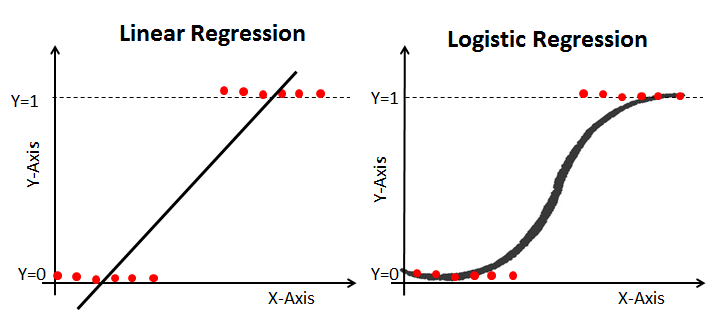

# The general idea behind our efforts was to use a set of observed events (samples) to capture the relationship between one or more predictor (AKA input, indipendent) variables and an output (AKA response, dependent) variable. The nature of the dependent variables differentiates *__regression__* and *__classification__* problems.

# <br>  <br>

#

#

# Regression problems have continuous and usually unbounded outputs. An example is when you’re estimating the salary as a function of experience and education level. Or all the examples we have covered so far!

#

# On the other hand, classification problems have discrete and finite outputs called classes or categories. For example, predicting if an employee is going to be promoted or not (true or false) is a classification problem. There are two main types of classification problems:

#

# - Binary or binomial classification:

#

# exactly two classes to choose between (usually 0 and 1, true and false, or positive and negative)

#

# - Multiclass or multinomial classification:

#

# three or more classes of the outputs to choose from

#

#

# - __When Do We Need Classification?__

#

# We can apply classification in many fields of science and technology. For example, text classification algorithms are used to separate legitimate and spam emails, as well as positive and negative comments. Other examples involve medical applications, biological classification, credit scoring, and more.

#

# ## Logistic Regression

#

# - __What is logistic regression?__

# Logistic regression is a fundamental classification technique. It belongs to the group of linear classifiers and is somewhat similar to polynomial and linear regression. Logistic regression is fast and relatively uncomplicated, and it’s convenient for users to interpret the results. Although it’s essentially a method for binary classification, it can also be applied to multiclass problems.

#

# <br>  <br>

#

#

#

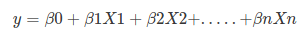

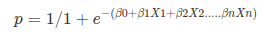

# Logistic regression is a statistical method for predicting binary classes. The outcome or target variable is dichotomous in nature. Dichotomous means there are only two possible classes. For example, it can be used for cancer detection problems. It computes the probability of an event occurrence. Logistic regression can be considered a special case of linear regression where the target variable is categorical in nature. It uses a log of odds as the dependent variable. Logistic Regression predicts the probability of occurrence of a binary event utilizing a logit function. HOW?

# Remember the general format of the multiple linear regression model:

# <br>  <br>

#

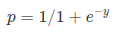

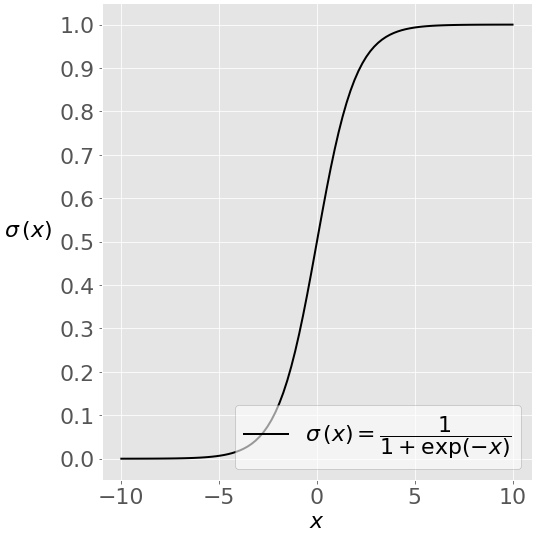

# Where, y is dependent variable and x1, x2 ... and Xn are explanatory variables. This was, as you know by now, a linear function. There is another famous function known as the *__Sigmoid Function__*, also called *__logistic function__*. Here is the equation for the Sigmoid function:

# <br>  <br>

#

# This image shows the sigmoid function (or S-shaped curve) of some variable 𝑥:

# <br>  <br>

# As you see, The sigmoid function has values very close to either 0 or 1 across most of its domain. It can take any real-valued number and map it into a value between 0 and 1. If the curve goes to positive infinity, y predicted will become 1, and if the curve goes to negative infinity, y predicted will become 0. This fact makes it suitable for application in classification methods since we are dealing with two discrete classes (labels, categories, ...). If the output of the sigmoid function is more than 0.5, we can classify the outcome as 1 or YES, and if it is less than 0.5, we can classify it as 0 or NO. This cutoff value (threshold) is not always fixed at 0.5. If we apply the Sigmoid function on linear regression:

# <br> <br>

#

# Notice the difference between linear regression and logistic regression:

# <br> <br>

#

# logistic regression is estimated using Maximum Likelihood Estimation (MLE) approach. Maximizing the likelihood function determines the parameters that are most likely to produce the observed data.

#

# Let's work on an example in Python! <br>

#

#  <br>

# ### Example 1: Diagnosing Diabetes <br>

#

#  <br>

#

#

#

# #### The "diabetes.csv" dataset is originally from the National Institute of Diabetes and Digestive and Kidney Diseases. The objective of the dataset is to diagnostically predict whether or not a patient has diabetes, based on certain diagnostic measurements included in the dataset.

# *Several constraints were placed on the selection of these instances from a larger database. In particular, all patients here are females at least 21 years old of Pima Indian heritage.*

# #### The datasets consists of several medical predictor variables and one target variable, Outcome. Predictor variables includes the number of pregnancies the patient has had, their BMI, insulin level, age, and so on.

#

# |Columns|Info.|

# |---:|---:|

# |Pregnancies |Number of times pregnant|

# |Glucose |Plasma glucose concentration a 2 hours in an oral glucose tolerance test|

# |BloodPressure |Diastolic blood pressure (mm Hg)|

# |SkinThickness |Triceps skin fold thickness (mm)|

# |Insulin |2-Hour serum insulin (mu U/ml)|

# |BMI |Body mass index (weight in kg/(height in m)^2)|

# |Diabetes pedigree |Diabetes pedigree function|

# |Age |Age (years)|

# |Outcome |Class variable (0 or 1) 268 of 768 are 1, the others are 0|

#

#

# #### Let's see if we can build a logistic regression model to accurately predict whether or not the patients in the dataset have diabetes or not?

# *Acknowledgements:

# <NAME>., <NAME>., <NAME>., <NAME>., & <NAME>. (1988). Using the ADAP learning algorithm to forecast the onset of diabetes mellitus. In Proceedings of the Symposium on Computer Applications and Medical Care (pp. 261--265). IEEE Computer Society Press.*

import numpy as np

import pandas as pd

from matplotlib import pyplot as plt

import sklearn.metrics as metrics

import seaborn as sns

# %matplotlib inline

# Import the dataset:

data = pd.read_csv("diabetes.csv")

data.rename(columns = {'Pregnancies':'pregnant', 'Glucose':'glucose','BloodPressure':'bp','SkinThickness':'skin',

'Insulin ':'Insulin','BMI':'bmi','DiabetesPedigreeFunction':'pedigree','Age':'age',

'Outcome':'label'}, inplace = True)

data.head()

data.describe()

#Check some histograms

sns.distplot(data['pregnant'], kde = True, rug= True, color ='orange')

sns.distplot(data['glucose'], kde = True, rug= True, color ='darkblue')

sns.distplot(data['label'], kde = False, rug= True, color ='purple', bins=2)

sns.jointplot(x ='glucose', y ='label', data = data, kind ='kde')

# #### Selecting Feature: Here, we need to divide the given columns into two types of variables dependent(or target variable) and independent variable(or feature variables or predictors).

#split dataset in features and target variable

feature_cols = ['pregnant', 'glucose', 'bp', 'skin', 'Insulin', 'bmi', 'pedigree', 'age']

X = data[feature_cols] # Features

y = data.label # Target variable

# #### Splitting Data: To understand model performance, dividing the dataset into a training set and a test set is a good strategy. Let's split dataset by using function train_test_split(). You need to pass 3 parameters: features, target, and test_set size. Additionally, you can use random_state to select records randomly. Here, the Dataset is broken into two parts in a ratio of 75:25. It means 75% data will be used for model training and 25% for model testing:

# split X and y into training and testing sets

from sklearn.model_selection import train_test_split

X_train,X_test,y_train,y_test=train_test_split(X,y,test_size=0.25,random_state=0)

# #### Model Development and Prediction: First, import the Logistic Regression module and create a Logistic Regression classifier object using LogisticRegression() function. Then, fit your model on the train set using fit() and perform prediction on the test set using predict().

# +

# import the class

from sklearn.linear_model import LogisticRegression

# instantiate the model (using the default parameters)

#logreg = LogisticRegression()

logreg = LogisticRegression()

# fit the model with data

logreg.fit(X_train,y_train)

#

y_pred=logreg.predict(X_test)

# -

#  <br>

# - __How to assess the performance of logistic regression?__

#

# Binary classification has four possible types of results:

#

# - True negatives: correctly predicted negatives (zeros)

# - True positives: correctly predicted positives (ones)

# - False negatives: incorrectly predicted negatives (zeros)

# - False positives: incorrectly predicted positives (ones)

#

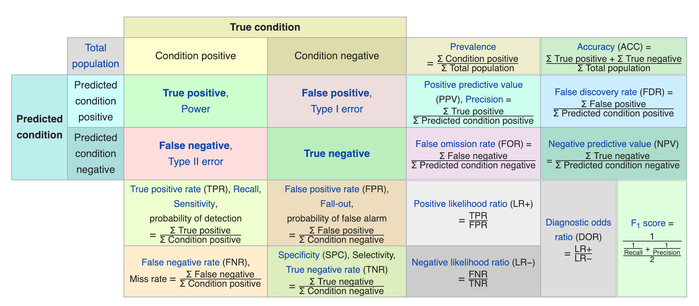

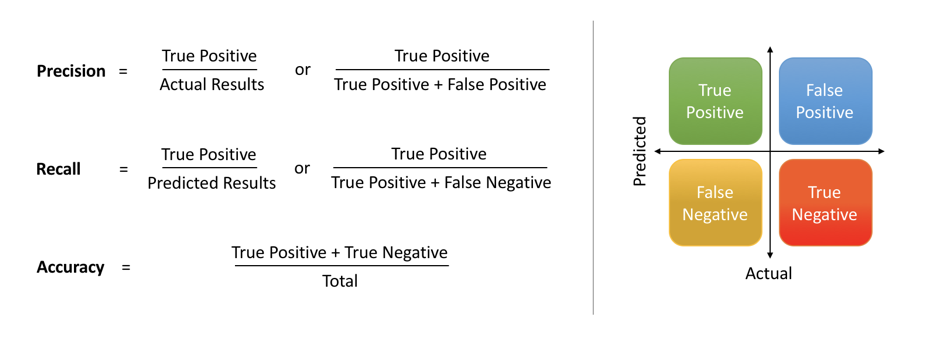

# We usually evaluate the performance of a classifier by comparing the actual and predicted outputsand counting the correct and incorrect predictions. A confusion matrix is a table that is used to evaluate the performance of a classification model.

#

# <br>  <br>

#

# Some indicators of binary classifiers include the following:

#

# - The most straightforward indicator of classification accuracy is the ratio of the number of correct predictions to the total number of predictions (or observations).

# - The positive predictive value is the ratio of the number of true positives to the sum of the numbers of true and false positives.

# - The negative predictive value is the ratio of the number of true negatives to the sum of the numbers of true and false negatives.

# - The sensitivity (also known as recall or true positive rate) is the ratio of the number of true positives to the number of actual positives.

# - The precision score quantifies the ability of a classifier to not label a negative example as positive. The precision score can be interpreted as the probability that a positive prediction made by the classifier is positive.

# - The specificity (or true negative rate) is the ratio of the number of true negatives to the number of actual negatives.

# <br>  <br>

#

# The extent of importance of recall and precision depends on the problem. Achieving a high recall is more important than getting a high precision in cases like when we would like to detect as many heart patients as possible. For some other models, like classifying whether a bank customer is a loan defaulter or not, it is desirable to have a high precision since the bank wouldn’t want to lose customers who were denied a loan based on the model’s prediction that they would be defaulters.

# There are also a lot of situations where both precision and recall are equally important. Then we would aim for not only a high recall but a high precision as well. In such cases, we use something called F1-score. F1-score is the Harmonic mean of the Precision and Recall:

# <br>  <br>

# This is easier to work with since now, instead of balancing precision and recall, we can just aim for a good F1-score and that would be indicative of a good Precision and a good Recall value as well.

# <br>  <br>

# #### Model Evaluation using Confusion Matrix: A confusion matrix is a table that is used to evaluate the performance of a classification model. You can also visualize the performance of an algorithm. The fundamental of a confusion matrix is the number of correct and incorrect predictions are summed up class-wise.

# import the metrics class

from sklearn import metrics

cnf_matrix = metrics.confusion_matrix(y_pred, y_test)

cnf_matrix

# #### Here, you can see the confusion matrix in the form of the array object. The dimension of this matrix is 2*2 because this model is binary classification. You have two classes 0 and 1. Diagonal values represent accurate predictions, while non-diagonal elements are inaccurate predictions. In the output, 119 and 36 are actual predictions, and 26 and 11 are incorrect predictions.

# #### Visualizing Confusion Matrix using Heatmap: Let's visualize the results of the model in the form of a confusion matrix using matplotlib and seaborn.

class_names=[0,1] # name of classes

fig, ax = plt.subplots()

tick_marks = np.arange(len(class_names))

plt.xticks(tick_marks, class_names)

plt.yticks(tick_marks, class_names)

# create heatmap

sns.heatmap(pd.DataFrame(cnf_matrix), annot=True, cmap="YlGnBu" ,fmt='g')

ax.xaxis.set_label_position("top")

plt.tight_layout()

plt.title('Confusion matrix', y=1.1)

plt.ylabel('Predicted label')

plt.xlabel('Actual label')

# #### Confusion Matrix Evaluation Metrics: Let's evaluate the model using model evaluation metrics such as accuracy, precision, and recall.

print("Accuracy:",metrics.accuracy_score(y_test, y_pred))

print("Precision:",metrics.precision_score(y_test, y_pred))

print("Recall:",metrics.recall_score(y_test, y_pred))

print("F1-score:",metrics.f1_score(y_test, y_pred))

# +

from sklearn.metrics import classification_report

print(classification_report(y_test, y_pred))

# -

#

# ___

# ## Example: Credit Card Fraud Detection <br>

#

#  <br>

#

#

#

# ### For many companies, losses involving transaction fraud amount to more than 10% of their total expenses. The concern with these massive losses leads companies to constantly seek new solutions to prevent, detect and eliminate fraud. Machine Learning is one of the most promising technological weapons to combat financial fraud. The objective of this project is to create a simple Logistic Regression model capable of detecting fraud in credit card operations, thus seeking to minimize the risk and loss of the business.

#

# ### The dataset used contains transactions carried out by European credit card holders that took place over two days in September 2013, and is a shorter version of a dataset that is available on kaggle at https://www.kaggle.com/mlg-ulb/creditcardfraud/version/3.

#

# ### "It contains only numerical input variables which are the result of a PCA transformation. Unfortunately, due to confidentiality issues, we cannot provide the original features and more background information about the data. Features V1, V2, … V28 are the principal components obtained with PCA, the only features which have not been transformed with PCA are 'Time' and 'Amount'. Feature 'Time' contains the seconds elapsed between each transaction and the first transaction in the dataset. The feature 'Amount' is the transaction Amount, this feature can be used for example-dependant cost-senstive learning. Feature 'Class' is the response variable and it takes value 1 in case of fraud and 0 otherwise."

#

#

# |Columns|Info.|

# |---:|---:|

# |Time |Number of seconds elapsed between this transaction and the first transaction in the dataset|

# |V1-V28 |Result of a PCA Dimensionality reduction to protect user identities and sensitive features(v1-v28)|

# |Amount |Transaction amount|

# |Class |1 for fraudulent transactions, 0 otherwise|

#

#

# *NOTE: Principal Component Analysis, or PCA, is a dimensionality-reduction method that is often used to reduce the dimensionality of large data sets, by transforming a large set of variables into a smaller one that still contains most of the information in the large set.*

#

# <hr>

#

# *__Acknowledgements__*

# The dataset has been collected and analysed during a research collaboration of Worldline and the Machine Learning Group (http://mlg.ulb.ac.be) of ULB (Université Libre de Bruxelles) on big data mining and fraud detection.

# More details on current and past projects on related topics are available on https://www.researchgate.net/project/Fraud-detection-5 and the page of the DefeatFraud project

#

# Please cite the following works:

#

# *<NAME>, <NAME>, <NAME> and <NAME>. Calibrating Probability with Undersampling for Unbalanced Classification. In Symposium on Computational Intelligence and Data Mining (CIDM), IEEE, 2015*

#

# *<NAME>, Andrea; <NAME>; <NAME>; <NAME>; <NAME>. Learned lessons in credit card fraud detection from a practitioner perspective, Expert systems with applications,41,10,4915-4928,2014, Pergamon*

#

# *<NAME>, Andrea; <NAME>; <NAME>; <NAME>; <NAME>. Credit card fraud detection: a realistic modeling and a novel learning strategy, IEEE transactions on neural networks and learning systems,29,8,3784-3797,2018,IEEE*

#

# *<NAME>, Andrea Adaptive Machine learning for credit card fraud detection ULB MLG PhD thesis (supervised by G. Bontempi)*

#

# *<NAME>; <NAME>, Andrea; <NAME>, Yann-Aël; <NAME>; <NAME>; <NAME>. Scarff: a scalable framework for streaming credit card fraud detection with Spark, Information fusion,41, 182-194,2018,Elsevier*

#

# *<NAME>; <NAME>, Yann-Aël; <NAME>; <NAME>. Streaming active learning strategies for real-life credit card fraud detection: assessment and visualization, International Journal of Data Science and Analytics, 5,4,285-300,2018,Springer International Publishing*

#

# *<NAME>, <NAME>, <NAME>, <NAME>, <NAME> Deep-Learning Domain Adaptation Techniques for Credit Cards Fraud Detection, INNSBDDL 2019: Recent Advances in Big Data and Deep Learning, pp 78-88, 2019*

#

# *<NAME>, <NAME>, <NAME>, <NAME>, <NAME> Combining Unsupervised and Supervised Learning in Credit Card Fraud Detection Information Sciences, 2019*

# ### As you know by now, the first step is to load some necessary libraries:

import numpy as np

import pandas as pd

from matplotlib import pyplot as plt

import sklearn.metrics as metrics

import seaborn as sns

# %matplotlib inline

# ### Then, we should read the dataset and explore it using tools such as descriptive statistics:

# Import the dataset:

data = pd.read_csv("creditcard_m.csv")

data.head()

# ### As expected, the dataset has 31 columns and the target variable is located in the last one. Let's check and see whether we have any missing values in the dataset:

data.isnull().sum()

# ### Great! No missing values!

data.describe()

print ('Not Fraud % ',round(data['Class'].value_counts()[0]/len(data)*100,2))

print ()

print (round(data.Amount[data.Class == 0].describe(),2))

print ()

print ()

print ('Fraud % ',round(data['Class'].value_counts()[1]/len(data)*100,2))

print ()

print (round(data.Amount[data.Class == 1].describe(),2))

# ### We have a total of 140000 samples in this dataset. The PCA components (V1-V28) look as if they have similar spreads and rather small mean values in comparison to another predictors such as 'Time'. The majority (75%) of transactions are below 81 euros with some considerably high outliers (the max is 19656.53 euros). Around 0.19% of all the observed transactions were found to be fraudulent which means that we are dealing with an extremely unbalanced dataset. An important characteristic of such problems. Although the share may seem small, each fraud transaction can represent a very significant expense, which together can represent billions of dollars of lost revenue each year.

# ### The next step is to defind our predictors and target:

#split dataset in features and target variable

y = data.Class # Target variable

X = data.loc[:, data.columns != "Class"] # Features

# ### The next step would be to split our dataset and define the training and testing sets. The random seed (np.random.seed) is used to ensure that the same data is used for all runs. Let's do a 70/30 split:

# +

# split X and y into training and testing sets

np.random.seed(123)

from sklearn.model_selection import train_test_split

X_train,X_test,y_train,y_test=train_test_split(X,y,test_size=0.30,random_state=1)

# -

# ### Now it is time for model development and prediction!

# ### import the Logistic Regression module and create a Logistic Regression classifier object using LogisticRegression() function. Then, fit your model on the train set using fit() and perform prediction on the test set using predict().

# +

# import the class

from sklearn.linear_model import LogisticRegression

# instantiate the model (using the default parameters)

#logreg = LogisticRegression()

logreg = LogisticRegression(solver='lbfgs',max_iter=10000)

# fit the model with data -TRAIN the model

logreg.fit(X_train,y_train)

# -

# TEST the model

y_pred=logreg.predict(X_test)

# ### Once the model and the predictions are ready, we can assess the performance of our classifier. First, we need to get our confusion matrix:

#

# *A confusion matrix is a table that is used to evaluate the performance of a classification model. You can also visualize the performance of an algorithm. The fundamental of a confusion matrix is the number of correct and incorrect predictions are summed up class-wise.*

from sklearn import metrics

cnf_matrix = metrics.confusion_matrix(y_pred, y_test)

print(cnf_matrix)

tpos = cnf_matrix[0][0]

fneg = cnf_matrix[1][1]

fpos = cnf_matrix[0][1]

tneg = cnf_matrix[1][0]

print("True Positive Cases are",tpos) #How many non-fraud cases were identified as non-fraud cases - GOOD

print("True Negative Cases are",tneg) #How many Fraud cases were identified as Fraud cases - GOOD

print("False Positive Cases are",fpos) #How many Fraud cases were identified as non-fraud cases - BAD | (type 1 error)

print("False Negative Cases are",fneg) #How many non-fraud cases were identified as Fraud cases - BAD | (type 2 error)

class_names=[0,1] # name of classes

fig, ax = plt.subplots()

tick_marks = np.arange(len(class_names))

plt.xticks(tick_marks, class_names)

plt.yticks(tick_marks, class_names)

# create heatmap

sns.heatmap(pd.DataFrame(cnf_matrix), annot=True, cmap="YlGnBu" ,fmt='g')

ax.xaxis.set_label_position("top")

plt.tight_layout()

plt.title('Confusion matrix', y=1.1)

plt.ylabel('Predicted label')

plt.xlabel('Actual label')

# ### We should go further and evaluate the model using model evaluation metrics such as accuracy, precision, and recall. These are calculated based on the confustion matrix:

print("Accuracy:",metrics.accuracy_score(y_test, y_pred))

# ### That is a fantastic accuracy score, isn't it?

print("Precision:",metrics.precision_score(y_test, y_pred))

print("Recall:",metrics.recall_score(y_test, y_pred))

print("F1-score:",metrics.f1_score(y_test, y_pred))

# +

from sklearn.metrics import classification_report

print(classification_report(y_test, y_pred))

# -

# ### Although the accuracy is excellent, the model struggles with fraud detection and has not captured about 30 out of 71 fraudulent transactions.

# ### Accuracy in a highly unbalanced data set does not represent a correct value for the efficiency of a model. That's where precision, recall and more specifically F1-score as their combinations becomes important:

#

# - *Accuracy is used when the True Positives and True negatives are more important while F1-score is used when the False Negatives and False Positives are crucial*

#

# - *Accuracy can be used when the class distribution is similar while F1-score is a better metric when there are imbalanced classes as in the above case.*

#

# - *In most real-life classification problems, imbalanced class distribution exists and thus F1-score is a better metric to evaluate our model on.*

#  <br>

#

# *This notebook was inspired by several blogposts including:*

#

# - __"Logistic Regression in Python"__ by __<NAME>__ available at* https://realpython.com/logistic-regression-python/ <br>

# - __"Understanding Logistic Regression in Python"__ by __<NAME>__ available at* https://www.datacamp.com/community/tutorials/understanding-logistic-regression-python <br>

# - __"Understanding Logistic Regression with Python: Practical Guide 1"__ by __<NAME>__ available at* https://datascience.foundation/sciencewhitepaper/understanding-logistic-regression-with-python-practical-guide-1 <br>

# - __"Understanding Data Science Classification Metrics in Scikit-Learn in Python"__ by __<NAME>__ available at* https://towardsdatascience.com/understanding-data-science-classification-metrics-in-scikit-learn-in-python-3bc336865019 <br>

#

#

# *Here are some great reads on these topics:*

# - __"Example of Logistic Regression in Python"__ available at* https://datatofish.com/logistic-regression-python/ <br>

# - __"Building A Logistic Regression in Python, Step by Step"__ by __<NAME>__ available at* https://towardsdatascience.com/building-a-logistic-regression-in-python-step-by-step-becd4d56c9c8 <br>

# - __"How To Perform Logistic Regression In Python?"__ by __<NAME>__ available at* https://www.edureka.co/blog/logistic-regression-in-python/ <br>

# - __"Logistic Regression in Python Using Scikit-learn"__ by __<NAME>__ available at* https://heartbeat.fritz.ai/logistic-regression-in-python-using-scikit-learn-d34e882eebb1 <br>

# - __"ML | Logistic Regression using Python"__ available at* https://www.geeksforgeeks.org/ml-logistic-regression-using-python/ <br>

#

# *Here are some great videos on these topics:*

# - __"StatQuest: Logistic Regression"__ by __StatQuest with <NAME>__ available at* https://www.youtube.com/watch?v=yIYKR4sgzI8&list=PLblh5JKOoLUKxzEP5HA2d-Li7IJkHfXSe <br>

# - __"Linear Regression vs Logistic Regression | Data Science Training | Edureka"__ by __edureka!__ available at* https://www.youtube.com/watch?v=OCwZyYH14uw <br>

# - __"Logistic Regression in Python | Logistic Regression Example | Machine Learning Algorithms | Edureka"__ by __edureka!__ available at* https://www.youtube.com/watch?v=VCJdg7YBbAQ <br>

# - __"How to evaluate a classifier in scikit-learn"__ by __Data School__ available at* https://www.youtube.com/watch?v=85dtiMz9tSo <br>

# - __"How to evaluate a classifier in scikit-learn"__ by __Data School__ available at* https://www.youtube.com/watch?v=85dtiMz9tSo <br>

# ___

#  <br>

#

# ## Exercise: Logistic Regression in Engineering <br>

#

# ### Think of a few applications of Logistic Regression in Engineering?

#

# #### _Make sure to cite any resources that you may use._

#  <br>

#

| 8-Labs/Lab31/.ipynb_checkpoints/Lab31-checkpoint.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# +

# default_exp gcp_elevation_api

# -

# # Traffic networks with google elevation api

#

# > Integrating traffic networks with google elevation api for an area in Melbourne. Then calculate the shortest path which accounts for grade impedance.

#hide

from nbdev.showdoc import *

import os

import numpy as np

import geopandas as gpd

import osmnx as ox

# Setup google elevation api key

ELEVATION_API = os.environ.get('GCP_ELEVATION_API')

# ### Get a network and add elevation to its nodes

# Check number of nodes and edges of Greater Melbourne

GMB = ox.graph_from_place("Greater Melbourne, Victoria, Australia", network_type='all')

len(GMB.nodes), len(GMB.edges)

# Check number of nodes and edges of City of Monash

GM = ox.graph_from_place("City of Monash, Victoria, Australia", network_type='all')

len(GM.nodes), len(GM.edges)

# ##### Get a network by place

# Get for a small area

place = "Oakleigh"

place_query = "Oakleigh, the city of Monash, Victoria, 3166, Australia"

G = ox.graph_from_place(place_query, network_type='bike')

len(G.nodes), len(G.edges)

fig, ax = ox.plot_graph(G)

# ##### Get elevation data

# Add elevations to nodes, and grades to edges

G = ox.add_node_elevations(G, api_key=ELEVATION_API)

G = ox.add_edge_grades(G)

# ### Calculate several stats

# ##### Average and median grade

edge_grades = [data['grade_abs'] for u, v, k, data in ox.get_undirected(G).edges(keys=True, data=True)]

avg_grade = np.mean(edge_grades)

print('Average street grade in {} is {:.1f}%'.format(place, avg_grade*100))

med_grade = np.median(edge_grades)

print('Median street grade in {} is {:.1f}%'.format(place, med_grade*100))

# ##### Plot nodes by elevation

# get one color for each node by elevation

nc = ox.plot.get_node_colors_by_attr(G, 'elevation', cmap='plasma')

fig, ax = ox.plot_graph(G, node_color=nc, node_size=5, edge_color='#333333', bgcolor='k')

# ##### Plot the edges by grade

# get a color for each edge, by grade, then plot the network

ec = ox.plot.get_edge_colors_by_attr(G, 'grade_abs', cmap='plasma', num_bins=5, equal_size=True)

fig, ax = ox.plot_graph(G, edge_color=ec, edge_linewidth=0.5, node_size=0, bgcolor='k')

# ### Shortest paths account for grade impedance

from shapely.geometry import Polygon, Point

# Select an origin and destination node

orig = (-37.8943, 145.0900)

dest = (-37.9059, 145.1030)

# Check the distance

Point(orig).distance(Point(dest))

# Get nearest nodes and a bounding box

orig = ox.get_nearest_node(G, orig)

dest = ox.get_nearest_node(G, dest)

bbox = ox.utils_geo.bbox_from_point((-37.9001, 145.0965), dist=1500)

# +

# An edge impedance function

def impedance(length, grade):

penalty = grade ** 2

return length * penalty

def impedance_2(length, grade):

penalty = length * (np.abs(grade) ** 3)

return penalty

# -

for u, v, k, data in G.edges(keys=True, data=True):

# data['impedance'] = impedance(data['length'], data['grade_abs'])

data['impedance'] = impedance_2(data['length'], data['grade'])

data['rise'] = data['length'] * data['grade']

# #### First find the shortest path by minimising distance

route_by_length = ox.shortest_path(G, orig, dest, weight='length')

fig, ax = ox.plot_graph_route(G, route_by_length, node_size=0)

# #### Find the shortest path by minimising impedance

route_by_impedance = ox.shortest_path(G, orig, dest, weight='impedance')

fig, ax = ox.plot_graph_route(G, route_by_impedance, node_size=0)

# #### Stats about these two routes

def print_route_stats(route):

route_grades = ox.utils_graph.get_route_edge_attributes(G, route, 'grade_abs')

msg = 'The average grade is {:.1f}% and the max is {:.1f}%'

print(msg.format(np.mean(route_grades)*100, np.max(route_grades)*100))

route_rises = ox.utils_graph.get_route_edge_attributes(G, route, 'rise')

ascent = np.sum([rise for rise in route_rises if rise >= 0])

descent = np.sum([rise for rise in route_rises if rise < 0])

msg = 'Total elevation change is {:.1f} meters: a {:.0f} meter ascent and a {:.0f} meter descent'

print(msg.format(np.sum(route_rises), ascent, abs(descent)))

route_lengths = ox.utils_graph.get_route_edge_attributes(G, route, 'length')

print('Total trip distance: {:,.0f} meters'.format(np.sum(route_lengths)))

# stats of route minimizing length

print_route_stats(route_by_length)

# stats of route minimizing impedance (function of length and grade)

print_route_stats(route_by_impedance)

| 12_gcp_elevation_api.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# # Pre-processing notebook

# In this notebook, we will pre-process the frames. For better visualisation, we will just capture 2 frames and visualise all the steps. The steps are:

# 1. Capture 2 consecutive frames.

# 2. Find difference between the frames to capture the motion.

# 3. Use GaussianBlur, thresholding, dilation and erosion to pre-process the frames.

# 4. Image segmentation using contours. Extract the vehicles during this method.

# 5. Convert contours to hulls.

# +

# Run these if OpenCV doesn't load

import sys

# sys.path.append('/Library/Frameworks/Python.framework/Versions/3.6/lib/python3.6/site-packages/cv2/')

# -

# First, we import the necessary libraries

import cv2

import numpy as np

import math

import matplotlib.pyplot as plt

# %matplotlib inline

# Next, we define variables that will be used through the duration of the code

# Here, we define some colours

SCALAR_BLACK = (0.0,0.0,0.0)

SCALAR_WHITE = (255.0,255.0,255.0)

SCALAR_YELLOW = (0.0,255.0,255.0)

SCALAR_GREEN = (0.0,255.0,0.0)

SCALAR_RED = (0.0,0.0,255.0)

SCALAR_CYAN = (255.0,255.0,0.0)

# Function to draw the image

# function to plot n images using subplots

def plot_image(images, captions=None, cmap=None ):

f, axes = plt.subplots(1, len(images), sharey=True)

f.set_figwidth(15)

for ax,image,caption in zip(axes, images, captions):

ax.imshow(image, cmap)

ax.set_title(caption)

# ### Capturing movement in video

# **Two consecutive frames are required to capture the movement**. If there is movement in vehicle, there will be small change in pixel value in the current frame compared to the previous frame. The change implies movement. Let's capture the first 2 frames now.

# +

SHOW_DEBUG_STEPS = True

# Reading video

cap = cv2.VideoCapture('./AundhBridge.mp4')

# if video is not present, show error

if not(cap.isOpened()):

print("Error reading file")

# Check if you are able to capture the video

ret, fFrame = cap.read()

# Capturing 2 consecutive frames and making a copy of those frame. Perform all operations on the copy frame.

ret, fFrame1 = cap.read()

ret, fFrame2 = cap.read()

img1 = fFrame1.copy()

img2 = fFrame2.copy()

if(SHOW_DEBUG_STEPS):

print ('img1 height' + str(img1.shape[0]))

print ('img1 width' + str(img1.shape[1]))

print ('img2 height' + str(img2.shape[0]))

print ('img2 width' + str(img2.shape[1]))

# Convert the colour images to greyscale in order to enable fast processing

img1 = cv2.cvtColor(img1, cv2.COLOR_BGR2GRAY)

img2 = cv2.cvtColor(img2, cv2.COLOR_BGR2GRAY)

#plotting

plot_image([img1, img2], cmap='gray', captions=["First frame", "Second frame"])

# -

# ### Adding gaussion blur for smoothening

# +

# Add some Gaussian Blur

img1 = cv2.GaussianBlur(img1,(5,5),0)

img2 = cv2.GaussianBlur(img2,(5,5),0)

#plotting

plot_image([img1, img2], cmap='gray', captions=["GaussianBlur first frame", "GaussianBlur second frame"])

# -

# ### Find the movement in video

# If vehicle is moving, there will be **slight change** in pixel value in the next frame compared to previous frame. We then threshold the image. This will be useful further for preprocessing. Pixel value below 30 will be set as 0(black) and above as 255(white)

#

# Thresholding: https://docs.opencv.org/3.0-beta/doc/py_tutorials/py_imgproc/py_thresholding/py_thresholding.html

# +

# This imgDiff variable is the difference between consecutive frames, which is equivalent to detecting movement

imgDiff = cv2.absdiff(img1, img2)

# Thresholding the image that is obtained after taking difference. Pixel value below 30 will be set as 0(black) and above as 255(white)

ret,imgThresh = cv2.threshold(imgDiff,30.0,255.0,cv2.THRESH_BINARY)

ht = np.size(imgThresh,0)

wd = np.size(imgThresh,1)

plot_image([imgDiff, imgThresh], cmap='gray', captions = ["Difference between 2 frames", "Difference between 2 frames after threshold"])

# -

# ### Dilation and erosion in image

# Dilation and erosion in the image with the filter size of structuring elements.

# +

# Now, we define structuring elements

strucEle3x3 = cv2.getStructuringElement(cv2.MORPH_RECT,(3,3))

strucEle5x5 = cv2.getStructuringElement(cv2.MORPH_RECT,(5,5))

strucEle7x7 = cv2.getStructuringElement(cv2.MORPH_RECT,(7,7))

strucEle15x15 = cv2.getStructuringElement(cv2.MORPH_RECT,(15,15))

plot_image([strucEle3x3, strucEle5x5, strucEle7x7, strucEle15x15], cmap='gray', captions = ["strucEle3x3", "strucEle5x5", "strucEle7x7", "strucEle15x15"])

# +

for i in range(2):

imgThresh = cv2.dilate(imgThresh,strucEle5x5,iterations = 2)

imgThresh = cv2.erode(imgThresh,strucEle5x5,iterations = 1)

imgThreshCopy = imgThresh.copy()

if(SHOW_DEBUG_STEPS):

print ('imgThreshCopy height' + str(imgThreshCopy.shape[0]))

print ('imgThreshCopy width' + str(imgThreshCopy.shape[1]))

plt.imshow(imgThresh, cmap = 'gray')

# -

# ## Extracting contours

# Till now, you have a binary image. Next, we will segment the image and find all possible contours(possible vehicles). The shape of the contours will tell us the number of contours that has been identified. Define *drawAndShowContours()* function to plot the contours. You will see that the threshold image above and the countour image will look alike. So, additionally, we also plot a particular '9th' countour for further clarity.

def drawAndShowContours(wd,ht,contours,strImgName):

global SCALAR_WHITE

global SHOW_DEBUG_STEPS

# Defining a blank frame. Since it is initialised with zeros, it will be black. Will add all the coutours in this image.

blank_image = np.zeros((ht,wd,3), np.uint8)

#cv2.drawContours(blank_image,contours,10,SCALAR_WHITE,-1)

# Adding all possible contour to the blank frame. Contour is white

cv2.drawContours(blank_image,contours,-1,SCALAR_WHITE,-1)

# For better clarity, lets just view countour 9

blank_image_contour_9 = np.zeros((ht,wd,3), np.uint8)

# Let's just add contour 9 to the blank image and view it

cv2.drawContours(blank_image_contour_9,contours,8,SCALAR_WHITE,-1)

# Plotting

plot_image([blank_image, blank_image_contour_9], cmap='gray', captions = ["All possible contours", "Only the 9th contour"])

return blank_image

# +

# Now, we move on to the contour mapping portion

im, contours, hierarchy = cv2.findContours(imgThreshCopy,cv2.RETR_EXTERNAL,cv2.CHAIN_APPROX_SIMPLE)

im2 = drawAndShowContours(wd,ht,contours,'imgContours')

# Printing all the coutours in the image.

if(SHOW_DEBUG_STEPS):

print ('contours.shape: ' + str(len(contours)))

# -

# ## Hulls

# Hulls are contours with the "convexHull".

# +

# Next, we define hulls.

# Hulls are contours with the "convexHull" function from cv2

hulls = contours # does it work?

for i in range(len(contours)):

hulls[i] = cv2.convexHull(contours[i])

# Then we draw the contours

im3 = drawAndShowContours(wd,ht,hulls,'imgConvexHulls')

| 06Deep Learning/04Convolutional Neural Networks - Industry Applications/03Industry Demo_ Detecting Vehicles in Videos/07Detecting Object in Video/Pre-processing+notebook.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# # Exercise 3.2

# Import the pySpark libraries

import sys

# !conda install --yes --prefix {sys.prefix} -c conda-forge pyspark

from pyspark.sql import SparkSession

from pyspark.sql.functions import col, split, size

# Connect to a local Spark session

spark = SparkSession.builder.appName("Packt").getOrCreate()

# Read the raw data from a CSV file

data = spark.read.csv('../../Datasets/netflix_titles_nov_2019.csv', header='true')

# Take only the movies

movies = data.filter((col('type') == 'Movie') & (col('release_year') == 2019))

# Add a column with the number of actors

transformed = movies.withColumn('count_cast', size(split(movies['cast'], ',')))

# Select a subset of columns to store

selected = transformed.select('title', 'director', 'count_cast', 'cast', 'rating', 'release_year', 'type')

# Write the contents of the DataFrame to disk

selected.write.csv('transformed.csv', header='true')

| Chapter03/Exercise03.02/spark_etl.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 2

# language: python

# name: python2

# ---

import matplotlib .pyplot as plt

# %matplotlib inline

import numpy as np

x = np.linspace(0,20,1000)

y2 = [np.exp(-6*i)*(0.0037*np.cos(8*i)+0.09*np.sin(8*i))+0.017*np.sin(10*i-np.pi*0.5) for i in x]

plt.grid()

plt.plot(x, y2,c = 'r')

| Untitled35.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 2

# language: python

# name: python2

# ---

# ## Hi and welcome to your first code along lesson!

#

# #### Reading, writing and displaying images with OpenCV

# Let's start by importing the OpenCV libary

# +

# Press CTRL + ENTER to run this line

# You should see an * between the [ ] on the left

# OpenCV takes a couple seconds to import the first time

import cv2

# -

# +

# Now let's import numpy

# We use as np, so that everything we call on numpy, we can type np instead

# It's short and looks neater

import numpy as np

# -

# Let's now load our first image

# +

# We don't need to do this again, but it's a good habit

import cv2

# Load an image using 'imread' specifying the path to image

input = cv2.imread('./images/input.jpg')

# Our file 'input.jpg' is now loaded and stored in python

# as a varaible we named 'image'

# To display our image variable, we use 'imshow'

# The first parameter will be title shown on image window

# The second parameter is the image varialbe

cv2.imshow('Hello World', input)

# 'waitKey' allows us to input information when a image window is open

# By leaving it blank it just waits for anykey to be pressed before

# continuing. By placing numbers (except 0), we can specify a delay for

# how long you keep the window open (time is in milliseconds here)

cv2.waitKey()

# This closes all open windows

# Failure to place this will cause your program to hang

cv2.destroyAllWindows()

# +

# Same as above without the extraneous comments

import cv2

input = cv2.imread('./images/input.jpg')

cv2.imshow('<NAME>', input)

cv2.waitKey(0)

cv2.destroyAllWindows()

# -

# ### Let's take a closer look at how images are stored

# Import numpy

import numpy as np

print input.shape

# #### Shape gives the dimensions of the image array

#

# The 2D dimensions are 830 pixels in high bv 1245 pixels wide.

# The '3L' means that there are 3 other components (RGB) that make up this image.

# +

# Let's print each dimension of the image

print 'Height of Image:', int(input.shape[0]), 'pixels'

print 'Width of Image: ', int(input.shape[1]), 'pixels'

# -

# ### How do we save images we edit in OpenCV?

# Simply use 'imwrite' specificing the file name and the image to be saved

cv2.imwrite('output.jpg', input)

cv2.imwrite('output.png', input)

| LECTURES/Lecture 2.4 - Reading, writing and displaying images.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# +

import os

import sys

root_path = os.path.abspath("../../../")

if root_path not in sys.path:

sys.path.append(root_path)

def draw_losses(nn):

el, il = nn.log["epoch_loss"], nn.log["iter_loss"]

ee_base = np.arange(len(el))

ie_base = np.linspace(0, len(el) - 1, len(il))

plt.plot(ie_base, il, label="Iter loss")

plt.plot(ee_base, el, linewidth=3, label="Epoch loss")

plt.legend()

plt.show()

from NN import Basic

from Util.Util import DataUtil

# %matplotlib inline

import numpy as np

import matplotlib.pyplot as plt

plt.rcParams["figure.figsize"] = (18, 8)

# -

x_train, y_train = DataUtil.gen_xor(size=1000, one_hot=False)

x_test, y_test = DataUtil.gen_xor(size=100, one_hot=False)

nn = Basic(model_param_settings={"n_epoch": 200}).fit(x_train, y_train, x_test, y_test, snapshot_ratio=0)

draw_losses(nn)

x_train, y_train = DataUtil.gen_spiral(size=1000, one_hot=False)

x_test, y_test = DataUtil.gen_spiral(size=100, one_hot=False)

nn = Basic(model_param_settings={"n_epoch": 200}).fit(x_train, y_train, x_test, y_test, snapshot_ratio=0)

draw_losses(nn)

x_train, y_train = DataUtil.gen_nine_grid(size=1000, one_hot=False)

x_test, y_test = DataUtil.gen_nine_grid(size=100, one_hot=False)

nn = Basic(model_param_settings={"n_epoch": 200}).fit(x_train, y_train, x_test, y_test, snapshot_ratio=0)

draw_losses(nn)

| _Dist/NeuralNetworks/c_BasicNN/BasicNN.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# %matplotlib inline

#

# # Cascade decomposition

#

# This example script shows how to compute and plot the cascade decompositon of

# a single radar precipitation field in pysteps.

#

from matplotlib import cm, pyplot as plt

import numpy as np

import os

from pprint import pprint

from pysteps.cascade.bandpass_filters import filter_gaussian

from pysteps import io, rcparams

from pysteps.cascade.decomposition import decomposition_fft

from pysteps.utils import conversion, transformation

from pysteps.visualization import plot_precip_field

# ## Read precipitation field

#

# First thing, the radar composite is imported and transformed in units

# of dB.

#

#

# +

# Import the example radar composite

root_path = rcparams.data_sources["fmi"]["root_path"]

filename = os.path.join(

root_path, "20160928", "201609281600_fmi.radar.composite.lowest_FIN_SUOMI1.pgm.gz"

)

R, _, metadata = io.import_fmi_pgm(filename, gzipped=True)

# Convert to rain rate

R, metadata = conversion.to_rainrate(R, metadata)

# Nicely print the metadata

pprint(metadata)

# Plot the rainfall field

plot_precip_field(R, geodata=metadata)

plt.show()

# Log-transform the data

R, metadata = transformation.dB_transform(R, metadata, threshold=0.1, zerovalue=-15.0)

# -

# ## 2D Fourier spectrum

#

# Compute and plot the 2D Fourier power spectrum of the precipitaton field.

#

#

# +

# Set Nans as the fill value

R[~np.isfinite(R)] = metadata["zerovalue"]

# Compute the Fourier transform of the input field

F = abs(np.fft.fftshift(np.fft.fft2(R)))

# Plot the power spectrum

M, N = F.shape

fig, ax = plt.subplots()

im = ax.imshow(

np.log(F ** 2), vmin=4, vmax=24, cmap=cm.jet, extent=(-N / 2, N / 2, -M / 2, M / 2)

)

cb = fig.colorbar(im)

ax.set_xlabel("Wavenumber $k_x$")

ax.set_ylabel("Wavenumber $k_y$")

ax.set_title("Log-power spectrum of R")

plt.show()

# -

# ## Cascade decomposition

#

# First, construct a set of Gaussian bandpass filters and plot the corresponding

# 1D filters.

#

#

# +

num_cascade_levels = 7

# Construct the Gaussian bandpass filters

filter = filter_gaussian(R.shape, num_cascade_levels)

# Plot the bandpass filter weights

L = max(N, M)

fig, ax = plt.subplots()

for k in range(num_cascade_levels):

ax.semilogx(

np.linspace(0, L / 2, len(filter["weights_1d"][k, :])),

filter["weights_1d"][k, :],

"k-",

base=pow(0.5 * L / 3, 1.0 / (num_cascade_levels - 2)),

)

ax.set_xlim(1, L / 2)

ax.set_ylim(0, 1)

xt = np.hstack([[1.0], filter["central_wavenumbers"][1:]])

ax.set_xticks(xt)

ax.set_xticklabels(["%.2f" % cf for cf in filter["central_wavenumbers"]])

ax.set_xlabel("Radial wavenumber $|\mathbf{k}|$")

ax.set_ylabel("Normalized weight")

ax.set_title("Bandpass filter weights")

plt.show()

# -

# Finally, apply the 2D Gaussian filters to decompose the radar rainfall field

# into a set of cascade levels of decreasing spatial scale and plot them.

#

#

# +

decomp = decomposition_fft(R, filter, compute_stats=True)

# Plot the normalized cascade levels

for i in range(num_cascade_levels):

mu = decomp["means"][i]

sigma = decomp["stds"][i]

decomp["cascade_levels"][i] = (decomp["cascade_levels"][i] - mu) / sigma

fig, ax = plt.subplots(nrows=2, ncols=4)

ax[0, 0].imshow(R, cmap=cm.RdBu_r, vmin=-5, vmax=5)

ax[0, 1].imshow(decomp["cascade_levels"][0], cmap=cm.RdBu_r, vmin=-3, vmax=3)

ax[0, 2].imshow(decomp["cascade_levels"][1], cmap=cm.RdBu_r, vmin=-3, vmax=3)

ax[0, 3].imshow(decomp["cascade_levels"][2], cmap=cm.RdBu_r, vmin=-3, vmax=3)

ax[1, 0].imshow(decomp["cascade_levels"][3], cmap=cm.RdBu_r, vmin=-3, vmax=3)

ax[1, 1].imshow(decomp["cascade_levels"][4], cmap=cm.RdBu_r, vmin=-3, vmax=3)

ax[1, 2].imshow(decomp["cascade_levels"][5], cmap=cm.RdBu_r, vmin=-3, vmax=3)

ax[1, 3].imshow(decomp["cascade_levels"][6], cmap=cm.RdBu_r, vmin=-3, vmax=3)

ax[0, 0].set_title("Observed")

ax[0, 1].set_title("Level 1")

ax[0, 2].set_title("Level 2")

ax[0, 3].set_title("Level 3")

ax[1, 0].set_title("Level 4")

ax[1, 1].set_title("Level 5")

ax[1, 2].set_title("Level 6")

ax[1, 3].set_title("Level 7")

for i in range(2):

for j in range(4):

ax[i, j].set_xticks([])

ax[i, j].set_yticks([])

plt.tight_layout()

plt.show()

# sphinx_gallery_thumbnail_number = 4

| notebooks/plot_cascade_decomposition.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: geopy2019

# language: python

# name: geopy2019

# ---

# # Test that the Python environment is properly installed

#

# - check the "kernel" under "Kernel" -> "change kernel" -> "geopy2019"

# - always close a notebook safely via "File" -> "Save and Checkpoint" and then "Close and Halt"

# - close the Jupyter notebook server via the "Quit" button on the main page

import numpy as np

import pandas as pd

import matplotlib.pyplot as plt

import seaborn

import scipy

import shapely

import gdal

import fiona

import shapely

import geopandas as gpd

import pysal

import bokeh

import cartopy

import mapclassify

import geoviews

import rasterstats

import rasterio

import geoplot

import folium

print("The Pysal package can use additional packages, but we don't need that functionality. The warning is ok.")

| test_install.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# + [markdown] papermill={"duration": 0.021005, "end_time": "2021-04-23T11:48:51.989118", "exception": false, "start_time": "2021-04-23T11:48:51.968113", "status": "completed"} tags=[]

# # Parcels Experiment:<br><br>Expanding the polyline code to release particles at density based on local velocity normal to section.

#

# _(Based on an experiment originally designed by <NAME>.)_

#

# _(Runs on GEOMAR Jupyter Server at https://schulung3.geomar.de/user/workshop007/lab)_

# + [markdown] papermill={"duration": 0.018031, "end_time": "2021-04-23T11:48:52.025362", "exception": false, "start_time": "2021-04-23T11:48:52.007331", "status": "completed"} tags=[]

# ## To do

#

# - Check/ask how OceanParcels deals with partial cells, if it does.

# - It doesn't. Does it matter?

# + [markdown] papermill={"duration": 0.018189, "end_time": "2021-04-23T11:48:52.063205", "exception": false, "start_time": "2021-04-23T11:48:52.045016", "status": "completed"} tags=[]

# ## Technical preamble

# + papermill={"duration": 3.156467, "end_time": "2021-04-23T11:48:55.237706", "exception": false, "start_time": "2021-04-23T11:48:52.081239", "status": "completed"} tags=[]

# %matplotlib inline

from parcels import (

AdvectionRK4_3D,

ErrorCode,

FieldSet,

JITParticle,

ParticleSet,

Variable

)

# from operator import attrgetter

from datetime import datetime, timedelta

import numpy as np

from pathlib import Path

import matplotlib.pyplot as plt

import cmocean as co

import pandas as pd

import xarray as xr

# import dask as dask

# + [markdown] papermill={"duration": 0.018082, "end_time": "2021-04-23T11:48:55.275850", "exception": false, "start_time": "2021-04-23T11:48:55.257768", "status": "completed"} tags=[]

# ## Experiment settings (user input)

# + [markdown] papermill={"duration": 0.020178, "end_time": "2021-04-23T11:48:55.314305", "exception": false, "start_time": "2021-04-23T11:48:55.294127", "status": "completed"} tags=[]

# ### Parameters

# These can be set in papermill

# + papermill={"duration": 0.02382, "end_time": "2021-04-23T11:48:55.356204", "exception": false, "start_time": "2021-04-23T11:48:55.332384", "status": "completed"} tags=["parameters"]

# OSNAP multiline details

sectionPathname = '../data/external/'

sectionFilename = 'osnap_pos_wp.txt'

sectionname = 'osnap'

# location of input data

path_name = '/data/iAtlantic/ocean-only/VIKING20X.L46-KKG36107B/nemo/output/'

experiment_name = 'VIKING20X.L46-KKG36107B'

data_resolution = '1m'

w_name_extension = '_repaire_depthw_time'

# location of mask data

mask_path_name = '/data/iAtlantic/ocean-only/VIKING20X.L46-KKG36107B/nemo/suppl/'

mesh_mask_filename = '1_mesh_mask.nc_notime_depthw'

# location of output data

outpath_name = '../data/raw/'

year_prefix = 201 # this does from 2000 onwards

# set line segment to use

start_vertex = 4

end_vertex = 12

# experiment duration etc

runtime_in_days = 10

dt_in_minutes = -10

# repeatdt = timedelta(days=3)

# number of particles to track

create_number_particles = 200000 # many will not be ocean points

use_number_particles = 200000

min_release_depth = 0

max_release_depth = 1_000

# max current speed for particle selection

max_current = 1.0

# set base release date and time

t_0_str = '2010-01-16T12:00:00'

t_start_str = '2016-01-16T12:00:00'

# particle positions are stored every x hours

outputdt_in_hours = 120

# select subdomain (to decrease needed resources) comment out to use whole domain

# sd_i1, sd_i2 = 0, 2404 # western/eastern limit (indices not coordinates)

# sd_j1, sd_j2 = 1200, 2499 # southern/northern limit (indices not coordinates)

# sd_z1, sd_z2 = 0, 46

# how to initialize the random number generator

# --> is set in next cell

# RNG_seed = 123

use_dask_chunks = True

# + papermill={"duration": 0.022373, "end_time": "2021-04-23T11:48:55.396851", "exception": false, "start_time": "2021-04-23T11:48:55.374478", "status": "completed"} tags=["injected-parameters"]

# Parameters

path_name = "/gxfs_work1/geomar/smomw355/model_data/ocean-only/VIKING20X.L46-KKG36107B/nemo/output/"

data_resolution = "5d"

w_name_extension = ""

mask_path_name = "/gxfs_work1/geomar/smomw355/model_data/ocean-only/VIKING20X.L46-KKG36107B/nemo/suppl/"

mesh_mask_filename = "1_mesh_mask.nc"

year_prefix = ""

runtime_in_days = 3650

create_number_particles = 4000000

use_number_particles = 4000000

max_release_depth = 1000

max_current = 2.0

t_0_str = "1980-01-03T12:00:00"

t_start_str = "2019-02-22T12:00:00"

use_dask_chunks = False

# + [markdown] papermill={"duration": 0.017956, "end_time": "2021-04-23T11:48:55.432903", "exception": false, "start_time": "2021-04-23T11:48:55.414947", "status": "completed"} tags=[]

# ### Derived variables

# + papermill={"duration": 0.026652, "end_time": "2021-04-23T11:48:55.477730", "exception": false, "start_time": "2021-04-23T11:48:55.451078", "status": "completed"} tags=[]

# times

t_0 = datetime.fromisoformat(t_0_str) # using monthly mean fields. Check dates.

t_start = datetime.fromisoformat(t_start_str)

# RNG seed based on release day (days since 1980-01-03)

RNG_seed = int((t_start - t_0).total_seconds() / (60*60*24))

# names of files to load

fname_U = f'1_{experiment_name}_{data_resolution}_{year_prefix}*_grid_U.nc'

fname_V = f'1_{experiment_name}_{data_resolution}_{year_prefix}*_grid_V.nc'

fname_T = f'1_{experiment_name}_{data_resolution}_{year_prefix}*_grid_T.nc'

fname_W = f'1_{experiment_name}_{data_resolution}_{year_prefix}*_grid_W.nc{w_name_extension}'

sectionPath = Path(sectionPathname)

data_path = Path(path_name)

mask_path = Path(mask_path_name)

outpath = Path(outpath_name)

display(t_0)

display(t_start)

# + papermill={"duration": 0.022805, "end_time": "2021-04-23T11:48:55.519288", "exception": false, "start_time": "2021-04-23T11:48:55.496483", "status": "completed"} tags=[]

if dt_in_minutes > 0:

direction = '_forwards_'

else:

direction = '_backward_'

year_str = str(t_start.year)

month_str = str(t_start.month).zfill(2)

day_str = str(t_start.day).zfill(2)

days = str(runtime_in_days)

seed = str(RNG_seed)

npart= str(use_number_particles)

# + papermill={"duration": 0.022343, "end_time": "2021-04-23T11:48:55.560561", "exception": false, "start_time": "2021-04-23T11:48:55.538218", "status": "completed"} tags=[]

degree2km = 1.852*60.0

# + [markdown] papermill={"duration": 0.020862, "end_time": "2021-04-23T11:48:55.600297", "exception": false, "start_time": "2021-04-23T11:48:55.579435", "status": "completed"} tags=[]

# ## Construct input / output paths etc.

# + papermill={"duration": 0.022398, "end_time": "2021-04-23T11:48:55.641720", "exception": false, "start_time": "2021-04-23T11:48:55.619322", "status": "completed"} tags=[]

mesh_mask = mask_path / mesh_mask_filename

# + [markdown] papermill={"duration": 0.018687, "end_time": "2021-04-23T11:48:55.679163", "exception": false, "start_time": "2021-04-23T11:48:55.660476", "status": "completed"} tags=[]

# ## Load input datasets

# + papermill={"duration": 0.028614, "end_time": "2021-04-23T11:48:55.726606", "exception": false, "start_time": "2021-04-23T11:48:55.697992", "status": "completed"} tags=[]

def fieldset_defintions(

list_of_filenames_U, list_of_filenames_V,

list_of_filenames_W, list_of_filenames_T,

mesh_mask

):

ds_mask = xr.open_dataset(mesh_mask)

filenames = {'U': {'lon': (mesh_mask),

'lat': (mesh_mask),

'depth': list_of_filenames_W[0],

'data': list_of_filenames_U},

'V': {'lon': (mesh_mask),

'lat': (mesh_mask),

'depth': list_of_filenames_W[0],

'data': list_of_filenames_V},

'W': {'lon': (mesh_mask),

'lat': (mesh_mask),

'depth': list_of_filenames_W[0],

'data': list_of_filenames_W},

'T': {'lon': (mesh_mask),

'lat': (mesh_mask),

'depth': list_of_filenames_W[0],

'data': list_of_filenames_T},

'S': {'lon': (mesh_mask),

'lat': (mesh_mask),

'depth': list_of_filenames_W[0],

'data': list_of_filenames_T},

'MXL': {'lon': (mesh_mask),

'lat': (mesh_mask),

'data': list_of_filenames_T}

}

variables = {'U': 'vozocrtx',

'V': 'vomecrty',

'W': 'vovecrtz',

'T': 'votemper',

'S': 'vosaline',

'MXL':'somxl010'

}

dimensions = {'U': {'lon': 'glamf', 'lat': 'gphif', 'depth': 'depthw',

'time': 'time_counter'}, # needs to be on f-nodes

'V': {'lon': 'glamf', 'lat': 'gphif', 'depth': 'depthw',

'time': 'time_counter'}, # needs to be on f-nodes

'W': {'lon': 'glamf', 'lat': 'gphif', 'depth': 'depthw',

'time': 'time_counter'}, # needs to be on f-nodes

'T': {'lon': 'glamf', 'lat': 'gphif', 'depth': 'depthw',

'time': 'time_counter'}, # needs to be on t-nodes

'S': {'lon': 'glamf', 'lat': 'gphif', 'depth': 'depthw',

'time': 'time_counter'}, # needs to be on t-nodes

'MXL': {'lon': 'glamf', 'lat': 'gphif',

'time': 'time_counter'}, # needs to be on t-nodes

}

# exclude the two grid cells at the edges of the nest as they contain 0

# and everything south of 20N

indices = {'lon': range(2, ds_mask.x.size-2), 'lat': range(1132, ds_mask.y.size-2)}

# indices = {

# 'U': {'depth': range(sd_z1, sd_z2), 'lon': range(sd_i1, sd_i2), 'lat': range(sd_j1, sd_j2)},

# 'V': {'depth': range(sd_z1, sd_z2), 'lon': range(sd_i1, sd_i2), 'lat': range(sd_j1, sd_j2)},

# 'W': {'depth': range(sd_z1, sd_z2), 'lon': range(sd_i1, sd_i2), 'lat':range(sd_j1, sd_j2)},

# 'T': {'depth': range(sd_z1, sd_z2), 'lon': range(sd_i1, sd_i2), 'lat':range(sd_j1, sd_j2)},

# 'S': {'depth': range(sd_z1, sd_z2), 'lon': range(sd_i1, sd_i2), 'lat':range(sd_j1, sd_j2)}

# }

if use_dask_chunks:

field_chunksizes = {'U': {'lon':('x', 1024), 'lat':('y',128), 'depth': ('depthw', 64),

'time': ('time_counter',3)}, # needs to be on f-nodes

'V': {'lon':('x', 1024), 'lat':('y',128), 'depth': ('depthw', 64),

'time': ('time_counter',3)}, # needs to be on f-nodes

'W': {'lon':('x', 1024), 'lat':('y',128), 'depth': ('depthw', 64),

'time': ('time_counter',3)}, # needs to be on f-nodes

'T': {'lon':('x', 1024), 'lat':('y',128), 'depth': ('depthw', 64),

'time': ('time_counter',3)}, # needs to be on t-nodes

'S': {'lon':('x', 1024), 'lat':('y',128), 'depth': ('depthw', 64),

'time': ('time_counter',3)}, # needs to be on t-nodes

'MXL': {'lon':('x', 1024), 'lat':('y',128),

'time': ('time_counter',3)}, # needs to be on t-nodes

}

else:

field_chunksizes = None

return FieldSet.from_nemo(

filenames, variables, dimensions,

indices=indices,

chunksize=field_chunksizes, # = None for no chunking

mesh='spherical',

tracer_interp_method='cgrid_tracer'

# ,time_periodic=time_loop_period

# ,allow_time_extrapolation=True

)

# + papermill={"duration": 0.023563, "end_time": "2021-04-23T11:48:55.769023", "exception": false, "start_time": "2021-04-23T11:48:55.745460", "status": "completed"} tags=[]

def create_fieldset(

data_path=data_path, experiment_name=experiment_name,

fname_U=fname_U, fname_V=fname_V, fname_W=fname_W, fname_T=fname_T,

mesh_mask = mesh_mask

):

files_U = list(sorted((data_path).glob(fname_U)))

files_V = list(sorted((data_path).glob(fname_V)))

files_W = list(sorted((data_path).glob(fname_W)))

files_T = list(sorted((data_path).glob(fname_T)))

print(files_U)

fieldset = fieldset_defintions(

files_U, files_V,

files_W, files_T, mesh_mask)

return fieldset

# + papermill={"duration": 280.78306, "end_time": "2021-04-23T11:53:36.570974", "exception": false, "start_time": "2021-04-23T11:48:55.787914", "status": "completed"} tags=[]

fieldset = create_fieldset()

# + [markdown] papermill={"duration": 0.019498, "end_time": "2021-04-23T11:53:36.610897", "exception": false, "start_time": "2021-04-23T11:53:36.591399", "status": "completed"} tags=[]

# ## Create Virtual Particles

# + [markdown] papermill={"duration": 0.01937, "end_time": "2021-04-23T11:53:36.649637", "exception": false, "start_time": "2021-04-23T11:53:36.630267", "status": "completed"} tags=[]

# #### add a couple of simple plotting routines

# + papermill={"duration": 0.02814, "end_time": "2021-04-23T11:53:36.697389", "exception": false, "start_time": "2021-04-23T11:53:36.669249", "status": "completed"} tags=[]

def plot_section_sdist():

plt.figure(figsize=(10,5))

u = np.array([p.uvel for p in pset]) * degree2km * 1000.0 * np.cos(np.radians(pset.lat))

v = np.array([p.vvel for p in pset]) * degree2km * 1000.0

section_index = np.searchsorted(lonlat.lon,pset.lon)-1

u_normal = v * lonlatdiff.costheta[section_index].data - u * lonlatdiff.sintheta[section_index].data

y = (pset.lat - lonlat.lat[section_index]) * degree2km

x = (pset.lon - lonlat.lon[section_index]) * degree2km*np.cos(np.radians(lonlat2mean.lat[section_index+1].data))

dist = np.sqrt(x**2 + y**2) + lonlatdiff.length_west[section_index].data

plt.scatter(

dist,

[p.depth for p in pset],

1,

u_normal,

cmap=co.cm.balance,vmin=-0.3,vmax=0.3

)

plt.ylim(1200,0)

plt.colorbar(label = r'normal velocity [$\mathrm{m\ s}^{-1}$]')

plt.xlabel('distance [km]')

plt.ylabel('depth [m]')

return

# + papermill={"duration": 0.025351, "end_time": "2021-04-23T11:53:36.742399", "exception": false, "start_time": "2021-04-23T11:53:36.717048", "status": "completed"} tags=[]

def plot_section_lon():

plt.figure(figsize=(10,5))

u = np.array([p.uvel for p in pset]) * degree2km * 1000.0 * np.cos(np.radians(pset.lat))

v = np.array([p.vvel for p in pset]) * degree2km * 1000.0

section_index = np.searchsorted(lonlat.lon,pset.lon)-1