code stringlengths 38 801k | repo_path stringlengths 6 263 |

|---|---|

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python [default]

# language: python

# name: python3

# ---

# <img src="../static/images/joinnode.png" width="240">

#

# # JoinNode

#

# JoinNode have the opposite effect of [iterables](basic_iteration.ipynb). Where `iterables` split up the execution workflow into many different branches, a JoinNode merges them back into on node. For a more detailed explanation, check out [JoinNode, synchronize and itersource](http://nipype.readthedocs.io/en/latest/users/joinnode_and_itersource.html) from the main homepage.

# ## Simple example

#

# Let's consider the very simple example depicted at the top of this page:

# ```python

# from nipype import Node, JoinNode, Workflow

#

# # Specify fake input node A

# a = Node(interface=A(), name="a")

#

# # Iterate over fake node B's input 'in_file?

# b = Node(interface=B(), name="b")

# b.iterables = ('in_file', [file1, file2])

#

# # Pass results on to fake node C

# c = Node(interface=C(), name="c")

#

# # Join forked execution workflow in fake node D

# d = JoinNode(interface=D(),

# joinsource="b",

# joinfield="in_files",

# name="d")

#

# # Put everything into a workflow as usual

# workflow = Workflow(name="workflow")

# workflow.connect([(a, b, [('subject', 'subject')]),

# (b, c, [('out_file', 'in_file')])

# (c, d, [('out_file', 'in_files')])

# ])

# ```

# As you can see, setting up a ``JoinNode`` is rather simple. The only difference to a normal ``Node`` are the ``joinsource`` and the ``joinfield``. ``joinsource`` specifies from which node the information to join is coming and the ``joinfield`` specifies the input field of the JoinNode where the information to join will be entering the node.

# ## More realistic example

#

# Let's consider another example where we have one node that iterates over 3 different numbers and generates randome numbers. Another node joins those three different numbers (each coming from a separate branch of the workflow) into one list. To make the whole thing a bit more realistic, the second node will use the ``Function`` interface to do something with those numbers, before we spit them out again.

from nipype import JoinNode, Node, Workflow

from nipype.interfaces.utility import Function, IdentityInterface

# +

def get_data_from_id(id):

"""Generate a random number based on id"""

import numpy as np

return id + np.random.rand()

def merge_and_scale_data(data2):

"""Scale the input list by 1000"""

import numpy as np

return (np.array(data2) * 1000).tolist()

node1 = Node(Function(input_names=['id'],

output_names=['data1'],

function=get_data_from_id),

name='get_data')

node1.iterables = ('id', [1, 2, 3])

node2 = JoinNode(Function(input_names=['data2'],

output_names=['data_scaled'],

function=merge_and_scale_data),

name='scale_data',

joinsource=node1,

joinfield=['data2'])

# -

wf = Workflow(name='testjoin')

wf.connect(node1, 'data1', node2, 'data2')

eg = wf.run()

wf.write_graph(graph2use='exec')

from IPython.display import Image

Image(filename='graph_detailed.png')

# Now, let's look at the input and output of the joinnode:

res = [node for node in eg.nodes() if 'scale_data' in node.name][0].result

res.outputs

res.inputs

# ## Extending to multiple nodes

#

# We extend the workflow by using three nodes. Note that even this workflow, the joinsource corresponds to the node containing iterables and the joinfield corresponds to the input port of the JoinNode that aggregates the iterable branches. As before the graph below shows how the execution process is setup.

# +

def get_data_from_id(id):

import numpy as np

return id + np.random.rand()

def scale_data(data2):

import numpy as np

return data2

def replicate(data3, nreps=2):

return data3 * nreps

node1 = Node(Function(input_names=['id'],

output_names=['data1'],

function=get_data_from_id),

name='get_data')

node1.iterables = ('id', [1, 2, 3])

node2 = Node(Function(input_names=['data2'],

output_names=['data_scaled'],

function=scale_data),

name='scale_data')

node3 = JoinNode(Function(input_names=['data3'],

output_names=['data_repeated'],

function=replicate),

name='replicate_data',

joinsource=node1,

joinfield=['data3'])

# -

wf = Workflow(name='testjoin')

wf.connect(node1, 'data1', node2, 'data2')

wf.connect(node2, 'data_scaled', node3, 'data3')

eg = wf.run()

wf.write_graph(graph2use='exec')

Image(filename='graph_detailed.png')

# ### Exercise 1

#

# You have list of DOB of the subjects in a few various format : ``["10 February 1984", "March 5 1990", "April 2 1782", "June 6, 1988", "12 May 1992"]``, and you want to sort the list.

#

# You can use ``Node`` with ``iterables`` to extract day, month and year, and use [datetime.datetime](https://docs.python.org/2/library/datetime.html) to unify the format that can be compared, and ``JoinNode`` to sort the list.

# + solution2="hidden" solution2_first=true

# write your solution here

# + solution2="hidden"

# the list of all DOB

dob_subjects = ["10 February 1984", "March 5 1990", "April 2 1782", "June 6, 1988", "12 May 1992"]

# + solution2="hidden"

# let's start from creating Node with iterable to split all strings from the list

from nipype import Node, JoinNode, Function, Workflow

def split_dob(dob_string):

return dob_string.split()

split_node = Node(Function(input_names=["dob_string"],

output_names=["split_list"],

function=split_dob),

name="splitting")

#split_node.inputs.dob_string = "10 February 1984"

split_node.iterables = ("dob_string", dob_subjects)

# + solution2="hidden"

# and now let's work on the date format more, independently for every element

# sometimes the second element has an extra "," that we should remove

def remove_comma(str_list):

str_list[1] = str_list[1].replace(",", "")

return str_list

cleaning_node = Node(Function(input_names=["str_list"],

output_names=["str_list_clean"],

function=remove_comma),

name="cleaning")

# now we can extract year, month, day from our list and create ``datetime.datetim`` object

def datetime_format(date_list):

import datetime

# year is always the last

year = int(date_list[2])

#day and month can be in the first or second position

# we can use datetime.datetime.strptime to convert name of the month to integer

try:

day = int(date_list[0])

month = datetime.datetime.strptime(date_list[1], "%B").month

except(ValueError):

day = int(date_list[1])

month = datetime.datetime.strptime(date_list[0], "%B").month

# and create datetime.datetime format

return datetime.datetime(year, month, day)

datetime_node = Node(Function(input_names=["date_list"],

output_names=["datetime"],

function=datetime_format),

name="datetime")

# + solution2="hidden"

# now we are ready to create JoinNode and sort the list of DOB

def sorting_dob(datetime_list):

datetime_list.sort()

return datetime_list

sorting_node = JoinNode(Function(input_names=["datetime_list"],

output_names=["dob_sorted"],

function=sorting_dob),

joinsource=split_node, # this is the node that used iterables for x

joinfield=['datetime_list'],

name="sorting")

# + solution2="hidden"

# and we're ready to create workflow

ex1_wf = Workflow(name="sorting_dob")

ex1_wf.connect(split_node, "split_list", cleaning_node, "str_list")

ex1_wf.connect(cleaning_node, "str_list_clean", datetime_node, "date_list")

ex1_wf.connect(datetime_node, "datetime", sorting_node, "datetime_list")

# + solution2="hidden"

# you can check the graph

from IPython.display import Image

ex1_wf.write_graph(graph2use='exec')

Image(filename='graph_detailed.png')

# + solution2="hidden"

# and run the workflow

ex1_res = ex1_wf.run()

# + solution2="hidden"

# you can check list of all nodes

ex1_res.nodes()

# + solution2="hidden"

# and check the results from sorting_dob.sorting

list(ex1_res.nodes())[0].result.outputs

| notebooks/basic_joinnodes.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: PySpark

# language: ''

# name: pysparkkernel

# ---

# ## Tuning Model Parameters

#

# In this exercise, you will optimise the parameters for a classification model.

#

# ### Prepare the Data

#

# First, import the libraries you will need and prepare the training and test data:

# +

# Import Spark SQL and Spark ML libraries

from pyspark.sql.types import *

from pyspark.sql.functions import *

from pyspark.ml import Pipeline

from pyspark.ml.classification import LogisticRegression

from pyspark.ml.feature import VectorAssembler

from pyspark.ml.tuning import ParamGridBuilder, TrainValidationSplit

from pyspark.ml.evaluation import BinaryClassificationEvaluator

# Load the source data

csv = spark.read.csv('wasb:///data/flights.csv', inferSchema=True, header=True)

# Select features and label

data = csv.select("DayofMonth", "DayOfWeek", "OriginAirportID", "DestAirportID", "DepDelay", ((col("ArrDelay") > 15).cast("Int").alias("label")))

# Split the data

splits = data.randomSplit([0.7, 0.3])

train = splits[0]

test = splits[1].withColumnRenamed("label", "trueLabel")

# -

# ### Define the Pipeline

# Now define a pipeline that creates a feature vector and trains a classification model

# Define the pipeline

assembler = VectorAssembler(inputCols = ["DayofMonth", "DayOfWeek", "OriginAirportID", "DestAirportID", "DepDelay"], outputCol="features")

lr = LogisticRegression(labelCol="label", featuresCol="features")

pipeline = Pipeline(stages=[assembler, lr])

# ### Tune Parameters

# You can tune parameters to find the best model for your data. A simple way to do this is to use **TrainValidationSplit** to evaluate each combination of parameters defined in a **ParameterGrid** against a subset of the training data in order to find the best performing parameters.

# +

paramGrid = ParamGridBuilder().addGrid(lr.regParam, [0.3, 0.1, 0.01]).addGrid(lr.maxIter, [10, 5]).addGrid(lr.threshold, [0.35, 0.30]).build()

tvs = TrainValidationSplit(estimator=pipeline, evaluator=BinaryClassificationEvaluator(), estimatorParamMaps=paramGrid, trainRatio=0.8)

model = tvs.fit(train)

# -

# ### Test the Model

# Now you're ready to apply the model to the test data.

prediction = model.transform(test)

predicted = prediction.select("features", "prediction", "probability", "trueLabel")

predicted.show(100)

# ### Compute Confusion Matrix Metrics

# Classifiers are typically evaluated by creating a *confusion matrix*, which indicates the number of:

# - True Positives

# - True Negatives

# - False Positives

# - False Negatives

#

# From these core measures, other evaluation metrics such as *precision* and *recall* can be calculated.

tp = float(predicted.filter("prediction == 1.0 AND truelabel == 1").count())

fp = float(predicted.filter("prediction == 1.0 AND truelabel == 0").count())

tn = float(predicted.filter("prediction == 0.0 AND truelabel == 0").count())

fn = float(predicted.filter("prediction == 0.0 AND truelabel == 1").count())

metrics = spark.createDataFrame([

("TP", tp),

("FP", fp),

("TN", tn),

("FN", fn),

("Precision", tp / (tp + fp)),

("Recall", tp / (tp + fn))],["metric", "value"])

metrics.show()

# ### Review the Area Under ROC

# Another way to assess the performance of a classification model is to measure the area under a ROC curve for the model. the spark.ml library includes a **BinaryClassificationEvaluator** class that you can use to compute this.

evaluator = BinaryClassificationEvaluator(labelCol="trueLabel", rawPredictionCol="prediction", metricName="areaUnderROC")

aur = evaluator.evaluate(prediction)

print "AUR = ", aur

| notebooks/06. Parameter Tuning.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# +

# %matplotlib notebook

from asteria import config, detector

import astropy.units as u

import numpy as np

import matplotlib as mpl

import matplotlib.pyplot as plt

from mpl_toolkits.mplot3d import Axes3D

mpl.rc('font', size=12)

# -

# ## Load Configuration

#

# This will load the source configuration from a file.

#

# For this to work, either the user needs to have done one of two things:

# 1. Run `python setup.py install` in the ASTERIA directory.

# 2. Run `python setup.py develop` and set the environment variable `ASTERIA` to point to the git source checkout.

#

# If these were not done, the initialization will fail because the paths will not be correctly resolved.

conf = config.load_config('../../data/config/default.yaml')

ic86 = detector.initialize(conf)

doms = ic86.doms_table()

doms

# +

fig = plt.figure(figsize=(6,6))

ax = fig.add_subplot(111, projection='3d')

# Plot the DeepCore DOMs

dc = doms['type'] == 'dc'

x, y, z = [doms[coord][dc] for coord in 'xyz']

ax.scatter(x, y, z, alpha=0.7)

# Plot the standard DOMs

i3 = doms['type'] == 'i3'

x, y, z = [doms[coord][i3] for coord in 'xyz']

ax.scatter(x, y, z, alpha=0.2)

ax.set(xlabel='x [m]', ylabel='y [m]', zlabel='z [m]')

ax.view_init(30, -40)

fig.tight_layout()

# -

| docs/nb/detector.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# # ARDUAIR: Procesamiento de datos PM10

# Se procesan los datos para obtener un archivo CSV con los promedios hora de las lecturas de pm10 de las fechas 17, 18 y 19 de abril de 2017

#

# Se filtran los datos extraños por encima de (lecturas por encima de 1000000) para este caso

#

# ## importacion de librerias

import pandas as pd

import numpy as np

import datetime as dt

import seaborn as sns

import matplotlib.pyplot as plt

# %matplotlib inline

pd.options.mode.chained_assignment = None

# +

#load

data=pd.read_csv('DATA.TXT',names=['year','month','day','hour','minute','second','hum','temp','pr','l','co','so2','no2','o3','pm10','pm25','void'])

#Dates to datetime

dates=data[['year','month','day','hour','minute','second']]

dates['year']=dates['year'].add(2000)

dates['minute']=dates['minute'].add(60)

data['datetime']=pd.to_datetime(dates)

#agregation

data=data[['datetime','pm10']]

plt.plot(data.datetime, data.pm10)

# -

# # filtration

#

# Se filtran los datos extraños por encima de (lecturas por encima de 1000000) para este caso

#

#

# +

#load

data=pd.read_csv('DATA.TXT',names=['year','month','day','hour','minute','second','hum','temp','pr','l','co','so2','no2','o3','pm10','pm25','void'])

data=data[data.pm10<1000000]

#Dates to datetime

dates=data[['year','month','day','hour','minute','second']]

dates['year']=dates['year'].add(2000)

dates['minute']=dates['minute'].add(60)

data['datetime']=pd.to_datetime(dates)

data=data[['datetime','pm10']]

#agregation

data1=data.groupby(pd.Grouper(key='datetime',freq='1h',axis=1)).mean()

data2=data.groupby(pd.Grouper(key='datetime',freq='2h',axis=1)).mean()

data3=data.groupby(pd.Grouper(key='datetime',freq='3h',axis=1)).mean()

# save

data1.to_csv('arduair_pm10_promedio_1h.csv')

data2.to_csv('arduair_pm10_promedio_2h.csv')

data3.to_csv('arduair_pm10_promedio_3h.csv')

# reset index

data=data.reset_index()

data1=data1.reset_index()

data2=data2.reset_index()

data2=data2.reset_index()

plt.plot(data1.datetime, data1.pm10)

# -

# ## ¿Que pasa si eliminamos los valores en 0.00?

#

# Realizando la correlacion con los datos generados por el Dusttrack,la correlacion es 0.3 comparada con 0.48 de los datos originales

# +

data_sin_ceros=data[data.pm10>0]

plt.plot(data_sin_ceros.datetime, data_sin_ceros.pm10)

data_sin_ceros_1h=data_sin_ceros.groupby(pd.Grouper(key='datetime',freq='1h',axis=1)).mean()

# -

| pm10_upb/arduair_data_processing_PM10.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: mykernel

# language: python

# name: mykernel

# ---

# ## _*Quantum SVM (quantum kernel method)*_

#

# ### Introduction

#

# Please refer to [this file](https://github.com/Qiskit/qiskit-tutorials/blob/master/qiskit/aqua/artificial_intelligence/qsvm_kernel_classification.ipynb) for introduction.

#

# In this file, we show two ways for using the quantum kernel method: (1) the non-programming way and (2) the programming way.

#

# ### Part I: non-programming way.

# In the non-programming way, we config a json-like configuration, which defines how the svm instance is internally constructed. After the execution, it returns the json-like output, which carries the important information (e.g., the details of the svm instance) and the processed results.

from datasets import *

from qiskit import Aer

from qiskit_aqua.utils import split_dataset_to_data_and_labels, map_label_to_class_name

from qiskit_aqua import run_algorithm, QuantumInstance

from qiskit_aqua.input import SVMInput

from qiskit_aqua.components.feature_maps import SecondOrderExpansion

from qiskit_aqua.algorithms import QSVMKernel

# First we prepare the dataset, which is used for training, testing and the finally prediction.

#

# *Note: You can easily switch to a different dataset, such as the Breast Cancer dataset, by replacing 'ad_hoc_data' to 'Breast_cancer' below.*

# +

feature_dim = 2 # dimension of each data point

training_dataset_size = 20

testing_dataset_size = 10

random_seed = 10598

shots = 1024

sample_Total, training_input, test_input, class_labels = ad_hoc_data(training_size=training_dataset_size,

test_size=testing_dataset_size,

n=feature_dim, gap=0.3, PLOT_DATA=False)

datapoints, class_to_label = split_dataset_to_data_and_labels(test_input)

print(class_to_label)

# -

# Now we create the svm in the non-programming way.

# In the following json, we config:

# - the algorithm name

# - the feature map

params = {

'problem': {'name': 'svm_classification', 'random_seed': random_seed},

'algorithm': {

'name': 'QSVM.Kernel'

},

'backend': {'shots': shots},

'feature_map': {'name': 'SecondOrderExpansion', 'depth': 2, 'entanglement': 'linear'}

}

backend = Aer.get_backend('qasm_simulator')

algo_input = SVMInput(training_input, test_input, datapoints[0])

# With everything setup, we can now run the algorithm.

#

# The run method includes training, testing and predict on unlabeled data.

#

# For the testing, the result includes the success ratio.

#

# For the prediction, the result includes the predicted class names for each data.

#

# After that the trained model is also stored in the svm instance, you can use it for future prediction.

result = run_algorithm(params, algo_input, backend=backend)

# +

print("kernel matrix during the training:")

kernel_matrix = result['kernel_matrix_training']

img = plt.imshow(np.asmatrix(kernel_matrix),interpolation='nearest',origin='upper',cmap='bone_r')

plt.show()

print("testing success ratio: ", result['testing_accuracy'])

print("predicted classes:", result['predicted_classes'])

# -

# ### part II: Programming way.

# We construct the svm instance directly from the classes. The programming way offers the users better accessibility, e.g., the users can access the internal state of svm instance or invoke the methods of the instance. We will demonstrate this advantage soon.

# Now we create the svm in the programming way.

# - We build the svm instance by instantiating the class QSVMKernel.

# - We build the feature map instance (required by the svm instance) by instantiating the class SecondOrderExpansion.

backend = Aer.get_backend('qasm_simulator')

feature_map = SecondOrderExpansion(num_qubits=feature_dim, depth=2, entangler_map={0: [1]})

svm = QSVMKernel(feature_map, training_input, test_input, None)# the data for prediction can be feeded later.

svm.random_seed = random_seed

quantum_instance = QuantumInstance(backend, shots=shots, seed=random_seed, seed_mapper=random_seed)

result = svm.run(quantum_instance)

# Let us check the result.

# +

print("kernel matrix during the training:")

kernel_matrix = result['kernel_matrix_training']

img = plt.imshow(np.asmatrix(kernel_matrix),interpolation='nearest',origin='upper',cmap='bone_r')

plt.show()

print("testing success ratio: ", result['testing_accuracy'])

# -

# Different from the non-programming way, the programming way allows the users to invoke APIs upon the svm instance directly. In the following, we invoke the API "predict" upon the trained svm instance to predict the labels for the newly provided data input.

#

# Use the trained model to evaluate data directly, and we store a `label_to_class` and `class_to_label` for helping converting between label and class name

# +

predicted_labels = svm.predict(datapoints[0])

predicted_classes = map_label_to_class_name(predicted_labels, svm.label_to_class)

print("ground truth: {}".format(datapoints[1]))

print("preduction: {}".format(predicted_labels))

| community/aqua/artificial_intelligence/qsvm_kernel_directly.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# # %latex

# # What's an activation function

# In artificial neural networks, the activation function of a node defines the output of that node given an input or set of inputs.

| deep_learning/activate_function/Introduction.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# <script async src="https://www.googletagmanager.com/gtag/js?id=UA-59152712-8"></script>

# <script>

# window.dataLayer = window.dataLayer || [];

# function gtag(){dataLayer.push(arguments);}

# gtag('js', new Date());

#

# gtag('config', 'UA-59152712-8');

# </script>

#

# # `GiRaFFE_NRPy`: Source Terms

#

# ## Author: <NAME>

#

# <a id='intro'></a>

#

# **Notebook Status:** <font color=green><b> Validated </b></font>

#

# **Validation Notes:** This code produces the expected results for generated functions.

#

# ## This module presents the functionality of [GiRaFFE_NRPy_Source_Terms.py](../../edit/in_progress/GiRaFFE_NRPy/GiRaFFE_NRPy_Source_Terms.py).

#

# ## Introduction:

# This writes and documents the C code that `GiRaFFE_NRPy` uses to compute the source terms for the right-hand sides of the evolution equations for the unstaggered prescription.

#

# The equations themselves are already coded up in other functions; however, for the $\tilde{S}_i$ source term, we will need derivatives of the metric. It will be most efficient and accurate to take them using the interpolated metric values that we will have calculated anyway; however, we will need to write our derivatives in a nonstandard way within NRPy+ in order to take advantage of this, writing our own code for memory access.

# <a id='toc'></a>

#

# # Table of Contents

# $$\label{toc}$$

#

# This notebook is organized as follows

#

# 1. [Step 1](#stilde_source): The $\tilde{S}_i$ source term

# 1. [Step 2](#code_validation): Code Validation against original C code

# 1. [Step 3](#latex_pdf_output): Output this notebook to $\LaTeX$-formatted PDF file

# +

# Step 0: Add NRPy's directory to the path

# https://stackoverflow.com/questions/16780014/import-file-from-parent-directory

import os,sys

nrpy_dir_path = os.path.join("..")

if nrpy_dir_path not in sys.path:

sys.path.append(nrpy_dir_path)

import cmdline_helper as cmd

outdir = os.path.join("GiRaFFE_NRPy","GiRaFFE_Ccode_validation","RHSs")

cmd.mkdir(outdir)

# -

# <a id='stilde_source'></a>

#

# ## Step 1: The $\tilde{S}_i$ source term \[Back to [top](#toc)\]

# $$\label{stilde_source}$$

#

# We start in the usual way - import the modules we need. We will also import the Levi-Civita symbol from `indexedexp.py` and use it to set the Levi-Civita tensor $\epsilon^{ijk} = [ijk]/\sqrt{\gamma}$.

# +

# Step 1: The StildeD RHS *source* term

from outputC import outputC, outCfunction # NRPy+: Core C code output module

import indexedexp as ixp # NRPy+: Symbolic indexed expression (e.g., tensors, vectors, etc.) support

import GRHD.equations as GRHD # NRPy+: Generate general relativistic hydrodynamics equations

import GRFFE.equations as GRFFE # NRPy+: Generate general relativistic force-free electrodynamics equations

thismodule = "GiRaFFE_NRPy_Source_Terms"

def generate_memory_access_code():

# There are several pieces of C code that we will write ourselves because we need to do things

# a little bit outside of what NRPy+ is built for.

# First, we will write general memory access. We will read in values from memory at a given point

# for each quantity we care about.

global general_access

general_access = ""

for var in ["GAMMADD00", "GAMMADD01", "GAMMADD02",

"GAMMADD11", "GAMMADD12", "GAMMADD22",

"BETAU0", "BETAU1", "BETAU2","ALPHA",

"BU0","BU1","BU2",

"VALENCIAVU0","VALENCIAVU1","VALENCIAVU2"]:

lhsvar = var.lower().replace("dd","DD").replace("u","U").replace("bU","BU").replace("valencia","Valencia")

# e.g.,

# const REAL gammaDD00dD0 = auxevol_gfs[IDX4S(GAMMA_FACEDD00GF,i0,i1,i2)];

general_access += "const REAL "+lhsvar+" = auxevol_gfs[IDX4S("+var+"GF,i0,i1,i2)];\n"

# This quick function returns a nearby point for memory access. We need this because derivatives are not local operations.

def idxp1(dirn):

if dirn==0:

return "i0+1,i1,i2"

if dirn==1:

return "i0,i1+1,i2"

if dirn==2:

return "i0,i1,i2+1"

# Next we evaluate needed derivatives of the metric, based on their values at cell faces

global metric_deriv_access

metric_deriv_access = []

for dirn in range(3):

metric_deriv_access.append("")

for var in ["GAMMA_FACEDDdD00", "GAMMA_FACEDDdD01", "GAMMA_FACEDDdD02",

"GAMMA_FACEDDdD11", "GAMMA_FACEDDdD12", "GAMMA_FACEDDdD22",

"BETA_FACEUdD0", "BETA_FACEUdD1", "BETA_FACEUdD2","ALPHA_FACEdD"]:

lhsvar = var.lower().replace("dddd","DDdD").replace("udd","UdD").replace("dd","dD").replace("u","U").replace("_face","")

rhsvar = var.replace("dD","")

# e.g.,

# const REAL gammaDDdD000 = (auxevol_gfs[IDX4S(GAMMA_FACEDD00GF,i0+1,i1,i2)]-auxevol_gfs[IDX4S(GAMMA_FACEDD00GF,i0,i1,i2)])/dxx0;

metric_deriv_access[dirn] += "const REAL "+lhsvar+str(dirn)+" = (auxevol_gfs[IDX4S("+rhsvar+"GF,"+idxp1(dirn)+")]-auxevol_gfs[IDX4S("+rhsvar+"GF,i0,i1,i2)])/dxx"+str(dirn)+";\n"

metric_deriv_access[dirn] += "REAL Stilde_rhsD"+str(dirn)+";\n"

# This creates the C code that writes to the Stilde_rhs direction specified.

global write_final_quantity

write_final_quantity = []

for dirn in range(3):

write_final_quantity.append("")

write_final_quantity[dirn] += "rhs_gfs[IDX4S(STILDED"+str(dirn)+"GF,i0,i1,i2)] += Stilde_rhsD"+str(dirn)+";"

def write_out_functions_for_StildeD_source_term(outdir,outCparams,gammaDD,betaU,alpha,ValenciavU,BU,sqrt4pi):

generate_memory_access_code()

# First, we declare some dummy tensors that we will use for the codegen.

gammaDDdD = ixp.declarerank3("gammaDDdD","sym01",DIM=3)

betaUdD = ixp.declarerank2("betaUdD","nosym",DIM=3)

alphadD = ixp.declarerank1("alphadD",DIM=3)

# We need to rerun a few of these functions with the reset lists to make sure these functions

# don't cheat by using analytic expressions

GRHD.compute_sqrtgammaDET(gammaDD)

GRHD.u4U_in_terms_of_ValenciavU__rescale_ValenciavU_by_applying_speed_limit(alpha, betaU, gammaDD, ValenciavU)

GRFFE.compute_smallb4U(gammaDD, betaU, alpha, GRHD.u4U_ito_ValenciavU, BU, sqrt4pi)

GRFFE.compute_smallbsquared(gammaDD, betaU, alpha, GRFFE.smallb4U)

GRFFE.compute_TEM4UU(gammaDD,betaU,alpha, GRFFE.smallb4U, GRFFE.smallbsquared,GRHD.u4U_ito_ValenciavU)

GRHD.compute_g4DD_zerotimederiv_dD(gammaDD,betaU,alpha, gammaDDdD,betaUdD,alphadD)

GRHD.compute_S_tilde_source_termD(alpha, GRHD.sqrtgammaDET,GRHD.g4DD_zerotimederiv_dD, GRFFE.TEM4UU)

for i in range(3):

desc = "Adds the source term to StildeD"+str(i)+"."

name = "calculate_StildeD"+str(i)+"_source_term"

outCfunction(

outfile = os.path.join(outdir,name+".h"), desc=desc, name=name,

params ="const paramstruct *params,const REAL *auxevol_gfs, REAL *rhs_gfs",

body = general_access \

+metric_deriv_access[i]\

+outputC(GRHD.S_tilde_source_termD[i],"Stilde_rhsD"+str(i),"returnstring",params=outCparams)\

+write_final_quantity[i],

loopopts ="InteriorPoints",

rel_path_to_Cparams=os.path.join("../"))

def add_to_Cfunction_dict__functions_for_StildeD_source_term(outCparams,gammaDD,betaU,alpha,ValenciavU,BU,sqrt4pi,

includes=None, rel_path_to_Cparams=os.path.join("../"),

path_from_rootsrcdir_to_this_Cfunc=os.path.join("RHSs/")):

generate_memory_access_code()

# First, we declare some dummy tensors that we will use for the codegen.

gammaDDdD = ixp.declarerank3("gammaDDdD","sym01",DIM=3)

betaUdD = ixp.declarerank2("betaUdD","nosym",DIM=3)

alphadD = ixp.declarerank1("alphadD",DIM=3)

# We need to rerun a few of these functions with the reset lists to make sure these functions

# don't cheat by using analytic expressions

GRHD.compute_sqrtgammaDET(gammaDD)

GRHD.u4U_in_terms_of_ValenciavU__rescale_ValenciavU_by_applying_speed_limit(alpha, betaU, gammaDD, ValenciavU)

GRFFE.compute_smallb4U(gammaDD, betaU, alpha, GRHD.u4U_ito_ValenciavU, BU, sqrt4pi)

GRFFE.compute_smallbsquared(gammaDD, betaU, alpha, GRFFE.smallb4U)

GRFFE.compute_TEM4UU(gammaDD,betaU,alpha, GRFFE.smallb4U, GRFFE.smallbsquared,GRHD.u4U_ito_ValenciavU)

GRHD.compute_g4DD_zerotimederiv_dD(gammaDD,betaU,alpha, gammaDDdD,betaUdD,alphadD)

GRHD.compute_S_tilde_source_termD(alpha, GRHD.sqrtgammaDET,GRHD.g4DD_zerotimederiv_dD, GRFFE.TEM4UU)

for i in range(3):

desc = "Adds the source term to StildeD"+str(i)+"."

name = "calculate_StildeD"+str(i)+"_source_term"

params ="const paramstruct *params,const REAL *auxevol_gfs, REAL *rhs_gfs",

body = general_access \

+metric_deriv_access[i]\

+outputC(GRHD.S_tilde_source_termD[i],"Stilde_rhsD"+str(i),"returnstring",params=outCparams)\

+write_final_quantity[i],

loopopts ="InteriorPoints",

add_to_Cfunction_dict(

includes=includes,

desc=desc,

name=name, params=params,

body=body, loopopts=loopopts,

rel_path_to_Cparams=rel_path_to_Cparams)

# -

# <a id='code_validation'></a>

#

# # Step 2: Code Validation against original C code \[Back to [top](#toc)\]

# $$\label{code_validation}$$

#

# To validate the code in this tutorial we check for agreement between the files

#

# 1. that were written in this tutorial and

# 1. those that are stored in `GiRaFFE_NRPy/GiRaFFE_Ccode_library` or generated by `GiRaFFE_NRPy_A2B.py`

#

# +

# Declare gridfunctions necessary to generate the C code:

gammaDD = ixp.register_gridfunctions_for_single_rank2("AUXEVOL","gammaDD","sym01",DIM=3)

betaU = ixp.register_gridfunctions_for_single_rank1("AUXEVOL","betaU",DIM=3)

alpha = gri.register_gridfunctions("AUXEVOL","alpha",DIM=3)

BU = ixp.register_gridfunctions_for_single_rank1("AUXEVOL","BU",DIM=3)

ValenciavU = ixp.register_gridfunctions_for_single_rank1("AUXEVOL","ValenciavU",DIM=3)

StildeD = ixp.register_gridfunctions_for_single_rank1("EVOL","StildeD",DIM=3)

# Declare this symbol:

sqrt4pi = par.Cparameters("REAL",thismodule,"sqrt4pi","sqrt(4.0*M_PI)")

# First, we generate the file using the functions written in this notebook:

outCparams = "outCverbose=False"

write_out_functions_for_StildeD_source_term(outdir,outCparams,gammaDD,betaU,alpha,ValenciavU,BU,sqrt4pi)

# Define the directory that we wish to validate against:

valdir = os.path.join("GiRaFFE_NRPy","GiRaFFE_Ccode_library","RHSs")

cmd.mkdir(valdir)

import GiRaFFE_NRPy.GiRaFFE_NRPy_Source_Terms as source

source.write_out_functions_for_StildeD_source_term(valdir,outCparams,gammaDD,betaU,alpha,ValenciavU,BU,sqrt4pi)

import difflib

import sys

print("Printing difference between original C code and this code...")

# Open the files to compare

files = ["calculate_StildeD0_source_term.h","calculate_StildeD1_source_term.h","calculate_StildeD2_source_term.h"]

for file in files:

print("Checking file " + file)

with open(os.path.join(valdir,file)) as file1, open(os.path.join(outdir,file)) as file2:

# Read the lines of each file

file1_lines = file1.readlines()

file2_lines = file2.readlines()

num_diffs = 0

for line in difflib.unified_diff(file1_lines, file2_lines, fromfile=os.path.join(valdir+file), tofile=os.path.join(outdir+file)):

sys.stdout.writelines(line)

num_diffs = num_diffs + 1

if num_diffs == 0:

print("No difference. TEST PASSED!")

else:

print("ERROR: Disagreement found with .py file. See differences above.")

sys.exit(1)

# -

# <a id='latex_pdf_output'></a>

#

# # Step 3: Output this notebook to $\LaTeX$-formatted PDF file \[Back to [top](#toc)\]

# $$\label{latex_pdf_output}$$

#

# The following code cell converts this Jupyter notebook into a proper, clickable $\LaTeX$-formatted PDF file. After the cell is successfully run, the generated PDF may be found in the root NRPy+ tutorial directory, with filename

# [Tutorial-GiRaFFE_NRPy_C_code_library-Source_Terms](TTutorial-GiRaFFE_NRPy_C_code_library-Source_Terms.pdf) (Note that clicking on this link may not work; you may need to open the PDF file through another means.)

import cmdline_helper as cmd # NRPy+: Multi-platform Python command-line interface

cmd.output_Jupyter_notebook_to_LaTeXed_PDF("Tutorial-GiRaFFE_NRPy-Source_Terms",location_of_template_file=os.path.join(".."))

| in_progress/Tutorial-GiRaFFE_NRPy-Source_Terms.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

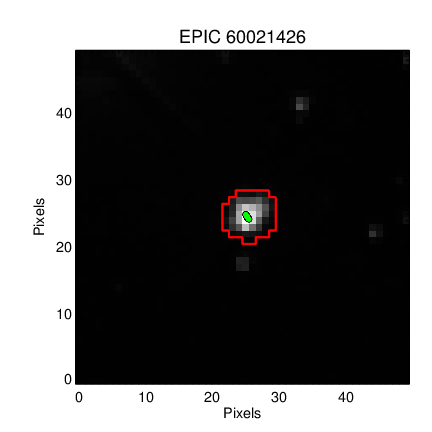

# # How does the SFF method work?

# Vanderburg and Johnson (2014) introduced a method for "Self Flat Fielding" by tracking how the lightcurve changes with motion of the spacecraft:

#

# [A Technique for Extracting Highly Precise Photometry for the Two-Wheeled Kepler Mission](http://adsabs.harvard.edu/abs/2014PASP..126..948V)

#

# In this notebook we replicate the K2SFF method following the same example source, #60021426, as that in the publication. We aim to demystify the technique, which is extremely popular within the K2 community. We have focused on reproducibility, so that we achieve the same result at the publication.

#

# The Vanderburg & Johnson 2014 paper uses data from the Kepler two-wheel "Concept Engineering Test", predating campaign 0, and sometimes called campaign *"eng"* or abbreviated CET. This vestigal "campaign" lacks some of the standardization of later K2 campaigns--- it was much shorter, only about 9 days long, it lacks some of the standard quality flags, targets have non-traditional EPIC IDs, and other quirks.

# %matplotlib inline

import numpy as np

import matplotlib.pyplot as plt

from astropy.io import fits

import pandas as pd

# ## Retrieve the K2SFF data for ENG test source `60021426`

# First we will retrieve data and inspect the mask used in the paper.

path = 'http://archive.stsci.edu/hlsps/k2sff/cet/060000000/21426/hlsp_k2sff_k2_lightcurve_060021426-cet_kepler_v1_llc.fits'

vdb_fits = fits.open(path)

# The `BESTAPER` keyword explains which aperture was chosen as the "best" by Vanderburg & Johnson 2014. The FITS header for that slice contains the metadata needed to reproduce the mask.

keys = ['MASKTYPE', 'MASKINDE', 'NPIXSAP']

_ = [print(key, ' : ', vdb_fits['BESTAPER'].header[key]) for key in keys]

# We want the *exact same* mask as Vanderburg & Johnson 2014, but the publication version and MAST version differ!

#

# Publication version:

#

# MAST Version:

#

# Aperture 7 should yield a bigger mask, more similar to what was used in the paper.

VDB_J_mask = vdb_fits['PRF_APER_TBL'].data[7,:, :] == True

VDB_J_mask.sum()

# Save the mask for easy use in our next notebook.

np.save('VDB_J_2014_mask.npy', VDB_J_mask)

# ## Manually reproduce with the Vanderburg-provided diagnostic data

# Retrieve the Vanderburg-provided diagnostic data for the Kepler ENG testing.

# Uncomment the line below to retrieve the data programmatically, or manually get the linked file in a browser and save it to this directory.

# +

# #! wget https://www.cfa.harvard.edu/~avanderb/k2/ep60021426alldiagnostics.csv

# -

df = pd.read_csv('ep60021426alldiagnostics.csv',index_col=False)

df.head()

# We can mean-subtract the provided $x-y$ centroids, assigning them column and row identifiers, then rotate the coordinates into their major and minor axes.

col = df[' X-centroid'].values

col = col - np.mean(col)

row = df[' Y-centroid'].values

row = row - np.mean(row)

def _get_eigen_vectors(centroid_col, centroid_row):

'''get the eigenvalues and eigenvectors given centroid x, y positions'''

centroids = np.array([centroid_col, centroid_row])

eig_val, eig_vec = np.linalg.eigh(np.cov(centroids))

return eig_val, eig_vec

def _rotate(eig_vec, centroid_col, centroid_row):

'''rotate the centroids into their predominant linear axis'''

centroids = np.array([centroid_col, centroid_row])

return np.dot(eig_vec, centroids)

eig_val, eig_vec = _get_eigen_vectors(col, row)

v1, v2 = eig_vec

# The major axis is the latter.

platescale = 4.0 # The Kepler plate scale; has units of arcseconds / pixel

plt.figure(figsize=(5, 6))

plt.plot(col * platescale, row * platescale, 'ko', ms=4)

plt.plot(col * platescale, row * platescale, 'ro', ms=1)

plt.xticks([-2, -1,0, 1, 2])

plt.yticks([-2, -1,0, 1, 2])

plt.xlabel('X position [arcseconds]')

plt.ylabel('Y position [arcseconds]')

plt.xlim(-2, 2)

plt.ylim(-2, 2)

plt.plot([0, v1[0]], [0, v1[1]], color='blue', lw=3)

plt.plot([0, v2[0]], [0, v2[1]], color='blue', lw=3);

# Following the form of **Figure 2** of Vanderburg & Johsnon 2014.

rot_colp, rot_rowp = _rotate(eig_vec, col, row) #units in pixels

# You can rotate into the new reference frame.

plt.figure(figsize=(5, 6))

plt.plot(rot_rowp * platescale, rot_colp * platescale, 'ko', ms=4)

plt.plot(rot_rowp * platescale, rot_colp * platescale, 'ro', ms=1)

plt.xticks([-2, -1,0, 1, 2])

plt.yticks([-2, -1,0, 1, 2])

plt.xlabel("X' position [arcseconds]")

plt.ylabel("Y' position [arcseconds]")

plt.xlim(-2, 2)

plt.ylim(-2, 2)

plt.plot([0, 1], [0, 0], color='blue')

plt.plot([0, 0], [0, 1], color='blue');

# We need to calculate the arclength using:

# \begin{equation}s= \int_{x'_0}^{x'_1}\sqrt{1+\left( \frac{dy'_p}{dx'}\right)^2} dx'\end{equation}

#

# where $x^\prime_0$ is the transformed $x$ coordinate of the point with the smallest $x^\prime$ position, and $y^\prime_p$ is the best--fit polynomial function.

# Fit a $5^{th}$ order polynomial to the rotated coordinates.

z = np.polyfit(rot_rowp, rot_colp, 5)

p5 = np.poly1d(z)

p5_deriv = p5.deriv()

x0_prime = np.min(rot_rowp)

xmax_prime = np.max(rot_rowp)

x_dense = np.linspace(x0_prime, xmax_prime, 2000)

plt.plot(rot_rowp, rot_colp, '.')

plt.plot(x_dense, p5(x_dense))

plt.ylabel('Position along minor axis (pixels)')

plt.xlabel('Position along major axis (pixels)')

plt.title('Performance of polynomial regression')

plt.ylim(-0.1, 0.1);

# We see evidence for a [bias-variance tradeoff](https://en.wikipedia.org/wiki/Bias%E2%80%93variance_tradeoff), suggesting some modest opportunity for improvement.

@np.vectorize

def arclength(x):

'''Input x1_prime, get out arclength'''

gi = x_dense <x

s_integrand = np.sqrt(1 + p5_deriv(x_dense[gi]) ** 2)

s = np.trapz(s_integrand, x=x_dense[gi])

return s

# Let's double check that we compute the same arclength as the published paper.

aspect_ratio = plt.figaspect(1)

plt.figure(figsize=aspect_ratio)

plt.plot(df[' arclength'], arclength(rot_rowp)*4.0, '.')

plt.xlabel('$s$ (Vanderburg & Johnson 2014)')

plt.ylabel('$s$ (This work)')

plt.plot([0, 4], [0, 4], 'k--');

# Yes, we compute arclength correctly.

# Now we apply a **high-pass filter** to the raw lightcurve data. We follow the original paper by using *BSplines* with 1.5 day breakpoints. You can also apply data exclusion at this stage.

from scipy.interpolate import BSpline

from scipy import interpolate

times, raw_fluxes = df['BJD - 2454833'].values, df[' Raw Flux'].values

# We find the weighted least square spline for a given set of knots, $t$. We supply interior knots as knots on the ends are added automatically, as stated in the `interpolate.splrep()` docstring.

interior_knots = np.arange(times[0]+1.5, times[0]+6, 1.5)

t,c,k = interpolate.splrep(times, raw_fluxes, s=0, task=-1, t=interior_knots)

bspl = BSpline(t,c,k)

plt.plot(times, raw_fluxes, '.')

plt.plot(times, bspl(times))

plt.xlabel('$t$ (days)')

plt.ylabel('Raw Flux');

# The Spline fit looks good, so we can normalize the flux by the long-term trend.

# Plot the normalized flux versus arclength to see the position-dependent flux.

fluxes = raw_fluxes/bspl(times)

# Mask the data by keeping only the good samples.

bi = df[' Thrusters On'].values == 1.0

gi = df[' Thrusters On'].values == 0.0

clean_fluxes = fluxes[gi]

al = arclength(rot_rowp[gi]) * platescale

sorted_inds = np.argsort(al)

# We will follow the paper by interpolating **flux versus arclength position** in 15 bins of means, which is a *piecewise linear fit*.

knots = np.array([np.min(al)]+

[np.median(splt) for splt in np.array_split(al[sorted_inds], 15)]+

[np.max(al)])

bin_means = np.array([clean_fluxes[sorted_inds][0]]+

[np.mean(splt) for splt in np.array_split(clean_fluxes[sorted_inds], 15)]+

[clean_fluxes[sorted_inds][-1]])

zz = np.polyfit(al, clean_fluxes,6)

sff = np.poly1d(zz)

al_dense = np.linspace(0, 4, 1000)

interp_func = interpolate.interp1d(knots, bin_means)

# +

plt.figure(figsize=(5, 6))

plt.plot(arclength(rot_rowp)*4.0, fluxes, 'ko', ms=4)

plt.plot(arclength(rot_rowp)*4.0, fluxes, 'o', color='#3498db', ms=3)

plt.plot(arclength(rot_rowp[bi])*4.0, fluxes[bi], 'o', color='r', ms=3)

plt.plot(np.sort(al), interp_func(np.sort(al)), '-', color='#e67e22')

plt.xticks([0, 1,2, 3, 4])

plt.minorticks_on()

plt.xlabel('Arclength [arcseconds]')

plt.ylabel('Relative Brightness')

plt.title('EPIC 60021426, Kp =10.3')

plt.xlim(0,4)

plt.ylim(0.997, 1.002);

# -

# Following **Figure 4** of Vanderburg & Johnson 2014.

# Apply the Self Flat Field (SFF) correction:

corr_fluxes = clean_fluxes / interp_func(al)

# +

plt.figure(figsize=(10,6))

dy = 0.004

plt.plot(df['BJD - 2454833'], df[' Raw Flux']+dy, 'ko', ms=4)

plt.plot(df['BJD - 2454833'], df[' Raw Flux']+dy, 'o', color='#3498db', ms=3)

plt.plot(df['BJD - 2454833'][bi], df[' Raw Flux'][bi]+dy, 'o', color='r', ms=3)

plt.plot(df['BJD - 2454833'][gi], corr_fluxes*bspl(times[gi]), 'o', color='k', ms = 4)

plt.plot(df['BJD - 2454833'][gi], corr_fluxes*bspl(times[gi]), 'o', color='#e67e22', ms = 3)

plt.xlabel('BJD - 2454833')

plt.ylabel('Relative Brightness')

plt.xlim(1862, 1870)

plt.ylim(0.994, 1.008);

# -

# Following **Figure 5** of Vanderburg & Johnson 2015.

# *The end.*

| docs/source/tutorials/04-replicate-vanderburg-2014-k2sff.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# # Optimal stopping

# > Or how I learned you can loose more than 100% value on options

#

# - toc: true

# - badges: true

# - comments: true

# - categories: [jupyter]

# - image: images/SPY_raw_bfly_prob.png

# Discrete time case

# Continuous time case

| _notebooks/2022-05-01-Optimal Stopping American Options.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# Do you want to speed up the fitting of your machine learning algorithm? Scikit-learn offers quite a few ways to do this. One way is to train your model in parallel using the n_jobs parameter which exists for many scikit-learn models. A really simple way is to reduce the number of rows or columns in your data. The problem with this approach is that its hard to know which rows and especially which columns to remove. Principal component analysis, commonly known as PCA, is a technique that you can use to smartly reduce the dimensionality of your dataset while losing the least amount of information possible. In this video, I'll share with you the process of how you can use principal component analysis to speed up the fitting of a logistic regression model.

# ## Import Libraries

# +

# %matplotlib inline

import matplotlib.pyplot as plt

import pandas as pd

import numpy as np

from sklearn.model_selection import train_test_split

from sklearn.preprocessing import StandardScaler

from sklearn.decomposition import PCA

from sklearn.linear_model import LogisticRegression

# -

# ## Load the Dataset

# The dataset is a modified version of the MNIST dataset that contains 2000 labeled images of each digit 0 and 1. The images are 28 pixels by 28 pixels.

#

# Parameters | Number

# --- | ---

# Classes | 2 (digits 0 and 1)

# Samples per class | 2000 samples per class

# Samples total | 4000

# Dimensionality | 784 (28 x 28 images)

# Features | integers values from 0 to 255

#

# For convenience, I have arranged the data into csv file.

df = pd.read_csv('data/MNISTonly0_1.csv')

df.head()

# ## Visualize Each Digit

pixel_colnames = df.columns[:-1]

# Get all columns except the label column for the first image

image_values = df.loc[0, pixel_colnames].values

plt.figure(figsize=(8,4))

for index in range(0, 2):

plt.subplot(1, 2, 1 + index )

image_values = df.loc[index, pixel_colnames].values

image_label = df.loc[index, 'label']

plt.imshow(image_values.reshape(28,28), cmap ='gray')

plt.title('Label: ' + str(image_label), fontsize = 18)

# ## Splitting Data into Training and Test Sets

X_train, X_test, y_train, y_test = train_test_split(df[pixel_colnames], df['label'], random_state=0)

# ## Standardize the Data

# PCA and logisitic regression are sensitive to the scale of your features. You can standardize your data onto unit scale (mean = 0 and variance = 1) by using Scikit-Learn's `StandardScaler`.

# +

scaler = StandardScaler()

# Fit on training set only.

scaler.fit(X_train)

# Apply transform to both the training set and the test set.

X_train = scaler.transform(X_train)

X_test = scaler.transform(X_test)

# -

# Variable created for demonstational purposes in the notebook

scaledTrainImages = X_train.copy()

# ## PCA then Logistic Regression

# +

"""

n_components = .90 means that scikit-learn will choose the minimum number

of principal components such that 90% of the variance is retained.

"""

pca = PCA(n_components = .90)

# Fit PCA on training set only

pca.fit(X_train)

# Apply the mapping (transform) to both the training set and the test set.

X_train = pca.transform(X_train)

X_test = pca.transform(X_test)

# Logistic Regression

clf = LogisticRegression()

clf.fit(X_train, y_train)

print('Number of dimensions before PCA: ' + str(len(pixel_colnames)))

print('Number of dimensions after PCA: ' + str(pca.n_components_))

print('Classification accuracy: ' + str(clf.score(X_test, y_test)))

# -

# ## Relationship between Cumulative Explained Variance and Number of Principal Components

#

# Don't worry if you don't understand the code in this section. It is to show the level of redundancy present in multiple dimensions.

# +

# if n_components is not set, all components are kept (784 in this case)

pca = PCA()

pca.fit(scaledTrainImages)

# Summing explained variance

tot = sum(pca.explained_variance_)

var_exp = [(i/tot)*100 for i in sorted(pca.explained_variance_, reverse=True)]

# Cumulative explained variance

cum_var_exp = np.cumsum(var_exp)

# PLOT OUT THE EXPLAINED VARIANCES SUPERIMPOSED

fig, ax = plt.subplots(nrows = 1, ncols = 1, figsize = (10,7));

ax.tick_params(labelsize = 18)

ax.plot(range(1, 785), cum_var_exp, label='cumulative explained variance')

ax.set_ylabel('Cumulative Explained variance', fontsize = 16)

ax.set_xlabel('Principal components', fontsize = 16)

ax.axhline(y = 95, color='k', linestyle='--', label = '95% Explained Variance')

ax.axhline(y = 90, color='c', linestyle='--', label = '90% Explained Variance')

ax.axhline(y = 85, color='r', linestyle='--', label = '85% Explained Variance')

ax.legend(loc='best', markerscale = 1.0, fontsize = 12)

# -

# So that's it, PCA can be used to speed up the fitting of your algorithm.

| scikitlearn/Ex_Files_ML_SciKit_Learn/Exercise Files/03_04_PCA_to_Speed_Up_Machine_Learning.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# # Identifying People in CDCS Metadata Descriptions

# Run named entity recognition (NER) on the metadata descriptions extracted from the CDCS' online archival catalog.

#

# The code in this Jupyter Notebook is part of a PhD project to create a gold standard dataset labeled for gender biased language, on which a classifier can be trained to identify gender bias in archival metadata descriptions.

#

# This project is focused on the English language and archival institutions in the United Kingdom.

#

# * Author: <NAME>

# * Date: November 2020 - February 2021

# * Project: PhD Case Study 1

# * Data Provider: [ArchivesSpace](https://archives.collections.ed.ac.uk/), Centre for Research Collections, University of Edinburgh

#

# ***

#

# **Table of Contents**

#

# [I. Corpus Statistics](#corpus-stats)

#

# [II. Named Entity Recognition with SpaCy](#ner)

#

# [III. Checking for Duplicate Descritions](#check-dups)

#

# ***

# +

import pandas as pd

import nltk

from nltk.tokenize import word_tokenize

from nltk.tokenize import sent_tokenize

# nltk.download('punkt')

from nltk.corpus import PlaintextCorpusReader

# nltk.download('averaged_perceptron_tagger')

from nltk.corpus import stopwords

# nltk.download('stopwords')

from nltk.tag import pos_tag

import string

import csv

import re

import spacy

from spacy import displacy

from collections import Counter

try:

import en_core_web_sm

except ImportError:

print("Downlading en_core_web_sm model")

import sys

# !{sys.executable} -m spacy download en_core_web_sm

else:

print("Already have en_core_web_sm")

import en_core_web_sm

nlp = en_core_web_sm.load()

# -

# <a id="corpus-stats"></a>

# ## I. Corpus Statisctics

descs = PlaintextCorpusReader("../AnnotationData/descriptions_by_fonds_split_with_ann/descriptions_by_fonds_split_with_ann/", ".+\.txt")

tokens = descs.words()

print(tokens[0:20])

sentences = descs.sents()

print(sentences[0:5])

# +

def corpusStatistics(plaintext_corpus_read_lists):

total_chars = 0

total_tokens = 0

total_sents = 0

total_files = 0

# fileids are the TXT file names in the nls-text-ladiesDebating folder:

for fileid in plaintext_corpus_read_lists.fileids():

total_chars += len(plaintext_corpus_read_lists.raw(fileid))

total_tokens += len(plaintext_corpus_read_lists.words(fileid))

total_sents += len(plaintext_corpus_read_lists.sents(fileid))

total_files += 1

print("Total Estimated...")

print(" Characters:", total_chars)

print(" Tokens:", total_tokens)

print(" Sentences:", total_sents)

print(" Files:", total_files)

corpusStatistics(descs)

# -

words = [t for t in tokens if t.isalpha()]

print("Total Estimated Words:",len(words))

to_exclude = set((stopwords.words("english")) + ["Title", "Identifier", "Scope",

"Contents", "Biograhical", "Historical", "Processing", "Information"]

)

words = [w for w in words if w not in to_exclude]

print("Total Words Excluding Metadata Field Names:",len(words))

# <a id="ner"></a>

# ## II. Name Entity Recognition with spaCy

# Run named entity recognition (NER) to estimate the names in the dataset and get a sense for the value in manually labeling names during the annotation process.

fileids = descs.fileids()

sentences = []

for fileid in fileids:

file = descs.raw(fileid)

sentences += nltk.sent_tokenize(file)

person_list = []

for s in sentences:

s_ne = nlp(s)

for entity in s_ne.ents:

if entity.label_ == 'PERSON':

person_list += [entity.text]

unique_persons = list(set(person_list))

print(len(unique_persons))

# + jupyter={"outputs_hidden": true}

unique_persons

# -

# Not perfect...some non-person entities labeled such as `Librarian` and `Diploma`. I'll add labeling person names to the annotation instructions!

# <a id="check-dups"></a>

# ## III. Checking for Duplicate Descriptions

# +

# descs = PlaintextCorpusReader("../AnnotationData/descriptions_by_fonds_split_with_ann/descriptions_by_fonds_split_with_ann/", ".+\.txt")

# fileids = descs.fileids()

# descs.raw(fileids[0])

# -

x = descs.raw(fileids[0])

x.split("\n")

descs_split = []

for fileid in fileids:

d = descs.raw(fileid).split("\n")

for section in d:

if len(section) > 0:

if "Identifier" not in section and "Title" not in section and "Scope and Contents" not in section and "Biographical / Historical" not in section and "Processing Information" not in section and "No information provided" not in section:

descs_split += [section]

print(descs_split[0:4])

len(descs_split)

len(set(descs_split))

print(len(descs_split)-len(set(descs_split)))

# It looks like there are 11,378 descriptions that are repeated in the corpus.

| Analysis_Preannotation.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# # NER with bertreach

#

# > "Finetuning bertreach for NER"

#

# - toc: false

# - branch: master

# - hidden: true

# - badges: true

# - categories: [irish, ner, bert, bertreach]

# + [markdown] id="UldPY2vaxCOJ"

# This is a lightly edited version of [this notebook](https://github.com/huggingface/notebooks/blob/master/examples/token_classification.ipynb).

# + [markdown] id="X4cRE8IbIrIV"

# If you're opening this Notebook on colab, you will probably need to install 🤗 Transformers and 🤗 Datasets. Uncomment the following cell and run it.

# + id="MOsHUjgdIrIW"

# %%capture

# !pip install datasets transformers seqeval

# + [markdown] id="1IU9pa_DPSOk"

# If you're opening this notebook locally, make sure your environment has an install from the last version of those libraries.

#

# To be able to share your model with the community and generate results like the one shown in the picture below via the inference API, there are a few more steps to follow.

#

# First you have to store your authentication token from the Hugging Face website (sign up [here](https://huggingface.co/join) if you haven't already!) then execute the following cell and input your username and password:

# + [markdown] id="ARRVh3-ixvs9"

# (Huggingface notebooks skip this bit, but you need to set credential.helper before anything else works).

# + id="9RX-uPZLxurJ"

# !git config --global credential.helper store

# + colab={"base_uri": "https://localhost:8080/", "height": 283, "referenced_widgets": ["445ac7ed80f7436eaa1f81e8f71cb1e5", "<KEY>", "f7a501140914451d952b3f1528c907d7", "11eedcf6113342f8a5795f40686cb7cd", "efb271ceea364dde8e54a5397615c267", "ed03af8d393543be9abedf5f6ac070d5", "<KEY>", "<KEY>", "<KEY>", "5e16bebe96ad4b6fb4b141675fe6be4c", "<KEY>", "9594610636864c05a147ad11dfee7063", "418a605ac1d54f8f852c76504a941934", "d981126fe0a649b5aba5d4a7f2c3bbdf", "abc72496d5eb48a787ddd8f4f1ab21e8", "2cccaab9e726491592efc43d2296698c"]} id="npzw8gOYPSOl" outputId="f9c0bfaa-2a9e-4f0f-9746-bb2002a619b2"

from huggingface_hub import notebook_login

notebook_login()

# + [markdown] id="5-CthmJKPSOm"

# Then you need to install Git-LFS. Uncomment the following instructions:

# + colab={"base_uri": "https://localhost:8080/"} id="7YAk6M5KPSOn" outputId="154c5842-906c-4f27-e26b-aa7ad595cd4b"

# !apt install git-lfs

# + [markdown] id="CHYxLRR8PSOo"

# Make sure your version of Transformers is at least 4.11.0 since the functionality was introduced in that version:

# + colab={"base_uri": "https://localhost:8080/"} id="U7ZVbepsPSOp" outputId="8036e6e0-eb72-4b89-ca57-e3d60c0b7f0f"

import transformers

print(transformers.__version__)

# + [markdown] id="HFASsisvIrIb"

# You can find a script version of this notebook to fine-tune your model in a distributed fashion using multiple GPUs or TPUs [here](https://github.com/huggingface/transformers/tree/master/examples/token-classification).

# + [markdown] id="rEJBSTyZIrIb"

# # Fine-tuning a model on a token classification task

# + [markdown] id="w6vfS60cPSOt"

# In this notebook, we will see how to fine-tune one of the [🤗 Transformers](https://github.com/huggingface/transformers) model to a token classification task, which is the task of predicting a label for each token.

#

#

#

# The most common token classification tasks are:

#

# - NER (Named-entity recognition) Classify the entities in the text (person, organization, location...).

# - POS (Part-of-speech tagging) Grammatically classify the tokens (noun, verb, adjective...)

# - Chunk (Chunking) Grammatically classify the tokens and group them into "chunks" that go together

#

# We will see how to easily load a dataset for these kinds of tasks and use the `Trainer` API to fine-tune a model on it.

# + [markdown] id="4RRkXuteIrIh"

# This notebook is built to run on any token classification task, with any model checkpoint from the [Model Hub](https://huggingface.co/models) as long as that model has a version with a token classification head and a fast tokenizer (check on [this table](https://huggingface.co/transformers/index.html#bigtable) if this is the case). It might just need some small adjustments if you decide to use a different dataset than the one used here. Depending on you model and the GPU you are using, you might need to adjust the batch size to avoid out-of-memory errors. Set those three parameters, then the rest of the notebook should run smoothly:

# + id="zVvslsfMIrIh"

task = "ner" # Should be one of "ner", "pos" or "chunk"

model_checkpoint = "jimregan/BERTreach"

batch_size = 16

# + [markdown] id="whPRbBNbIrIl"

# ## Loading the dataset

# + [markdown] id="W7QYTpxXIrIl"

# We will use the [🤗 Datasets](https://github.com/huggingface/datasets) library to download the data and get the metric we need to use for evaluation (to compare our model to the benchmark). This can be easily done with the functions `load_dataset` and `load_metric`.

# + id="IreSlFmlIrIm"

from datasets import load_dataset, load_metric

# + [markdown] id="CKx2zKs5IrIq"

# For our example here, we'll use the [CONLL 2003 dataset](https://www.aclweb.org/anthology/W03-0419.pdf). The notebook should work with any token classification dataset provided by the 🤗 Datasets library. If you're using your own dataset defined from a JSON or csv file (see the [Datasets documentation](https://huggingface.co/docs/datasets/loading_datasets.html#from-local-files) on how to load them), it might need some adjustments in the names of the columns used.

# + colab={"base_uri": "https://localhost:8080/", "height": 67, "referenced_widgets": ["50c7c01d13da42d6b264ea36684e5f1f", "<KEY>", "<KEY>", "d6c350910a3a48d485cfeab446eb60ff", "6c1e4ea54ae844a3a2d32a8b4a71e962", "e41938f14b3946cf9874de60a1cf5fa5", "174e47a8e6da4edca874390cab52707e", "f54f7775ee234038a4071ab8f1841186", "<KEY>", "61451ee6101842a98d05aca771754332", "d5c6208c774d4440894efb480abc1664"]} id="s_AY1ATSIrIq" outputId="f5442b3e-5c6a-409f-ce71-a5b46b894803"

datasets = load_dataset("wikiann", "ga")

# + [markdown] id="RzfPtOMoIrIu"

# The `datasets` object itself is [`DatasetDict`](https://huggingface.co/docs/datasets/package_reference/main_classes.html#datasetdict), which contains one key for the training, validation and test set.

# + colab={"base_uri": "https://localhost:8080/"} id="GWiVUF0jIrIv" outputId="4cda094b-9ed3-4a00-fc41-f5a163b3bff2"

datasets

# + [markdown] id="X_XUcknPPSO1"

# We can see the training, validation and test sets all have a column for the tokens (the input texts split into words) and one column of labels for each kind of task we introduced before.

# + [markdown] id="u3EtYfeHIrIz"

# To access an actual element, you need to select a split first, then give an index:

# + colab={"base_uri": "https://localhost:8080/"} id="X6HrpprwIrIz" outputId="24e54194-65b2-433a-dba2-7bf1ac7eb5bb"

datasets["train"][0]

# + [markdown] id="lppNSJaKPSO3"

# The labels are already coded as integer ids to be easily usable by our model, but the correspondence with the actual categories is stored in the `features` of the dataset:

# + colab={"base_uri": "https://localhost:8080/"} id="vetcKtTJPSO3" outputId="d06b4662-e8c2-4c6f-ea22-67cbc5c9a7a5"

datasets["train"].features[f"ner_tags"]

# + [markdown] id="URaPOdjRPSO5"

# So for the NER tags, 0 corresponds to 'O', 1 to 'B-PER' etc... On top of the 'O' (which means no special entity), there are four labels for NER here, each prefixed with 'B-' (for beginning) or 'I-' (for intermediate), that indicate if the token is the first one for the current group with the label or not:

# - 'PER' for person

# - 'ORG' for organization

# - 'LOC' for location

# - 'MISC' for miscellaneous

# + [markdown] id="dCCD_uaQPSO5"

# Since the labels are lists of `ClassLabel`, the actual names of the labels are nested in the `feature` attribute of the object above:

# + colab={"base_uri": "https://localhost:8080/"} id="Q9jfLo3rPSO6" outputId="a258d350-8279-498b-d94a-da31853b5dfb"

label_list = datasets["train"].features[f"{task}_tags"].feature.names

label_list

# + [markdown] id="WHUmphG3IrI3"

# To get a sense of what the data looks like, the following function will show some examples picked randomly in the dataset (automatically decoding the labels in passing).

# + id="i3j8APAoIrI3"

from datasets import ClassLabel, Sequence

import random

import pandas as pd

from IPython.display import display, HTML

def show_random_elements(dataset, num_examples=10):

assert num_examples <= len(dataset), "Can't pick more elements than there are in the dataset."

picks = []

for _ in range(num_examples):

pick = random.randint(0, len(dataset)-1)

while pick in picks:

pick = random.randint(0, len(dataset)-1)

picks.append(pick)

df = pd.DataFrame(dataset[picks])

for column, typ in dataset.features.items():

if isinstance(typ, ClassLabel):

df[column] = df[column].transform(lambda i: typ.names[i])

elif isinstance(typ, Sequence) and isinstance(typ.feature, ClassLabel):

df[column] = df[column].transform(lambda x: [typ.feature.names[i] for i in x])

display(HTML(df.to_html()))

# + colab={"base_uri": "https://localhost:8080/", "height": 432} id="SZy5tRB_IrI7" outputId="35f50cf0-4606-48be-97cc-54643321ccba"

show_random_elements(datasets["train"])

# + [markdown] id="n9qywopnIrJH"

# ## Preprocessing the data

# + [markdown] id="YVx71GdAIrJH"

# Before we can feed those texts to our model, we need to preprocess them. This is done by a 🤗 Transformers `Tokenizer` which will (as the name indicates) tokenize the inputs (including converting the tokens to their corresponding IDs in the pretrained vocabulary) and put it in a format the model expects, as well as generate the other inputs that model requires.

#

# To do all of this, we instantiate our tokenizer with the `AutoTokenizer.from_pretrained` method, which will ensure:

#

# - we get a tokenizer that corresponds to the model architecture we want to use,

# - we download the vocabulary used when pretraining this specific checkpoint.

#

# That vocabulary will be cached, so it's not downloaded again the next time we run the cell.

# + colab={"base_uri": "https://localhost:8080/"} id="eXNLu_-nIrJI" outputId="5981ce18-02da-4278-c75d-9939729142aa"

from transformers import RobertaTokenizerFast

tokenizer = RobertaTokenizerFast.from_pretrained(model_checkpoint, add_prefix_space=True)

# + [markdown] id="Vl6IidfdIrJK"

# The following assertion ensures that our tokenizer is a fast tokenizers (backed by Rust) from the 🤗 Tokenizers library. Those fast tokenizers are available for almost all models, and we will need some of the special features they have for our preprocessing.

# + id="pZaRFHN7PSO-"

import transformers

assert isinstance(tokenizer, transformers.PreTrainedTokenizerFast)

# + [markdown] id="cfiX-_BgPSO_"

# You can check which type of models have a fast tokenizer available and which don't on the [big table of models](https://huggingface.co/transformers/index.html#bigtable).

# + [markdown] id="rowT4iCLIrJK"

# You can directly call this tokenizer on one sentence:

# + colab={"base_uri": "https://localhost:8080/"} id="a5hBlsrHIrJL" outputId="2bd9771e-e59b-460c-a325-45333c61cee2"

tokenizer("Is abairt amháin é seo!")

# + [markdown] id="B9K1KIg4PSPA"

# Depending on the model you selected, you will see different keys in the dictionary returned by the cell above. They don't matter much for what we're doing here (just know they are required by the model we will instantiate later), you can learn more about them in [this tutorial](https://huggingface.co/transformers/preprocessing.html) if you're interested.

#

# If, as is the case here, your inputs have already been split into words, you should pass the list of words to your tokenzier with the argument `is_split_into_words=True`:

# + colab={"base_uri": "https://localhost:8080/"} id="qdxB67GzPSPA" outputId="3bedb26b-6e89-4885-a85e-8f3416478539"

tokenizer(["Hello", ",", "this", "is", "one", "sentence", "split", "into", "words", "."], is_split_into_words=True)

# + [markdown] id="XjXRHpJLPSPB"

# Note that transformers are often pretrained with subword tokenizers, meaning that even if your inputs have been split into words already, each of those words could be split again by the tokenizer. Let's look at an example of that:

# + colab={"base_uri": "https://localhost:8080/"} id="mi8IJrMFPSPB" outputId="cad513ef-3f29-4e5b-c134-4e290d067ef1"

example = datasets["train"][4]

print(example["tokens"])

# + colab={"base_uri": "https://localhost:8080/"} id="NKP2znNrPSPC" outputId="93a5dee3-b047-4231-974a-bf569d1cc0ea"

tokenized_input = tokenizer(example["tokens"], is_split_into_words=True)

tokens = tokenizer.convert_ids_to_tokens(tokenized_input["input_ids"])

print(tokens)

# + [markdown] id="aL91xiUePSPC"

# Here the words "Zwingmann" and "sheepmeat" have been split in three subtokens.

#

# This means that we need to do some processing on our labels as the input ids returned by the tokenizer are longer than the lists of labels our dataset contain, first because some special tokens might be added (we can a `[CLS]` and a `[SEP]` above) and then because of those possible splits of words in multiple tokens:

# + colab={"base_uri": "https://localhost:8080/"} id="3intBYzJPSPD" outputId="b5296afe-243a-4dc6-f0f2-14c4c81814fd"

len(example[f"{task}_tags"]), len(tokenized_input["input_ids"])

# + [markdown] id="YFEDGF_WPSPD"

# Thankfully, the tokenizer returns outputs that have a `word_ids` method which can help us.

# + colab={"base_uri": "https://localhost:8080/"} id="ZjPu1wxoPSPD" outputId="96a09327-cc87-478c-ee60-356f38207b03"

print(tokenized_input.word_ids())

# + [markdown] id="WqHQp3otPSPE"

# As we can see, it returns a list with the same number of elements as our processed input ids, mapping special tokens to `None` and all other tokens to their respective word. This way, we can align the labels with the processed input ids.

# + colab={"base_uri": "https://localhost:8080/"} id="S2bLHIshPSPE" outputId="b5aa29df-fb7b-4eaf-de0b-3e06b66f4847"

word_ids = tokenized_input.word_ids()

aligned_labels = [-100 if i is None else example[f"{task}_tags"][i] for i in word_ids]

print(len(aligned_labels), len(tokenized_input["input_ids"]))

# + [markdown] id="cj3LA4UXPSPE"

# Here we set the labels of all special tokens to -100 (the index that is ignored by PyTorch) and the labels of all other tokens to the label of the word they come from. Another strategy is to set the label only on the first token obtained from a given word, and give a label of -100 to the other subtokens from the same word. We propose the two strategies here, just change the value of the following flag:

# + id="lJkzdIlIPSPF"

label_all_tokens = True

# + [markdown] id="2C0hcmp9IrJQ"

# We're now ready to write the function that will preprocess our samples. We feed them to the `tokenizer` with the argument `truncation=True` (to truncate texts that are bigger than the maximum size allowed by the model) and `is_split_into_words=True` (as seen above). Then we align the labels with the token ids using the strategy we picked:

# + id="vc0BSBLIIrJQ"

def tokenize_and_align_labels(examples):

tokenized_inputs = tokenizer(examples["tokens"], truncation=True, is_split_into_words=True)

labels = []

for i, label in enumerate(examples[f"{task}_tags"]):

word_ids = tokenized_inputs.word_ids(batch_index=i)

previous_word_idx = None

label_ids = []

for word_idx in word_ids:

# Special tokens have a word id that is None. We set the label to -100 so they are automatically

# ignored in the loss function.

if word_idx is None:

label_ids.append(-100)

# We set the label for the first token of each word.

elif word_idx != previous_word_idx:

label_ids.append(label[word_idx])

# For the other tokens in a word, we set the label to either the current label or -100, depending on

# the label_all_tokens flag.

else:

label_ids.append(label[word_idx] if label_all_tokens else -100)

previous_word_idx = word_idx

labels.append(label_ids)

tokenized_inputs["labels"] = labels

return tokenized_inputs

# + [markdown] id="0lm8ozrJIrJR"

# This function works with one or several examples. In the case of several examples, the tokenizer will return a list of lists for each key:

# + colab={"base_uri": "https://localhost:8080/"} id="-b70jh26IrJS" outputId="4ffb9712-e409-43d1-991f-239bbfa46c51"

tokenize_and_align_labels(datasets['train'][:5])

# + [markdown] id="zS-6iXTkIrJT"

# To apply this function on all the sentences (or pairs of sentences) in our dataset, we just use the `map` method of our `dataset` object we created earlier. This will apply the function on all the elements of all the splits in `dataset`, so our training, validation and testing data will be preprocessed in one single command.

# + colab={"base_uri": "https://localhost:8080/", "height": 113, "referenced_widgets": ["ee50223163a0429b83391b426dbf4191", "<KEY>", "<KEY>", "<KEY>", "<KEY>", "<KEY>", "<KEY>", "af435b9755a942caba61094ec781870a", "<KEY>", "<KEY>", "<KEY>", "<KEY>", "<KEY>", "265a7a3116f4435abadf5b8d5e8ab594", "8f947072da59454c88e18196dc9976d0", "<KEY>", "<KEY>", "<KEY>", "<KEY>", "<KEY>", "<KEY>", "<KEY>", "fa5a85c7a80642d79abf1fe8f8d8dca4", "c406925717dd42e480e0f6b3957e390f", "df92ae2e9102414aa589c3a1040aed9a", "<KEY>", "<KEY>", "6ea5e881272241569d79091f8478ab95", "<KEY>", "<KEY>", "<KEY>", "<KEY>", "3bda313521644f9284681fc804a8848f"]} id="DDtsaJeVIrJT" outputId="abf1d75d-e60a-4e55-ced6-67c554a7d165"

tokenized_datasets = datasets.map(tokenize_and_align_labels, batched=True)

# + [markdown] id="voWiw8C7IrJV"

# Even better, the results are automatically cached by the 🤗 Datasets library to avoid spending time on this step the next time you run your notebook. The 🤗 Datasets library is normally smart enough to detect when the function you pass to map has changed (and thus requires to not use the cache data). For instance, it will properly detect if you change the task in the first cell and rerun the notebook. 🤗 Datasets warns you when it uses cached files, you can pass `load_from_cache_file=False` in the call to `map` to not use the cached files and force the preprocessing to be applied again.