code stringlengths 38 801k | repo_path stringlengths 6 263 |

|---|---|

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# # How to Create a US Census Bar Chart Race

# ## Overview

# The beginning of every data science project starts with data and this will be the focus of this project. Usually, this part involves three steps:

# * data acquisition,

# * data cleaning and

# * data visualization.

#

# Data visualization can mean anything from simple print statements to fancy plots and animations.

#

# ## Data Acquisition

# As a data source, we use Wikipedia's census data websites. Since this data is only available on the web, we need to parse the website and extract the useful data. We use the popular [pandas](https://pandas.pydata.org/) package for this task.

import pandas as pd

# We also be using two more packages, `pickle` which allows us to easily save data to disk and `re` which allows us to use [regular expression](https://regexr.com/) syntax. Finally, for nicer table formatting, we import `IPython.display`.

# +

import pickle

import re

from IPython.display import display, HTML

# Define empty Census data dictionary - the keys will be the census years.

Census_raw = {}

# -

# ### Parsing Wikipedia

# Generally, parsing is not recommended to get data from the web as we often have to deal with messy formatting and a lot of cleaning up is needed. However, in this case, Wikipedia's layout of the US census years seems relatively consistent and thus we give parsing a shot. The following code goes through all Wikipedia census websites from [1790](https://en.wikipedia.org/wiki/1790_United_States_Census) to [2010](https://en.wikipedia.org/wiki/2010_United_States_Census) and collects all tables from the pages. We also add the latest census estimate from 2019.

# +

# Uncomment if you like to parse the data again (not recommended!)

# for year in range(1790,2020,10):

# Census_raw[year]=pd.read_html('https://en.wikipedia.org/wiki/{}_United_States_Census'.format(year))

# Census_raw[2019]=pd.read_html('https://en.wikipedia.org/wiki/List_of_states_and_territories_of_the_United_States_by_population')

# -

# Once, we have parsed the data for the first time, we save it to disk and when we run our notebook the next time, we can skip the parsing step.

if len(Census_raw.keys()):

with open('rawdata.pickle', 'wb') as handle:

pickle.dump(Census_raw, handle, protocol=pickle.HIGHEST_PROTOCOL)

else:

with open('rawdata.pickle', 'rb') as handle:

Census_raw = pickle.load(handle)

# ## Data Cleaning

#

# All Census pages contain several tables but we are only interested in those tables that contain `State` (or `District` as in [1800](https://en.wikipedia.org/wiki/1800_United_States_Census)) as one of their columns. Most pages also have a seperate statistic on largest cities and we thus ignore all tables that have `City` as one of their columns.

Census = {}

for key in Census_raw.keys():

Census[key] = [table for table in Census_raw[key] if ('State' in table.columns) or (('District' in table.columns))]

if len(Census[key]) > 1:

Census[key] = [table for table in Census[key] if ('City' not in table.columns)][0]

else:

Census[key] = Census[key][0]

# After these relatively easy manipulations, we need to get our hands dirty and make sure that all tables are of the correct format. Often, it is best to visualize the tables (or at least the first entry) to get a better idea. So let's do that.

for key in Census.keys():

print("Displaying values for {}".format(key))

display(Census[key].head(1))

# Ok, so it looks like 1790 and 1800 use `Total` instead of `Population` and 1800 also uses `District` instead of `State`. Let's change that!

Census[1790].rename(columns = {'Total':'Population'}, inplace=True)

Census[1800].rename(columns = {'Total':'Population', 'District':'State'}, inplace=True)

# Also, from 1990 to 2010, the population of the previous decade is listed and we need to make sure we get the right one. For this task, we use regular expressions to select the column that contains the current year. Once we have found the right column, we rename it to `Population`. For 2019, we need to change it from `Population estimate, July 1, 2019[5]` to `Population`.

for year in range(1990,2020,10):

d = {'Population as of{}.*'.format(year): 'Population'}

Census[year].columns = Census[year].columns.to_series().replace(d, regex=True)

Census[2019].columns = Census[2019].columns.to_series().replace({'Population estimate, July 1, 2019.*': 'Population'}, regex=True)

# Next, we get rid of all columns other than `State` and `Population`. We also remove all non-numerical characters from the `Population` column with some regular expression magic. Once we have done these two steps, we display the first 8 entries of all tables.

for key in Census.keys():

print("Displaying values for {}".format(key))

Census[key] = Census[key][['State','Population']]

Census[key].loc[:,'Population']=Census[key].loc[:,'Population'].apply(

lambda s: int(re.sub(r'\[.*','', str(s)).replace(',','')))

display(Census[key].head(8))

# ## The year 1800 😒

display(Census[1800].head(10))

# Unfortunately, the year 1800 doesn't play well with the other years and requires some additional cleaning up (that's why parsing is not recommended). We can see that instead of `States`, the 1800 census data has smaller subdivisions. To remedy this, we match our list of the 50+1 states against the district names and rename them to a single state. For example, our row with `New York (Duchess, Ulster, Orange counties)` will just become `New York`. Once we have renamed them, we group them by state and sum up the populations on all rows that share the same state. Just to be safe, we apply the same action to every census year. Grouping also has the nice side effect of setting the index to our column that we group by (`State`). Finally, we rename our `Population` column to the census year.

# +

States = pd.read_csv('States.csv')['States']

StatesList = States.tolist()

if isinstance(Census[1790].index[0], int):

for key in Census.keys():

for i, row in Census[key].iterrows():

for State in StatesList:

if State in row['State']:

Census[key].loc[i,'State'] = State

Census[key] = Census[key][Census[key]['State'].isin(StatesList)]

Census[key] = Census[key].groupby(['State'],squeeze=True).sum()

Census[key].rename(columns={'Population':str(key)},inplace=True)

display(Census[1800].head(14))

# -

# It worked! Great, now we are ready to put everything in one big table and make some final checks.

#

result_df = pd.DataFrame(States).set_index('States')

for key in Census.keys():

result_df = result_df.add(Census[key],fill_value=0)

result_df.to_csv('State_Census_Historical.csv')

result_df.tail(5)

# It looks like some years have missing information because records were lost. For instance, there is no value for `West Virginia` in 1860. To fix this, we interpolate all missing values.

result_df.interpolate(axis=1, inplace = True)

result_df.tail()

# We can see how `West Virginia`'s 1860 result was successfully interpolated between its neighbors. Now we are ready to go and visualize the census data over the past 230 years!

# ## Data Visualization

# Getting and cleaning the data was by far the hardest part. Now that we got this out of the way, we can use a visualization tool such as Flourish to get our final **US Census Bar Chart Race** which is available at https://public.flourish.studio/visualisation/1322083.

#

# Congrats on completing this tutorial and I hope it can help you in your next data science project!

HTML("<iframe src='https://public.flourish.studio/visualisation/1322083/embed' frameborder='0' scrolling='no' style='width:100%;height:600px;'></iframe><div style='width:100%!;margin-top:4px!important;text-align:right!important;'><a class='flourish-credit' href='https://public.flourish.studio/visualisation/1322083/?utm_source=embed&utm_campaign=visualisation/1322083' target='_top' style='text-decoration:none!important'><img alt='Made with Flourish' src='https://public.flourish.studio/resources/made_with_flourish.svg' style='width:105px!important;height:16px!important;border:none!important;margin:0!important;'> </a></div>")

# ## Licensing

# This work is licensed under a [Creative Commons Attribution 4.0 International

# License][cc-by]. This means that you are free to:

#

# * **Share** — copy and redistribute the material in any medium or format

# * **Adapt** — remix, transform, and build upon the material for any purpose, even commercially.

#

# Under the following terms:

#

# * **Attribution** — a link to this [github repo](https://github.com/AntonMu/Census2020)!

#

# [![CC BY 4.0][cc-by-image]][cc-by]

#

# [cc-by]: http://creativecommons.org/licenses/by/4.0/

# [cc-by-image]: https://i.creativecommons.org/l/by/4.0/88x31.png

# [cc-by-shield]: https://img.shields.io/badge/License-CC%20BY%204.0-lightgrey.svg

| USCensus2020.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: fe_test

# language: python

# name: fe_test

# ---

# ## Outlier Engineering

#

#

# An outlier is a data point which is significantly different from the remaining data. “An outlier is an observation which deviates so much from the other observations as to arouse suspicions that it was generated by a different mechanism.” [<NAME>. Identification of Outliers, Chapman and Hall , 1980].

#

# Statistics such as the mean and variance are very susceptible to outliers. In addition, **some Machine Learning models are sensitive to outliers** which may decrease their performance. Thus, depending on which algorithm we wish to train, we often remove outliers from our variables.

#

# We discussed in section 3 of this course how to identify outliers. In this section, we we discuss how we can process them to train our machine learning models.

#

#

# ## How can we pre-process outliers?

#

# - Trimming: remove the outliers from our dataset

# - Treat outliers as missing data, and proceed with any missing data imputation technique

# - Discrestisation: outliers are placed in border bins together with higher or lower values of the distribution

# - Censoring: capping the variable distribution at a max and / or minimum value

#

# **Censoring** is also known as:

#

# - top and bottom coding

# - windsorisation

# - capping

#

#

# ## Censoring or Capping.

#

# **Censoring**, or **capping**, means capping the maximum and /or minimum of a distribution at an arbitrary value. On other words, values bigger or smaller than the arbitrarily determined ones are **censored**.

#

# Capping can be done at both tails, or just one of the tails, depending on the variable and the user.

#

# Check my talk in [pydata](https://www.youtube.com/watch?v=KHGGlozsRtA) for an example of capping used in a finance company.

#

# The numbers at which to cap the distribution can be determined:

#

# - arbitrarily

# - using the inter-quantal range proximity rule

# - using the gaussian approximation

# - using quantiles

#

#

# ### Advantages

#

# - does not remove data

#

# ### Limitations

#

# - distorts the distributions of the variables

# - distorts the relationships among variables

#

#

# ## In this Demo

#

# We will see how to perform capping with the quantiles using the Boston House Dataset

#

# ## Important

#

# When doing capping, we tend to cap values both in train and test set. It is important to remember that the capping values MUST be derived from the train set. And then use those same values to cap the variables in the test set

#

# I will not do that in this demo, but please keep that in mind when setting up your pipelines

# +

import pandas as pd

import numpy as np

import matplotlib.pyplot as plt

import seaborn as sns

# for Q-Q plots

import scipy.stats as stats

# boston house dataset for the demo

from sklearn.datasets import load_boston

from feature_engine.outlier_removers import Winsorizer

# +

# load the the Boston House price data

# load the boston dataset from sklearn

boston_dataset = load_boston()

# create a dataframe with the independent variables

# I will use only 3 of the total variables for this demo

boston = pd.DataFrame(boston_dataset.data,

columns=boston_dataset.feature_names)[[

'RM', 'LSTAT', 'CRIM'

]]

# add the target

boston['MEDV'] = boston_dataset.target

boston.head()

# +

# function to create histogram, Q-Q plot and

# boxplot. We learned this in section 3 of the course

def diagnostic_plots(df, variable):

# function takes a dataframe (df) and

# the variable of interest as arguments

# define figure size

plt.figure(figsize=(16, 4))

# histogram

plt.subplot(1, 3, 1)

sns.distplot(df[variable], bins=30)

plt.title('Histogram')

# Q-Q plot

plt.subplot(1, 3, 2)

stats.probplot(df[variable], dist="norm", plot=plt)

plt.ylabel('RM quantiles')

# boxplot

plt.subplot(1, 3, 3)

sns.boxplot(y=df[variable])

plt.title('Boxplot')

plt.show()

# +

# let's find outliers in RM

diagnostic_plots(boston, 'RM')

# +

# visualise outliers in LSTAT

diagnostic_plots(boston, 'LSTAT')

# +

# outliers in CRIM

diagnostic_plots(boston, 'CRIM')

# -

# There are outliers in all of the above variables. RM shows outliers in both tails, whereas LSTAT and CRIM only on the right tail.

#

# To find the outliers, let's re-utilise the function we learned in section 3:

def find_boundaries(df, variable):

# the boundaries are the quantiles

lower_boundary = df[variable].quantile(0.05)

upper_boundary = df[variable].quantile(0.95)

return upper_boundary, lower_boundary

# +

# find limits for RM

RM_upper_limit, RM_lower_limit = find_boundaries(boston, 'RM')

RM_upper_limit, RM_lower_limit

# +

# limits for LSTAT

LSTAT_upper_limit, LSTAT_lower_limit = find_boundaries(boston, 'LSTAT')

LSTAT_upper_limit, LSTAT_lower_limit

# +

# limits for CRIM

CRIM_upper_limit, CRIM_lower_limit = find_boundaries(boston, 'CRIM')

CRIM_upper_limit, CRIM_lower_limit

# +

# Now let's replace the outliers by the maximum and minimum limit

boston['RM']= np.where(boston['RM'] > RM_upper_limit, RM_upper_limit,

np.where(boston['RM'] < RM_lower_limit, RM_lower_limit, boston['RM']))

# +

# Now let's replace the outliers by the maximum and minimum limit

boston['LSTAT']= np.where(boston['LSTAT'] > LSTAT_upper_limit, LSTAT_upper_limit,

np.where(boston['LSTAT'] < LSTAT_lower_limit, LSTAT_lower_limit, boston['LSTAT']))

# +

# Now let's replace the outliers by the maximum and minimum limit

boston['CRIM']= np.where(boston['CRIM'] > CRIM_upper_limit, CRIM_upper_limit,

np.where(boston['CRIM'] < CRIM_lower_limit, CRIM_lower_limit, boston['CRIM']))

# +

# let's explore outliers in the trimmed dataset

# for RM we see much less outliers as in the original dataset

diagnostic_plots(boston, 'RM')

# -

diagnostic_plots(boston, 'LSTAT')

diagnostic_plots(boston, 'CRIM')

# We can see that the outliers are gone, but the variable distribution was distorted quite a bit.

# ## Censoring with feature-engine

# +

# load the the Boston House price data

# load the boston dataset from sklearn

boston_dataset = load_boston()

# create a dataframe with the independent variables

# I will use only 3 of the total variables for this demo

boston = pd.DataFrame(boston_dataset.data,

columns=boston_dataset.feature_names)[[

'RM', 'LSTAT', 'CRIM'

]]

# add the target

boston['MEDV'] = boston_dataset.target

boston.head()

# +

# create the capper

windsoriser = Winsorizer(distribution='quantiles', # choose from skewed, gaussian or quantiles

tail='both', # cap left, right or both tails

fold=0.05,

variables=['RM', 'LSTAT', 'CRIM'])

windsoriser.fit(boston)

# -

boston_t = windsoriser.transform(boston)

diagnostic_plots(boston, 'RM')

diagnostic_plots(boston_t, 'RM')

# we can inspect the minimum caps for each variable

windsoriser.left_tail_caps_

# we can inspect the maximum caps for each variable

windsoriser.right_tail_caps_

| Section-09-Outlier-Engineering/09.04-Capping-Quantiles.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# Boston(42.3601° N, 71.0589° W) is located on the east coast of the US, whereas Seattle(47.6062° N, 122.3321° W) is located on the west coast.

#

# If we compare the weathers, this is what we see:<br>

# Boston, Massachusetts <br>

# Summer High: the July high is around 82.4 degrees<br>

# Winter Low: the January low is 19.2<br>

# Rain: averages 47.4 inches of rain a year<br>

# Snow: averages 48.1 inches of snow a year<br>

#

# Seattle, Washington <br>

# Summer High: the July high is around 75.8 degrees<br>

# Winter Low: the January low is 37<br>

# Rain: averages 38 inches of rain a year<br>

# Snow: averages 4.6 inches of snow a year<br>

# Source: https://www.bestplaces.net/climate/?c1=55363000&c2=52507000<br>

#

#

# # 1. Business Understanding

# Since, they are located almost in the same latitude, and bost are coastal cities, it's worth comparing the cities and find out which is a better place to travel?<br>

# As we can see, **Seattle** is a slightly more comfortable place to visit. I used Airbnb data to find out travelling pattern for the cities. Let’s see what we can infer using the Airbnb data.

#

# This projects aims to solve 4 business questions related to Boston and Seattle data.

#

# **Question 1:** Which place is cheaper to stay? Boston or Seattle. <br>

# If we compare the average housing price between Boston and Seattle, we can determine which place is cheaper.

#

# **Question 2:** What is the best time to visit these places?<br>

# We can find out the pricing change throughout the year.

#

# **Question 3:** Which city is more popular with visitors?<br>

# If we ca find out the monthly availibility of the properties throughout the year, we can answer this question.

#

# **Question 4:** What are the popular areas to stay and what is the recommended type of housing?<br>

# We need to find out the pricing change with the property type, bedrooms, bathrooms, neighbourhood, zipcode etc to determine the popular areas. In this project I tried to find out the relation between the neighbourhood and price and review vs price.

# For the second part we can investigate the relation between property type and price.

# # 2. Data Understanding

# +

# Import useful libraries

# for computation

import numpy as np

import pandas as pd

# for visualization

import matplotlib.pyplot as plt

import seaborn as sns

# %matplotlib inline

# -

# #### Gather the Data

# There are four types of datasets used for this project. "Boston_listing.csv", "Seattle_listings.csv", "Boston_calendar.csv" and "Seattle_calendar.csv".

# +

# reading the dataset

# get the data from here : http://insideairbnb.com/get-the-data.html

# Boston

boston_calendar_sep2016 = pd.read_csv('./data/Boston_calendar_sep2016.csv')

boston_calendar_oct2017 = pd.read_csv('./data/Boston_calendar_oct2017.csv')

boston_calendar_apr2018 = pd.read_csv('./data/Boston_calendar_apr2018.csv')

boston_calendar_jan2019 = pd.read_csv('./data/Boston_calendar_jan2019.csv')

boston_calendar_jan2020 = pd.read_csv('./data/Boston_calendar_jan2020.csv')

boston_listing = pd.read_csv('./data/Boston_listings_jan2020.csv')

# reading the dataset

# get the data from here : http://insideairbnb.com/get-the-data.html

# Seattle

seattle_calendar_jan2016 = pd.read_csv('./data/Seattle_calendar_jan2016.csv')

seattle_calendar_apr2018 = pd.read_csv('./data/Seattle_calendar_apr2018.csv')

seattle_calendar_jan2019 = pd.read_csv('./data/Seattle_calendar_jan2019.csv')

seattle_calendar_jan2020 = pd.read_csv('./data/Seattle_calendar_jan2020.csv')

seattle_listing = pd.read_csv('./data/Seattle_listings_jan2020.csv')

# -

# #### Assess the data

#

# Let's look at one of the boston_calendar and one of the seattle_calnedar data first. There 4 boston_calendar data and 4 seattle_calnedar data taken at different years. Let's check the first 5 rows of one of the datasets (one for Boston one for Seattle)

boston_calendar_oct2017.head()

# rows and columns

print (f"boston_calendar_oct2017 has {boston_calendar_oct2017.shape[0]} rows and {boston_calendar_oct2017.shape[1]} columns")

print ( "Columns are = ", list(boston_calendar_oct2017.columns))

seattle_calendar_jan2016.head()

print (f"seattle_calendar_oct2017 has {seattle_calendar_jan2016.shape[0]} rows and {seattle_calendar_jan2016.shape[1]} columns")

print ( "Columns are = ", list(seattle_calendar_jan2016.columns))

# Which columns had no missing values? Provide a set of column names that have no missing values.

# +

boston_calendar_no_nulls = set(boston_calendar_oct2017.columns[boston_calendar_oct2017.isnull().mean()==0])

boston_calendar_most_nulls = set(boston_calendar_oct2017.columns[boston_calendar_oct2017.isnull().mean() > 0.75])

print("boston_calendar data columns with no missing values \n",boston_calendar_no_nulls) #columns with no missing value

print("\nboston_calendar data columns with atleast values \n",boston_calendar_most_nulls) #columns with atleast 75% missing value

# +

seattle_calendar_no_nulls = set(seattle_calendar_jan2016.columns[seattle_calendar_jan2016.isnull().mean()==0])

seattle_calendar_most_nulls = set(seattle_calendar_jan2016.columns[seattle_calendar_jan2016.isnull().mean() > 0.75])

print("seattle_calendar data columns with no missing values \n",seattle_calendar_no_nulls) #columns with no missing value

print("\n seattle_calendar data columns with atleast values \n",seattle_calendar_most_nulls) #columns with atleast 75% missing value

# -

boston_calendar_oct2017.available.value_counts() # Categorical values

seattle_calendar_jan2016.available.value_counts() # Categorical values

# As we can see, boston_calendar_oct2017 and seattle_calendar_jan2016 has no missing values in **'date', 'available', 'listing_id'** columns.<br>

# **available** column has 2 categorical features **t** means available and **f** means not available.<br>

# Also, the **price** column is NAN is the housing is not available.<br>

# **listing_id** corresponds to host category.

# Let's look at one of the boston_listing and one of the seattle_listing data

boston_listing.head()

print (f"boston_listing has {boston_listing.shape[0]} rows and {boston_listing.shape[1]} columns. \n")

print ( "Columns are = ", list(boston_listing.columns))

seattle_listing.head()

print (f"seattle_listing has {seattle_listing.shape[0]} rows and {seattle_listing.shape[1]} columns. \n")

print ( "Columns are = ", list(seattle_listing.columns))

# As we can see in terms of number of columns, **boston_calendar and seattle_calendar** are identical. Similarly **boston_listing and seattle_listing** are identical.

# Which columns had no missing values? Provide a set of column names that have no missing values.

# +

boston_listing_no_nulls = set(boston_listing.columns[boston_listing.isnull().mean()==0])

boston_listing_most_nulls = set(boston_listing.columns[boston_listing.isnull().mean() > 0.75])

print("boston_listing data columns with no missing values \n",boston_listing_no_nulls) #columns with no missing value

print("\nboston_listing data columns with atleast values \n",boston_listing_most_nulls) #columns with atleast 75% missing value

# +

seattle_listing_no_nulls = set(seattle_listing.columns[seattle_listing.isnull().mean()==0])

seattle_listing_most_nulls = set(seattle_listing.columns[seattle_listing.isnull().mean() > 0.75])

print("seattle_listing data columns with no missing values \n",seattle_listing_no_nulls) #columns with no missing value

print("\n seattle_listing data columns with atleast values \n",seattle_listing_most_nulls) #columns with atleast 75% missing value

# -

# It's better to ignore **'monthly_price', 'neighbourhood_group_cleansed','square_feet', 'weekly_price', 'host_acceptance_rate', 'medium_url', 'thumbnail_url', 'xl_picture_url'** columns from boston_listing and seattle_listing as more than 75% values are missing.

# #### Data insight

#

# For the first 3 business understanding questions we only need boston_calendar/seattle_calendar dataset with **available, date and price** columns.<br>

# there are missing values in the price column. I will discuss how to handle with that.

#

#

# For the last question, we can use **"neighbourhood_cleansed" and "price"** and **"review_scores_rating" and "price"** columns to find out the popular areas and **"property_type" and "price"** columns to find out the recommended housing.<br>

#

# **The good news is there are no missing values! except "review_scores_rating" :)**

# # 3. Data Preparation

# First, we have to remove the '$' sign of the **price** column and convert srting into float

# +

# function to remove $ sign and return float

def str_to_float(string):

"""

INPUT

string - string of the price (ex : $250.00)

OUTPUT

float - returns float value of the price (ex: 250.0)

"""

if string[:1] == '$':

return float(string[1:].replace(',', ''))

else:

return np.nan

# -

# We can extract the month and year from the **date** column

# ### Data Cleaning

#

# ### Boston/Seattle calendar data

# +

# Boston

# Sep 2016

boston_calendar_sep2016["price"] = boston_calendar_sep2016["price"].dropna().apply(str_to_float)

boston_calendar_sep2016["year"] = pd.DatetimeIndex(boston_calendar_sep2016['date']).year # exracting year

boston_calendar_sep2016["month"] = pd.DatetimeIndex(boston_calendar_sep2016['date']).month # extracting month

# Oct 2017

boston_calendar_oct2017["price"] = boston_calendar_oct2017["price"].dropna().apply(str_to_float)

boston_calendar_oct2017["year"] = pd.DatetimeIndex(boston_calendar_oct2017['date']).year

boston_calendar_oct2017["month"] = pd.DatetimeIndex(boston_calendar_oct2017['date']).month

# Apr2018

boston_calendar_apr2018["price"] = boston_calendar_apr2018["price"].dropna().apply(str_to_float)

boston_calendar_apr2018["year"] = pd.DatetimeIndex(boston_calendar_apr2018['date']).year

boston_calendar_apr2018["month"] = pd.DatetimeIndex(boston_calendar_apr2018['date']).month

# Jan 2019

boston_calendar_jan2019["price"] = boston_calendar_jan2019["price"].dropna().apply(str_to_float)

boston_calendar_jan2019["year"] = pd.DatetimeIndex(boston_calendar_jan2019['date']).year

boston_calendar_jan2019["month"] = pd.DatetimeIndex(boston_calendar_jan2019['date']).month

# Jan 2020

boston_calendar_jan2020["price"] = boston_calendar_jan2020["price"].dropna().apply(str_to_float)

boston_calendar_jan2020["year"] = pd.DatetimeIndex(boston_calendar_jan2020['date']).year

boston_calendar_jan2020["month"] = pd.DatetimeIndex(boston_calendar_jan2020['date']).month

# boston listing

boston_listing["price"] = boston_listing["price"].apply(str_to_float)

# Seattle

# Jan 2016

seattle_calendar_jan2016["price"] = seattle_calendar_jan2016["price"].dropna().apply(str_to_float)

seattle_calendar_jan2016["year"] = pd.DatetimeIndex(seattle_calendar_jan2016['date']).year

seattle_calendar_jan2016["month"] = pd.DatetimeIndex(seattle_calendar_jan2016['date']).month

# Apr2018

seattle_calendar_apr2018["price"] = seattle_calendar_apr2018["price"].dropna().apply(str_to_float)

seattle_calendar_apr2018["year"] = pd.DatetimeIndex(seattle_calendar_apr2018['date']).year

seattle_calendar_apr2018["month"] = pd.DatetimeIndex(seattle_calendar_apr2018['date']).month

# Jan 2019

seattle_calendar_jan2019["price"] = seattle_calendar_jan2019["price"].dropna().apply(str_to_float)

seattle_calendar_jan2019["year"] = pd.DatetimeIndex(seattle_calendar_jan2019['date']).year

seattle_calendar_jan2019["month"] = pd.DatetimeIndex(seattle_calendar_jan2019['date']).month

# Jan 2020

seattle_calendar_jan2020["price"] = seattle_calendar_jan2020["price"].dropna().apply(str_to_float)

seattle_calendar_jan2020["year"] = pd.DatetimeIndex(seattle_calendar_jan2020['date']).year

seattle_calendar_jan2020["month"] = pd.DatetimeIndex(seattle_calendar_jan2020['date']).month

# Seattle listing

seattle_listing["price"] = seattle_listing["price"].apply(str_to_float)

# -

# Our plan is to see how much the yearly average pricing has changed from the year 2016-2020 and investigate any general trend. <br>

#

# Let's extract the yearly data from the above dataset. We only need **year ,month, available, and price** columns

#

#

# We are going to filter the Boston/Seattle data w.r.t year(2017-2020 for Boston and 2016,2018-2020 for seattle as 2017 was not available in Airbnb database)

#

# +

# Boston

# Year 2017

boston_2017_p1 = boston_calendar_sep2016[boston_calendar_sep2016["year"] == 2017]

boston_2017_p2 = boston_calendar_oct2017[boston_calendar_oct2017["year"] == 2017]

boston_2017 = pd.concat([boston_2017_p1,boston_2017_p2], axis = 0)

boston_2017 = boston_2017[["year" ,"month", "available" ,"price"]]

# Year 2018

boston_2018_p1 = boston_calendar_oct2017[boston_calendar_oct2017["year"] == 2018]

boston_2018_p2 = boston_calendar_apr2018[boston_calendar_apr2018["year"] == 2018]

boston_2018 = pd.concat([boston_2018_p1,boston_2018_p2], axis = 0)

boston_2018 = boston_2018[["year" ,"month", "available" ,"price"]]

# Year 2019

boston_2019_p1 = boston_calendar_apr2018[boston_calendar_apr2018["year"] == 2019]

boston_2019_p2 = boston_calendar_jan2019[boston_calendar_jan2019["year"] == 2019]

boston_2019 = pd.concat([boston_2019_p1,boston_2019_p2], axis = 0)

boston_2019 = boston_2019[["year" ,"month", "available" ,"price"]]

# Year 2020

boston_2020_p1 = boston_calendar_jan2019[boston_calendar_jan2019["year"] == 2020]

boston_2020_p2 = boston_calendar_jan2020[boston_calendar_jan2020["year"] == 2020]

boston_2020 = pd.concat([boston_2020_p1,boston_2020_p2], axis = 0)

boston_2020 = boston_2020[["year" ,"month", "available" ,"price"]]

# Seattle

# Year 2016

seattle_2016 = seattle_calendar_jan2016[seattle_calendar_jan2016["year"] == 2016]

seattle_2016 = seattle_2016[["year" ,"month", "available" ,"price"]]

# Year 2018

seattle_2018 = seattle_calendar_apr2018[seattle_calendar_apr2018["year"] == 2018]

seattle_2018 = seattle_2018[["year" ,"month", "available" ,"price"]]

# Year 2019

seattle_2019_p1 = seattle_calendar_apr2018[seattle_calendar_apr2018["year"] == 2019]

seattle_2019_p2 = seattle_calendar_jan2019[seattle_calendar_jan2019["year"] == 2019]

seattle_2019 = pd.concat([seattle_2019_p1,seattle_2019_p2], axis = 0)

seattle_2019 = seattle_2019[["year" ,"month", "available" ,"price"]]

# Year 2020

seattle_2020_p1 = seattle_calendar_jan2019[seattle_calendar_jan2019["year"] == 2020]

seattle_2020_p2 = seattle_calendar_jan2020[seattle_calendar_jan2020["year"] == 2020]

seattle_2020 = pd.concat([seattle_2020_p1,seattle_2020_p2], axis = 0)

seattle_2020 = seattle_2020[["year" ,"month", "available" ,"price"]]

# -

# ### Handling categorical values in Boston/Seattle calendar data ("available" column)

# Since **available** column has 2 values "t"(available) and "f"(not-available) Let's expand the **available** column using one-hot encoding

# +

# Boston

# 2017

boston_one_hot_2017 = pd.get_dummies(boston_2017['available'])

boston_2017 = boston_2017.join(boston_one_hot_2017)

# 2018

boston_one_hot_2018 = pd.get_dummies(boston_2018['available'])

boston_2018 = boston_2018.join(boston_one_hot_2018)

#2019

boston_one_hot_2019 = pd.get_dummies(boston_2019['available'])

boston_2019 = boston_2019.join(boston_one_hot_2019)

#2020

boston_one_hot_2020 = pd.get_dummies(boston_2020['available'])

boston_2020 = boston_2020.join(boston_one_hot_2020)

# Seattle

# 2016

seattle_one_hot_2016 = pd.get_dummies(seattle_2016['available'])

seattle_2016 = seattle_2016.join(seattle_one_hot_2016)

# 2018

seattle_one_hot_2018 = pd.get_dummies(seattle_2018['available'])

seattle_2018 = seattle_2018.join(seattle_one_hot_2018)

#2019

seattle_one_hot_2019 = pd.get_dummies(seattle_2019['available'])

seattle_2019 = seattle_2019.join(seattle_one_hot_2019)

#2020

seattle_one_hot_2020 = pd.get_dummies(seattle_2020['available'])

seattle_2020 = seattle_2020.join(seattle_one_hot_2020)

# -

# This will create two new columns **t** and **f** where, **t** = 1 means the housing is available and **f** = 1 means it's not available

# ### Merge the calendar data¶

#

# Finally we will merge all all boston and seattle calendar data as **boston_total** and **seattle_total** respectively

# +

boston_total = pd.concat([boston_2017,boston_2018,boston_2019,boston_2020], axis = 0)

seattle_total = pd.concat([seattle_2016,seattle_2018,seattle_2019,seattle_2020], axis = 0)

# +

# Free up some memory

boston_calendar_sep2016 = []

boston_calendar_oct2017 = []

boston_calendar_apr2018 = []

boston_calendar_jan2019 = []

boston_calendar_jan2020 = []

boston_one_hot_2017 = []

boston_one_hot_2018 = []

boston_one_hot_2019 = []

boston_one_hot_2020 = []

boston_2017 = []

boston_2018 = []

boston_2019 = []

boston_2020 = []

# Free up some memory

seattle_calendar_sep2016 = []

seattle_calendar_apr2018 = []

seattle_calendar_jan2019 = []

seattle_calendar_jan2020 = []

seattle_one_hot_2016 = []

seattle_one_hot_2019 = []

seattle_one_hot_2020 = []

seattle_2018 = []

seattle_2019 = []

# -

# ### Handling missing values

#

# Let's see how much missing values does boston_total and seattle_total's **price** has, since this is the only column with missing values

# Also, boston_listing and seattle_listing has **review_scores_rating** which can be used to find recommended housing. We have to look for missing values in this column as well.

# +

boston_missing_value = boston_total.price.isnull().mean()

seattle_missing_value = seattle_total.price.isnull().mean()

boston_review_missing_value = boston_listing.review_scores_rating.isnull().mean()

seattle_review_missing_value = seattle_listing.review_scores_rating.isnull().mean()

print(f"boston_total has {boston_missing_value*100 : .2f}% missing value in the price column")

print(f"seattle_total has {seattle_missing_value*100 : .2f}% missing value in the price column")

print(f"boston_listing has {boston_review_missing_value*100 : .2f}% missing value in the review_scores_rating column")

print(f"seattle_listing has {seattle_review_missing_value*100 : .2f}% missing value in the review_scores_rating column")

# -

# Since both **boston_total and seattle_total "price"** columns have less than 40% missing values and we will mostly work with average price, it's safe to just **drop** the corresponding rows whenever necessary.<br>

# Similarly both **boston_listing and seattle_listing "review_scores_rating"** columns have less than 20% missing values and we will mostly work with average price, it's safe to just **drop** the corresponding rows whenever necessary.<br>

# The only disadvantage will be, we'll have lesser data points. One possible solution could be to download more data from Airbnb wesite. But we have enough dataset to usderstand the general trend

# #### Boston/Seattle listing data

#

# we only need the **"neighbourhood_cleansed","property_type" and "price"** columns for this project. So let's just pick those and drop the rest. These columns have no missing values!

boston_listing = boston_listing[["neighbourhood_cleansed","property_type", "price",'review_scores_rating' ]]

seattle_listing = seattle_listing[["neighbourhood_cleansed","property_type", "price",'review_scores_rating']]

# ## 4. Analysis, Modeling, Visualization

# We can ask 4 business questions from the data. Answer to each question can be analyzed through visualization

# ## Question 1: Which place is cheaper to stay? Boston or Seattle.

# To answer this question I downloaded the Boston and Seattle data for the past few years and calculated the average pricing for each year. The following figures explain it.

figure, axes = plt.subplots(1, 2,figsize=(10,5))

seattle_total.groupby(["month","year"]).price.mean().unstack().plot(kind='box',title = "Seattle", ax = axes[1]);

boston_total.groupby(["month","year"]).price.mean().unstack().plot(kind='box',title = "Boston",ax = axes[0]);

axes[0].set_ylabel("Price in USD")

axes[0].set_xlabel("Year");

axes[1].set_xlabel("Year");

#plt.savefig('avg_price.png')

# The graph shows the mean price and spread of price (standard diviation) for each year

# Average housing price in Boston by year

boston_total.groupby(["year"]).price.mean()

# Average housing price in Seattle by year

seattle_total.groupby(["year"]).price.mean()

# So, from the above graph we can see that Seattle is cheaper to visit. For the past two years the average price of seattle is **159 dollars** whereas, the average price of Boston is **206 dollars**

# ## Question 2: What is the best time to visit these places?

# To address this question, we have to look into the availability of Airbnb housing and price change during the season.

# +

# average price of boston from 2017-2020

print (f"average price of boston from 2017-2020 is {boston_total.price.mean() :.0f} USD")

# average price of seattle from 2016-2020

print (f"average price of seattle from 2016-2020 is {seattle_total.price.mean() :.0f} USD")

# +

figure, axes = plt.subplots(1, 2,figsize=(10,5))

(boston_total.groupby(["month"]).price.mean() - boston_total.price.mean()).plot(kind='bar', title = "Boston price from 2017-2020",ax = axes[0])

(seattle_total.groupby(["month"]).price.mean() - seattle_total.price.mean()).plot(kind='bar', title = "Seattle price from 2016-2020",ax = axes[1])

axes[0].set_ylabel("change in price by month (in USD)");

axes[0].set_xlabel("Month");

axes[1].set_xlabel("Month");

#plt.savefig('price_change.png')

# -

# Here 0 means the **216 USD** for **Boston**. Anything more above 0 means more expensive during that month <br>

# Here 0 means the **159 USD** for **Seattle**. Anything more above 0 means more expensive during that month

# From this graph, we can see that since Boston has more extreme weather compared to Seattle, Boston has more price variation. Also, from the end of spring to the end of fall (March — November), Boston Airbnb's price is higher than average. So for Boston, **May and July** should be avoided if you want to save money. **March and November** are the best months to visit. During the winter season, **February** has the least price.<br>

# Similarly for Seattle, **July and August** should be avoided and **February** is the cheapest month of the year. From spring to the end of fall **March, April, May, October, and November** are the best months to visit.

# ## Question 3: Which city is more popular and what is the ideal time to visit?

# ### Average occupancy

# +

# Average yearly occupancy of Boston (in percentage)

boston_total.groupby(["year"]).f.mean() * 100

# +

# Average yearly occupancy of Seattle (in percentage)

seattle_total.groupby(["year"]).f.mean() * 100

# +

# average price of boston from 2017-2020

print (f"average occupancy of boston from 2017-2020 is {boston_total.f.mean()*100 :.0f} %") # f = 1 means occupied

# average price of seattle from 2016-2020

print (f"average 0ccupancy of seattle from 2016-2020 is {seattle_total.f.mean()*100 :.0f} %") # f = 1 means occupied

# -

# Over the last few years, the average occupancy of Boston and Seattle is 58% and 59% respectively. which suggests that Seattle is more popular among visitors. So what time people usually try to visit these cities?

# +

figure, axes = plt.subplots(1, 2,figsize=(10,5))

(boston_total.groupby(["month"]).f.mean() - boston_total.f.mean()).plot(kind='bar',title = "Boston occupancy from 2017-2020 (avg 58%)",ax = axes[0]);

(seattle_total.groupby(["month"]).f.mean() - seattle_total.f.mean()).plot(kind='bar',title = "Seattle occupancy from 2016-2020 (avg 58%)",ax = axes[1]);

axes[0].set_ylabel("Change in Availibility from yearly average (in fraction)");

axes[0].set_xlabel("Month");

axes[1].set_xlabel("Month");

#plt.savefig('accupancy.png')

# -

# As we can see, during early winter Boston is more occupied than the yearly average (more than 4% of the yearly average).<br>

# Seattle has more occupancy in **January** and from **July — December**, which also suggests Seattle being more popular among the two.

#

# ## Question 4: What are the popular areas to stay and what is the recommended type of housing?

# ### where are most of the hosts located in Boston?

# +

plt.figure(figsize = (10,10))

host_loc = boston_listing.neighbourhood_cleansed.value_counts()

(host_loc*100/boston_listing.neighbourhood_cleansed.value_counts().dropna().sum()).plot(kind="bar");

plt.ylabel('percentage of the neighbourhood')

plt.xlabel( "Neighborhood")

plt.title("Where are the hosts located in Boston?");

plt.xticks(rotation=45, ha='right');

#plt.savefig('most_host.png')

# +

boston_host_loc = seattle_listing.neighbourhood_cleansed.value_counts() # number of properties by neighbourhood

boston_host_loc[:6].sum()/boston_listing.neighbourhood_cleansed.value_counts().dropna().sum() # fraction of properties in the first 5 neighbourhood

# -

# As we can see from the above graph the more that roughly 50% of the hosing is located in **Dorchester, Downtown, Jamaica Plain, South End, and Back Bay** <br>

# ### So what are the recommended neighborhoods in Boston?

#

# If 75% of the hosts in each neighbourhood are rated more than 8, that neighbourhood can be recommended.

# We can check the rating of these neighbourhoods and find out which neighbourhood has ratings more than 80 out of 100 and we can filter out places with less than 70/100 rating.

above_80 = boston_listing[boston_listing['review_scores_rating'] > 80.0] #data with review above 80/100 rating

below_70 = boston_listing[boston_listing['review_scores_rating'] < 70.0] #data with review less than 70/100 rating

host_loc80 = above_80.neighbourhood_cleansed.value_counts()

(host_loc80*100/host_loc - 75).dropna().plot(kind="bar");

plt.ylabel('Percentage diviation from 75%')

plt.xlabel('Neighbourhood')

plt.title("Neighbourhood with more than 75% hosts rated 8/10");

#plt.savefig('recomended_palce.png')

# Here 0 marks the neighborhood with 75% of the hosts. Above 0 are the recommended places.

# From the graph above the recommended properties are **Allston, Beacon Hill, Charlestown, Dorchester, East Boston, Hyde Park, Jamaica Plain, Leather District, Mattapan, North End, Roslindale, Roxbury, South Boston, South End, West Roxbury.** <br>

# If you prefer a popular neighbourhood, **Jamaica Plain, Dorchester, and South End** is recommended. <br>

# In terms of rating, most places in **Hyde Park, Leather District, Mattapan, Roslindale, West Roxbury** are highly rated, but further research is needed since these places have lesser number of hosts.

#

# ### Which places should be avoided in Boston?

host_loc70 = below_70.neighbourhood_cleansed.value_counts()

(host_loc70/host_loc).dropna().plot(kind="bar");

plt.ylabel('fraction of the hosts\' rated less than 7')

plt.xlabel('Neighbourhood')

plt.title("Neighbourhood with hosts' rated less than 7/10");

#plt.savefig('avoid.png')

# From the graph above We can see that **Downtown and Misson Hill** have the most number of hosts' with less than 7 rating. So these places should be avoided.

# ### What is the recommended type of housing in Boston?

# Property type vs Price

(boston_listing.groupby(["property_type"]).price.mean()).dropna().plot(kind='bar');

plt.ylabel('Price in USD');

#plt.savefig('recomended_housing.png')

# If our goal is to save money, then we see that **Bungalow, Guesthouse, In-law, and Villa** are among the cheaper options to stay in Boston (Costs $150 or less).

# #### Similar analysis on Seattle

# #### where are most of the hosts located in seattle?

# +

plt.figure(figsize=(20,5))

seattle_host_loc = seattle_listing.neighbourhood_cleansed.value_counts().dropna()

(seattle_host_loc*100/seattle_listing.neighbourhood_cleansed.value_counts().dropna().sum()).plot(kind="bar");

plt.ylabel('percentage of the neighbourhood')

plt.title("Where are the hosts located?");

# -

seattle_host_loc[:16].sum()/seattle_listing.neighbourhood_cleansed.value_counts().dropna().sum() # fraction of properties in the first 15 neighbourhood

# First 15 neighbourhoods has 50% of the hosts

#

# As we can see from above graph top 50% neighbourhoods are located in **Broadway, Belltown, Central Business District, Wallingford, and Minor,First Hill, Univeristy District, Pike-Market, ..**

#

# #### So what is the recomended neighbourhood in Seattle?

seattle_above_80 = seattle_listing[seattle_listing['review_scores_rating'] > 80.0]

seattle_below_70 = seattle_listing[seattle_listing['review_scores_rating'] < 70.0]

# We can check the rating of these neighbourhoods and find out which neighbourhood has ratings more than 80 out of 100 and we can filter out places with less than 70/100 rating.

plt.figure(figsize=(20,5))

seattle_host_loc80 = seattle_above_80.neighbourhood_cleansed.value_counts().dropna()

(seattle_host_loc80*100/seattle_host_loc - 90).dropna().plot(kind="bar");

plt.ylabel('Percentage diviation from 90%')

plt.xlabel('Neighbourhood')

plt.title("Neighbourhood with more than 90% hosts rated 8/10");

# Here 0 marks the neighborhood with 90% of the hosts. Above 0 are the recommended places.

#More than 95% housing reted 8/10

[(seattle_host_loc80*100/seattle_host_loc - 95).index[i] for i, value in enumerate(seattle_host_loc80*100/seattle_host_loc - 95) if value > 0]

# Seattle is more spread out than Boston, and most of the neighbourhood is highly rated. <br>

# If 90% of the hosts in each neighbourhood are rated more than 8, that neighbourhood can be rocomended. So the recomended properties are **Arbor Heights, Atlantic, Broadview, Columbia City, Crown Hill, Fremont,Genesee,Georgetown, Harbor Island,Leschi,Loyal Heights,Madison Park,Madrona,Mann,Maple Leaf,Mount Baker,North Admiral,North Beacon Hill,North Delridge,Olympic Hills, Phinney Ridge,Rainier Beach,Ravenna, Seaview, Seward Park,South Beacon Hill,Victory Heights, West Queen Anne, and West Woodland** <br>

# If you prefer poplular neighbourhood, **Fremont, North Beacon Hill, and Mann** is recomended. <br>

# In terms of rating, most places in **Arbor Heights,Crown Hill,Georgetown,Harbor Island,Madrona,Rainier Beach,Seaview** are highly rated, but further research is needed since these palecs have leeser number of hosts.

# From the graph below We can see that **International District,Montlake, South Park, and View Ridge** have the most number of hosts with less than 7 rating. So these places should be avoided.

# #### Which places should be avoided in Seattle?

seattle_host_loc70 = seattle_below_70.neighbourhood_cleansed.value_counts()

(seattle_host_loc70/seattle_host_loc).dropna().plot(kind="bar");

plt.ylabel('fraction of the hosts\' rated less than 7')

plt.xlabel('Neighbourhood')

plt.title("Neighbourhood with hosts' rated less than 7/10");

# Property_type vs Price

#

(seattle_listing.groupby(["property_type"]).price.mean()).dropna().plot(kind='bar');

plt.ylabel('Price in USD');

# From the graph above we see that **Camper/RV, Dome house, Hostel, and Treehouse** are among the cheaper options to stay in Seattle (Costs less than $100).

# ## 5. Evaluation

#

# Let's put all the questions and answers togather and dicuss the the final remarks.

#

# ---

#

# ### Question 1: Which place is cheaper to stay? Boston or Seattle.

#

# **Seattle** is cheaper to visit. For the past two years the average price of seattle is **159 dollars** whereas, the average price of Boston is **206 dollars**.

#

# ---

#

# ### Question 2: What is the best time to visit these places?

#

# From the end of spring to the end of fall (March — November), Boston Airbnb's price is higher than average. So for Boston, **May and July** should be avoided if you want to save money. **March and November** are the best months to visit. During the winter season, **February** has the least price.<br>

# Similarly for Seattle, **July and August** should be avoided and **February** is the cheapest month of the year. From spring to the end of fall **March, April, May, October, and November** are the best months to visit.

#

# ---

#

# ### Question 3: Which city is more popular and what is the ideal time to visit?

#

# Over the last few years, the average occupancy of Boston and Seattle is 58% and 59% respectively. which suggests that Seattle is slightly more popular among visitors. <br>

# During early winter Boston is more occupied than the yearly average (more than 4% of the yearly average).<br>

# Seattle has more occupancy in **January** and from **July — December**.

#

# ---

#

# ### Question 4: What are the popular areas to stay and what is the recommended type of housing?

#

# **Bosoton:**

#

# The recommended properties are **Allston, Beacon Hill, Charlestown, Dorchester, East Boston, Hyde Park, Jamaica Plain, Leather District, Mattapan, North End, Roslindale, Roxbury, South Boston, South End, West Roxbury.** <br>

# If you prefer a popular neighbourhood, **Jamaica Plain, Dorchester, and South End** is recommended. <br>

# In terms of rating, most places in **Hyde Park, Leather District, Mattapan, Roslindale, West Roxbury** are highly rated, but further research is needed since these places have lesser number of hosts.<br>

# **Downtown and Misson Hill** have the most number of hosts' with less than 7 rating. So these places should be **avoided**.

# If our goal is to save money, then we see that **Bungalow, Guesthouse, In-law, and Villa** are among the cheaper options to stay in Boston (Costs $150 or less).

#

# **Seattle:**

#

# the recommended properties are **Arbor Heights, Atlantic, Broadview, Columbia City, Crown Hill, Fremont, Genesee, Georgetown, Harbor Island, Leschi, Loyal Heights, Madison Park, Madrona, Mann, Maple Leaf, Mount Baker, North Admiral, North Beacon Hill, North Delridge, Olympic Hills, Phinney Ridge, Rainier Beach, Ravenna, Seaview, Seward Park, South Beacon Hill, Victory Heights, West Queen Anne, and West Woodland** <br>

# If you prefer a popular neighborhood, **Fremont, North Beacon Hill, and Mann** are recommended.

# Most places in **Arbor Heights, Crown Hill, Georgetown, Harbor Island, Madrona, Rainier Beach, Seaview** are highly rated, but further research is needed since these places have a lesser number of hosts.

# We can see that **Montlake, South Park, and View Ridge** have the most number of hosts with less than 7 ratings. So these places should be **avoided**.<br>

# We see that **Camper/RV, Dome house, Hostel, and Treehouse** are among the cheaper options to stay in Seattle (Costs less than $100).

#

# ---

#

# In conclusion, I found out that in terms of weather conditions and housing prices, Seattle is a better place to travel compared to Boston.

# In future work, I am planning to predict the Airbnb price using machine learning.

#

| What is your next destination? Boston or Seattle!.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .jl

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Julia 1.0.1

# language: julia

# name: julia-1.0

# ---

# ### GASS utils

#

# Misc. methods for the GASS

# +

using Distances , Random

using PyCall

using DataFrames

import CSV

rootdir = "/home/stephane/Science/ALMA/ArrayConfig/GASS"

push!(LOAD_PATH,"$rootdir/master/src")

using GASS

import PyPlot

@pyimport astropy.units as u

## directory

datadir = "$rootdir/master/data"

wdir = "$rootdir/products"

plotdir = "$rootdir/products/test"

cd(wdir)

# +

## metatype for population

struct _cfg

arr::AbstractDataFrame

obs::observation

sub::subarrayParameters

wei::weight

ga::GA

end

# -

function _read_input_cfg(inpfile)

res= input_parameters(inpfile)

inpcfg= parse_input(res)

println(inpcfg)

## parameters inputs

res= input_parameters(inpcfg.file_parameters)

paramcfg= parse_parameters(res)

println(paramcfg)

return(inpcfg , paramcfg)

end

# +

## init population ...

function _init_cfg(obs , sub , wei , ga )

arrcfg = CSV.read(obs.Array_Configuration_File, datarow=4 , header=["X" , "Y", "Z" , "diam" , "name"] , delim= " ")

pop = _cfg(arrcfg , obs , sub , wei , ga)

return(pop)

end

# +

macro main(inpfile)

inpcfg , paramcfg = read_input_cfg(inpfile)

cfg = _init_cfg(paramcfg[1] , paramcfg[2], paramcfg[3], paramcfg[4])

end

@main("../master/data/GA_Inputs_O-10.txt.julia")

| notebooks/.ipynb_checkpoints/GA-util-checkpoint.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# + [markdown] colab_type="text" id="view-in-github"

# <a href="https://colab.research.google.com/github/richardtml/riiaa-20-aa/blob/master/notebooks/2a_cnn.ipynb" target="_parent"><img src="https://colab.research.google.com/assets/colab-badge.svg" alt="Open In Colab"/></a>

# -

# # Red convolucional

#

# #### Bere & <NAME>

#

# En esta libreta veremos un ejemplo de clasificación multiclase de imágenes de dígitos implementando una red convolucional en PyTorch.

#

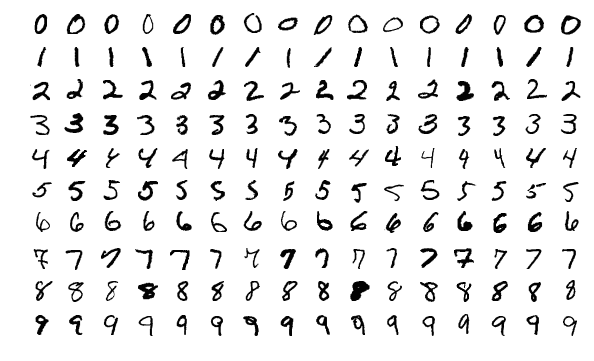

# Emplearemos un conjunto referencia llamado [MNIST](http://yann.lecun.com/exdb/mnist/) recolectado por [Yann LeCun](http://yann.lecun.com). Está compuesto de imágenes en escala de grises de 28 × 28 píxeles que contienen dígitos entre 0 y 9 escritos a mano. El conjunto cuenta con 60,000 imágenes de entrenamiento y 10,000 de prueba.

#

#

# ## 1 Preparación

# ### 1.1 Bibliotecas

# + colab={} colab_type="code" id="Ny0L2LzogTN-"

# funciones aleatorias

import random

# tomar n elementos de una secuencia

from itertools import islice as take

# gráficas

import matplotlib.pyplot as plt

# arreglos multidimensionales

import numpy as np

# redes neuronales

import torch

import torch.nn as nn

import torch.nn.functional as F

import torch.optim as optim

# imágenes

from skimage import io

# redes neuronales

from torch.utils.data import DataLoader

from torchvision import transforms

from torchvision.datasets import MNIST

# barras de progreso

from tqdm import tqdm

# directorio de datos

DATA_DIR = '../data'

# MNIST

MEAN = (0.1307)

STD = (0.3081)

# tamaño del lote

BATCH_SIZE = 128

# reproducibilidad

SEED = 0

random.seed(SEED)

np.random.seed(SEED)

torch.manual_seed(SEED)

# -

# ### 1.2 Auxiliares

def display_grid(xs, titles, rows, cols):

fig, ax = plt.subplots(rows, cols)

for r in range(rows):

for c in range(cols):

i = r * rows + c

ax[r, c].imshow(xs[i], cmap='gray')

ax[r, c].set_title(titles[i])

ax[r, c].set_xticklabels([])

ax[r, c].set_yticklabels([])

fig.tight_layout()

plt.show()

# + [markdown] colab_type="text" id="MjKxreAkoZeT"

# ## 2 Datos

# -

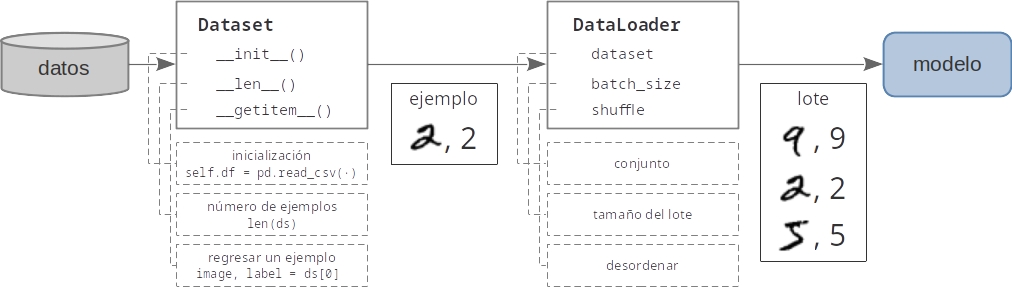

# ### 2.1 Tuberias de datos con PyTorch

#

#

# ### 2.2 Exploración

# creamos un Dataset

ds = MNIST(

# directorio de datos

root=DATA_DIR,

# subconjunto de entrenamiento

train=True,

# convertir la imagen a ndarray

transform=np.array,

# descargar el conjunto

download=True

)

# +

# cargamos algunas imágenes

images, labels = [], []

for i in range(12):

x, y = ds[i]

images.append(x)

labels.append(y)

# desplegamos

print(f'images[0] shape={images[0].shape} dtype={images[0].dtype}')

titles = [str(y) for y in labels]

display_grid(images, titles, 3, 4)

# + [markdown] colab_type="text" id="9p_BsiITogUA"

# ### 2.3 Cargadores de datos

# -

# #### Entrenamiento

# + colab={"base_uri": "https://localhost:8080/", "height": 54} colab_type="code" id="E1aEVpYtuadH" outputId="8df25761-3201-461a-e82b-26b5befd0302"

# transformaciones para la imagen

trn_tsfm = transforms.Compose([

# convertimos a torch.Tensor y escalamos a [0,1]

transforms.ToTensor(),

# estandarizamos: restamos la media y dividimos sobre la varianza

transforms.Normalize(MEAN, STD),

])

# creamos un Dataset

trn_ds = MNIST(

# directorio de datos

root=DATA_DIR,

# subconjunto de entrenamiento

train=True,

# transformación

transform=trn_tsfm

)

# creamos un DataLoader

trn_dl = DataLoader(

# conjunto

trn_ds,

# tamaño del lote

batch_size=BATCH_SIZE,

# desordenar

shuffle=True

)

# desplegamos un lote de imágenes

for x, y in take(trn_dl, 1):

print(f'x shape={x.shape} dtype={x.dtype}')

print(f'y shape={y.shape} dtype={y.dtype}')

# -

# #### Prueba

# + colab={"base_uri": "https://localhost:8080/", "height": 54} colab_type="code" id="QXMXXc9DPgqY" outputId="100e6a58-5552-4c5f-b08e-dc83c23c3b21"

# transformaciones para la imagen

tst_tsfm = transforms.Compose([

# convertimos a torch.Tensor y escalamos a [0,1]

transforms.ToTensor(),

# estandarizamos: restamos la media y dividimos sobre la varianza

transforms.Normalize(MEAN, STD),

])

# creamos un Dataset

tst_ds = MNIST(

# directorio de datos

root=DATA_DIR,

# subconjunto de entrenamiento

train=False,

# transformación

transform=tst_tsfm

)

# creamos un DataLoader

tst_dl = DataLoader(

# subconjunto

tst_ds,

# tamaño del lote

batch_size=BATCH_SIZE,

# desordenar

shuffle=True

)

# imprimimos forma y tipo del lote

for x, y in take(tst_dl, 1):

print(f'x shape={x.shape} dtype={x.dtype}')

print(f'y shape={y.shape} dtype={y.dtype}')

# -

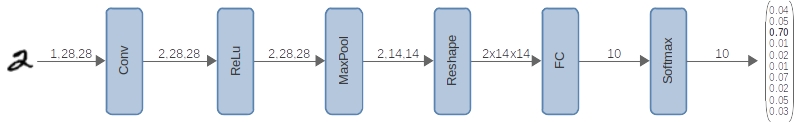

# ## 3 Modelo

#

#

# ### 3.1 Definición de la arquitectura

# definición del modelo

class CNN(nn.Module):

# inicializador

def __init__(self):

# inicilización del objeto padre, obligatorio

super(CNN, self).__init__()

# tamaño de las capas

C1 = 2

FC1 = 10

self.num_feats = 2 * 14 * 14

# capas convolucionales

self.cnn = nn.Sequential(

# bloque conv1

# [N, 1, 28, 28] => [N, 2, 28, 28]

nn.Conv2d(in_channels=1, out_channels=C1, kernel_size=3, padding=1),

# [N, 2, 28, 28]

nn.ReLU(),

# [N, 2, 28, 28] => [N, 2, 14, 14]

nn.MaxPool2d(kernel_size=2, stride=2)

)

# capas completamente conectadas

self.cls = nn.Sequential(

# bloque fc1

# [N, 2x14x14] => [N, 10]

nn.Linear(self.num_feats, FC1),

)

# metodo para inferencia

def forward(self, x):

# [N, 1, 28, 28] => [N, 2, 14, 14]

x = self.cnn(x)

# aplanamos los pixeles de la imagen

# [N, 2, 14, 14] => [N, 2x14x14]

x = x.view(-1, self.num_feats)

# [N, 2x14x14] => [N, 10]

x = self.cls(x)

return x

# ### 3.2 Impresión de la arquitectura

model = CNN()

print(model)

# ### 3.3 Prueba de la arquitectura

# inferencia con datos sinteticos

x = torch.zeros(1, 1, 28, 28)

y = model(x)

print(y.shape)

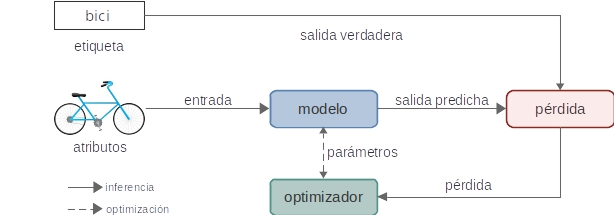

# ## 4 Entrenamiento

#

#

# ### 4.1 Ciclo de entrenamiento

# + colab={"base_uri": "https://localhost:8080/", "height": 568} colab_type="code" id="xCqwGRD1nz1a" outputId="7dc4823c-865a-41ee-b54b-67117e5d4e95"

# creamos un modelo

model = CNN()

# optimizador

opt = optim.SGD(model.parameters(), lr=1e-3)

# historial de pérdida

loss_hist = []

# ciclo de entrenamiento

EPOCHS = 20

for epoch in range(EPOCHS):

# entrenamiento de una época

for x, y_true in trn_dl:

# vaciamos los gradientes

opt.zero_grad()

# hacemos inferencia para obtener los logits

y_lgts = model(x)

# calculamos la pérdida

loss = F.cross_entropy(y_lgts, y_true)

# retropropagamos

loss.backward()

# actulizamos parámetros

opt.step()

# evitamos que se registren las operaciones

# en la gráfica de cómputo

with torch.no_grad():

losses, accs = [], []

# validación de la época

for x, y_true in take(tst_dl, 10):

# hacemos inferencia para obtener los logits

y_lgts = model(x)

# calculamos las probabilidades

y_prob = F.softmax(y_lgts, 1)

# obtenemos la clase predicha

y_pred = torch.argmax(y_prob, 1)

# calculamos la pérdida

loss = F.cross_entropy(y_lgts, y_true)

# calculamos la exactitud

acc = (y_true == y_pred).type(torch.float32).mean()

# guardamos históricos

losses.append(loss.item() * 100)

accs.append(acc.item() * 100)

# imprimimos métricas

loss = np.mean(losses)

acc = np.mean(accs)

print(f'E{epoch:2} loss={loss:6.2f} acc={acc:.2f}')

# agregagmos al historial de pérdidas

loss_hist.append(loss)

# -

# ### 4.2 Gráfica de la pérdida

plt.plot(loss_hist, color='red')

plt.xlabel('época')

plt.ylabel('pérdida')

plt.show()

# ## 5 Evaluación

#

#

# ### 5.1 Conjunto de validación

# +

# modelo en modo de evaluación

model.eval()

# evitamos que se registren las operaciones

# en la gráfica de cómputo

with torch.no_grad():

accs = []

# validación de la época

for x, y_true in tst_dl:

# hacemos inferencia para obtener los logits

y_lgts = model(x)

# calculamos las probabilidades

y_prob = F.softmax(y_lgts, 1)

# obtenemos la clase predicha

y_pred = torch.argmax(y_prob, 1)

# calculamos la exactitud

acc = (y_true == y_pred).type(torch.float32).mean()

accs.append(acc.item() * 100)

acc = np.mean(accs)

print(f'Exactitud = {acc:.2f}')

# -

# ### 5.2 Inferencia

# +

with torch.no_grad():

for x, y_true in take(tst_dl, 1):

y_lgts = model(x)

y_prob = F.softmax(y_lgts, 1)

y_pred = torch.argmax(y_prob, 1)

x = x[:12].squeeze().numpy()

y_true = y_true[:12].numpy()

y_pred = y_pred[:12].numpy()

titles = [f'V={t} P={p}' for t, p in zip(y_true, y_pred)]

display_grid(x, titles, 3, 4)

| notebooks/2a_cnn.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# name: python3

# ---

# + [markdown] id="view-in-github" colab_type="text"

# <a href="https://colab.research.google.com/github/seryeongi/exec_machinlearning/blob/master/wholesalecustomers_kmeans.ipynb" target="_parent"><img src="https://colab.research.google.com/assets/colab-badge.svg" alt="Open In Colab"/></a>

# + colab={"base_uri": "https://localhost:8080/"} id="51xtoNUHeb7T" outputId="3cdce381-10d3-4df1-f528-5b4bc61e8b4e"

# !ls #list

# + colab={"base_uri": "https://localhost:8080/"} id="mu3R39ibfY7c" outputId="55b83626-e357-4ebb-f1ab-0a9da0c1fc6d"

# !ls -l

# + [markdown] id="16TsWVn4gaiT"

# 자세하게(맨 앞과 맨 뒤 보기)

# **d**rwxr-xr-x 1 root root 4096 Jun 15 13:37 **sample_data**(directory)

# **-**rwxr-xr-x 1 root root 1697 Jan 1 2000 **anscombe.json**(file)

# + colab={"base_uri": "https://localhost:8080/"} id="gpQ6IkBdfgl-" outputId="b934b590-aca7-4d80-a849-a963c2a5541e"

# !pwd # 현재 자기 위치

# + colab={"base_uri": "https://localhost:8080/"} id="XFluru-rfxYp" outputId="4983700d-f1e5-41c3-cb56-4638ffda8e0d"

# !ls -l ./sample_data # 내가 원하는 디렉토리명 or 파일명

# + colab={"base_uri": "https://localhost:8080/"} id="aEdUFX4iguS0" outputId="f67cf3c7-41c3-4816-9042-e199231e706e"

# !ls -l ./

# + colab={"base_uri": "https://localhost:8080/"} id="YLzjUUfVjHTz" outputId="fec0c244-35d7-4c00-e924-15ecda45d178"

# !ls -l ./Wholesale_customers_data.csv

# + id="BJ60umwVin5K"

import pandas as pd

df = pd.read_csv('./Wholesale_customers_data.csv')

# + colab={"base_uri": "https://localhost:8080/"} id="1C66FKgsmMn4" outputId="e309fbff-e015-44fe-ec0f-74a4d1212cea"

df.info()

# + id="kF2ljLJqr5Sr"

X = df.iloc[:,:]

# + colab={"base_uri": "https://localhost:8080/"} id="_2bZ5GeCsSeh" outputId="6533be6e-ebec-4888-e2c1-6495c468a768"

X.shape

# + id="JbPlcc8FsVNz"

# split 할 필요없음 -> 측정할 방법 없음(y가 없어서)

from sklearn.preprocessing import StandardScaler

scaler = StandardScaler()

scaler.fit(X)

X = scaler.transform(X)

# + id="n2ABo3iCtUGJ"

from sklearn import cluster

kmeans = cluster.KMeans(n_clusters = 5)

# + colab={"base_uri": "https://localhost:8080/"} id="a2l2u4vBun4Q" outputId="01cf10e5-f7c1-4230-a457-c0ff178a657d"

kmeans.fit(X)

# + colab={"base_uri": "https://localhost:8080/"} id="1mIxUCKvuuGL" outputId="858147a8-31df-4b12-de43-13c2b8f6eb75"

kmeans.labels_

# + id="cOIltUgFvGFQ"

df['label'] = kmeans.labels_

# + colab={"base_uri": "https://localhost:8080/", "height": 204} id="m3xPXuifve3O" outputId="f52d384b-f762-4bba-dd49-19d2155c8941"

df.head()

# + [markdown] id="JCtEWxfzwphT"

# 시각화의 중심은 x, y 무조건 x, y 두개의 컬럼으로 만들기

# 이차원으로 무조건 만들어야 한다.

# + colab={"base_uri": "https://localhost:8080/", "height": 602} id="nAzmPf_ZvffF" outputId="2adde85c-d3cd-4c5e-9684-1caab4a62485"

df.plot(kind='scatter', x='Grocery', y='Frozen', c='label', cmap='Set1', figsize=(10,10)) # 종류, x, y

# + colab={"base_uri": "https://localhost:8080/"} id="pqw4Q6BJxNgT" outputId="41ee32dd-74ee-46a2-ea63-69746a38d62c"

# for ...:

# if ~((df['label'] == 0) | (df['label'] == 1)):

dfx = df[~((df['label'] == 0) | (df['label'] == 1))]

df.shape, dfx.shape

# + colab={"base_uri": "https://localhost:8080/", "height": 602} id="mLNeDKC82LwS" outputId="c7c220cd-ae8e-46ac-e18f-327c45c8af6d"

dfx.plot(kind='scatter', x='Grocery', y='Frozen', c='label', cmap='Set1', figsize=(10,10)) # 종류, x, y

# + id="VmASp-Bi26H0"

df.to_excel('./wholesale.xls')

# + id="SvIPbbWP3JBp"

| wholesalecustomers_kmeans.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# +

# prerequisite package imports

import numpy as np

import pandas as pd

import matplotlib.pyplot as plt

import seaborn as sb

# %matplotlib inline

from solutions_biv import scatterplot_solution_1, scatterplot_solution_2

# -

# In this workspace, you'll make use of this data set describing various car attributes, such as fuel efficiency. The cars in this dataset represent about 3900 sedans tested by the EPA from 2013 to 2018. This dataset is a trimmed-down version of the data found [here](https://catalog.data.gov/dataset/fuel-economy-data).

fuel_econ = pd.read_csv('./data/fuel_econ.csv')

fuel_econ.head()

# **Task 1**: Let's look at the relationship between fuel mileage ratings for city vs. highway driving, as stored in the 'city' and 'highway' variables (in miles per gallon, or mpg). Use a _scatter plot_ to depict the data. What is the general relationship between these variables? Are there any points that appear unusual against these trends?

plt.scatter(data=fuel_econ, x="city", y="highway", alpha=0.05)

plt.xlabel("City fuel efficency")

plt.ylabel("Highway fuel efficency")

plt.xlim((10, 60))

plt.ylim((10, 60))

# run this cell to check your work against ours

scatterplot_solution_1()

# **Task 2**: Let's look at the relationship between two other numeric variables. How does the engine size relate to a car's CO2 footprint? The 'displ' variable has the former (in liters), while the 'co2' variable has the latter (in grams per mile). Use a heat map to depict the data. How strong is this trend?

x_vals = fuel_econ["displ"]

x_bins = np.arange(0, np.ceil(x_vals.max()), np.ceil(x_vals.std())/5)

y_vals = fuel_econ["co2"]

y_bins = np.arange(np.floor(y_vals.min()), np.ceil(y_vals.max()), np.ceil(y_vals.std())/2)

plt.hist2d(x=x_vals, y=y_vals, cmin=1, bins=[x_bins, y_bins], cmap="viridis");

plt.colorbar()

y_vals.describe()

# run this cell to check your work against ours

scatterplot_solution_2()

| exercises/Data & Plotting/Scatterplot_Practice.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# +

from collections import defaultdict

import json

import os

import matplotlib.pyplot as plt

from matplotlib.ticker import FuncFormatter

import numpy as np

import pandas as pd

from tti_explorer import config, sensitivity, utils

from tti_explorer.strategies import RETURN_KEYS

# +

perc_formatter = FuncFormatter(lambda x, _: f"{100*x:.0f}%")

formatters = {

'app_cov': perc_formatter,

'trace_adherence': perc_formatter

}

rc_dct = {

'figure.figsize': (7, 6),

'figure.max_open_warning': 1000,

"errorbar.capsize": 2.5,

'font.size': 16

}

plt.rcParams.update(rc_dct)

plt.style.use("seaborn-ticks")

# +

chart_folder = os.path.join(os.environ['REPOS'], 'tti-explorer', 'outputs', 'charts', 'new-style')

input_folder = os.path.join(os.environ['DATA'], "tti-explorer")

measures_order = [

'no_TTI',

'symptom_based_TTI',

'test_based_TTI',

'test_based_TTI_test_contacts',

]

# +

def config_files(folder):

return filter(lambda x: x.startswith("config") and x.endswith('.json'), os.listdir(folder))

def run_fname(cfg_fname):

return cfg_fname.replace("config", "run").replace("json", 'csv')

def resgen(data_dir):

lockdowns = next(os.walk(data_dir))[1]

for lockdown in lockdowns:

folder = os.path.join(data_dir, lockdown)

for cfg_file in config_files(folder):

cfg = utils.read_json(os.path.join(folder, cfg_file))

target = cfg[sensitivity.TARGET_KEY]

results = pd.read_csv(os.path.join(folder, run_fname(cfg_file)), index_col=0)

yield lockdown, target, cfg[sensitivity.CONFIG_KEY], results

def errorbar(ax, xaxis, means, stds, label, **kwds):

conf_intervals = 1.96 * stds

ax.errorbar(xaxis, means, yerr=conf_intervals, label=label, **kwds)

ax.set_xticks(xaxis)

ax.set_xticklabels(xaxis)

def legend(ax, **kwds):

defaults = dict(

loc="best",

frameon=True,

framealpha=0.5,

fancybox=False,

fontsize=12

)

defaults.update(kwds)

return ax.legend(**defaults)

def plot_lockdown(plotter, lockdown_dct, deck, key_to_plot, order, formatters={}, title=False):

for param_name, sim_results in lockdown_dct.items():

tick_formatter = formatters.get(param_name)

fig, ax = plt.subplots(1)

for measure in order:

xaxis, means, std_errs = arrange_sim_results(sim_results[measure], key_to_plot)

plotter(ax, xaxis, means, std_errs, label=nice_lockdown_name(measure))

legend(ax)

if title:

ax.set_title(param_name)

ax.set_ylabel(key_to_plot, fontsize=14)

ax.set_xlabel(nice_param_name(param_name))

if tick_formatter is not None:

ax.xaxis.set_major_formatter(tick_formatter)

deck.add_figure(fig, name=param_name+"_"+key_to_plot.lower().replace(" ", "_").replace("%", ""))

return fig

def make_tables(entry, key):

coords, reslist = zip(*entry)

means, stds = zip(*[(k[key].loc['mean'], k[key].loc['std']) for k in reslist])

means_mat = pd.DataFrame(np.array(utils.sort_by(means, coords, return_idx=Faelse)).reshape(3, 3))

stds_mat = pd.DataFrame(np.array(utils.sort_by(stds, coords, return_idx=False)).reshape(3, 3))

return means_mat, stds_mat

def format_table(means, stds):

t1, t2 = ['TTI Delay (days)', 'NPI severity']

table = means.applymap(

lambda x: f"{x:.2f}"

).add(

" \pm "

).add(

stds.mul(

1.96

).applymap(

lambda x: f"{x:.2f}"

)

).applymap(lambda x: f"${x}$")

new_order = [[2,2], [2,1], [1,2], [2,0], [1,1], [0,2], [1,0], [0,1], [0,0]]

new_order = list(tuple(x) for x in new_order)

table.index = ['No TTI'] + new_order

table.columns = sorted(test_trace_results.keys(), reverse = True)

table.index.name = t1

table.columns.name = t2

return table

def format_mean_only(means):

t1, t2 = ['TTI Delay (days)', 'NPI severity']

table = means.applymap(

lambda x: f"{x:.0f}\%"

)

new_order = [[2,2], [2,1], [1,2], [2,0], [1,1], [0,2], [1,0], [0,1], [0,0]]

new_order = list(tuple(x) for x in new_order)

table.index = ['No TTI'] + new_order

table.columns = sorted(test_trace_results.keys(), reverse = True)

table.index.name = t1

table.columns.name = t2

return table

def make_new_tables(big_dict, name='test_based_TTI'):

new_order = [[2,2], [2,1], [1,2], [2,0], [1,1], [0,2], [1,0], [0,1], [0,0]]

mean_mat = np.zeros((10, 5))

stds_mat = np.zeros((10, 5))

no_tti, test_based_tti = ('no_TTI', name)

s_levels = sorted(test_trace_results.keys(), reverse=True)

for s_idx, s_level in enumerate(s_levels):

mean_mat[0, s_idx] = big_dict[s_level][no_tti]['means'].iloc[0].loc[0]

stds_mat[0, s_idx] = big_dict[s_level][no_tti]['stds'].iloc[0].loc[0]

for new_row_idx, (row_idx, col_idx) in enumerate(new_order):

mean_mat[new_row_idx+1, s_idx] = big_dict[s_level][test_based_tti]['means'].iloc[row_idx].loc[col_idx]

stds_mat[new_row_idx+1, s_idx] = big_dict[s_level][test_based_tti]['stds'].iloc[row_idx].loc[col_idx]