code stringlengths 38 801k | repo_path stringlengths 6 263 |

|---|---|

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# +

import sys

sys.path.append('../../pyutils')

import numpy as np

from scipy.linalg import solve_triangular

import metrics

# -

# # The Cholesky decomposition

#

# Let $A \in \mathbb{R}^{n*n}$ a symmetric positive definite matrix.

# $A$ can de decomposed as:

# $$A = LL^T$$

# with $L \in \mathbb{R}^{n*n}$ a lower triangular matrix with positive diagonal entries.

#

#

# $$L_{jj} = \sqrt{A_{jj} - \sum_{k=1}^{j-1} L_{jk}^2}$$

# $$L_{ij} = \frac{1}{L_{jj}}(A_{ij} - \sum_{k=1}^{j-1}L_{ik}L_{jk}) \text{ for } i > j$$

#

# The algorithm can be impleted row by row or column by column

# +

def cholesky(A):

n = len(A)

L = np.zeros((n, n))

for j in range(n):

L[j,j] = np.sqrt(A[j,j] - np.sum(L[j,:j]**2))

for i in range(j+1, n):

L[i,j] = (A[i,j] - np.sum(L[i, :j] * L[j, :j])) / L[j,j]

return L

A = np.random.randn(5, 5)

A = A @ A.T

L = cholesky(A)

print(metrics.tdist(L - np.tril(L), np.zeros(A.shape)))

print(metrics.tdist(L @ L.T, A))

# -

# # The LDL Decomposition

#

# Let $A \in \mathbb{R}^{n*n}$ a symmetric positive definite matrix.

# $A$ can de decomposed as:

# $$A = LDL^T$$

# with $L \in \mathbb{R}^{n*n}$ a lower unit triangular matrix and $D \in \mathbb{R}^{n*n}$ a diagonal matrix.

# This is a modified version of the Cholsky decomposition that doesn't need square roots.

#

# $$D_{jj} = A_{jj} - \sum_{k=1}^{j-1} L_{jk}^2D_{kk}$$

# $$L_{ij} = \frac{1}{D_{jj}}(A_{ij} - \sum_{k=1}^{j-1}L_{ik}L_{jk}D_{kk}) \text{ for } i > j$$

# +

def cholesky_ldl(A):

n = len(A)

L = np.eye(n)

d = np.zeros(n)

for j in range(n):

d[j] = A[j,j] - np.sum(L[j,:j]**2 * d[:j])

for i in range(j+1, n):

L[i,j] = (A[i,j] - np.sum(L[i,:j]*L[j,:j]*d[:j])) / d[j]

return L, d

A = np.random.randn(5, 5)

A = A @ A.T

L, d = cholesky_ldl(A)

print(metrics.tdist(L - np.tril(L), np.zeros(A.shape)))

print(metrics.tdist(np.diag(L), np.ones(len(L))))

print(metrics.tdist(L @ np.diag(d) @ L.T, A))

# -

# # Solve a linear system

#

# Find $x$ such that:

#

# $$Ax = b$$

#

# Compute the cholesky decomposition of $A$

#

# $$A = LL^T$$

# $$LL^Tx = b$$

#

# Solve the lower triangular system:

#

# $$Ly = b$$

#

# Solve the upper triangular system:

#

# $$L^Tx = y$$

# +

def cholesky_system(A, b):

L = cholesky(A)

y = solve_triangular(L, b, lower=True)

x = solve_triangular(L.T, y, lower=False)

return x

A = np.random.randn(5, 5)

A = A @ A.T

b = np.random.randn(5)

x = cholesky_system(A, b)

print(metrics.tdist(A @ x, b))

| courses/linear_algebra/cholesky_decomposition.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# +

# importacion general de librerias y de visualizacion (matplotlib y seaborn)

import pandas as pd

import numpy as np

import random

import re

pd.options.display.float_format = '{:20,.2f}'.format # suprimimos la notacion cientifica en los outputs

import warnings

warnings.filterwarnings('ignore')

# -

twt_data = pd.read_csv('~/Documents/Datos/DataSets/TP2/train_super_featured.csv')

twt_data.head()

pattern = re.compile("(?P<url>https?://[^\s]+)")

def remove_link(twt):

return pattern.sub("r ", twt)

# + active=""

# twt_data['text'] = twt_data['text'].map(remove_link)

# -

from sklearn.feature_extraction.text import CountVectorizer

from sklearn.feature_extraction.text import TfidfTransformer

from sklearn.naive_bayes import MultinomialNB

from sklearn.pipeline import Pipeline

from sklearn.linear_model import SGDClassifier

from sklearn.model_selection import GridSearchCV

from sklearn.model_selection import train_test_split

from sklearn.feature_extraction.text import TfidfVectorizer

train, test = train_test_split(twt_data, test_size=0.2)

count_vect = CountVectorizer(stop_words='english')

X_train_counts = count_vect.fit_transform(twt_data['super_clean_text'])

X_train_counts.shape

tfidf_transformer = TfidfTransformer()

X_train_tfidf = tfidf_transformer.fit_transform(X_train_counts)

X_train_tfidf.shape

count_vect.get_feature_names()

text_clf = Pipeline([('vect', CountVectorizer()),

('tfidf', TfidfTransformer()),

('clf', MultinomialNB()),

])

text_clf = text_clf.fit(train.super_clean_text, train.target_relabeled)

predicted = text_clf.predict(test.super_clean_text)

np.mean(predicted == test.target_relabeled)

text_clf_svm = Pipeline([('vect', CountVectorizer()),

('tfidf', TfidfTransformer()),

('clf-svm', SGDClassifier(loss='hinge', penalty='l2',

alpha=1e-3, random_state=42)),

])

text_clf_svm = text_clf_svm.fit(train.super_clean_text, train.target_relabeled)

predicted_svm = text_clf_svm.predict(test.super_clean_text)

np.mean(predicted_svm == test.target_relabeled)

parameters = {'vect__ngram_range': [(1, 1), (1, 2)],

'tfidf__use_idf': (True, False),

'clf__alpha': (1e-2, 1e-3),

}

gs_clf = GridSearchCV(text_clf, parameters, n_jobs=-1)

gs_clf = gs_clf.fit(train.clean_text, train.target_label)

gs_clf.best_score_

gs_clf.best_params_

text_clf_improved = Pipeline([('vect', CountVectorizer()),

('clf', MultinomialNB()),

])

text_clf = text_clf_improved.fit(train.clean_text, train.target_label)

predicted = text_clf_improved.predict(test.clean_text)

np.mean(predicted == test.target_label)

vectorizer = TfidfVectorizer()

X = vectorizer.fit_transform(train.clean_text)

tf_idf_train = pd.DataFrame(data = X.toarray(), columns=vectorizer.get_feature_names())

tf_idf_train

test_data = pd.read_csv('~/Documents/Datos/DataSets/TP2/test_super_featured.csv')

test_data.head()

text_clf = text_clf.fit(twt_data.clean_text, twt_data.target_label)

predicted = text_clf.predict(test_data.clean_text)

predicted

test_data[test_data.clean_text != test_data.clean_text]

test_data['text_super_cleaned'].fillna(" ", inplace=True)

test_data[test_data.clean_text != test_data.clean_text]

test_data['target'] = predicted

test_data[['id_original', 'target']].rename(columns={'id_original': 'id'}).to_csv('~/Documents/Datos/DataSets/TP2/res_NB_1.csv', index=False)

| TP2/Alejo/1_NB_SUPERCLEAN.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# +

import os

os.chdir("..")

import pandas as pd

import scipy.stats as stats

# -

bio_merge_sample_df=\

pd.read_csv("constructed\\capstone\\bio_merge_sample.csv", sep=',')

bio_merge_sample_df=bio_merge_sample_df.loc[:, ~bio_merge_sample_df.columns.str.contains('^Unnamed')]

bio_merge_sample_df=bio_merge_sample_df.reset_index(drop=True)

bio_merge_sample_df.head(10)

bio_merge_sample_df.shape

#t-test to compare abnormal returns on sec filing days: Average return on days with SEC filings

#has more variance

bio_merge_sample_df['abs_abnormal_return']=abs(bio_merge_sample_df['arith_resid'])

returns_nosec=bio_merge_sample_df.loc[bio_merge_sample_df.path.isnull()]['abs_abnormal_return']

returns_sec=bio_merge_sample_df[bio_merge_sample_df.path.notnull()]['abs_abnormal_return']

t_stat, p_val = stats.ttest_ind(returns_nosec, returns_sec, equal_var=False)

t_stat

p_val

returns_nosec.describe()

returns_sec.describe()

# +

#compare Psychosocial Words and TFID-selected words for biotech sample

words_df= pd.read_csv("input files\\capstone\\capstone_sentiment.csv")

words_df=words_df.loc[:, ~words_df.columns.str.contains('^Unnamed')]

words_df=words_df.reset_index(drop=True)

words_df['word']=words_df['word'].str.lower()

#words_df.head(10)

bio_dataset_features= pd.read_csv("constructed\\bio_dataset_features.csv")

bio_dataset_features=bio_dataset_features.loc[:, ~bio_dataset_features.columns.str.contains('^Unnamed')]

bio_dataset_features=bio_dataset_features.reset_index(drop=True)

#bio_dataset_features.head(10)

comparison_df=pd.merge(words_df, bio_dataset_features, how='inner', \

on=['word'])

comparison_df.head(20)

# +

#compare Psychosocial Words and TFID-selected words for large sample:

all_features_features= pd.read_csv("constructed\\Large_dataset_features.csv")

all_features_features=all_features_features.loc[:, ~all_features_features.columns.str.contains('^Unnamed')]

all_features_features=all_features_features.reset_index(drop=True)

all_comparison_df=pd.merge(words_df, all_features_features, how='inner', \

on=['word'])

all_comparison_df.head(20)

# -

| python/capstone/Capstone Results Additional.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# # Study Designer Example (DNA sequencing)

from ipywidgets import (RadioButtons, VBox, HBox, Layout, Label, Checkbox, Text, IntSlider)

from qgrid import show_grid

label_layout = Layout(width='100%')

from isatools.create.models import *

from isatools.model import Investigation

from isatools.isatab import dump_tables_to_dataframes as dumpdf

import qgrid

qgrid.nbinstall(overwrite=True)

# ## Sample planning section

# ### Study design type

#

# Please specify if the study is an intervention or an observation.

rad_study_design = RadioButtons(options=['Intervention', 'Observation'], value='Intervention', disabled=False)

VBox([Label('Study design type?', layout=label_layout), rad_study_design])

# ### Intervention study

#

# If specifying an intervention study, please answer the following:

# - Are study subjects exposed to a single intervention or to multiple intervention?

# - Are there 'hard to change' factors, which restrict randomization of experimental unit?

#

# *Note: if you chose 'observation' as the study design type, the following choices will be disabled and you should skip to the Observation study section*

#

if rad_study_design.value == 'Intervention':

study_design = InterventionStudyDesign()

if rad_study_design.value == 'Observation':

study_design = None

intervention_ui_disabled = not isinstance(study_design, InterventionStudyDesign)

intervention_type = RadioButtons(options=['single', 'multiple'], value='single', disabled=intervention_ui_disabled)

intervention_type_vbox = VBox([Label('Single intervention or to multiple intervention?', layout=label_layout), intervention_type])

free_or_restricted_design = RadioButtons(options=['yes', 'no'], value='no', disabled=intervention_ui_disabled)

free_or_restricted_design_vbox = VBox([Label("Are there 'hard to change' factors?", layout=label_layout), free_or_restricted_design])

HBox([intervention_type_vbox, free_or_restricted_design_vbox])

hard_to_change_factors_ui_disabled = free_or_restricted_design.value == 'no'

hard_to_change_factors = RadioButtons(options=[1, 2], value=1, disabled=hard_to_change_factors_ui_disabled)

VBox([Label("If applicable, how many 'hard to change factors'?", layout=label_layout), hard_to_change_factors])

repeats = intervention_type.value != 'single'

factorial_design = free_or_restricted_design.value == 'no'

split_plot_design = (free_or_restricted_design.value == 'yes' and hard_to_change_factors.value == 1)

split_split_plot_design = (free_or_restricted_design.value == 'yes' and hard_to_change_factors.value == 2)

print('Interventions: {}'.format('Multiple interventions' if repeats else 'Single intervention'))

design_type = 'factorial' # always default to factorial

if split_plot_design:

design_type = 'split plot'

elif split_split_plot_design:

design_type = 'split split'

print('Design type: {}'.format(design_type))

# #### Factorial design - intervention types

#

# If specifying an factorial design, please list the intervention types here.

factorial_design_ui_disabled = not factorial_design

chemical_intervention = Checkbox(value=True, description='Chemical intervention', disabled=factorial_design_ui_disabled)

behavioural_intervention = Checkbox(value=False, description='Behavioural intervention', disabled=factorial_design_ui_disabled)

surgical_intervention = Checkbox(value=False, description='Surgical intervention', disabled=factorial_design_ui_disabled)

biological_intervention = Checkbox(value=False, description='Biological intervention', disabled=factorial_design_ui_disabled)

radiological_intervention = Checkbox(value=False, description='Radiological intervention', disabled=factorial_design_ui_disabled)

VBox([chemical_intervention, surgical_intervention, biological_intervention, radiological_intervention])

level_uis = []

if chemical_intervention:

agent_levels = Text(

value='calpol, no agent',

placeholder='e.g. cocaine, calpol',

description='Agent:',

disabled=False

)

dose_levels = Text(

value='low, high',

placeholder='e.g. low, high',

description='Dose levels:',

disabled=False

)

duration_of_exposure_levels = Text(

value='short, long',

placeholder='e.g. short, long',

description='Duration of exposure:',

disabled=False

)

VBox([Label("Chemical intervention factor levels:", layout=label_layout), agent_levels, dose_levels, duration_of_exposure_levels])

factory = TreatmentFactory(intervention_type=INTERVENTIONS['CHEMICAL'], factors=BASE_FACTORS)

for agent_level in agent_levels.value.split(','):

factory.add_factor_value(BASE_FACTORS[0], agent_level.strip())

for dose_level in dose_levels.value.split(','):

factory.add_factor_value(BASE_FACTORS[1], dose_level.strip())

for duration_of_exposure_level in duration_of_exposure_levels.value.split(','):

factory.add_factor_value(BASE_FACTORS[2], duration_of_exposure_level.strip())

print('Number of study groups (treatment groups): {}'.format(len(factory.compute_full_factorial_design())))

treatment_sequence = TreatmentSequence(ranked_treatments=factory.compute_full_factorial_design())

# Next, specify if all study groups of the same size, i.e have the same number of subjects? (in other words, are the groups balanced).

group_blanced = RadioButtons(options=['Balanced', 'Unbalanced'], value='Balanced', disabled=False)

VBox([Label('Are study groups balanced?', layout=label_layout), group_blanced])

# Provide the number of subject per study group:

group_size = IntSlider(value=5, min=0, max=100, step=1, description='Group size:', disabled=False, continuous_update=False, orientation='horizontal', readout=True, readout_format='d')

group_size

plan = SampleAssayPlan(group_size=group_size.value)

rad_sample_type = RadioButtons(options=['Blood', 'Sweat', 'Tears', 'Urine'], value='Blood', disabled=False)

VBox([Label('Sample type?', layout=label_layout), rad_sample_type])

# How many times each of the samples have been collected?

sampling_size = IntSlider(value=3, min=0, max=100, step=1, description='Sample size:', disabled=False, continuous_update=False, orientation='horizontal', readout=True, readout_format='d')

sampling_size

plan.add_sample_type(rad_sample_type.value)

plan.add_sample_plan_record(rad_sample_type.value, sampling_size.value)

isa_object_factory = IsaModelObjectFactory(plan, treatment_sequence)

# ## Generate ISA model objects from the sample plan and render the study-sample table

# *Check state of the Sample Assay Plan after entering sample planning information:*

import json

from isatools.create.models import SampleAssayPlanEncoder

print(json.dumps(plan, cls=SampleAssayPlanEncoder, sort_keys=True, indent=4, separators=(',', ': ')))

isa_investigation = Investigation(identifier='inv101')

isa_study = isa_object_factory.create_study_from_plan()

isa_study.filename = 's_study.txt'

isa_investigation.studies = [isa_study]

dataframes = dumpdf(isa_investigation)

sample_table = next(iter(dataframes.values()))

show_grid(sample_table)

print('Total rows generated: {}'.format(len(sample_table)))

# ## Assay planning

# ### Select assay technology type to map to sample type from sample plan

rad_assay_type = RadioButtons(options=['DNA microarray', 'DNA sequencing', 'Mass spectrometry', 'NMR spectroscopy'], value='DNA sequencing', disabled=False)

VBox([Label('Assay type to map to sample type "{}"?'.format(rad_sample_type.value), layout=label_layout), rad_assay_type])

if rad_assay_type.value == 'DNA sequencing':

assay_type = AssayType(measurement_type='genome sequencing', technology_type='nucleotide sequencing')

print('Selected measurement type "genome sequencing" and technology type "nucleotide sequencing"')

else:

raise Exception('Assay type not implemented')

# ### Topology modifications

technical_replicates = IntSlider(value=2, min=0, max=5, step=1, description='Technical repeats:', disabled=False, continuous_update=False, orientation='horizontal', readout=True, readout_format='d')

technical_replicates

inst_mod_454gs = Checkbox(value=True, description='Instrument: 454 GS')

inst_mod_454gsflx = Checkbox(value=True, description='Instrument: 454 GS FLX')

VBox([inst_mod_454gs, inst_mod_454gsflx])

instruments = set()

if inst_mod_454gs.value: instruments.add('454 GS')

if inst_mod_454gsflx.value: instruments.add('454 GS FLX')

top_mods = DNASeqAssayTopologyModifiers(technical_replicates=technical_replicates.value, instruments=instruments)

print('Technical replicates: {}'.format(top_mods.technical_replicates))

assay_type.topology_modifiers = top_mods

plan.add_assay_type(assay_type)

plan.add_assay_plan_record(rad_sample_type.value, assay_type)

assay_plan = next(iter(plan.assay_plan))

print('Added assay plan: {0} -> {1}/{2}'.format(assay_plan[0].value.term, assay_plan[1].measurement_type.term, assay_plan[1].technology_type.term))

if len(top_mods.instruments) > 0:

print('Instruments: {}'.format(list(top_mods.instruments)))

# ## Generate ISA model objects from the assay plan and render the assay table

# *Check state of Sample Assay Plan after entering assay plan information:*

print(json.dumps(plan, cls=SampleAssayPlanEncoder, sort_keys=True, indent=4, separators=(',', ': ')))

isa_investigation.studies = [isa_object_factory.create_assays_from_plan()]

for assay in isa_investigation.studies[-1].assays:

print('Assay generated: {0}, {1} samples, {2} processes, {3} data files'

.format(assay.filename, len(assay.samples), len(assay.process_sequence), len(assay.data_files)))

dataframes = dumpdf(isa_investigation)

show_grid(dataframes[next(iter(dataframes.keys()))])

| notebooks/Study Designer (DNA sequencing).ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# ## Predicing Housing Prices Using Linear Regression

#

#

# In our last exercises, we learned about Python, Matrices, Vectors, handing and plotting data. In this tutorial, we will use all that information and create a mathematical model to predict housing prices using Linear regression.

#

#

# #### What is Linear Regression

#

# Linear regression attempts to model the relationship between two variables by fitting a linear equation to observed data. One variable is considered to be an explanatory variable, and the other is considered to be a dependent variable.

#

# <img src="1.PNG" align="center"/>

#

# **Least Squares Methods**

#

# <img src="2.PNG" align="center"/>

from IPython.display import YouTubeVideo

YouTubeVideo('OxMNNjp-mDw', width=860, height=460)

# ### Predicting Housing prices using Linear Regression

#

# We will take the Housing dataset which contains information about different houses in Boston. We can access this data from the scikit-learn library. There are 506 samples and 13 feature variables in this dataset. The objective is to predict the value of prices of the house using the given features.

#

# So let’s get started.

# +

import numpy as np

import matplotlib.pyplot as plt

import pandas as pd

import seaborn as sns

from sklearn.datasets import load_boston

boston_dataset = load_boston()

# -

print(boston_dataset.keys())

print (boston_dataset.DESCR)

boston = pd.DataFrame(boston_dataset.data, columns=boston_dataset.feature_names)

boston.head()

#- Median value of owner-occupied homes in $1000's

boston['MEDV'] = boston_dataset.target

sns.distplot(boston['MEDV'], bins=30)

plt.show()

# We will use the following features to train our Model.

#

# **RM:** It's an average number of rooms, so I would expect this to never be less than 1, while the maximum number of rooms could be quite high. Given the maximum price of $50,000, I would expect it to be either 1.385 or 5.609.

#

# **CRIM:** Per capita crime rate by town

#

# In machine learning, the variable that is being modeled is called the target variable; it's what you are trying to predict given the features. For this dataset, the suggested target is MEDV, the median house value in 1,000s of dollar

X = pd.DataFrame(np.c_[boston['CRIM'], boston['RM']], columns = ['CRIM','RM'])

Y = boston['MEDV']

# +

from sklearn.model_selection import train_test_split

X_train, X_test, Y_train, Y_test = train_test_split(X, Y, test_size = 0.2, random_state=5)

print(X_train.shape)

print(X_test.shape)

print(Y_train.shape)

print(Y_test.shape)

# +

from sklearn.linear_model import LinearRegression

lin_model = LinearRegression()

lin_model.fit(X_train, Y_train)

# -

Y_predict = lin_model.predict(X_test)

print (X_test.shape)

print (Y_predict)

# Scatter plots of Actual vs Predicted are one of the richest form of data visualization. You can tell pretty much everything from it. Ideally, all your points should be close to a regressed diagonal line.

# +

plt.scatter(Y_test, Y_predict , c = 'red')

# 100 percent accuracy line

plt.plot(Y_test, Y_test, c = 'blue')

plt.xlabel("Actual House Prices ($1000)")

plt.ylabel("Predicted House Prices: ($1000)")

plt.xticks(range(0, int(max(Y_test)),2))

plt.yticks(range(0, int(max(Y_predict)),2))

plt.title("Actual Prices vs Predicted prices")

| Implementation_Examples_Predicting_Something/Implementation_Examples_Predicting_Something.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# +

import pandas

data = pandas.read_csv('data/gapminder_gdp_europe.csv', index_col='country')

#Select value by position in tabel

#Select value in 1st row and 1st column

print(data.iloc[0, 0])

# -

#Select value by entry label, similar to using dictionary keys

print(data.loc["Albania", "gdpPercap_1952"])

#Using : to select all columns/rows

#Like slicing

#Would produce the same result without a 2nd index ( data.loc["Albania] )

print(data.loc["Albania", :])

# Would get the same result from

# data["gdpPercap_1952"]

# and data.gdpPercap_1952

print(data.loc[:, "gdpPercap_1952"])

#Select multiple columns/rows using a named slice

# loc is inclusive at both ends of the slice

# iloc includes everything but the final index

print(data.loc['Italy':'Poland', 'gdpPercap_1962':'gdpPercap_1972'])

#Calculate the max value in a slice

print(data.loc['Italy':'Poland', 'gdpPercap_1962':'gdpPercap_1972'].max())

#Calculate the min value in a slice

print(data.loc['Italy':'Poland', 'gdpPercap_1962':'gdpPercap_1972'].min())

#Use comparisons based on values

#Produces a boolean dataframe

subset = data.loc['Italy':'Poland', 'gdpPercap_1962':'gdpPercap_1972']

print('Subset of data:\n', subset)

# Which values were greater than 10000 ?

print('\nWhere are values large?\n', subset > 10000)

#Mask where false using NaN (Not a number)

mask = subset > 10000

print(subset[mask])

#NaN is ignored by stats operations

print(subset[subset > 10000].describe())

# # Select-Apply-Combine methods

#Split European contries by GDP

#Split into higher and lower than average GDP

#Estimate 'Wealthy score' by how many times a country has been higher or lower

mask_higher = data.apply(lambda x:x>x.mean())

wealth_score = mask_higher.aggregate('sum',axis=1)/len(data.columns)

wealth_score

#Sum financial contribution over the years

data.groupby(wealth_score).sum()

#Find per capita of Serbia in 2007

print(data.loc["Serbia", "gdpPercap_2007"])

#iloc includes all but last index: 0, 1

print(data.iloc[0:2, 0:2])

#loc includes all: 0, 1, 2

print(data.loc['Albania':'Belgium', 'gdpPercap_1952':'gdpPercap_1962'])

#first = Reads in data and assigns columns as row headings

#second = Selects data where continent=Americas

#third = From Americas data, remove Puerto Rico

#fourth = Remove the "continent" column - the 2nd column which has an index of 1

#Write to .csv called "result.csv"

first = pandas.read_csv('data/gapminder_all.csv', index_col='country')

second = first[first['continent'] == 'Americas']

third = second.drop('Puerto Rico')

fourth = third.drop('continent', axis = 1)

fourth.to_csv('result.csv')

#Return index value of column minimum

print(data.idxmin())

#Return index value of column maximum

print(data.idxmax())

#Select GDP per capita for all countries 1982

data['gdpPercap_1982']

#Select GDP per capita for Denmark in all years

data.loc['Denmark']

#Select GDP per capita for all countries for after 1985

data.loc[:,'gdpPercap_1985':]

#Select GDP per capita for each country in 2007 as a multiple of GDP

#per capita for that country in 1952

data['gdpPercap_2007']/data['gdpPercap_1952']

| Pandas/Pandas8_Dataframes.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# # Visualizing sequential Bayesian learning

#

# In this notebook we will examine the problem of estimation given observed data from a Bayesian perspective.

#

# We start by gathering a dataset $\mathcal{D}$ consisting of multiple observations. Each observation is independent and drawn from a parametric probability distribution with parameter $\mu$. We can thus write the probability of the dataset as $p(\mathcal{D}\mid\mu)$, which determines how likely it is to observe the dataset. For this reason, $p(\mathcal{D}\mid\mu)$ is known as the **likelihood** function.

#

# Furthermore, we believe that the parameter $\mu$ is itself a random variable with a known probability distribution $p(\mu)$ that encodes our prior belief. This distribution is known as the **prior** distribution.

#

# Now we might ask ourselves: at a moment posterior to observing the data $\mathcal{D}$, how does our initial belief on $\mu$ change? We would like to know what the probability $p(\mu\mid\mathcal{D})$ is, which is known as the **posterior** distribution. This probability, according to Bayes' theorem, is given by

#

# $$

# p(\mu\mid\mathcal{D}) = \frac{p(\mathcal{D}\mid\mu)p(\mu)}{p(\mathcal{D})}

# $$

#

# ## Flipping coins

#

# Suppose we have a strange coin for which we are not certain about the probability $\mu$ of getting heads with it. We can start flipping it many times, and as we record heads or tails in a dataset $\mathcal{D}$ we can modify our beliefs on $\mu$ given our observations. Given that we have flipped the coins $N$ times, the likelihood of observing $m$ heads is given by the Binomial distribution,

#

# $$\text{Bin}(m\mid N,\mu)={N\choose k}\mu^m(1-\mu)^{N-m}$$

#

# A mathematically convenient prior distribution for $\mu$ that works well with a binomial likelihood is the Beta distribution,

#

# $$\text{Beta}(\mu\mid a, b) = \frac{\Gamma(a+b)}{\Gamma(a)\Gamma(b)}\mu^{a-1}(1-\mu)^{b-1}$$

#

# We said $\mu$ would encode our *prior* belief on $\mu$, so why does it seem that we are choosing a prior for mathematical convenience instead? It turns out this prior, in addition to being convenient, will also allow us to encode a variety of prior beliefs as we might see fit. We can see this by plotting the distribution for different values of $a$ and $b$:

# +

# %matplotlib inline

import matplotlib.pyplot as plt

import matplotlib.animation as animation

import numpy as np

from scipy.stats import beta

# The set of parameters of the prior to test

a_b_params = ((0.1, 0.1), (1, 1), (2, 3), (8, 4))

mu = np.linspace(0, 1, 100)

# Plot one figure per set of parameters

plt.figure(figsize=(13,3))

for i, (a, b) in enumerate(a_b_params):

plt.subplot(1, len(a_b_params), i+1)

prior = beta(a, b)

plt.plot(mu, prior.pdf(mu))

plt.xlabel(r'$\mu$')

plt.title("a = {:.1f}, b = {:.1f}".format(a, b))

plt.tight_layout()

# -

# We can see that the distribution is rather flexible. Since we don't know anything about the probability of the coin (which is also a valid prior belief), we can select $a=1$ and $b=1$ so that the probability is uniform in the interval $(0, 1)$.

#

# Now that we have the likelihood and prior distributions, we can obtain the posterior distribution $p(\mu\mid\mathcal{D})$: the revised beliefs on $\mu$ after flipping the coin many times and gathering a dataset $\mathcal{D}$. In general, calculating the posterior can be a very involved derivation, however what makes the choice of the prior mathematically convenient is that it makes the posterior distribution to be the same: a Beta distribution. A prior distribution is called a **conjugate prior** for a likelihood function if the resulting posterior has the same form of the prior. For our example, the posterior is

#

# $$p(\mu\mid m, l, a, b) = \frac{\Gamma(m+a+l+b)}{\Gamma(m+a)\Gamma{l+b}}\mu^{m+a-1}(1-\mu)^{l+b-1}$$

#

# where $m$ is the number of heads we observed and $l$ the number of tails (equal to $N-m$).

#

# That's it! Our prior belief is ready and the likelihood of our observations is determined, we can now start flipping coins and seeing how the posterior probability changes with each observation. We will set up a simulation where we flip a coin with probability 0.8 of landing on heads, which is the unknown probability that we aim to find. Via the process of bayesian learning we will discover the true value as we flip the coin one time after the other.

# +

def coin_flip(mu=0.8):

""" Returns True (heads) with probability mu, False (tails) otherwise. """

return np.random.random() < mu

# Parameters for a uniform prior

a = 1

b = 1

posterior = beta(a, b)

# Observed heads and tails

m = 0

l = 0

# A list to store posterior updates

updates = []

for toss in range(50):

# Store posterior

updates.append(posterior.pdf(mu))

# Get a new observation by flipping the coin

if coin_flip():

m += 1

else:

l += 1

# Update posterior

posterior = beta(a + m, b + l)

# -

# We now have a list, `updates`, containing the values of the posterior distribution after observing one coin toss. We can visualize the change in the posterior interactively using <a href="https://plot.ly/#/" target="_blank">Plotly</a>. Even though there are other options to do animations and use interactive widgets with Python, I chose Plotly because it is portable (Matplotlib's `FuncAnimation` requires extra components to be installed) and it looks nice. All we have to do is to define a dictionary according to the specifications, and we obtain an interactive plot that can be embedded in a notebook or a web page.

#

# In the figure below we will add *Play* and *Pause* buttons, as well as a slider to control playback, which will allow us to view the changes in the posterior distributions are new observations are made. Let's take a look at how it's done.

# +

# Set up and plot with Plotly

import plotly.offline as ply

ply.init_notebook_mode(connected=True)

figure = {'data': [{'x': [0, 1], 'y': [0, 1], 'mode': 'lines'}],

'layout':

{

'height': 400, 'width': 600,

'xaxis': {'range': [0, 1], 'autorange': False},

'yaxis': {'range': [0, 8], 'autorange': False},

'title': 'Posterior distribution',

'updatemenus':

[{

'type': 'buttons',

'buttons':

[

{

'label': 'Play',

'method': 'animate',

'args':

[

None,

{

'frame': {'duration': 500, 'redraw': False},

'fromcurrent': True,

'transition': {'duration': 0, 'easing': 'linear'}

}

]

},

{

'args':

[

[None],

{

'frame': {'duration': 0, 'redraw': False},

'mode': 'immediate',

'transition': {'duration': 0}

}

],

'label': 'Pause',

'method': 'animate'

}

]

}],

'sliders': [{

'steps': [{

'args':

[

['frame{}'.format(f)],

{

'frame': {'duration': 0, 'redraw': False},

'mode': 'immediate',

'transition': {'duration': 0}

}

],

'label': f,

'method': 'animate'

} for f, update in enumerate(updates)]

}]

},

'frames': [{'data': [{'x': mu, 'y': pu}], 'name': 'frame{}'.format(f)} for f, pu in enumerate(updates)]}

ply.iplot(figure, link_text='')

# -

# We can see how bayesian learning allows us to go from a uniform prior distribution on the parameter $\mu$, when there are no observations, and as the number of observations increases, our uncertainty is reduced (which can be seen from the reduced variance of the distribution) and the estimated value centers around the true value of 0.8.

#

# In my opinion, this is a very elegant and powerful method for inference that adequately adapts to a sequential setting as the one we have explored. Even more interesting is the fact that this simple idea can be applied to much more complicated models that deal with uncertainty. On the other hand, we have to keep in mind that the posterior was chosen for mathematical convenience, rather than a selection based on the nature of a particular problem, for which in some cases a conjugate prior might not be the best.

| 00-bayesian-learning.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# # BuildSys 2020 Figures

# The point of this notebook is to generate good quality figures for the BuildSys 2020 conference.

import warnings

warnings.filterwarnings('ignore')

# # Package Import

# +

import os

import sys

sys.path.append('../')

from src.features import build_features

from src.visualization import visualize

from src.reports import make_report

import pandas as pd

import numpy as np

from datetime import datetime, timedelta

import matplotlib.pyplot as plt

import seaborn as sns

import matplotlib

import matplotlib.dates as mdates

from matplotlib.colors import ListedColormap, LinearSegmentedColormap

import matplotlib.animation as animation

# -

# # Overview of Figures

# There are few figures we want to create for the poster. In general, we want to create four figures:

# 1. Beacon

# 2. Fitbit

# 3. Beiwe

# 4. Combination of the previous three

#

# Zoltan is also very adamant about animating our figures, so we need to look into that. He sent a [link](https://towardsdatascience.com/how-to-create-animated-graphs-in-python-bb619cc2dec1) that has some promising information.

# ## Animation Test

# More information on animation can be found using these blog posts:

# - [Somewhat Helpful](https://towardsdatascience.com/how-to-create-animated-graphs-in-python-bb619cc2dec1)

# - [More Helpful](https://towardsdatascience.com/animations-with-matplotlib-d96375c5442c)

#

# And documentation on the main animate function is [here](https://matplotlib.org/3.3.2/api/_as_gen/matplotlib.animation.FuncAnimation.html)

beacon_data = pd.read_csv('../data/processed/bpeace2-beacon.csv',index_col=0,parse_dates=True)

# show it off:

beacon_data.head()

# +

beacon_bb = beacon_data[beacon_data['Beacon'] == 19][datetime(2020,7,6):datetime(2020,7,14)]

beacon_bb = beacon_bb.resample('60T').mean()

beacon_bb_pollutants = beacon_bb[['CO2','PM_C_2p5','NO2','CO','TVOC','Lux','T_CO']]

normalized_df = (beacon_bb_pollutants-beacon_bb_pollutants.min())/(beacon_bb_pollutants.max()-beacon_bb_pollutants.min())

# -

Writer = animation.writers['ffmpeg']

writer = Writer(fps=20, metadata=dict(artist='Me'), bitrate=1800)

# +

# #%matplotlib notebook

import numpy as np

import seaborn as sns

import matplotlib.pyplot as plt

from matplotlib import animation

fig = plt.figure()

frames = 50

def init():

initial = normalized_df.T

initial = initial.replace(initial, 0)

sns.heatmap(initial, vmax=1, cbar=False)

def animate(i):

data = normalized_df.T[normalized_df.T < i/frames]

sns.heatmap(data, vmax=1, cbar=False)

anim = animation.FuncAnimation(fig, animate, init_func=init, frames=frames, interval=1000, repeat = False)

anim.save('../test_heatmap.mp4', writer=writer)

# -

# # Beacon

# <div class="alert alert-block alert-success">

# We are looking for a good summary of a week's worth of data. Looks:

# <ul>

# <li>week of July 6 is a good place to start</li>

# <li>Beacon 19</li>

# </ul>

# </div>

def create_cmap(colors,nodes):

cmap = LinearSegmentedColormap.from_list("mycmap", list(zip(nodes, colors)))

return cmap

# ## Static Plot

# +

# Creating the dataframe

beacon_bb = beacon_data[beacon_data['Beacon'] == 19][datetime(2020,7,6):datetime(2020,7,14)]

beacon_bb = beacon_bb.resample('60T').mean()

beacon_bb_pollutants = beacon_bb[['CO2','PM_C_2p5','NO2','CO','TVOC','Lux','T_CO']]

fig, axes = plt.subplots(7,1,figsize=(16,7),sharex=True)

ylabels = ['CO$_2$',

'PM$_{2.5}$',

'NO$_2$',

'CO',

'TVOC',

'Light',

'T']

other_ylabels = ['ppm','$\mu$g/m$^3$','ppb','ppm','ppb','lux', '$^\circ$C']

cbar_ticks = [np.arange(400,1200,200),

np.arange(0,40,10),

np.arange(0,120,50),

np.arange(0,15,4),

[0,200,500,700],

np.arange(0,250,50),

np.arange(18,32,4)]

cmaps = [create_cmap(["green", "yellow", "orange", "red",],[0.0, 0.33, 0.66, 1]),

create_cmap(["green", "yellow", "orange", "red"],[0.0, 0.2, 0.4, 1]),

create_cmap(["green", "yellow", "orange", "red"],[0.0, 0.33, 0.66, 1]),

create_cmap(["green", "yellow", "orange", "red"],[0.0, 0.375, 0.75, 1]),

create_cmap(["green", "yellow", "orange", "red"],[0.0, 0.1, 0.31, 1]),

create_cmap(["black","purple","red","orange","yellow","green"],[0.0, 0.1, 0.16, 0.2, 0.64, 1]),

create_cmap(["cyan","blue","green","orange","red"],[0.0, 0.2, 0.4, 0.7, 1])]

for ax, var, low, high, ylabel, other_y, ticks, cmap in zip(axes,beacon_bb_pollutants.columns,[400,0,0,0,0,0,18],[1000,30,100,12,700,200,30],ylabels,other_ylabels,cbar_ticks,cmaps):

sns.heatmap(beacon_bb_pollutants[[var]].T,vmin=low,vmax=high,ax=ax,cbar_kws={'ticks':ticks},cmap=cmap)

ax.set_ylabel('')

ax.text(0,-0.1,f'{ylabel} ({other_y})')

ax.set_yticklabels([''])

ax.set_xlabel('')

xlabels = ax.get_xticklabels()

new_xlabels = []

for label in xlabels:

new_xlabels.append(label.get_text()[11:16])

ax.set_xticklabels(new_xlabels)

plt.subplots_adjust(hspace=0.6)

plt.savefig('../reports/BuildSys2020/beacon_heatmap.pdf',bbox_inches='tight')

plt.show()

plt.close()

# -

# ## Animation

# +

# #%matplotlib notebook

# Creating the dataframe

beacon_bb = beacon_data[beacon_data['Beacon'] == 19][datetime(2020,7,6):datetime(2020,7,14)]

beacon_bb = beacon_bb.resample('60T').mean()

beacon_bb_pollutants = beacon_bb[['CO2','PM_C_2p5','NO2','CO','TVOC','Lux','T_CO']]

# creating the figure

fig, axes = plt.subplots(7,1,figsize=(12,8),sharex=True)

ylabels = ['CO$_2$',

'PM$_{2.5}$',

'NO$_2$',

'CO',

'TVOC',

'Light',

'T']

other_ylabels = ['ppm','$\mu$g/m$^3$','ppb','ppm','ppb','lux', '$^\circ$C']

cbar_ticks = [np.arange(400,1200,200),

np.arange(0,40,10),

np.arange(0,120,50),

np.arange(0,15,4),

[0,200,500,700],

np.arange(0,250,50),

np.arange(18,32,4)]

cmaps = [create_cmap(["green", "yellow", "orange", "red",],[0.0, 0.33, 0.66, 1]),

create_cmap(["green", "yellow", "orange", "red"],[0.0, 0.2, 0.4, 1]),

create_cmap(["green", "yellow", "orange", "red"],[0.0, 0.33, 0.66, 1]),

create_cmap(["green", "yellow", "orange", "red"],[0.0, 0.375, 0.75, 1]),

create_cmap(["green", "yellow", "orange", "red"],[0.0, 0.1, 0.31, 1]),

create_cmap(["black","purple","red","orange","yellow","green"],[0.0, 0.1, 0.16, 0.2, 0.64, 1]),

create_cmap(["cyan","blue","green","orange","red"],[0.0, 0.2, 0.4, 0.7, 1])]

for ax, var, low, high, ylabel, other_y, ticks, cmap in zip(axes,beacon_bb_pollutants.columns,[400,0,0,0,0,0,18],[1000,30,100,12,700,200,30],ylabels,other_ylabels,cbar_ticks,cmaps):

sns.heatmap(beacon_bb_pollutants[[var]].T,vmin=low,vmax=high,ax=ax,cbar_kws={'ticks':ticks},cmap=cmap)

ax.set_ylabel('')

ax.text(0,-0.1,f'{ylabel} ({other_y})')

ax.set_yticklabels([''])

ax.set_xlabel('')

xlabels = ax.get_xticklabels()

new_xlabels = []

for label in xlabels:

new_xlabels.append(label.get_text()[11:16])

ax.set_xticklabels(new_xlabels)

plt.subplots_adjust(hspace=0.6)

# Animation

frames = 193

Writer = animation.writers['ffmpeg']

writer = Writer(fps=20, metadata=dict(artist='Me'), bitrate=1800)

def init():

initial = beacon_bb_pollutants

initial = initial.replace(initial, 0)

for ax, var in zip(axes,beacon_bb_pollutants.columns):

sns.heatmap(initial[[var]].T, cbar=False, ax=ax)

plt.subplots_adjust(hspace=0.6)

def animate(i):

ylabels = ['CO$_2$',

'PM$_{2.5}$',

'NO$_2$',

'CO',

'TVOC',

'Light',

'T']

other_ylabels = ['ppm','$\mu$g/m$^3$','ppb','ppm','ppb','lux', '$^\circ$C']

cbar_ticks = [np.arange(400,1200,200),

np.arange(0,40,10),

np.arange(0,120,50),

np.arange(0,15,4),

[0,200,500,700],

np.arange(0,250,50),

np.arange(18,32,4)]

cmaps = [create_cmap(["green", "yellow", "orange", "red",],[0.0, 0.33, 0.66, 1]),

create_cmap(["green", "yellow", "orange", "red"],[0.0, 0.2, 0.4, 1]),

create_cmap(["green", "yellow", "orange", "red"],[0.0, 0.33, 0.66, 1]),

create_cmap(["green", "yellow", "orange", "red"],[0.0, 0.375, 0.75, 1]),

create_cmap(["green", "yellow", "orange", "red"],[0.0, 0.1, 0.31, 1]),

create_cmap(["black","purple","red","orange","yellow","green"],[0.0, 0.1, 0.16, 0.2, 0.64, 1]),

create_cmap(["cyan","blue","green","orange","red"],[0.0, 0.2, 0.4, 0.7, 1])]

for ax, var, low, high, ylabel, other_y, ticks, cmap in zip(axes,beacon_bb_pollutants.columns,[400,0,0,0,0,0,18],[1000,30,100,12,700,200,30],ylabels,other_ylabels,cbar_ticks,cmaps):

df = beacon_bb_pollutants[[var]].T

#df = df[df <= np.nanmax(df)*((i+1)/frames)]

df = df.iloc[:,:i+1]

sns.heatmap(df,vmin=low,vmax=high,ax=ax,cbar=False,cmap=cmap)

ax.set_ylabel('')

ax.text(0,-0.1,f'{ylabel} ({other_y})')

ax.set_yticklabels([''])

ax.set_xlabel('')

xlabels = ax.get_xticklabels()

new_xlabels = []

for label in xlabels:

if label.get_text()[11:13] == '00':

new_xlabels.append(label.get_text()[5:10] + ' ' + label.get_text()[11:16])

else:

new_xlabels.append(label.get_text()[11:16])

ax.set_xticklabels(new_xlabels)

anim = animation.FuncAnimation(fig, animate, init_func=init, frames=frames, interval=500, repeat = False)

anim.save('../reports/BuildSys2020/beacon_heatmap.mp4', writer=writer)

# -

# # Fitbit

# In terms of purely Fitbit data, I was thinking of just showing something simple like daily steps with an overlay of the hourly numbers.

fb_hourly = pd.read_csv('../data/processed/bpeace2-fitbit-intraday.csv',

index_col=0,parse_dates=True,infer_datetime_format=True)

fb_hourly.head()

fb_daily = pd.read_csv('../data/processed/bpeace2-fitbit-daily.csv',

index_col=0,parse_dates=True,infer_datetime_format=True)

fb_daily.head()

# The participant that corresponds to beacon 19 is qh34m4r9. We will continue to use this participant, at least initially to make sure all the data overlap.

fb19_hourly = fb_hourly[fb_hourly['beiwe'] == 'qh34m4r9'][datetime(2020,7,6):datetime(2020,7,14)]

fb19_hourly = fb19_hourly.resample('60T').sum()

fb19_hourly.head()

fb19_daily = fb_daily[fb_daily['beiwe'] == 'qh34m4r9'][datetime(2020,7,6):datetime(2020,7,14)]

fb19_daily.head()

# ## Static Plot

# +

fig, ax1 = plt.subplots(figsize=(8,4.5))

ax2 = ax1.twinx()

# Daily Steps - Bar

ax1.stem(fb19_daily.index, fb19_daily['activities_steps'], linefmt='k-',markerfmt='ko',basefmt='w ')

# Formatting the x-axis

ax1.xaxis.set_tick_params(rotation=-30)

ax1.set_xlim([datetime(2020,7,5,20),datetime(2020,7,14,4)])

# Formatting the y-axis (daily)

ax1.set_yticks([0,2000,4000,6000,8000])

ax1.set_ylabel('Daily Steps')

# Hourly Steps - Time Series

scatter_color = '#bf5700'

ax2.scatter(fb19_hourly.index.values, fb19_hourly['activities_steps'].values,marker='s',s=5,color=scatter_color)

# Formatting the y-axis (hourly)

ax2.set_yticks([0,1000,2000,3000,4000])

ax2.spines['right'].set_color(scatter_color)

ax2.yaxis.label.set_color(scatter_color)

ax2.tick_params(axis='y', colors=scatter_color)

ax2.set_ylabel('Hourly Steps')

# Formatting the x-axis (again)

ax2.xaxis.set_major_locator(mdates.DayLocator())

myFmt = mdates.DateFormatter('%m/%d')

ax2.xaxis.set_major_formatter(myFmt)

# Saving

plt.savefig('../reports/BuildSys2020/fitbit_steps.pdf')

plt.show()

plt.close()

# -

# # Beiwe

# The best and simplest thing I can think to do with Beiwe is to include the submission times for the morning and weekly surveys.

ema_morning = pd.read_csv('../data/processed/bpeace2-morning-survey.csv',

index_col=0,parse_dates=True,infer_datetime_format=True)

ema_morning.sort_index(inplace=True)

ema_morning.head()

ema_evening = pd.read_csv('../data/processed/bpeace2-evening-survey.csv',

index_col=0,parse_dates=True,infer_datetime_format=True)

ema_evening.sort_index(inplace=True)

ema_evening.head()

morning_b19 = ema_morning[ema_morning['ID'] == 'qh34m4r9'][datetime(2020,7,6):datetime(2020,7,14)]

morning_b19.head()

evening_b19 = ema_evening[ema_evening['ID'] == 'qh34m4r9'][datetime(2020,7,6):datetime(2020,7,14)]

evening_b19.head()

# ## Static Plot

# ### First attempt

# Not well-received primarily because the way contentment is framed compared to the other moods.

# +

fig, ax = plt.subplots(figsize=(8,4.5))

# Plotting submission Times

for i in range(len(morning_b19)):

if i == 0:

ax.axvline(morning_b19.index[i],color='goldenrod',linestyle='dashed',zorder=1,label='Morning')

ax.axvline(evening_b19.index[i],color='indigo',linestyle='dashed',zorder=2,label='Evening')

else:

ax.axvline(morning_b19.index[i],color='goldenrod',linestyle='dashed',zorder=1)

ax.axvline(evening_b19.index[i],color='indigo',linestyle='dashed',zorder=2)

# Plotting mood scores

i = 0

for df in [morning_b19,evening_b19]:

for mood, color, offset in zip(['Content','Stress','Lonely','Sad'],['seagreen','firebrick','gray','navy'],[270,90,-90,-270]):

if i == 0:

ax.scatter(df.index+timedelta(minutes=offset),df[mood].values,color=color,zorder=10,label=mood)

else:

ax.scatter(df.index+timedelta(minutes=offset),df[mood].values,color=color,zorder=10)

i+= 1

# Formatting x-axis

ax.set_xlim([datetime(2020,7,6),datetime(2020,7,14,4)])

ax.xaxis.set_major_locator(mdates.DayLocator())

myFmt = mdates.DateFormatter('%m/%d')

ax.xaxis.set_major_formatter(myFmt)

# Formatting y-axis

ax.set_ylim([-0.5,3.5])

ax.set_yticks([0,1,2,3])

ax.set_yticklabels(['Not at all','A little bit','Quite a bit','Very much'])

ax.legend(loc='upper center',bbox_to_anchor=(1.1,1),ncol=1,frameon=False)

#plt.savefig('../reports/BuildSys2020/beiwe_mood.pdf')

plt.show()

plt.close()

# -

# ### Second Attempt

# Now for the second attempt where we keep the mood on the same axis and color the points based on the response

# +

fig, ax = plt.subplots(figsize=(8,4.5))

# Plotting submission Times

for i in range(len(morning_b19)):

if i == 0:

ax.axvline(morning_b19.index[i],color='gray',linestyle='dashed',zorder=1,label='Morning')

ax.axvline(evening_b19.index[i],color='black',linestyle='dashed',zorder=2,label='Evening')

else:

ax.axvline(morning_b19.index[i],color='gray',linestyle='dashed',zorder=1)

ax.axvline(evening_b19.index[i],color='black',linestyle='dashed',zorder=2)

# Plotting mood scores

i = 0

for df in [morning_b19,evening_b19]:

j = 0

for mood, color in zip(['Content','Stress','Lonely','Sad'],['seagreen','firebrick','gray','navy']):

ys = []

shades = []

for val in df[mood]:

if val == 0:

shades.append('red')

elif val == 1:

shades.append('orange')

elif val == 2:

shades.append('blue')

elif val == 3:

shades.append('green')

else:

shades.append('white')

ys.append(j)

if i == 0:

ax.scatter(df.index,ys,color=shades,edgecolor='gray',marker='s',s=200,zorder=10)

else:

ax.scatter(df.index,ys,color=shades,edgecolor='black',marker='s',s=200,zorder=10)

j += 1

i+= 1

# Formatting x-axis

ax.set_xlim([datetime(2020,7,6),datetime(2020,7,14,4)])

ax.xaxis.set_major_locator(mdates.DayLocator())

myFmt = mdates.DateFormatter('%m/%d')

ax.xaxis.set_major_formatter(myFmt)

# Formatting y-axis

ax.set_ylim([-0.5,3.5])

ax.set_yticks([0,1,2,3])

ax.set_yticklabels(['Content','Stress','Lonely','Sad'])

ax.legend(loc='upper center',bbox_to_anchor=(1.1,1),ncol=1,frameon=False)

plt.savefig('../reports/BuildSys2020/beiwe_mood.pdf')

plt.show()

plt.close()

# -

# Figure is decent and the iffy parts can be removed in illustrator.

| notebooks/archive/0.3.0-hef-BuildSys20-figures.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

import numpy as np

vet = np.arange(50).reshape((5,10))

vet

vet.shape

vet[0:2][:1]

vet2 = vet[:4]

vet2

vet2[:] = 100

vet2

vet

vet2 = vet[:3].copy()

vet2

vet[1:4,5:7]

bol = vet > 50

bol

vet[bol]

vet3 = np.linspace(0,100,30)

vet3.shape

vet31 = vet3.reshape(3, 10)

vet31

vet31[0:2, 2]

| secao5 - Python para Analise de dados utizando Numpy/indexacao e fatiamento de arrays.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3.8.2 64-bit

# metadata:

# interpreter:

# hash: 31f2aee4e71d21fbe5cf8b01ff0e069b9275f58929596ceb00d14d90e3e16cd6

# name: Python 3.8.2 64-bit

# ---

# We will use different algorithms to create models for predicting fraudulant transactions.

# Data source: https://www.kaggle.com/mlg-ulb/creditcardfraud

# 1. Let's try XGBoost. Tutorial: https://machinelearningmastery.com/develop-first-xgboost-model-python-scikit-learn/

# Importing classes that will be used later.

from numpy import loadtxt

from xgboost import XGBClassifier

from sklearn.model_selection import train_test_split

from sklearn.metrics import accuracy_score

# +

# load data

import pandas as pd

import numpy as np

import warnings

warnings.filterwarnings('ignore')

df = pd.read_csv('train/creditcard.csv')

df.head()

# -

# Split training data.

from sklearn.model_selection import train_test_split

X = df.drop('Class',axis=1)

y = df['Class']

seed = 7

test_size = 0.33

X_train, X_test, y_train, y_test = train_test_split(X, y, test_size=test_size, random_state=seed)

# Train the model.

model = XGBClassifier()

model.fit(X_train, y_train)

# Make predictions for test data.

y_pred = model.predict(X_test)

predictions = [round(value) for value in y_pred]

# + tags=[]

# Evaluate predictions.

accuracy = accuracy_score(y_test, predictions)

print("Accuracy: %.2f%%" % (accuracy * 100.0))

# Here we show the logic of accuracy_score: it basically counts the percentage of non-diff.

diff = 0

for i in range(len(y_test)):

if y_test[i] != predictions[i]: diff += 1

print("diff: {}, non-diff rate: {}%".format(diff,100-round(diff/len(y_test)*100,2)))

# + tags=[]

# Check out confusion matrix and calculate recall and accuracy.

from sklearn.metrics import confusion_matrix

data = confusion_matrix(y_test,predictions)

recall,precision = data[1][1]/sum(data[1]),data[1][1]/(data[0][1]+data[1][1])

print('recall is {}%, precision is {}%'.format(round(recall*100,1),round(precision*100,1)))

# +

# Let's try normalize training data with StandardScalar, which makes sure the standard deviation of the data is 1 and mean is 0. Then we run the training and testing again.

from sklearn.preprocessing import StandardScaler

scaler = StandardScaler()

# Convert to numpy arrays for tensorflow.

y_train = y_train.values

y_test = y_test.values

# Transform to scaled.

X_train_scaled = scaler.fit_transform(X_train,y_train)

X_test_scaled = scaler.transform(X_test)

# -

# Train again.

model_scaled = XGBClassifier()

model_scaled.fit(X_train_scaled, y_train)

# Predict again.

y_pred_scaled = model_scaled.predict(X_test_scaled)

predictions_scaled = [round(value) for value in y_pred_scaled]

# + tags=[]

# Let's see recall and accuracy. It is the same as non-scaled version.

# It seems normalization is not needed for XGBoost: https://github.com/dmlc/xgboost/issues/2621

# Both decision trees and random forest are not sensitive to monotonic transformation: https://stats.stackexchange.com/questions/353462/what-are-the-implications-of-scaling-the-features-to-xgboost

data = confusion_matrix(y_test,predictions_scaled)

recall,precision = data[1][1]/sum(data[1]),data[1][1]/(data[0][1]+data[1][1])

print('recall is {}%, precision is {}%'.format(round(recall*100,1),round(precision*100,1)))

# + tags=[]

# Plot ROC curve.

import matplotlib.pyplot as plt

from sklearn.metrics import roc_curve, auc

fpr, tpr, _ = roc_curve(y_test, predictions)

roc_auc = auc(fpr, tpr)

#xgb.plot_importance(gbm)

#plt.show()

plt.figure()

lw = 2

plt.plot(fpr, tpr, color='darkorange',

lw=lw, label='ROC curve (area = %0.2f)' % roc_auc)

plt.plot([0, 1], [0, 1], color='navy', lw=lw, linestyle='--')

plt.xlim([-0.02, 1.0])

plt.ylim([0.0, 1.05])

plt.xlabel('False Positive Rate')

plt.ylabel('True Positive Rate')

plt.title('ROC curve')

plt.legend(loc="lower right")

plt.show()

# -

| cc_fraud.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# +

# import stuff

import os

import numpy as np

#import pandas as pd

from math import sqrt as sqrt

from itertools import product as product

import torch

import torch.utils.data as data

from torchvision import models

import torch.nn as nn

import torch.nn.init as init

import torch.nn.functional as F

from torch.autograd import Function

import pandas as pd

# -

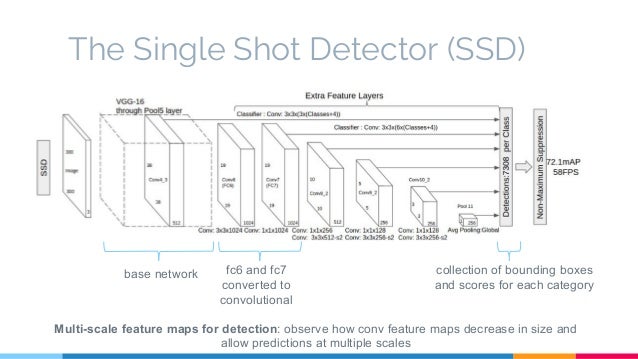

# # SSDメインモデルを構築

# VGGベースモデルを構築。

#

# TODO: Resnetベースへの改良?どこにつなげればよいか、正規化などを入れないといけないので参考文献などを読みたい。\

#

# plot vgg model

vgg = models.vgg16(pretrained=False)

print(vgg)

# +

# VGG

# 転移学習なしで学習するのか?

def make_vgg():

layers = []

in_channels = 3

# VGGのモデル構造を記入

cfg = [64, 64, "M", 128, 128, "M", 256, 256, 256, "MC", 512, 512, 512, "M", 512, 512, 512]

for v in cfg:

if v == "M":

layers += [nn.MaxPool2d(kernel_size=2, stride=2)]

elif v == "MC":

layers += [nn.MaxPool2d(kernel_size=2, stride=2, ceil_mode=True)]

else:

conv2d = nn.Conv2d(in_channels, v, kernel_size=3, padding=1)

layers += [conv2d, nn.ReLU(inplace=True)] #メモリ節約

in_channels = v

pool5 = nn.MaxPool2d(kernel_size=3, stride=1, padding=1)

conv6 = nn.Conv2d(512, 1024, kernel_size=3, padding=6, dilation=6)

conv7 = nn.Conv2d(1024, 1024, kernel_size=1)

layers += [pool5, conv6, nn.ReLU(inplace=True), conv7, nn.ReLU(inplace=True)]

return nn.ModuleList(layers)

# 動作確認

vgg_test = make_vgg()

print(vgg_test)

# +

# 小さい物体のbbox検出用のextras moduleを追加

def make_extras():

layers = []

in_channels = 1024 # vgg module outputs

# extra modeule configs

cfg = [256, 512, 128, 256, 128, 256, 128, 256]

layers += [nn.Conv2d(in_channels, cfg[0], kernel_size=1)]

layers += [nn.Conv2d(cfg[0], cfg[1], kernel_size=3, stride=2, padding=1)]

layers += [nn.Conv2d(cfg[1], cfg[2], kernel_size=1)]

layers += [nn.Conv2d(cfg[2], cfg[3], kernel_size=3, stride=2, padding=1)]

layers += [nn.Conv2d(cfg[3], cfg[4], kernel_size=1)]

layers += [nn.Conv2d(cfg[4], cfg[5], kernel_size=3)]

layers += [nn.Conv2d(cfg[5], cfg[6], kernel_size=1)]

layers += [nn.Conv2d(cfg[6], cfg[7], kernel_size=3)]

return nn.ModuleList(layers)

extras_test = make_extras()

print(extras_test)

# -

# ## locとconfに対するモジュール。

# +

# locとconfモジュールを作成

def make_loc_conf(num_classes=21, bbox_aspect_num=[4, 6, 6, 6, 4, 4]):

loc_layers = []

conf_layers = []

# VGGの中間出力に対するレイヤ

loc_layers += [nn.Conv2d(512, bbox_aspect_num[0] * 4,

kernel_size=3, padding=1)]

conf_layers += [nn.Conv2d(512, bbox_aspect_num[0] * num_classes,

kernel_size=3, padding=1)]

# VGGの最終そうに対するCNN

loc_layers += [nn.Conv2d(1024, bbox_aspect_num[1] * 4,

kernel_size=3, padding=1)]

conf_layers += [nn.Conv2d(1024, bbox_aspect_num[1] * num_classes,

kernel_size=3, padding=1)]

# source3

loc_layers += [nn.Conv2d(512, bbox_aspect_num[2] * 4,

kernel_size=3, padding=1)]

conf_layers += [nn.Conv2d(512, bbox_aspect_num[2] * num_classes,

kernel_size=3, padding=1)]

# source4

loc_layers += [nn.Conv2d(256, bbox_aspect_num[3] * 4,

kernel_size=3, padding=1)]

conf_layers += [nn.Conv2d(256, bbox_aspect_num[3] * num_classes,

kernel_size=3, padding=1)]

# source5

loc_layers += [nn.Conv2d(256, bbox_aspect_num[4] * 4,

kernel_size=3, padding=1)]

conf_layers += [nn.Conv2d(256, bbox_aspect_num[4] * num_classes,

kernel_size=3, padding=1)]

# source6

loc_layers += [nn.Conv2d(256, bbox_aspect_num[5] * 4,

kernel_size=3, padding=1)]

conf_layers += [nn.Conv2d(256, bbox_aspect_num[5] * num_classes,

kernel_size=3, padding=1)]

return nn.ModuleList(loc_layers), nn.ModuleList(conf_layers)

loc_test, conf_test = make_loc_conf()

print(loc_test)

print(conf_test)

# -

# ## L2 normの実装

# ありなしで性能はどう変化するのか?

# 自作レイヤ

class L2Norm(nn.Module):

def __init__(self, input_channels=512, scale=20):

super(L2Norm, self).__init__()

self.weight = nn.Parameter(torch.Tensor(input_channels))

self.scale = scale

self.reset_parameters()

self.eps = 1e-10

def reset_parameters(self):

init.constant_(self.weight, self.scale) # weightの値が全てscaleになる

def forward(self, x):

"""

38x38の特徴量に対し、チャネル方向の和を求めそれを元に正規化する。

また正規化したあとに係数(weight)をかける(ロスが減るように学習してくれるみたい)

"""

norm = x.pow(2).sum(dim=1, keepdim=True).sqrt()+self.eps #チャネル方向の自乗和

x = torch.div(x, norm) # 正規化

weights = self.weight.unsqueeze(0).unsqueeze(2).unsqueeze(3).expand_as(x) # 学習させるパラメータ

out = weights * x

return out

# bbox

for i, j in product(range(3), repeat=2):

print(i, j)

# +

# binding boxを出力するクラス

class DBox(object):

def __init__(self, cfg):

super(DBox, self).__init__()

self.image_size = cfg["input_size"]

# 各sourceの特徴量マップのサイズ

self.feature_maps = cfg["feature_maps"]

self.num_priors = len(cfg["feature_maps"]) # number of sources

self.steps = cfg["steps"] #各boxのピクセルサイズ

self.min_sizes = cfg["min_sizes"] # 小さい正方形のサイズ

self.max_sizes = cfg["max_sizes"] # 大きい正方形のサイズ

self.aspect_ratios = cfg["aspect_ratios"]

def make_dbox_list(self):

mean = []

# feature maps = 38, 19, 10, 5, 3, 1

for k, f in enumerate(self.feature_maps):

for i, j in product(range(f), repeat=2):

# fxf画素の組み合わせを生成

f_k = self.image_size / self.steps[k]

# 300 / steps: 8, 16, 32, 64, 100, 300

# center cordinates normalized 0~1

cx = (j + 0.5) / f_k

cy = (i + 0.5) / f_k

# small bbox [cx, cy, w, h]

s_k = self.min_sizes[k] / self.image_size

mean += [cx, cy, s_k, s_k]

# larger bbox

s_k_prime = sqrt(s_k * (self.max_sizes[k]/self.image_size))

mean += [cx, cy, s_k_prime, s_k_prime]

# その他のアスペクト比のdefbox

for ar in self.aspect_ratios[k]:

mean += [cx, cy, s_k*sqrt(ar), s_k/sqrt(ar)]

mean += [cx, cy, s_k/sqrt(ar), s_k*sqrt(ar)]

# convert the list to tensor

output = torch.Tensor(mean).view(-1, 4)

# はみ出すのを防ぐため、大きさを最小0, 最大1にする

output.clamp_(max=1, min=0)

return output

# +

# test boxes

# SSD300の設定

ssd_cfg = {

'num_classes': 21, # 背景クラスを含めた合計クラス数

'input_size': 300, # 画像の入力サイズ

'bbox_aspect_num': [4, 6, 6, 6, 4, 4], # 出力するDBoxのアスペクト比の種類

'feature_maps': [38, 19, 10, 5, 3, 1], # 各sourceの画像サイズ

'steps': [8, 16, 32, 64, 100, 300], # DBOXの大きさを決める

'min_sizes': [30, 60, 111, 162, 213, 264], # DBOXの大きさを決める

'max_sizes': [60, 111, 162, 213, 264, 315], # DBOXの大きさを決める

'aspect_ratios': [[2], [2, 3], [2, 3], [2, 3], [2], [2]],

}

dbox = DBox(ssd_cfg)

dbox_list = dbox.make_dbox_list()

pd.DataFrame(dbox_list.numpy())

# -

# # SSDクラスを実装する

# +

class SSD(nn.Module):

def __init__(self, phase, cfg):

super(SSD, self).__init__()

self.phase = phase

self.num_classes = cfg["num_classes"]

# call SSD network

self.vgg = make_vgg()

self.extras = make_extras()

self.L2Norm = L2Norm()

self.loc, self.conf = make_loc_conf(self.num_classes, cfg["bbox_aspect_num"])

# make Dbox

dbox = DBox(cfg)

self.dbox_list = dbox.make_dbox_list()

# use Detect if inference

if phase == "inference":

self.detect = Detect()

# check operation

ssd_test = SSD(phase="train", cfg=ssd_cfg)

print(ssd_test)

# +

def decode(loc, dbox_list):

"""

DBox(cx,cy,w,h)から回帰情報のΔを使い、

BBox(xmin,ymin,xmax,ymax)方式に変換する。

loc: [8732, 4] [Δcx, Δcy, Δw, Δheight]

SSDのオフセットΔ情報

dbox_list: (cx,cy,w,h)

"""

boxes = torch.cat((

dbox_list[:, :2] + loc[:, :2] * 0.1 * dbox_list[:, :2],

dbox_list[:, 2:] * torch.exp(loc[:, 2:] * 0.2)), dim=1)

# convert boxes to (xmin,ymin,xmax,ymax)

boxes[:, :2] -= boxes[:, 2:] / 2

boxes[:, 2:] += boxes[:, :2]

return boxes

# test

dbox = DBox(ssd_cfg)

dbox_list = dbox.make_dbox_list()

print(dbox_list.size())

loc = torch.ones(8732, 4)

loc[0, :] = torch.tensor([-10, 0, 1, 1])

print(loc.size())

dbox_process = decode(loc, dbox_list)

pd.DataFrame(dbox_process.numpy())

# -

def nms(boxes, scores, overlap=0.45, top_k=200):

"""

overlap以上のディテクションに関して信頼度が高い方をキープする。

キープしないものは消去。

物体のクラス毎にnmsは実効する。

------------------

inputs:

scores: bboxの信頼度

bbox: bboxの座標情報

------------------

出力:

keep:

"""

# returnを定義

count = 0

keep = scores.new(scores.size(0)).zero_().long()

print(keep.size())

# keep: 確信度thresholdを超えたbboxの数

# 各bboxの面積を計算

x1 = boxes[:, 0]

x2 = boxes[:, 2]

y1 = boxes[:, 1]

y2 = boxes[:, 3]

area = torch.mul(x2 - x1, y2 - y1)

# copy boxes

tmp_x1 = boxes.new()

tmp_y1 = boxes.new()

tmp_x2 = boxes.new()

tmp_y2 = boxes.new()

tmp_w = boxes.new()

tmp_h = boxes.new()

# sort scores 高い信頼度のものを上に。

v, idx = scores.sort(0)

# topk個の箱のみ取り出す

idx = idx[-top_k:]

# indexの要素数が0でない限りループする。

while idx.numel() > 0:

i = idx[-1] # 一番高い信頼度のboxを指定

# keep の最後にconf最大のindexを格納

keep[count] = i

count += 1

# 最後の一つになったらbreak

if idx.size(0) == 1:

break

# indexをへらす

idx = idx[:-1]

# ------------------------------------

# このboxとiouの大きいboxを消していく。

# ------------------------------------

# torch.index_select(input, dim, index, out=None) → Tensor

torch.index_select(x1, 0, idx, out=tmp_x1)

torch.index_select(y1, 0, idx, out=tmp_y1)

torch.index_select(x2, 0, idx, out=tmp_x2)

torch.index_select(y2, 0, idx, out=tmp_y2)

# target boxの最小、最大にclamp

tmp_x1 = torch.clamp(tmp_x1, min=x1[i])

tmp_y1 = torch.clamp(tmp_y1, min=y1[i])

tmp_x2 = torch.clamp(tmp_x2, min=x2[i])

tmp_y2 = torch.clamp(tmp_y2, min=y2[i])

# wとhのテンソルサイズをindex一つ減らしたものにする

tmp_w.resize_as_(temp_x2)

tmp_h.resize_as_(temp_y2)

# clampした状態の高さ、幅を求める

tmp_w = tmp_x2 - tmp_x1

tmp_h = tmp_y2 - tmp_y1

# 幅や高さが負になっているものは0に

tmp_w = torch.clamp(tmp_w, min=0.0)

tmp_h = torch.clamp(tmp_h, min=0.0)

# clamp時の面積を導出

inter = tmp_w * tmp_h # オーバラップしている面積

# IoU の計算

# intersect=overlap

# IoU = intersect部分 / area(a) + area(b) - intersect

rem_areas = torch.index_select(area, 0, idx) # bbox元の面積

union = rem_areas + area[i] - inter

IoU = inter / union

# IoUがしきい値より大きいものは削除

idx = idx[IoU.le(overlap)] # leはless than or eqal to

return keep, count

# # 推論用のクラスDetectの実装

# +

class Detect(Function):

def __init__(self, conf_thresh=0.01, top_k=200, nms_thresh=0.45):

self.softmax = nn.Softmax(dim=-1)

self.conf_thresh = conf_thresh

self.top_k = top_k

self.nms_thresh = nms_thresh

def forward(self, loc_data, conf_data, dbox_list):

"""

SSDの推論結果を受け取り、bboxのデコードとnms処理を行う。

"""

#

num_batch = loc_data.size(0)

num_dbox = loc_data.size(1)

num_classes = conf_data.size(2)

# confをsoftmaxを使って正規化

conf_data = self.softmax(conf_data)

# 出力の方を作成する

# [batch, class, topk, 5]

output = torch.zeros(num_batch, num_classes, self.top_k, 5)

# conf_dataを[batch, 8732, classes]から[batch, classes, 8732]に変更

conf_preds = conf_data.tranpose(2, 1)

# batch毎にループ

for i in range(num_batch):

# 1. LocとDBoxからBBox情報に変換

decoded_boxes = decode(loc_data, dbox_list)

# confのコピー

conf_scores = conf_preds[i].clone()

# classごとにデコードとNMSを回す。

for cl in range(1, num_classes): # 背景は飛ばす。

# 2. 敷地を超えた結果を取り出す

c_mask = conf_scores[cl].gt(self.conf_thresh) # gt=greater than

# index maskを作成した。

# threshを超えると1, 超えなかったら0に。

# c_mask = [8732]

scores = conf_scores[cl][c_mask]

if scores.nelement() == 0:

continue

# 箱がなかったら終わり。

# cmaskをboxに適応できるようにサイズ変更

l_mask = c_mask.unsqueeze(1).expand_as(decoded_boxes)

# l_mask.size = [8732, 4]

boxes = decoded_boxes[l_mask].view(-1, 4) # reshape to [boxnum, 4]

# 3. NMSを適応する

ids, count = nms(boxes, scores, self.nms_thresh, self.top_k)

# torch.cat(tensors, dim=0, out=None) → Tensor

output[i, cl, :count] = torch.cat((scores[ids[:count]].unsqueeze(1), boxes[ids[:count]]),

1)

return output # torch.size([batch, 21, 200, 5])

# -

class SSD(nn.Module):

def __init__(self, phase, cfg):

super(SSD, self).__init__()

self.phase = phase

self.num_classes = cfg["num_classes"]

# call SSD network

self.vgg = make_vgg()

self.extras = make_extras()

self.L2Norm = L2Norm()

self.loc, self.conf = make_loc_conf(self.num_classes, cfg["bbox_aspect_num"])

# make Dbox

dbox = DBox(cfg)

self.dbox_list = dbox.make_dbox_list()

# use Detect if inference

if phase == "inference":

self.detect = Detect()

def forward(self, x):

sources = list()

loc = list()

conf = list()

# VGGのconv4_3まで計算

for k in range(23):

x = self.vgg[k](x)

# conv4_3の出力をL2Normに入力。source1をsourceに追加

source1 = self.L2Norm(x)

sources.append(source1)

# VGGを最後まで計算しsource2を取得

for k in range(23, len(self.vgg)):

x = self.vgg[k](x)

sources.append(x)

# extra層の計算を行う。

# source3-6に結果を格納。

for k, v in enumerate(self.extras):

x = F.relu(v(x), inplace = True)

if k % 2 == 1:

sources.append(x)

# source 1-6にそれぞれ対応するconvを適応しconfとlocを得る。

for (x, l, c) in zip(sources, self.loc, self.conf):

# Permuteは要素の順番を入れ替え

loc.append(l(x).permute(0, 2, 3, 1).contiguous())

conf.append(c(x).permute(0, 2, 3, 1).contiguous())

# convの出力は[batch, 4*anker, fh, fw]なので整形しなければならない。

# まず[batch, fh, fw, anker]に整形

# locとconfの形を変形

# locのサイズは、torch.Size([batch_num, 34928])

# confのサイズはtorch.Size([batch_num, 183372])になる

loc = torch.cat([o.view(o.size(0), -1) for o in loc], 1)

conf = torch.cat([o.view(o.size(0), -1) for o in conf], 1)

# さらにlocとconfの形を整える

# locのサイズは、torch.Size([batch_num, 8732, 4])

# confのサイズは、torch.Size([batch_num, 8732, 21])

loc = loc.view(loc.size(0), -1, 4)

conf = conf.view(conf.size(0), -1, self.num_classes)

# これで後段の処理につっこめるかたちになる。

output = (loc, conf, self.dbox_list)

if self.phase == "inference":

# Detectのforward

return self.detect(output[0], output[1], output[2])

else:

return output

| utils/ssd.ipynb |

/ ---

/ jupyter:

/ jupytext:

/ text_representation:

/ extension: .q

/ format_name: light

/ format_version: '1.5'

/ jupytext_version: 1.14.4

/ ---

/ + [markdown] cell_id="9703282e-b28b-4d15-8c0d-23b9e7bbd784" deepnote_cell_type="text-cell-h1" is_collapsed=false tags=[]

/ # Data exploration

/ + [markdown] cell_id="a7577f14-d78c-4fca-9a53-ea0a0c3e4e58" deepnote_cell_type="markdown" tags=[]

/ In this notebook we're analyzing the cleaned dataset as a first step in the project.

/

/ Here we'll plot the data as well as find summary statistics.

/ + cell_id="17ee1ebd-6222-4944-89ee-704dde66ee0c" deepnote_cell_type="code" deepnote_to_be_reexecuted=false execution_millis=3280 execution_start=1644074187803 source_hash="1643160e" tags=[]

#libs

import pandas as pd

import matplotlib.pyplot as plt

import seaborn as sns

import numpy as np

import os

/ + [markdown] cell_id="de352d00-9bde-4115-9070-0644932caccf" deepnote_cell_type="markdown" tags=[]

/ Now, let's import our cleaned dataset. Remember that, because of raw data size, an auxiliar directory containing cleaned data was created.

/

/ ```

/ data/processed/cleaned_dataset.csv -> This won't be available on GitHub, only on Deepnote

/

/ data_sent_github/cleaned_dataset.csv -> This will be available on both GitHub and Deepnote

/ ```

/

/ For your convenience, I'll be working with the dataset which will be stored on GitHub.

/ + cell_id="5eae51e9-3f42-4df0-9c87-f9b5f4706345" deepnote_cell_type="code" deepnote_to_be_reexecuted=false execution_millis=5 execution_start=1644074195770 source_hash="add48eb4" tags=[]

DATA_CLEANED_DIR = os.path.join(os.getcwd(), os.pardir, 'data_sent_github')

print(DATA_CLEANED_DIR)

/ + cell_id="64d5004a-06e6-4fcd-919b-6cd903c6ede0" deepnote_cell_type="code" deepnote_to_be_reexecuted=false execution_millis=1267 execution_start=1644074199592 source_hash="e91f393c" tags=[]

df = pd.read_csv(DATA_CLEANED_DIR+'/cleaned_dataset.csv', index_col=[0])

/ + cell_id="a4081ae5-09f4-4737-96d0-000302cfeaae" deepnote_cell_type="code" deepnote_output_heights=[21.1875] deepnote_to_be_reexecuted=false execution_millis=40 execution_start=1644074203457 source_hash="f2eb8fb8" tags=[]

#Let's make sure there're no duplicates

df.drop_duplicates(subset=['CustomerId'], keep='first', inplace=True)

/ + cell_id="231b6516-fa24-4695-8269-36fae52eab0e" deepnote_cell_type="code" deepnote_output_heights=[21.1875] deepnote_to_be_reexecuted=false execution_millis=118 execution_start=1644022412198 source_hash="c085b6ba" tags=[]

df.head()

/ + cell_id="b4392250-f3ab-4db1-990d-2a635629be21" deepnote_cell_type="code" deepnote_output_heights=[520] deepnote_to_be_reexecuted=false execution_millis=8 execution_start=1644022421434 source_hash="52430027" tags=[]

df.dtypes

/ + cell_id="7f16af2c-9626-4ee8-a9a2-4b23fe8e528c" deepnote_cell_type="code" deepnote_output_heights=[232.3125] deepnote_to_be_reexecuted=false execution_millis=82 execution_start=1644074211341 source_hash="817d440e" tags=[]

#Transforming datetimes

df['application_date'] = pd.to_datetime(df['application_date'])

df['exit_date'] = pd.to_datetime(df['exit_date'])

df['birth_date'] = pd.to_datetime(df['birth_date'])

/ + cell_id="3a513dc4-a3b5-49e0-892c-045479b2ad74" deepnote_cell_type="code" deepnote_output_heights=[232] deepnote_to_be_reexecuted=false execution_millis=9 execution_start=1644075060525 source_hash="6159abf9" tags=[]