code stringlengths 38 801k | repo_path stringlengths 6 263 |

|---|---|

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# +

#3.1 Linear Regression

#3.1.2 Vectorization for Speed

#比較 使用for迴圈一個一個加 及 使用向量加法 的時間

import math

import time

import numpy as np

import torch

from d2l import torch as d2l

n = 10000

a = torch.ones(n)

b = torch.ones(n)

# -

class Timer:

"""Record multiple running times."""

def __init__(self):

self.times = []

self.start()

def start(self):

"""Start the timer."""

self.tik = time.time()

def stop(self):

"""Stop the timer and record the time in a list."""

self.times.append(time.time() - self.tik)

return self.times[-1]

def avg(self):

"""Return the average time."""

return sum(self.times) / len(self.times)

def sum(self):

"""Return the sum of time."""

return sum(self.times)

def cumsum(self):

"""Return the accumulated time."""

return np.array(self.times).cumsum().tolist()

# +

c = torch.zeros(n)

timer = Timer()

#用for迴圈一個一個加

for i in range(n):

c[i] = a[i] + b[i]

f'{timer.stop():.5f} sec'

# +

timer.start()

#用向量加法全部一起加

d = a + b

f'{timer.stop():.5f} sec'

# +

#3.1.3 The Normal Distribution and Squared Loss

def normal(x, mu, sigma): #正態分佈function

p = 1 / math.sqrt(2 * math.pi * sigma**2)

return p * np.exp(-0.5 / sigma**2 * (x - mu)**2)

# +

# Use numpy again for visualization

x = np.arange(-7, 7, 0.01)

# Mean and standard deviation pairs

params = [(0, 1), (0, 2), (3, 1)]

d2l.plot(x, [normal(x, mu, sigma) for mu, sigma in params], xlabel='x',

ylabel='p(x)', figsize=(4.5, 2.5),

legend=[f'mean {mu}, std {sigma}' for mu, sigma in params])

# -

| coookie89/Week1/ch3/3.1/example.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# + [markdown] button=false new_sheet=false run_control={"read_only": false}

# # Asserting Expectations

#

# In the previous chapters on [tracing](Tracer.ipynb) and [interactive debugging](Debugger.ipynb), we have seen how to observe executions. By checking our observations against our expectations, we can find out when and how the program state is faulty. So far, we have assumed that this check would be done by _humans_ – that is, us. However, having this check done by a _computer_, for instance as part of the execution, is infinitely more rigorous and efficient. In this chapter, we introduce techniques to _specify_ our expectations and to check them at runtime, enabling us to detect faults _right as they occur_.

# -

from bookutils import YouTubeVideo

YouTubeVideo("9mI9sbKFkwU")

# + [markdown] button=false new_sheet=false run_control={"read_only": false}

# **Prerequisites**

#

# * You should have read the [chapter on tracing executions](Tracer.ipynb).

# + button=false new_sheet=false run_control={"read_only": false} slideshow={"slide_type": "skip"}

import bookutils

# -

from bookutils import quiz

import Tracer

# + [markdown] slideshow={"slide_type": "skip"}

# ## Synopsis

# <!-- Automatically generated. Do not edit. -->

#

# To [use the code provided in this chapter](Importing.ipynb), write

#

# ```python

# >>> from debuggingbook.Assertions import <identifier>

# ```

#

# and then make use of the following features.

#

#

# This chapter discusses _assertions_ to define _assumptions_ on function inputs and results:

#

# ```python

# >>> def my_square_root(x): # type: ignore

# >>> assert x >= 0

# >>> y = square_root(x)

# >>> assert math.isclose(y * y, x)

# >>> return y

# ```

# Notably, assertions detect _violations_ of these assumptions at runtime:

#

# ```python

# >>> with ExpectError():

# >>> y = my_square_root(-1)

# Traceback (most recent call last):

# File "<ipython-input-158-b63177b4c2f7>", line 2, in <module>

# y = my_square_root(-1)

# File "<ipython-input-157-dc1d0082e740>", line 2, in my_square_root

# assert x >= 0

# AssertionError (expected)

#

# ```

# _System assertions_ help to detect invalid memory operations.

#

# ```python

# >>> managed_mem = ManagedMemory()

# >>> managed_mem

# ```

# |Address|<span style="color: blue">0</span>|<span style="color: blue">1</span>|<span style="color: lightgrey">2</span>|<span style="color: lightgrey">3</span>|<span style="color: lightgrey">4</span>|<span style="color: lightgrey">5</span>|<span style="color: lightgrey">6</span>|<span style="color: lightgrey">7</span>|<span style="color: lightgrey">8</span>|<span style="color: lightgrey">9</span>|

# |:---|:---|:---|:---|:---|:---|:---|:---|:---|:---|:---|

# |Allocated| | | | | | | | | | |

# |Initialized| | | | | | | | | | |

# |Content|-1|0| | | | | | | | |

#

# ```

# >>> with ExpectError():

# >>> x = managed_mem[2]

# Traceback (most recent call last):

# File "<ipython-input-160-89a206319ebb>", line 2, in <module>

# x = managed_mem[2]

# File "<ipython-input-97-d25e50073b38>", line 3, in __getitem__

# return self.read(address)

# File "<ipython-input-138-7b46abe4a8e1>", line 10, in read

# "Reading from unallocated memory"

# AssertionError: Reading from unallocated memory (expected)

#

# ```

#

# + [markdown] button=false new_sheet=true run_control={"read_only": false}

# ## Introducing Assertions

#

# [Tracers](Tracer.ipynb) and [Interactive Debuggers](Debugger.ipynb) are very flexible tools that allow you to observe precisely what happens during a program execution. It is still _you_, however, who has to check program states and traces against your expectations. There is nothing wrong with that – except that checking hundreds of statements or variables can quickly become a pretty boring and tedious task.

#

# Processing and checking large amounts of data is actually precisely what _computers were invented for_. Hence, we should aim to _delegate such checking tasks to our computers_ as much as we can. This automates another essential part of debugging – maybe even _the_ most essential part.

# -

# ### Assertions

#

# The standard tool for having the computer check specific conditions at runtime is called an _assertion_. An assertion takes the form

#

# ```python

# assert condition

# ```

#

# and states that, at runtime, the computer should check that `condition` holds, e.g. evaluates to True. If the condition holds, then nothing happens:

assert True

# If the condition evaluates to _False_, however, then the assertion _fails_, indicating an internal error.

from ExpectError import ExpectError

with ExpectError():

assert False

# A common usage for assertions is for _testing_. For instance, we can test a square root function as

def test_square_root() -> None:

assert square_root(4) == 2

assert square_root(9) == 3

...

# and `test_square_root()` will fail if `square_root()` returns a wrong value.

# Assertions are available in all programming languages. You can even go and implement assertions yourself:

def my_own_assert(cond: bool) -> None:

if not cond:

raise AssertionError

# ... and get (almost) the same functionality:

with ExpectError():

my_own_assert(2 + 2 == 5)

# ### Assertion Diagnostics

# In most languages, _built-in assertions_ offer a bit more functionality than what can be obtained with self-defined functions. Most notably, built-in assertions

#

# * frequently tell _which condition_ failed (`2 + 2 == 5`)

# * frequently tell _where_ the assertion failed (`line 2`), and

# * are _optional_ – that is, they can be turned off to save computation time.

# C and C++, for instance, provide an `assert()` function that does all this:

# ignore

open('testassert.c', 'w').write(r'''

#include <stdio.h>

#include "assert.h"

int main(int argc, char *argv[]) {

assert(2 + 2 == 5);

printf("Foo\n");

}

''');

# ignore

from bookutils import print_content

print_content(open('testassert.c').read(), '.h')

# If we compile this function and execute it, the assertion (expectedly) fails:

# !cc -g -o testassert testassert.c

# !./testassert

# How would the C `assert()` function be able to report the condition and the current location? In fact, `assert()` is commonly implemented as a _macro_ that besides checking the condition, also turns it into a _string_ for a potential error message. Additional macros such as `__FILE__` and `__LINE__` expand into the current location and line, which can then all be used in the assertion error message.

# A very simple definition of `assert()` that provides the above diagnostics looks like this:

# ignore

open('assert.h', 'w').write(r'''

#include <stdio.h>

#include <stdlib.h>

#ifndef NDEBUG

#define assert(cond) \

if (!(cond)) { \

fprintf(stderr, "Assertion failed: %s, function %s, file %s, line %d", \

#cond, __func__, __FILE__, __LINE__); \

exit(1); \

}

#else

#define assert(cond) ((void) 0)

#endif

''');

print_content(open('assert.h').read(), '.h')

# (If you think that this is cryptic, you should have a look at an [_actual_ `<assert.h>` header file](https://github.com/bminor/glibc/blob/master/assert/assert.h).)

# This header file reveals another important property of assertions – they can be _turned off_. In C and C++, defining the preprocessor variable `NDEBUG` ("no debug") turns off assertions, replacing them with a statement that does nothing. The `NDEBUG` variable can be set during compilation:

# !cc -DNDEBUG -g -o testassert testassert.c

# And, as you can see, the assertion has no effect anymore:

# !./testassert

# In Python, assertions can also be turned off, by invoking the `python` interpreter with the `-O` ("optimize") flag:

# !python -c 'assert 2 + 2 == 5; print("Foo")'

# !python -O -c 'assert 2 + 2 == 5; print("Foo")'

# In comparison, which language wins in the amount of assertion diagnostics? Have a look at the information Python provides. If, after defining `fun()` as

def fun() -> None:

assert 2 + 2 == 5

quiz("If we invoke `fun()` and the assertion fails,"

" which information do we get?",

[

"The failing condition (`2 + 2 == 5`)",

"The location of the assertion in the program",

"The list of callers",

"All of the above"

], '123456789 % 5')

# Indeed, a failed assertion (like any exception in Python) provides us with lots of debugging information, even including the source code:

with ExpectError():

fun()

# + [markdown] button=false new_sheet=false run_control={"read_only": false}

# ## Checking Preconditions

#

# Assertions show their true power when they are not used in a test, but used in a program instead, because that is when they can check not only _one_ run, but actually _all_ runs.

# -

# The classic example for the use of assertions is a _square root_ program, implementing the function $\sqrt{x}$. (Let's assume for a moment that the environment does not already have one.)

# We want to ensure that `square_root()` is always called with correct arguments. For this purpose, we set up an assertion:

def square_root(x): # type: ignore

assert x >= 0

... # compute square root in y

# This assertion is called the _precondition_. A precondition is checked at the beginning of a function. It checks whether all the conditions for using the function are met.

# So, if we call `square_root()` with an bad argument, we will get an exception. This holds for _any_ call, ever.

with ExpectError():

square_root(-1)

# For a dynamically typed language like Python, an assertion could actually also check that the argument has the correct type. For `square_root()`, we could ensure that `x` actually has a numeric type:

def square_root(x): # type: ignore

assert isinstance(x, (int, float))

assert x >= 0

... # compute square root in y

# And while calls with the correct types just work...

square_root(4)

square_root(4.0)

# ... a call with an illegal type will raise a revealing diagnostic:

with ExpectError():

square_root('4')

quiz("If we did not check for the type of `x`, "

"would the assertion `x >= 0` still catch a bad call?",

[

"Yes, since `>=` is only defined between numbers",

"No, because an empty list or string would evaluate to 0"

], '0b10 - 0b01')

# Fortunately (for us Python users), the assertion `x >= 0` would already catch a number of invalid types, because (in contrast to, say, JavaScript), Python has no implicit conversion of strings or structures to integers:

with ExpectError():

'4' >= 0 # type: ignore

# + [markdown] button=false new_sheet=false run_control={"read_only": false}

# ## Checking Results

#

# While a precondition ensures that the _argument_ to a function is correct, a _postcondition_ checks the _result_ of this very function (assuming the precondition held in the first place). For our `square_root()` function, we can check that the result $y = \sqrt{x}$ is correct by checking that $y^2 = x$ holds:

# -

def square_root(x): # type: ignore

assert x >= 0

... # compute square root in y

assert y * y == x

# In practice, we might encounter problems with this assertion. What might these be?

quiz("Why could the assertion fail despite `square_root()` being correct?",

[

"We need to compute `y ** 2`, not `y * y`",

"We may encounter rounding errors",

"The value of `x` may have changed during computation",

"The interpreter / compiler may be buggy"

], '0b110011 - 0o61')

# Technically speaking, there could be many things that _also_ could cause the assertion to fail (cosmic radiation, operating system bugs, secret service bugs, anything) – but the by far most important reason is indeed rounding errors. Here's a simple example, using the Python built-in square root function:

import math

math.sqrt(2.0) * math.sqrt(2.0)

math.sqrt(2.0) * math.sqrt(2.0) == 2.0

# If you want to compare two floating-point values, you need to provide an _epsilon value_ denoting the margin of error.

def square_root(x): # type: ignore

assert x >= 0

... # compute square root in y

epsilon = 0.000001

assert abs(y * y - x) < epsilon

# In Python, the function `math.isclose(x, y)` also does the job, by default ensuring that the two values are the same within about 9 decimal digits:

math.isclose(math.sqrt(2.0) * math.sqrt(2.0), 2.0)

# So let's use `math.isclose()` for our revised postcondition:

def square_root(x): # type: ignore

assert x >= 0

... # compute square root in y

assert math.isclose(y * y, x)

# Let us try out this postcondition by using an actual implementation. The [Newton–Raphson method](https://en.wikipedia.org/wiki/Newton%27s_method) is an efficient way to compute square roots:

def square_root(x): # type: ignore

assert x >= 0 # precondition

approx = None

guess = x / 2

while approx != guess:

approx = guess

guess = (approx + x / approx) / 2

assert math.isclose(approx * approx, x)

return approx

# Apparently, this implementation does the job:

square_root(4.0)

# However, it is not just this call that produces the correct result – _all_ calls will produce the correct result. (If the postcondition assertion does not fail, that is.) So, a call like

square_root(12345.0)

# does not require us to _manually_ check the result – the postcondition assertion already has done that for us, and will continue to do so forever.

# ### Assertions and Tests

#

# Having assertions right in the code gives us an easy means to _test_ it – if we can feed sufficiently many inputs into the code without the postcondition ever failing, we can increase our confidence. Let us try this out with our `square_root()` function:

for x in range(1, 10000):

y = square_root(x)

# Note again that we do not have to check the value of `y` – the `square_root()` postcondition already did that for us.

# Instead of enumerating input values, we could also use random (non-negative) numbers; even totally random numbers could work if we filter out those tests where the precondition already fails. If you are interested in such _test generation techniques_, the [Fuzzing Book](fuzzingbook.org) is a great reference for you.

# Modern program verification tools even can _prove_ that your program will always meet its assertions. But for all this, you need to have _explicit_ and _formal_ assertions in the first place.

# For those interested in testing and verification, here is a quiz for you:

quiz("Is there a value for x that satisfies the precondition, "

"but fails the postcondition?",

[

"Yes",

"No"

], 'int("Y" in "Yes")')

# This is indeed something a test generator or program verifier might be able to find with zero effort.

# ### Partial Checks

#

# In the case of `square_root()`, our postcondition is _total_ – if it passes, then the result is correct (within the `epsilon` boundaries, that is). In practice, however, it is not always easy to provide such a total check. As an example, consider our `remove_html_markup()` function from the [Introduction to Debugging](Intro_Debugging.ipynb):

def remove_html_markup(s): # type: ignore

tag = False

quote = False

out = ""

for c in s:

if c == '<' and not quote:

tag = True

elif c == '>' and not quote:

tag = False

elif c == '"' or c == "'" and tag:

quote = not quote

elif not tag:

out = out + c

return out

remove_html_markup("I am a text with <strong>HTML markup</strong>")

# The precondition for `remove_html_markup()` is trivial – it accepts any string. (Strictly speaking, a precondition `assert isinstance(s, str)` could prevent it from being called with some other collection such as a list.)

# The challenge, however, is the _postcondition_. How do we check that `remove_html_markup()` produces the correct result?

#

# * We could check it against some other implementation that removes HTML markup – but if we already do have such a "golden" implementation, why bother implementing it again?

#

# * After a change, we could also check it against some earlier version to prevent _regression_ – that is, losing functionality that was there before. But how would we know the earlier version was correct? (And if it was, why change it?)

# If we do not aim for ensuring full correctness, our postcondition can also check for _partial properties_. For instance, a postcondition for `remove_html_markup()` may simply ensure that the result no longer contains any markup:

def remove_html_markup(s): # type: ignore

tag = False

quote = False

out = ""

for c in s:

if c == '<' and not quote:

tag = True

elif c == '>' and not quote:

tag = False

elif c == '"' or c == "'" and tag:

quote = not quote

elif not tag:

out = out + c

# postcondition

assert '<' not in out and '>' not in out

return out

# Besides doing a good job at checking results, the postcondition also does a good job in documenting what `remove_html_markup()` actually does.

quiz("Which of these inputs causes the assertion to fail?",

[

'`<foo>bar</foo>`',

'`"foo"`',

'`>foo<`',

'`"x > y"`'

], '1 + 1 -(-1) + (1 * -1) + 1 ** (1 - 1) + 1')

# Indeed. Our (partial) assertion does _not_ detect this error:

remove_html_markup('"foo"')

# But it detects this one:

with ExpectError():

remove_html_markup('"x > y"')

# ### Assertions and Documentation

#

# In contrast to "standard" documentation – "`square_root()` expects a non-negative number `x`; its result is $\sqrt{x}$" –, assertions have a big advantage: They are _formal_ – and thus have an unambiguous semantics. Notably, we can understand what a function does _uniquely by reading its pre- and postconditions_. Here is an example:

def some_obscure_function(x: int, y: int, z: int) -> int:

result = int(...) # type: ignore

assert x == y == z or result > min(x, y, z)

assert x == y == z or result < max(x, y, z)

return result

quiz("What does this function do?",

[

"It returns the minimum value out of `x`, `y`, `z`",

"It returns the middle value out of `x`, `y`, `z`",

"It returns the maximum value out of `x`, `y`, `z`",

], 'int(0.5 ** math.cos(math.pi))', globals())

# Indeed, this would be a useful (and bug-revaling!) postcondition for one of our showcase functions in the [chapter on statistical debugging](StatisticalDebugger.ipynb).

# ### Using Assertions to Trivially Locate Defects

#

# The final benefit of assertions, and possibly even the most important in the context of this book, is _how much assertions help_ with locating defects.

# Indeed, with proper assertions, it is almost trivial to locate the one function that is responsible for a failure.

# Consider the following situation. Assume I have

# * a function `f()` whose precondition is satisfied, calling

# * a function `g()` whose precondition is violated and raises an exception.

quiz("Which function is faulty here?",

[

"`g()` because it raises an exception",

"`f()`"

" because it violates the precondition of `g()`",

"Both `f()` and `g()`"

" because they are incompatible",

"None of the above"

], 'math.factorial(int(math.tau / math.pi))', globals())

# The rule is very simple: If some function `func()` is called with its preconditions satisfied, and the postcondition of `func()` fail, then the fault in the program state must have originated at some point between these two events. Assuming that all functions called by `func()` also are correct (because their postconditions held), the defect _can only be in the code of `func()`._

# What pre- and postconditions imply is actually often called a _contract_ between caller and callee:

#

# * The caller promises to satisfy the _precondition_ of the callee,

# whereas

# * the callee promises to satisfy its own _postcondition_, delivering a correct result.

#

# In the above setting, `f()` is the caller, and `g()` is the callee; but as `f()` violates the precondition of `g()`, it has not kept its promises. Hence, `f()` violates the contract and is at fault. `f()` thus needs to be fixed.

# + [markdown] button=false new_sheet=false run_control={"read_only": false}

# ## Checking Data Structures

#

# Let us get back to debugging. In debugging, assertions serve two purposes:

#

# * They immediately detect bugs (if they fail)

# * They immediately rule out _specific parts of code and state_ (if they pass)

#

# This latter part is particularly interesting, as it allows us to focus our search on the lesser checked aspects of code and state.

#

# When we say "code _and_ state", what do we mean? Actually, assertions can not quickly check several executions of a function, but also _large amounts of data_, detecting faults in data _at the moment they are introduced_.

# -

# ### Times and Time Bombs

# Let us illustrate this by an example. Let's assume we want a `Time` class that represents the time of day. Its constructor takes the current time using hours, minutes, and seconds. (Note that this is a deliberately simple example – real-world classes for representing time are way more complex.)

class Time:

def __init__(self, hours: int = 0, minutes: int = 0, seconds: int = 0) -> None:

self._hours = hours

self._minutes = minutes

self._seconds = seconds

# To access the individual elements, we introduce a few getters:

class Time(Time):

def hours(self) -> int:

return self._hours

def minutes(self) -> int:

return self._minutes

def seconds(self) -> int:

return self._seconds

# We allow to print out time, using the [ISO 8601 format](https://en.wikipedia.org/wiki/ISO_8601):

class Time(Time):

def __repr__(self) -> str:

return f"{self.hours():02}:{self.minutes():02}:{self.seconds():02}"

# Three minutes to midnight can thus be represented as

t = Time(23, 57, 0)

t

# Unfortunately, there's nothing in our `Time` class that prevents blatant misuse. We can easily set up a time with negative numbers, for instance:

t = Time(-1, 0, 0)

t

# Such a thing _may_ have some semantics (relative time, maybe?), but it's not exactly conforming to ISO format.

# Even worse, we can even _construct_ a `Time` object with strings as numbers.

t = Time("High noon") # type: ignore

# and this will raise an error only at the moment we try to print it:

with ExpectError():

print(t)

# Note how cryptic this error message must be for the poor soul who has to debug this: Where on earth do we have an `'=' alignment` in our code? The fact that the error comes from an _invalid value_ is totally lost.

# In fact, what we have here is a _time bomb_ – a fault in the program state that can sleep for ages until someone steps on it. These are hard to debug, because one has to figure out when the time bomb was set – which can be thousands or millions of lines earlier in the program. Since in the absence of type checking, _any_ assignment to a `Time` object could be the culprit – so good luck with the search.

# This is again where assertions save the day. What you need is an _assertion that checks whether the data is correct_. For instance, we could revise our constructor such that it checks for correct arguments:

class Time(Time):

def __init__(self, hours: int = 0, minutes: int = 0, seconds: int = 0) -> None:

assert 0 <= hours <= 23

assert 0 <= minutes <= 59

assert 0 <= seconds <= 60 # Includes leap seconds (ISO8601)

self._hours = hours

self._minutes = minutes

self._seconds = seconds

# These conditions check whether `hours`, `minutes`, and `seconds` are within the right range. They are called _data invariants_ (or short _invariants_) because they hold for the given data (notably, the internal attributes) at all times.

#

# Note the unusual syntax for range checks (this is a Python special), and the fact that seconds can range from 0 to 60. That's because there's not only leap years, but also leap seconds.

# With this revised constructor, we now get errors as soon as we pass an invalid parameter:

with ExpectError():

t = Time(-23, 0, 0)

# Hence, _any_ attempt to set _any_ Time object to an illegal state will be immediately detected. In other words, the time bomb defuses itself at the moment it is being set.

# This means that when we are debugging, and search for potential faults in the state that could have caused the current failure, we can now rule out `Time` as a culprit, allowing us to focus on other parts of the state.

# The more of the state we have checked with invariants,

#

# * the less state we have to examine,

# * the fewer possible causes we have to investigate,

# * the faster we are done with determining the defect.

# ### Invariant Checkers

#

# For invariants to be effective, they have to be checked at all times. If we introduce a method that changes the state, then this method will also have to ensure that the invariant is satisfied:

class Time(Time):

def set_hours(self, hours: int) -> None:

assert 0 <= hours <= 23

self._hours = hours

# This also implies that state changes should go through methods, not direct accesses to attributes. If some code changes the attributes of your object directly, without going through the method that could check for consistency, then it will be much harder for you to a) detect the source of the problem and b) even detect that a problem exists.

# #### Excursion: Checked Getters and Setters in Python

# In Python, the `@property` decorator offers a handy way to implement checkers, even for otherwise direct accesses to attributes. It allows to define specific "getter" and "setter" functions for individual properties that would even be invoked when a (seemingly) attribute is accessed.

# Using `@property`, our `Time` class could look like this:

class MyTime(Time):

@property

def hours(self) -> int:

return self._hours

@hours.setter

def hours(self, new_hours: int) -> None:

assert 0 <= new_hours <= 23

self._hours = new_hours

# To access the current hour, we no longer go through a specific "getter" function; instead, we access a synthesized attribute that – behind the scenes – invokes the "getter" function marked with `@property`:

my_time = MyTime(11, 30, 0)

my_time.hours

# If we "assign" to the attribute, the "setter" function is called in the background;

my_time.hours = 12 # type: ignore

# We see this immediately when trying to assign an illegal value:

with ExpectError():

my_time.hours = 25 # type: ignore

# If you build large infrastructures in Python, you can use these features to implement

#

# * attributes that are _checked_ every time they are accessed or changed;

# * attributes that are easier to remember than a large slew of getter and setter functions.

#

# In this book, we do not have that many attributes, and we try to use not too many Python-specific features, so we usually go without `@property`. But for Python aficionados, and especially those who care about runtime checks, checked property accesses are a boon.

# #### End of Excursion

# If we have several methods that can alter an object, it can be helpful to factor out invariant checking into its own method. Such a method can also be called to check for inconsistencies that might have been introduced without going through one of the methods – e.g. by direct object access, memory manipulation, or memory corruption.

# By convention, methods that check invariants have the name `repOK()`, since they check whether the internal representation is okay, and return True if so.

# Here's a `repOK()` method for `Time`:

class Time(Time):

def repOK(self) -> bool:

assert 0 <= self.hours() <= 23

assert 0 <= self.minutes() <= 59

assert 0 <= self.seconds() <= 60

return True

# We can integrate this method right into our constructor and our setter:

class Time(Time):

def __init__(self, hours: int = 0, minutes: int = 0, seconds: int = 0) -> None:

self._hours = hours

self._minutes = minutes

self._seconds = seconds

assert self.repOK()

def set_hours(self, hours: int) -> None:

self._hours = hours

assert self.repOK()

with ExpectError():

t = Time(-23, 0, 0)

# Having a single method that checks everything can be beneficial, as it may explicitly check for more faulty states. For instance, it is still permissible to pass a floating-point number for hours and minutes, again breaking the Time representation:

Time(1.5) # type: ignore

# (Strictly speaking, ISO 8601 _does_ allow fractional parts for seconds and even for hours and minutes – but still wants two leading digits before the fraction separator. Plus, the comma is the "preferred" fraction separator. In short, you won't be making too many friends using times formatted like the one above.)

# We can extend our `repOK()` method to check for correct types, too.

class Time(Time):

def repOK(self) -> bool:

assert isinstance(self.hours(), int)

assert isinstance(self.minutes(), int)

assert isinstance(self.seconds(), int)

assert 0 <= self.hours() <= 23

assert 0 <= self.minutes() <= 59

assert 0 <= self.seconds() <= 60

return True

Time(14, 0, 0)

# This now also catches other type errors:

with ExpectError():

t = Time("After midnight") # type: ignore

# Our `repOK()` method can also be used in combination with pre- and postconditions. Typically, you'd like to make it part of the pre- and postcondition checks.

# Assume you want to implement an `advance()` method that adds a number of seconds to the current time. The preconditions and postconditions can be easily defined:

class Time(Time):

def seconds_since_midnight(self) -> int:

return self.hours() * 3600 + self.minutes() * 60 + self.seconds()

def advance(self, seconds_offset: int) -> None:

old_seconds = self.seconds_since_midnight()

... # Advance the clock

assert (self.seconds_since_midnight() ==

(old_seconds + seconds_offset) % (24 * 60 * 60))

# But you'd really like `advance()` to check the state before _and_ after its execution – again using `repOK()`:

class BetterTime(Time):

def advance(self, seconds_offset: int) -> None:

assert self.repOK()

old_seconds = self.seconds_since_midnight()

... # Advance the clock

assert (self.seconds_since_midnight() ==

(old_seconds + seconds_offset) % (24 * 60 * 60))

assert self.repOK()

# The first postcondition ensures that `advance()` produces the desired result; the second one ensures that the internal state is still okay.

# ### Large Data Structures

#

# Invariants are especially useful if you have a large, complex data structure which is very hard to track in a conventional debugger.

# Let's assume you have a [red-black search tree](https://en.wikipedia.org/wiki/Red–black_tree) for storing and searching data. Red-black trees are among the most efficient data structures for representing associative arrays (also known as mappings); they are self-balancing and guarantee search, insertion, and deletion in logarithmic time. They also are among the most ugly to debug.

# What is a red-black tree? Here is an example from Wikipedia:

#

# As you can see, there are red nodes and black nodes (giving the tree its name). We can define a class `RedBlackTrees` and implement all the necessary operations.

class RedBlackTree:

RED = 'red'

BLACK = 'black'

...

# +

# ignore

# A few dummy methods to make the static type checker happy. Ignore.

class RedBlackNode:

def __init__(self) -> None:

self.parent = None

self.color = RedBlackTree.BLACK

pass

# +

# ignore

# More dummy methods to make the static type checker happy. Ignore.

class RedBlackTree(RedBlackTree):

def redNodesHaveOnlyBlackChildren(self) -> bool:

return True

def equalNumberOfBlackNodesOnSubtrees(self) -> bool:

return True

def treeIsAcyclic(self) -> bool:

return True

def parentsAreConsistent(self) -> bool:

return True

def __init__(self) -> None:

self._root = RedBlackNode()

self._root.parent = None

self._root.color = self.BLACK

# -

# However, before we start coding, it would be a good idea to _first_ reason about the invariants of a red-black tree. Indeed, a red-black tree has a number of important properties that hold at all times – for instance, that the root node be black or that the tree be balanced. When we implement a red-black tree, these _invariants_ can be encoded into a `repOK()` method:

class RedBlackTree(RedBlackTree):

def repOK(self) -> bool:

assert self.rootHasNoParent()

assert self.rootIsBlack()

assert self.redNodesHaveOnlyBlackChildren()

assert self.equalNumberOfBlackNodesOnSubtrees()

assert self.treeIsAcyclic()

assert self.parentsAreConsistent()

return True

# Each of these helper methods are checkers in their own right:

class RedBlackTree(RedBlackTree):

def rootHasNoParent(self) -> bool:

return self._root.parent is None

def rootIsBlack(self) -> bool:

return self._root.color == self.BLACK

...

# With all these helpers, our `repOK()` method will become very rigorous – but all this rigor is very much needed. Just for fun, check out the [description of red-black trees on Wikipedia](https://en.wikipedia.org/wiki/Red–black_tree). The description of how insertion or deletion work is 4 to 5 pages long (each!), with dozens of special cases that all have to be handled properly. If you ever face the task of implementing such a data structure, be sure to (1) write a `repOK()` method such as the above, and (2) call it before and after each method that alters the tree:

# ignore

from typing import Any, List

class RedBlackTree(RedBlackTree):

def insert(self, item: Any) -> None:

assert self.repOK()

... # four pages of code

assert self.repOK()

def delete(self, item: Any) -> None:

assert self.repOK()

... # five pages of code

assert self.repOK()

# Such checks will make your tree run much slower – essentially, instead of logarithmic time complexity, we now have linear time complexity, as the entire tree is traversed with each change – but you will find any bugs much, much faster. Once your tree goes in production, you can deactivate `repOK()` by default, using some debugging switch to turn it on again should the need ever arise:

class RedBlackTree(RedBlackTree):

def __init__(self, checkRepOK: bool = False) -> None:

...

self.checkRepOK = checkRepOK

def repOK(self) -> bool:

if not self.checkRepOK:

return True

assert self.rootHasNoParent()

assert self.rootIsBlack()

...

return True

# Just don't delete it – future maintainers of your code will be forever grateful that you have documented your assumptions and given them a means to quickly check their code.

# ## System Invariants

#

# When interacting with the operating system, there are a number of rules that programs must follow, lest they get themselves (or the system) in some state where they cannot execute properly anymore.

#

# * If you work with files, every file that you open also must be closed; otherwise, you will deplete resources.

# * If you create temporary files, be sure to delete them after use; otherwise, you will consume disk space.

# * If you work with locks, be sure to release locks after use; otherwise, your system may end up in a deadlock.

#

# One area in which it is particularly easy to make mistakes is _memory usage_. In Python, memory is maintained by the Python interpreter, and all memory accesses are checked at runtime. Accessing a non-existing element of a string, for instance, will raise a memory error:

with ExpectError():

index = 10

"foo"[index]

# The very same expression in a C program, though, will yield _undefined behavior_ – which means that anything can happen. Let us explore a couple of C programs with undefined behavior.

# ### The C Memory Model

#

# We start with a simple C program which uses the same invalid index as our Python expression, above. What does this program do?

# ignore

open('testoverflow.c', 'w').write(r'''

#include <stdio.h>

// Access memory out of bounds

int main(int argc, char *argv[]) {

int index = 10;

return "foo"[index]; // BOOM

}

''');

print_content(open('testoverflow.c').read())

# In our example, the program will read from a random chunk or memory, which may exist or not. In most cases, nothing at all will happen – which is a bad thing, because you won't realize that your program has a defect.

# !cc -g -o testoverflow testoverflow.c

# !./testoverflow

# To see what is going on behind the scenes, let us have a look at the C memory model.

# #### Excursion: A C Memory Model Simulator

# We build a little simulation of C memory. A `Memory` item stands for a block of continuous memory, which we can access by address using `read()` and `write()`. The `__repr__()` method shows memory contents as a string.

class Memory:

def __init__(self, size: int = 10) -> None:

self.size: int = size

self.memory: List[Any] = [None for i in range(size)]

def read(self, address: int) -> Any:

return self.memory[address]

def write(self, address: int, item: Any) -> None:

self.memory[address] = item

def __repr__(self) -> str:

return repr(self.memory)

mem: Memory = Memory()

mem

mem.write(0, 'a')

mem

mem.read(0)

# We introduce `[index]` syntax for easy read and write:

class Memory(Memory):

def __getitem__(self, address: int) -> Any:

return self.read(address)

def __setitem__(self, address: int, item: Any) -> None:

self.write(address, item)

mem_with_index: Memory = Memory()

mem_with_index[1] = 'a'

mem_with_index

mem_with_index[1]

# Here are some more advanced methods to show memory cntents. The `repr()` and `_repr_markdown_()` methods display memory as a table. In a notebook, we can simply evaluate the memory to see the table.

from IPython.display import display, Markdown, HTML

class Memory(Memory):

def show_header(self) -> str:

out = "|Address|"

for address in range(self.size):

out += f"{address}|"

return out + '\n'

def show_sep(self) -> str:

out = "|:---|"

for address in range(self.size):

out += ":---|"

return out + '\n'

def show_contents(self) -> str:

out = "|Content|"

for address in range(self.size):

contents = self.memory[address]

if contents is not None:

out += f"{repr(contents)}|"

else:

out += " |"

return out + '\n'

def __repr__(self) -> str:

return self.show_header() + self.show_sep() + self.show_contents()

def _repr_markdown_(self) -> str:

return repr(self)

mem_with_table: Memory = Memory()

for i in range(mem_with_table.size):

mem_with_table[i] = 10 * i

mem_with_table

# #### End of Excursion

# In C, memory comes as a single block of bytes at continuous addresses. Let us assume we have a memory of only 20 bytes (duh!) and the string "foo" is stored at address 5:

mem_with_table: Memory = Memory(20)

mem_with_table[5] = 'f'

mem_with_table[6] = 'o'

mem_with_table[7] = 'o'

mem_with_table

# When we try to access `"foo"[10]`, we try to read the memory location at address 15 – which may exist (or not), and which may have arbitrary contents, based on whatever previous instructions left there. From there on, the behavior of our program is undefined.

# Such _buffer overflows_ can also come as _writes_ into memory locations – and thus overwrite the item that happens to be at the location of interest. If the item at address 15 happens to be, say, a flag controlling administrator access, then setting it to a non-zero value can come handy for an attacker.

# ### Dynamic Memory

#

# A second source of errors in C programs is the use of dynamic memory – that is, memory allocated and deallocated at run-time. In C, the function `malloc()` returns a continuous block of memory of a given size; the function `free()` returns it back to the system.

#

# After a block has been `free()`'d, it must no longer be used, as the memory might already be in use by some other function (or program!) again. Here's a piece of code that violates this assumption:

# ignore

open('testuseafterfree.c', 'w').write(r'''

#include <stdlib.h>

// Access a chunk of memory after it has been given back to the system

int main(int argc, char *argv[]) {

int *array = malloc(100 * sizeof(int));

free(array);

return array[10]; // BOOM

}

''');

print_content(open('testuseafterfree.c').read())

# Again, if we compile and execute this program, nothing tells us that we have just entered undefined behavior:

# !cc -g -o testuseafterfree testuseafterfree.c

# !./testuseafterfree

# What's going on behind the scenes here?

# #### Excursion: Dynamic Memory in C

# `DynamicMemory` introduces dynamic memory allocation `allocate()` and deallocation `free()`, using a list of allocated blocks.

class DynamicMemory(Memory):

# Address at which our list of blocks starts

BLOCK_LIST_START = 0

def __init__(self, *args: Any) -> None:

super().__init__(*args)

# Before each block, we reserve two items:

# One pointing to the next block (-1 = END)

self.memory[self.BLOCK_LIST_START] = -1

# One giving the length of the current block (<0: freed)

self.memory[self.BLOCK_LIST_START + 1] = 0

def allocate(self, block_size: int) -> int:

"""Allocate a block of memory"""

# traverse block list

# until we find a free block of appropriate size

chunk = self.BLOCK_LIST_START

while chunk < self.size:

next_chunk = self.memory[chunk]

chunk_length = self.memory[chunk + 1]

if chunk_length < 0 and abs(chunk_length) >= block_size:

# Reuse this free block

self.memory[chunk + 1] = abs(chunk_length)

return chunk + 2

if next_chunk < 0:

# End of list - allocate new block

next_chunk = chunk + block_size + 2

if next_chunk >= self.size:

break

self.memory[chunk] = next_chunk

self.memory[chunk + 1] = block_size

self.memory[next_chunk] = -1

self.memory[next_chunk + 1] = 0

base = chunk + 2

return base

# Go to next block

chunk = next_chunk

raise MemoryError("Out of Memory")

def free(self, base: int) -> None:

"""Free a block of memory"""

# Mark block as available

chunk = base - 2

self.memory[chunk + 1] = -abs(self.memory[chunk + 1])

# In our table, we highlight free blocks in grey:

class DynamicMemory(DynamicMemory):

def show_header(self) -> str:

out = "|Address|"

color = "black"

chunk = self.BLOCK_LIST_START

allocated = False

# States and colors

for address in range(self.size):

if address == chunk:

color = "blue"

next_chunk = self.memory[address]

elif address == chunk + 1:

color = "blue"

allocated = self.memory[address] > 0

chunk = next_chunk

elif allocated:

color = "black"

else:

color = "lightgrey"

item = f'<span style="color: {color}">{address}</span>'

out += f"{item}|"

return out + '\n'

dynamic_mem: DynamicMemory = DynamicMemory(10)

dynamic_mem

dynamic_mem.allocate(2)

dynamic_mem

dynamic_mem.allocate(2)

dynamic_mem

dynamic_mem.free(2)

dynamic_mem

dynamic_mem.allocate(1)

dynamic_mem

with ExpectError():

dynamic_mem.allocate(1)

# #### End of Excursion

# Dynamic memory is allocated as part of our main memory. The following table shows unallocated memory in grey and allocated memory in black:

dynamic_mem: DynamicMemory = DynamicMemory(13)

dynamic_mem

# The numbers already stored (-1 and 0) are part of our dynamic memory housekeeping (highlighted in blue); they stand for the next block of memory and the length of the current block, respectively.

# Let us allocate a block of 3 bytes. Our (simulated) allocation mechanism places these at the first continuous block available:

p1 = dynamic_mem.allocate(3)

p1

# We see that a block of 3 items is allocated at address 2. The two numbers before that address (5 and 3) indicate the beginning of the next block as well as the length of the current one.

dynamic_mem

# Let us allocate some more.

p2 = dynamic_mem.allocate(4)

p2

# We can make use of that memory:

dynamic_mem[p1] = 123

dynamic_mem[p2] = 'x'

dynamic_mem

# When we free memory, the block is marked as free by giving it a negative length:

dynamic_mem.free(p1)

dynamic_mem

# Note that freeing memory does not clear memory; the item at `p1` is still there. And we can also still access it.

dynamic_mem[p1]

# But if, in the meantime, some other part of the program requests more memory and uses it...

p3 = dynamic_mem.allocate(2)

dynamic_mem[p3] = 'y'

dynamic_mem

# ... then the memory at `p1` may simply be overwritten.

dynamic_mem[p1]

# An even worse effect comes into play if one accidentally overwrites the dynamic memory allocation information; this can easily corrupt the entire memory management. In our case, such corrupted memory can lead to an endless loop when trying to allocate more memory:

from ExpectError import ExpectTimeout

dynamic_mem[p3 + 3] = 0

dynamic_mem

# When `allocate()` traverses the list of blocks, it will enter an endless loop between the block starting at address 0 (pointing to the next block at 5) and the block at address 5 (pointing back to 0).

with ExpectTimeout(1):

dynamic_mem.allocate(1)

# Real-world `malloc()` and `free()` implementations suffer from similar problems. As stated above: As soon as undefined behavior is reached, anything may happen.

# ### Managed Memory

#

# The solution to all these problems is to _keep track of memory_, specifically

#

# * which parts of memory have been _allocated_, and

# * which parts of memory have been _initialized_.

# To this end, we introduce two extra flags for each address:

#

# * The `allocated` flag tells whether an address has been allocated; the `allocate()` method sets them, and `free()` clears them again.

# * The `initialized` flag tells whether an address has been written to. This is cleared as part of `allocate()`.

# With these, we can run a number of checks:

#

# * When _writing_ into memory and _freeing_ memory, we can check whether the address has been _allocated_; and

# * When _reading_ from memory, we can check whether the address has been allocated and _initialized_.

#

# Both of these should effectively prevent memory errors.

# #### Excursion: Managed Memory

# We create a simulation of managed memory. `ManagedMemory` keeps track of every address whether it is intiialized and allocated.

class ManagedMemory(DynamicMemory):

def __init__(self, *args: Any) -> None:

super().__init__(*args)

self.initialized = [False for i in range(self.size)]

self.allocated = [False for i in range(self.size)]

# This allows memory access functions to run a number of extra checks:

class ManagedMemory(ManagedMemory):

def write(self, address: int, item: Any) -> None:

assert self.allocated[address], \

"Writing into unallocated memory"

self.memory[address] = item

self.initialized[address] = True

def read(self, address: int) -> Any:

assert self.allocated[address], \

"Reading from unallocated memory"

assert self.initialized[address], \

"Reading from uninitialized memory"

return self.memory[address]

# Dynamic memory functions are set up such that they keep track of these flags.

class ManagedMemory(ManagedMemory):

def allocate(self, block_size: int) -> int:

base = super().allocate(block_size)

for i in range(block_size):

self.allocated[base + i] = True

self.initialized[base + i] = False

return base

def free(self, base: int) -> None:

assert self.allocated[base], \

"Freeing memory that is already freed"

block_size = self.memory[base - 1]

for i in range(block_size):

self.allocated[base + i] = False

self.initialized[base + i] = False

super().free(base)

# Let us highlight these flags when printing out the table:

class ManagedMemory(ManagedMemory):

def show_contents(self) -> str:

return (self.show_allocated() +

self.show_initialized() +

DynamicMemory.show_contents(self))

def show_allocated(self) -> str:

out = "|Allocated|"

for address in range(self.size):

if self.allocated[address]:

out += "Y|"

else:

out += " |"

return out + '\n'

def show_initialized(self) -> str:

out = "|Initialized|"

for address in range(self.size):

if self.initialized[address]:

out += "Y|"

else:

out += " |"

return out + '\n'

# #### End of Excursion

# Here comes a simple simulation of managed memory. After we create memory, all addresses are neither allocated nor initialized:

managed_mem: ManagedMemory = ManagedMemory()

managed_mem

# Let us allocate some elements. We see that the first three bytes are now marked as allocated:

p = managed_mem.allocate(3)

managed_mem

# After writing into memory, the respective addresses are marked as "initialized":

managed_mem[p] = 10

managed_mem[p + 1] = 20

managed_mem

# Attempting to read uninitialized memory fails:

with ExpectError():

x = managed_mem[p + 2]

# When we free the block again, it is marked as not allocated:

managed_mem.free(p)

managed_mem

# And accessing any element of the free'd block will yield an error:

with ExpectError():

managed_mem[p] = 10

# Freeing the same block twice also yields an error:

with ExpectError():

managed_mem.free(p)

# With this, we now have a mechanism in place to fully detect memory issues in languages such as C.

# Obviously, keeping track of whether memory is allocated/initialized or not requires some extra memory – and also some extra computation time, as read and write accesses have to be checked first. During testing, however, such effort may quickly pay off, as memory bugs can be quickly discovered.

# To detect memory errors, a number of tools have been developed. The first class of tools _interprets_ the instructions of the executable code, tracking all memory accesses. For each memory access, they can check whether the memory accessed _exists_ and has been _initialized_ at some point.

# ### Checking Memory Usage with Valgrind

#

# The [Valgrind](https://www.valgrind.org) tool allows to _interpret_ executable code, thus tracking each and every memory access. You can use Valgrind to execute any program from the command-line, and it will check all memory accesses during execution.

#

# <!-- Installing ValGrind on macOS: https://github.com/LouisBrunner/valgrind-macos/ -->

#

# Here's what happens if we run Valgrind on our `testuseafterfree` program:

print_content(open('testuseafterfree.c').read())

# !valgrind ./testuseafterfree

# We see that Valgrind has detected the issue ("Invalid read of size 4") during execution; it also reported the current stack trace (and hence the location at which the error occurred). Note that the program continues execution even after the error occurred; should further errors occur, Valgrind will report these, too.

# Being an interpreter, Valgrind slows down execution of programs dramatically. However, it requires no recompilation and thus can work on code (and libraries) whose source code is not available.

# Valgrind is not perfect, though. For our `testoverflow` program, it fails to detect the illegal access:

print_content(open('testoverflow.c').read())

# !valgrind ./testoverflow

# This is because at compile time, the information about the length of the `"foo"` string is no longer available – all Valgrind sees is a read access into the static data portion of the executable that may be valid or invalid. To actually detect such errors, we need to hook into the compiler.

# ### Checking Memory Usage with Memory Sanitizer

#

# The second class of tools to detect memory issues are _address sanitizers_. An address sanitizer injects memory-checking code into the program _during compilation_. This means that every access will be checked – but this time, the code still runs on the processor itself, meaning that the speed is much less reduced.

# Here is an example of how to use the address sanitizer of the Clang C compiler:

# !cc -fsanitize=address -o testuseafterfree testuseafterfree.c

# At the very first moment we have an out-of-bounds access, the program aborts with a diagnostic message – in our case already during `read_overflow()`.

# !./testuseafterfree

# Likewise, if we apply the address sanitizer on `testoverflow`, we also immediately get an error:

# !cc -fsanitize=address -o testoverflow testoverflow.c

# !./testoverflow

# Since the address sanitizer monitors each and every read and write, as well as usage of `free()`, it will require some effort to create a bug that it won't catch. Also, while Valgrind runs the program ten times slower and more, the performance penalty for memory sanitization is much much lower. Sanitizers can also help in finding data races, memory leaks, and all other sorts of undefined behavior. As [<NAME> puts it](https://lemire.me/blog/2016/04/20/no-more-leaks-with-sanitize-flags-in-gcc-and-clang/):

#

# > Really, if you are using gcc or clang and you are not using these flags, you are not being serious.

# ## When Should Invariants be Checked?

#

# We have seen that during testing and debugging, invariants should be checked _as much as possible_, thus narrowing down the time it takes to detect a violation to a minimum. The easiest way to get there is to have them checked as _postcondition_ in the constructor and any other method that sets the state of an object.

# If you have means to alter the state of an object outside of these methods – for instance, by directly writing to memory, or by writing to internal attributes –, then you may have to check them even more frequently. Using the [tracing infrastructure](Tracer.ipynb), for instance, you can have the tracer invoke `repOK()` with each and every line executed, thereby again directly pinpointing the moment the state gets corrupted. While this will slow down execution tremendously, it is still better to have the computer do the work than you stepping backwards and forwards through an execution.

# Another question is whether assertions should remain active even in production code. Assertions take time, and this may be too much for production.

# ### Assertions are not Production Code

#

# First of all, assertions are _not_ production code – the rest of the code should not be impacted by any assertion being on or off. If you write code like

#

# ```python

# assert map.remove(location)

# ```

# your assertion will have a side effect, namely removing a location from the map. If one turns assertions off, the side effect will be turned off as well. You need to change this into

#

# ```python

# locationRemoved = map.remove(location)

# assert locationRemoved

# ```

# ### For System Preconditions, Use Production Code

#

# Consequently, you should not rely on assertions for _system preconditions_ – that is, conditions that are necessary to keep the system running. System input (or anything that could be controlled by another party) still has to be validated by production code, not assertions. Critical conditions have to be checked by production code, not (only) assertions.

#

# If you have code such as

# ```python

# assert command in {"open", "close", "exit"}

# exec(command)

# ```

# then having the assertion document and check your assumptions is fine. However, if you turn the assertion off in production code, it will only be a matter of time until somebody sets `command` to `'system("/bin/sh")'` and all of a sudden takes control over your system.

# ### Consider Leaving Some Assertions On

#

# The main reason for turning assertions off is efficiency. However, _failing early is better than having bad data and not failing._ Think carefully which assertions have a high impact on execution time, and turn these off first. Assertions that have little to no impact on resources can be left on.

# As an example, here's a piece of code that handles traffic in a simulation. The `light` variable can be either `RED`, `AMBER`, or `GREEN`:

#

# ```python

# if light == RED:

# traffic.stop()

# elif light == AMBER:

# traffic.prepare_to_stop()

# elif light == GREEN:

# traffic.go()

# else:

# pass # This can't happen!

# ```

# Having an assertion

#

# ```python

# assert light in [RED, AMBER, GREEN]

# ```

#

# in your code will eat some (minor) resources. However, adding a line

#

# ```python

# assert False

# ```

#

# in the place of the `This can't happen!` line, above, will still catch errors, but require no resources at all.

# If you have very critical software, it may be wise to actually pay the extra penalty for assertions (notably system assertions) rather than sacrifice reliability for performance. Keeping a memory sanitizer on even in production can have a small impact on performance, but will catch plenty of errors before some corrupted data (and even some attacks) have bad effects downstream.

# ### Define How Your Application Should Handle Internal Errors

#

# By default, failing assertions are not exactly user-friendly – the diagnosis they provide is of interest to the code maintainers only. Think of how your application should handle internal errors as discovered by assertions (or the runtime system). Simply exiting (as assertions on C do) may not be the best option for critical software. Think about implementing your own assert functions with appropriate recovery methods.

# ## Synopsis

# This chapter discusses _assertions_ to define _assumptions_ on function inputs and results:

def my_square_root(x): # type: ignore

assert x >= 0

y = square_root(x)

assert math.isclose(y * y, x)

return y

# Notably, assertions detect _violations_ of these assumptions at runtime:

with ExpectError():

y = my_square_root(-1)

# _System assertions_ help to detect invalid memory operations.

managed_mem = ManagedMemory()

managed_mem

with ExpectError():

x = managed_mem[2]

# + [markdown] button=false new_sheet=true run_control={"read_only": false}

# ## Lessons Learned

#

# * _Assertions_ are powerful tools to have the computer check invariants during execution:

# * _Preconditions_ check whether the arguments to a function are correct

# * _Postconditions_ check whether the result of a function is correct

# * _Data Invariants_ allow to check data structures for integrity

# * Since assertions can be turned off for optimization, they should

# * not _change correct operation_ in any way

# * not do _any work that your application requires for correct operation_

# * not be used as a _replacement for errors that can possibly happen_; create permanent checks (and own exceptions) for these

# * _System assertions_ are powerful tools to monitor the integrity of the runtime system (notably memory)

# * The more assertions,

# * the earlier errors are detected

# * the easier it is to locate defects

# * the better the guidance towards failure causes during debugging

# -

# ignore

import os

import shutil

for path in [

'assert.h',

'testassert',

'testassert.c',

'testassert.dSYM',

'testoverflow',

'testoverflow.c',

'testoverflow.dSYM',

'testuseafterfree',

'testuseafterfree.c',

'testuseafterfree.dSYM',

]:

if os.path.isdir(path):

shutil.rmtree(path)

else:

try:

os.remove(path)

except FileNotFoundError:

pass

# + [markdown] button=false new_sheet=false run_control={"read_only": false}

# ## Next Steps

#

# In the next chapters, we will learn how to

#

# * [identify performance issues](PerformanceDebugger.ipynb)

# * [check flows and dependencies](Slicer.ipynb)

# -

# ## Background

#

# The usage of _assertions_ goes back to the earliest days of programming. In 1947, Neumann and Goldstine defined _assertion boxes_ that would check the limits of specific variables. In his 1949 talk ["Checking a Large Routine"](http://www.turingarchive.org/browse.php/B/8), Alan Turing suggested

#

# > How can one check a large routine in the sense of making sure that it's right? In order that the man who checks may not have too difficult a task, the programmer should make a number of definite _assertions_ which can be checked individually, and from which the correctness of the whole program easily follows.

#

# [Valgrind](https://valgrind.org) originated as an academic tool which has seen lots of industrial usage. A [list of papers](https://www.valgrind.org/docs/pubs.html) is available on the Valgrind page.

#

# The [Address Sanitizer](http://clang.llvm.org/docs/AddressSanitizer.html) discussed in this chapter was developed at Google; the [paper by Serebryany](https://www.usenix.org/system/files/conference/atc12/atc12-final39.pdf) discusses several details.

# + [markdown] button=false new_sheet=true run_control={"read_only": false}

# ## Exercises

# + [markdown] button=false new_sheet=true run_control={"read_only": false}

# ### Exercise 1 – Storage Assertions

# -

# The Python [`shelve`](https://docs.python.org/3/library/shelve.html) module provides a simple interface for permanent storage of Python objects:

import shelve

d = shelve.open('mydb')

d['123'] = 123

d['123']

d.close()

d = shelve.open('mydb')

d['123']

d.close()

# Based on `shelve`, we can implement a class `ObjectStorage` that uses a context manager (a `with` block) to ensure the shelve database is always closed - also in presence of exceptions:

# ignore

from typing import Sequence, Any, Callable, Optional, Type, Tuple, Any

from typing import Dict, Union, Set, List, FrozenSet, cast

from types import TracebackType

class Storage:

def __init__(self, dbname: str) -> None:

self.dbname = dbname

def __enter__(self) -> Any:

self.db = shelve.open(self.dbname)

return self

def __exit__(self, exc_tp: Type, exc_value: BaseException,

exc_traceback: TracebackType) -> Optional[bool]:

self.db.close()

return None

def __getitem__(self, key: str) -> Any:

return self.db[key]

def __setitem__(self, key: str, value: Any) -> None:

self.db[key] = value

with Storage('mydb') as storage:

print(storage['123'])

# #### Task 1 – Local Consistency

#

# Extend `Storage` with assertions that ensure that after adding an element, it also can be retrieved with the same value.

# **Solution.** One single assertion suffices:

class Storage(Storage):

def __setitem__(self, key: str, value: Any) -> None:

self.db[key] = value

assert self.db[key] == value

# #### Task 2 – Global Consistency

#

# Extend `Storage` with a "shadow dictionary" which holds elements in memory storage, too. Have a `repOK()` method that memory storage and `shelve` storage are identical at all times.

# **Solution.** Here is a possible implementation:

class ShadowStorage:

def __init__(self, dbname: str) -> None:

self.dbname = dbname

def __enter__(self) -> Any:

self.db = shelve.open(self.dbname)

self.memdb = {}

for key in self.db.keys():

self.memdb[key] = self.db[key]

assert self.repOK()

return self

def __exit__(self, exc_tp: Type, exc_value: BaseException,

exc_traceback: TracebackType) -> Optional[bool]:

self.db.close()

return None

def __getitem__(self, key: str) -> Any:

assert self.repOK()

return self.db[key]

def __setitem__(self, key: str, value: Any) -> None:

assert self.repOK()

self.memdb[key] = self.db[key] = value

assert self.repOK()

def repOK(self) -> bool:

assert self.db.keys() == self.memdb.keys(), f"{self.dbname}: Differing keys"

for key in self.memdb.keys():

assert self.db[key] == self.memdb[key], \

f"{self.dbname}: Differing values for {repr(key)}"

return True

with ShadowStorage('mydb') as storage:

storage['456'] = 456

print(storage['123'])

# ignore

try:

os.remove('mydb.db') # on macOS

except FileNotFoundError:

pass

# ignore

try:

os.remove('mydb') # on Linux

except FileNotFoundError:

pass

| notebooks/Assertions.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

import pandas as pd

import matplotlib.pyplot as plt

# !ls data/alex_hours_csv/

df = pd.read_csv('data/alex_hours_csv/17_5_2021.csv')

df["Time"] = '2021-05-17 ' + df['Time'].astype(str)

df["Time"] = pd.to_datetime(df["Time"])

df

df.plot(x="Time", y="Username")

| pandas_practice/.ipynb_checkpoints/sample_work_hours_practice-checkpoint.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# name: python3

# ---

# + [markdown] id="view-in-github" colab_type="text"

# [View in Colaboratory](https://colab.research.google.com/github/ayan59dutta/Assignment-4/blob/ayan59dutta/ayan59dutta.ipynb)

# + [markdown] id="2XXfXed5YLbe" colab_type="text"

# # First Date with TensorFlow

#

# Hi all,<br/>

#

# You know what's important for understanding Deep Learning / Machine Learning?<br/>

# Intuition. Period.

#

# And Intuition comes when you run the code multiple times.

#

# So, today I can write a couple of defination and say this is this, this is that.<br/>

# You Google half of the things up. You find answers which you need to Google further.<br/>

# In the process, you probably won't even remember what's the first thing you started out with!

#

# # So?

#

# Hence on, I will execute cells with code. <br/>

# The neurons in your brain will optimize a function to get a hold of what each function is doing.<br/>

# **No Theory Just Code.**

#

# I will at max give a defination that extends for a line. That's it.<br/>

# Let's get started!

#

# <hr/>

#

# **RECOMMENDED!**<br/>

# Write the code in the cells using the signals sent by your brain to your fingers!<br/>

# Don't just `shift+enter` the cells.

#

# [Source](https://github.com/iArunava/TensorFlow-NoteBooks)

# + id="gYWUpE-bYKWP" colab_type="code" colab={}

# Essential imports

import numpy as np

import tensorflow as tf

import matplotlib.pyplot as plt

# + id="eKpz5NCIYMdi" colab_type="code" colab={}

# Let's define some tensors

t1 = tf.constant(2.0, dtype=tf.float32)

t2 = tf.constant([1.0, 2.0], dtype=tf.float32)

t3 = tf.constant([[[1.0, 9.0], [2.0, 3.0], [4.0, 5.0]],

[[1.0, 9.0], [2.0, 3.0], [4.0, 5.0]]])

# + id="vmMcjzTxbWzw" colab_type="code" colab={"base_uri": "https://localhost:8080/", "height": 72} outputId="97e61a64-612a-4e7b-89b4-755ba204b606"

# Let's print them out!

print (t1)

print (t2)

print (t3)

# + [markdown] id="10ahnfjYbcop" colab_type="text"

# Where's Waldo?<br/>

# I mean, the value?<br/>

#

# So, the thing is you can't print the value of tensors directly.<br/>

# You have to use `session`, so let's do that!

# + id="ol6O5I7Tb2nb" colab_type="code" colab={"base_uri": "https://localhost:8080/", "height": 219} outputId="3fd33587-4d6f-4c4d-88a0-060842eccf58"

sess = tf.Session()

print (sess.run(t1))

print ("=======================")

print (sess.run(t2))

print ("=======================")

print (sess.run(t3))

sess.close()

# + [markdown] id="rXKfVs_zb-kU" colab_type="text"

# Aaahaa!! Just printed those tensors!!!<br/>

# Feels good! <br/>

#

# For some of you, who are like, dude you got "No Theory Just Code" in bold <br/>

# And you are still using the markdown cells for the theory ?!

#

# I am just gonna say I am a unreasonable man.<br/>

#

#

# So, you are programming with tf.<br/>

# What ever you do is broken down to 2 basic steps:

# - Building the computational Graph!

# - Execute that graph using `session`!

#

# That's all!

#

# <hr/>

#

# Let's compare this 2 steps with what we did above!<br/>

# So, I defined 3 `tensor`s and these 3 `tensor`s formed my computational Graph.<br/>

# And then I executed each tensor in this graph using a `session`.

#

# That simple!

#

# <hr/>

#

# Now, let's define a few more computational graphs and execute them with sessions.

#

# Okay, to start with let's build this computational graph!

#

#

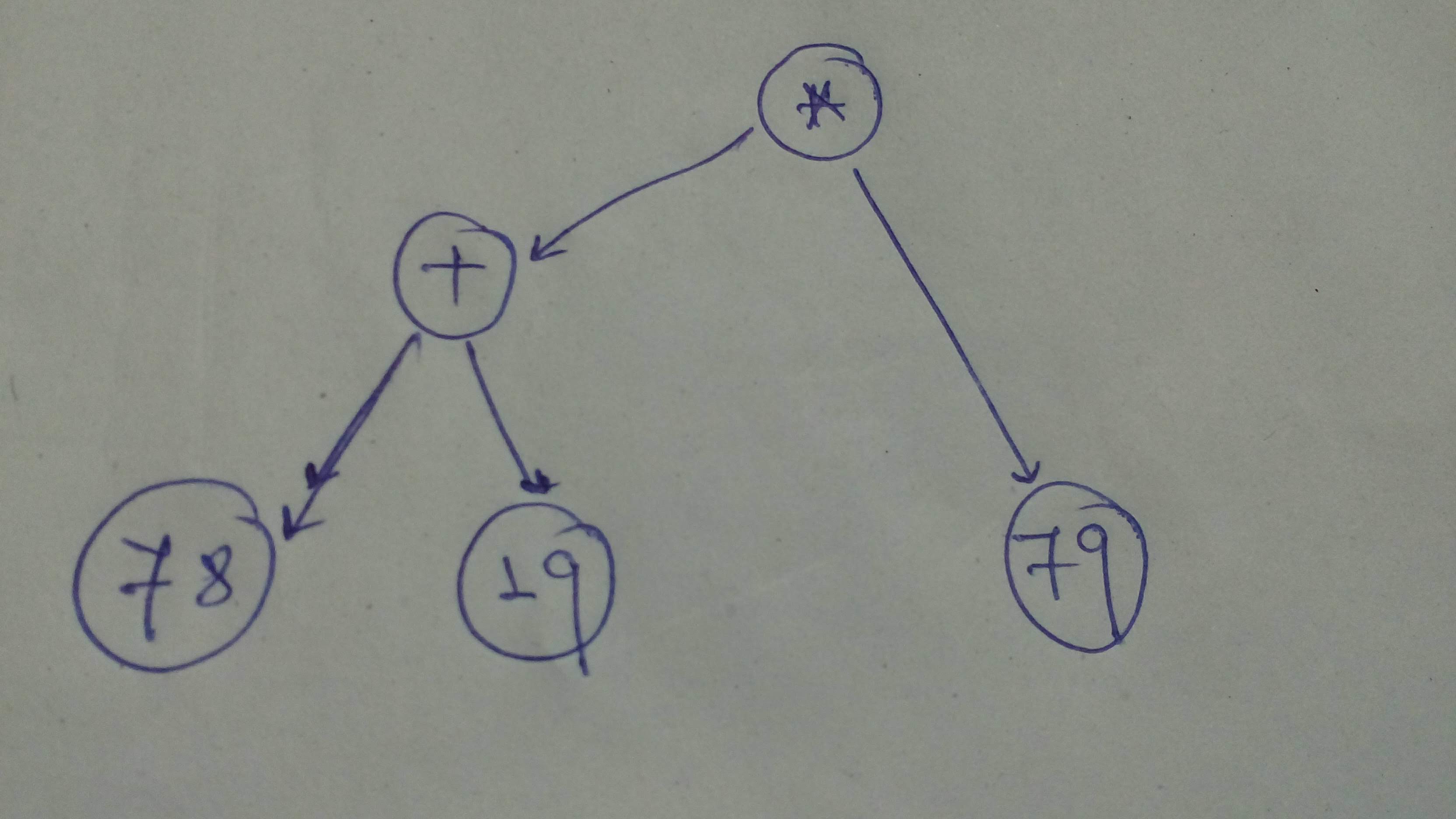

# + id="FyVz0GNqgreZ" colab_type="code" colab={"base_uri": "https://localhost:8080/", "height": 54} outputId="df83c837-8f55-487f-b2d9-92466a952d6a"

# Let's define the graph

comp_graph_1 = tf.multiply(tf.add(78, 19), 79)

# Alternatively

comp_graph_1_alt = (tf.constant(78) + tf.constant(19)) * tf.constant(79)

# Let's execute using session

sess = tf.Session()

print ('Comp Graph 1 : ', sess.run(comp_graph_1))

print ('Comp Graph 1 Alt: ', sess.run(comp_graph_1_alt))

sess.close()

# + [markdown] id="SVMMtuFYhaQB" colab_type="text"

# Let's define a sligtly more involved graph!

#

#

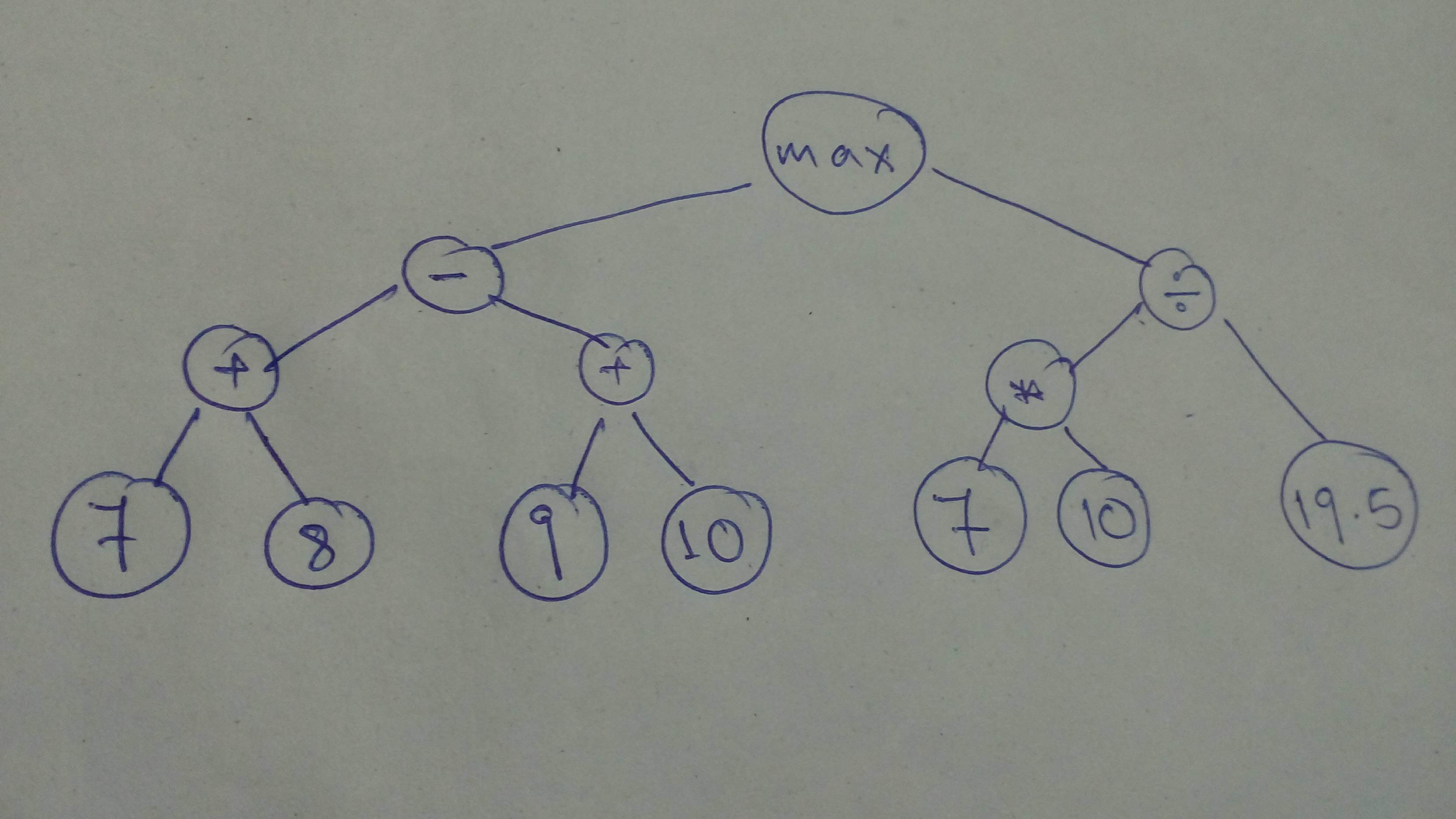

# + id="4856BTvRhiBb" colab_type="code" colab={"base_uri": "https://localhost:8080/", "height": 72} outputId="0dce8f47-b8f5-4b23-eb4c-313ac98034b6"

# Let build the graph

# We need to cast cause the tensors operated on should be of the same type

comp_graph_part_1 = tf.cast(tf.subtract(tf.add(7, 8), tf.add(9, 10)),

dtype=tf.float32)

comp_graph_part_2 = tf.divide(tf.cast(tf.multiply(7, 10), dtype=tf.float32), tf.constant(19.5))

comp_graph_complete = tf.maximum(comp_graph_part_1, comp_graph_part_2)

# Let's execute

sess = tf.Session()

part1_res, part2_res, total_res = sess.run([comp_graph_part_1, comp_graph_part_2, comp_graph_complete])

print ('Complete Result: ', total_res)

print ('Part 1 Result: ', part1_res)

print ('Part 2 Result: ', part2_res)

sess.close()

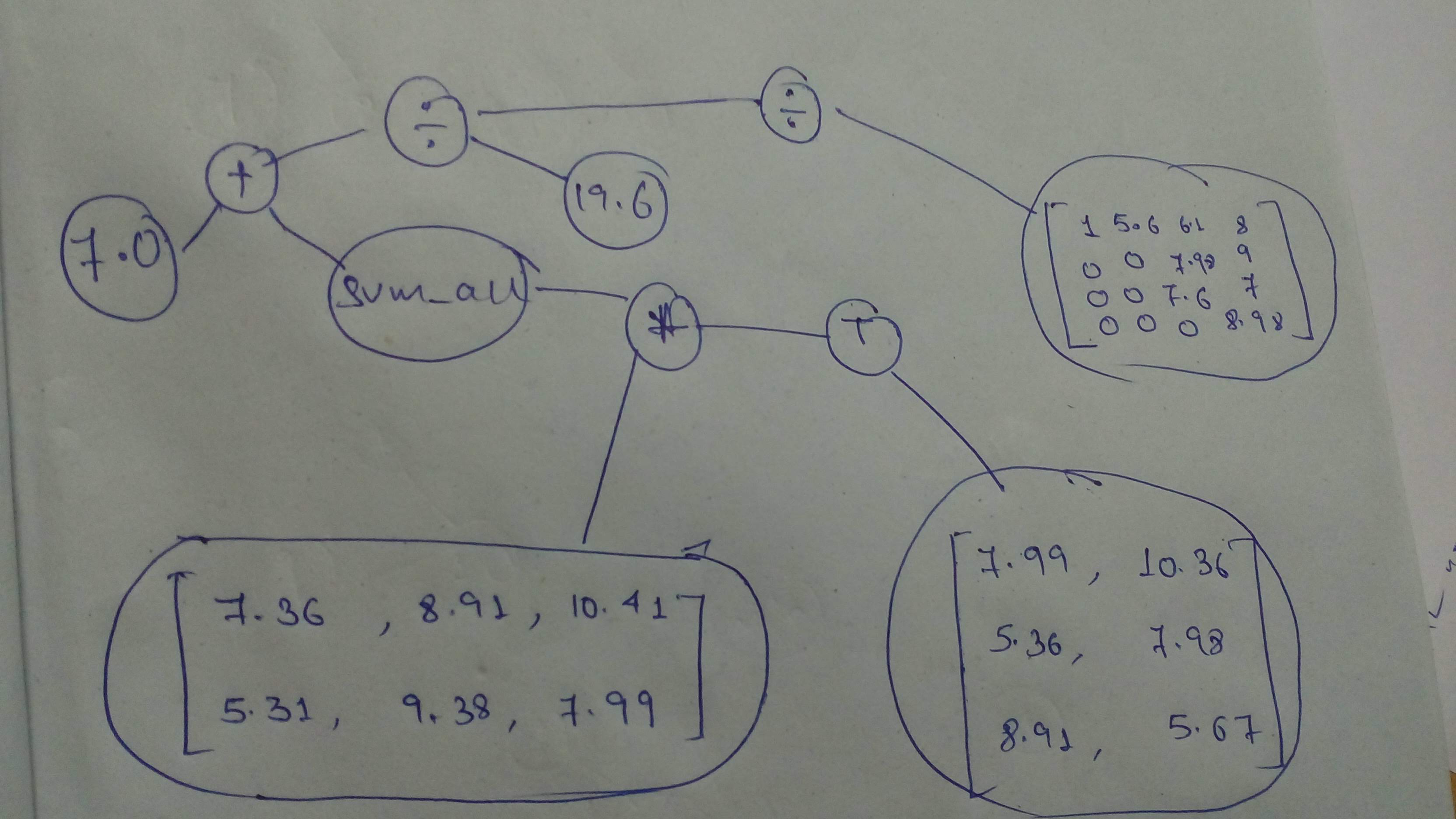

# + [markdown] id="B-_ZDtEbj4N0" colab_type="text"

# Cool! Let's go! Build another graph and execute it with sessions.<br/>

#

# But this time, it's all you!

#

# Build this graph and execute it with `session`!

#

#

#

# _Remember that `tensors` operated on should be of the same type!_<br/>

# _Search up errors and other help you need on Google_

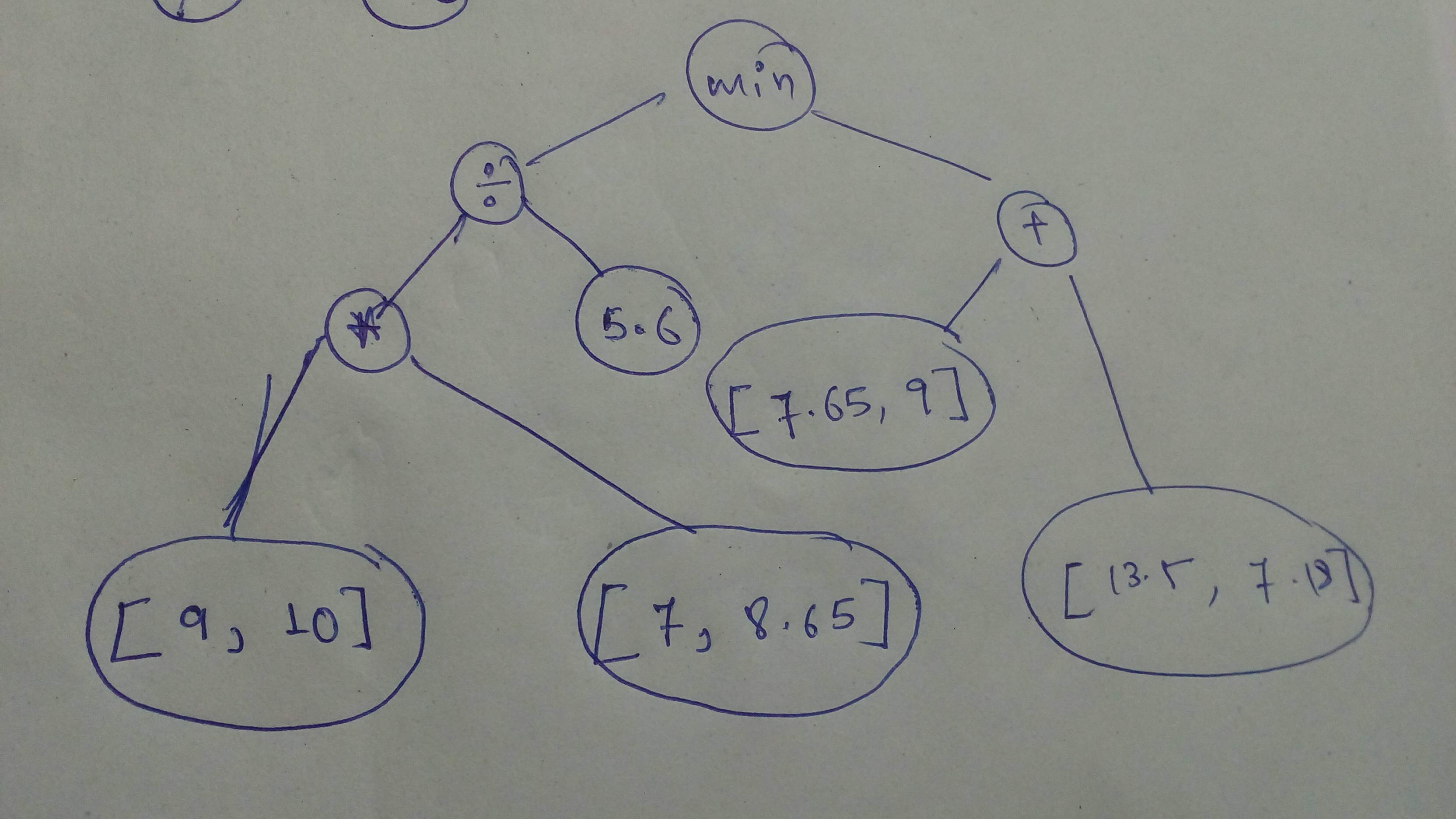

# + id="-uHNe1BolJY0" colab_type="code" colab={"base_uri": "https://localhost:8080/", "height": 35} outputId="9faf40fc-22ec-4665-ffe1-0c060caf047f"

# Build the graph

# YOUR CODE HERE

n1 = tf.constant([9, 10], dtype=tf.float32)

n2 = tf.constant([7, 8.65], dtype=tf.float32)

n3 = tf.constant(5.6, dtype=tf.float32)

n4 = tf.constant([7.65, 9], dtype=tf.float32)

n5 = tf.constant([13.5, 7.18], dtype=tf.float32)

cg1 = tf.minimum(((n1 * n2) / n3), (n4 + n5))

# Execute

# YOUR CODE HERE

with tf.Session() as sess:

print(sess.run(cg1))

# + [markdown] id="qmap38WelREN" colab_type="text"

# Let's do another!<br/>

# It's fun! Isn't it?!

#

# Build and execute this one!

#

#

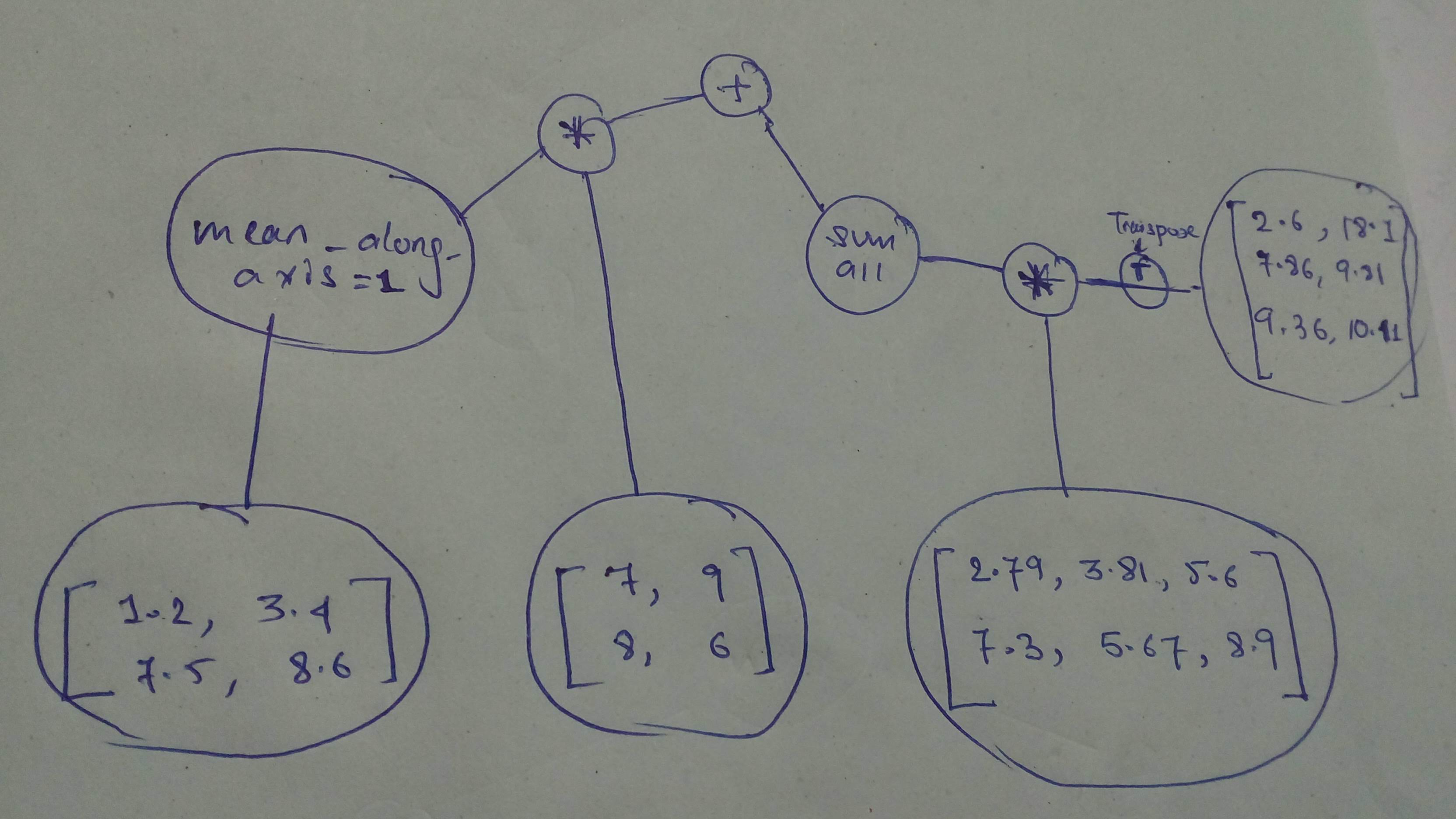

# + id="0ZhYwAlLmEvB" colab_type="code" colab={"base_uri": "https://localhost:8080/", "height": 54} outputId="e2798e59-847e-400c-a1a7-ea8019f09419"

# Build the graph

# YOUR CODE HERE

n1 = tf.constant([[1.2, 3.4],

[7.5, 8.6]], dtype=tf.float32)

n2 = tf.constant([[7, 9],

[8, 6]], dtype=tf.float32)

n3 = tf.constant([[2.79, 3.81, 5.6],

[7.3, 5.67, 8.9]], dtype=tf.float32)

n4 = tf.constant([[2.6, 18.1],

[7.86, 9.81],

[9.36, 10.41]], dtype=tf.float32)

cg2 = (tf.reduce_mean(n1, axis=1) * n2) + tf.reduce_sum(n3 * tf.transpose(n4))

# Execute

# YOUR CODE HERE

with tf.Session() as sess:

print(sess.run(cg2))

# + [markdown] id="BnB0b6qCmGmg" colab_type="text"

# And a final one, before we move on to the next part!

#

#

# + id="GQWyCvsQmMcL" colab_type="code" colab={"base_uri": "https://localhost:8080/", "height": 90} outputId="4b7e04ba-a97b-4f0d-bc60-aa7dd1adc5d0"

# Build the graph