code stringlengths 38 801k | repo_path stringlengths 6 263 |

|---|---|

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# # IMPORTS

# ## Libraries

# +

import pandas as pd

import numpy as np

import matplotlib.pyplot as plt

from scipy.sparse import hstack

from sklearn.metrics import roc_auc_score, average_precision_score

from sklearn.ensemble import RandomForestClassifier

from sklearn.feature_extraction.text import TfidfVectorizer

from google.oauth2 import service_account

from googleapiclient.discovery import build

np.random.seed(0)

# %matplotlib inline

pd.set_option('display.max_columns', 200)

# -

# # Load Data

dfTrain = pd.read_feather('../Data/FeatherData/dfTrainGS.feather')

dfTest = pd.read_feather('../Data/FeatherData/dfTestGS.feather')

dfTrain = dfTrain.sort_values('UploadDate')

# # Generating some features to evaluate a simple model

dfFeatures = pd.DataFrame(index=dfTrain.index)

dfFeatures['ViewCount'] = dfTrain['ViewCount']

dfFeatures['DaysSincePublication'] = dfTrain['DaysSincePublication']

dfFeatures['WatchList'] = dfTrain['WatchList'].astype(int)

dfFeatures['ViewsPerDay'] = dfFeatures['ViewCount'] / dfFeatures['DaysSincePublication']

dfFeatures = dfFeatures.drop('DaysSincePublication', axis=1)

dfFeatures.head()

# # Split DataFrame into Training and Validation Dataset

dfTrain['UploadDate'].value_counts().plot(figsize=(20, 10))

Xtrain, Xval = dfFeatures.iloc[:int(round(dfTrain.shape[0]/2,0))].drop('WatchList', axis=1), dfFeatures.iloc[int(round(dfTrain.shape[0]/2,0)):].drop('WatchList', axis=1)

ytrain, yval = dfFeatures['WatchList'].iloc[:int(round(dfTrain.shape[0]/2,0))], dfFeatures['WatchList'].iloc[int(round(dfTrain.shape[0]/2,0)):]

Xtrain.shape, Xval.shape, ytrain.shape, yval.shape

# # Text Features

# +

titleTrain = dfTrain['Title'].iloc[:int(round(dfTrain.shape[0]/2,0))]

titleVal = dfTrain['Title'].iloc[int(round(dfTrain.shape[0]/2,0)):]

titleVec = TfidfVectorizer(min_df=2)

titleBowTrain = titleVec.fit_transform(titleTrain)

titleBowVal = titleVec.transform(titleVal)

# -

titleBowTrain.shape

titleBowTrain

XtrainWTitle = hstack([Xtrain, titleBowTrain])

XvalWTitle = hstack([Xval, titleBowVal])

XtrainWTitle.shape, XvalWTitle.shape

# # Model

# ## RandomForestClassifier

model = RandomForestClassifier(n_estimators=1000, random_state=0, class_weight='balanced', n_jobs=-1)

model.fit(XtrainWTitle, ytrain)

p = model.predict_proba(XvalWTitle)[:,1]

# ## Model Evaluate

average_precision_score(yval,p)

roc_auc_score(yval, p)

# # ACTIVE LEARNING

# - 70 examples that the model has difficulty

# - 30 random examples

dfTest.shape

dfUnlabeled = dfTest.sample(800)

dfUnlabeled.head()

# ## Create a New DataFrame for Unlabeled Data

dfUnlabeledFeatures = pd.DataFrame(index=dfUnlabeled.index)

dfUnlabeledFeatures['ViewCount'] = dfUnlabeled['ViewCount']

dfUnlabeledFeatures['DaysSincePublication'] = dfUnlabeled['DaysSincePublication']

dfUnlabeledFeatures['ViewsPerDay'] = dfUnlabeledFeatures['ViewCount'] / dfUnlabeledFeatures['DaysSincePublication']

dfUnlabeledFeatures = dfUnlabeledFeatures.drop('DaysSincePublication', axis=1)

# ## Text Features

XUnlabeled = dfUnlabeledFeatures.copy()

titleUnlabeled = dfUnlabeled['Title']

titleUnlabeledBow = titleVec.transform(titleUnlabeled)

XUnlabeledWTitle = hstack([XUnlabeled, titleUnlabeledBow])

XtrainWTitle

# ## Model Evaluate for Filter Hard Decisions

pu = model.predict_proba(XUnlabeledWTitle)[:,1]

dfUnlabeled['p'] = pu

# ### Filter Hard Decisions and Random Decisions

maskUnlabeled = (dfUnlabeled['p'] >= 0.25) & (dfUnlabeled['p'] <= 0.75)

maskUnlabeled.sum()

hardDecisionSample = dfUnlabeled[maskUnlabeled]

randomSample = dfUnlabeled[~maskUnlabeled].sample(300 - maskUnlabeled.sum())

dfActiveLearning = pd.concat([hardDecisionSample, randomSample])

# # Send to Google Sheets

dfActiveLearning['UploadDate'] = dfActiveLearning['UploadDate'].astype(str)

dfActiveLearning['WatchList'] = ''

dfActiveLearning = dfActiveLearning.values.tolist()

# ### Credentials

# +

# Documentation: https://developers.google.com/sheets/api/quickstart/python

SERVICE_ACCOUNT_FILE = 'D:/01-DataScience/04-Projetos/00-Git/Youtube-Video-Recommendations/Credentials/keys.json'

SCOPES = ['https://www.googleapis.com/auth/spreadsheets']

credentials = None

credentials = service_account.Credentials.from_service_account_file(

SERVICE_ACCOUNT_FILE, scopes=SCOPES)

# The ID of spreadsheet.

SAMPLE_SPREADSHEET_ID = '1uCur7jOXuLnwuwfWgoBL8mvDDvchuLf-o0X-AnOxS7s'

service = build('sheets', 'v4', credentials=credentials)

# Call the Sheets API

sheet = service.spreadsheets()

# -

# ### Write Values

# Write Values

request = sheet.values().update(spreadsheetId=SAMPLE_SPREADSHEET_ID,

range="ActiveLearning!A2", valueInputOption="USER_ENTERED", body={"values":dfActiveLearning}).execute()

# ### Read Values

# +

#Read Values

result = sheet.values().get(spreadsheetId=SAMPLE_SPREADSHEET_ID,

range="ActiveLearning!A1:S").execute()

values = result.get('values', [])

# -

# ### Convert dtypes

dfGoogleSheets = pd.DataFrame(values[1:], columns=values[0])

dfGoogleSheets = dfGoogleSheets[dfGoogleSheets['WatchList'].notnull()].reset_index(drop=True)

dfGoogleSheets['UploadDate'] = pd.to_datetime(dfGoogleSheets['UploadDate'])

dfGoogleSheets['WatchList'] = dfGoogleSheets['WatchList'].replace('', np.nan)

dfGoogleSheets[['DaysSincePublication', 'Duration', 'ViewCount', 'LikeCount', 'DislikeCount']] = dfGoogleSheets[['DaysSincePublication', 'Duration', 'ViewCount', 'LikeCount', 'DislikeCount']].astype(int)

dfGoogleSheets['AverageRating'] = dfGoogleSheets['AverageRating'].astype(float)

dfGoogleSheets['p'] = dfGoogleSheets['p'].astype(float)

dfGoogleSheets[dfGoogleSheets.select_dtypes(include=['object']).columns] = dfGoogleSheets.select_dtypes(include=['object']).astype('category')

# ### Convert to .feather

dfGoogleSheets.to_feather('../Data/FeatherData/dfActiveLearningGS.feather')

| 02-YouTubeNotebooks/05-ActiveLearningFirstPart.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

#Importing packages

from selenium import webdriver

import pandas as pd

import re

import time

driver = webdriver.Chrome('resources/chromedriver.exe')

# +

# Login credentials

fb_email = "XXX"

fb_pass = "<PASSWORD>"

def fb_login():

driver.get ("https://www.facebook.com")

driver.find_element_by_id("email").send_keys(fb_email)

driver.find_element_by_id("pass").send_keys(<PASSWORD>)

driver.find_element_by_xpath("//input[@value='Log In' or @value='Log Masuk']").click()

# -

fb_login()

search_url = 'https://www.facebook.com/page/373560576236/search/?q=blood%20donation&filters=eyJycF9jaHJvbm9fc29ydCI6IntcIm5hbWVcIjpcImNocm9ub3NvcnRcIixcImFyZ3NcIjpcIlwifSJ9'

driver.get(search_url)

# +

#Scroll to bottom infinity to load all posts

SCROLL_PAUSE_TIME = 1

# Get scroll height

last_height = driver.execute_script("return document.body.scrollHeight")

while True:

# Scroll down to bottom

driver.execute_script("window.scrollTo(0, document.body.scrollHeight);")

# Wait to load page

time.sleep(SCROLL_PAUSE_TIME)

# Calculate new scroll height and compare with last scroll height

new_height = driver.execute_script("return document.body.scrollHeight")

if new_height == last_height:

break

last_height = new_height

# +

post_class = 'rq0escxv l9j0dhe7 du4w35lb hybvsw6c ue3kfks5 pw54ja7n uo3d90p7 l82x9zwi ni8dbmo4 stjgntxs k4urcfbm sbcfpzgs'

#content_class = 'a8c37x1j ni8dbmo4 stjgntxs l9j0dhe7'

#date_class = 'd2edcug0 hpfvmrgz qv66sw1b c1et5uql rrkovp55 jq4qci2q a3bd9o3v knj5qynh m9osqain'

regex_dict = {

'date' : "\n(\w{3}\s\d{1,2}(\,\s\d{4})?)\n",

'title' : "\N{MIDDLE DOT}\n\s*\N{MIDDLE DOT}\s*(.*)\n",

'reaction' : r"\n(\d+(\.\d+)?\w*)\n\1\n",

'comment' : "(\d+)\sComments?",

'share' : "(\d+)\sShares?",

}

xpath = "//*[contains(@class, '{}')]"

vals = driver.find_elements_by_xpath(xpath.format(post_class))

info_list = []

for ele in vals:

info = {}

for search_val,regex in regex_dict.items():

match = re.search(regex,ele.text)

if match:

info[search_val] = match.group(1)

else:

info[search_val] = None

print(info)

print('---\n')

info_list.append(info)

# -

df = pd.DataFrame(data=info_list)

df

| 1. WebScrap/.ipynb_checkpoints/WebScraping-checkpoint.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# # The idea of permutation

#

# The idea of permutation is fundamental to a wide range of statistical tests.

# This page shows how permutation works by comparing to a physical

# implementation of permutation, that randomizes values by mixing balls in a

# bucket.

#

# ## A mosquito problem

#

#

# With thanks to <NAME>: [Statistics Without the Agonizing Pain](https://www.youtube.com/watch?v=5Dnw46eC-0o)

# ## The data

#

# Download the data from [mosquito_beer.csv](https://matthew-brett.github.io/cfd2019/data/mosquito_beer.csv).

# See [this

# page](https://github.com/matthew-brett/datasets/tree/master/mosquito_beer) for

# more details on the dataset, and [the data license page](https://matthew-brett.github.io/cfd2019/data/license).

# +

# Import Numpy library, rename as "np"

import numpy as np

# Import Pandas library, rename as "pd"

import pandas as pd

# Set up plotting

import matplotlib.pyplot as plt

# %matplotlib inline

plt.style.use('fivethirtyeight')

# -

# HIDDEN

# An extra tweak to make sure we always get the same random numbers.

# Do not use this in your own code; you nearly always want an unpredictable

# stream of random numbers. Making them predictable in this way only makes

# sense for a very limited range of things, like tutorials and tests.

np.random.seed(42)

# Read in the data:

mosquitoes = pd.read_csv('mosquito_beer.csv')

mosquitoes.head()

# Filter the data frame to contain only the "after" treatment rows:

# After treatment rows.

afters = mosquitoes[mosquitoes['test'] == 'after']

# Filter the "after" rows to contain only the "beer" group, and get the number of activated mosquitoes for these 25 subjects:

# After beer treatment rows.

beers = afters[afters['group'] == 'beer']

# The 'activated' numbers for the after beer rows.

beer_activated = np.array(beers['activated'])

beer_activated

# The number of subjects in the "beer" condition:

n_beer = len(beer_activated)

n_beer

# Get the "activated" number for the 18 subjects in the "water" group:

# Same for the water group.

waters = afters[afters['group'] == 'water']

water_activated = np.array(waters['activated'])

water_activated

# Number of subjects in the "water" condition:

n_water = len(water_activated)

n_water

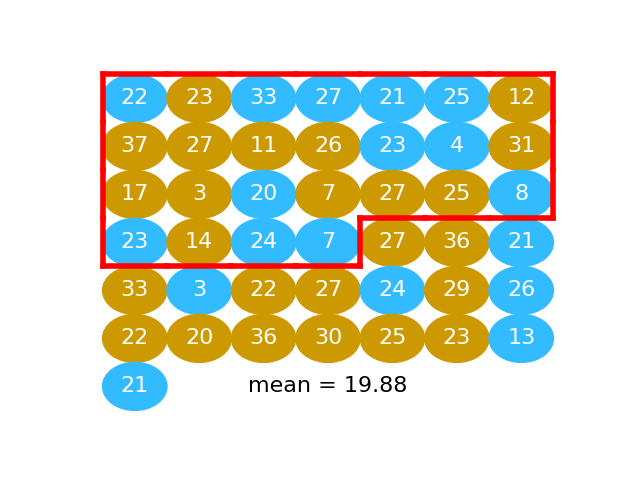

# ## The permutation way

# * Calculate difference in means

# * Pool

# * Repeat many times:

# * Shuffle

# * Split

# * Recalculate difference in means

# * Store

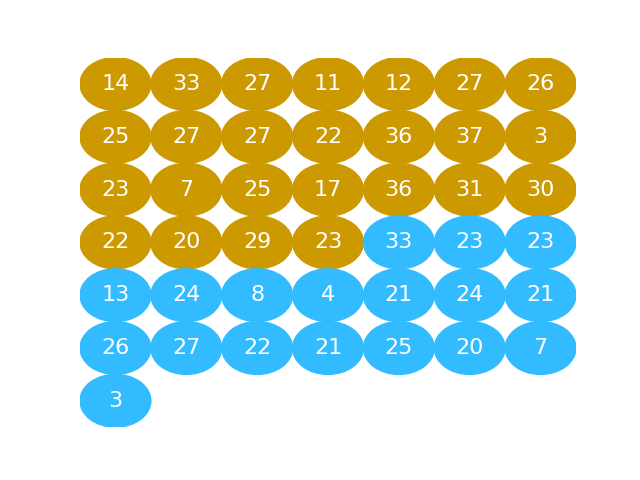

# The next graphic shows the activated values as a series of gold and blue

# balls. The activated numbers for the "beer" group are gold), and the activated

# numbers for the "water" group, in blue:

#

#

#

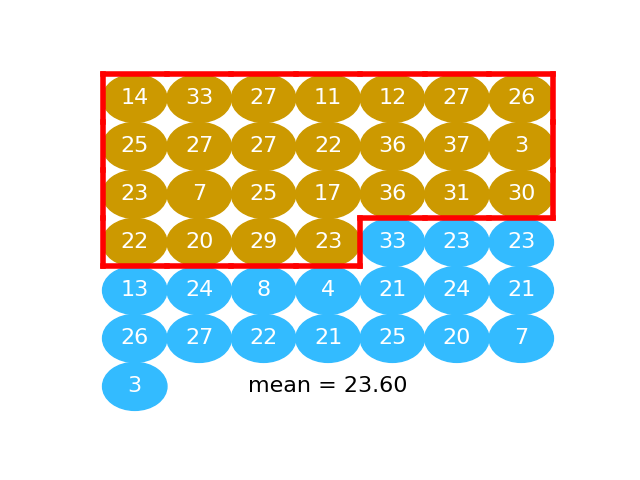

# ## Calculate difference in means

#

# Here we take the mean of "beer" activated numbers (the numbers in gold):

#

#

beer_mean = np.mean(beer_activated)

beer_mean

# Next we take the mean of activation values for the "water" subjects (value in

# blue):

#

#

water_mean = np.mean(water_activated)

water_mean

# The difference between the means in our data:

observed_difference = beer_mean - water_mean

observed_difference

# ## Pool

#

# We can put the values values for the beer and water conditions into one long

# array, 25 + 18 values long.

pooled = np.append(beer_activated, water_activated)

pooled

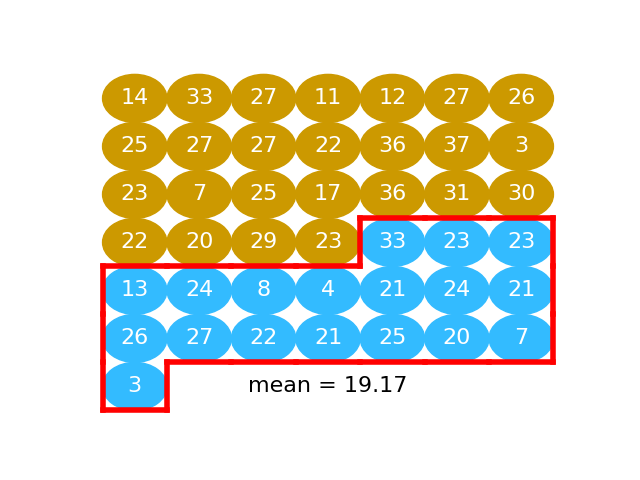

# ## Shuffle

#

# Then we shuffle the pooled values so the beer and water values are completely

# mixed.

np.random.shuffle(pooled)

pooled

# This is the same idea as putting the gold and blue balls into a bucket and shaking them up into a random arrangement.

#

#

#

# ## Split

#

# We take the first 25 values as our fake beer group. In fact these 25 values

# are a random mixture of the beer and the water values. This is the same idea as taking 25 balls at random from the jumbled mix of gold and blue balls.

# Take the first 25 values

fake_beer = pooled[:n_beer]

#

#

# We calculate the mean:

fake_beer_mean = np.mean(fake_beer)

fake_beer_mean

# Then we take the remaining 18 values as our fake water group:

fake_water = pooled[n_beer:]

#

#

# We take the mean of these too:

fake_water_mean = np.mean(fake_water)

fake_water_mean

# The difference between these means is our first estimate of how much the mean difference will vary when we take random samples from this pooled population:

fake_diff = fake_beer_mean - fake_water_mean

fake_diff

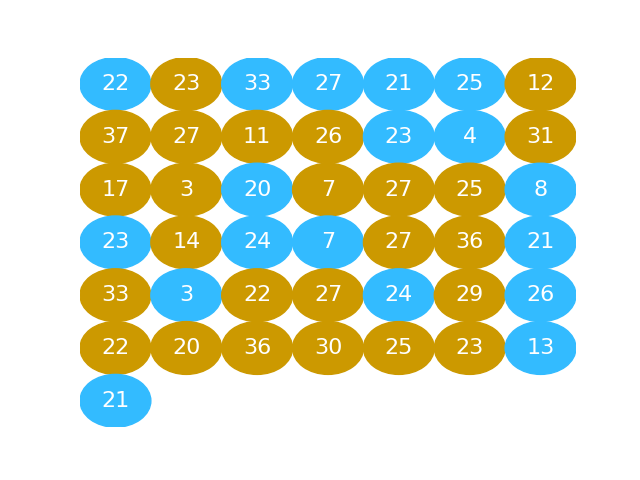

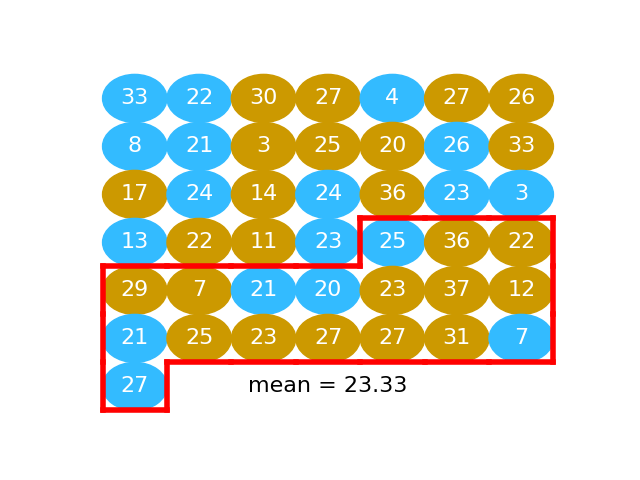

# ## Repeat

#

# We do another shuffle:

np.random.shuffle(pooled)

#

#

# We take another fake beer group, and calculate another fake beer mean:

fake_beer = pooled[:n_beer]

np.mean(fake_beer)

# We take another fake water group, find the mean:

#

#

fake_water = pooled[n_beer:]

np.mean(fake_water)

# Now we have another example difference between these means:

np.mean(fake_beer) - np.mean(fake_water)

# We can keep on repeating this process to get more and more examples of mean

# differences:

# Shuffle

np.random.shuffle(pooled)

# Split

fake_beer = pooled[:n_beer]

fake_water = pooled[n_beer:]

# Recalculate mean difference

fake_diff = np.mean(fake_beer) - np.mean(fake_water)

fake_diff

# It is not hard to do this as many times as we want, using a `for` loop:

fake_differences = np.zeros(10000)

for i in np.arange(10000):

# Shuffle

np.random.shuffle(pooled)

# Split

fake_beer = pooled[:n_beer]

fake_water = pooled[n_beer:]

# Recalculate mean difference

fake_diff = np.mean(fake_beer) - np.mean(fake_water)

# Store mean difference

fake_differences[i] = fake_diff

plt.hist(fake_differences);

# We are interested to know just how unusual it is to get a difference as big as we actually see, in these many samples of differences we expect by chance, from random sampling.

#

# To do this we calculate how many of the fake differences we generated are equal to or greater than the difference we observe:

n_ge_actual = np.count_nonzero(fake_differences >= observed_difference)

n_ge_actual

# That means that the chance of any one difference being greater than the one we observe is:

p_ge_actual = n_ge_actual / 10000

p_ge_actual

# This is also an estimate of the probability we would see a difference as large as the one we observe, if we were taking random samples from a matching population.

| ipynb/05/permutation_idea.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# ### Unique Email Addresses

# + active=""

# Every email consists of a local name and a domain name, separated by the @ sign.

# For example, in <EMAIL>, alice is the local name, and leetcode.com is the domain name.

# Besides lowercase letters, these emails may contain '.'s or '+'s.

# If you add periods ('.') between some characters in the local name part of an email address,

# mail sent there will be forwarded to the same address without dots in the local name.

# For example, "<EMAIL>" and "<EMAIL>" forward to the same email address.

# (Note that this rule does not apply for domain names.)

# If you add a plus ('+') in the local name, everything after the first plus sign will be ignored.

# This allows certain emails to be filtered, for example <EMAIL> will be forwarded to <EMAIL>.

# (Again, this rule does not apply for domain names.)

# It is possible to use both of these rules at the same time.

# Given a list of emails, we send one email to each address in the list. How many different addresses actually receive mails?

#

# Example 1:

# Input: ["<EMAIL>","<EMAIL>+<EMAIL>","<EMAIL>"]

# Output: 2

# Explanation: "<EMAIL>" and "<EMAIL>" actually receive mails

#

# Note:

# 1 <= emails[i].length <= 100

# 1 <= emails.length <= 100

# Each emails[i] contains exactly one '@' character.

# -

class Solution(object):

def numUniqueEmails(self, emails):

"""

:type emails: List[str]

:rtype: int

"""

new_emails = []

for mail in emails:

[local, domain] = mail.split('@') # 分出 local name & domain name

local = local.split('+')[0] # 留下 + 以前的字串

local_lst = local.split('.') # 用 '.' 分散開的字串

local = ''

for x in local_lst: # local name 分散的字串合併

local += x

email = local + '@' + domain # 組合成email : local name + @ + domain name

if email not in new_emails:

new_emails.append(email)

return len(new_emails)

emails = ["<EMAIL>+<EMAIL>","<EMAIL>+<EMAIL>","<EMAIL>+<EMAIL>"]

ans = Solution()

ans.numUniqueEmails(emails)

| 929. Unique Email Addresses.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# %load_ext autoreload

# %autoreload 2

# +

import numpy as np; np.random.seed(0)

from extquadcontrol import ExtendedQuadratic, dp_infinite, dp_finite

from scipy.linalg import solve_discrete_are

# -

# # LQR

#

# We verify that our implementation matches the controller returned by the infinite horizon Riccati Recursion.

# +

n, m = 5,5

K = 1

N = 1

T = 25

As = np.random.randn(1,1,n,n)

Bs = np.random.randn(1,1,n,m)

cs = np.zeros((1,1,n))

gs = [ExtendedQuadratic(np.eye(n+m),np.zeros(n+m),0) for _ in range(K)]

Pi = np.eye(K)

def sample(t, N):

A = np.zeros((N,K,n,n)); A[:] = As

B = np.zeros((N,K,n,m)); B[:] = Bs

c = np.zeros((N,K,n)); c[:] = cs

g = [gs for _ in range(N)]

return A,B,c,g,Pi

g_T = [ExtendedQuadratic(np.eye(n),np.zeros(n),0) for _ in range(K)]

Vs, Qs, policies = dp_finite(sample, g_T, T, N)

# -

Vs[0][0].P

policies[0][0][0]

A = As[0,0]

B = Bs[0,0]

Q = np.eye(n)

R = np.eye(m)

def solve_finite_time():

P = Q

for _ in range(50):

P = Q+A.T@P@A-A.T@P@B@np.linalg.solve(R+B.T@P@B,B.T@P@A)

K = -np.linalg.solve(R+B.T@P@B,B.T@P@A)

return P, K

P, K = solve_finite_time()

P

K

# ### Infinite-horizon

A = np.random.randn(1,1,n,n)

B = np.random.randn(1,1,n,m)

c = np.zeros((1,1,n))

g = [[ExtendedQuadratic(np.eye(n+m),np.zeros(n+m),0)]]

Pi = np.ones((1,1))

def sample(t):

return A,B,c,g,Pi

V, Qs, policy = dp_infinite(sample, 50, 1)

V[0].P

A = A[0,0]

B = B[0,0]

P = solve_discrete_are(A,B,Q,R)

P

| examples/LQR (6.1).ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# + pycharm={"name": "#%%\n"}

#Based on tutorial: https://machinelearningmastery.com/random-forest-ensemble-in-python/

#Run this code before you can classify

# Use numpy to convert to arrays

import numpy as np

from numpy import mean, std

# Pandas is used for data manipulation

import pandas as pd

# Using Skicit-learn to split data into training and testing sets

from sklearn.model_selection import train_test_split

# Import the model we are using

from sklearn.ensemble import RandomForestClassifier

from sklearn.model_selection import cross_val_score, RepeatedStratifiedKFold

def buildModel(features, labelDimension) :

# Labels are the values we want to predict

labels = np.array(features[labelDimension])

# Remove the labels from the features

# axis 1 refers to the columns

features= features.drop(labelDimension, axis = 1)

# Convert to numpy array

features = np.array(features)

# Split the data into training and testing sets (heavily overfit on provided dataset to get as close as possible to the original model)

train_features, test_features, train_labels, test_labels = train_test_split(features, labels, test_size = 0.30)

print('Training Features Shape:', train_features.shape)

print('Training Labels Shape:', train_labels.shape)

print('Testing Features Shape:', test_features.shape)

print('Testing Labels Shape:', test_labels.shape)

# Instantiate model with 1000 decision trees

rf = RandomForestClassifier(n_estimators = 1500)

# Train the model on training data

rf.fit(train_features, train_labels)

#evaluate the model

cv = RepeatedStratifiedKFold(n_splits=10, n_repeats=1)

n_scores = cross_val_score(rf, features, labels, scoring='accuracy', cv=cv, n_jobs=-1, error_score='raise')

print("done!")

print("evaluating:")

# report performance

print(n_scores)

print('Accuracy: %.3f (%.3f)' % (mean(n_scores), std(n_scores)))

return rf

# + pycharm={"name": "#%%\n"}

#load in the dataset

features = pd.read_csv('heloc_dataset_v1.csv')

#the columns that stores the labels

labelDimension = "RiskPerformance"

#build a random forest classifier

model = buildModel(features, labelDimension)

# + pycharm={"name": "#%%\n"}

#get the first datarow of the dataset

row = features.loc[0,:]

#remove the label column (first column)

instance = row[1:len(row)]

# Use the forest's predict method on the test data

prediction = model.predict(instance.to_numpy().reshape(1,-1))

#print prediction

print(prediction)

# + pycharm={"name": "#%%\n"}

| heloc_model/default.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: SageMath 9.2.rc2

# language: sage

# name: sagemath

# ---

# # RSA in ECB mode

#

# Suppose that the RSA public key $(n, e) = (2491, 1595)$

# has been used to encrypt each individual character in a message $m$ (using their ASCII codes),

# giving the following ciphertext:

# $$

# c = (111, 2474, 1302, 1302, 1587, 395, 224, 313, 1587, 1047, 1302, 1341, 980).

# $$

# Determine the original message $m$ without factoring $n$.

n = 2491

e = 1595

c = [111, 2474, 1302, 1302, 1587, 395, 224, 313, 1587, 1047, 1302, 1341, 980]

# Since there are only 128 ASCII characters, we can build a dictionary mapping encryptions to the corresponding codes.

d = {pow(x, e, n): x for x in range(128)}

d

# We can now use the dictionary to decrypt each character.

''.join(chr(d[y]) for y in c)

| notebooks/RSA-ECB.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# +

# %matplotlib inline

import sys, os, time

import numpy as np

import matplotlib.pyplot as plt

from keras import backend as K

from keras.utils import to_categorical

from keras.applications.vgg19 import VGG19, preprocess_input

# -

# ## Load data

x_val = np.load("/mnt/dados/imagenet/ILSVRC2012_img_val_224/x_val.npy") # loaded as RGB

x_val = preprocess_input(x_val) # converted to BGR

y_val = np.load("/mnt/dados/imagenet/ILSVRC2012_img_val_224/y_val.npy")

y_val_one_hot = to_categorical(y_val, 1000)

keras_idx_to_name = {}

f = open("/mnt/dados/imagenet/ILSVRC2012_img_val_224/synset_words.txt","r")

idx = 0

for line in f:

parts = line.split(" ")

keras_idx_to_name[idx] = " ".join(parts[1:])

idx += 1

f.close()

# ## Benchmark models

def top_k_accuracy(y_true, y_pred, k=1):

'''From: https://github.com/chainer/chainer/issues/606

Expects both y_true and y_pred to be one-hot encoded.

'''

argsorted_y = np.argsort(y_pred)[:,-k:]

return np.any(argsorted_y.T == y_true.argmax(axis=1), axis=0).mean()

K.clear_session()

model = VGG19()

y_pred = model.predict(x_val[:1000], verbose=1)

# #### Top-1 Accuracy

#

# Compare to 0.713 from Keras documentation

top_k_accuracy(y_val_one_hot[:1000], y_pred, k=1)

# #### Top-5 Accuracy

#

# Compare to 0.900 from Keras documentation

top_k_accuracy(y_val_one_hot[:1000], y_pred, k=5)

| LARS2019_2. Benchmark Keras pretrained models on ImageNet.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# # $T_1$

# In a $T_1$ experiment, we measure an excited qubit after a delay. Due to decoherence processes (e.g. amplitude damping channel), it is possible that, at the time of measurement, after the delay, the qubit will not be excited anymore. The larger the delay time is, the more likely is the qubit to fall to the ground state. The goal of the experiment is to characterize the decay rate of the qubit towards the ground state.

#

# We start by fixing a delay time $t$ and a number of shots $s$. Then, by repeating $s$ times the procedure of exciting the qubit, waiting, and measuring, we estimate the probability to measure $|1\rangle$ after the delay. We repeat this process for a set of delay times, resulting in a set of probability estimates.

#

# In the absence of state preparation and measurement errors, the probability to measure |1> after time $t$ is $e^{-t/T_1}$, for a constant $T_1$ (the coherence time), which is our target number. Since state preparation and measurement errors do exist, the qubit's decay towards the ground state assumes the form $Ae^{-t/T_1} + B$, for parameters $A, T_1$, and $B$, which we deduce form the probability estimates. To this end, the $T_1$ experiment internally calls the `curve_fit` method of `scipy.optimize`.

#

# The following code demonstrates a basic run of a $T_1$ experiment for qubit 0.

# +

import numpy as np

from qiskit_experiments.framework import ParallelExperiment

from qiskit_experiments.library import T1

# A T1 simulator

from qiskit.test.mock import FakeVigo

from qiskit.providers.aer import AerSimulator

from qiskit.providers.aer.noise import NoiseModel

# Create a pure relaxation noise model for AerSimulator

noise_model = NoiseModel.from_backend(

FakeVigo(), thermal_relaxation=True, gate_error=False, readout_error=False

)

# Create a fake backend simulator

backend = AerSimulator.from_backend(FakeVigo(), noise_model=noise_model)

# Look up target T1 of qubit-0 from device properties

qubit0_t1 = backend.properties().t1(0)

# Time intervals to wait before measurement

delays = np.arange(1e-6, 3 * qubit0_t1, 3e-5)

# Create an experiment for qubit 0

# with the specified time intervals

exp = T1(qubit=0, delays=delays)

# Set scheduling method so circuit is scheduled for delay noise simulation

exp.set_transpile_options(scheduling_method='asap')

# Run the experiment circuits and analyze the result

exp_data = exp.run(backend=backend).block_for_results()

# Print the result

display(exp_data.figure(0))

for result in exp_data.analysis_results():

print(result)

# -

# ## Parallel $T_1$ experiments on multiple qubits

# To measure $T_1$ of multiple qubits in the same experiment, we create a parallel experiment:

# +

# Create a parallel T1 experiment

parallel_exp = ParallelExperiment([T1(qubit=i, delays=delays) for i in range(2)])

parallel_exp.set_transpile_options(scheduling_method='asap')

parallel_data = parallel_exp.run(backend).block_for_results()

# View result data

for result in parallel_data.analysis_results():

print(result)

# -

# ### Viewing sub experiment data

#

# The experiment data returned from a batched experiment also contains individual experiment data for each sub experiment which can be accessed using `child_data`

# Print sub-experiment data

for i, sub_data in enumerate(parallel_data.child_data()):

print(f"Component experiment {i}")

display(sub_data.figure(0))

for result in sub_data.analysis_results():

print(result)

import qiskit.tools.jupyter

# %qiskit_copyright

| docs/tutorials/t1.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# + pycharm={"name": "#%%\n", "is_executing": false}

import os

import xlrd

import pandas as pd

import glob

import re

# + pycharm={"name": "#%%\n", "is_executing": false}

#Getting MSP files

msp_files = os.listdir('path/to/dataset/folder')

msp_files[0]

# + pycharm={"name": "#%%\n", "is_executing": false}

#reading Columns names from file

columns_names = []

with open('column-names.txt', 'r') as filehandle:

names = filehandle.readlines()

for name in names:

columns_names.append(str(name).rstrip('\n'))

#Creating Raw DataFrame

dataframe =pd.DataFrame(columns=columns_names)

dataframe

# + pycharm={"name": "#%%\n", "is_executing": false}

#Reading the sheets

df_list = []

no_tp = []

for file in msp_files:

final_TP = ""

xls = xlrd.open_workbook('path/to/dataset/folder'+file)

for sheet_name in list(xls.sheet_names()):

if re.match('TP[0-9]+',sheet_name):

# Checking TP acquisition

# sheet_num = re.match(r"(TP)([0-9]+)",sheet_name)

# print(sheet_num.group(2))

final_TP = sheet_name

try:

if final_TP != "":

df = pd.read_excel('path/to/dataset/folder'+file, sheet_name=final_TP,header=None)

df =df[3:]

df = df.drop(df.iloc[:,24:],axis=1)

headers = df.iloc[0]

df =df[1:]

df.columns = headers

df['project_name'] = file

df.reset_index(drop=True)

df_list.append(df)

print("Added "+ file)

else:

print("NO TP for "+ file)

no_tp.append(file)

except:

print("Not successful for "+ file)

dataset = pd.concat(df_list)

dataset.to_excel('data.xlsx')

with open("no-tp.txt",'w') as file_tp:

for project in no_tp:

file_tp.writeline(project)

no_tp_perc = int(len(no_tp))/int(len(msp_files))

print("the percentage that has no tp:"+ str(no_tp_perc))

print('Number of no-tp Files: '+ str(len(no_tp)))

| 1-data-extraction/data-extraction.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# + [markdown] slideshow={"slide_type": "slide"}

# # University of Applied Sciences Munich

# ## Kalman Filter Tutorial

#

# ---

# (c) <NAME> (<EMAIL>)

# -

# <h2 style="color:green">Instructions. Please Read.</h2>

#

# + Create a copy/clone of this Notebook and change the name slightly, i.e. Exercise1-Solution-YourName

# + Change the my_name variable in the cell below, s.t. your solution can be evaluated (done by the evaluation notebook)

# + When you execute the last cell your results will be saved as .csv files with specific naming and read by the evaluation Notebook

# + You can use different names, e.g. Lukas1, Lukas2, Lukas3, ... for different iterations of your solution

# ## Exercise 1 - Ball Flight

#

# #### Task:

# + You are given data (height and velocity) of a ball that was shot straight into the air.

# + There are several things you have to do

# + In the "Kalman Step" cell implement the 1D Kalman Filter

# + Tune the parameters s.t. the output is optimal

# + Set the initial Conditions

my_name = "LukasKostler" # Only Alphanumeric characters

# + slideshow={"slide_type": "skip"}

import matplotlib.pyplot as plt

plt.rcParams['figure.figsize'] = (6, 3)

import re

import numpy as np

from scipy.integrate import quad

# %matplotlib notebook

# +

# Load Data

tt = np.genfromtxt('time.csv')

zz = np.genfromtxt('measurements.csv')

vv = np.genfromtxt('velocity.csv')

plt.figure()

plt.plot(tt, zz, label="Height Measurements")

plt.plot(tt, vv, label="Velocity Measurements")

plt.legend()

plt.show()

# -

### Big Kalman Filter Function

def kalman_step(mu_n, sigma_n, z_np1, velocity_n):

#####################################

### Implement your solution here ###

#####################################

### Not a Kalman Filter, just a dummy

mu_np1_np1 = z_np1

sigma_np1_np1 = 1.0

return mu_np1_np1, sigma_np1_np1

# +

## Total Filter Run

mus = np.zeros_like(zz)

sigmas = np.zeros_like(zz)

###############################

### Initial Conditions here ###

###############################

mus[0] = 0.0

sigmas[0] = 1.0

for i in range(1, len(zz)):

mu_np1_np1, sigma_np1_np1 = kalman_step(

mus[i-1],

sigmas[i-1],

zz[i],

vv[i-1])

mus[i] = mu_np1_np1

sigmas[i] = sigma_np1_np1

# +

plt.figure()

plt.plot(tt, zz, label="Measurements")

plt.plot(tt, mus, 'r--+', label="Kalman Filter Mean")

plt.fill_between(tt, mus-2*sigmas, mus+2*sigmas, alpha=0.3, color='r', label="Mean ± 2 Sigma")

plt.legend()

plt.title("Height of the Object")

plt.ylabel("Height in Meters")

plt.xlabel("Time in seconds")

# +

#############################

##### SAVE YOUR RESULTS #####

#############################

stripped_name = re.sub(r'\W+', '', my_name)

np.savetxt(stripped_name+'_mus.csv', mus)

np.savetxt(stripped_name+'_sigmas.csv', sigmas)

| exercise/.ipynb_checkpoints/Exercise1-Solution-Template-checkpoint.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

import pandas as pd

from sklearn.datasets import load_iris,fetch_20newsgroups,load_boston

from sklearn.model_selection import train_test_split

from sklearn.neighbors import KNeighborsClassifier

from sklearn.preprocessing import StandardScaler

data = pd.read_csv('./data/FBlocation/train.csv')

data.head(10)

data = data.query('x > 1.0 & x < 1.25 & y>2.5 & y<2.75')

#处理时间

time_value = pd.to_datetime(data['time'],unit='s')

time_value = pd.DatetimeIndex(time_value)

data['day'] = time_value.day

data['weekday'] = time_value.weekday

data['hour'] = time_value.hour

#删除时间

data.drop(['time'],axis=1)

#签到数量少于n个目标位置的删除

place_count = data.groupby('place_id').count()

place_count.reset_index().head()

tf = place_count[place_count.row_id > 3].reset_index()

data = data[data['place_id'].isin(tf.place_id)]

#取出数据中的特征值和目标值

y = data['place_id']

x = data.drop(['place_id','row_id'],axis=1)

#进行数据的分割:训练集合 & 测试集合

x_train,x_test,y_train,y_test = train_test_split(x,y,test_size=0.25)

#特征工程(标准化)

std = StandardScaler()

#对测试集合训练集的特征值进行标准化

x_train = std.fit_transform(x_train)

x_test = std.transform(x_test)

#进行算法流程

knn = KNeighborsClassifier(n_neighbors=100)

#fit , predict ,score

knn.fit(x_train,y_train)

#得出预测结果

y_predict = knn.predict(x_test)

#得出准确率

knn.score(x_test,y_test)

| day_02_FBlocation.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# name: python3

# ---

# + [markdown] id="view-in-github" colab_type="text"

# <a href="https://colab.research.google.com/github/3778/COVID-19/blob/master/notebooks/%5BIssue_30%5D_Simulador_Leitos.ipynb" target="_parent"><img src="https://colab.research.google.com/assets/colab-badge.svg" alt="Open In Colab"/></a>

# + id="y36qcx_mUixM" colab_type="code" colab={"base_uri": "https://localhost:8080/", "height": 105} outputId="f9de287c-831c-48fa-eaa7-eafe92e6c981"

# !pip3 install simpy

# + id="jyjjTR1HUmFN" colab_type="code" colab={}

from collections import defaultdict

import numpy as np

import pandas as pd

import simpy

# + id="7u6LouY4Z6NH" colab_type="code" colab={}

def gen_time_between_arrival():

return np.random.random() / 2

def gen_days_in_ward():

return np.random.randint(15, 20)

def gen_days_in_icu():

return np.random.randint(15, 45)

def gen_go_to_icu():

return np.random.random() < 0.10

def gen_recovery():

return np.random.random() < 0.90

def gen_priority():

return np.random.randint(1, 5)

def gen_icu_max_wait():

return np.random.randint(2, 5)

def gen_ward_max_wait():

return np.random.randint(5, 15)

# + id="_r2M6AudV0dr" colab_type="code" colab={}

def request_icu(env, icu):

logger['requested_icu'].append(env.now)

priority = gen_priority()

with icu.request(priority=priority) as icu_request:

time_of_arrival = env.now

final = yield icu_request | env.timeout(gen_icu_max_wait())

logger['time_waited_icu'].append(env.now - time_of_arrival)

logger['priority_icu'].append(priority)

if icu_request in final:

yield env.timeout(gen_days_in_icu())

else:

logger['lost_patients_icu'].append(env.now)

# + id="0mEsefAu5Km5" colab_type="code" colab={}

def request_ward(env, ward):

logger['requested_ward'].append(env.now)

with ward.request() as ward_request:

time_of_arrival = env.now

final = yield ward_request | env.timeout(gen_ward_max_wait())

if ward_request in final:

logger['time_waited_ward'].append(env.now - time_of_arrival)

yield env.timeout(gen_days_in_ward())

else:

logger['lost_patients_ward'].append(env.now)

# + id="Vwfml2S95NK0" colab_type="code" colab={}

def patient(env, ward, icu):

if gen_go_to_icu():

yield env.process(request_icu(env, icu))

if not gen_recovery():

logger['deaths'].append(env.now)

else:

yield env.process(request_ward(env, ward))

else:

yield env.process(request_ward(env, ward))

if not gen_go_to_icu():

logger['recovered_from_ward'].append(env.now)

else:

yield env.process(request_icu(env, icu))

if not gen_recovery():

logger['deaths'].append(env.now)

else:

yield env.process(request_ward(env, ward))

# + id="F9kH6XSjfusv" colab_type="code" colab={}

def generate_patients(env, ward, icu):

while True:

env.process(patient(env, ward, icu))

yield env.timeout(gen_time_between_arrival())

# + id="UghTvL4gbgNp" colab_type="code" colab={}

def observer(env, ward, icu):

while True:

logger['queue_ward'].append(len(ward.queue))

logger['queue_icu'].append(len(icu.queue))

logger['count_ward'].append(ward.count)

logger['count_icu'].append(icu.count)

yield env.timeout(1)

# + id="cwhZQrI7XFx_" colab_type="code" colab={}

nsim = 120

logger = defaultdict(list)

env = simpy.Environment()

ward = simpy.Resource(env, 75)

icu = simpy.PriorityResource(env, 15)

env.process(generate_patients(env, ward, icu))

env.process(observer(env, ward, icu))

env.run(until=nsim)

# + id="SvDlaqHAdT0C" colab_type="code" colab={"base_uri": "https://localhost:8080/", "height": 170} outputId="74d0f612-96a1-4edb-8aae-ab6e6ade8118"

pd.Series(logger['time_waited_ward']).describe()

# + id="wGWQk3gctJ9l" colab_type="code" colab={"base_uri": "https://localhost:8080/", "height": 34} outputId="15e54dc9-7437-4b8d-c392-86ee11bdac7b"

len(logger['deaths'])

# + id="V9MuRuIVxsSG" colab_type="code" colab={"base_uri": "https://localhost:8080/", "height": 34} outputId="1e7f478f-3da2-4858-87a4-c26d98bb6802"

len(logger['lost_patients_ward'])

# + id="7812DVrl3cit" colab_type="code" colab={"base_uri": "https://localhost:8080/", "height": 34} outputId="52a84525-23f1-4d5e-c12f-9e10cba59b15"

len(logger['lost_patients_icu'])

# + id="Ce8hssERBt4Z" colab_type="code" colab={"base_uri": "https://localhost:8080/", "height": 320} outputId="6dfb3f10-250d-435c-9cc0-ce7c9a4eb055"

pd.Series(logger['count_ward']).plot(figsize=(15, 5));

# + id="sqDs536gcW_S" colab_type="code" colab={"base_uri": "https://localhost:8080/", "height": 320} outputId="b39bbb03-a58a-4e61-d333-069f1a9c8e41"

pd.Series(logger['queue_ward']).plot(figsize=(15, 5));

# + id="B7gIwdwDBy2-" colab_type="code" colab={"base_uri": "https://localhost:8080/", "height": 320} outputId="c0084e62-416c-4238-b2f2-74b8ddb959cf"

pd.Series(logger['count_icu']).plot(figsize=(15, 5));

# + id="dDzWsf_IcctK" colab_type="code" colab={"base_uri": "https://localhost:8080/", "height": 320} outputId="2ee2a194-0e38-48fe-accb-f9d2269ecb17"

pd.Series(logger['queue_icu']).plot(figsize=(15, 5));

# + id="EHN1S_9ZclWJ" colab_type="code" colab={"base_uri": "https://localhost:8080/", "height": 320} outputId="9e88f3b5-5df3-42e9-e546-f54a53609fe3"

pd.Series(logger['time_waited_ward']).plot.hist(bins=30, figsize=(15, 5));

# + id="nN2K9Ck6c1dA" colab_type="code" colab={"base_uri": "https://localhost:8080/", "height": 320} outputId="b7dc6b17-a82b-4d16-f74c-25fb6b2ccc30"

pd.Series(logger['time_waited_icu']).plot.hist(bins=30, figsize=(15, 5));

# + id="ZnZL_c02mJkQ" colab_type="code" colab={"base_uri": "https://localhost:8080/", "height": 204} outputId="478bca46-37b1-47ab-d2d3-b81075ab7d04"

(

pd.DataFrame(

np.column_stack(

[logger['priority_icu'], logger['time_waited_icu']]

),

columns=['priority_icu', 'time_waited_icu']

)

.groupby('priority_icu')

['time_waited_icu']

.mean()

.rename('mean_time_waited_by_priority_level')

.to_frame()

)

| notebooks/[Issue_30]_Simulador_Leitos.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .r

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: R

# language: R

# name: ir

# ---

# LOAD PACKAGES

install.packages('SentimentAnalysis')

library(SentimentAnalysis)

library(ggplot2)

library(dplyr)

install.packages('wru')

library(wru)

# +

# LOAD DATA

data <- read.csv("output/OpenSci3Discipline.csv", header = T, stringsAsFactors = F)

## how many missing abstracts?

nrow(data) #2926

sum(data$IndexedAbstract == '') #1020

mean(data$IndexedAbstract == '') #35% missing

data %>% group_by(Tag) %>% summarize(count_no_abstract = sum(IndexedAbstract == ''), pct_no_abstract = mean(IndexedAbstract == ''))

# +

# ANALYZE SENTIMENT

## use R package to analyze sentiment

as <- analyzeSentiment(data$IndexedAbstract[1:nrow(data)])

data <- cbind(data, as)

## View sentiment direction (i.e. positive, neutral and negative) with the two

## most popular directories QDAP and GI

data$DirectionQDAP <- convertToDirection(data$SentimentQDAP)

data$DirectionGI <- convertToDirection(data$SentimentGI)

# SAVE

write.csv(x = data, file = "abstracts_scored.csv")

# -

## ANALYSIS

### Histograms

qplot(x = as$SentimentGI, geom = "histogram")

qplot(x = as$SentimentQDAP, geom = "histogram")

qplot(x = as$SentimentLM, geom = "histogram")

qplot(x = as$SentimentHE, geom = "histogram")

qplot(x = as.factor(data$DirectionQDAP), geom = "bar")

# ### CUSTOM DICTIONARIES

## load the custom words file

custom <- read.csv("input/Lancet Dictionaries.csv", header = T, stringsAsFactors = F)

head(custom)

# +

# how many dictionaries:

constructs <- levels(as.factor(custom$IndivConstruct))

length(constructs) #15

# extract the right data (the word list)

data <- read.csv("input/OpenSci3Discipline.csv", header = T, stringsAsFactors = F)

full_custom_results <- data.frame(PaperId = data$PaperId)

for (construct_i in constructs){

X <- custom %>%

filter(IndivConstruct %in% construct_i)

# create the dictionary with the word list

wordlist_i <- SentimentDictionaryWordlist(X$Words)

summary(wordlist_i)

# score the data

custom_results_i <- analyzeSentiment(data$IndexedAbstract,

rules=list(x=list(ruleRatio, wordlist_i)))

full_custom_results <- cbind(full_custom_results, custom_results_i)

}

# set the columns name

constructs2 <- gsub(pattern = " ", replacement = "", x=constructs) #remove spaces

constructs2 <- gsub(pattern = "/", replacement = "_", x=constructs2) #replace slashes

constructs2 <- gsub(pattern = "-", replacement = "", x=constructs2) #replace dashes

names(full_custom_results)[-1] <- constructs2

#bring in important vars

full_custom_results <- cbind(full_custom_results, data[,c('Tag','IndexedAbstract','Title')])

write.csv(x = full_custom_results, file="output/abstracts_scored_custom.csv", row.names = FALSE)

# -

| code-data/.ipynb_checkpoints/computeSentiment-checkpoint.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# # 1. DATA TYPES

#

# + active=""

# 1) Numeric Type -> int, float, complex, long

# 2) Text Type -> string

# 3) boolean type -> bool

# + active=""

# Fourtypes which we mostly used

#

# -> String (Example: "uzair", 'IBA')

# -> Integer (Example: 12, 100, 3)

# -> Float (Example: 1.2, 12.5, 111.885)

# -> Boolean (True or False)

#

# + [markdown] slideshow={"slide_type": "slide"}

# <a name='variables'></a>Variables

# ===

# A variable holds a value.

#

# Python automatically assign type to a variable based on the values

# + [markdown] slideshow={"slide_type": "subslide"}

# <a name='example'></a>Example

# ---

# + slideshow={"slide_type": "fragment"}

message = "Hello Python world!"

print(message)

type(message)

# + [markdown] slideshow={"slide_type": "subslide"}

# A variable holds a value. You can change the value of a variable at any point.

# + slideshow={"slide_type": "fragment"}

message = "Hello Python world!"

print(message)

python_string = "Python is my favorite language!"

print(python_string)

# + [markdown] slideshow={"slide_type": "subslide"}

# <a name='naming_rules'></a>Naming rules

# ---

# - Variables can only contain letters, numbers, and underscores. Variable names can start with a letter or an underscore, but can not start with a number.

# - Spaces are not allowed in variable names, so we use underscores instead of spaces. For example, use student_name instead of "student name".

# - You cannot use [Python keywords](http://docs.python.org/3/reference/lexical_analysis.html#keywords) as variable names.

# - Variable names should be descriptive, without being too long. For example mc_wheels is better than just "wheels", and number_of_wheels_on_a_motorycle.

# - Be careful about using the lowercase letter l and the uppercase letter O in places where they could be confused with the numbers 1 and 0.

# -

# + [markdown] slideshow={"slide_type": "slide"}

# Strings

# ===

# Strings are sets of characters. Strings are easier to understand by looking at some examples.

# + [markdown] slideshow={"slide_type": "subslide"}

# <a name='single_double_quotes'></a>Single and double quotes

# ---

# Strings are contained by either single or double quotes.

# + slideshow={"slide_type": "fragment"}

my_string = "This is a double-quoted string."

my_string = 'This is a single-quoted string.'

# + [markdown] slideshow={"slide_type": "subslide"}

# This lets us make strings that contain quotations.

# + slideshow={"slide_type": "fragment"}

quote = "<NAME> once said, \

'Any program is only as good as it is useful.'"

quote

# -

# ### Multiline Strings

#

# In case we need to create a multiline string, there is the **triple-quote** to the rescue:

# `'''`

# +

multiline_string = '''

This is a string where I

can confortably write on multiple lines

without worring about to use the escape character "\\" as in

the previsou example.

As you'll see, the original string formatting is preserved.

'''

print(multiline_string)

# + [markdown] slideshow={"slide_type": "subslide"}

# <a name='changing_case'></a>Changing case

# ---

# You can easily change the case of a string, to present it the way you want it to look.

# + slideshow={"slide_type": "fragment"}

first_name = 'uzair'

print(first_name)

print(first_name.title())

# + [markdown] slideshow={"slide_type": "subslide"}

# It is often good to store data in lower case, and then change the case as you want to for presentation. This catches some TYpos. It also makes sure that 'eric', 'Eric', and 'ERIC' are not considered three different people.

#

# Some of the most common cases are lower, title, and upper.

# + slideshow={"slide_type": "fragment"}

first_name = 'eric'

print(first_name)

first_name = first_name.title()

print(first_name)

print(first_name.upper())

first_name_titled = 'Eric'

print(first_name_titled.lower())

# -

# **Note**: Please notice that the original strings remain **always** unchanged

print(first_name)

print(first_name_titled)

# + [markdown] slideshow={"slide_type": "subslide"}

# <a name='concatenation'></a>Combining strings (concatenation)

# ---

# It is often very useful to be able to combine strings into a message or page element that we want to display. Again, this is easier to understand through an example.

# + slideshow={"slide_type": "fragment"}

first_name = 'uzair'

last_name = 'adamjee'

full_name = first_name + ' ' + last_name

print(full_name.title())

# + [markdown] slideshow={"slide_type": "fragment"}

# The plus sign combines two strings into one, which is called **concatenation**.

# + [markdown] slideshow={"slide_type": "subslide"}

# You can use as many plus signs as you want in composing messages. In fact, many web pages are written as giant strings which are put together through a long series of string concatenations.

# + slideshow={"slide_type": "fragment"}

first_name = 'ada'

last_name = 'lovelace'

full_name = first_name + ' ' + last_name

print(full_name)

message = full_name.title() + ' ' + \

"was considered the world's first computer programmer."

print(message)

# + [markdown] slideshow={"slide_type": "subslide"}

# <a name='whitespace'></a>Whitespace

# ---

# The term "whitespace" refers to characters that the computer is aware of, but are invisible to readers. The most common whitespace characters are spaces, tabs, and newlines.

#

# Spaces are easy to create, because you have been using them as long as you have been using computers. Tabs and newlines are represented by special character combinations.

#

# The two-character combination "\t" makes a tab appear in a string. Tabs can be used anywhere you like in a string.

# + slideshow={"slide_type": "fragment"}

print("Hello everyone!")

# + slideshow={"slide_type": "fragment"}

print("\tHello everyone!")

# + slideshow={"slide_type": "fragment"}

print("Hello \teveryone!")

# + [markdown] slideshow={"slide_type": "subslide"}

# The combination "\n" makes a newline appear in a string. You can use newlines anywhere you like in a string.

# + slideshow={"slide_type": "fragment"}

print("Hello everyone!")

# + slideshow={"slide_type": "fragment"}

print("\nHello everyone!")

# + slideshow={"slide_type": "fragment"}

print("Hello \neveryone!")

# + slideshow={"slide_type": "fragment"}

print("\n\n\nHello everyone!")

# + [markdown] slideshow={"slide_type": "subslide"}

# ### Stripping whitespace

#

# Many times you will allow users to enter text into a box, and then you will read that text and use it. It is really easy for people to include extra whitespace at the beginning or end of their text. Whitespace includes spaces, tabs, and newlines.

#

# It is often a good idea to strip this whitespace from strings before you start working with them. For example, you might want to let people log in, and you probably want to treat 'eric ' as 'eric' when you are trying to see if I exist on your system.

# + [markdown] slideshow={"slide_type": "subslide"}

# You can strip whitespace from the left side, the right side, or both sides of a string.

# + slideshow={"slide_type": "fragment"}

name = ' eric '

print(name.lstrip())

print(name.rstrip())

print(name.strip())

# + [markdown] slideshow={"slide_type": "subslide"}

# It's hard to see exactly what is happening, so maybe the following will make it a little more clear:

# + slideshow={"slide_type": "fragment"}

name = ' eric '

print('-' + name.lstrip() + '-')

print('-' + name.rstrip() + '-')

print('-' + name.strip() + '-')

# + [markdown] slideshow={"slide_type": "subslide"}

# <a name='integers'></a>Integers

# ---

# You can do all of the basic operations with integers, and everything should behave as you expect. Addition and subtraction use the standard plus and minus symbols. Multiplication uses the asterisk, and division uses a forward slash. Exponents use two asterisks.

# + slideshow={"slide_type": "subslide"}

print(3+2)

# + slideshow={"slide_type": "fragment"}

print(3-2)

# + slideshow={"slide_type": "fragment"}

print(3*2)

# + slideshow={"slide_type": "fragment"}

f = 3/2

print(3/2)

type(f)

# + slideshow={"slide_type": "fragment"}

print(3**2)

# + [markdown] slideshow={"slide_type": "subslide"}

# You can use parenthesis to modify the standard order of operations.

# + slideshow={"slide_type": "fragment"}

standard_order = 2+3*4

print(standard_order)

# + slideshow={"slide_type": "fragment"}

my_order = (2+3)*4

print(my_order)

# -

type(my_order)

# + [markdown] slideshow={"slide_type": "subslide"}

# <a name='floats'></a>Floating-Point numbers

# ---

# Floating-point numbers refer to any number with a decimal point. Most of the time, you can think of floating point numbers as decimals, and they will behave as you expect them to.

# + slideshow={"slide_type": "fragment"}

number = 0.1+0.1

print(number)

# -

type(number)

flag = False

type(flag)

# + [markdown] slideshow={"slide_type": "slide"}

# <a name='comments'></a> 2. Comments

# ===

# As you begin to write more complicated code, you will have to spend more time thinking about how to code solutions to the problems you want to solve. Once you come up with an idea, you will spend a fair amount of time troubleshooting your code, and revising your overall approach.

#

# Comments allow you to write in English, within your program. In Python, any line that starts with a pound (#) symbol is ignored by the Python interpreter.

# + slideshow={"slide_type": "subslide"}

# This line is a comment.

#this

#is

#not

# uasdadasaadaad

print("This line is not a comment, it is code.")

# + [markdown] slideshow={"slide_type": "subslide"}

# <a name='good_comments'></a>What makes a good comment?

# ---

# - It is short and to the point, but a complete thought. Most comments should be written in complete sentences.

# - It explains your thinking, so that when you return to the code later you will understand how you were approaching the problem.

# - It explains your thinking, so that others who work with your code will understand your overall approach to a problem.

# - It explains particularly difficult sections of code in detail.

# + [markdown] slideshow={"slide_type": "subslide"}

# <a name='when_comments'></a>When should you write a comment?

# ---

# - When you have to think about code before writing it.

# - When you are likely to forget later exactly how you were approaching a problem.

# - When there is more than one way to solve a problem.

# - When others are unlikely to anticipate your way of thinking about a problem.

#

# Writing good comments is one of the clear signs of a good programmer. If you have any real interest in taking programming seriously, start using comments now. You will see them throughout the examples in these notebooks.

# +

### Example 1

first_name = input('Write First Name: ')

last_name = input('Enter Last Name: ')

print(first_name+' '+last_name)

fn = first_name+' '+last_name

print(fn.title())

# +

### Example 2

first_number = 15

second_number = 2

# math operations on numeric values

print('addition')

print(first_number + second_number)

print('subtraction')

print(first_number - second_number)

print('multiplication')

print(first_number * second_number)

print('division')

print(first_number / second_number)

result = first_number / second_number

type(result)

# -

# # 3. CONDITIONAL LOGIC

# + active=""

# 3 Things to remeber

#

# -> IF

# -> ELIF

# -> ELSE

# -

#

# # IF Statement

# + active=""

# HOW TO WRITE??

#

# if <expr>:

# <statement>

#

# +

# Example 1

first_number = input('Enter first number: ')

second_number = input('Enter second number: ')

if first_number > second_number:

print('First Number is greater')

# -

# # IF..ELSE Statements

# + active=""

# if <expr>:

# <statement(s)>

# else:

# <statement(s)>

# + active=""

# Types Casting

# -

first_number = input('Enter first number: ')

result = int(first_number)

type(result)

# +

# Example 2

first_number = input('Enter first number: ')

second_number = input('Enter second number: ')

first_number = int(first_number)

second_number = int(second_number)

if first_number > second_number:

print('First Number is greater')

else:

print('Second Number is greater')

# -

# # IF..ELIF..ELSE Statements

# + active=""

# if <expr>:

# <statement(s)>

# elif <expr>:

# <statement(s)>

# elif <expr>:

# <statement(s)>

# ...

# else:

# <statement(s)>

# +

# Example 3

a = 50

b= 50

if a>b:

print('a is greater')

elif a==b:

print('both are equal')

else:

print('b is greater')

# -

# +

# Example 4

num = input("Enter a number: ") # input

num = float(num) # typecasts string value into float

if num > 0:

print("Positive number")

elif num == 0:

print("Zero")

else:

print("Negative number")

# +

# Example 5

a = 100

b = 50

c = 175

if a > b and c > a:

print("Both conditions are True")

# +

# Example 6

a = 25

b = 30

c = 40

if a > b or c > a:

print("One condition is true")

# -

# # ASSIGNMENT

# 1) Take input from user

#

# Then make a logic which will identfy whether the defined variable is ODD or EVEN. (Search for MOD operation on google)

#

# Example

#

# Input: 7

#

# Output: "This number 7 is an odd number"

#

# Input: 16

#

# Output: "This number 16 is an even number"

#

# 2) Consider a string called data= "Hi my name is Zain and I am currently doing my bachelor from IBA Karachi".

#

# Task: Print above mentioned string in UPPER, LOWER case

# # ---- End of Session ----

| Session-2/Session 2 DSC-Introduction to Data Types, Comment & Conditional Logic.ipynb |

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# name: python3

# ---

# + [markdown] id="vzHDl7E57UgO" colab_type="text"

# This tutorial guides you on how to train a Gradient Boosting model using decision trees with the `tf.estimator` APIs. Boosted trees are the popular ML approach for both regression and classification. It is a kind of ensemble technique to summarize the result from many tree models.

#

# This tutorial guides you further on understanding the Boosted Tree model. You can inspect the model from the `local` and the `global`. **From the local aspect, you are going to understand the model on the individual example level**. To distinguish the importance of features, we introduce the `directional feature contributions (DFCs)` detecting what features most contribute to the prediction. **From the global scope, we introduce `permutation feature importances`.** The permutation feature importance is defined to be the decrease in a model score when a feature value is randomly shuffled. This procedure breaks the relationship between the feature and the target. This procedure causes a drop in the model score so that you can understand how much the model depends on the feature.

#

# Reference:

# * Boosted trees: https://www.tensorflow.org/tutorials/estimator/boosted_trees

# * Boosted trees mooel understanding: https://www.tensorflow.org/tutorials/estimator/boosted_trees_model_understanding

# + id="zozKP1dh3L9O" colab_type="code" colab={}

# !pip install -q tf-nightly

# + id="GpZxn1UY3Z_I" colab_type="code" outputId="f9c489e0-6fff-419c-ac1b-1c8de80f2dee" executionInfo={"status": "ok", "timestamp": 1579071668609, "user_tz": -480, "elapsed": 4689, "user": {"displayName": "\u738bDevOps", "photoUrl": "https://lh3.googleusercontent.com/a-/AAuE7mAzLB0C3whTHAdHpq24UrEWqGtbhJElQxTU5_b_4g=s64", "userId": "04300517850278510646"}} colab={"base_uri": "https://localhost:8080/", "height": 68}

import tensorflow as tf

import pandas as pd

import matplotlib.pyplot as plt

from IPython.display import clear_output

import numpy as np

import seaborn as sns

tf.random.set_seed(123)

sns_colors = sns.color_palette('colorblind')

print("Tensorflow Version: {}".format(tf.__version__))

print("Eager Mode: {}".format(tf.executing_eagerly()))

print("GPU {} available".format("is" if tf.config.experimental.list_physical_devices("GPU") else "not"))

# + [markdown] id="DQaj_faF7Xut" colab_type="text"

# # Data Preprocessing and Exploring

# + id="CiQl0gRx4UUa" colab_type="code" colab={}

dftrain = pd.read_csv("https://storage.googleapis.com/tf-datasets/titanic/train.csv")

dfeval = pd.read_csv("https://storage.googleapis.com/tf-datasets/titanic/eval.csv")

y_train = dftrain.pop("survived")

y_eval = dfeval.pop("survived")

# + id="x7jGaXT9741W" colab_type="code" outputId="83bbdb30-c9a9-421b-e036-140e82b48dfe" executionInfo={"status": "ok", "timestamp": 1579071669016, "user_tz": -480, "elapsed": 5060, "user": {"displayName": "\u738bDevOps", "photoUrl": "https://lh3.googleusercontent.com/a-/AAuE7mAzLB0C3whTHAdHpq24UrEWqGtbhJElQxTU5_b_4g=s64", "userId": "04300517850278510646"}} colab={"base_uri": "https://localhost:8080/", "height": 204}

dftrain.head(5)

# + id="oFDFd0Ii770l" colab_type="code" outputId="0f47faff-9511-402e-8ed8-4c2a750c37ef" executionInfo={"status": "ok", "timestamp": 1579071669020, "user_tz": -480, "elapsed": 5044, "user": {"displayName": "\u738bDevOps", "photoUrl": "https://lh3.googleusercontent.com/a-/AAuE7mAzLB0C3whTHAdHpq24UrEWqGtbhJElQxTU5_b_4g=s64", "userId": "04300517850278510646"}} colab={"base_uri": "https://localhost:8080/", "height": 297}

dftrain.describe()

# + id="lZDAC1v58EHD" colab_type="code" outputId="97082f7f-304d-4a0e-f2f6-1dcdf9dadb5c" executionInfo={"status": "ok", "timestamp": 1579071669021, "user_tz": -480, "elapsed": 5029, "user": {"displayName": "\u738bDevOps", "photoUrl": "https://lh3.googleusercontent.com/a-/AAuE7mAzLB0C3whTHAdHpq24UrEWqGtbhJElQxTU5_b_4g=s64", "userId": "04300517850278510646"}} colab={"base_uri": "https://localhost:8080/", "height": 34}

dftrain.shape[0], dfeval.shape[0]

# + id="ADOlWgr68J4E" colab_type="code" outputId="d731d1dd-f34d-40cf-c422-0d4eaf6a3170" executionInfo={"status": "ok", "timestamp": 1579071669614, "user_tz": -480, "elapsed": 5604, "user": {"displayName": "\u738bDevOps", "photoUrl": "https://lh3.googleusercontent.com/a-/AAuE7mAzLB0C3whTHAdHpq24UrEWqGtbhJElQxTU5_b_4g=s64", "userId": "04300517850278510646"}} colab={"base_uri": "https://localhost:8080/", "height": 265}

dftrain["age"].hist(bins=20)

plt.show()

# + id="hcSiRvbk8RZj" colab_type="code" outputId="20be08a9-864c-4b71-9a8b-632d2404cf4c" executionInfo={"status": "ok", "timestamp": 1579071669615, "user_tz": -480, "elapsed": 5573, "user": {"displayName": "\u738bDevOps", "photoUrl": "https://lh3.googleusercontent.com/a-/AA<KEY>TU5_b_4g=s64", "userId": "04300517850278510646"}} colab={"base_uri": "https://localhost:8080/", "height": 265}

dftrain["sex"].value_counts().plot(kind="barh")

plt.show()

# + id="86_2jtUv9roQ" colab_type="code" outputId="18c0c5a0-4f57-4f6d-f3a6-8a2d307d66a0" executionInfo={"status": "ok", "timestamp": 1579071669616, "user_tz": -480, "elapsed": 5537, "user": {"displayName": "\u738bDevOps", "photoUrl": "https://lh3.googleusercontent.com/a-/AAuE7mAzLB0C3whTHAdHpq24UrEWqGtbhJElQxTU5_b_4g=s64", "userId": "04300517850278510646"}} colab={"base_uri": "https://localhost:8080/", "height": 265}

dftrain["class"].value_counts().plot(kind='barh')

plt.show()

# + id="Js6CuA5J98cA" colab_type="code" outputId="9bcf7514-868f-4230-c051-d7425f7b6b01" executionInfo={"status": "ok", "timestamp": 1579071669616, "user_tz": -480, "elapsed": 5485, "user": {"displayName": "\u738bDevOps", "photoUrl": "https://lh3.googleusercontent.com/a-/AAuE7mAzLB0C3whTHAdHpq24UrEWqGtbhJElQxTU5_b_4g=s64", "userId": "04300517850278510646"}} colab={"base_uri": "https://localhost:8080/", "height": 265}

dftrain["embark_town"].value_counts().plot(kind='barh')

plt.show()

# + id="mQVbBPLo_feo" colab_type="code" outputId="1af24b94-dd62-4a66-8c87-1286114a7919" executionInfo={"status": "ok", "timestamp": 1579071670013, "user_tz": -480, "elapsed": 5857, "user": {"displayName": "\u738bDevOps", "photoUrl": "https://lh3.googleusercontent.com/a-/AAuE7mAzLB0C3whTHAdHpq24UrEWqGtbhJElQxTU5_b_4g=s64", "userId": "04300517850278510646"}} colab={"base_uri": "https://localhost:8080/", "height": 265}

pd.concat([dftrain, y_train], axis=1).groupby(['sex'])["survived"].mean().plot(kind='barh')

plt.show()

# + [markdown] id="x--T3K-jJFRp" colab_type="text"

# # Create Feature Columns and Input functions

# + id="YTo2dlDIAZ2x" colab_type="code" colab={}

CATEGORICAL_COLUMNS = ['sex', 'n_siblings_spouses', 'parch', 'class', 'deck',

'embark_town', 'alone']

NUMERIC_COLUMNS = ['age', 'fare']

# + id="_YHmDC0BJSbB" colab_type="code" colab={}

feature_columns = []

for feat in CATEGORICAL_COLUMNS:

vocabulary = dftrain[feat].unique()

# estimators require dense features

feature_columns.append(tf.feature_column.indicator_column(

tf.feature_column.categorical_column_with_vocabulary_list(

key=feat, vocabulary_list=vocabulary

)

))

for feat in NUMERIC_COLUMNS:

feature_columns.append(tf.feature_column.numeric_column(key=feat))

# + [markdown] id="RT2_6c79LLfa" colab_type="text"

# Let's inspect the data first.

# + id="uA1Qn8DqJ4RM" colab_type="code" outputId="7d49b74d-8a82-4fd3-b372-4e121820221d" executionInfo={"status": "ok", "timestamp": 1579071670015, "user_tz": -480, "elapsed": 5812, "user": {"displayName": "\u738bDevOps", "photoUrl": "https://lh3.googleusercontent.com/a-/AAuE7mAzLB0C3whTHAdHpq24UrEWqGtbhJElQxTU5_b_4g=s64", "userId": "04300517850278510646"}} colab={"base_uri": "https://localhost:8080/", "height": 359}

example_data = dftrain.iloc[:10,:]

example_data

# + id="_eDVuy7xLUvq" colab_type="code" outputId="2f72b6c9-6058-48f5-d332-c2b973041ce1" executionInfo={"status": "ok", "timestamp": 1579071670408, "user_tz": -480, "elapsed": 6188, "user": {"displayName": "\u738bDevOps", "photoUrl": "https://lh3.googleusercontent.com/a-/AAuE7mAzLB0C3whTHAdHpq24UrEWqGtbhJElQxTU5_b_4g=s64", "userId": "04300517850278510646"}} colab={"base_uri": "https://localhost:8080/", "height": 187}

class_feat = tf.keras.layers.DenseFeatures([feature_columns[3]])(dict(example_data))

class_feat.numpy()

# + [markdown] id="ft8E56EMMXtO" colab_type="text"

# Now you can create an input function that feeds the dataset and the label into the model during training and evaluation.

# + id="8v4NdQN6Loxj" colab_type="code" colab={}

NUM_EXAMPLES = len(dftrain)

def make_input_fn(X, y, n_epochs=1, shuffle=True, batch_size=NUM_EXAMPLES):

def input_fn():

# repeat == None: endless repeat

ds = tf.data.Dataset.from_tensor_slices((dict(X), y)).repeat(n_epochs)

if shuffle:

ds = ds.shuffle(1000)

ds = ds.batch(batch_size) # no need to do shuffle due to in-memory data

return ds

return input_fn

# + id="IwVrAVUOTKP-" colab_type="code" colab={}

def make_in_memory_train_input_fn(X, y):

"""Used when the whole data is saved in memory.

It is no need to batch the dataset.

"""

y = np.expand_dims(y, axis=1)

def input_fn():

return dict(X), y

return input_fn

# + id="kJeKbIx_MmnE" colab_type="code" colab={}

train_input_fn = make_input_fn(dftrain, y_train, n_epochs=None)

eval_input_fn = make_input_fn(dfeval, y_eval, shuffle=1)

# + [markdown] id="P0BDFTHIOLUi" colab_type="text"

# You can also use the input_fn method to get a batch of dataset.

# + id="b3-rsvQxN3H3" colab_type="code" outputId="90c9fd7b-083a-4472-b012-73afbcbe4676" executionInfo={"status": "ok", "timestamp": 1579071670411, "user_tz": -480, "elapsed": 6126, "user": {"displayName": "\u738bDevOps", "photoUrl": "https://lh3.googleusercontent.com/a-/AAuE7mAzLB0C3whTHAdHpq24UrEWqGtbhJElQxTU5_b_4g=s64", "userId": "04300517850278510646"}} colab={"base_uri": "https://localhost:8080/", "height": 54}

_ds = make_input_fn(dfeval, y_eval, shuffle=False, batch_size=3)()

_ds

# + id="WtjKBNJyOlsx" colab_type="code" outputId="67f393ee-c3a0-48da-c121-51561dd5e1e2" executionInfo={"status": "ok", "timestamp": 1579071670412, "user_tz": -480, "elapsed": 6108, "user": {"displayName": "\u738bDevOps", "photoUrl": "https://lh3.googleusercontent.com/a-/AAuE7mAzLB0C3whTHAdHpq24UrEWqGtbhJElQxTU5_b_4g=s64", "userId": "04300517850278510646"}} colab={"base_uri": "https://localhost:8080/", "height": 68}

for _data, _label in _ds.take(1):

print(_data.keys())

print(_data['sex'].numpy())

print(_label)

# + [markdown] id="GxZKPrhUPD0Z" colab_type="text"

# # Training, Evaluating and Predicting

# + [markdown] id="w-BnhiLxQIBB" colab_type="text"

# The following is a linear model as the baseline.

# + id="ck8hFeqVOuEX" colab_type="code" outputId="dd7c4e91-2d10-4644-f6e5-f0243fd781d8" executionInfo={"status": "ok", "timestamp": 1579071676004, "user_tz": -480, "elapsed": 9, "user": {"displayName": "\u738bDevOps", "photoUrl": "https://lh3.googleusercontent.com/a-/AA<KEY>TU5_b_4g=s64", "userId": "04300517850278510646"}} colab={"base_uri": "https://localhost:8080/", "height": 221}

linear_est = tf.estimator.LinearClassifier(feature_columns)

# train the model

linear_est.train(input_fn=train_input_fn, max_steps=100)

# evaluate the model

result = linear_est.evaluate(input_fn=eval_input_fn)

clear_output()

print(pd.Series(result))

# + [markdown] id="BH-lAYLgQRI_" colab_type="text"

# Next you are going to build a Boosted Trees model. Both regression and classification are supported via the `BoostedTreesClassifier` and the `BoostedTreesClassifier` API respectively.

# + id="l0Jd3jtePjG5" colab_type="code" outputId="2b7e2ff9-0a6a-42fb-cb98-9ea8ac129e9a" executionInfo={"status": "ok", "timestamp": 1579071679909, "user_tz": -480, "elapsed": 19, "user": {"displayName": "\u738bDevOps", "photoUrl": "https://lh3.googleusercontent.com/a-/AAuE7mAzLB0C3whTHAdHpq24UrEWqGtbhJElQxTU5_b_4g=s64", "userId": "04300517850278510646"}} colab={"base_uri": "https://localhost:8080/", "height": 221}

params = {

'n_trees': 50,

'max_depth': 3,

'n_batches_per_layer': 1,

# You have to set the center_bias to True to get DFCs.

'center_bias': True

}

# Because all dataset is loaded into the memory, use entire dataset per layer.

n_batches = 1

est = tf.estimator.BoostedTreesClassifier(feature_columns=feature_columns,

**params)

# train the model

est.train(train_input_fn, max_steps=100)

# evaluate the model

result = est.evaluate(eval_input_fn)

clear_output()

print(pd.Series(result))

# + [markdown] id="HkBUtT43RgQj" colab_type="text"

# Make the prediction.