code

stringlengths 38

801k

| repo_path

stringlengths 6

263

|

|---|---|

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# # Lab 1: Exploring languages through word frequencies

# ## Learning Objectives:

# In this lab you will learn the following linguistic concepts and programming skills:

# * Basic text processing and regular expressions.

# * What do word frequencies tell us about a language?

# * How do different languages compare?

# * How to manipulate corpora and plot insightful graphs?

# ## About this assignment

#

# - This is a Jupyter notebook. You can execute cell blocks by pressing control-enter.

# - You will be submitting the lab1.py file on Gradescope.

# - We have provided local access to the Gradescope autograder test cases. In order to run the test cases locally, simply run <code>python run_tests.py</code> or <code>python run_tests.py -j</code> (this commands gives information about the performance on each test case in the form of a readable json object).

# ## Pre-requisites:

# For this lab, you need to make sure you have the following installed:

# * python3.6 (python2.7 should also work)

# * nltk (python package)

# * matplotlib

#

# To make sure your installation is successful, execute the block below.

import nltk

import matplotlib

import importlib

import lab1

# As a packaged solution, I would recommend installing [conda](https://docs.conda.io/en/latest/miniconda.html), and creating a conda [environment](https://docs.conda.io/projects/conda/en/latest/user-guide/tasks/manage-environments.html) 'ece365_nlp' to use for the rest of this module.

# ## Exercise 1: Working With Text

# Before getting started, let's work with some simple text processing!

# +

text1 = "Ethics are built right into the ideals and objectives of the United Nations "

len(text1) # The length of text1 (String Length)

# -

text2 = text1.split(' ') # Return a list of the words in text2, separating by ' '.

len(text2) # Word Length

text2

# <br>

# List comprehension allows us to find specific words:

[w for w in text2 if len(w) > 3] # Words that are greater than 3 letters long in text2

[w for w in text2 if w.istitle()] # Capitalized words in text2

[w for w in text2 if w.endswith('s')] # Words in text2 that end in 's'

# <br>

# We can find unique words using 'set()'.

# +

text3 = 'To be or not to be'

text4 = text3.split(' ')

len(text4)

# -

len(set(text4))

set(text4)

len(set([w.lower() for w in text4])) # .lower converts the string to lowercase.

set([w.lower() for w in text4])

# ### Processing free-text

# +

text5 = '"Ethics are built right into the ideals and objectives of the United Nations" \

#UNSG @ NY Society for Ethical Culture bit.ly/2guVelr'

text6 = text5.split(' ')

text6

# -

# <br>

# Finding hastags:

[w for w in text6 if w.startswith('#')]

# <br>

# Finding callouts:

[w for w in text6 if w.startswith('@')]

text7 = '@UN @UN_Women "Ethics are built right into the ideals and objectives of the United Nations" \

#UNSG @ NY Society for Ethical Culture bit.ly/2guVelr'

text8 = text7.split(' ')

[w for w in text8 if w.startswith('@')]

# ### Regular Expressions

# We can use regular expressions to help us with more complex parsing. A regular expression is a special sequence of characters that helps you match or find other strings or sets of strings, using a specialized syntax held in a pattern. Regular expressions are widely used in UNIX world.

#

# For example `'@[A-Za-z0-9_]+'` will return all words that:

# * start with `'@'` and are followed by at least one:

# * capital letter (`'A-Z'`)

# * lowercase letter (`'a-z'`)

# * number (`'0-9'`)

# * or underscore (`'_'`)

# +

import re # import re - a module that provides support for regular expressions

[w for w in text8 if re.search('@[A-Za-z0-9_]+', w)]

# -

# Let's get more familiar with Regular Expression on pandas (pandas is a powerful open source data analysis Python tool.)!

# +

import pandas as pd

time_sentences = ["Monday: The doctor's appointment is at 2:45pm.",

"Tuesday: The dentist's appointment is at 11:30 am.",

"Wednesday: At 7:00pm, there is a basketball game!",

"Thursday: Be back home by 11:15 pm at the latest.",

"Friday: Take the train at 08:10 am, arrive at 09:00am."]

df = pd.DataFrame(time_sentences, columns=['text'])

df

# -

# find the number of characters for each string in df['text']

df['text'].str.len()

# find the number of tokens for each string in df['text']

df['text'].str.split().str.len()

# find which entries contain the word 'appointment'

df['text'].str.contains('appointment')

# find how many times a digit occurs in each string

df['text'].str.count(r'\d')

# find all occurances of the digits

df['text'].str.findall(r'\d')

# group and find the hours and minutes

df['text'].str.findall(r'(\d?\d):(\d\d)')

# replace weekdays with '???'

df['text'].str.replace(r'\w+day\b', '???')

# replace weekdays with 3 letter abbrevations

df['text'].str.replace(r'(\w+day\b)', lambda x: x.groups()[0][:3])

# create new columns from first match of extracted groups

df['text'].str.extract(r'(\d?\d):(\d\d)')

# extract the entire time, the hours, the minutes, and the period

df['text'].str.extractall(r'((\d?\d):(\d\d) ?([ap]m))')

# extract the entire time, the hours, the minutes, and the period with group names

df['text'].str.extractall(r'(?P<time>(?P<hour>\d?\d):(?P<minute>\d\d) ?(?P<period>[ap]m))')

# You may realize there is nothing for you to answer. This section is ungraded, but you are strongly recommended to read&understand this section before moving on.

# ## Exercise 2: Word Frequencies

# We live in a multi-lingual world. The languages we use are like English in some ways and distinct from English in many ways. In this exercise, we will explore some aspects of languages that make them different from English by the use of quantitative indices.

#

# Before we begin comparing languages, let us begin with English. How many words are there in English? Well, that depends on who we ask. The Second Edition of the 20-volume Oxford English Dictionary contains full entries for 171,476 words in current use (and 47,156 obsolete words). Looking elsewhere, Webster's Third New International Dictionary, Unabridged, together with its 1993 Addenda Section, includes some 470,000 entries. But, the number of words in the Oxford and Webster Dictionaries are not the same as the number of words in English. Why is that? First, it takes a while for dictionary publishers like Oxford University and Merriam-Webster to include new words in their dictionaries. While it may seem surprising new words are being coined at a rapid rate, a recent article in The Guardian reports that English speakers are adding new words at the rate of around 1,000 a year. Recent dictionary debutants include blog, grok, crowdfunding, hackathon, airball, e-marketing, sudoku, twerk and Brexit, many of which are words we find in use in our everyday lives. Slang and jargon could also be considered in this list. You have probably observed how some of these terms depend on where you live (e.g., 'prepone' in India means the opposite of postpone), whereas others are common in many places (e.g. the portmanteau, brunch = breakfast + lunch).

#

# A natural question that arises in this setting is, are all words equally likely, or do they occur with different frequencies? As you can expect, words occur with different frequencies, but what you would would not have expected is how skewed the word frequencies can be. That is what you will see in your first exercise.

#

# First, you will count the frequency of words from a word list derived from a large collection of words -- a 'corpus' (meaning 'a body of text'). For this part of the exercise, you will use the corpus of Reuters from which you will count the number of times each word occurs.

# For this, you will need to do some tokenization. Towards that, you will lowercase all words, remove the punctuation marks and numbers. Then you will use NLTK to get the frequency distribution of the tokenized text.

#

# Based on the frequency distribution of word that you will collect, you will answer the following questions.

#

# * What are the 10 most frequent words?

# * What are the 10 least frequent words?

# * What proportion of words have a frequency of 1? These singleton words are termed 'hapax legomena' (a sophisticated Greek name) and the numebr of singletons in a corpus is a measure of the richness of the vocabulary of that collection, giving you the rate at which new words appear in that text. If you take a very large text in a language and call it representative of that language, then the rate of singletons is a measure of its richness.

# * What are the answers to the above questions, if we consider stemming or lemmatization?

#

# **Total points: 50 points**

# ### Coding Questions

#

# a. In the lab1.py file, complete the function "get_freqs" that takes as an input the "Reuters" corpus (type str) from nltk and returns as an output a dictionary with the key being a word, and the value being the frequency of the word in the corpus.

# Make sure to lowercase all words in the corpus and to replace all punctuations and digits with a space character. This will take care of tokenization for you. To avoid confusion, the list of punctuation marks are given to you. (15 points)

# + nbgrader={"grade": false, "grade_id": "cell-053fc5a44cfd341b", "locked": false, "schema_version": 3, "solution": true, "task": false}

puncts = ['.','!','?',',',';',':','[', ']', '{', '}', '(', ')', '\'', '\"']

# +

importlib.reload(lab1)

raw_corpus = nltk.corpus.reuters.raw()

freqs = lab1.get_freqs(raw_corpus, puncts)

# -

freqs

# b. Next, complete the function called "get_top_10" that takes in the "freqs" dictionary, and returns the top 10 most frequent words as a list. (5 points)

# + nbgrader={"grade": false, "grade_id": "cell-4aec5a37bbd4cef5", "locked": false, "schema_version": 3, "solution": true, "task": false}

importlib.reload(lab1)

print(lab1.get_top_10(freqs))

# + nbgrader={"grade": true, "grade_id": "cell-76c42b84f8038afc", "locked": true, "points": 20, "schema_version": 3, "solution": false, "task": false}

### BEGIN HIDDEN TESTS

assert lab1.get_top_10(freqs) == ['the', 'of', 'to', 'in', 'and', 'said', 'a', 'mln', 's', 'vs']

### END HIDDEN TESTS

# -

# c. Next, complete the function called "get_bottom_10" that takes in the "freqs" dictionary, and returns the top 10 least frequent words as a list. (5 points)

# + nbgrader={"grade": false, "grade_id": "cell-f7d5084d8b86ea60", "locked": false, "schema_version": 3, "solution": true, "task": false}

importlib.reload(lab1)

print(lab1.get_bottom_10(freqs))

# + nbgrader={"grade": true, "grade_id": "cell-eedc6f45889eadd8", "locked": true, "points": 5, "schema_version": 3, "solution": false, "task": false}

### BEGIN HIDDEN TESTS

assert lab1.get_bottom_10(freqs) == ['inflict', 'sheen', 'stand-off', 'avowed', 'kilolitres', 'kilowatt/hour', 'janunary/march', 'pineapples', 'hasrul', 'paian']

### END HIDDEN TESTS

# -

# d. Complete the function called "get_percentage_singletons" which takes in the "freqs" dictionary and returns a float value of the percentage of words that appear once in the corpus. (5 points)

# + tags=["outputPrepend"]

print(freqs)

# + nbgrader={"grade": false, "grade_id": "cell-a165768ae088ea2c", "locked": false, "schema_version": 3, "solution": true, "task": false}

importlib.reload(lab1)

print(lab1.get_percentage_singletons(freqs))

# + nbgrader={"grade": true, "grade_id": "cell-9e57695b3b6a675d", "locked": true, "points": 5, "schema_version": 3, "solution": false, "task": false}

### BEGIN HIDDEN TESTS

assert (lab1.get_percentage_singletons(freqs)>40.4)

assert (lab1.get_percentage_singletons(freqs)<40.6)

### END HIDDEN TESTS

# -

# e. The next two blocks show examples of how stemming and lemmatization are done.

from nltk.stem import PorterStemmer

porter = PorterStemmer()

print(porter.stem("cats"))

print(porter.stem("trouble"))

print(porter.stem("troubling"))

print(porter.stem("troubled"))

from nltk.stem import WordNetLemmatizer

wordnet_lemmatizer = WordNetLemmatizer()

sentence = "He was running and eating at same time. He has bad habit of swimming after playing long hours in the Sun."

for word in sentence.split():

print ("{0:20}{1:20}".format(word,wordnet_lemmatizer.lemmatize(word, pos="v")))

# f. Repeat steps b,c,d by doing stemming. You should modify the get_freqs_stemming function. (5 points)

# + nbgrader={"grade": false, "grade_id": "cell-9e9343b8ca4c2880", "locked": false, "schema_version": 3, "solution": true, "task": false}

importlib.reload(lab1)

freqs_stemming = lab1.get_freqs_stemming(raw_corpus, puncts)

print(lab1.get_top_10(freqs_stemming))

print(lab1.get_bottom_10(freqs_stemming))

print(lab1.get_percentage_singletons(freqs_stemming))

# + nbgrader={"grade": true, "grade_id": "cell-d58f04c1eef6cf43", "locked": true, "points": 5, "schema_version": 3, "solution": false, "task": false}

### BEGIN HIDDEN TESTS

assert lab1.get_top_10(freqs_stemming) == ['the', 'of', 'to', 'in', 'and', 'said', 'a', 'mln', 'it', 's']

assert lab1.get_bottom_10(freqs_stemming) == ['inflict', 'sheen', 'stand-off', 'avow', 'kilolitr', 'kilowatt/hour', 'janunary/march', 'hasrul', 'paian', 'sawn']

assert (lab1.get_percentage_singletons(freqs_stemming)>41.9)

assert (lab1.get_percentage_singletons(freqs_stemming)<42.2)

### END HIDDEN TESTS

# -

# g. Repeat steps b,c,d by doing lemmatization. You should modify the get_freqs_lemmatized function. (5 points)

# + nbgrader={"grade": false, "grade_id": "cell-f364b1fc291d7e74", "locked": false, "schema_version": 3, "solution": true, "task": false}

importlib.reload(lab1)

freqs_lemmatized = lab1.get_freqs_lemmatized(raw_corpus, puncts)

print(lab1.get_top_10(freqs_lemmatized))

print(lab1.get_bottom_10(freqs_lemmatized))

print(lab1.get_percentage_singletons(freqs_lemmatized))

# + nbgrader={"grade": true, "grade_id": "cell-cad80b5f0643a89f", "locked": true, "points": 5, "schema_version": 3, "solution": false, "task": false}

### BEGIN HIDDEN TESTS

assert lab1.get_top_10(freqs_lemmatized) == ['the', 'of', 'to', 'in', 'be', 'say', 'and', 'a', 'mln', 's']

assert lab1.get_bottom_10(freqs_lemmatized) == ['inflict', 'sheen', 'stand-off', 'avow', 'kilolitres', 'kilowatt/hour', 'janunary/march', 'pineapples', 'hasrul', 'paian']

assert (lab1.get_percentage_singletons(freqs_lemmatized)>41.9)

assert (lab1.get_percentage_singletons(freqs_lemmatized)<42.2)

### END HIDDEN TESTS

# -

# h. What is the vocabulary size of this corpus (i.e., raw_corpus)? How about the vocabulary size after doing stemming and lemmatization respectively? Note that we lowercase all words in the corpus and replace all punctuations and digits with empty spaces. Add your code to the size_of_raw_corpus, size_of_stemmed_raw_corpus and size_of_lemmatized_raw_corpus functions (5 points)

# + nbgrader={"grade": false, "grade_id": "cell-60cc741a45f09b60", "locked": false, "schema_version": 3, "solution": true, "task": false}

importlib.reload(lab1)

print(lab1.size_of_raw_corpus(freqs)) # Vocalbulary size of raw_corpus.

print(lab1.size_of_stemmed_raw_corpus(freqs_stemming)) # Vocalbulary size of raw_corpus after stemming.

print(lab1.size_of_lemmatized_raw_corpus(freqs_lemmatized)) # Vocalbulary size of raw_corpus after lemmatization.

# + nbgrader={"grade": true, "grade_id": "cell-c3277367acf9daa0", "locked": true, "points": 5, "schema_version": 3, "solution": false, "task": false}

### BEGIN HIDDEN TESTS

assert lab1.size_of_raw_corpus(freqs) == 33206

assert lab1.size_of_stemmed_raw_corpus(freqs_stemming) == 25778

assert lab1.size_of_lemmatized_raw_corpus(freqs_lemmatized) == 29032

### END HIDDEN TESTS

# -

# i. Different documents, even of equal length are usually composed of different vocabularies. We will compare two documents of equal length, and see the percentage of unseen vocabulary between them.

#

# More specifically, we have document "a" to be the first 100 words of raw_corpus and document "b" to be the last 100 words of raw_corpus. How many percent of words in document "a" does NOT appear in document "b"? What if we change the document size to be 1000 (first 1000 words of raw_corpus v.s. last 1000 words of raw_corpus), 10000, 100000, 500000?

# What do you observe with the document size increasing? You may find set(a)-set(b) is a useful function. Modify the percentage_of_unseen_vocab function (5 points)

# + nbgrader={"grade": true, "grade_id": "cell-3e819a96835cdc2b", "locked": false, "points": 5, "schema_version": 3, "solution": true, "task": false}

importlib.reload(lab1)

length = [100,1000,10000,100000,500000]

for length_i in length:

a = raw_corpus.split()[:length_i]

b = raw_corpus.split()[-length_i:]

print(lab1.percentage_of_unseen_vocab(a, b, length_i))

### Write down your observation here: (Ungraded)

# + nbgrader={"grade": true, "grade_id": "cell-3e391821c0e4a4d1", "locked": true, "points": 5, "schema_version": 3, "solution": false, "task": false}

### BEGIN HIDDEN TESTS

assert lab1.percentage_of_unseen_vocab(raw_corpus.split()[:100], raw_corpus.split()[-100:], 100) == 0.79

assert lab1.percentage_of_unseen_vocab(raw_corpus.split()[:1000], raw_corpus.split()[-1000:], 1000) == 0.464

assert lab1.percentage_of_unseen_vocab(raw_corpus.split()[:10000], raw_corpus.split()[-10000:], 10000) == 0.2182

assert lab1.percentage_of_unseen_vocab(raw_corpus.split()[:100000], raw_corpus.split()[-100000:], 100000) == 0.10077

assert lab1.percentage_of_unseen_vocab(raw_corpus.split()[:500000], raw_corpus.split()[-500000:], 500000) == 0.052344

### END HIDDEN TESTS

# -

# ## Exercise 3: Pareto principle

# The popular Pareto principle (also known as the 80/20 rule), states that for many events, roughly 80% of the effects come from 20% of the causes. This includes observations that found that the distribution of global income is very uneven, with the richest 20% of the world's population controlling 82.7% of the world's income. This seems to be the case with words as well.

#

# In this exercise, we observe something similar to the Pareto principle in words. By calculating what fraction of the most frequent words accounts for 80% of the total words in the corpus, you will see that a very small number of frequent words account for a large number of words.

#

# **Total points: 15 points**

#

# a. Complete the function called "frac_80_perc" which takes in "freqs" as an input, and returns a float representing the fraction of words that account for 80% of the tokens in the corpus (the expected answer is around 3% for Reuters corpus -- a News corpus). Note: you should be considering the words in decreasing order of frequency until reaching 80% of word (frequency) count. (15 points)

# + nbgrader={"grade": false, "grade_id": "cell-7d532348cdd5379a", "locked": false, "schema_version": 3, "solution": true, "task": false}

importlib.reload(lab1)

print(lab1.frac_80_perc(freqs))

# + nbgrader={"grade": true, "grade_id": "cell-d39b1cdba3cd5662", "locked": true, "points": 15, "schema_version": 3, "solution": false, "task": false}

### BEGIN HIDDEN TESTS

assert lab1.frac_80_perc(freqs) > 0.033

assert lab1.frac_80_perc(freqs) < 0.034

### END HIDDEN TESTS

# -

# This relation between the frequency and rank for words is called Zipf's law. It states that given a large sample of words used, the frequency of any word is inversely proportional to its rank in the frequency table. So word number n has a frequency proportional to 1/n. In order to see this, sort the words in a decreasing order of their frequencies and do a rank-frequency plot, with the words (indicated by their ranks) indicated along the x-axis and their frequencies in the y-axis.

#

# b. Accordingly, we will plot the frequency of words when ranked in decreasing order. Complete the function "plot_zipf" that takes in "freqs" as an input, and generates a plot using matplotlib. In this plot, the x-axis represents the rank of words in decreasing order of frequency, and the y-axis represents the frequency of the corresponding word. (Ungraded)

# + nbgrader={"grade": true, "grade_id": "cell-42ff4546787c495e", "locked": false, "points": 5, "schema_version": 3, "solution": true, "task": false}

import matplotlib.pyplot as plt

# -

importlib.reload(lab1)

lab1.plot_zipf(freqs)

# ## Exercise 4: Type-to-Token Ratio (TTR)

# Another way of measuring the richenss of vocabulary is by looking at the type-token distribution of words in a language. Word types are unique words in a corpus, whereas the tokens are the words in a corpus with repetition. And so, a sentence such as "I am taking this class because I love taking on challenges" has 11 tokens, but 9 types since the words "I" and "taking" are repeated twice. Accordingly, Type-to-Token Ratio (TTR) is the ratio of types to tokens, and the higher it is, the less words are repeated, and the richer is the language.

#

# **Total points: 15 points**

#

# a. In this exercise we will be exploring, for every language, the amount of "types" explored as we explore larger portions of the corpus, or tokens. We will be considering the Universal Declaration of Human Rights in 4 languages. Particularly, we will be plotting the amount of types explored per language as we explore 100 more tokens. For this exercise, complete the following function "get_TTRs" which takes in as an input a predefined set of languages, and returns as an output the dictionary TTR, which has a language as the key, and the value as a list showing the count of types as we explore 100 tokens, 200 tokens, 300 tokens, up until 1300 tokens of the respective corpus. Accordingly, each list in the dictionary should be made of 13 data points. Do not forget to lowercase, but you do not need to perform tokenization as the corpora now are actually a list of words instead of one string. (15 points)

# + nbgrader={"grade": false, "grade_id": "cell-f89f433ea4675fed", "locked": false, "schema_version": 3, "solution": true, "task": false}

from nltk.corpus import udhr

languages = ['Italian-Latin1', 'English-Latin1', 'German_Deutsch-Latin1', 'Finnish_Suomi-Latin1']

# -

importlib.reload(lab1)

TTRs = lab1.get_TTRs(languages)

print(TTRs)

# + nbgrader={"grade": true, "grade_id": "cell-65fae85d3536e61a", "locked": true, "points": 15, "schema_version": 3, "solution": false, "task": false}

### BEGIN HIDDEN TESTS

assert TTRs['Italian-Latin1'] == [64, 110, 143, 179, 221, 260, 286, 326, 355, 386, 412, 426, 451]

assert TTRs['English-Latin1'] == [57, 99, 133, 167, 207, 231, 262, 292, 318, 339, 358, 381, 403]

assert TTRs['German_Deutsch-Latin1'] == [63, 113, 155, 204, 254, 284, 324, 358, 388, 418, 446, 475, 504]

assert TTRs['Finnish_Suomi-Latin1'] == [74, 137, 192, 252, 303, 356, 406, 459, 491, 537, 586, 631, 675]

### END HIDDEN TESTS

# -

# b. Next, plot a line graph (one line for every language, four lines in total) that shows the count of types discovered on the y-axis and the amount of tokens in the corpus discovered on the x-axis, in increments of 100 tokens, up to 1300. (Ungraded)

# + nbgrader={"grade": true, "grade_id": "cell-8260219dae07a1cd", "locked": false, "points": 5, "schema_version": 3, "solution": true, "task": false}

import matplotlib.pyplot as plt

importlib.reload(lab1)

lab1.plot_TTRs(TTRs)

# -

# c. Which language has the highest TTR? What could be driving the TTR? Share your thoughts in the textbox below: (Ungraded)

# + [markdown] nbgrader={"grade": true, "grade_id": "cell-97883af5aa5d83f4", "locked": false, "points": 5, "schema_version": 3, "solution": true, "task": false}

# **Share your thoughts here:**

# -

|

ECE365/nlp/ece365sp21_nlp_lab1dist/Lab1_NB.ipynb

|

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# +

# importing modules

import networkx as nx

import matplotlib.pyplot as plt

G = nx.DiGraph()

G.add_edges_from([('A', 'D'), ('B', 'C'), ('B', 'E'), ('C', 'A'),

('D', 'C'), ('E', 'D'), ('E', 'B'), ('E', 'F'),

('E', 'C'), ('F', 'C'), ('F', 'H'), ('G', 'A'),

('G', 'C'), ('H', 'A')])

plt.figure(figsize =(10, 10))

nx.draw_networkx(G, with_labels = True)

hubs, authorities = nx.hits(G, max_iter = 50, normalized = True)

# The in-built hits function returns two dictionaries keyed by nodes

# containing hub scores and authority scores respectively.

print("Hub Scores: ", hubs)

print("Authority Scores: ", authorities)

# -

|

Semester 6/DWM/Untitled.ipynb

|

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python (dataSc)

# language: python

# name: datasc

# ---

# + [markdown] toc=true

# <h1>Table of Contents<span class="tocSkip"></span></h1>

# <div class="toc"><ul class="toc-item"><li><span><a href="#Imports" data-toc-modified-id="Imports-1"><span class="toc-item-num">1 </span>Imports</a></span></li><li><span><a href="#Load-data" data-toc-modified-id="Load-data-2"><span class="toc-item-num">2 </span>Load data</a></span></li><li><span><a href="#Dark-custom-mpl-styles" data-toc-modified-id="Dark-custom-mpl-styles-3"><span class="toc-item-num">3 </span>Dark custom mpl styles</a></span></li><li><span><a href="#Light-custom-mpl-styles" data-toc-modified-id="Light-custom-mpl-styles-4"><span class="toc-item-num">4 </span>Light custom mpl styles</a></span></li><li><span><a href="#Default-mpl-styles" data-toc-modified-id="Default-mpl-styles-5"><span class="toc-item-num">5 </span>Default mpl styles</a></span></li></ul></div>

# -

# # Imports

# +

import numpy as np

import pandas as pd

import seaborn as sns

import bhishan

from bhishan import bp

import matplotlib.pyplot as plt

# %load_ext autoreload

# %load_ext watermark

# %autoreload 2

# %watermark -a "<NAME>" -d -v -m

# %watermark -iv

# -

# # Load data

df = sns.load_dataset('titanic')

df.head()

df.plot.scatter(x='age',y=['fare'])

# # Dark custom mpl styles

plt.style.use(bp.get_mpl_style(-1))

df.plot.scatter(x='age',y='fare')

plt.style.use(bp.get_mpl_style(-2))

df.plot.scatter(x='age',y='fare')

plt.style.use(bp.get_mpl_style(-3))

df.plot.scatter(x='age',y='fare')

# # Light custom mpl styles

plt.style.use(bp.get_mpl_style(-100))

df.plot.scatter(x='age',y='fare')

plt.style.use(bp.get_mpl_style(-200))

df.plot.scatter(x='age',y='fare')

plt.style.use(bp.get_mpl_style(-300))

df.plot.scatter(x='age',y='fare')

# # Default mpl styles

plt.style.use(bp.get_mpl_style(0))

df.plot.scatter(x='age',y='fare')

plt.style.use(bp.get_mpl_style(1))

df.plot.scatter(x='age',y='fare')

plt.style.use(bp.get_mpl_style(2))

df.plot.scatter(x='age',y='fare')

plt.style.use(bp.get_mpl_style(3))

df.plot.scatter(x='age',y='fare')

s = bp.get_mpl_style(4)

plt.style.use(s)

df.plot.scatter(x='age',y='fare')

|

examples/example_mpl_style.ipynb

|

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python3 (deeplearning)

# language: python

# name: deeplearning

# ---

# +

from keras import backend as K

n_cores = 10

K.set_session(K.tf.Session(config=K.tf.ConfigProto(

intra_op_parallelism_threads=n_cores,

inter_op_parallelism_threads=n_cores)))

from keras.layers import Input, Dense, Lambda

from keras.callbacks import TensorBoard

from keras.models import Model

from keras import regularizers

from keras.datasets import mnist

from IPython.display import SVG

from keras.utils.vis_utils import plot_model

from keras.losses import binary_crossentropy, kullback_leibler_divergence

import numpy as np

import time

import matplotlib.pyplot as plt

tb_session_name = "SAE"

tb_logs = "/home/nanni/tensorboard_logs"

# -

def get_tensorboard_callback():

return TensorBoard(log_dir="{}/{}__{}".format(tb_logs, tb_session_name,time.strftime('%Y_%m_%d__%H_%M')))

# ## MNIST

(x_train, y_train), (x_test, y_test) = mnist.load_data()

x_train = x_train.astype('float32') / 255.

x_test = x_test.astype('float32') / 255.

x_train = x_train.reshape((len(x_train), np.prod(x_train.shape[1:])))

x_test = x_test.reshape((len(x_test), np.prod(x_test.shape[1:])))

print(x_train.shape)

print(x_test.shape)

# ## Sparse AutoEncoder

# +

# this is the size of our encoded representations

encoding_dim = 32 # 32 floats -> compression of factor 24.5, assuming the input is 784 floats

# this is our input placeholder

input_img = Input(shape=(784,))

# "encoded" is the encoded representation of the input with a L1 activity regularizer

encoded = Dense(encoding_dim, activation='relu',

activity_regularizer=regularizers.l2(10e-7))(input_img)

# "decoded" is the lossy reconstruction of the input

decoded = Dense(784, activation='sigmoid')(encoded)

# this model maps an input to its reconstruction

autoencoder = Model(input_img, decoded)

# this model maps an input to its encoded representation

encoder = Model(input_img, encoded)

# create a placeholder for an encoded (32-dimensional) input

encoded_input = Input(shape=(encoding_dim,))

# retrieve the last layer of the autoencoder model

decoder_layer = autoencoder.layers[-1]

# create the decoder model

decoder = Model(encoded_input, decoder_layer(encoded_input))

# logging and compilation

autoencoder.compile(optimizer='adadelta', loss='binary_crossentropy')

# -

# ### Fitting

autoencoder.fit(x_train, x_train, verbose=0,

epochs=100,

batch_size=256,

shuffle=True,

validation_data=(x_test, x_test),

callbacks=[get_tensorboard_callback()])

# ### Encoding and Decoding

# encode and decode some digits

# note that we take them from the *test* set

encoded_imgs = encoder.predict(x_test)

decoded_imgs = decoder.predict(encoded_imgs)

n = 10 # how many digits we will display

plt.figure(figsize=(20, 4))

for i in range(n):

# display original

ax = plt.subplot(2, n, i + 1)

plt.imshow(x_test[i].reshape(28, 28))

plt.gray()

ax.get_xaxis().set_visible(False)

ax.get_yaxis().set_visible(False)

# display reconstruction

ax = plt.subplot(2, n, i + 1 + n)

plt.imshow(decoded_imgs[i].reshape(28, 28))

plt.gray()

ax.get_xaxis().set_visible(False)

ax.get_yaxis().set_visible(False)

plt.show()

# ## Variational AutoEncoder

# +

def sampling(args):

"""Reparameterization trick by sampling from an isotropic unit Gaussian.

# Arguments:

args (tensor): mean and log of variance of Q(z|X)

# Returns:

z (tensor): sampled latent vector

"""

z_mean, z_log_var = args

batch = K.shape(z_mean)[0]

dim = K.int_shape(z_mean)[1]

# by default, random_normal has mean=0 and std=1.0

epsilon = K.random_normal(shape=(batch, dim))

return z_mean + K.exp(0.5 * z_log_var) * epsilon

image_size = x_train.shape[1]

# network parameters

intermediate_dim = 512

batch_size = 128

latent_dim = 2

epochs = 50

# +

inputs = Input(shape=(image_size, ), name='encoder_input')

x = Dense(intermediate_dim, activation='relu')(inputs)

z_mean = Dense(latent_dim, name='z_mean')(x)

z_log_var = Dense(latent_dim, name='z_log_var')(x)

# use reparameterization trick to push the sampling out as input

# note that "output_shape" isn't necessary with the TensorFlow backend

z = Lambda(sampling, output_shape=(latent_dim,), name='z')([z_mean, z_log_var])

# instantiate encoder model

encoder = Model(inputs, [z_mean, z_log_var, z], name='encoder')

# build decoder model

latent_inputs = Input(shape=(latent_dim,), name='z_sampling')

x = Dense(intermediate_dim, activation='relu')(latent_inputs)

outputs = Dense(image_size, activation='sigmoid')(x)

# instantiate decoder model

decoder = Model(latent_inputs, outputs, name='decoder')

# instantiate VAE model

outputs = decoder(encoder(inputs)[2])

vae = Model(inputs, outputs, name='vae_mlp')

vae.summary()

# -

#

# References:

# - [<NAME>. & <NAME>. Auto-Encoding Variational Bayes](https://arxiv.org/pdf/1312.6114.pdf).

# - [<NAME>. Tutorial on Variational Autoencoders. (2016)](https://arxiv.org/pdf/1606.05908.pdf)

# $$

# D_{KL}[N(\mathbf z; \mu, \sigma^2) || N(0, 1)] = \frac{1}{2} \, \sum_j \left( \sigma^2_j + \mu^2_j - 1 - \log \sigma^2_j \right)

# $$

#

# In practice, however, it’s better to model $\Sigma(X)$ as $\log\Sigma(X)$ as it is more numerically stable to take exponent compared to computing log. Hence, our final KL divergence term is:

# $$

# D_{KL}[N(\mathbf z;\mu, \sigma^2) || N(0, 1)] = \frac{1}{2} \, \sum_j \left( \exp(\sigma^2_j) + \mu^2_j - 1 - \sigma^2_j \right)

# $$

def vae_loss(x, x_decoded):

xent_loss = binary_crossentropy(x, x_decoded)

kl_loss = 0.5 * K.mean(K.exp(z_log_var) + K.square(z_mean) - 1 - z_log_var, axis=-1)

return xent_loss + kl_loss

vae.compile(optimizer='rmsprop', loss=vae_loss)

vae.fit(x_train, x_train,

shuffle=True,

epochs=epochs,

batch_size=batch_size,

validation_data=(x_test, x_test),

callbacks=[get_tensorboard_callback()])

z_mean_test_encoded, z_log_var_test_encoded, z_test_encoded = encoder.predict(x_test, batch_size=batch_size)

plt.figure(figsize=(6, 6))

plt.scatter(z_mean_test_encoded[:, 0], z_mean_test_encoded[:, 1], c=y_test)

plt.colorbar()

plt.show()

|

notebooks/staging/AutoEncoders_in_Keras_Tutorial.ipynb

|

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# name: python3

# ---

# + colab_type="code" id="RXZT2UsyIVe_" colab={"base_uri": "https://localhost:8080/", "height": 411} outputId="d8202c79-9856-45b7-f98e-846186d55fd7"

# !wget --no-check-certificate \

# https://storage.googleapis.com/laurencemoroney-blog.appspot.com/horse-or-human.zip \

# -O /tmp/horse-or-human.zip

# !wget --no-check-certificate \

# https://storage.googleapis.com/laurencemoroney-blog.appspot.com/validation-horse-or-human.zip \

# -O /tmp/validation-horse-or-human.zip

import os

import zipfile

local_zip = '/tmp/horse-or-human.zip'

zip_ref = zipfile.ZipFile(local_zip, 'r')

zip_ref.extractall('/tmp/horse-or-human')

local_zip = '/tmp/validation-horse-or-human.zip'

zip_ref = zipfile.ZipFile(local_zip, 'r')

zip_ref.extractall('/tmp/validation-horse-or-human')

zip_ref.close()

# Directory with our training horse pictures

train_horse_dir = os.path.join('/tmp/horse-or-human/horses')

# Directory with our training human pictures

train_human_dir = os.path.join('/tmp/horse-or-human/humans')

# Directory with our training horse pictures

validation_horse_dir = os.path.join('/tmp/validation-horse-or-human/horses')

# Directory with our training human pictures

validation_human_dir = os.path.join('/tmp/validation-horse-or-human/humans')

# + [markdown] colab_type="text" id="5oqBkNBJmtUv"

# ## Building a Small Model from Scratch

#

# But before we continue, let's start defining the model:

#

# Step 1 will be to import tensorflow.

# + id="qvfZg3LQbD-5" colab_type="code" colab={}

import tensorflow as tf

# + [markdown] colab_type="text" id="BnhYCP4tdqjC"

# We then add convolutional layers as in the previous example, and flatten the final result to feed into the densely connected layers.

# + [markdown] id="gokG5HKpdtzm" colab_type="text"

# Finally we add the densely connected layers.

#

# Note that because we are facing a two-class classification problem, i.e. a *binary classification problem*, we will end our network with a [*sigmoid* activation](https://wikipedia.org/wiki/Sigmoid_function), so that the output of our network will be a single scalar between 0 and 1, encoding the probability that the current image is class 1 (as opposed to class 0).

# + id="PixZ2s5QbYQ3" colab_type="code" colab={"base_uri": "https://localhost:8080/", "height": 88} outputId="401b7e7e-941c-4d77-f84f-042498f07420"

model = tf.keras.models.Sequential([

# Note the input shape is the desired size of the image 300x300 with 3 bytes color

# This is the first convolution

tf.keras.layers.Conv2D(16, (3,3), activation='relu', input_shape=(300, 300, 3)),

tf.keras.layers.MaxPooling2D(2, 2),

# The second convolution

tf.keras.layers.Conv2D(32, (3,3), activation='relu'),

tf.keras.layers.MaxPooling2D(2,2),

# The third convolution

tf.keras.layers.Conv2D(64, (3,3), activation='relu'),

tf.keras.layers.MaxPooling2D(2,2),

# The fourth convolution

tf.keras.layers.Conv2D(64, (3,3), activation='relu'),

tf.keras.layers.MaxPooling2D(2,2),

# The fifth convolution

tf.keras.layers.Conv2D(64, (3,3), activation='relu'),

tf.keras.layers.MaxPooling2D(2,2),

# Flatten the results to feed into a DNN

tf.keras.layers.Flatten(),

# 512 neuron hidden layer

tf.keras.layers.Dense(512, activation='relu'),

# Only 1 output neuron. It will contain a value from 0-1 where 0 for 1 class ('horses') and 1 for the other ('humans')

tf.keras.layers.Dense(1, activation='sigmoid')

])

# + colab_type="code" id="8DHWhFP_uhq3" colab={"base_uri": "https://localhost:8080/", "height": 88} outputId="10e49dad-be2d-42ef-e7ae-e71788396ded"

from tensorflow.keras.optimizers import RMSprop

model.compile(loss='binary_crossentropy',

optimizer=RMSprop(lr=1e-4),

metrics=['acc'])

# + colab_type="code" id="ClebU9NJg99G" colab={"base_uri": "https://localhost:8080/", "height": 51} outputId="8557f72d-0f00-4779-c8b0-4ee4aeadf9e2"

from tensorflow.keras.preprocessing.image import ImageDataGenerator

# All images will be rescaled by 1./255

train_datagen = ImageDataGenerator(

rescale=1./255,

rotation_range=40,

width_shift_range=0.2,

height_shift_range=0.2,

shear_range=0.2,

zoom_range=0.2,

horizontal_flip=True,

fill_mode='nearest')

validation_datagen = ImageDataGenerator(rescale=1/255)

# Flow training images in batches of 128 using train_datagen generator

train_generator = train_datagen.flow_from_directory(

'/tmp/horse-or-human/', # This is the source directory for training images

target_size=(300, 300), # All images will be resized to 150x150

batch_size=128,

# Since we use binary_crossentropy loss, we need binary labels

class_mode='binary')

# Flow training images in batches of 128 using train_datagen generator

validation_generator = validation_datagen.flow_from_directory(

'/tmp/validation-horse-or-human/', # This is the source directory for training images

target_size=(300, 300), # All images will be resized to 150x150

batch_size=32,

# Since we use binary_crossentropy loss, we need binary labels

class_mode='binary')

# + colab_type="code" id="Fb1_lgobv81m" colab={"base_uri": "https://localhost:8080/", "height": 1000} outputId="552ec10c-79ea-43c4-feea-251ea5136b40"

history = model.fit_generator(

train_generator,

steps_per_epoch=8,

epochs=100,

verbose=1,

validation_data = validation_generator,

validation_steps=8)

# + id="7zNPRWOVJdOH" colab_type="code" colab={"base_uri": "https://localhost:8080/", "height": 545} outputId="5045526c-fe9f-4732-9609-a90e8d8be619"

import matplotlib.pyplot as plt

acc = history.history['acc']

val_acc = history.history['val_acc']

loss = history.history['loss']

val_loss = history.history['val_loss']

epochs = range(len(acc))

plt.plot(epochs, acc, 'r', label='Training accuracy')

plt.plot(epochs, val_acc, 'b', label='Validation accuracy')

plt.title('Training and validation accuracy')

plt.figure()

plt.plot(epochs, loss, 'r', label='Training Loss')

plt.plot(epochs, val_loss, 'b', label='Validation Loss')

plt.title('Training and validation loss')

plt.legend()

plt.show()

# + [markdown] id="MjOwWyejCdKe" colab_type="text"

# The problem is Data augmentation introduces a random element to the training images but if **validation set doesn't have the same randomness**, **the type of images too close to the images in the training set**.

|

Convolutional Neural Networks in TensorFlow/week2 Augmentation to Avoid Overfitting/Horse_or_Human_WithAugmentation.ipynb

|

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 2

# language: python

# name: python2

# ---

skuinfo=[]

skuinfo.append('%s' % '\t'.join(["cid3","cid3n","sku_id","sku_name", "word22", "aaa"]))

print(str(skuinfo)[1:-1].split("\\t")[4:-1])

arrstr = str(skuinfo)[1:-1].split("\\t")

arrstr[4:-1].split(" ")

|

other/Untitled12.ipynb

|

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

import numpy as np

import pandas as pd

# # Arrays

ar = np.array([1, 2])

ar

ar2 = np.array([[1,2,4],[1,4,5]])

ar2

ar3 = np.array([[[1,2,3],[3,4,5],[5,6,7]],

[[1,2,3],[3,4,5],[5,6,7]],

[[1,2,3],[3,4,5],[5,6,7]]])

ar3

ar.dtype

type(ar), type(ar2), type(ar3)

ar4 = np.array([[[[ar3, ar3, ar3, ar3], [ar3, ar3,ar3, ar3]], [[ar3, ar3,ar3, ar3], [ar3, ar3,ar3, ar3]]], [[[ar3, ar3,ar3, ar3], [ar3, ar3,ar3, ar3]], [[ar3, ar3,ar3, ar3], [ar3, ar3,ar3, ar3]]]])

ar4

ar.shape, ar2.shape, ar3.shape, ar4.shape

ar.ndim, ar2.ndim, ar3.ndim, ar4.ndim

ar.size, ar2.size, ar3.size, ar4.size

ar5 = np.array([[ar4], [ar4]])

pd.DataFrame(ar2)

# # Criando Arrays

# ### Completando com 1:

um = np.ones((1, 2, 2)) #Dá pra fazer com zeros também.

um

um.size, um.ndim

# ### Povoando com números aleatórios:

np.arange(1, 20, 2)

np.random.randint(0, 50, size = (2, 2, 3))

matriz = np.random.random((3,4))

matriz

rand = np.random.seed(seed = 10)

a, b = np.random.randint(100), np.random.randint(100)

a, b

# # Enxergando Arrays e Matrizes

a = np.random.randint(10, size = 10)

a

np.unique(a)

a.min()

matriz.min(), matriz[1].min()

matriz, matriz[1:3, 1:3],

#

# $x=\frac{10}{2}$

# Eu não sei como surgiu a linha "Type Markdown and LaTeX: 𝛼2". Mas fez eu me dar conta de que posso usar alguns comandos LateX aqui dentro, o que é foda.

ar3

ar3[1][0][1:3]

cu = pd.DataFrame({'A': range(10), 'B': range(20,30)})

cu

np.array(cu), np.array(cu)[1]

# # Manipulando Arrays

a = np.array([1, 3, 4])

a

b = np.ones(3)*2

b

a-b, abs(a-b)

a*ar3

matriz1 = np.array([[5, 6, 7], [7, 8, 9], [10, 11, 12]])

matriz1

matriz2 = np.array([[1, 2, 3], [3, 4, 5], [6, 7, 8]

])

matriz2

matriz1.shape, matriz2.shape

# #### Multiplicar usando * não é o mesmo que multiplicação matricial!

matriz1*matriz2

# #### Esse tipo de multiplicação é comutativo.

#

matriz2*matriz1

# ### Multiplicação matricial é feito por meio de dot:

np.dot(matriz1, matriz2)

np.dot(matriz2, matriz1)

ar = np.array([[1], [2], [3]])

ar1 = np.array([1, 2, 3])

ar, ar1

ar.shape, ar1.shape

np.dot(ar1, matriz1)

np.dot(matriz1, ar)

# # Reshape e Transposto

a.shape

a

# ### Mudando o formato do array:

# #### Mantendo a mesma informação!

a.reshape(3,1)

a.reshape(3,1,1)

a.reshape(3,2) #Não funciona, porque não tem informação o suficiente

a.reshape(1,3)

# ### Transposto

ar3, ar3.shape

ar3.T, ar3.T.shape

ar2, ar2.shape

ar2.T, ar2.T.shape

a

ar*ar3

ar3

ar

# ### Exemplo de multiplicação matricial na vida real

vendas = pd.DataFrame({'Almond butter': [2, 9, 11, 13, 15], 'Peanut butter': [7, 4, 14, 13, 18], 'Cashew butter': [1, 16, 18, 16, 9]}, index = ['Mon', 'Tues', 'Wed', 'Thurs', 'Fri'])

vendas

precos = pd.DataFrame({'Almond butter': [10], 'Peanut butter': [8], 'Cashew butter': [12]}, index = ['Preço'])

precos

matriz_vendas = np.array(vendas)

matriz_vendas

vetor_precos = np.array(precos)

vetor_precos

vetor_precos.shape

matriz_vendas.shape

vetor_total = np.dot( matriz_vendas, vetor_precos.T)

vetor_total

vendas['Total'] = vetor_total

vendas

# ### Ordenando Arrays

np.sort(matriz_vendas)

np.sort(vendas)

cu = np.array([3,7,1])

np.argsort(cu)

np.argmin(cu)

cu = np.array([[[2, 2]], [[2, 1]]])

cu.shape

np.argmin(cu, axis = 0)

# # Exemplo Prático: IMAGENS como arrays

# Para carregar a imagem, tem que estar na forma de MarkDown! <#img src = 'blablabla'> (Tira o #)

# <img src = 'feijao'>

from matplotlib.image import imread

feijao = imread('feijao.png')

type(feijao)

feijao.size, feijao.shape, feijao.ndim

feijao.dtype

feijao[0][0][0]

|

Numpy.ipynb

|

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# language: python

# name: python3

# ---

# # Collaboration and Competition

#

# ---

#

# In this notebook, you will learn how to use the Unity ML-Agents environment for the third project of the [Deep Reinforcement Learning Nanodegree](https://www.udacity.com/course/deep-reinforcement-learning-nanodegree--nd893) program.

#

# ### 1. Start the Environment

#

# We begin by importing the necessary packages. If the code cell below returns an error, please revisit the project instructions to double-check that you have installed [Unity ML-Agents](https://github.com/Unity-Technologies/ml-agents/blob/master/docs/Installation.md) and [NumPy](http://www.numpy.org/).

from unityagents import UnityEnvironment

import numpy as np

# Next, we will start the environment! **_Before running the code cell below_**, change the `file_name` parameter to match the location of the Unity environment that you downloaded.

#

# - **Mac**: `"path/to/Tennis.app"`

# - **Windows** (x86): `"path/to/Tennis_Windows_x86/Tennis.exe"`

# - **Windows** (x86_64): `"path/to/Tennis_Windows_x86_64/Tennis.exe"`

# - **Linux** (x86): `"path/to/Tennis_Linux/Tennis.x86"`

# - **Linux** (x86_64): `"path/to/Tennis_Linux/Tennis.x86_64"`

# - **Linux** (x86, headless): `"path/to/Tennis_Linux_NoVis/Tennis.x86"`

# - **Linux** (x86_64, headless): `"path/to/Tennis_Linux_NoVis/Tennis.x86_64"`

#

# For instance, if you are using a Mac, then you downloaded `Tennis.app`. If this file is in the same folder as the notebook, then the line below should appear as follows:

# ```

# env = UnityEnvironment(file_name="Tennis.app")

# ```

env = UnityEnvironment(file_name="./App/Tennis.app")

# Environments contain **_brains_** which are responsible for deciding the actions of their associated agents. Here we check for the first brain available, and set it as the default brain we will be controlling from Python.

# get the default brain

brain_name = env.brain_names[0]

brain = env.brains[brain_name]

# ### 2. Examine the State and Action Spaces

#

# In this environment, two agents control rackets to bounce a ball over a net. If an agent hits the ball over the net, it receives a reward of +0.1. If an agent lets a ball hit the ground or hits the ball out of bounds, it receives a reward of -0.01. Thus, the goal of each agent is to keep the ball in play.

#

# The observation space consists of 8 variables corresponding to the position and velocity of the ball and racket. Two continuous actions are available, corresponding to movement toward (or away from) the net, and jumping.

#

# Run the code cell below to print some information about the environment.

# +

# reset the environment

env_info = env.reset(train_mode=True)[brain_name]

# number of agents

num_agents = len(env_info.agents)

print('Number of agents:', num_agents)

# size of each action

action_size = brain.vector_action_space_size

print('Size of each action:', action_size)

# examine the state space

states = env_info.vector_observations

state_size = states.shape[1]

print('There are {} agents. Each observes a state with length: {}'.format(states.shape[0], state_size))

print('The state for the first agent looks like:', states[0])

#print('The state for the first agent looks like:', states)

# -

# ### 3. Take Random Actions in the Environment

#

# In the next code cell, you will learn how to use the Python API to control the agents and receive feedback from the environment.

#

# Once this cell is executed, you will watch the agents' performance, if they select actions at random with each time step. A window should pop up that allows you to observe the agents.

#

# Of course, as part of the project, you'll have to change the code so that the agents are able to use their experiences to gradually choose better actions when interacting with the environment!

for i in range(1, 6): # play game for 5 episodes

env_info = env.reset(train_mode=False)[brain_name] # reset the environment

states = env_info.vector_observations # get the current state (for each agent)

scores = np.zeros(num_agents) # initialize the score (for each agent)

while True:

actions = np.random.randn(num_agents, action_size) # select an action (for each agent)

actions = np.clip(actions, -1, 1) # all actions between -1 and 1

env_info = env.step(actions)[brain_name] # send all actions to tne environment

next_states = env_info.vector_observations # get next state (for each agent)

rewards = env_info.rewards # get reward (for each agent)

dones = env_info.local_done # see if episode finished

scores += env_info.rewards # update the score (for each agent)

states = next_states # roll over states to next time step

if np.any(dones): # exit loop if episode finished

break

print('Score (max over agents) from episode {}: {}'.format(i, np.max(scores)))

# When finished, you can close the environment.

# ### 4. It's Your Turn!

#

# Now it's your turn to train your own agent to solve the environment! When training the environment, set `train_mode=True`, so that the line for resetting the environment looks like the following:

# ```python

# env_info = env.reset(train_mode=True)[brain_name]

# ```

# +

import numpy as np

import torch

import torch.nn as nn

import torch.nn.functional as F

def hidden_init(layer):

fan_in = layer.weight.data.size()[0]

lim = 1. / np.sqrt(fan_in)

return (-lim, lim)

class Actor(nn.Module):

"""Actor (Policy) Model."""

def __init__(self, state_size, action_size, seed, fc1_units=128, fc2_units=64):

"""Initialize parameters and build model.

Params

======

state_size (int): Dimension of each state

action_size (int): Dimension of each action

seed (int): Random seed

fc1_units (int): Number of nodes in first hidden layer

fc2_units (int): Number of nodes in second hidden layer

"""

super(Actor, self).__init__()

self.seed = torch.manual_seed(seed)

self.bn0 = nn.BatchNorm1d(state_size)

self.fc1 = nn.Linear(state_size, fc1_units)

self.bn1 = nn.BatchNorm1d(fc1_units)

self.fc2 = nn.Linear(fc1_units, fc2_units)

self.bn2 = nn.BatchNorm1d(fc2_units)

self.fc3 = nn.Linear(fc2_units, action_size)

self.reset_parameters()

def reset_parameters(self):

self.fc1.weight.data.uniform_(*hidden_init(self.fc1))

self.fc2.weight.data.uniform_(*hidden_init(self.fc2))

self.fc3.weight.data.uniform_(-3e-3, 3e-3)

def forward(self, state):

"""Build an actor (policy) network that maps states -> actions."""

x = self.bn0(state)

x = F.relu(self.bn1(self.fc1(x)))

x = F.relu(self.bn2(self.fc2(x)))

return torch.tanh(self.fc3(x))

class Critic(nn.Module):

"""Critic (Value) Model."""

def __init__(self, state_size, action_size, seed,fcs1_units=128, fc2_units=32):

"""Initialize parameters and build model.

Params

======

state_size (int): Dimension of each state

action_size (int): Dimension of each action

seed (int): Random seed

fcs1_units (int): Number of nodes in the first hidden layer

fc2_units (int): Number of nodes in the second hidden layer

fc3_units (int): Number of nodes in the third hidden layer

"""

super(Critic, self).__init__()

self.seed = torch.manual_seed(seed)

self.bn0 = nn.BatchNorm1d(state_size)

self.fcs1 = nn.Linear(state_size, fcs1_units)

self.fc2 = nn.Linear(fcs1_units+action_size, fc2_units)

self.fc3 = nn.Linear(fc2_units, 1)

self.reset_parameters()

def reset_parameters(self):

self.fcs1.weight.data.uniform_(*hidden_init(self.fcs1))

self.fc2.weight.data.uniform_(*hidden_init(self.fc2))

self.fc3.weight.data.uniform_(-3e-3, 3e-3)

def forward(self, state, action):

"""Build a critic (value) network that maps (state, action) pairs -> Q-values."""

state = self.bn0(state)

xs = F.relu(self.fcs1(state))

x = torch.cat((xs, action), dim=1)

x = F.relu(self.fc2(x))

return self.fc3(x)

# +

import random

import copy

from collections import namedtuple, deque

import torch.optim as optim

BUFFER_SIZE = int(1e5) # replay buffer size

BATCH_SIZE = 128 # minibatch size

GAMMA = 0.99 # discount factor

TAU = 1e-3 # for soft update of target parameters

LR_ACTOR = 1e-4 # learning rate of the actor

LR_CRITIC = 1e-3 # learning rate of the critic

WEIGHT_DECAY = 0 # L2 weight decay

#DEVICE = torch.device("cuda:0" if torch.cuda.is_available() else "cpu")

DEVICE = "cpu"

class DDPGAgent():

"""Interacts with and learns from the environment."""

def __init__(self, state_size, action_size, random_seed):

"""Initialize an Agent object.

Params

======

state_size (int): dimension of each state

action_size (int): dimension of each action

random_seed (int): random seed

num_agents (int): number of agents

"""

self.state_size = state_size

self.action_size = action_size

self.seed = random.seed(random_seed)

self.device = DEVICE

self.buffer_size = BUFFER_SIZE

self.batch_size = BATCH_SIZE

self.gamma = GAMMA

self.tau = TAU

# Actor Network (w/ Target Network)

self.actor_local = Actor(state_size, action_size, random_seed).to(DEVICE)

self.actor_target = Actor(state_size, action_size, random_seed).to(DEVICE)

self.actor_optimizer = optim.Adam(self.actor_local.parameters(), lr=LR_ACTOR)

# Critic Network (w/ Target Network)

self.critic_local = Critic(state_size, action_size, random_seed).to(DEVICE)

self.critic_target = Critic(state_size, action_size, random_seed).to(DEVICE)

self.critic_optimizer = optim.Adam(self.critic_local.parameters(), lr=LR_CRITIC, weight_decay=WEIGHT_DECAY)

# Noise process

self.noise = OUNoise(action_size, random_seed)

# Replay memory

self.memory = ReplayBuffer(action_size, BUFFER_SIZE, BATCH_SIZE, random_seed, DEVICE)

def step(self, state, action, reward, next_state, done):

"""Save experience in replay memory, and use random sample from buffer to learn"""

# Save experience / reward

self.memory.add(state, action, reward, next_state, done)

# Learn, if enough samples are available in memory

if len(self.memory) > self.batch_size:

experiences = self.memory.sample()

self.learn(experiences)

def act(self, state, noise=0.0):

"""Returns actions for given state as per current policy"""

state = torch.from_numpy(state).float().to(self.device)

self.actor_local.eval()

with torch.no_grad():

action = self.actor_local(state).cpu().data.numpy()

self.actor_local.train()

action += noise*self.noise.sample()

return np.clip(action, -1, 1)

def reset(self):

self.noise.reset()

def learn(self, experiences):

"""Update policy and value parameters using given batch of experience tuples

q_targets = r + γ * critic_target(next_state, actor_target(next_state))

where:

actor_target(state) -> action

critic_target(state, action) -> Q-value

Params

======

experiences (Tuple[torch.Tensor]): tuple of (s, a, r, s', done)

gamma (float): discount factor

"""

states, actions, rewards, next_states, dones = experiences

# ---------------------------- update critic ---------------------------- #

# Get predicted next-state actions and Q values from target models

next_actions = self.actor_target(next_states)

q_targets_next = self.critic_target(next_states, next_actions)

# Compute Q targets for current states (y_i)

q_targets = rewards + (self.gamma * q_targets_next * (1 - dones))

# Compute critic loss

q_expected = self.critic_local(states, actions)

critic_loss = F.mse_loss(q_expected, q_targets)

# Minimize the loss

self.critic_optimizer.zero_grad()

critic_loss.backward()

self.critic_optimizer.step()

# ---------------------------- update actor ---------------------------- #

# Compute actor loss

predicted_actions = self.actor_local(states)

actor_loss = -self.critic_local(states, predicted_actions).mean()

# Minimize the loss

self.actor_optimizer.zero_grad()

actor_loss.backward()

self.actor_optimizer.step()

# ----------------------- update target networks ----------------------- #

self.soft_update(self.critic_local, self.critic_target, self.tau)

self.soft_update(self.actor_local, self.actor_target, self.tau)

def soft_update(self, local_model, target_model, tau):

"""Soft update model parameters

θ_target = τ*θ_local + (1 - τ)*θ_target

Params

======

local_model: PyTorch model (weights will be copied from)

target_model: PyTorch model (weights will be copied to)

tau (float): interpolation parameter

"""

for target_param, local_param in zip(target_model.parameters(), local_model.parameters()):

target_param.data.copy_(

tau * local_param.data + (1.0 - tau) * target_param.data)

def add_id(self, states):

"""Add (i+1) at the end of the states of the i-th agent as its id number."""

states_with_id = [np.concatenate([s, [i+1]]) for i,s in enumerate(states)]

return np.vstack(states_with_id)

def save_progress(self):

"""Save the most recent weights of local actor and critic."""

torch.save(self.actor_local.state_dict(), './Model_Weights/checkpoint_actor.pth')

torch.save(self.critic_local.state_dict(), './Model_Weights/checkpoint_critic.pth')

class OUNoise:

"""Ornstein-Uhlenbeck process."""

def __init__(self, size, seed, mu=0., theta=0.15, sigma=0.2):

"""Initialize parameters and noise process."""

self.mu = mu * np.ones(size)

self.theta = theta

self.sigma = sigma

self.seed = random.seed(seed)

self.reset()

def reset(self):

"""Reset the internal state (= noise) to mean (mu)."""

self.state = copy.copy(self.mu)

def sample(self):

"""Update internal state and return it as a noise sample."""

x = self.state

dx = self.theta * (self.mu - x) + self.sigma * np.random.randn(len(x))

#dx = self.theta * (self.mu - x) + self.sigma * np.random.standard_normal(len(x))

self.state = x + dx

return self.state

class ReplayBuffer:

"""Fixed-size buffer to store experience tuples."""

def __init__(self, action_size, buffer_size, batch_size, seed, device):

"""Initialize a ReplayBuffer object.

Params

======

buffer_size (int): maximum size of buffer

batch_size (int): size of each training batch

"""

self.action_size = action_size

self.memory = deque(maxlen=buffer_size) # internal memory (deque)

self.batch_size = batch_size

self.experience = namedtuple("Experience", field_names=["state", "action", "reward", "next_state", "done"])

self.seed = random.seed(seed)

self.device = device

def add(self, state, action, reward, next_state, done):

"""Add a new experience to memory."""

e = self.experience(state, action, reward, next_state, done)

self.memory.append(e)

def sample(self):

"""Randomly sample a batch of experiences from memory."""

experiences = random.sample(self.memory, k=self.batch_size)

device = self.device

states = torch.from_numpy(np.vstack([e.state for e in experiences if e is not None])).float().to(device)

actions = torch.from_numpy(np.vstack([e.action for e in experiences if e is not None])).float().to(device)

rewards = torch.from_numpy(np.vstack([e.reward for e in experiences if e is not None])).float().to(device)

next_states = torch.from_numpy(np.vstack([e.next_state for e in experiences if e is not None])).float().to(device)

dones = torch.from_numpy(np.vstack([e.done for e in experiences if e is not None]).astype(np.uint8)).float().to(device)

return (states, actions, rewards, next_states, dones)

def __len__(self):

"""Return the current size of internal memory."""

return len(self.memory)

# +

# creat a new agent to test trained model

agent_test = DDPGAgent(state_size=state_size+1, action_size=action_size, random_seed=12345)

# load trained weights

agent_test.actor_local.load_state_dict(torch.load('./Model_Weights/checkpoint_actor.pth',map_location={'cuda:0': 'cpu'}))

agent_test.critic_local.load_state_dict(torch.load('./Model_Weights/checkpoint_critic.pth',map_location={'cuda:0': 'cpu'}))

# test training result

for i in range(1, 6): # play game for 5 episodes

env_info = env.reset(train_mode=False)[brain_name] # reset the environment

states = agent_test.add_id(env_info.vector_observations) # get the current state

scores = np.zeros(num_agents) # initialize the score

while True:

actions = agent_test.act(states) # select an action

env_info = env.step(actions)[brain_name] # send all actions to the environment

next_states = agent_test.add_id(env_info.vector_observations) # get next state

rewards = env_info.rewards # get reward

dones = env_info.local_done # see if episode finished

scores += env_info.rewards # update the score

states = next_states # roll over states to next time step

if np.any(dones): # exit loop if episode finished

break

print('Score (max over agents) from episode {}: {}'.format(i, np.max(scores)))

# -

env.close()

|

Projects/p3_collab-compet/Tennis.ipynb

|

# ---

# jupyter:

# jupytext:

# text_representation:

# extension: .py

# format_name: light

# format_version: '1.5'

# jupytext_version: 1.14.4

# kernelspec:

# display_name: Python 3

# name: python3

# ---

# + [markdown] id="view-in-github" colab_type="text"

# <a href="https://colab.research.google.com/github/mrklees/PracticalStatistics/blob/master/3_Regression_Models.ipynb" target="_parent"><img src="https://colab.research.google.com/assets/colab-badge.svg" alt="Open In Colab"/></a>

# + [markdown] id="w_d9MORIfjza" colab_type="text"

# # Regression Modelling

#

# ### Don't Forget to Run All (Ctrl+F9)

# ### Alex! Press the Record Button!!!

#

#

# By the end of the session participants will...

#

# * Review Expectation and Variance

# * Review the concept of a joint distribution

# *

#

# ## Language Repository

# These are some key terms that I will throw around a lot. You can always review their definitions here or [go back to the notebook on Statistical Testing & p-values to review.](https://colab.research.google.com/drive/15GQcjwz1TlVOOZfxiYakS1fAl3-3BiJA)

#

# **Expected Value: ** This is the average! It's the long-run average value of repetitions of the same experiment.

#

# **Variance:** This is a measure of how spread out a distribution is from its mean. The greater the variance, the futher values fall from its mean.

#

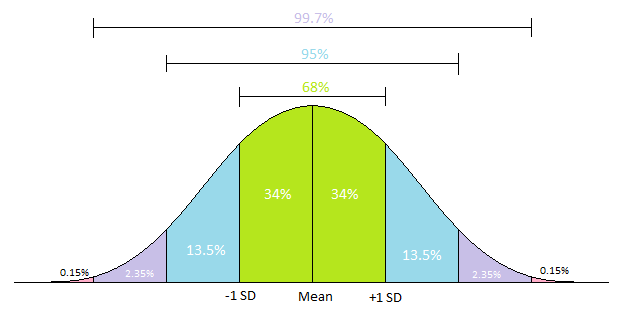

# **Standard Deviation:** This is really just the square root of the variance: $\text{std dev}(X) = \sqrt{Var(X)}$, and thus it behaves very similarly to variance. The greater the standard deviation, the futher values fall from its mean. It is often used in the context of normally distributed data because of how nicely a standard deviation partitions the normal distribution, per the obligatory graph:

#

#

#

#

# + [markdown] id="bwTnA1BkfzOO" colab_type="text"

# ## Motivating Today with Simpson's Paradox

#

# In the last session, we learned about methods which allows us to directly compare two different variables, like focus list status and assessment scores. And, ignore the fact that I spoke at length about why you shouldn't really use those mothods, you might wonder why we don't just stop there. Why work up to more complex models?

#

# We will start off with a small example that gets at this idea. It involves a very simple table of data. Suppose we did some trial on the effectiveness of a drug on the risk of heart attacks. The data is summarized below.

#

# | | Control - Heart Attacks | Control - Total Participants | Treatment - Heart Attacks | Treatment - Total Participants+ |

# |:---:|:---:|:---:|:---:|:---:|

# |Female|1|20|3|40|

# |Male|12|40|8|20|

#

# The paradox here comes from the two stories that I can tell with this table of data.

#

# The **first story** is about a fantastic new drug! In a clinical trial of 120 participants, participants who tool the experimental drug experienced heart attacks had about a 15% reduction in heart attacks. Talk to your doctor today!

#

# However the **second story** is an investigative journlism piece about a dangerous drug put out on the market. When *looking at men and women separately* it turns that in either case it *raises your risk of heart attack* by about 25% (for men) to 50% (for women). Obviously a drug which is bad for both men and women should be banned.

#

# To be clear, these calculations are really just simple percentages. In the first story, we just pretend like the data isn't segmented. So $\frac{13}{60}=21.6\%$ participants in the control and $\frac{11}{60}=18.3\%$ in the treatment group experienced heart attacks. Of course I then presented their ratio, because it's a much more marketable number 👍.

#

# Since the second story is symmetrical, I'll just talk about women. In the control group, $\frac{1}{20}=5\%$ of women experience heart attacks where as $\frac{3}{40}=7.5\%$ women in the treatment group experienced heart attacks. A similar increase was seen for men.

#

# There is a lot within Simpson's Paradox. It was published over 60 years ago and still is the topic of some conversation. What I really want you to get from this is: this is what confounding can look like. If we just measure effects directly then there is a risk that what we measure could not only be different from the true effect, but could be in the completely wrong direction.

# + id="UsB6SDSaIf__" colab_type="code" colab={}

#@title Imports and Global Variables (run this cell first) { display-mode: "form" }

#@markdown This sets the warning status (default is `ignore`, since this notebook runs correctly)

warning_status = "ignore" #@param ["ignore", "always", "module", "once", "default", "error"]

import warnings

warnings.filterwarnings(warning_status)

with warnings.catch_warnings():

warnings.filterwarnings(warning_status, category=DeprecationWarning)

warnings.filterwarnings(warning_status, category=UserWarning)

import numpy as np

import pandas as pd

import os

#@markdown This sets the styles of the plotting (default is styled like plots from [FiveThirtyeight.com](https://fivethirtyeight.com/))

matplotlib_style = 'fivethirtyeight' #@param ['fivethirtyeight', 'bmh', 'ggplot', 'seaborn', 'default', 'Solarize_Light2', 'classic', 'dark_background', 'seaborn-colorblind', 'seaborn-notebook']

import matplotlib.pyplot as plt; plt.style.use(matplotlib_style)

# %matplotlib inline

import seaborn as sns; sns.set_context('notebook')

import statsmodels.api as sm

from statsmodels.api import OLS

# + id="fngP6ohTWJzv" colab_type="code" outputId="8ac01270-6a97-448b-83c2-519330ad5bcf" colab={"base_uri": "https://localhost:8080/", "height": 224}

#@title Read the Data from the Web { display-mode: "form" }

data_url = 'https://impactblob.blob.core.windows.net/public/anon_hmh.csv'

data = pd.read_csv(data_url)

data.head()

# + id="XwtSwre9WSzW" colab_type="code" outputId="ca78fe79-be37-4ab6-a59c-f36e56039154" colab={"base_uri": "https://localhost:8080/", "height": 365}

#@title Descriptive Stats to Reference { display-mode: "form" }

def describe_nulls(data):

desc = data.describe(include=data.dtypes.unique())

desc.loc['% Null'] = data.isna().sum() / data.shape[0]

return desc

describe_nulls(data)

# + [markdown] id="_lWAOH6QZjc3" colab_type="text"

# # Regression

#

# This is a typical section where people bring out formulas and abstract graphs to try to explain this topic... but in the flavor of practical statistics we're going to talk about regression in terms of a different conceptual model. One which will hopefully allow us to worry a little bit less about the math. The reason that I feel that I can get away with this is that *most modern statistical interfaces* allow you to operate at this level of abstraction with this kind of conceptual framework instead of having to concern yourself with the details. Of course there is some variation in the aesthetics, but largely they are all the same.

#

# ## Regression as a Framework for Prediction

#

# In our framework, regression is all about being able to take some data and make predictions about future data. In this context, we'll use a few new pieces of language with somewhat specific meanings. Let's go through the language with some explanation.

#

# **Features**: The data we use to make predictions. For a student, this might be things like grade, school, focus list student, etc... We hope that this data contains information about the **outcome** we want to predict.

#

# **Outcomes**: The variable we want to make predictions about. For example, we've made predictions about how much a student will improve on a math assessment.

#

# **Model**: A **model** is used to make predictions about **outcomes**. Generally speaking, it is simply some mathematical device which tells us how to multiply and add our data to get **predictions** about our **outcomes**.

#

# The general goal of Regression is to *fit* (or *train*) **models** on **features** where the **outcomes** are known, and then use them to make predictions on new data where the **outcomes** aren't known. In the last session we previewed a formula notation which expresses this idea. In its full form it might look like:

# $$ \text{Outcomes} \sim \text{Model(Features)}$$

# Although, we dropped the model portion, because it doesn't really tell us much. So we're just left with:

# $$ \text{Outcomes} \sim \text{Features}$$

#

# ### Fitting Model: What do we need to understand for Practical Statistics?

#

# For the purposes of Pratical Statistics, we will acknowledge that these models exist and talk *exclusively* about how to use them. Unfortunately, going much further into how a model is trained requires going into some calculus, so we will skate around the topic. Instead, since you can use software prepared by professionals you can largely trust that *as long as you specify the model correctly*, the software will not make a mistake in fitting the model correctly. What I mean by *specifying the model correctly* will be one of the key subjects we discuss.

# + id="EViiFp4wWsDg" colab_type="code" outputId="ef4c0fd8-5657-4e01-af3b-bcddb6a1e852" colab={"base_uri": "https://localhost:8080/", "height": 493}

#@title Regression Choose Your Own Adventure {run: 'auto', display-mode: "form"}

#@markdown This tool will allow you fit nearly any possible linear model from the data. Start by selecting which column will be the **Outcome**. I would recommend `MathAssess_RAWCHANGE` or `LITASSESS_RAWCHANGE`, but I've left every column available.

Outcomes = "MathAssess_RAWCHANGE" #@param ['GRADE_ID_NUMERIC', 'OFFICIALFLLIT', 'OFFICIALFLMTH', 'FL_LIT_MET_DOSAGE', 'FL_MTH_MET_DOSAGE', 'litassess_pre_value_num', 'LITASSESS_RAWCHANGE', 'LITASSESS_SRITARGET', 'mathassess_pre_value_num', 'MathAssess_RAWCHANGE', 'SMI_TARGET', 'AnonId', 'SiteId', 'SchoolId', 'att_pre_value', 'att_post_value']

#@markdown Then select which columns of data should be included in your model! Sorry for the rough interface, but Colab hasn't published a better one yet.

GRADE_ID_NUMERIC = False #@param {type:"boolean"}

OFFICIALFLLIT = False #@param {type:"boolean"}

OFFICIALFLMTH = False #@param {type:"boolean"}

FL_LIT_MET_DOSAGE = False #@param {type:"boolean"}

FL_MTH_MET_DOSAGE = False #@param {type:"boolean"}

litassess_pre_value_num = False #@param {type:"boolean"}

LITASSESS_RAWCHANGE = False #@param {type:"boolean"}

LITASSESS_SRITARGET = False #@param {type:"boolean"}

mathassess_pre_value_num = False #@param {type:"boolean"}

MathAssess_RAWCHANGE = False #@param {type:"boolean"}

SMI_TARGET = False #@param {type:"boolean"}

SiteId = False #@param {type:"boolean"}

SchoolId = False #@param {type:"boolean"}

att_pre_value = True #@param {type:"boolean"}

att_post_value = False #@param {type:"boolean"}

colnames= np.array([

'GRADE_ID_NUMERIC', 'OFFICIALFLLIT', 'OFFICIALFLMTH',

'FL_LIT_MET_DOSAGE', 'FL_MTH_MET_DOSAGE', 'litassess_pre_value_num',

'LITASSESS_RAWCHANGE', 'LITASSESS_SRITARGET',

'mathassess_pre_value_num', 'MathAssess_RAWCHANGE', 'SMI_TARGET',

'SiteId', 'SchoolId', 'att_pre_value', 'att_post_value'])

responses = np.array([GRADE_ID_NUMERIC, OFFICIALFLLIT, OFFICIALFLMTH,

FL_LIT_MET_DOSAGE, FL_MTH_MET_DOSAGE, litassess_pre_value_num,

LITASSESS_RAWCHANGE, LITASSESS_SRITARGET,

mathassess_pre_value_num, MathAssess_RAWCHANGE, SMI_TARGET,

SiteId, SchoolId, att_pre_value, att_post_value])

# Get Selected Features

Features = list(colnames[responses])

try:

assert Outcomes not in Features