Add task categories, paper link and code link

#1

by nielsr HF Staff - opened

README.md

CHANGED

|

@@ -1,15 +1,19 @@

|

|

| 1 |

---

|

| 2 |

license: cc-by-4.0

|

|

|

|

|

|

|

|

|

|

|

|

|

| 3 |

---

|

| 4 |

|

| 5 |

# OSMa-Bench Dataset

|

| 6 |

|

|

|

|

|

|

|

| 7 |

[](https://be2rlab.github.io/OSMa-Bench/)

|

| 8 |

|

| 9 |

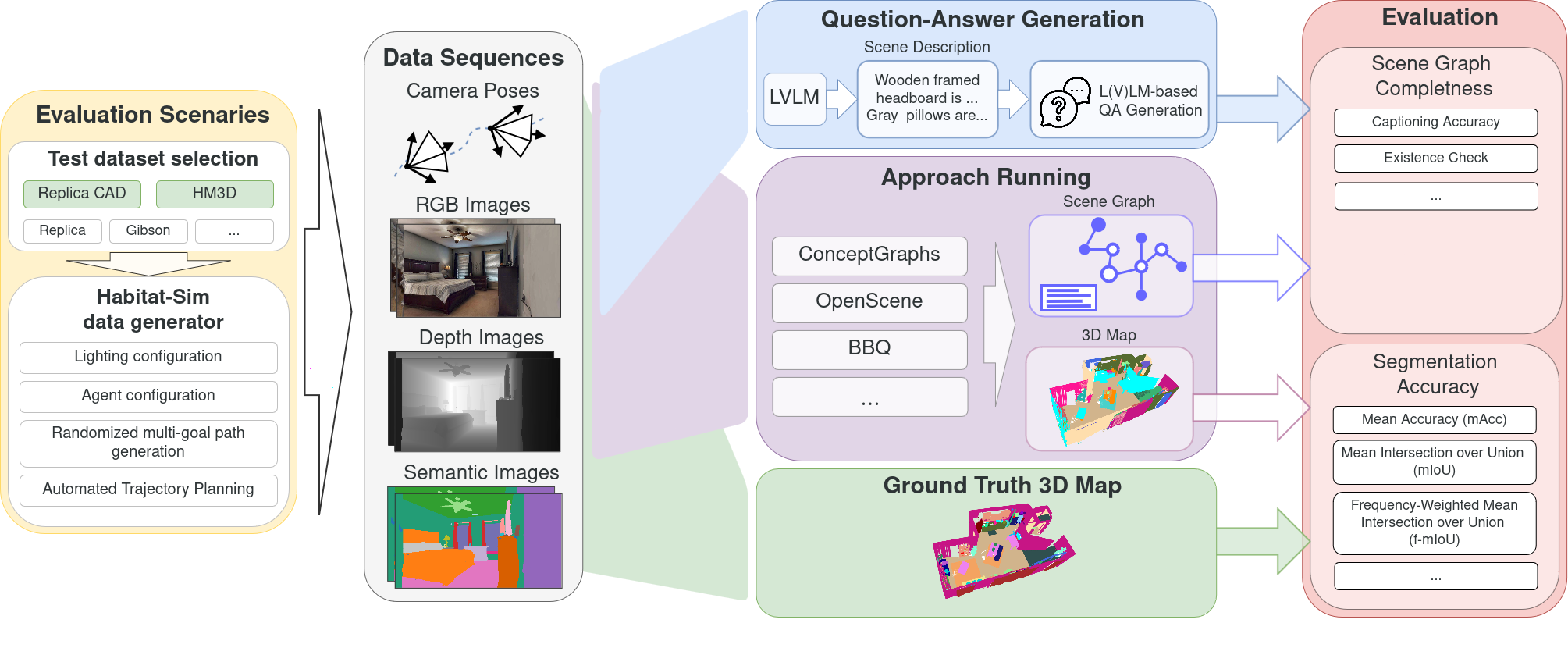

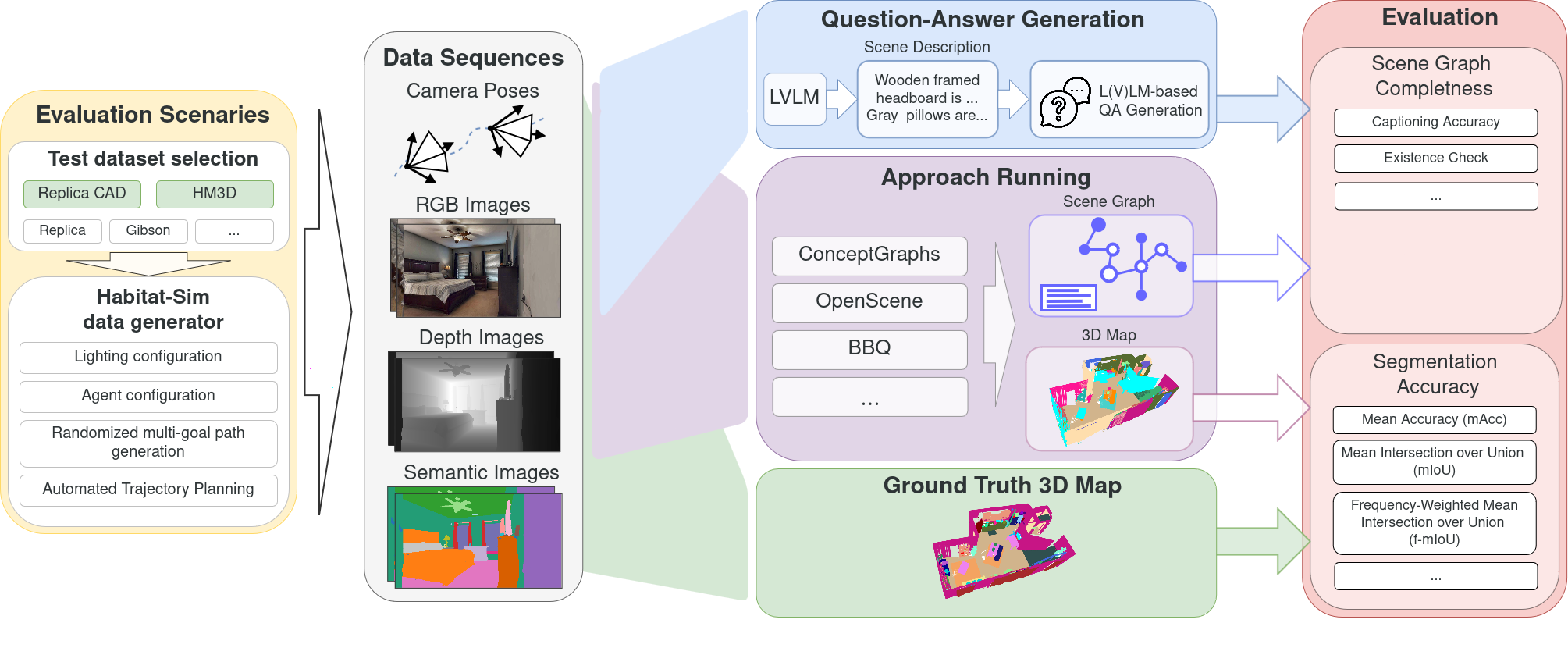

OSMa-Bench (Open Semantic Mapping Benchmark) dataset is a fully automatically generated dataset for evaluating the robustness of open semantic mapping and segmentation systems under varying indoor lighting conditions and robot movement dynamics. This dataset is part of [OSMa-Bench](https://be2rlab.github.io/OSMa-Bench/) pipeline.

|

| 10 |

|

| 11 |

-

- **Homepage:** [OSMa-Bench Project Page](https://be2rlab.github.io/OSMa-Bench/)

|

| 12 |

-

|

| 13 |

## Dataset Summary

|

| 14 |

|

| 15 |

This dataset provides simulated RGB-D and semantically annotated posed sequences for evaluation of semantic mapping and segmentation, with a particular focus on handling dynamic lighting—a critical but often overlooked factor in existing benchmarks. It also includes a collection of automatically generated question–answer pairs across multiple categories to support the evaluation of scene-graph–based reasoning, offering a task-driven measure of how well a system’s reconstructed scene captures semantic relationships between objects.

|

|

@@ -48,7 +52,7 @@ The dataset includes the following configurations for the ReplicaCAD and HM3D sc

|

|

| 48 |

|

| 49 |

---

|

| 50 |

|

| 51 |

-

## Data

|

| 52 |

|

| 53 |

The dataset provides structured data for each scene, suitable for tasks like 3D scene understanding, visual question answering, and robotics. Each scene contains the following components:

|

| 54 |

|

|

@@ -88,7 +92,7 @@ The dataset includes a automatically generated answer-question pairs with the fo

|

|

| 88 |

*Example:* `Which is taller: the bookshelf or the lamp?`

|

| 89 |

|

| 90 |

|

| 91 |

-

##

|

| 92 |

|

| 93 |

Using OSMa-Bench dataset in your research? Please cite following paper: [OSMa-Bench arxiv](https://arxiv.org/abs/2503.10331).

|

| 94 |

|

|

|

|

| 1 |

---

|

| 2 |

license: cc-by-4.0

|

| 3 |

+

task_categories:

|

| 4 |

+

- robotics

|

| 5 |

+

- image-segmentation

|

| 6 |

+

- image-text-to-text

|

| 7 |

---

|

| 8 |

|

| 9 |

# OSMa-Bench Dataset

|

| 10 |

|

| 11 |

+

[**Project Page**](https://be2rlab.github.io/OSMa-Bench/) | [**Paper**](https://huggingface.co/papers/2503.10331) | [**Code**](https://github.com/be2rlab/OSMa-Bench)

|

| 12 |

+

|

| 13 |

[](https://be2rlab.github.io/OSMa-Bench/)

|

| 14 |

|

| 15 |

OSMa-Bench (Open Semantic Mapping Benchmark) dataset is a fully automatically generated dataset for evaluating the robustness of open semantic mapping and segmentation systems under varying indoor lighting conditions and robot movement dynamics. This dataset is part of [OSMa-Bench](https://be2rlab.github.io/OSMa-Bench/) pipeline.

|

| 16 |

|

|

|

|

|

|

|

| 17 |

## Dataset Summary

|

| 18 |

|

| 19 |

This dataset provides simulated RGB-D and semantically annotated posed sequences for evaluation of semantic mapping and segmentation, with a particular focus on handling dynamic lighting—a critical but often overlooked factor in existing benchmarks. It also includes a collection of automatically generated question–answer pairs across multiple categories to support the evaluation of scene-graph–based reasoning, offering a task-driven measure of how well a system’s reconstructed scene captures semantic relationships between objects.

|

|

|

|

| 52 |

|

| 53 |

---

|

| 54 |

|

| 55 |

+

## Data Structure

|

| 56 |

|

| 57 |

The dataset provides structured data for each scene, suitable for tasks like 3D scene understanding, visual question answering, and robotics. Each scene contains the following components:

|

| 58 |

|

|

|

|

| 92 |

*Example:* `Which is taller: the bookshelf or the lamp?`

|

| 93 |

|

| 94 |

|

| 95 |

+

## Citation

|

| 96 |

|

| 97 |

Using OSMa-Bench dataset in your research? Please cite following paper: [OSMa-Bench arxiv](https://arxiv.org/abs/2503.10331).

|

| 98 |

|