|

|

--- |

|

|

datasets: |

|

|

- letxbe/BoundingDocs |

|

|

language: |

|

|

- en |

|

|

pipeline_tag: visual-question-answering |

|

|

tags: |

|

|

- Visual-Question-Answering |

|

|

- Question-Answering |

|

|

- Document |

|

|

license: apache-2.0 |

|

|

--- |

|

|

|

|

|

|

|

|

<div align="center"> |

|

|

|

|

|

<h1>DocExplainerV0: Visual Document QA with Bounding Box Localization</h1> |

|

|

|

|

|

[](https://creativecommons.org/licenses/by/4.0/) |

|

|

[]() |

|

|

[](https://huggingface.co/letxbe/DocExplainerV0) |

|

|

|

|

|

</div> |

|

|

|

|

|

## Model description |

|

|

|

|

|

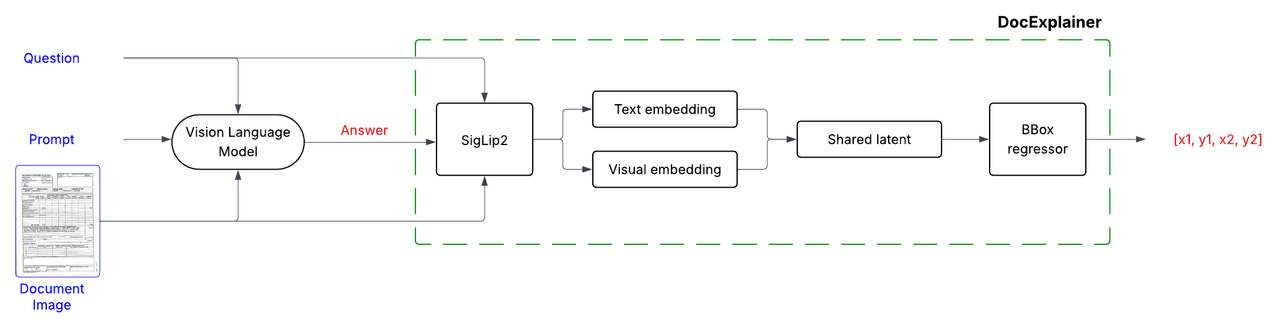

DocExplainerV0 is a **first-step approach** to Visual Document Question Answering with bounding box localization. |

|

|

Unlike standard VLMs that only provide text-based answers, DocExplainerV0 adds **visual evidence through bounding boxes**, making model predictions more interpretable. |

|

|

It is designed as a **plug-and-play module** to be combined with existing Vision-Language Models (VLMs), decoupling answer generation from spatial grounding. |

|

|

|

|

|

⚠️ **Important Note**: |

|

|

This model is **not intended as a final solution**, but rather as a **baseline framework and proof of concept**. Its purpose is to highlight the gap between textual accuracy and spatial grounding in current VLMs, and to serve as a foundation for future research on interpretable document understanding. |

|

|

|

|

|

|

|

|

- **Authors:** Alessio Chen, Simone Giovannini, Andrea Gemelli, Fabio Coppini, Simone Marinai |

|

|

- **Affiliations:** [Letxbe AI](https://letxbe.ai/), [University of Florence](https://www.unifi.it/it) |

|

|

- **License:** CC-BY-4.0 |

|

|

- **Paper:** ["Towards Reliable and Interpretable Document Question Answering via VLMs"](https://arxiv.org/abs/2501.03403) by Alessio Chen et al. |

|

|

|

|

|

<div align="center"> |

|

|

<img src="https://cdn.prod.website-files.com/655f447668b4ad1dd3d4b3d9/664cc272c3e176608bc14a4c_LOGO%20v0%20-%20LetXBebicolore.svg" alt="letxbe ai logo" width="200"> |

|

|

<img src="https://www.dinfo.unifi.it/upload/notizie/Logo_Dinfo_web%20(1).png" alt="Logo Unifi" width="200"> |

|

|

</div> |

|

|

--- |

|

|

|

|

|

## Model Details |

|

|

|

|

|

DocExplainerV0 is a fine-tuned SigLIP-based regressor that predicts bounding box coordinates for answer localization in document images. The system operates in a two-stage process: |

|

|

|

|

|

1. **Question Answering**: Any VLM processes the document image and question to generate a textual answer. |

|

|

2. **Bounding Box Explanation**: DocExplainerV0 takes the image, question, and generated answer to predict the coordinates of the supporting evidence. |

|

|

|

|

|

|

|

|

## Model Architecture |

|

|

DocExplainerV0 builds on [SigLIP2](https://huggingface.co/google/siglip2-giant-opt-patch16-384) visual and text embeddings. |

|

|

|

|

|

|

|

|

|

|

|

## Training Procedure |

|

|

- Visual and textual embeddings from SigLiP2 are projected into a shared latent space, fused via fully connected layers. |

|

|

- A regression head outputs normalized coordinates `[x1, y1, x2, y2]`. |

|

|

- **Backbone**: SigLiP2 (frozen). |

|

|

- **Loss Function**: Smooth L1 (Huber loss) applied to normalized coordinates in [0,1]. |

|

|

|

|

|

#### Training Setup |

|

|

- **Dataset**: [BoundingDocs v2.0](https://huggingface.co/datasets/letxbe/BoundingDocs) |

|

|

- **Epochs**: 20 |

|

|

- **Optimizer**: AdamW (default settings) |

|

|

- **Hardware**: 1 × NVIDIA L40S-1-48G GPU |

|

|

- **Model Selection**: Best checkpoint chosen by highest mean IoU on the validation split. |

|

|

|

|

|

|

|

|

|

|

|

## Quick Start |

|

|

|

|

|

Here is a simple example of how to use `DocExplainer` to get an answer and its corresponding bounding box from a document image. |

|

|

|

|

|

```python |

|

|

from PIL import Image |

|

|

import requests |

|

|

from transformers import AutoModel |

|

|

|

|

|

# Load example document image |

|

|

url = "https://datasets-server.huggingface.co/cached-assets/letxbe/BoundingDocs/--/47db6d2b6af0aadfd082591a8445d0f47c3b8d61/--/default/test/7/doc_images/image-1d100e9.jpg" |

|

|

image = Image.open(requests.get(url, stream=True).raw).convert("RGB") |

|

|

question = "What is the invoice number?" |

|

|

answer = "3Y8M2d-846" # generate it with any VLM |

|

|

|

|

|

explainer = AutoModel.from_pretrained("letxbe/DocExplainerv0", trust_remote_code=True) |

|

|

bbox = explainer.predict(image, answer) |

|

|

print(f"Bounding box: {bbox}") # [x1, y1, x2, y2] |

|

|

``` |

|

|

|

|

|

|

|

|

<table> |

|

|

<tr> |

|

|

<td width="50%" valign="top"> |

|

|

Example Output: |

|

|

|

|

|

**Question**: What is the invoice number? <br> |

|

|

**Answer**: 3Y8M2d-846<br><br> |

|

|

**Predicted BBox**: [0.6353235244750977, 0.03685223311185837, 0.8617828488349915, 0.058749228715896606] <br> |

|

|

</td> |

|

|

<td width="50%" valign="top"> |

|

|

Visualized Answer Location: |

|

|

<img src="https://i.postimg.cc/0NmBM0b1/invoice-explained.png" alt="Invoice with predicted bounding box" width="100%"> |

|

|

</td> |

|

|

</tr> |

|

|

</table> |

|

|

|

|

|

|

|

|

|

|

|

|

|

|

## Performance |

|

|

Evaluated on [BoundingDocs v2.0](https://huggingface.co/datasets/letxbe/BoundingDocs) dataset: |

|

|

### Full DocExplainer Pipeline |

|

|

|

|

|

| VLM Model | ANLS ↑| IoU ↑ | |

|

|

| --------------- | ----- | ----- | |

|

|

| SmolVLM2-2.2b | 0.572 | 0.175 | |

|

|

| qwen2.5-vl-7b | 0.689 | 0.188 | |

|

|

|

|

|

|

|

|

### VLM-only Baseline (for comparison) |

|

|

| VLM Model | ANLS ↑| IoU ↑ | |

|

|

| --------------- | ----- | ----- | |

|

|

| SmolVLM2-2.2b | 0.561 | 0.011 | |

|

|

| qwen2.5-vl-7b | 0.720 | 0.038 | |

|

|

| Claude Sonnet 4 | 0.737 | 0.031 | |

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

## Limitations |

|

|

- **Prototype only**: Intended as a first approach, not a production-ready solution. |

|

|

- **Dataset constraints**: Current evaluation is limited to cases where an answer fits in a single bounding box. Answers requiring reasoning over multiple regions or not fully captured by OCR cannot be properly evaluated. |

|

|

|

|

|

## Citation |

|

|

If you use this model in your research, please cite: |

|

|

``` |

|

|

bibtex@misc{docexplainer2025, |

|

|

title={Towards Reliable and Interpretable Document Question Answering via VLMs}, |

|

|

author={[Your Name]}, |

|

|

year={2025}, |

|

|

url={} |

|

|

} |

|

|

``` |

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|